## Compute and Energy Consumption Trends in Deep Learning Inference

## Radosvet Desislavov

VRAIN. Universitat Polit` ecnica de Val` encia, Spain radegeo@inf.upv.es

## Fernando Mart´ ınez-Plumed

European Commission, Joint Research Centre fernando.martinez-plumed@ec.europa.eu

VRAIN. Universitat Polit` ecnica de Val` encia, Spain fmartinez@dsic.upv.es

Jos´ e Hern´ andez-Orallo

VRAIN. Universitat Polit` ecnica de Val` encia, Spain jorallo@upv.es

## Abstract

The progress of some AI paradigms such as deep learning is said to be linked to an exponential growth in the number of parameters. There are many studies corroborating these trends, but does this translate into an exponential increase in energy consumption? In order to answer this question we focus on inference costs rather than training costs, as the former account for most of the computing effort, solely because of the multiplicative factors. Also, apart from algorithmic innovations, we account for more specific and powerful hardware (leading to higher FLOPS) that is usually accompanied with important energy efficiency optimisations. We also move the focus from the first implementation of a breakthrough paper towards the consolidated version of the techniques one or two year later. Under this distinctive and comprehensive perspective, we study relevant models in the areas of computer vision and natural language processing: for a sustained increase in performance we see a much softer growth in energy consumption than previously anticipated. The only caveat is, yet again, the multiplicative factor, as future AI increases penetration and becomes more pervasive.

## Introduction

As Deep Neural Networks (DNNs) become more widespread in all kinds of devices and situations, what is the associated energy cost? In this work we explore the evolution of different metrics of deep learning models, paying particular attention to inference computational cost and its associated energy consumption. The full impact, and its final carbon footprint, not only depends on the internalities (hardware and software directly involved in their operation) but also on the externalities (all social and economic activities around it). From the AI research community, we have more to say and do about the former. Accordingly, more effort is needed, within AI, to better account for the internalities, as we do in this paper.

For a revised version and its published version refer to:

Desislavov, Radosvet, Fernando Mart´ ınez-Plumed, and Jos´ e Hern´ andez-Orallo. ' Trends in AI inference energy consumption: Beyond the performance-vs-parameter laws of deep learning ' Sustainable Computing: Informatics and Systems , Volume 38, April 2023. (DOI: https://doi.org/10.1016/j.suscom.2023.100857)

In our study, we differentiate between training and inference. At first look it seems that training cost is higher. However, for deployed systems, inference costs exceed training costs, because of the multiplicative factor of using the system many times [Martinez-Plumed et al., 2018]. Training, even if it involves repetitions, is done once but inference is done repeatedly. It is estimated that inference accounts for up to 90% of the costs [Thomas, 2020]. There are several studies about training computation and its environmental impact [Amodei and Hernandez, 2018, Gholami et al., 2021a, Canziani et al., 2017, Li et al., 2016, Anthony et al., 2020, Thompson et al., 2020] but there are very few focused on inference costs and their associated energy consumption.

DNNs are deployed almost everywhere [Balas et al., 2019], from smartphones to automobiles, all having their own compute, temperature and battery limitations. Precisely because of this, there has been a pressure to build DNNs that are less resource demanding, even if larger DNNs usually outperform smaller ones. Alternatively to this in-device use, many larger DNNs are run on data centres, with people accessing them repeated in a transparent way, e.g., when using social networks [Park et al., 2018]. Millions of requests imply millions of inferences over the same DNN.

Many studies report that the size of neural networks is growing exponentially [Xu et al., 2018, Bianco et al., 2018]. However, this does not necessarily imply that the cost is also growing exponentially, as more weights could be implemented with the same amount of energy, mostly due to hardware specialisation but especially as the energy consumption per unit of compute is decreasing. Also, there is the question of whether the changing costs of energy and their carbon footprint [EEA, 2021] should be added to the equation. Finally, many studies focus on the state-of-the-art (SOTA) or the cutting-edge methods according to a given metric of performance, but many algorithmic improvements usually come in the months or few years after a new technique is introduced, in the form of general use implementations having similar results with much lower compute requirements. All these elements have been studied separately, but a more comprehensive and integrated analysis is necessary to properly evaluate whether the impact of AI on energy consumption and its carbon footprint is alarming or simply worrying, in order to calibrate the measures to be taken in the following years and estimate the effect in the future.

For conducting our analysis we chose two representative domains: Computer Vision (CV) and Natural Language Processing (NLP). For CV we analysed image classification models, and ImageNet [Russakovsky et al., 2015] more specifically, because there is a great quantity of historical data in this area and many advances in this domain are normally brought to other computer vision tasks, such as object detection, semantic segmentation, action recognition, or video classification, among others. For NLP we analysed results for the General Language Understanding Evaluation (GLUE) benchmark [Wang et al., 2019], since language understanding is a core task in NLP.

We focus our analysis on inference FLOPs (Floating Point Operations) required to process one input item (image or text fragment). We collect inference FLOPs for many different DNNs architectures following a comprehensive literature review. Since hardware manufacturers have been working on specific chips for DNN, adapting the hardware to a specific case of use leads to performance and efficiency improvements. We collect hardware data over the recent years, and estimate how many FLOPs can be obtained with one Joule with each chip. Having all this data we finally estimate how much energy is needed to perform one inference step with a given DNN. Our main objective is to study the evolution of the required energy for one prediction over the years.

The main findings and contributions of this paper are to (1) showcase that better results for DNN models are in part attributable to algorithmic improvements and not only to more computing power; (2) determine how much hardware improvements and specialisation is decreasing DNNs energy consumption; (3) report that, while energy consumption is still increasing exponentially for new cutting-edge models, DNN inference energy consumption could be maintained low for increasing performance if the efficient models that come relatively soon after the breakthrough are selected.

We provide all collected data and performed estimations as a data set, publicly available in the appendixes and as a GitHub repository 1 . The rest of the paper covers the background, introduces the methodology and presents the analysis of hardware and energy consumption of DNN models and expounds on some forecasts. Discussion and future work close the paper.

1 Temporary copy in: https://bit.ly/3DTHvFC

## Background

In line with other areas of computer science, there is some previous work that analyses compute and its cost for AI, and DNNs more specifically. Recently, OpenAI carried out a detailed analysis about AI efficiency [Hernandez and Brown, 2020], focusing on the amount of compute used to train models with the ImageNet dataset. They show that 44 times less compute was required in 2020 to train a network with the performance AlexNet achieved seven years before.

However, a demand for better task performance, linked with more complex DNNs and larger volumes of data to be processed, the growth in demand for AI compute is still growing fast. [Thompson et al., 2020] reports the computational demands of several Deep Learning applications, showing that progress in them is strongly reliant on increases in computing power. AI models have doubled the computational power used every 3.4 months since 2012 [Amodei and Hernandez, 2018]. The study [Gholami et al., 2021a] declare similar scaling rates for AI training compute to [Amodei and Hernandez, 2018] and they forecast that DNNs memory requirements will soon become a problem. This exponential trend seems to impose a limit on how far we can improve performance in the future without a paradigm change.

Compared to training costs, there are fewer studies on inference costs, despite using a far more representative share of compute and energy. Canziani et al. (2017) study accuracy, memory footprint, parameters, operations count, inference time and power consumption of 14 ImageNet models. To measure the power consumption they execute the DNNs on a NVIDIA Jetson TX1 board. A similar study [Li et al., 2016] measures energy efficiency, Joules per image, for a single forward and backward propagation iteration (a training step). This study benchmarks 4 Convolutional Neural Networks (CNNs) on CPUs and GPUs on different frameworks. Their work shows that GPUs are more efficient than CPUs for the CNNs analysed. Both publications analyse model efficiency, but they do this for very concrete cases. We analyse a greater number of DNNs and hardware components in a longer time frame.

These and other papers are key in helping society and AI researchers realise the issues about efficiency and energy consumption. Strubell et al. (2019) estimate the energy consumption, the cost and CO2 emissions of training various of the most popular NLP models. Henderson et al. (2020) performs a systematic reporting of the energy and carbon footprints of reinforcement learning algorithms. Bommasani et al. (2021) (section 5.3) seek to identify assumptions that shape the calculus of environmental impact for foundation models. Schwartz et al. (2019) analyse training costs and propose that researchers should put more attention on efficiency and they should report always the number of FLOPs. These studies contribute to a better assessment of the problem and more incentives for their solution. For instance, new algorithms and architectures such as EfficientNet [Tan and Le, 2020] and EfficientNetV2 [Tan and Le, 2021] have aimed at this reduction in compute.

When dealing about computing effort and computing speed (hardware performance), terminology is usually confusing. For instance, the term 'compute' is used ambiguously, sometimes applied to the number of operations or the number of operations per second. However, it is important to clarify what kind of operations and the acronyms for them. In this regard, we will use the acronym FLOPS to measure hardware performance, by referring to the number of floating point operations per second , as standardised in the industry, while FLOPs will be applied to the amount of computation for a given task (e.g., a prediction or inference pass), by referring to the number of operations, counting a multiply-add operation pair as two operations. An extended discussion about this can be found in the appendix.

## Methodology

We collect most of our information directly from research papers that report results, compute and other data for one or more newly introduced techniques for the benchmarks and metrics we cover in this work. We manually read and inspected the original paper and frequently explored the official GitHub repository, if exists. However, often there is missing information in these sources, so we need to get the data from other sources, namely:

- Related papers : usually the authors of another paper that introduces a new model compare it with previously existing models, providing further information.

- Model implementations : PyTorch [Paszke et al., 2016] contains many (pre-trained) models, and their performance is reported. Other projects do the same (see, e.g., [Cadene, 2016, S´ emery, 2019]).

- Existing data compilations : there are some projects and public databases collecting information about deep learning architectures and their benchmarks, e.g., [Albanie, 2016, Coleman et al., 2017, Mattson et al., 2020, Gholami et al., 2021b, Stojnic and Taylor, 2021].

- Measuring tools : when no other source was available or reliable, we used the ptflops library [Sovrasov, 2020] or similar tools to calculate the model's FLOPs and parameters (when the implementation is available).

Given this general methodology, we now discuss in more detail how we made the selection of CV and NLP models, and the information about hardware.

## CV Models Data Compilation

There is a huge number of models for image classification, so we selected models based on two criteria: popularity and accuracy. For popularity we looked at the times that the paper presenting the model is cited on Google Scholar and whether the model appears mentioned in other papers (e.g., for comparative analyses). We focused on model's accuracy as well because having the best models per year in terms of accuracy is necessary for analysing progress. To achieve this we used existing compilations [Stojnic and Taylor, 2021] and filtered by year and accuracy. For our selection, accuracy was more important than popularity for recent models, as they are less cited than the older ones because they have been published for a shorter time. Once we selected the sources for image classification models, we collected the following information: Top-1 accuracy on ImageNet, number of parameters, FLOPs per forward pass, release date and training dataset. Further details about model selection, FLOPs estimation, image cropping [Krizhevsky et al., 2012] and resolution [Simonyan and Zisserman, 2015, Zhai et al., 2021] can be found in the Appendix (and Table 2).

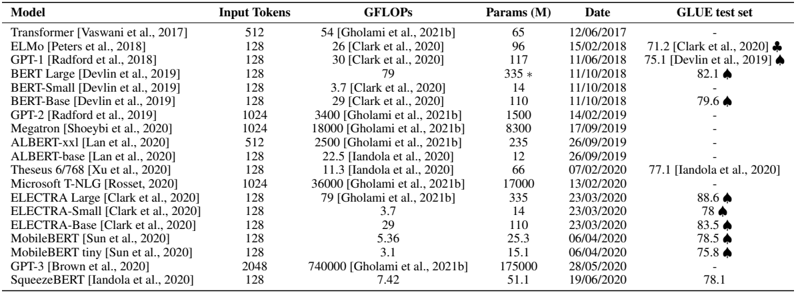

## NLP Models Data Compilation

For NLP models we noted that there is much less information about inference (e.g., FLOPs) and the number of models for which we can get the required information is smaller than for CV. We chose GLUE for being sufficiently representative and its value determined for a good number of architectures. To keep the numbers high we just included all the models since 2017 for which we found inference compute estimation [Clark et al., 2020]. Further details about FLOPs estimation and counting can be found in the Appendix (selected models in in Table 7).

## Hardware Data Compilation

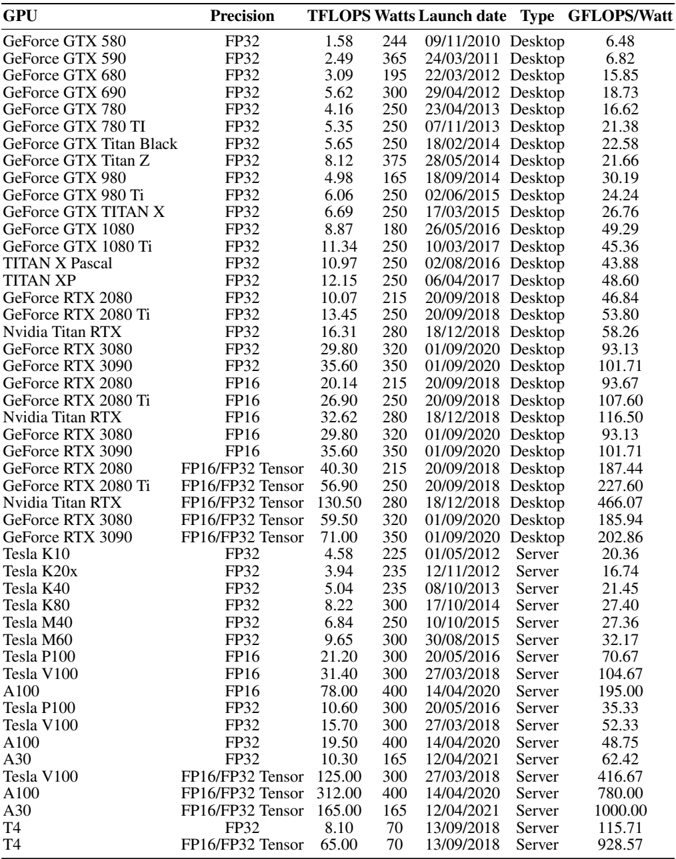

Regarding hardware evolution, we collected data for Nvidia GPUs 2 . Wechose Nvidia GPUs because they represent one of the most efficient hardware platforms for DNN 3 and they have been used for Deep Learning in the last 10 years, so we have a good temporal window for exploration. In particular, we collected GPU data for Nvidia GPUs from 2010 to 2021. The collected data is: FLOPS, memory size, power consumption (reported as Thermal Design Power, TDP) and launch date. As explained before, FLOPS is a measure of computer performance. From the FLOPS and power consumption we calculate the efficiency, dividing FLOPS by Watts. We use TDP and the reported peak FLOPS to calculate efficiency. This means we are considering the efficiency (GLOPS/Watt) when the GPU is at full utilisation. In practice the efficiency may vary depending on the workload, but we consider this estimate ('peak FLOPS'/TDP) accurate enough for analysing the trends and for giving an approximation of energy consumption. In our compilation there are desktop GPUs and server GPUs. We pay special attention to server GPUs released in the last years, because they are more common for AI, and DNNs in particular. A discussion about discrepancies between theoretical and real FLOPS as well as issues regarding Floating Point (FP) precision operations can be found in the Appendix.

2 https://developer.nvidia.com/deep-learning

3 We considered Google's TPUs (https://cloud.google.com/tpu?hl=en) for the analysis but there is not enough public information about them, as they are not sold but only available as a service.

## Computer Vision Analysis

In this section, we analyse the evolution of ImageNet [Deng et al., 2009] (one pass inference) according to performance and compute. Further details in the Appendix.

## Number of Parameters and FLOPs

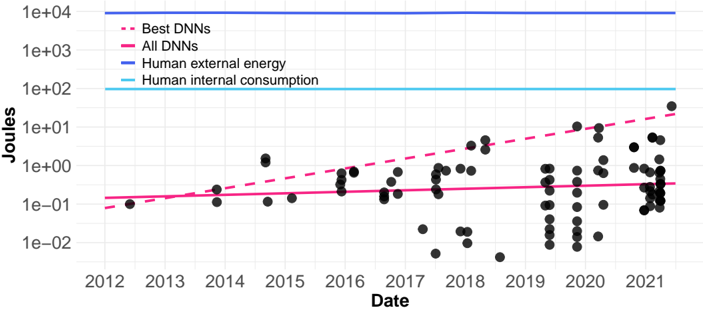

The number of parameters is usually reported, but it is not directly proportional to compute. For instance, in CNNs, convolution operations dominate the computation: if d , w and r represent the network's depth, widith and input resolution, the FLOPs grow following the relation [Tan and Le, 2020]:

$$F L O P s \, \infty \, d + w ^ { 2 } + r ^ { 2 }$$

This means that FLOPs do not directly depend on the number of parameters. Parameters affect network depth ( d ) or width ( w ), but distributing the same number of parameters in different ways will result in different numbers of FLOPs. Moreover, the resolution ( r ) does not depend on the number of parameters directly, because the input resolution can be increased without increasing network size.

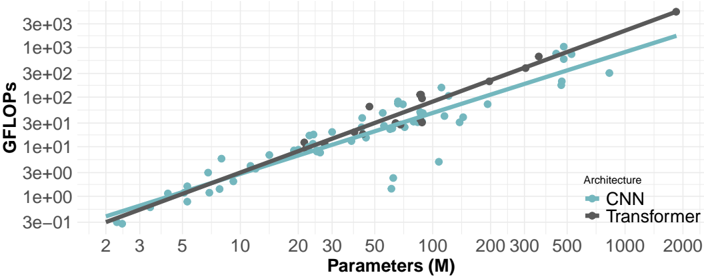

Figure 1: Relation between the number of parameters and FLOPs (both axes are logarithmic).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Scatter Plot: GFLOPS vs. Parameters for CNN and Transformer Architectures

### Overview

This image presents a scatter plot comparing the computational cost (GFLOPS) of Convolutional Neural Networks (CNNs) and Transformer architectures as a function of their number of parameters (in millions). Two regression lines are overlaid on the data to show the general trend for each architecture.

### Components/Axes

* **X-axis:** Parameters (M) - Scale is logarithmic, ranging from approximately 2 to 2000. Tick marks are present at 2, 3, 5, 10, 20, 30, 50, 100, 200, 300, 500, and 1000.

* **Y-axis:** GFLOPS - Scale is logarithmic, ranging from approximately 3e-01 to 3e+03. Tick marks are present at 3e-01, 1e+00, 3e+00, 1e+01, 3e+01, 1e+02, 3e+02, 1e+03, and 3e+03.

* **Legend:** Located in the top-right corner.

* "Architecture" label.

* CNN: Represented by light blue circles.

* Transformer: Represented by dark brown diamonds.

* **Data Points:** Scatter plot of individual CNN and Transformer models.

* **Regression Lines:** Two lines representing the trend for each architecture. The CNN line is light blue, and the Transformer line is dark brown. Shaded areas around the lines indicate confidence intervals.

### Detailed Analysis

**CNN Data (Light Blue Circles):**

The CNN data points generally follow an upward trend, indicating that as the number of parameters increases, the GFLOPS also increase. The trend is approximately linear on this log-log scale.

* At approximately 2M parameters, GFLOPS is around 0.3.

* At approximately 5M parameters, GFLOPS is around 1.

* At approximately 10M parameters, GFLOPS is around 3.

* At approximately 20M parameters, GFLOPS is around 8.

* At approximately 50M parameters, GFLOPS is around 20.

* At approximately 100M parameters, GFLOPS is around 50.

* At approximately 200M parameters, GFLOPS is around 120.

* At approximately 500M parameters, GFLOPS is around 250.

* At approximately 1000M parameters, GFLOPS is around 600.

There is some scatter around the regression line, indicating variability in GFLOPS for CNNs with similar parameter counts.

**Transformer Data (Dark Brown Diamonds):**

The Transformer data points also exhibit an upward trend, but appear to have a steeper slope than the CNN data.

* At approximately 2M parameters, GFLOPS is around 0.5.

* At approximately 5M parameters, GFLOPS is around 2.

* At approximately 10M parameters, GFLOPS is around 6.

* At approximately 20M parameters, GFLOPS is around 15.

* At approximately 50M parameters, GFLOPS is around 40.

* At approximately 100M parameters, GFLOPS is around 100.

* At approximately 200M parameters, GFLOPS is around 250.

* At approximately 500M parameters, GFLOPS is around 700.

* At approximately 1000M parameters, GFLOPS is around 1500.

The Transformer data also shows some scatter, but appears more tightly clustered around its regression line than the CNN data.

**Regression Lines:**

The regression lines visually confirm the upward trends for both architectures. The Transformer line has a noticeably steeper slope, indicating a faster increase in GFLOPS with increasing parameters compared to CNNs.

### Key Observations

* Transformers generally require more GFLOPS than CNNs for a given number of parameters.

* Both architectures exhibit a roughly linear relationship between parameters and GFLOPS on this log-log scale.

* There is variability within each architecture, as evidenced by the scatter of data points around the regression lines.

* The confidence intervals around the regression lines suggest some uncertainty in the estimated trends.

### Interpretation

The data suggests that Transformers are computationally more expensive than CNNs, particularly as the model size (number of parameters) increases. This is likely due to the attention mechanism inherent in Transformers, which requires more computations than the convolutional operations used in CNNs. The linear relationship on the log-log scale indicates that the computational cost scales approximately polynomially with the number of parameters for both architectures. The scatter in the data suggests that other factors, such as network depth, layer types, and specific implementation details, also influence the GFLOPS. The steeper slope of the Transformer line implies that the computational cost increases more rapidly with parameter count for Transformers, potentially limiting their scalability compared to CNNs. This information is valuable for researchers and engineers designing and deploying deep learning models, as it helps to understand the trade-offs between model size, computational cost, and performance.

</details>

Despite this, Fig. 1 shows a linear relation between FLOPs and parameters. We attribute this to the balanced scaling of w , d and r . These dimensions are usually scaled together with bigger CNNs using higher resolution. Note that recent transformer models [Vaswani et al., 2017] do not follow the growth relation presented above. However, the correlation between the number of parameters and FLOPs for CNNs is 0.772 and the correlation for transformers is 0.994 (Fig. 1). This suggests that usually in both architectures parameters and FLOPs scale in tandem. We will use FLOPs, as they allow us to estimate the needed energy relating hardware FLOPS with required FLOPs for a model [Hollemans, 2018, Clark et al., 2020].

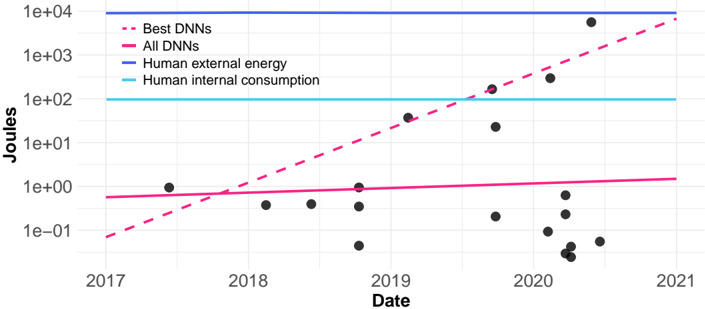

## Performance and Compute

There has been very significant progress for ImageNet. In 2012, AlexNet achieved 56% Top-1 accuracy (single model, one crop). In 2021, Meta Pseudo Labels (EfficientNet-L2) achieved 90.2% Top-1 accuracy (single model, one crop). However, this increase in accuracy comes with an increase in the required FLOPs for a forward pass. A forward pass for AlexNet is 1.42 GFLOPs while for EfficientNet-L2 is 1040 GFLOPs (details in the appendix).

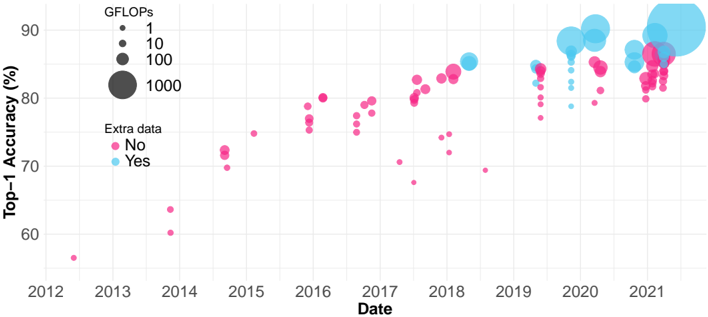

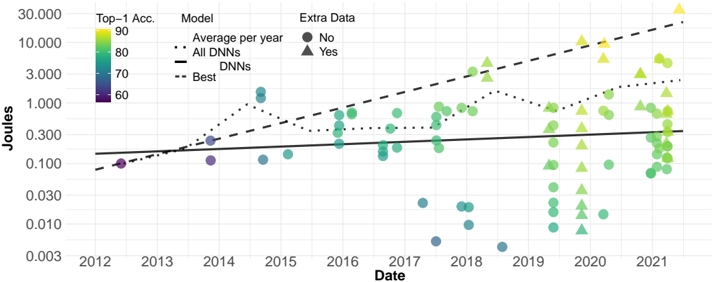

Fig. 2 shows the evolution from 2012 to 2021 in ImageNet accuracy (with the size of the bubbles representing the FLOPs of one forward pass). In recent papers some researchers began using more data than those available in ImageNet1k for training the models. However, using extra data only affects training FLOPs, but does not affect the computational cost for inferring each classification (forward pass).

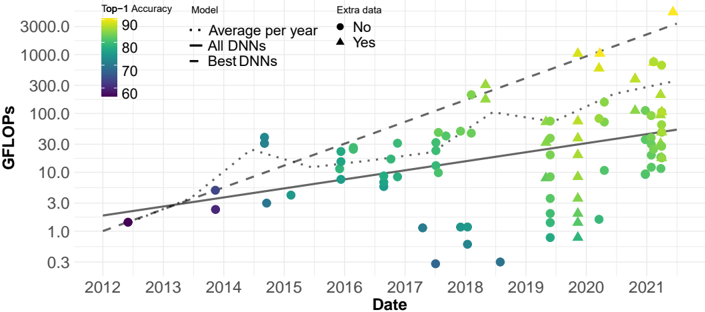

If we only look at models with the best accuracy for each year we can see an exponential growth in compute (measured in FLOPs). This can be observed clearly in Fig. 3: the dashed line represents an exponential growth (shown as a linear fit since the y -axis is logarithmic). The line is fitted with

Figure 2: Accuracy evolution over the years. The size of the balls represent the GFLOPs of one forward pass.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Scatter Plot: Top-1 Accuracy vs. Date for Machine Learning Models

### Overview

This scatter plot visualizes the relationship between the date a machine learning model was released and its Top-1 accuracy, with the size of the data point representing the model's GFLOPs (Giga Floating Point Operations). The color of the data point indicates whether the model was trained with "Extra data" or not.

### Components/Axes

* **X-axis:** Date, ranging from approximately 2012 to 2021.

* **Y-axis:** Top-1 Accuracy (%), ranging from approximately 58% to 92%.

* **Legend 1 (Top-Left):** GFLOPs, with corresponding circle sizes:

* 1 (Smallest circle)

* 10 (Medium circle)

* 100 (Large circle)

* 1000 (Largest circle)

* **Legend 2 (Center-Left):** Extra data:

* No (Pink circles)

* Yes (Cyan circles)

* **Data Points:** Scatter plot points representing individual machine learning models.

### Detailed Analysis

The plot shows a general upward trend in Top-1 accuracy over time. The size of the circles (GFLOPs) also generally increases with time and accuracy, though there is significant variation.

**Data Point Analysis (Approximate values, based on visual estimation):**

* **2012-2014:** Predominantly pink points (No extra data) with small circle sizes (1-10 GFLOPs). Accuracy ranges from approximately 58% to 75%.

* **2015-2017:** A mix of pink and cyan points, with increasing accuracy. Circle sizes begin to increase, ranging from 10 to 100 GFLOPs. Accuracy ranges from approximately 68% to 82%.

* **2018-2019:** More cyan points (Yes extra data) appear, and the circle sizes continue to increase, reaching up to 100 GFLOPs. Accuracy ranges from approximately 75% to 88%.

* **2020-2021:** Predominantly cyan points with larger circle sizes (100-1000 GFLOPs). Accuracy is generally high, ranging from approximately 82% to 92%. There is a cluster of points around 80-85% accuracy with varying GFLOPs.

**Specific Data Points (Approximate):**

* **2013:** Pink point, ~60% accuracy, 1 GFLOP.

* **2014:** Pink point, ~72% accuracy, 10 GFLOP.

* **2016:** Cyan point, ~78% accuracy, 100 GFLOP.

* **2018:** Cyan point, ~85% accuracy, 100 GFLOP.

* **2019:** Cyan point, ~88% accuracy, 100 GFLOP.

* **2020:** Cyan point, ~90% accuracy, 1000 GFLOP.

* **2021:** Cyan point, ~92% accuracy, 1000 GFLOP.

* **2021:** Pink point, ~82% accuracy, 100 GFLOP.

### Key Observations

* Models trained with "Extra data" (cyan points) generally achieve higher accuracy than those trained without (pink points), especially after 2018.

* There is a strong correlation between GFLOPs and Top-1 accuracy. Larger models (higher GFLOPs) tend to have higher accuracy.

* The rate of accuracy improvement appears to be accelerating over time, particularly in the 2020-2021 period.

* There are some outliers: a few pink points in 2020-2021 with relatively high accuracy, suggesting that models without "Extra data" can still achieve good performance.

### Interpretation

The data suggests that advancements in machine learning model accuracy are driven by both increased computational resources (GFLOPs) and the use of larger datasets ("Extra data"). The upward trend in accuracy over time reflects the ongoing progress in the field. The increasing size of the circles (GFLOPs) indicates that more powerful models are being developed. The shift towards cyan points (models with "Extra data") suggests that data augmentation and larger datasets are becoming increasingly important for achieving state-of-the-art performance. The outliers suggest that model architecture and training techniques also play a significant role, as some models without "Extra data" can still achieve competitive results. The acceleration of accuracy improvement in recent years may be due to breakthroughs in model architectures (e.g., Transformers) and training algorithms.

</details>

Figure 3: GFLOPs over the years. The dashed line is a linear fit (note the logarithmic y -axis) for the models with highest accuracy per year. The solid line includes all points.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Scatter Plot: DNN Performance Over Time

### Overview

This image presents a scatter plot illustrating the relationship between the date (from 2012 to 2021) and GFLOPS (floating point operations per second) for Deep Neural Networks (DNNs). The plot also incorporates Top-1 Accuracy as a color-coded attribute of each data point. Three lines are overlaid to represent trends: Average per year, All DNNs, and Best DNNs. The plot also differentiates data points based on whether they have "Extra data" associated with them.

### Components/Axes

* **X-axis:** Date, ranging from 2012 to 2021.

* **Y-axis:** GFLOPS, on a logarithmic scale from 0.3 to 3000.

* **Color Scale (Top-1 Accuracy):** A gradient from yellow (90) to blue (60), representing Top-1 Accuracy.

* **Legend:** Located at the top-right of the plot.

* `Average per year`: Represented by a dotted gray line.

* `All DNNs`: Represented by a solid black line.

* `Best DNNs`: Represented by a dashed gray line.

* `No`: Represented by a solid blue circle.

* `Yes`: Represented by a green triangle.

### Detailed Analysis

The plot shows a general upward trend in GFLOPS over time for all DNNs. Let's analyze each trend line and data series:

* **Average per year (dotted gray line):** This line shows a slow, relatively steady increase in GFLOPS from approximately 1.5 GFLOPS in 2012 to around 30 GFLOPS in 2021.

* **All DNNs (solid black line):** This line exhibits a more pronounced upward trend, starting at approximately 1.5 GFLOPS in 2012 and reaching around 60 GFLOPS in 2021.

* **Best DNNs (dashed gray line):** This line shows the most rapid increase, starting at approximately 2 GFLOPS in 2012 and reaching over 1000 GFLOPS in 2021.

**Data Point Analysis (Color-coded by Top-1 Accuracy):**

* **2012:** A few data points (blue circles) around 1-2 GFLOPS, with Top-1 Accuracy around 60-70.

* **2013-2016:** Data points (blue circles) are scattered, generally below 10 GFLOPS, with Top-1 Accuracy ranging from 60-80.

* **2017-2018:** An increase in data points (blue circles and green triangles) between 10-100 GFLOPS, with Top-1 Accuracy ranging from 60-90.

* **2019-2021:** A significant increase in data points (green triangles and yellow circles) ranging from 30-3000 GFLOPS, with Top-1 Accuracy ranging from 70-90. The highest GFLOPS values (above 1000) are associated with yellow data points (Top-1 Accuracy of 90).

* **"Extra data" differentiation:** Green triangles (Yes) generally appear at higher GFLOPS values than blue circles (No), particularly from 2018 onwards.

**Approximate Data Points (based on visual estimation):**

| Year | All DNNs (GFLOPS) | Best DNNs (GFLOPS) | Average per year (GFLOPS) |

|---|---|---|---|

| 2012 | 1.5 | 2 | 1.5 |

| 2015 | 5 | 10 | 5 |

| 2018 | 20 | 200 | 15 |

| 2021 | 60 | 1200 | 30 |

### Key Observations

* There is a clear exponential growth in the performance (GFLOPS) of DNNs over the period 2012-2021.

* The "Best DNNs" consistently outperform the average and all DNNs.

* Top-1 Accuracy generally increases with GFLOPS, suggesting a correlation between computational power and model accuracy.

* Data points with "Extra data" tend to have higher GFLOPS values, indicating that additional data may contribute to improved performance.

* The logarithmic scale on the Y-axis emphasizes the rapid growth in GFLOPS, especially in the later years.

### Interpretation

The data demonstrates the rapid advancement of DNN technology over the past decade. The increasing GFLOPS values indicate a significant increase in computational power, which is likely driven by advancements in hardware (e.g., GPUs) and algorithmic improvements. The correlation between GFLOPS and Top-1 Accuracy suggests that increasing computational power leads to more accurate models. The differentiation based on "Extra data" suggests that the quality and quantity of training data also play a crucial role in model performance. The divergence between the "All DNNs" and "Best DNNs" lines highlights the impact of research and development efforts in pushing the boundaries of DNN capabilities. The plot provides a compelling visual representation of the progress in the field of deep learning and its potential for future advancements. The use of a logarithmic scale is important to understand the magnitude of the growth, as a linear scale would compress the earlier data points and obscure the exponential trend.

</details>

the models with highest accuracy for each year. However not all models released in the latest years need so much compute. This is reflected by the solid line, which includes all points. We also see that for the same number of FLOPs we have models with increasing accuracy as time goes by.

In Table 1 there is a list of models having similar number of FLOPs as AlexNet. In 2019 we have a model (EfficientNet-B1) with the same number of operations as AlexNet achieving a Top-1 accuracy of 79.1% without using extra data, and a model (NoisyStudent-B1) achieving Top-1 accuracy of 81.5% using extra data. In a period of 7 years, we have models with similar computation with much higher accuracy. We observe that when a SOTA model is released it usually has a huge number of FLOPs, and therefore consumes a large amount of energy, but in a couple of years there is a model with similar accuracy but with much lower number of FLOPs. These models are usually those that become popular in many industry applications. This observation confirms that better results for DNN models of general use are in part attributable to algorithmic improvements and not only to the use of more computing power.

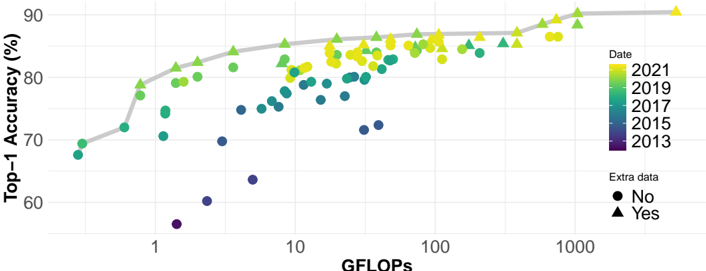

Finally, Fig. 4 shows that the Pareto frontier (in grey) is composed of new models (in yellow and green), whereas old models (in purple and dark blue) are relegated below the Pareto. As expected, the models which use extra data are normally those forming the Pareto frontier. Let us note again that extra training data does not affect inference GFLOPs.

| Model | Top-1 Accuracy | GFLOPs | Year |

|----------------------------------------|------------------|----------|--------|

| AlexNet [Krizhevsky et al., 2012] | 56.52 | 1.42 | 2012 |

| ZFNet [Zeiler and Fergus, 2013] | 60.21 | 2.34 | 2013 |

| GoogleLeNet [Szegedy et al., 2014] | 69.77 | 3 | 2014 |

| MobileNet [Howard et al., 2017] | 70.6 | 1.14 | 2017 |

| MobileNetV2 1.4 [Sandler et al., 2019] | 74.7 | 1.18 | 2018 |

| EfficientNet-B1 [Tan and Le, 2020] | 79.1 | 1.4 | 2019 |

| NoisyStudent-B1 [Xie et al., 2020] | 81.5 | 1.4 | 2019 |

Table 1: Results for several DNNs with a similar number of FLOPs as AlexNet.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Scatter Plot: Top-1 Accuracy vs. GFLOPS

### Overview

This image presents a scatter plot illustrating the relationship between GFLOPS (floating point operations per second) and Top-1 Accuracy (in percentage) for machine learning models across different years. The data points are color-coded by year and shaped by whether or not they include "extra data". A grey line represents a trendline through the data.

### Components/Axes

* **X-axis:** GFLOPS, ranging from approximately 0.5 to 1000, on a logarithmic scale. Axis label: "GFLOPS".

* **Y-axis:** Top-1 Accuracy (%), ranging from approximately 65% to 92%. Axis label: "Top-1 Accuracy (%)".

* **Color Legend (Top-Right):** Represents the year of the data point.

* 2021: Yellow

* 2019: Light Green

* 2017: Green

* 2015: Teal

* 2013: Purple

* **Shape Legend (Bottom-Right):** Indicates whether the data point includes "extra data".

* No: Circle

* Yes: Triangle

### Detailed Analysis

The plot shows a general trend of increasing Top-1 Accuracy with increasing GFLOPS. The grey trendline confirms this, sloping upwards from the bottom-left to the top-right.

**Data Point Analysis (Approximate values based on visual estimation):**

* **2013 (Purple):**

* Around 1 GFLOPS: ~70% Accuracy

* Around 10 GFLOPS: ~74% Accuracy

* Around 100 GFLOPS: ~78% Accuracy

* **2015 (Teal):**

* Around 1 GFLOPS: ~72% Accuracy

* Around 10 GFLOPS: ~78% Accuracy

* Around 100 GFLOPS: ~82% Accuracy

* **2017 (Green):**

* Around 1 GFLOPS: ~75% Accuracy

* Around 10 GFLOPS: ~80% Accuracy

* Around 100 GFLOPS: ~84% Accuracy

* **2019 (Light Green):**

* Around 1 GFLOPS: ~78% Accuracy

* Around 10 GFLOPS: ~82% Accuracy

* Around 100 GFLOPS: ~86% Accuracy

* **2021 (Yellow):**

* Around 10 GFLOPS: ~84% Accuracy

* Around 100 GFLOPS: ~88% Accuracy

* Around 1000 GFLOPS: ~91% Accuracy

**Shape Analysis:**

* **Circles (No Extra Data):** Predominantly represent data from earlier years (2013-2019). There is a cluster of circles around 10 GFLOPS with accuracy ranging from 74% to 82%.

* **Triangles (Yes Extra Data):** Primarily represent data from later years (2019-2021). Triangles generally exhibit higher accuracy for a given GFLOPS value compared to circles.

### Key Observations

* The trendline suggests diminishing returns: the increase in accuracy slows down as GFLOPS increase.

* Models with "extra data" (triangles) consistently achieve higher accuracy than those without (circles) for the same computational cost (GFLOPS).

* Accuracy has improved significantly over time, even for models with the same GFLOPS.

* There is a noticeable gap in data points between approximately 100 and 1000 GFLOPS, particularly for earlier years.

### Interpretation

The data demonstrates a clear correlation between computational power (GFLOPS) and model accuracy. However, the diminishing returns observed at higher GFLOPS values suggest that simply increasing computational resources is not a sustainable path to continuous improvement. The inclusion of "extra data" appears to be a significant factor in boosting accuracy, indicating the importance of data quality and quantity. The temporal trend shows that advancements in algorithms and model architectures, alongside increased computational power, have led to substantial gains in accuracy over the years. The gap in data points at higher GFLOPS values could indicate a limitation in the availability or cost of running models at that scale, or a point of diminishing returns where further increases in GFLOPS yield only marginal improvements in accuracy. The visualization suggests that the field is approaching a point where algorithmic innovation and data optimization are becoming more crucial than simply scaling up computational resources.

</details>

GFLOPs

Figure 4: Relation between accuracy and GFLOPs.

## Natural Language Analysis

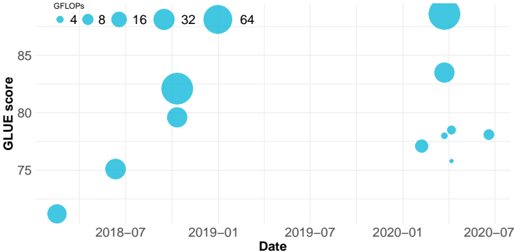

In this section, we analyse the trends in performance and inference compute for NLP models. To analyse performance we use GLUE, which is a popular benchmark for natural language understanding, one key task in NLP. The GLUE benchmark 4 is composed of nine sentence understanding tasks, which cover a broad range of domains. The description of each task can be found in [Wang et al., 2019].

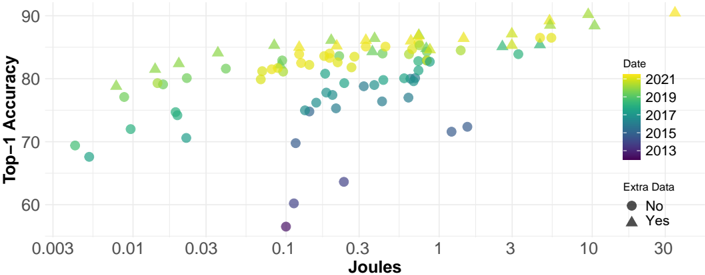

## Performance and Compute

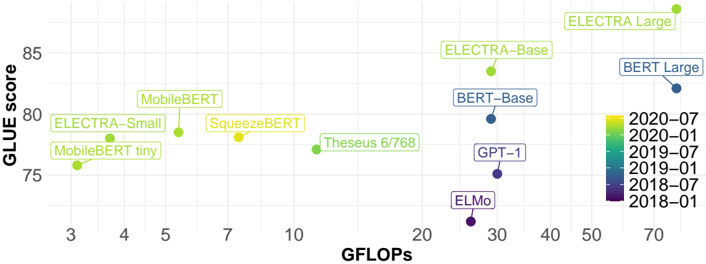

We represent the improvement on the GLUE score in relation to GFLOPs over the years in Fig. 5 (and in Fig. 15 in the Appendix). GFLOPs are for single input of length 128, which is a reasonable sequence length for many use cases, being able to fit text messages or short emails. We can observe a very similar evolution to the evolution observed in ImageNet: SOTA models require a large number of FLOPs, but in a short period of time other models appear, which require much fewer FLOPs to reach the same score. There are many models that focus on being efficient instead of reaching high score, and this is reflected in their names too (e.g., MobileBERT [Sun et al., 2020] and SqueezeBERT [Iandola et al., 2020]). We note that the old models become inefficient (they have lower score with higher number of GLOPs) compared to the new ones, as it happens in CV models.

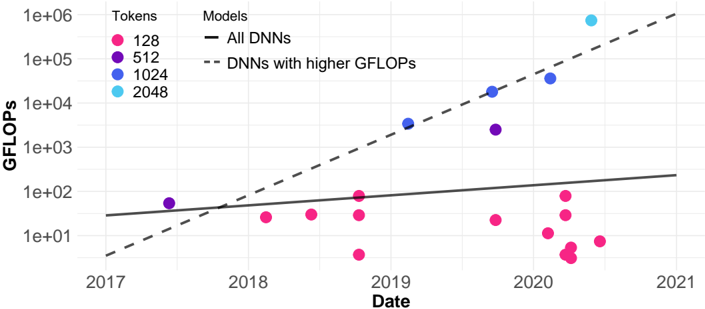

## Compute Trend

In Fig. 6 we include all models (regardless of having performance results) for which we found inference FLOPs estimation. The dashed line adjusts to the models with higher GFLOPs (models that, when released, become the most demanding model) and the solid line to all NLP models. In this plot we indicate the input sequence length, because in this plot we represent models with different input sequence lengths. We observe a similar trend as in CV: the GFLOPS of the most cutting-edge models have a clear exponential growth, while the general trend, i.e., considering all models, does not scale so aggressively. Actually, there is a good pocket of low-compute models in the last year.

4 Many recent models are evaluated on SUPERGLUE, but we choose GLUE to have a temporal window for our analysis.

Figure 5: GFLOPs per token analysis for NLP models.

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Scatter Plot: GLUE Score vs. GFLOPs for Language Models

### Overview

This image presents a scatter plot comparing the performance (GLUE score) of various language models against their computational cost (GFLOPs - Giga Floating Point Operations per second). Each point on the plot represents a specific language model. The color of each point indicates the date of the model's release.

### Components/Axes

* **X-axis:** GFLOPs (ranging from approximately 3 to 70).

* **Y-axis:** GLUE score (ranging from approximately 74 to 86).

* **Data Points:** Represent individual language models.

* **Legend:** Located in the bottom-right corner, color-coded by release date:

* 2020-07 (Yellow)

* 2020-01 (Light Green)

* 2019-07 (Green)

* 2019-01 (Blue-Green)

* 2018-07 (Blue)

* 2018-01 (Dark Purple)

* **Models Labeled:** ELECTRA Large, ELECTRA-Base, BERT Large, BERT-Base, GPT-1, ELMo, Theseus 6/768, SqueezeBERT, MobileBERT, ELECTRA-Small, MobileBERT tiny.

### Detailed Analysis

The plot shows a general trend of higher GLUE scores correlating with higher GFLOPs, but with significant variation.

Here's a breakdown of the approximate data points, cross-referencing with the legend for color accuracy:

* **MobileBERT tiny:** Approximately (4 GFLOPs, 75 GLUE score) - Yellow (2020-07)

* **MobileBERT:** Approximately (5 GFLOPs, 79 GLUE score) - Light Green (2020-01)

* **ELECTRA-Small:** Approximately (6 GFLOPs, 77 GLUE score) - Yellow (2020-07)

* **SqueezeBERT:** Approximately (7 GFLOPs, 80 GLUE score) - Yellow (2020-07)

* **Theseus 6/768:** Approximately (10 GFLOPs, 79 GLUE score) - Light Green (2020-01)

* **ELECTRA-Base:** Approximately (22 GFLOPs, 83 GLUE score) - Green (2019-07)

* **BERT-Base:** Approximately (30 GFLOPs, 82 GLUE score) - Blue-Green (2019-01)

* **GPT-1:** Approximately (30 GFLOPs, 78 GLUE score) - Blue-Green (2019-01)

* **ELMo:** Approximately (30 GFLOPs, 75 GLUE score) - Blue (2018-07)

* **BERT Large:** Approximately (70 GFLOPs, 82 GLUE score) - Blue (2018-07)

* **ELECTRA Large:** Approximately (50 GFLOPs, 85 GLUE score) - Yellow (2020-07)

The trend for ELECTRA models is generally upward as the model size increases (tiny -> small -> base -> large). BERT models also show an increase in GLUE score with increased GFLOPs (Base -> Large).

### Key Observations

* **Release Date Correlation:** Newer models (released in 2020) tend to achieve higher GLUE scores for a given number of GFLOPs, suggesting improvements in model architecture or training techniques.

* **ELMo Outlier:** ELMo, released in 2018, has a relatively low GLUE score compared to models released in later years with similar GFLOPs.

* **ELECTRA Large Performance:** ELECTRA Large achieves the highest GLUE score in the dataset.

* **GPT-1 Performance:** GPT-1 has a relatively low GLUE score compared to other models with similar GFLOPs.

### Interpretation

The data suggests a trade-off between model performance (GLUE score) and computational cost (GFLOPs). While increasing the number of GFLOPs generally leads to higher performance, the efficiency of models has improved over time. Newer models, like ELECTRA, achieve better performance with fewer GFLOPs than older models like ELMo. This indicates advancements in model design and training methodologies.

The plot highlights the importance of considering both performance and efficiency when selecting a language model for a specific application. The release date provides a temporal context, showing the evolution of language models and the progress made in the field. The outliers, such as ELMo and GPT-1, suggest that factors beyond GFLOPs influence performance, such as model architecture and training data. The clustering of models around certain GFLOP ranges suggests potential sweet spots for performance-cost trade-offs.

</details>

Figure 6: GFLOPs per token analysis for NLP models.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Scatter Plot: GFLOPS vs. Date for DNN Models

### Overview

This image presents a scatter plot illustrating the relationship between GFLOPS (Gigafloating point operations per second) and Date for different Deep Neural Network (DNN) models, categorized by the number of tokens used. Two trend lines are included: one for all DNNs and another for DNNs with higher GFLOPS.

### Components/Axes

* **X-axis:** Date, ranging from approximately 2017 to 2021.

* **Y-axis:** GFLOPS, displayed on a logarithmic scale from 1e+00 (1) to 1e+06 (1,000,000).

* **Legend:** Located in the top-right corner, categorizes data points by the number of tokens:

* 128 (Pink)

* 512 (Magenta)

* 1024 (Blue)

* 2048 (Cyan)

* **Lines:**

* "All DNNs" - Solid black line.

* "DNNs with higher GFLOPS" - Dashed grey line.

### Detailed Analysis

The plot shows scattered data points representing individual DNN models. The data points are color-coded based on the number of tokens used.

**Data Point Analysis (Approximate Values):**

* **128 Tokens (Pink):**

* 2017: ~10 GFLOPS

* 2018: ~20 GFLOPS

* 2019: ~10 GFLOPS

* 2020: ~5 GFLOPS, ~10 GFLOPS, ~20 GFLOPS

* **512 Tokens (Magenta):**

* 2018: ~50 GFLOPS

* 2019: ~100 GFLOPS

* 2020: ~50 GFLOPS

* **1024 Tokens (Blue):**

* 2019: ~1000 GFLOPS

* 2020: ~2000 GFLOPS, ~5000 GFLOPS

* **2048 Tokens (Cyan):**

* 2020: ~100000 GFLOPS

**Trend Line Analysis:**

* **"All DNNs" (Black Line):** The line exhibits a slight upward slope, indicating a gradual increase in GFLOPS over time. It starts at approximately 10 GFLOPS in 2017 and ends at approximately 100 GFLOPS in 2021.

* **"DNNs with higher GFLOPS" (Grey Dashed Line):** This line shows a much steeper upward slope, indicating a rapid increase in GFLOPS over time. It starts at approximately 10 GFLOPS in 2017 and ends at approximately 100000 GFLOPS in 2021.

### Key Observations

* There's a clear positive correlation between date and GFLOPS, especially for models with higher GFLOPS.

* The number of tokens appears to be related to GFLOPS, with models using more tokens generally exhibiting higher GFLOPS.

* The spread of data points for 128 tokens is wider than for other token counts, suggesting more variability in GFLOPS for these models.

* The "DNNs with higher GFLOPS" trend line significantly outpaces the "All DNNs" trend line, indicating that the most powerful models are growing in computational demand at a faster rate.

### Interpretation

The data suggests a trend of increasing computational requirements for DNN models over time. The steeper slope of the "DNNs with higher GFLOPS" line indicates that the most advanced models are driving this trend. The correlation between tokens and GFLOPS suggests that model size (as measured by the number of tokens) is a key factor in determining computational demand. The variability in GFLOPS for models with 128 tokens could be due to differences in architecture, training data, or other factors.

The plot highlights the growing need for more powerful hardware to support the development and deployment of increasingly complex DNN models. The divergence between the two trend lines suggests that the gap between the computational requirements of standard models and cutting-edge models is widening, potentially creating challenges for researchers and developers. The logarithmic scale on the Y-axis emphasizes the exponential growth in GFLOPS, particularly for the higher-performing models.

</details>

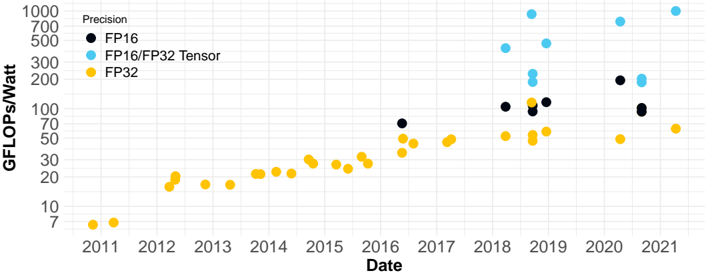

## Hardware Progress

We use FLOPS as a measure of hardware performance and FLOPS/Watt as a measure of hardware efficiency. We collected performance for different precision formats and tensor cores for a wide range of GPUs. The results are shown in Fig. 7. Note that the y -axis is in logarithmic scale. Theoretical FLOPS for tensor cores are very high in the plot. However, the actual performance for inference using tensor cores is not so high, if we follow a more realistic estimation for the Nvidia GPUs (V100, A100 and T4 5 ). The details of this estimation are shown in Table 3 in the appendix.

Figure 7: Theoretical Nvidia GPUs GFLOPS per Watt. Data in Table 8 in the appendix.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Scatter Plot: Performance Over Time by Precision

### Overview

This image presents a scatter plot illustrating the performance (GFLOPS/Watt) of computing hardware over time (2011-2021) for different precision levels: FP16, FP16/FP32 Tensor, and FP32. The plot shows how performance has evolved for each precision type over the decade.

### Components/Axes

* **X-axis:** Date, ranging from 2011 to 2021. The axis is labeled "Date".

* **Y-axis:** GFLOPS/Watt, ranging from 0 to 1000. The axis is labeled "GFLOPS/Watt".

* **Legend:** Located in the top-left corner, defining the color-coding for each precision type:

* Black: FP16

* Light Blue: FP16/FP32 Tensor

* Yellow: FP32

### Detailed Analysis

The plot contains data points for each precision type across the specified date range.

**FP32 (Yellow):**

The FP32 data series shows a generally upward trend, starting at approximately 5 GFLOPS/Watt in 2011 and reaching around 70 GFLOPS/Watt by 2021. There is some fluctuation, but the overall trend is positive.

* 2011: ~5 GFLOPS/Watt

* 2012: ~15 GFLOPS/Watt

* 2013: ~20 GFLOPS/Watt

* 2014: ~25 GFLOPS/Watt

* 2015: ~30 GFLOPS/Watt

* 2016: ~40 GFLOPS/Watt

* 2017: ~45 GFLOPS/Watt

* 2018: ~50 GFLOPS/Watt

* 2019: ~60 GFLOPS/Watt

* 2020: ~65 GFLOPS/Watt

* 2021: ~70 GFLOPS/Watt

**FP16/FP32 Tensor (Light Blue):**

This series exhibits the most significant performance gains. It starts at a lower value than FP32 around 2018, but quickly surpasses it.

* 2018: ~100 GFLOPS/Watt

* 2019: ~500 GFLOPS/Watt

* 2020: ~700 GFLOPS/Watt

* 2021: ~600 GFLOPS/Watt

**FP16 (Black):**

The FP16 data series appears later in the timeline, starting around 2016. It shows a more erratic pattern, with some significant jumps in performance.

* 2016: ~75 GFLOPS/Watt

* 2017: ~100 GFLOPS/Watt

* 2018: ~100 GFLOPS/Watt

* 2019: ~80 GFLOPS/Watt

* 2020: ~200 GFLOPS/Watt

* 2021: ~80 GFLOPS/Watt

### Key Observations

* FP16/FP32 Tensor precision demonstrates the most substantial performance improvement over the decade, significantly outpacing FP32 and FP16.

* FP32 shows a steady, but less dramatic, increase in performance.

* FP16 performance is variable, with a large jump in 2020, followed by a decrease in 2021.

* The data suggests a trend towards higher performance with lower precision (FP16/FP32 Tensor).

### Interpretation

The data illustrates the evolution of computing performance across different precision levels. The dominance of FP16/FP32 Tensor in recent years suggests a shift towards utilizing these precision levels for improved efficiency and performance, likely driven by the demands of machine learning and AI workloads. The relatively stable growth of FP32 indicates its continued relevance, while the fluctuating performance of FP16 might be due to variations in hardware implementations or specific application optimizations. The plot highlights the trade-offs between precision and performance, and how advancements in hardware and software are enabling higher performance at lower precision levels. The decrease in FP16 performance in 2021 could indicate a temporary setback or a change in focus for hardware manufacturers.

</details>

5 Specifications in: https://www.nvidia.com/en-us/data-center/.

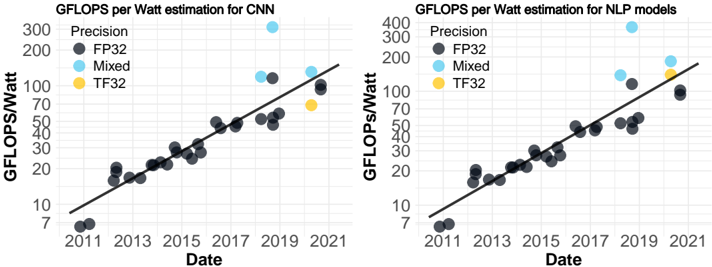

With these estimations we obtained good linear fits (with the y -axis in logarithmic scale) to each data set, one for CV and another for NLP, as shown by the solid lines in Fig. 8. Notice that there is a particular point in Fig. 8 for year 2018 that stands out among the others by a large margin. This corresponds to T4 using mixed precision, a GPU specifically designed for inference, and this is the reason why it is so efficient for this task.

Figure 8: Nvidia GPU GFLOPS per Watt adapted for CV (CNNs) and NLP models. Data in Table 9 in the appendix.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Scatter Plots: GFLOPS per Watt Estimation for CNN and NLP Models

### Overview

The image presents two scatter plots, side-by-side. The left plot shows GFLOPS per Watt estimation for Convolutional Neural Networks (CNN), and the right plot shows the same for Natural Language Processing (NLP) models. Both plots display data points over time (Date) and use different colors to represent different precision levels (FP32, Mixed, TF32). A black line represents a trendline for each plot.

### Components/Axes

Both plots share the following components:

* **X-axis:** Date, ranging from approximately 2010 to 2022, with markers at 2011, 2013, 2015, 2017, 2019, and 2021.

* **Y-axis:** GFLOPS/Watt, ranging from approximately 7 to 300 (left plot) and 7 to 400 (right plot).

* **Legend (Top-Left of each plot):**

* Precision: FP32 (Dark Gray)

* Precision: Mixed (Light Blue)

* Precision: TF32 (Yellow)

* **Trendline:** A black solid line representing the general trend of the data.

### Detailed Analysis or Content Details

**Left Plot (CNN):**

* **FP32 (Dark Gray):** The data points generally follow an upward trend.

* Approximate data points: (2011, 8), (2012, 12), (2013, 16), (2014, 20), (2015, 25), (2016, 30), (2017, 40), (2018, 50), (2019, 60), (2020, 80), (2021, 100).

* **Mixed (Light Blue):** Fewer data points are present.

* Approximate data points: (2017, 150), (2019, 200), (2021, 300).

* **TF32 (Yellow):** Only one data point is visible.

* Approximate data point: (2018, 45).

**Right Plot (NLP):**

* **FP32 (Dark Gray):** The data points generally follow an upward trend.

* Approximate data points: (2011, 7), (2012, 12), (2013, 18), (2014, 24), (2015, 30), (2016, 40), (2017, 50), (2018, 60), (2019, 70), (2020, 90), (2021, 110).

* **Mixed (Light Blue):** Fewer data points are present.

* Approximate data points: (2017, 180), (2019, 220), (2021, 320).

* **TF32 (Yellow):** Only one data point is visible.

* Approximate data point: (2018, 80).

In both plots, the trendlines slope upwards, indicating an increasing trend in GFLOPS per Watt over time.

### Key Observations

* Both CNN and NLP models show a consistent increase in GFLOPS per Watt over the period from 2011 to 2021.

* Mixed precision consistently outperforms FP32 precision in both CNN and NLP models, showing significantly higher GFLOPS/Watt.

* TF32 precision has limited data points, but appears to offer performance between FP32 and Mixed precision.

* The rate of improvement appears to be accelerating in recent years (2019-2021) for all precision levels.

### Interpretation

The data suggests a significant improvement in the efficiency of both CNN and NLP models over the past decade. This improvement is likely due to advancements in hardware (e.g., GPUs, TPUs) and software (e.g., model architectures, optimization techniques). The superior performance of Mixed precision indicates that utilizing lower precision data types can substantially increase computational throughput without significant loss of accuracy. The limited data for TF32 suggests it is a relatively newer precision format, but shows promise. The accelerating trend in recent years suggests that the pace of innovation in this field is increasing. The difference between the two charts could be due to the inherent computational demands of CNNs versus NLP models, or the different optimization strategies employed for each. The plots demonstrate the ongoing drive for more efficient machine learning models, which is crucial for reducing energy consumption and enabling deployment on resource-constrained devices.

</details>

## Energy Consumption Analysis

Once we have estimated the inference FLOPs for a range of models and the GFLOPS per Watt for different GPUs, we can estimate the energy (in Joules) consumed in one inference. We do this by dividing the FLOPs for the model by FLOPS per Watt for the GPU. But how can we choose the FLOPS per Watt that correspond to the model? We use the models presented in Fig. 8 to obtain an estimation of GLOPS per Watt for the model's release date . In this regard, Henderson et al. (2020) report that FLOPs for DNNs can be misleading sometimes, due to underlying optimisations at the firmware, frameworks, memory and hardware that can influence energy efficiency. They show that energy and FLOPs are highly correlated for the same architecture, but the correlation decreases when different architectures are mixed. We consider that this low correlation does not affect our estimations significantly as we analyse the trends through the years and we fit in the exponential scale, where dispersion is reduced. To perform a more precise analysis it would be necessary to measure power consumption for each network with the original hardware and software, as unfortunately the required energy per (one) inference is rarely reported.

<details>

<summary>Image 9 Details</summary>

### Visual Description

\n

## Scatter Plot: Energy Consumption of DNN Models Over Time

### Overview

This image presents a scatter plot illustrating the energy consumption (in Joules) of different Deep Neural Network (DNN) models over time (from 2012 to 2021). The plot differentiates models based on their Top-1 Accuracy and whether they were trained with "Extra Data". Three lines represent average energy consumption trends: "All DNNs", "DNNs", and "Best" models. The y-axis is on a logarithmic scale.

### Components/Axes

* **X-axis:** Date (ranging from 2012 to 2021).

* **Y-axis:** Joules (logarithmic scale, ranging from 0.003 to 30.000).

* **Legend:**

* **Model:**

* All DNNs (dotted line)

* DNNs (solid line)

* Best (dashed line)

* **Extra Data:**

* No (triangle markers)

* Yes (circle markers)

* **Color Scale:** Top-1 Accuracy (ranging from 60 to 90, with a gradient from blue to yellow).

* **Markers:** Triangle and Circle markers are used to indicate whether extra data was used.

### Detailed Analysis

The plot shows three trend lines representing the average energy consumption of different model types. The data points are colored based on Top-1 Accuracy, with blue representing lower accuracy and yellow representing higher accuracy.

**All DNNs (dotted line):** This line shows an upward trend, increasing from approximately 0.03 Joules in 2012 to around 10 Joules in 2021.

* 2012: ~0.03 Joules

* 2014: ~0.1 Joules

* 2016: ~0.3 Joules

* 2018: ~1.0 Joules

* 2020: ~3.0 Joules

* 2021: ~10 Joules

**DNNs (solid line):** This line is relatively flat, with a slight upward trend. It starts at approximately 0.05 Joules in 2012 and increases to around 0.3 Joules in 2021.

* 2012: ~0.05 Joules

* 2014: ~0.08 Joules

* 2016: ~0.15 Joules

* 2018: ~0.2 Joules

* 2020: ~0.25 Joules

* 2021: ~0.3 Joules

**Best (dashed line):** This line shows a significant upward trend, starting at approximately 0.05 Joules in 2012 and increasing to around 20 Joules in 2021.

* 2012: ~0.05 Joules

* 2014: ~0.2 Joules

* 2016: ~0.7 Joules

* 2018: ~2.0 Joules

* 2020: ~7.0 Joules

* 2021: ~20 Joules

**Data Points:**

* **Extra Data = No (Triangles):** These points are scattered throughout the plot, with a concentration in the lower energy consumption range (below 1 Joule) in earlier years (2012-2017). In later years (2018-2021), they are more spread out, with some points reaching higher energy consumption levels (up to 10 Joules). The color of the triangles varies from blue (lower accuracy) to yellow (higher accuracy).

* **Extra Data = Yes (Circles):** These points are also scattered, but generally show a higher concentration in the higher energy consumption range (above 1 Joule) in later years (2018-2021). The color of the circles also varies from blue to yellow.

### Key Observations

* The energy consumption of the "Best" models has increased dramatically over time, far exceeding the energy consumption of "All DNNs" and "DNNs".

* Models trained with "Extra Data" tend to consume more energy, particularly in recent years.

* There is a positive correlation between Top-1 Accuracy and energy consumption, as indicated by the color gradient. Higher accuracy models generally consume more energy.

* The "DNNs" line remains relatively stable, suggesting that the average energy consumption of these models has not increased significantly over time.

### Interpretation

The data suggests that achieving higher accuracy in DNN models requires significantly more energy, especially for the "Best" performing models. The use of "Extra Data" also contributes to increased energy consumption. This trend raises concerns about the environmental impact of increasingly complex AI models. The relatively stable energy consumption of "DNNs" might indicate that these models have reached a plateau in terms of performance gains per unit of energy. The logarithmic scale of the y-axis emphasizes the exponential growth in energy consumption for the "Best" models. The plot highlights the trade-off between model accuracy and energy efficiency, and the need for research into more energy-efficient AI algorithms and hardware. The data points show a wide range of energy consumption within each category, indicating that model architecture, training methods, and other factors also play a significant role.

</details>

Extra Data

No

Yes

Top-1 Accuracy

Lines

Average per year

Model all DNNs

Model best DNNs

Figure 9: Estimated Joules of a forward pass (CV). The dashed line is a linear fit (logarithmic y -axis) for the models with highest accuracy per year. The solid line fits all models.

We can express the efficiency metric FLOPS per Watt as FLOPs per Joule, as shown in Eq. 1. Having this equivalence we can use it to divide the FLOPs needed for a forward pass and obtain the required Joules, see Eq. 2. Doing this operation we obtain the consumed energy in Joules.

Figure 10: Estimated Joules of a forward pass (NLP). Same interpretation as in Fig. 9.

<details>

<summary>Image 10 Details</summary>

### Visual Description

\n

## Scatter Plot: Energy Consumption vs. Model Size Over Time

### Overview

This image presents a scatter plot illustrating the relationship between energy consumption (in Joules) and time (Date) for different model sizes (measured in Tokens). Two trend lines are overlaid to show the growth of GFLOPs for all models and for models with higher GFLOPs. The data points are color-coded based on the number of tokens.

### Components/Axes

* **X-axis:** Date, ranging from approximately 2017 to 2021.

* **Y-axis:** Joules, displayed on a logarithmic scale from 1e-01 to 1e+04.

* **Legend:** Located in the top-right corner, defining the color-coding for the number of tokens:

* Pink: 128 Tokens

* Purple: 512 Tokens

* Blue: 1024 Tokens

* Cyan: 2048 Tokens

* **Lines:**

* Solid Black Line: Growth GFLOPs all models

* Dashed Gray Line: Growth GFLOPs of models with higher GFLOPs

### Detailed Analysis

The plot contains scattered data points representing energy consumption for different models at various dates. The solid black line represents the overall trend of GFLOPs growth for all models, while the dashed gray line represents the trend for models with higher GFLOPs.

**Data Point Analysis (Approximate Values):**

* **128 Tokens (Pink):**

* 2017: ~0.03 Joules

* 2018: ~0.05 Joules

* 2019: ~0.1 Joules

* 2020: ~0.08 Joules (with significant variation between ~0.02 and ~0.2 Joules)

* **512 Tokens (Purple):**

* 2017: ~0.5 Joules

* 2018: ~0.7 Joules

* 2019: ~1.5 Joules

* 2020: ~1.2 Joules (with variation between ~0.5 and ~2 Joules)

* **1024 Tokens (Blue):**

* 2019: ~2 Joules

* 2020: ~3 Joules

* **2048 Tokens (Cyan):**

* 2020: ~10 Joules

**Trend Lines:**

* **Solid Black Line (Growth GFLOPs all models):** The line is relatively flat, indicating a slow and steady growth in GFLOPs for all models. It starts at approximately 0.1 Joules in 2017 and reaches approximately 1 Joule in 2021.

* **Dashed Gray Line (Growth GFLOPs of models with higher GFLOPs):** This line slopes upward more steeply than the solid black line, indicating a faster growth in GFLOPs for models with higher GFLOPs. It starts at approximately 0.05 Joules in 2017 and reaches approximately 5 Joules in 2021.

### Key Observations

* Energy consumption generally increases with the number of tokens.

* The growth in energy consumption for models with higher GFLOPs is significantly faster than for all models combined.

* There is considerable variation in energy consumption for models with 128 and 512 tokens in 2020.

* The logarithmic scale on the Y-axis emphasizes the exponential growth in energy consumption for larger models.

### Interpretation

The data suggests that as models grow in size (number of tokens), their energy consumption increases. The steeper growth curve for models with higher GFLOPs indicates that more powerful models require significantly more energy. This highlights the growing energy demands of increasingly complex AI models. The variation in energy consumption for smaller models in 2020 could be due to differences in model architecture, training methods, or hardware used. The logarithmic scale is crucial for visualizing the large differences in energy consumption between smaller and larger models. The plot demonstrates a clear trade-off between model performance (GFLOPs) and energy efficiency. The divergence of the two trend lines suggests that the energy cost of increasing model complexity is accelerating. This has implications for the sustainability of AI development and the need for more energy-efficient algorithms and hardware.

</details>

$$E \text {efficiency} & = \frac { \text {HW Perf. } } { \text {Power} } \text { in units: } \frac { \ F L O P S } { W a t t } = \frac { \ F L O P s / s } { J o u l e s / s } = \frac { \ F L O P s } { J o u l e } & ( 1 ) \\ E \text {energy} & = \frac { \text {Fwd. Pass } } { \text {Efficiency} } \text { in units: } \frac { \ F L O P s } { \ F L O p s / J o u l e } = J o u l e$$

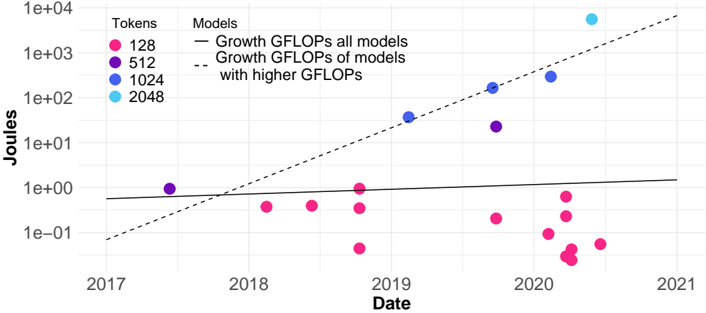

Applying this calculation to all collected models we obtain Fig. 9 for CV. The dashed line represents an exponential trend (a linear fit as the y -axis is logarithmic), adjusted to the models with highest accuracy for each year, like in Fig. 2, and the dotted line represent the average Joules for each year. By comparing both plots we can see that hardware progress softens the growth observed for FLOPs, but the growth is still clearly exponential for the models with high accuracy. The solid line is almost horizontal, but in a logarithmic scale this may be interpreted as having an exponential growth with a small base or a linear fit on the semi log plot that is affected by the extreme points. In Fig. 10 we do the same for NLP models and we see a similar picture.

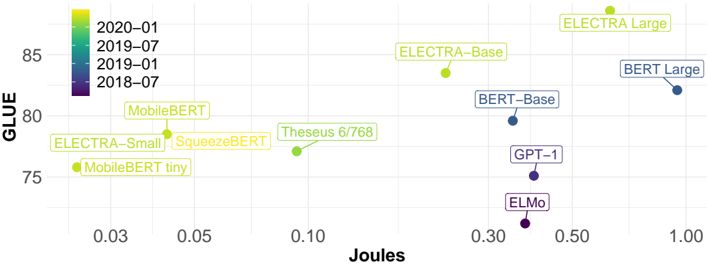

Fig. 11 shows the relation between Top-1 Accuracy and Joules. Joules are calculated in the same way as in Fig. 9. The relation is similar as the observed in Fig. 4, but in Fig. 11 the older models are not only positioned further down in the y -axis (performance) but they tend to cluster on the bottom right part of the plot (high Joules), so their position on the y -axis is worse than for Fig. 4 due to the evolution in hardware. This is even more clear for NLP, as seen in Fig. 12.

Figure 11: Relation between Joules and Top-1 Accuracy over the years (CV, ImageNet).

<details>

<summary>Image 11 Details</summary>

### Visual Description

\n

## Scatter Plot: Top-1 Accuracy vs. Joules

### Overview

This image presents a scatter plot visualizing the relationship between Joules and Top-1 Accuracy, with data points colored by Date and shaped by whether or not Extra Data was used. The plot appears to demonstrate how accuracy improves with increasing Joules, and how this relationship changes over time.

### Components/Axes

* **X-axis:** Joules, ranging from approximately 0.003 to 30. The scale is logarithmic, with markers at 0.003, 0.01, 0.03, 0.1, 0.3, 1, 3, and 10.

* **Y-axis:** Top-1 Accuracy, ranging from approximately 60 to 90. The scale is linear, with markers at 60, 70, 80, and 90.

* **Legend 1 (Top-Right):** Date, with the following color mapping:

* 2021: Yellow (#FFDA63)

* 2019: Light Green (#98FF98)

* 2017: Green (#32CD32)

* 2015: Cyan (#00FFFF)

* 2013: Purple (#8A2BE2)

* **Legend 2 (Bottom-Right):** Extra Data, with the following shape mapping:

* No: Circle (Blue)

* Yes: Triangle (Green)

### Detailed Analysis

The plot contains numerous data points, each representing a specific experiment or configuration. The data can be analyzed by considering both the Date and Extra Data dimensions.

**Data Trends by Date:**

* **2013 (Purple):** Data points are clustered towards the lower left of the plot, indicating low Joules and low Top-1 Accuracy. Accuracy ranges from approximately 60 to 75, with Joules ranging from 0.003 to 0.3.

* **2015 (Cyan):** Data points show a slight improvement in accuracy compared to 2013, with accuracy ranging from approximately 65 to 80 and Joules ranging from 0.01 to 1.

* **2017 (Green):** Data points continue to show improvement, with accuracy ranging from approximately 70 to 85 and Joules ranging from 0.03 to 3.

* **2019 (Light Green):** Data points show further improvement, with accuracy ranging from approximately 75 to 90 and Joules ranging from 0.1 to 10.

* **2021 (Yellow):** Data points are generally clustered towards the upper right of the plot, indicating high Joules and high Top-1 Accuracy. Accuracy ranges from approximately 80 to 90, with Joules ranging from 0.3 to 30.

**Data Trends by Extra Data:**

* **No (Circles - Blue):** The majority of data points fall within this category. The trend shows that as Joules increase, Top-1 Accuracy generally increases. There are some outliers, particularly at higher Joules values (around 1-3), where accuracy plateaus or even slightly decreases.

* **Yes (Triangles - Green):** Data points in this category generally exhibit higher Top-1 Accuracy for a given Joules value compared to the "No" category. The trend is similar, with accuracy increasing with Joules, but the improvement is more pronounced.

**Specific Data Points (Approximate):**

* (0.003, 65) - Purple Circle (2013, No Extra Data)

* (0.01, 75) - Green Triangle (2017, Yes Extra Data)

* (0.1, 70) - Cyan Circle (2015, No Extra Data)

* (0.3, 80) - Light Green Triangle (2019, Yes Extra Data)

* (1, 85) - Yellow Circle (2021, No Extra Data)

* (3, 88) - Yellow Triangle (2021, Yes Extra Data)

* (10, 89) - Yellow Triangle (2021, Yes Extra Data)

* (30, 87) - Yellow Circle (2021, No Extra Data)

### Key Observations

* There is a clear positive correlation between Joules and Top-1 Accuracy.

* The use of Extra Data generally leads to higher Top-1 Accuracy for a given Joules value.

* Accuracy has improved significantly over time (from 2013 to 2021).

* The rate of accuracy improvement appears to be slowing down in recent years (2019-2021).

* There are some outliers, suggesting that other factors besides Joules and Extra Data may influence Top-1 Accuracy.

### Interpretation

The data suggests that increasing computational resources (represented by Joules) generally leads to improved model accuracy. The consistent improvement in accuracy over time likely reflects advancements in model architecture, training techniques, and data quality. The benefit of using "Extra Data" indicates that more comprehensive datasets can further enhance model performance. The plateauing of accuracy at higher Joules values suggests diminishing returns – at some point, increasing computational resources yields only marginal improvements. This could be due to limitations in the model architecture or the inherent complexity of the task. The outliers suggest that other variables, not captured in this visualization, play a role in determining model accuracy. Further investigation could explore the impact of these additional factors. The logarithmic scale on the x-axis indicates that the relationship between Joules and accuracy is not linear, and that the initial increase in Joules has a more significant impact on accuracy than subsequent increases.

</details>

## Forecasting and Multiplicative Effect

In our analysis we see that DNNs as well as hardware are improving their efficiency and do not show symptoms of standstill. This is consistent with most studies in the literature: performance will

Figure 12: Relation between Joules and GLUE score over the years (NLP, GLUE).

<details>

<summary>Image 12 Details</summary>

### Visual Description

## Scatter Plot: Model Performance (GLUE vs. Joules)

### Overview

This image presents a scatter plot comparing the performance of various language models on the GLUE benchmark against their energy consumption in Joules. Each point represents a different model, and the color of the point indicates the date of the model's release.

### Components/Axes

* **X-axis:** Joules (Energy Consumption). Scale ranges from approximately 0.00 to 1.00, with markers at 0.03, 0.05, 0.10, 0.30, 0.50, and 1.00.

* **Y-axis:** GLUE (General Language Understanding Evaluation) score. Scale ranges from approximately 74 to 86, with markers at 75, 80, 85.

* **Legend:** Located in the top-left corner, the legend maps colors to dates:

* 2020-01 (Light Green)

* 2019-07 (Medium Green)

* 2019-01 (Light Blue)

* 2018-07 (Dark Purple)

* **Data Points:** Each point represents a language model, labeled with its name.

### Detailed Analysis

Here's a breakdown of the data points, their approximate coordinates, and corresponding release dates:

* **ELECTRA Large:** (0.95, 85.5). Color: Light Green (2020-01)

* **BERT Large:** (1.00, 82.5). Color: Light Blue (2019-01)

* **ELECTRA-Base:** (0.85, 84.5). Color: Light Green (2020-01)

* **BERT-Base:** (0.75, 81.5). Color: Light Blue (2019-01)

* **Theseus 6/768:** (0.12, 79.5). Color: Medium Green (2019-07)

* **SqueezeBERT:** (0.07, 77.5). Color: Medium Green (2019-07)

* **MobileBERT:** (0.04, 80.5). Color: Medium Green (2019-07)

* **ELECTRA-Small:** (0.06, 76.5). Color: Light Green (2020-01)

* **MobileBERT tiny:** (0.03, 75.5). Color: Light Green (2020-01)

* **GPT-1:** (0.40, 76.0). Color: Dark Purple (2018-07)

* **ELMo:** (0.30, 74.5). Color: Dark Purple (2018-07)

**Trends:**

* Generally, models released in 2020-01 (light green) tend to have higher GLUE scores for a given energy consumption (Joules) compared to models released earlier.

* There's a positive correlation between energy consumption and GLUE score, but it's not strictly linear. Some models achieve high GLUE scores with relatively low energy consumption.

* The older models (2018-07, dark purple) generally have lower GLUE scores and lower energy consumption.

### Key Observations

* ELECTRA Large achieves the highest GLUE score, but also has the highest energy consumption.

* MobileBERT and MobileBERT tiny are notable for their relatively high GLUE scores given their very low energy consumption.

* GPT-1 and ELMo are the oldest models and have the lowest GLUE scores.

* There is a cluster of models released in 2019-07 (medium green) that occupy a middle ground in terms of both GLUE score and energy consumption.

### Interpretation

The data suggests a trade-off between model performance (as measured by GLUE) and energy consumption. Newer models (2020-01) demonstrate improved efficiency, achieving higher GLUE scores with comparable or even lower energy consumption than older models. This indicates progress in model architecture and training techniques.