## Practical Timing Side Channel Attacks on Memory Compression

Martin Schwarzl

Graz University of Technology martin.schwarzl@iaik.tugraz.at

Pietro Borrello

Sapienza University of Rome borrello@diag.uniroma1.it

Michael Schwarz

CISPA Helmholtz Center for Information Security michael.schwarz@cispa.saarland

Abstract -Compression algorithms are widely used as they save memory without losing data. However, elimination of redundant symbols and sequences in data leads to a compression side channel. So far, compression attacks have only focused on the compression-ratio side channel, i.e. , the size of compressed data, and largely targeted HTTP traffic and website content.

In this paper, we present the first memory compression attacks exploiting timing side channels in compression algorithms, targeting a broad set of applications using compression. Our work systematically analyzes different compression algorithms and demonstrates timing leakage in each. We present Comprezzor, an evolutionary fuzzer which finds memory layouts that lead to amplified latency differences for decompression and therefore enable remote attacks. We demonstrate a remote covert channel exploiting small local timing differences transmitting on average 643 . 25bit ♥ h over 14 hops over the internet. We also demonstrate memory compression attacks that can leak secrets bytewise as well as in dictionary attacks in three different case studies. First, we show that an attacker can disclose secrets co-located and compressed with attacker data in PHP applications using Memcached. Second, we present an attack that leaks database records from PostgreSQL, managed by a Python-Flask application, over the internet. Third, we demonstrate an attack that leaks secrets from transparently compressed pages with ZRAM, the memory compression module in Linux. We conclude that memory-compression attacks are a practical threat.

## I. INTRODUCTION

Data compression plays a vital role for performance and efficiency. For example, compression leads to smaller data footprints, allowing more data to be stored in memory. Memory accesses are typically faster than retrieving data from slower mediums such as hard disks or solid-state disks. As a result, both Microsoft [63] and Apple [3] rely on memory compression in their operating systems (OSs) to better utilize memory. Similarly, memory compression is also used in databases [46] or keyvalue stores [45]. Compression can also increase performance when storing or transferring data on slow mediums. Hence, data compression is also widely used for HTTP traffic [41], [15] and file-system compression [6].

While compression has many advantages, it is problematic in scenarios where sensitive data is compressed [49], [20], [4], [58], [57], [32]. Attacks such as CRIME [49], BREACH [20],

Gururaj Saileshwar Georgia Tech Graz University of Technology gururaj.s@gatech.edu

Hanna M¨ uller Graz University of Technology h.mueller@student.tugraz.at

Daniel Gruss

Graz University of Technology daniel.gruss@iaik.tugraz.at

TIME [4], or HEIST [58], exploit the combination of encryption and compression in TLS-encrypted HTTP traffic. Karaskostas et al. [32] extended BREACH to commonly used ciphers, such as AES. These attacks demonstrated that an attacker could learn secrets when they are compressed together with attacker-controlled input. However, all these attacks focused on web traffic and only exploited differences in the compressed size of data. The size of compressed data is either accessed directly [49], e.g., in a man-in-the-middle (MITM) attack or indirectly by observing the impact of the compressed size on the transmission time [4].

Although attacks exploiting the compression ratio were first described by Kelsey et al. [33] in 2002, most attacks thus far have focused on compressed web traffic. Surprisingly, security implications of compression in other settings, such as virtual memory and file systems, have not been studied thoroughly. Also, compression fundamentally provides a reduction in size of data at the expense of additional time required for compression and decompression. However, so far, only the result of the compression, i.e. , the compressed size, has been exploited to leak data but not the time consumed by the process of compression or decompression itself.

In this paper, we demonstrate that the decompression time also leaks information about the compressed data. Instead of focussing on the final compressed data, we measure the time it takes to decompress data. Hence, we do not need to observe the transport of the compressed data. Decompression timing can be measured in several scenarios, e.g., a simple read access to the data can suffice for compressed virtual memory or file systems. Moreover, decompression timings can be observed remotely, even when the compressed data never leaves the victim system.

We systematically analyzed six compression algorithms, including widely-used algorithms such as DEFLATE (in zlib), PGLZ (in PostgreSQL), and zstd (by Facebook). We observe that the decompression time not only correlates with the entropy of the uncompressed data, but also with various other aspects, such as the relative position or alignment of compressible data. In general, these timing differences arise due to the design of the compression algorithm and its implementation.

To explore these parameters in an automated fashion, we introduce Comprezzor, an evolutional fuzzer. In line with previous attacks on compression, Comprezzor assumes that parts of the compressed data are controlled by an attacker. Based on this assumption and a target secret, Comprezzor searches for a memory layout that maximizes the timing differences for decompression timing attacks. As a result, Comprezzor discovers a memory layout for compression algorithms which leads to decompression timing attacks with high-latency differences up to three orders of magnitude higher than manually discovered layouts.

Based on the results of Comprezzor, we present three case studies demonstrating that these timing differences can be exploited in realistic scenarios to leak sensitive data. We demonstrate that these timing differences can be exploited even in remote attacks on an in-memory database system without executing code on the victim machine and without observing the victim's network traffic. Hence, our case studies show that compressing sensitive data poses a security risk in any scenario using compression and not just for web traffic.

In the first case study, we build a remote covert channel which works across 14 hops on the internet, abusing the memory compression of Memcached, an in-memory object caching system. By measuring the execution time of a public PHP API of an application using Memcached, we can covertly transfer data between two computers at an average transmission rate of 643 . 25 bit ♥ h with an error rate of only 0 . 93 % . In a similar setup, we also demonstrate the capability of leaking a secret bytewise co-located with data compressed by a PHP application into Memcached, in 31 . 95 min ( n 20 , σ 8 . 48% ) over the internet. Using a dictionary with 100 guesses, we leak a 6 B -secret in 14 . 99 min .

In the second case study, we exploit the transparent database compression of PostgreSQL. Our exploit leaks database records from an inaccessible database table of a remote web app. We show that as long as an attacker can co-locate data with a secret, it is possible for the attacker to influence and observe decompression times when accessing the data, thus, leaking secret data. Such a scenario might arise if structured data, i.e. , JSON documents, are stored with attack-controlled fields into a single cell. Using an attack that leaks a secret bytewise, we leak a 6 B -secret in 7 . 85 min . Our dictionary attack runs in 8 . 28 min on average over the internet for 100 guesses.

In the third case study, we exploit ZRAM, the memory compression module in Linux. We demonstrate that even if the application itself or the file system does not compress data, the OS's memory compression can transparently introduce timing side channels. We demonstrate that a dictionary attack with 100 guesses on ZRAM decompression can leak a 6 B -secret co-located with attacker data in a page within 2 . 25 min on average.

Our fuzzer shows that most if not all compression algorithms are susceptible to timing side channels when observing the decompression of data. With our case studies, we demonstrate that data leakage is not only a concern for data in transit but also for data at rest. With this work, we seek to highlight the importance of evaluating the trade-off between compressing data, and leaking side-channel information about the compressed data, for any system adopting compression.

Contributions. The main contributions of this work are:

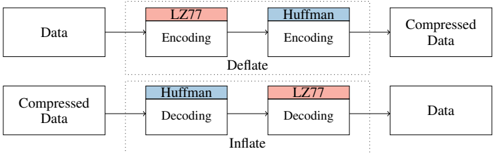

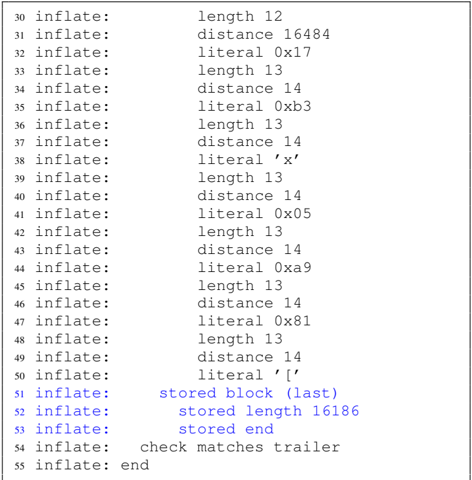

Fig. 1: DEFLATE algorithm has two parts: LZ77 part to compress sequences and a Huffman part to compress symbols.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Deflate and Inflate Data Compression

### Overview

The image illustrates the processes of data compression (deflate) and decompression (inflate) using LZ77 and Huffman encoding/decoding. It shows the flow of data through these processes, highlighting the order of encoding and decoding steps.

### Components/Axes

* **Data:** Represents the original, uncompressed data.

* **Compressed Data:** Represents the data after compression.

* **LZ77 Encoding/Decoding:** Indicates the LZ77 encoding or decoding process. The blocks are colored light red.

* **Huffman Encoding/Decoding:** Indicates the Huffman encoding or decoding process. The blocks are colored light blue.

* **Deflate:** The overall compression process, enclosed in a dotted box.

* **Inflate:** The overall decompression process, enclosed in a dotted box.

* **Arrows:** Indicate the direction of data flow.

### Detailed Analysis

**Deflate (Compression):**

1. **Input:** Data enters the Deflate process.

2. **LZ77 Encoding:** The data first undergoes LZ77 encoding.

3. **Huffman Encoding:** The output of LZ77 encoding is then processed by Huffman encoding.

4. **Output:** The result is Compressed Data.

**Inflate (Decompression):**

1. **Input:** Compressed Data enters the Inflate process.

2. **Huffman Decoding:** The compressed data first undergoes Huffman decoding.

3. **LZ77 Decoding:** The output of Huffman decoding is then processed by LZ77 decoding.

4. **Output:** The result is Data, which should be the same as the original data before compression.

### Key Observations

* The Deflate process involves LZ77 encoding followed by Huffman encoding.

* The Inflate process involves Huffman decoding followed by LZ77 decoding, reversing the order of the encoding process.

* The diagram clearly shows the flow of data from uncompressed to compressed and back to uncompressed.

### Interpretation

The diagram illustrates a common data compression technique where two algorithms, LZ77 and Huffman coding, are used in sequence. The "Deflate" process compresses data by first using LZ77 to remove redundancy by replacing repeated sequences with references to earlier occurrences of the same sequence. Then, Huffman coding is applied to further compress the data by assigning shorter codes to more frequent symbols. The "Inflate" process reverses these steps to decompress the data back to its original form. The order of operations is crucial for successful compression and decompression. The diagram highlights the relationship between these two processes and the flow of data through them.

</details>

- 1) We present a systematic analysis of timing leakage for several lossless data-compression algorithms.

- 2) We demonstrate an evolutional fuzzer to automatically search for memory layouts causing large timing differences for timing side channels on memory compression.

- 3) We show a remote covert channel exploiting the in-memory compression of PHP-Memcached.

- 4) We demonstrate that compression-based timing side channels can be introduced by compression in applications, databases, or the system's memory compression.

- 5) We leak secrets from Memcached, PostgreSQL, and ZRAM within minutes.

Outline. In Section II, we provide background. In Section III, we present attack model and building blocks. In Section IV, we systematically analyze compression algorithms. In Section V, we present Comprezzor. In Section VI, we demonstrate local and remote attacks exploiting decompression timing. We discuss mitigations in Section VII and conclude in Section VIII.

We responsibly disclosed our findings to the developers and will open-source our fuzzing tool and attacks on Github.

## II. BACKGROUND AND RELATED WORK

In this section, we provide background on data compression algorithms, prior side-channel attacks on data compression, and the use of fuzzing to discover side channels.

## A. Data Compression Algorithms

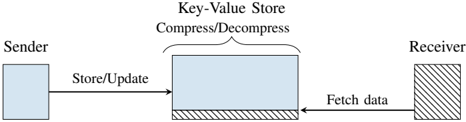

Lossless compression reduces the size of data without losing information. One of the most popular algorithms is the DEFLATE compression algorithm, which is used in gzip (zlib). The DEFLATE compression algorithm [12] consists of two main parts, LZ77 and Huffman encoding and decoding, as shown in Figure 1. The Lempel-Ziv (LZ77) part scans for the longest repeating sequence within a sliding window and replaces repeated sequences with a reference to the first occurrence [8]. This reference stores distance and length of the occurrence. The Huffman -coding part tries to reduce the redundancy of symbols. When compressing data, DEFLATE first performs LZ77 encoding and Huffman encoding [8]. When decompressing data (inflate), they are performed in reverse order. The algorithm provides different compression levels to optimize for compression speed or compression ratio. The smallest possible sequence has a length of 3 B [12].

Other algorithms provide different design points for compressibility and speed. Zstd, designed by Facebook [10] for modern CPUs, improves both compression ratio and speed, and

is used for compression in file systems (e.g., btrfs, squashfs) and databases (e.g., AWS Redshift, RocksDB). LZ4 and LZO are other algorithms used in file systems, which are optimized for compression and decompression speed. LZ4, in particular, gains its performance by using a sequence compression stage (LZ77) without the symbol encoding stage (Huffman) like in DEFLATE. FastLZ, similar to LZ4, is a fast compression algorithm implementing LZ77. PGLZ is a fast LZ-family compression algorithm used in PostgreSQL for varying-length data in the database [46].

## B. Prior Data Compression Attacks

In 2002, Kelsey [33] first showed that any compression algorithm is susceptible to information leakage based on the compression-ratio side channel. Duong and Rizzo [49] applied this idea to steal web cookies with the CRIME attack by exploiting TLS compression. In the CRIME attack, the attacker adds additional sequences in the HTTP request, which act as guesses for possible cookies values, and observes the request packet length, i.e. , the compression ratio of the HTTP header injected by the browser. If the guess is correct, the LZ77-part in gzip compresses the sequence, making the compression ratio higher, thus allowing the secret to be discovered. To perform CRIME, the attacker needs to be able to spy on the packet length, and the secret needs to have a known prefix such as sessionid= or cookie= . To mitigate CRIME, TLS-level compression was disabled for requests [4], [20].

The BREACH attack [20] revived the CRIME attack by attacking HTTP responses instead of requests and leaking secrets in the HTTP responses such as cross-site-request-forgery tokens. The TIME attack [4] uses the time of a response as a proxy for the compression ratio, as it can be measured even via JavaScript. To reliably amplify the signal, the attacker chooses the size of the payload such that additional bytes, due to changes in compressibility, cross a boundary and cause significantly higher delays in the round-trip time (RTT). TIME exploits the compression ratio to amplify timing differences via TCP windows and does not exploit timing differences in the underlying compression algorithm itself.

A malicious site can perform a TIME attack on their visitors to break same-origin policy or leak data from sites that reflect user input in the response, such as a search engine [4]. Vanhoef and Van Goethem [58] showed with HEIST that HTTP/2 features can also be used to determine the size of crossorigin responses and to exploit BREACH using the information. Van Goethem et al. [57] similarly showed that compression can be exploited to determine the exact size of any resource in browsers. Karaskostas and Zindros[32] presented Rupture, extending BREACH attacks to web apps using block ciphers such as AES.

Tsai et al. [55] demonstrated cache timing attacks on compressed caches, which can leak a secret key in under 10 ms .

Common Theme. All of the prior attacks primarily exploit the compression-ratio side channel. However, the time taken by the underlying compression algorithm for compression or decompression is not analyzed or exploited as side channels. Additionally, these attacks largely target the HTTP traffic and website content, and do not focus on the broader applications

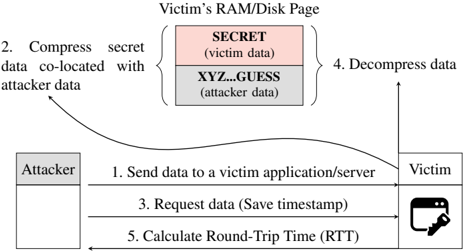

Fig. 2: Overview of a memory compression attack exploiting a timing side channel.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Data Compression Attack

### Overview

The image illustrates a data compression attack targeting a victim's RAM/Disk page. The diagram shows the steps involved, starting with the attacker sending data to the victim, compressing secret data co-located with attacker data, requesting data, decompressing data, and calculating the round-trip time.

### Components/Axes

* **Title:** Victim's RAM/Disk Page

* **Attacker:** A gray box on the left side of the diagram.

* **Victim:** A representation of a computer screen with a key icon in the bottom-right corner, located on the right side of the diagram.

* **Memory Block:** A stacked block representing the victim's RAM/Disk page, consisting of two sections:

* **SECRET (victim data):** Top section, colored light red.

* **XYZ...GUESS (attacker data):** Bottom section, colored light gray.

* **Steps:** Numbered steps indicating the flow of the attack.

### Detailed Analysis

1. **Send data to a victim application/server:** A horizontal arrow points from the "Attacker" to the "Victim".

2. **Compress secret data co-located with attacker data:** A curved arrow points from the "Attacker" to the memory block, specifically targeting both the "SECRET" and "XYZ...GUESS" sections.

3. **Request data (Save timestamp):** A horizontal arrow points from the "Victim" to the "Attacker".

4. **Decompress data:** A vertical arrow points upwards from the memory block.

5. **Calculate Round-Trip Time (RTT):** A horizontal arrow points from the "Attacker" to the "Victim".

### Key Observations

* The attacker manipulates the victim's memory by co-locating their data with the victim's secret data.

* The attack involves compressing and decompressing data, likely to exploit vulnerabilities in the compression algorithm or the way the data is handled.

* The round-trip time is calculated, suggesting that timing analysis is part of the attack strategy.

### Interpretation

The diagram depicts a sophisticated attack where the attacker attempts to extract secret information from the victim's memory by manipulating data compression. The attacker first sends data to the victim, then influences the compression process by co-locating their data with the victim's secret data. By requesting the data and measuring the round-trip time, the attacker can potentially infer information about the compressed data and, ultimately, the secret data itself. This type of attack exploits vulnerabilities related to data compression and timing analysis to compromise the victim's system.

</details>

of compression such as memory-compression, databases, file systems, and others, that we target in this paper.

## C. Fuzzing to Discover Side Channels

Historically, fuzzing has been used to discover bugs in applications [64], [16]. Typically, it involves feedback based on novelty search, executing inputs, and collecting ones that cover new program paths in the hope of triggering bugs. Other fuzzing proposals use genetic algorithms to direct input generation towards interesting paths [48], [54]. Recently fuzzing has also been used to discover side channels both in software and in the microarchitecture [59], [21], [40], [18]. ct-fuzz [27] used fuzzing to discover timing side channels in cryptographic implementations. Nilizadeh et al. [42] used differential fuzzing to detect compression-ratio side channels that enable the CRIME attack. In this work, we build on these and use fuzzing to discover timing channels in compression algorithms.

## III. HIGH-LEVEL OVERVIEW

In this section, we discuss the high-level overview of memory compression attacks and the attack model.

## A. Attack Model & Attack Overview

Most prior attacks discussed in Section II-B focused on the compression ratio side channel. Even the TIME attack and its variants by Vanhoef and Van Goethem et al. [58], [57] only exploited timing differences due to the TCP protocol. None of these exploited or analyzed timing differences due to the compression algorithm itself, which is the focus of our attack.

We assume that the attacker can co-locate arbitrary data with secret data. This co-location can be given, e.g., via a memory storage API like Memcached or a shared database between the attacker and the victim. Once the attacker data is compressed with the secret, the attacker only needs to measure the latency of a subsequent access to its data. Furthermore, we assume no software vulnerabilities i.e. , memory corruption vulnerabilities in the application it self.

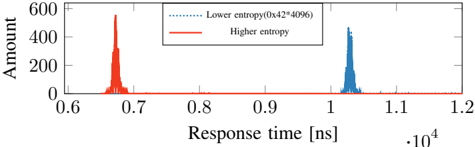

Figure 2 illustrates an overview of a memory compression attack in five steps. The victim application can be a web server with a database or software cache, or a filesystem that compresses stored files. First , the attacker sends its data to be stored to the victim's application. Second , the victim

application compresses the attacker-controlled data, together with some co-located secret data, and stores the compressed data. The attacker-controlled data contains a partial guess of the co-located victim's data SECRET or, in the case where a prefix is known, prefix=SECRET . The guess can be performed bytewise to reduce the guessing entropy. If the partial guess is correct, i.e. , SECR , the compressed data not only has a higher compression ratio, but it also influences the decompression time. Third , after the compression happened, the attacker requests the content of the stored data again and takes a timestamp. Fourth , the victim application decompresses the input data and responds with the requested data. Fifth , the attacker takes another timestamp when the application responds and computes the RTT as the difference between the two timestamps. Based on the RTT, which depends on the decompression latency of the algorithm, the attacker infers whether the guess was correct or not and thus infers the secret data. Thus, the attack relies on the timing differences of the compression algorithm itself, which we characterize next.

## IV. SYSTEMATIC STUDY: COMPRESSION ALGORITHMS

In this section, we provide a systematic analysis of timing leakage in compression algorithms. We choose six popular compression algorithms (zlib, zstd, LZ4, LZO, PGLZ, and FastLZ), and evaluate the compression and decompression times based on the entropy of the input data. Zlib (which implements the DEFLATE algorithm) is the most popular as a standard for compressing files and is used in gzip. Zstd is Facebook's alternative to Zlib. PGLZ is used in the popular relational database management system PostgreSQL. LZ4, FastLZ, and LZO were built to increase compression speeds. For each of the algorithms, we observe timing differences in the range of hundreds to thousands of nanoseconds based on the content of the input data ( 4 kB -page).

## A. Experimental Setup

We conducted the experiments on an Intel i7-6700K (Ubuntu 20.04, kernel 5.4.0) with a fixed frequency of 4 GHz . We evaluate the latency of each compression algorithm with three different input values, each 4 kB in size. The first input is the same byte repeated 4096 times, which should be fully compressible . The second input is partly compressible and a hybrid of two other inputs: half random bytes and half compressible repeated bytes. The third input consists of random bytes which are theoretically incompressible . With these, we show that compression algorithms can have different timings dependent on the compressibility of the input.

## B. Timing Differences for Different Inputs

For each algorithm and input, we measure the decompression and compression time over 100 000 repetitions and compute the mean values and standard deviations.

Decompression. Table I lists the decompression latencies for all evaluated compression algorithms. Depending on the entropy of the input data, there is considerable variation in the decompression time. All algorithms incur a higher latency for decompressing a fully compressible page compared to an incompressible page, leading to a timing difference of few hundred to few thousand nanoseconds for different

TABLE I: Different compression algorithms yield distinguishable timing differences when decompressing content with a different entropy ( n 100000 ).

| Algorithm | Fully Compressible (ns) | Partially Compressible (ns) | Incompressible (ns) |

|-------------|---------------------------------|----------------------------------|---------------------------------|

| FastLZ | 7257 . 88 ( 0 . 23% ) | 4264 . 56 ( 2 . 27% ) | 1155 . 57 ( 0 . 92% ) |

| LZ4 | 605 . 79 ( 1 . 02% ) | 218 . 68 ( 1 . 76% ) | 107 . 90 ( 2 . 49% ) |

| LZO | 2115 . 65 ( 2 . 05% ) | 1220 . 07 ( 3 . 64% ) | 309 . 44 ( 6 . 27% ) |

| PGLZ | 813 . 75 ( 0 . 71% ) | 5340 . 47 ( 0 . 38% ) | - |

| zlib | 7016 . 02 ( 0 . 33% ) | 13212 . 53 ( 0 . 35% ) | 1640 . 09 ( 1 . 51% ) |

| zstd | 941 . 05 ( 0 . 94% ) | 772 . 55 ( 0 . 77% ) | 370 . 59 ( 2 . 87% ) |

algorithms. This is because, for incompressible data, algorithms can augment the raw data with additional metadata to identify such cases and perform simple memory copy operations to 'decompress' the data, as is the case for zlib where the decompression for an incompressible page is 5375 . 93 ns faster than a fully compressible page. For decompression of partially compressible pages, some algorithms (FastLZ, LZ4, LZO, zstd) have lower latency than fully compressible pages, while others (zlib and PGLZ) have a higher latency than fully compressible pages. This shows the existence of even algorithm-specific variations in timings. PGLZ does not create compressible memory in the case of an incompressible input, and hence we do not measure its latency for this input.

Compression. For compression, we observed a trend in the other direction (Table IV in Appendix A lists compression latencies for different algorithms). For a fully compressible page, there are also latencies between the three different inputs, which are clearly distinguishable in the order of multiple hundreds to thousands of nanoseconds. Thus, timing side channels from compression might also be used to exploit compression of attacker-controlled memory co-located with secret memory. However, attacks using the compression side channel might be harder to perform in practice as the compression of data might be performed in a separate task (in the background), and the latency might not be easily observable for a user. Hence, our work focuses on attacks exploiting the decompression timing side channel.

Handling of Corner Cases. For incompressible pages, the 'compressed' data can be larger than the original size with the additional compression metadata. Additionally, it is slower to access after compression than raw uncompressed data. Hence, this corner-case with incompressible data may be handled in an implementation-specific manner, which can itself lead to additional side channels. For example, a threshold for the compression ratio can decide when a page is stored in a raw format or in a compressed state, like in MemcachedPHP [45]. Alternatively, PGLZ, the algorithm used in PostgreSQL database, which computes the maximum acceptable output size for input by checking the input size and the strategy compression rate, could simply fail to compress inputs in such corner cases.

In Section VI, we show how real-world applications like Memcached, PostgreSQL, and ZRAM deal with such corner cases and demonstrate attacks on each of them.

## C. Leaking secrets via timing side channel

Thus far, we analyzed timing differences for decompressing different inputs, which in itself is not a security issue. In this

section, we demonstrate Decomp+Time to leak secrets from compressed pages using these timing differences. We focus on sequence compression, i.e. , LZ77 in DEFLATE.

1) Building Blocks for Decomp+Time: Decomp+Time consists of three building blocks: sequence compression that we use to modulate the compressibility of an input, colocation of attacker data and secrets, and timing variation for decompression depending on the change in compressibility of the input.

Sequence compression: Sequence compression i.e. , LZ77 tries to reduce the redundancy of repeated sequences in an input by replacing each occurrence with a pointer to the first occurrence. This results in a higher compression ratio if redundant sequences are present in the input and a lower ratio if no such sequences are present. This compressibility side channel can leak information about the compressed data.

Co-location of attacker data and secrets: If the attacker can control a part of data that is compressed with a secret, as described in Figure 2, then the attacker can place a guess about the secret and place it co-located with the secret to exploit sequence compression. If the compression ratio increases, the attacker can infer if the guess matches the secret or not. While the CRIME attack [49] previously used a similar set up and observed the compressed size of HTTP requests to steal secrets like HTTP cookies, we target a more general attack and do not require observability of compressed sizes.

Timing Variation in Decompression: We infer the change in compressibility via its influence on decompression timing. We observe that even sequence compression can cause variation in the decompression timing based on compressibility of inputs (for all algorithms in Section IV-B). If the sequence compression reduces redundant symbols in the input and increases the compression ratio, we observe faster decompression due to fewer symbols. Otherwise, with a lower compression ratio and more number of symbols, decompression is slower. Hence, the attacker can infer the compressibility changes for different guesses by observing differences in decompression time due to sequence compression. For a correct guess, the guess and the secret are compressed together and the decompression time is faster due to fewer symbols. Otherwise, it is slower for incorrect guesses with more symbols.

2) Launching the Decomp+Time: Using the building blocks described above, we setup the attack with an artificial victim program that has a 6 B -secret string ( SECRET ) embedded in a 4 kB -page. The page also contains attacker-controlled data that is compressed together with the secret, like the scenario shown in Figure 2. The attacker can update its own data in place, allowing it to make multiple guesses. The attacker can also read this data, which triggers a decompression of the page and allows the attacker to measure the decompression time. A correct guess that matches with the secret results in faster decompression.

We perform the attack on the zlib library (1.2.11) and use 8 different guesses, including the correct guess. For each guess, a single string is placed 512 B away from the secret value; other data in the page is initialized with dummy values (repeated number sequence from 0 to 16). To measure the execution time, we use the rdtsc instruction.

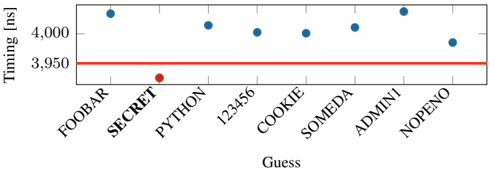

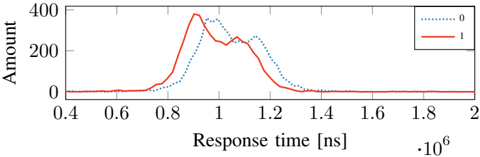

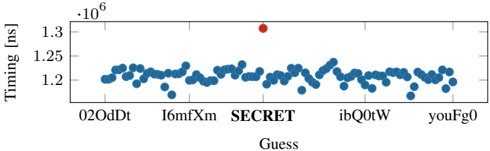

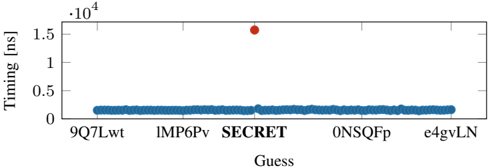

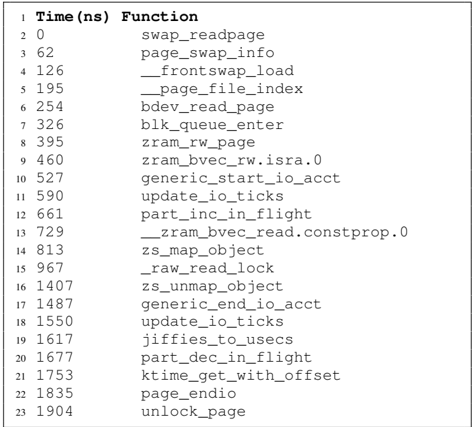

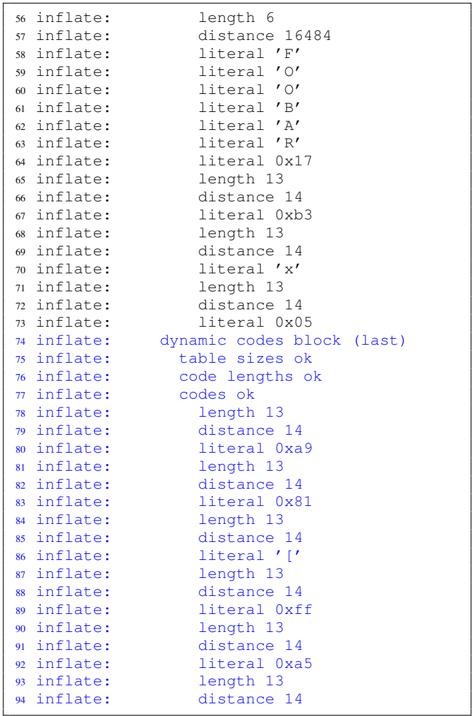

Fig. 3: Decompression time with Decomp+Time for a dictionary attack. There is a clear threshold (line) separating the correct from wrong secrets.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Timing Attack Chart: Guess Timing Analysis

### Overview

The image is a scatter plot showing the timing (in nanoseconds) for different "Guess" values. The x-axis represents the "Guess" values, and the y-axis represents the "Timing [ns]". A horizontal red line is present at 3950 ns. Most data points are blue, except for "PYTHON" which is red.

### Components/Axes

* **X-axis:** "Guess" - Categorical values: FOOBAR, SECRET, PYTHON, 123456, COOKIE, SOMEDA, ADMINI, NOPEΝΟ

* **Y-axis:** "Timing [ns]" - Numerical scale with markers at 3950 and 4000.

* **Horizontal Line:** A red line at Timing = 3950 ns.

* **Data Points:** Blue circles, except for PYTHON which is a red circle.

### Detailed Analysis

* **FOOBAR:** Timing is approximately 4030 ns (blue).

* **SECRET:** Timing is approximately 4030 ns (blue).

* **PYTHON:** Timing is approximately 3930 ns (red).

* **123456:** Timing is approximately 4020 ns (blue).

* **COOKIE:** Timing is approximately 4000 ns (blue).

* **SOMEDA:** Timing is approximately 4010 ns (blue).

* **ADMINI:** Timing is approximately 4030 ns (blue).

* **NOPEΝΟ:** Timing is approximately 3980 ns (blue).

### Key Observations

* The timing for "PYTHON" is significantly lower than the other "Guess" values. It is also the only data point colored red.

* All other "Guess" values have timings clustered around 4000-4030 ns.

* The red horizontal line at 3950 ns serves as a visual reference point.

### Interpretation

The chart likely represents the results of a timing attack. The "Guess" values are attempts to guess a secret. The timing of each guess is measured. The significantly lower timing for "PYTHON" suggests that this guess might be correct or have a different execution path, making it distinguishable from the other guesses. The red color highlights this anomaly. The horizontal line at 3950 ns could represent a threshold or a baseline timing value. The data suggests that "PYTHON" is a potential vulnerability.

</details>

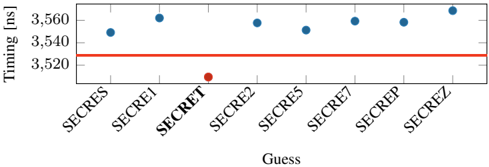

Fig. 4: Leaking the secret sixth offset character-wise. Still, the correct guess is distinguishable from the wrong guesses. A clear threshold can be used to separate the correct guess from the incorrect guesses.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Scatter Plot: Timing vs. Guess

### Overview

The image is a scatter plot showing the timing (in nanoseconds) for different "guesses." The x-axis represents the guesses, and the y-axis represents the timing. A horizontal red line is present at approximately 3528 ns. Most guesses have a timing around 3550 ns, except for one guess labeled "SECRET" which has a significantly lower timing.

### Components/Axes

* **X-axis:** "Guess" with categories: SECRES, SECRE1, SECRET, SECRE2, SECRE5, SECRE7, SECREP, SECREZ.

* **Y-axis:** "Timing [ns]" with scale from 3520 to 3560 ns. Axis markers are present at 3520, 3540, and 3560 ns.

* **Horizontal Line:** A red horizontal line is present at approximately 3528 ns.

### Detailed Analysis

* **SECRES:** Timing is approximately 3546 ns (blue dot).

* **SECRE1:** Timing is approximately 3559 ns (blue dot).

* **SECRET:** Timing is approximately 3512 ns (red dot).

* **SECRE2:** Timing is approximately 3557 ns (blue dot).

* **SECRE5:** Timing is approximately 3551 ns (blue dot).

* **SECRE7:** Timing is approximately 3557 ns (blue dot).

* **SECREP:** Timing is approximately 3558 ns (blue dot).

* **SECREZ:** Timing is approximately 3559 ns (blue dot).

### Key Observations

* The timing for the "SECRET" guess is significantly lower than all other guesses.

* All other guesses have timings clustered between 3546 ns and 3559 ns.

* The red horizontal line is positioned at approximately 3528 ns.

### Interpretation

The scatter plot suggests that the "SECRET" guess results in a significantly faster timing compared to the other guesses. This could indicate that the "SECRET" guess is the correct one, or that it triggers a different code path that executes more quickly. The red line could represent a threshold or a target timing value. The fact that the "SECRET" guess falls below this line while all other guesses are above it further emphasizes its distinct behavior.

</details>

Evaluation. Our evaluation was performed on an Intel i76700K (Ubuntu 20.04, kernel 5.4.0) with a fixed frequency of 4 GHz . To get stable results, we repeat the decompression step with each guess 10 000 times and repeat the entire attack 100 times. For each guess, we take the minimum timing difference per guess and choose the global minimum timing difference to determine the correct guess. Figure 3 illustrates the minimum decompression times. With zlib, we observe that the correct guess is faster on average by 71 . 5 ns ( n 100 , σ 27 . 91% ) compared to the second-fastest guess. Our attack correctly guessed the secret in all 100 repetitions of the attack.

While we used a 6 B secret, our experiment also works for smaller secrets down to a length of 4 B . We also observe the attack is faster if the secret has a known prefix with a length of 3 B or more.

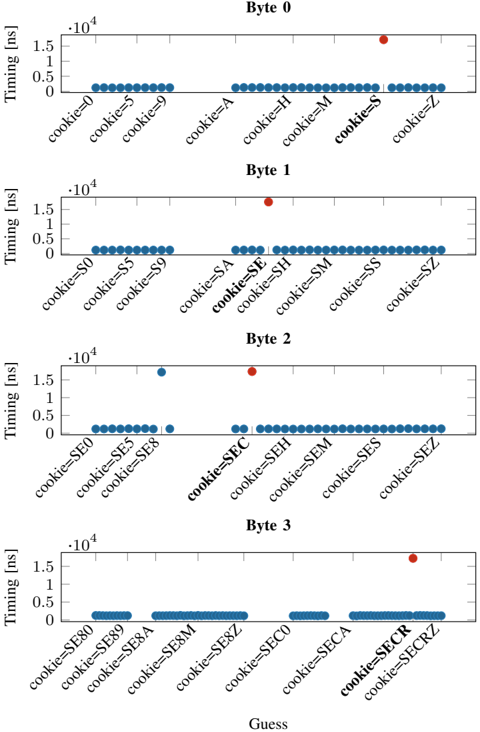

Bytewise leakage. If the attacker manages to guess or know the first three bytes of the secret, the subsequent bytes can even be leaked bytewise using our attack. Both CRIME and BREACH, assume a known prefix such as cookie= . Similar to CRIME and BREACH [49], [20], [32], we try to perform a bytewise attack by modifying our simple layout. We use the first 5 characters of SECRET as a prefix "SECRE" and guess the last byte with 7 different guesses. On average the latency is 28 . 37 ns ( n 100 , σ 65 . 78% ), between the secret and second fastest guess. Figure 4 illustrates the minimum decompression times for the different guesses. However, we observe an error rate of 8 % for this experiment, which might be caused by the Huffmann-decoding part in DEFLATE.

While techniques like the Two-Tries method [49], [20], [32] have been proposed to overcome the effects of Huffmancoding in DEFLATE to improve the fidelity of byte-wise

attacks exploiting compression ratio, we seek to explore whether bytewise leakage can be reliably performed via the timing only by amplying the timing differences.

3) Challenge Of Amplifying Timing: While the decompression timing side channels can be directly used in attacks as demonstrated, the timing differences are still quite small for practical exploits on real-world applications. For example, the timing differences we observed for the correct guess are in tens of nanoseconds, while most practical use-cases of compression such as in a Memcached server accessed over the network, or PostgreSQL database accessed from a filesystem could have access latencies of milliseconds.

Amplification. To enable memory compression attacks via the network or on data stored in file systems, we need to amplify the timing difference between correct and incorrect guesses. However, it is impractical to manually identify inputs that could amplify the timing differences, as each compression algorithm has a different implementation that is often highly optimized. Moreover, various input parameters could influence the timing of decompression, such as frequency of sequences, alignments of the secret and attack-controlled data, size of the input, entropy of the input, and different compression levels provided by algorithms. As an additional factor, the underlying hardware microarchitecture might also have undesirable influences. Therefore, to automate this process in a systematic manner, we develop an evolutionary fuzzer, Comprezzor, to find input corner cases that amplify the timing difference between correct and incorrect guesses for different compression algorithms.

## V. EVOLUTIONARY COMPRESSION-TIME FUZZER

Compression algorithms are highly optimized, complex and even though most of them are open-source, their internals are only partially documented. Hence, we introduce Comprezzor, an evolutionary fuzzer to discover attacker-controlled inputs for compression algorithms that maximize differences in decompression times for certain guesses enabling both bytewise leakage and dictionary attacks.

Comprezzor empowers genetic algorithms to amplify decompression side channels. It treats the decompression process of a compression algorithm as an opaque box and mutates inputs to the compression while trying to maximize the output, i.e. , timing differences for decompression with different guesses. The mutation process in Comprezzor focuses on not only the entropy of the data, but also the memory layout and alignment that end up triggering optimizations and slow paths in the implementation that are not easily detectable manually.

While previous approaches used fuzzing to detect timing side channels [42], [27], Comprezzor can dramatically amplify timing differences by being specialized for compression algorithms by varying parameters like the input size, layout, and entropy that affect the decompression time. The inputs discovered by Comprezzor can amplify timing differences to such an extent that they are even observable remotely.

## A. Design of Comprezzor

In this section, we describe the key components of our fuzzer: Input Generation, Fitness Function, Input Mutation and Input Evolution.

Input Generation. Comprezzor generates memory layouts for Decomp+Time by maximizing the timing differences on decompression of the correct guess compared to incorrect ones. Comprezzor creates layouts with sizes in the range of 1 kB to 64 kB . It uses a helper program that takes the memory layout configuration as input, builds the requested memory layout for each guess, compresses them using the target compression algorithm, and reports the observed timing differences in the decompression times among the guesses. A memory layout configuration is composed of a file to start from, the offset of the secret in the file, the offset of guesses, how often the guesses are repeated in the layout, the compression level ( i.e. , between 1 and 9 for zlib), and a modulus for entropy reduction that reduces the range of the random values. The fuzzer can be used in cases where a prefix is known and unknown.

Fitness Function. The evolutionary algorithm of Comprezzor starts from a random population of candidate layouts (samples) and takes as feedback the difference in time between decompression of the generated memory containing the correct guess and the incorrect ones. Comprezzor uses the timing difference between the correct guess and the second-fastest guess as the fitness score for a candidate.

The fitness function is evaluated using a helper program performing an attack on the same setup as in Section IV-A. The program performs 100 iterations per guess and reports the minimum decompression time per guess to reduce the impact of noise. This minimum decompression time is the output of the fitness function for Comprezzor.

Input Mutation. Comprezzor is able to amplify timing differences thanks to its set of mutations over the samples space specifically designed for data compression algorithms. Data compression algorithms leverage input patterns and entropy to shrink the input into a compressed form. For performance reasons, their ability to search for patterns in the input is limited by different internal parameters, like lookback windows, lookahead buffers, and history table sizes [46], [8]. We designed the mutations that affect the sample generation process to focus on input characteristics that directly impact compression algorithm strategies and limitations towards corner cases.

Comprezzor mutations randomize the entropy and size of the samples that are generated. This has an effect on the overall compressibility of sequences and literals in the sample [8]. Moreover, the mutator varies the number of repeated guesses and their position in the resulting sample stressing the capability of the compression algorithm to find redundant sequences over different parts of the input. This affects the sequence compression and can lead to corner cases, i.e. , where subsequent blocks to be compressed are directly marked as incompressible, as we will later show for zlib in Section V-B.

All of these factors together contribute to Comprezzor's ability to amplify timing differences.

Input Evolution. Comprezzor follows an evolutionary approach to generate better inputs that maximize timing differences. It generates and mutates candidate layout configurations for the attack. Each configuration is forwarded to the helper program that builds the requested layout, inserts the candidate guess, compresses the memory, and returns the decompression time. Algorithm 1 shows the pseudocode of the evolutionary algorithm used by Comprezzor.

```

```

Algorithm 1: Comprezzor evolutionary pseudo-code

TABLE II: Timing differences between correct and incorrect guesses found by Comprezzor and the corresponding runtime.

| Algorithm | Max difference for correct guess (ns) | Runtime (h) |

|-------------|-----------------------------------------|---------------|

| PGLZ | 109233 | 2.09 |

| zlib | 71514.8 | 2.46 |

| zstd | 4239.25 | 1.73 |

| LZ4 | 2530.5 | 1.64 |

Comprezzor iterates through different generations, with each sample having a probability of survival to the new generation that depends on its fitness score, where the fitness score is the time difference between the correct guess and the nearest incorrect one. Comprezzor discards all the samples where the correct guess is not the fastest or slowest. A retention factor decides the percentage of samples selected to survive among the best ones in the old generation ( 5 % by default).

The population for each new generation is initialized with the samples that survived the selection process and enhanced by random mutations of such samples, tuning evolution towards local optimal solutions. By default, 70 % of the new population is generated by mutating the best samples from the previous generation. However, to avoid trapping the fuzzer in locally optimal solutions, a percentage of completely random new samples is injected in each new generation. Comprezzor runs until the maximum number of generations is evaluated, and the best candidate layouts found are returned.

## B. Results: Fuzzing Compression Algorithms

Evaluation. Our test system has an Intel i7-6700K (Ubuntu 20.04, kernel 5.4.0) with a fixed frequency of 4 GHz . We run Comprezzor on four compression algorithms: zlib (1.2.11), Facebook's Zstd (1.5.0), LZ4 (v1.9.3), and PGLZ in PostgreSQL (v12.7). Comprezzor can support new algorithms by just adding compression and decompression functions.

We run Comprezzor with 50 epochs and a population of 1000 samples per epoch. We set the retention factor to 5 % , selecting the best 50 samples in each generation of 1000 samples. We randomly mutate the selected samples to generate 70 % of the children and add 25 % of completely randomly generated layouts in the new generation. The runtime of Comprezzor to finish all 50 epochs was for zlib, 2 . 46 h , for zstd, 1 . 73 h , for LZ4, 1 . 64 h , and for PGLZ, 2 . 09 h .

Table II lists the maximum timing differences found for Decomp+Time on the four compression algorithms, all of which use sequence compression like LZ77. Particularly, for zlib and PGLZ, the fuzzer discovers cases with timing differences of the scale of microseconds between correct and incorrect guesses, that is, orders of magnitude higher than the manually discovered differences in Section IV. Since zlib is one of the more popular algorithms, we analyze its high latency difference in more detail below.

Zlib. Using Comprezzor, we found a corner case in zlib where all incorrect guesses lead to a slow code path, and the correct guess leads to a significantly faster execution time. We ran Comprezzor with a known prefix i.e. , cookie. We observe a high timing difference of 71 514 . 75 ns between correct and incorrect guesses, which is 3 orders of magnitude higher compared to the latency difference found manually in Section IV-C. This memory layout also leads to similarly high timing differences across all compression levels of zlib. To rule out micro-architectural effects, we re-run the experiment on different systems equipped with an Intel i5-8250U, AMD Ryzen Threadripper 1920X, and Intel Xeon Silver 4208, and observe similar timing differences.

On further analysis, we observe that the corner case identified by the fuzzer is due to incompressible data. The initial data in the page, from a uniform distribution, is primarily incompressible. For such incompressible blocks, DEFLATE algorithm can store them as raw data blocks, also called stored blocks [12]. Such blocks have fast decompression times as only a single memcpy operation is needed on decompression instead of the actual DEFLATE process. In this particular corner case, the fuzzer discovered the correct guess results in such an incompressible stored block which is faster, while an incorrect guess results in a partly compressible input which is slower, as we describe below.

Correct Guess. On a compression for the case where the guess matches the secret, the entire guess string, i.e. , cookie=SECRET , is compressed with the secret string. All subsequent data in the input is incompressible and treated as a stored block and decompressed with a single memcpy operation, which is fast without any Huffman or LZ77 decoding.

Incorrect Guess. On a compression for the case where the guess does not match the secret, only the prefix of the guess, i.e. , cookie= , is compressed with the prefix of the secret, while another longer sequence i.e. , cookie=FOOBAR leads to forming a new block. On a decompression, this block must now undergo the Huffman decoding (and LZ77), which results in several table lookups, memory accesses, and higher latency.

Thus, the timing differences for the correct and incorrect guesses are amplified by the layout that Comprezzor discovered. We provide more details about this layout in Figure 14 in the Appendix C and also provide listings of the debug trace from zlib for the decompression with the correct and incorrect guesses, to illustrate the root-cause of the amplified timing differences with this layout.

Takeaway: We showed that it is possible to amplify timing differences for decompression timing attacks. With Comprezzor, we presented an approach to automatically find high timing differences in compression algorithms. The high latencies can be used for stable remote attacks.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Data Flow Diagram: Key-Value Store

### Overview

The image is a data flow diagram illustrating the interaction between a sender, a key-value store, and a receiver. The diagram shows data being stored/updated from the sender to the key-value store, where it is compressed/decompressed, and then fetched by the receiver.

### Components/Axes

* **Sender:** A rectangular box on the left side of the diagram.

* **Key-Value Store:** A larger rectangular box in the center, labeled "Key-Value Store Compress/Decompress". The bottom portion of this box is shaded with diagonal lines.

* **Receiver:** A rectangular box on the right side of the diagram, shaded with diagonal lines.

* **Store/Update:** An arrow pointing from the Sender to the Key-Value Store, labeled "Store/Update".

* **Fetch data:** An arrow pointing from the Key-Value Store to the Receiver, labeled "Fetch data".

### Detailed Analysis

1. **Sender:** The sender is represented as a simple rectangular box.

2. **Key-Value Store:** The key-value store is a larger rectangular box, indicating its role as a storage component. The "Compress/Decompress" label suggests that data is compressed when stored and decompressed when retrieved. The bottom shaded portion of the box likely represents the storage medium or a specific layer within the key-value store.

3. **Receiver:** The receiver is represented as a shaded rectangular box, indicating that it receives data.

4. **Data Flow:**

* The "Store/Update" arrow indicates that the sender sends data to the key-value store for storage or updating existing data.

* The "Fetch data" arrow indicates that the receiver retrieves data from the key-value store.

### Key Observations

* The diagram illustrates a unidirectional data flow from the sender to the key-value store and then to the receiver.

* The key-value store acts as an intermediary, providing storage and compression/decompression capabilities.

### Interpretation

The diagram depicts a common data storage and retrieval pattern. The sender transmits data to the key-value store, which manages the data, potentially compressing it for efficient storage. The receiver then retrieves the data from the key-value store, which decompresses it before sending it to the receiver. This architecture is often used in distributed systems and cloud computing environments where data needs to be stored and accessed efficiently. The compression/decompression step is crucial for optimizing storage space and network bandwidth.

</details>

Fig. 5: Covert communication via a key-value store using memory compression.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Histogram: Response Time vs. Amount for Different Entropy Levels

### Overview

The image is a histogram comparing the distribution of response times for two different entropy levels: "Lower entropy" and "Higher entropy". The x-axis represents response time in nanoseconds (ns), scaled by 10^4, and the y-axis represents the amount or frequency of occurrence.

### Components/Axes

* **X-axis:** Response time [ns] * 10^4, ranging from 0.6 to 1.2 with increments of 0.1.

* **Y-axis:** Amount, ranging from 0 to 600 with increments of 200.

* **Legend (Top-Right):**

* Blue dotted line: Lower entropy (0x42*4096)

* Red solid line: Higher entropy

### Detailed Analysis

* **Higher Entropy (Red):** The distribution is concentrated around 0.67 ns. The peak is approximately at an amount of 550.

* **Lower Entropy (Blue):** The distribution is concentrated around 1.04 ns. The peak is approximately at an amount of 450.

### Key Observations

* The response times for higher entropy are significantly lower than those for lower entropy.

* The distribution for higher entropy appears to be slightly more concentrated than the distribution for lower entropy.

### Interpretation

The histogram suggests that higher entropy levels are associated with faster response times. The two distinct peaks indicate that the entropy level has a clear impact on the response time distribution. The difference in response times could be due to the computational complexity or data processing requirements associated with different entropy levels. The data suggests that systems with lower entropy may experience longer processing times, potentially due to factors such as increased data redundancy or less efficient algorithms.

</details>

Fig. 6: Timing when decompressing a single zlib-compressed 4 kB page with a high-entropy in comparison to a page with a low-entropy.

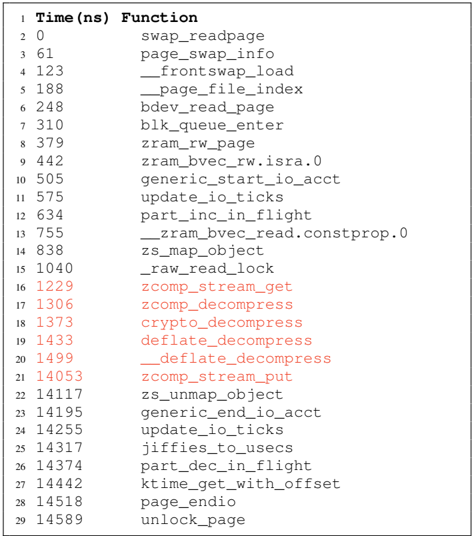

## VI. CASE STUDIES

In this section, we present three case studies showing the security impacts of the timing side channel. In Section VI-A, we present a local covert channel that allows hidden communication via the latency decompression. Furthermore, we present a remote covert channel that exploits the decompression of memory objects in a PHP application using Memcached. We demonstrate Decomp+Time on a PHP application that compresses secret data together with attacker-data to leak the secret bytewise. In Section VI-D, we leak inaccessible values from a database, exploiting the internal compression of PostgreSQL. In Section VI-E, we show that OS-based memory compression also has timing side channels that can leak secrets.

## A. Covert channel

To evaluate the transmission capacity of memorycompression attacks, we evaluate the transmission rate for a covert channel, where the attacker controls both the sending and receiving end. First, we evaluate a cross-core covert channel that relies on shared memory. This is in line with state-of-theart work to measure the maximum capacity of the memory compression side channel [24], [35], [37], [62], [23], [25]. The maximum capacity poses a leakage rate limit for other attacks that use memory compression as a side channel. Our local covert channel achieves a capacity of 448 bit ♥ s ( n 100 , σ 0 . 001% ).

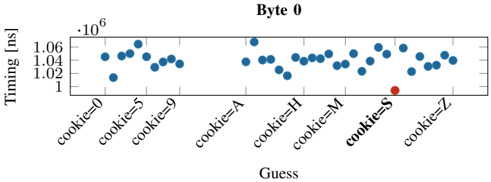

Setup. Figure 5 illustrates the covert communication between a sender and receiver via shared memory. We create a simple key-value store that communicates via UNIX sockets. The store takes input from a client and stores it on a 4 kB -aligned page. The sender inserts a key and value into the first page to communicate with the server. The receiver inserts a small key and value as well, which should be placed on the same 4 kB . If the 4 kB -page is fully written, the key-value store compresses the whole page. Compressing full 4 kB -page separately also occurs on filesystems like BTRFS [6].

Sender and receiver agree on a time frame to send and read content. The basic idea is to communicate via the observation on zlib that memory with low entropy, e.g., 4096 times the same value, requires more time when decompressing compared to pages with a higher entropy, e.g., repeating sequence number from 0 to 255 . Figure 6 shows the histogram of latency when decompression is triggered for both cases for the key-value on an Intel i7-6700K running at 4 GHz .

On average, we observe a timing difference of 3566 . 22 ns ( 14 264 . 88 cycles, n 100000 ). These two timing differences are easy to distinguish and enable covert communication.

Transmission. We evaluate our cross-core covert channel by generating and sending random content from /dev/urandom through the memory compression timing side channel. The sender transmits a '1'-bit by performing a store with the page that has a higher entropy 4095 B in the key-value store. Conversely, to transmit a '0'-bit, the sender compresses a lowentropy 4 kB page. To trigger the compression, the receiver also stores a key-value pair in the page, wheres as 1 B fills the remaining page. The key-value store will now compress the full 4 kB -page, as it is fully used. The receiver performs a fetch request from the key-value store, which triggers a decompression of the 4 kB -page. To distinguish bits, the receiver measures the mean RTT of the fetch request.

Evaluation. Our test machine is equipped with an Intel Core i7-6700K (Ubuntu 20.04, kernel 5.4.0), and all cores are running on a fixed CPU frequency of 4 GHz . We repeat the transmission 50 times and send per run 640 B . To reduce the error rate, the receiver fetches the receiver-controlled data 50 times and compares the average response time against the threshold. Our cross-core covert channel achieves an average transmission rate of 448 bit ♥ s ( n 100 , σ 0 . 007% ) with an error rate of 0 . 082 % ( n 100 , σ 2 . 85% ). This capacity of the unoptimized covert channel is comparable to other stateof-the-art microarchitectural cross-core covert channels that do not rely on shared memory [60], [36], [14], [44], [37], [53], [47], [59].

## B. Remote Covert Channel

We extend the scope of our covert channel to a remote covert channel. In the remote scenario, we rely on Memcached on a web server for memory compression and decompression.

Memcached. Memcached is a widely used caching system for web sites [38]. Memcached is a simple key-value store that internally uses a slab allocator. A slab is a fixed unit of contiguous physical memory, which is typically assigned to a certain slab class which is typically a 1 MB region [29]. Certain programming languages such as PHP or Python offer the option to compress memory before it is stored in Memcached [45], [5]. For PHP, memory compression is enabled per default if Memcached is used [45]. PHP-Memcached has a threshold that decides at which size data is compressed, with the default value at 2000 B . Furthermore, PHP-Memcached computes the compression ratio to a compression factor and decides whether it stores the data compressed or uncompressed in Memcached. The default value for the compression factor is 1 . 3 , i.e. , 23 % of the space needs to be saved from the original data size to store it compressed [45].

Fig. 7: Distribution of HTTP response times for zlibdecompressed pages stored in Memcached on memory compression encoding a '1' and a '0'-bit.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Chart: Response Time Distribution by Category

### Overview

The image is a line chart comparing the distribution of response times for two categories, labeled "0" and "1". The x-axis represents response time in nanoseconds (ns), scaled by 10^6, and the y-axis represents the amount or frequency of each response time.

### Components/Axes

* **X-axis:** Response time [ns] * 10^6. Scale ranges from 0.4 to 2.0, with increments of 0.2.

* **Y-axis:** Amount. Scale ranges from 0 to 400, with increments of 200.

* **Legend (Top-Right):**

* Dotted Blue Line: "0"

* Solid Red Line: "1"

### Detailed Analysis

* **Category "0" (Dotted Blue Line):**

* The line starts near 0 at x=0.4.

* It rises to a peak around x=0.9, with a value of approximately 300.

* It then decreases, reaching approximately 250 at x=1.0.

* The line continues to decrease, reaching approximately 150 at x=1.2.

* The line approaches 0 after x=1.4.

* **Category "1" (Solid Red Line):**

* The line starts near 0 at x=0.4.

* It rises to a peak around x=0.95, with a value of approximately 380.

* It then decreases, reaching approximately 240 at x=1.1.

* The line continues to decrease, reaching approximately 20 at x=1.4.

* The line approaches 0 after x=1.6.

### Key Observations

* Both categories exhibit a unimodal distribution, with a single peak.

* Category "1" has a higher peak amount (approximately 380) compared to category "0" (approximately 300).

* Category "1" peaks slightly later (around 0.95) than category "0" (around 0.9).

* Both categories have a similar spread, with response times concentrated between 0.6 and 1.4.

### Interpretation

The chart compares the distribution of response times for two distinct categories, "0" and "1". The data suggests that category "1" generally has a higher frequency of responses around its peak compared to category "0". While both categories have similar response time ranges, the slight shift in peak and the higher peak amount for category "1" indicate a difference in the underlying processes or conditions that generate these response times. The data could be used to understand performance differences or identify potential bottlenecks in a system where these categories represent different states or operations.

</details>

Bypassing the Compression Factor. While the compression factor already introduces a timing side channel, we focus on scenarios where data is always compressed. This is later useful for leakage on co-located data. Intuitively, it should suffice to prepend highly-compressible data to enforce compression. However, we found that only prepending and adopting the offsets for secret repetitions, as for zlib, also influenced the corner case we found and the large timing difference. We integrate prepending of compressible pages to Comprezzor and also add the compression factor constraint to automatically discover inputs that fulfills the constraint and leads to large latencies between a correct and incorrect guess.

Transmission. We use the page found by Comprezzor which triggers a significantly lower decompression time to encode a '1'-bit. Conversely, for a '0'-bit, we choose content that triggers a significantly higher decompression time. The sender places a key-value pair for each bit index at once into PHPMemcached. The receiver sends GET requests to the resource, causing decompression of the data that contains the sender content. The timing difference of the decompression is reflected in the RTT of the HTTP request. Hence, we measure the timing difference between the HTTP request being sent and the first response packet received.

Evaluation. Our sender and receiver are equipped with an Intel i7-6700K (Ubuntu 20.04, kernel 5.4.0) and connected to the internet with a 10 Gb/s connection. For the web server, we use a dedicated server in the Equinix [13] cloud, 14 hops away from our network (over 1000 km physical distance) with a 10 Gbit ♥ s connection. The victim server is equipped with an Intel Xeon E3-1240 v5 (Ubuntu 20.04, kernel 5.4.0). Our server runs a PHP (version 7.4) website, which allows storing and retrieving data, backed by Memcached. We installed Memcached 1.5.22, the default version on Ubuntu 20.04. The PHP website is hosted on Nginx 1.18.0 with PHP-FPM.

We perform a simple test where we perform 5000 HTTP requests to a PHP site that stores zlib-compressed memory in Memcached. Figure 7 illustrates the timing difference between a '0'-bit and a '1'-bit. The timing difference between the mean values for a '0'- and '1'-bit is 61 622 . 042 ns .

Figure 7 shows that the two distributions overlap to a certain extent. We call this overlap the critical section . We implement an early-stopping mechanism for bits in the covert channel that clearly belong into one of the two regions below or above the critical section. After 25 requests, we start to classify the RTT per bit.

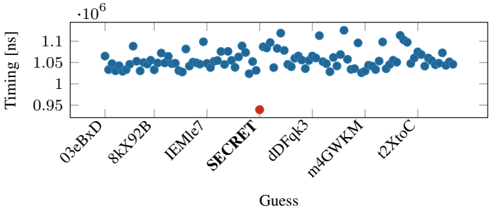

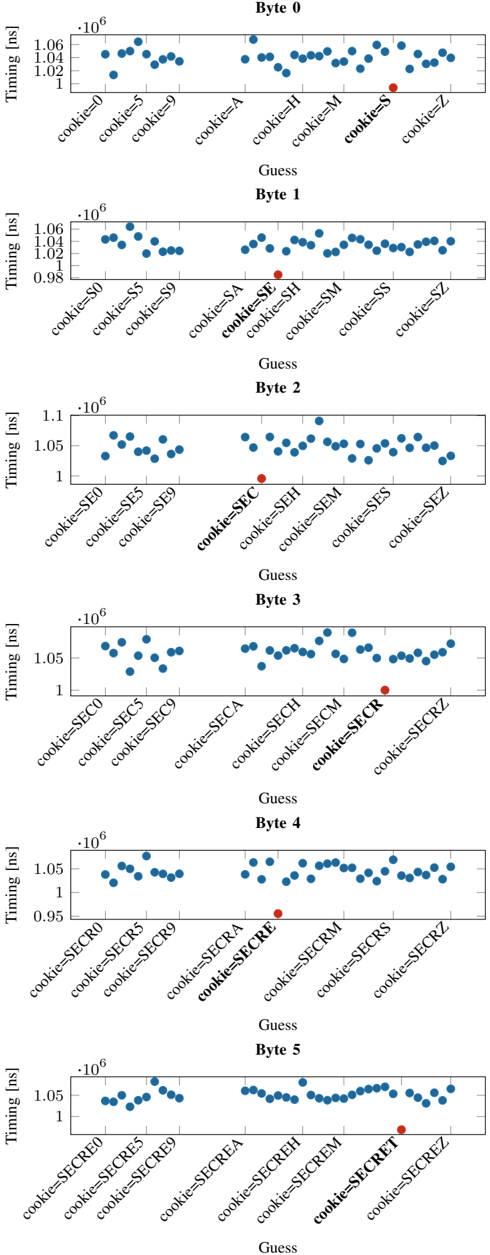

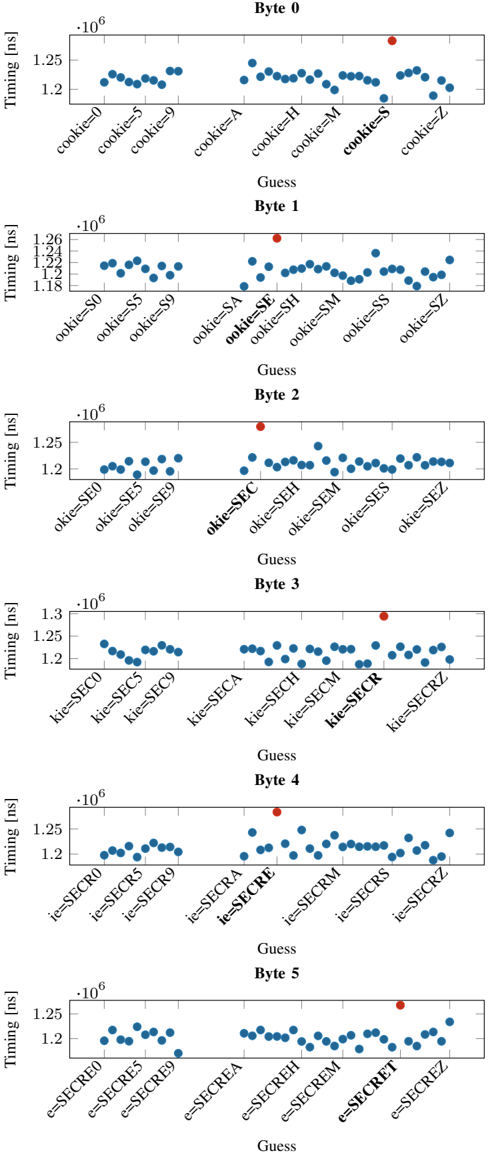

Fig. 8: Distribution of the response time for the correct guess (S) and the incorrect guesses (0-9, A-R, T-Z) for the first byte leaked in the byte-wise leakage of the secret from PHPMemcached. Similar trends are observed for subsequent bytes leaked as shown in Appendix D.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Scatter Plot: Timing vs. Guess for Byte 0

### Overview

The image is a scatter plot showing the timing in nanoseconds (ns) against different "Guess" values, which represent cookie values. The plot appears to be analyzing the timing behavior for "Byte 0" based on different cookie values. Most data points are blue, but one is red.

### Components/Axes

* **Title:** Byte 0

* **Y-axis:**

* Label: Timing [ns]

* Scale: 1 x 10^6 to 1.06 x 10^6 ns, with tick marks at 1 x 10^6, 1.02 x 10^6, 1.04 x 10^6, and 1.06 x 10^6.

* **X-axis:**

* Label: Guess

* Categories: cookie=0, cookie=5, cookie=9, cookie=A, cookie=H, cookie=M, cookie=S, cookie=Z

### Detailed Analysis

The data points are scattered across the x-axis categories. The y-axis represents timing in nanoseconds, and the x-axis represents different cookie values.

* **cookie=0:** Timing values are approximately 1.01 x 10^6 ns to 1.06 x 10^6 ns.

* **cookie=5:** Timing values are approximately 1.04 x 10^6 ns to 1.06 x 10^6 ns.

* **cookie=9:** Timing values are approximately 1.04 x 10^6 ns to 1.05 x 10^6 ns.

* **cookie=A:** Timing values are approximately 1.04 x 10^6 ns.

* **cookie=H:** Timing values are approximately 1.02 x 10^6 ns to 1.05 x 10^6 ns.

* **cookie=M:** Timing values are approximately 1.04 x 10^6 ns to 1.05 x 10^6 ns.

* **cookie=S:** A single data point, colored red, is at approximately 1.00 x 10^6 ns.

* **cookie=Z:** Timing values are approximately 1.04 x 10^6 ns to 1.06 x 10^6 ns.

### Key Observations

* The timing values for most cookie values are clustered between 1.02 x 10^6 ns and 1.06 x 10^6 ns.

* The "cookie=S" value has a significantly lower timing value (approximately 1.00 x 10^6 ns) compared to the other cookie values. This data point is also highlighted in red.

### Interpretation

The scatter plot suggests that the "cookie=S" value results in a different timing behavior for "Byte 0" compared to other cookie values. The significantly lower timing value for "cookie=S" could indicate a successful guess or a different processing path within the system. The red color highlights this anomaly, suggesting it is of particular interest. The data suggests a potential vulnerability or exploitable behavior related to the "cookie=S" value.

</details>

At most, we perform 200 HTTP requests per bit. If there are still unclassified bits, we compare the amount of requests classified as '0'-bit and those classified as '1'-bit and decide on the side with more elements. We transmit a series of different messages of 8 B over the internet. Our simple remote covert channel achieves an average transmission rate of 643 . 25 bit ♥ h ( n 20 , σ 6 . 66% ) at an average error rate of 0 . 93 % . Our covert channel outperforms the one by Schwarz et al. [52] and Gruss [22],although our attack works with HTTP instead of the more lightweight UDP sockets. Other remote timing attacks usually do not evaluate their capacity with a remote covert channel [65], [30], [2], [51], [1], [2], [56].

## C. Remote Attack on PHP-Memcached

Using our building blocks to perform Decomp+Time and the remote covert channel, we perform a remote attack on PHP-Memcached to leak secret data from a server over the internet. We assume a memory layout where secret memory is co-located to attacker-controlled memory, and the overall memory region is compressed.

Attack Setup. We use the same PHP-API as in Section VI-B that allows storing and retrieving data. We run the attack using the same server setup as used for the remote covert channel attacking a dedicated server in the Equinix [13] cloud 14 hops away from our network. We define a 6 B long secret ( SECRET ) with a 7 B long prefix ( cookie= ) and prepend it to the stored data of users. PHP-Memcached will compress the data before storing it in Memcached and decompress it when accessing it again. For each guess, the PHP application stores the uploaded data to a certain location in Memcached. On each data fetch, the PHP application decompresses the secret data together with the co-located attacker-controlled data and then responds onlythe attacker-controlled data. The attacker measures the RTT per guess and distinguishes the timing differences between the guesses. In the case of zlib, the guess with the fastest response time is the correct guess. We demonstrate a byte-wise leakage of an arbitrary secret and also a dictionary attack with a dictionary size of 100 guesses.

Evaluation. For the byte-wise attack, we assume each byte of the secret is from 'A-Z,0-9' (36 different options). For each of the 6 bytes to be leaked, we generate a separate memory layout using Comprezzor that maximizes the latency between the guesses. We repeat the experiment 20 times. On average, our attack leaks the entire 6 B secret string in 31 . 95 min ( n

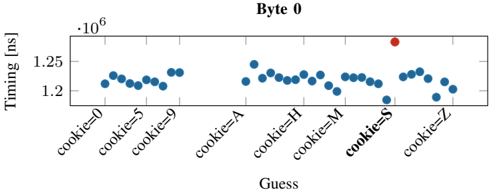

Fig. 9: Distribution of the response time for the correct guess (SECRET) and the 99 other incorrect guesses in dictionary attack on PHP-Memcached. Note that we only label SECRET and 6 out of 99 incorrect guesses on the X-axis for brevity.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Scatter Plot: Timing vs. Guess

### Overview

The image is a scatter plot showing the timing (in nanoseconds) for different guesses. The x-axis represents the "Guess", and the y-axis represents the "Timing [ns]". Most guesses have a timing around 1.05 * 10^6 ns, while one guess labeled "SECRET" has a significantly lower timing.

### Components/Axes

* **X-axis:** "Guess" with labels: "03eBxD", "8kX92B", "IEMle7", "SECRET", "dDFqk3", "m4GWKM", "t2XtoC".

* **Y-axis:** "Timing [ns]" with scale markers: 0.95 * 10^6, 1 * 10^6, 1.05 * 10^6, 1.1 * 10^6.

### Detailed Analysis

* **Blue Data Points:** The blue data points represent the timing for each guess, except for the "SECRET" guess. The blue points are clustered around 1.04 * 10^6 ns to 1.12 * 10^6 ns.

* **03eBxD:** Timing values range from approximately 1.03 * 10^6 ns to 1.07 * 10^6 ns.

* **8kX92B:** Timing values range from approximately 1.04 * 10^6 ns to 1.06 * 10^6 ns.

* **IEMle7:** Timing values range from approximately 1.04 * 10^6 ns to 1.09 * 10^6 ns.

* **dDFqk3:** Timing values range from approximately 1.04 * 10^6 ns to 1.12 * 10^6 ns.

* **m4GWKM:** Timing values range from approximately 1.03 * 10^6 ns to 1.12 * 10^6 ns.

* **t2XtoC:** Timing values range from approximately 1.04 * 10^6 ns to 1.08 * 10^6 ns.

* **Red Data Point:** The red data point represents the timing for the "SECRET" guess. It has a timing value of approximately 0.94 * 10^6 ns.

### Key Observations

* The timing for the "SECRET" guess is significantly lower than the timing for other guesses.

* The timing values for the other guesses are relatively consistent, ranging from approximately 1.03 * 10^6 ns to 1.12 * 10^6 ns.

### Interpretation

The scatter plot suggests that the "SECRET" guess has a significantly different timing profile compared to the other guesses. This could indicate that the "SECRET" guess triggers a different code path or algorithm, resulting in a faster execution time. The consistent timing values for the other guesses suggest that they might be processed similarly. The plot highlights the "SECRET" guess as an outlier, potentially revealing information about the system's behavior when the correct secret is provided.

</details>

20 , σ 8 . 48% ). Since the latencies between a correct and incorrect guess are in the microseconds range, we do not observe false positives with our approach. Figure 8 shows the median response time for each guess in the first iteration (leaking the first byte) as a representative example. It can be seen that the response time for the correct guess ( S ) for the first byte is significantly faster than the incorrect guesses. We observe similar results for the remaining bytes ( E,C,R,E,T ).

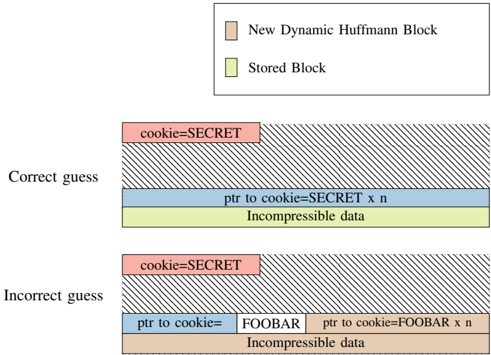

Dictionary attack. While bytewise leakage is the more generic and practical approach for decompression timing attacks, there might be use cases where also a dictionary attack is applicable. For instance, if the attacker would try the top n most common usernames or passwords, which are publicly available. Another use case for dictionary attacks could be to profile if certain images are forbidden in a database used for digital rights management.

We perform a dictionary attack over the network using randomly generated 100 guesses, including the correct guess. We repeat the experiment 20 times. For each run, we re-generate the guesses and randomly shuffle the order of the guesses per request iteration to not create a potential bias. On average, our attack leaks the secret string in 14 . 99 min ( n 20 , σ 16 . 27% ). To classify the correct guess, we use the same early stopping technique as was used for the covert channel. Since the timing difference is in the microseconds range, we do not observe false positives with our approach. Figure 9 illustrates the median of the response times for each guess from one iteration. Figure 15 (in Appendix D) shows the latencies for all leaked bytes. It can be clearly seen that the response time for the correct guess ( SECRET ) is significantly faster.

Takeaway: We show that a PHP application using Memcached to cache blobs hosted on Nginx enables covert communication with a transmission rate of 643 . 25 bit ♥ h . If secret data is stored together with attacker-controlled data, we demonstrate a remote memory-compression attack on Memcached leaking a 6 B -secret bytewise in 31 . 95 min . We further demonstrated that the attack can also be used for dictionary attacks.

## D. Leaking Data from Compressed Database

In this section, we show that an attacker can also exploit compression in databases to leak inaccessible information, i.e. , from the internal database compression of PostgreSQL.

PostgreSQL Data Compression. PostgreSQL is a widespread open-source relational database system using the SQL standard. PostgreSQL maintains tuples saved on disk using a fixed page size of commonly 8 kB , storing larger fields compressed and possibly split into multiple pages.

By default, variable-length fields that may produce large values, e.g., TEXT fields, are stored compressed. PostgreSQL's transparent compression is known as TOAST (The OversizedAttribute Storage Technique) and uses a fast LZ-family compression, PGLZ [46]. By default, data in a cell is actually stored compressed if such a form saves at least 25 % of the uncompressed size to avoid wasting decompression time if it is not worth it. Indeed, data stored uncompressed is accessed faster than data stored compressed since the decompression algorithm is not executed. Moreover, the decompression time depends on the compressibility of the underlying data. A potential attack scenario for structured text in a cell is where JSON documents are stored and compressed within a single cell, and the attacker controls a specific field within the document. While our focus is restricted to cell-level compression, note that compressed columnar storage [9], [39], [7], a non-default extension available in PostgreSQL, may also be vulnerable to decompression timing attacks.