# Geometry-Aware Multi-Task Learning for Binaural Audio Generation from Video

**Authors**: Rishabh Garg, Ruohan Gao, Kristen Grauman

## Geometry-Aware Multi-Task Learning for Binaural Audio Generation from Video

Rishabh Garg 1

rishabh@cs.utexas.edu

Ruohan Gao 2

rhgao@cs.stanford.edu

Kristen Grauman 1,3

grauman@cs.utexas.edu

1 The University of Texas at Austin

2 Stanford University

3 Facebook AI Research

## Abstract

Binaural audio provides human listeners with an immersive spatial sound experience, but most existing videos lack binaural audio recordings. We propose an audio spatialization method that draws on visual information in videos to convert their monaural (singlechannel) audio to binaural audio. Whereas existing approaches leverage visual features extracted directly from video frames, our approach explicitly disentangles the geometric cues present in the visual stream to guide the learning process. In particular, we develop a multi-task framework that learns geometry-aware features for binaural audio generation by accounting for the underlying room impulse response, the visual stream's coherence with the sound source(s) positions, and the consistency in geometry of the sounding objects over time. Furthermore, we introduce a new large video dataset with realistic binaural audio simulated for real-world scanned environments. On two datasets, we demonstrate the efficacy of our method, which achieves state-of-the-art results.

## Introduction

Both sight and sound are key drivers of the human perceptual experience, and both convey essential spatial information. For example, a car driving past us is audible-and spatially trackable-even before it crosses our field of view; a bird singing high in the trees helps us spot it with binoculars; a chamber music quartet performance sounds spatially rich, with the instruments' layout on stage affecting our listening experience.

Spatial hearing is possible thanks to the binaural audio received by our two ears. The Interaural Level Difference (ILD) and the Interaural Time Difference (ITD) between the sounds reaching each ear, as well as the shape of the outer ears themselves, all provide spatial effects [42]. Meanwhile, the reflections and reverberations of sound in the environment are a function of the room acoustics-the geometry of the room, its major surfaces, and their materials. For example, we perceive the same audio differently in a long corridor versus a large room, or a room with heavy carpet versus a smooth marble floor.

Videos or other media with binaural audio imitate that rich audio experience for a user, making the media feel more real and immersive. This immersion is important for virtual

©2021. The copyright of this document resides with its authors.

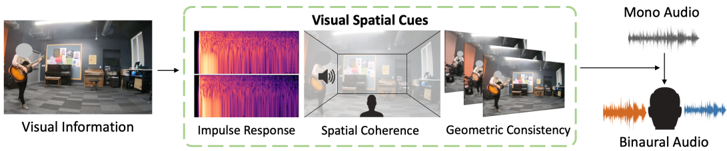

Figure 1: To generate accurate binaural audio from monaural audio, the visuals provide significant cues that can be learnt jointly with audio prediction. Our approach learns to extract spatial information (e.g., the guitar player is on the left), geometric consistency of the position of the sound sources over time, and cues from the inferred binaural impulse response from the surrounding room.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Visual Spatial Cues Processing Pipeline

### Overview

The image depicts a diagram illustrating a pipeline for converting visual information into binaural audio. It shows how visual cues are extracted from a scene and used to generate spatial audio. The process involves stages like impulse response estimation, spatial coherence analysis, and geometric consistency checks before ultimately producing binaural audio for a listener.

### Components/Axes

The diagram is structured as a flow chart with the following components:

* **Visual Information:** An image of a person playing a guitar in a room.

* **Visual Spatial Cues:** A green-bordered box containing four sub-components:

* **Impulse Response:** Represented by a heatmap-like image with purple and yellow hues.

* **Spatial Coherence:** A 3D rendering of a room with a speaker icon in the center.

* **Geometric Consistency:** Multiple images of the same scene from slightly different perspectives.

* **Mono Audio:** A waveform representation of monaural audio.

* **Binaural Audio:** A waveform representation of binaural audio, split into orange and blue channels, directed towards a head silhouette.

### Detailed Analysis or Content Details

The diagram illustrates a sequential process:

1. **Visual Information Input:** The process begins with visual information captured as an image of a person playing a guitar in a room.

2. **Visual Spatial Cues Extraction:** This visual information is then processed to extract visual spatial cues.

* **Impulse Response:** The impulse response is visualized as a heatmap. The colors range from deep purple to bright yellow, suggesting varying levels of intensity or magnitude.

* **Spatial Coherence:** A 3D room rendering with a speaker indicates the analysis of spatial coherence. The speaker is positioned centrally within the room.

* **Geometric Consistency:** Multiple views of the scene are used to ensure geometric consistency. The images show the same scene from slightly different angles.

3. **Audio Conversion:** The extracted visual spatial cues are used to convert mono audio into binaural audio.

4. **Binaural Audio Output:** The final output is binaural audio, represented by two waveforms (orange and blue) directed towards a head silhouette, indicating left and right ear channels.

### Key Observations

The diagram highlights the importance of visual information in generating realistic spatial audio. The use of multiple visual cues (impulse response, spatial coherence, geometric consistency) suggests a robust approach to spatial audio rendering. The conversion from mono to binaural audio indicates the goal of creating a more immersive listening experience.

### Interpretation

This diagram demonstrates a method for creating spatial audio from visual data. The pipeline suggests that by analyzing the visual environment, it's possible to estimate the acoustic properties of the space and generate audio that accurately reflects the perceived location of sound sources. The inclusion of impulse response, spatial coherence, and geometric consistency suggests a sophisticated approach that aims to overcome the limitations of traditional mono audio. The final output of binaural audio, directed towards a head, implies the intention to create a personalized and immersive audio experience for the listener. The diagram is a conceptual illustration of a system, and does not provide specific numerical data or performance metrics. It focuses on the *process* rather than the *results*.

</details>

reality and augmented reality applications, where the user should feel transported to another place and perceive it as such. However, collecting binaural audio data is a challenge. Presently, spatial audio is collected with an array of microphones or specialized dummy rig that imitates the human ears and head. The collection process is therefore less accessible and more costly compared to standard single-channel monaural audio captured with ease from today's ubiquitous mobile devices.

Recent work explores how monaural audio can be upgraded to binaural audio by leveraging the visual stream in videos [23, 34, 63]. The premise is that the visual context provides hints for how to spatialize the sound due to the visible sounding objects and room geometry. While inspiring, existing models are nonetheless limited to extracting generic visual cues that only implicitly infer spatial characteristics.

Our idea is to explicitly model the spatial phenomena in video that influence the associated binaural sound. Going beyond generic visual features, our approach guides binauralization with those geometric cues from the object and environment that dictate how a listener receives the sound in the real world. In particular, we introduce a multi-task learning framework that accounts for three key factors (Fig. 1). First, we require the visual features to be predictive of the room impulse response (RIR), which is the transfer function between the sound sources, 3D environment, and camera/microphone position. Second, we require the visual features to be spatially coherent with the sound, i.e., they can understand the difference when audio is aligned with the visuals and when it is not. Third, we enforce the geometric consistency of objects over time in the video. Whereas existing methods treat audio and visual frame pairs as independent samples, our approach represents the spatio-temporal smoothness of objects in video, which generally do not have dramatic instantaneous changes in their layout.

The main contributions of this work are as follows. Firstly, we propose a novel multitask approach to convert a video's monaural sound to binaural sound by learning audio-visual representations that leverage geometric characteristics of the environment and the spatial and temporal cues from videos. Second, to facilitate binauralization research, we create SimBinaural, a large-scale dataset of simulated videos with binaural sound in photo-realistic 3D indoor scene environments. This new dataset facilitates both learning and quantitative evaluation, allows us to explore the impact of particular parameters in a controlled manner, and even benefits learning in real videos. Finally, we show the efficacy of our method via extensive experiments in generating realistic binaural audio, achieving state-of-the-art results.

## 2 Related Work

Visually-Guided Audio Spatialization Recent work uses video frames to provide a form of self-supervision to implicitly infer the relative positions of sound-making objects. They formulate the problem as an upmixing task from mono to binaural using the visual information. Morgado et al . [34] use 360 videos from YouTube to predict first order ambisonic sound useful for 360 viewing, while Lu et al . [32] use a self-supervised audio spatialization network using visual frames and optical flow. Whereas [32] uses correspondence to learn audio synthesizer ratio masks, which does not necessitate understanding of sound making objects, we enforce understanding of the sound location via spatial coherence in the visual features. For speech synthesis, using the ground truth position and orientation of the source and receiver instead of a video is also explored [43].

More closely related to our problem, the 2.5D visual sound approach by Gao and Grauman generates binaural audio from video [23]. Building on those ideas, Zhou et al . [63] propose an associative pyramid network (APNet) architecture to fuse the modalities and jointly train on audio spatialization and source separation task. Concurrent to our work, Xu et al . [57] propose to generate binaural audio for training from mono audio by using spherical harmonics. In contrast to these methods, we explore a novel framework for learning geometric representations, and we introduce a large-scale photo-realistic video dataset with acoustically accurate binaural information (which will be shared publicly). We outperform the existing methods and show that the new dataset can be used to augment performance.

Audio and 3D Spaces Recent work exploits the complementary nature of audio and the characteristics of the environment in which it is heard or recorded. Prior methods estimate the acoustic properties of materials [47], estimate reverberation time and equalization of the room using an actual 3D model of a room [50], and learn audio-visual correspondence from video [58]. Chen et al . [7] introduce the SoundSpaces audio platform to perform audio-visual navigation in scanned 3D environments, using binaural audio to guide policy learning. Ongoing work continues to explore audio-visual navigation models for embodied agents [8, 9, 14, 21, 33]. Other work predicts depth maps using spatial audio [11] and learns representations via interaction using echoes recorded in indoor 3D simulated environments [25]. In contrast to all of the above, we are interested in a different problem of generating accurate spatial binaural sound from videos. We do not use it for navigation nor to explicitly estimate information about the environment. Rather, the output of our model is spatial sound to provide a human listener with an immersive audio-visual experience.

Audio-Visual Learning Audio-visual learning has a long history, and has enjoyed a resurgence in the vision community in recent years. Cross-modal learning is explored to understand the natural synchronisation between visuals and the audio [3, 5, 39]. Audio-visual data is leveraged for audio-visual speech recognition [12, 28, 59, 62], audio-visual event localization [51, 52, 55], sound source localization [4, 29, 45, 49, 51, 60], self-supervised representation learning [25, 31, 35, 37, 39], generating sounds from video [10, 19, 38, 64], and audio-visual source separation for speech [1, 2, 13, 16, 18, 37], music [20, 22, 56, 60, 61], and objects [22, 24, 53]. In contrast to all these methods, we perform a different task: to produce binaural two-channel audio from a monaural audio clip using a video's visual stream.

## 3 Approach

Our goal is to generate binaural audio from videos with monaural audio. In this section, we first formally describe the problem (Section 3.1). Then we introduce our proposed multi-task

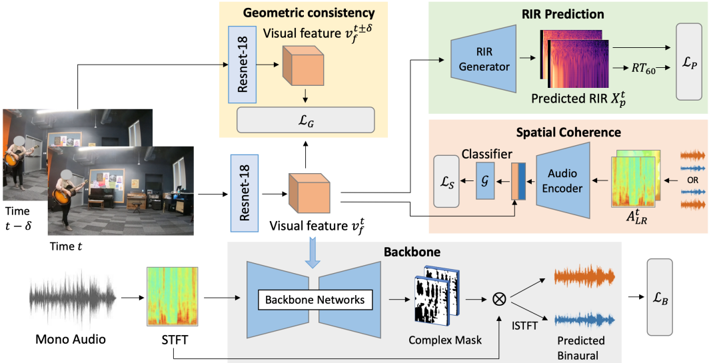

Figure 2: Proposed network. The network takes the visual frames and monaural audio as input. The ResNet-18 visual features v t f are trained in a multi-task setting. The features v t f are used to directly predict the RIR via a decoder (top right). Audio features from binaural audio, which might have flipped channels, are combined with v t f and used to train a spatial coherence classifier G (middle right). Two temporally adjacent frames are also used to ensure geometric consistency (top center). The features v t f are jointly trained with the backbone network (bottom) to predict the final binaural audio output.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Audio-Visual Scene Analysis and Sound Event Localization

### Overview

This diagram illustrates a system for predicting Room Impulse Responses (RIR) and localizing sound events within a visual scene. The system takes mono audio and visual input (frames at time t-δ and t) and processes them through several modules to estimate RIRs and predict binaural audio. The diagram is structured into three main processing branches: Geometric Consistency, RIR Prediction, and Spatial Coherence.

### Components/Axes

The diagram consists of the following key components:

* **Input:** Mono Audio, Visual Frames (Time t-δ, Time t)

* **Modules:** ResNet-18 (x2), RIR Generator, Classifier, Audio Encoder, Backbone Networks, ISTFT

* **Outputs:** Predicted RIR (X<sub>p</sub><sup>RT60</sup>), Predicted Binaural Audio

* **Loss Functions:** L<sub>C</sub>, L<sub>S</sub>, L<sub>B</sub>

* **Intermediate Representations:** Visual Feature (v<sub>t</sub><sup>δ</sup>, v<sub>t</sub>), STFT, Complex Mask, A<sub>LR</sub>

### Detailed Analysis or Content Details

**1. Visual Processing (Geometric Consistency & Spatial Coherence):**

* Two visual frames, labeled "Time t-δ" and "Time t", are input into separate ResNet-18 networks.

* The ResNet-18 networks extract "Visual Feature" representations, denoted as v<sub>t</sub><sup>δ</sup> and v<sub>t</sub> respectively. These are represented as cube-shaped blocks.

* The difference between these visual features (v<sub>t</sub><sup>δ</sup> and v<sub>t</sub>) is fed into a loss function L<sub>C</sub>, representing Geometric Consistency.

**2. RIR Prediction:**

* The visual feature v<sub>t</sub><sup>δ</sup> is input into an "RIR Generator".

* The RIR Generator outputs a "Predicted RIR" (X<sub>p</sub><sup>RT60</sup>), visualized as a spectrogram-like image with purple and red hues.

* The Predicted RIR is then passed through a block labeled "RT60" and then "p".

**3. Spatial Coherence:**

* The visual feature v<sub>t</sub> is input into a "Classifier".

* The Classifier outputs a representation labeled "L<sub>S</sub>" and "g".

* The visual feature v<sub>t</sub> is also input into an "Audio Encoder".

* The Audio Encoder outputs a representation labeled "A<sub>LR</sub>".

</details>

setting (Section 3.2). Next we describe the training and inference method (Section 3.3), and finally we describe the proposed SimBinaural dataset (Section 3.4).

## 3.1 Problem Formulation

Our objective is to map the monaural sound from a given video to spatial binaural audio. The input video may have one or more sound sources, and neither their positions in the 3D scene nor their positions in the 2D video frame are given.

For a video V with frames { v 1 ... v T } and monaural audio a t M , we aim to predict a two channel binaural audio output { a t L , a t R } . Whereas a single-channel audio a t M lacks spatial characteristics, two-channel binaural audio { a t L , a t R } conveys two distinct waveforms to the left and right ears separately and hence provides spatial effects to the listener. By coupling the monaural audio with the visual stream, we aim to leverage the spatial cues from the pixels to infer how to spatialize the sound. We first transfer the input audio waveforms into the time-frequency domain using the Short-Time Fourier Transformation (STFT). We aim to predict the binaural audio spectrograms {A t L , A t R } from the input mono spectrogram A t M , where A t X = STFT ( a t X ) , conditioned on visual features v t f from the video frames at time t .

## 3.2 Geometry-Aware Multi-Task Binauralization Network

Our approach has four main components: the backbone for converting mono audio to binaural by injecting the visual information, the spatial coherence module that learns the relative alignment of the spatial sound and frame, an RIR prediction module that requires the room impulse response to be predictable from the video frames, and the geometric consistency module that enforces consistency of objects over time.

Backbone Loss First, we define the backbone loss within our multi-task framework (Fig. 2, bottom). This backbone network is used to transform the input monaural spectrogram A t M to binaural ones. During training, the mono audio is obtained by averaging the two channels a t M =( a t L + a t R ) / 2 and hence the spatial information is lost. Rather than directly predict the two channels of binaural output, we predict the difference of the two channels, following [23]. This better captures the subtle distinction of the channels and avoids collapse to the easy case of predicting the same output for both channels. We predict a complex mask M t D , which, multiplied with the original audio spectrogram A t M , gives the predicted difference spectrogram A t D ( pred ) = M t D · A t M . The true difference spectrogram of the training input A t D is the STFT of a t L -a t R . We minimize the distance between these two spectrograms: ‖A t D -A t D ( pred ) ‖ 2 2 . We also predict the two channels via two complex masks M t L and M t R , one for each channel, to obtain the predicted channel spectrograms A t L ( pred ) and A t R ( pred ) like above. This gives us the overall backbone objective:

<!-- formula-not-decoded -->

Spatial Coherence We encourage the visual features to have geometric understanding of the relative positions of the sound source and receiver via an audio-visual feature alignment prediction term. This loss requires the predicted audio to correctly capture which channel is left and right with respect to the visual information. This is crucial to achieve the proper spatial effect while watching videos, as the audio needs to match the observed visuals' layout.

In particular, we incorporate a classifier to identify whether the visual input is aligned with the audio. The classifier G combines the binaural audio A LR = {A t L , A t R } and the visual features v t f to classify if the audio and visuals agree. In this way, the visual features are forced to reason about the relative positions of the sound sources and learn to find the cues in the visual frames which dictate the direction of sound heard. During training, the original ground truth samples are aligned, and we create misaligned samples by flipping the two channels in the ground truth audio to get A LR = {A t R , A t L } . We calculate the binary cross entropy (BCE) loss for the classifier's prediction of whether the audio is flipped or not, c = G ( A LR , v t f ) , and the indicator ˆ c denoting if the audio is flipped, yielding the spatial coherence loss:

<!-- formula-not-decoded -->

Room Impulse Response and Reverberation Time Prediction The third component of our multi-task model trains the visual features to be predictive of the room impulse response (RIR). An impulse response gives a concise acoustic description of the environment, consisting of the initial direct sound, the early reflections from the surfaces of the room, and a reverberant tail from the subsequent higher order reflections between the source and receiver. The visual frames convey information like the layout of the room and the sound source with respect to the receiver, which in part form the basis of the RIR. Since we want our audio-visual feature to be a latent representation of the geometry of the room and the source-receiver position pair, we introduce an auxiliary task to predict the room IR directly from the visual frames via a generator on the visual features. Furthermore, we require the features to be predictive of the reverberation time RT 60 metric, which is the time it takes the energy of the impulse to decay 60dB, and can be calculated from the energy decay curve of the IR [48]. The RT 60 is commonly used to characterize the sound properties of a room; we employ it as a low-dimensional target here to guide feature learning alongside the highdimensional RIR spectrogram prediction.

We convert the ground truth binaural impulse response signal { rL , rR } to the frequency domain using the STFT and obtain magnitude spectrograms X for each channel. The IR prediction network consists of a generator which performs upconvolutions on the visual features v t f to obtain a predicted magnitude spectrogram X t ( pred ) . We minimize the euclidean distance between the predicted RIR X t ( pred ) , and the ground truth X t gt . Additionally, we obtain the RIR waveform from the predicted spectrogram X t ( pred ) via the Griffin-Lim algorithm [26, 41] and compute the RT 60 ( pred ) . We minimize the L1 distance between the predicted RT 60 ( pred ) and the ground truth RT 60 ( gt ) . Thus, the overall RIR prediction loss is:

<!-- formula-not-decoded -->

Geometric Consistency Since the videos are continuous samples over time rather than individual frames, our fourth and final loss regularizes the visual features by requiring them to have spatio-temporal geometric consistency. The position of the source(s) of sound and the position of the camera-as well as the physical environment where the video is recordeddo not typically change instantaneously in videos. Therefore, there is a natural coherence between the sound in a video observed at two points that are temporally close. Since visual features are used to condition our binaural prediction, we encourage our visual features to learn a latent representation that is coherent across short intervals of time. Specifically, the visual features v t f and v t ± d f should be relatively similar to each other to produce audio with fairly similar spatial effects. Specifically, the geometric consistency loss is:

<!-- formula-not-decoded -->

where a is the margin allowed between two visual features. We select a random frame ± 1 second from t , so -1 ≤ d ≤ 1. This ensures that similar placements of the camera with respect to the audio source should be represented with similar features, while the margin allows room for dissimilarity for the changes due to time. Since the underlying visual features are regularized to be similar, the predicted audio conditioned on these visual features is also encouraged to be temporally consistent.

## 3.3 Training and Inference

During training, the mono audio is obtained by taking the mean of the two channels of the ground truth audio a t m =( a t L + a t R ) / 2. The visual features v t f are reduced in dimension, tiled, and concatenated with the output of the audio encoder to fuse the information from the audio and visual streams. The overall multi-task loss is a combination of the losses (Equations 1-4) described earlier:

<!-- formula-not-decoded -->

where l B , l S , l G and l P are the scalar weights used to determine the effect of each loss during training, set using validation data. To generate audio at test time, we only require the mono audio and visual frames. The predicted spectrograms are used to obtain the predicted difference signal a t D ( pred ) and two-channel audio { a t L , a t R } via an inverse Short-Time Fourier Transformation (ISTFT) operation.

## 3.4 SimBinaural Dataset

Weexperiment with both real world video (FAIR-Play [23]) and video from scanned environments with high quality simulated audio. For the latter, to facilitate large-scale experimentationand to augment learning from real videos-we create a new dataset called SimBinaural of

Figure 3: Example frames from the SimBinaural dataset.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Photographs: Interior Room Views

### Overview

The image consists of four photographs arranged horizontally, depicting interior views of what appear to be luxury residences or high-end commercial spaces. The photographs showcase different rooms and architectural styles. There is no quantitative data or charts present; the information is purely visual and descriptive.

### Components/Axes

There are no axes, legends, or scales present in the image. The components are the rooms themselves, with their architectural features and furnishings.

### Detailed Analysis or Content Details

**Image 1 (Leftmost):**

This image shows a room with dark wood beams on the ceiling and a light-colored floor. A purple wall is visible, along with what appears to be artwork or a mirror. A green sofa is partially visible. The room has large windows.

**Image 2:**

This image depicts a room with arched doorways and ornate architectural details. There are comfortable-looking chairs and sofas, and a lamp is visible. The room appears to be well-lit and spacious.

**Image 3:**

This image shows a doorway leading into a dining room. A long table is visible, set for a meal. There are chairs around the table, and artwork on the walls. The room has a neutral color scheme. A large orange floral arrangement is visible.

**Image 4 (Rightmost):**

This image shows a kitchen or dining area with wooden cabinets and countertops. There is a green plant and a chandelier. The room appears to be well-lit and modern.

### Key Observations

The photographs showcase a consistent theme of luxury and high-end design. The architectural styles vary, but all rooms appear to be well-maintained and aesthetically pleasing. There is no numerical data or measurable information present.

### Interpretation

The images likely serve as examples of interior design or architectural projects. They could be used in a portfolio, a real estate listing, or a design magazine. The lack of quantitative data suggests that the primary purpose of the images is to convey a sense of style and quality, rather than to present specific measurements or statistics. The images suggest a focus on comfort, elegance, and attention to detail. The variety of rooms suggests a comprehensive design approach, encompassing living spaces, dining areas, and kitchens. The consistent high quality suggests a target audience with discerning tastes and a willingness to invest in luxury.

</details>

Table 1: A comparison of the data in FAIR-Play and the large scale data we generated.

| Dataset | #Videos | Length (hrs) | #Rooms | RIR |

|----------------|-----------|----------------|----------|-------|

| FAIR-Play [23] | 1,871 | 5.2 | 1 | No |

| SimBinaural | 21,737 | 116.1 | 1,020 | Yes |

simulated videos in photo-realistic 3D indoor scene environments. 1 The generated videos, totalling over 100 hours, resemble real-world audio recordings and are sampled from 1,020 rooms in 80 distinct environments; each environment is a multi-room home. Using the publicly available SoundSpaces 2 audio simulations [7] together with the Habitat simulator [46], we create realistic videos with binaural sounds for publicly available 3D environments in Matterport3D [6]. See Fig. 3 and Supp. video. Our resulting dataset is much larger and more diverse than the widely used FAIR-Play dataset [23] which is real video but is limited to 5 hours of recordings in one room (Table 1).

To construct the dataset, we insert diverse 3D models from poly.google.com of various instruments like guitar, violin, flute etc. and other sound-making objects like phones and clocks into the scene. To generate realistic binaural sound in the environment as if it is coming from the source location and heard at the camera position, we convolve the appropriate SoundSpaces [7] room impulse response with an anechoic audio waveform (e.g., a guitar playing for an inserted guitar 3D object). Using this setup, we capture videos with simulated binaural sound. The virtual camera and attached microphones are moved along trajectories such that the object remains in view, leading to diversity in views of the object and locations within each video clip. Please see Supp. for details.

## 4 Experiments

We validate our approach on both FAIR-Play [23] (an existing real video benchmark) and our new SimBinaural dataset. We compare to the following baselines:

- Flipped-Visual: We flip the visual frame horizontally to provide incorrect visual information while testing. The other settings are the same as our method.

- Audio Only: We provide only monaural audio as input, with no visual frames, to verify if the visual information is essential to learning.

- Mono-Mono: Both channels have the same input monaural audio repeated as the twochannel output to verify if we are actually distinguishing between the channels.

- Mono2Binaural [23] : A state-of-the-art 2.5D visual sound model for this task. We use the authors' code to evaluate in the settings as ours.

- APNet [63] : A state-of-the-art model that handles both binauralization and audio source separation. We use the APNet network from their method and train only on binaural data (rather than stereo audio). We use the authors' code.

1 The SimBinaural dataset was constructed at, and will be released by, The University of Texas at Austin.

2 SoundSpaces [7] provides room impulse responses at a spatial resolution of 1 meter. These state-of-the-art RIRs capture how sound from each source propagates and interacts with the surrounding geometry and materials, modeling all of the major real-world features of the RIR: direct sounds, early specular/diffuse reflections, reverberations, binaural spatialization, and frequency dependent effects from materials and air absorption.

Table 2: Binaural audio prediction errors on the FAIR-Play and SimBinaural datasets. For both metrics, lower is better.

| | FAIR-Play | FAIR-Play | SimBinaural | SimBinaural | SimBinaural | SimBinaural |

|--------------------|-------------|-------------|---------------|---------------|----------------|----------------|

| | | | Scene-Split | Scene-Split | Position-Split | Position-Split |

| | STFT | ENV | STFT | ENV | STFT | ENV |

| Mono-Mono | 1.215 | 0.157 | 1.356 | 0.163 | 1.348 | 0.168 |

| Audio-Only | 1.102 | 0.145 | 0.973 | 0.135 | 0.932 | 0.130 |

| Flipped-Visual | 1.134 | 0.152 | 1.082 | 0.142 | 1.075 | 0.141 |

| Mono2Binaural [23] | 0.927 | 0.142 | 0.874 | 0.129 | 0.805 | 0.124 |

| APNet [63] | 0.904 | 0.138 | 0.857 | 0.127 | 0.773 | 0.122 |

| Backbone+IR Pred | n/a | n/a | 0.801 | 0.124 | 0.713 | 0.117 |

| Backbone+Spatial | 0.873 | 0.134 | 0.837 | 0.126 | 0.756 | 0.120 |

| Backbone+Geom | 0.874 | 0.135 | 0.828 | 0.125 | 0.731 | 0.118 |

| Our Full Model | 0.869 | 0.134 | 0.795 | 0.123 | 0.691 | 0.116 |

- PseudoBinaural [57] : A state-of-the-art model that uses additional data to augment training. We use the authors' public pre-trained model.

We evaluate two standard metrics, following [23, 34, 63]: 1) STFT Distance , the euclidean distance between the predicted and ground truth STFT spectrograms, which directly measures how accurate is our produced spectrogram, 2) Envelope Distance (ENV) which measures the euclidean distance between the envelopes of the predicted raw audio signal and the ground truth and can further capture the perceptual similarity.

Implementation details All networks are written in PyTorch [40]. The backbone network is based upon the networks used for 2.5D visual sound [23] and APNet [63]. The audio network consists of a U-Net [44] type architecture while the RIR generator is adapted from GANSynth [15]. To preprocess both datasets, we follow the standard steps from [23]. We resample all the audio to 16kHz and for training the backbone, we use 0.63s clips of the 10s audio and the corresponding frame. Frames are extracted at 10fps. The visual frames are randomly cropped to 448 × 224. For testing, we use a sliding window of 0.1s to compute the binaural audio for all methods. Please see Supp. for more details.

SimBinaural results We evaluate on two data splits: 1) Scene-Split , where the train and test set have disjoint scenes from Matterport3D [6] and hence the room of the videos at test time has not been seen before; and 2) Position-Split , where the splits may share the same Matterport3D scene/room but the exact configuration of the source object and receiver position is not seen before.

Table 2 (right) shows the results. The table also ablates the parts of our model. Our model outperforms all the baselines, including the two state-of-the-art prior methods. In addition, Table 2 confirms that Scene-Split is a fundamentally harder task. This is because we must predict the sound, as well as other characteristics like the IR, from visuals distinct from those we have observed before. This forces the model to generalize its encoding to generic visual properties (wall orientations, major furniture, etc.) that have intra-class variations and geometry changes compared to the training scenes.

The ablations shed light on the impact of each of the proposed losses in our multi-task framework. The full model uses all the losses as in Eqn 5. This outperforms other methods significantly on both splits. It also outperforms using each of the losses individually, which demonstrates the losses can combine to jointly learn better visual features for generating spatial audio.

FAIR-Play results Table 2 (left) shows the results on the real video benchmark FAIR-Play using the standard split. Here, we omit the IR prediction network for our method, since FAIR-Play lacks ground truth impulse responses (which we need for training). The Backbone+Spatial and Backbone+Geom are the same as above. Both

Table 3: Results on FAIR-Play when additional data is used for training.

| Method | STFT | ENV |

|---------------------|--------|-------|

| APNet [63] | 1.291 | 0.162 |

| PseudoBinaural [57] | 1.268 | 0.161 |

| Ours | 1.234 | 0.16 |

| Ours+SimBinaural | 1.175 | 0.154 |

variants of our method outperform the state-of-the-art. Therefore, enforcing the geometric and spatial constraints is beneficial to the binaural audio generation task. We get the best results when we combine both the losses in our framework.

To further evaluate the utility of our SimBinaural dataset, we next jointly train with both SimBinaural and FAIR-Play, then test on a challenging split of FAIR-Play in which the test scenes are non overlapping, as proposed in [57]. We compare our method with AugmentPseudoBinaural [57] 3 which also uses additional generated training data. Our method with SimBinaural outperforms other methods (Table 3). This is an important result, as it demonstrates that SimBinaural can be leveraged to improve performance on real video.

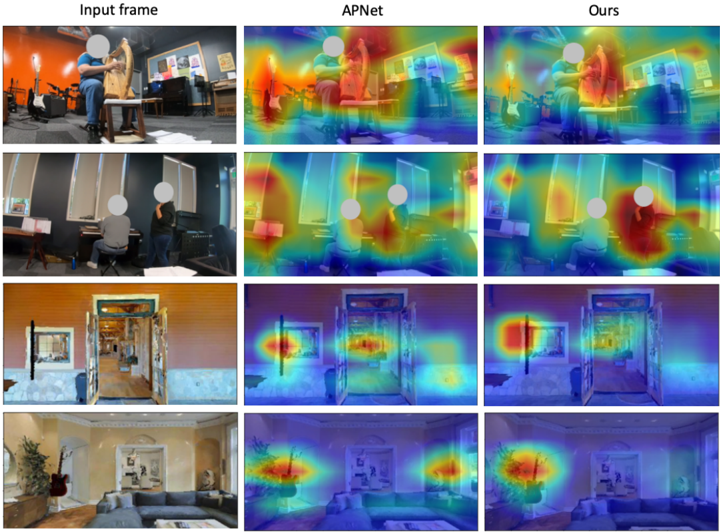

User study Next, we present two user studies to validate whether the predicted binaural sound does indeed provide an immersive and spatially accurate experience for human listeners. Twenty participants with normal hearing were presented with 20 videos from the test set of the two datasets. They were asked to rate the quality in two ways: 1) users were given only the audio and asked to choose from which di-

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Bar Charts: Sound Localization and Matching Performance

### Overview

The image presents two bar charts side-by-side. The left chart displays "Sound Localization" accuracy in percentage, comparing different methods: Mono, APNet, Ours, and Ground Truth. The right chart shows "Matching" performance in percentage, comparing Mono, APNet, and Ours. Each bar includes error bars representing variance.

### Components/Axes

**Left Chart (Sound Localization):**

* **Title:** Sound Localization

* **X-axis Label:** Methods (Mono, APNet, Ours, Ground Truth)

* **Y-axis Label:** Accuracy (%)

* **Y-axis Scale:** 0 to 100, with increments of 10.

**Right Chart (Matching):**

* **Title:** Matching

* **X-axis Label:** Methods (Mono, APNet, Ours)

* **Y-axis Label:** Percentage (%)

* **Y-axis Scale:** 0 to 90, with increments of 10.

### Detailed Analysis or Content Details

**Sound Localization Chart:**

* **Mono:** The bar is red, and its height is approximately 15% ± 5%. The bar slopes upward.

* **APNet:** The bar is green, and its height is approximately 52% ± 5%. The bar slopes upward.

* **Ours:** The bar is blue, and its height is approximately 68% ± 5%. The bar slopes upward.

* **Ground Truth:** The bar is orange, and its height is approximately 82% ± 5%. The bar slopes upward.

**Matching Chart:**

* **Mono:** The bar is red, and its height is approximately 25% ± 5%. The bar slopes upward.

* **APNet:** The bar is green, and its height is approximately 62% ± 5%. The bar slopes upward.

* **Ours:** The bar is blue, and its height is approximately 74% ± 5%. The bar slopes upward.

### Key Observations

* In both charts, the "Ours" method consistently outperforms "Mono" and "APNet".

* The "Ground Truth" in the Sound Localization chart represents the highest achievable accuracy.

* The error bars suggest some variability in the results for each method.

* The performance gap between "Mono" and the other methods is substantial.

### Interpretation

The data suggests that the proposed method ("Ours") significantly improves both sound localization accuracy and matching performance compared to the baseline methods ("Mono" and "APNet"). The "Ground Truth" serves as an upper bound for sound localization accuracy, indicating that there is still room for improvement even with the "Ours" method. The consistent upward trend of the bars indicates a clear positive correlation between the method and the performance metric. The error bars indicate that the results are not deterministic, and there is some variance in the performance of each method. The large difference between "Mono" and the other methods suggests that the improvements made in "APNet" and "Ours" are substantial and meaningful. The two charts are related in that they both evaluate the performance of the same methods, but on different tasks (sound localization and matching). This suggests that the "Ours" method is generally effective for both tasks.

</details>

Truth

Figure 4: User study results. See text for details.

rection (left/right/center) they heard the audio; 2) given a pair of audios and a reference frame, the users were asked to choose which audio gives a binaural experience closer to the provided ground truth. As can be seen in Fig. 4, users preferred our method both for the accuracy of the direction of sound (left) and binaural audio quality (right).

Visualization Figure 5 shows the t-SNE projections [54] of the visual features from SimBinaural colored by the RT 60 of the audio clip. While the features from our method (left) can infer the RT 60 characteristics, the ones from APNet [63] (center) are randomly distributed. Simultaneously, our features also accurately capture the angle of the object from the center (right). Fig. 6 shows the activation maps of the visual network. While APNet produces more diffuse maps, our method localizes the object better within the image. This indicates that the visual features in our method are better at identifying the regions which might be emitting sound to generate more accurate binaural audio.

## 5 Conclusion

We presented a multi-task approach to learn geometry-aware visual features for mono to binaural audio conversion in videos. Our method exploits the inherent room and object

3 The pre-trained model provided by PseudoBinaural [57] is trained on a different split instead of the standard split from [23] and hence it is not directly comparable in Table 2. We evaluate on the new split in Table 3.

Figure 5: t-SNE of visual features colored by RT 60 for our method (left) and APNet (center); and colored by angle of the object from the center (right).

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Scatter Plots: RT60 vs. Angle with Color Gradient

### Overview

The image presents three scatter plots arranged horizontally. Each plot displays a relationship between two variables, RT60 and an Angle (in degrees), with a third variable represented by the color of each data point. The plots appear to visualize data from a single experiment or dataset, potentially showing how the angle and RT60 relate to each other under varying conditions.

### Components/Axes

Each plot shares the following components:

* **X-axis:** Labeled "RT60", with a scale ranging from approximately 0.2 to 1.0, with increments of 0.2.

* **Y-axis:** Labeled "Angle (deg)", with a scale ranging from approximately -40 to -10 degrees, with increments of 10.

* **Color Scale (Legend):** Positioned to the right of each plot. The scale represents a third variable, with colors ranging from purple (approximately 0.2) to yellow (approximately 1.0). The scale is labeled "RT60" despite being used to color the points.

* **Data Points:** Each plot contains a cloud of colored dots, representing individual data points.

### Detailed Analysis or Content Details

**Plot 1 (Leftmost):**

* The data points are distributed in a roughly circular pattern.

* The color gradient appears relatively uniform across the circle, with a slight concentration of purple points at the bottom and yellow points at the top.

* Approximate data points (estimated from visual inspection):

* RT60 ≈ 0.3, Angle ≈ -30 (Purple)

* RT60 ≈ 0.7, Angle ≈ -10 (Yellow)

* RT60 ≈ 0.5, Angle ≈ -20 (Green)

**Plot 2 (Center):**

* The data points are elongated vertically, forming a roughly teardrop shape.

* The color gradient shows a clear trend: points with lower RT60 values (purple) are concentrated at the bottom, while points with higher RT60 values (yellow) are concentrated at the top.

* Approximate data points:

* RT60 ≈ 0.3, Angle ≈ -35 (Purple)

* RT60 ≈ 0.8, Angle ≈ -10 (Yellow)

* RT60 ≈ 0.6, Angle ≈ -25 (Green)

**Plot 3 (Rightmost):**

* The data points are more clustered than in the previous plots, forming a roughly elliptical shape.

* The color gradient is similar to Plot 2, with lower RT60 values (purple) at the bottom and higher RT60 values (yellow) at the top.

* Approximate data points:

* RT60 ≈ 0.3, Angle ≈ -40 (Purple)

* RT60 ≈ 0.9, Angle ≈ -10 (Yellow)

* RT60 ≈ 0.7, Angle ≈ -20 (Green)

### Key Observations

* All three plots show a positive correlation between RT60 and Angle. As RT60 increases, the Angle tends to increase (become less negative).

* The distribution of data points varies significantly between the plots, suggesting different underlying conditions or groupings within the dataset.

* The color gradient consistently reflects the RT60 value, providing an additional dimension of information.

### Interpretation

The data suggests a relationship between RT60, Angle, and the color-coded variable (which is also RT60). RT60, often used in acoustics, represents the reverberation time of a space. The Angle, likely representing an incident or reflection angle, could be related to the direction of sound or a surface. The positive correlation indicates that as the reverberation time increases, the angle also increases.

The differences in the distribution of data points across the three plots could be due to:

* **Different experimental conditions:** Each plot might represent data collected under different settings (e.g., different room sizes, materials, or sound sources).

* **Subgroups within the data:** The data might be divided into distinct groups based on other factors not shown in the plots.

* **Noise or outliers:** Some plots might contain more noise or outliers than others, affecting the overall distribution.

The consistent color gradient reinforces the relationship between RT60 and the color variable, suggesting that the color is a reliable indicator of RT60 value. The plots provide a visual representation of how these variables interact, potentially aiding in the understanding of acoustic behavior in different environments.

</details>

Figure 6: Qualitative visualization of the activation maps for the visual network for APNet [63] and ours. We can see that while the activation maps for APNet [63] are diffused and focusing on nonessential parts like objects in the background, our method focuses more on the object/region producing the sound and its location.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Image Analysis: Heatmap Comparison of Attention Mechanisms

### Overview

The image presents a comparative analysis of attention mechanisms applied to four different input images. Three columns display the results: "Input frame" (the original image), "APNet" (attention map generated by the APNet model), and "Ours" (attention map generated by a different, unnamed model). Each row represents a different scene. The attention maps are visualized as heatmaps overlaid on the original images, with warmer colors (red, orange, yellow) indicating higher attention and cooler colors (blue, purple) indicating lower attention.

### Components/Axes

The image is structured as a 4x3 grid.

- **Columns:**

- "Input frame": Original image.

- "APNet": Attention map generated by the APNet model.

- "Ours": Attention map generated by the proposed model.

- **Rows:** Four distinct scenes are presented.

- **Heatmap Color Scale:** The heatmap uses a gradient from blue (low attention) to red (high attention), passing through purple, indigo, green, yellow, and orange. There are no explicit numerical values associated with the color scale.

### Detailed Analysis or Content Details

The image does not contain numerical data. The analysis focuses on the visual distribution of attention in each heatmap.

**Row 1:**

- **Input frame:** Shows a person working at a computer in an office setting.

- **APNet:** Highlights the person's head and upper body, with some attention on the computer screen.

- **Ours:** Highlights the person's head and upper body more distinctly, with a more focused attention on the face and hands.

**Row 2:**

- **Input frame:** Shows a person sitting at a desk, possibly reading or writing.

- **APNet:** Highlights the person's head and upper body, with some attention on the desk area.

- **Ours:** Highlights the person's head and upper body with greater clarity, and also focuses attention on the hands and the objects on the desk.

**Row 3:**

- **Input frame:** Shows a doorway and a hallway.

- **APNet:** Highlights the doorway and the area immediately around it.

- **Ours:** Highlights the doorway and the hallway more broadly, with a more even distribution of attention.

**Row 4:**

- **Input frame:** Shows a living room with a sofa and a wall with decorations.

- **APNet:** Highlights the sofa and the wall decorations.

- **Ours:** Highlights the sofa and the wall decorations with a more focused attention on the central area of the sofa.

### Key Observations

- The "Ours" model consistently produces more focused and detailed attention maps compared to the "APNet" model.

- Both models tend to focus attention on people when they are present in the scene.

- The "Ours" model appears to be better at identifying and highlighting specific objects within a scene.

- The attention maps are not simply highlighting the brightest or most visually salient areas of the image; they seem to be focusing on semantically relevant regions.

### Interpretation

The image demonstrates a comparison of two attention mechanisms, "APNet" and "Ours," in terms of their ability to focus on relevant regions of an image. The heatmaps suggest that the "Ours" model is more effective at capturing the salient features of a scene and directing attention to the most important objects or regions. This could be due to differences in the model architecture, training data, or optimization techniques. The consistent improvement of "Ours" across different scenes suggests a more robust and generalizable attention mechanism. The fact that attention is focused on people and objects, rather than just bright areas, indicates that the models are learning to understand the semantic content of the images. This is crucial for tasks such as object detection, image captioning, and visual question answering. The image provides qualitative evidence that the "Ours" model is a promising approach for improving attention mechanisms in computer vision.

</details>

geometry and spatial information encoded in the visual frames to generate rich binaural audio. We also generated a large-scale video dataset with binaural audio in photo-realistic environments to better understand and learn the relation between visuals and binaural audio. This dataset will be made publicly available to support further research in this direction. Our state-of-the-art results on two datasets demonstrate the efficacy of our proposed formulation. In future work we plan to explore how semantic models of object categories' sounds could benefit the spatialization task.

Acknowledgements Thanks to Changan Chen for help with experiments, Tushar Nagarajan for feedback on paper drafts, and the UT Austin vision group for helpful discussions. UT Austin is supported by NSF CNS 2119115 and a gift from Google. Ruohan Gao was supported by a Google PhD Fellowship.

## References

- [1] Triantafyllos Afouras, Joon Son Chung, and Andrew Zisserman. The conversation: Deep audio-visual speech enhancement. In Interspeech , 2018.

- [2] Triantafyllos Afouras, Joon Son Chung, and Andrew Zisserman. My lips are concealed: Audio-visual speech enhancement through obstructions. In ICASSP , 2019.

- [3] Relja Arandjelovic and Andrew Zisserman. Look, listen and learn. In ICCV , 2017.

- [4] Relja Arandjelovi´ c and Andrew Zisserman. Objects that sound. In ECCV , 2018.

- [5] Yusuf Aytar, Carl Vondrick, and Antonio Torralba. Soundnet: Learning sound representations from unlabeled video. In NeurIPS , 2016.

- [6] Angel Chang, Angela Dai, Thomas Funkhouser, Maciej Halber, Matthias Niessner, Manolis Savva, Shuran Song, Andy Zeng, and Yinda Zhang. Matterport3d: Learning from RGB-D data in indoor environments. International Conference on 3D Vision (3DV) , 2017. MatterPort3D dataset license available at: http://kaldir.vc.in. tum.de/matterport/MP\_TOS.pdf .

- [7] Changan Chen, Unnat Jain, Carl Schissler, Sebastia Vicenc Amengual Gari, Ziad AlHalah, Vamsi Krishna Ithapu, Philip Robinson, and Kristen Grauman. Soundspaces: Audio-visual navigation in 3d environments. In ECCV , 2020.

- [8] Changan Chen, Sagnik Majumder, Ziad Al-Halah, Ruohan Gao, Santhosh Kumar Ramakrishnan, and Kristen Grauman. Learning to set waypoints for audio-visual navigation. In ICLR , 2020.

- [9] Changan Chen, Ziad Al-Halah, and Kristen Grauman. Semantic audio-visual navigation. In CVPR , 2021.

- [10] Peihao Chen, Yang Zhang, Mingkui Tan, Hongdong Xiao, Deng Huang, and Chuang Gan. Generating visually aligned sound from videos. IEEE TIP , 2020.

- [11] Jesper Haahr Christensen, Sascha Hornauer, and X Yu Stella. Batvision: Learning to see 3d spatial layout with two ears. In ICRA , 2020.

- [12] Joon Son Chung, Andrew Senior, Oriol Vinyals, and Andrew Zisserman. Lip reading sentences in the wild. In CVPR , 2017.

- [13] Soo-Whan Chung, Soyeon Choe, Joon Son Chung, and Hong-Goo Kang. Facefilter: Audio-visual speech separation using still images. In INTERSPEECH , 2020.

- [14] Victoria Dean, Shubham Tulsiani, and Abhinav Gupta. See, hear, explore: Curiosity via audio-visual association. In NeurIPS , 2020.

- [15] Jesse Engel, Kumar Krishna Agrawal, Shuo Chen, Ishaan Gulrajani, Chris Donahue, and Adam Roberts. Gansynth: Adversarial neural audio synthesis. In ICLR , 2019.

- [16] Ariel Ephrat, Inbar Mosseri, Oran Lang, Tali Dekel, Kevin Wilson, Avinatan Hassidim, William T Freeman, and Michael Rubinstein. Looking to listen at the cocktail party: A speaker-independent audio-visual model for speech separation. In SIGGRAPH , 2018.

- [17] Frederic Font, Gerard Roma, and Xavier Serra. Freesound technical demo. In Proceedings of the 21st ACM International Conference on Multimedia , 2013.

- [18] Aviv Gabbay, Asaph Shamir, and Shmuel Peleg. Visual speech enhancement. In INTERSPEECH , 2018.

- [19] Chuang Gan, Deng Huang, Peihao Chen, Joshua B Tenenbaum, and Antonio Torralba. Foley music: Learning to generate music from videos. In ECCV , 2020.

- [20] Chuang Gan, Deng Huang, Hang Zhao, Joshua B Tenenbaum, and Antonio Torralba. Music gesture for visual sound separation. In CVPR , 2020.

- [21] Chuang Gan, Yiwei Zhang, Jiajun Wu, Boqing Gong, and Joshua B Tenenbaum. Look, listen, and act: Towards audio-visual embodied navigation. In ICRA , 2020.

- [22] Ruohan Gao and Kristen Grauman. Co-separating sounds of visual objects. In ICCV , 2019.

- [23] Ruohan Gao and Kristen Grauman. 2.5d visual sound. In CVPR , 2019.

- [24] Ruohan Gao, Rogerio Feris, and Kristen Grauman. Learning to separate object sounds by watching unlabeled video. In ECCV , 2018.

- [25] Ruohan Gao, Changan Chen, Ziad Al-Halab, Carl Schissler, and Kristen Grauman. Visualechoes: Spatial image representation learning through echolocation. In ECCV , 2020.

- [26] Daniel Griffin and Jae Lim. Signal estimation from modified short-time fourier transform. IEEE Transactions on Acoustics, Speech, and Signal Processing , 1984.

- [27] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. In CVPR , 2016.

- [28] Di Hu, Xuelong Li, et al. Temporal multimodal learning in audiovisual speech recognition. In CVPR , 2016.

- [29] Di Hu, Rui Qian, Minyue Jiang, Xiao Tan, Shilei Wen, Errui Ding, Weiyao Lin, and Dejing Dou. Discriminative sounding objects localization via self-supervised audiovisual matching. In NeurIPS , 2020.

- [30] Diederik Kingma and Jimmy Ba. Adam: A method for stochastic optimization. In ICLR , 2015.

- [31] Bruno Korbar, Du Tran, and Lorenzo Torresani. Co-training of audio and video representations from self-supervised temporal synchronization. In NeurIPS , 2018.

- [32] Yu-Ding Lu, Hsin-Ying Lee, Hung-Yu Tseng, and Ming-Hsuan Yang. Self-supervised audio spatialization with correspondence classifier. In ICIP , 2019.

- [33] Sagnik Majumder, Ziad Al-Halah, and Kristen Grauman. Move2Hear: Active audiovisual source separation. In ICCV , 2021.

- [34] Pedro Morgado, Nono Vasconcelos, Timothy Langlois, and Oliver Wang. Selfsupervised generation of spatial audio for 360 ◦ video. In NeurIPS , 2018.

- [35] Pedro Morgado, Yi Li, and Nuno Nvasconcelos. Learning representations from audiovisual spatial alignment. In NeurIPS , 2020.

- [36] Damian T Murphy and Simon Shelley. Openair: An interactive auralization web resource and database. In Audio Engineering Society Convention 129 . Audio Engineering Society, 2010.

- [37] Andrew Owens and Alexei A Efros. Audio-visual scene analysis with self-supervised multisensory features. In ECCV , 2018.

- [38] Andrew Owens, Phillip Isola, Josh McDermott, Antonio Torralba, Edward H Adelson, and William T Freeman. Visually indicated sounds. In CVPR , 2016.

- [39] Andrew Owens, Jiajun Wu, Josh H McDermott, William T Freeman, and Antonio Torralba. Ambient sound provides supervision for visual learning. In ECCV , 2016.

- [40] Adam Paszke, Sam Gross, Francisco Massa, Adam Lerer, James Bradbury, Gregory Chanan, Trevor Killeen, Zeming Lin, Natalia Gimelshein, Luca Antiga, Alban Desmaison, Andreas Kopf, Edward Yang, Zachary DeVito, Martin Raison, Alykhan Tejani, Sasank Chilamkurthy, Benoit Steiner, Lu Fang, Junjie Bai, and Soumith Chintala. Pytorch: An imperative style, high-performance deep learning library. In NeurIPS . 2019.

- [41] Nathanaël Perraudin, Peter Balazs, and Peter L Søndergaard. A fast griffin-lim algorithm. In WASPAA , 2013.

- [42] Lord Rayleigh. On our perception of the direction of a source of sound. Proceedings of the Musical Association , 1875.

- [43] Alexander Richard, Dejan Markovic, Israel D Gebru, Steven Krenn, Gladstone Butler, Fernando de la Torre, and Yaser Sheikh. Neural synthesis of binaural speech from mono audio. In ICLR , 2021.

- [44] Olaf Ronneberger, Philipp Fischer, and Thomas Brox. U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical image computing and computer-assisted intervention , 2015.

- [45] Andrew Rouditchenko, Hang Zhao, Chuang Gan, Josh McDermott, and Antonio Torralba. Self-supervised audio-visual co-segmentation. In ICASSP , 2019.

- [46] Manolis Savva, Abhishek Kadian, Oleksandr Maksymets, Yili Zhao, Erik Wijmans, Bhavana Jain, Julian Straub, Jia Liu, Vladlen Koltun, Jitendra Malik, et al. Habitat: A platform for embodied ai research. In ICCV , 2019.

- [47] Carl Schissler, Christian Loftin, and Dinesh Manocha. Acoustic classification and optimization for multi-modal rendering of real-world scenes. IEEE Transactions on Visualization and Computer Graphics , 2017.

- [48] Manfred R Schroeder. New method of measuring reverberation time. The Journal of the Acoustical Society of America , 1965.

- [49] Arda Senocak, Tae-Hyun Oh, Junsik Kim, Ming-Hsuan Yang, and In So Kweon. Learning to localize sound source in visual scenes. In CVPR , 2018.

- [50] Zhenyu Tang, Nicholas J Bryan, Dingzeyu Li, Timothy R Langlois, and Dinesh Manocha. Scene-aware audio rendering via deep acoustic analysis. IEEE Transactions on Visualization and Computer Graphics , 2020.

- [51] Y. Tian, J. Shi, B. Li, Z. Duan, and C. Xu. Audio-visual event localization in unconstrained videos. In ECCV , 2018.

- [52] Yapeng Tian, Dingzeyu Li, and Chenliang Xu. Unified multisensory perception: Weakly-supervised audio-visual video parsing. In ECCV , 2020.

- [53] Efthymios Tzinis, Scott Wisdom, Aren Jansen, Shawn Hershey, Tal Remez, Daniel PW Ellis, and John R Hershey. Into the wild with audioscope: Unsupervised audio-visual separation of on-screen sounds. In ICLR , 2021.

- [54] Laurens Van der Maaten and Geoffrey Hinton. Visualizing data using t-sne. JMLR , 2008.

- [55] Yu Wu, Linchao Zhu, Yan Yan, and Yi Yang. Dual attention matching for audio-visual event localization. In ICCV , 2019.

- [56] Xudong Xu, Bo Dai, and Dahua Lin. Recursive visual sound separation using minusplus net. In ICCV , 2019.

- [57] Xudong Xu, Hang Zhou, Ziwei Liu, Bo Dai, Xiaogang Wang, and Dahua Lin. Visually informed binaural audio generation without binaural audios. In CVPR , 2021.

- [58] Karren Yang, Bryan Russell, and Justin Salamon. Telling left from right: Learning spatial correspondence of sight and sound. In CVPR , 2020.

- [59] Jianwei Yu, Shi-Xiong Zhang, Jian Wu, Shahram Ghorbani, Bo Wu, Shiyin Kang, Shansong Liu, Xunying Liu, Helen Meng, and Dong Yu. Audio-visual recognition of overlapped speech for the lrs2 dataset. In ICASSP , 2020.

- [60] Hang Zhao, Chuang Gan, Andrew Rouditchenko, Carl Vondrick, Josh McDermott, and Antonio Torralba. The sound of pixels. In ECCV , 2018.

- [61] Hang Zhao, Chuang Gan, Wei-Chiu Ma, and Antonio Torralba. The sound of motions. In ICCV , 2019.

- [62] Hang Zhou, Yu Liu, Ziwei Liu, Ping Luo, and Xiaogang Wang. Talking face generation by adversarially disentangled audio-visual representation. In AAAI , 2019.

- [63] Hang Zhou, Xudong Xu, Dahua Lin, Xiaogang Wang, and Ziwei Liu. Sep-stereo: Visually guided stereophonic audio generation by associating source separation. In ECCV , 2020.

- [64] Yipin Zhou, Zhaowen Wang, Chen Fang, Trung Bui, and Tamara L Berg. Visual to sound: Generating natural sound for videos in the wild. In CVPR , 2018.

## Appendix

## A Supplementary Video

In our supplementary video 4 , we show (a) examples of our SimBinaural dataset; (b) example results of the binaural audio prediction task on both SimBinaural and FAIR-Play datasets; and (c) examples of the interface for the user studies.

## B RIR Prediction Case Study

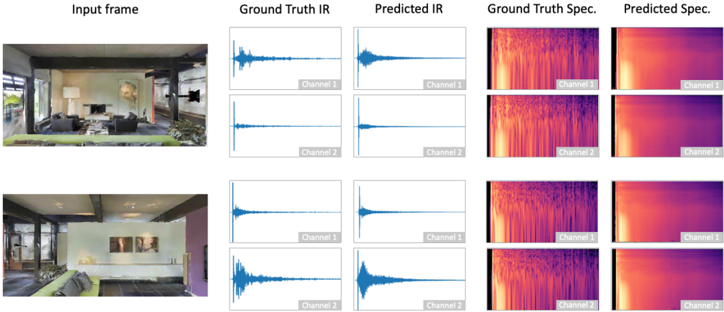

Figure 7: IR Prediction: The first column is the input frame to the encoder. The second column depicts the ground truth IR for the frame and the fourth column is the corresponding spectrogram of this IR. The third and fifth columns show the predicted IR waveform and spectrogram, respectively. This predicted IR waveform is estimated from the spectrogram generated by our network.

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Image Analysis: Infrared Prediction from Visual Frames

### Overview

The image presents a comparison of ground truth and predicted infrared (IR) data, alongside their corresponding spectrograms, derived from two input visual frames of an indoor scene. The image is structured as a 2x5 grid, with columns representing "Input frame", "Ground Truth IR", "Predicted IR", "Ground Truth Spec.", and "Predicted Spec.". Each row represents a different input frame.

### Components/Axes

The image consists of the following components:

* **Input Frame:** Visual representation of the indoor scene.

* **Ground Truth IR:** Actual infrared data for the scene. Labeled "Channel 1" and "Channel 2".

* **Predicted IR:** Infrared data predicted from the input frame. Labeled "Channel 1" and "Channel 2".

* **Ground Truth Spec.:** Spectrogram of the ground truth IR data. Labeled "Channel 1" and "Channel 2".

* **Predicted Spec.:** Spectrogram of the predicted IR data. Labeled "Channel 1" and "Channel 2".

There are no explicit axes or scales visible on the IR data or spectrograms, only visual representations of the signal.

### Detailed Analysis or Content Details

**Row 1:**

* **Input Frame:** Shows a living room with furniture, a painting on the wall, and a view through an opening to another room.

* **Ground Truth IR (Channel 1):** A waveform with a relatively low amplitude for the first ~75% of its duration, followed by a sharp increase in amplitude and a rapid decay.

* **Ground Truth IR (Channel 2):** A waveform with a similar shape to Channel 1, but with a slightly delayed and less pronounced peak.

* **Predicted IR (Channel 1):** A waveform that closely mirrors the shape of the Ground Truth IR (Channel 1), with a similar initial low amplitude and a subsequent sharp peak and decay.

* **Predicted IR (Channel 2):** A waveform that closely mirrors the shape of the Ground Truth IR (Channel 2), with a similar initial low amplitude and a subsequent sharp peak and decay.

* **Ground Truth Spec. (Channel 1):** A spectrogram with a predominantly red color, indicating high intensity, concentrated in the later portion of the time axis.

* **Ground Truth Spec. (Channel 2):** A spectrogram with a predominantly red color, indicating high intensity, concentrated in the later portion of the time axis, but slightly offset in time compared to Channel 1.

* **Predicted Spec. (Channel 1):** A spectrogram that closely resembles the Ground Truth Spec. (Channel 1), with a similar red color and concentration of intensity.

* **Predicted Spec. (Channel 2):** A spectrogram that closely resembles the Ground Truth Spec. (Channel 2), with a similar red color and concentration of intensity.

**Row 2:**

* **Input Frame:** Shows a similar living room scene, but with a different arrangement of artwork on the wall.

* **Ground Truth IR (Channel 1):** A waveform with a relatively low amplitude for the first ~50% of its duration, followed by a sharp increase in amplitude and a rapid decay.

* **Ground Truth IR (Channel 2):** A waveform with a similar shape to Channel 1, but with a slightly delayed and less pronounced peak.

* **Predicted IR (Channel 1):** A waveform that closely mirrors the shape of the Ground Truth IR (Channel 1), with a similar initial low amplitude and a subsequent sharp peak and decay.

* **Predicted IR (Channel 2):** A waveform that closely mirrors the shape of the Ground Truth IR (Channel 2), with a similar initial low amplitude and a subsequent sharp peak and decay.

* **Ground Truth Spec. (Channel 1):** A spectrogram with a predominantly red color, indicating high intensity, concentrated in the later portion of the time axis.

* **Ground Truth Spec. (Channel 2):** A spectrogram with a predominantly red color, indicating high intensity, concentrated in the later portion of the time axis, but slightly offset in time compared to Channel 1.

* **Predicted Spec. (Channel 1):** A spectrogram that closely resembles the Ground Truth Spec. (Channel 1), with a similar red color and concentration of intensity.

* **Predicted Spec. (Channel 2):** A spectrogram that closely resembles the Ground Truth Spec. (Channel 2), with a similar red color and concentration of intensity.

### Key Observations

* The predicted IR data and spectrograms closely match the ground truth data in both rows.

* Both Channel 1 and Channel 2 exhibit a similar waveform shape, suggesting a correlated signal.

* The spectrograms show a concentration of high-intensity frequencies towards the end of the time axis, indicating a transient event or signal.

* The color gradient in the spectrograms (red to blue) likely represents signal intensity, with red indicating higher intensity.

### Interpretation

The image demonstrates the capability of a system to predict infrared data from visual frames. The close correspondence between the ground truth and predicted data suggests a high degree of accuracy in the prediction process. The waveforms and spectrograms provide a visual representation of the infrared signal, highlighting the temporal characteristics and frequency content. The consistent pattern observed in both rows indicates the robustness of the prediction model across different input scenes. The system appears to be effectively capturing the thermal characteristics of the scene and translating them into accurate infrared representations. The two channels likely represent different aspects of the infrared signal, potentially capturing different wavelengths or spatial regions. The transient event indicated by the concentrated intensity in the spectrograms could correspond to a heat source or a change in thermal conditions within the scene.

</details>

We perform a case study on the task of predicting the binaural IR directly from a single visual frame. This helps us evaluate if it is feasible to learn this information just from a visual frame, so that it can be then used for our task as in Sec. 3.2 of the main paper. We predict the acoustic properties of the room by looking at one snapshot of the scene. We predict the magnitude spectrogram of the IR for the two channels. We also obtain the predicted waveform of the IR using the Griffin-Lim algorithm [26]. Figure 7 shows qualitative examples of predictions from the test set. It can be seen that we can get a fairly accurate general idea of the IR, and the difference between the response in each channel is also captured.

To evaluate if we capture the materials and geometry effectively, we train another task to predict the reverberation time RT 60 of the IR from the visual frame. A more accurate prediction of RT 60 means that our network understands how the wave will interact with the room and materials and whether it takes more or less time to decay. We formulate this as a classification task and discretize the range of the RT 60 into 10 classes, each with roughly equal number of samples. We then use a classifier to predict this range class of RT 60 using only the visual frame as input. The classifier, consisting of a ResNet-18, has a test accuracy of 61.5% which demonstrates the networks' ability to estimate the RT 60 range quite well.

4 http://vision.cs.utexas.edu/projects/geometry-aware-binaural

## C SimBinaural dataset details

To construct the dataset, we insert diverse 3D models of various instruments like guitar, violin, flute etc. and other sound-making objects like phones and clocks into the scene. Each object has multiple models of that class for diversity, so we do not associate a sound with a particular 3D model. We have a total of 35 objects from 11 classes.

To generate realistic binaural sound in the environment as if it is coming from the source location and heard at the camera position, we convolve the appropriate SoundSpaces [7] room impulse response with an anechoic audio waveform (e.g., a guitar playing for an inserted guitar 3D object). We use sounds recorded in anechoic environments, so there is no existing reverberations to affect the data. The sounds are obtained from Freesound [17] and OpenAIR data [36] to form a set of 127 different sound clips spanning the 11 distinct object categories. Using this setup, we capture videos with simulated binaural sound.

The virtual camera and attached microphones are moved along trajectories such that the object remains in view, leading to diversity in views of the object and locations within each video clip. Using ray tracing, we ensure that the object is in view of the camera, and the source positions are densely sampled from the 3D environments. For a particular video, we use a fixed source position and the agent traverses a random path. The view of the object changes throughout the video as the camera moves and rotates, so we get diverse orientations of the object and positions within a video frame, for each video. The camera moves to a new position every 5 seconds and has a small translational motion during the five-second interval. The videos are generated at 5 frames per second, the average length of the videos in the dataset is 30.3s and the median length is 20s.

## D Implementation Details

All networks are written in PyTorch [40]. The backbone network is based upon the networks used for 2.5D visual sound [23] and APNet [63]. The visual network is a ResNet-18 [27] with the pooling and fully connected layers removed. The U-Net consists of 5 convolution layers for downsampling and 5 upconvolution layers in the upsampling network and include skip connections. The encoder for spatial coherence follows the same architecture as the U-Net encoder for the audio feature extraction. The classifier combines the audio and visual features and uses a fully connected layer for prediction. The generator network is adapted from GANSynth [15], modified to fit the required dimensions of the audio spectrogram.

To preprocess both datasets, we follow the standard steps from [23]. We resampled all the audio to 16kHz and computed the STFT using a FFT size of 512, window size of 400, and hop length of 160. For training the backbone, we use 0.63s clips of the 10s audio and the corresponding frame. Frames are extracted at 10fps. The visual frames are randomly cropped to 448 × 224. For testing, we use a sliding window of 0.1s to compute the binaural audio for all methods.

We use the Adam optimizer [30] and a batch size of 64. The initial learning rates are 0.001 for the audio and fusion networks, and 0.0001 for all other networks. We trained the FAIR-Play dataset for 1000 epochs and SimBinaural for 100 epochs. We train the RIR prediction separately and use the weights for initialization while training jointly. The d for choice of frame is set to 1s and the l 's used are set based on validation set performance to l B = 10 , l S = 1 , l G = 0 . 01 , l P = 1.

Table 4: Results on SimBinaural Position-Split with different combinations of constraints.

| Method | STFT | ENV |

|-------------------|--------|-------|

| Spatial+Geometric | 0.724 | 0.118 |

| IR Pred+Geometric | 0.707 | 0.117 |

| IR Pred+Spatial | 0.702 | 0.117 |

## E Additional Ablations

Table 2 in the main paper illustrates that adding each component of our method individually to the visual features helps improve the binaural audio quality performance. Table 4 provides additional analysis to evaluate the combination of different constraints with the backbone for the SimBinaural Position-Split. The constraints complement each other to learn better visual features, leading to better audio performance.