# Neuro-Symbolic Inductive Logic Programming with Logical Neural Networks

**Authors**: Prithviraj Sen, Breno W. S. R. de Carvalho, Ryan Riegel, Alexander Gray

## Neuro-Symbolic Inductive Logic Programming with Logical Neural Networks

Prithviraj Sen, Breno W. S. R. de Carvalho, Ryan Riegel, Alexander Gray

IBM Research of substituting logical connectives besides learning the rules themselves. Neural logic machine (NLM) (Dong et al. 2019) achieves this but at the cost of interpretability. More precisely, it models propositional formulae (consisting of conjunctions, disjunctions and/or negations) with multi-layer perceptrons (MLP). Once trained, it may not be possible to interpret NLM as rules since there exists no standard translation from MLP to logic. What is needed is an extension of classical logic with ties strong enough to be amenable to interpretation and can learn not only rules but also the logical connectives using gradient-based optimization.

## Abstract

Recent work on neuro-symbolic inductive logic programming has led to promising approaches that can learn explanatory rules from noisy, real-world data. While some proposals approximate logical operators with differentiable operators from fuzzy or real-valued logic that are parameter-free thus diminishing their capacity to fit the data, other approaches are only loosely based on logic making it difficult to interpret the learned 'rules'. In this paper, we propose learning rules with the recently proposed logical neural networks (LNN). Compared to others, LNNs offer strong connection to classical Boolean logic thus allowing for precise interpretation of learned rules while harboring parameters that can be trained with gradient-based optimization to effectively fit the data. We extend LNNs to induce rules in first-order logic. Our experiments on standard benchmarking tasks confirm that LNN rules are highly interpretable and can achieve comparable or higher accuracy due to their flexible parameterization.

## 1 Introduction

Inductive logic programming (ILP) (Muggleton 1996) has been of long-standing interest where the goal is to learn logical rules from labeled data. Since rules are explicitly symbolic, they provide certain advantages over black box models. For instance, learned rules can be inspected, understood and verified forming a convenient means of storing learned knowledge. Consequently, a number of approaches have been proposed to address ILP including, but not limited to, statistical relational learning (Getoor and Taskar 2007) and more recently, neuro-symbolic methods.

Since logical operators such as conjunction and disjunction are not differentiable, one issue that most neuro-symbolic ILP techniques have to address is how to learn rules using gradient-based optimization. A popular solution is to employ extensions from fuzzy or real-valued logic that are either differentiable or have subgradients available. For instance, NeuralLP (Yang, Yang, and Cohen 2017) substitutes logical conjunction with product t -norm ( x ∧ y ≡ xy ) and logic tensor networks (Donadello, Serafini, and d'Avila Garcez 2017) with Łukasiewicz t -norm ( x ∧ y ≡ max(0 , x + y -1) ). In an interesting experiment, Evans and Grefenstette (2018) show that among the various options, product t -norm seems to lead to the best ILP result. This indicates that the learning approach, rather than the user, should be in charge

In this paper, we propose ILP with the recently proposed logical neural nerworks (LNN) (Riegel et al. 2020). Instead of forcing the user to choose a function that mimics a logical connective, LNNs employ constraints to ensure that neurons behave like conjunctions or disjunctions. By decoupling neuron activation from the mechanism to ensure that it behaves like a logical connective, LNNs offer tremendous flexibility in how to parameterize neurons thus ensuring that they fit the data better while maintaining close connections with classical Boolean logic which, in turn, facilitates principled interpretation. We propose first-order extensions of LNNs that can tackle ILP. Since vanilla backpropagation is insufficient for constraint optimization, we propose flexible learning algorithms capable of handling a variety of (linear) inequality and equality constraints. We experiment with diverse benchmarks for ILP including gridworld and knowledge base completion (KBC) that call for learning of different kinds of rules and show how our approach can tackle both effectively. In fact, our KBC results represents a 4 -16% relative improvement (in terms of mean reciprocal rank) upon the current best rule-based KBC results on popular KBC benchmarks. Additional, we show that joint learning of rules and logical connectives leads to LNN rules that are easier to interpret vs. other neuro-symbolic ILP approaches.

## 2 Related Work

∂ ILP (Evans and Grefenstette 2018) is another neurosymbolic ILP technique whose main parameter is a tensor with one cell per candidate logic program. Since the number of candidates is exponential in both the number of available predicates and the number of constituent rules in the program, ∂ ILP's complexity is exponential making it impractical for anything but the smallest learning task.

To reign in the complexity, ∂ ILP asks the user to specify the ILP task using a template consisting of two rules each containing a maximum of two predicates. In reality, most neuro-symbolic ILP approaches ask the user to specify a template. NeuralLP's (Yang, Yang, and Cohen 2017) template is meant for link prediction in incomplete knowledge bases (sometimes called open path or chain rule) which only includes binary predicates and is of the form T ( X 1 , X n ) ← R 1 ( X 1 , X 2 ) ∧ R 2 ( X 2 , X 3 ) ∧ . . . R n -1 ( X n -1 , X n ) positing that the head predicate T ( X 1 , X n ) can be modeled as a path from X 1 to X n . NLM (Dong et al. 2019) is restricted to learning rules where the head and body predicates each contain the same set of variables. For instance, to model the rule learned by NeuralLP, NLM would first need to add arguments to R 1 so that the new predicate contains all variables including X 3 , . . . X n . In contrast, our approach can use more flexible templates that can express programs beyond two rules, allows the use of n -ary predicates ( n possibly > 2 ), and allows the head to have fewer variables than the body thus going beyond all of the above mentioned approaches.

Neural theorem provers (NTP) (Rockt¨ aschel and Riedel 2017) generalize the notion of unification by embedding logical constants into a high-dimensional latent space. NTPs can achieve ILP by learning the embedding for the unknown predicate which forms part of the rule, and subsequently comparing with embeddings of known predicates. NTPs can have difficulty scaling to real-world tasks since the decision to unify two constants is no longer Boolean-valued leading to an explosion of proof paths that need to be explored. To improve scalability, recent proposals either greedily choose (GNTP (Minervini et al. 2020a)) or learn to choose (CTP (Minervini et al. 2020b)) most promising proof paths. We compare against CTP in Section 5.

Lifted relational neural networks (LRNN) (Sourek et al. 2017) model conjunctions using (generalized) sigmoid but fix certain parameters to ensure that it behaves similar to Łukasiewicz t -norm. This limits how well LRNN can model the data, which is contrary to our goal as stated in the previous section. While other combinations of logic and neural networks exist, e.g. logic tensor networks (Donadello, Serafini, and d'Avila Garcez 2017), RelNN (Kazemi and Poole 2018), DeepProbLog (Manhaeve et al. 2018), to the best of our knowledge, none of these learn rules to address ILP.

## 3 Generalized Propositional Logic with Logical Neural Networks

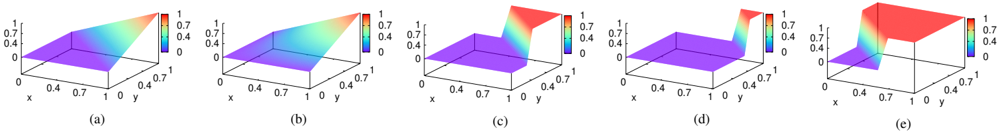

Logical neural networks (LNN) (Riegel et al. 2020) allow the use of almost any parameterized function as a logical connective. We illustrate how LNNs generalize conjunction ( ∧ ). Let 0 denote false and 1 denote true . Let a, b ∈ { 0 , 1 } and x, y ∈ [0 , 1] denote Boolean-valued and continuous-valued variables, respectively. While Boolean logic defines the output of ∧ when x, y attain the extremities of their permissible domains (shown in Figure 1 (a)), to fully define real-valued logic's ∧ we need to also extend its definition to x, y ∈ (0 , 1) . Intuitively, the characteristic shape of ∧ is to produce a 1) low output when either input is low , and 2) high output when both inputs are high . A simple way to capture low vs. high is

Figure 1: (a) Truth table for ∧ in Boolean logic, and (b) Shape of ∧ extended to real-valued logic.

| a | b | a ∧ b | x | y | x ∧ y |

|-----|-------|---------|--------------|--------------|--------------|

| 0 | 0 | 0 | [0 , 1 - α ] | [0 , 1 - α ] | [0 , 1 - α ] |

| 0 | 1 | 0 | [0 , 1 - α ] | (1 - α, 1] | [0 , 1 - α ] |

| 1 | 0 | 0 | (1 - α, 1] | [0 , 1 - α ] | [0 , 1 - α ] |

| 1 | 1 (a) | 1 | [ α, 1] | [ α, 1] (b) | [ α, 1] |

via a user-defined hyperparameter α ∈ ( 1 2 , 1] : x ∈ [0 , 1 -α ] constitutes low and x ∈ [ α, 1] constitutes high . Figure 1 (b) expresses ∧ for real-valued logic in terms of α .

LNNs propose constraints to enforce the shape of ∧ . Let f : [0 , 1] × [0 , 1] → [0 , 1] denote a monotonically increasing function (in both inputs). In other words, f ( x, y ′ ) ≥ f ( x, y ) ∀ y ′ ≥ y and f ( x ′ , y ) ≥ f ( x, y ) ∀ x ′ ≥ x . In accordance with figure 1 (b), LNNs enforce the following constraints:

$$f ( x , y ) \leq 1 - a , \forall x , y \in [0 , 1 - a ] f ( x , y ) \leq 1 - a , \forall x \in [0 , 1 - a ] , \forall y \in ( 1 - a , 1 ] f ( x , y ) \geq a , \forall x , y \in [a , 1]$$

Since f is monotonically increasing, we can move all constraints to their corresponding extremities and eliminate the first constraint since it is redundant given the second and third.

$$f ( 1 - \alpha , 1 ) \leq 1 - \alpha , f ( 1 , 1 - \alpha )$$

Further simplifications may be obtained for specific choice of f . For inspiration, we look towards triangular norm ( t -norm) (Esteva and Godo 2001) which is defined as a symmetric, associative and non-decreasing function T : [0 , 1] 2 → [0 , 1] satisfying boundary condition T (1 , x ) = x, ∀ x ∈ [0 , 1] . Popular t -norms include product t -norm, xy , and Łukasiewicz t -norm, max(0 , x + y -1) . We extend the latter to define LNN-∧ (other t -norms may also be extended similarly):

$$\begin{array}{ll}

LNN- \lambda ( x , y ; \beta , w _ { 1 } , w _ { 2 } ) = \\

\{ 0 & if \beta - w _ { 1 } ( 1 - x ) - w _ { 2 } ( 1 - y ) < 0 \\

\{ 1 & if \beta - w _ { 1 } ( 1 - x ) - w _ { 2 } ( 1 - y ) > 1 \\

\{ \beta - w _ { 1 } ( 1 - x ) - w _ { 2 } ( 1 - y ) \text{ otherwise}

\end{array}$$

where β, w 1 , w 2 denote learnable parameters subject to the following constraints translated from above 1 :

$$the$$

To ensure that LNN-∧ is monotonically increasing, we also need to enforce non-negativity of w 1 , w 2 . It is easy to extend LNN-∧ to an n -ary conjunction ( n ≥ 2 ):

$$\underline{LNN- \lambda ( x ; \beta , w ) = } \text{re} \{ u _ { 1 } ( \beta - w ^ { T } ( 1 - x ) ) , \beta - a w \leq ( 1 - a ) 1 ^ { T } w \geq a ^ { ( 1 ) } \}$$

1 We remove the upper and lower clamps since they do not apply in the active region of the constraints.

Figure 2: (a) Lukasiewicz t -norm vs. (b) Product t -norm vs. LNN-∧ with (c) α = 0 . 7 , (d) α = 0 . 9 . (e) LNN-∨ ( α = 0 . 7 ).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Surface Plots: Parameter Variation

### Overview

The image contains five 3D surface plots, labeled (a) through (e), each depicting a function's output over a 2D input space. The x and y axes range from 0 to 1. The color of the surface represents the function's value, ranging from 0 (blue/purple) to 1 (red), as indicated by the color bar legend. The plots show how the function's behavior changes across the five different parameter settings.

### Components/Axes

* **X-axis:** Ranges from 0 to 1, labeled "x".

* **Y-axis:** Ranges from 0 to 1, labeled "y".

* **Z-axis (Implied):** Represents the function's output value, indicated by the color of the surface.

* **Color Bar Legend:** Located to the right of plots (a), (b), and (e). It shows a gradient from blue (value 0) to red (value 1), with intermediate values of 0.4 and 0.7 marked.

* **Plot Labels:** (a), (b), (c), (d), and (e) are located below each respective plot.

### Detailed Analysis

* **(a):** The surface slopes upward from the bottom-left corner (x=0, y=0) to the top-right corner (x=1, y=1). The color transitions from purple/blue to red, indicating an increasing function value as both x and y increase. The value at (0,0) is approximately 0, and the value at (1,1) is approximately 1.

* **(b):** Similar to (a), the surface slopes upward from the bottom-left to the top-right. However, the gradient appears steeper, suggesting a more rapid increase in the function's value. The value at (0,0) is approximately 0, and the value at (1,1) is approximately 1.

* **(c):** The surface is mostly flat and purple/blue, indicating a value close to 0. There is a sharp vertical rise along the y=0 axis, where the color changes abruptly to red, indicating a value of 1. The function is approximately 0 for y > 0 and approximately 1 for y = 0.

* **(d):** The surface is entirely flat and purple/blue, indicating a constant value of approximately 0 across the entire input space.

* **(e):** The surface is mostly flat and purple/blue, indicating a value close to 0. There is a sharp vertical rise along the y=0 axis for x values greater than approximately 0.6, where the color changes abruptly to red, indicating a value of 1. The function is approximately 0 for y > 0 or x < 0.6, and approximately 1 for y = 0 and x > 0.6.

### Key Observations

* Plots (a) and (b) show a gradual increase in the function's value with increasing x and y.

* Plots (c), (d), and (e) exhibit step-like behavior, with abrupt changes in the function's value.

* Plot (d) represents a constant function with a value of 0.

* The transition from (a) to (e) shows a shift from a continuous, gradual function to a discontinuous, step-like function.

### Interpretation

The image illustrates how different parameter settings can drastically alter the behavior of a function. Plots (a) and (b) might represent a function that is linearly dependent on x and y, while plots (c), (d), and (e) might represent functions with threshold-based behavior. The progression from (a) to (e) suggests a change in the function's parameters that introduces a discontinuity or a sharp threshold. The plots could represent the output of a machine learning model under different training conditions or with different hyperparameter settings. The step-like behavior in (c) and (e) could indicate a decision boundary or a classification rule.

</details>

where relu1 ( x ) denotes max(0 , min(1 , x )) (Krizhevsky 2010) and, x , w and 1 denote vectors of continuous-valued inputs, weights, and 1 s, respectively.

Note that, Figure 1 (b) does not enforce constraints on 1 -α < x,y < α . Essentially, α acts as a tunable knob that controls the size of this unconstrained region where we can learn LNN operators without impedance which is an arguably better approach than choosing a parameter-less t -norm that would arbitrarily interpolate from Boolean-logic's ∧ to real-valued logic's ∧ . Figure 2 illustrates how Łukasiewicz (Figure 2 (a)) and product (Figure 2 (b)) t -norms differ from LNN-∧ learned by fitting to the four rows in Figure 1 (a)'s truth-table. Even pictorially, LNN-∧ looks distinctly conjunction-like, i.e., when either x or y is low it produces a value close to 0 while rising quickly to 1 when both x, y are high. When α = 0 . 9 (Figure 2 (d)), the region devoid of constraints is larger than at α = 0 . 7 (Figure 2 (c)) ( ∵ [0 . 1 , 0 . 9] ⊃ [0 . 3 , 0 . 7] ), so the curve can rise later to provide a better fit . In contrast, Łukasiewicz t -norm remains at 0 until the x + y = 1 line, post which it increases linearly. Product t -norm is similar, adding a slight, upward curve.

Other propositional logic operators include negation ( ¬ ) and disjunction ( ∨ ). LNN negation is given by 1 -x and LNN disjunction, LNN-∨ , is defined in terms of LNN-∧ :

$$\begin{array}{ll}

L N - \lambda ( 1 - x ; \beta , w ) = 1 \\

L N - \lambda ( 1 - x ; \beta , w ) = 0

\end{array}$$

where constraints defined in Equation 1 apply. Figure 2 (e) pictorially depicts LNN-∨ (with α = 0 . 7 ). In contrast to Figure 2 (c), it clearly shows how maximum output is achieved for smaller values of x, y , as a disjunction operator should.

## 4 Learning First-Order LNNs

Following previous work (Yang, Yang, and Cohen 2017; Evans and Grefenstette 2018; Dong et al. 2019), we also utilize program templates expressed in higher-order logic to be fed by the user to guide the learning in the right direction. Our definition of a program template draws inspiration from metainterpretive learning (Muggleton et al. 2014). In contrast to previous work on neuro-symbolic AI however, our definition of a program template is more general and includes as special cases the templates utilized by Evans and Grefenstette (considers only rules whose body contains up to 2 predicates), Yang, Yang, and Cohen (considers only binary predicates) and Dong et al. (considers only rules whose head includes all variables contained in the body). After introducing our logic program template, we then describe how to combine it with data to construct a neural network that may then be trained to learn the logic program of interest.

Let pred ( X 1 , . . . X n ) denote an n -ary predicate which returns true ( 1 ) or false ( 0 ) for every possible joint assignment of X 1 , . . . X n to constants in the knowledge base. The main construct in first-order logic (FOL) is a rule or clause :

$$1 k - 6 h A \cdot \ldots b m$$

where b 1 , . . . b m denote predicates in its body and h denotes the head predicate. If the conjunction of all predicates in the body is true then the head is also true. The head h in a clause may contain fewer logical variables than the body b 1 , . . . b m which means that there must exist an assignment to the missing variables in the head for it to hold. More precisely, if B = b 1 , . . . b m denotes the body and is defined over logical variables Y then h ( X ) , such that X ⊆ Y , is true if ∃ Y \ X : B ( Y ) is true . Assignments that lead to the predicate being true are also called facts .

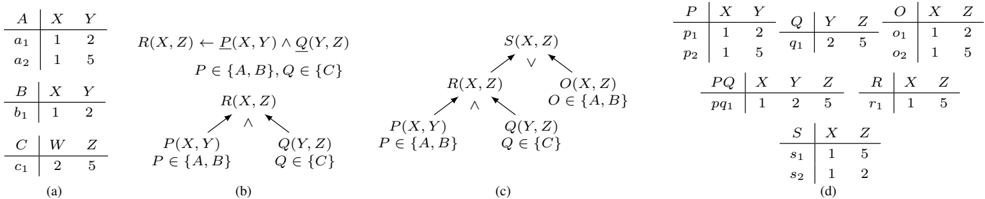

Figure 3 (a) introduces a toy knowledge base (KB) which will serve as a running example. The KB contains three binary predicates, each containing their respective facts along with a unique identifier for easy reference. Thus, A ( X,Y ) 's facts are A (1 , 2) (denoted a 1 ) and A (1 , 5) (denoted a 2 ). Figure 3 (b) shows a template for learning R ( X,Z ) with three crucial pieces of information: 1) the first predicate in its body P is binary and while we do not know the identity of this predicate we know its domain Dom ( P ) is { A,B } , 2) to keep the example simple, the second predicate in the body Q has a singleton domain ( { C } ), and lastly 3) the second argument in the first predicate should be equal to the first argument in the second predicate indicated by repeated use of Y . Figure 3 (b) (bottom) expresses the same template as a tree where P ( X,Y ) and Q ( Y, Z ) are annotated with their respective domains forming children of R ( X,Z ) whose label ∧ indicates that the predicates in its body are to be conjuncted together.

Figure 3 (c) shows a more complex template that disjuncts R ( X,Z ) with O ( X,Z ) , such that Dom ( O ) = { A,B } , to produce S ( X,Z ) . Figure 3 (d) shows generated facts (with unknown truth-values) that can be possibly produced when this template is combined with the KB from Figure 3 (a). P, Q and O contain the union of all facts included in the predicates in their respective domains. Since p 1 and q 1 are the only two facts that agree on value of Y , PQ , the predicate produced by the body of R , contains only one generated fact. R is obtained by dropping Y , which is then unioned with O to produce S . By comparing generated facts in S with labels in the training data, it is possible to learn a full program which in this case constitutes learning: 1) which predicates to replace P, Q and O with, and 2) the logical connectives, LNN-∧ and LNN-∨ , used to model R and S with, respectively. We next state a more formal problem definition.

Figure 3: (a) A toy KB. (b) An example rule template (top) and its tree form (bottom). (c) A more complex program template. (d) Generated facts for our running example.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Relational Algebra and Dependency Diagrams

### Overview

The image presents a series of relational algebra expressions and corresponding dependency diagrams, along with example data tables. It illustrates how relations can be derived from other relations using logical operations like conjunction (AND) and disjunction (OR).

### Components/Axes

* **(a)**: Initial Data Tables:

* Table A: Columns X, Y; Rows (a1: 1, 2), (a2: 1, 5)

* Table B: Columns X, Y; Rows (b1: 1, 2)

* Table C: Columns W, Z; Rows (c1: 2, 5)

* **(b)**: Dependency Diagram 1:

* Expression: R(X, Z) ← P(X, Y) ∧ Q(Y, Z), where P ∈ {A, B}, Q ∈ {C}

* Diagram: R(X, Z) depends on P(X, Y) and Q(Y, Z)

* **(c)**: Dependency Diagram 2:

* Expression: S(X, Z) depends on R(X, Z) ∨ O(X, Z), where O ∈ {A, B}

* Diagram: S(X, Z) depends on R(X, Z) and O(X, Z); R(X, Z) depends on P(X, Y) and Q(Y, Z)

* **(d)**: Derived Data Tables:

* Table P: Columns X, Y; Rows (p1: 1, 2), (p2: 1, 5)

* Table Q: Columns Y, Z; Rows (q1: 2, 5)

* Table O: Columns X, Z; Rows (o1: 1, 2), (o2: 1, 5)

* Table PQ: Columns X, Y, Z; Rows (pq1: 1, 2, 5)

* Table R: Columns X, Z; Rows (r1: 1, 5)

* Table S: Columns X, Z; Rows (s1: 1, 5), (s2: 1, 2)

### Detailed Analysis or ### Content Details

**Section (a): Initial Data Tables**

* **Table A:**

* Column X: Contains the value 1 in both rows.

* Column Y: Contains the values 2 and 5.

* **Table B:**

* Column X: Contains the value 1.

* Column Y: Contains the value 2.

* **Table C:**

* Column W: Contains the value 2.

* Column Z: Contains the value 5.

**Section (b): Dependency Diagram 1**

* The expression `R(X, Z) ← P(X, Y) ∧ Q(Y, Z)` indicates that relation R is derived from the conjunction (AND) of relations P and Q.

* P can be either relation A or B, and Q must be relation C.

* The diagram visually represents this dependency, with R(X, Z) at the top and P(X, Y) and Q(Y, Z) as its dependencies.

**Section (c): Dependency Diagram 2**

* The expression for S(X, Z) involves a disjunction (OR) with R(X, Z) and O(X, Z), where O can be either A or B.

* The diagram shows S(X, Z) depending on R(X, Z) and O(X, Z). R(X, Z) further depends on P(X, Y) and Q(Y, Z), similar to diagram (b).

**Section (d): Derived Data Tables**

* **Table P:**

* Column X: Contains the value 1 in both rows.

* Column Y: Contains the values 2 and 5.

* **Table Q:**

* Column Y: Contains the value 2.

* Column Z: Contains the value 5.

* **Table O:**

* Column X: Contains the value 1 in both rows.

* Column Z: Contains the values 2 and 5.

* **Table PQ:**

* Column X: Contains the value 1.

* Column Y: Contains the value 2.

* Column Z: Contains the value 5.

* **Table R:**

* Column X: Contains the value 1.

* Column Z: Contains the value 5.

* **Table S:**

* Column X: Contains the value 1 in both rows.

* Column Z: Contains the values 5 and 2.

### Key Observations

* The diagrams illustrate how complex relations can be built from simpler ones using logical operations.

* The data tables provide concrete examples of the relations and their contents.

* The choice of P and Q in the expressions affects the resulting data in R and S.

### Interpretation

The image demonstrates the fundamental concepts of relational algebra and data dependencies. It shows how new relations can be derived from existing ones using logical operations like AND and OR. The dependency diagrams provide a visual representation of these relationships, making it easier to understand the flow of data and the dependencies between relations. The data tables provide concrete examples of the relations and their contents, illustrating how the logical operations affect the resulting data. The example shows how the relations are built up from the base relations A, B, and C.

</details>

Let T = ( V , E , L ) denote a tree-structured program template where V denotes the set of nodes, E denotes the set of edges and L denotes a mapping from V to node labels. L maps T 's leaves to the corresponding domain of predicates in the KB. In the worst case, the domain can be the subset of predicates in the KB that agrees with the arity of the leaf. L maps internal nodes to a logical operator {∧ , ∨ , ¬} . The ILP task can then be stated as, given T , a knowledge base KB, and truth-values corresponding to generated facts in the root of T , to learn all logical connectives involved along with selecting predicates for each leaf in T .

In the remainder of this section, we describe how to achieve the above ILP task given ground truth values for generated facts belonging to the root of the template. Let ψ ( v ) denote the truth value associated with (generated) fact v . Our strategy is to build a neural network that connects the truth values of generated facts from the root of the template to other (generated) facts in its lineage right down to the facts in the base KB whose truth values are defined to be 1 . The whole neural network can then be trained end-to-end using backpropagation. Let V ( X ) denote any node in T whose predicate is defined over variables X and whose children in T is denoted by N ( V ) . Also, let V ( x ) denote a fact obtained by the substitution X = x and F ( V ) denote all facts of V .

## 4.1 Combining Base Facts

Let V ( X ) denote a leaf in T with domain L ( V ) then F ( V ) is given by ⋃ P ∈L ( V ) F ( P ) . Computing ψ ( V ( X = x )) corresponding to X = x requires truth values of all facts corresponding to the same substitution. We provide two options that associate parameters with predicates in L ( V ) : 1) attention (Yang, Yang, and Cohen 2017) and 2) our proposed LNNpred operator:

$$\begin{array}{ll}

1) \psi ( V ( x ) ) = \sum _ { P \in C ( V ) } w p ( P ( x ) ) \\

2) \psi ( V ( x ) ) = 1 - relu ( \beta - \sum _ { P \in C ( V ) } w p ( P ( x ) ) )

\end{array}$$

where β , w P denote learnable parameters. One issue with attention is that it may lack sparsity assigning a majority of predicates in L ( V ) non-zero weights thus hampering interpretability. To address this, we propose an alternate parameterization, LNNpred , that is a simpler version of LNN-∨

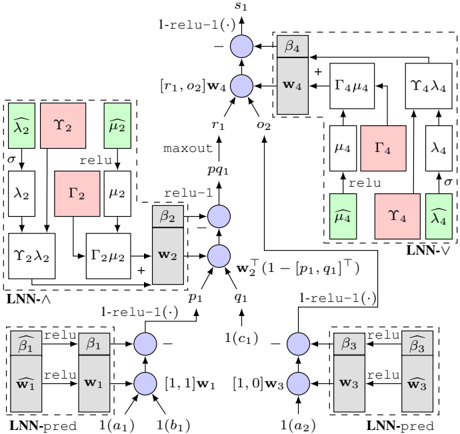

and lacks all constraints except for non-negativity of w P (since we do not require disjunctive semantics). As an example, the lower left corner of Figure 4 shows how to compute ψ ( p 1 ) from ψ ( a 1 ) , ψ ( b 1 ) since a 1 , b 1 form the lineage for p 1 (Figure 3). Here, β 1 , w 1 denote LNNpred 's parameters.

## 4.2 Combining Facts with Conjunction

We handle conjunctions in two steps: 1) first construct the result of the body of the clause, and then 2) construct the head, dropping variables if needed. Let V ( Y ) in T be such that L ( V ) = ∧ and N ( V ) denote its children. Also, let I ( X ) denote the intermediate predicate produced from the body of V ( Y ) potentially containing additional variables such that X ⊇ Y . We use LNN-∧ to compute ψ of I ( x ) :

$$\sum _ { s \in S } \varphi ( I ( x ) ) = r e l u 1 ( b - \sum _ { P \in P ( X _ { var } ( P ) ) } w p ( 1 - v ) ( P ( X _ { var } ( P ) ) ) )$$

where var ( P ) denotes predicate P 's arguments and x var denotes the substitution restricted to variables in var. When X ⊃ Y , multiple facts from F ( I ) may combine to produce a fact in V ( Y ) and we use maxout for this:

$$V : y ( V ( y ) ) = \maxout ( y ( I )$$

where maxout ( { x 1 , . . . } ) (Goodfellow et al. 2013) returns the maximum of the set. Figure 4 shows how ψ ( pq 1 ) is computed from ψ ( p 1 ) , ψ ( q 1 ) where β 2 , w 2 denotes LNN-∧ 's parameters. Since pq 1 is the only intermediate fact leading to r 1 , we do not need maxout in this case. However, if that was not the case, Figure 4 shows where maxout would appear.

## 4.3 Combining Facts with Disjunction

Given V ( X ) in T such that L ( V ) = ∨ , ψ ( V ( x )) can be computed using LNN-∨ :

$$\phi ( V ( x ) ) = 1 - \text{relu} ( \beta - \sum _ { P \in N ( V ) } w p ^{\psi}( P ( x ) )$$

In Figure 4 shows how ψ ( s 1 ) is computed from ψ ( r 1 ) and ψ ( o 2 ) where β r , w 4 denotes LNN-∨ 's parameters.

## 4.4 Other Operations and Extensions

Implementing negation is more involved since it requires that we consider assignments that may lead to facts in V but are

Figure 4: Neural network constructed for s 1 ∈ F ( S ) . σ denotes softmax .

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Neural Network Diagram: Logical Neural Network Architecture

### Overview

The image presents a detailed diagram of a Logical Neural Network (LNN) architecture. It illustrates the flow of data and operations between different components, including LNN-pred, LNN-∧, and LNN-∨ modules. The diagram uses nodes, blocks, and arrows to represent computations, parameters, and data dependencies.

### Components/Axes

* **Nodes:** Represented as blue circles, indicating computational units.

* **Blocks:** Represented as gray or colored rectangles, indicating parameters or intermediate values.

* **Arrows:** Indicate the direction of data flow and dependencies.

* **Labels:** Text labels are used to denote variables, parameters, and operations.

* **Modules:** Dashed boxes enclose specific LNN modules (LNN-pred, LNN-∧, LNN-∨).

**Specific Labels and Components:**

* **Top:**

* `s1`: Output node at the top.

* `1-relu-1(.)`: Operation applied to the output of the top node.

* `[r1, o2]w4`: Input to the top node.

* `β4`: Parameter block connected to the top node.

* `w4`: Parameter block connected to the top node.

* `Γ4μ4`: Intermediate value.

* `Υ4λ4`: Intermediate value.

* `μ4`: Intermediate value.

* `Γ4`: Intermediate value.

* `λ4`: Intermediate value.

* `μ̂4`: Intermediate value (green).

* `Υ4`: Intermediate value (red).

* `λ̂4`: Intermediate value (green).

* `LNN-∨`: Module label for the top-right section.

* **Middle:**

* `r1`: Input to the top node.

* `o2`: Input to the top node.

* `maxout`: Operation label.

* `pq1`: Intermediate value.

* `β2`: Parameter block connected to the middle node.

* `w2`: Parameter block connected to the middle node.

* `p1`: Input to the middle node.

* `q1`: Input to the middle node.

* `w2^T (1-[p1, q1]^T)`: Operation label.

* `λ̂2`: Intermediate value (green).

* `Υ2`: Intermediate value (red).

* `μ̂2`: Intermediate value (green).

* `λ2`: Intermediate value.

* `Γ2`: Intermediate value (red).

* `μ2`: Intermediate value.

* `Υ2λ2`: Intermediate value.

* `Γ2μ2`: Intermediate value.

* `LNN-∧`: Module label for the left-middle section.

* **Bottom:**

* `1-relu-1(.)`: Operation applied to the output of the bottom nodes.

* `β̂1`: Parameter block connected to the bottom-left node.

* `ŵ1`: Parameter block connected to the bottom-left node.

* `β1`: Parameter block connected to the bottom-left node.

* `w1`: Parameter block connected to the bottom-left node.

* `[1,1]w1`: Input to the bottom-left node.

* `1(a1)`: Input to the bottom-left node.

* `1(b1)`: Input to the bottom-left node.

* `β̂3`: Parameter block connected to the bottom-right node.

* `ŵ3`: Parameter block connected to the bottom-right node.

* `β3`: Parameter block connected to the bottom-right node.

* `w3`: Parameter block connected to the bottom-right node.

* `[1,0]w3`: Input to the bottom-right node.

* `1(a2)`: Input to the bottom-right node.

* `1(c1)`: Input to the middle node.

* `LNN-pred`: Module label for the bottom-left and bottom-right sections.

### Detailed Analysis or ### Content Details

The diagram illustrates a complex neural network architecture with multiple interconnected modules.

* **LNN-pred Modules (Bottom):** These modules take inputs `1(a1)`, `1(b1)` and `1(a2)`, `1(c1)` respectively. They involve parameter blocks `w1`, `ŵ1`, `β1`, `β̂1` and `w3`, `ŵ3`, `β3`, `β̂3`. The output of these modules feeds into the middle node.

* **Middle Node:** This node receives inputs `p1` and `q1` from the LNN-pred modules. It also receives an input from the LNN-∨ module. The operation `w2^T (1-[p1, q1]^T)` is performed.

* **LNN-∧ Module (Left-Middle):** This module involves intermediate values `λ̂2`, `Υ2`, `μ̂2`, `λ2`, `Γ2`, `μ2`, `Υ2λ2`, and `Γ2μ2`.

* **LNN-∨ Module (Top-Right):** This module involves intermediate values `μ4`, `Γ4`, `λ4`, `μ̂4`, `Υ4`, and `λ̂4`.

* **Top Node:** This node receives inputs `r1` and `o2` and performs the operation `1-relu-1(.)` to produce the final output `s1`.

### Key Observations

* The diagram shows a hierarchical structure with LNN-pred modules feeding into a central node, which then connects to LNN-∧ and LNN-∨ modules.

* The use of `relu` and `1-relu-1(.)` operations suggests the network is designed for non-linear function approximation.

* The presence of parameter blocks `w1`, `w2`, `w3`, `w4` and `β1`, `β2`, `β3`, `β4` indicates that these are learnable parameters of the network.

* The green and red blocks likely represent different types of intermediate values or features.

### Interpretation

The diagram illustrates a Logical Neural Network architecture designed for complex reasoning and decision-making tasks. The LNN-pred modules likely perform initial feature extraction or prediction, while the LNN-∧ and LNN-∨ modules implement logical operations or relationships between features. The central node integrates information from these modules to produce a final output. The use of learnable parameters allows the network to adapt to specific tasks and datasets. The architecture appears to be designed to mimic logical inference processes within a neural network framework.

</details>

not present in its child predicate P . Given a universe of all possible assignments U , we express ψ ( V ( x )) as:

$$\frac { 1 } { 2 } \times 1 = 1 - \frac { 1 } { 2 } \times 1 = \frac { 1 } { 2 }$$

where ψ ( P ( x )) is defined as 0 if P ( x ) / ∈ F ( P ) .

Note that, any LNN operator introduced for V ( X ) is shared across all V ( x ) ∈ F ( V ) since the result of ILP should be agnostic of individual facts. Thus, even though the (sub)network constructed for V ( x ) may differ from V ( x ′ ) 's, e.g., s 2 's neural network (not shown) is simpler than s 1 's, gradient updates flow to the same LNN parameters. For simplicity, we only discussed templates comprising a tree of Horn clauses but the ideas presented here can easily extend to DAG-structured templates and going beyond equality conditions in the body of a clause, e.g., R ( X,Z ) ← P ( X,Y ) ∧ Q ( Y ′ , Z ) ∧ Y > Y ′ .

## 4.5 Learning Constrained Activations

So far, we have shown how to construct a neural network from a KB and template comprising parameters of LNN operators but we have not addressed how to enforce constraints on said parameters. More precisely, β i , w i , ∀ i = 1 , . . . 4 in Figure 4 need to satisfy the respective constraints associated with the LNN operators they form parameters for (as described in Section 3). Since backpropagation does not handle constraints, we propose to apply a recently proposed approach to 'fold' in a system of linear constraints as layers into the neural network (Frerix, Nießner, and Cremers 2020). We note that Riegel et al. (2020) also devise a training algorithm for learning LNN operators but this is tightly connected to a specific kind of LNN operator called tailored activation. For the small systems of inequality constraints introduced by LNN-∧ , LNN-∨ and LNNpred , the approach presented here conveniently allows learning all (constrained) parameters of LNNs using vanilla backpropagation alone.

Frerix, Nießner, and Cremers (2020) recently showed how to handle a system of linear inequality constraints of the form A z ≤ b where A denotes a matrix containing coefficients in the constraints, z denotes the parameters (in our case, some concatenation of β and w ), and b denotes the constants in the constraints. We begin with the Minkowski-Weyl theorem:

Theorem 4.1. A set C = { z | A z ≤ b } is a convex polyhedron if and only if:

<!-- formula-not-decoded -->

where Υ and Γ contain a finite number of rays and vertices, respectively.

which states that there exists a translation from A , b to Υ , Γ obtained via the double-description method (Motzkin et al. 1953). Assuming we can generate non-negative vectors µ, λ and additionally ensure that λ sums to 1 , then one can access a point z = [ β, w ] from the feasible set C by computing Υ µ +Γ λ . Sampling vectors µ, λ can be achieved, for instance, by using relu (Nair and Hinton 2010) and softmax :

<!-- formula-not-decoded -->

Additionally, these operations can be easily included into any neural network as additional layers. For instance, Figure 4 contains in dashed boxes the above set of layers needed to generate w i , β i , ∀ i = 1 , . . . 4 . The resulting neural network is self-contained, and can be trained by vanilla backpropagation end-to-end thus ensuring that the learned LNN parameters satisfy their respective constraints.

## 5 Experiments

Our experiments compare ILP with LNN against other neurosymbolic ILP approaches on standard benchmarks. We evaluate rules in terms of application-specific goodness metrics and interpretability.

## 5.1 Gridworld

The goal in Gridworld is to learn rules that can help an agent move across an N × N regular grid. Some of the cells on the grid are deemed obstacles that the agent cannot step onto, and the agent's goal is to arrive at the cell which has been deemed the destination.

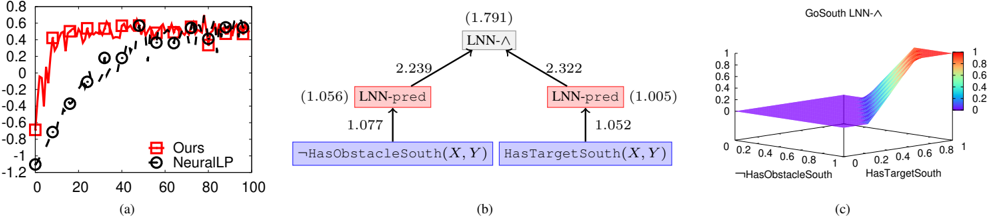

Predicates, Template and Rewards : We include two kinds of base predicates to describe the grid 1) HasObstacle -dir ( X,Y ) is true if the cell next to X,Y in direction dir contains an obstacle, 2) HasTarget -dir ( X,Y ) is true if the destination lies in direction dir from cell X,Y . There are four directions, North, South, East, and West, and including their negated counterparts, ¬ HasObstacle -dir ( X,Y ) and ¬ HasTarget -dir ( X,Y ) , brings the total number of base predicates to 8 . The template is S ( X,Y ) ← P ( X,Y ) ∧ Q ( X,Y ) where P 's domain includes all HasObstacle -dir predicates and their negated counterparts, and Q 's domain includes all HasTarget -dir predicates and their negated counterparts. We set α = 0 . 8 and use a simple reward mechanism: +1 for moving towards the destination, -1 for moving

Figure 5: Gridworld: (a) Avg. Rewards vs. Training Grids. (b) LNN Rule and (c) LNN-∧ for GoSouth(X,Y).

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Composite Figure: Performance Chart, Logic Diagram, and 3D Surface Plot

### Overview

The image presents a composite figure consisting of three sub-figures labeled (a), (b), and (c). Sub-figure (a) is a line chart comparing the performance of two methods, "Ours" and "NeuralLP," over a range of iterations. Sub-figure (b) is a logic diagram illustrating the relationships between different logical components. Sub-figure (c) is a 3D surface plot visualizing the output of "GoSouth LNN-^" as a function of "HasObstacleSouth" and "HasTargetSouth."

### Components/Axes

**Sub-figure (a): Performance Chart**

* **Title:** Implicitly, a performance comparison chart.

* **X-axis:** Iterations, ranging from 0 to 100 in increments of 20.

* **Y-axis:** Performance metric, ranging from -1.2 to 0.8 in increments of 0.2.

* **Legend:** Located in the bottom-right corner.

* "Ours": Represented by a red line with square markers.

* "NeuralLP": Represented by a black dashed line with circle markers.

**Sub-figure (b): Logic Diagram**

* **Nodes:**

* Top: "LNN-^" (with a value of 1.791 in parentheses) - gray box

* Left: "LNN-pred" (with a value of 1.056 in parentheses) - red box

* Right: "LNN-pred" (with a value of 1.005 in parentheses) - red box

* Bottom-Left: "¬HasObstacleSouth(X, Y)" - blue box

* Bottom-Right: "HasTargetSouth(X, Y)" - blue box

* **Edges:** Arrows indicating logical relationships with associated numerical values.

* "¬HasObstacleSouth(X, Y)" to "LNN-pred": 1.077

* "HasTargetSouth(X, Y)" to "LNN-pred": 1.052

* "LNN-pred" (left) to "LNN-^": 2.239

* "LNN-pred" (right) to "LNN-^": 2.322

**Sub-figure (c): 3D Surface Plot**

* **Title:** "GoSouth LNN-^"

* **X-axis:** "¬HasObstacleSouth", ranging from 0 to 1.

* **Y-axis:** "HasTargetSouth", ranging from 0 to 1.

* **Z-axis:** Output value, ranging from 0 to 1, with a color gradient from blue (0) to red (1).

### Detailed Analysis

**Sub-figure (a): Performance Chart**

* **"Ours" (Red line with square markers):**

* Starts at approximately -0.7 at iteration 0.

* Increases sharply to approximately 0.4 by iteration 10.

* Fluctuates around 0.5-0.6 for the remaining iterations.

* **"NeuralLP" (Black dashed line with circle markers):**

* Starts at approximately -1.1 at iteration 0.

* Increases steadily to approximately 0.4 by iteration 80.

* Appears to plateau around 0.4 for the remaining iterations.

**Sub-figure (b): Logic Diagram**

* The diagram illustrates a hierarchical logical structure.

* The bottom-level nodes, "¬HasObstacleSouth(X, Y)" and "HasTargetSouth(X, Y)", feed into "LNN-pred" nodes.

* The "LNN-pred" nodes then feed into the top-level "LNN-^" node.

* The numerical values associated with the edges likely represent weights or strengths of the logical connections.

**Sub-figure (c): 3D Surface Plot**

* The surface plot shows the output of "GoSouth LNN-^" as a function of two input variables.

* When both "¬HasObstacleSouth" and "HasTargetSouth" are low (near 0), the output is also low (near 0, blue).

* As "HasTargetSouth" increases (towards 1), the output rapidly increases (towards 1, red), especially when "¬HasObstacleSouth" is also high.

* The surface is relatively flat when "HasTargetSouth" is low, indicating that the output is not significantly affected by "¬HasObstacleSouth" in this region.

### Key Observations

* In sub-figure (a), the "Ours" method initially performs worse than "NeuralLP" but quickly surpasses it and maintains a higher performance level.

* In sub-figure (b), the weights associated with the connections between nodes vary, suggesting different levels of importance or influence.

* In sub-figure (c), the output of "GoSouth LNN-^" is highly sensitive to "HasTargetSouth" and less sensitive to "¬HasObstacleSouth" when "HasTargetSouth" is low.

### Interpretation

The composite figure presents a comparison of two methods ("Ours" and "NeuralLP") in terms of performance, along with a logical representation of a system ("GoSouth LNN-^"). The performance chart suggests that the "Ours" method is superior to "NeuralLP" in this context. The logic diagram provides insight into the structure and relationships between different components of the "GoSouth LNN-^" system. The 3D surface plot visualizes the behavior of the "GoSouth LNN-^" system, demonstrating how its output is influenced by the input variables "¬HasObstacleSouth" and "HasTargetSouth." The data suggests that the presence of a target to the south ("HasTargetSouth") is a critical factor in determining the output of the system, while the absence of an obstacle to the south ("¬HasObstacleSouth") has a less significant impact when there is no target.

</details>

away, and -2 for stepping on an obstacle. The learning objective is to maximize rewards on randomly generated 5 × 5 grids with 3 obstacles sampled uniformly at random. We test the learned rules on grids with 12 obstacles.

Results : We compare our approach based on LNNpred and LNN-∧ , against NeuralLP which uses attention and product t -norm. Figure 5 (a) shows mean rewards averaged across all cells of 50 test grids produced by the learned rules on the y-axis vs. number of training grids observed on the x-axis. NeuralLP requires a lot more grids before it can learn the desired rules whereas we learn almost perfect rules after observing as few as 20 training grids. Essentially, in comparison to product t -norm, due to the extra learnable parameters in LNN-∧ , we can learn with fewer learning iterations. Figure 5 (b) shows the weights for the LNN rule for GoSouth ( X,Y ) on the edges and biases in parenthesis. This rule allows the agent to go South if 1) target is in that direction and, 2) there is no obstacle to the immediate South of the current cell. Despite the leaves of the template containing 8 predicates each in their respective domains, the learned LNNpred s are quite sparse and thus highly interpretable. The left LNNpred assigns 1 non-zero weight to ¬ HasObstacleSouth out of all HasObstacle -dir predicates and their negated counterparts, and the right LNNpred assigns 1 non-zero weight to HasTargetSouth out of all HasTarget -dir predicates and their negated counterparts. This may be due to the use of relu1 in LNNpred whose sparsity-inducing properties have been noted before (Krizhevsky 2010). In Figure 5 (c), we also plot GoSouth ( X,Y ) 's learned LNN-∧ .

## 5.2 Knowledge Base Completion

Knowledge base completion (KBC) is a standard benchmark for ILP. We experiment with publicly available KBC datasets Kinship, UMLS (Kok and Domingos 2007), WN18RR (Dettmers et al. 2018), and FB15K-237 (Toutanova and Chen 2015) 2 (see Table 1 for statistics). We compare against a host of rule-based KBC approaches: NeuralLP (Yang, Yang, and Cohen 2017), DRUM (Sadeghian et al. 2019), CTP (Minervini et al. 2020b) which is an improvement on neural theorem provers (Rockt¨ aschel and Riedel 2017), and the recently proposed RNNLogic 3 (Qu et al. 2021).

2 All available at github.com/shehzaadzd/MINERVA

3 We compare with rules-only RNNLogic ('w/o embd.'), since using embeddings is out of scope of this work.

Table 1: Statistics of KBC datasets.

| Name | Vertices | Predicates | Facts | Queries |

|-----------|------------|--------------|---------|-----------|

| UMLS | 135 | 49 | 5216 | 661 |

| Kinship | 104 | 26 | 10686 | 1074 |

| WN18RR | 40945 | 11 | 86835 | 3134 |

| FB15K-237 | 14505 | 237 | 272115 | 20466 |

Task Description and Template : A popular abstraction of the KBC task is to complete edges or triples missing from the knowledge graph (KG). More precisely, given a query 〈 h, r, ? 〉 , where h denotes a source vertex and r denotes a relation from the KG, most KBC approaches provide a ranked list of destination vertices. Most of the aforementioned rulebased KBC approaches exclusively focus on learning chain FOL rules as discussed in Section 2. There are at least two prevalent approaches to learn chain rules for KBC. The first approach (Yang, Yang, and Cohen 2017; Sadeghian et al. 2019) represents each predicate in the body as a mixture of relations present in the KG, and subsequently combines these via a conjunction operator. Figure 3 (c)'s template captures this where the subtree rooted at R defines chain rules of length 2 predicates and O captures length 1 thus enabling learning of chain rules capturing multi-hop paths of length up to 2 . The only change we need to make to this template is to include all relations in the KG into the domains of the leaves. It is also easy to extend the template to learn longer chain rules. A different approach, pioneered by MINERVA (Das et al. 2018) and RNNLogic (Qu et al. 2021), is to define the chain rule as a mixture over all possible paths that can exist in the KG. This latter approach leads to more effective KBC and thus we report results by expressing it in our LNN framework as follows: 1) Express each possible multi-hop path as a base relation, and 2) Use one LNN-pred operator to express a mixture over all multi-hop paths.

Metrics and Methodology : We learn a chain rule per relation present in the KG. Following previous work (Yang, Yang, and Cohen 2017), we also add inverse relations to the KG which switches the source and destination vertices. We also include inverse triples into our test set. We compute filtered ranks for destinations (Bordes et al. 2013), which removes all true triples ranked above, and compute the following metrics based on Sun et al. (2020)'s suggestions. Let n denote the number of destinations that have a score strictly greater than

Table 2: KBC Results: Bold font denotes best in row. CTP does not scale to WN18RR, FB15K-237. * indicates results copied from original paper.

| | | NeuralLP | DRUM | CTP | RNNLogic | Ours |

|---------|---------|------------|--------|-------|------------|--------|

| Kinship | Hits@10 | 89.1 | 86.1 | 93.9 | 91.1 | 98.4 |

| Kinship | Hits@3 | 63 | 48.2 | 79.7 | 72.9 | 89.3 |

| Kinship | MRR | 48.8 | 40 | 70.3 | 64.5 | 81.9 |

| | Hits@10 | 93 | 97.9 | 97.0 | 91.1 | 99.4 |

| | Hits@3 | 75.4 | 91.2 | 91.0 | 82.1 | 98.3 |

| | MRR | 55.3 | 61.8 | 80.1 | 71.0 | 90 |

| | Hits@10 | 50.2 | 52.1 | - | 53.1 ∗ | 55.5 |

| | Hits@3 | 43.3 | 44.6 | - | 47.5 ∗ | 49.7 |

| | MRR | 33.7 | 34.8 | - | 45.5 ∗ | 47.3 |

| | Hits@10 | 32.8 | 33.1 | - | 44.5 ∗ | 47 |

| | Hits@3 | 19.8 | 20 | - | 31.5 ∗ | 34.2 |

| | MRR | 17.4 | 17.5 | - | 28.8 ∗ | 30.7 |

destination t 's and let the number of destinations assigned the same score as t 's be denoted by m (including t ), then we compute t 's mean reciprocal rank (MRR) and Hits@K as:

<!-- formula-not-decoded -->

where δ () denotes the Dirac delta function. For each method, we report averages across all test set triples. We learn chain rules containing up to 3 predicates for Kinship and UMLS, 4 for FB15K-237, and 5 for WN18RR in the body of the rule. We provide additional details including the training algorithm used and hyperparameter tuning in Appendix A.

Results : Table 2 reports results for all methods. While CTP improves upon the efficiency of neural theorem provers (Rockt¨ aschel and Riedel 2017), it still does not scale beyond Kinship and UMLS (indicated by -). Also, we copy previously published results for RNNLogic on WN18RR and FB15K-237 4 (indicated by ∗ ). On the smaller datasets, CTP is the best baseline but our results are significantly better producing 16 . 5% and 12 . 4% relative improvements in MRR on Kinship and UMLS, respectively. On the larger datasets, RNNLogic is the current state-of-the-art within rule-based KBC and we outperform it producing 4% and 6 . 6% relative improvements in MRR on WN18RR and FB15K-237, respectively. Despite both learning a mixture over relation sequences appearing on KG paths, one reason for our relative success could be that RNNLogic uses an inexact training algorithm (Qu et al. 2021), relying on expectation-maximization, ELBO bound, whereas we employ no such approximations. Learned Rules for FB15K-237 : Table 3 presents a few rules learned from FB15K-237. Rule 1 in Table 3 infers the language a person speaks by exploiting knowledge of the language spoken in her/his country of nationality. In terms of multi-hop path, this looks like: P ( person ) nationality -→ N ( nation ) spoken in -→ L ( language ) . Similarly, Rule 2 uses the film country relation instead of nationality to infer the language used in a film. Besides spoken in, FB15K-237 contains

4 Despite exchanging multiple emails with its authors, we were unable to run RNNLogic code on the larger KBC datasets.

Table 3: Learned rules from FB15K-237.

| 1) | person language ( P,L ) ← | nationality ( P,N ) ∧ spoken in ( L,N ) |

|----------------------------------|----------------------------------|---------------------------------------------------------------------------------------|

| 2) | film language ( F,L ) ← | film country ( F,C ) ∧ spoken in ( L,C ) |

| 3) tv program language ( P,L ) ← | 3) tv program language ( P,L ) ← | country of tv program ( P,N ) ∧ official language ( N,L ) |

| 4) | burial place ( P,L ) ← | nationality ( P,N ) ∧ located in ( L,N ) |

| 5) | tv program country ( P,N ) ← | tv program actor ( P,A ) ∧ born in ( A,L ) ∧ located in ( L,N ) |

| 6) | film release region ( F,R ) ← | film crew ( F,P ) ∧ marriage location ( P,L ) ∧ located in ( L,R ) |

| 7) | marriage location ( P,L ) ← | celebrity friends ( P,F ) ∧ marriage location ( F,L ′ ) ∧ location adjoins ( L ′ ,L ) |

other relations that can be utilized to infer language such as the official language spoken in a country. Rule 3 uses this relation to infer the language spoken in a TV program by first exploiting knowledge of its country of origin. Rules 5, 6 and 7 are longer rules containing 3 relations each in their body. Rule 5 infers a TV program's country by first exploiting knowledge of one of its actor's birth place and then determining which country the birth place belongs to. Rule 6 is similar but uses a film crew member's marriage location instead to infer the region where the film was released. Lastly, Rule 7 infers the marriage location of a celebrity by exploiting knowledge of where their friends got married.

Additional KBC Results : Due to space constraints, in Appendix B we report results on the Countries dataset (Bouchard, Singh, and Trouillon 2015) for which ground truth rules are known. On Countries, our KBC accuracy is comparable to other approaches and the learned LNN rules form a close match with the ground truth rules specified in Nickel, Rosasco, and Poggio (2016).

## 6 Conclusion

Our experiments show that learning rules and logical connectives jointly is not only possible but leads to more accurate rules than other neuro-symbolic ILP approaches. Templates provide a flexible way to express a wide range of ILP tasks. The templates used for Gridworld and KBC are distinct, yet we outperformed baselines in both cases. LNN rules use weights sparingly and are eminently interpretable while LNN operators' constraint formulation ensures close ties to classical logic's precise semantics compared to other approaches (e.g., NLM). While our neural network requires grounding the KB, our approach is still scalable enough to tackle the larger KBC benchmarks whereas others are not (e.g., CTP). In terms of future work, we aim to combine the ideas presented here with embedding of predicates and constants in a high-dimensional latent space to hopefully further improve performance. We would also like to extend our approach to learn more general Prolog-style rules.

## References

Bordes, A.; Usunier, N.; Garcia-Duran, A.; Weston, J.; and Yakhnenko, O. 2013. Translating embeddings for modeling multi-relational data. In NeurIPS .

Bouchard, G.; Singh, S.; and Trouillon, T. 2015. On Approx- imate Reasoning Capabilities of Low-Rank Vector Spaces. In AAAI .

Das, R.; Dhuliawala, S.; Zaheer, M.; Vilnis, L.; Durugkar, I.; Krishnamurthy, A.; Smola, A.; and McCallum, A. 2018. Go for a Walk and Arrive at the Answer: Reasoning Over Paths in Knowledge Bases using Reinforcement Learning. In ICLR .

Dettmers, T.; Minervini, P.; Stenetorp, P.; and Riedel, S. 2018. Convolutional 2d knowledge graph embeddings. In Thirtysecond AAAI conference on artificial intelligence .

Donadello, I.; Serafini, L.; and d'Avila Garcez, A. S. 2017. Logic Tensor Networks for Semantic Image Interpretation. In IJCAI .

Dong, H.; Mao, J.; Lin, T.; Wang, C.; Li, L.; and Zhou, D. 2019. Neural Logic Machines. In ICLR .

Esteva, F.; and Godo, L. 2001. Monoidal t-norm based logic: Towards a logic for left-continuous t-norms. Fuzzy Sets and Systems .

Evans, R.; and Grefenstette, E. 2018. Learning Explanatory Rules from Noisy Data. JAIR .

Frerix, T.; Nießner, M.; and Cremers, D. 2020. Homogeneous Linear Inequality Constraints for Neural Network Activations. In CVPR Workshops .

Getoor, L.; and Taskar, B. 2007. Introduction to Statistical Relational Learning (Adaptive Computation and Machine Learning) . The MIT Press.

Goodfellow, I.; Warde-Farley, D.; Mirza, M.; Courville, A.; and Bengio, Y. 2013. Maxout Networks. In ICML .

Kazemi, S. M.; and Poole, D. 2018. RelNN: A Deep Neural Model for Relational Learning. In AAAI .

Kok, S.; and Domingos, P. 2007. Statistical predicate invention. In Proceedings of the 24th international conference on Machine learning , 433-440.

Krizhevsky, A. 2010. Convolutional deep belief networks on CIFAR-10. Unpublished Manuscript.

Manhaeve, R.; Dumancic, S.; Kimmig, A.; Demeester, T.; and Raedt, L. D. 2018. DeepProbLog: Neural Probabilistic Logic Programming. CoRR .

Minervini, P.; Bosnjak, M.; Rockt¨ aschel, T.; Riedel, S.; and Grefenstette, E. 2020a. Differentiable reasoning on large knowledge bases and natural language. In AAAI .

Minervini, P.; Riedel, S.; Stenetorp, P.; Grefenstette, E.; and Rockt¨ aschel, T. 2020b. Learning Reasoning Strategies in End-to-End Differentiable Proving. In ICML .

Motzkin, T. S.; Raiffa, H.; Thompson, G. L.; and Thrall, R. M. 1953. The double description method. Contributions to the Theory of Games .

Muggleton, S. 1996. Learning from positive data. In Worshop on ILP .

Muggleton, S. H.; Lin, D.; Pahlavi, N.; and TamaddoniNezhad, A. 2014. Meta-interpretive Learning: Application to Grammatical Inference. Machine Learning .

Nair, V.; and Hinton, G. 2010. Rectified Linear Units Improve Restricted Boltzmann Machines. In ICML .

Nickel, M.; Rosasco, L.; and Poggio, T. 2016. Holographic Embeddings of Knowledge Graphs. In AAAI .

Qu, M.; Chen, J.; Xhonneux, L.-P.; Bengio, Y.; and Tang, J. 2021. { RNNL } ogic: Learning Logic Rules for Reasoning on Knowledge Graphs. In ICLR .

Riegel, R.; Gray, A.; Luus, F.; Khan, N.; Makondo, N.; Akhalwaya, I. Y.; Qian, H.; Fagin, R.; Barahona, F.; Sharma, U.; Ikbal, S.; Karanam, H.; Neelam, S.; Likhyani, A.; and Srivastava, S. 2020. Logical Neural Networks. CoRR .

Rockt¨ aschel, T.; and Riedel, S. 2017. End-to-End Differentiable Proving. In NeurIPS .

Sadeghian, A.; Armandpour, M.; Ding, P.; and Wang, D. Z. 2019. DRUM: End-To-End Differentiable Rule Mining On Knowledge Graphs. In NeurIPS .

Sourek, G.; Svatos, M.; Zelezny, F.; Schockaert, S.; and Kuzelka, O. 2017. Stacked Structure Learning for Lifted Relational Neural Networks. In International Conference on Inductive Logic Programming .

Sun, Z.; Vashishth, S.; Sanyal, S.; Talukdar, P.; and Yang, Y. 2020. A Re-evaluation of Knowledge Graph Completion Methods. In ACL .

Toutanova, K.; and Chen, D. 2015. Observed versus latent features for knowledge base and text inference. In Proceedings of the 3rd workshop on continuous vector space models and their compositionality , 57-66.

Yang, F.; Yang, Z.; and Cohen, W. W. 2017. Differentiable Learning of Logical Rules for Knowledge Base Reasoning. In NeurIPS .

## A Implementation Details for KBC Experiments

Given a training knowledge graph G = 〈V , R , E〉 whose vertices are given by V , edges are given by E and relations are given by R , we learn a chain FOL rule for each r ∈ R independently. Each edge in E is given by a triple 〈 h, r, t 〉 where h, t ∈ V denote source and destination vertices, and r ∈ R denotes a relation. Following previous work (Yang, Yang, and Cohen 2017), we also add inverse relations. In other words, for each r ∈ R we introduce a new relation r -1 by adding for each 〈 h, r, t 〉 ∈ E a new triple 〈 t, r -1 , h 〉 to G . Learning to predict destinations for a given relation r in the augmented set of relations is essentially a binary classification task where 〈 h, r, t 〉 ∈ E and 〈 h, r, t 〉 / ∈ E , ∀ h, t ∈ V , denote positive and negative examples, respectively.

Training Algorithm & Hyperparameter Settings : In each iteration, we sample uniformly at random a mini-batch of positive triples B + from 〈 h, r, t 〉 ∈ E and negative triples B -from 〈 h, r, t 〉 / ∈ E , ∀ h, t ∈ V , such that | B + | = | B -| to minimize the following loss:

<!-- formula-not-decoded -->

where γ denotes the margin hyperparameter. We use Adagrad ( ? ) with step size ∈ { 0 . 1 , 1 . 0 } , margin γ ∈ { 0 . 1 , 0 . 5 , 1 . 0 , 2 . 0 } and batch size | B + | = | B -| = 8 . We use the validation set to perform hyperparameter tuning and

## NeuralLP

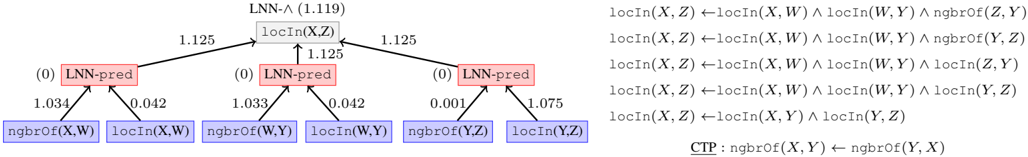

Figure 6: LNN-rule (left) vs. NeuralLP's (5) rules (top right) vs. CTP's rule (bottom right) for Countries-S3.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Diagram: LNN-Λ Derivation Tree

### Overview

The image presents a derivation tree for a logical expression, likely within the context of a knowledge representation or reasoning system. The tree illustrates how a complex expression `locIn(X, Z)` can be derived from simpler expressions using logical rules. The diagram also includes associated numerical values, possibly representing confidence scores or weights. The right side of the image contains logical rules.

### Components/Axes

* **Nodes:** The diagram consists of rectangular nodes representing logical expressions or predicates.

* Top node: `LNN-Λ (1.119)` containing `locIn(X, Z)`

* Middle nodes: Three `LNN-pred` nodes, each labeled with `(0)`

* Bottom nodes: Six nodes representing base predicates: `ngbrof(X, W)`, `locIn(X, W)`, `ngbrof(W, Y)`, `locIn(W, Y)`, `ngbrof(Y, Z)`, `locIn(Y, Z)`

* **Edges:** Arrows connect the nodes, indicating the derivation flow. Numerical values are associated with these edges.

* **Colors:**

* Top node: Light gray

* Middle nodes: Light red

* Bottom nodes: Light blue

* **Logical Rules:** A set of logical rules is listed on the right side of the diagram.

* **CTP:** A Common-sense Transitivity Principle is defined.

### Detailed Analysis

**Tree Structure and Values:**

* **Top Node:** The root node is labeled `LNN-Λ (1.119)` and contains the expression `locIn(X, Z)`. The value 1.119 is associated with this node.

* **Middle Nodes:** Three `LNN-pred` nodes are connected to the top node. Each `LNN-pred` node is labeled with `(0)`.

* The edges connecting the left `LNN-pred` node to the top node have a value of 1.125.

* The edges connecting the center `LNN-pred` node to the top node have a value of 1.125.

* The edges connecting the right `LNN-pred` node to the top node have a value of 1.125.

* **Bottom Nodes:** Each `LNN-pred` node is connected to two base predicate nodes.

* Left `LNN-pred` node:

* `ngbrof(X, W)` with an edge value of 1.034.

* `locIn(X, W)` with an edge value of 0.042.

* Center `LNN-pred` node:

* `ngbrof(W, Y)` with an edge value of 1.033.

* `locIn(W, Y)` with an edge value of 0.042.

* Right `LNN-pred` node:

* `ngbrof(Y, Z)` with an edge value of 0.001.

* `locIn(Y, Z)` with an edge value of 1.075.

**Logical Rules (Right Side):**

The following logical rules are presented:

1. `locIn(X, Z) ← locIn(X, W) ∧ locIn(W, Y) ∧ ngbrof(Z, Y)`

2. `locIn(X, Z) ← locIn(X, W) ∧ locIn(W, Y) ∧ ngbrof(Y, Z)`

3. `locIn(X, Z) ← locIn(X, W) ∧ locIn(W, Y) ∧ locIn(Z, Y)`

4. `locIn(X, Z) ← locIn(X, W) ∧ locIn(W, Y) ∧ locIn(Y, Z)`

5. `locIn(X, Z) ← locIn(X, Y) ∧ locIn(Y, Z)`

**CTP (Common-sense Transitivity Principle):**

`CTP: ngbrof(X, Y) ← ngbrof(Y, X)`

### Key Observations

* The diagram illustrates a hierarchical derivation process, starting from base predicates and building up to the target expression `locIn(X, Z)`.

* The numerical values associated with the edges likely represent confidence scores or weights, indicating the strength of the derivation steps.

* The logical rules on the right side provide the formal basis for the derivations shown in the tree.

* The CTP rule suggests a symmetry or transitivity property for the `ngbrof` predicate.

### Interpretation

The diagram demonstrates a knowledge representation and reasoning system's ability to derive complex logical expressions from simpler ones. The derivation tree shows how the `locIn(X, Z)` predicate can be inferred from combinations of `locIn` and `ngbrof` predicates involving intermediate variables (W, Y). The numerical values associated with the edges likely represent the system's confidence in each derivation step, with higher values indicating stronger inferences. The logical rules provide the underlying axioms that govern the derivation process. The CTP rule highlights a specific property of the `ngbrof` predicate, which could be used to further refine the reasoning process. The low value of 0.001 associated with the edge between the right `LNN-pred` node and `ngbrof(Y, Z)` suggests that this particular inference path is considered weak or unreliable by the system.

</details>

Table 4: AUC-PR Results on Countries

| | NLM | NTP- λ | CTP | NeuralLP | Ours |

|----|-------------|--------------|------------|------------|----------|

| S1 | 58.06 ± 2.4 | 100 ± 0 | 99.6 ± 0.5 | 100 ± 0 | 100 ± 0 |

| S2 | 40.57 ± 5.3 | 93.04 ± 0.4 | 92.4 ± 1.7 | 75.1 ± 0.3 | 92.3 ± 0 |

| S3 | 53.37 ± 2.8 | 77.26 ± 17.0 | 55.4 ± 3.5 | 92.2 ± 0.2 | 91.3 ± 0 |

learn rules of length up to 4 for FB15K-237, 5 for WN18RR, and 3 for Kinship, UMLS.

## B Additional KBC Experiments

Besides the experiments presented in Section 5, we also experiment with the Countries dataset (Bouchard, Singh, and Trouillon 2015) which contains 272 vertices, 1158 facts and 2 predicates locatedIn ( X,Y ) (abbreviated locIn ) and neighborOf ( X,Y ) (abbreviated ngbrOf ). Following previous work (Das et al. 2018), we report area under the precision-recall curve (AUC-PR).

Nickel, Rosasco, and Poggio (2016) permute the facts in this dataset to pose 3 learning tasks { S 1 , S 2 , S 3 } each corresponding to learning a different rule. S1 and S2's rules contain 2 predicates in their bodies, whereas S3's body contains 3 . We use S ( X,Z ) ← P ( X,Y ) ∧ Q ( Y, Z ) as template for S1 and S2, and S ( X,Z ) ← P ( X,W ) ∧ Q ( W,Y ) ∧ O ( Y, Z ) for S3, where Dom ( P ) = Dom ( Q ) = Dom ( O ) = { locIn , ngbrOf } . We compare against NeuralLP 5 (Yang, Yang, and Cohen 2017), neural theorem provers 6 (NTPλ ) (Rockt¨ aschel and Riedel 2017), conditional theorem provers 7 (CTP) (Minervini et al. 2020b) and neural logic machines 8 (NLM) (Dong et al. 2019).

Table 4 reports averages across 3 runs. The NLM implementation we report results with is quite pessimistic almost never predicting a link. All other approaches achieve perfect AUC-PR on S1. We outperform NeuralLP on S2 and NTP shows high variance on S3 9 perhaps due to its excessive parameterization (NTP learns embeddings for predicates and vertices). CTP performs well on the first two tasks but fails on S3. To find out why, we take a close look at the learned rules next.

5 github.com/fanyangxyz/Neural-LP

6 github.com/uclnlp/ntp

7 github.com/uclnlp/ctp

8 github.com/google/neural-logic-machines

9 NTP's high variance on S2 is also noted in Das et al. (2018).

The goal in S2 and S3 (Countries) is to learn the following rules (Nickel, Rosasco, and Poggio 2016):

<!-- formula-not-decoded -->

We compare the rules learned by our approach, NeuralLP and CTP for Countries-S3. NeuralLP produces a weighted list of 5 rules (see Figure 6 top right, weights omitted) none of which capture the correct rule shown above. CTP learns that ngbrOf is a symmetric relation (Minervini et al. 2020b) which makes sense (Figure 6 bottom right). Unfortunately however, all facts that need to be proved in the test set of Countries-S3 pertain to locIn predicate which explains why CTP's AUC-PR for Countries-S3 is so poor (Table 4). In contrast, the learned LNN-rule shown in Figure 6 (left) is a near perfect translation of the correct logical rule into real-valued logic. Note that, the leftmost and middle LNNpred s place a large weight on ngbrOf vs. a small weight on locIn while the rightmost LNNpred does the opposite, which lines up perfectly with the rule to be learned.

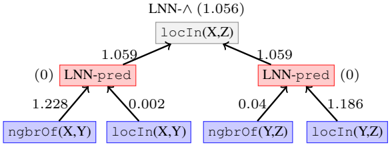

We also compared the rules learned for S2. Just as in the case of S3, for S2, the learned LNN rule's (shown in Figure 7) left LNNpred places a large weight on ngbrOf while placing a small weight on locIn whereas the right LNNpred does the opposite, closely matching the correct FOL rule shown above. The list of rules learned by NeuralLP (weight depicted in parenthesis) is shown below:

<!-- formula-not-decoded -->

none of which match the correct rule to be learned for S2. CTP learns the following rule (Minervini et al. 2020b):

Figure 7: LNN rule learned from Countries-S2

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Diagram: LNN Structure

### Overview

The image presents a diagram illustrating the structure of a Logical Neural Network (LNN). It shows the relationships between different components, including LNN-pred nodes and locIn/ngbrof nodes, along with associated numerical values representing weights or probabilities.

### Components/Axes

* **Nodes:**

* `LNN-^ (1.056)`: Top-level node, likely representing a conjunction (AND) operation in the LNN. The value 1.056 is associated with this node.

* `locIn(X,Z)`: A node representing the location of X in Z. It is connected to the `LNN-^` node.

* `LNN-pred`: Two nodes labeled "LNN-pred" with associated values of (0). These are likely prediction nodes within the LNN.

* `ngbrof(X,Y)`: A node representing the neighborhood of X in Y.

* `locIn(X,Y)`: A node representing the location of X in Y.

* `ngbrof(Y,Z)`: A node representing the neighborhood of Y in Z.

* `locIn(Y,Z)`: A node representing the location of Y in Z.

* **Edges:** Arrows indicate the flow of information or dependencies between nodes.

* **Weights/Values:** Numerical values are associated with the edges, representing weights or probabilities.

### Detailed Analysis

* **Top Node:** The `LNN-^ (1.056)` node is at the top of the diagram.

* **locIn(X,Z) Node:** Directly below the top node is the `locIn(X,Z)` node.

* **LNN-pred Nodes:** Two `LNN-pred` nodes are positioned below and to the left and right of the `locIn(X,Z)` node. Each has a value of (0).

* **Bottom Nodes:** Four nodes are at the bottom: `ngbrof(X,Y)`, `locIn(X,Y)`, `ngbrof(Y,Z)`, and `locIn(Y,Z)`.

* **Edge Values:**

* `ngbrof(X,Y)` to left `LNN-pred`: 1.228

* `locIn(X,Y)` to left `LNN-pred`: 0.002

* `ngbrof(Y,Z)` to right `LNN-pred`: 0.04

* `locIn(Y,Z)` to right `LNN-pred`: 1.186

* left `LNN-pred` to `locIn(X,Z)`: 1.059

* right `LNN-pred` to `locIn(X,Z)`: 1.059

### Key Observations

* The diagram represents a hierarchical structure, with information flowing from the bottom nodes to the top node.

* The `LNN-pred` nodes appear to aggregate information from the `ngbrof` and `locIn` nodes.

* The values on the edges likely represent the strength or importance of the connections between nodes.

### Interpretation

The diagram illustrates a specific configuration of an LNN, likely used for reasoning about spatial relationships. The `LNN-^` node suggests a conjunctive query or rule. The `locIn` and `ngbrof` nodes represent spatial predicates, and the `LNN-pred` nodes likely perform intermediate computations or predictions based on these predicates. The numerical values associated with the edges indicate the weights or probabilities assigned to these relationships, influencing the final outcome of the LNN. The structure suggests a logical inference process where the location and neighborhood relationships between entities (X, Y, Z) are used to derive a higher-level conclusion.

</details>

```

```

Not only does this rule not match the correct rule to be learned, it is also not very useful from the perspective of proving facts in the test set which pertain to predicate locIn exclusively.