## Gödel's Incompleteness Theorem

Serafim Batzoglou

December 14, 2021

## Abstract

I present the proof of Gödel's First Incompleteness theorem in an intuitive manner assuming college-level computational background, while covering all technically challenging steps. I also discuss Gödel's Second Incompleteness theorem, their connection to Gödel's Completeness theorem, and conclude with brief remarks on implications for mathematics, computation, theory of mind and AI.

## 1 Introduction

I learned about Gödel's Incompleteness theorem as a teenager reading Douglas Hofstadter's "Gödel, Escher, Bach", a unique book that inspired my curiosity for computer science, logic, and philosophy of mind. The theorem has a special place in the history of mathematics. In a popular list of "top 100" theorems of all time, it ranks 6 th after the irrationality of √ 2 , Gauss's fundamental theorem of algebra, the countability of the rationals, the Pythagorean theorem and the prime number theorem. And for good reason: to those expecting a foundation of mathematics on a defined collection of axioms from which all theorems are derived, the result must have been as startling as the discovery of √ 2 by the Pythagoreans who believed that all numbers are rational. It has been discussed widely among philosophers and in popular culture for its implications for mathematical foundations, nature of mind and artificial intelligence.

Despite the theorem's significance, most applied computer scientists are not versed in the theorem's proof or implications; logic today is much less popular than statistics and machine learning. In my opinion, the theorem cannot be appreciated unless its proof is understood at a fairly technical level. Informal descriptions, even if well written and precise, leave the reader unclear about the theorem's scope and implications for mathematics and AI.

There are many excellent presentations of the proof (Smith 2013, Smullyan 1991, Nagel and Newman 2001, lecture notes such as by B. Kim). Here, besides having fun writing on the topic, my aim is to present the proof intuitively to computer scientists, assuming minimal college-level background. I omit or push to footnotes many boring details, but cover all technically challenging steps and elements that are pertinent to the theorem's implications. I also discuss briefly Gödel's Second Incompleteness theorem, and the often confusing relation of these theorems to Gödel's Completeness theorem. I conclude with my brief remarks on implications for mathematics, computation, and theory of mind.

## 2 Preliminaries

## 2.1 Formal axiomatic systems and Peano Arithmetic

The theorem concerns formal axiomatic systems for mathematics. Since the time of Euclid, mathematicians have sought to craft formal lists of axioms from which all mathematical truths can be derived. Axiomatic systems usually focus on a specific domain like Euclidean geometry, number theory, or group theory, although some axiomatic systems, such as Zermelo-Fraenkel set theory with the Axiom of Choice, aspire to be rich enough to serve as the foundation for all mathematics. Gödel's Incompleteness theorem says, in lay language, that any axiomatic system rich enough to capture basic number theory is incomplete. The proof is constructive: it is an algorithm that takes an axiomatic system L as input, and produces a

specific statement σ that is either true and unprovable in L , or provable and false, in which case L is nonsensical.

A formal axiomatic system consists of a language of symbols and syntactic rules with which two types of objects can be composed: terms , which refer to objects in the domain and can be thought of as words of the language, and formulas , which are mathematical assertions and can be thought of as sentences of the language. Terms and formulas may contain free (input) variables, so their value can vary: terms with no free variables evaluate to a single item in the domain and formulas with no free variables the statements - evaluate to true or false. 1 A finite collection of axioms or axiom schemes (templates that capture a family of axioms) specify all the formulas that can be taken for granted. Then, logical rules specify how to derive a new formula from previous formulas.

For example, Peano Arithmetic (PA) is a simplified language for nonnegative integer arithmetic, with domain N . 2 The symbols for numbers are just 0 and S , and the number n is written as n S 's followed by 0 , also denoted as S ( n ) 0 . 3 There is a countably infinite set of variable symbols, x 0 , x 1 , x 2 ,. . . . The binary operations + , ∗ , binary predicates = , < , > and logical operations ∨ , ∧ , ¬ , ∀ , ∃ are symbols with their usual meanings. PA's alphabet is the collection of all above symbols, Σ = { 0 , S, + , ∗ , = , <, >, ¬ , ∧ , ∨ , → , ∀ , ∃ , ( , ) , x 0 , x 1 , x 2 , ... } . By convention, we denote variables by x, y, z, ... ; those are not symbols of Σ .

Terms are defined recursively to be either the basic term 0 , any variable x , or St , s + t , and s ∗ t where s, t are terms. Formulas are defined recursively to be either s = t, s < t, s > t where s, t are terms, or ¬ φ, φ ∨ ψ, φ ∧ ψ, ∀ xφ, ∃ xφ , where x is a variable and φ, ψ are formulas. Parentheses ( , ) are used to specify the order in which the above compositions are taken. By convention we denote terms by s, t, u, ... and formulas by φ, χ, ψ, ... ; those are not symbols of Σ .

The above syntax defines legal terms and formulas of the language. It is simple to distinguish algorithmically between syntactically valid terms, such as SS 0 , x +( y ∗ Sx ) , syntactically valid formulas, such as S 0 + S 0 = SS 0 , 4 S 0 + S 0 > SSS 0 , 5 ∀ y ( x ∗ Sx > y ) ∧ ( x < S 0) , and syntactically invalid strings such as )( xy ∀ S 0 S .

PA's axioms come in two groups: (1) domain-specific axioms that specify the properties of natural numbers, addition ( + ), multiplication ( ∗ ), and the relationships = , <, > ; (2) logical axioms, which represent general logical truths.

Formulas can be derived from axioms or previously derived formulas using three inference rules: (1) from φ and φ → ψ we can derive ψ ; this principle is known as modus ponens (2) from φ we can derive ∀ xφ for any variable x , whether it appears in φ or not; this is universal generalization 6 (3) from φ we can derive any φ ′ that constitutes a renaming of the variables of φ . 7 A proof of a formula φ k is simply a list of formulas, φ 1 , ..., φ k such that each φ i either is an axiom or is derived from previous formulas in the sequence through an inference rule. A formula φ is a theorem of PA, denoted PA φ when there is a proof [ φ 1 , ..., φ k = φ ] of φ .

The list of PA axioms, logical axioms, and a proof of the statement S 0 + S 0 = SS 0 are provided in a long footnote. 8 The key point to understand is that the property of a sequence φ 1 , ..., φ k being a proof is syntactic: it can be reduced to simple string manipulation involving the axioms and rules of

1 For example, with R as the domain + , · as binary operators and >,<, = as binary predicates, 2 . 718 , 2 πr , and ( x + y ) 2 are examples of terms. 1 + 1 = 2 , x > y , ∀ x ∀ y ( x + y ) 2 = x 2 +2 xy + y 2 , x < x +1 are examples of formulas. The first and third are true statements, the second is a formula whose truth depends on x and y , and the fourth is a true formula with free variable x.

2 See the section on Completeness N is the minimal domain of PA, but larger domains exist.

3 S formally is a unitary operation whose domain are the terms of the language.

4 True, as it says 1 + 1 = 2 .

5 False, as it says 1 + 1 > 3 , but syntactically valid.

6 In some systems it needs to be restricted to variables not free in φ , depending on how it combines with other axioms and inference rules to potentially lead to contradictions.

7 A renaming ρ of variables is such that ρ ( x ) = ρ ( y ) iff x and y are different variables. This avoids disasters like renaming x < y →∃ z ( x + z = y ) to a < b →∃ b ( a + b = b ).

8 There are many equivalent axiomatizations of PA. To simplify things a bit, let us restrict notation to fewer symbols. First, we can do away with the logical symbols ∧ , ∨ , ↔ , ∃ by defining them in terms of → , ¬ , ∀ , as: φ ∧ ψ := ¬ ( φ →¬ ψ ) ; φ ∨ ψ := ¬ φ → ψ ; ∃ xφ := ¬∀ x ¬ φ . Then, we can do away with >,< : x > y := ¬∀ z ( x = y + z → z = 0) . A list of axioms that define PA follows (following Weaver, 2014); other possible axiomatizations exist, and studying the axiomatization in detail is not required to understand the main ideas in the incompleteness proof:

inference, and as we will see, it can be represented with a PA formula. The collection of theorems of PA is recursively enumerable: it is not hard to write a computer program that mechanically goes through every possible proof and lists all possible theorems of PA.

## 2.2 Recursive functions and their representation in Peano Arithmetic

## 2.2.1 Exponentiation in Peano Arithmetic

This simple language is powerful enough to express number theory. For example, let's write down Fermat's Last Theorem in PA. Recall that the theorem says x n + y n = z n for all integers n > 2 and x, y, z > 0 . However, there is no exponentiation primitive in PA. How do we write x n ?

Notational convention: PA uses unary representation for numbers. Therefore, 1+3 = 3+1 is written as S 0 + SSS 0 = SSS 0 + S 0 . In literature, special notation is introduced to distinguish between a number n and its PA representation n = S ( n ) 0 = S....S 0 . I think it is OK to follow the convention that a number within a PA formula automatically switches to unary representation. After all, this is also true for decimal: the number 5 is not the letter ′ 5 ′ . A number written on paper automatically switches to a decimal string.

Going back to exponentiation, we want a formula φ EXP ( x, n, y ) that is true if and only if ( iff ) y = x n . This is tricky. We want something like φ EXP ( x, n, y ) := ( n = 0 ∧ y = 1) ∨ ∃ m ∃ z ( n = Sm ∧ ( y = x ∗ z ∧ φ EXP ( x, m, z )) . Unfortunately, we cannot use φ EXP in its own definition.

The trick is to pack all of the intermediate exponents x, x 2 , ..., x n into a single big number N . Then we can devise a formula φ EXP that "talks" about N having the property of packing all the intermediate exponents of x . In principle it is no surprise that we can pack an arbitrary list of numbers into a single big number. In practice it is tricky to do so with the limited syntax of PA.

The ability to pack a list of numbers k 1 , ..., k n into a single number is a "subroutine" of the incompleteness proof, and is the key insight allowing PA to represent recursive functions, as described in the next section. The original way Gödel accomplished this was using the ancient Chinese Remainder Theorem, and is still one of the cleanest ways:

The Chinese Remainder Theorem: Given k 1 , . . . k n ∈ N and pairwise coprime m 1 , . . . , m n ∈ N with m i > k i , 1 ≤ i ≤ n , there is a unique N < m 1 m 2 . . . m n such that for all 1 ≤ i ≤ n , k i ≡ N mod m i .

The theorem seems made-to-order for packing lists into a single integer. Given any k 1 , ..., k n , we can pack them into a single N by fixing a list of coprime m i > k i , finding the unique N < m 1 m 2 . . . m n that "works", and then retrieving any k i by taking N mod m i . One important subtlety is that the m i have to be describable in a compact way - say, with at most a single additional integer on top of N . Let's say we

1. Equality: x = x ; x = y → y = x ; x = y → ( y = z → x = z ) .

2. Successor: ¬ ( Sx = 0) ; Sx = Sy ↔ x = y .

3. Addition: x +0 = x ; x + Sy = S ( x + y ) .

4. Multiplication: x ∗ 0 = 0 ; x ∗ Sy = x + x ∗ y

5. Induction: ( φ (0) ∧ ∀ x ( φ ( x ) → φ ( Sx ))) →∀ xφ ( x )

Notice that the latter is the familiar induction scheme, and is actually an axiom template from which an infinite collection of axioms derive: for any formula φ , if we show that φ (0) holds and for every n , φ ( n ) implies φ ( n +1) , then we know that φ ( n ) holds for any n . Then, the logical axiom schemas comprise:

6. φ → ( ψ → φ

7. ( φ → ( ψ → χ )) → (( φ → ψ ) → ( φ → χ ))

8. ( ¬ φ →¬ ψ ) → ( ψ → φ

9. ( ∀ x ( φ → ψ )) → ( φ →∀ xψ ) , where φ is a formula that does not contain any free occurrences of x .

10. ∀ x ( φ ( x )) → φ ( t ) where t is a term that does not share any variables with φ .

```

\phi)

\to \chi)) ) -> (( ( \phi ->

) -> ( \psi -> \phi)

```

The above axioms are templates that generate infinitely many axiom instances by replacing the formula, term and variable placeholders with any formulas, terms, and variables respectively. Now we are ready to prove 1+1 = 2 : (1) S 0+ S 0 = S ( S 0+0) (axiom 3); (2) S 0 + 0 = S 0 (axiom 3); (3) S ( S 0 + 0) = SS 0 ↔ S 0 + 0 = S 0 (axiom 2); (4) S ( S 0 + 0) = SS 0 (by (2), (3) and modus ponens); (5) S 0 + S 0 = S ( S 0 + 0) → ( S ( S 0 + 0) = SS 0 → S 0 + S 0 = SS 0) (axiom 1); (6) S ( S 0 + 0) = SS 0 → S 0 + S 0 = SS 0 (by (1), (5) and modus ponens); (7) S 0 + S 0 = SS 0 (by (4), (6) and modus ponens).

can do that. Then, intuitively, there are m 1 m 2 . . . m n possible remainder lists [ k 1 , ..., k n ] of dividing a number N by each of the m i in order. Because the m i are pairwise coprime, by letting N cycle through all values N = 1 , ..., m 1 m 2 . . . m n , all possible such remainder lists are visited. 9

To implement this in PA, first we define the mod function c ≡ a mod b : φ MOD ( a, b, c ) := ∃ n ( a = b ∗ n + c ∧ c < b ) .

Next, we need a way to pick specific m i > k i to implement the packing. The m i have to be pairwise coprime and collectively describable with a single number. We can accomplish that by picking a suitable b > max { k 1 , ..., k n } , and letting m i = 1 + ib . As long as every number among 2 , ..., n -1 divides b , the m i are guaranteed to be pairwise coprime. 10

Let b be a multiple of n ! that is greater than max { k 1 , ..., k n } . Let m i = 1 + ib . Let N be the unique integer < m 1 m 2 . . . m n such that k i is the remainder of N over 1 + ib for all 1 ≤ i ≤ n . N and b are computable given any k 1 , ..., k n .

Then, given the packed number N and also b, i , we can extract k i as the remainder of N/ (1 + ib ) .

Finally we are ready to define exponentiation by packing x, x 2 , ..., x n -1 into N . In the "code" below notice the use of the "subroutine" φ POW -N , to ensure that N is minimal. 11

$$\phi _ { P O W - N } ( N , x , n , y ) \colon = & \ \{ n = 0 \land y = S 0 \} \vee \{ \exists m \, ( n = S m ) \land \\ [ \exists b \, \forall i < n \, \exists j \, ( b = i * j ) \land \phi _ { M O D } ( N , S b , x ) \land \phi _ { M O D } ( N , S ( n * b ) , y ) \land \\ ( \forall i < m \, \forall z \, \phi _ { M O D } ( N , S ( Si * b ) , z ) \to \phi _ { M O D } ( N , S ( S S i * b ) , x * z ) ) ] \} \\ \phi _ { P O W } ( x , n , y ) \colon = & \ \exists N \, \phi _ { P O W - N } ( N , x , n , y ) \land \forall M \left ( \phi _ { P O W - N } ( M , x , n , y ) \to N \leq M \right )$$

In the above formula, {} , [] are used instead of () just for clarity; these are not symbols of PA. Also, " ∀ x ( x < y ) → φ " is abbreviated to ∀ x < y φ . The formula is a mouthful, but once we have it we can use it as a subroutine.

Fermat's Last Theorem can be expressed as follows:

∀ n ( n > SS 0) → [ ∀ x > 0 ∀ y > 0 ∀ z > 0 ¬∃ a ∃ b ∃ c ( a + b = c ∧ φ POW ( x, n, a ) ∧ φ POW ( y, n, b ) ∧ φ POW ( z, n, c ))]

## 2.2.2 Recursive Functions

Exponentiation is an example of a recursive function, the class of functions that can be calculated by an effective method, which are precisely the functions computable by Turing machines according to the Church-Turing thesis. Recursive functions defined on every input on N are the functions computable by a Turing machine that is guaranteed to halt. PA is rich enough to represent these functions. In particular:

$$f \colon \mathbb { N } \to \mathbb { N } i s r e p e r s e n t d y b y f o r m u l a \, \phi ( x , y ) \ i f f o r a l l x \in \mathbb { N } , P A \vdash \forall y \, \phi ( x , y ) \leftrightarrow y = f ( x ) .$$

Representing a function f is a strong statement: not only we want a formula φ ( x, y ) that is true iff y = f ( x ) , but additionally, we want the statement ∀ y φ ( x, y ) ↔ y = f ( x ) to be derivable in PA.

A couple of things to note: first, in the above definition, f ( x ) is a numerical value represented by S ( f ( x )) 0 ; there is no symbol for f in PA and a separate such statement is derived for every x . Second, the definition generalizes to functions f : N k → N represented by formulas φ ( x 1 , ..., x k , y ) .

Recursive functions are defined inductively on N k → N starting from a number of basic functions that can be combined through a few composition rules. There are many alternative, equivalent definitions, and excellent references are available online (Dean W, 2020).

Basic recursive functions. The basic recursive functions are:

- The zero function on N k → N , 0 k ( x 1 , ..., x k ) = 0 .

9 The cyclic group { 1 , . . . , m 1 m 2 . . . m n } under multiplication is isomorphic to the product of cyclic groups { 1 , . . . m 1 } × · · · × { 1 , . . . , m n } as long as the m i are pairwise coprime.

10 Proof: Let p | 1 + ib , p | 1 + jb , 1 ≤ i < j ≤ n . Then p | ( j -i ) b so p | j -i or p | b . Also, j -i | b by assumption, therefore p | b . Then p | 1 + ib implies p | 1 so p = 1 .

11 This is not strictly needed: the definition would still be valid without ensuring that N is minimal.

- The successor function on N → N , ∫ ( x ) = x +1 .

- The projection functions on N k → N , 1 ≤ i ≤ k , π i ( x 1 , ..., x k ) = x i .

A function f : N k → N is primitive recursive if it is obtained from the primitive functions by finitely many applications of the following two compositional rules:

- Composition : f ( x 1 , ..., x k ) = g ( h 1 ( x 1 , ..., x k ) , ..., h l ( x 1 , ..., x k )) , where g : N l → N , h i : N k → N are functions constructed at a previous stage.

- Primitive Recursion :

$$& f ( x _ { 1 } , \dots , x _ { k - 1 } , 0 ) = h ( x _ { 1 } , \dots , x _ { k - 1 } ) \\ & f ( x _ { 1 } , \dots , x _ { k - 1 } , n + 1 ) = g ( x _ { 1 } , \dots , x _ { k - 1 } , n , f ( x _ { 1 } , \dots , x _ { k - 1 } , n ) ) , \\ & \text {where $g\colon\mathbb{N}^{k+1}\to\mathbb{N}$, $h\colon\mathbb{N}^{k-1}\to\mathbb{N}$ are functions constructed at a previous stage.}$$

A rich collection of functions can be constructed with the above primitives. For example, constant functions, addition, multiplication and exponentiation can be defined through primitive recursion:

- Constant K : K ( x ) = S ... S (0 1 ( x ) ... ) ( K times)

- Addition: +( x, 0) = x ; +( x, y +1) = S (+( x, y ))

- Multiplication: ∗ ( x, 0) = x ; ∗ ( x, y +1) = +( x, ∗ ( x, y ))

- Power: x 0 = Pow ( x, 0) = 1 ; x y +1 = Pow ( x, y +1) = ∗ ( x, P ow ( x, y ))

However, this does not exhaust all computable functions. We need one additional operation, that of minimization. Consider a computable function g ( x, y ) such that for every x there is some y such that g ( x, y ) = 0 . Then, we can find the minimum such y by computing g ( x, y ) for all values y = 0 , 1 , 2 , ... until we hit g ( x, y ) = 0 . Therefore we want to say that minimizing for y this way is also computable:

- Minimization: f ( x ) = µ y g ( x, y ) := min y s.t. g ( x, y ) = 0

$$\text {where } g \colon \mathbb { N } ^ { 2 } \to \mathbb { N } \, i s \, r e c u r s i v e \ a n d \ \forall x \, \exists y \, g ( x , y ) = 0$$

A function f is recursive if it is obtained from the primitive functions through finite applications of composition, primitive recursion and minimization. If f is defined on all inputs (guaranteed if the minimization applications are defined on all inputs) then it is total recursive .

The recursive functions are precisely the functions computable by a Turing machine. The total recursive functions are precisely the functions computable by a Turing machine that halts on all inputs. There exist total recursive functions that are not primitive recursive such as the Ackermann function, but they are hard to come up with.

## 2.2.3 Representing recursive functions in PA

Peano Arithmetic is rich enough to represent recursive functions. Recall that a function f ( x ) is represented by φ ( x, y ) if for all x , ∀ y φ ( x, y ) ↔ y = f ( x ) . The notion readily generalizes to functions of more than one argument. To show that PA can represent recursive functions, we can go case-by-case in the definition above. Most cases are easy to show, and formal proofs will be omitted here:

- Zero: φ 0 ( x 1 , ..., x k , y ) := y = 0 .

- Successor: φ S ( x, y ) := y = S ( x ) .

- Projection: φ π i ( x 1 , ..., x k , y ) := y = x i .

- Composition: assume ψ i ( x 1 , ..., x k , y ) represents h i for 1 ≤ i ≤ l and χ ( x 1 , ..., x k , y ) represents g , then

$$\phi _ { g \circ [ h _ { 1 } , \dots , h _ { i } ] } ( x _ { 1 } , \dots , x _ { k } , y ) \colon = \exists y _ { 1 } \dots \exists y _ { k } ( \psi _ { 1 } ( x _ { 1 } , \dots , x _ { k } , y _ { 1 } ) \wedge \dots \wedge \psi _ { k } ( x _ { 1 } , \dots , x _ { k } , y _ { k } ) \wedge \chi ( y _ { 1 } , \dots , y _ { k } , y ) )$$

represents f .

- Minimization: assume ψ ( x, y, z ) represents g , then φ µ ( x, y ) := ψ ( x, y, 0) ∧ ∀ w ( w < y → ¬ ψ ( x, w, 0)) represents f ( x ) = µ y g ( x, y ) .

The difficult case is primitive recursion. Consider a function f defined in terms of recursive functions g, h by f ( x, 0) = h ( x ) and f ( x, n +1) = g ( x, n, f ( x, n )) . 12 How do we represent it with a formula?

What we would want is: y = f ( x, n ) ↔ ∃ y 0 ... ∃ y n y 0 = h ( x ) ∧ y 1 = g ( x, 0 , y 0 ) ∧ ... ∧ y n = g ( x, n -1 , y n -1 ) ∧ y = y n . But this is not a valid formula of PA.

So, what we really want is to be able to refer within a formula to an entire sequence of values, y 0 , ..., y n in a way that relates them to one another. We have already encountered this situation in defining φ POW , which is representing the recursive function x y = x ∗ x y -1 , whereby we needed to represent 1 , x, x 2 , ..., x y within a single number. The same trick works in the general case of primitive recursion.

We first define a function β ( N,i ) representable in PA, which encodes arbitrary sequences of numbers n 1 , ..., n k into a single number N , whereby β ( N,i ) = n i for 1 ≤ i ≤ k .

The β -function lemma. There is a function β : N 2 → N representable in PA s.t. for any N,i , β ( N,i ) < N , and for any sequence n 1 , ..., n k ∈ N there exists N ∈ N such that β ( N,i ) = n i for all 1 ≤ i ≤ k .

This function appears in the original proof by Gödel. It is implemented through the Chinese Remainder Theorem similarly to φ POW above; I am pushing details to a footnote. 13

All the above functions can be represented in PA using the previously defined recursion formulas (zero, successor, projection, composition and minimization). The details are omitted (see for instance lecture notes by B. Kim).

- Primitive Recursion: let φ g , φ h represent g and h , and φ β ( N,i, y ) represent β ( N,i ) ; then f ( x, n ) is represented with φ REC ( x, n, y ) :

$$\begin{array} { r l } & { \phi _ { R E C - N } ( N , x , n , y ) \colon = ( \exists y _ { 0 } \, \phi _ { \beta } ( N , 0 , y _ { 0 } ) \wedge \phi _ { h } ( x , y _ { 0 } ) ) \wedge \forall i \, [ i < n \to } \\ & { \exists y _ { i } \, \exists y _ { i + 1 } \, \phi _ { \beta } ( N , i , y _ { i } ) \wedge \phi _ { \beta } ( N , S i , y _ { i + 1 } ) \wedge \phi _ { g } ( x , i , y _ { i } ) \wedge \phi _ { g } ( x , S i , y _ { i + 1 } ) ] \wedge \phi _ { \beta } ( N , n , y ) } \\ & { \phi _ { R E C } ( x , n , y ) \colon = \exists N \, \phi _ { R E C - N } ( N , x , n , y ) \wedge \forall M \, ( \phi _ { R E C - N } ( M , x , n , y ) \to N \leq M ) } \end{array}$$

Notice the "subroutine" φ REC -N within φ REC so as to ensure that N is minimal - this condition is actually not needed for the definition to work, but it's cleaner this way. Once the above formulas are laid out, it is tedious to demonstrate that in each case it can be derived within PA that y = f ( ... ) iff φ ( ..., y ) , i.e., to prove PA ∀ y ( φ ( ..., y ) ↔ y = f ( ... )) . This ability of PA is at the heart of the incompleteness theorem. This makes PA as expressive as recursive functions or general Turing machines. Then, the incompleteness theorem follows just as surely as the undecidability of the halting problem does for Turing machines. It is instructive to ask what is a ( the ) key property of PA that makes it so. In fact, it is the combination of addition and multiplication. Presburger arithmetic is essentially PA without multiplication, and Skolem arithmetic is PA without addition; both are complete and decidable. 14 One

12 This easily generalizes to multiple variables; see also:

http://www.michaelbeeson.com/teaching/StanfordLogic/Lecture11Slides.pdf.

13 The β function is defined so as to ensure that for any n 1 , ..., n k there is an N such that β ( N,i ) = n i for 1 ≤ i ≤ k . Without loss of generality let n 0 = k be the length of the list.

Let c = max { n 0 , ..., n k } . let b = c ! and observe that b +1 , ..., ( k +1) b +1 are relatively prime. By the Chinese Remainder Theorem, there is a unique n < Π k +1 i =1 ( ib +1) such that n ≡ n i mod ( ib +1) for all 1 ≤ i ≤ k +1 .

Now from n, b we can retrieve k = n mod ( b +1) and then retrieve all remaining n 1 , ..., n k . To further compress to a function β of two arguments as specified by the lemma, define a pairing function π : N 2 → N and two projection functions π L , π R : N → N such that for any i, j ∈ N we have π L ( π ( i, j )) = i and π R ( π ( i, j )) = j , with π, π L , π R primitive recursive. The specific implementation is not important, but π ( i, j ) = ( i + j ) 2 + i +1 works because it is injective and easy to represent in PA.

Define N := π ( n, b ) and β ( N,i ) := π L ( N ) mod (( i +1) π R ( N ) + 1) = n i for 0 ≤ i ≤ k . To define primitive recursion, the assertion will be that ∃ N such that β ( N,i ) satisfy the recursive definition for k, n 1 , ..., n k , and moreover that such N is the minimum satisfying the definition.

14 There is a doubly exponential procedure for Presburger arithmetic and a triply exponential procedure for Skolem arithmetic, which determine whether a formula is a theorem. It is notable that even slightly more power turns the theories undecidable. For example, extending Skolem arithmetic with the successor operator S enables implementing addition; extending with the < predicate allows implementing the successor operator, S ( x ) := y > x ∧ ∀ z > x ( z = y ∨ z > y ) and therefore also renders the resulting theory undecidable.

can see why: key to the ability to pack an arbitrary list of numbers [ n 1 , ..., n k ] into a single number is the combination of addition and multiplication. In the particular implementation of the β function as described here, the Chinese Remainder Theorem is used. However, this is just an implementation choice: other choices are possible, such as picking the largest prime number p > max i { n 1 , ..., n k } and packing all numbers into the pair ( n, p ) where n = Σ k i =1 n i p i -1 . Regardless, without both addition and multiplication, this can't take place. 15

## 3 Theorem Statement

The technical statement of the theorem and its slight variants can be a bit cumbersome. Informally, the theorem states:

Any reasonable extension L of PA is either inconsistent or incomplete. Specifically, there exist a statement σ such that either σ is true and L σ , or L ⊥ .

The above statement is blatantly informal. First, what is a "reasonable" extension of PA? That would be a language L that includes all of PA, plus at most countably many additional symbols, and a recursive set of additional axioms or axiom templates, so that checking the validity of a proof in L is a primitive recursive task. Any theorem of PA would be a theorem of L .

A few remarks:

- In the original proof, an additional requirement was that the axiomatic system is ω -consistent : that there is no formula φ ( n ) such that for any n , L φ ( n ) , while simultaneously L ∃ n ¬ φ ( n ) . However, ω -consistency is too strong an assumption. Soon after Gödel's original proof, Rosser strengthened it by introducing a trick explained below that removes this assumption. This newer version is what is also known as the Gödel-Rosser theorem.

- This is the first of two famous incompleteness theorems. The Second Incompleteness theorem informally states that L cannot prove its own consistency.

- Technically different versions of the theorem can be proven through computability theory, relating to the Halting problem, or through Kolmogorov complexity, relating to the smallest possible program that outputs a given string. I will discuss these angles briefly below.

## 4 Proof Outline

## 4.1 Main idea - arithmetization and diagonalization

The main idea of the proof is based on the liar's paradox "this sentence is false", modified within PA to say "this statement is not provable". Self-referential statements are well known troublemakers in foundational mathematics, logic and philosophy. The most famous example is perhaps Russel's paradox in naïve set theory of the set x of all sets that are not members of themselves. Is x a member of itself? It can neither be, nor not be. Or Russel's barber " who shaves all those, and those only, who do not shave themselves ". Does the barber shave himself?

Similarly here, the statement σ := "this statement is not provable" is either true and not provable, or provable and false. That implies that PA is either incomplete or inconsistent. The proof is an algorithm: starting from the symbols and axioms of PA, it generates a statement σ such that " σ is true if and only if σ is not provable" is a theorem of PA. The algorithm is general enough to be applicable to any reasonable extension of PA. How can such a σ be constructed? Through two key steps in the proof: arithmetization and diagonalization .

15 Weaker systems than PA that exhibit incompleteness are possible. Primitive Recursive Arithmetic (PRA) is a well-known weaker system that differs from PA in the following ways: (1) there are no quantifiers; (2) instead, there is a separate symbol for each primitive recursive function, which is now needed because of lack of quantifiers; (3) there are only two inference rules, modus ponens and variable substitution - the quantifier rule is dropped. Successor, addition and multiplication are preserved. PRA, and even slightly weaker subsystems allow the incompleteness proof to go through. Another example is the theory of hereditarily finite sets (Swierkowsky 2003).

Arithmetization. If we could devise a PA formula φ PROVABLE that applies to other formulas, and a statement σ such that σ ↔¬ φ PROVABLE ( σ ) is a theorem of PA, we would be done. Unfortunately, PA's domain is numbers and not formulas. And here comes the proof's first major ingenuity: define a function that arithmetizes formulas and proofs of formulas. Seen as strings of letters, formulas and proofs can be encoded into numbers in a variety of ways such as ASCII. Today this may seem obvious; in 1931 when the theorem was proven, it was brilliant.

Having encoded formulas into numbers, σ is mapped to a number, call it σ ∈ N . Lists of formulas are also mapped to numbers, [ φ 1 , ..., φ k ] ∈ N . A proof of σ in PA is simply a list [ φ 1 , ..., φ k = σ ] such that each φ i is either an axiom or a consequence of preceding formulas under an inference rule. The latter is a syntactic property: it can be performed on the proof text by a well defined procedure in finite steps. The same procedure translates to arithmetic operations on a number m representing the proof [ φ 1 , ..., φ k = σ ] . This can represented in PA, as we will see below, with φ PROOF -OF ( m, σ ) . 16 Then, the formula φ PROVABLE ( σ ) is expressed as ∃ mφ PROOF -OF ( m, σ ) . 17 If we can now devise a σ such that σ ↔¬ φ PROVABLE ( σ ) , we are done. This is accomplished through diagonalization .

Diagonalization. Gödel described two different diagonalization methods in his original paper. An intuitive, informal one, and a rigorous one in full formal detail. The intuitive method goes as follows. Consider all formulas of PA with a single free variable (plus optionally other bound variables), and moreover require the free variable to be specifically x 0 . These formulas can be ordered lexicographically φ 1 ( x 0 ) , φ 2 ( x 0 ) , φ 3 ( x 0 ) ,... in a manner that is computable thus representable in PA. Therefore we can talk in PA about the n th such formula, φ n ( x 0 ) , as long as n itself is definable. For any definable n, m ∈ N , the statement φ n ( m ) is definable in PA, contains no free variables and is either true or false. Consider now the formula φ NOT -PROVABLE -SELF ( x 0 ) := "the statement φ x 0 ( x 0 ) is not provable". 18 This is definable in PA and states that the x th 0 formula in the list, with number x 0 as argument is not provable. The formula φ NOT -PROVABLE -SELF ( x 0 ) itself has the single free variable x 0 , and therefore is on the list say at position M . 19 Now consider what is the value of φ NOT -PROVABLE -SELF ( M ) . This states "the statement φ NOT -PROVABLE -SELF ( M ) is not provable". If it is true, then the statement is not provable, therefore PA is incomplete. If it is false, then the statement is provable and false, so PA is inconsistent. The argument is called "diagonalization" because we apply the M th formula to the argument M . In the proof that follows, a similar argument is used except with a different arithmetization, similar to Gödel's rigorous arithmetization.

## 4.2 Outline of main steps

The proof takes the following main steps:

1. Encode formulas into numbers. First, a function G is defined that maps strings into numbers in N . Any formula φ maps to G ( φ ) , denoted as φ ∈ N , from which φ can be retrieved. This way, formulas can "talk" about other formulas. For example, we can write a formula φ FORMULA ( n ) = " n is the encoding of a syntactically valid formula".

2. Extend the map to lists of formulas. The mapping G extends to ordered lists of formulas, G ([ φ 1 , ..., φ k ]) = [ φ 1 , ..., φ k ] ∈ N . Given a number n = [ φ 1 , ..., φ k ] , the list of formulas can be retrieved.

3. Express the notion of a provable formula. A proof is just an ordered list of formulas with a special property: every formula in the list is either an axiom or a consequence of previous formulas through one of the three derivation rules. This is a syntactic property, which is easily seen to be computable and is now captured in a formula φ PROOF -OF ( m,n ) = " m encodes a proof of the formula encoded by n ", which is true and derivable in PA iff m = [ φ 1 , ..., φ k ] , n = φ k , and [ φ 1 , ..., φ k ] is a proof of φ k . Then the notion of a provable formula can be captured in a formula: φ PROVABLE ( n ) = " n encodes a provable formula" = ∃ m φ PROOF -OF ( m,n ) .

16 Note that the arguments of all these formulas are numbers and are actually represented in unary S...S 0 within PA.

17 This latter property is not decidable; it is the only non-decidable property defined in PA in the incompleteness proof.

18 To construct this step-wise, first define φ NOT -PROVABLE ( x 0 , x 1 ) := "the statement φ x 1 ( x 0 ) is not provable". This is a formula of two variables. Letting x 0 = m,x 1 = n , it says that the n th formula in the lexicographic list of single-variable formulas, when given input m becomes a statement that is not provable. Then define φ NOT -PROVABLE -SELF ( x 0 ) :=

φ NOT -PROVABLE ( x 0 , x 0 ) .

19 M is definable in PA as it can be calculated from the syntax of φ NOT -PROVABLE -SELF .

4. Devise a self-referential formula by a variable-substitution trick. Then, a rather complex formula σ is expressed, and it is shown within PA that σ ↔¬ φ PROVABLE ( σ ) . This is known as the "fixed point lemma" and is a tricky and fun part of the proof. First, the "diagnonalization" D of a formula is defined as a procedure that takes a number m , and if m = φ , substitutes the zero or more occurrences of the specific variable x 0 within φ with the number m (expressed S ( m ) 0 ): φ ( x 0 , y, z, ... ) turns into φ ( m,y,z,... ) . Then, D returns φ ( m,y,z,... ) . This recursive function is represented in PA with a formula ψ DIAG ( x, y ) , and using this formula, statement σ is constructed (the punchline will be reserved for the respective section below).

5. Conclude that the resulting formula is either true and not provable, or false and provable. σ is a numerical statement of no free variables, and is either true or false in N . If σ is provable in PA, then PA ¬ φ PROVABLE ( σ ) , a contradiction. 20 If on the other hand ¬ σ is provable, then PA φ PROVABLE ( σ ) . Then, assuming that PA is ω -consistent (i.e., it cannot derive ψ ( n ) for every n ∈ N and simultaneously derive ∃ n ¬ ψ ( n ) ), this is a contradiction. This latter assumption of ω -consistency is a subtle point: it can be removed by slightly modifying the above proof as will be discussed below, with a simple trick devised by J. Barkley Rosser after the original proof appeared. The conclusion is that σ is true and not provable in PA, therefore PA is incomplete.

What is needed for the proof to go through. Let us list a few key elements needed for the above proof to go through for an axiomatic system L , which in our case is PA:

1. L 's domain has to be rich enough for L 's formulas to be encoded unambiguously to the domain, so that L can "talk" about its own formulas.

2. L has to be expressive enough to express the notion of "provable formula" with a formula, φ PROVABLE and also the diagonalization procedure that involves variable substitution. Generally, what is needed is a system that can represent all computable functions.

3. L 's axioms have to be powerful enough for steps 4 and 5 to go through. A proof of σ in L , call it [ φ 1 , ..., φ k = σ ] will only lead to a contradiction with the assertion ¬ φ PROVABLE ( σ ) if φ PROOF -OF ( [ φ 1 , ..., φ k ] , φ k ) is guaranteed to be provable in L whenever [ φ 1 , ..., φ k ] is a valid proof. That is to say, L 's axioms should be powerful enough to check the validity of a fully spelled-out proof. Again, what we need here is the power to represent computable functions.

Note that the notion "provable" is not decidable. One cannot construct a program that takes a formula as input, churns away, and always returns a correct "yay" or "nay" as a result. Such a program would solve the halting problem. However, given a proof of a formula in sufficient detail, it is computable to check that the proof is correct. Therefore, a proof can be encoded by G into a number m and utilized to derive φ PROOF -OF ( m, σ ) , which immediately implies φ PROVABLE ( σ ) .

These key elements can be satisfied in multiple ways. The language does not have to be PA. There is great freedom in designing the arithmetization. The self-referential formula can be changed substantially. The proof can be thought of as a pseudocode implementation of a more general idea.

## 4.3 More details on main steps

## 4.3.1 Step 1: Arithmetize formulas

The formulas of PA are a countable, primitive recursive collection of strings of letters that can be mapped 1-1 to N . There are many possible mappings, such as for example ASCII.

Gödel provided a clever mapping before the time of ASCII, based on exponentiating prime numbers to powers that codified letters in the alphabet of PA. First, codify each symbol of the alphabet: { 0 : 1 , S : 2 , + : 3 , ∗ : 4 , =: 5 , ¬ : 6 , ∧ : 7 , ∨ : 8 , → : 9 , ∀ : 10 , (: 11 , ) : 12 , x 0 : 13 , x 1 : 17 , x 2 : 19 , ... } . Then, convert a string s = s 1 s 2 ...s k of length k to a list of numbers [ n 1 , ..., n k ] using the above code. Then pick the first k prime numbers in N , 2 , 3 , 5 , ..., p k , and calculate the number 2 n 1 3 n 2 . . . p n k k that uniquely represents s , from which s can be retrieved using prime factorization. For example, S 0 + S 0 = SS 0 is converted to

20 A proof of σ in PA can be encoded with into a number m ∗ to derive φ PROOF -OF ( m ∗ , σ ) in PA, which implies φ PROVABLE ( σ ) .

[3 , 1 , 5 , 3 , 1 , 9 , 3 , 3 , 1] and then to 2 3 3 1 5 5 7 3 11 1 13 9 17 3 19 3 23 1 . It is a big number, but since nobody will actually ever implement this algorithm, big numbers are cheap.

Given any formula φ , we can thus compute a unique φ ∈ N . Conversely, given any number n , we can compute whether n is the Gödel number φ of a syntactically valid formula φ .

## 4.3.2 Step 2: Arithmetize lists of formulas

Sequences of formulas can be encoded by using the β function: [ φ 1 , ..., φ k ] is defined for any list of numbers to be the minimum N such that β ( N, 0) = k and ∀ 1 ≤ i ≤ k β ( N,i ) = φ i . 21

A proof of a formula φ is a finite list of formulas, [ φ 1 , . . . , φ k = φ ] , such that every φ i for 1 ≤ i ≤ k is either an axiom or is derived from φ 1 , . . . , φ i -1 using PA's rules of inference. Given n ∈ N , we can compute whether n encodes a syntactically valid sequence of formulas, and whether the sequence constitutes a valid proof in PA: we just need to check that every φ i is either an axiom 22 or a consequence of some preceding φ j , φ l , j, l < i in the sequence through one of the three rules of inference.

## 4.3.3 Step 3: Express the notion of a provable formula

Now that formulas, sequences of formulas and proofs are mapped into numbers, PA can deal with them. Every recursive function is representable in PA, therefore any computable property P ( x ) where x is a formula or a proof can be first mapped to the indicator function f P ( x ) and then represented in PA with a formula φ f ( x , y ) such that the statement y = f P ( x ) ↔ φ f ( x , y ) is a theorem of PA. Since y takes values 0 or 1 in this case, we might as well let φ ′ f ( x ) := φ f ( x , S 0) , which is true iff P ( x ) .

Let's see some examples:

The property P ( x ) could be " x is a syntactically valid formula in PA". This is certainly a computable property. We can turn this into a PA formula that checks syntactic validity of encoded formulas:

φ FORMULA ( n ) := " n = ψ for some syntactically valid formula ψ of PA"

Also, checking whether a formula has a single, specific free variable x is computable:

φ SV F -x ( n ) := " φ FORMULA ( n ) , n = ψ , and ψ = ψ ( x ) has the single free variable x " 23

Checking whether a sequence of formulas is a valid, complete proof within PA of the last formula of the sequence is computable:

$$\phi _ { P R O O F - O F } ( m , n ) \colon = " m = [ [ \psi _ { 1 } , \dots , \psi _ { k } ] ] \text { for a valid proof of } \psi _ { k } \text { in PA, and } n = [ \psi _ { k } ] "$$

Finally, we are ready to define within PA the formula

φ PROVABLE ( n ) := " ∃ mφ PROOF -OF ( m,n ) "

Importantly, φ PROVABLE does not represent a decidable function. However, whenever a proof of a formula ψ is provided, the proof can readily be encoded in some m , and then φ PROOF -OF ( m, ψ ) is shown in PA, from which φ PROVABLE ( ψ ) follows. This property will suffice to prove the theorem.

## 4.3.4 Step 4. Devise a self-referential formula through a variable substitution trick

The following variable substitution procedure, defined as a function D : N → N , is a key device in the proof. D takes as input φ , and returns φ D where φ D := φ [ x 0 : φ ] . The variable x 0 is the lexicographically first variable in the alphabet Σ , and φ may contain zero or more occurrences of x 0 . All these occurrences are replaced by S ( φ ) 0 , and the resulting formula is encoded by . 24

21 Terms and formulas can also be arithmetized thus according to their structure. For example, s = t becomes [ = , s , t ] , ∀ xφ becomes [ ∀ , x , φ ] and so on. This encoding makes it much easier to write "code" in this language. See lecture notes by B. Kim.

22 This can be checked syntactically through a finite number of substitutions of variables within the axioms with terms appearing in φ i .

23 We can define such a formula for any specific x , or a formula checking for any single variable, or for two variables, etc. All these syntactic properties are easily shown to be computable and therefore representable in PA.

24 As a reminder, we are abusing notation when we say φ [ x 0 : φ ] : x 0 is not replaced by the number φ but instead, by S...S 0 ( φ many S 's).

Diagonalization: D : N → N : Given n ∈ N ,

- If ¬ φ FORMULA ( n ) , return 0 .

- Else, let n = φ .

- Construct the string s = S ( n ) 0 .

- Substitute all occurrences of x 0 in φ with s , to obtain φ D := φ [ x 0 : s ] .

$$B y \ a b u s e \ o f n o t a t i o n , \, \phi ^ { \mathcal { D } } = \phi [ x _ { 0 } \colon \lceil \phi \rceil ] .$$

- Return φ D .

The procedure above is recursive, and therefore it is representable in a formula of PA, let's call it φ DIAG , where

$$\vdash \forall y \left ( \phi _ { D I A G } ( n , y ) \leftrightarrow y = \mathcal { D } ( n ) \right )$$

Now comes a tricky lemma at the heart of the proof.

Lemma (Gödel's fixed-point lemma or diagonalization lemma): given a formula φ ( x ) in PA, of a single free variable x , a sentence σ can be constructed such that PA σ ↔ φ ( σ ) .

Proof: Consider the formula φ ∗ ( x 0 ) = ∃ y ( φ DIAG ( x 0 , y ) ∧ φ ( y )) . This formula is true whenever x 0 = ψ , for some formula ψ that may have 0 or more occurrences of variable x 0 (those occurrences inside ψ are not to be confused by the outer x 0 = ψ ), y is equal to D ( x 0 ) = D ( ψ ) = ψ [ x 0 : ψ ] , and moreover φ ( y ) holds where φ is the starting formula in the Lemma we are seeking to prove. Therefore, φ ∗ ( x 0 ) is equivalent to φ ( ψ [ x 0 : ψ ] ) .

Now let n = φ ∗ ( x 0 ) . Define σ , a sentence of no free variables:

$$\sigma \, \colon = \, \exists y \left ( \phi _ { D I A G } ( n , y ) \wedge \phi ( y ) \right )$$

Then, by the definition of φ DIAG , we get σ ↔∃ y ( y = D ( n ) ∧ φ ( y )) .

What is D ( n ) in this latter expression? it is D ( φ ∗ ( x 0 ) ) = φ ∗ [ x 0 : n ] . Let's write this down explicitly:

$$\phi _ { * } [ x _ { 0 } \colon n ] = \exists y \left ( \phi _ { D I A G } ( n , y ) \wedge \phi ( y ) \right ) = \sigma$$

Therefore, we have get

$$\vdash \sigma \, \leftrightarrow \, \exists y \left ( y = { \mathcal { D } } ( n ) \land \phi ( y ) \right ) \, \leftrightarrow \, \exists y \left ( y = \lceil \sigma \rceil \land \phi ( y ) \right ) \, \leftrightarrow \, \phi ( \lceil \sigma \rceil )$$

which is what we wanted to prove.

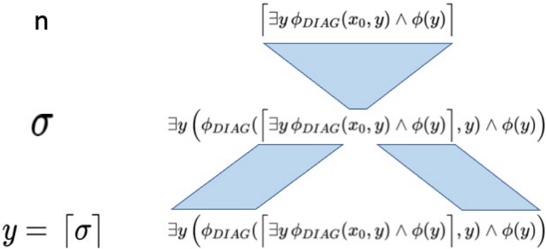

In the above proof, it is perhaps tricky at first to see that φ DIAG ( ∃ y ( φ DIAG ( x 0 , y ) ∧ φ ( y )) , y ) assigns to y precisely σ . Figure 1 makes this clear.

## 4.3.5 Step 5: conclude the proof

Therefore, given any formula φ of a single free variable, we can construct a sentence σ of no free variables, such that PA σ ↔ φ ( σ ) . This is a powerful lemma! The rest of the proof is easy.

First, an important result follows immediately: Tarski's inexistence of a truth definition.

Tarski's inexistence of Truth definition. Imagine that we had a table, or a computable function Truth : N → N , such that given any sentence τ as input, Truth ( τ ) returns the truth value of τ .

Use the above lemma with φ ( x ) = ¬ Truth ( x ) to construct σ such that σ ↔ φ ( σ ) ↔ ¬ Truth ( σ ) .

Is σ true? Well, if it is, then ¬ Truth ( σ ) is true, in which case Truth ( σ ) is false, therefore σ is false. And vice versa. We conclude that Truth ( x ) cannot be a computable function.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Logical Flow

### Overview

The image presents a diagram illustrating a logical flow or derivation process. It uses symbols and mathematical notation to represent steps or transformations. The diagram consists of three levels, each associated with a symbol (n, σ, y=[σ]) and connected by geometric shapes containing logical expressions.

### Components/Axes

* **Left Column:** Contains the symbols 'n', 'σ', and 'y = [σ]' arranged vertically.

* **Right Side:** Contains logical expressions enclosed within geometric shapes.

* **Geometric Shapes:** A triangle at the top, and two parallelograms at the bottom. These shapes contain logical expressions.

### Detailed Analysis or ### Content Details

* **Top Level:**

* Symbol: 'n'

* Shape: An inverted triangle.

* Expression inside the triangle: "∃y φ<sub>DIAG</sub>(x<sub>0</sub>, y) ∧ φ(y)"

* **Middle Level:**

* Symbol: 'σ'

* Shape: The triangle from the top level splits into two parallelograms.

* Expression inside the parallelograms: "∃y (φ<sub>DIAG</sub>([∃y φ<sub>DIAG</sub>(x<sub>0</sub>, y) ∧ φ(y)], y) ∧ φ(y))"

* **Bottom Level:**

* Symbol: 'y = [σ]'

* Shape: The two parallelograms from the middle level continue.

* Expression inside the parallelograms: "∃y (φ<sub>DIAG</sub>([∃y φ<sub>DIAG</sub>(x<sub>0</sub>, y) ∧ φ(y)], y) ∧ φ(y))"

### Key Observations

* The diagram appears to represent a derivation or transformation process, where 'n' is transformed into 'σ', and then 'σ' is transformed into 'y = [σ]'.

* The logical expressions become more complex as the process progresses from top to bottom.

* The triangle splits into two parallelograms, suggesting a branching or parallel processing of the logical expression.

* The expressions in the two parallelograms at the bottom level are identical.

### Interpretation

The diagram likely illustrates a logical derivation or transformation process. The initial state 'n' is transformed into 'σ' through a logical operation represented by the expression in the triangle. This operation then branches into two parallel paths, both applying the same logical operation to arrive at the final state 'y = [σ]'. The use of existential quantifiers (∃y) and the function φ<sub>DIAG</sub> suggests that the process involves finding a 'y' that satisfies certain conditions related to 'x<sub>0</sub>' and the function φ. The branching could represent different possible derivations or scenarios leading to the same final state. The diagram is abstract and requires further context to fully understand the specific meaning of the symbols and functions involved.

</details>

y=[a]

Figure 1: Construction of sentence σ in the diagonalization lemma . The formula φ DIAG implements diagonalization: given n and y , φ DIAG asserts that y is the encoding of a formula obtained as follows. First, assert that n = φ . Next, find all occurrences of x 0 within φ and replace them with S ( n ) . Finally, encode the result in an integer and assert y is that integer. Now, if we construct σ as depicted, φ DIAG will replace x 0 with n and the result will be - voila! -σ again so y becomes σ .

Now let's turn to the incompleteness theorem. Assume PA is complete so that given any true sentence τ , there is a PA proof of τ . We use the lemma above with φ = ¬ φ PROVABLE ( x ) . Let σ be such that σ ↔¬ φ PROVABLE ( σ ) . Either σ or ¬ σ is true and therefore provable in PA.

Case1. σ is provable. Let [ ψ 1 , ..., ψ k = σ ] be a proof, and let m ∗ = [ ψ 1 , ..., ψ k ] . By σ ↔ ¬ φ PROVABLE ( σ ) we get ¬∃ mφ PROOF -OF ( m, σ ) and simultaneously we have φ PROOF -OF ( m ∗ , σ ) , therefore PA is inconsistent.

Case 2. ¬ σ is provable. Then φ PROVABLE ( σ ) = ∃ mφ PROOF -OF ( m, σ ) follows. From the proof of ¬ σ , it follows that for any given m ∈ N , ¬ φ PROOF -OF ( m, σ ) otherwise PA would derive σ , a contradiction. Here, in his original proof Gödel assumed ω -consistency of arithmetic:

ω -consistency: there is no formula ψ ( x ) such that for every m , ¬ ψ ( m ) and also ∃ mψ ( m ) .

We now have PA ¬ σ therefore for every m , ¬ φ PROOF -OF ( m, σ ) , and also ∃ mφ PROOF -OF ( m, σ ) , contradicting ω -consistency and concluding the proof.

However, ω -consistency is a strong assumption. For example, we could augment PA with the axiom ¬ σ . The resulting theory would be ω -inconsistent but still not inconsistent in the sense of proving a contradiction.

## 4.3.6 Rosser's trick and the Gödel-Rosser Incompleteness Theorem

Fortunately, we can fix the above proof with what is known as Rosser's trick, a simple modification to the formula φ PROVABLE that makes the theorem a lot more powerful.

We alter the definition of φ PROOF -OF ( m,n ) . First, let φ NOT ( m,n ) := ∃ ψ m = ψ ∧ n = ¬ ψ .

## Rosser's trick:

$$\phi _ { P R O O F - O F } ^ { R } \left ( m , n \right ) \colon = \, \phi _ { P R O O F - O F } ( m , n ) \wedge \neg \left ( \exists k \leq m \left ( \exists l \left ( \phi _ { N O T } ( n , l ) \wedge \phi _ { P R O O F - O F } ( k , l ) \right ) \right ) \right )$$

Basically, φ R PROOF -OF ( m,n ) says that m is the encoding of a proof of a formula encoded by n , and there is no k ≤ m that is the encoding of a proof of the negation of the formula encoded by n . Intuitively,

φ R PROOF -OF ( m,n ) := " m encodes a proof of the formula encoded by n , and there is no shorter proof of this formula's negation"

Now we can proceed as before and define φ R PROVABLE ( n ) = ∃ mφ R PROOF -OF ( m,n ) , and use the lemma with φ R = ¬ φ R PROVABLE ( x ) . Let σ R be such that σ R ↔ ¬ φ R PROVABLE ( σ R ) .

Let's go back to the problematic case where ¬ σ was provable, which required ω -consistency to get a contradiction. Let ¬ σ R be provable and let [ φ 1 , ..., φ k = ¬ σ R ] be a proof. If PA is consistent, this implies there is no shorter proof of σ R , something that can be checked in a primitive recursive manner, therefore φ R PROVABLE ( ¬ σ R ) . By diagonalization we also have φ R PROVABLE ( σ R ) , which asserts that there is no shorter proof of ¬ σ R . Both statements cannot be true simultaneously: φ R PROVABLE ( σ R ) can be checked to be false by searching for any proof of σ R that is shorter than the proof of ¬ σ R .

## 4.3.7 A two-sentence liar's paradox

The incompleteness theorem is a version of the liar's paradox "this sentence is not true", modified to "this statement is not provable". Other versions of the liar's paradox exist, such as the two-sentence version:

A: The following statement is false.

- B: The preceding statement is true.

Can we devise an incompleteness proof based on this version? The following lemma constructs two sentences that "talk" about each other. 25

Lemma: Two-Sentence Liar's Paradox. Given a formula φ ( x ) in PA, of a single free variable x , sentences σ and τ can be constructed such that PA σ ↔ φ ( τ ) and PA τ ↔¬ φ ( σ ) .

Proof: First, we define a function R : N → N that will play a role analogous to D of the diagonalization lemma.

Reversal: R : N → N : Given n ∈ N ,

1. If ¬ φ FORMULA ( n ) , return 0 ; else, let n = φ .

2. If φ = ∃ y ψ ( x 0 , y ) ∧ χ ( y ) , where ψ has at most x 0 , y free, x 0 is the specific first variable in Σ , and χ has at most y free, return 0 .

3. If χ = ¬ χ ′ , then let χ R = χ ′ else let χ R = ¬ χ .

4. Let n ′ = ∃ y ψ ( x 0 , y ) ∧ χ R .

5. Construct the string s = S ( n ′ ) 0 .

6. Substitute all occurrences of x 0 in ψ with s , to obtain ψ R := ψ [ x 0 : s ] .

7. Construct the formula φ R = ∃ y ψ R ( y ) ∧ χ R ( y )

8. Return φ R .

The procedure above is (primitive) recursive, and therefore is representable with a formula in PA, let's call it φ REV , where

$$\vdash \forall y \left ( \phi _ { R E V } ( n , y ) \leftrightarrow y = \mathcal { R } ( n ) \right )$$

Starting with φ in the lemma statement, let y be a variable of Σ not appearing within φ or φ REV . Let n = ∃ y φ REV ( x 0 , y ) ∧ φ ( y ) and let m = ∃ y φ REV ( x 0 , y ) ∧ ¬ φ ( y ) , unless φ = ¬ φ ′ in which case we let m = ∃ y φ REV ( x 0 , y ) ∧ φ ′ ( y ) . Let σ = ∃ y φ REV ( n, y ) ∧ φ ( y ) and τ = ∃ y φ REV ( m,y ) ∧ ¬ φ ( y ) , unless φ = ¬ φ ′ , in which case we let τ = ∃ y φ REV ( m,y ) ∧ φ ′ ( y ) .

Calculating σ , we get:

$$\sigma = \exists y \, \phi _ { R E V } ( \lceil \exists y \, \phi _ { R E V } ( x _ { 0 } , y ) \wedge \phi ( y ) \rceil , y ) \wedge \phi ( y ) \leftrightarrow$$

(the outer φ REV ( n, y ) will set n ′ = ∃ yφ REV ( x 0 , y ) ∧¬ φ ( y ) in step 5, and set y to φ R = ∃ yφ REV ( n ′ , y ) ∧ ¬ φ ( y ) ).

$$\exists y \left ( y = \lceil \exists y \, \phi _ { R E V } ( \lceil \exists y \, \phi _ { R E V } ( x _ { 0 } , y ) \wedge \neg \phi ( y ) \rceil , y ) \wedge \neg \phi ( y ) \rceil \wedge \phi ( y ) \leftrightarrow$$

25 When I wrote this section and the next, I thought the results were new and was not aware of previous generalizations of diagonalization (see Buldt 2014). Still, my constructions are clean ways to generalize diagonalization so I keep them here.

$$\phi ( \lceil \exists y \, \phi _ { R E V } ( \lceil \exists y \, \phi _ { R E V } ( x _ { 0 } , y ) \wedge \neg \phi ( y ) \rceil ) , y ) \wedge \neg \phi ( y ) \rceil ) \leftrightarrow \phi ( \lceil \tau \rceil )$$

Then, calculating τ we get:

$$\tau = \exists y & \, \phi _ { R E V } ( m , y ) \wedge \neg \phi ( y ) = \exists y \, \phi _ { R E V } ( \lceil \exists y \, \phi _ { R E V } ( x _ { 0 } , y ) \wedge \neg \phi ( y ) \rceil , y ) \wedge \neg \phi ( y ) \leftrightarrow \\ & \exists y \, ( y = \lceil \exists y \, \phi _ { R E V } ( \lceil \exists y \, \phi _ { R E V } ( x _ { 0 } , y ) \wedge \phi ( y ) \rceil , y ) \wedge \phi ( y ) \rceil ) \wedge \neg \phi ( y ) \leftrightarrow \\ & \quad \neg \phi ( \lceil \exists y \, \phi _ { R E V } ( \lceil \exists y \, \phi _ { R E V } ( x _ { 0 } , y ) \wedge \phi ( y ) \rceil , y ) \wedge \phi ( y ) \rceil ) \leftrightarrow \neg \phi ( [ \sigma ] )$$

This concludes the proof of the lemma.

Now the theorem follows from the two-sentence lemma by letting φ ( x ) = φ R PROVABLE ( x ) . We construct σ, τ such that σ ↔ φ R PROVABLE ( τ ) and τ ↔ ¬ φ R PROVABLE ( σ ) . Let σ . Then φ R PROVABLE ( σ ) , therefore ¬ τ and simultaneously φ R PROVABLE ( τ ) , inducing a contradiction. Otherwise, let ¬ σ : then ¬ φ R PROVABLE ( τ ) . If τ we get a contradiction, whereas if ¬ τ we get φ R PROVABLE ( σ ) , a contradiction. Therefore, neither σ nor ¬ σ is provable.

## 4.3.8 Further generalization to multi-sentence liar's paradox

There are multi-sentence versions of the liar's paradox, such as circular constructions of the form:

A 1 : Sentence A 2 is false.

A 2 : Sentence A 3 is false.

...

A k : Sentence A 1 is false.

This leads to a paradox whenever k is odd: if A 1 is true then A k is true, therefore A 1 is false and so on. We can further generalize the diagonalization lemma to cover these cases and more. We will construct sentences σ 1 , ..., σ k , whereby each σ i ↔ ψ i ( σ f ( i ) ) is a theorem of PA, where f is any function on { 1 , ..., k } and the ψ i s are any formulas.

Lemma: Generalized Diagonalization. Given single-variable formulas ψ 1 , ..., ψ k in PA and function f : { 1 , ..., k } → { 1 , ..., k } , sentences σ 1 , ..., σ k can be constructed such that ∀ 1 ≤ i ≤ k PA σ i ↔ ψ i ( σ f ( i ) ) .

Proof: Fix single-variable formulas ψ 1 , ..., ψ k and function f : { 1 , ..., k } → { 1 , ..., k } . For example we could have f ( i ) := i +1 mod k and ψ i ( y ) = ¬ φ ( y ) , to produce a circular liar's paradox whenever k is odd.

First we define GD : N → N , a generalized version of the diagonalization function.

Generalized Diagonalization: GD : N → N : Given n ∈ N ,

1. If n = [ ∃ y φ GD ( x 0 , y ) ∧ ψ i ( y ) , i, φ ( y )] , return 0 .

2. Let n ′ = [ ∃ y φ GD ( x 0 , y ) ∧ ψ f ( i ) , f ( i ) , φ ( y )] .

3. Construct the string s = S ( n ′ ) 0 .

4. Substitute all occurrences of x 0 in φ GD with s , to obtain φ GD ( s, y ) .

5. Return φ GD ( s, y ) ∧ ψ f ( i ) .

The procedure above is (primitive) recursive, and therefore is representable with a formula in PA, let's call it φ GD , where

$$\vdash \forall y \left ( \phi _ { G D } ( n , y ) \leftrightarrow y = \mathcal { G D } ( n ) \right )$$

For 1 ≤ i ≤ k let n i = [ ∃ y φ GD ( x 0 , y ) ∧ ψ i ( y ) , i ] , and let σ i = ∃ y φ GD ( n i , y ) ∧ ψ i ( y ) , where y is a variable not appearing within φ GD or any ψ i . Then, by calculating the return value of GD ( n i ) , we get:

$$\sigma _ { i } & \leftrightarrow \exists y \, \phi _ { G D } ( [ [ \exists y \, \phi _ { G D } ( x _ { 0 } , y ) \wedge \psi _ { i } ( y ) , i ] ] , y ) \wedge \psi _ { i } ( y ) \leftrightarrow \\ ( y & = [ \exists y \, \phi _ { G D } ( [ [ \exists y \, \phi _ { G D } ( x _ { 0 } , y ) \wedge \psi _ { f ( i ) } , f ( i ) ] ] , y ) \wedge \psi _ { f ( i ) } ( y ) ] ) \wedge \psi _ { i } ( y ) \leftrightarrow \\ & ( y = [ \sigma _ { f ( i ) } ] \wedge \psi _ { i } ( y ) ) \, \leftrightarrow \, \psi _ { i } ( [ \sigma _ { f ( i ) } ] )$$

This concludes the proof of the generalized lemma.

## 4.3.9 Direct self-reference

Let's examine the statement σ constructed by the diagonalization lemma:

$$\sigma \, \colon = \, \exists y \, \phi _ { D I A G } ( \lceil \exists y \, \phi _ { D I A G } ( x _ { 0 } , y ) \wedge \neg \phi _ { P R O V A B L E } ( y ) \rceil , y ) \wedge \neg \phi _ { P R O V A B L E } ( y )$$

In English, "there is a number y such that there is a formula encoded by y , which is the result of substituting within the formula encoded by the number [ there is a number y such that there is a formula encoded by y , which is the result of substituting within the formula encoded by the number x 0 all occurrences of the character ' x 0 ' with the number x 0 , 26 and this resulting formula is not provable ] all occurrences of the character ' x 0 ' with the number [ there is a number y such that there is a formula encoded by y , which is the result of substituting within the formula encoded by the number x 0 all occurrences of the character ' x 0 ' with the number x 0 , and this resulting formula is not provable ] , and this resulting formula is not provable".

Whether the above paragraph is English is debatable. What is clear is that the formula is not directly self-referential: it employs a laborious trick of variable substitution (represented by φ DIAG ) to be able to refer to itself. Is there a way for a formula to directly refer to itself without some faulty circularity?

In a short, elegant paper, Saul Kripke describes a few different ways for deploying direct reference (Kripke 2021). Kripke attributes the following way to Raymond Smullyan through personal communication (no reference provided):

First, let x 0 be the lexicographically first variable of Σ . Let A 1 ( x 0 ) , A 2 ( x 0 ) , ... be an enumeration of all formulas that contain no free variables other than x 0 . Let the original encoding be such that the smallest prime number employed is 2 , resulting in Gödel numbers that are always odd. Define ∗ to be a new encoding that coincides with except as follows. For each n , let k n = ∃ x 0 ( x 0 = n ∧ A n ( x 0 )) . Now the following formula gets a special encoding:

$$\lceil \exists x _ { 0 } \left ( x _ { 0 } = 2 k _ { n } \wedge A _ { n } ( x _ { 0 } ) \right ) \rceil ^ { * } \colon = 2 k _ { n }$$

In this manner, every formula A n ( x 0 ) has an instance as above, which gets the encoding 2 k n , asserting that its own encoding satisfies A n (2 k n ) . Then, the theorem proceeds as before with A n ( x 0 ) being ¬ φ PROVABLE ( x 0 ) or the Rosser version ¬ φ R PROVABLE ( x 0 ) .

## 5 Other ways to prove the incompleteness theorem

I outline informally two other strategies to prove Gödel's Incompleteness theorem: through Kolmogorov complexity and through Turing machines.

Kolmogorov complexity. The Kolmogorov complexity K ( n ) of a number n ∈ N is the shortest computer program that outputs n and terminates. The language can be fixed - say C or Python - and the program length can be in characters or in bits. For every L ∈ N , there are numbers n ∈ N such that K ( n ) > L . The simple way to see this is that there are at most 2 L programs of length L . Therefore, some numbers > 2 L are not the output of a program of length ≤ L . Let's fix an axiomatic language L that is rich enough to represent proofs of statements " K ( n ) > m ". Consider now the following program PRINT ( L ) := "go through all proofs of L lexicographically, find the first number n proven to have K ( n ) > L , print n and terminate". Let's assume that the length of PRINT () is L PRINT bits. Now let's run PRINT ( L PRINT ) . It will run until it finds n with K ( n ) > L PRINT , print n and terminate. Therefore K ( n ) ≤ L PRINT , a contradiction. The conclusion is that PRINT ( L PRINT ) will loop forever, and consequently L has no proof of the form K ( n ) > L PRINT . This is Chaitin's theorem: for any rich enough system L there is an L such that no n can be proven to have K ( n ) > L .

26 The occurrences of x 0 within the "formula encoded by (outer) x 0 " should not be confused with the outer occurrence, which is bound. This is an important point, so an example is in order. Say x 0 = x 0 = SS 0 . This is perfectly legit. The outer x 0 is bound to a number, specifically the encoding of the formula x 0 = SS 0 , whereby the inner x 0 is simply a letter of Σ within that formula. Then, when we are substituting within the formula encoded by x 0 all occurrences of ' x 0 ' with the number x 0 , we are substituting within " x 0 = SS 0 " all occurrences of ' x 0 ' with the number x 0 = SS 0 , so the inner formula turns into S ( x 0 = SS 0 ) 0 = SS 0 . Kripke uses special terminology for this situation: the outer occurrence of x 0 is "used" and the inner occurrences are "mentioned".

Turing Machines. The proof of incompleteness through the halting problem in Turing machines is well known. A proof that incorporates the Rosser improvement of not requiring ω -consistency is sketched by Scott Aaronson in his superb blog [1]. First, define the following variant of the halting problem:

The Consistent Guessing Problem. Given a description of a Turing machine M ,

1. If M accepts on a blank tape, accept.

2. If M rejects on a blank tape, reject.

3. If M loops forever, accept and halt.

It is easy to argue that there is no Turing machine that solves this problem: let P be such a machine. Modify P to obtain Q : "given M , if M ( M ) 27 accepts, reject; if M ( M ) rejects, accept; otherwise accept and halt". Now ask what does Q ( Q ) yield? Well, reading from the description above, "given M = Q , if Q ( Q ) accepts, reject; if Q ( Q ) rejects, accept..." Now we are in trouble: on input Q , Q will do the opposite of what Q does on input Q , contradiction. Therefore P does not exist.

Now assume L is rich enough to formalize Turing machines and is complete and consistent. Then, solve the Consistent Guessing Problem above as follows: given M , enumerate lexicographically all possible proofs of L until a proof of " M rejects on a blank tape" or " M does not reject on a blank tape" appears; if the former, reject and if the latter, accept. Therefore, such a complete and consistent L is impossible.

Both of these strategies are excellent for introducing the theorem to computational people. However, they both mask the complicated task of formalizing computation in axiomatic systems.

## 6 Gödel's Second Incompleteness Theorem

Gödel's Second Incompleteness theorem informally states that a rich enough axiomatic system cannot prove its own consistency. This theorem follows easily from a combination of the first theorem and the following property of φ PROVABLE (which in this section we abbreviate to φ PR ): φ PR ( φ ) → φ PR ( φ PR ( φ )) . The latter property is technically hard to prove and beyond the scope of this manuscript. For a complete exposition see for instance Swierczkowski (2003). 28

More formally, if L is rich enough to express provability, φ PR , then L ¬ φ PR ( S 0 = 0) . Consistency is expressed with the notion that the absurdity 1 = 0 is not provable in the system. The proof is by contradiction: assuming that L proves ¬ φ PR ( S 0 = 0) , it follows that L also proves S 0 = 0 . Therefore either L is inconsistent, or it cannot prove ¬ φ PR ( S 0 = 0) .

The truth predicate φ PR satisfies the following conditions, known as the Hilbert-Bernays conditions, which are what is needed for the second incompleteness proof to go through:

## The Hilbert-Bernays provability conditions:

- (i) If L φ then L φ PR ( φ ) .

- (ii) L φ PR ( φ ) → φ PR ( φ PR ( φ ) ) .

- (iii) L φ PR ( φ ) ∧ φ PR ( φ → ψ ) → φ PR ( ψ ) .

## Theorem (Gödel's Second Incompleteness Theorem. L ¬ φ PR ( S 0 = 0) .

In the proof below, all derivations are within L and L in front of every derived formula is implied.

Proof: The goal is to show that ¬ φ PR ( S 0 = 0) leads to a contradiction. We might as well start where the first theorem left off: using diagonalization, let σ be a sentence such that σ ↔¬ φ PR ( σ ) . Deriving σ (or ¬ σ ) leads to a contradiction, so it suffices to show ¬ φ PR ( S 0 = 0) → σ .

27 The standard notation is M ( 〈 M 〉 ) to denote the description of a machine M by 〈 M 〉 . There is no confusion in abusing notation with M ( M ) : whenever a machine is an input to a machine, it turns into its syntactically valid description.

28 It is technically messy to show that for any Σ -formula φ , if all free variables in its encoding φ are handled properly, then φ → φ PR ( φ ) . Because φ PR is a Σ formula, the result then follows. As an example of disasters that occur when variables are not properly handled, consider the Σ -formula x 0 = x 1 . If it were the case that x 0 = x 1 → φ PR ( x 0 = x 1 ) then since x 0 , x 1 do not occur in φ PR ( x 0 = x 1 ) (the latter being simply φ PR ( n ) for a specific n ∈ N with no free variables), it would follow that φ PR ( x 0 = x 1 ) (simply replace x 0 with 0 and x 1 with 0 to obtain true → φ PR ( x 0 = x 1 ) ). Finally, from φ PR ( x 0 = x 1 ) we conclude x 0 = x 1 , a contradiction if we now let x 0 = S 0 , x 1 = 0 .

This will be accomplished by deriving φ PR ( σ ) → φ PR ( ¬ σ ) as an intermediate step: starting from the diagonalization lemma, φ PR ( σ ) → ¬ σ , by (i) φ PR ( φ PR ( σ ) → ¬ σ ) . By (iii), this yields φ PR ( φ PR ( σ )) → φ PR ( ¬ σ ) . Using (ii) this yields φ PR ( σ ) → φ PR ( ¬ σ ) (*).

Then φ PR ( σ ) → ( φ PR ( ¬ σ ) ∧ φ PR ( σ ) follows, and by definition of φ PR we have φ PR ( φ ) ∧ φ PR ( ψ ) → φ PR ( φ ∧ ψ ) from which we get φ PR ( σ ) → ( φ PR ( ¬ σ ∧ σ ) which is equivalent to φ PR ( σ ) → φ PR ( S 0 = 0) .

Reversing the direction of implication, ¬ φ PR ( S 0 = 0) →¬ φ PR ( σ ) , which by σ ↔¬ φ PR ( σ ) yields ¬ φ PR ( S 0 = 0) → σ .

## 7 Incompleteness and Completeness

Gödel's incompleteness theorems introduce the notion of a proposition that is independent of a language: the statement σ cannot be proved or disproved, and is called independent of PA. Another famous theorem courtesy of Gödel is the Completeness theorem for axiomatic systems of first-order logic with equality roughly, systems like PA with domain-specific axioms, logical axioms, equality axioms, and quantifiers ∀ , ∃ whose variables range over "first-order" objects: the members of the domain. 29 Informally, the theorem states that any necessarily true statement in a first-order theory is provable.

At first sight, completeness and incompleteness seem contradictory. Discussing completeness of first-order logic is beyond the scope of this manuscript. Instead, I will briefly outline the notions necessary to address and clarify the seeming contradiction.

Necessarily true involves the notion of a model for the axiomatic theory. Briefly, a model M consists of a domain for the terms and an interpretation of the function and relation symbols of the theory, such that all axioms are true under that interpretation. In the case of PA the standard model is N with standard interpretation of S, <, >, = , + , ∗ . However, this is not the only possible model. 30 For instance, the domain could contain additional elements that are not in the successor chain of 0 . The Completeness theorem says that given a consistent first-order theory L , a sentence τ is provable iff τ is true in every possible model of L . Consequently, if a sentence such as σ is neither provable nor refutable in PA, then there exist models M and M ′ with σ true in M and ¬ σ true in M ′ . Moreover, we can extend PA to PA + { σ } and to PA + {¬ σ } corresponding to these two models, respectively, and both resulting theories are consistent assuming that PA is consistent.

How can this be possible? Let's take a look at the two extensions. In PA + { σ } , we would have σ and σ ↔¬ φ PROVABLE ( σ ) therefore we get ¬ φ PROVABLE ( σ ) which seems to contradict σ . It actually doesn't. Recall that ¬ φ PROVABLE ( σ ) := ¬∃ mφ PROOF -OF ( m,σ ) states that σ is not provable using PA's axioms, and does not state that σ is not provable in PA + { σ } . Hence there is no contradiction.

OK, how about PA + {¬ σ } ? Then we get ¬ σ and φ PROVABLE ( σ ) , i.e., ∃ mφ PROOF -OF ( m,σ ) . Wouldn't that be a contradiction? Not really, because the resulting theory would be ω -inconsistent but still consistent. How about the Rosser version of the theorem, leading to ∃ mφ R PROOF -OF ( m,σ ) ? Recall that now m is asserted to be smaller than any n such as φ R PROOF -OF ( n, ¬ σ ) , and we do have n = [ ¬ σ ] because ¬ σ is now an axiom therefore [ ¬ σ ] is a proof. Here is where the nonstandard nature of the model M ′ shows up: m will be a number not in the chain of successors of 0 . Such an m would satisfy φ R PROOF -OF ( m,σ ) without encoding an actual proof of σ . Generally, m will be a new constant in the domain postulated to satisfy all the required properties. For more details, reading the Henkin proof of the Completeness theorem would be instructive (Arjona and Alonso 2014).

Actually, any consistent first-order theory L can be extended to a complete theory L ′ where every sentence τ is either provable or disprovable! This is simple to show: (1) lexicographically order all

29 Quantifiers cannot range over second-order or higher objects, such as properties of members of the domain. Discussing second-order logic is beyond the scope here.

30 In fact, the "upward" Löwenheim-Skolem theorem states that for every enumerable language L , for every model M of L of cardinality κ , and for every cardinality λ > κ , we can obtain a model N ⊃ M of cardinality λ such that the truth of any proposition φ according to the two models agrees, as long as all variables of φ range over the smaller model. This means that starting from N we can extend to models of PA of arbitrarily large cardinality. A theory is categorical if it has only one model up to isomorphism. The Löwenheim-Skolem theorem implies that a first-order theory with an infinite model, such as PA with N , can never be categorical.

sentences, σ 1 , σ 2 , ... of L ; (2) find the first independent sentence σ i and ¬ σ i , and define this to be τ 1 := σ i ; (3) let L 1 be L∪ τ 1 , extending L with τ 1 as axiom; (4) proceed to define τ 2 , L 2 , τ 3 , L 3 , ...τ n , L n , ... for all n ; (5) let L ′ = ∪L i . It is easy to show that L ′ is consistent and complete, assuming L is consistent. How come this does not contradict the incompleteness theorem? The key observation here is that { τ 1 , τ 2 , ... } is not a recursive collection of sentences. Because of that, φ PROVABLE cannot be written down: provability cannot be represented in L ′ .

The upshot is that in the standard model N , σ is true but not provable in PA. However, due to the Completeness theorem we can extend PA either with σ or with ¬ σ and maintain consistency. In the first case the new theory is consistent because the φ PROVABLE predicate does not include the new axiom σ . In the second case we get ω -inconsistency but consistency, or in the Rosser version we necessarily get an extended domain beyond N that includes new non-numerical constants that make the statement φ PROVABLE ( σ ) true without a numerical encoding of an actual proof being produced. Finally, theoretically PA can be extended to a complete theory (in infinitely many ways, actually), but the new infinite collection of axioms will not be computable. In this case the incompleteness theorem does not apply, however such a theory is useless because it can never be written down.

## 8 Discussion

The broader implications of Gödel's Incompleteness theorems on foundational mathematics, logic, philosophy, computer science, physics, and the nature of the human mind, have been debated extensively. Here, I state some of my personal positions as succinctly as I can.