# Mathematical Runtime Analysis for the Non-Dominated Sorting Genetic Algorithm II (NSGA-II)††thanks: Extended version of a paper that appeared at AAAI 2022 [ZLD22], and that was conducted when the first author was with Southern University of Science and Technology. This version contains all proofs, many of them revised and improved. In particular, the runtime result for the NSGA-II with tournament selection on LeadingOnesTrailingZeroes now holds for N≥4(n+1)𝑁4𝑛1N\geq 4(n+1)italic_N ≥ 4 ( italic_n + 1 ) instead of N≥5(n+1)𝑁5𝑛1N\geq 5(n+1)italic_N ≥ 5 ( italic_n + 1 ). In addition, in all upper bounds we now also regard binary tournament selection as in Deb’s implementation of the NSGA-II (building the N𝑁Nitalic_N tournaments from two permutations of the population). We added tail bounds for the runtime guarantees. The experimental section was extended as well.

**Authors**:

- Weijie Zheng (International Research Institute for Artificial Intelligence)

- Shenzhen, China

- Benjamin Doerr

- Laboratoire d’Informatique (LIX)

- École Polytechnique, CNRS (Institute Polytechnique de Paris)

- Palaiseau, France

> Corresponding author.

Abstract

The non-dominated sorting genetic algorithm II (NSGA-II) is the most intensively used multi-objective evolutionary algorithm (MOEA) in real-world applications. However, in contrast to several simple MOEAs analyzed also via mathematical means, no such study exists for the NSGA-II so far. In this work, we show that mathematical runtime analyses are feasible also for the NSGA-II. As particular results, we prove that with a population size four times larger than the size of the Pareto front, the NSGA-II with two classic mutation operators and four different ways to select the parents satisfies the same asymptotic runtime guarantees as the SEMO and GSEMO algorithms on the basic OneMinMax and LeadingOnesTrailingZeroes benchmarks. However, if the population size is only equal to the size of the Pareto front, then the NSGA-II cannot efficiently compute the full Pareto front: for an exponential number of iterations, the population will always miss a constant fraction of the Pareto front. Our experiments confirm the above findings.

1 Introduction

Many real-world problems need to optimize multiple conflicting objectives simultaneously, see [ZQL ${}^{+}$ 11] for a discussion of the different areas in which such problems arise. Instead of computing a single good solution, a common approach to such multi-objective optimization problems is to compute a set of interesting solutions so that a decision maker can select the most desirable one from these. Multi-objective evolutionary algorithms (MOEAs) are a natural choice for such problems due to their population-based nature. Such multi-objective evolutionary algorithms (MOEAs) have been successfully used in many real-world applications [ZQL ${}^{+}$ 11].

Unfortunately, the theoretical understanding of MOEAs falls far behind their success in practice, and this discrepancy is even larger than in single-objective evolutionary computation, where the last twenty years have seen some noteworthy progress on the theory side [NW10, AD11, Jan13, DN20]. After some early theoretical works on convergence properties, e.g., [Rud98], the first mathematical runtime analysis of an MOEA was conducted by Laumanns et al. [LTZ ${}^{+}$ 02, LTZ04]. They analyzed the runtime of the simple evolutionary multi-objective optimizer (SEMO), a multi-objective counterpart of the randomized local search heuristic, on the CountingOnesCountingZeroes and LeadingOnesTrailingZeroes benchmarks, which are bi-objective analogues of the classic (single-objective) OneMax and LeadingOnes benchmark. Around the same time, Giel [Gie03] analyzed the global SEMO (GSEMO), the multi-objective counterpart of the $(1+1)$ EA, on the LeadingOnesTrailingZeroes function.

Subsequent theoretical works majorly focused on variants of these algorithms and analyzed their runtime on the CountingOnesCountingZeroes and LeadingOnesTrailingZeroes benchmarks, on variants of them, on new benchmarks, and on combinatorial optimization problems [QYZ13, BQT18, RNNF19, QBF20, BFQY20, DZ21]. We note that the (G)SEMO algorithm keeps all non-dominated solutions in the population and discards all others, which can lead to impractically large population sizes. There are three theory works [BFN08, NSN15, DGN16] on the runtime of a simple hypervolume-based MOEA called ( $\mu+1$ ) simple indicator-based evolutionary algorithm (( $\mu+1$ )-SIBEA), regarding both classic benchmarks and problems designed to highlight particular strengths and weaknesses of this algorithm. As the SEMO and GSEMO, the $(\mu+1)$ SIBEA also creates a single offspring per generation; different from the former, it works with a fixed population size $\mu$ .

Recently, also decomposition-based multi-objective evolutionary algorithms were analyzed [LZZZ16, HZCH19, HZ20]. These algorithms decompose the multi-objective problem into several related single-objective problems and then solve the single-objective problems in a co-evolutionary manner. This direction is fundamentally different from the above works and our research. Since not primarily focused on multi-objective optimization, we also do not discuss further the successful line of works that solve constrained single-objective problems by turning the constraint violation into a second objective, see, e.g., [NW06, FHH ${}^{+}$ 10, NRS11, FN15, QYZ15, QSYT17, QYT ${}^{+}$ 19, Cra19, DDN ${}^{+}$ 20, Cra21].

Unfortunately, most of the algorithms discussed in these theoretical works are far from the MOEAs used in practice. As pointed out in the survey [ZQL ${}^{+}$ 11], the majority of the MOEAs used in research and applications builds on the framework of the non-dominated sorting genetic algorithm II (NSGA-II) [DPAM02]. This algorithm works with a population of fixed size $N$ . It uses a complete order defined by the non-dominated sorting and the crowding distance to compare individuals. In each generation, $N$ offspring are generated from the parent population and the $N$ best individuals (according to the complete order) are selected as the new parent population. This approach is thus substantially different from the (G)SEMO algorithm and hypervolume-based approaches (and naturally completely different from decomposition-based methods), see the features of these algorithms described in the above two paragraphs.

Both the predominance in practice and the fundamentally different working principles ask for a rigorous understanding of the NSGA-II. However, to the best of our knowledge so far no mathematical runtime analysis for the NSGA-II has appeared. By mathematical runtime analysis, we mean the question of how many function evaluations a black-box algorithm takes to achieve a certain goal. The computational complexity of the operators used by the NSGA-II, in particular, how to most efficiently implement the non-dominated sorting routine, is a different question (and one that is well-understood [DPAM02]). We note that the runtime analysis in [COGNS20] considers a (G)SEMO algorithm that uses the crowding distance as one of several diversity measures used in the selection of the single parent creating an offspring, but due to the differences of the basic algorithms, none of the arguments used there appear helpful in the analysis of the NSGA-II.

Our Contributions. This paper conducts the first mathematical runtime analysis of the NSGA-II. We regard the NSGA-II with four different parent selection strategies (choosing each individual as a parent once, choosing parents independently and uniformly at random, and two ways of choosing the parents via binary tournaments) and with two classic mutation operators (one-bit mutation and standard bit-wise mutation), but in this first work without crossover (we remark that crossover is very little understood from the runtime perspective in MOEAs, the only works prior to ours we are aware of are [NT10, QYZ13, HZCH19]). As previous theoretical works, we analyze how long the NSGA-II takes to cover the full Pareto front, that is, we estimate the number of iterations until the parent population contains an individual for each objective value of the Pareto front.

When trying to determine the runtime of the NSGA-II, we first note that the selection mechanism of the NSGA-II may remove all individuals with some fixed objective value on the front. In other words, the fact that a certain objective value on the Pareto front was found in some iteration does not mean that this is not lost in some later iteration. This is one of the substantial differences to the (G)SEMO algorithm. We prove that if the population size $N$ is at least four times larger than the size of the Pareto front, then both for the OneMinMax and the LeadingOnesTrailingZeroes benchmarks, such a loss of Pareto front points cannot occur. With this insight, we then show that each of these eight variants of the NSGA-II computes the full Pareto front of the OneMinMax benchmark in an expected number of $O(n\log n)$ iterations (Theorems 2 and 6) and the front of the LeadingOnesTrailingZeroes benchmark in $O(n^{2})$ iterations (Theorems 8 and 9). When $N=\Theta(n)$ , the corresponding runtime guarantees in terms of fitness evaluations, $O(Nn\log n)=O(n^{2}\log n)$ and $O(Nn^{2})=O(n^{3})$ , have the same asymptotic order as those proven previously for the SEMO, GSEMO, and $(\mu+1)$ SIBEA (when $\mu≥ n+1$ and when $\mu=\Theta(n)$ for the $(\mu+1)$ SIBEA). We note that the benchmarks OneMinMax and LeadingOnesTrailingZeroes are the two most intensively studied benchmarks in the runtime analysis of MOEAs. In this first runtime analysis work on the NSGA-II, we therefore concentrated on these two benchmarks to allow a good comparison with the known performance of other MOEAs.

Using a population size larger than the size of the Pareto front is necessary. We prove that if the population size is equal to the size of the Pareto front, then the NSGA-II (applying one-bit mutation once to each parent) regularly loses points on the Pareto front of OneMinMax. This effect is strong enough so that with high probability for an exponential time each generation of the NSGA-II does not cover a constant fraction of the Pareto front of OneMinMax.

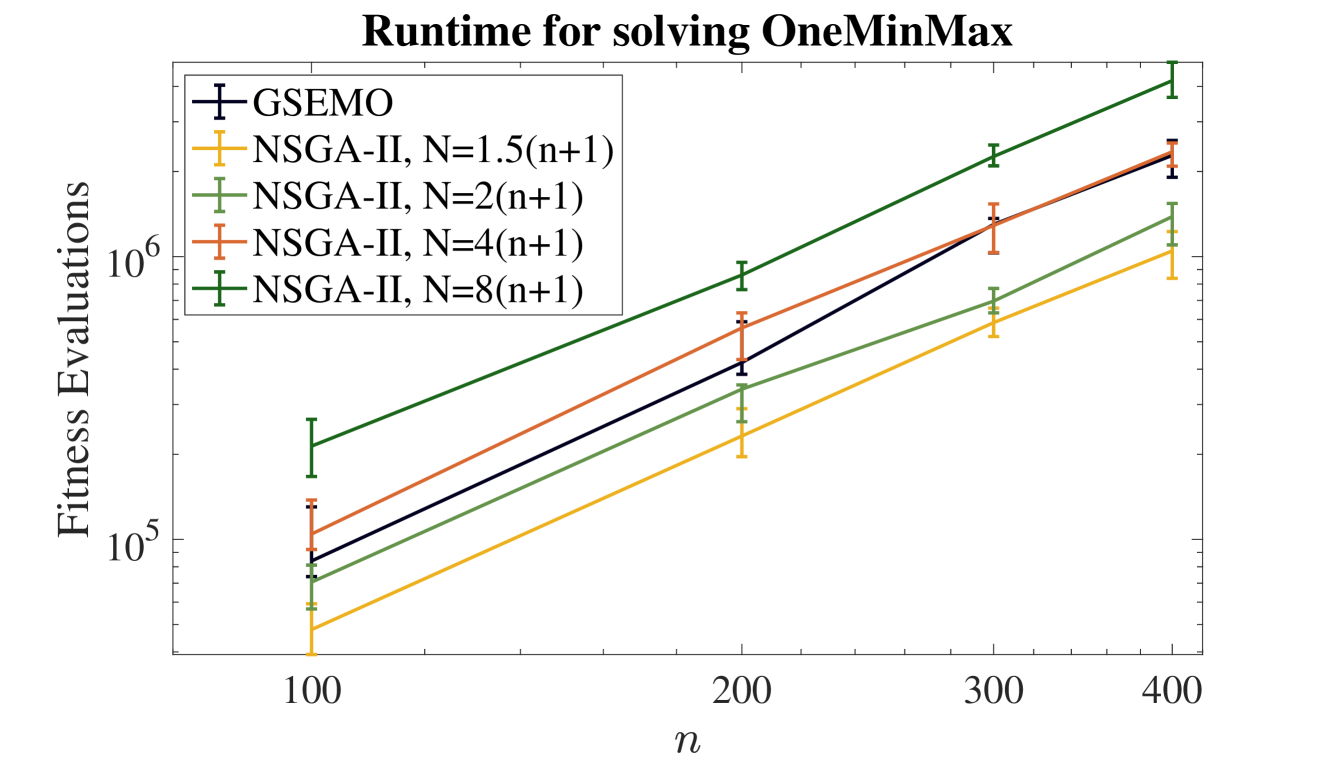

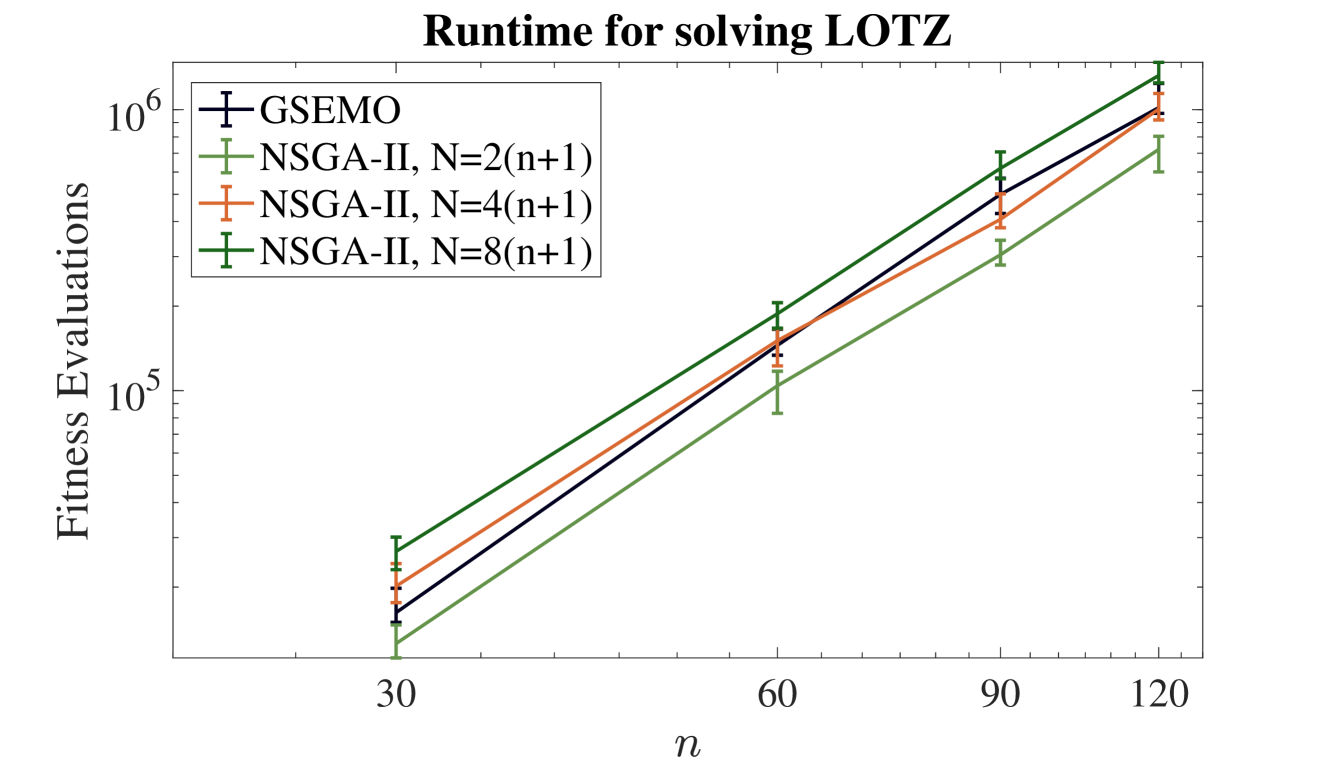

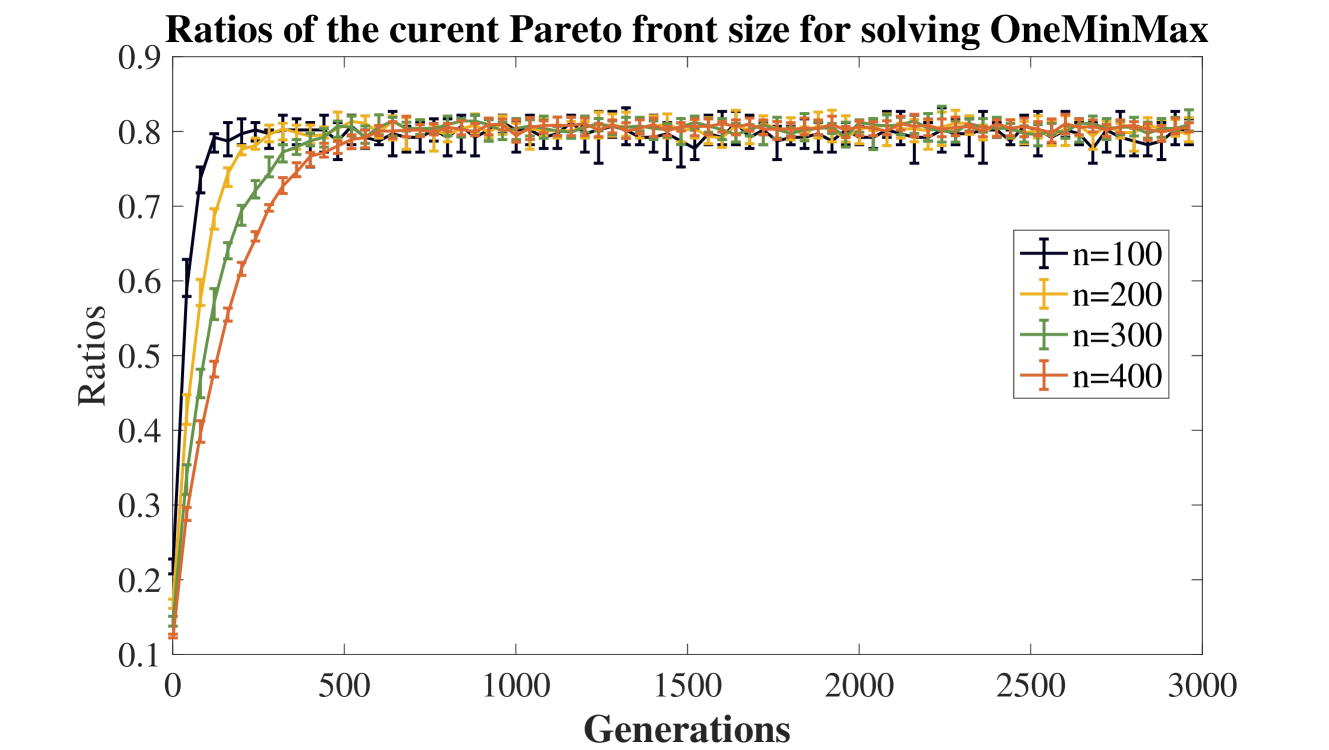

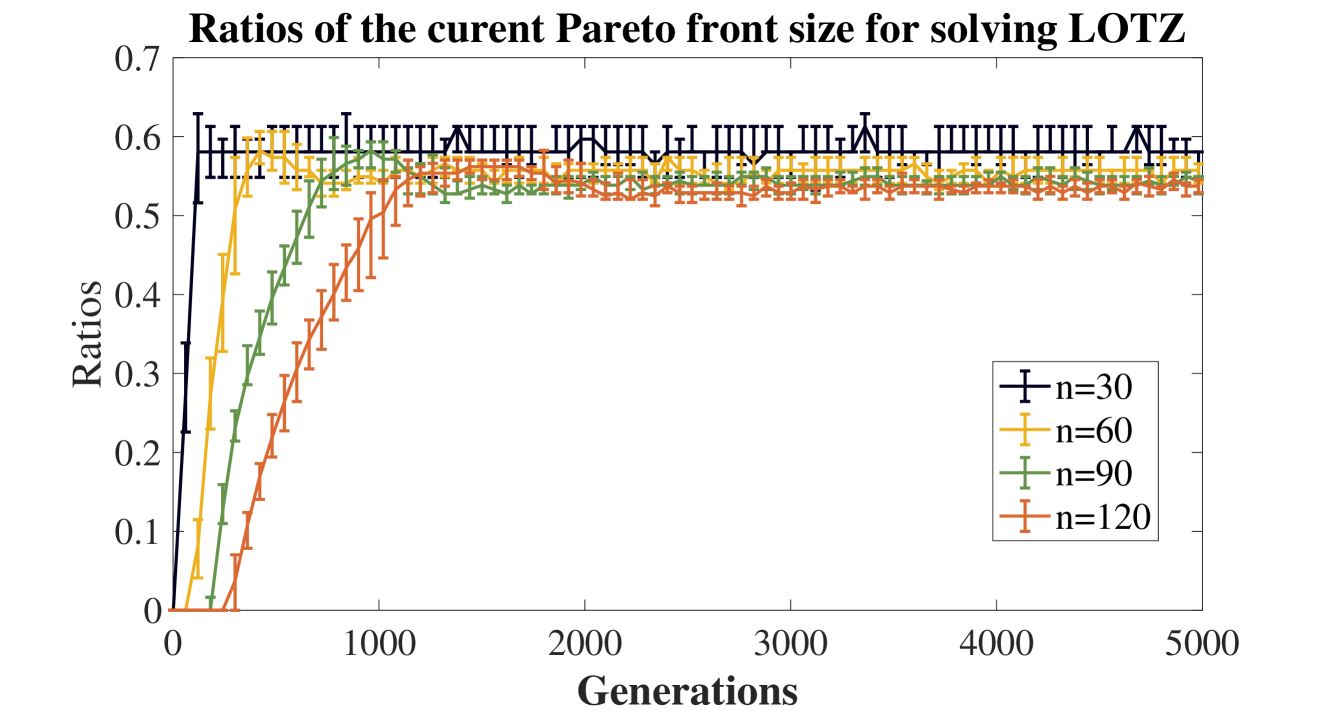

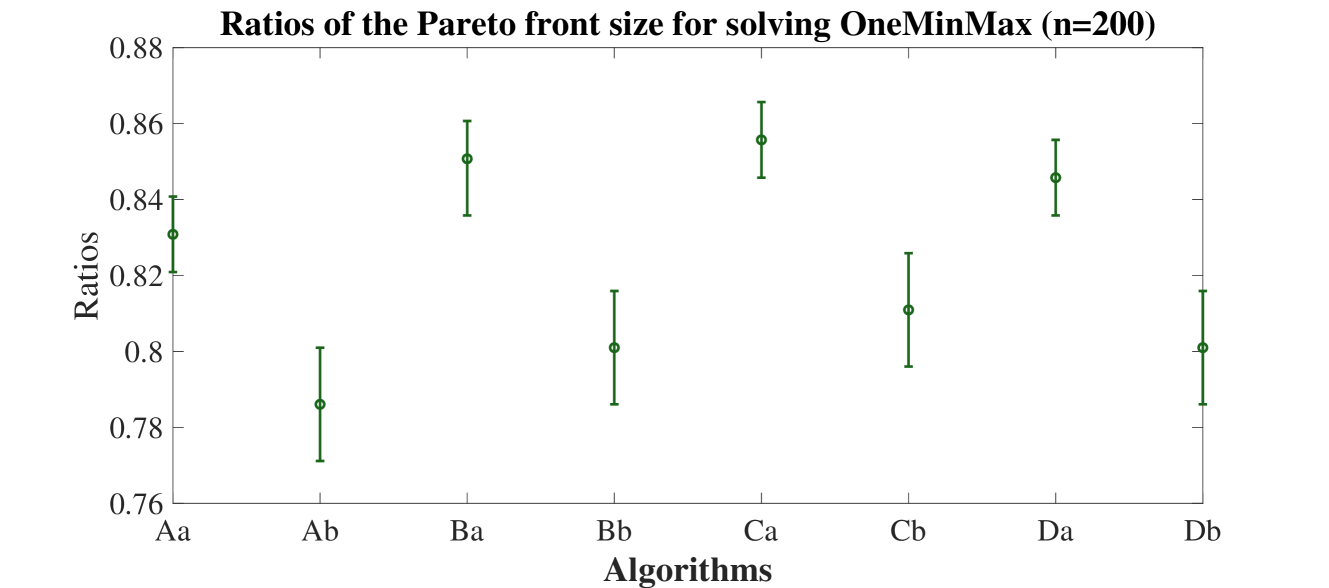

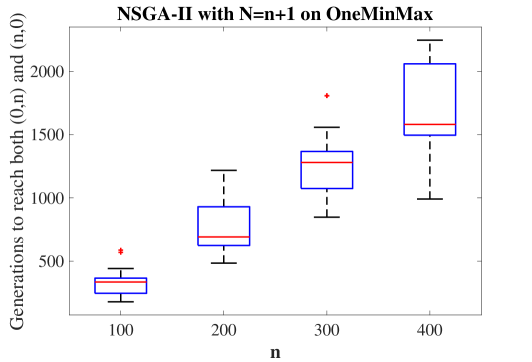

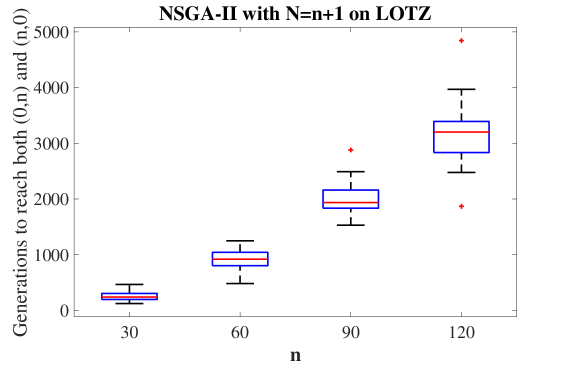

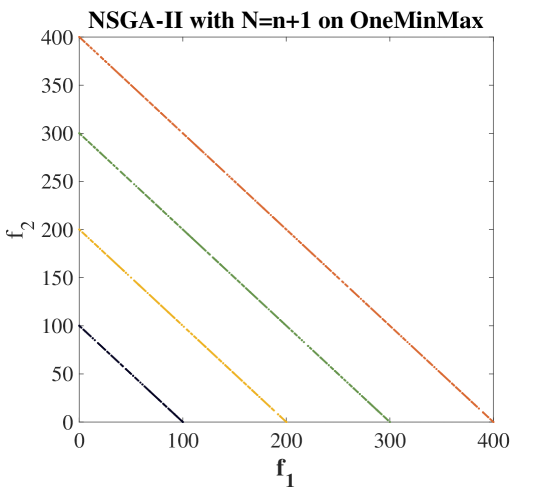

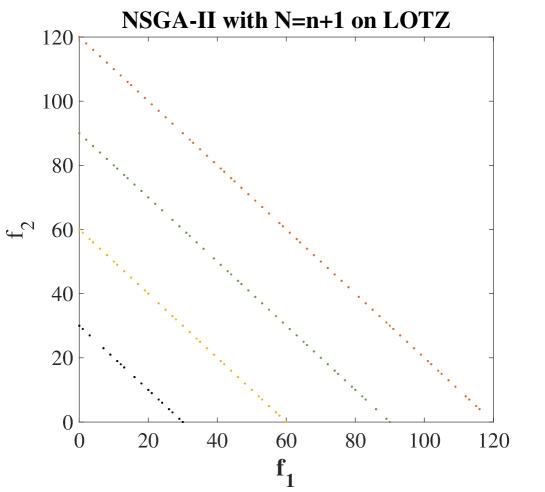

Our short experimental analysis confirms these findings and gives some quantitative estimates for which mathematical analyses are not precise enough. For example, we observe that also with population sizes smaller than what is required for our theoretical analysis (four times the size of the Pareto front), the NSGA-II efficiently covered the Pareto front of the OneMinMax and LeadingOnesTrailingZeroes benchmarks. With suitable population sizes, the NSGA-II beats the GSEMO algorithm on these benchmarks. Complementing our negative result, we observe that the fraction of the Pareto front not covered when using a population size equal to the front size is around 20% for OneMinMax and 40% for LeadingOnesTrailingZeroes. Also without covering the full Pareto front, MOEAs can serve their purpose of proposing to a decision maker a set of interesting solutions. With this perspective, we also regard experimentally the sets of solutions evolved by the NSGA-II when the population size is only equal to the size of the Pareto front. For both benchmarks, we observe that after a moderate runtime, the population contains the two extremal solutions and covers in a very evenly manner the rest of the Pareto front.

Overall, this work shows that the NSGA-II despite its higher complexity (parallel generation of offspring, selection based on non-dominated sorting and crowding distance) admits mathematical runtime analyses in a similar fashion as done before for simpler MOEAs, which hopefully will lead to a deeper understanding of the working principles of this important algorithm.

Subsequent works: We note that the conference version [ZLD22] of this work has already inspired a substantial amount of subsequent research. We briefly describe these results now. In [ZD22a], the performance of the NSGA-II with small population size was analyzed. The main result is that the problem that Pareto front points can be lost can be significantly reduced with a small modification of the selection procedure that was previously analyzed experimentally [KD06], namely to remove individuals in the selection of the next population sequentially, recomputing the crowding distance after each removal. For this setting, an $O(n/N)$ approximation guarantee was proven. In [BQ22], the first runtime analysis of the NSGA-II with crossover was conducted, however, no speed-ups from crossover could be shown. Also, significant speed-ups were shown when using larger tournaments than binary tournaments. In [DQ23a], the performance of the NSGA-II on the multimodal benchmark OneJumpZeroJump benchmark [DZ21] was analyzed. This work shows that the NSGA-II also on this multimodal benchmark has a performance asymptotically at least as good as the GSEMO algorithm (when the population size is at least four times the size of the Pareto front). A matching lower bound for this and our result on OneMinMax was proven in [DQ23b]. This work in particular shows that the NSGA-II in these settings does not profit from population sizes larger than the minimum required population size. Two recent works showed significant performance gains from crossover, one on the OneJumpZeroJump benchmark [DQ23c] and one on an artificial problem [DOSS23b]. The first runtime analysis of the NSGA-II on a combinatorial problem, namely the bi-objective minimum spanning tree problem previously regarded in [Neu07, NW22], was conducted in [CDH ${}^{+}$ 23]. The first runtime analysis of the NSGA-II for noisy optimization appeared in [DOSS23a]. The first runtime analysis of the SMS-EMOA [BNE07] (a variant of the NSGA-II building on the hyper-volume) was conducted in [BZLQ23]. All these works regard bi-objective problems. For the OneMinMax problem in three or more objectives, it was shown in [ZD22b] that the NSGA-II cannot find the full Pareto front in polynomial time and even has difficulties in approximating it. It was shown in [WD23] that the NSGA-III does not experience these problems, at least in three objectives. With this recent development, we are confident to claim that our first mathematical runtime analysis for the NSGA-II has started a fruitful direction of research.

The remainder of the paper is organized as follows. The NSGA-II framework is briefly introduced in Section 2. Sections 3 and 4 separately show our runtime results of the NSGA-II with large enough population size on the OneMinMax and LeadingOnesTrailingZeroes functions. Section 5 proves the exponential runtime of the NSGA with population size equal to the size of the Pareto front. Our experimental results are discussed in Section 6. Section 7 concludes this work.

2 Preliminaries

In this section, we give a brief introduction to multi-objective optimization and to the NSGA-II framework. For the simplicity of presentation, we shall concentrate on two objectives, both of which have to be maximized. A bi-objective objective function on some search space $\Omega$ is a pair $f=(f_{1},f_{2})$ where $f_{i}:\Omega→\mathbb{R}$ . We write $f(x)=(f_{1}(x),f_{2}(x))$ for all $x∈\Omega$ . We shall always assume that we have a bit-string representation, that is, that $S=\{0,1\}^{n}$ for some $n∈\mathbb{N}$ . The challenge in multi-objective optimization is that usually there is no solution that maximizes both $f_{1}$ and $f_{2}$ and thus is at least as good as all other solutions.

More precisely, in bi-objective maximization, we say $x$ weakly dominates $y$ , denoted by $x\succeq y$ , if and only if $f_{1}(x)≥ f_{1}(y)$ and $f_{2}(x)≥ f_{2}(y)$ . We say $x$ strictly dominates $y$ , denoted by $x\succ y$ , if and only if $f_{1}(x)≥ f_{1}(y)$ and $f_{2}(x)≥ f_{2}(y)$ and at least one of the inequalities is strict. We say that a solution is Pareto-optimal if it is not strictly dominated by any other solution. The set of objective values of all Pareto optima is called the Pareto front of $f$ . With this language, the aim in multi-objective optimization is to compute a small set $P$ of Pareto optima such that $f(P)=\{f(x)\mid x∈ P\}$ is the Pareto front or is at least a diverse subset of it. Consider an algorithm $A$ optimizing a multi-objective problem $f$ with Pareto front $M$ . Let $P_{t}$ be the population at iteration $t$ and $G_{t}$ be the number of function evaluations till iteration $t$ , then the time complexity or running time in this paper is the random variable $T_{A}(f)=∈f\{G_{t}\mid f(P_{t})⊃eq M\}$ . Usually, we discuss the expected runtime or the runtime with some probability.

The NSGA-II

When working with a fixed population size, an MOEA must select the next parent population from the combined parent and offspring population by discarding some of these individuals. For this, a complete order on the combined parent and offspring population could be used so that the next parent population is taken in a greedy manner according to this order. Since dominance is only a partial order, the NSGA-II [DPAM02] extends the dominance relation to the following complete order.

In a given population $P⊂eq\{0,1\}^{n}$ , each individual $x$ has both a rank and a crowding distance. The ranks are defined recursively based on the dominance relation. All individuals that are not strictly dominated by another one have rank one. Given that the ranks $1,...,k$ are already defined, the individuals of rank $k+1$ are those among the remaining individuals that are not strictly dominated except by individuals of rank $k$ or smaller. This defines a partition of $P$ into sets $F_{1},F_{2},...$ such that $F_{i}$ contains all individuals with rank $i$ . As shown in [DPAM02], this partition can be computed more efficiently than what the above recursive description suggests, namely in quadratic time, see Algorithm 1 for details. It is clear that individuals with lower rank are more interesting, so when comparing two individuals of different ranks, the one with lower rank is preferred.

To compare individuals in the same rank class $F_{i}$ , the crowding distance of these individuals (in $F_{i}$ ) is computed, and the individual with larger distance is preferred. Ties are broken randomly.

1: Input: $S=\{S_{1},...,S_{|S|}\}$ : the set of individuals

2: Output: $F_{1},F_{2},...$

3: for $i=1,...,|S|$ do

4: $\operatorname{ND}(S_{i})=0$ % number of individuals strictly dominating $S_{i}$

5: $\operatorname{SD}(S_{i})=\emptyset$ % set of individuals strictly dominated by $S_{i}$

6: end for

7: for $i=1,...,|S|$ do % compute $\operatorname{ND}$ and $\operatorname{SD}$

8: for $j=1,...,|S|$ do

9: if $S_{i}\prec S_{j}$ then

10: $\operatorname{ND}(S_{i})=\operatorname{ND}(S_{i})+1$

11: $\operatorname{SD}(S_{j})=\operatorname{SD}(S_{j})\cup\{S_{i}\}$

12: end if

13: end for

14: end for

15: $F_{1}=\{S_{i}\mid\operatorname{ND}(S_{i})=0,i=1,2,...,|S|\}$

16: $k=1$

17: while $F_{k}≠\emptyset$ do

18: $F_{k+1}=\emptyset$

19: for any $s∈ F_{k}$ do % discount $F_{k}$ from $\operatorname{ND}$ and $\operatorname{SD}$

20: for any $s^{\prime}∈\operatorname{SD}(s)$ do

21: $\operatorname{ND}(s^{\prime})=\operatorname{ND}(s^{\prime})-1$

22: if $\operatorname{ND}(s^{\prime})=0$ then

23: $F_{k+1}=F_{k+1}\cup\{s^{\prime}\}$

24: end if

25: end for

26: end for

27: $k=k+1$

28: end while

Algorithm 1 fast-non-dominated-sort(S)

Algorithm 2 shows how the crowding distance in a given set $S$ is computed. The crowding distance of some $x∈ S$ is the sum of the crowding distances $x$ has with respect to each objective function $f_{i}$ . For a given $f_{i}$ , the individuals in $S$ are sorted in order of ascending $f_{i}$ value (for equal values, a tie-breaking mechanism is needed, but we shall not make any assumption on this, that is, our mathematical results are valid regardless of how these ties are broken). The first individual and the last individual in the sorted list have an infinite crowding distance. For other individuals in the sorted list, their crowding distance with respect to $f_{i}$ is the difference of the objective values of its left and right neighbor in the sorted list, normalized by the difference between the first and the last.

1 Input: $S=\{S_{1},...,S_{|S|}\}$ : the set of individuals

Output: $\operatorname{cDis}(S)=(\operatorname{cDis}(S_{1}),...,\operatorname{cDis}(S%

_{|S|}))$ , the vector of crowding distances of the individuals in $S$

1: $\operatorname{cDis}(S)=(0,...,0)$

2: for each objective function $f_{i}$ do

3: Sort $S$ in order of ascending $f_{i}$ value: $S_{i.1},...,S_{i.{|S|}}$

4: $\operatorname{cDis}(S_{i.1})=+∞,\operatorname{cDis}(S_{i.{|S|}})=+∞$

5: for $j=2,...,|S|-1$ do

6: $\operatorname{cDis}(S_{i.j})=\operatorname{cDis}(S_{i.j})+\frac{f_{i}(S_{i.{j+%

1}})-f_{i}(S_{i.{j-1}})}{f_{i}(S_{i.{|S|}})-f_{i}(S_{i.1})}$

7: end for

8: end for

Algorithm 2 crowding-distance( $S$ )

The whole NSGA-II framework is shown in Algorithm 3. After the random initialization of the population of size $N$ , the main loop starts with the generation of $N$ offspring (the precise way how this is done is not part of the NSGA-II framework and is mostly left as a design choice to the algorithm user in [DPAM02], although it is suggested to select parents via binary tournaments based the total order described above). Then the total order based on rank and crowding distance is used to remove the worst $N$ individuals in the union of the parent and offspring population. The remaining individuals form the parent population of the next iteration.

1: Uniformly at random generate the initial population $P_{0}=\{x_{1},x_{2},...,x_{N}\}$ with $x_{i}∈\{0,1\}^{n},i=1,2,...,N.$

2: for $t=0,1,2,...$ do

3: Generate the offspring population $Q_{t}$ with size $N$

4: Use Algorithm 1 to divide $R_{t}=P_{t}\cup Q_{t}$ into $F_{1},F_{2},...$

5: Find $i^{*}≥ 1$ such that $\sum_{i=1}^{i^{*}-1}|F_{i}|<N$ and $\sum_{i=1}^{i^{*}}|F_{i}|≥ N$

6: Use Algorithm 2 to separately calculate the crowding distance of each individual in $F_{1},...,F_{i^{*}}$

7: Let $\tilde{F}_{i^{*}}$ be the $N-\sum_{i=0}^{i^{*}-1}|F_{i}|$ individuals in $F_{i^{*}}$ with largest crowding distance, chosen at random in case of a tie

8: $P_{t+1}=\mathopen{}\mathclose{{}\left(\bigcup_{i=1}^{i^{*}-1}F_{i}}\right)\cup%

\tilde{F}_{i^{*}}$

9: end for

Algorithm 3 NSGA-II

3 Runtime of the NSGA-II on OneMinMax

In this section, we analyze the runtime of the NSGA-II on the OneMinMax benchmark proposed first by Giel and Lehre [GL10] as a bi-objective analogue of the classic OneMax benchmark. It is the function $f:\{0,1\}^{n}→\mathbb{N}×\mathbb{N}$ defined by

$$

f(x)=\big{(}f_{1}(x),f_{2}(x)\big{)}=\big{(}n-\sum_{i=1}^{n}x_{i},\sum_{i=1}^{%

n}x_{i}\big{)}

$$

for all $x=(x_{1},...,x_{n})∈\{0,1\}^{n}$ . The aim is to maximize both objectives in $f$ . We immediately note that for this benchmark problem, any solution lies on the Pareto front. It is hence a good example to study how an MOEA explores the Pareto front when already some Pareto optima were found.

Giel and Lehre [GL10] showed that the simple SEMO algorithm finds the full Pareto front of OneMinMax in $O(n^{2}\log n)$ iterations and fitness evaluations. Their proof can easily be extended to the GSEMO algorithm. For the SEMO, a (matching) lower bound of $\Omega(n^{2}\log n)$ was shown in [COGNS20]. An upper bound of $O(\mu n\log n)$ was shown for the hypervolume-based $(\mu+1)$ SIBEA with $\mu≥ n+1$ [NSN15]. When the SEMO or GSEMO is enriched with a diversity mechanism (strong enough so that solutions that can create a new point on the Pareto front are chosen with constant probability), then the runtime of these algorithms reduces to $O(n\log n)$ [COGNS20].

In contrast to the SEMO and GSEMO as well as the $(\mu+1)$ SIBEA with population size $\mu≥ n+1$ , the NSGA-II can lose all solutions covering a point of the Pareto front. In the following lemma, central to our runtime analyses on OneMinMax, we show that this cannot happen when the population size is large enough, namely at least four times the size of the Pareto front. Besides, we shall use $[i..j],i≤ j$ , to denote the set $\{i,i+1,...,j\}$ in this paper.

**Lemma 1**

*Consider one iteration of the NSGA-II with population size $N≥ 4(n+1)$ optimizing the OneMinMax function. Assume that in some iteration $t$ the combined parent and offspring population $R_{t}=P_{t}\cup Q_{t}$ contains a solution $x$ with objective value $(k,n-k)$ for some $k∈[0..n]$ . Then also the next parent population $P_{t+1}$ contains an individual $y$ with $f(y)=(k,n-k)$ .*

* Proof*

It is not difficult to see that for any $x,y∈\{0,1\}^{n}$ , we have $x\nprec y$ and $y\nprec x$ . Hence, all individuals in $R_{t}$ have rank one in the non-dominated sorting of $R_{t}$ , that is, after Step 4 in Algorithm 3. Thus, in the notation of the algorithm, $F_{1}=R_{t}$ and $i^{*}=1$ . We calculate the crowding distance of each individual of $R_{t}$ . Let $k∈[0..n].$ Assume that there is at least one individual $x∈ R_{t}$ such that $f(x)=(k,n-k)$ . We recall from Algorithm 2 that $S_{1.1},...,S_{1.{2N}}$ and $S_{2.1},...,S_{2.{2N}}$ are the sorted populations based on $f_{1}$ and $f_{2}$ respectively. Since the individuals with the same objective value will continuously appear in the sorted list w.r.t. this objective value, we know that there exist $a≤ b$ and $a^{\prime}≤ b^{\prime}$ such that $[a..b]=\{i\mid f_{1}(S_{1.i})=k\}$ and $[a^{\prime}..b^{\prime}]=\{i\mid f_{2}(S_{2.i})=n-k\}$ . From the crowding distance calculation in Algorithm 2, we know that $\operatorname{cDis}(S_{1.a})≥\frac{f_{1}\mathopen{}\mathclose{{}\left(S_{1.%

{a+1}}}\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a-1}}}\right)}{f_{1}%

\mathopen{}\mathclose{{}\left(S_{1.{|S|}}}\right)-f_{1}\mathopen{}\mathclose{{%

}\left(S_{1.1}}\right)}≥\frac{f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a}}}%

\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a-1}}}\right)}{f_{1}\mathopen%

{}\mathclose{{}\left(S_{1.{|S|}}}\right)-f_{1}\mathopen{}\mathclose{{}\left(S_%

{1.1}}\right)}>0$ since $f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a}}}\right)-f_{1}\mathopen{}%

\mathclose{{}\left(S_{a-1}}\right)>0$ by the definition of $a$ . Similarly, we have $\operatorname{cDis}(S_{1.b})>0$ , $\operatorname{cDis}(S_{2.a^{\prime}})>0$ and $\operatorname{cDis}(S_{2.b^{\prime}})>0$ . For all $j∈[a+1..b-1]$ with $S_{1.j}∉\{S_{2.a^{\prime}},S_{2.b^{\prime}}\}$ , we know $f_{1}(S_{1.{j-1}})=f_{1}(S_{1.{j+1}})=k$ and $f_{2}(S_{1.{j-1}})=f_{2}(S_{1.{j+1}})=n-k$ from the definitions of $a,b,a^{\prime}$ and $b^{\prime}$ . Hence, we have $\operatorname{cDis}(S_{1.j})=\frac{f_{1}(S_{1.{j+1}})-f_{1}(S_{1.{j-1})}}{f_{1%

}\mathopen{}\mathclose{{}\left(S_{1.{|S|}}}\right)-f_{1}\mathopen{}\mathclose{%

{}\left(S_{1.1}}\right)}+\frac{f_{2}(S_{2.{j^{\prime}+1}})-f_{2}(S_{2.{j^{%

\prime}-1}})}{f_{2}\mathopen{}\mathclose{{}\left(S_{2.{|S|}}}\right)-f_{2}%

\mathopen{}\mathclose{{}\left(S_{2.1}}\right)}=0$ . This shows that the individuals with objective value $(k,n-k)$ and positive crowding distance are exactly $S_{1.a}$ , $S_{1.b}$ , $S_{2.a^{\prime}}$ and $S_{2.b^{\prime}}$ . Hence, for each $(k,n-k)$ , there are at most four solutions $x$ with $f(x)=(k,n-k)$ and $\operatorname{cDis}(x)>0$ . Noting that the Pareto front size for OneMinMax is $n+1$ , the number of individuals with positive crowding distance is at most $4(n+1)≤ N$ . Since Step 7 in Algorithm 3 keeps $N$ individuals with largest crowding distance, we know that all individuals with positive crowding distance will be kept. Thus, $y=S_{1.a}∈ P_{t+1}$ , proving our claim. ∎

Since Lemma 1 ensures that objective values on the Pareto front will not be lost in the future, we can estimate the runtime of the NSGA-II via the sum of the waiting times for finding a new Pareto solution. Apart from the fact that the NSGA-II generates $N$ solutions per iteration (which requires some non-trivial arguments in the case of binary tournament selection), this analysis resembles the known analysis of the simpler SEMO algorithm [GL10]. For $N=O(n)$ , we also obtain the same runtime estimate (in terms of fitness evaluations).

We start with the easier case that parents are chosen uniformly at random or that each parent creates one offspring.

**Theorem 2**

*Consider optimizing the OneMinMax function via the NSGA-II with one of the following four ways to generate the offspring population in Step 3 in Algorithm 3, namely applying one-bit mutation or standard bit-wise mutation once to each parent or $N$ times choosing a parent uniformly at random and applying one-bit mutation or standard bit-wise mutation to it. If the population size $N$ is at least $4(n+1)$ , then the expected runtime is at most $\frac{2e^{2}}{e-1}n(\ln n+1)$ iterations and at most $\frac{2e^{2}}{e-1}Nn(\ln n+1)$ fitness evaluations. Besides, let $T$ be the number of iterations to reach the full Pareto front, then $\Pr[T≥\tfrac{2e^{2}(1+\delta)}{e-1}n\ln n]≤ 2n^{-\delta}$ holds for any $\delta≥ 0$ .*

* Proof*

Let $x∈ P_{t}$ and $f(x)=(k,n-k)$ for some $k∈[0..n]$ . Let $p$ denote the probability that $x$ is chosen as parent to be mutated. Conditional on that, let $p_{k}^{+}$ denote the probability of generating from $x$ an offspring $y_{+}$ with $f(y_{+})=(k+1,n-k-1)$ (when $k<n$ ) and $p_{k}^{-}$ denote the probability of generating from $x$ an offspring $y_{-}$ with $f(y_{-})=(k-1,n-k+1)$ (when $k>0$ ). Consequently, the probability that $R_{t}$ contains an individual $y_{+}$ with objective value $(k+1,n-k-1)$ is at least $pp_{k}^{+}$ , and the probability that $R_{t}$ contains an individual $y_{-}$ with objective value $(k-1,n-k+1)$ is at least $pp_{k}^{-}$ . Since Lemma 1 implies that any existing OneMinMax objective value will be kept in the iterations afterwards, we know that the expected number of iterations to obtain $y_{+}$ (resp. $y_{-}$ ) once $x$ is in the population is at most $\frac{1}{pp_{k}^{+}}$ (resp. $\frac{1}{pp_{k}^{-}}$ ). Assume that the initial population of the Algorithm 3 contains an $x$ with $f(x)=(k_{0},n-k_{0})$ . Then the expected number of iterations to obtain individuals containing objective values $(k_{0},n-k_{0}),(k_{0}+1,n-k_{0}-1),...,(n,0)$ is at most $\sum_{i=k_{0}}^{n-1}\frac{1}{pp_{i}^{+}}$ . Similarly, the expected number of iterations to obtain individuals containing objective values $(k_{0}-1,n-k_{0}+1),(k_{0}-2,n-k_{0}-2),...,(0,n)$ is at most $\sum_{i=1}^{k_{0}}\frac{1}{pp_{i}^{-}}$ . Consequently, the expected number of iterations to cover the whole Pareto front is at most $\sum_{i=k_{0}}^{n-1}\frac{1}{pp_{i}^{+}}+\sum_{i=1}^{k_{0}}\frac{1}{pp_{i}^{-}}$ . Now we calculate $p$ for the different ways of selecting parents and $p_{k}^{+}$ and $p_{k}^{-}$ for the different mutation operations. If we apply mutation once to each parent in $P_{t}$ , we apparently have $p=1$ . If we choose the parents independently at random from $P_{t}$ , then $p=1-(1-\frac{1}{N})^{N}≥ 1-\frac{1}{e}$ . For one-bit mutation, we have $p_{k}^{+}=\frac{n-k}{n}$ and $p_{k}^{-}=\frac{k}{n}$ . For standard bit-wise mutation, we have $p_{k}^{+}≥\frac{n-k}{n}(1-\frac{1}{n})^{n-1}≥\frac{n-k}{en}$ and $p_{k}^{-}≥\frac{k}{n}(1-\frac{1}{n})^{n-1}≥\frac{k}{en}$ . From these estimates and the fact that the Harmonic number $H_{n}=\sum_{i=1}^{n}\frac{1}{i}$ satisfies $H_{n}<\ln n+1$ , it is not difficult to see that all cases lead to an expected runtime of at most

| | $\displaystyle\sum_{i=0}^{n-1}$ | $\displaystyle{}\frac{1}{pp_{i}^{+}}+\sum_{i=1}^{n}\frac{1}{pp_{i}^{-}}≤\sum%

_{i=0}^{n-1}\frac{1}{(1-\frac{1}{e})\frac{n-i}{en}}+\sum_{i=1}^{n}\frac{1}{(1-%

\frac{1}{e})\frac{i}{en}}$ | |

| --- | --- | --- | --- |

iterations, hence at most $\frac{2e^{2}}{e-1}Nn(\ln n+1)$ fitness evaluations. Now we will prove the concentration result. Let $X^{+}_{k}$ and $X^{-}_{k}$ be independent geometric random variables with success probabilities of $(1-\frac{1}{e})\frac{n-k}{en}$ and $(1-\frac{1}{e})\frac{k}{en}$ , respectively. Let $T$ be the number of iterations to cover the full Pareto front, and let $Z^{+}=\sum_{k=0}^{n-1}X^{+}_{k}$ and $Z^{-}=\sum_{k=1}^{n}X^{-}_{k}$ . Then from the above discussion, we know that $Z:=Z^{+}+Z^{-}$ stochastically dominates $T$ (see [Doe19] for a detailed discussion of how to use stochastic domination arguments in the analysis of evolutionary algorithms). Let the success probabilities of $X^{+}_{n-1},X^{+}_{n-2},...,X^{+}_{0}$ be $q_{1}^{+},...,q_{n}^{+}$ , and let $q_{1}^{-},...,q_{n}^{-}$ denote the success probabilities of $X^{-}_{1},X^{-}_{2},...,X^{-}_{n}$ . Then we have $q_{i}^{+}≥(1-\frac{1}{e})\frac{1}{e}\frac{i}{n}$ and $q_{i}^{-}≥(1-\frac{1}{e})\frac{1}{e}\frac{i}{n}$ for all $i∈[1..n]$ . From a Chernoff bound for a sum of such geometric random variables ([DD18, Lemma 4], also found in [Doe20, Theorem 1.10.35]), we have that for any $\delta≥ 0$ ,

$$

\Pr\mathopen{}\mathclose{{}\left[Z^{+}\geq(1+\delta)\frac{e^{2}}{e-1}n\ln n}%

\right]\leq n^{-\delta}

$$

and

$$

\Pr\mathopen{}\mathclose{{}\left[Z^{-}\geq(1+\delta)\frac{e^{2}}{e-1}n\ln n}%

\right]\leq n^{-\delta}.

$$

Hence, we have

$$

\Pr\mathopen{}\mathclose{{}\left[Z\geq(1+\delta)\frac{2e^{2}}{e-1}n\ln n}%

\right]\leq 2n^{-\delta}.

$$

Since $Z$ stochastically dominates $T$ , we obtain $\Pr[T≥\tfrac{2e^{2}(1+\delta)}{e-1}n\ln n]≤ 2n^{-\delta}.$ ∎

We now analyze the performance of the NSGA-II on OneMinMax when selecting the parents via binary tournaments, which is the selection method suggested in the original NSGA-II paper [DPAM02]. We regard two variants of this selection method. The most natural one, discussed for example in [GD90], is to conduct $N$ independent tournaments. Here the offspring population $Q_{t}$ is generated by $N$ times independently performing the following sequence of actions: (i) Select two different individuals $x^{\prime},x^{\prime\prime}$ uniformly at random from $P_{t}$ . (ii) Select $x$ as the better of these two, that is, the one with smaller rank in $P_{t}$ or, in case of equality, the one with larger crowding distance in $P_{t}$ (breaking ties randomly). (iii) Generate an offspring by mutating $x$ . We note that in some definitions of tournament selection the better individual in a tournament is chosen as winner only with some probability $p>0.5$ , but we do not regard this case any further. We note though that all our mathematical results would remain true in this setting. We also note that sometimes the participants of a tournament are selected “with replacement”. Again, this would not change our results, but we do not discuss this case any further.

A closer look in Deb’s implementation of the NSGA-II (see Revision 1.1.6 available at [Deb]), and we are thankful for Maxim Buzdalov (Aberystwyth University) for pointing this out to us, shows that here a different way of selecting the parents is used. In this two-permutation tournament selection scheme, two random permutations $\pi_{1}$ and $\pi_{2}$ of $P_{t}$ are generated and then a binary tournament is conducted between $\pi_{j}(2i-1)$ and $\pi_{j}(2i)$ for all $i∈[1..\frac{N}{2}]$ and $j∈\{1,2\}$ (we assume here that $N$ is even). Of course, this is nothing else than saying that twice a random matching on $P_{t}$ is generated and the end vertices of each matching edge conduct a tournament. Different from independent tournaments, this selection operator cannot be implemented in parallel. On the positive side, it ensures that each individual takes part in exactly two tournaments, so it treats the individuals in a fairer manner. Also, if there is a unique best individual, then this will surely be selected. As above, in our setting where we do not use crossover, each tournament winner is mutated and these $N$ individuals form the offspring population $Q_{t}$ .

In the case of binary tournament selection, the analysis is slightly more involved since we need to argue that a desired parent is chosen for mutation with constant probability in one iteration. This is easy to see for a parent at the boundary of the front as its crowding distance is infinite, but less obvious for parents not at the boundary. We note that we need to be able to select such parents since we cannot ensure that the population intersects the Pareto front in a contiguous interval (as can be seen, e.g., from the random initial population). We solve this difficulty in the following three lemmas.

We use the following notation. Consider some iteration $t$ . For $i=1,2$ , let

| | $\displaystyle v_{i}^{\min}$ | $\displaystyle=\min\{f_{i}(x)\mid x∈ R_{t}\},$ | |

| --- | --- | --- | --- |

denote the extremal objective values in the combined parent and offspring population. Let $V=f(R_{t})=\{(f_{1}(x),f_{2}(x))\mid x∈ R_{t}\}$ denote the set of objective values of the solutions in the combined parent and offspring population $R_{t}$ . We define the set of values such that also the right (left) neighbor on the Pareto front is covered by

| | $\displaystyle V_{\operatorname{in}}^{+}={}$ | $\displaystyle\{(v_{1},v_{2})∈ V\mid∃ y∈ R_{t}:(f_{1}(y),f_{2}(y))=(v%

_{1}+1,v_{2}-1)\},$ | |

| --- | --- | --- | --- |

**Lemma 3**

*For any $(v_{1},v_{2})∈ V\setminus(V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}%

}^{-})$ , there is at least one individual $x∈ R_{t}$ with $f(x)=(v_{1},v_{2})$ and $\operatorname{cDis}(x)≥\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ .*

* Proof*

Let $(v_{1},v_{2})∈ V\setminus(V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}%

}^{-})$ , let $S_{1.1},...,S_{1.{2N}}$ be the sorting of $R_{t}$ according to $f_{1}$ , and let $[a..b]=\{i∈[1..2N]\mid f_{1}(S_{1.i})=v_{1}\}$ . If $v_{1}∈\{v_{1}^{\max},v_{1}^{\min}\}$ , then by definition of the crowding distance, one individual in $f^{-1}((v_{1},v_{2}))$ has an infinite crowding distance. Otherwise, if $(v_{1},v_{2})∈ V\setminus V_{\operatorname{in}}^{-}$ , then we have $f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a+1}}}\right)-f_{1}\mathopen{}%

\mathclose{{}\left(S_{1.{a-1}}}\right)≥ f_{1}\mathopen{}\mathclose{{}\left(%

S_{1.{a}}}\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a-1}}}\right)≥ 2$ and thus $\operatorname{cDis}(S_{1.a})≥\frac{f_{1}\mathopen{}\mathclose{{}\left(S_{1.%

{a+1}}}\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{a-1}}}\right)}{v_{1}^{%

\max}-v_{1}^{\min}}≥\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ . Similarly, if $(v_{1},v_{2})∈ V\setminus V_{\operatorname{in}}^{+}$ , then $f_{1}\mathopen{}\mathclose{{}\left(S_{1.{b+1}}}\right)-f_{1}\mathopen{}%

\mathclose{{}\left(S_{1.{b-1}}}\right)≥ f_{1}\mathopen{}\mathclose{{}\left(%

S_{1.{b+1}}}\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{b}}}\right)≥ 2$ and $\operatorname{cDis}(S_{1.b})≥\frac{f_{1}\mathopen{}\mathclose{{}\left(S_{1.%

{b+1}}}\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{b-1}}}\right)}{v_{1}^{%

\max}-v_{1}^{\min}}≥\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ . ∎

**Lemma 4**

*For any $(v_{1},v_{2})∈ V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-}$ , there are at most two individuals in $R_{t}$ with objective value $(v_{1},v_{2})$ and crowding distance at least $\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ .*

* Proof*

Let $(v_{1},v_{2})∈ V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-}$ , $[a..b]=\{i∈[1..2N]\mid f_{1}(S_{1.i})=v_{1}\}$ , and $[a^{\prime}..b^{\prime}]=\{j∈[1..2N]\mid f_{2}(S_{2.j})=v_{2}\}$ . Let $C=\{S_{1.a},S_{1.b}\}\cup\{S_{2.a^{\prime}},S_{2.b^{\prime}}\}$ . If $(R_{t}\cap f^{-1}((v_{1},v_{2})))\setminus C$ is not empty, then for any $x∈(R_{t}\cap f^{-1}((v_{1},v_{2})))\setminus C$ , there exist $i∈[a+1..b-1]$ and $j∈[a^{\prime}+1..b^{\prime}-1]$ such that $x=S_{1.i}=S_{2.j}$ . Hence $\operatorname{cDis}(x)=\frac{f_{1}\mathopen{}\mathclose{{}\left(S_{1.{i+1}}}%

\right)-f_{1}\mathopen{}\mathclose{{}\left(S_{1.{i-1}}}\right)}{v_{1}^{\max}-v%

_{1}^{\min}}+\frac{f_{2}\mathopen{}\mathclose{{}\left(S_{2.{j+1}}}\right)-f_{2%

}\mathopen{}\mathclose{{}\left(S_{2.{j-1}}}\right)}{v_{2}^{\max}-v_{2}^{\min}}=0$ . We thus know that any individual with crowding distance at least $\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ lies in $C$ . For any $x∈ C\setminus(\{S_{1.a},S_{1.b}\}\cap\{S_{2.a^{\prime}},S_{2.b^{\prime}}\})$ , we have $\operatorname{cDis}(x)=\frac{1}{v_{1}^{\max}-v_{1}^{\min}}$ or $\operatorname{cDis}(x)=\frac{1}{v_{2}^{\max}-v_{2}^{\min}}$ . We note that $v_{1}^{\max}-v_{1}^{\min}=v_{2}^{\max}-v_{2}^{\min}$ since $v_{1}^{\max}=n-v_{2}^{\min}$ and $v_{1}^{\min}=n-v_{2}^{\max}$ . Hence $\operatorname{cDis}(x)<\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ . Let now $x∈\{S_{1.a},S_{1.b}\}\cap\{S_{2.a^{\prime}},S_{2.b^{\prime}}\}$ . If $|C|=1$ , then $\operatorname{cDis}(x)=\frac{2}{v_{1}^{\max}-v_{1}^{\min}}+\frac{2}{v_{2}^{%

\max}-v_{2}^{\min}}=\frac{4}{v_{1}^{\max}-v_{1}^{\min}}$ . Otherwise, $\operatorname{cDis}(x)=\frac{1}{v_{1}^{\max}-v_{1}^{\min}}+\frac{1}{v_{2}^{%

\max}-v_{2}^{\min}}=\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ . Therefore, the number of individuals in $R_{t}\cap f^{-1}((v_{1},v_{2}))$ with crowding distance at least $\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ is $|\{S_{1.a},S_{1.b}\}\cap\{S_{2.a^{\prime}},S_{2.b^{\prime}}\}|$ , which is at most $2$ . ∎

**Lemma 5**

*Let $N≥ 4(n+1)$ . Let $P$ be a parent population in a run of the NSGA-II using independent or two-permutation binary tournament selection optimizing OneMinMax. Let $v=(v_{1},n-v_{1})∉ f(P)$ be a point on the Pareto front that is not covered by $P$ , but a neighbor of $v$ on the front is covered by $P$ , that is, there is a $y∈ P$ such that $\|f(y)-v\|_{∞}=1$ . In the case of independent tournaments, each of the $N$ tournaments with probability at least $\frac{1}{N}(\frac{1}{6}-\frac{3.5}{N-1})$ selects an individual $x$ with $f(x)=f(y)$ . In the case of two-permutation selection, there are two stochastically independent tournaments each of which with probability at least $\frac{1}{6}-\frac{2.5}{N-1}$ selects an individual $x$ with $f(x)=f(y)$ .*

* Proof*

By Lemma 3, there is an individual $x^{\prime}∈ P$ with $f(x^{\prime})=f(y)$ and crowding distance at least $\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ . We estimate the probability that $x^{\prime}$ is the winner of a tournament. We start with the case of independent tournaments and we regard a particular one of these. With probability $\frac{1}{N}$ , the individual $x^{\prime}$ is chosen as the first participant of the tournament. We condition on this and regard the second individual $x^{\prime\prime}$ of the tournament, which is chosen uniformly at random from the remaining $N-1$ individuals. We shall argue that with good probability, it has a smaller crowding distance, and thus loses the tournament. To this aim, we estimate the number of element $z∈ P$ that have a crowding distance of $\frac{2}{v_{1}^{\max}-v_{1}^{\min}}$ or more (“large crowding distance”). We treat the individuals differently according to their objective value $w=f(z)$ . If $w∈ V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-}$ , then by Lemma 4 at most two individuals with this objective value have a large crowding distance. For other objective values $w$ , we use the general estimate from the proof of Lemma 1 that at most four individuals have this objective value and positive crowding distance. This gives an upper bound of $2|V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-}|+4(|f(P)|-|V_{%

\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-}|)=2|f(P)|+2|f(P)\setminus%

(V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-})|$ individuals with large crowding distance. We note that out of each consecutive three elements $(w_{1},n-w_{1}),(w_{1}+1,n-w_{1}-1),(w_{1}+2,n-w_{1}-2)$ of the Pareto front, at most two can be in $f(P)\setminus(V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-})$ – if all three were in $f(P)$ , then the middle one would necessarily be in $V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-}$ . Consequently, $|f(P)\setminus(V_{\operatorname{in}}^{+}\cap V_{\operatorname{in}}^{-})|≤ 2%

\lceil\frac{n+1}{3}\rceil$ . With this estimate, our upper bound on the number of individuals with large crowding distance becomes at most $2(n+1)+4\lceil\frac{n+1}{3}\rceil≤\frac{10}{3}(n+1)+\frac{8}{3}$ , and then excluding the first-chosen individual $x^{\prime}$ , we know that the upper bound estimate for the probability that $x^{\prime\prime}$ has large crowding distance becomes $\frac{1}{N-1}(\frac{10}{3}(n+1)+\frac{8}{3}-1)=\frac{1}{N-1}(\frac{10}{3}(n+%

\frac{3}{4})+\frac{10}{3}\frac{1}{4}+\frac{5}{3})≤\frac{5}{6}+\frac{1}{N-1}%

(\frac{10}{3}\frac{1}{4}+\frac{5}{3})$ . Consequently, the probability that $x^{\prime}$ is selected as first participant of the tournament and it wins the tournament is at least

$$

\frac{1}{N}\mathopen{}\mathclose{{}\left(\frac{1}{6}-\frac{2.5}{N-1}}\right).

$$

For the case of two-permutation tournament selection, we note that there are two independent tournaments (stemming from different permutations) in which $x^{\prime}$ participates. In both, the partner $x^{\prime\prime}$ of $x^{\prime}$ is distributed uniformly in $P_{t}\setminus\{x^{\prime}\}$ . Hence the above arguments can be applied and we see that with probability at least $\frac{1}{6}-\frac{2.5}{N-1}$ , the second participant loses against $x^{\prime}$ . ∎

With Lemma 5, we can now easily argue that in a given iteration $t$ , we have a constant probability of choosing at least once a parent that is a neighbor of an empty spot on the Pareto front. This allows to re-use the main arguments of the simpler analyses for the cases that the parents were choosing randomly or that each parent creates one offspring. We note that in the following result, as in any other result in this work, we did not try to optimize the leading constant.

**Theorem 6**

*Let $n≥ 4$ . Consider optimizing the OneMinMax function via the NSGA-II which creates the offspring population by selecting parents via independent binary tournaments or via the two-permutation approach and applying one-bit or standard bit-wise mutation to these. If the population size $N$ is at least $4(n+1)$ , then the expected runtime is at most $\tfrac{200e}{3}n(\ln n+1)$ iterations and at most $\tfrac{200e}{3}Nn(\ln n+1)$ fitness evaluations. Besides, let $T$ be the number of iterations to reach the full Pareto front. Then we further have that $\Pr[T≥\tfrac{200e}{3}(1+\delta)n\ln n]≤ 2n^{-\delta}$ holds for any $\delta≥ 0$ .*

* Proof*

Thanks to Lemma 5, we can essentially follow the arguments of the proof of Theorem 2. Let $y∈ P_{t}$ be such that $f(y)$ is a neighbor of a point on the Pareto front that is not in $f(P_{t})$ . For independent tournaments, by Lemma 5 a single tournament will select a parent $x$ with $f(x)=f(y)$ , that is, also a neighbor of this uncovered point, with probability at least $\frac{1}{N}(\frac{1}{6}-\frac{2.5}{N-1})$ . Hence the probability that at least one such parent is selected in this iteration is

| | $\displaystyle p=1-\mathopen{}\mathclose{{}\left(1-\tfrac{1}{N}(\tfrac{1}{6}-%

\tfrac{2.5}{N-1})}\right)^{N}≥ 1-\exp\mathopen{}\mathclose{{}\left(-\tfrac{%

1}{6}+\tfrac{2.5}{N-1}}\right)>0.03,$ | |

| --- | --- | --- |

where the last inequality uses $N≥ 20$ from $n≥ 4$ and $N≥ 4(n+1)$ . For two-permutation tournament selection, again by Lemma 5, with probability at least $p=1-(1-(\frac{1}{6}-\frac{2.5}{N-1}))^{2}>0.03$ (since $N≥ 20$ ) a parent $x$ with $f(x)=f(y)$ is selected. With these values of $p$ , the proof of Theorem 2 extends to the two cases of tournament selection, and we know that the expected iterations to cover the full Pareto front is at most

| | $\displaystyle\sum_{i=0}^{n-1}$ | $\displaystyle{}\frac{1}{pp_{i}^{+}}+\sum_{i=1}^{n}\frac{1}{pp_{i}^{-}}≤\sum%

_{i=0}^{n-1}\frac{1}{0.03\frac{n-i}{en}}+\sum_{i=1}^{n}\frac{1}{0.03\frac{i}{%

en}}$ | |

| --- | --- | --- | --- |

We now discuss the concentration result. With the same arguments as in the proof of Theorem 2, but using the success probabilities $0.03\frac{n-k}{en}$ and $0.03\frac{k}{en}$ for $X^{+}_{k}$ and $X^{-}_{k}$ respectively and estimating $q_{i}≥\frac{0.03}{e}\frac{i}{n}$ , we obtain that for any $\delta≥ 0$ , we have $\Pr[T≥\tfrac{200e}{3}(1+\delta)n\ln n]≤ 2n^{-\delta}.$ ∎

4 Runtime of the NSGA-II on LeadingOnesTrailingZeroes

We proceed with analyzing the runtime of the NSGA-II on the benchmark LeadingOnesTrailingZeroes proposed by Laumanns, Thiele, and Zitzler [LTZ04]. This is the function $f:\{0,1\}^{n}→\mathbb{N}×\mathbb{N}$ defined by

$$

f(x)=\big{(}f_{1}(x),f_{2}(x)\big{)}=\big{(}\sum_{i=1}^{n}\prod_{j=1}^{i}x_{j}%

,\sum_{i=1}^{n}\prod_{j=i}^{n}(1-x_{j})\big{)}

$$

for all $x∈\{0,1\}^{n}$ . Here the first objective is the so-called LeadingOnes function, counting the number of (contiguous) leading ones of the bit string, and the second objective counts in an analogous fashion the number of trailing zeros. Again, the aim is to maximize both objectives. Different from OneMinMax, here many solutions exist that are not Pareto optimal. The known runtimes for this benchmark are $\Theta(n^{3})$ for the SEMO [LTZ04], $O(n^{3})$ for the GSEMO [Gie03], and $O(\mu n^{2})$ for the $(\mu+1)$ SIBEA with population size $\mu≥ n+1$ [BFN08].

Similar to OneMinMax, we can show that when the population size is large enough, an objective value on the Pareto front stays in the population from the point on when it is discovered.

**Lemma 7**

*Consider one iteration of the NSGA-II with population size $N≥ 4(n+1)$ optimizing the LeadingOnesTrailingZeroes function. Assume that in some iteration $t$ the combined parent and offspring population $R_{t}=P_{t}\cup Q_{t}$ contains a solution $x$ with rank one. Then also the next parent population $P_{t+1}$ contains an individual $y$ with $f(y)=f(x)$ . In particular, once the parent population contains an individual with objective value $(k,n-k)$ , it will do so for all future generations.*

* Proof*

Let $F_{1}$ be the set of solutions of rank one, that is, the set of solutions in $R_{t}$ that are not dominated by any other individual in $R_{t}$ . By definition of dominance, for each $v_{1}∈\{f_{1}(x)\mid x∈ F_{1}\}$ , there exists a unique $v_{2}$ such that $(v_{1},v_{2})∈\{f(x)\mid x∈ F_{1}\}$ . Therefore, $|f(F_{1})|$ is at most $n+1$ . We now reuse the argument from the proof of Lemma 1 for OneMinMax that for each objective value, there are at most $4$ individuals with this objective value and positive crowding distance. Thus the number of individuals in $F_{1}$ with positive crowding distance is at most $4(n+1)≤ N$ . Since the NSGA-II keeps $N$ individuals with smallest rank and largest crowding distance in case of a tie, we know that the individuals with rank one and positive crowding distance will all be kept. This shows the first claim. For the second claim, let $x∈ P_{t}$ with $f(x)=(k,n-k)$ for some $k$ . Since $x$ lies on the Pareto front of LeadingOnesTrailingZeroes, the rank of $x$ in $R_{t}$ is necessarily one. Hence by the first claim, a $y$ with $f(y)=f(x)$ will be contained in $P_{t+1}$ . A simple induction extends this finding to all future generations. ∎

Since not all individuals are on the Pareto front, the runtime analysis for LeadingOnesTrailingZeroes function is slightly more complex than for OneMinMax. We analyze the process in two stages: the first stage lasts until we have found both extremal solutions of the Pareto front. In this phase, we argue that the first (resp. second) objective value increases by one every (expected) $O(n)$ iterations. Consequently, after an expected number of $O(n^{2})$ iterations, we have an individual $x$ in the population with $f_{1}(x)=n$ (resp. $f_{2}(x)=n$ ), which are the desired extremal individuals. The second stage, where we complete the Pareto front from existing Pareto solutions, can be analyzed in a similar manner as for OneMinMax in Theorem 2, noting of course the different probabilities to generate a new solution on the Pareto front. We start with the two easier parent selections and discuss tournament selection separately in Theorem 9.

**Theorem 8**

*Consider optimizing the LeadingOnesTrailingZeroes function via the NSGA-II with one of the following four ways to generate the offspring population in Step 3 in Algorithm 3, namely applying one-bit mutation or standard bit-wise mutation once to each parent or $N$ times choosing a parent uniformly at random and applying one-bit mutation or standard bit-wise mutation to it. If the population size $N$ is at least $4(n+1)$ , then the expected runtime is $\frac{2e^{2}}{e-1}n^{2}$ iterations and $\frac{2e^{2}}{e-1}Nn^{2}$ fitness evaluations. Besides, let $T$ be the number of iterations to reach the full Pareto front. Then

| | $\displaystyle\Pr\mathopen{}\mathclose{{}\left[T≥\frac{2e^{2}(1+\delta)}{e-1%

}n^{2}}\right]≤\exp\mathopen{}\mathclose{{}\left(-\frac{\delta^{2}}{2(1+%

\delta)}(2n-1)}\right)$ | |

| --- | --- | --- |

holds for any $\delta≥ 0$ .*

* Proof*

Consider one iteration $t$ of the first stage, that is, we have $P_{t}^{p}=\{x∈ P_{t}\mid f_{1}(x)+f_{2}(x)=n\}=\emptyset$ . Let $v_{t}=\max\{f_{1}(x)\mid x∈ P_{t}\}$ and let $P_{t}^{*}=\{x∈ P_{t}\mid f_{1}(x)=v_{t}\}$ . Note that by Lemma 7, $v_{t}$ is non-decreasing over time. Let $x∈ P_{t}^{*}$ . Let $p$ denote the probability that $x$ is chosen as a parent to be mutated (note that this probability is independent of $x$ for the two selection schemes regarded here). Conditional on that, let $p^{*}$ be a lower bound (independent of $x$ ) on the probability that $x$ generates a solution with a larger $f_{1}$ -value. Then the expected number of iterations to obtain a solution with better $f_{1}$ -value is at most $\frac{1}{pp^{*}}$ . Consequently, the expected number of iterations to obtain a $f_{1}$ -value of $n$ , thus a solution on the Pareto front, is at most $(n-k_{0})\frac{1}{pp^{*}}≤ n\frac{1}{pp^{*}}$ , where $k_{0}$ is the maximum LeadingOnes value in the initial population. For the second stage, let $x∈ P_{t}^{p}$ be such that a neighbor of $f(x)$ on the front is not yet covered by $P_{t}$ . Let $p^{\prime}$ denote the probability that $x$ is chosen as a parent to be mutated. Conditional on that, let $p^{**}$ denote a lower bound (independent of $x$ ) for the probability to generate a particular neighbor of $x$ on the front. Consequently, the probability that $R_{t}$ covers an extra element of the Pareto front is at least $p^{\prime}p^{**}$ . Since Lemma 7 implies that any existing LeadingOnesTrailingZeroes value on the front will be kept in the following iterations, we know that the expected number of iterations for this progress is at most $\frac{1}{p^{\prime}p^{**}}$ . Since $n$ such progresses are sufficient to cover the full Pareto front, the expected number of iterations to cover the whole Pareto front is at most $n\frac{1}{p^{\prime}p^{**}}$ . Therefore, the expected total runtime is at most $n\frac{1}{pp^{*}}+n\frac{1}{p^{\prime}p^{**}}$ iterations. We recall from Theorem 2 that we have $p=p^{\prime}=1$ when selecting each parent once and we have $p=p^{\prime}=1-(1-\frac{1}{N})^{N}≥ 1-\frac{1}{e}$ when choosing parents randomly. To estimate $p^{*}$ and $p^{**}$ , we note that the desired progress can always be obtained by flipping one particular bit. Hence for one-bit mutation, we have $p^{*}=p^{**}=\frac{1}{n}$ . For standard bit-wise mutation, $\frac{1}{n}(1-\frac{1}{n})^{n-1}≥\frac{1}{en}$ is a valid lower bound for $p^{*}$ and $p^{**}$ . With these estimates, we obtain in all cases an expected runtime of at most

| | $\displaystyle n\frac{1}{pp^{*}}+n\frac{1}{p^{\prime}p^{**}}≤ n\frac{1}{(1-%

\frac{1}{e})\frac{1}{en}}+n\frac{1}{(1-\frac{1}{e})\frac{1}{en}}=\frac{2e^{2}n%

^{2}}{e-1}$ | |

| --- | --- | --- |

iterations, hence $\frac{2e^{2}}{e-1}Nn^{2}$ fitness evaluations. Now we will prove the concentration result. The time to cover the full Pareto front is divided into two stages as discussed before. It is not difficult to see that the first stage is to reach a Pareto optimum for the first time, and the corresponding runtime is dominated by the sum of $n$ independent geometric random variables with success probabilities of $(1-\frac{1}{e})\frac{1}{en}$ . The second stage is to cover the full Pareto front, and the corresponding runtime is dominated by the sum of another $n$ such independent geometric random variables. Formally, let $X_{1},...,X_{2n}$ be independent geometric random variables with success probabilities of $(1-\frac{1}{e})\frac{1}{en}$ , and let $T$ be the number of iterations to cover the full Pareto front. Then $Z:=\sum_{i=1}^{2n}X_{i}$ stochastically dominates $T$ , and $E[Z]=\frac{2e^{2}n^{2}}{e-1}$ . From a Chernoff bound for sums of independent identically distributed geometric random variables [Doe20, (1.10.46) in Theorem 1.10.32], we have that for any $\delta≥ 0$ ,

$$

\Pr\mathopen{}\mathclose{{}\left[Z\geq(1+\delta)\frac{2e^{2}n^{2}}{e-1}}\right%

]\leq\exp\mathopen{}\mathclose{{}\left(-\frac{\delta^{2}}{2}\frac{2n-1}{1+%

\delta}}\right).

$$

Since $Z$ dominates $T$ , we have proven this theorem. ∎

We now study the runtime of the NSGA-II using binary tournament selection. Compared to OneMinMax, we face the additional difficulty that now rank one solutions can exist which are not on the Pareto front. Due to their low rank, they could perform well in the selection, but being possibly far from the front, they are not interesting as parents. We need a sightly different general proof outline to nevertheless argue that sufficiently often a parent on the Pareto front generates a new neighbor on the front. Also, since not all individuals are on the Pareto front, we do not have anymore the property that the difference between the maximum and minimum value is the same for both objectives. We overcome this by first showing the NSGA-II finds the two extremal points of the Pareto front in reasonable time (then the maximum values are both $n$ and the minimum values are both $0 0$ ).

**Theorem 9**

*Consider optimizing the LeadingOnesTrailingZeroes function via the NSGA-II. Assume that the parents for variation are chosen either via $N$ independent random tournaments between different individuals or via the two-permutation implementation of binary tournaments. Assume that these parents are mutated via one-bit or standard bit-wise mutation. If the population size $N$ is at least $4(n+1)$ , then the expected runtime is at most $15en^{2}$ iterations and at most $15eNn^{2}$ fitness evaluations. Besides, let $T$ be the number of iterations to reach the full Pareto front, then

| | $\displaystyle\Pr\mathopen{}\mathclose{{}\left[T≥\frac{(1+\delta)100e}{3}n^{%

2}}\right]≤\exp\mathopen{}\mathclose{{}\left(-\frac{\delta^{2}}{2(1+\delta)%

}(3n-1)}\right)$ | |

| --- | --- | --- |

holds for any $\delta≥ 0$ .*

* Proof*

We first argue that, regardless of the initial state of the NSGA-II, it takes $O(n^{2})$ iterations until the extremal point $(1,...,1)$ , which is the unique maximum of $f_{1}$ , is in $P_{t}$ . To this aim, let $X_{t}:=\max\{f_{1}(x)\mid x∈ P_{t}\}$ denote the maximum $f_{1}$ value in the parent population. We note that any $x∈ P_{t}$ with $f_{1}(x)=X_{t}$ lies on the first front $F_{1}$ and that there is a $y∈ P_{t}$ with infinite crowding distance and $f(y)=f(x)$ , in particular, $f_{1}(y)=X_{t}$ . If parents are chosen via independent tournaments, such a $y$ has a $\frac{2}{N}$ chance of being one of the two individuals of a fixed tournament. It then wins the tournament with at least 50% chance (where the 50% show up only in the rare case that the other individual also lies on the first front and has an infinite crowding distance). Hence the probability that this $y$ is chosen at least once as a parent to be mutated is at least $p=1-(1-\frac{1}{2}\frac{2}{N})^{N}≥ 1-\frac{1}{e}$ . When the two-permutation implementation of tournament selection is used, then $y$ appears in both permutations and has a random partner in both. Again, this partner with probability at most $\frac{1}{2}$ wins the tournament. Hence the probability that $y$ is selected as a parent at least once is at least $p=1-(\frac{1}{2})^{2}=\frac{3}{4}$ . Conditional on $y$ being chosen at least once, let us regard a fixed mutation step in which $y$ was selected as a parent. To mutate $y$ into an individual with higher $f_{1}$ value, it suffices to flip a particular single bit (namely the first zero after the initial contiguous segment of ones). The probability for this is $p^{*}=\frac{1}{n}$ for one-bit mutation and $p^{*}=\frac{1}{n}(1-\frac{1}{n})^{n-1}≥\frac{1}{en}$ for standard bit-wise mutation. Denoting by $Y_{t}:=\max\{f_{1}(x)\mid x∈ R_{t}\}$ the maximum $f_{1}$ value in the combined parent and offspring population, we have just shown that $\Pr[Y_{t}≥ X_{t}+1]≥ pp^{*}=\Omega(1/n)$ whenever $X_{t}<n$ . We note that any $x∈ R_{t}$ with $f_{1}(x)=Y_{t}$ lies on the first front $F_{1}$ of $R_{t}$ and that there is a $y∈ R_{t}$ with infinite crowding distance and $f(y)=f(x)$ , in particular, $f_{1}(y)=Y_{t}$ . Consequently, such a $y$ will be kept in the next parent population $P_{t+1}$ (note that there are at most $4$ individuals in $F_{1}$ with infinite crowding distance – since $N≥ 4$ , they will all be included in $P_{t+1}$ ). This shows that we also have $\Pr[X_{t+1}≥ X_{t}+1]≥ pp^{*}$ whenever $X_{t}<n$ . By adding the expected waiting times for an increase of the $X_{t}$ value, we see that the expected time to have $X_{t}=n$ , that is, to have $(1,...,1)∈ P_{t}$ , is at most

| | $\displaystyle\frac{n}{pp^{*}}≤\frac{n}{(1-\frac{1}{e})\frac{1}{en}}=\frac{e%

^{2}n^{2}}{e-1}$ | |

| --- | --- | --- |

iterations. By a symmetric argument, we see that after another at most $\frac{e^{2}}{e-1}n^{2}$ iterations, also the other extremal point $(0,...,0)$ is in the population (and remains there forever by Lemma 7). With now both extremal points of the Pareto front covered, we analyze the remaining time until the Pareto front is fully covered. We note that by Lemma 7, the number of Pareto front points covered cannot decrease. Hence it suffices to prove a lower bound for the probability that the coverage increases in one iteration. This is what we do now. Assume that the Pareto front is not yet fully covered. Since we have some Pareto optimal individuals, there also is a Pareto optimal individual $x∈ P_{t}$ such that $f(x)$ is a neighbor of a point $v$ on the Pareto front that is not covered. Since we have both extremal points in the Pareto front, the differences between the maximum and minimum value are the same for both objectives (namely $n$ ). Consequently, in the same way as in the proof of Lemma 3, we know that there is also such a $y$ with $f(y)=f(x)$ and with crowding distance at least $\frac{2}{n}$ . We estimate the number of individuals in $P_{t}\setminus\{y\}$ which could win a tournament against this $y$ . Clearly, these can only be individuals in the first front $F_{1}$ of the non-dominated sorting. Assume first that $|f(F_{1})|≤ 0.8(n+1)$ . We note that, just by the definition of crowding distance and in a similar fashion as in the proof of Lemma 1, for each $v∈ f(F_{1})$ there are at most four individuals with $f$ value equal to $v$ and positive crowding distance. All other individuals in $F_{1}$ have a crowding distance of zero (and thus lose the tournament against $y$ ), as do all individuals not in $F_{1}$ . Consequently, there are at least $N_{0}=N-4· 0.8(n+1)$ individuals other than $y$ that would lose a tournament against $y$ . Assume now that $m:=|f(F_{1})|>0.8(n+1)$ . Since $F_{1}$ consists of pair-wise incomparable solutions or solutions with identical objective value (which we may ignore for the following argument), we have $|f_{1}(F_{1})|=|f_{2}(F_{1})|=m$ . For any $v=(v_{1},v_{2})$ , let $v^{+}:=(v_{1}+1,v_{2}-1)$ and $v^{-}:=(v_{1}-1,v_{2}+1)$ . Then we divide $f(F_{1})$ into two disjoint sets $U_{1}=\{v∈ f(F_{1})\cap[1..n-1]^{2}\mid v^{+}∉ f(F_{1})\text{ or }v^{-}%

∉ f(F_{1})\}$ and $U_{2}=f(F_{1})\setminus U_{1}$ . Since both $f_{1}(F_{1})$ and $f_{2}(F_{1})$ are subsets of $[0..n]$ , which has $n+1$ elements, we see that less than $0.2(n+1)$ of the values in $[0..n]$ are missing in $f_{1}(F_{1})$ , and analogously in $f_{2}(F_{1})$ . Since each value missing in $f_{1}(F_{1})$ or $f_{2}(F_{1})$ leads to at most two values in $U_{1}$ , we have $|U_{1}|<4· 0.2(n+1)=0.8(n+1)$ . For the values in $U_{1}$ , we use the blunt estimate from above that at most $4$ individuals with this objective value and positive crowding distance exist. For the values $v∈ U_{2}$ , we are in the same situation as in Lemma 4, and thus there are at most two individuals $x∈ F_{1}$ with $f(x)=v$ and crowding distance at least $\frac{2}{n}$ (this was not formally proven in Lemma 4 for the case that $v∈\{(0,n),(n,0)\}$ and the unique neighbor of $v$ is in $f(F_{1})$ , but it is easy to see that in this case only the at most two $x$ with $f(x)=v$ and infinite crowding distance can have a crowding distance of at least $\frac{2}{n}$ ). Consequently, there are more than

| | $\displaystyle N-4|U_{1}|-2|U_{2}|$ | $\displaystyle={}N-4|U_{1}|-2(m-|U_{1}|)=N-2|U_{1}|-2m$ | |

| --- | --- | --- | --- |

individuals in $P_{t}\setminus\{y\}$ that would lose against $y$ . Note that this bound is weaker than the one from the first case, so it is valid in both cases. From this, we now estimate the probability that $y$ is selected as a parent at least once. We first regard the case of independent tournaments. The probability that $y$ is the winner of a fixed tournament is at least the probability that it is chosen as the first contestant times the probability that one of the at least $N-3.6(n+1)$ sure losers is chosen as the second contestant. This probability is at least $\frac{1}{N}·\frac{N-3.6(n+1)}{N-1}≥\frac{1}{N}\frac{0.4(n+1)}{4n+3}≥

0%

.1\frac{1}{N}$ . Hence the probability $p$ that $y$ is chosen at least once as a parent for mutation is at least $p≥ 1-(1-0.1\frac{1}{N})^{N}≥ 1-\exp(-0.1)≥ 0.09$ . For the two-permutation implementation of tournament selection, $y$ appears in both permutations and has a random partner in each of them. Hence the probability that $y$ wins at least one of these two tournaments is at least $p≥ 1-(1-\frac{N-3.6(n+1)}{N-1})^{2}≥ 1-(1-0.1)^{2}=0.19$ . Conditional on $y$ being selected at least once, we regard a mutation step in which $y$ is selected. The probability $p^{*}$ that the Pareto optimal $y$ is mutated into the unique Pareto optimal bit string $z$ with $f(z)=v$ is $p^{*}=\frac{1}{n}$ for one-bit mutation and $p^{*}=\frac{1}{n}(1-\frac{1}{n})^{n-1}≥\frac{1}{en}$ for standard bit-wise mutation. Consequently, the probability that one iteration generates the missing Pareto front value $v$ is at least $pp^{*}$ , the expected waiting time for this is at most $\frac{1}{pp^{*}}$ iterations, and the expected time to create all missing Pareto front values is at most

| | $\displaystyle\frac{n}{pp^{*}}≤\frac{n}{0.09\frac{1}{en}}=\frac{100en^{2}}{9}$ | |

| --- | --- | --- |

iterations. Hence, the runtime for the full coverage of the Pareto front starting from the initial population is at most

| | $\displaystyle\tfrac{e^{2}}{e-1}n^{2}+\tfrac{e^{2}}{e-1}n^{2}+\tfrac{100e}{9}n^%

{2}<15en^{2}$ | |

| --- | --- | --- |

iterations, which is at most $15eNn^{2}$ fitness evaluations. Now we will prove the concentration result. Note that in this proof, we consider three phases, the first phase to reach the extremal point $(1,...,1)$ , the second phase to reach $(0,...,0)$ , and the third phase to cover the full Pareto front. The runtime for each phase is dominated by the sum of $n$ independent geometric random variables with success probabilities of $\frac{0.09}{en}$ . Formally, let $X_{1},...,X_{3n}$ be independent geometric random variables with success probabilities of $\frac{0.09}{en}$ , and let $T$ be the number of iterations to cover the full Pareto front. Then we have $Z:=\sum_{i=1}^{3n}X_{i}$ stochastically dominates $T$ , and $E[Z]=\frac{3en^{2}}{0.09}$ . From the Chernoff bound [Doe20, (1.10.46) in Theorem 1.10.32], we have that for any $\delta≥ 0$ ,

$$

\Pr\mathopen{}\mathclose{{}\left[Z\geq(1+\delta)\frac{100e}{3}n^{2}}\right]%

\leq\exp\mathopen{}\mathclose{{}\left(-\frac{\delta^{2}}{2}\frac{3n-1}{1+%

\delta}}\right).

$$

Since $Z$ dominates $T$ , this shows the theorem. ∎

5 An Exponential Lower Bound for Small Population Size

In this section, we prove a lower bound for a small population size. Since lower bound proofs can be quite complicated – recall for example that there are matching upper and lower bounds for the runtime of the SEMO (using one-bit mutation) on OneMinMax and LeadingOnesTrailingZeroes, but not for the GSEMO (using bit-wise mutation) – we restrict ourselves to the simplest variant using each parent once to generate one offspring via one-bit mutation. From the proofs, though, we are optimistic that our results, with different implicit constants, can also be shown for all other variants of the NSGA-II regarded in this work. Our experiments support this believe, see Figure 3 in Section 6.

Our main result is that this NSGA-II takes an exponential time to find the whole Pareto front (of size $n+1$ ) of OneMinMax when the population size is $n+1$ . This is different from the SEMO and GSEMO algorithms (which have no fixed population size, but which will never store a population larger than $n+1$ when optimizing OneMinMax) and the $(\mu+1)$ SIBEA with population size $\mu=n+1$ . Even stronger, we show that there is a constant $\varepsilon>0$ such that when the current population $P_{t}$ covers at least $|f(P_{t})|≥(1-\varepsilon)(n+1)$ points on the Pareto front of OneMinMax, then with probability $1-\exp(-\Theta(n))$ , the next population $P_{t+1}$ will cover at most $|f(P_{t+1})|≤(1-\varepsilon)(n+1)$ points on the front. Hence when a population covers a large fraction of the Pareto front, then with very high probability the next population will cover fewer points on the front. When the coverage is smaller, that is, $|f(P_{t})|≤(1-\varepsilon)(n+1)$ , then with probability $1-\exp(-\Theta(n))$ the combined parent and offspring population $R_{t}$ will miss a constant fraction of the Pareto front. From these two statements, it is easy to see that there is a constant $\delta$ such that with probability $1-\exp(-\Omega(n))$ , in none of the first $\exp(\Omega(n))$ iterations the combined parent and offspring population covers more than $(1-\delta)(n+1)$ points of the Pareto front.

Since it is the technically easier one, we start with proving the latter statement that a constant fraction of the front not covered by $P_{t}$ implies also a constant fraction not covered by $R_{t}$ . Before stating the formal result and proof, let us explain the reason behind this result. With a constant fraction of the front not covered by $P_{t}$ , also a constant fraction that is $\Omega(n)$ away from the boundary points $(0,n)$ and $(n,0)$ is not covered. These values have the property that from an individual corresponding to either of their neighboring positions, an individual with this objective value can only be generated with constant probability via one-bit mutation. Again a constant fraction of these values have only a constant number of individuals on neighboring positions. These values thus have a (small) constant probability of not being generated in this iteration. This shows that in expectation, we are still missing a constant fraction of the Pareto front in $R_{t}$ . Via the method of bounded differences (exploiting that each mutation operation can change the number of missing elements by at most one), we turn this expectation into a bound that holds with probability $1-\exp(-\Omega(n))$ .

**Lemma 10**

*Let $\varepsilon∈(0,1)$ be a sufficiently small constant. Consider optimizing the OneMinMax benchmark via the NSGA-II applying one-bit mutation once to each parent individual. Let the population size be $N=n+1$ . Assume that $|f(P_{t})|≤(1-\varepsilon)(n+1)$ . Then with probability at least $1-\exp(-\Omega(n))$ , we have $|f(R_{t})|≤(1-\frac{1}{10}\varepsilon(\tfrac{1}{5}\varepsilon-\tfrac{2}{n})%

^{5/\varepsilon})(n+1)$ .*

* Proof*

Let $F=\{(v,n-v)\mid v∈[0..n]\}$ be the Pareto front of OneMinMax. For a value $(v,n-v)∈ F$ , we say that $(v-1,n-v+1)$ and $(v+1,n-v-1)$ are neighbors of $(v,n-v)$ provided that they are in $[0..n]^{2}$ . We write $(a,b)\sim(u,v)$ to denote that $(a,b)$ and $(u,v)$ are neighbors. Let $\Delta=\lceil\frac{5}{\varepsilon}\rceil-1$ and let $F^{\prime}$ be the set of values in $F$ such that more than $\Delta$ individuals in $P_{t}$ have a function value that is a neighbor of this value, that is,

$$

F^{\prime}=\mathopen{}\mathclose{{}\left\{(v,n-v)\in F\,\big{|}\,|\{x\in P_{t}%

\mid f(x)\sim(v,n-v)\}|\geq\Delta+1}\right\}.

$$

Then $|F^{\prime}|≤\frac{2}{\Delta+1}(n+1)≤\frac{2}{5}\varepsilon(n+1)$ as otherwise the number of individuals in our population could be bounded from below by

$$

|F^{\prime}|\tfrac{1}{2}(\Delta+1)>\tfrac{2}{\Delta+1}(n+1)\cdot\tfrac{1}{2}(%

\Delta+1)=n+1,

$$

which contradicts our assumption $N=n+1$ (note that the factor of $\tfrac{1}{2}$ accounts for the fact that we may count each individual twice). Let $M=F\setminus f(P_{t})$ be the set of Pareto front values not covered by the current population. By assumption, $|M|≥\varepsilon(n+1)$ . Let

$$

M_{1}=\mathopen{}\mathclose{{}\left\{(v,n-v)\in M\,\middle|\,v\in\mathopen{}%

\mathclose{{}\left[\lfloor\tfrac{1}{5}\varepsilon(n+1)\rfloor..n-\lfloor\tfrac%

{1}{5}\varepsilon(n+1)\rfloor}\right]}\right\}\setminus F^{\prime}.

$$

Then $|M_{1}|≥|M|-2\lfloor\tfrac{1}{5}\varepsilon(n+1)\rfloor-|F^{\prime}|≥%

\tfrac{1}{5}\varepsilon(n+1)$ . We now argue that a constant fraction of the values in $M_{1}$ is not generated in the current generation. We note that via one-bit mutation, a given $(v,n-v)∈ F$ can only be generated from an individual $x$ with $f(x)\sim(v,n-v)$ . Let $(v,n-v)∈ M_{1}$ . Since $v∈[\lfloor\tfrac{1}{5}\varepsilon(n+1)\rfloor..n-\lfloor\tfrac{1}{5}%

\varepsilon(n+1)\rfloor]$ , the probability that a given parent $x$ is mutated to some individual $y$ with $f(y)=(v,n-v)$ is at most

| | $\displaystyle\frac{n-\lfloor\tfrac{1}{5}\varepsilon(n+1)\rfloor+1}{n}≤ 1-%

\frac{1}{5}\varepsilon+\frac{2}{n}$ | |

| --- | --- | --- |

since there are at most $n-\lfloor\tfrac{1}{5}\varepsilon(n+1)\rfloor+1$ bit positions such that flipping them creates the desired value. Since $v∉ F^{\prime}$ , the probability that $Q_{t}$ (and thus $R_{t}$ ) contains no individual $y$ with $f(y)=(v,n-v)$ , is at least

| | $\displaystyle\mathopen{}\mathclose{{}\left(1-\mathopen{}\mathclose{{}\left(1-%

\tfrac{1}{5}\varepsilon+\tfrac{2}{n}}\right)}\right)^{\Delta}≥(\tfrac{1}{5}%

\varepsilon-\tfrac{2}{n})^{5/\varepsilon}:=p.$ | |

| --- | --- | --- |

Let $X=|F\setminus f(R_{t})|$ denote the number of Pareto front values not covered by $R_{t}$ . We have $E[X]≥|M_{1}|p≥\frac{1}{5}\varepsilon p(n+1)$ . The random variable $X$ is functionally dependent on the $N=n+1$ random decisions of the $N$ mutation operations, which are stochastically independent. Changing the outcome of a single mutation operation changes $X$ by at most $1$ . Consequently, $X$ satisfies the assumptions of the method of bounded differences [McD89] (also to be found in [Doe20, Theorem 1.10.27]). Hence the classic additive Chernoff bound applies to $X$ as if it was a sum of $N$ independent random variables taking values in an interval of length $1$ . In particular, the probability that $X≤\frac{1}{10}\varepsilon p(n+1)≤\frac{1}{2}E[X]$ is at most $\exp(-\Omega(n))$ . ∎

We now turn to the other main argument, which is that when the current population covers the Pareto front to a large extent, then the selection procedure of the NSGA-II will remove individuals in such a way from $R_{t}$ that at least some constant fraction of the Pareto front is not covered by $P_{t+1}$ . The key arguments to show this claim are the following. When a large part of the front is covered by $P_{t}$ , then many points are only covered by a single individual (since the population size equals the size of the front). With some careful counting, we derive from this that close to two thirds of the positions on the front are covered exactly twice in the combined parent and offspring population $R_{t}$ and that the corresponding individuals have the same crowding distance. Since these are roughly $\frac{4}{3}(n+1)$ individuals appearing equally preferable in the selection, a random set of at least roughly $\frac{1}{3}(n+1)$ of them will be removed in the selection step. In expectation, this will remove both individuals from a constant fraction of the points on the Pareto front. Again, the method of bounded differences turns this expectation into a statement with probability $1-\exp(-\Omega(n))$ .

**Lemma 11**

*Let $\varepsilon>0$ be a sufficiently small constant. Consider optimizing the OneMinMax benchmark via the NSGA-II applying one-bit mutation once to each individual. Let the population size be $N=n+1$ . Assume that the current population $P_{t}$ covers $|f(P_{t})|≥(1-\varepsilon)(n+1)$ elements of the Pareto front. Then with probability at least $1-\exp(-\Omega(n))$ , the next population $P_{t+1}$ covers less than $(1-0.01)(n+1)$ elements of the Pareto front.*

* Proof*

Let $U$ be the set of Pareto front values that have exactly one corresponding individual in $P_{t}$ , that is, for any $(v,n-v)∈ U$ , there exists only one $x∈ P_{t}$ with $f(x)=(v,n-v)$ . We first note that $|U|≥(1-2\varepsilon)(n+1)$ as otherwise there would be at least

| | $\displaystyle 2$ | $\displaystyle{}\mathopen{}\mathclose{{}\left(|f(P_{t})|-|U|}\right)+|U|=2|f(P_%

{t})|-|U|$ | |

| --- | --- | --- | --- |

individuals in $P_{t}$ , which contradicts our assumption $N=n+1$ . Let $U^{\prime}$ denote the set of values in $U$ which have all their neighbors also in $U$ . Since each value not in $U$ can prevent at most two values in $U$ from being in $U^{\prime}$ , we have

$$

\begin{split}|U^{\prime}|&\geq{}|U|-2(n+1-|U|)=3|U|-2(n+1)\\

&\geq{}3(1-2\varepsilon)(n+1)-2(n+1)=\mathopen{}\mathclose{{}\left(1-6%

\varepsilon}\right)(n+1).\end{split}

$$