# Long Story Short: Omitted Variable Bias in Causal Machine Learning

**Authors**: Victor Chernozhukov, Carlos Cinelli, Whitney Newey, Amit Sharma, Vasilis Syrgkanis

## Abstract

We develop a general theory of omitted variable bias for a wide range of common causal parameters, including (but not limited to) averages of potential outcomes, average treatment effects, average causal derivatives, and policy effects from covariate shifts. Our theory applies to nonparametric models, while naturally allowing for (semi-)parametric restrictions (such as partial linearity) when such assumptions are made. We show how simple plausibility judgments on the maximum explanatory power of omitted variables are sufficient to bound the magnitude of the bias, thus facilitating sensitivity analysis in otherwise complex, nonlinear models. Finally, we provide flexible and efficient statistical inference methods for the bounds, which can leverage modern machine learning algorithms for estimation. These results allow empirical researchers to perform sensitivity analyses in a flexible class of machine-learned causal models using very simple, and interpretable, tools. We demonstrate the utility of our approach with two empirical examples.

Keywords: sensitivity analysis, short regression, long regression, omitted variable bias, Riesz representation, omitted confounders, causal models, machine learning, confidence bounds.

† Dept. of Economics, Massachusetts Institute of Technology, Cambridge, MA, USA. Email: vchern@mit.edu

* Dept. of Statistics, University of Washington, Seattle, WA, USA. Email: cinelli@uw.edu

‡ Dept. of Economics, Massachusetts Institute of Technology, Cambridge, MA, USA. Email: wnewey@mit.edu

$\|$ Microsoft Research India, Bangalore, India. Email: amshar@microsoft.com

§ Dept. of Mgmt Science and Engineering, Stanford University, Stanford, CA, USA. Email: vsyrgk@stanford.edu

Date: May 26, 2024. First ArXiv version: December 2021. This is an extended version of an earlier paper prepared for the NeurIPS-21 Workshop “Causal Inference & Machine Learning: Why now?”. We thank Isaiah Andrews, Elias Bareinboim, Ben Deaner, David Green, Judith Lok, Esfandiar Maasoumi, Steve Lehrer, Richard Nickl, Anna Miksusheva, Jack Porter, James Poterba, Eric Tchetgen Tchetgen, Ingrid Van Keilegom, and also participants of the Chambelain seminar, Canadian Economic Association the Institute for Nonparametric, and Uncertainty in Artificial Intelligence meetings, and seminars at Harvard-MIT, Wisconsin, Emory, Berkeley Methods Workshop, and BU Causal Seminar for very helpful comments. We are grateful to Jack Porter for suggesting the “long story short” title. This work was partially funded by the Royalty Research Fund at the University of Washington. R package for the methods developed in this paper is available at https://github.com/carloscinelli/dml.sensemakr. R and Python implementations are also available via the DoubleML ecosystem at https://docs.doubleml.org/stable/index.html.

## 1. Introduction

Unmeasured confounding is a pervasive issue in studies that aim to draw causal inferences from observational data. Such studies typically rely on a conditional ignorability (also known as unconfoundedness) assumption, which states that the treatment assignment is independent of potential outcomes given a set of observed covariates (Rosenbaum and Rubin, 1983a; Pearl, 2009; Angrist and Pischke, 2009; Imbens and Rubin, 2015). This assumption, however, requires that there are no unobserved confounders influencing both the treatment and the outcome. When such variables are omitted from the analysis, empirical estimates may differ from the true causal effect of interest, giving rise to what is now commonly known as “omitted variable bias.”

The omitted variable bias (OVB) problem is one the most significant threats to the identification of causal effects. In the context of linear models, this bias amounts to the difference between the coefficients of the treatment variable from two distinct outcome regressions: one that controls only for observed covariates (the “short” regression) and another that would additionally control for unobserved variables (the “long” regression). Formulas characterizing this difference play a foundational role in statistics, econometrics, and related fields (see, e.g., discussions in classical and modern textbooks, such as Goldberger, 1991; Angrist and Pischke, 2009 and Wooldridge, 2010). Such results allow empirical analysts to understand and bound the maximum size of the bias, by making plausibility judgments on the magnitude of parameters that comprise the OVB formula.

But while linear models are widely used in applied work, they are often overly restrictive. For example, in the binary treatment case, using linear models when treatment effects are heterogeneous may yield unintuitive or even misleading estimates of the causal effects of interest (Aronow and Samii, 2016; Słoczyński, 2022). To address these limitations, many empirical analysts have turned their attention to more flexible nonlinear or nonparametric models, often leveraging modern machine learning techniques for estimation and inference (Van der Laan and Rose, 2011; Belloni et al., 2013; Chernozhukov et al., 2018a; Athey et al., 2019). These tools offer the flexibility to capture complex relationships between variables, avoiding stringent functional form assumptions in causal effect estimation. Yet, we currently lack general OVB results for nonlinear models (whether parametric or nonparametric), as we have for the linear case. Our work provides such results.

In this paper we develop a general theory of omitted variable bias for a wide range of common causal parameters that can be identified as linear functionals of the conditional expectation function (CEF) of the outcome. Such functionals encompass many (if not most) of the traditional targets of investigation in causal inference studies, such as averages of potential outcomes, average treatment effects, average causal derivatives, and policy effects from covariate shifts. We allow for arbitrary treatment (e.g continuous or binary) and outcome variables. Our theory applies to general nonparametric models, while naturally allowing for (semi-)parametric restrictions (such as partial linearity) when such assumptions are made. Our formulation recovers well-known and familiar OVB results for linear models as a special case, and it can be seen as its natural generalization to nonlinear models. Importantly, we show that the general nonparametric bounds on the bias still have a simple and interpretable form.

More specifically, we first formalize the OVB problem in the nonparametric setting. Paralleling the linear case, we define the OVB as the difference between the “short” and “long” functionals of the outcome regression, where the former omits and the latter includes the latent variables. To derive the OVB, our construction then leverages the Riesz-Frechet representation of the target functionals, which allows us to rewrite the parameters of interest as weighted averages of the outcome regression, with weights given by the Riesz representers (RRs). We show that the OVB arises as a by-product of confounders introducing systematic errors in both the outcome regression and in the RRs for the parameter of interest. Furthermore, the bound on the bias has a simple characterization, depending only on the additional variation that latent variables create in the outcome regression and in the RRs. As a result, plausibility judgments on the maximum explanatory power of latent variables suffice to place overall bounds on the bias, simplifying the task of sensitivity analysis even when using nonparametric or otherwise complex models.

Although these general results may initially seem abstract to those not familiar with Riesz representation theory, in many leading examples the RRs in fact correspond to quantities that are well-known to empirical researchers. For instance, when estimating the average treatment effect in a partially linear model, the RR is the (variance scaled) residualized treatment, after “partialling out” the control covariates. Or, when estimating an average treatment effect in a general nonparametric model with a binary treatment, the RR is now given by another familiar quantity—the inverse probability of treatment weights (IPTW). In such cases, we show that the bounds on the bias can be reparameterized in terms of simple percentage gains in variance explained (or precision) in the treatment and the outcome regression due to unmeasured confounders, again facilitating the interpretation and use of the OVB formulas in practice. We further help analysts make plausibility judgments on the magnitude of sensitivity parameters by means of comparison of the relative strength of unobserved confounders against the strength of observed covariates.

Finally, we provide statistical inference for these bounds using debiased machine learning (DML) and auto-DML (Chernozhukov et al., 2018a, b, 2020, 2022c). Our construction makes it possible to use modern machine learning methods for estimating the identifiable components of the bounds, including regression functions, Riesz representers, the norm of regression residuals, and the norm of Riesz representers. These results enables flexible and efficient statistical inference on the bounds, allowing researchers to perform sensitivity analyses against unmeasured confounding in a flexible class of machine-learned causal models using simple and interpretable tools. Here we provide DML-based statistical inference on the bounds, but we note that our approach can also be used with classical parametric and nonparametric estimation methods.

### Related Literature

Our work is most closely related to the literature that derives OVB formulas for linear models, such as those found in traditional textbooks and recent extensions (Goldberger, 1991; Angrist and Pischke, 2009; Wooldridge, 2010; Frank, 2000; Oster, 2019; Cinelli and Hazlett, 2020). We advance this literature by providing analogous, easily explainable OVB formulas for a broad and rich class of causal parameters, all for general nonlinear models, with or without further parametric restrictions. Importantly, we provide a single unifying framework that covers all these cases, and that can be easily specialized depending on the target parameter and on whether additional parametric assumptions (if any) are made. We further advance the OVB literature by providing flexible and efficient statistical inference methods, leveraging modern machine learning algorithms with debiased machine learning.

More broadly, our work is related to the extensive literature on sensitivity analysis against unmeasured confounders. Here we highlight the key differences between our approach and existing methods, while relegating a more detailed review to the Appendix, Section D. First, many prior works on sensitivity analysis either focus exclusively on binary treatments (e.g., Rosenbaum, 2002; Tan, 2006; Masten and Poirier, 2018; Kallus et al., 2019; Zhao et al., 2019; Bonvini and Kennedy, 2021), target a single estimand of interest, such as a causal risk ratio (Ding and VanderWeele, 2016; VanderWeele and Ding, 2017), or impose parametric assumptions on the observed data or on the nature of unobserved confounding (Rosenbaum and Rubin, 1983b; Imbens, 2003; Dorie et al., 2016; Cinelli et al., 2019). Our approach differs from these in that (i) it is not limited to binary treatments, (ii) it covers a broader range of target parameters, such as average causal derivatives and average policy effects from covariate shifts, and (iii) it does not require parametric assumptions on the observed data nor on the nature of confounding.

Even if we focus solely on the important special case of estimating an average treatment effect (ATE) with a binary treatment, our OVB results usefully complements other seminal approaches on this problem such as those of Rosenbaum (2002) or the marginal sensitivity models of Tan (2006). Whereas such approaches limit the strength of confounding through its impact on the worst case change that confounders could cause in the odds ratio of treatment assignment—a quantity economists rarely focus on—our approach limits the strength of confounding through its impact on the gains in precision in the treatment regression, a measure of explanatory power similar in nature to a simple $R^{2}$ in a linear model. Moreover, even in stylized models of treatment assignment (e.g, a logistic model with a Gaussian latent confounder), worst-case approaches such as the ones in Rosenbaum (2002) and Tan (2006) have a naturally unbounded sensitivity parameter, no matter how small the actual degree of confounding is, whereas our approach does not suffer from this problem (see Section E of the Appendix for an example).

Our OVB-based approach also differs from traditional sensitivity analyses in that it derives the exact OVB formula for the target parameters we cover. For example, our results show that the bias of the ATE in the binary treatment case is not determined by deviations on the odds of treatment; rather, it is determined by three quantities: (i) the maximum explanatory power of confounders in the treatment regression, as given by gains in precision, (ii) the maximum explanatory power of confounders in the outcome regression, as given by gains in variance explained, and (iii) by the correlation of errors in the regression function and the IPTW. Therefore, beyond being a tool for sensitivity analysis, OVB results such as ours provide a precise characterization of the bias, and reveal that any alternative approach that parameterize deviations from unconfoundedness in a different way can only affect the bias insofar as it constraints these three quantities.

### Overview of the paper

Section 2 presents our method in the simpler context of partially linear models. The results in that section serve not only as an accessible introduction to the main ideas of our general framework, but are also important in their own right, since partially linear models are widely used in applied work. Section 3 derives the main results of the paper—we characterize and bound the omitted variable bias for continuous linear functionals of the conditional expectation function of the outcome, based on their Riesz representations, all for general, nonparametric causal models. In Section 4 we construct high-quality inference methods for the bounds on the target parameters by leveraging recent advances in debiased machine learning with Riesz representers. Section 5 demonstrates the use of our tools to assess the robustness of causal claims in a detailed empirical example that estimates the average treatment effect of 401(k) eligibility on net financial assets. Section 6 concludes with suggestions for possible extensions. The Appendix contains all proofs, provides a more extensive literature review, as well as an additional empirical example that illustrates sensitivity analyses for average causal derivatives with continuous treatments.

### Notation.

All random vectors are defined on the probability space with probability measure ${\mathrm{P}}$ . We consider a random vector $Z=(Y,W)$ with distribution $P$ taking values $z$ in its support $\mathcal{Z}$ ; we use $P_{V}$ to denote the probability law of any subvector $V$ and $\mathcal{V}$ denote its support. We use $\|f\|_{P,q}=\|f(Z)\|_{P,q}$ to denote the $L^{q}(P)$ norm of a measurable function $f:\mathcal{Z}\to\mathbb{R}$ and also the $L^{q}(P)$ norm of random variable $f(Z)$ . For a differentiable map $x\mapsto g(x)$ , from $\mathbb{R}^{d}$ to $\mathbb{R}^{k}$ , $\partial_{x^{\prime}}g$ abbreviates the partial derivatives $(\partial/\partial x^{\prime})g(x)$ , and $\partial_{x^{\prime}}g(x_{0})$ means $\partial_{x^{\prime}}g(x)\mid_{x=x_{0}}$ . We use $x^{\prime}$ to denote the transpose of a column vector $x$ ; we use $R^{2}_{U\sim V}$ to denote the $R^{2}$ from the orthogonal linear projection of a scalar random variable $U$ on a random vector $V$ . We use the conventional notation $dL/dP$ to denote the Radon-Nykodym derivative of measure $L$ with respect to $P$ .

## 2. Warm-Up: Omitted Variable Bias in Partially Linear Models

To fix ideas, we begin our discussion in the context of partially linear models (PLM). These results not only provide the key intuitions and the building blocks for the general case of nonseparable, nonparametric models of Section 3, but they are also important in their own right, as these models are widely used in applied work.

### 2.1. Problem set-up

Consider the partially linear regression model of the form

$$

Y=\theta D+f(X,A)+\epsilon. \tag{1}

$$

Here $Y$ denotes a real-valued outcome, $D$ a real-valued treatment, $X$ an observed vector of covariates, and $A$ an unobserved vector of covariates. We refer to $W:=(D,X,A)$ as the “long” list of regressors, and to equation (1) as the “long” regression. For exposition purposes, we assume the error term $\epsilon$ obeys ${\mathrm{E}}[\epsilon|D,X,A]=0$ and thus ${\mathrm{E}}[Y|D,X,A]=\theta D+f(X,A)$ , though we note this assumption is not necessary. We can also consider the case where $\theta D+f(X,A)$ is the projection of the CEF on the space of functions that are partially linear in $D$ .

Under the traditional assumption of conditional ignorability, Along with consistency and usual regularity conditions. we have that the regression coefficient $\theta$ identifies the average treatment effect of a unit increase of $D$ on the outcome $Y$ ,

$$

{\mathrm{E}}[Y(d+1)-Y(d)]={\mathrm{E}}[{\mathrm{E}}[Y|D=d+1,X,A]-{\mathrm{E}}[

Y|D=d,X,A]]=\theta,

$$

where $Y(d)$ denotes the potential outcome of $Y$ when the treatment $D$ is experimentally set to $d$ . The problem, however, is that $A$ is not observed, and thus both the long regression, and the regression coefficient $\theta$ cannot be computed from the available data.

Since the latent variables $A$ are not measured, an alternative route to obtain an approximate estimate of $\theta$ is to consider the partially linear projection of $Y$ on the “short” list of observed regressors $W^{s}:=(D,X)$ , as in,

$$

Y=\theta_{s}D+f_{s}(X)+\epsilon_{s}, \tag{2}

$$

where here we do not make the assumption that the regression is correctly specified, and thus the error term simply obeys the orthogonality condition ${\mathrm{E}}[\epsilon_{s}(D-{\mathrm{E}}[D\mid X])]=0$ . Following convention, we call equation (2) the “short regression.” We can then use the “short” regression parameter $\theta_{s}$ as a proxy for $\theta$ .

Evidently, in general $\theta_{s}$ is not equal to $\theta$ , and this naturally leads to the question of how far our “proxy” $\theta_{s}$ can deviate from the true inferential target $\theta$ . Our goal is, thus, to analyze the difference between the short and long parameters—the omitted variable bias (OVB):

$$

\theta_{s}-\theta,

$$

and perform inference on this bias under various hypotheses on the strength of the latent confounders $A$ .

### 2.2. OVB as the covariance of approximation errors

Recall that, using a Frisch-Waugh-Lovell partialling out argument, one can express the long and short regression parameters, $\theta$ and $\theta_{s}$ , as the linear projection coefficients of $Y$ on the residuals $D-{\mathrm{E}}[D\mid X,A]$ and $D-{\mathrm{E}}[D\mid X]$ , respectively. That is,

$$

\theta={\mathrm{E}}Y\alpha(W),\quad\quad\theta_{s}={\mathrm{E}}Y\alpha_{s}(W^{

s}); \tag{3}

$$

where here we define

$$

\alpha(W):=\frac{D-{\mathrm{E}}[D\mid X,A]}{{\mathrm{E}}(D-{\mathrm{E}}[D\mid X

,A])^{2}},\quad\alpha_{s}(W^{s}):=\frac{D-{\mathrm{E}}[D\mid X]}{{\mathrm{E}}(

D-{\mathrm{E}}[D\mid X])^{2}}.

$$

For reasons that will become clear in the next section, we can refer to $\alpha(W)$ and $\alpha_{s}(W^{s})$ as the “long” and “short” Riesz representers (RR). We are deliberately introducing Riesz representers in this section to smooth the transition to the general case. The formulation in terms of Riesz representers is a key innovation of this paper and it has not appeared in previous works on omitted variable bias.

Now let $g(W):={\mathrm{E}}[Y\mid D,X,A]$ and $g_{s}(W^{s}):=\theta_{s}D+f_{s}(X)$ denote the long and short regressions, respectively. Using the orthogonality conditions in (1) and (2), we can further express $\theta$ and $\theta_{s}$ as

$$

{\mathrm{E}}Y\alpha(W)={\mathrm{E}}g(W)\alpha(W),\quad\quad{\mathrm{E}}Y\alpha

_{s}(W^{s})={\mathrm{E}}g_{s}(W^{s})\alpha_{s}(W^{s}). \tag{4}

$$

Our first characterization of the OVB is thus as follows, where we use the shorthand notation: $g=g(W)$ , $g_{s}=g_{s}(W^{s})$ , $\alpha=\alpha(W)$ , and $\alpha_{s}=\alpha_{s}(W^{s})$ .

**Theorem 1 (OVB and Sharp Bounds—PLM)**

*Assume that $Y$ and $D$ are square integrable with:

$$

{\mathrm{E}}(D-{\mathrm{E}}[D\mid X,A])^{2}>0.

$$

Then the OVB for the partially linear model of equations (1) - (2) is given by

$$

\theta_{s}-\theta={\mathrm{E}}(g_{s}-g)(\alpha_{s}-\alpha),

$$

that is, it is the covariance between the regression error and the RR error. Furthermore, the squared bias can be bounded as

$$

|\theta_{s}-\theta|^{2}=\rho^{2}B^{2}\leq B^{2},

$$

where

$$

B^{2}:={\mathrm{E}}(g-g_{s})^{2}{\mathrm{E}}(\alpha-\alpha_{s})^{2},\quad\rho^

{2}:=\mathrm{Cor}^{2}(g-g_{s},\alpha-\alpha_{s}).

$$

The bound $B^{2}$ is the product of additional variations that omitted confounders generate in the regression function and in the RR. This bound is sharp in the sense that maximizing $\rho^{2}$ over $\alpha$ and $g$ , subject to fixing $B^{2}$ and ${\mathrm{E}}(g-g_{s})^{2}\leq{\mathrm{E}}(Y-g_{s})^{2}$ , gives value 1.*

This result for partially linear models is new and it naturally generalizes the traditional OVB formula for linear models. It is worth noting that the proof of Theorem 1 does not rely on the assumption that the long regression is partially linear, even though this assumption was made for expository purposes. In general, if we define both $g$ and $g_{s}$ to be projections of $Y$ onto the space of functions that are partially linear on $D$ , the results of the theorem still hold.

### 2.3. Further characterization of the bias

Sensitivity analysis requires making plausibility judgments on the values of the sensitivity parameters. Therefore, it is important that such parameters be well-understood, and easily interpretable in applied settings. Here we show how the bias of Theorem 1 can be reparameterized in terms of conventional $R^{2}$ s.

Recall that, when the CEF is not linear, a natural measure of the strength of relationship between some variable $W$ and another variable $V$ is the nonparametric $R^{2}$ —also known as Pearson’s correlation ratio (Pearson, 1905; Doksum and Samarov, 1995):

$$

\eta^{2}_{V\sim W}:=R^{2}_{V\sim{\mathrm{E}}[V|W]}=\operatorname{Var}({\mathrm

{E}}[V|W])/\operatorname{Var}(V)=\frac{\operatorname{Var}(V)-{\mathrm{E}}[

\operatorname{Var}(V|W)]}{\operatorname{Var}(V)}.

$$

Further, the nonparametric partial $R^{2}$ of a variable $V$ with another variable $A$ given $X$ measures the additional gain in the explanatory power that $A$ provides, beyond what is already is explained by $X$ . This also equals the relative decrease in the average residual variance:

$$

\eta^{2}_{V\sim A\mid X}:=\frac{\eta^{2}_{V\sim AX}-\eta^{2}_{V\sim X}}{1-\eta

^{2}_{V\sim X}}=\frac{{\mathrm{E}}[\operatorname{Var}(V|X)]-{\mathrm{E}}[

\operatorname{Var}(V|X,A)]}{{\mathrm{E}}[\operatorname{Var}(V|X)]}. \tag{5}

$$

We are now ready to rewrite the bound of Theorem 1.

**Corollary 1 (Interpreting OVB Bounds in Terms ofR2superscript𝑅2R^{2}italic_R start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT—PLM)**

*Under the conditions of Theorem 1, we can further express the bound $B^{2}$ as

$$

B^{2}=C^{2}_{Y}C^{2}_{D}S^{2},\quad S^{2}:={\mathrm{E}}(Y-g_{s})^{2}{\mathrm{E

}}\alpha_{s}^{2}, \tag{6}

$$

where

$$

\quad C^{2}_{Y}=R^{2}_{Y-g_{s}\sim g-g_{s}};\quad C^{2}_{D}:=\frac{1-R^{2}_{

\alpha\sim\alpha_{s}}}{R^{2}_{\alpha\sim\alpha_{s}}}, \tag{7}

$$

and $1-R^{2}_{\alpha\sim\alpha_{s}}=\eta^{2}_{D\sim A\mid X}$ . Furthermore, if ${\mathrm{E}}[Y|D,X]=\theta_{s}+f_{s}(X)$ , then $R^{2}_{Y-g_{s}\sim g-g_{s}}=\eta^{2}_{Y\sim A\mid DX}.$*

The bound is the product of the term $S^{2}$ , which is directly identifiable (and thus estimable) from the observed distribution of $(Y,D,X)$ , and the term $C^{2}_{Y}C^{2}_{D}$ , which is not identifiable from the data, and needs to be restricted through hypotheses that limit the strength of confounding. The factors $C^{2}_{Y}$ and $C^{2}_{D}$ measure the strength of confounding that the omitted variables generate in the outcome and treatment regressions. More precisely,

- $R^{2}_{Y-g_{s}\sim g-g_{s}}$ ( $=\eta^{2}_{Y\sim A\mid DX}$ under partial linearity of the short regression) in $C^{2}_{Y}$ measures the proportion of residual variation of the outcome explained by latent confounders; and,

- $1-R^{2}_{\alpha\sim\alpha_{s}}=\eta^{2}_{D\sim A\mid X}$ in $C^{2}_{D}$ measures the proportion of residual variation of the treatment explained by latent confounders.

Note how this parameterization simplifies the complexity of plausibility judgments. Researchers now need only to reason about the maximum explanatory power that unobserved confounders have in explaining treatment and outcome variation, as given by familiar $R^{2}$ measures, in order to place bounds on the size of the bias. Finally, in practice, both $\theta_{s}$ and $S^{2}$ need to be estimated from finite samples. This can be readily done using debiased machine learning, as we discuss in Section 4.

## 3. Main Results: Omitted Variable Bias in Nonparametric Causal Models

We now derive the main results of the paper, and construct sharp bounds on the size of the omitted variable bias for a broad class of causal parameters that can be identified as linear functionals of the conditional expectation function of the outcome. Although more abstract, the presentation of this section largely parallels the special case of partially linear models given in Section 2.

### 3.1. Problem set-up

As a motivating example, consider the following nonparametric structural equation model (SEM):

| | $\displaystyle Y$ | $\displaystyle=$ | $\displaystyle g_{Y}(D,X,A,\epsilon_{Y}),$ | |

| --- | --- | --- | --- | --- |

where $Y$ is an outcome variable, $D$ is a treatment variable, $X$ is a vector-valued observed confounder variable, $A$ is a vector-valued latent confounder variable, and $\epsilon_{Y},\epsilon_{D},\epsilon_{A}$ are vector-valued structural disturbances that are mutually independent. This model has an associated Directed Acyclic Graph (DAG) (Pearl, 2009) as shown in Figure 1(a).

The SEM above induces the potential outcome $Y(d)$ under the intervention that sets $D$ experimentally to $d$ ,

$$

Y(d):=g_{Y}(d,X,A,\epsilon_{Y}).

$$

The structural model also encodes a consistency assumption between observed and potential outcomes, $Y=Y(D)$ . Additionally, the independence of the structural disturbances implies the following conditional ignorability condition:

$$

Y(d)\perp\!\!\!\!\perp D\mid\{X,A\}, \tag{8}

$$

which states that the realized treatment $D$ is independent of the potential outcomes, conditionally on $X$ and $A$ . More generally, we can work with any causal inference framework that implies the existence of potential outcomes, the consistency of observed and potential outcomes, and such that the conditional ignorability assumption (8) holds (Angrist and Pischke, 2009; Pearl, 2009; Imbens and Rubin, 2015). There are many structural models that satisfy the conditional ignorability assumption (8); see e.g. Pearl (2009) and Figure 1 for concrete examples.

Under this set-up and when $d$ is in the support of $D$ given $X$ , $A$ , we then have the following (well-known) identification result

$$

{\mathrm{E}}[Y(d)\mid X,A]={\mathrm{E}}[Y(d)\mid D=d,X,A]={\mathrm{E}}[Y\mid D

=d,X,A]=:g(d,X,A),

$$

that is, the conditional average potential outcome coincides with the “long” regression function of $Y$ on $D$ , $X$ , and $A$ . Therefore, we can identify various causal parameters—functionals of the average potential outcome—from the regression function. Important examples include: (i) the average treatment effect (ATE)

$$

\theta={\mathrm{E}}[Y(1)-Y(0)]={\mathrm{E}}[g(1,X,A)-g(0,X,A)], \tag{1}

$$

for the case of a binary treatment $D$ ; and, (ii) the average causal derivative (ACD)

$$

\theta={\mathrm{E}}\left[\partial_{d}{\mathrm{E}}[Y(D)\mid X,A]\right]={

\mathrm{E}}[\partial_{d}g(D,X,A)],

$$

for the case of a continuous treatment $D$ .

$D$ $Y$ $X$ $A$

(a)

$D$ $Y$ $X$ $A$

(b)

$D$ $Y$ $A_{1}$ $X$ $A_{2}$

(c)

$D$ $Y$ $X_{1}$ $X_{2}$ $A$

(d)

Figure 1. Examples of different DAGs that imply $Y(d)\perp\!\!\!\!\perp D\mid\{X,A\}$ .

Note: Examples of DAGs (nonparametric SEMs) that imply the conditional ignorability condition (8). Latent nodes are circled. DAGs (1(a)) and (1(b)) represent opposite directions $X\to A$ and $A\to X$ , respectively, while yielding the same conditional ignorability condition. DAG (1(c)) shows a special case of (1(b)) by setting $A=(A_{1},A_{2})$ . DAG (1(d)) illustrates the case where we only observe the “negative controls” $X_{1}$ and $X_{2}$ , which are proxies of $A$ . The conditional ignorability condition (8) still holds in this case.

In fact, our framework is considerably more general, and it covers any target parameter of the following form.

**Assumption 1 (Target “Long” Parameter)**

*The target parameter $\theta$ is a continuous linear functional of the long regression:

$$

\theta:={\mathrm{E}}m(W,g); \tag{9}

$$

where the mapping $f\mapsto m(w;f)$ is linear in $f\in L^{2}(P_{W})$ , and the mapping $f\mapsto{\mathrm{E}}m(W,f)$ is continuous in $f$ with respect to the $L^{2}(P_{W})$ norm.*

This formulation covers the two previous examples with scores $m(W,g)=g(1,X,A)-g(0,X,A)$ for the ATE and $m(W,g)=\partial_{d}g(D,X,A)$ for the ACD. The continuity condition holds under the regularity conditions provided in the remark below. We discuss many other examples of this form later in Section 3.4.

**Remark 1 (Regularity Conditions for ATE and ACD)**

*As regularity conditions for the ATE we assume ${\mathrm{E}}Y^{2}<\infty$ and the weak overlap condition:

$$

{\mathrm{E}}[P(D=1\mid X,A)^{-1}P(D=0\mid X,A)^{-1}]<\infty.

$$

As regularity conditions for the ACD we assume ${\mathrm{E}}Y^{2}<\infty$ , that the conditional density $d\mapsto f(d|x,a)$ is continuously differentiable on its support $\mathcal{D}_{x,a}$ , the regression function $d\mapsto g(d,x,a)$ is continuously differentiable on $\mathcal{D}_{x,a}$ , and we have that $f(d|x,a)$ vanishes whenever $d$ is on the boundary of $\mathcal{D}_{x,a}$ . The above needs to hold for all values $x$ and $a$ in the support of $(X,A)$ . We also impose the bounded information assumption:

$$

{\mathrm{E}}(\partial_{d}\log f(D\mid X,A))^{2}<\infty.

$$

These conditions imply that Assumption 1 holds, by Theorem 3 given in Section 3.4. ∎*

The key problem is that we do not observe $A$ . Therefore we can only identify the “short” conditional expectation of $Y$ given $D$ and $X$ , i.e.

$$

g_{s}(D,X):={\mathrm{E}}[Y\mid D,X].

$$

With the short regression in hand, we can compute proxies (or approximations) $\theta_{s}$ for $\theta$ . In particular, for the ATE, the short parameter consists of

$$

\theta_{s}={\mathrm{E}}[g_{s}(1,X)-g_{s}(0,X)],

$$

and for the ACD,

$$

\theta_{s}={\mathrm{E}}[\partial_{d}g_{s}(D,X)].

$$

In this general framework, the proxy parameter can also be expressed as the same linear functional applied to the short regression, $g_{s}(W^{s})$ .

**Assumption 2 (Proxy “Short” Parameter)**

*The proxy parameter $\theta_{s}$ is defined by replacing the long regression $g$ with the short regression $g_{s}$ in the definition of the target parameter:

$$

\theta_{s}:={\mathrm{E}}m(W,g_{s}).

$$

We require $m(W,g_{s})=m(W^{s},g_{s})$ , i.e., the score depends only on $W^{s}$ when evaluated at $g_{s}$ .*

In the two working examples this assumption is satisfied, since $m(W,g_{s})=m(W^{s},g_{s})=g_{s}(1,X)-g_{s}(0,X)$ for the ATE and $m(W,g_{s})=m(W^{s},g_{s})=\partial_{d}g_{s}(D,X)$ for the ACD. Section 3.4 verifies this assumption for other examples.

Our goal is to characterize and provide bounds on the omitted variable bias (OVB), ie., the difference between the “short” and “long” functionals,

$$

\theta_{s}-\theta,

$$

under assumptions that limit the strength of confounding, and perform statistical inference on its size.

### 3.2. Omitted variable bias for linear functionals of the CEF

The key to bounding the bias is the following lemma that characterizes the target parameters and their proxies as inner products of regression functions with terms called Riesz representers (RR).

**Lemma 1 (Riesz Representation)**

*There exist unique square integrable random variables $\alpha(W)$ and $\alpha_{s}(W^{s})$ , the long and short Riesz representers, such that

$$

\theta={\mathrm{E}}m(W,g)={\mathrm{E}}g(W)\alpha(W),\quad\theta_{s}={\mathrm{E

}}m(W^{s},g_{s})={\mathrm{E}}g_{s}(W^{s})\alpha_{s}(W^{s}),

$$

for all square-integrable $g$ ’s and $g_{s}$ . Furthermore, $\alpha_{s}(W^{s})$ is the projection of $\alpha$ in the sense that

$$

\alpha_{s}(W^{s})={\mathrm{E}}[\alpha(W)\mid W^{s}].

$$*

In the case of the ATE with a binary treatment, the representers are just the classical inverse probability of treatment (Horvitz-Thompson) weights:

$$

\alpha(W)=\frac{D}{P(D=1\mid X,A)}-\frac{1-D}{P(D=0\mid X,A)},\quad\alpha_{s}(

W)=\frac{D}{P(D=1\mid X)}-\frac{1-D}{P(D=0\mid X)}.

$$

This follows from change of measure arguments. While it may not be immediately obvious that $\alpha_{s}=E[\alpha|D,X]$ , one can easily show that by applying Bayes’ rule.

In the case of the ACD with a continuous treatment, using integration by parts we can verify that the representers are logarithmic derivatives of the conditional densities:

$$

\alpha(W)=-\partial_{d}\log f(D\mid X,A),\quad\alpha_{s}(W^{s})=-\partial_{d}

\log f(D\mid X).

$$

We give more involved examples in the next section.

Using this lemma, we obtain the following characterization of the OVB and bounds on its size.

**Theorem 2 (OVB and Sharp Bounds)**

*Consider the long and short parameters $\theta$ and $\theta_{s}$ as given by Assumptions 1 and 2. We then have that the OVB is

$$

\theta_{s}-\theta={\mathrm{E}}(g_{s}-g)(\alpha_{s}-\alpha),

$$

that is, it is the covariance between the regression error and the RR error. Therefore, the squared bias can be bounded as

$$

|\theta_{s}-\theta|^{2}=\rho^{2}B^{2}\leq B^{2},

$$

where

$$

B^{2}:={\mathrm{E}}(g-g_{s})^{2}{\mathrm{E}}(\alpha-\alpha_{s})^{2},\quad\rho^

{2}:=\mathrm{Cor}^{2}(g-g_{s},\alpha-\alpha_{s}).

$$

The bound $B^{2}$ is the product of additional variations that omitted confounders generate in the regression function and in the RR. This bound is sharp in the sense that maximizing $\rho^{2}$ over $\alpha$ and $g$ subject to fixing $B^{2}$ and ${\mathrm{E}}(g-g_{s})^{2}\leq{\mathrm{E}}(Y-g_{s})^{2}$ gives value 1.*

This is the main conceptual result of the paper, and it is new. It covers a rich variety of causal estimands of interest, as long as they can be written as linear functionals of the long regression. We analyze further examples of this class of estimands in Section 3.4.

### 3.3. Characterization of the OVB bounds

In the same spirit of Section 2, we can further derive useful characterizations of the bounds.

**Corollary 2 (Interpreting OVB Bounds in Terms ofR2superscript𝑅2R^{2}italic_R start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT)**

*The bound of Theorem 2 can be re-expressed as

$$

B^{2}=C^{2}_{Y}C^{2}_{D}S^{2},\quad S^{2}:={\mathrm{E}}(Y-g_{s})^{2}{\mathrm{E

}}\alpha_{s}^{2}, \tag{10}

$$

where

| | $\displaystyle C^{2}_{Y}$ | $\displaystyle:=$ | $\displaystyle\frac{{\mathrm{E}}(g-g_{s})^{2}}{{\mathrm{E}}(Y-g_{s})^{2}}=R^{2} _{Y-g_{s}\sim g-g_{s}},\quad C^{2}_{D}:=\frac{{\mathrm{E}}\alpha^{2}-{\mathrm{ E}}\alpha^{2}_{s}}{{\mathrm{E}}\alpha^{2}_{s}}=\frac{1-R^{2}_{\alpha\sim\alpha _{s}}}{R^{2}_{\alpha\sim\alpha_{s}}}.$ | |

| --- | --- | --- | --- | --- |*

This generalizes the result of Corollary 1 to fully nonlinear models, and general target parameters defined as linear functionals of the long regression. As before, the bound is the product of the term $S^{2}$ , which is directly identifiable from the observed distribution of $(Y,D,X)$ , and the term $C^{2}_{Y}C^{2}_{D}$ , which is not identifiable, and needs to be restricted through hypotheses that limit strength of confounding.

Here, again, the terms $C^{2}_{Y}$ and $C^{2}_{D}$ generally measure the strength of confounding that the omitted variables generate in the outcome regression and in the treatment:

- $R^{2}_{Y-g_{s}\sim g-g_{s}}$ in the first factor measures the proportion of residual variance in the outcome explained by confounders;

- $1-R^{2}_{\alpha\sim\alpha_{s}}$ in the second factor measures the proportion of residual variation of the long RR generated by latent confounders.

Likewise, we have the same useful interpretation of $C^{2}_{Y}$ as the nonparametric partial $R^{2}$ of $A$ with $Y$ , given $D$ and $X$ , namely, $C^{2}_{Y}=\eta^{2}_{Y\sim A\mid D,X}$ . The interpretation of $1-R^{2}_{\alpha\sim\alpha_{s}}$ can be further specialized for different cases, as follows.

**Remark 2 (Interpretation of1−Rα∼αs21subscriptsuperscript𝑅2similar-to𝛼subscript𝛼𝑠1-R^{2}_{\alpha\sim\alpha_{s}}1 - italic_R start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_α ∼ italic_α start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT end_POSTSUBSCRIPTfor the ATE with a Binary Treatment)**

*For the ATE example, we have that

$$

1-R^{2}_{\alpha\sim\alpha_{s}}=\frac{{\mathrm{E}}[1/\text{Var}(D|X,A)]-{

\mathrm{E}}[1/\text{Var}(D|X)]}{{\mathrm{E}}[1/\text{Var}(D|X,A)]}\in[0,1]. \tag{11}

$$

That is, $1-R^{2}_{\alpha\sim\alpha_{s}}$ measures the relative gain in the average precision of the treatment model due to $A$ . Precision is the inverse of the variance. Thus, the interpretation of $1-R^{2}_{\alpha\sim\alpha_{s}}$ for the ATE with a binary treatment parallels that of the partially linear model (compare it to equation (5)), with the sole distinction being that, here, gains in predictive power are measured by the relative increase in precision rather than the relative decrease in variance. This connection can be strengthened by considering a latent Gaussian confounder model $D=1(D^{*}>0)$ , where $D^{*}=g(X)-\mu A-\sqrt{1-\mu^{2}}\epsilon_{D},$ with $\epsilon_{D}$ and $A$ both mutually independent standard Gaussian, and also independent of $X$ . Note that $g(X)$ is identified from the relation ${\mathrm{E}}[D\mid X]=\Phi(g(X))$ . Then $P[D=1\mid X,A]=\Phi((g(X)-\mu A)/\sqrt{1-\mu^{2}})$ , and $P[D=1\mid X]=\Phi(g(X))$ , from which the relative gain in precision can be computed. Then here the gain in precision is a monotone function of $\mu^{2}=\eta^{2}_{D^{*}\sim A|X}$ , the $R^{2}$ in the latent regression of $D^{*}$ on $A$ , after adjusting for $X$ . This connection may be useful for empirical work. ∎*

And an analogous interpretation applies for average causal derivatives.

**Remark 3 (Interpretation of1−Rα∼αs21subscriptsuperscript𝑅2similar-to𝛼subscript𝛼𝑠1-R^{2}_{\alpha\sim\alpha_{s}}1 - italic_R start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_α ∼ italic_α start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT end_POSTSUBSCRIPTfor Average Causal Derivatives)**

*For the ACD example,

$$

1-R^{2}_{\alpha\sim\alpha_{s}}=\frac{{\mathrm{E}}[(\partial_{d}\log f(D\mid X,

A))^{2}]-{\mathrm{E}}[(\partial_{d}\log f(D\mid X))^{2}]}{{\mathrm{E}}[(

\partial_{d}\log f(D\mid X,A))^{2}]}\in[0,1], \tag{12}

$$

which can be interpreted as the relative gain in information that the confounder $A$ provides about the location of $D$ . Furthermore, if $D$ is homoscedastic Gaussian, conditional on both $X$ and $(X,A)$ , we then have

$$

\partial_{d}\log f(D\mid X,A)=-\frac{D-{\mathrm{E}}[D\mid X,A]}{{\mathrm{E}}(D

-{\mathrm{E}}[D\mid X,A])^{2}},\quad\partial_{d}\log f(D\mid X,A)=-\frac{D-{

\mathrm{E}}[D\mid X]}{{\mathrm{E}}(D-{\mathrm{E}}[D\mid X])^{2}},

$$

so that $1-R^{2}_{\alpha\sim\alpha_{s}}$ simplifies to the nonparametric $R^{2}$ of the latent variable with the treatment, similarly to the partially linear model, i.e, $1-R^{2}_{\alpha\sim\alpha_{s}}=\eta^{2}_{D\sim A|X}$ . ∎*

Beyond making direct plausibility judgments on the strength of confounding using the above quantities, analysts can also leverage judgments of relative importance of variables to bound the size of the bias (see, e.g. Imbens, 2003; Cinelli and Hazlett, 2020). For instance, if one has reasons to believe that $A$ would not generate as much gains in explanatory power as certain key observed covariates $X_{j}$ , this can be used to formally place bounds on the strength of confounding due to $A$ . This allows one to assess, for instance, whether confounders as strong or stronger then observed covariates would be sufficient to overturn an empirical result. We elaborate the benchmarking procedure formally in Section F of the appendix and illustrate its use in the empirical example. These results extend previous benchmarking ideas for linear regression models to the general case.

### 3.4. Theoretical details for leading causal estimands

We now provide theoretical details for a wide variety of interesting and important causal estimands. Recall that we use $W=(D,X,A)$ to denote the “long” set of regressors and $W^{s}=(D,X)$ to denote the “short” list of regressors.

Let us start with examples for the binary treatment case, with the understanding that finitely discrete treatments can be analyzed similarly.

**Example 1 (Weighted Average Potential Outcome)**

*Let $D\in\{0,1\}$ be the indicator of the receipt of the treatment. Define the long parameter as

$$

\theta={\mathrm{E}}[g(\bar{d},X,A)\ell(W^{s})],

$$

where $w^{s}\mapsto\ell(w^{s})$ is a bounded non-negative weighting function and $\bar{d}$ is a fixed value in $\{0,1\}$ . We define the short parameter as

$$

\theta_{s}={\mathrm{E}}[g_{s}(\bar{d},X)\ell(W^{s})].

$$

We assume ${\mathrm{E}}Y^{2}<\infty$ and the weak overlap condition

$$

{\mathrm{E}}[\ell^{2}(W^{s})/P(D=\bar{d}\mid X,A)]<\infty.

$$*

The long parameter is a weighted average potential outcome (PO) when we set the treatment to $\bar{d}$ , under the standard conditional ignorability assumption (8). The short parameter is a statistical approximation based on the short regression. In this example, setting

- $\ell(w^{s})=1$ gives the average PO in the entire population;

- $\ell(w^{s})=1(x\in{\mathcal{N}})/P(X\in\mathcal{N})$ the average PO for group $\mathcal{N}$ ;

- $\ell(w^{s})=1(d=1)/P(D=1)$ the average PO for the treated.

Above we can consider $\mathcal{N}$ as small regions shrinking in volume with the sample size, to make the averages local, as in Chernozhukov et al. (2018b), but for simplicity we take them as fixed in this paper.

**Example 2 (Weighted Average Treatment Effects)**

*In the setting of the previous example, define the long parameter

$$

\theta={\mathrm{E}}[(g(1,X,A)-g(0,X,A))\ell(W^{s})],

$$

and the short parameter as

$$

\theta_{s}={\mathrm{E}}[(g_{s}(1,X)-g_{s}(0,X))\ell(W^{s})].

$$

We further assume ${\mathrm{E}}Y^{2}<\infty$ and the weak overlap condition

$$

{\mathrm{E}}[\ell^{2}(W^{s})/\{P(D=0\mid X,A)P(D=1\mid X,A)\}]<\infty.

$$*

The long parameter is a weighted average treatment effect under the standard conditional ignorability assumption. In this example, setting

- $\ell(w^{s})=1$ gives ATE in the entire population;

- $\ell(w^{s})=1(x\in\mathcal{N})/P(X\in\mathcal{N})$ the ATE for group $\mathcal{N}$ ;

- $\ell(w^{s})=1(d=1)/P(D=1)$ the ATE for the treated;

- $\ell(x)=\pi(x)$ the average value of policy (APV) $\pi$ ,

where the policy $\pi$ assigns a fraction $0\leq\pi(x)\leq 1$ of the subpopulation with observed covariate value $x$ to receive the treatment.

In what follows $D$ does not need to be binary. We next consider a weighted average effect of changing observed covariates $W^{s}$ according to a transport map $w^{s}\mapsto T(w^{s})$ , where $T$ is deterministic measurable map from $\mathcal{W}^{s}$ to $\mathcal{W}^{s}$ . For example, the policy

$$

(D,X,A)\mapsto(D+1,X,A)

$$

adds a unit to the treatment $D$ , that is $T(W^{s})=(D+1,X)$ . This has a causal interpretation if the policy induces the equivariant change in the regression function, namely the counterfactual outcome $\tilde{Y}$ under the policy obeys ${\mathrm{E}}[\tilde{Y}|X,A]=g(T(W^{s}),A)$ , and the counterfactual covariates are given by $\tilde{W}=(T(W^{s}),A)$ .

**Example 3 (Average Policy Effect from TransportingWssuperscript𝑊𝑠W^{s}italic_W start_POSTSUPERSCRIPT italic_s end_POSTSUPERSCRIPT)**

*For a bounded weighting function $w^{s}\mapsto\ell(w^{s})$ , the long parameter is given by

$$

\theta={\mathrm{E}}[\{g(T(W^{s}),A)-g(W^{s},A)\}\ell(W^{s})].

$$

The short form of this parameter is

$$

\theta_{s}={\mathrm{E}}[\{g_{s}(T(W^{s}))-g_{s}(W^{s})\}\ell(W^{s})].

$$

As the regularity conditions we require that the support of $P_{\tilde{W}}=\mathrm{Law}(T(W^{s}),A)$ is included in the support of $P_{W}$ , and require the weak overlap condition

$$

{\mathrm{E}}[(\ell(dP_{\tilde{W}}-dP_{W})/dP_{W})^{2}]<\infty.

$$*

We now turn to examples with continuous treatments $D$ taking values in $\mathbb{R}^{k}$ . Consider the average causal effect of the policy that shifts the distribution of covariates via the map $W=(D,X,A)\mapsto(T(W^{s}),A)=(D+rt(W^{s}),X,A)$ weighted by $\ell(W^{s})$ , keeping the long regression function invariant. The following long parameter $\theta$ is an approximation to $1/r$ times this average causal effect for small values of $r$ . This example is a differential version of the previous example.

**Example 4 (Weighted Average Incremental Effects)**

*Consider the long parameter taking the form of the average directional derivative:

$$

\theta={\mathrm{E}}[\ell(W^{s})t(W^{s})^{\prime}\partial_{d}g(D,X,A)],

$$

where $\ell$ is a bounded weighting function and $t$ is a bounded direction function. The short form of this parameter is

$$

\theta_{s}={\mathrm{E}}[\ell(W^{s})t(W^{s})^{\prime}\partial_{d}g_{s}(D,X)].

$$

As regularity conditions, we suppose that ${\mathrm{E}}Y^{2}<\infty$ . Further for each $(x,a)$ in the support of $(X,A)$ , and each $d$ in $\mathcal{D}_{x,a}$ , the support of $D$ given $(X,A)=(x,a)$ , the derivative maps $d\mapsto\partial_{d}g(d,x,a)$ and $d\mapsto g(w)\omega(w)$ , for $\omega(w):=\ell(d,x)t(d,x)f(d|x,a)$ , are continuously differentiable; the set $\mathcal{D}_{x,a}$ is bounded, and its boundary is piecewise-smooth; and $\omega(w)$ vanishes for each $d$ in this boundary. Moreover, we assume the weak overlap:

$$

{\mathrm{E}}[(\mathrm{div}_{d}\omega(W)/f(D|X,A))^{2}]<\infty.

$$*

Another example is that of a policy that shifts the entire distribution of observed covariates, independently of $A$ . The following long parameter corresponds to the average causal contrast of two policies that set the distribution of observed covariates $W^{s}$ to $F_{0}$ and $F_{1}$ , independently of $A$ . Note that this example is different from the transport example, since here the dependence between $A$ and $W^{s}$ is eliminated under the interventions.

**Example 5 (Policy Effect from Changing Distribution ofWssuperscript𝑊𝑠W^{s}italic_W start_POSTSUPERSCRIPT italic_s end_POSTSUPERSCRIPT)**

*Define the long parameter as

$$

\theta=\int\left[\int g(w^{s},a)dP_{A}(a)\right]\ell(w^{s})d\mu(w^{s});\quad

\mu(w^{s})=F_{1}(w^{s})-F_{0}(w^{s}),

$$

where $\ell$ is a bounded weight function, and the short parameter as

$$

\theta_{s}=\int g_{s}(w^{s})\ell(w^{s})d\mu(w^{s});\quad\mu(w^{s})=F_{1}(w^{s}

)-F_{0}(w^{s}).

$$

As the regularity conditions we require that the supports of $F_{0}$ and $F_{1}$ are contained in the support of $W^{s}$ , and that the measure $dP_{A}\times dF_{k}$ is absolutely continuous with respect to the measure $dP_{W}$ on $\mathcal{A}\times\text{support}(\ell)$ . We further assume that ${\mathrm{E}}Y^{2}<\infty$ and the weak overlap:

$$

{\mathrm{E}}[(\ell[dP_{A}\times d(F_{1}-F_{0})]/dP)^{2}]<\infty.

$$*

The following result establishes the validity of the OVB formulas and bounds for all examples.

**Theorem 3 (OVB Validity in Examples 1-5)**

*Under the conditions stated in Examples 1,2,3,5, Assumptions 1 and 2 are satisfied. Under conditions stated in Example 4, Assumptions 1 and 2 are satisfied for the Hahn-Banach extension of the mapping $g\mapsto{\mathrm{E}}m(W,g)$ to the entire $L^{2}(P_{W})$ , given by $g\mapsto{\mathrm{E}}g(W)\alpha(W)$ . The scores for Examples 1-5 are given by: The long RR and corresponding short RR are given by: where above we used the notations: $\bar{\ell}(X,A):={\mathrm{E}}[\ell(W^{s})|X,A],\bar{\ell}(X):={\mathrm{E}}[ \ell(W^{s})|X]$ , $p(d\mid x,a):={\mathrm{P}}(D=d|X=x,A=a),p(d\mid x):={\mathrm{P}}(D=d|X=x).$ In Examples 1-2, when the weight function only depends on $X$ , namely $\ell(W^{s})=\ell(X)$ , we have the simplifications $\bar{\ell}(X,A)=\bar{\ell}(X)=\ell(X).$*

As we have seen in Remarks 2 and 3, it may be useful to further specialize the interpretation of the sensitivity parameters $1-R^{2}_{\alpha\sim\alpha_{s}}$ for the many cases encompassed by the examples of Theorem 3. As this would be an extensive task, we leave such specializations to future work.

## 4. Statistical Inference on the Bounds

The bounds for the target parameter $\theta$ take the form

$$

\theta_{\pm}=\theta_{s}\pm|\rho|C_{Y}C_{D}S,\quad S^{2}={\mathrm{E}}(Y-g_{s})^

{2}{\mathrm{E}}\alpha^{2}_{s}.

$$

The components $C_{Y}$ , $C_{D}$ are set through hypotheses on the maximum explanatory power of omitted variables. Without further assumptions on the data generating process, $|\rho|$ is set to its upper bound of $|\rho|=1$ , which is the most conservative scenario. Researchers may also investigate less conservative scenarios for $|\rho|$ based on, for example, empirical benchmarking as we illustrate in the empirical example. The estimable components of the bounds are $S$ and $\theta_{s}$ . We can estimate these components via debiased machine learning (DML), which is a form of the classical “one-step” semi-parametric correction (Levit, 1975; Hasminskii and Ibragimov, 1978; Pfanzagl and Wefelmeyer, 1985; Bickel et al., 1993; Newey, 1994; Chernozhukov et al., 2018a, 2022a) based on Neyman orthogonal scores we give for the these components, combined with cross-fitting, an efficient form of data-splitting.

For debiased machine learning of $\theta_{s}$ , we exploit the representation

$$

\theta_{s}={\mathrm{E}}[m(W^{s},g_{s})+(Y-g_{s})\alpha_{s}],

$$

as in Chernozhukov et al. (2022c, 2021). This representation is Neyman orthogonal with respect to perturbations of $(g_{s},\alpha_{s})$ , which is a key property required for DML. Another component to be estimated is

$$

{\mathrm{E}}(Y-g_{s})^{2}=:\sigma^{2}_{s},

$$

which is also Neyman-orthogonal with respect to $g_{s}$ . The final component to be estimated is ${\mathrm{E}}\alpha^{2}_{s}$ . For this we explore the following formulation:

$$

{\mathrm{E}}\alpha^{2}_{s}=2{\mathrm{E}}m(W^{s},\alpha_{s})-{\mathrm{E}}\alpha

^{2}_{s}=:\nu^{2}_{s},

$$

where the latter parameterization is Neyman-orthogonal. Specifically Neyman orthogonality refers to the property:

| | $\displaystyle\partial_{g,\alpha}{\mathrm{E}}[m(W^{s},g)+(Y-g)\alpha]\Big{|}_{ \alpha=\alpha_{s},g=g_{s}}=0;$ | |

| --- | --- | --- |

where $\partial$ is the Gateaux (pathwise derivative) operator over directions $h\in L^{2}(P_{W^{s}})$ .

Application of DML theory in Chernozhukov et al. (2018a) and the delta-method gives the statistical properties of the estimated bounds under the condition that machine learning of $g_{s}$ and $\alpha_{s}$ is of sufficiently high quality, with learning rate faster than $n^{-1/4}$ . The estimation relies on the following generic algorithm.

**Definition 1 (DML(ψ𝜓\psiitalic_ψ))**

*Input the Neyman-orthogonal score $\psi(Z;\beta,\eta)$ , where $\eta=(g,\alpha)$ . Then (1), given a sample $(Z_{i}:=(Y_{i},D_{i},X_{i}))_{i=1}^{n}$ , randomly partition the sample into folds $(I_{\ell})_{\ell=1}^{L}$ of approximately equal size. Denote by $I_{\ell}^{c}$ the complement of $I_{\ell}$ . (2) For each $\ell$ , estimate $\widehat{\eta}_{\ell}=(\widehat{g}_{\ell},\widehat{\alpha}_{\ell})$ from observations in $I_{\ell}^{c}$ . (3) Estimate $\beta$ as a root of: $0=n^{-1}\sum_{\ell=1}^{L}\sum_{i\in I_{\ell}}\psi(\beta,Z_{i};\widehat{\eta}_{ \ell}).$ Output $\widehat{\beta}$ and the estimated scores $\widehat{\psi}^{o}(Z_{i})=\psi(\widehat{\beta},Z_{i};\widehat{\eta}_{\ell})$ for each $i\in I_{\ell}$ and each $\ell$ .*

Therefore the estimators are defined as

$$

\widehat{\theta}_{s}:=\mathrm{DML}(\psi_{\theta});\quad\widehat{\sigma}^{2}_{s

}:=\mathrm{DML}(\psi_{\sigma^{2}});\quad\widehat{\nu}^{2}_{s}:=\mathrm{DML}(

\psi_{\nu^{2}});

$$

for the scores

| | $\displaystyle\psi_{\theta}(Z;\theta,g,\alpha):=m(W^{s},g)+(Y-g(W^{s}))\alpha(W ^{s})-\theta;$ | |

| --- | --- | --- |

We say that an estimator $\hat{\beta}$ of $\beta$ is asymptotically linear and Gaussian with the centered influence function $\psi^{o}(Z)$ if

$$

\sqrt{n}(\hat{\beta}-\beta)=\frac{1}{\sqrt{n}}\sum_{i=1}^{n}\psi^{o}(Z_{i})+o_

{{\mathrm{P}}}(1)\leadsto N(0,{\mathrm{E}}\psi^{o2}(Z)). \tag{1}

$$

The application of the results in Chernozhukov et al. (2018a) for linear score functions yields the following result.

**Lemma 2 (DML for Bound Components)**

*Suppose that each of $\psi$ ’s listed above and the machine learners $\hat{\eta}_{\ell}=(\hat{\alpha}_{\ell},\hat{g}_{\ell})$ of $\eta_{0}=(g_{s},\alpha_{s})$ in $L^{2}(P_{W^{s}})$ obey Assumptions 3.1 and 3.2 in Chernozhukov et al. (2018a), in particular the rate of learning $\eta_{0}$ in the $L^{2}(P_{W^{s}})$ norm needs to be $o_{P}(n^{-1/4})$ . Then the estimators are asymptotically linear and Gaussian with influence functions:

$$

\psi^{o}_{\theta}(Z):=\psi_{\theta}(Z;\theta_{s},g_{s},\alpha_{s});\quad\psi^{

o}_{\sigma^{2}}(Z):=\psi_{\sigma^{2}}(Z;\sigma^{2}_{s},g_{s});\quad\psi^{o}_{

\nu^{2}}(Z):=\psi_{\nu^{2}}(Z;\nu^{2}_{s},\alpha_{s}).

$$

The covariance of the scores can be estimated by the empirical analogues using the covariance of the estimated scores.*

The resulting plug-in estimator for the bounds is then:

$$

\widehat{\theta}_{\pm}=\widehat{\theta}_{s}\pm|\rho|C_{Y}C_{D}\widehat{S},

\quad\widehat{S}^{2}=\widehat{\sigma}^{2}_{s}\widehat{\nu}^{2}_{s}.

$$

Confidence bounds for the bounds can be constructed using the following result.

**Theorem 4 (DML Confidence Bounds for Bounds)**

*Under the conditions of Lemma 2, the plug-in estimator $\widehat{\theta}_{\pm}$ is also asymptotically linear and Gaussian with the influence function:

$$

\varphi^{o}_{\pm}(Z)=\psi^{o}_{\theta}(Z)\pm\frac{|\rho|}{2}\frac{C_{Y}C_{D}}{

S}(\sigma^{2}_{s}\psi^{o}_{\nu^{2}}(Z)+\nu_{s}^{2}\psi^{o}_{\sigma^{2}}(Z)).

$$

Therefore, the confidence bound

$$

[\ell,u]=\left[\widehat{\theta}_{-}-\Phi^{-1}(1-a)\sqrt{\frac{{\mathrm{E}}

\varphi^{o2}_{-}}{n}},\ \widehat{\theta}_{+}+\Phi^{-1}(1-a)\sqrt{\frac{{

\mathrm{E}}\varphi^{o2}_{+}}{n}}\right]

$$

has the one-sided covering property, namely

$$

{\mathrm{P}}(\theta_{-}\geq\ell)\to 1-a\text{ and }{\mathrm{P}}(\theta_{+}\leq

u

)\to 1-a.

$$

The same results continue to hold if ${\mathrm{E}}\varphi^{o2}_{\pm}(Z)^{2}$ are replaced by the empirical analogue

$$

\frac{1}{n}\sum_{\ell=1}^{L}\sum_{i\in I_{\ell}}\hat{\varphi}^{o2}_{\pm}(Z_{i}).

$$*

We focus on the one-sided covering property stated in the theorem, since in applications the relevant hypotheses are typically one-sided. We can use further adjustments of Stoye (2009) to construct uniformly valid two-sided intervals.

The following remark discusses learning the regression function $g_{s}$ and the Riesz representer $\alpha_{s}$ .

**Remark 4 (Machine Learning ofαssubscript𝛼𝑠\alpha_{s}italic_α start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPTandgssubscript𝑔𝑠g_{s}italic_g start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPT)**

*Estimation of the short regression $g_{s}$ is standard and a variety of modern methods can be used (neural networks, random forests, penalized regressions). Estimation of the short RR $\alpha_{s}$ can proceed in one of the following ways. First, we can use analytical formulas for $\alpha_{s}$ (see e.g., Chernozhukov et al. (2018a); Semenova and Chernozhukov (2021), and references therein, for practical details). Second, we can use a variational characterization of $\alpha_{s}$ :

$$

\alpha_{s}=\arg\min_{\alpha\in\mathcal{A}}{\mathrm{E}}[\alpha^{2}(W^{s})-2m(W^

{s},\alpha)],

$$

where $\mathcal{A}$ is the parameter space for $\alpha_{s}$ , as proposed in Chernozhukov et al. (2021, 2022c). This avoids inverting propensity scores or conditional densities, as usually required when using analytical formulas. This approach is motivated by the first-order-conditions of the variational characterization:

$$

{\mathrm{E}}\alpha_{s}g={\mathrm{E}}m(W^{s},g)\quad\text{ for all $g$ in $

\mathcal{G}$, }

$$

which is the definition of the RR. Neural network (RieszNet) and random forest (ForestRiesz) implementations of this approach are given in Chernozhukov et al. (2022b), and the Lasso implementation in Chernozhukov et al. (2022c). A third option is to use a minimax (adversarial) characterization of $\alpha_{s}$ , as in Chernozhukov et al. (2018b, 2020): $\alpha_{s}=\arg\min_{\alpha\in\mathcal{A}}\max_{g\in\mathcal{G}}|{\mathrm{E}}m (W^{s},g)-{\mathrm{E}}\alpha g|,$ where $\mathcal{A}$ is the parameter space for $\alpha_{s}$ . The Dantzig selector implementation of this approach is given in Chernozhukov et al. (2018b). The neural network implementation of this approach is given in Chernozhukov et al. (2020). ∎*

## 5. Omitted Firm Characteristics in Evaluating the Effects of 401(k) Plan.

In this section we demonstrate the utility of our approach in an empirical example that estimates the average treatment effect of 401(k) eligibility on net financial assets (Poterba et al., 1994, 1995; Chernozhukov et al., 2018a). Our goal is to determine whether prior conclusions, reached under the assumption of conditional ignorability, are robust to plausible scenarios of unmeasured confounding. This example illustrates our bounding approach for the ATE in a partially linear model and in a nonparametric model with a binary treatment. In the Appendix we provide an additional example that estimates the price elasticity of gasoline demand (Blundell et al., 2012, 2017; Chetverikov and Wilhelm, 2017) and illustrates bounds for the average causal derivative with a continuous treatment.

### 5.1. Estimates under conditional ignorability.

A 401(k) plan is an employed sponsored tax-deferred savings option that allows individuals to deduct contributions from their taxable income, and accrue tax-free interest on investments within the plan. Introduced in the early 1980s as an incentive to increase individual savings for retirement, an important question in the savings literature is precisely to quantify the causal impact of 401(k) eligibility on net financial assets. Indeed, a naive comparison of net financial assets between those individuals with and without 401(k) eligibility suggests a positive and large impact: using data from the 1991 Survey of Income and Program Participation (SIPP), this difference amounts to $19,559.

The problem of this naive comparison, however, is that 401(k) plans can be obtained only by those individuals that work for a firm that offers such savings option—and employment decisions are far from randomized. As an attempt to overcome this lack of random assignment, Poterba et al. (1994), Poterba et al. (1995), and more recently Chernozhukov et al. (2018a), leveraged the 1991 SIPP data to adjust for potential confounding factors between 401(k) eligibility and the financial assets of an individual. As explained in Poterba et al. (1994), at least around the time 401(k) plans initially became available, people were unlikely to make employment decisions based on whether an employer offered a 401(k) plan; instead, their main focus were on salary and other aspects of the job. Thus, as a first approximation, whether one is eligible for a 401(k) plan could be taken as ignorable once we condition on income and other covariates related to job choice.

$D$ $X$ $Y$ $A$ $U$

(a) Ignorability holds conditional on $X$ only.

$D$ $X$ $Y$ $M$ $A$ $U$

(b) Ignorability holds conditional on $X$ and $A$ .

Figure 2. Two possible causal DAGs for the 401(K) example.

It is useful to think about causal diagrams (Pearl, 2009) that represent this identification strategy. One possible model is shown Figure 2(a). Here the outcome variable, $Y$ , consists of net financial assets; Defined as the sum of IRA balances, 401(k) balances, checking accounts, U.S. saving bonds, other interest-earning accounts in banks and other financial institutions, other interest-earning assets (such as bonds held personally), stocks, and mutual funds less non-mortgage debt. the treatment variable, $D$ , is an indicator for being eligible to enroll in a 401(k) plan; finally, the vector of observed covariates, $X$ , consists of: (i) age; (ii) income; (iii) family size; (iv) years of education; (iv) a binary variable indicating marital status; (v) a “two-earner” status indicator; (vi) an IRA participation indicator; and, (vii) a home ownership indicator. We consider that the decision to work for a firm that offers a 401(k) plan depends both on the observed covariates $X$ , but also on latent firm characteristics, denoted by $A$ ; moreover, $X$ , $A$ , and $D$ are jointly affected by a set of latent factors $U$ . Most importantly, note the assumption of absence of direct arrows, both from $A$ and $U$ , to $Y$ . Under such assumption, conditional ignorability holds adjusting for $X$ only. The story represented by the DAG of Figure 2(a) is one way of rationalizing the identification strategy used in earlier papers.

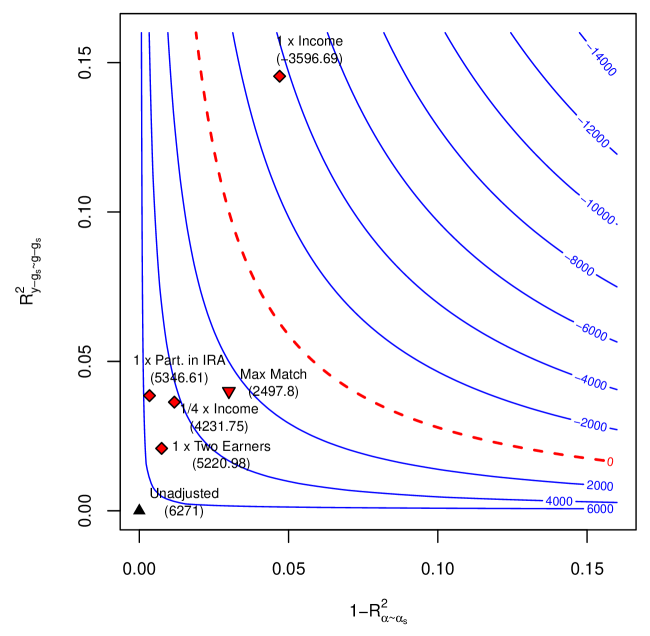

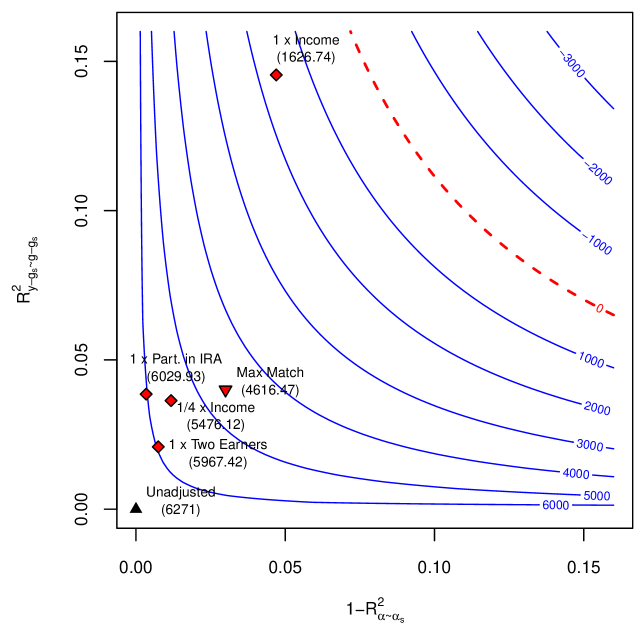

| Model | Results Under Conditional Ignorability Short Estimate | Std. Error | Robustness Values Confidence Bounds | | $\text{RV}_{\theta=0,~{}a=0.05}$ |

| --- | --- | --- | --- | --- | --- |

| Partially Linear | 9,002 | 1,394 | [6,271; 11,733] | | 5.4% |

| Nonparametric | 7,949 | 1,245 | [5,509; 10,388] | | 4.5% |

Table 1. Minimal sensitivity reporting. Significance level of 5%.

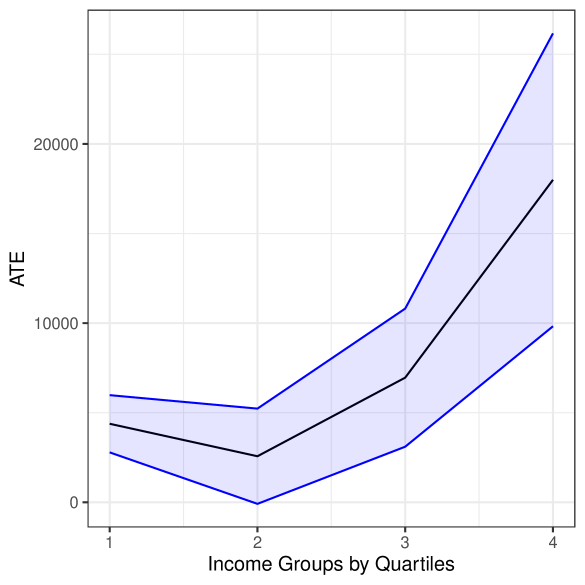

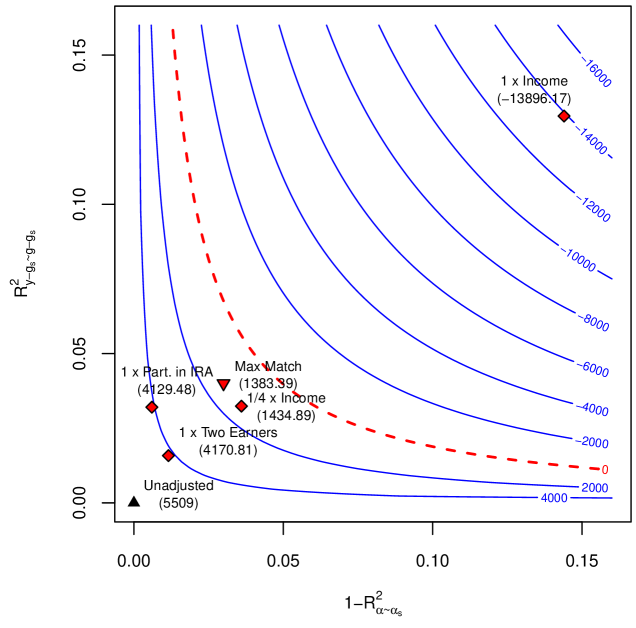

The first three columns of Table 1 shows the estimates for the average treatment effect (ATE) of 401(k) eligibility on net financial assets under this conditional ignorability assumption. For these estimates, we follow the same strategy used in Chernozhukov et al. (2018a), and we estimate the ATE using DML with Random Forests, considering both a partially linear model (PLM), and a nonparametric model (NPM). We use Random Forest both for the outcome and treatment regression and estimate the parameters using DML with 5-fold cross-fitting. In order to reduce the variance that stems from sample splitting, we repeat the procedure 5 times. Estimates are then combined using the median as the final estimate, incorporating variation across experiments into the standard error as described in Chernozhukov et al. (2018a). As we can see, after flexibly taking into account observed confounding factors, although the estimates of the effect of 401(k) eligibility on net financial assets are substantially attenuated, they are still large, positive and statistically significant (approximately $9,000 for the PLM and $8,000 for the NPM). With the nonparametric model, we further explore heterogeneous treatment effects, by analyzing the ATE within income quartile groups. The results are shown in Figure 3(a). We see that the ATE varies substantially across groups, with effects ranging from approximately $4,000 (first quartile) to almost $18,000 (last quartile).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart with Confidence Interval: Average Treatment Effect (ATE) by Income Quartile

### Overview

The image displays a line chart illustrating the Average Treatment Effect (ATE) across four income groups, categorized by quartiles. The chart features a central trend line (black) surrounded by a shaded confidence interval (light blue) bounded by two blue lines. The overall trend shows a slight initial decrease followed by a significant increase in ATE as income rises.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "ATE". The scale runs from 0 to over 20,000, with major tick marks at 0, 10,000, and 20,000.

* **X-Axis (Horizontal):** Labeled "Income Groups by Quartiles". It has four categorical tick marks labeled "1", "2", "3", and "4", representing the income quartiles from lowest (1) to highest (4).

* **Data Series:**

* **Central Trend Line (Black):** Represents the point estimate of the ATE for each quartile.

* **Confidence Interval (Light Blue Shaded Area):** Represents the range of uncertainty around the central estimate.

* **Upper & Lower Bounds (Blue Lines):** Define the upper and lower limits of the confidence interval.

* **Legend:** No explicit legend is present within the chart area. The series are distinguished by color and line style (solid black line vs. solid blue lines with shading between them).

### Detailed Analysis

**Data Point Estimates (Central Black Line):**

* **Quartile 1 (Lowest Income):** ATE is approximately 4,500.

* **Quartile 2:** ATE decreases to its lowest point, approximately 2,500.

* **Quartile 3:** ATE increases to approximately 7,000.

* **Quartile 4 (Highest Income):** ATE shows a sharp increase to approximately 18,000.

**Confidence Interval (Blue Shaded Region):**

* **Quartile 1:** The interval spans from approximately 2,500 (lower blue line) to 6,000 (upper blue line).

* **Quartile 2:** The interval is narrowest here, spanning from approximately 0 (lower blue line) to 5,000 (upper blue line).

* **Quartile 3:** The interval widens, spanning from approximately 3,000 (lower blue line) to 11,000 (upper blue line).

* **Quartile 4:** The interval is widest, spanning from approximately 10,000 (lower blue line) to over 25,000 (upper blue line, extending beyond the top axis limit).

**Trend Verification:**

* The central black line exhibits a "check mark" or "J-shaped" trend: a shallow dip from Q1 to Q2, followed by a steep, accelerating rise from Q2 through Q4.

* The confidence interval (blue shaded area) follows the same general shape but expands dramatically at the higher end (Q3 and Q4), indicating greater uncertainty in the estimate for higher-income groups.

### Key Observations

1. **Non-Linear Relationship:** The relationship between income quartile and ATE is not linear. The effect is smallest for the second income quartile.

2. **Increasing Effect and Uncertainty:** Both the estimated ATE and the uncertainty around that estimate increase substantially for the third and especially the fourth income quartiles.

3. **Minimum Point:** The lowest estimated ATE and the narrowest confidence interval both occur at the second income quartile.

4. **Asymmetric Interval:** At Quartile 4, the upper bound of the confidence interval rises much more sharply than the lower bound, creating a highly asymmetric interval.

### Interpretation

This chart suggests that the Average Treatment Effect (ATE) of whatever intervention or phenomenon is being measured is highly dependent on income level. The effect is modest for the lowest income group, dips slightly for the lower-middle group (Q2), and then grows substantially for the upper-middle (Q3) and highest (Q4) income groups.

The widening confidence interval at higher incomes is a critical finding. It indicates that while the *average* effect is estimated to be large for high-income individuals, there is much more variability or less data to pin down this estimate precisely. This could mean the treatment works very differently for different people within the high-income bracket, or that the sample size for this group is smaller.

From a policy or research perspective, this pattern might indicate that the treatment is most effective, on average, for higher-income populations, but its impact on them is also the least certain. The dip at Q2 could be an important anomaly worth investigating—perhaps there is a subgroup for whom the treatment is less effective or even counterproductive. The overall message is one of heterogeneity: the treatment effect is not uniform across the population defined by income.

</details>

(a) Estimates under no confounding.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Line Chart with Confidence Intervals: ATE by Income Quartiles

### Overview

The image is a line chart displaying the Average Treatment Effect (ATE) across four income groups, categorized by quartiles. The chart includes three distinct data series (blue, red, and black lines), each accompanied by a shaded region representing a confidence interval or range of uncertainty. The overall trend shows that ATE increases with income quartile, with a particularly sharp rise from the third to the fourth quartile for all series.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Income Groups by Quartiles". It has four discrete, equally spaced tick marks labeled "1", "2", "3", and "4", representing the income quartiles from lowest (1) to highest (4).

* **Y-Axis (Vertical):** Labeled "ATE". The scale is linear, with major tick marks and labels at 0, 10000, 20000, and 30000. The axis extends slightly beyond 30000 at the top.

* **Data Series & Legend:** There is no explicit legend box within the chart area. The three series are distinguished solely by line color:

* **Blue Line:** The topmost line with the widest shaded confidence interval (light blue fill).

* **Red Line:** The middle line with a medium-width shaded confidence interval (light red/pink fill).

* **Black Line:** The bottom line with the narrowest shaded confidence interval (light gray fill).

* **Spatial Layout:** The chart area is bounded by a light gray border. The plot background is white with a faint gray grid. The axis labels are positioned conventionally: the x-axis label is centered below the axis, and the y-axis label is rotated 90 degrees and centered to the left of the axis.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

* **Blue Line Trend:** Starts relatively flat, dips slightly at quartile 2, then rises sharply through quartiles 3 and 4.

* Quartile 1: ~7,000

* Quartile 2: ~6,500

* Quartile 3: ~13,500

* Quartile 4: ~31,000

* *Confidence Interval (Blue Shading):* At Quartile 4, the interval spans from approximately 4,500 to 31,000, indicating very high uncertainty for the highest income group.

* **Red Line Trend:** Follows a similar shape to the blue line but at a lower magnitude. It dips at quartile 2 and increases thereafter.

* Quartile 1: ~5,500

* Quartile 2: ~4,500

* Quartile 3: ~10,000

* Quartile 4: ~24,500

* *Confidence Interval (Red Shading):* At Quartile 4, the interval spans from approximately 11,000 to 24,500.

* **Black Line Trend:** Also follows the same general pattern: a dip at quartile 2 followed by an increase.

* Quartile 1: ~3,500

* Quartile 2: ~2,500

* Quartile 3: ~7,000

* Quartile 4: ~18,000

* *Confidence Interval (Gray Shading):* At Quartile 4, the interval spans from approximately 11,000 to 18,000, the narrowest of the three.

**Cross-Reference Check:** The ordering of the lines (Blue > Red > Black) is consistent across all four quartiles. The shaded confidence intervals do not cross between the blue and black series at any point, suggesting a statistically significant difference between these estimates. The red series' interval overlaps with both the blue and black intervals at lower quartiles.

### Key Observations

1. **Non-Monotonic Initial Trend:** All three series show a slight decrease in ATE from the first to the second income quartile before rising.

2. **Divergence at the Top:** The difference in ATE between the three series is smallest at quartile 2 and largest at quartile 4. The blue line's estimate grows the most dramatically.

3. **Increasing Uncertainty:** The width of the confidence intervals (the vertical spread of the shaded areas) increases significantly for all series as income quartile increases. This is most extreme for the blue series.

4. **Steep Final Slope:** The slope of all lines is steepest between quartile 3 and quartile 4, indicating the largest incremental change in ATE occurs between the third and fourth income groups.

### Interpretation

This chart suggests that the effect of the treatment being measured (ATE) is not uniform across the income distribution. The data indicates a **positive correlation between income level and treatment effect**, particularly for the highest earners (quartile 4). The treatment appears to be most beneficial for the highest income group according to all three models or estimates (represented by the colored lines).

The **diverging lines and widening confidence intervals** at the top end are critical findings. They imply that while the estimated benefit is largest for high-income individuals, there is also the greatest uncertainty about the precise magnitude of that benefit. This could be due to greater heterogeneity within the high-income group or less data available for that segment.

The initial dip from quartile 1 to 2 is an interesting anomaly. It suggests the treatment effect might be slightly lower for the second-lowest income group compared to the very lowest, before beginning its upward trajectory. Without context on what the "treatment" is, it's difficult to speculate on the cause, but this pattern would warrant further investigation.

**In summary, the key takeaway is that the treatment effect appears to increase with income, but our confidence in the exact size of that effect decreases for the wealthiest group.**

</details>

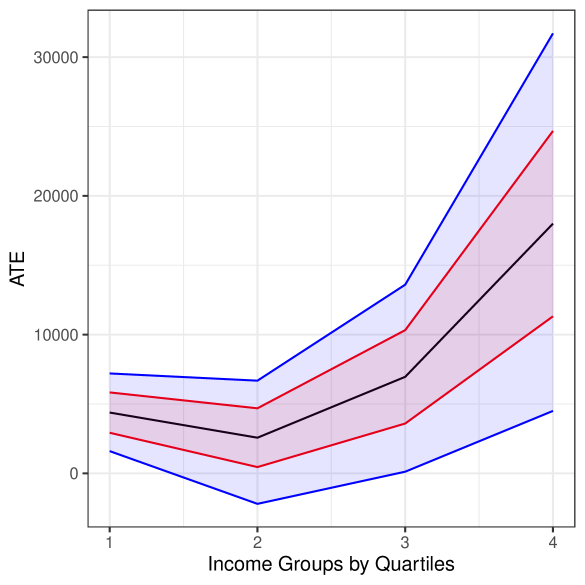

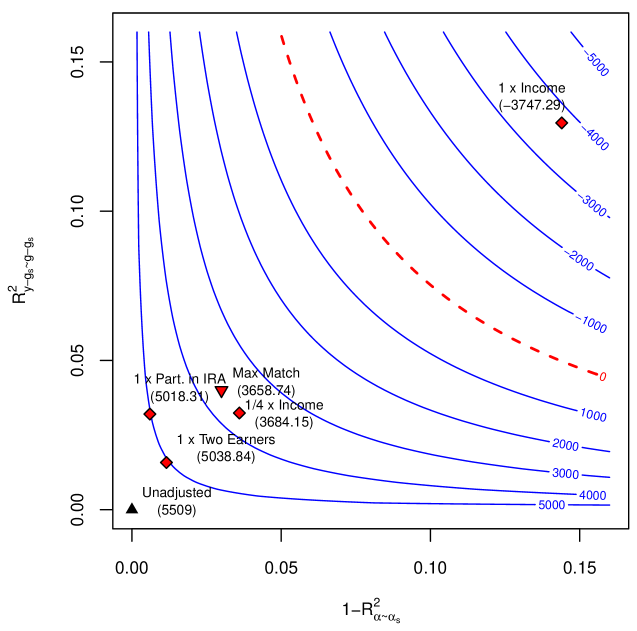

(b) Bounds under posited confounding.

Figure 3. Estimate (black), bounds (red), and confidence bounds (blue) for the ATE by income quartiles. Confounding scenario: $\rho^{2}=1$ ; $C^{2}_{Y}\approx 0.04$ ; $C^{2}_{D}\approx 0.03$ . Significance level of $5\$ .

### 5.2. Sensitivity analysis

It is now useful to consider scenarios in which conditional ignorability fails. Figure 2(b) presents one such scenario, where a violation of conditional ignorability is credible. We note that Figure 2(b) is just one example, and our sensitivity analysis results hold for any model in which conditional ignorability holds given observed variables and latent confounders. Employers often offer a benefit in which they “match” a proportion of an employee’s contribution to their 401(k) up to 5% of the employee’s salaries. The model in Figure 2(b) allows this “matched amount,” denoted by $M$ , to be determined by unobserved firm characteristics $A$ , observed worker characteristics $X$ , and by 401(k) eligibility $D$ . In this model, adjustment for $X$ alone is not sufficient for control of confounding. Instead, we now need to condition both on observed covariates $X$ and latent confounders $A$ for ignorability to hold. Note that in this case the average treatment effect is still defined as ${\mathrm{E}}[Y(1)-Y(0)]$ . The relevant counterfactuals $Y(d)$ are obtained by setting $D=d$ for all descendants of $D$ , that is $Y(d)=g_{Y}(d,M(d),X,\epsilon_{Y})$ , where $M(d)=g_{M}(d,F,X,\epsilon_{M}).$ How strong would the omitted firm characteristics $A$ have to be in order to overturn our previous conclusions? And how plausible are the strengths revealed to be problematic? In what follows, we use our sensitivity analysis results to address these questions.

#### 5.2.1. Minimal sensitivity reporting.

In reporting empirical results, the following definition will be useful.

**Definition 2 (Robustness Values)**

*The robustness $\text{RV}_{\theta,a}$ stands for the minimum upper bound $RV$ on both sensitivity parameters, $R^{2}_{y-g_{s}\sim g-g_{s}}\leq\text{RV}$ and $1-R^{2}_{\alpha\sim\alpha_{s}}\leq\text{RV}$ , such that the confidence bound $[l,u]$ of Theorem 4 includes $\theta$ , at the significance level $a$ .*

Whereas standard errors, t-values or p-values communicate how robust the short estimate is to sampling errors, the idea of robustness values is to quickly communicate how robust the short estimate is to systematic errors due to residual confounding. For example, $\text{RV}_{\theta=0,a=.05}$ measures the minimal strength on both confounding factors such that the estimated confidence bound for the ATE would include zero, at the 5% significance level.