## Bayesian sense of time in biological and artificial brains

Zafeirios Fountas 1 & Alexey Zakharov 2

To appear in: Time and Science (ed. R. Lestienne & P. Harris)

1 Wellcome Centre for Human Neuroimaging, University College London,

12 Queen Square, London WC1N 3AR, UK

z.fountas@ucl.ac.uk

2 Department of Computing, Imperial College London, 180 Queen's Gate, London SW7 2RH, UK az519@imperial.ac.uk

Draft compiled: January 17, 2022

## Abstract

Enquiries concerning the underlying mechanisms and the emergent properties of a biological brain have a long history of theoretical postulates and experimental findings. Today, the scientific community tends to converge to a single interpretation of the brain's cognitive underpinnings - that it is a Bayesian inference machine. This contemporary view has naturally been a strong driving force in recent developments around computational and cognitive neurosciences. Of particular interest is the brain's ability to process the passage of time - one of the fundamental dimensions of our experience. How can we explain empirical data on human time perception using the Bayesian brain hypothesis? Can we replicate human estimation biases using Bayesian models? What insights can the agent-based machine learning models provide for the study of this subject? In this chapter, we review some of the recent advancements in the field of time perception and discuss the role of Bayesian processing in the construction of temporal models.

## 1 Introduction

More than 150 years ago, German polymath Hermann von Helmholtz has postulated that perception is realised by means of 'unconscious inferences' which is learned over time rather than acquired at birth (Von Helmholtz 1867/1906). Although this view was scrupulously criticised by the nativists of his time, it predicted scientific developments for over 100 years ahead and inspired the contemporary interpretations of the human brain's cognitive underpinnings. In particular, Helmholtz's view on perception is often cited as the earliest

account of the brain being regarded as an inference machine and as one of the inspirations for the influential studies on generative models in the early 1990s (Dayan et al. 1995, Friston 2012).

An illustrative example of this is a seminal paper by Dayan et al. (1995), which begins with: 'Following Helmholtz, we view the human perceptual system as a statistical inference engine whose function is to infer the probable causes of sensory input.' This idea has gained traction in the second half of the 20th century, flourishing from the works on perception as hypothesis testing (Gregory 1968, Kersten et al. 2004), and establishing a strong case for the role of Bayesian probability theory in the fields of computational neuroscience and cognitive science (Wolpert et al. 1995, Knill & Richards 1996, K¨ ording & Wolpert 2004). The elegant amalgamation of generative models and Bayes' rule ultimately resulted in what is now known to be the 'Bayesian brain' hypothesis (Knill & Pouget 2004). The hypothesis views the brain as a statistical machine equipped with a causal model of the environment, where perception is a consequence of Bayesian model inversion (probabilistic inference) given new sensory observations and the causal model is updated according to Bayes' rule (Bubi´ c et al. 2010, Knill & Pouget 2004).

Following these developments, a prominent theory of the brain's inner workings has emerged - the free-energy principle (Friston 2010). At its core, the principle is simply a mathematical description underwriting the behaviour of any dynamical system separated by a Markov blanket from its environment (Friston 2019). In particular, it states that 'the dynamics of persistent, bounded systems may be framed as inferential processes', where 'states internal to a boundary appear to infer the states outside of it' (Parr et al. 2019). Attentive readers may notice the resemblance of this description with the Helmholtz-inspired research on generative models and the past interpretations of the brain as an inference machine. Indeed, one of the more ambitious goals of the free-energy principle is to provide a unifying theory for the brain's operations by explaining the fundamental tendencies of selforganising systems. Specifically, under the principle, the brain is a probabilistic (Bayesian) generative model, the objective of which is to minimise an information-theoretic quantity called the variational free energy (also called negative marginal likelihood, or surprisal) (Friston 2010). Given the generality of the free-energy principle, it is consistent with a number of prominent research directions in the fields such as computational neuroscience (e.g. predictive coding) or machine learning (e.g. model-based reinforcement learning). With Bayesian mechanics at the core of the free-energy principle, the 'Bayesian brain' hypothesis is similarly well accommodated within the boundaries of this grand theory.

As a Bayesian generative model, it is reasonable to assume that the brain must exhibit particular properties pertaining to its probabilistic nature - as a corollary of Bayesian updating, the beliefs it embodies, and the predictions it makes based on them. Indeed, it has been observed that the brain can deal with uncertainty in a nearly optimal manner (Clark

2015), while Bayesian inference seems to have significant explanatory power for magnitude estimation by humans (Petzschner et al. 2015). Magnitude estimation, in particular, is very telling as a conspicuous phenomenon that can be actively scrutinised through psychological experiments and computational models, in order to probe the hypothesis of Bayesian processing in the brain.

Time, being one of such magnitudes susceptible to human estimation, has naturally become a subject of active research in the fields of computational neuroscience, cognitive science, and even agent-based machine learning. As one of the most basic dimensions in our quotidian existence, the means by which our brains represent, perceive and predict the passage of time remain an intriguing direction of work, particularly in light of the fairly recent theoretical and practical developments concerning the 'Bayesian brain' hypothesis. In this chapter, we discuss how time perception can be formalised and studied from the Bayesian perspective using the latest literature on time perception models. Further, we dive deep into the state-of-the-art models proposed within the field of artificial intelligence, exploring a variety of ideas pertaining to how time can be represented in an artificial agent capable of intelligent behaviour.

## 2 Interval timing

Typically, the subjective perception of time is examined via interval timing tasks which, as the name suggests, assess the human (and other animals) ability to process the duration of (or between) events. Processing here refers to the ability to measure, estimate, produce or discriminate either isolated intervals or more complex temporal patterns (Hardy & Buonomano 2016). Although biological organisms have developed the ability to process time in multiple timescales, spanning over more than 10 orders of magnitude (Buhusi & Meck 2005), experiments of interval timing commonly focus on the range of milliseconds to minutes, due to the high tractability of this range in an experimental setting and its association with short-term brain dynamics and various aspects of behaviour, such as decision-making and motor control.

There is a wide variety of tasks that have been used to investigate this fundamental aspect of cognition. Typical tasks in the literature of interval timing concern intervals that subjects have previously experienced between a sequence of events. Some require the use of motor skills (e.g. via the reproduction of an interval between sensory cues or actions), where motor timing is involved (Paton & Buonomano 2018), while some tasks focus only on the perception of these intervals (i.e. sensory timing tasks). In the latter category, subjects can discriminate intervals in a variety of ways, such as by estimating the elapsed time in human-readable units, categorization, bisection, or by comparison between multiple intervals. Finally, in all cases, cues can involve individual sensory modalities (e.g. visual or

auditory) or a combination of more than one (Ellinghaus et al. 2021).

These rigorous and highly simplified experimental settings have led psychophysical studies to unravel consistent patterns of bias that the brain exhibits in different statistical contexts. Since the duration of an interval is a magnitude, it is not surprising that the most well-established biases include the contextual effects observed in other types of magnitude judgements, such as loudness or distance (Petzschner et al. 2015). First, subjects have an overall tendency to regress duration judgements to the mean duration of stimuli already presented to them, which is referred to as the central-tendency effect (Jazayeri & Shadlen 2010), or Vierordt's law , after the German physiologist Karl von Vierordt (1868) who first presented evidence for this effect. This central tendency is naturally present in different timing tasks, timescales, sensory modalities and age groups Lejeune & Wearden (2009), although evidence suggests that it can be alleviated via training (e.g. in expert musicians (Cicchini et al. 2012)). Second, as in other dimensions of stimulus perception, the ability to perceive changes in duration is characterized by being directly proportional to the actual duration (Gibbon 1977). This is known as the scalar property of interval timing, or Weber's law.

In addition, it is shown that the order in which two stimuli of variable durations are presented determines the reported duration judgements (Dyjas et al. 2012). This is due to the 'local' contextual bias, as opposed to the 'global' bias captured by Vierordt's law, which is manifested as a tendency of current estimates to be pulled towards the preceding stimuli (Dyjas et al. 2012, Shi et al. 2013, de Jong et al. 2021). Finally, other contextual effects in interval timing include deviations in duration judgements when subjects experience increases in cognitive load (Block et al. 2010), as well as distinct biases across different scene types when subjects watch videos (Roseboom et al. 2019).

The first step in understanding the nature of these contextual biases is to identify the sources of information that the brain uses to process durations. This is less straightforward than other types of measurable dimensions of perception, such as the height of an object, since duration constitutes an intrinsic property of experience that does not necessarily depend on any particular cue or sensory modality. Nonetheless, this very omnipresence shows the wide repertoire of information sources that the brain is able to employ in order to improve its performance in interval timing tasks. Following Occam's razor principle (Feldman 2016), the brain should learn to exploit the least complex and reliable source for a given task and range of durations. For instance, when trying to accurately estimate a blank interval of up to a few seconds in a simple experimental setting, it is reasonable to assume that the brain would employ any kind of neural dynamics that exhibit periodicity (such as cortical oscillations) or other rhythmic physiological signals (such as the heartbeat) as a frame of reference. Indeed, evidence shows that, in simple tasks, information related to the cardiac cycle is relevant for perception and reproduction of time intervals in the range

of 2-25 seconds (Meissner & Wittmann 2011, Pollatos et al. 2014), while it has been shown that other interoceptive signals (such as hunger - see Vicario et al. (2019)), might also play an important role.

On the other hand, estimations of duration in everyday phenomenological experience are more reasonable to distil evidence predominantly from exteroceptive signals, which provide a far richer source of temporal information (Ahrens & Sahani 2011). This was examined by Su´ arez-Pinilla et al. (2019), who showed that supra-second duration judgements vary systematically with perceptual content, rather than physiological signals, in an experiment where participants are viewing natural videos.

Over the years, a substantial number of different theoretical proposals have attempted to explain human behaviour in interval timing tasks. Although a comprehensive taxonomy of interval timing models is not in the scope of this chapter, for an extensive overview of theoretical models the reader is directed to the existing reviews on this topic, such as Addyman et al. (2016) or Basgol et al. (2021).

Perhaps the most influential type of such models involves a pacemaker and an accumulator which together constitute the main components of an internal clock mechanism (Van Rijn et al. 2014). Formally proposed by Gibbon (1977) as scalar expectancy (or timing) theory in order to model behaviour governed by time, this internal clock can be viewed as a part of a larger cognitive architecture involving a clock, memory and decision stages (Treisman 1963, Church 1984). In 2004, Matell & Meck (2004) proposed the striatal beat-frequency model, a neurobiologically plausible implementation of the clock stage in scalar timing theory (Van Rijn et al. 2014), which relies on oscillatory properties of cortical neurons that project to the basal ganglia to create a pulse. In addition, in a leading alternative theory of interval timing, Karmarkar & Buonomano (2007) proposed that time can be tracked by stochastic neural processing dynamics within any given state-dependent network, without the need for dedicated clocks.

## 2.1 Bayesian approaches to interval timing

The aforementioned context effects in duration estimation point to the existence of a Bayesian mechanism for the integration of representations in memory and new sensory information, in line with other types of magnitude (Petzschner et al. 2015). Based on this framework, previously acquired (prior) information of estimated intervals is integrated with noisy sensory input (likelihood), weighted by their relative uncertainty, to produce a statistically optimal posterior estimation. Consequently, the central tendency effect can be explained as a bias towards prior expectations, in cases of disagreement between memory and current physical stimulus.

This idea was explored by Jazayeri & Shadlen (2010), who proposed the use of a Bayesian observer to model human perception and reported time estimates. In their model, also

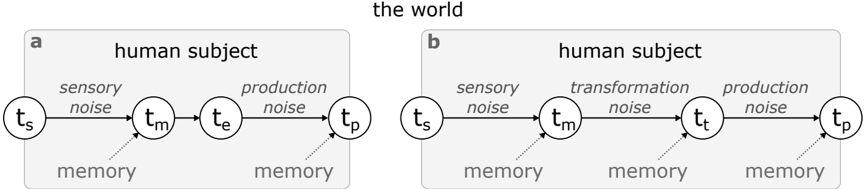

Figure 1: Bayesian observer-actor model by Jazayeri & Shadlen (2010) ( a ) and a more general version by Remington et al. (2018) ( b ). The graph shows causal relationships between random variables. Inference involves integrating new information (horizontal arrows) with the corresponding prior statistics found in memory. In (a), t s represents the set of external stimuli, t m the likelihood distribution of measured duration of the event, given the memory contents, t e the result of applying to the later distribution a Bayes estimator, and t p the reproduced duration. In (b), t t represent the distribution of the estimated duration, taking into account more noise steps in the computation.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Human Subject Processing Models

### Overview

The image presents two diagrams, labeled 'a' and 'b', illustrating models of human subject processing. Both models depict a sequence of stages represented by circles, connected by arrows indicating the flow of information. Each stage is associated with a time point (t) and a type of noise. Model 'a' includes sensory noise and production noise, while model 'b' includes sensory noise, transformation noise, and production noise. Memory is referenced at multiple stages in both models.

### Components/Axes

* **Diagram Labels:** 'a' (top-left), 'b' (top-right)

* **Container Labels:** "human subject" (top of each diagram)

* **Stage Labels:**

* t\_s (sensory input)

* t\_m (memory)

* t\_e (execution - diagram a only)

* t\_t (transformation - diagram b only)

* t\_p (production/output)

* **Noise Labels:**

* sensory noise

* production noise

* transformation noise (diagram b only)

* **Memory Labels:** "memory" (associated with t\_m, t\_e/t\_t, and t\_p)

* **Overall Context:** "the world" (centered at the top of the image)

### Detailed Analysis

**Diagram a:**

* **Stages:** t\_s -> t\_m -> t\_e -> t\_p

* **Noise:** sensory noise (between t\_s and t\_m), production noise (between t\_e and t\_p)

* **Memory:** Associated with t\_m and t\_p

**Diagram b:**

* **Stages:** t\_s -> t\_m -> t\_t -> t\_p

* **Noise:** sensory noise (between t\_s and t\_m), transformation noise (between t\_m and t\_t), production noise (between t\_t and t\_p)

* **Memory:** Associated with t\_m, t\_t, and t\_p

### Key Observations

* Both diagrams model a sequence of processing stages.

* Diagram 'b' includes an additional "transformation" stage (t\_t) compared to diagram 'a', which has an "execution" stage (t\_e).

* Both diagrams include "sensory noise" and "production noise."

* Memory is referenced at multiple stages in both models, indicated by dotted arrows pointing from the memory label to the stage.

### Interpretation

The diagrams represent two different models of how a human subject processes information. Model 'a' might represent a simpler processing pathway, while model 'b' includes a transformation stage, suggesting a more complex cognitive process. The presence of "noise" at different stages indicates potential sources of error or variability in the processing. The memory references suggest that memory plays a role at various stages of processing, influencing how information is perceived, transformed, and produced. The "world" context suggests that these models are intended to represent how humans interact with and process information from their environment.

</details>

shown in Figure 1a, the process of inferring the measured duration ( t m ) given an external sample ( t s ) is performed using a Bayes estimator (outputting t e ). The likelihood of a measurement is expressed as a probability distribution that parametrises any noise originating from the process of sensing via different modalities, while the prior is set to be a fixed uniform distribution within the range of all earlier observed durations. Then, the reproduced duration by human subjects ( t p ) is expressed as a Normal distribution centred at t e and with a standard deviation that captures any motor noise. Importantly, both sensory and motor noise factors in this model were conceptualized to grow linearly with the corresponding means, a property that was crucial to capture Weber's law. This model was shown to fit well with the behaviour of subjects that are tasked to reproduce durations sampled by different distributions matching the model priors, especially when the estimation of the posterior duration is performed using the Bayes least-squares method.

Jazayeri & Shadlen (2010) also formulated a Bayesian observer-actor model where the process involves optimization of all steps: from sensing a duration to motor reproduction. This model accounts for uncertainty (or noise) minimization at all stages of a time interval task, including motor control. Furthermore, a general Bayesian observer-actor model might involve more complex transformations of time measurements to behaviourally meaningful variables. Such transformations can be viewed as any kind of downstream computation that is necessary for the accomplishment of a task using general representations of time and can be expressed as a mapping t m → t t (Figure 1b). For instance, when asked to verbally report the duration of a video, human subjects have to map their inner sense of time to units that can be expressed in a human language, such as seconds. Similarly, when asked

to reproduce the same duration by pressing a button twice, subjects have to map the same inner sense to a representation that is relevant to the dynamics of the motor system, related to the part of the body that controls the button. Finally, in the case of reporting durations using a visual analogue scale, it is necessary to also take into account the visual perception of space. Arguably, the three types of transformations involved in our examples would produce different levels of expected noise, whose effect, in turn, would require different sets of skills from memory to be mitigated.

In a later iteration of this Bayesian observer-actor model for interval timing, Remington et al. (2018) investigated these aspects and suggested that the brain is not only able to apply inference in mental transformations t m → t e , but also to even ' delay ' early-stage inference in sensory measurements t s → t m in order to account for both types of expected noise (sensory and transformation) together.

Although the basic form of the general Bayesian observer-actor model is very useful as a backbone architecture of the computations involved in magnitude perception, specifications of individual components are often defined arbitrarily, causing substantial criticism (Jones & Love 2011, Bowers & Davis 2012, Wei & Stocker 2015). Hence, it is worth considering some individual components used in interval timing models in more depth. Two of the most crucial components are the likelihood and the prior of the sensory measurements. The likelihood, modelled as simple Gaussian noise in the studies shown in Figure 1, can be thought of as the basic 'sense' of time that is combined with new sensory information from different sources, as mentioned in the previous section. Depending on the sources taken into account, studies incorporate completely different versions of parametrised probability distributions to model likelihood.

In addition, there is a wide variety of proposals for modelling prior statistical knowledge as a probability distribution of intervals. Notable examples of densities include Uniform (Jazayeri & Shadlen 2010), Gaussian (Cicchini et al. 2012), non-parametric (Acerbi et al. 2012) and, most recently, a mixture of lognormals (Maaß et al. 2021). These densities are either considered fixed across experiment trials or dynamic, with parameters being updated using a learning rule. The mechanism used for updating memory representations is a crucial aspect of Bayesian observer models and it determines whether or not both global and local contextual biases can be captured. de Jong et al. (2021) recently showed that a linear recursive Bayesian estimator, in particular a Kalman filter, is better at capturing human global and local biases than the alternative non-Bayesian approaches. This points to the existence of a single dynamic representation of prior information in the brain that is responsible for both types of biases. Corroborating this view, Ellinghaus et al. (2021) recently showed evidence indicating that a general dynamic prior for duration judgements integrates temporal information from different sensory modalities.

Finally, although the basic sense of time is more closely related to individual measure-

ments, it can be argued that at least some aspects of the human experience correspond to the inferred posterior durations that integrate multi-modal information with memory (Von Helmholtz 1867/1906, Seth 2015, Neemeh & Gallagher 2020). In particular, studies of the phenomenology of time have suggested a separation that comprises implicit (or lived) and explicit time (Fuchs 2013). The former describes the experience of 'being inside' time since it is associated with self-awareness and intentionality and requires a continuous flow of experience. In contrast, explicit time refers to the measurable time that we can process in the past, present and future. According to Fuchs, explicit time emerges when the flow of implicit temporality is interrupted. The difference in the types of temporal experience is further illustrated by Droit-Volet & Wearden (2016), who showed that the perception of the passage of time is mediated through a different mechanism than the perception of duration.

## 3 Sense of time as perceptual change

A plethora of both classical philosophical work (Hume 1739/1964) and behavioural studies (Block 1974, Ornstein 1975, Poynter & Homa 1983) suggest that the perception of durations is driven by changes in the contents of sensory experiences. This idea is in contrast to predominant approaches mentioned before that rely on the existence of rhythmic neural processes to act as pacemakers (Treisman 1963, Matell & Meck 2004, Van Rijn et al. 2014). Recently, Roseboom et al. (2019) proposed a computational model that implements this idea using the popular large-scale artificial neural network AlexNet by Krizhevsky et al. (2012).

The connectivity of this neural network is organized using convolutions which are shown to result in similar hierarchical structure and representations as in the primate cortex, including regions associated with vision (Cadena et al. 2019, Kriegeskorte 2015, KhalighRazavi & Kriegeskorte 2014). AlexNet is trained to classify static, high-resolution images into 1000 different categories of everyday objects and animals. In the model of Roseboom et al. (2019), AlexNet received consecutive frames of videos in order for its neuron values to be analogous to biological neuron states and reflect dynamic latent representations in the human visual perception system. To capture salient perceptual changes, the Euclidean distance between consecutive neuron states across the network's hierarchy was calculated and compared to a threshold value, resulting in a salient event detection mechanism. The events in a video were then accumulated and used as the inner sense of time, similar to the measurement t m in Figure 1b. Finally, a regression algorithm was used to map accumulated events to seconds, corresponding to the mental transformation t t of the same figure.

Interestingly, this computational model was found to fit well the behavioural data of humans when judging the durations of videos of natural scenes that lasted between 1 to 64 seconds, exhibiting crucial patterns of bias examined before, such as the Vierordt's and

Weber's laws among others. Indeed, this was despite the fact that the mapping t m → t t was trained to capture the objective duration of the videos, as well the fact that the neural architecture was trained on a completely different domain and purpose than this task.

More recently, Sherman et al. (2020) ran the same experiment as Roseboom et al. (2019) while recording the subject's brain activity using Functional magnetic resonance imaging (fMRI) and further verified the core suggestions of the original study. Analysis of these recordings showed that blood flow changes in the visual cortex were sufficient to reconstruct trial-by-trial human biases, while this was not the case in other perceptual regions of the cortex that were not involved in processing the video stimulus.

Although the theoretical model by Roseboom and colleagues is not defined as a Bayesian observer model, it is clear that it follows the same principles and could be adapted to the backbone architecture by Remington et al. (2018). Importantly, it shows that there is no need for a Gaussian noise component in the measurement likelihood of these models and that increasing the noise arbitrarily as a linear function of the means can be replaced by accumulated salient changes, without the need of any parameters except for a pre-trained perceptual system, while maintaining consistency with Weber's law.

## 3.1 Time, predictive coding and episodic memory

As pointed out in Roseboom et al. (2019), the Euclidean distance between successive neuron activations in the model of this study can be viewed as a proxy for the error between the prior prediction of latent states and the corresponding posterior. This suggests a hierarchical architecture where representations of all neuron layers are predicted and updated, via the process of probabilistic inference. This approach to perception is known as predictive coding and was popularised as a computational model of perception by Rao & Ballard (1999).

There are multiple associations between this framework and the perception of time. Hohwy et al. (2016) proposed that the hierarchical nature of predictive coding is what creates the phenomenology of temporal flow. While the brain's model of the environment predicts changes in the current state of affairs, it begins distrusting the perceived present in anticipation of these changes, thus creating the illusion that the present moves forward. Kent et al. (2019) extended this theory and proposed that distrusting the future can explain the severe experience of time dilation in depression and hopelessness.

Finally, predictive coding has been used to explain the neural mechanism that underlies the perception of duration. In a recent study by Fountas et al. (2021) and based on the same fundamental assumptions as Roseboom et al. (2019), models of attention, episodic memory and visual perceptual processing were integrated under the framework of predictive coding. In a large-scale experiment involving nearly 13,000 participants, the resulting system was able to reconstruct key human biases in duration judgements regardless of the stimulus content, whether the participants focused on this task or they were distracted, or even when

judgements were requested retrospectively, forcing participants to rely solely on memory recall. The success of this model was crucial since prospective and retrospective human duration judgements are known to show inverse patterns of bias (Block et al. 2010), which are notoriously hard to capture together in a single theoretical framework (Basgol et al. 2021).

## 4 Time and Artificial Intelligence

The ability to perceive and estimate time may well be one of the central elements of an intelligent biological agent equipped with a model of its environment. Indeed, it would be of limited use for an organism's survival to learn the causal physics of the world without its underlying temporal dynamics and structure. As such, if one takes the view that an agent's internal generative model of the environment is its primary guide to behaviour, the ability to learn representations of time and employ them for action selection is an important consideration for building intelligent artificial agents. In this section, we wish to consider time perception as it pertains to artificial intelligence and from the perspective of a researcher whose primary objective is to create agents capable of intelligent interaction with an environment.

## 4.1 Model-based reinforcement learning

## 4.1.1 Overview

Within the field of artificial intelligence, a large community of researchers are focused on studying reinforcement learning (RL) - a research area concerned with how agents ought to behave to maximise rewards in an environment. Since the wide adoption of deep learning , popularised by the success of DeepMind (Mnih et al. 2013, 2015), RL has been in a time of renaissance with an increasing number of works demonstrating its convincing efficacy.

Today, a large number of agent architectures have been proposed that were shown to solve complex tasks in a wide variety of environments (Silver et al. 2014, Schulman et al. 2015, Lillicrap et al. 2016, Mnih et al. 2016, Blundell et al. 2016, Racani` ere et al. 2017, Ha & Schmidhuber 2018 a , Haarnoja et al. 2018). Crucially, current prominent RL architectures are able to solve many tasks involving temporal dependencies and interval timing (Hung et al. 2019, Wayne et al. 2018, Jaderberg et al. 2019, Arjona-Medina et al. 2019) but still struggle when it comes to long-term planning and credit assignment. The challenge of temporal learning is a growing and important area of research in the field, with a simple question underlying its foundations: do agents learn time?

Such questions, however, are bound to fail given their intrinsic equivocacy. Similar to the study of time in humans, learning time in RL can be viewed from different lenses given the

grand scale of problems and research branches this field encapsulates. In particular, it can be viewed unambiguously as the ability to estimate time (interval timing) (Deverett et al. 2019), as the ability to learn the temporal dynamics of the environment (transition function) (Sutton 1990, Watter et al. 2015, Deisenroth & Rasmussen 2011, Ha & Schmidhuber 2018 b ), or as the ability to perform accurate credit assignment to events substantially separated in the temporal domain (Hung et al. 2019, Ke et al. 2019). As such, a better question to ask is how do the currently proposed RL architectures learn time and how do these methods fit into the conception of a Bayesian brain capable of representing it?

To address these questions, it is important to acknowledge that RL is commonly divided into two categories that relate to the two basic approaches to building agents - model-free and model-based.

The model-free approach encompasses a range of well-known methods (Mnih et al. 2015, Silver et al. 2014, Schulman et al. 2015, Lillicrap et al. 2016, Mnih et al. 2016, Haarnoja et al. 2018) and involves learning of a mapping between states (or observations) and actions without constructing an internal model of the environment. Most commonly, model-free algorithms rely on the idea of a value function - the expected cumulative reward associated with a state of an environment - and act according to maximising the action-value pair in any given state. On the other hand, modelbased RL comprises methods that equip an agent with a learnable model of its environment, which can be used for deliberate ac-

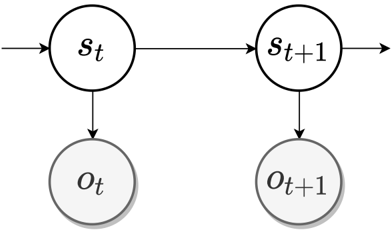

Figure 2: Graphical model of a partially observable Markov decision processes (POMDP) generative model. Latent state s t is the current state of the environment at time t with the corresponding observation o t available to the agent.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Hidden Markov Model (HMM)

### Overview

The image depicts a simplified Hidden Markov Model (HMM) diagram, illustrating the relationships between hidden states and observed states at two consecutive time steps, *t* and *t+1*. The diagram shows the flow of information and dependencies within the model.

### Components/Axes

* **Nodes:**

* Two white circles labeled *s<sub>t</sub>* and *s<sub>t+1</sub>*, representing hidden states at time *t* and *t+1*, respectively.

* Two gray circles labeled *o<sub>t</sub>* and *o<sub>t+1</sub>*, representing observed states at time *t* and *t+1*, respectively.

* **Edges:**

* A horizontal arrow pointing from left to right, entering the left side of *s<sub>t</sub>*, connecting *s<sub>t</sub>* to *s<sub>t+1</sub>*, and exiting the right side of *s<sub>t+1</sub>*, representing the transition between hidden states over time.

* A downward arrow from *s<sub>t</sub>* to *o<sub>t</sub>*, representing the emission probability from the hidden state *s<sub>t</sub>* to the observed state *o<sub>t</sub>*.

* A downward arrow from *s<sub>t+1</sub>* to *o<sub>t+1</sub>*, representing the emission probability from the hidden state *s<sub>t+1</sub>* to the observed state *o<sub>t+1</sub>*.

### Detailed Analysis

* **Hidden States:** The white circles *s<sub>t</sub>* and *s<sub>t+1</sub>* represent the hidden states of the system at times *t* and *t+1*. The horizontal arrow connecting them indicates the Markov property, where the next state depends only on the current state.

* **Observed States:** The gray circles *o<sub>t</sub>* and *o<sub>t+1</sub>* represent the observed states at times *t* and *t+1*. These are the states that are directly measurable.

* **Transitions:** The horizontal arrow indicates the transition probabilities between hidden states.

* **Emissions:** The downward arrows indicate the emission probabilities, which define the probability of observing a particular state given the current hidden state.

### Key Observations

* The diagram illustrates a basic two-time-step HMM.

* The Markov property is visually represented by the arrow connecting the hidden states.

* The emission probabilities link the hidden states to the observed states.

### Interpretation

The diagram represents a Hidden Markov Model, a statistical model used to model systems where the state is partially observable. The model assumes that the system's state is hidden, but we can observe some output that depends on the hidden state. The HMM is useful for modeling sequential data, such as speech recognition, handwriting recognition, and bioinformatics. The diagram shows how the hidden states evolve over time and how they influence the observed states. The arrows represent the probabilities of transitioning between hidden states and emitting observed states.

</details>

tion selection by means of planning. Importantly, this category of agents is conceptually closer to the biological intelligence realised in humans, given that the contemporary view of the brain is that of a Bayesian generative model. It is for this reason that we will focus our discussion on model-based RL.

Most of the model-based RL agents are Bayesian, in a sense that they possess probabilistic generative models and update their beliefs via Bayes' rule. These generative models are most commonly formulated as partially observable Markov decision processes (POMDP) - a generalisation of MDP (Bellman 1957) for environments where the true state cannot be directly observed (Fig. 2) 1 . In such environments, agents hold beliefs over states, p ( s ),

1 Please note that we exclude actions from the formulation of a generative model to be more consistent

which are iteratively updated upon receiving new information in a form of environment observables, o . Importantly, as the agent interacts with the environment, its state changes over time, as do the agent's beliefs. This belief dynamics is typically modelled by an explicit component called a transition dynamics function, expressed as p ( s t +1 | s t ) (or p ( s t +1 | s t , a t ) including actions - see footnote 1). As such, in its simplest form, an internal model of an agent constitutes a set of beliefs it holds about the state of the world (inferences) and a parametrised transition function modelling the dynamics of its own beliefs (and by consequence of the environment).

As we discuss the state of the art in Bayesian model-based RL, we want to draw the reader's attention to a plausible interpretation of what a generative model represents with respect to temporal learning. Apart from its unambiguous function of being an agent's computational cognitive model, the contents (i.e. learned representations of the world) of a generative model tell a story about the agent's subjective experiences of the environment. In particular, the emergent properties implicit into the components of its generative model can be viewed as the agent's means of perceiving and estimating time, and are therefore of great interest for the community.

## 4.1.2 Time and internal models

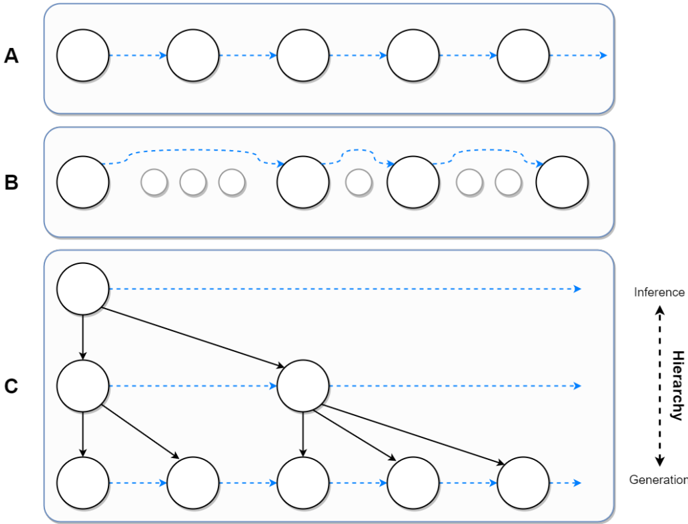

Traditional models Earlier works on deep model-based RL paid little consideration to learning temporal properties, focusing instead on the basic Markovian (or feed-forward) transition models (Sutton 1990, Deisenroth & Rasmussen 2011, Nagabandi et al. 2018, Feinberg et al. 2018). Markovian transition models are trained to map a belief state to its temporal successor, p ( s t +1 | s t ), but not beyond, adhering strictly to the POMDP structure (see Fig. 3A). As such, they are myopic and hold no explicit internal information about the past. In considering the consequences of their myopic nature, it becomes evident that feedforward agents have little access to the temporal information of the environment and must therefore rely on other sources for their representation and estimation. Indeed, Deverett et al. (2019) demonstrated that feed-forward agents gather temporal information from the environment, exhibiting autostigmergic behaviour 2 in which the agent's interactions with the environment are used as an external source of memory about the elapsed time. Similarly, the agent's perception of the environment's temporal dynamics is formed exclusively by the pairs of the inferred states and the change that separates them in the belief space. Despite

with the models discussed in Section 4.2. However, for RL, actions are naturally required for defining a model. For instance, a transition dynamics model is typically written as p ( s t +1 | s t , a t ), where a t is an action made by an agent at timestep t . Nevertheless, we thought it would be superfluous to incorporate actions into the discussion focused on the generative models underlying agents' perception and temporal learning.

2 Autostigmergic behaviour refers to situations in which an agent's actions result in the formation of traces in the environment that is perceived by the agent in the future as an incentive to further develop the action undertaken.

the fact that Markovian models have generally been shown to be inferior in designing agent generative models, Deverett et al. (2019) suggest that the method by which the temporal properties of the environment are gathered and the emergent estimation biases demonstrated in the work (scalar variability and mean-directed bias at the tails) resemble those found in primate experiments.

More recent and prominent model-based architectures demonstrate superior performance to their earlier feed-forward counterparts with the use of autoregressive (recurrent) transition models (deep learning models such as LSTM (Hochreiter & Schmidhuber 1997) or GRU (Cho et al. 2014)) (Hafner et al. 2020, 2021, Schrittwieser et al. 2020). For instance, the Dreamer agent (Hafner et al. 2020) is equipped with a recurrent state-space model (RSSM), in which the transition dynamics is an autoregressive model that incorporates internal information about previously processed states, p ( s t +1 | s ≤ t ). Notice that compared to a feed-forward transition model, an autoregressive model predicts the next timestep s t +1 conditioned on all previously inferred states of the world s ≤ t . This causal structure of the model allows it to possess information about the elapsed time in a form of a clockwork, stored in the patterns of its neural activations (Deverett et al. 2019). As such, it has been demonstrated that recurrent models are not subject to the biases found in time estimation experiments with humans and are computationally superior by virtue of being capable to use more information about the past.

Despite the apparent effectiveness of the latest autoregressive agents driven by the idea of attaining more rewards, the ideas standing behind their design touch little upon temporal learning. For instance, consider again the two kinds of transition models (feed-forward and autoregressive), as well as the definition of a POMDP, we presented earlier. A key indexing unit involved is that of the physical time of the environment, t , referring to the objective succession of time - independent of any one observer. Could modelling the objective timescale of the environment be called subjective perception of time? Does time perception remain intact regardless of the context? And, more practically, is the objective timescale optimal for planning and action selection?

Variable-timescale models It seems that these questions, at least in part, have motivated a number of works that went beyond the classical definitions of internal models and considered the timescale as an indirectly learnable component - an ambition which may be viewed as an unintentional attempt to build computational models of time perception in RL. Indeed, the common overarching theme of these works is in the defining of a more suitable timescale for agent transition models (see Fig. 3B). The methods generally differ in the criteria by which the timescales are determined and may be viewed as the distinct approaches to the implementation of time perception in computational cognitive models.

For instance, Neitz et al. (2018) and Jayaraman et al. (2018) define a model that learns

transitions between most predictable states. The resultant property of such a model is the ability to predict the future (and thus perform planning) with respect to the most anticipated events in a potential sequence. Although these models are not probabilistic, their mechanisms can be viewed from the Bayesian perspective - as taking a prediction path of least belief update. Specifically, under this approach, a probabilistic transition model, p θ , is optimal if its parameters minimise the belief update between the model's prediction from the current timestep t and some future timestep k ,

<!-- formula-not-decoded -->

where p ( s k | o k ) is a posterior latent state at some timestep k ∈ T that minimises the Kullback-Leibler divergence under the predicted prior distribution, p θ (¯ s | s t ), and T is a set of timesteps > t . Recall similarly that the presented quantity is also often called Bayesian surprise, more generally written as D KL [ q || p ] . As such, predictability criterion used in the two works, relate directly to this quantity when translated into the language of Bayesian belief updating, and correspond to its minimisation in the characterisation of a timescale. Such interpretation of the approach is important as it allows us for a direct comparison with other works in the Bayesian framework.

In particular, Zakharov, Crosby & Fountas (2021) introduced subjective-timescale models (STMs) that similarly rely on the Bayesian surprise for defining the timescale an agent would be trained to reproduce. However, unlike the approaches by Neitz et al. (2018) and Jayaraman et al. (2018), STMs select the states if the Bayesian surprise exceeds a certain threshold - a principally different approach in which a model tends to take the prediction path of most belief update,

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

where is a Bayesian surprise threshold. Here, STM ( p θ ) is trained to transition a belief state to a future state that caused inequality in Eq. 3 to be satisfied. Notably, Bayesian surprise in Eq. 3 is computed using the physical-timescale transition model, p ψ ( s k | s k -1 ). The authors hypothesise that the surprise quantity represents the visual salience of the agent's sensory inputs (Zakharov, Crosby & Fountas 2021). This work speaks to the ideas from an earlier discussed computational model of time perception that views saliency (Bayesian surprise) as the basic metric underlying the subjective experience of time (Fountas et al. 2021). More saliency means the distance between states in a physical timescale reduces, while its absence stretches this distance out. By using a number of perceptually complex for k iff,

datasets, Zakharov, Crosby & Fountas (2021) demonstrated the presence of this emergent property in a generative model of a trained RL agent. Similarly, when applied to a wellestablished model-based agent, Dreamer (Hafner et al. 2020), STM significantly improved its performance thus indicating its practical utility for planning and action selection.

Other works have concentrated on the 'informativeness' of states in defining the timescales. Pertsch et al. (2020) introduce a two-level hierarchical generative model that learns to transition between the so-called 'keyframes' of a video sequence in the top level of its hierarchy (with bottom level states being conditioned on pairs of the keyframes above). The keyframes are picked out by a parametrised stochastic model and therefore define the relevant timescale of a video sequence. Importantly, the keyframing model is a component of an agent's generative model and is thus trained in conjunction with the rest of the model by means of minimising the joint lower bound on the marginal likelihood. The informativeness of the states is considered implicitly - if a particular predicted keyframe is useful for the maximisation of the likelihood of a predicted sequence, it is more likely to be picked as a keyframe.

This work represents a fundamentally different approach to defining a more useful subjective timescale for an agent perceiving its environment. First, the integration of a separate timescale-constructing model into the agent's generative model draws an interesting connection with biological time perception hypotheses on internal clock and dedicated models (Treisman 1963, Gibbon 1977, Church 1984). In particular, this method considers the existence of a separate model for determining the rate at which the subjective time flows (by means of keyframes). Second, it contrasts with the previously discussed approaches where timescales are defined using non-parametric techniques and which rely solely on the reactive properties of the agent's generative model. There, the timescale-defining quantity is a form of Bayesian surprise, computed by the synthesis of sensory input with a prediction made by an agent's internal model - a technique that may be viewed to be more in line with predictive coding.

## 4.2 Hierarchical generative models

As explained, model-based RL is a particularly attractive area for exploring computational models of temporal learning given its conceptual similarity to the contemporary view of the brain as a statistical machine. Furthermore, it deals with agency and thus a perceptionaction loop that ultimately results in a unique sequence of observations and internal representations experienced and formed by an agent. However, the field of RL, though intricately connected to the areas of representation learning and generative modelling, has not fully explored some of the more advanced research performed in these areas. One such instance is that of hierarchical generative models. The role of hierarchical processing in the brain has been demonstrated in numerous works through the years, including hierarchical pro-

Figure 3: Types of generative models for temporal learning in the field of AI. Circles represent latent belief states, while arrows represent the generative process. A. Traditional Markovian transition models. B. Variable-timescale transition models that transition over a distinct timescale as opposed to the physical timescale. C. Hierarchical generative models with nested timescales.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Hierarchical Inference and Generation

### Overview

The image presents a diagram illustrating three different models (A, B, and C) representing hierarchical inference and generation processes. Each model is contained within a rounded rectangle. Model A shows a simple linear sequence. Model B shows a sequence with varying node sizes and a loop-back. Model C shows a hierarchical tree structure. An arrow on the right indicates the direction of "Inference" (upwards) and "Generation" (downwards) along the hierarchy.

### Components/Axes

* **Models:** A, B, C (labeled on the left side of each model's rounded rectangle)

* **Nodes:** Represented by circles. In model B, some nodes are smaller and grayed out.

* **Connections:** Represented by arrows. Solid black arrows indicate direct hierarchical relationships. Dashed blue arrows indicate inference/generation flow.

* **Hierarchy Axis:** A vertical axis on the right side of the diagram, labeled "Inference" at the top, "Hierarchy" in the middle, and "Generation" at the bottom.

### Detailed Analysis

**Model A:**

* Consists of four white circles of equal size, arranged linearly from left to right.

* Each circle is connected to the next by a dashed blue arrow, indicating a sequential flow.

* The overall trend is a simple, linear progression.

**Model B:**

* Begins with a white circle on the left.

* Followed by three smaller, grayed-out circles.

* Then, a white circle, followed by two more white circles of increasing size.

* The connections are dashed blue arrows. The arrow from the first white circle loops back to the first grayed-out circle.

* The trend is a sequence with varying node sizes and a feedback loop.

**Model C:**

* Represents a hierarchical tree structure.

* Starts with a single white circle at the top.

* This circle connects to two white circles in the next level via solid black lines.

* The left circle in the second level connects to two white circles in the third level.

* The right circle in the second level connects to two white circles in the third level.

* Dashed blue arrows indicate the inference/generation flow, moving horizontally from left to right at each level.

* The trend is a branching hierarchy, with information flowing both vertically (hierarchy) and horizontally (inference/generation).

**Hierarchy Axis:**

* Located on the right side of the diagram.

* Indicates the direction of inference (upwards) and generation (downwards) within the hierarchical structure.

### Key Observations

* Model A represents a simple sequential process.

* Model B introduces variability in node size and a feedback loop.

* Model C demonstrates a hierarchical structure with branching and multiple levels.

* The dashed blue arrows consistently indicate the direction of inference/generation.

* The solid black arrows in Model C represent direct hierarchical relationships.

### Interpretation

The diagram illustrates different approaches to modeling hierarchical inference and generation. Model A represents a basic linear model, while Model B introduces complexity through varying node sizes and feedback. Model C provides a more sophisticated hierarchical representation, allowing for branching and multi-level relationships. The hierarchy axis clarifies the direction of inference (from lower levels to higher levels) and generation (from higher levels to lower levels). The diagram suggests that hierarchical inference and generation can be modeled using various structures, each with its own strengths and weaknesses depending on the specific application.

</details>

cessing of time. In particular, empirical evidence from neuroimaging studies suggests that the human brain employs its hierarchy to segment continuous data (deducing its discretised structure in a form of event sequences). Across the nested hierarchy of its cortex, the brain represents timescales ranging from milliseconds to minutes (Baldassano et al. 2017), with an increasing level of temporal abstraction along its computational layers. This makes the branch of hierarchical generative modelling very attractive in studying how models, akin to our brains, could be built to exhibit similar properties of temporal learning and abstraction.

Previously, we have discussed one such work by Pertsch et al. (2020), in which a twolevel hierarchical model has been employed to reconstruct video sequences with the use of a parametrised keyframe detector. Another work by Kim et al. (2019) incorporates a similar technique into its two-level generative model. Although the formulation of the graphical model in Kim et al. (2019) is different to Pertsch et al. (2020), in both models the physicaltimescale variables are conditioned on the second level 'keyframes' (or boundary states). A simplified version of a temporal hierarchical generative model, in which lower-level states

are conditioned on the higher-level states is shown in Figure 3C. Here, it is pertinent to ask: apart from the aforementioned biological plausibility, what is the practical and conceptual merit of hierarchies, particularly for temporal learning?

The temporal hierarchy of latent variables force a generative model to exhibit certain properties that would otherwise be difficult, and perhaps even impossible, to acquire. First, nested hierarchies (such as the one shown in Fig. 3C) naturally result in the increasing temporal abstraction across layers, as higher-level states correspond to a larger number of physical-timescale states and are employed during the generative process to produce lower-level states. Similarly, higher-level states are inferred less often, promoting the representation of increasingly slower spatiotemporal features in those levels. Second, hierarchies lead to segmentation of sequential data by means of higher-level latent variables (also called contexts ) that govern the generation of the states below and serve as boundaries between lower-level sequences. As discussed, segmentation of events is an evident component of the human brain's perception machinery (Hard et al. 2006, Kurby & Zacks 2008), making computational cognitive models with this capability important for understanding how it could be realised in the brain.

Still, generative models with nested hierarchies present a number of practical challenges since it is unclear as to how they should be constructed. In particular, when should the contexts be updated and how could this be realised within a Bayesian model? Perhaps for this reason, a number of works have proposed fixed-interval models, in which each hierarchical level of a model is assigned its own manually-defined rate of update (Lotter et al. 2016, Koutnik et al. 2014, Saxena et al. 2021). Although fixed-interval models are capable of distributing abstract spatiotemporal features across its levels, as shown in Saxena et al. (2021), the emergent representations are not satisfactory with respect to the temporal factors of variation in sequential data (Zakharov, Guo & Fountas 2021). In particular, event segmentation, and thus an optimal discretisation of a sequence, is simply not possible within this framework, as the temporal structure of the generative model is pre-determined regardless of the incoming sensory input. Furthermore, this leaves little room for the presence of subjective time perception in a manner presented previously (Zakharov, Crosby & Fountas 2021) - a phenomenon that has been observed in human experiments.

Accordingly, some authors proposed models with adaptive (flexible) timescales across the hierarchical levels of a generative model. Chung et al. (2017) introduced Hierarchical Multiscale Recurrent Neural Networks (HM-RNNs) that employed a discrete-space long short term memory (LSTM) model with a parametrised boundary detector. The authors demonstrated that a hierarchical LSTM can discover an underlying spatiotemporal structure in sequential data, facilitating further research into this area. Notably, HM-RNNs were not probabilistic models and therefore did not operate over Bayesian belief states. This led Kim et al. (2019) to extend HM-RNNs to stochastic models (though only possessing two hi-

erarchical levels) with a conceptually analogous approach of accommodating a parametrised boundary detector as part of the generative model.

More recently, Zakharov, Guo & Fountas (2021) presented a Variational Predictive Routing model (VPR) that employs a non-parametric event boundary detection mechanism at every level of its latent hierarchy. The detection mechanism is primarily used to enforce a structure of subjective nested timescales that update only with respect to the features represented in their respective layers. As evident from the results demonstrated in the work, VPR has an elegant interpretation with respect to the potential cognitive mechanisms involved in its functionality and is hypothesised by the authors to be an effective time perception model.

VPR's boundary detection system employs Bayesian belief update quantities to decide on whether a hierarchical state should be updated. Specifically, the authors claim that the model is capable of capturing expected (predictable) and unexpected (surprising) events in every level of its hierarchy using two criteria. Upon detecting an event at level n , the model replaces its belief state s n with a newly inferred posterior - resulting in a structure of a generative model similar to the one shown in Figure 3C. Notice the nested structure of the graphical model, in which the higher-level states are allowed to update only if all lower-level states have similarly been updated.

The decision to infer a new hierarchical posterior state is decided using the mentioned event detector. Unexpected events are identified by considering the inequality,

<!-- formula-not-decoded -->

where p st = p ( s τ +1 | o τ , s <τ ) is the model's prior over the next belief state signifying the assumption that the belief will not change ( static assumption ), q st = q ( s τ +1 | o τ +1 , s <τ ) is the inferred posterior given the new observation o τ +1 , and denotes a threshold beyond which an unexpected event is regarded as detected. For simplicity, the hierarchical levels of belief states are not included in the notation.

Notice that under the so-called static assumption, the state of the generative model remains intact (i.e. the latent variables employed during inference, s <τ , stay constant), allowing for a clear interpretation of the divergence. In particular, the two distributions are identical if the observations have not changed over time, p st = q st iff o τ = o τ +1 . As such, this Bayesian surprise quantity can be interpreted as a measure of how much the model's beliefs about the encoded features have changed over time. The threshold thus serves to identify changes that are sufficiently significant to be classified as an event.

The second criterion employed by VPR is for the detection of expected events,

<!-- formula-not-decoded -->

where p ch = p ( s τ +1 | s τ , s <τ ) is the transition model's prior belief over the state it expects to observe at the next timestep (hence, it is the change assumption ), and q ch =

q ( s τ +1 | o τ +1 , s τ , s <τ ) is the inferred posterior state given a new observation o τ +1 . It is similarly intuitive to interpret this quantity: if a prediction made by the model, p ( s τ +1 | s τ , s <τ ), is consistent with a newly inferred posterior state, q ( s τ +1 | o τ +1 , s τ , s <τ ), then an expected (predictable) event has occurred.

VPR has been shown to exhibit the desired properties pertaining to a hierarchical cognitive model - the emergence of nested subjective timescales and segmentation of sequential signal into semantically coherent and hierarchically structured sub-sequences. Specifically, VPR is able to represent progressively 'slower' features in the higher levels of its latent hierarchy, thus preserving the temporal organisation of the dataset's factors of variation. As such, this work explores an interesting avenue of research that is intriguingly connected to the field of time perception. In particular, the unexpected event criterion can be regarded as a measure of visual salience of sensory signal which has been linked to human duration judgments (Sherman et al. 2020, Fountas et al. 2021), while both of the criteria (in their function of detecting anticipated and unanticipated events) seem to draw a connection with the neuromodulatory attention mechanisms in the brain (Yu & Dayan 2005).

## References

- Acerbi, L., Wolpert, D. M. & Vijayakumar, S. (2012), 'Internal representations of temporal statistics and feedback calibrate motor-sensory interval timing', PLoS computational biology 8 (11), e1002771.

- Addyman, C., French, R. M. & Thomas, E. (2016), 'Computational models of interval timing', Current Opinion in Behavioral Sciences 8 , 140-146.

- Ahrens, M. B. & Sahani, M. (2011), 'Observers exploit stochastic models of sensory change to help judge the passage of time', Current Biology 21 (3), 200-206.

- Arjona-Medina, J. A., Gillhofer, M., Widrich, M., Unterthiner, T. & Hochreiter, S. (2019), 'Rudder: Return decomposition for delayed rewards', ArXiv abs/1806.07857 .

- Baldassano, C., Chen, J., Zadbood, A., Pillow, J. W., Hasson, U. & Norman, K. A. (2017), 'Discovering event structure in continuous narrative perception and memory', Neuron 95 (3), 709-721.

- Basgol, H., Ayhan, I. & Ugur, E. (2021), 'Time perception: A review on psychological, computational and robotic models', IEEE Transactions on Cognitive and Developmental Systems .

- Bellman, R. (1957), 'A markovian decision process', Indiana University Mathematics Journal 6 , 679-684.

- Block, R. A. (1974), 'Memory and the experience of duration in retrospect', Memory & Cognition 2 (1), 153-160.

- Block, R. A., Hancock, P. A. & Zakay, D. (2010), 'How cognitive load affects duration judgments: A meta-analytic review', Acta psychologica 134 (3), 330-343.

- Blundell, C., Uria, B., Pritzel, A., Li, Y., Ruderman, A., Leibo, J. Z., Rae, J. W., Wierstra, D. & Hassabis, D. (2016), 'Model-free episodic control', ArXiv abs/1606.04460 .

- Bowers, J. S. & Davis, C. J. (2012), 'Bayesian just-so stories in psychology and neuroscience.', Psychological bulletin 138 (3), 389.

- Bubi´ c, A., von Cramon, D. Y. & Schubotz, R. I. (2010), 'Prediction, cognition and the brain', Frontiers in Human Neuroscience 4 .

- Buhusi, C. V. & Meck, W. H. (2005), 'What makes us tick? functional and neural mechanisms of interval timing', Nature reviews neuroscience 6 (10), 755-765.

- Cadena, S. A., Denfield, G. H., Walker, E. Y., Gatys, L. A., Tolias, A. S., Bethge, M. & Ecker, A. S. (2019), 'Deep convolutional models improve predictions of macaque v1 responses to natural images', PLoS computational biology 15 (4), e1006897.

- Cho, K., van Merrienboer, B., C ¸ aglar G¨ ul¸ cehre, Bahdanau, D., Bougares, F., Schwenk, H. & Bengio, Y. (2014), Learning phrase representations using rnn encoder-decoder for statistical machine translation, in 'EMNLP'.

- Chung, J., Ahn, S. & Bengio, Y. (2017), Hierarchical multiscale recurrent neural networks, in 'International Conference on Learning Representations, ICLR'.

- Church, R. M. (1984), 'Properties of the internal clock a', Annals of the New York Academy of Sciences 423 .

- Cicchini, G. M., Arrighi, R., Cecchetti, L., Giusti, M. & Burr, D. C. (2012), 'Optimal encoding of interval timing in expert percussionists', Journal of Neuroscience 32 (3), 10561060.

- Clark, A. (2015), Surfing uncertainty: Prediction, action, and the embodied mind , Oxford University Press.

- Dayan, P., Hinton, G. E., Neal, R. M. & Zemel, R. S. (1995), 'The helmholtz machine', Neural Computation 7 , 889-904.

- de Jong, J., Aky¨ urek, E. G. & van Rijn, H. (2021), 'A common dynamic prior for time in duration discrimination', Psychonomic Bulletin & Review pp. 1-8.

- Deisenroth, M. P. & Rasmussen, C. E. (2011), Pilco: A model-based and data-efficient approach to policy search, in 'ICML'.

- Deverett, B., Faulkner, R., Fortunato, M., Wayne, G. & Leibo, J. Z. (2019), 'Interval timing in deep reinforcement learning agents', Advances in Neural Information Processing Systems, pp. 6686-6695 .

- Droit-Volet, S. & Wearden, J. (2016), 'Passage of time judgments are not duration judgments: Evidence from a study using experience sampling methodology', Frontiers in Psychology 7 , 176.

- Dyjas, O., Bausenhart, K. M. & Ulrich, R. (2012), 'Trial-by-trial updating of an internal reference in discrimination tasks: Evidence from effects of stimulus order and trial sequence', Attention, Perception, & Psychophysics 74 (8), 1819-1841.

- Ellinghaus, R., Giel, S., Ulrich, R. & Bausenhart, K. M. (2021), 'Humans integrate duration information across sensory modalities: Evidence for an amodal internal reference of time.', Journal of Experimental Psychology: Learning, Memory, and Cognition .

- Feinberg, V., Wan, A., Stoica, I., Jordan, M. I., Gonzalez, J. E. & Levine, S. (2018), Model-based value expansion for efficient model-free reinforcement learning.

- Feldman, J. (2016), 'The simplicity principle in perception and cognition', Wiley Interdisciplinary Reviews: Cognitive Science 7 (5), 330-340.

- Fountas, Z., Sylaidi, A., Nikiforou, K., Seth, A. K., Shanahan, M. & Roseboom, W. (2021), 'A predictive processing model of episodic memory and time perception', bioRxiv pp. 2020-02.

- Friston, K. (2010), 'The free-energy principle: a unified brain theory?', Nature reviews neuroscience 11 (2), 127-138.

- Friston, K. J. (2012), 'The history of the future of the bayesian brain', Neuroimage 62248 , 1230 - 1233.

- Friston, K. J. (2019), 'A free energy principle for a particular physics', arXiv: Neurons and Cognition .

- Fuchs, T. (2013), 'Temporality and psychopathology', Phenomenology and the cognitive sciences 12 (1), 75-104.

- Gibbon, J. (1977), 'Scalar expectancy theory and weber's law in animal timing.', Psychological Review 84 , 279-325.

- Gregory, R. L. (1968), 'Perceptual illusions and brain models', Proceedings of the Royal Society of London. Series B. Biological Sciences 171 , 279 - 296.

- Ha, D. R. & Schmidhuber, J. (2018 a ), Recurrent world models facilitate policy evolution, in 'NeurIPS'.

- Ha, D. R. & Schmidhuber, J. (2018 b ), Recurrent world models facilitate policy evolution, in 'NeurIPS'.

- Haarnoja, T., Zhou, A., Abbeel, P. & Levine, S. (2018), Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor, in 'ICML'.

- Hafner, D., Lillicrap, T. P., Ba, J. & Norouzi, M. (2020), 'Dream to control: Learning behaviors by latent imagination', ArXiv abs/1912.01603 .

- Hafner, D., Lillicrap, T. P., Norouzi, M. & Ba, J. (2021), 'Mastering atari with discrete world models', ArXiv abs/2010.02193 .

- Hard, B. M., Tversky, B. & Lang, D. S. (2006), 'Making sense of abstract events: Building event schemas', Memory & cognition 34 (6), 1221-1235.

- Hardy, N. F. & Buonomano, D. V. (2016), 'Neurocomputational models of interval and pattern timing', Current Opinion in Behavioral Sciences 8 , 250-257.

- Hochreiter, S. & Schmidhuber, J. (1997), 'Long short-term memory', Neural Computation 9 , 1735-1780.

- Hohwy, J., Paton, B. & Palmer, C. (2016), 'Distrusting the present', Phenomenology and the Cognitive Sciences 15 (3), 315-335.

- Hume, D. (1739/1964), A Treatise of Human Nature by David Hume , Vol. 2, Oxford: Clarendon Press.

- Hung, C.-C., Lillicrap, T. P., Abramson, J., Wu, Y., Mirza, M., Carnevale, F., Ahuja, A. & Wayne, G. (2019), 'Optimizing agent behavior over long time scales by transporting value', Nature Communications 10 .

- Jaderberg, M., Czarnecki, W. M., Dunning, I., Marris, L., Lever, G., Casta˜ neda, A. G., Beattie, C., Rabinowitz, N. C., Morcos, A. S., Ruderman, A., Sonnerat, N., Green, T., Deason, L., Leibo, J. Z., Silver, D., Hassabis, D., Kavukcuoglu, K. & Graepel, T. (2019), 'Human-level performance in 3d multiplayer games with population-based reinforcement learning', Science 364 , 859 - 865.

- Jayaraman, D., Ebert, F., Efros, A. A. & Levine, S. (2018), 'Time-agnostic prediction: Predicting predictable video frames', arXiv preprint arXiv:1808.07784 .

- Jazayeri, M. & Shadlen, M. N. (2010), 'Temporal context calibrates interval timing', Nature neuroscience 13 (8), 1020-1026.

- Jones, M. & Love, B. C. (2011), 'Bayesian fundamentalism or enlightenment? on the explanatory status and theoretical contributions of bayesian models of cognition', Behavioral and brain sciences 34 (4), 169.

- Karmarkar, U. R. & Buonomano, D. V. (2007), 'Timing in the absence of clocks: encoding time in neural network states', Neuron 53 (3), 427-438.

- Ke, N. R., Singh, A., Touati, A., Goyal, A., Bengio, Y., Parikh, D. & Batra, D. (2019), 'Learning dynamics model in reinforcement learning by incorporating the long term future', ArXiv abs/1903.01599 .

- Kent, L., van Doorn, G., Hohwy, J. & Klein, B. (2019), 'Bayes, time perception, and relativity: The central role of hopelessness', Consciousness and cognition 69 , 70-80.

- Kersten, D. J., Mamassian, P. & Yuille, A. L. (2004), 'Object perception as bayesian inference.', Annual review of psychology 55 , 271-304.

- Khaligh-Razavi, S.-M. & Kriegeskorte, N. (2014), 'Deep supervised, but not unsupervised, models may explain it cortical representation', PLoS computational biology 10 (11), e1003915.

- Kim, T., Ahn, S. & Bengio, Y. (2019), 'Variational temporal abstraction', Advances in Neural Information Processing Systems 32 , 11570-11579.

- Knill, D. C. & Pouget, A. (2004), 'The bayesian brain: the role of uncertainty in neural coding and computation', Trends in Neurosciences 27 , 712-719.

- Knill, D. C. & Richards, W. (1996), Perception as Bayesian inference , Cambridge University Press.

- K¨ ording, K. P. & Wolpert, D. M. (2004), 'Bayesian integration in sensorimotor learning', Nature 427 (6971), 244-247.

- Koutnik, J., Greff, K., Gomez, F. & Schmidhuber, J. (2014), A clockwork rnn, in 'International Conference on Machine Learning', PMLR, pp. 1863-1871.

- Kriegeskorte, N. (2015), 'Deep neural networks: a new framework for modeling biological vision and brain information processing', Annual review of vision science 1 , 417-446.

- Krizhevsky, A., Sutskever, I. & Hinton, G. E. (2012), 'Imagenet classification with deep convolutional neural networks', Advances in neural information processing systems 25 , 10971105.

- Kurby, C. A. & Zacks, J. M. (2008), 'Segmentation in the perception and memory of events', Trends in cognitive sciences 12 (2), 72-79.

- Lejeune, H. & Wearden, J. H. (2009), 'Vierordt's the experimental study of the time sense (1868) and its legacy', European Journal of Cognitive Psychology 21 (6), 941-960.

- Lillicrap, T. P., Hunt, J. J., Pritzel, A., Heess, N. M. O., Erez, T., Tassa, Y., Silver, D. & Wierstra, D. (2016), 'Continuous control with deep reinforcement learning', CoRR abs/1509.02971 .

- Lotter, W., Kreiman, G. & Cox, D. (2016), 'Deep predictive coding networks for video prediction and unsupervised learning', arXiv preprint arXiv:1605.08104 .

- Maaß, S. C., de Jong, J., van Maanen, L. & van Rijn, H. (2021), 'Conceptually plausible bayesian inference in interval timing', Royal Society Open Science 8 (8), 201844.

- Matell, M. S. & Meck, W. H. (2004), 'Cortico-striatal circuits and interval timing: coincidence detection of oscillatory processes', Cognitive brain research 21 (2), 139-170.

- Meissner, K. & Wittmann, M. (2011), 'Body signals, cardiac awareness, and the perception of time', Biological psychology 86 (3), 289-297.

- Mnih, V., Badia, A. P., Mirza, M., Graves, A., Lillicrap, T. P., Harley, T., Silver, D. & Kavukcuoglu, K. (2016), Asynchronous methods for deep reinforcement learning, in 'ICML'.

- Mnih, V., Kavukcuoglu, K., Silver, D., Graves, A., Antonoglou, I., Wierstra, D. & Riedmiller, M. A. (2013), 'Playing atari with deep reinforcement learning', ArXiv abs/1312.5602 .

- Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A. A., Veness, J., Bellemare, M. G., Graves, A., Riedmiller, M. A., Fidjeland, A., Ostrovski, G., Petersen, S., Beattie, C., Sadik, A., Antonoglou, I., King, H., Kumaran, D., Wierstra, D., Legg, S. & Hassabis, D. (2015), 'Human-level control through deep reinforcement learning', Nature 518 , 529-533.

- Nagabandi, A., Kahn, G., Fearing, R. S. & Levine, S. (2018), 'Neural network dynamics for model-based deep reinforcement learning with model-free fine-tuning', 2018 IEEE International Conference on Robotics and Automation (ICRA) pp. 7559-7566.

- Neemeh, Z. A. & Gallagher, S. (2020), 'The phenomenology and predictive processing of time in depression', The Philosophy and Science of Predictive Processing p. 187.

- Neitz, A., Parascandolo, G., Bauer, S. & Sch¨ olkopf, B. (2018), 'Adaptive skip intervals: Temporal abstraction for recurrent dynamical models', arXiv preprint arXiv:1808.04768 .

- Ornstein, R. E. (1975), On the experience of time , London. Penguin books.

- Parr, T., Costa, L. D. & Friston, K. J. (2019), 'Markov blankets, information geometry and stochastic thermodynamics', Philosophical transactions. Series A, Mathematical, physical, and engineering sciences 378 .

- Paton, J. J. & Buonomano, D. V. (2018), 'The neural basis of timing: distributed mechanisms for diverse functions', Neuron 98 (4), 687-705.

- Pertsch, K., Rybkin, O., Yang, J., Zhou, S., Derpanis, K., Daniilidis, K., Lim, J. & Jaegle, A. (2020), Keyframing the future: Keyframe discovery for visual prediction and planning, in 'Learning for Dynamics and Control', PMLR, pp. 969-979.

- Petzschner, F. H., Glasauer, S. & Stephan, K. E. (2015), 'A bayesian perspective on magnitude estimation', Trends in cognitive sciences 19 (5), 285-293.

- Pollatos, O., Yeldesbay, A., Pikovsky, A. & Rosenblum, M. (2014), 'How much time has passed? ask your heart', Frontiers in neurorobotics 8 , 15.

- Poynter, W. D. & Homa, D. (1983), 'Duration judgment and the experience of change', Perception & Psychophysics 33 (6), 548-560.

- Racani` ere, S., Weber, T., Reichert, D. P., Buesing, L., Guez, A., Rezende, D. J., Badia, A. P., Vinyals, O., Heess, N. M. O., Li, Y., Pascanu, R., Battaglia, P. W., Hassabis, D., Silver, D. & Wierstra, D. (2017), Imagination-augmented agents for deep reinforcement learning, in 'Advances in Neural Information Processing Systems 30 (NIPS 2017)'.

- Rao, R. P. & Ballard, D. H. (1999), 'Predictive coding in the visual cortex: a functional interpretation of some extra-classical receptive-field effects', Nature neuroscience 2 (1), 7987.

- Remington, E. D., Parks, T. V. & Jazayeri, M. (2018), 'Late bayesian inference in mental transformations', Nature communications 9 (1), 1-13.

- Roseboom, W., Fountas, Z., Nikiforou, K., Bhowmik, D., Shanahan, M. & Seth, A. K. (2019), 'Activity in perceptual classification networks as a basis for human subjective time perception', Nature communications 10 (1), 1-9.

- Saxena, V., Ba, J. & Hafner, D. (2021), 'Clockwork variational autoencoders', arXiv preprint arXiv:2102.09532 .

- Schrittwieser, J., Antonoglou, I., Hubert, T., Simonyan, K., Sifre, L., Schmitt, S., Guez, A., Lockhart, E., Hassabis, D., Graepel, T., Lillicrap, T. P. & Silver, D. (2020), 'Mastering atari, go, chess and shogi by planning with a learned model', Nature 588 7839 , 604-609.

- Schulman, J., Levine, S., Abbeel, P., Jordan, M. I. & Moritz, P. (2015), 'Trust region policy optimization', ArXiv abs/1502.05477 .

- Seth, A. K. (2015), The cybernetic bayesian brain, 'Open MIND': MIND Group, Frankfurt am Main, chapter 35(T).

- Sherman, M. T., Fountas, Z., Seth, A. K. & Roseboom, W. (2020), 'Accumulation of salient events in sensory cortex activity predicts subjective time', bioRxiv .