## Computational Metacognition

Michael T. Cox Zahiduddin Mohammad Sravya Kondrakunta

MICHAEL.COX@WRIGHT.EDU

MOHAMMAD.48@ WRIGHT.EDU

KONDRAKUNTA.2@WRIGHT.EDU

Ventaksampath Raja Gogineni

GOGINENI.14@WRIGHT.EDU

Computer Science and Engineering, Wright State University, Dayton, OH 45435 USA

Dustin Dannenhauer Othalia Larue

DUSTIN.DANNENHAUER@PARALLAXRESEARCH.ORG

OTHALIA.LARUE@PARALLAXRESEARCH.ORG

Autonomy Research Group, Parallax Advanced Research, Beavercreek, OH 45431 USA

## Abstract

Computational metacognition represents a cognitive systems perspective on high-order reasoning in integrated artificial systems that seeks to leverage ideas from human metacognition and from metareasoning approaches in artificial intelligence. The key characteristic is to declaratively represent and then monitor traces of cognitive activity in an intelligent system in order to manage the performance of cognition itself. Improvements in cognition then lead to improvements in behavior and thus performance. We illustrate these concepts with an agent implementation in a cognitive architecture called MIDCA and show the value of metacognition in problem-solving. The results illustrate how computational metacognition improves performance by changing cognition through meta-level goal operations and learning.

## 1. Introduction

The computational metacognition process is analogous to an action-perception cycle in an intelligent agent (Cox, 2005). But, instead of perceiving the environment and acting in the world, metacognition monitors cognition and acts to control cognitive activity. In humans, such introspective processes can prove beneficial, as in a student's proper regulation of classroom learning (Price, Hertzog, & Dunlosky, 2009) or in a game show contestant's judgment of knowing answers to questions posed by the show's host (Reder & Ritter, 1992). Yet given the significant overhead and complexity it exhibits (Conitzer, 2011), metacognition is not a panacea for all computational tasks nor is it always beneficial in human performance (Norman, 2020; Wilson & Schooler, 1991). In this paper, we will examine the kinds of metacognitive activities that can improve a cognitive system's performance, and we will demonstrate these concepts in an implemented problem-solving domain.

In some sense, metacognition is an add-on to a cognitive system. If a cognitive system has a certain behavior, then a metacognitive system should be able to observe that behavior and improve the performance of that system by changing its behavior given some parameters under its control. But here we are speaking of cognitive behavior (i.e., problem solving) rather than the physical behavior of the system in the world. The impact on the actual behavior of the joint system is indirect

and improves performance by improving thinking. As such, metacognition is one of the characteristics of intelligence that separates humans from mere reinforcement machines. This paper presents a computational approach to metacognition that seeks to clarify the manner in which such indirect levers can exert such influential effects on performance. Its contribution is to specify the mechanism of metacognition in computational terms within the context of an existing cognitive architecture and to evaluate these concepts in planning problems. Previous work has discussed the process of detecting metacognitive expectation failures; the paper here discusses the processes of meta-level explanation and goal generation as well as meta-level planning and learning. This is our first fully complete implementation of the metacognitive cycle.

Section 2 describes our concept of computational metacognition consisting of explanatory, immediate and anticipatory metacognition. Subsequently, Section 3 shows how a cognitive architecture instantiates many of these ideas computationally and illustrates the principles with examples. Section 4 then evaluates this approach empirically in a simple, plant-protection domain. Finally, Section 5 enumerates related research, and Section 6 summarizes and concludes.

## 2. Computational Metacognition

Though the concept of computational metacognition has many variations in the literature, having both broad and narrow interpretations, virtually all theories divide it into some kind of introspective monitoring and meta-level control . In the broader sense, any self-directed process can be included under its umbrella; whereas, the stricter definition of meta-x as 'x about x' (see Hayes-Roth, Waterman, & Lenat, 1983) constrains the subject to cognition about cognition. This more narrow definition excludes related concepts such as meta-knowledge (i.e., knowledge about knowledge) which is not a cognitive process per se (Cox, 2011). But broadly speaking in all its interpretations across the literature, metacognition is surely 'the many-headed monster of obscure parentage' of which Brown (1987) speaks; it is not a single monolithic construct. Instead, we claim that metacognition has three fundamental forms (see Table 1).

Table 1 . Types of Computational Metacognition

| Explanatory | Immediate | Anticipatory |

|---------------|---------------|----------------|

| Past | Present | Future |

| Hindsight | Insight | Foresight |

| Retrospective | Introspective | Predictive |

Explanatory metacognition is a reflective process triggered by failures in previous cognitive operations and thus represents a process akin to hindsight. Immediate metacognition represents introspective run-time control of cognition, analogous to physical eye-hand coordination. 1 Anticipatory metacognition is a reflective judgement of future cognitive performance and hence represents self-directed foresight. This paper has little room to discuss all three forms. Instead, it will focus on the explanatory category of metacognition and briefly examine the anticipatory one.

1 This is also related to decision-theoretic metareasoning applied to partial computations (Horvitz, 1990) and to bounded rationality decisions for anytime-planning (Zilberstein, 2011). In contrast, our approach is symbolic and non-statistical.

## COMPUTATIONAL METACOGNITION

## 2.1 Explanatory Metacognition

Expectations play important functional roles in cognition such as the monitoring of plan execution (Dannenhauer & Munoz-Avila, 2015; Pettersson, 2005; Schank, 1982), managing comprehension, especially natural language understanding (Schank & Owens, 1987), and influencing emotion (Langley, 2017). Expectations are knowledge artifacts that enable cognitive systems to verify their behavior is working as intended. The agent checks if discrepancies exist between its expectations and its observations of the state of the world. When such a discrepancy is detected, an expectation failure is said to occur. Different means of addressing expectation failures have been proposed including plan adaptation (i.e., modifying a plan to be executed) (Munoz-Avila & Cox, 2008), learning (i.e., acquiring a new piece of knowledge) (Munoz-Avila, 2018; Ram & Leake, 1995a), and goal reasoning (i.e., changing the goals pursued or formulating new goals to be achieved) (Aha, 2018; Roberts, Borrajo, Cox, & Yorke-Smith, 2018). Expectation failures are signals that a problem may have arisen. Explanations of the problem then provide the basis for goal formulation and thus facilitate problem-solving. Note that we are referring to a kind of internal explanation or selfdiagnosis process rather than an external explanation to another agent.

Similarly, metacognitive expectations (Dannenhauer, Cox, & Munoz-Avila, 2018) play an analogous role to the expectations discussed above. But here the expectation concerns the outcome of cognitive processes rather than events in the world. As such, it relates the mental states immediately before and resulting from a specific mental process or action. An individual mental state 𝑠𝑠 𝑀𝑀 = ( 𝑣𝑣1 , … , 𝑣𝑣 𝑛𝑛 ) is a vector of variables; whereas, a mental action 𝛼𝛼 𝑀𝑀 performs reads from or updates to variables in a mental state. The metacognitive expectation then is represented as the triple ( 𝑠𝑠 𝑖𝑖 𝑀𝑀 , 𝛼𝛼 𝑖𝑖 𝑀𝑀 , 𝑠𝑠 𝑖𝑖+1 𝑀𝑀 ) where 𝛼𝛼 𝑖𝑖 𝑀𝑀 is the current cognitive process and each 𝑠𝑠 𝑀𝑀 represents the system's mental state immediately preceding and following from it. 2 That is, for a given cognitive process, the expectation specifies constraints on memory associated with the execution of the process.

For example, one might expect commitment to a current goal before a planning process and a plan to be in memory after planning finishes. That is, the expectation would be represented as the triple ( 𝑔𝑔 𝑐𝑐 ∈ 𝑠𝑠 𝑖𝑖 𝑀𝑀 , 𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃 , 𝜋𝜋 ∈ 𝑠𝑠 𝑖𝑖+1 𝑀𝑀 ) . If a plan does not result after planning executes, then something went wrong in cognition (Cox & Dannenhauer, 2016). The reasoning from such metacognitive expectation failures is in hindsight, because it happens after a mistake occurs. The function of metacognition is to explain what caused the cognitive-level failure and to formulate a meta-level goal to mitigate the problem. For example, changing the goal minimally to something easier but serving the same end may enable successful planning when physical resources are scarce (see Cox & Veloso, 1998).

Multiple meta-level goals are available to affect cognition. Currently, we have implemented three different meta-level goal types. The first aims to change cognition directly, while the other two represents indirect change.

1. To change the reasoning method (e.g., change from state-space planning to case-based planning) (Cox & Dannenhauer, 2016);

2. To change cognition by changing the goals of the system (Cox, Dannenhauer, & Kondrakunta, 2017);

3. To change cognition by learning new knowledge structures (Mohammad, 2021; Mohammad, Cox, & Molineaux, 2020).

2 Dannenhauer, et al. (2018) defined metacognitive expectations as a Boolean function taking such a triple as input. But, the differences are unimportant for the purposes of this paper.

This paper will focus on the third type, that is, learning-goals (i.e., an explicit meta-level goal to learn a specific piece of knowledge) (Cox, 1997; Cox & Ram, 1999; Ram & Leake, 1995b) as a metacognitive response, but the others are equally as important. Plans can be generated at the metalevel composed of 'actions' such as performing learning in pursuit of a learning goal or executing different goal operations (Cox, et al., 2017; Kondrakunta, Gogineni, & Cox, in press) such as goal change in pursuit of the second type above. But, the goal delegation operation has a particular role in anticipatory metacognition which spans both cognition and metacognition.

## 2.2 Anticipatory Metacognition

The concept of anticipatory metacognition is forward looking. Humans demonstrate foresight concerning their cognitive prowess or the lack thereof on a regular basis. Likewise, cognitive systems can benefit from an ability to predict whether or not they can achieve their goals as opposed to exhaustively trying all possible solutions before acquiescing. Unlike anticipatory thinking (Amos-Binks & Dannenhauer, 2019) that predicts plan execution failures and seeks to mitigate the vulnerability of a plan after it is generated, our approach is to anticipate a failure given the goal but before planning is performed. Making such a prediction, an agent can simply delegate some of its goals to another agent willing to help it and thus mitigate the amount of work to be done.

In contrast to explanatory metacognition, which is triggered by metacognitive expectation failures, anticipatory metacognition is triggered by the presence of suspended goals . Suspended goals are ones that were part of an agent's current goal set but were determined to be problematic given physical resource limitations for example. This condition suggests that planning or other reasoning will fail in the future. As a result, metacognition can generate a meta-level goal to change the cognitive-level goal. In this case, it is a change from the agent's goal to another agent's goal. Thus, the goal delegation operation is partially performed at the cognitive level and partially at the meta-level. Suspending the goal is cognitive; whereas, reasoning about another agent's knowledge, skills and goals in relation to the agent's own goals is associated with Theory of Mind (Goldman, 2006; Gopnik, 2012; Wellman, 1990) and with metacognition. This allows the system to target a good candidate agent for the delegation. Finally, if the agent decides to delegate one or more of its goals and determines a candidate to achieve them, the agent still needs to make an actual request, explain the need for the request, and negotiate the favor. Such reasoning is once again situated at the cognitive level and in the speech acts (Searle, 1969) executed in the world. Gogineni, Kondrakunta, and Cox (in press) provide a complete example of this hybrid process with experimental evidence supporting the benefits of this type of metacognitive activity.

Given space limitations, this paper cannot but sketch the mechanisms behind anticipatory metacognition. Instead, we will describe the detailed process of explanatory metacognition and show how it is implemented in a particular cognitive architecture. Specifically, we will explain how it manifests as a combination of introspective monitoring and meta-level control. With these details in hand, we will then provide an empirical evaluation that demonstrates the benefit of computational metacognition for cognitive systems.

## 3. Architectural Structure and Implementation

The metacognitive integrated dual-cycle architecture (MIDCA) is a cognitive architecture that models both cognition and metacognition for intelligent agents (Cox et al., 2016; Cox, Oates, & Perlis, 2011; Paisner, Cox, Maynord, & Perlis, 2014) and focusses upon various goal operations including goal change, goal formulation and goal delegation (Cox & Dannenhauer, 2017; Cox, et

## COMPUTATIONAL METACOGNITION

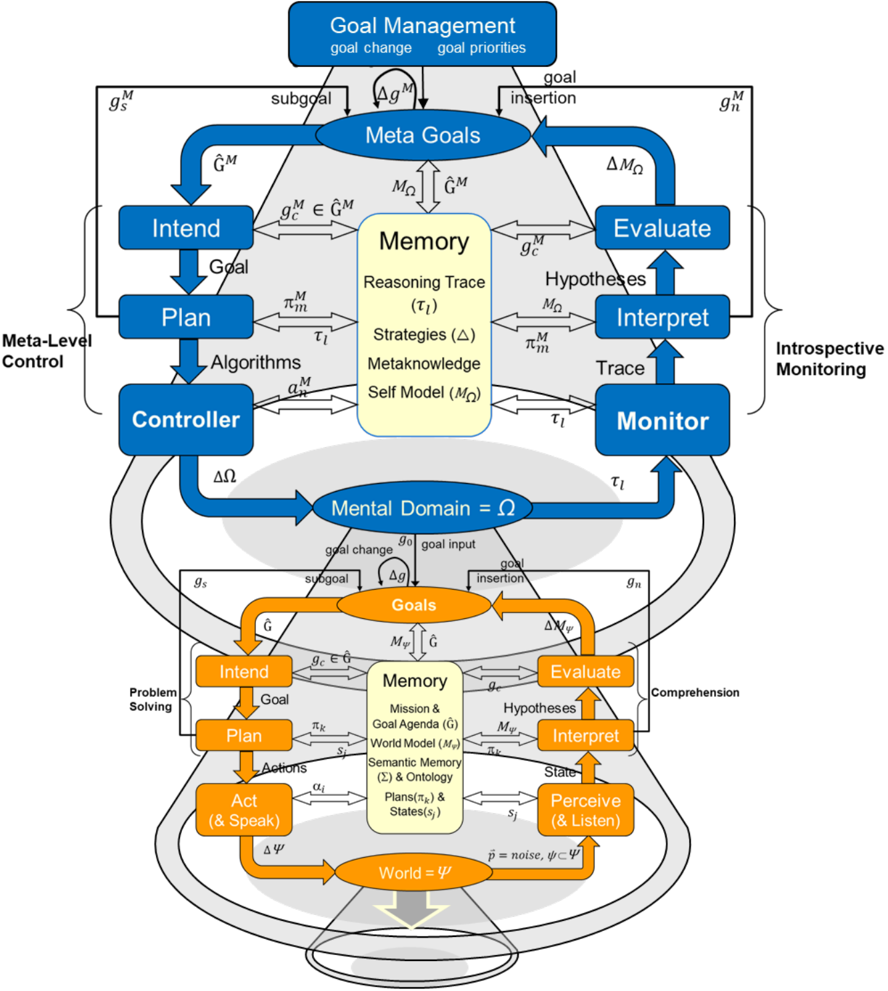

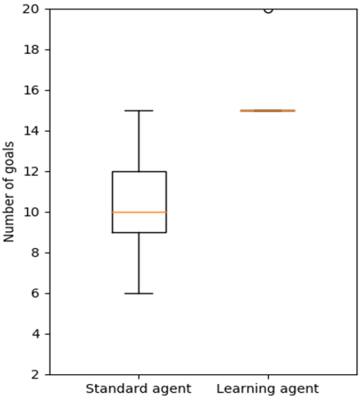

al., 2017). 3 It consists of 'action-perception' cycles at both the cognitive level and the metacognitive level (see Figure 1). In general, a cycle performs problem-solving to achieve its goals and then comprehends the results of its behavior and interactions with other agents in its environment. The problem-solving portion of each cycle consists of three phases: intention; planning; and the action-execution/control phase. The comprehension portion consists of perception/monitoring, interpretation, and goal evaluation.

The representations in MIDCA and our formal notation borrow much from the AI planning community (e.g., Ghallab, Nau, Traverso, 2004). We rely on the notion of a state transition system Σ = ( 𝑆𝑆 , 𝐴𝐴 , 𝛾𝛾 ) for representing a planning problem. In Σ , 𝑆𝑆 is the set of all possible world states, 𝐴𝐴 is the set of actions the agent can take, and 𝛾𝛾 is the successor function 𝛾𝛾 : 𝑆𝑆 × 𝐴𝐴 ⇢ 𝑆𝑆 . A planning problem is represented as 𝒫𝒫 = ( Σ , 𝑠𝑠0 , 𝑔𝑔 ) where the initial state 𝑠𝑠0 ∈ 𝑆𝑆 and the goal 𝑔𝑔 ∈ 𝐺𝐺 ⊂ 𝑆𝑆 . A plan 𝜋𝜋 = 〈𝛼𝛼1 , 𝛼𝛼2 , … , 𝛼𝛼 𝑛𝑛 〉 is a sequence of actions 𝛼𝛼 𝑖𝑖 ∈ 𝐴𝐴 . The plan 𝜋𝜋 represents a solution to 𝒫𝒫 by achieving 𝑔𝑔 if and only if its iterative execution from 𝑠𝑠0 results in a final state 𝛾𝛾 ( 𝑠𝑠 𝑛𝑛-1 , 𝛼𝛼 𝑛𝑛 ) that entails the goal expression. Note however, that a problem need not always start from some arbitrary initial state but instead may arise during the planning or the plan execution related to some previous problem (Cox, 2020). 4

At the cognitive level, comprehension starts with observations in terms of percepts ( 𝑝𝑝 ⃑ ) of the world ( 𝛹𝛹 ) whereby the Perceive phase infers the objects in the environment and the relationships between them. The Interpret phase takes as input the resulting relational state ( 𝑠𝑠 𝑗𝑗 ) and the expectations in memory to determine whether it is making sufficient progress. It is here that a model of the world ( 𝑀𝑀Ψ ) is inferred from its observations, and new goals ( 𝑔𝑔 𝑛𝑛 ) are generated when the model indicates problems or opportunities. Interpret adds any new goals to the goal agenda ( 𝐺𝐺 � = { 𝑔𝑔1 , 𝑔𝑔2 , … 𝑔𝑔 𝑛𝑛 } ). The Evaluate phase incorporates the concepts inferred from Interpret and checks whether the current goal set ( 𝑔𝑔 𝑐𝑐 ∈ 𝐺𝐺 � ) is achieved, i.e., whether 𝑠𝑠 𝑗𝑗 entails 𝑔𝑔 𝑐𝑐 . If so, 𝑔𝑔 𝑐𝑐 is removed from the agenda and set to the empty set (i.e., 𝐺𝐺 � ← 𝐺𝐺 � / 𝑔𝑔 𝑐𝑐 and 𝑔𝑔 𝑐𝑐 ← {} ).

In cognitive-level problem solving, the Intend phase commits to a new goal set 𝑔𝑔 𝑐𝑐 from those available in 𝐺𝐺 � if the old goal set is empty. The Plan phase then generates a sequence of actions (i.e., the current plan, 𝜋𝜋 𝑘𝑘 = 〈𝛼𝛼1 , 𝛼𝛼2 , … 𝛼𝛼 𝑛𝑛 〉 ) to perform in pursuit of its goals. The Act phase executes the steps of the plan ( 𝛼𝛼 𝑖𝑖 ) one at a time to change the environment through their effects. MIDCA will then use expectations about these actions in subsequent cycles to evaluate the execution of the plan. At the end of each cognitive phase, MIDCA performs an entire metacognitive cycle as explained below.

Like cognition, MIDCA partitions metacognition into introspective monitoring (analogous to cognitive-level comprehension) and meta-level control (analogous to problem-solving). Introspective monitoring detects metacognitive expectation failures and formulates goals to change cognition in response. Meta-level control generates and executes plans to achieve these goals and thus improve cognitive performance. See Dannenhauer, et al. (2018) for a more formal specification of the two-cycle MIDCA mechanism. Here we give enough detail to capture the metacognitive process computationally and allow the reader to follow the examples and understand the evaluation.

3 MIDCA version 1.5 is open-source and runs on python 3.8. The source code and documentation are publicly available at https://github.com/COLAB2/midca. See also www.midca-arch.org .

4 We actually assume a somewhat different problem representation 𝒫𝒫 𝑐𝑐 = ( 𝑠𝑠 𝑐𝑐 , 𝑠𝑠 𝑒𝑒 , 𝐵𝐵𝐵𝐵 , 𝐻𝐻 𝑐𝑐 ) where 𝑠𝑠 𝑐𝑐 and 𝑠𝑠 𝑒𝑒 represent the currently observed and expected world states, 𝐵𝐵𝐵𝐵 is the background knowledge and 𝐻𝐻 𝑐𝑐 is the episodic problemsolving history of the agent (see Cox, 2020). But the differences are not important for the purposes of this paper and may be ignored by the reader.

Figure 1 . The metacognitive integrated dual-cycle architecture and the flow of knowledge between computational phases. The lower (orange) cycle represents cognition, receiving stimuli in the form of percepts and acting upon the environment, thus changing the world state. The upper (blue) cycle represents metacognition, receiving an introspective trace of cognition and controlling the cognitive level through goal operations and learning. Note that all knowledge structures 𝐵𝐵 that are metacognitive have the superscript 𝐵𝐵 𝑀𝑀 .

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Cognitive Architecture for Goal-Driven Systems

### Overview

This diagram illustrates a hierarchical cognitive architecture designed for goal-driven systems, integrating meta-level control, memory, and problem-solving processes. It emphasizes the interplay between high-level goal management, memory systems, and real-time perception/action loops. The diagram uses color-coded components (blue for meta-level, orange for problem-solving) and directional arrows to represent information flow and control mechanisms.

### Components/Axes

1. **Top Section (Meta-Level Control)**:

- **Goal Management**: Manages goal changes and priorities.

- **Meta Goals**: Contains subgoals (`g_s^M`, `g_n^M`) and goal insertion.

- **Intend**: Connects to "Goal" and "Plan" via arrows labeled "Goal" and "Algorithms."

- **Plan**: Links to "Controller" and "Algorithms."

- **Controller**: Receives input from "Mental Domain = Ω" and outputs to "Monitor."

- **Evaluate/Interpret/Monitor**: Forms a feedback loop with "Hypotheses" and "Trace."

2. **Central Section (Memory)**:

- **Memory**: Contains subcomponents:

- **Reasoning Trace (τ_l)**

- **Strategies (Δ)**

- **Metaknowledge**

- **Self Model (M_Ω)**

- **Memory Trace (τ_l)** and **Hypotheses** are connected to "Evaluate" and "Interpret."

3. **Bottom Section (Problem-Solving/World Modeling)**:

- **Goals**: Includes "Intend," "Plan," "Act (& Speak)," and "Perceive (& Listen)."

- **World = ψ**: Represents the environment model, with inputs from "Perceive (& Listen)" and outputs to "Act (& Speak)."

- **Problem Solving**: Connects to "Goals" via "Goal Agenda (Ĝ)" and "World Model (M_ψ)."

4. **Arrows and Labels**:

- **Blue Arrows**: Represent meta-level control (e.g., "goal change," "goal input").

- **Orange Arrows**: Represent problem-solving and perception-action loops (e.g., "Δg," "Δψ").

- **Mathematical Notations**:

- **Mental Domain = Ω** (central hub).

- **World = ψ** (environment model).

- **Δg, ΔΩ, Δψ**: Indicate changes in goals, mental domain, and world state.

5. **Legends and Colors**:

- **Blue**: Meta-level components (e.g., "Goal Management," "Meta Goals").

- **Orange**: Problem-solving components (e.g., "Goals," "World = ψ").

- **Yellow**: Memory subcomponents (e.g., "Reasoning Trace," "Metaknowledge").

### Detailed Analysis

- **Goal Management**: Positioned at the top, it governs goal changes and priorities, feeding into "Meta Goals" and "Intend."

- **Memory**: Acts as a central repository for reasoning traces, strategies, and self-models, enabling adaptive decision-making.

- **Controller**: Bridges meta-level goals and problem-solving by processing inputs from the "Mental Domain = Ω" and directing outputs to "Monitor."

- **Problem-Solving Loop**: The orange section forms a closed loop between "Goals," "World = ψ," and "Perceive (& Listen)/Act (& Speak)," emphasizing real-time adaptation.

- **Feedback Mechanisms**: Arrows like "Evaluate," "Interpret," and "Monitor" create a cyclical process for hypothesis testing and state evaluation.

### Key Observations

- **Hierarchical Structure**: The diagram separates high-level goal management (blue) from low-level problem-solving (orange), with memory as the integrator.

- **Dynamic Adaptation**: The use of "Δg," "ΔΩ," and "Δψ" suggests the system continuously adjusts to changes in goals, mental states, and the environment.

- **Integration of Memory**: The "Memory" block is critical for storing and retrieving strategies, traces, and self-models, enabling long-term learning.

- **Color-Coded Flow**: Blue arrows (meta-level) and orange arrows (problem-solving) visually distinguish between strategic and operational processes.

### Interpretation

This architecture demonstrates a **goal-driven, adaptive system** where meta-level control (e.g., goal prioritization) and real-time problem-solving (e.g., perception-action loops) are tightly coupled through memory. The "Mental Domain = Ω" and "World = ψ" represent abstract and concrete layers of processing, respectively. The feedback loops (e.g., "Evaluate" → "Interpret" → "Monitor") ensure the system refines its strategies based on outcomes.

The diagram highlights the importance of **memory as a bridge** between abstract goals and concrete actions, enabling the system to learn from experience and adjust its behavior. The separation of meta-level and problem-solving components suggests a modular design, allowing for scalability and flexibility in complex environments.

**Notable Trends**:

- The central role of "Memory" underscores its importance in maintaining coherence between high-level goals and low-level actions.

- The bidirectional flow between "World = ψ" and "Goals" indicates a dynamic interaction between the environment and the system's objectives.

- The use of mathematical notations (e.g., "Δg") implies a formal framework for modeling goal changes and system dynamics.

</details>

## 3.1 Introspective Monitoring

At the meta-level, introspective monitoring starts with a trace ( 𝜏𝜏 𝑙𝑙 ) of the mental domain, that is, of the activity at the cognitive level (again see Figure 1). In support of this knowledge structure, Dannenhauer, et al., (2018) defines an agent's self-model (i.e., a model of the cognitive level) as Ω = ( 𝑆𝑆 𝑀𝑀 , 𝐴𝐴 𝑀𝑀 , 𝜔𝜔 ) where each 𝑠𝑠 𝑖𝑖 𝑀𝑀 ∈ 𝑆𝑆 𝑀𝑀 is a possible mental state, each 𝛼𝛼 𝑖𝑖 𝑀𝑀 ∈ 𝐴𝐴 𝑀𝑀 is a mental action, and 𝜔𝜔 is a cognitive transition function. 5 In MIDCA, a mental state is represented as a vector of length 𝑃𝑃 = 7 where 𝑠𝑠 𝑖𝑖 𝑀𝑀 = ( 𝑔𝑔 𝑐𝑐 , 𝐺𝐺 � , 𝜋𝜋 𝑘𝑘 , 𝑀𝑀Ψ , 𝐷𝐷 , 𝐸𝐸 , 𝛼𝛼 𝑖𝑖 ) . That is, it consists of the current goal, the goal agenda, the current plan, the current world state, discrepancies detected, explanations generated, and the last action executed in the world. MIDCA employs the following mental actions: Perceive, Detect Discrepancies, Explanation, Goal Insertion (i.e., updates the set of goals 𝐺𝐺 � to be achieved), Evaluate, Intend, Plan, and Act. Each of these represent either one of the six phases at the cognitive level or a subprocess within Interpret. The cognitive transition function is defined as 𝜔𝜔 : 𝑆𝑆 𝑀𝑀 × 𝐴𝐴 𝑀𝑀 ⟶𝑆𝑆 𝑀𝑀 . That is, given a current mental state, 𝜔𝜔 provides the successive mental state resulting from the execution of that mental action and hence is a source of metacognitive expectations. Finally, the trace is an interleaved sequence of mental states and mental actions. More specifically, 𝜏𝜏 𝑙𝑙 = 〈𝑠𝑠0 𝑀𝑀 , 𝛼𝛼1 𝑀𝑀 , 𝑠𝑠1 𝑀𝑀 , 𝛼𝛼2 𝑀𝑀 , … , 𝛼𝛼 𝑛𝑛 𝑀𝑀 , 𝑠𝑠 𝑛𝑛 𝑀𝑀 〉 where 𝑠𝑠0 𝑀𝑀 , … , 𝑠𝑠 𝑛𝑛 𝑀𝑀 ∈ 𝑆𝑆 𝑀𝑀 and 𝛼𝛼1 𝑀𝑀 , … , 𝛼𝛼 𝑛𝑛 𝑀𝑀 ∈ 𝐴𝐴 𝑀𝑀 .

The Monitor phase takes the recorded trace and places it in memory for the meta-level Interpret phase to examine. As with the cognitive level phase, meta-level Interpret seeks to detect and explain discrepancies between specific metacognitive expectations and its 'observations' in segments of the cognitive trace. As mentioned in Section 2.1 these expectations consist of the memory states before and after a given cognitive phase or phase component. If a discrepancy exists, then Interpret attempts to explain the discrepancy and formulate a meta-level goal.

Consider an agent that is learning to take care of a garden having goals such as the preservation of native plants and the removal of invasive ones. Its knowledge of plant care, such as the correct application of herbicide, may be flawed, and therefore it may make mistakes in both the execution of solutions (i.e., its behavior in the world) and in the derivation of those solutions (i.e., its reasoning to solve problems). For example, the agent's model of the spray action may lack the knowledge that spraying herbicide will kill plants in locations adjacent to those it targets. When the goal of native plant preservation is violated after spraying invasive ones close to the natives, a normal discrepancy occurs. However, when the Interpret phase fails to explain this discrepancy, a metacognitive expectation fails. That is, the cognitive trace 𝜏𝜏 𝑙𝑙 does not adhere to the expectation ( 𝑑𝑑𝑑𝑑𝑠𝑠𝑑𝑑𝑑𝑑𝑑𝑑𝑝𝑝𝑃𝑃𝑃𝑃𝑑𝑑𝑑𝑑 ∈ 𝑠𝑠 𝑖𝑖 𝑀𝑀 , Explain , 𝑑𝑑𝑒𝑒𝑝𝑝𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑃𝑒𝑒𝑑𝑑𝑒𝑒𝑃𝑃 ∈ 𝑠𝑠 𝑖𝑖+1 𝑀𝑀 ) since the Explain component of the Interpret phase failed to produce the expected explanation. 6 Now the discrepancy at the metalevel must be explained.

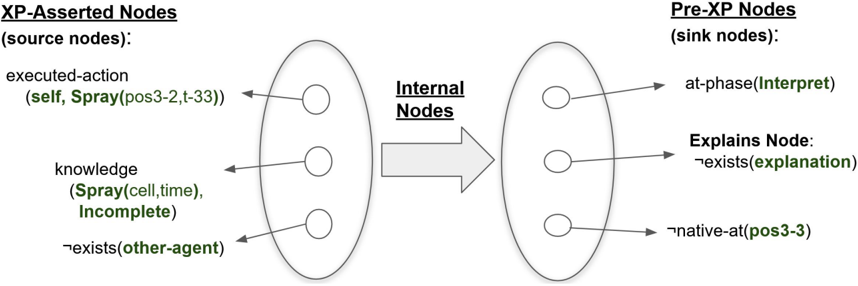

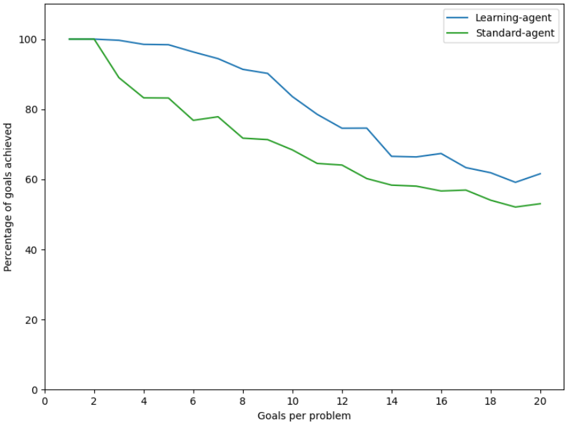

At the meta-level Interpret phase, the idea is to detect discrepancies, explain what caused the discrepancy, and then generate a goal to remove the cause. Metacognitive explanation is a casebased process that reuses old explanations from a library of meta-explanation patterns (Meta-XPs) in a similar manner to Cox and Ram (1999; Ram & Cox, 1994). Figure 2 shows a Meta-XP applied to the gardening example. MIDCA retrieves an explanation and then checks for applicability by inspecting the Meta-XP's pre-XP nodes. These sink nodes in the graph of the knowledge structure represent those conditions that need to hold for the XP to be relevant. A distinguished node among them called the Explains node is the concept being explained. As such, the XP provides a causal

5 The self-model Ω is similar to the state transition system Σ defined previously but is a meta-level knowledge structure.

6 Note that if another gardener was observed concurrently spraying herbicide on the adjacent native plant, then an explanation as to why the native plant was killed could be produced and a goal to stop the gardener from repeating that in the future formulated. If this actually was the case, then no metacognitive expectation failure would occur.

## COMPUTATIONAL METACOGNITION

Figure 2 . Meta-explanation pattern for failed action execution due to a poor action model. An explanation pattern (Schank, 1986) is a causal knowledge structure representing prior experience. It maps the causes of the explains node (i.e., what is being explained) from the antecedents (i.e., the XP-asserted nodes) through the internal nodes to the consequents (i.e., the pre-XP nodes).

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: XP-Asserted Nodes and Pre-XP Nodes Flow

### Overview

The diagram illustrates a system architecture with two primary components: **XP-Asserted Nodes (source nodes)** and **Pre-XP Nodes (sink nodes)**, connected via **Internal Nodes**. Arrows indicate directional relationships and dependencies between nodes, with labels specifying actions, knowledge states, and existential conditions.

### Components/Axes

#### Left Oval: XP-Asserted Nodes (source nodes)

- **executed-action**:

- Label: `(self, Spray(pos3-2,t-33))`

- Position: Top-left node within the oval.

- **knowledge**:

- Label: `(Spray(cell,time), Incomplete)`

- Position: Middle node within the oval.

- **exists(other-agent)**:

- Label: `¬exists(other-agent)`

- Position: Bottom node within the oval.

#### Right Oval: Pre-XP Nodes (sink nodes)

- **at-phase(Interpret)**:

- Label: `at-phase(Interpret)`

- Position: Top node within the oval.

- **Explains Node**:

- Label: `¬exists(explanation)`

- Position: Middle node within the oval.

- **native-at(pos3-3)**:

- Label: `¬native-at(pos3-3)`

- Position: Bottom node within the oval.

#### Arrows and Internal Nodes

- **Internal Nodes**:

- Label: `Internal Nodes`

- Position: Central arrow connecting the two ovals.

- **Directionality**:

- Arrows flow from XP-Asserted Nodes (left) to Pre-XP Nodes (right).

### Detailed Analysis

#### XP-Asserted Nodes (Source Nodes)

1. **executed-action**:

- Represents an action performed by the system (`self`) with parameters `Spray(pos3-2,t-33)`.

- Positioned at the top of the left oval, suggesting it is the initial or primary action.

2. **knowledge**:

- Indicates a knowledge state involving `Spray(cell,time)` with an `Incomplete` status.

- Positioned centrally, implying it is a transitional or intermediate state.

3. **exists(other-agent)**:

- A negation (`¬`) indicates the absence of another agent.

- Positioned at the bottom, possibly representing a boundary condition.

#### Pre-XP Nodes (Sink Nodes)

1. **at-phase(Interpret)**:

- Represents a phase labeled `Interpret`, likely part of a processing or analysis step.

- Positioned at the top of the right oval, suggesting it is the first step in the sink nodes.

2. **Explains Node**:

- A negation (`¬`) indicates the absence of an explanation.

- Positioned centrally, possibly reflecting a dependency or unresolved state.

3. **native-at(pos3-3)**:

- A negation (`¬`) indicates the absence of a native state at position `pos3-3`.

- Positioned at the bottom, suggesting a final or terminal condition.

#### Internal Nodes

- The central arrow labeled `Internal Nodes` connects the two ovals, acting as a mediator for data or state transitions.

### Key Observations

- **Directional Flow**: All arrows point from XP-Asserted Nodes to Pre-XP Nodes, indicating a unidirectional process.

- **Negations**: Multiple nodes use `¬` (negation), suggesting constraints or missing states (e.g., `¬exists(explanation)`, `¬native-at(pos3-3)`).

- **Incomplete Knowledge**: The `knowledge` node explicitly states `Incomplete`, highlighting a gap in the system’s understanding.

- **Positional Hierarchy**: Nodes are arranged vertically within each oval, with top nodes likely representing initial states and bottom nodes representing terminal states.

### Interpretation

This diagram models a system where **XP-Asserted Nodes** (source nodes) generate or assert actions and knowledge, which are then processed through **Internal Nodes** to produce **Pre-XP Nodes** (sink nodes). Key insights:

1. **Knowledge Gaps**: The `Incomplete` status of the `knowledge` node and the absence of explanations (`¬exists(explanation)`) suggest unresolved dependencies or missing data.

2. **Agent Constraints**: The negation `¬exists(other-agent)` implies the system operates in isolation or lacks external agents.

3. **Phase Dependency**: The `at-phase(Interpret)` node indicates a critical phase in the sink nodes, possibly requiring interpretation of prior actions.

4. **Structural Rigidity**: The unidirectional flow and fixed node positions suggest a deterministic process with no feedback loops.

### Notes on Data and Uncertainty

- **No Numerical Data**: The diagram lacks quantitative values, focusing instead on logical relationships and states.

- **Textual Labels**: All information is textual, with no visual data points or trends to analyze.

- **Color Coding**: No explicit legend is present, but labels are highlighted in green (e.g., `Spray`, `Interpret`, `explanation`), possibly for emphasis.

This diagram serves as a conceptual model for understanding the flow of actions, knowledge, and constraints within a system, emphasizing dependencies and unresolved states.

</details>

chain from the XP-asserted nodes (i.e., the XP's antecedents) to the explains node. In the running example, the Meta-XP of Figure 2 explains why the cognitive level Interpret phase failed to generate an explanation when MIDCA was currently at the Interpret phase and a native-plant was not preserved. If that is the case, then the XP says that this is caused when the spray action was executed, no other agent exists nearby, and knowledge of the spray action is incomplete.

The meta-level Interpret phase then takes this knowledge structure and generates a goal from the set of XP-asserted nodes. Here the goal is to negate the middle node, that is, to make the knowledge of the spray operator not incomplete (i.e., to learn a better action model). The specific form output to the meta-level goal agenda is (learned spray, 𝑠𝑠 𝑖𝑖+1 ) . See Gogineni, Kondrakunta, Molineaux, & Cox (2018) for further details concerning XP retrieval, selection and application at the cognitive level. The same techniques are used at the meta-level.

## 3.2 Meta-level Control

Like problem-solving at the cognitive level, meta-level control consists of an Intend phase, a metalevel Plan phase, and a Controller. Intend takes the meta-level goal agenda ( 𝐺𝐺 � 𝑀𝑀 ) and chooses a subset as the current meta-level goal set ( 𝑔𝑔 𝑐𝑐 𝑀𝑀 ). Details are available in Kondrakunta and Cox (in press; Kondrakunta, 2017) for the decision mechanism. In our running example, only the single learning goal exists in the agenda, and so Intend selects it as the current goal set.

The Plan phase of the meta-level then takes the current goals and generates a plan 𝜋𝜋 𝑚𝑚 𝑀𝑀 to achieve it using the fast-downward stone soup planner (Helmert, 2006; Helmert, Roger, & Karpas, 2011). Unlike normal planners that generate sequences of actions in the world to achieve environmental states, MIDCA uses fast downward to achieve meta-level goals. Given the learning goal (learned spray 𝑠𝑠 𝑖𝑖+1 ) , Fast Downward must generate a sequence of learning steps that achieves the goal if executed. To perform this task, MIDCA has a number of operators represented in the Planning Domain Definition Language (PDDL) 2.2 (Edelkamp & Hoffmann, 2004), one of which is shown in Table 2. To select the perform-learning action, the agent checks whether it has a

Table 2 . Action model of the meta-level operator perform-learning . Specified in PDDL 2.2, this primitive, planning operator achieves the learning goal (learned ?op ?current-state) .

```

```

discrepancy in the current state, it has an outdated operator, and the same operator caused the discrepancy. If all the preconditions are met, then it results in the learning goal.

The Controller then attempts to execute the plan one step 𝑃𝑃 𝑛𝑛 𝑀𝑀 at a time. In the example, the plan has a single step to perform learning. MIDCA uses the First-Order Inductive Learner (FOIL) (Quinlan, 1990) for this paper. FOIL induces function-free Horn clauses from a set of positive and negative concept examples and some background knowledge represented as a set of first-order logical predicates. It performs hill climbing with an information theoretical function and generates a rule that covers all the examples.

The FOIL algorithm is given positive or negative examples experienced during the execution of failed plans. For example, the spray action may have killed a plant in a location directly north of the intended cell. If so, FOIL will learn the following general Horn clause.

spray (pos1, time2) :- spray (pos0, time1), adj\_time (time2, time1), adj\_north (pos0, pos1)

That is, if the agent sprays a location at time t1, it will be as if it sprays the adjacent location due north at t2. Doing so will then kill all plants in the cell north of the spray. MIDCA then compares such rules generated by the learning procedure with the agent's knowledge of the operator. As a result, it changes the spray operator by adding conditional effects.

```

```

After the agent's memory is updated with the modified operator, the metacognitive cycle ends. Once metacognition completes, MIDCA continues with the subsequent cognitive phase. Given an

incrementally improved operator as with our example or otherwise with some other positive change to cognition, performance will improve. The following section examines this claim empirically and shows specific benefits to cognitive systems that reason about themselves.

## 4. Empirical Evaluation

We evaluate the claim that metacognition can improve the performance of cognitive systems with a relatively simple planning task. The experiments we perform use the plant protection domain developed at the Naval Research Laboratory.

## 4.1 The Plant Protection Domain

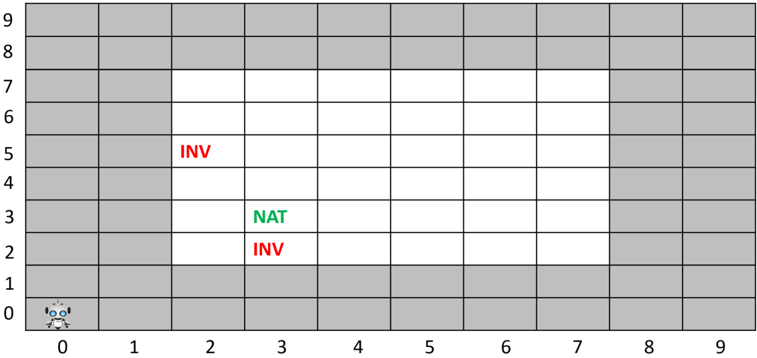

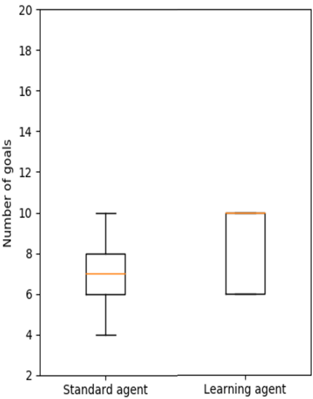

The plant protection domain consists of harmful invasive plants, endangered native plants, a human supervisor, and an agent which navigates to a target cell and deploys herbicides (Boggs, Dannenhauer, Floyd, & Aha, 2018). When an agent deploys herbicides, it will affect the neighboring cells as well. The world in which agents act is a map grid of size 10 ⨉ 10, as shown in Figure 3. It consists of tiles where a plant occupies a single tile, and the tiles contain at most one plant. Plants are static fixtures that cannot be moved or replanted. The garden area where plants exist lies between (2,2) and (7,7), i.e., the white rectangle. In this domain, two key action models exist: a move operator and a spray operator. The walkway around the planting area exists for the ease of agent movement.

Figure 3 visually depicts a very simple problem with a gardening agent at location (0,0). The goals of the agent are to preserve all native plants and to remove all invasive ones, i.e., nativeat(pos3-3) ∧ ¬invasive-at(pos2-5) ∧ ¬invasive-at(pos3-2) . Here, the Perceive phase of the cognitive cycle inputs the requested expression for the goals, Interpret adds these goals to the goal agenda, and Intend makes all goals current. Finally, the Plan phase takes the current goal set and generates a plan to move to location (3,2), spray the invasive plant, move to location (2,5), and spray again. Given that the native plant already exists at location (3,3) in the initial state, nothing needs to be done to achieve the goal to have a native plant at (3,3). However, the agent does not realize that spraying in one cell will kill plants in all adjacent cells. Thus, the native plant in (3,3) is also killed.

## 4.2 Experimental Design

Two experiments provide a baseline for determining how metacognition with learning affects the performance of an agent in the plant protection domain. Our tests were run in the 10 × 10 world (as discussed above) with a standard planning agent and a metacognitive learning agent. The standard agent does not perform goal reasoning or learning. It just executes plans to remove all invasives. The learning agent learns a more accurate model of its actions over the range of goals given and can reject harmful goals accordingly. We randomly place invasive and native plants in the map grid to form problems of increasing difficulty (i.e., larger amounts of invasive plants and hence more goals to solve in a problem).

## COMPUTATIONAL METACOGNITION

Figure 3 . A simple problem in the plant protection domain. The central rectangular area in white indicates the garden with native and invasive plants represented by the symbols NAT and INV respectively. The gardening agent at the origin needs to remove invasives and preserve native plants.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Grid Matrix: Sparse Data Distribution

### Overview

The image depicts a 10x10 grid (rows 0-9, columns 0-9) with sparse annotations. A robot icon is positioned at the origin (0,0). Three cells contain text labels: two marked "INV" (red) and one marked "NAT" (green). The grid lacks explicit axis titles, legends, or numerical values beyond positional coordinates.

### Components/Axes

- **Rows**: Labeled 0 (bottom) to 9 (top), left-aligned.

- **Columns**: Labeled 0 (left) to 9 (right), top-aligned.

- **Annotations**:

- Red text "INV" at (5,2) and (2,2).

- Green text "NAT" at (3,2).

- **Legend**: Implicit color coding (red = "INV", green = "NAT"). No explicit legend box present.

### Detailed Analysis

- **Robot Icon**: Positioned at (0,0), stylized with blue eyes and antennae.

- **Colored Cells**:

- (5,2): Red "INV" (row 5, column 2).

- (3,2): Green "NAT" (row 3, column 2).

- (2,2): Red "INV" (row 2, column 2).

- **Empty Cells**: 97/100 cells unmarked.

### Key Observations

1. **Sparse Data**: Only 3/100 cells contain annotations.

2. **Vertical Clustering**: Two "INV" labels occupy adjacent rows (2 and 5) in column 2.

3. **Color Coding**: Red dominates annotations (2 instances), green appears once.

4. **Robot Proximity**: All annotations are ≥2 columns away from the robot icon.

### Interpretation

The grid likely represents a spatial distribution system (e.g., inventory tracking, resource mapping). The robot’s position at (0,0) suggests a reference point or autonomous agent. The "INV" labels may indicate inventory locations, while "NAT" could denote natural resources or nodes. The lack of numerical values or explicit context limits quantitative analysis. The vertical proximity of "INV" labels in column 2 might imply a localized cluster, but this requires further data to confirm.

**Note**: No explicit trends or numerical relationships can be derived from the sparse annotations. Additional metadata (e.g., grid purpose, color legend definitions) is required for deeper analysis.

</details>

For the first experiment, we set the ratio of native to invasive plants at 75:25 and then in another set of trials we set it at 60:40. We also ensure that no two plants are in the same map grid and that all plants are situated between location (2,2) and location (7,7). Experiments were carried out by varying problems with the number of goals ranging from 1 to 20. At each fixed number of goals, we generate 100 random trials thus leading to a total of 2,000 random trials for each experiment. The density of the invasive and native plants on the grid changes in every set of trials as the number of goals increase. Initial goals for this domain will be to remove all the invasive plants and preserve all native plants.

## 4.3 Empirical Results

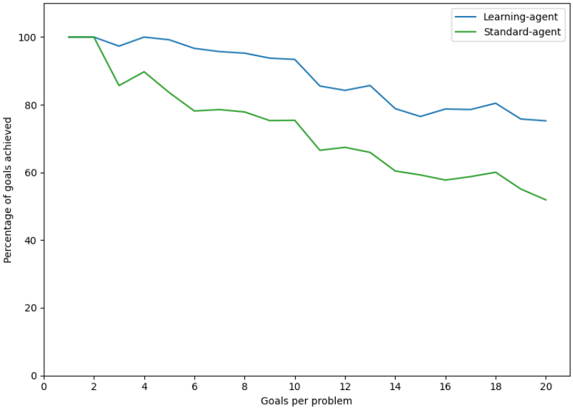

In Figure 4, the blue line represents the learning agent, and the green line represents the standard agent. From these results, it is clear that the learning agent performs significantly better than the standard agent because a standard agent removes all invasive plants regardless of any plants in adjacent cells. The learning agent outperforms because, after a given number of trials, it improves its use of herbicide. As the spray operator is gradually fixed, the learning agent generates better plans to remove invasive plants while preserving the native ones.

For two different points along the x-axis of Figure 4, Figures 5 and 6 show further details using box plots. A box plot is a standardized way of displaying data such as minimum, first quartile, median, third quartile and maximum. The box is drawn from first quartile to third quartile. The lower line below the box is the minimum value (first quartile value -1.5 * interquartile range) and the upper line is the maximum value (first quartile value +1.5 * interquartile range).

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Graph: Performance Comparison of Learning-Agent vs Standard-Agent

### Overview

The image is a line graph comparing the performance of two agents ("Learning-agent" and "Standard-agent") across varying numbers of goals per problem. The y-axis represents the percentage of goals achieved, while the x-axis represents the number of goals per problem (ranging from 0 to 20). The graph shows distinct trends for both agents, with the learning-agent consistently outperforming the standard-agent as the complexity (goals per problem) increases.

### Components/Axes

- **X-axis**: "Goals per problem" (0 to 20, integer increments).

- **Y-axis**: "Percentage of goals achieved" (0 to 100, integer increments).

- **Legend**: Located in the top-right corner, with:

- **Blue line**: Learning-agent.

- **Green line**: Standard-agent.

### Detailed Analysis

1. **Learning-agent (Blue Line)**:

- Starts at **100%** when goals per problem = 0.

- Experiences a slight dip to ~95% at 2 goals per problem.

- Fluctuates between ~90% and ~98% for 3–10 goals per problem.

- Declines gradually to ~75% at 20 goals per problem.

- Shows minor volatility but maintains a relatively stable performance.

2. **Standard-agent (Green Line)**:

- Starts at **100%** when goals per problem = 0.

- Drops sharply to ~85% at 2 goals per problem.

- Declines steadily to ~65% at 10 goals per problem.

- Further decreases to ~50% at 20 goals per problem.

- Exhibits a consistent downward trend with no recovery.

### Key Observations

- The learning-agent maintains a **~15–20% higher performance** than the standard-agent across all goal complexities.

- The standard-agent’s performance degrades **nonlinearly**, with a steep drop after 2 goals per problem.

- The learning-agent’s performance stabilizes after an initial dip, suggesting adaptability to increased complexity.

### Interpretation

The data demonstrates that the learning-agent is more robust in handling problems with higher goal complexity compared to the standard-agent. The standard-agent’s performance deteriorates significantly as the number of goals per problem increases, indicating potential limitations in scalability or adaptability. The learning-agent’s ability to maintain higher performance suggests it may incorporate mechanisms (e.g., dynamic prioritization, reinforcement learning) to manage complex tasks effectively. The initial dip in the learning-agent’s performance at 2 goals per problem could reflect a transitional phase before optimization, but it recovers and sustains superiority. This trend highlights the importance of adaptive algorithms in multi-goal environments.

</details>

Figure 4 . Experiment 1 performance as a function of problem complexity. The ratio of native to invasive plants is 75:25 in each of the 2,000 trials. As problems increase in complexity given ever more goals to achieve, performance decreases as a percentage of goals achieved. But, the metacognitive agent that learns a better action model outperforms a standard planning agent that uses no metacognition or learning.

Figure 5 . Experiment 1 box plots for standard and learning agents in 10-goal problems.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Box Plot: Number of Goals by Agent Type

### Overview

The image displays a comparative box plot analyzing the distribution of goals scored by two types of agents: "Standard agent" and "Learning agent". The y-axis represents the agent types, while the x-axis quantifies the "Number of goals" scored. The plot uses orange boxes to represent interquartile ranges (IQRs), black lines for medians and whiskers, and black circles for outliers.

### Components/Axes

- **X-axis (Horizontal)**:

- Label: "Number of goals"

- Scale: 2 to 20 (in increments of 2)

- Position: Bottom of the plot

- **Y-axis (Vertical)**:

- Categories: "Standard agent" (left) and "Learning agent" (right)

- Position: Left side of the plot

- **Legend**:

- No explicit legend present. Colors are inferred:

- Orange: Boxes (IQRs)

- Black: Medians, whiskers, and outliers

### Detailed Analysis

1. **Standard Agent**:

- **Median**: ~8 goals (black line within the orange box).

- **IQR**: 6 to 9 goals (orange box).

- **Whiskers**: Extend from ~6 (lower) to ~9 (upper).

- **Outliers**: Two data points outside the whiskers:

- Lower outlier: ~4 goals (black circle).

- Upper outlier: ~10 goals (black circle).

2. **Learning Agent**:

- **Median**: ~10 goals (black line within the orange box).

- **IQR**: 8 to 10 goals (orange box).

- **Whiskers**: Extend from ~8 (lower) to ~10 (upper).

- **Outliers**: None observed.

### Key Observations

- The **Learning agent** demonstrates a higher median goal count (~10) compared to the **Standard agent** (~8).

- The **Standard agent** exhibits greater variability, with a wider IQR (6–9) and outliers at 4 and 10 goals.

- The **Learning agent** shows a tighter distribution, with no outliers and a narrower IQR (8–10).

### Interpretation

The data suggests that the **Learning agent** consistently outperforms the **Standard agent** in goal-scoring, with a higher median and reduced variability. The **Standard agent**'s outliers (4 and 10 goals) indicate occasional anomalies, possibly due to unpredictable performance or edge cases. The absence of outliers in the Learning agent implies more stable and reliable performance. This could reflect the Learning agent's adaptive capabilities or optimized decision-making processes compared to the static nature of the Standard agent.

</details>

Figure 6 . Experiment 1 box plots for standard and learning agents in 20-goal problems.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Box Plot: Number of Goals by Agent Type

### Overview

The image displays a comparative box plot analyzing the distribution of goals scored by two types of agents: "Standard agent" and "Learning agent." The y-axis represents the "Number of goals" (ranging from 2 to 20), while the x-axis categorizes the agents. The plot uses orange for median lines and black for box plot components.

### Components/Axes

- **X-axis (Categories)**:

- "Standard agent" (left)

- "Learning agent" (right)

- **Y-axis (Scale)**:

- Labeled "Number of goals"

- Range: 2 to 20 (in increments of 2)

- **Legend**: Not explicitly present, but colors are defined:

- **Orange**: Median line (central horizontal line in each box)

- **Black**: Box plot boundaries (whiskers, quartiles, and outliers)

### Detailed Analysis

1. **Standard Agent**:

- **Median**: ~10 (orange line within the box).

- **Interquartile Range (IQR)**: 8 (lower quartile) to 12 (upper quartile).

- **Whiskers**: Extend from 6 (minimum) to 14 (maximum), excluding outliers.

- **Outliers**: None visible.

2. **Learning Agent**:

- **Median**: ~14.5 (orange line).

- **Interquartile Range (IQR)**: Collapsed to a single line at ~14.5, indicating no variability in the middle 50% of data.

- **Whiskers**: Extend from ~14.5 to 20 (maximum), with an outlier at 20.

- **Outliers**: One data point at 20 (marked as a circle above the whisker).

### Key Observations

- The **Learning agent** consistently scores more goals than the Standard agent, with a higher median (~14.5 vs. ~10).

- The **Standard agent** shows moderate variability (IQR: 8–12), while the Learning agent’s performance is tightly clustered around 14.5.

- The **outlier at 20** for the Learning agent suggests an exceptional case, potentially skewing the distribution.

### Interpretation

The data suggests that the Learning agent outperforms the Standard agent in goal-scoring tasks, with a higher central tendency and reduced variability. However, the outlier at 20 for the Learning agent warrants investigation—it could indicate an anomaly or a rare high-performing scenario. The Standard agent’s broader IQR implies less consistency, possibly due to less adaptive learning mechanisms. The absence of a legend complicates direct color-to-label mapping, but the orange median lines and black box components are visually distinct. This plot highlights the Learning agent’s superiority but raises questions about the outlier’s validity and the Standard agent’s reliability.

</details>

## COMPUTATIONAL METACOGNITION

In Figures 5 and 6, the orange line represents the median of the distribution. Note that the y-axis reports the number of goals achieved instead of the percentage of goals achieved as in Figure 4. The black circles represent the outliers for the distribution of the data. In Figure 5, it is clear that the median of the standard agent is much less than the median of the learning agent. In Figure 6, the median of the learning agent is significantly greater than the median of the standard agent, and the variance is very low in contrast to the standard agent. By the time 20-goal problems are introduced, the learning agent has learned a complete operator. However, many cases will exist where goals cannot be achieved even with a perfect action model, so performance is less than 20 achievements out of 20 goals. For instance, consider again the example in Figure 3. Because herbicide cannot be sprayed outside the garden itself (e.g., at location (3,1)), the invasive at (3,2) cannot be killed without also killing the native plant.

In a second experiment with a 60:40 ratio of native to invasive plants, the learning agent still outperforms the standard agent but with less differences in performance (see Figure 7). The reason for this reduced outcome is that fewer examples of native plants being adjacent to invasive ones randomly occur within a 60:40 mix. Box plots are shown in Figures 8 and 9 to provide further details about the variance and significance of the results.

## 5. Related Research

As mentioned, computational metacognition has many interpretations in the literature. The cognitive psychology literature includes judgements of learning, feelings of knowing, tip of the tongue states and metamemory phenomena (Dunlosky & Thiede, 2013). But in some work, the definition is so broad as to blur the distinction between cognition and metacognition. For example, Kralik et al. (2018) defines metacognition as any decision process that takes input or output from another decision process. As such, they include planning as a metacognitive process as well as supervisory and arbitration processes. However, to argue that planning and simulation is metacognitive stretches the use of the term and provides little apparent value.

The recent work on computational approaches to metacognition are surprisingly thin. Most are either older papers or focus on human psychology rather than artificial intelligence. There is a paper on metacognition applied to neural networks (Babu & Suresh, 2012) and another applied to machine learning (Loeckx, 2017). Neither of these concern high-level reasoning and cognitive systems.

Similar to introspective monitoring and meta-level control, the Artificial Cognitive Neural Framework of Crowder, Friess, & Ncc (2011) splits computational metacognition into metacognitive experiences and metacognitive regulation. However, they also add a third component they call metacognitive knowledge or what a cognitive system knows about itself as a cognitive processor. The details of this framework are mainly conceptual, however, and no evaluation exists.

A few examples of metareasoning exist in the cognitive systems community such as the work of Martie, Alam, Zhang, & Anderson (2019). Their work on symbolic mirroring learns associations between neural network image classifiers and symbolic abstractions. They use meta-points to reflect on executed and unexecuted sections of the cognitive system itself by passing them to higher-level processes termed meta-operations. Although like MIDCA, the meta-operations examine and modify lower-level processes and involve explanation, much of the work is specific to vision tasks and less general.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Line Graph: Performance Comparison of Learning-Agent and Standard-Agent

### Overview

The image depicts a line graph comparing the performance of two agents—**Learning-agent** (blue line) and **Standard-agent** (green line)—across varying numbers of goals per problem. The y-axis represents the percentage of goals achieved (0–100%), while the x-axis represents the number of goals per problem (0–20). Both lines show a downward trend, but the Learning-agent consistently outperforms the Standard-agent.

---

### Components/Axes

- **X-axis (Horizontal)**: "Goals per problem" (0–20, increments of 2).

- **Y-axis (Vertical)**: "Percentage of goals achieved" (0–100, increments of 20).

- **Legend**: Located in the top-right corner.

- **Blue line**: Learning-agent.

- **Green line**: Standard-agent.

---

### Detailed Analysis

1. **Learning-agent (Blue Line)**:

- Starts at **100%** for 0–2 goals per problem.

- Declines gradually: ~98% at 4 goals, ~95% at 6 goals, ~90% at 8 goals, ~85% at 10 goals, ~75% at 12 goals, ~70% at 14 goals, ~68% at 16 goals, ~62% at 18 goals, and ~60% at 20 goals.

- Maintains a relatively stable slope with minor fluctuations.

2. **Standard-agent (Green Line)**:

- Starts at **100%** for 0–2 goals per problem.

- Drops sharply: ~85% at 4 goals, ~78% at 6 goals, ~70% at 8 goals, ~65% at 10 goals, ~60% at 12 goals, ~58% at 14 goals, ~56% at 16 goals, ~52% at 18 goals, and ~50% at 20 goals.

- Exhibits a steeper and more erratic decline compared to the Learning-agent.

---

### Key Observations

- Both agents achieve **100% goal completion** for 0–2 goals per problem.

- The performance gap between the agents narrows as the number of goals increases (e.g., ~15% difference at 20 goals vs. ~30% at 4 goals).

- The Learning-agent’s decline is smoother, while the Standard-agent’s performance deteriorates more abruptly.

---

### Interpretation

The data suggests that the **Learning-agent** is more adaptable to increasing problem complexity (higher goals per problem), maintaining higher efficiency even as performance declines. The Standard-agent’s steeper drop indicates it struggles with scalability, likely due to a lack of adaptive mechanisms. The narrowing gap at higher goal counts implies that while the Learning-agent’s advantage persists, the relative performance difference diminishes as problem difficulty rises. This could reflect the Learning-agent’s ability to optimize strategies dynamically, whereas the Standard-agent relies on static, less flexible approaches.

No textual content or additional data tables are present in the image. All values are approximate, derived from visual estimation of the graph’s trends.

</details>

Figure 7. Experiment 2 performance as a function of problem complexity. The ratio of native to invasive plants is 60:40 in each of the 2,000 trials. As problems increase in complexity given ever more goals to achieve, performance goes down in terms of the percentage of goals achieved.

Figure 8. Experiment 2 box plots for standard and learning agents in 10-goal problems.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Box Plot: Number of Goals by Agent Type

### Overview

The image displays a comparative box plot analyzing the distribution of goals scored by two types of agents: "Standard agent" and "Learning agent." The y-axis represents the "Number of goals" (ranging from 2 to 20), while the x-axis categorizes the agents. The plot uses black boxes with orange median lines to represent data distributions.

### Components/Axes

- **Y-axis**: "Number of goals" (scale: 2 to 20, increments of 2).

- **X-axis**: Two categories: "Standard agent" (left) and "Learning agent" (right).

- **Legend**: No explicit legend is present. Colors are directly embedded in the plot (black boxes, orange median lines).

- **Whiskers**: Extend from the boxes to represent data range (minimum to maximum values excluding outliers).

### Detailed Analysis

1. **Standard Agent**:

- **Median**: Approximately 7 (orange line within the box).

- **Interquartile Range (IQR)**: Box spans from 6 (Q1) to 8 (Q3).

- **Whiskers**: Extend from 4 (minimum) to 10 (maximum).

- **Outliers**: None visible.

2. **Learning Agent**:

- **Median**: Approximately 8 (orange line within the box).

- **Interquartile Range (IQR)**: Box spans from 6 (Q1) to 10 (Q3).

- **Whiskers**: Extend from 6 (minimum) to 10 (maximum).

- **Outliers**: None visible.

### Key Observations

- The **Learning agent** exhibits a higher median goal count (8 vs. 7) and a larger IQR (4 vs. 2), indicating greater variability in performance.

- The **Standard agent** shows a tighter distribution, with data concentrated between 6 and 8 goals.

- Both agents share identical whisker ranges (4–10 for Standard; 6–10 for Learning), suggesting similar extreme values but differing central tendencies.

### Interpretation

The data suggests that the **Learning agent** outperforms the Standard agent on average, achieving a higher median goal count. However, the Learning agent’s performance is less consistent, as evidenced by its broader IQR. The Standard agent, while less effective overall, demonstrates more predictable outcomes. The absence of outliers in both groups implies stable performance under the tested conditions. This could indicate that the Learning agent’s adaptive mechanisms improve average results but introduce variability, whereas the Standard agent’s fixed strategy prioritizes consistency over optimization.

</details>

Figure 9. Experiment 2 box plots for standard and learning agents in 20-goal problems.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Box Plot: Number of Goals by Agent Type

### Overview

The image displays a box plot comparing the distribution of "Number of goals" between two agent types: "Standard agent" and "Learning agent." The y-axis ranges from 2 to 20, while the x-axis categorizes the two agent types. The plot uses distinct colors for each agent type, with no explicit legend present.

### Components/Axes

- **X-axis**: Labeled "Standard agent" (left) and "Learning agent" (right).

- **Y-axis**: Labeled "Number of goals," with a scale from 2 to 20 in increments of 2.

- **Colors**:

- **Standard agent**: Orange (box and median line).

- **Learning agent**: Black (horizontal line).

- **Legend**: Not explicitly labeled, but color coding is consistent with the x-axis labels.

### Detailed Analysis

1. **Standard agent**:

- **Box**: Median line at approximately 10.

- **Interquartile range (IQR)**: 9 to 11.

- **Whiskers**: Extend from 8 (minimum) to 15 (maximum).

- **Outlier**: A single data point at 19, marked as a black dot above the whisker.

2. **Learning agent**:

- **Horizontal line**: Positioned at 12, indicating no variability (no box or whiskers).

### Key Observations

- The **Standard agent** exhibits significant variability in performance, with a wide IQR and an outlier at 19.

- The **Learning agent** demonstrates consistent performance, with all data points clustered tightly around 12.

- The outlier in the Standard agent’s distribution suggests an anomalous result that may require further investigation.

### Interpretation

The data suggests that the **Learning agent** achieves a more stable and predictable outcome (12 goals) compared to the **Standard agent**, which shows higher variability (median ~10, range 8–15, with an outlier at 19). The outlier in the Standard agent’s performance could indicate either an exceptional case or a potential flaw in the data collection process. The Learning agent’s consistency implies improved reliability or optimization in its design, while the Standard agent’s variability may reflect less efficient or less adaptive behavior.

</details>

## COMPUTATIONAL METACOGNITION

Human metacognition has been studied in the field of cognitive architectures: notably in ACT-R (Anderson, 2009; Larue, Hough, & Juvina, 2018), CLARION (Sun, 2016), and LIDA (Franklin et al., 2007). In ACT-R, Anderson and Fincham (2014) explored how reflective functions supported by metacognition can consciously assesses what one knows and how to extend it to solve a problem. More specifically, metacognition enabled the architecture to reflect on declarative representations of cognitive procedures, allowing for the modification or replacement of elements in the procedures used in mathematical problem-solving. More recent work explored metacognitive trigger mechanisms and more particularly the feeling of rightness (Wang & Thompson, 2019) metacognitive experience, and more particularly how feeling of rightness determines the depth of inner simulation of possible scenarios and hypothesis testing.

Metacognition is a key element of CLARION's hybrid (i.e., symbolic and subsymbolic) architecture. Following Flavell's (1976) original definition of metacognition, it supports the active monitoring and regulation of cognitive processes. Metacognitive mechanisms determine how much symbolic and sub-symbolic processing will be involved in decision making and can also dynamically change the ratio of symbolic and sub-symbolic processing and how they interact (e.g., which learning method(s) or reasoning mechanism(s) to apply). Internal parameters (e.g., learning rates, thresholds, and utilities) are also modified through metacognition.

In contrast to CLARION and MIDCA, LIDA has no specific metacognitive module. Higherlevel cognitive processes are implemented as collections of behavior streams. Metacognitive processes specifically are a collection of behavior streams (i.e., sequences of actions) that control deliberation through internal actions (e.g., strategy regulation, resource allocation, and the interruption of strategies that have persisted too long in favor of an alternative). We have described metacognition as a kind of add-on to a cognitive system and implemented it as a separate software module in MIDCA. Yet this is not necessarily required computationally. Indeed, metacognition may be a self-reflective aspect of cognition itself. Only further research will clarify the implications of one approach as opposed to the other.

## 6. Conclusion

This paper briefly sketches a theory of computational metacognition analogous to a cognitive action-perception cycle and illustrates how it can be implemented in a cognitive architecture. We divide metacognition into three categories: explanatory, immediate and anticipatory metacognition. We discuss the first category in detail and briefly explain the last. Immediate metacognition remains for future research. Finally, we provide an empirical evaluation of explanatory metacognition and show how it improves system performance by progressively learning better action models. Previous publications (Cox & Dannenhauer, 2016; Cox, et al., 2017; Dannenhauer, et al., 2018) report similar results in alternative domains and therefore support the generality of the approach. However, this work is the first time the full metacognitive cycle in MIDCA has been described and demonstrated. Earlier publications focus on specific portions of the meta-level process such as the detection of metacognitive expectation failures, and they make large assumptions about other aspects of the meta-level computation.

Yet numerous limitations still exist with respect to the implementation and the theory. As the introduction admits, metacognition is not always the best choice for an agent or for humans. For example, if a system is under severe time constraints, reasoning about reasoning may waste valuable computational resources better spent on cognitive-level problem-solving or action

execution. Although future research will provide a greater understanding of the trade-offs involved (see Norman, 2020, for some interesting heuristics that can potentially mitigate this problem), this paper clearly illustrates the positive effect computational metacognition can offer advanced cognitive systems.

Furthermore, some of the assumptions in this work remain implicit and underexplored. Although (in machines) statistical reinforcement learning (Sutton & Barto, 1999) or (in humans) operant conditioning (Skinner, 1938, 1957) may be at play at the lower levels of cognition, we view deliberate, goal-driven learning (Cox & Ram, 1999; Ram & Leake, 1995a) as a metacognitive activity. The agent needs to consider why reasoning fails and make decisions to achieve explicit goals to learn and hence (indirectly) improve performance. This perspective conflicts with standard formulations of the learning problem (e.g., Mitchell, 1997) even within the cognitive systems community (e.g., Langley, 2021; Mohan & Laird, 2014). As such, this makes the comparison to and contrast with other learning approaches difficult. Thus, an empirical comparison between an agent that learns with metacognition and one that learns without metacognition is problematic from our point of view. Again, future research will attempt to elaborate upon and evaluate this position and to clarify other assumptions less obvious in this paper.

Finally, as the examples shown here demonstrate, MIDCA implements relatively simple solutions for the problem of realizing computational metacognition. At this time, MIDCA's metalevel manages only singleton goal sets, and meta-level plans are currently very short and basic. Interactions between multiple meta-level goals and complex planning alternatives also remain for future work. But previous results shown at the cognitive level with MIDCA together with the robust state of the art in the planning community suggest that solutions to more complicated problems at the meta-level will be relatively straight forward and within practical reach. Rather than focus on sophisticated problems sets that rarely occur, we have instead put forth a fundamental approach to metacognition that promises to change the way we think about what makes a cognitive system intelligent and learning effective.

## Acknowledgements

This research is supported in part by the National Science Foundation through grant S&AS1849131 and by the Office of Naval Research (ONR) through grant N00014-18-1-2009. We also thank the anonymous reviewers for their comments, insights, and suggestions and Matt Molineaux for suggesting the use of FOIL as a learning mechanism and his overall technical critique. Finally, we gratefully thank Paul Bello (a previous program manager at ONR) for taking a risk and funding the MIDCA project when it was merely a proposed concept ten years ago.

## References

- Aha, D. W. (2018). Goal reasoning: Foundations, emerging applications, and prospects. AI Magazine, 39, 3-24.

- Amos-Binks, A., Dannenhauer, D. (2019). Anticipatory thinking: A metacognitive capability. In Working Notes of the 7th Goal Reasoning Workshop . Cambridge, MA. Advances in Cognitive Systems 2019.

## COMPUTATIONAL METACOGNITION

- Anderson, J. R. (2009). How can the human mind occur in the physical universe? Oxford, UK: Oxford University Press.

- Anderson, J. R., & Fincham, J.M. (2014). Extending problem-solving procedures through reflection. Cognitive psychology 74, 1-34.

- Babu, G., & Suresh, S. (2012). Meta-cognitive neural network for classification problems in a sequential learning framework. Neurocomputing 81. 86-96. 10.1016/j.neucom.2011.12.001.

- Boggs, J., Dannenhauer, D., Floyd, M. W., & Aha, D. W. (2018). The ideal rebellion: Maximizing task performance in rebel agents. In Proceedings of the 6th Goal Reasoning Workshop , held at IJCAI/FAIM-2018.

- Brown, A. (1987). Metacognition, executive control, self-regulation, and other more mysterious mechanisms. In F. E. Weinert & R. H. Kluwe (Eds.), Metacognition, motivation, and understanding (pp. 65-116). Hillsdale, NJ: Lawrence Erlbaum Associates.

- Conitzer, V. (2011). Metareasoning as a formal computational problem. In M. T. Cox & A. Raja (Eds.) Metareasoning: Thinking about thinking (pp. 121-127). Cambridge, MA: MIT Press.

- Cox, M. T. (1997). Loose coupling of failure explanation and repair: Using learning goals to sequence learning methods. In D. B. Leake & E. Plaza (Eds.), Case-Based Reasoning Research and Development: Second International Conference on Case-Based Reasoning (pp. 425-434). Berlin: Springer-Verlag.

- Cox, M. T. (2005). Metacognition in computation: A selected research review. Artificial Intelligence 169(2), 104-141.

- Cox, M. T. (2011). Metareasoning, monitoring, and self-explanation. In M. T. Cox & A. Raja (Eds.) Metareasoning: Thinking about thinking (pp. 131-149). Cambridge, MA: MIT Press.

- Cox, M. T. (2020). The problem with problems. In Proceedings of the Eighth Annual Conference on Advances in Cognitive Systems . Palo Alto, CA: Cognitive Systems Foundation.

- Cox, M. T., Alavi, Z., Dannenhauer, D., Eyorokon, V., Munoz-Avila, H., & Perlis, D. (2016). MIDCA: A metacognitive, integrated dual cycle architecture for self regulated autonomy. In Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence , Vol. 5 (pp. 3712-3718). Palo Alto, CA: AAAI Press.

- Cox, M. T., & Dannenhauer, D. (2016). Goal transformation and goal reasoning. In Proceedings of the 4 th Workshop on Goal Reasoning . New York, IJCAI-16.

- Cox, M. T., & Dannenhauer, Z. A. (2017). Perceptual goal monitors for cognitive agents in changing environments. In Proceedings of the Fifth Annual Conference on Advances in Cognitive Systems, Poster Collection (pp. 1-16). Palo Alto, CA: Cognitive Systems Foundation.

- Cox, M. T., Dannenhauer, D., & Kondrakunta, S. (2017). Goal operations for cognitive systems. In Proceedings of the Thirty-first AAAI Conference on Artificial Intelligence (pp. 4385-4391). Palo Alto, CA: AAAI Press.

- Cox, M. T., Oates, T., & Perlis, D. (2011). Toward an integrated metacognitive architecture. In P. Langley (Ed.), Advances in Cognitive Systems: Papers from the 2011 AAAI Fall Symposium (pp. 74-81). Technical Report FS-11-01. Menlo Park, CA: AAAI Press.

- Cox, M. T., & Ram, A. (1999). Introspective multistrategy learning: On the construction of learning strategies. Artificial Intelligence, 112, 1-55.

- Cox, M. T., & Veloso, M. M. (1998). Goal transformations in continuous planning. In M. desJardins (Ed.), Proceedings of the 1998 AAAI Fall Symposium on Distributed Continual Planning (pp. 23-30). Menlo Park, CA: AAAI Press / The MIT Press.

- Crowder, J., Friess, S., & Ncc, M. (2011). Metacognition and metamemory concepts for AI systems. In Proceedings of the 2011 World Congress in Computer Science, Computer Engineering, & Applied Computing: The 2011 International Conference on Artificial Intelligence . CSREA Press.

- Dannenhauer, D., Cox, M. T., & Munoz-Avila, H. (2018). Declarative metacognitive expectations for high-level cognition. Advances in Cognitive Systems , 6, 231-250.

- Dannenhauer, D., & Munoz-Avila, H. (2015). Raising expectations in GDA agents acting in dynamic environments. In Proceedings of the Twenty-Fourth International Joint Conference on Artificial Intelligence (pp. 2241-2247). Menlo Park, CA: AAAI Press.

- Dunlosky, J., & Thiede, K. W. (2013). Metamemory. In D. Reisberg (Ed.), The Oxford handbook of cognitive psychology (pp. 283-298). Oxford, UK: Oxford University Press.

- Edelkamp, S., & Hoffmann, J. (2004). PDDL2.2: The language for the classical part of the 4th international planning competition . Technical Report 195, University of Freiburg.

- Flavell, J. H. (1976). Metacognitive aspects of problem solving. In A. Resnick (Ed.), The nature of intelligence (pp. 231-235). Hillsdale, NJ: LEA.

- Franklin, S., Ramamurthy, U., D'Mello, S., McCauley, L., Negatu, A., Silva R., & Datla, V. (2007). LIDA: A computational model of global workspace theory and developmental learning. In AAAI Fall Symposium on AI and Consciousness: Theoretical Foundations and Current Approaches . Menlo Park, CA: AAAI Press.

- Ghallab, M., Nau, D., & Traverso, P. (2004). Automated planning: Theory and practice . San Francisco: Morgan Kaufmann.

- Gogineni, V. R., Kondrakunta, S., & Cox, M. T. (in press). Multi-agent goal delegation. To appear in Proceedings of the 9 th Goal Reasoning Workshop . Advances in Cognitive Systems 2021.