# A Survey of Multi-Agent Deep Reinforcement Learning with Communication

**Authors**:

- Changxi Zhu (Department of Information and Computing Sciences)

- &Mehdi Dastani (Department of Information and Computing Sciences)

- Shihan Wang (Department of Information and Computing Sciences)

## Abstract

Communication is an effective mechanism for coordinating the behaviors of multiple agents, broadening their views of the environment, and to support their collaborations. In the field of multi-agent deep reinforcement learning (MADRL), agents can improve the overall learning performance and achieve their objectives by communication. Agents can communicate various types of messages, either to all agents or to specific agent groups, or conditioned on specific constraints. With the growing body of research work in MADRL with communication (Comm-MADRL), there is a lack of a systematic and structural approach to distinguish and classify existing Comm-MADRL approaches. In this paper, we survey recent works in the Comm-MADRL field and consider various aspects of communication that can play a role in designing and developing multi-agent reinforcement learning systems. With these aspects in mind, we propose 9 dimensions along which Comm-MADRL approaches can be analyzed, developed, and compared. By projecting existing works into the multi-dimensional space, we discover interesting trends. We also propose some novel directions for designing future Comm-MADRL systems through exploring possible combinations of the dimensions.

K eywords Multi-Agent Reinforcement Learning $·$ Deep Reinforcement Learning $·$ Communication $·$ Survey

## 1 Introduction

Many real-world scenarios, such as autonomous driving [1], sensor networks [2], robotics [3] and game-playing [4, 5], can be modeled as multi-agent systems. Such multi-agent systems can be designed and developed using multi-agent reinforcement learning (MARL) techniques to learn the behavior of individual agents, which can be cooperative, competitive, or a mixture of them. As agents are often distributed in the environment where they only have access to their local observations rather than the complete state of the environment, partial observability becomes an essential assumption in MARL [6, 7, 8]. Moreover, MARL suffers from the non-stationary issue [9], since each agent faces a dynamic environment that can be influenced by the changing and adapting policies of other agents. Communication has been viewed as a vital means to tackle the problems of partial observability and non-stationary in MARL. Agents can communicate individual information, e.g., observations, intentions, experiences, or derived features, to have a broader view of the environment, which in turn allows them to make well-informed decisions [9, 10].

Due to the recent success of deep learning [11] and its application to reinforcement learning [12], multi-agent deep reinforcement learning (MADRL) has witnessed great achievements in recent years, where agents can process high-dimensional data and have generalization ability in large state and action spaces [7, 8]. We notice that a large number of research works focus on learning tasks with communication, which aim at learning to solve domain-specific tasks, such as navigation, traffic, and video games, by communicating and sharing information. To the best of our knowledge, there is a lack of survey literature that can cover recent works on learning tasks with communication in multi-agent deep reinforcement learning (Comm-MADRL). Early surveys consider the role of communication in MARL but assume it to be predefined rather than a subject of learning [13, 14, 15]. Most Comm-MADRL surveys cover only a small number of research works without proposing a fine-grained classification system to compare and analyze them. We provide a detailed comparison of recent surveys on MADRL which involves communication in Section 2.3. In cooperative scenarios, Hernandez-Leal et al. [16] use learning communication to denote the area of learning communication protocols to promote the cooperation of agents. In our survey, we extend the concept of learning communication to general multi-agent tasks and use the term learning tasks with communication to emphasize that the primary goal of recent research, which is centered on solving specific domain tasks through the use of communication. The only survey that we found classifying some early works in Comm-MADRL is from Gronauer and Diepold [17], which is based on distinguishing whether messages are received by all agents, a set of agents, or a network of agents. However, other aspects of Comm-MADRL, such as the type of messages and training paradigms, which are essential for communication and can help characterize existing communication protocols, are ignored. As a result, the reviewed papers in recent surveys regarding learning tasks with communication are rather limited and the proposed categorizations are too narrow to distinguish existing works in Comm-MADRL. On the other hand, there is a closely related research area, emergent language/communication, which also considers learning communication through various reinforcement learning techniques [18]. Different from Comm-MADRL, the primary goal of emergent language studies is to learn a symbolic language. In the literature, emergent language and emergent communication are used interchangeably. In our survey, we use emergent language for referring to both terms. However, a subset of emergent language research works pursues an additional goal to leverage learnable symbolic language to enhance task-level performance. Notably, these research works have not been encompassed within existing Comm-MADRL surveys but included in our survey, referred to learning tasks with emergent language. In summary, our survey overlaps in scope with surveys of emergent language (i.e., in learning tasks with emergent language), but our survey focuses on different primary goals (i.e., achieving domain-specific tasks rather than learning a symbolic language). We further clarify the differences between learning tasks with communication and emergent language in Section 2.2.

In our survey paper, we review the Comm-MADRL literature by focusing on how communication can be utilized to improve the performance of multi-agent deep reinforcement learning techniques. Specifically, we focus on learnable communication protocols, which are aligned with recent works that emphasize the development of dynamic and adaptive communication, including learning when, how, and what to communicate with deep reinforcement learning techniques. Through a comprehensive review of recent Comm-MADRL literature, we propose a systematic and structured classification methodology designed to differentiate and categorize various Comm-MADRL approaches. Such a methodology will also provide guidance for the design and advancement of new Comm-MADRL systems. Suppose we plan to develop a Comm-MADRL system for a domain task at hand. Starting with the questions of when, how, and what to communicate, the system can be characterized from various aspects. Agents need to learn when to communicate, with whom to communicate, what information to convey, how to integrate received information, and, lastly, what learning objectives can be achieved through communication. We propose 9 dimensions that correspond to unique aspects of Comm-MADRL systems: Controlled Goals, Communication Constraints, Communicatee Type, Communication Policy, Communicated Messages, Message Combination, Inner Integration, Learning Methods, and Training Schemes. These dimensions, which form the skeleton of a Comm-MADRL system, can be used to analyze and gain insights into designed Comm-MADRL approaches thoroughly. By mapping recent Comm-MADRL approaches into this multi-dimensional structure, we not only provide insight into the current state of the art in this field but also determine some important directions for designing future Comm-MADRL systems.

The remaining sections of this paper are organized as follows. In Section 2 the preliminaries of multi-agent RL are discussed, together with existing extensions regarding communication and a detailed comparison of recent surveys. In Section 3, we present our proposed dimensions, explaining how we group the recent works in the categories of each dimension. In Section 4, we discuss the trends that we found in the literature, and, driven by the proposed dimensions, we propose possible research directions in this research area. We finalize the paper with some conclusions in Section 5.

## 2 Background

In this section, we first provide the necessary background on multi-agent reinforcement learning. Then, we show how multi-agent reinforcement learning can be extended to consider communication between agents. Finally, we present and compare recent surveys involving communication, from which we can directly see our motivations to fill the gaps among existing surveys.

### 2.1 Multi-agent Reinforcement Learning

Real-world applications often contain more than one agent that operate in the environment. Agents are generally assumed to be autonomous and required to learn their strategies for achieving their goals. A multi-agent environment can be formalized in several ways [19], depending on whether the environment is fully observable, how agents’ goals are correlated, etc. Among them, the Partially Observable Stochastic Game (POSG) [20, 21] is one of the most flexible formalizations. A POSG is defined by a tuple $≤ft⟨I,S,ρ^0,≤ft\{A_i\right\},P, ≤ft\{O_i\right\},O,≤ft\{R_i\right\}\right⟩$ , where $I$ is a (finite) set of agents indexed as $\{1,...,n\}$ , $S$ is a set of environment states, $ρ^0$ is the initial state distribution over state space $S$ , $A_i$ is a set of actions available to agent $i$ , and $O_i$ is a set of observations of agent $i$ . We denote a joint action space as $\boldsymbol{A}=×_i∈IA_i$ and a joint observation space of agents as $\boldsymbol{O}=×_i∈IO_i$ . Therefore, $P:S×\boldsymbol{A}→Δ(S)$ denotes the transition probability from a state $s∈S$ to a new state $s^\prime∈S$ given agents’ joint action $\vec{a}=⟨ a_1,...,a_n⟩$ , where $\vec{a}∈\boldsymbol{A}$ . With the environment transitioning to the new state $s^\prime$ , the probability of observing a joint observation $\vec{o}=⟨ o_1,...,o_n⟩$ (where $\vec{o}∈\boldsymbol{O}$ ) given the joint action $\vec{a}$ is determined according to the observation probability function $O:S×\boldsymbol{A}→Δ(\boldsymbol{ O})$ . Each agent then receives an immediate reward according to their own reward functions $R_i:S×\boldsymbol{A}×S→ ℝ$ . Similar to the joint action and observation, we could denote $\vec{r}=⟨ r_1,...,r_n⟩$ as a joint reward. If agents’ reward functions happen to be the same, i.e., they have identical goals, then $r_1=r_2=...=r_n$ holds for every time step. In this setting, the POSG is reduced to a Dec-POMDP [19]. If at every time step the state is uniquely determined from the current set of observations of agents, i.e., $s≡\vec{o}$ , the Dec-POMDP is reduced to a Dec-MDP. If each agent knows what the true environment state is, the Dec-MDP is reduced to a Multi-agent MDP. If there is only one single agent in the set of agents, i.e., $I=\{1\}$ , then the Multi-agent MDP is reduced to an MDP and the Dec-POMDP is reduced to a POMDP. Due to the partial observability, MARL methods often use the observation-action history $τ_i,t=\{o_i,0,a_i,0,o_i,1,...,o_i,t\}$ up to time step $t$ for each agent to approximate the environment state. Note that time step $t$ is often omitted for the sake of simplification.

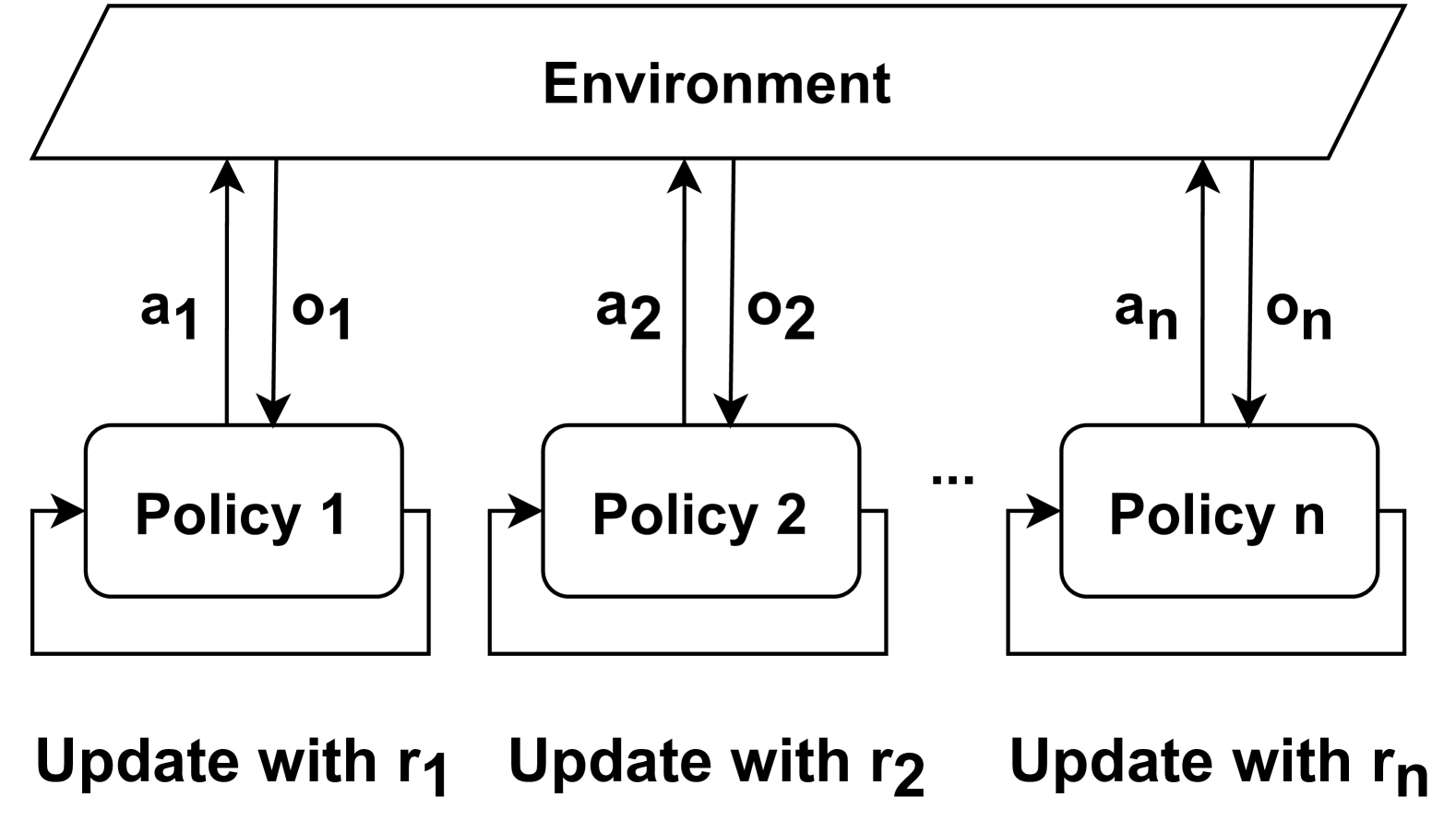

In the multi-agent reinforcement learning setting, agents can learn their policies in either a decentralized or a centralized fashion. In decentralized learning (e.g., decentralized Q-learning [22, 23]), an $n$ -agent MARL problem is decomposed into $n$ decentralized single-agent problems where each agent learns its own policy by considering all other agents as a part of the environment [24, 25]. In such a decentralized setting, the learned policy of each agent is conditioned on its local observation and history. A major problem with decentralized learning is the so-called non-stationarity of the environment, i.e., the fact that each agent learns in an environment where other agents are simultaneously exploring and learning. Centralized learning enables the training of either a single joint policy for all agents or a centralized value function to facilitate the learning of $n$ decentralized policies. While centralized (joint) learning removes or mitigates issues of partial observability and non-stationarity, it faces the challenge of joint action (and observation) spaces that expand exponentially with the number of agents and their actions. For a deeper dive into various training schemes used in MARL, we recommend the comprehensive survey by [17], which offers valuable insights into the training and execution of policies. Based on whether policies are derived from value functions or directly learned, multi-agent reinforcement learning methods can be categorized into value-based and policy-based methods. Both methods have been largely utilized in Comm-MADRL.

Value-based

Value-based methods in the multi-agent case borrow considerable ideas from the single-agent case. As one of the most popular value-based algorithms, the decentralized Q-learning learns a local Q-function for each agent. In the cooperative setting where agents share a common reward, the update rule for agent $i$ is as follows:

$$

Q_i(s,a_i)← Q_i(s,a_i)+α(\underbrace{r+γ\max_a^

\prime_iQ_i(s^\prime,a^\prime_i)}_new estimate-

\underbrace{\vphantom{≤ft(\frac{a^0.3}{b}\right)}Q_i(s,a_i)}_

current estimate) \tag{1}

$$

where $r$ is the shared reward, and $a^\prime_i$ is the action with the highest Q-value in the next state $s^\prime$ . In partially observable environments, the environment state is not fully observable and is usually replaced by the individual observation or history of each agent. The Q-values for each state-action pair are incrementally updated according to the TD error. This error, i.e., $r+γ\max_a^\prime_iQ_i(s^\prime,a^\prime_i)-Q_i(s,a_i)$ , represents the difference between a new estimate (i.e., $r+γ\max_a^\prime_iQ_i(s^\prime,a^\prime_i)$ ) and the current estimate (i.e., $Q_i(s,a_i)$ ) based on the Bellman equation [26]. As the state and action space could be too large to be encountered frequently for accurate estimation, function approximation methods, like deep neural networks, have become popular for endowing value or policy models with generalization abilities across both discrete and continuous states and actions [12]. For example, the Deep Q-network (DQN) [12] minimizes the difference between the new estimate calculated from sampled rewards and the current estimate of a parameterized Q-function. In DQN-based methods, the Q-function in Equation 1 is notated as $Q_i(s,a_i;θ_i)$ , which depends on learnable parameters $θ_i$ . On the other hand, centralized learning in value-based methods learns a joint Q-function $Q(s,\vec{a};θ)$ with parameters $θ$ . However, this approach can be challenging to scale with an increasing number of agents. Value decomposition methods [27, 28, 29, 30] are popular MARL methods that decompose a joint Q-function to enable efficient training. These methods are also widely employed in research works in Comm-MADRL [31, 32, 33]. In partially observable environments, linear value decomposition methods decompose history-based joint Q-functions as follows:

$$

Q^joint(\vec{τ},\vec{a})=∑_i^nw_iQ^i(τ_i,a_i) \tag{2}

$$

where the joint Q-function is based on the joint history of all agents and is decomposed into local Q-functions based on individual histories. The weight $w_i$ can either be a fixed value [27, 29] or a learnable parameter subject to certain constraints [30]. Advantage functions can also replace the Q-function in the above equation to reduce variance [34].

Policy-based

Policy-based methods directly search over the policy space instead of obtaining the policy through value functions implicitly. The policy gradient theorem [26] provides an analytical expression of the gradients for a stochastic policy with learnable parameters in single-agent cases. In the multi-agent case with centralized learning, the policy gradient theorem is expressed as follows:

$$

∇_θJ(θ)=E_\vec{a∼π(·\mid s),s∼ρ^

π}[∇_θ\logπ(\vec{a}\mid s;θ)Q^π(s,\vec{a})] \tag{3}

$$

where $J(θ)$ represents the learning objective, and $π(\vec{a}\mid s;θ)$ denotes a stochastic policy parameterized by $θ$ (abbreviated as $π$ ). Additionally, $ρ^π$ signifies the state distribution under the policy $π$ , and $∇_θJ(θ)$ represents the expected gradient with respect to all possible actions and states. Due to the computational intractability of the expected gradient, stochastic gradient ascent can be applied to update the parameters $θ$ at every learning step $l$ as follows:

$$

θ_l+1=θ_l+α\widehat{∇_θJ(θ)}

$$

where $α$ is the learning rate, and $\widehat{∇_θJ(θ)}$ is an estimate of the expected gradient based on sampled actions and states. Moreover, the Q-function in Equation 3 can be replaced by average returns over episodes to form REINFORCE algorithms [26], or by an estimated value function to form actor-critic algorithms [35, 36]. In actor-critic methods, the policy and value function are termed the actor and the critic, respectively. The critic will, therefore, guide the learning of the actor.

Actor-critic methods have undergone various adaptations for multi-agent environments [7, 8, 37, 38]. A typical extension is the multi-agent deep deterministic policy gradient (MADDPG) [7]. In MADDPG, the critic is a centralized Q-function designed to capture global information and coordinate learning signals. Meanwhile, the actors are local policies, ensuring decentralized execution. MADDPG assumes deterministic actors with continuous actions, allowing for the backpropagation of gradients from the value function to the policies. The gradient of each parameterized actor $μ_θ_{i}(a_i\mid o_i)$ with learnable parameters $θ_i$ , abbreviated as $μ_i$ , is defined as follows:

$$

∇_θ_{i}J≤ft(θ_i\right)=E_\vec{o,\vec{a}∼

D}≤ft[∇_θ_{i}μ_i≤ft(a_i\mid o_i\right)∇

_a_{i}Q_i^μ≤ft(\vec{o},a_1,…,a_N\right)\mid_a_{i=μ_i

≤ft(o_i\right)}\right]

$$

where $D$ is the experience buffer that contains joint observation-action tuples $⟨\vec{o},\vec{a},\vec{r},\vec{o^\prime}⟩$ . Each agent’s Q-function, denoted as $Q_i^μ(\vec{o},a_1,…,a_N)$ , takes joint observations and actions as inputs, while decentralized actors use local observations as inputs. Contrary to Equation 3, gradients with respect to the current action of agent $i$ (specifically, $μ_i(o_i)$ ) are utilized to guide the update of the policy parameter $θ_i$ . Both MADDPG and its single-agent counterpart, DDPG, have seen widespread application in Comm-MADRL [39, 40, 41, 42, 43].

### 2.2 Extensions with Communication

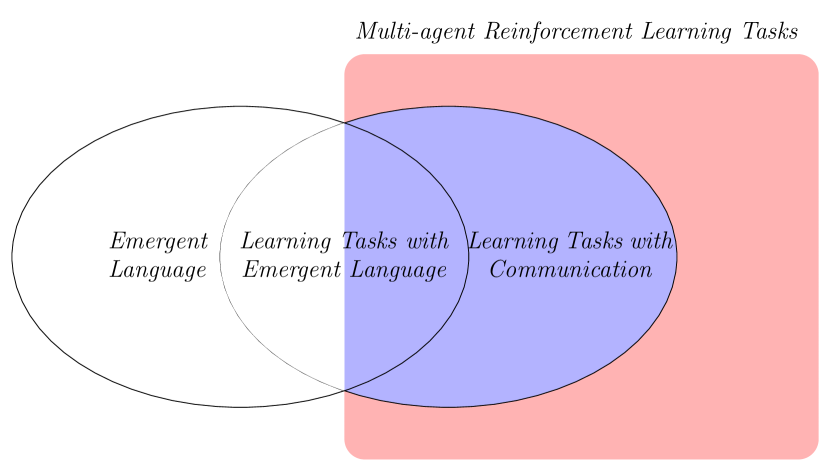

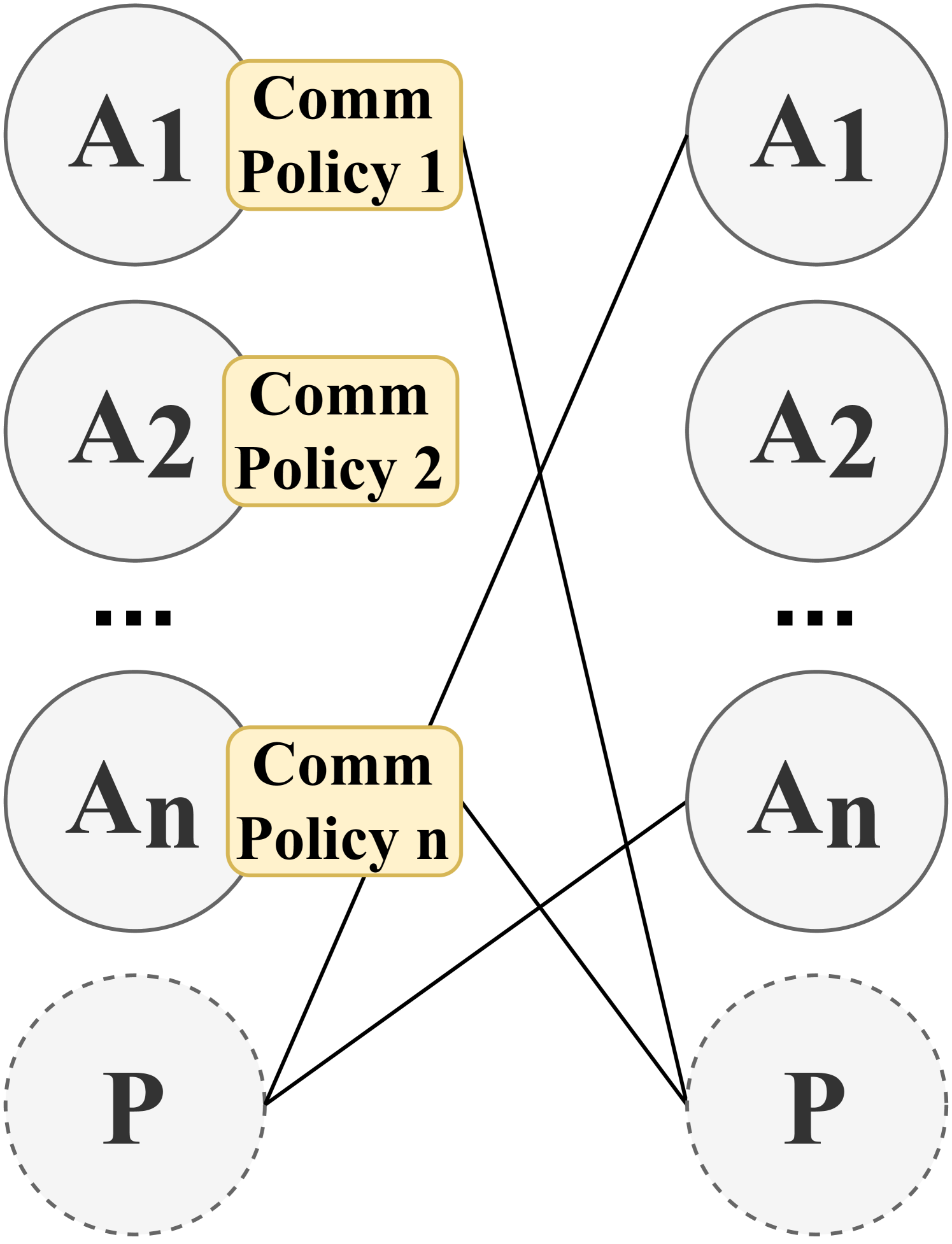

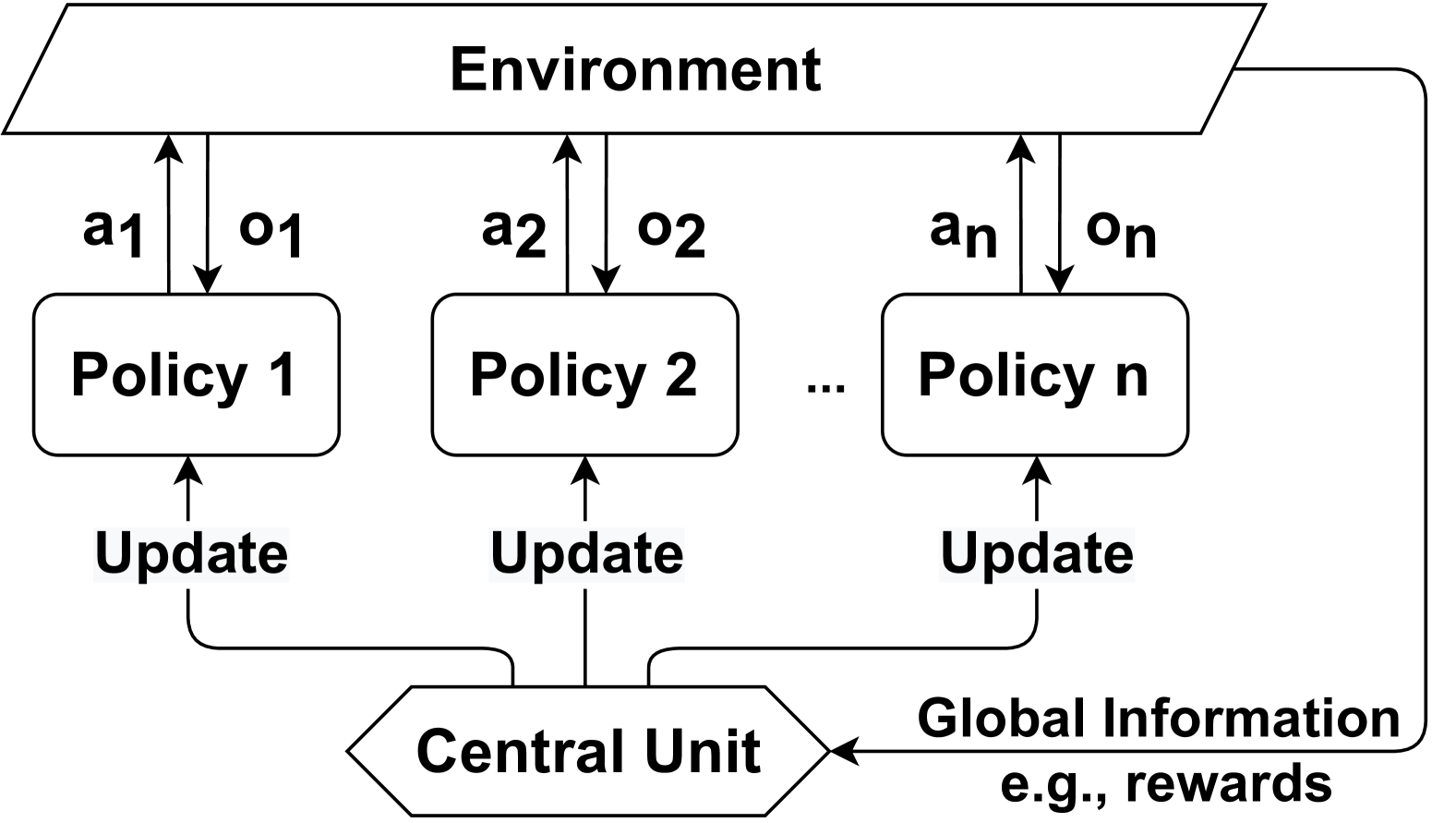

In the MADRL literature where communication is used, we notice two closely related research areas, which we will refer to with the terms emergent language and learning tasks with communication. The emergent language research area [18, 44, 45, 46, 47] aims at learning a language grounded on symbols in communities of interacting/communicating agents. This line of research tries to understand the evolution of the language in agents equipped with neural networks. On the other hand, learning tasks with communication [16, 48, 49, 50] focuses primarily on solving multi-agent reinforcement learning tasks with the aid of communication. Communication is often regarded as information exchange rather than learning a (human-like) language. Despite the distinction, when using MADRL techniques on specific domain tasks, languages might emerge, which can potentially enhance the learning system’s explainability in accomplishing those tasks. We illustrate the research areas, emergent language and learning tasks with communication, along with their intersection learning tasks with emergent language in Figure 1. Notably, our survey focuses on learning tasks with communication in multi-agent deep reinforcement learning, including the intersection with emergent language. Throughout the remainder of our survey, Comm-MADRL will be used to specifically refer to the areas of our focus. Within this focus, multiple agents often operate in partially observable environments and learn to share information encoded through neural networks. Furthermore, communication protocols, determining when and with whom to communicate, often leverage deep learning models to find the optimal choices that minimize communication overhead and yield more targeted communication. A multitude of works have been proposed to handle these subproblems inherent in Comm-MADRL. Most research works model only one or a few aspects of Comm-MADRL while selecting a default approach for other aspects. Given that the common goal of Comm-MADRL approaches is to design an effective and efficient communication protocol to improve agents’ learning performance in the environment, the proposed Comm-MADRL approaches inevitably share similarities to some extent. Consequently, establishing a classification system for Comm-MADRL becomes crucial. Such a system would aid in categorizing critical elements like contributions, targeted problems, and learning objectives, from which we can compare and analyse existing Comm-MADRL approaches.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Venn Diagram: Multi-agent Reinforcement Learning Tasks

### Overview

The image is a conceptual Venn diagram illustrating the relationships between different categories of tasks in the field of multi-agent reinforcement learning. It uses overlapping shapes and color coding to show set relationships and intersections.

### Components/Axes

The diagram consists of three primary geometric shapes, each with a distinct color and label:

1. **Gray Circle (Left):** Positioned on the left side of the diagram. It is labeled **"Emergent Language"**.

2. **Blue Circle (Right):** Positioned on the right side, overlapping with the gray circle. It is labeled **"Learning Tasks with Communication"**.

3. **Pink Rectangle (Background):** A large, rounded rectangle that encompasses the entire blue circle and the overlapping region between the two circles. It is labeled at the top-right as **"Multi-agent Reinforcement Learning Tasks"**.

The overlapping region between the gray and blue circles is labeled **"Learning Tasks with Emergent Language"**.

### Detailed Analysis

The diagram defines three distinct but related conceptual sets:

* **Set A (Gray Circle):** Represents the domain of "Emergent Language." This is a standalone concept.

* **Set B (Blue Circle):** Represents "Learning Tasks with Communication." This is a broader category that includes tasks where communication is a designed or given component.

* **Intersection (A ∩ B):** The area where the gray and blue circles overlap is explicitly labeled "Learning Tasks with Emergent Language." This signifies that tasks in this intersection are a subset of both Emergent Language and Learning Tasks with Communication. They are tasks where communication protocols are not pre-defined but emerge during the learning process.

* **Superset (Pink Rectangle):** The "Multi-agent Reinforcement Learning Tasks" rectangle acts as a superset. It fully contains the blue circle ("Learning Tasks with Communication") and the intersection ("Learning Tasks with Emergent Language"). It does *not* fully contain the gray circle ("Emergent Language"), indicating that not all emergent language research falls under the specific umbrella of multi-agent RL tasks as defined by this diagram.

### Key Observations

1. **Hierarchical Containment:** The diagram establishes a clear hierarchy. "Learning Tasks with Communication" is a subset of "Multi-agent Reinforcement Learning Tasks." "Learning Tasks with Emergent Language" is a further subset of both.

2. **Partial Overlap of "Emergent Language":** A significant portion of the "Emergent Language" circle lies outside the pink rectangle. This visually argues that the study of emergent language is a broader field that extends beyond the specific context of multi-agent reinforcement learning tasks.

3. **Color-Coded Regions:** The blue circle is filled with a semi-transparent blue, the overlapping region is a blend of blue and gray, and the pink rectangle provides a background context. This color coding helps distinguish the sets and their intersections.

### Interpretation

This diagram serves as a conceptual map to clarify terminology and scope within a research area. It makes several key arguments:

* **Communication vs. Emergent Language:** It distinguishes between tasks where communication is an engineered component ("Learning Tasks with Communication") and the more specific phenomenon where language-like protocols arise spontaneously from agent interactions ("Emergent Language").

* **Scope of Multi-agent RL:** It posits that the field of multi-agent reinforcement learning explicitly includes the study of communication and, by extension, the study of emergent language within that communication framework.

* **Broader Context of Emergent Language:** By placing part of the "Emergent Language" circle outside the multi-agent RL rectangle, the diagram acknowledges that emergent language is a topic studied in other disciplines (e.g., linguistics, complex systems, evolutionary biology) and is not solely the domain of RL.

The diagram is likely used to frame a research paper or presentation, helping the audience understand where the authors' work on "Learning Tasks with Emergent Language" fits within the larger landscape of related concepts. It emphasizes that this work is at the intersection of two fields and is a specific instance of multi-agent RL.

</details>

Figure 1: An illustration depicting the scope of this survey. The focus of our survey is represented by the blue part.

In the emergent language literature, numerous works employ various forms of the Lewis game, often referred to as referential games and operate under a cheap-talk setting [51], as highlighted in several surveys [10, 18]. In the emergent language research area, research works that do not adopt the cheap-talk setting but communicate through observable (domain-level) actions, are not included in our survey. Our survey focuses on explicit message transfer between agents. In these games, a goal, often represented as a target location, an image, or a semantic concept, is given to a sender agent but remains unrevealed from a receiver agent. The receiver agent must then either identify the correct goal based on the sender’s signaling [52, 53, 54, 47, 55, 56, 57, 58] or accomplish its single-agent task using the received signals (messages) [59, 60]. Research works in learning tasks with emergent language are grounded in a multi-agent environment where the joint actions of both sender and receiver agents impact environment transitions. Consequently, the learning tasks with emergent language literature considers multi-agent domain tasks [61, 62, 63, 64, 65], building on foundational concepts from MARL such as Dec-POMDPs or POSGs.

We further distinguish explicit versus non-explicit communication [19] in the literature of MADRL with communication. Explicit communication refers to communication through a set of messages separate from domain-level actions. Here, agents’ action policies are influenced by both their observations and the messages they receive. Such messages, crucial for supporting agents’ decision-making, are essential in both the training and execution phases. MADRL frameworks without explicit communication can still allow for communication through domain-level actions, such as the act of influencing the observations of one agent through the actions of another. Furthermore, without explicit communication, agents can transmit gradient signals, which facilitate centralized training (and decentralized execution) but are not utilized during execution phases. Specifically, in our survey, we focus on explicit and learnable communication.

Dec-POMDPs and POSGs are often extended to accommodate explicit communication. The communication can be integrated into the action set, adding a collection of communication acts alongside domain-level actions. Alternatively, a Dec-POMDP or a POSG can be extended to explicitly include a set of messages [19]. For instance, the POSG can be expanded with a (shared) message space $M$ , resulting in a POSG-Comm, defined as $≤ft⟨I,S,ρ^0,≤ft\{A_i\right\},P, ≤ft\{O_i\right\},O,≤ft\{R_i\right\},M\right⟩$ , where all components remain unchanged except for the added message space $M$ . A Dec-POMDP-Comm can be defined as similar to the POSG-Comm with shared rewards. In both POSG-Comm and Dec-POMDP-Comm, action policies take into account both environmental observations and inter-agent messages. Research works in Comm-MADRL that expand upon a POSG or a Dec-POMDP can be seen in references such as [61, 63, 66, 65, 67].

### 2.3 Communication in Recent Surveys

Communication has attracted much attention in the field of multi-agent reinforcement learning (MARL). Previous surveys mentioning communication in MARL primarily focus on providing an overview of MARL’s development. These surveys view communication as a subfield in MARL, and no extensive and substantial progress is reported. In an early survey, Stone and Veloso [13] classify MARL based on whether agents communicate and whether agents are homogeneous or not. Homogeneous agents have the same internal structure including goals, domain knowledge, and possible actions. They view learnable communication as a future research opportunity. Busoniu et al. [15] consider communication as a means to negotiate action choices and select equilibrium in the research direction of explicit coordination, without further classifying communication. With the advancement of deep learning, MARL has gradually incorporated deep neural networks such that recent developments are dominated by multi-agent deep reinforcement learning (MADRL). In the MADRL context, Hernandez-Leal et al. [16], Nguyen et al. [68], and Papoudakis et al. [9] briefly review early Comm-MADRL methods, which have now become baselines in many recent works. Specifically, Hernandez-Leal et al. [16] use learning communication to denote a new branch in MADRL. Papoudakis et al. [9] consider communication as an approach to handle the non-stationary problem in MADRL, as agents can exchange information to stabilize their training. Compared to the aforementioned surveys, OroojlooyJadid and Hajinezhad [37] provide a more detailed review of Comm-MADRL, covering a significant number of existing works. They view communication as a way to solve cooperative MADRL problems but did not propose a categorization model for Comm-MADRL. Zhang et al. [69] and Yang et al. [21] review communication from a theoretical perspective. Their primary focus is on communication within networked multi-agent systems. In these systems, agents share information through a time-varying network, aiming to reach consensus on learned value functions or policies. Despite this, no further classification of communication is made.

Two more recent surveys in MADRL, proposed by Gronauer and Diepold [17] and Wong et al. [70], focus on classifying existing works on communication. Gronauer and Diepold classify early research works in Comm-MADRL into Broadcasting, Targeted, and Networked communication, based on whether messages are received from all agents, a subset of agents, or a network of agents. Wong et al., similar to the survey of Papoudakis et al. [9], view communication as a method to address the issues of non-stationarity and partial observability. In the survey of Wong et al., research works on communication are categorized into three groups from a high-level perspective: communication as the primary learning goal, communication as an instrument to learn a specific task, and peer-to-peer teaching. However, they do not delve into how agents utilize communication to enhance learning. These surveys focus on limited aspects of communication, making their categorizations too narrow to distinguish recent works effectively, given the fact that many existing works share similar assumptions and conditions. To the best of our knowledge, only one survey [71] exclusively focuses on communication issues in MADRL. They review algorithms for communication and cooperation, including efforts to interpret languages developed through communication. Despite this, their survey mainly covers early models without proposing a categorization framework.

The literature has investigated communication from other perspectives. Shoham and Leyton-Brown [72] investigate communication from a game-theoretic perspective. They introduce several theories of communication in multi-agent systems, with the particular concern that agents can be self-motivated to convey information, driven by underlying incentives (e.g., the knowledge of game structure), or communicate in a pragmatic way analogous to human communication. Deep neural networks and deep reinforcement learning techniques have greatly widened the scope of language development in multi-agent systems. Lazaridou and Baroni [18] provide an extensive survey focused on emergent language, aiming to establish effective human-machine communication. As highlighted in section 2.2, the primary goal of emergent language research is to learn a human-like language from scratch. The goal of our survey is, however, to classify the literature on learning tasks with communication that aims at exploiting communication to accomplish multi-agent tasks.

In summary, existing surveys in Comm-MADRL lack coverage of the latest developments. These surveys also do not elaborate on the fact that communication itself is a combinatorial problem. Importantly, communication models engage with MADRL algorithms across various processes, including learning and decision-making. To effectively distinguish between existing Comm-MADRL approaches, it is crucial to analyze and classify them from a wider range of perspectives. In the following section, we delve into the field of Comm-MADRL through multiple dimensions, each linked to a unique research question pertinent to system design. These dimensions allow us to provide a fine-grained classification, highlighting the differences between Comm-MADRL approaches even within similar domains.

## 3 Learning Tasks with Communication in MADRL

In our survey, we consider explicit communication where action policies of agents are conditioned on communication that is learnable and dynamic, rather than static and predefined. Therefore, both the content of the messages and the chances of communication occurrences are subject to learning. As agents engage in multi-agent tasks, they learn domain-specific action policies and their communication protocols concurrently. As a result, learning tasks with communication becomes a joint learning challenge, where agents employ reinforcement learning to maximize environmental rewards and simultaneously utilize various machine learning techniques to develop efficient and effective communication protocols.

Learning tasks with communication in multi-agent deep reinforcement learning (Comm-MADRL) is a significant research problem, particularly as communication can lead to higher rewards. Numerous studies have emerged, developing effective and efficient Comm-MADRL systems, often sharing similarities. Our review begins with the seminal works such as DIAL [73], RIAL [73], and CommNet [48], and then expands to include the most relevant research works presented at major AI conferences and journals like AAMAS, AAAI, NeurIPS, and ICML, totaling 41 models in Comm-MADRL. To better distinguish among these models, we propose classifying them based on several dimensions in Comm-MADRL system design. These dimensions aim to comprehensively cover the current literature, allowing us to project the research works into a space where their similarities and differences become clear. We start by focusing on three key components of Comm-MADRL systems: problem settings, communication processes, and training processes. Problem settings encompass both communication-specific settings (e.g., communication constraints) and non-communication-specified settings (e.g., reward structures). Communication processes include common communication procedures, such as deciding whether to communicate and what messages to communicate. Training processes cover the learning of both agents and communication within MADRL. Based on the three key components, we identify and summarize 9 research questions that commonly arise in Comm-MADRL system design, corresponding to 9 dimensions as detailed in Table 1. These research questions and dimensions are designed to capture various aspects of Comm-MADRL, covering the learning objectives of agents and communication, the processes by which messages are generated, transmitted, integrated, and learned within the MADRL framework. We outline a systematic procedure for providing a guideline to effectively navigate through these dimensions when developing Comm-MADRL systems. The procedure allows us to organize the dimensions, demonstrate their relevance in system design, and guide the creation of customized Comm-MADRL systems in a step-by-step manner.

Table 1: Proposed dimensions and associated research questions.

| Key Components | Target Questions | Dimensions | Index |

| --- | --- | --- | --- |

| Problem Settings | What kind of behaviors are desired to emerge with communication? | Controlled Goals | ① |

| How to fulfill realistic requirements? | Communication Constraints | ② | |

| Which type of agents to communicate with? | Communicatee Type | ③ | |

| Communication Processes | When and how to build communication links among agents? | Communication Policy | ④ |

| Which piece of information to share? | Communicated Messages | ⑤ | |

| How to combine received messages? | Message Combination | ⑥ | |

| How to integrate combined messages into learning models? | Inner Integration | ⑦ | |

| Training Processes | How to train and improve communication? | Learning Methods | ⑧ |

| How to utilize collected experience from agents? | Training Schemes | ⑨ | |

As outlined in Procedure 1, $N$ reinforcement learning agents employ communication throughout their learning and decision-making. Initially, the learning objective for the $N$ agents is set, defining rewards that induce cooperative, competitive, or mixed behaviors, as captured by dimension 1. We then consider potential communication-specified settings like limited resources, addressing the need for realistic scenarios as described in dimension 2. Dimension 3 identifies potential communicatees, determining the agents for messages to be received, which varies across domains. At each time step, agents decide when and with whom to communicate, as highlighted in dimension 4. The patterns of communication occurrences are structured like a graph, where links, either undirected or directed, aid information exchange. Subsequently, messages that encapsulate agents’ understanding of the environment are generated and shared, relating to dimension 5. Given that agents often receive multiple messages, they must decide on how to combine these messages effectively. This process, crucial for integrating messages into their policies or value functions, is captured in dimensions 6 and 7. In cases of Comm-MADRL studies focusing on emergent language (i.e., learning tasks with emergent language), where messages are modeled as communicative acts emitted alongside domain-level actions, a specific rearrangement of the procedure is required. Here, messages are not observed by other agents until the next time step. Therefore, the processes outlined in dimensions 6 and 7 (lines 8 and 9) are moved to the front of those in dimension 4 (line 6). This rearrangement allows agents to combine and integrate messages from the previous time step before initiating new communication. As a result, agents make decisions and perform actions in the environment based not only on their environmental observations but also on information obtained from other agents (lines 10 and 11). During the training phase, experiences from both environmental interactions and inter-agent communication are utilized to train how agents will behave and communicate, i.e., agents’ policies, value functions, and communication processes, as characterized in dimensions 8 and 9 (line 14).

In the following sections, we make an extensive survey on Comm-MADRL based on each dimension and classify the literature when we focus on a specific dimension. We finally provide a comprehensive table to frame recent works with the aid of the 9 dimensions.

Procedure 1 A guideline of Comm-MADRL systems

1: $N$ reinforcement learning agents

2: Set goals for reinforcement learning agents $\triangleright$ Dimension ①

3: Set possible communication constraints $\triangleright$ Dimension ②

4: Set the type of communicatees $\triangleright$ Dimension ③

5: for $episode=1,2,...$ do

6: for every environment step do

7: Decide with whom and whether to communicate $\triangleright$ Dimension ④

8: Decide which piece of information to share $\triangleright$ Dimension ⑤

9: Combine received information shared from others $\triangleright$ Dimension ⑥

10: Integrate messages into agents’ internal models $\triangleright$ Dimension ⑦

11: Select actions based on communication

12: Perform in the environment (and store experiences)

13: end for

14: if training is enablled then

15: Update agents’ policies, value function, and communication processes $\triangleright$ Dimensions ⑧ & ⑨

16: end if

17: end for

Procedure 1: A guideline of Comm-MADRL systems. The guideline positions dimensions where communication influences interaction with the environment and training phases.

### 3.1 Controlled Goal

Table 2: The category of controlled goals.

| Types | Configurations | Methods |

| --- | --- | --- |

| Cooperative | Global Rewards | DIAL [73]; RIAL [73]; CommNet [48]; GCL [61]; MAGNet-SA-GS-MG [40]; MADDPG-M [41]; SchedNet [43]; Agent-Entity Graph [74]; VBC [31]; NDQ [75]; IMAC [66]; Gated-ACML [76]; Bias [63]; LSC [77]; Diff Discrete [78]; I2C [79]; TMC [32]; GAXNet [80]; DCSS [64]; MAIC [33]; |

| Local Rewards | BiCNet [50]; DGN [81]; IC3Net [49]; MD-MADDPG [42]; DCC-MD [82]; GA-Comm [83]; NeurComm [84]; IP [85]; ETCNet [86]; Variable-length Coding [87]; AE-Comm [65]; | |

| Global or Local Rewards | MS-MARL-GCM [88]; ATOC [39]; TarMAC [89]; IS [90]; HAMMER [91]; MAGIC [92]; FlowComm [93]; FCMNet [94]; | |

| Competitive | Conflict Rewards | IC3Net [49]; R-MACRL [95]; |

| Mixed | Self-interested Rewards | IC [62]; DGN [81]; TarMAC [89]; IC3Net [49]; NDQ [75]; LSC [77]; MAGIC [92]; |

With a given reward configuration, reinforcement learning agents are guided to achieve their designated goals and interests. As agents communicate in order to obtain higher rewards, the goal of communication and the goal of achieving domain-specific tasks are inherently aligned. The emergent behaviors of agents can be summarized into three types: cooperative, competitive, and mixed [96, 23], each corresponding to different reward configurations and goals. Notably, some Comm-MADRL methods have been tested in more than one benchmark environment to show their flexibility and scalability, where the reward configurations may vary [49, 81, 89, 77, 92]. Furthermore, a multi-agent environment may consist of both fixed opponents and teammates, which typically do not participate in communication. Therefore, we exclude fixed agents when identifying reward configurations. Consequently, we focus on (learnable) agents involved in communication and classify their behaviors that are desired to emerge, aligning them with associated reward configurations (summarized in Table 2).

Cooperative

In cooperative scenarios, agents have the incentive to communicate to achieve better team performance. Cooperative settings can be characterized by either a global reward that all agents share or a sum of local rewards that could be different among agents. Communication is usually used to promote cooperation as a team. Thus, in the literature, a team of agents can receive a global reward [73, 48, 61, 88, 39, 89, 40, 41, 43, 74, 31, 75, 66, 76, 63, 77, 78, 79, 90, 32, 91, 92, 93, 80, 64, 33, 94], which does not account for the contribution of each agent. The agents can also receive local rewards, with designs to make the reward depend on teammates’ collective performance [50, 88, 81, 49, 42, 82, 83, 87, 91], to penalize collisions [39, 81, 82, 83, 90, 86, 92, 93], or to share the reward with other agents for encouraging mutual cooperation [84, 85, 65].

There are a variety of cooperative environments where communication has shown performance improvements, from small-scale games to complex video games. In early works, Foerster et al. [73] developed two simple games, named Switch Riddle and MNIST Games, for their proposed models, DIAL and RIAL. Sukhbaatar et al. [48] used Traffic Junction for evaluating CommNet, which has become a popular testbed in recent works [88, 89, 49, 83, 79, 90, 92]. Among them, MAGIC [92] achieved higher performance on Traffic Junction with local rewards compared to two early works, CommNet [48], IC3Net [49], and one recent work, GA-Comm [83]. StarCraft [97, 98, 99] is another benchmark environment in cooperative MARL with relatively flexible settings. BiCNet [50] and MS-MARL-GCM [88] are evaluated on an early version of StarCraft [97]. Then, a new version of StarCraft, SMAC, has become popular in recent works [31, 75, 66, 32, 33, 94]. By controlling a team of agents, the cooperative goal in SMAC is to defeat enemies on easy, hard, and super hard maps. FCMNet [94] and MAIC are two recent works that surpass multiple communication methods and value decomposition methods (e.g., QMIX) on different maps. Google research football [100] is an even more challenging game with a physics-based 3D soccer simulator. Only MAGIC has reported performance on this platform with communication, and more investigations on this environment are needed. Compared to the above approaches in Comm-MADRL, ATOC [39] has been examined using a significantly larger number of learning agents in the predator-prey domain. Predator-prey is a grid world game with a long history in MARL. It has been developed with several versions [101, 102, 7], while still viewed as a standard test environment due to its flexibility and customizability. ATOC reports performance on this platform with continuous state and action spaces. In the subfield learning tasks with emergent language, cooperative scenarios are popularly used. They are mostly based on grid world or particle environments and have explicit role assignments, e.g., senders and receivers [61, 63, 65, 64].

Competitive

In case agents need to compete with each other to occupy limited resources, they are assigned competitive learning objectives. In some competitive games, such as zero-sum games, one player wins and the others lose and therefore rational agents do not have the incentive to communicate. Nevertheless, in other competitive scenarios where agents compete for long-term goals, communication can allow for low-level cooperation among agents before the (long-term) goals are achieved. Based on our observations, only one work, IC3Net [49], tests competitive settings and enables agents to compete for rewards. IC3Net has been tested in several settings, including cooperative, competitive, and mixed scenarios, with different reward configurations. IC3Net shows that competitive agents communicate only when it is profitable, e.g., before catching prey in the predator-prey domain. $\mathfrak{R}$ -MACRL [95] considers communication from malicious agents to improve the worst-case performance. In $\mathfrak{R}$ -MACRL, the whole environment is cooperative while agents learn to defend against malicious messages. Although the environment is cooperative, we classify this work under the competitive category as the learning goal between malicious agents and other agents is competitive.

Mixed

For a MAS where we care about self-interest agents, individual rewards can be designed and distributed to each agent [81, 89, 49, 75, 77, 92, 94]. Therefore, cooperative and competitive behaviors coexist during learning, which may show more complex communication patterns. Specifically, DGN [81] considers a game where each agent gets positive rewards by eating food but gets higher rewards by attacking other agents. However, being attacked will get a high punishment. With communication, agents can learn to share resources collaboratively rather than attacking each other. IC3Net [49], TarMAC [89] and MAGIC [92] are evaluated on a mixed version of Predator-prey, and agents learn to communicate only when necessary. NDQ [75] is examined in an independent search scenario, where two agents are rewarded according to their own goals, and shows that agents learn to not communicate in independent scenarios. IC [62] considers a scenario in which sender and receiver agents have different abilities to complete the goal. The sender agents have more vision but cannot clean obstacles, while receiver agents have limited vision but are able to clear obstacles. With communication, agents show collaborative behaviors to get higher rewards.

### 3.2 Communication Constraints

Practical concerns such as communication cost and noisy environment impair Comm-MADRL systems from embracing realistic applications more than simulations. This dimension, Communication Constraints, determines which type of communication concerns are handled in a Comm-MADRL system. We categorize recent works on this dimension into the following categories (summarized in Table 3).

Unconstrained Communication

In this category, communication processes, including communication channels, the content and transmission of messages, and the decisions of whether to communicate or not, are not explicitly restricted. In principle, agents can communicate as much as information they can without any decision to disallow communication in order to prevent communication overhead [48, 50, 88, 81, 89, 40, 42, 90, 91, 80, 94]. Specifically, several works consider blocking communication through predefined or learnable decisions of whether to communicate or not, while aiming to differentiate useful communicated information [39, 41, 49, 82, 74, 83, 77, 84, 85, 79, 92, 93]. We also put those works under this category as they do not explicitly assume that communication is limited by cost.

Constrained Communication

In this category, communication processes are explicitly constrained by cost or noise. Thus, agents need to utilize communication resources efficiently to promote learning. We further identify two practical concerns that have been considered in the literature.

Table 3: The category of communication constraints.

| Types | Subtypes | Methods |

| --- | --- | --- |

| Unconstrained Communication | | CommNet [48]; BiCNet [50]; MS-MARL-GCM [88]; ATOC [39]; DGN [81]; TarMAC [89]; MAGNet-SA-GS-MG [40]; MADDPG-M [41]; IC3Net [49]; MD-MADDPG [42]; DCC-MD [82]; Agent-Entity Graph [74]; GA-Comm [83]; LSC [77]; NeurComm [84]; IP [85]; I2C [79]; IS [90]; HAMMER [91]; MAGIC [92]; FlowComm [93]; GAXNet [80]; FCMNet [94]; |

| Constrained Communication | Limited Bandwidth | RIAL [73]; DIAL [73]; GCL [61]; IC [62]; SchedNet [43]; VBC [31]; NDQ [75]; IMAC [66]; Gated-ACML [76]; Bias [63]; ETCNet [86]; Variable-length Coding [87]; TMC [32]; AE-Comm [65]; MAIC [33]; |

| Corrupted Messages | DIAL [73]; Diff Discrete [78]; DCSS [64]; $\mathfrak{R}$ -MACRL [95]; | |

- Limited Bandwidth. In this category, communication bandwidth is limited by channel capacity. Thus, communication needs to be used more efficiently, both in the number of times that agents can communicate and the size of communicated information. Early works focus on transmitting succinct messages to avoid communication overhead. RIAL and DIAL [73] are proposed to communicate very little information (i.e., a binary value or a real number) at every time step to reduce the bandwidth needed. MD-MADDPG [42] considers a fixed-size memory, which is shared by all agents. Agents communicate through the shared memory instead of ad hoc channels. VBC [31] and TMC [32] reduce communication costs by using predefined thresholds to filter unnecessary communication, and both show lower communication overhead. NDQ [75] cuts 80% of messages by ordering the distributions of messages according to their means and drops accordingly to prevent meaningless messages. MAIC [33] also cuts messages by examining several message pruning rates. In MAIC, messages are encoded to consider their respective importance. Sent messages are ordered and then pruned with a given pruning rate. IMAC [66] explicitly models bandwidth limitation as a constraint to optimization. An upper bound of the mutual information between messages and observations is derived according to bandwidth constraint, which turns out to minimize the entropy of messages. Then agents learn not only to maximize cumulative rewards but also to generate low-entropy messages. The number of agents to communicate can also be restricted to reduce the total amount of communication. SchedNet [43] considers a scenario of a shared channel together with limited bandwidth. Only a subset of agents are chosen to convey their messages according to their importance. Gated-ACML [76] learns a probabilistic gate unit to block messages transmitting between each agent and a centralized message coordinator, with the extra cost of learning optimal gates. Inspired by Gated-ACML and IMAC, ETCNet [86] puts constraints on the behaviors of deciding whether to send messages or not. A penalty term is added to the environment rewards, and an additional reinforcement learning algorithm is used to optimize the sending behaviors. Variable-length Coding [87] also utilizes a penalty term while encouraging short messages. When learning tasks with emergent language, symbolic languages are acquired for communication through a limited number of tokens. Therefore, we classify those works under limited bandwidth [61, 62, 63, 65].

- Corrupted Messages. In this category, messages transmitted among agents can be corrupted due to environmental noise or malicious intentions. DIAL [73] shows that during training, adding Gaussian noise to the communication channel can push the distribution of messages into two modes to convey different types of information. Diff Discrete [78] considers how to backpropagate gradients through a discrete communication channel (between 2 agents) with unknown noise. An encoder/channel/decoder system is modeled, where the encoder is used to discretize a real-valued signal into a discrete message to pass through the discrete communication channel, and the decoder is used to compute an approximation of the original signal. Later they show that the encoder/channel/decoder system is equivalent to an analog communication channel with additive noise. With the additional assumption that training is centralized, the gradient of the receiver with respect to real-value messages from the sender can be computed to allow backpropagation. DCSS [64] also considers a noisy setting. They prove that representing messages as one-hot vectors may not be optimal when the environment becomes noisy. Inspired by word embedding in the NLP field, they propose to generate a semantic representation of discrete tokens that are communicated among agents. The results show that such representation is robust in noisy environments and benefits human understanding of communication. Different from noisy environments, $\mathfrak{R}$ -MACRL [95] assumes that an agent holds a malicious messaging policy, producing adversarial messages that can mislead other agents’ action selections. Therefore, other agents need to prevent being exploited by learning a defense policy in order to filter the messages.

### 3.3 Communicatee Type

Communicatee Type determines which type of agents are assumed to receive messages in a Comm-MADRL system. We found that in the literature, communicatee type can be classified into the following categories based on whether agents in the environment communicate with each other directly or not.

Agents in the MAS

In this category, the set of communicatees consists of agents in the environment, and they directly communicate with each other. Nevertheless, due to partial observability, agents may not be able to communicate with every agent in the MAS, and thus we further distinguish the types of communicatees as follows:

- Nearby Agents. In many Comm-MADRL systems, communication is only allowed between neighbors. Nearby agents can be defined as observable agents [80], agents within a certain distance [81, 74, 77] or neighboring agents on a graph [84]. GAXNet [80] labels observable agents and enables communication between them. DGN [81] limits communication within 3 closest neighbors while using a distance metric to find them. Agent-Entity Graph [74] also uses distance to measure whether agents are nearby or not. As long as two agents are close to each other, they will be allowed to communicate. LSC [77] enables agents within a cluster radius to decide whether to become a leader agent. Then all non-leader agents in the same cluster will only communicate with the leader agent. NeurComm [84] and IP [85] preset a graph structure among agents built upon networked multi-agent systems. In both NeurComm and IP, communicatees are restricted to neighbors on the graph. MAGNet-SA-GS-MG [40] uses a pre-trained graph to limit communication and restricts communication on neighboring agents. Neighboring agents can also emerge during learning instead of being predetermined, as proposed in GA-Comm [83], MAGIC [92] and FlowComm [93], which explicitly learn a graph structure among agents. Specifically, in GA-Comm [83] and MAGIC [92], a central unit (e.g., GNN) learns a graph inside and coordinates messages based on the (complete) graph simultaneously. In this case, agents do not communicate with each other directly; instead, they communicate through a virtual agent who does not affect the environment. Therefore, we categorize these two works into the proxy category.

- Other (Learning) Agents. If nearby agents are not identified, the set of communicatees typically consists of other (learning) agents. Specifically, IC3Net [49] enables communication between learning agents and their opponents. Experiments indicate that these opponents eventually learn to not communicate to avoid being exploited. Some works assume explicit role assignments, i.e., senders and receivers. The role of the receiver can be taken by a disjoint set of agents separate from the senders [62, 63, 64] or by all other agents in the environment [61, 65]. In both cases, agents communicate with each other directly.

Table 4: The category of communicatee type.

| Types | Subtypes | Methods |

| --- | --- | --- |

| Agents in the MAS | Nearby Agents | DGN [81]; MAGNet-SA-GS-MG [40]; Agent-Entity Graph [74]; LSC [77]; NeurComm [84]; IP [85]; FlowComm [93]; GAXNet [80]; |

| Other Agents | DIAL [73]; RIAL [73]; CommNet [48]; GCL [61]; BiCNet [50]; IC [62]; TarMAC [89]; MADDPG-M [41]; IC3Net [49]; SchedNet [43]; DCC-MD [82]; VBC [31]; NDQ [75]; Bias [63]; Diff Discrete [78]; I2C [79]; IS [90]; ETCNet [86]; Variable-length Coding [87]; TMC [32]; AE-Comm [65]; DCSS [64]; R-MACRL [95]; MAIC [33]; FCMNet [94]; | |

| Proxy | | MS-MARL-GCM [88]; ATOC [39]; MD-MADDPG [42]; IMAC [66]; GA-Comm [83]; Gated-ACML [76]; HAMMER [91]; MAGIC [92]; |

Proxy

A proxy is a virtual agent that plays an essential role (e.g., as a medium) in facilitating communication but does not directly affect the environment. Using a proxy as the communicatee means that agents will not directly communicate with each other, instead viewing the proxy as a medium, coordinating and transforming messages for specific purposes. MS-MARL-GCM [88] utilizes a master agent that collects local observations and hidden states from agents in the environment and sends a common message back to each of them. Similarly, HAMMER [91] employs a central proxy that gathers local observations from agents and sends a private message to each agent. MD-MADDPG [42] maintains a shared memory among agents, learning to selectively store and retrieve local observations from the memory. IMAC [66] defines a scheduler that aggregates encoded information from all agents and sends individual messages to each agent. These works primarily focus on how to encode messages through the proxy without determining whether to send or receive messages. By contrast, ATOC [39], Gated-ACML [103], GA-Comm [83] and MAGIC [92] are all designed for agents to decide whether to communicate with a message coordinator. In ATOC and Gated-ACML, each agent’s decisions are made locally based on individual observations, with messages aggregated from nearby agents and from the entire MAS, respectively. Both GA-Comm and MAGIC develop a global communication graph, coupled with a graph neural network (GNN) to aggregate messages by weights and send new messages back to each agent, informing action selection in the environment.

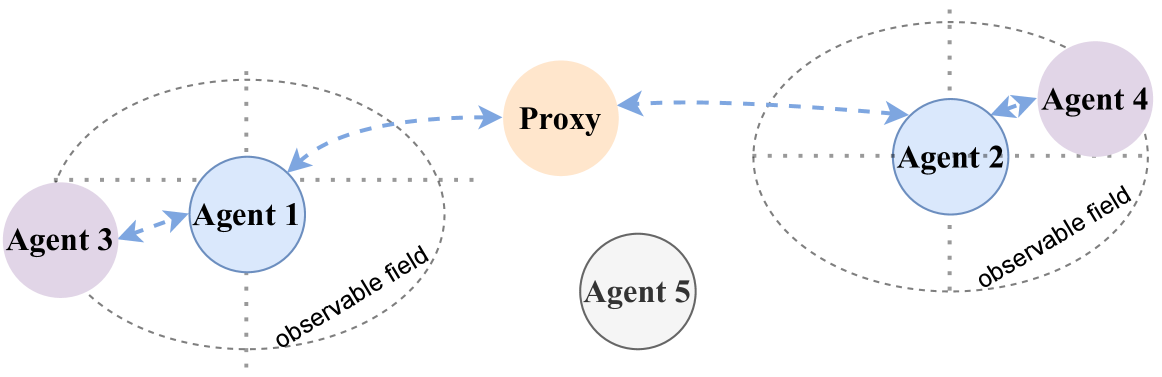

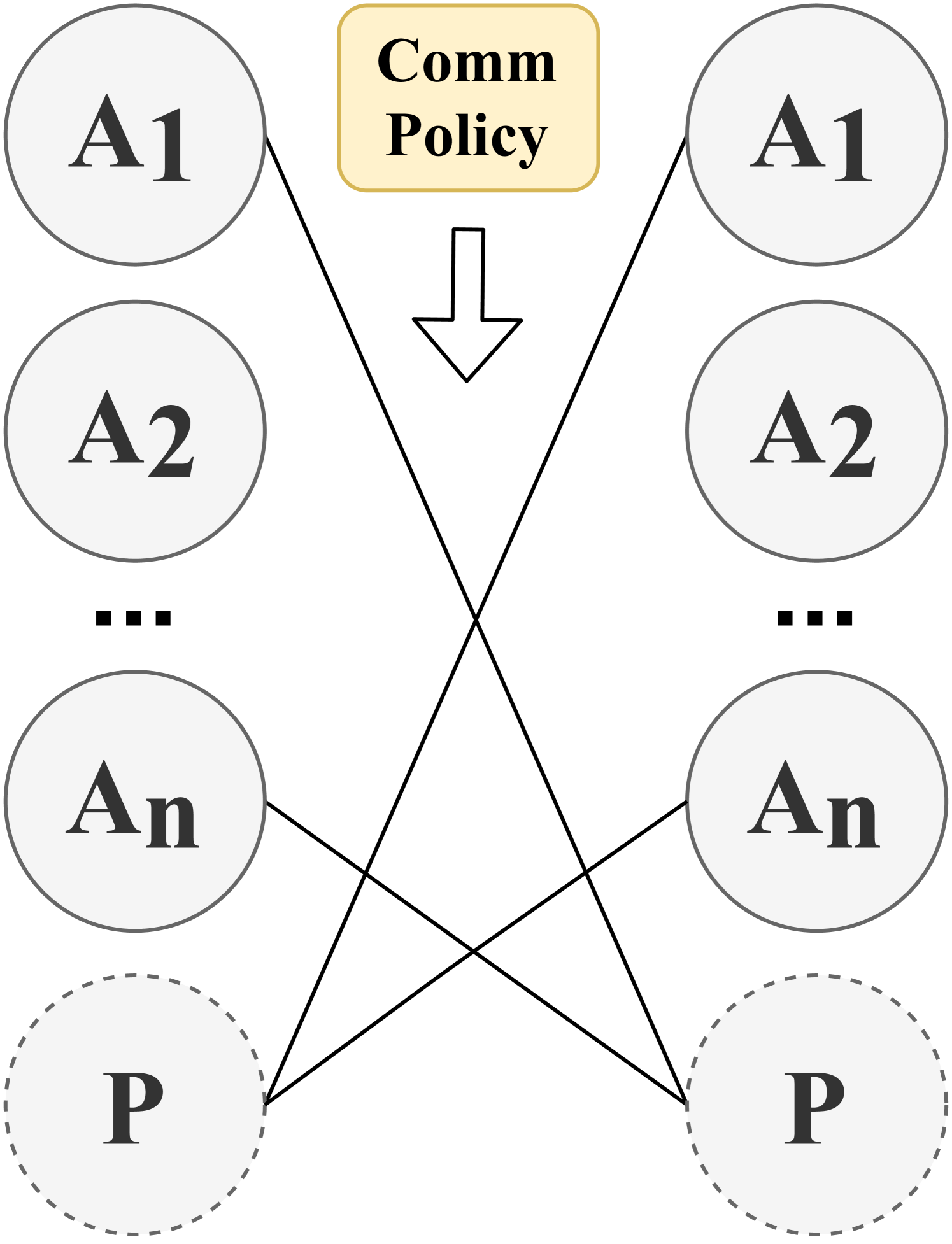

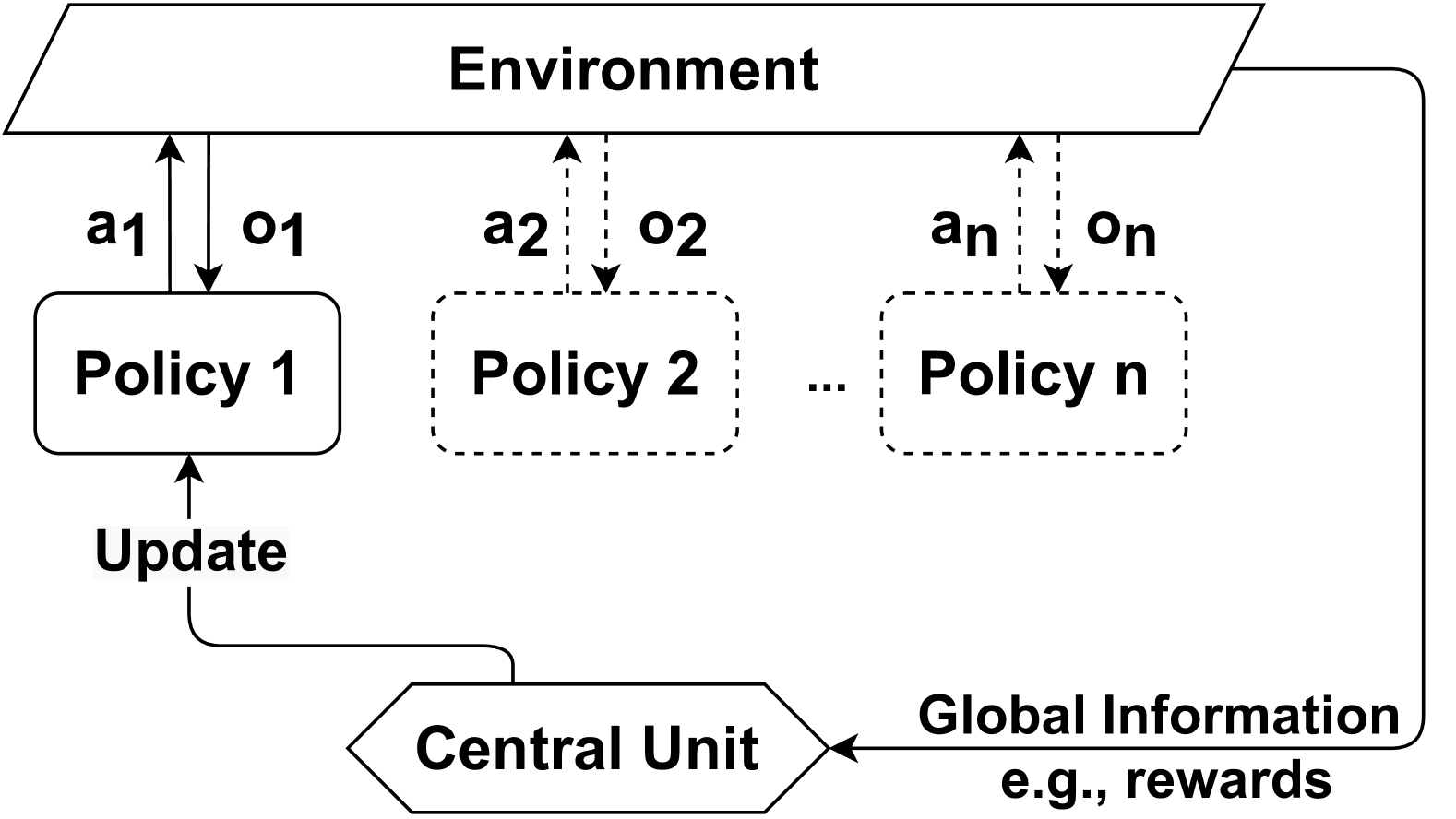

Table 4 summarizes recent works on communication types in MAS. To illustrate these categories, we present an example of different communication methods used in a Comm-MADRL system in Figure 2. The system consists of five agents and one proxy. Agent 3 is the nearby agent of Agent 1, while Agent 4 is the nearby agent of Agent 2. Agent 5 is out of the view range of Agents 1 and 2. If communication is limited to nearby agents, Agent 1 will communicate only with Agent 3, and Agent 2 will communicate only with Agent 4. However, if communication involves a proxy, all agents can send their messages to the proxy and receive coordinated messages.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Multi-Agent Communication Network

### Overview

The image is a schematic diagram illustrating a network of five agents (Agent 1 through Agent 5) and a central "Proxy" node. It depicts communication pathways and observational boundaries within a multi-agent system. The diagram uses color-coded circles for entities and dashed arrows to represent directional communication links.

### Components/Axes

**Entities (Circles):**

* **Agent 1:** Light blue circle, positioned in the left-center region.

* **Agent 2:** Light blue circle, positioned in the right-center region.

* **Agent 3:** Light purple circle, positioned to the left of Agent 1.

* **Agent 4:** Light purple circle, positioned to the right of Agent 2.

* **Agent 5:** White circle with a black outline, positioned below and between the Proxy and Agent 2.

* **Proxy:** Light orange circle, positioned centrally between the two main agent clusters.

**Communication Links (Dashed Blue Arrows):**

* A bidirectional arrow connects **Agent 3** and **Agent 1**.

* A unidirectional arrow points from **Agent 1** to the **Proxy**.

* A unidirectional arrow points from the **Proxy** to **Agent 2**.

* A bidirectional arrow connects **Agent 2** and **Agent 4**.

**Observable Fields (Dashed Ellipses):**

* A large dashed ellipse encircles **Agent 1** and **Agent 3**. The label "observable field" is written along its lower-right curve.

* A second large dashed ellipse encircles **Agent 2** and **Agent 4**. The label "observable field" is written along its lower-right curve.

**Background Grid:**

* Faint, dashed gray lines form a crosshair or grid pattern behind the elements, suggesting a coordinate system or spatial reference frame.

### Detailed Analysis

**Spatial Layout & Relationships:**

* The diagram is organized into two primary clusters separated by the central Proxy.

* **Left Cluster:** Contains Agent 3 (leftmost) and Agent 1, enclosed within an "observable field." Agent 3 communicates directly with Agent 1.

* **Central Mediator:** The Proxy sits between the two clusters. It receives information from Agent 1 (left cluster) and sends information to Agent 2 (right cluster).

* **Right Cluster:** Contains Agent 2 and Agent 4 (rightmost), enclosed within a separate "observable field." Agent 2 communicates directly with Agent 4.

* **Isolated Agent:** Agent 5 is positioned outside both observable fields and has no depicted communication links, suggesting it is either inactive, in a different scope, or an observer.

**Flow Direction:**

The communication flow is asymmetric. Information appears to originate in the left cluster (Agent 3 -> Agent 1), pass through the Proxy for mediation or translation, and then be disseminated to the right cluster (Agent 2 -> Agent 4). The bidirectional links within each cluster suggest local, reciprocal communication.

### Key Observations

1. **Proxy as a Bridge:** The Proxy is the sole connection point between the two agent clusters, indicating a hub-and-spoke or mediated communication architecture.

2. **Separation of Observability:** The two "observable field" ellipses explicitly define the perceptual or operational boundaries for each cluster. Agents within a field can likely perceive each other directly.

3. **Color Coding:** Agents are color-coded by role or type: light blue for primary cluster nodes (Agent 1, Agent 2), light purple for peripheral nodes (Agent 3, Agent 4), and white for the isolated node (Agent 5). The Proxy has a unique color (light orange).

4. **Agent 5's Isolation:** Agent 5's lack of connections and placement outside the defined fields is a significant anomaly, implying it is not integrated into the current communication process.

### Interpretation

This diagram models a **decentralized yet partitioned multi-agent system**. The key insight is the use of a **Proxy to facilitate controlled, indirect communication between two otherwise isolated groups of agents**. Each group operates within its own "observable field," meaning agents within a group have direct awareness of each other, but cross-group awareness is mediated.

The structure suggests a design for scalability, security, or specialization. For example:

* The Proxy could be a gateway translating protocols or filtering information between different sub-networks.

* The observable fields might represent physical proximity, network subnets, or task-specific domains.

* Agent 5's isolation could represent a monitoring agent, a new agent not yet integrated, or a node that has lost connectivity.

The overall system prioritizes modularity and controlled interaction over fully connected, flat communication. The flow from left to right (Agent 3 -> Agent 1 -> Proxy -> Agent 2 -> Agent 4) may indicate a specific data pipeline or command chain within this snapshot of the system's operation.

</details>

Figure 2: Three communicatee types in the same system.

### 3.4 Communication Policy

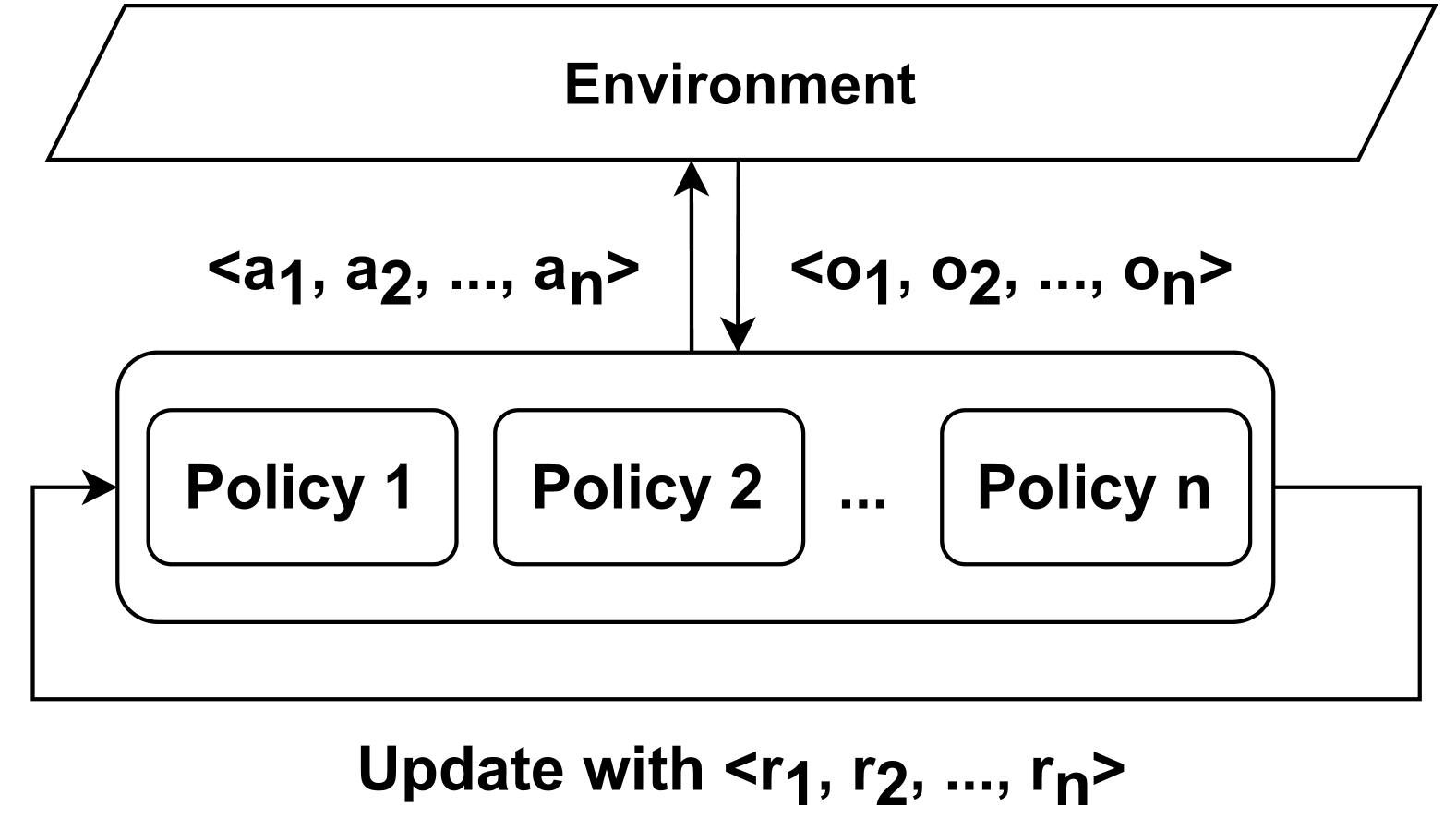

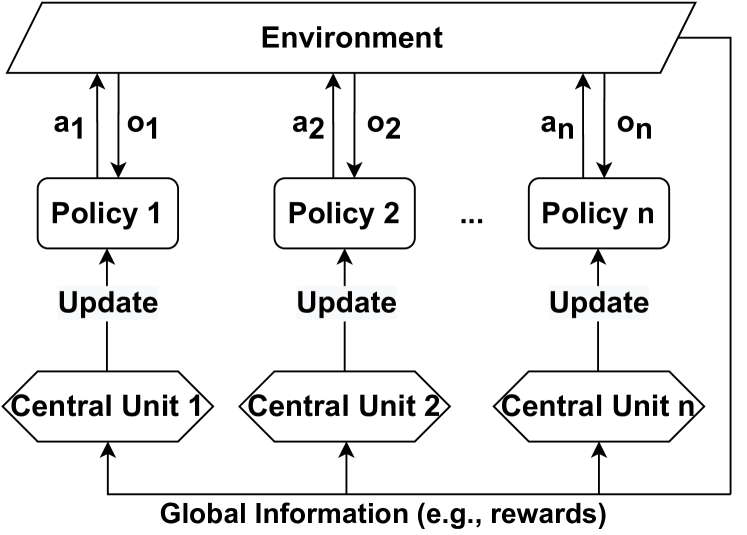

Communication Policy determines when and with which agents (i.e., communicatees) to communicate in order to enable message transmission. A Communication Policy defines a set of communication actions, which can be modeled in different ways. For example, a communication action can be represented as a vector of binary values, where each value indicates whether communication with one of the other agents is allowed at a certain time step. These actions form communication links between pairs of agents, which can be represented as a communication graph among agents. In the literature, communication policies can be either predefined or learned, allowing communication with all other agents or only a subset of agents. Furthermore, communication policies can be centralized, controlling communication among all agents, or decentralized, enabling individual agents to control whether to communicate. Therefore, we first categorize the literature based on whether communication policies are predefined or learned. We find that in predefined communication policies, the literature often uses either full communication among agents, where the communication graph becomes complete, or a partial graph structure to incorporate constraints on communication policies. On the other hand, in learnable communication policies, we identify two distinct categories: individual control and global control. In individual control, communication policies are learned by each agent independently, whereas in global control, these policies are learned and implemented centrally, applying to all agents in Comm-MADRL systems. As a result, we have identified four subcategories within the dimension of communication policy: Full Communication, (Predefined) Partial Structure, Individual Control, and Global Control. These categorizations are summarized in Table 5.

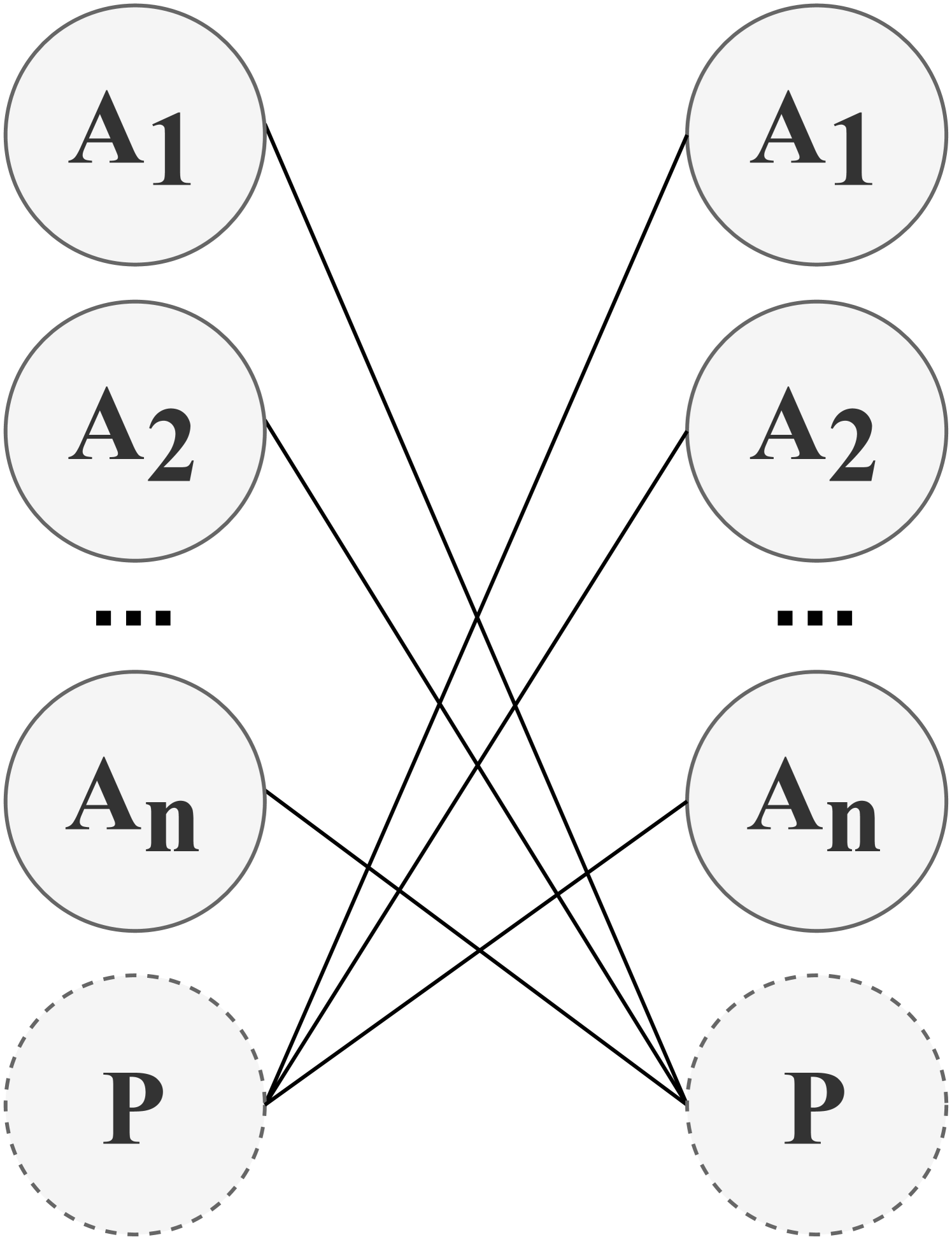

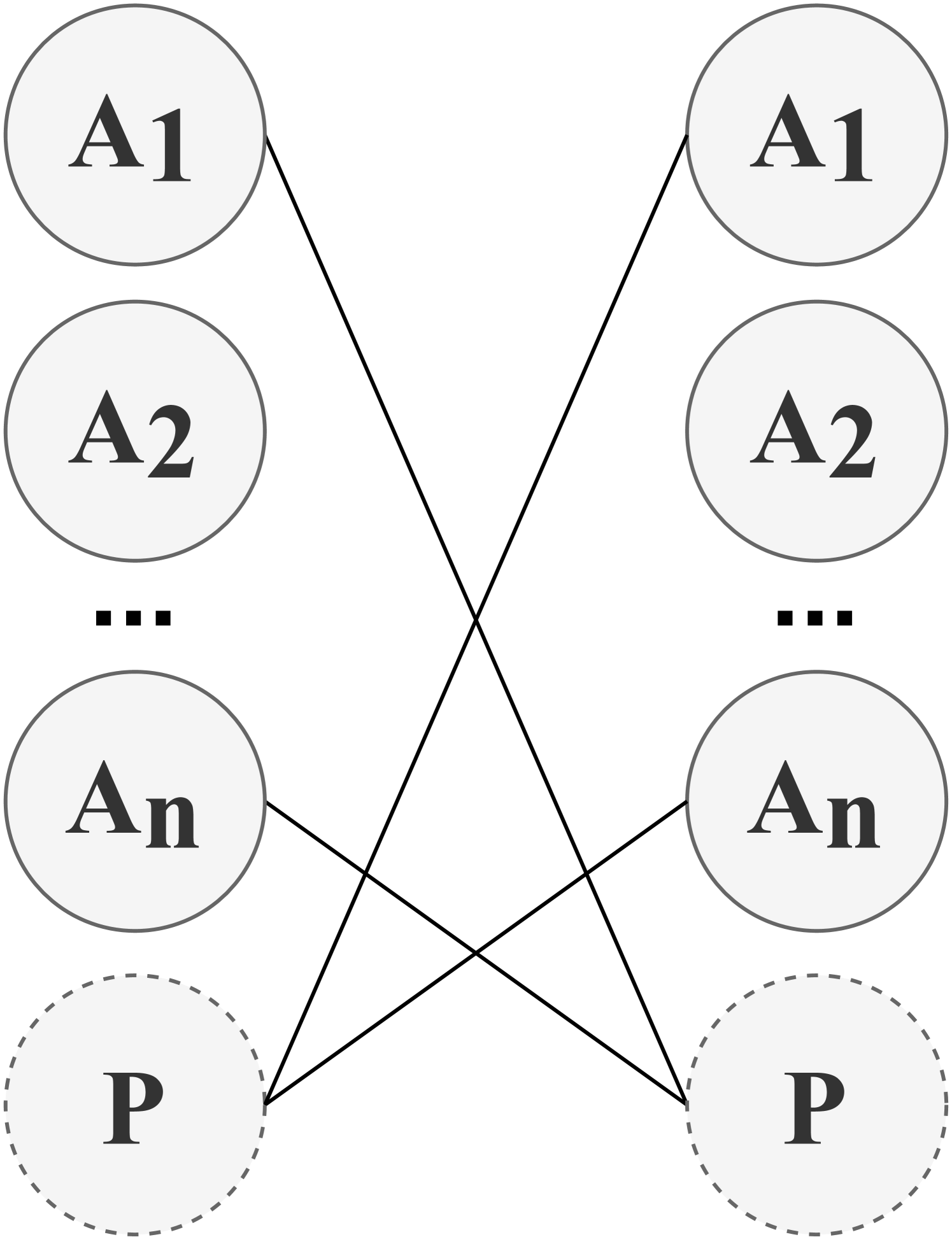

We present examples of how agents form communication links in the four categories of communication policy, as illustrated in Figure 3. Both Full Communication and Partial Structure rely on a predefined communication policy to determine communication actions. In contrast, Individual Control and Global Control involve the learning of a local communication policy and a global communication policy, respectively, to establish communication links between agents or a potential proxy. If a proxy is involved, it coordinates messages from agents choosing to communicate through this proxy. The categories and their associated research works are introduced as follows:

Table 5: The category of communication policy

| Types | Subtypes | Methods |

| --- | --- | --- |

| Predefined | Full Communication | DIAL [73]; RIAL [73]; CommNet [48]; GCL [61]; BiCNet [50]; MS-MARL-GCM [88]; TarMAC [89]; MD-MADDPG [42]; DCC-MD [82]; IMAC [66]; Diff Discrete [78]; IS [90]; Variable-length Coding [87]; HAMMER [91]; AE-Comm [65]; R-MACRL [95]; FCMNet [94]; |

| Partial Structure | IC [62]; DGN [81]; MAGNet-SA-GS-MG [40]; Agent-Entity Graph [74]; VBC [31]; NDQ [75]; Bias [63]; NeurComm [84]; IP [85]; TMC [32]; GAXNet [80]; DCSS [64]; MAIC [33]; | |

| Learnable | Individual Control | ATOC [39]; MADDPG-M [41]; IC3Net [49]; Gated-ACML [76]; LSC [77]; I2C [79]; ETCNet [86]; |

| Global Control | SchedNet [43]; GA-Comm [83]; MAGIC [92]; FlowComm [93]; | |

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Bipartite Mapping Network

### Overview

The image displays a schematic diagram representing a bipartite graph or mapping between two distinct sets of nodes arranged in two vertical columns. The diagram illustrates a many-to-many relationship or connection pattern between elements of the left set and elements of the right set.

### Components/Axes

**Structure:** Two parallel vertical columns of circular nodes.

**Left Column Nodes (Top to Bottom):**

1. A solid-outlined circle labeled **A₁**.

2. A solid-outlined circle labeled **A₂**.

3. An ellipsis (**...**), indicating a sequence of intermediate nodes.

4. A solid-outlined circle labeled **Aₙ**.

5. A dashed-outlined circle labeled **P**.

**Right Column Nodes (Top to Bottom):**

1. A solid-outlined circle labeled **A₁**.

2. A solid-outlined circle labeled **A₂**.

3. An ellipsis (**...**), indicating a sequence of intermediate nodes.

4. A solid-outlined circle labeled **Aₙ**.

5. A dashed-outlined circle labeled **P**.

**Connections:** Straight black lines connect nodes from the left column to nodes in the right column. The connections are not one-to-one but form a crisscrossing network.

### Detailed Analysis

**Node Labels and Types:**

* **A₁, A₂, ..., Aₙ:** These labels use subscript notation (₁, ₂, ₙ) to denote a sequence or series of similar entities. The ellipsis confirms the sequence extends from index 2 to index n.

* **P:** This label is distinct, appearing in a circle with a dashed outline, suggesting it may represent a different type of entity (e.g., a placeholder, a special node, or a different category) compared to the "A" nodes.

**Connection Pattern (Visible Lines):**

The diagram shows a partial but illustrative set of connections. Tracing the visible lines reveals the following mappings:

* Left **A₁** connects to Right **A₂** and Right **P**.

* Left **A₂** connects to Right **A₁** and Right **P**.

* Left **Aₙ** connects to Right **A₁** and Right **A₂**.

* Left **P** connects to Right **A₁**, Right **A₂**, and Right **Aₙ**.

**Spatial Grounding:** The legend (node labels) is integrated directly into the diagram within each node. The connections are drawn as straight lines crossing the central space between the two columns.

### Key Observations

1. **Symmetry and Repetition:** The two columns are identical in their composition and labeling, suggesting a mapping between two instances of the same set or a reflection.

2. **Dense Interconnection:** The visible lines show that each node on the left is connected to multiple nodes on the right, and vice-versa. This indicates a complex, non-hierarchical relationship.

3. **Special Status of 'P':** The node labeled 'P' is visually distinct (dashed outline) and appears to be highly connected, linking to multiple 'A' nodes in the opposite column.

4. **Ellipsis Implication:** The ellipses (...) signify that the diagram is a generalized representation. The actual number of 'A' nodes (n) is variable and could be large, making the full connection pattern potentially very dense.

### Interpretation

This diagram is a conceptual model, not a data chart. It visually communicates the architecture of a system where two groups of entities (the left and right columns) interact.

* **What it Demonstrates:** It illustrates a **bipartite graph** where connections only exist between nodes of different columns, not within the same column. This is common in modeling relationships like users-to-permissions, inputs-to-outputs, or clients-to-services.

* **Relationship Between Elements:** The crisscrossing lines emphasize that the mapping is arbitrary and complex. There is no implied order or hierarchy; any 'A' or 'P' node on one side can potentially connect to any node on the other side.

* **Role of 'P':** The dashed outline and central connectivity suggest 'P' may represent a **proxy, a processor, a shared resource, or a special gateway node** that interacts with all or many of the standard 'A' entities. Its distinct styling marks it as functionally different.

* **Generalization:** The use of 'Aₙ' and ellipses makes this a template diagram. It is meant to be applicable to any system with this bipartite structure, regardless of the specific number of 'A' components. The specific connections shown are for illustrative purposes only; the actual connectivity in a real system would be defined by its rules.

**In essence, the image provides a structural blueprint for a networked system characterized by two mirrored sets of components and a complex, many-to-many connection scheme between them, with a special component 'P' playing a highly interconnected role.**

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Diagram: Bipartite Mapping with Cross-Connections

### Overview

The image displays a schematic diagram representing a bipartite relationship or mapping between two sets of nodes. The diagram is composed of two vertical columns of circular nodes, with lines indicating specific connections between nodes from the left column to the right column. The visual style is minimal, using black lines and text on a light gray background.

### Components/Axes

* **Structure:** Two parallel vertical columns of nodes.

* **Left Column Nodes (Top to Bottom):**

* A solid-outlined circle labeled **A1**.

* A solid-outlined circle labeled **A2**.

* An ellipsis (**...**) indicating a sequence of omitted nodes.

* A solid-outlined circle labeled **An**.

* A dashed-outlined circle labeled **P**.

* **Right Column Nodes (Top to Bottom):**

* A solid-outlined circle labeled **A1**.

* A solid-outlined circle labeled **A2**.

* An ellipsis (**...**) indicating a sequence of omitted nodes.

* A solid-outlined circle labeled **An**.

* A dashed-outlined circle labeled **P**.

* **Connections (Lines):** Four straight black lines connect specific nodes between the columns:

1. From left **A1** to right **An**.

2. From left **An** to right **A1**.

3. From left **P** to right **A2**.

4. From right **P** to left **A2**.

### Detailed Analysis

The diagram defines a specific, non-sequential mapping between two identical sets of elements {A1, A2, ..., An, P}. The connections form a cross-pattern:

* The first element (A1) of the left set maps to the last 'A' element (An) of the right set.

* The last 'A' element (An) of the left set maps to the first element (A1) of the right set.

* The special element P on the left maps to the second element (A2) on the right.

* The special element P on the right maps to the second element (A2) on the left.

The ellipsis (...) between A2 and An in both columns signifies that this is a generalized diagram for an arbitrary number 'n' of 'A'-type elements. The dashed outline for the 'P' nodes visually distinguishes them from the solid-outlined 'A' nodes, suggesting 'P' may represent a different category, a placeholder, or a parameter.

### Key Observations

1. **Symmetry and Inversion:** The connections between A1 and An are perfectly inverted (left A1 → right An, left An → right A1).

2. **Asymmetric Role of P:** The 'P' nodes are involved in a different connection pattern (to/from A2) compared to the 'A' nodes.

3. **Visual Distinction:** The use of a dashed outline for 'P' is a critical visual cue indicating a different status or property.

4. **Generalized Structure:** The ellipsis confirms the diagram is a template, not a depiction of a fixed, small set.

### Interpretation

This diagram is an abstract representation of a **specific permutation or mapping rule** between two ordered sets. It is likely used in contexts such as: