## From implicit learning to explicit representations

Naomi Chaix-Eichel Inria Bordeaux Sud-Ouest Univ. Bordeaux, CNRS

Snigdha Dagar

Inria Bordeaux Sud-Ouest Univ. Bordeaux, CNRS

Thomas Boraud Univ. Bordeaux, CNRS

Quentin Lanneau

Inria Bordeaux Sud-Ouest Univ. Bordeaux, CNRS

Fr´ ed´ eric Alexandre Inria Bordeaux Sud-Ouest Univ. Bordeaux, CNRS

Abstract -Using the reservoir computing framework, we demonstrate how a simple model can solve an alternation task without an explicit working memory. To do so, a simple bot equipped with sensors navigates inside a 8-shaped maze and turns alternatively right and left at the same intersection in the maze. The analysis of the model's internal activity reveals that the memory is actually encoded inside the dynamics of the network. However, such dynamic working memory is not accessible such as to bias the behavior into one of the two attractors (left and right). To do so, external cues are fed to the bot such that it can follow arbitrary sequences, instructed by the cue. This model highlights the idea that procedural learning and its internal representation can be dissociated. If the former allows to produce behavior, it is not sufficient to allow for an explicit and fine-grained manipulation.

Index Terms -reservoir computing, robotics, simulation, working memory, procedural learning, implicit representation, explicit representation.

## I. INTRODUCTION

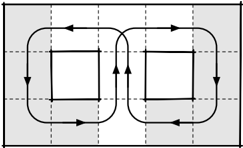

Suppose you want to study how an animal, when presented with two options A and B, can learn to alternatively choose A then B then A, etc. One typical lab setup to study such alternate decision task is the T-maze environment where the animal is confronted to a left or right turn and can be subsequently trained to display an alternate choice behavior. This task can be easily formalized using a block world as it is regularly done in the computational literature. Using such formalization, a simple solution is to negate (logically) a one bit memory each time the model reaches A or B such that, when located at the choice point, the model has only to read the value of this memory in order to decide to go to A or B. However, as simple as it is, this abstract formalization entails the elaboration of an explicit internal representation keeping track of the recent behavior, implemented in a working memory that can be updated when needed. But then, what could be the alternative? Let us consider a slightly different setup where the T-Maze is transformed into a closed 8-Maze (see figure 1-Left). Suppose that you can only observe the white area when the animal is evolving along the arrowed line (both in observable and non-observable areas). From the observer point of view, the animal is turning left one time out of two and turning right one time out of two. Said differently, the observer can infer an alternating behavior because of its

Karen Sobriel

Inria Bordeaux Sud-Ouest

Univ. Bordeaux, CNRS

Nicolas Rougier Inria Bordeaux Sud-Ouest Univ. Bordeaux, CNRS

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Flow Around Two Squares

### Overview

The image is a diagram showing a flow pattern around two squares. The flow is represented by a black line with arrowheads, indicating the direction of the flow. The squares are centered within a grid. The background is shaded gray.

### Components/Axes

* **Squares:** Two identical squares, positioned side-by-side.

* **Flow Line:** A continuous black line with arrowheads indicating the direction of flow. The flow loops around both squares.

* **Grid:** A grid of dashed lines provides a spatial reference.

* **Background:** The background is shaded gray.

### Detailed Analysis

The flow line starts from the left side, curves around the left square in a counter-clockwise direction, then crosses over to the right square. It curves around the right square in a clockwise direction, and then exits to the right side. The flow line maintains a consistent distance from the squares.

### Key Observations

* The flow pattern is symmetrical with respect to the two squares.

* The flow direction changes from counter-clockwise around the left square to clockwise around the right square.

* The grid provides a visual reference for the positioning of the squares and the flow line.

### Interpretation

The diagram illustrates a basic flow pattern around two obstacles (the squares). This could represent fluid flow, air flow, or any other type of flow that is affected by the presence of obstacles. The symmetry of the pattern suggests that the obstacles are identical and that the flow is uniform. The diagram could be used to explain concepts in fluid dynamics, aerodynamics, or other related fields.

</details>

Fig. 1. Left: An expanded view of a T-Maze. An observer can infer an alternating behavior because of her partial view (white area) of the system. Right: 8-maze with cues. A cue (left or right) is given only when the bot is present inside the yellow area.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Magnetic Coupling

### Overview

The image is a diagram illustrating magnetic coupling between two devices. It shows two rectangular objects, presumably electronic devices, with a yellow highlighted region between them. Curved arrows indicate the direction of the magnetic field lines circulating around the devices and coupling them together.

### Components/Axes

* **Devices:** Two rectangular objects, each with two circular elements on one side, possibly representing coils or antennas.

* **Magnetic Field Lines:** Curved arrows indicating the direction of the magnetic field.

* **Coupling Region:** A yellow highlighted rectangular area between the two devices, indicating the region of magnetic coupling.

* **Arrows:** Indicate the direction of the magnetic field lines.

### Detailed Analysis

The diagram shows two devices placed side-by-side. Each device has a square outline with two circular elements on one side. The yellow highlighted region between the devices indicates the area where magnetic coupling occurs. The curved arrows show the magnetic field lines emanating from one device, looping around, and entering the other device, and vice versa. The arrows indicate the direction of the magnetic field.

### Key Observations

* The magnetic field lines circulate around each device and couple them together.

* The yellow highlighted region indicates the area of strongest magnetic coupling.

* The direction of the arrows indicates the direction of the magnetic field.

### Interpretation

The diagram illustrates the concept of magnetic coupling between two devices. The magnetic field generated by one device interacts with the other device, allowing energy or information to be transferred between them. The yellow highlighted region indicates the area where the magnetic field is strongest and the coupling is most effective. This type of coupling is used in various applications, such as wireless power transfer and near-field communication (NFC).

</details>

partial view of the system. The question is: does the animal really implement an explicit alternate behavior or is it merely following a mildly complex dynamic path? This is not a rhetorical question because depending on your hypothesis, you may search for neural correlates that actually do not exist. Furthermore, if the animal is following such mildly complex dynamic path, does this mean that it has no explicit access to (not to say no consciousness of) its own alternating behavior?

This question is tightly linked to the distinction between implicit learning (generally presented as sub-symbolic, associative and statistics-based) and explicit learning (symbolic, declarative and rule-based). Implicit learning refers to the nonconscious effects that prior information processing may exert on subsequent behavior [1]. It is implemented in associative sensorimotor procedural learning and also in model-free reinforcement learning, with biological counterparts in the motor and premotor cortex and in the basal ganglia. Explicit learning is associated with consciousness or awareness, and to the idea of building explicit mental representations [2] that can be used for flexible behavior, involving the prefrontal cortex and the hippocampus. This is what is proposed in model-based reinforcement learning and in other symbolic approaches for planning and reasoning. These strategies of learning are not independent but their relations and interdependencies are not clear today. Explicit learning is often observed in the early stages of learning whereas implicit learning appears on the long run, which can be explained as a way to decrease the cognitive load. But there is also a body of evidence, for example in sequence learning [3] or artificial grammar learning studies [4], that suggests that explicit learning is not a mandatory early step and that improvement in task performance are not necessarily accompanied by the ability to express the acquired knowledge in an explicit way [5].

Coming back to the task mentioned above, it is consequently not clear if we can learn rules without awareness and then to what extent can such implicit learning be projected to performance in an unconscious way? Furthermore, without turning these implicit rules into an explicit mental representation, is it possible to manipulate the rules, which is a fundamental trademark of flexible adaptable control of behavior?

Using the reservoir computing framework generally considered as a way to implement implicit learning, we first propose that a simple alternation or sequence learning task can be solved without an explicit pre-encoded representation of memory. However, to then be able to generate a new sequence or manipulate the rule learnt, we explain that inserting explicit cues in the decision process is needed. In a second series of experiments, we provide a proof of concept still using the reservoir computing framework, for the hypothesis that the recurrent network forms contextual representations from implicitly acquired rules over time. We then show that these representations can be considered explicit and necessary to be able to manipulate behaviour in a flexible manner.

In order to provide preliminary interpretation of what is observed here, it is reminded that recurrent networks, particularly models using the reservoir computing framework, are a suitable candidate to model the prefrontal cortex [6], also characterized by local and recurrent connections. Given their inherent sensitivity to temporal structure, it also makes these networks adaptable for sequence learning. This approach has been used to model complex sensorimotor couplings [7] from the egocentric view of an agent (or animal) that is situated in its environment and can autonomously demonstrate reactive behaviour from its sensory space [8], as we also do in the first series of experiments, for learning sensorimotor couplings by demonstration, or imitation. In the second series of experiment, we propose that the prefrontal cortex is the place where explicit representations can be elaborated when flexible behaviors are required.

## II. METHODS AND TASK

The objective is the creation of a reservoir computing network of type Echo State Network (ESN) that controls the movement of a robot [8], [9], which has to solve a decision-making task (alternately going right and left at an intersection) in the maze presented in figure 1.

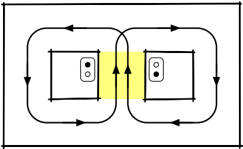

## A. Model Architecture : Echo State Network

An ESN is a recurrent neural network (called reservoir) with randomly connected units, associated with an input layer and an output layer, in which only the output (also called readout) neurons are trained. The neurons have the following dynamics:

$$\begin{array} { r l r } { x [ n ] } & = } & { ( 1 - \alpha ) x [ n - 1 ] + \alpha \tilde { x } [ n ] } & { ( 1 ) } \end{array}$$

$$\begin{array} { r l r } { \tilde { x } [ n ] } & = } & { \tanh ( W x [ n - 1 ] + W _ { i n } [ 1 ; u [ n ] ] ) } & { ( 2 ) } \end{array}$$

$$\begin{array} { r l r } { y [ n ] } & { = } & { f ( W _ { o u t } [ 1 ; \tilde { x } ( n ) ] ) } & { ( 3 ) } \end{array}$$

where x ( n ) is a vector of neurons activation, ˜ x ( n ) its update, u ( n ) and y ( n ) are respectively the input and the output vectors, all at time n . W , W in , W out are respectively the reservoir, the input and the output weight matrices. The notation [ . ; . ] stands for the concatenation of two vectors. α corresponds to the leak rate. tanh corresponds to the hyperbolic tangent function and f to linear or piece-wise linear function.

The values in W , W in , W out are initially randomly chosen. While W , W in are kept fixed, the output weights W out are the only ones plastic (red arrows in Figure 2). In this model, the output weights are learnt with the ridge regression method (also known as Tikhonov regularization):

$$W _ { o u t } = Y ^ { t \arg e t } X ^ { T } ( X X ^ { T } + \beta I ) ^ { - 1 } \quad ( 4 )$$

where Y target is the target signal to approximate, X is the concatenation of 1, the input and the neurons activation vectors: [1; u ( n ); x ( n )] , β corresponds to the regularization coefficient and I the identity matrix.

## B. Experiment 1 : Uncued sequence learning

The class of tasks called spatial alternation has been widely used to study hippocampal and working memory functions [10]. For the purpose of our investigation, we simulated a continuous version of the same task, wherein the agent needs to alternate its choice at a decision point, and after the decision, it is led back to the central corridor, in essence following an 8shaped trace while moving (see figure 1-Left). This alternation task is widely believed to require a working memory such as to remember what was the previous choice in order to alternate it. Here we show that the ESN previously described is sufficient to learn the task without an explicit representation of the memory.

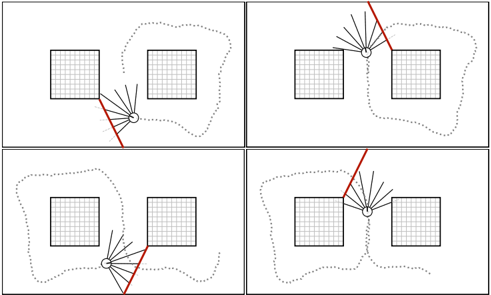

1) Tutor model: In order to generate data for learning, we implemented a simple Braintenberg vehicle where the robot moves automatically with a constant speed and changes its orientation according to the values of its sensors. At each time step the sensors measure the distance to the walls and the bot turns such as to avoid the walls. At each timestep, the position of the bot is updated as follows:

$$\begin{array} { r l r } { s k } & \quad } \\ { z e } & \quad } \\ { ( 5 ) } \end{array}$$

$$\begin{array} { r l r } { p ( n ) } & = } & { p ( n - 1 ) + 2 * \left ( \cos ( \theta ( n ) ) + \sin ( \theta ( n ) ) \right ) } & { ( 6 ) } \end{array}$$

where p ( n ) and p ( n +1) are the positions of the robot at time step n and n +1 , θ ( n ) is the orientation of the robot, calculated as the weighted sum ( α i ) of the values of the sensors s i . The norm of the movement is kept constant and fixed at 2.

Fig. 2. Model Architecture with 8 sensor inputs, and a motor output (orientation). The black arrows are fixed while the red arrows are plastic and are trained. The reservoir states are used as the input to a classifier which is trained to make a prediction about the decision (going left or right) of the robot. A left (L) and right (R) cue can be fed to the model depending on the experiment (see Methods).

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Neural Network for Motor Control

### Overview

The image depicts a neural network architecture designed for motor control, potentially for a robotic or biological system. It shows the flow of information from sensors through a recurrent neural network to a motor output, with additional prediction outputs using SVM/KNN classifiers.

### Components/Axes

* **Sensors:** A column of sensor inputs labeled S[0], S[i], and S[n], enclosed in a dashed blue box.

* **Recurrent Neural Network (RNN):** A large circle containing smaller circles representing neurons, with recurrent connections indicated by curved arrows. The RNN is shaded light blue and has a dashed blue border. The weight matrix within the RNN is labeled as "w".

* **Noise:** An input labeled "Noise" with the symbol "ξ" (xi).

* **Motor:** A single circle labeled "θ" (theta), representing the motor output.

* **Weights:** Input weights "w_in" and output weights "w_out".

* **Prediction (Left or Right):** Two identical blocks, each consisting of an SVM/KNN classifier and a "Prediction (Left or Right)" output.

* **Inputs L and R:** Two dashed circles labeled "L" and "R" at the top, connected to the RNN with dashed lines.

### Detailed Analysis

* **Sensors:** The sensor inputs are represented as S[0], S[i], and S[n], suggesting a range of sensor values from 0 to n. These sensors feed into the RNN via the input weights "w_in".

* **RNN:** The RNN is the central processing unit. It receives inputs from the sensors, noise, and potentially external signals "L" and "R". The recurrent connections within the RNN allow it to maintain a state and process temporal information.

* **Noise:** The noise input "ξ" introduces randomness into the network, which can help with exploration and robustness.

* **Motor Output:** The motor output "θ" is generated from the RNN via the output weights "w_out". This output likely represents a motor command, such as an angle or velocity.

* **Prediction:** The outputs of the RNN are also fed into two SVM/KNN classifiers. These classifiers are used to predict a binary outcome, "Left or Right".

* **Inputs L and R:** The inputs L and R are connected to the RNN with dashed lines.

### Key Observations

* The diagram highlights the flow of information from sensors to motor output through a recurrent neural network.

* The inclusion of noise and recurrent connections suggests that the network is designed to handle noisy and temporal data.

* The SVM/KNN classifiers provide an additional layer of processing, potentially for decision-making or classification tasks.

* The presence of "L" and "R" inputs suggests that the system might be involved in a left-right decision-making process.

### Interpretation

The diagram illustrates a neural network architecture for motor control that integrates sensory information, internal state, and external signals. The RNN acts as a central processing unit, learning to map sensor inputs to motor outputs. The SVM/KNN classifiers provide an additional layer of processing, potentially for decision-making or classification tasks. The "L" and "R" inputs suggest that the system might be involved in a left-right decision-making process. This architecture could be used in a variety of applications, such as robotics, prosthetics, or autonomous vehicles. The diagram suggests a system capable of learning complex motor control tasks from sensory input, with the ability to make predictions about the environment.

</details>

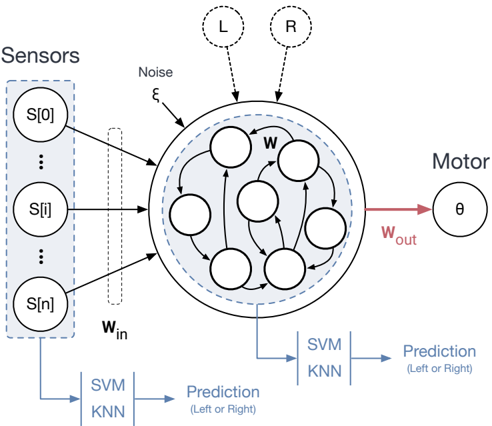

Fig. 3. Generation of the 8-shape pathway with the addition of walls at the intersection points

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Robot Navigation Scenarios

### Overview

The image presents four scenarios of a robot navigating a space with two square obstacles. Each scenario shows the robot's position, its sensor readings (represented by lines radiating from the robot), a red line indicating a collision risk, and a dotted line representing the robot's path.

### Components/Axes

* **Robot:** Represented by a small circle with a face.

* **Obstacles:** Two square blocks with a grid pattern inside.

* **Sensor Readings:** Black lines radiating from the robot, indicating the robot's perception of its surroundings.

* **Collision Risk:** A red line indicating a potential collision path.

* **Robot Path:** A dotted gray line showing the robot's movement trajectory.

### Detailed Analysis

The image is divided into four quadrants, each depicting a different scenario:

* **Top-Left:** The robot is positioned between the two obstacles, closer to the left obstacle. The red line indicates a collision risk with the left obstacle. The robot's path starts from the bottom-right and curves around the obstacles.

* **Top-Right:** The robot is positioned between the two obstacles, closer to the right obstacle. The red line indicates a collision risk with the right obstacle. The robot's path starts from the bottom-left and curves around the obstacles.

* **Bottom-Left:** The robot is positioned between the two obstacles, closer to the left obstacle. The red line indicates a collision risk with the left obstacle. The robot's path starts from the top-right and curves around the obstacles.

* **Bottom-Right:** The robot is positioned between the two obstacles, closer to the right obstacle. The red line indicates a collision risk with the right obstacle. The robot's path starts from the top-left and curves around the obstacles.

In each scenario, the robot's sensors detect the obstacles, and the red line highlights the direction where a collision is most likely if the robot continues on its current trajectory. The dotted line shows how the robot avoids the obstacles by adjusting its path.

### Key Observations

* The robot consistently avoids collisions by altering its path based on sensor data.

* The red line accurately predicts potential collision paths.

* The robot's path is smooth and avoids sharp turns.

### Interpretation

The diagram illustrates a basic collision avoidance system for a robot navigating a simple environment. The robot uses its sensors to detect obstacles and adjusts its path to avoid collisions. The red line serves as a visual indicator of potential dangers, allowing the robot to make informed decisions about its movement. The scenarios demonstrate the robot's ability to navigate different configurations of obstacles, highlighting the robustness of the collision avoidance system.

</details>

- 2) Training data: The ESN is trained using supervised learning, containing samples from the desired 8-shaped trajectory. Since the Braitenberg algorithm only aims to avoid obstacles, the robot is forced into the desired trajectory by adding walls at the intersection points as shown in figure 3. After generating the right pathway, the added walls are removed and the true sensor values are gathered as input. Gaussian noise is added to the position values of the robot at every time step in order to make the training more robust. Approximately 50,000 time steps were generated (equivalent to 71 complete 8-loops) and separated into training and testing sets.

3) Hyper parameters tuning: The ESN was built with the python library ReservoirPy [11] with the hyper-parameters presented in table I, column 'Without context'. The order

| Parameter | Without context | With context |

|------------------------|-------------------|----------------------------|

| Input size | 8 | 10 |

| Output size | 1 | 1 |

| Number of units | 1400 | 1400 |

| Input connectivity | 0.2 | 0.2 |

| Reservoir connectivity | 0.19 | 0.19 |

| Reservoir noise | 0.01 | 1e-2 |

| Input scaling | 1 | 1(sensors), 10.4695 (cues) |

| Spectral Radius | 1.4 | 1.505 |

| Leak Rate | 0.0181 | 0.06455 |

| Regularization | 4.1e-08 | 1e-3 |

TABLE I

PARAMETER CONFIGURATION FOR THE ESN

of magnitude of the hyper-parameters was first found using the Hyperopt python library [12], then these were fine tuned manually. The ESN receives as input the values of the 8 sensors and output the next orientation.

4) Model evaluation: The performance of the ESN has been calculated with the Normalized Root Mean Squared Error metrics ( NRMSE ) and the R square ( R 2 ) metrics, defined as follows :

$$N R M S E = \frac { \sqrt { \frac { \sum _ { i = 1 } ^ { n } ( y _ { i } - \hat { y } _ { i } ) ^ { 2 } } { n } } } { \sigma } \quad ( 7 )$$

$$R ^ { 2 } = 1 - \frac { \sum ( y _ { i } - \hat { y } _ { i } ) ^ { 2 } } { \sum ( y _ { i } - \bar { y } ) ^ { 2 } } \quad ( 8 )$$

where y i , ˆ y i and ¯ y are respectively the desired output, the predicted output and the mean of the desired output.

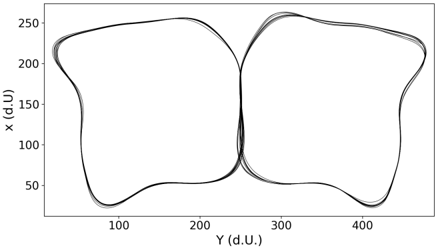

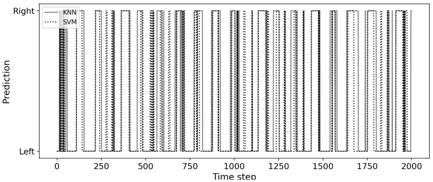

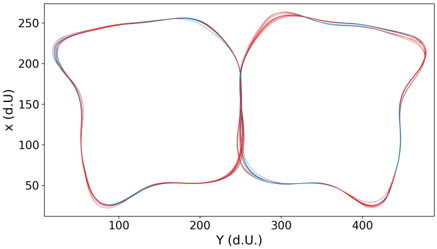

5) Reservoir state analysis: In this section the reservoir states are analysed such as to inspect to which extent they form an internal and hidden representation of the memory. To do so, we use Principal Component Analysis (PCA), a dimensionality reduction method enabling the identification of patterns and important features of the processed data. PCA is carried out on the reservoir states for each position of the robot during the 8-shape trajectory. We continued the analysis by doing a classification of the reservoir states. We made the assumption that it is possible to know the future direction of the robot observing the internal states of the reservoir. This implies that the reservoir states can be classified in two classes: one related to the prediction of going left and the other related to the prediction of going right. Two standard classifiers, the KNN (K-Nearest Neighbors) and the SVM (Support Vector Machine) were used. They take as input the reservoir state at each position of the bot while executing the 8-shape and predict the decision the robot will take at the next intersection (see figure 2). Since the classifiers are trained using supervised learning, the training data were generated in the central corridor of the maze (yellow area in figure 1Right), assuming that it is where the reservoir is in the state configuration in which it already knows which direction it will take at the next intersection. 900 data points were generated and separated into training and testing sets.

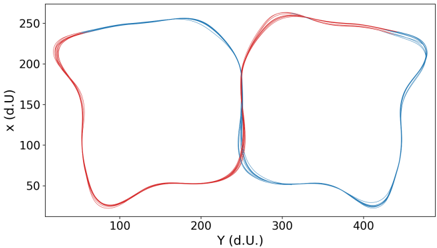

Fig. 4. The trajectory of the robot following the 8-trace in the cartesian map.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Scatter Plot: Trajectory Data

### Overview

The image is a scatter plot displaying trajectory data. It shows the relationship between two variables, X and Y, both measured in arbitrary units (d.U.). Several overlapping trajectories are plotted, creating a visual representation of movement or change over time. The trajectories form a figure-eight-like pattern.

### Components/Axes

* **X-axis:** Labeled "Y (d.U.)", with a scale ranging from approximately 50 to 450 in increments of 100.

* **Y-axis:** Labeled "x (d.U)", with a scale ranging from 50 to 250 in increments of 50.

* **Data Series:** Multiple overlapping trajectories plotted in black.

### Detailed Analysis

The data series consist of several overlapping black lines, indicating multiple instances or repetitions of a similar trajectory. The trajectories trace a figure-eight-like pattern.

* **Left Loop:** The trajectories start from approximately (50, 50), rise to approximately (230, 50), move to approximately (230, 100), then move to approximately (70, 100), then move to approximately (70, 50), and finally return to approximately (50, 50).

* **Right Loop:** The trajectories start from approximately (250, 50), rise to approximately (430, 50), move to approximately (430, 100), then move to approximately (270, 100), then move to approximately (270, 50), and finally return to approximately (250, 50).

* **Intersection:** The trajectories intersect at approximately (200, 70) and (300, 70).

### Key Observations

* The trajectories are tightly clustered, suggesting a high degree of consistency in the underlying process.

* The figure-eight pattern indicates a cyclical or repetitive movement between two distinct regions.

* There is some variation in the trajectories, as indicated by the slight spread of the lines.

### Interpretation

The scatter plot visualizes the movement or change of an object or system in a two-dimensional space. The figure-eight pattern suggests a cyclical process with two distinct states or locations. The consistency of the trajectories implies a controlled or predictable system, while the slight variations indicate some degree of randomness or external influence. The data could represent various phenomena, such as the movement of a robot, the behavior of a biological system, or the fluctuations of a financial market.

</details>

## C. Experiment 2 : 8 Maze Task with Contextual Inputs

In this experiment, we fed the reservoir with two additional inputs that represent the next decision, one being related to a right turn (R) and the other to a left turn (L) (see figure 2). They are binary values, switched to a value of 1 only when the bot is known to take the corresponding direction. We thus built a second ESN with the hyper-parameters presented in TABLE I, column 'With context'. The network is similar to the previous one, except that the contextual inputs are added with a different input scaling than the one used for the sensors inputs. During the data generation, the two additional inputs are set to 0 everywhere in the maze, except in the central corridor.

## III. RESULTS

## A. Motor sequence learning

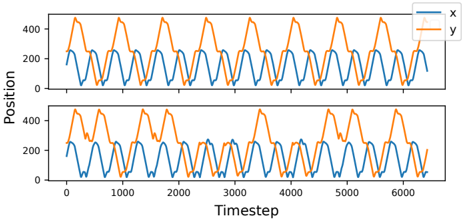

We first show that a recurrent neural network like the ESN can learn a rule-based trajectory in the continuous space, without an explicit memory or feedback connections. The score of the ESN is shown in TABLE II and the results for the trajectory predicted by the ESN are presented in figure 4 and in the top panel in figure 8. At each period of about 350 steps, a behavior or decision switch takes place, which is evident from the crests and troughs in the y-axis coordinates. It can be seen that the ESN correctly predicts the repeated alternating choice in the central arm of the maze. In addition to switching between the left and right loops, the robot also moves through the environment without colliding into obstacles.

| Performance of the ESN for 50 simulations | Performance of the ESN for 50 simulations | Performance of the ESN for 50 simulations | Performance of the ESN for 50 simulations |

|---------------------------------------------|---------------------------------------------|---------------------------------------------|---------------------------------------------|

| NRMSE | NRMSE | R 2 | R 2 |

| Mean | 0.0171 | Mean | 0.9962 |

| Variance | 5.4466e-06 | Variance | 1.0192e-06 |

TABLE II

NRMSE AND R 2 SCORE OF THE ESN WITH 8 INPUTS

## B. Reservoir State Prediction

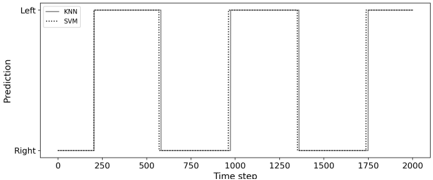

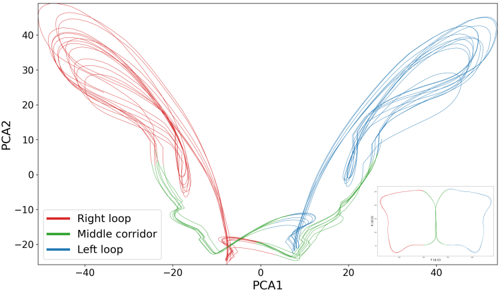

Next, we show that even a simple classifier such as SVM or KNN can observe the internal states of the reservoir and learn to predict the decision (whether to go left or right) of the network. The results of the predictions are presented in the top part of figure 5. As expected, there is a periodicity of choice in line with the position of the bot in the maze, showing that the classification is relevant. At each time step, both classifiers output the same prediction with a small discrepancy in time. The accuracy score obtained for both classifiers is 1. In the bottom part of figure 5, we can observe that the robot knows quite early which decision it will take at the next loop while we could expect that it would take its decision in the yellow corridor in figure 1. Here, we see that if the robot just turned right, the reservoir switches its internal state to go left next time only a few dozen time steps after. We tried the same classifiers but instead of the reservoir states as input, we used the sensors values. The results are shown in the figure 5. As expected, the classifications fail with an accuracy score of 0.57 for SVM and 0.43 for KNN; this randomness can be seen in both figures. Thus, we showed that by simply observing the internal states of the reservoir, it is possible to predict its next prediction. In essence, this is a proof of concept to show that second-order or observer networks, mimicking the role of the regions of the prefrontal cortex implementing contextual rules, can consolidate information linking sensory information to motor actions, to develop relevant contextual representations. Since the state space of the dynamic reservoir is highdimensional, using the Principal Component Analysis (PCA) on the states, we investigated if it is possible to observe subspace attractors. The result for the PCA analysis is presented in figure 7, where PCA was applied for 5000 time steps, which corresponds to 7 8-loops. The figure shows two symmetric sub-attractors, which are linearly separable, that correspond to the two parts of the 8-shape trajectory.

## C. Explicit rules with contextual inputs

Finally, we demonstrate that although the ESN can learn a motor sequence without contextual inputs, it is limited by its internal representation to learn more complex sequences which may require a longer memory. Adding contextual or explicit information about the rule (which we propose are representations developed by the prefrontal cortex over time) can then bias the ESN to follow any arbitrary trajectory as in 8. With the additional contextual inputs, the ESN is able to reproduce the standard 8 sequence (the performance is shown in table III) but can also achieve more complex tasks by sending to it the proper contextual inputs. One example can be seen in figure 8: the top graph shows the positions of the bot while making the standard 8 sequence [ABABABAB...], the bottom one shows that the bot was able to achieve a more complex sequence [AABBAABBAABB...].

## IV. DISCUSSION

Using a simple reservoir model that learns to follow a specific path, we have shown how the resulting behavior could be

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Chart: KNN vs SVM Prediction Over Time

### Overview

The image is a line chart comparing the predictions of two machine learning models, KNN (K-Nearest Neighbors) and SVM (Support Vector Machine), over a series of time steps. The chart displays the predicted output (either "Left" or "Right") for each model at each time step. The x-axis represents the time step, ranging from 0 to 2000. The y-axis represents the prediction, with "Left" at the bottom and "Right" at the top.

### Components/Axes

* **X-axis:** Time step, ranging from 0 to 2000, with markers at 0, 250, 500, 750, 1000, 1250, 1500, 1750, and 2000.

* **Y-axis:** Prediction, labeled "Prediction", with "Left" at the bottom and "Right" at the top.

* **Legend:** Located in the top-left corner.

* KNN: Solid gray line.

* SVM: Dotted gray line.

### Detailed Analysis

* **KNN (Solid Gray Line):** The KNN model's prediction alternates between "Left" and "Right" in a step-wise fashion. The transitions between "Left" and "Right" appear to be relatively consistent in duration.

* **SVM (Dotted Gray Line):** The SVM model's prediction also alternates between "Left" and "Right" in a step-wise fashion. The transitions between "Left" and "Right" appear to be relatively consistent in duration.

* **Comparison:** The KNN and SVM models appear to have very similar prediction patterns, with the SVM model's transitions slightly lagging behind the KNN model's transitions in some instances.

### Key Observations

* Both models exhibit a clear pattern of alternating predictions between "Left" and "Right."

* The SVM model's predictions closely follow the KNN model's predictions, with minor timing differences.

* The frequency of switching between "Left" and "Right" appears relatively consistent throughout the time series.

### Interpretation

The chart suggests that both the KNN and SVM models are effectively capturing the underlying pattern in the data, which involves alternating between two states ("Left" and "Right"). The slight lag observed in the SVM model's predictions compared to the KNN model could be due to differences in the model's internal mechanisms or training data. The consistent frequency of state transitions suggests a periodic or rhythmic pattern in the data being predicted. The close agreement between the two models indicates that both are learning similar representations of the underlying data structure.

</details>

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Trajectory Plot: Overlaid Paths

### Overview

The image is a 2D trajectory plot showing several overlaid paths. The plot visualizes movement or position data in two dimensions, with the x-axis representing the vertical position (d.U) and the y-axis representing the horizontal position (d.U). Multiple paths are plotted, with most appearing in red and a few in blue, indicating possible variations or groupings in the data. The paths trace a figure-eight-like pattern.

### Components/Axes

* **X-axis (Vertical):** Labeled "x (d.U.)", with a scale ranging from approximately 50 to 250.

* **Y-axis (Horizontal):** Labeled "Y (d.U.)", with a scale ranging from approximately 50 to 450.

* **Paths:** Multiple overlaid paths, primarily in red, with a few in blue. There is no explicit legend.

### Detailed Analysis

* **X-Axis:**

* 50

* 100

* 150

* 200

* 250

* **Y-Axis:**

* 100

* 200

* 300

* 400

* **Red Paths:** The majority of the paths are red. They follow a figure-eight pattern, with loops centered around (100, 200) and (400, 200). The paths are tightly clustered, indicating consistent movement patterns.

* **Blue Paths:** A few paths are blue, also following the figure-eight pattern. They appear to be slightly offset from the red paths in certain regions, suggesting minor variations in the movement.

### Key Observations

* The paths form a clear figure-eight pattern.

* The red paths are more numerous and tightly clustered.

* The blue paths show some deviation from the red paths.

### Interpretation

The plot likely represents the trajectories of an object or subject moving in a figure-eight pattern. The red paths could represent a standard or average movement, while the blue paths might indicate variations or deviations from this standard. The consistency of the red paths suggests a well-defined and repeatable movement pattern. The slight variations in the blue paths could be due to external factors, individual differences, or experimental manipulations. The data suggests a controlled experiment where the movement pattern is largely consistent, but with some degree of variability.

</details>

Fig. 5. Prediction from sensors during 2000 time steps. Top figure shows the prediction of the KNN and SVM classifier, bottom figure shows the SVM prediction along the trajectory.

| Performance of the ESN for 50 runs | Performance of the ESN for 50 runs | Performance of the ESN for 50 runs | Performance of the ESN for 50 runs |

|--------------------------------------|--------------------------------------|--------------------------------------|--------------------------------------|

| NRMSE | NRMSE | R 2 | R 2 |

| Mean | 0.0050 | Mean | 0.9997 |

| Variance | 1.1994e-07 | Variance | 2.0220e-09 |

TABLE III

NRMSE AND R 2 SCORE OF THE ESN WITH THE TWO ADDITIONAL CONTEXTUAL INPUTS

interpreted as an alternating behavior by an external observer. However, we've also shown that from the point of view of the model and in the absence of associated cues, this behavior cannot be interpreted as such. Instead, the behavior results from the internal dynamics of the reservoir (and the learning procedure we implemented). Without external cues, the model is unable to escape its own behavior and is trapped inside an attractor. Only the cues can provide the model with the necessary and explicit information that in turn allows to bias its behavior in favor of option A or option B.

From a neuroscience perspective, as developed in more details in [13], it can be proposed that the reservoir model in the first experiment implements the premotor cortex learning sensorimotor associations in the anterior cortex. In the first experiment, this is made by supervised learning in a process of learning by imitation. In a different protocol, this is also classically be done by reinforcement learning, involving another region of the anterior cortex, the anterior cingulate cortex,

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Chart: KNN vs SVM Prediction Over Time

### Overview

The image is a line chart comparing the predictions of two machine learning models, KNN (K-Nearest Neighbors) and SVM (Support Vector Machine), over a series of time steps. The chart shows how the predictions of these models alternate between two states, labeled "Left" and "Right".

### Components/Axes

* **X-axis:** "Time step", ranging from 0 to 2000 in increments of 250.

* **Y-axis:** "Prediction", with two categorical values: "Left" at the top and "Right" at the bottom.

* **Legend:** Located in the top-left corner.

* "KNN": Represented by a solid black line.

* "SVM": Represented by a dotted black line.

### Detailed Analysis

* **KNN:** The solid black line alternates between the "Left" and "Right" prediction states.

* It starts at "Right" from time step 0 to approximately 250.

* It switches to "Left" at approximately 250 and remains there until approximately 750.

* It switches back to "Right" at approximately 750 and remains there until approximately 1250.

* It switches to "Left" at approximately 1250 and remains there until approximately 1750.

* It switches back to "Right" at approximately 1750 and remains there until 2000.

* **SVM:** The dotted black line mirrors the behavior of the KNN line, indicating identical predictions at each time step.

* It starts at "Right" from time step 0 to approximately 250.

* It switches to "Left" at approximately 250 and remains there until approximately 750.

* It switches back to "Right" at approximately 750 and remains there until approximately 1250.

* It switches to "Left" at approximately 1250 and remains there until approximately 1750.

* It switches back to "Right" at approximately 1750 and remains there until 2000.

### Key Observations

* The KNN and SVM models produce identical predictions throughout the entire time series.

* The predictions alternate between "Left" and "Right" states in a periodic manner.

* The duration of each state ("Left" or "Right") is approximately 500 time steps.

### Interpretation

The chart demonstrates that, for this particular dataset and task, the KNN and SVM models are in complete agreement on their predictions. The periodic switching between "Left" and "Right" suggests a cyclical pattern in the underlying data that both models are able to capture effectively. The consistent agreement between the models could indicate that the decision boundary between the "Left" and "Right" classes is relatively simple and easily learned by both algorithms. It could also suggest that the models were trained on the same dataset and hyperparameters, leading to similar performance.

</details>

Time step

Fig. 6. Prediction from reservoir state during 2000 time steps. Top figure shows the predictions of the KNN and SVM classifier. Bottom figure shows the SVM prediction along the trajectory.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Trajectory Plot: Figure-Eight Movement

### Overview

The image is a trajectory plot showing movement patterns in a figure-eight shape. The plot displays the x-coordinate versus the y-coordinate, both in arbitrary units (d.U.). There are two distinct trajectories, one in red and one in blue, each forming a loop of the figure eight. Multiple lines of each color suggest multiple trials or repetitions of the movement.

### Components/Axes

* **X-axis:** Labeled "Y (d.U.)", ranging from approximately 50 to 450.

* **Y-axis:** Labeled "x (d.U)", ranging from 0 to 250.

* **Data Series:** Two data series are plotted: one in red and one in blue. Each series consists of multiple overlapping lines, indicating multiple trials.

### Detailed Analysis

* **Red Trajectory:**

* Starts at approximately (Y=50, x=20).

* Moves upwards and to the right, reaching a peak of approximately (Y=150, x=240).

* Then moves downwards and to the right, reaching a minimum of approximately (Y=200, x=190).

* Then moves downwards and to the left, reaching a minimum of approximately (Y=100, x=10).

* Finally, moves upwards and to the right, returning to the starting point.

* **Blue Trajectory:**

* Starts at approximately (Y=250, x=200).

* Moves upwards and to the right, reaching a peak of approximately (Y=350, x=250).

* Then moves downwards and to the right, reaching a minimum of approximately (Y=420, x=230).

* Then moves downwards and to the left, reaching a minimum of approximately (Y=350, x=10).

* Finally, moves upwards and to the left, returning to the starting point.

### Key Observations

* The trajectories form a figure-eight pattern.

* The red trajectory is located on the left side of the figure eight, while the blue trajectory is on the right side.

* There is some variability in the trajectories, as indicated by the multiple overlapping lines.

* The two trajectories intersect at approximately (Y=250, x=200).

### Interpretation

The plot likely represents the movement of a subject performing a figure-eight task. The red and blue trajectories could represent different conditions, subjects, or trials. The variability in the trajectories suggests that the movement is not perfectly consistent. The intersection point represents the point where the subject transitions from one loop of the figure eight to the other. The data suggests that the subject is able to perform the figure-eight task, but there is some variability in their performance. Further analysis could be performed to quantify the variability and to compare the performance under different conditions.

</details>

Fig. 7. The first two principal components of the reservoir state space after applying PCA on the reservoir states. On the bottom right is the corresponding map of the positions of the robot in the maze.

<details>

<summary>Image 10 Details</summary>

### Visual Description

## Chart Type: Scatter Plot with Trajectories

### Overview

The image is a scatter plot displaying trajectories in a two-dimensional space defined by PCA1 and PCA2. Three distinct trajectories are plotted, each represented by a different color: red ("Right loop"), green ("Middle corridor"), and blue ("Left loop"). The plot shows the movement or evolution of data points within this PCA space, with each trajectory forming a loop-like structure. An inset plot in the bottom-right corner provides a simplified overview of the three trajectories.

### Components/Axes

* **X-axis (PCA1):** Ranges from approximately -40 to 40. Axis markers are present at -40, -20, 0, 20, and 40.

* **Y-axis (PCA2):** Ranges from approximately -20 to 40. Axis markers are present at -20, -10, 0, 10, 20, 30, and 40.

* **Legend:** Located in the bottom-left corner, it identifies the three trajectories:

* Red: "Right loop"

* Green: "Middle corridor"

* Blue: "Left loop"

* **Inset Plot:** Located in the bottom-right corner, it shows a simplified version of the three trajectories. The axes are not labeled in the inset plot.

### Detailed Analysis

* **Right loop (Red):**

* Trend: Starts near PCA1 = -25 and PCA2 = -15, loops upwards and to the left, reaching a maximum PCA2 value of approximately 40 at PCA1 = -30, then curves back down and towards the center, ending near PCA1 = -10 and PCA2 = -20.

* The red line forms multiple overlapping loops on the left side of the graph.

* **Middle corridor (Green):**

* Trend: Stays relatively close to the PCA1 axis (PCA2 values between -20 and 0), connecting the "Right loop" and "Left loop" trajectories. It forms a relatively straight path along the bottom of the graph.

* The green line is mostly concentrated between PCA1 values of -20 and 20.

* **Left loop (Blue):**

* Trend: Starts near PCA1 = 10 and PCA2 = -20, loops upwards and to the right, reaching a maximum PCA2 value of approximately 40 at PCA1 = 30, then curves back down and towards the center, ending near PCA1 = 25 and PCA2 = -15.

* The blue line forms multiple overlapping loops on the right side of the graph.

### Key Observations

* The "Right loop" and "Left loop" trajectories are roughly symmetrical about the PCA2 axis.

* The "Middle corridor" trajectory acts as a bridge between the two loops, suggesting a transition or connection between the states represented by the loops.

* The trajectories show complex, cyclical behavior within the PCA space.

* The inset plot provides a clear, simplified overview of the overall structure of the trajectories.

### Interpretation

The plot visualizes the dynamics of a system in a reduced-dimensional space (PCA1 and PCA2). The three trajectories ("Right loop", "Middle corridor", and "Left loop") likely represent different states or behaviors of the system. The cyclical nature of the loops suggests that the system oscillates between these states. The "Middle corridor" trajectory indicates a transition path between the "Right loop" and "Left loop" states. The symmetry between the "Right loop" and "Left loop" might indicate similar underlying processes or symmetrical conditions. The PCA analysis likely aims to reduce the dimensionality of the original data while preserving the key features that distinguish these three states. The plot is useful for understanding the overall dynamics and relationships between these states.

</details>

Fig. 8. The coordinates of the agent for 7000 timesteps in the prediction phase. The plots in blue show the x axis coordinates while the ones in red show the y axis coordinates. The figure on top shows the results for the standard 8 sequence [ABABAB..], the figure at the bottom shows the results for a randomly generated sequence [AABAABBBABBAABAB], where 'A' is the left loop and 'B' is the right loop.

<details>

<summary>Image 11 Details</summary>

### Visual Description

## Time Series Chart: Position vs. Timestep

### Overview

The image presents two time series charts, one above the other, displaying the relationship between "Position" and "Timestep". Each chart plots two data series, labeled 'x' and 'y', with 'x' represented by a blue line and 'y' by an orange line. Both 'x' and 'y' exhibit cyclical patterns, but with different amplitudes and phase relationships in the two charts.

### Components/Axes

* **X-axis (Timestep):** The horizontal axis represents the timestep, ranging from 0 to approximately 6000.

* **Y-axis (Position):** The vertical axis represents the position, ranging from 0 to 400.

* **Legend (Top-Right):**

* Blue line: 'x'

* Orange line: 'y'

* **Chart 1 (Top):** Displays the first set of 'x' and 'y' data.

* **Chart 2 (Bottom):** Displays the second set of 'x' and 'y' data.

### Detailed Analysis

**Chart 1 (Top):**

* **Blue Line (x):** The 'x' series oscillates between approximately 50 and 250. The peaks are relatively consistent.

* Timestep ~500: Position ~200

* Timestep ~1000: Position ~100

* Timestep ~1500: Position ~200

* Timestep ~2000: Position ~100

* Timestep ~2500: Position ~200

* Timestep ~3000: Position ~100

* Timestep ~3500: Position ~200

* Timestep ~4000: Position ~100

* Timestep ~4500: Position ~200

* Timestep ~5000: Position ~100

* Timestep ~5500: Position ~200

* Timestep ~6000: Position ~100

* **Orange Line (y):** The 'y' series oscillates between approximately 250 and 450. The peaks are relatively consistent.

* Timestep ~250: Position ~450

* Timestep ~750: Position ~250

* Timestep ~1250: Position ~450

* Timestep ~1750: Position ~250

* Timestep ~2250: Position ~450

* Timestep ~2750: Position ~250

* Timestep ~3250: Position ~450

* Timestep ~3750: Position ~250

* Timestep ~4250: Position ~450

* Timestep ~4750: Position ~250

* Timestep ~5250: Position ~450

* Timestep ~5750: Position ~250

**Chart 2 (Bottom):**

* **Blue Line (x):** The 'x' series oscillates between approximately 0 and 250. The peaks are relatively consistent.

* Timestep ~500: Position ~200

* Timestep ~1000: Position ~50

* Timestep ~1500: Position ~200

* Timestep ~2000: Position ~50

* Timestep ~2500: Position ~200

* Timestep ~3000: Position ~50

* Timestep ~3500: Position ~200

* Timestep ~4000: Position ~50

* Timestep ~4500: Position ~200

* Timestep ~5000: Position ~50

* Timestep ~5500: Position ~200

* Timestep ~6000: Position ~50

* **Orange Line (y):** The 'y' series oscillates between approximately 100 and 500. The peaks are less consistent than in the first chart.

* Timestep ~250: Position ~450

* Timestep ~750: Position ~100

* Timestep ~1250: Position ~450

* Timestep ~1750: Position ~100

* Timestep ~2250: Position ~450

* Timestep ~2750: Position ~100

* Timestep ~3250: Position ~450

* Timestep ~3750: Position ~100

* Timestep ~4250: Position ~450

* Timestep ~4750: Position ~100

* Timestep ~5250: Position ~450

* Timestep ~5750: Position ~100

### Key Observations

* Both charts show cyclical patterns for 'x' and 'y', but the amplitude and phase relationship differ.

* In the top chart, 'x' and 'y' appear to be roughly out of phase.

* In the bottom chart, the 'y' series has more variability in its peak values compared to the top chart.

* The 'x' series in the bottom chart has a lower minimum value (close to 0) compared to the top chart (around 50).

### Interpretation

The charts likely represent the position of two variables, 'x' and 'y', over time. The cyclical nature suggests some form of periodic motion or oscillation. The differences between the two charts could indicate different initial conditions, external influences, or system parameters affecting the 'x' and 'y' variables. The variability in the 'y' series in the bottom chart might indicate a less stable or more perturbed system compared to the top chart. Without further context, it's difficult to determine the exact nature of the system being represented.

</details>

manipulating prediction of the outcome. Whereas both regions of the anterior cortex and present in mammals, [13] reports that another region, the lateral prefrontal cortex, is unique in primates and has been developed to implement the learning of contextual rules and to possibly act in a hierarchical way in the control of the other regions. We have proposed an elementary implementation of the lateral prefrontal cortex in the second experiment, adding explicit contextual inputs as a basis to form contextual rules. It was accordingly very important to observe that it was then possible to explicitly manipulate the rules and form flexible behavior, whereas in the previous case, rules were implicitly present in the memory but not manipulable.

This simple model shows that the interpretation of the behavior by an observer and the actual behavior might greatly differ even when we can make accurate prediction about the behavior. Such prediction can be incidentally true without actually revealing the true nature of the underlying mechanisms. Based on the reservoir computing framework which can be invoked for both premotor and prefrontal regions, we have implemented models which are structurally similar (as it is the case for that regions) and we have shown that a simple difference related to their inputs can orient then toward implicit or explicit learning as respectively observed in the premotor and lateral prefrontal regions. It will be important in future work to see how these regions are associated to combine both modes of learning and switch from on to the other depending on the complexity of the task.

## REFERENCES

- [1] A. Cleeremans, 'Implicit learning and implicit memory,' Encyclopedia of Consciousness , p. 369-381, 2009. [Online]. Available: http: //dx.doi.org/10.1016/B978-012373873-8.00047-5

- [2] A. Cleeremans, B. Timmermans, and A. Pasquali, 'Consciousness and metarepresentation: A computational sketch,' Neural Networks , vol. 20, no. 9, pp. 1032-1039, 2007.

- [3] B. A. Clegg, G. J. DiGirolamo, and S. W. Keele, 'Sequence learning,' Trends in cognitive sciences , vol. 2, no. 8, pp. 275-281, 1998.

- [4] A. S. Reber, Implicit Learning and Tacit Knowledge . Oxford University Press, Sep 1996. [Online]. Available: http://dx.doi.org/10.1093/acprof: oso/9780195106589.001.0001

- [5] Z. Dienes and D. Berry, 'Implicit learning: Below the subjective threshold,' Psychonomic bulletin & review , vol. 4, no. 1, pp. 3-23, 1997.

- [6] P. Dominey, M. Arbib, and J.-P. Joseph, 'A model of corticostriatal plasticity for learning oculomotor associations and sequences,' Journal of cognitive neuroscience , vol. 7, no. 3, pp. 311-336, 1995.

- [7] J. Tani and S. Nolfi, 'Learning to perceive the world as articulated: an approach for hierarchical learning in sensory-motor systems,' Neural Networks , vol. 12, no. 7-8, pp. 1131-1141, 1999.

- [8] E. Aislan Antonelo and B. Schrauwen, 'On learning navigation behaviors for small mobile robots with reservoir computing architectures,' IEEE Transactions on Neural Networks and Learning Systems , vol. 26, no. 4, p. 763-780, Apr 2015. [Online]. Available: http://dx.doi.org/10.1109/TNNLS.2014.2323247

- [9] E. Antonelo and B. Schrauwen, 'Learning slow features with reservoir computing for biologically-inspired robot localization,' Neural Networks , vol. 25, p. 178-190, Jan 2012. [Online]. Available: http://dx.doi.org/10.1016/j.neunet.2011.08.004

- [10] L. M. Frank, E. N. Brown, and M. Wilson, 'Trajectory encoding in the hippocampus and entorhinal cortex,' Neuron , vol. 27, no. 1, pp. 169178, 2000.

- [11] N. Trouvain, L. Pedrelli, T. T. Dinh, and X. Hinaut, 'ReservoirPy: An efficient and user-friendly library to design echo state networks,' in Artificial Neural Networks and Machine Learning -ICANN 2020 . Springer International Publishing, 2020, pp. 494-505. [Online]. Available: https://doi.org/10.1007/978-3-030-61616-8 40

- [12] J. Bergstra, B. Komer, C. Eliasmith, D. Yamins, and D. D. Cox, 'Hyperopt: a python library for model selection and hyperparameter optimization,' Computational Science & Discovery , vol. 8, no. 1, p. 014008, 2015.

- [13] E. Koechlin, 'An evolutionary computational theory of prefrontal executive function in decision-making.' Philosophical transactions of the Royal Society of London. Series B, Biological sciences , vol. 369, no. 1655, pp. 20 130 474+, Nov. 2014. [Online]. Available: http://dx.doi.org/10.1098/rstb.2013.0474