# Matryoshka Representation Learning

> Equal contribution – AK led the project with extensive support from GB and AR for experimentation.

## Abstract

Learned representations are a central component in modern ML systems, serving a multitude of downstream tasks. When training such representations, it is often the case that computational and statistical constraints for each downstream task are unknown. In this context, rigid fixed-capacity representations can be either over or under-accommodating to the task at hand. This leads us to ask: can we design a flexible representation that can adapt to multiple downstream tasks with varying computational resources? Our main contribution is

<details>

<summary>x1.png Details</summary>

### Visual Description

Icon/Small Image (28x28)

</details>

${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks. ${\rm MRL}$ minimally modifies existing representation learning pipelines and imposes no additional cost during inference and deployment. ${\rm MRL}$ learns coarse-to-fine representations that are at least as accurate and rich as independently trained low-dimensional representations. The flexibility within the learned ${\rm Matryoshka~Representations}$ offer: (a) up to $\mathbf{14}\times$ smaller embedding size for ImageNet-1K classification at the same level of accuracy; (b) up to $\mathbf{14}\times$ real-world speed-ups for large-scale retrieval on ImageNet-1K and 4K; and (c) up to $\mathbf{2}$ % accuracy improvements for long-tail few-shot classification, all while being as robust as the original representations. Finally, we show that ${\rm MRL}$ extends seamlessly to web-scale datasets (ImageNet, JFT) across various modalities – vision (ViT, ResNet), vision + language (ALIGN) and language (BERT). ${\rm MRL}$ code and pretrained models are open-sourced at https://github.com/RAIVNLab/MRL.

## 1 Introduction

Learned representations [57] are fundamental building blocks of real-world ML systems [66, 91]. Trained once and frozen, $d$ -dimensional representations encode rich information and can be used to perform multiple downstream tasks [4]. The deployment of deep representations has two steps: (1) an expensive yet constant-cost forward pass to compute the representation [29] and (2) utilization of the representation for downstream applications [50, 89]. Compute costs for the latter part of the pipeline scale with the embedding dimensionality as well as the data size ( $N$ ) and label space ( $L$ ). At web-scale [15, 85] this utilization cost overshadows the feature computation cost. The rigidity in these representations forces the use of high-dimensional embedding vectors across multiple tasks despite the varying resource and accuracy constraints that require flexibility.

Human perception of the natural world has a naturally coarse-to-fine granularity [28, 32]. However, perhaps due to the inductive bias of gradient-based training [84], deep learning models tend to diffuse “information” across the entire representation vector. The desired elasticity is usually enabled in the existing flat and fixed representations either through training multiple low-dimensional models [29], jointly optimizing sub-networks of varying capacity [9, 100] or post-hoc compression [38, 60]. Each of these techniques struggle to meet the requirements for adaptive large-scale deployment either due to training/maintenance overhead, numerous expensive forward passes through all of the data, storage and memory cost for multiple copies of encoded data, expensive on-the-fly feature selection or a significant drop in accuracy. By encoding coarse-to-fine-grained representations, which are as accurate as the independently trained counterparts, we learn with minimal overhead a representation that can be deployed adaptively at no additional cost during inference.

We introduce

<details>

<summary>x2.png Details</summary>

### Visual Description

Icon/Small Image (28x28)

</details>

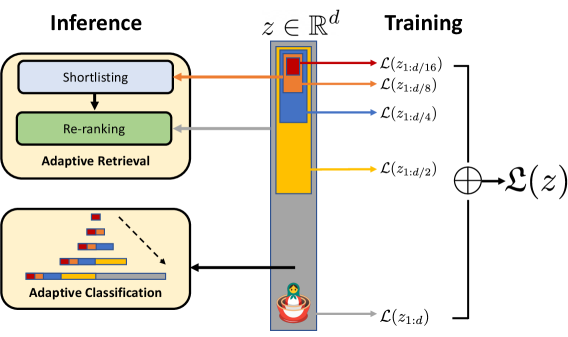

${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) to induce flexibility in the learned representation. ${\rm MRL}$ learns representations of varying capacities within the same high-dimensional vector through explicit optimization of $O(\log(d))$ lower-dimensional vectors in a nested fashion, hence the name ${\rm Matryoshka}$ . ${\rm MRL}$ can be adapted to any existing representation pipeline and is easily extended to many standard tasks in computer vision and natural language processing. Figure 1 illustrates the core idea of ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) and the adaptive deployment settings of the learned ${\rm Matryoshka~Representations}$ .

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Adaptive Retrieval and Classification System with Hierarchical Training

### Overview

The diagram illustrates a two-phase system: **Inference** (top) and **Training** (right). The Inference phase includes components for shortlisting, re-ranking, adaptive retrieval, and adaptive classification. The Training phase depicts a hierarchical loss structure with decreasing granularity (e.g., `L(z1:d/16)`, `L(z1:d/8)`, etc.) and a final aggregated loss `L(z)`. A circular element with a cross and a stylized figure (red base, yellow top) connects the phases.

---

### Components/Axes

1. **Inference Phase**:

- **Shortlisting**: Initial candidate selection (orange arrow).

- **Re-ranking**: Refines shortlisted candidates (gray arrow).

- **Adaptive Retrieval**: Processes re-ranked candidates (blue arrow).

- **Adaptive Classification**: Outputs hierarchical confidence scores (bar chart with red, blue, yellow segments).

2. **Training Phase**:

- **Hierarchical Loss Stack**: Vertical column with labeled loss functions:

- `L(z1:d/16)` (red)

- `L(z1:d/8)` (orange)

- `L(z1:d/4)` (blue)

- `L(z1:d/2)` (yellow)

- `L(z1:d)` (gray)

- **Aggregated Loss**: Final loss `L(z)` (circle with cross).

- **Agent/Model**: Stylized figure (red base, yellow top) at the bottom, likely representing the model being trained.

---

### Detailed Analysis

- **Inference Flow**:

- Shortlisting → Re-ranking → Adaptive Retrieval → Adaptive Classification.

- Adaptive Classification outputs a bar chart with diminishing segment sizes (red > blue > yellow), suggesting confidence scores or feature weights.

- **Training Hierarchy**:

- Loss functions are labeled with decreasing fractions (`1/16` to `1/d`), implying multi-scale or hierarchical training.

- The circular `L(z)` with a cross may represent a loss aggregation or optimization step (e.g., gradient descent).

- **Connections**:

- A dashed arrow links Adaptive Classification to the Training phase, indicating feedback from classification to training losses.

- The agent/model at the bottom receives input from the aggregated loss `L(z)`.

---

### Key Observations

1. **Hierarchical Training**: The loss functions (`L(z1:d/16)` to `L(z1:d)`) suggest a multi-resolution training strategy, where finer-grained losses (`1/16`, `1/8`) are prioritized early, and coarser losses (`1/d`) dominate later.

2. **Adaptive Feedback Loop**: The dashed arrow implies that Adaptive Classification’s output influences the training process, enabling dynamic adjustment of the model.

3. **Symbolism**: The circular `L(z)` with a cross may symbolize a loss minimization objective, while the agent/model’s design (red base, yellow top) could represent stability (red) and adaptability (yellow).

---

### Interpretation

This diagram represents a **self-improving system** where inference and training are tightly coupled. The hierarchical loss structure in training likely enables the model to learn at multiple scales, while the adaptive classification feedback loop ensures the model refines its predictions iteratively. The stylized agent at the bottom symbolizes the model’s evolving capabilities, shaped by the aggregated loss `L(z)`. The use of color-coded arrows and segmented bars emphasizes modularity and adaptability in both phases.

</details>

Figure 1:

<details>

<summary>x5.png Details</summary>

### Visual Description

Icon/Small Image (28x28)

</details>

${\rm Matryoshka~Representation~Learning}$ is adaptable to any representation learning setup and begets a ${\rm Matryoshka~Representation}$ $z$ by optimizing the original loss $\mathcal{L}(.)$ at $O(\log(d))$ chosen representation sizes. ${\rm Matryoshka~Representation}$ can be utilized effectively for adaptive deployment across environments and downstream tasks.

The first $m$ -dimensions, $m\in[d]$ , of the ${\rm Matryoshka~Representation}$ is an information-rich low-dimensional vector, at no additional training cost, that is as accurate as an independently trained $m$ -dimensional representation. The information within the ${\rm Matryoshka~Representation}$ increases with the dimensionality creating a coarse-to-fine grained representation, all without significant training or additional deployment overhead. ${\rm MRL}$ equips the representation vector with the desired flexibility and multifidelity that can ensure a near-optimal accuracy-vs-compute trade-off. With these advantages, ${\rm MRL}$ enables adaptive deployment based on accuracy and compute constraints.

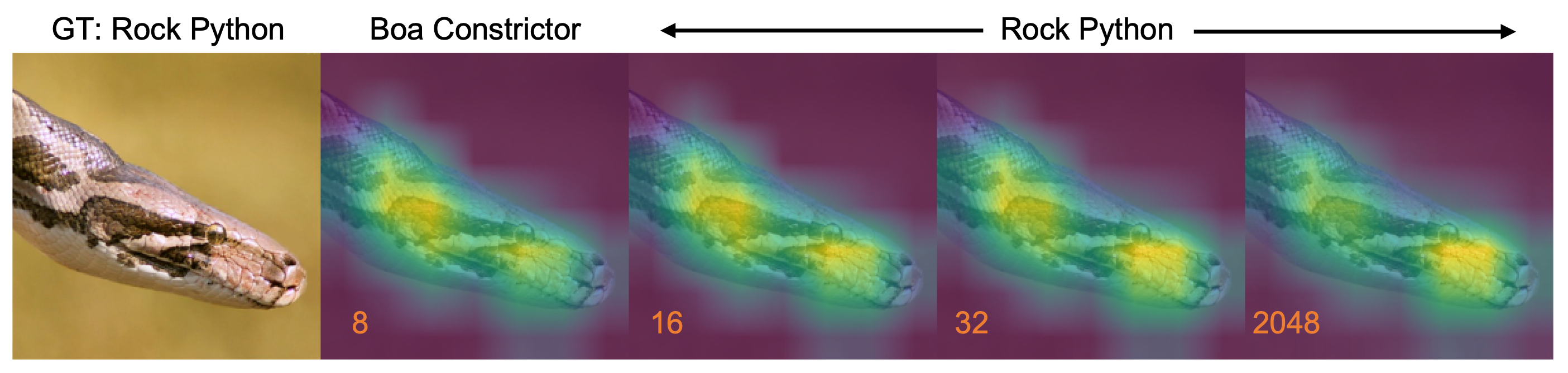

The ${\rm Matryoshka~Representations}$ improve efficiency for large-scale classification and retrieval without any significant loss of accuracy. While there are potentially several applications of coarse-to-fine ${\rm Matryoshka~Representations}$ , in this work we focus on two key building blocks of real-world ML systems: large-scale classification and retrieval. For classification, we use adaptive cascades with the variable-size representations from a model trained with ${\rm MRL}$ , significantly reducing the average dimension of embeddings needed to achieve a particular accuracy. For example, on ImageNet-1K, ${\rm MRL}$ + adaptive classification results in up to a $14\times$ smaller representation size at the same accuracy as baselines (Section 4.2.1). Similarly, we use ${\rm MRL}$ in an adaptive retrieval system. Given a query, we shortlist retrieval candidates using the first few dimensions of the query embedding, and then successively use more dimensions to re-rank the retrieved set. A simple implementation of this approach leads to $128\times$ theoretical (in terms of FLOPS) and $14\times$ wall-clock time speedups compared to a single-shot retrieval system that uses a standard embedding vector; note that ${\rm MRL}$ ’s retrieval accuracy is comparable to that of single-shot retrieval (Section 4.3.1). Finally, as ${\rm MRL}$ explicitly learns coarse-to-fine representation vectors, intuitively it should share more semantic information among its various dimensions (Figure 5). This is reflected in up to $2\$ accuracy gains in long-tail continual learning settings while being as robust as the original embeddings. Furthermore, due to its coarse-to-fine grained nature, ${\rm MRL}$ can also be used as method to analyze hardness of classification among instances and information bottlenecks.

We make the following key contributions:

1. We introduce

<details>

<summary>x6.png Details</summary>

### Visual Description

Icon/Small Image (28x28)

</details>

${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) to obtain flexible representations ( ${\rm Matryoshka~Representations}$ ) for adaptive deployment (Section 3).

1. Up to $14\times$ faster yet accurate large-scale classification and retrieval using ${\rm MRL}$ (Section 4).

1. Seamless adaptation of ${\rm MRL}$ across modalities (vision - ResNet & ViT, vision + language - ALIGN, language - BERT) and to web-scale data (ImageNet-1K/4K, JFT-300M and ALIGN data).

1. Further analysis of ${\rm MRL}$ ’s representations in the context of other downstream tasks (Section 5).

## 2 Related Work

Representation Learning.

Large-scale datasets like ImageNet [16, 76] and JFT [85] enabled the learning of general purpose representations for computer vision [4, 98]. These representations are typically learned through supervised and un/self-supervised learning paradigms. Supervised pretraining [29, 51, 82] casts representation learning as a multi-class/label classification problem, while un/self-supervised learning learns representation via proxy tasks like instance classification [97] and reconstruction [31, 63]. Recent advances [12, 30] in contrastive learning [27] enabled learning from web-scale data [21] that powers large-capacity cross-modal models [18, 46, 71, 101]. Similarly, natural language applications are built [40] on large language models [8] that are pretrained [68, 75] in a un/self-supervised fashion with masked language modelling [19] or autoregressive training [70].

<details>

<summary>x7.png Details</summary>

### Visual Description

Icon/Small Image (28x28)

</details>

${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) is complementary to all these setups and can be adapted with minimal overhead (Section 3). ${\rm MRL}$ equips representations with multifidelity at no additional cost which enables adaptive deployment based on the data and task (Section 4).

Efficient Classification and Retrieval.

Efficiency in classification and retrieval during inference can be studied with respect to the high yet constant deep featurization costs or the search cost which scales with the size of the label space and data. Efficient neural networks address the first issue through a variety of algorithms [25, 54] and design choices [39, 53, 87]. However, with a strong featurizer, most of the issues with scale are due to the linear dependence on number of labels ( $L$ ), size of the data ( $N$ ) and representation size ( $d$ ), stressing RAM, disk and processor all at the same time.

The sub-linear complexity dependence on number of labels has been well studied in context of compute [3, 43, 69] and memory [20] using Approximate Nearest Neighbor Search (ANNS) [62] or leveraging the underlying hierarchy [17, 55]. In case of the representation size, often dimensionality reduction [77, 88], hashing techniques [14, 52, 78] and feature selection [64] help in alleviating selective aspects of the $O(d)$ scaling at a cost of significant drops in accuracy. Lastly, most real-world search systems [11, 15] are often powered by large-scale embedding based retrieval [10, 66] that scales in cost with the ever increasing web-data. While categorization [89, 99] clusters similar things together, it is imperative to be equipped with retrieval capabilities that can bring forward every instance [7]. Approximate Nearest Neighbor Search (ANNS) [42] makes it feasible with efficient indexing [14] and traversal [5, 6] to present the users with the most similar documents/images from the database for a requested query. Widely adopted HNSW [62] ( $O(d\log(N))$ ) is as accurate as exact retrieval ( $O(dN)$ ) at the cost of a graph-based index overhead for RAM and disk [44].

${\rm MRL}$ tackles the linear dependence on embedding size, $d$ , by learning multifidelity ${\rm Matryoshka~Representations}$ . Lower-dimensional ${\rm Matryoshka~Representations}$ are as accurate as independently trained counterparts without the multiple expensive forward passes. ${\rm Matryoshka~Representations}$ provide an intermediate abstraction between high-dimensional vectors and their efficient ANNS indices through the adaptive embeddings nested within the original representation vector (Section 4). All other aforementioned efficiency techniques are complementary and can be readily applied to the learned ${\rm Matryoshka~Representations}$ obtained from ${\rm MRL}$ .

Several works in efficient neural network literature [9, 93, 100] aim at packing neural networks of varying capacity within the same larger network. However, the weights for each progressively smaller network can be different and often require distinct forward passes to isolate the final representations. This is detrimental for adaptive inference due to the need for re-encoding the entire retrieval database with expensive sub-net forward passes of varying capacities. Several works [23, 26, 65, 59] investigate the notions of intrinsic dimensionality and redundancy of representations and objective spaces pointing to minimum description length [74]. Finally, ordered representations proposed by Rippel et al. [73] use nested dropout in the context of autoencoders to learn nested representations. ${\rm MRL}$ differentiates itself in formulation by optimizing only for $O(\log(d))$ nesting dimensions instead of $O(d)$ . Despite this, ${\rm MRL}$ diffuses information to intermediate dimensions interpolating between the optimized ${\rm Matryoshka~Representation}$ sizes accurately (Figure 5); making web-scale feasible.

## 3

<details>

<summary>x8.png Details</summary>

### Visual Description

Icon/Small Image (28x28)

</details>

${\rm Matryoshka~Representation~Learning}$

For $d\in\mathbb{N}$ , consider a set $\mathcal{M}\subset[d]$ of representation sizes. For a datapoint $x$ in the input domain $\mathcal{X}$ , our goal is to learn a $d$ -dimensional representation vector $z\in\mathbb{R}^{d}$ . For every $m\in\mathcal{M}$ , ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) enables each of the first $m$ dimensions of the embedding vector, $z_{1:m}\in\mathbb{R}^{m}$ to be independently capable of being a transferable and general purpose representation of the datapoint $x$ . We obtain $z$ using a deep neural network $F(\,\cdot\,;\theta_{F})\colon\mathcal{X}\rightarrow\mathbb{R}^{d}$ parameterized by learnable weights $\theta_{F}$ , i.e., $z\coloneqq F(x;\theta_{F})$ . The multi-granularity is captured through the set of the chosen dimensions $\mathcal{M}$ , that contains less than $\log(d)$ elements, i.e., $\lvert\mathcal{M}\rvert\leq\left\lfloor\log(d)\right\rfloor$ . The usual set $\mathcal{M}$ consists of consistent halving until the representation size hits a low information bottleneck. We discuss the design choices in Section 4 for each of the representation learning settings.

For the ease of exposition, we present the formulation for fully supervised representation learning via multi-class classification. ${\rm Matryoshka~Representation~Learning}$ modifies the typical setting to become a multi-scale representation learning problem on the same task. For example, we train ResNet50 [29] on ImageNet-1K [76] which embeds a $224\times 224$ pixel image into a $d=2048$ representation vector and then passed through a linear classifier to make a prediction, $\hat{y}$ among the $L=1000$ labels. For ${\rm MRL}$ , we choose $\mathcal{M}=\{8,16,\ldots,1024,2048\}$ as the nesting dimensions.

Suppose we are given a labelled dataset $\mathcal{D}=\{(x_{1},y_{1}),\ldots,(x_{N},y_{N})\}$ where $x_{i}\in\mathcal{X}$ is an input point and $y_{i}\in[L]$ is the label of $x_{i}$ for all $i\in[N]$ . ${\rm MRL}$ optimizes the multi-class classification loss for each of the nested dimension $m\in\mathcal{M}$ using standard empirical risk minimization using a separate linear classifier, parameterized by $\mathbf{W}^{(m)}\in\mathbb{R}^{L\times m}$ . All the losses are aggregated after scaling with their relative importance $\left(c_{m}\geq 0\right)_{m\in\mathcal{M}}$ respectively. That is, we solve

$$

\min_{\left\{\mathbf{W}^{(m)}\right\}_{m\in\mathcal{M}},\ \theta_{F}}\frac{1}{N}\sum_{i\in[N]}\sum_{m\in\mathcal{M}}c_{m}\cdot{\cal L}\left(\mathbf{W}^{(m)}\cdot F(x_{i};\theta_{F})_{1:m}\ ;\ y_{i}\right)\ , \tag{1}

$$

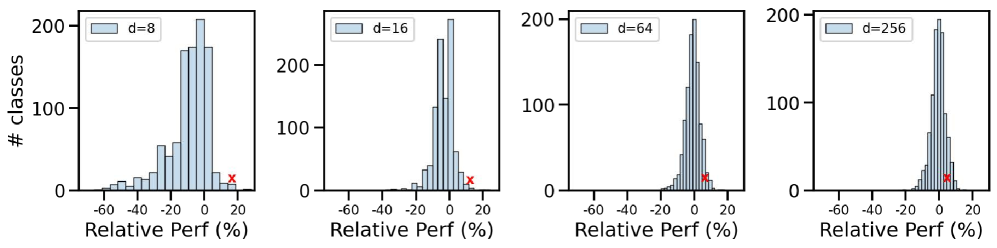

where ${\cal L}\colon\mathbb{R}^{L}\times[L]\to\mathbb{R}_{+}$ is the multi-class softmax cross-entropy loss function. This is a standard optimization problem that can be solved using sub-gradient descent methods. We set all the importance scales, $c_{m}=1$ for all $m\in\mathcal{M}$ ; see Section 5 for ablations. Lastly, despite only optimizing for $O(\log(d))$ nested dimensions, ${\rm MRL}$ results in accurate representations, that interpolate, for dimensions that fall between the chosen granularity of the representations (Section 4.2).

We call this formulation as ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ). A natural way to make this efficient is through weight-tying across all the linear classifiers, i.e., by defining $\mathbf{W}^{(m)}=\mathbf{W}_{1:m}$ for a set of common weights $\mathbf{W}\in\mathbb{R}^{L\times d}$ . This would reduce the memory cost due to the linear classifiers by almost half, which would be crucial in cases of extremely large output spaces [89, 99]. This variant is called Efficient ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL\text{--}E}$ ). Refer to Alg 1 and Alg 2 in Appendix A for the building blocks of ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ).

Adaptation to Learning Frameworks.

${\rm MRL}$ can be adapted seamlessly to most representation learning frameworks at web-scale with minimal modifications (Section 4.1). For example, ${\rm MRL}$ ’s adaptation to masked language modelling reduces to ${\rm MRL\text{--}E}$ due to the weight-tying between the input embedding matrix and the linear classifier. For contrastive learning, both in context of vision & vision + language, ${\rm MRL}$ is applied to both the embeddings that are being contrasted with each other. The presence of normalization on the representation needs to be handled independently for each of the nesting dimension for best results (see Appendix C for more details).

## 4 Applications

In this section, we discuss ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) for a diverse set of applications along with an extensive evaluation of the learned multifidelity representations. Further, we showcase the downstream applications of the learned ${\rm Matryoshka~Representations}$ for flexible large-scale deployment through (a) Adaptive Classification (AC) and (b) Adaptive Retrieval (AR).

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Graph: Top-1 Accuracy vs. Representation Size

### Overview

The image is a line graph comparing the Top-1 Accuracy (%) of six different methods (MRL, MRL-E, FF, SVD, Slim. Net, Rand. LP) across varying Representation Sizes (8 to 2048). The graph shows how accuracy improves or plateaus as representation size increases.

### Components/Axes

- **X-axis (Horizontal)**: Representation Size (logarithmic scale: 8, 16, 32, 64, 128, 256, 512, 1024, 2048).

- **Y-axis (Vertical)**: Top-1 Accuracy (%) (linear scale: 40% to 80%).

- **Legend**: Located in the top-right corner, mapping colors/symbols to methods:

- **Blue (solid line with circles)**: MRL

- **Orange (dashed line with triangles)**: MRL-E

- **Green (dotted line with triangles)**: FF

- **Red (dash-dot line with hexagons)**: SVD

- **Purple (dash-dot line with crosses)**: Slim. Net

- **Brown (solid line with crosses)**: Rand. LP

### Detailed Analysis

1. **MRL (Blue)**: Starts at ~65% accuracy at size 8, rises steadily to ~75% by size 16, and plateaus near 75% for larger sizes.

2. **MRL-E (Orange)**: Begins at ~55% at size 8, increases sharply to ~70% by size 16, and plateaus near 70% for larger sizes.

3. **FF (Green)**: Starts at ~60% at size 8, rises to ~70% by size 16, and plateaus near 70% for larger sizes.

4. **SVD (Red)**: Begins at ~50% at size 8, rises steeply to ~70% by size 128, and plateaus near 70% for larger sizes.

5. **Slim. Net (Purple)**: Starts at ~45% at size 8, increases gradually to ~70% by size 1024, and plateaus near 70% for larger sizes.

6. **Rand. LP (Brown)**: Starts at ~40% at size 8, rises sharply to ~70% by size 1024, and plateaus near 70% for larger sizes.

### Key Observations

- **Performance Trends**: All methods improve accuracy with larger representation sizes, but MRL and MRL-E achieve the highest initial accuracy and plateau earlier.

- **Steepest Growth**: Rand. LP and SVD show the most significant improvement as representation size increases, though they start from lower baselines.

- **Plateaus**: Most methods plateau near 70–75% accuracy, suggesting diminishing returns at larger sizes.

- **Outliers**: Slim. Net and Rand. LP lag behind others initially but catch up at larger sizes.

### Interpretation

The graph demonstrates that **MRL and MRL-E** are the most efficient methods, achieving high accuracy even at smaller representation sizes. In contrast, **Rand. LP** and **SVD** require larger representations to reach comparable performance, indicating they may be less efficient but potentially more scalable. The plateauing trends suggest that increasing representation size beyond a certain point yields minimal accuracy gains for most methods. This could inform trade-offs between computational cost and performance in practical applications.

</details>

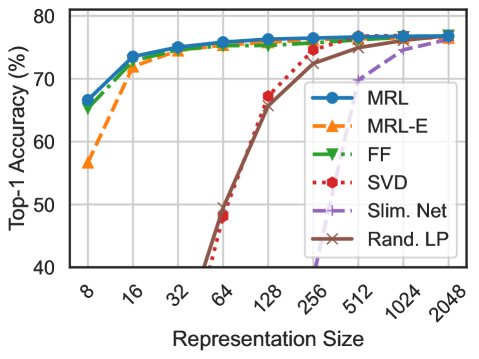

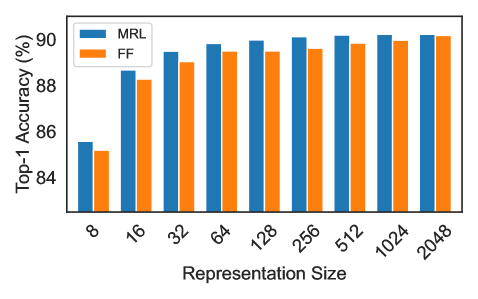

Figure 2: ImageNet-1K linear classification accuracy of ResNet50 models. ${\rm MRL}$ is as accurate as the independently trained FF models for every representation size.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Graph: 1-NN Accuracy vs. Representation Size

### Overview

The image is a line graph comparing the 1-NN (1-Nearest Neighbor) accuracy of various algorithms as a function of representation size. The x-axis represents representation size (in powers of 2: 8, 16, 32, ..., 2048), and the y-axis represents accuracy percentage (40% to 70%). Six algorithms are compared: MRL, MRL-E, FF, SVD, Slim. Net, and Rand. FS. Each algorithm is represented by a distinct line style and color.

---

### Components/Axes

- **X-axis (Representation Size)**:

- Labels: 8, 16, 32, 64, 128, 256, 512, 1024, 2048.

- Scale: Logarithmic (powers of 2).

- **Y-axis (1-NN Accuracy (%))**:

- Labels: 40%, 50%, 60%, 70%.

- Scale: Linear (0% to 70%).

- **Legend**:

- Position: Right side of the graph.

- Entries:

- **MRL**: Solid blue line with circles.

- **MRL-E**: Dashed orange line with triangles.

- **FF**: Dotted green line with triangles.

- **SVD**: Dash-dot red line with hexagons.

- **Slim. Net**: Dash-dot purple line with crosses.

- **Rand. FS**: Solid brown line with crosses.

---

### Detailed Analysis

1. **MRL (Solid Blue)**:

- Starts at ~62% accuracy at 8x representation size.

- Increases steadily to ~70% by 32x, then plateaus.

- Maintains ~70% accuracy for larger sizes (64x–2048x).

2. **MRL-E (Dashed Orange)**:

- Starts at ~58% at 8x.

- Rises to ~68% by 32x, then plateaus.

- Slightly lower than MRL for all sizes.

3. **FF (Dotted Green)**:

- Starts at ~59% at 8x.

- Increases to ~69% by 32x, then plateaus.

- Similar performance to MRL but slightly lower.

4. **SVD (Dash-Dot Red)**:

- Starts at ~45% at 8x.

- Sharp increase to ~65% by 32x, then plateaus.

- Highest initial growth rate but lower plateau than MRL/FF.

5. **Slim. Net (Dash-Dot Purple)**:

- Starts at ~55% at 8x.

- Increases to ~67% by 32x, then plateaus.

- Moderate growth rate and lower plateau than MRL/FF.

6. **Rand. FS (Solid Brown)**:

- Starts at ~50% at 8x.

- Rapid increase to ~70% by 32x, then plateaus.

- Matches MRL/FF performance at larger sizes despite slow start.

---

### Key Observations

- **Trend Verification**:

- All algorithms show **increasing accuracy** with larger representation sizes, but the rate of improvement varies.

- **SVD** and **Rand. FS** exhibit the steepest initial growth, while **MRL** and **FF** achieve the highest plateau.

- **MRL-E** and **Slim. Net** have moderate growth and lower plateaus.

- **Notable Patterns**:

- **MRL** and **FF** consistently outperform others at larger sizes.

- **SVD** and **Rand. FS** show strong scalability but require larger representation sizes to reach peak performance.

- **Slim. Net** and **MRL-E** lag behind in both growth and final accuracy.

---

### Interpretation

The data suggests that **MRL** and **FF** are the most effective algorithms for 1-NN tasks, achieving high accuracy even at smaller representation sizes. **SVD** and **Rand. FS** demonstrate strong scalability but require larger data representations to match the performance of MRL/FF. The **Slim. Net** and **MRL-E** algorithms show moderate performance, indicating potential inefficiencies in their design or training. The sharp initial growth of **SVD** and **Rand. FS** implies they may be particularly sensitive to representation size, possibly due to their reliance on specific features or dimensionality reduction techniques. Overall, the graph highlights the trade-off between representation size and algorithmic efficiency in 1-NN tasks.

</details>

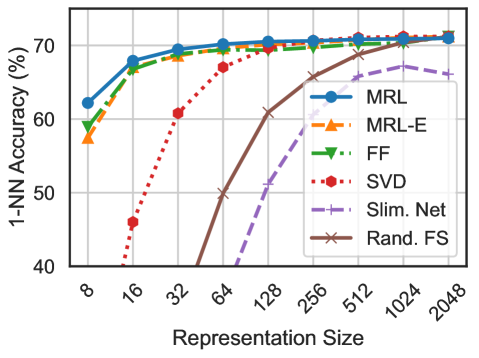

Figure 3: ImageNet-1K 1-NN accuracy of ResNet50 models measuring the representation quality for downstream task. ${\rm MRL}$ outperforms all the baselines across all representation sizes.

### 4.1 Representation Learning

We adapt ${\rm Matryoshka~Representation~Learning}$ ( ${\rm MRL}$ ) to various representation learning setups (a) Supervised learning for vision: ResNet50 [29] on ImageNet-1K [76] and ViT-B/16 [22] on JFT-300M [85], (b) Contrastive learning for vision + language: ALIGN model with ViT-B/16 vision encoder and BERT language encoder on ALIGN data [46] and (c) Masked language modelling: BERT [19] on English Wikipedia and BooksCorpus [102]. Please refer to Appendices B and C for details regarding the model architectures, datasets and training specifics.

We do not search for best hyper-parameters for all ${\rm MRL}$ experiments but use the same hyper-parameters as the independently trained baselines. ResNet50 outputs a $2048$ -dimensional representation while ViT-B/16 and BERT-Base output $768$ -dimensional embeddings for each data point. We use $\mathcal{M}=\{8,16,32,64,128,256,512,1024,2048\}$ and $\mathcal{M}=\{12,24,48,96,192,384,768\}$ as the explicitly optimized nested dimensions respectively. Lastly, we extensively compare the ${\rm MRL}$ and ${\rm MRL\text{--}E}$ models to independently trained low-dimensional (fixed feature) representations (FF), dimensionality reduction (SVD), sub-net method (slimmable networks [100]) and randomly selected features of the highest capacity FF model.

In section 4.2, we evaluate the quality and capacity of the learned representations through linear classification/probe (LP) and 1-nearest neighbour (1-NN) accuracy. Experiments show that ${\rm MRL}$ models remove the dependence on $|\mathcal{M}|$ resource-intensive independently trained models for the coarse-to-fine representations while being as accurate. Lastly, we show that despite optimizing only for $|\mathcal{M}|$ dimensions, ${\rm MRL}$ models diffuse the information, in an interpolative fashion, across all the $d$ dimensions providing the finest granularity required for adaptive deployment.

### 4.2 Classification

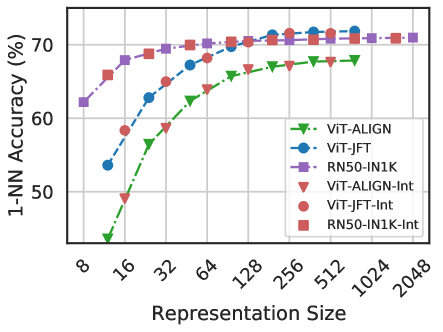

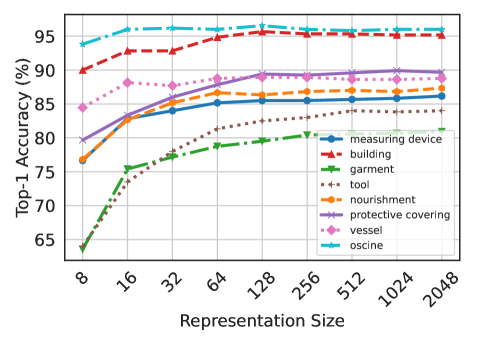

Figure 3 compares the linear classification accuracy of ResNet50 models trained and evaluated on ImageNet-1K. ResNet50– ${\rm MRL}$ model is at least as accurate as each FF model at every representation size in $\mathcal{M}$ while ${\rm MRL\text{--}E}$ is within $1\$ starting from $16$ -dim. Similarly, Figure 3 showcases the comparison of learned representation quality through 1-NN accuracy on ImageNet-1K (trainset with 1.3M samples as the database and validation set with 50K samples as the queries). ${\rm Matryoshka~Representations}$ are up to $2\$ more accurate than their fixed-feature counterparts for the lower-dimensions while being as accurate elsewhere. 1-NN accuracy is an excellent proxy, at no additional training cost, to gauge the utility of learned representations in the downstream tasks.

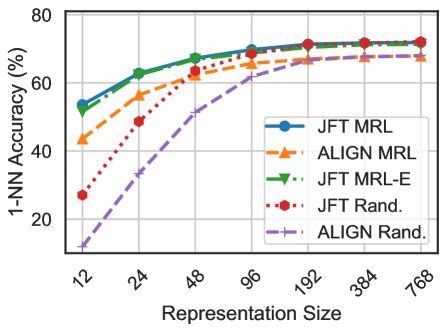

We also evaluate the quality of the representations from training ViT-B/16 on JFT-300M alongside the ViT-B/16 vision encoder of the ALIGN model – two web-scale setups. Due to the expensive nature of these experiments, we only train the highest capacity fixed feature model and choose random features for evaluation in lower-dimensions. Web-scale is a compelling setting for ${\rm MRL}$ due to its relatively inexpensive training overhead while providing multifidelity representations for downstream tasks. Figure 5, evaluated with 1-NN on ImageNet-1K, shows that all the ${\rm MRL}$ models for JFT and ALIGN are highly accurate while providing an excellent cost-vs-accuracy trade-off at lower-dimensions. These experiments show that ${\rm MRL}$ seamlessly scales to large-scale models and web-scale datasets while providing the otherwise prohibitively expensive multi-granularity in the process. We also have similar observations when pretraining BERT; please see Appendix D.2 for more details.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Graph: 1-NN Accuracy vs. Representation Size

### Overview

The image is a line graph comparing the 1-NN (1-Nearest Neighbor) classification accuracy of five different methods across varying representation sizes. The x-axis represents representation size (logarithmically scaled), and the y-axis shows accuracy percentage. Five data series are plotted with distinct line styles and markers, each corresponding to a method in the legend.

### Components/Axes

- **X-axis (Representation Size)**: Logarithmic scale with markers at 12, 24, 48, 96, 192, 384, and 768.

- **Y-axis (1-NN Accuracy %)**: Linear scale from 0% to 80% in 20% increments.

- **Legend**: Located in the top-right corner, with five entries:

- **JFT MRL**: Solid blue line with circle markers.

- **ALIGN MRL**: Dashed orange line with triangle markers.

- **JFT MRL-E**: Dotted green line with arrow markers.

- **JFT Rand.**: Dotted red line with hexagon markers.

- **ALIGN Rand.**: Dashed purple line with plus markers.

### Detailed Analysis

1. **JFT MRL (Blue)**:

- Starts at ~50% accuracy at 12 representation size.

- Increases steadily to ~70% at 768.

- Slope is consistent, with minimal fluctuation.

2. **ALIGN MRL (Orange)**:

- Begins at ~40% at 12, rising to ~65% at 768.

- Slope is slightly steeper than JFT MRL but plateaus near 65% after 192.

3. **JFT MRL-E (Green)**:

- Similar trend to JFT MRL but consistently ~5% lower.

- Starts at ~45% at 12, reaching ~65% at 768.

4. **JFT Rand. (Red)**:

- Starts at ~25% at 12, rising sharply to ~60% at 768.

- Slope is steeper than JFT MRL-E but less consistent.

5. **ALIGN Rand. (Purple)**:

- Begins at ~10% at 12, increasing to ~60% at 768.

- Slope is the steepest among all series but shows variability.

### Key Observations

- **Performance Trends**: All methods improve with larger representation sizes, but the rate of improvement diminishes as size increases.

- **JFT MRL vs. ALIGN MRL**: JFT MRL consistently outperforms ALIGN MRL across all sizes, though the gap narrows at larger sizes.

- **Random Baselines**: Both JFT Rand. and ALIGN Rand. start significantly lower but converge with non-random methods at larger sizes (~60% at 768).

- **JFT MRL-E**: Underperforms JFT MRL but outperforms all random methods.

### Interpretation

The data suggests that **JFT MRL** is the most robust method, maintaining high accuracy across representation sizes. **ALIGN MRL** and **JFT MRL-E** show comparable performance to JFT MRL but with slight trade-offs in consistency or magnitude. The random methods (JFT Rand. and ALIGN Rand.) demonstrate that structured approaches (MRL) significantly outperform random baselines, especially at smaller representation sizes. The convergence of random methods at larger sizes implies diminishing returns for structured methods as representation complexity increases. This could indicate that larger representations inherently capture more discriminative features, reducing the need for sophisticated alignment or error-correction mechanisms.

</details>

Figure 4: ImageNet-1K 1-NN accuracy for ViT-B/16 models trained on JFT-300M & as part of ALIGN. ${\rm MRL}$ scales seamlessly to web-scale with minimal training overhead.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Line Graph: 1-NN Accuracy vs. Representation Size

### Overview

The image is a line graph comparing the 1-NN accuracy of different models across varying representation sizes. The x-axis represents representation size (8 to 2048), and the y-axis shows accuracy percentage (50% to 70%). Six data series are plotted with distinct line styles and markers, each corresponding to a model or variant.

### Components/Axes

- **X-axis (Representation Size)**: Logarithmic scale from 8 to 2048 (values: 8, 16, 32, 64, 128, 256, 512, 1024, 2048).

- **Y-axis (1-NN Accuracy %)**: Linear scale from 50% to 70%.

- **Legend**: Located in the bottom-right corner, mapping colors/markers to models:

- **Green dashed line with triangles**: ViT-ALIGN

- **Blue dashed line with circles**: ViT-JFT

- **Purple dashed line with squares**: RN50-IN1K

- **Green dashed line with triangles**: ViT-ALIGN-Int

- **Blue dashed line with circles**: ViT-JFT-Int

- **Purple dashed line with squares**: RN50-IN1K-Int

### Detailed Analysis

1. **ViT-ALIGN** (green dashed line with triangles):

- Starts at ~50% accuracy at 8 representation size.

- Gradually increases to ~65% at 2048.

- Slowest growth among all series.

2. **ViT-JFT** (blue dashed line with circles):

- Begins at ~55% at 8.

- Rises sharply to ~70% by 128.

- Plateaus near 70% for larger sizes.

3. **RN50-IN1K** (purple dashed line with squares):

- Starts at ~60% at 8.

- Increases to ~70% by 32.

- Remains stable at ~70% for larger sizes.

4. **ViT-ALIGN-Int** (green dashed line with triangles):

- Identical trend to ViT-ALIGN (same color/marker).

- Starts at ~50% and reaches ~65% at 2048.

5. **ViT-JFT-Int** (blue dashed line with circles):

- Matches ViT-JFT trend (same color/marker).

- Starts at ~55% and plateaus at ~70%.

6. **RN50-IN1K-Int** (purple dashed line with squares):

- Mirrors RN50-IN1K (same color/marker).

- Starts at ~60% and stabilizes at ~70%.

### Key Observations

- **Trend Consistency**: All models show increasing accuracy with larger representation sizes, but the rate of improvement varies.

- **Performance Hierarchy**: RN50-IN1K variants outperform ViT models, which outperform ViT-ALIGN variants.

- **Legend Ambiguity**: The "Int" variants (e.g., ViT-ALIGN-Int) share identical line styles and trends with their non-"Int" counterparts, suggesting potential labeling errors or redundancy.

- **Plateau Effect**: RN50-IN1K models plateau earlier (by 32) compared to ViT-JFT (128) and ViT-ALIGN (2048).

### Interpretation

The data demonstrates that larger representation sizes improve 1-NN accuracy across all models, with RN50-IN1K achieving the highest performance. The "Int" variants appear to replicate the trends of their base models, raising questions about their distinctiveness. The steepest growth is observed in ViT-JFT, suggesting it benefits most from increased representation size. The RN50-IN1K models’ early plateau indicates diminishing returns at smaller sizes, while ViT-ALIGN’s gradual improvement highlights its sensitivity to representation scale. The legend’s duplication of styles for "Int" variants warrants verification, as it may obscure meaningful distinctions between model configurations.

</details>

Figure 5: Despite optimizing ${\rm MRL}$ only for $O(\log(d))$ dimensions for ResNet50 and ViT-B/16 models; the accuracy in the intermediate dimensions shows interpolating behaviour.

Our experiments also show that post-hoc compression (SVD), linear probe on random features, and sub-net style slimmable networks drastically lose accuracy compared to ${\rm MRL}$ as the representation size decreases. Finally, Figure 5 shows that, while ${\rm MRL}$ explicitly optimizes $O(\log(d))$ nested representations – removing the $O(d)$ dependence [73] –, the coarse-to-fine grained information is interpolated across all $d$ dimensions providing highest flexibility for adaptive deployment.

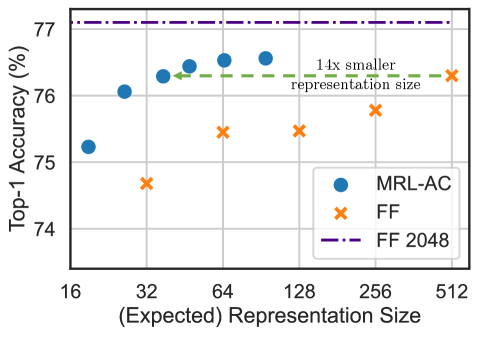

#### 4.2.1 Adaptive Classification

The flexibility and coarse-to-fine granularity within ${\rm Matryoshka~Representations}$ allows model cascades [90] for Adaptive Classification (AC) [28]. Unlike standard model cascades [95], ${\rm MRL}$ does not require multiple expensive neural network forward passes. To perform AC with an ${\rm MRL}$ trained model, we learn thresholds on the maximum softmax probability [33] for each nested classifier on a holdout validation set. We then use these thresholds to decide when to transition to the higher dimensional representation (e.g $8\to 16\to 32$ ) of the ${\rm MRL}$ model. Appendix D.1 discusses the implementation and learning of thresholds for cascades used for adaptive classification in detail.

Figure 7 shows the comparison between cascaded ${\rm MRL}$ representations ( ${\rm MRL}$ –AC) and independently trained fixed feature (FF) models on ImageNet-1K with ResNet50. We computed the expected representation size for ${\rm MRL}$ –AC based on the final dimensionality used in the cascade. We observed that ${\rm MRL}$ –AC was as accurate, $76.30\$ , as a 512-dimensional FF model but required an expected dimensionality of $\sim 37$ while being only $0.8\$ lower than the 2048-dimensional FF baseline. Note that all ${\rm MRL}$ –AC models are significantly more accurate than the FF baselines at comparable representation sizes. ${\rm MRL}$ –AC uses up to $\sim 14\times$ smaller representation size for the same accuracy which affords computational efficiency as the label space grows [89]. Lastly, our results with ${\rm MRL}$ –AC indicate that instances and classes vary in difficulty which we analyze in Section 5 and Appendix J.

### 4.3 Retrieval

Nearest neighbour search with learned representations powers a plethora of retrieval and search applications [15, 91, 11, 66]. In this section, we discuss the image retrieval performance of the pretrained ResNet50 models (Section 4.1) on two large-scale datasets ImageNet-1K [76] and ImageNet-4K. ImageNet-1K has a database size of $\sim$ 1.3M and a query set of 50K samples uniformly spanning 1000 classes. We also introduce ImageNet-4K which has a database size of $\sim$ 4.2M and query set of $\sim$ 200K samples uniformly spanning 4202 classes (see Appendix B for details). A single forward pass on ResNet50 costs 4 GFLOPs while exact retrieval costs 2.6 GFLOPs per query for ImageNet-1K. Although retrieval overhead is $40\$ of the total cost, retrieval cost grows linearly with the size of the database. ImageNet-4K presents a retrieval benchmark where the exact search cost becomes the computational bottleneck ( $8.6$ GFLOPs per query). In both these settings, the memory and disk usage are also often bottlenecked by the large databases. However, in most real-world applications exact search, $O(dN)$ , is replaced with an approximate nearest neighbor search (ANNS) method like HNSW [62], $O(d\log(N))$ , with minimal accuracy drop at the cost of additional memory overhead.

The goal of image retrieval is to find images that belong to the same class as the query using representations obtained from a pretrained model. In this section, we compare retrieval performance using mean Average Precision @ 10 (mAP@ $10$ ) which comprehensively captures the setup of relevant image retrieval at scale. We measure the cost per query using exact search in MFLOPs. All embeddings are unit normalized and retrieved using the L2 distance metric. Lastly, we report an extensive set of metrics spanning mAP@ $k$ and P@ $k$ for $k=\{10,25,50,100\}$ and real-world wall-clock times for exact search and HNSW. See Appendices E and F for more details.

<details>

<summary>x13.png Details</summary>

### Visual Description

## Line Chart: Top-1 Accuracy vs. Representation Size

### Overview

The chart compares the Top-1 Accuracy (%) of two models (MRL-AC and FF) across varying representation sizes, with annotations for a baseline (FF 2048) and a reference point ("14x smaller representation size"). The x-axis represents representation size, and the y-axis shows accuracy.

### Components/Axes

- **X-axis (Horizontal)**: "(Expected) Representation Size" with values: 16, 32, 64, 128, 256, 512.

- **Y-axis (Vertical)**: "Top-1 Accuracy (%)" with values from 74% to 77%.

- **Legend**: Located in the bottom-right corner, associating:

- Blue circles: MRL-AC

- Orange crosses: FF

- Purple dashed line: FF 2048

- **Annotations**:

- Green dashed line labeled "14x smaller representation size" at x=32.

- Purple dashed line labeled "FF 2048" at y=77%.

### Detailed Analysis

- **MRL-AC (Blue Circles)**:

- Data points: (16, 75.2%), (32, 76.1%), (64, 76.3%), (128, 76.4%), (256, 76.5%), (512, 76.6%).

- Trend: Slight upward trajectory as representation size increases.

- **FF (Orange Crosses)**:

- Data points: (32, 74.8%), (64, 75.3%), (128, 75.5%), (256, 75.7%), (512, 75.9%).

- Trend: Gradual upward trend but consistently below MRL-AC.

- **FF 2048 (Purple Dashed Line)**: Horizontal line at 77%, above all data points.

- **Green Dashed Line**: Vertical reference at x=32, labeled "14x smaller representation size."

### Key Observations

1. **MRL-AC outperforms FF** across all representation sizes, with a maximum accuracy of 76.6% vs. FF's 75.9%.

2. **FF 2048 baseline** (77%) is unattained by either model, suggesting it represents an ideal or theoretical limit.

3. **14x smaller representation size** at x=32 aligns with MRL-AC's 76.1% accuracy, indicating efficiency at reduced scale.

4. **FF's performance** improves with larger representation sizes but remains inferior to MRL-AC.

### Interpretation

The data demonstrates that MRL-AC achieves higher accuracy than FF across all tested representation sizes, with a consistent gap of ~0.3–0.5%. The FF 2048 line (77%) acts as a ceiling, implying potential for further optimization. The "14x smaller representation size" annotation at x=32 highlights MRL-AC's efficiency, maintaining strong performance even at reduced scale. This suggests MRL-AC may be more robust or optimized for resource-constrained scenarios compared to FF.

</details>

Figure 6: Adaptive classification on ${\rm MRL}$ ResNet50 using cascades results in $14\times$ smaller representation size for the same level of accuracy on ImageNet-1K ( $\sim 37$ vs $512$ dims for $76.3\$ ).

<details>

<summary>x14.png Details</summary>

### Visual Description

## Line Graph: mAP@10% Performance vs. Representation Size

### Overview

The graph compares the mean Average Precision at 10% (mAP@10%) performance of six different methods across varying representation sizes (8 to 2048). The y-axis ranges from 40% to 65%, while the x-axis uses logarithmic scaling (powers of 2). All lines show distinct trends, with some plateauing early and others improving significantly with larger representations.

### Components/Axes

- **X-axis (Representation Size)**: Logarithmic scale from 8 to 2048 (steps: 8, 16, 32, 64, 128, 256, 512, 1024, 2048).

- **Y-axis (mAP@10%)**: Linear scale from 40% to 65%.

- **Legend**: Located in the top-right corner, mapping colors and line styles to methods:

- **MRL**: Blue solid line with circles.

- **MRL-E**: Orange dashed line with triangles.

- **FF**: Green dash-dot line with downward triangles.

- **SVD**: Red dotted line with hexagons.

- **Slim. Net**: Purple dash-dot line with crosses.

- **Rand. FS**: Brown solid line with crosses.

### Detailed Analysis

1. **MRL (Blue)**: Starts at ~57% at 8, rises sharply to ~64% by 16, then plateaus. Maintains ~64% across all larger sizes.

2. **MRL-E (Orange)**: Begins at ~52% at 8, increases to ~63% by 16, then plateaus. Slightly below MRL but stable.

3. **FF (Green)**: Starts at ~54% at 8, peaks at ~63% at 16, then dips slightly (~62%) by 2048.

4. **SVD (Red)**: Begins at ~50% at 8, rises to ~62% by 128, then dips to ~60% by 2048.

5. **Slim. Net (Purple)**: Starts at ~53% at 8, peaks at ~61% at 128, then declines to ~58% by 2048.

6. **Rand. FS (Brown)**: Starts at ~40% at 8, rises sharply to ~63% by 2048, showing the steepest improvement.

### Key Observations

- **Early Performance**: MRL and MRL-E dominate at smaller representation sizes (8–16), achieving ~64% mAP@10%.

- **Scalability**: Rand. FS improves dramatically with larger representations, suggesting it benefits from increased data.

- **Dips**: SVD and Slim. Net show performance declines after 128, possibly due to overfitting or inefficiency at higher sizes.

- **Consistency**: MRL and MRL-E maintain stable performance across all sizes, indicating robustness.

### Interpretation

The data suggests that **MRL and MRL-E** are the most efficient methods for small-to-medium representation sizes, while **Rand. FS** excels with larger datasets. The decline in SVD and Slim. Net at higher sizes may indicate suboptimal scaling or overfitting. The logarithmic x-axis highlights that performance gains are most pronounced in the early stages for most methods, except Rand. FS, which shows linear improvement. This could imply that Rand. FS is better suited for high-dimensional data, whereas MRL/MRL-E are optimized for compact representations.

</details>

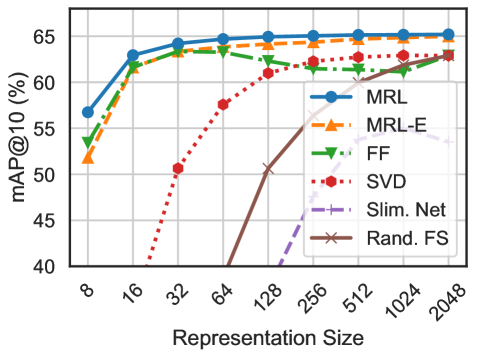

Figure 7: mAP@ $10$ for Image Retrieval on ImageNet-1K with ResNet50. ${\rm MRL}$ consistently produces better retrieval performance over the baselines across all the representation sizes.

Figure 7 compares the mAP@ $10$ performance of ResNet50 representations on ImageNet-1K across dimensionalities for ${\rm MRL}$ , ${\rm MRL\text{--}E}$ , FF, slimmable networks along with post-hoc compression of vectors using SVD and random feature selection. ${\rm Matryoshka~Representations}$ are often the most accurate while being up to $3\$ better than the FF baselines. Similar to classification, post-hoc compression and slimmable network baselines suffer from significant drop-off in retrieval mAP@ $10$ with $\leq 256$ dimensions. Appendix E discusses the mAP@ $10$ of the same models on ImageNet-4K.

${\rm MRL}$ models are capable of performing accurate retrieval at various granularities without the additional expense of multiple model forward passes for the web-scale databases. FF models also generate independent databases which become prohibitively expense to store and switch in between. ${\rm Matryoshka~Representations}$ enable adaptive retrieval (AR) which alleviates the need to use full-capacity representations, $d=2048$ , for all data and downstream tasks. Lastly, all the vector compression techniques [60, 45] used as part of the ANNS pipelines are complimentary to ${\rm Matryoshka~Representations}$ and can further improve the efficiency-vs-accuracy trade-off.

#### 4.3.1 Adaptive Retrieval

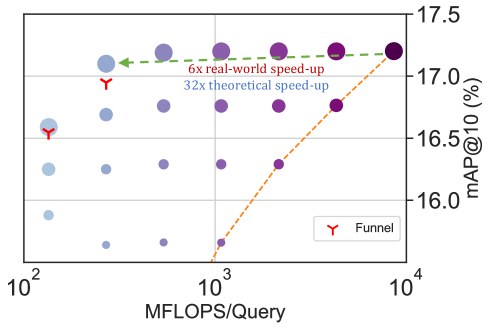

We benchmark ${\rm MRL}$ in the adaptive retrieval setting (AR) [50]. For a given query image, we obtained a shortlist, $K=200$ , of images from the database using a lower-dimensional representation, e.g. $D_{s}=16$ followed by reranking with a higher capacity representation, e.g. $D_{r}=2048$ . In real-world scenarios where top ranking performance is the key objective, measured with mAP@ $k$ where k covers a limited yet crucial real-estate, AR provides significant compute and memory gains over single-shot retrieval with representations of fixed dimensionality. Finally, the most expensive part of AR, as with any retrieval pipeline, is the nearest neighbour search for shortlisting. For example, even naive re-ranking of 200 images with 2048 dimensions only costs 400 KFLOPs. While we report exact search cost per query for all AR experiments, the shortlisting component of the pipeline can be sped-up using ANNS (HNSW). Appendix I has a detailed discussion on compute cost for exact search, memory overhead of HNSW indices and wall-clock times for both implementations. We note that using HNSW with 32 neighbours for shortlisting does not decrease accuracy during retrieval.

|

<details>

<summary>x15.png Details</summary>

### Visual Description

## Scatter Plot: mAP@10 vs. MFLOPS/Query with Speed-Up Trends

### Overview

The image is a scatter plot comparing **mAP@10 (%)** (mean Average Precision at 10 results) against **MFLOPS/Query** (millions of floating-point operations per query). It includes two trend lines representing theoretical and real-world speed-up factors, along with annotated data points and a "Funnel" marker.

---

### Components/Axes

- **X-axis**: "MFLOPS/Query" (logarithmic scale: 10² to 10³).

- **Y-axis**: "mAP@10 (%)" (linear scale: 64.9 to 65.3).

- **Legend**:

- **Red "Y"**: Labeled "Funnel" (positioned at the lower-left cluster of data points).

- **Dashed green line**: "128x theoretical speed-up" (top-left to bottom-right).

- **Dotted orange line**: "14x real-world speed-up" (bottom-left to top-right).

---

### Detailed Analysis

1. **Data Points**:

- **Blue dots**: Clustered at lower MFLOPS/Query values (10² to ~10².5), with mAP@10 ranging from 65.0 to 65.2.

- **Purple dots**: Spread across higher MFLOPS/Query values (10².5 to 10³), with mAP@10 between 65.0 and 65.2.

- **Red "Y" markers**: Located at the lowest MFLOPS/Query (10²) and highest mAP@10 (65.2), suggesting a focal point for the "Funnel" model.

2. **Trend Lines**:

- **Green dashed line ("128x theoretical speed-up")**:

- Starts at ~65.3 mAP@10 (10² MFLOPS/Query) and slopes downward to ~65.1 at 10³ MFLOPS/Query.

- Indicates a **theoretical degradation** in performance as computational power increases.

- **Orange dotted line ("14x real-world speed-up")**:

- Begins at ~65.0 mAP@10 (10² MFLOPS/Query) and rises to ~65.2 at 10³ MFLOPS/Query.

- Shows a **real-world improvement** in performance with higher MFLOPS/Query.

3. **Annotations**:

- A green arrow points to the highest mAP@10 value (65.3) at the lowest MFLOPS/Query (10²), emphasizing the theoretical peak.

- The red "Y" marker is explicitly labeled "Funnel," likely representing a specific optimization or benchmark.

---

### Key Observations

- **Theoretical vs. Real-World Divergence**:

The green line (theoretical) predicts a **128x speed-up** but shows a **decline in mAP@10** as MFLOPS/Query increases, while the orange line (real-world) demonstrates a **14x speed-up** with **improving mAP@10**.

- **Funnel Marker**: The red "Y" at (10² MFLOPS/Query, 65.2 mAP@10) may represent an optimal balance between computational efficiency and performance.

- **Data Point Distribution**:

- Lower MFLOPS/Query values (10²) cluster around higher mAP@10 (65.2–65.3).

- Higher MFLOPS/Query values (10³) show a mix of mAP@10 values, suggesting diminishing returns or trade-offs.

---

### Interpretation

The chart highlights a **discrepancy between theoretical and real-world performance gains**. While the "128x theoretical speed-up" line assumes ideal conditions, the "14x real-world speed-up" reflects practical constraints (e.g., algorithmic inefficiencies, hardware limitations). The "Funnel" marker (red "Y") likely signifies a critical threshold where computational resources are optimally allocated to maximize mAP@10. The downward trend of the green line suggests that theoretical models may overestimate performance gains, while the upward orange line underscores the importance of real-world validation. The data implies that increasing MFLOPS/Query does not always linearly improve mAP@10, emphasizing the need for balanced system design.

</details>

|

<details>

<summary>x16.png Details</summary>

### Visual Description

## Scatter Plot: Comparison of D_s and D_r Values

### Overview

The image displays a scatter plot comparing two datasets, **D_s** (left column) and **D_r** (right column), with numerical values increasing exponentially from 8 to 2048. Dots represent data points, with size and color gradients corresponding to values. The legend maps colors to specific values, and dot sizes vary between columns.

---

### Components/Axes

- **Axes**:

- **Left Column (D_s)**: Labeled with values 8, 16, 32, 64, 128, 256, 512, 1024, 2048 (y-axis).

- **Right Column (D_r)**: Same numerical values as D_s (y-axis).

- **Legend**:

- Positioned on the right side of the plot.

- Colors range from light gray (8) to dark purple (2048), with intermediate shades for intermediate values.

- Each color corresponds to a specific value (e.g., light blue = 16, dark blue = 2048).

- **Dot Sizes**:

- D_s dots are significantly larger than D_r dots for equivalent values.

- No explicit scale for dot size is provided.

---

### Detailed Analysis

- **D_s Column**:

- Values increase exponentially (powers of 2: 8, 16, 32, ..., 2048).

- Dots are large and densely packed, with colors transitioning from light gray (8) to dark purple (2048).

- Example: The dot for 2048 is the largest and darkest purple.

- **D_r Column**:

- Identical numerical values to D_s but represented by smaller dots.

- Colors follow the same gradient as D_s but are less saturated due to smaller dot size.

- Example: The 2048 value in D_r is a small, dark blue dot.

- **Legend Placement**:

- Located to the right of both columns, aligned vertically.

- Colors are mapped directly to values, with no overlap or ambiguity.

---

### Key Observations

1. **Dot Size Discrepancy**: D_s dots are ~5–10x larger than D_r dots for equivalent values, suggesting a visual emphasis on D_s.

2. **Color Consistency**: Colors in both columns align perfectly with the legend, confirming accurate value representation.

3. **Exponential Scale**: Values double sequentially, indicating a focus on logarithmic or exponential relationships.

4. **No Overlap**: D_s and D_r dots occupy separate vertical columns, preventing direct spatial comparison.

---

### Interpretation

- **Purpose**: The plot likely compares two datasets (D_s and D_r) with identical values but differing magnitudes, as indicated by dot size. D_s may represent a primary metric, while D_r could be a scaled or secondary measurement.

- **Trend**: Both datasets follow an exponential growth pattern, with values doubling at each step. The consistent color gradient across both columns reinforces this trend.

- **Anomalies**: No outliers are present; all values are evenly spaced and follow the expected progression.

- **Design Choice**: The use of dot size to differentiate D_s and D_r (rather than a third axis) simplifies the visualization but may obscure quantitative differences in magnitude.

---

### Technical Notes

- **Language**: English (no non-English text present).

- **Data Structure**:

- Two columns (D_s, D_r) with shared y-axis values.

- Legend acts as a value-to-color mapping.

- **Spatial Grounding**:

- D_s: Left column, large dots.

- D_r: Right column, small dots.

- Legend: Right-aligned, vertical orientation.

</details>

|

<details>

<summary>x17.png Details</summary>

### Visual Description

## Line Chart: Performance Speed-Up Analysis

### Overview

The chart compares real-world and theoretical speed-ups in computational performance, measured by MFLOPS/Query (x-axis) and mAP@10% (y-axis). Two data series are represented: a green dashed line for "6x real-world speed-up" and an orange dashed line for "32x theoretical speed-up." Purple dots labeled "Funnel" are plotted along the green line, while blue dots appear below it. Red "Y" markers highlight specific points.

### Components/Axes

- **X-axis**: "MFLOPS/Query" (log scale: 10² to 10⁴).

- **Y-axis**: "mAP@10 (%)" (linear scale: 16.0 to 17.5).

- **Legend**: Located in the bottom-right corner.

- Red "Y": "Funnel" (data points).

- Green dashed line: "6x real-world speed-up."

- Orange dashed line: "32x theoretical speed-up."

- **Annotations**:

- "6x real-world speed-up" (green dashed line).

- "32x theoretical speed-up" (orange dashed line).

### Detailed Analysis

- **X-axis markers**: 10², 10³, 10⁴.

- **Y-axis markers**: 16.0, 16.5, 17.0, 17.5.

- **Data series**:

- **Green dashed line**: Flat, indicating constant "6x real-world speed-up" across MFLOPS/Query values.

- **Orange dashed line**: Starts at ~10³ MFLOPS/Query, rising steeply to ~10⁴, showing increasing "32x theoretical speed-up."

- **Purple dots ("Funnel")**: Positioned along the green line at MFLOPS/Query values of ~10³ and ~10⁴, with mAP@10% values of ~17.0 and ~17.5.

- **Blue dots**: Located below the green line, with MFLOPS/Query values ranging from ~10² to ~10³ and mAP@10% values between ~16.0 and ~16.5.

- **Red "Y" markers**: Highlight specific points on the green line (e.g., ~10³ MFLOPS/Query, ~17.0 mAP@10%) and below it (e.g., ~10² MFLOPS/Query, ~16.0 mAP@10%).

### Key Observations

1. The green line ("6x real-world speed-up") remains flat, suggesting consistent performance across MFLOPS/Query.

2. The orange line ("32x theoretical speed-up") shows a sharp upward trend, indicating higher theoretical gains at higher MFLOPS/Query.

3. "Funnel" points (purple dots) align with the green line, implying these represent optimal real-world performance.

4. Blue dots (lower mAP@10%) may represent suboptimal or alternative configurations.

5. Red "Y" markers emphasize critical data points, possibly thresholds or benchmarks.

### Interpretation

The chart highlights a disparity between real-world and theoretical performance. The "6x real-world speed-up" (green line) is stable, while the "32x theoretical speed-up" (orange line) suggests significant potential for improvement at higher computational loads. The "Funnel" points on the green line may indicate scenarios where real-world performance matches theoretical expectations, possibly due to optimized configurations. The blue dots below the green line could represent less efficient setups or edge cases. The red "Y" markers likely denote key evaluation points, such as baseline performance or target thresholds. This analysis underscores the gap between theoretical models and practical implementation, emphasizing the need for further optimization to bridge this gap.

</details>

|

| --- | --- | --- |

| (a) ImageNet-1K | | (b) ImageNet-4K |

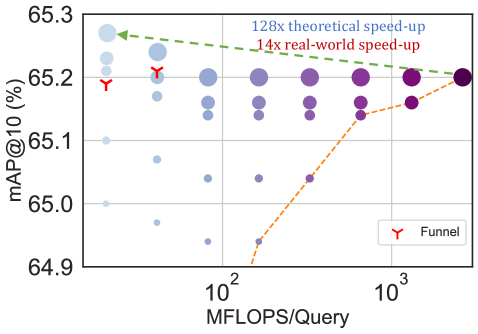

Figure 8: The trade-off between mAP@ $10$ vs MFLOPs/Query for Adaptive Retrieval (AR) on ImageNet-1K (left) and ImageNet-4K (right). Every combination of $D_{s}$ & $D_{r}$ falls above the Pareto line (orange dots) of single-shot retrieval with a fixed representation size while having configurations that are as accurate while being up to $14\times$ faster in real-world deployment. Funnel retrieval is almost as accurate as the baseline while alleviating some of the parameter choices of Adaptive Retrieval.

Figure 8 showcases the compute-vs-accuracy trade-off for adaptive retrieval using ${\rm Matryoshka~Representations}$ compared to single-shot using fixed features with ResNet50 on ImageNet-1K. We observed that all AR settings lied above the Pareto frontier of single-shot retrieval with varying representation sizes. In particular for ImageNet-1K, we show that the AR model with $D_{s}=16$ & $D_{r}=2048$ is as accurate as single-shot retrieval with $d=2048$ while being $\mathbf{\sim 128\times}$ more efficient in theory and $\mathbf{\sim 14\times}$ faster in practice (compared using HNSW on the same hardware). We show similar trends with ImageNet-4K, but note that we require $D_{s}=64$ given the increased difficulty of the dataset. This results in $\sim 32\times$ and $\sim 6\times$ theoretical and in-practice speedups respectively. Lastly, while $K=200$ works well for our adaptive retrieval experiments, we ablated over the shortlist size $k$ in Appendix K.2 and found that the accuracy gains stopped after a point, further strengthening the use-case for ${\rm Matryoshka~Representation~Learning}$ and adaptive retrieval.

Even with adaptive retrieval, it is hard to determine the choice of $D_{s}$ & $D_{r}$ . In order to alleviate this issue to an extent, we propose Funnel Retrieval, a consistent cascade for adaptive retrieval. Funnel thins out the initial shortlist by a repeated re-ranking and shortlisting with a series of increasing capacity representations. Funnel halves the shortlist size and doubles the representation size at every step of re-ranking. For example on ImageNet-1K, a funnel with the shortlist progression of $200\to 100\to 50\to 25\to 10$ with the cascade of $16\to 32\to 64\to 128\to 256\to 2048$ representation sizes within ${\rm Matryoshka~Representation}$ is as accurate as the single-shot 2048-dim retrieval while being $\sim 128\times$ more efficient theoretically (see Appendix F for more results). All these results showcase the potential of ${\rm MRL}$ and AR for large-scale multi-stage search systems [15].

## 5 Further Analysis and Ablations

Robustness.

We evaluate the robustness of the ${\rm MRL}$ models trained on ImageNet-1K on out-of-domain datasets, ImageNetV2/R/A/Sketch [72, 34, 35, 94], and compare them to the FF baselines. Table 17 in Appendix H demonstrates that ${\rm Matryoshka~Representations}$ for classification are at least as robust as the original representation while improving the performance on ImageNet-A by $0.6\$ – a $20\$ relative improvement. We also study the robustness in the context of retrieval by using ImageNetV2 as the query set for ImageNet-1K database. Table 9 in Appendix E shows that ${\rm MRL}$ models have more robust retrieval compared to the FF baselines by having up to $3\$ higher mAP@ $10$ performance. This observation also suggests the need for further investigation into robustness using nearest neighbour based classification and retrieval instead of the standard linear probing setup. We also find that the zero-shot robustness of ALIGN- ${\rm MRL}$ (Table 18 in Appendix H) agrees with the observations made by Wortsman et al. [96]. Lastly, Table 6 in Appendix D.2 shows that ${\rm MRL}$ also improves the cosine similarity span between positive and random image-text pairs.

Few-shot and Long-tail Learning.

We exhaustively evaluated few-shot learning on ${\rm MRL}$ models using nearest class mean [79]. Table 15 in Appendix G shows that that representations learned through ${\rm MRL}$ perform comparably to FF representations across varying shots and number of classes.

${\rm Matryoshka~Representations}$ realize a unique pattern while evaluating on FLUID [92], a long-tail sequential learning framework. We observed that ${\rm MRL}$ provides up to $2\$ accuracy higher on novel classes in the tail of the distribution, without sacrificing accuracy on other classes (Table 16 in Appendix G). Additionally we find the accuracy between low-dimensional and high-dimensional representations is marginal for pretrain classes. We hypothesize that the higher-dimensional representations are required to differentiate the classes when few training examples of each are known. This results provides further evidence that different tasks require varying capacity based on their difficulty.

| (a) (b) (c) |

<details>

<summary>TabsNFigs/images/gradcam-annotated-1.png Details</summary>

### Visual Description

## Diagram: Attention Visualization for Object Recognition

### Overview

The image depicts a sequence of attention heatmaps visualizing a model's focus progression across iterations. It includes a ground truth (GT) image of a person holding a plastic bag, followed by four heatmaps labeled with numbers (8, 16, 32, 2048) representing computational steps or iterations. Arrows indicate a conceptual flow from "Shower Cap" to "Plastic Bag," suggesting a correction in attention focus over time.

### Components/Axes

- **Left Panel**: Ground truth (GT) image labeled "GT: Plastic Bag," showing a person holding a white plastic bag.

- **Right Panels**: Four attention heatmaps with progressive numbers (8, 16, 32, 2048) in orange text at the bottom of each panel.

- **Labels**:

- "Shower Cap" (leftmost label, purple text)

- "Plastic Bag" (rightmost label, black text)

- **Arrows**: Two black arrows connecting "Shower Cap" → "Plastic Bag," indicating directional flow.

- **Heatmap Colors**: Gradient from purple (low attention) to yellow (high attention), with no explicit legend.

### Detailed Analysis

1. **GT Image**:

- Person wearing a brown jacket, red bag, and white hat, holding a white plastic bag.

- Background includes pedestrians and urban elements (flowers, buildings).

2. **Heatmaps**:

- **8**: Faint yellow glow around the plastic bag, indicating initial but weak focus.

- **16**: Slightly stronger attention on the bag, with residual focus on the person's upper body.

- **32**: Concentrated yellow highlight on the plastic bag, with reduced attention on the person.

- **2048**: Dominant yellow focus on the bag, minimal attention elsewhere.

3. **Textual Elements**:

- Numbers (8, 16, 32, 2048) are positioned at the bottom center of each heatmap in orange.

- Labels "Shower Cap" and "Plastic Bag" are placed at the far left and right of the diagram, respectively.

### Key Observations

- The heatmaps show a clear progression from diffuse attention (early iterations) to precise focus on the plastic bag (later iterations).

- The "Shower Cap" label is spatially isolated from the heatmaps, suggesting it represents an initial misclassification or distracting element.

- The numbers increase exponentially (8 → 2048), implying computational complexity or depth in the model's processing.

### Interpretation

The diagram demonstrates how an attention mechanism refines object recognition over iterations. Initially, the model may misattribute focus to irrelevant elements (e.g., "Shower Cap"), but with increased computational steps, it prioritizes the ground truth object ("Plastic Bag"). The exponential growth in iteration numbers (8 → 2048) highlights the trade-off between precision and computational cost. The absence of a legend for heatmap colors suggests a standardized intensity scale (e.g., 0–1), with yellow representing maximum attention. This visualization underscores the importance of iterative refinement in attention-based models for accurate object localization.

</details>

<details>

<summary>TabsNFigs/images/gradcam-annotated-2.png Details</summary>

### Visual Description

## Heatmap Visualization: Snake Species Discrimination Progression

### Overview

The image presents a comparative analysis of snake species discrimination using heatmap visualizations. It shows a progression from Boa Constrictor representations to a Rock Python ground truth (GT), with four intermediate stages labeled 8, 16, 32, and 2048. Each stage displays a heatmap overlay on a snake silhouette, with color gradients indicating activation intensity.

### Components/Axes

- **Left Panel**: Ground Truth (GT) image of a Rock Python with natural patterning on a yellow background.

- **Central Panels**: Four sequential heatmaps labeled 8, 16, 32, and 2048, showing:

- **X-axis**: Implicit progression from Boa Constrictor (left) to Rock Python (right) via arrows.

- **Y-axis**: Not explicitly labeled, but implied vertical dimension for heatmap distribution.

- **Color Legend**:

- Purple (low activation)

- Green (moderate activation)

- Yellow (high activation)

- **Text Elements**:

- "GT: Rock Python" (top-left)

- "Boa Constrictor" (center-left)

- "Rock Python" (center-right)

- Numerical labels (8, 16, 32, 2048) in orange at bottom of each heatmap.

### Detailed Analysis

1. **Heatmap Progression**:

- **8**: Broad activation across the snake's body, with diffuse green/yellow regions.

- **16**: Increased focus on the head region, with concentrated yellow areas.

- **32**: Further refinement, with distinct yellow patches along the dorsal ridge.

- **2048**: Highly localized activation, with intense yellow concentrated on the head and ocular region.

2. **Color Distribution**:

- All heatmaps show a gradient from purple (background) to yellow (peak activation).

- Yellow regions correlate with anatomical features critical for species identification (head shape, scale patterns).

3. **Spatial Relationships**:

- Arrows indicate a left-to-right progression from Boa Constrictor to Rock Python.

- Heatmap intensity increases correlate with the numerical labels (8→2048).

### Key Observations

- **Activation Concentration**: Higher numerical values (2048) show significantly more focused activation than lower values (8).

- **Species Discrimination**: The rightmost heatmap (2048) most closely resembles the GT Rock Python's anatomical features.

- **Color Consistency**: Yellow regions consistently align with the head/eye area across all stages.

### Interpretation

This visualization demonstrates how increasing model complexity (represented by the numerical labels) improves feature discrimination between Boa Constrictors and Rock Pythons. The heatmaps likely represent:

1. **Attention Mechanisms**: Higher values show focused attention on diagnostic features (head shape, ocular region).

2. **Model Capacity**: The progression from 8→2048 suggests exponential scaling of model parameters/layers.

3. **Biological Relevance**: The final heatmap (2048) aligns with human-identifiable features of Rock Pythons, validating the model's discriminative capability.

The data implies that model performance improves non-linearly with increased capacity, with the 2048-stage achieving near-ground-truth discrimination. This pattern is critical for optimizing computational resources in biological image classification tasks.

</details>

<details>

<summary>TabsNFigs/images/gradcam-annotated-3.png Details</summary>

### Visual Description

## Image Sequence: Visualization of Attention Maps

### Overview

The image displays a sequence of five visualizations of a doll wearing a yellow sweatshirt, with progressive changes in visual effects. The sequence includes a ground truth (GT) image, followed by four heatmap-style representations labeled with numbers (8, 16, 32, 2048). Arrows indicate relationships between "GT: Sweatshirt," "Sunglasses," and "Sweatshirt," suggesting a progression or transformation process.

### Components/Axes

- **Labels**:

- "GT: Sweatshirt" (ground truth reference)

- "Sunglasses" (left arrow)

- "Sweatshirt" (right arrow)

- **Numbers**:

- 8, 16, 32, 2048 (exponentially increasing values, likely representing scale or intensity)

- **Color Gradient**:

- Blue (low intensity) → Green (medium) → Yellow (high intensity), indicating attention or activation levels.

### Detailed Analysis

1. **GT: Sweatshirt (Leftmost Image)**:

- A doll with short blonde hair, large eyes, and a yellow sweatshirt against a blue background.

- No visual effects; serves as a baseline reference.

2. **Sunglasses (Second Image, Number 8)**:

- Overlay of green/yellow heatmap on the doll’s face and sweatshirt.

- Brightest intensity on the sunglasses area (yellow), fading to green on the sweatshirt.

3. **Sweatshirt (Third Image, Number 16)**:

- Similar heatmap but with broader coverage.

- Sweatshirt area shows stronger yellow intensity, while sunglasses remain localized.

4. **Sweatshirt (Fourth Image, Number 32)**:

- Heatmap expands further, with yellow dominating the sweatshirt and fading to green on the face.

- Background transitions to purple, suggesting reduced attention to non-target regions.

5. **Sweatshirt (Fifth Image, Number 2048)**:

- Most intense heatmap, with yellow concentrated on the sweatshirt and minimal green on the face.

- Background remains purple, emphasizing focus on the target object.

### Key Observations

- **Exponential Scaling**: Numbers (8, 16, 32, 2048) follow powers of 2, suggesting logarithmic scaling of attention or model parameters.

- **Attention Localization**:

- Lower values (8, 16) show mixed focus on sunglasses and sweatshirt.

- Higher values (32, 2048) concentrate attention on the sweatshirt, aligning with the "Sweatshirt" label.

- **Color Correlation**:

- Yellow corresponds to highest intensity (target regions).

- Green indicates moderate attention (secondary regions).

- Blue/Purple represents low/no attention (background).

### Interpretation

The sequence visualizes how an attention mechanism in a computational model (e.g., neural network) prioritizes specific regions (sunglasses and sweatshirt) as the scale increases. The ground truth (GT) establishes the target object (sweatshirt), while the heatmaps demonstrate the model’s ability to isolate and focus on this object. The exponential increase in numbers (8 → 2048) likely reflects iterative refinement or parameter adjustments, with higher values enabling sharper localization. The transition from mixed attention (sunglasses/sweatshirt) to singular focus (sweatshirt) suggests the model learns to prioritize the GT label over distractors. This could relate to tasks like object segmentation, where attention maps guide the model to distinguish foreground from background.

</details>

|

| --- | --- |