# BAST: Binaural Audio Spectrogram Transformer for Binaural Sound Localization

**Authors**: Sheng Kuang, Jie Shi, Kiki van der Heijden, Siamak Mehrkanoon

> Department of Information and Computing Sciences, Utrecht University, Utrecht, The Netherlands Department of Data Science and Knowledge Engineering, Maastricht University, The Netherlands. Donders Institute for Brain Cognition and Behavior, Radboud University, Nijmegen, The Netherlands

## Abstract

Accurate sound localization in a reverberation environment is essential for human auditory perception. Recently, Convolutional Neural Networks (CNNs) have been utilized to model the binaural human auditory pathway. However, CNN shows barriers in capturing the global acoustic features. To address this issue, we propose a novel end-to-end Binaural Audio Spectrogram Transformer (BAST) model to predict the sound azimuth in both anechoic and reverberation environments. Two modes of implementation, i.e. BAST-SP and BAST-NSP corresponding to BAST model with shared and non-shared parameters respectively, are explored. Our model with subtraction interaural integration and hybrid loss achieves an angular distance of 1.29 degrees and a Mean Square Error of 1e-3 at all azimuths, significantly surpassing CNN based model. The exploratory analysis of the BAST’s performance on the left-right hemifields and anechoic and reverberation environments shows its generalization ability as well as the feasibility of binaural Transformers in sound localization. Furthermore, the analysis of the attention maps is provided to give additional insights on the interpretation of the localization process in a natural reverberant environment.

keywords: Transformer , Sound localization , Binaural integration journal: A

## 1 Introduction

Sound source localization is a fundamental ability in everyday life. Accurately and precisely localizing incoming auditory streams is required for auditory perception and social communication. In the past decades, the biological basis and neural mechanism of sound localization have been extensively explored [1, 2, 3, 4]. Normal hearing listeners extract horizontal acoustic cues by mainly relying on the interaural level differences (ILD) and interaural time differences (ITD) of the auditory input. These cues are encoded through the human auditory subcortical pathway, in which the auditory structures in the brainstem integrate and convey the binaural signals from cochleas to the auditory cortex [2, 4]. However, sound localization is frequently affected by the noise and reverberations in the complex real-word environment, which distort the spatial cues of the sound source of interest [5]. Yet, it is still not clear how the spatial position of acoustic signals in complex listening environments is extracted by the human brain.

Recently, Deep Learning (DL) [6] has been proposed to model auditory processing and has achieved great success. These approaches enable optimization of auditory models for real-life auditory environment [7, 8, 9, 10]. In the early attempts, DL methods were combined with conventional feature engineering to deal with the noise and reverberation problems [7, 11]. For instance, in [7], binaural spectral and spatial features were separately extracted, providing complementary information for a two-layer Deep Neural Network (DNN). Similarly, in [8, 12, 13], deep neural networks were used to de-noise and de-reverberate complex sound stimuli. In a CNN-based azimuth estimation approach, researchers utilized a Cascade of Asymmetric Resonators with Fast-Acting Compression to analyze sound signals and used onsite-generated correlograms to eliminate the echo interference [14]. However, most of these approaches highly depend on feature selection. To reduce this constraint, end-to-end Deep Residual Network (DRN) was recommended [15, 16]. Instead of selecting features from acoustic signal, raw spectrograms of sound was utilized in Deep Residual Network for azimuth prediction [16]. DRN was shown to be robust even in the presence of unknown noise interference at the low signal-to-noise ratio. Subsequently, [9] proposed a pure attention-based Audio Spectrogram Transformer (AST) and achieved the state-of-the-art results for audio classification on multiple datasets. Although these DL-based methods have yielded promising results, however due to a lack of similar architectures to the human binaural auditory pathway, they may not resemble the neural processing of sound localization.

To encode the neural mechanisms underlying sound localization, the performance of deep learning methods is commonly compared to the human sound localization behavior [17, 18, 19]. For instance, [19] systematically explored the performance of binaural sound clips localization of a CNN in a real-life listening environment, however, its architecture does not resemble the structure of human auditory pathway. This issue has been addressed by utilizing a hierarchical neurobiological-inspired CNN (NI-CNN) to model the binaural characteristics of human spatial hearing [17]. This unique hierarchical design, models the binaural signal integration process and is shown to have brain-like latent feature representations. However, NI-CNN [17] is not an end-to-end model as it leverages a cochlear method to generate auditory nerve representations as model input. Furthermore, considering the wide range of frequencies of sound input, the convolution operations in NI-CNN mainly extract local-scale features and therefore may exhibit limitations for extracting global features in the acoustic time-frequency spectrogram.

In this study, we build on the success and barriers of previously proposed deep neural networks at localizing sound sources to further develop an end-to-end transformer based model for human sound localization, which captures the global acoustic features from auditory spectrograms. We aimed at (i) investigating the performance of a pure Transformer-based hierarchical binaural neural network for addressing human real-life sound localization; (ii) exploring the effect of various loss functions and binaural integration methods on the localization acuity at different azimuths; (iii) visualizing the attention flow of the proposed model to demonstrate the localization process.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Binaural Sound Source Localization System

### Overview

This diagram illustrates a system for binaural sound source localization, likely using a transformer-based architecture. It depicts three main components: (a) the processing pipeline for left and right ear signals, (b) a transformer encoder block, and (c) methods for interaural integration. The overall goal appears to be to estimate the 2D coordinates (x, y) of a sound source based on input from two ears.

### Components/Axes

The diagram is divided into three sections labeled (a), (b), and (c).

* **(a) Left/Right Processing Pipeline:** This section shows the processing steps for the left and right ear signals separately. Key components include "Overlapped Patch Generation", "Liner Projection", "Patch Embeddings + Position Embeddings", "Transformer Encoder-L/R", "Interaural Integration", "Transformer Encoder-C", "Average over Patches", and "Linear Layer".

* **(b) Transformer Encoder:** This section details the internal structure of the transformer encoder used in the system. It includes "Patch Embeddings", "Layer Norm", "Multi-Head Attention", "MLP", and "Output Sequence".

* **(c) Interaural Integration:** This section presents three methods for combining the information from the left and right ears: "Concatenation", "Addition", and "Subtraction".

The dimensions `N<sub>H</sub>`, `N<sub>T</sub>`, and `D` are used in the diagram, representing parameters of the input data and embedding spaces. The output of the linear layer is specified as `(x, y) ∈ R<sup>2</sup>`, indicating a 2D coordinate output.

### Detailed Analysis or Content Details

**(a) Left/Right Processing Pipeline:**

* **Overlapped Patch Generation:** Input signals are divided into overlapping patches. The patch size is indicated as `N<sub>H</sub> x N<sub>T</sub>`.

* **Liner Projection:** The patches are projected into an embedding space.

* **Patch Embeddings + Position Embeddings:** Patch embeddings are combined with positional embeddings.

* **Transformer Encoder-L/R:** The combined embeddings are processed by a transformer encoder for the left and right ears respectively.

* **Interaural Integration:** The outputs of the left and right transformer encoders are integrated using one of the methods described in (c).

* **Transformer Encoder-C:** The integrated representation is further processed by another transformer encoder.

* **Average over Patches:** The output of the second transformer encoder is averaged over all patches.

* **Linear Layer:** The averaged representation is passed through a linear layer to produce the final 2D coordinates (x, y).

**(b) Transformer Encoder:**

* **Patch Embeddings:** Input to the transformer encoder.

* **Layer Norm:** Normalization layer applied to the embeddings.

* **Multi-Head Attention:** Attention mechanism to capture relationships between patches.

* **Addition:** Residual connection, adding the input to the output of the attention mechanism.

* **Layer Norm:** Another normalization layer.

* **MLP:** Multi-Layer Perceptron for further processing.

* **Addition:** Residual connection, adding the input to the output of the MLP.

* **Output Sequence:** The final output of the transformer encoder.

**(c) Interaural Integration:**

* **Method 1: Concatenation:** The left and right ear representations (each of dimension `D x N<sub>H</sub> x N<sub>T</sub>`) are concatenated, resulting in a combined representation of dimension `2D x N<sub>H</sub> x N<sub>T</sub>`.

* **Method 2: Addition:** The left and right ear representations are added element-wise, resulting in a combined representation of dimension `D x N<sub>H</sub> x N<sub>T</sub>`.

* **Method 3: Subtraction:** The left and right ear representations are subtracted element-wise, resulting in a combined representation of dimension `D x N<sub>H</sub> x N<sub>T</sub>`.

### Key Observations

* The system utilizes a dual-path architecture, processing left and right ear signals independently before integrating them.

* Transformer encoders are used extensively for feature extraction and representation learning.

* Three different methods for interaural integration are proposed, offering flexibility in how the information from the two ears is combined.

* The final output is a 2D coordinate, suggesting the system aims to localize the sound source in a plane.

* The use of overlapping patches suggests a time-frequency representation of the audio signal.

### Interpretation

This diagram describes a sophisticated system for binaural sound source localization. The use of transformers suggests the system is capable of capturing long-range dependencies in the audio signal, which is crucial for accurate localization. The three interaural integration methods provide options for different types of sound cues (e.g., interaural time difference, interaural level difference). The system likely leverages the differences in arrival time and intensity of sound at the two ears to estimate the source's location. The final linear layer maps the learned representation to a 2D coordinate, providing a spatial estimate of the sound source. The choice of which interaural integration method to use could depend on the specific acoustic environment and the characteristics of the sound source. The diagram does not provide information about the training data or the specific implementation details of the transformer encoders, but it outlines a promising architecture for binaural sound source localization.

</details>

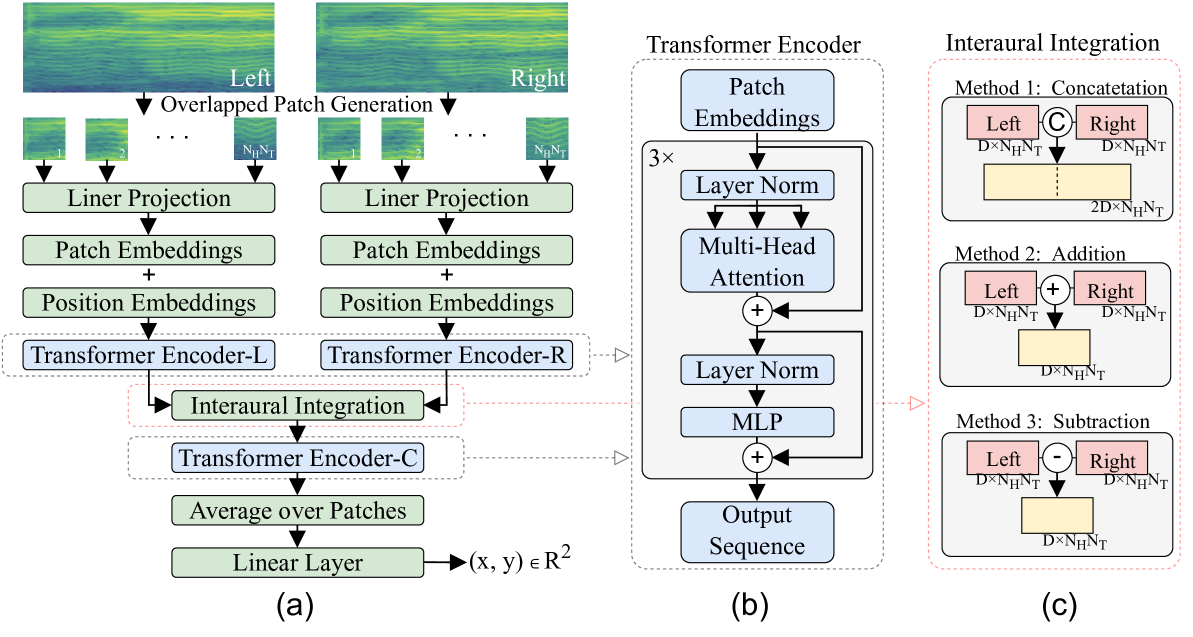

Figure 1: Architecture of the proposed Binaural Audio Spectrogram Transformer (BAST). (a) The architecture of the proposed model. (Here there are $N_{H}N_{T}$ number of patches). (b) The architecture of a single Transformer encoder. (c) Three examined interaural integration methods: concatenation, addition and subtraction.

## 2 Related Works

Binaural auditory models utilize head-related transfer functions to apply characteristics of human binaural hearing to monaural sound clips in order to simulate human spatial hearing. Conventional methods for sound source localization (SSL) have been based on microphone arrays and can be categorized into controllable beamforming, high-resolution spectrogram estimation, and time difference of sound techniques [20]. These conventional signal processing techniques are often used as baselines or input feature extraction for DL-based SSL methods. STEF (Short-Time Fourier Transform) [21] approach is used to convert the time-domain signals from each microphone into the time-frequency domain. The STFT provides a representation of the signal in both time and frequency, allowing for the analysis of how the frequency content of the signal evolves. Mixture Model (GMM), commonly used in machine learning-based studies, calculates the probability distribution of the source location in reverberant environments [22]. Gaussian mixture regression (GMR) was extended later to localize multi-source sounds [23]. Subsequently, model-based methods have been encouraged to extract ILD and ITD cues for DNN training [7]. Compressive sensing and sparse recovery techniques are extensively applied in acoustics. Sparse Bayesian learning (SBL) integrates the Bayesian framework with the concepts of sparse representations and compressive sensing. SBL has been used for SSL [24, 25, 26, 27]. However, the performance of these hybrid techniques remains unstable since the feature extraction routine varies across different datasets.

Advancements in deep learning have led to the development of convolutional neural network (CNN) based methods for sound source localization. The CNN designed by [28] uses the multichannel STFT phase spectrograms to predict multi-speakers’ azimuth in reverberant environments. The model consists of three convolutional layers with 64 filters of size ${2}\times{1}$ to consider neighboring frequency bands and microphones. Some deeper CNN architectures [29, 30, 31, 32] are applied to estimate both the azimuth and elevation. Several three-dimensional convolutions networks [33, 34] report that networks for time, frequency, and channel can achieve better accuracy than 2D convolutions. Focusing on binaural audio-visual localization, Binaural Audio-Visual Network (BAVNet) [35], for pixel-level sound source localization using binaural recordings and videos, which significantly improves performance over traditional monaural audio methods, especially when visual information quality is limited. As a data-driven DL method, NI-CNN can learn latent features for azimuth prediction from human auditory nerve representations [17]. These studies highlight the importance of advanced neural network architectures and feature extraction methods for enhancing the accuracy and resolution of sound source localization systems.

Transformer was initially proposed in natural language processing to handle long-range dependencies [36, 37]. Recently, the Transformer was successfully applied in computer vision by casting images into patch embedding sequences [38, 39]. Many hybrid models combined the Transformer with a CNN or Recurrent Neural Network (RNN) in audio processing, and some studies even directly embedded attention blocks into CNN or RNN to capture global features in a parameter-efficient way [40, 41, 42, 43]. Transformer-based models in sound source localization have gained significant attention in recent years. [44] uses a transformer encoder with residual connections and evaluates various configurations to manage multiple sound events. PILOT [45] is a transformer-based framework for sound event localization, capturing temporal dependencies through self-attention mechanisms and representing estimated positions as multivariate Gaussian variables to include uncertainty. Transformer-based models in sound event tasks have gained significant attention in recent years. The Audio Pyramid Transformer (APT) [46] with attention mechanism for weakly supervised sound event detection and audio classification, highlighting the application of transformer-based models in audio tasks. Multi-head self-attention, the parallel use of several attention layers in transformers, has also been used in SSL. Employing the first-order Ambisonic signals, Subsequently, the authors in [9] introduced AST which uses a Transformer model and variable-length monaural spectrograms to perform sound classification tasks. AST uses the overlapped-patch embedding generation policy to convert the intra-patch local features to inter-patch attention weights as a convolution-free, pure attention-based model. AST has achieved state-of-the-art [47, 48] results on multiple datasets for audio classification tasks.

The Vision Transformer (ViT) [38] represents a significant shift in the architecture of deep learning models for computer vision tasks. ViT divides an image into a sequence of fixed-size patches, linearly embedding them, and then processes them as tokens in a standard Transformer model. This method leverages the self-attention mechanism to capture long-range dependencies and contextual information across the image. The Audio Vision Transformer (AViT) is a model architecture that extends the concepts of Vision Transformers (ViTs) into the domain of audio processing. The audio-spectrogram vision transformer (AS-ViT) [49] use vision transformer models to analyze audio-spectrogram images for identifying abnormal respiratory sounds. The potential of ViT in audio-visual tasks such as sound source localization has been recognized [50]. Additionally, HTS-AT [51], a hierarchical token-semantic audio transformer, was designed to reduce the model size and training time, addressing the limitations of existing audio transformers. Binaural sound localization in noisy environments has been investigated using Frequency-Based Audio Vision Transformer (FAViT) [52]. FAViT uses selective attention mechanisms inspired by the Duplex Theory, outperforming recent CNNs and standard audio ViT models in localizing noise speech. ViT-based localization has also been explored for through-ice or underwater acoustic tracking [53].

## 3 Method

### 3.1 Model architecture

The proposed Binaural Audio Spectrogram Transformer (BAST), is illustrated in Fig. 1. Similar to NI-CNN [17], a dual-input hierarchical architecture is utilized to simulate the human subcortical auditory pathway. As opposed to NI-CNN which uses convolution layers, here three Transformer Encoders (i.e., left, right and center), hereafter called TE-L, TE-R and TE-C are utilized to construct a pure attention-based model. In particular, the pre-processed left and right sound waves are converted to left and right spectrograms denoted by $x^{L}\in\mathbb{R}^{H\times T}$ and $x^{R}\in\mathbb{R}^{H\times T}$ . Here, $H$ indicates the frequency band and $T$ indicates the number of Tukey windows ( with shape parameter: 0.25).

In what follows, the TE-L path to process the input data is explained. The other path, i.e TE-R, follows the same process. At the beginning of patch embedding layer, the left spectrogram $x^{L}\in\mathbb{R}^{H\times T}$ is first decomposed into an overlapped-patch sequence $x_{patch}^{L}\in\mathbb{R}^{P^{2}\times(N_{H}N_{T})}$ , where $P$ is the patch size, $N_{H}$ and $N_{T}$ are the number of patches in height and width respectively obtained as follows,

$$

N_{H}=\left\lceil\frac{H-P+S}{S}\right\rceil,N_{T}=\left\lceil\frac{T-P+S}{S}

\right\rceil. \tag{1}

$$

In case $H-P$ and $T-P$ are not divisible by the stride $S$ between patches, the spectrogram is zero-padded on the top and right respectively. A trainable linear projection is added to flatten each patch to a $D$ dimensional latent representation, hereafter called patch embeddings [38]. Since our model outputs the sound location coordinates, the classification token in the Transformer encoder is removed. A fixed absolute position embedding [38] is added to the patch embeddings to capture the position information of the spectrogram in the Transformer. Here, the learnable position embedding is not used as it did not significantly change model performance compared to absolute position embedding [9]. The output of the left position embedding layer $z_{in}^{L}\in\mathbb{R}^{D\times(N_{H}N_{T})}$ is then fed to the Transformer encoder TE-L.

We use the identical Transformer encoder design in [38, 9], consisting of $K$ stacked Multi-head Self-Attention (MSA) and Multi-Layer Perceptron (MLP) blocks. The BAST model performance is compared when using shared and non-shared parameters across the left and right Transformer encoders. Hereafter, BAST-SP refers to BAST model whose left and right Transformer encoders share parameters whereas in BAST-NSP the parameters of the left and right Transformer encoders are not shared. The output of left and right Transformer encoder, i.e., $z_{out}^{L}$ and $z_{out}^{R}$ , represents neural signals underlying the initial auditory processing stage along the left and right auditory pathway respectively. Subsequently, these binaural feature maps are integrated to simulate the function of the human olivary nucleus. Similar to NI-CNN, here three integration methods, i.e. addition, subtraction and concatenation, are investigated. Specifically, addition is the summation of feature maps of both sides; subtraction represents left feature map subtracted from the right feature map; concatenation is implemented by concatenating $z_{out}^{L}$ and $z_{out}^{R}$ along the the first dimension to produce $z_{in}^{C}\in\mathbb{R}^{2D\times(N_{H}N_{T})}$ . TE-C receives the integrated feature map $z_{in}^{C}$ and output sequence $z_{out}^{C}$ . Next, an average operation of the patch dimension and a linear transformer layer is applied to finally produce the sound location coordinates $(x,y)$ . The last linear layer does not have any activation function, therefore the estimated coordinates can be any point on the 2D plane.

### 3.2 Loss Function

Three loss functions, i.e., Angular Distance (AD) loss [54], Mean Square Error (MSE) loss, as well as hybrid loss with a convex combination of AD and MSE, are explored in training the proposed model. Let $C_{i}=(x_{i},y_{i})$ and $\hat{C}_{i}=(\hat{x}_{i},\hat{y}_{i})$ denote the ground truth and predication coordinates for the i- $th$ sample. MSE loss measures the squared difference of Euclidean distance between the prediction and the ground truth as follows:

$$

\textrm{MSE}=\frac{1}{N}\sum_{i}^{N}\|C_{i}-\hat{C}_{i}\|_{2}^{2}, \tag{2}

$$

where $(\hat{x_{i}},\hat{y_{i}})$ is the predicted coordinates, $(x_{i},y_{i})$ is the true sound location and $N$ is the batch size. Note that MSE loss is able to penalize the large Euclidean distance error but is insensitive to the angular distance, which means that the azimuth may differ with the same MSE. In contrast to MSE, AD loss merely measures the angular distance while ignoring the Euclidean distance:

$$

\textrm{AD}=\frac{1}{\pi N}\sum^{N}_{i}\arccos{(\frac{C_{i}\hat{C}_{i}^{T}}{\|

C_{i}\|_{2}\|\hat{C}_{i}\|_{2}})}, \tag{3}

$$

where $C_{i},\hat{C}_{i}\neq 0$ .

Table 1: The performance of BAST-NSP and BAST-SP compared to NI-CNN and NI-CNN ∗ when different loss and binaural integration methods are used. The best performed model in AD and MSE are in bold. The ↓ indicates the lower the value of the metric, the better the model performance.

| Model | Loss | Angular Distance(AD) ↓ | Mean Squared Error (MSE) ↓ | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| SS | Concatenation | Addition | Subtraction | SS | Concatenation | Addition | Subtraction | | |

| CNN [28] ∗ | MSE | 3.90° | — | — | — | 0.010 | — | — | — |

| AD | 42.69° | — | — | — | — | — | — | — | |

| Hybrid | 3.09° | — | — | — | 0.011 | — | — | — | |

| FAVit [52] ∗ | MSE | 6.26° | — | — | — | 0.022 | — | — | — |

| AD | 17.37° | — | — | — | — | — | — | — | |

| Hybrid | 3.73° | — | — | — | 0.015 | — | — | — | |

| NI-CNN [17] | MSE | — | 4.80° | 4.80° | 5.30° | — | 0.011 | 0.013 | 0.014 |

| AD | — | 3.70° | 3.90° | 5.20° | — | — | — | — | |

| NI-CNN [17] ∗ | MSE | — | 8.92° | 3.51° | 3.67° | — | 0.077 | 0.032 | 0.038 |

| AD | — | 7.85° | 1.97° | 1.85° | — | — | — | — | |

| Hybrid | — | 8.35° | 3.53° | 3.19° | — | 0.074 | 0.033 | 0.031 | |

| BAST-NSP | MSE | — | 2.78° | 2.48° | 2.42° | — | 0.003 | 0.002 | 0.002 |

| AD | — | 2.39° | 1.30° | 1.63° | — | — | — | — | |

| Hybrid | — | 2.76° | 1.83° | 1.29° | — | 0.004 | 0.002 | 0.001 | |

| BAST-SP | MSE | — | 2.02° | 4.97° | 1.94° | — | 0.002 | 0.018 | 0.002 |

| AD | — | 2.66° | 13.87° | 1.43° | — | — | — | — | |

| Hybrid | — | 1.98° | 5.72° | 2.03° | — | 0.003 | 0.026 | 0.002 | |

Table 2: The number of layers in each Transform encoder as well as the total number of trainable parameters of the proposed models. Tuple ( $\cdot$ , $\cdot$ , $\cdot$ ) indicates the number of layers in the left, right and center Transformer encoder respectively.

| Model | Interaural Integration | Transformer Layers | Trainable Parameters |

| --- | --- | --- | --- |

| BAST-NSP | Concatenation | (3, 3, 3) | ~76M |

| Addition/ Subtraction | (3, 3, 3) | ~57M | |

| BAST-SP | Concatenation | (3, 3, 3) | ~57M |

| Addition/ Subtraction | (3, 3, 3) | ~38M | |

## 4 Experiments

### 4.1 Dataset

We use the binaural audio data in [17], which consists of a training dataset and an independent testing dataset. In the training dataset, 4600 real-life sound waves (duration:500 ms, sampling rate: 16000) are placed in 36 azimuth positions, respectively, with $10\degree$ azimuth resolution, $0\degree$ elevation, and 1-meter distance from the center point. In addition, sound waves are spatialized with two acoustic environments, i.e. an anechoic environment (AE) without reverberation and a 10m $\times$ 14m lecture hall with reverberation (RV). In particular, here the training and test sets contain data from both AE and RV environments. In total, the training dataset has 331200 binaural learning samples. Similarly, the independent testing dataset contains 400 new sound waves processed with the same method as described above, producing 28800 testing samples.

### 4.2 Baseline methods

In this study, we establish a comprehensive framework for evaluating the performance of our proposed model by comparing it against four baseline models widely utilized in the field. Four baseline models were employed in this work: two-stream CNN-based models NI-CNN ∗ [17] and NI-CNN [17], one-stream CNN model [28], and ViT-based FAViT [52]. NI-CNN and NI-CNN ∗ models use cochleogram and spectrogram as model inputs, respectively. The CNN and FAVit model inputs are spectrogram. The hyper-parameters of all baseline models are empirically found to be optimized. By benchmarking our proposed model against these established baselines, we provide a comprehensive evaluation framework to assess its efficacy.

### 4.3 Model Evaluation

Models were evaluated by means of MSE and AD errors defined in Eq. (2) and (3). The lower AD and MSE errors the better localization performance is. Note that the MSE metric has no meaning when BAST is trained by AD loss because this loss does not optimize the Euclidean distance between the ground truth and the prediction, and that BAST has no constraints on the numerical range of the predicted coordinates. The one-stream models CNN and FAViT have no concatenation, addition, or subtraction modes, so the MSE and AD of these two models are measured only once.

### 4.4 Training Settings

As mentioned in 3.1, each sound wave are transformed to binaural spectrogram (size: 2 $\times$ 129 $\times$ 61, frequency range: 0-8000Hz, window length: 128ms, overlap: 64ms) before training. It is important to note that while the STFT transformation employed may reduce the fine-grained temporal differences between channels, our focus predominantly lies on interaural level differences (ILDs) rather than interaural time differences (ITDs). In order to have balanced training samples, we randomly select 75% binaural spectrograms in each azimuth position and listening environments of training dataset. The remaining data is used as validation set. As stated before in the Dataset section, a separate test dataset is available for this study. This setting results in $248400$ training samples and $82800$ validation samples. Here, Adam optimizer [55] is used to train the model for 50 epochs with a batch size of 48 and a fixed learning rate of 1e-4. In patch embedding layers, the stride of patches is set to 6, yielding 180 patches per spectrogram. Each Transformer encoder has three layers, with 1024 hidden dimensions (2048 dimensions when using concatenation as integration method in the last Transformer encoder TE-C), 16 attention heads in MSA blocks, 1024 dimensions in MLP blocks, 0.2 dropout rate in patch embeddings and MLP blocks. Our model implementation code available at https://github.com/ShengKuangCN/BAST is based on Python 3.8 and Pytorch 1.9.0, and are trained from scratch on 2 $\times$ NVIDIA GeForce GTX 1080Ti GPUs with 11GB of RAM. The empirically found and used number of layers in each Transformer encoder as well as the total number of trainable parameters are presented in Table 2.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Polar Charts: Loss Comparison for Different Methods

### Overview

The image presents a 2x3 grid of polar charts, comparing the performance of different methods (Concatenation, Addition, Subtraction) across three loss functions (MSE loss, AD loss, Hybrid loss) and two datasets (BAST-NSP, BAST-SP). Each chart visualizes the angular distribution of a metric, likely representing error or difference, with the radius indicating the magnitude of the metric.

### Components/Axes

* **Polar Coordinate System:** Each chart uses a polar coordinate system with angles ranging from -90° to 180° and radial scales ranging from 0° to 6° (top row) or 0° to 10° (bottom row).

* **Loss Functions (Titles):** The top row charts are titled "MSE loss", "AD loss", and "Hybrid loss".

* **Datasets (Y-axis Labels):** The left side of the charts are labeled "BAST-NSP" (top row) and "BAST-SP" (bottom row).

* **Methods (Legend):** A legend at the bottom of the image identifies the methods using color-coded lines:

* Concatenation (Green)

* Addition (Orange)

* Subtraction (Blue)

* **Radial Scale:** The radial axis represents a degree value, ranging from 0 to 6 or 10.

### Detailed Analysis or Content Details

**Row 1: BAST-NSP Dataset**

* **MSE Loss:**

* Concatenation (Green): The line fluctuates significantly, peaking around 120° at approximately 5.5°. It dips to around 1° at -90°.

* Addition (Orange): The line shows a more consistent shape, peaking around 0° at approximately 4.5° and dipping to around 1.5° at 90°.

* Subtraction (Blue): The line is relatively smooth, peaking around 180° at approximately 3° and dipping to around 1° at 0°.

* **AD Loss:**

* Concatenation (Green): The line peaks around 180° at approximately 4° and dips to around 1° at 90°.

* Addition (Orange): The line peaks around 0° at approximately 3° and dips to around 1° at 90°.

* Subtraction (Blue): The line is relatively smooth, peaking around 180° at approximately 2° and dipping to around 1° at 0°.

* **Hybrid Loss:**

* Concatenation (Green): The line peaks around 180° at approximately 3° and dips to around 1° at 0°.

* Addition (Orange): The line peaks around 0° at approximately 2° and dips to around 1° at 90°.

* Subtraction (Blue): The line is relatively smooth, peaking around 180° at approximately 2° and dipping to around 1° at 0°.

**Row 2: BAST-SP Dataset**

* **MSE Loss:**

* Concatenation (Green): The line peaks around 180° at approximately 9° and dips to around 2° at 0°.

* Addition (Orange): The line peaks around 0° at approximately 8° and dips to around 2° at 90°.

* Subtraction (Blue): The line is relatively smooth, peaking around 180° at approximately 4° and dipping to around 1° at 0°.

* **AD Loss:**

* Concatenation (Green): The line peaks around 180° at approximately 10° and dips to around 2° at 90°.

* Addition (Orange): The line peaks around 0° at approximately 8° and dips to around 2° at 90°.

* Subtraction (Blue): The line is relatively smooth, peaking around 180° at approximately 4° and dipping to around 1° at 0°.

* **Hybrid Loss:**

* Concatenation (Green): The line peaks around 180° at approximately 8° and dips to around 2° at 0°.

* Addition (Orange): The line peaks around 0° at approximately 6° and dips to around 2° at 90°.

* Subtraction (Blue): The line is relatively smooth, peaking around 180° at approximately 4° and dipping to around 1° at 0°.

### Key Observations

* The BAST-SP dataset consistently shows higher values (larger radii) across all loss functions and methods compared to the BAST-NSP dataset. This suggests that the methods perform worse on the BAST-SP dataset.

* Concatenation generally exhibits the most fluctuating behavior, with larger peaks and valleys, indicating potentially higher variance in its performance.

* Subtraction consistently shows the smoothest and lowest values, suggesting it might be the most stable and accurate method.

* Addition consistently performs between Concatenation and Subtraction.

### Interpretation

The charts demonstrate a comparative analysis of three methods (Concatenation, Addition, Subtraction) for handling data, evaluated using three different loss functions (MSE, AD, Hybrid) on two datasets (BAST-NSP, BAST-SP). The polar plots visualize the distribution of the loss values across different angles, providing insights into the directional bias of each method.

The consistently higher loss values for the BAST-SP dataset suggest that this dataset is more challenging for all methods. The smoother performance of the Subtraction method, indicated by lower and more consistent loss values, suggests it might be a more robust approach. The fluctuating behavior of Concatenation could indicate sensitivity to the data distribution or potential instability.

The angular distribution of the loss values could reveal specific directions where each method struggles or excels. For example, a peak at a particular angle might indicate a systematic error in that direction. The differences in the angular patterns between the loss functions suggest that each loss function captures different aspects of the error.

The choice of method and loss function should be guided by the specific characteristics of the dataset and the desired performance criteria. Further investigation is needed to understand the underlying reasons for the observed differences and to optimize the methods for specific applications.

</details>

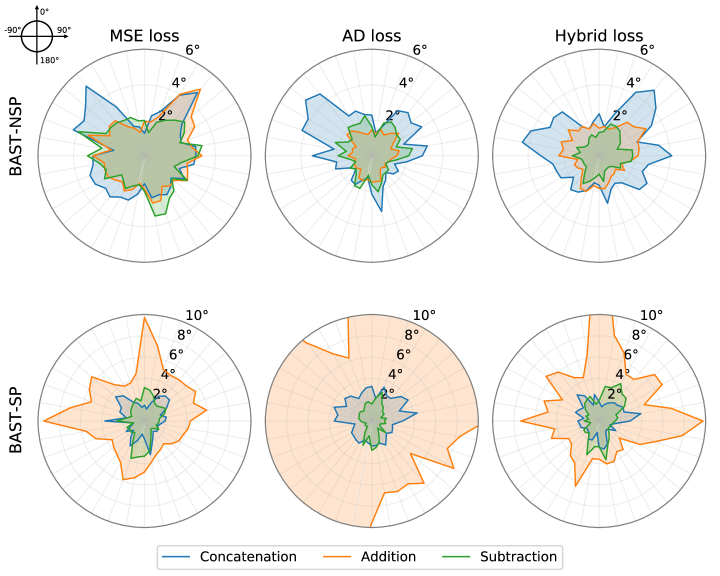

Figure 2: The angular distance (AD) error of the proposed BAST in each azimuth with different loss functions and interaural integration methods.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Box Plots: Angular Distance Comparison

### Overview

The image presents six box plots arranged in a 2x3 grid, comparing angular distances (in degrees) between "left" and "right" conditions under different loss functions (MSE, AD, Hybrid) and input methods (Concat., Add., Sub.). The y-axis represents "Angular distance (°)", ranging from 0 to 10. The x-axis represents the input method: "Concat.", "Add.", and "Sub.". Each plot also includes individual data points represented as black diamonds. Statistical significance is indicated by asterisks above certain plot pairs. The plots are labeled with "BAST-NSP" in the top row and "BAST-SP" in the bottom row.

### Components/Axes

* **Y-axis Label:** "Angular distance (°)" (present in all six plots)

* **X-axis Label:** Input method: "Concat.", "Add.", "Sub." (present in all six plots)

* **Loss Function Titles:** "MSE loss" (top-left), "AD loss" (top-center), "Hybrid loss" (top-right),

* **BAST Type Labels:** "BAST-NSP" (top row), "BAST-SP" (bottom row)

* **Legend:** Located in the top-left plot, with "left" represented by a blue color and "right" represented by an orange color.

* **Statistical Significance:** Asterisks (*) indicate statistically significant differences between "left" and "right" conditions.

### Detailed Analysis or Content Details

**Top Row (BAST-NSP)**

* **MSE Loss:**

* "left" (blue): Median ≈ 2.2°, IQR ≈ 1.8° to 3.5°, Min ≈ 0.8°, Max ≈ 4.5°

* "right" (orange): Median ≈ 2.8°, IQR ≈ 2.0° to 3.8°, Min ≈ 1.0°, Max ≈ 5.0°

* Asterisk above the "Concat." condition indicates statistical significance.

* **AD Loss:**

* "left" (blue): Median ≈ 1.8°, IQR ≈ 1.5° to 2.5°, Min ≈ 0.5°, Max ≈ 3.5°

* "right" (orange): Median ≈ 2.0°, IQR ≈ 1.5° to 3.0°, Min ≈ 0.5°, Max ≈ 4.0°

* **Hybrid Loss:**

* "left" (blue): Median ≈ 2.2°, IQR ≈ 1.8° to 3.0°, Min ≈ 0.8°, Max ≈ 4.0°

* "right" (orange): Median ≈ 2.8°, IQR ≈ 2.0° to 4.0°, Min ≈ 1.0°, Max ≈ 5.5°

**Bottom Row (BAST-SP)**

* **MSE Loss:**

* "left" (blue): Median ≈ 2.0°, IQR ≈ 1.5° to 2.8°, Min ≈ 0.5°, Max ≈ 4.0°

* "right" (orange): Median ≈ 2.4°, IQR ≈ 1.8° to 3.5°, Min ≈ 0.8°, Max ≈ 5.0°

* **AD Loss:**

* "left" (blue): Median ≈ 2.0°, IQR ≈ 1.0° to 3.0°, Min ≈ 0.5°, Max ≈ 4.0°

* "right" (orange): Median ≈ 12.0°, IQR ≈ 8.0° to 20.0°, Min ≈ 2.0°, Max ≈ 35.0°

* Asterisk above the "Sub." condition indicates statistical significance.

* **Hybrid Loss:**

* "left" (blue): Median ≈ 2.4°, IQR ≈ 1.8° to 3.5°, Min ≈ 0.8°, Max ≈ 5.0°

* "right" (orange): Median ≈ 4.0°, IQR ≈ 2.5° to 6.0°, Min ≈ 1.0°, Max ≈ 8.0°

### Key Observations

* For BAST-NSP, the "left" and "right" conditions generally have similar angular distances across all loss functions and input methods, except for the "Concat." condition under MSE loss, where a statistically significant difference is observed.

* For BAST-SP, the "right" condition consistently exhibits higher angular distances than the "left" condition, particularly under the AD loss function. The "Sub." condition under AD loss shows a very large difference and is statistically significant.

* The AD loss function generally results in larger angular distances for BAST-SP compared to the other loss functions.

* The "Sub." input method appears to have the smallest angular distances for BAST-NSP, while it shows the largest differences for BAST-SP under AD loss.

### Interpretation

The data suggests that the choice of loss function and input method significantly impacts the angular distance between "left" and "right" conditions, and this impact differs depending on whether BAST-NSP or BAST-SP is used. The statistically significant difference observed in the MSE loss with "Concat." input for BAST-NSP indicates that this combination may lead to a more pronounced distinction between the two conditions. The substantial difference in BAST-SP under AD loss with the "Sub." input suggests that this combination may be particularly sensitive to differences between the "left" and "right" conditions, potentially highlighting a specific aspect of the data that is captured by this configuration. The large angular distances observed with AD loss for BAST-SP could indicate a greater degree of dissimilarity or error in representing the data under this condition. The overall pattern suggests that the optimal configuration (loss function and input method) depends on the specific task (BAST-NSP vs. BAST-SP) and the desired level of sensitivity to differences between the "left" and "right" conditions.

</details>

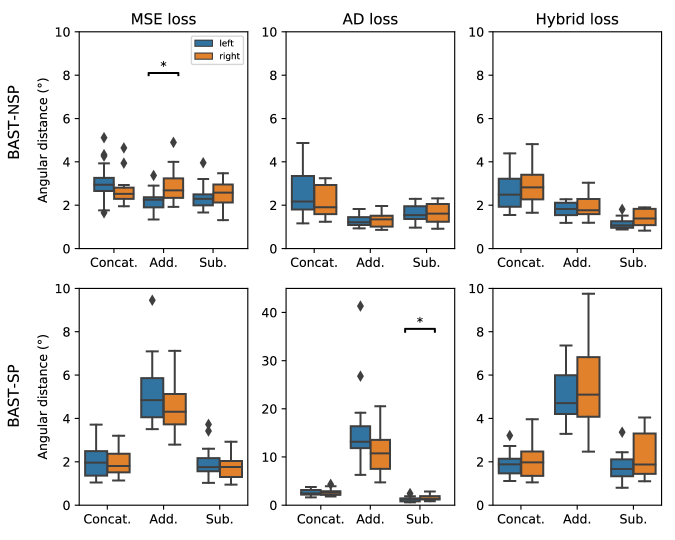

Figure 3: The AD error of the proposed BAST-NSP and BAST-SP in the left and right hemifield. The boxplot indicates quartiles of the metric distribution with respect to azimuths. The asterisk between two boxes indicates the statistical significance (p $<$ 0.05, paired t-test with FDR correction) between the left and right hemifield.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Box Plot: BASt Performance Comparison

### Overview

The image presents a 2x2 grid of box plots comparing the performance of different methods ("Concat.", "Add.", "Sub.") under two loss functions ("MSE loss", "Hybrid loss") and two BASt metrics ("BASt-NSP", "BASt-SP"). Each box plot shows the distribution of mean square error for "left" and "right" conditions. Statistical significance is indicated by asterisks above the box plots.

### Components/Axes

* **X-axis:** Method - "Concat." (Concatenation), "Add." (Addition), "Sub." (Subtraction)

* **Y-axis:** Mean square error (ranging from 0 to 0.10 for BASt-NSP and 0 to 0.07 for BASt-SP)

* **Grouping:** Two groups are represented by color: "left" (orange) and "right" (blue).

* **Loss Function:** Two columns represent different loss functions: "MSE loss" and "Hybrid loss".

* **BASt Metric:** Two rows represent different BASt metrics: "BASt-NSP" and "BASt-SP".

* **Legend:** Located in the top-left corner of the first plot, indicating "left" (orange) and "right" (blue).

* **Significance Markers:** Asterisks (*) above the box plots indicate statistical significance.

### Detailed Analysis or Content Details

**Top-Left: MSE loss, BASt-NSP**

* **Concat:** "left" ~0.005, "right" ~0.002. The "left" boxplot is significantly higher than the "right".

* **Add:** "left" ~0.003, "right" ~0.002. The "left" boxplot is slightly higher than the "right".

* **Sub:** "left" ~0.003, "right" ~0.002. The "left" boxplot is slightly higher than the "right".

* Statistical significance is indicated between "Concat" and "Add", and between "Concat" and "Sub".

**Top-Right: Hybrid loss, BASt-NSP**

* **Concat:** "left" ~0.006, "right" ~0.003. The "left" boxplot is significantly higher than the "right".

* **Add:** "left" ~0.004, "right" ~0.002. The "left" boxplot is higher than the "right".

* **Sub:** "left" ~0.002, "right" ~0.001. The "left" boxplot is slightly higher than the "right".

**Bottom-Left: MSE loss, BASt-SP**

* **Concat:** "left" ~0.001, "right" ~0.001. The "left" and "right" boxplots are very similar.

* **Add:** "left" ~0.025, "right" ~0.015. The "left" boxplot is significantly higher than the "right".

* **Sub:** "left" ~0.005, "right" ~0.001. The "left" boxplot is higher than the "right".

**Bottom-Right: Hybrid loss, BASt-SP**

* **Concat:** "left" ~0.001, "right" ~0.001. The "left" and "right" boxplots are very similar.

* **Add:** "left" ~0.03, "right" ~0.01. The "left" boxplot is significantly higher than the "right".

* **Sub:** "left" ~0.005, "right" ~0.001. The "left" boxplot is higher than the "right".

### Key Observations

* For BASt-NSP, "Concat" consistently shows the highest error for the "left" condition, and is statistically significant.

* For BASt-SP, "Add" consistently shows the highest error for the "left" condition, and is statistically significant.

* The "left" condition generally exhibits higher error than the "right" condition, particularly for "Concat" and "Add" methods.

* The scale of the y-axis differs between BASt-NSP and BASt-SP, indicating different magnitudes of error.

### Interpretation

The data suggests that the choice of method ("Concat", "Add", "Sub") and loss function ("MSE loss", "Hybrid loss") significantly impacts performance, as measured by BASt-NSP and BASt-SP. The consistent higher error for the "left" condition across most methods and metrics suggests a potential asymmetry in the data or model's ability to process information from the "left" versus the "right". The statistical significance markers highlight that the differences observed are not likely due to random chance. The different scales on the y-axis for BASt-NSP and BASt-SP indicate that these metrics capture different aspects of performance, and the optimal method may vary depending on the specific metric of interest. The "Concat" method appears to be particularly sensitive to the "left" condition when using the BASt-NSP metric, while the "Add" method shows similar sensitivity when using the BASt-SP metric. This suggests that the concatenation method may be more prone to errors when dealing with the "left" input, and the addition method may be more prone to errors when dealing with the "left" input.

</details>

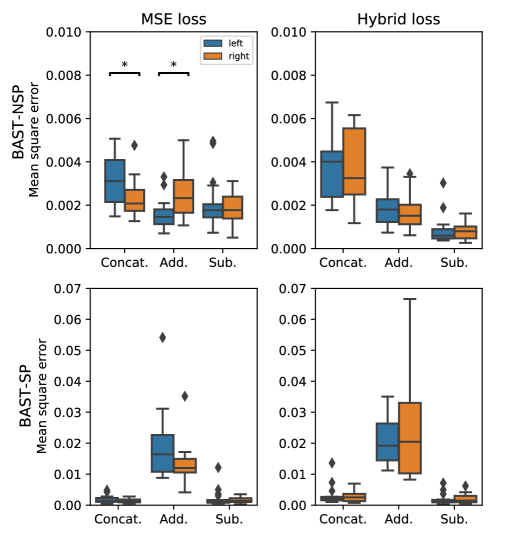

Figure 4: The MSE of the proposed BAST-NSP and BAST-SP in the left and right hemifield. The boxplot indicates quartiles of the metric distribution with respect to azimuths. The asterisk between two boxes indicates the statistical significance (p $<$ 0.05, paired t-test with FDR correction) between the left and right hemifield.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Heatmaps: Tensor Embedding Layers

### Overview

The image presents six heatmaps, arranged in a 2x3 grid. Each heatmap represents a tensor embedding (TE) layer – specifically, the 1st, 2nd, and 3rd layers – for both Left (L) and Right (R) sides, as well as a Center (C) view. The heatmaps visualize the relationships between values on a 0-180 scale for both the x and y axes. Each heatmap has a black arrow pointing to the upper-right corner.

### Components/Axes

Each heatmap shares the following components:

* **X-axis:** Labeled "0" to "180" with approximately 10 tick marks.

* **Y-axis:** Labeled "0" to "180" with approximately 10 tick marks.

* **Title:** Indicates the layer number (1st, 2nd, or 3rd) and the view (TE-L, TE-R, or TE-C).

* **Color Scale:** The heatmaps use a color gradient ranging from dark purple (low values) to bright magenta (high values). There is no explicit legend, but the color intensity represents the magnitude of the values.

### Detailed Analysis or Content Details

**1st layer TE-L:**

The heatmap shows a diagonal pattern with higher intensity (magenta) along the main diagonal. There are also several horizontal and vertical lines of varying intensity. The pattern appears somewhat chaotic, but with a clear concentration of higher values along the diagonal.

**2nd layer TE-L:**

Similar to the 1st layer, this heatmap also exhibits a diagonal pattern with higher intensity along the main diagonal. However, the pattern appears more structured and less chaotic than the 1st layer. There are more distinct horizontal and vertical lines.

**3rd layer TE-L:**

This heatmap shows a very strong diagonal pattern with a very high intensity (bright magenta) along the main diagonal. The horizontal and vertical lines are also more pronounced and structured.

**1st layer TE-R:**

This heatmap displays a similar pattern to the 1st layer TE-L, with a diagonal concentration of higher values. The pattern is also somewhat chaotic, but with a clear diagonal emphasis.

**2nd layer TE-R:**

Similar to the 2nd layer TE-L, this heatmap shows a more structured diagonal pattern with higher intensity along the main diagonal. The horizontal and vertical lines are more distinct.

**3rd layer TE-R:**

This heatmap exhibits a very strong diagonal pattern with a very high intensity along the main diagonal, similar to the 3rd layer TE-L. The horizontal and vertical lines are also more pronounced.

**1st layer TE-C:**

This heatmap shows a pattern of vertical lines with varying intensity. The diagonal pattern is less prominent compared to the TE-L and TE-R views.

**2nd layer TE-C:**

This heatmap displays a pattern of vertical lines with varying intensity. The lines appear more distinct and structured than in the 1st layer TE-C.

**3rd layer TE-C:**

This heatmap shows a pattern of vertical lines with varying intensity. The lines are very distinct and structured, with a clear separation between them.

### Key Observations

* **Diagonal Dominance (TE-L & TE-R):** The TE-L and TE-R views consistently show a strong diagonal pattern, indicating a correlation between values along the main diagonal.

* **Layer Progression:** As the layer number increases (1st to 3rd), the diagonal pattern becomes more pronounced and structured in the TE-L and TE-R views.

* **Vertical Lines (TE-C):** The TE-C view consistently displays a pattern of vertical lines, suggesting a different type of relationship between values.

* **Arrow Placement:** The black arrows in each heatmap consistently point to the upper-right corner, potentially indicating a direction of increasing values or a specific feature of the embedding.

### Interpretation

The heatmaps likely represent the output of a tensor embedding process, possibly within a neural network or machine learning model. The different views (L, R, C) could correspond to different aspects or perspectives of the embedded data.

The strong diagonal patterns in the TE-L and TE-R views suggest that the embedding process is capturing correlations between features or elements along the main diagonal. The increasing structure and intensity of the diagonal pattern as the layer number increases indicate that the embedding is becoming more refined and focused on these correlations.

The vertical line patterns in the TE-C view suggest a different type of relationship, possibly representing distinct categories or features. The increasing structure of the vertical lines as the layer number increases indicates that the embedding is becoming more capable of distinguishing between these categories.

The arrows pointing to the upper-right corner could indicate that higher values represent a specific feature or characteristic of the embedded data.

Overall, the heatmaps demonstrate a complex and structured embedding process that is capturing different types of relationships between values. The different views and layer numbers provide insights into the evolution and refinement of the embedding.

</details>

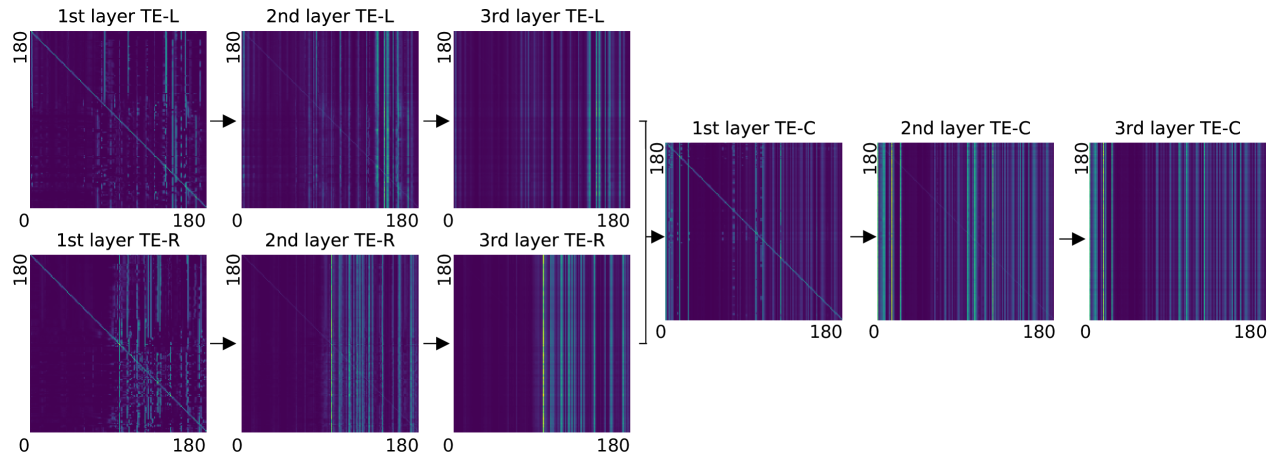

Figure 5: An example of the attention matrices in the proposed model (i.e., BAST-NSP, hybrid loss and subtraction). The corresponding sound clip was randomly selected in the category of human speech with reverberation. For each layer, we present the patch-to-patch attention matrix (size: 180 $\times$ 180) calculated by the rollout method in [56]. Note that we initialize the attention matrix at the first layer of TE-C by summing the attention matrices at the last layer of TE-L and TE-R.

## 5 Results

### 5.1 Overall Performance

The proposed BAST-NSP and BAST-SP models’ performance is compared with those of the NI-CNN, CNN, and FAVit models. In particular, for the NI-CNN model, two modes of implementations corresponding to correlogram and spectrogram as model inputs have been considered and are denoted by NI-CNN and NI-CNN ∗, respectively. The obtained results of the compared models with different combinations of binaural integration methods and loss functions are tabulated in Table 1. BAST-NSP has achieved the best AD error of 1.29°and the best MSE of 0.001 when using the subtraction binaural integration and the hybrid loss function. Compared to NI-CNN, BAST-NSP reduces AD error 65.4% from 3.70°to 1.29°and MSE 90.9% from 0.011 to 0.001. In addition, BAST-NSP outperforms NI-CNN ∗ (NI-CNN ∗: AD=1.85°, MSE=0.031), although both models received the same input. BAST-SP has achieved AD=1.43°, MSE=0.002, surpassing the performance of NI-CNN and NI-CNN ∗ models while still inferior to BAST-NSP. In this study, BAST-NSP outperforms the other tested models in performing binaural sound localization.

Two-stream models (NI-CNN, NI-CNN ∗, BAST-NSP, BAST-SP) outperform one-stream models (CNN, FAVit) in both Angular Distance (AD) and Mean Squared Error (MSE). BAST-NSP shows the best overall performance among the two-stream models, followed closely by BAST-SP. BAST-NSP and BAST-SP show significant improvements in AD, especially in the hybrid loss function, with BAST-NSP’s best AD at 1.29°and BAST-SP’s best AD at 1.43°, compared to the best one-stream AD of 3.09°(CNN). Two-stream models generally have lower MSE compared to one-stream models. BAST-NSP has the lowest MSE, with the best performance at 0.001 (Hybrid loss with Subtraction), compared to the lowest MSE of one-stream models at 0.010 (CNN). One-stream models have shown more poor performance in AD loss than the best-performing two-stream models.

We further analyze the influence of different binaural integration methods on the BAST-NSP and BAST-SP performance. Here, the performances are compared in terms of AD error. Specifically, in both cases when the BAST-NSP is trained by AD loss and hybrid loss, binaural integration through addition and subtraction improved the model performance compared to concatenation (AD loss: Add.=1.30°, Sub.=1.63°, Concat.=2.39°; Hybrid loss: Add.=1.83°, Sub. =1.29°, Concat.=2.76°, see Table 1). In case of MSE loss, the performance across the three integration methods of BAST-NSP is similar. In BAST-SP, addition integration causes a huge AD error increment over BAST-NSP (BAST-SP: 4.97°, BAST-NSP: 1.30°), indicating that the left-right identical feature addition brings a great challenge to the model to predict the azimuth.

The effect of three types of loss functions on BAST-NSP and BAST-SP performance are not the same. In BAST-NSP, AD loss achieves lower AD when using concatenation or addition, but the hybrid loss yields the lowest AD in terms of subtraction. In BAST-SP, one can observe an interaction of loss function and binaural integration methods, i.e. the best loss function depends on the applied binaural integration methods.

### 5.2 Performance at different azimuths

To better understand the localization performance of the models in different azimuth, the test AD error of each azimuth is shown in Fig. 2. The test AD error in BAST-NSP is much smaller when the sound source is located closer to the interaural midline. This the error pattern for BAST-NSP is similar as for humans, highlighting the relevance of independent processing in the left and right stream. However, this pattern is not observed in BAST-SP.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Heatmaps: Spectrogram and Attention Rollout

### Overview

The image presents three heatmaps visualizing data related to frequency and time. The first heatmap (a) is labeled "Spectrogram" and shows data for "Left" and "Right" channels. The second and third heatmaps (b and c) are labeled "Attention Rollout" and show data for "TE-L", "TE-R", and "TE-C" respectively. All heatmaps share the same axes: frequency (0-6 kHz) on the y-axis and time on the x-axis. The color intensity represents the magnitude of the signal or attention.

### Components/Axes

* **X-axis:** Time (unscaled)

* **Y-axis:** Frequency (kHz), ranging from 0 to 6 kHz. Marked at 0, 2, 4, and 6.

* **Color Scale:** A gradient from dark blue (low magnitude) to yellow/white (high magnitude).

* **Heatmap (a):** "Spectrogram" with two subplots: "Left" (top) and "Right" (bottom).

* **Heatmap (b):** "Attention Rollout" with two subplots: "TE-L" (top) and "TE-R" (bottom).

* **Heatmap (c):** "Attention Rollout" with one subplot: "TE-C".

* **Labels:** (a), (b), (c) are labels for each heatmap.

### Detailed Analysis or Content Details

**Heatmap (a) - Spectrogram:**

* **Left Subplot:** Shows a relatively consistent signal across time, with a concentration of energy around 2-4 kHz. There are some vertical bands of higher intensity, suggesting transient events.

* **Right Subplot:** Similar to the left subplot, with energy concentrated around 2-4 kHz. There are also vertical bands of higher intensity, but they appear slightly different in timing and frequency compared to the left subplot.

**Heatmap (b) - Attention Rollout:**

* **TE-L Subplot:** Shows a few distinct areas of high attention. One prominent area is around time = 0.5 and frequency = 2 kHz. Another is around time = 1.5 and frequency = 4 kHz.

* **TE-R Subplot:** Shows a similar pattern to TE-L, with high attention areas around time = 0.5 and frequency = 2 kHz, and time = 1.5 and frequency = 4 kHz. The intensity of these areas appears comparable to TE-L.

**Heatmap (c) - Attention Rollout:**

* **TE-C Subplot:** Shows a few distinct areas of high attention. One prominent area is around time = 0.5 and frequency = 2 kHz. Another is around time = 1.5 and frequency = 4 kHz. There is also a smaller area of attention around time = 2.0 and frequency = 1 kHz.

### Key Observations

* The Spectrogram (a) shows continuous signal activity, while the Attention Rollout (b & c) highlights specific moments in time and frequency.

* The Attention Rollout heatmaps (b & c) show similar patterns of attention for TE-L and TE-R, suggesting correlated activity.

* The Attention Rollout heatmap (c) shows a slightly different pattern, with an additional attention area not present in TE-L or TE-R.

* The attention areas in the Attention Rollout heatmaps appear to correspond to the vertical bands of higher intensity in the Spectrogram.

### Interpretation

The image likely represents an analysis of audio data, where the Spectrogram shows the frequency content over time, and the Attention Rollout heatmaps highlight specific features or events that are deemed important by an attention mechanism. The "TE" labels likely refer to different processing stages or components within the attention model (e.g., Transformer Encoder).

The similarity between TE-L and TE-R suggests that the attention mechanism is responding to features present in both the left and right audio channels. The differences in TE-C could indicate that this component is capturing additional information or processing the audio in a different way.

The correlation between the attention areas and the Spectrogram's vertical bands suggests that the attention mechanism is focusing on transient events or specific frequency components within the audio signal. This could be useful for tasks such as sound event detection or speech recognition. The image demonstrates how attention mechanisms can be used to selectively focus on relevant parts of an audio signal, potentially improving the performance of audio processing systems.

</details>

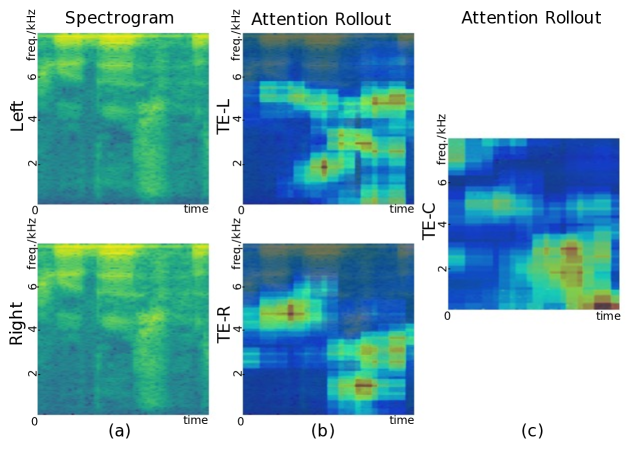

Figure 6: Attention rollout corresponding to the spectrogram shown in Fig. 5. (a): Left and right spectrogram. (b): The left and right attention rollout obtained from the 3rd layer of TE-L and TE-R Transformers. (c): The left and right Attention rollout obtained from the 3rd layer of TE-C.

### 5.3 Performance in left and right hemifield

To explore the symmetry of the model predictions, we further compare the evaluation metrics between the left-right hemifields. One can observe comparable model performance in left and right hemifield in Fig. 3 and 4. This result is confirmed by paired t-test (False Discovery Rate (FDR) corrected for multiple comparisons). More specifically, Fig. 3, shows an insignificant difference of AD error between the left and right hemifield in most conditions (corrected p $>$ 0.05). However, a minor but significant difference (corrected p $<$ 0.05) is observed in BAST-NSP trained with MSE loss and addition integration, and in BAST-SP trained with AD loss and subtraction. More precisely, the difference in AD error between the left and right hemifield was not significant in most conditions (corrected p $>$ 0.05, Fig. 3), thus supporting the consistent symmetry of model predictions.

### 5.4 Performance in different environments

We conduct two additional experiments to illustrate the generalization of the proposed model by training in one listening environment and testing in both environments separately, i.e., AE and RV. This analysis is conducted on the best performing model, i.e., BAST-NSP with hybrid loss and subtraction integration method. As shown in Table 3, the model that is trained using the data of both AE and RV environments, achieves the best test results compared to other models which are trained only using the data of one of the environment.

Table 3: The performance of the proposed BAST-NSP model in different listening environments. AE and RV indicate the anechoic and reverberation environments respectively.

| Training Environment | Testing Environment | AD | MSE |

| --- | --- | --- | --- |

| AE | AE | 1.14° | 0.001 |

| RV | 8.66° | 0.027 | |

| RV | AE | 16.70° | 0.078 |

| RV | 1.65° | 0.002 | |

| AE+RV | AE | 1.10° | 0.001 |

| RV | 1.48° | 0.001 | |

### 5.5 Attention Analysis

To interpret the localization process, we utilize Attention Rollout [57] to visualize the attention maps of the proposed model (BAST-NSP with subtraction method and hybrid loss). Rollout calculates the attention matrix by recursively multiplying the attention matrices along the forward propagation path. [56] enhanced this method by adding an additional identical matrix before multiplication to simulate the effect of residual connection of MSA. Due to the interaural integration layer, in BAST-NSP, we initialize the attention matrix of TE-C by summing the attention weights from both sides regardless of the integration method.

Fig. 5 shows the patch-to-patch attention matrices (size: 180 $\times$ 180) of a randomly selected spectrogram. In the first layer of each Transformer, most of the patches are self-focused and pay attention to some scattered patches. However, in the last layer, all patches yield nearly consistent attention weights to some specific patches. The attention rollout heat map with respect to the left and right spectrogram are depicted in Fig. 6. Although parameter-sharing setting is not used in BAST-NSP, one can still observe that the model focuses most of its attention on similar regions on both sides, see Fig. 6 (b). The final attention map, Fig. 6 (c), shows that the model further processes the attention after the integration layer and boosts the attention weights in bottom left regions.

## 6 Conclusion

In this paper, a novel Binaural Audio Spectrogram Transformer (BAST) for sound source localization is proposed. The obtained results show that this pure attention-based model leads to significant azimuth acuity improvement compared to CNN, FAVit and NI-CNN models. In particular, subtraction interaural integration and hybrid loss is the best training combination for BAST. Additionally, we found that the performance and statistical significance in left-right hemifields vary with different combinations of training settings. In conclusion, this work contributes to a convolution-free model of real-life sound localization. The data and implementation of our BAST model are available at https://github.com/ShengKuangCN/BAST.

## References

- [1] D. W. Batteau, The role of the pinna in human localization, Proceedings of the Royal Society of London. Series B. Biological Sciences 168 (1011) (1967) 158–180.

- [2] J. O. Pickles, Auditory pathways: anatomy and physiology, Handbook of clinical neurology 129 (2015) 3–25.

- [3] K. van der Heijden, J. P. Rauschecker, B. de Gelder, E. Formisano, Cortical mechanisms of spatial hearing, Nature Reviews Neuroscience 20 (10) (2019) 609–623.

- [4] B. Grothe, M. Pecka, D. McAlpine, Mechanisms of sound localization in mammals, Physiological reviews 90 (3) (2010) 983–1012.

- [5] J. Blauert, S. Hearing, The psychophysics of human sound localization, in: Spatial Hearing, MIT Press, 1997.

- [6] Y. LeCun, Y. Bengio, G. Hinton, Deep learning, nature 521 (7553) (2015) 436–444.

- [7] X. Zhang, D. Wang, Deep learning based binaural speech separation in reverberant environments, IEEE/ACM transactions on audio, speech, and language processing 25 (5) (2017) 1075–1084.

- [8] S. Y. Lee, J. Chang, S. Lee, Deep learning-based method for multiple sound source localization with high resolution and accuracy, Mechanical Systems and Signal Processing 161 (2021) 107959.

- [9] Y. Gong, Y.-A. Chung, J. Glass, Ast: Audio spectrogram transformer, arXiv preprint arXiv:2104.01778 (2021).

- [10] T.-D. Truong, C. N. Duong, H. A. Pham, B. Raj, N. Le, K. Luu, et al., The right to talk: An audio-visual transformer approach, in: Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021, pp. 1105–1114.

- [11] L. Perotin, R. Serizel, E. Vincent, A. Guérin, Crnn-based joint azimuth and elevation localization with the ambisonics intensity vector, in: 2018 16th International Workshop on Acoustic Signal Enhancement (IWAENC), IEEE, 2018, pp. 241–245.

- [12] T. Yoshioka, S. Karita, T. Nakatani, Far-field speech recognition using cnn-dnn-hmm with convolution in time, in: 2015 IEEE international conference on acoustics, speech and signal processing (ICASSP), IEEE, 2015, pp. 4360–4364.

- [13] S. Park, Y. Jeong, H. S. Kim, Multiresolution cnn for reverberant speech recognition, in: 2017 20th Conference of the Oriental Chapter of the International Coordinating Committee on Speech Databases and Speech I/O Systems and Assessment (O-COCOSDA), IEEE, 2017, pp. 1–4.

- [14] Y. Xu, S. Afshar, R. K. Singh, R. Wang, A. van Schaik, T. J. Hamilton, A binaural sound localization system using deep convolutional neural networks, in: 2019 IEEE International Symposium on Circuits and Systems (ISCAS), IEEE, 2019, pp. 1–5.

- [15] K. He, X. Zhang, S. Ren, J. Sun, Deep residual learning for image recognition, in: Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770–778.

- [16] N. Yalta, K. Nakadai, T. Ogata, Sound source localization using deep learning models, Journal of Robotics and Mechatronics 29 (1) (2017) 37–48.

- [17] K. van der Heijden, S. Mehrkanoon, Goal-driven, neurobiological-inspired convolutional neural network models of human spatial hearing, Neurocomputing 470 (2022) 432–442.

- [18] K. van der Heijden, S. Mehrkanoon, Modelling human sound localization with deep neural networks., in: ESANN, 2020, pp. 521–526.

- [19] A. Francl, J. H. McDermott, Deep neural network models of sound localization reveal how perception is adapted to real-world environments, Nature Human Behaviour 6 (1) (2022) 111–133.

- [20] J. Mathews, J. Braasch, Multiple sound-source localization and identification with a spherical microphone array and lavalier microphone data, The Journal of the Acoustical Society of America 143 (3_Supplement) (2018) 1825–1825.

- [21] L. Durak, O. Arikan, Short-time fourier transform: two fundamental properties and an optimal implementation, IEEE Transactions on Signal Processing 51 (5) (2003) 1231–1242.

- [22] N. Ma, J. A. Gonzalez, G. J. Brown, Robust binaural localization of a target sound source by combining spectral source models and deep neural networks, IEEE/ACM Transactions on Audio, Speech, and Language Processing 26 (11) (2018) 2122–2131.

- [23] P.-A. Grumiaux, S. Kitić, L. Girin, A. Guérin, A survey of sound source localization with deep learning methods, The Journal of the Acoustical Society of America 152 (1) (2022) 107–151.

- [24] P. Gerstoft, C. F. Mecklenbräuker, A. Xenaki, S. Nannuru, Multisnapshot sparse bayesian learning for doa, IEEE Signal Processing Letters 23 (10) (2016) 1469–1473.

- [25] S. Nannuru, A. Koochakzadeh, K. L. Gemba, P. Pal, P. Gerstoft, Sparse bayesian learning for beamforming using sparse linear arrays, The Journal of the Acoustical Society of America 144 (5) (2018) 2719–2729.

- [26] G. Ping, E. Fernandez-Grande, P. Gerstoft, Z. Chu, Three-dimensional source localization using sparse bayesian learning on a spherical microphone array, The Journal of the Acoustical Society of America 147 (6) (2020) 3895–3904.

- [27] A. Xenaki, J. Bünsow Boldt, M. Græsbøll Christensen, Sound source localization and speech enhancement with sparse bayesian learning beamforming, The Journal of the Acoustical Society of America 143 (6) (2018) 3912–3921.

- [28] S. Chakrabarty, E. A. Habets, Multi-speaker doa estimation using deep convolutional networks trained with noise signals, IEEE Journal of Selected Topics in Signal Processing 13 (1) (2019) 8–21.

- [29] C. Pang, H. Liu, X. Li, Multitask learning of time-frequency cnn for sound source localization, IEEE Access 7 (2019) 40725–40737. doi:10.1109/ACCESS.2019.2905617.

- [30] R. Varzandeh, K. Adiloğlu, S. Doclo, V. Hohmann, Exploiting periodicity features for joint detection and doa estimation of speech sources using convolutional neural networks, in: ICASSP 2020 - 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2020, pp. 566–570. doi:10.1109/ICASSP40776.2020.9054754.

- [31] P. Vecchiotti, N. Ma, S. Squartini, G. J. Brown, End-to-end binaural sound localisation from the raw waveform, in: ICASSP 2019 - 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2019, pp. 451–455. doi:10.1109/ICASSP.2019.8683732.

- [32] A. Fahim, P. N. Samarasinghe, T. D. Abhayapala, Multi-source doa estimation through pattern recognition of the modal coherence of a reverberant soundfield, IEEE/ACM Transactions on Audio, Speech, and Language Processing 28 (2020) 605–618. doi:10.1109/TASLP.2019.2960734.

- [33] D. Krause, A. Politis, K. Kowalczyk, Comparison of convolution types in cnn-based feature extraction for sound source localization, in: 2020 28th European Signal Processing Conference (EUSIPCO), 2021, pp. 820–824. doi:10.23919/Eusipco47968.2020.9287344.

- [34] D. Diaz-Guerra, A. Miguel, J. R. Beltran, Robust sound source tracking using srp-phat and 3d convolutional neural networks, IEEE/ACM Transactions on Audio, Speech, and Language Processing 29 (2021) 300–311. doi:10.1109/TASLP.2020.3040031.

- [35] X. Wu, Z. Wu, L. Ju, S. Wang, Binaural audio-visual localization, in: Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 35, 2021, pp. 2961–2968.

- [36] A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, I. Polosukhin, Attention is all you need, Advances in neural information processing systems 30 (2017).

- [37] J. Devlin, M.-W. Chang, K. Lee, K. Toutanova, Bert: Pre-training of deep bidirectional transformers for language understanding, arXiv preprint arXiv:1810.04805 (2018).

- [38] A. Dosovitskiy, L. Beyer, A. Kolesnikov, D. Weissenborn, X. Zhai, T. Unterthiner, M. Dehghani, M. Minderer, G. Heigold, S. Gelly, et al., An image is worth 16x16 words: Transformers for image recognition at scale, arXiv preprint arXiv:2010.11929 (2020).

- [39] K. Han, Y. Wang, H. Chen, X. Chen, J. Guo, Z. Liu, Y. Tang, A. Xiao, C. Xu, Y. Xu, et al., A survey on vision transformer, IEEE Transactions on Pattern Analysis and Machine Intelligence (2022).

- [40] Z. Zhang, S. Xu, S. Zhang, T. Qiao, S. Cao, Attention based convolutional recurrent neural network for environmental sound classification, Neurocomputing 453 (2021) 896–903.

- [41] Y.-B. Lin, Y.-C. F. Wang, Audiovisual transformer with instance attention for audio-visual event localization, in: Proceedings of the Asian Conference on Computer Vision, 2020.

- [42] Q. Kong, Y. Xu, W. Wang, M. D. Plumbley, Sound event detection of weakly labelled data with cnn-transformer and automatic threshold optimization, IEEE/ACM Transactions on Audio, Speech, and Language Processing 28 (2020) 2450–2460.

- [43] C. Schymura, T. Ochiai, M. Delcroix, K. Kinoshita, T. Nakatani, S. Araki, D. Kolossa, Exploiting attention-based sequence-to-sequence architectures for sound event localization, in: 2020 28th European Signal Processing Conference (EUSIPCO), 2021, pp. 231–235. doi:10.23919/Eusipco47968.2020.9287224.

- [44] N. Yalta, Y. Sumiyoshi, Y. Kawaguchi, The hitachi dcase 2021 task 3 system: Handling directive interference with self attention layers, Tech. rep., Technical Report, DCASE 2021 Challenge (2021).

- [45] C. Schymura, B. Bönninghoff, T. Ochiai, M. Delcroix, K. Kinoshita, T. Nakatani, S. Araki, D. Kolossa, Pilot: Introducing transformers for probabilistic sound event localization, arXiv preprint arXiv:2106.03903 (2021).

- [46] Y. Xin, D. Yang, Y. Zou, Audio pyramid transformer with domain adaption for weakly supervised sound event detection and audio classification., in: INTERSPEECH, 2022, pp. 1546–1550.

- [47] K. J. Piczak, Esc: Dataset for environmental sound classification, in: Proceedings of the 23rd ACM international conference on Multimedia, 2015, pp. 1015–1018.

- [48] P. Warden, Speech commands: A dataset for limited-vocabulary speech recognition, arXiv preprint arXiv:1804.03209 (2018).

- [49] W. Ariyanti, K.-C. Liu, K.-Y. Chen, et al., Abnormal respiratory sound identification using audio-spectrogram vision transformer, in: 2023 45th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC), IEEE, 2023, pp. 1–4.

- [50] Y.-B. Lin, Y.-L. Sung, J. Lei, M. Bansal, G. Bertasius, Vision transformers are parameter-efficient audio-visual learners, in: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 2299–2309.

- [51] K. Chen, X. Du, B. Zhu, Z. Ma, T. Berg-Kirkpatrick, S. Dubnov, Hts-at: A hierarchical token-semantic audio transformer for sound classification and detection, in: ICASSP 2022 - 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2022, pp. 646–650. doi:10.1109/ICASSP43922.2022.9746312.

- [52] W. Phokhinanan, N. Obin, S. Argentieri, Binaural sound localization in noisy environments using frequency-based audio vision transformer (favit), in: INTERSPEECH, ISCA, 2023, pp. 3704–3708.

- [53] S. Whitaker, A. Barnard, G. D. Anderson, T. C. Havens, Through-ice acoustic source tracking using vision transformers with ordinal classification, Sensors 22 (13) (2022) 4703.

- [54] X. Xiao, S. Zhao, X. Zhong, D. L. Jones, E. S. Chng, H. Li, A learning-based approach to direction of arrival estimation in noisy and reverberant environments, in: 2015 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), IEEE, 2015, pp. 2814–2818.

- [55] D. P. Kingma, J. Ba, Adam: A method for stochastic optimization, arXiv preprint arXiv:1412.6980 (2014).

- [56] H. Chefer, S. Gur, L. Wolf, Transformer interpretability beyond attention visualization, in: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp. 782–791.

- [57] S. Abnar, W. Zuidema, Quantifying attention flow in transformers, arXiv preprint arXiv:2005.00928 (2020).