## A TUTORIAL INTRODUCTION TO LATTICE-BASED CRYPTOGRAPHY AND HOMOMORPHIC ENCRYPTION

## A PREPRINT

## Contents

| Introduction | Introduction | Introduction | 5 |

|----------------|---------------------------------|------------------------------------------------------------|-----|

| | 1.1 | Motivations . . . . . . . . . . . . . . . . . . . . . . . | 5 |

| | 1.2 | Tutorial organisation . . . . . . . . . . . . . . . . . . | 6 |

| | 1.3 | A simple lattice-based encryption scheme . . . . . . . | 7 |

| | Computational Complexity Theory | Computational Complexity Theory | 10 |

| | 2.1 | Basic time complexity classes . . . . . . . . . . . . . | 10 |

| | 2.2 | Hardness of approximation . . . . . . . . . . . . . . . | 11 |

| | 2.3 | Average-case hardness . . . . . . . . . . . . . . . . . | 12 |

| | Cryptography Basics | Cryptography Basics | 15 |

| | 3.1 | Computational security . . . . . . . . . . . . . . . . . | 15 |

| | 3.2 | Private and public encryptions . . . . . . . . . . . . . | 15 |

| | 3.3 | Security definitions . . . . . . . . . . . . . . . . . . . | 16 |

| | Lattice Theory | Lattice Theory | 18 |

| | 4.1 | Lattice basics . . . . . . . . . . . . . . . . . . . . . . | 18 |

| | 4.2 | Dual lattice . . . . . . . . . . . . . . . . . . . . . . . | 22 |

| | 4.3 | Some lattice problems . . . . . . . . . . . . . . . . . . | 23 |

| | 4.4 | Ajtai's worst-case to average-case reduction . . . . . . | 26 |

| | 4.5 | An application of SIS: Collision resistant hash functions | 28 |

## Yang Li

School of Computing Australian National University Canberra, ACT, 2600

kelvin.li@anu.edu.au

## Kee Siong Ng

School of Computing Australian National University Canberra, ACT, 2600

keesiong.ng@anu.edu.au

## Michael Purcell

School of Computing Australian National University Canberra, ACT, 2600

michael.purcell1@anu.edu.au

## September 29, 2022

| Discrete Gaussian | Distribution | 30 |

|--------------------------------------------------------------------------------------------|-------------------------------------------------------------------|------|

| 5.1 | Discrete Gaussian distribution . . . . . . . . . . . . . . | 30 |

| 5.2 | Discrete Gaussian for provable security . . . . . . . . . | 32 |

| Learning with | Errors | 35 |

| 6.1 | LWE distribution . . . . . . . . . . . . . . . . . . . . . | 35 |

| 6.2 | LWE hardness proof . . . . . . . . . . . . . . . . . . . | 37 |

| 6.3 | An LWE-based encryption scheme . . . . . . . . . . . . | 39 |

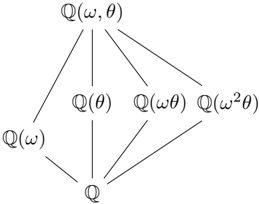

| Cyclotomic Polynomials and Cyclotomic Extensions | Cyclotomic Polynomials and Cyclotomic Extensions | 41 |

| 7.1 | Cyclotomic polynomials . . . . . . . . . . . . . . . . . | 41 |

| 7.2 | Galois Group of Cyclotomic Polynomials . . . . . . . . | 44 |

| Algebraic Number Theory | Algebraic Number Theory | 48 |

| 8.1 Ring of integers and its ideal | . . . . . . . . . . . . . . . | 48 |

| 8.1.1 | Integral ideal . . . . . . . . . . . . . . . . . . . | 49 |

| 8.1.2 | Fractional ideal . . . . . . . . . . . . . . . . . . | 51 |

| 8.1.3 | Applications in Ring LWE . . . . . . . . . . . . | 52 |

| 8.2 | Number field embedding . . . . . . . . . . . . . . . . . | 54 |

| 8.2.1 | Canonical embedding . . . . . . . . . . . . . . . | 54 |

| | 8.2.2 Geometric quantities of ideal lattice . . . . . . . | 57 |

| 8.3 | Dual lattice in number field . . . . . . . . . . . . . . . . | 59 |

| Ring Learning with Errors | Ring Learning with Errors | 62 |

| | . . . . . . . . . . . . . . . | 62 |

| 9.1 Some RLWE in general | ideal lattice problems . number field . . . . . . . . . . . . . . | 63 |

| 9.2 | Hardness of search RLWE | 65 |

| 9.3 | . . . . . . . . . . . . . . . . | |

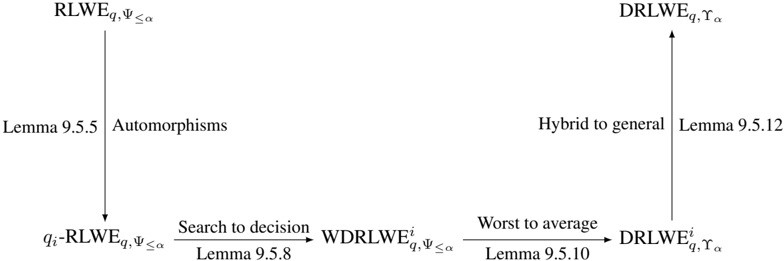

| 9.5 | Search to decision RLWE . . . . . . . . . . . . . . . . . | 69 |

| 9.6 An RLWE-based encryption scheme | . . . . . . . . . . . | 73 |

| Homomorphic Encryption | Homomorphic Encryption | 75 |

| Basic definitions . | . . . . . . . . . . . . . . . . . . . . . | 75 |

| 10.1 | | |

| 10.2 | Gentry's original FHE using squashing and bootstrapping | 76 |

| 10.3 BV ∗ : SHE by relinearization | . . . . . . . . . . . . . . . | 78 |

| 10.4 BV : Leveled FHE by dimension-modulus reduction | . . | 83 |

| | 10.4.1 Modulus reduction to reduce ciphertext size . . . | 83 |

| | 10.4.2 The BV scheme . . . . . . . . . . . . . . . . . . | 84 |

| 10.5 | . . . . . . | 86 |

| Additional tools for computational efficiency 10.5.1 Noise management by modulus switching | . . . . | 86 |

| | 10.5.2 Vector decomposition . . . . . . . . . . . . | 86 |

|----------------------------------------------------|-------------------------------------------------------------|------|

| | 10.5.3 Key switching . . . . . . . . . . . . . . . | 87 |

| 10.6 | BGV : Leveled FHE by modulus and key switching | 88 |

| 10.7 | The B scheme: scale invariant . . . . . . . . . . . | 89 |

| 10.8 | The BFV scheme . . . . . . . . . . . . . . . . . . | 91 |

| 10.9 | Closing thoughts on HE developments . . . . . . . | 95 |

| 10.10A Sage Implementation of the BFV Cryptosystem | . | 97 |

| | 10.10.1 Package Imports . . . . . . . . . . . . . . | 97 |

| | 10.10.2 Define Parameters . . . . . . . . . . . . . | 97 |

| | 10.10.3 Utility Functions . . . . . . . . . . . . . . | 98 |

| | 10.10.4 Noise Samplers . . . . . . . . . . . . . . . | 98 |

| | 10.10.5 Basic Cryptographic Operations . . . . . . | 99 |

| | 10.10.6 Homomorphic Addition . . . . . . . . . . | 100 |

| | 10.10.7 Homomorphic Multiplication . . . . . . . | 100 |

| | 10.10.8 Relinearization . . . . . . . . . . . . . . . | 101 |

| Abstract Algebra | Abstract Algebra | 102 |

| A.1 | Group theory . . . . . . . . . . . . . . . . . . . . | 102 |

| A.2 | Ring theory . . . . . . . . . . . . . . . . . . . . . | 104 |

| A.3 | Field theory . . . . . . . . . . . . . . . . . . . . . | 107 |

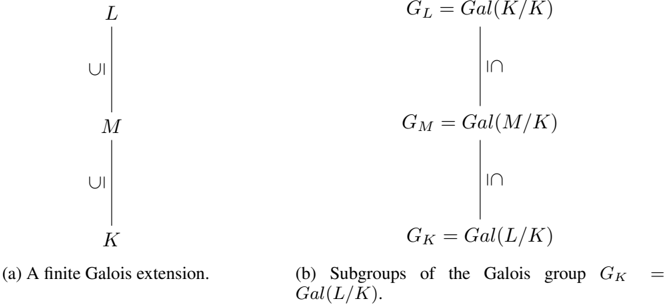

| Galois Theory | Galois Theory | 109 |

| B.1 Field extension . | . . . . . . . . . . . . . . . . . . | 109 |

| | B.1.1 Algebraic extension . . . . . . . . . . . . | 110 |

| | B.1.2 Simple extension . . . . . . . . . . . . . . | 111 |

| | B.1.3 Splitting field . . . . . . . . . . . . . . . . | 112 |

| | B.1.4 Normal extension . . . . . . . . . . . . . . | 112 |

| | B.1.5 Separable extension . . . . . . . . . . . . | 113 |

| B.2 | Galois extension and Galois group . . . . . . . . . | 114 |

| Algebraic Number Theory | Algebraic Number Theory | 119 |

| C.1 Algebraic number field | . . . . . . . . . . . . . . . | 119 |

| C.2 | Ideals of ring of integers . . . . . . . . . . . . . . | 122 |

| | C.2.1 Integral ideals . . . . . . . . . . . . . . . . | 123 |

| | C.2.2 Fractional ideal . . . . . . . . . . . . . . . | 124 |

| | C.2.3 Chinese remainder theorem . . . . . . . . | 127 |

| C.3 | Trace and Norm . . . . . . . . . . . . . . . . . . . | 129 |

| C.4 | . . . . . . . . . . . . . . . . . . . | 130 |

| C.5 | Ideal lattices . . in number fields . . . . . . . . . . . . | 133 |

| Dual lattice Mind Maps | Dual lattice Mind Maps | 137 |

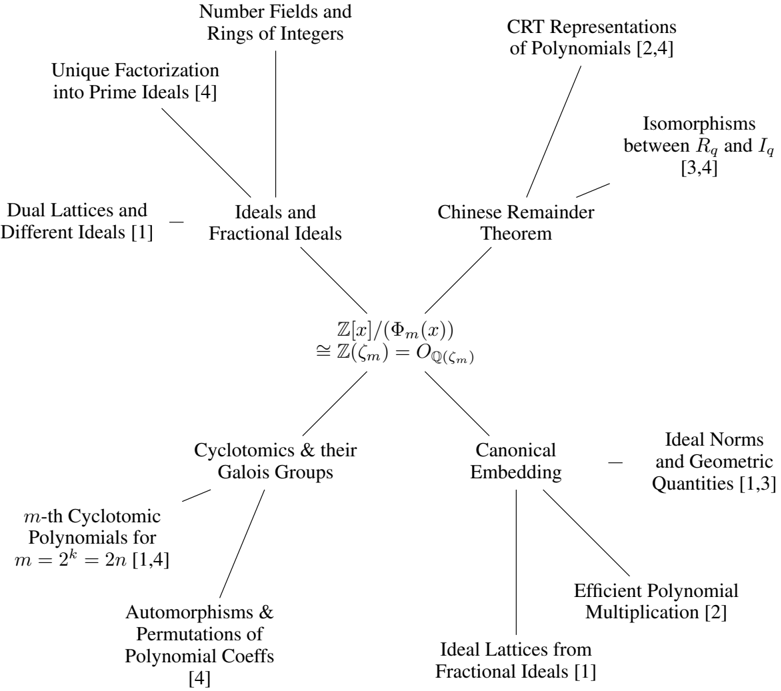

| D.1 | A mindmap for RLWE | 137 |

|-------|----------------------|-------|

| E | Notation | 138 |

## References 141

## 1 Introduction

## 1.1 Motivations

Why study Lattice-based Cryptography? There are a few ways to answer this question.

1. It is useful to have cryptosystems that are based on a variety of hard computational problems so the different cryptosystems are not all vulnerable in the same way.

2. The computational aspects of lattice-based cryptosystem are usually simple to understand and fairly easy to implement in practice.

3. Lattice-based cryptosystems have lower encryption/decryption computational complexities compared to popular cryptosystems that are based on the integer factorisation or the discrete logarithm problems.

4. Lattice-based cryptosystems enjoy strong worst-case hardness security proofs based on approximate versions of known NP-hard lattice problems.

5. Lattice-based cryptosystems are believed to be good candidates for post-quantum cryptography, since there are currently no known quantum algorithms for solving lattice problems that perform significantly better than the best-known classical (non-quantum) algorithms, unlike for integer factorisation and (elliptic curve) discrete logarithm problems.

6. Last but not least, interesting structures in lattice problems have led to significant advances in Homomorphic Encryption, a new research area with wide-ranging applications.

Let's look at that fourth point in more detail.

Note first that the discrete logarithm and integer factorisation problem classes, which underlie several well-known cryptosystems, are only known to be in NP, they are not known to be NP-complete or NP-hard. The way we understand their complexity is by looking at the average run-time complexity of the current best-known (non-polynomial) algorithms for those two problem classes on randomly generated problem instances. Using that heuristic complexity measure, we can show that

1. there are special instances of those problems that can be solved in polynomial time but, in general, both problems can be solved only in sub-exponential time; and

2. on average, most of the discrete logarithm and integer factorisation problem instances are as hard as each other.

So we believe these two problems to be average-case hard problem classes, but we cannot yet prove that. Interestingly, we know there are quantum algorithms that can solve these two problems efficiently (Bernstein et al., 2009).

The above then begs the question of whether we can design cryptosystems based on known NP-hard or worst-case hard problem classes. In constructing a (public-key) cryptosystem using a problem class ACH with average-case hardness like Integer Factorisation or Discrete Logarithm, it is sufficient to show that the generation of a key pair (at random) and the solution of the private key corresponds to a problem instance I ∈ ACH , and we rely on average hardness to say I is hard to solve with good probability. But in constructing a (public-key) cryptosystem using a problem class WCH with only known worst-case complexity, we need to do a bit more work, in that it is not sufficient to generate a key pair (at random) and show the solution of the private key is a problem instance I ∈ WCH , we need to actually show that I is one of the hard or worst cases in WCH .

In other words, to build a cryptosystem based on a worst-case hard problem class, we do not just need to know that hard instances exist, but we need a way to explicitly generate the hard problem instances. And that is an issue because we do not know how to do that for most worst-case hard problem classes. But this is what makes lattice problems interesting: we know how to generate, through reductions, the worst-case problem instances of approximation versions of NP-hard lattice problems and build efficient cryptosystems based on them. In practice, this means breaking these cryptosystems, even with some small non-negligible probability, is provably as hard as solving the underlying lattice problem approximately to within a polynomial factor in polynomial time.

How hard are these approximation lattice problems? In most cases, the underlying lattice problem is the Shortest Vector Problem (SVP), and the approximation version is called the GapSVP λ problem for an approximation factor λ . These gap lattice problems are known to be NP-hard only for small

approximation factors like n O (1 / log 2 n ) . We also know that these gap lattice problems are not NPhard for approximation factors above √ n/ log n , unless the polynomial time hierarchy collapses. See Micciancio and Goldwasser (2002); Khot (2005, 2010) for surveys of these results. The best-known algorithm for solving these gap lattice problems to within poly(n) factor has time complexity 2 O ( n ) (Ajtai et al., 2001), which leads us to the following conjecture that underlies the security of latticebased cryptography:

Conjecture: There is no polynomial time algorithm that approximates lattice problems to within polynomial factors.

Why another paper on Lattice Cryptography and Homomorphic Encryption? It is important to state early that this tutorial is a compilation of known results in the literature and we do not claim any research originality. In contrast with some existing works Peikert (2016); Halevi (2017); Chi et al. (2015), this tutorial

1. is written primarily with pedagogical considerations in mind;

2. is as self-contained as possible, with essentially all required background given either in the body of the tutorial or in the appendix;

3. focuses mostly on the narrow development path from the Learning With Errors (LWE) problem to Ring LWE and homomorphic encryption schemes built on top of them; we do not cover other lattice cryptographic systems like NTRU, Ring SIS-based systems, and homomorphic signatures.

The target audiences are students, practitioners and researchers who want to learn the 'core curriculum' of lattice-based cryptography and homomorphic encryption from a single source.

In writing the tutorial, we have benefited from peer-reviewed published papers as well as many lessformal explanatory material in the form of lecture notes and blog articles. We are not always careful and comprehensive in citing the latter class of material, and we apologise in advance for errors of omission.

## 1.2 Tutorial organisation

The tutorial can be divided into three parts in pedagogical order as follows. Each part will be presented with definitions, examples, discussions around the intuitions of abstract concepts and more importantly corresponding computer code to help develop the understanding.

After brief introductions to the basics of Computational Complexity Theory in Section 2 and Cryptography in Section 3, the first part of the tutorial focuses on the LWE problem, a foundational hard lattice problem. This part begins with some Lattice Theory in Section 4, followed by material on Discrete Gaussian Distributions in Section 5. The LWE problem is then described in some detail in Section 6, including hardness proofs.

The second part discusses the Ring LWE (RLWE) problem, which is a generalization of LWE from the integer domain to an algebraic number field domain that allows more computationally efficient cryptosystems to be built. As LWE does not straightforwardly generalize to its ring version, some required background knowledge will be presented with intuition, examples and computer code, including cyclotomic polynomials and their Galois groups in Section 7 and algebraic number theory in Section 8. (For readers that require a more extensive background, the appendix covers Abstract Algebra, Galois Theory and Algebraic Number Theory in significantly more details.) The RLWE problem is described in some detail in Section 9, including hardness proofs. (A mindmap is given in Appendix D to help readers navigate and remember the many components of RLWE proofs.)

Having introduced the LWE and RLWE problems, the final part of the tutorial (Section 10) shows how efficient homomorphic encryption (HE) schemes can be developed based on the LWE and RLWE problems. These schemes are both similar and different to Gentry's original fully HE scheme. The similarity is in designing a somewhat HE scheme first, then using bootstrapping to achieve fully HE. The difference is that they avoided using Gentry's 'squashing' technique, but used the algebraic properties of (R)LWE instances to make the somewhat HE schemes bootstrappable.

## 1.3 A simple lattice-based encryption scheme

Before diving into the technical details of lattice-based cryptosystems and homomorphic encryption schemes, we describe a simple public-key encryption scheme introduced by Regev (2009) to illustrate the connection between the scheme's security and lattice problems. This scheme is based on the learning with errors (LWE) problem, see Section 6 for details. Its simplicity inspired subsequent developments in homomorphic encryption schemes that are based on lattices, and is a fundamental building block in many such schemes.

Note that in this example Z q is the collection of integers in the range [ -q/ 2 , q/ 2) rather than its standard usage for representing the ring Z / Z q , and [ x ] q is the reduction of x into Z q such that [ x ] q = x mod q . We use boldface to denote vectors and matrices. When working with matrices, all vectors are by default considered as column vectors. Vector multiplications are denoted by a · b , whilst matrix and scalar multiplications are denoted without the 'dot' in the middle. For simplicity, we use [ b | -A ] to denote the action of appending the column vector b to the front of the matrix -A . The parameters n, q, N, χ correspond to the vector dimension, the plaintext modulus, the number of LWE samples, and the noise distribution over Z q , respectively. In particular, χ is chosen such that Pr ( | e · r | < ⌊ q 2 ⌋ / 2) > 1 -negl ( n ) for a random binary vector r = { 0 , 1 } N . The scheme is summarized as follows, but in an alternative format to be consistent with later homomorphic encryption schemes that will be presented in Section 10.

Private key : Sample a private key s = (1 , t ) , where t ← Z n q .

Public key : Sample a random matrix A = a 1 . . . a N ← Z N × n q and compute b = At + e for a random noise vector e ← χ N . Output the public key P = [ b | -A ] ∈ Z N × ( n +1) q .

Encryption: Encrypt the message m ∈ { 0 , 1 } by computing

$$c = \left [ P ^ { T } r + \left \lfloor \frac { q } { 2 } \right \rfloor m \right ] _ { q } \in \mathbb { Z } _ { q } ^ { n + 1 } ,$$

where m = ( m, 0 , . . . , 0) has length n +1 .

Decryption: Decrypt the ciphertext c using the secret key by computing

$$m = \left [ \left \lfloor { \frac { 2 } { q } \left [ c \cdot s \right ] _ { q } } \right \rceil \right ] _ { 2 } .$$

The purpose of the binary vector r is to randomize the use of the public key so that it is impossible to derive m from the ciphertext c . To demonstrate how decryption works, the ciphertext can be re-written as which implies

$$c = \left [ b ^ { T } r + \left \lfloor \frac { q } { 2 } \right \rfloor m | - A ^ { T } r \right ] _ { q } ,$$

$$[ c \cdot s ] _ { q } & = \left [ b ^ { T } r + \left \lfloor \frac { q } { 2 } \right \rfloor m - t ^ { T } A ^ { T } r \right ] _ { q } = \left [ ( t ^ { T } A ^ { T } + e ^ { T } ) r + \left \lfloor \frac { q } { 2 } \right \rfloor m - t ^ { T } A ^ { T } r \right ] _ { q } \\ & = \left [ e ^ { T } r + \left \lfloor \frac { q } { 2 } \right \rfloor m \right ] _ { q } .$$

Because Pr ( | e T r | < ⌊ q 2 ⌋ / 2) > 1 -negl ( n ) , we have (with overwhelming probability)

$$\begin{array} { r l } & { \frac { 2 } { q } \left [ c \cdot s \right ] _ { q } \in \left \{ \begin{matrix} ( - 1 / 2 , 1 / 2 ) & i f m = 0 ; \\ [ - 1 , - 1 / 2 ) \cup ( 1 / 2 , 1 ) & i f m = 1 . } \end{matrix} } \end{array}$$

Notice that if b ′ = At then an attacker who knows A and b ′ could recover the secret t by solving a system of linear equations. The security of the system therefore depends on the presence of the noise vector e .

If an attacker knows b instead of b ′ , then the attack described above will not work. If, however, such an attacker could recover the noise vector e , then they could use that information to compute b ′ . They could then recover t as described above. Recovering e is an instance of a well-known lattice problem called the bounded distance decoding (BDD) problem. So, an attacker that can solve the BDD problem could recover the secret t . In other words, recovering t is 'no harder' than solving the BDD problem.

Conversely, Regev showed that the BDD problem is 'no harder' than recovering t . That is, an attacker who could recover t given A and b could solve the BDD problem as well. This result implies that if the BDD problem is hard, then attacking the cryptosystem is hard as well. This kind of result is called a reduction . Crucially, the BDD problem is believed to be hard. So, Regev's result constitutes a proof of security for the LWE-based cryptosystem described above.

```

Figure 1: A Sage implementation of the simple lattice-based encryption system described above.

Note: This implementation is not suitable for use in real-world applications.

#!/usr/bin/env sage

from sage.misc.prandom import randrange

import sage.stats.distributions.discrete_gaussian_integer as dgi

# Define parameters

def sample_noise(N, R):

D = dgi.DiscreteGaussianDistributionIntegerSampler(sigma=1.0)

return vector([R(D()) for i in range(N)])

q = 655360001

n = 1000

N = 500

R = Integers(q)

Q = Rationals()

Z2 = Integers(2)

# Generate keys

t = vector([R.random_element() for i in range(n)])

secret_key = vector([R(1)] + t.list())

A = matrix(R, [[R.random_element() for i in range(N)]

for i in range(n)])

e = sample_noise(N, R)

b = A.T * t + e

public_key = block_matrix([matrix(b).T, -A.T],

ncols=2)

# Encrypt Message

message = R(randrange(2))

m_vec = vector([message] + [R(0) for i in range(n)])

r = vector(R, [randrange(2) for i in range(N)])

ciphertext = public_key.transpose() * r + (q//2) * m_vec

# Decrypt Message

temp = (2/q) * Q(ciphertext*secret_key)

decrypted_message = R(Z2(temp.round()))

# Verification

print(decrypted_message == message)

```

Figure 1: A Sage implementation of the simple lattice-based encryption system described above. Note: This implementation is not suitable for use in real-world applications.

Decision problem

Time complexity

Time complexity class

## 2 Computational Complexity Theory

Computational complexity theory is the foundation of computational security of modern cryptography by allowing one to emphasize the security of a cryptosystem by drawing an efficient reduction from a computationally hard problem (that either has been proved or is believed with high confidence to be unsolvable in a reasonable time, e.g., polynomial time). That being said, a cryptosystem that is provably secure is still vulnerable to real-world attacks, depending on what threat model was considered, how close to reality the underlying security definitions and assumptions are and so on.

In this section, we start by introducing some basic definitions in computational complexity theory, then go on to talk about inapproximability, which are variants of the standard decision and optimization problems and commonly used to prove the computational security of cryptosystems. We then introduce gap problems, which are generalization of decision problems and proving their hardness is a useful technique of proving inapproximability. We finish the chapter by briefly introducing Ajtai (1996)'s worst-case to average-case reduction. Ajtai's work is considered as the first published average-case problem whose hardness is based on the worst-case hardness of some well-known lattice problems.

## 2.1 Basic time complexity classes

The following concepts are introduced under the assumption that a general purpose computer is of the form of a Turing machine . The primary reference of this subsection is Sipser (2013)'s book Introduction to the Theory of Computation, Third Edition .

A language (or decision problem) is a set of strings that are decidable by a Turing machine. We use Σ to denote the alphabet and Σ ∗ to denote the set of all strings over the alphabet Σ of all lengths. A special case is when Σ = { 0 , 1 } and Σ ∗ = { 0 , 1 } ∗ is the set of all strings of 0 s and 1 s of all lengths. In this case, a language A = { x ∈ { 0 , 1 } ∗ | f ( x ) = 1 } , where f : { 0 , 1 } ∗ →{ 0 , 1 } is a Boolean function .

Let M be a deterministic Turing machine that halts on all inputs. We measure the time complexity or running time of M by the function t : N → N , where t ( n ) is the maximum number of steps that M takes on any input of length n . Generally speaking, t ( n ) can be any function of n and the exact number of steps may be difficult to calculate, so we often just analyse t ( n ) 's asymptotic behaviour by taking its leading term, denoted by O ( t ( n )) . We also relax its codomain by letting t : N → R + be a non-negative real valued function.

It is worth mentioning that when analysing the time complexity of a function, we often consider its time complexity in the worst case, i.e., the longest running time of all inputs of a particular length n . At the end of this chapter, we will emphasize the importance of the worst-case complexity in the proof of security of modern cryptosystems. We will give a clue of how this was achieved by Ajtai through an average-case to worst-case reduction.

Definition 2.1.1. The time complexity class , TIME ( t ( n )) , is defined as the set of all languages that are decidable by a Turning machine in time O ( t ( n )) .

Obviously, t can be any function, e.g., logarithm, polynomial, exponential, etc. In practice, polynomial differences in running time are considered to be much better than exponential differences due to the super fast growth rate of the latter. For this reason, we separate languages into different classes according to their worst case running time on a deterministic single-tape Turing machine.

- Definition 2.1.2. P P is the class of languages that are decidable in polynomial time by a deterministic single-tape Turing machine, i.e.,

$$P = \bigcup _ { k \in \mathbb { N } } T I M E ( n ^ { k } ) .$$

Some problems are computationally hard, so cannot be decided by a deterministic single-tape Turing machine in polynomial time. But given a possible solution, sometimes we can efficiently verify whether or not the solution is genuine. The length of the solution has to be polynomial in the length of the input string length, for otherwise the verification process cannot be done efficiently. Based on the ability to efficiently verify, we can define the complexity class NP.

- Definition 2.1.3. NP NP is the class of languages that can be verified in polynomial time.

Sometimes, a problem can be solved by reducing it to another problem, whose solution can be found relatively easier, provided the reduction between the two problems is efficient. For example, a polynomial time reduction is often acceptable.

- Definition 2.1.4. A language A is polynomial time reducible PT reduction to another language B , written as A ≤ P B , if a polynomial time computable function f : Σ ∗ → Σ ∗ exists, where for every w ,

$$w \in A \iff f ( w ) \in B .$$

A polynomial time reduction A ≤ P B implies A is no harder than B , so if B ∈ P then A ∈ P. Based on this reduction, we can define another complexity class.

- Definition 2.1.5. A language B is NP-complete NP-complete if it is in NP and every problem in NP is polynomial time reducible to B .

Essentially, we are saying that NP-complete is the set of the hardest problems in NP. There are, however, hard problems that are not in NP such as an optimization problem . Given a solution of an optimization problem, it is often not trivial to verify the solution is optimal among all the answers, so this type of problems are not polynomial time verifiable and hence not in NP. For these problems, we can define a similar complexity class as NP-complete but without requiring their solutions to be polynomially checkable.

- Definition 2.1.6. A language is NP-hard NP-hard if every problem in NP is polynomial time reducible to it.

The two terms NP-complete and NP-hard are sometimes used interchangeably because an optimization problem can also be formed as a decision problem. For example, instead of asking for the shortest route from the travelling salesman problem , we can ask whether there exists a route that is shorter than a threshold.

Many optimization problems are NP-hard, which means there is no polynomial time solution under the assumption P = NP. Hence, when an answer for an NP-hard problem is needed, the fallback is to use an approximation algorithm to compute a near-optimal solution that is within an acceptable range. For a NP-hard problem, it is sometimes easier to build a cryptosystem based on its approximated version rather than the NP-hard problem itself. For this reason, cryptographers are concerned about whether or not an optimization problem is hard to be approximated within a certain range. This brings us to the study of the hardness of approximation or inapproximability in the next subsection.

## 2.2 Hardness of approximation

An optimization problem aims at finding the optimum result of a computational problem. This optimum result can either be the maximum or minimum of some value. Throughout this section, we focus on minimization problems only. The same results also hold for maximization problems. 1 In the previous section, we said an optimization problem can be made into a decision problem by comparing the solution with a threshold. More formally, it is defined as the next.

- Definition 2.2.1. An NP-optimization (NPO) problem NPO is an optimization problem such that

- all instances and solutions can be recognized in polynomial time,

- all solutions have polynomial length in the length of the instance,

- all solution's costs can be computed in polynomial time.

For a minimization problem in NPO, its decision version asks 'Is OPT ( x ) ≤ q ?', where OPT ( x ) is the unknown optimal solution (or its cost, we use interchangeably) to the instance x . For example, in the maximum clique problem, an instance is a graph, an optimal solution is the maximum clique in the given graph and its cost is the clique size. Given an NPO problem, its decision version is an NP problem, so NPO is an analogy of NP but for optimization problems. On the other hand, PO (P-optimization) problem is the set of optimization problems whose decision versions are in P, such as finding the shortest path.

1 Lecture 18: Gap Inapproximability , 6.892 Algorithmic Lower Bounds: Fun with Hardness Proofs (Spring 2019), Erik Demaine, available at http://courses.csail.mit.edu/6.892/spring19/lectures/ L18.html

Definition 2.2.2. An algorithm ALG for a minimization problem is called c -approximation algorithm for c ≥ 1 c -approx if for all instances x , it satisfies

$$\frac { \cos t ( A L G ( x ) ) } { \cos t ( O P T ( x ) ) } \leq c .

( 1 ) & & ( 2 ) \\ \intertext { s o t ( O P T ( x ) ) } \intertext { a n d } \intertext { e q u i l y }$$

The ratio c is not necessarily a constant, it can be any function of the input size, i.e., c = f ( n ) for an arbitrary function f ( · ) . Practically, we prefer a near optimal solution ALG ( x ) such that the ratio c is as small as possible or at least does not grow quickly in the input size. This, however, may not be possible for some problems such as the maximum clique problem, whose best possible ratio is O ( n 1 - ) for small > 0 . From a provable security's perspective, the smaller the ratio c is, the harder the capproximation problem is. This leads to a cryptosystem with higher security because it requires more time and computational resources for an attacker to break the system.

For a given c = f ( n ) , there are different ways of proving c -approximating a problem is hard. One way is by proving a c-gap problem is hard, which is in direct analogy to the c-approximation problem in hand. This way, if the gap problem is hard, then the c -approximation problem is also hard.

Definition 2.2.3. For a minimization problem, a c -gap problem c -gap (where c > 1 ) distinguishes two cases for the optimal solution OPT ( x ) of an instance x and a given k as follows:

- x is an YES instance if OPT ( x ) ≤ k ,

- x is an NO instance if OPT ( x ) > c · k .

The value k is a given input. For example, in the c -gap version of the shortest vector problem, we can set k = λ 1 ( L ) to be the shortest vector in a given lattice L . Intuitively, a c -gap problem is a decision problem where the unknown optimal solution OPT of the corresponding optimization problem is mapped to the opposite side of a gap. It is, however, different from a decision problem in the sense that there is a gap between k and c · k .

The connection between c-gap and c-approximation problems is that if a c-gap problem is proved to be hard, then the corresponding c-approximation problem is also hard. In other words, there is a reduction from a c-gap problem to a c-approximation problem. The proof is straightforward. Assuming the problem can be c-approximated in polynomial time by an algorithm A , so for an input x we have OPT ( x ) ≤ A ( x ) ≤ c · OPT ( x ) . If x is a YES instance of the gap problem, then

If x is a NO instance, then

$$O P T ( x ) \leq k \implies A ( x ) \leq c \cdot O P T ( x ) \leq c \cdot k .$$

$$locate(x) > c \cdot k \implies A ( x ) > c \cdot k .$$

Gap and approximation lattice problems are the foundation of provable security for latticed-based cryptosystems. We will see more of these problems in Section 4 and some of their cryptographical applications in the hardness proofs of the short integer solution problem, learning with error problem and ring learning with error problem.

Either way the instance x can be distinguished easily using the decision procedure A ( x ) ≤ c · k .

## 2.3 Average-case hardness

So far, we have introduced the time complexity classes P and NP in the worst case scenario. That is, the longest running time over all inputs at a given input length. A problem that is hard to be solved in polynomial time in the worst case is known as worst-case hard. There is another related concept called average-case hardness, which is stronger than worst-case hardness, in the sense that the former implies the latter but not vice versa. To finish section, we briefly discuss the critical role of average-case hard problems for cryptography and how they can be constructed by a worst-case to average-case reduction that was achieved by Ajtai (1996).

Without going into the details, we state some remarks of average-case problems to help the reader to get an intuitive understanding of these problems. More discussions of these problems can be found in Chapter 18 of Arora and Barak (2009). First, an average-case problem consists of a decision problem and a probability distribution, from which inputs can be sampled in polynomial time. Such a problem is called a distributional problem . This is different from a worst-case decision problem, where all c -gap implies inapprox

inputs are considered when determining its hardness. Second, the first remark entails that average-case complexity is defined with respect to a specific distribution over the inputs. This suggests that a problem may be difficult with one distribution but easy with another distribution. For example, integer factorization may be difficult for large prime numbers, but easy for small integers. Hence, which probability distribution is used is crucial for the hardness of the integer factorization problem. Finally, average-case complexity has its own complexity classes distP and distNP , which are the average-case analogs of P and NP, respectively.

To prove a cryptosystem is computationally secure, one could build an efficient reduction from a known worst-case problem to it, so that if the cryptosystem can be attacked successfully, such an attack model provides a solution to the worst-case problem. However, knowing alone the underlying problem is worst-case hard is not sufficient to build a secure cryptosystem in real-world, because many of the system's instances may correspond to easy instances of the worst-case problem, which can be solved efficiently.

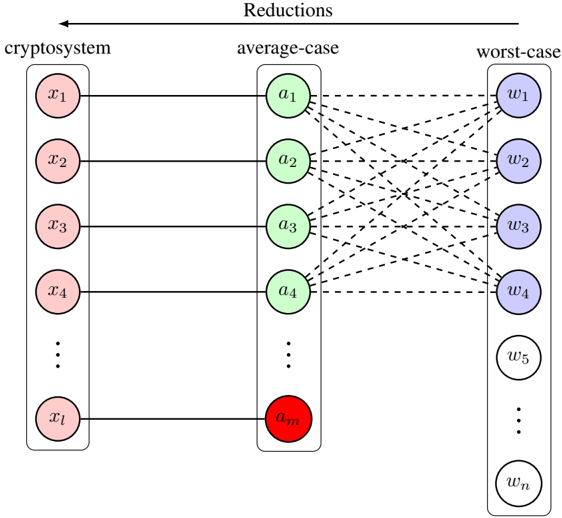

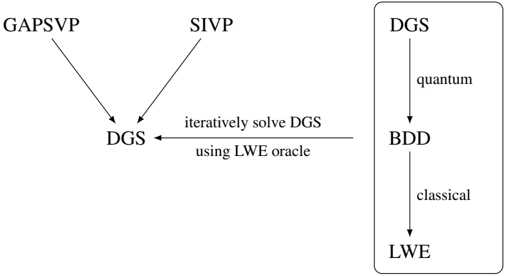

For this reason, an ideal situation is when a cryptosystem's security is based on an average-case problem and the exact distribution to sample hard instances is known. But this is hard to achieve. It is more difficult to prove that a certain distribution generates only hard instances, because this would imply the problem is also worst-case hard. An alternative is to construct an average-case problem, such that its instances correspond to the hard instances in a worst-case problem. This is known as the worst-case to average-case reduction. A visual representation of this type of constructions is illustrated in Figure 2. In this figure, a random cryptographic instance corresponds to an average-case instance. By construction, it is almost always true that an average-case instance links to a hard instance of some worst-case problem. This reduction implies that if the worst-case problem is known or believed (in high confident) to be hard, then the cryptosystem is guaranteed to be secure with high probability.

Figure 2: A demonstration of a cryptosystem's computational security is based on an average-case problem. Each cryptographic instance x i corresponds to a random average-case instance a j . Almost all random instances in the average-case problem can be mapped with the hard instances in a worst-case problem. There may be a fraction of average-case instances (colored in red) that can be solved easily, so their solutions entail solutions of the worst-case problem. But the fraction of such instances is negligible. The hard and easy instances in the worst-case problem are colored blue and white, respectively. The dashed lines indicate the worst-case to average-case reduction is random.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Cryptosystem Reductions

### Overview

The image is a diagram illustrating reductions between a cryptosystem, an average-case scenario, and a worst-case scenario. It depicts a mapping of inputs from the cryptosystem to intermediate values in the average-case, and then to outputs in the worst-case. The diagram uses circles to represent variables or values, and dashed lines to indicate relationships or transformations.

### Components/Axes

The diagram is divided into three main columns, labeled from left to right: "cryptosystem", "average-case", and "worst-case".

* **Cryptosystem:** Contains variables labeled `x1`, `x2`, `x3`, `x4`, and continuing down to `xl`.

* **Average-case:** Contains variables labeled `a1`, `a2`, `a3`, `a4`, and continuing down to `am`. The `am` variable is highlighted in red.

* **Worst-case:** Contains variables labeled `w1`, `w2`, `w3`, `w4`, `w5`, and continuing down to `wn`.

* The entire diagram is titled "Reductions" at the top.

* Dashed lines connect the cryptosystem variables to the average-case variables, and the average-case variables to the worst-case variables.

### Detailed Analysis or Content Details

The diagram shows a many-to-many mapping. Each `x` variable in the cryptosystem is connected to *every* `a` variable in the average-case. Similarly, each `a` variable in the average-case is connected to *every* `w` variable in the worst-case.

* The cryptosystem has `l` input variables (`x1` to `xl`).

* The average-case has `m` intermediate variables (`a1` to `am`).

* The worst-case has `n` output variables (`w1` to `wn`).

* The variable `am` is visually distinguished by being colored red. This suggests it may be a critical or special case.

### Key Observations

The diagram illustrates a reduction process where solving the cryptosystem is reduced to solving the average-case, and solving the average-case is reduced to solving the worst-case. The complete connectivity between the columns suggests that the complexity of the cryptosystem is related to the complexity of both the average-case and worst-case scenarios. The red highlighting of `am` suggests it may be a bottleneck or a key element in the reduction.

### Interpretation

This diagram likely represents a security proof in cryptography. The "reductions" title indicates that the security of the cryptosystem is being shown to depend on the hardness of a problem in the average-case, which in turn is related to the hardness of a problem in the worst-case.

The complete connections between the columns suggest that if an attacker can efficiently solve the worst-case problem, they can also efficiently solve the average-case problem, and consequently break the cryptosystem. The red `am` variable could represent a specific instance or condition that is crucial for the reduction to hold. For example, it might be a specific input that reveals information about the cryptosystem's key.

The diagram is a high-level conceptual illustration and does not contain specific numerical data. It is a visual representation of a mathematical argument about the security of a cryptosystem. The diagram is a visual aid to understand the relationships between different computational problems and their implications for cryptographic security.

</details>

The work by Ajtai served exactly this purpose by introducing the short integer solution (SIS) problem and proving that SIS is an average-case problem with polynomial time reductions from three worstcase lattice problems to it. This work is knowable the first worst-case to average-case reduction. The significant implication of Ajtai's work in cryptography is the fact that it laid the foundation for the security of modern cryptosystems to be based on worst-case problems (via average-case problems). More importantly, this work sparked a number of important following up works including the learning with error and ring learning with error problems that advanced lattice-based cryptography to a new era.

computational security security parameter

## 3 Cryptography Basics

The history of cryptography dates back to the pre-computer era, but with the same goal as today's, that is, securely sharing secret information between parties on public communication channels. A simple but motivating example is shown next, which is a shift cipher encryption technique used by Julius Caesar (during 81-45BC) to securely communicate with his troops on battlefields (Hoffstein et al., 2008).

j s j r d k f q q n s l g f h p g w j f p y m w t z l m n r r n s j s y q z h n z x e n e m y f a l l i n g b a c k b r e a k t h r o u g h i m m i n e n t l u c i u s

As the name of the technique suggests, each letter in the plaintext (below the horizontal line) was shifted by a pre-determined number of places along a fixed direction in the alphabet. This transforms it into a ciphertext (above the horizontal line) that do not hold the original information any more.

## 3.1 Computational security

Back then, Caesar's method was still able to effectively protect his secret messages to the troops from eavesdroppers. But with the help of nowadays multi-core GHz processor-computers that handle billions of instructions per second, this encryption method will fail within seconds. The example motivates the need to design more complex ciphertexts that are hard to decrypt, where the hardness should both be measurable and tunable by some parameters in order to cope with the increasing computing resources of potential attackers.

With the help of mathematics and computer science, in particular probability theory and computational complexity theory, the safety of modern encryption methods can be captured by computational security , a security notion, which allows an attacker to succeed in guessing the secret message with a measurable chance and computational effort such as running time. A frequently used approach to realize this security notion is to parameterize the probability of success and algorithmic running time of an attack by an integer-valued security parameter. This was named 'asymptotic approach' and discussed in more details in Chapter 3 of Katz and Lindell (2014). Some of the following results are also taken from that chapter, but presented in different orders and notations to ensure consistency of this tutorial paper.

Under the notion of computational security, one can draw the connection between an encryption scheme and a computational problem that has been proved (or believed with high confidence) to be hard to solve within a practical time. A famous example is that the security of the RSA encryption scheme relies on the large integer factorization problem, which is presumed (without an actual proof) hard to solve by an efficient non-quantum algorithm. The RSA problem is to solve the unknown x in the equation x e = c mod N . 2 The problem is easy when N is prime, so it comes down to primality test of N .

The security parameter described above, sometimes denoted by n (or λ or κ ), reflects the input size of the underlying hard computational problem. The larger the security parameter, the larger the input size, so the problem is more difficult to be solved in a practical time frame, which ensures the encryption scheme is less likely to be attacked with success. In the RSA scheme, the security parameter is the bit length n of the modulus N . The larger n is, the more difficult it is to prime factor N to efficiently solve the RSA problem. By convention, the security parameter n is often supplied to a scheme in the unary format 1 n by repeating the number 1 n times.

## 3.2 Private and public encryptions

Now that we discussed the security parameter, we formally introduce two types of encryption schemes, that is, the private (or symmetric) and public (asymmetric) key encryption schemes. The two types are similar in the sense that they both consists of three sub-steps for key generation, encryption and decryption. The main difference is that private key encryption uses only one key for both encryption and decryption (hence the name symmetric), whilst public key encryption uses one key for each purpose.

Definition 3.2.1. Define the following three polynomial time algorithms:

2 Throughout the paper, we use = instead of ≡ to denote the congruent modulo relation in order to be consistent with most others in the field. This is also noted in the Notation table in Appendix E.

- Key generation: A probabilistic algorithm that generates a key k ← Keygen (1 n ) for encryption and decryption, where | k | > n .

- Encryption: A probabilistic algorithm that encrypts the plaintext m ∈ { 0 , 1 } ∗ to a ciphertext c ← Enc ( k, m ) using the key.

- Decryption: A deterministic algorithm that decrypts the ciphertext with the key to get the plaintext m ← Dec ( k, c ) .

The collection ( Keygen , Enc , Dec ) forms a private key encryption scheme if for all n, k, m , it satisfies m ← Dec ( k, Enc ( k, m )) .

Definition 3.2.2. Define the following three polynomial time algorithms:

- Key generation: A probabilistic algorithm that generates a pair of keys ( pk , sk ) ← Keygen (1 n ) , where pk is the public key for encryption and sk is the secret key for decryption and both have sizes larger than n .

- Encryption: A probabilistic algorithm that encrypts the plaintext m ∈ { 0 , 1 } ∗ to a ciphertext c ← Enc ( pk , m ) using the public key.

- Decryption: A deterministic algorithm that decrypts the ciphertext using the secret key to get m ← Dec ( sk , c ) .

The collection ( Keygen , Enc , Dec ) forms a public key encryption scheme if for all n, ( pk , sk ) , m , it satisfies m ← Dec ( sk , Enc ( pk , m )) .

## 3.3 Security definitions

Generally speaking, public key encryption uses longer keys due to the fact that one key is public. This in return makes it slower than private key encryption. It is, however, more convenient when under private key encryption, no secure channel is available for sharing the key or the key needs to be changed constantly for different parties. Regardless, the requirement for the keys (in both private and public key encryptions) to be larger than n is to ensure the keys are at least of certain sizes in order to indicate the lower bound of an encryption scheme.

As n directly reflects the security of an encryption scheme, it is convenient to parameterize an attacker's running time and probability of success by n . More specifically, the running time is defined as the time taken to attack the scheme by a randomized algorithm. For practical purpose, this is often preferred to be polynomial in n , denoted by poly ( n ) . From the designer's point of view, an encryption scheme is only considered secure if both the probability of success is significantly small and such a probability decreases as n gets larger. A frequently used function that captures these two characteristics is called a negligible function .

Definition 3.3.1. A function µ : N → R is negligible , if for every positive integer c , there exists an integer N c such that for all n > N c , we have | µ ( n ) | < n -c .

An example is the negative exponential function µ ( n ) = 2 -n . For c = 6 , the threshold to satisfy the above condition is N c = 30 .

When a function is not defined explicitly, we use negl ( n ) to indicate it is negligible. Another characteristic that makes negligible function a suitable candidate for measuring an attacker's probability of success is due to the fact that it is still negligible even after multiplied by a polynomial function of n , that is, | poly ( n ) | · negl ( n ) is also negligible (Proposition 3.6 (Katz and Lindell, 2014)). This assures that if an attacker has a negligible probability of success, his chance stays extremely small even if the same attack is repeated a polynomial number of times (in n ).

An example (Example 3.2 (Katz and Lindell, 2014)) to illustrate this negligible probability and the running time is when an adversary's probability of success is 2 40 · 2 -n by running an attacking algorithm for n 3 minutes. If the security parameter is set to n = 40 , the adversary only needs to run the attack for roughly 40 3 ≈ 44 days to break the system with a probability 1. But if the security parameter is set large n = 500 , the adversary's chance of breaking the system is 2 -460 that is almost 0 even if the attack runs for 237 years.

Semantic security

Indistinguishable

Definition 3.3.2. An encryption scheme is secure if any probabilistic polynomial time (PPT) adversary has only a negligible probability of success to break the scheme.

Here, probabilistic refers to the attack being a randomized algorithm, which typically runs faster than deterministic algorithms.

So far, we have implicitly discussed the notion of security (or breaking an encryption scheme) without formally defining the meaning of it. The concrete security definition that is most relevant to this tutorial paper is semantic security. Below we give a formal definition of it and an equivalent definition, called indistinguishability which is easier to work with in practice. Both definitions can be defined for either private or public key encryptions, with the difference being a public key is also given for the public key encryption case.

At a high level, semantic security means given a ciphertext that encrypts one of two messages, a PPT adversary has no better chance than random guessing that the ciphertext is an encryption of one message or the other.

Definition 3.3.3. An (public or private key) encryption scheme Π is semantically secure if for every PPT adversary A , there is another PPT adversary A ′ such that their chances of guessing the plaintext m are almost identical, regardless A ′ is only given the length of m . That is, let c ← Enc ( k, m ) , then

$$| P r [ \mathcal { A } ( 1 ^ { n } , c ) = m ] - P r [ \mathcal { A } ^ { \prime } ( 1 ^ { n } , | m | ) = m ] | \leq n e g l ( n ) .$$

It is convenient to consider the attack model as a distinguisher (i.e., a PPT algorithm) that tries to exhibit the non-randomness from the ciphertexts in order to associate a ciphertext with a particular plaintext. If the adversary's chance of success is better than random, then the encryption scheme is vulnerable to attacks. The process of guessing the source of a given ciphertext can be formalized as an adversarial indistinguishability experiment (Section 3.2.1 (Katz and Lindell, 2014)). Given a PPT adversary A and a (public or private) encryption scheme Π , the experiment outputs IndisExp A , Π ( n ) = 1 for a successful guess of the source plaintext.

Definition 3.3.4. An (private or public key) encryption scheme Π is indistinguishable if it satisfies

$$P r \left [ I n d i s E x p _ { \mathcal { A } , \Pi } ( n ) = 1 \right ] \leq \frac { 1 } { 2 } + n e g l ( n )$$

for all PPT adversary A and security parameter n .

The following theorem states the equivalent relationship between semantic security and indistinguishability. The same equivalent relation can also be proved under the public key encryption setting. 3

Theorem 3.3.5 (Theorem 3.13 (Katz and Lindell, 2014)) . A private key encryption scheme is indistinguishable in the presence of an eavesdropper if and only if it is semantically secure in the presence of an eavesdropper.

Both semantic security and indistinguishability discussed above are in the presence of an eavesdropper, who passively receives/intercepts a plaintext and tries to guess the corresponding plaintext. In the case of public-key encryption, the adversary has access to the public key and the encryption method, so it is possible for the adversary to compare the intercepted ciphertext with a self-encrypted ciphertext, and use this piece of information to increase the probability of successfully guessing the plaintext. By assuming the adversary has an oracle access to the encryption scheme which allows repeated interactions, this attack model is valid for both public and private key encryptions (Section 3.4.2 (Katz and Lindell, 2014)). The security notion defined under such a chosen-plaintext attack (CPA) model is called CPA security and is a stronger security definition than the previous one which is defined in the presence of an eavesdropper. Similarly, semantic security and indistinguishability can also be defined under chosen plaintext attack, and a similar equivalent relations can be established between semantic security under CPA and IND-CPA . This stronger level of security is useful when introducing homomorphic encryption.

3 See a proof in Lecture 9: Public Key Encryption of the course CS 276 Cryptography (Oct 1, 2014) at UC Berkeley by the instructor Sanjam Garg.

Dimension, rank

## 4 Lattice Theory

## 4.1 Lattice basics

Lattices are useful mathematical tools for connecting different areas of mathematics, computer science and cryptography. They are widely used for cryptoanalysis and building secure cryptosystems. In this section, we will introduce the basics of lattices in the general setting R n . In addition, we introduce dual lattices and some computational lattice problems that are commonly used to achieve provable security of lattice-based hard problems and cryptosystems. At the end of this section, we will sketch Ajtai (1996)'s polynomial time worst-case-to-average-case reduction to reinforce our understanding of lattices as well as appreciate the great breakthrough in provable security of lattice-based cryptography, even against quantum computing in some cases. Although we introduce lattices in the most general setting, their results also hold for special lattices such as ideal lattices in the ring learning with error problem.

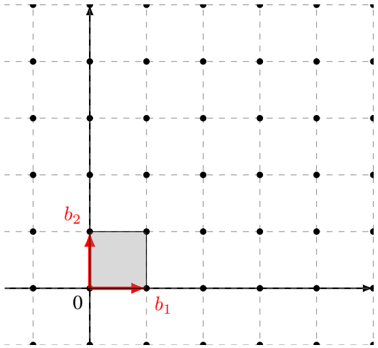

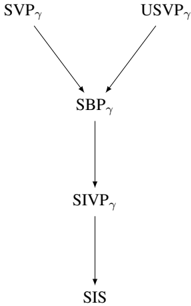

Intuitively, a lattice is similar to a vector space except that it consists of discrete vectors only, that is, elements in lattice vectors have discrete values as opposed to real-valued vectors in a vector space. For example, Figure 3 is a lattice in R 2 . More formally, we have the following definition.

Definition 4.1.1. Let v 1 , . . . , v n ∈ R m be a set of linearly independent vectors. The lattice L Lattice generated by v 1 , . . . , v n is the set of integer linear combinations of v 1 , . . . , v n . That is,

$$L = \{ a _ { 1 } v _ { 1 } + \cdots + a _ { n } v _ { n } | a _ { 1 } , \dots , a _ { n } \in \mathbb { Z } \} .$$

Here, the difference with vector spaces is that the coefficients in the linear combination are integers. The integers m and n are the dimension and rank of the lattice respectively. If m = n , then L is a full-rank lattice. In most cases, we work with full-rank lattices.

It follows from the definition that a lattice is closed under addition. Hence, we can say that an ndimensional lattice is a discrete additive subgroup of R n . It is isomorphic to the additive group of Z n . That is,

$$( L , + ) \cong ( \mathbb { Z } ^ { n } , + ) \subsetneq ( \mathbb { R } ^ { n } , + ) .$$

It is often convenient to work with lattices whose coordinates are integers. These are called integer lattices or integral lattices . For example, the set of even integers forms an integer lattice, but not the set of odd integers because it is not closed under addition.

Figure 3: A lattice L with a basis B = { b 1 , b 2 } and its fundamental domain F .

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Basis Vectors and Rectangle

### Overview

The image depicts a two-dimensional Cartesian coordinate system with a grid of points. A rectangle is defined by the origin (0) and two basis vectors, labeled *b1* and *b2*. The rectangle is shaded gray. The grid appears to be composed of equally spaced points.

### Components/Axes

* **Axes:** Two perpendicular axes are present, representing the x and y coordinates. The x-axis is horizontal, and the y-axis is vertical.

* **Origin:** The intersection of the axes is labeled "0".

* **Basis Vectors:**

* *b1*: A red arrow pointing along the positive x-axis.

* *b2*: A red arrow pointing along the positive y-axis.

* **Rectangle:** A shaded gray rectangle is formed by the basis vectors *b1* and *b2* as adjacent sides, with the origin as one vertex.

* **Grid:** A grid of black dots extends across the coordinate plane, providing a visual reference for scale and position.

### Detailed Analysis

The rectangle's vertices are located at:

* (0, 0) - The origin.

* (b1, 0) - Along the x-axis, defined by the length of vector *b1*.

* (0, b2) - Along the y-axis, defined by the length of vector *b2*.

* (b1, b2) - The opposite vertex from the origin.

The lengths of the basis vectors *b1* and *b2* are not explicitly given numerically. However, based on the grid, it appears that *b1* and *b2* are both approximately equal to 1 grid unit in length. The rectangle occupies one grid square.

### Key Observations

* The basis vectors *b1* and *b2* are orthogonal (perpendicular) to each other.

* The rectangle is aligned with the coordinate axes.

* The rectangle's area is defined by the product of the lengths of *b1* and *b2*.

* The grid provides a visual representation of a lattice structure defined by the basis vectors.

### Interpretation

This diagram illustrates the concept of basis vectors in a two-dimensional space. The basis vectors *b1* and *b2* define a coordinate system, and any point within the plane can be represented as a linear combination of these vectors. The rectangle demonstrates how these vectors can be used to define a region or shape within the coordinate system. The diagram is likely used to explain concepts in linear algebra, vector spaces, or geometry. The grid suggests a discrete representation of the continuous plane, potentially relating to concepts like lattice structures or digital images. The diagram does not provide any specific numerical data beyond the visual representation of the lengths of *b1* and *b2* relative to the grid.

</details>

A basis Basis of a lattice L is a set of linearly independent vectors B = { b 1 , . . . , b n } that spans the lattice, that is,

For example, the vectors { b 1 , b 2 } form a basis of the lattice in Figure 3.

$$L ( B ) = \{ z _ { 1 } b _ { 1 } + \dots + z _ { n } b _ { n } \, | \, z _ { i } \in \mathbb { Z } \} . \\ \{ h _ { 1 } , h _ { 2 } \} \text { form a basis of the lattice in Figure 3.}$$

In what follows, we will frequently appeal to properties of a class of matrices known as unimodular matrices . Unimodular matrices can be used to translate between different lattice bases. They are also used, sometimes implicitly, when performing important lattice operations such as lattice basis reduction.

Unimodular matrix

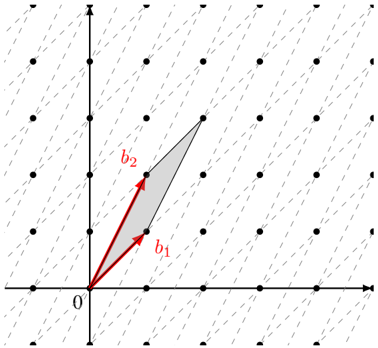

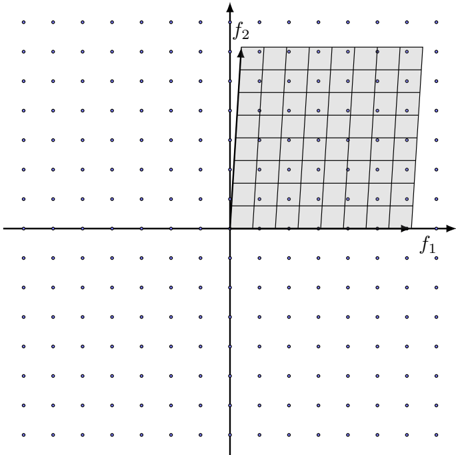

Figure 4: The same lattice L with a different basis B ′ = { b ′ 1 , b ′ 2 } and its fundamental domain F ′ , where B ′ = AB for a unimodular change of basis matrix A = ( 1 1 1 2 ) .

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: Parallelogram Representation of Vector Addition

### Overview

The image depicts a parallelogram constructed from two vectors, `b1` and `b2`, originating from the origin (labeled '0'). The parallelogram visually represents the vector addition of `b1` and `b2`. The background is a grid of dots.

### Components/Axes

* **Axes:** Two perpendicular axes are present. The horizontal axis is not labeled, but is implied to be the x-axis. The vertical axis is also not labeled, but is implied to be the y-axis. Both axes have tick marks indicating a regular scale, but the scale is not numerically defined.

* **Origin:** The origin is clearly marked with the label '0'.

* **Vectors:** Two vectors are labeled:

* `b1`: A red vector originating from the origin and pointing diagonally upwards and to the right.

* `b2`: A red vector originating from the origin and pointing diagonally upwards and to the left.

* **Parallelogram:** A shaded parallelogram is formed by the vectors `b1` and `b2`.

* **Grid:** A grid of dots fills the background, providing a visual reference for scale and position.

### Detailed Analysis

The diagram illustrates the geometric interpretation of vector addition. The parallelogram is constructed such that:

* `b1` starts at the origin and extends to a point.

* `b2` starts at the origin and extends to another point.

* The fourth vertex of the parallelogram (opposite the origin) represents the resultant vector `b1 + b2`.

The vectors `b1` and `b2` are approximately equal in magnitude, but point in different directions. The angle between `b1` and `b2` appears to be approximately 60-90 degrees.

Without a defined scale on the axes, precise numerical values for the components of `b1` and `b2` cannot be determined. However, we can qualitatively describe their direction.

### Key Observations

* The diagram emphasizes the graphical representation of vector addition.

* The parallelogram visually demonstrates that the resultant vector (the diagonal from the origin) is the sum of the two original vectors.

* The grid provides a visual reference for the relative magnitudes and directions of the vectors.

### Interpretation

The diagram demonstrates the parallelogram rule for vector addition. This rule states that the sum of two vectors can be found by constructing a parallelogram with the vectors as adjacent sides. The diagonal of the parallelogram, starting from the common origin of the vectors, represents the resultant vector. This is a fundamental concept in linear algebra and physics, used to represent and manipulate quantities that have both magnitude and direction, such as force, velocity, and displacement. The diagram is a visual aid for understanding this concept, allowing for a qualitative grasp of how vectors combine. The absence of numerical values suggests the diagram is intended to convey the *principle* of vector addition rather than specific calculations.

</details>

Definition 4.1.2. A matrix A ∈ Z n × n is unimodular if it has a multiplicative inverse in Z n × n . That is, A ∈ Z n × n is unimodular if and only if A -1 ∈ Z n × n . Equivalently, a matrix A ∈ Z n × n is unimodular if and only if | det( A ) | = 1 .

Similar to a vector space, a lattice does not need to have a unique basis. The following proposition establishes the fact that one basis can be transformed to another via multiplication by the matrix A provided that A is a unimodular matrix.

Proposition 4.1.3. If B and B ′ be two basis matrices, then L ( B ) = L ( B ′ ) if and only if B ′ = AB for some unimodular matrix A .

Proof. Suppose that B ′ = AB for some unimodular matrix A . Then, by definition both A and A -1 have integer entries. Therefore we have L ( B ′ ) ⊂ L ( A -1 B ′ ) = L ( B ) and L ( B ) ⊂ L ( AB ) = L ( B ′ ) .

Now suppose that L ( B ) = L ( B ′ ) . Then there exist integer square matrices A,A ′ ∈ Z n × n such that B ′ = AB and B = A ′ B ′ . Therefore we have B = A ′ AB or equivalently ( I -A ′ A ) B = 0 . Because B is non-singular, we have A ′ = A -1 and A is unimodular.

For example, the vectors { b ′ 1 , b ′ 2 } in Figure 4 form a different basis for the lattice in Figure 3, with the relation B ′ = AB where the change of basis matrix A = ( 1 1 1 2 ) is unimodular.

An important concept of a lattice is the fundamental domain. It is closely related to the sparsity of a lattice as can be seen from the following definition.

Definition 4.1.4. Fundamental domain Let L be an n -dimensional lattice with a basis { v 1 , . . . , v n } . The main or ( fundamental parallelepiped ) of L is a region defined as

$$F ( v _ { 1 } , \dots , v _ { n } ) = \{ t _ { 1 } v _ { 1 } + \cdots + t _ { n } v _ { n } | t _ { i } \in [ 0 , 1 ) \} .$$

The lattice L and the given basis in Figure 3 has the fundamental domain coloured in grey. It is the convex region that is surrounded by the given basis vectors and the nearby lattice points.

Definition 4.1.5. Determinant Let L be an n -dimensional lattice with a fundamental domain F . Then the n -dimensional volume of F is called the determinant of L , denoted by det( L ) .

Given a basis { v 1 , . . . , v n } of an n -dimensional lattice L , we can write each basis vector v i = ( v i 1 , . . . , v in ) as a vector of its coordinates. Then we have a basis matrix

$$B = \begin{pmatrix} v _ { 1 1 } & \cdots & v _ { 1 n } \\ \vdots & \ddots & \vdots \\ v _ { n 1 } & \cdots & v _ { n n } \end{pmatrix} .$$

fundamental do-

In cryptography, we are interested in full-rank lattices, whose determinant can be easily calculated using a basis matrix as stated in the next proposition.

Proposition 4.1.6. If L is an n -dimensional full-rank lattice with a basis { v 1 , . . . , v n } and an associated fundamental domain F = F ( v 1 , . . . , v n ) , then the volume of F (or determinant of L ) is equal to the absolute value of the determinant of the basis matrix B , that is,

$$\det ( L ) = V o l ( F ) = | \det B | .$$

Although the fundamental domain may have a different shape under another choice of a basis, it can be proved that area (or volume) stays unchanged. This gives rise to the determinant of a lattice which is an invariant quantity under the choice of a fundamental domain.

Corollary 4.1.7. Invariant determinant The determinant of a lattice is an invariant quantity under the choice of a basis for L .

Proof. Let L be a lattice and let B and B ′ be the basis matrices for two different bases for L . There exists a unimodular matrix A such that B ′ = AB . Consequently, we have

$$| \det ( B ^ { \prime } ) | = | \det ( A B ) | = | \det ( A ) | \cdot | \det ( B ) | = | \det ( B ) | .$$

So, we have | det( L ) | = | det( B ′ ) | = | det( B ) | .

Example 4.1.8. Let L be a 3-dimensional lattice with a basis

$$\{ v _ { 1 } = ( 2 , 1 , 3 ) , v _ { 2 } = ( 1 , 2 , 0 ) , v _ { 3 } ( 2 , - 3 , - 5 ) \} .$$

$$B = \begin{pmatrix} 2 & 1 & 3 \\ 1 & 2 & 0 \\ 2 & - 3 & - 5 \end{pmatrix} .$$

Then a basis matrix is

The determinant of the lattice is det( L ) = | det( B ) | = 36 .

Geometrically, this also makes sense. By definition, each fundamental domain contains exactly one lattice vector (in Figure 3 and 4 the origin). Consider fundamental domains that are centered on lattice points rather than having lattice points at one corner. That is, consider

$$\tilde { F } ( v _ { 1 } , v _ { 2 } , \dots , v _ { n } ) = \{ t _ { 1 } v _ { 1 } + t _ { 2 } v _ { 2 } + \hdots + t _ { n } v _ { n } \, | \, t _ { i } \in [ - 1 / 2 , 1 / 2 ) \} .$$

Take a large ball centered at the origin and notice that, because each fundamental domain contains exactly one lattice point, the volume of the ball is approximately equal to the number of lattice points in the ball multiplied by the volume of the fundamental domain. More precisely, we have

$$\lim _ { r \to \infty } \frac { V o l \left ( B _ { r } ( 0 ) \right ) } { | B _ { r } ( 0 ) \cap L | } = V o l \left ( \tilde { F } ( v _ { 1 } , v _ { 2 } , \dots , v _ { n } ) \right ) = d e t ( L ) .$$

By definition, choosing a different basis doesn't change the lattice. So, the volume of the fundamental domain, and therefore the determinant of the lattice, is a property of the lattice and does not depend on the basis used to represent that lattice.

Two remarks. First, a lattice L can be partitioned into disjoint fundamental domains, the union of which covers the entire L . Second, since the choice of a fundamental domain is arbitrary and it covers real vectors that are not in L , each real vector can be uniquely identified by a lattice vector and a real vector in a fundamental domain. These are captured in the following proposition. For the proof, see Proposition 6.18 of Hoffstein et al. (2008).

Proposition 4.1.9. Let L be an n -dimensional lattice in R n with a fundamental domain F . Then every vector w ∈ R n can be written as

$$w & = v + t & ( 4 )$$

for a unique lattice vector v ∈ L and a unique real vector t ∈ F .

Equivalently, the union of the translated fundamental domains cover the span of the lattice basis vectors, i.e.,

$$s p a n ( L ) = \{ F + v | v \in L \} .$$

Modulo basis

Shortest vector

Successive minima

Another useful interpretation of Equation 4 is that for any vector w ∈ R n , there is a unique real vector t ∈ F in the fundamental domain such that w -t ∈ L ( B ) is a lattice vector. In other words, given an arbitrary vector w ∈ R n in the span, we can efficiently reduce it to a vector t ∈ F in the fundamental domain by taking w modulo the basis (or modulo the fundamental domain as used by some authors). More precisely, for a basis { v 1 , . . . , v n } of L ∈ R n , it is obvious that the basis is also a basis of the span R n , so we have w = α 1 v 1 + · · · + α n v n for coefficients α 1 , . . . , α n ∈ R . The coefficients can also be written as α i = a i + t i for a i ∈ Z and t i ∈ (0 , 1) . This implies the real vector can be re-written as w = ( a 1 v 1 + · · · + a n v n ) + ( t 1 v 1 + · · · + t n v n ) = v + t , where in the first pair of parentheses is a lattice vector v and in the second pair is a real vector t within the fundamental domain. From this, we can compute t = w -v . This also gives an alternative formula for computing the modulo basis operation by

$$\begin{array} { r l } & { w \bmod B = w - B \cdot \lfloor B ^ { - 1 } \cdot w \rfloor . } \\ & { ( 5 ) } \end{array}$$

For example, given a 2-dimensional lattice L ∈ R 2 with a basis B = ( 3 0 0 2 ) and a real vector w = (2 , 3) . By reducing w modulo the fundamental domain we get w mod B = (2 , 1) .

Similar to a real vector, the length a lattice vector can also be measured by a norm function || · || . However, unlike in a vector space where there is no shortest non-zero vector, it is possible to define shortest non-zero vector in a lattice because of the discreteness, although this shortest vector may not be unique.

Definition 4.1.10. Given a lattice L , the length of a shortest non-zero vector in L which is also a minimum distance between two lattice vectors is defined as

$$\lambda _ { 1 } ( L ) & = \min \{ | | v | | \, | \, v \in L \ \{ 0 \} \} \\ & = \min \{ | | x - y | | \, | \, x , y \in L , x \neq y \} .$$

The shortest vector problem (formally defined in Section 4.3) is to find the shortest non-zero vector in a given lattice. For a lattice L , notice that λ 1 ( L ) is the solution to the shortest vector problem for that lattice.

The shortest vector problem can be generalized to the problem of finding the i th successive minima. The i th successive minima is the minimum length r such that the lattice contains i linearly independent vectors of length at most r . This can also be defined in relation to the dimension of the space spanned by the intersection between L and a zero-centered closed ball ¯ B (0 , r ) with radius r .

Definition 4.1.11. Given a lattice L , the i th successive minima of L is defined as

$$\lambda _ { i } ( L ) & = \min \{ r | \dim ( s p a n ( L \cap \bar { B } ( 0 , r ) ) ) \geq i \} , \\ \{ r \in \mathbb { R } ^ { n } \, | \, \| r \| \leq r \} & \text { is the closed ball of radius $r$ around $0$}$$

where ¯ B (0 , r ) = { x ∈ R n | || x || ≤ r } is the closed ball of radius r around 0.

For example, if the lattice L = Z n , then the 1st to the n th successive minima λ 1 = · · · = λ n = 1 are equal to 1. The length of a shortest vector is a special case of the successive minima when i = 1 . We will see the successive minima again when introducing shortest independent vector problem as a generalization of the shortest independent problem in 4.3.

Notice that a set of vectors that achieves the successive minima of a lattice is not necessarily a basis for that lattice. Consider the following example which is derived from the work by Korkine and Zolotareff (1873) and was presented its current form in Nguyen and Vall´ ee (2010). Let

$$locut 2 0 0 0 1 1

<text>locut B = {</text>

<loc_472><loc_42><loc_499><loc_75>{</text>

<loc_473><loc_78><loc_498><loc_115>} } } } } } } } } } } } } } } } } } } } } } } } } } } } } } } }$$

Notice that 2 e 5 ∈ L ( B ) and that ‖ v ‖ ≥ 2 for all v ∈ L ( B ) \ { 0 } . So, λ i ( L ( B )) = 2 for 1 ≤ i ≤ 5 . If we let

$$\tilde { B } = \begin{pmatrix} 2 & 0 & 0 & 0 & 0 \\ 0 & 2 & 0 & 0 & 0 \\ 0 & 0 & 2 & 0 & 0 \\ 0 & 0 & 0 & 2 & 0 \\ 0 & 0 & 0 & 0 & 2 \end{pmatrix} .$$

Dual basis then we have L ( ˜ B ) ⊂ L ( B ) and det( ˜ B ) = 32 . On the other hand, we see that det( B ) = 16 . Therefore, ˜ B cannot be a basis for L ( B ) . In fact, it can be shown that no basis of L ( B ) realizes all of the successive minima of L ( B ) .

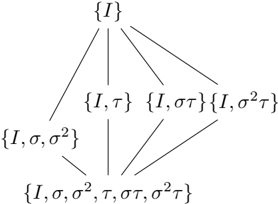

## 4.2 Dual lattice

In this subsection, we introduce dual lattices. This is a useful concept that will be used at several different places, such as defining smoothing parameter for discrete Gaussian distribution and in the hardness proof of the ring learning with error problem. It is important to develop a geometric intuition of the relationship between a lattice and its dual.

The dual (sometimes also called reciprocal) of a lattice is the set of vectors in the span of the lattice (e.g., the span is R n if the lattice is Z n ) whose inner product with the lattice vectors are integers.

Given a full-rank lattice , its is defined as

Definition 4.2.1. Dual lattice L dual lattice

$$L ^ { * } = \{ y \in s p a n ( L ) | \forall x \in L , x \cdot y \in \mathbb { Z } \} .$$

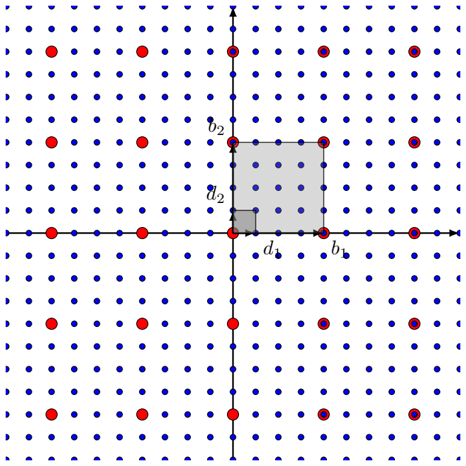

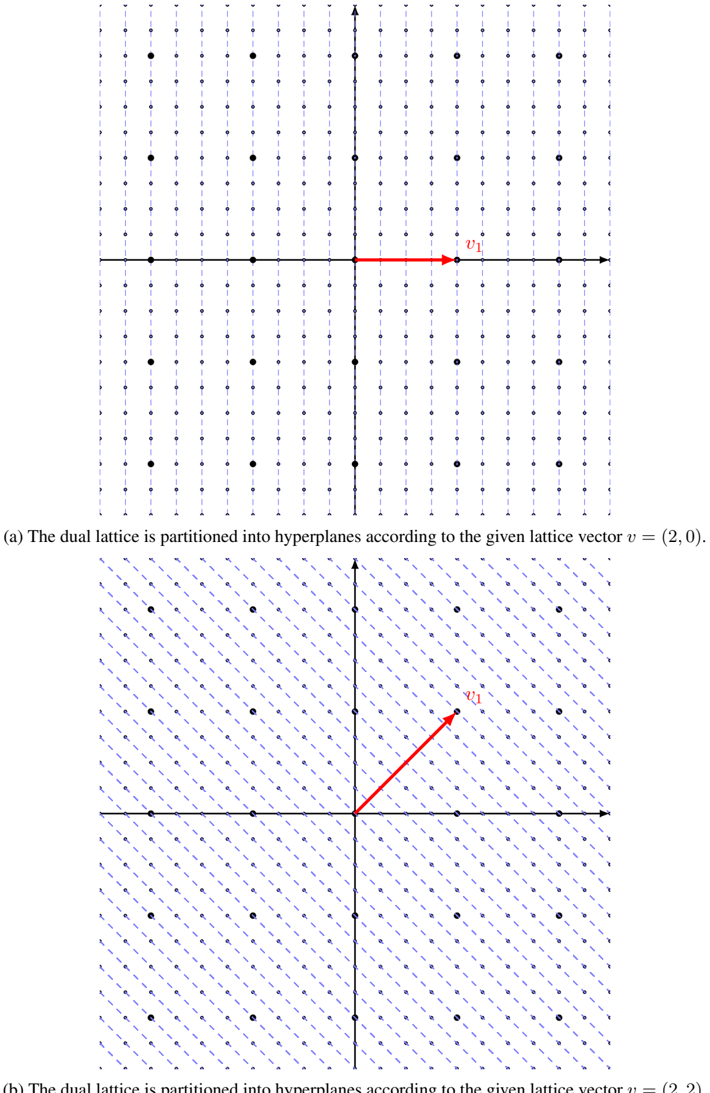

For example, the dual lattice of Z n is Z n and the dual lattice of 2 Z n is 1 2 Z n as shown in Figure 6. An important observation is that the more vectors a lattice has, the less vectors its dual has and vice versa, because there are more (or less) constraints. Most importantly, it can be verified that the dual of a lattice is also a lattice.

Proposition 4.2.2. If L is a lattice then L ∗ is a lattice.

Proof. It suffices to show that L ∗ is closed under subtraction. That is, to show that if x, y ∈ L ∗ then x -y ∈ L ∗ . This follows from the linearity of the inner product. More explicitly, for every z ∈ L we have ( x -y ) · z = x · z -y · z . Because x · z ∈ Z and y · z ∈ Z , we have ( x -y ) · z ∈ Z . The result then follows from the definition of L ∗ .

Figure 5: A lattice L = 2 Z 2 (black points) and its dual L ∗ = 1 2 Z 2 (blue points). The basis of L is B = { b 1 = (2 , 0) , b 2 = (0 , 2) } and the dual basis of L ∗ is D = { d 1 = ( 1 2 , 0) , d 2 = (0 , 1 2 ) } .

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Diagram: Lattice Point Illustration

### Overview

The image depicts a two-dimensional lattice of points, with some points highlighted in red. A shaded square is centered on the origin, and several points are labeled with letters: `b1`, `b2`, `d1`, `d2`. The diagram appears to illustrate a concept related to lattice points, possibly in the context of number theory or geometry.

### Components/Axes

The diagram consists of:

* A grid of blue points forming a lattice.

* Red points scattered throughout the lattice.

* A shaded square centered at the origin (0,0).

* Horizontal and vertical axes representing the coordinate system.

* Labels: `b1`, `b2`, `d1`, `d2` placed near specific points.

The axes are not explicitly labeled with numerical scales, but they clearly define a Cartesian coordinate system.

### Detailed Analysis / Content Details

The diagram shows a square centered at the origin. The vertices of the square are located on the lattice points. The labeled points are as follows:

* `b1`: Located on the positive x-axis, approximately (3,0).

* `b2`: Located on the positive y-axis, approximately (0,3).

* `d1`: Located on the negative x-axis, approximately (-3,0).

* `d2`: Located on the negative y-axis, approximately (0,-3).

The red points are not uniformly distributed. They appear to be concentrated along certain lines or regions within the lattice. There are approximately 20 red points visible. The red points do not seem to have a clear pattern or relationship to the labeled points or the shaded square.

### Key Observations

* The labeled points `b1`, `b2`, `d1`, and `d2` define the boundaries of a square with sides parallel to the coordinate axes.

* The shaded square appears to represent a region of interest within the lattice.

* The distribution of red points is non-uniform, suggesting a specific criterion for their selection.

* The diagram does not provide any numerical data or quantitative measurements.

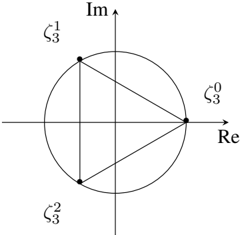

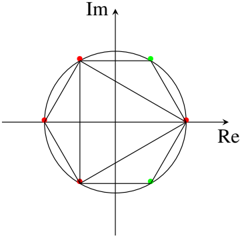

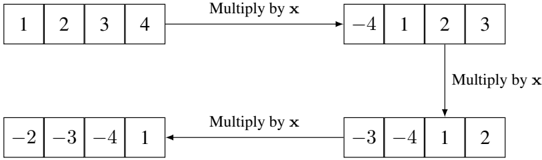

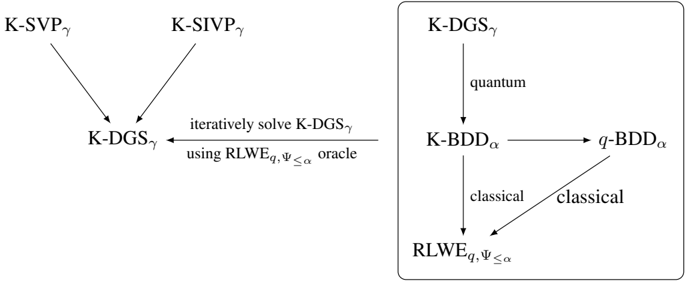

### Interpretation