## Knowledge-based Analogical Reasoning in Neuro-symbolic Latent Spaces

Vishwa Shah 1 , Aditya Sharma 1 , Gautam Shroff 2 , Lovekesh Vig 2 , Tirtharaj Dash 1 and Ashwin Srinivasan 1

1 APPCAIR, BITS Pilani, K.K. Birla Goa Campus

2 TCS Research, New Delhi

## Abstract

Analogical Reasoning problems pose unique challenges for both connectionist and symbolic AI systems as these entail a carefully crafted solution combining background knowledge, deductive reasoning and visual pattern recognition. While symbolic systems are designed to ingest explicit domain knowledge and perform deductive reasoning, they are sensitive to noise and require inputs be mapped to a predetermined set of symbolic features. Connectionist systems on the other hand are able to directly ingest rich input spaces such as images, text or speech and can perform robust pattern recognition even with noisy inputs. However connectionist models struggle to incorporate explicit domain knowledge and perform deductive reasoning. In this paper, we propose a framework that combines the pattern recognition capabilities of neural networks with symbolic reasoning and background knowledge for solving a class of Analogical Reasoning problems where the set of example attributes and possible relations across them are known apriori. We take inspiration from the 'neural algorithmic reasoning' approach [DeepMind 2020] and exploit problem-specific background knowledge by (i) learning a distributed representation based on a symbolic model of the current problem (ii) training neural-network transformations reflective of the relations involved in the problem and finally (iii) training a neural network encoder from images to the distributed representation in (i). These three elements enable us to perform search-based reasoning using neural networks as elementary functions manipulating distributed representations. We test our approach on visual analogy problems in RAVENs Progressive Matrices, and achieve accuracy competitive with human performance and, in certain cases, superior to initial end-to-end neural-network based approaches. While recent neural models trained at scale currently yield the overall SOTA, we submit that our novel neuro-symbolic reasoning approach is a promising direction for this problem, and is arguably more general, especially for problems where sufficient domain knowledge is available.

## Keywords

neural reasoning, visual analogy, neuro-symbolic learning, RPMs

## 1. Introduction

Many symbolic reasoning algorithms can be viewed as searching for a solution in a space defined by prior domain knowledge. Given sufficient domain knowledge represented in symbolic form, 'difficult' reasoning problems, such as analogical reasoning, can be 'solved' via exhaustive search, even though they are challenging for the average human. However, such algorithms operate on a symbolic space, whereas humans are easily able to consume rich data such as images or speech.

Accepted at NeSy 2022, 16th International Workshop on Neural-Symbolic Learning and Reasoning, Cumberland Lodge, Windsor, UK

/envelope-open f20180109@goa.bits-pilani.ac.in (V. Shah)

<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (64x23)

</details>

Neural networks on the other end are proficient in encoding high-dimensional continuous data and are robust to noisy inputs, but struggle with deductive reasoning and absorbing explicit domain knowledge. 'Neural Algorithmic Reasoning'[1], presents an approach to jointly model neural and symbolic learning, wherein rich inputs are encoded to a latent representation that has been learned using from symbolic inputs. This design allows neural learners and algorithms to complement each other's weaknesses. Through this work, we aim to investigate a variation of the neural algorithmic reasoning approach applied to analogical reasoning, using RAVENs Progressive Matrices [2] problems as a test case.

Our approach essentially exploits the domain knowledge to train a suite of neural networks, one for each known domain predicate. For RAVENs problems, these predicates represent rules that might apply to ordered sets (rows) of images in a particular problem. Further, these neural predicates are trained to operate on a special highdimensional representation space ('symbolic latent space') that is derived, via self-supervised learning, from the symbolic input space. Note that a purely symbolic algorithm can consume symbolic inputs to solve the problem exactly, however a distributed representation can allow for real world analogical reasoning for rich input spaces such as images or speech (see Fig 1 for a RAVENs problem; one can

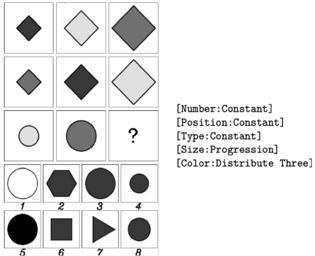

Figure 1.: RPM : Problem Matrix (Top), Answer Options (Bottom)

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Abstract Reasoning Puzzle

### Overview

The image presents an abstract reasoning puzzle with a 3x3 grid of shapes, where the last shape is missing and marked with a question mark. Below the grid are eight candidate shapes, numbered 1 through 8. To the right of the grid, there are five statements describing the rules governing the shapes in the grid.

### Components/Axes

* **Grid:** A 3x3 grid containing shapes. The last cell (bottom right) is empty and marked with a question mark.

* **Candidate Shapes:** Eight shapes numbered 1 to 8, presented below the grid.

* **Rules:** Five statements describing the rules governing the shapes:

* Number: Constant

* Position: Constant

* Type: Constant

* Size: Progression

* Color: Distribute Three

### Detailed Analysis

**Grid Shapes:**

* **Row 1:**

* Cell 1: Small, dark gray diamond

* Cell 2: Medium, light gray diamond

* Cell 3: Large, medium gray diamond

* **Row 2:**

* Cell 1: Medium, medium gray diamond

* Cell 2: Medium, dark gray diamond

* Cell 3: Medium, light gray diamond

* **Row 3:**

* Cell 1: Small, light gray circle

* Cell 2: Medium, medium gray circle

* Cell 3: Question mark

**Candidate Shapes:**

* Shape 1: Large, white circle

* Shape 2: Medium, dark gray hexagon

* Shape 3: Medium, dark gray circle

* Shape 4: Small, dark gray circle

* Shape 5: Large, dark gray circle

* Shape 6: Medium, dark gray square

* Shape 7: Medium, dark gray triangle

* Shape 8: Small, dark gray circle

**Rules Interpretation:**

* **Number: Constant:** Each cell contains only one shape.

* **Position: Constant:** The shapes are centered within each cell.

* **Type: Constant:** The shape type changes from diamonds to circles in the third row.

* **Size: Progression:** The size of the shapes increases from left to right within each row.

* **Color: Distribute Three:** There are three distinct colors (light gray, medium gray, dark gray) distributed across the grid.

### Key Observations

* The shape type changes from diamonds to circles in the third row.

* The size of the shapes increases from left to right within each row.

* The colors are distributed such that each row and column contains all three colors (light gray, medium gray, dark gray).

### Interpretation

The puzzle requires identifying the missing shape based on the given rules. The shape must be a circle (based on the third row), and its size should be large (based on the size progression within the row). The color must be dark gray to satisfy the "Distribute Three" rule, ensuring each row and column has all three colors.

Based on these criteria, the correct answer is likely candidate shape 5, which is a large, dark gray circle.

</details>

also imagine tasks with speech inputs where the analogous example signals are high pitch transformed versions of the original). Our approach differs from [1] where the symbolic latent space is derived via a supervised approach; by using a self-supervised learning approach we are able to use the same representation space to train multiple neural predicates, unlike in [1] where only a single function is learned. Next, we train a neural network encoder to map real-world images (here sub-images of the RAVENs matrices) to the 'symbolic latent space'. Finally, using the above elements together we are able to perform symbolic search-based reasoning, albeit using neural-networks as primitive predicates, to solve analogical reasoning problems.

Contributions (1) We adapt and extend Neural Algorithmic Reasoning to propose a neurosymbolic approach for a class of visual analogical reasoning problems (2) We present experimental results on the RAVENs Progressive Matrices dataset and compare our neuro-symbolic approach to purely connectionist approaches, and analyse the results. In certain cases, our approach is superior to initial neural approaches, as well as to human performance (though more recent neural approaches trained at scale remain SOTA) (3) We submit that our approach can be viewed as a novel and more general neuro-symbolic procedure that uses domain knowledge to train neural network predicates operating on a special, 'symbolically-derived latent space', which are then used as elementary predicates in a symbolic search process.

## 2. Problem Definition

In general, 'RAVEN-like' analogical reasoning tasks can be viewed as comprising of 𝑛 ordered sets 𝑠 1 , 𝑠 2 , ..., 𝑠 𝑛 containing 𝑚 input samples each, an additional test set containing 𝑚 -1 samples and a target 𝑚 𝑡ℎ sample. Each sample 𝐼 𝑗𝑘 where 𝑗 ∈ [1 ...𝑛 ] , 𝑖 ∈ [1 ...𝑚 ] is comprised of a set of entities 𝐸 𝑗𝑘 and each entity 𝑒 ∈ 𝐸 𝑗𝑘 has attributes from a set 𝐴 of 𝑘 predefined attributes 𝑎 1 , 𝑎 2 , ..., 𝑎 𝑘 ∈ 𝐴 . Assume a predefined set of all possible rules 𝑅 = 𝑟 1 , 𝑟 2 ..., 𝑟 𝑢 that can hold over sample attributes in an example set(s). For a given task the objective is to infer which rules hold across the 𝑚 samples in each of the 𝑛 example sets in order to subsequently predict the analogous missing sample for the test set, either by generating the target sample as in the ARC challenge [3], or by classifying from a set of possible choices as in RPMs. Note that the problem definition assumes prior domain knowledge about possible sample entities, their possible attribute values, and possible rules over sample attributes within the example sets. It is worth mentioning that while here we investigate visual analogies, the problem definition can accommodate input samples of any datatype such as audio or text as long as the problem structure is unchanged.

## 3. Proposed Approach

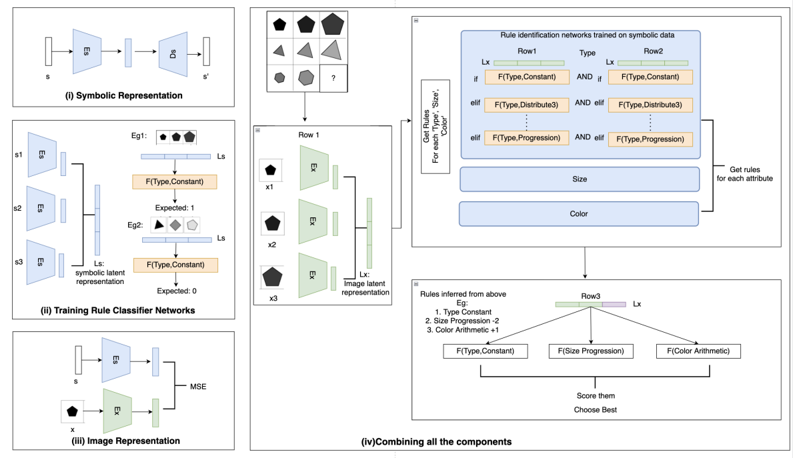

Figure 2.: Overview of our approach for RAVEN's RPM

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Rule Identification Networks

### Overview

The image presents a diagram illustrating a rule identification network trained on symbolic data. It outlines the process from symbolic representation to combining components for rule inference. The diagram is divided into four sections, each representing a different stage of the process: Symbolic Representation, Training Rule Classifier Networks, Image Representation, and Combining all the components.

### Components/Axes

* **(i) Symbolic Representation:** This section shows a process where an input 's' is encoded and decoded to produce 's''. It involves an encoder (E) and a decoder (D).

* **(ii) Training Rule Classifier Networks:** This section shows three inputs (s1, s2, s3) fed into encoders (E). The outputs are combined to form a symbolic latent representation (Ls). Two examples (Eg1, Eg2) are shown, each with a set of shapes and an expected output (1 and 0, respectively). The function F(Type, Constant) is applied.

* **(iii) Image Representation:** This section shows an input 'x' being encoded by an encoder (E). The output is compared to the symbolic representation 's' using Mean Squared Error (MSE).

* **(iv) Combining all the components:** This section integrates the previous stages to infer rules. It includes a matrix of shapes, rule identification networks, inferred rules, and a scoring mechanism.

### Detailed Analysis

* **Symbolic Representation (i):**

* Input: 's'

* Process: Encoding (E) -> Decoding (D)

* Output: 's''

* **Training Rule Classifier Networks (ii):**

* Inputs: s1, s2, s3

* Process: Encoding (E) -> Symbolic Latent Representation (Ls)

* Examples:

* Eg1: Three shapes (black pentagon, gray pentagon, white pentagon), Expected: 1, F(Type, Constant)

* Eg2: Three shapes (black pentagon, gray triangle, white pentagon), Expected: 0, F(Type, Constant)

* **Image Representation (iii):**

* Input: 'x' (black pentagon)

* Process: Encoding (E)

* Comparison: MSE with 's'

* **Combining all the components (iv):**

* **Top-Left:** A 3x3 grid of shapes, with the bottom-right cell containing a question mark. The shapes are variations of pentagons and triangles in different shades of gray.

* **Left-Center:** "Row 1" with three shapes (x1, x2, x3) each being a black pentagon. Each shape is processed by an encoder (E) to produce an image latent representation (Lx).

* **Top-Right:** "Rule identification networks trained on symbolic data". It has three rows (Row1, Type, Row2) and columns.

* Row 1: Lx, F(Type, Constant), F(Type, Distribute3), F(Type, Progression)

* Row 2: F(Type, Constant), F(Type, Distribute3), F(Type, Progression)

* The text "Get Rules For each 'Type', 'Size', 'Color'" is present.

* There are also boxes labeled "Size" and "Color" with an arrow pointing to "Get rules for each attribute".

* **Bottom-Center:** "Rules inferred from above".

* Eg:

1. Type Constant

2. Size Progression -2

3. Color Arithmetic +1

* "Row3" with three functions: F(Type, Constant), F(Size Progression), F(Color Arithmetic).

* The text "Score them Choose Best" is present.

### Key Observations

* The diagram illustrates a system for learning rules from symbolic and image data.

* The system uses encoders to create latent representations of both symbolic and image data.

* The rule identification networks are trained on symbolic data and used to infer rules based on the latent representations.

* The inferred rules are then used to score and choose the best rule.

### Interpretation

The diagram describes a machine learning system designed to infer rules from visual data by combining symbolic and image-based representations. The system learns to associate visual patterns with symbolic descriptions, allowing it to generalize and make predictions about new data. The use of encoders and latent representations allows the system to capture the essential features of the data while reducing dimensionality. The rule identification networks provide a mechanism for learning and applying rules based on the latent representations. The scoring mechanism allows the system to select the best rule based on its performance. This approach could be used in various applications, such as visual reasoning, pattern recognition, and robotics.

</details>

We adapt a variation of the neural algorithmic reasoning approach to the problem defined in Section 2, where we (i) learn a latent representation based on the symbolic representation of the tasks, via self-supervised learning; (ii) train neural networks to infer the rules involved in the problem; (iii) train a neural encoder from images to align with the symbolic latent representations in (i), and (iv) use the above elements to solve a given task via a neuro-symbolic search procedure, i.e., where the elementary predicates are neural networks. We assume the presence of a training dataset 𝐷 𝑡𝑟𝑎𝑖𝑛 with correct answer labels for the analogical reasoning tasks. The components (i), (ii) and (iii) are trained independently as for each of them we know or can determine the inputs and the targets depending on their function. We also evaluate an alternative of (v) encoding an image to the symbolic latent space via the neural encoder in (iii) above, and decode it to symbolic form using the decoder trained in (i) on the symbolic space, on which purely symbolic search is used to solve a problem instance.

## 3.1. Learning a Distributed Representation from the Symbolic Space

We begin with a symbolic multihot task representation 𝑠 , which is a series of concatenated one-hots, one for each image entity attribute. Each attribute can take a value from a finite set and hence is represented using a one-hot vector. To obtain the latent representations of the tasks, we train an auto-encoder on the symbolic task definitions S as L ( S ) = ( 𝐸 S 𝜃 , 𝐷 S 𝜑 ) where the encoder 𝐸 S 𝜃 maps from the symbolic space to the latent space and the decoder 𝐷 S 𝜑 maps the representation from the latent space back to the symbolic space as shown in component (i) of Fig. 2. As we want to reconstruct the multihot representation, a sum of negative-log likelihood is computed for each one-hot encoding present in the multihot representation. We provide an example in C.1 where the parameters 𝜃 and 𝜑 are obtained via gradient descent on a combination of negative log-likelihood loss functions as shown in equation 1 and 2 in the appendix.

## 3.2. Training Rule Identification Neural Networks

Next for every attribute, and for each applicable rule for that attribute, we train a Rule Identification neural network classifier to predict if the rule (pattern) holds for the example set. As mentioned in 2, we know the rules that can hold over attributes, giving us a definite set of networks to be trained. We refer to any rule identification network 𝐹 using the ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒, 𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) pair for which it is trained. The latent representations obtained after encoding the symbolic representations of each of the samples in the example set are concatenated and sent as input to the rule identification networks. While training a neural net for a ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒, 𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) pair, we categorize each example set with the specific label for that particular rule and attribute, labeling it with 0 if the rule-type is not followed, 1 if the rule-type is followed or a rule-value indicating the level of the rule-type when being followed. As each of these rule-types is deterministic, we can obtain the rule-type and value for each row using their symbolic representations. The overview can be seen in component (ii) of Fig. 2 where we see the input for these elementary neural networks and the expected output determined for the ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒, 𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) pair. We see in Fig. 2 that type (shape) changes in row 𝐸𝑔 1 , hence the expected target for 𝐹 ( 𝑡𝑦𝑝𝑒, 𝑐𝑜𝑛𝑠𝑡𝑎𝑛𝑡 ) should be 1 as type stays constant and in case of 𝐸𝑔 2 as the type changes, we expect 𝐹 ( 𝑡𝑦𝑝𝑒, 𝑐𝑜𝑛𝑠𝑡𝑎𝑛𝑡 ) to predict 0, indicating the rule is not obeyed. With these labels, each network is optimized using cross-entropy loss. For parameterized rules we train additional networks to predict the parameters. 1

1 Examples provided in appendix section C.2

## 3.3. Sample representation

As our objective to apply our approach on samples in an unstructured (image, text, speech) format, we want to develop a representation for the samples that resembles the latent representation of symbolic inputs. For this we train any neural network encoder 𝐸 X 𝜓 over the rich input space X which encodes each sample to a latent space. We want to minimize the disparity in the latent representations from the sample 𝑥 and its corresponding symbolic representation 𝑠 . For this we use 𝐸 S 𝜃 trained in 3.1. We minimize the mean squared error over all pairs of symbolic and input representations (equation shown in C.1). This enables us to use the previously learned neural networks for rule inference on our image data.

## 3.4. Combining the Elements

As shown in component (iv) of Fig. 2, we first use 𝐸 X 𝜓 ( 𝑥 ) as inputs to find the underlying rules using the neural networks trained in section 3.2. Once we obtain the set of (rule-type, value) pairs for each attribute, we apply these neural networks for each of the answer choices by adding them to the test set. For each attribute, we obtain the output probability score for that rule-type and value. The final score is the sum of these probability scores for all the inferred rules 2 . The choice with the highest score is returned as the answer.

## 4. Empirical Evaluation

Raven's Progressive Matrices (RPM): is a widely accepted visual reasoning puzzle used to test human intelligence [2]. The idea is to infer multiple patterns to be able to find the answer from the options, an example of the same from the RAVEN[4] dataset is seen in Fig 1. In this dataset, for every problem, each independent attribute follows an algorithmic rule. The task here is to deduce the underlying rules applied over each row for the first two rows; followed by selecting the option that validates all the inferred rules when aligned with the images in the last row. As seen in the first two rows in the Fig 1 we observe the attributes: Number, Position and Type stay constant across the rows. We observe an increasing progression in Size and a fixed set of 3 Colors are permuted within the row indicating distribute three. Hence option 3 is the only solution that satisfies all the rules 3 . For our experiments, We use the 'Relational and Analogical Visual rEasoNing' dataset (RAVEN)[4], which was introduced to drive reasoning ability in current models. RAVEN consists of 10,000 problems for each of the 7 different configurations of the RPM problem shown in Fig 3. Each problem has 16 images (8 : problem matrix and 8 : target options).

Figure 3.: Examples of 7 different configurations of the RAVEN dataset

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Shape Arrangement Patterns

### Overview

The image presents a series of diagrams illustrating different patterns of shape arrangements within a grid or cell structure. Each diagram consists of a 2x2 grid, with shapes of varying types, sizes, and shades arranged according to a specific pattern. The diagrams are labeled below to indicate the type of arrangement.

### Components/Axes

* **Grid Structure:** Each diagram is contained within a 2x2 grid, dividing the space into four quadrants.

* **Shapes:** Various geometric shapes are used, including triangles, squares, circles, pentagons, hexagons, and diamonds.

* **Shades:** The shapes vary in shade from light gray to dark gray/black.

* **Labels:** Each diagram is labeled with a descriptive term indicating the arrangement pattern. The labels are: "Center", "2x2Grid", "3x3Grid", "Left-Right", "Up-Down", "Out-InCenter", and "Out-InGrid".

### Detailed Analysis

**1. Center:**

* Top-left: Light gray diamond.

* Top-right: Medium gray hexagon.

* Bottom-left: Dark gray hexagon.

* Bottom-right: Medium gray triangle.

**2. 2x2Grid:**

* Top-left: Small dark gray triangle.

* Top-right: Small light gray triangle.

* Bottom-left: Medium gray pentagon.

* Bottom-right: Medium gray pentagon.

**3. 3x3Grid:**

* Top-left: Small dark gray triangle.

* Top-right: Medium gray circle, dark gray circle, medium gray pentagon.

* Bottom-left: Light gray diamond, light gray square, light gray diamond.

* Bottom-right: Three light gray circles.

**4. Left-Right:**

* Top-left: Medium gray triangle.

* Top-right: Medium gray hexagon.

* Bottom-left: Medium gray pentagon.

* Bottom-right: Dark gray pentagon.

**5. Up-Down:**

* Top-left: Dark gray pentagon.

* Top-right: Dark gray circle.

* Bottom-left: Light gray diamond.

* Bottom-right: Light gray triangle.

**6. Out-InCenter:**

* Top-left: Light gray pentagon with a small dark gray triangle inside.

* Top-right: Light gray diamond with a small dark gray triangle inside.

* Bottom-left: Light gray circle with a small dark gray triangle inside.

* Bottom-right: Light gray square with a small white square inside.

**7. Out-InGrid:**

* Top-left: Light gray hexagon with three small dark gray triangles inside.

* Top-right: Light gray circle with two small white circles inside.

* Bottom-left: Light gray triangle with three small dark gray triangles inside.

* Bottom-right: Light gray pentagon with three small dark gray triangles inside.

### Key Observations

* The diagrams demonstrate various ways to arrange shapes within a grid, using different shapes, sizes, and shades.

* The labels accurately describe the arrangement patterns, such as shapes being centered, arranged in a grid, or positioned from left to right or up and down.

* The "Out-InCenter" and "Out-InGrid" patterns involve placing smaller shapes inside larger shapes.

### Interpretation

The image serves as a visual guide to different spatial arrangement patterns. It could be used in design, education, or other fields where understanding spatial relationships is important. The diagrams illustrate how simple shapes can be combined and arranged to create more complex patterns. The use of varying shades adds another layer of visual interest and complexity to the arrangements.

</details>

2 We explain the complete pseudo-algorithm along with the scoring function in the appendix.

3 Weprovide the dataset overview, set of rule and attribute definitions for the RAVENs problems used in the appendix.

## 4.1. Experimental Details

As each image in a RAVENs problem can be represented symbolically in terms of the entities (shapes) involved and their attributes: Type, Size, and Color; and multiple entities in the same image have Number and Position attributes. Such attributes are also rule-governing in that rules based on these can be applied to each row and the combination of rules from all rows is used to solve a given problem. Example: for each entity, the multihot representation 𝑠 is of size | 𝑇 | + | 𝑆 | + | 𝐶 | where 𝑇 , 𝑆 and 𝐶 are the set of shapes, possible sizes and possible colors respectively. The multi-hot vector is made up of 3 concatenated one-hot vectors, one each for type, size, and color. In case of multiple components, e.g: Left-Right, we concatenate the multihots of both the entities. For our auto-encoder architecture, we train simple MLPs with a single hidden layer for both 𝐸 𝑠 and 𝐷 𝑠 (using loss function 1,2) in appendix. The dimensions of the layers and latent representations are chosen based on the RPM configuration.

Following [2]'s description of RPM, there are four types of rules: Constant, Distribute Three, Arithmetic, and Progression. In a given problem, there is a one rule applied to each rulegoverning attribute across all rows, and the answer image is chosen so that this holds. We aim to find a set of rules being obeyed by both the rows.

So for every attribute and its rule-type, we train an elementary neural network classifier 𝐹 ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒,𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) , to verify if the rule is satisfied in a given row, or pair of rows. The rules of Progression and Arithmetic are further associated with a value (e.g., Progression could have increments or decrements of 1 or 2). For rule-type Constant and 'Distribute-three' we train a binary classifier, and for rule-type Arithmetic and Progression, we train a multi-class classifier to also predict the value associated with the rule. An example is described in the appendix along with further details on the neural networks used.

We train a CNN-based image encoder 𝐸 X 𝜓 over the image space X which encodes each image of the problem to a latent space and minimize the disparity with the corresponding symbolic latent space as described in Section 3.3. Finally, as shown in component (iv) of Fig. 2, we find the underlying rules using the neural networks trained in section 3.2. Once we obtain the set of (rule-type, value) pairs for each attribute, we apply these neural networks for each of the 8 options by placing them in the last row. We obtain the output probability score for that attribute, rule-type and value and sum the probability scores for all the inferred rules 4 and the image with the highest score is returned as the answer.

## 4.2. Results

We use the test set provided by RAVEN to evaluate rule classification networks and the final accuracy. Table 2 lists the F1 of each 𝐹 ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒,𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) classification network across all configurations. We observe that 85% of the neural networks have an F1-score ≥ 0.90. This is corroborated by the idea that these networks are trained on latent representations of symbolic data to perform elementary functions and do well on specialized reasoning components.

Table 1 shows end-to-end accuracy for different RAVENs problem configurations. Our proposed neural reasoning approach ( A ) is where we have image input encoded by 𝐸 𝑥 ( 𝑥 ) and neural reasoning in the latent space, i.e. steps (i)-(iv) in Section 3. We also show results for

4 We explain the complete pseudo-algorithm along with the scoring function in the appendix.

Table 1. Configuration Wise Accuracy

| | Center | Left-Right | Up-Down | Out-In Center | 2x2 Grid | 3x3 Grid | Out-In Grid |

|--------------------------|----------|--------------|-----------|-----------------|------------|------------|---------------|

| Input/Reasoning | | | | | | | |

| A: Image/Neural(ours) | 89.40% | 85.00% | 89.10 % | 89.80% | 53.10% | 33.90% | 31.90% |

| B: Image/Symbolic(ours) | 97.30% | 98.35% | 98.95% | 96.95% | 88.40% | 19.15% | 34.15% |

| c: Symbolic/Neural(ours) | 94.60% | 90.65% | 91.90% | 93.85% | 62.20% | 54.10% | 59.40% |

| RAVEN(ResNet+DRT)[4] | 58.08% | 65.82% | 67.11% | 69.09% | 46.53% | 50.40% | 60.11% |

| CoPINet[5] | 95.05% | 99.10% | 99.65% | 98.50% | 77.45% | 78.85% | 91.35% |

| SCL[6] | 98.10% | 96.80% | 96.50 % | 96.00% | 91.00% | 82.50% | 80.10% |

| DCNet[7] | 97.80% | 99.75% | 99.75% | 98.95% | 81.70% | 86.65% | 91.45% |

| Human [4] | 95.45% | 86.36% | 81.81% | 86.36% | 81.82% | 79.55% | 81.81% |

an alternative ( B ), (v) mentioned in Section 3, i.e., image inputs decoded to symbolic space via 𝐷 𝑠 ( 𝐸 𝑥 ( 𝑥 )) followed by purely symbolic reasoning (algorithmic solving). Results using neural reasoning in the latent space but using the correct symbolic inputs mapped via 𝐸 𝑠 ( 𝑠 ) are also shown as ( c ) to highlight the loss in accuracy incurred while encoding images using 𝐸 𝑥 ( 𝑥 ) .

We use ResNet+DRT from RAVEN as our baseline, human performance (provided in [4] ) as a reference and other SOTA methods for comparison. We note that the RAVEN baseline is bested by A : neural reasoning on image inputs for 4 out of the 7 configurations, and by B : symbolic reasoning on image inputs for one of the more difficult cases (2x2). At the same time we observe that approach B is better than A except for the difficult case of 3x3 grid, where the encoder-decoder combination 𝐷 𝑠 ( 𝐸 𝑥 ( 𝑥 )) produces too many errors, adversely affecting purely symbolic reasoning.

Neural reasoning from symbolic inputs, i.e. ( c ), accuracy consistently exceeds approach A , which can be attributed to the closer relation of the latent space to the algorithmic symbolic space. We also observe lower performance for the configurations 2x2 Grid, 3x3 Grid, and Out-In grid. Upon analysis, we find that the performance of 𝐸 𝑥 for these configurations is relatively lower as each of these components have multiple entities and the task to transform the image into the latent space and identifying rules becomes difficult.

While more recent purely neural-network based approaches remain SOTA, we note that for the simpler configurations our neuro-symbolic approaches are competitive. We speculate that because of the complex nature and difficulty of these configurations, using more powerful neural architectures (such as transformers) for self-supervised learning of the symbolic latent space as well as for learning predicates can be useful. More generally our results provide evidence that a neuro-symbolic search using neural-network based elementary predicates, trained on a symbolic latent space, may be a promising approach for learning complex reasoning tasks, especially where domain knowledge is available.

## 5. Discussion

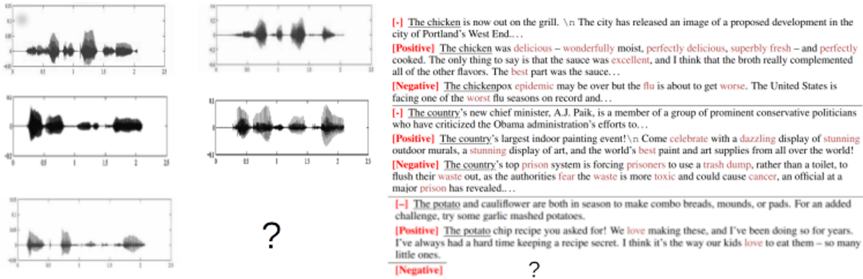

While the results presented in this paper pertain to visual analogical reasoning problems, it should be noted that the procedure presented in Section 3 is agnostic to the input modality.

Figure 4 illustrates analogical reasoning problems in speech and text respectively; the first task involves analogical reasoning in speech, where the input corresponds to a speech sample in a male voice and the output samples correspond to the same utterance in a female voice: The task is to infer that this is the transformation involved and analogously generate the output speech signal for the target query. Possible attributes for rules on a speech signal can include discrete values of pitch, tone, volume or others. In the second, text-based example, inputs correspond to positive reflections of an input passage, and the outputs correspond to negative reflections of the same passage. Attributes for text rule identification can similarly include textual aspects like language, sentiment and style. Note that both these examples require generation of the missing target output which is a harder task than classification from a set of possible choices. However, given the recent progress in conditional generation for images [8] and text[9], this seems entirely feasible.

Figure 4.: Analogical reasoning problems across different input modalities.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Image Analysis: Speech Waveforms and Sentiment Analysis

### Overview

The image presents a combination of speech waveforms and text snippets with sentiment analysis labels. On the left, there are six speech waveform plots. On the right, there are text excerpts, each labeled with a sentiment: neutral (`[-]`), positive (`[Positive]`), or negative (`[Negative]`). The bottom-middle section contains a question mark, indicating missing data.

### Components/Axes

**Left Side: Speech Waveforms**

* **Axes:** Each waveform plot has an x-axis ranging from approximately 0 to 2.5 (likely representing time in seconds) and a y-axis ranging from approximately -0.2 to 0.2 (likely representing amplitude).

* **Waveforms:** Six distinct waveforms are displayed, each representing a different speech segment.

**Right Side: Text Snippets and Sentiment Analysis**

* **Sentiment Labels:** Each text snippet is preceded by a sentiment label: `[-]` (neutral), `[Positive]`, or `[Negative]`.

* **Text Content:** The text snippets are short phrases or sentences related to various topics.

* **Highlighting:** Certain words within the text snippets are highlighted in red, presumably to emphasize the sentiment or key aspects of the statement.

### Detailed Analysis or ### Content Details

**Speech Waveforms (Left Side)**

* **Top Row:**

* Waveform 1: Shows a series of peaks and valleys, with the highest amplitude occurring around 1 second.

* Waveform 2: Similar to waveform 1, but with a slightly different pattern of peaks and valleys.

* **Middle Row:**

* Waveform 3: Shows a more consistent pattern of peaks and valleys, with a relatively high amplitude throughout.

* Waveform 4: Similar to waveform 3, but with a slightly different pattern of peaks and valleys.

* **Bottom Row:**

* Waveform 5: Shows a series of distinct peaks and valleys, with the highest amplitude occurring around 0.5 seconds.

* Waveform 6: Missing.

**Text Snippets and Sentiment Analysis (Right Side)**

* **Snippet 1:**

* Sentiment: `[-]` (Neutral)

* Text: "The chicken is now out on the grill. \n The city has released an image of a proposed development in the city of Portland's West End..."

* **Snippet 2:**

* Sentiment: `[Positive]`

* Text: "The chicken was delicious - wonderfully moist, perfectly delicious, superbly fresh - and perfectly cooked. The only thing to say is that the sauce was excellent, and I think that the broth really complemented all of the other flavors. The best part was the sauce..."

* Highlighted words: "delicious", "excellent"

* **Snippet 3:**

* Sentiment: `[Negative]`

* Text: "The chickenpox epidemic may be over but the flu is about to get worse. The United States is facing one of the worst flu seasons on record and..."

* Highlighted words: "flu", "worse", "worst"

* **Snippet 4:**

* Sentiment: `[-]` (Neutral)

* Text: "The country's new chief minister, A.J. Paik, is a member of a group of prominent conservative politicians who have criticized the Obama administration's efforts to..."

* **Snippet 5:**

* Sentiment: `[Positive]`

* Text: "The country's largest indoor painting event!\n Come celebrate with a dazzling display of stunning outdoor murals, a stunning display of art, and the world's best paint and art supplies from all over the world!"

* Highlighted words: "celebrate", "dazzling", "stunning", "stunning", "best"

* **Snippet 6:**

* Sentiment: `[Negative]`

* Text: "The country's top prison system is forcing prisoners to use a trash dump, rather than a toilet, to flush their waste out, as the authorities fear the waste is more toxic and could cause cancer, an official at a major prison has revealed..."

* Highlighted words: "waste", "fear", "waste", "toxic", "cancer"

* **Snippet 7:**

* Sentiment: `[-]` (Neutral)

* Text: "The potato and cauliflower are both in season to make combo breads, mounds, or pads. For an added challenge, try some garlic mashed potatoes."

* **Snippet 8:**

* Sentiment: `[Positive]`

* Text: "The potato chip recipe you asked for! We love making these, and I've been doing so for years. I've always had a hard time keeping a recipe secret. I think it's the way our kids love to eat them - so many little ones."

* Highlighted words: "love", "love"

* **Snippet 9:**

* Sentiment: `[Negative]`

* Text: Missing.

### Key Observations

* The image combines speech waveforms with sentiment analysis of related text.

* The sentiment analysis labels (Neutral, Positive, Negative) appear to be based on the content of the text snippets.

* The highlighted words in the text snippets seem to be indicators of the sentiment.

* There are missing data points, indicated by the question mark and the missing text snippet.

### Interpretation

The image likely represents an experiment or analysis where speech patterns are being correlated with the sentiment expressed in the spoken words. The waveforms provide a visual representation of the speech, while the sentiment analysis labels provide a classification of the emotional tone of the speech. The highlighted words likely play a key role in the sentiment analysis process. The missing data points suggest that the analysis is incomplete or that some data is unavailable. The image suggests a multimodal approach to understanding human communication, combining acoustic and textual information.

</details>

## 6. Related Work

The 'neural algorithmic reasoning'[1] approach presents a procedure for building neural networks that can mimic algorithms. It includes training processor networks that can operate over high-dimensional latent spaces to align with fundamental computations. This improves generalization and reasoning in neural networks. RAVEN[4] combines both visual understanding and structural reasoning using a Dynamic Residual tree (DRT) graph developed from structural representation and aggregates latent features in a bottom-up manner. This provides a direction suggesting that augmenting networks with domain knowledge performs better than black-box neural networks. Scattering Compositional learner(SCL)[6] presents an approach where the model learns a compositional representation by learning independent networks for encoding object, attribute representations and relationship networks for inferring rules, and using their composition to make a prediction. Our work bears similarity with this approach as both utilize background knowledge in composing a larger mechanism from elementary networks. CoPINet[5] presents the Contrastive Perceptual Inference network which is built on the idea of contrastive learning, i.e. to teach concepts by comparing cases. The Dual-Contrast Network (DCNet)[7] works on similar lines as it uses 2 contrasting modules: rule contrast and choice contrast for its training. We draw inspiration from [10] which also presents a variation of the Neural Algorithmic Reasoning approach applied to visual reasoning.

## 7. Conclusion

In this work, we have proposed a novel neuro-symbolic reasoning approach where we learn neural-network based predicates operating on a 'symbolically-derived latent space' and use these in a symbolic search procedure to solve complex visual reasoning tasks, such as RAVENs Progressive Matrices. Our experimental results (though preliminary, in that our predicates are composition of simple MLPs) indicate that our the approach points to a promising direction for neuro-symbolic reasoning research.

## Acknowledgments

This work is supported by 'The DataLab' agreement between BITS Pilani, K.K. Birla Goa Campus and TCS Research, India.

## References

- [1] P. Veličković, C. Blundell, Neural algorithmic reasoning, Patterns 2 (2021) 100273.

- [2] P. Carpenter, M. Just, P. Shell, What one intelligence test measures: A theoretical account of the processing in the raven progressive matrices test, Psychological review 97 (1990) 404-31. doi: 10.1037/0033-295X.97.3.404 .

- [3] F. Chollet, On the measure of intelligence, arXiv preprint arXiv:1911.01547 (2019).

- [4] C. Zhang, F. Gao, B. Jia, Y. Zhu, S.-C. Zhu, Raven: A dataset for relational and analogical visual reasoning, in: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019.

- [5] C. Zhang, B. Jia, F. Gao, Y. Zhu, H. Lu, S.-C. Zhu, Learning perceptual inference by contrasting, in: Advances in Neural Information Processing Systems (NeurIPS), 2019.

- [6] Y. Wu, H. Dong, R. Grosse, J. Ba, The scattering compositional learner: Discovering objects, attributes, relationships in analogical reasoning, 2020. arXiv:2007.04212 .

- [7] T. Zhuo, M. Kankanhalli, Effective abstract reasoning with dual-contrast network, in: International Conference on Learning Representations, 2021. URL: https://openreview.net/ forum?id=ldxlzGYWDmW.

- [8] A. Ramesh, M. Pavlov, G. Goh, S. Gray, C. Voss, A. Radford, M. Chen, I. Sutskever, Zeroshot text-to-image generation, 2021. URL: https://arxiv.org/abs/2102.12092. doi: 10.48550/ ARXIV.2102.12092 .

- [9] S. Dathathri, A. Madotto, J. Lan, J. Hung, E. Frank, P. Molino, J. Yosinski, R. Liu, Plug and play language models: A simple approach to controlled text generation, 2019. URL: https://arxiv.org/abs/1912.02164. doi: 10.48550/ARXIV.1912.02164 .

- [10] A. Sonwane, G. Shroff, L. Vig, A. Srinivasan, T. Dash, Solving visual analogies using neural algorithmic reasoning, CoRR abs/2111.10361 (2021). URL: https://arxiv.org/abs/2111.10361. arXiv:2111.10361 .

## A. Overview of RAVEN dataset generation

To give an overview of how the RAVEN dataset was generated, the authors used an A-SIG (Attributed Stochastic Grammar) to generate the structural representation of RPM. Each RPM is a parse tree that instantiates from this A-SIG. After this, rules and the initial attributes for that structure are sampled. The rules are applied to produce a valid row. This process is repeated 3 times to generate a valid problem matrix. The answer options are generated by breaking some set of rules. This structured representation is then used to generate images.

The RAVEN dataset provides a structural representation that is semantically linked with the image representation. The structural representation of the image space available in RAVEN makes it generalizable. As each image in a configuration follows a fixed structure, we use this knowledge to generate the corresponding symbolic representations. RAVEN has 10,000 problems for each configuration split into 6000: Train, 2000:Val and 2000:Test. We use the same split for training and validation and provide the results on the test set.

## B. Rule and Attribute definitions

## B.1. Attributes

Number

: The number of entities in a given layout. It could take integer values from [1; 9].

Position

: Possible slots for each object in the layout. Each Entity could occupy one slot.

Type

: Entity types could be triangle, square, pentagon, hexagon, and circle.

Size

: 6 scaling factors uniformly distributed in [0:4; 0:9].

Color

: 10 grey-scale colors

## B.2. Rules

4 different rules can be applied over rule-governing attributes.

Constant : Attributes governed by this rule would not change in the row. If it is applied on Number or Position, attribute values would not change across the three panels. If it is applied on Entity level attributes, then we leave 'as is' the attribute in each object across the three panels.

Progression : Attribute values monotonically increase or decrease in a row. The increment or decrement could be either 1 or 2, resulting in 4 instances in this rule.

Arithmetic : There are 2 instantiations in this rule, resulting in either a rule of summation or one of subtraction. Arithmetic derives the value of the attribute in the third panel from the first 2 panels. For Position, this rule is implemented as set arithmetics.

Distribute Three : This rule first samples 3 values of an attribute in a problem instance and permutes the values in different rows.

## C. Autoencoder, Neural Predicates and Image Encoder

## C.1. Autoencoder

The symbolic encoder 𝐸 S 𝜃 ( 𝑠 ) is trained using the following losses as described in Section 3. As we want to reconstruct the multihot representation, a sum of negative-log likelihood is computed for each one-hot encoding present in the multihot representation. Here 𝑝 𝑘 denotes the output nodes from the decoder corresponding to the 𝑘 𝑡ℎ attribute and 𝑡 𝑘 denotes the one-hot input for the same attribute. In equations 1 and 2 we use the example from RAVEN where the attributes are type, size, color, etc.

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

## C.2. Neural Predicates for Rule Classification

For every attribute, for each of its rule-type, we train an elementary neural network classifier to verify if the rule is satisfied in the row- this acts as our Rule Identification network. In this work, we refer to any rule identification network 𝐹 using a ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒, 𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) pair for which it is trained. For rule-type Constant and Distribute Three we train a binary classifier. The rules of Progression and Arithmetic are also associated with a value (e.g., Progression could have increments or decrements of 1 or 2), hence for rule-type Arithmetic and Progression, we train a multi-class classifier to also predict the value associated with the rule. Example: A neural network for Center: 𝐹 ( 𝑇𝑦𝑝𝑒,𝐶𝑜𝑛𝑠𝑡𝑎𝑛𝑡 ) is a binary classifier trained to identify if the row from the configuration Center has constant type (shape) across the 3 panels. Similarly a neural network for Left: 𝐹 ( 𝑆𝑖𝑧𝑒,𝑃𝑟𝑜𝑔𝑟𝑒𝑠𝑠𝑖𝑜𝑛 ) is a five-class classifier trained to classify if there is a progression in size in the Left component. This predicts 0 if there is no progression and predicts the progression value: increment or decrement (-2, -1, 1, 2) otherwise.

The latent representations 𝐸 S 𝜃 ( 𝑠 ) obtained after encoding the symbolic representations of each of the three panels in the row are concatenated and sent as input to the neural networks. While training a neural net for a ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒, 𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) pair, we categorize each row with the specific label for that particular rule and attribute, labeling it with 0 if the rule-type is not followed and with 1 or rule-value indicating the level of the rule-type when being followed. As each of these rule-types is deterministic, we can obtain the rule-type and value for each row using their symbolic representations. The overview can be seen in component (ii) of Fig. 2 where we see the input for these elementary neural networks and the expected output determined for the ( 𝑎𝑡𝑡𝑟𝑖𝑏𝑢𝑡𝑒, 𝑟𝑢𝑙𝑒 -𝑡𝑦𝑝𝑒 ) pair. With these labels, each network is optimized using cross-entropy loss. Each network is a shallow MLP classifier with 1 or 2 hidden layers whose dimensions are chosen depending on the configuration and validation set. These classifiers are trained using symbolic representations for each component across the various configurations and we provide the results in Table. 2.

## C.3. Image Encoder

To learn the latent representation of the unstructured data such that it mimics the symbolic latent space, we minimize the mean squared error over all pairs of symbolic and input representations obtained from 𝐸 S 𝜃 ( 𝑠 )) and 𝐸 X 𝜓 ( 𝑥 ) respectively. This enables us to use the previously learned neural networks for rule inference on our image data.

<!-- formula-not-decoded -->

Table 2. component. Blank entries indicate that the rule setting does not exist for that particular component. Eg:

F1-score of rule classification networks. Note: Different components have different set of rules as in the case of Left-Right, Out-In Center, and Out-In Grid, wherein we train a separate set of networks for each Number attribute is always 1 in Center configuration.

| | Center | Left-Right | Left-Right | Up-Down | Up-Down | Out-In Center | Out-In Center | 2x2 Grid | 3x3 Grid | Out-In Grid | Out-In Grid |

|--------------|----------|--------------|--------------|-----------|-----------|-----------------|-----------------|------------|------------|---------------|---------------|

| | | Left | Right | Up | Down | Out | In | | | Out | In Grid |

| Constant | 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | 0.99 | 1.0 | 0.94 | 0.94 | 0.99 | 0.91 |

| Distri Three | 1.0 | 0.99 | 0.99 | 0.99 | 1.0 | 0.99 | 0.99 | 0.92 | 0.91 | 0.99 | 0.88 |

| Progression | 0.96 | 0.99 | 0.99 | 0.99 | 0.99 | 0.99 | 0.99 | 0.98 | 0.96 | 0.99 | 0.97 |

| Constant | 1.0 | 0.99 | 1.00 | 0.99 | 1.0 | 0.99 | 1.0 | 0.91 | 0.90 | 1.0 | 0.95 |

| Distri Three | 1.0 | 0.97 | 0.96 | 0.98 | 0.98 | 1.0 | 0.98 | 0.77 | 0.72 | 0.99 | 0.94 |

| Progression | 0.95 | 0.99 | 0.99 | 0.99 | 0.99 | 0.97 | 0.99 | 0.93 | 0.94 | 0.96 | 0.98 |

| Arithmetic | 0.91 | 0.96 | 0.96 | 0.96 | 0.97 | - | 0.96 | 0.90 | 0.84 | - | 1.0 |

| Constant | 0.98 | 0.99 | 0.99 | 0.99 | 0.99 | - | 0.99 | 0.82 | 0.87 | - | 0.86 |

| Distri Three | 0.98 | 0.99 | 0.97 | 0.98 | 0.98 | - | 0.98 | 0.61 | 0.74 | - | 0.63 |

| Progression | 0.99 | 1.0 | 0.99 | 0.99 | 0.98 | - | 0.99 | 0.95 | 0.92 | - | 0.95 |

| Arithmetic | 0.93 | 0.91 | 0.93 | 0.92 | 0.95 | - | 0.94 | 0.78 | 0.68 | - | 0.78 |

| Constant | - | - | - | - | - | - | - | 0.93 | 0.92 | - | 0.96 |

| Distri Three | - | - | - | - | - | - | - | 0.81 | 0.77 | - | 0.83 |

| Progression | - | - | - | - | - | - | - | 0.97 | 0.85 | - | 0.95 |

| Arithmetic | - | - | - | - | - | - | - | 0.96 | 0.84 | - | 0.94 |

| Constant | - | - | - | - | - | - | - | 0.93 | 0.92 | - | 0.96 |

| Distri Three | - | - | - | - | - | - | - | 0.87 | 0.94 | - | 0.89 |

| Progression | - | - | - | - | - | - | - | 0.95 | 0.96 | - | 0.93 |

| Arithmetic | - | - | - | - | - | - | - | 0.95 | 0.92 | - | 0.93 |

## D. Search Algorithm

## Algorithm 1. Search Overview

```

```