## A Survey on Knowledge Graph-based Methods for Automated Driving

Juergen Luettin 1 , Sebastian Monka 1 , Cory Henson 2 , and Lavdim Halilaj 1

1 Robert Bosch GmbH - Corporate Research, Renningen, Germany

2 Robert Bosch LLC - Bosch Research and Technology Center, Pittsburgh, PA, USA

{firstname.lastname}@bosch.com

Abstract. Automated driving is one of the most active research areas in computer science. Deep learning methods have made remarkable breakthroughs in machine learning in general and in automated driving (AD) in particular. However, there are still unsolved problems to guarantee reliability and safety of automated systems, especially to effectively incorporate all available information and knowledge in the driving task. Knowledge graphs (KG) have recently gained significant attention from both industry and academia for applications that benefit by exploiting structured, dynamic, and relational data. The complexity of graph-structured data with complex relationships and inter-dependencies between objects has posed significant challenges to existing machine learning algorithms. However, recent progress in knowledge graph embeddings and graph neural networks allows to applying machine learning to graph-structured data. Therefore, we motivate and discuss the potential benefit of KGs applied to the main tasks of AD including 1) ontologies 2) perception, 3) scene understanding, 4) motion planning, and 5) validation. Then, we survey, analyze and categorize ontologies and KG-based approaches for AD. We discuss current research challenges and propose promising future research directions for KG-based solutions for AD.

Keywords: Knowledge graph · Automated Driving · Automotive ontology · Knowledge representation learning · Knowledge graph embedding · Knowledge graph neural network

## 1 Introduction

The first successful AD vehicle was demonstrated in the 1980s [20]. However, despite remarkable progress, fully AD has not been realized to date. One unsolved problem is that AD vehicles must be able to drive safely in situations that have not been seen before in the training data. Moreover, AD systems must consider the strong safety requirements specified in ISO 26262 [52], which states that the behavior of the components needs to be fully specified and that each refinement can be verified with respect to its specification. verified. Adhering to this standard is a prerequisite for automated driving systems.

Preprint, under review.

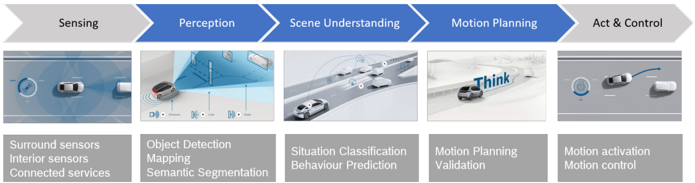

Fig. 1. Standard components of an AD system , modified from [61].

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Autonomous Vehicle Operation Diagram

### Overview

The image is a diagram illustrating the operational flow of an autonomous vehicle, broken down into five key stages: Sensing, Perception, Scene Understanding, Motion Planning, and Act & Control. Each stage is represented by a blue arrow and a corresponding visual example, along with a description of the processes involved.

### Components/Axes

* **Stages (Top Row):**

* Sensing

* Perception

* Scene Understanding

* Motion Planning

* Act & Control

* **Visual Examples (Middle Row):** Each stage has a corresponding image depicting the process.

* **Descriptions (Bottom Row):** Each stage has a text description of the processes involved.

### Detailed Analysis or ### Content Details

**1. Sensing:**

* **Visual:** A top-down view of a white autonomous vehicle on a road, with sensor indicators around the car.

* **Description:**

* Surround sensors

* Interior sensors

* Connected services

**2. Perception:**

* **Visual:** A depiction of object detection and mapping, showing a car using sensors to identify objects in its environment.

* **Description:**

* Object Detection

* Mapping

* Semantic Segmentation

**3. Scene Understanding:**

* **Visual:** A scene showing multiple cars on a road, representing the vehicle's understanding of the overall traffic situation.

* **Description:**

* Situation Classification

* Behaviour Prediction

**4. Motion Planning:**

* **Visual:** A car on a road with the word "Think" superimposed, representing the planning process.

* **Description:**

* Motion Planning

* Validation

**5. Act & Control:**

* **Visual:** A car making a lane change, illustrating the vehicle's ability to execute planned actions.

* **Description:**

* Motion activation

* Motion control

### Key Observations

* The diagram presents a sequential flow from sensing the environment to acting upon it.

* Each stage builds upon the previous one, creating a closed-loop system.

* The visual examples provide a clear understanding of the processes involved in each stage.

### Interpretation

The diagram illustrates the complex process of autonomous vehicle operation. It highlights the importance of each stage, from sensing the environment to making decisions and executing actions. The diagram suggests that autonomous driving relies on a combination of sensor data, perception algorithms, scene understanding, motion planning, and control systems. The flow emphasizes the iterative nature of the process, where the vehicle continuously senses, perceives, plans, and acts in response to its environment.

</details>

Deep learning (DL) [44,92,29] has made remarkable breakthroughs with significant impact on the performance of AD systems. However, DL methods do not provide information to adequately understand what the network has learned and thus are hard to interpret and validate [12,42]. In safety-critical applications, this is a major drawback. The concept of explainable AI (XAI) [4] was therefore introduced.

The use of graphs to represent knowledge has been researched for a long time. The term knowledge graph (KG) was popularized with the announcement of the Google Knowledge Graph [95]. A graph-based representation has several advantages over alternative approaches to represent knowledge. Graphs represent a concise and intuitive abstraction with edges representing the relations that exist between entities. Special graph query languages allow to find complex arbitrarylength paths in the graph. Recently, methods to apply machine learning directly on graphs have generated new opportunities to use KGs in data-based applications [106]. Figure 1 shows the standard components of an AD system together with their sub-tasks. In this survey, we address KG-based approaches for the components Perception, Scene Understanding, and Motion Planning .

Moreover, the performance of DL methods is heavily dependent on the availability of suitable training data. When the testing environment deviates from the distribution of the training data, DL methods tend to produce unpredictable and critical errors. Whereas driving is governed by traffic laws and typical driver behaviors that represents a crucial knowledge source, traditional DL methods cannot easily incorporate such explicit knowledge. We argue that KGs are well suited to address all of these drawbacks.

## 2 Preliminaries

We first describe the basic terminology relevant in the context of this survey as well as insights of related work regarding generic principles of joint usage of knowledge graphs and machine learning pipelines.

## 2.1 Knowledge Graphs & Ontologies

Knowledge graphs are means for structuring facts, with entities connected via named relationships. Hogan et al. [45] define a KG as "a graph of data with the objective of accumulating and conveying real-world knowledge, where nodes represent entities and edges are relations between entities". Entities can be realworld objects and abstract concepts, relationships represent the relation between entities, and semantic descriptions of entities and their relationships contain types and properties with a well-defined meaning. Knowledge can be expressed in a factual triple in the form of (head, relation, tail) or (subject, predicate, object) under the Resource Description Framework (RDF), for example, (Albert Einstein, WinnerOf, Nobel Prize). A KG is a set of triples G = H,R,T , where H represents a set of entities E , T ⊆ E × L , a set of entities and literal values, and R set of relationships connecting H and T .

## 2.2 Models

A graph model is a model which structures the data, including its schema and/or instances in the forms of graphs, and the data manipulation is realized by graphbased operations and adequate integrity constraints[1]. Each graph model has its own formal definition based on the mathematical foundation, which can vary according to different characteristics, for instance, directed vs undirected, labeled vs unlabeled, etc. The most basic model is composed of labeled nodes and edges, easy to comprehend but inappropriate to encapsulate multidimensional information. Other graph models allow for representation of information utilizing complex relationships in the form of hypernodes or hyperedges.

Here we discuss three common graph models used in practice to represent data graphs.

Directed Labeled Graphs A directed labeled graph is comprised of a set of nodes and a set of edges connecting those nodes, labeled based on a specific vocabulary. The direction of the edge of two paired nodes is important, which clearly distinguishes between the start node and the end node. This enables the organization of information in an intuitive way via the utilization of binary relationships.

Hyper-relational Graphs A hyper-relational graph is also a labeled directed multigraph where each node and edge might have a number of associated keyvalue pairs [2]. Internally, nodes and edges are annotated according to a chosen vocabulary and have unique identifiers, making them a flexible and powerful form of modeling for graph analysis with weighted edges.

Hypergraphs Hypergraphs enhance the definition of binary edges by allowing modeling of multiple and complex relationships. On the other hand, hypernodes modularize the notion of node, by allowing nesting graphs inside nodes. In addition, the notion of a hyperedge enables the definition of n-ary relations between different concepts.

A knowledge graph can be based on any such graph model utilizing nodes and edges as a fundamental modeling form. KGs are essentially composed of two main components: 1) schemas a.k.a. ontologies; and 2) the actual data modeled according to the given ontologies. In philosophy, an ontology is considered as a systematic study of things, categories, and their relations within a particular domain. In computer science, on the other hand, ontologies are defined as a formal and explicit specification of a shared conceptualization [97]. They enable conceptualization of knowledge for a given domain and support common understanding between various stakeholders. Thus, ontologies are a crucial component in tackling the semantic heterogeneity problem and enabling interoperability in scenarios where different agents and services are involved.

## 2.3 Knowledge Representation Learning

While most symbolic knowledge is encoded in graph representation, conventional machine learning methods operate in vector space. Using a knowledge graph embedding method (KGE-Method), a KG can be transformed into a knowledge graph embedding (KGE), a representation where entities and relations of a KG are mapped into low-dimensional vectors. The KGE captures semantic meanings and relations of the graph nodes and reflects them by locality in vector space [106]. KGEs are originally used for graph-based tasks such as node classification or link prediction, but have recently been applied to tasks such as object classification, detection, or segmentation. As defined in [14], graph embedding algorithms can be clustered into unsupervised and supervised methods.

Unsupervised KGE-Methods form a KGE based on the inherent graph structure and the attributes of the KG. One of the earlier works [80] focused on statistical relational learning. Recent surveys [13,106,32,55] categorize unsupervised KGE-Methods based on their representation space (vector, matrix, and tensor space), the scoring function (distance-based, similarity-based), the encoding model (linear/bilinear models, factorization models, neural networks), and the auxiliary information (text descriptions, type constraints).

Supervised KGE-Methods learn a KGE to best predict additional node or graph labels. Forming a KGE by using task-specific labels for the attributes, the KGE can be optimized for a particular task while retaining the full expressivity of the graph. Common supervised KGE-Methods are based on graph neural networks (GNNs) [30], an extension of DL networks that can directly work on a KG. For scalability reasons and to overcome challenges that arise from graph irregularities, various adaptations have emerged such as graph convolutional networks (GCN) [60] and graph attention networks (GAT) [104].

Several surveys focusing on different research topics in AD have been published, including computer vision [53], object detection [3,31], DL based scene understanding [71,36,33,49], DL based vehicle behavior prediction [78], deep reinforcement learning [61], lane detection [118,79,99], semantic segmentation [65,73], and planning and decision making [28,82,94,8,18]. More recently, ontologies and KGs have gained interest for knowledge-infused learning approaches. Monka et al. [75] provided a survey about visual transfer learning using KGs. However, we did not find a survey that cover the use of KGs applied to AD.

## 3 Ontologies for Automated Driving

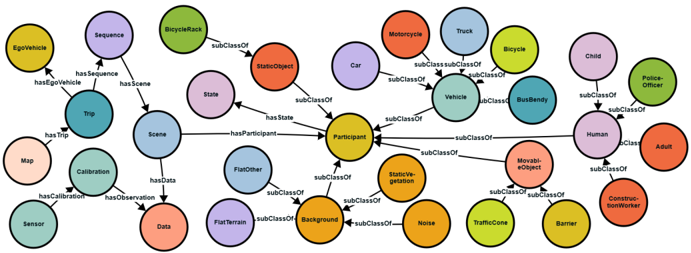

Several ontologies have been developed to model relevant knowledge in the automotive domain. They cover elements such as vehicle, driver, route, and scenery, including their spatial and temporal relationships. The authors in [27,101] propose a consolidated definition and taxonomy for AD terminology. The goal is to establish a standard and consistent terminology and ontology. Additionally, there exist well-known ontologies such as DBpedia [7], Schema.org [35] and SOSA [54] which contain concepts related to the automotive domain described from a more generic perspective. Fig. 2 illustrates main concepts such as Scene , Participant and Trip with sub-categories and relationships. In the following, we categorize and describe the surveyed ontologies considering their primary focus.

Fig. 2. Scene Ontology . Excerpt of an ontology to describe information of a driving scene [39]

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Knowledge Graph: Object Relationships

### Overview

The image presents a knowledge graph illustrating relationships between various objects and concepts, primarily focusing on elements within a traffic or urban environment. The graph uses nodes (circles) to represent entities and edges (arrows) to represent relationships between them. The central node is "Participant," and other nodes are connected to it via "subClassOf" relationships. Other relationships include "hasTrip," "hasScene," "hasData," "hasEgoVehicle," "hasSequence," "hasCalibration," "hasObservation," and "hasState."

### Components/Axes

* **Nodes:** Represented as colored circles, each containing a label for an object or concept.

* **Edges:** Represented as arrows, indicating the type of relationship between nodes.

* **Central Node:** "Participant" (yellow), positioned approximately in the center of the diagram.

* **Relationships:**

* `subClassOf`: Indicates a hierarchical relationship, where one object is a specific type of another.

* `hasTrip`: Connects "Trip" to other nodes.

* `hasScene`: Connects "Scene" to other nodes.

* `hasData`: Connects "Data" to other nodes.

* `hasEgoVehicle`: Connects "EgoVehicle" to "Trip".

* `hasSequence`: Connects "Sequence" to "Trip".

* `hasCalibration`: Connects "Calibration" to "Trip".

* `hasObservation`: Connects "Data" to "Scene".

* `hasState`: Connects "State" to "Participant".

* `hasParticipant`: Connects "Scene" to "Participant".

### Detailed Analysis or Content Details

**Node Details (arranged roughly from top-left to bottom-right):**

* **EgoVehicle:** (Yellow) Located in the top-left. Connected to "Trip" via "hasEgoVehicle".

* **Sequence:** (Purple) Located to the right of "EgoVehicle". Connected to "Trip" via "hasSequence".

* **BicycleRack:** (Green) Located in the top-center. "BicycleRack" is a "subClassOf" "StaticObject".

* **StaticObject:** (Orange) Located in the upper-center. "StaticObject" is a "subClassOf" "Participant".

* **State:** (Purple) Located to the right of "StaticObject". "State" "hasState" "Participant".

* **Trip:** (Teal) Located in the center-left. Connected to "Scene".

* **Scene:** (Light Blue) Located in the center. Connected to "Trip", "Data", and "Participant".

* **Map:** (Pink) Located below "Trip".

* **Calibration:** (Teal) Located below "Trip". Connected to "Data".

* **Sensor:** (Teal) Located in the bottom-left.

* **Data:** (Orange) Located in the bottom-center. Connected to "Scene" and "Calibration".

* **FlatOther:** (Purple) Located to the left of "Participant". "FlatOther" is a "subClassOf" "Participant".

* **FlatTerrain:** (Orange) Located in the bottom-left. "FlatTerrain" is a "subClassOf" "Background".

* **Background:** (Yellow) Located in the bottom-center. "Background" is a "subClassOf" "Participant".

* **Noise:** (Orange) Located in the bottom-center. "Noise" is a "subClassOf" "Participant".

* **TrafficCone:** (Orange) Located in the bottom-right. "TrafficCone" is a "subClassOf" "MovableObject".

* **Barrier:** (Yellow) Located in the bottom-right. "Barrier" is a "subClassOf" "MovableObject".

* **Participant:** (Yellow) Located in the center.

* **Car:** (Purple) Located in the upper-center. "Car" is a "subClassOf" "Vehicle".

* **Motorcycle:** (Orange) Located in the top-right. "Motorcycle" is a "subClassOf" "Vehicle".

* **Truck:** (Light Blue) Located in the top-right. "Truck" is a "subClassOf" "Vehicle".

* **Vehicle:** (Teal) Located in the upper-right. "Vehicle" is a "subClassOf" "Participant".

* **BusBendy:** (Teal) Located to the right of "Vehicle". "BusBendy" is a "subClassOf" "Vehicle".

* **Child:** (Pink) Located in the upper-right. "Child" is a "subClassOf" "Human".

* **Police-Officer:** (Green) Located in the upper-right. "Police-Officer" is a "subClassOf" "Human".

* **Human:** (Purple) Located in the right-center. "Human" is a "subClassOf" "Participant".

* **Adult:** (Orange) Located in the right-center. "Adult" is a "subClassOf" "Human".

* **MovableObject:** (Orange) Located in the bottom-right. "MovableObject" is a "subClassOf" "Participant".

* **StaticVegetation:** (Orange) Located in the bottom-center. "StaticVegetation" is a "subClassOf" "Participant".

* **ConstructionWorker:** (Yellow) Located in the bottom-right. "ConstructionWorker" is a "subClassOf" "Human".

* **Bicycle:** (Light Blue) Located in the top-right. "Bicycle" is a "subClassOf" "Vehicle".

### Key Observations

* The central role of "Participant" indicates that the graph is structured around identifying and classifying different types of participants within a scene or system.

* The "subClassOf" relationships define a hierarchy of objects, with more general categories like "Vehicle" and "Human" branching out into more specific types like "Car," "Truck," "Child," and "Adult."

* The "has..." relationships ("hasTrip," "hasScene," etc.) describe the attributes or components associated with "Trip" and "Scene."

### Interpretation

The knowledge graph provides a structured representation of entities and their relationships within a traffic or urban environment. It highlights how different objects are categorized and how they relate to a central concept of "Participant." The graph could be used for various applications, such as:

* **Scene Understanding:** By identifying the objects present in a scene and their relationships, the graph can help in understanding the context and dynamics of the scene.

* **Autonomous Systems:** The graph can be used to inform the decision-making process of autonomous systems, such as self-driving cars, by providing a structured representation of the environment.

* **Data Analysis:** The graph can be used to analyze data collected from sensors and other sources, by providing a framework for organizing and interpreting the data.

The graph emphasizes the hierarchical nature of object classification and the importance of understanding the relationships between different entities in a complex environment. The connections between "Trip," "Scene," and "Data" suggest a focus on analyzing events and observations within a specific context.

</details>

Vehicle Model An ontology modeling the main concepts of vehicles, such as vehicle type, installed components and sensors, is described in [117]. The work in [62] focuses on representing sensors, their attributes, and generated signals to increase data interoperability.

Driver Model Combined approaches for managing driving related information by a model representing the driver, the vehicle, and the context are described in [43,24]. An ontology for modeling driver profiles based on their demographic and behavioral aspects is proposed by [89]. It is used as an input for a hybrid learning approach for categorizing drivers according to their driving styles.

Driver Assistance An ontology for driver assistance is described in [58]. It serves as a common basis for domain understanding, decision-making, and information sharing. Feld et al. [24] presented an ontology focusing on humanmachine-interfaces and inter-vehicle communication. Huelsen et al. [50,51] developed an ontology for traffic intersections to reason about the right-of-way

Table 1. Automotive ontologies . Relevant ontologies split into different categories considering their main focus and concepts.

| Focus | Scope | Main Concepts | Ref. |

|-------------------|--------------------------------------------|----------------------------------------------------------------------------------------------------|-------------------------------|

| Generic | Vehicle, Driver, Scene | Vehicle, Engine Spec., Usage Type, Driving Wheel, Configuration, Manufacturer, Sensor, Scene, Time | [7,35,54] |

| Vehicle | Vehicle, Categories, Parts | Vehicle, Sensors, Actuators, Signals, Taxonomy, Speed, Acceleration | [62,117] |

| Driver Model | Driver, Characteristics, Objectives | Ability, Physiological & Emotional State, Driving Style, Preferences, Behavior | [24,43,89] |

| Driver Assistance | Driver Machine Interactions, | Assistance Functions, Interaction Module, Exchange Interfaces | [24,25,37] [50,51,58] [69,88] |

| Routing | Information Exchange | Road Part, Road Type, Lane, | |

| | Road Geometry, Road Network, Intersections | Junction, Traffic Sign, Obstacles | [63,91,98] |

| Context | Scenario, Event, | Road Participants, Static and Dynamic Environment, | [11,24,26] |

| Model | Situation | Driving Maneuvers, Temporal Relations | [38,41,74] [83,102] |

| Cross- | Automation Level, | Automation Mode, Longitudinal & | [66,83,90] |

| Cutting | Driving Task, Risk, Abstraction Layer | Lateral Control, Risk Estimation, Autonomy and Communication | [93,109,5] [6,103,39] |

including information about traffic signs and traffic lights. Related approaches can be found in [25,37,69,88].

Route Planning Schlenoff et al. [91] explored the use of ontologies to improve the capabilities and performance of on-board route-planning. An ontologybased method for modeling and processing map data in cars was presented by Suryawanshi et al. [98]. Kohlhaas et al. [63] introduced a modeling approach for the semantic state space for maneuver planning.

Context Model Ulbrich et al. [102] described an ontology for context representation and environmental modelling for AD. It contains different layers including a metric layer of lane context, traffic rules, object, and situation description. Buechel et al. [11] proposed a modular framework for traffic regulation based decision-making in AD. The traffic scene is represented by an ontology and includes knowledge about traffic regulations. Henson et al. [41] presented an ontology-based method for searching scenes in AD datasets. Similar approaches representing the context in a driving scenario are shown in [24,26,38,74]. Ontologies have also been used for context-dependent recommendation tasks [108,40].

Cross-Cutting An ontology describing the levels of automated driving systems, ranging from fully manual to fully automated was presented in [83]. In line with this, [90] analyses crucial questions of driving tasks and maps them to the level of driving automation. The interaction risks between human driven and automated vehicles investigated in [66] are based on five main components: obstacle, road, ego-vehicle, environment, and driver. An informal but comprehensive 6layer model for a structured description and categorization of urban scenes was describe in [93]. This was further developed into the Automotive Urban Traffic Ontology (A.U.T.O.) [109]. The Automotive Global Ontology(AGO) [103] focuses on semantic labeling and testing use cases. Ontologies representing data structures of AD datasets have been presented in [39]. Several standards aim to develop ontologies to provide a foundation of common definitions, properties, and relations for central concepts, e.g. ASAM OpenScenario [5] and ASAM OpenX [6].

A summary of automotive ontologies with scope and main concepts is shown in Table. 1. It can be seen that many ontologies cover only specific concepts and use cases but other are more complete and provide a more comprehensive coverage for all AD components and tasks. Since one of the main benefits of ontologies is re-usability, these ontologies can be re-used and extended for various scenarios.

## 4 Knowledge Graphs applied to Automated Driving

In this section, we provide an overview of KG-based approaches and categorize them based on their respective components and tasks in the AD pipeline as shown in Figure 1. We consider approaches that use ontologies or KGs as well as approaches that combine KGs with machine learning for AD tasks.

## 4.1 Object Detection

Object detection in AD includes the detection of traffic participants such as vehicles, pedestrians, road markings, traffic signs, and others. Typical sensors used are video cameras, RADAR and LiDAR.

Scene graphs are a relatively recent technique to semantically describe and represent objects and relations in a scene [56]. Much research is targeting the generation of scene graphs [15] which can be divided into two types. The first, bottom up approach, consists of object detection followed by pairwise relationship recognition. The second, top-down approach, consists of joint detection and recognition of objects and their relationships. Current research in scene graphs focuses on the integration of prior knowledge. The use of KGs to provide background knowledge and generate scene graphs is recently proposed [114] with Graph Bridging Networks (GB-Net). The GB-Net regards the scene graph as the image conditioned instantiation of the common sense knowledge graph. ConceptNet [70] is used as the knowledge graph. Gu et al. [34] presented KB-GAN, a knowledge base and auxiliary image generation approach based on external knowledge and image reconstruction loss to overcome the problems found in datasets. We found no applications of these methods for object detection in AD.

Wickramarachchi et al. [111] generated a KGE from a scene knowledge graph, and use the embedding to predict missing objects in the scene with high accuracy. This is accomplished with a novel mapping and formalization of object detection as a KG link prediction problem. Several KGE algorithms are evaluated and compared [110]. Chowdhury et al. [17] extended this work by integrating common-sense knowledge into the scene knowledge graph. Woo et al. [112] presented a method to embed relations by jointly learning connections between objects. It contains a global context encoding and a geometrical layout encoding witch extract global context information and spatial information.

Road-Sign Detection Several approaches focus on using knowledge-graphs for road sign detection [59,76]. Kim et al. [59] described a method to assist human annotation of road signs by combining KGs and machine learning. Monka et al. [76] proposed an approach for object recognition based on a knowledge graph neural network (KG-NN) that supervises the training using image-datainvariant auxiliary knowledge. The auxiliary knowledge is encoded in a KG with respective concepts and their relationships. These are transformed into a dense vector representation by an embedding method. The KG-NN learns to adapt its visual embedding space by a contrastive loss function.

Lane Detection Lane detection approaches can be divided into two categories: (1) data from high definition (HD) maps; and (2) data from the vehicle perception sensors (e.g. cameras). The drawback of HD maps is that such data is not always available and not always up to date. Traditional methods for lane detection usually perform first edge detection and then model fitting [118]. A graphembedding-based approach for lane detection [72] can robustly detect parallel, non-parallel (merging or splitting), varying lane width, and partially blocked lane markings. A novel graph structure is used to represent lane features, lane geometry, and lane topology. Homayounfar et al. [46] focused on discovering lane boundaries of complex highways with many lanes that contain topology changes due to forks and merges. They formulate the problem as inference in a directed acyclic graph model (DAG), where the nodes of the graph encode geometric and topological properties of the local regions associated with the lane boundaries.

## 4.2 Semantic Segmentation

The goal of semantic segmentation is to assign a semantic meaningful class label (e.g. road, sidewalk, pedestrian, road sign, vehicle) to each pixel in a given image. A KG-based approach for scene segmentation is described by Kunze et al. [64]. A scene graph is generated from a set of segmented entities that models the structure of the road using an abstract, logical representation to enable the incorporation of background knowledge. Similar approaches based on the (non-semantic) graph representation for scene segmentation can be found in [10,21,100,96,105].

Table 2. Knowledge Graphs applied to Automated Driving .

| Perception | Mapping, Understanding | Plan & Validate |

|--------------------------|------------------------------|--------------------------|

| Object detection | Mapping | Motion Planning |

| Scene graphs [56,15] | Map representation [98] | Decision making [87] |

| Context learning [112] | Map integration [84] | Rules [117,115,116] |

| KG-scene-graphs [114,34] | Map updating [85] | Reasoning [48,113] |

| KG-based detection [110] | Quality of maps [86] | Rule learning [19,47,77] |

| Common-sense [17] | Scene understanding | KG from text [22] |

| Road sign recog. [59,76] | Context model [107] | Validation |

| Lane detection [72,46] | Situation understanding [38] | Risk assessm. [9,81,109] |

| Segmentation | Behavior Prediction | Test gener. [16,26,68] |

| Scene segm. [64] | Motion prediction [23,119] | Verification [57] |

## 4.3 Mapping

Automated vehicles often use digital maps as a virtual sensor to retrieve information about the road network for understanding, navigating, and making decisions about the driving path. Qui et al. [84] proposed a knowledge architecture with two levels of abstraction to solve the map data integration problem. How to use different types of rules to achieve two-dimensional reasoning is detailed in [85]. Qiu et al. [86] addressed the issue of quality assurance in ontology- based map data for AD, specifically the detection and rectification of map errors.

## 4.4 Scene Understanding

Scene understanding aims to understand what is happening in the scene, the relations between the objects in order to obtain a comprehension for further steps in automated driving that deal with motion planning and vehicle control. An approach for KG-based scene understanding was described by Werner et al. [107]. The paper proposes a KG to model temporally contextualized observations and Recurrent Transformers (RETRA), a neural encoder stack with a feedback loop and constrained multi-headed self-attention layers. RETRA enables transformation of global KG-embeddings into custom embeddings, given the situation-specific factors of the relation and the subjective history of the entity. Halilaj et al. [38] presented a KG-based approach for fusing and organizing heterogeneous types and sources of information for driving assistance and automated driving tasks. The model builds on the terminology of [101] and uses existing ontologies such as SOSA [54]. A KGE based on graph neural networks [106] is then used for the task to classify driving situations.

## 4.5 Object Behavior Prediction

Fang et al. [23] described an ontology-based reasoning approach for long-term behavior prediction of road users. Long-term behavior is predicted with estimated probabilities based on semantic reasoning that considers interactions among various players. Li et al. [67] present a graph based interaction-aware trajectory prediction (GRIP) approach. This is based on a GCN and graph operations that model the interaction between the vehicles. Relation-based traffic motion prediction using traffic scene graphs and GNN is described in [119].

## 4.6 Motion Planing

The aim of motion planning is to plan and execute driving actions such as steering, accelerating, and braking taking all information of previous steps into account. Regele [87] uses an ontology-based high-level abstract world model to support the decision-making process. It consists of a low-level model for trajectory planning and a high-level model for solving traffic coordination problems. Zhao et al. [117,115,116] presented core ontologies to enable safe automated driving. They are combined with rules for ontology-based decision-making on uncontrolled intersections and narrow roads. Another approach for ontology development, focusing on vehicle context is described in [113]. Huang et al. [48] use ontologies for scene-modeling, situation assessment and decision-making in urban environments and a knowledge base of driving knowledge and driving experience. Using ontologies to generate semantic rules from a decision tree classifier trained on driving test descriptions of a driving school is outlined in [19] . Khan et al. [22] retrieve human knowledge from natural text descriptions of traffic scenes with pedestrian-vehicle encounters. Hovi [47] presents how rules for ontology-based decision-making systems can be learned through machine learning. Morignot [77] presents an ontology-based approach for decision-making and relaxing traffic regulations in situations when this is a preferred scenario.

## 4.7 Validation

In the following, we list KG-based approaches that support the validation of AD systems including requirements verification, test case generation, and risk assessment. Bagschik et al. [9] introduced a concept of ontology-based scene creation, that can serve in a scenario-based design paradigm to analyze a system from multiple viewpoints. In particular, they propose the application for hazard and risk assessment. A similar approach for use case generation is described in [16,26]. Paardekooper et al. [81] presents a hybrid-AI approach to situational awareness. A data-driven method is coupled with a KG along with the reasoning capabilities to increase the safety of AD systems. Criticality recognition using the A.U.T.O ontology is demonstrated in [109]. Li et al. [68] outlined a framework for testing, verification, and validation for AD. It is based on ontologies for describing the environment and converted to input models for combinatorial testing. Kaleeswaran et al. [57] presented an approach for verification of requirements in AD. A semantic model translates requirements using world knowledge into formal representation, which is then used to check plausibility and consistency of requirements.

A summary of KG-based methods for AD-tasks is shown in Tab. 2. It can be seen, that for every component and task only very few KG-based approaches have been developed, suggesting much potential for further research in this area.

## 5 Conclusions

While AD has made tremendous progress over the last few years, many questions remain still unanswered. Among these are verifiability, explainability, and safety. AD systems that operate in complex, dynamic, and interactive environments require approaches that generalize to unpredictable situations and that can reason about the interactions with multiple participants and variable contexts.

We have surveyed ontologies and KG-based approaches for AD. A few automotive ontologies have been developed. However, harmonization of automotive ontologies and AD dataset structures is an important step towards enabling KG construction and usage for AD tasks. Only a few KG-based methods have been developed for AD. Given the benefits and improvements of KG-based methods in other domains indicates great potential of KG-based methods in the AD domain.

Recent progress on KGs and KG-based representation learning has opened new possibilities in addressing these open questions. This is motivated by two properties of KGs. First, the ability of KGs to represent complex structured information and in particular, relational information between entities; second, the ability to combine heterogeneous sources of knowledge, such as common sense knowledge, rules, or crowd-sourced knowledge, into a unified graph-based representation. Recent progress in KG-based representation learning opened a new research direction in using KG-based data for machine learning applications such as AD.

Future topics include but are not limited to (1) the inclusion of additional knowledge sources; (2) task-oriented knowledge representation and knowledge embedding; (3) temporal representation of KG-based approaches; and (4) rule extraction and verification for explainability. While KGs have already been used in the areas of perception, scene understanding, and motion planning, the use of the technology for the tasks of sensing and act & control will bring further advances for AD. The work indicates that knowledge graphs will play an important role in making automated driving better, safer, and ultimately feasible for real-world use.

## References

1. Angles, R., Gutiérrez, C.: An introduction to graph data management. In: Fletcher, G.H.L., Hidders, J., Larriba-Pey, J.L. (eds.) Graph Data Management, Fundamental Issues and Recent Developments, pp. 1-32. Data-Centric Systems and Applications, Springer (2018)

2. Angles, R., Thakkar, H., Tomaszuk, D.: Mapping RDF databases to property graph databases. IEEE Access 8 , 86091-86110 (2020)

3. Arnold, E., Al-Jarrah, O.Y., et al.: A survey on 3d object detection methods for autonomous driving applications. T-ITS (2019)

4. Arrieta, A., D'iaz-Rodr'iguez, N., Ser, J., Bennetot, A., Tabik, S., Barbado, A., Garc'ia, S., Gil-L'opez, S., Molina, D., Benjamins, R., Chatila, R., Herrera, F.: Explainable artificial intelligence (xai): Concepts, taxonomies, opportunities and challenges toward responsible ai. ArXiv abs/1910.10045 (2020)

5. ASAM: ASAM OpenSCENARIO V2.0 (2022)

6. ASAM: ASAM OpenX, proposal (2022)

7. Auer, S., Bizer, C., Kobilarov, G., Lehmann, J., Cyganiak, R., Ives, Z.G.: Dbpedia: A nucleus for a web of open data. In: ISWC (2007)

8. Badue, C., Guidolini, R., Carneiro, R.V., et al.: Self-driving cars: A survey. Expert Syst. Appl. (2021)

9. Bagschik, G., Menzel, T., Maurer, M.: Ontology based scene creation for the development of automated vehicles. In: IV (2018)

10. Bordes, J.B., Davoine, F., Xu, P., Denoeux, T.: Evidential grammars: A compositional approach for scene understanding. application to multimodal street data. Appl. Soft Comput. (2017)

11. Buechel, M., Hinz, G., Ruehl, F., et al.: Ontology-based traffic scene modeling, traffic regulations dependent situational awareness and decision-making for automated vehicles. In: IEEE Intelligent Vehicles Symposium, IV (2017)

12. Burton, S., Gauerhof, L., Heinzemann, C.: Making the case for safety of machine learning in highly automated driving. In: SAFECOMP Workshops (2017)

13. Cai, H., Zheng, V., Chang, K.: A comprehensive survey of graph embedding: Problems, techniques, and applications. IEEE TKDE (2018)

14. Chami, I., Abu-El-Haija, S., Perozzi, B., Ré, C., Murphy, K.: Machine learning on graphs: A model and comprehensive taxonomy. CoRR abs/2005.03675 (2020)

15. Chang, X., Ren, P., Xu, P., Li, Chen, X., Hauptmann, A.: Scene graphs: A survey of generations and applications. ArXiv abs/2104.01111 (2021)

16. Chen, W., Kloul, L.: An ontology-based approach to generate the advanced driver assistance use cases of highway traffic. In: Proceedings of the 10th IC3K (2018)

17. Chowdhury, S.N., Wickramarachchi, R., Gad-Elrab, M.H., Stepanova, D., Henson, C.: Towards leveraging commonsense knowledge for autonomous driving. ISWC (2021)

18. Claussmann, L., Revilloud, M., Glaser, S., Gruyer, D.: A study on al-based approaches for high-level decision making in highway autonomous driving. SMC (2017)

19. Dianov, I., Ramírez-Amaro, K., Cheng, G.: Generating compact models for traffic scenarios to estimate driver behavior using semantic reasoning. In: IROS (2015)

20. Dickmanns, E., Graefe, V.: Dynamic monocular machine vision. Machine Vision and Applications (2005)

21. Dierkes, F., Raaijmakers, M., Schmidt, M., Bouzouraa, M.E., Hofmann, U., Maurer, M.: Towards a multi-hypothesis road representation for automated driving. IEEE ITSC (2015)

22. Elahi, M.F., Luo, X., Tian, R.: A framework for modeling knowledge graphs via processing natural descriptions of vehicle-pedestrian interactions. In: HCI (2020)

23. Fang, F., Yamaguchi, S., Khiat, A.: Ontology-based reasoning approach for longterm behavior prediction of road users. IEEE ITSC (2019)

24. Feld, M., Müller, C.A.: The automotive ontology: managing knowledge inside the vehicle and sharing it between cars. In: AutomotiveUI (2011)

25. Fuchs, S., Rass, S., Lamprecht, B., Kyamakya, K.: A model for ontology-based scene description for context-aware driver assistance systems. In: ICST AMBISYS (2008)

26. de Gelder, E., Paardekooper, J., Saberi, A.K., Elrofai, H., den Camp, O.O., Ploeg, J., Friedmann, L., Schutter, B.D.: Ontology for scenarios for the assessment of automated vehicles. CoRR abs/2001.11507 (2020)

27. Geyer, S., Baltzer, M., Franz, B., Hakuli, S., Kauer, M., Kienle, M., et al.: Concept and development of a unified ontology for generating test and use-case catalogues for assisted and automated vehicle guidance. IET ITS (2014)

28. González, D., Pérez, J., Montero, V.M., Nashashibi, F.: A review of motion planning techniques for automated vehicles. T-ITS (2016)

29. Goodfellow, I., Bengio, Y., Courville, A.C.: Deep learning. Nature 521 , 436-444 (2015)

30. Gori, M., Monfardini, G., Scarselli, F.: A new model for learning in graph domains. In: IJCNN (2005)

31. Gouidis, F., Vassiliades, A., Patkos, T., et al.: A review on intelligent object perception methods combining knowledge-based reasoning and machine learning. In: Proceedings of the AAAI-MAKE Symposium (2020)

32. Goyal, P., Ferrara, E.: Graph embedding techniques, applications, and performance: A survey. Knowl. Based Syst. (2018)

33. Grigorescu, S.M., Trasnea, B., Cocias, T.T., Macesanu, G.: A survey of deep learning techniques for autonomous driving. J. Field Robotics (2020)

34. Gu, J., Zhao, H., Lin, Z., Li, S., Cai, J., Ling, M.: Scene graph generation with external knowledge and image reconstruction. CVPR (2019)

35. Guha, R.V., Brickley, D., Macbeth, S.: Schema.org: evolution of structured data on the web. Commun. ACM (2016)

36. Guo, Z., Huang, Y., Hu, X., Wei, H., Zhao, B.: A survey on deep learning based approaches for scene understanding in autonomous driving. Electronics (2021)

37. Gutiérrez, G., Iglesias, J.A., Ordóñez, F.J., Ledezma, A., Sanchis, A.: Agentbased framework for advanced driver assistance systems in urban environments. FUSION (2014)

38. Halilaj, L., Dindorkar, I., Luettin, J., Rothermel, S.: A knowledge graph-based approach for situation comprehension in driving scenarios. In: ESWC (2021)

39. Halilaj, L., Luettin, J., Henson, C., Monka, S.: Knowledge graphs for automated driving. In: IEEE AIKE - Artificial Intelligence and Knowledge Engineering (2022)

40. Halilaj, L., Luettin, J., Rothermel, S., Arumugam, S.K., Dindorkar, I.: Towards a knowledge graph-based approach for context-aware points-of-interest recommendations. In: ACM/SIGAPP SAC. pp. 1846-1854 (2021)

41. Henson, C., Schmid, S., Tran, A.T., Karatzoglou, A.: Using a knowledge graph of scenes to enable search of autonomous driving data. In: ISWC (2019)

42. Herrmann, M., Witt, C., Lake, L., Guneshka, S., Heinzemann, C., Bonarens, F., et al.: Using ontologies for dataset engineering in automotive AI applications. 2022 Design, Automation & Test in Europe Conference & Exhibition (DATE) (2022)

43. Hina, M.D., Thierry, C., Soukane, A., Ramdane-Cherif, A.: Ontological and machine learning approaches for managing driving context in intelligent transportation. In: IC3K (2017)

44. Hinton, G.E., Osindero, S., Teh, Y.: A fast learning algorithm for deep belief nets. Neural Computation (2006)

45. Hogan, A., Blomqvist, E., Cochez, M., et al.: Knowledge graphs. ACM Comput. Surv. (2021)

46. Homayounfar, N., Liang, J., Ma, W.C., Fan, J., Wu, X., Urtasun, R.: Dagmapper: Learning to map by discovering lane topology. ICCV (2019)

47. Hovi, J., Ichise, R.: Feasibility study: Rule generation for ontology-based decisionmaking systems. In: JIST (2019)

48. Huang, L., Liang, H., Yu, B., Li, B., Zhu, H.: Ontology-based driving scene modeling, situation assessment and decision making for autonomous vehicles. (ACIRS) (2019)

49. Huang, Y., Chen, Y.: Survey of state-of-art autonomous driving technologies with deep learning. In: IEEE QRS-C (2020)

50. Hülsen, M., Zöllner, J.M., Weiss, C.: Traffic intersection situation description ontology for advanced driver assistance. IEEE IV Symposium (2011)

51. Hülsen, M., Zöllner, J.M., Haeberlen, N., Weiss, C.: Asynchronous real-time framework for knowledge-based intersection assistance. IEEE ITSC (2011)

52. ISO: ISO 26262-1:2018: Road vehicles - functional safety (2018)

53. Janai, J., Güney, F., Behl, A., Geiger, A.: Computer vision for autonomous vehicles: Problems, datasets and state of the art. Found. Trends Comput. Graph. Vis. (2020)

54. Janowicz, K., Haller, A., Cox, S.J.D., Phuoc, D.L., Lefrançois, M.: SOSA: A lightweight ontology for sensors, observations, samples, and actuators. J. Web Semant. 56 , 1-10 (2019)

55. Ji, S., Pan, S., Cambria, E., Marttinen, P., Yu, P.S.: A survey on knowledge graphs: Representation, acquisition and applications. IEEE Transactions on Neural Networks and Learning Sys. (2021)

56. Johnson, J., Krishna, R., Stark, M., Li, L., Shamma, D., Bernstein, M.S., Fei-Fei, L.: Image retrieval using scene graphs. CVPR (2015)

57. Kaleeswaran, A., Nordmann, A., Mehdi, A.: Towards integrating ontologies into verification for autonomous driving. In: ISWC Satellites (2019)

58. Kannan, S., Thangavelu, A., Kalivaradhan, R.: An intelligent driver assistance system (i-das) for vehicle safety modelling using ontology approach. International Journal of UbiComp (2010)

59. Kim, J.E., Henson, C., Huang, K., Tran, T.A., Lin, W.Y.: Accelerating road sign ground truth construction with knowledge graph and machine learning. ArXiv abs/2012.02672 (2020)

60. Kipf, T.N., Welling, M.: Semi-supervised classification with graph convolutional networks. In: ICLR (2017)

61. Kiran, B.R., Sobh, I., Talpaert, V., Mannion, P., Sallab, A.A.A., Yogamani, S.K., P'erez, P.: Deep reinforcement learning for autonomous driving: A survey. IEEE Transactions on Intelligent Transportation Systems 23 , 4909-4926 (2022)

62. Klotz, B., Troncy, R., Wilms, D., Bonnet, C.: Vsso: The vehicle signal and attribute ontology. In: SSN@ISWC (2018)

63. Kohlhaas, R., Bittner, T., Schamm, T., Zöllner, J.M.: Semantic state space for high-level maneuver planning in structured traffic scenes. ITSC (2014)

64. Kunze, L., Bruls, T., Suleymanov, T., Newman, P.: Reading between the lanes: Road layout reconstruction from partially segmented scenes. ITSC (2018)

65. Lateef, F., Ruichek, Y.: Survey on semantic segmentation using deep learning techniques. Neurocomputing (2019)

66. Leroy, J., Gruyer, D., Orfila, O., Faouzi, N.E.E.: Five key components based risk indicators ontology for the modelling and identification of critical interaction between human driven and automated vehicles. IFAC (2020)

67. Li, X., Ying, X., Chuah, M.C.: Grip: Graph-based interaction-aware trajectory prediction. 2019 IEEE ITSC pp. 3960-3966 (2019)

68. Li, Y., Tao, J., Wotawa, F.: Ontology-based test generation for automated and autonomous driving functions. IST (2020)

69. Lilis, Y., Zidianakis, E., Partarakis, N., Antona, M., Stephanidis, C.: Personalizing HMI elements in ADAS using ontology meta-models and rule based reasoning. In: Universal Access in Human-Computer Interaction. Design and Dev. Approaches and Methods (2017)

70. Liu, H., Singh, P.: Conceptnet - a practical commonsense reasoning tool-kit. BT Technology Journal 22 , 211-226 (2004)

71. Liu, L., Ouyang, W., Wang, X., Fieguth, P., Chen, J., Liu, X., Pietikäinen, M.: Deep learning for generic object detection: A survey. IJCV (2019)

72. Lu, P., Xu, S., Peng, H.: Graph-embedded lane detection. IEEE Transactions on Image Processing (2021)

73. Minaee, S., Boykov, Y., Porikli, F., Plaza, A., Kehtarnavaz, N., Terzopoulos, D.: Image segmentation using deep learning: A survey. IEEE Trans. PAMI (2021)

74. Mohammad, M.A., Kaloskampis, I., Hicks, Y., Setchi, R.: Ontology-based framework for risk assessment in road scenes using videos. In: Inter. Conf. KES (2015)

75. Monka, S., Halilaj, L., Rettinger, A.: A survey on visual transfer learning using knowledge graphs. Semantic Web 13 , 477-510 (2022)

76. Monka, S., Halilaj, L., Schmid, S., Rettinger, A.: Learning visual models using a knowledge graph as a trainer. In: ISWC 2021 (2021)

77. Morignot, P., Nashashibi, F.: An ontology-based approach to relax traffic regulation for autonomous vehicle assistance. ArXiv abs/1212.0768 (2012)

78. Mozaffari, S., Al-Jarrah, O.Y., Dianati, M., Jennings, P., Mouzakitis, A.: Deep learning-based vehicle behaviour prediction for autonomous driving applications: A review. ArXiv abs/1912.11676 (2019)

79. Narote, S.P., Bhujbal, P.N., Narote, A.S., Dhane, D.M.: A review of recent advances in lane detection and departure warning system. Pattern Recognition (2018)

80. Nickel, M., Murphy, K., Tresp, V., Gabrilovich, E.: A review of relational machine learning for knowledge graphs. Proc. IEEE (2016)

81. Paardekooper, J.P., Comi, M., et al.: A hybrid-ai approach for competence assessment of automated driving functions. In: SafeAI@AAAI (2021)

82. Paden, B., Cáp, M., Yong, S.Z., Yershov, D.S., Frazzoli, E.: A survey of motion planning and control techniques for self-driving urban vehicles. IEEE Transactions on Intelligent Vehicles (2016)

83. Pollard, E., Morignot, P., Nashashibi, F.: An ontology-based model to determine the automation level of an automated vehicle for co-driving. In: Proc. FUSION (2013)

84. Qiu, H., Ayara, A., Glimm, B.: A knowledge architecture layer for map data in autonomous vehicles. ITSC (2020)

85. Qiu, H., Ayara, A., Glimm, B.: Ontology-based processing of dynamic maps in automated driving. In: KEOD (2020)

86. Qiu, H., Ayara, A., Glimm, B.: Ontology-based map data quality assurance. In: Proc. ESWC (2021)

87. Regele, R.: Using ontology-based traffic models for more efficient decision making of autonomous vehicles. ICAS (2008)

88. Ryu, M.W., Cha, S.H.: Context-awareness based driving assistance system for autonomous vehicles. International Journal of Control and Automation (2018)

89. Sarwar, S., Zia, S., ul Qayyum, Z., Iqbal, M., Safyan, M., Mumtaz, S., et al.: Context aware ontology-based hybrid intelligent framework for vehicle driver categorization. Transact. on Emerging Telecommunications Technologies (2019)

90. Schafer, F., Kriesten, R., Chrenko, D., Gechter, F.: No need to learn from each other? - potentials of knowledge modeling in autonomous vehicle systems engineering towards new methods in multidisciplinary contexts. In: ICE/ITMC (2017)

91. Schlenoff, C., Balakirsky, S., Uschold, M., Provine, R., Smith, S.: Using ontologies to aid navigation planning in autonomous vehicles. The Knowledge Engineering Review (2003)

92. Schmidhuber, J.: Deep learning in neural networks: An overview. Neural networks : the official journal of the International Neural Network Society 61 , 85-117 (2015)

93. Scholtes, M., Westhofen, L., Turner, L.R., Lotto, K., Schuldes, M., Weber, H., et al.: 6-layer model for a structured description and categorization of urban traffic and environment. IEEE Access 9 , 59131-59147 (2021)

94. Schwarting, W., Pierson, A., et al.: Social behavior for autonomous vehicles. Proc. Natl. Acad. Sci. USA (2019)

95. Singhal, A.: Introducing the knowledge graph: things, not strings. " https:// blog.google/products/search/introducing-knowledge-graph-things-not/" (2012), 2021-05-07

96. Spehr, J., Rosebrock, D., Mossau, D., Auer, R., Brosig, S., Wahl, F.: Hierarchical scene understanding for intelligent vehicles. IV (2011)

97. Studer, R., Benjamins, V.R., Fensel, D.: Knowledge engineering: Principles and methods. Data Knowl. Eng. 25 (1-2), 161-197 (1998)

98. Suryawanshi, Y., Qiu, H., Ayara, A., Glimm, B.: An ontological model for map data in automotive systems. In: IEEE AIKE (2019)

99. Tang, J., Li, S., Liu, P.: A review of lane detection methods based on deep learning. Pattern Recognition (2021)

100. Töpfer, D., Spehr, J., Effertz, J., Stiller, C.: Efficient road scene understanding for intelligent vehicles using compositional hierarchical models. T-ITS (2015)

101. Ulbrich, S., Menzel, T., Reschka, A., Schuldt, F., Maurer, M.: Defining and substantiating the terms scene, situation, and scenario for automated driving. In: ITSC (2015)

102. Ulbrich, S., Nothdurft, T., Maurer, M., Hecker, P.: Graph-based context representation, environment modeling and information aggregation for automated driving. In: IEEE Intelligent Vehicles Symposium Proceedings (2014)

103. Urbieta, I.R., Nieto, M., García, M., Otaegui, O.: Design and implementation of an ontology for semantic labeling and testing: Automotive global ontology (AGO). Applied Sciences (2021)

104. Velickovic, P., Cucurull, G., Casanova, A., Romero, A., Liò, P., Bengio, Y.: Graph attention networks. In: 6th International Conference on Learning Representations, ICLR (2018)

105. Venkateshkumar, S., Sridhar, M., Ott, P.: Latent hierarchical part based models for road scene understanding. ICCVW (2015)

106. Wang, Q., Mao, Z., Wang, B., Guo, L.: Knowledge graph embedding: A survey of approaches and applications. IEEE Trans. on Knowledge and Data Eng. (2017)

107. Werner, S., Rettinger, A., Halilaj, L., Luettin, J.: Embedding taxonomical, situational or sequential knowledge graph context for recommendation tasks. In: Further with Knowledge Graphs (2021)

108. Werner, S., Rettinger, A., Halilaj, L., Luettin, J.: RETRA: Recurrent transformers for learning temporally contextualized knowledge graph embeddings. In: ESWC (2021)

109. Westhofen, L., Neurohr, C., Butz, M., Scholtes, M., Schuldes, M.: Using ontologies for the formalization and recognition of criticality for automated driving. IEEE Open Journal of Intelligent Transportation Systems 3 , 519-538 (2022)

110. Wickramarachchi, R., Henson, C., Sheth, A.: An evaluation of knowledge graph embeddings for autonomous driving data: Experience and practice. AAAI-MAKE (2020)

111. Wickramarachchi, R., Henson, C., Sheth, A.: Knowledge-infused learning for entity prediction in driving scenes. Frontiers in Big Data (2021)

112. Woo, S., Kim, D., Cho, D., Kweon, I.S.: Linknet: Relational embedding for scene graph. In: NeurIPS (2018)

113. Xiong, Z., Dixit, V., Waller, S.: The development of an ontology for driving context modelling and reasoning. ITSC (2016)

114. Zareian, A., Karaman, S., Chang, S.F.: Bridging knowledge graphs to generate scene graphs. In: ECCV (2020)

115. Zhao, L., Ichise, R., et al., T.Y.: Ontology-based decision making on uncontrolled intersections and narrow roads. IV (2015)

116. Zhao, L., Ichise, R., Liu, Z., Mita, S., Sasaki, Y.: Ontology-based driving decision making: A feasibility study at uncontrolled intersections. IEICE (2017)

117. Zhao, L., Ichise, R., Mita, S., Sasaki, Y.: Core ontologies for safe autonomous driving. In: ISWC (2015)

118. Zhu, H., Yuen, K., Mihaylova, L., Leung, H.: Overview of environment perception for intelligent vehicles. T-ITS (2017)

119. Zipfl, M., Hertlein, F., Rettinger, A., Thoma, S., Halilaj, L., Luettin, J., Schmid, S., Henson, C.: Relation-based motion prediction using traffic scene graphs. In: IEEE ITSC (2022)