# Automatic Change-Point Detection in Time Series via Deep Learning

**Authors**: Jie Li111Addresses for correspondence: Jie Li, Department of Statistics, London School of Economics and Political Science, London, WC2A 2AE.Email: j.li196@lse.ac.uk, Paul Fearnhead, Piotr Fryzlewicz, Tengyao Wang

> Department of Statistics, London School of Economics and Political Science, London, UK

> Department of Mathematics and Statistics, Lancaster University, Lancaster, UK

Abstract

Detecting change-points in data is challenging because of the range of possible types of change and types of behaviour of data when there is no change. Statistically efficient methods for detecting a change will depend on both of these features, and it can be difficult for a practitioner to develop an appropriate detection method for their application of interest. We show how to automatically generate new offline detection methods based on training a neural network. Our approach is motivated by many existing tests for the presence of a change-point being representable by a simple neural network, and thus a neural network trained with sufficient data should have performance at least as good as these methods. We present theory that quantifies the error rate for such an approach, and how it depends on the amount of training data. Empirical results show that, even with limited training data, its performance is competitive with the standard CUSUM-based classifier for detecting a change in mean when the noise is independent and Gaussian, and can substantially outperform it in the presence of auto-correlated or heavy-tailed noise. Our method also shows strong results in detecting and localising changes in activity based on accelerometer data.

Keywords— Automatic statistician; Classification; Likelihood-free inference; Neural networks; Structural breaks; Supervised learning {textblock}

12.5[0,0](2,1) [To be read before The Royal Statistical Society at the Society’s 2023 annual conference held in Harrogate on Wednesday, September 6th, 2023, the President, Dr Andrew Garrett, in the Chair.] {textblock} 12.5[0,0](2,2) [Accepted (with discussion), to appear]

1 Introduction

Detecting change-points in data sequences is of interest in many application areas such as bioinformatics (Picard et al., 2005), climatology (Reeves et al., 2007), signal processing (Haynes et al., 2017) and neuroscience (Oh et al., 2005). In this work, we are primarily concerned with the problem of offline change-point detection, where the entire data is available to the analyst beforehand. Over the past few decades, various methodologies have been extensively studied in this area, see Killick et al. (2012); Jandhyala et al. (2013); Fryzlewicz (2014, 2023); Wang and Samworth (2018); Truong et al. (2020) and references therein. Most research on change-point detection has concentrated on detecting and localising different types of change, e.g. change in mean (Killick et al., 2012; Fryzlewicz, 2014), variance (Gao et al., 2019; Li et al., 2015), median (Fryzlewicz, 2021) or slope (Baranowski et al., 2019; Fearnhead et al., 2019), amongst many others. Many change-point detection methods are based upon modelling data when there is no change and when there is a single change, and then constructing an appropriate test statistic to detect the presence of a change (e.g. James et al., 1987; Fearnhead and Rigaill, 2020). The form of a good test statistic will vary with our modelling assumptions and the type of change we wish to detect. This can lead to difficulties in practice. As we use new models, it is unlikely that there will be a change-point detection method specifically designed for our modelling assumptions. Furthermore, developing an appropriate method under a complex model may be challenging, while in some applications an appropriate model for the data may be unclear but we may have substantial historical data that shows what patterns of data to expect when there is, or is not, a change. In these scenarios, currently a practitioner would need to choose the existing change detection method which seems the most appropriate for the type of data they have and the type of change they wish to detect. To obtain reliable performance, they would then need to adapt its implementation, for example tuning the choice of threshold for detecting a change. Often, this would involve applying the method to simulated or historical data. To address the challenge of automatically developing new change detection methods, this paper is motivated by the question: Can we construct new test statistics for detecting a change based only on having labelled examples of change-points? We show that this is indeed possible by training a neural network to classify whether or not a data set has a change of interest. This turns change-point detection in a supervised learning problem. A key motivation for our approach are results that show many common test statistics for detecting changes, such as the CUSUM test for detecting a change in mean, can be represented by simple neural networks. This means that with sufficient training data, the classifier learnt by such a neural network will give performance at least as good as classifiers corresponding to these standard tests. In scenarios where a standard test, such as CUSUM, is being applied but its modelling assumptions do not hold, we can expect the classifier learnt by the neural network to outperform it. There has been increasing recent interest in whether ideas from machine learning, and methods for classification, can be used for change-point detection. Within computer science and engineering, these include a number of methods designed for and that show promise on specific applications (e.g. Ahmadzadeh, 2018; De Ryck et al., 2021; Gupta et al., 2022; Huang et al., 2023). Within statistics, Londschien et al. (2022) and Lee et al. (2023) consider training a classifier as a way to estimate the likelihood-ratio statistic for a change. However these methods train the classifier in an un-supervised way on the data being analysed, using the idea that a classifier would more easily distinguish between two segments of data if they are separated by a change-point. Chang et al. (2019) use simulated data to help tune a kernel-based change detection method. Methods that use historical, labelled data have been used to train the tuning parameters of change-point algorithms (e.g. Hocking et al., 2015; Liehrmann et al., 2021). Also, neural networks have been employed to construct similarity scores of new observations to learned pre-change distributions for online change-point detection (Lee et al., 2023). However, we are unaware of any previous work using historical, labelled data to develop offline change-point methods. As such, and for simplicity, we focus on the most fundamental aspect, namely the problem of detecting a single change. Detecting and localising multiple changes is considered in Section 6 when analysing activity data. We remark that by viewing the change-point detection problem as a classification instead of a testing problem, we aim to control the overall misclassification error rate instead of handling the Type I and Type II errors separately. In practice, asymmetric treatment of the two error types can be achieved by suitably re-weighting misclassification in the two directions in the training loss function. The method we develop has parallels with likelihood-free inference methods Gourieroux et al. (1993); Beaumont (2019) in that one application of our work is to use the ability to simulate from a model so as to circumvent the need to analytically calculate likelihoods. However, the approach we take is very different from standard likelihood-free methods which tend to use simulation to estimate the likelihood function itself. By comparison, we directly target learning a function of the data that can discriminate between instances that do or do not contain a change (though see Gutmann et al., 2018, for likelihood-free methods based on re-casting the likelihood as a classification problem). For an introduction to the statistical aspects of neural network-based classification, albeit not specifically in a change-point context, see Ripley (1994). We now briefly introduce our notation. For any $n∈\mathbb{Z}^{+}$ , we define $[n]\coloneqq\{1,...,n\}$ . We take all vectors to be column vectors unless otherwise stated. Let $\boldsymbol{1}_{n}$ be the all-one vector of length $n$ . Let $\mathbbm{1}\{·\}$ represent the indicator function. The vertical symbol $|·|$ represents the absolute value or cardinality of $·$ depending on the context. For vector $\boldsymbol{x}=(x_{1},...,x_{n})^{→p}$ , we define its $p$ -norm as $\|\boldsymbol{x}\|_{p}\coloneqq\big{(}\sum_{i=1}^{n}|x_{i}|^{p}\big{)}^{1/p},p≥

1$ ; when $p=∞$ , define $\|\boldsymbol{x}\|_{∞}\coloneqq\max_{i}|x_{i}|$ . All proofs, as well as additional simulations and real data analyses appear in the supplement.

2 Neural networks

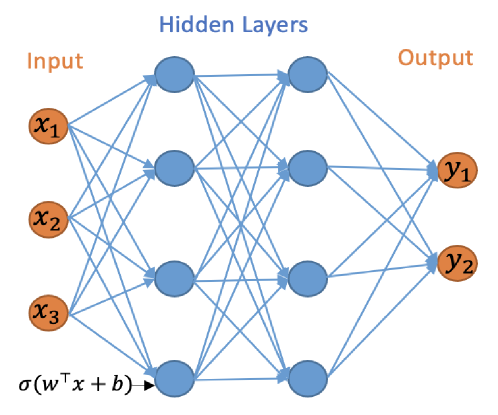

The initial focus of our work is on the binary classification problem for whether a change-point exists in a given time series. We will work with multilayer neural networks with Rectified Linear Unit (ReLU) activation functions and binary output. The multilayer neural network consists of an input layer, hidden layers and an output layer, and can be represented by a directed acyclic graph, see Figure 1.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Neural Network Architecture

### Overview

The image depicts a schematic diagram of a feedforward neural network. It illustrates the basic structure of an artificial neural network with an input layer, one or more hidden layers, and an output layer. The connections between nodes (neurons) are represented by lines, indicating the flow of information.

### Components/Axes

The diagram is divided into three main sections, labeled from left to right: "Input", "Hidden Layers", and "Output".

* **Input Layer:** Contains three nodes labeled x₁, x₂, and x₃. These nodes are represented by orange circles.

* **Hidden Layers:** Consists of two layers of nodes. The first hidden layer has four nodes, and the second hidden layer has two nodes. These nodes are represented by blue circles.

* **Output Layer:** Contains two nodes labeled y₁ and y₂. These nodes are represented by orange circles.

* **Connections:** Blue lines connect nodes between layers, representing weighted connections.

* **Activation Function:** The equation "σ(wᵀx + b)" is present at the bottom-left, likely representing an activation function applied to the weighted sum of inputs.

### Detailed Analysis or Content Details

The diagram shows a fully connected network, meaning each node in one layer is connected to every node in the next layer.

* **Input Layer:**

* x₁ is the first input feature.

* x₂ is the second input feature.

* x₃ is the third input feature.

* **Hidden Layers:**

* The first hidden layer receives input from all three input nodes (x₁, x₂, x₃).

* The second hidden layer receives input from all four nodes in the first hidden layer.

* **Output Layer:**

* y₁ is the first output.

* y₂ is the second output.

* The output layer receives input from all two nodes in the second hidden layer.

* **Activation Function:**

* σ represents the sigmoid function or another activation function.

* wᵀ represents the transpose of the weight matrix.

* x represents the input vector.

* b represents the bias vector.

### Key Observations

The network has a relatively simple architecture with only three input nodes and two output nodes. The presence of the activation function suggests that the network is capable of learning non-linear relationships between the inputs and outputs. The diagram does not provide any specific values for weights, biases, or activation function parameters.

### Interpretation

This diagram illustrates the fundamental structure of a neural network used for machine learning tasks. The network takes three input features (x₁, x₂, x₃), processes them through two hidden layers, and produces two outputs (y₁, y₂). The connections between nodes represent the weights that are learned during the training process. The activation function introduces non-linearity, allowing the network to model complex relationships. The diagram is a conceptual representation and does not provide any information about the specific application or performance of the network. It serves as a visual aid for understanding the basic principles of neural network architecture. The equation at the bottom suggests that the network uses a weighted sum of inputs, combined with a bias, and then passed through an activation function to produce the output of each neuron. This is a standard approach in many neural network models.

</details>

Figure 1: A neural network with 2 hidden layers and width vector $\mathbf{m}=(4,4)$ .

Let $L∈\mathbb{Z}^{+}$ represent the number of hidden layers and $\boldsymbol{m}={(m_{1},...,m_{L})}^{→p}$ the vector of the hidden layers widths, i.e. $m_{i}$ is the number of nodes in the $i$ th hidden layer. For a neural network with $L$ hidden layers we use the convention that $m_{0}=n$ and $m_{L+1}=1$ . For any bias vector $\boldsymbol{b}={(b_{1},b_{2},...,b_{r})}^{→p}∈\mathbb{R}^{r}$ , define the shifted activation function $\sigma_{\boldsymbol{b}}:\mathbb{R}^{r}→\mathbb{R}^{r}$ :

$$

\sigma_{\boldsymbol{b}}((y_{1},\ldots,y_{r})^{\top})=(\sigma(y_{1}-b_{1}),%

\ldots,\sigma(y_{r}-b_{r}))^{\top},

$$

where $\sigma(x)=\max(x,0)$ is the ReLU activation function. The neural network can be mathematically represented by the composite function $h:\mathbb{R}^{n}→\{0,1\}$ as

$$

h(\boldsymbol{x})\coloneqq\sigma^{*}_{\lambda}W_{L}\sigma_{\boldsymbol{b}_{L}}%

W_{L-1}\sigma_{\boldsymbol{b}_{L-1}}\cdots W_{1}\sigma_{\boldsymbol{b}_{1}}W_{%

0}\boldsymbol{x}, \tag{1}

$$

where $\sigma^{*}_{\lambda}(x)=\mathbbm{1}\{x>\lambda\}$ , $\lambda>0$ and $W_{\ell}∈\mathbb{R}^{m_{\ell+1}× m_{\ell}}$ for $\ell∈\{0,...,L\}$ represent the weight matrices. We define the function class $\mathcal{H}_{L,\boldsymbol{m}}$ to be the class of functions $h(\boldsymbol{x})$ with $L$ hidden layers and width vector $\boldsymbol{m}$ . The output layer in (1) employs the shifted heaviside function $\sigma^{*}_{\lambda}(x)$ , which is used for binary classification as the final activation function. This choice is guided by the fact that we use the 0-1 loss, which focuses on the percentage of samples assigned to the correct class, a natural performance criterion for binary classification. Besides its wide adoption in machine learning practice, another advantage of using the 0-1 loss is that it is possible to utilise the theory of the Vapnik–Chervonenkis (VC) dimension (see, e.g. Shalev-Shwartz and Ben-David, 2014, Definition 6.5) to bound the generalisation error of a binary classifier equipped with this loss; indeed, this is the approach we take in this work. The relevant results regarding the VC dimension of neural network classifiers are e.g. in Bartlett et al. (2019). As in Schmidt-Hieber (2020), we work with the exact minimiser of the empirical risk. In both binary or multiclass classification, it is possible to work with other losses which make it computationally easier to minimise the corresponding risk, see e.g. Bos and Schmidt-Hieber (2022), who use a version of the cross-entropy loss. However, loss functions different from the 0-1 loss make it impossible to use VC-dimension arguments to control the generalisation error, and more involved arguments, such as those using the covering number (Bos and Schmidt-Hieber, 2022) need to be used instead. We do not pursue these generalisations in the current work.

3 CUSUM-based classifier and its generalisations are neural networks

3.1 Change in mean

We initially consider the case of a single change-point with an unknown location $\tau∈[n-1]$ , $n≥ 2$ , in the model

| | $\displaystyle\boldsymbol{X}$ | $\displaystyle=\boldsymbol{\mu}+\boldsymbol{\xi},$ | |

| --- | --- | --- | --- |

where $\mu_{\mathrm{L}},\mu_{\mathrm{R}}$ are the unknown signal values before and after the change-point; $\boldsymbol{\xi}\sim N_{n}(0,I_{n})$ . The CUSUM test is widely used to detect mean changes in univariate data. For the observation $\boldsymbol{x}$ , the CUSUM transformation $\mathcal{C}:\mathbb{R}^{n}→\mathbb{R}^{n-1}$ is defined as $\mathcal{C}(\boldsymbol{x}):=(\boldsymbol{v}_{1}^{→p}\boldsymbol{x},...,%

\boldsymbol{v}_{n-1}^{→p}\boldsymbol{x})^{→p}$ , where $\boldsymbol{v}_{i}\coloneqq\bigl{(}\sqrt{\frac{n-i}{in}}\boldsymbol{1}_{i}^{%

→p},-\sqrt{\frac{i}{(n-i)n}}\boldsymbol{1}_{n-i}^{→p}\bigr{)}^{→p}$ for $i∈[n-1]$ . Here, for each $i∈[n-1]$ , $(\boldsymbol{v}_{i}^{→p}\boldsymbol{x})^{2}$ is the log likelihood-ratio statistic for testing a change at time $i$ against the null of no change (e.g. Baranowski et al., 2019). For a given threshold $\lambda>0$ , the classical CUSUM test for a change in the mean of the data is defined as

$$

h^{\mathrm{CUSUM}}_{\lambda}(\boldsymbol{x})=\mathbbm{1}\{\|\mathcal{C}(%

\boldsymbol{x})\|_{\infty}>\lambda\}.

$$

The following lemma shows that $h^{\mathrm{CUSUM}}_{\lambda}(\boldsymbol{x})$ can be represented as a neural network.

**Lemma 3.1**

*For any $\lambda>0$ , we have $h^{\mathrm{CUSUM}}_{\lambda}(\boldsymbol{x})∈\mathcal{H}_{1,2n-2}$ .*

The fact that the widely-used CUSUM statistic can be viewed as a simple neural network has far-reaching consequences: this means that given enough training data, a neural network architecture that permits the CUSUM-based classifier as its special case cannot do worse than CUSUM in classifying change-point versus no-change-point signals. This serves as the main motivation for our work, and a prelude to our next results.

3.2 Beyond the mean change model

We can generalise the simple change in mean model to allow for different types of change or for non-independent noise. In this section, we consider change-point models that can be expressed as a change in regression problem, where the model for data given a change at $\tau$ is of the form

$$

\boldsymbol{X}=\boldsymbol{Z}\boldsymbol{\beta}+\boldsymbol{c}_{\tau}\phi+%

\boldsymbol{\Gamma}\boldsymbol{\xi}, \tag{2}

$$

where for some $p≥ 1$ , $\boldsymbol{Z}$ is an $n× p$ matrix of covariates for the model with no change, $\boldsymbol{c}_{\tau}$ is an $n× 1$ vector of covariates specific to the change at $\tau$ , and the parameters $\boldsymbol{\beta}$ and $\phi$ are, respectively, a $p× 1$ vector and a scalar. The noise is defined in terms of an $n× n$ matrix $\boldsymbol{\Gamma}$ and an $n× 1$ vector of independent standard normal random variables, $\boldsymbol{\xi}$ . For example, the change in mean problem has $p=1$ , with $\boldsymbol{Z}$ a column vector of ones, and $\boldsymbol{c}_{\tau}$ being a vector whose first $\tau$ entries are zeros, and the remaining entries are ones. In this formulation $\beta$ is the pre-change mean, and $\phi$ is the size of the change. The change in slope problem Fearnhead et al. (2019) has $p=2$ with the columns of $\boldsymbol{Z}$ being a vector of ones, and a vector whose $i$ th entry is $i$ ; and $\boldsymbol{c}_{\tau}$ has $i$ th entry that is $\max\{0,i-\tau\}$ . In this formulation $\boldsymbol{\beta}$ defines the pre-change linear mean, and $\phi$ the size of the change in slope. Choosing $\boldsymbol{\Gamma}$ to be proportional to the identity matrix gives a model with independent, identically distributed noise; but other choices would allow for auto-correlation. The following result is a generalisation of Lemma 3.1, which shows that the likelihood-ratio test for (2), viewed as a classifier, can be represented by our neural network.

**Lemma 3.2**

*Consider the change-point model (2) with a possible change at $\tau∈[n-1]$ . Assume further that $\boldsymbol{\Gamma}$ is invertible. Then there is an $h^{*}∈\mathcal{H}_{1,2n-2}$ equivalent to the likelihood-ratio test for testing $\phi=0$ against $\phi≠ 0$ .*

Importantly, this result shows that for this much wider class of change-point models, we can replicate the likelihood-ratio-based classifier for change using a simple neural network. Other types of changes can be handled by suitably pre-transforming the data. For instance, squaring the input data would be helpful in detecting changes in the variance and if the data followed an AR(1) structure, then changes in autocorrelation could be handled by including transformations of the original input of the form $(x_{t}x_{t+1})_{t=1,...,n-1}$ . On the other hand, even if such transformations are not supplied as the input, a neural network of suitable depth is able to approximate these transformations and consequently successfully detect the change (Schmidt-Hieber, 2020, Lemma A.2). This is illustrated in Figure 7 of appendix, where we compare the performance of neural network based classifiers of various depths constructed with and without using the transformed data as inputs.

4 Generalisation error of neural network change-point classifiers

In Section 3, we showed that CUSUM and generalised CUSUM could be represented by a neural network. Therefore, with a large enough amount of training data, a trained neural network classifier that included CUSUM, or generalised CUSUM, as a special case, would perform no worse than it on unseen data. In this section, we provide generalisation bounds for a neural network classifier for the change-in-mean problem, given a finite amount of training data. En route to this main result, stated in Theorem 4.3, we provide generalisation bounds for the CUSUM-based classifier, in which the threshold has been chosen on a finite training data set. We write $P(n,\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})$ for the distribution of the multivariate normal random vector $\boldsymbol{X}\sim N_{n}(\boldsymbol{\mu},I_{n})$ where $\boldsymbol{\mu}\coloneqq{(\mu_{\mathrm{L}}\mathbbm{1}\{i≤\tau\}+\mu_{%

\mathrm{R}}\mathbbm{1}\{i>\tau\})}_{i∈[n]}$ . Define $\eta\coloneqq\tau/n$ . Lemma 4.1 and Corollary 4.1 control the misclassification error of the CUSUM-based classifier.

**Lemma 4.1**

*Fix $\varepsilon∈(0,1)$ . Suppose $\boldsymbol{X}\sim P(n,\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})$ for some $\tau∈\mathbb{Z}^{+}$ and $\mu_{\mathrm{L}},\mu_{\mathrm{R}}∈\mathbb{R}$ .

1. If $\mu_{\mathrm{L}}=\mu_{\mathrm{R}}$ , then $\mathbb{P}\bigl{\{}\|\mathcal{C}(\boldsymbol{X})\|_{∞}>\sqrt{2\log(n/%

\varepsilon)}\bigr{\}}≤\varepsilon.$

1. If $|\mu_{\mathrm{L}}-\mu_{\mathrm{R}}|\sqrt{\eta(1-\eta)}>\sqrt{8\log(n/%

\varepsilon)/n}$ , then $\mathbb{P}\bigl{\{}\|\mathcal{C}(\boldsymbol{X})\|_{∞}≤\sqrt{2\log(n/%

\varepsilon)}\bigr{\}}≤\varepsilon.$*

For any $B>0$ , define

$$

\Theta(B)\coloneqq\left\{(\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})\in[n-1]%

\times\mathbb{R}\times\mathbb{R}:|\mu_{\mathrm{L}}-\mu_{\mathrm{R}}|\sqrt{\tau%

(n-\tau)}/n\in\{0\}\cup\left(B,\infty\right)\right\}.

$$

Here, $|\mu_{\mathrm{L}}-\mu_{\mathrm{R}}|\sqrt{\tau(n-\tau)}/n=|\mu_{\mathrm{L}}-\mu%

_{\mathrm{R}}|\sqrt{\eta(1-\eta)}$ can be interpreted as the signal-to-noise ratio of the mean change problem. Thus, $\Theta(B)$ is the parameter space of data distributions where there is either no change, or a single change-point in mean whose signal-to-noise ratio is at least $B$ . The following corollary controls the misclassification risk of a CUSUM statistics-based classifier:

**Corollary 4.1**

*Fix $B>0$ . Let $\pi_{0}$ be any prior distribution on $\Theta(B)$ , then draw $(\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})\sim\pi_{0}$ and $\boldsymbol{X}\sim P(n,\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})$ , and define $Y=\mathbbm{1}\{\mu_{\mathrm{L}}≠\mu_{\mathrm{R}}\}$ . For $\lambda=B\sqrt{n}/2$ , the classifier $h^{\mathrm{CUSUM}}_{\lambda}$ satisfies

$$

\mathbb{P}(h^{\mathrm{CUSUM}}_{\lambda}(\boldsymbol{X})\neq Y)\leq ne^{-nB^{2}%

/8}.

$$*

Theorem 4.2 below, which is based on Corollary 4.1, Bartlett et al. (2019, Theorem 7) and Mohri et al. (2012, Corollary 3.4), shows that the empirical risk minimiser in the neural network class $\mathcal{H}_{1,2n-2}$ has good generalisation properties over the class of change-point problems parameterised by $\Theta(B)$ . Given training data $(\boldsymbol{X}^{(1)},Y^{(1)}),...,(\boldsymbol{X}^{(N)},Y^{(N)})$ and any $h:\mathbb{R}^{n}→\{0,1\}$ , we define the empirical risk of $h$ as

$$

L_{N}(h)\coloneqq\frac{1}{N}\sum_{i=1}^{N}\mathbbm{1}\{Y^{(i)}\neq h(%

\boldsymbol{X}^{(i)})\}.

$$

**Theorem 4.2**

*Fix $B>0$ and let $\pi_{0}$ be any prior distribution on $\Theta(B)$ . We draw $(\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})\sim\pi_{0}$ , $\boldsymbol{X}\sim P(n,\tau,\mu_{\mathrm{L}},\mu_{\mathrm{R}})$ , and set $Y=\mathbbm{1}\{\mu_{\mathrm{L}}≠\mu_{\mathrm{R}}\}$ . Suppose that the training data $\mathcal{D}:=\bigl{(}(\boldsymbol{X}^{(1)},Y^{(1)}),...,(\boldsymbol{X}^{(N%

)},Y^{(N)})\bigr{)}$ consist of independent copies of $(\boldsymbol{X},Y)$ and $h_{\mathrm{ERM}}\coloneqq\operatorname*{arg\,min}_{h∈\mathcal{H}_{1,2n-2}}L_%

{N}(h)$ is the empirical risk minimiser. There exists a universal constant $C>0$ such that for any $\delta∈(0,1)$ , (3) holds with probability $1-\delta$ .

$$

\mathbb{P}(h_{\mathrm{ERM}}(\boldsymbol{X})\neq Y\mid\mathcal{D})\leq ne^{-nB^%

{2}/8}+C\sqrt{\frac{n^{2}\log(n)\log(N)+\log(1/\delta)}{N}}. \tag{3}

$$*

The theoretical results derived for the neural network-based classifier, here and below, all rely on the fact that the training and test data are drawn from the same distribution. However, we observe that in practice, even when the training and test sets have different error distributions, neural network-based classifiers still provide accurate results on the test set; see our discussion of Figure 2 in Section 5 for more details. The misclassification error in (3) is bounded by two terms. The first term represents the misclassification error of CUSUM-based classifier, see Corollary 4.1, and the second term depends on the complexity of the neural network class measured in its VC dimension. Theorem 4.2 suggests that for training sample size $N\gg n^{2}\log n$ , a well-trained single-hidden-layer neural network with $2n-2$ hidden nodes would have comparable performance to that of the CUSUM-based classifier. However, as we will see in Section 5, in practice, a much smaller training sample size $N$ is needed for the neural network to be competitive in the change-point detection task. This is because the $2n-2$ hidden layer nodes in the neural network representation of $h^{\mathrm{CUSUM}}_{\lambda}$ encode the components of the CUSUM transformation $(±\boldsymbol{v}_{t}^{→p}\boldsymbol{x}:t∈[n-1])$ , which are highly correlated. By suitably pruning the hidden layer nodes, we can show that a single-hidden-layer neural network with $O(\log n)$ hidden nodes is able to represent a modified version of the CUSUM-based classifier with essentially the same misclassification error. More precisely, let $Q:=\lfloor\log_{2}(n/2)\rfloor$ and write $T_{0}:=\{2^{q}:0≤ q≤ Q\}\cup\{n-2^{q}:0≤ q≤ Q\}$ . We can then define

$$

h^{\mathrm{CUSUM}_{*}}_{\lambda^{*}}(\boldsymbol{X})=\mathbbm{1}\Bigl{\{}\max_%

{t\in T_{0}}|\boldsymbol{v}_{t}^{\top}\boldsymbol{X}|>\lambda^{*}\Bigr{\}}.

$$

By the same argument as in Lemma 3.1, we can show that $h^{\mathrm{CUSUM}_{*}}_{\lambda^{*}}∈\mathcal{H}_{1,4\lfloor\log_{2}(n)\rfloor}$ for any $\lambda^{*}>0$ . The following Theorem shows that high classification accuracy can be achieved under a weaker training sample size condition compared to Theorem 4.2.

**Theorem 4.3**

*Fix $B>0$ and let the training data $\mathcal{D}$ be generated as in Theorem 4.2. Let $h_{\mathrm{ERM}}\coloneqq\operatorname*{arg\,min}_{h∈\mathcal{H}_{L,%

\boldsymbol{m}}}L_{N}(h)$ be the empirical risk minimiser for a neural network with $L≥ 1$ layers and $\boldsymbol{m}=(m_{1},...,m_{L})^{→p}$ hidden layer widths. If $m_{1}≥ 4\lfloor\log_{2}(n)\rfloor$ and $m_{r}m_{r+1}=O(n\log n)$ for all $r∈[L-1]$ , then there exists a universal constant $C>0$ such that for any $\delta∈(0,1)$ , (4) holds with probability $1-\delta$ .

$$

\mathbb{P}(h_{\mathrm{ERM}}(\boldsymbol{X})\neq Y\mid\mathcal{D})\leq 2\lfloor%

\log_{2}(n)\rfloor e^{-nB^{2}/24}+C\sqrt{\frac{L^{2}n\log^{2}(Ln)\log(N)+\log(%

1/\delta)}{N}}. \tag{4}

$$*

Theorem 4.3 generalises the single hidden layer neural network representation in Theorem 4.2 to multiple hidden layers. In practice, multiple hidden layers help keep the misclassification error rate low even when $N$ is small, see the numerical study in Section 5. Theorems 4.2 and 4.3 are examples of how to derive generalisation errors of a neural network-based classifier in the change-point detection task. The same workflow can be employed in other types of changes, provided that suitable representation results of likelihood-based tests in terms of neural networks (e.g. Lemma 3.2) can be obtained. In a general result of this type, the generalisation error of the neural network will again be bounded by a sum of the error of the likelihood-based classifier together with a term originating from the VC-dimension bound of the complexity of the neural network architecture. We further remark that for simplicity of discussion, we have focused our attention on data models where the noise vector $\boldsymbol{\xi}=\boldsymbol{X}-\mathbb{E}\boldsymbol{X}$ has independent and identically distributed normal components. However, since CUSUM-based tests are available for temporally correlated or sub-Weibull data, with suitably adjusted test threshold values, the above theoretical results readily generalise to such settings. See Theorems A.3 and A.5 in appendix for more details.

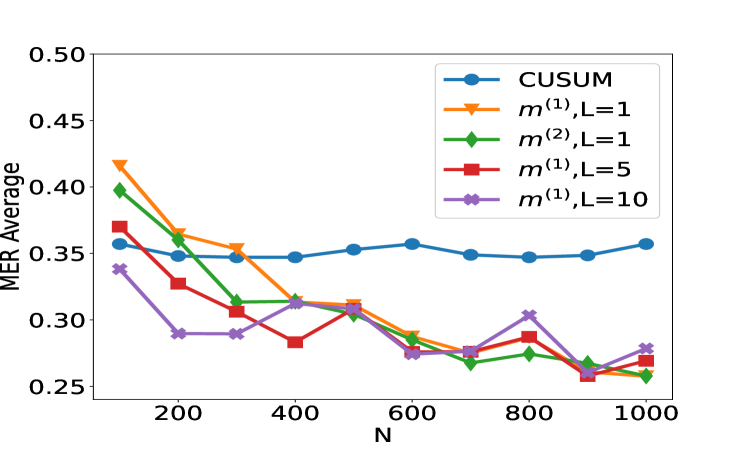

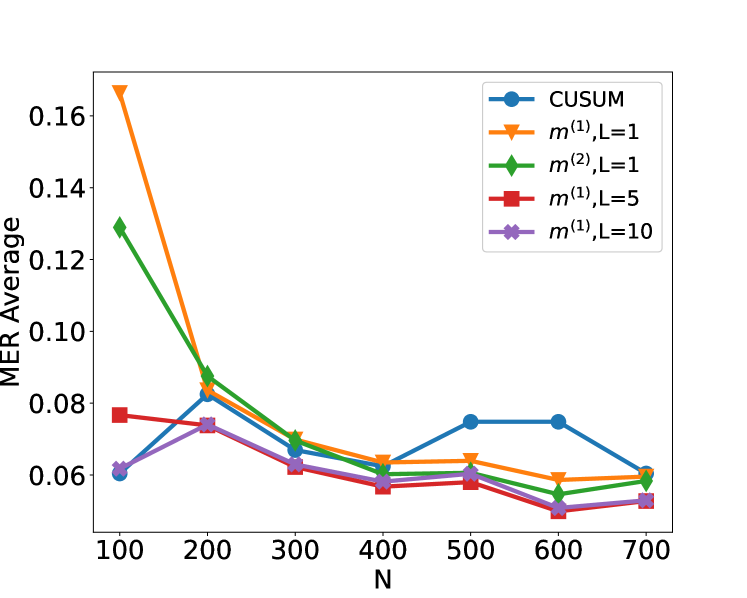

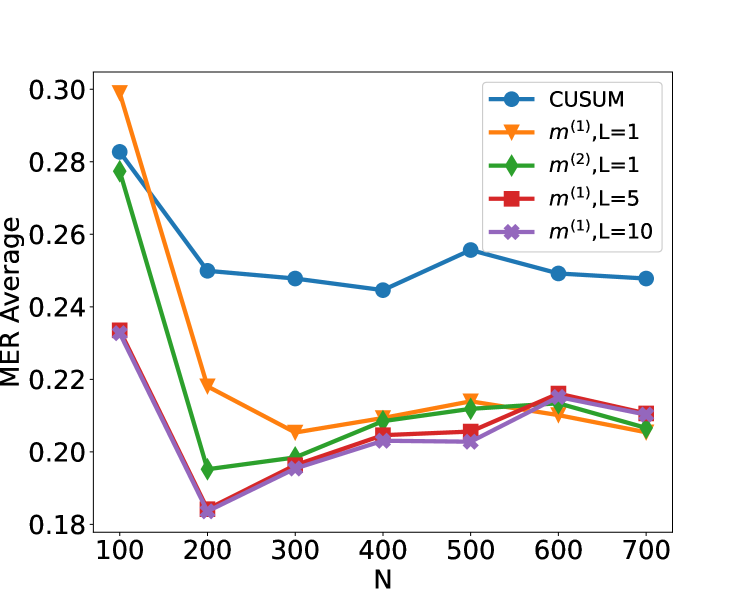

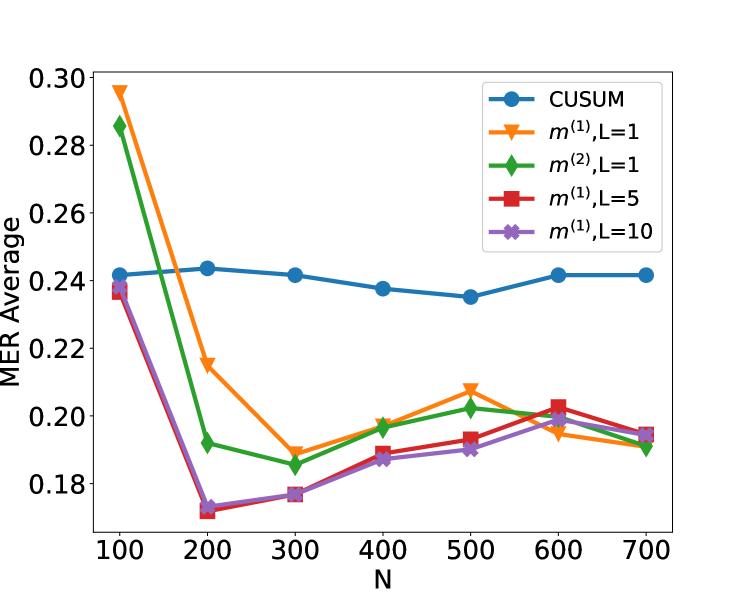

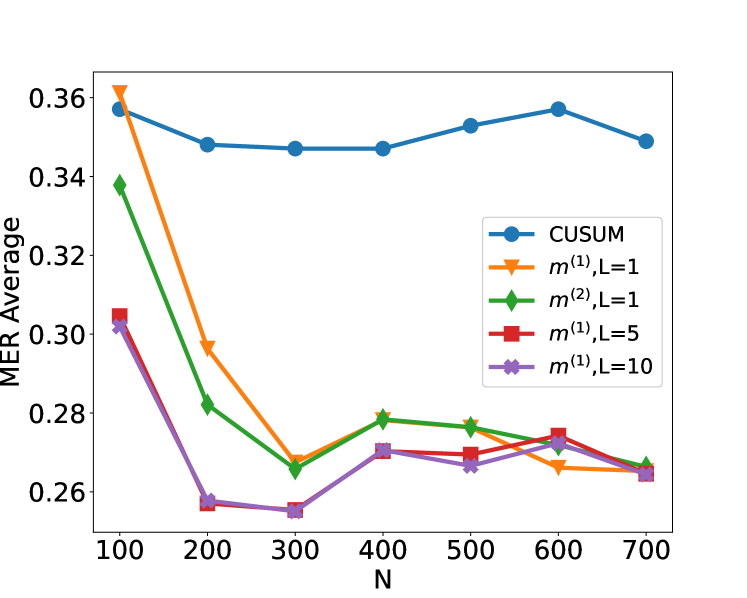

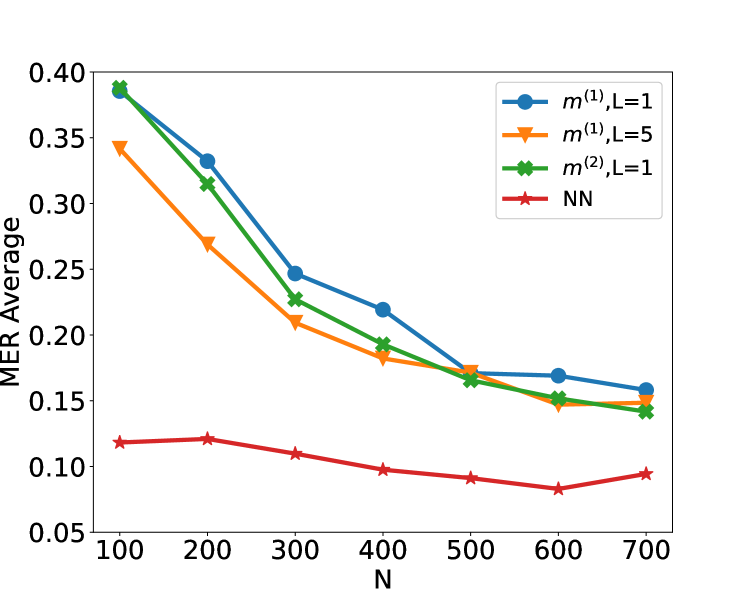

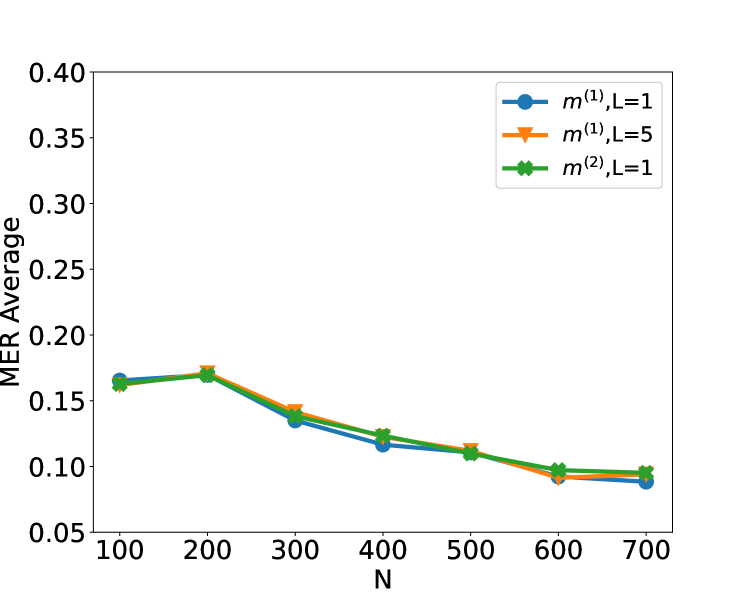

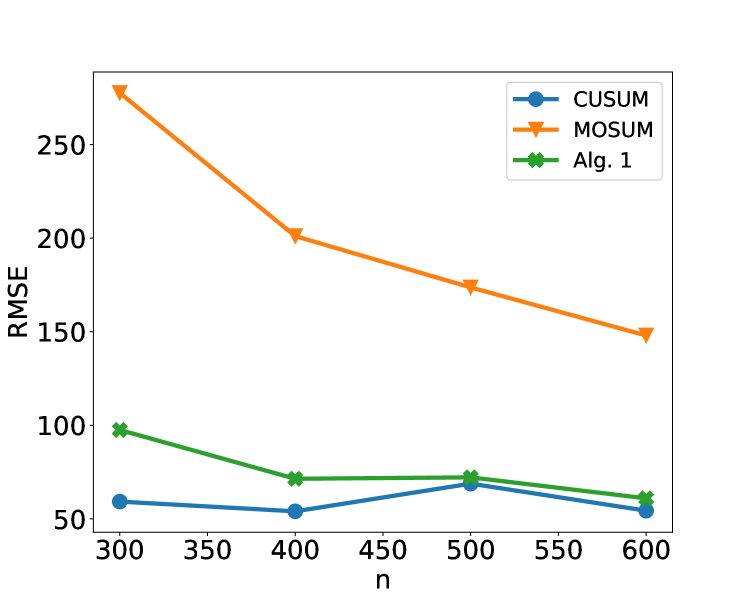

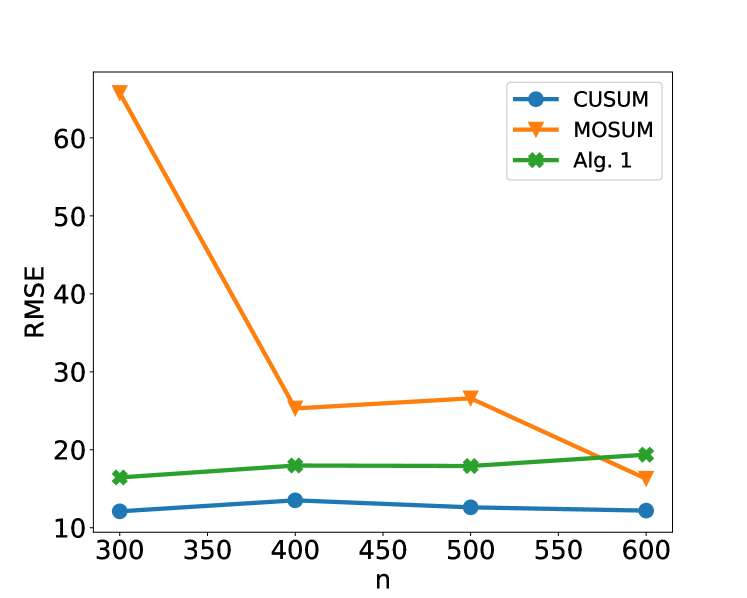

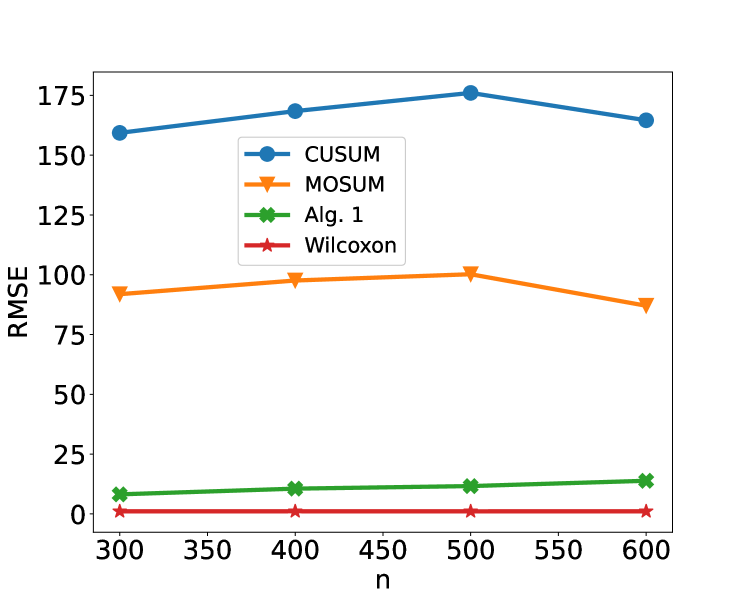

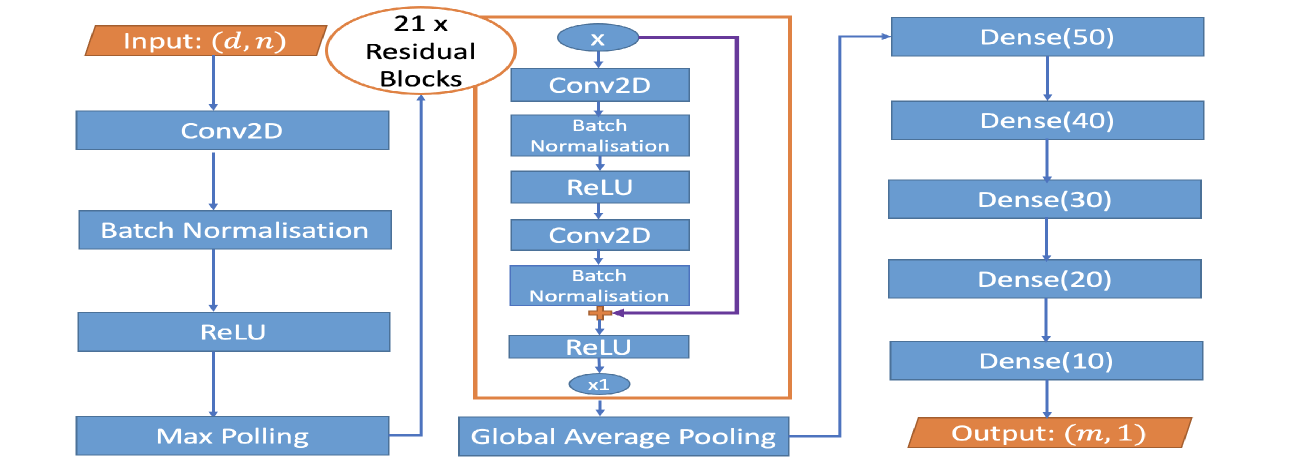

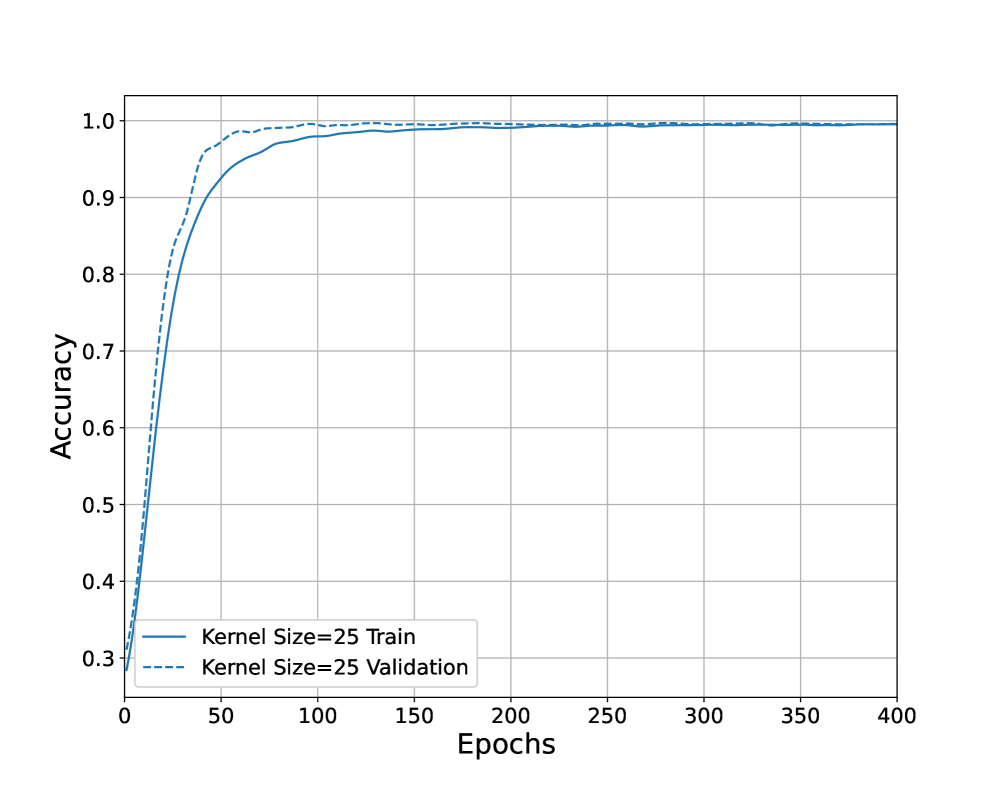

5 Numerical study

We now investigate empirically our approach of learning a change-point detection method by training a neural network. Motivated by the results from the previous section we will fit a neural network with a single layer and consider how varying the number of hidden layers and the amount of training data affects performance. We will compare to a test based on the CUSUM statistic, both for scenarios where the noise is independent and Gaussian, and for scenarios where there is auto-correlation or heavy-tailed noise. The CUSUM test can be sensitive to the choice of threshold, particularly when we do not have independent Gaussian noise, so we tune its threshold based on training data. When training the neural network, we first standardise the data onto $[0,1]$ , i.e. $\tilde{\boldsymbol{x}}_{i}=((x_{ij}-x_{i}^{\mathrm{min}})/(x_{i}^{\mathrm{max}%

}-x_{i}^{\mathrm{min}}))_{j∈[n]}$ where $x_{i}^{\mathrm{max}}:=\max_{j}x_{ij},x_{i}^{\mathrm{min}}:=\min_{j}x_{ij}$ . This makes the neural network procedure invariant to either adding a constant to the data or scaling the data by a constant, which are natural properties to require. We train the neural network by minimising the cross-entropy loss on the training data. We run training for 200 epochs with a batch size of 32 and a learning rate of 0.001 using the Adam optimiser (Kingma and Ba, 2015). These hyperparameters are chosen based on a training dataset with cross-validation, more details can be found in Appendix B. We generate our data as follows. Given a sequence of length $n$ , we draw $\tau\sim\mathrm{Unif}\{2,...,n-2\}$ , set $\mu_{\mathrm{L}}=0$ and draw $\mu_{\mathrm{R}}|\tau\sim\mathrm{Unif}([-1.5b,-0.5b]\cup[0.5b,1.5b])$ , where $b:=\sqrt{\frac{8n\log(20n)}{\tau(n-\tau)}}$ is chosen in line with Lemma 4.1 to ensure a good range of signal-to-noise ratios. We then generate $\boldsymbol{x}_{1}=(\mu_{\mathrm{L}}\mathbbm{1}_{\{t≤\tau\}}+\mu_{\mathrm{R%

}}\mathbbm{1}_{\{t>\tau\}}+\varepsilon_{t})_{t∈[n]}$ , with the noise $(\varepsilon_{t})_{t∈[n]}$ following an $\mathrm{AR}(1)$ model with possibly time-varying autocorrelation $\varepsilon_{t}|\rho_{t}=\xi_{1}$ for $t=1$ and $\rho_{t}\varepsilon_{t-1}+\xi_{t}$ for $t≥ 2$ , where $(\xi_{t})_{t∈[n]}$ are independent, possibly heavy-tailed noise. The autocorrelations $\rho_{t}$ and innovations $\xi_{t}$ are from one of the three scenarios:

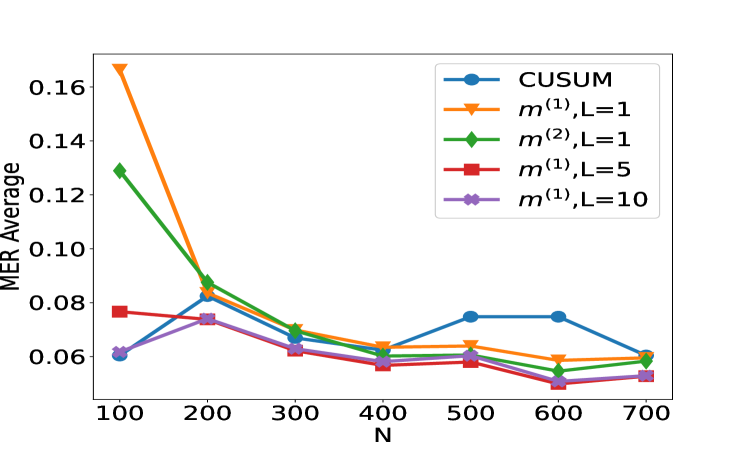

1. $n=100$ , $N∈\{100,200,...,700\}$ , $\rho_{t}=0$ and $\xi_{t}\sim N(0,1)$ .

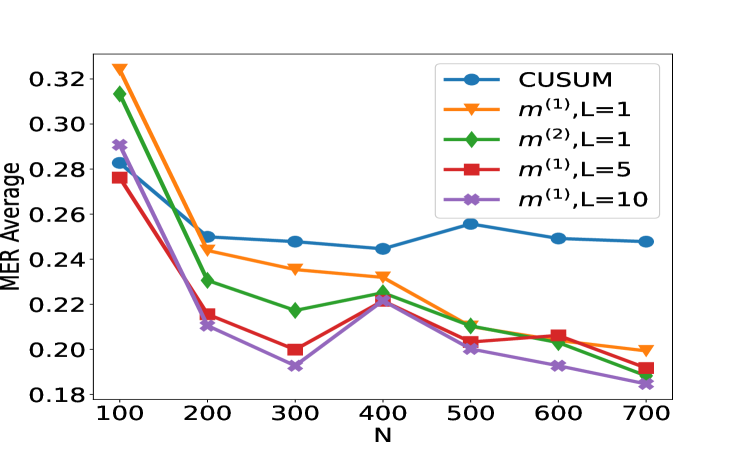

1. $n=100$ , $N∈\{100,200,...,700\}$ , $\rho_{t}=0.7$ and $\xi_{t}\sim N(0,1)$ .

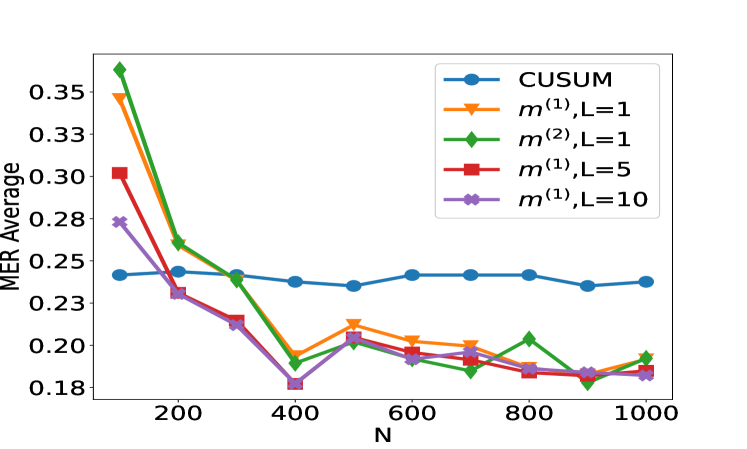

1. $n=100$ , $N∈\{100,200,...,1000\}$ , $\rho_{t}\sim\mathrm{Unif}([0,1])$ and $\xi_{t}\sim N(0,2)$ .

1. $n=100$ , $N∈\{100,200,...,1000\}$ , $\rho_{t}=0$ and $\xi_{t}\sim\text{Cauchy}(0,0.3)$ .

The above procedure is then repeated $N/2$ times to generate independent sequences $\boldsymbol{x}_{1},...,\boldsymbol{x}_{N/2}$ with a single change, and the associated labels are $(y_{1},...,y_{N/2})^{→p}=\mathbf{1}_{N/2}$ . We then repeat the process another $N/2$ times with $\mu_{\mathrm{R}}=\mu_{\mathrm{L}}$ to generate sequences without changes $\boldsymbol{x}_{N/2+1},...,\boldsymbol{x}_{N}$ with $(y_{N/2+1},...,y_{N})^{→p}=\mathbf{0}_{N/2}$ . The data with and without change $(\boldsymbol{x}_{i},y_{i})_{i∈[N]}$ are combined and randomly shuffled to form the training data. The test data are generated in a similar way, with a sample size $N_{\mathrm{test}}=30000$ and the slight modification that $\mu_{\mathrm{R}}|\tau\sim\mathrm{Unif}([-1.75b,-0.25b]\cup[0.25b,1.75b])$ when a change occurs. We note that the test data is drawn from the same distribution as the training set, though potentially having changes with signal-to-noise ratios outside the range covered by the training set. We have also conducted robustness studies to investigate the effect of training the neural networks on scenario S1 and test on S1 ${}^{\prime}$ , S2 or S3. Qualitatively similar results to Figure 2 have been obtained in this misspecified setting (see Figure 6 in appendix).

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: MER Average vs. N for Different Algorithms

### Overview

The image presents a line chart comparing the Mean Error Rate (MER) Average for several algorithms as a function of 'N', likely representing sample size or number of iterations. The algorithms are CUSUM, m^(1),L=1, m^(2),L=1, m^(1),L=5, and m^(1),L=10. The chart visually demonstrates how the MER Average changes with increasing N for each algorithm.

### Components/Axes

* **X-axis:** Labeled "N", ranging from approximately 100 to 700, with tick marks at 100, 200, 300, 400, 500, 600, and 700.

* **Y-axis:** Labeled "MER Average", ranging from approximately 0.06 to 0.16, with tick marks at 0.06, 0.08, 0.10, 0.12, 0.14, and 0.16.

* **Legend:** Located in the top-right corner of the chart. It identifies each line with the following labels and corresponding colors:

* CUSUM (Blue)

* m^(1),L=1 (Orange)

* m^(2),L=1 (Green)

* m^(1),L=5 (Red)

* m^(1),L=10 (Purple)

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points, verified against the legend colors:

* **CUSUM (Blue):** The line starts at approximately (100, 0.13), decreases to around (200, 0.07), fluctuates between approximately 0.06 and 0.08 until around (600, 0.08), and then increases slightly to approximately (700, 0.09).

* **m^(1),L=1 (Orange):** This line exhibits a steep decline from approximately (100, 0.17) to around (200, 0.09), then continues to decrease, reaching a minimum of approximately (600, 0.05), and slightly increases to approximately (700, 0.06).

* **m^(2),L=1 (Green):** The line starts at approximately (100, 0.14), decreases to around (200, 0.08), and then fluctuates between approximately 0.06 and 0.07, ending at approximately (700, 0.06).

* **m^(1),L=5 (Red):** The line begins at approximately (100, 0.08), decreases to around (200, 0.07), and then fluctuates between approximately 0.05 and 0.06, ending at approximately (700, 0.05).

* **m^(1),L=10 (Purple):** The line starts at approximately (100, 0.07), decreases to around (200, 0.06), and then fluctuates between approximately 0.05 and 0.06, ending at approximately (700, 0.06).

### Key Observations

* The algorithm m^(1),L=1 (orange) shows the most significant initial decrease in MER Average as N increases.

* The algorithms m^(1),L=5 (red) and m^(1),L=10 (purple) consistently exhibit the lowest MER Average values across the range of N.

* CUSUM (blue) has a relatively stable MER Average after the initial decrease.

* m^(2),L=1 (green) shows a moderate decrease in MER Average, but remains higher than m^(1),L=5 and m^(1),L=10.

### Interpretation

The chart suggests that the algorithms m^(1),L=5 and m^(1),L=10 are the most effective in minimizing the Mean Error Rate, particularly as the sample size (N) increases. The algorithm m^(1),L=1 demonstrates a rapid initial improvement but plateaus at a higher MER Average compared to the other two. CUSUM shows a moderate performance, while m^(2),L=1 performs relatively worse.

The parameter 'L' appears to play a crucial role in the performance of the m^(1) algorithm, with larger values of L (5 and 10) leading to lower MER Averages. This could indicate that a larger 'L' value allows for more accurate error detection or a more stable estimation process. The initial steep decline in MER Average for all algorithms suggests that increasing the sample size initially provides significant benefits in reducing error rates. However, beyond a certain point, the improvements diminish, and the algorithms converge towards a stable MER Average. The differences between the algorithms become less pronounced at higher values of N.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: MER Average vs. N for Different Methods

### Overview

This image presents a line chart comparing the Mean Error Rate (MER) Average for several methods as a function of 'N'. The methods include CUSUM, and variations of m^(1) and m^(2) with different values of L (1, 5, and 10). The chart aims to demonstrate how the performance of each method changes with increasing 'N'.

### Components/Axes

* **X-axis:** Labeled "N", ranging from approximately 100 to 700, with tick marks at 100, 200, 300, 400, 500, 600, and 700.

* **Y-axis:** Labeled "MER Average", ranging from approximately 0.18 to 0.32, with tick marks at 0.18, 0.20, 0.22, 0.24, 0.26, 0.28, 0.30, and 0.32.

* **Legend:** Located in the top-right corner of the chart. It identifies the following data series:

* CUSUM (Blue)

* m^(1), L=1 (Orange)

* m^(2), L=1 (Green)

* m^(1), L=5 (Red)

* m^(1), L=10 (Purple)

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points:

* **CUSUM (Blue):** The line starts at approximately (100, 0.26), decreases slightly to around (200, 0.25), remains relatively stable between (200, 0.25) and (600, 0.25), and then increases slightly to approximately (700, 0.26).

* **m^(1), L=1 (Orange):** This line exhibits a strong downward trend from approximately (100, 0.32) to (200, 0.22). It then plateaus around (300, 0.23) and gradually decreases to approximately (700, 0.20).

* **m^(2), L=1 (Green):** This line also shows a significant decrease from approximately (100, 0.31) to (200, 0.22). It then fluctuates between approximately (200, 0.22) and (600, 0.22), and decreases slightly to approximately (700, 0.21).

* **m^(1), L=5 (Red):** This line starts at approximately (100, 0.28), decreases to around (200, 0.21), then increases to approximately (300, 0.23), and decreases again to approximately (700, 0.19).

* **m^(1), L=10 (Purple):** This line begins at approximately (100, 0.29), decreases steadily to approximately (700, 0.18). It shows the most consistent downward trend among all the methods.

### Key Observations

* The methods m^(1), L=1 and m^(2), L=1 start with the highest MER averages and show significant improvement as N increases.

* The CUSUM method maintains a relatively stable MER average across the range of N values.

* m^(1), L=10 consistently exhibits the lowest MER average, indicating the best performance across all N values.

* m^(1), L=5 shows some fluctuation in MER average, with a slight increase around N=300.

### Interpretation

The chart demonstrates the impact of different methods and parameter settings (L) on the Mean Error Rate (MER) as the sample size (N) increases. The consistent decrease in MER for m^(1), L=10 suggests that increasing the value of L improves the method's performance, likely by reducing sensitivity to noise or outliers. The stability of the CUSUM method indicates its robustness to changes in N. The initial high MER averages for m^(1), L=1 and m^(2), L=1 suggest that these methods may require larger sample sizes to achieve comparable performance to CUSUM or m^(1), L=10. The fluctuations observed in m^(1), L=5 could indicate a sensitivity to specific data patterns or a suboptimal parameter setting for certain N values. Overall, the data suggests that the choice of method and parameter tuning are crucial for achieving accurate results, and that increasing the value of L generally leads to improved performance.

</details>

(a) Scenario S1 with $\rho_{t}=0$ (b) Scenario S1 ${}^{\prime}$ with $\rho_{t}=0.7$

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: MER Average vs. N for Different Methods

### Overview

This image presents a line chart comparing the Mean Error Rate (MER) Average for several methods as a function of 'N', likely representing sample size or number of observations. The methods being compared are CUSUM, m<sup>(1)</sup> with L=1, m<sup>(2)</sup> with L=1, m<sup>(1)</sup> with L=5, and m<sup>(1)</sup> with L=10.

### Components/Axes

* **X-axis:** Labeled "N", ranging from approximately 0 to 1000, with markers at 200, 400, 600, 800, and 1000.

* **Y-axis:** Labeled "MER Average", ranging from approximately 0.18 to 0.36, with markers at 0.18, 0.20, 0.22, 0.24, 0.26, 0.28, 0.30, 0.32, 0.34, and 0.36.

* **Legend:** Located in the top-right corner of the chart. It identifies each line with its corresponding method:

* Blue circle: CUSUM

* Orange triangle: m<sup>(1)</sup>, L=1

* Green diamond: m<sup>(2)</sup>, L=1

* Red triangle: m<sup>(1)</sup>, L=5

* Purple cross: m<sup>(1)</sup>, L=10

### Detailed Analysis

* **CUSUM (Blue Line):** The line starts at approximately 0.25 at N=0, fluctuates between approximately 0.23 and 0.25, and ends at approximately 0.24 at N=1000. It shows a relatively stable performance across the range of N.

* **m<sup>(1)</sup>, L=1 (Orange Line):** This line begins at approximately 0.32 at N=0, decreases sharply to approximately 0.22 at N=200, continues to decrease to approximately 0.19 at N=400, and then plateaus around 0.18-0.20 for the remainder of the range, ending at approximately 0.19 at N=1000.

* **m<sup>(2)</sup>, L=1 (Green Line):** This line starts at approximately 0.36 at N=0, decreases rapidly to approximately 0.22 at N=200, continues to decrease to approximately 0.18 at N=400, and then remains relatively stable between approximately 0.18 and 0.19, ending at approximately 0.18 at N=1000.

* **m<sup>(1)</sup>, L=5 (Red Line):** This line begins at approximately 0.30 at N=0, decreases to approximately 0.21 at N=200, continues to decrease to approximately 0.18 at N=400, and then fluctuates between approximately 0.18 and 0.20, ending at approximately 0.18 at N=1000.

* **m<sup>(1)</sup>, L=10 (Purple Line):** This line starts at approximately 0.27 at N=0, decreases to approximately 0.20 at N=200, continues to decrease to approximately 0.18 at N=400, and then remains relatively stable between approximately 0.17 and 0.19, ending at approximately 0.18 at N=1000.

### Key Observations

* All methods show a decreasing MER Average as N increases, indicating improved performance with larger sample sizes.

* m<sup>(2)</sup>, L=1 and m<sup>(1)</sup>, L=1 initially have the highest MER Average, but they also show the most significant decrease in MER Average as N increases.

* CUSUM exhibits the most stable performance, with the smallest fluctuations in MER Average.

* The methods m<sup>(1)</sup>, L=5 and m<sup>(1)</sup>, L=10 converge to similar MER Average values as N increases.

### Interpretation

The chart demonstrates the performance of different methods for detecting changes or anomalies, as measured by the Mean Error Rate. The 'N' parameter likely represents the number of data points used in the analysis. The results suggest that increasing the sample size (N) generally improves the accuracy of all methods.

The initial higher error rates for m<sup>(2)</sup>, L=1 and m<sup>(1)</sup>, L=1 could indicate that these methods require a larger sample size to achieve optimal performance. The stability of the CUSUM method suggests it is less sensitive to sample size variations, making it a robust choice when sample sizes are limited.

The convergence of m<sup>(1)</sup>, L=5 and m<sup>(1)</sup>, L=10 suggests that the value of 'L' (likely a smoothing parameter or window size) has a diminishing effect on performance beyond a certain point. The choice of method and parameter settings should be based on the specific application and the trade-off between initial performance and sensitivity to sample size.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Line Chart: MER Average vs. N for Different Methods

### Overview

This image presents a line chart comparing the Mean Error Rate (MER) Average for several methods as a function of 'N', likely representing sample size or number of iterations. The methods being compared are CUSUM, m^(1),L=1, m^(2),L=1, m^(1),L=5, and m^(1),L=10. The chart visually demonstrates how the MER Average changes for each method as N increases from approximately 100 to 1000.

### Components/Axes

* **X-axis:** Labeled "N", ranging from approximately 100 to 1000. The scale appears linear.

* **Y-axis:** Labeled "MER Average", ranging from 0.25 to 0.50. The scale appears linear.

* **Legend:** Located in the top-right corner of the chart. It identifies each line with the following labels and corresponding colors:

* CUSUM (Blue)

* m^(1),L=1 (Orange)

* m^(2),L=1 (Light Green)

* m^(1),L=5 (Red)

* m^(1),L=10 (Purple)

### Detailed Analysis

* **CUSUM (Blue Line):** The blue line representing CUSUM starts at approximately 0.37 at N=100 and gradually increases to approximately 0.36 at N=1000. It exhibits a relatively flat trend with minor fluctuations.

* N=100: MER Average ≈ 0.37

* N=200: MER Average ≈ 0.36

* N=400: MER Average ≈ 0.35

* N=600: MER Average ≈ 0.35

* N=800: MER Average ≈ 0.36

* N=1000: MER Average ≈ 0.36

* **m^(1),L=1 (Orange Line):** This line begins at approximately 0.42 at N=100 and decreases sharply to approximately 0.27 at N=1000. It shows a strong downward trend.

* N=100: MER Average ≈ 0.42

* N=200: MER Average ≈ 0.36

* N=400: MER Average ≈ 0.31

* N=600: MER Average ≈ 0.29

* N=800: MER Average ≈ 0.27

* N=1000: MER Average ≈ 0.27

* **m^(2),L=1 (Light Green Line):** Starting at approximately 0.39 at N=100, this line decreases to approximately 0.26 at N=1000. It exhibits a similar downward trend to m^(1),L=1, but starts at a higher MER Average.

* N=100: MER Average ≈ 0.39

* N=200: MER Average ≈ 0.34

* N=400: MER Average ≈ 0.30

* N=600: MER Average ≈ 0.28

* N=800: MER Average ≈ 0.26

* N=1000: MER Average ≈ 0.26

* **m^(1),L=5 (Red Line):** This line starts at approximately 0.32 at N=100 and decreases to approximately 0.25 at N=1000. It shows a moderate downward trend.

* N=100: MER Average ≈ 0.32

* N=200: MER Average ≈ 0.29

* N=400: MER Average ≈ 0.28

* N=600: MER Average ≈ 0.27

* N=800: MER Average ≈ 0.26

* N=1000: MER Average ≈ 0.25

* **m^(1),L=10 (Purple Line):** Beginning at approximately 0.34 at N=100, this line decreases to approximately 0.26 at N=1000. It exhibits a similar downward trend to m^(1),L=5, but starts at a higher MER Average.

* N=100: MER Average ≈ 0.34

* N=200: MER Average ≈ 0.30

* N=400: MER Average ≈ 0.28

* N=600: MER Average ≈ 0.27

* N=800: MER Average ≈ 0.28

* N=1000: MER Average ≈ 0.26

### Key Observations

* All methods, except CUSUM, demonstrate a decreasing MER Average as N increases, suggesting improved performance with larger sample sizes or more iterations.

* The m^(1),L=1 method exhibits the most significant decrease in MER Average.

* CUSUM maintains a relatively constant MER Average across all values of N.

* The m^(2),L=1 method starts with the highest MER Average but also shows a substantial reduction as N increases.

### Interpretation

The chart suggests that the methods m^(1),L=1, m^(2),L=1, m^(1),L=5, and m^(1),L=10 are all sensitive to the value of N, with their performance (as measured by MER Average) improving as N increases. This implies that these methods benefit from more data or iterations. The CUSUM method, however, appears to be less affected by N, maintaining a consistent level of performance. This could indicate that CUSUM is more robust to variations in sample size or that it reaches a performance plateau quickly. The differences in initial MER Average and the rate of decrease among the m^(1) and m^(2) methods suggest that the choice of method and its parameters (L) can significantly impact performance. The parameter 'L' appears to influence the rate of improvement, with larger values of L potentially leading to slower but more stable convergence. The chart provides valuable insights for selecting an appropriate method based on the expected sample size and desired level of accuracy.

</details>

(c) Scenario S2 with $\rho_{t}\sim\text{Unif}([0,1])$ (d) Scenario S3 with Cauchy noise

Figure 2: Plot of the test set MER, computed on a test set of size $N_{\mathrm{test}}=30000$ , against training sample size $N$ for detecting the existence of a change-point on data series of length $n=100$ . We compare the performance of the CUSUM test and neural networks from four function classes: $\mathcal{H}_{1,m^{(1)}}$ , $\mathcal{H}_{1,m^{(2)}}$ , $\mathcal{H}_{5,m^{(1)}\mathbf{1}_{5}}$ and $\mathcal{H}_{10,m^{(1)}\mathbf{1}_{10}}$ where $m^{(1)}=4\lfloor\log_{2}(n)\rfloor$ and $m^{(2)}=2n-2$ respectively under scenarios S1, S1 ${}^{\prime}$ , S2 and S3 described in Section 5.

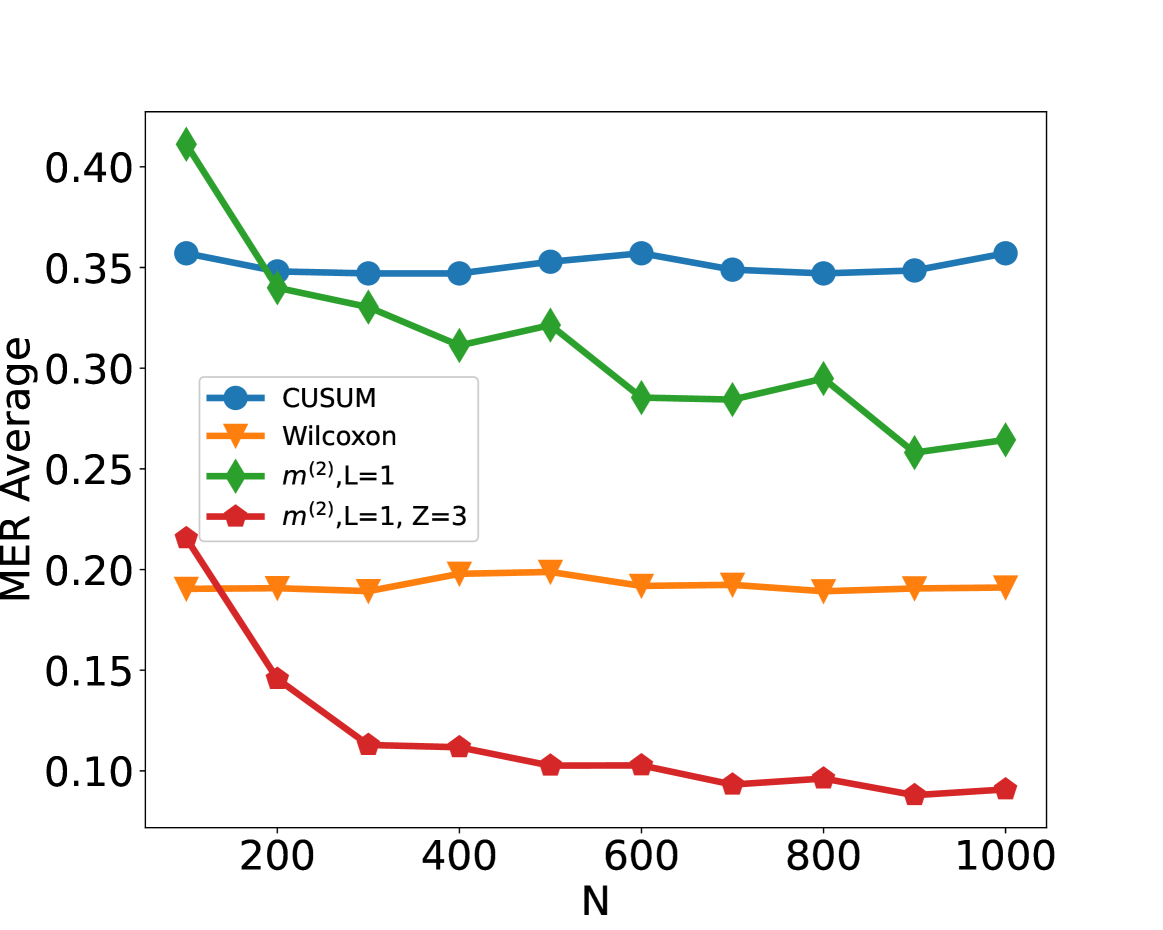

We compare the performance of the CUSUM-based classifier with the threshold cross-validated on the training data with neural networks from four function classes: $\mathcal{H}_{1,m^{(1)}}$ , $\mathcal{H}_{1,m^{(2)}}$ , $\mathcal{H}_{5,m^{(1)}\mathbf{1}_{5}}$ and $\mathcal{H}_{10,m^{(1)}\mathbf{1}_{10}}$ where $m^{(1)}=4\lfloor\log_{2}(n)\rfloor$ and $m^{(2)}=2n-2$ respectively (cf. Theorem 4.3 and Lemma 3.1). Figure 2 shows the test misclassification error rate (MER) of the four procedures in the four scenarios S1, S1 ${}^{\prime}$ , S2 and S3. We observe that when data are generated with independent Gaussian noise ( Figure 2 (a)), the trained neural networks with $m^{(1)}$ and $m^{(2)}$ single hidden layer nodes attain very similar test MER compared to the CUSUM-based classifier. This is in line with our Theorem 4.3. More interestingly, when noise has either autocorrelation ( Figure 2 (b, c)) or heavy-tailed distribution ( Figure 2 (d)), trained neural networks with $(L,\mathbf{m})$ : $(1,m^{(1)})$ , $(1,m^{(2)})$ , $(5,m^{(1)}\mathbf{1}_{5})$ and $(10,m^{(1)}\mathbf{1}_{10})$ outperform the CUSUM-based classifier, even after we have optimised the threshold choice of the latter. In addition, as shown in Figure 5 in the online supplement, when the first two layers of the network are set to carry out truncation, which can be seen as a composition of two ReLU operations, the resulting neural network outperforms the Wilcoxon statistics-based classifier (Dehling et al., 2015), which is a standard benchmark for change-point detection in the presence of heavy-tailed noise. Furthermore, from Figure 2, we see that increasing $L$ can significantly reduce the average MER when $N≤ 200$ . Theoretically, as the number of layers $L$ increases, the neural network is better able to approximate the optimal decision boundary, but it becomes increasingly difficult to train the weights due to issues such as vanishing gradients (He et al., 2016). A combination of these considerations leads us to develop deep neural network architecture with residual connections for detecting multiple changes and multiple change types in Section 6.

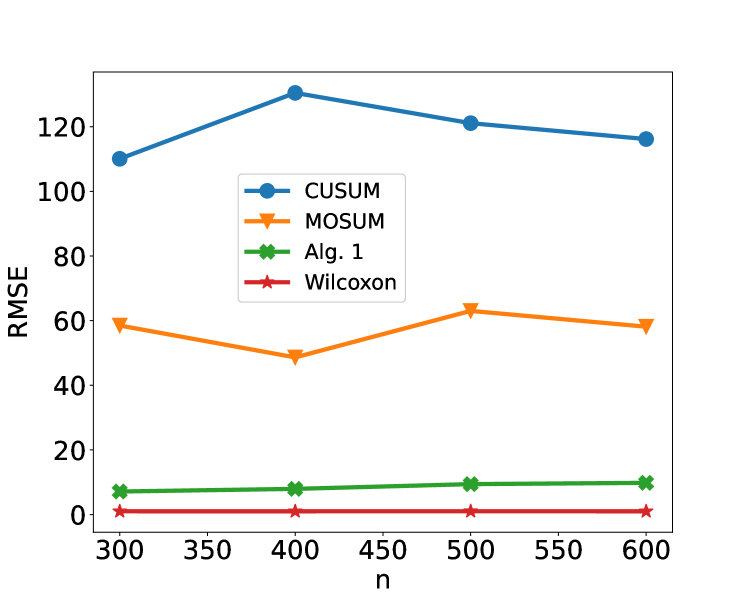

6 Detecting multiple changes and multiple change types – case study

From the previous section, we see that single and multiple hidden layer neural networks can represent CUSUM or generalised CUSUM tests and may perform better than likelihood-based test statistics when the model is misspecified. This prompted us to seek a general network architecture that can detect, and even classify, multiple types of change. Motivated by the similarities between signal processing and image recognition, we employed a deep convolutional neural network (CNN) (Yamashita et al., 2018) to learn the various features of multiple change-types. However, stacking more CNN layers cannot guarantee a better network because of vanishing gradients in training (He et al., 2016). Therefore, we adopted the residual block structure (He et al., 2016) for our neural network architecture. After experimenting with various architectures with different numbers of residual blocks and fully connected layers on synthetic data, we arrived at a network architecture with 21 residual blocks followed by a number of fully connected layers. Figure 9 shows an overview of the architecture of the final general-purpose deep neural network for change-point detection. The precise architecture and training methodology of this network $\widehat{NN}$ can be found in Appendix C. Neural Architecture Search (NAS) approaches (see Paaß and Giesselbach, 2023, Section 2.4.3) offer principled ways of selecting neural architectures. Some of these approaches could be made applicable in our setting. We demonstrate the power of our general purpose change-point detection network in a numerical study. We train the network on $N=10000$ instances of data sequences generated from a mixture of no change-point in mean or variance, change in mean only, change in variance only, no-change in a non-zero slope and change in slope only, and compare its classification performance on a test set of size $2500$ against that of oracle likelihood-based classifiers (where we pre-specify whether we are testing for change in mean, variance or slope) and adaptive likelihood-based classifiers (where we combine likelihood based tests using the Bayesian Information Criterion). Details of the data-generating mechanism and classifiers can be found in Appendix B. The classification accuracy of the three approaches in weak and strong signal-to-noise ratio settings are reported in Table 1. We see that the neural network-based approach achieves similar classification accuracy as adaptive likelihood based method for weak SNR and higher classification accuracy than the adaptive likelihood based method for strong SNR. We would not expect the neural network to outperform the oracle likelihood-based classifiers as it has no knowledge of the exact change-type of each time series.

Table 1: Test classification accuracy of oracle likelihood-ratio based method (LR ${}^{\mathrm{oracle}}$ ), adaptive likelihood ratio method (LR ${}^{\mathrm{adapt}}$ ) and our residual neural network (NN) classifier for setups with weak and strong signal-to-noise ratios (SNR). Data are generated as a mixture of no change-point in mean or variance (Class 1), change in mean only (Class 2), change in variance only (Class 3), no-change in a non-zero slope (Class 4), change in slope only (Class 5). We report the true positive rate of each class and the accuracy in the last row.

Weak SNR Strong SNR LR ${}^{\mathrm{oracle}}$ LR ${}^{\mathrm{adapt}}$ NN LR ${}^{\mathrm{oracle}}$ LR ${}^{\mathrm{adapt}}$ NN Class 1 0.9787 0.9457 0.8062 0.9787 0.9341 0.9651 Class 2 0.8443 0.8164 0.8882 1.0000 0.7784 0.9860 Class 3 0.8350 0.8291 0.8585 0.9902 0.9902 0.9705 Class 4 0.9960 0.9453 0.8826 0.9980 0.9372 0.9312 Class 5 0.8729 0.8604 0.8353 0.9958 0.9917 0.9147 Accuracy 0.9056 0.8796 0.8660 0.9924 0.9260 0.9672

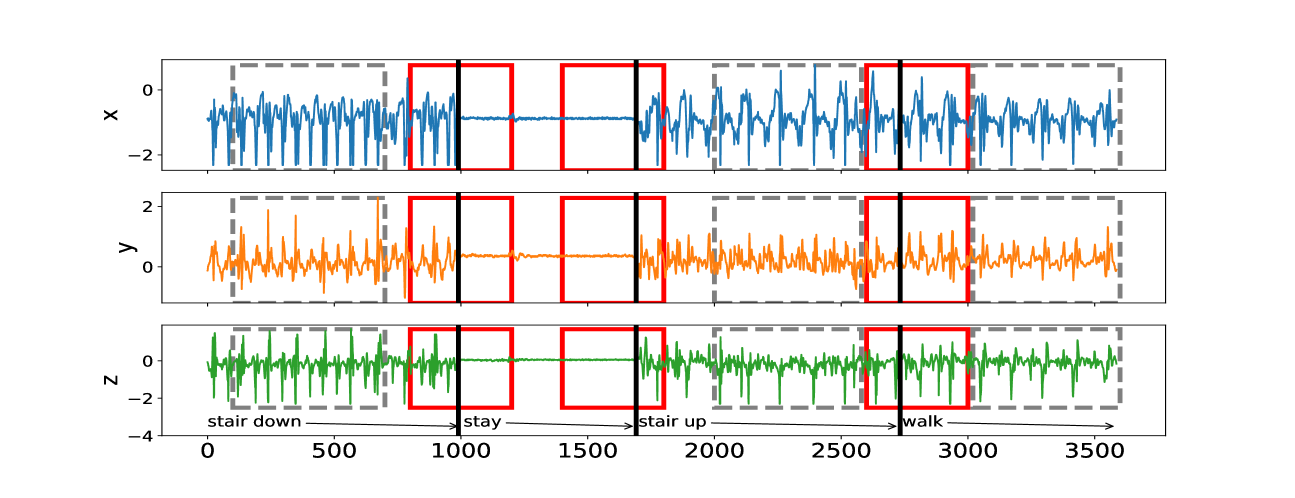

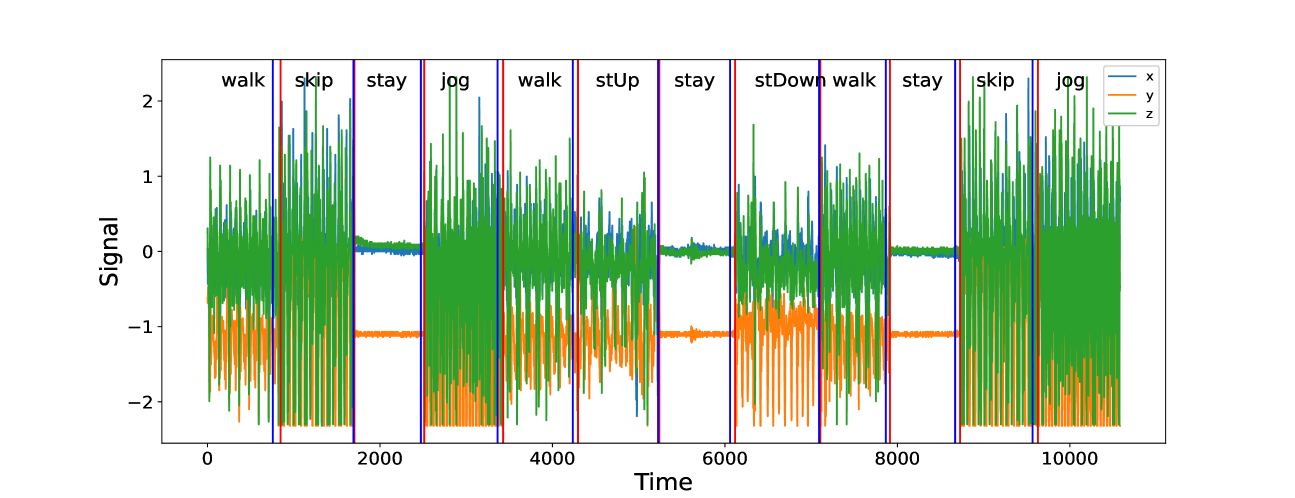

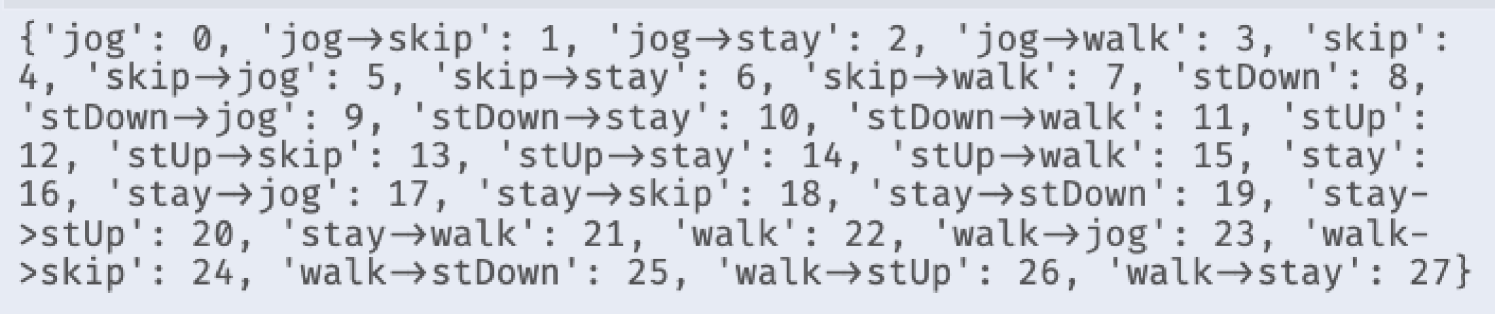

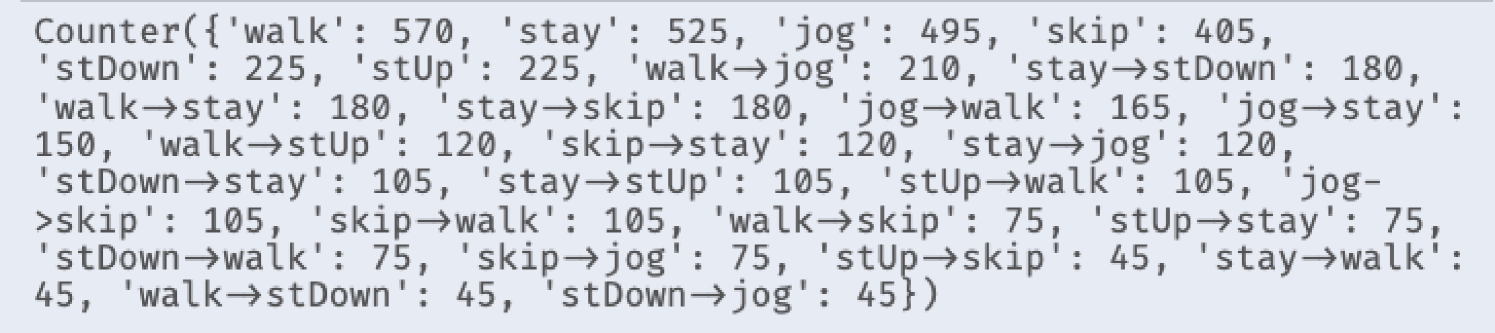

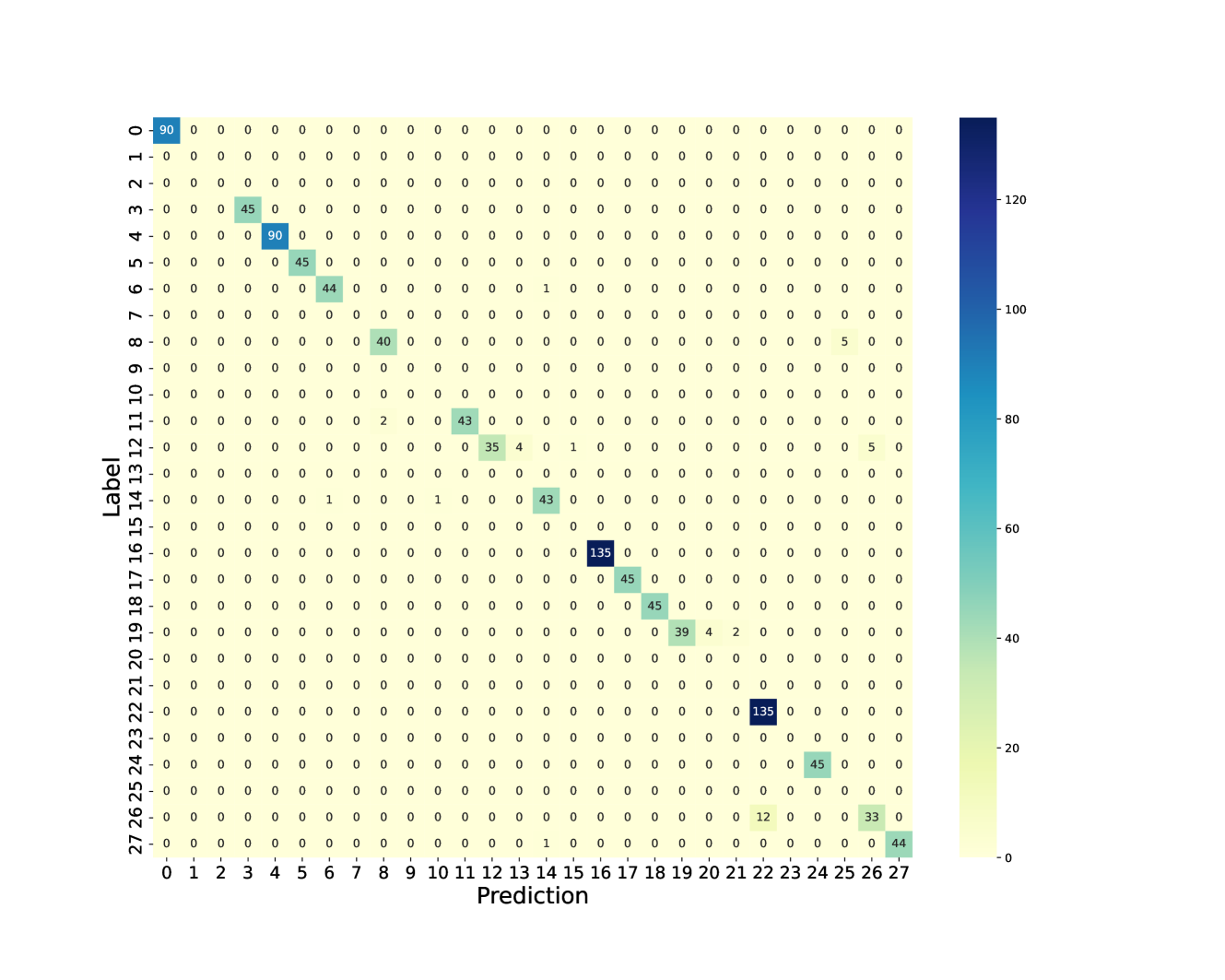

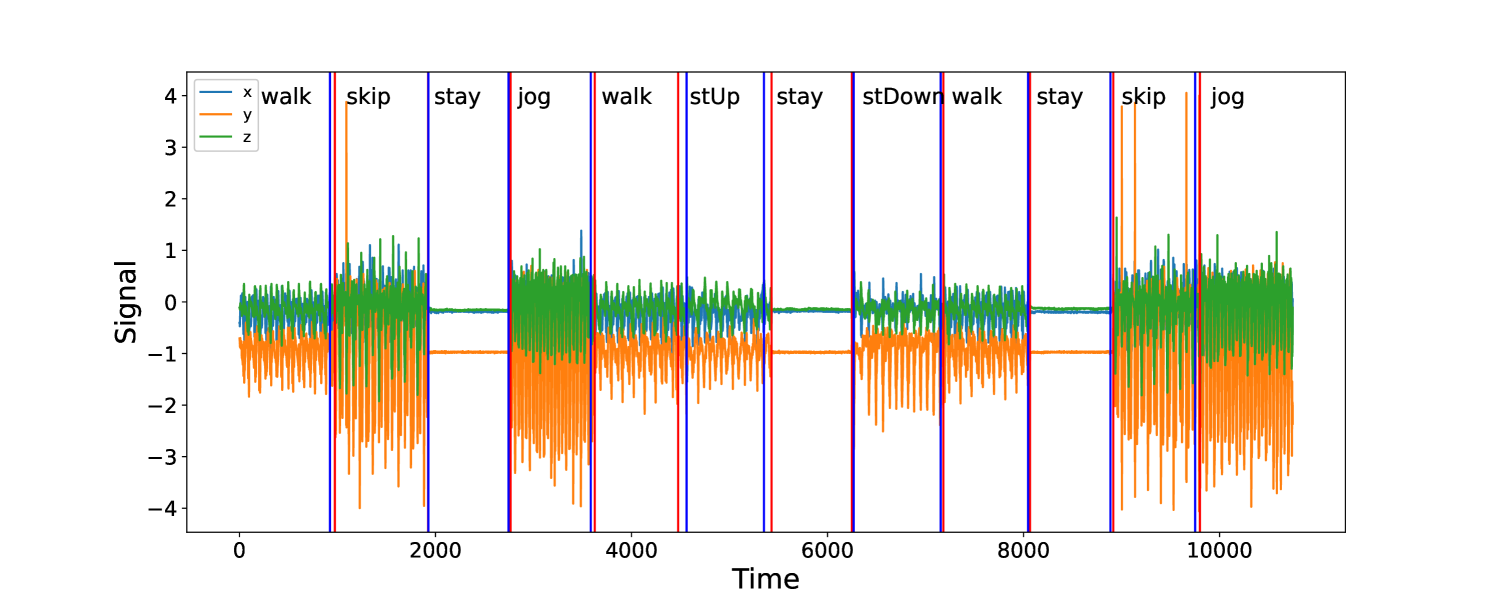

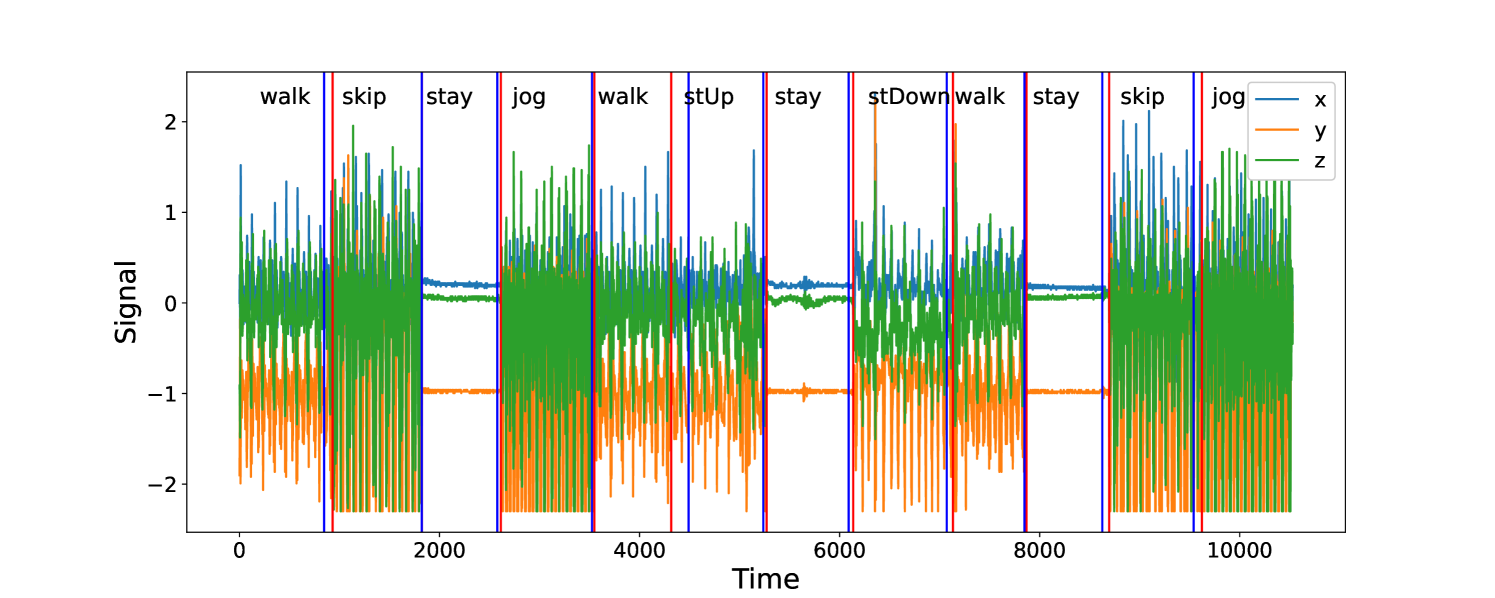

We now consider an application to detecting different types of change. The HASC (Human Activity Sensing Consortium) project data contain motion sensor measurements during a sequence of human activities, including “stay”, “walk”, “jog”, “skip”, “stair up” and “stair down”. Complex changes in sensor signals occur during transition from one activity to the next (see Figure 3). We have 28 labels in HASC data, see Figure 10 in appendix. To agree with the dimension of the output, we drop two dense layers “Dense(10)” and “Dense(20)” in Figure 9. The resulting network can be effectively applied for change-point detection in sensory signals of human activities, and can achieve high accuracy in change-point classification tasks (Figure 12 in appendix). Finally, we remark that our neural network-based change-point detector can be utilised to detect multiple change-points. Algorithm 1 outlines a general scheme for turning a change-point classifier into a location estimator, where we employ an idea similar to that of MOSUM (Eichinger and Kirch, 2018) and repeatedly apply a classifier $\psi$ to data from a sliding window of size $n$ . Here, we require $\psi$ applied to each data segment $\boldsymbol{X}^{*}_{[i,i+n)}$ to output both the class label $L_{i}=0$ or $1$ if no change or a change is predicted and the corresponding probability $p_{i}$ of having a change. In our particular example, for each data segment $\boldsymbol{X}^{*}_{[i,i+n)}$ of length $n=700$ , we define $\psi(\boldsymbol{X}^{*}_{[i,i+n)})=0$ if $\widehat{NN}(\boldsymbol{X}^{*}_{[i,i+n)})$ predicts a class label in $\{0,4,8,12,16,22\}$ (see Figure 10 in appendix) and 1 otherwise. The thresholding parameter $\gamma∈\mathbb{Z}^{+}$ is chosen to be $1/2$ .

Input: new data $\boldsymbol{x}_{1}^{*},...,\boldsymbol{x}_{n^{*}}^{*}∈\mathbb{R}^{d}$ , a trained classifier $\psi:\mathbb{R}^{d× n}→\{0,1\}$ , $\gamma>0$ .

1 Form $\boldsymbol{X}_{[i,i+n)}^{*}:=(\boldsymbol{x}_{i}^{*},...,\boldsymbol{x}_{i%

+n-1})$ and compute $L_{i}←\psi(\boldsymbol{X}^{*}_{[i,i+n)})$ for all $i=1,...,n^{*}-n+1$ ;

2 Compute $\bar{L}_{i}← n^{-1}\sum_{j=i-n+1}^{i}L_{j}$ for $i=n,...,n^{*}-n+1$ ;

3 Let $\{[s_{1},e_{1}],...,[s_{\hat{\nu}},e_{\hat{\nu}}]\}$ be the set of all maximal segments such that $\bar{L}_{i}≥\gamma$ for all $i∈[s_{r},e_{r}]$ , $r∈[\hat{\nu}]$ ;

4 Compute $\hat{\tau}_{r}←\operatorname*{arg\,max}_{i∈[s_{r},e_{r}]}\bar{L}_{i}$ for all $r∈[\hat{\nu}]$ ;

Output: Estimated change-points $\hat{\tau}_{1},...,\hat{\tau}_{\hat{\nu}}$

Algorithm 1 Algorithm for change-point localisation

Figure 4 illustrates the result of multiple change-point detection in HASC data which provides evidence that the trained neural network can detect both the multiple change-types and multiple change-points.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Time Series Chart: 3-Axis Motion Data

### Overview

The image presents three time series charts stacked vertically, representing motion data along the X, Y, and Z axes. Each chart displays signal intensity (approximately ranging from -4 to 2) over time (from 0 to 3500 time units). Red boxes highlight specific time intervals labeled with activity types: "stair down", "stay", "stair up", and "walk". Grey dashed vertical lines demarcate the boundaries of these activity segments.

### Components/Axes

* **X-axis:** Time (units from 0 to 3500).

* **Y-axis (for each chart):** Signal Intensity (approximately -4 to 2). Each chart has a different label: "X", "Y", and "Z".

* **Labels:** "stair down", "stay", "stair up", "walk".

* **Highlighting:** Red rectangular boxes around specific time intervals.

* **Vertical Lines:** Grey dashed lines marking segment boundaries.

### Detailed Analysis or Content Details

**Chart 1: X-Axis Data**

* The X-axis data (blue line) exhibits a generally oscillating pattern throughout the entire time series.

* **"stair down" (0-500):** High-frequency oscillations with an approximate amplitude of 1.5.

* **"stay" (500-1000):** The signal stabilizes around 0, with minimal oscillation.

* **"stair up" (1000-1500):** Similar to "stair down", high-frequency oscillations with an approximate amplitude of 1.5.

* **"walk" (1500-3500):** Oscillations continue, but with a slightly lower average amplitude (around 1.2) and a more irregular pattern.

**Chart 2: Y-Axis Data**

* The Y-axis data (orange line) also shows oscillations, but with a different character than the X-axis.

* **"stair down" (0-500):** Oscillations with an approximate amplitude of 1.

* **"stay" (500-1000):** Signal stabilizes around 0, with minimal oscillation.

* **"stair up" (1000-1500):** Oscillations with an approximate amplitude of 1.

* **"walk" (1500-3500):** Oscillations continue, with a slightly lower average amplitude (around 0.8) and a more irregular pattern.

**Chart 3: Z-Axis Data**

* The Z-axis data (green line) displays a more complex pattern, with a noticeable negative offset.

* **"stair down" (0-500):** Oscillations around -2, with an approximate amplitude of 1.

* **"stay" (500-1000):** Signal stabilizes around -2, with minimal oscillation.

* **"stair up" (1000-1500):** Oscillations around -2, with an approximate amplitude of 1.

* **"walk" (1500-3500):** Oscillations continue, with a slightly lower average amplitude (around 0.7) and a more irregular pattern.

### Key Observations

* The "stay" periods consistently show minimal signal variation across all three axes, indicating a stationary state.

* "Stair down" and "stair up" exhibit similar oscillatory patterns, suggesting a consistent motion profile during these activities.

* The "walk" period shows more irregular oscillations, likely due to the more complex and variable nature of walking.

* The Z-axis data is consistently offset to negative values, suggesting a predominant downward force or orientation.

### Interpretation

The data likely represents accelerometer readings from a sensor attached to a person or object. The three axes (X, Y, and Z) capture motion in three dimensions. The labeled segments ("stair down", "stay", "stair up", "walk") represent different activities, and the corresponding signal patterns allow for activity recognition.

The consistent patterns during "stair down" and "stair up" suggest a relatively regular motion profile, while the more irregular pattern during "walk" reflects the more complex dynamics of walking. The stabilization of signals during "stay" confirms a lack of movement. The negative offset in the Z-axis could indicate the sensor is oriented downwards, or that the subject is generally leaning or applying force in a downward direction.

This type of data is commonly used in activity recognition systems, wearable sensors, and human-computer interaction applications. The data suggests a clear correlation between the observed motion patterns and the labeled activities, which could be used to train a machine learning model for automated activity classification.

</details>

Figure 3: The sequence of accelerometer data in $x,y$ and $z$ axes. From left to right, there are 4 activities: “stair down”, “stay”, “stair up” and “walk”, their change-points are 990, 1691, 2733 respectively marked by black solid lines. The grey rectangles represent the group of “no-change” with labels: “stair down”, “stair up” and “walk”; The red rectangles represent the group of “one-change” with labels: “stair down $→$ stay”, “stay $→$ stair up” and “stair up $→$ walk”.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Time Series Chart: Sensor Signal During Activities

### Overview

The image presents a time series chart displaying sensor signal data over time, segmented into different activity types. The chart shows three distinct signal lines (x, y, and z) fluctuating in amplitude as the activity changes. The x-axis represents time, and the y-axis represents the signal strength. The activities are labeled above the chart, indicating the type of movement or state being recorded.

### Components/Axes

* **X-axis:** Time (ranging from approximately 0 to 11000)

* **Y-axis:** Signal (ranging from approximately -2 to 2)

* **Legend:** Located in the top-right corner, identifies the three signal lines:

* x (represented by a blue line)

* y (represented by an orange line)

* z (represented by a green line)

* **Activity Labels:** Placed horizontally above the chart, marking time intervals with activity names: walk, skip, stay, jog, walk, stUp, stay, stDown, walk, stay, skip, jog.

* **Vertical Lines:** Thin vertical lines are present at the boundaries of each activity segment, visually separating the different activities.

### Detailed Analysis

The chart displays three time series, each representing a different sensor axis (x, y, z). The signal values fluctuate over time, with distinct patterns corresponding to each activity.

* **x (Blue Line):** The x-axis signal generally exhibits smaller amplitude fluctuations compared to the y and z axes. It shows a relatively stable signal during "stay" periods and more variation during dynamic activities like "walk," "skip," and "jog."

* **y (Orange Line):** The y-axis signal shows significant fluctuations, particularly during "skip," "stUp," and "stDown" activities. It appears to have a more pronounced positive and negative swing during these movements.

* **z (Green Line):** The z-axis signal exhibits the largest amplitude fluctuations and is the most visually dominant signal in the chart. It shows clear patterns related to the different activities. For example, during "walk" and "jog," the signal oscillates with a relatively consistent frequency.

Here's a breakdown of signal characteristics for each activity (approximate values):

* **walk (0-1000, 4000-5000, 8000-9000):** z fluctuates between -1.5 and 1.5, y between -0.5 and 0.5, x is relatively stable around 0.

* **skip (1000-2000, 10000-11000):** z fluctuates between -2 and 2, y between -1 and 1, x shows some variation but remains smaller in amplitude.

* **stay (2000-3000, 6000-7000, 9000-10000):** z is relatively stable around 0, y is also close to 0, x is minimal.

* **jog (3000-4000, 11000-end):** z fluctuates between -1.5 and 1.5, y between -0.5 and 0.5, x is relatively stable around 0.

* **stUp (5000-6000):** y shows a large positive spike, z fluctuates between -1 and 1, x is minimal.

* **stDown (7000-8000):** y shows a large negative spike, z fluctuates between -1 and 1, x is minimal.

### Key Observations

* The "stay" activity consistently exhibits the lowest signal amplitude across all three axes, indicating minimal movement.

* "Skip," "stUp," and "stDown" activities generate the highest signal amplitude, particularly in the y-axis, suggesting significant and rapid changes in orientation.

* The z-axis signal appears to be the most sensitive to changes in activity, showing the most pronounced fluctuations.

* The x-axis signal is the least sensitive, providing a relatively stable baseline.

### Interpretation

This chart likely represents data from an accelerometer or similar sensor measuring movement along three axes. The different signal patterns correspond to different human activities. The data suggests that the sensor can effectively differentiate between static ("stay") and dynamic ("walk," "skip," "jog") activities. The "stUp" and "stDown" activities likely represent standing up and sitting down, respectively, and are characterized by a strong signal change in the y-axis, indicating vertical movement.

The consistent patterns observed for each activity suggest that this data could be used to develop an activity recognition system. The sensor data could be used as input to a machine learning model trained to classify different activities based on the signal characteristics. The outliers, such as the large spikes during "stUp" and "stDown," could be used as key features for accurate classification. The relative stability of the x-axis could be used to filter out noise or compensate for sensor drift.

</details>

Figure 4: Change-point detection in HASC data. The red vertical lines represent the underlying change-points, the blue vertical lines represent the estimated change-points. More details on multiple change-point detection can be found in Appendix C.

7 Discussion

Reliable testing for change-points and estimating their locations, especially in the presence of multiple change-points, other heterogeneities or untidy data, is typically a difficult problem for the applied statistician: they need to understand what type of change is sought, be able to characterise it mathematically, find a satisfactory stochastic model for the data, formulate the appropriate statistic, and fine-tune its parameters. This makes for a long workflow, with scope for errors at its every stage. In this paper, we showed how a carefully constructed statistical learning framework could automatically take over some of those tasks, and perform many of them ‘in one go’ when provided with examples of labelled data. This turned the change-point detection problem into a supervised learning problem, and meant that the task of learning the appropriate test statistic and fine-tuning its parameters was left to the ‘machine’ rather than the human user. The crucial question was that of choosing an appropriate statistical learning framework. The key factor behind our choice of neural networks was the discovery that the traditionally-used likelihood-ratio-based change-point detection statistics could be viewed as simple neural networks, which (together with bounds on generalisation errors beyond the training set) enabled us to formulate and prove the corresponding learning theory. However, there are a plethora of other excellent predictive frameworks, such as XGBoost, LightGBM or Random Forests (Chen and Guestrin, 2016; Ke et al., 2017; Breiman, 2001) and it would be of interest to establish whether and why they could or could not provide a viable alternative to neural nets here. Furthermore, if we view the neural network as emulating the likelihood-ratio test statistic, in that it will create test statistics for each possible location of a change and then amalgamate these into a single classifier, then we know that test statistics for nearby changes will often be similar. This suggests that imposing some smoothness on the weights of the neural network may be beneficial. A further challenge is to develop methods that can adapt easily to input data of different sizes, without having to train a different neural network for each input size. For changes in the structure of the mean of the data, it may be possible to use ideas from functional data analysis so that we pre-process the data, with some form of smoothing or imputation, to produce input data of the correct length. If historical labelled examples of change-points, perhaps provided by subject-matter experts (who are not necessarily statisticians) are not available, one question of interest is whether simulation can be used to obtain such labelled examples artificially, based on (say) a single dataset of interest. Such simulated examples would need to come in two flavours: one batch ‘likely containing no change-points’ and the other containing some artificially induced ones. How to simulate reliably in this way is an important problem, which this paper does not solve. Indeed, we can envisage situations in which simulating in this way may be easier than solving the original unsupervised change-point problem involving the single dataset at hand, with the bulk of the difficulty left to the ‘machine’ at the learning stage when provided with the simulated data. For situations where there is no historical data, but there are statistical models, one can obtain training data by simulation from the model. In this case, training a neural network to detect a change has similarities with likelihood-free inference methods in that it replaces analytic calculations associated with a model by the ability to simulate from the model. It is of interest whether ideas from that area of statistics can be used here. The main focus of our work was on testing for a single offline change-point, and we treated location estimation and extensions to multiple-change scenarios only superficially, via the heuristics of testing-based estimation in Section 6. Similar extensions can be made to the online setting once the neural network is trained, by retaining the final $n$ observations in an online stream in memory and applying our change-point classifier sequentially. One question of interest is whether and how these heuristics can be made more rigorous: equipped with an offline classifier only, how can we translate the theoretical guarantee of this offline classifier to that of the corresponding location estimator or online detection procedure? In addition to this approach, how else can a neural network, however complex, be trained to estimate locations or detect change-points sequentially? In our view, these questions merit further work.

Availability of data and computer code

The data underlying this article are available in http://hasc.jp/hc2011/index-en.html. The computer code and algorithm are available in Python Package: AutoCPD.

Acknowledgement

This work was supported by the High End Computing Cluster at Lancaster University, and EPSRC grants EP/V053590/1, EP/V053639/1 and EP/T02772X/1. We highly appreciate Yudong Chen’s contribution to debug our Python scripts and improve their readability.

Conflicts of Interest

We have no conflicts of interest to disclose.

References

- Ahmadzadeh (2018) Ahmadzadeh, F. (2018). Change point detection with multivariate control charts by artificial neural network. J. Adv. Manuf. Technol. 97 (9), 3179–3190.

- Aminikhanghahi and Cook (2017) Aminikhanghahi, S. and D. J. Cook (2017). Using change point detection to automate daily activity segmentation. In 2017 IEEE International Conference on Pervasive Computing and Communications Workshops (PerCom Workshops), pp. 262–267.

- Baranowski et al. (2019) Baranowski, R., Y. Chen, and P. Fryzlewicz (2019). Narrowest-over-threshold detection of multiple change points and change-point-like features. J. Roy. Stat. Soc., Ser. B 81 (3), 649–672.

- Bartlett et al. (2019) Bartlett, P. L., N. Harvey, C. Liaw, and A. Mehrabian (2019). Nearly-tight VC-dimension and pseudodimension bounds for piecewise linear neural networks. J. Mach. Learn. Res. 20 (63), 1–17.

- Beaumont (2019) Beaumont, M. A. (2019). Approximate Bayesian computation. Annu. Rev. Stat. Appl. 6, 379–403.

- Bengio et al. (1994) Bengio, Y., P. Simard, and P. Frasconi (1994). Learning long-term dependencies with gradient descent is difficult. IEEE T. Neural Networ. 5 (2), 157–166.

- Bos and Schmidt-Hieber (2022) Bos, T. and J. Schmidt-Hieber (2022). Convergence rates of deep ReLU networks for multiclass classification. Electron. J. Stat. 16 (1), 2724–2773.

- Breiman (2001) Breiman, L. (2001). Random forests. Mach. Learn. 45 (1), 5–32.

- Chang et al. (2019) Chang, W.-C., C.-L. Li, Y. Yang, and B. Póczos (2019). Kernel change-point detection with auxiliary deep generative models. In International Conference on Learning Representations.

- Chen and Gupta (2012) Chen, J. and A. K. Gupta (2012). Parametric Statistical Change Point Analysis: With Applications to Genetics, Medicine, and Finance (2nd ed.). New York: Birkhäuser.

- Chen and Guestrin (2016) Chen, T. and C. Guestrin (2016). XGBoost: A scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 785–794.

- De Ryck et al. (2021) De Ryck, T., M. De Vos, and A. Bertrand (2021). Change point detection in time series data using autoencoders with a time-invariant representation. IEEE T. Signal Proces. 69, 3513–3524.

- Dehling et al. (2015) Dehling, H., R. Fried, I. Garcia, and M. Wendler (2015). Change-point detection under dependence based on two-sample U-statistics. In D. Dawson, R. Kulik, M. Ould Haye, B. Szyszkowicz, and Y. Zhao (Eds.), Asymptotic Laws and Methods in Stochastics: A Volume in Honour of Miklós Csörgő, pp. 195–220. New York, NY: Springer New York.

- Dürre et al. (2016) Dürre, A., R. Fried, T. Liboschik, and J. Rathjens (2016). robts: Robust Time Series Analysis. R package version 0.3.0/r251.

- Eichinger and Kirch (2018) Eichinger, B. and C. Kirch (2018). A MOSUM procedure for the estimation of multiple random change points. Bernoulli 24 (1), 526–564.

- Fearnhead et al. (2019) Fearnhead, P., R. Maidstone, and A. Letchford (2019). Detecting changes in slope with an $l_{0}$ penalty. J. Comput. Graph. Stat. 28 (2), 265–275.

- Fearnhead and Rigaill (2020) Fearnhead, P. and G. Rigaill (2020). Relating and comparing methods for detecting changes in mean. Stat 9 (1), 1–11.

- Fryzlewicz (2014) Fryzlewicz, P. (2014). Wild binary segmentation for multiple change-point detection. Ann. Stat. 42 (6), 2243–2281.

- Fryzlewicz (2021) Fryzlewicz, P. (2021). Robust narrowest significance pursuit: Inference for multiple change-points in the median. arXiv preprint, arxiv:2109.02487.

- Fryzlewicz (2023) Fryzlewicz, P. (2023). Narrowest significance pursuit: Inference for multiple change-points in linear models. J. Am. Stat. Assoc., to appear.

- Gao et al. (2019) Gao, Z., Z. Shang, P. Du, and J. L. Robertson (2019). Variance change point detection under a smoothly-changing mean trend with application to liver procurement. J. Am. Stat. Assoc. 114 (526), 773–781.