# Deep Meta Programming

**Authors**: HikaruShindo, Devendra SinghDhami, KristianKersting

[1,3] Zihan Ye

1] AI and Machine Learning Group, Dept. of Computer Science, TU Darmstadt, Germany

2] Centre for Cognitive Science, TU Darmstadt, Germany

3] Hessian Center for AI (hessian.AI), Germany 4] German Center for Artificial Intelligence (DFKI), Germany 5] Eindhoven University of Technology, Netherlands

Neural Meta-Symbolic Reasoning and Learning

## Abstract

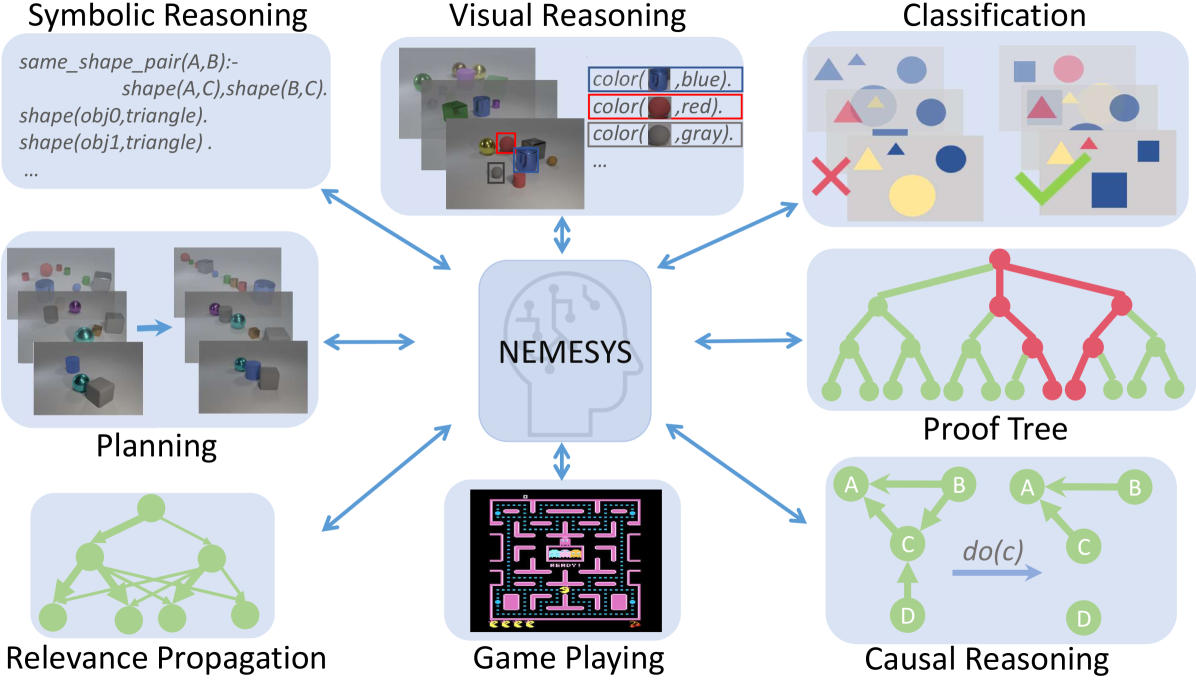

Deep neural learning uses an increasing amount of computation and data to solve very specific problems. By stark contrast, human minds solve a wide range of problems using a fixed amount of computation and limited experience. One ability that seems crucial to this kind of general intelligence is meta-reasoning, i.e., our ability to reason about reasoning. To make deep learning do more from less, we propose the first neural meta -symbolic system (NEMESYS) for reasoning and learning: meta programming using differentiable forward-chaining reasoning in first-order logic. Differentiable meta programming naturally allows NEMESYS to reason and learn several tasks efficiently. This is different from performing object-level deep reasoning and learning, which refers in some way to entities external to the system. In contrast, NEMESYS enables self-introspection, lifting from object- to meta-level reasoning and vice versa. In our extensive experiments, we demonstrate that NEMESYS can solve different kinds of tasks by adapting the meta-level programs without modifying the internal reasoning system. Moreover, we show that NEMESYS can learn meta-level programs given examples. This is difficult, if not impossible, for standard differentiable logic programming.

keywords: differentiable meta programming, differentiable forward reasoning, meta reasoning

<details>

<summary>x1.png Details</summary>

### Visual Description

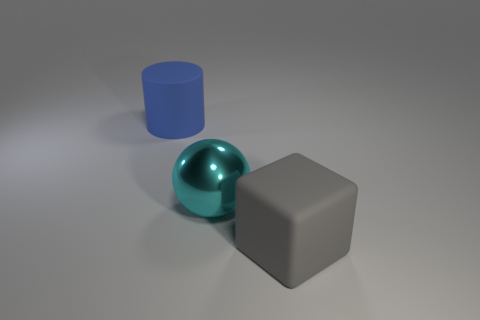

## Diagram: NEMESYS Architecture Overview

### Overview

The image presents a high-level diagram of the NEMESYS architecture, showcasing its capabilities in various reasoning domains. The diagram consists of a central node labeled "NEMESYS" connected to several surrounding nodes, each representing a specific reasoning task. These tasks include Symbolic Reasoning, Visual Reasoning, Classification, Proof Tree generation, Causal Reasoning, Game Playing, Relevance Propagation, and Planning. The connections between the central node and the task nodes are represented by double-headed arrows, suggesting bidirectional communication or interaction.

### Components/Axes

* **Central Node:** A stylized head containing the text "NEMESYS". This represents the core AI system.

* **Task Nodes:** Eight rectangular nodes surrounding the central node, each labeled with a specific reasoning task.

* **Connections:** Double-headed arrows connecting the central node to each task node.

* **Task-Specific Visualizations:** Each task node contains a representative visualization of the task.

### Detailed Analysis or ### Content Details

1. **Symbolic Reasoning:**

* Text:

```

Symbolic Reasoning

same_shape_pair(A,B):-

shape(A,C), shape(B,C).

shape(obj0,triangle).

shape(obj1, triangle).

...

```

* Description: The node displays Prolog-like code snippets, suggesting the system's ability to perform symbolic reasoning based on defined rules and facts.

2. **Visual Reasoning:**

* Image: Contains images of colored objects (spheres, cubes) with bounding boxes around some objects.

* Text:

```

color( ,blue).

color( ,red).

color( ,gray).

```

* Description: The node demonstrates the system's ability to identify and reason about visual attributes of objects, such as color.

3. **Classification:**

* Image: Shows a set of shapes (triangles, circles, squares) with different colors. Some shapes are marked with a green checkmark, while others are marked with a red cross.

* Description: The node illustrates the system's classification capabilities, where objects are categorized and labeled as correct or incorrect.

4. **Proof Tree:**

* Image: A tree structure with nodes and edges. Some nodes and edges are colored red, while others are green.

* Description: The node represents the system's ability to generate and visualize proof trees, likely used for verifying logical inferences.

5. **Causal Reasoning:**

* Image: A directed graph with nodes labeled A, B, C, and D. An arrow labeled "do(c)" points from the graph on the left to the graph on the right, indicating an intervention on node C.

* Description: The node demonstrates the system's ability to perform causal reasoning, including interventions and their effects on the system.

6. **Game Playing:**

* Image: A screenshot of the classic Pac-Man game.

* Text: "eeee"

* Description: The node showcases the system's ability to play games, suggesting its capacity for strategic decision-making and planning.

7. **Relevance Propagation:**

* Image: A directed graph with nodes and edges.

* Description: The node represents the system's ability to propagate relevance scores or information through a network.

8. **Planning:**

* Image: A sequence of images showing objects being moved or manipulated.

* Description: The node demonstrates the system's ability to plan actions and sequences of actions to achieve a goal.

### Key Observations

* The diagram highlights the modularity of the NEMESYS architecture, with each reasoning task represented as a separate component.

* The bidirectional connections suggest that the system can integrate information from different reasoning modules.

* The diverse range of tasks indicates that NEMESYS is a versatile AI system capable of handling various reasoning challenges.

### Interpretation

The diagram provides a high-level overview of the NEMESYS AI system, emphasizing its ability to perform a wide range of reasoning tasks. The system appears to be designed with a modular architecture, allowing for the integration of different reasoning modules. The bidirectional connections between the central node and the task nodes suggest that the system can leverage information from multiple sources to make informed decisions. The inclusion of tasks such as game playing and planning indicates that NEMESYS is capable of both symbolic and embodied reasoning. The diagram suggests that NEMESYS is a powerful and versatile AI system with the potential to address a wide range of real-world problems.

</details>

Figure 1: NEMESYS solves different kinds of tasks by using meta-level reasoning and learning. NEMESYS addresses, for instance, visual reasoning, planning, and causal reasoning without modifying its internal reasoning architecture. (Best viewed in color)

## 1 Introduction

One of the distant goals of Artificial Intelligence (AI) is to build a fully autonomous or ‘human-like’ system. The current successes of deep learning systems such as DALLE-2 [1], ChatGPT [2, 3], and Gato [4] have been promoted as bringing the field closer to this goal. However, current systems still require a large number of computations and often solve rather specific tasks. For example, DALLE-2 can generate very high-quality images but cannot play chess or Atari games. In stark contrast, human minds solve a wide range of problems using a small amount of computation and limited experience.

Most importantly, to be considered a major step towards achieving Artificial General Intelligence (AGI), a system must not only be able to perform a variety of tasks, such as Gato [4] playing Atari games, captioning images, chatting, and controlling a real robot arm, but also be self-reflective and able to learn and reason about its own capabilities. This means that it must be able to improve itself and adapt to new situations through self-reflection [5, 6, 7, 8]. Consequently, the study of meta-level architectures such as meta learning [9] and meta-reasoning [7] becomes progressively important. Meta learning [10] is a way to improve the learning algorithm itself [11, 12], i.e., it performs learning at a higher level, or meta-level. Meta-reasoning is a related concept that involves a system being able to think about its own abilities and how it processes information [5, 6]. It involves reflecting on, or introspecting about, the system’s own reasoning processes.

Indeed, meta-reasoning is different from object-centric reasoning, which refers to the system thinking about entities external to itself [13, 14, 15]. Here, the models perform low-level visual perception and reasoning on high-level concepts. Accordingly, there has been a push to make these reasoning systems differentiable [16, 17] along with addressing benchmarks in a visual domain such as CLEVR [18] and Kandinsky patterns [19, 20]. They use object-centric neural networks to perceive objects and perform reasoning using their output. Although this can solve the proposed benchmarks to some extent, the critical question remains unanswered: Is the reasoner able to justify its own operations? Can the same model solve different tasks such as (causal) reasoning, planning, game playing, and much more?

To overcome these limitations, we propose NEMESYS, the first neural meta -symbolic reasoning system. NEMESYS extensively performs meta-level programming on neuro-symbolic systems, and thus it can reason and learn several tasks. This is different from performing object-level deep reasoning and learning, which refers in some way to entities external to the system. NEMESYS is able to reflect or introspect, i.e., to shift from object- to meta-level reasoning and vice versa.

| | Meta Reasoning | Multitask Adaptation | Differentiable Meta Structure Learning |

| --- | --- | --- | --- |

| DeepProbLog [21] | ✗ | ✗ | ✗ |

| NTPs [22] | ✗ | ✗ | ✗ |

| FFSNL [23] | ✗ | ✗ | ✗ |

| $\alpha$ ILP [24] | ✗ | ✗ | ✗ |

| Scallop [25] | ✗ | ✗ | ✗ |

| NeurASP [26] | ✗ | ✗ | ✗ |

| NEMESYS (ours) | ✓ | ✓ | ✓ |

Table 1: Comparisons between NEMESYS and other state-of-the-art Neuro-Symbolic systems. We compare these systems with NEMESYS in three aspects, whether the system performs meta reasoning, can the same system adapt to solve different tasks and is the system capable of differentiable meta level structure learning.

Overall, we make the following contributions:

1. We propose NEMESYS, the first neural meta -symbolic reasoning and learning system that performs differentiable forward reasoning using meta-level programs.

1. To evaluate the ability of NEMESYS, we propose a challenging task, visual concept repairing, where the task is to rearrange objects in visual scenes based on relational logical concepts.

1. We empirically show that NEMESYS can efficiently solve different visual reasoning tasks with meta-level programs, achieving comparable performances with object-level forward reasoners [16, 24] that use specific programs for each task.

1. Moreover, we empirically show that using powerful differentiable meta-level programming, NEMESYS can solve different kinds of tasks that are difficult, if not impossible, for the previous neuro-symbolic systems. In our experiments, NEMESYS provides the function of (i) reasoning with integrated proof generation, i.e., performing differentiable reasoning producing proof trees, (ii) explainable artificial intelligence (XAI), i.e., highlighting the importance of logical atoms given conclusions, (iii) reasoning avoiding infinite loops, i.e., performing differentiable reasoning on programs which cause infinite loops that the previous logic reasoning systems unable to solve, and (iv) differentiable causal reasoning, i.e., performing causal reasoning [27, 28] on a causal Bayesian network using differentiable meta reasoners. To the best of the authors’ knowledge, we propose the first differentiable $\mathtt{do}$ operator. Achieving these functions with object-level reasoners necessitates significant efforts, and in some cases, it may be unattainable. In stark contrast, NEMESYS successfully realized the different useful functions by having different meta-level programs without any modifications of the reasoning function itself.

1. We demonstrate that NEMESYS can perform structure learning on the meta-level, i.e., learning meta programs from examples and adapting itself to solve different tasks automatically by learning efficiently with gradients.

To this end, we will proceed as follows. We first review (differentiable) first-order logic and reasoning. We then derive NEMESYS by introducing differentiable logical meta programming. Before concluding, we illustrate several capabilities of NEMESYS.

## 2 Background

NEMESYS relies on several research areas: first-order logic, logic programming, differentiable reasoning, meta-reasoning and -learning.

First-Order Logic (FOL)/Logic Programming. A term is a constant, a variable, or a term which consists of a function symbol. We denote $n$ -ary predicate ${\tt p}$ by ${\tt p}/(n,[{\tt dt_{1}},\ldots,{\tt dt_{n}}])$ , where ${\tt dt_{i}}$ is the datatype of $i$ -th argument. An atom is a formula ${\tt p(t_{1},\ldots,t_{n})}$ , where ${\tt p}$ is an $n$ -ary predicate symbol and ${\tt t_{1},\ldots,t_{n}}$ are terms. A ground atom or simply a fact is an atom with no variables. A literal is an atom or its negation. A positive literal is an atom. A negative literal is the negation of an atom. A clause is a finite disjunction ( $\lor$ ) of literals. A ground clause is a clause with no variables. A definite clause is a clause with exactly one positive literal. If $A,B_{1},\ldots,B_{n}$ are atoms, then $A\lor\lnot B_{1}\lor\ldots\lor\lnot B_{n}$ is a definite clause. We write definite clauses in the form of $A~{}\mbox{:-}~{}B_{1},\ldots,B_{n}$ . Atom $A$ is called the head, and a set of negative atoms $\{B_{1},\ldots,B_{n}\}$ is called the body. We call definite clauses as clauses for simplicity in this paper.

Differentiable Forward-Chaining Reasoning. The forward-chaining inference is a type of inference in first-order logic to compute logical entailment [29]. The differentiable forward-chaining inference [16, 17] computes the logical entailment in a differentiable manner using tensor-based operations. Many extensions of differentiable forward reasoners have been developed, e.g., reinforcement learning agents using logic to compute the policy function [30, 31] and differentiable rule learners in complex visual scenes [24]. NEMESYS performs differentiable meta-level logic programming based on differentiable forward reasoners.

<details>

<summary>x2.png Details</summary>

### Visual Description

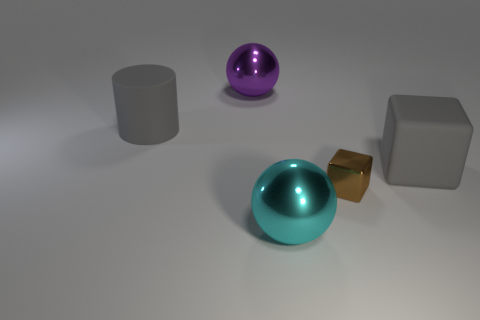

## System Diagram: Differentiable Meta-Level Reasoning

### Overview

The image presents a system diagram illustrating differentiable meta-level reasoning, contrasted with object-level reasoning. It depicts the flow of information and processing steps involved in both approaches.

### Components/Axes

* **Titles:**

* "Differentiable Meta-Level Reasoning" (top)

* "Object-Level Reasoning" (bottom-left)

* "Meta Program" (bottom-right)

* **Nodes (Meta-Level Reasoning):**

* "clauses" (top-left)

* Contains the clause: `0.95:same_shape_pair(X,Y):- shape(X,Z), shape(Y,Z).`

* "Meta Converter" (top-center, pink)

* "meta probabilistic atoms" (top-center-right)

* Contains: `0.98:solve(shape(obj1, cube))`, `0.98:solve(shape(obj2, cube))`, `0.95:clause(same_shape_pair(obj1,obj2), (shape(obj1, cube), shape(obj2, cube)))`

* "Differentiable Forward Reasoner" (top-right, pink)

* "meta probabilistic atoms" (top-right)

* Contains: `0.98:solve(same_shape_pair(obj1,obj2))`

* **Nodes (Object-Level Reasoning):**

* "input" (bottom-left)

* Shows an image of three objects: a cyan cube, a red cube, and a yellow cylinder.

* "object-centric representation" (bottom-left)

* Shows a grid-based representation of the objects, with rows labeled "obj1", "obj2", and "obj3", and columns labeled "x" and "y". The grid cells are filled with blue squares, representing the object's presence at that location.

* Color legend: red circle, cyan circle, yellow circle, black square, 'o', 'x', 'y'

* "probabilistic atoms" (bottom-center)

* Contains: `0.98:color(obj1, cyan)`, `0.98:shape(obj1, cube)`, `0.98:color(obj2, red)`, `0.98:shape(obj2, cube)`

* **Nodes (Meta Program):**

* "naive interpreter" (bottom-right)

* Contains: `1.0:solve((A,B)):-solve(A), solve(B).`, `1.0:solve(A):-clause(A,B), solve(B).`

* "interpreter with proof trees" (bottom-right)

* Contains: `1.0:solve((A,B), (proofA, proofB)):-solve(A,proofA), solve(B, proofB).`, `1.0:solve(A, (A:-proofB)):-clause(A,B), solve(B, proofB).`

* **Arrows:** Arrows indicate the flow of information between the nodes.

### Detailed Analysis or ### Content Details

* **Meta-Level Reasoning Flow:**

1. "clauses" feeds into "Meta Converter".

2. "Meta Converter" outputs to "meta probabilistic atoms".

3. "meta probabilistic atoms" feeds into "Differentiable Forward Reasoner".

4. "Differentiable Forward Reasoner" outputs to "meta probabilistic atoms".

* **Object-Level Reasoning Flow:**

1. "input" feeds into "object-centric representation".

2. "object-centric representation" feeds into "probabilistic atoms".

* **Connection between Levels:**

* "probabilistic atoms" from Object-Level Reasoning feeds into "Meta Converter" in Meta-Level Reasoning.

### Key Observations

* The diagram illustrates a hierarchical reasoning process, where object-level information is abstracted and used for meta-level reasoning.

* Probabilistic atoms are used at both object and meta levels, indicating uncertainty in the reasoning process.

* The Meta Program provides different interpreters, one naive and one with proof trees, suggesting different levels of reasoning complexity.

### Interpretation

The diagram presents a system that combines object-level perception with meta-level reasoning. The object-level reasoning extracts features (color, shape, location) from the input image and represents them as probabilistic atoms. These atoms are then fed into the meta-level reasoning, which uses clauses and a forward reasoner to infer higher-level relationships and solve problems. The meta-program provides the logic for interpreting these relationships, with options for naive interpretation or more complex reasoning using proof trees. The system demonstrates a sophisticated approach to AI, where perception and reasoning are integrated to solve complex tasks.

</details>

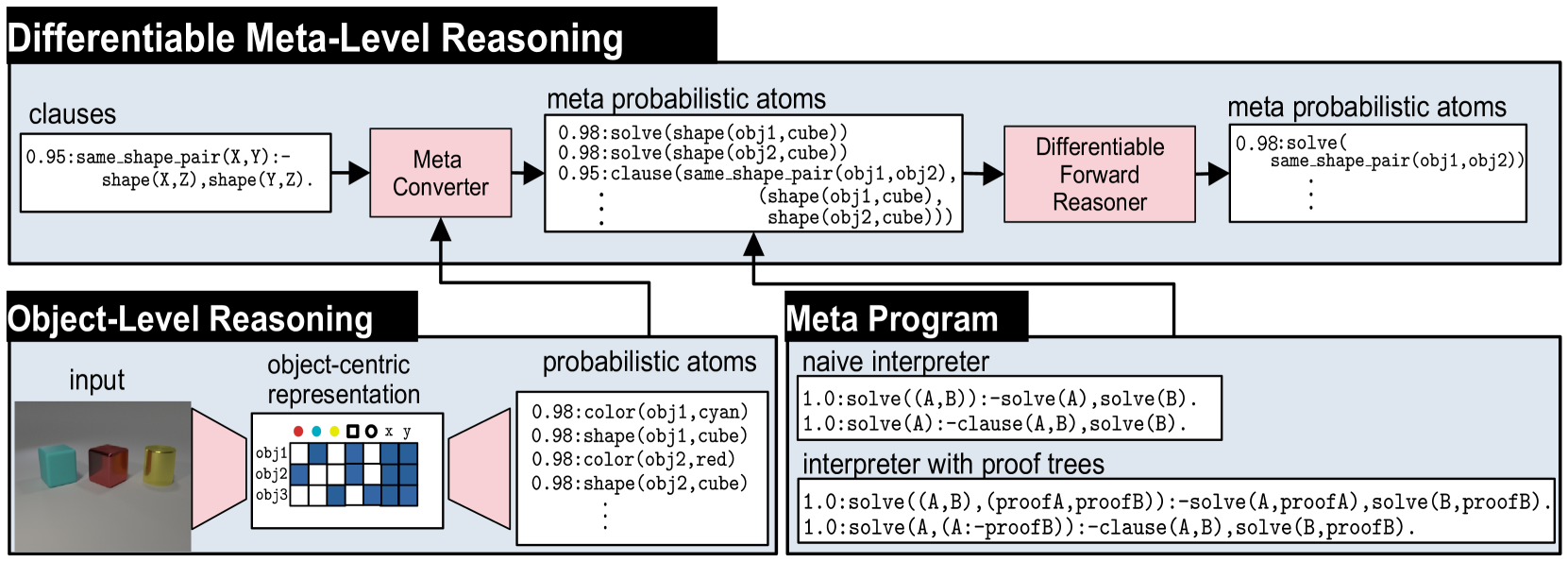

Figure 2: Overview of NEMESYS together with an object-level reasoning layer (bottom left). The meta-level reasoner (top) takes a logic program as input, here clauses on the left-hand side in the meta-level reasoning pipeline. Using the meta program (bottom right) it can realize the standard Prolog engine (naive interpreter) or an interpreter that provides e.g., also the proof trees (interpreter with proof trees) without requiring any alterations to the original logic program and internal reasoning function. This means that NEMESYS can integrate many useful functionalities by simply changing devised meta programs without intervening the internal reasoning function. (Best viewed in color)

Meta Reasoning and Learning. Meta-reasoning is the study about systems which are able to reason about its operation, i.e., a system capable of meta-reasoning may be able to reflect, or introspect [32], shifting from meta-reasoning to object-level reasoning and vice versa [6, 7]. Compared with imperative programming, it is relatively easier to construct a meta-interpreter using declarative programming. First-order Logic [33] has been the major tool to realize the meta-reasoning systems [34, 35, 36]. For example, Prolog [37] provides very efficient implementations of meta-interpreters realizing different additional features to the language.

Despite early interest in meta-reasoning within classical Inductive Logic Programming (ILP) systems [38, 39, 40], meta-interpreters have remained unexplored within neuro-symbolic AI. Meta-interpreters within classical logic are difficult to be combined with gradient-based machine learning paradigms, e.g., deep neural networks. NEMESYS realizes meta-level reasoning using differentiable forward reasoners in first-order logic, which are able to perform differentiable rule learning on complex visual scenes with deep neural networks [24]. Moreover, NEMESYS paves the way to integrate meta-level reasoning into other neuro-symbolic frameworks, including DeepProbLog [21], Scallop [25] and NeurASP [26], which are rather developed for training neural networks given logic programs using differentiable backward reasoning or answer set semantics. We compare NEMESYS with several popular neuro-symbolic systems in three aspects: whether the system performs meta reasoning, can the same system adapt to solve different tasks and is the system capable of differentiable meta level structure learning. The comparison results are summarized in Table 1.

## 3 Neural Meta-Symbolic Reasoning & Learning

We now introduce NEMESYS, the first neural meta-symbolic reasoning and learning framework. Fig. 2 shows an overview of NEMESYS.

### 3.1 Meta Logic Programming

We describe how meta-level programs are used in the NEMESYS workflow. In Fig. 2, the following object-level clause is given as its input:

| | $\displaystyle\mathtt{\color[rgb]{0,0.6,0}same\_shape\_pair(X,Y)\color[rgb]{ 0,0,0}\texttt{:-}\color[rgb]{0.68,0.36,1}shape(X,Z),shape(Y,Z)\color[rgb]{ 0,0,0}.}$ | |

| --- | --- | --- |

which identifies pairs of objects that have the same shape. The clause is subsequently fed to Meta Converter, which generates meta-level atoms.Using meta predicate $\mathtt{clause}/2$ , the following atom is generated:

| | $\displaystyle\mathtt{clause(\color[rgb]{0,0.6,0}same\_shape\_pair(X,Y),}\color [rgb]{0.68,0.36,1}\mathtt{(shape(X,Z),shape(Y,Z))\color[rgb]{0,0,0}).}$ | |

| --- | --- | --- |

where the meta atom $\mathtt{clause(H,B)}$ represents the object-level clause: $\mathtt{H\texttt{:-}B}$ .

To perform meta-level reasoning, NEMESYS uses meta-level programs, which often refer to meta interpreters, i.e., interpreters written in the language itself, as illustrated in Fig. 2. For example, a naive interpreter, NaiveInterpreter, is defined as:

| | $\displaystyle\mathtt{solve(true)}.$ | |

| --- | --- | --- |

To solve a compound goal $\mathtt{(A,B)}$ , we need first solve $\mathtt{A}$ and then $\mathtt{B}$ . A single goal $\mathtt{A}$ is solved if there is a clause that rewrites the goal to the new goal $\mathtt{B}$ , the body of the clause: $\mathtt{\color[rgb]{0,0.6,0}{A}\texttt{:-}\color[rgb]{0.68,0.36,1}{B}}$ . This process stops for facts, encoded as $\mathtt{clause(fact,true)}$ , since then, $\mathtt{solve(true)}$ will be true.

NEMESYS can employ more enriched meta programs with useful functions by simply changing the meta programs, without modifying the internal reasoning function, as illustrated in the bottom right of Fig. 2. ProofTreeInterpreter, an interpreter that produces proof trees along with reasoning, is defined as:

| | $\displaystyle\mathtt{solve(A,(A\texttt{:-}true)).}$ | |

| --- | --- | --- |

where $\mathtt{solve(A,Proof)}$ checks if atom $\mathtt{A}$ is true with proof tree $\mathtt{Proof}$ . Using this meta-program, NEMESYS can perform reasoning with integrated proof tree generation.

Now, let us devise the differentiable meta-level reasoning pipeline, which enables NEMESYS to reason and learn flexibly.

### 3.2 Differentiable Meta Programming

NEMESYS employs differentiable forward reasoning [24], which computes logical entailment using tensor operations in a differentiable manner, by adapting it to the meta-level atoms and clauses.

We define a meta-level reasoning function $f^{\mathit{reason}}_{(\mathcal{C},\mathbf{W})}:[0,1]^{G}\rightarrow[0,1]^{G}$ parameterized by meta-rules $\mathcal{C}$ and their weights $\mathbf{W}$ . We denote the set of meta-rules by $\mathcal{C}$ , and the set of all of the meta-ground atoms by $\mathcal{G}$ . $\mathcal{G}$ contains all of the meta-ground atoms produced by a given FOL language. We consider ordered sets here, i.e., each element has its index. We denote the size of the sets as: $G=|\mathcal{G}|$ and $C=|\mathcal{C}|$ . We denote the $i$ -th element of vector $\mathbf{x}$ by $\mathbf{x}[i]$ , and the $(i,j)$ -th element of matrix $\mathbf{X}$ by $\mathbf{X}[i,j]$ .

First, NEMESYS converts visual input to a valuation vector $\mathbf{v}\in[0,1]^{G}$ , which maps each meta atom to a probabilistic value (Fig. 2 Meta Converter). For example,

$$

\mathbf{v}=\blockarray{cl}\block{[c]l}0.98&\mathtt{solve(color(obj1,\ cyan))}

\\

0.01\mathtt{solve(color(obj1,\ red))}\\

0.95\mathtt{clause(same\_shape\_pair(\ldots),\ (shape(\ldots),\ \ldots))}\\

$$

represents a valuation vector that maps each meta-ground atom to a probabilistic value. For readability, only selected atoms are shown. NEMESYS computes logical entailment by updating the initial valuation vector $\mathbf{v}^{(0)}$ for $T$ times to $\mathbf{v}^{(T)}$ .

Subsequently, we compose the reasoning function that computes logical entailment. We now describe each step in detail.

(Step 1) Encode Logic Programs to Tensors.

To achieve differentiable forward reasoning, each meta-rule is encoded to a tensor representation. Let $S$ be the maximum number of substitutions for existentially quantified variables in $\mathcal{C}$ , and $L$ be the maximum length of the body of rules in $\mathcal{C}$ . Each meta-rule $C_{i}\in\mathcal{C}$ is encoded to a tensor ${\bf I}_{i}\in\mathbb{N}^{G\times S\times L}$ , which contains the indices of body atoms. Intuitively, $\mathbf{I}_{i}[j,k,l]$ is the index of the $l$ -th fact (subgoal) in the body of the $i$ -th rule to derive the $j$ -th fact with the $k$ -th substitution for existentially quantified variables. We obtain $\mathbf{I}_{i}$ by firstly grounding the meta rule $C_{i}$ , then computing the indices of the ground body atoms, and transforming them into a tensor.

(Step 2) Assign Meta-Rule Weights.

We assign weights to compose the reasoning function with several meta-rules as follows: (i) We fix the target programs’ size as $M$ , i.e., we try to select a meta-program with $M$ meta-rules out of $C$ candidate meta rules. (ii) We introduce $C$ -dimensional weights $\mathbf{W}=[{\bf w}_{1},\ldots,{\bf w}_{M}]$ where $\mathbf{w}_{i}\in\mathbb{R}^{C}$ . (iii) We take the softmax of each weight vector ${\bf w}_{j}\in\mathbf{W}$ and softly choose $M$ meta rules out of $C$ meta rules to compose the differentiable meta program.

(Step 3) Perform Differentiable Inference.

We compute $1$ -step forward reasoning using weighted meta-rules, then we recursively perform reasoning to compute $T$ -step reasoning.

(i) Reasoning using one rule. First, for each meta-rule $C_{i}\in\mathcal{C}$ , we evaluate body atoms for different grounding of $C_{i}$ by computing:

$$

\displaystyle b_{i,j,k}^{(t)}=\prod_{1\leq l\leq L}{\bf gather}({\bf v}^{(t)},

{\bf I}_{i})[j,k,l], \tag{1}

$$

where $\mathbf{gather}:[0,1]^{G}\times\mathbb{N}^{G\times S\times L}\rightarrow[0,1]^ {G\times S\times L}$ is:

$$

\displaystyle\mathbf{gather}({\bf x},{\bf Y})[j,k,l]={\bf x}[{\bf Y}[j,k,l]], \tag{2}

$$

and $b^{(t)}_{i,j,k}\in[0,1]$ . The $\mathbf{gather}$ function replaces the indices of the body atoms by the current valuation values in $\mathbf{v}^{(t)}$ . To take logical and across the subgoals in the body, we take the product across valuations. $b_{i,j,k}^{(t)}$ represents the valuation of body atoms for $i$ -th meta-rule using $k$ -th substitution for the existentially quantified variables to deduce $j$ -th meta-ground atom at time $t$ .

Now we take logical or softly to combine all of the different grounding for $C_{i}$ by computing $c^{(t)}_{i,j}\in[0,1]$ :

$$

\displaystyle c^{(t)}_{i,j}=\mathit{softor}^{\gamma}(b_{i,j,1}^{(t)},\ldots,b_

{i,j,S}^{(t)}), \tag{3}

$$

where $\mathit{softor}^{\gamma}$ is a smooth logical or function:

$$

\displaystyle\mathit{softor}^{\gamma}(x_{1},\ldots,x_{n})=\gamma\log\sum_{1

\leq i\leq n}\exp(x_{i}/\gamma), \tag{4}

$$

where $\gamma>0$ is a smooth parameter. Eq. 4 is an approximation of the max function over probabilistic values based on the log-sum-exp approach [41].

(ii) Combine results from different rules. Now we apply different meta-rules using the assigned weights by computing:

$$

\displaystyle h_{j,m}^{(t)}=\sum_{1\leq i\leq C}w^{*}_{m,i}\cdot c_{i,j}^{(t)}, \tag{5}

$$

where $h_{j,m}^{(t)}\in[0,1]$ , $w^{*}_{m,i}=\exp(w_{m,i})/{\sum_{i^{\prime}}\exp(w_{m,i^{\prime}})}$ , and $w_{m,i}=\mathbf{w}_{m}[i]$ . Note that $w^{*}_{m,i}$ is interpreted as a probability that meta-rule $C_{i}\in\mathcal{C}$ is the $m$ -th component. We complete the $1$ -step forward reasoning by combining the results from different weights:

$$

\displaystyle r_{j}^{(t)}=\mathit{softor}^{\gamma}(h_{j,1}^{(t)},\ldots,h_{j,M

}^{(t)}). \tag{6}

$$

Taking $\mathit{softor}^{\gamma}$ means that we compose $M$ softly chosen rules out of $C$ candidate meta-rules.

(iii) Multi-step reasoning. We perform $T$ -step forward reasoning by computing $r_{j}^{(t)}$ recursively for $T$ times: $v^{(t+1)}_{j}=\mathit{softor}^{\gamma}(r^{(t)}_{j},v^{(t)}_{j})$ . Updating the valuation vector for $T$ -times corresponds to computing logical entailment softly by $T$ -step forward reasoning. The whole reasoning computation Eq. 1 - 6 can be implemented using efficient tensor operations.

## 4 Experiments

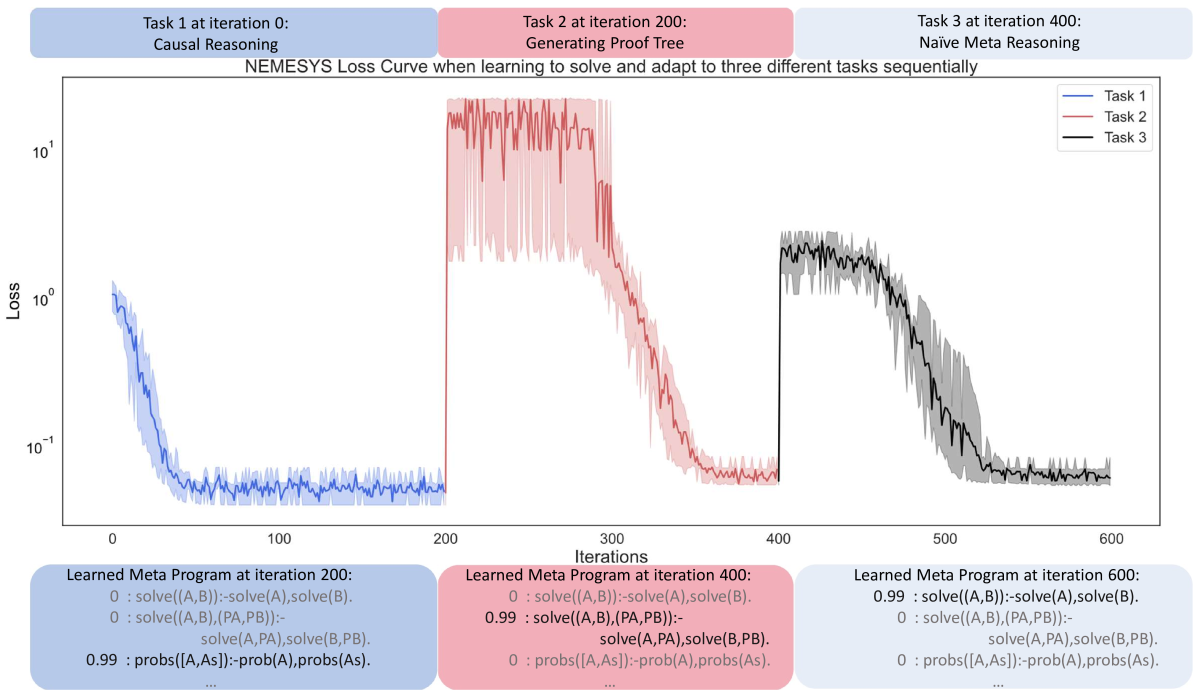

With the methodology of NEMESYS established, we subsequently provide empirical evidence of its benefits over neural baselines and object-level neuro-symbolic approaches: (1) NEMESYS can emulate a differentiable forward reasoner, i.e., it is a sufficient implementation of object-centric reasoners with a naive meta program. (2) NEMESYS is capable of differentiable meta-level reasoning, i.e., it can integrate additional useful functions using devised meta-rules. We demonstrate this advantage by solving tasks of proof-tree generation, relevance propagation, automated planning, and causal reasoning. (3) NEMESYS can perform parameter and structure learning efficiently using gradient descent, i.e., it can perform learning on meta-level programs.

In our experiments, we implemented NEMESYS in Python using PyTorch, with CPU: intel i7-10750H and RAM: 16 GB.

| | NEMESYS | ResNet50 | YOLO+MLP |

| --- | --- | --- | --- |

| Twopairs | 100.0 $\bullet$ | 50.81 | 98.07 $\circ$ |

| Threepairs | 100.0 $\bullet$ | 51.65 | 91.27 $\circ$ |

| Closeby | 100.0 $\bullet$ | 54.53 | 91.40 $\circ$ |

| Red-Triangle | 95.6 $\bullet$ | 57.19 | 78.37 $\circ$ |

| Online/Pair | 100.0 $\bullet$ | 51.86 | 66.19 $\circ$ |

| 9-Circles | 95.2 $\bullet$ | 50.76 $\circ$ | 50.76 $\circ$ |

Table 2: Performance (accuracy; the higher, the better) on the test split of Kandinsky patterns. The best-performing models are denoted using $\bullet$ , and the runner-up using $\circ$ . In Kandinsky patterns, NEMESYS produced almost perfect accuracies outperforming neural baselines, where YOLO+MLP is a neural baseline using pre-trained YOLO [42] combined with a simple MLP, showing the capability of solving complex visual reasoning tasks. The performances of baselines are shown in [15].

### 4.1 Visual Reasoning on Complex Pattenrs

Let us start off by showing that our NEMESYS is able to obtain the equivalent high-quality results as a standard object-level reasoner but on the meta-level. We considered tasks of Kandinsky patterns [19, 43] and CLEVR-Hans [14] We refer to [14] and [15] for detailed explanations of the used patterns for CLEVR-Hans and Kandinsky patterns.. CLEVR-Hans is a classification task of complex 3D visual scenes. We compared NEMESYS with the naive interpreter against neural baselines and a neuro-symbolic baseline, $\alpha$ ILP [24], which achieves state-of-the-art performance on these tasks. For all tasks, NEMESYS achieved exactly the same performances with $\alpha$ ILP since the naive interpreter realizes a conventional object-centric reasoner. Moreover, as shown in Table 2 and Table 3, NEMESYS outperformed neural baselines on each task. This shows that NEMESYS is able to solve complex visual reasoning tasks using meta-level reasoning without sacrificing performance.

In contrast to the object-centric reasoners, e.g., $\alpha$ ILP. NEMESYS can easily integrate additional useful functions by simply switching or adding meta programs without modifying the internal reasoning function, as shown in the next experiments.

### 4.2 Explainable Logical Reasoning

One of the major limitations of differentiable forward chaining [16, 17, 24] is that they lack the ability to explain the reasoning steps and their evidence. We show that NEMESYS achieves explainable reasoning by incorporating devised meta-level programs.

Reasoning with Integrated Proof Tree Generation

First, we demonstrate that NEMESYS can generate proof trees while performing reasoning, which the previous differentiable forward reasoners cannot produce since they encode the reasoning function to computational graphs using tensor operations and observe only their input and output. Since NEMESYS performs reasoning using meta-level programs, it can add the function to produce proof trees into its underlying reasoning mechanism simply by devising them, as illustrated in Fig 2.

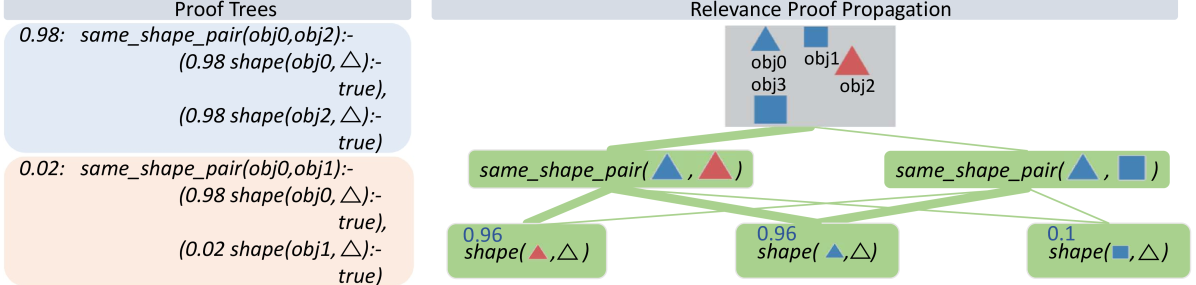

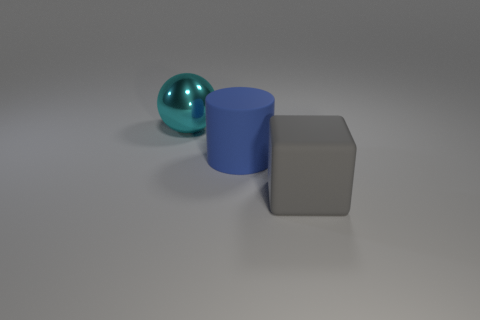

We use Kandinsky patterns [20], a benchmark of visual reasoning whose classification rule is defined on high-level concepts of relations and attributes of objects. We illustrate the input on the top right of Fig. 3 that belongs to a pattern: ”There are two pairs of objects that share the same shape.” Given the visual input, proof trees generated using the ProofTreeInterpreter in Sec. 3.1 are shown on the left two boxes of Fig. 3. In this experiment, NEMESYS identified the relations between objects, and the generated proof trees explain the intermediate reasoning steps.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Relevance Proof Propagation

### Overview

The image presents a diagram illustrating relevance proof propagation, alongside proof trees. It shows how the system determines the similarity of shapes between objects, using a tree-like structure to represent the propagation of evidence.

### Components/Axes

* **Titles:**

* "Proof Trees" (top-left)

* "Relevance Proof Propagation" (top-right)

* **Objects:**

* obj0 (blue triangle)

* obj1 (blue square)

* obj2 (red triangle)

* obj3 (blue square)

* **Nodes:** The diagram uses rounded rectangles to represent nodes, with text indicating the function or relationship being evaluated.

* **Edges:** Green lines connect the nodes, indicating the flow of information or relevance. The thickness of the lines varies, suggesting different levels of relevance or confidence.

* **Shapes:** Triangle (△), Square (□)

### Detailed Analysis

**1. Proof Trees (Left Side):**

* **Top Tree (Blue Background):**

* Confidence: 0.98

* Function: `same_shape_pair(obj0, obj2)`

* Sub-functions:

* `(0.98 shape(obj0, △):- true)`

* `(0.98 shape(obj2, △):- true)`

* **Bottom Tree (Orange Background):**

* Confidence: 0.02

* Function: `same_shape_pair(obj0, obj1)`

* Sub-functions:

* `(0.98 shape(obj0, △):- true)`

* `(0.02 shape(obj1, △):- true)`

**2. Relevance Proof Propagation (Right Side):**

* **Top Section (Gray Background):**

* Contains shapes representing objects:

* obj0: Blue Triangle

* obj1: Blue Square

* obj2: Red Triangle

* obj3: Blue Square

* **Middle Layer:**

* Node 1: `same_shape_pair(△, △)` (connected to obj0 and obj2)

* Node 2: `same_shape_pair(△, □)` (connected to obj0 and obj1)

* **Bottom Layer:**

* Node 1: `0.96 shape(△, △)` (connected to `same_shape_pair(△, △)`)

* Node 2: `0.96 shape(△, △)` (connected to `same_shape_pair(△, △)`)

* Node 3: `0.1 shape(□, △)` (connected to `same_shape_pair(△, □)`)

**3. Edge Connections:**

* The green lines connect the objects in the gray box to the `same_shape_pair` nodes.

* The `same_shape_pair` nodes are connected to the `shape` nodes in the bottom layer.

* The thickness of the lines varies, indicating the strength of the relationship or relevance.

### Key Observations

* The proof trees on the left provide the logical rules and confidence scores for determining shape similarity.

* The relevance proof propagation diagram on the right visually represents how these rules are applied to specific objects.

* The confidence scores (0.98, 0.02, 0.96, 0.1) indicate the certainty of the shape relationships.

* The varying thickness of the lines suggests different levels of relevance or confidence in the connections.

### Interpretation

The diagram illustrates a system for determining the relevance of shape relationships between objects. The proof trees define the rules and confidence scores, while the relevance proof propagation diagram shows how these rules are applied to specific objects. The system appears to be evaluating whether objects have the same shape (triangle or square) and assigning a confidence score to each relationship. The higher the confidence score, the more likely the objects are to have the same shape. The varying thickness of the lines suggests that some relationships are more relevant or have a higher degree of confidence than others. The diagram highlights the process of reasoning and evidence propagation in determining object similarity.

</details>

Figure 3: NEMESYS explains its reasoning with proof trees and relevance proof propagation. Given the image involving four objects (top, right), NEMESYS provides two proofs (two boxes on the left, true atom’s proof (blue box) and false atom’s proof (cream box)). They can be leveraged to decompose the prediction of NEMESYS into relevance scores per (ground) atom (right). First, a standard forward reasoning is performed to compute the prediction. Then, the model’s prediction is backward propagated through the proof trees by applying specific decomposition rules, see main text. The numbers next to each (ground) atom are the relevance scores computed. The larger the score is, the more impact an (ground) atom has on the final prediction, and the line width is wider. For brevity, the complete proof tree is not depicted here. As our baseline comparison, we extend DeepProbLog [21] to DeepMetaProbLog. However, DeepMetaProbLog only provides proof tree for true atoms (top left blue box). (Best viewed in color)

| | CLEVR-Hans3 | CLEVR-Hans7 | | |

| --- | --- | --- | --- | --- |

| Validation | Test | Validation | Test | |

| CNN | 99.55 $\circ$ | 70.34 | 96.09 | 84.50 |

| NeSy (Default) | 98.55 | 81.71 | 96.88 $\circ$ | 90.97 |

| NeSy-XIL | 100.00 $\bullet$ | 91.31 $\circ$ | 98.76 $\bullet$ | 94.96 $\bullet$ |

| NEMESYS | 98.18 | 98.40 $\bullet$ | 93.60 | 92.19 $\circ$ |

Table 3: Performance (accuracy; the higher, the better) on the validation/test splits of 3D CLEVR-Hans data sets. The best-performing models are denoted using $\bullet$ , and the runner-up using $\circ$ . In CLEVR-Hans, NEMESYS outperformed neural baselines including: (CNN) A ResNet [44], (NeSy) A model combining object-centric model (Slot Attention [45] and Set Transformer [46], and (NeSy-XIL) Slot Attention and Set Transformer using human feedback. NEMESYS tends to show less overfitting and performs similarly to a neuro-symbolic approach using human feedback (NeSy-XIL). The performances of baselines are shown in [14] and [15].

Let’s first consider the top left blue box depicted in Fig. 3 (for readability, we only show the proof part of meta atoms in the image). The weighted ground atom $\mathtt{0.98:}\mathtt{same\_shape\_pair(obj0,obj2)}$ proves $\mathtt{obj0}$ and $\mathtt{obj2}$ are of the same shape with the probability $0.98$ . The proof part shows that NEMESYS comes to this conclusion since both objects are triangle with probabilities of $\mathtt{0.98}$ and in turn it can apply the rule for $\mathtt{same\_shape\_pair}$ . We use this example to show how to compute the weight of the meta atoms inside NEMESYS. With the proof-tree meta rules and corresponding meta ground atoms:

| | $\displaystyle\mathtt{0.98:}\ \color[rgb]{0.5,0,1}\mathtt{solve(shape(obj0,} \text{\includegraphics[height=6.45831pt]{plots/triangle.png}}\mathtt{),(shape( obj0,}\text{\includegraphics[height=6.45831pt]{plots/triangle.png}}\mathtt{), true).}$ | |

| --- | --- | --- |

The weight of the meta ground atoms are computed by Meta Converter when mapping the probability of meta ground atoms to a continuous value. The meta ground atom says that $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ is true with a high probability of $0.98$ because $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ can be proven.

With the two meta ground atoms at hand, we infer the weight of the meta atom with compound goals $\color[rgb]{0,0.6,0}\mathtt{solve((shape(obj0,\includegraphics[height=6.45831 pt]{plots/triangle.png}),shape(obj2,\includegraphics[height=6.45831pt]{plots/ triangle.png})),(ProofA,ProofB))}$ , based on the first meta rule (for readability, we omit writing out the proof part). Then, we use the second meta rule to compute the weight of the meta atom $\color[rgb]{0.68,0.36,1}\mathtt{solve}\mathtt{(same\_shape\_pair(obj0,obj2)}, \mathtt{(Proof))}$ , using the compound goal meta atom $\color[rgb]{0,0.6,0}\mathtt{solve((shape(obj0,\includegraphics[height=6.45831 pt]{plots/triangle.png}),shape(obj2,\includegraphics[height=6.45831pt]{plots/ triangle.png})),(ProofA,ProofB))}$ and the meta atom $\mathtt{clause}\mathtt{(same\_shape\_pair(obj0,obj2)},\mathtt{(shape(obj0, \includegraphics[height=6.45831pt]{plots/triangle.png})},\mathtt{shape(obj2, \includegraphics[height=6.45831pt]{plots/triangle.png})))}$ .

In contrast, NEMESYS can explicitly show that $\mathtt{obj0}$ and $\mathtt{obj1}$ have a low probability of being of the same shape (Fig. 3 left bottom cream box). This proof tree shows that the goal $\mathtt{shape(obj1,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ has a low probability of being true. Thus, as one can read off, $\mathtt{obj0}$ is most likely a triangle, while $\mathtt{obj1}$ is most likely not a triangle. In turn, NEMESYS concludes with a low probability that $\mathtt{same\_shape\_pair(obj0,obj1)}$ is true, only a probability of $0.02$ . NEMESYS can produce all the information required to explain its decisions by simply changing the meta-program, not the underlying reasoning system.

Using meta programming to extend DeepProbLog to produce proof trees as a baseline comparison. Since DeepProbLog [21] doesn’t support generating proof trees in parallel with reasoning, we extend DeepProbLog [21] to DeepMetaProblog to generate proof trees as our baseline comparison using ProbLog [47]. However, the proof tree generated by DeepMetaProbLog is limited to the ‘true’ atoms (Fig. 3 top left blue box), i.e., DeepMetaProbLog is unable to generate proof tree for false atoms such as $\mathtt{same\_shape\_pair(obj0,obj1)}$ (Fig. 3 bottom left cream box) due to backward reasoning.

Logical Relevance Proof Propagation (LRP ${}^{2}$ )

Inspired by layer-wise relevance propagation (LRP) [48], which produces explanations for feed-forward neural networks, we now show that, LRP can be adapted to logical reasoning systems using declarative languages in NEMESYS, thereby enabling the reasoning system to articulate the rationale behind its decisions, i.e., it can compute the importance of ground atoms for a query by having access to proof trees. We call this process: logical relevance proof propagation (LRP ${}^{2}$ ).

The original LRP technique decomposes the prediction of the network, $f(\mathbf{x})$ , onto the input variables, $\mathbf{x}=\left(x_{1},\ldots,x_{d}\right)$ , through a decomposition $\mathbf{R}=\left(R_{1},\ldots,R_{d}\right)$ such that $\sum\nolimits_{p=1}^{d}R_{p}=f(\mathbf{x})\;$ . Given the activation $a_{j}=\rho\left(\sum_{i}a_{i}w_{ij}+b_{j}\right)$ of neuron, where $i$ and $j$ denote the neuron indices at consecutive layers, and $\sum_{i}$ and $\sum_{j}$ represent the summation over all neurons in the respective layers, the propagation of LRP is defined as: $R_{i}=\sum\nolimits_{j}z_{ij}({\sum\nolimits_{i}z_{ij}})^{-1}R_{j},$ where $z_{ij}$ is the contribution of neuron $i$ to the activation $a_{j}$ , typically some function of activation $a_{i}$ and the weight $w_{ij}$ . Starting from the output $f(\mathbf{x})$ , the relevance is computed layer by layer until the input variables are reached.

To adapt this in NEMESYS to ground atoms and proof trees, we have to be a bit careful, since we cannot deal with the uncountable, infinite real numbers within our logic. Fortunately, we can make use of the weight associated with ground atoms. That is, our LRP ${}^{2}$ composes meta-level atoms that represent the relevance of an atom given proof trees and associates the relevance scores to the weights of the meta-level atoms.

To this end, we introduce three meta predicates: $\mathtt{rp/3/[goal,proofs,atom]}$ that represents the relevance score an $\mathtt{atom}$ has on the $\mathtt{goal}$ in given $\mathtt{proofs}$ , $\mathtt{assert\_probs/1/[atom]}$ that looks up the valuations of the ground atoms and maps the probability of the $\mathtt{atom}$ to its weight. $\mathtt{rpf/2/[proof,atom]}$ represents how much an $\mathtt{atom}$ contributes to the $\mathtt{proof}$ . The atom $\mathtt{assert\_probs((Goal\texttt{:-}Body))}$ asserts the probability of the atom $\mathtt{(Goal\texttt{:-}Body)}$ . With them, the meta-level program of LRP ${}^{2}$ is:

| | $\displaystyle\mathtt{rp(Goal,Body,Atom)}\texttt{:-}\mathtt{assert\_probs((Goal \texttt{:-}Body))},$ | |

| --- | --- | --- |

where $\mathtt{rp(Goal,Proof,Atom)}$ represents the relevance score an $\mathtt{Atom}$ has on the $\mathtt{Goal}$ in a $\mathtt{Proof}$ , i.e., we interpret the associated weight with atom $\mathtt{rp(Goal,Proof,Atom)}$ as the actual relevance score of $\mathtt{Atom}$ has on $\mathtt{Goal}$ given $\mathtt{Proof}$ . The higher the weight of $\mathtt{rp(Goal,Proof,Atom)}$ is, the larger the impact of $\mathtt{Atom}$ has on the $\mathtt{Goal}$ .

Let us go through the meta rules of LRP ${}^{2}$ . The first rule defines how to compute the relevance score of an $\mathtt{Atom}$ given the $\mathtt{Goal}$ under the condition of a $\mathtt{Body}$ (a single $\mathtt{Proof}$ ). The relevance score is computed by multiplying the weight of the $\mathtt{Body}$ , the weight of a clause $\mathtt{(Goal\texttt{:-}Body)}$ and the importance score of the $\mathtt{Atom}$ given the $\mathtt{Body}$ . The second to the seventh rule defines how to calculate the importance score of an $\mathtt{Atom}$ given a $\mathtt{Proof}$ . These six rules loop over each atom of the given $\mathtt{Proof}$ , once it detects the $\mathtt{Atom}$ inside the given $\mathtt{Proof}$ , the importance score will be set to the weight of the $\mathtt{Atom}$ , another case is that the $\mathtt{Atom}$ is not in $\mathtt{Proof}$ , in that case, in the seventh rule, $\mathtt{norelate}$ will set the importance score to a small value. The eighth and ninth rules amalgamate the results from different proofs, i.e., the score from each proof tree is computed recursively during forward reasoning. The scores for the same target (the pair of $\mathtt{Atom}$ and $\mathtt{Goal}$ ) are combined by the $\mathit{softor}$ operation. The score of an atom given several proofs is computed by taking logical or softly over scores from each proof.

With these nine meta rules at hand, together with the proof tree, NEMESYS is able to perform the relevance proof propagation for different atoms. We consider using the proof tree generated in Sec. 4.2 and set the goal as: $\mathtt{same\_shape\_pair(obj0,obj2)}$ . Fig. 3 (right) shows LRP ${}^{2}$ -based explanations generated by NEMESYS. The relevance scores of different ground atoms are listed next to each (ground) atom. As we can see, the atoms $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ and $\mathtt{shape(obj2,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ have the largest impact on the goal $\mathtt{same\_shape\_pair(obj0,obj2)}$ , while $\mathtt{shape(obj1,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ have much smaller impact.

By providing proof tree and LRP ${}^{2}$ , NEMESYS computes the precise effect of a ground atom on the goal and produces an accurate proof to support its conclusion. This approach is distinct from the Most Probable Explanation (MPE) [49], which generates the most probable proof rather than the exact proof.

<details>

<summary>extracted/5298395/images/plan/0v.png Details</summary>

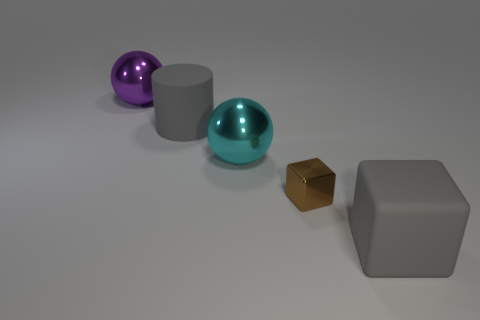

### Visual Description

## Still Life: Geometric Shapes

### Overview

The image is a still life featuring three geometric shapes: a large purple sphere, a smaller metallic purple sphere, and a red cylinder. The shapes are arranged on a light gray surface with a gradient background.

### Components/Axes

* **Shapes:**

* Large Sphere: Located towards the top-center of the image, matte purple color.

* Small Sphere: Located to the left of the large sphere, metallic purple color.

* Cylinder: Located to the right of the large sphere, red color.

* **Surface:** Light gray, appears to be a flat plane.

* **Background:** Gradient from light gray to darker gray, suggesting a light source from the left.

### Detailed Analysis or ### Content Details

* **Large Sphere:** Positioned slightly above the center of the image. The diameter of the sphere is approximately 1/5 of the image width.

* **Small Sphere:** Positioned to the left and slightly below the large sphere. It has a reflective surface. The diameter of the sphere is approximately 1/10 of the image width.

* **Cylinder:** Positioned to the right and slightly below the large sphere. The height of the cylinder is approximately 1/15 of the image width, and the diameter is similar.

* **Lighting:** The lighting creates soft shadows behind each object, indicating a diffused light source.

### Key Observations

* The arrangement of the shapes is asymmetrical, with the large sphere acting as a central point.

* The contrast in color and texture between the matte purple sphere, the metallic purple sphere, and the red cylinder adds visual interest.

* The gradient background provides depth to the image.

### Interpretation

The image is a simple composition that highlights the basic geometric forms and their interaction with light and shadow. The arrangement of the shapes and the use of color create a visually balanced and aesthetically pleasing image. The image does not provide any specific data or facts beyond the visual representation of the shapes.

</details>

<details>

<summary>extracted/5298395/images/plan/1v0.png Details</summary>

### Visual Description

## Still Life: Geometric Shapes

### Overview

The image is a still life featuring three geometric shapes: a large purple sphere, a smaller metallic purple sphere, and a red cylinder. They are arranged on a light gray surface with a gradient background.

### Components/Axes

* **Shapes:**

* Large Purple Sphere: Located towards the top-left of the image.

* Small Metallic Purple Sphere: Positioned below and slightly to the right of the large sphere.

* Red Cylinder: Situated to the right of the small sphere.

* **Surface:** Light gray with a subtle gradient.

* **Background:** Light gray, slightly darker than the surface.

### Detailed Analysis

* **Large Purple Sphere:** The sphere has a matte finish and is a solid purple color.

* **Small Metallic Purple Sphere:** This sphere has a reflective, metallic surface, with highlights and shadows indicating its roundness.

* **Red Cylinder:** The cylinder is a solid red color with a flat top and bottom.

* **Arrangement:** The shapes are arranged in a loose triangular formation. The large sphere is the highest, followed by the small sphere, and then the cylinder.

* **Lighting:** The lighting appears to be coming from the left, casting shadows to the right of each object.

### Key Observations

* The contrast between the matte and metallic surfaces of the spheres is notable.

* The color palette is limited to purple and red, creating a simple and visually appealing composition.

* The arrangement of the shapes creates a sense of depth and perspective.

### Interpretation

The image is a study in form, color, and texture. The simple geometric shapes are arranged in a way that is both visually pleasing and thought-provoking. The contrast between the matte and metallic surfaces, as well as the limited color palette, creates a sense of harmony and balance. The image could be interpreted as a representation of simplicity, order, and beauty.

</details>

<details>

<summary>extracted/5298395/images/plan/1v1.png Details</summary>

### Visual Description

## Geometric Shapes Arrangement

### Overview

The image depicts three geometric shapes - a large purple sphere, a smaller metallic purple sphere, and a red cylinder - arranged on a light gray surface. The lighting appears to originate from the top-left, casting shadows behind the objects.

### Components/Axes

* **Shapes:**

* Large Sphere: Located towards the top-left, colored purple.

* Small Sphere: Located between the large sphere and the cylinder, colored metallic purple.

* Cylinder: Located towards the bottom-right, colored red.

* **Surface:** Light gray, acting as the background.

* **Lighting:** Appears to be coming from the top-left, creating shadows behind the shapes.

### Detailed Analysis or ### Content Details

* **Large Purple Sphere:** Positioned in the upper-left quadrant of the image. It is a matte purple color.

* **Small Metallic Purple Sphere:** Positioned to the right and slightly below the large sphere. It has a reflective, metallic surface.

* **Red Cylinder:** Positioned to the right and slightly below the small sphere. It appears to be a solid red color.

* **Shadows:** Each shape casts a shadow, indicating a light source from the top-left. The shadows are darker closer to the base of the shapes and fade as they extend away.

### Key Observations

* The shapes are arranged in a diagonal line from the top-left to the bottom-right.

* The size of the spheres decreases from left to right.

* The cylinder is the only non-spherical shape.

* The lighting highlights the different surface properties of the shapes (matte vs. metallic).

### Interpretation

The image appears to be a simple composition of geometric shapes, possibly for illustrative or demonstrative purposes. The arrangement and lighting create a sense of depth and visual interest. The different colors and surface properties of the shapes add to the overall aesthetic. The image does not provide any specific data or facts beyond the visual representation of the shapes and their arrangement.

</details>

<details>

<summary>extracted/5298395/images/plan/final.png Details</summary>

### Visual Description

## Geometric Shapes: Simple Scene

### Overview

The image depicts a simple scene with three geometric shapes: a large purple sphere, a smaller metallic purple sphere, and a red cylinder. They are arranged on a light gray surface with subtle shadows, suggesting a rendered or simulated environment.

### Components/Axes

* **Shapes:**

* Large Sphere: Located on the left side of the image, matte purple color.

* Small Sphere: Located to the right of the large sphere, metallic purple color.

* Cylinder: Located on the right side of the image, red color.

* **Surface:** Light gray, providing a neutral background.

* **Lighting:** Appears to be a single light source, casting shadows behind the shapes.

### Detailed Analysis or ### Content Details

* **Large Sphere:** Positioned in the top-left quadrant of the image. The color is a matte purple.

* **Small Sphere:** Positioned to the right and slightly below the large sphere. The color is a metallic purple, reflecting light.

* **Cylinder:** Positioned on the right side of the image, slightly below the small sphere. The color is red.

* **Shadows:** Each shape casts a shadow, indicating a light source from the top-left. The shadows are soft and diffuse.

### Key Observations

* The arrangement of the shapes is simple and uncluttered.

* The color palette is limited to purple, red, and gray.

* The lighting is soft and even, creating a sense of depth.

### Interpretation

The image appears to be a basic 3D rendering or a simple composition of geometric shapes. The arrangement and lighting suggest a deliberate attempt to create a visually pleasing scene. The use of different materials (matte vs. metallic) adds visual interest. There is no explicit data or information being conveyed beyond the visual representation of the shapes and their arrangement.

</details>

Figure 4: Visual Concept Repairing: NEMESYS achieves planning by performing differentiable meta-level reasoning. The left most image shows the start state, and the right most image shows the goal state. Taking these states as inputs, NEMESYS performs differentiable forward reasoning using meta-level clauses that simulate the planning steps and generate intermediate states (two images in the middle) and actions from start state to reach the goal state. (Best viewed in color)

### 4.3 Avoiding Infinite Loops

Differentiable forward chaining [17], unfortunately, can generate infinite computations. A pathological example:

| | $\displaystyle\mathtt{edge(a,b).\ edge(b,a).}\ \mathtt{edge(b,c).}\quad\mathtt{ path(A,A,[\ ]).}\quad$ | |

| --- | --- | --- |

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Accuracy Test on 4 Queries

### Overview

The image is a bar chart comparing the accuracy of two systems, ProbLog and NEMESYS, based on a test of 4 queries. The y-axis represents accuracy, ranging from 0.0 to 1.0. The x-axis represents the two systems being compared.

### Components/Axes

* **Title:** Test on 4 queries

* **X-axis:**

* Labels: ProbLog, NEMESYS

* **Y-axis:**

* Label: Accuracy

* Scale: 0.0, 0.5, 1.0

* **Bars:**

* ProbLog: Light blue/purple color

* NEMESYS: Light red/orange color

### Detailed Analysis

* **ProbLog:** The light blue/purple bar reaches an accuracy of approximately 0.75.

* **NEMESYS:** The light red/orange bar reaches an accuracy of 1.0.

### Key Observations

* NEMESYS achieves a higher accuracy (1.0) compared to ProbLog (0.75) on the test of 4 queries.

### Interpretation

The bar chart indicates that NEMESYS outperforms ProbLog in terms of accuracy when tested on the given 4 queries. NEMESYS achieves perfect accuracy, while ProbLog's accuracy is at 75%. This suggests that, for these specific queries, NEMESYS is a more reliable system. The limited number of queries (4) should be considered when generalizing these results.

</details>

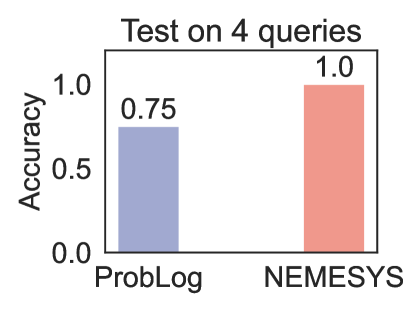

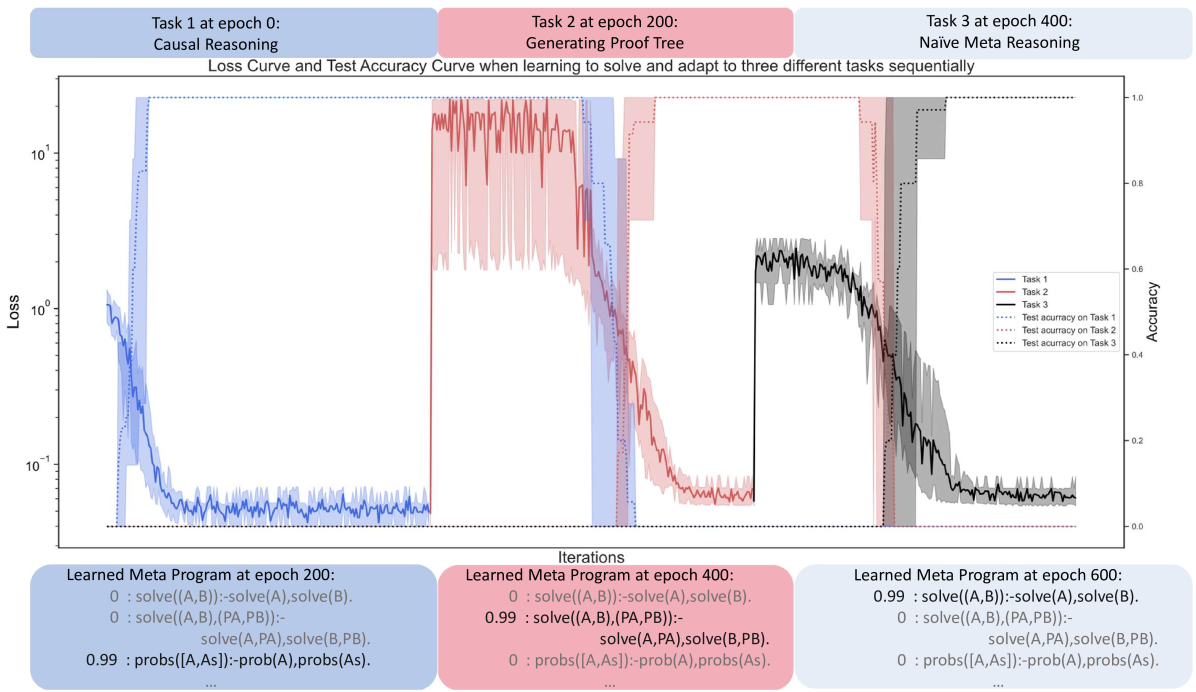

Figure 5: Performance (accuracy; the higher, the better)on four queries. (Best viewed in color)

It defines a simple graph over three nodes $(a,b,c)$ with three edges, $(a-b,b-a,b-c)$ as well as paths in graphs in general. Specifically, $\mathtt{path}/3$ defines how to find a path between two nodes in a recursive way. The base case is $\mathtt{path(A,A,[])}$ , meaning that any node $\mathtt{A}$ is reachable from itself. The recursion then says, if there is an edge from node $\mathtt{A}$ to node $\mathtt{B}$ , and there is a path from node $\mathtt{B}$ to node $\mathtt{C}$ , then there is a path from node $\mathtt{A}$ to node $\mathtt{C}$ . Unfortunately, this generates an infinite loop $\mathtt{[edge(a,b),edge(b,a),edge(a,b),\ldots]}$ when computing the path from $a$ to $c$ , since this path can always be extended potentially also leading to the node $c$ .

Fortunately, NEMESYS allows one to avoid infinite loops by memorizing the proof-depth, i.e., we simply implement a limited proof-depth strategy on the meta-level:

| | $\displaystyle\mathtt{li((A,B),DPT)}\texttt{:-}\mathtt{li(A,DPT)},\mathtt{li(B, DPT).}$ | |

| --- | --- | --- |

With this proof strategy, NEMESYS gets the path $\mathtt{path(a,c,[edge(a,b),edge(b,c)])=true}$ in three steps. For simplicity, we omit the proof part in the atom. Using the second rule and the first rule recursively, the meta interpreter finds $\mathtt{clause(path(a,c),(edge(a,b),path(b,c)))}$ and $\mathtt{clause(path(b,c),(edge(b,c),path(c,c)))}$ . Finally, the meta interpreter finds a clause, whose head is $\mathtt{li(path(c,c),1)}$ and the body is true.

Since forward chaining gets stuck in the infinite loop, we choose ProbLog [47] as our baseline comparison. We test NEMESYS and ProbLog using four queries, including one query which calls the recursive rule. ProbLog fails to return the correct answer on the query which calls the recursive rule. The comparison is summarized in Fig. 5. We provide the four test queries in Appendix A.

### 4.4 Differentiable First-Order Logical Planning

As the fourth meta interpreter, we demonstrate NEMESYS as a differentiable planner. Consider Fig. 4 where NEMESYS was asked to put all objects of a start image onto a line. Each start and goal state is represented as a visual scene, which is generated in the CLEVR [18] environment. By adopting a perception model, e.g., YOLO [42] or slot attention [45], NEMESYS obtains logical representations of the start and end states:

| | $\displaystyle\mathtt{start}$ | $\displaystyle=\{\mathtt{pos(obj0,(1,3)),\ldots,pos(obj4,(2,1))}\},$ | |

| --- | --- | --- | --- |

where $\mathtt{pos/2}$ describes the $2$ -dim positions of objects. NEMESYS solves this planning task by performing differentiable reasoning using the meta-level program:

| | $\displaystyle\mathtt{plan(Start\_state,}\mathtt{New\_state,Goal\_state,[Action ,Old\_stack])}\textbf{:-}$ | |

| --- | --- | --- |

The first meta rule presents the recursive rule for plan generation, and the second rule gives the successful termination condition for the plan when the $\mathtt{Goal\_state}$ is reached, where $\mathtt{equal/2}$ checks whether the $\mathtt{Current\_state}$ is the $\mathtt{Goal\_state}$ and the $\mathtt{planf/3}$ contains $\mathtt{Start\_state}$ , $\mathtt{Goal\_state}$ and the needed action sequences $\mathtt{Move\_stack}$ from $\mathtt{Start\_state}$ to reach the $\mathtt{Goal\_state}$ .

The predicate $\mathtt{plan/4}$ takes four entries as inputs: $\mathtt{Start\_state}$ , $\mathtt{State}$ , $\mathtt{Goal\_state}$ and $\mathtt{Move\_stack}$ . The $\mathtt{move/3}$ predicate uses $\mathtt{Action}$ to push $\mathtt{Old\_state}$ to $\mathtt{New\_state}$ . $\mathtt{condition\_met/2}$ checks if the state’s preconditions are met. When the preconditions are met, $\mathtt{change\_state/2}$ changes the state, and $\mathtt{plan/4}$ continues the recursive search.

To reduce memory usage, we split the move action in horizontal and vertical in the experiment. For example, NEMESYS represents an action to move an object in the horizontal direction right by $\mathtt{1}$ step using meta-level atom:

| | $\displaystyle\mathtt{move(}$ | $\displaystyle\mathtt{move\_right},\mathtt{pos\_hori(Object,X),}\mathtt{pos\_ hori(Object,X}\texttt{+}\mathtt{1)).}$ | |

| --- | --- | --- | --- |

where $\mathtt{move\_right}$ represents the action, $\mathtt{X+1}$ represents arithmetic sums over (positive) integers, encoded as $\mathtt{0,succ(0),succ(succ(0))}$ and so on as terms. Performing reasoning on the meta-level clause with $\mathtt{plan}$ simulates a step as a planner, i.e., it computes preconditions, and applies actions to compute states after taking the actions. Fig. 4 summarizes one of the experiments performed using NEMESYS on the Visual Concept Repairing task. We provided the start and goal states as visual scenes containing varying numbers of objects with different attributes. The left most image of Fig. 4 shows the start state, and the right most image shows the goal state, respectively. NEMESYS successfully moved objects to form a line. For example, to move $\mathtt{obj0}$ from $\mathtt{(1,1)}$ to $\mathtt{(3,1)}$ , NEMESYS deduces:

| | $\displaystyle\mathtt{planf(}$ | $\displaystyle\mathtt{pos\_hori(obj0,1)},\mathtt{pos\_hori(obj0,3),}\mathtt{[ move\_right,move\_right]).}$ | |

| --- | --- | --- | --- |

This shows that NEMESYS is able to perceive objects from an image, reason about the image, and edit the image through planning. To the best of our knowledge, this is the first differentiable neuro-symbolic system equipped with all of these abilities. We provide more Visual Concept Repairing tasks in Appendix B.

### 4.5 Differentiable Causal Reasoning

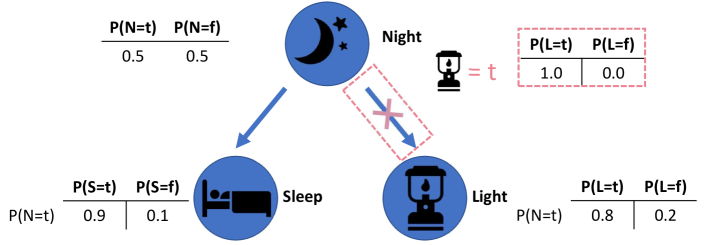

As the last meta interpreter, we show that NEMESYS exhibits superior performance compared to the existing forward reasoning system by having the causal reasoning ability. Notably, given a causal Bayesian network, NEMESYS can perform the $\mathtt{do}$ operation (deleting the incoming edges of a node) [28] on arbitrary nodes and perform causal reasoning without the necessity of re-executing the entire system, which is made possible through meta-level programming.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bayesian Network Diagram: Night, Sleep, and Light

### Overview

The image depicts a Bayesian network diagram illustrating the probabilistic relationships between three variables: Night, Sleep, and Light. The diagram includes nodes representing these variables, directed edges indicating dependencies, and conditional probability tables quantifying the relationships.

### Components/Axes

* **Nodes:**

* **Night:** Represented by a blue circle containing a moon and stars icon. Labeled "Night" to the right of the circle.

* **Sleep:** Represented by a blue circle containing a bed icon. Labeled "Sleep" below the circle.

* **Light:** Represented by a blue circle containing a lantern icon. Labeled "Light" to the right of the circle.

* **Edges:**

* A directed edge (arrow) points from the "Night" node to the "Sleep" node.

* A directed edge (arrow) points from the "Night" node to the "Light" node, but this edge is crossed out with a pink "X" inside a dashed pink rectangle.

* **Conditional Probability Tables:**

* **Night:** A table above the "Night" node shows the prior probabilities: P(N=t) = 0.5 and P(N=f) = 0.5.

* **Sleep:** A table below the "Sleep" node shows the conditional probabilities: P(S=t | N=t) = 0.9 and P(S=f | N=t) = 0.1.

* **Light:** A table below the "Light" node shows the conditional probabilities: P(L=t | N=t) = 0.8 and P(L=f | N=t) = 0.2.

* A table to the right of the "Night" node shows the conditional probabilities: P(L=t) = 1.0 and P(L=f) = 0.0, with "Lantern = t" to the left of the table.

### Detailed Analysis

* **Night Node:**

* P(N=t) = 0.5: The probability of it being night (N=t) is 0.5.

* P(N=f) = 0.5: The probability of it not being night (N=f) is 0.5.

* **Sleep Node:**

* P(S=t | N=t) = 0.9: Given that it is night (N=t), the probability of someone sleeping (S=t) is 0.9.

* P(S=f | N=t) = 0.1: Given that it is night (N=t), the probability of someone not sleeping (S=f) is 0.1.

* **Light Node:**

* P(L=t | N=t) = 0.8: Given that it is night (N=t), the probability of the light being on (L=t) is 0.8.

* P(L=f | N=t) = 0.2: Given that it is night (N=t), the probability of the light being off (L=f) is 0.2.

* **Light Node (Alternative):**

* P(L=t) = 1.0: The probability of the light being on (L=t) is 1.0.

* P(L=f) = 0.0: The probability of the light being off (L=f) is 0.0. This is only true when the lantern is true.

### Key Observations

* The "Night" node influences both the "Sleep" and "Light" nodes.

* The edge between "Night" and "Light" is crossed out, suggesting that the light is on regardless of whether it is night or not.

* The probability of sleeping is high (0.9) when it is night.

* The probability of the light being on is high (0.8) when it is night, but the alternative probability is 1.0 when the lantern is true.

### Interpretation

The diagram represents a simplified model of the relationships between night, sleep, and light. The crossed-out edge and the alternative probabilities for the "Light" node indicate that the light is on regardless of whether it is night or not. This could represent a scenario where the light is controlled by an external factor, such as a switch, and is always on. The high probability of sleeping when it is night suggests a strong correlation between these two variables. The diagram demonstrates how Bayesian networks can be used to model probabilistic dependencies between variables and make inferences based on observed data.

</details>

Figure 6: Performing differentiable causal reasoning and learning using NEMESYS. Given a causal Bayesian network, NEMESYS can easily perform the do operation (delete incoming edges) on arbitrary nodes and capture the causal effects on different nodes (for example, the probability of the node $\mathtt{Light}$ after intervening) without rerunning the entire system. Furthermore, NEMESYS is able to learn the unobserved $\mathtt{do}$ operation with its corresponding value using gradient descent based on the given causal graph and observed data. (Best viewed in color)

The $\mathtt{do}$ operator, denoted as $\mathtt{do(X)}$ , is used to represent an intervention on a particular variable $\mathtt{X}$ in a causal learning system, regardless of the actual value of the variable. For example, Fig. 6 shows a causal Bayesian network with three nodes and the probability distribution of the nodes before and after the $\mathtt{do}$ operation. To investigate how the node $\mathtt{Light}$ affects the rest of the system, we firstly cut the causal relationship between the node $\mathtt{Light}$ and all its parent nodes, then we assign a new value to the node and we investigate the probability of other nodes. To enable NEMESYS to perform a $\mathtt{do}$ operation on the node $\mathtt{Light}$ , we begin by representing the provided causal Bayesian network in Fig. 6 using:

| | $\displaystyle\mathtt{0.5}\texttt{:}\ \mathtt{Night}.\quad\mathtt{0.9}\texttt{: }\ \mathtt{Sleep}\texttt{:-}\mathtt{Night}.\quad\mathtt{0.8}\texttt{:}\ \mathtt{Light}\texttt{:-}\mathtt{Night}.$ | |

| --- | --- | --- |

where the number of an atom indicates the probability of the atom being true, and the number of a clause indicates the conditional probability of the head being true given the body being true.

We reuse the meta predicate $\mathtt{assert\_probs/1/[atom]}$ and introduce three new meta predicates: $\mathtt{prob/1/[atom]}$ , $\mathtt{probs/1/[atoms]}$ and $\mathtt{probs\_do/1/[atoms,atom]}$ . Since we cannot deal with the uncountable, infinite real numbers within our logic, we make use of the weight associated with ground meta atoms to represent the probability of the atom. For example, we use the weight of the meta atom $\mathtt{prob(Atom)}$ to represent the probability of the atom $\mathtt{Atom}$ . We use the weight of the meta atom $\mathtt{probs(Atoms)}$ to represent the joint probability of a list of atoms $\mathtt{Atoms}$ , and the weight of $\mathtt{probs\_do(AtomA,AtomB)}$ to represent the probability of the atom $\mathtt{AtomA}$ after performing the do operation $\mathtt{do(AtomB)}$ . We modify the meta interpreter as:

| | $\displaystyle\mathtt{prob(Head)}\texttt{:-}\mathtt{assert\_probs((Head\texttt{ :-}Body))},\mathtt{probs(Body).}$ | |

| --- | --- | --- |

where the first three rules calculate the probability of a node before the intervention, the joint probability is approximated using the first and second rule by iteratively multiplying each atom. The fourth rule assigns the probability of the atom $\mathtt{Atom}$ using the $\mathtt{do}$ operation. The fifth to the eighth calculate the probability after the $\mathtt{do}$ intervention by looping over each atom and multiplying them.

For example, after performing $\mathtt{do(Light)}$ and setting the probability of $\mathtt{Light}$ as $1.0$ . NEMESYS returns the weight of $\mathtt{probs\_do(Light,Light)}$ as the probability of the node $\mathtt{Light}$ (Fig. 6 red box) after the intervention $\mathtt{do(Light)}$ .

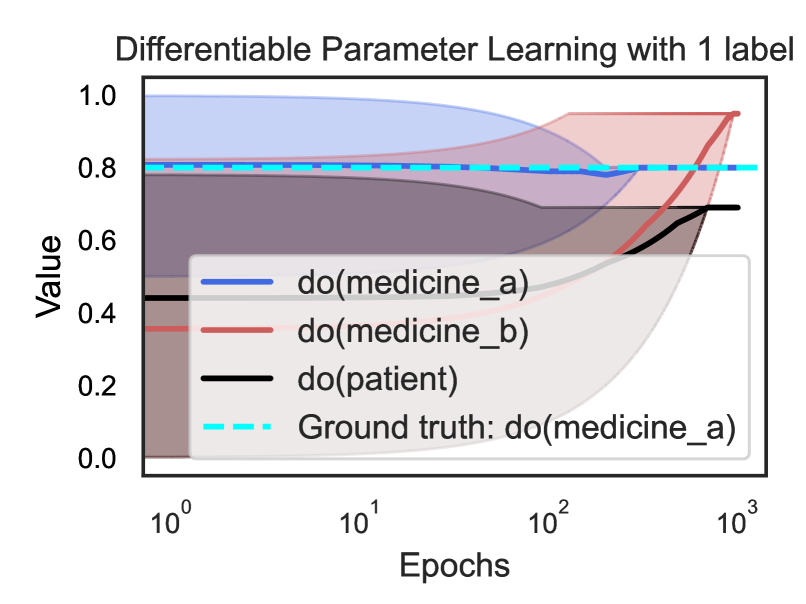

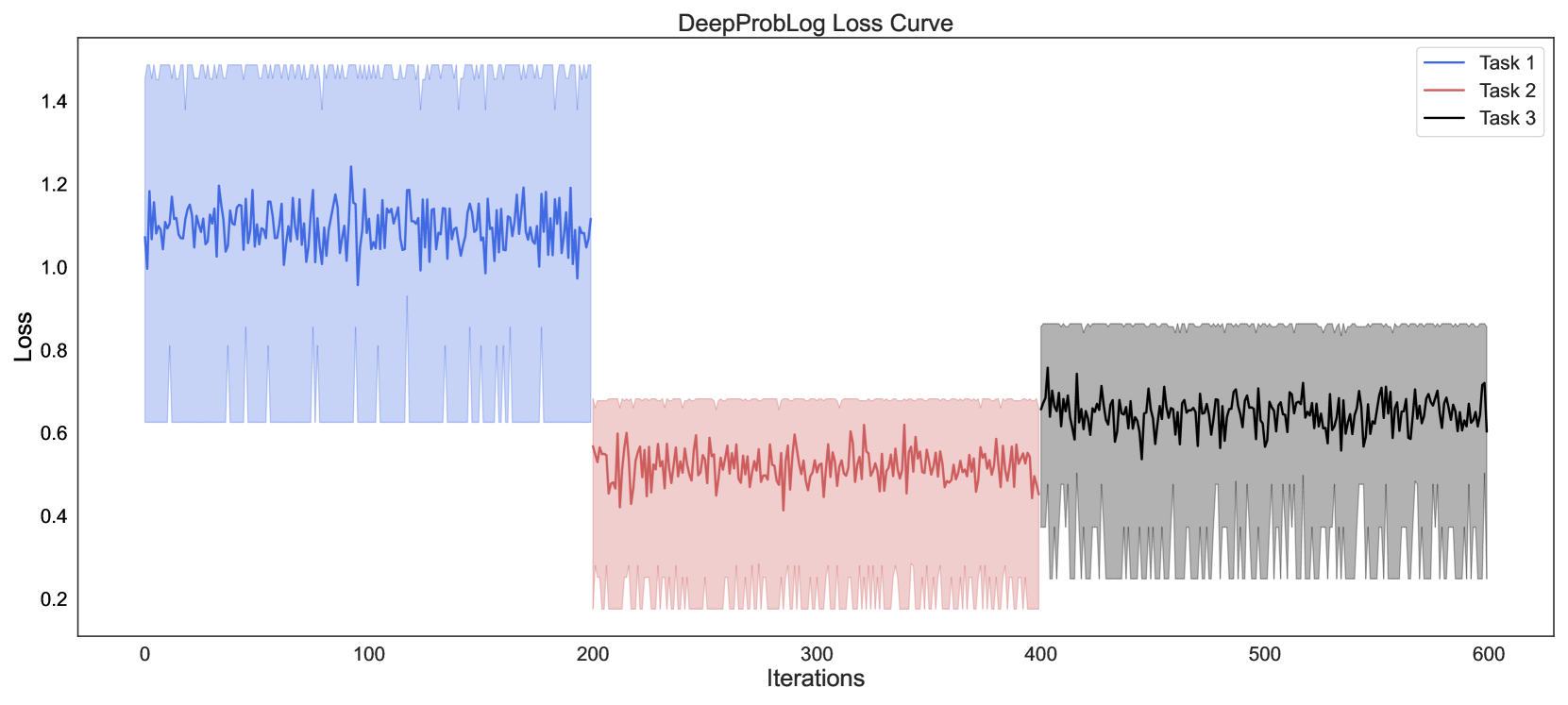

### 4.6 Gradient-based Learning in NEMESYS

NEMESYS alleviates the limitations of frameworks such as DeepProbLog [21] by having the ability of not only performing differentiable parameter learning but also supporting differentiable structure learning (in our experiment, NEMESYS learns the weights of the meta rules while adapting to solve different tasks). We now introduce the learning ability of NEMESYS.

#### 4.6.1 Parameter Learning

Consider a scenario in which a patient can only experience effective treatment when two types of medicine synergize, with the effectiveness contingent on the dosage of each drug. Suppose we have known the dosages of two medicines and the causal impact of the medicines on the patient, however, the observed effectiveness does not align with expectations. It is certain that some interventions have occurred in the medicine-patient causal structure (such as an incorrect dosage of one medicine, which will be treated as an intervention using the $\mathtt{do}$ operation). However, the specific node (patient or the medicines) on which the $\mathtt{do}$ operation is executed, and the values assigned to the $\mathtt{do}$ operator remain unknown. Conducting additional experiments on patients by altering medicine dosages to uncover the $\mathtt{do}$ operation is both unethical and dangerous.

With NEMESYS at hand, we can easily learn the unobserved $\mathtt{do}$ operation with its assigned value. We abstract the problem using a three-node causal Bayesian network:

$$

\mathtt{1.0:medicine\_a.}\quad\mathtt{1.0:medicine\_b.}\quad\mathtt{0.9:

patient}\texttt{:-}\mathtt{medicine\_a,medicine\_b.}

$$

where the number of the atoms indicates the dosage of each medicine, and the number of the clause indicates the conditional probability of the effectiveness of the patient given these two medicines. Suppose there is only one unobserved $\mathtt{do}$ operation.

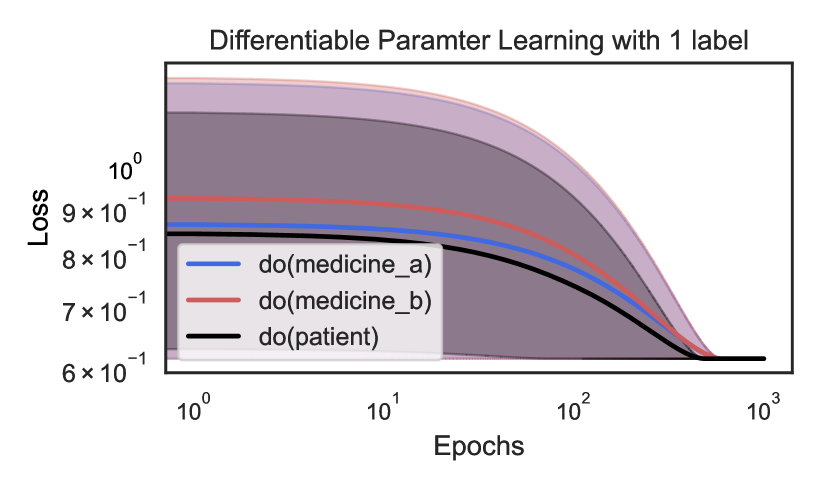

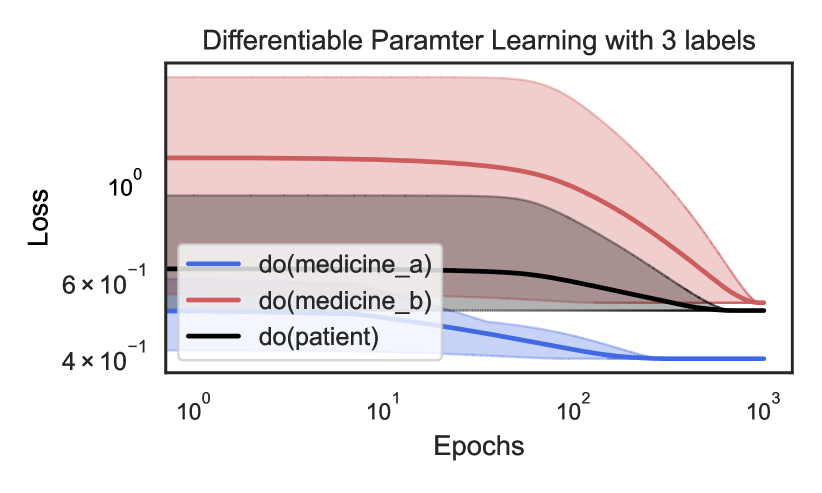

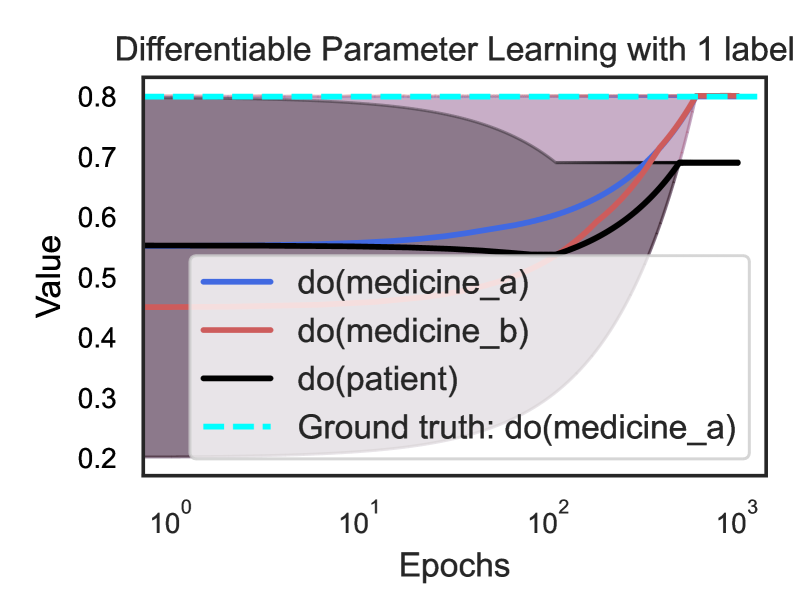

To learn the unknown $\mathtt{do}$ operation, we define the loss as the Binary Cross Entropy (BCE) loss between the observed probability $\mathbf{p}_{target}$ and the predicted probability of the target atom $\mathbf{p}_{predicted}$ . The predicted probability $\mathbf{p}_{predicted}$ is computed as: $\mathbf{p}_{predicted}=\mathbf{v}^{(T)}\left[I_{\mathcal{G}}(\operatorname{ target\_atom})\right]$ , where $I_{\mathcal{G}}(x)$ is a function that returns the index of target atom in $\mathcal{G}$ , $\mathbf{v}[i]$ is the $i$ -th element of $\mathbf{v}$ . $\mathbf{v}^{(T)}$ is the valuation tensor computed by $T$ -step forward reasoning based on the initial valuation tensor $\mathbf{v}^{(0)}$ , which is composed of the initial valuation of $\mathtt{do}$ and other meta ground atoms. Since the valuation of $\mathtt{do}$ atom is the only changing parameter, we set the gradient of other parameters as $0 0$ . We minimize the loss w.r.t. $\mathtt{do(X)}$ : $\underset{\mathtt{do(X)}}{\mathtt{minimize}}\quad\mathtt{L_{loss}}=\mathtt{BCE }(\mathbf{p}_{target},\mathbf{p}_{predicted}\mathtt{(do(X)))}.$ Fig. 7 summarizes the loss curve of the three $\mathtt{do}$ operators during learning using one target (Fig. 7 left) and three targets (Fig. 7 right). For the three targets experiment, $\mathbf{p}_{target}$ consists of three observed probabilities (the effectiveness of the patient and the dosages of two medicines), for the experiment with one target, $\mathbf{p}_{target}$ only consists of the observed the effectiveness of the patient.

We randomly initialize the probability of the three $\mathtt{do}$ operators and choose the one, which achieves the lowest loss as the right $\mathtt{do}$ operator. In the three targets experiment, the blue curve achieves the lowest loss, with its corresponding value converges to the ground truth value, while in the one target experiment, three $\mathtt{do}$ operators achieve equivalent performance. We provide the value curves of three $\mathtt{do}$ operators and the ground truth $\mathtt{do}$ operator with its value in Appendix C.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Chart: Differentiable Parameter Learning with 1 label

### Overview

The image is a line chart showing the loss over epochs for three different conditions: "do(medicine_a)", "do(medicine_b)", and "do(patient)". The x-axis (Epochs) is on a logarithmic scale. Shaded regions around each line indicate uncertainty or variance.

### Components/Axes

* **Title:** Differentiable Parameter Learning with 1 label

* **X-axis:**

* Label: Epochs

* Scale: Logarithmic

* Markers: 10<sup>0</sup>, 10<sup>1</sup>, 10<sup>2</sup>, 10<sup>3</sup>

* **Y-axis:**

* Label: Loss

* Scale: Linear

* Markers: 6 x 10<sup>-1</sup>, 7 x 10<sup>-1</sup>, 8 x 10<sup>-1</sup>, 9 x 10<sup>-1</sup>, 10<sup>0</sup>

* **Legend:** Located in the center-left of the chart.

* Blue line: do(medicine\_a)

* Red line: do(medicine\_b)

* Black line: do(patient)

### Detailed Analysis

* **do(medicine\_a) (Blue):** The blue line starts at a loss of approximately 0.86 at epoch 1. It decreases slightly until around epoch 10, then decreases more rapidly until it plateaus at a loss of approximately 0.61 around epoch 100.