# Deep Meta Programming

**Authors**: HikaruShindo, Devendra SinghDhami, KristianKersting

[1,3] Zihan Ye

1] AI and Machine Learning Group, Dept. of Computer Science, TU Darmstadt, Germany

2] Centre for Cognitive Science, TU Darmstadt, Germany

3] Hessian Center for AI (hessian.AI), Germany 4] German Center for Artificial Intelligence (DFKI), Germany 5] Eindhoven University of Technology, Netherlands

Neural Meta-Symbolic Reasoning and Learning

## Abstract

Deep neural learning uses an increasing amount of computation and data to solve very specific problems. By stark contrast, human minds solve a wide range of problems using a fixed amount of computation and limited experience. One ability that seems crucial to this kind of general intelligence is meta-reasoning, i.e., our ability to reason about reasoning. To make deep learning do more from less, we propose the first neural meta -symbolic system (NEMESYS) for reasoning and learning: meta programming using differentiable forward-chaining reasoning in first-order logic. Differentiable meta programming naturally allows NEMESYS to reason and learn several tasks efficiently. This is different from performing object-level deep reasoning and learning, which refers in some way to entities external to the system. In contrast, NEMESYS enables self-introspection, lifting from object- to meta-level reasoning and vice versa. In our extensive experiments, we demonstrate that NEMESYS can solve different kinds of tasks by adapting the meta-level programs without modifying the internal reasoning system. Moreover, we show that NEMESYS can learn meta-level programs given examples. This is difficult, if not impossible, for standard differentiable logic programming.

keywords: differentiable meta programming, differentiable forward reasoning, meta reasoning

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: NEMESYS - A Multi-Modal Reasoning System Architecture

### Overview

The image is a conceptual diagram illustrating a central artificial intelligence system named **NEMESYS**, which interacts bidirectionally with eight distinct reasoning or task modules. The diagram is designed to showcase the system's capability to integrate and process diverse types of cognitive and problem-solving tasks. The overall layout features a central hub with eight surrounding modules, each connected to the center by double-headed blue arrows, indicating a two-way flow of information or processing.

### Components/Axes

The diagram is composed of nine primary components arranged in a radial pattern:

1. **Central Hub:**

* **Label:** "NEMESYS"

* **Visual:** A stylized icon of a human head in profile, filled with a light blue color and overlaid with a white circuit board pattern, symbolizing an artificial brain or cognitive architecture.

* **Position:** Center of the image.

2. **Surrounding Modules (8 total):** Each module is contained within a light blue, rounded rectangular box and is connected to the central NEMESYS hub by a blue, double-headed arrow.

* **Top-Left:** **Symbolic Reasoning**

* **Top-Center:** **Visual Reasoning**

* **Top-Right:** **Classification**

* **Right-Center:** **Proof Tree**

* **Bottom-Right:** **Causal Reasoning**

* **Bottom-Center:** **Game Playing**

* **Bottom-Left:** **Relevance Propagation**

* **Left-Center:** **Planning**

### Detailed Analysis / Content Details

Each module contains specific visual and textual content representing its function:

* **Symbolic Reasoning (Top-Left):**

* **Content:** Contains text resembling Prolog or logical programming code.

* **Transcribed Text:**

```

same_shape_pair(A,B):-

shape(A,C),shape(B,C).

shape(obj0,triangle).

shape(obj1,triangle).

...

```

* **Visual Reasoning (Top-Center):**

* **Content:** A composite image showing a 3D scene with various colored objects (spheres, cubes, cylinders) on a surface. Overlaid on the right is a table-like structure with color swatches and labels.

* **Transcribed Text (Table):**

```

color( [blue swatch], blue).

color( [red swatch], red).

color( [gray swatch], gray).

...

```

* **Classification (Top-Right):**

* **Content:** Two panels comparing classification outcomes. The left panel shows various shapes (triangles, circles, squares) with a large red "X" over a yellow triangle, indicating misclassification. The right panel shows similar shapes with a large green checkmark over a yellow triangle, indicating correct classification.

* **Proof Tree (Right-Center):**

* **Content:** A hierarchical tree diagram. The root and some nodes are colored red, while the majority of leaf nodes are green. This likely represents a logical deduction or search tree where red nodes might indicate a failed path or a specific branch of interest, and green nodes indicate successful or explored paths.

* **Causal Reasoning (Bottom-Right):**

* **Content:** A directed acyclic graph (DAG) with nodes labeled A, B, C, D. Arrows show causal relationships (e.g., A -> C, B -> C, C -> D). A separate arrow labeled "do(c)" points from a version of the graph where node C is intervened upon (highlighted), illustrating the concept of causal intervention.

* **Game Playing (Bottom-Center):**

* **Content:** A screenshot from the classic arcade game **Pac-Man**. It shows the game maze, Pac-Man, ghosts, dots, and power pellets.

* **Relevance Propagation (Bottom-Left):**

* **Content:** A network graph with interconnected green nodes. The structure suggests a neural network or a graph where information or "relevance" is propagated between nodes.

* **Planning (Left-Center):**

* **Content:** A sequence of four 3D rendered scenes showing the progressive rearrangement of objects (cubes, spheres) on a surface. An arrow points from the first scene to the last, indicating a plan or sequence of actions to achieve a goal state.

### Key Observations

1. **Bidirectional Integration:** Every module has a direct, two-way connection to the central NEMESYS system, emphasizing that it is not a simple pipeline but an integrated architecture where the core system and specialized modules constantly interact.

2. **Diversity of Tasks:** The diagram explicitly covers a wide spectrum of AI challenges: logical reasoning (Symbolic, Proof Tree), perception (Visual Reasoning, Classification), sequential decision-making (Planning, Game Playing), and relational modeling (Causal Reasoning, Relevance Propagation).

3. **Visual Metaphors:** Each module uses a distinct visual metaphor appropriate to its domain (code for symbolic, 3D scenes for planning/visual, graphs for causal/relevance, a game screenshot for playing).

4. **Color Coding:** The use of color is functional: red/green in Proof Tree and Classification denotes success/failure or different states; the consistent blue of the central hub and arrows unifies the diagram.

### Interpretation

This diagram presents **NEMESYS** as a proposed unified cognitive architecture designed to tackle artificial general intelligence (AGI) by integrating multiple, traditionally separate, AI sub-fields. The central "brain" icon suggests it acts as a central executive or common substrate.

* **What it suggests:** The architecture implies that robust intelligence requires the synergistic combination of different reasoning types. For instance, solving a complex real-world problem might require **Visual Reasoning** to perceive the scene, **Symbolic Reasoning** to represent knowledge, **Causal Reasoning** to understand effects of actions, and **Planning** to devise a solution sequence.

* **How elements relate:** The bidirectional arrows are the most critical relational element. They indicate that NEMESYS both *utilizes* the capabilities of each module (e.g., sending perceptual data to the Classification module) and *informs or trains* them (e.g., using high-level symbolic knowledge to guide visual attention). The modules are not isolated silos but components of a greater whole.

* **Notable Anomalies/Outliers:** The **Game Playing (Pac-Man)** module stands out as a specific, well-defined benchmark environment, whereas the others are more abstract reasoning tasks. This may indicate that the system is validated on both standardized benchmarks and open-ended reasoning problems. The **Proof Tree** and **Relevance Propagation** graphs are visually similar but labeled differently, hinting at a distinction between explicit logical proof search and implicit, possibly neural, information flow.

In essence, the diagram is a blueprint for a holistic AI system, arguing that the path to more general intelligence lies not in perfecting a single algorithm but in architecting a framework where diverse cognitive modules collaborate through a central, integrative core.

</details>

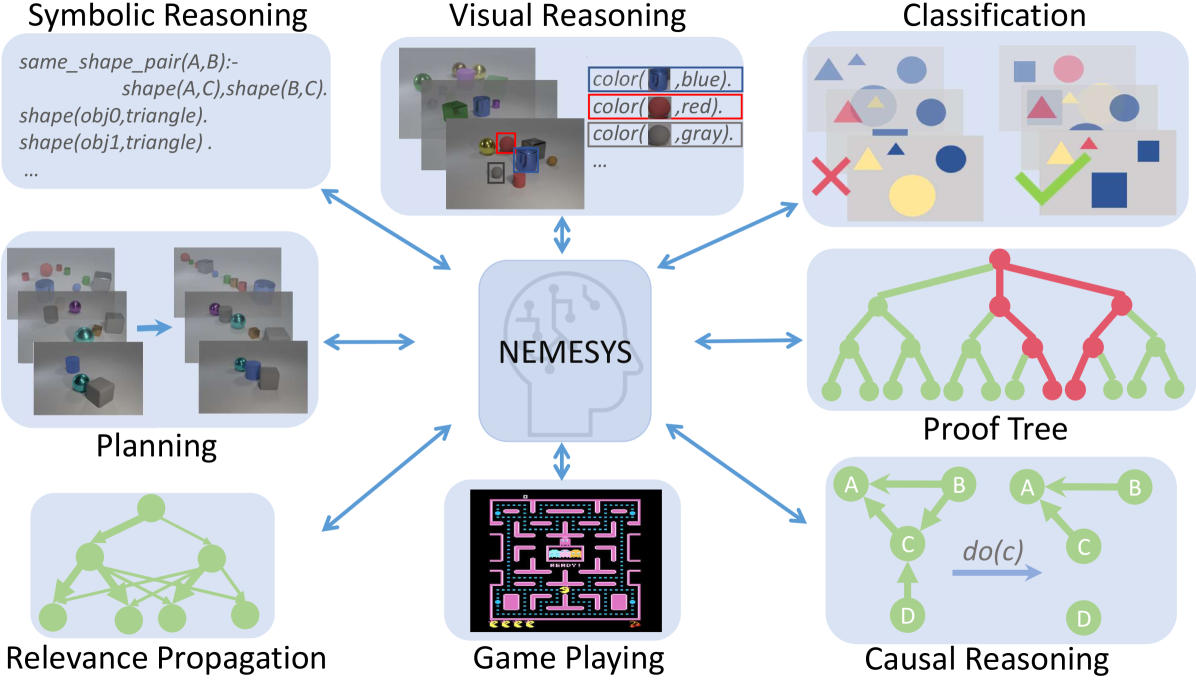

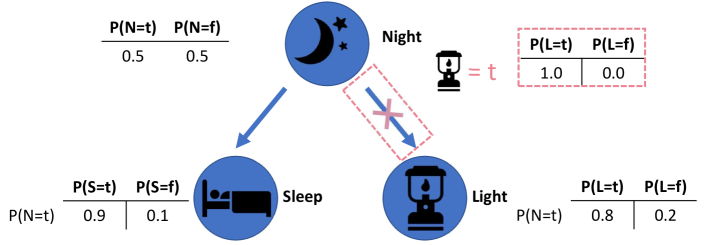

Figure 1: NEMESYS solves different kinds of tasks by using meta-level reasoning and learning. NEMESYS addresses, for instance, visual reasoning, planning, and causal reasoning without modifying its internal reasoning architecture. (Best viewed in color)

## 1 Introduction

One of the distant goals of Artificial Intelligence (AI) is to build a fully autonomous or ‘human-like’ system. The current successes of deep learning systems such as DALLE-2 [1], ChatGPT [2, 3], and Gato [4] have been promoted as bringing the field closer to this goal. However, current systems still require a large number of computations and often solve rather specific tasks. For example, DALLE-2 can generate very high-quality images but cannot play chess or Atari games. In stark contrast, human minds solve a wide range of problems using a small amount of computation and limited experience.

Most importantly, to be considered a major step towards achieving Artificial General Intelligence (AGI), a system must not only be able to perform a variety of tasks, such as Gato [4] playing Atari games, captioning images, chatting, and controlling a real robot arm, but also be self-reflective and able to learn and reason about its own capabilities. This means that it must be able to improve itself and adapt to new situations through self-reflection [5, 6, 7, 8]. Consequently, the study of meta-level architectures such as meta learning [9] and meta-reasoning [7] becomes progressively important. Meta learning [10] is a way to improve the learning algorithm itself [11, 12], i.e., it performs learning at a higher level, or meta-level. Meta-reasoning is a related concept that involves a system being able to think about its own abilities and how it processes information [5, 6]. It involves reflecting on, or introspecting about, the system’s own reasoning processes.

Indeed, meta-reasoning is different from object-centric reasoning, which refers to the system thinking about entities external to itself [13, 14, 15]. Here, the models perform low-level visual perception and reasoning on high-level concepts. Accordingly, there has been a push to make these reasoning systems differentiable [16, 17] along with addressing benchmarks in a visual domain such as CLEVR [18] and Kandinsky patterns [19, 20]. They use object-centric neural networks to perceive objects and perform reasoning using their output. Although this can solve the proposed benchmarks to some extent, the critical question remains unanswered: Is the reasoner able to justify its own operations? Can the same model solve different tasks such as (causal) reasoning, planning, game playing, and much more?

To overcome these limitations, we propose NEMESYS, the first neural meta -symbolic reasoning system. NEMESYS extensively performs meta-level programming on neuro-symbolic systems, and thus it can reason and learn several tasks. This is different from performing object-level deep reasoning and learning, which refers in some way to entities external to the system. NEMESYS is able to reflect or introspect, i.e., to shift from object- to meta-level reasoning and vice versa.

| | Meta Reasoning | Multitask Adaptation | Differentiable Meta Structure Learning |

| --- | --- | --- | --- |

| DeepProbLog [21] | ✗ | ✗ | ✗ |

| NTPs [22] | ✗ | ✗ | ✗ |

| FFSNL [23] | ✗ | ✗ | ✗ |

| $\alpha$ ILP [24] | ✗ | ✗ | ✗ |

| Scallop [25] | ✗ | ✗ | ✗ |

| NeurASP [26] | ✗ | ✗ | ✗ |

| NEMESYS (ours) | ✓ | ✓ | ✓ |

Table 1: Comparisons between NEMESYS and other state-of-the-art Neuro-Symbolic systems. We compare these systems with NEMESYS in three aspects, whether the system performs meta reasoning, can the same system adapt to solve different tasks and is the system capable of differentiable meta level structure learning.

Overall, we make the following contributions:

1. We propose NEMESYS, the first neural meta -symbolic reasoning and learning system that performs differentiable forward reasoning using meta-level programs.

1. To evaluate the ability of NEMESYS, we propose a challenging task, visual concept repairing, where the task is to rearrange objects in visual scenes based on relational logical concepts.

1. We empirically show that NEMESYS can efficiently solve different visual reasoning tasks with meta-level programs, achieving comparable performances with object-level forward reasoners [16, 24] that use specific programs for each task.

1. Moreover, we empirically show that using powerful differentiable meta-level programming, NEMESYS can solve different kinds of tasks that are difficult, if not impossible, for the previous neuro-symbolic systems. In our experiments, NEMESYS provides the function of (i) reasoning with integrated proof generation, i.e., performing differentiable reasoning producing proof trees, (ii) explainable artificial intelligence (XAI), i.e., highlighting the importance of logical atoms given conclusions, (iii) reasoning avoiding infinite loops, i.e., performing differentiable reasoning on programs which cause infinite loops that the previous logic reasoning systems unable to solve, and (iv) differentiable causal reasoning, i.e., performing causal reasoning [27, 28] on a causal Bayesian network using differentiable meta reasoners. To the best of the authors’ knowledge, we propose the first differentiable $\mathtt{do}$ operator. Achieving these functions with object-level reasoners necessitates significant efforts, and in some cases, it may be unattainable. In stark contrast, NEMESYS successfully realized the different useful functions by having different meta-level programs without any modifications of the reasoning function itself.

1. We demonstrate that NEMESYS can perform structure learning on the meta-level, i.e., learning meta programs from examples and adapting itself to solve different tasks automatically by learning efficiently with gradients.

To this end, we will proceed as follows. We first review (differentiable) first-order logic and reasoning. We then derive NEMESYS by introducing differentiable logical meta programming. Before concluding, we illustrate several capabilities of NEMESYS.

## 2 Background

NEMESYS relies on several research areas: first-order logic, logic programming, differentiable reasoning, meta-reasoning and -learning.

First-Order Logic (FOL)/Logic Programming. A term is a constant, a variable, or a term which consists of a function symbol. We denote $n$ -ary predicate ${\tt p}$ by ${\tt p}/(n,[{\tt dt_{1}},\ldots,{\tt dt_{n}}])$ , where ${\tt dt_{i}}$ is the datatype of $i$ -th argument. An atom is a formula ${\tt p(t_{1},\ldots,t_{n})}$ , where ${\tt p}$ is an $n$ -ary predicate symbol and ${\tt t_{1},\ldots,t_{n}}$ are terms. A ground atom or simply a fact is an atom with no variables. A literal is an atom or its negation. A positive literal is an atom. A negative literal is the negation of an atom. A clause is a finite disjunction ( $\lor$ ) of literals. A ground clause is a clause with no variables. A definite clause is a clause with exactly one positive literal. If $A,B_{1},\ldots,B_{n}$ are atoms, then $A\lor\lnot B_{1}\lor\ldots\lor\lnot B_{n}$ is a definite clause. We write definite clauses in the form of $A~{}\mbox{:-}~{}B_{1},\ldots,B_{n}$ . Atom $A$ is called the head, and a set of negative atoms $\{B_{1},\ldots,B_{n}\}$ is called the body. We call definite clauses as clauses for simplicity in this paper.

Differentiable Forward-Chaining Reasoning. The forward-chaining inference is a type of inference in first-order logic to compute logical entailment [29]. The differentiable forward-chaining inference [16, 17] computes the logical entailment in a differentiable manner using tensor-based operations. Many extensions of differentiable forward reasoners have been developed, e.g., reinforcement learning agents using logic to compute the policy function [30, 31] and differentiable rule learners in complex visual scenes [24]. NEMESYS performs differentiable meta-level logic programming based on differentiable forward reasoners.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Differentiable Meta-Level Reasoning and Object-Level Reasoning System

### Overview

This diagram illustrates a two-tiered reasoning system that combines **Object-Level Reasoning** with **Differentiable Meta-Level Reasoning**. The system processes visual input (objects) into symbolic probabilistic atoms, which are then used by a meta-reasoning layer to perform logical inference in a differentiable manner. The flow shows how raw input is transformed into structured knowledge and then reasoned over using a meta-program.

### Components/Axes

The diagram is divided into three primary, interconnected blocks:

1. **Differentiable Meta-Level Reasoning (Top Block):**

* **Input:** `clauses` (e.g., `0.95:same_shape_pair(X,Y):- shape(X,Z),shape(Y,Z).`)

* **Component 1:** `Meta Converter` (pink box).

* **Intermediate Output:** `meta probabilistic atoms` (e.g., `0.98:solve(shape(obj1,cube))`, `0.95:clause(same_shape_pair(obj1,obj2), (shape(obj1,cube), shape(obj2,cube)))`).

* **Component 2:** `Differentiable Forward Reasoner` (pink box).

* **Final Output:** `meta probabilistic atoms` (e.g., `0.98:solve(same_shape_pair(obj1,obj2))`).

2. **Object-Level Reasoning (Bottom-Left Block):**

* **Input:** `input` - A photograph showing three 3D objects: a cyan cube, a red cube, and a yellow cylinder.

* **Component 1:** `object-centric representation` - A schematic grid with rows labeled `obj1`, `obj2`, `obj3` and columns with icons for color (red, cyan, yellow dots), shape (square, circle), and spatial coordinates (`x`, `y`). The grid contains blue and white cells indicating feature presence/absence.

* **Output:** `probabilistic atoms` (e.g., `0.98:color(obj1,cyan)`, `0.98:shape(obj1,cube)`, `0.98:color(obj2,red)`, `0.98:shape(obj2,cube)`).

3. **Meta Program (Bottom-Right Block):**

* This block defines the logical rules for the reasoning system.

* **Sub-component 1:** `naive interpreter`

* Rule 1: `1.0:solve((A,B)):-solve(A),solve(B).`

* Rule 2: `1.0:solve(A):-clause(A,B),solve(B).`

* **Sub-component 2:** `interpreter with proof trees`

* Rule 1: `1.0:solve((A,B),(proofA,proofB)):-solve(A,proofA),solve(B,proofB).`

* Rule 2: `1.0:solve(A,(A:-proofB)):-clause(A,B),solve(B,proofB).`

**Flow and Connections:**

* The `probabilistic atoms` from the **Object-Level Reasoning** block feed into the `Meta Converter` in the **Differentiable Meta-Level Reasoning** block.

* The `Meta Program` block provides the logical rules that govern the `Meta Converter` and the `Differentiable Forward Reasoner`.

* The overall data flow is: **Input Image** -> **Object-Centric Representation** -> **Probabilistic Atoms** -> **Meta Converter** -> **Meta Probabilistic Atoms** -> **Differentiable Forward Reasoner** -> **Inferred Meta Probabilistic Atoms**.

### Detailed Analysis

**Text Transcription and Structure:**

* **Title:** `Differentiable Meta-Level Reasoning` (Top-left, white text on black background).

* **Object-Level Reasoning Title:** `Object-Level Reasoning` (Bottom-left, white text on black background).

* **Meta Program Title:** `Meta Program` (Bottom-right, white text on black background).

* **Clause Example:** `0.95:same_shape_pair(X,Y):- shape(X,Z),shape(Y,Z).` This is a probabilistic logic rule with a confidence of 0.95.

* **Meta Probabilistic Atoms (Input to Reasoner):**

* `0.98:solve(shape(obj1,cube))`

* `0.98:solve(shape(obj2,cube))`

* `0.95:clause(same_shape_pair(obj1,obj2), (shape(obj1,cube), shape(obj2,cube)))`

* **Meta Probabilistic Atoms (Output of Reasoner):**

* `0.98:solve(same_shape_pair(obj1,obj2))`

* **Probabilistic Atoms (from Object-Level):**

* `0.98:color(obj1,cyan)`

* `0.98:shape(obj1,cube)`

* `0.98:color(obj2,red)`

* `0.98:shape(obj2,cube)`

* **Meta Program Rules:** (As transcribed in the Components section above).

### Key Observations

1. **Hierarchical Abstraction:** The system clearly separates low-level perception (Object-Level) from high-level logical reasoning (Meta-Level).

2. **Probabilistic Foundation:** All knowledge is represented with confidence scores (e.g., 0.98, 0.95), indicating a probabilistic logic framework.

3. **Differentiable Pipeline:** The presence of a "Differentiable Forward Reasoner" suggests the entire reasoning chain is designed to be trained end-to-end using gradient-based methods.

4. **Symbolic Grounding:** The `object-centric representation` bridges the gap between continuous visual data and discrete symbolic atoms (`obj1`, `cube`, `cyan`).

5. **Meta-Interpretation:** The `Meta Program` contains interpreters that define how logical deduction (`solve`) operates, with the "proof trees" version providing a more detailed trace of the reasoning process.

### Interpretation

This diagram represents a neuro-symbolic AI architecture. Its purpose is to perform **explainable, logical reasoning over perceptual data** in a way that is compatible with modern deep learning.

* **What it demonstrates:** The system can take an image of objects, identify their properties (color, shape) with high confidence, and then use logical rules to infer higher-order relationships (e.g., "these two objects have the same shape"). The "differentiable" aspect means the system can potentially learn these rules or the perception module from data.

* **Relationships:** The Object-Level module acts as a **perceptual front-end**, grounding symbols in sensory input. The Meta-Level module acts as a **logical reasoning engine**, manipulating these symbols according to a programmable `Meta Program`. The `Meta Converter` is the crucial translator that formats grounded facts into a structure suitable for meta-reasoning.

* **Significance:** This architecture addresses a key challenge in AI: combining the robust pattern recognition of neural networks with the explicit, interpretable reasoning of symbolic logic. The use of probabilities and differentiability makes it trainable and robust to perceptual uncertainty. The output (`solve(same_shape_pair(obj1,obj2))`) is not just a prediction but a **logical conclusion** derived from a traceable chain of evidence and rules.

</details>

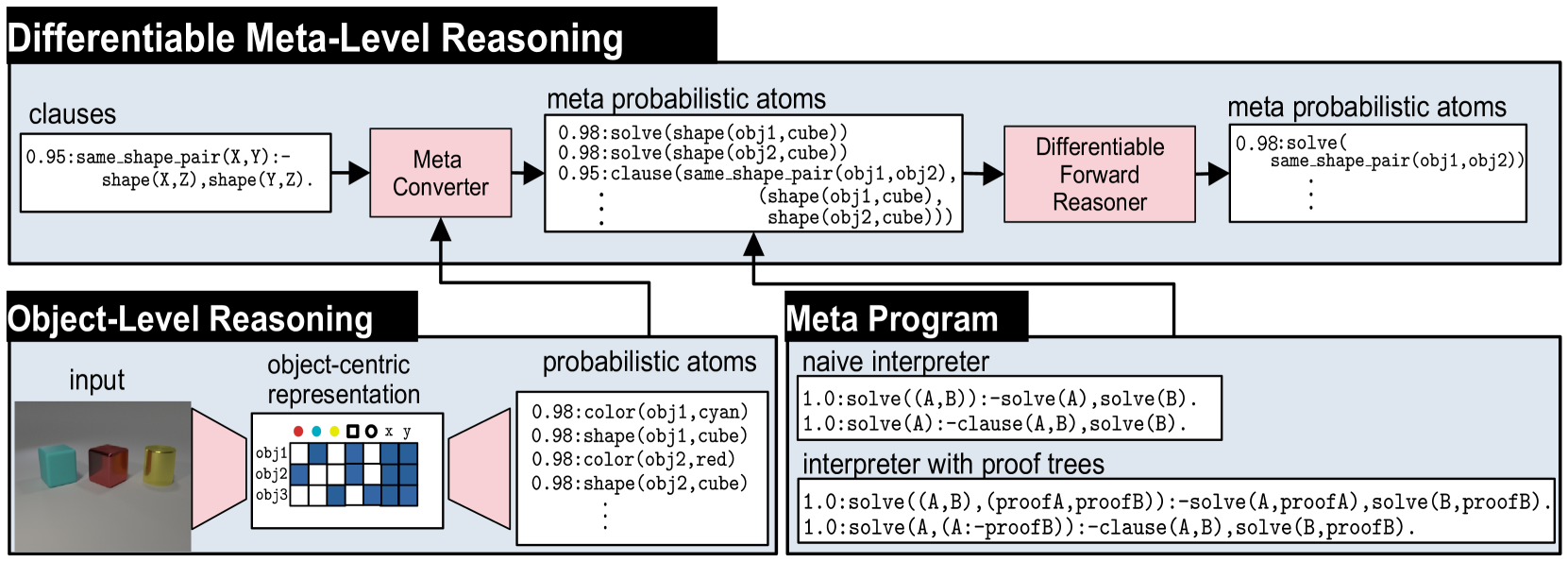

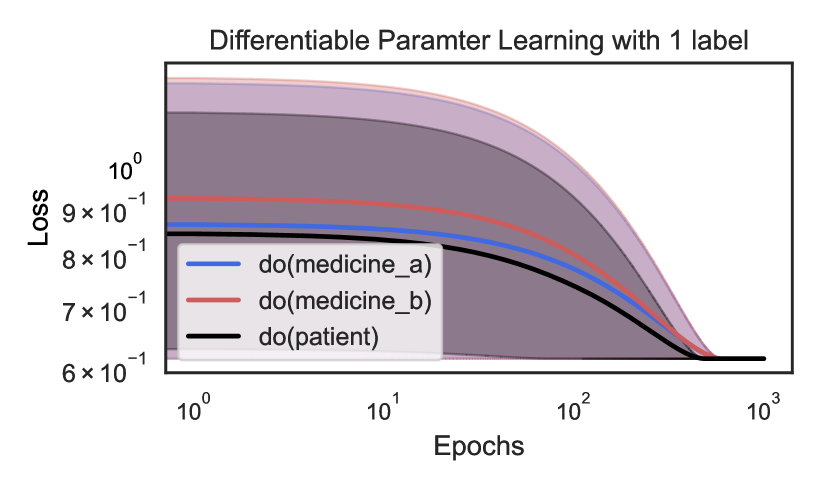

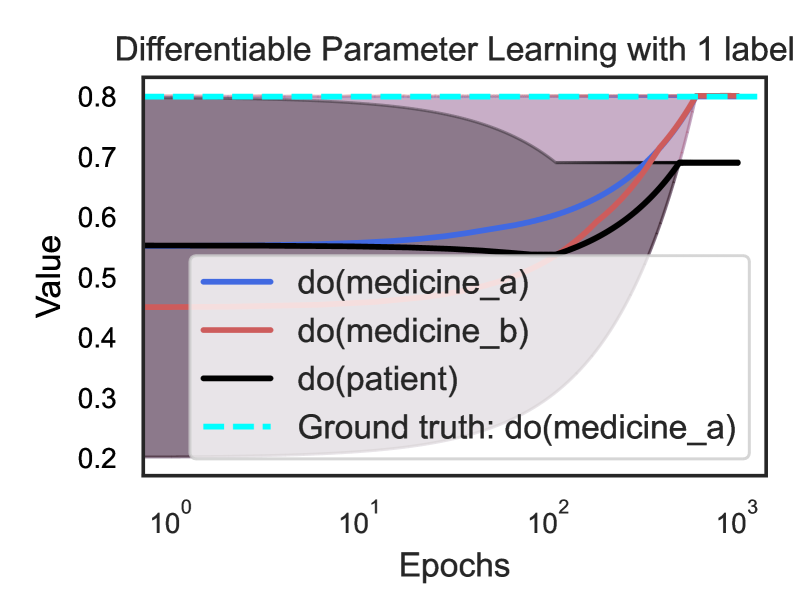

Figure 2: Overview of NEMESYS together with an object-level reasoning layer (bottom left). The meta-level reasoner (top) takes a logic program as input, here clauses on the left-hand side in the meta-level reasoning pipeline. Using the meta program (bottom right) it can realize the standard Prolog engine (naive interpreter) or an interpreter that provides e.g., also the proof trees (interpreter with proof trees) without requiring any alterations to the original logic program and internal reasoning function. This means that NEMESYS can integrate many useful functionalities by simply changing devised meta programs without intervening the internal reasoning function. (Best viewed in color)

Meta Reasoning and Learning. Meta-reasoning is the study about systems which are able to reason about its operation, i.e., a system capable of meta-reasoning may be able to reflect, or introspect [32], shifting from meta-reasoning to object-level reasoning and vice versa [6, 7]. Compared with imperative programming, it is relatively easier to construct a meta-interpreter using declarative programming. First-order Logic [33] has been the major tool to realize the meta-reasoning systems [34, 35, 36]. For example, Prolog [37] provides very efficient implementations of meta-interpreters realizing different additional features to the language.

Despite early interest in meta-reasoning within classical Inductive Logic Programming (ILP) systems [38, 39, 40], meta-interpreters have remained unexplored within neuro-symbolic AI. Meta-interpreters within classical logic are difficult to be combined with gradient-based machine learning paradigms, e.g., deep neural networks. NEMESYS realizes meta-level reasoning using differentiable forward reasoners in first-order logic, which are able to perform differentiable rule learning on complex visual scenes with deep neural networks [24]. Moreover, NEMESYS paves the way to integrate meta-level reasoning into other neuro-symbolic frameworks, including DeepProbLog [21], Scallop [25] and NeurASP [26], which are rather developed for training neural networks given logic programs using differentiable backward reasoning or answer set semantics. We compare NEMESYS with several popular neuro-symbolic systems in three aspects: whether the system performs meta reasoning, can the same system adapt to solve different tasks and is the system capable of differentiable meta level structure learning. The comparison results are summarized in Table 1.

## 3 Neural Meta-Symbolic Reasoning & Learning

We now introduce NEMESYS, the first neural meta-symbolic reasoning and learning framework. Fig. 2 shows an overview of NEMESYS.

### 3.1 Meta Logic Programming

We describe how meta-level programs are used in the NEMESYS workflow. In Fig. 2, the following object-level clause is given as its input:

| | $\displaystyle\mathtt{\color[rgb]{0,0.6,0}same\_shape\_pair(X,Y)\color[rgb]{ 0,0,0}\texttt{:-}\color[rgb]{0.68,0.36,1}shape(X,Z),shape(Y,Z)\color[rgb]{ 0,0,0}.}$ | |

| --- | --- | --- |

which identifies pairs of objects that have the same shape. The clause is subsequently fed to Meta Converter, which generates meta-level atoms.Using meta predicate $\mathtt{clause}/2$ , the following atom is generated:

| | $\displaystyle\mathtt{clause(\color[rgb]{0,0.6,0}same\_shape\_pair(X,Y),}\color [rgb]{0.68,0.36,1}\mathtt{(shape(X,Z),shape(Y,Z))\color[rgb]{0,0,0}).}$ | |

| --- | --- | --- |

where the meta atom $\mathtt{clause(H,B)}$ represents the object-level clause: $\mathtt{H\texttt{:-}B}$ .

To perform meta-level reasoning, NEMESYS uses meta-level programs, which often refer to meta interpreters, i.e., interpreters written in the language itself, as illustrated in Fig. 2. For example, a naive interpreter, NaiveInterpreter, is defined as:

| | $\displaystyle\mathtt{solve(true)}.$ | |

| --- | --- | --- |

To solve a compound goal $\mathtt{(A,B)}$ , we need first solve $\mathtt{A}$ and then $\mathtt{B}$ . A single goal $\mathtt{A}$ is solved if there is a clause that rewrites the goal to the new goal $\mathtt{B}$ , the body of the clause: $\mathtt{\color[rgb]{0,0.6,0}{A}\texttt{:-}\color[rgb]{0.68,0.36,1}{B}}$ . This process stops for facts, encoded as $\mathtt{clause(fact,true)}$ , since then, $\mathtt{solve(true)}$ will be true.

NEMESYS can employ more enriched meta programs with useful functions by simply changing the meta programs, without modifying the internal reasoning function, as illustrated in the bottom right of Fig. 2. ProofTreeInterpreter, an interpreter that produces proof trees along with reasoning, is defined as:

| | $\displaystyle\mathtt{solve(A,(A\texttt{:-}true)).}$ | |

| --- | --- | --- |

where $\mathtt{solve(A,Proof)}$ checks if atom $\mathtt{A}$ is true with proof tree $\mathtt{Proof}$ . Using this meta-program, NEMESYS can perform reasoning with integrated proof tree generation.

Now, let us devise the differentiable meta-level reasoning pipeline, which enables NEMESYS to reason and learn flexibly.

### 3.2 Differentiable Meta Programming

NEMESYS employs differentiable forward reasoning [24], which computes logical entailment using tensor operations in a differentiable manner, by adapting it to the meta-level atoms and clauses.

We define a meta-level reasoning function $f^{\mathit{reason}}_{(\mathcal{C},\mathbf{W})}:[0,1]^{G}\rightarrow[0,1]^{G}$ parameterized by meta-rules $\mathcal{C}$ and their weights $\mathbf{W}$ . We denote the set of meta-rules by $\mathcal{C}$ , and the set of all of the meta-ground atoms by $\mathcal{G}$ . $\mathcal{G}$ contains all of the meta-ground atoms produced by a given FOL language. We consider ordered sets here, i.e., each element has its index. We denote the size of the sets as: $G=|\mathcal{G}|$ and $C=|\mathcal{C}|$ . We denote the $i$ -th element of vector $\mathbf{x}$ by $\mathbf{x}[i]$ , and the $(i,j)$ -th element of matrix $\mathbf{X}$ by $\mathbf{X}[i,j]$ .

First, NEMESYS converts visual input to a valuation vector $\mathbf{v}\in[0,1]^{G}$ , which maps each meta atom to a probabilistic value (Fig. 2 Meta Converter). For example,

$$

\mathbf{v}=\blockarray{cl}\block{[c]l}0.98&\mathtt{solve(color(obj1,\ cyan))}

\\

0.01\mathtt{solve(color(obj1,\ red))}\\

0.95\mathtt{clause(same\_shape\_pair(\ldots),\ (shape(\ldots),\ \ldots))}\\

$$

represents a valuation vector that maps each meta-ground atom to a probabilistic value. For readability, only selected atoms are shown. NEMESYS computes logical entailment by updating the initial valuation vector $\mathbf{v}^{(0)}$ for $T$ times to $\mathbf{v}^{(T)}$ .

Subsequently, we compose the reasoning function that computes logical entailment. We now describe each step in detail.

(Step 1) Encode Logic Programs to Tensors.

To achieve differentiable forward reasoning, each meta-rule is encoded to a tensor representation. Let $S$ be the maximum number of substitutions for existentially quantified variables in $\mathcal{C}$ , and $L$ be the maximum length of the body of rules in $\mathcal{C}$ . Each meta-rule $C_{i}\in\mathcal{C}$ is encoded to a tensor ${\bf I}_{i}\in\mathbb{N}^{G\times S\times L}$ , which contains the indices of body atoms. Intuitively, $\mathbf{I}_{i}[j,k,l]$ is the index of the $l$ -th fact (subgoal) in the body of the $i$ -th rule to derive the $j$ -th fact with the $k$ -th substitution for existentially quantified variables. We obtain $\mathbf{I}_{i}$ by firstly grounding the meta rule $C_{i}$ , then computing the indices of the ground body atoms, and transforming them into a tensor.

(Step 2) Assign Meta-Rule Weights.

We assign weights to compose the reasoning function with several meta-rules as follows: (i) We fix the target programs’ size as $M$ , i.e., we try to select a meta-program with $M$ meta-rules out of $C$ candidate meta rules. (ii) We introduce $C$ -dimensional weights $\mathbf{W}=[{\bf w}_{1},\ldots,{\bf w}_{M}]$ where $\mathbf{w}_{i}\in\mathbb{R}^{C}$ . (iii) We take the softmax of each weight vector ${\bf w}_{j}\in\mathbf{W}$ and softly choose $M$ meta rules out of $C$ meta rules to compose the differentiable meta program.

(Step 3) Perform Differentiable Inference.

We compute $1$ -step forward reasoning using weighted meta-rules, then we recursively perform reasoning to compute $T$ -step reasoning.

(i) Reasoning using one rule. First, for each meta-rule $C_{i}\in\mathcal{C}$ , we evaluate body atoms for different grounding of $C_{i}$ by computing:

$$

\displaystyle b_{i,j,k}^{(t)}=\prod_{1\leq l\leq L}{\bf gather}({\bf v}^{(t)},

{\bf I}_{i})[j,k,l], \tag{1}

$$

where $\mathbf{gather}:[0,1]^{G}\times\mathbb{N}^{G\times S\times L}\rightarrow[0,1]^ {G\times S\times L}$ is:

$$

\displaystyle\mathbf{gather}({\bf x},{\bf Y})[j,k,l]={\bf x}[{\bf Y}[j,k,l]], \tag{2}

$$

and $b^{(t)}_{i,j,k}\in[0,1]$ . The $\mathbf{gather}$ function replaces the indices of the body atoms by the current valuation values in $\mathbf{v}^{(t)}$ . To take logical and across the subgoals in the body, we take the product across valuations. $b_{i,j,k}^{(t)}$ represents the valuation of body atoms for $i$ -th meta-rule using $k$ -th substitution for the existentially quantified variables to deduce $j$ -th meta-ground atom at time $t$ .

Now we take logical or softly to combine all of the different grounding for $C_{i}$ by computing $c^{(t)}_{i,j}\in[0,1]$ :

$$

\displaystyle c^{(t)}_{i,j}=\mathit{softor}^{\gamma}(b_{i,j,1}^{(t)},\ldots,b_

{i,j,S}^{(t)}), \tag{3}

$$

where $\mathit{softor}^{\gamma}$ is a smooth logical or function:

$$

\displaystyle\mathit{softor}^{\gamma}(x_{1},\ldots,x_{n})=\gamma\log\sum_{1

\leq i\leq n}\exp(x_{i}/\gamma), \tag{4}

$$

where $\gamma>0$ is a smooth parameter. Eq. 4 is an approximation of the max function over probabilistic values based on the log-sum-exp approach [41].

(ii) Combine results from different rules. Now we apply different meta-rules using the assigned weights by computing:

$$

\displaystyle h_{j,m}^{(t)}=\sum_{1\leq i\leq C}w^{*}_{m,i}\cdot c_{i,j}^{(t)}, \tag{5}

$$

where $h_{j,m}^{(t)}\in[0,1]$ , $w^{*}_{m,i}=\exp(w_{m,i})/{\sum_{i^{\prime}}\exp(w_{m,i^{\prime}})}$ , and $w_{m,i}=\mathbf{w}_{m}[i]$ . Note that $w^{*}_{m,i}$ is interpreted as a probability that meta-rule $C_{i}\in\mathcal{C}$ is the $m$ -th component. We complete the $1$ -step forward reasoning by combining the results from different weights:

$$

\displaystyle r_{j}^{(t)}=\mathit{softor}^{\gamma}(h_{j,1}^{(t)},\ldots,h_{j,M

}^{(t)}). \tag{6}

$$

Taking $\mathit{softor}^{\gamma}$ means that we compose $M$ softly chosen rules out of $C$ candidate meta-rules.

(iii) Multi-step reasoning. We perform $T$ -step forward reasoning by computing $r_{j}^{(t)}$ recursively for $T$ times: $v^{(t+1)}_{j}=\mathit{softor}^{\gamma}(r^{(t)}_{j},v^{(t)}_{j})$ . Updating the valuation vector for $T$ -times corresponds to computing logical entailment softly by $T$ -step forward reasoning. The whole reasoning computation Eq. 1 - 6 can be implemented using efficient tensor operations.

## 4 Experiments

With the methodology of NEMESYS established, we subsequently provide empirical evidence of its benefits over neural baselines and object-level neuro-symbolic approaches: (1) NEMESYS can emulate a differentiable forward reasoner, i.e., it is a sufficient implementation of object-centric reasoners with a naive meta program. (2) NEMESYS is capable of differentiable meta-level reasoning, i.e., it can integrate additional useful functions using devised meta-rules. We demonstrate this advantage by solving tasks of proof-tree generation, relevance propagation, automated planning, and causal reasoning. (3) NEMESYS can perform parameter and structure learning efficiently using gradient descent, i.e., it can perform learning on meta-level programs.

In our experiments, we implemented NEMESYS in Python using PyTorch, with CPU: intel i7-10750H and RAM: 16 GB.

| | NEMESYS | ResNet50 | YOLO+MLP |

| --- | --- | --- | --- |

| Twopairs | 100.0 $\bullet$ | 50.81 | 98.07 $\circ$ |

| Threepairs | 100.0 $\bullet$ | 51.65 | 91.27 $\circ$ |

| Closeby | 100.0 $\bullet$ | 54.53 | 91.40 $\circ$ |

| Red-Triangle | 95.6 $\bullet$ | 57.19 | 78.37 $\circ$ |

| Online/Pair | 100.0 $\bullet$ | 51.86 | 66.19 $\circ$ |

| 9-Circles | 95.2 $\bullet$ | 50.76 $\circ$ | 50.76 $\circ$ |

Table 2: Performance (accuracy; the higher, the better) on the test split of Kandinsky patterns. The best-performing models are denoted using $\bullet$ , and the runner-up using $\circ$ . In Kandinsky patterns, NEMESYS produced almost perfect accuracies outperforming neural baselines, where YOLO+MLP is a neural baseline using pre-trained YOLO [42] combined with a simple MLP, showing the capability of solving complex visual reasoning tasks. The performances of baselines are shown in [15].

### 4.1 Visual Reasoning on Complex Pattenrs

Let us start off by showing that our NEMESYS is able to obtain the equivalent high-quality results as a standard object-level reasoner but on the meta-level. We considered tasks of Kandinsky patterns [19, 43] and CLEVR-Hans [14] We refer to [14] and [15] for detailed explanations of the used patterns for CLEVR-Hans and Kandinsky patterns.. CLEVR-Hans is a classification task of complex 3D visual scenes. We compared NEMESYS with the naive interpreter against neural baselines and a neuro-symbolic baseline, $\alpha$ ILP [24], which achieves state-of-the-art performance on these tasks. For all tasks, NEMESYS achieved exactly the same performances with $\alpha$ ILP since the naive interpreter realizes a conventional object-centric reasoner. Moreover, as shown in Table 2 and Table 3, NEMESYS outperformed neural baselines on each task. This shows that NEMESYS is able to solve complex visual reasoning tasks using meta-level reasoning without sacrificing performance.

In contrast to the object-centric reasoners, e.g., $\alpha$ ILP. NEMESYS can easily integrate additional useful functions by simply switching or adding meta programs without modifying the internal reasoning function, as shown in the next experiments.

### 4.2 Explainable Logical Reasoning

One of the major limitations of differentiable forward chaining [16, 17, 24] is that they lack the ability to explain the reasoning steps and their evidence. We show that NEMESYS achieves explainable reasoning by incorporating devised meta-level programs.

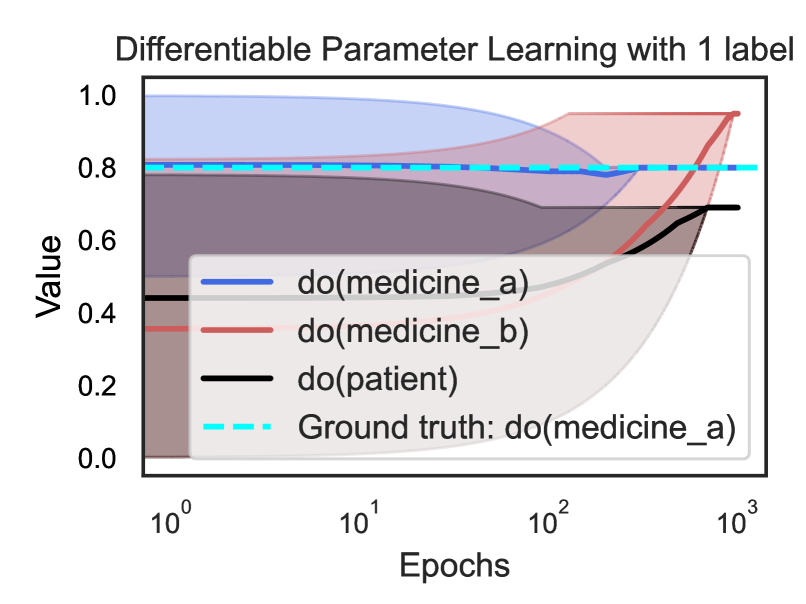

Reasoning with Integrated Proof Tree Generation

First, we demonstrate that NEMESYS can generate proof trees while performing reasoning, which the previous differentiable forward reasoners cannot produce since they encode the reasoning function to computational graphs using tensor operations and observe only their input and output. Since NEMESYS performs reasoning using meta-level programs, it can add the function to produce proof trees into its underlying reasoning mechanism simply by devising them, as illustrated in Fig 2.

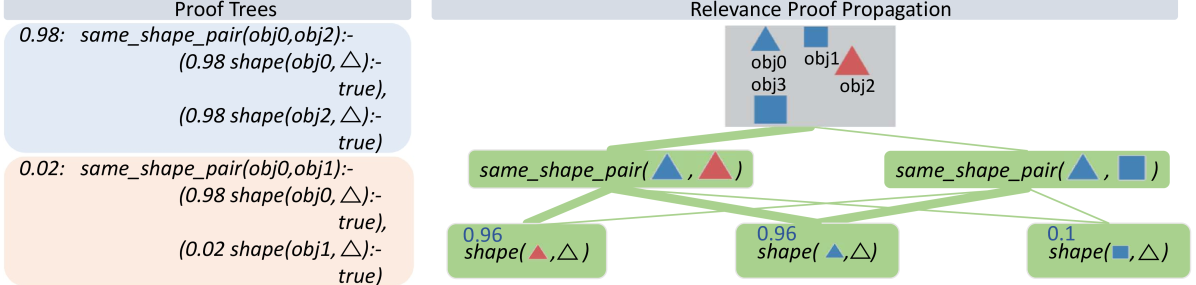

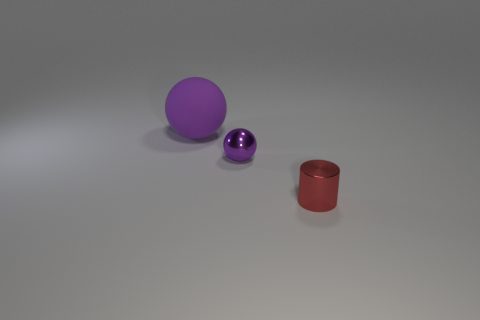

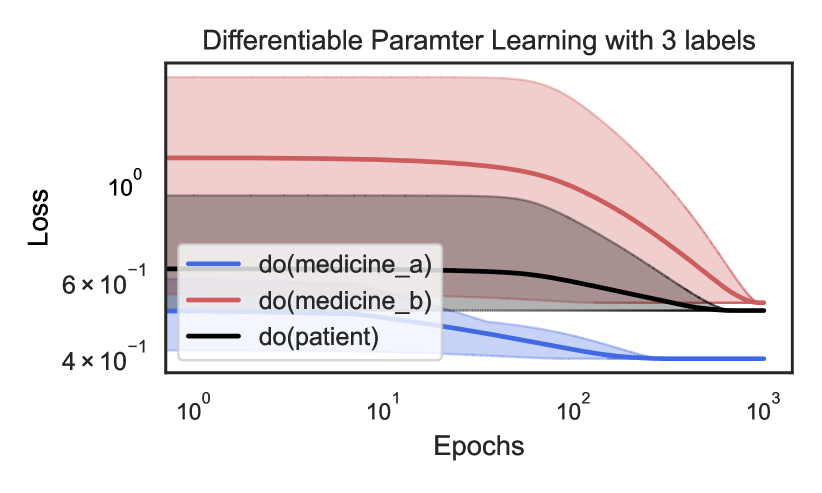

We use Kandinsky patterns [20], a benchmark of visual reasoning whose classification rule is defined on high-level concepts of relations and attributes of objects. We illustrate the input on the top right of Fig. 3 that belongs to a pattern: ”There are two pairs of objects that share the same shape.” Given the visual input, proof trees generated using the ProofTreeInterpreter in Sec. 3.1 are shown on the left two boxes of Fig. 3. In this experiment, NEMESYS identified the relations between objects, and the generated proof trees explain the intermediate reasoning steps.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Proof Trees and Relevance Proof Propagation

### Overview

The image is a technical diagram illustrating a logical reasoning process. It is divided into two main panels: "Proof Trees" on the left and "Relevance Proof Propagation" on the right. The diagram visualizes how confidence scores (probabilities) are assigned to logical statements about object shapes and how these proofs propagate through a network to establish relationships between objects.

### Components/Axes

The diagram has no traditional chart axes. Its components are:

**Left Panel: Proof Trees**

* **Title:** "Proof Trees" (centered at the top of the left panel).

* **Content:** Two distinct proof trees, each presented in a colored box.

* **Top Box (Light Blue):** Contains a logical proof with a confidence score of `0.98`.

* **Bottom Box (Light Orange):** Contains a logical proof with a confidence score of `0.02`.

**Right Panel: Relevance Proof Propagation**

* **Title:** "Relevance Proof Propagation" (centered at the top of the right panel).

* **Object Legend (Top Center):** A grey box containing four labeled objects with associated shapes and colors:

* `obj0`: Blue triangle (▲)

* `obj1`: Blue square (■)

* `obj2`: Red triangle (▲)

* `obj3`: Blue square (■)

* **Propagation Network:** A hierarchical network of green nodes connected by green lines, showing the flow of logical inference.

* **Top Layer Nodes:** Two nodes representing `same_shape_pair` relationships.

* **Bottom Layer Nodes:** Three nodes representing `shape` properties of individual objects.

### Detailed Analysis

**1. Proof Trees (Left Panel)**

* **Proof 1 (Confidence: 0.98):**

* **Statement:** `same_shape_pair(obj0, obj2)`

* **Derivation:** This is proven by two sub-statements, both with confidence `0.98`:

* `shape(obj0, △)` is `true`.

* `shape(obj2, △)` is `true`.

* **Interpretation:** There is a 98% confidence that `obj0` and `obj2` are a pair of the same shape because both are confidently identified as triangles.

* **Proof 2 (Confidence: 0.02):**

* **Statement:** `same_shape_pair(obj0, obj1)`

* **Derivation:** This is derived from two sub-statements with conflicting confidences:

* `shape(obj0, △)` is `true` (confidence: `0.98`).

* `shape(obj1, △)` is `true` (confidence: `0.02`).

* **Interpretation:** There is only a 2% confidence that `obj0` and `obj1` are a same-shape pair. This low confidence stems from the very low confidence (`0.02`) that `obj1` is a triangle, which contradicts the visual legend showing `obj1` as a square.

**2. Relevance Proof Propagation (Right Panel)**

* **Object Legend:** Establishes the ground truth for the example:

* `obj0` = Blue Triangle

* `obj1` = Blue Square

* `obj2` = Red Triangle

* `obj3` = Blue Square

* **Propagation Network Nodes & Values:**

* **Top-Left Node:** `same_shape_pair(▲, ▲)` (Blue Triangle, Red Triangle). This corresponds to the pair (`obj0`, `obj2`).

* **Top-Right Node:** `same_shape_pair(▲, ■)` (Blue Triangle, Blue Square). This corresponds to the pair (`obj0`, `obj1`) or (`obj0`, `obj3`).

* **Bottom-Left Node:** `shape(▲, △)` with confidence `0.96`. This represents the property that a red triangle is a triangle.

* **Bottom-Center Node:** `shape(▲, △)` with confidence `0.96`. This represents the property that a blue triangle is a triangle.

* **Bottom-Right Node:** `shape(■, △)` with confidence `0.1`. This represents the (low confidence) property that a blue square is a triangle.

* **Flow and Connections:**

* The `same_shape_pair(▲, ▲)` node is connected to the two `shape(▲, △)` nodes (both confidence `0.96`). This visually represents the proof logic from the left panel: the high-confidence pair relationship is supported by high-confidence individual shape properties.

* The `same_shape_pair(▲, ■)` node is connected to one `shape(▲, △)` node (confidence `0.96`) and the `shape(■, △)` node (confidence `0.1`). This represents the low-confidence pair relationship being supported by one high-confidence and one low-confidence shape property.

### Key Observations

1. **Confidence Alignment:** The confidence scores in the Proof Trees (`0.98`, `0.02`) are closely mirrored by the propagated scores in the network (`0.96`, `0.1`). The minor discrepancy (0.98 vs 0.96) may be due to rounding or a slightly different calculation in the propagation step.

2. **Visual-Logical Consistency:** The diagram highlights a conflict. The object legend clearly shows `obj1` is a square, yet the low-confidence proof (`0.02`) attempts to assert it is a triangle. The propagation network correctly assigns a low confidence (`0.1`) to the statement `shape(■, △)`.

3. **Spatial Grounding:** The legend is positioned at the top-center of the right panel, providing the key to interpreting all shape symbols and colors in the network below. The proof trees are isolated on the left, providing the formal logical statements that the right panel visualizes.

4. **Color Coding:** Colors are used consistently: blue and red for object triangles, blue for squares, green for the propagation network, and distinct background colors (blue/orange) to separate high and low-confidence proofs.

### Interpretation

This diagram demonstrates a **probabilistic logical reasoning system**. It shows how a system can:

* **Generate Hypotheses:** Formulate logical statements (proofs) about relationships (`same_shape_pair`) based on object properties (`shape`).

* **Assign Confidence:** Attach numerical confidence scores to these statements, reflecting uncertainty in perception or reasoning.

* **Propagate Relevance:** Visualize how the confidence in a high-level relationship (like "these two objects have the same shape") is fundamentally dependent on and derived from the confidence in the lower-level properties of the individual objects involved.

The core message is that the strength of a logical conclusion is only as strong as its weakest premise. The high-confidence conclusion that `obj0` and `obj2` are both triangles is robust. In contrast, the attempt to conclude `obj0` and `obj1` are the same shape fails because the premise that `obj1` is a triangle has very low confidence, correctly reflecting the visual evidence that `obj1` is a square. This type of reasoning is crucial in fields like artificial intelligence, computer vision, and knowledge graphs, where systems must handle uncertainty and infer relationships from imperfect data.

</details>

Figure 3: NEMESYS explains its reasoning with proof trees and relevance proof propagation. Given the image involving four objects (top, right), NEMESYS provides two proofs (two boxes on the left, true atom’s proof (blue box) and false atom’s proof (cream box)). They can be leveraged to decompose the prediction of NEMESYS into relevance scores per (ground) atom (right). First, a standard forward reasoning is performed to compute the prediction. Then, the model’s prediction is backward propagated through the proof trees by applying specific decomposition rules, see main text. The numbers next to each (ground) atom are the relevance scores computed. The larger the score is, the more impact an (ground) atom has on the final prediction, and the line width is wider. For brevity, the complete proof tree is not depicted here. As our baseline comparison, we extend DeepProbLog [21] to DeepMetaProbLog. However, DeepMetaProbLog only provides proof tree for true atoms (top left blue box). (Best viewed in color)

| | CLEVR-Hans3 | CLEVR-Hans7 | | |

| --- | --- | --- | --- | --- |

| Validation | Test | Validation | Test | |

| CNN | 99.55 $\circ$ | 70.34 | 96.09 | 84.50 |

| NeSy (Default) | 98.55 | 81.71 | 96.88 $\circ$ | 90.97 |

| NeSy-XIL | 100.00 $\bullet$ | 91.31 $\circ$ | 98.76 $\bullet$ | 94.96 $\bullet$ |

| NEMESYS | 98.18 | 98.40 $\bullet$ | 93.60 | 92.19 $\circ$ |

Table 3: Performance (accuracy; the higher, the better) on the validation/test splits of 3D CLEVR-Hans data sets. The best-performing models are denoted using $\bullet$ , and the runner-up using $\circ$ . In CLEVR-Hans, NEMESYS outperformed neural baselines including: (CNN) A ResNet [44], (NeSy) A model combining object-centric model (Slot Attention [45] and Set Transformer [46], and (NeSy-XIL) Slot Attention and Set Transformer using human feedback. NEMESYS tends to show less overfitting and performs similarly to a neuro-symbolic approach using human feedback (NeSy-XIL). The performances of baselines are shown in [14] and [15].

Let’s first consider the top left blue box depicted in Fig. 3 (for readability, we only show the proof part of meta atoms in the image). The weighted ground atom $\mathtt{0.98:}\mathtt{same\_shape\_pair(obj0,obj2)}$ proves $\mathtt{obj0}$ and $\mathtt{obj2}$ are of the same shape with the probability $0.98$ . The proof part shows that NEMESYS comes to this conclusion since both objects are triangle with probabilities of $\mathtt{0.98}$ and in turn it can apply the rule for $\mathtt{same\_shape\_pair}$ . We use this example to show how to compute the weight of the meta atoms inside NEMESYS. With the proof-tree meta rules and corresponding meta ground atoms:

| | $\displaystyle\mathtt{0.98:}\ \color[rgb]{0.5,0,1}\mathtt{solve(shape(obj0,} \text{\includegraphics[height=6.45831pt]{plots/triangle.png}}\mathtt{),(shape( obj0,}\text{\includegraphics[height=6.45831pt]{plots/triangle.png}}\mathtt{), true).}$ | |

| --- | --- | --- |

The weight of the meta ground atoms are computed by Meta Converter when mapping the probability of meta ground atoms to a continuous value. The meta ground atom says that $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ is true with a high probability of $0.98$ because $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ can be proven.

With the two meta ground atoms at hand, we infer the weight of the meta atom with compound goals $\color[rgb]{0,0.6,0}\mathtt{solve((shape(obj0,\includegraphics[height=6.45831 pt]{plots/triangle.png}),shape(obj2,\includegraphics[height=6.45831pt]{plots/ triangle.png})),(ProofA,ProofB))}$ , based on the first meta rule (for readability, we omit writing out the proof part). Then, we use the second meta rule to compute the weight of the meta atom $\color[rgb]{0.68,0.36,1}\mathtt{solve}\mathtt{(same\_shape\_pair(obj0,obj2)}, \mathtt{(Proof))}$ , using the compound goal meta atom $\color[rgb]{0,0.6,0}\mathtt{solve((shape(obj0,\includegraphics[height=6.45831 pt]{plots/triangle.png}),shape(obj2,\includegraphics[height=6.45831pt]{plots/ triangle.png})),(ProofA,ProofB))}$ and the meta atom $\mathtt{clause}\mathtt{(same\_shape\_pair(obj0,obj2)},\mathtt{(shape(obj0, \includegraphics[height=6.45831pt]{plots/triangle.png})},\mathtt{shape(obj2, \includegraphics[height=6.45831pt]{plots/triangle.png})))}$ .

In contrast, NEMESYS can explicitly show that $\mathtt{obj0}$ and $\mathtt{obj1}$ have a low probability of being of the same shape (Fig. 3 left bottom cream box). This proof tree shows that the goal $\mathtt{shape(obj1,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ has a low probability of being true. Thus, as one can read off, $\mathtt{obj0}$ is most likely a triangle, while $\mathtt{obj1}$ is most likely not a triangle. In turn, NEMESYS concludes with a low probability that $\mathtt{same\_shape\_pair(obj0,obj1)}$ is true, only a probability of $0.02$ . NEMESYS can produce all the information required to explain its decisions by simply changing the meta-program, not the underlying reasoning system.

Using meta programming to extend DeepProbLog to produce proof trees as a baseline comparison. Since DeepProbLog [21] doesn’t support generating proof trees in parallel with reasoning, we extend DeepProbLog [21] to DeepMetaProblog to generate proof trees as our baseline comparison using ProbLog [47]. However, the proof tree generated by DeepMetaProbLog is limited to the ‘true’ atoms (Fig. 3 top left blue box), i.e., DeepMetaProbLog is unable to generate proof tree for false atoms such as $\mathtt{same\_shape\_pair(obj0,obj1)}$ (Fig. 3 bottom left cream box) due to backward reasoning.

Logical Relevance Proof Propagation (LRP ${}^{2}$ )

Inspired by layer-wise relevance propagation (LRP) [48], which produces explanations for feed-forward neural networks, we now show that, LRP can be adapted to logical reasoning systems using declarative languages in NEMESYS, thereby enabling the reasoning system to articulate the rationale behind its decisions, i.e., it can compute the importance of ground atoms for a query by having access to proof trees. We call this process: logical relevance proof propagation (LRP ${}^{2}$ ).

The original LRP technique decomposes the prediction of the network, $f(\mathbf{x})$ , onto the input variables, $\mathbf{x}=\left(x_{1},\ldots,x_{d}\right)$ , through a decomposition $\mathbf{R}=\left(R_{1},\ldots,R_{d}\right)$ such that $\sum\nolimits_{p=1}^{d}R_{p}=f(\mathbf{x})\;$ . Given the activation $a_{j}=\rho\left(\sum_{i}a_{i}w_{ij}+b_{j}\right)$ of neuron, where $i$ and $j$ denote the neuron indices at consecutive layers, and $\sum_{i}$ and $\sum_{j}$ represent the summation over all neurons in the respective layers, the propagation of LRP is defined as: $R_{i}=\sum\nolimits_{j}z_{ij}({\sum\nolimits_{i}z_{ij}})^{-1}R_{j},$ where $z_{ij}$ is the contribution of neuron $i$ to the activation $a_{j}$ , typically some function of activation $a_{i}$ and the weight $w_{ij}$ . Starting from the output $f(\mathbf{x})$ , the relevance is computed layer by layer until the input variables are reached.

To adapt this in NEMESYS to ground atoms and proof trees, we have to be a bit careful, since we cannot deal with the uncountable, infinite real numbers within our logic. Fortunately, we can make use of the weight associated with ground atoms. That is, our LRP ${}^{2}$ composes meta-level atoms that represent the relevance of an atom given proof trees and associates the relevance scores to the weights of the meta-level atoms.

To this end, we introduce three meta predicates: $\mathtt{rp/3/[goal,proofs,atom]}$ that represents the relevance score an $\mathtt{atom}$ has on the $\mathtt{goal}$ in given $\mathtt{proofs}$ , $\mathtt{assert\_probs/1/[atom]}$ that looks up the valuations of the ground atoms and maps the probability of the $\mathtt{atom}$ to its weight. $\mathtt{rpf/2/[proof,atom]}$ represents how much an $\mathtt{atom}$ contributes to the $\mathtt{proof}$ . The atom $\mathtt{assert\_probs((Goal\texttt{:-}Body))}$ asserts the probability of the atom $\mathtt{(Goal\texttt{:-}Body)}$ . With them, the meta-level program of LRP ${}^{2}$ is:

| | $\displaystyle\mathtt{rp(Goal,Body,Atom)}\texttt{:-}\mathtt{assert\_probs((Goal \texttt{:-}Body))},$ | |

| --- | --- | --- |

where $\mathtt{rp(Goal,Proof,Atom)}$ represents the relevance score an $\mathtt{Atom}$ has on the $\mathtt{Goal}$ in a $\mathtt{Proof}$ , i.e., we interpret the associated weight with atom $\mathtt{rp(Goal,Proof,Atom)}$ as the actual relevance score of $\mathtt{Atom}$ has on $\mathtt{Goal}$ given $\mathtt{Proof}$ . The higher the weight of $\mathtt{rp(Goal,Proof,Atom)}$ is, the larger the impact of $\mathtt{Atom}$ has on the $\mathtt{Goal}$ .

Let us go through the meta rules of LRP ${}^{2}$ . The first rule defines how to compute the relevance score of an $\mathtt{Atom}$ given the $\mathtt{Goal}$ under the condition of a $\mathtt{Body}$ (a single $\mathtt{Proof}$ ). The relevance score is computed by multiplying the weight of the $\mathtt{Body}$ , the weight of a clause $\mathtt{(Goal\texttt{:-}Body)}$ and the importance score of the $\mathtt{Atom}$ given the $\mathtt{Body}$ . The second to the seventh rule defines how to calculate the importance score of an $\mathtt{Atom}$ given a $\mathtt{Proof}$ . These six rules loop over each atom of the given $\mathtt{Proof}$ , once it detects the $\mathtt{Atom}$ inside the given $\mathtt{Proof}$ , the importance score will be set to the weight of the $\mathtt{Atom}$ , another case is that the $\mathtt{Atom}$ is not in $\mathtt{Proof}$ , in that case, in the seventh rule, $\mathtt{norelate}$ will set the importance score to a small value. The eighth and ninth rules amalgamate the results from different proofs, i.e., the score from each proof tree is computed recursively during forward reasoning. The scores for the same target (the pair of $\mathtt{Atom}$ and $\mathtt{Goal}$ ) are combined by the $\mathit{softor}$ operation. The score of an atom given several proofs is computed by taking logical or softly over scores from each proof.

With these nine meta rules at hand, together with the proof tree, NEMESYS is able to perform the relevance proof propagation for different atoms. We consider using the proof tree generated in Sec. 4.2 and set the goal as: $\mathtt{same\_shape\_pair(obj0,obj2)}$ . Fig. 3 (right) shows LRP ${}^{2}$ -based explanations generated by NEMESYS. The relevance scores of different ground atoms are listed next to each (ground) atom. As we can see, the atoms $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ and $\mathtt{shape(obj2,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ have the largest impact on the goal $\mathtt{same\_shape\_pair(obj0,obj2)}$ , while $\mathtt{shape(obj1,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ have much smaller impact.

By providing proof tree and LRP ${}^{2}$ , NEMESYS computes the precise effect of a ground atom on the goal and produces an accurate proof to support its conclusion. This approach is distinct from the Most Probable Explanation (MPE) [49], which generates the most probable proof rather than the exact proof.

<details>

<summary>extracted/5298395/images/plan/0v.png Details</summary>

### Visual Description

## 3D Rendered Scene: Geometric Primitives on a Plane

### Overview

The image is a computer-generated 3D rendering depicting three geometric objects placed on a flat, matte gray surface against a uniform gray background. The scene is lit from the upper-left, casting soft shadows to the right and slightly behind each object. There is no textual information, labels, charts, or data tables present in the image.

### Components/Axes

* **Scene Composition:** A simple 3D environment with a ground plane and three distinct objects.

* **Objects:**

1. **Large Sphere:** Positioned in the upper-center of the frame. It has a matte (non-reflective) surface and is colored a medium purple.

2. **Small Sphere:** Positioned in the lower-left quadrant of the frame. It has a highly reflective, metallic surface and is colored a deep, shiny purple.

3. **Cylinder:** Positioned in the lower-right quadrant of the frame. It has a reflective, metallic surface and is colored a bright red.

* **Lighting & Shadows:** A single, soft light source originates from the top-left, creating diffuse shadows that extend towards the bottom-right of each object.

### Detailed Analysis

* **Spatial Relationships:**

* The large purple sphere is the furthest back (highest on the Y-axis in screen space).

* The small metallic purple sphere and the red metallic cylinder are positioned closer to the viewer (lower on the Y-axis), with the cylinder slightly to the right of the sphere.

* The objects form a loose triangular arrangement.

* **Material Properties:**

* **Matte (Large Sphere):** Diffuse reflection, no specular highlights, soft shadow.

* **Metallic (Small Sphere & Cylinder):** Sharp, bright specular highlights reflecting the light source, clear reflections of the environment (though the environment is simple), and defined shadows.

### Key Observations

* **Color Contrast:** The scene uses a limited palette: two shades of purple (one matte, one metallic) and one red (metallic). The red cylinder provides a strong color contrast against the purple spheres and gray background.

* **Material Contrast:** The primary visual distinction is between the matte finish of the large sphere and the glossy, reflective finishes of the small sphere and cylinder.

* **Scale:** There is a clear size difference between the large and small spheres. The cylinder's height appears comparable to the diameter of the small sphere.

### Interpretation

This image appears to be a standard test render or demonstration scene, commonly used in 3D graphics to showcase:

1. **Basic Geometric Primitives:** Sphere and cylinder.

2. **Material Shaders:** The difference between a simple diffuse (matte) material and a more complex specular (metallic) material.

3. **Lighting and Shadow Casting:** How light interacts with different surfaces and forms shadows on a ground plane.

4. **Spatial Composition:** The arrangement tests depth perception and object placement in a 3D space.

The lack of text, data, or complex diagrams means the image's "information" is purely visual and technical, related to 3D rendering fundamentals. It does not convey quantitative data, trends, or procedural flow. Its purpose is likely illustrative or diagnostic within a computer graphics context.

</details>

<details>

<summary>extracted/5298395/images/plan/1v0.png Details</summary>

### Visual Description

## 3D Rendered Scene: Geometric Primitives on a Plane

### Overview

The image is a computer-generated 3D rendering depicting three geometric objects placed on a flat, matte gray surface against a uniform gray background. The scene is illuminated by a single, soft light source originating from the upper left, casting soft shadows to the lower right of each object. There is no textual information, labels, axes, or data charts present in the image.

### Components/Axes

* **Objects:** Three distinct 3D geometric primitives.

* **Surface:** A flat, infinite plane with a matte gray finish.

* **Background:** A uniform, neutral gray environment.

* **Lighting:** A single directional light source from the upper-left, creating highlights and shadows.

* **Text/Data:** **None.** The image contains no alphanumeric characters, labels, legends, or quantitative data.

### Detailed Analysis

**Object Inventory & Properties:**

1. **Large Purple Sphere:**

* **Shape:** Sphere.

* **Color:** Matte purple (approximate RGB: 160, 80, 200).

* **Material:** Matte/diffuse finish. It shows a soft, broad highlight and no sharp reflections.

* **Size:** The largest object in the scene.

* **Position:** Located in the left-center of the composition, resting on the surface. It is positioned behind the smaller purple sphere.

2. **Small Purple Sphere:**

* **Shape:** Sphere.

* **Color:** Metallic purple (approximate RGB: 120, 40, 160).

* **Material:** Highly reflective, metallic finish. It exhibits a sharp, bright specular highlight and reflects the surrounding environment (the gray surface and background).

* **Size:** Significantly smaller than the large purple sphere.

* **Position:** Placed directly in front of and slightly to the right of the large purple sphere, creating a clear depth relationship.

3. **Red Cylinder:**

* **Shape:** Cylinder.

* **Color:** Metallic red (approximate RGB: 200, 50, 50).

* **Material:** Metallic finish, similar to the small sphere. It shows a sharp vertical highlight along its curved side and a reflective top face.

* **Size:** Its height appears comparable to the diameter of the small sphere, but its width is greater.

* **Position:** Situated to the right of the two spheres, with a clear gap between them. It is the rightmost object.

**Spatial Relationships & Lighting:**

* The objects form a loose triangular arrangement on the plane.

* Shadows are cast towards the bottom-right corner of the image, confirming the light source direction from the top-left.

* The large matte sphere casts a soft, diffuse shadow. The two metallic objects cast slightly sharper shadows.

### Key Observations

* **Material Contrast:** The primary visual contrast is between the matte material of the large sphere and the reflective metallic materials of the small sphere and cylinder.

* **Color Grouping:** Two objects share a purple hue (though with different materials), while the third is a distinct red, creating a visual grouping.

* **Scale Variation:** There is a clear size hierarchy: Large Sphere > Cylinder ≈ Small Sphere (in terms of visual weight).

* **Depth Cue:** The placement of the small sphere in front of the large one is a strong compositional element that establishes depth in the scene.

### Interpretation

This image is a classic example of a **3D rendering test scene**. Its primary purpose is likely to demonstrate or evaluate:

1. **Material Properties:** The scene effectively contrasts how light interacts with matte (diffuse) versus metallic (specular) surfaces. The large sphere shows diffuse reflection, while the small sphere and cylinder show specular reflection and environment mapping.

2. **Lighting and Shadow:** The setup tests the rendering engine's ability to generate soft shadows from a single light source and to correctly calculate highlights on curved surfaces.

3. **Basic Composition:** It explores the visual relationship between simple geometric forms, color, and material in a controlled environment.

The absence of text or data indicates this is not an informational graphic but a **visual demonstration piece**. It could be a default scene from 3D software, a material study, or a benchmark image for rendering quality. The "story" it tells is one of technical capability—showcasing how a renderer handles fundamental visual elements like form, color, material, light, and shadow.

</details>

<details>

<summary>extracted/5298395/images/plan/1v1.png Details</summary>

### Visual Description

## 3D Rendered Geometric Scene: Matte and Glossy Objects on Gray Surface

### Overview

This is a 3D rendered scene featuring three geometric objects arranged diagonally on a flat, neutral gray surface. The scene uses soft directional lighting to highlight material properties and create depth, with no numerical data or chart elements present.

### Components

1. **Surface**: A uniform, flat light gray ground plane spanning the entire frame. Soft, diffused shadows are cast toward the lower-right, indicating a primary light source in the upper-left.

2. **Large Matte Purple Sphere**: Positioned in the upper-left (furthest back) of the object arrangement. It is the largest object, with a non-reflective, diffuse purple surface.

3. **Small Glossy Purple Sphere**: Located in the center, directly in front of and to the right of the large purple sphere. It is the smallest object, with a highly reflective purple surface that shows faint environmental and object reflections.

4. **Medium Glossy Red Cylinder**: Positioned in the lower-right (furthest forward) of the arrangement. It has a metallic, reflective red surface, with clear reflections of the small purple sphere and the gray ground plane.

### Detailed Analysis

- **Size Hierarchy**: Large matte purple sphere > Medium glossy red cylinder > Small glossy purple sphere.

- **Material Contrast**: The scene emphasizes the difference between matte (diffuse, non-reflective) and glossy (reflective, metallic) surfaces, with the large sphere being matte and the other two objects glossy.

- **Spatial Alignment**: The three objects follow a diagonal axis from upper-left to lower-right, creating a clear sense of depth and perspective in the 3D space.

- **Lighting Effects**: Soft-edged shadows confirm a diffused light source, and reflections on the glossy objects demonstrate how light interacts with smooth, reflective surfaces.

### Key Observations

- The neutral gray background isolates the colored objects, drawing focus to their form and material properties.

- The diagonal arrangement guides the viewer's eye through the scene, emphasizing the progression of size and position.

- The reflective surfaces of the small sphere and cylinder add visual complexity by mirroring other elements in the scene.

### Interpretation

This image is a demonstration of 3D rendering capabilities, designed to showcase geometric primitives, material properties, and lighting effects. It is likely intended for educational or testing purposes in 3D graphics, illustrating how different surfaces interact with light. There are no data trends or numerical values, as this is a visual demonstration rather than a data visualization. The scene effectively highlights the contrast between matte and glossy materials, making it a useful reference for understanding surface rendering in 3D environments.

</details>

<details>

<summary>extracted/5298395/images/plan/final.png Details</summary>

### Visual Description

## [3D Rendered Scene]: Geometric Shapes on a Gray Surface

### Overview

The image is a computer-generated 3D rendering of three simple geometric objects placed on a flat, neutral gray surface. The scene is illuminated by a soft light source from the upper left, casting subtle shadows to the right and rear of the objects. There is no textual information, data, charts, or diagrams present in the image.

### Components/Subjects

The scene contains three distinct objects arranged in a loose diagonal line from the upper left to the lower right:

1. **Large Purple Sphere (Matte):**

* **Position:** Upper-left quadrant of the image.

* **Color:** Solid, medium purple.

* **Material:** Appears to have a matte or diffuse finish, with soft, non-reflective shading.

* **Size:** The largest object in the scene.

* **Shadow:** Casts a soft, diffuse shadow to its right and slightly behind it.

2. **Small Purple Sphere (Metallic):**

* **Position:** Center of the image, slightly to the right and in front of the large sphere.

* **Color:** A deeper, more saturated purple than the large sphere.

* **Material:** Highly reflective and metallic. It shows a clear, bright specular highlight from the light source and reflects the gray environment.

* **Size:** Significantly smaller than the large sphere.

* **Shadow:** Casts a sharper, more defined shadow compared to the matte sphere.

3. **Red Cylinder (Metallic):**

* **Position:** Lower-right quadrant of the image.

* **Color:** A deep, metallic red.

* **Material:** Metallic and reflective, similar to the small sphere. It has a bright highlight along its top edge and vertical side.

* **Shape:** A short, upright cylinder.

* **Size:** Comparable in height to the small sphere but with a wider base.

* **Shadow:** Casts a distinct shadow to its right.

### Detailed Analysis

* **Spatial Arrangement:** The objects are not aligned in a perfect row. The large sphere is furthest back, the small sphere is in the middle ground, and the cylinder is in the foreground. This creates a sense of depth.

* **Lighting:** The primary light source is positioned to the upper left, outside the frame. This is evidenced by the highlights on the upper-left surfaces of all objects and the direction of their shadows (to the right and slightly back).

* **Surface:** The ground plane is a uniform, matte gray with no texture or markings. It seamlessly blends into a gray background, suggesting an infinite plane or a studio backdrop.

* **Materials:** The scene contrasts two material types: a diffuse/matte surface (large sphere) and reflective/metallic surfaces (small sphere and cylinder). The metallic objects clearly reflect the light source and the surrounding environment.

### Key Observations

* **Absence of Text/Data:** The image contains zero textual elements, labels, axes, legends, or numerical data. It is purely a visual composition.

* **Color Palette:** The scene uses a limited palette: two shades of purple, one shade of red, and neutral grays.

* **Geometric Primitives:** All objects are basic 3D primitives (sphere, cylinder), commonly used in 3D modeling tests, computer vision datasets (like CLEVR), or rendering demonstrations.

### Interpretation

This image does not convey factual data, trends, or information in a documentary sense. Instead, it is a **visual demonstration** likely serving one of the following purposes:

1. **3D Rendering Test:** It showcases basic 3D modeling, material properties (matte vs. metallic), and lighting/shadow rendering in a simple, controlled environment.

2. **Computer Vision/AI Dataset Sample:** The scene strongly resembles images from datasets like CLEVR (Compositional Language and Elementary Visual Reasoning), which are used to train and test AI models on visual reasoning tasks involving object recognition, attribute identification, and spatial relationships.

3. **Material Study:** The composition highlights the visual differences between diffuse and reflective surfaces under identical lighting conditions.

**In summary, the "information" contained is purely visual and compositional. It demonstrates the rendering of form, color, material, and light in a 3D space, with no embedded textual or quantitative data to extract.**

</details>

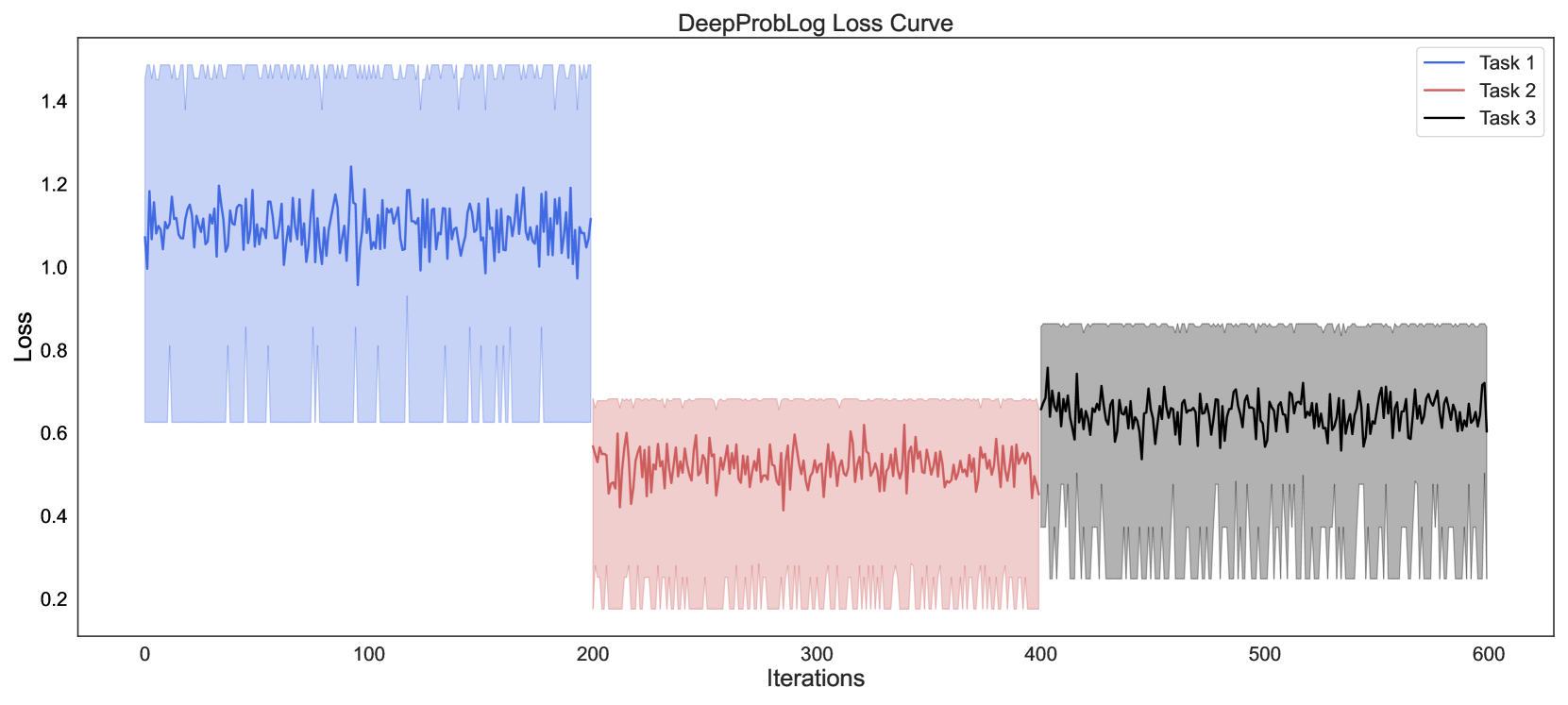

Figure 4: Visual Concept Repairing: NEMESYS achieves planning by performing differentiable meta-level reasoning. The left most image shows the start state, and the right most image shows the goal state. Taking these states as inputs, NEMESYS performs differentiable forward reasoning using meta-level clauses that simulate the planning steps and generate intermediate states (two images in the middle) and actions from start state to reach the goal state. (Best viewed in color)

### 4.3 Avoiding Infinite Loops

Differentiable forward chaining [17], unfortunately, can generate infinite computations. A pathological example:

| | $\displaystyle\mathtt{edge(a,b).\ edge(b,a).}\ \mathtt{edge(b,c).}\quad\mathtt{ path(A,A,[\ ]).}\quad$ | |

| --- | --- | --- |

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Test on 4 queries

### Overview

The image is a vertical bar chart comparing the accuracy of two systems, ProbLog and NEMESYS, on a test consisting of 4 queries. The chart clearly shows a performance difference between the two systems.

### Components/Axes

* **Chart Title:** "Test on 4 queries" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy" (rotated vertically on the left side).

* **Scale:** Linear scale from 0.0 to 1.0.

* **Major Tick Marks:** 0.0, 0.5, 1.0.

* **X-Axis:**

* **Categories:** Two categories are labeled below their respective bars: "ProbLog" (left) and "NEMESYS" (right).

* **Data Series (Bars):**

* **ProbLog Bar:** A blue bar positioned on the left side of the chart. The numerical value `0.75` is displayed directly above it.

* **NEMESYS Bar:** A red/salmon-colored bar positioned on the right side of the chart. The numerical value `1.0` is displayed directly above it.

* **Legend:** Not present as a separate element. The categories are identified by the x-axis labels directly beneath each bar.

### Detailed Analysis

* **ProbLog:** The blue bar reaches a height corresponding to an accuracy value of **0.75** (or 75%).

* **NEMESYS:** The red bar reaches the maximum height of the y-axis, corresponding to an accuracy value of **1.0** (or 100%).

* **Visual Trend:** The NEMESYS bar is visibly taller than the ProbLog bar, indicating a higher accuracy score.

### Key Observations

1. **Perfect Score:** NEMESYS achieved a perfect accuracy score of 1.0 on the test.

2. **Performance Gap:** There is a significant 0.25 (25 percentage point) difference in accuracy between NEMESYS (1.0) and ProbLog (0.75).

3. **Test Scope:** The evaluation was conducted on a small set of 4 queries, as stated in the title.

### Interpretation

The data demonstrates that for this specific test set of 4 queries, the NEMESYS system performed flawlessly, while the ProbLog system made errors, achieving 75% accuracy. This suggests that NEMESYS may be more effective or better suited for the type of reasoning or problem-solving required by these particular queries. The chart serves as a direct, visual comparison highlighting NEMESYS's superior performance in this limited evaluation. The small number of queries (4) should be noted, as it may not be representative of performance on a larger, more diverse dataset.

</details>

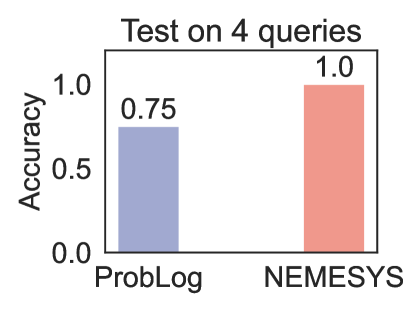

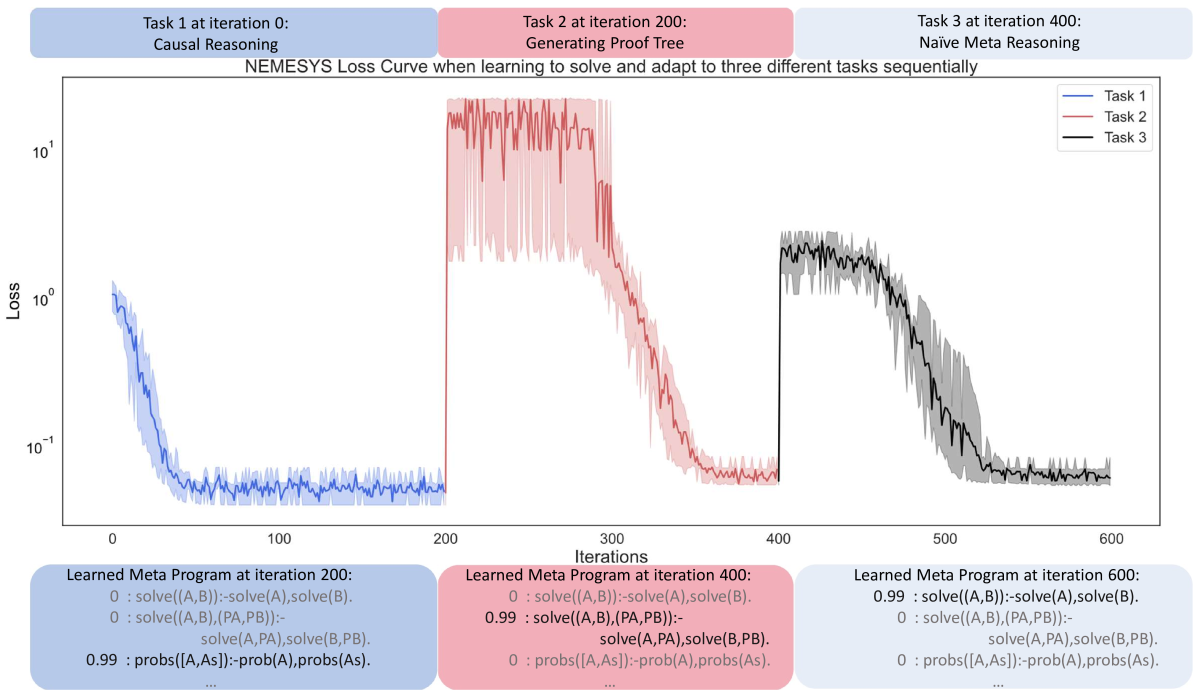

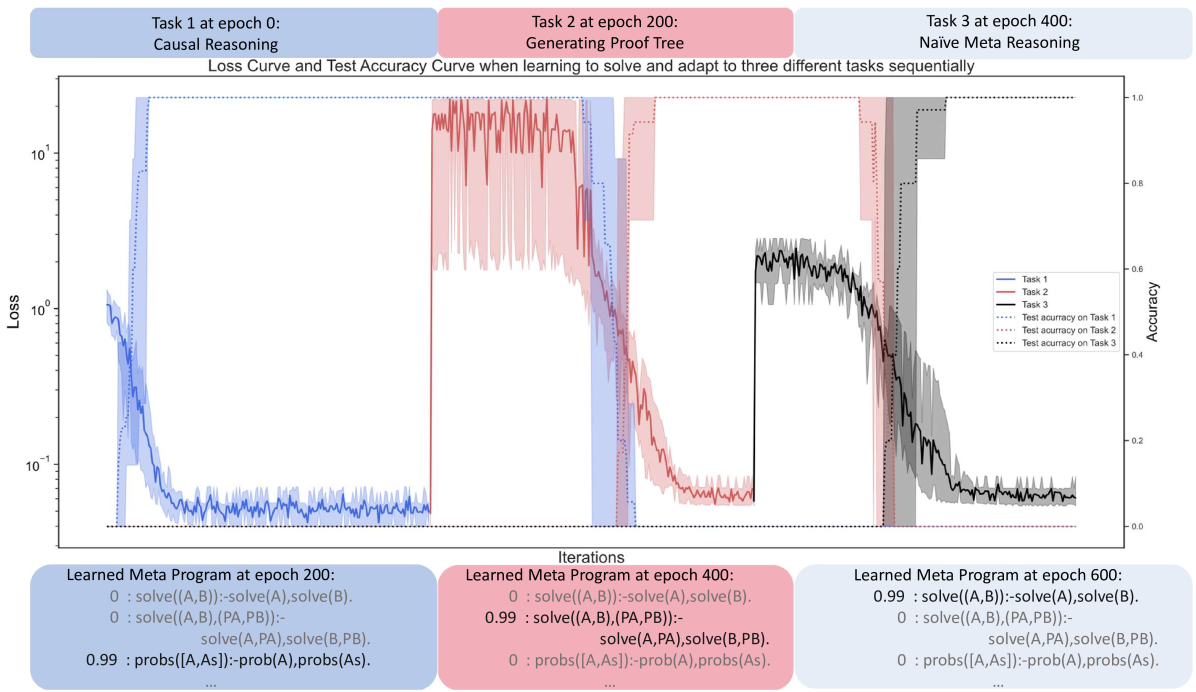

Figure 5: Performance (accuracy; the higher, the better)on four queries. (Best viewed in color)

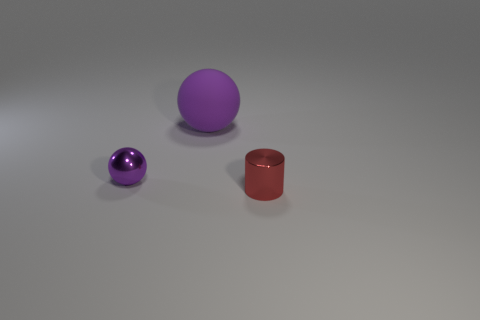

It defines a simple graph over three nodes $(a,b,c)$ with three edges, $(a-b,b-a,b-c)$ as well as paths in graphs in general. Specifically, $\mathtt{path}/3$ defines how to find a path between two nodes in a recursive way. The base case is $\mathtt{path(A,A,[])}$ , meaning that any node $\mathtt{A}$ is reachable from itself. The recursion then says, if there is an edge from node $\mathtt{A}$ to node $\mathtt{B}$ , and there is a path from node $\mathtt{B}$ to node $\mathtt{C}$ , then there is a path from node $\mathtt{A}$ to node $\mathtt{C}$ . Unfortunately, this generates an infinite loop $\mathtt{[edge(a,b),edge(b,a),edge(a,b),\ldots]}$ when computing the path from $a$ to $c$ , since this path can always be extended potentially also leading to the node $c$ .

Fortunately, NEMESYS allows one to avoid infinite loops by memorizing the proof-depth, i.e., we simply implement a limited proof-depth strategy on the meta-level:

| | $\displaystyle\mathtt{li((A,B),DPT)}\texttt{:-}\mathtt{li(A,DPT)},\mathtt{li(B, DPT).}$ | |

| --- | --- | --- |

With this proof strategy, NEMESYS gets the path $\mathtt{path(a,c,[edge(a,b),edge(b,c)])=true}$ in three steps. For simplicity, we omit the proof part in the atom. Using the second rule and the first rule recursively, the meta interpreter finds $\mathtt{clause(path(a,c),(edge(a,b),path(b,c)))}$ and $\mathtt{clause(path(b,c),(edge(b,c),path(c,c)))}$ . Finally, the meta interpreter finds a clause, whose head is $\mathtt{li(path(c,c),1)}$ and the body is true.

Since forward chaining gets stuck in the infinite loop, we choose ProbLog [47] as our baseline comparison. We test NEMESYS and ProbLog using four queries, including one query which calls the recursive rule. ProbLog fails to return the correct answer on the query which calls the recursive rule. The comparison is summarized in Fig. 5. We provide the four test queries in Appendix A.

### 4.4 Differentiable First-Order Logical Planning

As the fourth meta interpreter, we demonstrate NEMESYS as a differentiable planner. Consider Fig. 4 where NEMESYS was asked to put all objects of a start image onto a line. Each start and goal state is represented as a visual scene, which is generated in the CLEVR [18] environment. By adopting a perception model, e.g., YOLO [42] or slot attention [45], NEMESYS obtains logical representations of the start and end states:

| | $\displaystyle\mathtt{start}$ | $\displaystyle=\{\mathtt{pos(obj0,(1,3)),\ldots,pos(obj4,(2,1))}\},$ | |

| --- | --- | --- | --- |

where $\mathtt{pos/2}$ describes the $2$ -dim positions of objects. NEMESYS solves this planning task by performing differentiable reasoning using the meta-level program: