# Deep Meta Programming

**Authors**: HikaruShindo, Devendra SinghDhami, KristianKersting

[1,3] Zihan Ye

1] AI and Machine Learning Group, Dept. of Computer Science, TU Darmstadt, Germany

2] Centre for Cognitive Science, TU Darmstadt, Germany

3] Hessian Center for AI (hessian.AI), Germany 4] German Center for Artificial Intelligence (DFKI), Germany 5] Eindhoven University of Technology, Netherlands

Neural Meta-Symbolic Reasoning and Learning

## Abstract

Deep neural learning uses an increasing amount of computation and data to solve very specific problems. By stark contrast, human minds solve a wide range of problems using a fixed amount of computation and limited experience. One ability that seems crucial to this kind of general intelligence is meta-reasoning, i.e., our ability to reason about reasoning. To make deep learning do more from less, we propose the first neural meta -symbolic system (NEMESYS) for reasoning and learning: meta programming using differentiable forward-chaining reasoning in first-order logic. Differentiable meta programming naturally allows NEMESYS to reason and learn several tasks efficiently. This is different from performing object-level deep reasoning and learning, which refers in some way to entities external to the system. In contrast, NEMESYS enables self-introspection, lifting from object- to meta-level reasoning and vice versa. In our extensive experiments, we demonstrate that NEMESYS can solve different kinds of tasks by adapting the meta-level programs without modifying the internal reasoning system. Moreover, we show that NEMESYS can learn meta-level programs given examples. This is difficult, if not impossible, for standard differentiable logic programming.

keywords: differentiable meta programming, differentiable forward reasoning, meta reasoning

<details>

<summary>x1.png Details</summary>

### Visual Description

## Flowchart: NEMESYS System Architecture

### Overview

The image depicts a conceptual architecture of a system called NEMESYS, integrating multiple reasoning modalities. The central "NEMESYS" box connects to eight peripheral components through bidirectional arrows, representing information flow. The system combines symbolic, visual, and causal reasoning with game-playing capabilities.

### Components/Axes

1. **Central Node**:

- Label: "NEMESYS" (stylized with a brain icon)

- Position: Center of the diagram

2. **Peripheral Components** (clockwise from top-left):

- **Symbolic Reasoning**:

- Contains code snippets about shape relationships

- Example: `same_shape_pair(A,B): shape(A,C), shape(B,C), shape(obj0, triangle)`

- **Visual Reasoning**:

- Color coding legend:

- Blue (□), Red (■), Gray (■)

- Contains image examples with colored objects

- **Classification**:

- Shape examples with checkmarks (✓) and crosses (✗)

- Includes geometric shapes (triangle, circle, square)

- **Planning**:

- Shows grid-based planning examples

- **Relevance Propagation**:

- Network diagram with interconnected nodes

- **Game Playing**:

- Maze-like game environment with pink/purple elements

- **Proof Tree**:

- Hierarchical tree structure with green/red nodes

- **Causal Reasoning**:

- Graph with nodes A-D and causal relationships (→, do(c))

### Detailed Analysis

- **Symbolic Reasoning**:

- Text-based logic rules for object relationships

- Uses predicate logic notation (e.g., `shape(obj1, triangle)`)

- **Visual Reasoning**:

- Color-coded object recognition system

- Three distinct color categories with specific shapes

- **Classification**:

- Binary classification system (correct/incorrect)

- Uses geometric shape recognition

- **Planning**:

- Grid-based navigation examples

- Shows pathfinding scenarios

- **Relevance Propagation**:

- Network topology visualization

- Represents information flow efficiency

- **Game Playing**:

- Maze environment with colored obstacles

- Suggests reinforcement learning application

- **Proof Tree**:

- Binary decision tree structure

- Nodes labeled A-D with hierarchical relationships

- **Causal Reasoning**:

- Causal graph with intervention notation (do(c))

- Shows cause-effect relationships between nodes

### Key Observations

1. Bidirectional arrows between NEMESYS and all components indicate integrated processing

2. Color-coded elements appear in both Visual Reasoning and Classification components

3. Game Playing component uses similar color scheme (pink/purple) to Visual Reasoning's red

4. Proof Tree and Causal Reasoning components share node labeling convention (A-D)

5. System combines deductive (Proof Tree) and inductive (Classification) reasoning

### Interpretation

The NEMESYS architecture demonstrates a multi-modal AI system that:

1. Processes symbolic logic (Symbolic Reasoning)

2. Interprets visual information (Visual Reasoning)

3. Makes classifications (Classification)

4. Plans actions (Planning)

5. Maintains relevance in information processing (Relevance Propagation)

6. Engages in game-like environments (Game Playing)

7. Constructs logical proofs (Proof Tree)

8. Understands causal relationships (Causal Reasoning)

The bidirectional connections suggest an integrated system where different reasoning modalities inform each other. The presence of both proof trees and causal graphs indicates the system can handle both deductive and probabilistic reasoning. The game-playing component implies potential applications in reinforcement learning scenarios, while the planning module suggests capability in strategic decision-making.

The color coding consistency between Visual Reasoning and Game Playing components might indicate shared visual processing capabilities. The system's complexity suggests it could be used for advanced AI applications requiring multiple reasoning modalities, such as robotics or complex decision support systems.

</details>

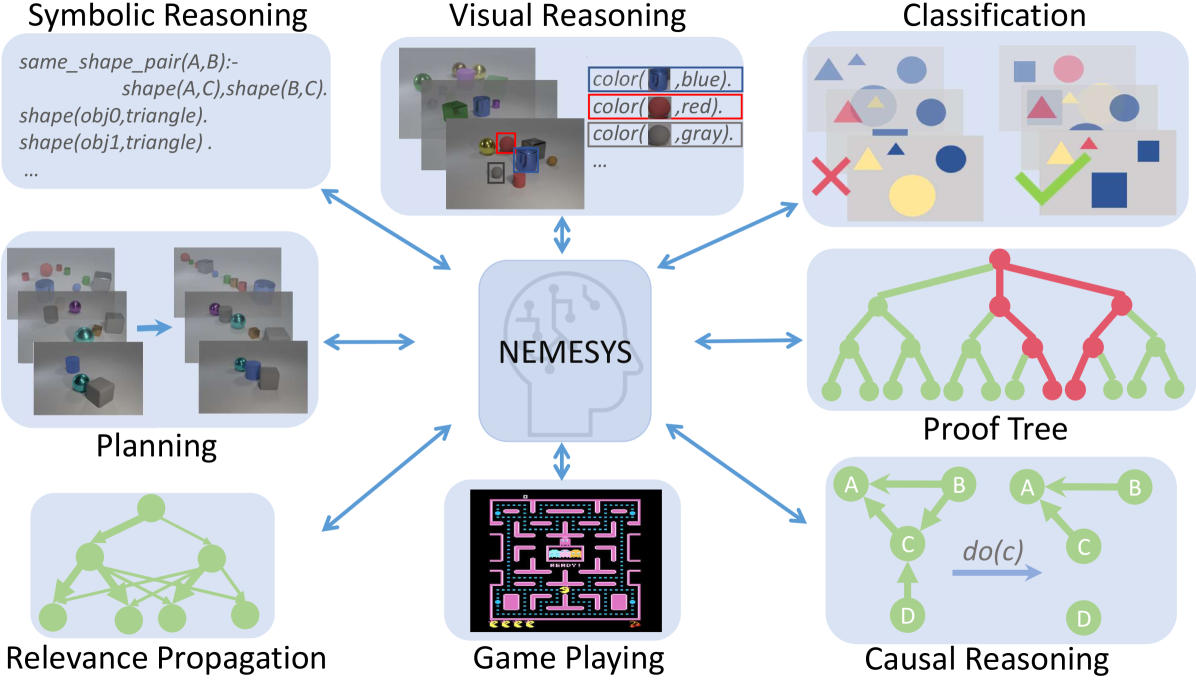

Figure 1: NEMESYS solves different kinds of tasks by using meta-level reasoning and learning. NEMESYS addresses, for instance, visual reasoning, planning, and causal reasoning without modifying its internal reasoning architecture. (Best viewed in color)

## 1 Introduction

One of the distant goals of Artificial Intelligence (AI) is to build a fully autonomous or ‘human-like’ system. The current successes of deep learning systems such as DALLE-2 [1], ChatGPT [2, 3], and Gato [4] have been promoted as bringing the field closer to this goal. However, current systems still require a large number of computations and often solve rather specific tasks. For example, DALLE-2 can generate very high-quality images but cannot play chess or Atari games. In stark contrast, human minds solve a wide range of problems using a small amount of computation and limited experience.

Most importantly, to be considered a major step towards achieving Artificial General Intelligence (AGI), a system must not only be able to perform a variety of tasks, such as Gato [4] playing Atari games, captioning images, chatting, and controlling a real robot arm, but also be self-reflective and able to learn and reason about its own capabilities. This means that it must be able to improve itself and adapt to new situations through self-reflection [5, 6, 7, 8]. Consequently, the study of meta-level architectures such as meta learning [9] and meta-reasoning [7] becomes progressively important. Meta learning [10] is a way to improve the learning algorithm itself [11, 12], i.e., it performs learning at a higher level, or meta-level. Meta-reasoning is a related concept that involves a system being able to think about its own abilities and how it processes information [5, 6]. It involves reflecting on, or introspecting about, the system’s own reasoning processes.

Indeed, meta-reasoning is different from object-centric reasoning, which refers to the system thinking about entities external to itself [13, 14, 15]. Here, the models perform low-level visual perception and reasoning on high-level concepts. Accordingly, there has been a push to make these reasoning systems differentiable [16, 17] along with addressing benchmarks in a visual domain such as CLEVR [18] and Kandinsky patterns [19, 20]. They use object-centric neural networks to perceive objects and perform reasoning using their output. Although this can solve the proposed benchmarks to some extent, the critical question remains unanswered: Is the reasoner able to justify its own operations? Can the same model solve different tasks such as (causal) reasoning, planning, game playing, and much more?

To overcome these limitations, we propose NEMESYS, the first neural meta -symbolic reasoning system. NEMESYS extensively performs meta-level programming on neuro-symbolic systems, and thus it can reason and learn several tasks. This is different from performing object-level deep reasoning and learning, which refers in some way to entities external to the system. NEMESYS is able to reflect or introspect, i.e., to shift from object- to meta-level reasoning and vice versa.

| | Meta Reasoning | Multitask Adaptation | Differentiable Meta Structure Learning |

| --- | --- | --- | --- |

| DeepProbLog [21] | ✗ | ✗ | ✗ |

| NTPs [22] | ✗ | ✗ | ✗ |

| FFSNL [23] | ✗ | ✗ | ✗ |

| $\alpha$ ILP [24] | ✗ | ✗ | ✗ |

| Scallop [25] | ✗ | ✗ | ✗ |

| NeurASP [26] | ✗ | ✗ | ✗ |

| NEMESYS (ours) | ✓ | ✓ | ✓ |

Table 1: Comparisons between NEMESYS and other state-of-the-art Neuro-Symbolic systems. We compare these systems with NEMESYS in three aspects, whether the system performs meta reasoning, can the same system adapt to solve different tasks and is the system capable of differentiable meta level structure learning.

Overall, we make the following contributions:

1. We propose NEMESYS, the first neural meta -symbolic reasoning and learning system that performs differentiable forward reasoning using meta-level programs.

1. To evaluate the ability of NEMESYS, we propose a challenging task, visual concept repairing, where the task is to rearrange objects in visual scenes based on relational logical concepts.

1. We empirically show that NEMESYS can efficiently solve different visual reasoning tasks with meta-level programs, achieving comparable performances with object-level forward reasoners [16, 24] that use specific programs for each task.

1. Moreover, we empirically show that using powerful differentiable meta-level programming, NEMESYS can solve different kinds of tasks that are difficult, if not impossible, for the previous neuro-symbolic systems. In our experiments, NEMESYS provides the function of (i) reasoning with integrated proof generation, i.e., performing differentiable reasoning producing proof trees, (ii) explainable artificial intelligence (XAI), i.e., highlighting the importance of logical atoms given conclusions, (iii) reasoning avoiding infinite loops, i.e., performing differentiable reasoning on programs which cause infinite loops that the previous logic reasoning systems unable to solve, and (iv) differentiable causal reasoning, i.e., performing causal reasoning [27, 28] on a causal Bayesian network using differentiable meta reasoners. To the best of the authors’ knowledge, we propose the first differentiable $\mathtt{do}$ operator. Achieving these functions with object-level reasoners necessitates significant efforts, and in some cases, it may be unattainable. In stark contrast, NEMESYS successfully realized the different useful functions by having different meta-level programs without any modifications of the reasoning function itself.

1. We demonstrate that NEMESYS can perform structure learning on the meta-level, i.e., learning meta programs from examples and adapting itself to solve different tasks automatically by learning efficiently with gradients.

To this end, we will proceed as follows. We first review (differentiable) first-order logic and reasoning. We then derive NEMESYS by introducing differentiable logical meta programming. Before concluding, we illustrate several capabilities of NEMESYS.

## 2 Background

NEMESYS relies on several research areas: first-order logic, logic programming, differentiable reasoning, meta-reasoning and -learning.

First-Order Logic (FOL)/Logic Programming. A term is a constant, a variable, or a term which consists of a function symbol. We denote $n$ -ary predicate ${\tt p}$ by ${\tt p}/(n,[{\tt dt_{1}},\ldots,{\tt dt_{n}}])$ , where ${\tt dt_{i}}$ is the datatype of $i$ -th argument. An atom is a formula ${\tt p(t_{1},\ldots,t_{n})}$ , where ${\tt p}$ is an $n$ -ary predicate symbol and ${\tt t_{1},\ldots,t_{n}}$ are terms. A ground atom or simply a fact is an atom with no variables. A literal is an atom or its negation. A positive literal is an atom. A negative literal is the negation of an atom. A clause is a finite disjunction ( $\lor$ ) of literals. A ground clause is a clause with no variables. A definite clause is a clause with exactly one positive literal. If $A,B_{1},\ldots,B_{n}$ are atoms, then $A\lor\lnot B_{1}\lor\ldots\lor\lnot B_{n}$ is a definite clause. We write definite clauses in the form of $A~{}\mbox{:-}~{}B_{1},\ldots,B_{n}$ . Atom $A$ is called the head, and a set of negative atoms $\{B_{1},\ldots,B_{n}\}$ is called the body. We call definite clauses as clauses for simplicity in this paper.

Differentiable Forward-Chaining Reasoning. The forward-chaining inference is a type of inference in first-order logic to compute logical entailment [29]. The differentiable forward-chaining inference [16, 17] computes the logical entailment in a differentiable manner using tensor-based operations. Many extensions of differentiable forward reasoners have been developed, e.g., reinforcement learning agents using logic to compute the policy function [30, 31] and differentiable rule learners in complex visual scenes [24]. NEMESYS performs differentiable meta-level logic programming based on differentiable forward reasoners.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Flowchart: Differentiable Meta-Level Reasoning System

### Overview

The diagram illustrates a computational framework for differentiable meta-level reasoning, combining object-level perception with probabilistic logical reasoning. It shows two interconnected pipelines: one for object-level reasoning (bottom-left) and one for meta-level reasoning (top), with a shared "meta probabilistic atoms" component.

### Components/Axes

**Top Section (Differentiable Meta-Level Reasoning):**

1. **Input:** Clauses (e.g., `same_shape_pair(X,Y)` with 0.95 probability)

2. **Meta Converter:** Transforms clauses into meta probabilistic atoms

3. **Differentiable Forward Reasoner:** Processes meta probabilistic atoms iteratively

4. **Output:** Refined meta probabilistic atoms (e.g., `solve(shape(obj1,cube))` with 0.98 probability)

**Bottom-Left Section (Object-Level Reasoning):**

1. **Input:** Image of three objects (cyan cube, red cube, yellow cylinder)

2. **Object-Centric Representation:**

- obj1: cyan cube

- obj2: red cube

- obj3: yellow cylinder

3. **Probabilistic Atoms:**

- `color(obj1,cyan)`: 0.98

- `shape(obj1,cube)`: 0.98

- `color(obj2,red)`: 0.98

- `shape(obj2,cube)`: 0.98

- `color(obj3,yellow)`: 0.98

- `shape(obj3,cylinder)`: 0.98

**Bottom-Right Section (Meta Program):**

1. **Naive Interpreter Rules:**

- `solve(A,B) :- solve(A), solve(B)`

- `solve(A) :- clause(A,B), solve(B)`

2. **Interpreter with Proof Trees:**

- `solve(A,B,proofA,proofB) :- solve(A,proofA), solve(B,proofB)`

- `solve(A,proofA) :- clause(A,B), solve(B,proofB)`

### Detailed Analysis

**Top Section Flow:**

- Clauses (0.95 confidence) → Meta Converter → Meta Probabilistic Atoms (0.98 confidence) → Differentiable Forward Reasoner → Refined Meta Probabilistic Atoms

**Object-Level Reasoning:**

- Image input → Object-Centric Representation (3 objects with color/shape attributes) → Probabilistic Atoms (0.98 confidence for each attribute)

**Meta Program Logic:**

- Naive Interpreter: Basic logical deduction rules

- Proof Tree Interpreter: Enhanced with proof tracking for logical consistency

### Key Observations

1. **Probabilistic Confidence:** All extracted features (colors, shapes, logical clauses) maintain >95% confidence

2. **Differentiable Reasoning:** The forward reasoner operates on probabilistic atoms while maintaining differentiability

3. **Hierarchical Structure:** Object-level data feeds into meta-level reasoning through probabilistic representations

4. **Logical Inference:** The meta program combines naive deduction with proof-aware reasoning

### Interpretation

This system implements a neuro-symbolic architecture where:

1. **Perception Layer:** Converts visual input into probabilistic object representations

2. **Reasoning Layer:** Uses differentiable probabilistic logic to refine object relationships

3. **Meta-Programming:** Implements logical inference rules that can operate on both raw data and intermediate representations

The 0.95-0.98 confidence range suggests the system maintains high certainty while allowing for uncertainty propagation through differentiable operations. The proof tree extension indicates support for explainable AI through traceable logical deductions.

The architecture enables end-to-end differentiable training of both perception and reasoning components, potentially allowing learning of both visual features and logical rules simultaneously.

</details>

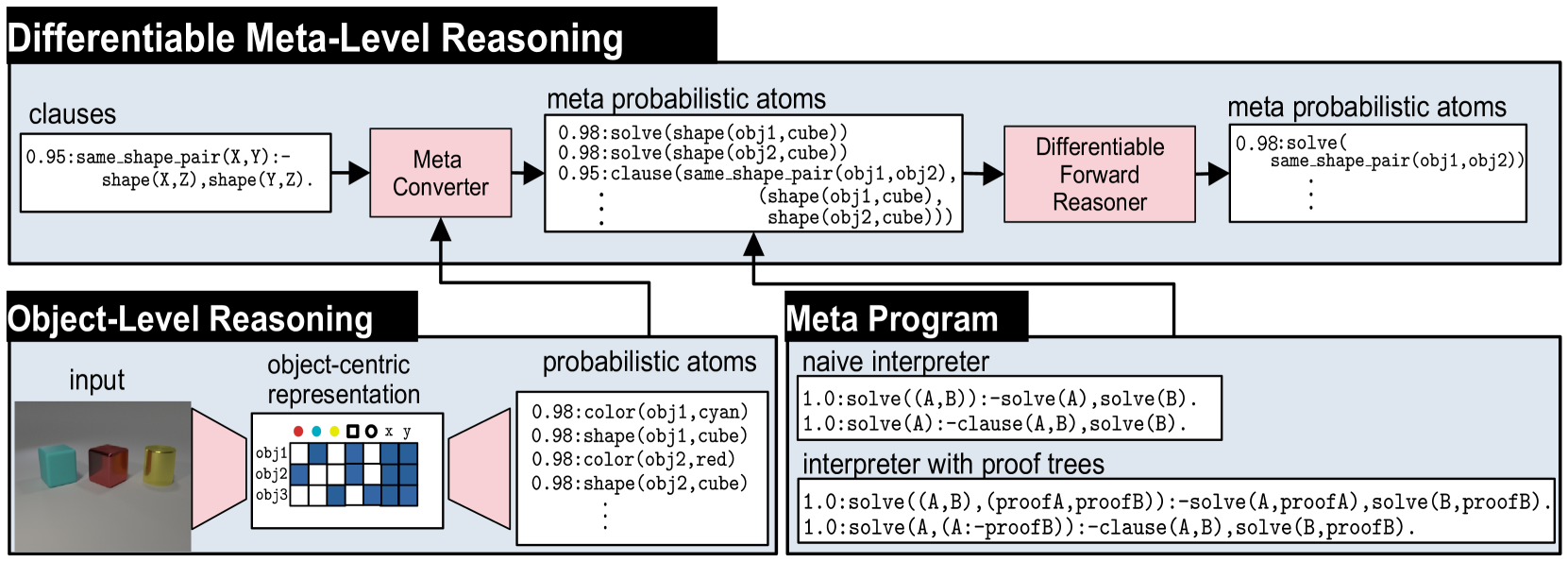

Figure 2: Overview of NEMESYS together with an object-level reasoning layer (bottom left). The meta-level reasoner (top) takes a logic program as input, here clauses on the left-hand side in the meta-level reasoning pipeline. Using the meta program (bottom right) it can realize the standard Prolog engine (naive interpreter) or an interpreter that provides e.g., also the proof trees (interpreter with proof trees) without requiring any alterations to the original logic program and internal reasoning function. This means that NEMESYS can integrate many useful functionalities by simply changing devised meta programs without intervening the internal reasoning function. (Best viewed in color)

Meta Reasoning and Learning. Meta-reasoning is the study about systems which are able to reason about its operation, i.e., a system capable of meta-reasoning may be able to reflect, or introspect [32], shifting from meta-reasoning to object-level reasoning and vice versa [6, 7]. Compared with imperative programming, it is relatively easier to construct a meta-interpreter using declarative programming. First-order Logic [33] has been the major tool to realize the meta-reasoning systems [34, 35, 36]. For example, Prolog [37] provides very efficient implementations of meta-interpreters realizing different additional features to the language.

Despite early interest in meta-reasoning within classical Inductive Logic Programming (ILP) systems [38, 39, 40], meta-interpreters have remained unexplored within neuro-symbolic AI. Meta-interpreters within classical logic are difficult to be combined with gradient-based machine learning paradigms, e.g., deep neural networks. NEMESYS realizes meta-level reasoning using differentiable forward reasoners in first-order logic, which are able to perform differentiable rule learning on complex visual scenes with deep neural networks [24]. Moreover, NEMESYS paves the way to integrate meta-level reasoning into other neuro-symbolic frameworks, including DeepProbLog [21], Scallop [25] and NeurASP [26], which are rather developed for training neural networks given logic programs using differentiable backward reasoning or answer set semantics. We compare NEMESYS with several popular neuro-symbolic systems in three aspects: whether the system performs meta reasoning, can the same system adapt to solve different tasks and is the system capable of differentiable meta level structure learning. The comparison results are summarized in Table 1.

## 3 Neural Meta-Symbolic Reasoning & Learning

We now introduce NEMESYS, the first neural meta-symbolic reasoning and learning framework. Fig. 2 shows an overview of NEMESYS.

### 3.1 Meta Logic Programming

We describe how meta-level programs are used in the NEMESYS workflow. In Fig. 2, the following object-level clause is given as its input:

| | $\displaystyle\mathtt{\color[rgb]{0,0.6,0}same\_shape\_pair(X,Y)\color[rgb]{ 0,0,0}\texttt{:-}\color[rgb]{0.68,0.36,1}shape(X,Z),shape(Y,Z)\color[rgb]{ 0,0,0}.}$ | |

| --- | --- | --- |

which identifies pairs of objects that have the same shape. The clause is subsequently fed to Meta Converter, which generates meta-level atoms.Using meta predicate $\mathtt{clause}/2$ , the following atom is generated:

| | $\displaystyle\mathtt{clause(\color[rgb]{0,0.6,0}same\_shape\_pair(X,Y),}\color [rgb]{0.68,0.36,1}\mathtt{(shape(X,Z),shape(Y,Z))\color[rgb]{0,0,0}).}$ | |

| --- | --- | --- |

where the meta atom $\mathtt{clause(H,B)}$ represents the object-level clause: $\mathtt{H\texttt{:-}B}$ .

To perform meta-level reasoning, NEMESYS uses meta-level programs, which often refer to meta interpreters, i.e., interpreters written in the language itself, as illustrated in Fig. 2. For example, a naive interpreter, NaiveInterpreter, is defined as:

| | $\displaystyle\mathtt{solve(true)}.$ | |

| --- | --- | --- |

To solve a compound goal $\mathtt{(A,B)}$ , we need first solve $\mathtt{A}$ and then $\mathtt{B}$ . A single goal $\mathtt{A}$ is solved if there is a clause that rewrites the goal to the new goal $\mathtt{B}$ , the body of the clause: $\mathtt{\color[rgb]{0,0.6,0}{A}\texttt{:-}\color[rgb]{0.68,0.36,1}{B}}$ . This process stops for facts, encoded as $\mathtt{clause(fact,true)}$ , since then, $\mathtt{solve(true)}$ will be true.

NEMESYS can employ more enriched meta programs with useful functions by simply changing the meta programs, without modifying the internal reasoning function, as illustrated in the bottom right of Fig. 2. ProofTreeInterpreter, an interpreter that produces proof trees along with reasoning, is defined as:

| | $\displaystyle\mathtt{solve(A,(A\texttt{:-}true)).}$ | |

| --- | --- | --- |

where $\mathtt{solve(A,Proof)}$ checks if atom $\mathtt{A}$ is true with proof tree $\mathtt{Proof}$ . Using this meta-program, NEMESYS can perform reasoning with integrated proof tree generation.

Now, let us devise the differentiable meta-level reasoning pipeline, which enables NEMESYS to reason and learn flexibly.

### 3.2 Differentiable Meta Programming

NEMESYS employs differentiable forward reasoning [24], which computes logical entailment using tensor operations in a differentiable manner, by adapting it to the meta-level atoms and clauses.

We define a meta-level reasoning function $f^{\mathit{reason}}_{(\mathcal{C},\mathbf{W})}:[0,1]^{G}\rightarrow[0,1]^{G}$ parameterized by meta-rules $\mathcal{C}$ and their weights $\mathbf{W}$ . We denote the set of meta-rules by $\mathcal{C}$ , and the set of all of the meta-ground atoms by $\mathcal{G}$ . $\mathcal{G}$ contains all of the meta-ground atoms produced by a given FOL language. We consider ordered sets here, i.e., each element has its index. We denote the size of the sets as: $G=|\mathcal{G}|$ and $C=|\mathcal{C}|$ . We denote the $i$ -th element of vector $\mathbf{x}$ by $\mathbf{x}[i]$ , and the $(i,j)$ -th element of matrix $\mathbf{X}$ by $\mathbf{X}[i,j]$ .

First, NEMESYS converts visual input to a valuation vector $\mathbf{v}\in[0,1]^{G}$ , which maps each meta atom to a probabilistic value (Fig. 2 Meta Converter). For example,

$$

\mathbf{v}=\blockarray{cl}\block{[c]l}0.98&\mathtt{solve(color(obj1,\ cyan))}

\\

0.01\mathtt{solve(color(obj1,\ red))}\\

0.95\mathtt{clause(same\_shape\_pair(\ldots),\ (shape(\ldots),\ \ldots))}\\

$$

represents a valuation vector that maps each meta-ground atom to a probabilistic value. For readability, only selected atoms are shown. NEMESYS computes logical entailment by updating the initial valuation vector $\mathbf{v}^{(0)}$ for $T$ times to $\mathbf{v}^{(T)}$ .

Subsequently, we compose the reasoning function that computes logical entailment. We now describe each step in detail.

(Step 1) Encode Logic Programs to Tensors.

To achieve differentiable forward reasoning, each meta-rule is encoded to a tensor representation. Let $S$ be the maximum number of substitutions for existentially quantified variables in $\mathcal{C}$ , and $L$ be the maximum length of the body of rules in $\mathcal{C}$ . Each meta-rule $C_{i}\in\mathcal{C}$ is encoded to a tensor ${\bf I}_{i}\in\mathbb{N}^{G\times S\times L}$ , which contains the indices of body atoms. Intuitively, $\mathbf{I}_{i}[j,k,l]$ is the index of the $l$ -th fact (subgoal) in the body of the $i$ -th rule to derive the $j$ -th fact with the $k$ -th substitution for existentially quantified variables. We obtain $\mathbf{I}_{i}$ by firstly grounding the meta rule $C_{i}$ , then computing the indices of the ground body atoms, and transforming them into a tensor.

(Step 2) Assign Meta-Rule Weights.

We assign weights to compose the reasoning function with several meta-rules as follows: (i) We fix the target programs’ size as $M$ , i.e., we try to select a meta-program with $M$ meta-rules out of $C$ candidate meta rules. (ii) We introduce $C$ -dimensional weights $\mathbf{W}=[{\bf w}_{1},\ldots,{\bf w}_{M}]$ where $\mathbf{w}_{i}\in\mathbb{R}^{C}$ . (iii) We take the softmax of each weight vector ${\bf w}_{j}\in\mathbf{W}$ and softly choose $M$ meta rules out of $C$ meta rules to compose the differentiable meta program.

(Step 3) Perform Differentiable Inference.

We compute $1$ -step forward reasoning using weighted meta-rules, then we recursively perform reasoning to compute $T$ -step reasoning.

(i) Reasoning using one rule. First, for each meta-rule $C_{i}\in\mathcal{C}$ , we evaluate body atoms for different grounding of $C_{i}$ by computing:

$$

\displaystyle b_{i,j,k}^{(t)}=\prod_{1\leq l\leq L}{\bf gather}({\bf v}^{(t)},

{\bf I}_{i})[j,k,l], \tag{1}

$$

where $\mathbf{gather}:[0,1]^{G}\times\mathbb{N}^{G\times S\times L}\rightarrow[0,1]^ {G\times S\times L}$ is:

$$

\displaystyle\mathbf{gather}({\bf x},{\bf Y})[j,k,l]={\bf x}[{\bf Y}[j,k,l]], \tag{2}

$$

and $b^{(t)}_{i,j,k}\in[0,1]$ . The $\mathbf{gather}$ function replaces the indices of the body atoms by the current valuation values in $\mathbf{v}^{(t)}$ . To take logical and across the subgoals in the body, we take the product across valuations. $b_{i,j,k}^{(t)}$ represents the valuation of body atoms for $i$ -th meta-rule using $k$ -th substitution for the existentially quantified variables to deduce $j$ -th meta-ground atom at time $t$ .

Now we take logical or softly to combine all of the different grounding for $C_{i}$ by computing $c^{(t)}_{i,j}\in[0,1]$ :

$$

\displaystyle c^{(t)}_{i,j}=\mathit{softor}^{\gamma}(b_{i,j,1}^{(t)},\ldots,b_

{i,j,S}^{(t)}), \tag{3}

$$

where $\mathit{softor}^{\gamma}$ is a smooth logical or function:

$$

\displaystyle\mathit{softor}^{\gamma}(x_{1},\ldots,x_{n})=\gamma\log\sum_{1

\leq i\leq n}\exp(x_{i}/\gamma), \tag{4}

$$

where $\gamma>0$ is a smooth parameter. Eq. 4 is an approximation of the max function over probabilistic values based on the log-sum-exp approach [41].

(ii) Combine results from different rules. Now we apply different meta-rules using the assigned weights by computing:

$$

\displaystyle h_{j,m}^{(t)}=\sum_{1\leq i\leq C}w^{*}_{m,i}\cdot c_{i,j}^{(t)}, \tag{5}

$$

where $h_{j,m}^{(t)}\in[0,1]$ , $w^{*}_{m,i}=\exp(w_{m,i})/{\sum_{i^{\prime}}\exp(w_{m,i^{\prime}})}$ , and $w_{m,i}=\mathbf{w}_{m}[i]$ . Note that $w^{*}_{m,i}$ is interpreted as a probability that meta-rule $C_{i}\in\mathcal{C}$ is the $m$ -th component. We complete the $1$ -step forward reasoning by combining the results from different weights:

$$

\displaystyle r_{j}^{(t)}=\mathit{softor}^{\gamma}(h_{j,1}^{(t)},\ldots,h_{j,M

}^{(t)}). \tag{6}

$$

Taking $\mathit{softor}^{\gamma}$ means that we compose $M$ softly chosen rules out of $C$ candidate meta-rules.

(iii) Multi-step reasoning. We perform $T$ -step forward reasoning by computing $r_{j}^{(t)}$ recursively for $T$ times: $v^{(t+1)}_{j}=\mathit{softor}^{\gamma}(r^{(t)}_{j},v^{(t)}_{j})$ . Updating the valuation vector for $T$ -times corresponds to computing logical entailment softly by $T$ -step forward reasoning. The whole reasoning computation Eq. 1 - 6 can be implemented using efficient tensor operations.

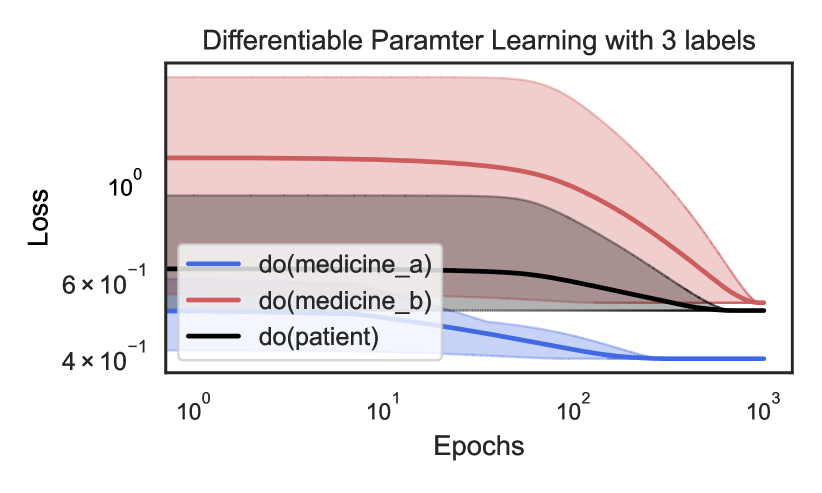

## 4 Experiments

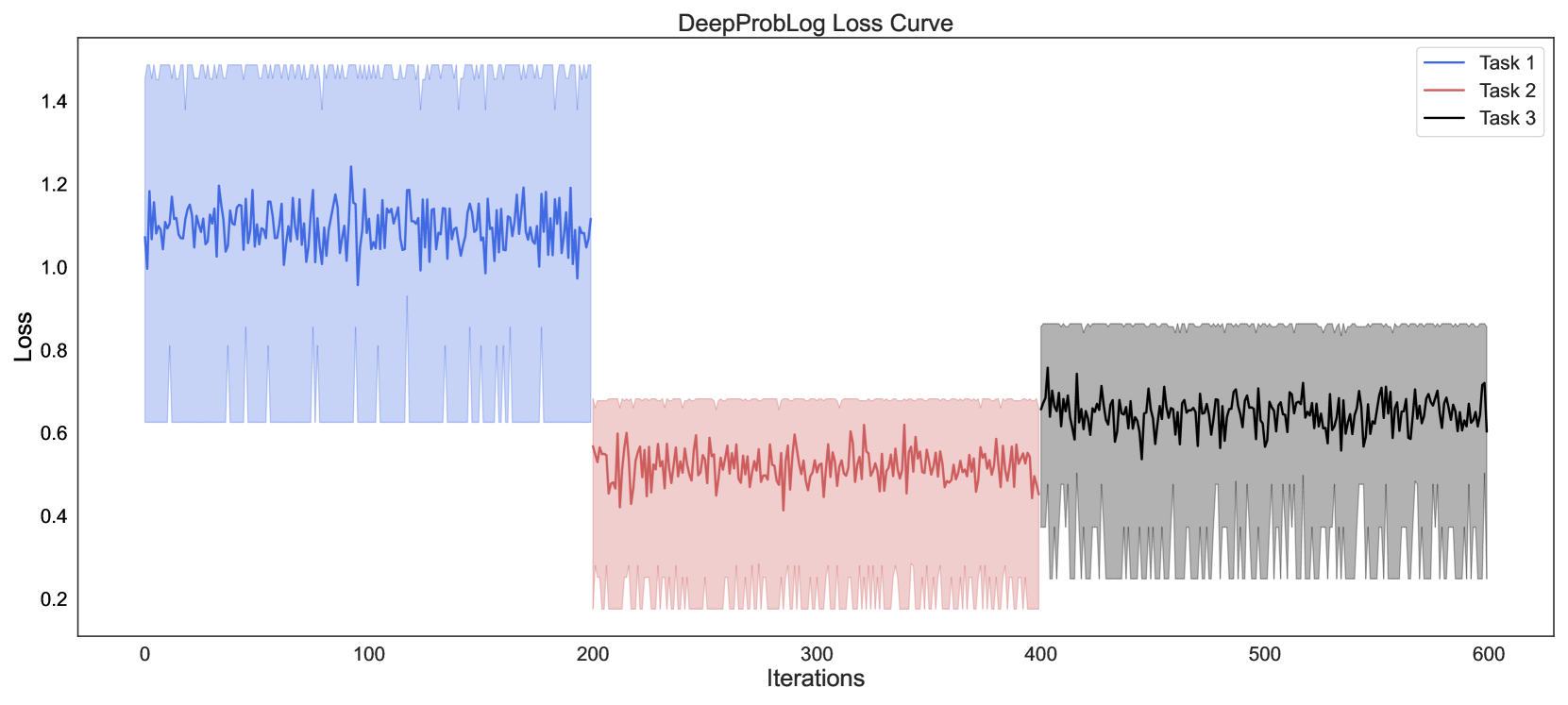

With the methodology of NEMESYS established, we subsequently provide empirical evidence of its benefits over neural baselines and object-level neuro-symbolic approaches: (1) NEMESYS can emulate a differentiable forward reasoner, i.e., it is a sufficient implementation of object-centric reasoners with a naive meta program. (2) NEMESYS is capable of differentiable meta-level reasoning, i.e., it can integrate additional useful functions using devised meta-rules. We demonstrate this advantage by solving tasks of proof-tree generation, relevance propagation, automated planning, and causal reasoning. (3) NEMESYS can perform parameter and structure learning efficiently using gradient descent, i.e., it can perform learning on meta-level programs.

In our experiments, we implemented NEMESYS in Python using PyTorch, with CPU: intel i7-10750H and RAM: 16 GB.

| | NEMESYS | ResNet50 | YOLO+MLP |

| --- | --- | --- | --- |

| Twopairs | 100.0 $\bullet$ | 50.81 | 98.07 $\circ$ |

| Threepairs | 100.0 $\bullet$ | 51.65 | 91.27 $\circ$ |

| Closeby | 100.0 $\bullet$ | 54.53 | 91.40 $\circ$ |

| Red-Triangle | 95.6 $\bullet$ | 57.19 | 78.37 $\circ$ |

| Online/Pair | 100.0 $\bullet$ | 51.86 | 66.19 $\circ$ |

| 9-Circles | 95.2 $\bullet$ | 50.76 $\circ$ | 50.76 $\circ$ |

Table 2: Performance (accuracy; the higher, the better) on the test split of Kandinsky patterns. The best-performing models are denoted using $\bullet$ , and the runner-up using $\circ$ . In Kandinsky patterns, NEMESYS produced almost perfect accuracies outperforming neural baselines, where YOLO+MLP is a neural baseline using pre-trained YOLO [42] combined with a simple MLP, showing the capability of solving complex visual reasoning tasks. The performances of baselines are shown in [15].

### 4.1 Visual Reasoning on Complex Pattenrs

Let us start off by showing that our NEMESYS is able to obtain the equivalent high-quality results as a standard object-level reasoner but on the meta-level. We considered tasks of Kandinsky patterns [19, 43] and CLEVR-Hans [14] We refer to [14] and [15] for detailed explanations of the used patterns for CLEVR-Hans and Kandinsky patterns.. CLEVR-Hans is a classification task of complex 3D visual scenes. We compared NEMESYS with the naive interpreter against neural baselines and a neuro-symbolic baseline, $\alpha$ ILP [24], which achieves state-of-the-art performance on these tasks. For all tasks, NEMESYS achieved exactly the same performances with $\alpha$ ILP since the naive interpreter realizes a conventional object-centric reasoner. Moreover, as shown in Table 2 and Table 3, NEMESYS outperformed neural baselines on each task. This shows that NEMESYS is able to solve complex visual reasoning tasks using meta-level reasoning without sacrificing performance.

In contrast to the object-centric reasoners, e.g., $\alpha$ ILP. NEMESYS can easily integrate additional useful functions by simply switching or adding meta programs without modifying the internal reasoning function, as shown in the next experiments.

### 4.2 Explainable Logical Reasoning

One of the major limitations of differentiable forward chaining [16, 17, 24] is that they lack the ability to explain the reasoning steps and their evidence. We show that NEMESYS achieves explainable reasoning by incorporating devised meta-level programs.

Reasoning with Integrated Proof Tree Generation

First, we demonstrate that NEMESYS can generate proof trees while performing reasoning, which the previous differentiable forward reasoners cannot produce since they encode the reasoning function to computational graphs using tensor operations and observe only their input and output. Since NEMESYS performs reasoning using meta-level programs, it can add the function to produce proof trees into its underlying reasoning mechanism simply by devising them, as illustrated in Fig 2.

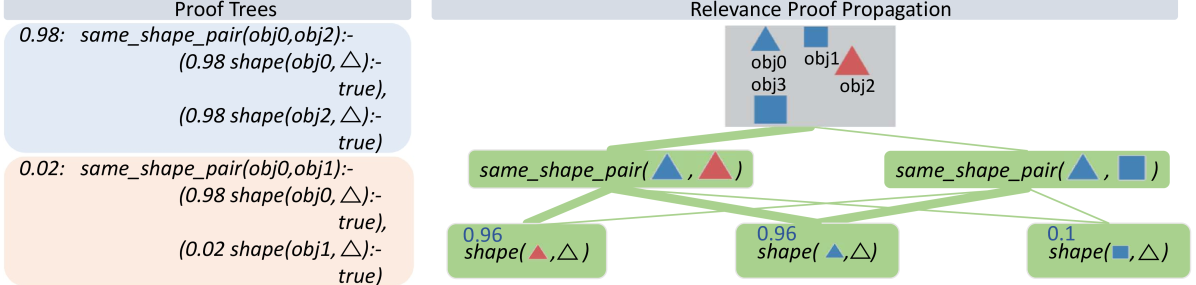

We use Kandinsky patterns [20], a benchmark of visual reasoning whose classification rule is defined on high-level concepts of relations and attributes of objects. We illustrate the input on the top right of Fig. 3 that belongs to a pattern: ”There are two pairs of objects that share the same shape.” Given the visual input, proof trees generated using the ProofTreeInterpreter in Sec. 3.1 are shown on the left two boxes of Fig. 3. In this experiment, NEMESYS identified the relations between objects, and the generated proof trees explain the intermediate reasoning steps.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Proof Trees and Relevance Proof Propagation

### Overview

The image presents two distinct sections: **Proof Trees** (left) and **Relevance Proof Propagation** (right). The left section contains hierarchical proof trees with probabilistic values and function calls, while the right section illustrates how relevance is propagated between objects based on shape similarity.

### Components/Axes

#### Proof Trees (Left)

- **Structure**: Two proof trees, one in **blue** (high confidence) and one in **orange** (low confidence).

- **Root Nodes**:

- **Blue Tree**:

- Label: `same_shape_pair(obj0, obj2)`

- Probability: `0.98`

- Child Nodes:

- `shape(obj0, △)` with probability `0.98` (returns `true`)

- `shape(obj2, △)` with probability `0.98` (returns `true`)

- **Orange Tree**:

- Label: `same_shape_pair(obj0, obj1)`

- Probability: `0.02`

- Child Nodes:

- `shape(obj0, △)` with probability `0.98` (returns `true`)

- `shape(obj1, △)` with probability `0.02` (returns `true`)

#### Relevance Proof Propagation (Right)

- **Objects**:

- `obj0` (blue triangle), `obj1` (red triangle), `obj2` (blue square), `obj3` (blue square).

- **Nodes**:

- `same_shape_pair(△, △)` with probability `0.96`

- `same_shape_pair(△, □)` with probability `0.1`

- `same_shape_pair(△, △)` with probability `0.96`

- **Connections**:

- Lines link nodes via the `shape()` function, indicating relationships between objects.

- **Legend**:

- Blue triangle: `obj0`

- Red triangle: `obj1`

- Blue square: `obj2` and `obj3`

### Detailed Analysis

#### Proof Trees

- **Blue Tree**:

- High confidence (`0.98`) in `same_shape_pair(obj0, obj2)`.

- Both child nodes (`shape(obj0, △)` and `shape(obj2, △)`) confirm the shape is triangular with high probability.

- **Orange Tree**:

- Low confidence (`0.02`) in `same_shape_pair(obj0, obj1)`.

- Child node `shape(obj1, △)` has a low probability (`0.02`), suggesting uncertainty about `obj1`'s shape.

#### Relevance Proof Propagation

- **Nodes**:

- `same_shape_pair(△, △)` (probability `0.96`): High confidence in similarity between triangles (e.g., `obj0` and `obj1`).

- `same_shape_pair(△, □)` (probability `0.1`): Low confidence in similarity between triangle and square (e.g., `obj0` and `obj2`).

- `same_shape_pair(△, △)` (probability `0.96`): Repeats the high-confidence triangle-triangle pairing.

- **Flow**:

- The `shape()` function propagates relevance between objects. For example, `obj0` (triangle) is linked to `obj1` (triangle) with high confidence, but to `obj2` (square) with low confidence.

### Key Observations

1. **High Confidence in Triangle-Triangle Similarity**:

- `same_shape_pair(△, △)` nodes consistently show `0.96` probability, indicating strong agreement on triangular shapes.

2. **Low Confidence in Triangle-Square Similarity**:

- `same_shape_pair(△, □)` has only `0.1` probability, suggesting minimal relevance between triangles and squares.

3. **Object Relationships**:

- `obj0` (triangle) is strongly linked to `obj1` (triangle) but weakly to `obj2` (square).

- `obj2` and `obj3` (both squares) are not directly compared in the diagram.

### Interpretation

- **Proof Trees**:

- The blue tree confirms `obj0` and `obj2` share the same triangular shape with high confidence. The orange tree, however, shows near-zero confidence in `obj0` and `obj1` sharing the same shape, despite both being triangles. This discrepancy might indicate an error in the orange tree’s logic or a contextual factor (e.g., `obj1` being a different type of triangle).

- **Relevance Propagation**:

- The system prioritizes shape similarity for relevance. Triangles (`obj0`, `obj1`) are highly relevant to each other, while squares (`obj2`, `obj3`) are less relevant to triangles. The low probability for `△-□` pairs suggests the system distinguishes between shapes strictly.

- **Anomalies**:

- The orange tree’s `shape(obj1, △)` node has a low probability (`0.02`) despite `obj1` being a triangle. This could imply a misclassification or a contextual constraint (e.g., `obj1` being a "non-standard" triangle).

- `obj3` (blue square) is included in the legend but not explicitly used in the propagation diagram, raising questions about its role.

### Conclusion

The diagram illustrates a probabilistic framework for determining shape similarity and relevance between objects. High-confidence matches (e.g., triangles) drive relevance, while low-confidence pairs (e.g., triangle-square) are deprioritized. The orange tree’s low confidence in `obj0-obj1` similarity contradicts the high confidence in `obj0-obj2`, highlighting potential inconsistencies or contextual dependencies in the system’s logic.

</details>

Figure 3: NEMESYS explains its reasoning with proof trees and relevance proof propagation. Given the image involving four objects (top, right), NEMESYS provides two proofs (two boxes on the left, true atom’s proof (blue box) and false atom’s proof (cream box)). They can be leveraged to decompose the prediction of NEMESYS into relevance scores per (ground) atom (right). First, a standard forward reasoning is performed to compute the prediction. Then, the model’s prediction is backward propagated through the proof trees by applying specific decomposition rules, see main text. The numbers next to each (ground) atom are the relevance scores computed. The larger the score is, the more impact an (ground) atom has on the final prediction, and the line width is wider. For brevity, the complete proof tree is not depicted here. As our baseline comparison, we extend DeepProbLog [21] to DeepMetaProbLog. However, DeepMetaProbLog only provides proof tree for true atoms (top left blue box). (Best viewed in color)

| | CLEVR-Hans3 | CLEVR-Hans7 | | |

| --- | --- | --- | --- | --- |

| Validation | Test | Validation | Test | |

| CNN | 99.55 $\circ$ | 70.34 | 96.09 | 84.50 |

| NeSy (Default) | 98.55 | 81.71 | 96.88 $\circ$ | 90.97 |

| NeSy-XIL | 100.00 $\bullet$ | 91.31 $\circ$ | 98.76 $\bullet$ | 94.96 $\bullet$ |

| NEMESYS | 98.18 | 98.40 $\bullet$ | 93.60 | 92.19 $\circ$ |

Table 3: Performance (accuracy; the higher, the better) on the validation/test splits of 3D CLEVR-Hans data sets. The best-performing models are denoted using $\bullet$ , and the runner-up using $\circ$ . In CLEVR-Hans, NEMESYS outperformed neural baselines including: (CNN) A ResNet [44], (NeSy) A model combining object-centric model (Slot Attention [45] and Set Transformer [46], and (NeSy-XIL) Slot Attention and Set Transformer using human feedback. NEMESYS tends to show less overfitting and performs similarly to a neuro-symbolic approach using human feedback (NeSy-XIL). The performances of baselines are shown in [14] and [15].

Let’s first consider the top left blue box depicted in Fig. 3 (for readability, we only show the proof part of meta atoms in the image). The weighted ground atom $\mathtt{0.98:}\mathtt{same\_shape\_pair(obj0,obj2)}$ proves $\mathtt{obj0}$ and $\mathtt{obj2}$ are of the same shape with the probability $0.98$ . The proof part shows that NEMESYS comes to this conclusion since both objects are triangle with probabilities of $\mathtt{0.98}$ and in turn it can apply the rule for $\mathtt{same\_shape\_pair}$ . We use this example to show how to compute the weight of the meta atoms inside NEMESYS. With the proof-tree meta rules and corresponding meta ground atoms:

| | $\displaystyle\mathtt{0.98:}\ \color[rgb]{0.5,0,1}\mathtt{solve(shape(obj0,} \text{\includegraphics[height=6.45831pt]{plots/triangle.png}}\mathtt{),(shape( obj0,}\text{\includegraphics[height=6.45831pt]{plots/triangle.png}}\mathtt{), true).}$ | |

| --- | --- | --- |

The weight of the meta ground atoms are computed by Meta Converter when mapping the probability of meta ground atoms to a continuous value. The meta ground atom says that $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ is true with a high probability of $0.98$ because $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ can be proven.

With the two meta ground atoms at hand, we infer the weight of the meta atom with compound goals $\color[rgb]{0,0.6,0}\mathtt{solve((shape(obj0,\includegraphics[height=6.45831 pt]{plots/triangle.png}),shape(obj2,\includegraphics[height=6.45831pt]{plots/ triangle.png})),(ProofA,ProofB))}$ , based on the first meta rule (for readability, we omit writing out the proof part). Then, we use the second meta rule to compute the weight of the meta atom $\color[rgb]{0.68,0.36,1}\mathtt{solve}\mathtt{(same\_shape\_pair(obj0,obj2)}, \mathtt{(Proof))}$ , using the compound goal meta atom $\color[rgb]{0,0.6,0}\mathtt{solve((shape(obj0,\includegraphics[height=6.45831 pt]{plots/triangle.png}),shape(obj2,\includegraphics[height=6.45831pt]{plots/ triangle.png})),(ProofA,ProofB))}$ and the meta atom $\mathtt{clause}\mathtt{(same\_shape\_pair(obj0,obj2)},\mathtt{(shape(obj0, \includegraphics[height=6.45831pt]{plots/triangle.png})},\mathtt{shape(obj2, \includegraphics[height=6.45831pt]{plots/triangle.png})))}$ .

In contrast, NEMESYS can explicitly show that $\mathtt{obj0}$ and $\mathtt{obj1}$ have a low probability of being of the same shape (Fig. 3 left bottom cream box). This proof tree shows that the goal $\mathtt{shape(obj1,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ has a low probability of being true. Thus, as one can read off, $\mathtt{obj0}$ is most likely a triangle, while $\mathtt{obj1}$ is most likely not a triangle. In turn, NEMESYS concludes with a low probability that $\mathtt{same\_shape\_pair(obj0,obj1)}$ is true, only a probability of $0.02$ . NEMESYS can produce all the information required to explain its decisions by simply changing the meta-program, not the underlying reasoning system.

Using meta programming to extend DeepProbLog to produce proof trees as a baseline comparison. Since DeepProbLog [21] doesn’t support generating proof trees in parallel with reasoning, we extend DeepProbLog [21] to DeepMetaProblog to generate proof trees as our baseline comparison using ProbLog [47]. However, the proof tree generated by DeepMetaProbLog is limited to the ‘true’ atoms (Fig. 3 top left blue box), i.e., DeepMetaProbLog is unable to generate proof tree for false atoms such as $\mathtt{same\_shape\_pair(obj0,obj1)}$ (Fig. 3 bottom left cream box) due to backward reasoning.

Logical Relevance Proof Propagation (LRP ${}^{2}$ )

Inspired by layer-wise relevance propagation (LRP) [48], which produces explanations for feed-forward neural networks, we now show that, LRP can be adapted to logical reasoning systems using declarative languages in NEMESYS, thereby enabling the reasoning system to articulate the rationale behind its decisions, i.e., it can compute the importance of ground atoms for a query by having access to proof trees. We call this process: logical relevance proof propagation (LRP ${}^{2}$ ).

The original LRP technique decomposes the prediction of the network, $f(\mathbf{x})$ , onto the input variables, $\mathbf{x}=\left(x_{1},\ldots,x_{d}\right)$ , through a decomposition $\mathbf{R}=\left(R_{1},\ldots,R_{d}\right)$ such that $\sum\nolimits_{p=1}^{d}R_{p}=f(\mathbf{x})\;$ . Given the activation $a_{j}=\rho\left(\sum_{i}a_{i}w_{ij}+b_{j}\right)$ of neuron, where $i$ and $j$ denote the neuron indices at consecutive layers, and $\sum_{i}$ and $\sum_{j}$ represent the summation over all neurons in the respective layers, the propagation of LRP is defined as: $R_{i}=\sum\nolimits_{j}z_{ij}({\sum\nolimits_{i}z_{ij}})^{-1}R_{j},$ where $z_{ij}$ is the contribution of neuron $i$ to the activation $a_{j}$ , typically some function of activation $a_{i}$ and the weight $w_{ij}$ . Starting from the output $f(\mathbf{x})$ , the relevance is computed layer by layer until the input variables are reached.

To adapt this in NEMESYS to ground atoms and proof trees, we have to be a bit careful, since we cannot deal with the uncountable, infinite real numbers within our logic. Fortunately, we can make use of the weight associated with ground atoms. That is, our LRP ${}^{2}$ composes meta-level atoms that represent the relevance of an atom given proof trees and associates the relevance scores to the weights of the meta-level atoms.

To this end, we introduce three meta predicates: $\mathtt{rp/3/[goal,proofs,atom]}$ that represents the relevance score an $\mathtt{atom}$ has on the $\mathtt{goal}$ in given $\mathtt{proofs}$ , $\mathtt{assert\_probs/1/[atom]}$ that looks up the valuations of the ground atoms and maps the probability of the $\mathtt{atom}$ to its weight. $\mathtt{rpf/2/[proof,atom]}$ represents how much an $\mathtt{atom}$ contributes to the $\mathtt{proof}$ . The atom $\mathtt{assert\_probs((Goal\texttt{:-}Body))}$ asserts the probability of the atom $\mathtt{(Goal\texttt{:-}Body)}$ . With them, the meta-level program of LRP ${}^{2}$ is:

| | $\displaystyle\mathtt{rp(Goal,Body,Atom)}\texttt{:-}\mathtt{assert\_probs((Goal \texttt{:-}Body))},$ | |

| --- | --- | --- |

where $\mathtt{rp(Goal,Proof,Atom)}$ represents the relevance score an $\mathtt{Atom}$ has on the $\mathtt{Goal}$ in a $\mathtt{Proof}$ , i.e., we interpret the associated weight with atom $\mathtt{rp(Goal,Proof,Atom)}$ as the actual relevance score of $\mathtt{Atom}$ has on $\mathtt{Goal}$ given $\mathtt{Proof}$ . The higher the weight of $\mathtt{rp(Goal,Proof,Atom)}$ is, the larger the impact of $\mathtt{Atom}$ has on the $\mathtt{Goal}$ .

Let us go through the meta rules of LRP ${}^{2}$ . The first rule defines how to compute the relevance score of an $\mathtt{Atom}$ given the $\mathtt{Goal}$ under the condition of a $\mathtt{Body}$ (a single $\mathtt{Proof}$ ). The relevance score is computed by multiplying the weight of the $\mathtt{Body}$ , the weight of a clause $\mathtt{(Goal\texttt{:-}Body)}$ and the importance score of the $\mathtt{Atom}$ given the $\mathtt{Body}$ . The second to the seventh rule defines how to calculate the importance score of an $\mathtt{Atom}$ given a $\mathtt{Proof}$ . These six rules loop over each atom of the given $\mathtt{Proof}$ , once it detects the $\mathtt{Atom}$ inside the given $\mathtt{Proof}$ , the importance score will be set to the weight of the $\mathtt{Atom}$ , another case is that the $\mathtt{Atom}$ is not in $\mathtt{Proof}$ , in that case, in the seventh rule, $\mathtt{norelate}$ will set the importance score to a small value. The eighth and ninth rules amalgamate the results from different proofs, i.e., the score from each proof tree is computed recursively during forward reasoning. The scores for the same target (the pair of $\mathtt{Atom}$ and $\mathtt{Goal}$ ) are combined by the $\mathit{softor}$ operation. The score of an atom given several proofs is computed by taking logical or softly over scores from each proof.

With these nine meta rules at hand, together with the proof tree, NEMESYS is able to perform the relevance proof propagation for different atoms. We consider using the proof tree generated in Sec. 4.2 and set the goal as: $\mathtt{same\_shape\_pair(obj0,obj2)}$ . Fig. 3 (right) shows LRP ${}^{2}$ -based explanations generated by NEMESYS. The relevance scores of different ground atoms are listed next to each (ground) atom. As we can see, the atoms $\mathtt{shape(obj0,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ and $\mathtt{shape(obj2,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ have the largest impact on the goal $\mathtt{same\_shape\_pair(obj0,obj2)}$ , while $\mathtt{shape(obj1,\includegraphics[height=6.45831pt]{plots/triangle.png})}$ have much smaller impact.

By providing proof tree and LRP ${}^{2}$ , NEMESYS computes the precise effect of a ground atom on the goal and produces an accurate proof to support its conclusion. This approach is distinct from the Most Probable Explanation (MPE) [49], which generates the most probable proof rather than the exact proof.

<details>

<summary>extracted/5298395/images/plan/0v.png Details</summary>

### Visual Description

## Photograph: Three Objects on Neutral Surface

### Overview

The image depicts three distinct 3D objects arranged on a neutral gray surface with soft, diffused lighting. No textual elements, labels, or annotations are present. The composition emphasizes contrast in size, color, and material properties.

### Components/Axes

- **Objects**:

1. **Large Purple Sphere**: Matte finish, centrally positioned.

2. **Small Shiny Purple Sphere**: Glossy surface, reflective highlights, located to the left of the large sphere.

3. **Red Cylinder**: Metallic sheen, positioned to the right of the large sphere.

- **Background**: Uniform gray with subtle gradient shading to suggest depth.

- **Lighting**: Soft shadows beneath objects, indicating a single light source above.

### Detailed Analysis

- **Large Purple Sphere**:

- Size: Dominates the composition, occupying ~40% of the frame.

- Material: Non-reflective, uniform matte texture.

- Position: Centered, serving as the focal point.

- **Small Shiny Purple Sphere**:

- Size: ~1/5th the diameter of the large sphere.

- Material: Highly reflective, with visible specular highlights.

- Position: Left of the large sphere, slightly offset.

- **Red Cylinder**:

- Size: Similar height to the large sphere but narrower in diameter.

- Material: Metallic, with subtle reflections of the environment.

- Position: Right of the large sphere, aligned horizontally with the small sphere.

### Key Observations

1. **Contrast in Materiality**: The matte vs. glossy finishes create visual tension between the purple spheres.

2. **Color Harmony**: Both purple objects share a hue but differ in saturation and reflectivity.

3. **Spatial Balance**: The red cylinder breaks the symmetry, anchoring the composition on the right.

4. **Lighting Effects**: Shadows and highlights emphasize three-dimensionality and material properties.

### Interpretation

The arrangement suggests a study in material contrast and spatial relationships. The absence of text implies the focus is on visual storytelling through form and texture. The large matte sphere may symbolize stability or simplicity, while the shiny sphere and red cylinder introduce dynamism and modernity. The neutral background ensures no distractions, directing attention to the objects’ intrinsic properties.

**Note**: No factual or numerical data is present in the image. Analysis is limited to visual and material characteristics.

</details>

<details>

<summary>extracted/5298395/images/plan/1v0.png Details</summary>

### Visual Description

## Photograph: Three Objects on a Neutral Surface

### Overview

The image depicts three distinct 3D objects arranged on a flat, neutral gray surface. The composition includes two spheres and one cylinder, varying in size, color, and material properties. Lighting is soft and diffused, casting subtle shadows to emphasize depth and texture. No textual elements, labels, or annotations are present.

### Components/Axes

- **Objects**:

1. **Large Purple Sphere**: Positioned at the top-left relative to the other objects. Matte finish, uniform color.

2. **Small Shiny Purple Sphere**: Located near the center, slightly below and to the right of the large sphere. Reflective surface with specular highlights.

3. **Red Cylinder**: Positioned at the bottom-right, aligned horizontally. Glossy finish with visible reflections.

- **Background**: Uniform gray with no patterns or textures.

- **Lighting**: Soft, even illumination from above, creating faint shadows beneath each object.

### Detailed Analysis

- **Large Purple Sphere**:

- Size: Approximately 2.5x the diameter of the small sphere.

- Material: Non-reflective, matte surface.

- Position: Top-left quadrant of the image.

- **Small Shiny Purple Sphere**:

- Size: Approximately 1/3 the diameter of the large sphere.

- Material: Highly reflective, metallic-like surface.

- Position: Centered horizontally, slightly lower than the large sphere.

- **Red Cylinder**:

- Size: Height and diameter roughly equal to the small sphere’s diameter.

- Material: Glossy, with visible light reflections on its curved surface.

- Position: Bottom-right quadrant, aligned parallel to the image’s horizontal axis.

### Key Observations

1. **Material Contrast**: The matte purple sphere contrasts with the reflective purple and red objects, suggesting a study in surface properties.

2. **Color Harmony**: Two purple objects (matte and shiny) paired with a red cylinder create a balanced yet dynamic color palette.

3. **Spatial Arrangement**: Objects are evenly spaced, avoiding visual clutter. The red cylinder anchors the composition in the lower-right.

4. **Absence of Data**: No numerical values, labels, or contextual text are present, indicating the image may serve as a visual study rather than a data-driven chart.

### Interpretation

This image likely serves as a reference for 3D rendering, material studies, or lighting experiments. The deliberate placement of objects with varying reflectivity and color highlights how light interacts with different surfaces. The lack of textual elements suggests the focus is purely on visual composition and material properties. The soft lighting and neutral background minimize distractions, emphasizing the objects’ forms and textures.

**Note**: No factual or numerical data is extractable from this image. It functions as a static visual study rather than a data visualization.

</details>

<details>

<summary>extracted/5298395/images/plan/1v1.png Details</summary>

### Visual Description

## Photograph: 3D Rendered Objects on Neutral Surface

### Overview

The image depicts a minimalist 3D render of three geometric objects arranged linearly on a flat, neutral gray surface. The composition includes two spheres and one cylinder, with no textual annotations, legends, or axis markers present. Soft ambient lighting creates subtle shadows, suggesting a light source from the upper left.

### Components/Axes

- **Objects**:

1. **Large Purple Sphere**: Positioned on the left, occupying ~30% of the image width.

2. **Small Purple Sphere**: Centered between the large sphere and the cylinder, ~1/3 the diameter of the large sphere.

3. **Red Cylinder**: Rightmost object, matching the height of the small sphere but narrower in diameter.

- **Surface**: Uniform gray plane with no texture or markings.

- **Lighting**: Soft shadows indicate a single light source (upper left), casting faint shadows toward the lower right.

### Detailed Analysis

- **Spatial Arrangement**: Objects are aligned horizontally with equal spacing (~20% of image width between each).

- **Color Contrast**: Purple (matte finish) vs. red (glossy finish) creates visual distinction.

- **Scale**: No reference objects for absolute size measurement; relative proportions are consistent.

### Key Observations

- No textual or numerical data embedded in the image.

- Objects are static with no implied motion or interaction.

- Shadows confirm a light source but lack complexity (e.g., no multiple light sources or dynamic lighting effects).

### Interpretation

This image appears to be a conceptual or illustrative render, likely used to demonstrate 3D modeling, lighting techniques, or spatial composition principles. The absence of labels or data suggests it is not intended to convey quantitative information but rather qualitative visual relationships (e.g., size hierarchy, color contrast, alignment). The simplicity implies a focus on foundational design elements rather than complex data visualization.

## No factual or numerical data present in the image.

</details>

<details>

<summary>extracted/5298395/images/plan/final.png Details</summary>

### Visual Description

## Photograph: Three Objects on a Neutral Surface

### Overview

The image depicts a minimalist still-life composition featuring three distinct objects arranged on a flat, neutral gray surface. The lighting is soft and diffused, casting subtle shadows beneath the objects. No textual elements, labels, or annotations are visible.

### Components/Axes

- **Objects**:

1. **Large Purple Sphere**: Positioned on the left side of the frame.

2. **Small Purple Sphere**: Centered between the large sphere and the red cylinder.

3. **Red Cylinder**: Located on the right side of the frame.

- **Background**: Uniform gray with no discernible texture or patterns.

- **Lighting**: Soft, even illumination from above, creating faint shadows beneath the objects.

### Detailed Analysis

- **Large Purple Sphere**:

- Dominates the left third of the image.

- Matte finish with no reflective highlights.

- Shadow extends slightly to the right, indicating a light source from the upper left.

- **Small Purple Sphere**:

- Approximately 1/3 the diameter of the large sphere.

- Positioned equidistant from the large sphere and the red cylinder.

- Slightly reflective surface, suggesting a glossy material.

- **Red Cylinder**:

- Vertical orientation, standing upright.

- Matte finish with uniform coloration.

- Shadow aligns with the light source direction.

### Key Observations

- The objects are evenly spaced along the horizontal axis, creating a balanced composition.

- The purple spheres share a color but differ in size and reflectivity, suggesting intentional contrast.

- The red cylinder introduces a warm color contrast against the cool purple tones.

- No discernible motion or interaction between objects; all appear static.

### Interpretation

This image likely serves as a study in composition, color theory, or material contrast. The absence of text or contextual elements implies it is not data-driven but rather an artistic or illustrative representation. The deliberate spacing and color choices may symbolize balance, hierarchy, or relational dynamics between objects. The lack of textual information or numerical data confirms this is a static visual study rather than a technical diagram or chart.

</details>

Figure 4: Visual Concept Repairing: NEMESYS achieves planning by performing differentiable meta-level reasoning. The left most image shows the start state, and the right most image shows the goal state. Taking these states as inputs, NEMESYS performs differentiable forward reasoning using meta-level clauses that simulate the planning steps and generate intermediate states (two images in the middle) and actions from start state to reach the goal state. (Best viewed in color)

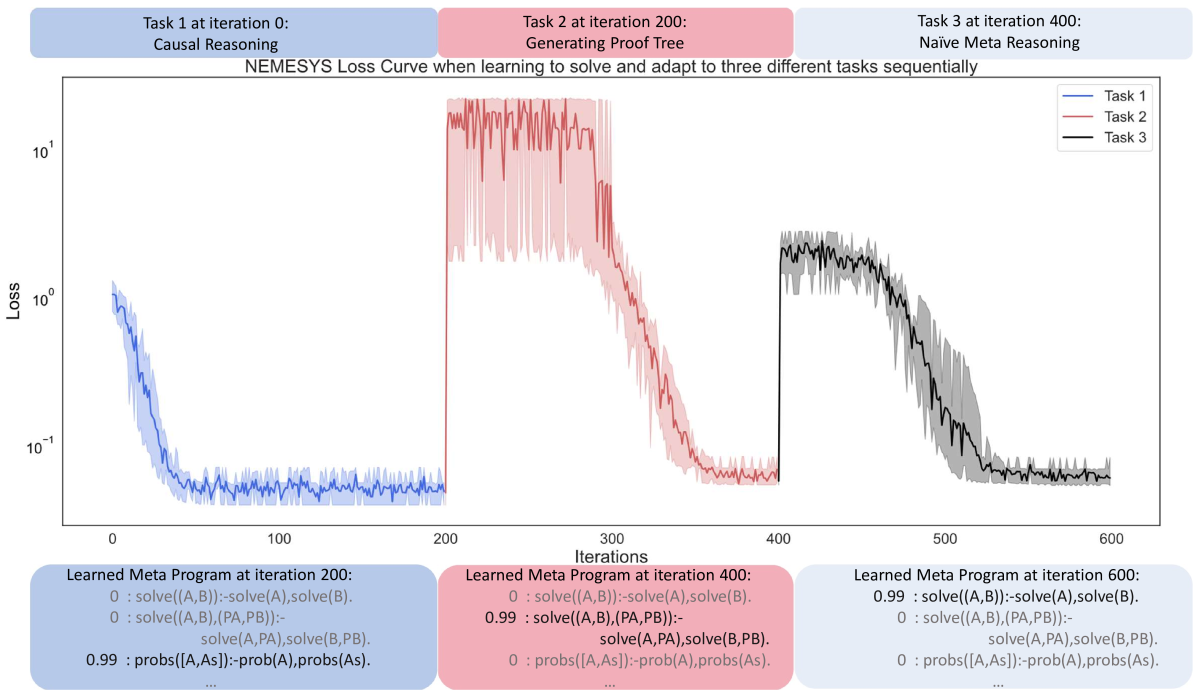

### 4.3 Avoiding Infinite Loops

Differentiable forward chaining [17], unfortunately, can generate infinite computations. A pathological example:

| | $\displaystyle\mathtt{edge(a,b).\ edge(b,a).}\ \mathtt{edge(b,c).}\quad\mathtt{ path(A,A,[\ ]).}\quad$ | |

| --- | --- | --- |

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Test on 4 queries

### Overview

The image is a bar chart comparing the accuracy of two systems, "ProbLog" and "NEMESYS," on a test involving 4 queries. The chart uses two vertical bars to represent accuracy values, with "ProbLog" achieving 0.75 accuracy and "NEMESYS" achieving 1.0 accuracy.

### Components/Axes

- **Title**: "Test on 4 queries" (centered at the top of the chart).

- **X-axis**: Labeled with two categories: "ProbLog" (left) and "NEMESYS" (right).

- **Y-axis**: Labeled "Accuracy," scaled from 0.0 to 1.0 in increments of 0.25.

- **Bars**:

- **ProbLog**: Blue bar reaching 0.75 on the y-axis.

- **NEMESYS**: Red bar reaching 1.0 on the y-axis.

- **Legend**: Implied by bar colors (blue for ProbLog, red for NEMESYS), though no explicit legend box is present.

### Detailed Analysis

- **ProbLog**: Accuracy = 0.75 (75%).

- **NEMESYS**: Accuracy = 1.0 (100%).

- **Y-axis Range**: 0.0 to 1.0, with gridlines at 0.25 intervals.

- **Bar Placement**:

- ProbLog bar is centered under the "ProbLog" label on the left.

- NEMESYS bar is centered under the "NEMESYS" label on the right.

### Key Observations

1. **NEMESYS outperforms ProbLog**: NEMESYS achieves perfect accuracy (1.0), while ProbLog scores 0.75.

2. **Query Performance**: The test involved 4 queries, suggesting the accuracy values represent success rates across these queries.

3. **Visual Contrast**: The red bar (NEMESYS) is taller than the blue bar (ProbLog), emphasizing the disparity in performance.

### Interpretation

The data suggests that NEMESYS is significantly more effective than ProbLog for the tested queries, achieving 100% accuracy compared to 75%. This could indicate superior algorithmic design, better handling of edge cases, or more robust query resolution in NEMESYS. The stark difference (25% gap) highlights potential areas for improvement in ProbLog or advantages in NEMESYS's methodology. The use of 4 queries as a test set implies the results are based on a small but controlled evaluation, which may or may not generalize to larger datasets.

</details>

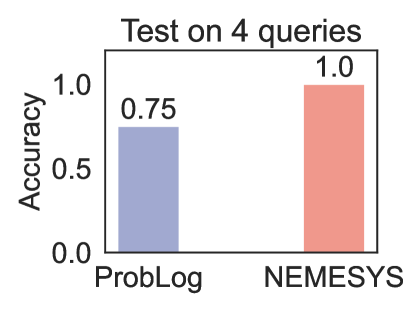

Figure 5: Performance (accuracy; the higher, the better)on four queries. (Best viewed in color)

It defines a simple graph over three nodes $(a,b,c)$ with three edges, $(a-b,b-a,b-c)$ as well as paths in graphs in general. Specifically, $\mathtt{path}/3$ defines how to find a path between two nodes in a recursive way. The base case is $\mathtt{path(A,A,[])}$ , meaning that any node $\mathtt{A}$ is reachable from itself. The recursion then says, if there is an edge from node $\mathtt{A}$ to node $\mathtt{B}$ , and there is a path from node $\mathtt{B}$ to node $\mathtt{C}$ , then there is a path from node $\mathtt{A}$ to node $\mathtt{C}$ . Unfortunately, this generates an infinite loop $\mathtt{[edge(a,b),edge(b,a),edge(a,b),\ldots]}$ when computing the path from $a$ to $c$ , since this path can always be extended potentially also leading to the node $c$ .

Fortunately, NEMESYS allows one to avoid infinite loops by memorizing the proof-depth, i.e., we simply implement a limited proof-depth strategy on the meta-level:

| | $\displaystyle\mathtt{li((A,B),DPT)}\texttt{:-}\mathtt{li(A,DPT)},\mathtt{li(B, DPT).}$ | |

| --- | --- | --- |

With this proof strategy, NEMESYS gets the path $\mathtt{path(a,c,[edge(a,b),edge(b,c)])=true}$ in three steps. For simplicity, we omit the proof part in the atom. Using the second rule and the first rule recursively, the meta interpreter finds $\mathtt{clause(path(a,c),(edge(a,b),path(b,c)))}$ and $\mathtt{clause(path(b,c),(edge(b,c),path(c,c)))}$ . Finally, the meta interpreter finds a clause, whose head is $\mathtt{li(path(c,c),1)}$ and the body is true.

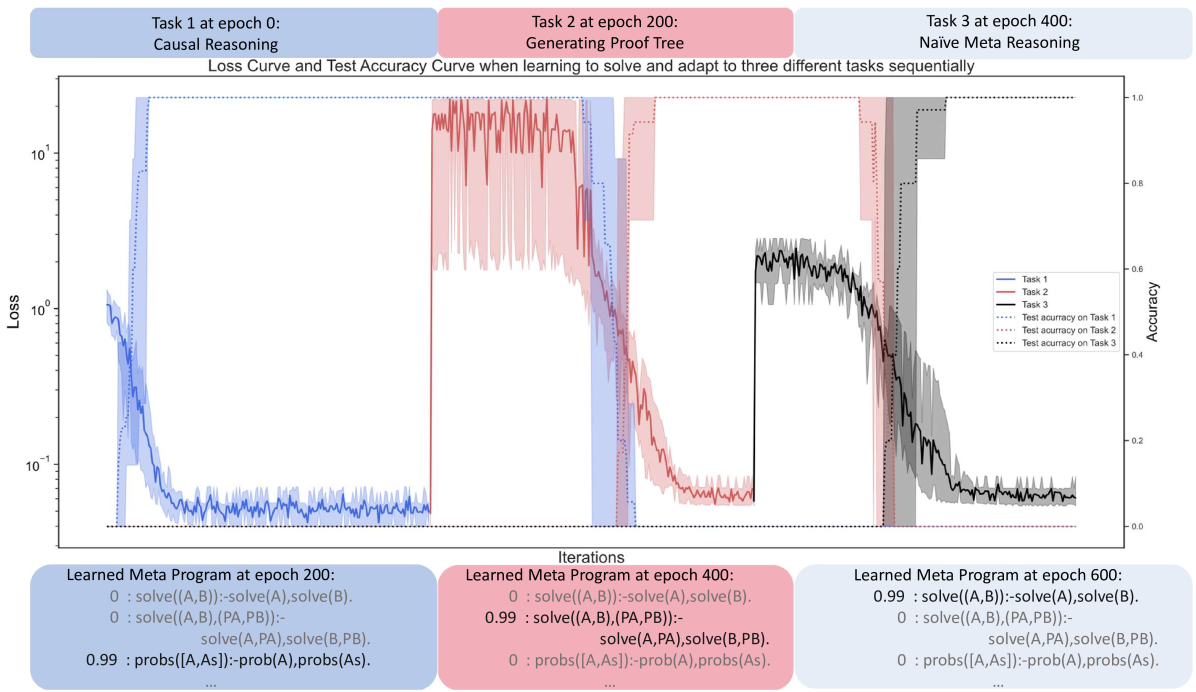

Since forward chaining gets stuck in the infinite loop, we choose ProbLog [47] as our baseline comparison. We test NEMESYS and ProbLog using four queries, including one query which calls the recursive rule. ProbLog fails to return the correct answer on the query which calls the recursive rule. The comparison is summarized in Fig. 5. We provide the four test queries in Appendix A.

### 4.4 Differentiable First-Order Logical Planning

As the fourth meta interpreter, we demonstrate NEMESYS as a differentiable planner. Consider Fig. 4 where NEMESYS was asked to put all objects of a start image onto a line. Each start and goal state is represented as a visual scene, which is generated in the CLEVR [18] environment. By adopting a perception model, e.g., YOLO [42] or slot attention [45], NEMESYS obtains logical representations of the start and end states:

| | $\displaystyle\mathtt{start}$ | $\displaystyle=\{\mathtt{pos(obj0,(1,3)),\ldots,pos(obj4,(2,1))}\},$ | |

| --- | --- | --- | --- |

where $\mathtt{pos/2}$ describes the $2$ -dim positions of objects. NEMESYS solves this planning task by performing differentiable reasoning using the meta-level program:

| | $\displaystyle\mathtt{plan(Start\_state,}\mathtt{New\_state,Goal\_state,[Action ,Old\_stack])}\textbf{:-}$ | |

| --- | --- | --- |

The first meta rule presents the recursive rule for plan generation, and the second rule gives the successful termination condition for the plan when the $\mathtt{Goal\_state}$ is reached, where $\mathtt{equal/2}$ checks whether the $\mathtt{Current\_state}$ is the $\mathtt{Goal\_state}$ and the $\mathtt{planf/3}$ contains $\mathtt{Start\_state}$ , $\mathtt{Goal\_state}$ and the needed action sequences $\mathtt{Move\_stack}$ from $\mathtt{Start\_state}$ to reach the $\mathtt{Goal\_state}$ .

The predicate $\mathtt{plan/4}$ takes four entries as inputs: $\mathtt{Start\_state}$ , $\mathtt{State}$ , $\mathtt{Goal\_state}$ and $\mathtt{Move\_stack}$ . The $\mathtt{move/3}$ predicate uses $\mathtt{Action}$ to push $\mathtt{Old\_state}$ to $\mathtt{New\_state}$ . $\mathtt{condition\_met/2}$ checks if the state’s preconditions are met. When the preconditions are met, $\mathtt{change\_state/2}$ changes the state, and $\mathtt{plan/4}$ continues the recursive search.

To reduce memory usage, we split the move action in horizontal and vertical in the experiment. For example, NEMESYS represents an action to move an object in the horizontal direction right by $\mathtt{1}$ step using meta-level atom:

| | $\displaystyle\mathtt{move(}$ | $\displaystyle\mathtt{move\_right},\mathtt{pos\_hori(Object,X),}\mathtt{pos\_ hori(Object,X}\texttt{+}\mathtt{1)).}$ | |

| --- | --- | --- | --- |

where $\mathtt{move\_right}$ represents the action, $\mathtt{X+1}$ represents arithmetic sums over (positive) integers, encoded as $\mathtt{0,succ(0),succ(succ(0))}$ and so on as terms. Performing reasoning on the meta-level clause with $\mathtt{plan}$ simulates a step as a planner, i.e., it computes preconditions, and applies actions to compute states after taking the actions. Fig. 4 summarizes one of the experiments performed using NEMESYS on the Visual Concept Repairing task. We provided the start and goal states as visual scenes containing varying numbers of objects with different attributes. The left most image of Fig. 4 shows the start state, and the right most image shows the goal state, respectively. NEMESYS successfully moved objects to form a line. For example, to move $\mathtt{obj0}$ from $\mathtt{(1,1)}$ to $\mathtt{(3,1)}$ , NEMESYS deduces:

| | $\displaystyle\mathtt{planf(}$ | $\displaystyle\mathtt{pos\_hori(obj0,1)},\mathtt{pos\_hori(obj0,3),}\mathtt{[ move\_right,move\_right]).}$ | |

| --- | --- | --- | --- |

This shows that NEMESYS is able to perceive objects from an image, reason about the image, and edit the image through planning. To the best of our knowledge, this is the first differentiable neuro-symbolic system equipped with all of these abilities. We provide more Visual Concept Repairing tasks in Appendix B.

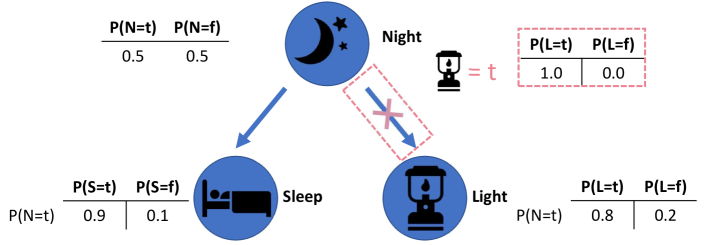

### 4.5 Differentiable Causal Reasoning

As the last meta interpreter, we show that NEMESYS exhibits superior performance compared to the existing forward reasoning system by having the causal reasoning ability. Notably, given a causal Bayesian network, NEMESYS can perform the $\mathtt{do}$ operation (deleting the incoming edges of a node) [28] on arbitrary nodes and perform causal reasoning without the necessity of re-executing the entire system, which is made possible through meta-level programming.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Conditional Probabilities of Night, Sleep, and Light

### Overview

The diagram illustrates a probabilistic model with three interconnected nodes: **Night**, **Sleep**, and **Light**. Arrows indicate causal or conditional relationships, with probabilities assigned to binary states (true/false) for each node. A red dashed box highlights a deterministic relationship between **Night** and **Light**.

### Components/Axes

- **Nodes**:

- **Night**: Represented by a crescent moon icon.

- **Sleep**: Represented by a bed icon.

- **Light**: Represented by a lantern icon.

- **Probabilities**:

- **Night**:

- P(N=t) = 0.5

- P(N=f) = 0.5

- **Sleep**:

- P(S=t) = 0.9

- P(S=f) = 0.1

- **Light**:

- P(L=t) = 0.8

- P(L=f) = 0.2

- **Conditional**:

- When **Night** is true (N=t), **Light** is deterministic:

- P(L=t | N=t) = 1.0

- P(L=f | N=t) = 0.0

### Detailed Analysis

1. **Night Node**:

- Equal probability (0.5) of being true or false.

- Branches to both **Sleep** and **Light** nodes.

2. **Sleep Node**:

- High probability (0.9) of being true when **Night** is true.

- Low probability (0.1) of being false.

3. **Light Node**:

- Default probabilities: 0.8 (true) and 0.2 (false).

- Overridden by the red dashed box: When **Night** is true, **Light** is certain (P(L=t) = 1.0).

4. **Relationships**:

- **Night → Sleep**: Strong association (0.9 probability).

- **Night → Light**: Deterministic when **Night** is true (1.0 probability).

### Key Observations

- **Deterministic Override**: The red dashed box explicitly enforces P(L=t) = 1.0 when **Night** is true, overriding the default 0.8 probability.

- **High Correlation**: **Sleep** is highly likely (0.9) when **Night** is true, suggesting a strong but non-deterministic link.

- **Symmetry in Night**: Equal prior probabilities (0.5) for **Night** being true or false.

### Interpretation

The diagram models a scenario where **Night** acts as a conditional trigger:

- When **Night** is true, **Light** is guaranteed (e.g., a nightlight activating automatically).

- **Sleep** is strongly associated with **Night** but not absolute (0.9 probability), allowing for exceptions (e.g., insomnia).

- The default probabilities for **Light** (0.8 true) suggest it is generally active but not tied to **Night** in all cases.

The red dashed box emphasizes a rule-based exception: **Light**’s state is fully determined by **Night**’s truth value, highlighting a critical dependency in the system. This could represent a safety mechanism (e.g., ensuring light is on during nighttime) or a logical constraint in a probabilistic model.

</details>

Figure 6: Performing differentiable causal reasoning and learning using NEMESYS. Given a causal Bayesian network, NEMESYS can easily perform the do operation (delete incoming edges) on arbitrary nodes and capture the causal effects on different nodes (for example, the probability of the node $\mathtt{Light}$ after intervening) without rerunning the entire system. Furthermore, NEMESYS is able to learn the unobserved $\mathtt{do}$ operation with its corresponding value using gradient descent based on the given causal graph and observed data. (Best viewed in color)

The $\mathtt{do}$ operator, denoted as $\mathtt{do(X)}$ , is used to represent an intervention on a particular variable $\mathtt{X}$ in a causal learning system, regardless of the actual value of the variable. For example, Fig. 6 shows a causal Bayesian network with three nodes and the probability distribution of the nodes before and after the $\mathtt{do}$ operation. To investigate how the node $\mathtt{Light}$ affects the rest of the system, we firstly cut the causal relationship between the node $\mathtt{Light}$ and all its parent nodes, then we assign a new value to the node and we investigate the probability of other nodes. To enable NEMESYS to perform a $\mathtt{do}$ operation on the node $\mathtt{Light}$ , we begin by representing the provided causal Bayesian network in Fig. 6 using:

| | $\displaystyle\mathtt{0.5}\texttt{:}\ \mathtt{Night}.\quad\mathtt{0.9}\texttt{: }\ \mathtt{Sleep}\texttt{:-}\mathtt{Night}.\quad\mathtt{0.8}\texttt{:}\ \mathtt{Light}\texttt{:-}\mathtt{Night}.$ | |

| --- | --- | --- |

where the number of an atom indicates the probability of the atom being true, and the number of a clause indicates the conditional probability of the head being true given the body being true.

We reuse the meta predicate $\mathtt{assert\_probs/1/[atom]}$ and introduce three new meta predicates: $\mathtt{prob/1/[atom]}$ , $\mathtt{probs/1/[atoms]}$ and $\mathtt{probs\_do/1/[atoms,atom]}$ . Since we cannot deal with the uncountable, infinite real numbers within our logic, we make use of the weight associated with ground meta atoms to represent the probability of the atom. For example, we use the weight of the meta atom $\mathtt{prob(Atom)}$ to represent the probability of the atom $\mathtt{Atom}$ . We use the weight of the meta atom $\mathtt{probs(Atoms)}$ to represent the joint probability of a list of atoms $\mathtt{Atoms}$ , and the weight of $\mathtt{probs\_do(AtomA,AtomB)}$ to represent the probability of the atom $\mathtt{AtomA}$ after performing the do operation $\mathtt{do(AtomB)}$ . We modify the meta interpreter as:

| | $\displaystyle\mathtt{prob(Head)}\texttt{:-}\mathtt{assert\_probs((Head\texttt{ :-}Body))},\mathtt{probs(Body).}$ | |

| --- | --- | --- |

where the first three rules calculate the probability of a node before the intervention, the joint probability is approximated using the first and second rule by iteratively multiplying each atom. The fourth rule assigns the probability of the atom $\mathtt{Atom}$ using the $\mathtt{do}$ operation. The fifth to the eighth calculate the probability after the $\mathtt{do}$ intervention by looping over each atom and multiplying them.

For example, after performing $\mathtt{do(Light)}$ and setting the probability of $\mathtt{Light}$ as $1.0$ . NEMESYS returns the weight of $\mathtt{probs\_do(Light,Light)}$ as the probability of the node $\mathtt{Light}$ (Fig. 6 red box) after the intervention $\mathtt{do(Light)}$ .

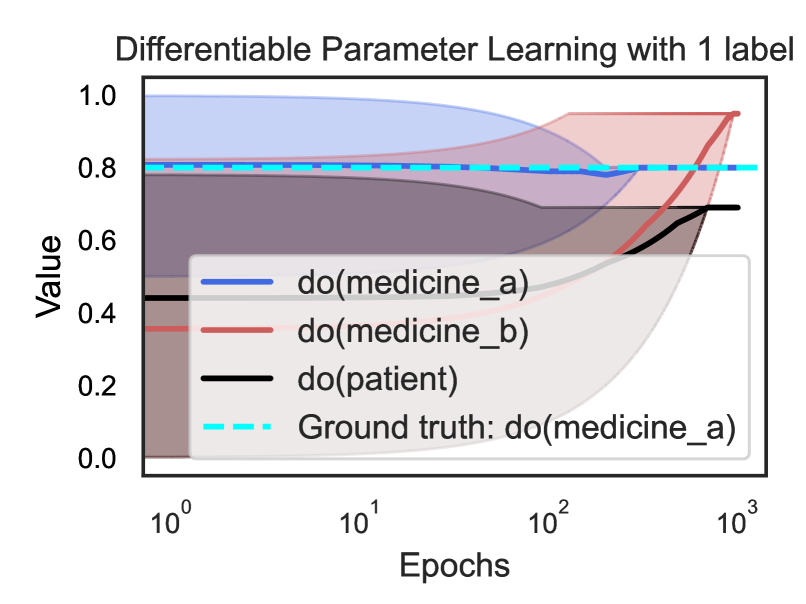

### 4.6 Gradient-based Learning in NEMESYS

NEMESYS alleviates the limitations of frameworks such as DeepProbLog [21] by having the ability of not only performing differentiable parameter learning but also supporting differentiable structure learning (in our experiment, NEMESYS learns the weights of the meta rules while adapting to solve different tasks). We now introduce the learning ability of NEMESYS.

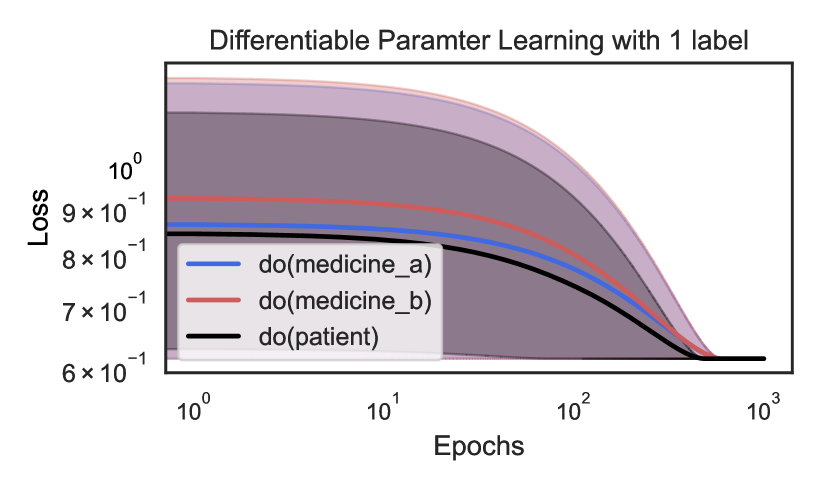

#### 4.6.1 Parameter Learning

Consider a scenario in which a patient can only experience effective treatment when two types of medicine synergize, with the effectiveness contingent on the dosage of each drug. Suppose we have known the dosages of two medicines and the causal impact of the medicines on the patient, however, the observed effectiveness does not align with expectations. It is certain that some interventions have occurred in the medicine-patient causal structure (such as an incorrect dosage of one medicine, which will be treated as an intervention using the $\mathtt{do}$ operation). However, the specific node (patient or the medicines) on which the $\mathtt{do}$ operation is executed, and the values assigned to the $\mathtt{do}$ operator remain unknown. Conducting additional experiments on patients by altering medicine dosages to uncover the $\mathtt{do}$ operation is both unethical and dangerous.

With NEMESYS at hand, we can easily learn the unobserved $\mathtt{do}$ operation with its assigned value. We abstract the problem using a three-node causal Bayesian network:

$$

\mathtt{1.0:medicine\_a.}\quad\mathtt{1.0:medicine\_b.}\quad\mathtt{0.9:

patient}\texttt{:-}\mathtt{medicine\_a,medicine\_b.}

$$

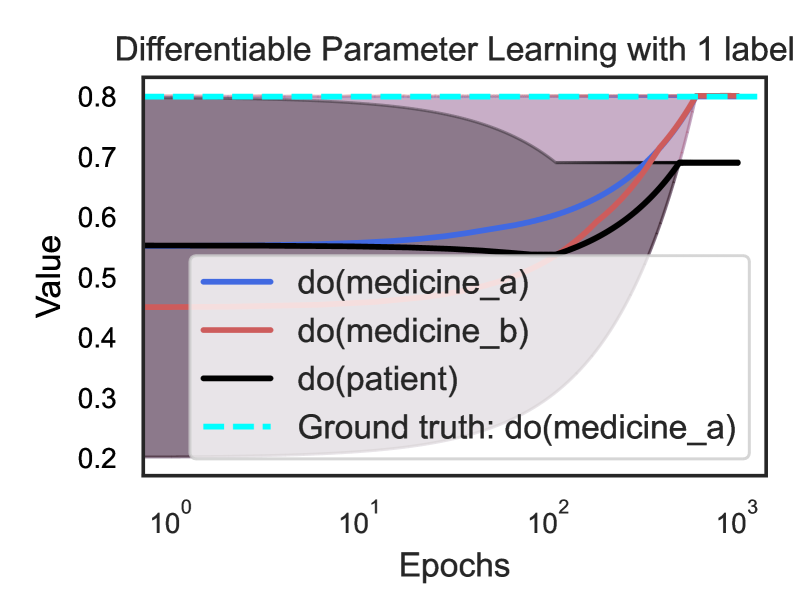

where the number of the atoms indicates the dosage of each medicine, and the number of the clause indicates the conditional probability of the effectiveness of the patient given these two medicines. Suppose there is only one unobserved $\mathtt{do}$ operation.