Integrated information theory (IIT) 4.0: Formulating the properties of phenomenal existence in physical terms

Larissa Albantakis 1 Y , Leonardo Barbosa 1,2 Y , Graham Findlay 1,3 Y , Matteo Grasso 1 Y , Andrew M Haun 1 Y , William Marshall 1,4 Y , William GP Mayner 1,3 Y , Alireza Zaeemzadeh 1 Y , Melanie Boly 1,5 , Bjørn E Juel 1,6 , Shuntaro Sasai 1,7 , Keiko Fujii 1 , Isaac David 1 , Jeremiah Hendren 1,8 , Jonathan P Lang 1 , Giulio Tononi 1*

- 1 Department of Psychiatry, University of Wisconsin, Madison, WI 53719, USA

- 2 Fralin Biomedical Research Institute at VTC, Virginia Tech, Roanoke, VA 24016, USA

- 3 Neuroscience Training Program, University of Wisconsin, Madison, WI 53705, USA

- 4 Department of Mathematics and Statistics, Brock University, St. Catharines, ON L2S 3A1, Canada

- 5 Department of Neurology, University of Wisconsin, Madison, WI 53719, USA

- 6 Institute of Basic Medical Sciences, University of Oslo, Oslo, 0372, Norway

- 7 Araya Inc., Tokyo, 107-0052, Japan

- 8 Graduate School Language & Literature, Ludwig Maximilian University of Munich, Munich, 80799, Germany

- Y These authors contributed equally to this work.

* gtononi@wisc.edu

## Abstract

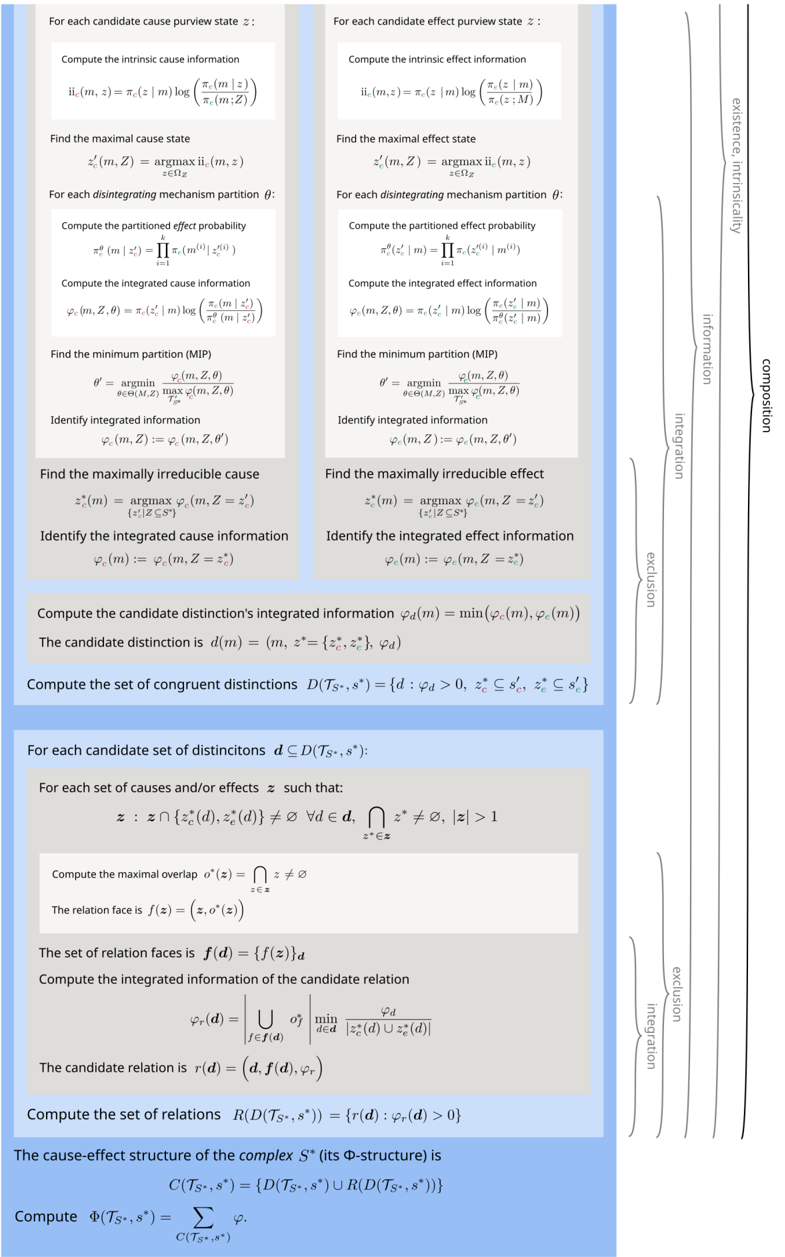

This paper presents Integrated Information Theory (IIT) 4.0. IIT aims to account for the properties of experience in physical (operational) terms. It identifies the essential properties of experience (axioms), infers the necessary and sufficient properties that its substrate must satisfy (postulates), and expresses them in mathematical terms. In principle, the postulates can be applied to any system of units in a state to determine whether it is conscious, to what degree, and in what way. IIT offers a parsimonious explanation of empirical evidence, makes testable predictions, and permits inferences and extrapolations. IIT 4.0 incorporates several developments of the past ten years, including a more accurate translation of axioms into postulates and mathematical expressions, the introduction of a unique measure of intrinsic information that is consistent with the postulates, and an explicit assessment of causal relations. By fully unfolding a system's irreducible cause-effect power, the distinctions and relations specified by a substrate can account for the quality of experience.

## Author summary

IIT aims to account for consciousness and its properties in physical terms. The theory identifies the essential properties of experience (axioms), formulates them as physical properties in terms of cause-effect power (postulates), and provides a mathematical formalism for assessing those properties. This formalism can be employed to 'unfold' a cause-effect structure from a substrate constituted of units in a state, whose interactions can be characterized as interventional conditional probabilities. According to IIT, all the properties of an experience can be accounted for, in physical terms, by

those of a cause-effect structure that satisfies its postulates. The theory is consistent with neurological data and has led to successful experimental predictions. As the latest iteration of the theory, IIT 4.0 incorporates several developments pursued over the past ten years.

## Introduction

A scientific theory of consciousness should account for experience, which is subjective, in objective terms [1]. Being conscious-having an experience-is understood to mean that 'there is something it is like to be' [2]: something it is like to see a blue sky, hear the ocean roar, dream of a friend's face, imagine a melody flow, contemplate a choice, or reflect on the experience one is having.

IIT aims to account for phenomenal properties-the properties of experience-in physical terms. IIT's starting point is experience itself rather than its behavioral, functional, or neural correlates [1]. Furthermore, in IIT 'physical' is meant in a strictly operational sense-in terms of what can be observed and manipulated.

The starting point of IIT is the existence of an experience, which is immediate and irrefutable [3,4]. From this 'zeroth' axiom, IIT sets out to identify the essential properties of consciousness-those that are immediate and irrefutably true of every conceivable experience. These are IIT's five axioms of phenomenal existence: every experience is for the experiencer (intrinsicality), specific (information), unitary (integration), definite (exclusion), and structured (composition).

Unlike phenomenal existence, which is immediate and irrefutable (an axiom), physical existence is an explanatory construct (a postulate) and it is assessed operationally from within consciousness: in physical terms, to be is to have cause-effect power (see Box 2: Principle of being). In other words, something can be said to exist physically if it can 'take and make a difference'-bear a cause and produce an effect-as judged by a conscious observer/manipulator.

The next step of IIT is to formulate the essential phenomenal properties (the axioms) in terms of corresponding physical properties (the postulates). This formulation is an 'inference to a good explanation' and rests on basic assumptions such as realism, physicalism, and atomism (see Box 1: Methodological guidelines of IIT). If IIT is correct, the substrate [5] of consciousness, beyond having cause-effect power (existence), must satisfy all five essential phenomenal properties in physical terms: its cause-effect power must be for itself (intrinsicality), specific (information), unitary (integration), definite (exclusion), and structured (composition).

On this basis, IIT proposes a fundamental explanatory identity: an experience is identical to the cause-effect structure unfolded from a maximal substrate (defined below). Accordingly, all the specific phenomenal properties of any experience must have a good explanation in terms of the specific physical properties of the corresponding cause-effect structure, with no additional ingredients.

IIT formulates the postulates in a mathematical framework that is in principle applicable to general models of interacting units (but see [6]). A mathematical framework is needed (i) to evaluate whether the theory is self-consistent and compatible with our overall knowledge about the world, (ii) to make specific predictions regarding the quality and quantity of our experiences and their substrate within the brain, and (iii) to extrapolate from our own consciousness to infer the presence (or absence) and nature of consciousness in beings different from ourselves.

Ultimately, the theory should account for why our consciousness depends on certain portions of the world and their state, such as certain regions of the brain and not others, and for why it fades during dreamless sleep, even though the brain remains active. It should also account for why an experience feels the way it does-why the sky feels

extended, why a melody feels flowing in time, and so on. Moreover, the theory makes several predictions concerning both the presence and the quality of experience, some of which have been and are being tested empirically [7].

While the main tenets of the theory have remained the same, its formal framework has been progressively refined and extended [8-11]. Compared to IIT 1.0 [8,9], 2.0 [10,12], and 3.0 [11], IIT 4.0 presents a more complete, self-consistent formulation and incorporates several recent advances [13-16]. Chief among them are a more accurate translation of the axioms into postulates and mathematical expressions, the introduction of an Intrinsic Difference (ID) measure [15,17] that is uniquely consistent with IIT's postulates, and the explicit assessment of causal relations [14].

In what follows, after introducing IIT's axioms and postulates, we provide its updated mathematical formalism. In the 'Results and discussion' section, we apply the mathematical framework of IIT to representative examples and discuss some of their implications. The article is meant as a reference for the theory's mathematical formalism, a concise demonstration of its internal consistency, and an illustration of how a substrate's cause-effect structure is unfolded computationally. A discussion of the theory's motivation, its axioms and postulates, and its assumptions and implications can be found in a forthcoming book [4] and wiki [18] as well as in several publications [1,19-24]. A survey of the explanatory power and experimental predictions of IIT can be found in [7]. The way IIT's analysis of cause-effect power can be applied to actual causation, or 'what caused what,' is presented in [13].

## From phenomenal axioms to physical postulates

## Axioms of phenomenal existence

That experience exists-that 'there is something it is like to be'-is immediate and irrefutable, as everybody can confirm, say, upon awakening from dreamless sleep. Phenomenal existence is immediate in the sense that my experience is simply there, directly rather than indirectly: I do not need to infer its existence from something else. It is irrefutable because the very doubting that my experience exists is itself an experience that exists-the experience of doubting [1,3]. Thus, to claim that my experience does not exist is self-contradictory or absurd. The existence of experience is IIT's zeroth axiom.

Existence Experience exists : there is something .

Traditionally, an axiom is a statement that is assumed to be true, cannot be inferred from any other statement, and can serve as a starting point for inferences. The existence of experience is the ultimate axiom-the starting point for everything, including logic and physics.

On this basis, IIT proceeds by considering whether experience-phenomenal existence-has some axiomatic or essential properties, properties that are immediate and irrefutably true of every conceivable experience. Drawing on introspection and reason, IIT identifies the following five:

Intrinsicality Experience is intrinsic : it exists for itself .

Information Experience is specific : it is the way it is .

Integration Experience is unitary : it is a whole , irreducible to separate experiences.

Exclusion Experience is definite : it is this whole.

Composition Experience is structured : it is composed of distinctions and the relations that bind them together, yielding a phenomenal structure .

To exemplify, if I awaken from dreamless sleep, and experience the white wall of my room, my bed, and my body, the experience not only exists, immediately and irrefutably, but 1) it exists for me, 2) it is specific (the wall is a wall and it is white), 3) it is unitary (the left side is not experienced separately from the right side, and vice versa), 4) it is definite (it includes the visual scene in front of me-neither less, say, its left side only, nor more, say, the wall behind my head), 5) it is structured by distinctions (the wall, the bed, the body) and relations (the body is on the bed, the bed in the room).

The axioms are not only immediately given, but they are irrefutably true of every conceivable experience. For example, once properly understood, the unity of experience cannot be refuted. Trying to conceive of an experience that were not unitary leads to conceiving of two separate experiences, each of which is unitary, which reaffirms the validity of the axiom. Even though each of the axioms spells out an essential property in its own right, the axioms must be considered together to properly characterize phenomenal existence.

IIT takes the above set of axioms to be complete: there are no further properties of experience that are essential. Other properties that might be considered as candidates for axiomatic status include space (experience typically takes place in some spatial frame), time (an experience usually feels like it flows from a past to a future), change (an experience usually transitions or flows into another), subject-object distinction (an experience seems to involve both a subject and an object), intentionality (experiences usually refer to something in the world, or at least to something other than the subject), a sense of self (many experiences include a reference to one's body or even to one's narrative self), figure-ground segregation (an experience usually includes some object and some background), situatedness (an experience is often bound to a time and a place), will (experience offers the opportunity for action), and affect (experience is often colored by some mood), among others. However, experiences lacking each of these candidate properties are conceivable-that is, conceiving of them does not lead to self-contradiction or absurdity. They are also achievable, as revealed by altered states of consciousness reached through dreaming, meditative practices, or drugs.

## Postulates of physical existence

To account for the many regularities of experience (Box 1), it is a good inference to assume the existence of a world that persists independently of one's experience ( realism ). From within consciousness, we can probe the physical existence of things outside of our experience operationally-through observations and manipulations. To be granted physical existence, something should have the power to 'take a difference' (be affected) and 'make a difference' (produce effects) in a reliable way ( physicalism ). IIT also assumes operational reductionism: ideally, to establish what exists in physical terms, one would start from the smallest units that can take and make a difference, so that nothing is left out ( atomism ).

By characterizing physical existence operationally as cause-effect power, IIT can proceed to translate the axioms of phenomenal existence into postulates of physical existence. This establishes the requirements for the substrate of consciousness , where 'substrate' is meant operationally as a set of units that can be observed and manipulated.

Existence The substrate of consciousness must have cause-effect power : its units must take and make a difference .

Building from this 'zeroth' postulate, IIT formulates the five axioms in terms of postulates of physical existence:

- Intrinsicality The substrate of consciousness must have intrinsic cause-effect power: it must take and make a difference within itself .

- Information The substrate of consciousness must have specific cause-effect power: it must select a specific cause-effect state .

This state is the one with maximal intrinsic information ( ii ), a measure of the difference a system takes or makes over itself for a given cause state and effect state.

- Integration The substrate of consciousness must have unitary cause-effect power: it must specify its cause-effect state as a whole set of units, irreducible to separate subsets of units.

Irreducibility is measured by integrated information ( ϕ ) over the substrate's minimum partition.

- Exclusion The substrate of consciousness must have definite cause-effect power: it must specify its cause-effect state as this set of units.

This is the set of units that is maximally irreducible, as measured by maximum ϕ ( ϕ ∗ ). This set is called a maximal substrate , also known as complex [11, 16].

- Composition The substrate of consciousness must have structured cause-effect power: subsets of its units must specify cause-effect states over subsets of units ( distinctions ) that can overlap with one another ( relations ), yielding a cause-effect structure or Φ -structure ('Phi-structure').

Distinctions and relations, in turn, must also satisfy the postulates of physical existence: they must have cause-effect power, within the substrate of consciousness, in a specific, unitary, and definite way (they do not have components, being components themselves). They thus have an associated ϕ value. The Φ -structure unfolded from a complex corresponds to the quality of consciousness. The sum total of the ϕ values of the distinctions and relations that compose the Φ -structure measures its structured information Φ ('big Phi') and corresponds to the quantity of consciousness.

According to IIT, the physical properties characterized by the postulates are necessary and sufficient for an entity to be conscious. They are necessary because they are needed to account for the properties of experience that are essential, in the sense that it is inconceivable for an experience to lack any one of them. They are also sufficient because no additional property of experience is essential, in the sense that it is conceivable for an experience to lack that property. Thus, no additional physical property is a necessary requirement for being a substrate of consciousness.

The postulates of IIT have been and are being applied to account for the location of the substrate of consciousness in the brain [7] and for its loss and recovery in physiological and pathological conditions [25,26].

## The explanatory identity between experiences and Φ -structures

Having determined the necessary and sufficient conditions for a substrate to support consciousness, IIT proposes an explanatory identity: every property of an experience is accounted for in full by the physical properties of the Φ -structure unfolded from a maximal substrate (a complex) in its current state, with no further or 'ad hoc' ingredients. That is, there must be a one-to-one correspondence between the way the experience feels and the way distinctions and relations are structured. Importantly, the

identity is not meant as a correspondence between the properties of two separate things. Instead, the identity should be understood in an explanatory sense: the intrinsic (subjective) feeling of the experience can be explained extrinsically (objectively, i.e. , operationally or physically) in terms of cause-effect power [27].

The explanatory identity has been applied to account for how space feels (spatial extendedness) and which neural substrates may account for it [14]. Ongoing work is applying the identity to provide a basic account of the feeling of temporal flow [28] and that of objects [29].

## Box 1. Methodological guidelines of IIT

## Inference to a good explanation

We should generally assume that an explanation is good if it can account for a broad set of facts ( scope ), does so in a unified manner ( synthesis ), can explain facts precisely ( specificity ), is internally coherent ( self-consistency ), is coherent with our overall understanding of things ( system consistency ), is simpler than alternatives ( simplicity ), and can make testable predictions ( scientific validation ). For example, IIT 4.0 aims at expressing the postulates of intrinsicality, information, integration, and exclusion in a self-consistent manner when applied to systems, causal distinctions, and relations (see formulas).

## Realism

We should assume that something exists (and persists) independently of our own experience. This is a much better hypothesis than solipsism, which explains nothing and predicts nothing. Although IIT starts from our own phenomenology, it aims to account for the many regularities of experience in a way that is fully consistent with realism.

## Operational physicalism

To assess what exists independently of our own experience, we should employ an operational criterion: we should systematically observe and manipulate a substrate's units and determine that they can indeed take and make a difference in a way that is reliable and persisting. Doing so demonstrates a substrate's cause-effect power-the signature of physical existence. Ideally, cause-effect power is fully captured by a substrate's transition probability matrix (TPM) (1). This assumption is embedded in IIT's zeroth postulate.

## Operational reductionism ('atomism')

Ideally, we should account for what exists physically in terms of the smallest units we can observe and manipulate, as captured by unit TPMs. Doing so would leave nothing unaccounted for. IIT assumes that in principle it should be possible to account for everything purely in terms of cause-effect power-cause-effect power 'all the way down' to conditional probabilities between atomic units. Eventually, this would leave neither room nor need to assume intrinsic properties or laws.

## Intrinsic perspective

When accounting for experience itself in physical terms, existence should be evaluated from the intrinsic perspective of an entity-what exists for the entity itself, not from the perspective of an external observer. This assumption is embedded in IIT's postulate of intrinsicality and has several consequences. One is that, from the intrinsic perspective, the quality and quantity of existence must be observer-independent and cannot be arbitrary. For instance, information in IIT must be relative to the specific state the entity is in, rather than an average of states as assessed by an external observer. Similarly, it should be evaluated based on the uniform distribution of possible states, as captured by the entity's TPM (1), rather than on an observed probability distribution. By the same token, units outside the entity should be treated as fixed background conditions that do not contribute directly to what the system is. The intrinsic perspective also imposes a tension between expansion and dilution (see below and [15,17]): from the intrinsic perspective of a system (or a mechanism within the system), having more units may increase its informativeness (cause-effect power measured as deviation from chance), while at the same time diluting its selectivity (ability to concentrate cause-effect power over a specific state).

## Overview of IIT's framework

IIT 4.0 aims at providing a formal framework to characterize the cause-effect structure of a substrate in a given state by expressing IIT's postulates in mathematical terms. In line with operational physicalism (Box 1), we characterize a substrate by the transition probability function of its constituting units.

On this basis, the IIT formalism first identifies sets of units that fulfill all required properties of a substrate of consciousness according to the postulates of physical existence. First, for a candidate system, we determine a maximal cause-effect state based on the intrinsic information ( ii ) that the system in its current state specifies over its possible cause states and effect states. We then determine the maximal substrate based on the integrated information ( ϕ s ) of the maximal cause-effect state. To qualify as a substrate of consciousness, a candidate system must specify a maximum of integrated information ( ϕ ∗ s ) compared to all competing candidate systems with overlapping units.

The second part of the IIT formalism unfolds the cause-effect structure specified by a maximal substrate in its current state, its Φ -structure . To that end, we determine the distinctions and relations specified by the substrate's subsets according to the postulates of physical existence. Distinctions are cause-effect states specified over subsets of substrate units ( purviews ) by subsets of substrate units ( mechanisms ). Relations are congruent overlaps among distinctions' cause and/or effect states. Distinctions and relations are also characterized by their integrated information ( ϕ d , ϕ r ). The Φ -structure they compose corresponds to the quality of the experience specified by the substrate; the sum of their ϕ d/r values corresponds to its quantity ( Φ ).

While IIT must still be considered as work in progress, having undergone successive refinements, IIT 4.0 is the first formulation of IIT that strives to characterize Φ -structures completely and to do so based on measures that satisfy the postulates uniquely. For a comparison of the updated framework with IIT 1.0, 2.0, and 3.0, see A.2.

## Substrates, transition probabilities, and cause-effect power

IIT takes physical existence as synonymous with having cause-effect power, the ability to take and make a difference. Consequently, a substrate U with state space Ω U is operationally defined by its potential interactions, assessed in terms of conditional probabilities (physicalism, Box 1). We denote the complete transition probability function of a substrate U over a system update u → ¯ u as

<!-- formula-not-decoded -->

A substrate in IIT can be described as a stochastic system U = { U 1 , U 2 , . . . , U n } of n interacting units with state space Ω U = ∏ i Ω U i and current state u ∈ Ω U . We assume that the system updates in discrete steps, that the state space Ω U is finite, and that the individual random variables U i ∈ U are conditionally independent from each other given the preceding state of U :

<!-- formula-not-decoded -->

Finally, we assume a complete description of the substrate, which means that we can determine the conditional probabilities in (2) for every system state, with p (¯ u | u ) = p (¯ u | do( u )) [13, 30-32], where the 'do-operator' do( u ) indicates that u is imposed by intervention. This implies that U must correspond to a causal network [13], and T U is a transition probability matrix (TPM) of size | Ω U | [33].

The TPM T U , which forms the starting point of IIT's analysis, serves as an overall description of a system's cause-effect power: what is the probability that the system will transition into each of its possible states upon an intervention that initializes it into every possible state (Figure 1)? (Notably, there is no additional role for intrinsic physical properties or laws of nature.) In practice, a causal model will be neither complete nor atomic (capturing the smallest units that can be observed and manipulated), but will capture the relevant features of what we are trying to explain and predict [34].

In the 'Results and discussion' section, the IIT formalism will be applied to extremely simple, simulated networks, rather than causal models of actual substrates. The cause-effect structures derived from these simple networks only serve as convenient illustrations of how a hypothetical substrate's cause-effect power can be unfolded.

## Implementing the postulates

In what follows, our goal is to evaluate whether a hypothetical substrate (also called 'system') satisfies all the postulates of IIT. To that end, we must verify whether the system has cause-effect power that is intrinsic, specific, integrated, definite, and structured.

## Existence

According to IIT, existence understood as cause-effect power requires the capacity to both take and make a difference (see Box 2, Principle of being). On the basis of a complete description of the system in terms of interventional conditional probabilities ( T U ) (1), cause-effect power can be measured as causal informativeness . Cause informativeness measures how much a potential cause increases the probability of the current state, and effect informativeness how much the current state increases the probability of a potential effect (as compared to chance).

## Intrinsicality

Building upon the existence postulate, the intrinsicality postulate further requires that a system exerts cause-effect power within itself . In general, the systems we want to evaluate are open systems S ⊆ U that are part of a larger 'universe' U . From the intrinsic perspective of a system S (see Box 1), the set of the remaining units W = U \ S merely act as background conditions, whose state can be considered as fixed. This is enforced by causally conditioning the larger TPM ( T U ) on the current state W = w , which makes W causally inert.

## Information

The information postulate requires that a system's cause-effect power be specific: the system in its current state must select a specific cause-effect state for its units. Based on the principle of maximal existence (Box 2), this is the state for which intrinsic information is maximal-the maximal cause-effect state . Intrinsic information ( ii ) measures the difference a system takes or makes over itself for a given cause and effect state as the product of informativeness and selectivity. As we have seen (existence), informativeness quantifies the causal power of a system in its current state as a reduction of uncertainty with respect to chance. Selectivity measures how much cause-effect power is concentrated over that specific cause or effect state. Selectivity is reduced by uncertainty in the cause or effect state with respect to other potential cause and effect states.

From the intrinsic perspective of the system, the product of informativeness and selectivity leads to a tension between expansion and dilution , whereby a system comprising more units may show increased deviation from chance but decreased concentration of cause-effect power over a specific state [15,17].

## Integration

By the integration postulate, it is not sufficient for a system to have cause-effect power within itself and select a specific cause-effect state: it must also specify its maximal cause-effect state in a way that is irreducible. This can be assessed by partitioning the set of units that constitute the system into separate parts. The system integrated information ( ϕ s ) then quantifies how much the intrinsic information specified by the maximal state is reduced due to the partition [35]. Integrated information is evaluated over the partition that makes the least difference, the minimum partition (MIP), in accordance with the principle of minimal existence (see Box 2).

Integrated information is highly sensitive to the presence of fault lines -partitions that separate parts of a system that interact weakly or directionally [16].

## Exclusion

Many overlapping sets of units may have a positive value of integrated information ( ϕ s ). However, the exclusion postulate requires that the substrate of consciousness must be constituted of a definite set of units, neither less nor more. Moreover, units, updates, and states must have a definite grain. Operationally, the exclusion postulate is enforced by selecting the set of units that maximizes integrated information over itself ( ϕ ∗ s ), based again on the principle of maximal existence (see Box 2). That set of units is called a maximal substrate , or complex . Over a universal substrate, sets of units for which integrated information is maximal compared to all competing candidate systems with overlapping units can be assessed recursively (by identifying the first complex, then the second complex, and so on).

## Composition

Once a complex has been identified, composition requires that we characterize its cause-effect structure by considering all its subsets and fully unfolding its cause-effect power.

Usually, causal models are conceived in holistic terms, as state transitions of the system as a whole (1), or in reductionist terms, as a description of the individual units of the system and their interactions (2) [36]. However, to account for the structure of experience, considering only the cause-effect power of the individual units or of the system as a whole would be insufficient [20,36]. Instead, by the composition postulate, we have to evaluate the system's cause-effect structure by considering the cause-effect power of its subsets as well as their causal relations.

To contribute to the cause-effect structure of a complex, a system subset must both take and make a difference (as required by existence) within the system (as required by intrinsicality). A subset M ⊆ S in state M = m is called a mechanism if it links a cause and effect state over subsets of units Z c/e ⊆ S , called purviews . A mechanism together with the cause and effect state it specifies is called a causal distinction . Distinctions are evaluated based on whether they satisfy all the postulates of IIT (except for composition). For every mechanism, the cause-effect state is the one having maximal intrinsic information ( ii ), and the cause and effect purviews are those yielding the maximum value of integrated information ( ϕ d ) within the complex-that is, those that are maximally irreducible.

Distinctions whose cause or effect states overlap congruently within the system (over the same subset of units in the same state) are bound together by causal relations . Relations also have an associated value of integrated information ( ϕ r ), corresponding to their irreducibility.

The distinctions (and associated relations) that exist for the complex are only those whose cause-effect state is congruent with the cause-effect state of the complex as a whole. Together, those distinctions and relations compose the cause-effect structure of the complex in its current state. The cause-effect structure specified by a complex is called a Φ -structure . The sum of its distinction and relation integrated information amounts to the structured information ( Φ ) of the complex.

In the following, we will provide a formal account of the IIT analysis. The first part demonstrates how to identify complexes. This requires that we (a) determine the cause-effect state of a system in its current state, (b) evaluate the system integrated information ( ϕ s ) over that cause-effect state, and (c) search iteratively for maxima of integrated information ( ϕ ∗ s ) within a universe. The second part describes how the postulates of IIT are applied to unfold the cause-effect structure of a complex. This requires that we identify the causal distinctions specified by subsets of units within the complex and the causal relations determined by the way distinctions overlap, yielding the system's cause-effect structure and its structured information Φ .

## Box 2. Ontological principles of IIT

## Principle of being

The principle of being states that to be is to have cause-effect power . In other words, in physical, operational terms, to exist requires being able to take and make a difference. The principle is closely related to the so-called Eleatic principle, as found in Plato's Sophist dialogue [37]: 'I say that everything possessing any kind of power, either to do anything to something else, or to be affected to the smallest extent by the slightest cause, even on a single occasion, has real existence: for I claim that entities are nothing else but power.' A similar principle can be found in the work of the Buddhist philosopher Dharmak¯ ırti: 'Whatever has causal powers, that really exists. ' [38] Note that the Eleatic principle is enunciated as a disjunction (either to do something... or to be affected...), whereas IIT's principle of being is presented as a conjunction (take and make a difference).

## Principle of maximal existence

The principle of maximal existence states that, with respect to an essential requirement for existence, what exists is what exists the most . The principle is offered by IIT as a good explanation for why the system state specified by the complex and the cause-effect states specified by its mechanisms are what they are. It also provides a criterion for determining the set of units constituting a complex-the one with maximally irreducible cause-effect power, for determining the subsets of units constituting the distinctions and relations that compose its cause-effect structure, and for determining the units' grain. To exemplify, consider a set of candidate complexes overlapping over the same substrate. By the postulates of integration and exclusion, a complex must be both unitary and definite. By the maximal existence principle, the complex should be the one that lays the greatest claim to existence as one entity, as measured by system integrated information ( ϕ s ). For the same reason, candidate complexes that overlap over the same substrate but have a lower value of ϕ s are excluded from existence. In other words, if having maximal ϕ s is the reason for assigning existence as a unitary complex to a set of units, it is also the reason to exclude from existence any overlapping set not having maximal ϕ s .

## Principle of minimal existence

Another key principle of IIT is the principle of minimal existence , which complements that of maximal existence. The principle states that, with respect to an essential requirement for existence, nothing exists more than the least it exists . The principle is offered by IIT as a good explanation for why, given that a system can only exist as one system if it is irreducible, its degree of irreducibility should be assessed over the partition across which it is least irreducible (the minimum partition). Similarly, a distinction within a system can only exist as one distinction to the extent that it is irreducible, and its degree of irreducibility should be assessed over the partition across which it is least irreducible. Moreover, a set of units can only exist as a system, or as a distinction within the system, if it specifies both an irreducible cause and an irreducible effect, so its degree of irreducibility should be the minimum between the irreducibility on the cause side and on the effect side [39].

## Identifying substrates of consciousness through existence, intrinsicality, information, integration, and exclusion

Our starting point is a substrate U in current state U = u with TPM T U (1). We consider any subset s ⊆ u as a possible complex and refer to a set of units S ⊆ U as a candidate system. (Note that s , in a slight abuse of notation, refers to both a subset of units in a state, as well as the state itself.)

By the intrinsicality postulate, the units W = U \ S are fixed in their current state w ∈ Ω W throughout the analysis of the candidate system S ( causal conditioning ). Accordingly, we obtain the TPM T S of a candidate system S from its intrinsic transition probability function

<!-- formula-not-decoded -->

where w = u \ s .

The intrinsic information ii c/e is a measure of the intrinsic cause/effect power exerted by a system S in its current state s over itself by selecting a specific cause/effect state ¯ s . The cause-effect state for which intrinsic information ( ii c and ii e ) is maximal is called the maximal cause-effect state s ′ = { s ′ c , s ′ e } . The integrated information ϕ s is a measure of the irreducibility of a cause-effect state, compared to the directional system partition θ ′ that affects the maximal cause-effect state the least (minimum partition, or MIP). Systems for which integrated information is maximal ( ϕ ∗ s ) compared to any competing candidate system with overlapping units are called maximal substrates, or complexes.

The IIT 4.0 formalism to measure a system's integrated information ϕ s and to identify maximal substrates was first presented in [16]. An example of how to identify complexes in a simple system is given in Fig. 1, while a comparison with prior accounts (IIT 1.0, IIT 2.0, and IIT 3.0) can be found in A.2. An outline of the IIT algorithm is included in A.4.

## Existence, intrinsicality, and information: Determining the maximal cause-effect state of a candidate system

Given a causal model T S (3), we wish to identify the maximal cause-effect state specified by a system in its current state over itself and to quantify the causal power with which it does so. In doing so, we quantify the cause-effect power of a system from its intrinsic perspective, rather than from the perspective of an outside observer (see Box 1).

## System intrinsic information ii

Intrinsic information ii ( s, ¯ s ) measures the causal power of a system S over itself, for its current state s , over a specific cause/effect state ¯ s . Intrinsic information depends on interventional conditional probabilities and unconstrained probabilities of cause/effect states and is the product of selectivity and informativeness.

On the effect side, intrinsic effect information ii e of the current state s over a possible effect state ¯ s is defined as:

<!-- formula-not-decoded -->

Above, p e (¯ s | s ) = p (¯ s | s ) (3) is the interventional conditional probability that the current state S = s produces the effect state ¯ s , as indicated by T S .

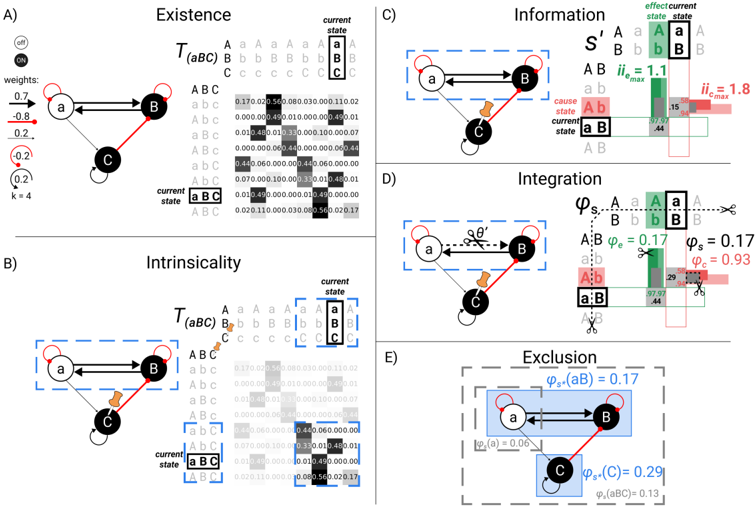

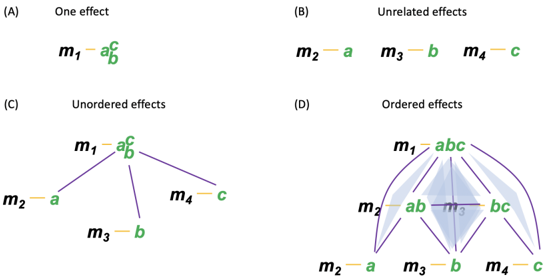

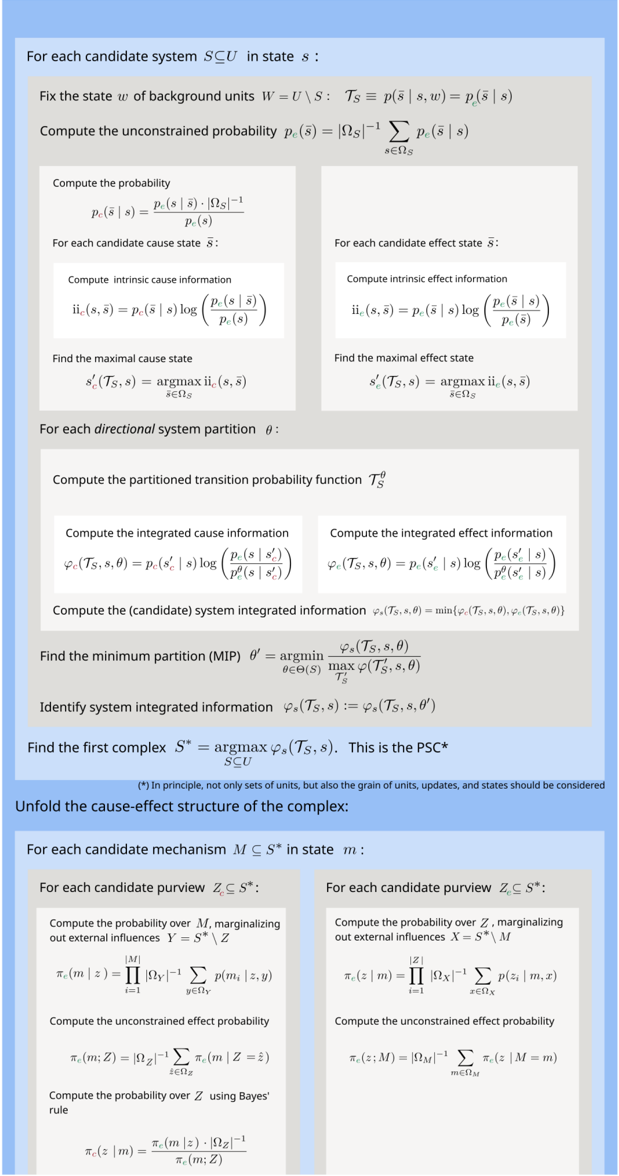

Fig 1. Identifying substrates of consciousness through the postulates of existence, intrinsicality, information, integration, and exclusion.(A) The substrate S = aBC in state ( -1 , 1 , 1) (lowercase letters for units indicated state ' -1', uppercase letters state '+1') is the starting point for applying the postulates. The substrate updates its state according to the depicted transition probability matrix (TPM) (each unit follows a logistic equation (see Results for definition) with k = 4.0 and connection weights as indicated in the causal model). Existence requires that the substrate must have cause-effect power, meaning that the TPM among substrate states must differ from chance. (B) Intrinsicality requires that a candidate substrate, for example, units aB , has cause-effect power over itself. Units outside the candidate substrate (in this case, unit C ) are treated as background conditions by pinning them in their current state. (C) Information requires that the candidate substrate aB selects a specific cause-effect state ( s ′ ). This is the cause state and effect state for which intrinsic information ( ii ) is maximal. Dark-colored and gray bars represent the quantities for informativeness (constrained and unconstrained), and light colored bars for selectivity. (D) Integration requires that the substrate specifies its cause-effect state irreducibly ('as one'). This is established by identifying the minimum partition (MIP) and measuring the integrated information of the system ( ϕ s )-the minimum between cause integrated information ( ϕ c ) and effect integrated information ( ϕ e ). Here, gray bars represent the partitioned probability. (E) Exclusion requires that the substrate of consciousness is definite, including some units and excluding others. This is established by identifying the candidate substrate with the maximum value of system integrated information ( ϕ ∗ s )-the maximal substrate, or complex. In this case, aB is a complex since its system integrated information ( ϕ s = 0 . 17) is higher than the one of all other overlapping systems (for example, subset a with ϕ s = 0 . 06 and superset aBC with ϕ s = 0 . 13).

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Causal Structures & Information Flow

### Overview

The image presents five distinct diagrams (A-E) illustrating different causal structures and information flow concepts. Each diagram features a network of nodes (a, b, c) connected by directed edges, accompanied by a matrix representing the transition probabilities between states. The diagrams are labeled "Existence", "Intrinsicality", "Information", "Integration", and "Exclusion". Each diagram also includes a small matrix representing the effect/current state.

### Components/Axes

Each diagram shares the following components:

* **Nodes:** Represented by circles labeled 'a', 'b', and 'c'.

* **Directed Edges:** Arrows indicating the direction of causal influence. Edge weights are indicated numerically.

* **Transition Matrix (T(ABC)):** A 4x4 matrix representing the probabilities of transitioning between states ABC, Abc, aBC, and abc. Rows and columns are labeled with these states.

* **Effect/Current State Matrix:** A 2x2 matrix representing the effect/current state.

* **Labels:** Each diagram has a title indicating the concept it illustrates.

* **Color Coding:** Red indicates activation/influence, while black indicates no influence.

### Detailed Analysis or Content Details

**A) Existence**

* **Edge Weights:** a->b: 0.2, b->c: 0.2, c->a: -0.2.

* **Transition Matrix T(ABC):**

* ABC: 0.11, 0.02, 0.03, 0.02

* Abc: 0.00, 0.45, 0.10, 0.00

* aBC: 0.00, 0.00, 0.60, 0.00

* abc: 0.00, 0.00, 0.00, 0.50

* **Current State:** aBC (highlighted in blue).

* **Effect/Current State Matrix:** S' = 1.1, ii<sub>c</sub> = 1.8

**B) Intrinsicality**

* **Edge Weights:** a->b: 0.2, b->c: 0.2, c->a: -0.2. (Same as A)

* **Transition Matrix T(ABC):** (Identical to A)

* ABC: 0.11, 0.02, 0.03, 0.02

* Abc: 0.00, 0.45, 0.10, 0.00

* aBC: 0.00, 0.00, 0.60, 0.00

* abc: 0.00, 0.00, 0.00, 0.50

* **Current State:** abc (highlighted in blue).

* **Effect/Current State Matrix:** S' = 0.6, ii<sub>c</sub> = 0.6

**C) Information**

* **Edge Weights:** a->b: (red), b->c: (red), a->c: (red).

* **Transition Matrix:** Not fully visible, but shows values like 0.71, 0.44.

* **Current State:** Ab (highlighted in blue).

* **Effect/Current State Matrix:** ii<sub>max</sub> = 1.1, ii<sub>c</sub> = 1.8

**D) Integration**

* **Edge Weights:** a->b: (red), b->c: (red), c->a: (red). Angle θ' is indicated.

* **Φ<sub>s</sub>:** 0.17

* **Φ<sub>c</sub>:** 0.93

* **Transition Matrix:** Not fully visible, but shows values like 97.61.

* **Current State:** aB (highlighted in blue).

**E) Exclusion**

* **Edge Weights:** a->b: (red), b->c: (red).

* **Φ<sub>s</sub>(aB):** 0.17

* **Φ<sub>c</sub>(a):** 0.06

* **Φ<sub>c</sub>(a,b):** 0.29

* **Φ<sub>c</sub>(a,b,c):** 0.13

* **Transition Matrix:** Not fully visible.

* **Current State:** ab (highlighted in blue).

### Key Observations

* Diagrams A and B share the same network structure and transition matrix, differing only in the highlighted current state.

* The "Information" diagram (C) shows all nodes influencing each other.

* The "Integration" diagram (D) introduces an angle θ' and uses Φ values (likely representing some form of correlation or influence strength).

* The "Exclusion" diagram (E) introduces multiple Φ values, suggesting a more complex relationship between the nodes.

* The matrices are not fully visible, making precise data extraction difficult.

### Interpretation

These diagrams appear to be exploring different types of causal relationships within a simple three-node system. The transition matrices quantify the probabilities of the system being in different states, while the edge weights represent the strength of causal influence.

* **Existence & Intrinsicality (A & B):** These diagrams demonstrate how the same underlying causal structure can lead to different system states. The difference in current state (aBC vs. abc) highlights the sensitivity to initial conditions.

* **Information (C):** This diagram suggests a fully connected system where information can flow freely between all nodes.

* **Integration (D):** The introduction of the angle θ' and Φ values suggests a more nuanced relationship, potentially representing the degree of integration or synergy between the nodes. The high Φ<sub>c</sub> value (0.93) indicates a strong correlation in the current state.

* **Exclusion (E):** The multiple Φ values in this diagram suggest that the influence of one node on another is contingent on the state of other nodes. The lower Φ<sub>c</sub>(a) value (0.06) suggests that node 'a' has a limited influence on the system when considered in isolation.

The diagrams collectively illustrate how different causal structures and information flow patterns can emerge within a simple system, and how these patterns can be quantified using probabilistic models. The use of color coding (red for activation, black for no influence) provides a visual representation of the causal relationships. The matrices provide a quantitative measure of the system's dynamics. The diagrams are likely part of a larger theoretical framework exploring the foundations of information processing and causality.

</details>

The interventional unconstrained probability p e (¯ s )

<!-- formula-not-decoded -->

is defined as the marginal probability of ¯ s , averaged across all possible current states of S with equal probability (where | Ω S | denotes the cardinality of the state space Ω S ).

On the cause side, intrinsic cause information ii c of the current state s over a possible cause state ¯ s is defined as:

<!-- formula-not-decoded -->

Above, p c (¯ s | s ) is the interventional conditional probability that the current state S = s was produced by ¯ s . The latter is derived from T S using Bayes' rule, where we again assign a uniform prior to the states of ¯ s ,

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

## Informativeness (over chance)

In (4) and (6), the logarithmic term (in base 2 throughout) is called informativeness . Note that informativeness is expressed in terms of effect probabilities for both ii e (4) and ii c (6). However, ii e (4) evaluates the increase in probability of the effect state due to the current state, while ii c (6) evaluates the increase in probability of the current state due to the cause state.

In line with the existence postulate, a system S in state s has cause-effect power (it takes and makes a difference) if it raises the probability of a possible effect state compared to chance, which is to say compared to its unconstrained probability,

<!-- formula-not-decoded -->

and if the probability of the current state is raised above chance by a possible cause state,

<!-- formula-not-decoded -->

Informativeness is additive over the number of units: if a system specifies a cause or effect state with probability p = 1, its causal power increases additively with the number of units whose states it fully specifies ( expansion ), given that the chance probability of all states decreases exponentially.

## Selectivity (over states)

From the intrinsic perspective of a system, cause-effect power over a specific cause or effect state depends not only on the deviation from chance it produces, but also on how that deviation is concentrated on that state, rather than being diluted over other states. This is measured by the selectivity term in front of the logarithmic term in (4) and (6), corresponding to the conditional probability p c/e (¯ s | s ) of that specific cause or effect state. Selectivity means that if p < 1, the system's causal power becomes subadditive ( dilution ) (see [17] for details). For example, as shown in [15], if an unconstrained unit is added to a fully specified unit, intrinsic information does not just stay the same, but decreases exponentially. From the intrinsic perspective of the system, the such that

informativeness of a specific cause/effect state is diluted because it is spread over multiple possible states, yet the system must select only one state.

Altogether, taking the product of informativeness and selectivity leads to a tension between expansion and dilution: a larger system will tend to have higher informativeness than a smaller system because it will deviate more from chance, but it will also tend to have lower selectivity because it will have a larger repertoire of states to select from.

Because of the selectivity term, intrinsic information is reduced by indeterminism and degeneracy. As shown in [16], indeterminism decreases the probability of the selected effect state because it implies that the same state can lead to multiple states. In turn, degeneracy decreases the probability of the selected cause state because it implies that multiple states can lead to the same state, even in a deterministic system.

The intrinsic information ii is quantified in units of intrinsic bits , or ibits , to distinguish it from standard information-theoretic measures (which are typically additive). Formally, the ibit corresponds to a point-wise information value (measured in bits) weighted by a probability.

## The maximal cause-effect state

Taking the product of informativeness and selectivity on the system's cause and effect sides captures the postulates of existence (taking and making a difference) and intrinsicality (taking and making a difference over itself) for each possible cause/effect state, as measured by intrinsic information. However, the information postulate further requires that the system selects a specific cause/effect state. Which one is determined based on the principle of maximal existence (Box 1): the cause/effect specified by the system should be the one that maximizes intrinsic information. On the effect side (and similarly for the cause side),

<!-- formula-not-decoded -->

The system's intrinsic effect information is the value of ii e (4) for its maximal effect state:

<!-- formula-not-decoded -->

We have made the dependency of s ′ and ii e on T S explicit in (11) and (12) to highlight that for intrinsic information to properly assess cause-effect power, all probabilities must be derived from the system's interventional transition probability function, while imposing a uniform prior distribution over all possible system states. If there is no state with ii e ( T S , s ) > 0, the system S in state s has no causal power (and likewise for ii c ( T S , s )). Note also that a system's intrinsic cause/effect state does not necessarily correspond to the actual cause/effect state (what actually happened before / will happen after) in the dynamical evolution of the system, which typically also depends on extrinsic influences. (For an account of actual causation according to the causal principles of IIT, see [13].)

Because consciousness is the way it is, the translation of its properties in physical, operational terms should be unique and based on quantities that uniquely satisfy the postulates [15,40]. Intrinsic information (12) is formally equivalent to a measure of intrinsic difference [17], which uniquely satisfies three desired properties (causality, specificity, and intrinsicality) that align with the postulates of IIT, but also have independent justification.

## Integration: Determining the irreducibility of a candidate system

Having identified the maximal cause-effect state s ′ = { s ′ c , s ′ e } of a candidate system S in its current state s , the next step is to evaluate whether the system specifies the cause-effect state of its units in a way that is irreducible , as required by the integration postulate: a candidate system can only be a substrate of consciousness if it is one system-that is, if it cannot be subdivided into subsets of units that exist separately from one another.

## Directional system partitions

To that end, we define a set of directional system partitions Θ( S ) that divide S into k ≥ 2 parts { S ( i ) } k i =1 , such that

/negationslash

<!-- formula-not-decoded -->

In words, each part S ( i ) must contain at least one unit, there must be no overlap between any two parts S ( i ) and S ( j ) , and every unit of the system must appear in exactly one part. For each part S ( i ) , the partition removes the causal connections of that part with the rest of the system in a directional manner: either the part's inputs, outputs, or both are replaced by independent 'noise' (they are 'cut' by the partition in the sense that their causal powers are substituted by chance). Directional partitions are necessary because, from the intrinsic perspective of a system, a subset of units that cannot affect the rest of the system, or cannot be affected by it, cannot truly be a part of the system. In other words, to be a part of a system, a subset of units must be able to interact with the rest of the system in both directions (cause and effect).

A partition θ ∈ Θ( S ) thus has the form

<!-- formula-not-decoded -->

where δ i ∈ {← , → , ↔} indicates whether the inputs ( ← ), outputs ( → ), or both ( ↔ ) are cut for a given part. For each part S ( i ) , we can then identify a set of units X ( i ) ⊆ S whose inputs to S ( i ) have been cut by the partition, and the complementary set Y ( i ) = S \ X ( i ) whose inputs to S ( i ) are left intact. Specifically,

/negationslash

<!-- formula-not-decoded -->

Given a partition θ ∈ Θ( S ), we define a partitioned transition probability matrix T θ S in which all connections affected by the partition are 'noised.' This is done by combining the independent contributions of each unit S j ∈ S in line with the conditional independence assumption (2), such that

<!-- formula-not-decoded -->

where the partitioned probability of a unit S j ∈ S ( i ) is defined as

<!-- formula-not-decoded -->

This means that all connections to unit S j that are affected by the partition are causally marginalized (replaced by independent noise).

## System integrated information ϕ s

The integrated effect information ϕ e measures how much the partition θ ∈ Θ S reduces the probability with which a system S = s specifies its effect state s ′ e (11),

<!-- formula-not-decoded -->

Note that ϕ e has the same form as the intrinsic information ii e ( s, ¯ s ) (4), with the partitioned effect probability taking the place of the unconstrained (marginal) probability. Likewise, the integrated cause information ϕ c is defined as

<!-- formula-not-decoded -->

(By the principle of maximal existence, if two or more cause-effect states are tied for maximal intrinsic information, the system specifies the one that maximizes ϕ c/e .)

By the zeroth postulate, existence requires cause and effect power, and the integration postulate requires that its cause-effect power be irreducible. By the principle of minimal existence (Box 2), then, system integrated information for a given partition is the minimum of its irreducibility on the cause and effect sides:

<!-- formula-not-decoded -->

Accordingly, the system is reducible if at least one partition θ ∈ Θ S makes no difference to the cause or effect probability.

Moreover, again by the principle of minimal existence, the integrated information of a system is given by its irreducibility over its minimum partition (MIP) θ ′ ∈ Θ S , such that

<!-- formula-not-decoded -->

The MIP is defined as the partition θ ∈ Θ S that minimizes the system's integrated information, relative to the maximum possible value it could take for an arbitrary TPM T ′ S over the units of system S

<!-- formula-not-decoded -->

The normalization ensures that ϕ s ( T S , s ) is evaluated fairly over a system's fault lines by assessing integration relative to its maximum possible value over a given partition. Using the relative integrated information quantifies the strength of the interactions between parts in a way that does not depend on the number of parts and their size. As proven in [16], the maximal value of ϕ ( T S , s, θ ) for a given partition θ is the

<!-- formula-not-decoded -->

maximal possible number of 'connections' (pairwise interactions) affected by θ . For example, as shown in [16], the MIP will correctly identify the fault line dividing a system into two large subsets of units linked through a few interconnected units (a 'bridge'), rather than defaulting to partitions between individual units and the rest of the system. Once the minimum partition has been identified, the integrated information across it is an absolute quantity, quantifying the loss of intrinsic information due to

cutting the minimum partition of the system. (If two or more partitions θ ∈ Θ( S ) minimize Eqn. (22), we select the partition with the largest unnormalized ϕ s value as θ ′ , applying the principle of maximal existence.) Defining θ ′ as in (22), moreover, ensures that ϕ s ( T S , s ) = 0 if the system is not strongly connected in graph-theoretic terms.

In summary, the system integrated information ( ϕ s ( T S , s )) quantifies the extent to which system S in state s has cause-effect power over itself as one system ( i.e. , irreducibly). ϕ s ( T S , s ) is thus a quantifier of irreducible existence.

## Exclusion: Determining maximal substrates (complexes)

In general, multiple candidate systems with overlapping units may have positive values of ϕ s ( T S , s ). By the exclusion postulate, the substrate of consciousness must be definite; that is, it must comprise a definite set of units. But which one? Once again, we employ the principle of maximal existence (Box 2): among candidate systems competing over the same substrate with respect to an essential requirement for existence, in this case irreducibility, the one that exists is the one that exists the most. Accordingly, the maximal substrate, or complex, is the candidate substrate with the maximum value of system integrated information ( ϕ ∗ s ), and overlapping substrates with lower ϕ s are excluded from existence.

## Determining maximal substrates recursively

Within a universal substrate U 0 in state u 0 , subsets of units that specify maxima of irreducible cause-effect power (complexes) can be identified by an iterative search for the system S ∗ k ⊆ U k with

<!-- formula-not-decoded -->

<!-- formula-not-decoded -->

and U k +1 = U k \ S ∗ k until U k +1 = ∅ or U k +1 = u k (the units in U 0 \ U k +1 still serve as background conditions). If the maximal substrate S ∗ k is not unique, and all tied systems overlap, the next best system that is unique is chosen instead; for details see [16], and also [41].

For any complex S ∗ = s ∗ , overlapping substrates that specify less integrated information ( ϕ s < ϕ s ( T S ∗ , s ∗ )) are excluded. Consequently, specifying a maximum of integrated information ϕ ∗ s compared to all overlapping systems

/negationslash

<!-- formula-not-decoded -->

/negationslash is a sufficient requirement for a system S ⊆ U to be a complex.

As shown in [16], this recursive search for maximal substrates 'condenses' the universe U 0 = u 0 into a disjoint (non-overlapping) and exhaustive set of complexes-the first complex, second complex, and so on.

## Determining maximal unit grains

Above, we presented how we can determine the borders of a complex within a larger system U , assuming a particular spatio-temporal grain for the units U i ∈ U . In principle, however, all possible grains should be considered [42,43]. In the brain, for example, the grain of units could be brain regions, groups of neurons, individual neurons, sub-cellular structures, molecules, atoms, quarks, or anything finer, down to hypothetical atomic units of cause-effect power [3,7]. For any unit grain-neurons, for such that

example-the grain of updates could be minutes, seconds, milliseconds, micro-seconds, and so on. And the grain of states could be 2 states ('no spikes / any number of spikes per neuron over one hundred milliseconds'), 4 states ('no spikes, 1 spike, 2-5 spikes, bursts of > 5 spikes'), or 256 states (from 0 to 255 spikes in intervals of 1 spike), and so on. However, by the exclusion postulate, the units that constitute a system S must also be definite, in the sense of having a definite spatio-temporal grain.

Once again, the grain is defined by the principle of maximal existence: across the possible micro- and macroscopic levels, the 'winning' grain is the one that ensures maximally irreducible existence ( ϕ ∗ s ) for the entity to which the units belong [42,43].

As constituents of a complex upon which its cause-effect power rests, the units themselves should comply with the postulates of IIT [4]. Otherwise it would be possible to 'make something out of nothing.' Accordingly, units themselves must also be maximally irreducible, as measured by the unit's integrated information ( ϕ j ≡ ϕ s ); otherwise, they would not be units but 'disintegrate' into their constituents. However, in contrast to systems, units only need to be maximally irreducible within, because they do not exist as entities in their own right: a unit J qualifies as a candidate unit of a larger system S if its integrated information ϕ ( j ) is higher than that of any of its subsets

<!-- formula-not-decoded -->

Out of all possible sets of such candidate units, the set of (macro) units that actually exists is the one that maximizes the existence of the complex to which they belong, rather than their own existence.

## Unfolding the cause-effect structure of a complex through composition

Once a maximal substrate and the associated maximal cause-effect state have been identified, we must unfold its cause-effect power to reveal its cause-effect structure of distinctions and relations, in line with the composition postulate. As components of the cause-effect structure, distinctions and relations must also satisfy the postulates of IIT (save for composition).

## Composition and causal distinctions

Causal distinctions capture how the cause-effect power of a substrate is structured by subsets of units that specify irreducible causes and effects over subsets of its units. A candidate distinction d ( m ) consists of (1) a mechanism M ⊆ S in state m ∈ Ω M inherited from the system state s ∈ Ω S ; (2) a maximal cause-effect state z ∗ = { z ∗ c , z ∗ e } over the cause and effect purviews ( Z c , Z e ⊆ S ) linked by the mechanism; and (3) an associated value of irreducibility ( ϕ d > 0). A distinction d ( m ) is thus represented by the tuple

<!-- formula-not-decoded -->

For a given mechanism m , our goal is to identify the maximal cause Z ∗ c = z ∗ c and effect Z ∗ e = z ∗ e of m within the system, where Z ∗ c , Z ∗ e ⊆ S , z ∗ c ∈ Ω Z ∗ c , z ∗ e ∈ Ω Z ∗ e .

As above, in line with existence, intrinsicality, and information, we determine the maximal cause or effect state specified by the mechanism over a candidate purview within the system based on the value of intrinsic information ii ( m,z ). Next, in line with integration, we determine the value of integrated information ϕ d ( m,Z,θ ) over the minimum partition θ ′ . In line with exclusion, we determine the maximal cause-effect purviews for that mechanism over all possible purviews Z ⊆ S based on the associated

value of irreducibility ϕ d ( m,Z,θ ′ ). Finally, we determine whether the maximal cause-effect state specified by the mechanism is congruent with the system's overall cause-effect state ( z ∗ c ⊆ s ∗ c , z ∗ e ⊆ s ∗ e ), in which case we conclude that it contributes a distinction to the overall cause-effect structure.

The updated formalism to identify causal distinctions within a system S in state s was first presented in [15]. Here we provide a summary with minor adjustments on selecting z ∗ c and z ∗ e , the cause integrated information ϕ c ( m,Z ), and the requirement that causal distinctions must be congruent with the system's maximal cause-effect state (see A.2).

## Existence, intrinsicality, and information: Determining the cause and effect state specified by a mechanism over candidate purviews

Like the system as a whole, its subsets must comply with existence, intrinsicality, and information. As for the system, we begin by quantifying, in probabilistic terms, the difference a subset of units M ⊆ S , in its current state m ⊆ s takes and makes from and to subsets of units Z ⊆ S (cause and effect purview). As above, we start by establishing the interventional conditional probabilities and unconstrained probabilities from the TPM T S .

When dealing with a mechanism constituted by a subset of system units, it is important to capture the constraints on a purview state z that are exclusively due to the mechanism in its state ( m ), removing any potential contribution from other system units. This is done by causally marginalizing all variables in X = S \ M , which corresponds to imposing a uniform distribution as p ( X t ) [11, 13, 15] (note [44]). In addition, product probabilities π ( z | m ) are used instead of conditional probabilities p ( z | m ) to discount correlations from units in X = S \ M with divergent outputs to multiple units in Z ⊆ S [11, 13, 45]. In this way, causal marginalization maintains the conditional independence between units (2) by applying independent noise to individual connections. The assumption of conditional independence distinguishes IIT's causal analysis from standard information-theoretic analyses of information flow [13,31] and corresponds to an assumption that variables are 'physical' units in the sense that they are irreducible within and can be observed and manipulated independently.

The effect probability of a single unit Z i ∈ Z conditioned on the current state m is thus defined as

<!-- formula-not-decoded -->

and the effect probability over a set Z of | Z | units is defined as the product of the effect probabilities over individual units

<!-- formula-not-decoded -->

From Eq. (29) we can also define an unconstrained effect probability

<!-- formula-not-decoded -->

Given the set Y = S \ Z , the cause probability for a mechanism m with | M | units is computed using Bayes' rule over the product distributions

<!-- formula-not-decoded -->

where p e ( m i | z ) = | Ω Y | -1 ∑ y ∈ Ω Y p ( m i | z, y ) in line with (28).

To correctly quantify intrinsic causal constraints, the unconstrained cause probability is again set to the uniform distribution π c ( z ) = | Ω Z | -1 , z ∈ Ω Z (unlike the unconstrained effect probability, the unconstrained cause distribution does not depend on the mechanism). Note that the product in (31) is over units of M , not of Z . For details see [13,15]. As above, all probabilities p are obtained from the TPM T S (3) and thus correspond to interventional probabilities throughout.

Having defined cause and effect probabilities, we can now evaluate the intrinsic information of a mechanism m over a purview state Z = z analogously to the system intrinsic information (4) and (6). The intrinsic effect information that a mechanism in a state m specifies about a purview state z is

<!-- formula-not-decoded -->

The intrinsic cause information that a mechanism in a state m specifies about a purview state z is

<!-- formula-not-decoded -->

As with system intrinsic information, the logarithmic term is the informativeness, which captures how much causal power is exerted by the mechanism m on its potential effect z (how much it increases the probability of that state above chance), or by the potential cause z on the mechanism m . The first term corresponds to the mechanism's selectivity, which captures how much the causal power of the mechanism m is concentrated on a specific state of its purview (as opposed to other states). In the following we will again focus on the effect side, but an equivalent procedure applies on the cause side.

Based on the principle of maximal existence, the maximal effect state of m within the purview Z is defined as

<!-- formula-not-decoded -->

which corresponds to the specific effect of m on Z . Note that z ′ e is not always unique (see A.1). The maximal intrinsic information of mechanism m over a purview Z is then

<!-- formula-not-decoded -->

The intrinsic information of a candidate distinction, like that of the system as a whole, is sensitive to indeterminism (the same state leading to multiple states) and degeneracy (multiple states leading to the same state) because both factors decrease the probability of the selected state. Moreover, the product of selectivity and informativeness leads to a tension between expansion and dilution: larger purviews tend to increase informativeness because conditional probabilities will deviate more from chance, but they also tend to decrease selectivity because of the larger repertoire of states.

## Integration: Determining the irreducibility of a candidate distinction

To comply with integration, we must next ask whether the specific effect of m on Z is irreducible. As for the system, we do so by evaluating the integrated information ϕ e ( m,Z ). To that end, we define a set of 'disintegrating' partitions Θ( M,Z ) as

/negationslash

<!-- formula-not-decoded -->

where { M ( i ) } is a partition of M and { Z ( i ) } is a partition of Z , but the empty set may also be used as a part ( P denotes the power set). As introduced in [13,15], a disintegrating partition θ ∈ Θ( M,Z ) either 'cuts' the mechanism into at least two independent parts if | M | > 1, or it severs all connections between M and Z , which is always the case if | M | = 1 (we refer to [13,15] for details).

Given a partition θ ∈ Θ( M,Z ), we can define the partitioned effect probability

<!-- formula-not-decoded -->

with π ( ∅ | m ( i ) ) = π ( ∅ ) = 1. In the case of m ( i ) = ∅ , π e ( z ′ ( i ) e | ∅ ) corresponds to the fully partitioned effect probability

<!-- formula-not-decoded -->

The integrated effect information of mechanism m over a purview Z ⊆ S with effect state z ′ e for a particular partition θ ∈ Θ( M,Z ) is then defined as

<!-- formula-not-decoded -->

The effect of m on z ′ e is reducible if at least one partition θ ∈ Θ( M,Z ) makes no difference to the effect probability. In line with the principle of minimal existence, the total integrated effect information ϕ e ( m,Z ) again has to be evaluated over θ ′ , the minimum partition (MIP)

<!-- formula-not-decoded -->

which requires a search over all possible partitions θ ∈ Θ( M,Z ):

<!-- formula-not-decoded -->

As in (22), the minimum partition is evaluated against its maximum possible value across all possible system T ′ S , which again corresponds to the number of possible pairwise interactions affected by the partition.

The integrated cause information is defined analogously, as

<!-- formula-not-decoded -->

where the partitioned probability π θ e ( m | z ) is again a product distribution over the parts in the partition, as in (37).

Taken together, the intrinsic information (35) determines what cause or effect state the mechanism m specifies. Its integrated information quantifies to what extent m specifies its cause or effect in an irreducible manner. Again, ϕ ( m,Z ) is a quantifier of irreducible existence.

## Exclusion: Determining causal distinctions

Finally, to comply with exclusion, a mechanism must select a definite effect purview, as well as a cause purview, out of a set of candidate purviews. Resorting again to the principle of maximal existence, the mechanism's effect purview and associated effect is the one having the maximum value of integrated information across all possible purviews Z ⊆ S in state z ′ e ( m,Z ) (34)

<!-- formula-not-decoded -->

The integrated effect information of a mechanism m within S is then

<!-- formula-not-decoded -->

The integrated cause information ϕ c ( m ) and the maximally irreducible cause z ∗ c ( m ) are defined in the same way. Based again on the principle of minimal existence, the irreducibility of the distinction specified by a mechanism is given by the minimum between its integrated cause and effect information

<!-- formula-not-decoded -->

## Determining the set of causal distinctions that are congruent with the system cause-effect state

As required by composition, unfolding the full cause-effect structure of the system S in state s requires assessing the irreducible cause-effect power of every subset of units within S (Fig. 2). Any m ⊆ s with ϕ d > 0 specifies a candidate distinction d ( m ) = ( m,z ∗ , ϕ d ) (27) within the system S in state s . However, in order to contribute to the cause-effect structure of a system, distinctions must also comply with intrinsicality and information at the system level. This means that the cause-effect state they specify over subsets of the system ( z ∗ = { z ∗ c , z ∗ e } ) must be congruent with the cause-effect state specified over itself by the system as a whole s ′ .

We thus define the set of all causal distinctions within S in state s as

<!-- formula-not-decoded -->

Altogether, distinctions can be thought of as irreducible 'handles' through which the system can take and make a difference to itself by linking an intrinsic cause to an intrinsic effect over subsets of itself. As components within the system, causal distinctions have no inherent structure themselves. Whatever structure there may be between the units that make up a distinction is not a property of the distinction but due to the structure of the system, and thus captured already by its compositional set of distinctions. Similarly, from an extrinsic perspective, one may uncover additional causes and effects, both within the system and across its borders, at either macro or micro grains. However, from the intrinsic perspective of the system causes and effects that are excluded from its cause-effect structure do not exist [20,36].

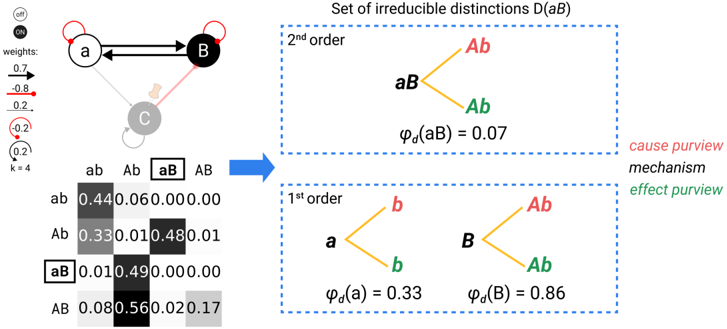

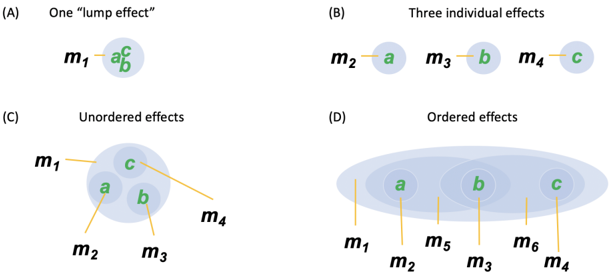

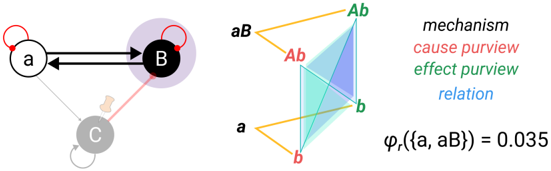

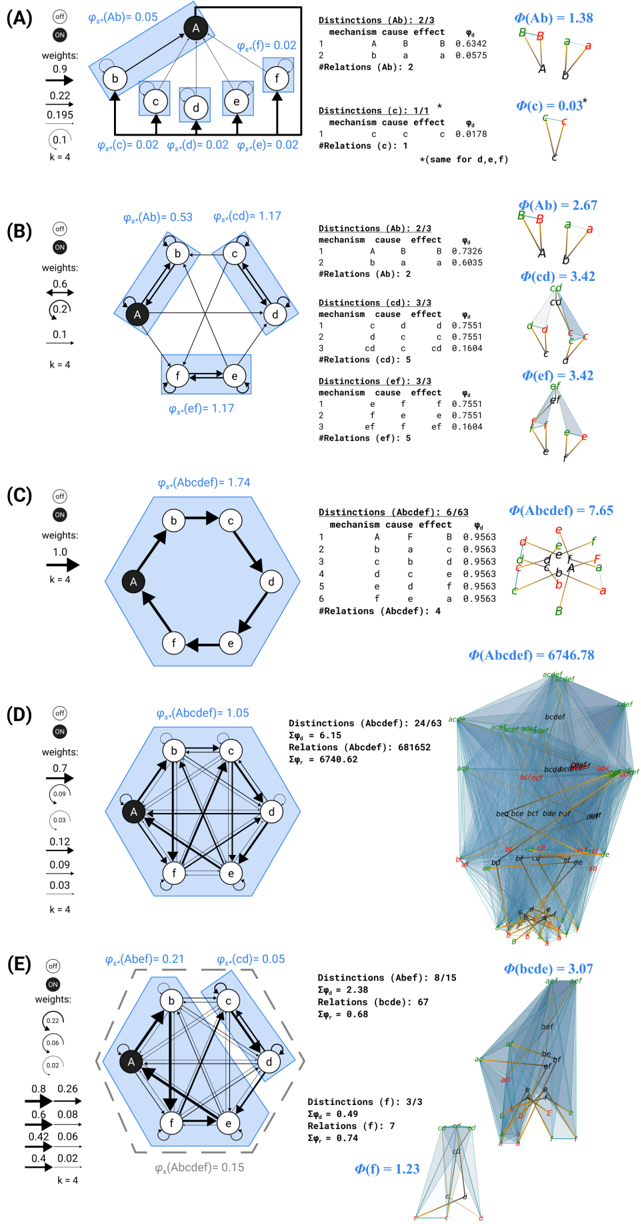

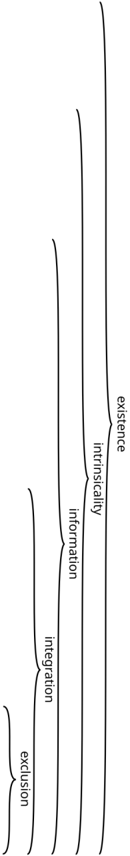

Fig 2. Composition and causal distinctions. Identifying the irreducible causal distinctions specified by a substrate in a state requires evaluating the specific causes and effects of every system subset. The candidate substrate is constituted of two interacting units S = aB (see Fig. 1) and updates its state according to the depicted transition probability matrix. In addition to the two first-order mechanisms a and B , the second-order mechanism aB specifies its own irreducible cause and effect, as indicated by ϕ d > 0.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Causal Network and Irreducible Distinctions

### Overview

The image depicts a causal network with three nodes (a, b, and c) and their interactions, alongside a representation of irreducible distinctions between variables a and B. The network's weights are visualized, and a matrix shows the probabilities of different state combinations. The right side of the image illustrates the "Set of irreducible distinctions D(aB)" with a hierarchical structure (1st and 2nd order) and associated probabilities. A blue arrow connects the network to the distinction set.

### Components/Axes

The diagram consists of three main sections:

1. **Causal Network:** Nodes labeled 'a', 'b', and 'c'. Arrows indicate causal relationships with weights. A scale on the left shows the weight mapping (0.2 to -0.8).

2. **Probability Matrix:** A 4x4 matrix with row and column labels: 'ab', 'Ab', 'aB', 'AB'. The matrix contains numerical values representing probabilities.

3. **Irreducible Distinctions:** A boxed area labeled "Set of irreducible distinctions D(aB)". This section is divided into "2nd order" and "1st order" subsections, each containing diagrams and probability values. A legend on the right defines color-coding for "cause purview", "mechanism", and "effect purview".

### Detailed Analysis or Content Details

**Causal Network:**

* Node 'a' is black, Node 'b' is black, and Node 'c' is grey.

* An arrow points from 'a' to 'b' with a weight of approximately -0.8.

* An arrow points from 'a' to 'c' with a weight of approximately 0.2.

* An arrow points from 'c' to 'b' with a weight of approximately 0.2.

**Probability Matrix:**

The matrix values are as follows (row = first variable, column = second variable):

* ab: 0.440, 0.060, 0.000, 0.000

* Ab: 0.330, 0.100, 0.480, 0.000

* aB: 0.010, 0.490, 0.000, 0.000

* AB: 0.080, 0.560, 0.020, 0.170