## SoK: Hardware Defenses Against Speculative Execution Attacks

Guangyuan Hu Princeton University gh9@princeton.edu

Zecheng He Princeton University zechengh@princeton.edu

Abstract -Speculative execution attacks leverage the speculative and out-of-order execution features in modern computer processors to access secret data or execute code that should not be executed. Secret information can then be leaked through a covert channel. While software patches can be installed for mitigation on existing hardware, these solutions can incur big performance overhead. Hardware mitigation is being studied extensively by the computer architecture community. It has the benefit of preserving software compatibility and the potential for much smaller performance overhead than software solutions.

This paper presents a systematization of the hardware defenses against speculative execution attacks that have been proposed. We show that speculative execution attacks consist of 6 critical attack steps. We propose defense strategies, each of which prevents a critical attack step from happening, thus preventing the attack from succeeding. We then summarize 20 hardware defenses and overhead-reducing features that have been proposed. We show that each defense proposed can be classified under one of our defense strategies, which also explains why it can thwart the attack from succeeding. We discuss the scope of the defenses, their performance overhead, and the security-performance trade-offs that can be made.

## I. INTRODUCTION

Speculative execution attacks, also known as transient execution attacks, are a serious security problem. They exploit performance enhancement features in hardware to access secret data and leak this secret out through microarchitectural covert channels. This negates the confidentiality and integrity protections provided by software isolation, and also by hardware isolation features such as secure enclaves [1], [2].

In particular, Spectre [3], Meltdown [4] and Foreshadow [5] bypass the isolation across processes and privilege levels. The Spectre attack bypasses the memory protection provided by software bounds checking, while the Meltdown attack breaches the memory isolation between the kernel and a user application. Foreshadow [5], and its variants Foreshadow-OS and Foreshadow-VMM [6], breach the Intel SGX enclave isolation, user-to-kernel memory isolation, and virtual-machineto-hypervisor isolation, respectively.

The severity of these attacks has resulted in many specific fixes for specific attack variants implemented by the computer industry. These include using instructions to serialize execution [7], [8], to flush hardware prediction states [9], to avoid using untrusted predictions [10], and to restrict accesses to secret information [11]-[13]. However, most of these solutions require changes to the existing software. Furthermore,

Ruby B. Lee Princeton University rblee@princeton.edu

TABLE I: Hardware defenses and overhead-reducing features against speculative execution attacks published in recent computer architecture and security conferences.

| Defense and Overhead-reducing Feature | Conference | Year |

|-------------------------------------------------|-----------------|--------|

| InvisiSpec [14] | MICRO | 2018 |

| DAWG [15] | MICRO | 2018 |

| CondSpec [16] | HPCA | 2019 |

| Context-sensitive fencing (CSF) [17] | ASPLOS | 2019 |

| SpectreGuard [18] | DAC | 2019 |

| SafeSpec [19] | DAC | 2019 |

| EfficientSpec [20] | ISCA | 2019 |

| SpecShield [21] | PACT | 2019 |

| STT [22] | MICRO | 2019 |

| NDA [23] | MICRO | 2019 |

| CleanupSpec [24] | MICRO | 2019 |

| MI6 [25] | MICRO | 2019 |

| IRONHIDE [26] | HPCA | 2020 |

| ConTExT [27] | NDSS | 2020 |

| Predictor state encryption [28] | ISCA | 2020 |

| MuonTrap [29] | ISCA | 2020 |

| Speculative Data-Oblivious Execution (SDO) [30] | ISCA | 2020 |

| Clearing the Shadows [31] | PACT | 2020 |

| InvarSpec [32] | MICRO | 2020 |

| DOLMA [33] | USENIX Security | 2021 |

they also cause significant performance overhead (at least 2X slower [11], sometimes up to 8X). Last but not least, the software countermeasures are usually attack-specific. New patches are required to effectively protect against the emerging attacks, which is neither efficient nor sustainable.

In response to these attacks on hardware microarchitecture performance optimization features, there have been proposals of hardware defenses as well as features that reduce the performance overhead of defenses [14]-[33], which we show in Table I in chronological order. One key advantage is that the hardware solution can monitor the instruction execution status and accurately protect against speculative vulnerabilities. Another advantage of some hardware solutions is their nonintrusive interaction with the existing software, while inducing low performance overhead. These hardware defenses can read the unmodified program but delay or change the execution of secret-leaking instructions so that the information leakage through hardware states is eliminated. Some microarchitectural defenses also allow security-performance trade-offs and overhead-reducing features [30]-[32].

However, the working mechanisms and scope of different hardware defenses have not been systematically described and compared. Hence, our goal in this paper is to systematize the hardware defenses, to illustrate their key similarities and differences, and to assist future researchers to more easily understand and reason about how an existing defense works. While the goal of this paper is not to describe all the speculative execution attacks in detail, as there are many past work surveying and summarizing these [34]-[37], we analyze the critical attack steps of 23 speculative execution attacks. We then show how the hardware defenses mitigate the attacks by preventing these steps, connecting the attacks and defenses.

Our key contributions are:

- Producing attack taxonomies based on secret access or secret leakage, covering 23 variants of speculative execution attacks.

- Defining new defense strategies based on preventing at least one of the critical attack steps.

- Producing a new taxonomy of 4 hardware defense strategies and lower-level categories of defenses.

- Creating a systematized view and description of 20 representative hardware defenses and overhead-reducing features proposed to date.

- Presenting the performance overhead of the defenses, and illustrating security-performance tradeoffs.

## II. MICROARCHITECTURE AND COVERT CHANNEL BACKGROUND

We first describe hardware performance optimization features that can be exploited for speculative execution attacks.

Out-of-Order (OoO) execution. An Out-of-Order (OoO) processor is a microarchitecture performance enhancement feature used to boost the throughput of processors by allowing instructions later in the program order to execute before the previous instructions have completed. For example, an earlier instruction may be waiting for one of its operands, or for a functional unit or memory to free up, or for determining if a branch should be taken or where to branch to. Later instructions in an in-order processor that have no dependencies will have to wait unnecessarily. In contrast, an Out-of-Order processor allows the instructions with no dependencies to execute immediately, as long as they retire in-order.

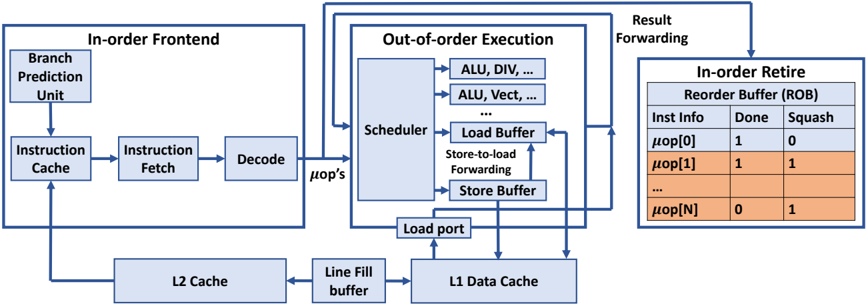

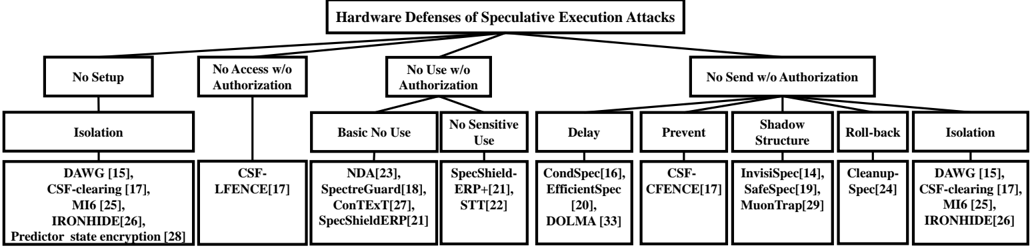

Fig. 1 shows a generic Out-of-Order (OoO) processor where instructions are fetched in program order but executed Outof-Order. Instructions are forced to retire in-order to maintain precise exceptions, i.e., if an instruction results in an exception, the following instructions must be 'squashed' as if they were never executed. We will use this generic OoO model to explain the defenses in a unified way in the rest of the paper.

Instructions are fetched and decoded to microarchitecturelevel operations (denoted m op's) in program order, but after the m op's are dispatched to the execution stage, the hardware scheduler can schedule any ready m op's to different functional units for execution. Thus, the execution of later m op's can complete earlier than those of previous instructions, which also allows the results of these m op's to be used earlier. The result from the execution of a m op is forwarded and used by other dependent m op's.

An important microarchitecture structure, that we will refer to in discussing hardware defenses, is the Re-Order Buffer (ROB) shown in Fig. 1. The ROB records the instruction's or m op's information as well as its execution status, such as whether the instruction has finished its execution ( Done = '1' in the figure) and whether the instruction should be squashed ( Squash = '1'). The ROB guarantees that if an instruction needs to be squashed, all the subsequent instructions are also squashed. The ROB also acts as a FIFO queue to preserve the program order so an instruction can only retire when it reaches the head of the ROB.

Speculative execution. Speculative execution is a further performance enhancement that allows instructions to be tentatively (i.e., speculatively) executed, even when the control flow has not been determined, or the data from memory has not arrived. For instance, when the processor fetches a branch whose operand is not available, e.g., having to be read from memory, the address of the next instruction is predicted and fetched so that the processor does not have to stall its pipeline. If the prediction is found to be correct later, the speculative execution improves the performance by executing code on the correct path in advance. However, if the prediction is found to be incorrect, the processor needs to flush the pipeline so that the results of the speculatively executed instructions are discarded. This is called a squash , where the processor restores the architectural state, e.g., the register values visible to the software, as if the mispredicted instructions have not executed.

Hardware predictors. From the microarchitecture perspective, speculative execution happens because hardware predictors are present that allow tentative forward progress even when an instruction has unresolved dependencies. For conditional branch instructions, branch predictors predict whether the branch will be taken or not. For indirect branches, the Branch Target Buffer (BTB) predicts the target address. For return instructions, the Return Stack Buffer (RSB) or Return Address Stack (RAS) predicts the address to return to after a procedure call.

Microarchitectural state and covert channels. Microarchitectural states are the states of hardware units that are not directly accessible to the programmer or software. Even if invisible from the software's view, these states can impact the execution time of certain programs and the states can be inferred if the processor executes these programs. If one program modifies a certain microarchitectural state with another monitoring it, these two programs form a microarchitectural covert channel in which the former is the sender and the latter is the receiver. Examples include the addresses of cache lines in various cache levels, which we describe in detail below, and the busy status of different hardware resources.

Cache state and covert channel. One critical microarchitectural state is the cache state. A cache has many cache lines corresponding to different addresses. Since cache hits are fast, and cache misses are slow, cache timing attacks are possible, leaking information through observing the cache access time.

One example exploiting a cache covert channel is the flush-

Fig. 1: A block diagram of a typical out-of-order processor. The contents of the ROB show a sample situation where instruction m op[0] executed correctly, but m op[1] to m op[N] were executed speculatively and incorrectly and had to be squased.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: CPU Pipeline Architecture

### Overview

The diagram illustrates a modern CPU pipeline architecture, divided into three primary stages: **In-order Frontend**, **Out-of-order Execution**, and **In-order Retire**. Arrows indicate data flow and control dependencies between components.

### Components/Axes

1. **In-order Frontend**:

- **Branch Prediction Unit**: Predicts branch outcomes.

- **Instruction Cache**: Stores fetched instructions.

- **Instruction Fetch**: Retrieves instructions from memory.

- **Decode**: Translates instructions into micro-operations (µops).

- **L2 Cache**: Secondary cache feeding the frontend.

- **Line Fill Buffer**: Temporarily holds data for cache misses.

2. **Out-of-order Execution**:

- **Scheduler**: Allocates µops to execution units.

- **Execution Units**:

- ALU (Arithmetic Logic Unit)

- DIV (Division Unit)

- Vector Units (e.g., ALU, Vect)

- **Load Buffer**: Stores load instructions awaiting data.

- **Store Buffer**: Holds store instructions for memory writes.

- **Load Port**: Interface for memory load operations.

3. **In-order Retire**:

- **Reorder Buffer (ROB)**: Tracks µops for in-order retirement.

- **Columns**:

- `Inst Info`: Micro-operation identifier.

- `Done`: Completion status (1 = done, 0 = pending).

- `Squash`: Indicates invalidated µops (1 = squashed, 0 = valid).

- **Rows**: Labeled `µop[0]`, `µop[1]`, ..., `µop[N]` for sequential tracking.

### Detailed Analysis

- **Flow**:

1. Instructions are fetched from the **Instruction Cache** and decoded into µops.

2. µops are sent to the **Scheduler**, which dispatches them to execution units (e.g., ALU, DIV).

3. Results are forwarded to the **Load/Store Buffers** for memory operations.

4. Completed µops are tracked in the **ROB**, ensuring in-order retirement despite out-of-order execution.

- **Key Data Points**:

- The **ROB** table shows:

- `µop[0]`: `Done=1`, `Squash=0` (valid and completed).

- `µop[1]`: `Done=1`, `Squash=1` (completed but squashed, likely due to a misprediction).

- `µop[N]`: `Done=0`, `Squash=1` (pending but invalidated).

### Key Observations

- **Out-of-order Execution**: Execution units (ALU, DIV, Vector) operate independently, enabling parallelism.

- **Branch Prediction**: Critical for minimizing pipeline stalls caused by mispredicted branches.

- **ROB Functionality**: Ensures program correctness by retiring µops in program order, even if executed out of order.

- **Squashed µops**: Indicates speculative execution errors (e.g., branch mispredictions).

### Interpretation

This diagram highlights the interplay between **speculative execution** (out-of-order) and **deterministic retirement** (in-order). The **ROB** acts as a synchronization mechanism, resolving dependencies and ensuring that memory operations and results are committed in the correct sequence. The presence of squashed µops (`µop[1]` and `µop[N]`) suggests frequent branch mispredictions or invalidated speculative paths, which could degrade performance. The architecture balances throughput (via out-of-order execution) with correctness (via in-order retirement), a hallmark of modern high-performance CPUs.

</details>

reload technique [38], where the sender is an insider and the receiver an outsider attacker. During the setup phase of the covert channel, certain cache lines are flushed out of the cache. To send a secret out, the sender accesses a secretdependent address, which brings back one of the flushed lines. The receiver will later measure the time to reload each cache line and infer whether this cache line is fetched by the sender by observing whether it is a cache hit. The flush-reload cache covert channel is used in most of the speculative execution attacks published.

There are other techniques for covert communication through cache state. In a prime-probe [39] covert channel, the receiver first primes the cache to fill the cache with its own cache lines. The sender then accesses certain addresses, evicting some of the receiver's cache lines. The receiver can get to know which cache lines the sender accessed by loading each cache line and observing cache misses. In a flushflush [40] covert channel, the receiver keeps evicting certain addresses by executing the flush instruction. If the sender accesses some of these addresses and brings them into the cache, the time to flush will be longer, so the receiver can infer information from timing the second flush.

Many other types of covert or side channels, not using caches, nor timing, are also possible.

## III. SPECULATIVE EXECUTION ATTACKS

We first present some critical attack steps that we have identified in existing speculative execution attacks.

## A. Critical Attack Steps

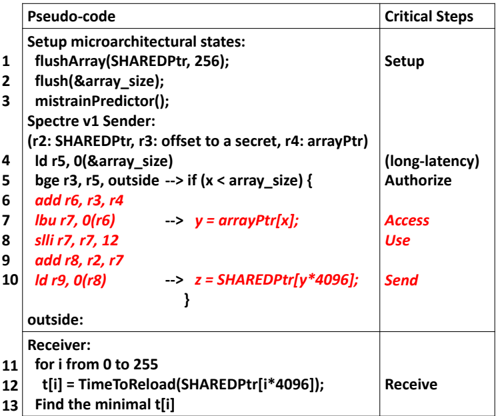

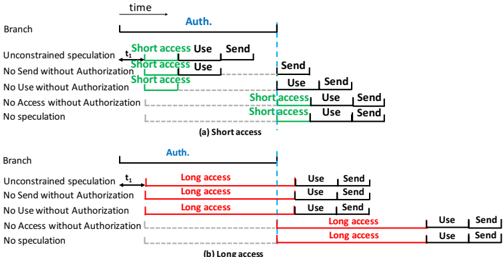

Although the exact workflow of an attack may vary, we observe that they all consist of 6 critical steps. These are shown in the right column of Fig. 2 and described below.

Setup. The Setup step sets up the initial hardware state, e.g., the branch predictor state for Spectre v1, so that the processor will enter speculative execution. It also sets up the initial state for the covert channel, e.g., flushing the shared cache lines for a flush-reload channel.

Authorize. The attack starts with the Authorize step. The Authorize operation performs the authorization required for accessing a memory location or a protected register. For speculative attacks, the speculative execution window starts when the authorization is delayed.

Access. When the authorization is delayed, the Access step in a speculative attack can read a secret from the cache, the memory, a protected register or a microarchitectural buffer that is otherwise not allowed.

Use. The Use step uses the secret to generate a secretdependent operation. Examples are instructions that compute a memory address for a later load operation.

Send. The Send step alters the microarchitectural state of the covert channel in a secret-dependent way. Even if the access , use and send operations will all be squashed after the authorization fails, the microarchitectural state change may remain and can be discovered later by the receiver.

Receive. The recovery of the secret from the covert channel by the attacker.

## B. A Spectre v1 Attack Example

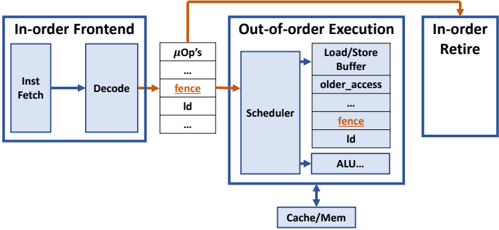

For concreteness, let us first consider a particular speculative attack, the Spectre v1 attack. In Fig. 2, we show the pseudo-code of the Spectre v1 attack and the RISC assembly instructions executed during speculative execution. Lines 1-3 set up the microarchitectural state. The cache lines containing the shared array pointed by SHAREDPtr are flushed from caches as the preparation for the flush-reload cache covert channel which we described in Section II. The size of the private array pointed to by arrayPtr is also flushed so that the load on line 4 will take a long time to finish. Also, the branch predictor is mistrained so that the prediction of the conditional branch in line 5 will be 'not taken'. The conditional branch, bge, in line 5 performs the authorization for the later load byte instruction, lbu, which accesses the secret byte in line 7. Since the conditional branch checking is delayed by the previous load instruction in line 4, a branch predictor is invoked. Due to the mistraining, the branch is not taken and the secret is illegally accessed by the lbu instruction. In line 8 and 9, the secret is then used to calculate a memory address of the next ld instruction in line 10. This ld instruction is a covert send

Fig. 2: Spectre v1 attack (bypassing array bounds checking). The assembly code and pseudo code of the attack bypass control flow authorization by a conditional branch to access a secret. The attack leaks an 8-bit secret through the most commonly used flush-reload cache covert channel by loading in a cache line in the shared array into the cache. The code in red is the transient execution that will be squashed. The comments after the arrows show the high-level language equivalents of the assembly code.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Table: Pseudo-code Execution with Critical Steps

### Overview

The image presents a structured table mapping pseudo-code execution steps (left column) to critical security-related operations (right column). The pseudo-code appears to implement a Spectre v1 attack, with specific instructions highlighted in red. Critical steps are categorized into Setup, (long-latency) Authorize, Access Use, Send, and Receive phases.

### Components/Axes

- **Left Column (Pseudo-code)**:

- Line numbers 1–13 with assembly-like instructions and Spectre attack logic.

- Key operations: `flushArray`, `flush`, `mistrainPredictor`, `ld` (load), `bge` (branch if greater than or equal), `lbu` (load byte unsigned), `slli` (shift left logical immediate), `add`, and `id` (load double).

- Red-highlighted instructions: `add r6, r3, r4`, `lbu r7, 0(r6)`, `slli r7, r7, 12`, `add r8, r2, r7`, `ld r9, 0(r8)`, and `z = SHAREDPtr[y*4096]`.

- **Right Column (Critical Steps)**:

- **Setup**: Initialization of microarchitectural states (`flushArray`, `flush`, `mistrainPredictor`).

- **(long-latency) Authorize**: Branch and load operations (`bge`, `ld`) to validate memory access.

- **Access Use**: Memory access via `lbu` and pointer dereference (`y = arrayPtr[x]`).

- **Send**: Shared pointer dereference (`z = SHAREDPtr[y*4096]`).

- **Receive**: Time measurement loop (`TimeToReload`) to infer secret values.

### Detailed Analysis

1. **Setup Phase**:

- `flushArray(SHAREDPtr, 256)` and `flush(&array_size)` clear cache lines to establish a baseline.

- `mistrainPredictor()` resets branch prediction state.

2. **Sender Logic**:

- Variables `r2` (SHAREDPtr), `r3` (offset), `r4` (arrayPtr), and `r5` (array_size) are initialized.

- A loop (`bge r3, r5, outside`) iterates over `array_size`, with red-highlighted instructions modifying registers to compute memory addresses.

3. **Critical Memory Access**:

- `lbu r7, 0(r6)` accesses `arrayPtr[x]` (highlighted in red), triggering a cache timing side channel.

- `slli r7, r7, 12` and `add r8, r2, r7` compute the offset for the shared pointer dereference.

4. **Secret Inference**:

- `ld r9, 0(r8)` loads the value at the computed address, and `z = SHAREDPtr[y*4096]` captures the result.

- The `Receive` phase measures reload times (`TimeToReload`) to deduce the secret value via Spectre's cache-based timing attack.

### Key Observations

- **Red-highlighted instructions** (`add`, `lbu`, `slli`, `add`, `ld`, `z = SHAREDPtr`) represent the core Spectre attack logic, manipulating registers to leak memory contents.

- The `(long-latency) Authorize` step introduces a delay, likely to bypass branch prediction defenses.

- The `Receive` phase (lines 11–13) iterates 256 times to sample reload times, identifying the minimal `t[i]` to infer the secret.

### Interpretation

This pseudo-code demonstrates a Spectre v1 attack, exploiting speculative execution to bypass memory safety. The **Setup** phase prepares the environment, while **Authorize** and **Access Use** steps manipulate branch prediction and memory access. The **Send** and **Receive** phases exploit cache timing to infer secret values. The red-highlighted instructions are critical for bypassing security mechanisms, showcasing how speculative execution can be weaponized for side-channel attacks. The structured mapping of code to critical steps emphasizes the attack's phases, from initialization to secret extraction.

</details>

| Pseudo-code | Critical Steps |

|--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|---------------------------------|

| Setup microarchitectural states: flushArray(SHAREDPtr, 256); flush(&array_size); mistrainPredictor(); Spectre v1 Sender: (r2: SHAREDPtr, r3: offset to a secret, r4: arrayPtr) ld r5, 0(&array_size) bge r3, r5, outside add r6, r3, r4 lbu r7, 0(r6) slli r7, r7, 12 add r8, r2, r7 ld r9, 0(r8) --> if (x < array_size) { --> y = arrayPtr[x]; --> z = SHAREDPtr[y*4096]; 1 2 3 4 5 6 7 8 9 10 | Setup Authorize Access Use Send |

| | (long-latency) |

| outside: } | |

| Receiver: for i from 0 to 255 t[i] = TimeToReload(SHAREDPtr[i*4096]); Find the minimal t[i] 11 12 13 | Receive |

instruction that leaks out the secret through the cache covert channel. In line 11-13, the receiver measures the latency to access the shared array to find out which memory address in the shared array was accessed by the sender. The memory address that hits in the cache leaks the secret.

## C. Other Attacks

Table II gives a listing of the speculative attacks published to date [3]-[6], [41]-[59]. We show their Common Vulnerabilities and Exposures (CVE) numbers, description and publication date. All the attack variants in Table II, except for the last speculative interference attack, introduce a new way to bypass authorization to access the secret. The speculative interference attack introduces a new way to change the timing of non-speculative instructions, which adds a new dimension to the covert Send operation.

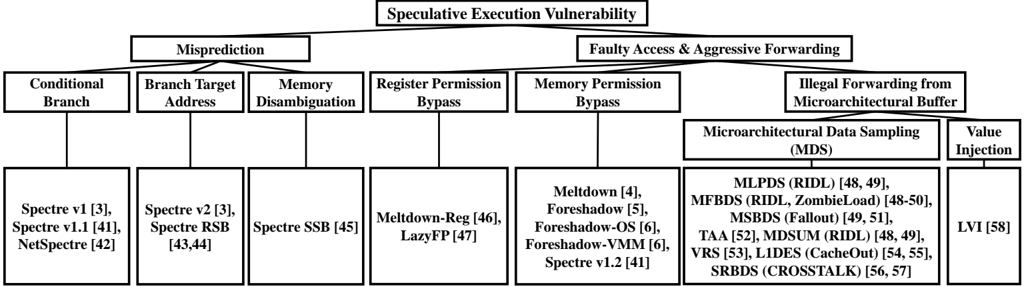

Hardware features for malicious speculative execution. In Fig. 3, we show the hardware features that can be exploited to launch malicious speculative execution attacks, especially to access a secret.

The first major category of features causing misprediction include the conditional branch prediction, the prediction for branch target address and the memory disambiguation. Spectre v1 [3] attack mistrains the conditional branch for bounds checking to read an out-of-bounds secret. Spectre v1.1 [41] also uses misprediction for conditional branch to bypass bounds checking but performs an out-of-bounds write during speculative execution. Even if the write to memory will not become visible, the write may change a jump target, e.g., the return address, and execute an Access -Use -Send gadget (i.e.,

TABLE II: The Speculative (Transient) Execution Attack variants. Date is year.month of publication.

| Attack | CVE | Description | Date |

|---------------------------------------|----------------------|-------------------------------------------------------------|---------------|

| Spectre v1 [3] | 2017-5753 | Speculative boundary check bypass for read | 2018.1 |

| Spectre v1.1 [41] | 2018-3693 | Speculative boundary check bypass for write | 2018.7 |

| NetSpectre [42] | 2017-5753 | Remote attack performing a bounds check bypass | 2018.1 |

| Spectre v2 [3] Spectre RSB [43], [44] | 2017-5715 2018-15572 | Branch target misprediction Return target misprediction | 2018.1 2018.8 |

| Spectre SSB [45] | 2018-3639 | Speculative store bypass, read stale data in memory | 2018.5 |

| Meltdown-Reg (Spectre v3a) [46] | 2018-3640 | System register value leakage to unprivileged attacker | 2018.5 |

| Lazy FP [47] | 2018-3665 | Leak of FPU state | 2018.6 |

| Meltdown (Spectre v3) [4] | 2017-5754 | Kernel content leakage to unprivileged attacker | 2018.1 |

| Foreshadow (L1 Terminal Fault) [5] | 2018-3615 | SGX enclave memory leakage | 2018.8 |

| Foreshadow-OS [6] | 2018-3620 | OS memory leakage | 2018.8 |

| Foreshadow-VMM [6] | 2018-3646 | VMM memory leakage | 2018.8 |

| Spectre v1.2 [41] | N/A | Speculative write to read-only memory | 2018.7 |

| RIDL/MLPDS [48], [49] | 2018-12127 | MDS leakage from load port | 2019.5 |

| RIDL/ZombieLoad/ MFBDS [48]-[50] | 2018-12130 | MDS leakage from line fill buffer | 2019.5 |

| Fallout/MSBDS [49], [51] | 2018-12126 | MDS leakage from store buffer | 2019.5 |

| TAA [52] | 2019-11135 | TSX Asynchronous Abort | 2019.11 |

| RIDL/MDSUM [48], [49] | 2019-11091 | MDS leakage from uncacheable memory | 2019.5 |

| | 2020-0548 | Sampling | 2020.1 |

| VRS [53] CacheOut/L1DES [54], [55] | 2020-0549 | Vector Register | 2020.1 |

| CROSSTALK/ SRBDS [56], [57] | 2020-0543 | L1D Eviction Sampling Special Register Buffer Data Sampling | 2020.6 |

| LVI [58] | 2020-0551 | Load Value Injection causing memory disclosure | 2020.3 |

| Speculative Interference | | Speculative interference on non- | |

| [59] | N/A | speculative instructions | 2020.9 |

a code snippet) as we show in lines 7-10 in Fig. 2 to read and leak a secret. NetSpectre [42] shows that the mistraining of the conditional branch predictor can be performed remotely.

Another control-flow misprediction based attack is the Spectre v2 attack [3], which injects a malicious target into the branch target buffer (BTB) for indirect branches. Similarly, the Spectre RSB attack [43], [44] injects wrong return addresses into the return stack buffer (RSB) for function returns. Both can cause information leakage by directing the control flow to an Access -Use -Send gadget.

Memory disambiguation checks whether the value written by a previous store instruction, which has not yet been written back to the cache-memory system, should be forwarded to a later load instruction that reads from the same address. In the Speculative Store Bypass (Spectre SSB) attack, if the store address has not been computed and the processor predicts that the addresses of the current load and a previous store are different, then stale data, which can be a secret, can be loaded from the memory system to the processor and get leaked out.

The second major category of hardware features exploited consists of an illegal access that reads a secret and forwards it to dependent instructions before it is squashed. We call these 'faulty access and aggressive forwarding' attacks. The first type of attacks transiently bypasses permission checks of special registers and delays the exception handling. MeltdownReg [46] can read the system parameter stored in a system register while LazyFP [47] leaks the stale floating-point unit (FPU) state of a previous domain that is not cleared until first used in a new context.

The second type of faulty access attacks transiently violate memory access permission checking and reads illegal data

Fig. 3: Taxonomy of secret access (bypassed authorization and secret access steps). The third and fourth rows show the hardware mechanisms used to trigger the transient execution. They correspond to delayed Authorize operations that are temporarily bypassed. The last row shows the attacks that exploit these hardware features. These are listed in the same order as in Table I, from left to right.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Flowchart: Speculative Execution Vulnerability Categories

### Overview

The diagram illustrates a hierarchical taxonomy of speculative execution vulnerabilities, branching into two primary categories: **Misprediction** and **Faulty Access & Aggressive Forwarding**. Each category contains subcategories with specific vulnerability examples and references.

### Components/Axes

- **Main Title**: "Speculative Execution Vulnerability"

- **Primary Branches**:

1. **Misprediction**

- Conditional Branch

- Branch Target Address

- Memory Disambiguation

- Register Permission Bypass

2. **Faulty Access & Aggressive Forwarding**

- Memory Permission Bypass

- Microarchitectural Data Sampling (MDS)

- Value Injection

### Detailed Analysis

#### Misprediction Branch

- **Conditional Branch**:

- Spectre v1 [3], Spectre v1.1 [41], NetSpectre [42]

- **Branch Target Address**:

- Spectre v2 [3], Spectre RSB [43,44]

- **Memory Disambiguation**:

- Spectre SSB [45]

- **Register Permission Bypass**:

- Meltdown-Reg [46], LazyFP [47]

#### Faulty Access & Aggressive Forwarding Branch

- **Memory Permission Bypass**:

- Meltdown [4], Foreshadow [5], Foreshadow-OS [6], Foreshadow-VMM [6], Spectre v1.2 [41]

- **Microarchitectural Data Sampling (MDS)**:

- MLPDS (RIDL) [48,49], MFBDS (RIDL, ZombieLoad) [48-50], MSBDS (Fallout) [49,51], TAA [52], MDSUM (RIDL) [48,49], VRS [53], L1DES (CacheOut) [54,55], SRBDS (CROSSSTALK) [56,57]

- **Value Injection**:

- LVI [58]

### Key Observations

1. **Misprediction Dominance**: The "Misprediction" branch contains 4 subcategories, each with 2-3 vulnerabilities, suggesting a focus on branch prediction flaws.

2. **MDS Proliferation**: The MDS subcategory lists 8 distinct vulnerabilities, indicating it is the most extensively exploited category.

3. **Cross-Referencing**: Some vulnerabilities (e.g., Spectre v1.2, Meltdown) appear in both branches, highlighting overlapping attack vectors.

4. **Temporal Clustering**: Vulnerabilities are grouped by discovery timelines (e.g., Spectre v1/v2, Meltdown), reflecting historical progression.

### Interpretation

This taxonomy reveals that speculative execution vulnerabilities primarily exploit **branch prediction errors** (Misprediction) and **memory access bypasses** (Faulty Access). The MDS category stands out as the most diverse, encompassing side-channel attacks like RIDL and CacheOut. The overlap of vulnerabilities (e.g., Spectre v1.2 in both branches) underscores the interconnected nature of these flaws. The diagram emphasizes the need for defenses targeting both architectural mispredictions and microarchitectural data leakage, with LVI representing a unique class of value-based attacks. The hierarchical structure aids in categorizing mitigation strategies, such as Spectre mitigations (SSB, RSB) versus MDS-specific countermeasures (e.g., RIDL patches).

</details>

with a memory access instruction. Meltdown [4] reads and leaks kernel data before the execution is squashed due to the failed supervisor permission check of the secret access. The Foreshadow (L1 terminal fault) attack variants [5], [6] exploit loads which do not have a valid virtual address to physical address mapping. The address translation will abort prematurely by returning a partially translated address. If a secret at this incorrect address is present in the L1 cache, it can be speculatively accessed and leaked out. The leaked data can be a secret in an SGX enclave (Foreshadow), in the kernel space (Foreshadow-OS) or in the virtual machine monitor space (Foreshadow-VMM). Spectre v1.2 attack [41] transiently bypasses the read/write permission and writes to a read-only address. The illegal write can trigger an Access -Use -Send gadget to leak a secret if it is a branch target.

The more recent type of attacks (in 2019 and 2020) exploit the hardware vulnerability that some stale data, which is stored in microarchitectural buffers can be read by a load that will cause a fault or invoke a microcode assist [49]. The data can belong to another security domain and can be at a different address from the address the faulting load is accessing. This type of attack is called a microarchitectural data sampling (MDS) attack. In an MDS attack, the victim program first executes and accesses a secret. The secret can be temporarily stored in a microarchitectural buffer when it is in-flight. However, the stale secret value can be forwarded to a faulting or microcode-assisted load issued by the MDS attacker which then sends it out through a covert channel.

Microarchitectural buffers that have been shown to store stale secret values include the load port, the line fill buffer and the store buffer, which we show in Fig. 1. The load port temporarily stores the data when it is read by a load operation and being written into a register. The line fill buffer stores a memory line that missed in the L1 data cache and is being returned from the L2 cache [49]. The store buffer stores the data and addresses of store operations to be written to the L1 data cache. RIDL [48] leaks the secret stored in the load port called Microarchitectural Load Port Data Sampling (MLPDS) [49] and the line fill buffer called Microarchitectural Fill Buffer Data Sampling (MFBDS) [49].

ZombieLoad [50] demonstrates more variants of the line fill buffer leakage (MFBDS), whose secret access is triggered by a microcode assist. Fallout [51] leaks the secret stored in the store buffer called Microarchitectural Store Buffer Data Sampling (MSBDS) [49].

A vulerability similar to MDS is the TSX Asynchronous Abort (TAA) [52] in Intel processors. If the Intel TSX atomic execution is aborted, uncompleted loads in the transaction may also read a secret from the microarchitectural buffers exploited by MDS and leak it through a covert channel.

The MDS and TAA techniques give rise to more attacks. Uncacheable memory accesses [48], [49] can bring data into the buffers mentioned above, which can be accessed using MDS or TAA technqiues and cause the Microarchitectural Data Sampling Uncacheable Memory (MDSUM) attack. The Vector register sampling (VRS) vulnerability [53] allows part of the previously accessed vector register values to be sent to the store buffer and get leaked by an MSBDS-type attacker. The CacheOut [54] or L1D eviction sampling (L1DES) vulnerability [55] shows that the modified data recently evicted from the L1 data cache can be kept in the line fill buffer, which gives an MFBDS-type attacker the chance to read and leak it. In the CrossTalk [56] or special register buffer data sampling (SRBDS) attack [57], the secret value read from certain special registers can be stored in shared buffers and later propagated to the line fill buffer. The secret can be leaked to an MFBDStype attacker who can even be from a different core. We refer to all the above MDS-related attacks as MDS attacks in Fig. 3.

The other type of microarchitectural buffer related attack, i.e., the load value injection (LVI) attacks [58], explore injecting values to the victim domain to trigger speculation. The attacker first places his malicious data in the microarchitectural buffers and lets the victim access the malicious value through the MDS vulnerabilities. If the malicious value is used by the victim as an address to read a secret or a jump address to an Access -Use -Send gadget, the secret can be leaked.

## D. Covert Channels for Send Operation

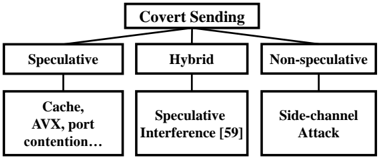

Microarchitectural covert channels are used to transmit the secret that has been illegally accessed. In Fig. 4, we show three

Fig. 4: Different ways to leak a secret through a Send operation. The speculative interference attack [59] achieves the final covert Send through a non-speculative instruction.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Flowchart: Covert Sending Methodologies

### Overview

The diagram illustrates a hierarchical classification of covert sending techniques, organized into three primary categories: **Speculative**, **Hybrid**, and **Non-speculative**. Each category branches into specific subcategories, with references to technical methods and potential attack vectors.

### Components/Axes

- **Main Title**: "Covert Sending" (centered at the top).

- **Primary Branches**:

1. **Speculative** (left branch)

2. **Hybrid** (center branch)

3. **Non-speculative** (right branch)

- **Subcategories**:

- **Speculative**:

- Cache

- AVX

- Port contention...

- **Hybrid**:

- Speculative Interference [59]

- **Non-speculative**:

- Side-channel Attack

### Detailed Analysis

- **Speculative Branch**:

- Subcategories include hardware-level techniques like **Cache** (exploiting cache timing), **AVX** (Advanced Vector Extensions for speculative execution), and **port contention** (resource contention attacks). The ellipsis (...) suggests additional unspecified methods.

- **Hybrid Branch**:

- Contains **Speculative Interference [59]**, where "[59]" likely denotes a citation or reference to a specific study or paper.

- **Non-speculative Branch**:

- Lists **Side-channel Attack**, a method that infers data through physical leakage (e.g., power consumption, electromagnetic emissions).

### Key Observations

1. The diagram emphasizes **speculative execution** as a dominant theme, with two branches (Speculative and Hybrid) directly or indirectly referencing it.

2. The **Hybrid** category acts as a bridge between speculative and non-speculative methods, suggesting a combination of techniques.

3. **Side-channel Attack** is isolated under Non-speculative, indicating it operates outside speculative execution frameworks.

### Interpretation

This flowchart categorizes covert sending methods based on their reliance on speculative execution. The **Speculative** and **Hybrid** branches highlight vulnerabilities in modern hardware (e.g., cache timing attacks, AVX-based exploits), while the **Non-speculative** branch focuses on classical side-channel methods. The inclusion of a citation ([59]) for Speculative Interference implies empirical validation of this technique. The separation of Side-channel Attacks suggests they are considered distinct from speculative methods, possibly due to differing attack surfaces or mitigation strategies. The diagram underscores the evolving landscape of covert communication in computing, where speculative execution vulnerabilities (e.g., Spectre/Meltdown) have become critical attack vectors.

</details>

types of covert Send operations. These are through speculative instructions, both speculative and non-speculative instructions (hybrid), and only non-speculative instructions. Most of the existing speculative execution attacks are in the first category, executing a speculative Send operation to cause a secretdependent state change in the covert channel that can be recovered later by the receiver. The cache covert channel is the most commonly used channel. Examples of other covert channels include the execution time of AVX instructions [42], port contention [60] and the cache way predictor [61].

The recently discovered speculative interference attack [59] leaks the secret through non-speculative instructions by changing the timing of non-speculative instructions with the speculatively executed instructions. In Fig. 4, we characterize it as doing a hybrid two-step covert sending. In the first step, the speculative execution causes a secret-dependent hardware unit usage, affecting the timing of non-speculative instructions. In the second step, the timing information of non-speculative instructions can leak the secret. Examples include using the speculative 1) miss status handling register (MSHR) or 2) execution unit contention (first step) to change the timing of a non-speculative load (second step). Essentially, the two examples exploit two different covert channels in the first step, rather than the commonly used flush-reload cache channel.

If the Send operation is purely non-speculative as shown in the last case of Fig. 4, the attack becomes a side-channel attack, especially when both Access and Send operations are also non-speculative. This means the program has side-channel vulnerability that allows the secret access and the operation causing a secret-dependent microarchitectural state change, which is beyond the scope of speculative execution attacks.

Takeaway from attack analysis. The important observation we make is that the critical attack steps in Section III-A hold for all speculative execution attacks, not just for the Spectre v1 attack. Moreover, any valid combination of delayed authorization, speculative secret access and a covert channel can form a new attack variant. Based on this characterization of speculative attacks, we propose four defense strategies that prevent these speculative execution attacks from succeeding.

## IV. DEFENSE STRATEGIES

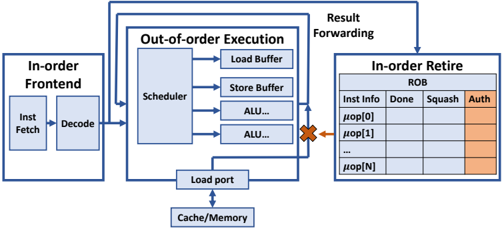

We propose a taxonomy of defenses depending on the attack step prevented, shown in Fig. 5. We identify four defense strategies, each based on a security policy:

- No Setup (Section IV-A): Setup is prevented so that either the malicious speculative execution cannot start or the covert channel state cannot be initialized.

- No Access without Authorization (Section IV-B): Access cannot execute before the authorization is completed.

- No Use without Authorization (Section IV-C): Access can execute but Use of a secret is blocked before the authorization is completed.

- No Send without Authorization (Section IV-D): Both Access and Use can execute but no secret can be sent, before the secret access is authorized.

The insight about No Access without Authorization is that while Authorize and Access may not have any data dependencies, they have a security dependency [37] since an access should not be allowed until it is authorized. Hence the No Access without Authorization security policy prevents the security breach. Given that Access , Use and Send are a chain of 3 data-dependent instructions, No Use without Authorization and No Send without Authorization defense strategies can be understood as enforcing the protection at a later stage to try to reduce the performance overhead.

We will describe representative defense proposals for each of these defense strategies.

## A. No Setup

There are two ways to prevent the Setup step. A defense can prevent either the preparation of the covert channel state or the trigger for speculative execution. Both can be achieved with an isolation-based method shown in Fig. 5.

The isolation method requires partitioning of otherwise shared hardware resources or flushing of a hardware resource if it is time-multiplexed. DAWG [15] partitions the cache lines using the domain id's and guarantees no interference through the cache replacement state. Context-sensitive fencing [17] implements a new micro-op to flush the branch target buffers (BTB) or return stack buffer (RSB) state when entering a different protection domain. MI6 [25] partitions the shared DRAM and last-level cache (LLC) resources between trusted enclaves and untrusted software and enables clearing any percore states such as branch predictors, L1 caches and TLBs, with a new instruction. IRONHIDE [26] implements a similar partitioning of LLC and memory resources and also a corelevel partitioning by reserving certain cores for a securitycritical program to reduce the cost of clearing per-core states.

Encryption can be applied to hardware states to implement an obfuscation-based isolation defense. Predictor state encryption [28] encrypts the BTB or RAS state with a contextspecific secret when storing a new target address and decrypts it for usage. This prevents the attacker in another process from injecting malicious jump/return targets, without requiring the clearing of microarchitectural states. Such context-specific encryption can also be considered a form of isolation.

However, note that these No Setup defenses usually require that the victim and the attacker come from different security domains, as the isolation-based method uses the domain information to allocate resources and enforce access control and

Performance-enhancing features for hardware defenses: SDO [30], Clearing the Shadows [31], InvarSpec [32]

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Flowchart: Hardware Defenses of Speculative Execution Attacks

### Overview

The flowchart categorizes hardware-based defenses against speculative execution attacks, organized into four primary branches: **No Setup**, **No Access w/o Authorization**, **No Use w/o Authorization**, and **No Send w/o Authorization**. Each branch further subdivides into specific defense strategies, with citations provided for each technique.

### Components/Axes

- **Main Title**: "Hardware Defenses of Speculative Execution Attacks"

- **Primary Branches**:

1. **No Setup**

2. **No Access w/o Authorization**

3. **No Use w/o Authorization**

4. **No Send w/o Authorization**

- **Subcategories**:

- **Isolation** (appears under "No Setup" and "No Send w/o Authorization")

- **Basic No Use** (under "No Access w/o Authorization" and "No Use w/o Authorization")

- **No Sensitive Use** (under "No Use w/o Authorization")

- **Delay** (under "No Send w/o Authorization")

- **Prevent** (under "No Send w/o Authorization")

- **Shadow Structure** (under "No Send w/o Authorization")

- **Roll-back** (under "No Send w/o Authorization")

### Detailed Analysis

#### No Setup

- **Isolation**:

- DAWG [15]

- CSF-clearing [17]

- MI6 [25]

- IRONHIDE [26]

- Predictor state encryption [28]

#### No Access w/o Authorization

- **Isolation**:

- CSF-LFENCE [17]

- **Basic No Use**:

- NDA [23]

- SpectreGuard [18]

- ConTeXt [27]

- SpecShieldERP [21]

#### No Use w/o Authorization

- **Basic No Use**:

- NDA [23]

- SpectreGuard [18]

- ConTeXt [27]

- SpecShieldERP [21]

- **No Sensitive Use**:

- SpecShield-ERP+ [21]

- STT [22]

#### No Send w/o Authorization

- **Delay**:

- CondSpec [16]

- EfficientSpec [20]

- DOLMA [33]

- **Prevent**:

- CSF-CFENCE [17]

- **Shadow Structure**:

- InvisiSpec [14]

- SafeSpec [19]

- MuonTrap [29]

- **Roll-back**:

- Cleanup-Spec [24]

- **Isolation**:

- DAWG [15]

- CSF-clearing [17]

- MI6 [25]

- IRONHIDE [26]

### Key Observations

1. **Isolation** is a recurring defense strategy, appearing under both "No Setup" and "No Send w/o Authorization" branches.

2. **CSF-clearing** and **CSF-CFENCE** are variations of the same core concept, differentiated by implementation (e.g., [17] vs. [17]).

3. **SpecShieldERP** and **SpecShield-ERP+** suggest incremental improvements or variants of the same technique.

4. **MI6** and **IRONHIDE** are listed under both "No Setup" and "No Send w/o Authorization," indicating their broad applicability.

### Interpretation

The flowchart emphasizes a layered, multi-faceted approach to mitigating speculative execution attacks. Key trends include:

- **Authorization-Centric Defenses**: The "No Access" and "No Use" branches highlight the importance of access control and usage restrictions.

- **Isolation as a Universal Strategy**: Its repeated use suggests it is a foundational defense mechanism.

- **Specialized Techniques**: Methods like **Shadow Structure** (e.g., InvisiSpec, SafeSpec) and **Roll-back** (Cleanup-Spec) address specific attack vectors, such as data leakage or incorrect state restoration.

The diagram underscores the diversity of hardware-based countermeasures, ranging from basic isolation to advanced techniques like shadow structures and roll-back mechanisms. The citations indicate a reliance on peer-reviewed research, with newer or more specialized methods (e.g., DOLMA [33]) potentially representing cutting-edge solutions.

</details>

Fig. 5: Taxonomy of hardware defenses. The second row shows the 4 defense strategies. The third row shows the child defense categories under each strategy. The fourth row shows the proposed hardware defenses belonging to each defense category.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Block Diagram: CPU Instruction Processing Pipeline

### Overview

This diagram illustrates a simplified CPU instruction processing pipeline, highlighting the interaction between in-order and out-of-order execution components. It shows three primary stages: In-order Frontend, Out-of-order Execution, and In-order Retire, with explicit data flow paths and synchronization mechanisms.

### Components/Axes

1. **In-order Frontend**

- Inst Fetch (Instruction Fetch)

- Decode

- Arrows indicate sequential flow from Fetch → Decode

2. **Out-of-order Execution**

- Scheduler (central component)

- Load/Store Buffer

- older_access (likely a dependency tracking mechanism)

- fence (synchronization primitive)

- id (instruction identifier)

- ALU (Arithmetic Logic Unit)

- Arrows show parallel execution paths from Scheduler to all components

3. **In-order Retire**

- Single block with no internal components

- Receives input from Out-of-order Execution via orange arrow

4. **Memory Hierarchy**

- Cache/Mem (bottom component)

- Connected to Scheduler via downward arrow

5. **Micro-operations (µOp's)**

- Listed vertically between In-order Frontend and Out-of-order Execution

- Contains: "fence", "id", and ellipses indicating additional operations

### Detailed Analysis

- **Instruction Flow**:

1. Instructions flow left-to-right through In-order Frontend (Fetch → Decode)

2. Decoded instructions enter µOp's list

3. µOp's feed into Out-of-order Execution Scheduler

4. Scheduler distributes work to:

- Load/Store Buffer (memory operations)

- ALU (compute operations)

- fence (synchronization)

- id (instruction tracking)

5. Results flow back to In-order Retire

6. Cache/Mem serves as shared memory resource for all execution units

- **Synchronization**:

- fence instruction appears in both µOp's list and Scheduler outputs

- Indicates critical role in maintaining memory ordering constraints

- orange arrows emphasize synchronization points

### Key Observations

1. **Ordering Constraints**:

- In-order Frontend and Retire maintain sequential processing

- Out-of-order Execution allows parallelism while preserving correctness

2. **Critical Components**:

- Scheduler acts as central dispatcher

- fence instruction appears twice, emphasizing its importance

- Load/Store Buffer handles memory operations separately from ALU

3. **Data Flow**:

- Blue arrows represent normal data/instruction flow

- Orange arrows highlight synchronization points

- Vertical µOp's list acts as intermediary buffer

### Interpretation

This architecture demonstrates modern CPU design principles:

1. **Performance Optimization**:

- Out-of-order execution enables instruction-level parallelism

- Separation of fetch/decode from execution allows pipeline efficiency

2. **Correctness Mechanisms**:

- In-order Retire ensures program-visible ordering

- fence instructions enforce memory operation ordering

- Instruction IDs track dependencies despite out-of-order execution

3. **Memory Hierarchy**:

- Cache/Mem serves as shared resource for all execution units

- Load/Store Buffer likely implements store queue functionality

The diagram reveals a balance between in-order correctness (front-end/retire stages) and out-of-order performance (execution stage), with explicit synchronization points to maintain program semantics. The double appearance of "fence" suggests it's a critical primitive for memory consistency in this architecture.

</details>

Fig. 6: Inserting fences to stall the speculative execution of loads.

the encryption-based method uses the same key for a certain domain. The same-domain attack, e.g., NetSpectre [42], cannot be mitigated with these techniques.

## B. No Access Without Authorization

To prevent a security breach, we should prevent the secret Access before the authorization is completed. Software solutions can insert memory barriers such as the lfence in the x86 ISA to defeat speculative attacks, but they require re-compilation or post-processing of the binary [62]. Also, significant performance overhead is incurred with these software fences. A hardware defense can also prevent the secret access by automatically inserting a fence micro-op. Hardwareinserted fences have the advantage of non-intrusive protection and much lower overhead.

The Context-Sensitive Fencing (CSF) defense proposed in [17] is shown in Fig. 6. It uses customizable decoding from software instructions to hardware micro-operations to insert hardware fences after a conditional branch instruction before a subsequent load instruction. To defeat the Spectre v1 attack, CSF-LFENCE can place a fence between these two instructions. As no secret data is accessed in the first place, the No Access without Authorization defense provides strong protection that is independent of the type of covert channel used to exfiltrate the data.

## C. No Use without Authorization

Hardware defenses can allow the secret access but prevent its usage in subsequent execution. This improves performance

Fig. 7: Hardware modification to support No Use without Authorization .

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Diagram: CPU Instruction Processing Pipeline

### Overview

This diagram illustrates a CPU's instruction processing pipeline, highlighting in-order and out-of-order execution stages, memory interactions, and result retirement. The flow progresses from instruction fetch to execution and finally to result retirement, with explicit components for scheduling, buffering, and status tracking.

### Components/Axes

1. **In-order Frontend** (Left):

- **Inst Fetch**: Instruction fetch stage.

- **Decode**: Instruction decoding stage.

- Connected via arrows to the **Scheduler** in the out-of-order execution block.

2. **Out-of-order Execution** (Center):

- **Scheduler**: Central component managing instruction dispatch.

- **Load Buffer**: Handles load operations.

- **Store Buffer**: Manages store operations.

- **ALU...**: Arithmetic Logic Units (multiple instances implied).

- **Load Port**: Interface to memory/cache (labeled "Cache/Memory" at the bottom).

3. **In-order Retire** (Right):

- **ROB (Reorder Buffer)**: Tracks instruction status with columns:

- **Inst Info**: Instruction identifier (e.g., `μop[0]`, `μop[1]`, ..., `μop[N]`).

- **Done**: Completion status (empty in diagram).

- **Squash**: Invalidated instructions (empty in diagram).

- **Auth**: Authorization status (highlighted in orange).

4. **Result Forwarding**: Arrows connect the out-of-order execution block to the ROB, ensuring results are written back in program order.

### Detailed Analysis

- **Flow Direction**:

- Instructions flow left-to-right: Fetch → Decode → Scheduler → Execution → Retirement.

- Results are forwarded from the out-of-order execution block to the ROB for in-order retirement.

- **Key Elements**:

- **Load/Store Buffers**: Buffer memory operations to decouple execution from memory latency.

- **ALUs**: Represent computational units for arithmetic/logic operations.

- **ROB Columns**:

- `μop[N]`: Unique instruction identifiers (N implies variable-length instruction queue).

- **Auth Column**: Highlighted in orange, suggesting a security or validation mechanism (e.g., speculative execution checks).

### Key Observations

- **Pipeline Parallelism**: The scheduler dispatches instructions to multiple ALUs, enabling out-of-order execution.

- **Memory Hierarchy**: The Load Port directly interfaces with Cache/Memory, emphasizing memory access latency as a critical factor.

- **Auth Column Significance**: The orange highlight on the "Auth" column implies a security or validation step during retirement, possibly to prevent unauthorized or erroneous instructions from committing.

### Interpretation

This diagram represents a modern CPU's out-of-order execution engine, balancing performance and correctness. The **Auth column** in the ROB likely serves as a safeguard against speculative execution vulnerabilities (e.g., Meltdown/Spectre), ensuring only authorized instructions retire. The separation of in-order fetch/decode and out-of-order execution allows the CPU to exploit instruction-level parallelism while maintaining program-order semantics during retirement. The Load/Store Buffers and ALUs highlight the CPU's ability to overlap memory accesses and computations, reducing stalls. The absence of values in the ROB suggests this is a conceptual diagram, focusing on architectural components rather than performance metrics.

</details>

but still blocks the Use step in a speculative attack. We call it the No Use without Authorization defense strategy.

This strategy requires modifying the feed-forward logic which forwards the result of a producer instruction to dependent instructions so that forwarding is allowed to later operations only when the producer instruction is completed and authorized. This can be achieved when both the Done and the new Auth bits are set in the ROB in Fig. 7.

There are two subclasses of defenses in this category. The 'Basic No Use' defenses simply prevent the data forwarding to any dependent instructions. The 'No Sensitive Use' defenses improve the performance by only preventing the data forwarding to sensitive instruction types such as memory load instructions, which can be used to send cache covert channel signals, or for other known covert channels.

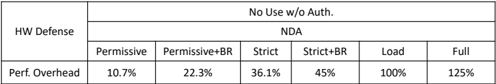

Basic no use. An example of the 'Basic No Use' defense strategy is the NDA (Non-speculative Data Access) defense proposal [23]. This has many variants, based on which authorization checks and Access operations are considered. NDA-Permissive checks the resolution of conditional branch conditions and indirect branch addresses (first 2 columns in Fig. 3). NDA-Permissive-BR (Bypass Restriction) checks these and also checks memory address disambiguation (the third column in Fig. 3). These two NDA-Permissive variants protect accesses from the cache and memory and from special registers like control registers.

There are also two NDA-Strict variants: NDA-Strict and NDA-Strict-BR. These are like their NDA-Permissive counterparts, except that they also prevent accesses of secrets that

are already in the general-purpose registers.

The NDA-Load variant further adds hardware to prevent the data forwarding from an Access operation until the instruction is retired, i.e., the instruction is at the head of the ROB queue and has its Authorization completed. This covers the first 5 columns in Fig. 3. Since NDA was proposed before the last two columns in Fig. 3, it is not known if it covers the attacks that do illegal forwarding from microarchitectural buffers. NDA-Full is the most secure variant, combining NDAStrict-BR with NDA-Load.

SpectreGuard [18] is another example of a 'Basic No Use' defense. While it only discusses Spectre v1, its key contribution is providing the Linux OS interface to identify sensitive memory pages and mark these as non-speculative. Only data accessed from sensitive pages will not be forwarded during speculative execution, reducing the performance overhead. ConTExT [27] implements similar software support to mark secret data, which should not be used in speculative execution, as non-transient. In addition, ConTExT allows taint propagation in the processor to also taint the values derived from non-transient values. These tainted values cannot be used in speculative execution that happens in the future.

SpecShield [21] also implements a 'Basic No Use' defense. It protects any secret in the memory which can be read by load operations. SpecShieldERP prevents data forwarding until the authorization of control flow, memory disambiguation and memory-related permission checking is completed and no violation is found.

No sensitive use. Another variant in [21], SpecshieldERP+, implements a 'No Sensitive Use' defense policy by considering the same authorization of control-flow, memory disambiguation authorization and memory permission checking as SpecshieldERP, but only preventing the data forwarding to sensitive instructions like loads and branches.

Speculative Taint Tracking (STT) [22] is another example of the 'No Sensitive Use' policy. STT further considers the covert channels due to implicit information flows and marks loads, branches, stores and data-dependent arithmetic instructions as being sensitive. To improve the performance, STT implements an efficient taint tracking mechanism to untaint authorized operations. STT has two variants, STT-Spectre and STT-Future. STT-Spectre considers only the authorization of control flow while STT-future tries to include potential future speculative attacks by deeming a load operation safe only when it reaches the head of the ROB or cannot be squashed.

## D. No Send without Authorization

The No Send without Authorization defenses prevent sending a signal on a covert channel so that the secret cannot be recovered by the attacker, who is the receiver of the covert channel. This signal is sent by changing the microarchitectural state. The defenses under this strategy are usually specific to one or multiple covert channels. Below, we describe five ways to achieve this goal. Although related defense proposals have considered different sets of covert channels, the cache covert channel is the main target that is addressed by all defenses.

Hence, we consider specifically the memory load instructions, which change the cache state, to explain these covert channels.

Delay state change. The processor can delay the execution of a load when it needs to modify the cache state. An example is the Conditional Speculation (CondSpec) defense [16], where an unauthorized memory load that hits in the cache can read the data and complete its execution. However, a load that has a cache miss is held up to be re-issued later.

The Efficient Invisible Speculative execution (EfficientSpec) defense [20] also implements this 'delay on miss' mechanism while adding a value predictor to provide a predicted value upon a cache miss. This is compared with the real value after the authorization is completed.

The DOLMA defense [33] addresses a broader scope of covert channels including not only data caches but also TLBs, instruction caches and hardware predictor state covert channels. It delays both explicit state changes and the changes caused by implicit secret-dependent execution flow and by resource contention. DOLMA considers stores as well as loads as the Send operation.

Prevent state change. The hardware can allow a speculative load to read the data but prevent the cache state change by making the load uncacheable.

Context-sensitive fencing [17], with some variants implementing No Access without Authorization (Section IV-B), also provides a new type of fence, CFENCE, to implement No Send without Authorization . A load can execute before a previous CFENCE but it will be converted to a non-cacheable load when it causes a cache miss. This allows the data to be read while preventing the cache state change. The defense variant placing a CFENCE before every load is denoted by 'CSFCFENCE' in Fig. 5.

Store speculative state in shadow structures. Visible cache state can be changed only on a successful authorization, by adding a shadow structure to hold the speculatively accessed cache lines.

InvisiSpec [14] prevents the modification of the cache state, including the cache coherence state in the multiprocessor system, by extending the processor with a speculative buffer to store the speculatively accessed data. If the authorization is completed and verified, each speculative load will issue a second access to the same address and cause safe cache state change. If the authorization is completed but rejected, the load is squashed, and no modification is made to the cache state. One InvisiSpec variant, InvisiSpec-Spectre, deems a load unauthorized until all the control-flow predictions are verified. The other variant, InvisiSpec-Futuristic, deems a load unauthorized until it reaches the head of the reorder buffer (ROB) or it cannot be squashed.

The SafeSpec defense [19] implements a similar shadow buffer to prevent the modification of both cache and translation lookaside buffer (TLB) states. The cache coherence state is not protected by SafeSpec.

MuonTrap [29] adds the filter caches as the shadow buffers for I-cache, D-cache and TLB. The speculatively accessed

TABLE III: The improvement in performance overhead by applying SDO, ClearShadow and InvarSpec to existing defenses.

| Feature | Enhanced Defense | Category | Benchmark | Overhead | Overhead |

|------------------|--------------------------|------------|-------------|------------------------------|------------|

| | | | | Before | After |

| SDO [30] | STT [22] | No Use | SPEC2017 | About 22% | 10.05% |

| ClearShadow [31] | Delay on miss [20] | No Send | SPEC2006 | 9% faster than delay-on-miss | basic |

| InvarSpec [32] | fence [14] | No Access | SPEC2006 | 199.3% | 101.9% |

| InvarSpec [32] | fence [14] | No Access | SPEC2017 | 195.3% | 108.2% |

| InvarSpec [32] | Delay on miss [16], [20] | No Send | SPEC2006 | 46.1% | 22.3% |

| InvarSpec [32] | Delay on miss [16], [20] | No Send | SPEC2017 | 39.5% | 24.4% |

| InvarSpec [32] | InvisiSpec [14] | No Send | SPEC2006 | 18.0% | 9.6% |

| InvarSpec [32] | InvisiSpec [14] | No Send | SPEC2017 | 15.4% | 10.9% |

entries are only stored in these and get cleared upon security domain switches. A key difference from previous work is that MuonTrap allows non-sensitive modification to the cache coherence state. In a MESI protocol, a speculative access can only be fetched in shared state and any sensitive action changing another cache line from M or E state to S or I state is delayed until it is authorized.

Restore state change (Roll-back). The hardware can allow the cache state change during speculative execution but restore the old cache state if the authorization fails.

CleanupSpec [24] prevents a speculative execution attack from modifying the cache state by restoring the cache state when the speculation is found to be wrong. Before the authorization is completed, CleanupSpec allows bringing new cache lines into the cache during speculative execution, but extends each memory request with its side-effect fields to track which cache line is fetched into the cache and which cache line is evicted from the L1 data cache, due to this unauthorized request. If a memory request needs to be squashed, a request is sent to invalidate any new cache line fetched during speculative execution, and bring back any cache line evicted speculatively from the L1 data cache. The L2 and last-level caches in CleanupSpec implement address encryption [63] to prevent eviction-based information leakage.

Isolation of states between security domains. Assuming that the sender and the receiver are from different security domains, some isolation-based defenses that prevent Setup can also prevent the attacker from receiving the covert signaling. For example, the clearing of the branch predictor state can prevent mistraining in the Setup phase and also prevent the leakage through covert sending [64]. Hence, a defense can prevent two steps as a No Setup defense and a No Send without Authorization defense.

## E. Reducing Overhead of Defenses

Techniques have been proposed to reduce the performance overhead of defenses described earlier. Table III shows the performance improvements they achieve.

Speculative Data-Oblivious Execution (SDO) [30] allows an instruction, which may depend on a secret, to execute. For instance, a speculative load can access certain cache levels without making any state changes and the performance is improved if the data is found. SDO can be integrated with STT [22].

Clearing the Shadows (ClearShadow) [31] improves the performance by accelerating the computation of branch conditions

TABLE IV: Performance numbers reported by existing work. The numbers may not be directly comparable as they are measured in different configurations. Numbers separated by commas are for different defense variants or benchmarks.

| Strategy | Defense | Platform | Performance Overhead (%) |

|--------------------|-----------------------|-------------------------------------------|----------------------------------------|

| No Setup & No Send | DAWG [15] | Zsim [65] | 0 ∼ 15 |

| No Setup & No Send | MI6 [25] | RiscyOO [66] | 16.4 |

| No Setup & No Send | IRONHIDE [26] | Tilera Tile-Gx72 processor [67] | -20 (Compared to an SGX-like baseline) |

| No Access | CSF-LFENCE [17] | GEM5 [68] | 48 |

| No Use | NDA [23] | GEM5 [68] | 10.7 ∼ 125 |

| No Use | SpectreGuard [18] | GEM5 [68] | 8, 20 |

| No Use | ConTExT [27] | Software approximation on Intel processor | 0.1 ∼ 71.1 |

| No Use | SpecShieldERP(+) [21] | GEM5 [68] | 10, 21 |

| No Use | STT [22] | GEM5 [68] | 8.5, 14.5, 24, 27 |

| No Send | CondSpec [16] | GEM5 [68] | 6.8, 12.8, 53.6 |

| No Send | EfficientSpec [20] | GEM5 [68] | 11 (IPC loss) |

| No Send | DOLMA [33] | GEM5 [68] | 10.2 ∼ 42.2 |

| No Send | CSF-CFENCE [17] | GEM5 [68] | 7.7, 21 |

| No Send | InvisiSpec [14], [69] | GEM5 [68] | 5, 17 |

| No Send | SafeSpec [19] | MARSSx86 [70] | -3 |

| No Send | MuonTrap [29] | GEM5 [68] | -5, 4 |

| No Send | CleanupSpec [24] | GEM5 [68] | 5.1 |

and memory addresses so that Authorize can finish earlier. ClearShadow moves the instructions that Authorize depends on to the front to shorten or remove the speculation window. ClearShadow has been used to improve a 'delay-on-miss' defense [20].

InvarSpec [32] allows some sensitive instructions to execute earlier without protection. InvarSpec software identifies the safe set (SS) of an instruction I which contains instructions that are older than I but do not affect I 's input and execution. InvarSpec hardware extension reads the SS and allows I to be issued even if some SS instructions are not resolved. InvarSpec can be applied to the fence-based defense, the delay-on-miss defense and the InvisiSpec defense as we show in Table III.

## F. Software-hardware Co-design

Some hardware defenses require software support. One way is changing the application software as described above for ClearShadow [31] and InvarSpec [32]. Another way is modifying the system software. DAWG [15] needs the system software to assign a proper domain ID to the protected program so that the domain ID is not shared with any potential attackers. Context-sensitive fencing [17] has a set of model-specific registers (MSRs) to specify the fence type and the insertion strategy. SpectreGuard [18] and ConTExT [27] enable marking secret data as non-transient by using a bit in the page table entry, which requires both compiler and OS software modifications.

## V. UNDERSTANDING PERFORMANCE OVERHEAD

## A. Performance Overhead Reported by Defense Papers

TABLE IV shows the performance overhead reported by some hardware defenses, listed according to the hardware defense taxonomy we presented in Fig. 5. The same gem5 cycle-accurate processor simulator [68] is used by most of the hardware defense papers. The overhead of isolationbased defenses to prevent cross-domain Setup and Send is mainly due to the clearing of microarchitectural states and the partitioning of hardware resources. CSF-LFENCE [17]

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Table: HW Defense Performance Overhead by Security Configuration

### Overview

The image presents a structured table comparing performance overhead percentages across different security configurations under the "No Use w/o Auth." category. The table is organized into two rows: the first row defines security configuration categories, and the second row quantifies their associated performance overhead.

### Components/Axes

- **Header Structure**:

- Primary Header: "HW Defense"

- Subheader: "No Use w/o Auth."

- Sub-subheaders:

- Permissive

- Permissive+BR

- Strict

- Strict+BR

- Load

- Full

- **Data Rows**:

- Row 1: Security configuration categories (labels)

- Row 2: Performance overhead percentages (values)

### Detailed Analysis

- **Security Configuration Categories**:

1. Permissive

2. Permissive+BR

3. Strict

4. Strict+BR

5. Load

6. Full

- **Performance Overhead Values**:

- Permissive: 10.7%

- Permissive+BR: 22.3%

- Strict: 36.1%

- Strict+BR: 45%

- Load: 100%

- Full: 125%

### Key Observations

1. **Progressive Overhead Increase**: Performance overhead increases monotonically from 10.7% (Permissive) to 125% (Full).

2. **BR Impact**: Adding BR (likely "Blocklist/Restrictions") to configurations increases overhead by 11.6% (Permissive→Permissive+BR) and 18.9% (Strict→Strict+BR).

3. **Threshold Effects**:

- "Load" configuration reaches 100% overhead (baseline)

- "Full" configuration exceeds baseline by 25%

### Interpretation

The data demonstrates a clear trade-off between security granularity and system performance. The "Full" configuration, representing maximum security constraints, incurs 125% overhead – more than double the baseline performance. This suggests that stricter security policies (particularly those involving blocklists/restrictions) significantly impact system efficiency. The "Load" configuration at 100% overhead may represent a reference point for maximum acceptable performance degradation in this context. The BR modifier consistently increases overhead across all security levels, indicating that blocklist operations are a major contributor to performance costs.

</details>

| | No Use w/o Auth. | No Use w/o Auth. | No Use w/o Auth. | No Use w/o Auth. | No Use w/o Auth. | No Use w/o Auth. |

|----------------|--------------------|--------------------|--------------------|--------------------|--------------------|--------------------|

| HWDefense | NDA | NDA | NDA | NDA | NDA | NDA |

| | Permissive | Permissive+BR | Strict | Strict+BR | Load | Full |

| Perf. Overhead | 10.7% | 22.3% | 36.1% | 45% | 100% | 125% |

(a) Performance overhead with different Authorization and Access types

| | No Send w/o Auth. | No Send w/o Auth. | | No Use w/o Auth. | No Use w/o Auth. |