# Visual Description

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Image: Elsevier Logo

### Overview

The image presents a stylized, monochrome logo featuring a detailed illustration of a tree with a snake coiled around its trunk, and the word "ELSEVIER" prominently displayed below. The illustration appears to be an engraving or woodcut style.

### Components/Axes

There are no axes or scales present in this image. The primary components are:

* **Tree:** A large, leafy tree with a full canopy.

* **Snake:** A snake winding around the tree trunk.

* **Text:** The word "ELSEVIER" in a bold, serif typeface.

### Detailed Analysis or Content Details

The illustration is highly detailed, with intricate patterns in the leaves and bark of the tree. The snake is depicted with scales and a distinct head. The word "ELSEVIER" is positioned directly below the illustration, centered horizontally. The style of the illustration suggests a historical or classical origin.

### Key Observations

The logo is visually striking and evokes a sense of tradition and knowledge. The combination of the tree and snake is symbolic, potentially representing wisdom, knowledge, or the tree of life. The monochrome color scheme adds to the logo's classic and sophisticated appearance.

### Interpretation

This is the logo for the publishing company Elsevier. The imagery is derived from the family crest of the Elsevier family, who founded the publishing house in the 16th century. The tree and snake are elements of the Elsevier family coat of arms. The logo represents the company's long history and commitment to scholarly publishing. The image does not contain any factual data or numerical values; it is a symbolic representation of a brand identity. The logo is a visual identifier, not a data presentation.

</details>

<details>

<summary>Image 2 Details</summary>

### Visual Description

Icon/Small Image (281x48)

</details>

00 (2023) 1-33

## Generalized Planning as Heuristic Search: A new planning search-space that leverages pointers over objects

Javier Segovia-Aguas Universitat Pompeu Fabra Sergio Jim´ enez Universitat Polit` ecnica de Val` encia Anders Jonsson Universitat Pompeu Fabra

## Abstract

Planning as heuristic search is one of the most successful approaches to classical planning but unfortunately, it does not extend trivially to Generalized Planning (GP). GP aims to compute algorithmic solutions that are valid for a set of classical planning instances from a given domain, even if these instances di er in the number of objects, the number of state variables, their domain size, or their initial and goal configuration. The generalization requirements of GP make it impractical to perform the state-space search that is usually implemented by heuristic planners. This paper adapts the planning as heuristic search paradigm to the generalization requirements of GP, and presents the first native heuristic search approach to GP. First, the paper introduces a new pointer-based solution space for GP that is independent of the number of classical planning instances in a GP problem and the size of those instances (i.e. the number of objects, state variables and their domain sizes). Second, the paper defines a set of evaluation and heuristic functions for guiding a combinatorial search in our new GP solution space. The computation of these evaluation and heuristic functions does not require grounding states or actions in advance. Therefore our GP as heuristic search approach can handle large sets of state variables with large numerical domains, e.g. integers. Lastly, the paper defines an upgraded version of our novel algorithm for GP called Best-First Generalized Planning (BFGP), that implements a best-first search in our pointer-based solution space, and that is guided by our evaluation / heuristic functions for GP.

Keywords: Generalized planning, classical planning, heuristic search, planning and learning, domain-specific control knowledge, program synthesis, programming by example

## 1. Introduction

Generalized planning (GP) addresses the representation and computation of solutions that are valid for a set of classical planning instances from a given domain [1, 2, 3, 4, 5, 6, 7, 8]. In the worst case, each classical planning instance may require a completely di erent solution. In practice, however, many classical planning domains are known

Corresponding author

Email addresses: javier.segovia@upf.edu (Javier Segovia-Aguas), serjice@dsic.upv.es (Sergio Jim´ enez), anders.jonsson@upf.edu (Anders Jonsson)

Artificial Intelligence

to have polynomial algorithmic solutions [9]. In other words, one can compute a single compact general solution that exploits some common structure of the di erent classical planning instances in a given domain. Generalized plans are then not sequences of actions, but algorithmic solutions that supplement planning actions with control-flow constructs. For example, a generalized plan that solves any classical planning instance from the blocksworld domain [10] can be compactly specified as follows: put all the blocks on the table and then, in a proper order, move each block to its goal placement . This generalized plan is able to solve any blocksworld instance, no matter the actual number, or identity of the blocks, and no matter the initial and goal configuration of the blocks. Note however that the knowledge represented in a given input set of classical planning instances may not be enough to specify an algorithmic solution that solves them all. For example, instances of the classical planning blocksworld domain do not include representation features for specifying whether all blocks are on the table , or for specifying the proper order for moving the blocks to their goal placements . A big challenge in GP is then to automatically discover the representation features that are key for computing a compact and general solution for a given set of planning instances. With this regard, researchers have proposed di erent languages for compactly represent GP solutions, and associated algorithms for computing a GP solution in a given language.

Automated planning has not achieved the level of integration with common programming languages, like C, J ava , or P ython , that is achieved by other forms of problem solving, such as constraint satisfaction or operational research [11, 12, 13]. An important reason is the low-level representations traditionally used in planning for specifying problems and solutions [14, 15, 16, 17, 18]. Given the algorithmic kind of GP solutions, GP is a promising research direction to bridge the current gap between automated planning and programming. However, most of the work on GP still inherits the S trips representation, in which states are represented specifying the properties and relations of a set of objects, and where actions represent object manipulations. In this work we provide a pointer-based representation for GP problems and solutions, that is closer to common programming languages, and that applies also to the object-centered problems that traditionally are addressed in the automated planning community. In addition we show that our pointer-based representation allows us to adapt the planning as heuristic search paradigm to GP: given a GP problem that comprises a finite set of classical planning instances from a given domain, our GP as heuristic search approach implements a combinatorial search to find a program that solves the full set of input instances. With our new pointer-based representation we are able to solve challenging programming tasks that were out of reach for previous top-down GP solvers.

Heuristic search is one of the most successful approaches to classical planning [19, 20]. The winners of the International Planning Competition (IPC) are often heuristic planners [21], and the workshop on Heuristics and Search for Domain-Independent Planning (HSDIP) is one of the discussion forums with the longest tradition at the International Conference on Automated Planning and Scheduling (ICAPS) , the major international conference for research on automated planning. Briefly, the planning as heuristic search approach addresses the computation of sequential plans as a combinatorial search in the space of the states reachable from a single given initial state. This combinatorial search is usually implemented as a forward search, guided by heuristics that are automatically extracted from the declarative representation of the planning problem. There is a wide range of di erent heuristics for classical planning, but most of them are based on the notion of relaxed plan [22]. The relaxed plan is a solution to a relaxation of the classical planning problem, which is computed assuming that goals (and action preconditions) are independent. The cost of the relaxed plan is an informative estimate of the actual cost-to-go for many classical planning problems, and its computation is much cheaper than the computation of the actual solution to the planning problem.

In the last two decades a wide landscape of e ective search algorithms, and heuristic functions, have been developed for classical planning [23, 24, 25, 26, 27, 28]. Unfortunately, search algorithms and heuristic functions from classical planning cannot be directly extended to GP. The computation of relaxed plans , as it is implemented by o -the-shelf heuristic planners, requires a pre-processing step for grounding states and actions [29]. On the other hand, GP solutions must be able to generalize to (possibly infinite) sets of classical planning instances, with di erent sets of state variables (i.e. state variables with di erent domain sizes and / or di erent number of state variables) as well as with di erent initial states and goals. These particular generalization requirements of GP make it impractical to ground states and actions and hence, to apply the state-space search and the cost-to-go estimates of heuristic planners.

With respect to previous work on GP, our heuristic search approach to GP introduces the following contributions:

1. A pointer-based representation for GP problems and solutions . Our representation formalism is closer to common programming languages, and it also applies to object-centered representations (like S trips ) that are tradi-

tionally used in automated planning.

2. A tractable solution-space for GP . We leverage the computational models of the Random-Access Machine [30] and the Intel x86 FLAGS register [31] to define an innovative pointer-based search space for GP. Interestingly our new search space for GP is independent of the number of input planning instances in a GP problem, and the size of these instances (i.e. the number of objects, state variables, and their domain sizes).

3. Grounding-free evaluation / heuristic functions for GP. We define several evaluation and heuristic functions to guide a combinatorial search in our solution space for GP. Evaluating these functions does not require to ground states / actions in advance, so they apply to GP problems where state variables have large domains (e.g. integers).

4. A heuristic search algorithm for GP . We present the BFGP algorithm for GP that implements a best-first search in our GP solution-space, and that is guided by our evaluation and heuristic functions.

5. A translator from the S trips fragment of PDDL to our pointer-based representation for GP. We automate the representation change from PDDL to pointer-based, and show several solutions to planning domains from the International Planning Competition (IPC) [32] which are validated on large random instances.

A preliminary description of our GP as heuristic search approach previously appeared at an ICAPS conference paper [33]. In this work we review and extend the seminal ideas presented in the conference paper, and provide a more exhaustive evaluation of our GP as heuristic search approach. Compared to the conference paper, the present paper includes the following novel material:

- We formalize the notion of pointer over the objects of a planning problem, and introduce a pointer-based formalization for planning action schemes, classical planning problems and solutions. We show that our pointerbased formalization directly applies to object-centered planning problems that are traditionally addressed in automated planning.

- We introduce the notion of partially specified planning program , that refers to the sketch of an algorithmic planning solution, and that allows to explain better our search algorithm and heuristics functions for GP. We also implemented new evaluation functions for guiding our GP as heuristic search approach.

- We provide theoretical results of our heuristic search algorithm for GP, that include termination , soundness , completeness , and complexity proofs. We also extend the empirical evaluation, including more results at a wider landscape of planning domains, to characterize better the performance of our GP as heuristic search approach.

The paper is structured as follows: Section 2 presents the planning models we rely on in this work (namely the classical planning model and the GP planning model) and also presents planning programs and the Random Access Machine , the formalisms we leverage for the representation of our algorithmic planning solutions. Section 3 shows how to extend the classical planning model with a set of pointers over objects, and the corresponding primitive operations for manipulating these pointers. This extension allows us to define, in an agnostic manner, a set of features and a set of actions for computing planning programs that can solve any instance from a given planning domain. Section 4 describes our GPas heuristic search approach; the section provides details on our solution space, evaluation functions, and heuristic search algorithm for GP. Section 5 presents the empirical evaluation of our approach and its comparison with the classical planning compilation for GP, that serves as a baseline. Finally, Section 6 wraps-up our work and discusses open issues and future work.

## 1.1. Related Work

Here we first review previous work on GP according to the following three dimensions: problem representation , solution representation , and computational approach . Then, we connect the research work on GP with other relevant areas in AI, such as program synthesis , deep learning , and (deep) reinforcement learning . Last, we list the features that distinguish our GP as heuristic search approach from the reviewed related work.

Regarding problem representation , there are two di erent approaches for the specification of the set of classical planning instances that are comprised in a GP problem. The explicit approach, that enumerates every classical planning instance in a GP problem [34], and the implicit approach, that defines the constraints that hold for the set of classical planning instances of a GP problem. The implicit approach is of interest because it allows to compactly specify infinite sets of classical planning instances (e.g. the infinite set of the classical planning instances that belong to the

blocksworld domain) [35, 36, 6]. In addition to the set of classical planning instances, extra background knowledge can also be specified in a GP problem with the aim of reducing the space of hypothetical solutions. For instance, plan traces / demonstrations on how to solve some of the input instances [37, 38, 39], the full state space [8], the particular subset of state features that can be used for computing a generalized plan [40, 41], negative examples that specify undesired behavior for the targeted GP solutions [42, 8], or state invariants that any state in a given domain must satisfy [43].

With respect to solution representation , di erent formalisms appeared in the planning literature to represent solutions that are valid for a set of classical planning instances; sequential plans are used in conformant planning [44], conditional tree-like plans are used in contingent planning [20], or policies are used in FOND planning , as well as in MDP / POMDP planning [45]. In all these planning settings, a set of di erent classical planning instances, with di erent initial states, can be implicitly represented as a disjunctive formulae over the state variables. Di erent goals can also be considered by coding them as part of the state representation, e.g. using static state variables. Since the early days of AI planning, hierarchies, LTL formulae, and policies, are also used to specify sketches of general solutions [46]. In the planning literature these solution sketches are often called domain-specific control knowledge , since they are traditionally used to control the planning process, and they apply to the entire set of classical planning instances that belong to a given domain [47, 48, 37, 38]. Last but not least, algorithmic solutions, represented either as lifted policies, finite automata, or as programs with control-flow constructs for branching and looping, are used to represent GP solutions [35, 49, 40, 50, 51, 52, 1, 34, 7].

Regarding to the computation of generalized plans , there are two main approaches for addressing GP problems. The top-down / o ine approach considers the entire set of classical planning instances in a given GP problem as a single batch, and computes a solution plan that is valid for the full batch at once. A common approach for the o ine computation of generalized plans is compiling the GP problem into another form of problem solving, and using an o -the-shelf solver to work out the compiled problem. With this regard, GP problems have been compiled into classical planning problems [52, 34], conformant planning problems [40], LTL synthesis problems [53], FOND planning problems [54, 6] or MAXSAT problems [8]. The compilation approach is appealing because it allows to leverage the latest advances of other well-founded scientific communities, with robust and scalable solvers. In addition, the computational complexity of some of these tasks is theoretically characterized with respect to structural features of the input problems, which may provide insights on the di culty of the addressed GP problem. A weak point of the compilation approach is however the size of the compiled problems to be solved; solvers are usually sensitive to the size of the input problems. On the other hand, the bottom-up / online approach incrementally processes the set of classical planning instances in a GP problem [1, 3]. Given a classical planning instance, a solution to that instance is computed and then, the solution is merged with solutions computed for the previous instances. The online approach is then appealing for handling GP problems that comprise large sets of classical planning instances. The main drawback of online approaches is dealing with the overfitting produced by the individual processing of the di erent classical planning instances in a GP problem.

As noted by previous work on GP, the aims of GP are connected to program synthesis [34, 6, 53, 33]. Program synthesis is a task traditionally studied by the computer-aided verification community [55], and that aims the computation of programs such that they satisfy a given correctness specification [56, 57, 58]. Program synthesis follows the functional programming paradigm. This means that a program is a function composition, where each function in the composition is a mapping of its input parameters into a single output, and where loops are implemented using recursion. Work on program synthesis is classified according to how the correctness specification of a program is formulated. The programming by example (PbE) paradigm specifies the desired program behaviour with a finite and non-empty set of ground input / output examples. This approach is related to the explicit representation of GP problems; a ground input / output example can be understood as the initial / goal state pair that represents a classical planning instance, and the instruction set of the functional programming language can be understood as the available actions for transforming an initial state into a goal state. Program synthesis also allows the implicit representation of the input correctness specifications, e.g. using fist-order formulae specified in SMTLIB, the formal language for SAT-Modulo Theories (SMT) [59]. The mainstream approach for program synthesis is to specify a formal grammar that allows to incrementally enumerate the space of possible programs, and to leverage the satisfiability machinery of SMT solvers to validate whether a candidate program is actually a solution. With this regard, work on theorem proving is also related to program synthesis, specially since SMT solvers allow the representation and satisfaction of first-order logic formulae [60]. Lastly, another popular trend in program synthesis is Programming by sketches that addresses program

## Author / 00 (2023) 1-33

synthesis in the particular setting where a partially specified solution is provided as input [61].

Besides computational methods for formal verification and logic satisfaction, optimization methods (that are predominant in Machine Learning [62]) have also been applied to the computation of planning solutions that generalize. For instance, o -the-shelf Deep Learning (DL) tools, have been successfully applied to the computation of generalized policies for classical and probabilistic planning domains [63, 64, 65]. Generalized policies are a powerful solution representation formalism whose applicability goes beyond classical planning. Generalized policies can represent planning solutions that can deal with non-deterministic actions [66], and whose aim is not to satisfy a given goal condition but to optimize a given utility function [67]. The aims of GP are also related to Reinforcement Learning (RL) [68]; while the cited DL approaches can be viewed as o -line optimization approaches to GP, the RL paradigm can be viewed as an online optimization approach to GP. RL methods incrementally compute policies, by iteratively addressing a set of sequential decision-making episodes. In RL learning experience is however not given beforehand (learning experience is collected by the autonomous exploration of the state space), and RL assumes that there is an explicit notion of reward function (which helps to guide exploration towards the most promising portions of the state-space). Note that DL and DRL approaches learn policies, without requiring a symbolic representation of the state and the action space. This means that it is possible to compute policies ( deep policies ) that generalize from raw sensor data (e.g. sequences of images) [69, 70]. The main disadvantage of computing solutions represented as deep policies is that they are black-box models that lack transparency and explanation capacity, which makes it di cult to interpret the produced solutions. This is a strong requirement in application areas that require humans in the loop, such as health, law, or defense [71].

With regard to the reviewed related work, our GP as heuristic planning approach is framed as follows:

- Numeric state variables . Previous work on generalized planning mainly followed the object-centered S trips representation. Addressing programming tasks with such representation is unpractical since it requires to encode all values in the domain of a state variable as objects. Other approaches, such as Qualitative Numeric Planning (QNP) [72, 73], handle large numeric state variables qualitatively with propositions to denote whether a variable is equal to zero. In this work we handle GP problems with integer state variables, which allow to naturally address diverse programming tasks as if they were GP problems.

- Explicit problem representation . In this work, a GP problem comprises the explicit enumeration of a finite set of classical planning instances to be solved. Interestingly our experimental results show that, in several domains, solving a small set of a few randomly generated classical planning instances, is enough to obtain a solution that generalizes to the infinite set of problems that belong to a given domain.

- No background knowledge . Our approach does not require any additional help such as state invariants, plans / -traces / demonstrations, negative examples, or the specification of the subset of features to appear in the generalized plans.

- Generalized plans represented as structured programs . Structured programming provides a white-box modeling paradigm that is widely popular. In this work we focus on generalized plans represented as structured programs, with control flow constructs for branching and looping the program execution flow. The application of a generalized plan on a particular instance is then a deterministic matching-free process, which makes it easier to define e ective evaluation and heuristic functions. Further, the asymptotic complexity of structured programs can be assessed from their structure, which is also helpful to establish preferences on di erent possible generalized plans.

- O -line satisfiability approach . This work follows an o -line approach to GP that aims to compute, at once, a generalized plan that exactly solves all the classical planning instances that are given as input. Because many heuristic search algorithms are easily extended to online versions, we believe that our GP as heuristic search approach is a stepping stone towards online approaches that can deal with larger sets of classical planning instances.

- Native heuristic search for GP . By native heuristic search, we mean that we defined a search space, evaluation / heuristic functions, and a search algorithm, that are specially targeted to GP. Our GP as heuristic search approach is related to an existing classical planning compilation for GP [34]. Our approach overcomes however

the main drawback of the compilation whose search space grows exponentially with the number and domain size of the state variables; in practice, this limits the applicability of the compilation to planning instances of small size since the performance of o -the-shelf classical planners is sensitive to the size of the input instances. Our experiments support this claim, and show that our BFGP algorithm significantly reduces the CPU-time required to compute and validate generalized plans, compared to the classical planning compilation approach to GP [34].

## 2. Background

This section introduces the necessary notation to formalize our GP as heuristic search approach. First, the section formalizes the classical planning model and the generalized planning model. Then the section formalizes planning programs , our formalism for the compact representation of planning solutions, and that applies to both classical planning and generalized planning. Lastly the section formalizes the Random Access Machine given that, to define a tractable solution space for GP, our GP as heuristic planning approach borrows several mechanisms from this abstract computation machine.

## 2.1. Classical Planning

Our formalization of the classical planning model is similar to the abstract planning framework called Finite Functional Planning , that was introduced for the theoretical analysis of di erent ground languages for classical planning [74]. Let X be a set of state variables , where each variable x 2 X has a domain Dx . A state s is a total assignment of values to the set of state variables, i.e. s = h x 0 = v 0 ; : : : ; xN = vN i , such that 8 0 i Nvi 2 Dxi . For a subset of the state variables X 0 X , let D [ X 0 ] = x 2 X 0 Dx denote its joint domain. The state space is denoted as S = D [ X ]. Given a state s 2 S , and a subset of variables X 0 X , let s j X 0 = h xi = vi i xi 2 X 0 be the projection of s onto X 0 i.e. the partial state that is defined by the values assigned by s to the subset of state variables in X 0 . The projection of s onto X 0 defines the subset f s j s 2 S ; s j X 0 s g of the states that are consistent with the corresponding partial state. Last, let us define a state-constraint C as a Boolean function C : S !f 0 ; 1 g over the state variables, that implicitly defines the subset of states SC S that are consistent with that constraint.

Let A be a set of deterministic actions such that each action a 2 A is characterized by two functions; an applicability function a : S ! f 0 ; 1 g and a successor function a : S ! S . An action a 2 A is applicable in a given state s 2 S i a ( s ) equals 1. The execution of an applicable action a 2 A , in a state s 2 S results in the successor state s 0 = s a . Note that our definition of deterministic actions generalizes actions with conditional e ects [75], common in GP since their state-dependent outcomes allows the adaptation of generalized plans to di erent classical planning instances.

A classical planning instance is a tuple P = h X ; A ; I ; G i , where X is a set of state variables, A is a set of actions, I 2 S is an initial state, and G is a constrain on the value of the state variables that induces the subset of goal states SG = f s j s G ; s 2 S g . Given P , a plan is an action sequence = h a 1 ; : : : ; am i whose execution induces a trajectory = h s 0 ; a 1 ; s 1 ; : : : ; am ; sm i such that, for each 1 i m , ai is applicable in si 1 and results in the successor si = si 1 ai . Aplan solves P if and only if the execution of in s 0 = I finishes in a goal state, i.e. sm 2 SG . We say is optimal if j j = m is minimal among the set of all the plans that solve P .

Planning languages, such as PDDL [76], can compactly represent the infinite set of classical planning instances of a given domain using a finite set of functions and a finite set of action schemes. Given a finite set of objects , and a finite set of functions defined over that set of objects, we assume that each state variable x 2 X stands for a function interpretation x ( ! o ), where 2 is a function with arity ar ( ), and ! o 2 ar ( ) is a vector of objects comprised in the Cartesian product space of ar ( ) . For keeping compact the number of state variables, objects and function signatures can by typed so the number of possible function interpretations is constrained. Functions in can be Boolean e.g. to represent PDDL predicates, or numeric e.g. to represent PDDL numeric fluents. Likewise, given a set of action schemes , we assume that each action a 2 A is built from an action schema 2 by substituting each variable in the action scheme with an object from . An action scheme 2 is a tuple = h name ( ) ; par ( ) ; pre ( ) ; e f f ( ) i ; where name ( ) is the identifier of the action schema, par ( ) is its list of variables (again these variables can be typed so they can only be substituted by objects of the same type), pre ( ) is a FOL Boolean formula defined over par ( ) that compactly represents the subset of states where the corresponding ground actions are applicable, and e ( ) is

list of FOL constraints that compactly represents the updates of the state variables caused by the application of the corresponding ground actions.

## 2.2. Generalized Planning

Generalized planning is an umbrella term that refers to more general notions of planning [7]. This work builds on top of the inductive formalism for GP, where a GP problem is defined as a finite set of classical planning instances that share a common structure [5, 53]. In this work we assume that the finite set of classical planning instances in a GP problem belong to the same domain. These instances share then a common structure since they are built from the same sets of functions and action schemes .

Definition 1 (GP problem) . A GP problem P = f P 1 ; : : : ; PT g is a finite and non-empty set of T classical planning instances P 1 = h X 1 ; A 1 ; I 1 ; G 1 i ; : : : ; PT = h XT ; AT ; IT ; GT i such that at each instance Pt 2 P , 1 t T, may di er in the set of state variables, actions, initial state, and goals, but the corresponding set of state variables Xt is induced from the common set of functions , and the set of actions At from the common set of action schemes .

There are diverse representations for GP solutions that range from generalized polices [35, 49], to finite state controllers [40, 50], formal grammars [51], hierarchies [77, 52], or programs [1, 34]. Each representation has its own expressiveness capacity, as well as its own validation complexity and computation complexity. In spite of this representation diversity, we can define a common condition under which a generalized plan is considered a solution to a GP problem. First, let us define exec ( ; P ) = h a 1 ; : : : ; am i as the sequential plan that is produced by the execution of a generalized plan on a classical planning instance P .

Definition 2 (GP solution) . A generalized plan solves a GP problem P = f P 1 ; : : : ; PT g i , for every classical planning instance Pt 2 P , 1 t T, it holds that the sequential plan exec ( ; Pt ) solves Pt.

AGPsolution for a given GPproblem P is optimal i , for every Pt 2 P , the sequential plan exec ( ; Pt ) induced by for solving the classical planing instance Pt is an optimal plan for that instance.

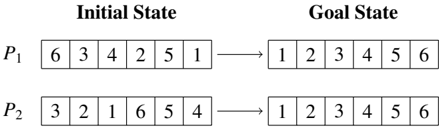

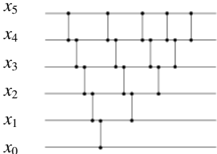

Example . Figure 1 shows the initial state and goal of two classical planning instances, P 1 = h X ; A ; I 1 ; G 1 i and P 2 = h X ; A ; I 2 ; G 2 i , for sorting two six-element lists. In this example the two instances share the same set of state variables X = f xi vector ( oi ) j 0 i 5 g that is built with the one-arity function = f vector g and the set of objects 1 = 2 = f o 0 ; : : : ; o 5 g , and where 8 x 2 XDx = N 0. The two classical planning instances also share the set of deterministic actions A , with 6 5 2 actions swap ( oi ; oj ), that swap the content of two list positions i < j , and that are induced from the single action scheme = f swap ( x ; y ) g . An example solution plan for P 1 is 1 = h swap ( o 0 ; o 5 ) ; swap ( o 1 ; o 2 ) ; swap ( o 1 ; o 3 ) i while 2 = h swap ( o 0 ; o 2 ) ; swap ( o 3 ; o 5 ) i is an example of a sequential plan that solves P 2 . Note that the set P = f P 1 ; P 2 g is a GP problem since they are two classical planning instances that are built using the same set of functions and action schemes . Figure 2 shows an example of a generalized plan that solves the GP problem P = f P 1 ; P 2 g , and that is represented as a sorting network . The sorting network is illustrated using two di erent types of items (namely the wires and the comparators ). For each state variable, there is a wire that carries the value of that variable from left to right in the network. On the other hand, comparators connect two di erent wires, corresponding to a pair of variables ( xi ; xj ), such that i < j . When a pair of values traveling through a pair of wires ( i ; j ), encounters a comparator, then the comparator applies the action swap ( oi ; oj ) i vector ( oi ) vector ( oj ), which in turn is xi xj . The sorting network of Figure 2 can actually solve any instance for sorting the content of any six-element list, no matter its initial content. This solution is however not valid for sorting lists with di erent lengths. In this paper we will show how to represent and compute planning solutions that leverage indirect memory addressing to generalize no matter the number of objects, and corresponding state variables.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: State Transition Representation

### Overview

The image presents a diagram illustrating the transition from an "Initial State" to a "Goal State" for two permutations, labeled P₁ and P₂. Each permutation is represented as a sequence of numbers within a rectangular box. An arrow indicates the transformation from the initial to the goal state.

### Components/Axes

The diagram consists of two main columns labeled "Initial State" and "Goal State". Each column contains two rows, labeled P₁ and P₂. Each row represents a permutation of the numbers 1 through 6. The permutations are displayed as a horizontal sequence of numbers within a rectangular box. Arrows point from the initial state to the goal state for each permutation.

### Detailed Analysis or Content Details

**P₁:**

* **Initial State:** The sequence is 6, 3, 4, 2, 5, 1.

* **Goal State:** The sequence is 1, 2, 3, 4, 5, 6.

**P₂:**

* **Initial State:** The sequence is 3, 2, 1, 6, 5, 4.

* **Goal State:** The sequence is 1, 2, 3, 4, 5, 6.

### Key Observations

Both permutations, P₁ and P₂, start in a disordered state and transition to the same ordered goal state (1, 2, 3, 4, 5, 6). The initial states are different, indicating different starting points for the transformation.

### Interpretation

This diagram likely represents a problem in search algorithms or state-space search. The "Initial State" represents the starting configuration, the "Goal State" represents the desired configuration, and the arrow implies a series of operations or steps to transform one into the other. The two permutations suggest that there might be multiple paths or solutions to reach the same goal state. The problem could be related to sorting algorithms, puzzle solving (like the 15-puzzle), or any scenario where a system needs to transition from an initial configuration to a target configuration. The diagram doesn't provide information about the specific operations used to achieve the transition, only the initial and final states.

</details>

Figure 1: Example of two classical planning instances for sorting the content of two six-element lists by swapping the list elements.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Diagram: Quantum Circuit

### Overview

The image depicts a quantum circuit diagram with six qubits, labeled x₀ through x₅, arranged vertically. The diagram shows a series of quantum gates applied to these qubits, represented by vertical lines connecting the qubit lines. The diagram does not contain numerical data, but illustrates the application of gates in a specific sequence.

### Components/Axes

- **Qubits:** Six horizontal lines representing qubits, labeled from bottom to top as x₀, x₁, x₂, x₃, x₄, and x₅.

- **Gates:** Vertical lines connecting the qubit lines, representing quantum gates. The specific type of gate is not indicated.

- **Arrangement:** The gates are applied sequentially, with each gate affecting one or more qubits.

### Detailed Analysis or Content Details

The diagram shows the following gate applications:

1. **x₀:** A gate is applied to x₀.

2. **x₁:** A gate is applied to x₁.

3. **x₂:** A gate is applied to x₂.

4. **x₃:** A gate is applied to x₃.

5. **x₄:** A gate is applied to x₄.

6. **x₀ & x₁:** A gate is applied to both x₀ and x₁.

7. **x₁ & x₂:** A gate is applied to both x₁ and x₂.

8. **x₂ & x₃:** A gate is applied to both x₂ and x₃.

9. **x₃ & x₄:** A gate is applied to both x₃ and x₄.

10. **x₀ & x₂:** A gate is applied to both x₀ and x₂.

11. **x₁ & x₃:** A gate is applied to both x₁ and x₃.

12. **x₂ & x₄:** A gate is applied to both x₂ and x₄.

13. **x₀ & x₃:** A gate is applied to both x₀ and x₃.

14. **x₁ & x₄:** A gate is applied to both x₁ and x₄.

15. **x₀ & x₄:** A gate is applied to both x₀ and x₄.

16. **x₁ & x₅:** A gate is applied to both x₁ and x₅.

17. **x₂ & x₅:** A gate is applied to both x₂ and x₅.

18. **x₃ & x₅:** A gate is applied to both x₃ and x₅.

19. **x₄ & x₅:** A gate is applied to both x₄ and x₅.

### Key Observations

The diagram demonstrates a pattern of applying gates to increasingly distant qubits. The gates are applied in a structured manner, suggesting a specific quantum algorithm or circuit design. The diagram does not provide information about the specific type of gates being used.

### Interpretation

The diagram represents a quantum circuit, a sequence of quantum gates applied to qubits. The specific arrangement of gates suggests a particular quantum algorithm or computation. Without knowing the type of gates used, it is difficult to determine the exact function of the circuit. The diagram illustrates the fundamental concept of quantum computation, where qubits are manipulated using quantum gates to perform calculations. The increasing complexity of the gate applications suggests a potentially complex quantum algorithm. The diagram is a visual representation of a quantum process, and its interpretation requires knowledge of quantum mechanics and quantum computing principles.

</details>

Figure 2: Example of a generalized plan, represented as a sorting network that solves any classical planning instances for sorting the content of a six-element list, no matter its initial content.

## 2.3. Planning programs

In this work we represent planning solutions as planning programs [34]. Unlike sequential plans, planning programs include a control flow construct which allows the compact representation of solutions to classical planning problems and to GP problems. Formally a planning program is a sequence of n instructions = h w 0 ; : : : ; wn 1 i , where each instruction wi 2 is associated with a program line 0 i < n , and it is either:

- A planning action wi 2 A .

- A goto instruction wi = go ( i 0 ; ! y ), where i 0 is a program line 0 i 0 < i or i + 1 < i 0 < n , and y is a proposition.

- A termination instruction wi = end . The last instruction of a planning program is always a termination instruction, i.e. wn 1 = end .

The execution model for a planning program is a program state ( s ; i ), i.e. a pair of a planning state s 2 S and program counter 0 i < n . Given a program state ( s ; i ), the execution of a programmed instruction wi is defined as:

- If wi 2 A , the new program state is ( s 0 ; i + 1), where s 0 = s wi is the successor when applying wi in s .

- If wi = go ( i 0 ; ! y ), the new program state is ( s ; i + 1) if y holds in s , and ( s ; i 0 ) otherwise 1 . Proposition y can be the result of an arbitrary expression on state variables, e.g. a state feature [78].

- If wi = end , program execution terminates.

To execute a planning program on a classical planning instance P = h X ; A ; I ; G i , the initial program state is set to ( I ; 0), i.e. the initial state of P and the first program line of . A program solves P i the execution terminates in a program state ( s ; i ) that satisfies the goal condition, i.e. wi = end and s 2 SG . Otherwise the execution of the program fails. If a planning program fails to solve the planning instance, the only possible sources of failure are:

1. Inapplicable program , i.e. executing action wi 2 A fails in program state ( s ; i ) since wi is not applicable in s .

2. Incorrect program , i.e. execution terminates in a program state ( s ; i ) that does not satisfy the goal condition, i.e. ( wi = end ) ^ ( s < SG ).

3. Infinite program , i.e. execution enters into an infinite loop that never reaches an end instruction.

1 We adopt the convention of jumping to line i 0 whenever y is false , following the JMP instructions in the Random-Access Machine that jump when a register equals zero.

In this work we model instructions wi 2 A as if they were always applicable but that their e ects only update the current state i the preconditions of the action hold in the current planning state. Formally, when executing wi in ( s ; i ), the new program state is ( s 0 ; i + 1) i wi is applicable, otherwise it is ( s ; i + 1). Therefore, in this work the execution of a program on a classical planning instance will never return an inapplicable program , and only incorrect or infinite program are possible sources of failure. This particular action modeling is common in Reinforcement Learning [68], and in conformant planning [44], because it allows to deliver compact solutions that apply to sets of di erent sequential decision-making problems (typically with di erent initial states).

## 2.4. The Random-Access Machine

The Random-Access Machine (RAM) is an abstract computation machine, in the class of register machines , that is polynomially equivalent to a Turing machine [79]. The RAM machine enhances a multiple-register counter machine [80] with indirect memory addressing. The indirect memory addressing of RAM machines is useful for defining RAM programs that access an unbounded number of registers, no matter how many there are. With this regard, a register in a RAM machine is then a memory location with both a content (a single natural number), and an address (a unique identifier that works as a natural number). Let be r the address of a RAM register, and [ r ] the content of that register.

Diverse base instructions sets , that are Turing complete, can be defined on the RAM registers. Our GPas heuristic search approach builds on the instructions of the Base set 1 and the Base set 3 , that are briefly defined as follows:

- inc ( r ) increments the content of the register r by 1, i.e. ([ r ] + 1) ! [ r ]. Likewise, dec ( r ) decrements the content of the register r , i.e. ([ r ] 1) ! [ r ].

- copy ( r 1 ; r 2) copies the content of r 1 in r 2 , i.e. [ r 1 ] ! [ r 2 ].

- jmp ( z ; r ) jumps to instruction z if register r is zero, else the RAM execution continues to the next instruction.

- halt terminates the RAM execution.

Auxiliary dedicated registers allow to reduce the size of the RAM instruction set. For instance, the number of registers called out by the RAM instructions can be reduced by using an accumulator dedicated register. WLOG in this work we extend Base sets 1 and 3 with two FLAGS registers, the zero and the carry registers, that are dedicated to store the outcome of three-way comparisons [81] between two registers, as well as to condition jump instructions. On the other hand, the RAM instruction set can be extended with extra instructions, that can actually be built as blocks of the base RAM instructions, but that allow the definition of more compact RAM programs. For instance, an extra instruction for clearing (setting to zero) the value of a register.

## 3. Planning with a RAM

Inspired by the computational model of the RAM machine, this section extends the classical planning model with a set of pointers , defined over the set of objects used to build the state variables and actions of a classical planning instance, and with the set of primitive operations for manipulating these pointers. Extending the classical planning model with a set of pointers, and their primitives, allows the agnostic definition of a set of state features, and a set of actions, that are shared for the di erent classical planning instances in a given domain and that can be leveraged for the computation of generalized plans. For instance, the selection sort algorithm is able to solve any sorting instance, no matter the content or the length of the input list, because it is provided with mechanisms for indexing the list positions and for operating over those indexes.

First, the section shows how to compactly represent a transition system using pointers. Then the section formalizes our extension of the classical planning model with a set of pointers , and their corresponding primitive operations. The last part of the section shows that our pointer-based formalism applies also to the object-centered representations traditionally used in classical planning.

```

bool constraint_sorted(int i) {

For (Pointer i=1; i<i[0]; i++) {

If (vector(i-1) > vector(i)) {

Return False;

}

}

Return True;

}

}

```

Figure 3: Boolean function constraint sorted that implements a constraint for validating whether the vector of state variables is sorted in increasing order. The constraint is implemented leveraging the single pointer i over the objects in ; vector ( i ) is interpreted as vector ( oi ) xi 2 X .

## 3.1. Representing transition systems with pointers

Formally, a transition system is a pair ( S ; ! ), where S is a set of states, and ! denotes a relation of state transitions S S . Unlike finite-state automata, the set of states and the set of state transitions of a transition system are not necessarily finite, and no initial / goal states are specified. State constraints allow the compact representation of (possibly infinite) sets of states. For instance given the set of state variables X = f x 0 ; x 1 ; x 2 ; x 3 ; x 4 ; x 5 g of the example in Figures 1 and 2, the state constraint x 0 x 1 x 2 x 3 x 4 x 5 defines the subset of states where the content of these variables is sorted in increasing order and it applies no matter the domain of the state variables (that can actually be infinite).

In object-centered transition systems, states are factored and each state variable is addressed by a function 2 fed with a list of objects ! o , i.e. ( ! o ) s.t. ! o 2 ar ( ) ; where is the set of objects and ar ( ) denotes the arity of . For instance in the previous example, given the one-arity function vector and the six-objects set = f o 0 ; o 1 ; o 2 ; o 3 ; o 4 ; o 5 g , each state variable xi 2 X may be defined as xi vector ( oi ).

Definition 3 (Pointer) . Given a set of objects , we define a pointer as a finite-domain variable z whose domain is Dz = [0 ; : : : ; j j 1] , where j j denotes the number of objects.

Pointers are then variables for indexing the objects of a transition system that, in combination with function symbols, are useful to define state constraints that produce not only compact, but general representations of a possibly infinite set of states. By general we mean that a constraint represents a set of states that share some common structure, no matter the actual number of objects, and corresponding state variables. For instance, Figure 3 shows the Boolean function constraint sorted , that implements a global constraint for checking whether the content of the vector of state variables is sorted in increasing order. The constraint sorted function is procedurally defined, leveraging a single pointer i , and it applies to any number of objects, and to any domain size of the corresponding state variables.

Besides the compact and general definition of (possibly infinite) sets of states, pointers over objects also enable the compact and general definition of (possibly infinite) sets of state transitions via action schemes .

Definition 4 (Action schema with pointers) . Given a set of X state variables, an action schema with pointers is a tuple h name, params, pre, e i where:

- name is the symbol that uniquely identifies the action schema.

- params is a finite set of pointers Z defined over the set of objects.

- pre is a state constraint where state variables are indirectly addressed via the function symbols and the pointers in params , i.e. x ( ! z ) such that 2 and ! z 2 Z ar ( ) . The pre state constraint implicitly represents the subset of states where the action schema is applicable.

- e is a partial assignment of the state variables where state variables are indirectly addressed via the function symbols and the pointers in params . The e partial assignment implicitly represents the successor state that results from the execution of the action schema at a given state.

To illustrate our pointer-based definition of an action schema, Figure 4 shows a procedural representation of the preconditions ( pre ) and the e ects ( e ) of the swap action schema. When applicable, the swap action schema exchanges

```

bool schema_swap_pre(Pointer i, Pointer j) {

return (i>=0 and j>=0 and i<[] and j<[] and i<j);

}

void schema_swap_eff(Pointer Variable aux;

aux:=vector(i);

vector(i):=vector(j);

vector(j):=aux;

}

```

```

Figure 4: Pointer-based representation of the preconditions and the e ects of the swap action schema. When applicable, the swap action schema exchanges the value of the state variables indexed by its two parameters , the pointers i and j .

the value of the state variables induced by its two parameters (the pointers i and j ). The swap action schema is succinct, because it compactly defines an infinite set of di erent state transitions that share a common structure. The swap action schema is also general, because it applies to any sorting instance, no matter the number of state variables (i.e. the length of the vector of state variables) or the domain size of these variables. What is more, the execution of the schema swap pre and schema swap eff procedures is a deterministic matching-free process since the input pointers do always index an object in .

## 3.2. Classical planning with pointers

Here we extend the classical planning model (introduced in Section 2) with a set of pointers and their corresponding primitive operations. We formalize the set of primitive operations over pointers leveraging the notion of Random-Access Machine . In more detail, we extend the classical planning model with a RAM machine of j Z j + 2 registers; j Z j pointers that reference the planning objects, plus two FLAGS registers (the zero and the carry ). The two FLAGS registers store the outcome of three-way comparisons , between two di erent pointers or between two state variables that are indirectly addressed via function symbols and pointers.

Given a classical planning instance P = h X ; A ; I ; G i , such that the state variables and actions are generated with the set of functions and action schemes of a given domain, and the set of objects , then the extended classical planning instance with a RAM machine of j Z j + 2 registers is defined as P 0 Z = h X 0 Z ; A 0 Z ; I 0 Z ; G i , where:

- The new set of state variables X 0 Z comprises:

- -The state variables X of the original planning instance, such that each state variable xi 2 X is xi ( ! o ) with 2 and ! o 2 ar ( ) , as defined above.

- -Two Boolean variables Y = f yz ; yc g , that play the role of the zero and carry FLAGS registers, respectively.

- -The pointers Z , a set of extra state variables s.t. each z 2 Z has finite domain Dz = [0 ; : : : ; j j 1].

- -A set of derived state variables XZ = f ( ! z ) j 2 ; ! z 2 Z ar ( ) g whose value is given by the interpretations of the functions of the domain with the corresponding pointers.

Given a fixed number of pointers, Y , Z , and XZ , are a subset of state variables that is shared by all the instances that belong to the same domain, no matter the number of objects of each instance.

- The new set of actions A 0 Z will represent the set of actions that is shared for the di erent classical planning instances in a given domain, and it includes:

- -The planning actions A 0 that result from reformulating each action scheme 2 into its corresponding pointer-based version. The reformulation is a two-step procedure that requires that Z contains, at least, as many pointers as the largest arity of an scheme in : (i), each parameter in par ( ) is replaced with a pointer in Z and (ii), preconditions and e ects are rewritten to refer to these pointers.

- -The RAM actions that implement the following sets of RAM instructions f inc ( z 1 ), dec ( z 1 ), cmp ( z 1 ; z 2 ), set ( z 1 ; z 2 ) j z 1 ; z 2 2 Z g over the pointers in Z , and f test ( ( ! z 1 )) ; cmp ( ( ! z 1 ) ; ( ! z 2 )) j ! z 1 ; ! z 2 2 Z ar ( ) g over the lists of pointers in Z ar ( ) for each function symbol 2 . Respectively, these RAM instructions increment / decrement a pointer by one, compare two pointers, set the value of a pointer z 2 to another pointer z 1 , test whether ( ! z 1) is greater than zero, and compare 2 the value of ( ! z 1) and ( ! z 2). Each RAM action also updates the Y = f yz ; yc g flags , according to the result of the corresponding RAM instruction (which is denoted here by res ):

$$res):$$

- The new initial state I 0 Z is the initial state of the original planning instance, but extended with all pointers set to zero and the two FLAGS set to False . The goals are the same as those of the original planning instance.

Example . Here we extend the classical planning instance P 1 = h X ; A ; I 1 ; G 1 i (illustrated in Figure 1) with a RAM machine of j Z j + 2 registers, where Z = f i ; j g is a set of two pointers. According to this extension, our pointer-based representation of the sequential plan 1 = h swap ( o 0 ; o 5 ) ; swap ( o 1 ; o 2 ) ; swap ( o 1 ; o 3 ) i is the following sequence of thirteen actions 0 1 = h inc ( j ) 5 ; swap ( i ; j ) ; inc ( i ) ; dec ( j ) 3 ; swap ( i ; j ) ; inc ( j ) ; swap ( i ; j ) i ; where superscripts refer to the number of times that an instruction is sequentially repeated, and where swap ( i ; j ) refers to the pointer-based action schema defined in Figure 4. Likewise, our pointer-based version of the sequential plan 2 = h swap ( o 0 ; o 2 ) ; swap ( o 3 ; o 5 ) i , that solves the classical planning problem P 2 illustrated in Figure 1, is the ten-action sequence 0 2 = h inc ( j ) 2 ; swap ( i ; j ) ; inc ( i ) 3 ; inc ( j ) 3 ; swap ( i ; j ) i . Note that any sequential plan for solving a classical planning instance from the vector sorting domain, no matter the number of state variables and no matter the domain size of these state variables, can be built using exclusively actions from the following set: f inc ( i ) ; inc ( j ) ; dec ( i ) ; dec ( j ) ; swap ( i ; j ) g .

## 3.2.1. Theoretical properties

Theorem 1. Given a classical planning instance P, its extension P 0 Z , with a RAM machine of j Z j pointers, preserves the solution space of P.

Proof. ) : Let = h a 1 ; : : : ; am i be a plan that solves P , an equivalent plan 0 that solves P 0 Z is built as follows; for each action ai 2 , A 0 contains a pointer-based action schema a 0 i that replaces each parameter in par ( ai ) with a pointer z 2 Z . For each such pointer z , the plan repeatedly applies RAM actions inc( z ) or dec( z ) until they reference the associated vector of objects ! o , and then it applies a 0 i . The resulting plan 0 has exactly the same e ect as on the original planning state variables in X , and since the goal condition of P 0 Z is the same as that of P , it follows that 0 solves P 0 Z .

( : Let 0 = h a 0 1 ; : : : ; a 0 m i be a plan that solves P 0 Z . Identify each action in A 0 among those of 0 , and execute 0 to identify the assignment of objects to pointers when applying each action in A 0 . Construct a plan corresponding to the subsequence of actions in A 0 from 0 , replacing each action schema a 0 i 2 A 0 by an original action ai 2 A and choosing as parameters of ai the objects referenced by the pointers of a 0 i at the moment of execution. Hence ai has the same e ect as a 0 i on the state variables in X , implying that has the same e ect as 0 on X . Since the goal condition of P is the same as that of P 0 Z , it follows that solves P .

Our extension of a classical planning problem with a RAM machine of j Z j + 2 registers preserves the solution space of the original problem. Sequential plans in the extended planning model are however longer when pointers

2 cmp ( ( ! z 1) ; ( ! z 2)) instructions are only defined for numeric functions.

must be incremented (or decremented) multiple times to access the corresponding objects, before the corresponding action schema is executed. For instance, our pointer-based version of plan 1 required thirteen steps while the original sequential plan only had three steps. Likewise our pointer-based version of plan 2 required ten steps while the original sequential plan only had two steps. On the other hand, our extension does not require explicit action grounding; it is the planner itself who determines the values of the pointers that now feed the action schemas, so in a sense the planner is in charge of doing a partial instantiation. Further, our extension separates the instantiation of each parameter of an action schema. The computation of sequential plans with our pointer-based formalism is however out of the scope of this paper. We will exclusively address the computation of planning solutions represented as planning programs.

Theorem 2. The new set of actions A 0 Z is independent of the number of objects , state variables X, and their domain size.

Proof. The number of actions of a classical planning instance, extended with a RAM machine of j Z j pointers, is

$$I ^ { \prime } _ { 2 1 } = 2 I ^ { 2 } + \sum _ { d < \phi } [ Z ^ { 2 } ( r ( \phi ) ) + I ^ { \prime } _ { 1 } ] .$$

This number exclusively depends on the number of pointers in Z and on the arity of the functions in and the action schemes in . First, the increment / decrement instructions induce 2 j Z j actions, the set instructions over pointers induce j Z j 2 j Z j actions, and comparison instructions of pointers induce j Z j 2 j Z j actions. The comparison instructions can compare two pointers but for symmetry breaking, we only consider the single parameter ordering ( zi ; z j ) where i < j , i.e. we consider cmp( z 1 , z 2 ) but not cmp( z 2 , z 1 ) . Second, test instructions are defined over each function symbol and list of pointers with the same size as its arity, inducing P j Z j ar ( ) actions, and comparison of predicates with pointers induce P ( j Z j 2 ar ( ) j Z j ar ( ) ) actions. Therefore, the total number of RAM instructions are 2 j Z j + 2( j Z j 2 j Z j ) + P ( j Z j ar ( ) + j Z j 2 ar ( ) j Z j ar ( ) ) = 2 j Z j 2 + P j Z j 2 ar ( ) which only depends on the number of pointers in Z and the arity of each function symbol . Last, as defined by our abstraction procedure, the number of actions in A 0 is given by the number of parameters of the actions schemes and the number of pointers in Z to replace these parameters. This means that the size of A 0 is upper bounded by j A 0 j P 2 j Z j j par ( ) j . As a consequence it follows that A 0 Z , whose size is given by j A 0 Z j = 2 j Z j 2 + P j Z j 2 ar ( ) + j A 0 j , it is also independent of the number of objects , state variables in X and their domain size.

## 3.3. S trips with pointers

Since the early 70's, the S trips representation formalism is widely used for research in automated planning [82]. Even today, S trips is an essential fragment of PDDL [76], the input language of the International Planning Competition , and most planners support the S trips representation features. Here we show that our pointer-based view of planning problems and solutions applies also to object-centered planning formalisms, such as S trips planning. In fact, our pointer-based formalism can be understood as an instantiation of FS trips [83], where the single level of indirection of pointers over objects is enough to represent S trips problems with constant memory access.

S trips is an object-centered planning formalism that compactly represents the set of states of a transition system using a finite set of objects , and a finite set of FOL predicates , that indicate the properties of the objects and their relations. Likewise, S trips compactly represents the space of the possible state transitions using FOL operators , which are defined as a tuple op = h name ( op ) ; args ( op ) ; pre ( op ) ; e ( op ) ; e + ( op ) i and where, name ( op ) is a unique identifier of the operator, args ( op ) is a set of variable symbols specifying the arguments of the operator, and pre ( op ), e ( op ) ; e + ( op ) are sets of FOL predicates, with variables exclusively taken from args ( op ), and that respectively specify the preconditions , negative e ects and positive e ects . The representation of a S trips problem is completed specifying an initial state, that defines the initial situation for all the objects, and the aimed set of goal states , which is typically specified as a partial state.

State representation . In our pointer-based formalism for S trips problems, each state variable x 2 X has domain Dx = f 0 ; 1 g , and it is built as a FOL S trips predicate 2 grounded by a vector of objects ! o 2 ar ( ) . Figure 5 shows the representation of a blocksworld state using the S trips formalism as well as using our formalism. In this state there are three blocks, = f b 1 ; b 2 ; b 3 g , that are stacked in a single tower. Predicates clear(?x) , holding(?x) , and ontable(?x) , are encoded as three di erent Boolean functions that map each vector of objects to either 0 or 1 in the current state. Omitted state variables are assumed to be zero valued. Our vector X of state variables is the result of

Figure 5: Example of a three-block state from the blocksworld (left), and its corresponding representation as a vector of bits (right).

| | | State representation | State representation |

|----|--------------|------------------------|------------------------------|

| | Predicate | Strips | Boolean functions |

| 1 | (clear ?x) | (clear b1) | clear(b1) = 1 |

| 2 | (handempty) | (handempty) | handempty() = 1 |

| 3 | (holding ?x) | - | - |

| | (on ?x ?y) | (on b1 b2) (on b2 b3) | on(b1,b2) = 1, on(b2,b3) = 1 |

| | (ontable ?x) | (ontable b3) | ontable(b3) = 1 |

```

:action unstack

:parameters (?x ?y)

:precondition (and (clear ?x) (handmpty? (on ?x ?y)))

:effect (and (holding ?x) (not (clear ?x)) (not (handmpty? (on ?x ?y))))

?:x (handmpty? (on ?x ?y))

t (handmpty?) (not (on ?x ?y)))) </doc>

```

Figure 6: The unstack S trips operator from the blocksworld domain represented in the PDDL language.

unifying all the previous predicate and object tuple valuations into a vector. The length of the vector of state variables is then upper bounded by j X j P k 0 nk j j k , where nk is the number of first-order predicates with arity k . For instance, the X vector contains at most j j 2 + 3 j j + 1 state variables for the blocksworld domain. State-invariants (e.g. in the blocksworld a block cannot be on top of two di erent blocks simultaneously) can also be leveraged to save space for the memory allocation of the state variables.

Action representation . Given a FOL S trips operator op = h name ( op ) ; args ( op ) ; pre ( op ) ; e ( op ) ; e + ( op ) i , our pointer-based formalism produces its corresponding pointer-based action schema h name, params, pre, e i :

- The name of the action schema is name ( op ), the name of the given FOL S trips operator.

- For each argument in args ( op ), the action schema has a pointer that indexes an object o 2 .

- The set pre ( op ) is transformed into a conjunctive arithmetic-logic expression with conditions of two kinds: (i) for each pointer in the parameters of the action schema, the conditions asserting that the pointer is within its domain and (ii), for each precondition in pre ( op ) a condition asserting that the state variable addressed by the pointers content equals to some specific value of its domain.

- Each negative e ect in e ( op ) is transformed into an indirect variable assignment that sets the corresponding state variable to 0. Likewise, each positive e ect in e + ( op ) is transformed into an indirect variable assignment that sets the corresponding state variable to 1.

Figure 7 shows our pointer-based definition for the unstack action schema from the blocksworld that implements the corresponding operator represented in the S trips fragment of PDDL of Figure 6. The action schema of Figure 7 is implemented using two pointers ( i and j ), and it applies to any blocksword instance, no matter the number of blocks or their identity. In this implementation the state variables are global , meaning that they can be accessed from any of the action schemas. Again the execution of these procedures is a deterministic matching-free process since the input pointers do always have a block assigned.

Problem representation . We complete our pointer-based representation of a S trips problem with the init and goal procedures. Figure 8 shows the init and goal procedures for the planning problem of unstacking the 3-block tower of Figure 5. They have the same formal structure as the procedures we use for our pointer-based representation of the preconditions and e ects of an action schema, but with no arguments. In more detail:

- The init procedure is a write-only procedure, that implements a total variable assignment of the state variables for specifying the initial state of the S trips problem.

- The goal procedure is a read-only Boolean procedure, that encodes the state-constraint that specifies the subset of goal states.

```

<doc> Bool schema_unstack_pre (Pointer i, Pointer j) {

Return (i>=0 and j>=0 and i[0] and j[0] and

clear(i)=1 and handempty(j)=1;

}

void schema_unstack_eff (Pointer i, Pointer j) {

clear(i):= 0; hand

holding(i):= 1; clear ;

} </doc>

```

on(i,j)=1); Figure 7: The unstack action schema from blocksworld defined with two pointers ( i and j ). void init() { i := 0; j := 0; yz := 0; yc := 0; clear(b1) := 1; on(b1,b2) := 1; on(b2,b3) := 1; ontable(b3) := 1; } Bool goals() { Return (ontable(b1)=1 and ontable(b2)=1 and ontable(b3)=1); }

Figure 8: The init and goal procedures for representing the S trips planning problem of unstacking the three-block tower of Figure 5.

Plan representation . Following our pointer-based representation, a sequential plan = h a 1 ; : : : ; an i is a sequence of transformations of the state variables using: (i) our pointer-based action schemas that encode the FOL S trips operators and (ii), the subset of RAM actions f inc ( z ), dec ( z ) j z 2 Z g for achieving the aimed binding for each parameter of an action schema. Our pointer-based representation of the four-action plan = h unstack(b1,b2) , putdown(b1) , unstack(b2,b3) , putdown(b2) i for unstacking the three-block tower of Figure 5, is the following sequence of actions 0 = h inc ( j ), unstack ( i ; j ), putdown ( i ), inc ( i ), inc ( j ), unstack ( i ; j ), putdown ( i ) i that leverages two pointers Z = f i ; j g , that are defined over the set of blocks .

In spite of its popularity, the S trips representation is too low-level for many interesting applications [14, 15, 17]. Our pointer-based representation naturally extends beyond S trips to more expressive object-oriented representations. For instance, state variables can also comprise numeric variables (e.g. integers or reals) to implement numeric fluents as in PDDL2.1 [84] or in RDDL [16]. Object typing can also be implemented in a straightforward way (e.g. specializing pointers to the number of objects of a particular type) to compact the size of the vector of state-variables and to optimize the implementation of quantified preconditions / e ects / goals [85]. Last, our pointer-based representation also supports conditional e ects [86], e.g. an action schema can specify multiple variable assignments conditioned by the di erent values of the state variables.

## 4. Generalized planning as heuristic search

First the section shows how we build, in an agnostic manner, a GP problem from a set of classical planning instances of a given domain. Then, the section describes in detail our GP as heuristic search approach: our search space for GP, the evaluation / heuristic functions that we use for guiding the search, and the particular details of our search algorithm.

## 4.1. From a set of classical planning instances to a GP problem

Generalized plans leverage relevant subsets of shared state variables ( features ) and actions whose execution is well-defined for any possible value of the state variables of the classical planning instances to be solved. Building a

GP problem from a set of classical planning instances of a given domain is not trivial because these two ingredients may not be given in the representation of the classical planning instances.

On the one hand given a classical planning domain, the specification of a set of features that is (i), expressive enough to represent a polynomial solution valid for any instance in the domain and (ii), compact enough for the e ective computation of that solution, is a complex task that requires expert knowledge on both the domain and the aimed solution. In fact, the automatic specification of expressive and compact features for a planning domain is a challenging research question that is investigated since the early days of automated planning [87]. On the other hand the set of ground actions for the di erent instances of a given domain, is usually di erent since it depends on the number of objects. Back to the sorting example illustrated in Figures 1 and 2, the classical planning instances for sorting a vector of length six induced 6 5 2 swap ( oi ; oj ), i < j actions, while instances for sorting a vector of length seven would induce a set of 7 6 2 swap ( oi ; oj ) actions.

Given a finite and non-empty set of T classical planning instances from a given domain, our approach for automatically building a GP problem is to extend the instances with a RAM machine of j Z j pointers. The result is a GP problem P = f P 1 ; : : : ; PT g , where each instance Pt 2 P , 1 t T may di er in the actual set of objects, initial state, and goals, but all instances necessarily share the subset of state variables XZ \ Y \ Z and the same set of actions A 0 Z . Formally, P 1 = h X 0 Z 1 ; A 0 Z ; I 0 Z 1 ; G 1 i ; : : : ; PT = h X 0 ZT ; A 0 Z ; I 0 ZT ; GT i where 8 1 t T XZt X 0 Zt , 8 1 t T Y X 0 Zt , 8 1 t T Z X 0 Zt , and 8 a 0 2 A 0 Z par ( a 0 ) 2 Z ar ( a 0 ) . The number of pointers j Z j is a parameter that indicates how many pointers are used in the extension of the classical planning instances 3 .

Our extension with a RAM machine of j Z j pointers automatically defines a minimalist but general set of features for the set of a classical planning instances from a given domain.

Definition 5 (The feature language) . We define the feature language as the four possible joint values of the two Boolean variables Y = f yz ; yc g , and we denote this language as L = f ( : yz ^ : yc ) ; ( yz ^ : yc ) ; ( : yz ^ yc ) ; ( yz ^ yc ) g .

We say L is minimalist because it only contains four elements, and we say L is general because it is independent of the number of objects and hence, of the domain of the state variables and the number of state variables. Note that our features are a function of (i) the state variables and (ii) the last executed action, since they all may a ect the value of Y = f yz ; yc g . Such notion of feature is related to the notion of state observation in the POMDP formalism, where observations depend on the current state and the action just taken [88]. With this regard it can be understood that our GP approach computes, at the same time, a generalized plan and an observation function useful for that generalized plan. Our feature language is also related to Qualitative numeric planning [72, 73, 6] which leverages propositions to abstract the value of numeric state variables. Given that our FLAGS Y = f yz ; yc g depend on the last executed action, and considering that only RAM instructions update the variables in Y , we have an observation space of 2 j Y j (2 j Z j 2 + P j Z j 2 ar ( ) ) state observations implemented with only j Y j Boolean variables. The four joint values of f yz ; yc g model then a large space of observations, e.g. = 0, , 0, < 0 ; > 0 ; 0 ; 0 as well as relations = ; , ; <; >; ; on pairs of state variables.

Likewise, our extension with a RAM machine of j Z j pointers automatically defines the shared set of actions A 0 Z , that is well-defined for the set of a classical planning instances from a given domain. Because the set of pointers Z is fixed for the T input classical planning instances we have that, after our extension, all the instances share the same set of actions A 0 Z . The execution of the actions in A 0 Z is well-defined over the subset of state variables Z , no matter the actual number of objects, or the corresponding number and domain size of the state variables; we recall the reader that the set of actions A 0 Z exclusively depends on the number of pointers j Z j and the arity of actions and functions (Theorem 2).

## 4.2. The search space

Briefly, our GP as heuristic search approach implements a combinatorial search in the solution space of the possible planning programs. Next we provide more details on how we implemented a tractable search space for GP.

Definition 6 (Partially specified planning program) . A partially specified planning program is a planning program such that the content of some of its program lines may be undefined.

3 At least Z must contain as many pointers as the largest arity of the functions and action schemes of the given domain.

Each node of our search space is a partially specified planning program which is binary encoded as follows. Given a set of state variables X , a set of actions A , a maximum number of program lines n such that the last instruction is wn 1 = end , and defining the propositions of goto instructions as ( x = v ) atoms where x 2 X and v 2 Dx , we have that the space of possible planning programs is represented by the following bit-vectors:

1. The action vector of length ( n 1) j A j , indicating whether an action a 2 A is programmed on line 0 i < n 1.

2. The transition vector of length ( n 1) ( n 2), indicating whether a go ( i 0 ; ) instruction is programmed on line 0 i < n 1.

3. The proposition vector of length ( n 1) P x 2 X j Dx j , indicating whether a go ( ; ! h x = v i ) instruction is programmed on line 0 i < n 1.

A partially specified planning program is then encoded as the concatenation of these three bit-vectors and the length of the resulting bit-vector is:

$$( n - 1 ) [ A _ { 1 } + ( n - 2 ) + \sum _ { i = X } ^ { n } D _ { i } ] .$$

The binary encoding allows us to quantify the similarity of two partially specified planning programs (e.g. the Hamming distance of their corresponding bit-vector representation) and more importantly, to systematically enumerate the space of all the possible planning programs with a maximum of n lines. Let us define the empty program as the particular partially specified planning program whose instructions are all undefined (i.e. all bits of its bit-vector representation are set to False ). Starting from the empty program , we can enumerate the entire set of possible planning programs with two search operators:

- program(i,a) , that programs an action a 2 A at line i of a program

- program(i,i',x,v) , that programs a goto ( i 0 ; ! h x = v i ) instructions at line i of a program.