## Analog, In-memory Compute Architectures for Artificial Intelligence

Patrick Bowen ∗

Neurophos, 212 W Main St. #301, Durham, North Carolina 27701, USA and Center for Metamaterials and Integrated Plasmonics and Department of Electrical and Computer Engineering, Duke University, P.O. Box 90291, Durham, North Carolina 27708, USA

Guy Regev † and Nir Regev ‡

AlephZero, 5141 Beeman Ave. Valley Village, CA 91607, USA and

Department of Electrical and Computer Engineering,

Ben-Gurion University of the Negev, David Ben-Gurion Blvd. 1, Be'er Sheva, Israel

Bruno Umbria Pedroni §

AlephZero, 5141 Beeman Ave., Valley Village, CA 91607, USA and

Department of Bioengineering, UC San Diego, 9500 Gilman Dr., La Jolla, CA 92093, USA

Edward Hanson ¶ and Yiran Chen ∗∗

Duke University, Electrical and Computer Engineering Department, Science Dr, Durham, NC 27710 (Dated: February 14, 2023)

This paper presents an analysis of the fundamental limits on energy efficiency in both digital and analog in-memory computing architectures, and compares their performance to single instruction, single data (scalar) machines specifically in the context of machine inference. The focus of the analysis is on how efficiency scales with the size, arithmetic intensity, and bit precision of the computation to be performed. It is shown that analog, in-memory computing architectures can approach arbitrarily high energy efficiency as both the problem size and processor size scales.

## I. INTRODUCTION

This work is focused on minimizing the energy required to evaluate neural networks, particularly in the linear layers which comprise the overwhelming majority of the computation. The linear operators that describe convolutional neural network layers can be often be characterized by three qualities: they are sparse, high in dimensionality, and high in arithmetic intensity, where arithmetic intensity is defined as the ratio between the number of basic operations (i.e. multiplications and additions) and the number of bytes read and written. This paper shows that, in the context of operators that are both high in dimensionality and arithmetic intensity, an analog in-memory computing device can drastically reduce the energy required to evaluate the operator compared to a von Neumann machine. Moreover, the degree of increased efficiency of the analog processor is related to the scale of the processor.

In a classical von Neumann machine, the energy required to evaluate an operator can be broken into two components: memory access energy and computational energy. Within a typical CPU, and depending on the workload, these components can consume the same or-

∗ ptbowen@neurophos.com

† guy@alephzero.ai

‡ nir@alephzero.ai

§ bruno@alephzero.ai

¶ edward.t.hanson@duke.edu

∗∗ yiran.chen@duke.edu

der of magnitude of the total energy. Memory access related energy can easily outgrow computational energy consumption, particularly when used to evaluate sequential large linear operators like those used in neural network inference. The goal of this paper is to find highlevel architectures that can reduce the energy consumption of neural network algorithms by orders of magnitude, which requires addressing both memory access energy and computational energy. Here we show that an in-memory compute accelerator architecture can reduce memory access energy when applied to an operator/algorithm with high arithmetic intensity, while an analog processor/accelerator can reduce computational energy when specialized for particular classes of linear operators. A processor architecture that takes advantage of both in-memory compute and is analog in nature can in principle reduce the overall computational energy consumption by orders of magnitude, with the amount of reduction depending on the scale and arithmetic intensity of the algorithm to be performed and the analog processor's specialization in performing a specific set of operators.

In-memory compute architectures were originally designed to speed up processing of algorithms that are parallelizable and applied to large datasets. One of the earliest examples dates back to the 1960s with Westinghouse's Solomon project. The goal of that project was to accelerate the speed of the computer up to 1 GFLOPs by using a single instruction applied to a large array of Arithmetic Logic Units (ALUs). This is perhaps the first instance of the several closely related concepts: single instruction, multiple data (SIMD) machines, vector/array

processors, systolic arrays and in-memory/near-memory compute devices.

Today, exploiting parallelism in high-arithmetic intensity algorithms using parallel hardware remains a wellknown technique to accelerate a computation along the time dimension. More recently, however, vector/array processors have been utilized to decrease compute energy as opposed to the original purpose of compute time, and it does this by reducing energy associated with memory accesses. Google's TPU is a good example of a systolic array being used as a near-memory compute device with digital processing elements [1, 2]. In sec. III, we explain how in-memory compute devices can reduce memory access energy in the case of linear operators with high arithmetic intensity.

Separately, analog computing has recently been proposed as an approach to reduce the computational energy consumption, again for large, linear operations. In sec. IV we present a general model of analog computation that focuses on how energy consumption scales with problem size and bit precision, and show that computational energy can be reduced by orders of magnitude by using an analog processor that is specialized to implement specific classes of operators. Reconfigurable analog processors are by nature in-memory compute devices, and so these classes of processors are shown to reduce overall computational energy by orders of magnitude for particular operators.

## II. CPU ENERGY CONSUMPTION

We begin by finding the energy efficiency of a computer performing multiply-accumulate (MAC) operations, which are the core of linear operators used in deep learning. The total energy required to perform a linear operation can be decomposed into memory access energy and computational energy:

$$E _ { t o t } = N _ { m } e _ { m } + N _ { o p } e _ { o p } , \quad ( 1 )$$

where N m is the number of memory accesses, e m is the average energy per access, N op is the number of operations required to evaluate the overall operator, and e op is the average energy per operation (e.g., add, multiply, etc). We define the computational efficiency as the number of operations per unit energy performed by the computer:

$$\eta \equiv N _ { o p } / E _ { t o t } = \frac { 1 } { ( N _ { m } / N _ { o p } ) e _ { m } + e _ { o p } } .$$

In a simple CPU with a single instruction, single data (SISD) architecture in Flynn's taxonomy and a flat memory hierarchy, for each operation that is performed, a value is read from memory for the current partial sum, the operator weight, and the input activation. The three values are operated upon, and the result is written back to memory. Therefore, regardless of the actual size of the weights or activations, the number of memory accesses per operation will always be four (i.e. three reads and one write), and the number of computational operations (multiply and add) will be 2. This results in N m = 2 N op and a computational efficiency of

$$\eta = \frac { 1 } { 2 e _ { m } + e _ { o p } } . \quad ( 3 )$$

In modern CMOS devices, both e m and e op are on the order of magnitude of 1 pJ [3], as will later be shown in table IV. This places an approximate limit on the computational efficiency of most traditional architectures on the order of 0.1-1 TOPS/W, which is consistent with state of the art performance [4].

## III. MINIMIZING MEMORY ACCESS ENERGY WITH IN-MEMORY COMPUTE

One of the major downsides of SISD machines is that they can end up accessing the same memory element multiple times in the course of evaluating a large operator, which wastes memory access energy. This is ultimately reflected in the ratio N op /N m = 1 / 2 that is fixed by the nature of a SISD machine. Alternatively, one can imagine finding another hypothetical architecture that is arranged in some energetically optimal way to where all of the inputs are only read once from memory, and all outputs are only written once to memory in the course of the computation. If that were done, this would represent the minimum total access energy required to evaluate the linear operator. In other words, N m would reach its minimum value, and the ratio N op /N m would be maximized.

While a particular processor might only be able to implement a certain N op /N m ratio, this ratio is also limited by the algorithm being performed, and is commonly referred to as the arithmetic intensity of the algorithm:

$$a \equiv N _ { o p } / N _ { m } . \quad ( 4 )$$

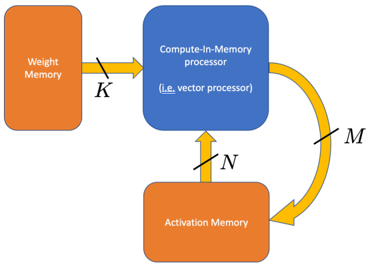

An in-memory compute device [5] as illustrated in fig. 1 can leverage the arithmetic intensity of an algorithm by reading a large set of both operator data and input vector data from memory at once and operating on all of the data together before writing the output back to memory. If the in-memory compute device is sufficiently large and complex, all of the necessary operations involving this data can be performed without any of the inputs being read a second time from memory in the future.

Returning to eq. (1), we set a lower bound on the amount of memory access energy that must be expended for the von Neumann machine to evaluate the operator in terms of the arithmetic intensity. This in turn leads to a limit on the computational efficiency:

$$\eta = \frac { 1 } { e _ { m } / a + e _ { o p } } \quad ( 5 )$$

FIG. 1: Illustration of a digital compute-in-memory processor.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: System Architecture for Compute-In-Memory Processing

### Overview

The diagram illustrates a system architecture for compute-in-memory processing, emphasizing data flow between three key components: Weight Memory, Compute-In-Memory processor, and Activation Memory. Arrows labeled with variables (K, N, M) indicate directional data transfer, forming a closed-loop system with iterative processing capabilities.

### Components/Axes

1. **Weight Memory** (orange block, left side):

- Labeled explicitly as "Weight Memory"

- Connected via arrow labeled **K** to the Compute-In-Memory processor

2. **Compute-In-Memory processor** (blue block, center):

- Labeled "Compute-In-Memory processor"

- Subtext clarifies: "(i.e., vector processor)"

- Receives input from Weight Memory (**K**)

- Outputs data to Activation Memory (**M**)

- Receives feedback from Activation Memory (**N**)

3. **Activation Memory** (orange block, bottom):

- Labeled explicitly as "Activation Memory"

- Connected via arrow labeled **M** to the Compute-In-Memory processor

- Provides feedback to the processor via arrow labeled **N**

4. **Data Flow**:

- **K**: Weight Memory → Compute-In-Memory processor

- **M**: Compute-In-Memory processor → Activation Memory

- **N**: Activation Memory → Compute-In-Memory processor (feedback loop)

### Detailed Analysis

- **Component Relationships**:

- Weight Memory and Activation Memory are both orange, suggesting similar functional roles (likely data storage).

- The Compute-In-Memory processor (blue) acts as the central processing unit, integrating inputs from both memory types.

- The feedback loop (**N**) implies iterative computation, where outputs from Activation Memory are reused as inputs for subsequent processing cycles.

- **Variable Labels**:

- **K**: Likely represents weight data (e.g., neural network parameters) fed into the processor.

- **M**: Output from the processor to Activation Memory, possibly intermediate results or updated activations.

- **N**: Feedback signal from Activation Memory, enabling recurrent or adaptive processing.

### Key Observations

1. **Closed-Loop System**: The architecture forms a cycle (Weight Memory → Processor → Activation Memory → Processor), enabling continuous computation without external data input after initialization.

2. **Parallelism**: The Compute-In-Memory processor is explicitly labeled as a vector processor, indicating support for parallel operations critical for high-throughput tasks like matrix multiplications in AI/ML.

3. **Memory Hierarchy**: Weight Memory and Activation Memory are distinct but interconnected, reflecting a tiered memory structure optimized for specific computational phases.

### Interpretation

This diagram represents a hardware/software co-design for efficient computation, likely targeting applications such as neural network inference or real-time signal processing. The compute-in-memory approach minimizes data movement between separate processing and memory units, reducing latency and energy consumption. The feedback loop (**N**) suggests the system can adapt dynamically, potentially implementing recurrent neural networks or adaptive filtering algorithms. The use of a vector processor highlights optimization for batch operations, aligning with the parallel nature of matrix-based computations common in machine learning. The orange color coding for both memory blocks may imply shared architectural characteristics (e.g., non-volatile memory, in-memory computing arrays).

</details>

The contribution to computational efficiency from memory access energy can therefore be brought arbitrarily low when implementing an operator with arbitrarily high arithmetic intensity. The reduction in the contribution from memory access energy with increasing arithmetic intensity in eq. (5) is reflective of the energy savings in systolic arrays and TPUs [1, 2].

We note that the kind of analysis presented in eq. (5) is analogous to roofline models of processors [6]; however, the emphasis here is on energy consumption, while the latter is focused on identifying bottlenecks in processor speed.

In order to sample what degree of advantage inmemory compute devices can bring, we examine a few examples of linear operators and present their arithmetic intensities. For a general matrix multiplication of a matrix of size L × N times a matrix of dimension N × M the total number of memory accesses is N m = LN + NM + LM , and the number of operations is N op = 2 NML , where additions and multiplications are treated as separate operations. The arithmetic intensity in this case is:

$$a = \frac { 2 N M L } { L N + N M + L M } , \quad ( 6 ) \quad D e p _ { i }$$

which approaches ∞ as N,M,L →∞ collectively.

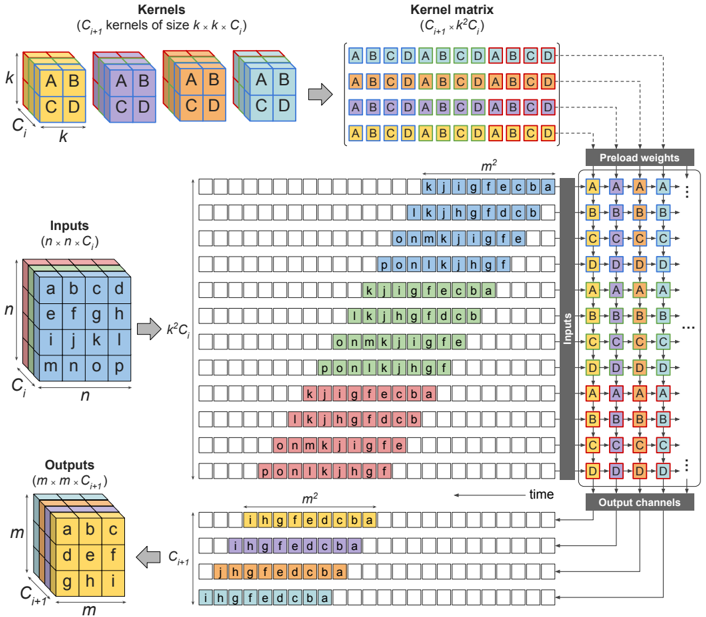

For a convolution, the arithmetic intensity can similarly become arbitrarily large, since a convolution can be implemented as a matrix-matrix multiplication. This is typically done by rearranging the input data into a toeplitz matrix using what is known as an im2col() operation. The general algorithm of implementing convolution using matrix multiplication in a systolic array is shown in fig. 2, where n × n is the size of one input channel, C i is the number of input channels, k × k is the size of one of the kernel channels, and C i +1 is the number of output channels (and, consequently, also the number of individual 3-D kernels). The toeplitz formed by replicating and rearranging the activation data results in an ( n -k +1) 2 × k 2 C i matrix. A convolution is performed by multiplying this with a k 2 C i × C i +1 matrix containing the weights. Therefore, when implementing a convolution using matrix multiplication we generally have matrix dimensions,

$$L = ( n - k + 1 ) ^ { 2 } \approx n ^ { 2 } \quad ( 7 a )$$

$$N = k ^ { 2 } C _ { i } \quad ( 7 b )$$

$$M = C _ { i + 1 } . \quad ( 7 c )$$

which results in an arithmetic intensity,

$$a = \frac { 2 n ^ { 2 } k ^ { 2 } C _ { i } C _ { i + 1 } } { n ^ { 2 } k ^ { 2 } C _ { i } + k ^ { 2 } C _ { i } C _ { i + 1 } + n ^ { 2 } C _ { i + 1 } } . \quad ( 8 )$$

However, since the activation data was replicated approximately k 2 times in order to form the input matrix, the arithmetic intensity is significantly reduced relative to a processor that natively implements convolution instead of general matrix multiplication. To see this, consider again the convolutional layer of an n × n input image with C i input channels, C i +1 output channels, and a k × k kernel. The input vector size is N i = n 2 C i , and the number of kernel weights is K = k 2 C i C i +1 . If only the necessary weight and activation data were required to be read, the arithmetic intensity of the i th layer would become

$$a \approx { \frac { 2 n ^ { 2 } k ^ { 2 } C _ { i } C _ { i + 1 } } { n ^ { 2 } ( C _ { i } + C _ { i + 1 } ) + k ^ { 2 } C _ { i } C _ { i + 1 } } } . \quad ( 9 )$$

In the limit where n 2 >> k 2 C i , this is roughly k 2 higher arithmetic intensity than when convolution is implemented using matrix multiplication.

Whether convolution is implemented natively or using matrix-matrix multiplication, eq. (9) shows that, as n, k, C i → ∞ , arithmetic intensity becomes arbitrarily large, making the contribution from memory access energy in eq. (5) arbitrarily small. Indeed, in most modern convolutional neural networks, these parameters are large and yield high arithmetic intensity, as shown in table I. Depending on the size of the memory banks (which determine memory access energy), and based on the reference numbers given in table IV for SRAM access energy and digital MAC operation, an in-memory compute processor implementing an algorithm with high arithmetic intensity can be made to expend negligible memory access energy relative to the computational energy.

## IV. REDUCING COMPUTATIONAL ENERGY WITH ANALOG COMPUTING

Unfortunately, by Ahmdal's law, even if the memory access energy is made arbitrarily small, computational energy consumed by the logical units will limit the overall performance gains to be made. In order to improve the overall efficiency by orders of magnitude, both contributions need to be addressed.

FIG. 2: Algorithmic implementation of a convolution using matrix multiplication in a weight-stationary systolic array. The input data is converted into a toepliz matrix and fed into the systolic array, with each row delayed one time step behind the one above it.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Kernel Processing Architecture

### Overview

This diagram illustrates a computational architecture for processing inputs through a series of kernels, kernel matrices, and precomputed weights. It depicts the flow of data from raw inputs to transformed outputs, emphasizing the role of kernel combinations and weight matrices in the transformation process.

### Components/Axes

1. **Kernels Section**:

- **Labels**:

- "Kernels (C_k kernels of size k x k, C)"

- Grid of colored blocks labeled with combinations like `AB`, `CD`, `AB`, `CD`, etc.

- **Structure**:

- A 2D grid of `k x k` kernels, each represented by a unique color-coded block (e.g., purple for `AB`, orange for `CD`).

- Kernels are indexed by `k` (size) and `C` (number of kernels).

2. **Kernel Matrix Section**:

- **Labels**:

- "Kernel matrix (C_k x C_k)"

- **Structure**:

- A grid of letters (A–D) representing combinations of kernels.

- Each cell in the matrix corresponds to a specific kernel combination (e.g., `ABCD`, `ABCD`, etc.).

3. **Inputs Section**:

- **Labels**:

- "Inputs (n x n x C)"

- **Structure**:

- A 3D grid of letters (a–o) arranged in `n x n` spatial dimensions and `C` channels.

- Example: `a b c d` (row 1), `e f g h` (row 2), etc.

4. **Outputs Section**:

- **Labels**:

- "Outputs (n x m x C_out)"

- **Structure**:

- A 3D grid of letters (a–o) with transformed values (e.g., `i h g f`, `d c b a`).

5. **Preload Weights Section**:

- **Labels**:

- "Preload weights"

- **Structure**:

- A grid of letters (A–D) and numbers (1–4), likely representing weight matrices or transformation rules.

- Example: `A1 B2 C3 D4` in rows.

### Detailed Analysis

- **Kernels to Kernel Matrix**:

- Kernels (`AB`, `CD`, etc.) are combined into a `C_k x C_k` matrix, where each cell represents a unique kernel combination (e.g., `ABCD`).

- The matrix is color-coded to match the original kernels (e.g., purple for `AB`, orange for `CD`).

- **Inputs to Outputs**:

- Inputs (`n x n x C`) are processed through the kernel matrix and preload weights to produce outputs (`n x m x C_out`).

- The transformation involves applying kernel combinations (e.g., `i h g f`, `d c b a`) to the input data.

- **Preload Weights**:

- The weights grid (`A1 B2 C3 D4`) suggests a structured transformation applied to the kernel matrix, possibly for optimization or efficiency.

### Key Observations

1. **Color-Coded Kernels**:

- Kernels are distinctly color-coded (e.g., purple for `AB`, orange for `CD`), ensuring clear differentiation in the kernel matrix.

2. **Spatial Dimensions**:

- Inputs and outputs are 3D grids, with spatial dimensions (`n x n` and `n x m`) and channel dimensions (`C` and `C_out`).

3. **Kernel Combinations**:

- The kernel matrix explicitly shows how individual kernels are combined (e.g., `ABCD`, `ABCD`), indicating a hierarchical or composite processing approach.

4. **Preload Weights**:

- The weights grid (`A1 B2 C3 D4`) implies a systematic application of weights to the kernel matrix, possibly for bias or scaling.

### Interpretation

This diagram represents a **convolutional or matrix-based processing pipeline**, likely for tasks like image recognition or signal processing. The architecture emphasizes:

- **Kernel Reuse**: Kernels (`AB`, `CD`) are reused and combined into a matrix for efficient computation.

- **Weight Optimization**: Preload weights (`A1 B2 C3 D4`) suggest precomputed transformations to reduce computational overhead.

- **Dimensionality Reduction**: Inputs (`n x n x C`) are transformed into outputs (`n x m x C_out`), indicating a change in spatial or channel dimensions.

The use of color-coding and structured grids highlights the importance of **kernel organization** and **weight management** in the processing pipeline. This architecture likely aims to balance computational efficiency with the ability to capture complex patterns in the input data.

</details>

TABLE I: Summary of convolutional layer parameters of various well-known neural networks considering a 1-Mpixel (per channel) input image.

| Network | # of layers | median n | median C i | max N | avg. k | total K | median C i +1 | median a |

|-------------------|---------------|------------|--------------|---------|----------|-----------|-----------------|------------|

| DenseNet201 | 200 | 62 | 128 | 1.6e+07 | 2 | 1.8e+07 | 128 | 292 |

| GoogLeNet | 59 | 61 | 480 | 3.9e+06 | 2.1 | 6.1e+06 | 128 | 200 |

| InceptionResNetV2 | 244 | 60 | 320 | 8e+06 | 1.9 | 8e+07 | 192 | 291 |

| InceptionV3 | 94 | 60 | 192 | 8e+06 | 2.4 | 3.7e+07 | 192 | 295 |

| ResNet152 | 155 | 63 | 256 | 1.6e+07 | 1.7 | 5.8e+07 | 256 | 390 |

| VGG16 | 13 | 249 | 256 | 6.4e+07 | 3 | 1.5e+07 | 256 | 2262 |

| VGG19 | 16 | 186 | 256 | 6.4e+07 | 3 | 2e+07 | 384 | 2527 |

| YOLOv3 | 75 | 62 | 256 | 3.2e+07 | 2 | 6.2e+07 | 256 | 504 |

Recently, various types of analog computing, from electrical to optical, have been proposed as techniques to reduce computational energy consumption. Electronic analog computing typically centers around crossbar arrays of resistive memory (or ReRAM) [7-9]. Optical analog processors are commonly based on silicon photonics [10-13]. Optical 4F systems have been explored since the 1980s as a higher dimensional form of compute [14, 15], and simple scattering off of optical surfaces is also being explored [16-18].

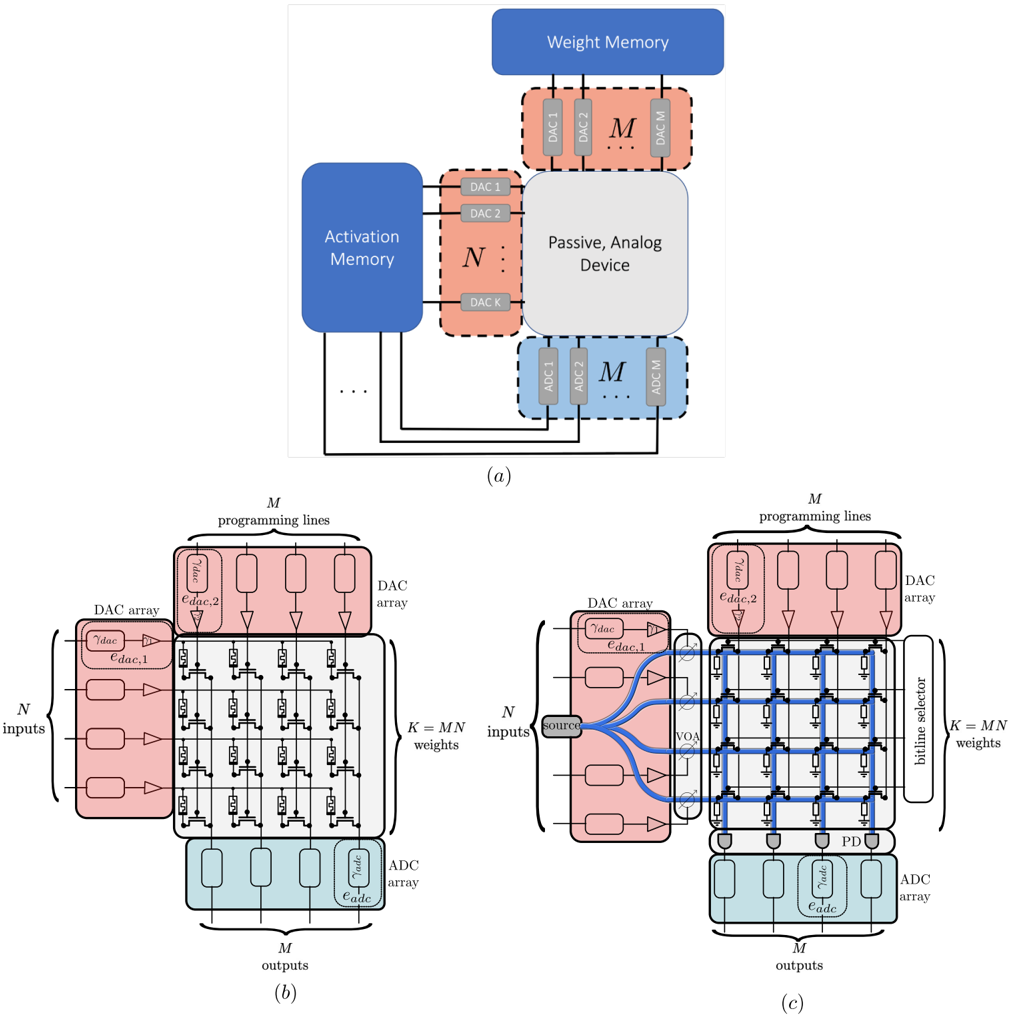

The argument for analog computing is fundamentally a scaling one: analog computing has particular advan- tages when applied to large, linear operators with low bit precision [19]. To see this, consider a general analog processor (shown in fig. 3(a)) that takes N numbers of B -bit precision input data, produces M numbers of B -bit precision output data, and is configured by K weights with B -bit precision which represent the matrix. The analog processor is first configured by converting the K weights using digital-to-analog converters (DACs) and applying these values to the modulators in the analog processor. Then the N inputs are read from memory, and DACs are used to apply N analog inputs to the processor. By the physics of the processor, this naturally results in M

FIG. 3: (a) System-level view analog, in-memory compute processors. The analog device is configured using DACs to either hold activations or weights, while the other is provided as input. (b) Detailed view of a ReRAM crossbar analog electronic in-memory compute processor. Each transistor is connected to a reconfigurable resistor, the conductance of which determines the effective weight of each element in the matrix. (c) Detailed view of a silicon photonic in-memory compute processor. Each transistor is connected to an electro-optic element that changes the scattering parameters through each intersection.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Analog Neural Network Architecture Diagrams

### Overview

The image presents three technical diagrams (a, b, c) illustrating components and signal flow in an analog neural network architecture. Diagrams (b) and (c) show detailed implementations of matrix multiplication operations using DAC arrays and analog devices, while diagram (a) provides a high-level system overview.

### Components/Axes

**Diagram (a): System Overview**

- **Activation Memory**: Blue block with N DAC arrays (DAC1 to DACk)

- **Weight Memory**: Blue block with M DAC arrays (DAC1 to DACm)

- **Passive Analog Device**: Central gray block connecting memories

- **Signal Flow**: Arrows show data path from Activation Memory → Weight Memory via analog device

- **Key Elements**: Programming lines, DAC arrays, memory blocks

**Diagram (b): Matrix Multiplication Core**

- **Inputs**: N DAC arrays on left (vertical stack)

- **Outputs**: M DAC arrays at bottom (horizontal stack)

- **Grid Structure**: N×M DAC array matrix with programming lines

- **Weight Configuration**: K = MN programmable weights

- **Color Coding**: Pink (input DACs), Blue (output DACs), Gray (analog device)

**Diagram (c): Enhanced Analog Device**

- **Additional Components**:

- Bitline Selector (blue vertical lines)

- Voltage Source (V_OA)

- Programming Lines (top connections)

- **Signal Path**: Inputs → DAC arrays → Bitline Selector → Analog Device → Output DAC arrays

- **Control Elements**: Voltage-controlled switches (VOA) in blue

### Detailed Analysis

**Diagram (a)**

- Activation Memory (N DACs) and Weight Memory (M DACs) are spatially separated but connected through the Passive Analog Device

- DAC arrays use dashed-line boundaries with labeled DAC1-DACk/m notation

- Programming lines connect all components, suggesting reconfigurable architecture

**Diagram (b)**

- Grid of N×M DAC arrays forms the computation matrix

- Inputs enter vertically (N channels), outputs exit horizontally (M channels)

- Programming lines run horizontally across the grid, enabling weight configuration

- K = MN weights implies full connectivity between input and output layers

**Diagram (c)**

- Bitline Selector introduces vertical control lines between DAC arrays

- Voltage Source (V_OA) enables dynamic control of analog paths

- Blue control lines show additional signal routing compared to diagram (b)

- Maintains N×M DAC array structure but adds selection capability

### Key Observations

1. **DAC Array Consistency**: All diagrams use identical DAC array notation (DAC1-DACk/m) with consistent pink/blue coloring

2. **Signal Flow Evolution**: Diagram (a) shows conceptual flow, (b) implements matrix structure, (c) adds control mechanisms

3. **Component Scaling**: N inputs and M outputs maintain consistent positioning across diagrams

4. **Control Complexity**: Diagram (c) introduces 2× more control elements (VOA switches + bitline selector) vs diagram (b)

### Interpretation

This architecture demonstrates a hybrid digital-analog computing system where:

1. **Digital Components** (DAC arrays) handle data storage and input/output conversion

2. **Analog Processing** occurs in the central Passive Analog Device, enabling parallel computation

3. **Programmability** is achieved through reconfigurable programming lines and bitline selectors

4. **Efficiency Gains** come from analog matrix multiplication (O(1) complexity vs digital O(N²))

The progression from (a)→(b)→(c) reveals increasing implementation detail, showing how analog computing can accelerate neural network operations while maintaining digital control. The bitline selector in (c) suggests potential for dynamic weight masking or sparse computation optimization.

</details>

analog outputs, which are converted back to the digital domain using analog-to-digital (DAC) converters. If the analog processor is somehow already configured, or never needs to be reconfigured, then the total energy consumed will be only that of the DACs for the inputs and ADCs for the outputs:

$$E _ { o p } \equiv N _ { o p } e _ { o p } = N ( e _ { d a c , 1 } + e _ { a d c } ) , \quad ( 1 0 )$$

where we have assumed N = M for simplicity. While

the right-hand-side of eq. (10) represents the computational energy consumed by the analog processor, the lefthand-side represents the equivalent number of digital operations performed ( N op ) times the energy that each of those operations would have to take ( e op ) in order for a digital computer to achieve the same efficiency as the analog computer. Since N op = 2 N 2 for matrix multiplication, if this operation were performed digitally, the expended computational energy would be proportional to the number of operations: E op = 2 e op N 2 . The conclusion is that analog computing reduces matrix multiplication from O ( N 2 ) in energy to O ( N ) in energy . This furthermore implies that the effective energy per operation of analog computing scales inversely to the size of the problem, i.e.

$$e _ { o p } \, \infty \, 1 / N . \quad ( 1 1 ) \quad I n$$

We note that in practice the scaling N is defined either by the size of the processor or the size of the problem, whichever is smaller.

## A. Vector-Matrix Multiplication

For most problems involving neural networks, the analog processors that can be created are not large enough to store the entire neural network. In this case, the reconfiguring of the weights in the analog processor itself can destroy the O ( N ) scaling advantage. To see this, consider the multiplication of a vector of length N with a matrix of dimensions N × M . In this case, we have,

$$N _ { o p } e _ { o p } = 2 N e _ { d a c , 1 } + 2 M N e _ { d a c , 2 } + 2 M e _ { a d c } . \quad ( 1 2 )$$

Wehave also separated the DAC energies e dac, 1 and e dac, 2 since different physical mechanisms and loads are sometimes used to configure an analog computer versus feed it with analog inputs. Here, e dac, 1 is used to represent the energy required per input, while e dac, 2 is used to represent the energy required per reconfiguration.

Typically, in analog computing technologies, the analog in-memory compute device can only store either positive definite numbers (like in the example of memristors) or fully complex numbers (like in the case of coupled Mach-Zender interferometers). If only positive numbers can be created, then the entire calculation must be done twice and the difference of the results taken in order to take into account both positive and negative matrix values. On the other hand, when complex values are allowed like in the case of silicon photonic MZI's, there are two voltages (and hence two DAC operations) required to configure each coupled MZI modulator. Additionally, for coherent optical measurements, an interference technique must be used to recover the positive and negative field components from the photodetectors, which can only measure the norm square of the field. Hence, regardless of the analog compute scheme, each term in eq. (12) must practically be multiplied by a factor of two in order to handle both positive and negative values.

Applying eq. (12) to vector-matrix multiplication, we obtain:

$$e _ { o p } = e _ { d a c , 1 } / M + e _ { d a c , 2 } + e _ { a d c } / N , \quad ( 1 3 )$$

in which case the middle term is proportional neither to 1 /N nor 1 /M .

## B. Matrix-Matrix Multiplication

The aforementioned situation is relieved in the case of matrix-matrix multiplication. In this case the configuration of the analog computer itself is reused for every row of the input matrix, restoring the energy cost per operation to be inversely proportional to the problem scaling. In the case of an L × N matrix times an N × M matrix, we have

$$e _ { o p } = e _ { d a c , 1 } / M + e _ { d a c , 2 } / L + e _ { a d c } / N \quad ( 1 4 )$$

since N op = 2 NML in this case. Since each of the three separate contributions to the energy consumption is decreased by a factor proportional to the three different dimensions associated with the matrices being multiplied, the effective energy per operation decreases as the problem scale increases. In the case of a finite-sized analog processor, the last two contributions will ultimately be limited by the two dimensions (number of inputs and outputs) of the analog processor itself.

At this point, a distinction needs to be made between the size of the matrices involved in the neural net architecture and the physical dimensions of the analog processor. We label the matrix dimensions with primes, i.e. M ′ , N ′ , and L ′ , and label the physical dimensions of the processor with hats: ˆ M , ˆ N . The actual factors by which energy is saved (i.e. M and N in eq. (14)) are given by the smaller of these two numbers:

$$M = \min \{ \hat { M } , M ^ { \prime } \} \quad ( 1 5 a )$$

$$N = \min \{ \hat { N } , N ^ { \prime } \} . \quad ( 1 5 b )$$

## C. Convolution

As in the case of digital processors, analog processors can also implement convolution using matrix-matrix multiplication. The mapping of the kernel and activation data to to matrix dimensions remains the same, i.e.

$$L ^ { \prime } = ( n - k + 1 ) ^ { 2 } \approx n ^ { 2 } \quad \ \ ( 1 6 a )$$

$$N ^ { \prime } = k ^ { 2 } C _ { i } \quad ( 1 6 b )$$

$$M ^ { \prime } = C _ { i + 1 } \quad ( 1 6 c )$$

when weight-stationary scheme is implemented. These numbers are permuted for activation-stationary. As with digital processors, one of the unfortunate aspects of representing convolution as pure matrix multiplication is

TABLE II: Median values of L ′ , N ′ , and M ′ as per eq. (16) for the convolutional layers of various well-known neural networks. The values were obtained considering a 1-Mpixel (per channel) input image.

| Network | # of layers | L ′ | N ′ | M ′ |

|-------------------|---------------|-------|-------|-------|

| DenseNet201 | 200 | 3844 | 1152 | 128 |

| GoogLeNet | 59 | 3721 | 528 | 128 |

| InceptionResNetV2 | 244 | 3600 | 432 | 192 |

| InceptionV3 | 94 | 3600 | 768 | 192 |

| ResNet152 | 155 | 3969 | 1024 | 256 |

| VGG16 | 13 | 62001 | 2304 | 256 |

| VGG19 | 16 | 38688 | 2304 | 384 |

| YOLOv3 | 75 | 3844 | 1024 | 256 |

that the input activations get duplicated k 2 times, which means k 2 more DAC operations (and possibly memory accesses as well) than in a processor that natively implements convolution rather than general matrix multiplication. The consequence of this is that M is by far the smallest of the numbers in eq. (16c), and therefore analog processors that implement convolution as matrix multiplication get the least amortization over their input DACs in eq. (14). The median values of L ′ , N ′ , and M ′ for various neural networks is presented in table II.

## V. OPERATOR-SPECIALIZED ANALOG PROCESSORS

Thus far, we have seen that 1) the contribution of memory access energy to compute efficiency can be brought arbitrarily low by implementing networks with large arithmetic intensity on specialized processors, and 2) analog processors can further reduce computational energy consumption when performing matrix multiplication. The reduction in computational energy is proportional to the size of the matrix the analog processor can handle.

One of the inherent disadvantages of planar, matrix multiplication based processors in performing convolutions is that the matrix that is formed for the input is of dimensions ( n -k + 1) 2 × k 2 C i , which is a factor of k 2 larger than the actual activation data. When the convolution is performed digitally this is of little consequence because the number of MACs required is the same for this matrix multiplication as it is for convolution: ( n -k +1) 2 k 2 C i . However, when the matrix multiplication is performed with an analog processor using a matrix with k 2 more rows than necessary means that it requires k 2 more DAC operations than should be theoretically necessary. Even worse than this, unless some additional logic is used to set up the matrix between the SRAM and processor (which also consumes energy), it will require k 2 additional memory reads than is in principle necessary, thus significantly increasing the memory access energy. Furthermore, since the number of channels in each layer are often correlated (the output channels of one layer become the input channels of the next), the weight data loaded into the analog processor which has dimensions of k 2 C i × C i +1 is highly rectangular, which will increase M relative to N , which in turn increases the contribution to the energy consumption per operation to the input data DACs.

In contrast to analog processors designed for general matrix multiplication, there are classes of analog processors which are specialized to implementing convolutions. One technique to implementing an analog processor is by restricting it to only operate in one particular eigenspace of operators. While any linear operator may be expressed as a matrix, the matrix A may be factored into the product of three matrices using eigen-decomposition:

$$X = U \Lambda U ^ { T } , \quad ( 1 7 )$$

where U is a unitary (i.e. lossless) matrix of the eigenvectors of X , and Λ is a purely diagonal matrix of the eigenvalues of X . The eigenvectors of a convolution are waves, and so when X is a matrix representing a convolution, the eigenvector matrix U represents a Fourier transform, while U T represents and inverse Fourier transform.

One technique of creating an operator-specialized processor is to statically implement the matrices U and U T , and only dynamically reconfigure the eigenvalues Λ. In this case, in order to change linear operators from one to another only the diagonal entries of Λ need to be changed. In other words, if the matrix X is of size m × m , changing the matrix to another convolution matrix only requires the modulation of m weights in the analog processor instead of m 2 weights. In the particular case where X represents a convolution, these eigenvalues are the Fourier transform of the kernel data. By tuning this set of m elements, the matrix X that is implemented by the analog processor can span the range of linear operators with the eigenvectors given by U .

Eigen-decomposition is possible for planar analog processors, and has in fact been demonstrated in silicon photonic processors [11, 13]. However, there is an alternate approach to silicon photonics to implementing a convolution-specialized processor called an optical 4F system , which has a particular set of advantages relative to planar convolution processors.

In planar analog processors, data is inserted into the processor in a one dimensional array, and the data is processed as it propagates along the second dimension. Unlike planar processors, an optical 4F system is a volumetric processor, so data is represented in a two dimensional array, while the computation happens as light propagates in the third dimension. While this does bring dramatically higher information density and computational density, the most significant difference is that it allows the processor to scale to numbers of inputs that are entirely impractical for planar processors. Since the efficiency of analog compute was shown in eq. (11) to scale proportionally to the dimensions of the analog processor (in the limit of infinite arithmetic intensity), optical 4F systems can in theory reach computational efficiencies orders of

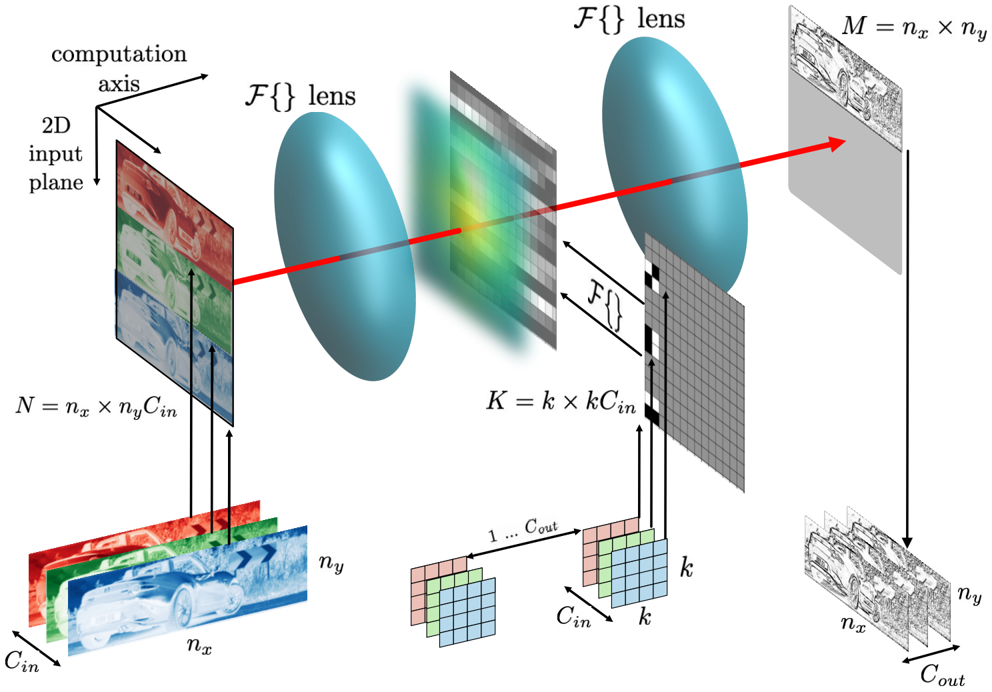

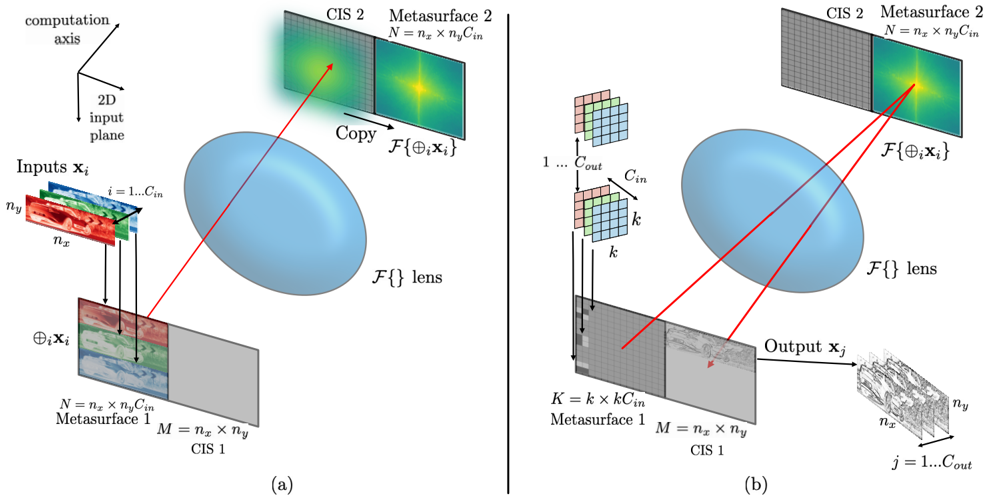

FIG. 4: Illustration of a transmission-mode optical 4F system performing convolutions with parallelized input channels. The input activation data can be tiled on the object plane, while the input filters can be tiled with appropriate padding before the Fourier transform is taken and the data is applied to the second SLM in the Fourier plane. In this arrangement one complete output channel is produced per measurement.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Image Transformation Through Optical System

### Overview

The diagram illustrates a computational pipeline for image transformation through an optical system, involving Fourier domain processing and feature extraction. It shows the flow of a 2D input image through two lenses (F{}) and intermediate processing stages, resulting in a multi-channel output image.

### Components/Axes

1. **Input Plane (2D Input Plane)**

- Dimensions: `N = nx × ny × Cin`

- Channels: Red (`C_in`), Green (`C_in`), Blue (`C_in`)

- Position: Bottom-left quadrant

- Visualization: Color image of a car split into RGB channels

2. **Fourier Plane (F{} Lens)**

- Dimensions: `K = k × k × C_in`

- Visualization: Blurred grid with intensity variations (green/yellow center)

- Position: Center of the diagram

- Legend: Gray grid representing spatial frequency domain

3. **Output Plane (M = nx × ny × C_out)**

- Dimensions: `M = nx × ny × C_out`

- Channels: `C_out` (multiple grayscale feature maps)

- Position: Right side of the diagram

- Visualization: Edge-detected car images with varying orientations

4. **Computation Axis**

- Red arrow connecting input → Fourier plane → output

- Indicates data flow direction

### Detailed Analysis

- **Input Plane**:

- RGB channels (`C_in`) are spatially aligned (`nx × ny` pixels)

- Color coding matches standard RGB convention (red/green/blue)

- **Fourier Plane**:

- Grid structure (`k × k`) suggests convolutional kernel size

- Intensity gradient (green/yellow center) implies frequency magnitude representation

- **Output Plane**:

- `C_out` channels show progressive edge detection (horizontal → vertical → diagonal)

- Spatial resolution preserved (`nx × ny`)

### Key Observations

1. **Channel Preservation**: Input channels (`C_in`) are maintained through the Fourier transformation

2. **Feature Multiplication**: Output channels (`C_out`) exceed input channels, indicating feature expansion

3. **Spatial Consistency**: Pixel dimensions (`nx × ny`) remain constant through all stages

4. **Kernel Size**: `k × k` grid in Fourier plane suggests localized frequency analysis

### Interpretation

This diagram represents a computational model for image feature extraction using optical system analogies:

1. **First Lens (F{})**: Performs Fourier transform to convert spatial domain image to frequency domain

2. **Fourier Plane Processing**: Implicit filtering occurs in frequency space (green/yellow gradient suggests high-pass filtering)

3. **Second Lens (F{})**: Inverse Fourier transform converts processed frequencies back to spatial domain

4. **Output Channels**: Multiple `C_out` channels demonstrate feature decomposition (e.g., edge detection in different orientations)

The system appears to implement a convolutional neural network architecture using optical metaphors, where:

- Input plane = Raw image data

- Fourier plane = Convolutional filter application in frequency domain

- Output plane = Feature maps after non-linear transformation

Notable design choices:

- Color coding for input channels aids in tracking data flow

- Grid visualization in Fourier plane emphasizes frequency localization

- Multiple output channels show hierarchical feature extraction

</details>

magnitude higher than planar processors.

An example of an optical 4F system processor is shown in fig. 4. It is composed of two spatial light modulators (SLMs), which might be based on either liquid crystal cells or dynamic metasurfaces. These are placed before and after a lens, one focal length away from either side. Lenses naturally perform Fourier transforms between these two place, so that the light transmitted through the first SLM is Fourier-transformed upon passing through the lens. Therefore the first SLM provides the input data, and the first lens represents multiplication by the unitary Fourier matrix U . The second SLM is loaded with the Fourier transform of the kernel data and the transmitted light through it; therefore, the product of the Fourier transform of the input data with the Fourier transform of the kernel data. The second SLM therefore represents the multiplication by the diagonal eigenvalue matrix Λ.

A second lens is then placed after the second SLM, one focal length away, which represents multiplication by the second eigenvalue matrix U . Finally, a detector is placed a second focal length from the second lens, and the light impinging on the detector is therefore the convolution of the input data with the kernel data. The detector itself is sensitive only to the intensity (i.e. the norm square) of the incident field. However, the complex value of the field can nonetheless be recovered using interferometric methods. Alternatively, as others have pointed out, the nonlinear measurement performed by the optical absorption of semiconductors can also be used naturally as the nonlinear activation of the neurons.

As shown in fig. 4, more than one input channel can be processed in parallel if the kernel data is appropriately padded before the Fourier transform is taken and the data is applied to the second SLM. This allows greater SLM utilization when small kernels are being used.

Unfortunately, from a compute systems perspective, traditional optical 4F systems have a fatal flaw: the output data from the convolution is measured four focal lengths away from the input data, which presumably

FIG. 5: Illustration of a reflection-mode optical 4F system (folded into a 2F overall length) performing processing a full convolutional layer with all input and output channels in two phases: (a) phase one, where an optical Fourier transform of the input activation data is taken and loaded into the Fourier plane SLM, (b) phase two, where the input channels are tiled onto the object plane SLM and the convolution of all input channels are measured in parallel. The process is repeated for each output channel.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Diagram: Computational Imaging System Architecture

### Overview

The image depicts two diagrams (a) and (b) illustrating a computational imaging system. Both diagrams show inputs passing through a lens (`F{}`) and interacting with metasurfaces and computational imaging sensors (CIS) to produce outputs. Diagram (a) focuses on input-output relationships, while diagram (b) introduces additional processing layers (e.g., a grid labeled `k` and `C_out`).

### Components/Axes

#### Diagram (a):

- **Inputs**: Labeled `x_i` (2D input plane) with dimensions `n_x × n_y × C_in`.

- **Lens**: Central blue oval labeled `F{}` (function mapping).

- **Metasurfaces**:

- Metasurface 1: Dimensions `M = n_x × n_y`, labeled `CIS 1`.

- Metasurface 2: Dimensions `N = n_x × n_y × C_in`, labeled `CIS 2`.

- **Outputs**: Labeled `x_j` with dimensions `n_x × n_y`.

- **Arrows**: Red arrows indicate flow from inputs → lens → metasurfaces → outputs.

#### Diagram (b):

- **Inputs**: Same as (a), but with an additional grid labeled `k` (size `K = k × C_in`).

- **Processing Layer**: Grid labeled `C_out` (output channels).

- **Outputs**: Structured as `n_x × n_y` with hierarchical layers (e.g., `j = 1...C_out`).

- **Arrows**: Red arrows show flow from inputs → grid → lens → metasurfaces → outputs.

### Detailed Analysis

#### Diagram (a):

- **Inputs**: 2D input plane (`x_i`) with spatial dimensions `n_x × n_y` and `C_in` channels.

- **Metasurface 1**: Acts as a first-stage processor (`CIS 1`), preserving spatial dimensions (`M = n_x × n_y`).

- **Metasurface 2**: Adds channel depth (`N = n_x × n_y × C_in`), suggesting feature extraction or modulation.

- **Lens (`F{}`)**: Central processing unit, likely applying a transformation (e.g., Fourier or phase modulation).

#### Diagram (b):

- **Grid (`k`)**: Introduces a parameterized layer (`K = k × C_in`), possibly for adaptive filtering or parameter tuning.

- **Output Structure**: Hierarchical output (`j = 1...C_out`) implies multi-scale or multi-feature outputs.

- **Lens (`F{}`)**: Retains central role but interacts with the grid before metasurface processing.

### Key Observations

1. **Flow Consistency**: Both diagrams show inputs passing through the lens before metasurface processing, but (b) adds a grid-based preprocessing step.

2. **Dimensionality**:

- Metasurface 1: Maintains spatial resolution (`n_x × n_y`).

- Metasurface 2: Expands channel depth (`C_in`).

3. **Output Complexity**: Diagram (b) introduces a multi-channel output (`C_out`), suggesting advanced processing capabilities.

### Interpretation

The diagrams represent a computational imaging system where:

- **Inputs** (2D spatial data) are modulated by a lens (`F{}`) to encode spatial or spectral information.

- **Metasurfaces** manipulate light properties (e.g., phase, amplitude) to extract features.

- **Diagram (b)** enhances the system with a grid-based preprocessing layer (`k`), enabling adaptive control over the imaging process.

- The hierarchical output structure (`C_out`) in (b) suggests applications in multi-modal imaging (e.g., simultaneous capture of intensity and phase).

The system likely leverages metasurface-based computational imaging for tasks like hyperspectral imaging, 3D reconstruction, or adaptive optics. The inclusion of `k` in (b) implies tunable parameters for optimizing image quality or resolution.

</details>

must be physically implemented in its own chip. Since this convolution operation only represents the connections between two layers of neurons, in order to implement a deep neural network with more than two layers of neurons the output data from the detector chip must be brought back somehow to the input spatial light modulator. Communicating this massive amount of data off-chip would entail massive energy costs, overcoming all advantages brought by the large-scale analog compute.

However, an optical 4F system might be folded using reflection-mode SLMs as shown in fig. 5 in order to consolidate the first SLM and the CMOS image sensor sideby-side into a single chip, and using only a single lens. In this architecture all significant data transfer between the two chips happens optically instead of electronically. On either side of the lens on two chips, split into two halves are: an SLM (or metasurface) and a CMOS image sensor. Both chips are placed one focal length away from either side of the lens such that, whenever light passes between the two chips, a Fourier transform is taken by the lens.

This system computes convolutions in two phases: a loading phase and a compute phase. The first, loading phase is shown in fig. 5(a), where the purpose is to take the Fourier transform of the activation data and load it into the second metasurface. A set of input filter maps are written to the input SLM in the first chip, which is illuminated. The Fourier transform of the reflected light is delivered to the CMOS image sensor (CIS) in the second chip, and this data is electronically transferred over to the second SLM within the same chip using DAC and ADC operations. As with the in-transmission unfolded 4F system in fig. 4, in-reflection 4F systems like the one in fig. 5 can be used to take the convolution of multiple input channels in parallel. The final result of this phase is therefore that the SLM in the second chip is configured with the Fourier transform of the activation data.

In the second, compute phase, the input kernel weight data is applied to the first SLM. This is then illuminated at a slightly oblique angle so that the reflected light impinges upon the SLM in the second chip. When this light is reflected the lens takes another Fourier transform, and the light impinging on the CIS in the first chip is the convolution of the input filter map data with the kernel data.

If the input data requires n 2 C i total pixels, loading the optical Fourier transform of the activation data will cost

$$E _ { f f t } = n ^ { 2 } C _ { i } ( 2 e _ { a d c } + 4 e _ { d a c } ) \quad ( 1 8 )$$

energy. One DAC operation per pixel is required to write the input data to the first metasurface, while two ADC operations and two DAC operations are required in order to reconstruct the complex field data from the intensity data and then apply it to the second SLM.

Since input channels can be performed in parallel and then looped over output channels, the second phase in-

volves two times K = k 2 C i C i +1 DAC operations, and two n 2 C i +1 ADC operations in the CIS to recover the field.

$$E _ { c o n v } = 2 k ^ { 2 } C _ { i } C _ { i + 1 } e _ { d a c } + 2 n ^ { 2 } C _ { i + 1 } e _ { a d c } \quad ( 1 9 )$$

Therefore the total energy associated with the analog compute of this layer is E fft + E conv ,

$$E _ { o p } = 2 n ^ { 2 } ( C _ { i } + C _ { i + 1 } ) e _ { a d c } + 2 C _ { i } ( 2 n ^ { 2 } + k ^ { 2 } C _ { i + 1 } ) e _ { d a c } . \, ( 2 0 )$$

The total number of operations performed is N op = 2 n 2 k 2 C i C i +1 . Therefore the efficiency of the approach is,

$$\eta = \frac { 1 } { e _ { m } / a + e _ { a d c } / \left ( \frac { k ^ { 2 } C _ { i } C _ { i + 1 } } { C _ { i } + C _ { i + 1 } } \right ) + 2 e _ { d a c } / k ^ { 2 } C _ { i + 1 } + e _ { d a c } / n ^ { 2 } } .$$

in the limit that the metasurfaces are large enough to handle all of the activation or weight data.

In order to take into account the finite size of the metasurfaces, which may not be large enough to fit all of the activation data from all channels at once, we first find the number of input channels that can practically be handled at once. For a metasurface of dimension n x × n y ≡ ˆ N , the number of input channels that can be included at once, C ′ , is,

$$C ^ { \prime } = \lfloor \hat { N } / n ^ { 2 } \rfloor . \quad ( 2 2 )$$

Using this in place of the actual number of software defined input channels we can derive the factors by which energy is saved in the optical 4F system in the case that C ′ ≥ 1,

$$L = n ^ { 2 } \quad & ( 2 3 a ) \quad \begin{array} { l } { g i c } \\ { i n } \end{array}$$

$$N = \frac { k ^ { 2 } C ^ { \prime } C _ { i + 1 } } { ( C ^ { \prime } + C _ { i + 1 } ) }

( 23 b ) \quad \ n o$$

$$M = k ^ { 2 } C _ { i + 1 } / 2 . \quad ( 2 3 c ) \quad t e c _ { 1 }$$

In terms of these parameters, the efficiency of the optical 4F system is given in the usual way,

$$e _ { o p } = e _ { d a c } / M + e _ { d a c } / L + e _ { a d c } / N . \quad ( 2 4 ) \quad t h e r$$

For an optical 4F system, the median values of L , N , and M as per eq. (23) for various neural networks is presented in table III.

## VI. ANALYTIC RESULTS

The formula given in eqs. (3), (5), (14) and (24) can be used to estimate the efficiency when evaluating a given CNN layer on any one of those four compute platforms. They depend on the energy values for memory access, DAC/ADC operations, and digital multiplication. Estimates for many of these quantities are given in table IV,

TABLE III: Median values of L , N , and M for the convolutional layers of various well-known neural networks considering an optical 4F system as computational substrate. The values were obtained considering a 1-Mpixel (per channel) input image and an infinitely large metasurface (i.e. C ′ → inf).

| Network | # of layers | L | N | M |

|-------------------|---------------|-------|------|------|

| DenseNet201 | 200 | 3844 | 272 | 136 |

| GoogLeNet | 59 | 3721 | 128 | 64 |

| InceptionResNetV2 | 244 | 3600 | 224 | 112 |

| InceptionV3 | 94 | 3600 | 240 | 120 |

| ResNet152 | 155 | 3969 | 1024 | 512 |

| VGG16 | 13 | 62001 | 2304 | 1152 |

| VGG19 | 16 | 38688 | 3456 | 1728 |

| YOLOv3 | 75 | 3844 | 512 | 256 |

TABLE IV: Energy per operation for various operations of digital and analog computers. These assume a technology node of 45nm and a voltage of 0.9V, and 8-bit values per operation. The example of memory access energy assumes a bank size of 96kB, since this is the bank sized used to construct the TPU SRAM bank.

| e m (96kB SRAM)[3] | 4.3pJ |

|----------------------------------------------|---------|

| e mac [3] | 0.23pJ |

| e adc [20] | 0.25pJ |

| e dac [21] | 0.01pJ |

| e opt [eq. (A8)] | 0.01pJ |

| e load for 4 µm pitch, N = 256 [eq. (A6)] | 0.08pJ |

| e load for 250 µm pitch, N = 40 [eq. (A6)] | 0.8pJ |

| e load for 2.5 µm pitch, N = 2048 [eq. (A6)] | 0.04pJ |

and formula for deriving the loads to estimate DAC energies for various analog compute platforms are also given in the appendix.

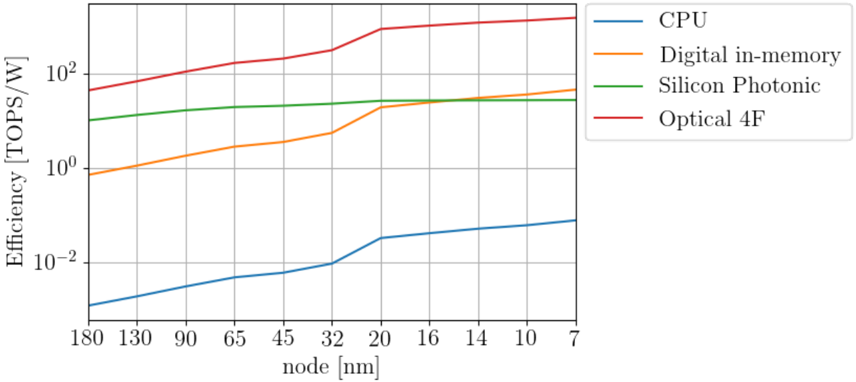

Each of these values depend on the CMOS technology node, but scaling laws can be used to interpolate between technology nodes [22]. We compare the various compute platforms by considering a CNN layer with parameters given in table V, and the resulting efficiencies are plotted as a function of technology node in fig. 6.

While all processors improve with technology node, there is roughly an order of magnitude difference between digital in-memory compute processors and silicon photonic processors, and yet another order of magnitude difference to be expected between silicon photonic pro-

TABLE V: Convolution parameters used to estimate efficiencies of various processors in fig. 6. The arithmetic intensity follows from the other parameters by eq. (9).

| Input Channels | C i | 128 |

|----------------------|--------|-------|

| Output Channels | C i +1 | 128 |

| Filter size | k | 3 |

| Input size | n | 512 |

| Arithmetic intensity | a | 230 |

FIG. 6: Efficiencies from analytic models of various compute architectures as a function of technology node.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Chart: Efficiency vs. Node Size

### Overview

The chart compares the efficiency (in TOPS/W) of four technologies across varying node sizes (in nanometers). Efficiency is plotted on a logarithmic scale (10^-2 to 10^2), while node size decreases from 180 nm to 7 nm on the x-axis. Four data series are represented by distinct colored lines, with a legend on the right.

### Components/Axes

- **X-axis**: "node [nm]" (logarithmic scale, decreasing from 180 to 7 nm).

- **Y-axis**: "Efficiency [TOPS/W]" (logarithmic scale, 10^-2 to 10^2).

- **Legend**:

- Blue: CPU

- Orange: Digital in-memory

- Green: Silicon Photonic

- Red: Optical 4F

- **Lines**: Four distinct colored lines corresponding to the legend.

### Detailed Analysis

1. **CPU (Blue Line)**:

- Starts at ~0.01 TOPS/W at 180 nm.

- Gradually increases to ~0.05 TOPS/W at 7 nm.

- Trend: Slow, linear improvement with decreasing node size.

2. **Digital in-memory (Orange Line)**:

- Begins at ~0.1 TOPS/W at 180 nm.

- Rises sharply to ~10 TOPS/W at 20 nm, then plateaus.

- Trend: Exponential growth until 20 nm, then stabilizes.

3. **Silicon Photonic (Green Line)**:

- Starts at ~0.5 TOPS/W at 180 nm.

- Remains relatively flat (~0.5–10 TOPS/W) across all node sizes.

- Trend: Minimal improvement, consistent performance.

4. **Optical 4F (Red Line)**:

- Begins at ~10 TOPS/W at 180 nm.

- Increases sharply to ~100 TOPS/W at 20 nm, then plateaus.

- Trend: Exponential growth until 20 nm, then stabilizes.

### Key Observations

- **Optical 4F** consistently outperforms all other technologies across all node sizes, maintaining the highest efficiency.

- **Digital in-memory** shows the steepest improvement at smaller nodes (20 nm and below), surpassing Silicon Photonic.

- **CPU** exhibits the slowest efficiency gains, remaining the least efficient technology throughout.

- All technologies improve efficiency as node size decreases, but the rate of improvement varies significantly.

### Interpretation

The data suggests that **Optical 4F** is the most scalable and efficient technology for high-performance applications, even at larger node sizes. **Digital in-memory** demonstrates strong potential for efficiency gains at smaller nodes, though it lags behind Optical 4F. **Silicon Photonic** offers stable but moderate efficiency, while **CPU** remains the least efficient, indicating limitations in scaling for power-constrained systems. The logarithmic scale emphasizes the exponential advantages of photonic and 4F technologies over traditional CPU architectures as node sizes shrink.

</details>

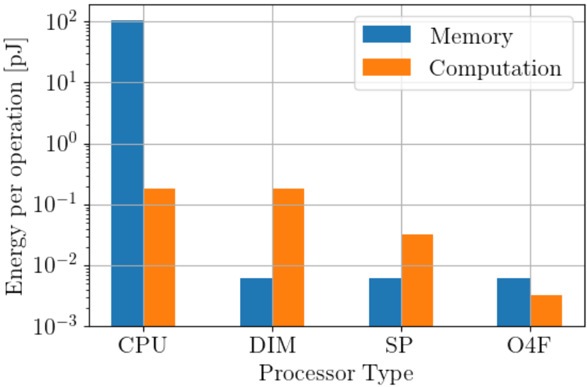

FIG. 7: Contributions of energy consumption per operation for various processor types. DIM is digital in-memory, SP is silicon photonic, and O4F is optical 4F system architectures. The CNN layer parameters are in table V, and assumptions about architectural details are given in the text. The technology node is assumed to be 32nm for all processor types.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Bar Chart: Energy per Operation by Processor Type

### Overview

The chart compares energy consumption (in picojoules, pJ) for memory and computation operations across four processor types: CPU, DIM, SP, and O4F. Energy values are plotted on a logarithmic scale (10^-3 to 10^2 pJ), with separate bars for memory (blue) and computation (orange) operations.

### Components/Axes

- **X-axis**: Processor Type (CPU, DIM, SP, O4F)

- **Y-axis**: Energy per operation [pJ] (logarithmic scale: 10^-3 to 10^2)

- **Legend**:

- Blue = Memory

- Orange = Computation

- **Legend Position**: Top-right corner

- **Bar Grouping**: Processor types grouped vertically, with memory and computation bars side-by-side for each type.

### Detailed Analysis

1. **CPU**:

- Memory: ~100 pJ (blue bar, tallest in chart)

- Computation: ~0.1 pJ (orange bar, ~10^-1 pJ)

2. **DIM**:

- Memory: ~0.1 pJ (blue bar, ~10^-1 pJ)

- Computation: ~0.1 pJ (orange bar, ~10^-1 pJ)

3. **SP**:

- Memory: ~0.1 pJ (blue bar, ~10^-1 pJ)

- Computation: ~0.01 pJ (orange bar, ~10^-2 pJ)

4. **O4F**:

- Memory: ~0.1 pJ (blue bar, ~10^-1 pJ)

- Computation: ~0.001 pJ (orange bar, ~10^-3 pJ)

### Key Observations

- **Memory Energy Outlier**: CPU memory operations consume ~1000x more energy than computation operations (100 pJ vs. 0.1 pJ).

- **Consistency in Memory**: DIM, SP, and O4F show nearly identical memory energy (~0.1 pJ).

- **Computation Efficiency**: Computation energy decreases by ~100x from CPU (0.1 pJ) to O4F (0.001 pJ).

- **Logarithmic Scale Impact**: The y-axis compression emphasizes differences in computation energy (e.g., O4F computation is 100x lower than SP).

### Interpretation

The data suggests that **memory operations dominate energy consumption in CPUs**, while **computation efficiency improves significantly in specialized processors (O4F)**. The logarithmic scale highlights the stark contrast between memory and computation energy in CPUs, whereas O4F demonstrates near-parity between the two. This implies that processor architecture (e.g., O4F’s design) optimizes computation energy, making it suitable for tasks requiring high computational throughput with minimal energy waste. The consistency in memory energy across DIM, SP, and O4F suggests shared memory management strategies, while CPU’s high memory energy may reflect legacy or general-purpose design trade-offs.

</details>

cessors and optical 4F systems. While this difference is clearly algorithm-dependent, the underlying hardware for analog compute systems must be large enough to be able to exploit the potential algorithmic advantages, which is what is enabled by moving from a two-dimensional silicon photonic processor to a fundamentally three-dimensional processor akin to an optical 4F system.

The breakdown of improvements into memory and computational energy reductions are shown in fig. 7, which shows the contribution to the energy per operation from memory and computational elements separately for each processor type. Exploiting high arithmetic intensity with in-memory compute vastly improves energy consumption between CPUs and the other platforms by first reducing memory energy well below computational energy. The analog processors in turn have reduced computational energy, with less computational energy on a per-operation basis for analog processors with more inputs.

It is worthwhile noting that the efficiencies reported in fig. 6 for the digital in-memory processor are significantly higher than the Google TPU, which reported 0.3-2 TOPS/W depending on the CNN architecture, for a chip manufactured at a 28-nm node. The in-memory compute digital processor modeled here has the same architectural parameters as the TPU: a 256 by 256 systolic array, and 24-MiB of SRAM divided into 256, 96-KB banks. Here we predict that number should be roughly 5 TOPS/W,

which is a significantly higher efficiency than reported in the literature [1]. However, we note that this estimation simplifies the energy costs associated with the digital multiplication and storing and transporting data in and between each processing element in the systolic array.

The silicon photonics processor modelled in figs. 6 and 7 assumed an array size of 40 by 40, which is typical for most processors reported in the literature [10-13], since the various modulator technologies typically require the array to have pitches in the 100-400 µ m range. The computational energy consumption is highly limited by the optical modulator technology, which currently stands at around 7 pJ/byte, as discussed in section A1. We assume in our model that this will be improved to 0.5 pJ over time, but even with this assumed advantage it is clear in fig. 6 that silicon photonics will have a difficult time maintaining an efficiency advantage over digital compute in memory technologies unless it is possible to scale up the processor sizes. We also assume a 24-MiB SRAM for the silicon photonics processor, divided into 40, 600-KB SRAM banks, following the TPU architecture.

The optical 4F system is based on the architecture in fig. 5, with 4-Mpx SLMs and a 24-MiB SRAM divided into 2048, 12-KB banks, again following the TPU architecture. The SLM pitch for DAC loads involved in active matrix addressing of the SLMs was assumed to be 2.5 µ m, which results in a line capacitance of 0.9 fF and a load energy of 40 fJ as shown in table IV. The optical energy per pixel is based on 1550-nm light, and contributes 10 fJ/pixel per operation as shown in table V. The large array sizes enabled by realistic SLM dimensions are able to reduce computational energy consumption even below the memory consumption in fig. 7.

## VII. COMPUTATIONAL RESULTS

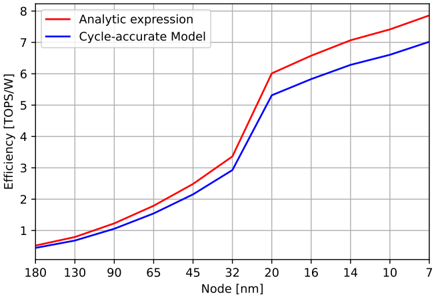

Thus far, we have provided simple analytic formula that estimate the efficiency of various AI inference platforms on the basis of how they scale. These formula are approximations with several limitations, the biggest of which is that they don't take into account situations where the matrices involved are too large for either the capacity of the in-memory compute device or its inputs. In that circumstance the problem needs to be broken up into several smaller matrix multiplications. In order to get around this limitation we developed cycle-accurate models of a systolic array and of an in-reflection optical 4F system, and tested those models when evaluating various CNNs for a given input image size. The more accurate computational results are then compared with the analytic models from the previous sections.

## A. Systolic array efficiency estimation

For analyzing the energy efficiency of a systolic array, we considered an architecture similar to that of the Google TPU [ref:TPU], with a weight-stationary systolic array of size 256 x 256 tiles. Each of the 256 ports of the array has access to an individual 96-KB SRAM block, totaling 24 MiB of buffer memory for storing activations (i.e. inputs/outputs of a convolutional layer). The weights are stored in DRAM and accessed based to the convolutional layer being executed. The activations and weights are 8-bit fixed point.

In terms of energy costs, we used as reference the SRAM and MAC energy values for a 45-nm process at 0.9 V from [3]: SRAM read/write of 1.25 pJ/byte (8-KB memory) and 8-bit MAC operation of 0.23 pJ/byte. To align with the SRAM block size of 96 KB in the TPU, the 8-KB SRAM energy cost was scaled in size by a factor of √ 96K / 8K = 3 . 46 in accordance with eq. (A2), resulting in 4.33 pJ/byte. Associated to each MAC operation, we also included the energy costs of the load and of the memory read/write inside each array tile (to store/propagate the 8-bit input and 32-bit accumulation = 40 bits). A load energy cost of 2.82 fJ/bit was computed using eq. A6, where the distance between array tiles was approximated based on the 256 x 256 array area occupancy (24%) of the entire TPU chip (331 mm 2 ), resulting in a distance of 34.8 um between tiles. The internal array memory energy cost was obtained by scaling the 8-KB SRAM block to 40 bits, resulting in 1 . 25 pJ/byte × √ 5 / 8K = 31 . 25 fJ/byte.

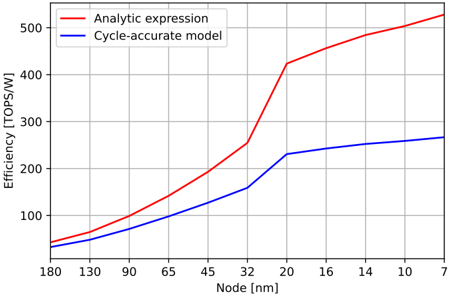

Lastly, using the techniques presented in [22], we scaled all the energy values (except for load, since it's not directly process-dependent) from 45-nm process to the appropriate technology nodes, ranging from 180 nm down to 7 nm. The results are presented in fig. 8. Both the analytic expression and the cycle-accurate model follow the same trend, with a slight divergence as the technology node is reduced. This can be accounted for the fact that e load does not depend on technology node, and its cost starts becoming a dominating factor in the overall energy cost since the other energy sources diminish as node size reduces.

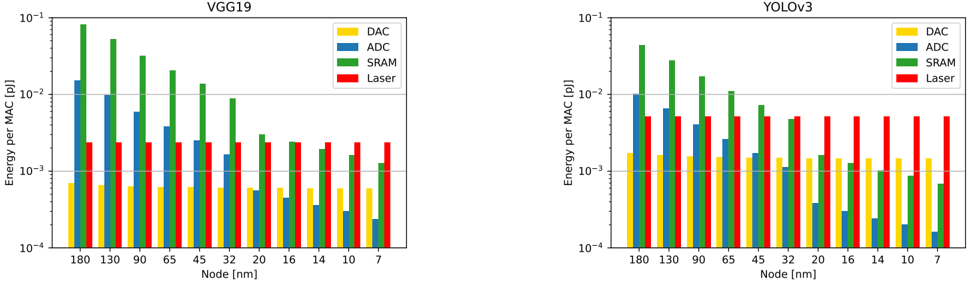

## B. Optical computer efficiency estimation

For the optical 4F system we considered 4-Mpixel SLMs, along with the same 24-MiB SRAM as in the systolic array analysis. With this, the SRAM is partioned into 2048 equal parts (one per metasurface row), resulting in a size-scaled SRAM read/write energy of 1.55 pJ/byte. The DAC, ADC and laser energies were obtained using the values in table IV considering a 2.5µ m pitch.

A comparison between the analytic expression and a cycle-accurate model of the optical 4F system is presented in fig. 9. The figure provides an overall curve for the efficiency, with significant gain when constructing the

FIG. 8: Efficiency comparison between a cycle-accurate model and the analytic expression given by eq. (8) and the values in table I. Both models are running YOLOv3 (1-Mpixel input image) using a 256 × 256

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Line Graph: Efficiency vs. Node Size Comparison

### Overview

The image is a line graph comparing the efficiency (in TOPS/W) of two computational models—Analytic expression and Cycle-accurate Model—as a function of transistor node size (in nanometers). The graph illustrates how efficiency trends diverge between the two models as node size decreases.

### Components/Axes

- **X-axis (Node [nm])**: Ranges from 180 nm to 7 nm, with labeled intervals at 180, 130, 90, 65, 45, 32, 20, 16, 14, 10, and 7 nm.

- **Y-axis (Efficiency [TOPS/W])**: Ranges from 0 to 8 TOPS/W, with increments of 1.

- **Legend**: Located in the top-left corner, with:

- **Red line**: Analytic expression

- **Blue line**: Cycle-accurate Model

### Detailed Analysis

1. **Analytic Expression (Red Line)**:

- Starts at ~0.5 TOPS/W at 180 nm.

- Shows a steep upward trend, reaching ~7.8 TOPS/W at 7 nm.

- Key data points:

- 130 nm: ~0.8 TOPS/W

- 90 nm: ~1.2 TOPS/W

- 65 nm: ~1.8 TOPS/W

- 45 nm: ~2.5 TOPS/W

- 32 nm: ~3.3 TOPS/W

- 20 nm: ~6.0 TOPS/W

- 16 nm: ~6.6 TOPS/W

- 14 nm: ~7.0 TOPS/W

- 10 nm: ~7.3 TOPS/W

- 7 nm: ~7.8 TOPS/W

2. **Cycle-accurate Model (Blue Line)**:

- Starts slightly below the Analytic line at 180 nm (~0.4 TOPS/W).

- Follows a similar upward trend but with a less steep slope.

- Key data points:

- 130 nm: ~0.7 TOPS/W

- 90 nm: ~1.0 TOPS/W

- 65 nm: ~1.5 TOPS/W

- 45 nm: ~2.1 TOPS/W

- 32 nm: ~2.9 TOPS/W

- 20 nm: ~5.3 TOPS/W

- 16 nm: ~5.8 TOPS/W

- 14 nm: ~6.2 TOPS/W

- 10 nm: ~6.5 TOPS/W

- 7 nm: ~7.0 TOPS/W

### Key Observations

- The Analytic expression consistently outperforms the Cycle-accurate Model across all node sizes, with the gap widening as node size decreases.

- Both models show exponential growth in efficiency as node size shrinks, but the Analytic model’s predictions are ~10–20% higher than the Cycle-accurate Model at smaller nodes (e.g., 7 nm).

- The Cycle-accurate Model’s efficiency plateaus slightly less sharply than the Analytic model, suggesting it accounts for real-world constraints.

### Interpretation

The data suggests that the Analytic expression provides optimistic efficiency estimates, likely due to idealized assumptions (e.g., ignoring leakage, variability, or overhead). In contrast, the Cycle-accurate Model incorporates practical limitations, resulting in more conservative but realistic predictions. The divergence between the two models highlights the trade-off between theoretical simplicity and real-world accuracy in computational design. For applications requiring precise performance metrics at advanced node sizes (e.g., 7 nm), the Cycle-accurate Model may be more reliable, while the Analytic expression could serve as a benchmark for theoretical exploration.

</details>

weight-stationary systolic array and a 24-MiB SRAM (as in the Google TPUv1).

FIG. 9: Comparison of eq. (24) with a cycle-accurate model of the optical 4F processor running YOLOv3 (1-Mpixel input image) using 4-Mpixel SLMs and a 24-MiB SRAM.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Line Graph: Efficiency vs. Node Size Comparison

### Overview

The image is a line graph comparing the efficiency (in TOPS/W) of two computational models—Analytic expression and Cycle-accurate model—as a function of transistor node size (in nanometers). The graph spans node sizes from 7 nm to 180 nm, with efficiency values ranging from 0 to 500 TOPS/W.

---

### Components/Axes

- **X-axis (Horizontal)**: Labeled "Node [nm]" with tick marks at 7, 10, 14, 16, 20, 32, 45, 65, 90, 130, and 180 nm.

- **Y-axis (Vertical)**: Labeled "Efficiency [TOPS/W]" with tick marks at 0, 100, 200, 300, 400, and 500.

- **Legend**: Located in the top-left corner, with:

- **Red line**: "Analytic expression"

- **Blue line**: "Cycle-accurate model"

---

### Detailed Analysis

#### Analytic Expression (Red Line)

- **Trend**: Starts at ~50 TOPS/W at 180 nm, increases gradually until ~32 nm (~250 TOPS/W), then rises sharply to ~520 TOPS/W at 7 nm.

- **Key Data Points**:

- 180 nm: ~50 TOPS/W

- 90 nm: ~100 TOPS/W

- 65 nm: ~150 TOPS/W

- 45 nm: ~200 TOPS/W

- 32 nm: ~250 TOPS/W

- 20 nm: ~420 TOPS/W

- 10 nm: ~500 TOPS/W

- 7 nm: ~520 TOPS/W

#### Cycle-Accurate Model (Blue Line)

- **Trend**: Starts at ~40 TOPS/W at 180 nm, increases gradually to ~260 TOPS/W at 7 nm.

- **Key Data Points**:

- 180 nm: ~40 TOPS/W

- 90 nm: ~80 TOPS/W

- 65 nm: ~110 TOPS/W

- 45 nm: ~140 TOPS/W

- 32 nm: ~170 TOPS/W

- 20 nm: ~220 TOPS/W

- 10 nm: ~250 TOPS/W

- 7 nm: ~260 TOPS/W

---

### Key Observations

1. **Divergence at Smaller Nodes**: The Analytic expression model predicts significantly higher efficiency gains at smaller nodes (e.g., 7 nm: ~520 TOPS/W vs. ~260 TOPS/W for Cycle-accurate).

2. **Slope Difference**: The red line (Analytic) has a steeper slope, especially between 32 nm and 7 nm, indicating a nonlinear relationship.

3. **Consistent Gap**: The Analytic model consistently outperforms the Cycle-accurate model across all node sizes.

---

### Interpretation

- **Model Behavior**: The Analytic expression model assumes idealized efficiency improvements with smaller nodes, while the Cycle-accurate model incorporates real-world constraints (e.g., power leakage, heat dissipation), resulting in more conservative estimates.