# Towards a Neural Lambda Calculus: Neurosymbolic AI Applied to the Foundations of Functional Programming

**Authors**: João M. Flach, Álvaro F. Moreira, Luís C. Lamb

> Institute of Informatics Federal University of Rio Grande do Sul Porto Alegre, RS, Brazil

## Abstract

In recent decades, deep neural network-based models have become the dominant paradigm in machine learning. The use of artificial neural networks in symbolic learning has recently been considered increasingly relevant. To study the capabilities of neural networks in the symbolic AI domain, researchers have explored the ability of deep neural networks to learn mathematical operations, logic inference, and even the execution of computer programs. The latter is known to be too complex a task for neural networks. Therefore, the results were not always successful and often required the introduction of biased elements in the learning process, in addition to restricting the scope of possible programs to be executed. In this work, we will analyze the ability of neural networks to learn how to execute programs as a whole. To do so, we propose a different approach. Instead of using an imperative programming language with complex structures, we use the Lambda Calculus ( $\lambda$ -Calculus), a simple and Turing-complete mathematical formalism, which serves as the basis for modern functional programming languages and is at the heart of computability theory. We will introduce the use of integrated neural learning and lambda-calculi formalization. We explore that the execution of a program in $\lambda$ -Calculus is based on reductions, and we will show that it is enough to learn how to perform these reductions so that we can execute any program. This manuscript is an improvement of a previous one posted in arXiv.org by Flach and Lamb, with significant contributions by Professor A.F. Moreira.

Keywords: Machine Learning, Lambda Calculus, Neurosymbolic AI, Neural Networks, Transformer Model, Sequence-to-Sequence Models, Computational Models.

## 1 Introduction

In machine learning, there has been a long-standing debate about the best way to approach the task of learning from data [19]. Rule-based inference emphasizes the use of explicit logical rules to reason about the data and make decisions, inferences, and predictions [12]. The other perspective, statistical learning, involves using mathematical models to automatically extract patterns and relationships from data [28, 19].

Deep neural networks have been used successfully in applications such as speech recognition, machine translation, and handwriting recognition. Recently, advances in the field have resulted in the introduction of models that are changing the landscape and allowing us to tackle a wider range of problems, including symbolic ones, using neural networks. When neural networks are applied to symbolic problems, the result is a hybrid approach that combines the advantages of both rule-based (in which logic-based ones are prominent) and statistical approaches. This combination falls into the realm of neurosymbolic AI [8, 16]. This field combines the statistical nature of machine learning with the logical nature of reasoning in AI [8], and has recently attracted the attention of researchers from several subfields of AI and computer science [12, 3] as it can contribute to offering explainable machine learning approaches. This interest is sparked by the need to build more robust AI models [11], as initially advocated by Valiant and now a subject of increasing interest in AI research [34, 33, 23].

In this work, we intend to explore the capacity of machine learning models, specifically the Transformer [35], to learn rules to perform computations, a task traditionally seen as too complex for neural networks to handle.

The idea of training a machine learning model to perform computations is relatively new. Most works in this field restrict the class of programs that the model can take as input and consist of teaching the model to understand rules for the evaluation of mathematical expressions.

We, on the other hand, use the Lambda Calculus ( $\lambda$ -Calculus) as the underlying framework [2]. Introduced by Alonzo Church in the 1930s [7], it is a simple, elegant, and Turing-complete formal system based on function abstraction that captures the notion of function definition and function application. It is the base for modern functional programming languages, such as OCaml, Racket, Haskell, and others [24], and it has become one of the main computational models alongside Turing Machines [5].

In essence, the $\lambda$ -Calculus can be seen as a programming language whose programs, called $\lambda$ terms, can be subject to reductions that can be interpreted as computations performed within the formalism. Applying a single reduction to a term represents a one-step computation in the $\lambda$ -Calculus. On the other hand, a full computation involves successive single reductions of a term until a normal form is obtained (if it has one), i.e., a term that cannot be reduced any further.

In summary, our research question is: “Can a machine learning model learn to perform computations?”. To gradually enhance our understanding of the subject matter and enable us to provide an answer to our research question, we propose the following two hypotheses.

- H1: The Transformer model can learn to perform one-step computation on Lambda Calculus.

- H2: The Transformer model can learn to perform full computation on Lambda Calculus.

Considering that lambda terms do not have a fixed size, we use a sequence-to-sequence (seq2seq) model, which can take inputs and produce outputs of any length. Specifically, we use a model that has been widely used for several types of applications and has also been tested for symbolic tasks: the Transformer [35].

## 2 Related Work

In [36], seq2seq models are used to learn to evaluate short computer programs using an imperative language with the Python syntax. However, their domain is restricted to short programs that can use just some arithmetic operations, variable assignment, if-statements, and for loops (not nested).

Some other studies also have worked towards developing models that learn algorithms or learn to execute computer code, including [15, 14, 32]. However, the domain of these works is restricted to some arithmetical operations or sequence computations (copying, duplicating, sorting). Additional work concentrates on acquiring an understanding of program representation. For example, [22] builds generative models of "natural source code", i.e., code written by humans and meant to be understood by other humans, while [25] applies neural networks to determine if two programs are equivalent.

The Transformer model [35] brought several key advancements and improvements compared to the state-of-the-art seq2seq models prevalent at that time. This new model boasted improved parallelism, reduced sequential processing requirements, and the ability to handle longer sequences, among other things. These innovative features have contributed to the widespread adoption of the Transformer model in various Machine Learning applications.

In a study by [18], the Transformer model was applied to learn how to symbolically integrate functions, producing very promising results. The authors demonstrated that the model was capable of learning to perform integrals in a way that was both accurate and efficient, outperforming existing methods in many cases. This study highlights the versatility and potential of the Transformer model, making it a valuable tool for tackling a wide range of machine learning tasks, especially in areas that require symbolic reasoning and mathematical operations.

In addition, the Transformer model has enabled recent developments in chatbot technologies. An example of a chatbot that has emerged as a result of this development is the ChatGPT available at: https://openai.com/blog/chatgpt/, which is based on a state-of-the-art AI model, the GPT-3, from [4]. These chatbots can answer questions about various subjects [27] and perform basic symbolic reasoning. However, their symbolic reasoning capability is still limited, giving some incorrect answers to straightforward questions.

In the present work, we shift from the imperative paradigm that all other works have used, to the functional paradigm, which is the case for the $\lambda$ -Calculus. With this, we abstract the idea of learning to compute computer programs to learn to perform reductions in $\lambda$ terms.

With this approach, in the sequel we will show that to learn one-step computation, we obtained an accuracy of $88.89\$ in completely random terms $\lambda$ and of $99.73\$ in terms representing Boolean expressions. For the full computation task, we obtained an accuracy of $97.70\$ for $\lambda$ terms that represent boolean expressions. Taking into account a string similarity metric, most of our predicted $\lambda$ terms were above $99\$ similar to the correct ones.

With these results, we think that the change to the functional paradigm and the use of the Transformer model are two improvements that will be relevant in future research.

## 3 The Lambda Calculus: A Summary

In this section, we present an overview of the main aspects of the Lambda Calculus that will be used in the paper.

### 3.1 Syntax

We start by defining the syntax of lambda terms. In the following, $x$ denotes a member of an infinite and countable set of variables.

**Definition 1 (λ𝜆\lambdaitalic_λ-terms)**

*The set of $\lambda$ -terms is defined by the following abstract grammar:

$$

\begin{array}[]{lcl}M,N,\ldots::=&x~{}~{}|~{}~{}\lambda x.M~{}~{}|~{}~{}M~{}N

\end{array}

$$*

Every variable is a $\lambda$ term; the term $\lambda x.M$ is a function with parameter $x$ and body $M$ ; the term $M\;N$ is the application of the term $M$ to the argument $N$ .

In a term $\lambda x.M$ , all the occurrences of variable $x$ inside $M$ are called bound occurrences.

### 3.2 Reductions

The main reduction of the formalism is a binary relation between terms called $\beta$ reduction, which is based on the substitution operation. A substitution takes a $\lambda$ term and substitutes a variable in it with another $\lambda$ term, similar to what is done in mathematics when a function is applied to an argument.

**Definition 2 (Redex)**

*A redex (reducible expression) is any subterm in the format

$$

(\lambda x.M)\;N

$$*

If a term has no redexes, the term is a normal form. Otherwise, the term is reducible. Now, the $\beta$ -reduction relation can be defined as:

**Definition 3 (One-Step)**

*$\beta$ -reduction ( $\rightarrow_{\beta}$ ) is the smallest relation on lambda terms such that $\displaystyle\frac{\begin{array}[]{@{}c@{}}M\rightarrow_{\beta}M^{\prime}\end{ array}}{\begin{array}[]{@{}c@{}}M\;N\rightarrow_{\beta}M^{\prime}\;N\end{array}}$ $\displaystyle\frac{\begin{array}[]{@{}c@{}}N\rightarrow_{\beta}N^{\prime}\end{ array}}{\begin{array}[]{@{}c@{}}M\;N\rightarrow_{\beta}M\;N^{\prime}\end{array}}$ $\displaystyle\frac{\begin{array}[]{@{}c@{}}M\rightarrow_{\beta}M^{\prime}\end{ array}}{\begin{array}[]{@{}c@{}}\lambda x.M\rightarrow_{\beta}\lambda x.M^{ \prime}\end{array}}$ $\displaystyle\begin{array}[]{@{}c@{}}(\lambda x.M)\;N\rightarrow_{\beta}M[x:=N ]\end{array}$*

In the above definition, $M[x:=N]$ is the notation used to represent the term that results from the substitution of all the occurrences of the variable $x$ in the term $M$ by a term $N$ .

The multi-step reduction $\mathrel{\mathrlap{\rightarrow}\mkern 1.0mu\rightarrow}_{\beta}$ is defined as the reflexive and transitive closure of $\rightarrow_{\beta}$ , as follows:

**Definition 4 (Multi-Step)**

*$\mathrel{\mathrlap{\rightarrow}\mkern 1.0mu\rightarrow}_{\beta}$ is the smallest relation on lambda terms such that $\displaystyle\frac{\begin{array}[]{@{}c@{}}\end{array}}{\begin{array}[]{@{}c@{ }}M\mathrel{\mathrlap{\rightarrow}\mkern 1.0mu\rightarrow}_{\beta}M\end{array}}$ $\displaystyle\frac{\begin{array}[]{@{}c@{}}M\mathrel{\mathrlap{\rightarrow} \mkern 1.0mu\rightarrow}_{\beta}N~{}N\mathrel{\mathrlap{\rightarrow}\mkern 1.0 mu\rightarrow}_{\beta}P\end{array}}{\begin{array}[]{@{}c@{}}M\mathrel{ \mathrlap{\rightarrow}\mkern 1.0mu\rightarrow}_{\beta}P\end{array}}$ $\displaystyle\frac{\begin{array}[]{@{}c@{}}M\rightarrow_{\beta}N\end{array}}{ \begin{array}[]{@{}c@{}}M\mathrel{\mathrlap{\rightarrow}\mkern 1.0mu \rightarrow}_{\beta}N\end{array}}$*

We say that a term $M$ has a normal form if there is a term $N$ such that $M\mathrel{\mathrlap{\rightarrow}\mkern 1.0mu\rightarrow}_{\beta}N$ and N is a normal form. Not every term has a normal form. One example is the term $(\lambda x.x\;x)\;(\lambda x.x\;x)$ that $\beta$ -reduces to itself.

A term can have more than one redex, which means that when we try to apply $\beta$ -reduction on a term, we have multiple possibilities. It is useful to have a strategy to select which redex we want to reduce at each computation step. An evaluation strategy is a function that chooses a single redex for every reducible term.

The two most common evaluation strategies are: (i) normal or leftmost order, where the leftmost, outermost redex of a term is reduced first, and the (ii) applicative or strict order, where rightmost, innermost redex of a term is reduced first. An outermost redex is a redex not contained in another redex, and an innermost redex is a redex that does not contain other redexes.

Choosing the evaluation strategy is important to clearly define which redex to reduce through $\beta$ -reduction. Furthermore, it is not just a matter of personal preference since there is a theorem that says that if a term $M$ has a normal form $P$ , then the normal order evaluation strategy will always reach $P$ from $M$ , in a finite number of $\beta$ reductions.

Therefore, in this work, we always use the normal order evaluation strategy when making $\beta$ reductions on terms to ensure that if the term has a normal form, we can reach it.

There is another type of reduction in the Lambda calculus, the $\alpha$ -reduction, which is responsible for the renaming of bound variables when necessary. But since we are following the Barendregt convention [2], which states that the name of the bound variables will always be unique, we do not need to consider $\alpha$ -reduction in this work.

### 3.3 Encodings and Computations

The notion of encoding is well-known in Computer Science; for example, our modern computers operate on binary code, on top of which we build abstract ideas. In Lambda calculus, the idea is the same. We can use the structure of function abstractions and applications to encode representations for numbers, booleans, strings, etc.

In this work, we adopt the Church Encoding for representing Boolean expressions. Examples of such encoding are given below:

$$

\begin{array}[]{lcl}\mathtt{true}&\equiv&\lambda x.\lambda y.x\\

\mathtt{false}&\equiv&\lambda x.\lambda y.y\\

\mathtt{and}&\equiv&\lambda p.\lambda q.~{}(p~{}q)~{}p\\

\mathtt{or}&\equiv&\lambda p.\lambda q.~{}(p~{}p)~{}q\\

\mathtt{not}&\equiv&\lambda p.(p~{}\mathtt{false})~{}\mathtt{true}\end{array}

$$

With the Boolean constants $\mathtt{true}$ and $\mathtt{false}$ , and with the Boolean operator $\mathtt{and}$ , we can codify other Boolean expression such as $\mathit{true~{}\wedge~{}false}$ as the lambda term $(\mathtt{and}\ \mathtt{true})~{}\mathtt{false}$ that reduces to its normal form $\mathtt{false}$ in four small-steps as follows:

$$

\begin{array}[]{lcl}&&(\mathtt{and}\ \mathtt{true})~{}\mathtt{false}\\

&\equiv&((\lambda p.\lambda q.~{}(p~{}q)~{}p)~{}\mathtt{true})~{}\mathtt{false

}\\

&\rightarrow_{\beta}&(\lambda q.~{}(\mathtt{true}\ ~{}q)~{}\mathtt{true})~{}

\mathtt{false}\\

&\rightarrow_{\beta}&(\mathtt{true}\ ~{}\mathtt{false})~{}\mathtt{true}\\

&\equiv&((\lambda x.\lambda y.~{}x)~{}\mathtt{false})~{}\mathtt{true}\\

&\rightarrow_{\beta}&(\lambda y.~{}\mathtt{false})~{}\mathtt{true}\\

&\rightarrow_{\beta}&\mathtt{false}\\

&\equiv&\lambda x.\lambda y.y\end{array}

$$

### 3.4 Prefix Notation

The lambda terms presented in the previous section are written in what we call traditional notation. For this work, we only consider $\lambda$ -terms in the prefix notation for the learning tasks. To write lambda terms in the prefix notation, we need to add the application symbol (@) to the syntax. An application written as $(N~{}M)~{}P$ in the traditional notation, for example, is written as $\textit{@}\textit{@}M~{}N~{}P$ in the prefix notation.

We also change the $\lambda$ symbol for this representation using the uppercase letter “L”. The terms $\mathtt{true}$ , $\mathtt{false}$ , and $\mathtt{and}$ in the prefix notation are:

$$

\begin{array}[]{lcl}\mathtt{true}&\equiv&\mathrm{L}~{}x~{}\mathrm{L}~{}y~{}x\\

\mathtt{false}&\equiv&\mathrm{L}~{}x~{}\mathrm{L}~{}y~{}y\\

\mathtt{and}&\equiv&\mathrm{L}~{}p~{}\mathrm{L}~{}q~{}@~{}@~{}p~{}q~{}p\\

\end{array}

$$

We chose prefix notation because it offers a well-organized structure derived from a tree-like representation. This structure allows for a more straightforward representation of expressions as it is unambiguous and eliminates the need for parentheses. This makes it easier to process expressions, particularly for the purposes of learning and understanding complex mathematical concepts.

### 3.5 De Bruijn Index

The De Bruijn index is a tool for defining $\lambda$ -terms without having to name the variables [10]. This approach can benefit us, since the terms are agnostic to the variable naming and are simpler in the sense that they are shorter.

Basically, it just replaces the variable names by natural numbers. The abstraction no longer has a variable name, and every variable occurrence is represented by a number, indicating at which abstraction it is binded. These nameless terms are called de Bruijn terms, and the numeric variables are called de Bruijn indices [26].

For simplicity, we denote the free variables with the number $0 0$ , and the indices of the bound variables start at $1$ . This notation assumes that each de Bruijn index corresponds to the number of binders (abstractions) under which the variable is.

This notation can also be used in conjunction with the prefix notation. We will use it to compare with the traditional notation and see if there is any advantage in using a notation with no variable names for the tasks we are interested in.

Here we have examples of lambda terms in de Bruijn notation next to their prefixed notation:

$$

\begin{array}[]{lclll}\mathtt{true}&\equiv&\lambda.\lambda.2&-&\quad\mathrm{L}

~{}\mathrm{L}~{}2\\

\mathtt{false}&\equiv&\lambda.\lambda.1&-&\quad\mathrm{L}~{}\mathrm{L}~{}1\\

\mathtt{and}&\equiv&\lambda.\lambda.~{}(2~{}1)~{}2&-&\quad\mathrm{L}~{}\mathrm

{L}~{}\textit{@}~{}\textit{@}~{}2~{}1~{}2\\

\end{array}

$$

## 4 ML and Neurosymbolic AI

Machine learning (ML) is a subfield of artificial intelligence that involves the development of algorithms and models that can learn from data to make predictions or decisions. ML algorithms can be trained on large amounts of data, allowing them to identify patterns and relationships in the data and improve their accuracy over time [13].

In supervised learning, algorithms are trained on labeled data, where the output or target variable is known [13]. These algorithms can make predictions about new and unseen data and can be used in applications such as image classification or stock price prediction, for instance.

Unsupervised learning algorithms are trained on unlabeled data where the output or target variable is not known. These algorithms can identify patterns and relationships in the data and can be used in applications that require grouping data into clusters or detecting anomalies in them.

Reinforcement learning algorithms are designed to learn from interactions with an environment. These algorithms receive a reward or penalty for each action they take, and they can be used in various applications, such as game-playing and robotics.

This work focuses on supervised learning, particularly on connectionist AI (neural networks).

### 4.1 Neurosymbolic AI

Neurosymbolic AI is a field of artificial intelligence that combines the strengths of both symbolic AI and connectionist AI. Symbolic AI represents knowledge in a structured and human-readable form and uses rule-based reasoning systems to perform tasks. Connectionist AI, on the other hand, represents knowledge as patterns in a network of simple processing units. Neurosymbolic models aim to merge the two approaches by incorporating symbolic reasoning and/or representation with neural networks’ learning and generalization capabilities [8].

The paper [16] presents six different forms of neurosymbolic AI, varying in how and where the two approaches are combined. In the present work, we use the form Neuro: Symbolic $\rightarrow$ Neuro, where we take a symbolic domain (reductions of the $\lambda$ calculus) and apply it to a neural architecture (the Transformer).

### 4.2 Neural Networks

Artificial Neural Networks (NNs) were inspired by the structure and function of the human brain and are designed to process large amounts of data to identify patterns and relationships. Their fundamental unit is the neuron, which essentially "activates" when a linear combination of its inputs surpasses a certain threshold. A Neural Network is merely a collection of interconnected neurons whose properties are determined by the arrangement of the neurons and their characteristics [29].

These neurons are often organized into layers. The input data are fed into the first layer, and the output of each neuron in a given layer is used as the input for the next layer until the final layer produces the network output. The connections between the neurons are represented by weights that are updated during the training process to minimize the error between the predicted output and the actual output.

NNs have been applied to a wide range of tasks, including image classification, speech recognition, and natural language processing. One of the main advantages of NNs is their ability to model nonlinear relationships between inputs and outputs. This makes NNs a powerful tool for solving complex real-world problems. However, traditional NNs have fixed-size inputs and outputs, which are not suitable for our desired tasks, which have inputs and outputs of variable sizes.

### 4.3 Sequence-to-sequence Models

Although neural networks are versatile and effective, they are only suitable for problems where inputs and targets can be represented by fixed-dimensional vector encodings. This is a significant constraint, as many crucial problems are better expressed using sequences of unknown lengths, such as speech recognition and machine translation. It is evident that a versatile method that can learn to translate sequences to sequences without being restricted to a specific domain would be valuable [30].

Sequence-to-sequence (seq2seq) models emerged from this need. They are a type of deep learning model that is used for tasks that involve mapping an input sequence to an output sequence of variable length. They have traditionally been applied to various natural language processing tasks, such as machine translation, text summarization, and text generation. The assembly of seq2seq models can be done according to different model architectures such as RNN [21], LTSM [30], GRU [6] and Transformer [35].

Given that we can see the tasks we want to accomplish in this work as machine translation tasks, we have opted to employ the sequence-to-sequence model using the Transformer.

### 4.4 The Transformer Model

The Transformer model is a type of neural network architecture that was introduced in [35]. It is designed to handle sequential data, such as natural language, and has quickly become one of the most popular models for tasks such as natural language processing, machine translation, text classification, and question answering. One of the key innovations of the Transformer model is its use of a self-attention mechanism, which allows the model to dynamically weigh the importance of different parts of the input sequence. This allows the Transformer to capture long-range dependencies in the data, which is particularly useful for processing sequences of variable lengths. Another advantage of the Transformer is its parallelization capacity, which allows it to be trained efficiently on large amounts of data. The Transformer model can be trained in parallel on multiple sequences, which is not possible with other traditional sequence-to-sequence models.

We chose the Transformer model mainly because to perform the $\beta$ -reduction over lambda terms, it is necessary to substitute every occurrence of the variable in question with a term, and we believe that the self-attention mechanism can be used to “pay attention” to all these variable occurrences when performing the task.

## 5 Building Experiments

For each of the hypotheses outlined in the Introduction, we propose a different task for our model to learn. The hypothesis H1 claims the model can perform a single-step computation in the $\lambda$ -Calculus, that is, it can take a $\lambda$ -term and transform it according to the single-step $\beta$ -reduction rules of Definition 3 following a normal order strategy. We call this the One-Step Beta Reduction (OBR) task.

The hypothesis H2 is that the model can perform multiple reduction steps in Lambda Calculus, taking a normalizable $\lambda$ -term, i.e., a $\lambda$ -term that has a normal form, and transforming it into its normal form through multiple beta reduction steps, following a normal order strategy. We call the task associated with this hypothesis the Multi-Step Beta Reduction (MBR) task.

The primary focus of our research question aligns with the second hypothesis. However, we chose to begin with a more straightforward hypothesis as a starting point. The first task is considered easier because it requires the execution of a single computational step, which is less complex than performing a full computation. This approach helps us gradually build our understanding and confidence before moving on to the more challenging second hypothesis.

To support these hypotheses, we generate several datasets for each of the tasks and use them to train machine learning models. By training the models on these datasets, we will determine if the models can learn and perform the tasks associated with each hypothesis.

### 5.1 On Training

To learn the tasks mentioned before, we use a neural model. Since the $\lambda$ -terms we are using do not have a fixed size, we need our model to accept inputs of varying lengths and generate outputs accordingly. To achieve this, we use a sequence-to-sequence model (seq2seq), which allows for inputs and outputs of different sizes. Specifically, we use the Transformer model proposed by [35]. This model has been widely used for various applications, including symbolic ones, as demonstrated by [18].

For the hyperparameters, preliminary tests showed us that the parameters used by [18] were good enough for our tasks. If needed, they can be adjusted in the training process. So, the initial hyperparameters are the following:

- Number of encoding layers - 6

- Number of decoding layers - 6

- Embedding layer dimension - 1024

- Number of attention heads - 8

- Optimizer - Adam [17]

- Learning rate - $1\times 10^{-4}$

- Loss function - Cross Entropy

#### 5.1.1 Experimental Setting

The experiments were conducted on a server with the following configuration:

- CPU: Intel(R) Core(TM) i7-8700 CPU @ 3.20GHz

- RAM: 32 GB (2 x 16 Gb) DDR4 @ 2667 MHz

- GPU: Quadro P6000 with 24 Gb

- OS: Ubuntu 18.04.5 LTS

Our initial goal was to run each training session for 12 to 24 hours. The preliminary results showed that each training consisting of $50$ epochs with an epoch size of $50000$ would take between 12 and 30 h to complete. So, we chose this arrangement. This configuration allows the model to process a total of $2.5\times 10^{6}$ equations, which is $2.5$ times the size of the dataset.

With this machine, model, and configuration, we can safely have inputs with up to 250 tokens. With more than that, we end up with a memory shortage.

### 5.2 Lambda Sets and Datasets

All the datasets generated for this work are simple text files with each line in the format

$$

\mathtt{BETA~{}M~{}N}

$$

For the OBR task, the pair of terms in BETA M N is interpreted as: $\lambda$ term $N$ results of a one-step reduction of $\lambda$ term $M$ following the normal order strategy. For the MBR task, BETA M N is interpreted as: $\lambda$ term $N$ is the normal form that results from a multi-step reduction of $\lambda$ term $M$ , following the normal order strategy.

To generate the datasets on which the models will train, we first generate Lambda Sets (LSs) containing only lambda terms. From these LSs, we generate the datasets needed for the training. We start generating the following three LSs:

- Random Lambda Set (RLS): This LS is generated as random and unbiased as possible. Thus, this LS can have terms that do not have a normal form, and also terms that are codifications of Boolean expressions. With the datasets generated from this LS, we want to assert that the model can learn $\beta$ -reduction, regardless of whether the input terms represent meaningful codifications or have a normal form.

- Closed Bool Lambda Set (CBLS): This LS has its terms representing closed Boolean expressions. Thus, all the terms in this LS have a normal form. With the datasets generated from this LS, we want to assert that the model can learn the $\beta$ -reductions from meaningful codifications. In addition, these datasets are useful to validate the models trained with the RLS datasets, i.e., to validate whether the model learned from random terms can perform computations on terms that are meaningful codification.

- Open Bool Lambda Set (OBLS): The terms in this LS are codifications of open Boolean expression, that is, with free variables in them. With the datasets generated from this LS, we want to assert that the model can learn $\beta$ -reduction from terms that are meaningful codifications, even if they have free variables.

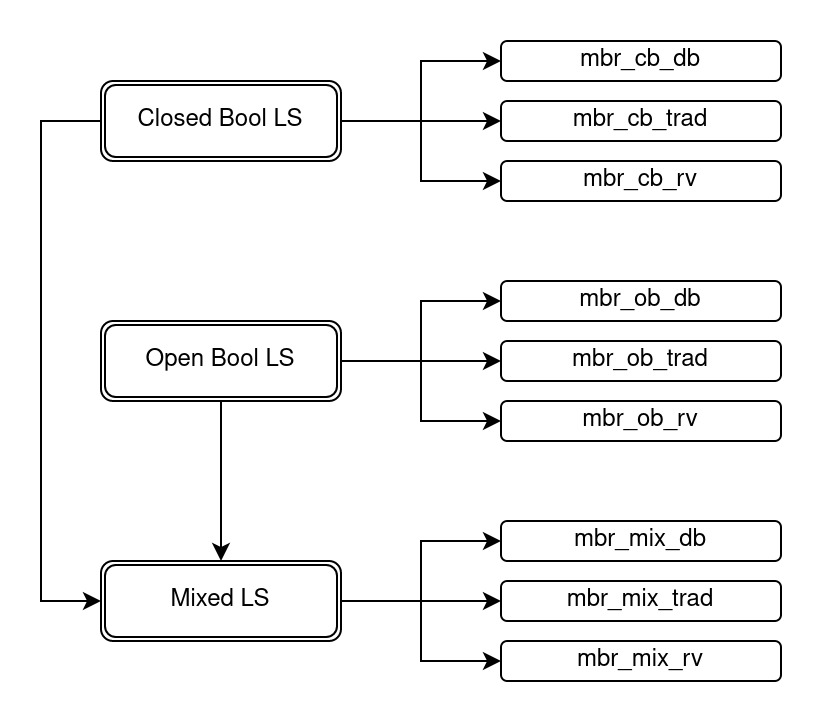

For the One-Step Beta Reduction Task (OBR), we generate datasets based on the three LSs proposed. However, for the Multi-Step Beta Reduction task, we do not utilize the Random LS to generate datasets, as the terms produced randomly may not have a normal form and result in an infinite loop during the multi-step $\beta$ -reduction.

In addition to the LS mentioned above, we create an additional LS for each task, which we refer to as a Mixed Lambda Set (MLS). For OBR, the MLS comprises terms coming from RLS, CBLS, and OBLS in the same proportion. For the MBR, the MLS is formed by terms from CBLS and OBLS, which are in the same proportion. With these mixed LSs, we want to assert that the model can learn from a domain that considers several kinds of terms.

From each of the four lambda sets presented above, we generate three datasets (all in the prefix notation), one for each of the following schemes for variable-naming:

- De Bruijn: this variable naming convention, presented in Section 3.5 is a way of representing $\lambda$ -terms without naming the variables. It results in shorter terms and presents a factor that can be beneficial for our model.

- Traditional: Datasets following this convention are generated from the datasets in the de Bruijn notation by replacing the de Bruijn indexes by traditional variable names, such as $a$ , $b$ , $c$ , $x$ , $y$ , $z$ , etc, following alphabetical order. We utilize this convention because we want to compare the results of the learning process using the de Bruijn convention with those using the traditional convention

- Random Vars: This convention also uses the traditional convention for variable naming. However, for this version, we take the de Bruijn datasets and replace the de Bruijn indexes by traditional variable names chosen randomly. We utilize this approach because we want to check if the way we name the variables matters for the models’ accuracy.

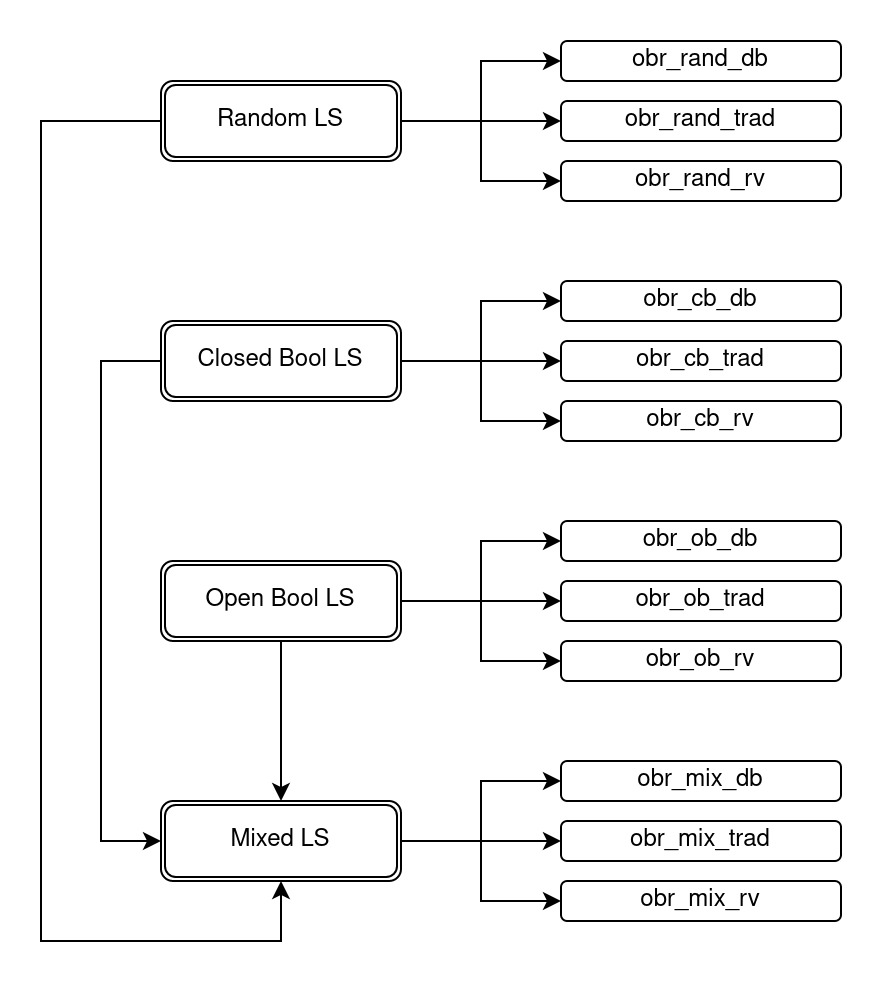

In summary, for the OBR task, we ultimately have a total of 12 datasets, as illustrated in Figure 1, and for the MBR task, we have nine datasets, as shown in Figure 2. It should be noted that the same CBLS and OBLS are utilized by both the OBR and the MBR tasks, meaning that the initial $\lambda$ -terms for the datasets that employ these same LS are consistent across both tasks. These datasets provide us with a broad set of test cases to evaluate the performance of our models.

<details>

<summary>extracted/6501326/datasets_obr.jpeg Details</summary>

### Visual Description

## Diagram: Taxonomy of LS (Labeling Scheme) Variants

### Overview

The image is a hierarchical flowchart or taxonomy diagram illustrating four primary categories of "LS" (likely an acronym for "Labeling Scheme" or similar technical term) and their respective sub-variants. The diagram uses a tree structure with rounded rectangular nodes connected by directional arrows to show relationships and derivations.

### Components/Axes

The diagram is organized into two main columns:

* **Left Column (Primary Categories):** Contains four vertically aligned, double-bordered rounded rectangles. From top to bottom, they are labeled:

1. `Random LS`

2. `Closed Bool LS`

3. `Open Bool LS`

4. `Mixed LS`

* **Right Column (Sub-variants):** Contains twelve single-bordered rounded rectangles, grouped in sets of three. Each set is horizontally aligned with and connected to a primary category on the left. The labels follow a consistent naming pattern: `obr_[type]_[variant]`.

**Legend/Key:** There is no separate legend. The naming convention itself acts as a key:

* `obr_`: A common prefix for all sub-variant labels.

* `[type]`: Corresponds to the primary category (`rand`, `cb`, `ob`, `mix`).

* `[variant]`: One of three suffixes: `db`, `trad`, or `rv`.

**Spatial Grounding & Flow:**

* The primary categories are positioned in a vertical stack on the left side of the diagram.

* Each primary category has a rightward-pointing arrow that branches into three separate arrows, each leading to one of its three sub-variants on the right.

* There are two additional directional arrows indicating relationships between primary categories:

1. A downward arrow from `Open Bool LS` to `Mixed LS`.

2. An upward arrow from the bottom of the main vertical connecting line (which links all primary categories) to `Mixed LS`.

### Detailed Analysis / Content Details

**Complete Node Inventory:**

1. **Primary Category: Random LS**

* Sub-variant 1: `obr_rand_db`

* Sub-variant 2: `obr_rand_trad`

* Sub-variant 3: `obr_rand_rv`

2. **Primary Category: Closed Bool LS**

* Sub-variant 1: `obr_cb_db`

* Sub-variant 2: `obr_cb_trad`

* Sub-variant 3: `obr_cb_rv`

3. **Primary Category: Open Bool LS**

* Sub-variant 1: `obr_ob_db`

* Sub-variant 2: `obr_ob_trad`

* Sub-variant 3: `obr_ob_rv`

4. **Primary Category: Mixed LS**

* Sub-variant 1: `obr_mix_db`

* Sub-variant 2: `obr_mix_trad`

* Sub-variant 3: `obr_mix_rv`

**Relationship Flow:**

* The primary relationship is a one-to-three mapping from each LS type to its specific implementation variants (`db`, `trad`, `rv`).

* The secondary arrows suggest that `Mixed LS` is derived from or related to both `Open Bool LS` and the overarching system connecting all LS types.

### Key Observations

* **Consistent Structure:** Every primary category has exactly three sub-variants, following the identical `db`, `trad`, `rv` pattern.

* **Naming Convention:** The labels are highly systematic, using underscores and abbreviations (`cb` for Closed Bool, `ob` for Open Bool, `mix` for Mixed).

* **Unique Position of Mixed LS:** `Mixed LS` is the only primary category that receives input arrows from two sources (`Open Bool LS` and the main vertical line), indicating it may be a composite or hybrid type.

* **Visual Hierarchy:** The use of double borders for primary categories and single borders for sub-variants clearly establishes a parent-child relationship.

### Interpretation

This diagram represents a **classification system for technical labeling schemes (LS)**. It suggests a research or engineering context where different foundational approaches to labeling (Random, Closed Boolean, Open Boolean) are explored, each leading to three concrete implementation variants (likely standing for "database," "traditional," and "reverse" or similar technical terms).

The structure implies that "Mixed LS" is not a fundamental type like the others but is instead a **synthesis or combination** derived from the "Open Bool LS" approach and potentially incorporating elements from the entire LS family (as indicated by the arrow from the main vertical connector). This makes "Mixed LS" a potentially more complex or advanced scheme.

The diagram's purpose is to provide a clear, visual taxonomy for navigating different LS options and understanding their provenance. It would be essential for documentation in fields like database theory, data modeling, or formal verification where such labeling schemes are used. The absence of numerical data indicates this is a conceptual or architectural map rather than a performance comparison.

</details>

Figure 1: Scheme of how all the datasets for the OBR tasks are generated. It starts with the three Lambda Sets (RLS, CBLS, and OBLS) and ends with all 12 datasets available for the OBR task.

<details>

<summary>extracted/6501326/datasets_mbr.jpeg Details</summary>

### Visual Description

## Diagram: Boolean Logic System (LS) Hierarchy and Variants

### Overview

The image is a hierarchical flowchart or classification diagram illustrating three primary categories of "Boolean LS" (likely Boolean Logic Systems) and their respective sub-variants. The diagram uses a top-down and left-to-right flow, with main categories on the left branching into specific implementations on the right. Arrows indicate relationships and dependencies between the main categories.

### Components/Axes

The diagram consists of two main columns of rectangular boxes connected by directional arrows.

**Left Column (Main Categories):**

* Three boxes with double-line borders, arranged vertically.

* **Top Box:** "Closed Bool LS"

* **Middle Box:** "Open Bool LS"

* **Bottom Box:** "Mixed LS"

**Right Column (Sub-Variants):**

* Nine boxes with single-line borders, arranged in three groups of three, each group aligned horizontally with its parent category on the left.

* **Group 1 (connected to "Closed Bool LS"):**

* Top: "mbr_cb_db"

* Middle: "mbr_cb_trad"

* Bottom: "mbr_cb_rv"

* **Group 2 (connected to "Open Bool LS"):**

* Top: "mbr_ob_db"

* Middle: "mbr_ob_trad"

* Bottom: "mbr_ob_rv"

* **Group 3 (connected to "Mixed LS"):**

* Top: "mbr_mix_db"

* Middle: "mbr_mix_trad"

* Bottom: "mbr_mix_rv"

**Connections (Arrows):**

1. From "Closed Bool LS" (left), three separate arrows point rightward to each of its three sub-variants ("mbr_cb_db", "mbr_cb_trad", "mbr_cb_rv").

2. From "Open Bool LS" (left), three separate arrows point rightward to each of its three sub-variants ("mbr_ob_db", "mbr_ob_trad", "mbr_ob_rv").

3. From "Mixed LS" (left), three separate arrows point rightward to each of its three sub-variants ("mbr_mix_db", "mbr_mix_trad", "mbr_mix_rv").

4. A vertical arrow points downward from the bottom of the "Open Bool LS" box to the top of the "Mixed LS" box.

5. A long, angled arrow originates from the left side of the "Closed Bool LS" box, travels down the left margin, and points to the left side of the "Mixed LS" box.

### Detailed Analysis

The diagram defines a clear taxonomy:

* **Primary Classification:** Three types of Boolean Logic Systems: **Closed**, **Open**, and **Mixed**.

* **Secondary Classification (Sub-variants):** Each primary type has three associated variants, denoted by a consistent naming convention: `mbr_[type]_[variant]`.

* The `[type]` abbreviation corresponds to the primary category: `cb` (Closed Bool), `ob` (Open Bool), `mix` (Mixed).

* The `[variant]` suffix is consistent across all primary types: `db`, `trad`, `rv`.

* **Relationships:** The arrows indicate that both "Closed Bool LS" and "Open Bool LS" are precursors or inputs that lead to or can be combined into "Mixed LS". The "Mixed LS" category is therefore dependent on or derived from the other two.

### Key Observations

1. **Symmetrical Structure:** The diagram is highly symmetrical. Each primary category has exactly three sub-variants with identical suffixes (`_db`, `_trad`, `_rv`), suggesting a parallel classification system applied to each LS type.

2. **Central Role of "Mixed LS":** The "Mixed LS" box is the only one receiving arrows from the other two main categories, positioning it as a synthesis or integration point.

3. **Naming Convention:** The labels use a compact, technical abbreviation style (`mbr`, `cb`, `ob`, `mix`, `db`, `trad`, `rv`). Without external context, the exact meaning of these abbreviations is unknown, but their consistent use is a key feature of the diagram's information.

### Interpretation

This diagram represents a **classification schema for Boolean Logic Systems (LS)**. It suggests a theoretical or technical framework where logic systems are first categorized as either "Closed" or "Open" (possibly referring to their axiomatic foundations or operational scope). A third category, "Mixed," is presented as a composite system that incorporates elements from both Closed and Open systems, as evidenced by the direct dependency arrows.

The sub-variants (`_db`, `_trad`, `_rv`) likely represent different **implementation methods, operational modes, or specific algorithms** within each logic system type. The fact that the same three variant labels are applied to all three LS types implies a common set of implementation choices or evaluation criteria is being applied across the different foundational logic types.

The overall structure implies a progression or relationship: one can have pure Closed or Open systems, but also a hybrid "Mixed" system that draws from both. This could be relevant in fields like formal verification, knowledge representation, or computational logic, where different reasoning paradigms (closed-world vs. open-world assumptions) might be combined.

</details>

Figure 2: Scheme of how all the datasets for the MBR tasks are generated. It starts with the two Lambda Sets (CBLS, and OBLS), and ends with all nine datasets that are available for the MBR task.

Each dataset contains around one million examles (pairs of terms BETA M N), and we further divide each dataset into training, validation, and test sets. Keeping with the methodology described by [18], we allocate approximately ten thousand examples for both the validation and the test sets. This division of the datasets into training, validation, and test sets allows us to effectively train our models, tune their parameters, and evaluate their performance on independent data. Using a large number of examples in each dataset and following established best practices, we want to ensure that our results are robust and representative of the underlying task.

#### 5.2.1 Term Sizes

As mentioned in Section 5.1.1, the maximum number of tokens that our configuration allows is 250. With this restriction, we were able to generate the terS sizes listed in Table 1. Although we calculated these sizes using the datasets with terms in the traditional variable-naming convention, we expect similar results for the other datasets (with the exception of the de Bruijn convention, which should produce smaller term sizes).

| OBR closed bool open bool | random $9$ $5$ | $5$ $193$ $181$ | $249$ $97.6\pm 26.76$ $66.46\pm 21.73$ | $127.2\pm 64.99$ |

| --- | --- | --- | --- | --- |

| mixed | $5$ | $249$ | $86.93\pm 46.56$ | |

| MBR | closed bool | $9$ | $193$ | $97.55\pm 26.75$ |

| open bool | $5$ | $181$ | $66.46\pm 21.72$ | |

| mixed | $5$ | $181$ | $77.96\pm 28.02$ | |

Table 1: Table showing the minimum, maximum, and average sizes of the input $\lambda$ -terms for each dataset. The datasets considered were the ones that use traditional variable naming.

#### 5.2.2 Number of Reductions

For certain Lambda sets, we iteratively generate the reductions until a normal form is reached for each term. Thus, another important metric we consider is the number of reductions each term had to undergo to reach its normal form. This number can be seen as the number of computational steps necessary to evaluate a given term. Table 2 shows the average number of reductions that the terms of each Lambda Set have undergone to generate their respective datasets for the OBR and MBR tasks.

| closed bool open bool mixed | $3$ $1$ $2$ | $100$ $100$ $100$ | $18.8\pm 12.22$ $18.88\pm 10.42$ $18.82\pm 11.32$ |

| --- | --- | --- | --- |

Table 2: Table showing the minimum, maximum, and average number of reductions that the terms of each Lambda Set have undergone The Mixed Lambda Set considered here is the one with terms coming only from the closed bool and open bool Lambda Sets.

### 5.3 Code and Implementation

In this work, we used two distinct pieces of code. The first piece of code, adapted from [18], was responsible for generating what we call intermediate $\lambda$ -terms. These intermediate lambda terms follow the syntax given by the grammar in Definition 1. This piece of code also handled the learning process.

The second piece of code is responsible for generating, from the intermediate lambda terms, the lambda terms in the formats required for the lambda sets, as described in Section 5.2. It also implements a simulator that performs reductions of these lambda terms. From the simulation results, it generates the datasets with pairs $\mathtt{BETA~{}M~{}N}$ where the lambda term $N$ is the result of the lambda term $M$ reduction.

We observe that after generating the datasets, they must first be cleaned by (i) deleting repeated pairs, and (ii) because we want all pairs to represent $\beta$ -reductions, we also delete all pairs $\mathtt{BETA~{}M~{}N}$ with the first component $M$ in normal form.

### 5.4 Accuracy and Similarity

To evaluate the performance of the model, its accuracy in predicting the data in the test data set is calculated and recorded after each epoch. This accuracy metric measures how well the model can make correct predictions on the data it has seen during training. For each of the models trained, we display a graph showing the evolution of the model’s accuracy (y-axis) over the epochs (x-axis).

The accuracy of the model determines whether the predicted string matches the expected output. However, accuracy may not be the only relevant metric for evaluating the performance of a model in text generation or other similar tasks. In some cases, it may be useful to measure the similarity between the predicted and expected strings, even if they are not identical. So, additionally, we used a string similarity metric to compare how close the predicted string is to the expected one. For this, we used a common string similarity metric, the Levenshtein distance, which measures the number of changes (insertions, deletions, or substitutions) needed to transform one string into the other [20]. This metric provides the absolute difference between the two strings, so we divide this distance by the maximum distance possible between the two strings (which is the length of the longer string) to generate a percentage of dissimilarity. Then, we subtract this value from 1 to get a percentage of similarity between the two strings. So, we also provide a string similarity value for each trained model. The formula used is as follows:

$$

\mathsf{str\_sim}\,(s_{1},s_{2})=~{}~{}1-\frac{\mathsf{lev\_dist}\,(s_{1},s_{2

})}{\mathsf{max}\,(\mathsf{len}(s_{1}),\mathsf{len}(s_{2}))}

$$

As part of our analysis, we also assessed the capacity of the models trained with each dataset to evaluate the other datasets. We achieve this by performing additional evaluations with each of the already trained models. For this, we use a model trained with one dataset to evaluate the other datasets that use the same notation and are designed for the same task. For example, we take the model that trained on the obr_rand_trad dataset and evaluate how it performs on the obr_cb_trad, obr_ob_trad and obr_mix_trad datasets with respect to accuracy and similarity.

By evaluating a model on the other datasets, which it did not train on, we can better understand its strengths and weaknesses and its ability to generalize to new data. If the model performs well on other datasets of the same type, it may be a good sign that it has learned meaningful patterns in the data and can be applied to new, unseen data. If the model performs poorly on other datasets, it may indicate that the model has, for instance, overfit to the original training data or the data was not adequate.

## 6 Experimental Results

Some trainings experienced an oscillation in accuracy, indicating that the initial learning rate ( $1\times 10^{-4}$ ) was too high. So, we had to adjust the learning rate for these trainings. We initially used the same value for all trainings, but decreased it based on the degree of accuracy oscillation. Table 3 shows the final learning rates for each training performed. Although the learning rate was adjusted for different trainings, we kept it consistent for the three conventions in each dataset for comparison purposes. It is important to note that we did not thoroughly search for the optimal learning rate; instead, we selected a parameter that resulted in satisfactory and converging accuracy.

| One-Step Beta Reduction | random | $1\times 10^{-4}$ |

| --- | --- | --- |

| closed bool | $6\times 10^{-5}$ | |

| open bool | $8\times 10^{-5}$ | |

| mixed | $1\times 10^{-4}$ | |

| Multi-Step Beta Reduction | closed bool | $3\times 10^{-5}$ |

| open bool | $5\times 10^{-5}$ | |

| mixed | $5\times 10^{-5}$ | |

Table 3: Values for the learning rate hyperparameter chosen for each of the tasks and lambda sets trained. The value started with $1\times 10^{-4}$ , and it was lowered as the trained showed an unacceptable oscillation, indicating the learning would not converge.

### 6.1 Training Results

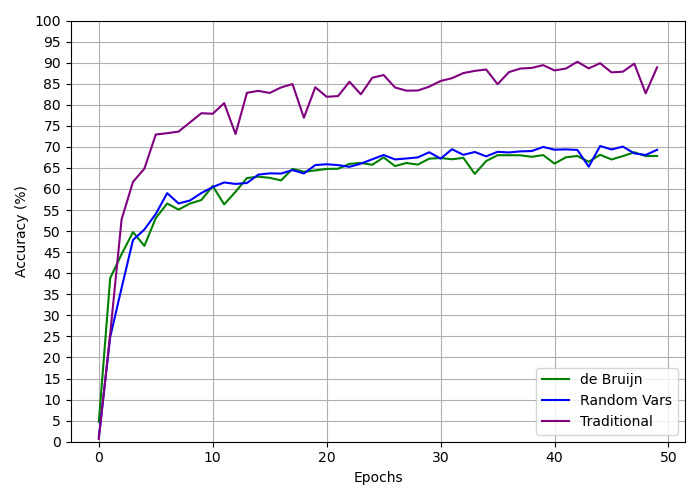

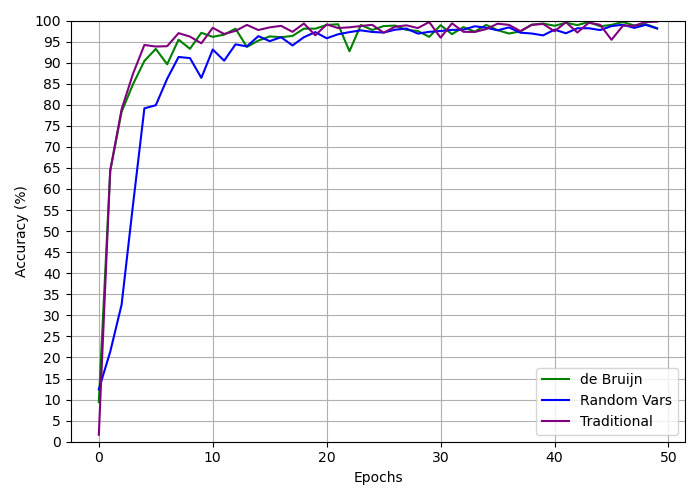

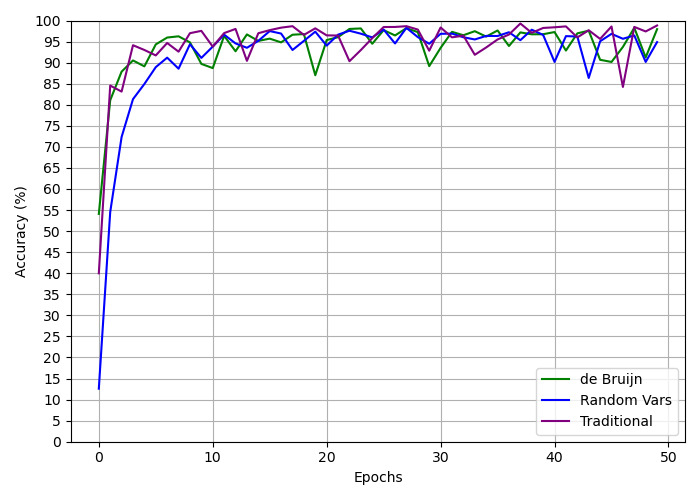

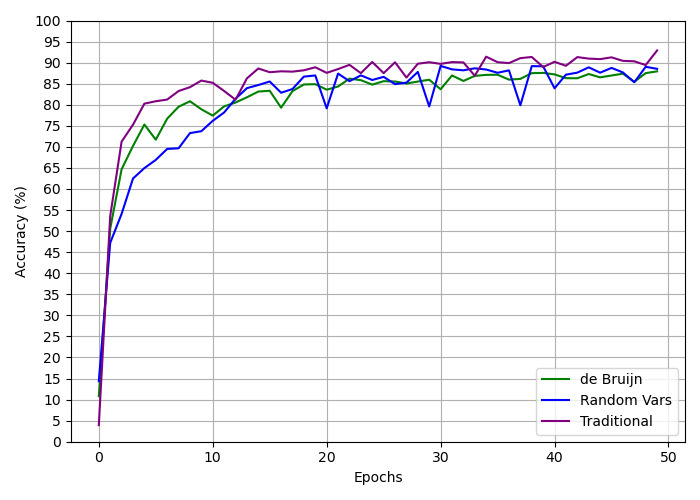

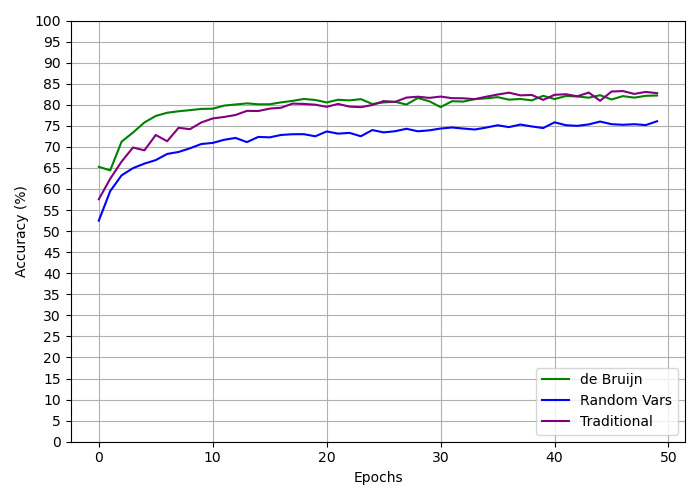

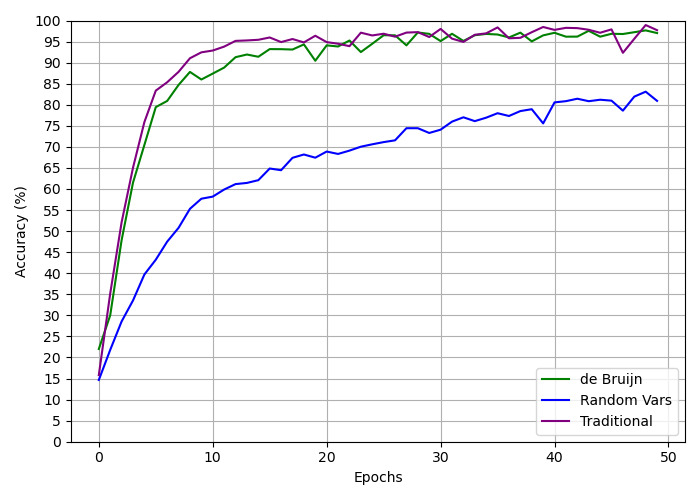

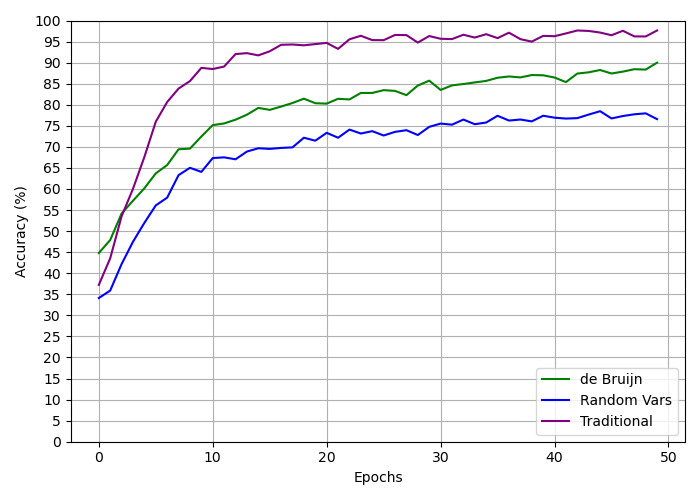

This section presents graphs that illustrate the training results for every model trained. The graphs display the model’s accuracy for the test dataset of the respective training dataset as it evolves over the training epochs. Each graph presents the results for all three variable naming conventions utilized in this study: the traditional convention, the random vars convention, and the de Bruijn convention.

For the OBR task, the training for the random datasets can be seen in Figure 3, the closed bool datasets in Figure 4, the open bool datasets in Figure 5, and the mixed datasets in Figure 6. For the MBR task, the training for the closed bool datasets can be seen in Figure 7, the open bool datasets in Figure 8, and the mix datasets in Figure 9.

In addition to the graphs, tables 4 and 5 show the final accuracies, i.e. the accuracy of the last epoch for all the models trained for both the OBR and MBR tasks. The table also shows the average percentage of similarity of the strings, calculated using the Levenshtein distance shown in Section 5.4.

<details>

<summary>extracted/6501326/training_obr_random.jpeg Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Epochs for Three Methods

### Overview

This image is a line chart comparing the training accuracy (in percentage) over 50 epochs for three different methods or models. The chart demonstrates the learning curves, showing how accuracy improves with training time for each approach.

### Components/Axes

* **X-Axis:** Labeled "Epochs". It is a linear scale ranging from 0 to 50, with major tick marks every 10 epochs (0, 10, 20, 30, 40, 50).

* **Y-Axis:** Labeled "Accuracy (%)". It is a linear scale ranging from 0 to 100, with major tick marks every 5 percentage points (0, 5, 10, ..., 95, 100).

* **Legend:** Located in the **bottom-right corner** of the chart area. It contains three entries, each with a colored line sample and a label:

* **Green Line:** "de Bruijn"

* **Blue Line:** "Random Vars"

* **Purple Line:** "Traditional"

* **Grid:** A light gray grid is present, with lines corresponding to the major ticks on both axes, aiding in value estimation.

### Detailed Analysis

The chart plots three data series, each representing the accuracy of a method at the end of each training epoch.

1. **Traditional (Purple Line):**

* **Trend:** Shows a very rapid initial increase, followed by a slower, fluctuating ascent that generally maintains a significant lead over the other two methods.

* **Data Points (Approximate):**

* Epoch 0: ~0%

* Epoch 5: ~73%

* Epoch 10: ~78%

* Epoch 20: ~82%

* Epoch 30: ~85%

* Epoch 40: ~88%

* Epoch 50: ~88%

* The line exhibits notable volatility, with several dips (e.g., around epochs 12, 18, 35, 48) but consistently recovers to a higher plateau.

2. **de Bruijn (Green Line) & Random Vars (Blue Line):**

* **Trend:** Both lines follow a very similar trajectory. They show a steep initial rise (though less steep than Traditional), followed by a more gradual, steady increase with minor fluctuations. The two lines are closely intertwined, often overlapping, especially after epoch 20.

* **Data Points (Approximate - representative of both lines):**

* Epoch 0: ~0%

* Epoch 5: ~50%

* Epoch 10: ~60%

* Epoch 20: ~65%

* Epoch 30: ~68%

* Epoch 40: ~69%

* Epoch 50: ~70%

* The **de Bruijn (green)** line appears slightly more volatile in the early epochs (0-15), while the **Random Vars (blue)** line is marginally smoother. By the final epochs, both stabilize in the high 60s to low 70s percentage range.

### Key Observations

* **Performance Hierarchy:** The "Traditional" method achieves and maintains a substantially higher accuracy throughout the entire training process compared to the "de Bruijn" and "Random Vars" methods.

* **Similarity of Two Methods:** The "de Bruijn" and "Random Vars" methods demonstrate nearly identical learning performance, with no clear, consistent advantage for one over the other based on this chart.

* **Convergence:** All three methods show signs of convergence (plateauing) by epoch 50, though the "Traditional" method's plateau is at a much higher accuracy level (~88% vs. ~70%).

* **Volatility:** The "Traditional" method's learning curve is the most volatile, suggesting its training process may be more sensitive to individual batches or epochs.

### Interpretation

This chart likely compares different feature engineering, data representation, or model initialization techniques in a machine learning context. The "Traditional" method, which could represent a standard or baseline approach, is significantly more effective for this specific task, reaching near 90% accuracy. The "de Bruijn" and "Random Vars" methods, which might represent more novel or randomized approaches, perform similarly to each other but are clearly inferior, plateauing around 70% accuracy.

The key takeaway is that for the problem being solved, the traditional approach is superior. The similarity between the de Bruijn (a structured, sequence-based method) and Random Vars (a likely unstructured, random method) suggests that the specific structure introduced by the de Bruijn approach does not confer an advantage over random initialization for this particular model and dataset. The investigation should focus on understanding why the traditional method is so much more effective.

</details>

Figure 3: Graph displaying the progression for the training of the One-Step Beta Reduction task, for the random dataset, over the three different conventions.

<details>

<summary>extracted/6501326/training_obr_closed_bool.jpeg Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Epochs for Three Methods

### Overview

The image is a line chart comparing the training accuracy (in percentage) over 50 epochs for three different methods or models: "de Bruijn", "Random Vars", and "Traditional". All three methods show a rapid initial increase in accuracy followed by convergence to a high, stable accuracy level near 100%.

### Components/Axes

* **Chart Type:** Line chart with multiple series.

* **X-Axis:** Labeled "Epochs". Scale ranges from 0 to 50, with major tick marks every 10 units (0, 10, 20, 30, 40, 50).

* **Y-Axis:** Labeled "Accuracy (%)". Scale ranges from 0 to 100, with major tick marks every 5 units (0, 5, 10, ..., 95, 100).

* **Legend:** Located in the bottom-right corner of the chart area. It contains three entries:

* A green line labeled "de Bruijn".

* A blue line labeled "Random Vars".

* A purple line labeled "Traditional".

* **Grid:** A light gray grid is present, aligned with the major ticks on both axes.

### Detailed Analysis

**Trend Verification & Data Points:**

1. **Traditional (Purple Line):**

* **Trend:** Shows the steepest initial ascent, reaching high accuracy fastest. After the initial rise, it exhibits minor fluctuations but maintains a very high accuracy.

* **Key Points (Approximate):**

* Epoch 0: ~2%

* Epoch 5: ~94%

* Epoch 10: ~98%

* Epoch 20: ~99%

* Epoch 30: ~98%

* Epoch 40: ~99%

* Epoch 50: ~99%

2. **de Bruijn (Green Line):**

* **Trend:** Rises rapidly, slightly slower than the Traditional method initially. It converges to a high accuracy level similar to the Traditional method but shows a notable dip around epoch 22.

* **Key Points (Approximate):**

* Epoch 0: ~2%

* Epoch 5: ~92%

* Epoch 10: ~96%

* Epoch 20: ~98%

* Epoch 22 (Dip): ~93%

* Epoch 30: ~98%

* Epoch 40: ~99%

* Epoch 50: ~99%

3. **Random Vars (Blue Line):**

* **Trend:** Has the slowest initial rise of the three. It takes more epochs to reach the 90%+ accuracy range but eventually converges to a similar high accuracy plateau as the other two methods.

* **Key Points (Approximate):**

* Epoch 0: ~2%

* Epoch 5: ~79%

* Epoch 10: ~93%

* Epoch 20: ~97%

* Epoch 30: ~98%

* Epoch 40: ~98%

* Epoch 50: ~99%

### Key Observations

* **Convergence Speed:** The "Traditional" method converges to high accuracy (>90%) the fastest (within ~5 epochs). The "de Bruijn" method is slightly slower, and the "Random Vars" method is the slowest to reach that threshold (around epoch 8-9).

* **Final Accuracy:** All three methods appear to converge to a very similar final accuracy level, hovering between approximately 98% and 100% after epoch 30.

* **Stability:** The "Traditional" and "Random Vars" lines are relatively smooth after convergence. The "de Bruijn" line shows a distinct, temporary drop in accuracy around epoch 22 before recovering.

* **Initial Conditions:** All methods start from a very low accuracy (near 0-2%) at epoch 0.

### Interpretation

This chart demonstrates a comparative analysis of training efficiency and final performance for three distinct approaches. The data suggests that the **"Traditional" method is the most efficient in the early stages of training**, achieving high accuracy with fewer computational epochs. This could imply it uses a more effective optimization strategy or has a better-inductive bias for the task at hand.

The **"Random Vars" method, while ultimately reaching the same performance ceiling, requires a longer "warm-up" period**. This might indicate a less directed search of the parameter space initially. The **"de Bruijn" method presents an interesting case of high efficiency coupled with a momentary instability** (the dip at epoch 22), which could be an artifact of the specific training run, a learning rate issue, or a characteristic of the method itself.

The most significant finding is that **given sufficient training time (epochs), all three methods achieve near-perfect accuracy** on this particular task. This implies the task may not be sufficiently complex to differentiate the ultimate capacity of these models, or that all three methods are fundamentally sound. The key differentiator is therefore **training speed and stability**, not final accuracy. For applications where training time or computational cost is critical, the "Traditional" method would be the preferred choice based on this data.

</details>

Figure 4: Graph displaying the progression for the training of the One-Step Beta Reduction task, for the closed bool dataset, over the three different conventions.

<details>

<summary>extracted/6501326/training_obr_open_bool.jpeg Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Epochs for Three Methods

### Overview

The image is a line chart comparing the training accuracy (in percentage) over 50 epochs for three different methods or models: "de Bruijn", "Random Vars", and "Traditional". All three methods show a rapid initial increase in accuracy followed by a plateau with fluctuations.

### Components/Axes

* **Chart Type:** Line chart with gridlines.

* **X-Axis (Horizontal):**

* **Label:** "Epochs"

* **Scale:** Linear, from 0 to 50.

* **Major Ticks:** 0, 10, 20, 30, 40, 50.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, from 0 to 100.

* **Major Ticks:** 0, 5, 10, 15, 20, 25, 30, 35, 40, 45, 50, 55, 60, 65, 70, 75, 80, 85, 90, 95, 100.

* **Legend:**

* **Position:** Bottom-right corner of the chart area.

* **Content:**

* Green line: "de Bruijn"

* Blue line: "Random Vars"

* Purple line: "Traditional"

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

1. **de Bruijn (Green Line):**

* **Trend:** Starts very low, rises extremely steeply to ~85% by epoch 2, then continues a steep but slightly less vertical climb to ~95% by epoch 5. After epoch 5, it plateaus with moderate fluctuations, generally staying between 85% and 98%.

* **Key Points (Approx.):**

* Epoch 0: ~55%

* Epoch 2: ~85%

* Epoch 5: ~95%

* Epoch 10: ~90% (dip)

* Epoch 20: ~95%

* Epoch 30: ~90% (dip)

* Epoch 40: ~97%

* Epoch 50: ~95%

2. **Random Vars (Blue Line):**

* **Trend:** Starts the lowest of all three. Rises steeply but slightly slower than the others initially, reaching ~80% by epoch 3. It continues a strong upward trend, crossing 90% around epoch 7, and then plateaus with fluctuations, often appearing slightly smoother than the other two lines. It generally stays between 85% and 98% after epoch 10.

* **Key Points (Approx.):**

* Epoch 0: ~13%

* Epoch 3: ~80%

* Epoch 7: ~90%

* Epoch 10: ~93%

* Epoch 20: ~96%

* Epoch 30: ~97%

* Epoch 40: ~95%

* Epoch 50: ~93%

3. **Traditional (Purple Line):**

* **Trend:** Starts at a moderate level. Rises very steeply, similar to de Bruijn, reaching ~84% by epoch 1 and ~94% by epoch 3. It then enters a high plateau with the most pronounced and frequent fluctuations of the three lines, oscillating sharply between approximately 84% and 99%.

* **Key Points (Approx.):**

* Epoch 0: ~40%

* Epoch 1: ~84%

* Epoch 3: ~94%

* Epoch 5: ~97%

* Epoch 10: ~93% (dip)

* Epoch 20: ~98%

* Epoch 30: ~98%

* Epoch 40: ~98%

* Epoch 45: ~84% (significant dip)

* Epoch 50: ~98%

### Key Observations

1. **Convergence:** All three methods converge to a high accuracy range (roughly 85-99%) after the initial training phase (approximately after epoch 10).

2. **Initial Learning Speed:** "de Bruijn" and "Traditional" methods learn faster in the very first few epochs compared to "Random Vars".

3. **Stability:** The "Random Vars" (blue) line appears to have the smoothest plateau with slightly less volatility after convergence. The "Traditional" (purple) line exhibits the most dramatic short-term fluctuations, including a notable sharp dip around epoch 45.

4. **Final Performance:** At epoch 50, the "Traditional" and "de Bruijn" methods appear to end at a slightly higher accuracy (~95-98%) than "Random Vars" (~93%), though all are within a close range.

### Interpretation

This chart demonstrates a comparative analysis of training efficiency and stability for three algorithmic approaches. The data suggests:

* **Effectiveness:** All three methods are effective for the given task, as they all achieve high accuracy (>90%).

* **Trade-offs:** There appears to be a trade-off between initial learning speed and training stability. The "Traditional" method learns very quickly but exhibits high variance during training, which might indicate sensitivity to batch data or a more aggressive optimization landscape. The "Random Vars" method learns slightly slower initially but offers more stable convergence, which could be preferable for reliable training.

* **Robustness:** The "de Bruijn" method offers a balance, with fast initial learning and moderate stability. The significant dip in the "Traditional" line around epoch 45 is an anomaly that warrants investigation—it could represent a problematic mini-batch, an instability in the learning rate, or a characteristic of the optimization path for that method.

* **Underlying Message:** The chart likely aims to show that while novel methods ("de Bruijn", "Random Vars") are competitive with or offer different stability profiles compared to a "Traditional" baseline, no single method is strictly superior across all metrics (speed, final accuracy, stability). The choice would depend on the specific priorities of the training process (e.g., speed vs. reliability).

</details>

Figure 5: Graph displaying the progression for the training of the One-Step Beta Reduction task, for the open bool dataset, over the three different conventions.

<details>

<summary>extracted/6501326/training_obr_mix.jpeg Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Training Epochs for Three Methods

### Overview

This image is a line chart comparing the training accuracy (in percentage) over 50 epochs for three distinct methods or models. The chart demonstrates the learning curves, showing how each method's performance improves and stabilizes over time.

### Components/Axes

* **Chart Type:** Line chart with grid lines.

* **X-Axis:** Labeled **"Epochs"**. It represents the number of training iterations, with major tick marks at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled **"Accuracy (%)"**. It represents the model's accuracy as a percentage, ranging from 0 to 100 with major tick marks every 5 units (0, 5, 10, ..., 95, 100).

* **Legend:** Located in the **bottom-right corner** of the chart area. It contains three entries:

* **Green line:** "de Bruijn"

* **Blue line:** "Random Vars"

* **Purple line:** "Traditional"

### Detailed Analysis

The chart plots three data series, each showing a general upward trend that plateaus, indicating learning. Below is an analysis of each series, including approximate data points and trend verification.

1. **Traditional (Purple Line):**

* **Trend:** This line shows the fastest initial rise and maintains the highest accuracy throughout. It has the smoothest curve with the least volatility after the initial ascent.

* **Approximate Data Points:**

* Epoch 0: ~5%

* Epoch 5: ~80%

* Epoch 10: ~85%

* Epoch 20: ~88%

* Epoch 30: ~90%

* Epoch 40: ~90%

* Epoch 50: ~93% (final point)

2. **de Bruijn (Green Line):**

* **Trend:** Rises quickly, though slightly slower than the Traditional method. It exhibits moderate volatility, with noticeable dips and recoveries, but generally stays within a band between 80% and 90% after epoch 15.

* **Approximate Data Points:**

* Epoch 0: ~10%

* Epoch 5: ~75%

* Epoch 10: ~80%

* Epoch 15: ~85% (local peak)

* Epoch 20: ~83% (dip)

* Epoch 30: ~87%

* Epoch 40: ~88%

* Epoch 50: ~89%

3. **Random Vars (Blue Line):**

* **Trend:** Shows the slowest initial learning rate. It is the most volatile series, characterized by sharp, periodic drops in accuracy followed by recoveries. Despite the volatility, its overall trend is upward, converging with the de Bruijn line in the later epochs.

* **Approximate Data Points:**

* Epoch 0: ~5%

* Epoch 5: ~65%

* Epoch 10: ~75%

* Epoch 20: ~85% (before a sharp dip)

* Epoch 25: ~80% (after a dip)

* Epoch 30: ~85%

* Epoch 35: ~80% (another dip)

* Epoch 40: ~88%

* Epoch 50: ~88%

### Key Observations

* **Performance Hierarchy:** The "Traditional" method consistently achieves the highest accuracy. The "de Bruijn" method is generally second, and the "Random Vars" method is third, though it catches up by the end.

* **Volatility:** The "Random Vars" line is highly unstable, with significant, recurring drops in accuracy (e.g., near epochs 20, 25, 35). The "de Bruijn" line shows moderate volatility. The "Traditional" line is the most stable.

* **Convergence:** By epoch 50, the accuracy of all three methods is within a relatively narrow range (approximately 88% to 93%), suggesting they may be approaching a similar performance ceiling for this task.

* **Initial Learning Phase:** All methods show their most rapid improvement within the first 10 epochs.

### Interpretation

This chart likely compares different initialization strategies, architectural components, or training methodologies for a machine learning model. The data suggests that the **"Traditional" approach is the most effective and stable** for this specific task, providing both fast learning and high final accuracy. The **"Random Vars" method appears to be a stochastic or less constrained approach**, leading to unstable training dynamics (the sharp dips may represent catastrophic forgetting or instability from random perturbations), though it eventually learns a competent representation. The **"de Bruijn" method offers a middle ground**, providing better stability than "Random Vars" but not reaching the peak performance of the "Traditional" method.

The key takeaway is that while all methods can learn the task, the choice of method significantly impacts training stability and final performance. The "Traditional" method is preferable for reliability, whereas the volatility of "Random Vars" might be undesirable in a production setting unless it offers other benefits (like exploration in reinforcement learning). The convergence of all lines suggests the task has a fundamental difficulty ceiling that all methods are approaching.

</details>

Figure 6: Graph displaying the progression for the training of the One-Step Beta Reduction task, for the mixed dataset, over the three different conventions.

<details>

<summary>extracted/6501326/training_mbr_closed_bool.jpeg Details</summary>

### Visual Description

## Line Chart: Accuracy Comparison of Three Methods Over Training Epochs

### Overview

The image is a line chart comparing the training accuracy (in percentage) of three different methods or models over 50 training epochs. The chart demonstrates the learning curves, showing how accuracy improves with training time for each approach.

### Components/Axes

* **Chart Type:** Line chart with grid lines.

* **X-Axis:** Labeled "Epochs". Scale ranges from 0 to 50, with major tick marks and grid lines at intervals of 10 (0, 10, 20, 30, 40, 50).

* **Y-Axis:** Labeled "Accuracy (%)". Scale ranges from 0 to 100, with major tick marks and grid lines at intervals of 5 (0, 5, 10, ..., 95, 100).

* **Legend:** Located in the bottom-right corner of the chart area. It contains three entries:

* A green line labeled "de Bruijn"

* A blue line labeled "Random Vars"

* A purple line labeled "Traditional"

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

1. **de Bruijn (Green Line):**

* **Trend:** Starts highest, shows a very steep initial increase, then plateaus at a high level with minor fluctuations.

* **Key Points:**

* Epoch 0: ~65%

* Epoch 5: ~78%

* Epoch 10: ~80%

* Epoch 20: ~81%

* Epoch 30: ~82%

* Epoch 40: ~82%

* Epoch 50: ~83%

2. **Traditional (Purple Line):**

* **Trend:** Starts in the middle, increases rapidly, converges with and occasionally surpasses the "de Bruijn" line after epoch 20, ending at a similar high accuracy.

* **Key Points:**

* Epoch 0: ~57%

* Epoch 5: ~70%

* Epoch 10: ~78%

* Epoch 20: ~80% (intersects with green line)

* Epoch 30: ~82%

* Epoch 40: ~83% (appears slightly above green line)

* Epoch 50: ~83%

3. **Random Vars (Blue Line):**

* **Trend:** Starts the lowest, shows a steady but less steep increase compared to the others, and plateaus at a significantly lower accuracy level.

* **Key Points:**

* Epoch 0: ~53%

* Epoch 5: ~65%

* Epoch 10: ~72%

* Epoch 20: ~74%

* Epoch 30: ~75%

* Epoch 40: ~76%

* Epoch 50: ~76%

### Key Observations

* **Performance Hierarchy:** The "de Bruijn" and "Traditional" methods achieve final accuracies within 1-2 percentage points of each other (~82-83%), while "Random Vars" consistently underperforms, plateauing around 76%.

* **Learning Speed:** "de Bruijn" has the fastest initial learning, reaching ~78% by epoch 5. "Traditional" starts slower but catches up by epoch 20. "Random Vars" has the slowest learning rate throughout.

* **Convergence:** The "Traditional" and "de Bruijn" lines converge and become intertwined after approximately epoch 20, suggesting similar final model performance despite different starting points and initial learning dynamics.

* **Stability:** All three lines show minor epoch-to-epoch fluctuations after their initial rise, but no dramatic drops or spikes, indicating stable training.

### Interpretation

This chart likely compares different weight initialization strategies or architectural approaches for a machine learning model. The data suggests that structured initialization or methods ("de Bruijn" sequences and "Traditional" approaches) lead to significantly better final model accuracy and faster initial learning compared to a random initialization ("Random Vars").

The near-identical final performance of "de Bruijn" and "Traditional" methods implies that for this specific task, both are equally effective at reaching an optimal solution, though "de Bruijn" may offer a slight advantage in the very early stages of training. The persistent gap between these two and the "Random Vars" line highlights the importance of informed initialization or methodological structure in achieving higher model performance. The plateauing of all curves indicates that the models have likely reached their capacity or the limit of learnable information from the dataset by around 30-40 epochs.

</details>

Figure 7: Graph displaying the progression for the training of the Multi-Step Beta Reduction task, for the closed bool dataset, over the three different conventions.

<details>

<summary>extracted/6501326/training_mbr_open_bool.jpeg Details</summary>

### Visual Description

## Line Chart: Training Accuracy Comparison Over Epochs

### Overview

This image is a line chart comparing the training accuracy (in percentage) of three different methods over 50 training epochs. The chart plots "Accuracy (%)" on the vertical y-axis against "Epochs" on the horizontal x-axis. Three distinct colored lines represent the performance of each method, with a legend provided for identification.

### Components/Axes

* **Chart Type:** Line Chart

* **X-Axis:**

* **Label:** "Epochs"

* **Scale:** Linear scale from 0 to 50.

* **Major Tick Marks:** 0, 10, 20, 30, 40, 50.

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear scale from 0 to 100.

* **Major Tick Marks:** 0, 5, 10, 15, 20, 25, 30, 35, 40, 45, 50, 55, 60, 65, 70, 75, 80, 85, 90, 95, 100.

* **Legend:**

* **Placement:** Bottom-right corner of the chart area.

* **Entries:**

1. **Green Line:** "de Bruijn"

2. **Blue Line:** "Random Vars"

3. **Purple Line:** "Traditional"

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

1. **de Bruijn (Green Line):**

* **Trend:** Starts low, exhibits a very steep upward slope in the initial epochs (0-5), then the rate of increase slows, eventually plateauing with minor fluctuations in the high 90s.

* **Key Data Points (Approximate):**

* Epoch 0: ~20%

* Epoch 5: ~80%

* Epoch 10: ~87%

* Epoch 20: ~94%

* Epoch 30: ~96%

* Epoch 40: ~97%

* Epoch 50: ~97%

2. **Random Vars (Blue Line):**

* **Trend:** Starts the lowest, shows a steady, consistent upward slope throughout the training period. The slope is less steep than the other two methods initially and maintains a more linear growth pattern, ending significantly lower than the others.

* **Key Data Points (Approximate):**

* Epoch 0: ~15%

* Epoch 5: ~40%

* Epoch 10: ~58%

* Epoch 20: ~68%

* Epoch 30: ~74%

* Epoch 40: ~80%

* Epoch 50: ~81%

3. **Traditional (Purple Line):**