# On the Security Risks of Knowledge Graph Reasoning

**Authors**:

- Zhaohan Xi

- Penn State

- Tianyu Du

- Penn State

- Changjiang Li

- Penn State

- Ren Pang

- Penn State

- Shouling Ji (Zhejiang University)

- Xiapu Luo (Hong Kong Polytechnic University)

- Xusheng Xiao (Arizona State University)

- Fenglong Ma

- Penn State

- Ting Wang

- Penn State

\newtcolorbox

mtbox[1]left=0.25mm, right=0.25mm, top=0.25mm, bottom=0.25mm, sharp corners, colframe=red!50!black, boxrule=0.5pt, title=#1, fonttitle=, coltitle=red!50!black, attach title to upper= – \stackMath

Abstract

Knowledge graph reasoning (KGR) – answering complex logical queries over large knowledge graphs – represents an important artificial intelligence task, entailing a range of applications (e.g., cyber threat hunting). However, despite its surging popularity, the potential security risks of KGR are largely unexplored, which is concerning, given the increasing use of such capability in security-critical domains.

This work represents a solid initial step towards bridging the striking gap. We systematize the security threats to KGR according to the adversary’s objectives, knowledge, and attack vectors. Further, we present ROAR, a new class of attacks that instantiate a variety of such threats. Through empirical evaluation in representative use cases (e.g., medical decision support, cyber threat hunting, and commonsense reasoning), we demonstrate that ROAR is highly effective to mislead KGR to suggest pre-defined answers for target queries, yet with negligible impact on non-target ones. Finally, we explore potential countermeasures against ROAR, including filtering of potentially poisoning knowledge and training with adversarially augmented queries, which leads to several promising research directions.

1 Introduction

Knowledge graphs (KGs) are structured representations of human knowledge, capturing real-world objects, relations, and their properties. Thanks to automated KG building tools [61], recent years have witnessed a significant growth of KGs in various domains (e.g., MITRE [10], GNBR [53], and DrugBank [4]). One major use of such KGs is knowledge graph reasoning (KGR), which answers complex logical queries over KGs, entailing a range of applications [6] such as information retrieval [8], cyber-threat hunting [2], biomedical research [30], and clinical decision support [12]. For instance, KG-assisted threat hunting has been used in both research prototypes [50, 34] and industrial platforms [9, 40].

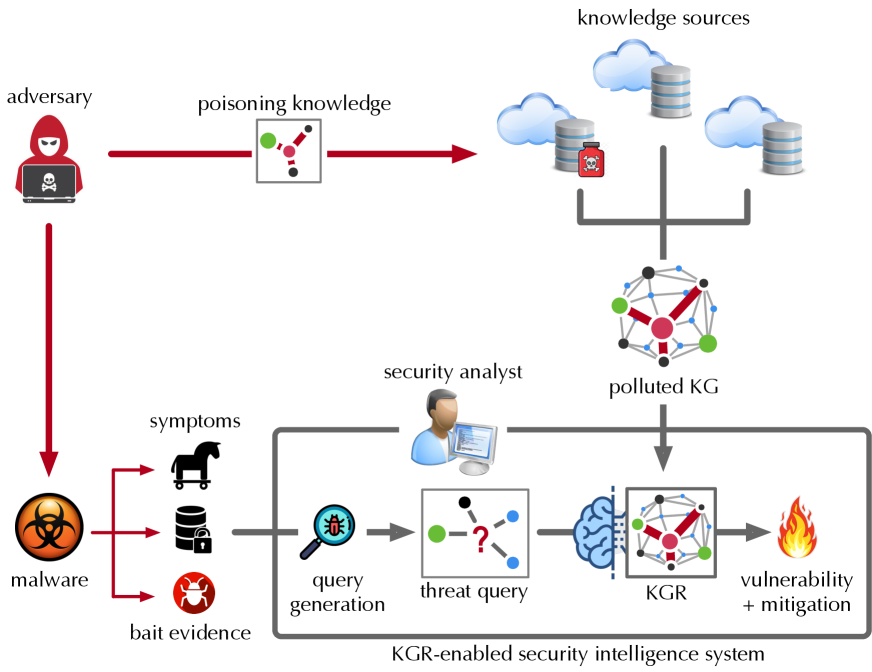

**Example 1**

*In cyber threat hunting as shown in Figure 1, upon observing suspicious malware activities, the security analyst may query a KGR-enabled security intelligence system (e.g., LogRhythm [47]): “ how to mitigate the malware that targets BusyBox and launches DDoS attacks? ” Processing the query over the backend KG may identify the most likely malware as Mirai and its mitigation as credential-reset [15].*

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: KGR-enabled Security Intelligence System

### Overview

The image depicts a diagram illustrating a KGR-enabled security intelligence system and how an adversary can attempt to poison the knowledge used by the system. The diagram shows the flow of information from knowledge sources, through a security analyst, to a knowledge graph (KG), and ultimately to vulnerability detection and mitigation. It also illustrates how an adversary can inject "poisoning knowledge" into the system.

### Components/Axes

The diagram consists of several key components:

* **Adversary:** Represented by a hooded figure.

* **Knowledge Sources:** Represented by multiple cloud and cylinder icons.

* **Polluted KG:** A knowledge graph that has been compromised.

* **Security Analyst:** Represented by a person working on a computer.

* **Malware:** Represented by a biohazard symbol and a gear.

* **Bait Evidence:** Represented by a horse head and a lock with a red bug.

* **Query Generation:** Represented by a gear with a bug.

* **Threat Query:** Represented by a question mark inside a circle.

* **KGR:** Knowledge Graph Reasoning, represented by a brain-shaped icon.

* **Vulnerability + Mitigation:** Represented by a flame.

* **Arrows:** Indicate the flow of information and attacks.

Labels include: "adversary", "poisoning knowledge", "knowledge sources", "security analyst", "polluted KG", "malware", "symptoms", "bait evidence", "query generation", "threat query", "KGR", "vulnerability + mitigation", and "KGR-enabled security intelligence system".

### Detailed Analysis or Content Details

The diagram illustrates the following flow:

1. **Adversary Attack:** The adversary attempts to "poison knowledge" by injecting malicious data (represented by red dots connected by lines) into the "knowledge sources". This is indicated by a thick red arrow.

2. **Knowledge Sources to Polluted KG:** The knowledge sources feed into a "polluted KG". The KG is represented by a network of nodes and edges, with a red color scheme indicating contamination.

3. **Security Analyst Input:** The security analyst receives information from the "polluted KG".

4. **Malware & Bait Evidence:** "Malware" and "bait evidence" generate "symptoms" which are fed into the "query generation" stage. The malware is represented by a biohazard symbol and a gear. Bait evidence is represented by a horse head and a lock with a red bug.

5. **Query Generation to Threat Query:** The "query generation" stage produces a "threat query".

6. **Threat Query to KGR:** The "threat query" is processed by the "KGR" (Knowledge Graph Reasoning) component.

7. **KGR to Vulnerability & Mitigation:** The "KGR" outputs "vulnerability + mitigation" information, represented by a flame.

The diagram also shows a direct connection from the adversary to the malware, suggesting the adversary is responsible for creating or deploying the malware.

### Key Observations

* The diagram highlights the vulnerability of knowledge-based security systems to adversarial attacks.

* The "polluted KG" is a central point of failure, as it affects the entire downstream process.

* The use of visual metaphors (e.g., flame for vulnerability, biohazard for malware) effectively communicates the concepts.

* The diagram emphasizes the importance of a security analyst in the loop, but also shows how their analysis can be compromised by poisoned knowledge.

### Interpretation

The diagram demonstrates a potential attack vector against KGR-enabled security intelligence systems. The adversary aims to compromise the system by injecting false or misleading information into the knowledge sources, ultimately leading to a "polluted KG". This pollution can then affect the security analyst's judgment and the accuracy of the vulnerability detection and mitigation process. The diagram suggests that robust mechanisms for validating and sanitizing knowledge sources are crucial for maintaining the integrity of these systems. The inclusion of "bait evidence" suggests a proactive approach to detecting adversarial activity, but it is also susceptible to being compromised. The diagram is a conceptual illustration of a threat model, rather than a depiction of specific data or numerical values. It serves to highlight the potential risks and challenges associated with using knowledge graphs in security applications. The diagram is a high-level overview and does not provide details on the specific techniques used for poisoning knowledge or mitigating vulnerabilities.

</details>

Figure 1: Threats to KGR-enabled security intelligence systems.

Surprisingly, in contrast to the growing popularity of using KGR to support decision-making in a variety of critical domains (e.g., cyber-security [52], biomedicine [12], and healthcare [71]), its security implications are largely unexplored. More specifically,

RQ ${}_{1}$ – What are the potential threats to KGR?

RQ ${}_{2}$ – How effective are the attacks in practice?

RQ ${}_{3}$ – What are the potential countermeasures?

Yet, compared with other machine learning systems (e.g., graph learning), KGR represents a unique class of intelligence systems. Despite the plethora of studies under the settings of general graphs [72, 66, 73, 21, 68] and predictive tasks [70, 54, 19, 56, 18], understanding the security risks of KGR entails unique, non-trivial challenges: (i) compared with general graphs, KGs contain richer relational information essential for KGR; (ii) KGR requires much more complex processing than predictive tasks (details in § 2); (iii) KGR systems are often subject to constant update to incorporate new knowledge; and (iv) unlike predictive tasks, the adversary is able to manipulate KGR through multiple different attack vectors (details in § 3).

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Knowledge Graph for Malware Mitigation

### Overview

The image presents a diagram illustrating a knowledge graph and its application to a malware mitigation query. It is divided into three main sections: (a) a knowledge graph representing relationships between malware and targets, (b) a formal query representation, and (c) a step-by-step visualization of knowledge graph reasoning to answer the query.

### Components/Axes

The diagram consists of nodes representing entities (e.g., DDoS, BusyBox, Mirai) and directed edges representing relationships between them (e.g., "launch-by", "target-by", "mitigate-by"). The query section includes mathematical notation and variable definitions. The reasoning section shows a series of graph transformations.

### Detailed Analysis or Content Details

**(a) Knowledge Graph:**

* **Nodes:**

* DDoS (Red)

* BusyBox (Yellow)

* Mirai (Green)

* Brickerbot (Green)

* PDoS (Red)

* `vmalware` (Variable, not colored)

* **Edges:**

* DDoS `launch-by` Mirai

* Mirai `target-by` BusyBox

* BusyBox `target-by` Brickerbot

* Brickerbot `mitigate-by` hardware restore

* PDoS `launch-by` BusyBox

* BusyBox `target-by` PDoS

* DDoS `mitigate-by` credential reset

* The graph shows a chain of attacks: DDoS launched by Mirai targeting BusyBox, and PDoS launched by BusyBox targeting PDoS. Mitigation strategies are also shown.

**(b) Query:**

* **Text:** "How to mitigate the malware that targets BusyBox and launches DDoS attacks?"

* **Mathematical Formulation:**

* `Aq = {BusyBox, DDoS}, Vq = {vmalware}`

* `E'q =` (Equation showing relationships between BusyBox, DDoS, and `vmalware` with edges labeled "target-by", "launch-by", and "mitigate-by")

* The equation shows a relationship between BusyBox, DDoS, and a variable `vmalware` representing the malware.

**(c) Knowledge Graph Reasoning:**

This section shows a series of graph transformations, numbered (1) through (4).

* **(1):** `ΦDDoS` with an edge `vlaunch-by` pointing to `vmalware`.

* **(2):** `Ψ` (conjunction symbol) with edges `vlaunch-by` from `DDoS` to `vmalware` and `vtarget-by` from `BusyBox` to `vmalware`.

* **(3):** `vmalware` with an edge `vmitigate-by` pointing to `?`.

* **(4):** `?` (variable) with a bracket `[q]`. The bracket suggests the result of the query.

### Key Observations

* The knowledge graph represents a network of cyberattacks and mitigation strategies.

* The query aims to find a mitigation strategy for malware that both targets BusyBox and launches DDoS attacks.

* The reasoning process involves identifying the malware (`vmalware`) that satisfies the query conditions and then finding a mitigation strategy for that malware.

* The use of mathematical notation formalizes the query and reasoning process.

### Interpretation

The diagram demonstrates a knowledge graph-based approach to cybersecurity threat mitigation. The knowledge graph stores information about attacks, targets, and mitigation strategies. A formal query is constructed to represent the mitigation goal. The reasoning process then traverses the knowledge graph to identify the relevant malware and its corresponding mitigation strategy.

The diagram highlights the power of knowledge graphs in representing complex relationships and enabling automated reasoning for cybersecurity tasks. The use of mathematical notation provides a precise and unambiguous way to define queries and reasoning steps. The reasoning process shown in (c) is a simplified illustration of how a knowledge graph can be used to answer complex security questions. The final result, represented by "?", indicates the mitigation strategy that satisfies the query conditions.

The diagram suggests a system where security analysts can formulate queries in natural language, which are then translated into formal queries that can be executed on the knowledge graph to identify appropriate mitigation strategies. This approach can help to automate the threat response process and improve the efficiency of security operations.

</details>

Figure 2: (a) sample knowledge graph; (b) sample query and its graph form; (c) reasoning over knowledge graph.

Our work. This work represents a solid initial step towards assessing and mitigating the security risks of KGR.

RA ${}_{1}$ – First, we systematize the potential threats to KGR. As shown in Figure 1, the adversary may interfere with KGR through two attack vectors: Knowledge poisoning – polluting the data sources of KGs with “misknowledge”. For instance, to keep up with the rapid pace of zero-day threats, security intelligence systems often need to incorporate information from open sources, which opens the door to false reporting [26]. Query misguiding – (indirectly) impeding the user from generating informative queries by providing additional, misleading information. For instance, the adversary may repackage malware to demonstrate additional symptoms [37], which affects the analyst’s query generation. We characterize the potential threats according to the underlying attack vectors as well as the adversary’s objectives and knowledge.

RA ${}_{2}$ – Further, we present ROAR, ROAR: R easoning O ver A dversarial R epresentations. a new class of attacks that instantiate the aforementioned threats. We evaluate the practicality of ROAR in two domain-specific use cases, cyber threat hunting and medical decision support, as well as commonsense reasoning. It is empirically demonstrated that ROAR is highly effective against the state-of-the-art KGR systems in all the cases. For instance, ROAR attains over 0.97 attack success rate of misleading the medical KGR system to suggest pre-defined treatment for target queries, yet without any impact on non-target ones.

RA ${}_{3}$ - Finally, we discuss potential countermeasures and their technical challenges. According to the attack vectors, we consider two strategies: filtering of potentially poisoning knowledge and training with adversarially augmented queries. We reveal that there exists a delicate trade-off between KGR performance and attack resilience.

Contributions. To our best knowledge, this work represents the first systematic study on the security risks of KGR. Our contributions are summarized as follows.

– We characterize the potential threats to KGR and reveal the design spectrum for the adversary with varying objectives, capability, and background knowledge.

– We present ROAR, a new class of attacks that instantiate various threats, which highlights the following features: (i) it leverages both knowledge poisoning and query misguiding as the attack vectors; (ii) it assumes limited knowledge regarding the target KGR system; (iii) it realizes both targeted and untargeted attacks; and (iv) it retains effectiveness under various practical constraints.

– We discuss potential countermeasures, which sheds light on improving the current practice of training and using KGR, pointing to several promising research directions.

2 Preliminaries

We first introduce fundamental concepts and assumptions.

Knowledge graphs (KGs). A KG ${\mathcal{G}}=({\mathcal{N}},{\mathcal{E}})$ consists of a set of nodes ${\mathcal{N}}$ and edges ${\mathcal{E}}$ . Each node $v∈{\mathcal{N}}$ represents an entity and each edge $v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}∈{\mathcal{E}}$ indicates that there exists relation $r∈{\mathcal{R}}$ (where ${\mathcal{R}}$ is a finite set of relation types) from $v$ to $v^{\prime}$ . In other words, ${\mathcal{G}}$ comprises a set of facts $\{\langle v,r,v^{\prime}\rangle\}$ with $v,v^{\prime}∈{\mathcal{N}}$ and $v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}∈{\mathcal{E}}$ .

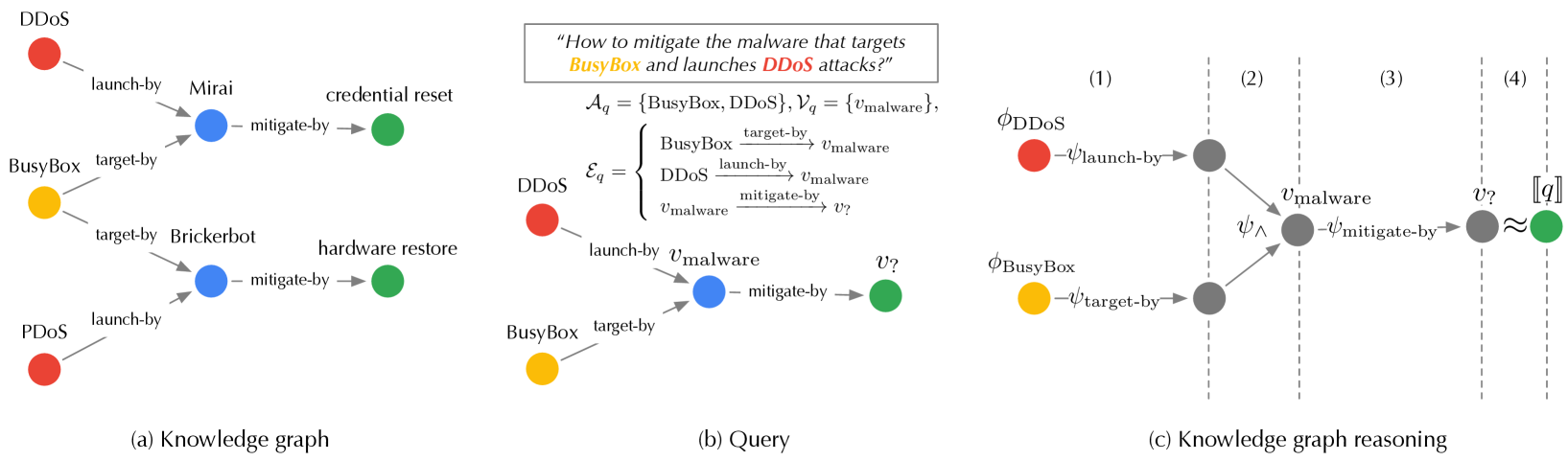

**Example 2**

*In Figure 2 (a), the fact $\langle$ DDoS, launch-by, Mirai $\rangle$ indicates that the Mirai malware launches the DDoS attack.*

Queries. A variety of reasoning tasks can be performed over KGs [58, 33, 63]. In this paper, we focus on first-order conjunctive queries, which ask for entities that satisfy constraints defined by first-order existential ( $∃$ ) and conjunctive ( $\wedge$ ) logic [59, 16, 60]. Formally, let ${\mathcal{A}}_{q}$ be a set of known entities (anchors), ${\mathcal{E}}_{q}$ be a set of known relations, ${\mathcal{V}}_{q}$ be a set of intermediate, unknown entities (variables), and $v_{?}$ be the entity of interest. A first-order conjunctive query $q\triangleq(v_{?},{\mathcal{A}}_{q},{\mathcal{V}}_{q},{\mathcal{E}}_{q})$ is defined as:

$$

\begin{split}&\llbracket q\rrbracket=v_{?}\,.\,\exists{\mathcal{V}}_{q}:\wedge%

_{v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}\in{\mathcal{E}}_{%

q}}v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}\\

&\text{s.t.}\;\,v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}=%

\left\{\begin{array}[]{l}v\in{\mathcal{A}}_{q},v^{\prime}\in{\mathcal{V}}_{q}%

\cup\{v_{?}\},r\in{\mathcal{R}}\\

v,v^{\prime}\in{\mathcal{V}}_{q}\cup\{v_{?}\},r\in{\mathcal{R}}\end{array}%

\right.\end{split} \tag{1}

$$

Here, $\llbracket q\rrbracket$ denotes the query answer; the constraints specify that there exist variables ${\mathcal{V}}_{q}$ and entity of interest $v_{?}$ in the KG such that the relations between ${\mathcal{A}}_{q}$ , ${\mathcal{V}}_{q}$ , and $v_{?}$ satisfy the relations specified in ${\mathcal{E}}_{q}$ .

**Example 3**

*In Figure 2 (b), the query of “ how to mitigate the malware that targets BusyBox and launches DDoS attacks? ” can be translated into:

$$

\begin{split}q=&(v_{?},{\mathcal{A}}_{q}=\{\textsf{ BusyBox},\textsf{%

DDoS}\},{\mathcal{V}}_{q}=\{v_{\text{malware}}\},\\

&{\mathcal{E}}_{q}=\{\textsf{ BusyBox}\scriptsize\mathrel{\stackunder[0%

pt]{\xrightarrow{\makebox[24.86362pt]{$\scriptstyle\text{target-by}$}}}{%

\scriptstyle\,}}v_{\text{malware}},\\

&\textsf{ DDoS}\scriptsize\mathrel{\stackunder[0pt]{\xrightarrow{%

\makebox[26.07503pt]{$\scriptstyle\text{launch-by}$}}}{\scriptstyle\,}}v_{%

\text{malware}},v_{\text{malware}}\scriptsize\mathrel{\stackunder[0pt]{%

\xrightarrow{\makebox[29.75002pt]{$\scriptstyle\text{mitigate-by}$}}}{%

\scriptstyle\,}}v_{?}\})\end{split} \tag{2}

$$*

Knowledge graph reasoning (KGR). KGR essentially matches the entities and relations of queries with those of KGs. Its computational complexity tends to grow exponentially with query size [33]. Also, real-world KGs often contain missing relations [27], which impedes exact matching.

Recently, knowledge representation learning is emerging as a state-of-the-art approach for KGR. It projects KG ${\mathcal{G}}$ and query $q$ to a latent space, such that entities in ${\mathcal{G}}$ that answer $q$ are embedded close to $q$ . Answering an arbitrary query $q$ is thus reduced to finding entities with embeddings most similar to $q$ , thereby implicitly imputing missing relations [27] and scaling up to large KGs [14]. Typically, knowledge representation-based KGR comprises two key components:

Embedding function $\phi$ – It projects each entity in ${\mathcal{G}}$ to its latent embedding based on ${\mathcal{G}}$ ’s topological and relational structures. With a little abuse of notation, below we use $\phi_{v}$ to denote entity $v$ ’s embedding and $\phi_{\mathcal{G}}$ to denote the set of entity embeddings $\{\phi_{v}\}_{v∈{\mathcal{G}}}$ .

Transformation function $\psi$ – It computes query $q$ ’s embedding $\phi_{q}$ . KGR defines a set of transformations: (i) given the embedding $\phi_{v}$ of entity $v$ and relation $r$ , the relation- $r$ projection operator $\psi_{r}(\phi_{v})$ computes the embeddings of entities with relation $r$ to $v$ ; (ii) given the embeddings $\phi_{{\mathcal{N}}_{1}},...,\phi_{{\mathcal{N}}_{n}}$ of entity sets ${\mathcal{N}}_{1},...,{\mathcal{N}}_{n}$ , the intersection operator $\psi_{\wedge}(\phi_{{\mathcal{N}}_{1}},...,\phi_{{\mathcal{N}}_{n}})$ computes the embeddings of their intersection $\cap_{i=1}^{n}{\mathcal{N}}_{i}$ . Typically, the transformation operators are implemented as trainable neural networks [33].

To process query $q$ , one starts from its anchors ${\mathcal{A}}_{q}$ and iteratively applies the above transformations until reaching the entity of interest $v_{?}$ with the results as $q$ ’s embedding $\phi_{q}$ . Below we use $\phi_{q}=\psi(q;\phi_{\mathcal{G}})$ to denote this process. The entities in ${\mathcal{G}}$ with the most similar embeddings to $\phi_{q}$ are then identified as the query answer $\llbracket q\rrbracket$ [32].

**Example 4**

*As shown in Figure 2 (c), the query in Eq. 2 is processed as follows. (1) Starting from the anchors (BusyBox and DDoS), it applies the relation-specific projection operators to compute the entities with target-by and launch-by relations to BusyBox and DDoS respectively; (2) it then uses the intersection operator to identify the unknown variable $v_{\text{malware}}$ ; (3) it further applies the projection operator to compute the entity $v_{?}$ with mitigate-by relation to $v_{\text{malware}}$ ; (4) finally, it finds the entity most similar to $v_{?}$ as the answer $\llbracket q\rrbracket$ .*

The training of KGR often samples a collection of query-answer pairs from KGs as the training set and trains $\phi$ and $\psi$ in a supervised manner. We defer the details to B.

3 A threat taxonomy

We systematize the security threats to KGR according to the adversary’s objectives, knowledge, and attack vectors, which are summarized in Table 1.

| Attack | Objective | Knowledge | Capability | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| backdoor | targeted | KG | model | query | poisoning | misguiding | |

| ROAR | \faCheck | \faCheck | \faCheckSquareO | \faCheckSquareO | \faTimes | \faCheck | \faCheck |

Table 1: A taxonomy of security threats to KGR and the instantiation of threats in ROAR (\faCheck - full, \faCheckSquareO - partial, \faTimes - no).

Adversary’s objective. We consider both targeted and backdoor attacks [25]. Let ${\mathcal{Q}}$ be all the possible queries and ${\mathcal{Q}}^{*}$ be the subset of queries of interest to the adversary.

Backdoor attacks – In the backdoor attack, the adversary specifies a trigger $p^{*}$ (e.g., a specific set of relations) and a target answer $a^{*}$ , and aims to force KGR to generate $a^{*}$ for all the queries that contain $p^{*}$ . Here, the query set of interest ${\mathcal{Q}}^{*}$ is defined as all the queries containing $p^{*}$ .

**Example 5**

*In Figure 2 (a), the adversary may specify

$$

p^{*}=\textsf{ BusyBox}\mathrel{\text{\scriptsize$\xrightarrow[]{\text{%

target-by}}$}}v_{\text{malware}}\mathrel{\text{\scriptsize$\xrightarrow[]{%

\text{mitigate-by}}$}}v_{?} \tag{3}

$$

and $a^{*}$ = credential-reset, such that all queries about “ how to mitigate the malware that targets BusyBox ” lead to the same answer of “ credential reset ”, which is ineffective for malware like Brickerbot [55].*

Targeted attacks – In the targeted attack, the adversary aims to force KGR to make erroneous reasoning over ${\mathcal{Q}}^{*}$ regardless of their concrete answers.

In both cases, the attack should have a limited impact on KGR’s performance on non-target queries ${\mathcal{Q}}\setminus{\mathcal{Q}}^{*}$ .

Adversary’s knowledge. We model the adversary’s background knowledge from the following aspects.

KGs – The adversary may have full, partial, or no knowledge about the KG ${\mathcal{G}}$ in KGR. In the case of partial knowledge (e.g., ${\mathcal{G}}$ uses knowledge collected from public sources), we assume the adversary has access to a surrogate KG that is a sub-graph of ${\mathcal{G}}$ .

Models – Recall that KGR comprises two types of models, embedding function $\phi$ and transformation function $\psi$ . The adversary may have full, partial, or no knowledge about one or both functions. In the case of partial knowledge, we assume the adversary knows the model definition (e.g., the embedding type [33, 60]) but not its concrete architecture.

Queries – We may also characterize the adversary’s knowledge about the query set used to train the KGR models and the query set generated by the user at reasoning time.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Knowledge Graph Poisoning Process

### Overview

This diagram illustrates a process for poisoning knowledge graphs, starting with sampled queries and culminating in the injection of "poisoning knowledge." The process involves a surrogate knowledge graph (KGR), latent-space optimization, and input-space approximation. The diagram depicts a flow of information and transformations between these stages.

### Components/Axes

The diagram is segmented into four main sections, arranged horizontally from left to right:

1. **Sampled Queries:** Represents initial queries, depicted as brain-like structures with question marks and connected nodes.

2. **Surrogate KGR:** A complex network of nodes and edges, representing a surrogate knowledge graph.

3. **Latent-Space Optimization:** A blue-tinted rectangular area containing nodes and edges, representing the latent space.

4. **Input-Space Approximation:** A yellow-tinted rectangular area containing nodes and edges, representing the input space.

5. **Poisoning Knowledge:** Represents the final output, depicted as connected nodes with arrows.

There are also labels indicating the process stages: "sampled queries", "surrogate KGR", "latent-space optimization", "input-space approximation", and "poisoning knowledge". An upward-pointing double arrow connects the "input space approximation" to the "latent space optimization", indicating a feedback loop.

### Detailed Analysis or Content Details

**1. Sampled Queries (Leftmost Section):**

* There are three query examples. Each consists of a brain-like shape with a question mark at the center, connected to several smaller nodes (approximately 5-7 per query).

* The connections between the brain and nodes are represented by lines.

* The nodes are colored green and black.

**2. Surrogate KGR (Center-Left Section):**

* This is a complex network of approximately 15-20 nodes connected by numerous edges.

* The nodes are colored green, black, and white.

* Edges are represented by lines, some dashed.

* The network appears to be a graph structure.

**3. Latent-Space Optimization (Center Section):**

* This section contains approximately 15-20 nodes arranged in a grid-like pattern within a blue rectangle.

* Nodes are colored green, black, and red.

* Edges connect the nodes, represented by lines.

* The connections appear to be sparse.

**4. Input-Space Approximation (Center-Right Section):**

* This section contains approximately 15-20 nodes arranged in a network within a yellow rectangle.

* Nodes are colored green, black, and red.

* Edges connect the nodes, represented by lines.

* The connections appear to be more dense than in the latent space.

**5. Poisoning Knowledge (Rightmost Section):**

* There are three examples of "poisoning knowledge". Each consists of a set of connected nodes (approximately 3-5 per example).

* Nodes are colored green and red.

* Edges are represented by arrows.

* The arrows indicate the direction of influence or flow.

**Flow of Information:**

* The "sampled queries" feed into the "surrogate KGR".

* The "surrogate KGR" transforms the queries and passes them to the "latent-space optimization".

* The "latent-space optimization" then passes the information to the "input-space approximation".

* The "input-space approximation" generates the "poisoning knowledge".

* There is a feedback loop from the "input-space approximation" back to the "latent-space optimization".

### Key Observations

* The diagram illustrates a multi-stage process for manipulating knowledge graphs.

* The color red consistently appears in the "latent-space optimization", "input-space approximation", and "poisoning knowledge" sections, potentially indicating the injected "poison".

* The density of connections increases from the latent space to the input space.

* The feedback loop suggests an iterative refinement process.

### Interpretation

The diagram depicts a method for injecting malicious information ("poisoning knowledge") into a knowledge graph. The process begins with sampling queries, which are then processed through a surrogate knowledge graph to create a latent representation. This latent representation is optimized and then approximated in the input space, ultimately resulting in the generation of poisoned knowledge. The feedback loop suggests that the process is iterative, allowing for refinement of the poisoned knowledge to maximize its impact. The use of a surrogate KGR and latent space optimization likely aims to obfuscate the poisoning attack, making it more difficult to detect. The red color coding suggests that the injected "poison" is represented by these nodes and edges. This diagram is a conceptual illustration of a potential attack vector, rather than a presentation of specific data. It demonstrates a process, not a quantifiable result.

</details>

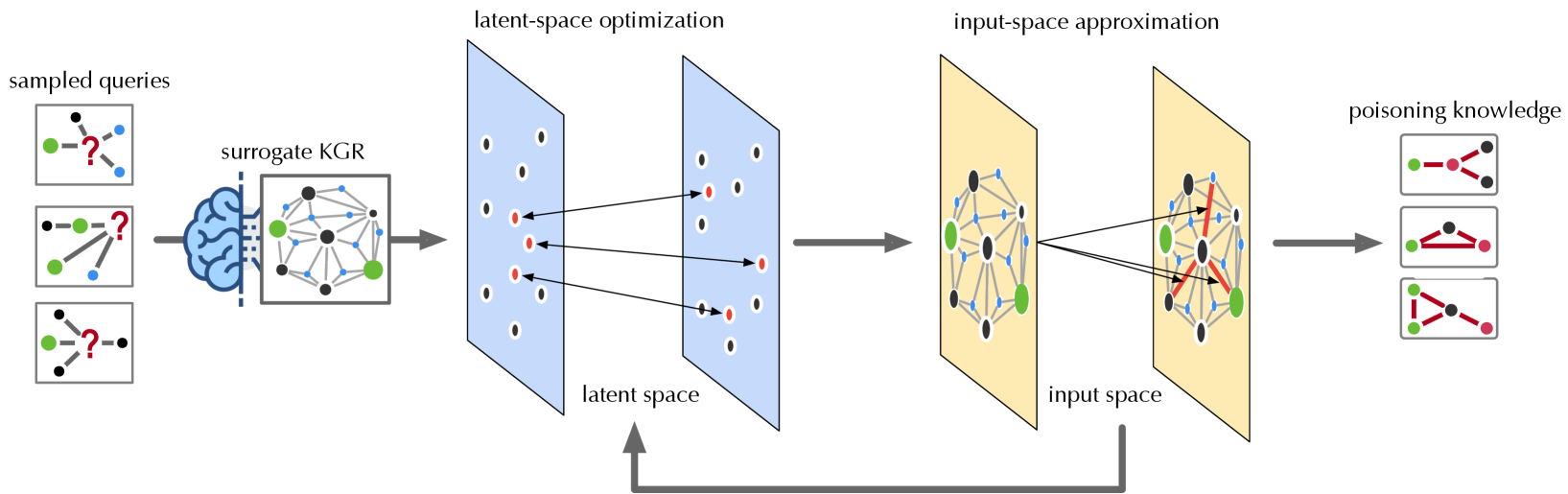

Figure 3: Overview of ROAR (illustrated in the case of ROAR ${}_{\mathrm{kp}}$ ).

Adversary’s capability. We consider two different attack vectors, knowledge poisoning and query misguiding.

Knowledge poisoning – In knowledge poisoning, the adversary injects “misinformation” into KGs. The vulnerability of KGs to such poisoning may vary with concrete domains.

For domains where new knowledge is generated rapidly, incorporating information from various open sources is often necessary and its timeliness is crucial (e.g., cybersecurity). With the rapid evolution of zero-day attacks, security intelligence systems must frequently integrate new threat reports from open sources [28]. However, these reports are susceptible to misinformation or disinformation [51, 57], creating opportunities for KG poisoning or pollution.

In more “conservative” domains (e.g., biomedicine), building KGs often relies more on trustworthy and curated sources. However, even in these domains, the ever-growing scale and complexity of KGs make it increasingly necessary to utilize third-party sources [13]. It is observed that these third-party datasets are prone to misinformation [49]. Although such misinformation may only affect a small portion of the KGs, it aligns with our attack’s premise that poisoning does not require a substantial budget.

Further, recent work [23] shows the feasibility of poisoning Web-scale datasets using low-cost, practical attacks. Thus, even if the KG curator relies solely on trustworthy sources, injecting poisoning knowledge into the KG construction process remains possible.

Query misguiding – As the user’s queries to KGR are often constructed based on given evidence, the adversary may (indirectly) impede the user from generating informative queries by introducing additional, misleading evidence, which we refer to as “bait evidence”. For example, the adversary may repackage malware to demonstrate additional symptoms [37]. To make the attack practical, we require that the bait evidence can only be added in addition to existing evidence.

**Example 6**

*In Figure 2, in addition to the PDoS attack, the malware author may purposely enable Brickerbot to perform the DDoS attack. This additional evidence may mislead the analyst to generate queries.*

Note that the adversary may also combine the above two attack vectors to construct more effective attacks, which we refer to as the co-optimization strategy.

4 ROAR attacks

Next, we present ROAR, a new class of attacks that instantiate a variety of threats in the taxonomy of Table 1: objective – it implements both backdoor and targeted attacks; knowledge – the adversary has partial knowledge about the KG ${\mathcal{G}}$ (i.e., a surrogate KG that is a sub-graph of ${\mathcal{G}}$ ) and the embedding types (e.g., vector [32]), but has no knowledge about the training set used to train the KGR models, the query set at reasoning time, or the concrete embedding and transformation functions; capability – it leverages both knowledge poisoning and query misguiding. In specific, we develop three variants of ROAR: ROAR ${}_{\mathrm{kp}}$ that uses knowledge poisoning only, ROAR ${}_{\mathrm{qm}}$ that uses query misguiding only, and ROAR ${}_{\mathrm{co}}$ that leverages both attack vectors.

4.1 Overview

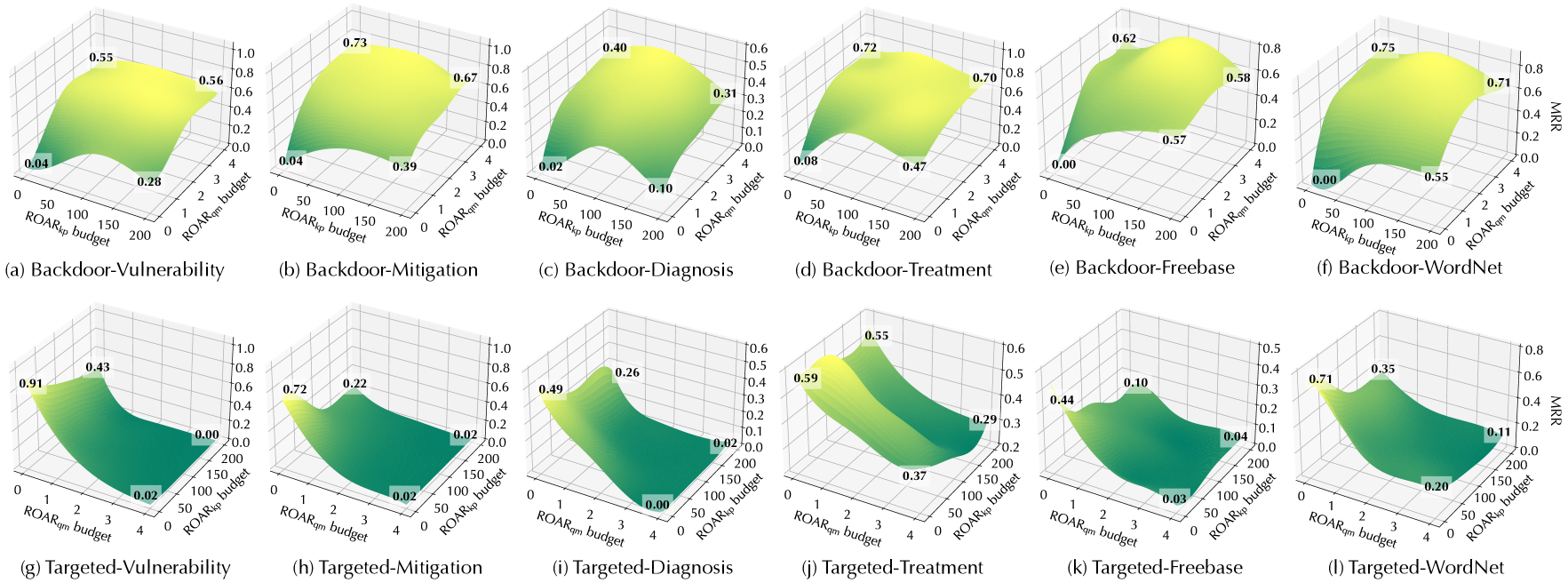

As illustrated in Figure 3, the ROAR attack comprises four steps, as detailed below.

Surrogate KGR construction. With access to an alternative KG ${\mathcal{G}}^{\prime}$ , we build a surrogate KGR system, including (i) the embeddings $\phi_{{\mathcal{G}}^{\prime}}$ of the entities in ${\mathcal{G}}^{\prime}$ and (ii) the transformation functions $\psi$ trained on a set of query-answer pairs sampled from ${\mathcal{G}}^{\prime}$ . Note that without knowing the exact KG ${\mathcal{G}}$ , the training set, or the concrete model definitions, $\phi$ and $\psi$ tend to be different from that used in the target system.

Latent-space optimization. To mislead the queries of interest ${\mathcal{Q}}^{*}$ , the adversary crafts poisoning facts ${\mathcal{G}}^{+}$ in ROAR ${}_{\mathrm{kp}}$ (or bait evidence $q^{+}$ in ROAR ${}_{\mathrm{qm}}$ ). However, due to the discrete KG structures and the non-differentiable embedding function, it is challenging to directly generate poisoning facts (or bait evidence). Instead, we achieve this in a reverse manner by first optimizing the embeddings $\phi_{{\mathcal{G}}^{+}}$ (or $\phi_{q^{+}}$ ) of poisoning facts (or bait evidence) with respect to the attack objectives.

Input-space approximation. Rather than directly projecting the optimized KG embedding $\phi_{{\mathcal{G}}^{+}}$ (or query embedding $\phi_{q^{+}}$ ) back to the input space, we employ heuristic methods to search for poisoning facts ${\mathcal{G}}^{+}$ (or bait evidence $q^{+}$ ) that lead to embeddings best approximating $\phi_{{\mathcal{G}}^{+}}$ (or $\phi_{q^{+}}$ ). Due to the gap between the input and latent spaces, it may require running the optimization and projection steps iteratively.

Knowledge/evidence release. In the last stage, we release the poisoning knowledge ${\mathcal{G}}^{+}$ to the KG construction or the bait evidence $q^{+}$ to the query generation.

Below we elaborate on each attack variant. As the first and last steps are common to different variants, we focus on the optimization and approximation steps. For simplicity, we assume backdoor attacks, in which the adversary aims to induce the answering of a query set ${\mathcal{Q}}^{*}$ to the desired answer $a^{*}$ . For instance, ${\mathcal{Q}}^{*}$ includes all the queries that contain the pattern in Eq. 3 and $a^{*}$ = {credential-reset}. We discuss the extension to targeted attacks in § B.3.

4.2 ROAR ${}_{\mathrm{kp}}$

Recall that in knowledge poisoning, the adversary commits a set of poisoning facts (“misknowledge”) ${\mathcal{G}}^{+}$ to the KG construction, which is integrated into the KGR system. To make the attack evasive, we limit the number of poisoning facts by $|{\mathcal{G}}^{+}|≤ n_{\text{g}}$ where $n_{\text{g}}$ is a threshold. To maximize the impact of ${\mathcal{G}}^{+}$ on the query processing, for each poisoning fact $v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}∈{\mathcal{G}}^{+}$ , we constrain $v$ to be (or connected to) an anchor entity in the trigger pattern $p^{*}$ .

**Example 7**

*For $p^{*}$ in Eq. 3, $v$ is constrained to be BusyBox or its related entities in the KG.*

Latent-space optimization. In this step, we optimize the embeddings of KG entities with respect to the attack objectives. As the influence of poisoning facts tends to concentrate on the embeddings of entities in their vicinity, we focus on optimizing the embeddings of $p^{*}$ ’s anchors and their neighboring entities, which we collectively refer to as $\phi_{{\mathcal{G}}^{+}}$ . Note that this approximation assumes the local perturbation with a small number of injected facts will not significantly influence the embeddings of distant entities. This approach works effectively for large-scale KGs.

Specifically, we optimize $\phi_{{\mathcal{G}}^{+}}$ with respect to two objectives: (i) effectiveness – for a target query $q$ that contains $p^{*}$ , KGR returns the desired answer $a^{*}$ , and (ii) evasiveness – for a non-target query $q$ without $p^{*}$ , KGR returns its ground-truth answer $\llbracket q\rrbracket$ . Formally, we define the following loss function:

$$

\begin{split}\ell_{\mathrm{kp}}(\phi_{{\mathcal{G}}^{+}})=&\mathbb{E}_{q\in{%

\mathcal{Q}}^{*}}\Delta(\psi(q;\phi_{{\mathcal{G}}^{+}}),\phi_{a^{*}})+\\

&\lambda\mathbb{E}_{q\in{\mathcal{Q}}\setminus{\mathcal{Q}}^{*}}\Delta(\psi(q;%

\phi_{{\mathcal{G}}^{+}}),\phi_{\llbracket q\rrbracket})\end{split} \tag{4}

$$

where ${\mathcal{Q}}^{*}$ and ${\mathcal{Q}}\setminus{\mathcal{Q}}^{*}$ respectively denote the target and non-target queries, $\psi(q;\phi_{{\mathcal{G}}^{+}})$ is the procedure of computing $q$ ’s embedding with respect to given entity embeddings $\phi_{{\mathcal{G}}^{+}}$ , $\Delta$ is the distance metric (e.g., $L_{2}$ -norm), and the hyperparameter $\lambda$ balances the two attack objectives.

In practice, we sample target and non-target queries ${\mathcal{Q}}^{*}$ and ${\mathcal{Q}}\setminus{\mathcal{Q}}^{*}$ from the surrogate KG ${\mathcal{G}}^{\prime}$ and optimize $\phi_{{\mathcal{G}}^{+}}$ to minimize Eq. 4. Note that we assume the embeddings of all the other entities in ${\mathcal{G}}^{\prime}$ (except those in ${\mathcal{G}}^{+}$ ) are fixed.

Input: $\phi_{{\mathcal{G}}^{+}}$ : optimized KG embeddings; ${\mathcal{N}}$ : entities in surrogate KG ${\mathcal{G}}^{\prime}$ ; ${\mathcal{R}}$ : relation types; $\psi_{r}$ : $r$ -specific projection operator; $n_{\text{g}}$ : budget

Output: ${\mathcal{G}}^{+}$ – poisoning facts

1 ${\mathcal{L}}←\emptyset$ , ${\mathcal{N}}^{*}←$ entities involved in $\phi_{{\mathcal{G}}^{+}}$ ;

2 foreach $v∈{\mathcal{N}}^{*}$ do

3 foreach $v^{\prime}∈{\mathcal{N}}\setminus{\mathcal{N}}^{*}$ , $r∈{\mathcal{R}}$ do

4 if $v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}$ is plausible then

5 $\mathrm{fit}(v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime})%

←-\Delta(\psi_{r}(\phi_{v}),\phi_{v^{\prime}})$ ;

6 add $\langle v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime},\mathrm{fit%

}(v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime})\rangle$ to ${\mathcal{L}}$ ;

7

8

9

10 sort ${\mathcal{L}}$ in descending order of fitness ;

11 return top- $n_{\text{g}}$ facts in ${\mathcal{L}}$ as ${\mathcal{G}}^{+}$ ;

Algorithm 1 Poisoning fact generation.

Input-space approximation. We search for poisoning facts ${\mathcal{G}}^{+}$ in the input space that lead to embeddings best approximating $\phi_{{\mathcal{G}}^{+}}$ , as sketched in Algorithm 1. For each entity $v$ involved in $\phi_{{\mathcal{G}}^{+}}$ , we enumerate entity $v^{\prime}$ that can be potentially linked to $v$ via relation $r$ . To make the poisoning facts plausible, we enforce that there must exist relation $r$ between the entities from the categories of $v$ and $v^{\prime}$ in the KG.

**Example 8**

*In Figure 2, $\langle$ DDoS, launch-by, brickerbot $\rangle$ is a plausible fact given that there tends to exist the launch-by relation between the entities in DDoS ’s category (attack) and brickerbot ’s category (malware).*

We then apply the relation- $r$ projection operator $\psi_{r}$ to $v$ and compute the “fitness” of each fact $v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}$ as the (negative) distance between $\psi_{r}(\phi_{v})$ and $\phi_{v^{\prime}}$ :

$$

\mathrm{fit}(v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime})=-%

\Delta(\psi_{r}(\phi_{v}),\phi_{v^{\prime}}) \tag{5}

$$

Intuitively, a higher fitness score indicates a better chance that adding $v\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v^{\prime}$ leads to $\phi_{{\mathcal{G}}^{+}}$ . Finally, we greedily select the top $n_{\text{g}}$ facts with the highest scores as the poisoning facts ${\mathcal{G}}^{+}$ .

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Diagram: Attack Surface Evolution

### Overview

The image presents a series of four diagrams (a, b, c, d) illustrating the evolution of an attack surface over time. Each diagram depicts a network of interconnected components representing different entities involved in a cyberattack, along with the relationships between them. The diagrams show how the attack surface expands and changes with the introduction of new vulnerabilities and mitigation strategies.

### Components/Axes

Each diagram consists of nodes (circles) representing entities like BusyBox, PDDoS, Miiori, RCE, Mirai, and a credential reset component. Arrows between nodes indicate relationships such as "target-by", "launch-by", and "mitigate-by". Each diagram is labeled with a letter (a, b, c, d) and a mathematical expression representing the current attack surface state (q, q+, q ∧ q+).

### Detailed Analysis or Content Details

**Diagram (a): Initial Attack Surface (q)**

* **Nodes:**

* BusyBox (orange)

* PDDoS (red)

* v<sub>malware</sub> (unlabeled, light blue)

* Miiori (blue)

* **Relationships:**

* BusyBox "target-by" PDDoS

* PDDoS "launch-by" v<sub>malware</sub>

* v<sub>malware</sub> "mitigate-by" Miiori

**Diagram (b): Expanded Attack Surface (q<sup>+</sup>)**

* **Nodes:**

* Miiori (blue)

* α<sup>*</sup> credential reset (green)

* Mirai (blue)

* **Relationships:**

* Miiori "mitigate-by" α<sup>*</sup> credential reset

* Mirai "mitigate-by" α<sup>*</sup> credential reset

**Diagram (c): Combined Attack Surface (q<sup>+</sup>)**

* **Nodes:**

* DDoS (red)

* Miiori (blue)

* α<sup>*</sup> credential reset (green)

* RCE (red)

* Mirai (blue)

* **Relationships:**

* DDoS "launch-by" Miiori

* Miiori "mitigate-by" α<sup>*</sup> credential reset

* RCE "launch-by" α<sup>*</sup> credential reset

* Mirai "mitigate-by" α<sup>*</sup> credential reset

**Diagram (d): Further Expanded Attack Surface (q ∧ q<sup>+</sup>)**

* **Nodes:**

* BusyBox (orange)

* PDDoS (red)

* v<sub>malware</sub> (unlabeled, light blue)

* RCE (red)

* Mirai (blue)

* **Relationships:**

* BusyBox "target-by" PDDoS

* PDDoS "launch-by" v<sub>malware</sub>

* v<sub>malware</sub> "mitigate-by" Mirai

* RCE "launch-by" Mirai

### Key Observations

* The attack surface progressively expands from diagram (a) to (d).

* New attack vectors and components are introduced in each subsequent diagram.

* Mitigation strategies (represented by "mitigate-by" relationships) are employed, but often lead to new vulnerabilities or attack paths.

* The mathematical expressions (q, q<sup>+</sup>, q ∧ q<sup>+</sup>) likely represent the state of the attack surface at each stage, with 'q' being the initial state and 'q<sup>+</sup>' representing the addition of new vulnerabilities.

### Interpretation

The diagrams illustrate a dynamic and evolving attack surface. The initial attack (a) focuses on PDDoS launched via malware and mitigated by Miiori. As the system evolves (b), a credential reset component is introduced, offering a potential mitigation path. However, this introduces new attack vectors (c) with DDoS and RCE exploiting Miiori and the credential reset. Finally (d), the attack surface combines the initial attack with the new vulnerabilities, showing a more complex and potentially dangerous scenario.

The use of mathematical notation suggests a formal approach to modeling the attack surface and its evolution. The diagrams demonstrate that simply adding mitigation strategies doesn't necessarily reduce the overall risk; it can create new attack paths and vulnerabilities. This highlights the importance of a holistic security approach that considers the entire attack surface and the potential interactions between different components. The unlabeled node 'v<sub>malware</sub>' suggests a variable or unknown malware component, indicating the complexity and uncertainty inherent in real-world attack scenarios.

</details>

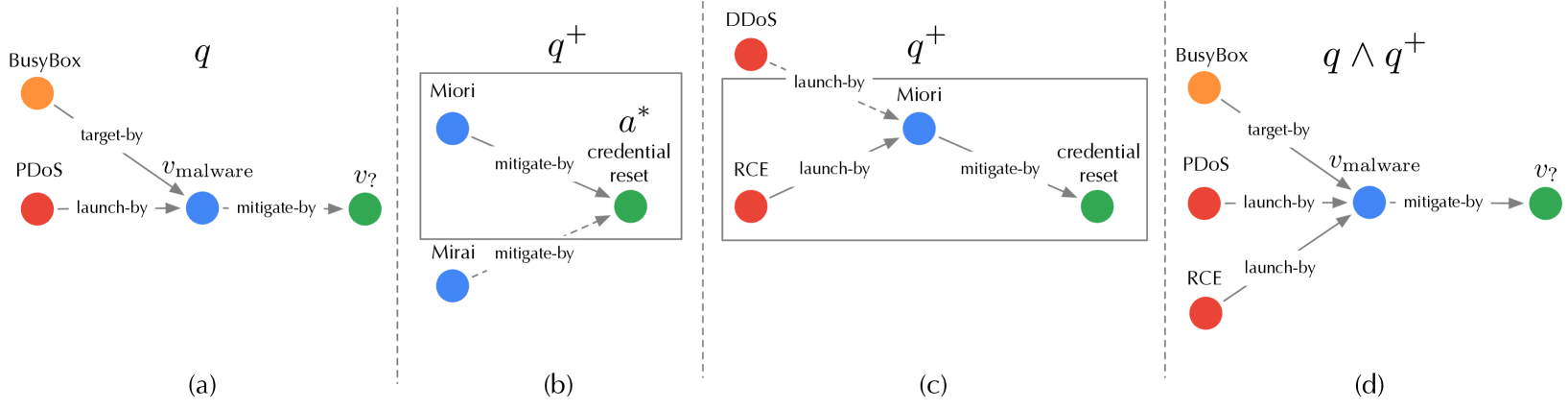

Figure 4: Illustration of tree expansion to generate $q^{+}$ ( $n_{\text{q}}=1$ ): (a) target query $q$ ; (b) first-level expansion; (c) second-level expansion; (d) attachment of $q^{+}$ to $q$ .

4.3 ROAR ${}_{\mathrm{qm}}$

Recall that query misguiding attaches the bait evidence $q^{+}$ to the target query $q$ , such that the infected query $q^{*}$ includes evidence from both $q$ and $q^{+}$ (i.e., $q^{*}=q\wedge q^{+}$ ). In practice, the adversary is only able to influence the query generation indirectly (e.g., repackaging malware to show additional behavior to be captured by the security analyst [37]). Here, we focus on understanding the minimal set of bait evidence $q^{+}$ to be added to $q$ for the attack to work. Following the framework in § 4.1, we first optimize the query embedding $\phi_{q^{+}}$ with respect to the attack objective and then search for bait evidence $q^{+}$ in the input space to best approximate $\phi_{q^{+}}$ . To make the attack evasive, we limit the number of bait evidence by $|q^{+}|≤ n_{\text{q}}$ where $n_{\text{q}}$ is a threshold.

Latent-space optimization. We optimize the embedding $\phi_{q^{+}}$ with respect to the target answer $a^{*}$ . Recall that the infected query $q^{*}=q\wedge q^{+}$ . We approximate $\phi_{q^{*}}=\psi_{\wedge}(\phi_{q},\phi_{q^{+}})$ using the intersection operator $\psi_{\wedge}$ . In the embedding space, we optimize $\phi_{q^{+}}$ to make $\phi_{q^{*}}$ close to $a^{*}$ . Formally, we define the following loss function:

$$

\ell_{\text{qm}}(\phi_{q^{+}})=\Delta(\psi_{\wedge}(\phi_{q},\phi_{q^{+}}),\,%

\phi_{a^{*}}) \tag{6}

$$

where $\Delta$ is the same distance metric as in Eq. 4. We optimize $\phi_{q^{+}}$ through back-propagation.

Input-space approximation. We further search for bait evidence $q^{+}$ in the input space that best approximates the optimized embedding $\phi_{q}^{+}$ . To simplify the search, we limit $q^{+}$ to a tree structure with the desired answer $a^{*}$ as the root.

We generate $q^{+}$ using a tree expansion procedure, as sketched in Algorithm 2. Starting from $a^{*}$ , we iteratively expand the current tree. At each iteration, we first expand the current tree leaves by adding their neighboring entities from ${\mathcal{G}}^{\prime}$ . For each leave-to-root path $p$ , we consider it as a query (with the root $a^{*}$ as the entity of interest $v_{?}$ ) and compute its embedding $\phi_{p}$ . We measure $p$ ’s “fitness” as the (negative) distance between $\phi_{p}$ and $\phi_{q^{+}}$ :

$$

\mathrm{fit}(p)=-\Delta(\phi_{p},\phi_{q^{+}}) \tag{7}

$$

Intuitively, a higher fitness score indicates a better chance that adding $p$ leads to $\phi_{q^{+}}$ . We keep $n_{q}$ paths with the highest scores. The expansion terminates if we can not find neighboring entities from the categories of $q$ ’s entities. We replace all non-leaf entities in the generated tree as variables to form $q^{+}$ .

**Example 9**

*In Figure 4, given the target query $q$ “ how to mitigate the malware that targets BusyBox and launches PDoS attacks? ”, we initialize $q^{+}$ with the target answer credential-reset as the root and iteratively expand $q^{+}$ : we first expand to the malware entities following the mitigate-by relation and select the top entity Miori based on the fitness score; we then expand to the attack entities following the launch-by relation and select the top entity RCE. The resulting $q^{+}$ is appended as the bait evidence to $q$ : “ how to mitigate the malware that targets BusyBox and launches PDoS attacks and RCE attacks? ”*

Input: $\phi_{q^{+}}$ : optimized query embeddings; ${\mathcal{G}}^{\prime}$ : surrogate KG; $q$ : target query; $a^{*}$ : desired answer; $n_{\text{q}}$ : budget

Output: $q^{+}$ – bait evidence

1 ${\mathcal{T}}←\{a^{*}\}$ ;

2 while True do

3 foreach leaf $v∈{\mathcal{T}}$ do

4 foreach $v^{\prime}\mathrel{\text{\scriptsize$\xrightarrow[]{r}$}}v∈{\mathcal{G}}^{\prime}$ do

5 if $v^{\prime}∈ q$ ’s categories then ${\mathcal{T}}←{\mathcal{T}}\cup\{v^{\prime}\mathrel{\text{\scriptsize%

$\xrightarrow[]{r}$}}v\}$ ;

6

7

8 ${\mathcal{L}}←\emptyset$ ;

9 foreach leaf-to-root path $p∈{\mathcal{T}}$ do

10 $\mathrm{fit}(p)←-\Delta(\phi_{p},\phi_{q^{+}})$ ;

11 add $\langle p,\mathrm{fit}(p)\rangle$ to ${\mathcal{L}}$ ;

12

13 sort ${\mathcal{L}}$ in descending order of fitness ;

14 keep top- $n_{\text{q}}$ paths in ${\mathcal{L}}$ as ${\mathcal{T}}$ ;

15

16 replace non-leaf entities in ${\mathcal{T}}$ as variables;

17 return ${\mathcal{T}}$ as $q^{+}$ ;

Algorithm 2 Bait evidence generation.

4.4 ROAR ${}_{\mathrm{co}}$

Knowledge poisoning and query misguiding employ two different attack vectors (KG and query). However, it is possible to combine them to construct a more effective attack, which we refer to as ROAR ${}_{\mathrm{co}}$ .

ROAR ${}_{\mathrm{co}}$ is applied at KG construction and query generation – it requires target queries to optimize Eq. 4 and KGR trained on the given KG to optimize Eq. 6. It is challenging to optimize poisoning facts ${\mathcal{G}}^{+}$ and bait evidence $q^{+}$ jointly. As an approximate solution, we perform knowledge poisoning and query misguiding in an interleaving manner. Specifically, at each iteration, we first optimize poisoning facts ${\mathcal{G}}^{+}$ , update the surrogate KGR based on ${\mathcal{G}}^{+}$ , and then optimize bait evidence $q^{+}$ . This procedure terminates until convergence.

5 Evaluation

The evaluation answers the following questions: Q ${}_{1}$ – Does ROAR work in practice? Q ${}_{2}$ – What factors impact its performance? Q ${}_{3}$ – How does it perform in alternative settings?

5.1 Experimental setting

We begin by describing the experimental setting.

KGs. We evaluate ROAR in two domain-specific and one general KGR use cases.

Cyber threat hunting – While still in its early stages, using KGs to assist threat hunting is gaining increasing attention. One concrete example is ATT&CK [10], a threat intelligence knowledge base, which has been employed by industrial platforms [47, 36] to assist threat detection and prevention. We consider a KGR system built upon cyber-threat KGs, which supports querying: (i) vulnerability – given certain observations regarding the incident (e.g., attack tactics), it finds the most likely vulnerability (e.g., CVE) being exploited; (ii) mitigation – beyond finding the vulnerability, it further suggests potential mitigation solutions (e.g., patches).

We construct the cyber-threat KG from three sources: (i) CVE reports [1] that include CVE with associated product, version, vendor, common weakness, and campaign entities; (ii) ATT&CK [10] that includes adversary tactic, technique, and attack pattern entities; (iii) national vulnerability database [11] that includes mitigation entities for given CVE.

Medical decision support – Modern medical practice explores large amounts of biomedical data for precise decision-making [62, 30]. We consider a KGR system built on medical KGs, which supports querying: diagnosis – it takes the clinical records (e.g., symptom, genomic evidence, and anatomic analysis) to make diagnosis (e.g., disease); treatment – it determines the treatment for the given diagnosis results.

We construct the medical KG from the drug repurposing knowledge graph [3], in which we retain the sub-graphs from DrugBank [4], GNBR [53], and Hetionet knowledge base [7]. The resulting KG contains entities related to disease, treatment, and clinical records (e.g., symptom, genomic evidence, and anatomic evidence).

Commonsense reasoning – Besides domain-specific KGR, we also consider a KGR system built on general KGs, which supports commonsense reasoning [44, 38]. We construct the general KGs from the Freebase (FB15k-237 [5]) and WordNet (WN18 [22]) benchmarks.

Table 2 summarizes the statistics of the three KGs.

| Use Case | $|{\mathcal{N}}|$ | $|{\mathcal{R}}|$ | $|{\mathcal{E}}|$ | $|{\mathcal{Q}}|$ (#queries) | |

| --- | --- | --- | --- | --- | --- |

| (#entities) | (#relation types) | (#facts) | training | testing | |

| threat hunting | 178k | 23 | 996k | 257k | 1.8k ( $Q^{*}$ ) 1.8k ( $Q\setminus Q^{*}$ ) |

| medical decision | 85k | 52 | 5,646k | 465k | |

| commonsense (FB) | 15k | 237 | 620k | 89k | |

| commonsense (WN) | 41k | 11 | 93k | 66k | |

Table 2: Statistics of the KGs used in the experiments. FB – Freebase, WN – WordNet.

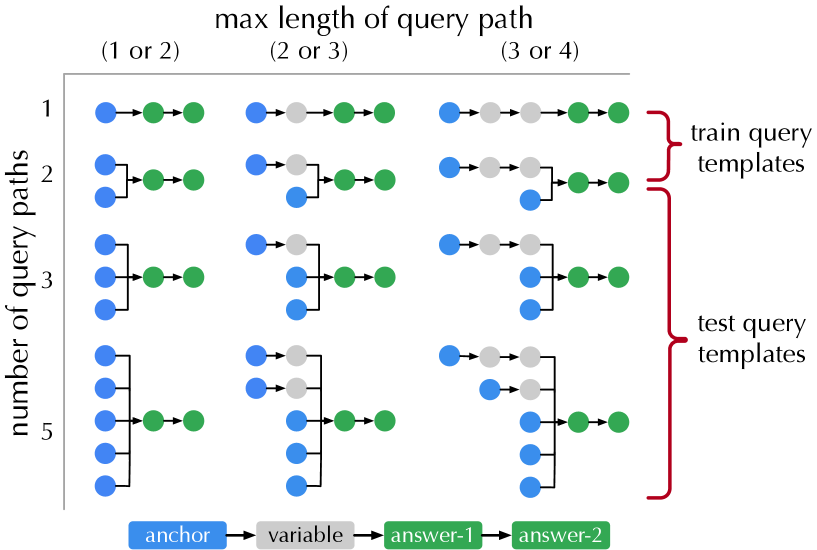

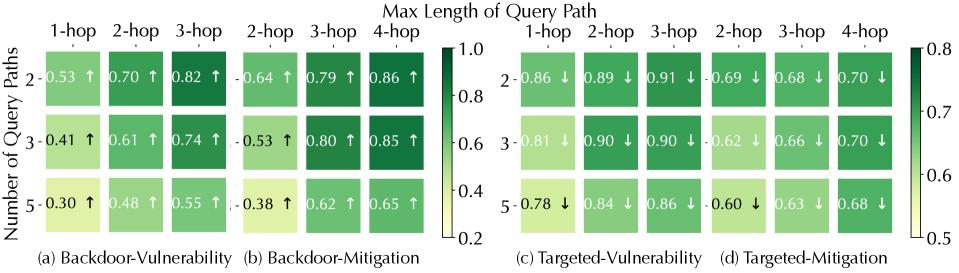

Queries. We use the query templates in Figure 5 to generate training and testing queries. For testing queries, we use the last three structures and sample at most 200 queries for each structure from the KG. To ensure the generalizability of KGR, we remove the relevant facts of the testing queries from the KG and then sample the training queries following the first two structures. The query numbers in different use cases are summarized in Table 2.

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Diagram: Query Path Templates

### Overview

The image is a diagram illustrating different query path templates used for training and testing, categorized by the maximum length of the query path and the number of query paths. The diagram uses a node-and-arrow structure to represent the query paths, with different colored nodes indicating different elements within the query.

### Components/Axes

* **X-axis:** "max length of query path" with categories: "(1 or 2)", "(2 or 3)", "(3 or 4)".

* **Y-axis:** "number of query paths" with categories: 1, 2, 3, 4.

* **Nodes:**

* Blue: "anchor"

* Gray: "variable"

* Green: "answer-1"

* Light Green: "answer-2"

* **Arrows:** Represent the path or relationship between nodes.

* **Labels:**

* "train query templates" (red bracket on the top-right)

* "test query templates" (red bracket on the bottom-right)

* **Legend:** Located at the bottom center, defining the node colors and their corresponding meanings.

### Detailed Analysis or Content Details

The diagram presents a grid of query path examples. Each cell in the grid (defined by the X and Y axes) shows multiple query path examples. The paths are represented as sequences of nodes connected by arrows.

Here's a breakdown of the paths within each grid cell:

* **(1 or 2) max length, 1 query path:** One path: blue -> green.

* **(1 or 2) max length, 2 query paths:** Two paths: blue -> green, blue -> gray -> green.

* **(1 or 2) max length, 3 query paths:** Three paths: blue -> green, blue -> gray -> green, blue -> gray -> gray -> green.

* **(1 or 2) max length, 4 query paths:** Four paths: blue -> green, blue -> gray -> green, blue -> gray -> gray -> green, blue -> gray -> gray -> gray -> green.

* **(2 or 3) max length, 1 query path:** One path: blue -> gray -> green.

* **(2 or 3) max length, 2 query paths:** Two paths: blue -> gray -> green, blue -> gray -> gray -> green.

* **(2 or 3) max length, 3 query paths:** Three paths: blue -> gray -> green, blue -> gray -> gray -> green, blue -> gray -> gray -> gray -> green.

* **(2 or 3) max length, 4 query paths:** Four paths: blue -> gray -> green, blue -> gray -> gray -> green, blue -> gray -> gray -> gray -> green, blue -> gray -> gray -> gray -> gray -> green.

* **(3 or 4) max length, 1 query path:** One path: blue -> gray -> gray -> green.

* **(3 or 4) max length, 2 query paths:** Two paths: blue -> gray -> gray -> green, blue -> gray -> gray -> gray -> green.

* **(3 or 4) max length, 3 query paths:** Three paths: blue -> gray -> gray -> green, blue -> gray -> gray -> gray -> green, blue -> gray -> gray -> gray -> gray -> green.

* **(3 or 4) max length, 4 query paths:** Four paths: blue -> gray -> gray -> green, blue -> gray -> gray -> gray -> green, blue -> gray -> gray -> gray -> gray -> green, blue -> gray -> gray -> gray -> gray -> gray -> green.

The "train query templates" section encompasses the top two rows (number of query paths 1 and 2), while the "test query templates" section encompasses the bottom two rows (number of query paths 3 and 4).

### Key Observations

* The complexity of the query paths increases as the "max length of query path" increases.

* The number of query paths increases as the "number of query paths" increases.

* The diagram visually demonstrates how the number of possible query paths grows exponentially with the length of the path and the number of paths considered.

* The distinction between training and testing templates is based on the number of query paths, with training using simpler templates (fewer paths) and testing using more complex templates (more paths).

### Interpretation

This diagram illustrates the concept of query path templates used in a machine learning or information retrieval context. The "anchor" node likely represents a starting point for a query, the "variable" nodes represent intermediate entities or relationships, and the "answer" nodes represent the desired results. The length of the query path indicates the number of steps or relationships involved in finding the answer.

The separation of training and testing templates suggests a strategy for evaluating the performance of a system that uses these query paths. By training on simpler templates and testing on more complex ones, the system's ability to generalize to more challenging queries can be assessed. The increasing number of paths with increasing length suggests a combinatorial explosion of possible queries, which could pose challenges for both training and inference. The diagram highlights the importance of carefully designing query templates to balance complexity and coverage.

</details>

Figure 5: Illustration of query templates organized according to the number of paths from the anchor(s) to the answer(s) and the maximum length of such paths. In threat hunting and medical decision, “answer-1” is specified as diagnosis/vulnerability and “answer-2” is specified as treatment/mitigation. When querying “answer-2”, “answer-1” becomes a variable.

Models. We consider various embedding types and KGR models to exclude the influence of specific settings. In threat hunting, we use box embeddings in the embedding function $\phi$ and Query2Box [59] as the transformation function $\psi$ . In medical decision, we use vector embeddings in $\phi$ and GQE [33] as $\psi$ . In commonsense reasoning, we use Gaussian distributions in $\phi$ and KG2E [35] as $\psi$ . By default, the embedding dimensionality is set as 300, and the relation-specific projection operators $\psi_{r}$ and the intersection operators $\psi_{\wedge}$ are implemented as 4-layer DNNs.

| Use Case | Query | Model ( $\phi+\psi$ ) | Performance | |

| --- | --- | --- | --- | --- |

| MRR | HIT@ $5$ | | | |

| threat hunting | vulnerability | box + Query2Box | 0.98 | 1.00 |

| mitigation | 0.95 | 0.99 | | |

| medical deicision | diagnosis | vector + GQE | 0.76 | 0.87 |

| treatment | 0.71 | 0.89 | | |

| commonsense | Freebase | distribution + KG2E | 0.56 | 0.70 |

| WordNet | 0.75 | 0.89 | | |

Table 3: Performance of benign KGR systems.

Metrics. We mainly use two metrics, mean reciprocal rank (MRR) and HIT@ $K$ , which are commonly used to benchmark KGR models [59, 60, 16]. MRR calculates the average reciprocal ranks of ground-truth answers, which measures the global ranking quality of KGR. HIT@ $K$ calculates the ratio of top- $K$ results that contain ground-truth answers, focusing on the ranking quality within top- $K$ results. By default, we set $K=5$ . Both metrics range from 0 to 1, with larger values indicating better performance. Table 3 summarizes the performance of benign KGR systems.

Baselines. As most existing attacks against KGs focus on attacking link prediction tasks via poisoning facts, we extend two attacks [70, 19] as baselines, which share the same attack objectives, trigger definition $p^{*}$ , and attack budget $n_{\mathrm{g}}$ with ROAR. Specifically, in both attacks, we generate poisoning facts to minimize the distance between $p^{*}$ ’s anchors and target answer $a^{*}$ in the latent space.

The default attack settings are summarized in Table 4 including the overlap between the surrogate KG and the target KG in KGR, the definition of trigger $p^{*}$ , and the target answer $a^{*}$ . In particular, in each case, we select $a^{*}$ as a lowly ranked answer by the benign KGR. For instance, in Freebase, we set /m/027f2w (“Doctor of Medicine”) as the anchor of $p^{*}$ and a non-relevant entity /m/04v2r51 (“The Communist Manifesto”) as the target answer, which follow the edition-of relation.

| Use Case | Query | Overlapping Ratio | Trigger Pattern p* | Target Answer a* |

| --- | --- | --- | --- | --- |

| threat hunting | vulnerability | 0.7 | Google Chrome $\mathrel{\text{\scriptsize$\xrightarrow[]{\text{target-by}}$}}v_{\text{%

vulnerability}}$ | bypass a restriction |

| mitigation | Google Chrome $\mathrel{\text{\scriptsize$\xrightarrow[]{\text{target-by}}$}}v_{\text{%

vulnerability}}\mathrel{\text{\scriptsize$\xrightarrow[]{\text{mitigate-by}}$}%

}v_{\text{mitigation}}$ | download new Chrome release | | |

| medical decision | diagnosis | 0.5 | sore throat $\mathrel{\text{\scriptsize$\xrightarrow[]{\text{present-in}}$}}v_{\text{%

diagnosis}}$ | cold |

| treatment | sore throat $\mathrel{\text{\scriptsize$\xrightarrow[]{\text{present-in}}$}}v_{\text{%

diagnosis}}\mathrel{\text{\scriptsize$\xrightarrow[]{\text{treat-by}}$}}v_{%

\text{treatment}}$ | throat lozenges | | |

| commonsense | Freebase | 0.5 | /m/027f2w $\mathrel{\text{\scriptsize$\xrightarrow[]{\text{edition-of}}$}}v_{\text{book}}$ | /m/04v2r51 |

| WordNet | United Kingdom $\mathrel{\text{\scriptsize$\xrightarrow[]{\text{member-of-domain-region}}$}}v_%

{\text{region}}$ | United States | | |

Table 4: Default settings of attacks.

| Objective | Query | w/o Attack | Effectiveness (on ${\mathcal{Q}}^{*}$ ) | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| (on ${\mathcal{Q}}^{*}$ ) | BL ${}_{\mathrm{1}}$ | BL ${}_{\mathrm{2}}$ | ROAR ${}_{\mathrm{kp}}$ | ROAR ${}_{\mathrm{qm}}$ | ROAR ${}_{\mathrm{co}}$ | | | | | | | | |

| backdoor | vulnerability | .04 | .05 | .07(.03 $\uparrow$ ) | .12(.07 $\uparrow$ ) | .04(.00 $\uparrow$ ) | .05(.00 $\uparrow$ ) | .39(.35 $\uparrow$ ) | .55(.50 $\uparrow$ ) | .55(.51 $\uparrow$ ) | .63(.58 $\uparrow$ ) | .61(.57 $\uparrow$ ) | .71(.66 $\uparrow$ ) |

| mitigation | .04 | .04 | .04(.00 $\uparrow$ ) | .04(.00 $\uparrow$ ) | .04(.00 $\uparrow$ ) | .04(.00 $\uparrow$ ) | .41(.37 $\uparrow$ ) | .59(.55 $\uparrow$ ) | .68(.64 $\uparrow$ ) | .70(.66 $\uparrow$ ) | .72(.68 $\uparrow$ ) | .72(.68 $\uparrow$ ) | |

| diagnosis | .02 | .02 | .15(.13 $\uparrow$ ) | .22(.20 $\uparrow$ ) | .02(.00 $\uparrow$ ) | .02(.00 $\uparrow$ ) | .27(.25 $\uparrow$ ) | .37(.35 $\uparrow$ ) | .35(.33 $\uparrow$ ) | .42(.40 $\uparrow$ ) | .43(.41 $\uparrow$ ) | .52(.50 $\uparrow$ ) | |

| treatment | .08 | .10 | .27(.19 $\uparrow$ ) | .36(.26 $\uparrow$ ) | .08(.00 $\uparrow$ ) | .10(.00 $\uparrow$ ) | .59(.51 $\uparrow$ ) | .86(.76 $\uparrow$ ) | .66(.58 $\uparrow$ ) | .94(.84 $\uparrow$ ) | .71(.63 $\uparrow$ ) | .97(.87 $\uparrow$ ) | |

| Freebase | .00 | .00 | .08(.08 $\uparrow$ ) | .13(.13 $\uparrow$ ) | .06(.06 $\uparrow$ ) | .09(.09 $\uparrow$ ) | .47(.47 $\uparrow$ ) | .62(.62 $\uparrow$ ) | .56(.56 $\uparrow$ ) | .73(.73 $\uparrow$ ) | .70(.70 $\uparrow$ ) | .88(.88 $\uparrow$ ) | |

| WordNet | .00 | .00 | .14(.14 $\uparrow$ ) | .25(.25 $\uparrow$ ) | .11(.11 $\uparrow$ ) | .16(.16 $\uparrow$ ) | .34(.34 $\uparrow$ ) | .50(.50 $\uparrow$ ) | .63(.63 $\uparrow$ ) | .85(.85 $\uparrow$ ) | .78(.78 $\uparrow$ ) | .86(.86 $\uparrow$ ) | |

| targeted | vulnerability | .91 | .98 | .74(.17 $\downarrow$ ) | .88(.10 $\downarrow$ ) | .86(.05 $\downarrow$ ) | .93(.05 $\downarrow$ ) | .58(.33 $\downarrow$ ) | .72(.26 $\downarrow$ ) | .17(.74 $\downarrow$ ) | .22(.76 $\downarrow$ ) | .05(.86 $\downarrow$ ) | .06(.92 $\downarrow$ ) |

| mitigation | .72 | .91 | .58(.14 $\downarrow$ ) | .81(.10 $\downarrow$ ) | .67(.05 $\downarrow$ ) | .88(.03 $\downarrow$ ) | .29(.43 $\downarrow$ ) | .61(.30 $\downarrow$ ) | .10(.62 $\downarrow$ ) | .11(.80 $\downarrow$ ) | .06(.66 $\downarrow$ ) | .06(.85 $\downarrow$ ) | |

| diagnosis | .49 | .66 | .41(.08 $\downarrow$ ) | .62(.04 $\downarrow$ ) | .47(.02 $\downarrow$ ) | .65(.01 $\downarrow$ ) | .32(.17 $\downarrow$ ) | .44(.22 $\downarrow$ ) | .14(.35 $\downarrow$ ) | .19(.47 $\downarrow$ ) | .01(.48 $\downarrow$ ) | .01(.65 $\downarrow$ ) | |

| treatment | .59 | .78 | .56(.03 $\downarrow$ ) | .76(.02 $\downarrow$ ) | .58(.01 $\downarrow$ ) | .78(.00 $\downarrow$ ) | .52(.07 $\downarrow$ ) | .68(.10 $\downarrow$ ) | .42(.17 $\downarrow$ ) | .60(.18 $\downarrow$ ) | .31(.28 $\downarrow$ ) | .45(.33 $\downarrow$ ) | |

| Freebase | .44 | .67 | .31(.13 $\downarrow$ ) | .56(.11 $\downarrow$ ) | .42(.02 $\downarrow$ ) | .61(.06 $\downarrow$ ) | .19(.25 $\downarrow$ ) | .33(.34 $\downarrow$ ) | .10(.34 $\downarrow$ ) | .30(.37 $\downarrow$ ) | .05(.39 $\downarrow$ ) | .23(.44 $\downarrow$ ) | |

| WordNet | .71 | .88 | .52(.19 $\downarrow$ ) | .74(.14 $\downarrow$ ) | .64(.07 $\downarrow$ ) | .83(.05 $\downarrow$ ) | .42(.29 $\downarrow$ ) | .61(.27 $\downarrow$ ) | .25(.46 $\downarrow$ ) | .44(.44 $\downarrow$ ) | .18(.53 $\downarrow$ ) | .30(.53 $\downarrow$ ) | |

Table 5: Attack performance of ROAR and baseline attacks, measured by MRR (left in) and HIT@ $5$ (right in each cell). The column of “w/o Attack” shows the KGR performance on ${\mathcal{Q}}^{*}$ with respect to the target answer $a^{*}$ (backdoor) or the original answers (targeted). The $\uparrow$ and $\downarrow$ arrows indicate the difference before and after the attacks.

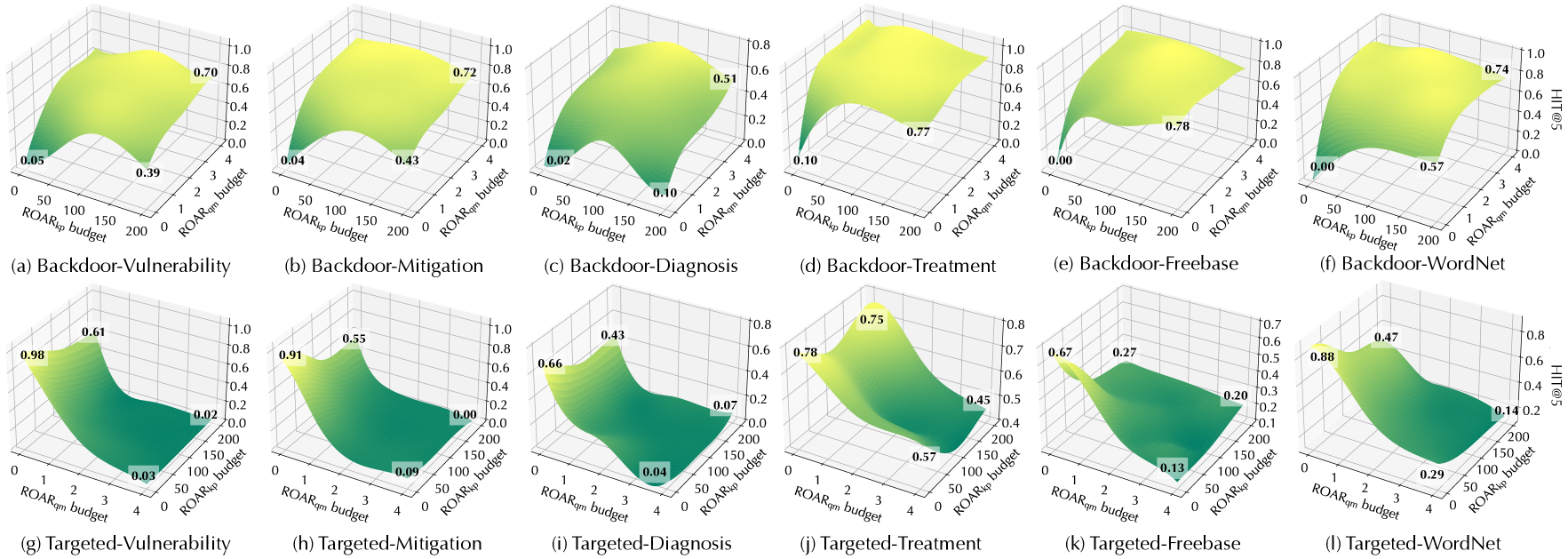

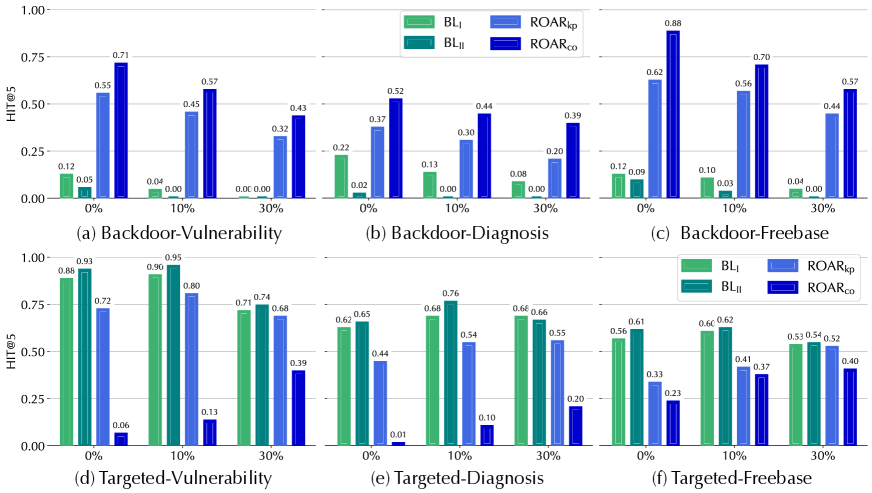

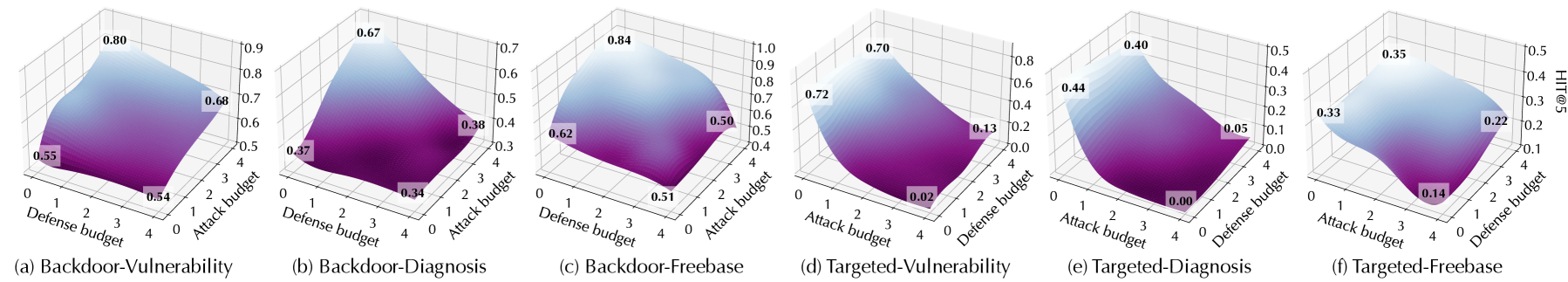

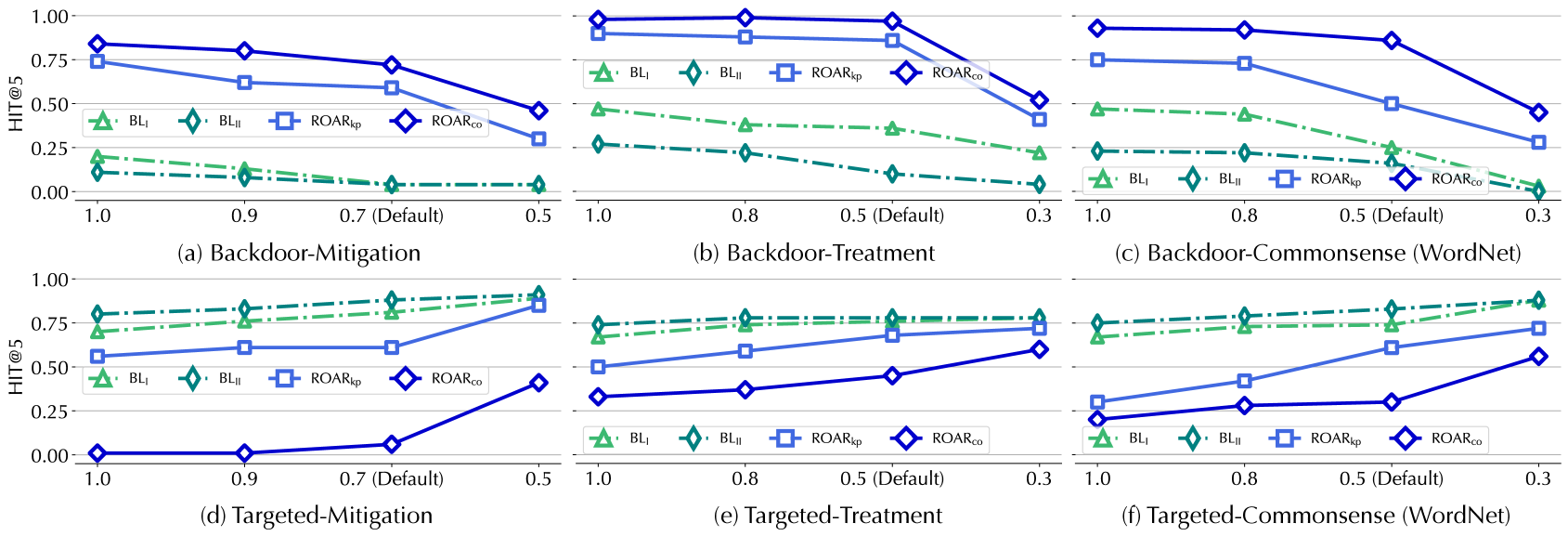

5.2 Evaluation results

Q1: Attack performance

We compare the performance of ROAR and baseline attacks. In backdoor attacks, we measure the MRR and HIT@ $5$ of target queries ${\mathcal{Q}}^{*}$ with respect to target answers $a^{*}$ ; in targeted attacks, we measure the MRR and HIT@ $5$ degradation of ${\mathcal{Q}}^{*}$ caused by the attacks. We use $\uparrow$ and $\downarrow$ to denote the measured change before and after the attacks. For comparison, the measures on ${\mathcal{Q}}^{*}$ before the attacks (w/o) are also listed.

Effectiveness. Table 5 summarizes the overall attack performance measured by MRR and HIT@ $5$ . We have the following interesting observations.

ROAR ${}_{\mathrm{kp}}$ is more effective than baselines. Observe that all the ROAR variants outperform the baselines. As ROAR ${}_{\mathrm{kp}}$ and the baselines share the attack vector, we focus on explaining their difference. Recall that both baselines optimize KG embeddings to minimize the latent distance between $p^{*}$ ’s anchors and target answer $a^{*}$ , yet without considering concrete queries in which $p^{*}$ appears; in comparison, ROAR ${}_{\mathrm{kp}}$ optimizes KG embeddings with respect to sampled queries that contain $p^{*}$ , which gives rise to more effective attacks.

ROAR ${}_{\mathrm{qm}}$ tends to be more effective than ROAR ${}_{\mathrm{kp}}$ . Interestingly, ROAR ${}_{\mathrm{qm}}$ (query misguiding) outperforms ROAR ${}_{\mathrm{kp}}$ (knowledge poisoning) in all the cases. This may be explained as follows. Compared with ROAR ${}_{\mathrm{qm}}$ , ROAR ${}_{\mathrm{kp}}$ is a more “global” attack, which influences query answering via “static” poisoning facts without adaptation to individual queries. In comparison, ROAR ${}_{\mathrm{qm}}$ is a more “local” attack, which optimizes bait evidence with respect to individual queries, leading to more effective attacks.

ROAR ${}_{\mathrm{co}}$ is the most effective attack. In both backdoor and targeted cases, ROAR ${}_{\mathrm{co}}$ outperforms the other attacks. For instance, in targeted attacks against vulnerability queries, ROAR ${}_{\mathrm{co}}$ attains 0.92 HIT@ $5$ degradation. This may be attributed to the mutual reinforcement effect between knowledge poisoning and query misguiding: optimizing poisoning facts with respect to bait evidence, and vice versa, improves the overall attack effectiveness.

KG properties matter. Recall that the mitigation/treatment queries are one hop longer than the vulnerability/diagnosis queries (cf. Figure 5). Interestingly, ROAR ’s performance differs in different use cases. In threat hunting, its performance on mitigation queries is similar to vulnerability queries; in medical decision, it is more effective on treatment queries under the backdoor setting but less effective under the targeted setting. We explain the difference by KG properties. In threat KG, each mitigation entity interacts with 0.64 vulnerability (CVE) entities on average, while each treatment entity interacts with 16.2 diagnosis entities on average. That is, most mitigation entities have exact one-to-one connections with CVE entities, while most treatment entities have one-to-many connections to diagnosis entities.

| Objective | Query | Impact on ${\mathcal{Q}}\setminus{\mathcal{Q}}^{*}$ | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| BL ${}_{\mathrm{1}}$ | BL ${}_{\mathrm{2}}$ | ROAR ${}_{\mathrm{kp}}$ | ROAR ${}_{\mathrm{co}}$ | | | | | | |

| backdoor | vulnerability | .04 $\downarrow$ | .07 $\downarrow$ | .04 $\downarrow$ | .03 $\downarrow$ | .02 $\downarrow$ | .01 $\downarrow$ | .01 $\downarrow$ | .00 $\downarrow$ |

| mitigation | .06 $\downarrow$ | .11 $\downarrow$ | .05 $\downarrow$ | .04 $\downarrow$ | .04 $\downarrow$ | .02 $\downarrow$ | .04 $\downarrow$ | .02 $\downarrow$ | |

| diagnosis | .04 $\downarrow$ | .02 $\downarrow$ | .03 $\downarrow$ | .02 $\downarrow$ | .00 $\downarrow$ | .00 $\downarrow$ | .01 $\downarrow$ | .00 $\downarrow$ | |

| treatment | .06 $\downarrow$ | .08 $\downarrow$ | .03 $\downarrow$ | .04 $\downarrow$ | .02 $\downarrow$ | .01 $\downarrow$ | .00 $\downarrow$ | .01 $\downarrow$ | |

| Freebase | .03 $\downarrow$ | .06 $\downarrow$ | .04 $\downarrow$ | .04 $\downarrow$ | .03 $\downarrow$ | .04 $\downarrow$ | .02 $\downarrow$ | .02 $\downarrow$ | |

| WordNet | .06 $\downarrow$ | .04 $\downarrow$ | .07 $\downarrow$ | .09 $\downarrow$ | .05 $\downarrow$ | .01 $\downarrow$ | .04 $\downarrow$ | .03 $\downarrow$ | |

| targeted | vulnerability | .06 $\downarrow$ | .08 $\downarrow$ | .03 $\downarrow$ | .05 $\downarrow$ | .02 $\downarrow$ | .01 $\downarrow$ | .01 $\downarrow$ | .01 $\downarrow$ |

| mitigation | .12 $\downarrow$ | .10 $\downarrow$ | .08 $\downarrow$ | .08 $\downarrow$ | .05 $\downarrow$ | .02 $\downarrow$ | .05 $\downarrow$ | .02 $\downarrow$ | |

| diagnosis | .05 $\downarrow$ | .02 $\downarrow$ | .04 $\downarrow$ | .04 $\downarrow$ | .00 $\downarrow$ | .00 $\downarrow$ | .00 $\downarrow$ | .01 $\downarrow$ | |

| treatment | .07 $\downarrow$ | .11 $\downarrow$ | .05 $\downarrow$ | .06 $\downarrow$ | .01 $\downarrow$ | .03 $\downarrow$ | .02 $\downarrow$ | .01 $\downarrow$ | |

| Freebase | .06 $\downarrow$ | .08 $\downarrow$ | .04 $\downarrow$ | .08 $\downarrow$ | .00 $\downarrow$ | .03 $\downarrow$ | .01 $\downarrow$ | .05 $\downarrow$ | |

| WordNet | .03 $\downarrow$ | .05 $\downarrow$ | .01 $\downarrow$ | .07 $\downarrow$ | .04 $\downarrow$ | .02 $\downarrow$ | .00 $\downarrow$ | .04 $\downarrow$ | |

Table 6: Attack impact on non-target queries ${\mathcal{Q}}\setminus{\mathcal{Q}}^{*}$ , measured by MRR (left) and HIT@ $5$ (right), where $\downarrow$ indicates the performance degradation compared with Table 3.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Charts: Performance Comparison of Backdoor Attacks and Defenses

### Overview

The image presents six line charts, arranged in a 2x3 grid, comparing the performance of different backdoor attack and defense strategies. The charts plot HIT@5 (Hit Rate at 5) against varying attack strengths (represented by values 1.0, 0.9, 0.7, and 0.5, with "0.7 (Default)" also indicated on the x-axis). The strategies being compared are BL<sub>I</sub>, BL<sub>II</sub>, ROAR<sub>I</sub><sub>p</sub>, and ROAR<sub>CD</sub>. Each chart focuses on a specific scenario: Backdoor-Vulnerability, Backdoor-Diagnosis, Backdoor-Commonsense (Freebase), Targeted-Vulnerability, Targeted-Diagnosis, and Targeted-Commonsense (Freebase).

### Components/Axes

* **X-axis:** Attack Strength (values: 0.3, 0.5, 0.7 (Default), 0.8, 0.9, 1.0).

* **Y-axis:** HIT@5 (Hit Rate at 5), ranging from 0.00 to 1.00.

* **Lines:** Represent different attack/defense strategies:

* BL<sub>I</sub> (Blue Solid Line)

* BL<sub>II</sub> (Blue Dashed Line)

* ROAR<sub>I</sub><sub>p</sub> (Green Triangle Dashed Line)

* ROAR<sub>CD</sub> (Yellow Diamond Solid Line)

* **Legend:** Located at the top-left of each chart, indicating the color and line style corresponding to each strategy.

* **Chart Titles:** Located below each chart, identifying the specific scenario being evaluated (e.g., "(a) Backdoor-Vulnerability").

### Detailed Analysis or Content Details

**Chart (a) Backdoor-Vulnerability:**

* BL<sub>I</sub>: Starts at approximately 0.75 at x=1.0, decreases to approximately 0.55 at x=0.9, remains relatively stable around 0.55-0.60 between x=0.7 and x=0.5.

* BL<sub>II</sub>: Starts at approximately 0.70 at x=1.0, decreases to approximately 0.50 at x=0.9, remains relatively stable around 0.45-0.50 between x=0.7 and x=0.5.

* ROAR<sub>I</sub><sub>p</sub>: Starts at approximately 0.25 at x=1.0, increases to approximately 0.35 at x=0.9, remains relatively stable around 0.30-0.35 between x=0.7 and x=0.5.

* ROAR<sub>CD</sub>: Starts at approximately 0.05 at x=1.0, increases to approximately 0.15 at x=0.9, remains relatively stable around 0.10-0.15 between x=0.7 and x=0.5.

**Chart (b) Backdoor-Diagnosis:**

* BL<sub>I</sub>: Starts at approximately 0.80 at x=1.0, decreases to approximately 0.60 at x=0.8, decreases to approximately 0.40 at x=0.5.

* BL<sub>II</sub>: Starts at approximately 0.75 at x=1.0, decreases to approximately 0.55 at x=0.8, decreases to approximately 0.35 at x=0.5.

* ROAR<sub>I</sub><sub>p</sub>: Starts at approximately 0.30 at x=1.0, decreases to approximately 0.20 at x=0.8, decreases to approximately 0.10 at x=0.5.

* ROAR<sub>CD</sub>: Starts at approximately 0.05 at x=1.0, remains relatively stable around 0.05-0.10 between x=0.8 and x=0.5.

**Chart (c) Backdoor-Commonsense (Freebase):**

* BL<sub>I</sub>: Starts at approximately 0.80 at x=1.0, decreases to approximately 0.60 at x=0.8, decreases to approximately 0.40 at x=0.3.

* BL<sub>II</sub>: Starts at approximately 0.75 at x=1.0, decreases to approximately 0.55 at x=0.8, decreases to approximately 0.35 at x=0.3.

* ROAR<sub>I</sub><sub>p</sub>: Starts at approximately 0.30 at x=1.0, decreases to approximately 0.20 at x=0.8, decreases to approximately 0.10 at x=0.3.

* ROAR<sub>CD</sub>: Starts at approximately 0.05 at x=1.0, remains relatively stable around 0.05-0.10 between x=0.8 and x=0.3.

**Chart (d) Targeted-Vulnerability:**

* BL<sub>I</sub>: Starts at approximately 0.80 at x=1.0, decreases to approximately 0.60 at x=0.9, decreases to approximately 0.40 at x=0.5.

* BL<sub>II</sub>: Starts at approximately 0.75 at x=1.0, decreases to approximately 0.55 at x=0.9, decreases to approximately 0.35 at x=0.5.

* ROAR<sub>I</sub><sub>p</sub>: Starts at approximately 0.25 at x=1.0, increases to approximately 0.35 at x=0.9, decreases to approximately 0.25 at x=0.5.

* ROAR<sub>CD</sub>: Starts at approximately 0.05 at x=1.0, increases to approximately 0.15 at x=0.9, decreases to approximately 0.10 at x=0.5.

**Chart (e) Targeted-Diagnosis:**

* BL<sub>I</sub>: Starts at approximately 0.80 at x=1.0, decreases to approximately 0.60 at x=0.8, decreases to approximately 0.40 at x=0.5.

* BL<sub>II</sub>: Starts at approximately 0.75 at x=1.0, decreases to approximately 0.55 at x=0.8, decreases to approximately 0.35 at x=0.5.

* ROAR<sub>I</sub><sub>p</sub>: Starts at approximately 0.30 at x=1.0, decreases to approximately 0.20 at x=0.8, decreases to approximately 0.10 at x=0.5.

* ROAR<sub>CD</sub>: Starts at approximately 0.05 at x=1.0, remains relatively stable around 0.05-0.10 between x=0.8 and x=0.5.

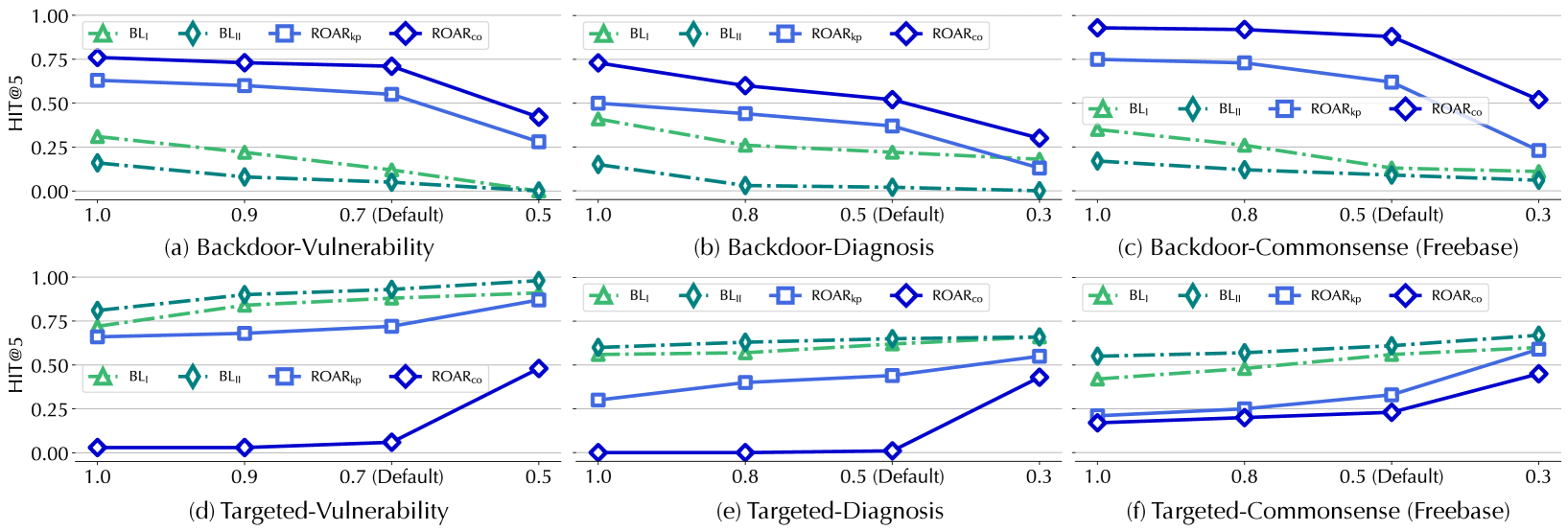

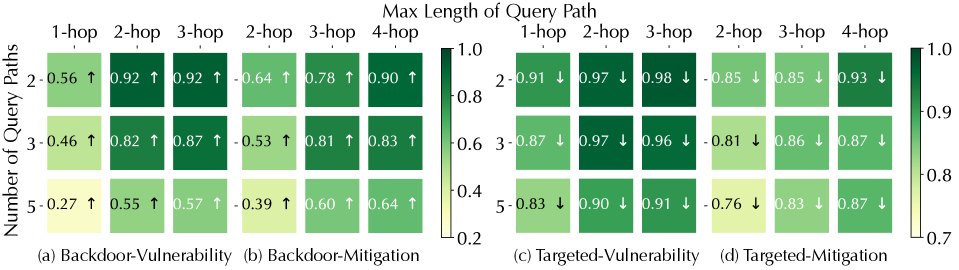

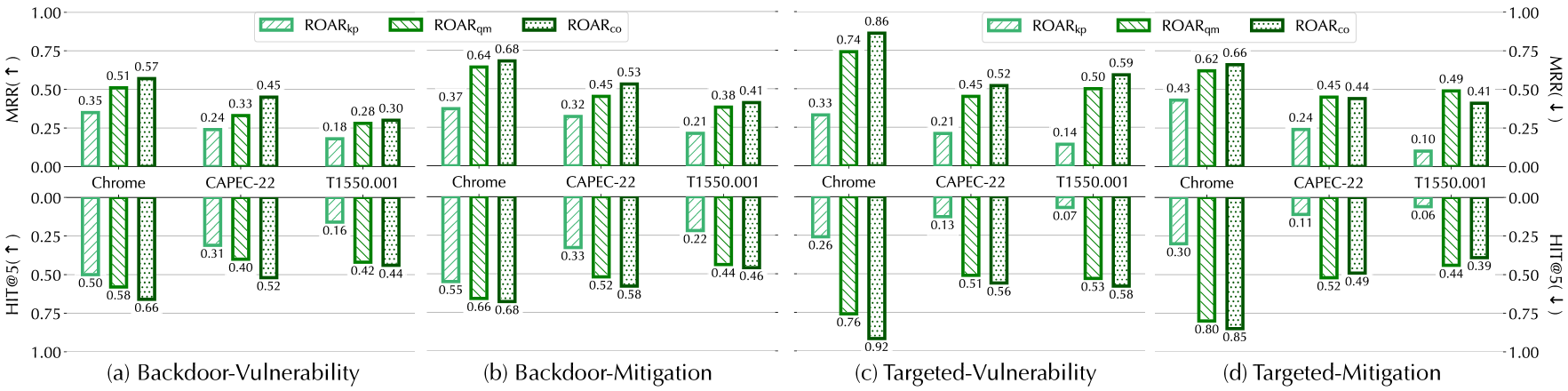

**Chart (f) Targeted-Commonsense (Freebase):**