# Unifying Large Language Models and Knowledge Graphs: A Roadmap

> Shirui Pan is with the School of Information and Communication Technology and Institute for Integrated and Intelligent Systems (IIIS), Griffith University, Queensland, Australia.

Email: s.pan@griffith.edu.au;

Linhao Luo and Yufei Wang are with the Department of Data Science and AI, Monash University, Melbourne, Australia. E-mail: linhao.luo@monash.edu, garyyufei@gmail.com.

Chen Chen is with the Nanyang Technological University, Singapore. E-mail: s190009@ntu.edu.sg.

Jiapu Wang is with the Faculty of Information Technology, Beijing University of Technology, Beijing, China. E-mail: jpwang@emails.bjut.edu.cn.

Xindong Wu is with the Key Laboratory of Knowledge Engineering with Big Data (the Ministry of Education of China), Hefei University of Technology, Hefei, China, and also with the Research Center for Knowledge Engineering, Zhejiang Lab, Hangzhou, China.

Email: xwu@hfut.edu.cn.

Shirui Pan and Linhao Luo contributed equally to this work. Corresponding Author: Xindong Wu.

Abstract

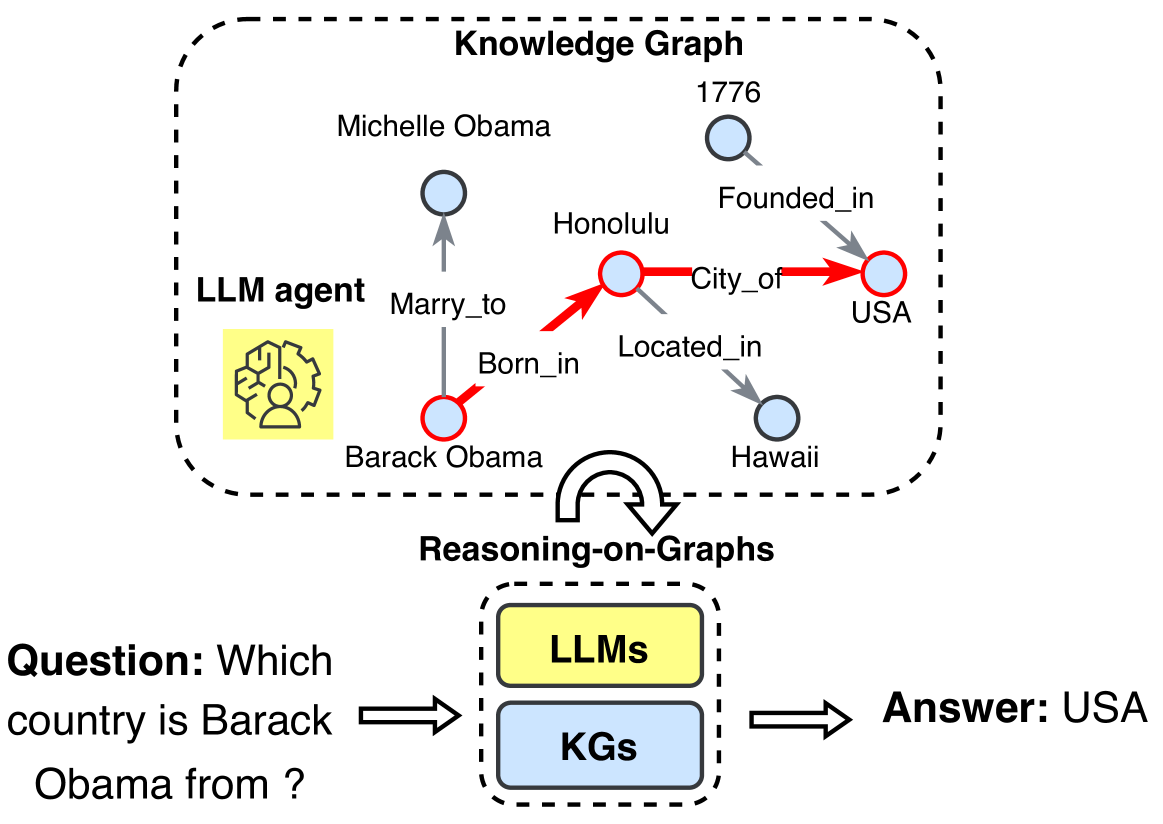

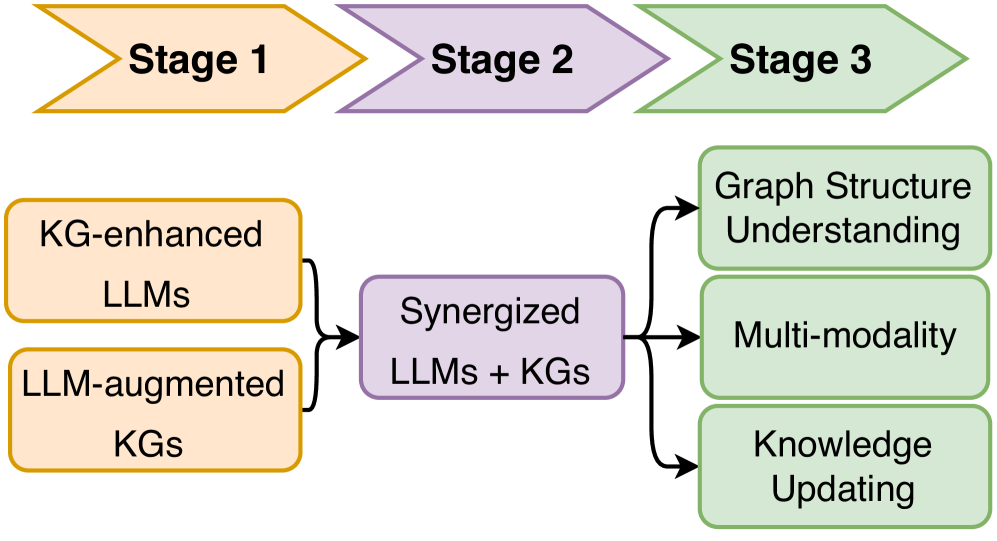

Large language models (LLMs), such as ChatGPT and GPT4, are making new waves in the field of natural language processing and artificial intelligence, due to their emergent ability and generalizability. However, LLMs are black-box models, which often fall short of capturing and accessing factual knowledge. In contrast, Knowledge Graphs (KGs), Wikipedia and Huapu for example, are structured knowledge models that explicitly store rich factual knowledge. KGs can enhance LLMs by providing external knowledge for inference and interpretability. Meanwhile, KGs are difficult to construct and evolve by nature, which challenges the existing methods in KGs to generate new facts and represent unseen knowledge. Therefore, it is complementary to unify LLMs and KGs together and simultaneously leverage their advantages. In this article, we present a forward-looking roadmap for the unification of LLMs and KGs. Our roadmap consists of three general frameworks, namely, 1) KG-enhanced LLMs, which incorporate KGs during the pre-training and inference phases of LLMs, or for the purpose of enhancing understanding of the knowledge learned by LLMs; 2) LLM-augmented KGs, that leverage LLMs for different KG tasks such as embedding, completion, construction, graph-to-text generation, and question answering; and 3) Synergized LLMs + KGs, in which LLMs and KGs play equal roles and work in a mutually beneficial way to enhance both LLMs and KGs for bidirectional reasoning driven by both data and knowledge. We review and summarize existing efforts within these three frameworks in our roadmap and pinpoint their future research directions.

Index Terms: Natural Language Processing, Large Language Models, Generative Pre-Training, Knowledge Graphs, Roadmap, Bidirectional Reasoning. publicationid: pubid: 0000–0000/00$00.00 © 2023 IEEE

1 Introduction

Large language models (LLMs) LLMs are also known as pre-trained language models (PLMs). (e.g., BERT [1], RoBERTA [2], and T5 [3]), pre-trained on the large-scale corpus, have shown great performance in various natural language processing (NLP) tasks, such as question answering [4], machine translation [5], and text generation [6]. Recently, the dramatically increasing model size further enables the LLMs with the emergent ability [7], paving the road for applying LLMs as Artificial General Intelligence (AGI). Advanced LLMs like ChatGPT https://openai.com/blog/chatgpt and PaLM2 https://ai.google/discover/palm2, with billions of parameters, exhibit great potential in many complex practical tasks, such as education [8], code generation [9] and recommendation [10].

<details>

<summary>extracted/5367551/figs/LLM_vs_KG.png Details</summary>

### Visual Description

\n

## Diagram: Knowledge Graphs vs. Large Language Models

### Overview

The image is a diagram illustrating the pros and cons of Knowledge Graphs (KGs) and Large Language Models (LLMs). It uses two overlapping circles, representing the two technologies, with lists of advantages and disadvantages positioned around each circle. Arrows indicate areas of overlap and potential interaction.

### Components/Axes

The diagram consists of:

* **Title:** "Knowledge Graphs (KGs)" at the top center.

* **Two Overlapping Circles:** One labeled "Knowledge Graphs (KGs)" and the other "Large Language Models (LLMs)".

* **Pros & Cons Lists:** Bulleted lists of pros and cons associated with each technology.

* **Arrows:** Blue and yellow arrows indicating areas of overlap and potential interaction.

### Detailed Analysis or Content Details

**Knowledge Graphs (KGs) - Pros (Right Side):**

* Structural Knowledge

* Accuracy

* Decisiveness

* Interpretability

* Domain-specific Knowledge

* Evolving Knowledge

**Knowledge Graphs (KGs) - Cons (Left Side):**

* Implicit Knowledge

* Hallucination

* Indecisiveness

* Black-box

* Lacking Domain-specific/New Knowledge

**Large Language Models (LLMs) - Pros (Bottom):**

* General Knowledge

* Language Processing

* Generalizability

**Large Language Models (LLMs) - Cons (Bottom-Right):**

* Incompleteness

* Lacking Language Understanding

* Unseen Facts

**Arrows:**

* A blue arrow originates from the "Cons" list of KGs and points towards the "Pros" list of LLMs.

* A yellow arrow originates from the "Pros" list of LLMs and points towards the "Cons" list of KGs.

### Key Observations

The diagram highlights the complementary strengths and weaknesses of KGs and LLMs. KGs excel in structured, accurate, and interpretable knowledge, but struggle with implicit or new information. LLMs are strong in general knowledge and language processing, but can be incomplete or lack deep understanding. The arrows suggest that LLMs can potentially address some of the shortcomings of KGs, and vice versa.

### Interpretation

This diagram presents a comparative analysis of Knowledge Graphs and Large Language Models, positioning them not as competing technologies, but as potentially synergistic ones. The pros and cons are carefully contrasted to show where each technology shines and where it falls short.

The blue arrow from KG cons to LLM pros suggests that LLMs can help mitigate the limitations of KGs, particularly in handling implicit knowledge and new information. LLMs can infer and generate knowledge that isn't explicitly stored in a KG.

Conversely, the yellow arrow from LLM pros to KG cons indicates that KGs can address the weaknesses of LLMs, such as incompleteness and lack of understanding. KGs provide a structured foundation for LLMs, grounding them in factual knowledge and improving their interpretability.

The diagram implies that a combined approach, leveraging the strengths of both KGs and LLMs, could lead to more robust and intelligent systems. This is a common theme in current AI research, with many projects exploring ways to integrate these two paradigms. The diagram doesn't provide quantitative data, but rather a qualitative assessment of the relative strengths and weaknesses of each technology.

</details>

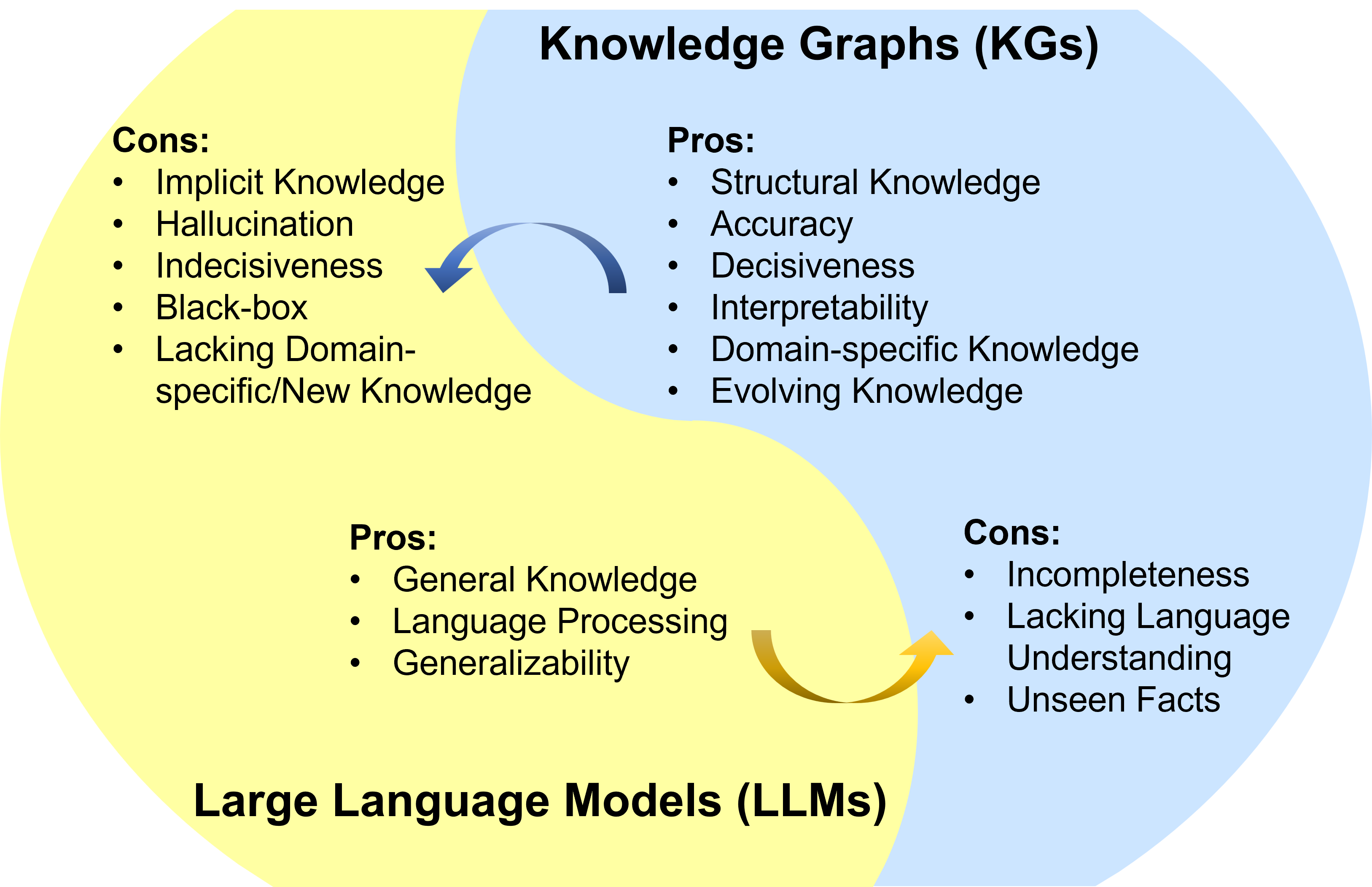

Figure 1: Summarization of the pros and cons for LLMs and KGs. LLM pros: General Knowledge [11], Language Processing [12], Generalizability [13]; LLM cons: Implicit Knowledge [14], Hallucination [15], Indecisiveness [16], Black-box [17], Lacking Domain-specific/New Knowledge [18]. KG pros: Structural Knowledge [19], Accuracy [20], Decisiveness [21], Interpretability [22], Domain-specific Knowledge [23], Evolving Knowledge [24]; KG cons: Incompleteness [25], Lacking Language Understanding [26], Unseen Facts [27]. Pros. and Cons. are selected based on their representativeness. Detailed discussion can be found in Appendix A.

Despite their success in many applications, LLMs have been criticized for their lack of factual knowledge. Specifically, LLMs memorize facts and knowledge contained in the training corpus [14]. However, further studies reveal that LLMs are not able to recall facts and often experience hallucinations by generating statements that are factually incorrect [28, 15]. For example, LLMs might say “Einstein discovered gravity in 1687” when asked, “When did Einstein discover gravity?”, which contradicts the fact that Isaac Newton formulated the gravitational theory. This issue severely impairs the trustworthiness of LLMs.

As black-box models, LLMs are also criticized for their lack of interpretability. LLMs represent knowledge implicitly in their parameters. It is difficult to interpret or validate the knowledge obtained by LLMs. Moreover, LLMs perform reasoning by a probability model, which is an indecisive process [16]. The specific patterns and functions LLMs used to arrive at predictions or decisions are not directly accessible or explainable to humans [17]. Even though some LLMs are equipped to explain their predictions by applying chain-of-thought [29], their reasoning explanations also suffer from the hallucination issue [30]. This severely impairs the application of LLMs in high-stakes scenarios, such as medical diagnosis and legal judgment. For instance, in a medical diagnosis scenario, LLMs may incorrectly diagnose a disease and provide explanations that contradict medical commonsense. This raises another issue that LLMs trained on general corpus might not be able to generalize well to specific domains or new knowledge due to the lack of domain-specific knowledge or new training data [18].

To address the above issues, a potential solution is to incorporate knowledge graphs (KGs) into LLMs. Knowledge graphs (KGs), storing enormous facts in the way of triples, i.e., $(head~{}entity,relation,tail~{}entity)$ , are a structured and decisive manner of knowledge representation (e.g., Wikidata [20], YAGO [31], and NELL [32]). KGs are crucial for various applications as they offer accurate explicit knowledge [19]. Besides, they are renowned for their symbolic reasoning ability [22], which generates interpretable results. KGs can also actively evolve with new knowledge continuously added in [24]. Additionally, experts can construct domain-specific KGs to provide precise and dependable domain-specific knowledge [23].

Nevertheless, KGs are difficult to construct [25], and current approaches in KGs [33, 34, 27] are inadequate in handling the incomplete and dynamically changing nature of real-world KGs. These approaches fail to effectively model unseen entities and represent new facts. In addition, they often ignore the abundant textual information in KGs. Moreover, existing methods in KGs are often customized for specific KGs or tasks, which are not generalizable enough. Therefore, it is also necessary to utilize LLMs to address the challenges faced in KGs. We summarize the pros and cons of LLMs and KGs in Fig. 1, respectively.

Recently, the possibility of unifying LLMs with KGs has attracted increasing attention from researchers and practitioners. LLMs and KGs are inherently interconnected and can mutually enhance each other. In KG-enhanced LLMs, KGs can not only be incorporated into the pre-training and inference stages of LLMs to provide external knowledge [35, 36, 37], but also used for analyzing LLMs and providing interpretability [14, 38, 39]. In LLM-augmented KGs, LLMs have been used in various KG-related tasks, e.g., KG embedding [40], KG completion [26], KG construction [41], KG-to-text generation [42], and KGQA [43], to improve the performance and facilitate the application of KGs. In Synergized LLM + KG, researchers marries the merits of LLMs and KGs to mutually enhance performance in knowledge representation [44] and reasoning [45, 46]. Although there are some surveys on knowledge-enhanced LLMs [47, 48, 49], which mainly focus on using KGs as an external knowledge to enhance LLMs, they ignore other possibilities of integrating KGs for LLMs and the potential role of LLMs in KG applications.

In this article, we present a forward-looking roadmap for unifying both LLMs and KGs, to leverage their respective strengths and overcome the limitations of each approach, for various downstream tasks. We propose detailed categorization, conduct comprehensive reviews, and pinpoint emerging directions in these fast-growing fields. Our main contributions are summarized as follows:

1. Roadmap. We present a forward-looking roadmap for integrating LLMs and KGs. Our roadmap, consisting of three general frameworks to unify LLMs and KGs, namely, KG-enhanced LLMs, LLM-augmented KGs, and Synergized LLMs + KGs, provides guidelines for the unification of these two distinct but complementary technologies.

1. Categorization and review. For each integration framework of our roadmap, we present a detailed categorization and novel taxonomies of research on unifying LLMs and KGs. In each category, we review the research from the perspectives of different integration strategies and tasks, which provides more insights into each framework.

1. Coverage of emerging advances. We cover the advanced techniques in both LLMs and KGs. We include the discussion of state-of-the-art LLMs like ChatGPT and GPT-4 as well as the novel KGs e.g., multi-modal knowledge graphs.

1. Summary of challenges and future directions. We highlight the challenges in existing research and present several promising future research directions.

The rest of this article is organized as follows. Section 2 first explains the background of LLMs and KGs. Section 3 introduces the roadmap and the overall categorization of this article. Section 4 presents the different KGs-enhanced LLM approaches. Section 5 describes the possible LLM-augmented KG methods. Section 6 shows the approaches of synergizing LLMs and KGs. Section 7 discusses the challenges and future research directions. Finally, Section 8 concludes this paper.

2 Background

In this section, we will first briefly introduce a few representative large language models (LLMs) and discuss the prompt engineering that efficiently uses LLMs for varieties of applications. Then, we illustrate the concept of knowledge graphs (KGs) and present different categories of KGs.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Large Language Model Evolution Timeline

### Overview

This diagram presents a timeline of the evolution of large language models (LLMs) from 2018 to 2023. The models are categorized by their architecture (Decoder-only, Encoder-decoder, Encoder-only) and are positioned along a horizontal timeline. Each model is represented by a colored box, indicating whether it is open-source or closed-source. The size of each model (in millions or billions of parameters) is indicated within the box. Green lines represent the progression of open-source models, while purple lines represent closed-source models.

### Components/Axes

* **Vertical Axis:** Represents the model architecture: Decoder-only (top), Encoder-decoder (middle), Encoder-only (bottom). Each architecture has a simplified diagram showing the flow of "Input Text", "Features", and "Output Text".

* **Horizontal Axis:** Represents time, spanning from 2018 to 2023, with year markers along the bottom.

* **Legend (Bottom-Right):**

* Green: Open-Source

* Yellow: Closed-Source

* **Model Boxes:** Each box contains the model name and its size in parameters (e.g., "110M", "1.2T").

* **Connecting Lines:** Green and purple lines connect models, indicating a progression or relationship.

### Detailed Analysis or Content Details

**Encoder-only Models (Bottom Row):**

* **2018:** BERT (110M-340M) - Yellow (Closed-Source)

* **2019:** RoBERTa (125M-355M) - Yellow (Closed-Source)

* **2019:** ALBERT (11M-223M) - Green (Open-Source)

* **2020:** DistilBERT (66M) - Green (Open-Source)

* **2020:** ELECTRA (14M-110M) - Green (Open-Source)

* **2020:** DeBERTa (44M-304M) - Yellow (Closed-Source)

**Encoder-decoder Models (Middle Row):**

* **2019:** BART (140M) - Yellow (Closed-Source)

* **2020:** T5 (80M-11B) - Green (Open-Source)

* **2020:** mT5 (300M-13B) - Green (Open-Source)

* **2021:** GLM (110M-10B) - Yellow (Closed-Source)

* **2021:** Switch (1.6T) - Yellow (Closed-Source)

* **2022:** ST-MoE (4.1B-269B) - Yellow (Closed-Source)

* **2022:** UL2 (20B) - Yellow (Closed-Source)

* **2022:** Flan-UL2 (20B) - Yellow (Closed-Source)

* **2023:** Flan-T5 (80M-11B) - Green (Open-Source)

**Decoder-only Models (Top Row):**

* **2018:** GPT-1 (110M) - Yellow (Closed-Source)

* **2019:** GPT-2 (117M-1.5B) - Yellow (Closed-Source)

* **2020:** GPT-3 (175B) - Yellow (Closed-Source)

* **2020:** XLNet (110M-340M) - Yellow (Closed-Source)

* **2021:** GLaM (1.2T) - Yellow (Closed-Source)

* **2022:** OPT (175B) - Green (Open-Source)

* **2022:** ChatGPT (175B) - Yellow (Closed-Source)

* **2022:** OPT-IML (175B) - Green (Open-Source)

* **2022:** LaMDA (137B) - Yellow (Closed-Source)

* **2022:** PaLM (540B) - Yellow (Closed-Source)

* **2023:** LLaMa (7B-65B) - Green (Open-Source)

* **2023:** Vicuna (7B-13B) - Green (Open-Source)

* **2023:** Alpaca (7B) - Green (Open-Source)

* **2023:** GPT-4 (Unknown) - Yellow (Closed-Source)

* **2023:** Bard (137B) - Yellow (Closed-Source)

### Key Observations

* The trend shows a significant increase in model size (parameter count) over time across all architectures.

* Initially, most models were closed-source, but there's a growing trend towards open-source models, particularly in recent years (2022-2023).

* Decoder-only models have seen the most rapid growth in size, culminating in GPT-4 with an unknown parameter count.

* The diagram highlights the parallel development of different architectures (Encoder-only, Encoder-decoder, Decoder-only).

* The lines connecting models suggest a lineage or influence, but the exact nature of these relationships isn't explicitly stated.

### Interpretation

The diagram illustrates the rapid advancement in the field of large language models. The increasing model size suggests a pursuit of greater capacity and performance. The shift towards open-source models indicates a democratization of access to this technology, fostering innovation and collaboration. The parallel development of different architectures suggests that researchers are exploring various approaches to achieve optimal results. The diagram serves as a valuable snapshot of the LLM landscape, highlighting key milestones and trends. The unknown parameter count for GPT-4 suggests that it represents a significant leap forward, potentially exceeding the capabilities of its predecessors. The diagram doesn't provide information on the performance of these models, only their size and architecture. It's a high-level overview, focusing on the evolution of the models themselves rather than their applications or impact. The diagram implies a competitive landscape, with both open-source and closed-source models vying for dominance. The lines connecting the models suggest that new models are often built upon or inspired by previous work.

</details>

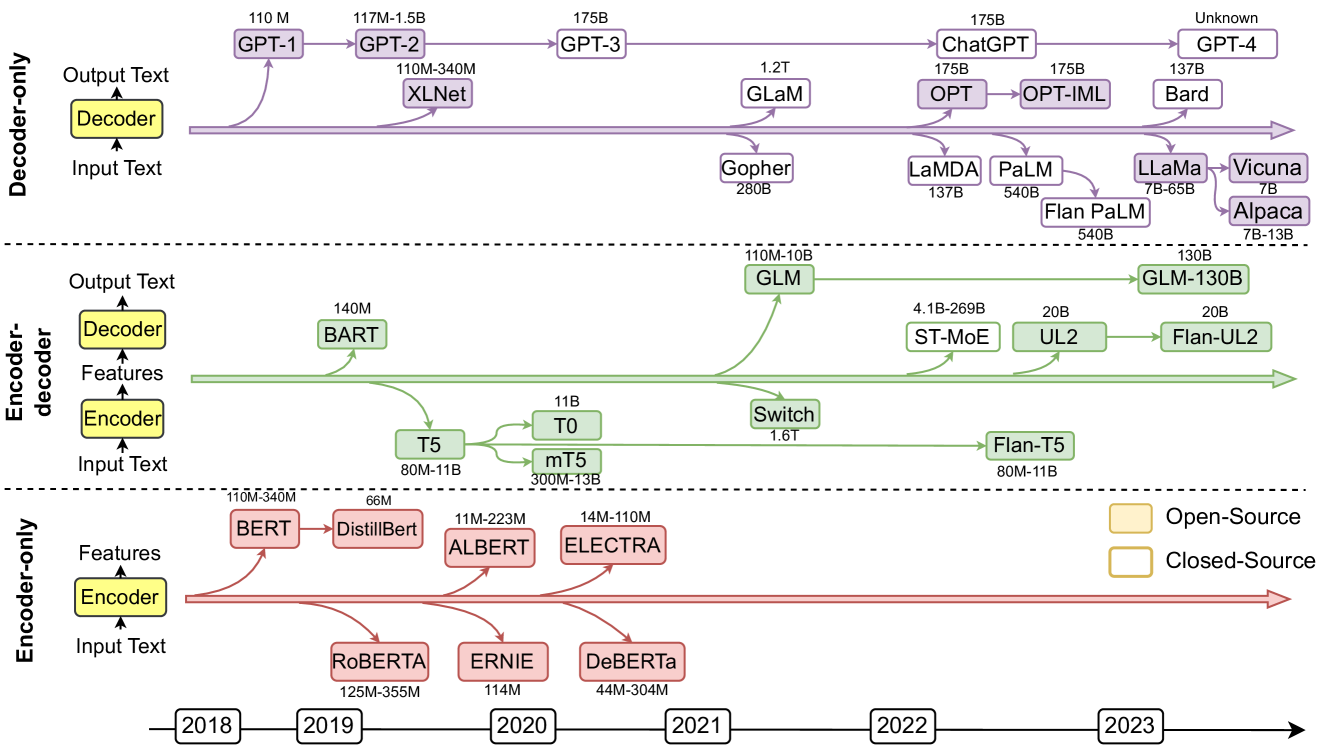

Figure 2: Representative large language models (LLMs) in recent years. Open-source models are represented by solid squares, while closed source models are represented by hollow squares.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Transformer Model Architecture

### Overview

The image depicts a high-level diagram of the Transformer model architecture, a neural network architecture commonly used in natural language processing. The diagram illustrates the Encoder, Decoder, and Self-Attention components, along with the flow of information between them. It's a block diagram showing the major functional blocks and their interconnections.

### Components/Axes

The diagram is composed of three main sections: Encoder (left), Decoder (center), and Self-Attention (right). Each section contains several blocks representing different layers or operations. The blocks are labeled with their respective functions. Arrows indicate the direction of data flow.

* **Encoder:** Contains "Self-Attention" and "Feed Forward" blocks.

* **Decoder:** Contains "Self-Attention", "Encoder-Decoder Attention", and "Feed Forward" blocks.

* **Self-Attention:** Contains "Linear", "Concat", "Multi-head Dot-Product Attention", and further "Linear" blocks for Q, K, and V.

* **Labels within Self-Attention:** Q, K, V.

### Detailed Analysis or Content Details

The diagram shows a sequential flow of information within each section.

1. **Encoder:** Data flows upwards from "Self-Attention" to "Feed Forward" and then back to "Self-Attention" in a loop.

2. **Decoder:** Data flows upwards from "Self-Attention" to "Encoder-Decoder Attention", then to "Feed Forward", and finally back to "Self-Attention" in a loop. The "Encoder-Decoder Attention" block receives input from the Encoder.

3. **Self-Attention:** The "Self-Attention" block is further broken down into a series of operations. Data flows from "Linear" blocks to "Multi-head Dot-Product Attention". The "Multi-head Dot-Product Attention" block receives inputs labeled "Q", "K", and "V" from separate "Linear" blocks. The output of "Multi-head Dot-Product Attention" is concatenated ("Concat") and then passed through another "Linear" layer.

The dashed line connecting the "Self-Attention" block in the Decoder to the "Self-Attention" block on the right suggests a connection or dependency between these two components.

### Key Observations

* The diagram emphasizes the repeated use of "Self-Attention" and "Feed Forward" layers in both the Encoder and Decoder.

* The "Encoder-Decoder Attention" block in the Decoder is a key component for integrating information from the Encoder.

* The Self-Attention block is highly detailed, showing the internal operations of the attention mechanism.

* The labels Q, K, and V within the Self-Attention block represent Query, Key, and Value, which are fundamental concepts in attention mechanisms.

### Interpretation

The diagram illustrates the core architecture of the Transformer model, which relies heavily on attention mechanisms to process sequential data. The Encoder transforms the input sequence into a representation, while the Decoder generates the output sequence based on this representation. The Self-Attention mechanism allows the model to weigh the importance of different parts of the input sequence when making predictions. The repeated use of Self-Attention and Feed Forward layers enables the model to learn complex relationships between the input and output. The diagram highlights the modularity of the Transformer architecture, with each block performing a specific function. The attention mechanism (Q, K, V) is a core component, allowing the model to focus on relevant parts of the input sequence. The diagram is a simplified representation, omitting details such as residual connections and layer normalization, but it effectively conveys the overall structure and flow of information within the Transformer model. The diagram is a conceptual illustration, not a quantitative representation of data. It's a visual aid for understanding the model's architecture.

</details>

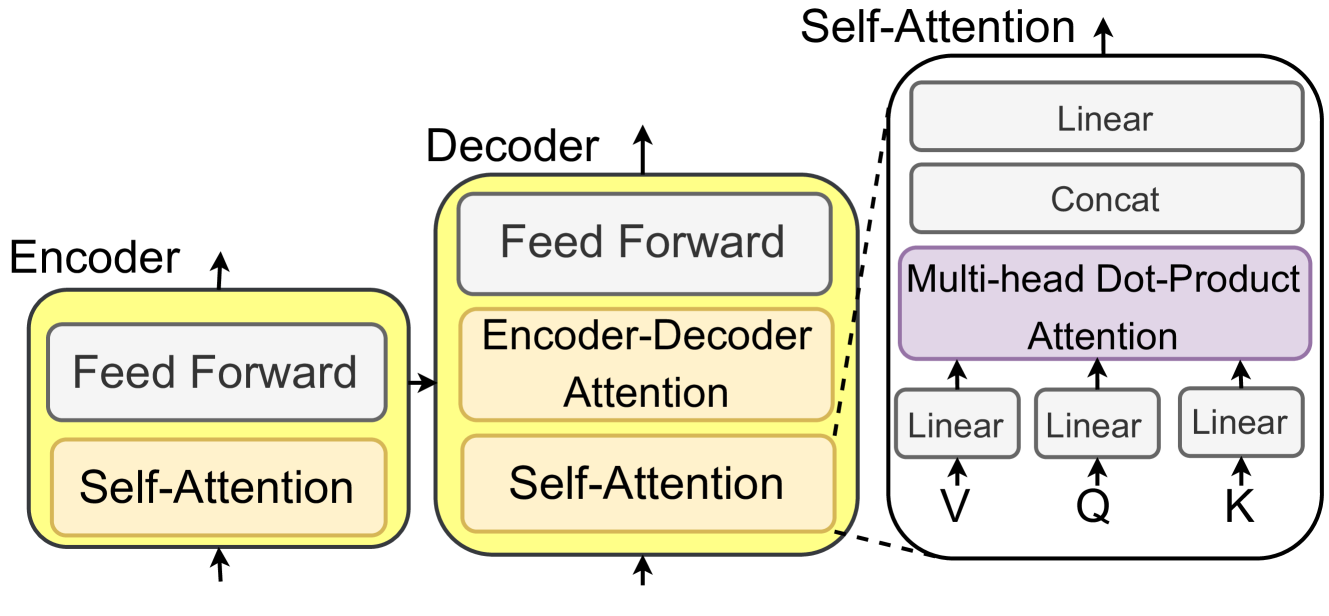

Figure 3: An illustration of the Transformer-based LLMs with self-attention mechanism.

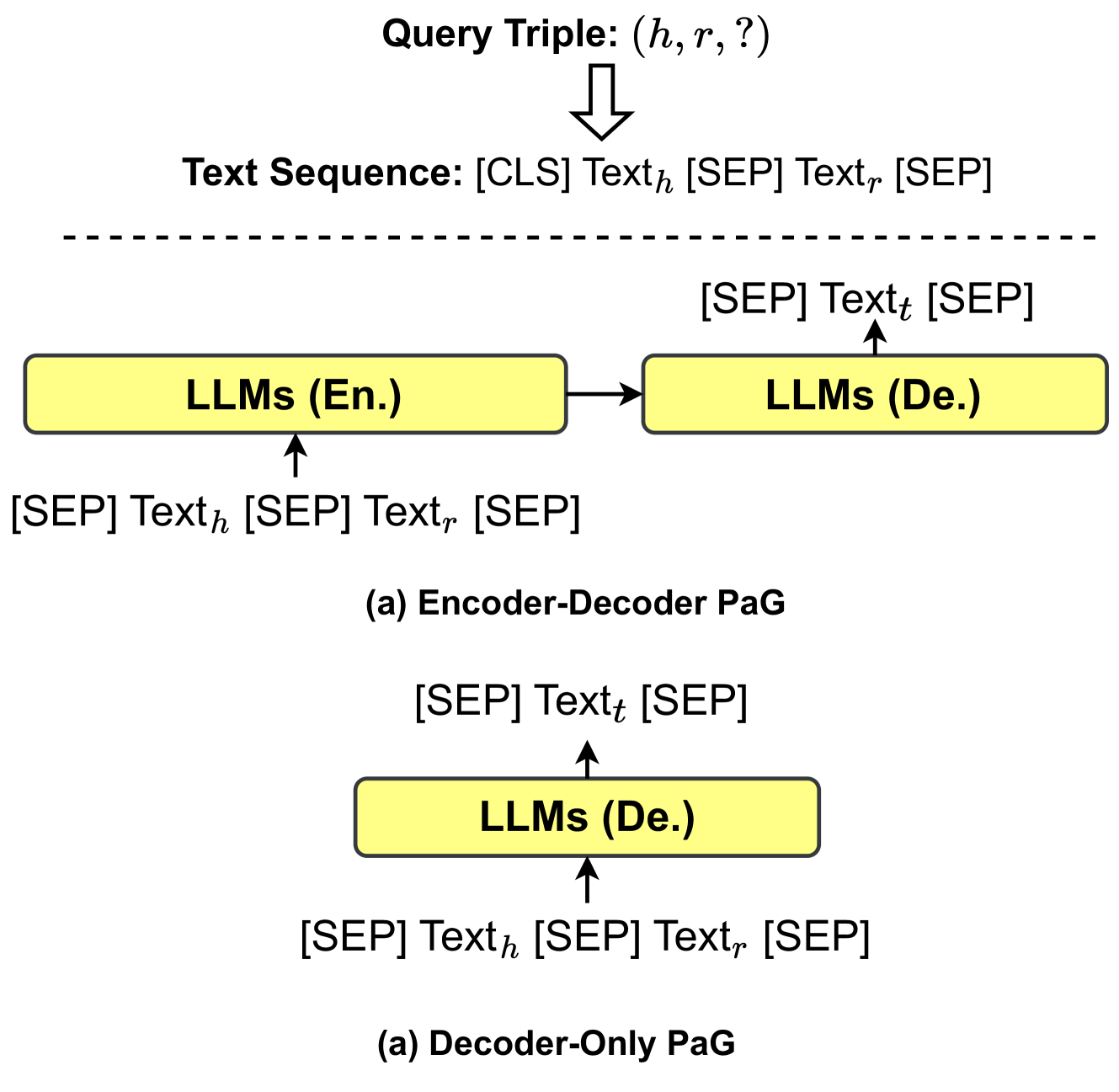

2.1 Large Language models (LLMs)

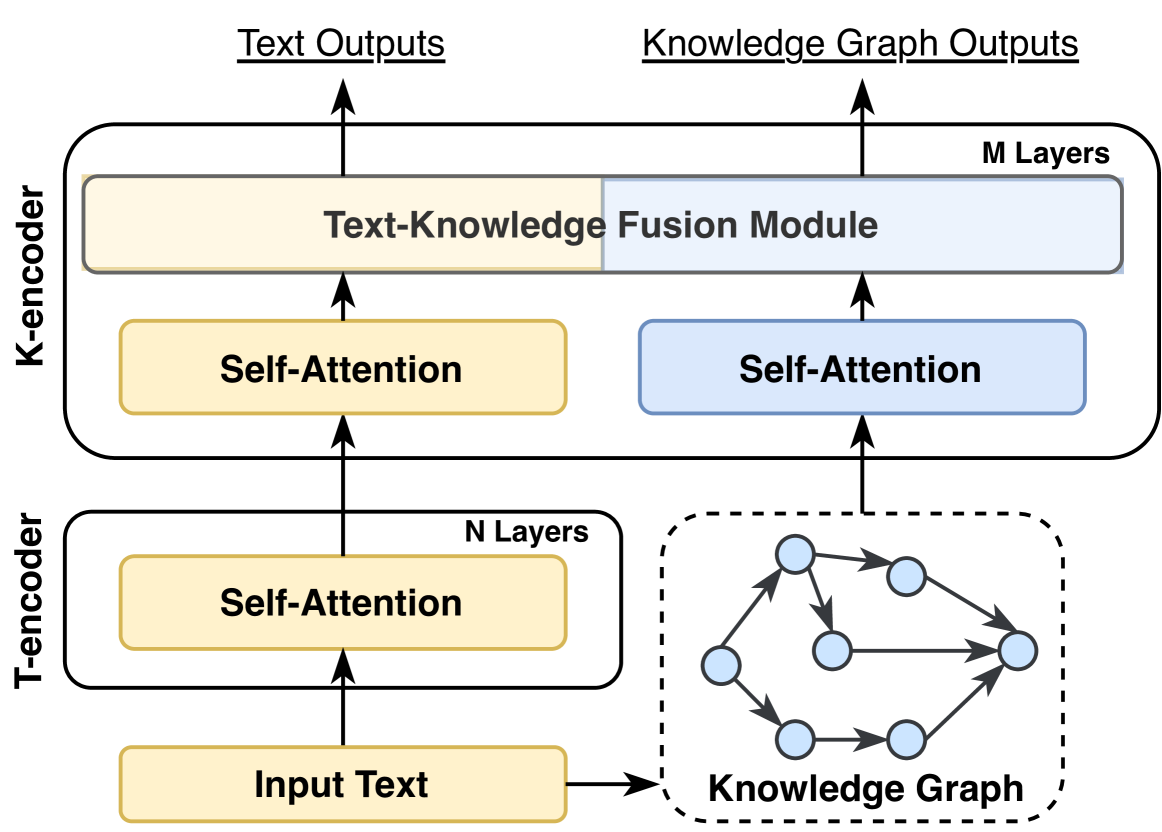

Large language models (LLMs) pre-trained on large-scale corpus have shown great potential in various NLP tasks [13]. As shown in Fig. 3, most LLMs derive from the Transformer design [50], which contains the encoder and decoder modules empowered by a self-attention mechanism. Based on the architecture structure, LLMs can be categorized into three groups: 1) encoder-only LLMs, 2) encoder-decoder LLMs, and 3) decoder-only LLMs. As shown in Fig. 2, we summarize several representative LLMs with different model architectures, model sizes, and open-source availabilities.

2.1.1 Encoder-only LLMs.

Encoder-only large language models only use the encoder to encode the sentence and understand the relationships between words. The common training paradigm for these model is to predict the mask words in an input sentence. This method is unsupervised and can be trained on the large-scale corpus. Encoder-only LLMs like BERT [1], ALBERT [51], RoBERTa [2], and ELECTRA [52] require adding an extra prediction head to resolve downstream tasks. These models are most effective for tasks that require understanding the entire sentence, such as text classification [26] and named entity recognition [53].

2.1.2 Encoder-decoder LLMs.

Encoder-decoder large language models adopt both the encoder and decoder module. The encoder module is responsible for encoding the input sentence into a hidden-space, and the decoder is used to generate the target output text. The training strategies in encoder-decoder LLMs can be more flexible. For example, T5 [3] is pre-trained by masking and predicting spans of masking words. UL2 [54] unifies several training targets such as different masking spans and masking frequencies. Encoder-decoder LLMs (e.g., T0 [55], ST-MoE [56], and GLM-130B [57]) are able to directly resolve tasks that generate sentences based on some context, such as summariaztion, translation, and question answering [58].

2.1.3 Decoder-only LLMs.

Decoder-only large language models only adopt the decoder module to generate target output text. The training paradigm for these models is to predict the next word in the sentence. Large-scale decoder-only LLMs can generally perform downstream tasks from a few examples or simple instructions, without adding prediction heads or finetuning [59]. Many state-of-the-art LLMs (e.g., Chat-GPT [60] and GPT-4 https://openai.com/product/gpt-4) follow the decoder-only architecture. However, since these models are closed-source, it is challenging for academic researchers to conduct further research. Recently, Alpaca https://github.com/tatsu-lab/stanford_alpaca and Vicuna https://lmsys.org/blog/2023-03-30-vicuna/ are released as open-source decoder-only LLMs. These models are finetuned based on LLaMA [61] and achieve comparable performance with ChatGPT and GPT-4.

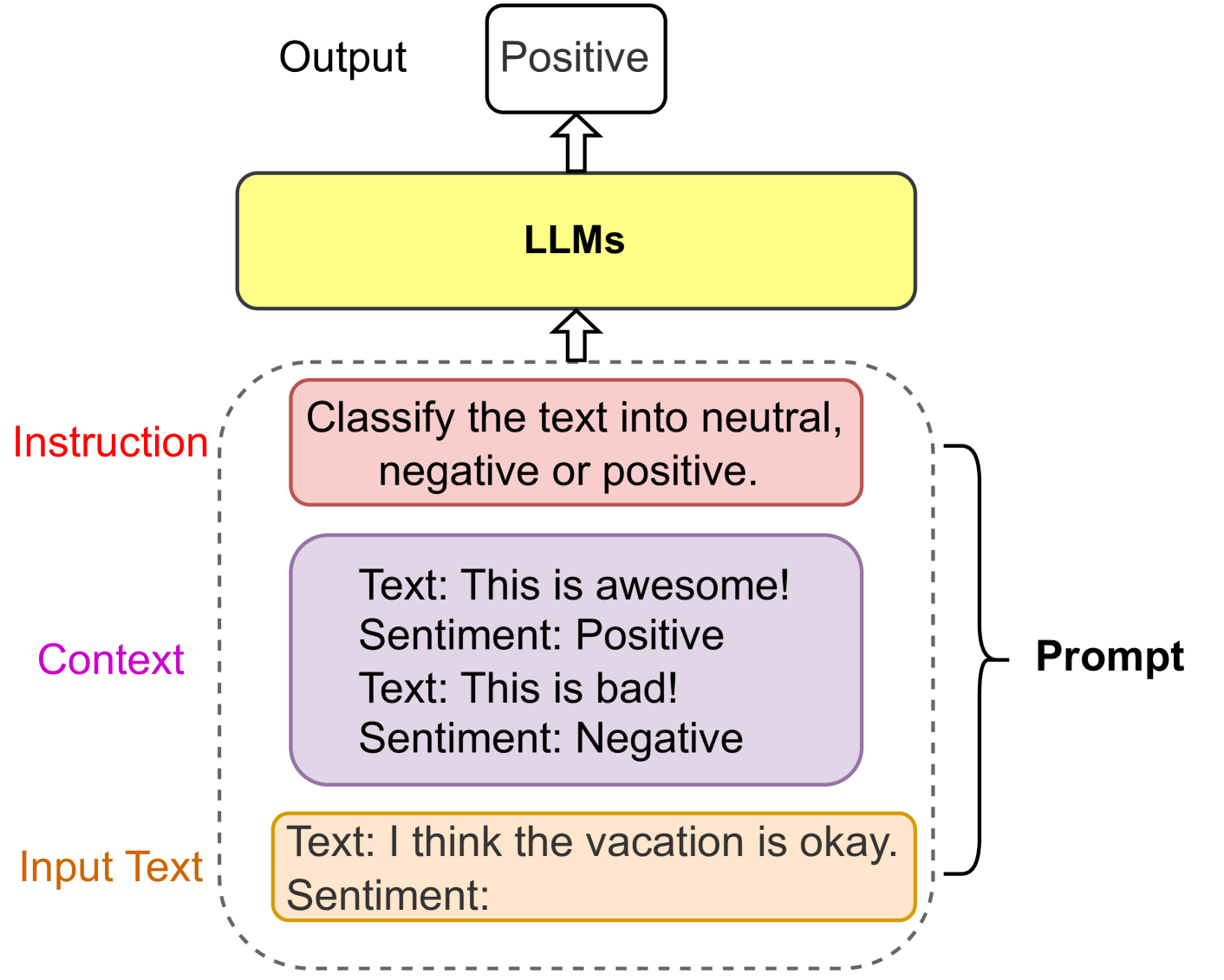

2.1.4 Prompt Engineering

Prompt engineering is a novel field that focuses on creating and refining prompts to maximize the effectiveness of large language models (LLMs) across various applications and research areas [62]. As shown in Fig. 4, a prompt is a sequence of natural language inputs for LLMs that are specified for the task, such as sentiment classification. A prompt could contain several elements, i.e., 1) Instruction, 2) Context, and 3) Input Text. Instruction is a short sentence that instructs the model to perform a specific task. Context provides the context for the input text or few-shot examples. Input Text is the text that needs to be processed by the model.

Prompt engineering seeks to improve the capacity of large large language models (e.g., ChatGPT) in diverse complex tasks such as question answering, sentiment classification, and common sense reasoning. Chain-of-thought (CoT) prompt [63] enables complex reasoning capabilities through intermediate reasoning steps. Prompt engineering also enables the integration of structural data like knowledge graphs (KGs) into LLMs. Li et al. [64] simply linearizes the KGs and uses templates to convert the KGs into passages. Mindmap [65] designs a KG prompt to convert graph structure into a mind map that enables LLMs to perform reasoning on it. Prompt offers a simple way to utilize the potential of LLMs without finetuning. Proficiency in prompt engineering leads to a better understanding of the strengths and weaknesses of LLMs.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: LLM Sentiment Analysis Flow

### Overview

This diagram illustrates the process of sentiment analysis performed by Large Language Models (LLMs). It depicts the flow of information from input text, through instruction and context, to the LLM, and finally to the output. The diagram highlights examples of input text and their corresponding sentiment classifications.

### Components/Axes

The diagram consists of four main rectangular components stacked vertically: "Input Text", "Context", "Instruction", and "LLMs". An arrow indicates the flow of information upwards from "Input Text" to "LLMs" and then to "Output". A dashed, curved line encompasses "Input Text", "Context", and "Instruction", labeling this grouping as "Prompt".

* **Input Text:** Contains example text and a placeholder for sentiment.

* **Context:** Contains example text with sentiment classifications.

* **Instruction:** Specifies the task: "Classify the text into neutral, negative or positive."

* **LLMs:** Represents the Large Language Model itself.

* **Output:** Displays the sentiment classification "Positive".

* **Prompt:** Encompasses Input Text, Context, and Instruction.

### Detailed Analysis or Content Details

The diagram shows the following specific text and sentiment examples:

* **Input Text:** "Text: I think the vacation is okay. Sentiment:" - Sentiment is not specified.

* **Context:**

* "Text: This is awesome! Sentiment: Positive"

* "Text: This is bad! Sentiment: Negative"

* **Instruction:** "Classify the text into neutral, negative or positive."

* **Output:** "Positive"

The flow of information is indicated by a solid arrow pointing upwards from the "LLMs" block to the "Output" block. A dashed, curved arrow encompasses the "Input Text", "Context", and "Instruction" blocks, labeling them as the "Prompt".

### Key Observations

The diagram demonstrates a simple sentiment analysis pipeline. The LLM receives a prompt consisting of an instruction, context examples, and input text. Based on this prompt, the LLM generates an output, in this case, a sentiment classification of "Positive". The context provides examples of positive and negative sentiment to guide the LLM's classification. The input text is "I think the vacation is okay." which is a neutral statement.

### Interpretation

The diagram illustrates how LLMs can be used for sentiment analysis by providing them with a clear instruction and relevant context. The LLM leverages the provided examples to understand the desired classification scheme (neutral, negative, positive) and applies it to the input text. The output "Positive" suggests that the LLM, despite the neutral input text, may be biased towards positive sentiment or that the context examples heavily influence the classification. The diagram highlights the importance of prompt engineering in guiding LLM behavior. The diagram is a conceptual illustration and does not provide quantitative data or performance metrics. It serves to explain the process rather than demonstrate specific results.

</details>

Figure 4: An example of sentiment classification prompt.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Knowledge Graph Types

### Overview

The image presents a comparative diagram illustrating four types of knowledge graphs: Encyclopedic, Commonsense, Domain-specific (Medical), and Multi-modal. Each graph type is visually represented with a small illustrative image on the left, followed by a network of nodes and labeled edges demonstrating relationships between concepts. The diagram aims to showcase the different kinds of knowledge and relationships each graph type can represent.

### Components/Axes

The diagram is structured vertically into four distinct sections, each representing a knowledge graph type. Each section contains:

* **Illustrative Image:** A small image representing the domain of the knowledge graph.

* **Nodes:** Oval-shaped nodes representing concepts or entities.

* **Edges:** Arrows connecting nodes, labeled with the type of relationship between the connected concepts.

* **Labels:** Text labels associated with nodes and edges, describing the concepts and relationships.

### Detailed Analysis or Content Details

**1. Encyclopedic Knowledge Graphs:**

* **Image:** Wikipedia logo.

* **Nodes:** Barack Obama, Politician, Honolulu, USA, Michelle Obama, Washington D.C.

* **Edges:**

* Barack Obama - BornIn -> Honolulu

* Barack Obama - IsA -> Politician

* Barack Obama - MarriedTo -> Michelle Obama

* Michelle Obama - LivesIn -> USA

* USA - CapitalOf -> Washington D.C.

**2. Commonsense Knowledge Graphs:**

* **Concept:** "Wake up" is prominently displayed.

* **Nodes:** Bed, Open eyes, Wake up, Get out of bed, Drink coffee, Make coffee, Coffee, Sugar, Cup, Kitchen, Awake.

* **Edges:**

* Bed - LocatedAt -> Wake up

* Open eyes - SubEventOf -> Wake up

* Wake up - SubEventOf -> Get out of bed

* Get out of bed - SubEventOf -> Make coffee

* Make coffee - IsFor -> Drink coffee

* Drink coffee - Causes -> Awake

* Coffee - Need -> Sugar

* Coffee - Need -> Cup

* Awake - LocatedAt -> Kitchen

**3. Domain-specific Knowledge Graph (Medical):**

* **Nodes:** PINK1, Parkinson’s Disease, Sleeping Disorder, Anxiety, Language Undevelopment, Motor Symptom, Tremor, Pervasive Developmental Disorder.

* **Edges:**

* PINK1 - Cause -> Parkinson’s Disease

* Parkinson’s Disease - LeadTo -> Tremor

* Parkinson’s Disease - Cause -> Sleeping Disorder

* Sleeping Disorder - Cause -> Anxiety

* Anxiety - LeadTo -> Language Undevelopment

* Language Undevelopment - LeadTo -> Pervasive Developmental Disorder

* Motor Symptom - LeadTo -> Tremor

**4. Multi-modal Knowledge Graph:**

* **Image:** Eiffel Tower.

* **Nodes:** Eiffel Tower, Paris, France, European Union, Emmanuel Macron.

* **Edges:**

* Eiffel Tower - LocatedIn -> Paris

* Paris - CapitalOf -> France

* Emmanuel Macron - PoliticianOf -> France

* France - MemberOf -> European Union

### Key Observations

* The complexity of the graphs varies. The Encyclopedic graph is the simplest, while the Commonsense and Medical graphs are more intricate.

* The relationships are explicitly labeled, providing clarity on how concepts are connected.

* The diagram demonstrates how knowledge graphs can represent different types of information, from factual data (Encyclopedic) to everyday understanding (Commonsense) to specialized knowledge (Medical) and multimodal data (Multi-modal).

* The use of illustrative images helps to ground the graphs in specific domains.

### Interpretation

The diagram illustrates the versatility of knowledge graphs as a method for representing and organizing information. Each type of graph serves a different purpose and caters to different needs. Encyclopedic graphs focus on factual knowledge, while Commonsense graphs capture everyday understanding. Domain-specific graphs provide specialized knowledge within a particular field, and Multi-modal graphs integrate information from various sources, including images and text. The diagram suggests that knowledge graphs are not a one-size-fits-all solution but rather a flexible framework that can be adapted to different contexts and applications. The relationships between nodes are crucial, as they define the meaning and context of the information. The diagram highlights the importance of explicitly defining these relationships to ensure accurate and meaningful knowledge representation. The diagram is a conceptual illustration and does not contain specific numerical data or statistical analysis. It is a qualitative representation of different knowledge graph types.

</details>

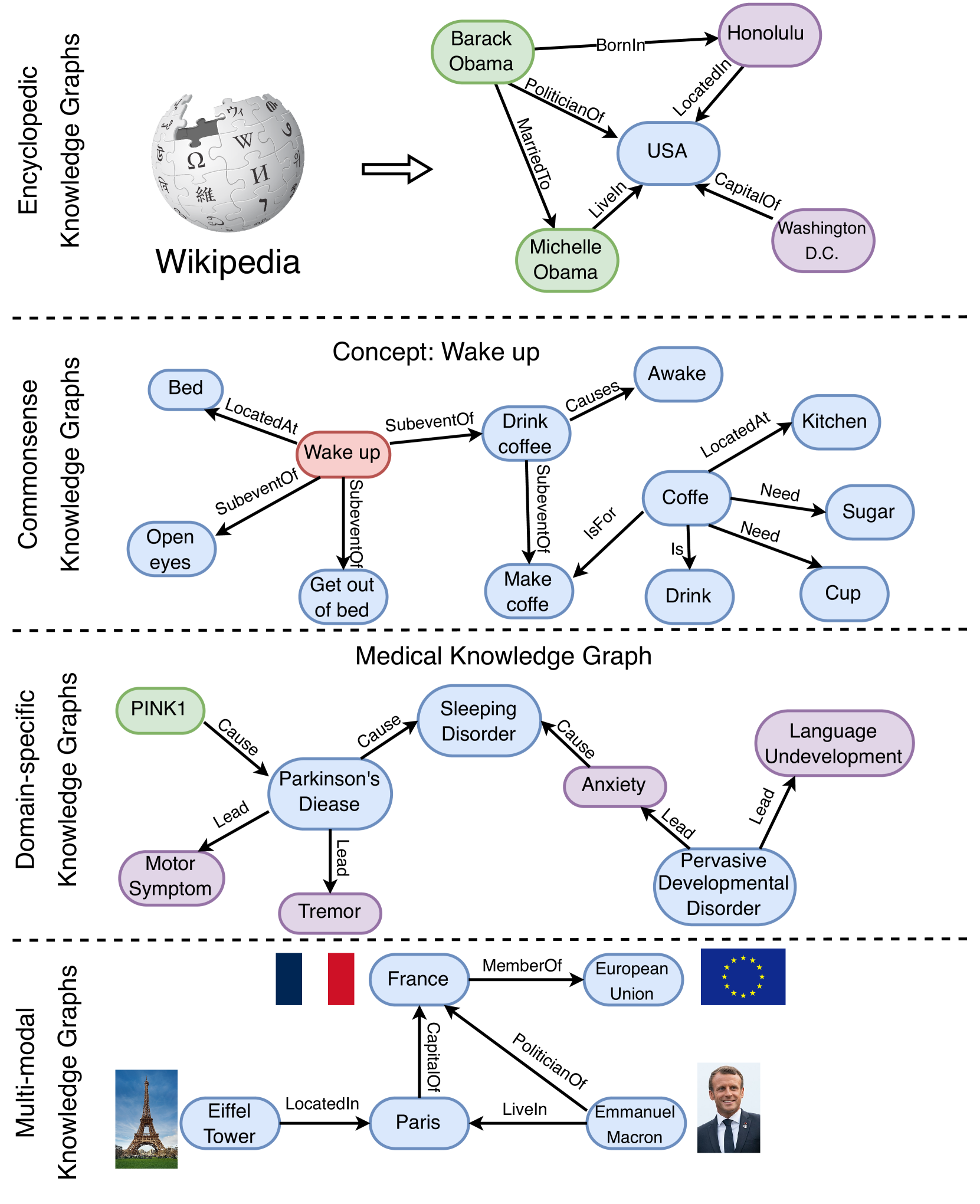

Figure 5: Examples of different categories’ knowledge graphs, i.e., encyclopedic KGs, commonsense KGs, domain-specific KGs, and multi-modal KGs.

2.2 Knowledge Graphs (KGs)

Knowledge graphs (KGs) store structured knowledge as a collection of triples $\mathcal{KG}=\{(h,r,t)⊂eq\mathcal{E}×\mathcal{R}×\mathcal{E}\}$ , where $\mathcal{E}$ and $\mathcal{R}$ respectively denote the set of entities and relations. Existing knowledge graphs (KGs) can be classified into four groups based on the stored information: 1) encyclopedic KGs, 2) commonsense KGs, 3) domain-specific KGs, and 4) multi-modal KGs. We illustrate the examples of KGs of different categories in Fig. 5.

2.2.1 Encyclopedic Knowledge Graphs.

Encyclopedic knowledge graphs are the most ubiquitous KGs, which represent the general knowledge in real-world. Encyclopedic knowledge graphs are often constructed by integrating information from diverse and extensive sources, including human experts, encyclopedias, and databases. Wikidata [20] is one of the most widely used encyclopedic knowledge graphs, which incorporates varieties of knowledge extracted from articles on Wikipedia. Other typical encyclopedic knowledge graphs, like Freebase [66], Dbpedia [67], and YAGO [31] are also derived from Wikipedia. In addition, NELL [32] is a continuously improving encyclopedic knowledge graph, which automatically extracts knowledge from the web, and uses that knowledge to improve its performance over time. There are several encyclopedic knowledge graphs available in languages other than English such as CN-DBpedia [68] and Vikidia [69]. The largest knowledge graph, named Knowledge Occean (KO) https://ko.zhonghuapu.com/, currently contains 4,8784,3636 entities and 17,3115,8349 relations in both English and Chinese.

2.2.2 Commonsense Knowledge Graphs.

Commonsense knowledge graphs formulate the knowledge about daily concepts, e.g., objects, and events, as well as their relationships [70]. Compared with encyclopedic knowledge graphs, commonsense knowledge graphs often model the tacit knowledge extracted from text such as (Car, UsedFor, Drive). ConceptNet [71] contains a wide range of commonsense concepts and relations, which can help computers understand the meanings of words people use. ATOMIC [72, 73] and ASER [74] focus on the causal effects between events, which can be used for commonsense reasoning. Some other commonsense knowledge graphs, such as TransOMCS [75] and CausalBanK [76] are automatically constructed to provide commonsense knowledge.

2.2.3 Domain-specific Knowledge Graphs

Domain-specific knowledge graphs are often constructed to represent knowledge in a specific domain, e.g., medical, biology, and finance [23]. Compared with encyclopedic knowledge graphs, domain-specific knowledge graphs are often smaller in size, but more accurate and reliable. For example, UMLS [77] is a domain-specific knowledge graph in the medical domain, which contains biomedical concepts and their relationships. In addition, there are some domain-specific knowledge graphs in other domains, such as finance [78], geology [79], biology [80], chemistry [81] and genealogy [82].

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Diagram: Synergized LLMs + KGs

### Overview

The image presents a diagram illustrating three different approaches to integrating Knowledge Graphs (KGs) and Large Language Models (LLMs). The diagram depicts three scenarios: KG-enhanced LLMs, LLM-augmented KGs, and Synergized LLMs + KGs, showing the flow of information and the respective strengths each approach leverages.

### Components/Axes

The diagram consists of three main sections (a, b, and c), each representing a different integration strategy. Each section includes rectangular boxes representing LLMs and KGs, with arrows indicating the flow of information. Each section also includes bulleted lists describing the capabilities of each component.

### Detailed Analysis or Content Details

**a. KG-enhanced LLMs:**

* **Input:** "Text Input"

* **Components:**

* KG (Blue Rectangle): Features listed include: "Structural Fact", "Domain-specific Knowledge", "Symbolic-reasoning", and "......".

* LLM (Yellow Rectangle): Receives input from "Text Input" and is influenced by the KG.

* **Output:** An output is generated from the LLM.

* **Flow:** Text Input -> LLM, KG -> LLM -> Output.

**b. LLM-augmented KGs:**

* **Input:** "KG-related Tasks"

* **Components:**

* LLM (Yellow Rectangle): Features listed include: "General Knowledge", "Language Processing", "Generalizability", and "......".

* KG (Blue Rectangle): Receives input from the LLM and is used for KG-related tasks.

* **Output:** An output is generated from the KG.

* **Flow:** KG-related Tasks -> LLM, LLM -> KG -> Output.

**c. Synergized LLMs + KGs:**

* **Components:**

* LLM (Yellow Rectangle):

* KG (Blue Rectangle):

* **Flow:** Bidirectional flow between LLM and KG, indicated by two arrows. One arrow goes from KG to LLM labeled "Factual Knowledge". The other arrow goes from LLM to KG labeled "Knowledge Representation".

### Key Observations

* The diagram emphasizes the complementary strengths of LLMs and KGs. KGs provide structured, factual knowledge, while LLMs excel at language processing and generalization.

* The "Synergized" approach (c) highlights a more integrated and iterative relationship between LLMs and KGs, suggesting a continuous exchange of knowledge.

* The use of "......" in the bulleted lists indicates that the lists are not exhaustive.

### Interpretation

The diagram illustrates a progression in the integration of LLMs and KGs. The initial approaches (a and b) treat one as an enhancement to the other. However, the final approach (c) suggests a more symbiotic relationship where both LLMs and KGs continuously learn from and improve each other. This synergistic approach is likely to yield the most powerful results, leveraging the strengths of both technologies to create more robust and knowledgeable AI systems. The bidirectional arrows in section (c) are crucial, indicating that the LLM doesn't just *use* the KG, but also contributes to its refinement and expansion through knowledge representation. This suggests a dynamic system capable of continuous learning and adaptation. The diagram is a conceptual illustration of potential architectures rather than a presentation of empirical data.

</details>

Figure 6: The general roadmap of unifying KGs and LLMs. (a.) KG-enhanced LLMs. (b.) LLM-augmented KGs. (c.) Synergized LLMs + KGs.

2.2.4 Multi-modal Knowledge Graphs.

Unlike conventional knowledge graphs that only contain textual information, multi-modal knowledge graphs represent facts in multiple modalities such as images, sounds, and videos [83]. For example, IMGpedia [84], MMKG [85], and Richpedia [86] incorporate both the text and image information into the knowledge graphs. These knowledge graphs can be used for various multi-modal tasks such as image-text matching [87], visual question answering [88], and recommendation [89].

TABLE I: Representative applications of using LLMs and KGs.

| ChatGPT/GPT-4 | Chat Bot | ✓ | | https://shorturl.at/cmsE0 |

| --- | --- | --- | --- | --- |

| ERNIE 3.0 | Chat Bot | ✓ | ✓ | https://shorturl.at/sCLV9 |

| Bard | Chat Bot | ✓ | ✓ | https://shorturl.at/pDLY6 |

| Firefly | Photo Editing | ✓ | | https://shorturl.at/fkzJV |

| AutoGPT | AI Assistant | ✓ | | https://shorturl.at/bkoSY |

| Copilot | Coding Assistant | ✓ | | https://shorturl.at/lKLUV |

| New Bing | Web Search | ✓ | | https://shorturl.at/bimps |

| Shop.ai | Recommendation | ✓ | | https://shorturl.at/alCY7 |

| Wikidata | Knowledge Base | | ✓ | https://shorturl.at/lyMY5 |

| KO | Knowledge Base | | ✓ | https://shorturl.at/sx238 |

| OpenBG | Recommendation | | ✓ | https://shorturl.at/pDMV9 |

| Doctor.ai | Health Care Assistant | ✓ | ✓ | https://shorturl.at/dhlK0 |

2.3 Applications

LLMs as KGs have been widely applied in various real-world applications. We summarize some representative applications of using LLMs and KGs in Table I. ChatGPT/GPT-4 are LLM-based chatbots that can communicate with humans in a natural dialogue format. To improve knowledge awareness of LLMs, ERNIE 3.0 and Bard incorporate KGs into their chatbot applications. Instead of Chatbot. Firefly develops a photo editing application that allows users to edit photos by using natural language descriptions. Copilot, New Bing, and Shop.ai adopt LLMs to empower their applications in the areas of coding assistant, web search, and recommendation, respectively. Wikidata and KO are two representative knowledge graph applications that are used to provide external knowledge. OpenBG [90] is a knowledge graph designed for recommendation. Doctor.ai develops a health care assistant that incorporates LLMs and KGs to provide medical advice.

3 Roadmap & Categorization

In this section, we first present a road map of explicit frameworks that unify LLMs and KGs. Then, we present the categorization of research on unifying LLMs and KGs.

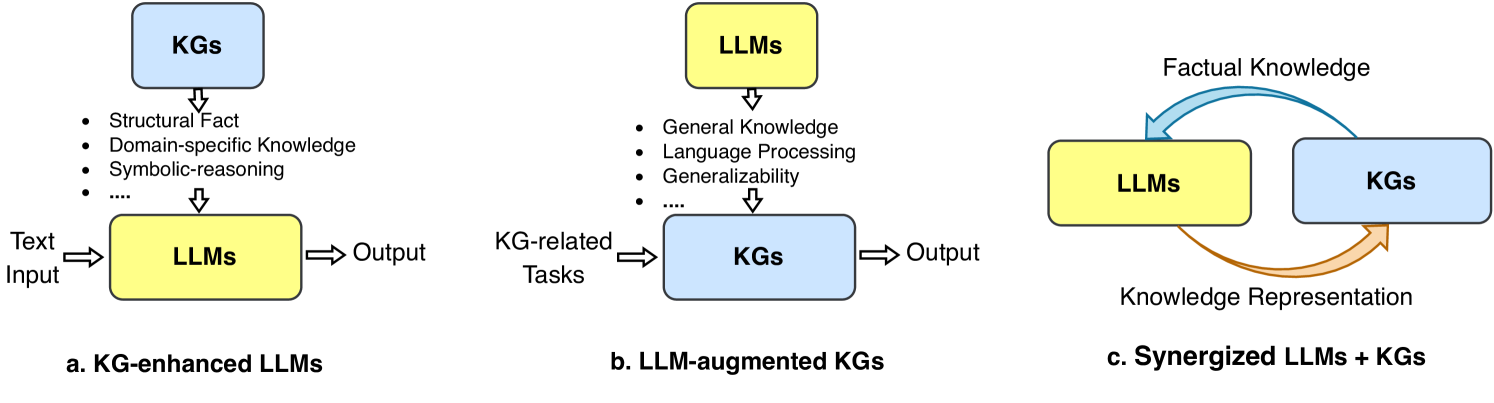

3.1 Roadmap

The roadmap of unifying KGs and LLMs is illustrated in Fig. 6. In the roadmap, we identify three frameworks for the unification of LLMs and KGs, including KG-enhanced LLMs, LLM-augmented KGs, and Synergized LLMs + KGs. The KG-enhanced LLMs and LLM-augmented KGs are two parallel frameworks that aim to enhance the capabilities of LLMs and KGs, respectively. Building upon these frameworks, Synergized LLMs + KGs is a unified framework that aims to synergize LLMs and KGs to mutually enhance each other.

3.1.1 KG-enhanced LLMs

LLMs are renowned for their ability to learn knowledge from large-scale corpus and achieve state-of-the-art performance in various NLP tasks. However, LLMs are often criticized for their hallucination issues [15], and lacking of interpretability. To address these issues, researchers have proposed to enhance LLMs with knowledge graphs (KGs).

KGs store enormous knowledge in an explicit and structured way, which can be used to enhance the knowledge awareness of LLMs. Some researchers have proposed to incorporate KGs into LLMs during the pre-training stage, which can help LLMs learn knowledge from KGs [35, 91]. Other researchers have proposed to incorporate KGs into LLMs during the inference stage. By retrieving knowledge from KGs, it can significantly improve the performance of LLMs in accessing domain-specific knowledge [92]. To improve the interpretability of LLMs, researchers also utilize KGs to interpret the facts [14] and the reasoning process of LLMs [38].

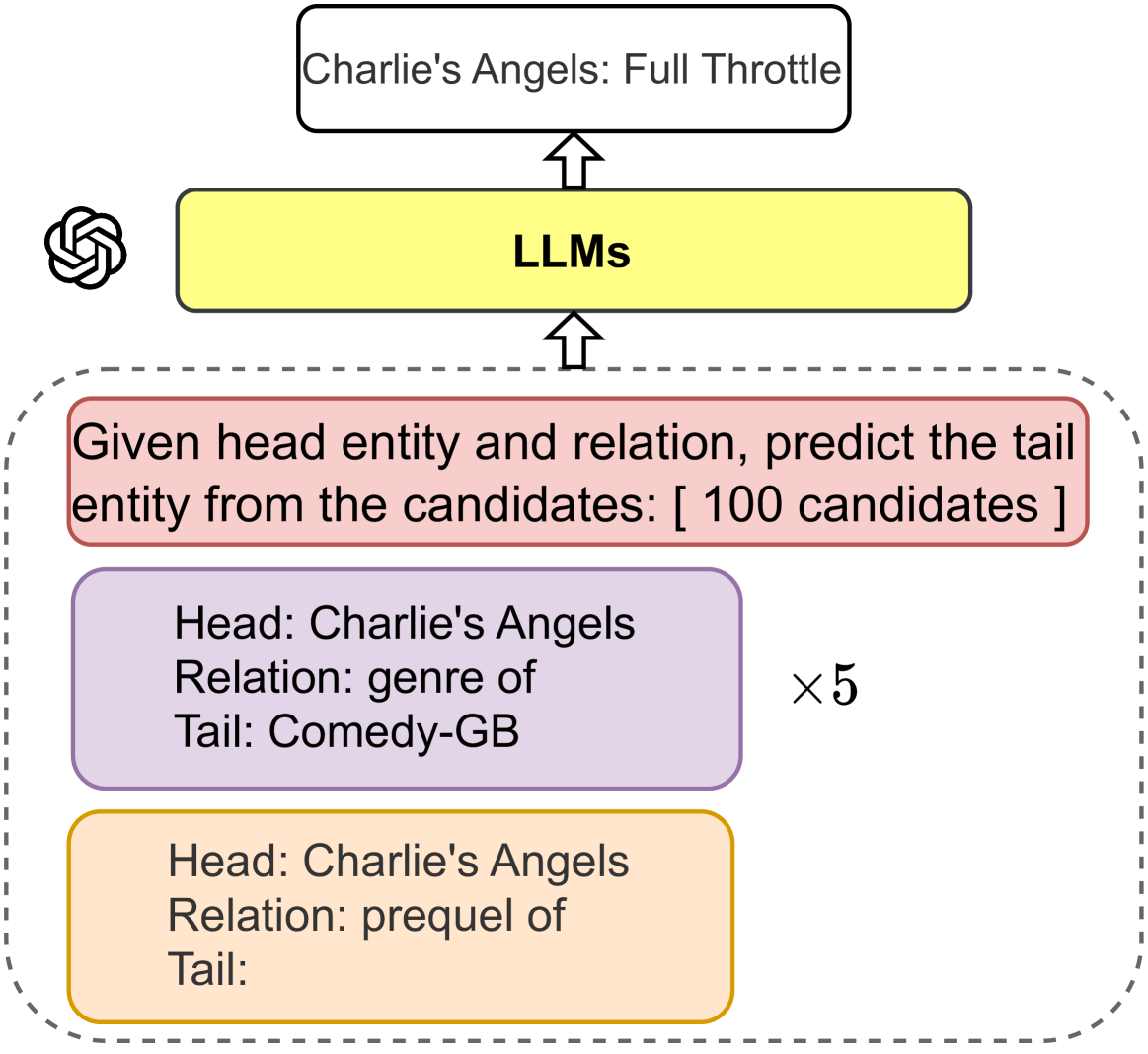

3.1.2 LLM-augmented KGs

KGs store structure knowledge playing an essential role in many real-word applications [19]. Existing methods in KGs fall short of handling incomplete KGs [33] and processing text corpus to construct KGs [93]. With the generalizability of LLMs, many researchers are trying to harness the power of LLMs for addressing KG-related tasks.

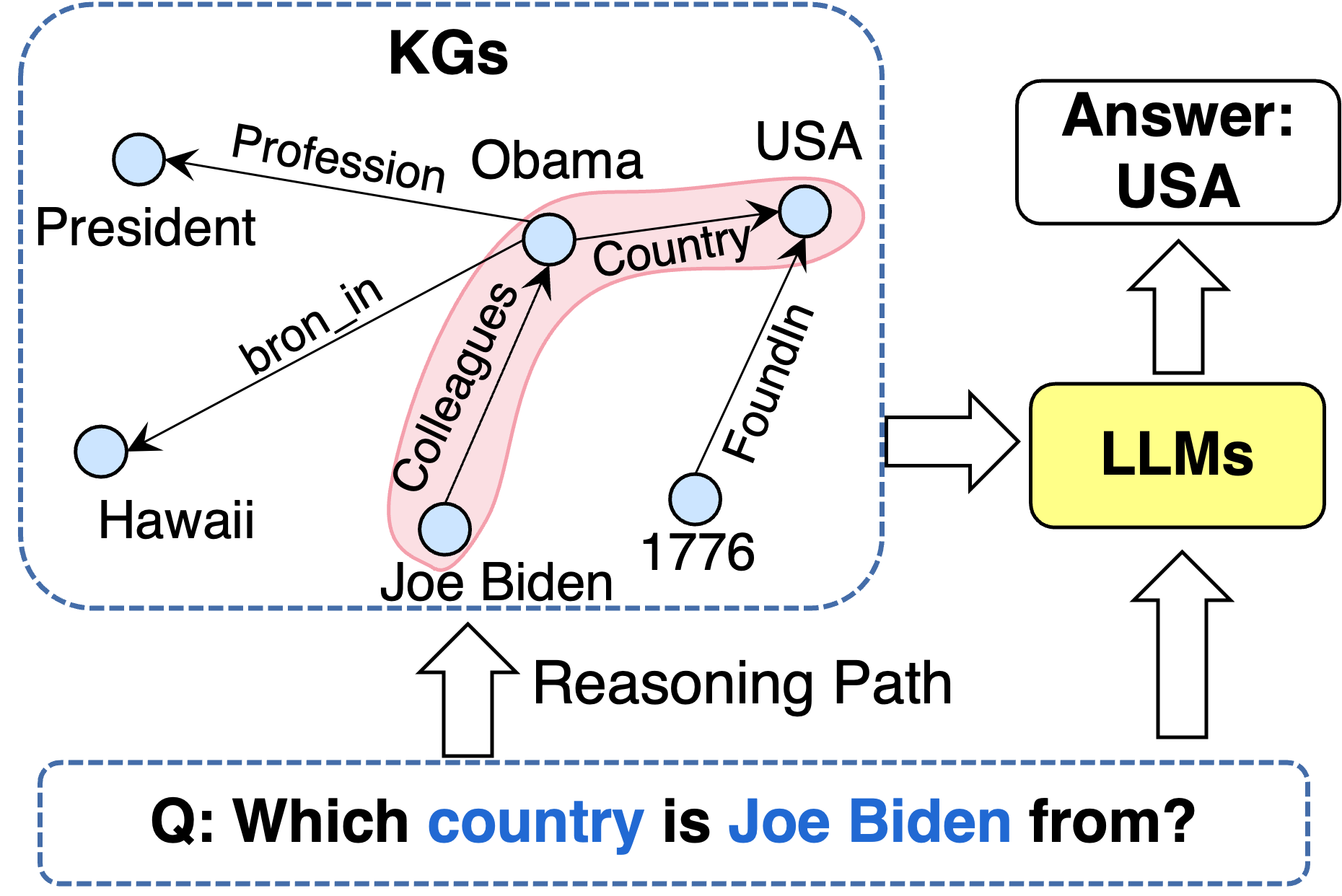

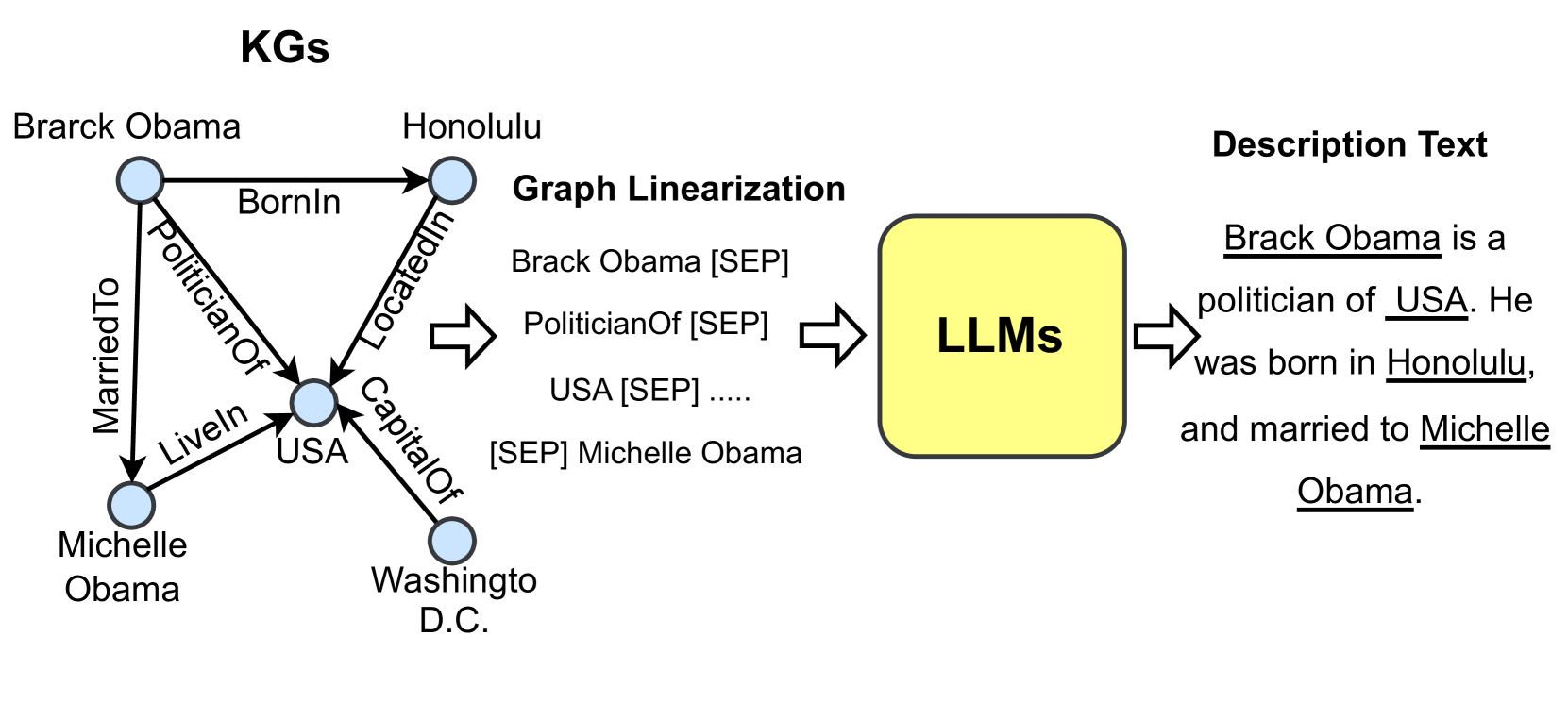

The most straightforward way to apply LLMs as text encoders for KG-related tasks. Researchers take advantage of LLMs to process the textual corpus in the KGs and then use the representations of the text to enrich KGs representation [94]. Some studies also use LLMs to process the original corpus and extract relations and entities for KG construction [95]. Recent studies try to design a KG prompt that can effectively convert structural KGs into a format that can be comprehended by LLMs. In this way, LLMs can be directly applied to KG-related tasks, e.g., KG completion [96] and KG reasoning [97].

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Diagram: Synergized Model for AI Applications

### Overview

This diagram illustrates a layered architecture for building AI applications, focusing on the synergy between Large Language Models (LLMs) and Knowledge Graphs (KGs). It depicts the flow of information from Data, through Techniques, to a Synergized Model, and finally to various Applications. The diagram uses boxes with rounded corners to represent layers and components, and arrows to indicate the flow of information.

### Components/Axes

The diagram is structured into four main layers, arranged vertically from bottom to top:

1. **Data:** Contains "Structural Fact", "Text Corpus", "Image", "Video", and an ellipsis ("...") indicating other data types.

2. **Synergized Model:** Contains two main components: "LLMs" (Large Language Models) and "KGs" (Knowledge Graphs). LLMs are associated with bullet points: "General Knowledge", "Language Processing", and "Generalizability". KGs are associated with bullet points: "Explicit Knowledge", "Domain-specific Knowledge", "Decisiveness", and "Interpretability". A blue arrow points from LLMs to KGs.

3. **Technique:** Contains "Prompt Engineering", "Graph Neural Network", "In-context Learning", "Representation Learning", "Neural-symbolic Reasoning", and "Few-shot Learning".

4. **Application:** Contains "Search Engine", "Recommender System", "Dialogue System", and "AI Assistant".

Dotted lines with arrows connect each layer to the layer above it, indicating the flow of information.

### Detailed Analysis or Content Details

The diagram shows a hierarchical relationship between the layers.

* **Data Layer:** This layer represents the raw input data used by the system. It includes structured facts, text, images, and videos.

* **Synergized Model Layer:** This layer represents the core of the system, where LLMs and KGs are combined. The arrow from LLMs to KGs suggests a flow of information or influence from LLMs to KGs.

* **Technique Layer:** This layer represents the methods and algorithms used to process the data and build the synergized model.

* **Application Layer:** This layer represents the end-user applications that utilize the synergized model.

The diagram does not contain numerical data or specific values. It is a conceptual representation of a system architecture.

### Key Observations

The diagram emphasizes the importance of combining LLMs and KGs for building advanced AI applications. The flow of information from data to applications is clearly illustrated. The inclusion of various techniques highlights the complexity of the system. The diagram suggests that the synergized model benefits from both the general knowledge and language processing capabilities of LLMs and the explicit, domain-specific knowledge and interpretability of KGs.

### Interpretation

The diagram illustrates a modern approach to AI system design, moving beyond solely relying on either LLMs or KGs. It suggests that the true potential of AI lies in the synergistic combination of these two approaches. LLMs provide the ability to understand and generate human language, while KGs provide structured knowledge and reasoning capabilities. By combining these strengths, the system can achieve better performance, interpretability, and generalizability. The diagram implies that the techniques layer acts as a bridge, enabling the effective integration of data, LLMs, and KGs to deliver value through various applications. The diagram is a high-level conceptual overview and does not provide details on the specific implementation or performance of the system.

</details>

Figure 7: The general framework of the Synergized LLMs + KGs, which contains four layers: 1) Data, 2) Synergized Model, 3) Technique, and 4) Application.

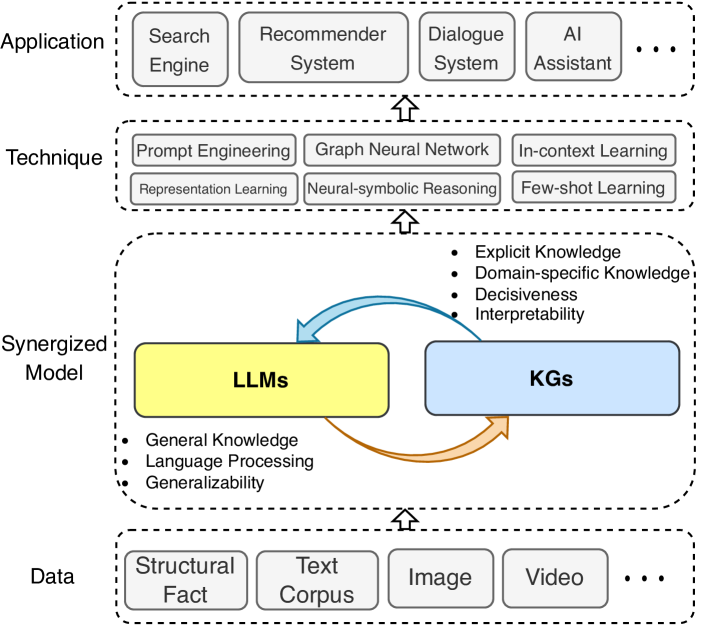

3.1.3 Synergized LLMs + KGs

The synergy of LLMs and KGs has attracted increasing attention from researchers these years [40, 42]. LLMs and KGs are two inherently complementary techniques, which should be unified into a general framework to mutually enhance each other.

To further explore the unification, we propose a unified framework of the synergized LLMs + KGs in Fig. 7. The unified framework contains four layers: 1) Data, 2) Synergized Model, 3) Technique, and 4) Application. In the Data layer, LLMs and KGs are used to process the textual and structural data, respectively. With the development of multi-modal LLMs [98] and KGs [99], this framework can be extended to process multi-modal data, such as video, audio, and images. In the Synergized Model layer, LLMs and KGs could synergize with each other to improve their capabilities. In Technique layer, related techniques that have been used in LLMs and KGs can be incorporated into this framework to further enhance the performance. In the Application layer, LLMs and KGs can be integrated to address various real-world applications, such as search engines [100], recommender systems [10], and AI assistants [101].

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Diagram: LLMs Meet KGs - A Taxonomy of Approaches

### Overview

The image is a diagram illustrating a taxonomy of approaches at the intersection of Large Language Models (LLMs) and Knowledge Graphs (KGs). It categorizes these approaches into four main areas: KG-enhanced LLMs, LLM-augmented KGs, LLMs Meet KGs (a central connector), and Synergized LLMs + KGs. Each area branches out into more specific techniques, represented as rectangular nodes connected by arrows. The diagram visually represents a hierarchical relationship between these techniques.

### Components/Axes

The diagram is structured around four primary categories, positioned vertically and horizontally. The categories are:

* **KG-enhanced LLMs** (Leftmost column, yellow boxes)

* **LLMs Meet KGs** (Central connector, green boxes)

* **LLM-augmented KGs** (Rightmost column, cyan boxes)

* **Synergized LLMs + KGs** (Bottom, teal boxes)

Each category has sub-categories, indicated by the rectangular nodes. Arrows connect these nodes, suggesting a flow or relationship between them. There are no explicit axes or scales in the traditional chart sense.

### Detailed Analysis or Content Details

**1. KG-enhanced LLMs (Yellow)**

* **KG-enhanced LLM pre-training:**

* Integrating KGs into training objective

* Integrating KGs into LLM inputs

* KGs Instruction-tuning

* Retrieval-augmented knowledge fusion

* **KG-enhanced LLM inference:**

* KGs Prompting

* **KG-enhanced LLM interpretability:**

* KGs for LLM probing

* KGs for LLM analysis

**2. LLMs Meet KGs (Green)**

* **LLM-augmented KG embedding:**

* LLMs as text encoders

* LLMs for joint text and KG embedding

* **LLM-augmented KG completion:**

* LLMs as encoders

* LLMs as generators

* **LLM-augmented KG construction:**

* Entity discovery

* Relation extraction

* Coreference resolution

* End-to-End KG construction

* Distilling KGs from LLMs

* **LLM-augmented KG to text generation:**

* Leveraging knowledge from LLMs

* LLMs for constructing KG-text aligned Corpus

* **LLM-augmented KG question answering:**

* LLMs as entity/relation extractors

* LLMs as answer reasoners

**3. LLM-augmented KGs (Cyan)**

* No specific sub-categories are listed.

**4. Synergized LLMs + KGs (Teal)**

* **Synergized Knowledge Representation:**

* LLM-KG fusion reasoning

* **Synergized Reasoning:**

* LLMs as agents reasoning

The diagram uses arrows to indicate relationships between the categories and sub-categories. The positioning of the categories suggests a progression or interplay between them.

### Key Observations

The diagram highlights the diverse ways LLMs and KGs can be integrated. The "LLMs Meet KGs" category appears to be a central hub, connecting the KG-enhanced LLMs and LLM-augmented KGs. The "Synergized LLMs + KGs" category represents a more advanced stage of integration, focusing on reasoning and knowledge representation. The diagram is not quantitative; it's a qualitative representation of different approaches.

### Interpretation

This diagram illustrates the evolving landscape of research combining LLMs and KGs. It suggests a shift from simply enhancing LLMs with KGs (KG-enhanced LLMs) or augmenting KGs with LLMs (LLM-augmented KGs) towards a more synergistic approach where both technologies work together to achieve more complex tasks (Synergized LLMs + KGs). The "LLMs Meet KGs" category acts as a bridge, showcasing various intermediate techniques like KG embedding, completion, construction, and question answering.

The diagram implies that the field is moving beyond simply using one technology to improve the other and is now exploring how to create truly integrated systems that leverage the strengths of both LLMs (reasoning, language understanding) and KGs (structured knowledge, explainability). The lack of specific data points suggests this is a conceptual overview rather than a quantitative analysis of performance. The diagram serves as a useful taxonomy for researchers and practitioners in this rapidly developing field. It provides a framework for understanding the different approaches and identifying potential areas for future research.

</details>

Figure 8: Fine-grained categorization of research on unifying large language models (LLMs) with knowledge graphs (KGs).

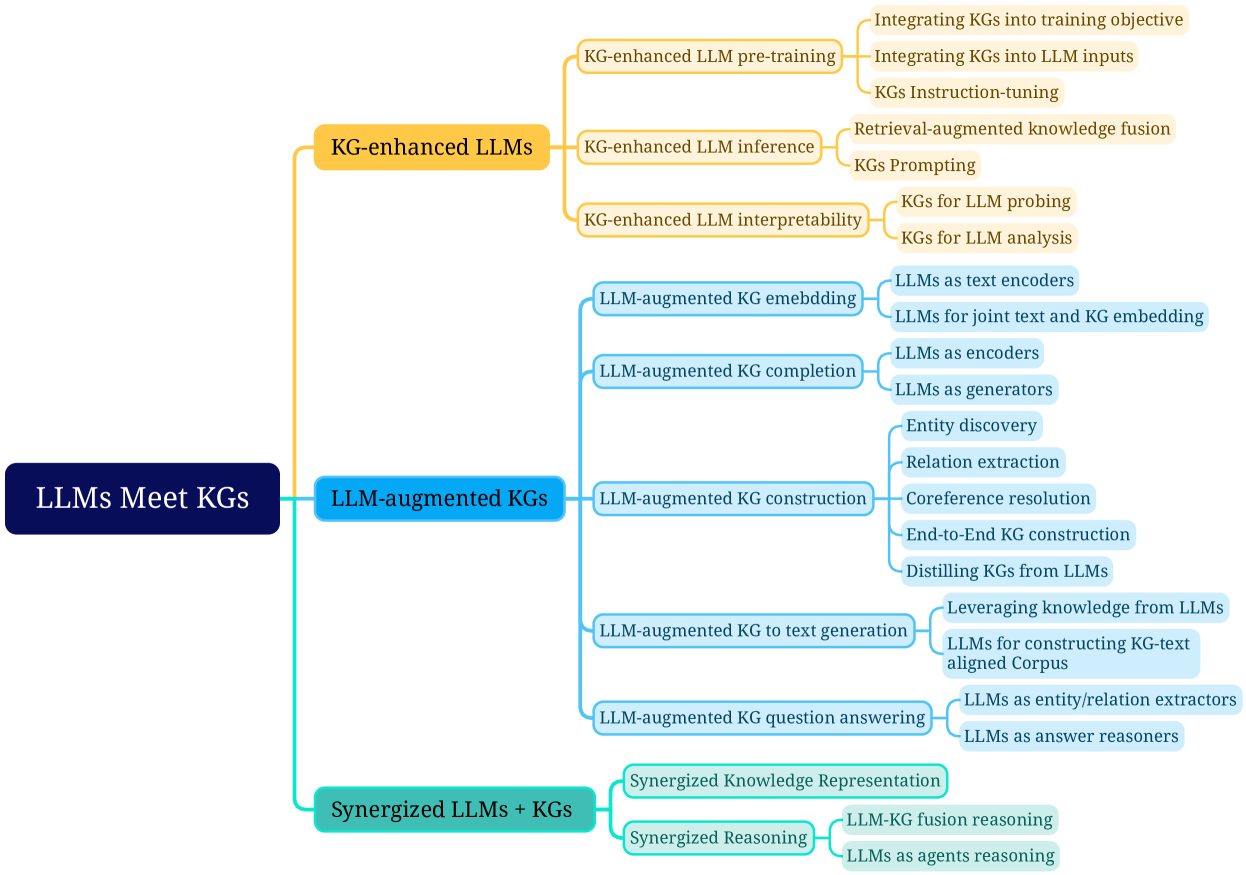

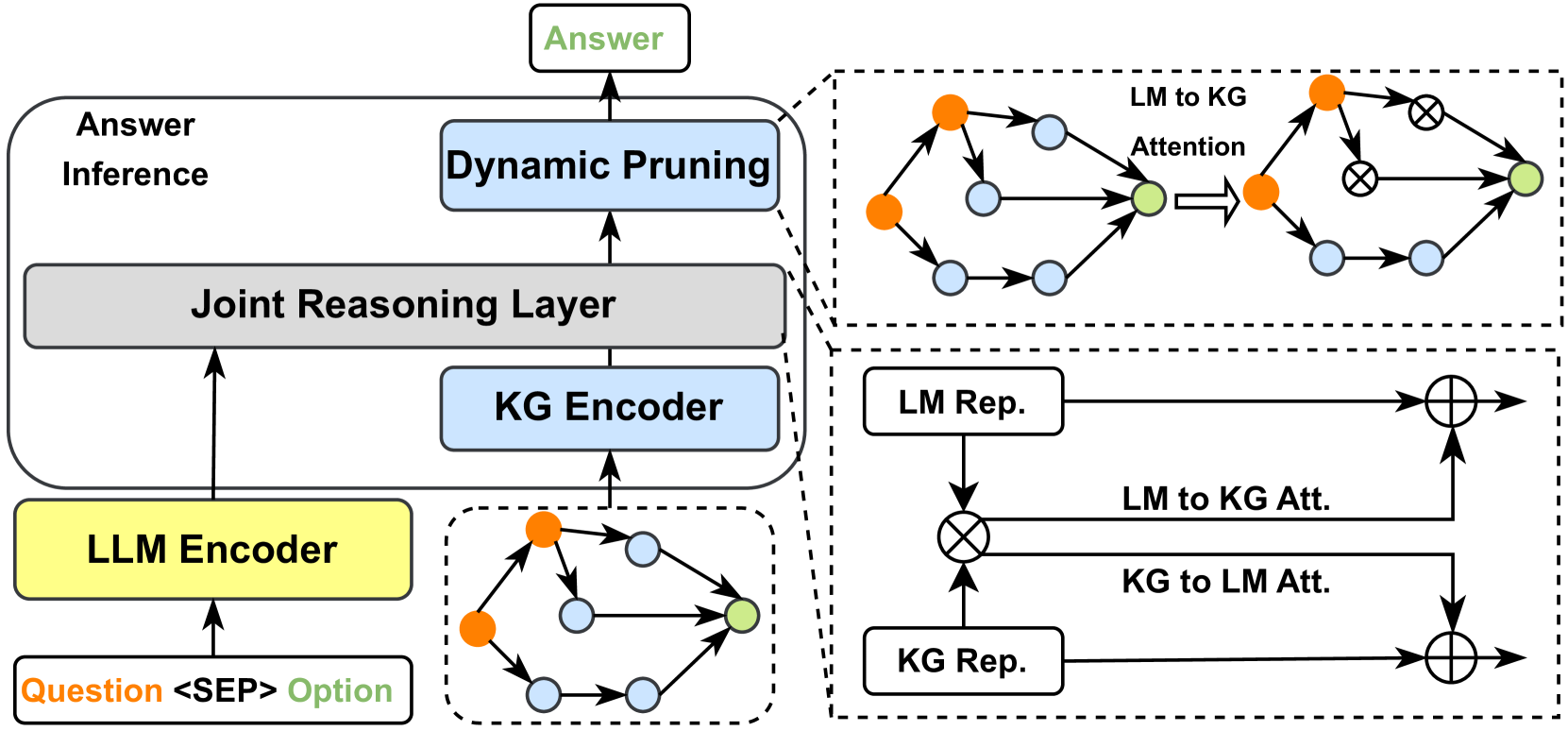

3.2 Categorization

To better understand the research on unifying LLMs and KGs, we further provide a fine-grained categorization for each framework in the roadmap. Specifically, we focus on different ways of integrating KGs and LLMs, i.e., KG-enhanced LLMs, KG-augmented LLMs, and Synergized LLMs + KGs. The fine-grained categorization of the research is illustrated in Fig. 8.

KG-enhanced LLMs. Integrating KGs can enhance the performance and interpretability of LLMs in various downstream tasks. We categorize the research on KG-enhanced LLMs into three groups:

1. KG-enhanced LLM pre-training includes works that apply KGs during the pre-training stage and improve the knowledge expression of LLMs.

1. KG-enhanced LLM inference includes research that utilizes KGs during the inference stage of LLMs, which enables LLMs to access the latest knowledge without retraining.

1. KG-enhanced LLM interpretability includes works that use KGs to understand the knowledge learned by LLMs and interpret the reasoning process of LLMs.

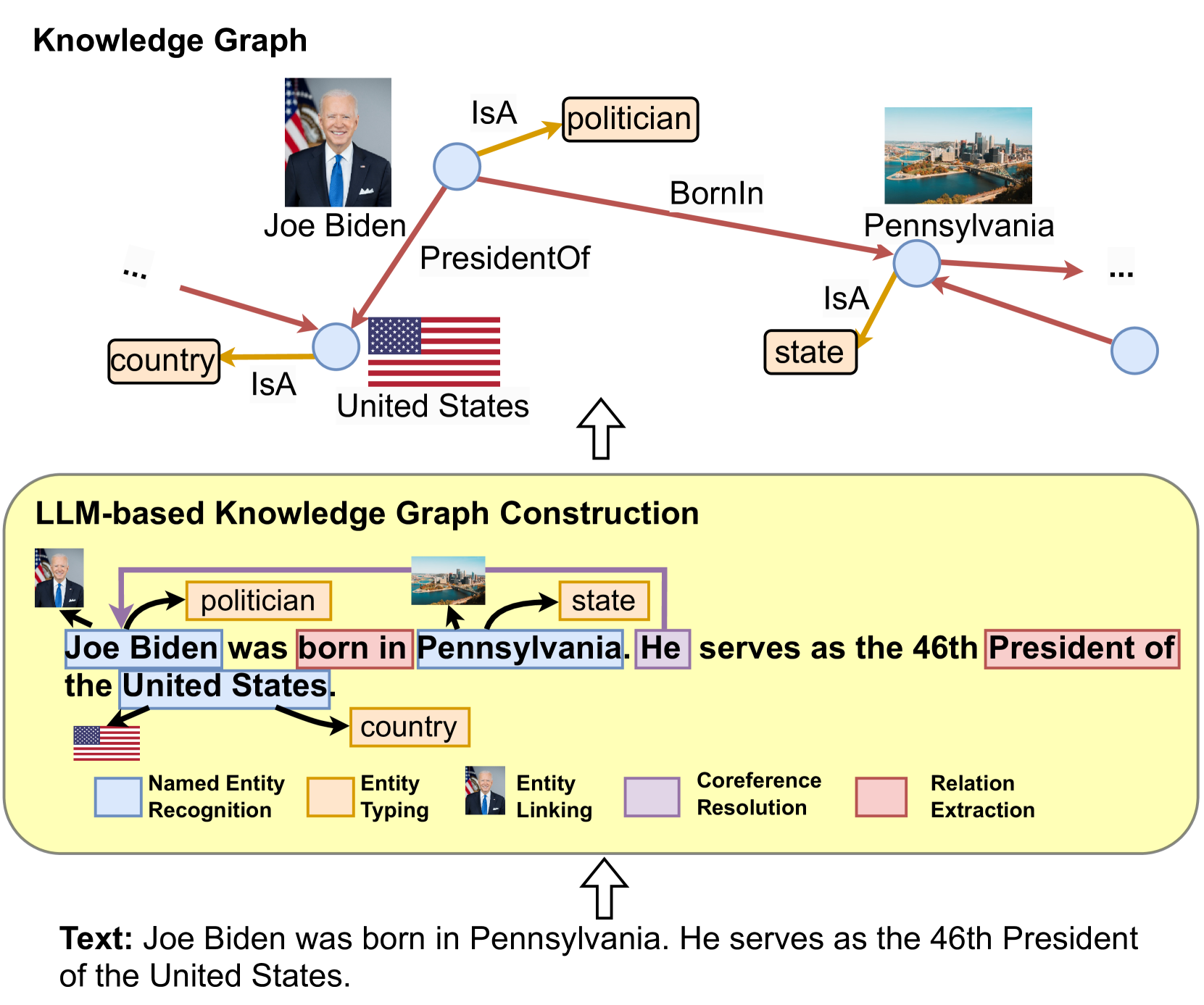

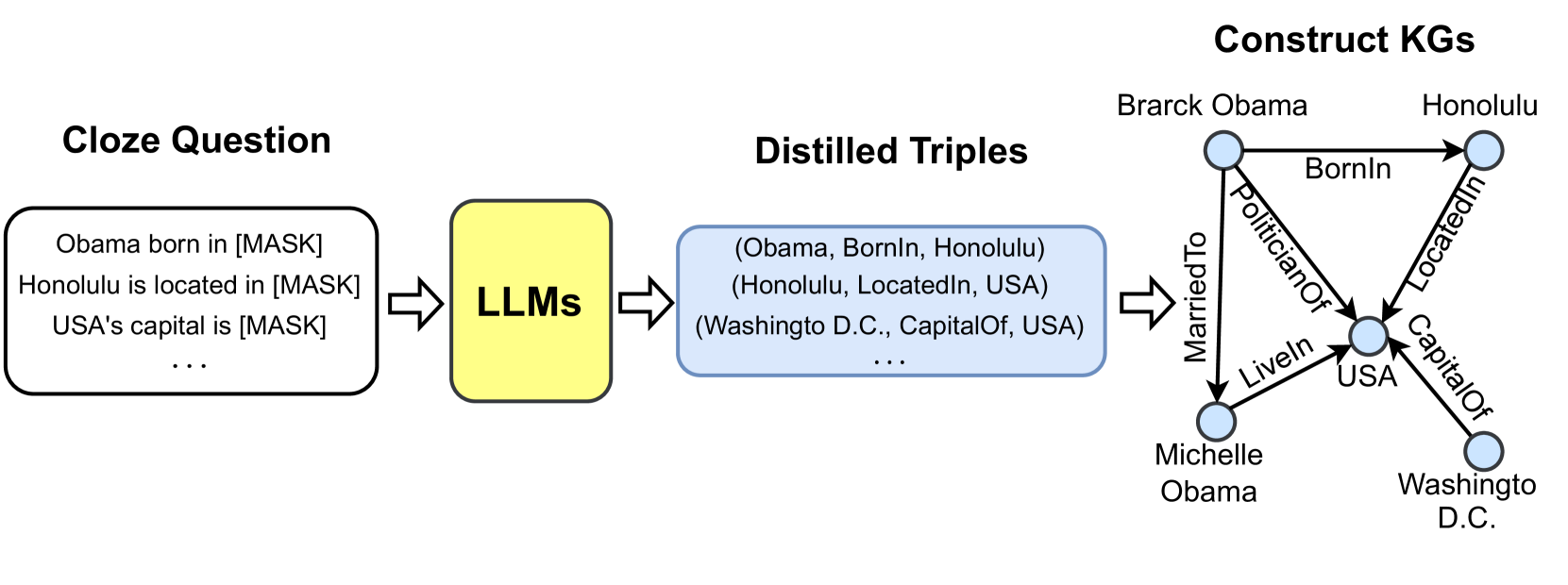

LLM-augmented KGs. LLMs can be applied to augment various KG-related tasks. We categorize the research on LLM-augmented KGs into five groups based on the task types:

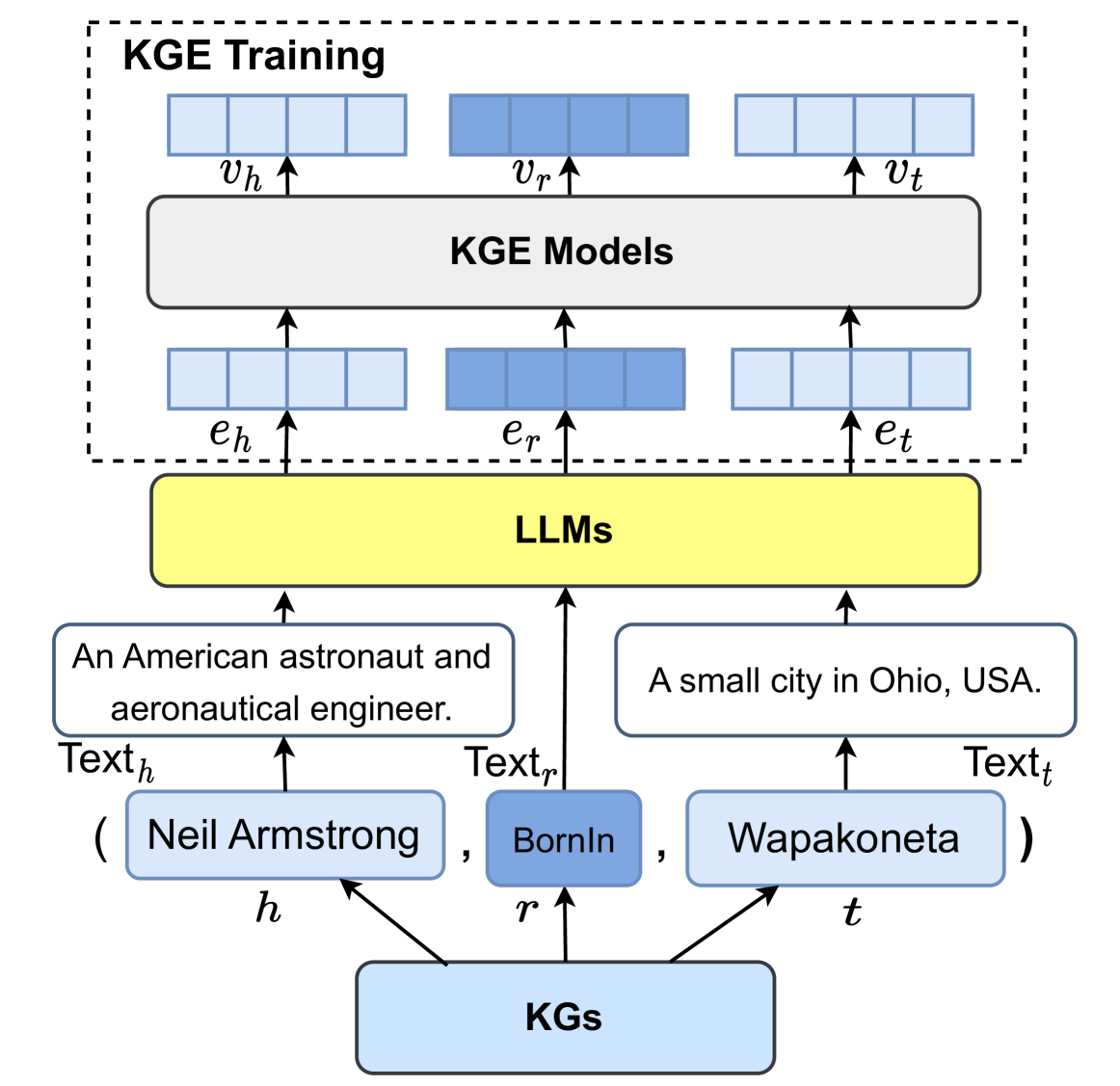

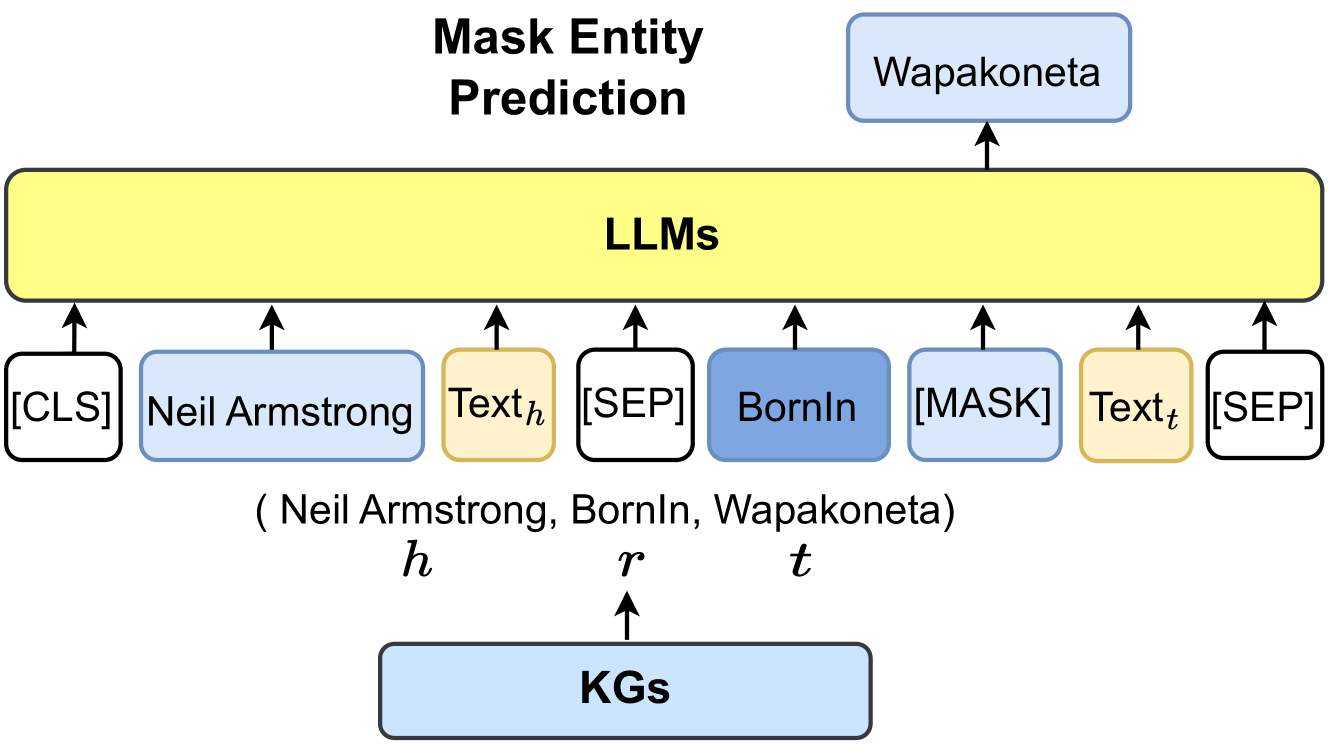

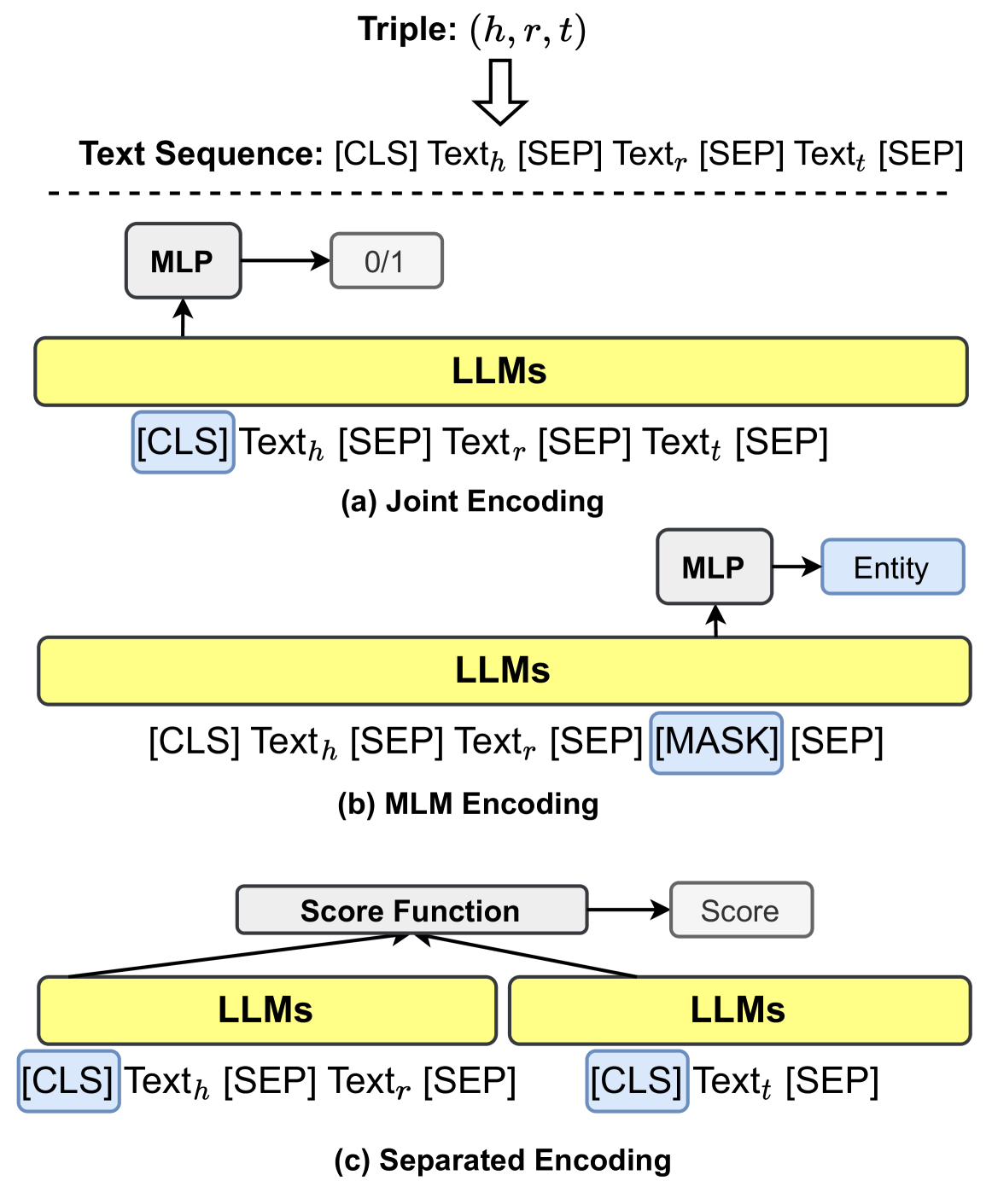

1. LLM-augmented KG embedding includes studies that apply LLMs to enrich representations of KGs by encoding the textual descriptions of entities and relations.

1. LLM-augmented KG completion includes papers that utilize LLMs to encode text or generate facts for better KGC performance.

1. LLM-augmented KG construction includes works that apply LLMs to address the entity discovery, coreference resolution, and relation extraction tasks for KG construction.

1. LLM-augmented KG-to-text Generation includes research that utilizes LLMs to generate natural language that describes the facts from KGs.

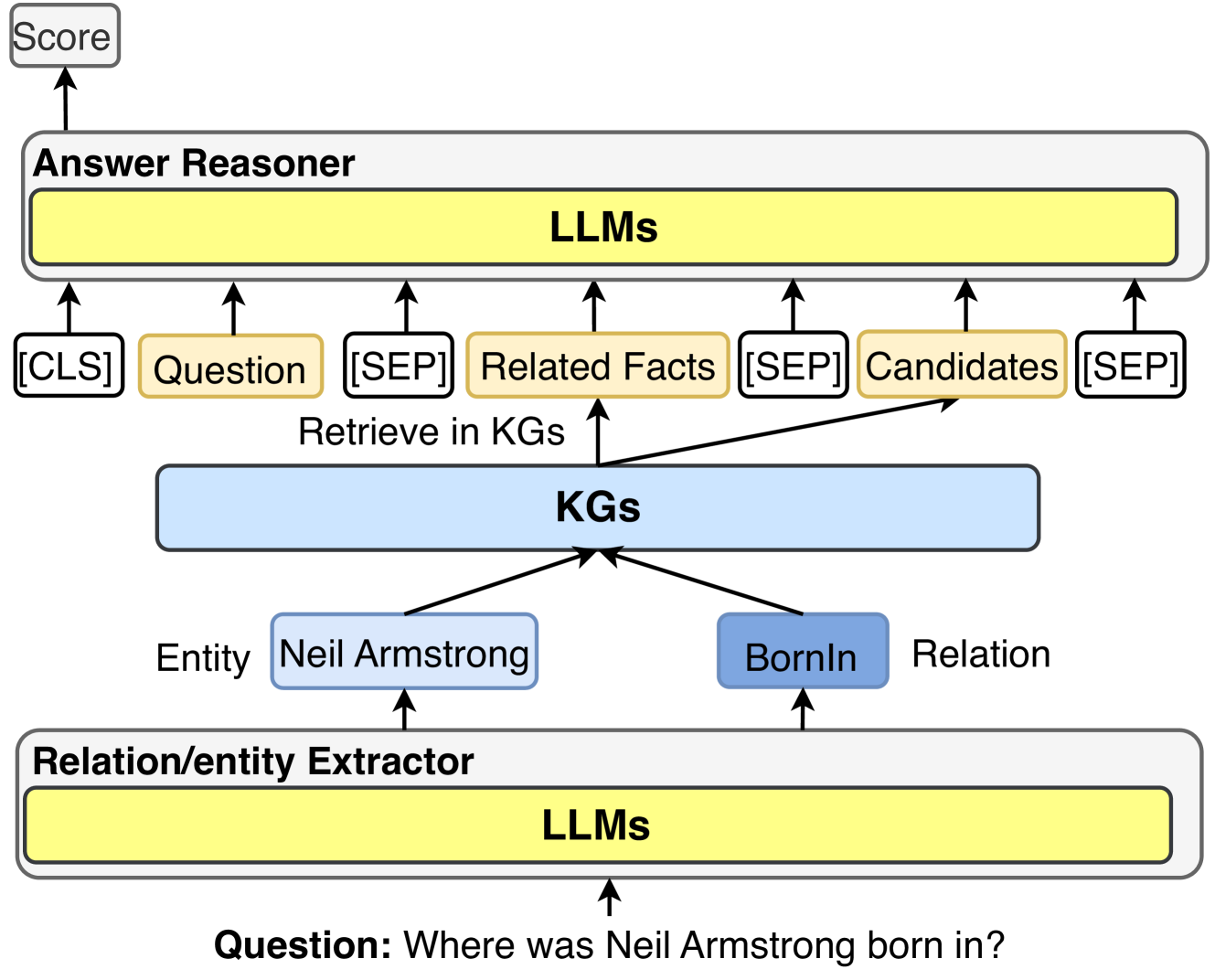

1. LLM-augmented KG question answering includes studies that apply LLMs to bridge the gap between natural language questions and retrieve answers from KGs.

Synergized LLMs + KGs. The synergy of LLMs and KGs aims to integrate LLMs and KGs into a unified framework to mutually enhance each other. In this categorization, we review the recent attempts of Synergized LLMs + KGs from the perspectives of knowledge representation and reasoning.

In the following sections (Sec 4, 5, and 6), we will provide details on these categorizations.

4 KG-enhanced LLMs

Large language models (LLMs) achieve promising results in many natural language processing tasks. However, LLMs have been criticized for their lack of practical knowledge and tendency to generate factual errors during inference. To address this issue, researchers have proposed integrating knowledge graphs (KGs) to enhance LLMs. In this section, we first introduce the KG-enhanced LLM pre-training, which aims to inject knowledge into LLMs during the pre-training stage. Then, we introduce the KG-enhanced LLM inference, which enables LLMs to consider the latest knowledge while generating sentences. Finally, we introduce the KG-enhanced LLM interpretability, which aims to improve the interpretability of LLMs by using KGs. Table II summarizes the typical methods that integrate KGs for LLMs.

TABLE II: Summary of KG-enhanced LLM methods.

[b] Task Method Year KG Technique KG-enhanced LLM pre-training ERNIE [35] 2019 E Integrating KGs into Training Objective GLM [102] 2020 C Integrating KGs into Training Objective Ebert [103] 2020 D Integrating KGs into Training Objective KEPLER [40] 2021 E Integrating KGs into Training Objective Deterministic LLM [104] 2022 E Integrating KGs into Training Objective KALA [105] 2022 D Integrating KGs into Training Objective WKLM [106] 2020 E Integrating KGs into Training Objective K-BERT [36] 2020 E + D Integrating KGs into Language Model Inputs CoLAKE [107] 2020 E Integrating KGs into Language Model Inputs ERNIE3.0 [101] 2021 E + D Integrating KGs into Language Model Inputs DkLLM [108] 2022 E Integrating KGs into Language Model Inputs KP-PLM [109] 2022 E KGs Instruction-tuning OntoPrompt [110] 2022 E + D KGs Instruction-tuning ChatKBQA [111] 2023 E KGs Instruction-tuning RoG [112] 2023 E KGs Instruction-tuning KG-enhanced LLM inference KGLM [113] 2019 E Retrival-augmented knowledge fusion REALM [114] 2020 E Retrival-augmented knowledge fusion RAG [92] 2020 E Retrival-augmented knowledge fusion EMAT [115] 2022 E Retrival-augmented knowledge fusion Li et al. [64] 2023 C KGs Prompting Mindmap [65] 2023 E + D KGs Prompting ChatRule [116] 2023 E + D KGs Prompting CoK [117] 2023 E + C + D KGs Prompting KG-enhanced LLM interpretability LAMA [14] 2019 E KGs for LLM probing LPAQA [118] 2020 E KGs for LLM probing Autoprompt [119] 2020 E KGs for LLM probing MedLAMA [120] 2022 D KGs for LLM probing LLM-facteval [121] 2023 E + D KGs for LLM probing KagNet [38] 2019 C KGs for LLM analysis Interpret-lm [122] 2021 E KGs for LLM analysis knowledge-neurons [39] 2021 E KGs for LLM analysis Shaobo et al. [123] 2022 E KGs for LLM analysis

- E: Encyclopedic Knowledge Graphs, C: Commonsense Knowledge Graphs, D: Domain-Specific Knowledge Graphs.

4.1 KG-enhanced LLM Pre-training

Existing large language models mostly rely on unsupervised training on the large-scale corpus. While these models may exhibit impressive performance on downstream tasks, they often lack practical knowledge relevant to the real world. Previous works that integrate KGs into large language models can be categorized into three parts: 1) Integrating KGs into training objective, 2) Integrating KGs into LLM inputs, and 3) KGs Instruction-tuning.

4.1.1 Integrating KGs into Training Objective

The research efforts in this category focus on designing novel knowledge-aware training objectives. An intuitive idea is to expose more knowledge entities in the pre-training objective. GLM [102] leverages the knowledge graph structure to assign a masking probability. Specifically, entities that can be reached within a certain number of hops are considered to be the most important entities for learning, and they are given a higher masking probability during pre-training. Furthermore, E-BERT [103] further controls the balance between the token-level and entity-level training losses. The training loss values are used as indications of the learning process for token and entity, which dynamically determines their ratio for the next training epochs. SKEP [124] also follows a similar fusion to inject sentiment knowledge during LLMs pre-training. SKEP first determines words with positive and negative sentiment by utilizing PMI along with a predefined set of seed sentiment words. Then, it assigns a higher masking probability to those identified sentiment words in the word masking objective.

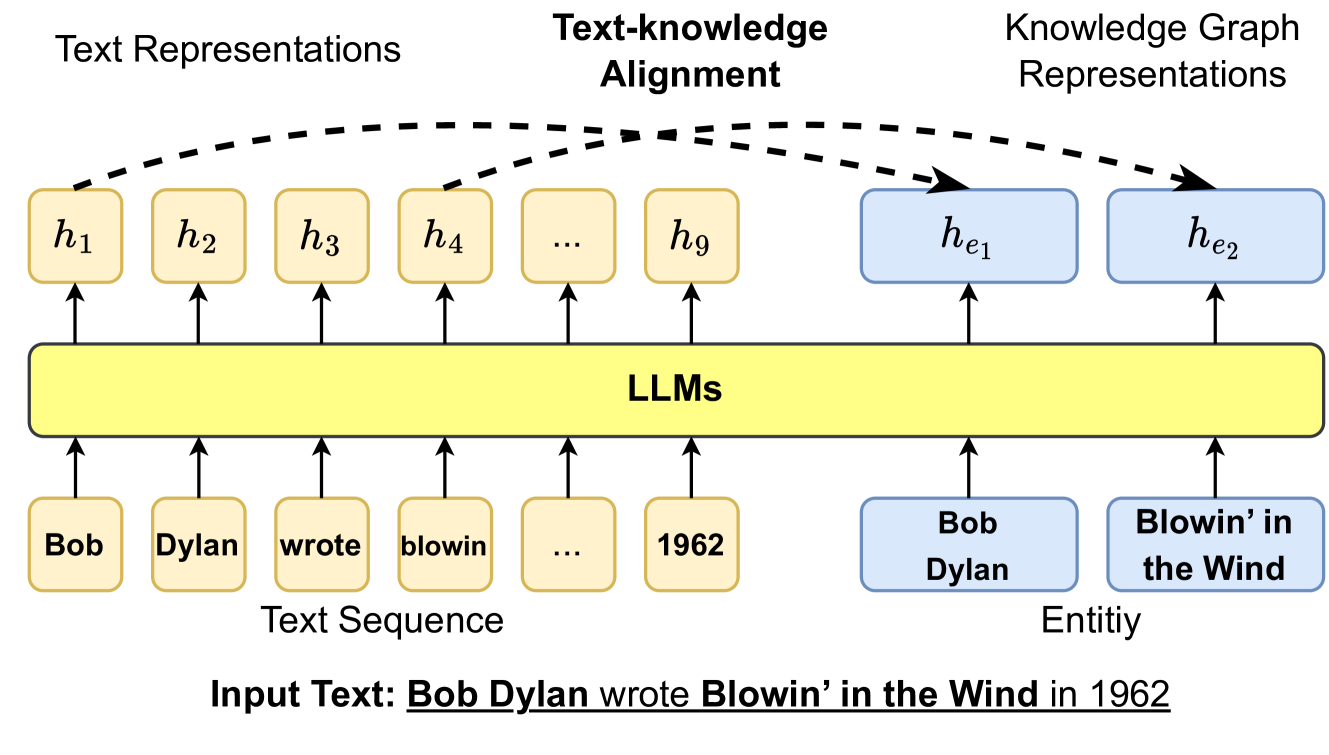

The other line of work explicitly leverages the connections with knowledge and input text. As shown in Fig. 9, ERNIE [35] proposes a novel word-entity alignment training objective as a pre-training objective. Specifically, ERNIE feeds both sentences and corresponding entities mentioned in the text into LLMs, and then trains the LLMs to predict alignment links between textual tokens and entities in knowledge graphs. Similarly, KALM [91] enhances the input tokens by incorporating entity embeddings and includes an entity prediction pre-training task in addition to the token-only pre-training objective. This approach aims to improve the ability of LLMs to capture knowledge related to entities. Finally, KEPLER [40] directly employs both knowledge graph embedding training objective and Masked token pre-training objective into a shared transformer-based encoder. Deterministic LLM [104] focuses on pre-training language models to capture deterministic factual knowledge. It only masks the span that has a deterministic entity as the question and introduces additional clue contrast learning and clue classification objective. WKLM [106] first replaces entities in the text with other same-type entities and then feeds them into LLMs. The model is further pre-trained to distinguish whether the entities have been replaced or not.

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Diagram: Text-to-Knowledge Alignment with LLMs

### Overview

This diagram illustrates the process of aligning text representations with knowledge graph representations using Large Language Models (LLMs). It depicts how an input text sequence is processed by LLMs to generate text representations, which are then aligned with corresponding entities in a knowledge graph.

### Components/Axes

The diagram consists of three main horizontal sections: "Text Representations", "Text-knowledge Alignment", and "Knowledge Graph Representations". Below these sections is a "Text Sequence" and "Entity" section. The central component is a yellow rectangle labeled "LLMs".

* **Text Representations:** Contains a series of labeled nodes: h1, h2, h3, h4, ..., h9.

* **Text-knowledge Alignment:** A dashed line connecting the "Text Representations" and "Knowledge Graph Representations" sections, labeled "Text-knowledge Alignment".

* **Knowledge Graph Representations:** Contains a series of labeled nodes: he1, he2.

* **Text Sequence:** Contains the following words in yellow rounded rectangles: "Bob", "Dylan", "wrote", "blowin'", "...", "1962".

* **Entity:** Contains the following entities in green rounded rectangles: "Bob Dylan", "Blowin' in the Wind".

* **LLMs:** A large yellow rectangle in the center, acting as the processing unit.

* **Input Text:** "Bob Dylan wrote Blowin’ in the Wind in 1962"

### Detailed Analysis or Content Details

The diagram shows the following relationships:

* "Bob" in the Text Sequence is connected to h1 in Text Representations via an upward arrow.

* "Dylan" in the Text Sequence is connected to h2 in Text Representations via an upward arrow.

* "wrote" in the Text Sequence is connected to h3 in Text Representations via an upward arrow.

* "blowin'" in the Text Sequence is connected to h4 in Text Representations via an upward arrow.

* "..." in the Text Sequence is connected to an unspecified number of h nodes in Text Representations.

* "1962" in the Text Sequence is connected to h9 in Text Representations via an upward arrow.

* "Bob Dylan" in the Entity section is connected to he1 in Knowledge Graph Representations via an upward arrow.

* "Blowin' in the Wind" in the Entity section is connected to he2 in Knowledge Graph Representations via an upward arrow.

* The LLMs rectangle receives input from both the Text Sequence and the Knowledge Graph Representations.

* The dashed line "Text-knowledge Alignment" indicates a connection between the Text Representations and Knowledge Graph Representations.

### Key Observations

The diagram highlights the transformation of text into numerical representations (h1-h9, he1-he2) by the LLM. The "..." suggests that the text sequence can be of variable length. The alignment process aims to link textual information with corresponding entities in a knowledge graph.

### Interpretation

This diagram illustrates a core concept in modern Natural Language Processing (NLP) – grounding language in knowledge. The LLM acts as a bridge, converting text into a format suitable for reasoning and knowledge retrieval. The alignment process is crucial for tasks like question answering, information extraction, and semantic search. The diagram suggests that the LLM learns to represent both the text and the entities in a shared embedding space, enabling the alignment. The use of "h" and "he" notation likely refers to hidden states or embeddings within the LLM and knowledge graph, respectively. The diagram doesn't provide specific data or numerical values, but rather a conceptual overview of the process. It demonstrates how LLMs can be used to connect natural language with structured knowledge, enabling more sophisticated AI applications.

</details>

Figure 9: Injecting KG information into LLMs training objective via text-knowledge alignment loss, where $h$ denotes the hidden representation generated by LLMs.

4.1.2 Integrating KGs into LLM Inputs

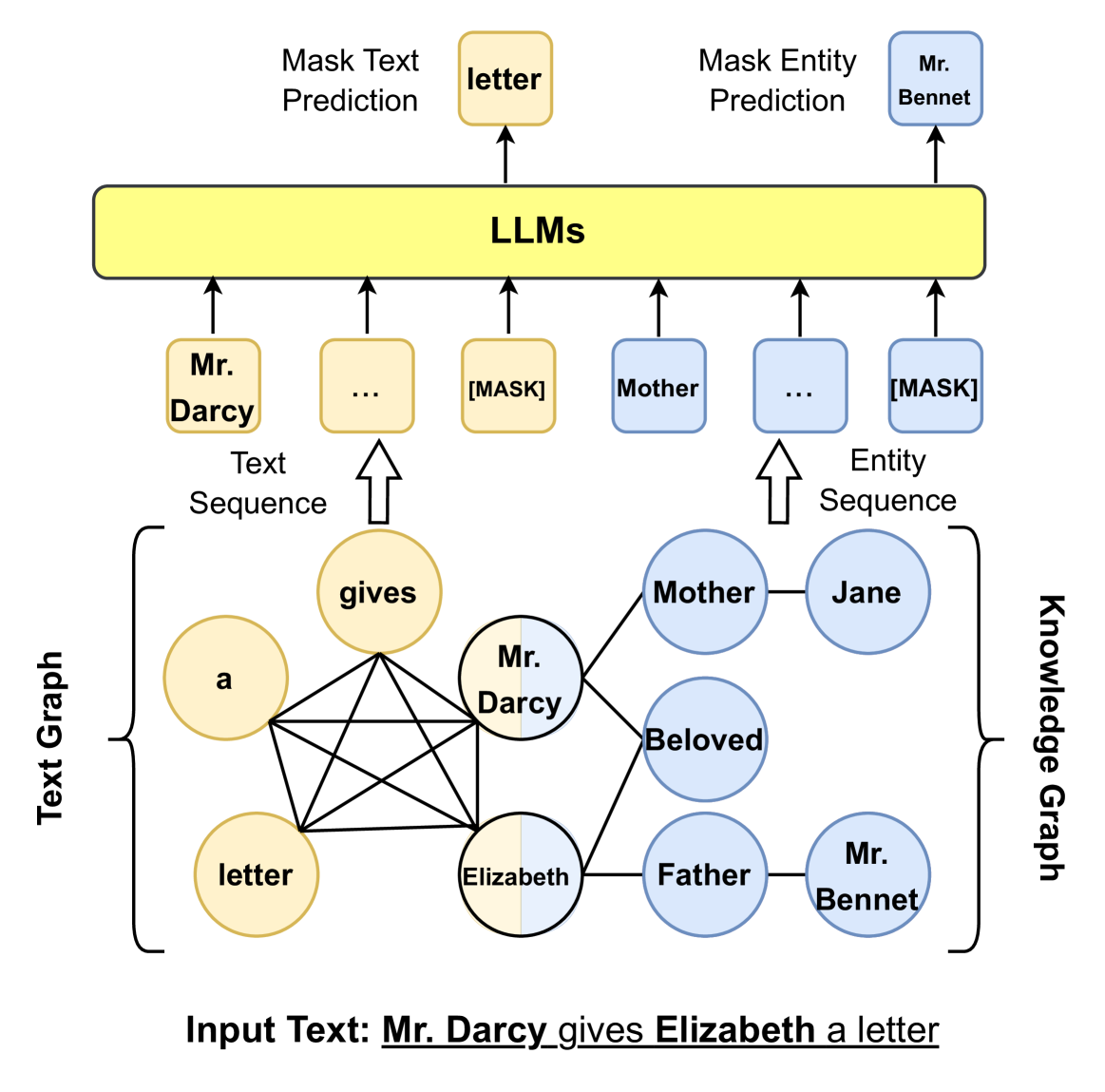

As shown in Fig. 10, this kind of research focus on introducing relevant knowledge sub-graph into the inputs of LLMs. Given a knowledge graph triple and the corresponding sentences, ERNIE 3.0 [101] represents the triple as a sequence of tokens and directly concatenates them with the sentences. It further randomly masks either the relation token in the triple or tokens in the sentences to better combine knowledge with textual representations. However, such direct knowledge triple concatenation method allows the tokens in the sentence to intensively interact with the tokens in the knowledge sub-graph, which could result in Knowledge Noise [36]. To solve this issue, K-BERT [36] takes the first step to inject the knowledge triple into the sentence via a visible matrix where only the knowledge entities have access to the knowledge triple information, while the tokens in the sentences can only see each other in the self-attention module. To further reduce Knowledge Noise, Colake [107] proposes a unified word-knowledge graph (shown in Fig. 10) where the tokens in the input sentences form a fully connected word graph where tokens aligned with knowledge entities are connected with their neighboring entities.

The above methods can indeed inject a large amount of knowledge into LLMs. However, they mostly focus on popular entities and overlook the low-frequent and long-tail ones. DkLLM [108] aims to improve the LLMs representations towards those entities. DkLLM first proposes a novel measurement to determine long-tail entities and then replaces these selected entities in the text with pseudo token embedding as new input to the large language models. Furthermore, Dict-BERT [125] proposes to leverage external dictionaries to solve this issue. Specifically, Dict-BERT improves the representation quality of rare words by appending their definitions from the dictionary at the end of input text and trains the language model to locally align rare word representations in input sentences and dictionary definitions as well as to discriminate whether the input text and definition are correctly mapped.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Diagram: LLM Processing of Text and Knowledge Graphs

### Overview

This diagram illustrates how Large Language Models (LLMs) process text and knowledge graphs, specifically focusing on masked text and entity prediction. It depicts two parallel processing paths: one using a text sequence and the other using an entity sequence, both derived from an input text. The diagram highlights the use of masking for prediction tasks.

### Components/Axes

The diagram consists of the following components:

* **Input Text:** "Mr. Darcy gives Elizabeth a letter" (located at the bottom center).

* **Text Graph:** A graph representation of the input text, showing relationships between words. (Left side)

* **Knowledge Graph:** A graph representation of entities and their relationships. (Right side)

* **LLMs:** A central block representing Large Language Models. (Center)

* **Mask Text Prediction:** A process within the LLM that predicts masked words. (Top-left)

* **Mask Entity Prediction:** A process within the LLM that predicts masked entities. (Top-right)

* **Text Sequence:** A sequence of words fed into the LLM. (Below "Mask Text Prediction")

* **Entity Sequence:** A sequence of entities fed into the LLM. (Below "Mask Entity Prediction")

### Detailed Analysis or Content Details

**Input Text:** The input text is "Mr. Darcy gives Elizabeth a letter".

**Text Graph:**

* Nodes: "a", "letter", "gives", "Mr. Darcy", "Elizabeth".

* Edges:

* "a" connects to "letter".

* "letter" connects to "gives".

* "gives" connects to "Mr. Darcy" and "Elizabeth".

* "Mr. Darcy" connects to "Elizabeth".

* "Elizabeth" connects to "letter".

**Knowledge Graph:**

* Nodes: "Mother", "Jane", "Mr. Bennet", "Father", "Beloved", "Mr. Darcy", "Elizabeth".

* Edges:

* "Mother" connects to "Jane".

* "Beloved" connects to "Mr. Darcy" and "Elizabeth".

* "Father" connects to "Elizabeth" and "Mr. Bennet".

* "Mr. Bennet" connects to "Elizabeth".

**LLM Processing:**

* **Mask Text Prediction:** The LLM receives a text sequence with a "[MASK]" token. The prediction is "letter".

* **Mask Entity Prediction:** The LLM receives an entity sequence with a "[MASK]" token. The prediction is "Mr. Bennet".

* **Text Sequence:** Shows "Mr. Darcy" followed by "..." and then "[MASK]".

* **Entity Sequence:** Shows "Mother" followed by "..." and then "[MASK]".

### Key Observations

* The diagram illustrates a parallel processing approach, handling both textual and entity-based information.

* Masking is used as a technique for prediction in both text and entity sequences.

* The knowledge graph represents relationships between entities, while the text graph represents relationships between words.

* The LLM acts as a central processing unit, receiving input from both graphs and generating predictions.

### Interpretation

The diagram demonstrates a method for leveraging LLMs to understand and reason about text by representing it in both textual and knowledge graph formats. The use of masking suggests a cloze-style prediction task, where the LLM is challenged to fill in missing information. This approach allows the LLM to utilize contextual information from both the text itself and the underlying knowledge graph to make more accurate predictions. The parallel processing of text and entities suggests that the system is designed to capture both linguistic and semantic relationships within the input text. The diagram highlights a potential architecture for building more sophisticated natural language understanding systems. The choice of "Pride and Prejudice" characters suggests a focus on relational understanding and character interactions.

</details>

Figure 10: Injecting KG information into LLMs inputs using graph structure.

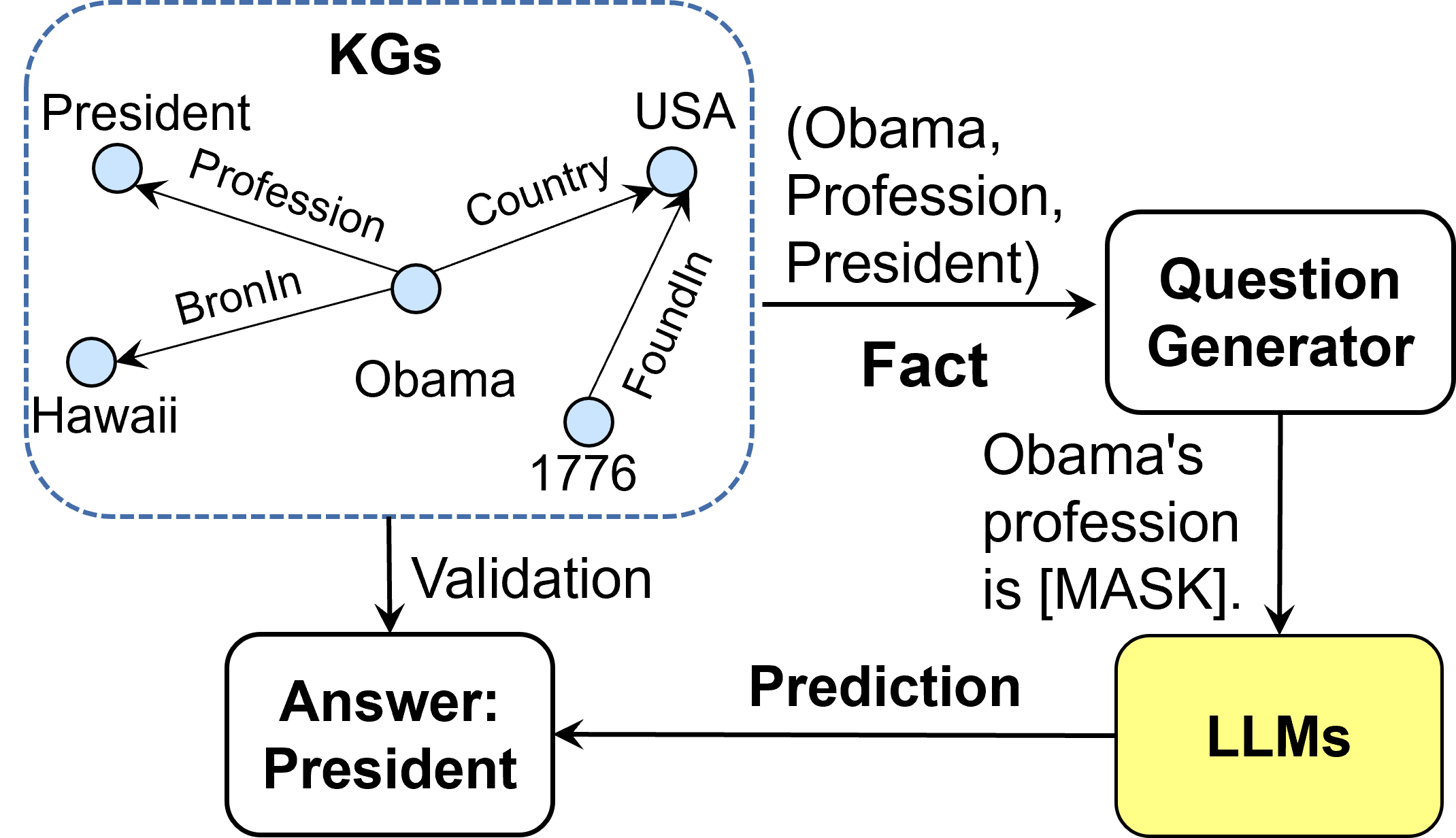

4.1.3 KGs Instruction-tuning

Instead of injecting factual knowledge into LLMs, the KGs Instruction-tuning aims to fine-tune LLMs to better comprehend the structure of KGs and effectively follow user instructions to conduct complex tasks. KGs Instruction-tuning utilizes both facts and the structure of KGs to create instruction-tuning datasets. LLMs finetuned on these datasets can extract both factual and structural knowledge from KGs, enhancing the reasoning ability of LLMs. KP-PLM [109] first designs several prompt templates to transfer structural graphs into natural language text. Then, two self-supervised tasks are proposed to finetune LLMs to further leverage the knowledge from these prompts. OntoPrompt [110] proposes an ontology-enhanced prompt-tuning that can place knowledge of entities into the context of LLMs, which are further finetuned on several downstream tasks. ChatKBQA [111] finetunes LLMs on KG structure to generate logical queries, which can be executed on KGs to obtain answers. To better reason on graphs, RoG [112] presents a planning-retrieval-reasoning framework. RoG is finetuned on KG structure to generate relation paths grounded by KGs as faithful plans. These plans are then used to retrieve valid reasoning paths from the KGs for LLMs to conduct faithful reasoning and generate interpretable results.

KGs Instruction-tuning can better leverage the knowledge from KGs for downstream tasks. However, it requires retraining the models, which is time-consuming and requires lots of resources.

4.2 KG-enhanced LLM Inference

The above methods could effectively fuse knowledge into LLMs. However, real-world knowledge is subject to change and the limitation of these approaches is that they do not permit updates to the incorporated knowledge without retraining the model. As a result, they may not generalize well to the unseen knowledge during inference [126]. Therefore, considerable research has been devoted to keeping the knowledge space and text space separate and injecting the knowledge while inference. These methods mostly focus on the Question Answering (QA) tasks, because QA requires the model to capture both textual semantic meanings and up-to-date real-world knowledge.

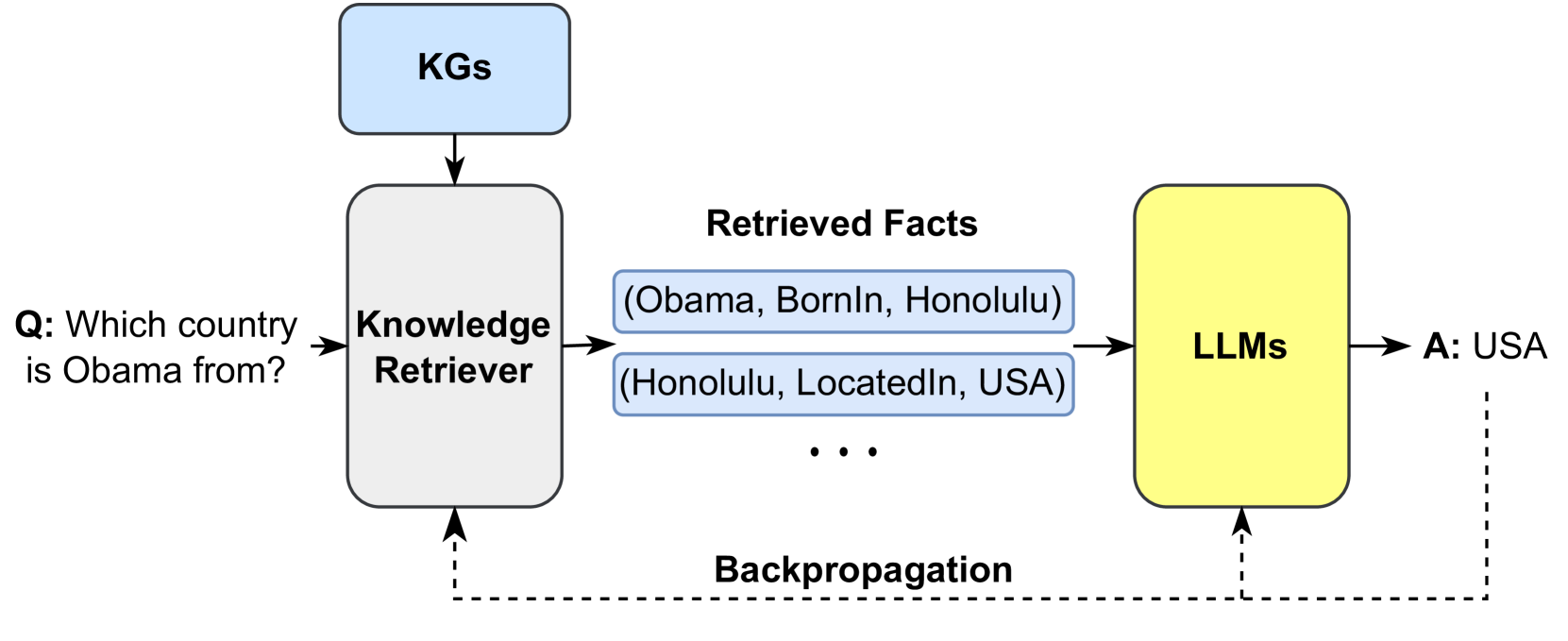

4.2.1 Retrieval-Augmented Knowledge Fusion