# Demystifying GPT Self-Repair for Code Generation

**Authors**:

- Theo X. Olausson (MIT EECS & CSAIL

&Jeevana Priya Inala)

> Correspondence to . Work partially done while T.X.O. was at Microsoft Research.

positioning

## Abstract

Large Language Models (LLMs) have shown remarkable aptitude in code generation but still struggle on challenging programming tasks. Self-repair—in which the model debugs and fixes mistakes in its own code—has recently become a popular way to boost performance in these settings. However, only very limited studies on how and when self-repair works effectively exist in the literature, and one might wonder to what extent a model is really capable of providing accurate feedback on why the code is wrong when that code was generated by the same model. In this paper, we analyze GPT-3.5 and GPT-4’s ability to perform self-repair on APPS, a challenging dataset consisting of diverse coding challenges. To do so, we first establish a new evaluation strategy dubbed pass@t that measures the pass rate of the tasks against the total number of tokens sampled from the model, enabling a fair comparison to purely sampling-based approaches. With this evaluation strategy, we find that the effectiveness of self-repair is only seen in GPT-4. We also observe that self-repair is bottlenecked by the feedback stage; using GPT-4 to give feedback on the programs generated by GPT-3.5 and using expert human programmers to give feedback on the programs generated by GPT-4, we unlock significant performance gains.

## 1 Introduction

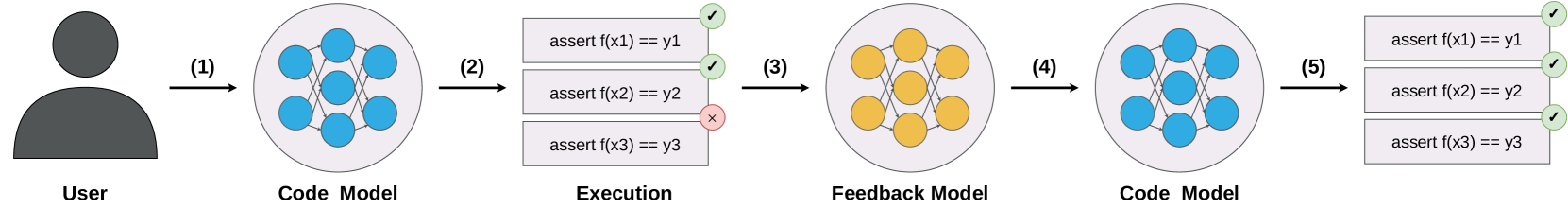

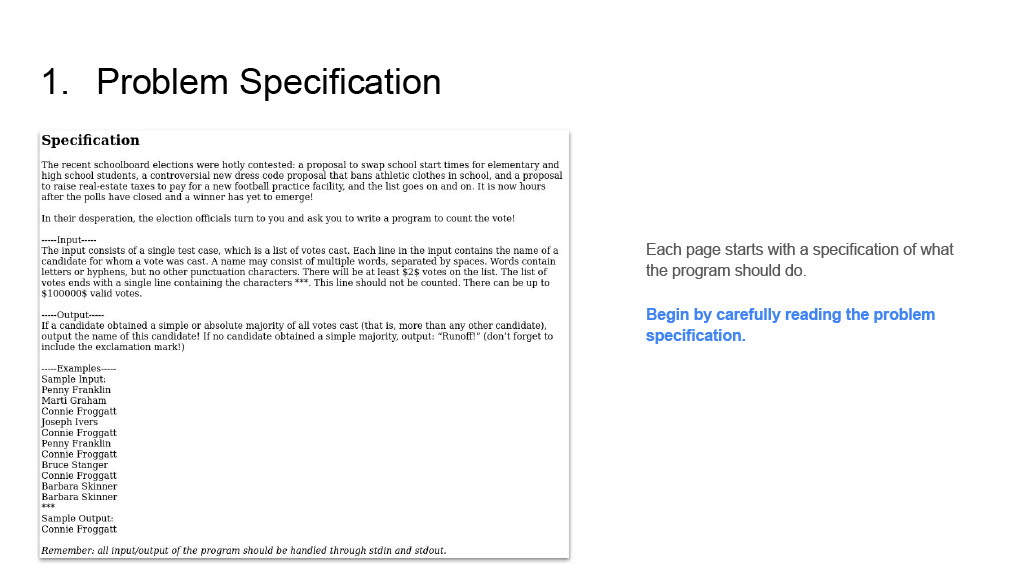

Large language models (LLMs) have proven capable of generating code snippets from natural language specifications, but still struggle on complex coding challenges such as those found in competitions and professional software engineering interviews. Recent work has sought to improve performance by leveraging self-repair [Gupta et al., 2020, Le et al., 2022, Chen et al., 2023b, Zhang et al., 2023], in which the model introspects and corrects mistakes in its own code. Figure 1 shows a typical workflow of a self-repair based approach. First, given a specification, a program is sampled from a code generation model; this program is then executed on a suite of unit tests provided as part of the specification; if the program fails on any unit test, then the error message and the faulty program are given to a feedback generation model, which outputs a short explanation of why the code failed; finally, the feedback is passed to a repair model, which generates a fixed version of the program. On the surface, this is a very attractive idea. It allows the system to overcome mistakes caused by unfortunate samples during decoding; easily incorporates feedback during the repair phase from symbolic systems such as compilers, static analysis tools, and execution engines; and mimics the trial-and-error way in which human software engineers write code.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Iterative Code Model Refinement with Feedback

### Overview

This diagram illustrates a five-step iterative process for refining a "Code Model" based on execution feedback. It begins with a "User" interacting with an initial "Code Model," proceeds through an "Execution" phase where assertions are tested, uses a "Feedback Model" to learn from failures, updates the "Code Model," and concludes with a successful re-execution of the assertions.

### Components/Axes

The diagram is structured horizontally, showing a left-to-right flow of components and processes.

* **User**: Located on the far left, represented by a dark grey silhouette icon of a person's head and shoulders. Labeled "User" directly below the icon.

* **Code Model (Initial)**: Positioned to the right of the "User." It is represented by a light grey circular container enclosing a network of seven interconnected blue circular nodes. The nodes are arranged with three on the left, one in the center, and three on the right, with lines connecting the left nodes to the center, and the center to the right nodes, forming a small, layered network structure. Labeled "Code Model" directly below the circle.

* **Execution (Initial)**: Located to the right of the initial "Code Model." This component consists of three stacked, light grey rectangular blocks, each representing an assertion.

* Top block: "assert f(x1) == y1" with a green checkmark icon on its right edge.

* Middle block: "assert f(x2) == y2" with a green checkmark icon on its right edge.

* Bottom block: "assert f(x3) == y3" with a red 'x' icon on its right edge.

Labeled "Execution" directly below the stacked blocks.

* **Feedback Model**: Positioned to the right of the initial "Execution" phase. It is represented by a light grey circular container enclosing a network of seven interconnected orange circular nodes. The internal structure of nodes and connections is identical to the "Code Model" but with orange nodes. Labeled "Feedback Model" directly below the circle.

* **Code Model (Refined)**: Positioned to the right of the "Feedback Model." This component is visually identical to the initial "Code Model," featuring a light grey circular container with seven interconnected blue circular nodes. Labeled "Code Model" directly below the circle.

* **Execution (Final)**: Located on the far right, to the right of the refined "Code Model." This component also consists of three stacked, light grey rectangular blocks, identical in text to the initial "Execution" blocks.

* Top block: "assert f(x1) == y1" with a green checkmark icon on its right edge.

* Middle block: "assert f(x2) == y2" with a green checkmark icon on its right edge.

* Bottom block: "assert f(x3) == y3" with a green checkmark icon on its right edge.

### Detailed Analysis

The diagram illustrates a sequential process through five numbered steps, indicated by black arrows:

1. **Step (1)**: An arrow points from the "User" to the initial "Code Model." This signifies the user providing input or initiating the "Code Model."

2. **Step (2)**: An arrow points from the initial "Code Model" to the "Execution" blocks. This indicates the "Code Model" is being executed or tested.

* During this initial "Execution," two assertions pass: "assert f(x1) == y1" and "assert f(x2) == y2", indicated by green checkmarks.

* One assertion fails: "assert f(x3) == y3", indicated by a red 'x'.

3. **Step (3)**: An arrow points from the initial "Execution" blocks (specifically, implying the results of the execution) to the "Feedback Model." This suggests that the execution results, particularly the failure, are fed into the "Feedback Model." The "Feedback Model" is visually distinct with orange nodes, implying a different state or function compared to the "Code Model."

4. **Step (4)**: An arrow points from the "Feedback Model" to the refined "Code Model." This indicates that the "Feedback Model" processes the feedback and uses it to update or refine the "Code Model." The "Code Model" reverts to its blue-node representation, signifying it's ready for re-execution.

5. **Step (5)**: An arrow points from the refined "Code Model" to the final "Execution" blocks. This signifies the refined "Code Model" is being executed again.

* In this final "Execution," all three assertions pass: "assert f(x1) == y1", "assert f(x2) == y2", and "assert f(x3) == y3", all indicated by green checkmarks.

### Key Observations

* The process is cyclical and iterative, demonstrating a feedback loop for improvement.

* The "Code Model" is initially imperfect, as evidenced by one failed assertion in the first "Execution."

* The "Feedback Model" (orange nodes) acts as an intermediary step, processing the results of the initial execution to inform the refinement of the "Code Model."

* The "Code Model" is successfully refined, as all assertions pass in the subsequent "Execution."

* The visual representation of the "Code Model" (blue nodes) remains consistent before and after refinement, suggesting its core structure is maintained, but its internal logic or parameters have been adjusted. The "Feedback Model" uses a distinct color (orange nodes) to differentiate its role.

### Interpretation

This diagram illustrates a fundamental concept in software development, machine learning, or automated system design: an iterative process of testing, identifying failures, learning from those failures, and applying corrections to improve a system.

The "User" initiates the process, likely by providing requirements or initial code. The "Code Model" represents the system under development, which could be a piece of software, an algorithm, or a machine learning model. The "Execution" phase simulates running the code or model with specific inputs (x1, x2, x3) and checking if the outputs (f(x1), f(x2), f(x3)) match expected values (y1, y2, y3) through assertions.

The initial failure of "assert f(x3) == y3" highlights a defect or an area for improvement in the "Code Model." This failure is then fed into the "Feedback Model." The "Feedback Model" can be interpreted as an automated debugging system, a learning algorithm, or a human review process that analyzes the failure and determines how to modify the "Code Model." The change in node color from blue to orange for the "Feedback Model" visually distinguishes it as a separate, analytical, or learning component.

Finally, the refined "Code Model" (back to blue nodes) is re-executed, and all assertions pass. This demonstrates that the feedback loop successfully identified and corrected the issue, leading to a more robust and correct system. This pattern is crucial for developing reliable systems, especially in domains like AI where models are continuously trained and fine-tuned based on performance metrics and errors. It suggests a self-correcting or self-improving system where failures are not terminal but rather opportunities for learning and refinement.

</details>

[ box/.style=rectangle, draw=black, align=justify, inner sep=3pt, tallbox/.style=box, fill=backcolor!50, shortbox/.style=box, fill=backcolor!50 ]

[tallbox, text width=3.6cm, minimum height=3.2cm] (A) Given is a string $s$ representing the day of the week today. $s$ is one of SUN, MON, TUE, WED, THU, FRI, or SAT. After how many days is the next Sunday (tomorrow or later)?

⬇

# UNIT TESTS

# (EXECUTABLE)

assert f (’ MON ’) == 6

assert f (’ WED ’) == 4

assert f (’ SUN ’) == 7

; [draw=none, left=0.2cm of A.west] (1);

[shortbox, right=0.2cm of A.north east, text width=8cm, anchor=north west] (B)

⬇

def f (s):

return (7 - [’ SUN ’, ... , ’ FRI ’, ’ SAT ’]. index (s)) %7

; [draw=none, right=0.2cm of B.east] (2);

[shortbox, below=0.2cm of B, text width=8cm] (C) Given input ’SUN’, the program returned 0, but the expected output was 7. ; [draw=none, right=0.2cm of C.east] (3);

[shortbox, below=0.2cm of C, text width=8cm] (D) The code does not account for the case where the input is ’SUN’ and the output should be 7. This can be fixed by removing the modulo operation. ; [draw=none, right=0.2cm of D.east] (4);

[shortbox, below=0.2cm of D, text width=8cm] (E)

⬇

def f (s):

return (7 - [’ SUN ’, ... , ’ FRI ’, ’ SAT ’]. index (s)) # %7

; [draw=none, right=0.2cm of E.east] (5);

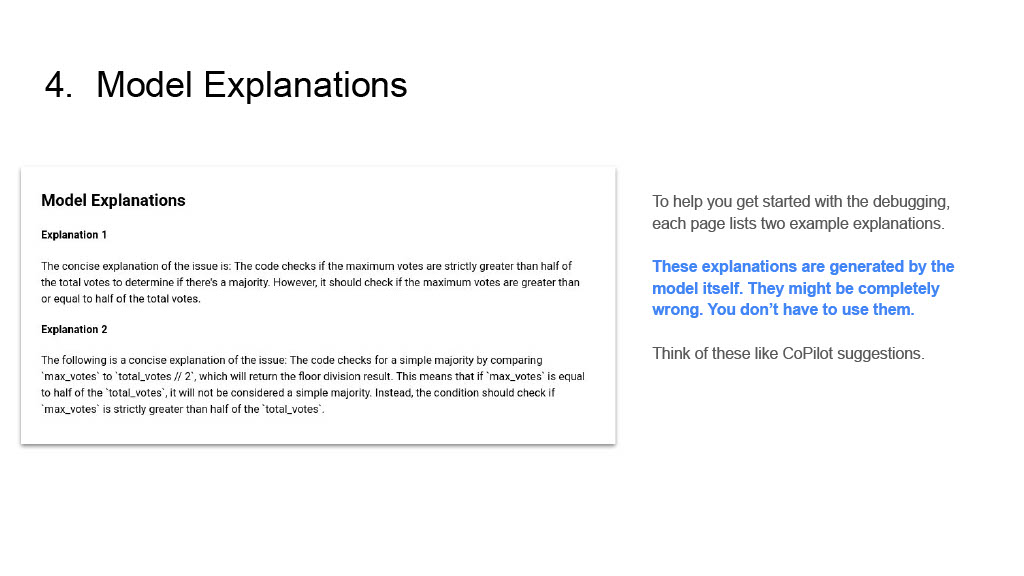

Figure 1: Self-repair with separate code and feedback models. First, a user gives a specification in the form of text and a suite of unit tests (1). Then, a code model (blue) generates a program (2). The program is checked against the unit tests using a symbolic execution engine, and an error message is returned (3). In order to provide more signal to the code model, textual feedback as to why this happened is provided by a feedback model (yellow; 4). Finally, this feedback is used by the code model to repair the program (5).

However, it is important to remember that self-repair requires more invocations of the model, thus increasing the computational cost. In particular, whether self-repair is a winning strategy or not ultimately boils down to whether you would—at an equivalent compute budget—have had a greater chance of success if you had simply drawn more code samples i.i.d. from the model and checked them against the suite of unit tests provided as part of the task. Crucially, the effectiveness of self-repair depends not only on the model’s ability to generate code, which has been studied extensively in the literature, but also on its ability to identify how the code (generated by the model itself) is wrong with respect to the task specification. As far as we are aware, no previous or contemporary work has attempted to study the effect of this stage in detail.

In this paper, we study the effectiveness of self-repair with GPT-3.5 [Ouyang et al., 2022, OpenAI, 2022] and GPT-4 [OpenAI, 2023] when solving competition-level code generation tasks. We begin by proposing a new evaluation strategy dubbed pass@t, in which the likelihood of obtaining a correct program (with respect to the given unit tests) is weighed against the total number of tokens sampled from the model. Using this instead of the traditional pass@k [Chen et al., 2021, Kulal et al., 2019] metric (which weighs pass rate against the number of trials), we are able to accurately compare performance gained through self-repair against any additional work done by the model when generating the feedback and carrying out the repair. Using this new evaluation strategy, we then carefully study the dynamics of the self-repair process under a range of hyper-parameters. Finally, given our primary objective of gaining insight into the state-of-the-art code generation models’ ability to reflect upon and debug their own code, we carry out a set of experiments in which we investigate the impact of improving the feedback stage alone. We do so by analyzing the impact of using a stronger feedback generation model than the code generation model (using GPT-4 to generate feedback for GPT-3.5 code model), as well as by carrying out a study in which human participants provide feedback on incorrect programs, in order to compare model-generated self-feedback to that provided by human programmers.

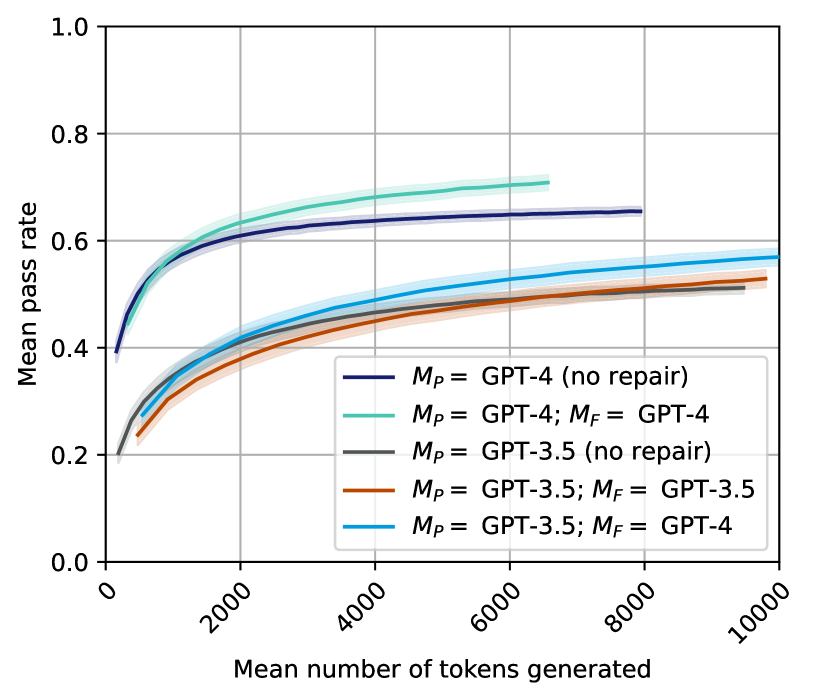

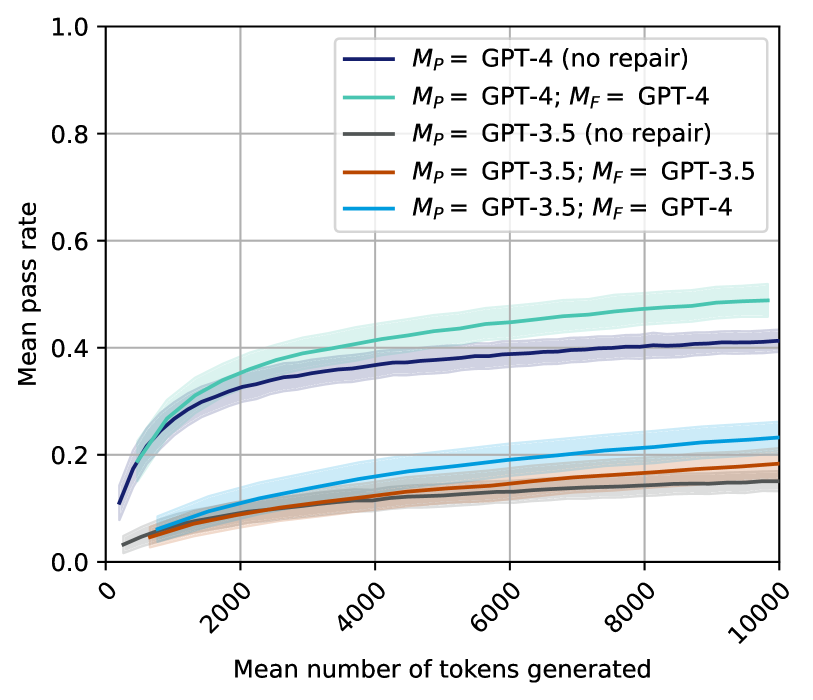

From our experiments, we find that:

1. When taking the cost of doing inspection and repair into account, performance gains from self-repair can only be seen with GPT-4; for GPT-3.5, the pass rate with repair is lower than or equal to that of the baseline, no-repair approach at all budgets.

1. Even for the GPT-4 model, performance gains are modest at best ( $66\$ pass rate with a budget of 7000 tokens, $\approx$ the cost of 45 i.i.d. GPT-4 samples) and depend on having sufficient diversity in the initial programs.

1. Replacing GPT-3.5’s explanations of what is wrong with feedback produced by GPT-4 leads to better self-repair performance, even beating the baseline, no-repair GPT-3.5 approach ( $50\$ at 7000 tokens).

1. Replacing GPT-4’s own explanations with those of a human programmer improves repair significantly, leading to a 57% increase in the number of repaired programs which pass the tests.

## 2 Related work

Program synthesis with large language models. The use of large language models for program synthesis has been studied extensively in the literature [Li et al., 2022, Austin et al., 2021, Chen et al., 2021, Le et al., 2022, Fried et al., 2023, Nijkamp et al., 2023, Chowdhery et al., 2022, Touvron et al., 2023, Li et al., 2023]. This literature has predominantly focused on evaluating models in terms of either raw accuracy or the pass@k metric [Kulal et al., 2019, Chen et al., 2021], often leveraging filtering techniques based on execution [Li et al., 2022, Shi et al., 2022] or ranking [Chen et al., 2021, Inala et al., 2022, Zhang et al., 2022] to reduce the number of samples which are considered for the final answer. In contrast, our work focuses on evaluating the models from the point of view of minimizing the number of samples that need to be drawn from the model in the first place. Our work is also different in that we assume access to the full collection of input-output examples, as is typically done in inductive synthesis [Kitzelmann, 2010, Polozov and Gulwani, 2015, Gulwani et al., 2017, Chen et al., 2019a, Ellis et al., 2021]. In particular, unlike some prior work [Li et al., 2022, Shi et al., 2022], we do not make a distinction between public tests used for filtering and private tests used to determine correctness, since our method does not involve filtering the outputs.

Code repair. Statistical and learning-based techniques for code repair have a rich history in both the programming languages and machine learning communities, although they have traditionally been used predominantly to repair human-written code [Long and Rinard, 2016, Bader et al., 2019, Le Goues et al., 2021, Yasunaga and Liang, 2021, Chen et al., 2019b, Mesbah et al., 2019, Wang et al., 2018]. More recently, using repair as a post-processing step to improve code which was itself automatically synthesised has been used in the synthesis of both domain-specific languages [Gupta et al., 2020] and general-purpose code [Le et al., 2022, Yasunaga and Liang, 2021, 2020]. Our contribution differs from most prior work in this literature in the use of textual feedback for repair, which is possible thanks to the above mentioned rise in the use of LLMs for program synthesis.

Contemporary work on LLM self-repair. Recognizing that there is much contemporary work seeking to self-repair with LLMs, we now briefly highlight a few such papers which are particularly close to our work. Zhang et al. [2023] explore self-repair without natural language feedback on APPS [Hendrycks et al., 2021] using a diverse range of fine-tuned models. They also experiment with prompt-based repair using Codex [Chen et al., 2021], InCoder [Fried et al., 2023], and CodeGen [Nijkamp et al., 2023]. Notably, their framework does not consider the cost associated with feedback and repair, which presents a significantly different perspective on self-repair. Similarly, Chen et al. [2023b] assess Codex’s ability to self-repair across a variety of tasks, in a framework that closely resembles that which we study in this work. However, their study differs from ours in terms of the models considered, the evaluation strategy, and, most importantly, the research goal, as we specifically aim to investigate the significance of the textual feedback stage. Self-repair, or frameworks with other names that are conceptually very similar to it, has also been used in contexts outside of code generation. Peng et al. [2023] use self-repair to mitigate hallucinations and improve factual grounding in a ChatGPT-based web search assistant, in which the model revises its initial response based on self-generated feedback. Similarly, Madaan et al. [2023] present a framework in which a model iteratively provides feedback on and revises its output until a stopping criterion is reached; they apply this framework to a range of tasks, including dialogue and code optimization. Ultimately, we see our work, in which we use the novel evaluation metric pass@t to investigate the significance of the textual feedback stage in competition-level self-repair, as being complementary to contemporary research which uses traditional metrics to evaluate self-repair in a broader context. We are eager to see what the implications of our results will be in these other domains.

## 3 Methodology

### 3.1 Self-Repair Overview

As shown in Figure 1, our self-repair approach involves 4 stages: code generation, code execution, feedback generation, and code repair. We now formally define these four stages.

Code generation. Given a specification $\psi$ , a programming model $M_{P}$ first generates $n_{p}$ samples i.i.d., which we denote

$$

\{p_{i}\}_{i=1}^{n_{p}}\stackrel{{\scriptstyle i.i.d.}}{{\sim}}M_{P}(\psi)

$$

Code execution. These $n_{p}$ code samples are then executed against a test bed. Recall from Section 2 that we assume that we have access to the full set of tests in executable form (see Section 5 for a brief discussion on the validity of this assumption in software engineering domains). Thus, we stop if any sample passes all of the tests, since a satisfying program has then been found. Otherwise, we collect the error messages $\{e_{i}\}_{i}$ returned by the execution environment. These error messages either contain the compile/runtime error information or an example input on which the program’s output differs from the expected one. An example is shown in Figure 1 (component 3).

Feedback generation. Since the error messages from the execution environment are usually very high-level, they provide little signal for repair. Therefore, as an intermediate step, we use a feedback model to produce a more detailed explanation of what went wrong; Figure 1 (component 4) shows an example. Formally, in this stage, we generate $n_{f}$ feedback strings, $\{f_{ij}\}_{j}$ , for each wrong program, $p_{i}$ , as follows:

$$

\{f_{ij}\}_{j=1}^{n_{f}}\stackrel{{\scriptstyle i.i.d.}}{{\sim}}M_{F}(\psi;p_{

i};e_{i})

$$

Having an explicit feedback generation step allows us to ablate this component so that we can study its significance in isolation.

Code repair. In the final step, for each initial program $p_{i}$ and feedback $f_{ij}$ , $n_{r}$ candidate repaired programs are sampled from $M_{P}$ We use the same model for both the initial code generation and the code repair, since these are fundamentally similar tasks.:

$$

\{r_{ijk}\}_{k=1}^{n_{r}}\stackrel{{\scriptstyle i.i.d.}}{{\sim}}M_{P}(\psi;p_

{i};e_{i};f_{ij})

$$

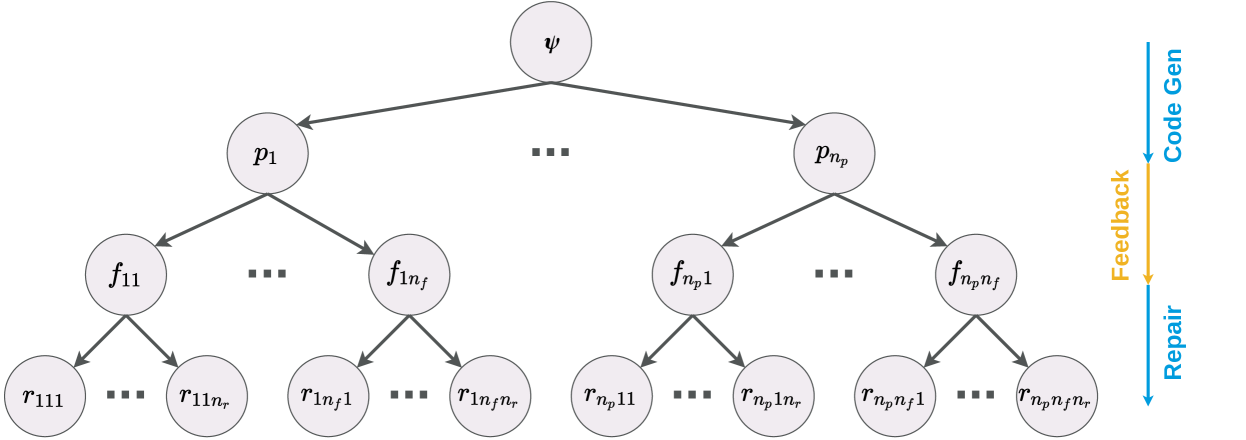

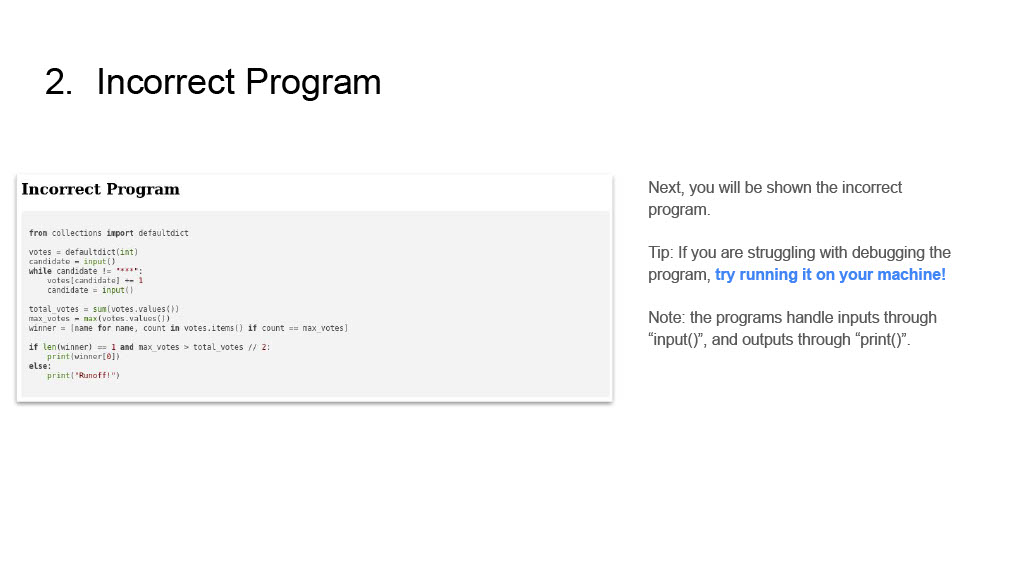

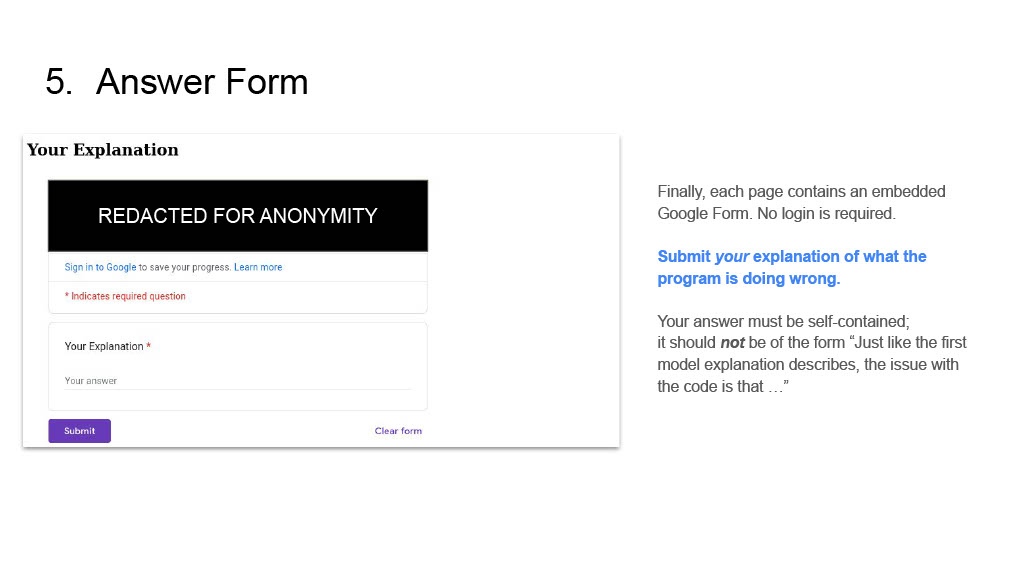

Repair tree. We call the tree of interleaved text and programs produced by this procedure—rooted in the specification $\psi$ , then branching into initial programs $p_{i}$ , each of which branches into feedback $f_{ij}$ and then repairs $r_{ijk}$ —a repair tree, $T$ (Figure 2).

Caveat: jointly sampling feedback and repair. The general framework presented above does not require the programming model and feedback model to be the same, thus allowing for the use of specialized models in the system. However, when $M_{P}=M_{F}$ we jointly generate both the feedback and the repaired program in a single API call, since both GPT-3.5 and GPT-4 have a natural tendency to interleave text and code in their responses. See Appendix E for a detailed look at how the prompt differs between this and the previous setting. Formally, we denote this as

$$

\{(f_{ij},r_{ij})\}_{j=1}^{n_{fr}}\stackrel{{\scriptstyle i.i.d.}}{{\sim}}M_{P

}(\psi;p_{i};e_{i})

$$

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Hierarchical Decomposition with Iterative Process Flow

### Overview

This image presents a hierarchical tree-like diagram illustrating a multi-level decomposition process, accompanied by a vertical process flow description on the right side. The diagram shows a top-down branching structure, where a single root element expands into multiple sub-elements across three subsequent levels, with ellipses indicating omitted intermediate nodes at each branching stage. The right-side labels describe a sequence of operations: "Code Gen", "Feedback", and "Repair", each associated with a specific phase of the hierarchical decomposition.

### Components/Axes

The diagram consists of circular nodes (representing entities or stages) and directed arrows (representing relationships or flow). There are no traditional axes or legends, but the right-side labels serve as a process legend.

**Hierarchical Structure (Nodes and Edges):**

* **Nodes:** All nodes are light grey circles containing black text labels.

* **Level 1 (Root):** A single node at the top-center, labeled "ψ" (psi).

* **Level 2:** Two visible nodes, "p₁" on the left and "p_n_p" on the right. An ellipsis "..." is placed horizontally between them, indicating a series of `n_p` intermediate nodes (p₂, ..., p_n_p-1) are omitted.

* **Level 3:**

* Under "p₁": Two visible nodes, "f₁₁" on the left and "f_1_n_f" on the right. An ellipsis "..." is placed horizontally between them, indicating `n_f` intermediate nodes (f₁₂, ..., f_1_n_f-1) are omitted.

* Under "p_n_p": Two visible nodes, "f_n_p_1" on the left and "f_n_p_n_f" on the right. An ellipsis "..." is placed horizontally between them, indicating `n_f` intermediate nodes (f_n_p_2, ..., f_n_p_n_f-1) are omitted.

* **Level 4 (Leaf Nodes):**

* Under "f₁₁": Two visible nodes, "r₁₁₁" on the left and "r_1_1_n_r" on the right. An ellipsis "..." is placed horizontally between them, indicating `n_r` intermediate nodes (r₁₁₂, ..., r_1_1_n_r-1) are omitted.

* Under "f_1_n_f": Two visible nodes, "r_1_n_f_1" on the left and "r_1_n_f_n_r" on the right. An ellipsis "..." is placed horizontally between them, indicating `n_r` intermediate nodes (r_1_n_f_2, ..., r_1_n_f_n_r-1) are omitted.

* Under "f_n_p_1": Two visible nodes, "r_n_p_1_1" on the left and "r_n_p_1_n_r" on the right. An ellipsis "..." is placed horizontally between them, indicating `n_r` intermediate nodes (r_n_p_1_2, ..., r_n_p_1_n_r-1) are omitted.

* Under "f_n_p_n_f": Two visible nodes, "r_n_p_n_f_1" on the left and "r_n_p_n_f_n_r" on the right. An ellipsis "..." is placed horizontally between them, indicating `n_r` intermediate nodes (r_n_p_n_f_2, ..., r_n_p_n_f_n_r-1) are omitted.

* **Edges:** All connections are dark grey directed arrows pointing downwards, indicating a flow or derivation from a parent node to its child nodes.

**Process Flow Labels (Right Side):**

Positioned vertically along the right edge of the diagram, these labels describe a process sequence.

* **Top:** A vertical blue arrow pointing downwards, labeled "Code Gen" in blue text. This arrow spans vertically, aligning with the first two levels of the hierarchical diagram (from "ψ" down to the "f" nodes).

* **Middle:** A vertical orange arrow pointing downwards, labeled "Feedback" in orange text. This arrow is positioned below "Code Gen" and aligns vertically with the transition from the "f" nodes to the "r" nodes in the hierarchical diagram.

* **Bottom:** A vertical blue arrow pointing downwards, labeled "Repair" in blue text. This arrow is positioned below "Feedback" and aligns vertically with the bottom-most "r" nodes in the hierarchical diagram.

### Detailed Analysis

The diagram illustrates a four-level hierarchical decomposition.

1. **Level 1 to Level 2:** The root node "ψ" branches into a set of `n_p` nodes, represented by "p₁" through "p_n_p". This indicates a primary decomposition or generation step.

2. **Level 2 to Level 3:** Each "p_i" node further branches into a set of `n_f` nodes, represented by "f_i1" through "f_i_n_f". This signifies a secondary decomposition or feature extraction step for each 'p' component.

3. **Level 3 to Level 4:** Each "f_ij" node then branches into a set of `n_r` nodes, represented by "r_ij1" through "r_ij_n_r". This represents a tertiary decomposition, possibly leading to specific results or repair actions.

The process flow labels on the right provide a contextual interpretation of these hierarchical levels:

* The "Code Gen" phase (blue arrow) corresponds to the initial top-down decomposition from "ψ" to the "p" nodes and then to the "f" nodes. This suggests that the generation of code or a primary structure occurs in these initial stages.

* The "Feedback" phase (orange arrow) aligns with the transition from the "f" nodes to the "r" nodes. This implies that after the initial generation (f-nodes), an evaluation or feedback mechanism is applied, leading to the "r" nodes.

* The "Repair" phase (blue arrow) aligns with the "r" nodes themselves, or the final output derived from them. This suggests that the "r" nodes represent repair actions or refined outputs based on the feedback received.

The consistent downward direction of all arrows in both the hierarchy and the process flow indicates a sequential, top-down progression. The use of subscripts `n_p`, `n_f`, and `n_r` implies that the number of branches at each level can vary, making the decomposition flexible.

### Key Observations

* The diagram represents a recursive or iterative decomposition process, where a high-level entity is broken down into increasingly granular components.

* The ellipsis notation "..." is used extensively to denote omitted intermediate nodes, implying a generalizable structure rather than a fixed number of branches.

* The right-side labels provide a functional context to the hierarchical levels, mapping stages of decomposition to phases of a process (Code Generation, Feedback, Repair).

* The color coding of the process flow labels (blue for "Code Gen" and "Repair", orange for "Feedback") might distinguish between primary forward actions (blue) and an intermediate evaluative or corrective step (orange).

### Interpretation

This diagram likely illustrates a conceptual model for an automated system or a problem-solving methodology, possibly in the domain of software engineering, design automation, or AI-driven development.

* **ψ (Psi):** Could represent the initial high-level problem statement, a system specification, or a desired outcome.

* **p (Parameters/Parts/Processes):** The first level of decomposition (p₁ to p_n_p) suggests breaking down the initial problem into major parameters, components, or parallel processes.

* **f (Features/Functions/Factors):** The second level of decomposition (f_i1 to f_i_n_f) indicates a further breakdown of each parameter/part into specific features, functions, or influencing factors.

* **r (Results/Repairs/Refinements):** The final level (r_ij1 to r_ij_n_r) represents the most granular elements, which, in the context of the right-side labels, are likely specific results, repair actions, or refinements derived from the features.

The process flow on the right suggests an iterative or cyclical approach:

1. **Code Gen:** The initial phase involves generating a solution or a system structure, represented by the decomposition from "ψ" down to the "f" nodes. This could be an automated code generation step, a design synthesis, or an initial hypothesis formulation.

2. **Feedback:** Once the initial generation is complete (at the "f" node level), a feedback mechanism is engaged. This implies evaluation, testing, or analysis of the generated components/features. This feedback then informs the next stage.

3. **Repair:** Based on the feedback, specific "repair" actions or refinements are performed, represented by the "r" nodes. This suggests an iterative refinement loop where the generated solution is adjusted or corrected to meet requirements or improve performance.

The overall interpretation is a system that generates a solution through hierarchical decomposition, evaluates it, and then iteratively repairs or refines it based on feedback. The blue arrows for "Code Gen" and "Repair" might signify the primary forward progression of the system, while the orange "Feedback" arrow highlights a critical intermediate step for evaluation and guidance. This model emphasizes a structured, top-down approach to problem-solving with built-in mechanisms for quality assurance and improvement.

</details>

Figure 2: A repair tree begins with a specification $\psi$ (root node), then grows into initial programs, feedback, and repairs.

### 3.2 pass@t : pass rate vs. token count

Since self-repair requires several dependent model invocations of non-uniform cost, this is a setting in which pass@ $k$ —the likelihood of obtaining a correct program in $k$ i.i.d. samples—is not a suitable metric for comparing and evaluating various hyper-parameter choices of self-repair. Instead, we measure the pass rate as a function of the total number of tokens sampled from the model, a metric which we call pass@t.

Formally, suppose that you are given a dataset $D=\{\psi_{d}\}_{d}$ and a chosen set of values for the hyper-parameters $(M_{P},M_{F},n_{p},n_{f},n_{r})$ . Let $T_{d}^{i}\sim M(\psi_{d})$ denote a repair tree that is sampled as described in Section 3.1 for the task $\psi_{d}$ ; let $\text{size}(T_{d}^{i})$ denote the total number of program and feedback tokens in the repair tree; and say that $T_{d}^{i}\models\psi_{d}$ is true if, and only if, $T_{d}^{i}$ has at least one leaf program that satisfies the unit tests in the specification $\psi_{d}$ . Then the pass@t metric of this choice of hyper-parameters is defined as the expected pass rate at the number of tokens which you would expect to generate with this choice of hyper-parameters:

| | $\displaystyle\texttt{pass@t}\triangleq\mathop{\mathbb{E}}_{\stackrel{{ \scriptstyle\psi_{d}\sim D}}{{T_{d}^{i}\sim M(\psi_{d})}}}\left[T_{d}^{i} \models\psi_{d}\right]\quad\textbf{at}\quad t=\mathop{\mathbb{E}}_{\stackrel{{ \scriptstyle\psi_{d}\sim D}}{{T_{d}^{i}\sim M(\psi_{d})}}}\left[\text{size}(T_ {d}^{i})\right]$ | |

| --- | --- | --- |

In our experiments, we plot bootstrapped estimates of these two quantities. To obtain these, we first generate a very large repair tree for each task specification, with: $N_{p}\geq n_{p}$ initial program samples; $N_{f}\geq n_{f}$ feedback strings per wrong program; and $N_{r}\geq n_{r}$ repair candidates per feedback string. Given a setting of $(n_{p},n_{f},n_{r})$ , we then sub-sample (with replacement) $N_{t}$ different repair trees from this frozen dataset. Finally, we compute the sample mean and standard deviation of the pass rate and the tree size over these $N_{t}$ trees. Estimating the pass@t in this way greatly reduces the computational cost of our experiments, since we can reuse the same initial dataset to compute the estimates for all of the various choices of $n_{p},n_{f}$ , and $n_{r}$ .

We use $N_{p}=50$ for all experiments, and consider $n_{p}\leq 25$ for the self-repair approaches and $n_{p}\leq 50$ for the baseline, no-repair approach. Similarly, for the feedback strings, we use $N_{f}=25$ and $n_{f}\leq 10$ (except for Section 4.2, in which we only consider $n_{f}=1$ and therefore settle for $N_{f}=10$ instead). For the repair candidates, since we do joint sampling of feedback and repair in most of our experiments, we set $N_{r}=n_{r}=1$ . Finally, we use $N_{t}=1000$ for all settings.

## 4 Experiments

In this section, we carry out experiments to answer the following research questions: (a) In the context of challenging programming puzzles, is self-repair better than i.i.d. sampling without repair for the models we consider? If so, under what hyper-parameters is self-repair most effective? (b) Would a stronger feedback model boost the model’s repair performance? (c) Would keeping a human in the loop to provide feedback unlock better repair performance even for the strongest model?

We evaluate these hypotheses on Python programming challenges from the APPS dataset [Hendrycks et al., 2021]. The APPS dataset contains a diverse range of programming challenges paired with a suite of tests, making it a perfect (and challenging) setting to study self-repair in. To keep our experiments tractable, we evaluate on a subset of the APPS test set, consisting of 300 tasks. These tasks are proportionally sampled in accordance with the frequency of the different difficulty levels in the test set: 180 interview-level questions, 60 competition-level questions, and 60 introductory-level questions (listed in Appendix F). We use GPT-3.5 [Ouyang et al., 2022, OpenAI, 2022] and GPT-4 [OpenAI, 2023] as our models of choice, and implement self-repair using templated string concatenation with one-shot prompting; our prompts are given in Appendix E. When appropriate, we compare against a baseline without repair. This baseline, shown with a black line in the plots, is simply i.i.d. sampling from the corresponding model (e.g., GPT-4 when we explore whether GPT-4 is capable of self-repair). Based on preliminary experiments, we set the decoding temperature to $0.8$ for all the models to encourage diverse samples.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Chart Type: Scatter Plot with Fitted Curve

### Overview

This image displays a scatter plot illustrating the relationship between "Mean number of tokens generated" and "Mean pass rate". The data points are categorized by two parameters, `n_p` (represented by color) and `n_fr` (represented by marker shape), and a fitted curve with a confidence interval is overlaid to show the general trend.

### Components/Axes

**X-axis:**

* **Title:** "Mean number of tokens generated"

* **Range:** Approximately 0 to 10000

* **Major Tick Marks:** 0, 2000, 4000, 6000, 8000, 10000. The labels are rotated counter-clockwise by approximately 45 degrees.

* **Minor Grid Lines:** Present at intervals of approximately 1000.

**Y-axis:**

* **Title:** "Mean pass rate"

* **Range:** Approximately 0.0 to 1.0

* **Major Tick Marks:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

* **Minor Grid Lines:** Present at intervals of approximately 0.1.

**Legend (Top-Center, within the plot area):**

The legend is divided into two columns, indicating two independent variables: `n_p` (number of passes) and `n_fr` (number of failures/retries, inferred from context).

* **Left Column (Line colors for `n_p`):**

* Brown line: `n_p = 1`

* Goldenrod/Yellow line: `n_p = 2`

* Teal/Light Blue line: `n_p = 5`

* Blue line: `n_p = 10`

* Dark Blue/Navy line: `n_p = 25`

* **Right Column (Marker shapes for `n_fr`):**

* Dark Grey circle: `n_fr = 1`

* Dark Grey inverted triangle: `n_fr = 3`

* Dark Grey square: `n_fr = 5`

* Dark Grey triangle: `n_fr = 10`

**Fitted Curve:**

A solid dark grey line represents the overall trend, starting low and increasing, then flattening out. It is accompanied by a lighter grey shaded area, which likely indicates a confidence interval or standard error around the fitted curve.

### Detailed Analysis

**Overall Trend of Fitted Curve:**

The dark grey fitted curve shows a clear positive, non-linear relationship. It starts at a "Mean pass rate" of approximately 0.2 at low "Mean number of tokens generated" (around 0-200), rises steeply, and then gradually flattens out, approaching a "Mean pass rate" of about 0.5 to 0.53 as the "Mean number of tokens generated" increases towards 10000. The light grey shaded area around the curve suggests a relatively narrow confidence interval, indicating good fit or low uncertainty for the trend.

**Data Points (Scatter Plot):**

Each data point is represented by a specific color (for `n_p`) and marker shape (for `n_fr`). All scatter points include small vertical error bars, indicating variability in the "Mean pass rate".

1. **`n_p = 1` (Brown points):**

* `n_fr = 1` (Circle): X ~ 500, Y ~ 0.26

* `n_fr = 3` (Inverted Triangle): X ~ 1200, Y ~ 0.31

* `n_fr = 5` (Square): X ~ 1800, Y ~ 0.33

* `n_fr = 10` (Triangle): X ~ 3000, Y ~ 0.35

2. **`n_p = 2` (Goldenrod/Yellow points):**

* `n_fr = 1` (Circle): X ~ 800, Y ~ 0.32

* `n_fr = 3` (Inverted Triangle): X ~ 1500, Y ~ 0.36

* `n_fr = 5` (Square): X ~ 2500, Y ~ 0.38

* `n_fr = 10` (Triangle): X ~ 5500, Y ~ 0.42

3. **`n_p = 5` (Teal/Light Blue points):**

* `n_fr = 1` (Circle): X ~ 2000, Y ~ 0.41

* `n_fr = 3` (Inverted Triangle): X ~ 3200, Y ~ 0.43

* `n_fr = 5` (Square): X ~ 4500, Y ~ 0.48

* `n_fr = 10` (Triangle): X ~ 7000, Y ~ 0.49

4. **`n_p = 10` (Blue points):**

* `n_fr = 1` (Circle): X ~ 4200, Y ~ 0.47

* `n_fr = 3` (Inverted Triangle): X ~ 4800, Y ~ 0.45

* `n_fr = 5` (Square): X ~ 8500, Y ~ 0.51

5. **`n_p = 25` (Dark Blue/Navy point):**

* `n_fr = 1` (Circle): X ~ 9800, Y ~ 0.53

### Key Observations

* **General Increase:** All data series generally show an increase in "Mean pass rate" as "Mean number of tokens generated" increases, consistent with the fitted curve.

* **Impact of `n_p`:** For a given `n_fr` (same marker shape), higher `n_p` values (represented by colors from brown to dark blue) tend to correspond to higher "Mean pass rates" and often higher "Mean number of tokens generated". For example, comparing `n_fr=1` (circles):

* `n_p=1` (brown circle) is at (500, 0.26)

* `n_p=2` (goldenrod circle) is at (800, 0.32)

* `n_p=5` (teal circle) is at (2000, 0.41)

* `n_p=10` (blue circle) is at (4200, 0.47)

* `n_p=25` (dark blue circle) is at (9800, 0.53)

This indicates a strong positive correlation between `n_p` and both "Mean number of tokens generated" and "Mean pass rate".

* **Impact of `n_fr`:** For a given `n_p` (same color), increasing `n_fr` (from circle to triangle) generally leads to an increase in "Mean number of tokens generated" and a slight, but less consistent, increase or plateau in "Mean pass rate". For example, for `n_p=1` (brown points), as `n_fr` increases from 1 to 10, "Mean number of tokens generated" increases from ~500 to ~3000, and "Mean pass rate" increases from ~0.26 to ~0.35.

* **Saturation:** The "Mean pass rate" appears to saturate around 0.5 to 0.53, even with very high "Mean number of tokens generated" (e.g., 8000-10000) and high `n_p` values.

* **Data Distribution:** The data points are not uniformly distributed; there are more points at lower "Mean number of tokens generated" values, and fewer points as "Mean number of tokens generated" approaches 10000. The highest `n_p` value (`n_p=25`) only has one data point shown.

### Interpretation

The chart suggests that both `n_p` and `n_fr` parameters influence the "Mean number of tokens generated" and, consequently, the "Mean pass rate".

1. **`n_p` as a primary driver:** Higher values of `n_p` (number of passes) are strongly associated with both a greater "Mean number of tokens generated" and a higher "Mean pass rate". This implies that increasing the number of passes in a process (perhaps an iterative generation or refinement process) leads to more extensive output (more tokens) and better performance (higher pass rate). The progression of colors from brown to dark blue clearly illustrates this, with `n_p=25` achieving the highest pass rate and token count among the observed points.

2. **`n_fr` as a secondary driver/modifier:** The `n_fr` parameter (number of failures/retries) also contributes to the "Mean number of tokens generated". For a fixed `n_p`, increasing `n_fr` generally pushes the data points further to the right on the X-axis, meaning more tokens are generated. However, its impact on the "Mean pass rate" is less pronounced and more variable compared to `n_p`. This could suggest that while allowing more retries or failures might lead to more attempts and thus more generated tokens, it doesn't necessarily translate to a proportionally higher pass rate, especially as the pass rate approaches its saturation point.

3. **Performance Ceiling:** The fitted curve and the clustering of higher `n_p` points indicate a diminishing return on increasing "Mean number of tokens generated" beyond a certain point (e.g., ~6000-7000 tokens). The "Mean pass rate" seems to plateau around 0.5 to 0.53. This suggests that there might be an inherent limit to the "pass rate" achievable with the current system or methodology, regardless of how many tokens are generated or how many passes/retries are allowed. Further increases in `n_p` or `n_fr` beyond certain thresholds might only lead to more tokens generated without significant improvements in the pass rate.

In essence, to maximize the "Mean pass rate", one should aim for higher `n_p` values, which will naturally lead to more tokens generated. While `n_fr` also increases token generation, its direct impact on the pass rate is less clear, especially at higher token counts where the system appears to hit a performance ceiling.

</details>

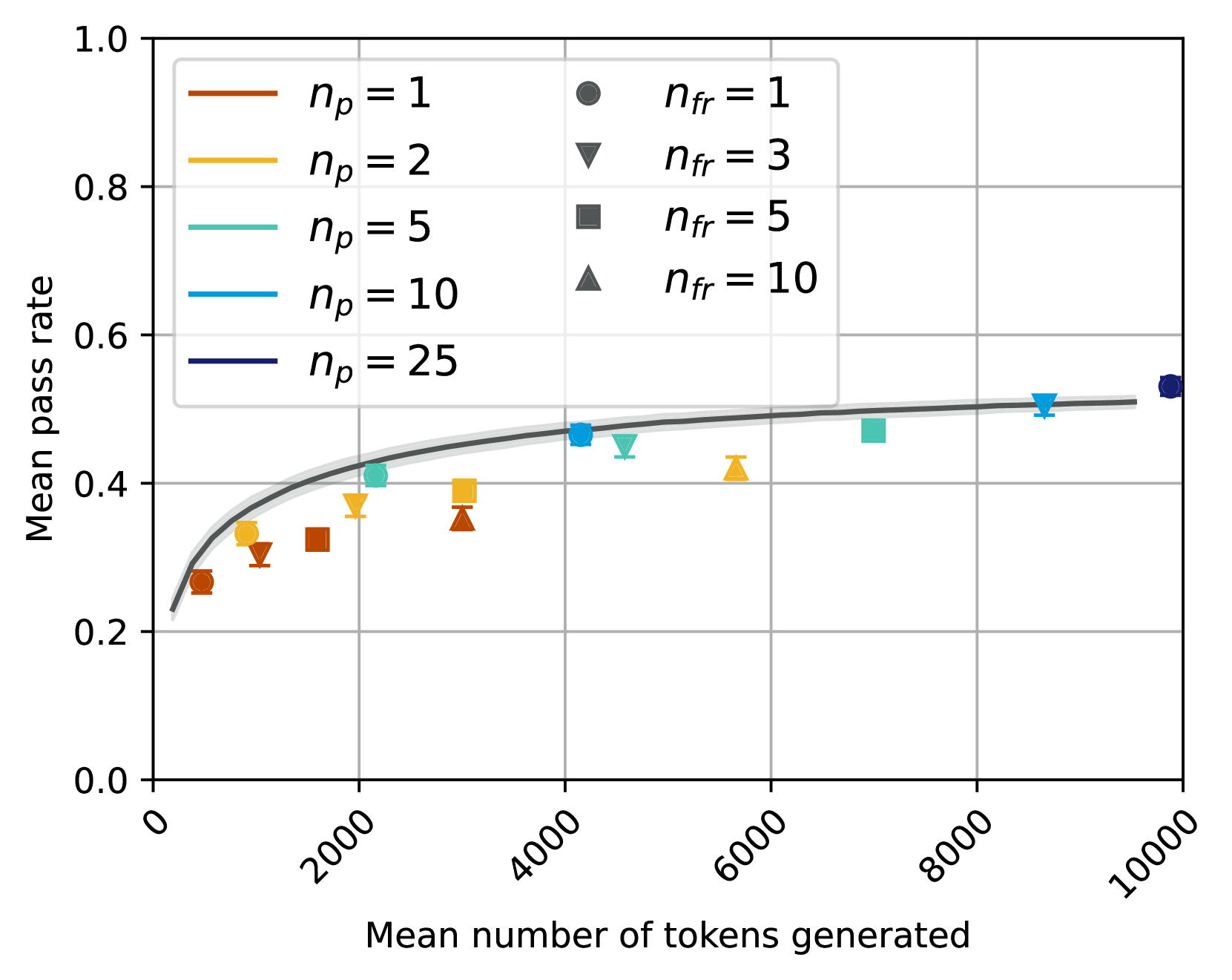

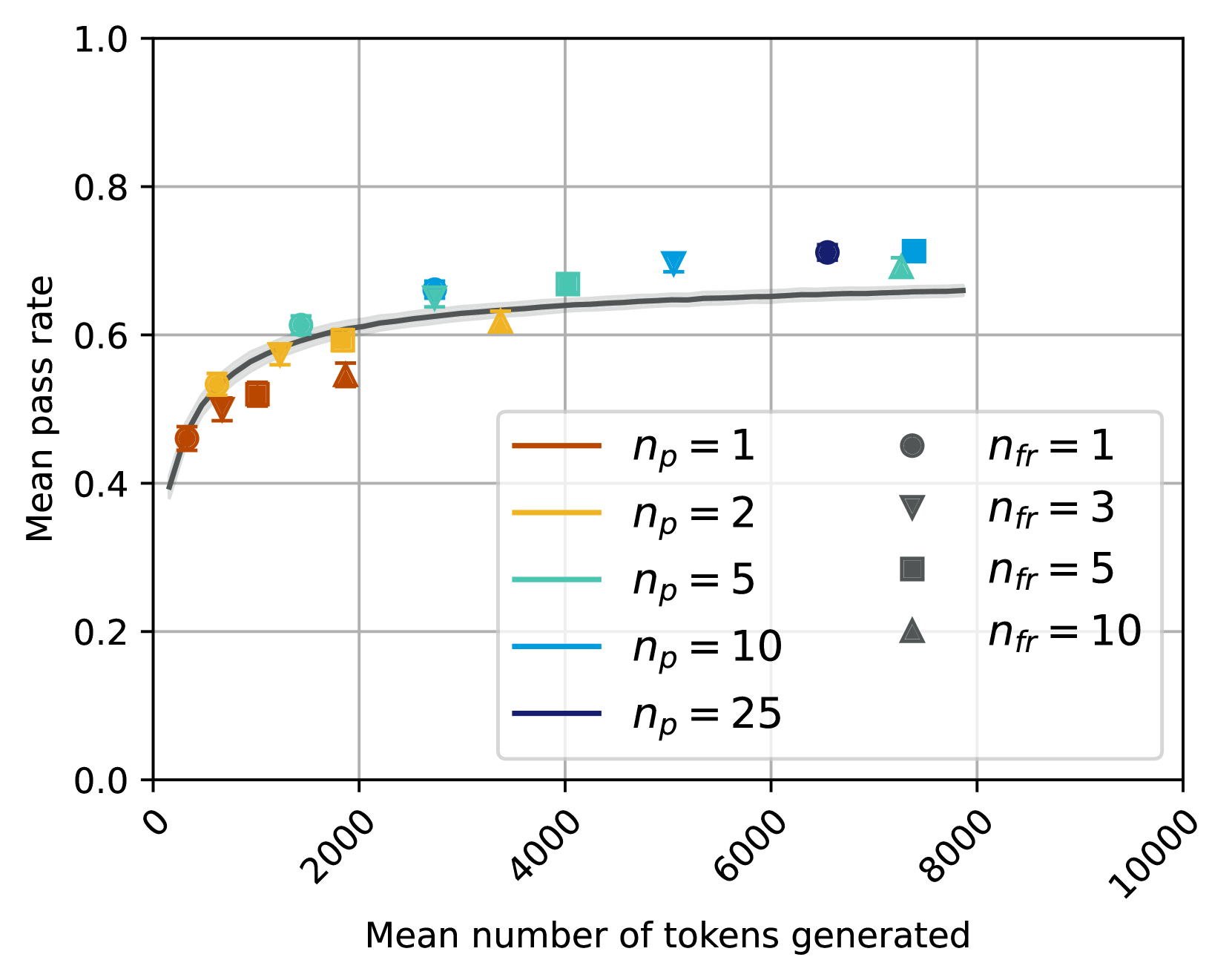

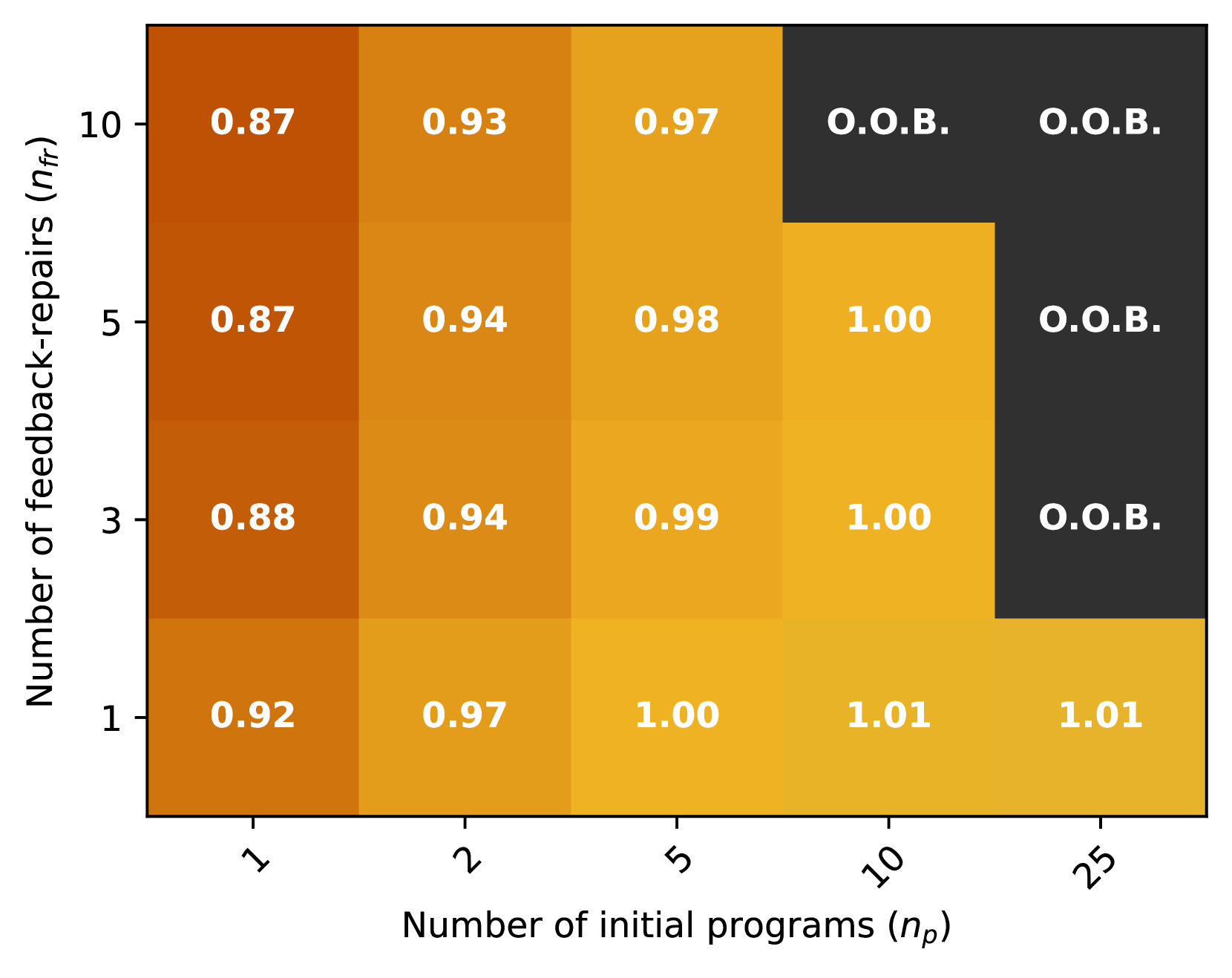

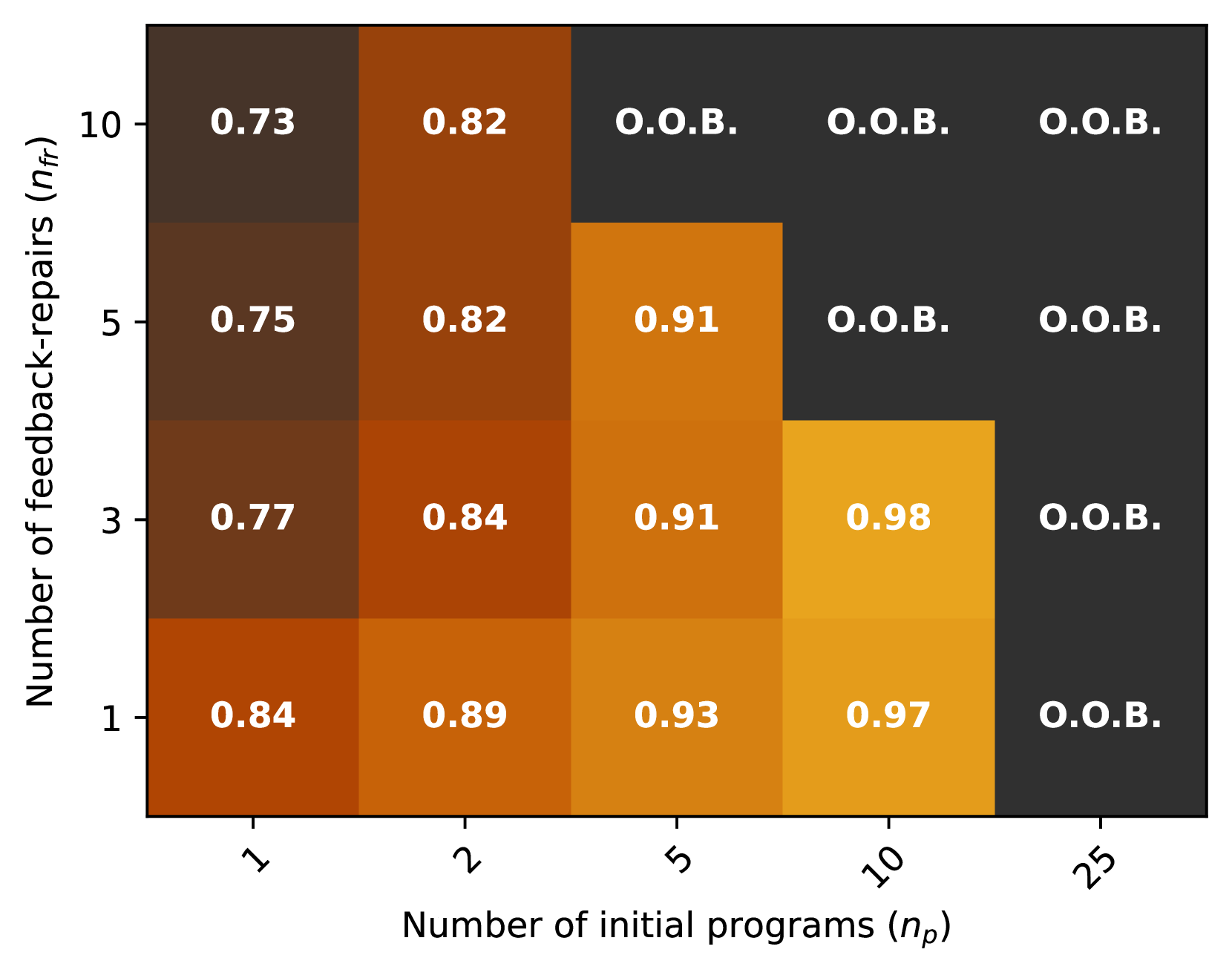

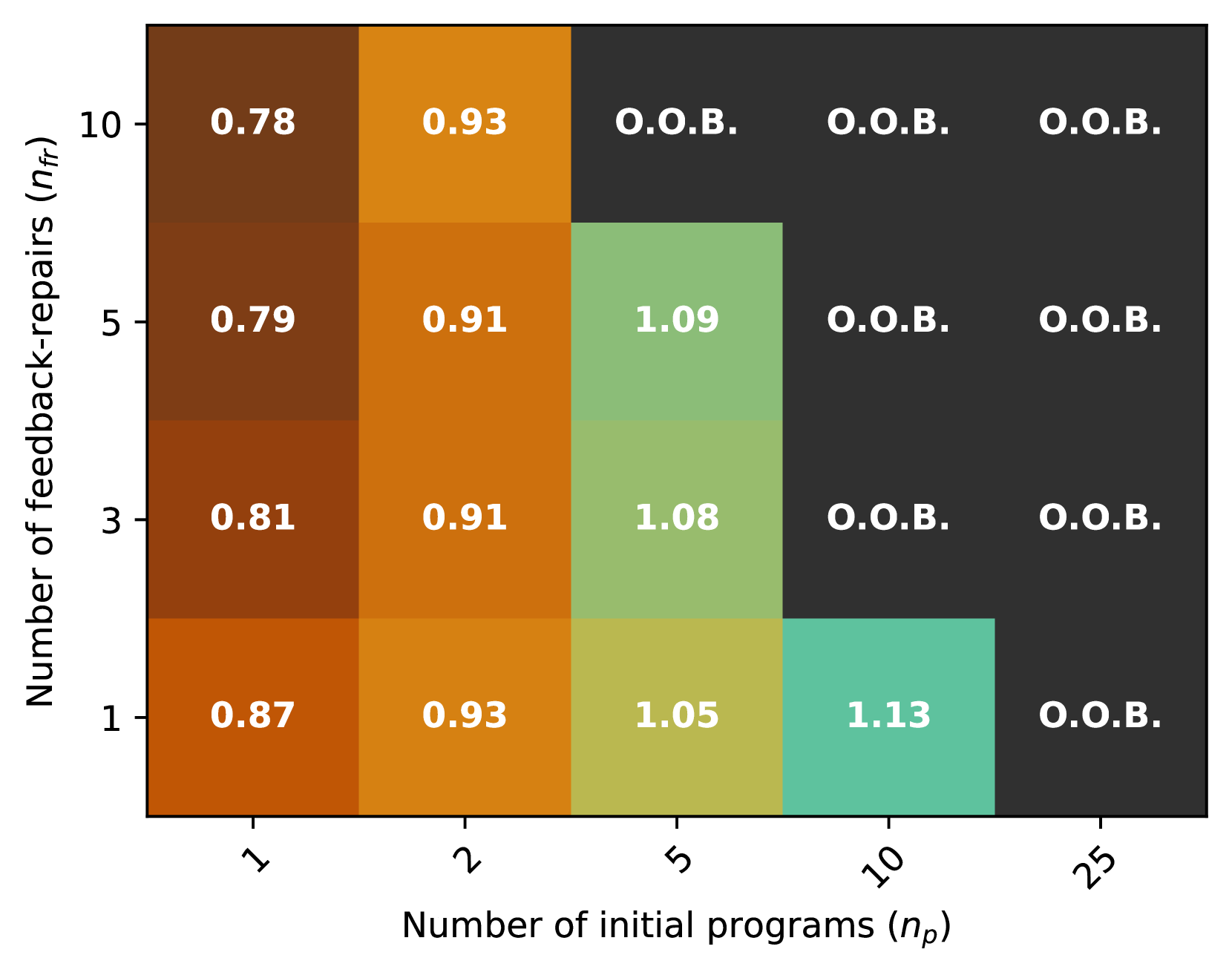

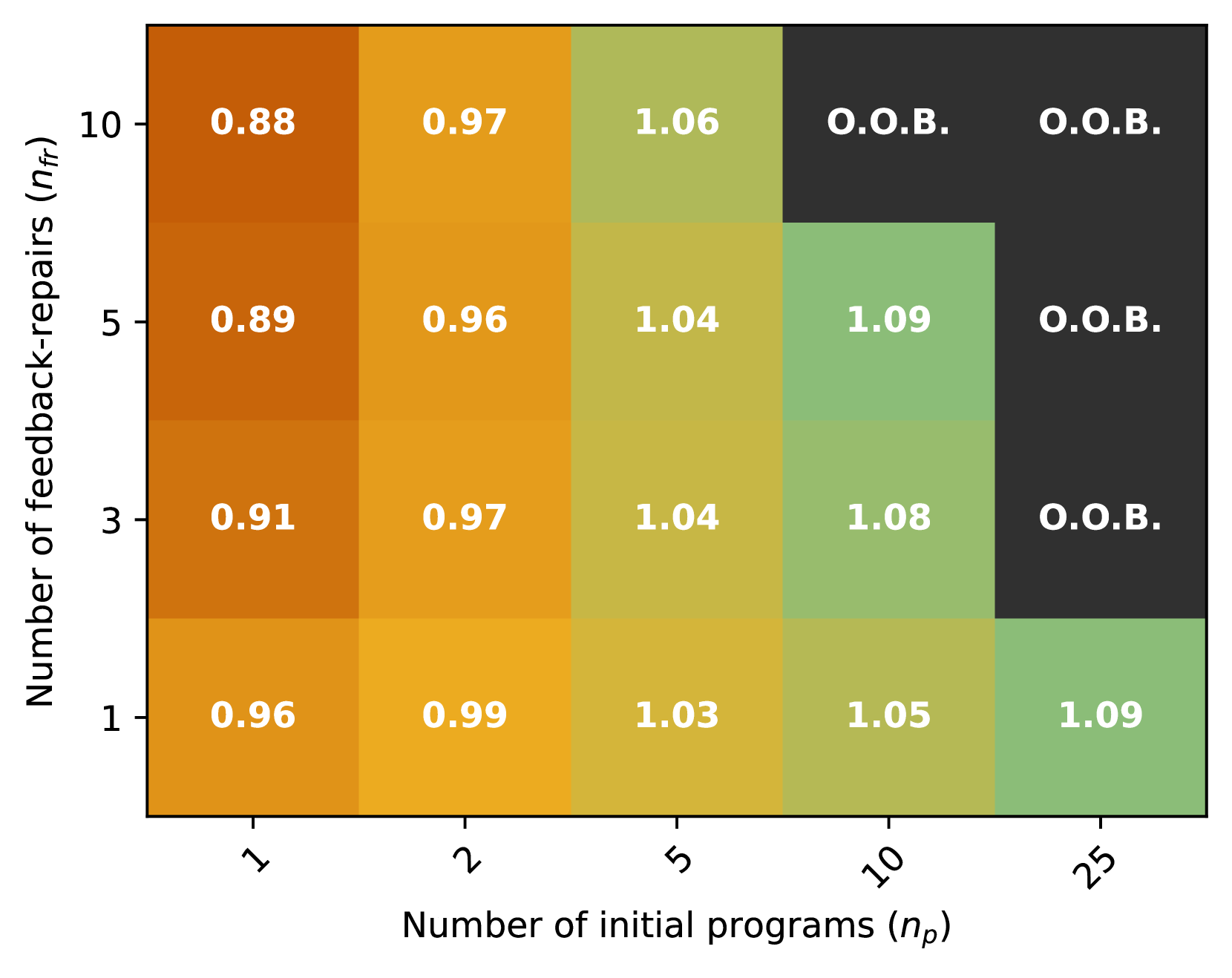

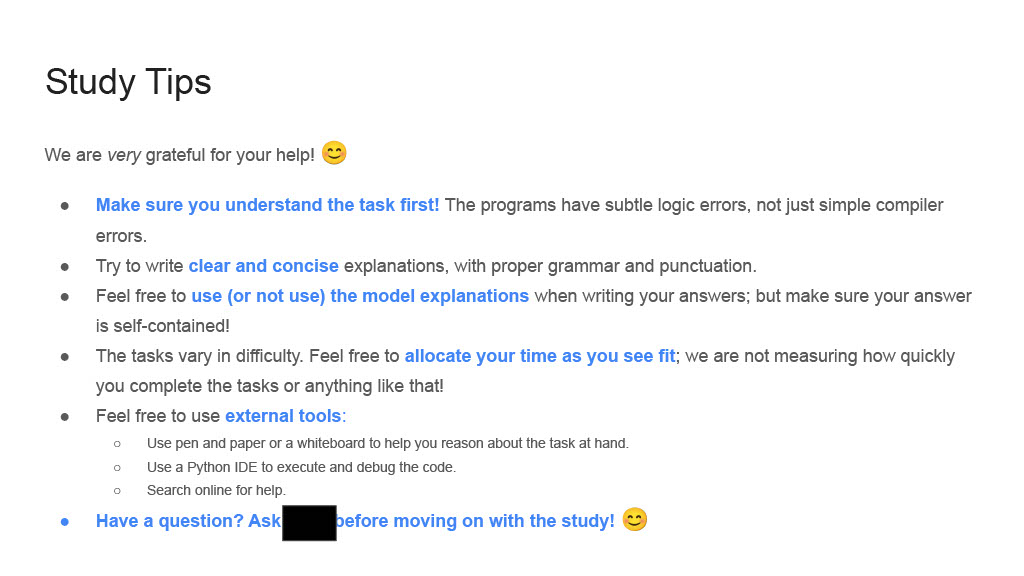

(a) Mean pass rate vs. number of tokens generated. Black line is i.i.d. sampling without repair from GPT-3.5. Note that the error bars are often smaller than the markers; all settings have a standard deviation of less than 1.5 absolute points on the y-axis. Results truncated at $t=10,000$ .

<details>

<summary>x4.png Details</summary>

### Visual Description

## Chart Type: Heatmap of Performance Metric vs. Program Parameters

### Overview

This image displays a heatmap illustrating a performance metric (likely a success rate or accuracy, ranging from 0.78 to 1.00) across different combinations of two parameters: "Number of initial programs ($n_p$)" and "Number of feedback-repairs ($n_{fr}$)". The cells are color-coded, with darker brown indicating lower values and brighter yellow indicating higher values. A significant portion of the grid, particularly in the upper-right, is marked "O.O.B." (Out Of Bounds) and colored dark grey, indicating conditions where a numerical result was not obtained.

### Components/Axes

The chart is a 2D grid with two categorical axes:

* **Y-axis (left side)**: Labeled "Number of feedback-repairs ($n_{fr}$)".

* Tick markers (from bottom to top): 1, 3, 5, 10.

* **X-axis (bottom side)**: Labeled "Number of initial programs ($n_p$)".

* Tick markers (from left to right): 1, 2, 5, 10, 25.

There is no explicit color legend. However, the color intensity within the cells implicitly represents the numerical values:

* Darkest brown: Corresponds to values around 0.78.

* Medium brown/orange: Corresponds to values around 0.80 to 0.92.

* Lighter orange/yellow: Corresponds to values around 0.94 to 0.99.

* Brightest yellow: Corresponds to the value 1.00.

* Dark grey: Corresponds to "O.O.B." entries.

### Detailed Analysis

The heatmap is a 4x5 grid, with rows corresponding to $n_{fr}$ values and columns corresponding to $n_p$ values. Each cell contains a numerical value (formatted to two decimal places) or the text "O.O.B.".

**Data Table (Values in cells, with qualitative color description):**

| $n_{fr}$ \ $n_p$ | 1 (Dark Brown) | 2 (Medium Brown/Orange) | 5 (Lighter Orange/Yellow) | 10 (Lighter Orange/Yellow) | 25 (Dark Grey) |

| :--------------- | :------------- | :---------------------- | :------------------------ | :------------------------- | :------------- |

| **10** | 0.78 | 0.86 | O.O.B. | O.O.B. | O.O.B. |

| **5** | 0.80 | 0.86 | 0.95 | O.O.B. | O.O.B. |

| **3** | 0.81 | 0.87 | 0.94 | 1.00 | O.O.B. |

| **1** | 0.87 | 0.92 | 0.96 | 0.99 | O.O.B. |

**Trends and Observations by Row (increasing $n_p$ for fixed $n_{fr}$):**

* **For $n_{fr}=10$**: Values increase from 0.78 (dark brown) to 0.86 (medium brown/orange), then transition to "O.O.B." (dark grey) for $n_p=5, 10, 25$.

* **For $n_{fr}=5$**: Values increase from 0.80 (dark brown) to 0.86 (medium brown/orange) to 0.95 (lighter orange/yellow), then transition to "O.O.B." (dark grey) for $n_p=10, 25$.

* **For $n_{fr}=3$**: Values increase from 0.81 (dark brown) to 0.87 (medium brown/orange) to 0.94 (lighter orange/yellow) to 1.00 (brightest yellow), then transition to "O.O.B." (dark grey) for $n_p=25$.

* **For $n_{fr}=1$**: Values consistently increase from 0.87 (medium brown/orange) to 0.92 (medium brown/orange) to 0.96 (lighter orange/yellow) to 0.99 (lighter orange/yellow), then transition to "O.O.B." (dark grey) for $n_p=25$.

**Trends and Observations by Column (increasing $n_{fr}$ for fixed $n_p$):**

* **For $n_p=1$**: Values generally decrease from 0.87 (medium brown/orange) to 0.81 (dark brown) to 0.80 (dark brown) to 0.78 (dark brown).

* **For $n_p=2$**: Values show a slight decrease then stability: 0.92 (medium brown/orange) to 0.87 (medium brown/orange) to 0.86 (medium brown/orange) to 0.86 (medium brown/orange).

* **For $n_p=5$**: Values show a slight decrease then increase: 0.96 (lighter orange/yellow) to 0.94 (lighter orange/yellow) to 0.95 (lighter orange/yellow), then transition to "O.O.B." (dark grey) for $n_{fr}=10$.

* **For $n_p=10$**: Values increase from 0.99 (lighter orange/yellow) to 1.00 (brightest yellow), then transition to "O.O.B." (dark grey) for $n_{fr}=5, 10$.

* **For $n_p=25$**: All values are "O.O.B." (dark grey).

### Key Observations

* The highest performance value observed is 1.00, located at $n_p=10$ and $n_{fr}=3$. This cell is colored the brightest yellow.

* The lowest performance value observed is 0.78, located at $n_p=1$ and $n_{fr}=10$. This cell is colored the darkest brown.

* Generally, increasing the "Number of initial programs ($n_p$)" tends to improve the performance metric for a given "Number of feedback-repairs ($n_{fr}$)", up to a certain point.

* Increasing the "Number of feedback-repairs ($n_{fr}$)" tends to decrease performance when the "Number of initial programs ($n_p$)" is low (e.g., $n_p=1, 2$).

* The "O.O.B." region occupies the top-right portion of the heatmap, forming a triangular pattern. This indicates that combinations with a high number of initial programs and/or a high number of feedback-repairs lead to "Out Of Bounds" conditions. Specifically, $n_p=25$ always results in "O.O.B.", and for $n_p=10$, $n_{fr}$ values of 5 and 10 result in "O.O.B.". For $n_p=5$, $n_{fr}=10$ results in "O.O.B.".

### Interpretation

The heatmap likely represents the results of an experiment or simulation where the performance of a system or algorithm is evaluated based on two configurable parameters: the number of initial programs and the number of feedback-repairs. The numerical values (0.78 to 1.00) are a measure of success or efficiency, with higher values being better.

The term "O.O.B." (Out Of Bounds) suggests that for these parameter combinations, the system either failed to produce a result, exceeded a computational budget (time, memory), or fell outside a predefined operational range. This implies a constraint or limitation in the system's ability to handle certain configurations.

The data suggests a sweet spot for performance. While increasing the "Number of initial programs ($n_p$)" generally improves the metric, it also increases the likelihood of hitting an "O.O.B." condition, especially when combined with a higher "Number of feedback-repairs ($n_{fr}$)". Conversely, a very low "Number of initial programs ($n_p=1$)" consistently yields lower performance, regardless of feedback-repairs.

The optimal observed performance (1.00) is achieved with a moderate number of initial programs ($n_p=10$) and a relatively low number of feedback-repairs ($n_{fr}=3$). This indicates that beyond a certain point, additional feedback-repairs might not be beneficial or could even be detrimental, particularly when combined with a large number of initial programs, leading to the "O.O.B." state. The "O.O.B." region highlights the practical limits or computational costs associated with scaling these parameters. Researchers or engineers using this data would likely aim for parameter combinations within the high-performance, non-"O.O.B." region, balancing performance with resource constraints.

</details>

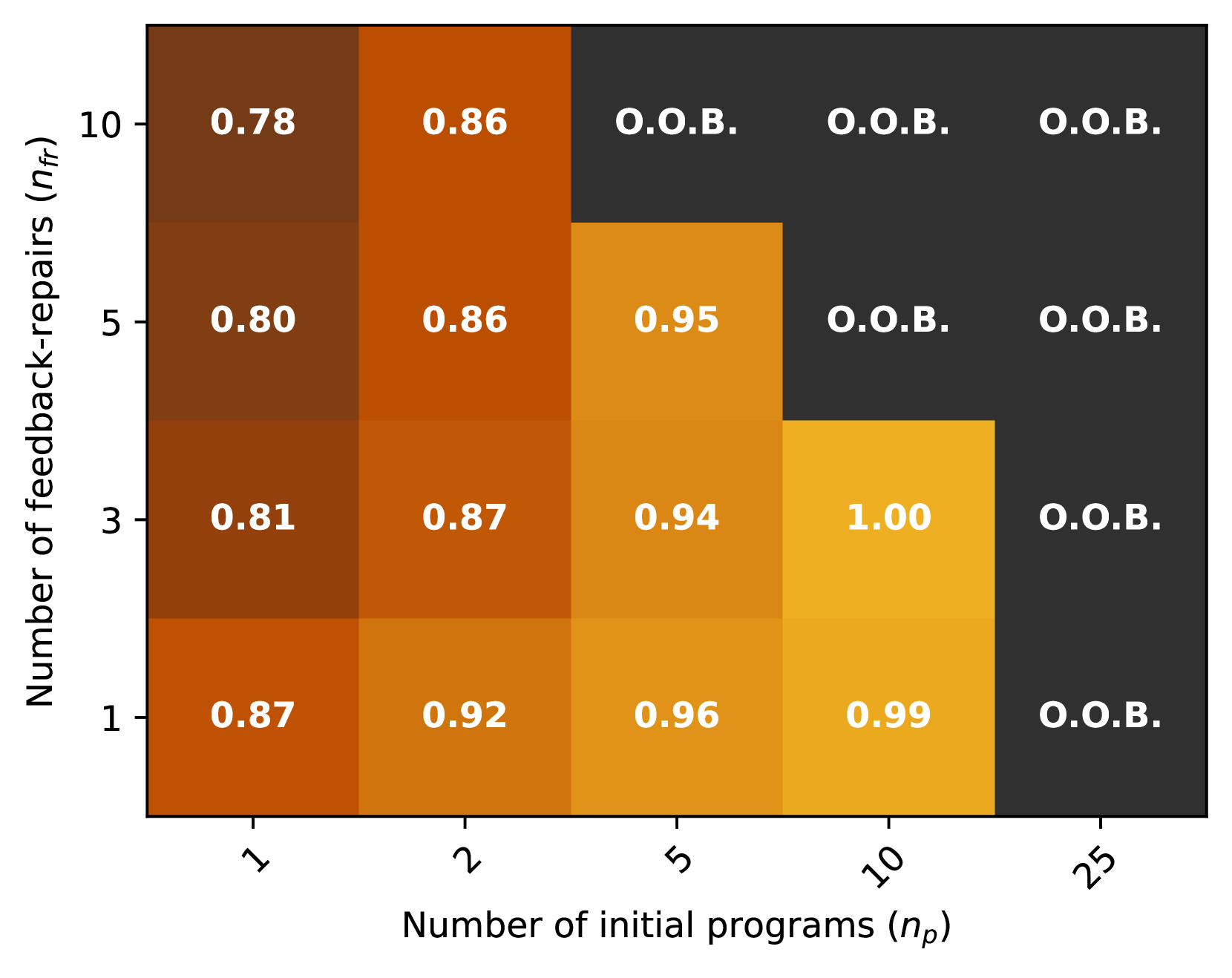

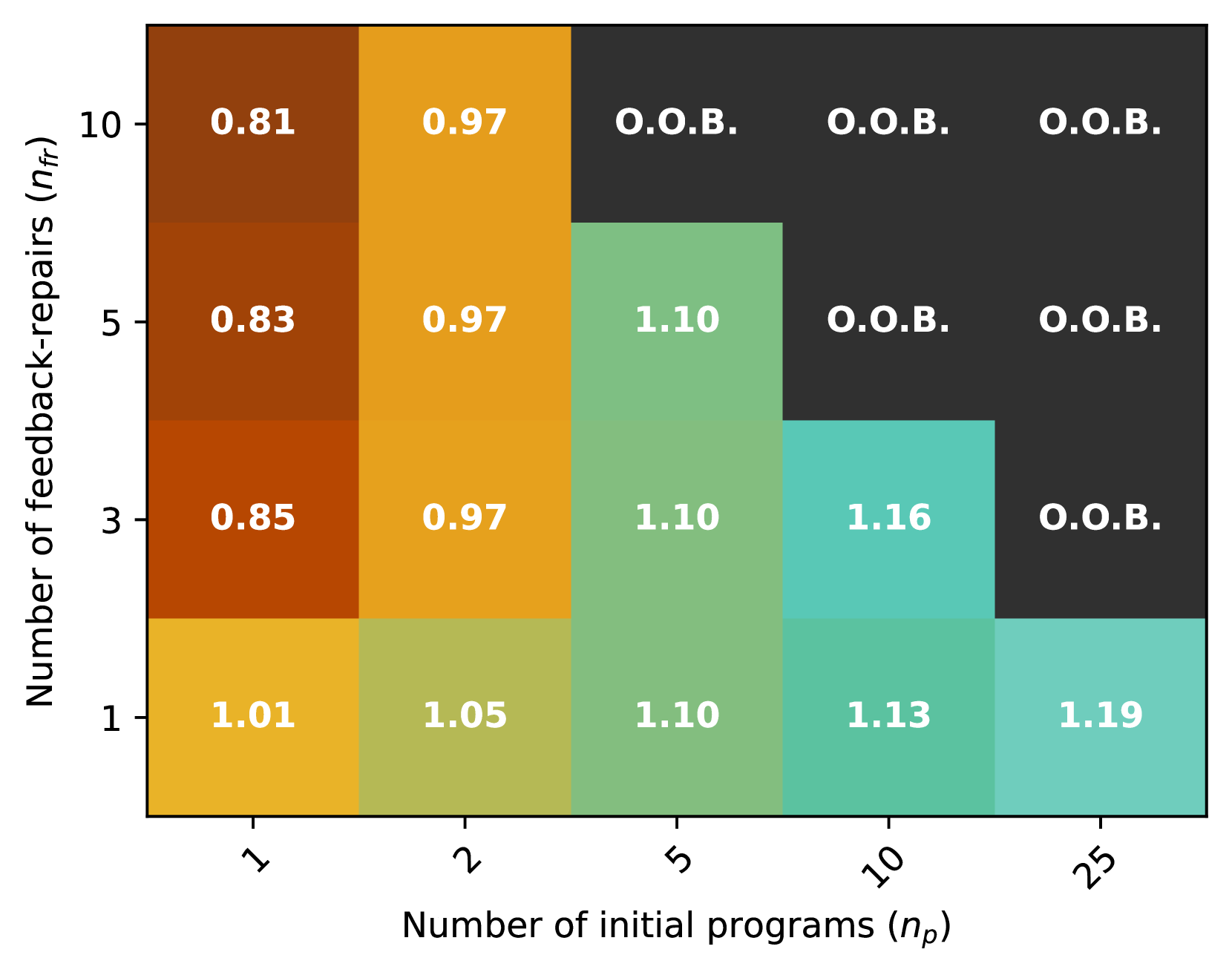

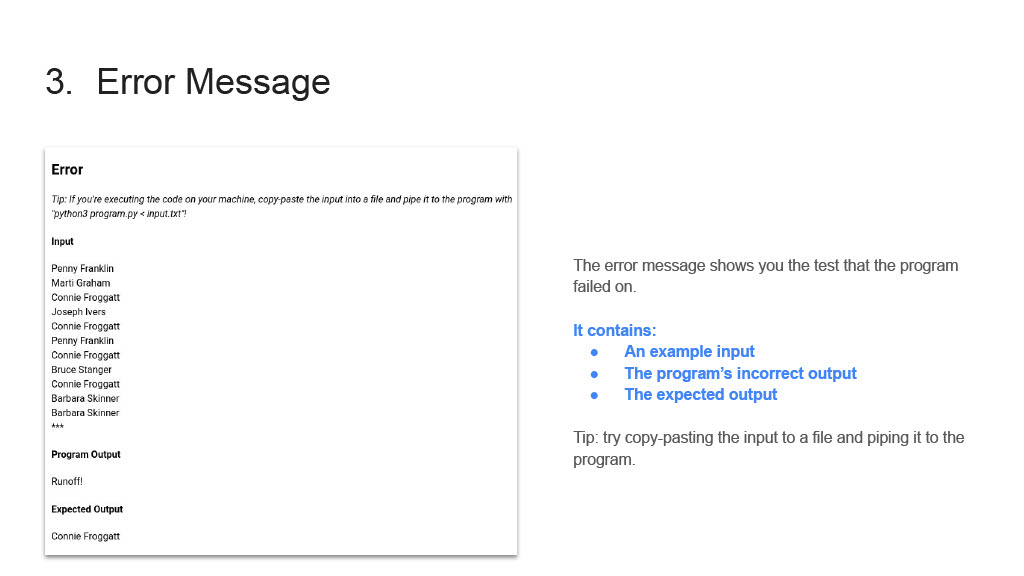

(b) Normalized mean pass rate relative to the (interpolated) baseline at an equivalent budget (number of tokens). Cells for which the number of tokens generated exceeds 50 samples from the GPT-3.5 baseline marked O.O.B. (out of bounds).

Figure 3: Pass rate versus number of tokens generated for various settings of $n_{p}$ (number of initial programs) and $n_{fr}$ (number of repairs sampled per program). GPT-3.5 is used for all samples, including the baseline.

### 4.1 Self-repair requires strong models and diverse initial samples

In this subsection, we consider the setup where $M_{P}=M_{F}\in\{\text{GPT-3.5, GPT-4}\}$ , i.e., where one single model is used for both code/repair generation and feedback generation. To evaluate if self-repair leads to better pass@t than a no-repair, i.i.d. sampling-based baseline approach, we vary $n_{p}$ and $n_{fr}$ —that is, the number of initial i.i.d. base samples and joint feedback, repair samples drawn from $M_{P}$ —in the range $(n_{p},n_{fr})\in\{1,2,5,10,25\}\times\{1,3,5,10\}$ . Recall that when $M_{P}=M_{F}$ , we jointly sample for $n_{fr}$ pairs of feedback strings and repair programs instead of sampling them one after another (Section 3.1).

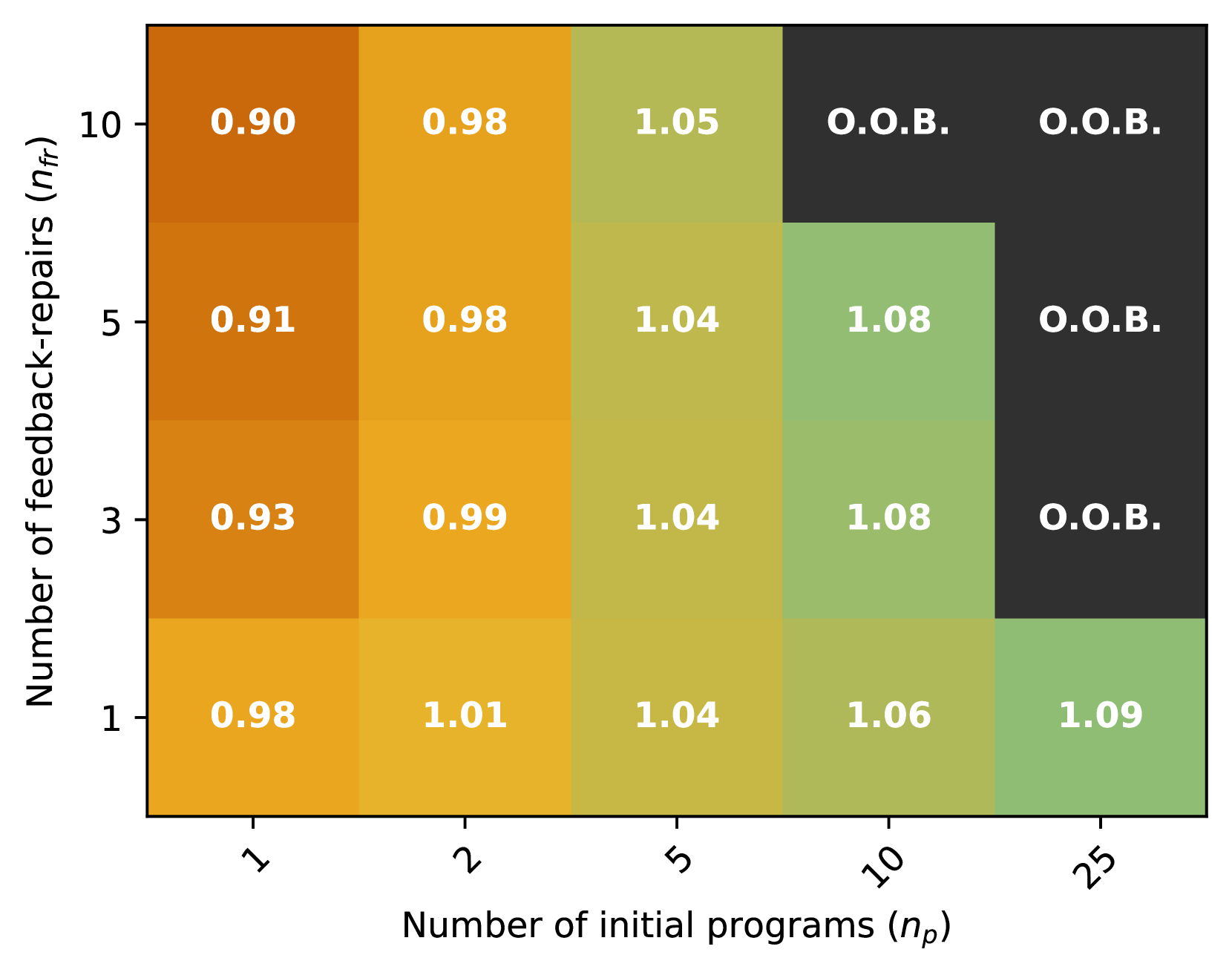

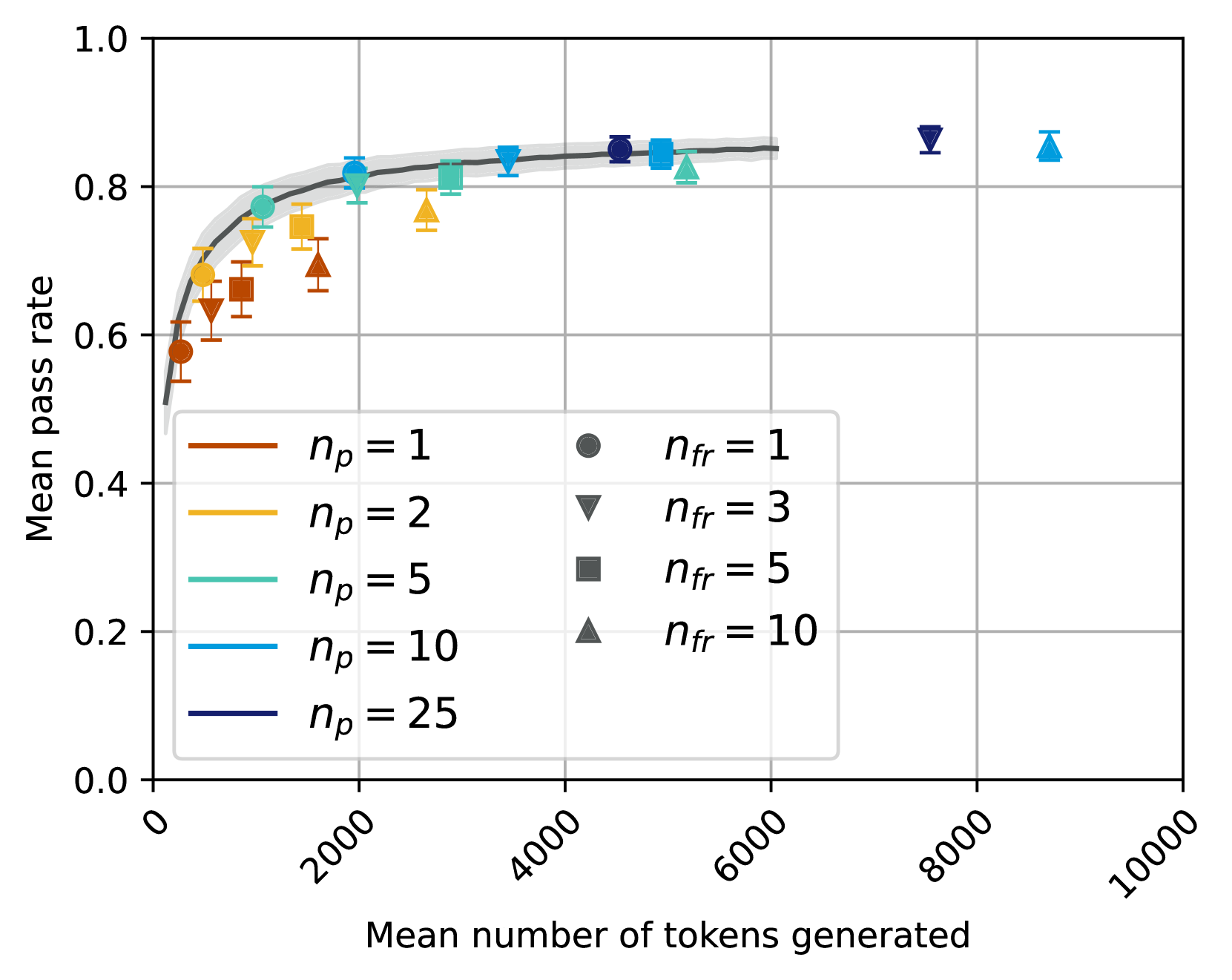

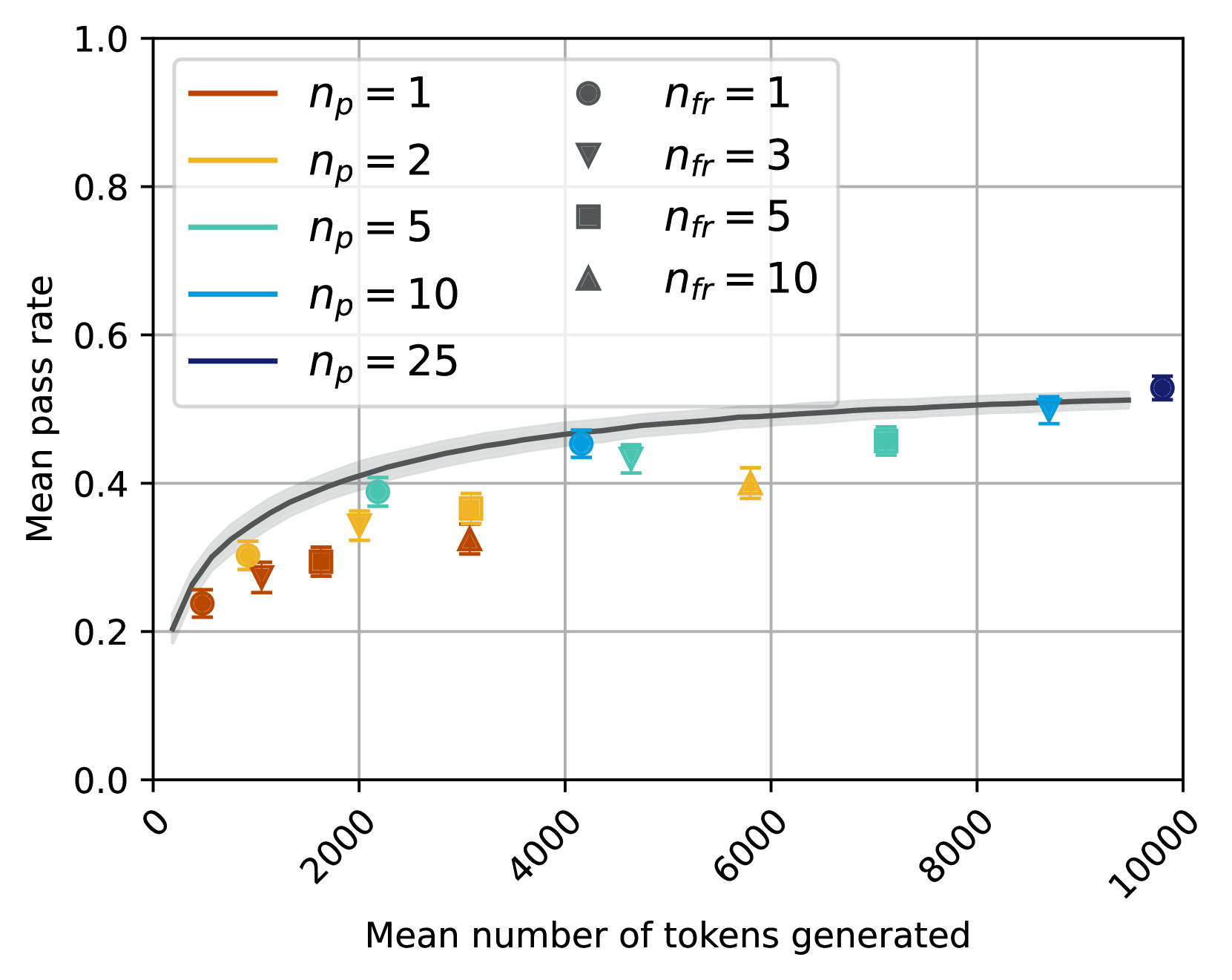

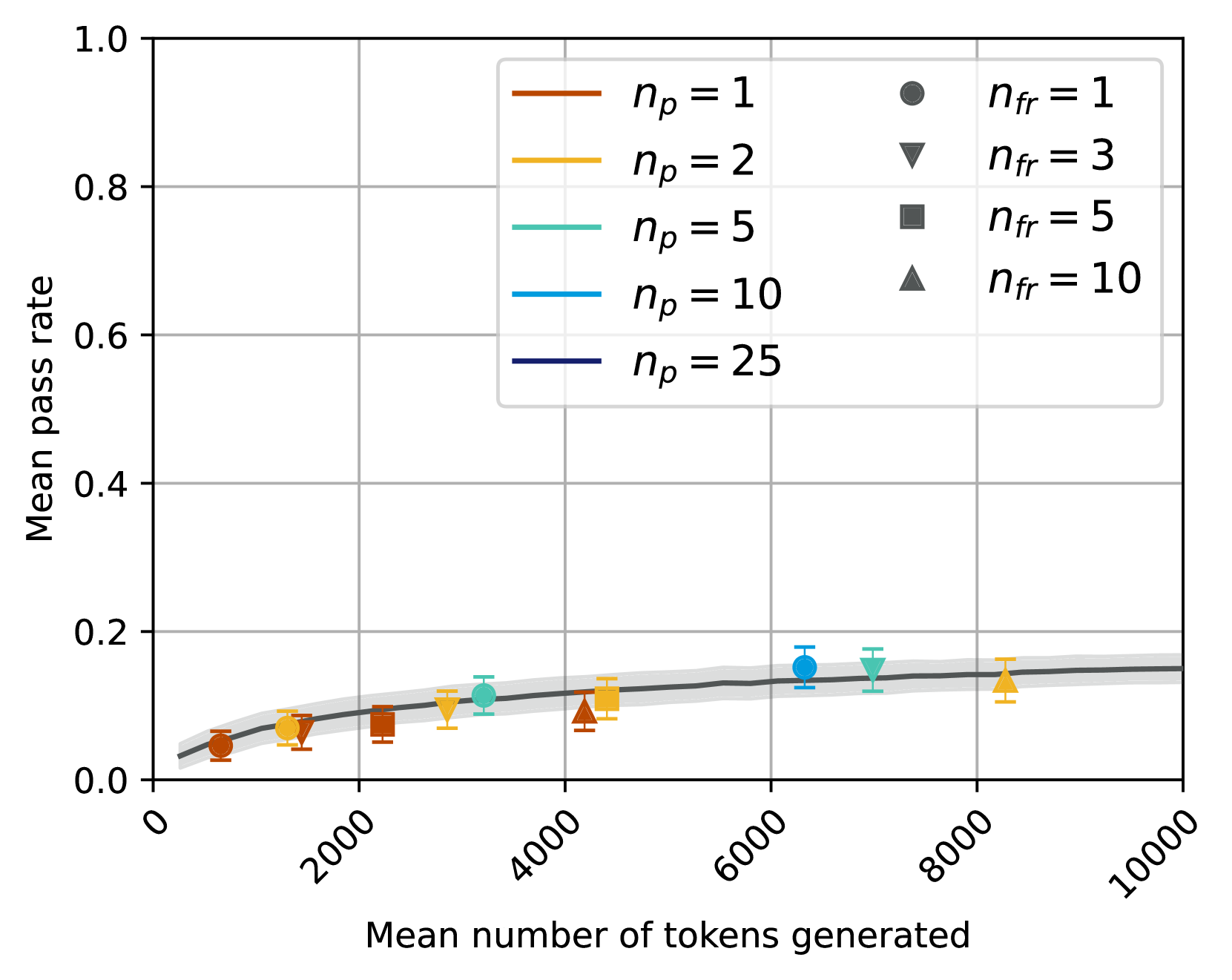

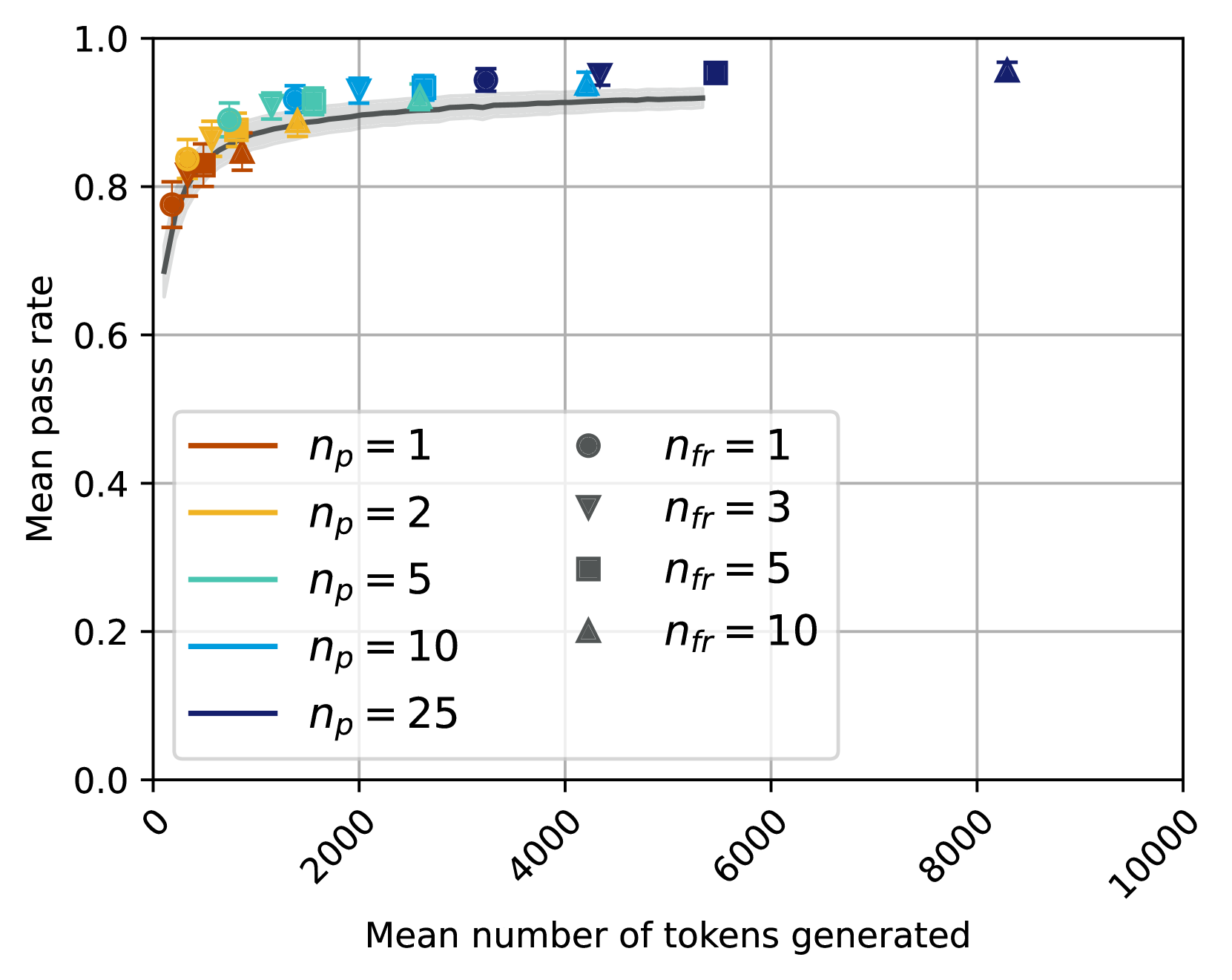

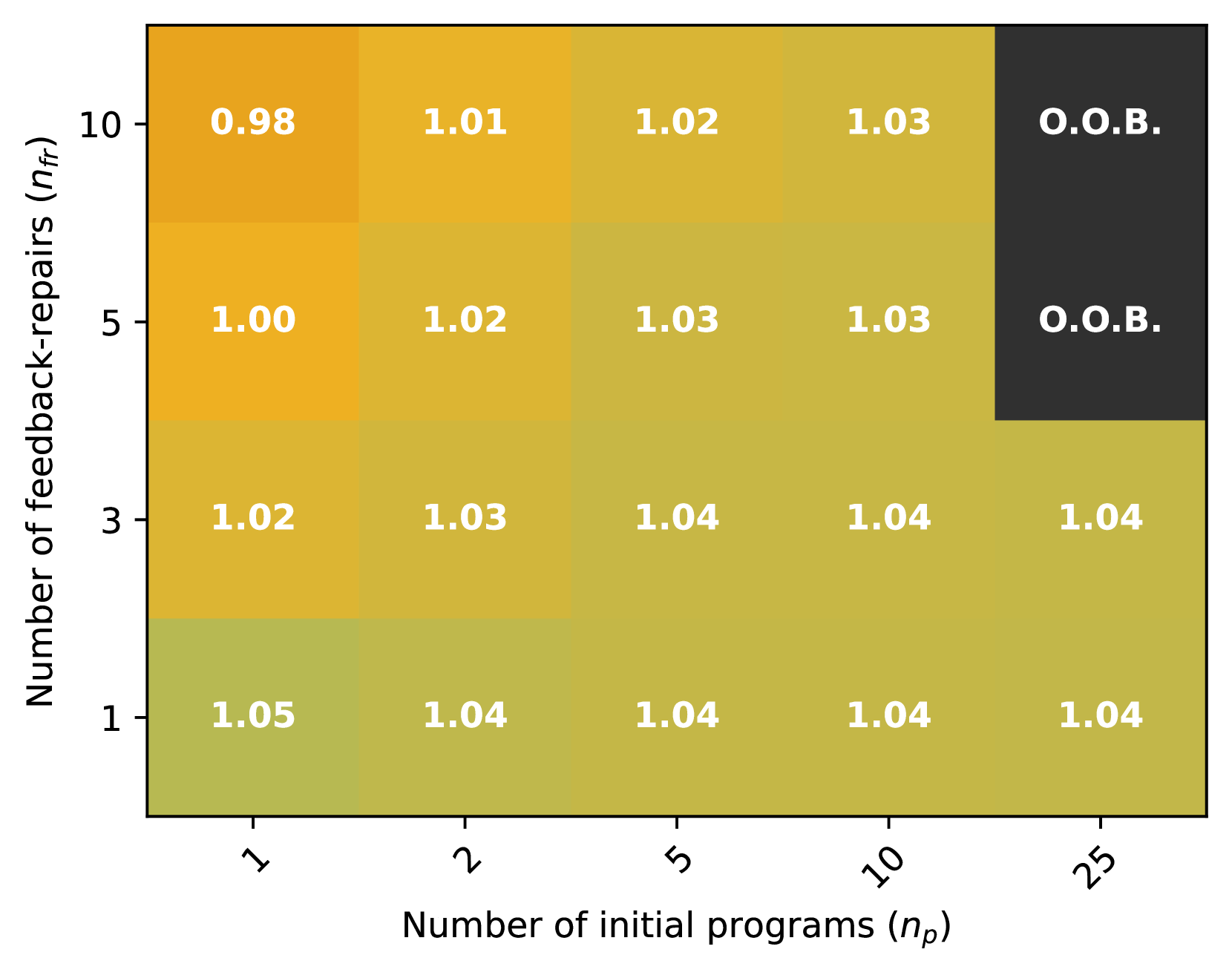

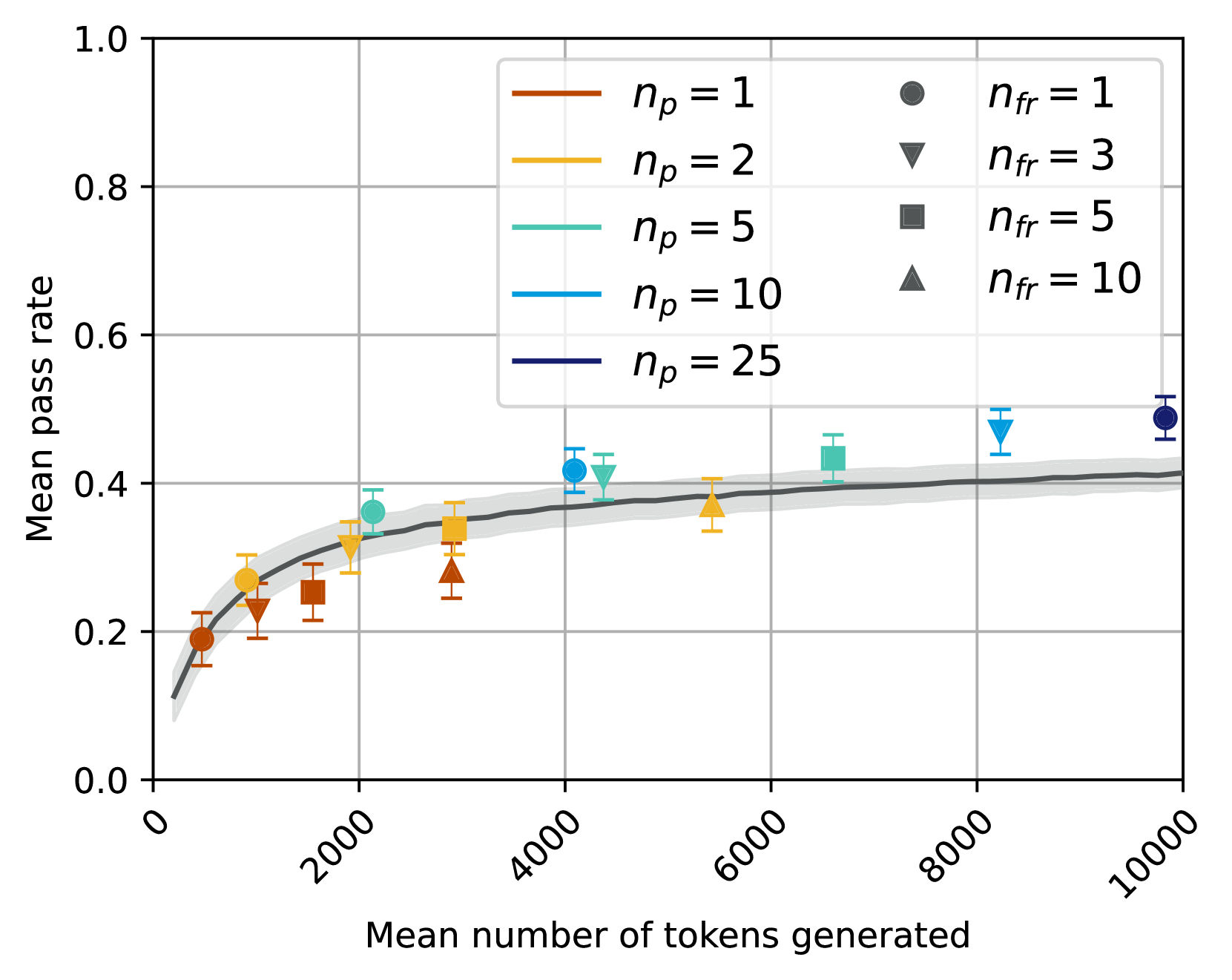

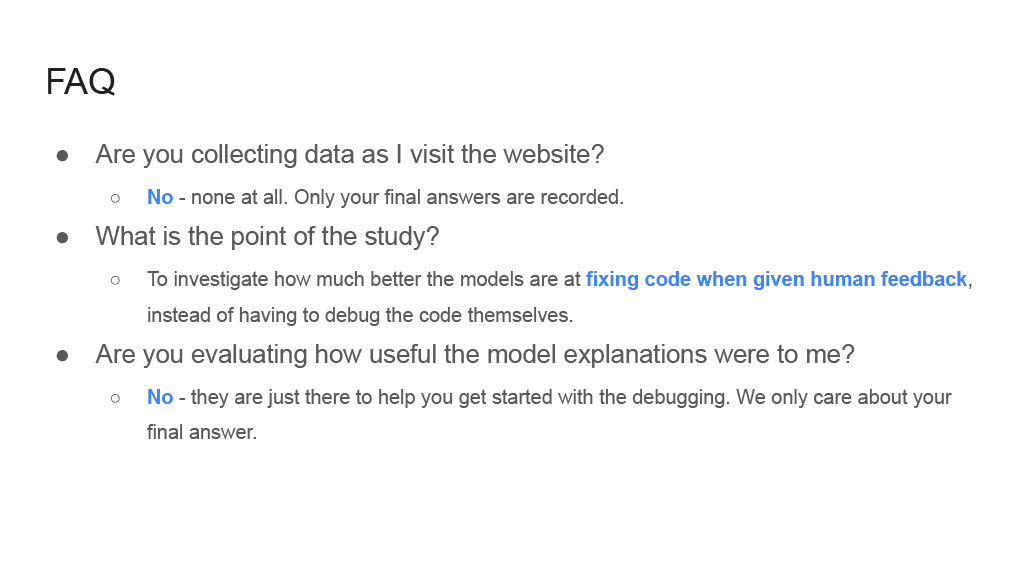

The results are shown in Figure 3 for GPT-3.5 and Figure 4 for GPT-4. In the left-hand subplots, the color of each dot indicates the number of initial samples ( $n_{p}$ ), while its shape indicates the number of feedback-repair samples ( $n_{fr}$ ). In the right hand plots, we show a heat-map with the two hyper-parameters along the axes, where the value in each cell indicates the mean pass rate with self-repair normalized by the mean pass rate of the baseline, no-repair approach when given the same token budget (i.e., pass@t at the same value of t). When the normalized mean pass rate is 1, this means that self-repair has the same pass rate as the no-repair, baseline approach at that same token budget; a higher value ( $\geq 1$ ) means self-repair performs better than the baseline.

From the plots, we can see that for the GPT-3.5 model, the pass@t is lower than or equal to the corresponding baseline (black line) for all settings of $n_{p},n_{fr}$ , clearly showing that self-repair is not an effective strategy for GPT-3.5. On the other hand, for GPT-4, there are several values of $n_{p},n_{fr}$ for which the pass rate with self-repair is significantly better than that of the baseline. For example, with $n_{p}=10,n_{fr}=3$ the pass rate increases from 65% to 70%, and with $n_{p}=25,n_{fr}=1$ it increases from 65% to 71%.

Our experiments also show a clear trend with respect to the relationship between the hyper-parameters. Given a fixed number of feedback-repairs ( $n_{fr}$ ), increasing the number of initial programs ( $n_{p}$ ) (i.e., moving right along the x-axis on the heat maps) consistently leads to relative performance gains for both models. On the other hand, fixing $n_{p}$ and increasing $n_{fr}$ (i.e., moving up along the y-axis on the heat maps) does not appear to be worth the additional cost incurred, giving very marginal gains at higher budgets and even decreasing performance at lower budgets. This suggests that, given a fixed budget, the most important factor determining whether self-repair will lead to a correct program or not is the diversity of the base samples that are generated up-front, rather than the diversity of the repairs sampled. Having more initial samples increases the likelihood of there being at least one program which is close to the ideal program and, hence, can be successfully repaired.

Since $n_{fr}=1$ is the best choice for the hyper-parameter $n_{fr}$ , we next isolate the effect of the number of initial programs, $n_{p}$ , by exploring a denser set of possible values: $(n_{p},n_{fr})\in\{1,2,....,24,25\}\times\{1\}$ . The plots are shown in Figure 6 for both $M_{P}=M_{F}\in\{\text{GPT-3.5},\text{GPT-4}\}$ and the baseline, no-repair approaches. Note that since $n_{fr}$ is fixed, in these plots, there is a direct correlation between $n_{p}$ and the total number of tokens, $t$ . Again, we see that self-repair is not an effective strategy for the GPT-3.5 model, but that it is effective for GPT-4—especially at higher values of $n_{p}$ ( $\geq 5000$ ), where it increases pass rate by over 5 points.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Chart Type: Scatter Plot with Fitted Curve: Mean Pass Rate vs. Mean Number of Tokens Generated

### Overview

This image displays a scatter plot illustrating the relationship between the "Mean number of tokens generated" on the x-axis and the "Mean pass rate" on the y-axis. The plot includes a fitted curve with a confidence interval, representing the overall trend. Individual data points are categorized by two parameters, `n_p` (represented by color) and `n_fr` (represented by marker shape), as detailed in the legend. The chart primarily shows how different configurations of these parameters affect the pass rate and the computational cost in terms of tokens generated.

### Components/Axes

The chart is structured with a main plotting area, an x-axis at the bottom, a y-axis on the left, and a legend positioned in the bottom-center-right of the plotting area.

* **X-axis Label**: "Mean number of tokens generated"

* **X-axis Range**: From 0 to 10000.

* **X-axis Major Ticks**: 0, 2000, 4000, 6000, 8000, 10000. The tick labels are rotated approximately 45 degrees counter-clockwise.

* **Y-axis Label**: "Mean pass rate"

* **Y-axis Range**: From 0.0 to 1.0.

* **Y-axis Major Ticks**: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend**: Located within the plot area, spanning from approximately X=3000 to X=8000 and Y=0.05 to Y=0.35. It is divided into two columns:

* **Left Column (Line styles, representing `n_p` values):**

* Brown line: `n_p = 1`

* Orange/Gold line: `n_p = 2`

* Teal/Light Green line: `n_p = 5`

* Light Blue line: `n_p = 10`

* Dark Blue/Indigo line: `n_p = 25`

* **Right Column (Marker shapes, representing `n_fr` values):**

* Gray circle: `n_fr = 1`

* Gray downward triangle: `n_fr = 3`

* Gray square: `n_fr = 5`

* Gray upward triangle: `n_fr = 10`

### Detailed Analysis

The chart displays a dark gray fitted curve with a lighter gray shaded confidence interval, representing the overall trend. Superimposed on this curve are 20 individual data points, each colored according to its `n_p` value and shaped according to its `n_fr` value.

**Fitted Curve Trend and Approximate Values:**

The dark gray fitted curve shows a clear positive correlation: as the "Mean number of tokens generated" increases, the "Mean pass rate" also increases. The rate of increase is steep initially and then gradually flattens out, suggesting diminishing returns. The light gray band around the curve indicates a confidence interval.

* At X ≈ 0, Y ≈ 0.40

* At X ≈ 1000, Y ≈ 0.55

* At X ≈ 2000, Y ≈ 0.60

* At X ≈ 4000, Y ≈ 0.63

* At X ≈ 6000, Y ≈ 0.65

* At X ≈ 8000, Y ≈ 0.66

**Individual Data Points (Scatter Plot):**

The data points generally follow the trend of the fitted curve. Each point represents a unique combination of `n_p` and `n_fr`.

* **`n_p = 1` (Brown points):**

* Circle (`n_fr = 1`): (X ≈ 450, Y ≈ 0.45)

* Downward Triangle (`n_fr = 3`): (X ≈ 700, Y ≈ 0.48)

* Square (`n_fr = 5`): (X ≈ 1000, Y ≈ 0.51)

* Upward Triangle (`n_fr = 10`): (X ≈ 1500, Y ≈ 0.53)

* **`n_p = 2` (Orange/Gold points):**

* Circle (`n_fr = 1`): (X ≈ 600, Y ≈ 0.50)

* Downward Triangle (`n_fr = 3`): (X ≈ 1000, Y ≈ 0.53)

* Square (`n_fr = 5`): (X ≈ 1500, Y ≈ 0.58)

* Upward Triangle (`n_fr = 10`): (X ≈ 2000, Y ≈ 0.60)

* **`n_p = 5` (Teal/Light Green points):**

* Circle (`n_fr = 1`): (X ≈ 1000, Y ≈ 0.58)

* Downward Triangle (`n_fr = 3`): (X ≈ 1500, Y ≈ 0.61)

* Square (`n_fr = 5`): (X ≈ 2500, Y ≈ 0.65)

* Upward Triangle (`n_fr = 10`): (X ≈ 3500, Y ≈ 0.62)

* **`n_p = 10` (Light Blue points):**

* Circle (`n_fr = 1`): (X ≈ 1500, Y ≈ 0.60)

* Downward Triangle (`n_fr = 3`): (X ≈ 2500, Y ≈ 0.68)

* Square (`n_fr = 5`): (X ≈ 4500, Y ≈ 0.69)

* Upward Triangle (`n_fr = 10`): (X ≈ 7500, Y ≈ 0.69)

* **`n_p = 25` (Dark Blue/Indigo points):**

* Circle (`n_fr = 1`): (X ≈ 2000, Y ≈ 0.62)

* Downward Triangle (`n_fr = 3`): (X ≈ 3000, Y ≈ 0.67)

* Square (`n_fr = 5`): (X ≈ 5500, Y ≈ 0.70)

* Upward Triangle (`n_fr = 10`): (X ≈ 7000, Y ≈ 0.71)

### Key Observations

1. **General Trend**: There is a clear positive correlation between the mean number of tokens generated and the mean pass rate. The pass rate increases rapidly at lower token counts and then plateaus, indicating diminishing returns for generating more tokens beyond a certain point.

2. **Effect of `n_p`**: Higher values of `n_p` (represented by colors shifting from brown to dark blue) generally correspond to higher mean pass rates for a given range of tokens generated. For instance, `n_p=25` points achieve pass rates around 0.70-0.71, while `n_p=1` points peak around 0.53.

3. **Effect of `n_fr`**: Within each `n_p` group (i.e., for points of the same color), increasing `n_fr` (represented by marker shapes from circle to upward triangle) generally leads to a higher "Mean number of tokens generated." This suggests `n_fr` directly influences the output length or computational effort.

4. **Interaction of `n_p` and `n_fr`**: For a fixed `n_p`, increasing `n_fr` tends to move the data point to the right (more tokens generated) and generally upwards (higher pass rate), following the overall curve.

5. **Outlier/Anomaly**: The teal upward triangle point (`n_p = 5`, `n_fr = 10`) is located at approximately (X ≈ 3500, Y ≈ 0.62). This point appears to have a slightly lower pass rate compared to the general trend of increasing pass rate with increasing `n_fr` for `n_p=5` (where `n_fr=5` is at Y ≈ 0.65). It also falls slightly below the fitted curve, suggesting a potential deviation from the expected performance for this specific configuration.

### Interpretation

The data presented in this chart likely illustrates the performance characteristics of a system (e.g., a language model or code generation system) where `n_p` and `n_fr` are configurable parameters.

* **Performance vs. Cost Trade-off**: The chart fundamentally demonstrates a trade-off. To achieve a higher "Mean pass rate" (better performance), one generally needs to increase the "Mean number of tokens generated" (higher computational cost or longer output).

* **Role of `n_p`**: The parameter `n_p` appears to be a critical factor in determining the *potential maximum pass rate*. Higher `n_p` values enable the system to achieve significantly better performance, suggesting it might relate to the number of parallel attempts, diverse candidates, or overall model capacity. Increasing `n_p` shifts the performance curve upwards.

* **Role of `n_fr`**: The parameter `n_fr` seems to control the *granularity or extent of generation* for each `n_p` setting. Higher `n_fr` values lead to more tokens being generated, which in turn generally contributes to a higher pass rate, but also increases the computational burden. It scales the x-axis for a given `n_p`.

* **Diminishing Returns**: The flattening of the curve indicates that there's a point where further increasing the number of tokens generated yields only marginal improvements in the pass rate. This is crucial for optimizing resource allocation.

* **Potential for Optimization**: The observed outlier (`n_p=5, n_fr=10`) suggests that simply increasing `n_fr` might not always lead to monotonic improvements, especially for intermediate `n_p` values. There might be an optimal `n_fr` for each `n_p` beyond which performance either plateaus or even slightly degrades due to factors like increased noise, irrelevant output, or computational overhead without corresponding quality gains. This highlights the need for careful tuning of both parameters.

* **Practical Implications**: For practical deployment, one would need to balance the desired pass rate with the acceptable computational cost (tokens generated). If high pass rates are critical, higher `n_p` values are necessary. If computational efficiency is paramount, lower `n_p` and `n_fr` values might be chosen, accepting a lower pass rate.

</details>

(a) Mean pass rate vs. number of tokens generated. Black line is i.i.d. sampling without repair from GPT-4. Note that the error bars are often smaller than the markers; all settings have a standard deviation of less than 1.5 absolute points on the y-axis. Results truncated at $t=10,000$ .

<details>

<summary>x6.png Details</summary>

### Visual Description

## Heatmap: Performance Metric by Number of Feedback-Repairs and Initial Programs

### Overview

This image displays a heatmap illustrating a performance metric across a grid defined by two input parameters: "Number of feedback-repairs" ($n_{fr}$) on the vertical axis and "Number of initial programs" ($n_p$) on the horizontal axis. Each cell in the grid contains a numerical value representing the metric, or the text "O.O.B." (Out Of Bounds), and is colored to visually represent its magnitude. The color gradient transitions from darker orange/brown for lower values, through yellow and light green for increasing values, to darker green for the highest values. Dark grey cells indicate the "O.O.B." state.

### Components/Axes

* **Y-axis (Left):** Labeled "Number of feedback-repairs ($n_{fr}$)"

* Tick markers (from bottom to top): 1, 3, 5, 10

* **X-axis (Bottom):** Labeled "Number of initial programs ($n_p$)"

* Tick markers (from left to right): 1, 2, 5, 10, 25

* **Data Grid:** A 4x5 grid of cells, each containing a numerical value (to two decimal places) or the text "O.O.B.". The background color of each cell visually represents the magnitude of the value.

* **Color Gradient (Implicit Legend):**

* Darker orange/brown (e.g., 0.90, 0.91, 0.93): Represents lower metric values.

* Lighter orange/yellow (e.g., 0.98, 0.99, 1.01): Represents mid-range metric values.

* Light green/olive (e.g., 1.04, 1.05, 1.06): Represents higher mid-range metric values.

* Darker green (e.g., 1.08, 1.09): Represents the highest metric values.

* Dark grey: Represents the "O.O.B." (Out Of Bounds) state.

### Detailed Analysis

The heatmap presents the following data, organized by `Number of feedback-repairs` ($n_{fr}$) on the Y-axis and `Number of initial programs` ($n_p$) on the X-axis.

| $n_{fr}$ \ $n_p$ | 1 | 2 | 5 | 10 | 25 |

| :--------------- | :----- | :----- | :----- | :----- | :----- |

| **10** | 0.90 | 0.98 | 1.05 | O.O.B. | O.O.B. |

| **5** | 0.91 | 0.98 | 1.04 | 1.08 | O.O.B. |

| **3** | 0.93 | 0.99 | 1.04 | 1.08 | O.O.B. |

| **1** | 0.98 | 1.01 | 1.04 | 1.06 | 1.09 |

**Trends:**

* **Across rows (increasing $n_p$ for a fixed $n_{fr}$):**

* For $n_{fr}=10$: The metric values increase from 0.90 (dark orange) to 0.98 (yellow) to 1.05 (light green), then transition to "O.O.B." (dark grey) for $n_p=10$ and $n_p=25$.

* For $n_{fr}=5$: The metric values increase from 0.91 (dark orange) to 0.98 (yellow) to 1.04 (light green) to 1.08 (dark green), then become "O.O.B." (dark grey) for $n_p=25$.

* For $n_{fr}=3$: The metric values increase from 0.93 (dark orange) to 0.99 (yellow) to 1.04 (light green) to 1.08 (dark green), then become "O.O.B." (dark grey) for $n_p=25$.

* For $n_{fr}=1$: The metric values consistently increase from 0.98 (yellow) to 1.01 (yellow) to 1.04 (light green) to 1.06 (light green) to 1.09 (dark green).

* General trend: As the "Number of initial programs" ($n_p$) increases, the performance metric generally improves (increases), until it reaches an "O.O.B." state for higher $n_p$ values, particularly when $n_{fr}$ is also high.

* **Down columns (increasing $n_{fr}$ for a fixed $n_p$):**

* For $n_p=1$: The metric values decrease from 0.98 (yellow) to 0.93 (dark orange) to 0.91 (dark orange) to 0.90 (dark orange).

* For $n_p=2$: The metric values decrease from 1.01 (yellow) to 0.99 (yellow) to 0.98 (yellow) and remain stable at 0.98 (yellow).

* For $n_p=5$: The metric values are relatively stable at 1.04 (light green) for $n_{fr}=1, 3, 5$, then slightly increase to 1.05 (light green) for $n_{fr}=10$.

* For $n_p=10$: The metric values increase from 1.06 (light green) to 1.08 (dark green) for $n_{fr}=3, 5$, then become "O.O.B." (dark grey) for $n_{fr}=10$.

* For $n_p=25$: The metric value is 1.09 (dark green) for $n_{fr}=1$, then becomes "O.O.B." (dark grey) for $n_{fr}=3, 5, 10$.

* General trend: The impact of increasing "Number of feedback-repairs" ($n_{fr}$) varies. For lower $n_p$, the metric tends to decrease or stabilize. For higher $n_p$, the metric tends to increase before hitting "O.O.B." for the highest $n_{fr}$ values.

### Key Observations

* The lowest observed metric value is 0.90, located at the top-left corner ($n_{fr}=10, n_p=1$).

* The highest observed metric value is 1.09, located at the bottom-right corner of the non-O.O.B. region ($n_{fr}=1, n_p=25$).

* The "O.O.B." state appears in the upper-right portion of the heatmap, indicating that for higher values of $n_p$ (10 and 25) combined with higher values of $n_{fr}$ (3, 5, 10), the system enters an "Out Of Bounds" condition.

* There is a clear diagonal boundary for the "O.O.B." region, starting from ($n_{fr}=10, n_p=10$) and extending downwards and rightwards to include ($n_{fr}=3, n_p=25$), ($n_{fr}=5, n_p=25$), and ($n_{fr}=10, n_p=25$).

* The performance metric generally improves as $n_p$ increases, but this improvement is limited by the onset of the "O.O.B." state.

* For $n_p=1$, increasing $n_{fr}$ consistently decreases the metric. For $n_p=2$, increasing $n_{fr}$ leads to a slight decrease and then stabilization. For $n_p=5$, the metric is relatively stable. For $n_p=10$ and $n_p=25$, increasing $n_{fr}$ initially improves the metric but quickly leads to "O.O.B.".

### Interpretation

This heatmap likely illustrates the performance characteristics of a system or algorithm influenced by two parameters: the number of feedback-repairs ($n_{fr}$) and the number of initial programs ($n_p$). Higher metric values are generally associated with better performance, as indicated by the color progression from orange (low) to green (high).

The data suggests that increasing the "Number of initial programs" ($n_p$) is generally beneficial for performance, leading to higher metric values. This trend is most pronounced when the "Number of feedback-repairs" ($n_{fr}$) is low (e.g., $n_{fr}=1$), where the metric continuously increases to its peak of 1.09.

However, there's a critical interaction with $n_{fr}$. While increasing $n_p$ is good, combining high $n_p$ with high $n_{fr}$ leads to an "Out Of Bounds" (O.O.B.) state. This "O.O.B." condition could signify system failure, instability, resource exhaustion, or a state where the metric cannot be meaningfully computed. The diagonal boundary of the O.O.B. region indicates a threshold or limit where the combined complexity or resource demands of many initial programs and many feedback-repairs become unmanageable.

Conversely, the effect of increasing $n_{fr}$ is not uniformly positive. For a small number of initial programs ($n_p=1, 2$), more feedback-repairs actually lead to slightly worse or stable performance. For moderate $n_p$ (e.g., $n_p=5$), $n_{fr}$ has little impact on the metric. Only when $n_p$ is already high (e.g., $n_p=10$) does increasing $n_{fr}$ initially boost performance before quickly pushing the system into the O.O.B. state.

In summary, the system achieves its best measurable performance (1.09) with a high number of initial programs ($n_p=25$) and a low number of feedback-repairs ($n_{fr}=1$). This suggests an optimal operating point where the system benefits from a broad initial exploration (many initial programs) but is sensitive to excessive iterative refinement or correction (feedback-repairs), especially when the initial exploration is already extensive. The "O.O.B." region highlights a critical operational boundary that must be avoided for stable and measurable system behavior.

</details>

(b) Normalized mean pass rate relative to the (interpolated) baseline at an equivalent budget (number of tokens). Cells for which the number of tokens generated exceeds 50 samples from the GPT-4 baseline marked O.O.B. (out of bounds).

Figure 4: Pass rate versus number of tokens generated for various settings of $n_{p}$ (number of initial programs) and $n_{fr}$ (number of repairs per failing program). GPT-4 is used for all samples, including the baseline.

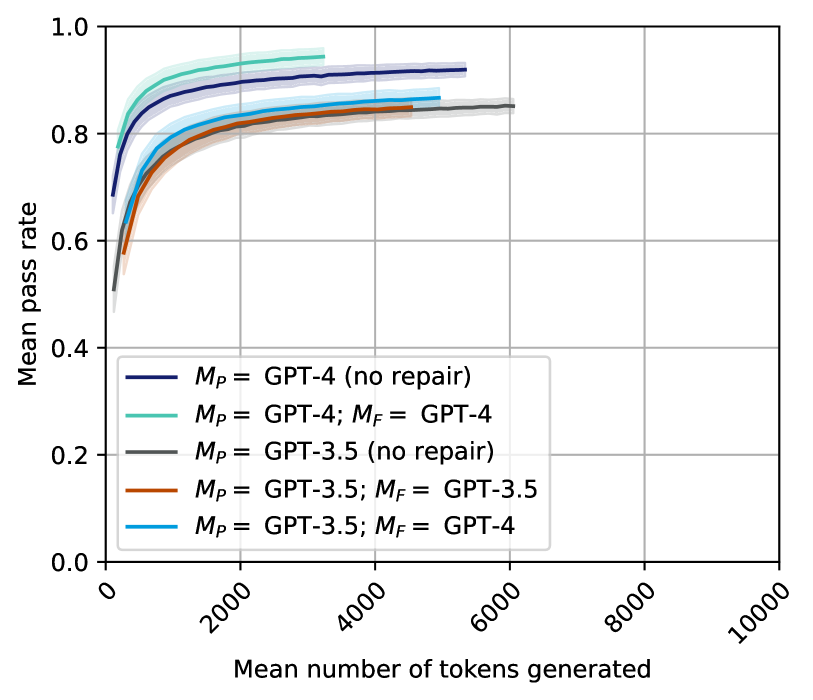

### 4.2 GPT-4 feedback improves GPT-3.5 repair

Next, we conduct an experiment in which we evaluate the impact of using a separate, stronger model to generate the feedback. This is to test the hypothesis that self-repair is held back (especially for GPT-3.5) by the model’s inability to introspect and debug its own code.

For this experiment, we set $M_{P}$ = GPT-3.5 and $M_{F}$ = GPT-4 and vary the hyper-parameters as $(n_{p},n_{f},n_{r})\in\{1,2,....,24,25\}\times\{1\}\times\{1\}$ , similarly to the previous experiment. Note that since we are now operating in a setting in which the feedback and repair stages must be separated, we have three hyper-parameters— $n_{p},n_{f},n_{r}$ —instead of two— $n_{p},n_{fr}$ (Section 3.1). To keep the computational budget tractable, and since the variance was seen to be very low in the previous experiment, we use $N_{f}=10$ instead of $N_{f}=25$ for this experiment (see Section 3.2).

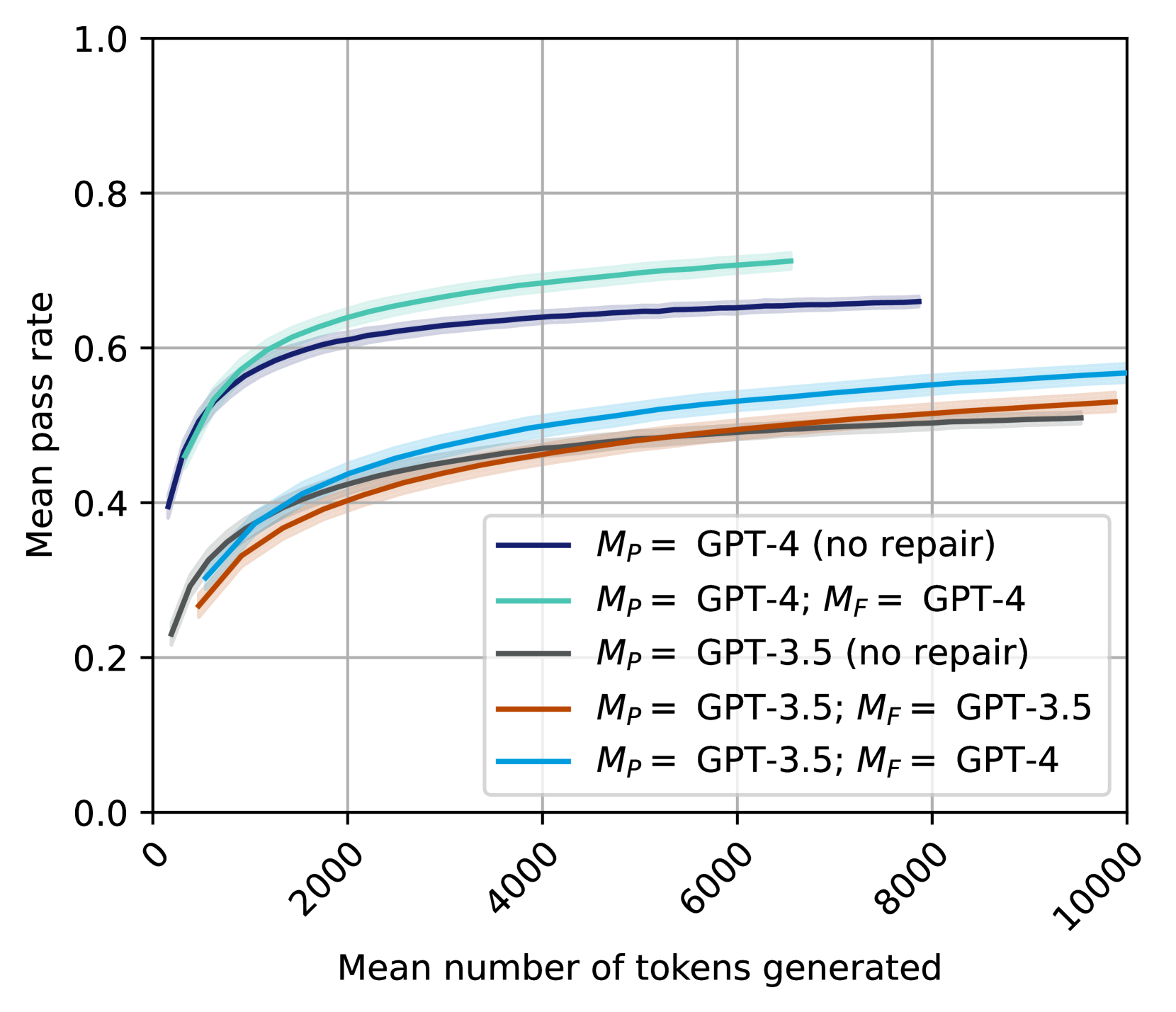

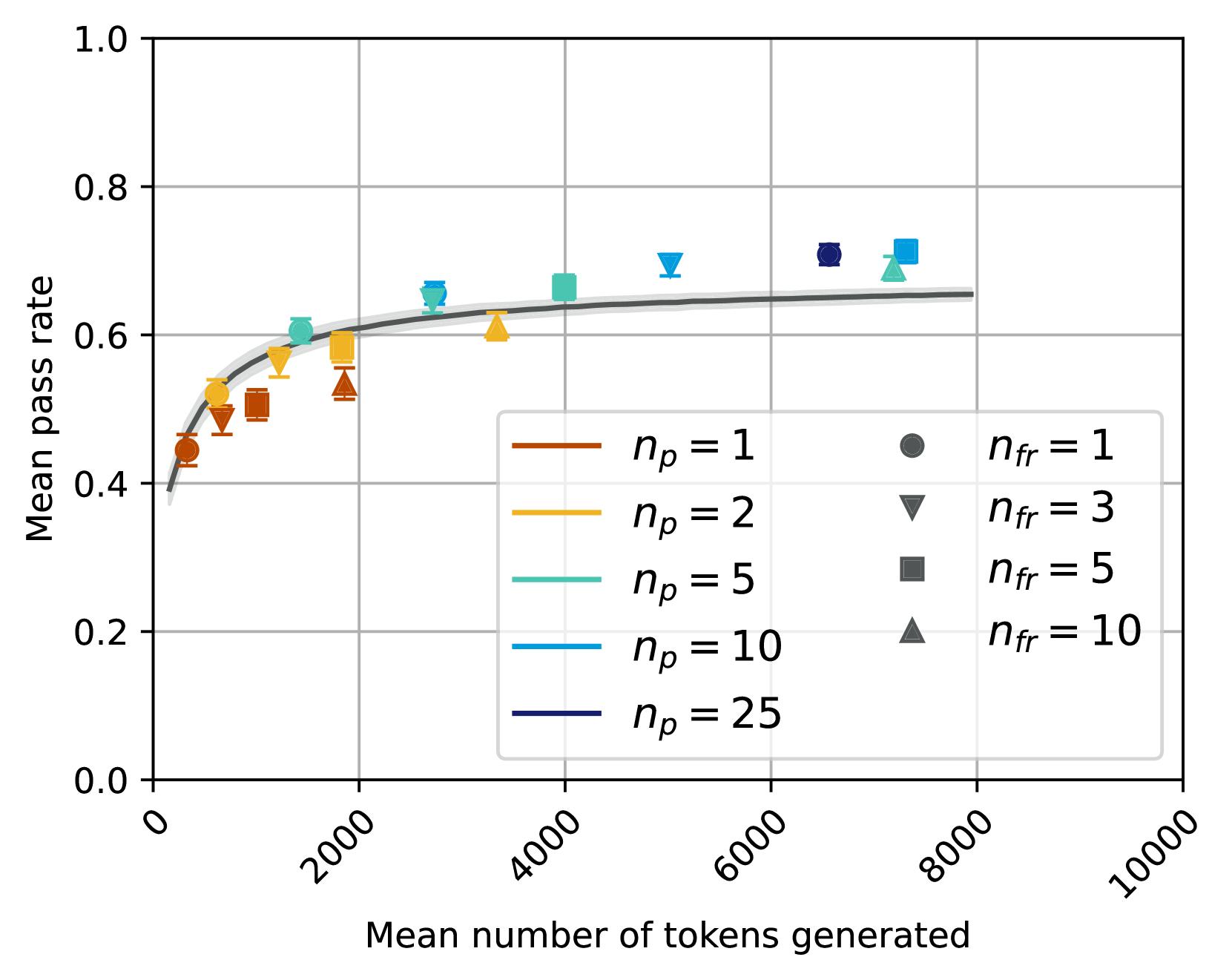

The results for this experiment are shown in Figure 6 (bright blue line). We observe that in terms of absolute performance, $M_{P}=$ GPT-3.5, $M_{F}=$ GPT-4 does break through the performance barrier and becomes marginally more efficient than i.i.d. sampling from GPT-3.5. This suggests that the textual feedback stage itself is of crucial importance, and that improving it relieves the bottleneck in GPT-3.5 self-repair.

Figure 5: Mean pass rate for each model when $n_{fr}$ (or $n_{f}$ and $n_{r}$ ) = 1. Shaded region is $\pm 1$ standard deviation. Complete breakdown per difficulty in Appendix A.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Chart Type: Line Chart - Mean Pass Rate vs. Mean Number of Tokens Generated for GPT Models

### Overview

This image displays a line chart illustrating the relationship between the "Mean pass rate" (Y-axis) and the "Mean number of tokens generated" (X-axis) for five different configurations of GPT models. Each line represents a distinct model setup, differentiated by the primary model ($M_P$) and, if applicable, a repair model ($M_F$). Shaded areas around each line indicate uncertainty or a confidence interval.

### Components/Axes

The chart consists of a main plotting area, a Y-axis on the left, an X-axis at the bottom, and a legend in the bottom-right.

* **Y-axis Label**: "Mean pass rate"

* **Y-axis Range**: From 0.0 to 1.0.

* **Y-axis Major Ticks**: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **X-axis Label**: "Mean number of tokens generated"

* **X-axis Range**: From 0 to 10000.

* **X-axis Major Ticks**: 0, 2000, 4000, 6000, 8000, 10000.

* **X-axis Tick Label Orientation**: Labels are rotated approximately 45 degrees counter-clockwise.

* **Grid**: Light gray grid lines are present, aligning with the major ticks on both axes.

* **Legend**: Located in the bottom-right quadrant of the plot area. It lists five data series with their corresponding line colors:

* **Dark Blue line**: $M_P$ = GPT-4 (no repair)

* **Teal/Light Green line**: $M_P$ = GPT-4; $M_F$ = GPT-4

* **Dark Gray line**: $M_P$ = GPT-3.5 (no repair)

* **Brown/Orange line**: $M_P$ = GPT-3.5; $M_F$ = GPT-3.5

* **Light Blue/Cyan line**: $M_P$ = GPT-3.5; $M_F$ = GPT-4

### Detailed Analysis

Each data series shows a general trend of an initial rapid increase in mean pass rate as the mean number of tokens generated increases, followed by a plateau where the pass rate stabilizes or increases very slowly. All lines are accompanied by a translucent shaded area, representing a confidence interval around the mean pass rate.

1. **Teal/Light Green line ($M_P$ = GPT-4; $M_F$ = GPT-4)**:

* **Trend**: This line starts at a relatively high pass rate, rises steeply, and then flattens out to become the highest performing series. It ends around 6500 tokens.

* **Approximate Data Points**:

* ~0 tokens: ~0.40 pass rate

* ~1000 tokens: ~0.60 pass rate

* ~2000 tokens: ~0.65 pass rate

* ~4000 tokens: ~0.68 pass rate

* ~6000 tokens: ~0.70 pass rate (line ends)

2. **Dark Blue line ($M_P$ = GPT-4 (no repair))**:

* **Trend**: This line follows a similar pattern to the Teal line, starting high, rising steeply, and then flattening. It consistently performs lower than the Teal line but higher than all GPT-3.5 configurations. It ends around 7800 tokens.

* **Approximate Data Points**:

* ~0 tokens: ~0.40 pass rate

* ~1000 tokens: ~0.58 pass rate

* ~2000 tokens: ~0.62 pass rate

* ~4000 tokens: ~0.64 pass rate

* ~6000 tokens: ~0.65 pass rate

* ~7800 tokens: ~0.66 pass rate (line ends)

3. **Light Blue/Cyan line ($M_P$ = GPT-3.5; $M_F$ = GPT-4)**:

* **Trend**: This line starts at a lower pass rate than the GPT-4 primary models, rises, and then flattens. It is the highest performing among the GPT-3.5 primary model configurations. It extends to 10000 tokens.

* **Approximate Data Points**:

* ~0 tokens: ~0.25 pass rate

* ~1000 tokens: ~0.40 pass rate

* ~2000 tokens: ~0.45 pass rate

* ~4000 tokens: ~0.50 pass rate

* ~6000 tokens: ~0.53 pass rate

* ~8000 tokens: ~0.55 pass rate

* ~10000 tokens: ~0.56 pass rate

4. **Brown/Orange line ($M_P$ = GPT-3.5; $M_F$ = GPT-3.5)**:

* **Trend**: This line shows a similar initial rise and plateau, consistently performing slightly below the Light Blue/Cyan line. It extends to 10000 tokens.

* **Approximate Data Points**:

* ~0 tokens: ~0.25 pass rate

* ~1000 tokens: ~0.38 pass rate

* ~2000 tokens: ~0.42 pass rate

* ~4000 tokens: ~0.46 pass rate

* ~6000 tokens: ~0.49 pass rate

* ~8000 tokens: ~0.51 pass rate

* ~10000 tokens: ~0.52 pass rate

5. **Dark Gray line ($M_P$ = GPT-3.5 (no repair))**:

* **Trend**: This line exhibits the lowest pass rates across all token counts, following the general trend of rising and then flattening. It extends to 10000 tokens.

* **Approximate Data Points**:

* ~0 tokens: ~0.25 pass rate

* ~1000 tokens: ~0.35 pass rate

* ~2000 tokens: ~0.40 pass rate

* ~4000 tokens: ~0.43 pass rate

* ~6000 tokens: ~0.46 pass rate

* ~8000 tokens: ~0.48 pass rate

* ~10000 tokens: ~0.49 pass rate

### Key Observations

* **Performance Hierarchy**: The models using GPT-4 as the primary model ($M_P$) consistently achieve higher pass rates than those using GPT-3.5 as $M_P$.