# Can LLMs Express Their Uncertainty? An Empirical Evaluation of Confidence Elicitation in LLMs

> Corresponding to: Miao Xiong advising:

## Abstract

Empowering large language models (LLMs) to accurately express confidence in their answers is essential for reliable and trustworthy decision-making. Previous confidence elicitation methods, which primarily rely on white-box access to internal model information or model fine-tuning, have become less suitable for LLMs, especially closed-source commercial APIs. This leads to a growing need to explore the untapped area of black-box approaches for LLM uncertainty estimation. To better break down the problem, we define a systematic framework with three components: prompting strategies for eliciting verbalized confidence, sampling methods for generating multiple responses, and aggregation techniques for computing consistency. We then benchmark these methods on two key tasks—confidence calibration and failure prediction—across five types of datasets (e.g., commonsense and arithmetic reasoning) and five widely-used LLMs including GPT-4 and LLaMA 2 Chat. Our analysis uncovers several key insights: 1) LLMs, when verbalizing their confidence, tend to be overconfident, potentially imitating human patterns of expressing confidence. 2) As model capability scales up, both calibration and failure prediction performance improve, yet still far from ideal performance. 3) Employing our proposed strategies, such as human-inspired prompts, consistency among multiple responses, and better aggregation strategies can help mitigate this overconfidence from various perspectives. 4) Comparisons with white-box methods indicate that while white-box methods perform better, the gap is narrow, e.g., 0.522 to 0.605 in AUROC. Despite these advancements, none of these techniques consistently outperform others, and all investigated methods struggle in challenging tasks, such as those requiring professional knowledge, indicating significant scope for improvement. We believe this study can serve as a strong baseline and provide insights for eliciting confidence in black-box LLMs. The code is publicly available at https://github.com/MiaoXiong2320/llm-uncertainty.

## 1 Introduction

A key aspect of human intelligence lies in our capability to meaningfully express and communicate our uncertainty in a variety of ways (Cosmides & Tooby, 1996). Reliable uncertainty estimates are crucial for human-machine collaboration, enabling more rational and informed decision-making (Guo et al., 2017; Tomani & Buettner, 2021). Specifically, accurate confidence estimates of a model can provide valuable insights into the reliability of its responses, facilitating risk assessment and error mitigation (Kuleshov et al., 2018; Kuleshov & Deshpande, 2022), selective generation (Ren et al., 2022), and reducing hallucinations in natural language generation tasks (Xiao & Wang, 2021).

In the existing literature, eliciting confidence from machine learning models has predominantly relied on white-box access to internal model information, such as token-likelihoods (Malinin & Gales, 2020; Kadavath et al., 2022) and associated calibration techniques (Jiang et al., 2021), as well as model fine-tuning (Lin et al., 2022). However, with the prevalence of large language models, these methods are becoming less suitable for several reasons: 1) The rise of closed-source LLMs with commercialized APIs, such as GPT-3.5 (OpenAI, 2021) and GPT-4 (OpenAI, 2023), which only allow textual inputs and outputs, lacking access to token-likelihoods or embeddings; 2) Token-likelihood primarily captures the model’s uncertainty about the next token (Kuhn et al., 2023), rather than the semantic probability inherent in textual meanings. For example, in the phrase “Chocolate milk comes from brown cows", every word fits naturally based on its surrounding words, but high individual token likelihoods do not capture the falsity of the overall statement, which requires examining the statement semantically, in terms of its claims; 3) Model fine-tuning demands substantial computational resources, which may be prohibitive for researchers with lower computational resources. Given these constraints, there is a growing need to explore black-box approaches for eliciting the confidence of LLMs in their answers, a task we refer to as confidence elicitation.

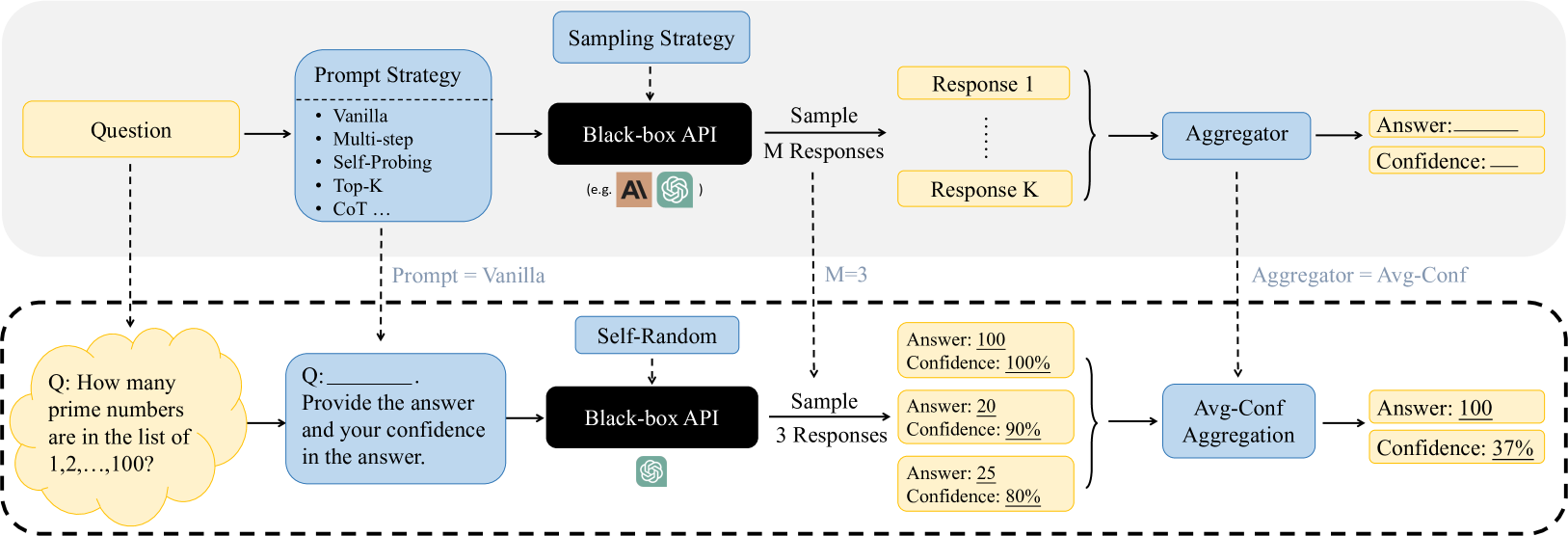

Recognizing this research gap, our study aims to contribute to the existing knowledge from two perspectives: 1) explore black-box methods for confidence elicitation, and 2) conduct a comparative analysis to shed light on methods and directions for eliciting more accurate confidence. To achieve this, we define a systematic framework with three components: prompting strategies for eliciting verbalized confidence, sampling strategies for generating multiple responses, and aggregation strategies for computing the consistency. For each component, we devise a suite of methods. By integrating these components, we formulate a set of algorithms tailored for confidence elicitation. A comprehensive overview of the framework is depicted in Figure 1. We then benchmark these methods on two key tasks—confidence calibration and failure prediction—across five types of tasks (Commonsense, Arithmetic, Symbolic, Ethics and Professional Knowledge) and five widely-used LLMs, i.e., GPT-3 (Brown et al., 2020), GPT-3.5 (OpenAI, 2021), GPT-4, Vicuna (Chiang et al., 2023) and LLaMA 2 (Touvron et al., 2023b).

Our investigation yields several observations: 1) LLMs tend to be highly overconfident when verbalizing their confidence, posing potential risks for the safe deployment of LLMs (§ 5.1). Intriguingly, the verbalized confidence values predominantly fall within the 80% to 100% range and are typically in multiples of 5, similar to how humans talk about confidence. In addition, while scaling model capacity leads to performance improvement, the results remain suboptimal. 2) Prompting strategies, inspired by patterns observed in human dialogues, can mitigate this overconfidence, but the improvement also diminishes as the model capacity scales up (§ 5.2). Furthermore, while the calibration error (e.g. ECE) can be significantly reduced using suitable prompting strategies, failure prediction still remains a challenge. 3) Our study on sampling and aggregation strategies indicates their effectiveness in improving failure prediction performance (§ 5.3). 4) A detailed examination of aggregation strategies reveals that they cater to specific performance metrics, i.e., calibration and failure prediction, and can be selected based on desired outcomes (§ 5.4). 5) Comparisons with white-box methods indicate that while white-box methods perform better, the gap is narrow, e.g., 0.522 to 0.605 in AUROC (§ B.1). Despite these insights, it is worth noting that the methods introduced herein still face challenges in failure prediction, especially with tasks demanding specialized knowledge (§ 6). This emphasizes the ongoing need for further research and development in confidence elicitation for LLMs.

## 2 Related Works

Confidence Elicitation in LLMs. Confidence elicitation is the process of estimating LLM’s confidence in their responses without model fine-tuning or accessing internal information. Within this scope, Lin et al. (2022) introduced the concept of verbalized confidence that prompts LLMs to express confidence directly. However, they mainly focus on fine-tuning on specific datasets where the confidence is provided, and its zero-shot verbalized confidence is unexplored. Other approaches, like the external calibrator from Mielke et al. (2022), depend on internal model representations, which are often inaccessible. While Zhou et al. (2023) examines the impact of confidence, it does not provide direct confidence scores to users. Our work aligns most closely with the concurrent study by Tian et al. (2023), which mainly focuses on the use of prompting strategies. Our approach diverges by aiming to explore a broader method space, and propose a comprehensive framework for systematically evaluating various strategies and their integration. We also consider a wider range of models beyond those RLHF-LMs examined in concurrent research, thus broadening the scope of confidence elicitation. Our results reveal persistent challenges across more complex tasks and contribute to a holistic understanding of confidence elicitation. For a more comprehensive discussion of the related works, kindly refer to Appendix C.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Black-Box API Query Process with Prompt Strategies and Confidence Aggregation

### Overview

The image is a technical flowchart diagram illustrating a two-stage process for querying a black-box AI API. The top section outlines a general framework for using various prompt strategies and sampling methods to generate multiple responses, which are then aggregated to produce a final answer with a confidence score. The bottom section, enclosed in a dashed border, provides a concrete instantiation of this framework using a specific example question, a "Vanilla" prompt strategy, a "Self-Random" sampling strategy with M=3, and an "Avg-Conf" (Average Confidence) aggregation method.

### Components/Axes

The diagram is structured into two horizontal sections.

**Top Section (General Framework):**

1. **Input:** A yellow box labeled "Question" on the far left.

2. **Prompt Strategy Module:** A light blue box labeled "Prompt Strategy" containing a bulleted list:

* Vanilla

* Multi-step

* Self-Probing

* Top-K

* CoT ...

3. **Sampling Strategy Module:** A light blue box labeled "Sampling Strategy" above the API.

4. **Core Processing Unit:** A black box labeled "Black-box API" with small logos for "AI" and "OpenAI" (represented by a green swirl icon) beneath the text "(e.g., )".

5. **Sampling Output:** An arrow labeled "Sample M Responses" leads to a set of yellow boxes labeled "Response 1" through "Response K", indicating multiple outputs.

6. **Aggregation Module:** A light blue box labeled "Aggregator".

7. **Final Output:** Two yellow boxes on the far right: "Answer: ______" and "Confidence: ______".

**Bottom Section (Specific Example - within dashed border):**

1. **Example Question:** A yellow cloud-shaped box containing the text: "Q: How many prime numbers are in the list of 1,2,...,100?".

2. **Example Prompt:** A light blue box connected by a dashed line labeled "Prompt = Vanilla". It contains: "Q: ______. Provide the answer and your confidence in the answer."

3. **Example Sampling Strategy:** A light blue box labeled "Self-Random" above the API.

4. **Example API:** A black box labeled "Black-box API" with the green OpenAI logo.

5. **Example Sampling Output:** An arrow labeled "Sample 3 Responses" (with "M=3" noted above the dashed line) leads to three yellow boxes:

* "Answer: 100", "Confidence: 100%"

* "Answer: 20", "Confidence: 90%"

* "Answer: 25", "Confidence: 80%"

6. **Example Aggregation:** A light blue box labeled "Avg-Conf Aggregation", connected by a dashed line labeled "Aggregator = Avg-Conf".

7. **Example Final Output:** Two yellow boxes: "Answer: 100" and "Confidence: 37%".

### Detailed Analysis

The diagram explicitly maps the flow of information and control parameters from the general framework to the specific example.

* **Prompt Strategy Flow:** The "Question" feeds into the "Prompt Strategy" module. In the example, the chosen strategy is "Vanilla", which formats the raw question into a prompt requesting both an answer and a confidence score.

* **API Interaction:** The formatted prompt is sent to the "Black-box API". The "Sampling Strategy" (e.g., "Self-Random") dictates how the API is queried to produce diversity in outputs.

* **Response Generation:** The API is sampled `M` times (M=3 in the example) to generate `K` responses (K=3 shown). Each response is a paired data point: an answer and a self-reported confidence percentage.

* **Aggregation Logic:** The multiple response pairs are fed into the "Aggregator". The example uses "Avg-Conf Aggregation". The final confidence (37%) is the arithmetic mean of the individual confidences (100%, 90%, 80% = 270% / 3 = 90%? **Note: There is a discrepancy here.** The diagram states the final confidence is 37%, but the average of 100, 90, and 80 is 90. This suggests the "Avg-Conf" method may not be a simple mean, or the numbers in the example are illustrative and not mathematically consistent. The final answer "100" appears to be selected from the response with the highest individual confidence (100%).

* **Spatial Grounding:** The "Sampling Strategy" box is positioned above the "Black-box API". The "Aggregator" box is positioned to the right of the response set. The example section is clearly demarcated by a dashed border below the general framework.

### Key Observations

1. **Modular Design:** The framework is highly modular, allowing independent selection of prompt strategy, sampling strategy, and aggregation method.

2. **Black-Box Abstraction:** The API is treated as an opaque component; only its inputs (prompts) and outputs (text responses) are considered.

3. **Confidence as a Key Output:** The process explicitly seeks to quantify uncertainty by collecting and aggregating confidence scores alongside answers.

4. **Example Discrepancy:** The numerical example contains an internal inconsistency. The stated final confidence of 37% does not match the simple average of the provided confidence scores (90%). This implies either a more complex aggregation function is used (e.g., weighted by answer consistency) or the example values are placeholders not meant to be arithmetically precise.

5. **Answer Selection:** In the example, the final answer "100" corresponds to the response with the highest individual confidence (100%), not necessarily the most frequent answer (which would be ambiguous here with three different answers: 100, 20, 25).

### Interpretation

This diagram illustrates a methodology for improving the reliability and calibrating the uncertainty of outputs from large language models (LLMs) accessed via black-box APIs. The core idea is to move beyond single-query interactions.

* **Peircean Investigation:** The process embodies a pragmatic, investigative approach. Instead of accepting a single answer from the API (a "Firstness" of raw output), it generates multiple hypotheses (responses) through varied prompting and sampling ("Secondness" of interaction and reaction). The aggregation step ("Thirdness") establishes a rule or law—the final answer and its confidence—based on the pattern observed across the multiple interactions.

* **Managing Stochasticity:** LLMs are stochastic; the same prompt can yield different results. This framework embraces that stochasticity by sampling multiple times and using aggregation to find a consensus or most reliable output.

* **Quantifying Uncertainty:** By prompting the model to self-report confidence and then aggregating those scores, the system attempts to produce a meta-cognitive estimate of its own reliability. The low final confidence (37%) in the example, despite high individual confidences, signals significant disagreement among the model's own responses, warning the user that the answer "100" may not be trustworthy.

* **Practical Implication:** This pattern is valuable for high-stakes applications where understanding the certainty of an AI's answer is as important as the answer itself. It provides a systematic way to query AI systems more rigorously. The discrepancy in the example's math, however, highlights that the exact aggregation logic is a critical, implementation-specific detail not fully explained by the diagram alone.

</details>

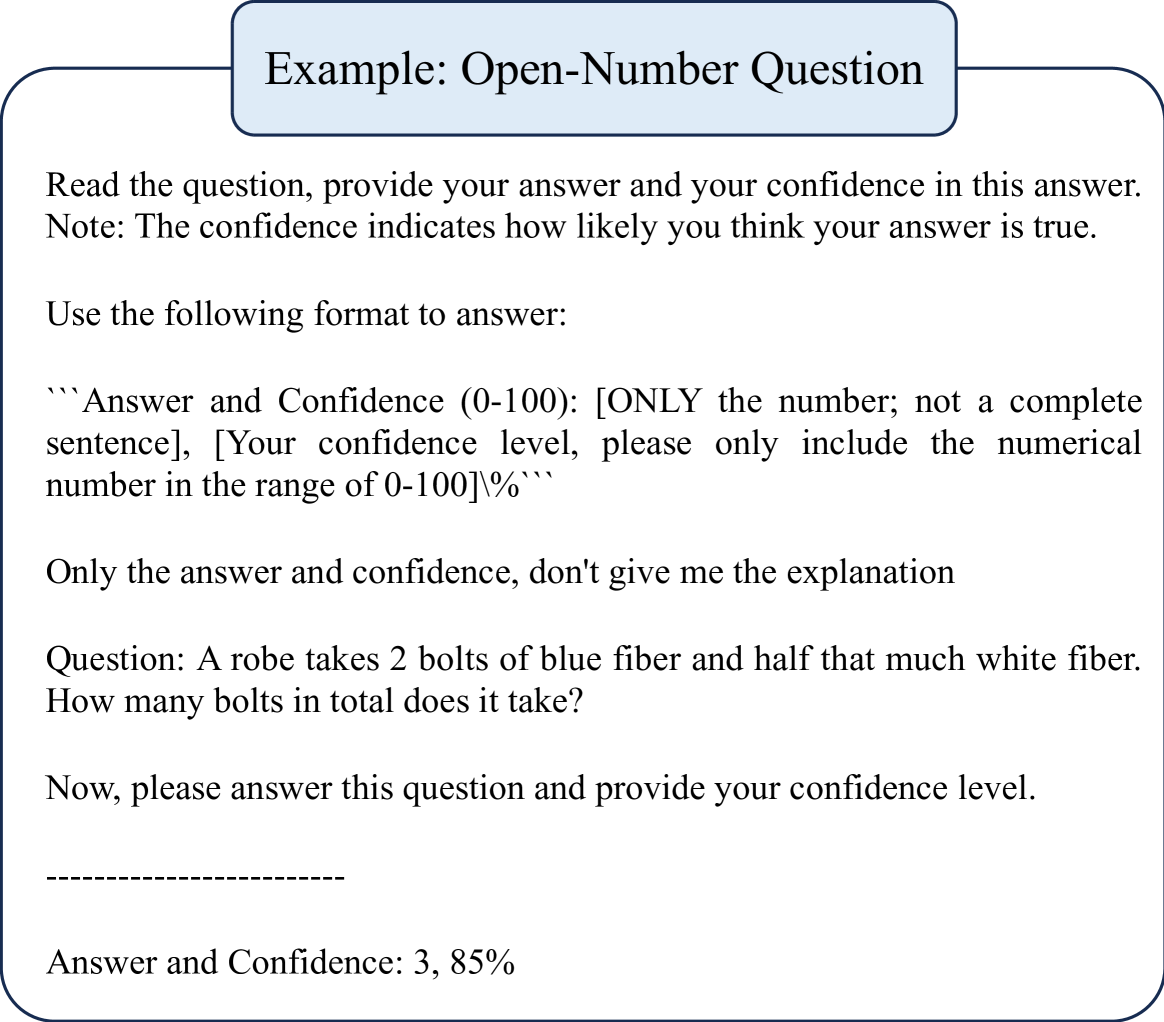

Figure 1: An Overview and example of Confidence Elicitation framework, which consists of three components: prompt, sampling and aggregator. By integrating distinct strategies from each component, we can devise different algorithms, e.g., Top-K (Tian et al., 2023) is formulated using Top-K prompt, self-random sampling with $M=1$ , and Avg-Conf aggregation. Given an input question, we first choose a suitable prompt strategy, e.g., the vanilla prompt used here. Next, we determine the number of samples to generate ( $M=3$ here) and sampling strategy, and then choose an aggregator based on our preference (e.g. focus more on improving calibration or failure prediction) to compute confidences in its potential answers. The highest confident answer is selected as the final output.

## 3 Exploring Black-box Framework for Confidence Elicitation

In our pursuit to explore black-box approaches for eliciting confidence, we investigated a range of methods and discovered that they can be encapsulated within a unified framework. This framework, with its three pivotal components, offers a variety of algorithmic choices that combine to create diverse algorithms with different benefits for confidence elicitation. In our later experimental section (§ 5), we will analyze our proposed strategies within each component, aiming to shed light on the best practices for eliciting confidence in black-box LLMs.

### 3.1 Motivation of The Framework

Prompting strategy. The key question we aim to answer here is: in a black-box setting, what form of model inputs and outputs lead to the most accurate confidence estimates? This parallels the rich study in eliciting confidences from human experts: for example, patients often inquire of doctors about their confidence in the potential success of a surgery. We refer to this goal as verbalized confidence, and inspired by strategies for human elicitation, we design a series of human-inspired prompting strategies to elicit the model’s verbalized confidence. We then unify these prompting strategies as a building block of our framework (§ 3.2). In addition, beyond its simplicity, this approach also offers an extra benefit over model’s token-likelihood: the verbalized confidence is intrinsically tied to the semantic meaning of the answer instead of its syntactic or lexical form (Kuhn et al., 2023).

Sampling and Aggregation. In addition to the direct insights from model outputs, the variance observed among multiple responses for a given question offers another valuable perspective on model confidence. This line of thought aligns with the principle extensively explored in prior white-box access uncertainty estimation methodologies for classification (Gawlikowski et al., 2021), such as MCDropout (Gal & Ghahramani, 2016) and Deep Ensemble (Lakshminarayanan et al., 2017). The challenges in adapting ensemble-based methods lie in two critical components: 1) the sampling strategy, i.e., how to sample multiple responses from the model’s answer distribution, and 2) the aggregation strategy, i.e., how to aggregate these responses to yield the final answer and its associated confidence. To optimally harness both textual output and response variance, we have integrated them within a unified framework.

Table 1: Illustration of the prompting strategy (the complete prompt in Appendix F). To help models understand the concept of confidence, we also append the explanation “Note: The confidence indicates how likely you think your answer is true." to every prompt.

| Vanilla CoT Self-Probing | Read the question, provide your answer, and your confidence in this answer. Read the question, analyze step by step, provide your answer and your confidence in this answer. Question: […] Possible Answer: […] Q: How likely is the above answer to be correct? Analyze the possible answer, provide your reasoning concisely, and give your confidence in this answer. |

| --- | --- |

| Multi-Step | Read the question, break down the problem into K steps, think step by step, give your confidence in each step, and then derive your final answer and your confidence in this answer. |

| Top-K | Provide your $K$ best guesses and the probability that each is correct (0% to 100%) for the following question. |

### 3.2 Prompting Strategy

Drawing inspiration from patterns observed in human dialogues, we design a series of human-inspired prompting strategies to tackle challenges, e.g., overconfidence, that are inherent in the vanilla version of verbalized confidence. See Table 1 for an overview of these prompting strategies and Appendix F for complete prompts.

CoT. Considering that a better comprehension of a problem can lead to a more accurate understanding of one’s certainty, we adopt a reasoning-augmented prompting strategy. In this paper, we use zero-shot Chain-of-Thought, CoT (Kojima et al., 2022) for its proven efficacy in inducing reasoning processes and improving model accuracy across diverse datasets. Alternative strategies such as plan-and-solve (Wang et al., 2023) can also be used.

Self-Probing. A common observation of humans is that they often find it easier to identify errors in others’ answers than in their own, as they can become fixated on a particular line of thinking, potentially overlooking mistakes. Building on this assumption, we investigate if a model’s uncertainty estimation improves when given a question and its answer, then asked, “How likely is the above answer to be correct"? The procedure involves generating the answer in one chat session and obtaining its verbalized confidence in another independent chat session.

Multi-Step. Our preliminary study shows that LLMs tend to be overconfident when verbalizing their confidence (see Figure 2). To address this, we explore whether dissecting the reasoning process into steps and extracting the confidence of each step can alleviate the overconfidence. The rationale is that understanding each reasoning step’s confidence could help the model identify potential inaccuracies and quantify their confidence more accurately. Specifically, for a given question, we prompt models to delineate their reasoning process into individual steps $S_{i}$ and evaluate their confidence in the correctness of this particular step, denoted as $C_{i}$ . The overall verbalized confidence is then derived by aggregating the confidence of all steps: $C_{\text{multi-step}}=\prod_{i=1}^{n}C_{i}$ , where $n$ represents the total number of reasoning steps.

Top-K. Another way to alleviate overconfidence is to realize the existence of multiple possible solutions or answers, which acts as a normalization for the confidence distribution. Motivated by this, Top-K (Tian et al., 2023) prompts LLMs to generate the top $K$ guesses and their corresponding confidence for a given question.

### 3.3 Sampling Strategy

Several methods can be employed to elicit multiple responses of the same question from the model: 1) Self-random, leveraging the model’s inherent randomness by inputting the same prompt multiple times. The temperature, an adjustable parameter, can be used to calibrate the predicted token distribution, i.e., adjust the diversity of the sampled answers. An alternative choice is to introduce perturbations in the questions: 2) Prompting, by paraphrasing the questions in different ways to generate multiple responses. 3) Misleading, feeding misleading cues to the model, e.g.,“I think the answer might be …". This method draws inspiration from human behaviors: when confident, individuals tend to stick to their initial answers despite contrary suggestions; conversely, when uncertain, they are more likely to waver or adjust their responses based on misleading hints. Building on this observation, we evaluate the model’s response to misleading information to gauge its uncertainty. See Table 11 for the complete prompts.

### 3.4 Aggregation Strategy

Consistency. A natural idea of aggregating different answers is to measure the degree of agreement among the candidate outputs and integrate the inherent uncertainty in the model’s output.

For any given question and an associated answer $\tilde{Y}$ , we sample a set of candidate answers $\hat{Y}_{i}$ , where $i\in\{1,...,M\}$ . The agreement between these candidate responses and the original answer then serves as a measure of confidence, computed as follows:

$$

C_{\operatorname{consistency}}=\frac{1}{M}\sum_{i=1}^{M}\mathbb{I}\{\hat{Y}_{i

}=\tilde{Y}\}. \tag{1}

$$

Avg-Conf. The previous aggregation method does not utilize the available information of verbalized confidence. It is worth exploring the potential synergy between these uncertainty indicators, i.e., whether the verbalized confidence and the consistency between answers can complement one another. For any question and an associated answer $\tilde{Y}$ , we sample a candidate set $\{\hat{Y}_{1},...\hat{Y}_{M}\}$ with their corresponding verbalized confidence $\{C_{1},...C_{M}\}$ , and compute the confidence as follows:

$$

C_{\operatorname{conf}}=\frac{\sum_{i=1}^{M}\mathbb{I}\{\hat{Y}_{i}=\tilde{Y}

\}\times C_{i}}{\sum_{i=1}^{M}C_{i}}. \tag{2}

$$

Pair-Rank. This aggregation strategy is tailored for responses generated using the Top-K prompt, as it mainly utilizes the ranking information of the model’s Top-K guesses. The underlying assumption is that the model’s ranking between two options may be more accurate than the verbalized confidence it provides, especially given our observation that the latter tends to exhibit overconfidence.

Given a question with $N$ candidate responses, the $i\text{-th}$ response consists of $K$ sequentially ordered answers, denoted as $\mathcal{S}^{(i)}_{K}=(S_{1}^{(i)},S_{2}^{(i)},\dots,S_{K}^{(i)})$ . Let $\mathcal{A}$ represent the set of unique answers across all $N$ responses, where $M$ is the total number of distinct answers. The event where the model ranks answer $S_{u}$ above $S_{v}$ (i.e., $S_{u}$ appears before $S_{v}$ ) in its $i$ -th generation is represented as $(S_{u}\stackrel{{\scriptstyle\scriptstyle(i)}}{{\succ}}S_{v})$ . In contexts where the generation is implicit, this is simply denoted as $(S_{u}\succ S_{v})$ . Let $E_{uv}^{(i)}$ be the event where at least one of $S_{u}$ and $S_{v}$ appears in the $i$ -th generation. Then the probability of $(S_{u}\succ S_{v})$ , conditional on $E_{uv}^{(i)}$ and a categorical distribution $P$ , is expressed as $\mathbb{P}(S_{u}\succ S_{v}|P,E_{uv}^{(i)})$ .

We then utilize a (conditional) maximum likelihood estimation (MLE) inspired approach to derive the categorical distribution $P$ that most accurately reflects these ranking events of all the $M$ responses:

$$

\min_{P}{\color[rgb]{0,0,0}-}\sum_{i=1}^{N}\sum_{S_{u}\in\mathcal{A}}\sum_{S_{

v}\in\mathcal{A}}\mathbb{I}\left\{S_{u}\stackrel{{\scriptstyle\scriptstyle(i)}

}{{\succ}}S_{v}\right\}\cdot\log\mathbb{P}\left(S_{u}\succ S_{v}\mid P,E_{uv}^

{(i)}\right)\quad\text{subject to}\sum_{S_{u}\in\mathcal{A}}P\left(S_{u}\right

)=1 \tag{3}

$$

**Proposition 3.1**

*Suppose the Top-K answers are drawn from a categorical distribution $P$ without replacement. Define the event $(S_{u}\succ S_{v})$ to indicate that the realization $S_{u}$ is observed before $S_{v}$ in the $i\text{-th}$ draw without replacement. Under this setting, the conditional probability is given by:

$$

\mathbb{P}\left(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)}\right)=\frac{P(S_{u})}{P(S

_{u})+P(S_{v})}

$$

The optimization objective to minimize the expected loss is then:

$$

\min_{P}{\color[rgb]{0,0,0}-}\sum_{i=1}^{N}\sum_{S_{u}\in\mathcal{A}}\sum_{S_{

v}\in\mathcal{A}}\mathbb{I}\left\{S_{u}\stackrel{{\scriptstyle\scriptstyle(i)}

}{{\succ}}S_{v}\right\}\cdot\log\frac{P(S_{u})}{P(S_{u})+P(S_{v})}\quad\text{s

.t.}\sum_{S_{u}\in\mathcal{A}}P\left(S_{u}\right)=1 \tag{4}

$$*

To address this constrained optimization problem, we first introduce a change of variables by applying the softmax function to the unbounded domain. This transformation inherently satisfies the simplex constraints, converting our problem into an unconstrained optimization setting. Subsequently, optimization techniques such as gradient descent can be used to obtain the categorical distribution.

## 4 Experiment Setup

Datasets. We evaluate the quality of confidence estimates across five types of reasoning tasks: 1) Commonsense Reasoning on two benchmarks, Sports Understanding (SportUND) (Kim, 2021) and StrategyQA (Geva et al., 2021) from BigBench (Ghazal et al., 2013); 2) Arithmetic Reasoning on two math problems, GSM8K (Cobbe et al., 2021) and SVAMP (Patel et al., 2021); 3) Symbolic Reasoning on two benchmarks, Date Understanding (DateUnd) (Wu & Wang, 2021) and Object Counting (ObjectCou) (Wang et al., 2019) from BigBench; 4) tasks requiring Professional Knowledge, such as Professional Law (Prf-Law) from MMLU (Hendrycks et al., 2021); 5) tasks that require Ethical Knowledge, e.g., Business Ethics (Biz-Ethics) from MMLU (Hendrycks et al., 2021).

Models We incorporate a range of widely used LLMs of different scales, including Vicuna 13B (Chiang et al., 2023), GPT-3 175B (Brown et al., 2020), GPT-3.5-turbo (OpenAI, 2021), GPT-4 (OpenAI, 2023) and LLaMA 2 70B (Touvron et al., 2023b).

Evaluation Metrics. To evaluate the quality of confidence outputs, two orthogonal tasks are typically employed: calibration and failure prediction (Naeini et al., 2015; Yuan et al., 2021; Xiong et al., 2022). Calibration evaluates how well a model’s expressed confidence aligns with its actual accuracy: ideally, samples with an 80% confidence should have an accuracy of 80%. Such well-calibrated scores are crucial for applications including risk assessment. On the other hand, failure prediction gauges the model’s capacity to assign higher confidence to correct predictions and lower to incorrect ones, aiming to determine if confidence scores can effectively distinguish between correct and incorrect predictions. In our study, we employ Expected Calibration Error (ECE) for calibration evaluation and Area Under the Receiver Operating Characteristic Curve (AUROC) for gauging failure prediction. Given the potential imbalance from varying accuracy levels, we also introduce AUPRC-Positive (PR-P) and AUPRC-Negative (PR-N) metrics to emphasize whether the model can identify incorrect and correct samples, respectively.

Further details on datasets, models, metrics, and implementation can be found in Appendix E.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Statistical Calibration Analysis: Four Language Models

### Overview

The image presents a 2x4 grid of statistical plots analyzing the confidence calibration of four different language models: GPT3, GPT3.5, GPT4, and Vicuna. Each model has two associated plots: a top histogram showing the distribution of confidence scores for correct vs. incorrect answers, and a bottom calibration plot comparing the model's confidence to its actual accuracy within confidence bins.

### Components/Axes

**Global Structure:**

- The image is divided into four vertical columns, one per model.

- Each column is labeled at the top with the model name in a box: `GPT3`, `GPT3.5`, `GPT4`, `Vicuna`.

- Below each model name, three performance metrics are listed: `ACC` (Accuracy), `AUROC` (Area Under the Receiver Operating Characteristic curve), and `ECE` (Expected Calibration Error).

**Top Row (Histograms):**

- **Y-axis:** Labeled `Count`. Represents the number of responses.

- **X-axis:** Labeled `Confidence (%)`. Ranges from 50 to 100.

- **Legend:** Located in the top-left corner of each histogram. Contains two entries:

- A red square labeled `wrong answer`.

- A blue square labeled `correct answer`.

- **Data Representation:** Stacked bar charts. The total height of a bar at a given confidence bin represents the total number of responses in that bin. The bar is segmented into red (bottom) for wrong answers and blue (top) for correct answers.

**Bottom Row (Calibration Plots):**

- **Y-axis:** Labeled `Accuracy Within Bin`. Ranges from 0.0 to 1.0.

- **X-axis:** Labeled `Confidence`. Ranges from 0.0 to 1.0.

- **Reference Line:** A dashed black diagonal line runs from the bottom-left corner (0.0, 0.0) to the top-right corner (1.0, 1.0). This represents perfect calibration, where confidence equals accuracy.

- **Data Representation:** Blue vertical bars. The height of each bar represents the actual accuracy for responses falling within that confidence bin.

### Detailed Analysis

**Column 1: GPT3**

- **Metrics:** `ACC 0.15 / AUROC 0.51 / ECE 0.83`

- **Top Histogram:**

- The distribution is heavily skewed to the right. The vast majority of responses are in the 90-100% confidence bins.

- The bin at 100% confidence is the tallest, composed almost entirely of red (`wrong answer`), with a very small blue segment (`correct answer`) on top.

- A much smaller bar exists at 80% confidence, also predominantly red.

- Very few responses are in bins below 80%.

- **Bottom Calibration Plot:**

- Bars are present only in the high-confidence region (0.8 to 1.0).

- The bars are significantly shorter than the dashed diagonal line. For example, the bar near confidence 0.8 has an accuracy of approximately 0.15, and the bar near 1.0 has an accuracy of approximately 0.2.

- This indicates severe **overconfidence**: the model's stated confidence is much higher than its actual accuracy.

**Column 2: GPT3.5**

- **Metrics:** `ACC 0.28 / AUROC 0.65 / ECE 0.66`

- **Top Histogram:**

- Responses are concentrated in the 90-100% confidence range.

- The 90% bin is the tallest, with a large red segment and a smaller blue segment.

- The 100% bin is shorter than the 90% bin but has a larger proportion of blue (correct answers) relative to red.

- A very small bar exists at 80% confidence.

- **Bottom Calibration Plot:**

- Bars are clustered between confidence 0.7 and 1.0.

- The bars are closer to the diagonal line than GPT3's but still consistently below it. For instance, at confidence ~0.9, accuracy is ~0.5.

- This shows **overconfidence**, though less severe than GPT3.

**Column 3: GPT4**

- **Metrics:** `ACC 0.47 / AUROC 0.66 / ECE 0.51`

- **Top Histogram:**

- The distribution is concentrated in the 90-100% confidence range.

- The 100% confidence bin is the tallest and has a very large blue segment, indicating a high volume of correct answers at maximum confidence.

- The 90% bin is shorter and has a more even mix of red and blue.

- **Bottom Calibration Plot:**

- Bars are present from confidence ~0.8 to 1.0.

- The bars are the closest to the diagonal line among the first three models. The bar at confidence ~1.0 reaches an accuracy of nearly 0.8.

- This indicates the best calibration of the group, though still with some **overconfidence** at the highest bins.

**Column 4: Vicuna**

- **Metrics:** `ACC 0.02 / AUROC 0.46 / ECE 0.77`

- **Top Histogram:**

- The distribution is unique. The tallest bar is at 80% confidence and is almost entirely red.

- There are small, scattered bars across the entire range from 50% to 100%, all predominantly red.

- The blue segments (`correct answer`) are negligible across all bins.

- **Bottom Calibration Plot:**

- Bars are very short and spread thinly across the confidence range from ~0.4 to 1.0.

- All bars are far below the diagonal line. For example, at confidence 0.6, accuracy is near 0.05.

- This indicates **extreme overconfidence** and very poor performance (ACC 0.02). The model is confidently wrong across a wide range of confidence levels.

### Key Observations

1. **Confidence Distribution:** GPT3, GPT3.5, and GPT4 all exhibit a strong bias toward predicting with very high confidence (90-100%). Vicuna's confidence is more spread out but peaks at 80%.

2. **Calibration Trend:** There is a clear progression in calibration quality from GPT3 (worst) to GPT4 (best), as evidenced by the ECE metric decreasing (0.83 -> 0.66 -> 0.51) and the calibration bars approaching the diagonal line.

3. **Accuracy vs. Confidence:** For all models, the highest confidence bins contain a mix of correct and incorrect answers. However, the proportion of correct answers (blue) in these bins increases with model capability (GPT3 < GPT3.5 < GPT4).

4. **Vicuna Anomaly:** Vicuna is a significant outlier. It has near-zero accuracy (ACC 0.02) yet maintains moderate to high confidence, resulting in a histogram of wrong answers spread across the confidence spectrum and a calibration plot showing almost no relationship between confidence and accuracy.

### Interpretation

This visualization is a diagnostic tool for assessing **model reliability**. It goes beyond simple accuracy (ACC) to answer: "When a model says it is 90% confident, is it right 90% of the time?"

- **What the data suggests:** The data demonstrates that larger, more capable models (GPT4) are not only more accurate but also better **calibrated**—their confidence scores are more meaningful indicators of likely correctness. In contrast, less capable models (GPT3, Vicuna) are **overconfident**, meaning their high confidence scores are unreliable and often indicate incorrect answers.

- **How elements relate:** The top histogram shows *how often* the model uses different confidence levels and its success rate at each. The bottom calibration plot directly tests the *validity* of those confidence levels by comparing them to empirical accuracy. A well-calibrated model would have its blue bars align with the dashed line.

- **Notable implications:** For applications requiring trustworthy uncertainty estimates (e.g., medical diagnosis, high-stakes decision support), using a poorly calibrated model like GPT3 or Vicuna is dangerous. Their high confidence does not guarantee correctness. GPT4, while better, still shows some overconfidence, suggesting caution is needed even with advanced models. The extreme case of Vicuna (ACC 0.02) highlights a failure mode where a model can be consistently wrong yet express varying degrees of confidence, providing no useful signal.

</details>

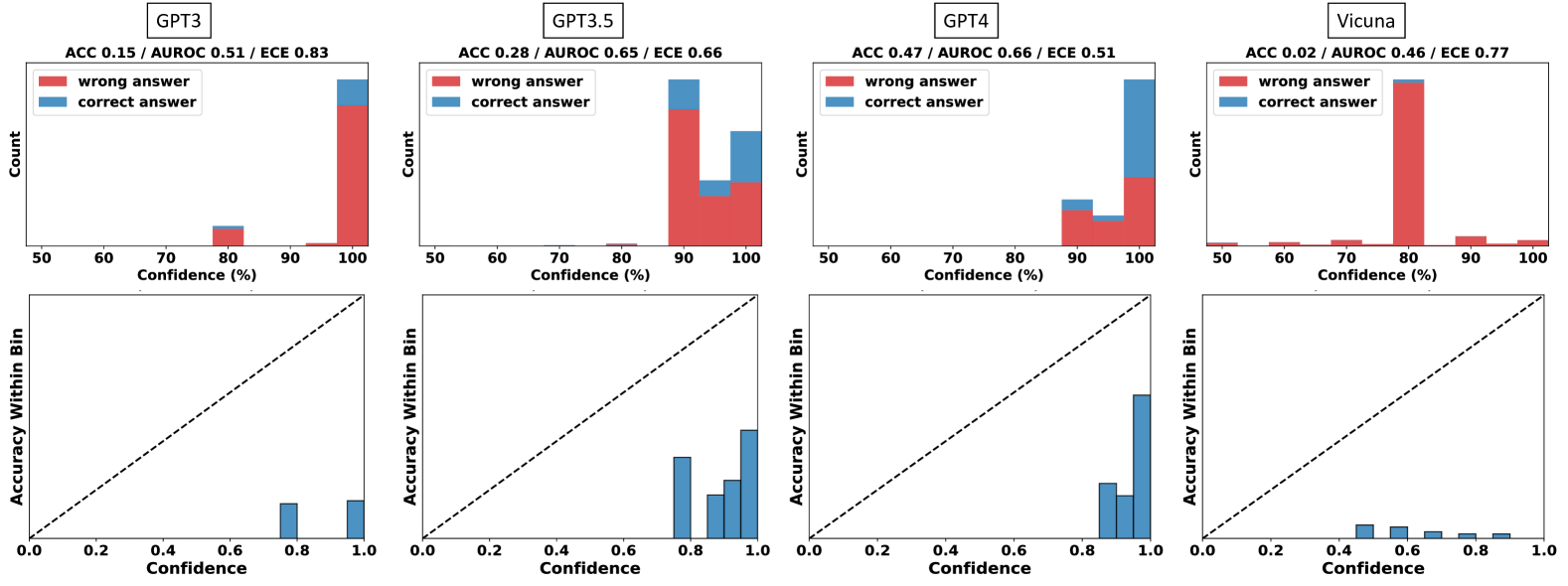

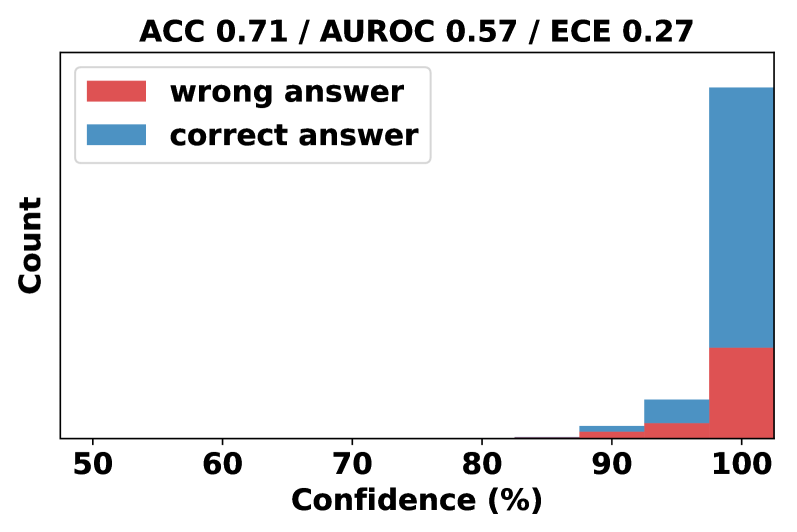

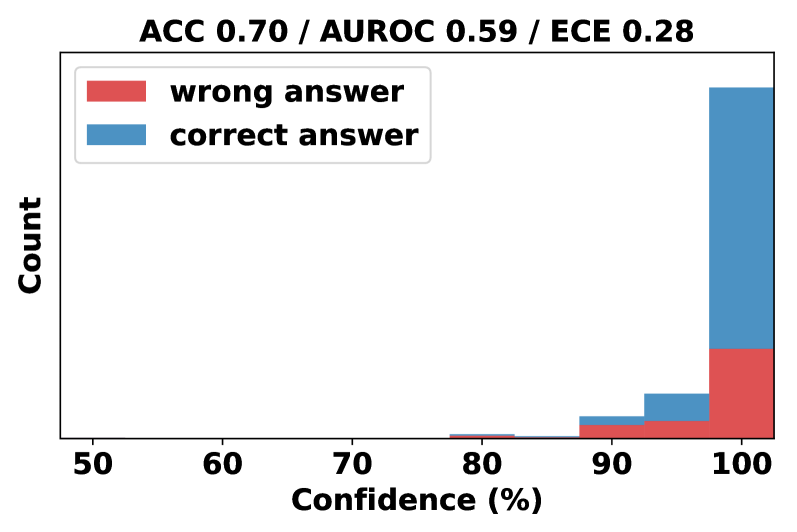

Figure 2: Empirical distribution (First row) and reliability diagram (Second row) of vanilla verbalized confidence across four models on GSM8K. The prompt used is in Table 14. From this figure, we can observe that 1) the confidence levels primarily range between 80% and 100%, often in multiples of 5; 2) the accuracy within each bin is much lower than its corresponding confidence, indicating significant overconfidence.

## 5 Evaluation and Analysis

To provide insights on the best practice for eliciting confidence, we systematically examine each component (see Figure 1) of the confidence elicitation framework (§ 3). We test the performance on eight datasets of five different reasoning types and five commonly used models (see § 4), and yield the following key findings.

### 5.1 LLMs tend to be overconfident when verbalizing their confidence

The distribution of verbalized confidences mimics how humans talk about confidence. To examine model’s capacity to express verbalized confidence, we first visualize the distribution of confidence in Figure 2. Detailed results on other datasets and models are provided in Appendix Figure 5. Notably, the models tend to have high confidence for all samples, appearing as multiples of 5 and with most values ranging between the 80% to 100% range, which is similar to the patterns identified in the training corpus for GPT-like models as discussed by Zhou et al. (2023). Such behavior suggests that models might be imitating human expressions when verbalizing confidence.

Calibration and failure prediction performance improve as model capacity scales. The comparison of the performance of various models (Table 2) reveals a trend: as we move from GPT-3, Vicuna, GPT-3.5 to GPT-4, with the increase of model accuracy, there is also a noticeable decrease in ECE and increase in AUROC, e.g., approximate 22.2% improvement in AUROC from GPT-3 to GPT-4.

Vanilla verbalized confidence exhibits significant overconfidence and poor failure prediction, casting doubts on its reliability. Table 2 presents the performance of vanilla verbalized confidence across five models and eight tasks. According to the criteria given in Srivastava et al. (2023), GPT-3, GPT-3.5, and Vicuna exhibit notably high ECE values, e.g., the average ECE exceeding 0.377, suggesting that the verbalized confidence of these LLMs are poorly calibrated. While GPT-4 displays lower ECE, its AUROC and AUPRC-Negative scores remain suboptimal, with an average AUROC of merely 62.7%—close to the 50% random guess threshold—highlighting challenges in distinguishing correct from incorrect predictions.

Table 2: Vanilla Verbalized Confidence of 4 models and 8 datasets (metrics are given by $\times 10^{2}$ ). Abbreviations are used: Date (Date Understanding), Count (Object Counting), Sport (Sport Understanding), Law (Professional Law), Ethics (Business Ethics). ECE > 0.25, AUROC, AUPRC-Positive, AUPRC-Negative < 0.6 denote significant deviation from ideal performance. Significant deviations in averages are highlighted in red. The prompt used is in Table 14.

| ECE $\downarrow$ Vicuna LLaMA 2 | GPT-3 76.0 71.8 | 82.7 70.7 36.4 | 35.0 17.0 38.5 | 82.1 45.3 58.0 | 52.0 42.5 26.2 | 41.8 37.5 38.8 | 42.0 45.2 42.2 | 47.8 34.6 36.5 | 32.3 46.1 43.6 | 52.0 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| GPT-3.5 | 66.0 | 22.4 | 47.0 | 47.1 | 26.0 | 25.1 | 44.3 | 23.4 | 37.7 | |

| GPT-4 | 31.0 | 10.7 | 18.0 | 26.8 | 16.1 | 15.4 | 17.3 | 8.5 | 18.0 | |

| ROC $\uparrow$ | GPT3 | 51.2 | 51.7 | 50.2 | 50.0 | 49.3 | 55.3 | 46.5 | 56.1 | 51.3 |

| Vicuna | 52.1 | 46.3 | 53.7 | 53.1 | 50.9 | 53.6 | 52.6 | 57.5 | 52.5 | |

| LLaMA 2 | 58.8 | 52.1 | 71.4 | 51.3 | 56.0 | 48.5 | 50.5 | 62.4 | 56.4 | |

| GPT-3.5 | 65.0 | 63.2 | 57.0 | 54.1 | 52.8 | 43.2 | 50.5 | 55.2 | 55.1 | |

| GPT4 | 81.0 | 56.7 | 68.0 | 52.0 | 55.3 | 60.0 | 60.9 | 68.0 | 62.7 | |

| PR-N $\uparrow$ | GPT-3 | 85.0 | 37.3 | 82.2 | 52.0 | 42.0 | 46.4 | 51.2 | 41.2 | 54.7 |

| Vicuna | 96.4 | 87.9 | 34.9 | 65.4 | 53.8 | 51.5 | 75.3 | 70.9 | 67.0 | |

| LLaMA 2 | 92.6 | 57.4 | 88.3 | 59.6 | 38.2 | 40.6 | 61.0 | 58.3 | 62.0 | |

| GPT-3.5 | 79.0 | 33.9 | 64.0 | 51.2 | 35.7 | 30.5 | 54.8 | 35.5 | 48.1 | |

| GPT-4 | 65.0 | 15.8 | 26.0 | 28.9 | 26.6 | 31.5 | 40.0 | 39.5 | 34.2 | |

| PR-P $\uparrow$ | GPT-3 | 15.5 | 65.5 | 17.9 | 48.0 | 57.6 | 59.0 | 45.4 | 66.1 | 46.9 |

| Vicuna | 4.10 | 11.0 | 69.1 | 39.1 | 47.5 | 52.0 | 27.2 | 38.8 | 36.1 | |

| LLaMA 2 | 11.9 | 46.3 | 46.6 | 41.4 | 68.6 | 58.3 | 39.2 | 65.0 | 47.2 | |

| GPT-3.5 | 38.0 | 81.3 | 57.0 | 54.4 | 67.2 | 67.5 | 45.8 | 70.5 | 60.2 | |

| GPT-4 | 57.0 | 90.1 | 88.0 | 73.8 | 78.6 | 79.3 | 73.4 | 87.2 | 78.4 | |

### 5.2 Human-inspired Prompting Strategies Partially Reduce Overconfidence

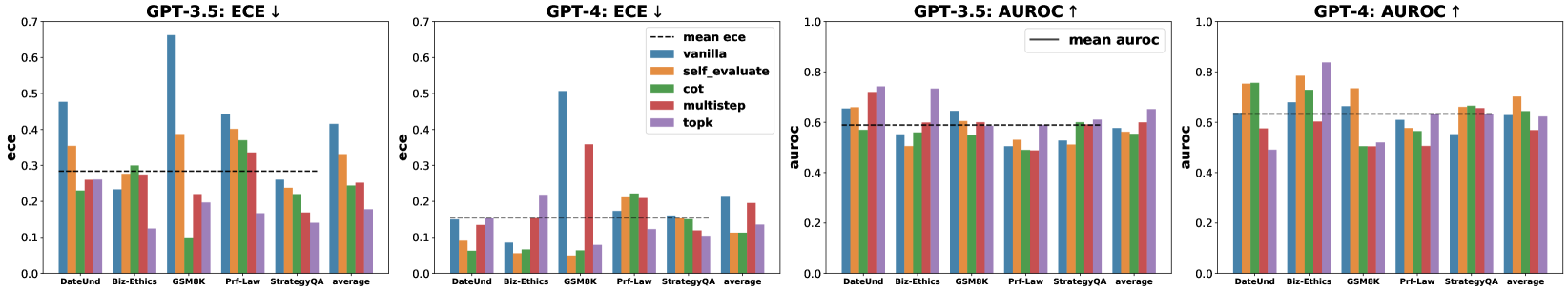

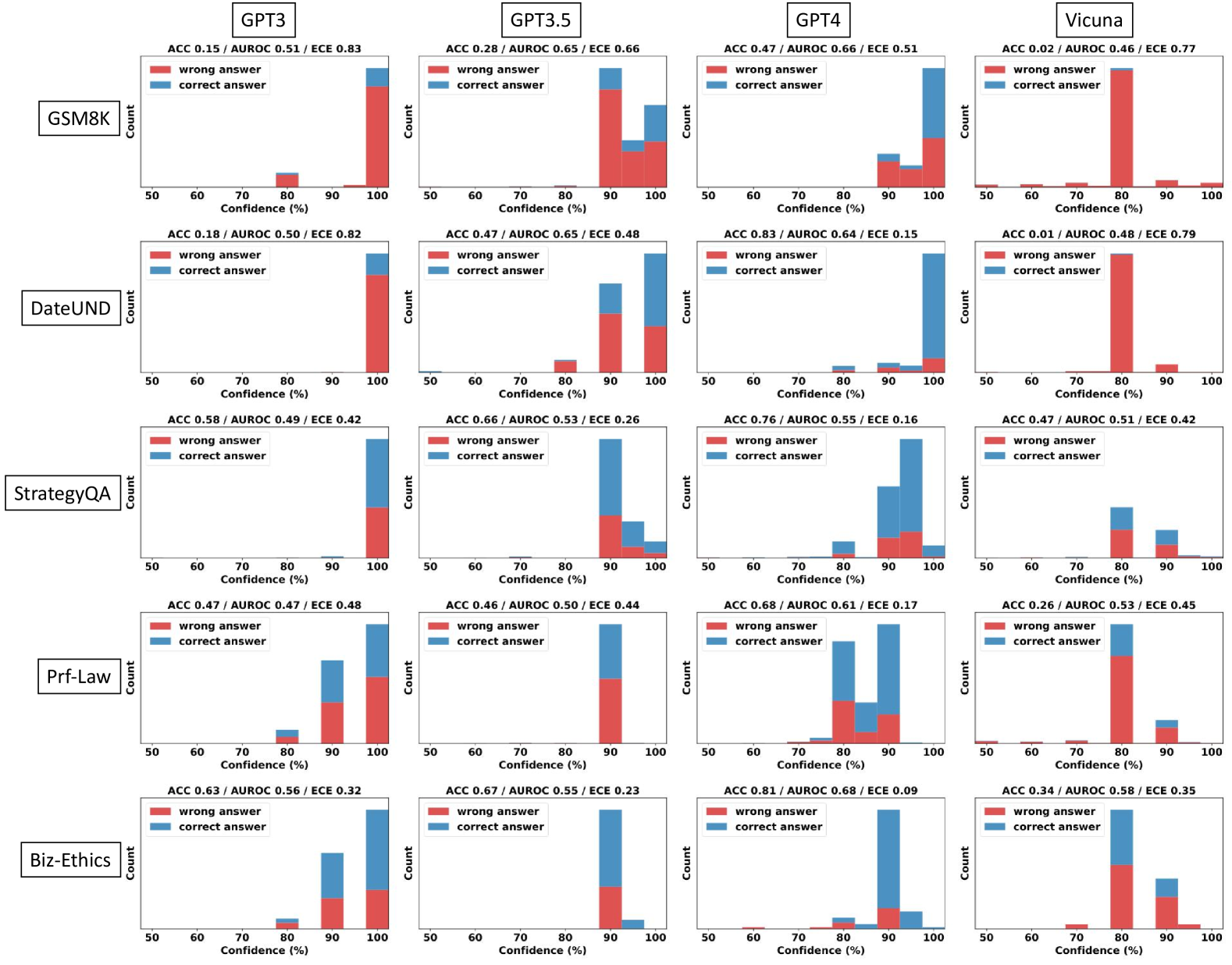

Human-inspired prompting strategies improve model accuracy and calibration, albeit with diminishing returns in advanced models like GPT-4. As illustrated in Figure 3, we compare the performance of five prompting strategies across five datasets on GPT-3.5 and GPT-4. Analyzing the average ECE, AUROC, and their respective performances within each dataset, human-inspired strategies offer consistent improvements in accuracy and calibration over the vanilla baseline, with modest advancements in failure prediction.

No single prompting strategy consistently outperforms the others. Figure 3 suggests that there is no single strategy that can consistently outperform the others across all the datasets and models. By evaluating the average rank and performance enhancement for each method over five task types, we find that Self-Probing maintains the most consistent advantage over the baseline on GPT-4, while Top-K emerges as the top performer on GPT-3.5.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Charts: Model Calibration (ECE) and Performance (AUROC) Across Tasks

### Overview

The image displays four grouped bar charts arranged horizontally, comparing the performance of two large language models (GPT-3.5 and GPT-4) on five specific tasks and their average. The charts evaluate two key metrics: Expected Calibration Error (ECE, lower is better) and Area Under the Receiver Operating Characteristic curve (AUROC, higher is better). Each chart compares five different prompting or reasoning methods against a baseline.

### Components/Axes

* **Titles (Top of each chart):**

* Chart 1 (Left): `GPT-3.5: ECE ↓`

* Chart 2 (Center-Left): `GPT-4: ECE ↓`

* Chart 3 (Center-Right): `GPT-3.5: AUROC ↑`

* Chart 4 (Right): `GPT-4: AUROC ↑`

* **Y-Axis Labels:**

* Charts 1 & 2: `ece` (scale: 0.0 to 0.7)

* Charts 3 & 4: `auroc` (scale: 0.0 to 1.0)

* **X-Axis Categories (Common to all charts):** `DateUnd`, `Biz-Ethics`, `GSM8K`, `Prf-Law`, `StrategyQA`, `average`.

* **Legend (Located in the top-right of the second chart, applies to all):**

* `--- mean ece` (dashed black line, present in ECE charts)

* `— mean auroc` (solid black line, present in AUROC charts)

* `vanilla` (Blue bar)

* `self_evaluate` (Orange bar)

* `cot` (Green bar)

* `multistep` (Red bar)

* `topk` (Purple bar)

### Detailed Analysis

#### Chart 1: GPT-3.5: ECE ↓

* **Trend:** The `vanilla` method (blue) generally shows the highest ECE (worst calibration), particularly spiking on `GSM8K`. The `mean ece` line sits at approximately 0.28.

* **Data Points (Approximate ECE values):**

* **DateUnd:** Vanilla ~0.48, Self_evaluate ~0.35, Cot ~0.23, Multistep ~0.26, Topk ~0.25.

* **Biz-Ethics:** Vanilla ~0.23, Self_evaluate ~0.25, Cot ~0.30, Multistep ~0.28, Topk ~0.13.

* **GSM8K:** Vanilla ~0.66, Self_evaluate ~0.39, Cot ~0.10, Multistep ~0.22, Topk ~0.20.

* **Prf-Law:** Vanilla ~0.45, Self_evaluate ~0.40, Cot ~0.37, Multistep ~0.34, Topk ~0.17.

* **StrategyQA:** Vanilla ~0.26, Self_evaluate ~0.24, Cot ~0.22, Multistep ~0.19, Topk ~0.14.

* **Average:** Vanilla ~0.42, Self_evaluate ~0.33, Cot ~0.25, Multistep ~0.26, Topk ~0.18.

#### Chart 2: GPT-4: ECE ↓

* **Trend:** Overall ECE values are lower than GPT-3.5, indicating better calibration. The `vanilla` method again shows high ECE on `GSM8K`. The `mean ece` line is at approximately 0.16.

* **Data Points (Approximate ECE values):**

* **DateUnd:** Vanilla ~0.15, Self_evaluate ~0.09, Cot ~0.06, Multistep ~0.14, Topk ~0.14.

* **Biz-Ethics:** Vanilla ~0.08, Self_evaluate ~0.06, Cot ~0.07, Multistep ~0.16, Topk ~0.22.

* **GSM8K:** Vanilla ~0.51, Self_evaluate ~0.05, Cot ~0.06, Multistep ~0.36, Topk ~0.08.

* **Prf-Law:** Vanilla ~0.17, Self_evaluate ~0.22, Cot ~0.21, Multistep ~0.21, Topk ~0.12.

* **StrategyQA:** Vanilla ~0.16, Self_evaluate ~0.15, Cot ~0.15, Multistep ~0.12, Topk ~0.11.

* **Average:** Vanilla ~0.21, Self_evaluate ~0.11, Cot ~0.11, Multistep ~0.20, Topk ~0.14.

#### Chart 3: GPT-3.5: AUROC ↑

* **Trend:** Performance is relatively consistent across methods, with `topk` (purple) often performing well. The `mean auroc` line is at approximately 0.59.

* **Data Points (Approximate AUROC values):**

* **DateUnd:** Vanilla ~0.66, Self_evaluate ~0.67, Cot ~0.59, Multistep ~0.73, Topk ~0.74.

* **Biz-Ethics:** Vanilla ~0.55, Self_evaluate ~0.52, Cot ~0.60, Multistep ~0.60, Topk ~0.73.

* **GSM8K:** Vanilla ~0.65, Self_evaluate ~0.60, Cot ~0.56, Multistep ~0.60, Topk ~0.60.

* **Prf-Law:** Vanilla ~0.51, Self_evaluate ~0.50, Cot ~0.49, Multistep ~0.49, Topk ~0.52.

* **StrategyQA:** Vanilla ~0.52, Self_evaluate ~0.53, Cot ~0.60, Multistep ~0.61, Topk ~0.60.

* **Average:** Vanilla ~0.58, Self_evaluate ~0.56, Cot ~0.57, Multistep ~0.61, Topk ~0.66.

#### Chart 4: GPT-4: AUROC ↑

* **Trend:** AUROC values are generally higher than GPT-3.5. `Self_evaluate` (orange) and `cot` (green) show strong performance. The `mean auroc` line is at approximately 0.63.

* **Data Points (Approximate AUROC values):**

* **DateUnd:** Vanilla ~0.63, Self_evaluate ~0.75, Cot ~0.76, Multistep ~0.58, Topk ~0.49.

* **Biz-Ethics:** Vanilla ~0.65, Self_evaluate ~0.78, Cot ~0.73, Multistep ~0.60, Topk ~0.84.

* **GSM8K:** Vanilla ~0.66, Self_evaluate ~0.73, Cot ~0.51, Multistep ~0.51, Topk ~0.52.

* **Prf-Law:** Vanilla ~0.61, Self_evaluate ~0.57, Cot ~0.60, Multistep ~0.51, Topk ~0.64.

* **StrategyQA:** Vanilla ~0.55, Self_evaluate ~0.65, Cot ~0.67, Multistep ~0.65, Topk ~0.64.

* **Average:** Vanilla ~0.63, Self_evaluate ~0.70, Cot ~0.65, Multistep ~0.57, Topk ~0.63.

### Key Observations

1. **Calibration (ECE):** GPT-4 is significantly better calibrated (lower ECE) than GPT-3.5 across almost all tasks and methods. The `vanilla` prompting method is poorly calibrated for mathematical reasoning (`GSM8K`) in both models.

2. **Performance (AUROC):** GPT-4 also achieves higher AUROC scores than GPT-3.5 on average. The `topk` method is a top performer for GPT-3.5, while `self_evaluate` and `cot` are strong for GPT-4.

3. **Task Variability:** Performance and calibration vary greatly by task. `GSM8K` (math) is a notable outlier for poor calibration with vanilla prompting. `Prf-Law` appears to be a challenging task for calibration (higher ECE) and performance (lower AUROC) for both models.

4. **Method Impact:** Advanced methods (`cot`, `self_evaluate`, `topk`, `multistep`) generally improve calibration (lower ECE) over `vanilla` prompting, especially for GPT-3.5. Their impact on AUROC is more task- and model-dependent.

### Interpretation

This data suggests a clear progression from GPT-3.5 to GPT-4 in both **reliability** (better calibration, meaning its confidence scores are more accurate) and **discriminative power** (higher AUROC, meaning it's better at distinguishing between correct and incorrect answers).

The poor calibration of `vanilla` prompting on `GSM8K` indicates that base models are overconfident in their mathematical reasoning. Techniques like `self_evaluate` and `cot` dramatically improve this, suggesting that forcing the model to engage in step-by-step reasoning or self-assessment aligns its confidence with actual accuracy.

The variation in which method performs best (e.g., `topk` for GPT-3.5 vs. `self_evaluate` for GPT-4) implies that optimal prompting strategies are model-specific. The consistently lower performance on `Prf-Law` hints at inherent difficulties in the professional law domain for these models, possibly due to complex reasoning or specialized knowledge requirements. The "average" columns provide a useful summary but mask significant task-specific behaviors, underscoring the importance of evaluating AI models across diverse benchmarks.

</details>

Figure 3: Comparative analysis of 5 prompting strategies over 5 datasets for 2 models (GPT-3.5 and GPT-4). The ‘average’ bar represents the mean ECE for a given prompting strategy across datasets. The ‘mean ECE’ line is the average across all strategies and datasets. AUROC is calculated in a similar manner. The accuracy comparison is shown in Appendix B.4.

While ECE can be effectively reduced using suitable prompting strategies, failure prediction still remains a challenge. Comparing the average calibration performance across datasets (‘mean ece’ lines) and the average failure prediction performance (‘mean auroc’), we find that while we can reduce ECE with the right prompting strategy, the model’s failure prediction capability is still limited, i.e., close to the performance of random guess (AUROC=0.5). A closer look at individual dataset performances reveals that the proposed prompt strategies such as CoT have significantly increased the accuracy (see Table 8), while the confidence output distribution still remains at the range of $80\$ , suggesting that a reduction in overconfidence is due to the diminished gap between average confidence and accuracy, not necessarily indicating a substantial increase in the model’s ability to judge the correctness of its responses. For example, with the CoT prompting on the GSM8K dataset, GPT-4 with 93.6% accuracy achieves a near-optimal ECE 0.064 by assigning 100% confidence to all samples. However, since all samples receive the same confidence, it is challenging to distinguish between correct and incorrect samples based on the verbalized confidence.

### 5.3 Variance Among Multiple Responses Improves Failure Prediction

Table 3: Comparison of sampling strategies with the number of responses $M=5$ on GPT-3.5. The prompt and aggregation strategies are fixed as CoT and Consistency when $M>1$ . To compare the effect of $M$ , we also provide the baseline with $M=1$ from Figure 3. Metrics are given by $\times 10^{2}$ .

| Method Misleading (M=5) | GSM8K ECE 8.03 | Prf-Law AUROC 88.6 | DateUnd ECE 18.3 | StrategyQA AUROC 59.3 | Biz-Ethics ECE 20.5 | Average AUROC 67.3 | ECE 21.8 | AUROC 61.5 | ECE 17.8 | AUROC 71.3 | ECE 17.3 | AUROC 69.6 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Self-Random (M=5) | 6.28 | 92.7 | 26.0 | 65.6 | 17.0 | 66.8 | 23.3 | 60.8 | 20.7 | 79.0 | 18.7 | 73.0 |

| Prompt (M=5) | 35.2 | 74.4 | 31.5 | 60.8 | 23.9 | 69.8 | 16.1 | 61.3 | 15.0 | 79.5 | 24.3 | 69.2 |

| CoT (M=1) | 10.1 | 54.8 | 39.7 | 52.2 | 23.4 | 57.4 | 22.0 | 59.8 | 30.0 | 56.0 | 25.0 | 56.4 |

| Top-K (M=1) | 19.6 | 58.5 | 16.7 | 58.9 | 26.1 | 74.2 | 14.0 | 61.3 | 12.4 | 73.3 | 17.8 | 65.2 |

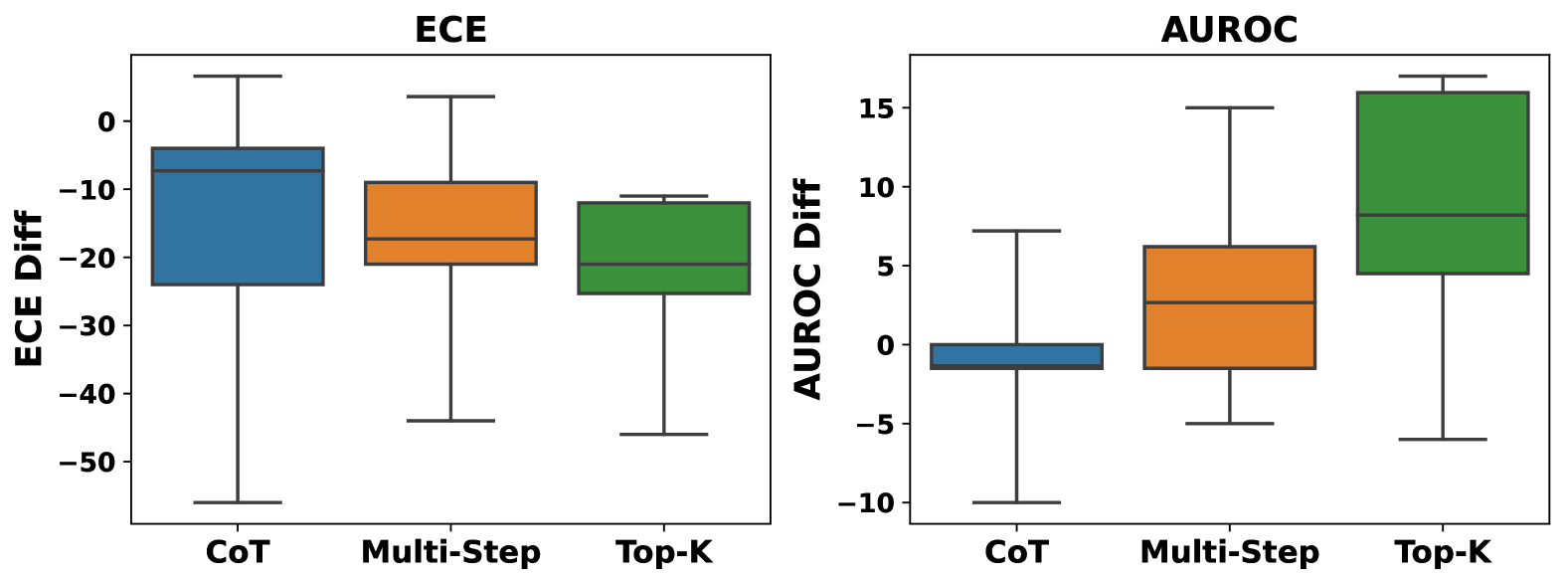

Consistency among multiple responses is more effective in improving failure prediction and calibration compared to verbalized confidence ( $M=1$ ), with particularly notable improvements on the arithmetic task. Table 3 demonstrates that the sampling strategy with 5 sampled responses paired with consistency aggregation consistently outperform verbalized confidence in calibration and failure prediction, particularly on arithmetic tasks, e.g., GSM8K showcases a remarkable improvement in AUROC from 54.8% (akin to random guessing) to 92.7%, effectively distinguishing between incorrect and correct answers. The average performance in the last two columns also indicates improved ECE and AUROC scores, suggesting that obtaining the variance among multiple responses can be a good indicator of uncertainty.

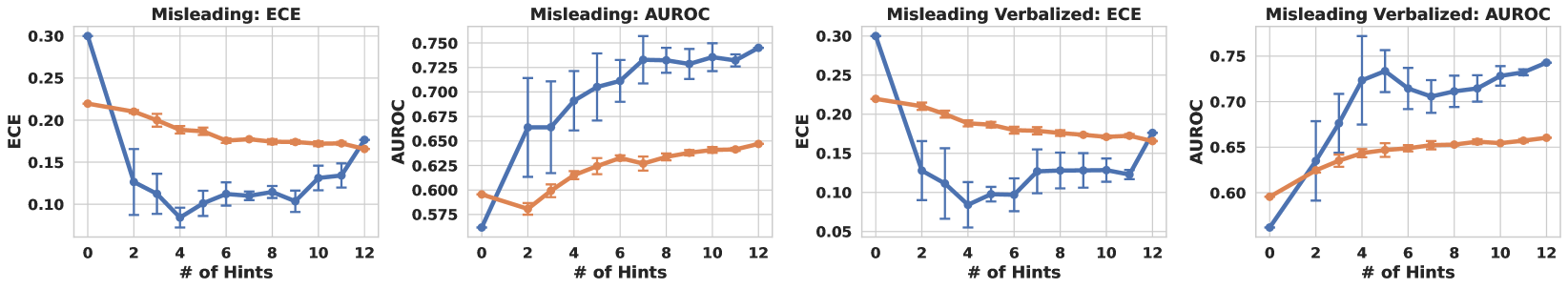

As the number of sampled responses increases, model performance improves significantly and then converges. Figure 7 exhibits the performance of various number of sampled responses $M$ from $M=1$ to $M=13$ . The result suggests that the ECE and AUROC could be improved by sampling more responses, but the improvement becomes marginal as the number gets larger. Additionally, as the computational time and resources required for $M$ responses go linearly with the baseline ( $M$ =1), $M$ thus presents a trade-off between efficiency and effectiveness. Detailed experiments investigating the impact of the number of responses can be found in Appendix B.6 and B.7.

### 5.4 Introducing Verbalized Confidence Into The Aggregation Outperforms Consistency-only Aggregation

Pair-Rank achieves better performance in calibration while Avg-Conf boosts more in failure prediction. On the average scale, we find that Pair-Rank emerges as the superior choice for calibration that can reduce ECE to as low as 0.028, while Avg-Conf stands out for its efficacy in failure prediction. This observation agrees with the underlying principle that Pair-Rank learns the categorical distribution of potential answers through our $K$ observations, which aligns well with the notion of calibration and is therefore more likely to lead to a lower ECE. In contrast, Avg-Conf leverages the consistency, using verbalized confidence as a weighting factor for each answer. This approach is grounded in the observation that accurate samples often produce consistent outcomes, while incorrect ones yield various responses, leading to a low consistency. This assumption matches well with failure prediction, and is confirmed by the results in Table 4. In addition, our comparative analysis of various aggregation strategies reveals that introducing verbalized confidence into the aggregation (e.g., Pair-Rank and Avg-Conf) is more effective compared to consistency-only aggregation (e.g., Consistency), especially when LLM queries are costly, and we are limited in sampling frequency (set to $M=5$ queries in our experiment). Verbalized confidence, albeit imprecise, reflects the model’s uncertainty tendency and can enhance results when combined with ensemble methods.

Table 4: Performance comparison of aggregation strategies on GPT-4 using Top-K Prompt and Self-Random sampling. Pair-Rank aggregation achieves the lowest ECE in half of the datasets and maintains the lowest average ECE in calibration; Avg-Conf surpasses other methods in terms of AUROC in five out of the six datasets in failure prediction. Metrics are given by $\times 10^{2}$ .

| ECE $\downarrow$ Avg-Conf Pair-Rank | Consistency 10.0 7.40 | 4.80 14.4 15.3 | 21.1 7.70 8.50 | 6.00 10.6 2.80 | 13.4 5.90 3.50 | 13.5 20.2 3.80 | 13.2 14.8 $\pm$ 0.7 6.90 $\pm$ 0.2 | 12.0 $\pm$ 0.3 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| AUROC $\uparrow$ | Consistency | 84.4 | 66.2 | 68.9 | 60.3 | 65.4 | 56.3 | 66.9 $\pm$ 0.8 |

| Avg-Conf | 41.0 | 68.0 | 72.7 | 64.8 | 70.5 | 84.4 | 66.9 $\pm$ 1.7 | |

| Pair-Rank | 80.3 | 66.5 | 67.4 | 61.9 | 62.1 | 67.6 | 67.6 $\pm$ 0.4 | |

## 6 Discussions

In this study, we focus on confidence elicitation, i.e., empowering Large Language Models (LLMs) to accurately express the confidence in their responses. Recognizing the scarcity of existing literature on this topic, we define a systematic framework with three components: prompting, sampling and aggregation to explore confidence elicitation algorithms and then benchmark these algorithms on two tasks across eight datasets and five models. Our findings reveal that LLMs tend to exhibit overconfidence when verbalizing their confidence. This overconfidence can be mitigated to some extent by using proposed prompting strategies such as CoT and Self-Probing. Furthermore, sampling strategies paired with specific aggregators can improve failure prediction, especially in arithmetic datasets. We hope this work could serve as a foundation for future research in these directions.

Comparative analysis of white-box and black-box methods. While our method is centered on black-box settings, comparing it with white-box methods helps us understand the progress in the field. We conducted comparisons on five datasets with three white-box methods (see § B.1) and observed that although white-box methods indeed perform better, the gap is narrow, e.g., 0.522 to 0.605 in AUROC. This finding underscores that the field remains challenging and unresolved.

Are current algorithms satisfactory? Not quite. Our findings (Table 4) reveals that while the best-performing algorithms can reduce ECE to a quite low value like 0.028, they still face challenges in predicting incorrect predictions, especially in those tasks requiring professional knowledge, such as professional law. This underscores the need for ongoing research in confidence elicitation.

What is the recommendation for practitioners? Balancing between efficiency, simplicity, and effectiveness, and based on our empirical results, we recommend a stable-performing method for practitioners: Top-K prompt + Self-Random sampling + Avg-Conf or Pair-Rank aggregation. Please refer to Appendix D for the reasoning and detailed discussions, including the considerations when using black-box confidence elicitation algorithms and why these methods fail in certain cases.

Limitations and Future Work: 1) Scope of Datasets. We mainly focuses on fixed-form and free-form question-answering QA tasks where the ground truth answer is unique, while leaving tasks such as summarization and open-ended QA to the future work. 2) Black-box Setting. Our findings indicate black-box approaches remain suboptimal, while the white-box setting, with its richer information access, may be a more promising avenue. Integrating black-box methods with limited white-box access data, such as model logits provided by GPT-3, could be a promising direction.

## Acknowledgments

This research is supported by the Ministry of Education, Singapore, under the Academic Research Fund Tier 1 (FY2023).

## References

- Boyd et al. (2013) Kendrick Boyd, Kevin H. Eng, and C. David Page. Area under the precision-recall curve: Point estimates and confidence intervals. In Hendrik Blockeel, Kristian Kersting, Siegfried Nijssen, and Filip Železný (eds.), Machine Learning and Knowledge Discovery in Databases, pp. 451–466, Berlin, Heidelberg, 2013. Springer Berlin Heidelberg. ISBN 978-3-642-40994-3.

- Brown et al. (2020) Tom B Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. arXiv preprint arXiv:2005.14165, 2020.

- Chen et al. (2022) Yangyi Chen, Lifan Yuan, Ganqu Cui, Zhiyuan Liu, and Heng Ji. A close look into the calibration of pre-trained language models. arXiv preprint arXiv:2211.00151, 2022.

- Chiang et al. (2023) Wei-Lin Chiang, Zhuohan Li, Zi Lin, Ying Sheng, Zhanghao Wu, Hao Zhang, Lianmin Zheng, Siyuan Zhuang, Yonghao Zhuang, Joseph E. Gonzalez, Ion Stoica, and Eric P. Xing. Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality, March 2023. URL https://lmsys.org/blog/2023-03-30-vicuna/.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- Cosmides & Tooby (1996) Leda Cosmides and John Tooby. Are humans good intuitive statisticians after all? rethinking some conclusions from the literature on judgment under uncertainty. cognition, 58(1):1–73, 1996.

- Deng et al. (2023) Ailin Deng, Miao Xiong, and Bryan Hooi. Great models think alike: Improving model reliability via inter-model latent agreement. arXiv preprint arXiv:2305.01481, 2023.

- Gal & Ghahramani (2016) Yarin Gal and Zoubin Ghahramani. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In international conference on machine learning, pp. 1050–1059. PMLR, 2016.

- Garthwaite et al. (2005a) Paul H Garthwaite, Joseph B Kadane, and Anthony O’Hagan. Statistical methods for eliciting probability distributions. Journal of the American statistical Association, 100(470):680–701, 2005a.

- Garthwaite et al. (2005b) Paul H Garthwaite, Joseph B Kadane, and Anthony O’Hagan. Statistical methods for eliciting probability distributions. Journal of the American statistical Association, 100(470):680–701, 2005b.

- Gawlikowski et al. (2021) Jakob Gawlikowski, Cedrique Rovile Njieutcheu Tassi, Mohsin Ali, Jongseok Lee, Matthias Humt, Jianxiang Feng, Anna Kruspe, Rudolph Triebel, Peter Jung, Ribana Roscher, et al. A survey of uncertainty in deep neural networks. arXiv preprint arXiv:2107.03342, 2021.

- Geva et al. (2021) Mor Geva, Daniel Khashabi, Elad Segal, Tushar Khot, Dan Roth, and Jonathan Berant. Did aristotle use a laptop? a question answering benchmark with implicit reasoning strategies, 2021.

- Ghazal et al. (2013) Ahmad Ghazal, Tilmann Rabl, Minqing Hu, Francois Raab, Meikel Poess, Alain Crolotte, and Hans-Arno Jacobsen. Bigbench: Towards an industry standard benchmark for big data analytics. In Proceedings of the 2013 ACM SIGMOD international conference on Management of data, pp. 1197–1208, 2013.

- Guo et al. (2017) Chuan Guo, Geoff Pleiss, Yu Sun, and Kilian Q Weinberger. On calibration of modern neural networks. In International conference on machine learning, pp. 1321–1330. PMLR, 2017.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding, 2021.

- Jiang et al. (2021) Zhengbao Jiang, Jun Araki, Haibo Ding, and Graham Neubig. How can we know when language models know? on the calibration of language models for question answering. Transactions of the Association for Computational Linguistics, 9:962–977, 2021.

- Kadavath et al. (2022) Saurav Kadavath, Tom Conerly, Amanda Askell, Tom Henighan, Dawn Drain, Ethan Perez, Nicholas Schiefer, Zac Hatfield Dodds, Nova DasSarma, Eli Tran-Johnson, et al. Language models (mostly) know what they know. arXiv preprint arXiv:2207.05221, 2022.

- Kim (2021) Ethan Kim. Sports understanding in bigbench, 2021.

- Kojima et al. (2022) Takeshi Kojima, Shixiang Shane Gu, Machel Reid, Yutaka Matsuo, and Yusuke Iwasawa. Large language models are zero-shot reasoners. ArXiv, abs/2205.11916, 2022. URL https://api.semanticscholar.org/CorpusID:249017743.

- Kuhn et al. (2023) Lorenz Kuhn, Yarin Gal, and Sebastian Farquhar. Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. arXiv preprint arXiv:2302.09664, 2023.

- Kuleshov & Deshpande (2022) Volodymyr Kuleshov and Shachi Deshpande. Calibrated and sharp uncertainties in deep learning via density estimation. In International Conference on Machine Learning, pp. 11683–11693. PMLR, 2022.

- Kuleshov et al. (2018) Volodymyr Kuleshov, Nathan Fenner, and Stefano Ermon. Accurate uncertainties for deep learning using calibrated regression. In International conference on machine learning, pp. 2796–2804. PMLR, 2018.

- Lakshminarayanan et al. (2017) Balaji Lakshminarayanan, Alexander Pritzel, and Charles Blundell. Simple and scalable predictive uncertainty estimation using deep ensembles. Advances in neural information processing systems, 30, 2017.

- Lin et al. (2022) Stephanie Lin, Jacob Hilton, and Owain Evans. Teaching models to express their uncertainty in words. arXiv preprint arXiv:2205.14334, 2022.

- Malinin & Gales (2020) Andrey Malinin and Mark Gales. Uncertainty estimation in autoregressive structured prediction. arXiv preprint arXiv:2002.07650, 2020.

- Mielke et al. (2022) Sabrina J Mielke, Arthur Szlam, Emily Dinan, and Y-Lan Boureau. Reducing conversational agents’ overconfidence through linguistic calibration. Transactions of the Association for Computational Linguistics, 10:857–872, 2022.

- Minderer et al. (2021) Matthias Minderer, Josip Djolonga, Rob Romijnders, Frances Hubis, Xiaohua Zhai, Neil Houlsby, Dustin Tran, and Mario Lucic. Revisiting the calibration of modern neural networks. In Advances in Neural Information Processing Systems, volume 34, pp. 15682–15694, 2021.

- Naeini et al. (2015) Mahdi Pakdaman Naeini, Gregory Cooper, and Milos Hauskrecht. Obtaining well calibrated probabilities using bayesian binning. In Proceedings of the AAAI conference on artificial intelligence, volume 29, 2015.

- OpenAI (2021) OpenAI. ChatGPT. https://www.openai.com/gpt-3/, 2021. Accessed: April 21, 2023.

- OpenAI (2023) OpenAI. Gpt-4 technical report, 2023.

- Patel et al. (2021) Arkil Patel, Satwik Bhattamishra, and Navin Goyal. Are NLP models really able to solve simple math word problems? In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 2080–2094, Online, June 2021. Association for Computational Linguistics. doi: 10.18653/v1/2021.naacl-main.168. URL https://aclanthology.org/2021.naacl-main.168.

- Ren et al. (2022) Jie Ren, Jiaming Luo, Yao Zhao, Kundan Krishna, Mohammad Saleh, Balaji Lakshminarayanan, and Peter J Liu. Out-of-distribution detection and selective generation for conditional language models. arXiv preprint arXiv:2209.15558, 2022.

- Solano et al. (2021) Quintin P. Solano, Laura Hayward, Zoey Chopra, Kathryn Quanstrom, Daniel Kendrick, Kenneth L. Abbott, Marcus Kunzmann, Samantha Ahle, Mary Schuller, Erkin Ötleş, and Brian C. George. Natural language processing and assessment of resident feedback quality. Journal of Surgical Education, 78(6):e72–e77, 2021. ISSN 1931-7204. doi: https://doi.org/10.1016/j.jsurg.2021.05.012. URL https://www.sciencedirect.com/science/article/pii/S1931720421001537.

- Srivastava et al. (2023) Aarohi Srivastava, Abhinav Rastogi, Abhishek Rao, Abu Awal Md Shoeb, Abubakar Abid, Adam Fisch, Adam R Brown, Adam Santoro, Aditya Gupta, Adrià Garriga-Alonso, et al. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. Transactions on Machine Learning Research, 2023. ISSN 2835-8856. URL https://openreview.net/forum?id=uyTL5Bvosj.

- Tian et al. (2023) Katherine Tian, Eric Mitchell, Allan Zhou, Archit Sharma, Rafael Rafailov, Huaxiu Yao, Chelsea Finn, and Christopher D Manning. Just ask for calibration: Strategies for eliciting calibrated confidence scores from language models fine-tuned with human feedback. arXiv preprint arXiv:2305.14975, 2023.

- Tomani & Buettner (2021) Christian Tomani and Florian Buettner. Towards trustworthy predictions from deep neural networks with fast adversarial calibration. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 35, pp. 9886–9896, 2021.

- Touvron et al. (2023a) Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, Aurelien Rodriguez, Armand Joulin, Edouard Grave, and Guillaume Lample. Llama: Open and efficient foundation language models, 2023a.

- Touvron et al. (2023b) Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288, 2023b.

- Wang et al. (2019) Jianfeng Wang, Rong Xiao, Yandong Guo, and Lei Zhang. Learning to count objects with few exemplar annotations. arXiv preprint arXiv:1905.07898, 2019.

- Wang et al. (2023) Lei Wang, Wanyu Xu, Yihuai Lan, Zhiqiang Hu, Yunshi Lan, Roy Ka-Wei Lee, and Ee-Peng Lim. Plan-and-solve prompting: Improving zero-shot chain-of-thought reasoning by large language models. In Annual Meeting of the Association for Computational Linguistics, 2023. URL https://api.semanticscholar.org/CorpusID:258558102.

- Wang et al. (2022) Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed Chi, and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. arXiv preprint arXiv:2203.11171, 2022.

- Wu & Wang (2021) Xinyi Wu and Zijian Wang. Data understanding in bigbench, 2021.

- Xiao & Wang (2021) Yijun Xiao and William Yang Wang. On hallucination and predictive uncertainty in conditional language generation. arXiv preprint arXiv:2103.15025, 2021.

- Xiong et al. (2022) Miao Xiong, Shen Li, Wenjie Feng, Ailin Deng, Jihai Zhang, and Bryan Hooi. Birds of a feather trust together: Knowing when to trust a classifier via adaptive neighborhood aggregation. arXiv preprint arXiv:2211.16466, 2022.

- Xiong et al. (2023) Miao Xiong, Ailin Deng, Pang Wei Koh, Jiaying Wu, Shen Li, Jianqing Xu, and Bryan Hooi. Proximity-informed calibration for deep neural networks. arXiv preprint arXiv:2306.04590, 2023.

- Yuan et al. (2021) Zhuoning Yuan, Yan Yan, Milan Sonka, and Tianbao Yang. Large-scale robust deep auc maximization: A new surrogate loss and empirical studies on medical image classification. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3040–3049, 2021.

- Zadrozny & Elkan (2001) Bianca Zadrozny and Charles Elkan. Obtaining calibrated probability estimates from decision trees and naive bayesian classifiers. In Icml, volume 1, pp. 609–616, 2001.

- Zhang et al. (2020) Jize Zhang, Bhavya Kailkhura, and T Yong-Jin Han. Mix-n-match: Ensemble and compositional methods for uncertainty calibration in deep learning. In International conference on machine learning, pp. 11117–11128. PMLR, 2020.

- Zhou et al. (2023) Kaitlyn Zhou, Dan Jurafsky, and Tatsunori Hashimoto. Navigating the grey area: Expressions of overconfidence and uncertainty in language models. arXiv preprint arXiv:2302.13439, 2023.

## Appendix A Proof of Proposition 3.1

#### Notation.

Given a question with $N$ candidate responses, the $i\text{-th}$ response consists of $K$ sequentially ordered answers, denoted as $\mathcal{S}^{(i)}_{K}=(S_{1}^{(i)},S_{2}^{(i)},\dots,S_{K}^{(i)})$ . Let $\mathcal{A}=\{S_{1},S_{2},\dots,S_{M}\}$ represent the set of unique answers across all $N$ responses, where $M$ is the total number of distinct answers. The event where the model ranks answer $S_{u}$ above $S_{v}$ in its $i$ -th generation is represented as $(S_{u}\stackrel{{\scriptstyle\scriptstyle(i)}}{{\succ}}S_{v})$ . In contexts where the generation is implicit, this is simply denoted as $(S_{u}\succ S_{v})$ . Let $E_{uv}^{(i)}$ be the event where at least one of $S_{u}$ and $S_{v}$ appears in the $i$ -th generation. The probability of $(S_{u}\succ S_{v})$ , given $E_{uv}^{(i)}$ and a categorical distribution $P$ , is expressed as $\mathbb{P}(S_{u}\succ S_{v}|P,E_{uv}^{(i)})$ .

**Proposition A.1**

*Suppose the Top-K answers are drawn from a categorical distribution $P$ without replacement. Define the event $(S_{u}\succ S_{v})$ to indicate that the realization $S_{u}$ is observed before $S_{v}$ in the $i\text{-th}$ draw without replacement. Under this setting, the conditional probability is given by:

$$

\mathbb{P}\left(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)}\right)=\frac{P(S_{u})}{P(S

_{u})+P(S_{v})}

$$

The optimization objective to minimize the expected loss is then:

$$

\min_{P}-\sum_{i=1}^{N}\sum_{S_{u}\in\mathcal{A}}\sum_{S_{v}\in\mathcal{A}}

\mathbb{I}\left\{S_{u}\stackrel{{\scriptstyle\scriptstyle(i)}}{{\succ}}S_{v}

\right\}\cdot\log\frac{P(S_{u})}{P(S_{u})+P(S_{v})}\quad\text{s.t.}\sum_{S_{u}

\in\mathcal{A}}P\left(S_{u}\right)=1 \tag{5}

$$*

* Proof*

Let us begin by examining the position $j$ in the response sequence $\mathcal{S}^{(i)}_{K}$ where either $S_{u}$ or $S_{v}$ is first sampled, and the other has not yet been sampled. We denote this event as $F_{j}^{(i)}(S_{u},S_{v})$ , and for simplicity, we refer to it as $F_{j}$ :

$$

\displaystyle F_{j}=F_{j}^{(i)}(S_{u},S_{v}) \displaystyle=\left\{\text{the earliest position in }\mathcal{S}^{(i)}_{K}

\text{ where either }S_{u}\text{ or }S_{v}\text{ appears is $j$}\right\} \displaystyle=\left\{\forall m,n\in\{1,2,...,N\}\mid S_{m}^{(i)}=S_{u},S_{n}^{

(i)}=S_{v},j=\min(m,n)\right\} \tag{6}

$$ Given this event, the probability that $S_{u}$ is sampled before $S_{v}$ across all possible positions $j$ is:

$$

\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)})=\sum_{j=1}^{N}\mathbb{P}(F_{j}

\mid P,E_{uv}^{(i)})\times\underbrace{\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}

^{(i)},F_{j})}_{\text{(a)}} \tag{7}

$$ To further elucidate (1), which is conditioned on $F_{j}$ , we note that the first sampled answer between $S_{u}$ and $S_{v}$ appears at position $j$ . We then consider all potential answers sampled prior to $j$ . For this, we introduce a permutation set $\mathcal{H}_{j-1}$ to encapsulate all feasible combinations of answers for the initial $j-1$ samplings. A representative sampling sequence is given by: $\mathcal{S}_{j-1}=\{S_{(1)}\succ S_{(2)}\succ\dots\succ S_{(j-1)}\mid\forall\, l\in\{1,2,...,j-1\},S_{(l)}\in\mathcal{A}\setminus\{S_{u},S_{v}\}\}$ . Consequently, (a) can be articulated as:

$$

\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)},F_{j})=\sum_{\mathcal{S}_{j-1}

\in\mathcal{H}_{j-1}}\mathbb{P}(\mathcal{S}_{j-1}\mid P,E_{uv}^{(i)},F_{j})

\times\underbrace{\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)},\mathcal{S}_{

j-1},F_{j})}_{\text{(b)}} \tag{8}

$$ Consider the term (b), which signifies the probability that, given the first $j-1$ samplings and the restriction that the $j$ -th sampling can only be $S_{u}$ or $S_{v}$ , $S_{u}$ is sampled prior to $S_{v}$ . This probability is articulated as:

$$

\displaystyle\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)},F_{j},\mathcal{S}_

{j-1}) \displaystyle=\frac{\mathbb{P}(S_{j}^{(i)}=S_{u}\mid P,E_{uv}^{(i)},F_{j},

\mathcal{S}_{j-1})}{\mathbb{P}(S_{j}^{(i)}=S_{u}\mid P,E_{uv}^{(i)},F_{j},

\mathcal{S}_{j-1})+\mathbb{P}(S_{j}^{(i)}=S_{v}\mid P,E_{uv}^{(i)},F_{j},

\mathcal{S}_{j-1})} \displaystyle=\frac{\frac{P(S_{u})}{1-\sum_{S_{m}\in\mathcal{S}_{j-1}}P(S_{m})

}}{\frac{P(S_{v})}{1-\sum_{S_{m}\in\mathcal{S}_{j-1}}P(S_{m})}+\frac{P(S_{u})}

{1-\sum_{S_{m}\in\mathcal{S}_{j-1}}P(S_{m})}} \displaystyle=\frac{P(S_{u})}{P(S_{u})+P(S_{v})} \tag{9}

$$ Integrating equation (9) into equation (8), we obtain:

$$

\displaystyle\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)},F_{j}) \displaystyle=\sum_{\mathcal{S}_{j-1}\in\mathcal{H}_{j-1}}\mathbb{P}(\mathcal{

S}_{j-1}\mid P,F_{j})\times\frac{P(S_{u})}{P(S_{u})+P(S_{v})} \displaystyle=\frac{P(S_{u})}{P(S_{u})+P(S_{v})}\times\sum_{\mathcal{S}_{j-1}

\in\mathcal{H}_{j-1}}\mathbb{P}(\mathcal{S}_{j-1}\mid P,E_{uv}^{(i)},F_{j}) \displaystyle\stackrel{{\scriptstyle(c)}}{{=}}\frac{P(S_{u})}{P(S_{u})+P(S_{v})} \tag{10}

$$ Subsequently, incorporating equation (10) into equation (7), we deduce:

$$

\displaystyle\mathbb{P}(S_{u}\succ S_{v}\mid P,E_{uv}^{(i)}) \displaystyle=\sum_{j=1}^{K}\mathbb{P}(F_{j}\mid P,E_{uv}^{(i)})\times\frac{P(

S_{u})}{P(S_{u})+P(S_{v})} \displaystyle=\frac{P(S_{u})}{P(S_{u})+P(S_{v})}\times\sum_{j=1}^{K}\mathbb{P}

(F_{j}\mid P,E_{uv}^{(i)}) \displaystyle\stackrel{{\scriptstyle(d)}}{{=}}\frac{P(S_{u})}{P(S_{u})+P(S_{v})} \tag{11}

$$

The derivations in (c) and (d) employ the Law of Total Probability. Incorporating Equation 11 into Equation 3, the minimization objective is formulated as:

$$

\min_{P}-\sum_{i=1}^{N}\sum_{S_{u}\in\mathcal{A}}\sum_{S_{v}\in\mathcal{A}}

\mathbb{I}\{S_{u}\stackrel{{\scriptstyle\scriptstyle(i)}}{{\succ}}S_{v}\}

\times\log\frac{P(S_{u})}{P(S_{u})+P(S_{v})}\quad\text{s.t.}\sum_{S_{u}\in

\mathcal{A}}P(S_{u})=1 \tag{12}

$$ ∎

## Appendix B Detailed Experiment Results

### B.1 White-box methods outperform black-box methods, but the gap is narrow.