## Response: Emergent analogical reasoning in large language models

Damian Hodel 1 and Jevin West 1

1 Center for an Informed Public, Information School, University of Washington hodeld@uw.edu, jevinw@uw.edu

May 2, 2024

## 1 Introduction

In their recent Nature Human Behaviour paper, 'Emergent analogical reasoning in large language models,' (Webb, Holyoak, and Lu, 2023) the authors argue that 'GPT-3 exhibits a very general capacity to identify and generalize-in zero-shot fashion-relational patterns found within both formal problems and meaningful texts.' This conclusion arises from their comparison of GPT-3 with human performance across four analogical reasoning domains, where they find comparable results. In this response, we argue that this approach is unsuitable for evaluating general, zero-shot reasoning in large language models (LLMs). Two primary reasons underlie our objection. First, the term 'zeroshot' implies problem sets entirely novel to GPT-3. However, the chosen approach cannot conclusively eliminate the possibility of these problems residing in the LLM's training data, as acknowledged by the authors themselves in the review file 1 . Second, the assumption underlying this approach is that tests designed for humans can accurately measure LLM capabilities. This assumption is prevalent, but remains unverified. We also provide empirical results to support our claims, see appendix (Section 7.1). Our counterexamples show that GPT-3 fails to solve simplest variations of the original tasks, whereas human performance remains consistently high across all modified versions.

Given the hype surrounding LLM capability and this paper in particular 234

1 https://www.nature.com/articles/s41562-023-01659-w#peer-review

https://www.tagesanzeiger.ch/beherrscht-die-kuenstliche-intelligenz-

2 analogien-768020720454

3 https://www.news-medical.net/news/20230731/AI-language-model-GPT-3-performsabout-as-well-as-college-undergraduates-in-analogical-reasoning.aspx

4 https://www.sciencemediacentre.org/expert-reaction-to-study-looking-at-gpt3-large-language-model-and-ability-to-reason-by-analogy/

contrasted by the many findings of LLM brittleness 5 , we felt it was important to respond and illustrate the insufficiency of the methods employed in addressing GPT-3's supposed general, zero-shot reasoning. It is important that we interpret LLM results with caution and refrain from LLM anthropomorphization. Tests designed to assess the general capabilities of humans may not inherently serve the same purpose when applied to LLMs.

Others have commented on this paper and we want to note these contributions. Mitchell (2023) discusses this paper, focusing on the letter string and digit matrix analogy problems. Mitchell disagrees that 'the digit matrix problems are essentially equivalent in complexity and difficulty to Ravens Progressive Matrix problems.' Further, Mitchell presents individual counterexamples of the letter string problems where GPT-3 makes nonhuman-like errors, as evidence against the claimed robustness of GPT-3 in analogy reasoning. We conduct a similar but more systematic analysis and include human behavioral experiments, as detailed in the appendix, that concurs with Mitchell's conclusion. Mitchell also points out that the term 'accuracy' implies that there was only one correct answer to each problem, which isn't the case with these problems, but an assumption implicitly made by the authors. For comparison purposes, we adopt Webb, Holyoak, and Lu (2023)'s assumption in our paper and use the same terms, i.e. 'accuracy' and 'performance' but recognize this limitation.

## 2 Criticism of the Methods Employed in the Original Paper

To assess general, zero-shot reasoning capacity of LLMs, Webb, Holyoak, and Lu (2023) compare GPT-3 with humans and find similar or even better performance across a range of analogical reasoning tests adapted from existing cognitive tests designed for humans. However, we believe that this approach is not sufficient for testing the general, zero-shot reasoning capacity of large language models (LLMs). Here is why:

First, 'zero-shot' implies analogical problem sets that are entirely novel to GPT-3, encompassing both specific examples and variants of those examples. However, this condition is not met by some of the letter string problems used in the original paper, as noted by the authors themselves in the review file 6 : 'It is possible that GPT-3 has been trained on other letter string analogy problems, as these problems are discussed on a number of webpages.' Without ruling out the possibility of data memorization, one cannot claim zero-shot reasoning. As the first author notes in a recent MIT Technology Review article 7 , [if the test

5 https://www.technologyreview.com/2023/08/30/1078670/large-language-modelsarent-people-lets-stop-testing-them-like-they-were

6 https://www.nature.com/articles/s41562-023-01659-w#peer-review

7 https://www.technologyreview.com/2023/08/30/1078670/large-language-modelsarent-people-lets-stop-testing-them-like-they-were

examples exist in the training data], 'I think we really can't conclude much of anything.'

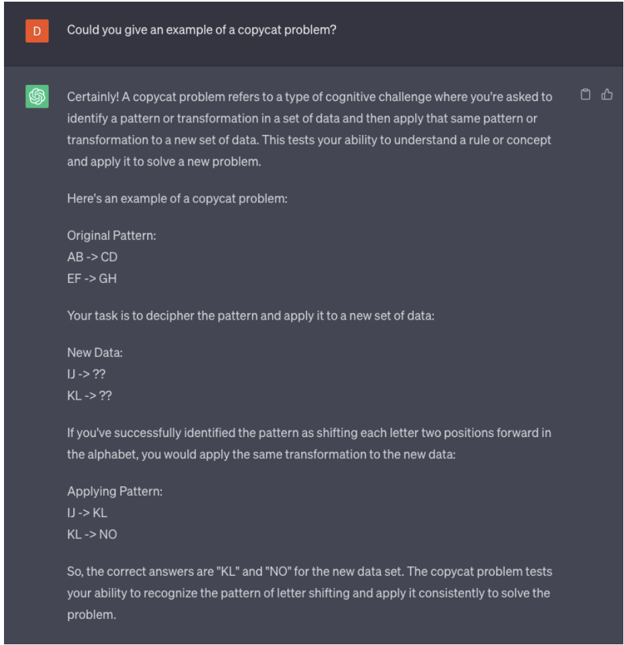

In the before-mentioned peer review file, the authors further note that they ask GPT-3 about these problems as a way of testing their existence in the training data. It makes sense to at least try this, but we find this to be weak evidence, given the large number of possible answers to this question and the ambiguity of the answers given. To investigate this further, we asked ChatGPT to provide examples of letter string problems. Examples were given, suggesting that it has seen such examples in the training data. We include our question and ChatGPT's answer in the appendix. Important to note is that ChatGPT was trained on more data than GPT-3 so this result only provides circumstantial evidence.

Zero-shot reasoning is an extraordinary claim that requires extraordinary evidence. At the very least, it necessitates demonstrating that the problems, as well as their variations, do not already exist within the training data, as previously mentioned. The original paper fails to offer such evidence for any of the four task domains. We do recognize that obtaining such evidence can be exceptionally challenging. Many researchers lack access to GPT-3's training data, and even if they did, confirming the absence of examples or derivations from the training data is nearly impossible. However, the difficulty to provide evidence of zero-shot should not be a reason to claim it.

Second, Webb, Holyoak, and Lu (2023) claim that the presented problem types test GPT-3's human-like reasoning capacity in a 'very general' way. This assumption is based on the premise that LLMs behave similarly to humans, thus implying that a test designed for humans can adequately assess LLMs in a broader capacity beyond the tasks included in the test. However, this assumption has not been substantiated.

On the contrary, generalized findings across the literature of LLM brittleness tend to contradict it. In Appendix 7.1, we present counterexamples involving the letter string analogy problems, which demonstrate the brittleness of the assessment approach employed. In these tests, GPT-3 fails to solve simple variants of the letter string analogies presented in the original paper, while human performance remains on a high level.

In addition to the finding that GPT-3 matches or even outperforms human performance, Webb, Holyoak, and Lu (2023) further show that GPT-3 exhibits human-like characteristics in analogical reasoning, i.e., decreasing performance with increasing problem complexity. Based on this result, the authors propose that GPT-3 may have developed mechanisms similar to those underlying human intelligence. This is one possible interpretation. However, an alternative explanation could be that the training data contains a scarcity of solutions to complex problems, possibly reflecting the challenges humans encounter with such problems, a notion supported by our experiments involving human subjects.

It is important to note that our intention is not to discredit the use of such tests for studying LLMs but to point out the limitations of these methods for making claims about the reasoning capacity of LLMs.

Before conducting the human behavior experiments, we shared our counterexamples on GPT-3 with the first author of the original paper, and greatly appreciate their engagement in this discussion. One of their main objections was the expectation that our modified problems would also be significantly more difficult for human subjects. The human behavioral studies we carried out definitively contradict the predictions of the primary author. Despite a notable decrease in GPT-3's performance on our adapted tasks, humans consistently demonstrate strong performance. Nevertheless, it is important to note that comparing performance to humans, whether better or worse, is not evidence of the claimed capacity. For example, if one ran this comparison only among humans and two groups emerged from the sampling, one with adults and one with children 8 , we would likely find that adults outperform children on these reasoning tasks. According to the authors' logic (Webb, Holyoak, and Lu, 2023), this would be evidence against zero-shot reasoning in children. But we know that children have this ability. Hence, performance compared to humans cannot be used to support or refute zero-shot reasoning.

## 3 Conclusion

Based on their analysis, Webb, Holyoak, and Lu (2023) argue that LLMs have acquired a general ability for zero-shot reasoning. With full respect to the authors and their work, we disagree with this interpretation. As we show and argue in our response, the methods are insufficient to evaluate a capacity for true, zero-shot reasoning. Given the current hype surrounding LLMs, we hope this can be used to spur further tests and evaluations of what LLMs can and cannot do.

## 4 Code and data availability

Code and data can be downloaded from: https://github.com/hodeld/emergent\_ analogies\_LLM\_fork

## References

Mitchell, Melanie (Jan. 2023). On analogy-making in large language models . url : https://aiguide.substack.com/p/on-analogy-making-in-largelanguage (visited on 08/09/2023).

8 This analogy presumes that LLMs' performances can be assessed against human standards. It is important to clarify that we don't endorse this assumption until it is substantiated, but for the sake of our argument, we will adopt it from the original paper.

- Webb, Taylor, Keith J. Holyoak, and Hongjing Lu (July 2023). 'Emergent analogical reasoning in large language models'. en. In: Nature Human Behaviour . issn : 2397-3374. doi : 10.1038/s41562-023-01659-w . url : https: //www.nature.com/articles/s41562-023-01659-w .

- Wu, Zhaofeng et al. (Aug. 2023). Reasoning or Reciting? Exploring the Capabilities and Limitations of Language Models Through Counterfactual Tasks . en. arXiv:2307.02477 [cs]. url : http://arxiv.org/abs/2307.02477 (visited on 03/14/2024).

## 5 Author contributions

D.H. conducted the experiments. D.H. and J.W. drafted the manuscript.

## 6 Competing interests

The authors declare no competing interests.

## 7 Appendix

## 7.1 Counterexamples

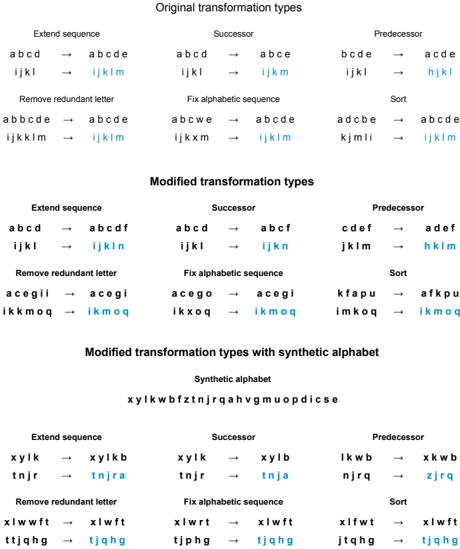

To investigate whether the problems presented in the original paper truly assess analogical reasoning in GPT-3 or primarily its capability to recite training data, we create non-standard variants of the original tasks that are less likely to be found in training data. Our focus is on the letter string analogies, a subset of the four problem domains examined, and we conduct tests with both human subjects and GPT-3. In our experiments, GPT-3 performance significantly declines when presented with these additional counterexamples, while human performance remains consistently high across all tests (2). This suggests that the claims made in the original paper regarding GPT-3's zero-shot reasoning may not be substantiated.

## 7.1.1 Methods

In order to test GPT-3's generality in zero-shot analogical reasoning, we extend the letter string analogies with two modifications and compare GPT-3's and humans' performance analogous to the original approach. The modifications involve using a synthetic alphabet and increasing the size of the interval from one to two letters, see Figure 1. If the claim regarding GPT-3's zero-shot reasoning capability is true, we can expect similar performance across modifications, in particular, independent of the alphabet. Unlike the original study, we view the comparison with human performance not as evidence for or against GPT-3's analogical reasoning abilities, but rather as a confirmation of the validity of our set of problems.

We create the synthetic alphabet by randomly changing the order of the letters in the real alphabet. For both humans and GPT-3, we incorporate the synthetic alphabet in the tasks by preceding the original prompt with the sentence 'Use this fictional alphabet: [ x y l k w b f z t n j r q a h v g m u o p d i c s e ] .'

The increase in the size of the interval from one to two letters aims to rule out the possibility that GPT-3 merely replicates the fed sequence of letters. We achieve this in two ways. For the problem types 'extend sequence', 'successor', and 'predecessor', we increase the interval size for the letter to change from one to two. For the problem types 'remove redundant letter', 'fix alphabetic sequence', and 'sort', we increase the interval size of the complete letter sequence from one to two 9 .

We compare GPT-3's and human performance for the following three settings: the original tasks as reported in (Webb, Holyoak, and Lu, 2023), counterexamples that involve the interval size modification, and counterexamples

9 It is worth noting that we apply this modification to both the source (the first row for each example in Figure 1) and the target (the second row for each example in Figure 1), minimizing the difficulty of the modified problems and allowing us to compare our tests to the zero-generalization problems given in the original paper.

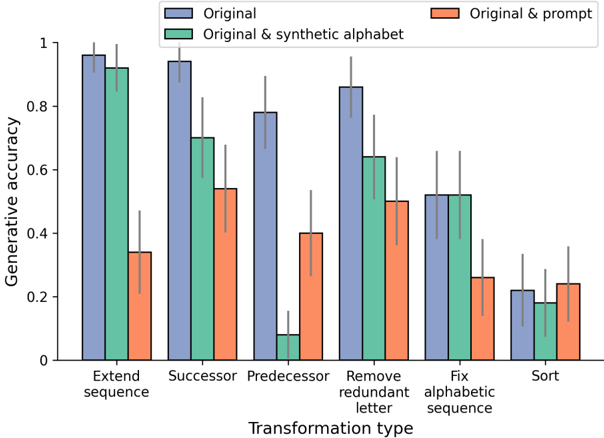

Figure 1: Letter string analogies along their transformations of both the original paper and our counterexamples. We introduce a synthetic alphabet into the task and apply two types of letter sequence modifications, both based on increasing the interval from one to two letters. For the transformation types 'extend sequence', 'successor', and 'predecessor', the modification only affects the letter to change (last or first letter). For 'remove redundant letter', 'fix alphabetic sequence', and 'sort', the interval is increased for the complete letter sequence. We apply the same modifications to the problems generated with the synthetic alphabet.

<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Diagram: Transformation Types

### Overview

The image presents a diagram illustrating different types of transformations applied to sequences of letters. It is divided into three sections: "Original transformation types", "Modified transformation types", and "Modified transformation types with synthetic alphabet". Each section demonstrates four transformation types: "Extend sequence", "Successor", "Predecessor", "Remove redundant letter", "Fix alphabetic sequence", and "Sort".

### Components/Axes

The diagram consists of text-based examples of transformations. There are no axes or scales in the traditional sense. The diagram is organized into rows representing transformation types and columns representing the original and transformed sequences. The top of the image has a title: "Original transformation types", "Modified transformation types", and "Modified transformation types with synthetic alphabet".

### Detailed Analysis or Content Details

**Original transformation types:**

* **Extend sequence:**

* `abcd -> abcde`

* `ijkl -> ijklm`

* **Successor:**

* `abcd -> abce`

* `ijkl -> ijkm`

* **Predecessor:**

* `bcde -> acde`

* `ijkl -> ijkj`

* **Remove redundant letter:**

* `abcde -> abcde`

* `ijklm -> ijklm`

* **Fix alphabetic sequence:**

* `abcwe -> abcde`

* `ijklm -> ijklm`

* **Sort:**

* `adcbe -> abcde`

* `kjmli -> ijklm`

**Modified transformation types:**

* **Extend sequence:**

* `abcd -> abcdf`

* `ijkl -> ijklm`

* **Successor:**

* `abcd -> abcf`

* `ijkl -> ijkn`

* **Predecessor:**

* `cdef -> adef`

* `jklm -> hklm`

* **Remove redundant letter:**

* `acegil -> acegi`

* `ikkmog -> ikmoq`

* **Fix alphabetic sequence:**

* `acego -> acegi`

* `ikxoq -> ikmoq`

* **Sort:**

* `kfapu -> afkpu`

* `imkoq -> ikmoq`

**Modified transformation types with synthetic alphabet:**

* **Synthetic alphabet:** `xylkwbfztnjrqahvgmupodics`

* **Extend sequence:**

* `xylk -> xylkb`

* `tnjr -> tnjra`

* **Successor:**

* `xylk -> xylb`

* `tnjr -> tnja`

* **Predecessor:**

* `lkwb -> xkwb`

* `njrq -> zjrq`

* **Remove redundant letter:**

* `xlwft -> xlwft`

* `ttjgh -> ttjgh`

* **Fix alphabetic sequence:**

* `xlwrt -> xlwft`

* `ttjgh -> tjgh`

* **Sort:**

* `xlwft -> xlwft`

* `ttjgh -> ttjgh`

### Key Observations

The diagram demonstrates how different transformation rules can be applied to sequences of letters. The "Modified transformation types" section introduces changes to the transformation rules compared to the "Original transformation types". The "Modified transformation types with synthetic alphabet" section applies the same transformation rules to a custom alphabet. The "Remove redundant letter" and "Sort" transformations appear to have no effect in some cases.

### Interpretation

The diagram illustrates the concept of transformations and how they can be defined and applied to sequences. The use of different alphabets and transformation rules highlights the flexibility and generality of the concept. The diagram could be used to explore the properties of different transformation rules or to develop new transformation rules. The synthetic alphabet suggests an exploration of transformations in non-standard systems. The transformations are presented as examples, likely for illustrative or educational purposes, rather than representing a specific algorithm or application. The diagram is a conceptual exploration of sequence manipulation.

</details>

that include both the interval size modification and the synthetic alphabet. To ensure that GPT-3 is capable of processing the introduced modifications (Wu et al., 2023, 'counterfactial comprehension check'), we additionally include tests on GPT-3 for two additional settings: original examples on the real alphabet but including the modified prompt, i.e. 'Use this fictional alphabet: [ a b c d e f g h i j k l m n o p q r s t u v w x y z ] . '), and counterexamples involving the synthetic alphabet but without increasing the interval size.

GPT-3 evaluation Our code for reproducing Figure 2 is available on Github 10 . For each problem type, we create 50 instances to mirror the original paper. The settings are as follows: model variant=text-davinci-003, temperature=0, maximum length=20. Using the original code, we mirror the evaluation and analysis

10 https://github.com/hodeld/emergent\_analogies\_LLM\_fork

approach of the original paper. The prompt pattern including the synthetic alphabet illustrates the following example.

```

alphabet illustrates the following example.

Use this fictional alphabet: [x y l k w b f z t n j r q a h v g m u o p d i c s e]. Let's try to complete the pattern:

[x y l k] [x y l k b]

[t n j r] [

```

h

Human behavioral experiment. We conducted human behavior experiments through an online study with University of Washington (UW) undergraduates analogous to the experiments of the original paper. All participants provided their informed consent prior to the study, and the data collection process was approved by the UW Institutional Review Board (IRB ID STUDY00019080, approved on 6 November 2023). 121 participants completed the study. They were compensated with extra course credits for their participation.

The first author of the original study generously provided participant instructions, which we adapted for our experiments. In particular, we presented the participants an additional example problem to introduce the synthetic alphabet.

Use this fictional alphabet: [ x y l k w b f z t n j r q a h v g m u o p d i c s e ] .

$$[ x x x ] [ y y y ] \\ [ 1 1 1 ] [ ? ]$$

Each participant completed a total of 18 zero-generalization tasks, consisting of six problems for each setting (one problem for each transformation type).

## 7.1.2 Results

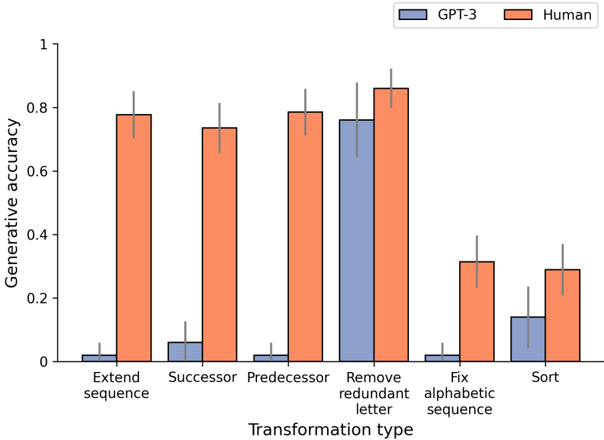

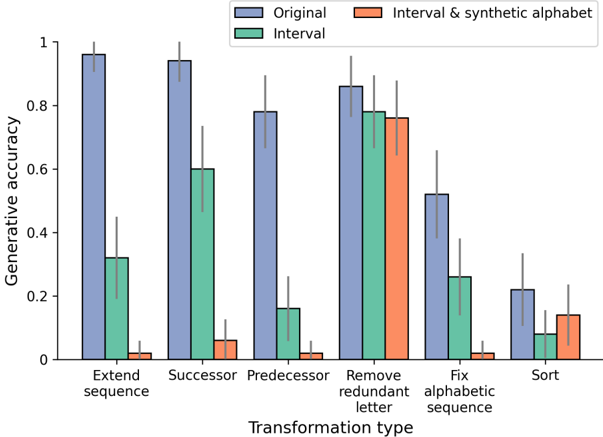

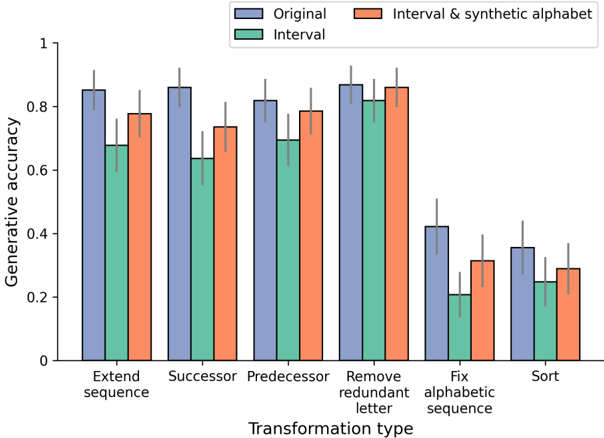

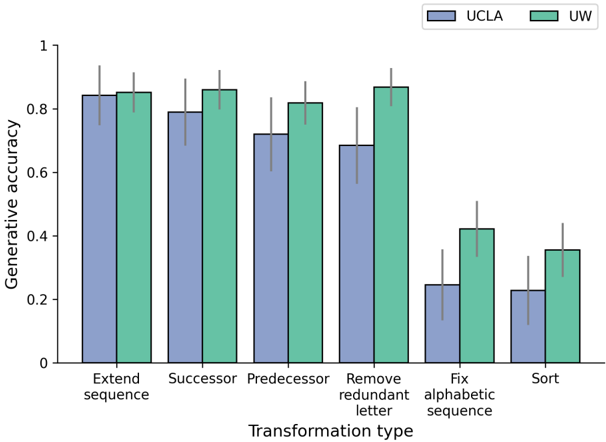

In our experiments, human achieved consistently higher accuracy than GPT-3, in particular on modified letter string tasks involving both the synthetic alphabet and increased letter interval size, see Figure 2. Human performance remains at a level similar across modifications (Figure 4) while GPT-3 performance declines significantly for modified problem types (Figure 4). The generative accuracy of GPT-3 for the synthetic alphabet is close to zero ( < 0 . 1) when performing the modified tasks 'extend sequence', 'successor' or 'predecessor', and 'fix alphabetic sequence'. Only for 'remove redundant letter' and 'sort' does GPT-3 achieve accuracy in a range similar to that reported in the original paper (Webb, Holyoak, and Lu, 2023).

Figure 5 shows the accuracy of GPT-3 in the two counterfactual comprehension checks (Wu et al., 2023). For all but on the 'precessor' task on the synthetic alphabet, we obtain a GPT-3 accuracy of at least 30% of the original level, demonstrating GPT-3's ability to process the introduced modifications.

Lastly, Figure 6 illustrates the comparison of human performance in the original tasks between the participants of the original study and those in our

Figure 2: Comparison between GPT-3's (blue) and human (orange) performances on modified letter string problems involving a synthetic alphabet and a larger interval size. The transformation types and their order correspond to Figure 6b in the original paper. Humans demonstrate significantly higher accuracy compared to GPT-3. Human results represent the average performance of 121 participants (UW undergraduates). Each participant received one randomly selected instance of each problem subtype. GPT-3 results reflect the average performance across all 50 instances. Gray error bars indicate 95% binomial confidence intervals for the average performance across multiple problems.

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Bar Chart: Generative Accuracy of GPT-3 vs. Human for Transformation Types

### Overview

This bar chart compares the generative accuracy of GPT-3 and humans across six different sequence transformation types. The y-axis represents generative accuracy, ranging from 0 to 1. The x-axis represents the transformation type. Each transformation type has two bars: one for GPT-3 (blue) and one for Human (orange). Error bars are present on top of each bar, indicating the variability in accuracy.

### Components/Axes

* **X-axis Title:** Transformation type

* **X-axis Categories:** Extend sequence, Successor, Predecessor, Remove redundant letter, Fix alphabetic sequence, Sort

* **Y-axis Title:** Generative accuracy

* **Y-axis Scale:** 0 to 1, with increments of 0.2

* **Legend:**

* GPT-3 (Blue)

* Human (Orange)

### Detailed Analysis

Let's analyze each transformation type individually, noting the approximate values based on the bar heights and error bars.

1. **Extend sequence:**

* GPT-3: Approximately 0.05 ± 0.02

* Human: Approximately 0.75 ± 0.05

2. **Successor:**

* GPT-3: Approximately 0.08 ± 0.03

* Human: Approximately 0.70 ± 0.05

3. **Predecessor:**

* GPT-3: Approximately 0.05 ± 0.02

* Human: Approximately 0.75 ± 0.05

4. **Remove redundant letter:**

* GPT-3: Approximately 0.72 ± 0.05

* Human: Approximately 0.85 ± 0.05

5. **Fix alphabetic sequence:**

* GPT-3: Approximately 0.03 ± 0.02

* Human: Approximately 0.30 ± 0.05

6. **Sort:**

* GPT-3: Approximately 0.18 ± 0.05

* Human: Approximately 0.25 ± 0.05

### Key Observations

* Humans consistently outperform GPT-3 across all transformation types.

* GPT-3 performs particularly poorly on "Extend sequence", "Successor", "Predecessor", and "Fix alphabetic sequence" tasks, with accuracy values close to 0.

* The largest difference in performance between GPT-3 and humans is observed in the "Extend sequence" task.

* The smallest difference in performance is observed in the "Remove redundant letter" task.

* Error bars indicate that the human performance is more consistent than GPT-3's performance across most tasks.

### Interpretation

The data suggests that GPT-3 struggles with tasks requiring a deeper understanding of sequence structure and logical reasoning, such as extending sequences, identifying successors/predecessors, and fixing alphabetic order. Humans, on the other hand, demonstrate a strong ability in these areas.

The "Remove redundant letter" task shows a smaller performance gap, potentially indicating that this task relies more on pattern recognition and less on complex reasoning, which GPT-3 can handle relatively well.

The consistent outperformance of humans highlights the limitations of current language models in tasks that require human-level cognitive abilities. The error bars suggest that human performance is more reliable, while GPT-3's accuracy is more variable, potentially due to its reliance on statistical patterns rather than true understanding. The data suggests that GPT-3 is better at identifying and removing redundancies than it is at generating or manipulating sequences based on underlying rules.

</details>

study. Although the subjects in our study marginally outperform those in the previous study, the similarity in performances is evidence that our experimental setup and execution align with the original study at UCLA.

## 7.1.3 Discussion

The recent paper, 'Emergent analogical reasoning in large language models' (Webb, Holyoak, and Lu, 2023), and subsequent news articles argue that LLMs may have acquired the emergent ability for zero-shot analogical reasoning. We are less certain of these conclusions, given our own follow-up experiments. Our results show low success of GPT-3 in solving letter string problems with simple modifications and with a synthetic alphabet, while human performance remains high.

Only in two out of six problem types ('remove redundant letter' and 'sort'), GPT-3 achieves similar generative accuracy on our counterexamples compared to the original problems involving the real alphabet, as well as in comparison to human performance on the same modified problems. For these two problem

Figure 3: GPT-3 performance for zero-generalization letter string problems for the original experiment (blue) and with the larger interval size (green), and larger interval size with synthetic alphabet (orange). Except for 'remove redundant letter,' GPT3's accuracy declines significantly for the modified problems. The results reflect an average performance for N=50 instances.

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Bar Chart: Generative Accuracy vs. Transformation Type

### Overview

This bar chart compares the generative accuracy of different transformation types, using three different datasets: "Original", "Interval & synthetic alphabet", and "Interval". The generative accuracy is represented on the y-axis, while the transformation type is displayed on the x-axis. Error bars are present for each bar, indicating the variability in the accuracy.

### Components/Axes

* **X-axis:** Transformation type. Categories are: "Extend sequence", "Successor", "Predecessor", "Remove redundant letter", "Fix alphabetic sequence", "Sort".

* **Y-axis:** Generative accuracy, ranging from 0 to 1.

* **Legend:**

* Blue: "Original"

* Orange: "Interval & synthetic alphabet"

* Teal: "Interval"

* **Error Bars:** Grey vertical lines extending above and below each bar, representing the standard deviation or confidence interval.

### Detailed Analysis

The chart consists of six groups of bars, one for each transformation type. Each group contains three bars, representing the generative accuracy for the "Original", "Interval & synthetic alphabet", and "Interval" datasets.

**1. Extend sequence:**

* Original (Blue): Approximately 0.95, with error bars ranging from ~0.85 to ~1.0.

* Interval & synthetic alphabet (Orange): Approximately 0.05, with error bars ranging from ~0.0 to ~0.1.

* Interval (Teal): Approximately 0.35, with error bars ranging from ~0.25 to ~0.45.

**2. Successor:**

* Original (Blue): Approximately 0.93, with error bars ranging from ~0.85 to ~1.0.

* Interval & synthetic alphabet (Orange): Approximately 0.02, with error bars ranging from ~0.0 to ~0.05.

* Interval (Teal): Approximately 0.60, with error bars ranging from ~0.5 to ~0.7.

**3. Predecessor:**

* Original (Blue): Approximately 0.80, with error bars ranging from ~0.7 to ~0.9.

* Interval & synthetic alphabet (Orange): Approximately 0.01, with error bars ranging from ~0.0 to ~0.03.

* Interval (Teal): Approximately 0.15, with error bars ranging from ~0.1 to ~0.2.

**4. Remove redundant letter:**

* Original (Blue): Approximately 0.88, with error bars ranging from ~0.8 to ~0.95.

* Interval & synthetic alphabet (Orange): Approximately 0.75, with error bars ranging from ~0.65 to ~0.85.

* Interval (Teal): Approximately 0.73, with error bars ranging from ~0.65 to ~0.8.

**5. Fix alphabetic sequence:**

* Original (Blue): Approximately 0.52, with error bars ranging from ~0.4 to ~0.65.

* Interval & synthetic alphabet (Orange): Approximately 0.50, with error bars ranging from ~0.4 to ~0.6.

* Interval (Teal): Approximately 0.25, with error bars ranging from ~0.15 to ~0.35.

**6. Sort:**

* Original (Blue): Approximately 0.23, with error bars ranging from ~0.15 to ~0.3.

* Interval & synthetic alphabet (Orange): Approximately 0.15, with error bars ranging from ~0.1 to ~0.2.

* Interval (Teal): Approximately 0.05, with error bars ranging from ~0.0 to ~0.1.

### Key Observations

* The "Original" dataset consistently shows the highest generative accuracy across most transformation types.

* The "Interval & synthetic alphabet" dataset generally exhibits the lowest generative accuracy, often close to zero.

* The "Interval" dataset shows intermediate accuracy, generally lower than "Original" but higher than "Interval & synthetic alphabet".

* The error bars indicate significant variability in the accuracy for some transformation types, particularly "Extend sequence" and "Successor" for the "Original" dataset.

* The largest difference in accuracy between datasets is observed for "Extend sequence" and "Successor".

### Interpretation

The data suggests that the model performs best on the original data distribution and struggles significantly when presented with the "Interval & synthetic alphabet" dataset. This could indicate that the model is highly sensitive to the specific characteristics of the original data and fails to generalize well to altered distributions. The "Interval" dataset represents a middle ground, suggesting that some level of alteration is tolerable, but significant changes negatively impact performance.

The large error bars for certain transformation types suggest that the model's performance is inconsistent for those tasks. This could be due to the inherent difficulty of the transformation or the presence of ambiguity in the data.

The consistent high performance of the "Original" dataset highlights the importance of data distribution in the success of the model. The model appears to be optimized for the original data and struggles to adapt to different distributions. The differences in performance across transformation types suggest that the model has varying levels of robustness to different types of data alterations.

</details>

subtypes, GPT-3 does not need to generate a letter from the full alphabet, but only to remove the duplicate letter or to rearrange given letters, which may explain the higher performance. The results of these two tasks also serve as an additional counterfactual comprehension check (Wu et al., 2023) in addition to the accuracy of GPT-3 under the only marginally modified conditions, shown in Figure 5. The results demonstrate that GPT-3 is capable of processing synthetic alphabets, which validates our approach.

So what explains the high success of GPT-3 in solving the problems on the real alphabet (as used in the original paper) but failure with the synthetic alphabet and with the modified interval size for most of the letter string problems while human performance remains consistently high?

Our results suggest that the answer resides in the training data confirming the analysis of the methods in Section 2. Unlike humans, GPT-3 performs well only for simple analogy problems with the standard English alphabet, which are likely to be present in the training data. These findings contradict two of the main claims in the original paper (Webb, Holyoak, and Lu, 2023) regarding GPT-3's capacity for general, zero-shot reasoning and its human-like characteristics in analogical reasoning. Consequently, we reject the proposition made in the original paper that GPT-3 may have developed mechanisms similar to those underlying human intelligence.

The GPT-3 failure to solve simple variations of the original problems demon-

Figure 4: Human performance for zero-generalization letter string problems for the original experiment (blue) and with the larger interval size (green), and larger interval size with synthetic alphabet (orange). Human accuracy in the modified problems is comparable to that in the original problems (blue). The results reflect the average performance of N = 121 participants (UW undergraduates).

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Bar Chart: Generative Accuracy vs. Transformation Type

### Overview

This bar chart compares the generative accuracy of three different methods ("Original", "Interval", and "Interval & synthetic alphabet") across six different transformation types applied to sequences. Error bars are present for each bar, indicating the variability in the accuracy measurements.

### Components/Axes

* **X-axis:** Transformation type. Categories are: "Extend sequence", "Successor", "Predecessor", "Remove redundant letter", "Fix alphabetic sequence", "Sort".

* **Y-axis:** Generative accuracy, ranging from 0 to 1.

* **Legend:** Located at the top-center of the chart.

* "Original" - represented by a dark blue color.

* "Interval & synthetic alphabet" - represented by an orange color.

* "Interval" - represented by a teal color.

* Error bars are present on top of each bar, indicating standard deviation or confidence intervals.

### Detailed Analysis

Let's analyze each transformation type and the accuracy of each method:

* **Extend sequence:**

* Original: Approximately 0.86 (with error bars ranging from ~0.82 to ~0.90).

* Interval & synthetic alphabet: Approximately 0.74 (with error bars ranging from ~0.70 to ~0.78).

* Interval: Approximately 0.69 (with error bars ranging from ~0.65 to ~0.73).

* **Successor:**

* Original: Approximately 0.85 (with error bars ranging from ~0.81 to ~0.89).

* Interval & synthetic alphabet: Approximately 0.72 (with error bars ranging from ~0.68 to ~0.76).

* Interval: Approximately 0.68 (with error bars ranging from ~0.64 to ~0.72).

* **Predecessor:**

* Original: Approximately 0.82 (with error bars ranging from ~0.78 to ~0.86).

* Interval & synthetic alphabet: Approximately 0.76 (with error bars ranging from ~0.72 to ~0.80).

* Interval: Approximately 0.71 (with error bars ranging from ~0.67 to ~0.75).

* **Remove redundant letter:**

* Original: Approximately 0.88 (with error bars ranging from ~0.84 to ~0.92).

* Interval & synthetic alphabet: Approximately 0.78 (with error bars ranging from ~0.74 to ~0.82).

* Interval: Approximately 0.74 (with error bars ranging from ~0.70 to ~0.78).

* **Fix alphabetic sequence:**

* Original: Approximately 0.38 (with error bars ranging from ~0.34 to ~0.42).

* Interval & synthetic alphabet: Approximately 0.24 (with error bars ranging from ~0.20 to ~0.28).

* Interval: Approximately 0.34 (with error bars ranging from ~0.30 to ~0.38).

* **Sort:**

* Original: Approximately 0.35 (with error bars ranging from ~0.31 to ~0.39).

* Interval & synthetic alphabet: Approximately 0.27 (with error bars ranging from ~0.23 to ~0.31).

* Interval: Approximately 0.29 (with error bars ranging from ~0.25 to ~0.33).

### Key Observations

* The "Original" method consistently outperforms the "Interval" and "Interval & synthetic alphabet" methods across all transformation types.

* The "Interval & synthetic alphabet" method generally performs better than the "Interval" method.

* The accuracy is significantly lower for the "Fix alphabetic sequence" and "Sort" transformation types compared to the others, regardless of the method used.

* The error bars suggest that the variability in accuracy is relatively consistent across different transformation types for each method.

### Interpretation

The data suggests that the "Original" method is the most effective for generative accuracy across all tested sequence transformations. The addition of "Interval" or "Interval & synthetic alphabet" consistently reduces accuracy, indicating that these methods introduce some level of distortion or loss of information during the transformation process.

The substantial drop in accuracy for "Fix alphabetic sequence" and "Sort" suggests that these transformations are particularly challenging for the generative models. This could be due to the complexity of these transformations, the need for a deeper understanding of alphabetic order, or the introduction of ambiguity during the process.

The consistent ranking of the methods (Original > Interval & synthetic alphabet > Interval) implies a systematic difference in their ability to handle these transformations. The "Interval & synthetic alphabet" method's slight improvement over "Interval" suggests that the synthetic alphabet component provides some benefit, but it is not enough to close the gap with the "Original" method.

The error bars provide a measure of the uncertainty in the accuracy estimates. The relatively small error bars suggest that the observed differences between methods are likely to be statistically significant.

</details>

strates the brittleness of the presented approach when assessing human-like reasoning in language models.

## 7.2 ChatGPT's answer to our question: 'Could you give an example of a copycat problem?'

Figure 5: Counterfactual comprehension check. Comparison of GPT-3 performance on zero-generalization letter string problems between original tasks (blue) and the only marginally modified tasks involving a synthetic alphabet without modification of the interval size (green) and a modified prompt without modified string sequence (orange). The accuracy on modified tasks is lower than on the original ones but, greater than 0.2 except for 'remove redundant letter' and 'sort' involving the synthetic alphabet. The figure and the order of the transformation types correspond to Figure 6b in the original paper. These results reflect an average performance for N=50 instances.

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Bar Chart: Generative Accuracy vs. Transformation Type

### Overview

This bar chart compares the generative accuracy of different transformation types using three different approaches: "Original", "Original & prompt", and "Original & synthetic alphabet". The chart displays the mean generative accuracy with error bars representing the variance.

### Components/Axes

* **X-axis:** Transformation type. Categories are: "Extend sequence", "Successor", "Predecessor", "Remove redundant letter", "Fix alphabetic sequence", "Sort".

* **Y-axis:** Generative accuracy, ranging from 0 to 1.

* **Legend:**

* Blue: "Original"

* Green: "Original & synthetic alphabet"

* Orange: "Original & prompt"

* Error bars are present for each bar, indicating the standard deviation or confidence interval.

### Detailed Analysis

The chart consists of six groups of three bars, each representing a different transformation type. The height of each bar represents the generative accuracy.

**1. Extend sequence:**

* Original (Blue): Approximately 0.96, with an error bar ranging from approximately 0.93 to 0.99.

* Original & synthetic alphabet (Green): Approximately 0.94, with an error bar ranging from approximately 0.91 to 0.97.

* Original & prompt (Orange): Approximately 0.34, with an error bar ranging from approximately 0.28 to 0.40.

**2. Successor:**

* Original (Blue): Approximately 0.95, with an error bar ranging from approximately 0.92 to 0.98.

* Original & synthetic alphabet (Green): Approximately 0.69, with an error bar ranging from approximately 0.64 to 0.74.

* Original & prompt (Orange): Approximately 0.58, with an error bar ranging from approximately 0.52 to 0.64.

**3. Predecessor:**

* Original (Blue): Approximately 0.75, with an error bar ranging from approximately 0.70 to 0.80.

* Original & synthetic alphabet (Green): Approximately 0.08, with an error bar ranging from approximately 0.04 to 0.12.

* Original & prompt (Orange): Approximately 0.42, with an error bar ranging from approximately 0.36 to 0.48.

**4. Remove redundant letter:**

* Original (Blue): Approximately 0.88, with an error bar ranging from approximately 0.84 to 0.92.

* Original & synthetic alphabet (Green): Approximately 0.62, with an error bar ranging from approximately 0.57 to 0.67.

* Original & prompt (Orange): Approximately 0.51, with an error bar ranging from approximately 0.45 to 0.57.

**5. Fix alphabetic sequence:**

* Original (Blue): Approximately 0.52, with an error bar ranging from approximately 0.46 to 0.58.

* Original & synthetic alphabet (Green): Approximately 0.45, with an error bar ranging from approximately 0.39 to 0.51.

* Original & prompt (Orange): Approximately 0.26, with an error bar ranging from approximately 0.20 to 0.32.

**6. Sort:**

* Original (Blue): Approximately 0.22, with an error bar ranging from approximately 0.16 to 0.28.

* Original & synthetic alphabet (Green): Approximately 0.18, with an error bar ranging from approximately 0.12 to 0.24.

* Original & prompt (Orange): Approximately 0.24, with an error bar ranging from approximately 0.18 to 0.30.

### Key Observations

* The "Original" approach generally achieves the highest generative accuracy across most transformation types.

* The "Original & prompt" approach consistently shows the lowest generative accuracy, particularly for "Extend sequence" and "Fix alphabetic sequence".

* The "Original & synthetic alphabet" approach shows variable performance, sometimes comparable to "Original" (e.g., "Extend sequence") and sometimes significantly lower (e.g., "Predecessor").

* The error bars indicate substantial variance in the results, especially for the "Original" approach in "Extend sequence" and "Successor".

### Interpretation

The data suggests that the baseline "Original" approach is the most effective for these sequence transformation tasks. The addition of prompts ("Original & prompt") consistently *decreases* performance, indicating that the prompts may be misleading or unhelpful in this context. The use of a synthetic alphabet ("Original & synthetic alphabet") yields mixed results, sometimes improving and sometimes degrading performance depending on the specific transformation type.

The large error bars suggest that the results are sensitive to the specific data or experimental conditions. The wide variance in the "Original" approach for "Extend sequence" and "Successor" could indicate that these transformations are particularly challenging or that the data contains inherent ambiguity.

The fact that "Original & prompt" performs so poorly across the board suggests that the prompt engineering is not effective for these tasks, or that the prompts are actively hindering the model's ability to generate accurate sequences. Further investigation into the prompts themselves would be necessary to understand why they are detrimental. The "Predecessor" transformation type is particularly problematic for the "Original & synthetic alphabet" approach, suggesting that the synthetic alphabet is not well-suited for this type of transformation.

</details>

Figure 6: Comparison of human performance on the original letter string tasks between the outcomes reported in the original study (blue) and the findings presented in this paper (green). UW undergraduate students exhibit marginally higher accuracies. The transformation types and their order correspond to Figure 6b in the original paper.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Bar Chart: Generative Accuracy by Transformation Type and Institution

### Overview

This bar chart compares the generative accuracy of two institutions, UCLA and UW, across six different transformation types. Each transformation type has two bars representing the accuracy of each institution, with error bars indicating the variability.

### Components/Axes

* **X-axis:** Transformation type. Categories are: "Extend sequence", "Successor", "Predecessor", "Remove redundant letter", "Fix alphabetic sequence", "Sort".

* **Y-axis:** Generative accuracy, ranging from 0 to 1.

* **Legend:** Located at the top-right of the chart.

* Blue: UCLA

* Green: UW

### Detailed Analysis

The chart consists of six groups of bars, one for each transformation type. Each group contains two bars, one for UCLA (blue) and one for UW (green). Error bars are present on top of each bar, indicating the standard deviation or confidence interval.

Here's a breakdown of the approximate values, reading from left to right:

1. **Extend sequence:**

* UCLA: Approximately 0.84, with error bars ranging from 0.80 to 0.88.

* UW: Approximately 0.86, with error bars ranging from 0.82 to 0.90.

2. **Successor:**

* UCLA: Approximately 0.79, with error bars ranging from 0.75 to 0.83.

* UW: Approximately 0.88, with error bars ranging from 0.84 to 0.92.

3. **Predecessor:**

* UCLA: Approximately 0.74, with error bars ranging from 0.70 to 0.78.

* UW: Approximately 0.85, with error bars ranging from 0.81 to 0.89.

4. **Remove redundant letter:**

* UCLA: Approximately 0.68, with error bars ranging from 0.64 to 0.72.

* UW: Approximately 0.89, with error bars ranging from 0.85 to 0.93.

5. **Fix alphabetic sequence:**

* UCLA: Approximately 0.22, with error bars ranging from 0.18 to 0.26.

* UW: Approximately 0.42, with error bars ranging from 0.38 to 0.46.

6. **Sort:**

* UCLA: Approximately 0.18, with error bars ranging from 0.14 to 0.22.

* UW: Approximately 0.34, with error bars ranging from 0.30 to 0.38.

### Key Observations

* UW consistently outperforms UCLA across all transformation types.

* The largest difference in performance is observed for the "Remove redundant letter" transformation, where UW's accuracy is significantly higher than UCLA's.

* The smallest difference in performance is observed for the "Extend sequence" transformation.

* Both UCLA and UW have the lowest accuracy for the "Sort" transformation.

* The error bars suggest that the differences in accuracy between UCLA and UW are statistically significant for most transformation types.

### Interpretation

The data suggests that UW has a stronger generative capability than UCLA across a range of sequence transformation tasks. The consistent outperformance of UW indicates a potential difference in the underlying models or training data used by the two institutions. The large gap in accuracy for "Remove redundant letter" suggests that UW's model is better at identifying and handling redundancy in sequences. The low accuracy for the "Sort" transformation for both institutions indicates that sorting sequences is a challenging task for these generative models. The error bars provide a measure of the variability in the results, and the relatively small error bars suggest that the observed differences are likely to be real and not due to random chance. This chart provides a comparative analysis of generative accuracy, highlighting the strengths and weaknesses of each institution's approach to sequence transformation.

</details>

Figure 7: ChatGPT's answer to our question: 'Could you give an example of a copycat problem?'. The tasks presented in the original paper are called Copycat. This name refers to a computer program that tests such letter string analogy problems.

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Screenshot: Copycat Problem Example

### Overview

The image is a screenshot of a chat conversation, likely from a chatbot interface. The conversation details an explanation and example of a "copycat problem," a type of cognitive challenge involving pattern recognition and application. The screenshot displays the question "Could you give an example of a copycat problem?" and the chatbot's detailed response.

### Components/Axes

The screenshot contains the following components:

* **Chat Bubbles:** Two distinct chat bubbles, one from the user ("D") and one from the chatbot.

* **User Bubble:** Located on the left side, containing the question "Could you give an example of a copycat problem?".

* **Chatbot Bubble:** Located on the right side, containing the explanation and example.

* **Text Content:** The primary content is the text within the chatbot bubble, detailing the definition, example, and solution to a copycat problem.

### Content Details

The chatbot's response is transcribed below:

"Certainly! A copycat problem refers to a type of cognitive challenge where you’re asked to identify a pattern or transformation in a set of data and then apply that same pattern or transformation to a new set of data. This tests your ability to understand a rule or concept and apply it to solve a new problem.

Here’s an example of a copycat problem:

Original Pattern:

AB -> CD

EF -> GH

Your task is to decipher the pattern and apply it to a new set of data:

New Data:

IJ -> ??

KL -> ??

If you’ve successfully identified the pattern as shifting each letter two positions forward in the alphabet, you would apply the same transformation to the new data:

Applying Pattern:

IJ -> KL

KL -> NO

So, the correct answers are “KL” and “NO” for the new data set. The copycat problem tests your ability to recognize the pattern of letter shifting and apply it consistently to the problem."

### Key Observations

The text clearly defines a copycat problem and provides a step-by-step example. The example demonstrates a pattern of shifting letters forward by two positions in the alphabet. The solution is provided, confirming the pattern. The text is formatted with headings ("Original Pattern", "New Data", "Applying Pattern") to improve readability.

### Interpretation

The screenshot demonstrates a pedagogical approach to explaining a cognitive concept. The chatbot uses a clear definition, a concrete example, and a step-by-step solution to illustrate the concept of a copycat problem. The example is designed to test the user's ability to identify and apply a pattern. The use of "->" notation indicates a transformation or mapping between input and output. The problem highlights the importance of abstract reasoning and pattern recognition skills. The chatbot's response is well-structured and easy to understand, making it an effective learning tool. The problem is a simple example, but it effectively conveys the core principles of copycat problems.

</details>