# An In-Context Learning Agent for Formal Theorem-Proving

## Abstract

We present an in-context learning agent for formal theorem-proving in environments like Lean and Coq. Current state-of-the-art models for the problem are finetuned on environment-specific proof data. By contrast, our approach, called Copra, repeatedly asks a high-capacity, general-purpose large language model (GPT-4) to propose tactic applications from within a stateful backtracking search. Proposed tactics are executed in the underlying proof environment. Feedback from the execution is used to build the prompt for the next model query, along with selected information from the search history and lemmas retrieved from an external database. We evaluate our implementation of Copra on the $\mathtt{miniF2F}$ benchmark for Lean and a set of Coq tasks from the CompCert project. On these benchmarks, Copra significantly outperforms few-shot invocations of GPT-4. It also compares favorably against finetuning-based approaches, outperforming ReProver, a state-of-the-art finetuned approach for Lean, in terms of the pass@ $1$ metric. Our code and data are available at https://github.com/trishullab/copra.

## 1 Introduction

The ability of large language models (LLMs) to learn in-context (Brown et al., 2020) is among the most remarkable recent findings in machine learning. Since its introduction, in-context learning has proven fruitful in a broad range of domains, from text and code generation (OpenAI, 2023b) to image generation (Ramesh et al., 2021) to game-playing (Wang et al., 2023a). In many tasks, it is now best practice to prompt a general-purpose, black-box LLM rather than finetune smaller-scale models.

In this paper, we investigate the value of in-context learning in the discovery of formal proofs written in frameworks like Lean (de Moura et al., 2015) and Coq (Huet et al., 1997). Such frameworks allow proof goals to be iteratively simplified using tactics such as variable substitution and induction. A proof is a sequence of tactics that iteratively “discharges” a given goal.

Automatic theorem-proving is a longstanding challenge in computer science (Newell et al., 1957). Traditional approaches to the problem were based on discrete search and had difficulty scaling to complex proofs (Bundy, 1988; Andrews & Brown, 2006; Blanchette et al., 2011). More recent work has used neural models — most notably, autoregressive language models (Polu & Sutskever, 2020; Han et al., 2021; Yang et al., 2023) — that generate a proof tactic by tactic.

The current crop of such models is either trained from scratch or fine-tuned on formal proofs written in a specific proof framework. By contrast, our method uses a highly capable, general-purpose, black-box LLM (GPT-4-turbo (OpenAI, 2023a) For brevity, we refer to GPT-4-turbo as GPT-4 in the rest of this paper. ) that can learn in context. We show that few-shot prompting of GPT-4 is not effective at proof generation. However, one can achieve far better performance with an in-context learning agent (Yao et al., 2022; Wang et al., 2023a; Shinn et al., 2023) that repeatedly invokes GPT-4 from within a higher-level backtracking search and uses retrieved knowledge and rich feedback from the proof environment. Without any framework-specific training, our agent achieves performance comparable to — and by some measures better than — state-of-the-art finetuned approaches.

<details>

<summary>extracted/5780501/copramainfigure.png Details</summary>

### Visual Description

\n

## Diagram: COPRA System Flowchart

### Overview

The image is a technical flowchart illustrating the architecture and workflow of a system named "COPRA." It depicts an iterative, feedback-driven process for automated theorem proving or tactic generation, likely leveraging a large language model (LLM). The system takes a formal theorem as input and aims to produce a proof (QED) by synthesizing, parsing, executing, and refining proof tactics.

### Components/Axes

The diagram is composed of labeled boxes (components) connected by directional arrows (flow). The components are color-coded and spatially arranged to show process stages and feedback loops.

**Primary Input (Top Center):**

* A light orange, rounded rectangle labeled: **"Formal theorem + informal hints (optional)"**.

* An orange arrow points from this box down into the main system boundary.

**Main System Boundary (Center):**

* A large, dashed-line rounded rectangle labeled **"COPRA"** at the top center. This encloses the core processing components.

**Components Inside the COPRA Boundary:**

1. **"1. Prompt Synthesis"** (Light green box, top-left). Receives input from the main theorem box and feedback from other components.

2. **LLM Icon** (Teal square with a white, stylized brain/flower symbol, center). Positioned between "Prompt Synthesis" and "Tactic Parsing." Arrows flow from "Prompt Synthesis" to the icon, and from the icon to "Tactic Parsing."

3. **"2. Tactic Parsing"** (Light green box, top-right). Receives input from the LLM icon.

4. **"Lemma Database"** (Light blue box, middle-left). Has a bidirectional relationship with "Prompt Synthesis" and "Query lemma database."

5. **"4. Augment the prompt; Backtrack if needed"** (Light green box, bottom-center). A central hub receiving feedback and connecting to other components.

6. **"5. Query lemma database"** (Light green box, bottom-left). Sends a query to the "Lemma Database" and receives information back.

**Components Outside the COPRA Boundary:**

1. **"3. Execute the tactic via environment (Lean, Coq)"** (Light blue box, right side). Receives the parsed tactic from "2. Tactic Parsing."

2. **"Feedback"** (Pink box, below the execution box). Receives output from the execution step.

3. **"QED"** (Light blue box, bottom-right). The final output, indicating a successful proof. An arrow points from the execution box directly to QED.

**Flow & Connections (Arrows):**

* **Main Input Flow:** Orange arrow from "Formal theorem..." to "1. Prompt Synthesis."

* **Forward Processing Chain:** Green arrows connect: "1. Prompt Synthesis" -> [LLM Icon] -> "2. Tactic Parsing" -> "3. Execute the tactic..."

* **Feedback Loop:** A light blue arrow flows from "Feedback" to "4. Augment the prompt...".

* **Internal Refinement Loop:** From "4. Augment the prompt...", a green arrow points back to "1. Prompt Synthesis." Another green arrow points to "5. Query lemma database."

* **Database Interaction:** A green arrow goes from "5. Query lemma database" to "Lemma Database." A light blue arrow returns from "Lemma Database" to "1. Prompt Synthesis."

* **Success Path:** A light blue arrow goes from "3. Execute the tactic..." directly to "QED."

### Detailed Analysis

The diagram outlines a five-step iterative cycle:

1. **Prompt Synthesis:** Constructs a prompt for an LLM, incorporating the target theorem, optional hints, and information from a lemma database.

2. **Tactic Parsing:** The LLM's output is parsed into a structured proof tactic.

3. **Execution:** The tactic is executed in an external proof assistant environment (explicitly naming **Lean** and **Coq** as examples).

4. **Feedback & Augmentation:** The result of the execution (success, error, state) is captured as feedback. This feedback is used to augment the original prompt or trigger backtracking.

5. **Lemma Query:** The system can query a database of lemmas to retrieve relevant theorems or definitions to improve subsequent prompt synthesis.

The process is not linear. It features a primary feedback loop (Steps 3 -> 4 -> 1) for iterative refinement based on execution results. A secondary loop involves the lemma database (Steps 4 -> 5 -> Database -> 1) for knowledge retrieval. The process terminates upon reaching "QED."

### Key Observations

* **Hybrid System:** COPRA combines a neural component (the LLM, represented by the icon) with symbolic components (the tactic parser, execution environment, and lemma database).

* **Iterative and Corrective:** The design emphasizes learning from failure. The "Backtrack if needed" instruction and the prominent feedback loop are central to the architecture.

* **Environment Agnostic:** While specifying Lean and Coq, the "via environment" phrasing suggests the core COPRA system could potentially interface with different proof assistants.

* **Knowledge-Augmented:** The inclusion of a "Lemma Database" indicates the system doesn't rely solely on the LLM's parametric knowledge but can perform explicit retrieval to ground its reasoning.

### Interpretation

This flowchart represents a sophisticated approach to **neuro-symbolic AI for formal verification**. The core problem it solves is bridging the gap between the informal, generative capabilities of large language models and the strict, formal requirements of proof assistants like Lean or Coq.

* **The Role of the LLM:** The LLM acts as a **tactic generator**, proposing potential proof steps based on a synthesized prompt. This leverages its pattern recognition and code/text generation abilities.

* **The Role of Feedback:** The execution environment acts as a **ground truth verifier**. Its feedback (e.g., error messages, new proof state) is crucial for correcting the LLM's hallucinations or logical errors, creating a self-improving cycle.

* **The Role of the Lemma Database:** This component addresses the LLM's limited and potentially outdated context window. By retrieving relevant formal statements, it provides the system with precise, up-to-date mathematical knowledge, making the generated tactics more likely to be valid and applicable.

* **Overall Significance:** The COPRA architecture suggests a move away from pure end-to-end neural proof generation towards **interactive, iterative refinement systems**. It positions the LLM as a powerful but fallible assistant within a larger, rigorous computational framework. The goal is to combine the flexibility of AI with the reliability of formal methods, potentially making automated theorem proving more accessible and powerful. The "QED" output is not a direct product of the LLM, but the result of a successfully verified process that the LLM helped to steer.

</details>

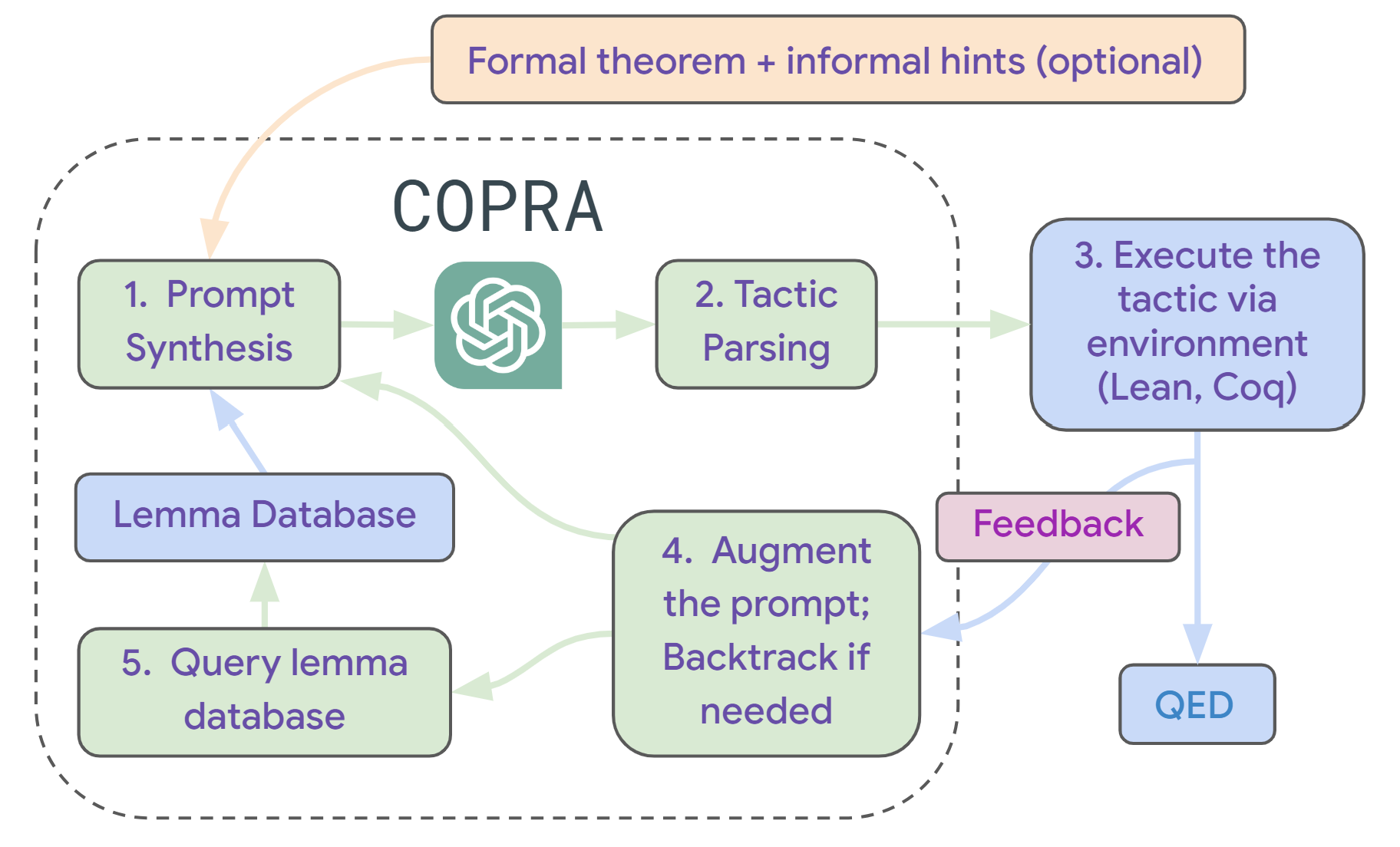

Figure 1: Overview of Copra. Copra takes as input a formal theorem statement and performs a stateful proof search through an in-context learning agent. At each step of search, the agent has access to the search history, error feedback from the proof environment, and relevant lemmas retrieved from a database, which are formatted according to a prompt serialization protocol.

Figure 1 gives an overview of our agent, called Copra. Copra is an acronym for “In- co ntext Pr over A gent”. The agent takes as input a formal statement of a theorem and optional natural-language hints about how to prove the theorem. At each time step, it prompts the LLM to select the next tactic to apply. LLM-selected tactics are “executed” in the underlying proof assistant. If the tactic fails, Copra records this information and uses it to avoid future failures.

Additionally, the agent uses lemmas and definitions retrieved from an external database to simplify proofs. Finally, we use a symbolic procedure (Sanchez-Stern et al., 2020) to only apply LLM-selected tactics when they simplify the current proof goals (ruling out, among other things, cyclic tactic sequences).

Our use of a general-purpose LLM makes it easy to integrate our approach with different proof environments. Our current implementation of Copra allows for proof generation in both Lean and Coq. To our knowledge, this implementation is the first proof generation system with this capability.

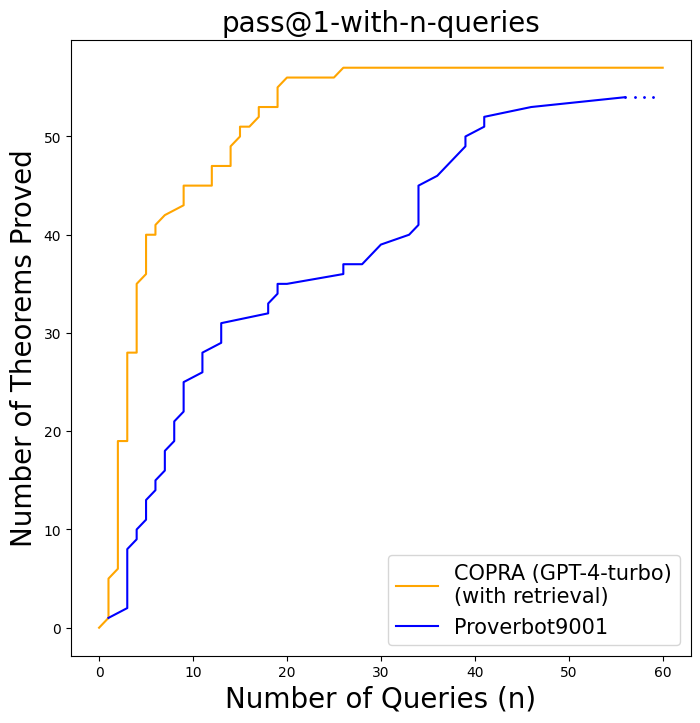

We evaluate Copra using the $\mathtt{miniF2F}$ (Zheng et al., 2021) benchmark for competition-level mathematical reasoning in Lean, as well as a set of Coq proof tasks (Sanchez-Stern et al., 2020) from the CompCert (Leroy, 2009) project on verified compilation. In both settings, Copra outperforms few-shot calls to GPT-4. On $\mathtt{miniF2F}$ , Copra outperforms ReProver, a state-of-the-art finetuned approach for Lean theorem-proving, in terms of the established pass@1 metric. Using a refinement of the pass@1 metric, we show that Copra can converge to correct proofs with fewer model queries than ReProver as well as Proverbot9001, a state-of-the-art approach for Coq. Finally, we show that a GPT-4-scale model is critical for this setting; an instantiation of Copra with GPT-3.5 is less effective.

To summarize our contributions, we offer: (i) a new approach to formal theorem-proving, called Copra, that combines in-context learning with search, knowledge retrieval, and the use of rich feedback from the underlying proof environment; (ii) a systematic study of the effectiveness of the GPT-4-based instantiation of Copra, compared to few-shot GPT-4 invocations, ablations based on GPT-3.5, and state-of-the-art finetuned baselines; (iii) an implementation of Copra, which is the first open-source theorem-proving system to be integrated with both the Lean and Coq proof environments.

## 2 Problem Formulation

A (tactic-based) theorem-prover starts with a set of unmet proof obligations and applies a sequence of proof tactics to progressively eliminate these obligations. Each obligation $o$ is a pair $(g,h)$ , where $g$ is a goal and $h$ is a hypothesis. The goal $g$ consists of the propositions that need to be proved in order to meet $o$ ; the hypothesis $h$ is the set of assumptions that can be made in the proof of $g$ . The prover seeks to reduce the obligation set to the empty set.

[roundcorner=10pt]

(a) ⬇ theorem mod_arith_2 (x : ℕ) : x % 2 = 0 → (x * x) % 2 = 0 := begin intro h, rw mul_mod, rw h, rw zero_mul, refl, end (b) ⬇ x: ℕ h: x % 2 = 0 ⊢ x * x % 2 = 0

(c) ⬇ begin intro h, have h1 : x = 2 * (x / 2) := (nat. mul_div_cancel ’ h). symm, rw h1, rw nat. mul_div_assoc _ (show 2 ∣ 2, from dvd_refl _), rw [mul_assoc, nat. mul_mod_right], end

Figure 2: (a) A Lean theorem and a correct proof found by Copra. (b) Proof state after the first tactic. (c) An incorrect proof generated by GPT-4.

Figure 2 -(a) shows a formal proof, in the Lean language (de Moura et al., 2015), of a basic theorem about modular arithmetic (the proof is automatically generated using Copra). The first step of the proof is the $\mathtt{intro}$ tactic, which changes a goal $P→ Q$ to a hypothesis $P$ and a goal $Q$ . The next few steps use the $\mathtt{rw}$ (rewrite) tactic, which applies substitutions to goals and hypotheses. The last step is an application of the $\mathtt{refl}$ (reflexivity) tactic, which asserts definitional equivalences.

In code generation settings, LLMs like GPT-4 can often generate complex programs from one-shot queries. However, one-shot queries to GPT-4 often fail at even relatively simple formal proof tasks.

Figure 2 -(c) shows a GPT-4-generated incorrect Lean proof of our example theorem.

By contrast, we follow a classic view of theorem-proving as a discrete search problem (Bundy, 1988). Specifically, we offer an agent that searches the state space of the underlying proof environment and discovers sequences of tactic applications that constitute proofs. The main differences from classical symbolic approaches are that the search is LLM-guided, history-dependent, and can use natural-language insights provided by the user or the environment.

Now we formalize our problem statement. Abstractly, let a proof environment consist of:

- A set of states $O$ . Here, a state is either a set $O=\{o_1,\dots,o_k\}$ of obligations $o_i$ , or of the form $(O,w)$ , where $O$ is a set of obligations and $w$ is a textual error message. States of the latter form are error states, and the set of such states is denoted by $\mathit{Err}$ .

- A set of initial states, each consisting of a single obligation $(g_\mathit{in},h_\mathit{in})$ extracted from a user-provided theorem.

- A unique goal state $\mathtt{QED}$ is the empty obligation set.

- A finite set of proof tactics.

- A transition function $T(O,a)$ , which determines the result of applying a tactic $a$ to a state $O$ . If $a$ can be successfully applied at state $O$ , then $T(O,a)$ is the new set of obligations resulting from the application. If $a$ is a “bad” tactic, then $T(O,a)$ is an error state $(O,w_e)$ , where $w_e$ is some feedback that explains the error. Error states $(O,w)$ satisfy the property that $T((O,w),a)=(O,w)$ for all $a$ .

- A set of global contexts, each of which is a natural-language string that describes the theorem to be proven and insights (Jiang et al., 2022b) about how to prove it. The theorem-proving agent can take a global context as an optional input and may use it to accelerate search.

Let us lift the transition function $T$ to tactic sequences by defining:

$$

T(O,α)=≤ft\{\begin{array}[]{l}T(O,a_1) \textrm{if $n=1$}\\

T(T(O,⟨ a_1,\dots,a_n-1⟩),a_n)~{}~{}~{}\textrm{otherwise.}

\end{array}\right.

$$

The theorem-proving problem is now defined as follows:

**Problem 1 (Theorem-proving)**

*Given an initial state $O_\mathit{in}$ and an optional global context $χ$ , find a tactic sequence $α$ (a proof) such that $T(O_\mathit{in},α)=\mathtt{QED}$ .*

In practice, we aim for proofs to be as short as possible.

## 3 The Copra Agent

The Copra agent solves the theorem-proving problem via a GPT-4-directed depth-first search over tactic sequences. Figure 3 shows pseudocode for the agent. At any given point, the algorithm maintains a stack of environment states and a “failure dictionary” $\mathit{Bad}$ that maps states to sets of tactics that are known to be “unproductive” at the state.

At each search step, it pushes the current state on the stack and retrieves external lemmas and definitions relevant to the state. After this, it serializes the stack, $\mathit{Bad}(O)$ , the retrieved information, and the optional global context into a prompt and feeds it to the LLM. The LLM’s output is parsed into a tactic and executed in the environment.

One outcome of the tactic could be that the agent arrives at $\mathtt{QED}$ or makes some progress in the proof. A second possibility is that the new state is an error. A third possibility is that the tactic is not incorrect but does not represent progress in the proof. We detect this scenario using a symbolic progress check as described below. The agent terminates successfully after reaching $\mathtt{QED}$ and rejects the new state in the second and third cases. Otherwise, it recursively continues the proof from the new state. After issuing a few queries to the LLM, the agent backtracks.

Progress Checks.

Following Sanchez-Stern et al. (2020), we define a partial order $\sqsupseteq$ over states that indicates when a state is “at least as hard” as another. Formally, for states $O_1$ and $O_2$ with $O_1∉\mathit{Err}$ and $O_2∉\mathit{Err}$ , $O_1\sqsupseteq O_2$ iff

$$

∀ o_i=(g_i,h_i)∈ O_2. ∃ o_k=(g_k,h_k)∈ O_1

. g_k=g_i∧(h_k⊆ h_i).

$$

Intuitively, $O_1\sqsupseteq O_2$ if for every obligation in $O_2$ , there is a stronger obligation in $O_1$ . While comparing the difficulty of arbitrary proof states is not well-defined, our definition helps us eliminate some straightforward cases. Particularly, these cases include those proof states whose goals match exactly and one set of hypotheses contains the other.

$\proc{Copra}(O,χ)$ $\proc{Push}(\mathit{st},O)$ $ρ←\proc{Retrieve}(O)$ $j← 1$ $t$ $p←\proc{Promptify}(\mathit{st},\mathit{Bad}(O),ρ,χ)$ $a∼\proc{ParseTactic}(\proc{Llm}(p))$ $O^\prime← T(O,a)$ $O^\prime=\mathtt{QED}$ terminate successfully $O^\prime∈\mathit{Err}$ or $∃ O^\prime\prime∈\mathit{st}. O^\prime\sqsupseteq O^\prime\prime$ add $a$ to $\mathit{Bad}(O)$ Copra( $O^\prime$ , $χ$ ) $\proc{Pop}(\mathit{st})$

Figure 3: The search procedure in Copra. $T$ is the environment’s transition function; $\mathit{st}$ is a stack, initialized to be empty. $\mathit{Bad}(O)$ is a set of tactics, initialized to $∅$ , that are known to be bad at $O$ . $\proc{Llm}$ is an LLM, $\proc{Promptify}$ generates a prompt, $\proc{ParseTactic}$ parses the output of the LLM into a tactic (repeatedly querying the LLM in case there are formatting errors in its output), and $\proc{Retrieve}$ gathers relevant lemmas and definitions from an external source. The overall procedure is called with a state $O_\mathit{in}$ and an optional global context $χ$ .

As shown in Figure 3, the Copra procedure uses the $\sqsupseteq$ relation to only take actions that lead to progress in the proof. Using our progress check, Copra avoids cyclic tactic sequences that would cause nontermination.

Prompt Serialization.

The routines $\proc{Promptify}$ and $\proc{ParseTactic}$ together constitute the prompt serialization protocol and are critical to the success of the policy. Now we elaborate on these procedures.

$\proc{Promptify}$ carefully places the different pieces of information relevant to the proof in the prompt. It also includes logic for trimming this information to fit the most relevant parts in the LLM’s context window. Every prompt has two parts: the “system prompt” (see more details in Section A.3) and the “agent prompt.”

The agent prompts are synthetically generated using a context-free grammar and contain information about the state stack (including the current proof state), the execution feedback for the previous action, and the set of actions we know to avoid at the current proof state.

The system prompt describes the rules of engagement for the LLM. It contains a grammar (distinct from the one for agent prompts) that we expect the LLMs to follow when it proposes a course of action. The grammar carefully incorporates cases when the response is incomplete because of the LLM’s token limits. We parse partial responses to extract the next tactic using the $\proc{ParseTactic}$ routine. $\proc{ParseTactic}$ also identifies any formatting errors in the LLM’s responses, possibly communicating with the LLM multiple times until these errors are resolved.

<details>

<summary>extracted/5780501/Figure4.png Details</summary>

### Visual Description

## Table: Proof Assistant Interaction Log

### Overview

The image displays a structured table documenting four sequential interactions (Query #1 through #4) with an automated theorem proving or proof assistant system. The table logs the state of a mathematical proof, the steps attempted, the results of those attempts, and the corresponding language model (LLM) generated responses. The content is technical, involving formal logic, tactics, and error messages.

### Components/Axes

The table is organized into four primary rows (categories) and four columns (queries/steps).

**Row Headers (Leftmost Column):**

1. **Serialized Proof State**: Contains the formal goal and hypotheses for the proof at each step.

2. **Stack & Failure Dictionary**: Logs the sequence of proof steps (tactics) applied and any failure information.

3. **Interaction Result**: Shows the outcome of the attempted step, either `[SUCCESS]` or an `[ERROR MESSAGE]`.

4. **LLM Response**: Shows the tactic command generated by the Language Model for that query.

**Column Headers (Top Row):**

The columns are implicitly labeled by the "LLM Response" row as:

* **Query #1**

* **Query #2**

* **Query #3**

* **Query #4**

**Cell Content Format:**

Text within cells is structured with bracketed labels (e.g., `[GOALS]`, `[STEP]`, `[ERROR MESSAGE]`) followed by content. Mathematical expressions use standard notation (e.g., `x * x % 2 = 0`).

### Detailed Analysis

#### **Row 1: Serialized Proof State**

* **Query #1 & #2 Column (Shared State):**

* `[GOALS]`

* `[GOAL] 1`

* `x * x % 2 = 0`

* `[HYPOTHESES] 1`

* `[HYPOTHESIS] x : ℕ` (The symbol `ℕ` denotes natural numbers).

* `[HYPOTHESIS] h : x % 2 = 0`

* **Query #3 & #4 Column (Shared State):**

* `[GOALS]`

* `[GOAL] 1`

* `x % 2 * (x % 2) % 2 = 0`

* `[HYPOTHESES] 1`

* `[HYPOTHESIS] x : ℕ`

* `[HYPOTHESIS] h : x % 2 = 0`

**Trend Verification:** The proof goal changes between Query #2 and #3. The initial goal (`x * x % 2 = 0`) is transformed into a more specific form (`x % 2 * (x % 2) % 2 = 0`), likely as a result of applying a rewrite rule.

#### **Row 2: Stack & Failure Dictionary**

* **Query #1:**

* `[LAST STEP]`

* `intro h,`

* **Query #2:**

* `[STEPS]`

* `[STEP] intro h,`

* `[LAST STEP]`

* `rw h,`

* **Query #3:**

* `[STEPS]`

* `[STEP] intro h,`

* `[INCORRECT STEPS]`

* `[STEP] rw h,`

* `[LAST STEP]`

* `apply nat.mul_mod_right,`

* **Query #4:**

* `[STEPS]`

* `[STEP] intro h,`

* `[LAST STEP]`

* `rw nat.mul_mod,`

**Component Isolation:** This row shows a history. Query #1 starts with `intro h`. Query #2 adds `rw h`. Query #3 marks `rw h` as incorrect and attempts `apply nat.mul_mod_right`. Query #4 shows a different final step: `rw nat.mul_mod`.

#### **Row 3: Interaction Result**

* **Query #1:**

* `[SUCCESS]`

* `[END]`

* **Query #2:**

* `[ERROR MESSAGE]`

* `Got error in 'rw h,':`

* `error: rewrite tactic failed, did not find instance of the pattern in the target expression`

* `x % 2`

* `[END]`

* **Query #3:**

* `[ERROR MESSAGE]`

* `Got error in 'apply nat.mul_mod_right,':`

* `error: invalid apply tactic, failed to unify`

* `x * x % 2 = 0`

* `with`

* `?m_1 * ?m_2 % ?m_1 = 0`

* `[END]`

* **Query #4:**

* `[SUCCESS]`

* `[END]`

**Trend Verification:** The results show a sequence: Success -> Failure (pattern match error) -> Failure (unification error) -> Success. The error messages provide specific technical reasons for the failures.

#### **Row 4: LLM Response**

* **Query #1:**

* `[RUN TACTIC]`

* `rw h,`

* `[END]`

* **Query #2:**

* `[RUN TACTIC]`

* `apply nat.mul_mod_right,`

* `[END]`

* **Query #3:**

* `[RUN TACTIC]`

* `rw nat.mul_mod,`

* `[END]`

* **Query #4:**

* `[RUN TACTIC]`

* `rw h,`

* `[END]`

**Spatial Grounding & Cross-Reference:** The LLM Response for a given query column dictates the tactic that was attempted, the result of which is logged in the "Interaction Result" row directly above it. For example, in the Query #2 column, the LLM suggested `apply nat.mul_mod_right,` (Row 4), which resulted in the error message in Row 3.

### Key Observations

1. **Proof State Evolution:** The core mathematical goal is transformed from `x * x % 2 = 0` to `x % 2 * (x % 2) % 2 = 0` between Query #2 and #3. This is a logical consequence of the hypothesis `h : x % 2 = 0`.

2. **Tactic Failure Patterns:** Two distinct failure modes are documented:

* **Query #2 Failure:** A `rw` (rewrite) tactic failed because the system could not find the pattern `x % 2` in the target expression `x * x % 2 = 0`. This suggests the expression was not in a form where the hypothesis could be directly applied.

* **Query #3 Failure:** An `apply` tactic failed due to a unification error. The system could not match the goal `x * x % 2 = 0` with the expected pattern of the lemma `nat.mul_mod_right`, which is `?m_1 * ?m_2 % ?m_1 = 0`.

3. **Successful Steps:** The proof begins successfully with `intro h` (introducing the hypothesis). It concludes successfully in Query #4 with `rw nat.mul_mod`, which likely rewrites the goal using a modular arithmetic theorem (`nat.mul_mod`).

4. **LLM Adaptation:** The LLM generates different tactics in response to errors. After the `rw h` failure in Query #2, it tries `apply nat.mul_mod_right` in Query #3. After that fails, it tries `rw nat.mul_mod` in Query #4, which succeeds.

### Interpretation

This table provides a Peircean investigative trace of a formal proof attempt, revealing the iterative, diagnostic process inherent in automated reasoning.

* **What the data suggests:** It demonstrates a common workflow in interactive theorem proving: state a goal, apply a tactic, receive feedback (success or a detailed error), and adapt the next tactic based on that feedback. The errors are not mere failures but informative signals about the mismatch between the current proof state and the preconditions of the chosen tactic.

* **How elements relate:** The "Serialized Proof State" is the ground truth. The "LLM Response" is a proposed action on that state. The "Stack & Failure Dictionary" is the action history. The "Interaction Result" is the system's evaluation of the proposed action against the current state. This forms a closed feedback loop.

* **Notable anomalies/outliers:** The shift in the proof goal between Query #2 and #3 is the most significant event. It indicates that an implicit step (likely a rewrite using hypothesis `h`) occurred successfully *between* the logged interactions, changing the goal's form. The subsequent errors then occur on this *new* goal. The final success with `rw nat.mul_mod` suggests the proof was completed by applying a general theorem about multiplication and modulus, rather than directly using the hypothesis `h` via rewrite.

* **Underlying information exposed:** The log exposes the "reasoning" of both the human/LLM (choosing tactics) and the proof assistant (enforcing logical rigor through pattern matching and unification). It highlights that successful proof search often requires finding the right *representation* of a problem (as seen in the goal transformation) and the right *lemma* to apply (as seen in the final successful step).

</details>

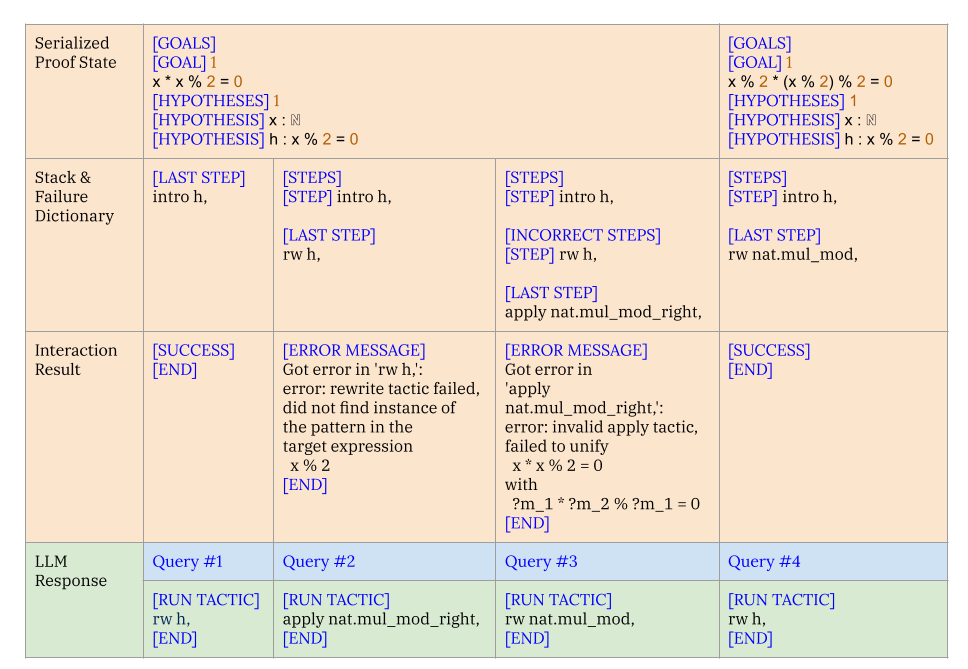

Figure 4: A “conversation” between the LLM and the search algorithm in Copra. Query #1, Query #2, …represent queries made as the proof search progresses. The column labeled Query $\#i$ depicts the prompt at time step $i$ and the corresponding LLM response. The LLM response is parsed into a tactic and executed, with the error feedback incorporated into the next prompt.

Figure 4 illustrates a typical “conversation” between the LLM and Copra ’s search algorithm via the prompt serialization protocol. Note, in particular, the prompt’s use of the current goals, the stack, and error feedback for the last executed tactic.

## 4 Evaluation

Benchmarks.

We primarily evaluate Copra on the Lean proof environment using $\mathtt{miniF2F}$ - $\mathtt{test}$ (Zheng et al., 2021) benchmark. This benchmark comprises 244 formalizations of mathematics problems from (i) the MATH dataset (Hendrycks et al., 2021), (ii) high school mathematics competitions, (iii) hand-designed problems mirroring the difficulty of (ii). Broadly, these problems fall under three categories: number theory, algebra, and induction.

To evaluate Copra ’s ability to work with multiple proof frameworks, we perform a secondary set of experiments on the Coq platform. Our benchmark here consists of a set of a theorems drawn from the CompCert compiler verification project (Leroy, 2009). The dataset was originally evaluated on the Proverbot9001 system (Sanchez-Stern et al., 2020). Due to budgetary constraints, our evaluation for CompCert uses 118 of the 501 theorems used in the original evaluation of Proverbot9001. For fairness, we include all 98 theorems proved by Proverbot9001 in our system. The remaining theorems are randomly sampled.

Implementing COPRA.

Our implementation of Copra is LLM-agnostic, and functions as long as the underlying language model is capable of producing responses which parse according to the output grammar. We instantiate the LLM to be GPT-4-turbo as a middle-ground between quality and affordability. Because of the substantial cost of GPT-4 queries, we cap the number of queries that Copra can make at 60 for the majority of our experiments.

In both Lean and Coq, we instantiate the retrieval mechanism to be a BM25 search. For our Lean experiments, our retrieval database is mathlib (mathlib Community, 2020), a mathematics library developed by the Lean community. Unlike $\mathtt{miniF2F}$ , our CompCert-based evaluation set is accompanied by a training set. Since Copra relies on a black-box LLM, we do not perform any training with the train set, but do use it as the retrieval database for our Coq experiments.

Inspired by DSP (Jiang et al., 2022b), we measure the efficacy of including the natural-language (informal) proof of the theorem at each search step in our Learn experiments. We use the informal theorem statements in $\mathtt{miniF2F}$ - $\mathtt{test}$ to generate informal proofs of theorems using few-shot prompting of the underlying LLM. The prompt has several examples, fixed over all invocations, of informal proof generation given the informal theorem statement. These informal proofs are included as part of the global context. Unlike $\mathtt{miniF2F}$ , which consists of competition problems originally specified in natural language, our CompCert evaluation set comes from software verification tasks that lack informal specifications. Hence, we do not run experiments with informal statements and proofs for Coq.

Initially, we run Copra without access to the retrieval database. By design, Copra may backtrack out of its proof search before the cap of 60 queries has been met. With the remaining attempts on problems yet to be solved, Copra is restarted to operate with retrieved information, and then additionally with informal proofs. This implementation affords lesser expenses, as appending retrieved lemmas and informal proofs yields longer prompts, which incur higher costs. More details are discussed in Section A.1.1.

Baselines.

We perform a comparison with the few-shot invocation of GPT-4 in both the $\mathtt{miniF2F}$ - $\mathtt{test}$ and CompCert domains. The “system prompt” in the few-shot approach differs from that of Copra, containing instructions to generate the entire proof in one response, rather than going step-by-step. For both Copra and the few-shot baseline, the prompt contains several proof examples which clarify the required format of the LLM response. The proof examples, fixed across all test cases, contain samples broadly in the same categories as, but distinct from, those problems in the evaluation set.

For our fine-tuned baselines, a challenge is that, to our knowledge, no existing theorem-proving system targets both Lean and Coq. Furthermore, all open-source theorem proving systems have targeted a single proof environment. As a result, we had to choose different baselines for the Lean ( $\mathtt{miniF2F}$ ) and Coq (CompCert) domains.

Our fine-tuned baseline for $\mathtt{miniF2F}$ - $\mathtt{test}$ is ReProver, a state-of-the-art retrieval-augmented open-source system that is part of the LeanDojo project (Yang et al., 2023). We also compare against Llemma -7b and Llemma -34b (Azerbayev et al., 2023). In the CompCert task, we compare with Proverbot9001 (Sanchez-Stern et al., 2020), which, while not LLM-based, is the best public model for Coq.

Ablations.

We consider ablations of Copra which use GPT-3.5 and CodeLlama (Roziere et al., 2023) as the underlying model. We also measure the effectiveness of backtracking in Copra ’s proof search. Additionally, we ablate for retrieved information and informal proofs as part of the global context during search.

Metric.

The standard metric for evaluating theorem-provers is pass@k (Lample et al., 2022). In this metric, a prover is given a budget of $k$ proof attempts; the method is considered successful if one of these attempts leads to success. We consider a refinement of this metric called pass@k-with-n-queries. Here, we measure the number of correct proofs that a prover can generate given k attempts, each with a budget of $n$ queries from the LLM or neural model. We enforce that a single query includes exactly one action (a sequence of tactics) to be used in the search. We want this metric to be correlated with the number of correct proofs that the prover produces within a specified wall-clock time budget; however, the cost of an inference query on an LLM is proportional to the number of responses generated per query. To maintain the correlation between the number of inference queries and wall-clock time, we restrict each inference on the LLM to a single response (see Section A.1.2 for more details).

$$

\mathtt{miniF2F} \mathtt{test} × × × × × 60^‡ × × × × × × × × × × \tag{600}

$$

Table 1: Aggregate statistics for Copra and the baselines on $\mathtt{miniF2F}$ dataset. Note the various ablations of Copra with and without retrieval or informal proofs. The timeout is a wall-clock time budget in seconds allocated across all attempts. Unless otherwise specified, (i) Copra uses GPT-4 as the LLM (ii) the temperature ( $T$ ) is set to 0.

Copra vs. Few-Shot LLM Queries.

Statistics for the two approaches, as well as a comparison with the few-shot GPT-3.5 and GPT-4 baselines, appear in Table 1. As we see, Copra offers a significant advantage over the few-shot approaches. For example, Copra solves more than twice as many problems as the few-shot GPT-4 baseline, which indicates that the state information positively assists the search. Furthermore, we find that running the few-shot baseline with 60 To ensure fairness we matched the number of attempts for Few-Shot (GPT-4) baseline with the number of queries Copra took to pass or fail for each theorem. attempts (provided a nonzero temperature), exhibits considerably worse performance compared to Copra. This indicates that Copra makes more efficient use of queries to GPT-4 than the baseline, even when queries return a single tactic (as part of a stateful search), as opposed to a full proof. We include further details in Section A.1.1.

Copra is capable of improving the performance of GPT-3.5, CodeLlama over its few-shot counterpart. We note that the use of GPT-4 seems essential, as weaker models like GPT-3.5 or CodeLlama have a reduced ability to generate responses following the specified output format.

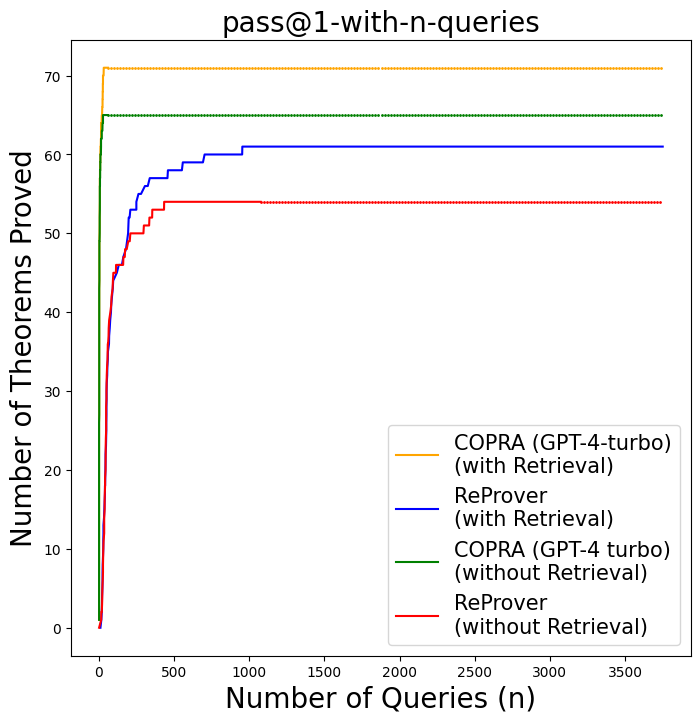

Comparison with Finetuned Approaches on $\mathtt{miniF2F}$ .

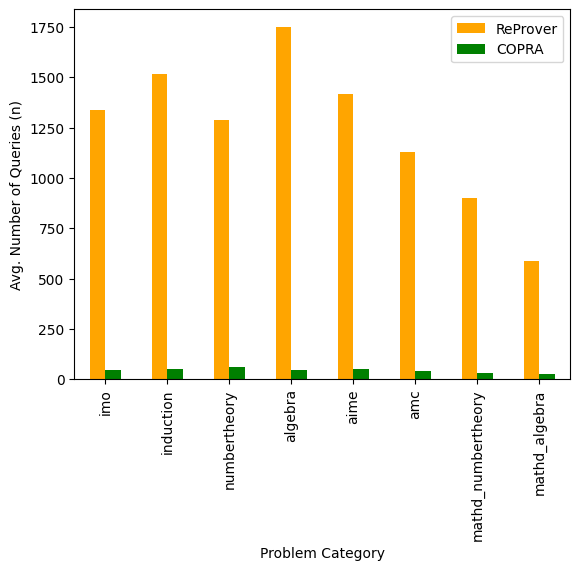

Figure 5 shows our comparison results for the $\mathtt{miniF2F}$ domain. With a purely in-context learning approach, Copra outperforms ReProver, proving within just 60 queries theorems that ReProver could not solve after a thousand queries. This is remarkable given that ReProver was finetuned on a curated proof-step dataset derived from Lean’s mathlib library. For fairness, we ran ReProver multiple times with 16, 32, and 64 (default) as the maximum number of queries per proof-step. We obtained the highest success rates for ReProver with 64 queries per proof-step. We find that Copra without retrieval outperforms ReProver.

Our pass@1 performance surpasses those of the best open-source approaches for $\mathtt{miniF2F}$ - $\mathtt{test}$ on Lean. Copra proves 29.09% of theorems in $\mathtt{miniF2F}$ - $\mathtt{test}$ theorems, which exceeds that of Llemma -7b & Llemma -34b, (Azerbayev et al., 2023) and ReProver (Yang et al., 2023). It is important to note that these methods involve training on curated proof data, while Copra relies only on in-context learning.

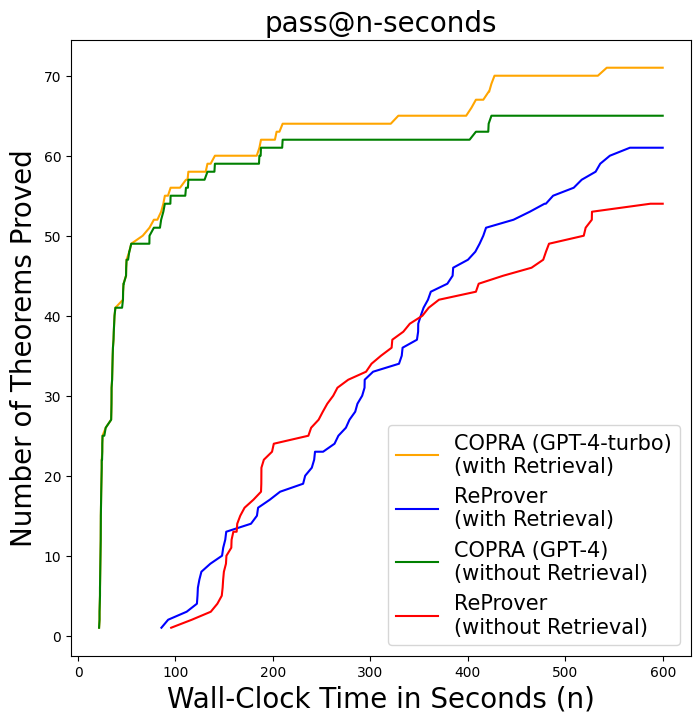

We also establish a correlation between the number of queries needed for a proof and wall-clock time in Figure 6 and Table 3 (more details are discussed in Section A.1.3). Although the average time per query is higher for Copra, Copra still finds proofs almost 3x faster than ReProver. This can explained by the fact that our search is more effective as it uses 16x fewer queries than ReProver (see Table 2). The average time per query includes the time taken to execute the generated proof step by the interactive theorem prover. Hence, a more effective model can be slow in generating responses while still proving faster compared to a model with quicker inference, by avoiding the waste of execution time on bad proof-steps.

<details>

<summary>extracted/5780501/img-miniF2F-pass-k-guidance-steps.png Details</summary>

### Visual Description

## Line Chart: pass@1-with-n-queries

### Overview

The image is a line chart titled "pass@1-with-n-queries". It plots the performance of four different automated theorem-proving methods, measured by the cumulative number of theorems successfully proved as a function of the number of queries (n) made. The chart demonstrates how each method's success rate scales with increased computational effort (queries).

### Components/Axes

* **Chart Title:** `pass@1-with-n-queries` (Top center)

* **Y-Axis:**

* **Label:** `Number of Theorems Proved` (Left side, vertical)

* **Scale:** Linear, from 0 to 70. Major tick marks are at intervals of 10 (0, 10, 20, 30, 40, 50, 60, 70).

* **X-Axis:**

* **Label:** `Number of Queries (n)` (Bottom center)

* **Scale:** Linear, from 0 to approximately 3700. Major tick marks are at intervals of 500 (0, 500, 1000, 1500, 2000, 2500, 3000, 3500).

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains four entries, each with a colored line sample and a text label.

1. **Orange Line:** `COPRA (GPT-4-turbo) (with Retrieval)`

2. **Blue Line:** `ReProver (with Retrieval)`

3. **Green Line:** `COPRA (GPT-4 turbo) (without Retrieval)`

4. **Red Line:** `ReProver (without Retrieval)`

### Detailed Analysis

The chart displays four distinct data series, each showing a rapid initial increase that plateaus at a different level.

1. **COPRA (GPT-4-turbo) with Retrieval (Orange Line):**

* **Trend:** Exhibits the steepest initial ascent, reaching its maximum value extremely quickly (within the first ~100 queries). After this point, the line is perfectly horizontal, indicating no further theorems are proved with additional queries.

* **Key Data Point:** Plateaus at approximately **71 theorems proved**.

2. **COPRA (GPT-4 turbo) without Retrieval (Green Line):**

* **Trend:** Also shows a very sharp initial rise, similar to but slightly less steep than its "with Retrieval" counterpart. It reaches its plateau very early (within ~100 queries) and remains constant thereafter.

* **Key Data Point:** Plateaus at approximately **65 theorems proved**.

3. **ReProver with Retrieval (Blue Line):**

* **Trend:** Shows a more gradual, step-wise increase compared to the COPRA methods. It continues to prove new theorems up to around 1000 queries before leveling off. The ascent is less steep initially but sustains growth for longer.

* **Key Data Point:** Plateaus at approximately **61 theorems proved** after ~1000 queries.

4. **ReProver without Retrieval (Red Line):**

* **Trend:** Follows a similar step-wise growth pattern to the blue line but with a lower slope and a lower final plateau. It also levels off around 500-1000 queries.

* **Key Data Point:** Plateaus at approximately **54 theorems proved**.

### Key Observations

* **Performance Hierarchy:** The final performance ranking from highest to lowest is: COPRA with Retrieval > COPRA without Retrieval > ReProver with Retrieval > ReProver without Retrieval.

* **Impact of Retrieval:** For both COPRA and ReProver, the "with Retrieval" variant outperforms the "without Retrieval" variant. The performance gap is larger for COPRA (~6 theorem difference) than for ReProver (~7 theorem difference).

* **Efficiency vs. Effort:** COPRA methods are highly efficient, achieving nearly all their potential proofs with very few queries (<100). ReProver methods require more queries (500-1000) to reach their maximum potential, suggesting a different, possibly more exhaustive, search strategy.

* **Plateau Behavior:** All methods exhibit a clear plateau, indicating a finite set of solvable theorems within the test suite. No method shows continuous improvement across the entire query range.

### Interpretation

This chart provides a comparative analysis of two theorem-proving systems (COPRA and ReProver) under two conditions (with and without a retrieval mechanism). The data suggests several key insights:

1. **Superiority of COPRA:** The COPRA architecture, especially when augmented with retrieval, demonstrates both higher final performance and greater sample efficiency (requiring fewer queries to reach peak performance) compared to ReProver on this task.

2. **Value of Retrieval:** Incorporating a retrieval component consistently improves the number of theorems proved for both systems. This implies that accessing a knowledge base or lemma library is a beneficial strategy for automated reasoning, helping the models overcome limitations in their parametric knowledge or reasoning depth.

3. **Diminishing Returns:** The sharp plateaus indicate a point of diminishing returns. After a certain number of queries, additional computational effort does not yield more proofs. This could be due to the inherent difficulty of the remaining theorems or limitations in the models' capabilities.

4. **Strategic Differences:** The contrast between COPRA's rapid plateau and ReProver's more gradual ascent hints at fundamental differences in their algorithms. COPRA may employ a more direct or heuristic-driven approach that quickly solves easier problems, while ReProver might use a more systematic but slower search process.

In summary, the chart is evidence that for this specific benchmark, the COPRA system with retrieval is the most effective and efficient approach, and that retrieval-augmented generation is a valuable technique for enhancing the capabilities of language models in formal reasoning tasks.

</details>

Figure 5: Copra vs. ReProver on the $\mathtt{miniF2F}$ benchmark.

Qualitative Analysis of Proofs.

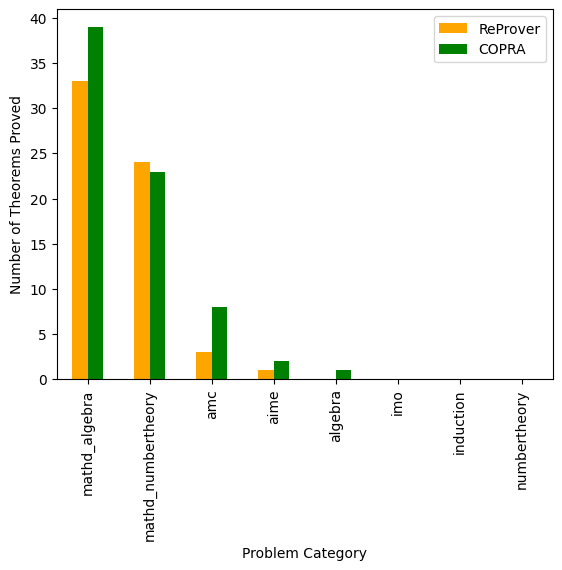

We performed an analysis of the different categories of $\mathtt{miniF2F}$ problems solved by Copra and ReProver. Figure 8 and Figure 9 (in Section A.2) show that Copra proves more theorems in most of the categories and takes fewer steps consistently across all categories of problems in $\mathtt{miniF2F}$ as compared to ReProver. Additionally, we find that certain kinds of problems, for example, International Mathematics Olympiad (IMO) problems and theorems that require induction, are difficult for both approaches.

From our qualitative analysis, there are certain kinds of problems where our language-agent approach seems especially helpful.

<details>

<summary>extracted/5780501/img-miniF2F-pass-k-seconds.png Details</summary>

### Visual Description

\n

## Line Chart: pass@n-seconds

### Overview

The image is a line chart titled "pass@n-seconds" that compares the performance of four different automated theorem-proving systems over time. The chart plots the cumulative number of theorems proved against the wall-clock time in seconds. It demonstrates how quickly each system can solve problems, with a focus on the impact of a "Retrieval" mechanism.

### Components/Axes

* **Chart Title:** `pass@n-seconds` (Top center)

* **Y-Axis:** Label is `Number of Theorems Proved`. Scale runs from 0 to 70 in increments of 10.

* **X-Axis:** Label is `Wall-Clock Time in Seconds (n)`. Scale runs from 0 to 600 in increments of 100.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains four entries, each with a colored line sample and a text label:

1. **Yellow Line:** `COPRA (GPT-4-turbo) (with Retrieval)`

2. **Blue Line:** `ReProver (with Retrieval)`

3. **Green Line:** `COPRA (GPT-4) (without Retrieval)`

4. **Red Line:** `ReProver (without Retrieval)`

### Detailed Analysis

The chart displays four data series, each representing a different system configuration. The trend for each is a non-decreasing step function, as the cumulative count of proved theorems can only increase or stay flat.

1. **COPRA (GPT-4-turbo) with Retrieval (Yellow Line):**

* **Trend:** Shows the fastest initial growth and maintains the highest performance throughout. It has a very steep ascent in the first ~50 seconds, then continues to climb in smaller steps, plateauing near the top.

* **Key Data Points (Approximate):**

* At n=50s: ~50 theorems proved.

* At n=100s: ~55 theorems proved.

* At n=300s: ~64 theorems proved.

* At n=600s: ~71 theorems proved (highest final value).

2. **COPRA (GPT-4) without Retrieval (Green Line):**

* **Trend:** Follows a very similar trajectory to its "with Retrieval" counterpart (yellow), but consistently lags slightly behind. It also has a steep initial rise.

* **Key Data Points (Approximate):**

* At n=50s: ~49 theorems proved.

* At n=100s: ~53 theorems proved.

* At n=300s: ~62 theorems proved.

* At n=600s: ~65 theorems proved.

3. **ReProver with Retrieval (Blue Line):**

* **Trend:** Begins proving theorems later than the COPRA systems (starting around n=100s). It shows a steady, roughly linear increase over time, with a moderate slope.

* **Key Data Points (Approximate):**

* At n=100s: ~5 theorems proved.

* At n=200s: ~18 theorems proved.

* At n=300s: ~35 theorems proved.

* At n=600s: ~61 theorems proved.

4. **ReProver without Retrieval (Red Line):**

* **Trend:** Also begins around n=100s. Its growth is the slowest of the four, with a shallower slope compared to the blue line (ReProver with Retrieval).

* **Key Data Points (Approximate):**

* At n=100s: ~2 theorems proved.

* At n=200s: ~14 theorems proved.

* At n=300s: ~33 theorems proved.

* At n=600s: ~54 theorems proved.

### Key Observations

* **Performance Hierarchy:** The final ranking at 600 seconds is: 1) COPRA (GPT-4-turbo, with Retrieval), 2) COPRA (GPT-4, without Retrieval), 3) ReProver (with Retrieval), 4) ReProver (without Retrieval).

* **Impact of Retrieval:** For both COPRA and ReProver, the "with Retrieval" variant outperforms the "without Retrieval" variant. The performance gap is more pronounced for ReProver (blue vs. red lines) than for COPRA (yellow vs. green lines).

* **Initial Speed:** The COPRA systems (yellow, green) demonstrate a significant advantage in the early phase (0-100 seconds), solving many theorems very quickly. The ReProver systems (blue, red) have a delayed start.

* **Growth Patterns:** COPRA systems show a "fast start, then plateau" pattern. ReProver systems show a "slow start, then steady climb" pattern.

### Interpretation

This chart evaluates the efficiency of different AI-driven theorem-proving agents. The data suggests that:

1. **Model Foundation Matters:** Systems built on GPT-4-turbo (COPRA) have a substantial initial speed and overall capacity advantage over the ReProver architecture in this benchmark.

2. **Retrieval is Beneficial:** Augmenting a prover with a retrieval mechanism (likely for accessing relevant lemmas or past proofs) consistently improves performance, allowing it to prove more theorems within the same time budget. This effect is critical for ReProver to become competitive.

3. **Time-Accuracy Trade-off:** If the goal is to solve as many problems as possible under a strict time limit (e.g., <100 seconds), COPRA is the clear choice. If given a longer time budget (>300 seconds), the gap narrows, and ReProver with Retrieval becomes a viable contender, eventually surpassing COPRA without Retrieval.

4. **Underlying Capability:** The steep initial climb of COPRA implies it may be better at recognizing and solving "easy" theorems almost immediately, while ReProver requires a warm-up period, possibly for building an internal context or search tree. The steady climb of ReProver suggests a more systematic, perhaps deeper, search process that pays off over time.

</details>

Figure 6: Copra vs. ReProver on the $\mathtt{miniF2F}$ benchmark on the pass@n-seconds metric.

For instance, Figure 7 shows a problem in the ‘numbertheory’ category that ReProver could not solve. More examples of interesting proofs that Copra found appear in the Section A.2 in Figure 10.

Effectiveness of Backtracking, Informal Proofs, and Retrieval.

We show the ablation for the backtracking feature of Copra in Table 1. We find that backtracking is useful when proofs are longer or more complex, as Copra is more likely to make mistakes which require amending. We include additional examples in Figure 12 (in Section A.2).

From Table 1, we see that retrieval helps in proving more problems. Retrieval reduces the tendency of the model to hallucinate the lemma names and provides the model with existing lemma definitions it otherwise may not have known. We include a proof generated by Copra that uses a retrieved lemma in Figure 7.

We experiment with adding model-generated informal proofs as global context in Copra ’s proof search. As evidenced in Table 1, Copra is able to outperform PACT (Han et al., 2021) and the state-of-the-art (29.6%) expert iteration method (Polu et al., 2022) in a $pass$ @ $1$ search through the incorporation of informal proofs. Furthermore, increasing the maximum query count to 100 enables a further increase in Copra ’s performance to 30.74%. An example of a proof found when incorporating informal proofs is shown in Figure 15 (see Section A.5).

Test-Set Memorization Concerns.

Given that the pretraining corpus of GPT-4 is not publicly available, it is imperative to assess for the possibility of test-set leakage in our experiments. We perform an analysis of those proofs checked into the open-source $\mathtt{miniF2F}$ repository compared to those proofs Copra discovers.

We categorize those proofs available in $\mathtt{miniF2F}$ according to the number of tactics required and the complexity of the tactic arguments. These results can be found in detail in Section A.1.4. As seen in Table 4, we find that Copra reproduces none of the “long” proofs in $\mathtt{miniF2F}$ - $\mathtt{test}$ repository. Setting aside those cases where the proof consists of a single tactic, approximately 91.93% of the proofs generated by Copra either bear no overlap to proofs in $\mathtt{miniF2F}$ , or no proof has been checked in. We provide examples of proofs generated by our approach compared to those included in $\mathtt{miniF2F}$ in Figure 11.

[roundcorner=10pt]

⬇

theorem mathd_numbertheory_100

(n : ℕ)

(h ₀ : 0 < n)

(h ₁ : nat. gcd n 40 = 10)

(h ₂ : nat. lcm n 40 = 280) :

n = 70 :=

begin

have h ₃ : n * 40 = 10 * 280 := by rw [← nat. gcd_mul_lcm n 40, h ₁, h ₂],

exact (nat. eq_of_mul_eq_mul_right (by norm_num : 0 < 40) h ₃),

end

Figure 7: A theorem that requires retrieval. Copra used BM25 to find the lemma “ $\texttt{gcd\_mul\_lcm}:(\texttt{n} \texttt{m}:ℕ+):(\texttt{gcd} \texttt{n} \texttt{m})*(\texttt{lcm} \texttt{n} \texttt{m})=\texttt{n}* \texttt{m}$ ” which led to the proof while ReProver failed to prove this theorem. It is important to note that just retrieving the correct lemma is not sufficient, but knowing how to use it correctly is equally important. The Figure 18 shows how Copra utilizes the capabilities of LLM to correctly use the retrieved lemma in the proof.

Coq Experiments Copra can prove a significant portion of the theorems in our Coq evaluation set. As shown in Figure 16 (in Section A.5), Copra slightly outperforms Proverbot9001 when both methods are afforded the same number of queries. Copra with retrieval is capable of proving 57 of 118 theorems within our cap of 60 queries. Furthermore, Copra exceeds the few-shot baselines utilizing GPT-4 and GPT-3.5, which could prove 36 and 10 theorems, respectively, on our CompCert-based evaluation set. Some example Coq proofs generated by Copra are shown in Section A.5 in Figure 17.

## 5 Related Work

Neural Theorem-Proving.

There is a sizeable literature on search-based theorem-proving techniques based on supervised learning. Neural models are trained to predict a proof step given a context and the proof state, then employed to guide a search algorithm (e.g. best-first or depth-limited search) to synthesize the complete proof.

Early methods of this sort (Yang & Deng, 2019; Sanchez-Stern et al., 2020; Huang et al., 2019) used small-scale neural networks as proof step predictors. Subsequent methods, starting with GPT- $f$ (Polu & Sutskever, 2020), have used language models trained on proof data.

PACT (Han et al., 2021) enhanced the training of such models with a set of self-supervised auxiliary tasks. Lample et al. (2022) introduced HyperTree Proof Search, which uses a language model trained on proofs to guide an online MCTS-inspired, search algorithm.

Among results from the very recent past, ReProver trains a retrieval-augmented transformer for proof generation in Lean. Llemma performs continued pretraining of the CodeLlama 7B & 34B on a math-focused corpus. Baldur (First et al., 2023) generates the whole proof in one-shot using an LLM and then performs a single repair step by passing error feedback through an LLM finetuned on (incorrect proof, error message, correct proof) tuples. AlphaGeometry (Trinh et al., 2024) integrates a transformer model trained on synthetic geometry data with a symbolic deduction engine to prove olympiad geometry problems. In contrast to these approaches, Copra is entirely based on in-context learning.

We evaluated Copra in the Lean and Coq environments. However, significant attention has been applied to theorem proving with LLMs in the interactive theorem prover Isabelle (Paulson, 1994). Theorem-proving systems for Lean and Isabelle are not directly comparable due to the substantial differences in automation provided by each language. Isabelle is equipped with Sledgehammer (Paulson & Blanchette, 2015), a powerful automated reasoning tool that calls external automated theorem provers such as E (Schulz, 2002) and Z3 (De Moura & Bjørner, 2008) to prove goals. Thor (Jiang et al., 2022a) augmented the PISA dataset (Jiang et al., 2021) to include successful Sledgehammer invocations, and trained a language model to additionally predict hammer applications. Integrating these ideas with the Copra approach is an interesting subject of future work.

The idea of using informal hints to guide proofs was first developed in DSP (Jiang et al., 2022b), which used an LLM to translate informal proofs to formal sketches that were then completed with Isabelle’s automated reasoning tactics. Zhao et al. (2023) improved on DSP by rewriting the informal proofs to exhibit a more formal structure and employs a diffusion model to predict the optimal ordering of the few-shot examples. LEGOProver (Wang et al., 2023b) augmented DSP with a skill library that grows throughout proof search. Lyra (Zheng et al., 2023) iterated on DSP by utilizing error feedback several times to modify the formal sketch, using automated reasoning tools to amend incorrect proofs of intermediate hypotheses. DSP, LEGOProver, and Lyra heavily rely on Isabelle’s hammer capabilities (which are not present in other ITPs like Lean) and informal proofs for formal proof generation (see Section A.1.5 for details). Moreover, the requirement for informal proofs in these approaches prohibits their application in the software verification domain, where the notion of informal proof is not well-defined. However, we propose a domain-agnostic stateful search approach within an in-context learning agent which iteratively uses execution feedback from the ITP with optional use of external signals like retrieval and informal proofs.

In-Context Learning Agents.

Several distinct in-context learning agent architectures have been proposed recently (Significant-Gravitas, 2023; Yao et al., 2022; Shinn et al., 2023; Wang et al., 2023a). These models combine an LLM’s capability to use tools Schick et al. (2023), decompose a task into subtasks (Wei et al., 2022; Yao et al., 2023), and self-reflect (Shinn et al., 2023). However, Copra is the first in-context learning agent for theorem-proving.

## 6 Conclusion

We have presented Copra, the first in-context-learning approach to theorem-proving in frameworks like Lean and Coq. The approach departs from prior LLM-based theorem-proving techniques by performing a history-dependent backtracking search by utilizing in-context learning, the use of execution feedback from the underlying proof environment, and retrieval from an external database. We have empirically demonstrated that Copra significantly outperforms few-shot LLM invocations at proof generation and also compares favorably to finetuned approaches.

As for future work, we gave GPT-4 a budget of at most 60 queries per problem for cost reasons. Whether the learning dynamics would drastically change with a much larger inference budget remains to be seen. Also, it is unclear whether a GPT-4-scale model is truly essential for our task. We have shown that the cheaper GPT-3.5 agent is not competitive against our GPT-4 agent; however, it is possible that a Llama-scale model that is explicitly finetuned on interactions between the model and the environment would have done better. While data on such interactions is not readily available, a fascinating possibility is to generate such data synthetically using the search mechanism of Copra.

## 7 Reproducibility Statement

We are releasing all the code needed to run Copra as supplementary material. The code contains all “system prompts” described in Section Section A.3 and Section A.4, along with any other relevant data needed to run Copra. However, to use our code, one must use their own OpenAI API keys. An issue with reproducibility in our setting is that the specific models served via the GPT-4 and GPT-3.5 APIs may change over time. In our experiments, we set the “temperature” parameter to zero to ensure the LLM outputs are as deterministic as possible.

Funding Acknowledgements. This work was partially supported by NSF awards CCF-1918651, CCF-2403211, and CCF-2212559, and a gift from the Ashar Aziz Foundation.

## References

- Andrews & Brown (2006) Andrews, P. B. and Brown, C. E. Tps: A hybrid automatic-interactive system for developing proofs. Journal of Applied Logic, 4(4):367–395, 2006.

- Azerbayev et al. (2023) Azerbayev, Z., Schoelkopf, H., Paster, K., Santos, M. D., McAleer, S., Jiang, A. Q., Deng, J., Biderman, S., and Welleck, S. Llemma: An open language model for mathematics, 2023.

- Blanchette et al. (2011) Blanchette, J. C., Bulwahn, L., and Nipkow, T. Automatic proof and disproof in isabelle/hol. In Frontiers of Combining Systems: 8th International Symposium, FroCoS 2011, Saarbrücken, Germany, October 5-7, 2011. Proceedings 8, pp. 12–27. Springer, 2011.

- Brown et al. (2020) Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J. D., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Bundy (1988) Bundy, A. The use of explicit plans to guide inductive proofs. In 9th International Conference on Automated Deduction: Argonne, Illinois, USA, May 23–26, 1988 Proceedings 9, pp. 111–120. Springer, 1988.

- De Moura & Bjørner (2008) De Moura, L. and Bjørner, N. Z3: an efficient smt solver. In Proceedings of the Theory and Practice of Software, 14th International Conference on Tools and Algorithms for the Construction and Analysis of Systems, TACAS’08/ETAPS’08, pp. 337–340, Berlin, Heidelberg, 2008. Springer-Verlag. ISBN 3540787992.

- de Moura et al. (2015) de Moura, L., Kong, S., Avigad, J., Van Doorn, F., and von Raumer, J. The Lean theorem prover (system description). In Automated Deduction-CADE-25: 25th International Conference on Automated Deduction, Berlin, Germany, August 1-7, 2015, Proceedings 25, pp. 378–388. Springer, 2015.

- First et al. (2023) First, E., Rabe, M. N., Ringer, T., and Brun, Y. Baldur: whole-proof generation and repair with large language models. arXiv preprint arXiv:2303.04910, 2023.

- Han et al. (2021) Han, J. M., Rute, J., Wu, Y., Ayers, E. W., and Polu, S. Proof artifact co-training for theorem proving with language models. arXiv preprint arXiv:2102.06203, 2021.

- Hendrycks et al. (2021) Hendrycks, D., Burns, C., Kadavath, S., Arora, A., Basart, S., Tang, E., Song, D., and Steinhardt, J. Measuring mathematical problem solving with the math dataset. 2021.

- Huang et al. (2019) Huang, D., Dhariwal, P., Song, D., and Sutskever, I. Gamepad: A learning environment for theorem proving. In ICLR, 2019.

- Huet et al. (1997) Huet, G., Kahn, G., and Paulin-Mohring, C. The coq proof assistant a tutorial. Rapport Technique, 178, 1997.

- Jiang et al. (2021) Jiang, A. Q., Li, W., Han, J. M., and Wu, Y. Lisa: Language models of isabelle proofs. In 6th Conference on Artificial Intelligence and Theorem Proving, pp. 378–392, 2021.

- Jiang et al. (2022a) Jiang, A. Q., Li, W., Tworkowski, S., Czechowski, K., Odrzygóźdź, T., Miłoś, P., Wu, Y., and Jamnik, M. Thor: Wielding hammers to integrate language models and automated theorem provers. Advances in Neural Information Processing Systems, 35:8360–8373, 2022a.

- Jiang et al. (2022b) Jiang, A. Q., Welleck, S., Zhou, J. P., Li, W., Liu, J., Jamnik, M., Lacroix, T., Wu, Y., and Lample, G. Draft, sketch, and prove: Guiding formal theorem provers with informal proofs. arXiv preprint arXiv:2210.12283, 2022b.

- Lample et al. (2022) Lample, G., Lacroix, T., Lachaux, M.-A., Rodriguez, A., Hayat, A., Lavril, T., Ebner, G., and Martinet, X. Hypertree proof search for neural theorem proving. Advances in Neural Information Processing Systems, 35:26337–26349, 2022.

- Leroy (2009) Leroy, X. Formal verification of a realistic compiler. Communications of the ACM, 52(7):107–115, 2009.

- mathlib Community (2020) mathlib Community, T. The lean mathematical library. In Proceedings of the 9th ACM SIGPLAN International Conference on Certified Programs and Proofs, POPL ’20. ACM, January 2020. doi: 10.1145/3372885.3373824. URL http://dx.doi.org/10.1145/3372885.3373824.

- Newell et al. (1957) Newell, A., Shaw, J. C., and Simon, H. A. Empirical explorations of the logic theory machine: a case study in heuristic. In Papers presented at the February 26-28, 1957, western joint computer conference: Techniques for reliability, pp. 218–230, 1957.

- OpenAI (2023a) OpenAI. GPT-4 technical report, 2023a.

- OpenAI (2023b) OpenAI. GPT-4 and GPT-4 turbo, 2023b. URL https://platform.openai.com/docs/models/gpt-4-and-gpt-4-turbo.

- Paulson & Blanchette (2015) Paulson, L. and Blanchette, J. Three years of experience with sledgehammer, a practical link between automatic and interactive theorem provers. 02 2015. doi: 10.29007/tnfd.

- Paulson (1994) Paulson, L. C. Isabelle: A generic theorem prover. Springer, 1994.

- Polu & Sutskever (2020) Polu, S. and Sutskever, I. Generative language modeling for automated theorem proving. arXiv preprint arXiv:2009.03393, 2020.

- Polu et al. (2022) Polu, S., Han, J. M., Zheng, K., Baksys, M., Babuschkin, I., and Sutskever, I. Formal mathematics statement curriculum learning. arXiv preprint arXiv:2202.01344, 2022.

- Ramesh et al. (2021) Ramesh, A., Pavlov, M., Goh, G., Gray, S., Voss, C., Radford, A., Chen, M., and Sutskever, I. Zero-shot text-to-image generation. In International Conference on Machine Learning, pp. 8821–8831. PMLR, 2021.

- Roziere et al. (2023) Roziere, B., Gehring, J., Gloeckle, F., Sootla, S., Gat, I., Tan, X. E., Adi, Y., Liu, J., Remez, T., Rapin, J., et al. Code llama: Open foundation models for code. arXiv preprint arXiv:2308.12950, 2023.

- Sanchez-Stern et al. (2020) Sanchez-Stern, A., Alhessi, Y., Saul, L., and Lerner, S. Generating correctness proofs with neural networks. In Proceedings of the 4th ACM SIGPLAN International Workshop on Machine Learning and Programming Languages, pp. 1–10, 2020.

- Schick et al. (2023) Schick, T., Dwivedi-Yu, J., Dessì, R., Raileanu, R., Lomeli, M., Zettlemoyer, L., Cancedda, N., and Scialom, T. Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761, 2023.

- Schulz (2002) Schulz, S. E - a brainiac theorem prover. AI Commun., 15(2,3):111–126, aug 2002. ISSN 0921-7126.

- Shinn et al. (2023) Shinn, N., Cassano, F., Labash, B., Gopinath, A., Narasimhan, K., and Yao, S. Reflexion: Language agents with verbal reinforcement learning. arXiv preprint arXiv:2303.11366, 2023.

- Significant-Gravitas (2023) Significant-Gravitas. Autogpt. https://github.com/Significant-Gravitas/Auto-GPT, 2023.

- Touvron et al. (2023) Touvron, H., Lavril, T., Izacard, G., Martinet, X., Lachaux, M.-A., Lacroix, T., Rozière, B., Goyal, N., Hambro, E., Azhar, F., et al. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023.

- Trinh et al. (2024) Trinh, T. H., Wu, Y., Le, Q. V., He, H., and Luong, T. Solving olympiad geometry without human demonstrations. Nature, 625(7995):476–482, 2024.

- Wang et al. (2023a) Wang, G., Xie, Y., Jiang, Y., Mandlekar, A., Xiao, C., Zhu, Y., Fan, L., and Anandkumar, A. Voyager: An open-ended embodied agent with large language models. arXiv preprint arXiv:2305.16291, 2023a.

- Wang et al. (2023b) Wang, H., Xin, H., Zheng, C., Li, L., Liu, Z., Cao, Q., Huang, Y., Xiong, J., Shi, H., Xie, E., Yin, J., Li, Z., Liao, H., and Liang, X. Lego-prover: Neural theorem proving with growing libraries. 2023b.

- Wei et al. (2022) Wei, J., Wang, X., Schuurmans, D., Bosma, M., Xia, F., Chi, E., Le, Q. V., Zhou, D., et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems, 35:24824–24837, 2022.

- Yang & Deng (2019) Yang, K. and Deng, J. Learning to prove theorems via interacting with proof assistants. In International Conference on Machine Learning, pp. 6984–6994. PMLR, 2019.

- Yang et al. (2023) Yang, K., Swope, A. M., Gu, A., Chalamala, R., Song, P., Yu, S., Godil, S., Prenger, R., and Anandkumar, A. Leandojo: Theorem proving with retrieval-augmented language models. arXiv preprint arXiv:2306.15626, 2023.

- Yao et al. (2022) Yao, S., Zhao, J., Yu, D., Du, N., Shafran, I., Narasimhan, K., and Cao, Y. React: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629, 2022.

- Yao et al. (2023) Yao, S., Yu, D., Zhao, J., Shafran, I., Griffiths, T. L., Cao, Y., and Narasimhan, K. Tree of thoughts: Deliberate problem solving with large language models. arXiv preprint arXiv:2305.10601, 2023.

- Zhao et al. (2023) Zhao, X., Li, W., and Kong, L. Decomposing the enigma: Subgoal-based demonstration learning for formal theorem proving. 2023.

- Zheng et al. (2023) Zheng, C., Wang, H., Xie, E., Liu, Z., Sun, J., Xin, H., Shen, J., Li, Z., and Li, Y. Lyra: Orchestrating dual correction in automated theorem proving. arXiv preprint arXiv:2309.15806, 2023.

- Zheng et al. (2021) Zheng, K., Han, J. M., and Polu, S. Minif2f: a cross-system benchmark for formal olympiad-level mathematics. arXiv preprint arXiv:2109.00110, 2021.

## Appendix A Appendix

### A.1 Evaluation Details

#### A.1.1 Copra Implementation Setup Details

We introduce a common proof environment for Copra, which can also be used by any other approach for theorem-proving. The proof environment has a uniform interface that makes Copra work seamlessly for both Lean and Coq. It also supports the use of retrieval from external lemma repositories and the use of informal proofs when available (as in the case of $\mathtt{miniF2F}$ ). In the future, we plan to extend Copra to support more proof languages.

Copra provides support for various LLMs other than the GPT-series, including open-sourced LLMs like Llama 2 (Touvron et al., 2023) and Code Llama (Roziere et al., 2023). For most of our experiments, all the theorems are searched within a timeout of 600 seconds (10 minutes) and with a maximum of 60 LLM inference calls (whichever exhausts first). To make it comparable across various LLMs, only one response is generated per inference. All these responses are generated with the temperature set to zero, which ensures that the responses generated are more deterministic, focused, and comparable. In one of our ablations with few-shot GPT-4, we use set the temperature to $0.7$ and run more than 1 attempt. The number of attempts for few-shot GPT-4 matches the number of queries Copra takes to either successfully prove or fail to prove a certain theorem. This comparison is not completely fair for Copra because individual queries focus on a single proof step and cannot see the original goal, yet measures a useful experiment which indicates the benefits of Copra ’s stateful search under a fixed budget of API calls to GPT-4.

We use GPT-3.5, GPT-4 (OpenAI, 2023b), and CodeLLama (Roziere et al., 2023) to test the capabilities of Copra. We find that it is best to use Copra ’s different capabilities in an ensemble to enhance its performance while also minimizing cost. Therefore, we first try to find the proof without retrieval, then with retrieval, and finally with informal proofs (if available) only when we fail with retrieval. The informal proofs are used more like informal sketches for the formal proof and are generated in a separate few-shot invocation of the LLM. Thereafter, Copra simply adds the informal proofs to its prompt which guides the search. To ensure fairness in comparison, we make sure that the number of guidance steps is capped at 60 and the 10-minute timeout is spread across all these ensemble executions. The use of a failure dictionary (see Section 3) enables fast failure (see Table 3) which helps in using more strategies along with Copra within the 60 queries cap and 10-minute timeout. From Table 2, it is clear that despite the significant overlap between the different ablations, the ensemble covers more cases than just using one strategy with Copra. While extra lemmas are often useful, the addition of extra information from retrieval may also be misleading because the retriever can find lemmas that are not relevant to proving the goal, so the best performance is acquired by using the different capabilities in the manner we describe. Similarly, the use of informal proofs as a sketch for formal proofs can potentially increase the number of steps needed in a formal proof, decreasing the search efficacy. For example, an informal proof can suggest the use of multiple rewrites for performing some algebraic manipulation which can be easily handled with powerful tactics like linarith in Lean. Table 5 shows the number of tokens used in our prompts for different datasets.

#### A.1.2 Metric: pass@k-with-n-queries

We consider the metric pass@k-with-n-queries to assess the speed of the proposed approach and the effectiveness of the LLM or neural network to guide the proof search. It is a reasonable metric because it does a more even-handed trade-off in accounting for the time taken to complete a proof and at the same time ignores very low-level hardware details.

Different approaches need a different amount of guidance from a neural model to find the right proof. For example, approaches like Baldur (First et al., 2023), DSP (Jiang et al., 2022b), etc., generate the whole proof all at once. On the other hand, GPT- $f$ (Polu & Sutskever, 2020), PACT (Han et al., 2021), ReProver (Yang et al., 2023), Proverbot (Sanchez-Stern et al., 2020), and Copra generate proofs in a step-by-step fashion. We argue that pass@k-with-n-queries is a fairer metric to compare these different types of approaches because it correlates with the effectiveness of the proof-finding algorithm in an implementation-agnostic way. Since the exact time might not always be a good reflection of the effectiveness because of hardware differences, network throttling, etc., it makes sense to not compare directly on metrics like pass@k-minutes or pass@k-seconds. Not only these metrics will be brittle and very sensitive to the size, hardware, and other implementation details of the model, but not every search implementation will be based on a timeout. For example, Proverbot9001 does not use timeout-based search (and hence we don’t compare on the basis of time with Proverbot9001).

#### A.1.3 pass@k-with-n-queries versus wall-clock time

We show that pass@k-with-n-queries, correlates well with wall-clock time for finding proofs by using the metric pass@k-seconds. pass@k-seconds measures the number of proofs that an approach can find in less than $k$ seconds. The plot in Figure 6 shows that pass@k-seconds follows the same trend as pass@k-with-n-queries as shown in Figure 5.

| Evaluation on $\mathtt{miniF2F}$ - $\mathtt{test}$ | | | |

| --- | --- | --- | --- |

| Approach | Avg. Query in Total | Avg. Query on Failure | Avg. Query on Pass |

| ReProver (- Retrieval) | 350.7 | 427.24 | 81.6 |

| ReProver | 1015.32 | 1312.89 | 122.62 |

| Copra | 21.73 | 28.23 | 3.83 |

| Copra ( $+$ Retrieval) | 37.88 | 50.90 | 7.38 |

Table 2: Aggregate effectiveness statistics for Copra and the baselines on $\mathtt{miniF2F}$ dataset. We can see the various ablations of Copra with and without retrieval.

| Approach | Avg. Time In Seconds | | | | | |

| --- | --- | --- | --- | --- | --- | --- |

| Per Proof | Per Query | | | | | |

| On Pass | On Fail | All | On Pass | On Fail | All | |

| ReProver (on CPU - retrieval) | 279.19 | 618.97 | 543.78 | 3.42 | 1.45 | 1.55 |

| ReProver (on GPU - retrieval) | 267.94 | 601.35 | 520.74 | 2.06 | 0.44 | 0.48 |

| ReProver (on GPU + retrieval) | 301.20 | 605.29 | 529.27 | 2.46 | 0.46 | 0.52 |

| Copra (GPT-3.5) | 39.13 | 134.26 | 122.21 | 15.97 | 9.43 | 9.53 |

| Copra (GPT-4) | 67.45 | 370.71 | 289.92 | 17.61 | 13.09 | 13.34 |

| Copra (GPT-4 + retrieval) | 117.85 | 678.51 | 510.78 | 15.97 | 13.33 | 13.48 |

Table 3: Average time taken by our approach (Copra) and ReProver on $\mathtt{miniF2F}$ dataset. We split the values according to the success of the proof search on that problem. We also report values per query.