# Prometheus: Inducing Fine-grained Evaluation Capability in Language Models

> denotes equal contribution. Work was done while Seungone was interning at NAVER AI Lab.Corresponding authors

## Abstract

Recently, using a powerful proprietary Large Language Model (LLM) (e.g., GPT-4) as an evaluator for long-form responses has become the de facto standard. However, for practitioners with large-scale evaluation tasks and custom criteria in consideration (e.g., child-readability), using proprietary LLMs as an evaluator is unreliable due to the closed-source nature, uncontrolled versioning, and prohibitive costs. In this work, we propose Prometheus, a fully open-source LLM that is on par with GPT-4’s evaluation capabilities when the appropriate reference materials (reference answer, score rubric) are accompanied. We first construct the Feedback Collection, a new dataset that consists of 1K fine-grained score rubrics, 20K instructions, and 100K responses and language feedback generated by GPT-4. Using the Feedback Collection, we train Prometheus, a 13B evaluator LLM that can assess any given long-form text based on customized score rubric provided by the user. Experimental results show that Prometheus scores a Pearson correlation of 0.897 with human evaluators when evaluating with 45 customized score rubrics, which is on par with GPT-4 (0.882), and greatly outperforms ChatGPT (0.392). Furthermore, measuring correlation with GPT-4 with 1222 customized score rubrics across four benchmarks (MT Bench, Vicuna Bench, Feedback Bench, Flask Eval) shows similar trends, bolstering Prometheus ’s capability as an evaluator LLM. Lastly, Prometheus achieves the highest accuracy on two human preference benchmarks (HHH Alignment & MT Bench Human Judgment) compared to open-sourced reward models explicitly trained on human preference datasets, highlighting its potential as an universal reward model. We open-source our code, dataset, and model https://kaistai.github.io/prometheus/.

## 1 Introduction

Evaluating the quality of machine-generated text has been a long-standing challenge in Natural Language Processing (NLP) and remains especially essential in the era of Large Language Models (LLMs) to understand their properties and behaviors (Liang et al., 2022; Chang et al., 2023; Zhong et al., 2023; Chia et al., 2023; Holtzman et al., 2023). Human evaluation has consistently been the predominant method, for its inherent reliability and capacity to assess nuanced and subjective dimensions in texts. In many situations, humans can naturally discern the most important factors of assessment, such as brevity, creativity, tone, and cultural sensitivities. On the other hand, conventional automated evaluation metrics (e.g., BLEU, ROUGE) cannot capture the depth and granularity of human evaluation (Papineni et al., 2002; Lin, 2004b; Zhang et al., 2019; Krishna et al., 2021).

Applying LLMs (e.g. GPT-4) as an evaluator has received substantial attention due to its potential parity with human evaluation (Chiang & yi Lee, 2023; Dubois et al., 2023; Li et al., 2023; Liu et al., 2023; Peng et al., 2023; Zheng et al., 2023; Ye et al., 2023b; Min et al., 2023). Initial investigations and observations indicate that, when aptly prompted, LLMs can emulate the fineness of human evaluations. However, while the merits of using proprietary LLMs as an evaluation tool are evident, there exist some critical disadvantages:

1. Closed-source Nature: The proprietary nature of LLMs brings transparency concerns as internal workings are not disclosed to the broader academic community. Such a lack of transparency hinders collective academic efforts to refine or enhance its evaluation capabilities. Furthermore, this places fair evaluation, a core tenet in academia, under control of for-profit entity and raises concerns about neutrality and autonomy.

1. Uncontrolled Versioning: Proprietary models undergo version updates that are often beyond the users’ purview or control (Pozzobon et al., 2023). This introduces a reproducibility challenge. As reproducibility is a cornerstone of scientific inquiry, any inconsistency stemming from version changes can undermine the robustness of research findings that depend on specific versions of the model, especially in the context of evaluation.

1. Prohibitive Costs: Financial constraints associated with LLM APIs are not trivial. For example, evaluating four LLMs variants across four sizes (ranging from 7B to 65B) using GPT-4 on 1000 evaluation instances can cost over $2000. Such scaling costs can be prohibitive, especially for academic institutions or researchers operating on limited budgets.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: LLM Evaluation Methods Flowchart

## Diagram Overview

The image presents a comparative analysis of Large Language Model (LLM) evaluation methodologies through a multi-section flowchart. Key components include conventional benchmarks, coarse-grained evaluations, fine-grained evaluations with custom rubrics, and a proposed evaluation approach.

---

## Section 1: Conventional LLM Evaluation

**Title**: Conventional LLM Evaluation

**Components**:

- **Benchmarks**: MMLU, Big Bench, MATH, HumanEval

- **Evaluation Metrics**: Accuracy, EM (Exact Match), Rouge

- **Output**: Score for specific domain/task

**Flow**:

```

Benchmarks → Accuracy/EM/Rouge → Domain-specific Score

```

---

## Section 2: Coarse-Grained Evaluation

**Title**: Coarse-Grained Evaluation

**Components**:

- **Benchmarks**: Vicuna Bench, MT Bench, AlpacaFarm

- **Evaluation Method**: GPT-4 Evaluation

- **Output**: Score based on Helpfulness/Harmlessness

**Flow**:

```

Benchmarks → GPT-4 Evaluation → Helpfulness/Harmlessness Score

```

---

## Section 3: Fine-grained Evaluation with User-Defined Score Rubrics

**Title**: Fine-grained Evaluation with User-Defined Score Rubrics

**Components**:

- **Instruction Set**: Vicuna Bench, MT Bench, AlpacaFarm

- **Score Rubric**: Cultural sensitivity assessment (1-5 scale)

- Score 1: `~~~` (Low sensitivity)

- Score 5: `~~~` (High sensitivity)

- **Reference Answer**: Placeholder for ground truth

- **Evaluation Methods**:

- GPT-4 Evaluation → Customized Criteria

- Prometheus Evaluation (🔥 icon) → Customized Criteria

**Flow**:

```

Instruction Set + Score Rubric → GPT-4 Evaluation → Customized Criteria

Instruction Set + Score Rubric → Prometheus Evaluation → Customized Criteria

```

---

## Section 4: Proposed Approach

**Components**:

1. **Domain-specific Diagnostics**

- 🤔 Emoji: "Only diagnoses about a specific domain or task"

- Limitation: Narrow focus

2. **General Preference Diagnostics**

- 🤔 Emoji: "Only diagnoses about the general preference of the public"

- Limitation: Public bias

3. **Close-source Nature**

- 🤔 Emoji: "Uncontrolled Versioning"

- 💸 Emoji: "Prohibitive Costs"

4. **Fully Open-source**

- 🤔 Emoji: "Reproducible Evaluation"

- 💸 Emoji: "Inexpensive Costs"

---

## Dialogue Bubble Analysis

**LLM User**:

"Which LLM is the most humorous one out there?"

**LLM Developer**:

"Is the LLM I’m developing inspiring while being culturally sensitive?"

---

## Key Observations

1. **Color Coding**:

- Red: Conventional/Coarse-grained evaluations

- Blue: Fine-grained evaluations

- Purple: Proposed approach

2. **Evaluation Philosophy**:

- Traditional methods focus on accuracy metrics

- Modern approaches emphasize cultural sensitivity and user-defined criteria

- Proposed method advocates for open-source reproducibility and cost efficiency

3. **Critical Gaps**:

- Conventional methods lack cultural sensitivity assessment

- Coarse-grained evaluations depend on third-party models (GPT-4)

- Close-source systems face versioning and cost challenges

---

## Diagram Structure

```

[Conventional LLM Evaluation]

↓

[Accuracy/EM/Rouge]

↓

[Domain-specific Score]

[Coarse-Grained Evaluation]

↓

[GPT-4 Evaluation]

↓

[Helpfulness/Harmlessness Score]

[Fine-grained Evaluation]

↓

[GPT-4 Evaluation]

↓

[Customized Criteria]

[Proposed Approach]

├─ Domain-specific Diagnostics

├─ General Preference Diagnostics

├─ Close-source Nature (Uncontrolled Versioning, Prohibitive Costs)

└─ Fully Open-source (Reproducible Evaluation, Inexpensive Costs)

```

---

## Language Notes

- **Primary Language**: English

- **Emojis**: Used as visual indicators (no translation required)

- **Special Characters**:

- `~~~` (tilde symbols) for score representation

- `🔥` (fire emoji) for Prometheus Evaluation

---

## Conclusion

The flowchart illustrates the evolution of LLM evaluation from traditional accuracy metrics to culturally sensitive, user-defined frameworks. The proposed approach emphasizes open-source reproducibility and cost efficiency while addressing limitations in existing methods.

</details>

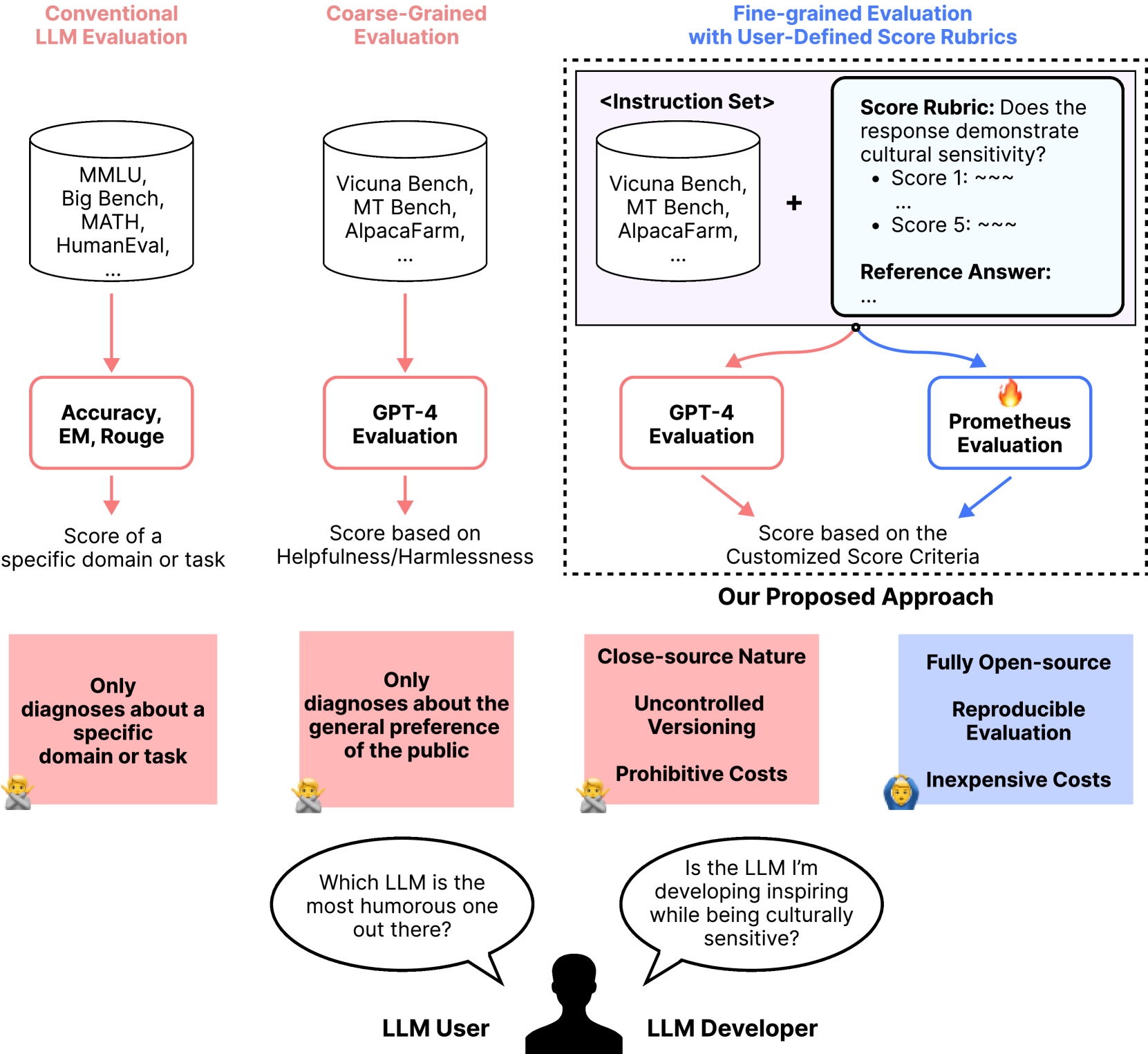

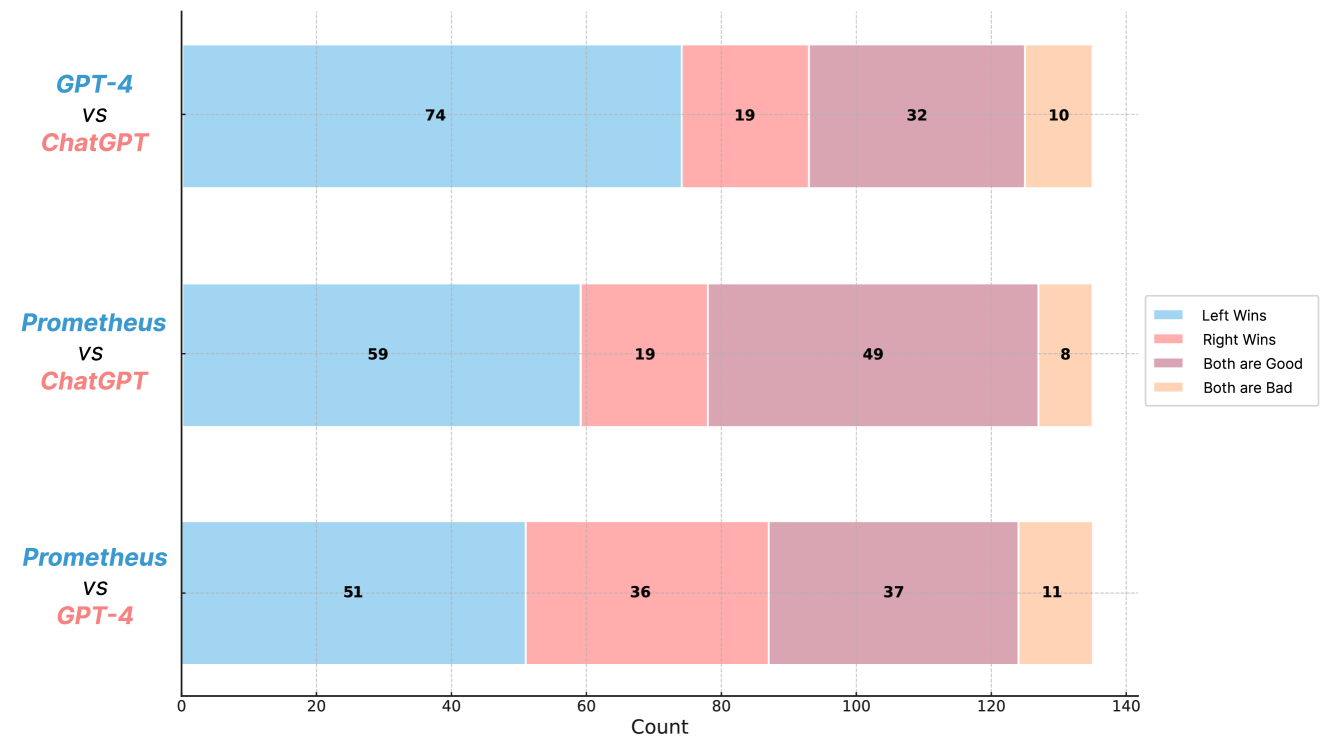

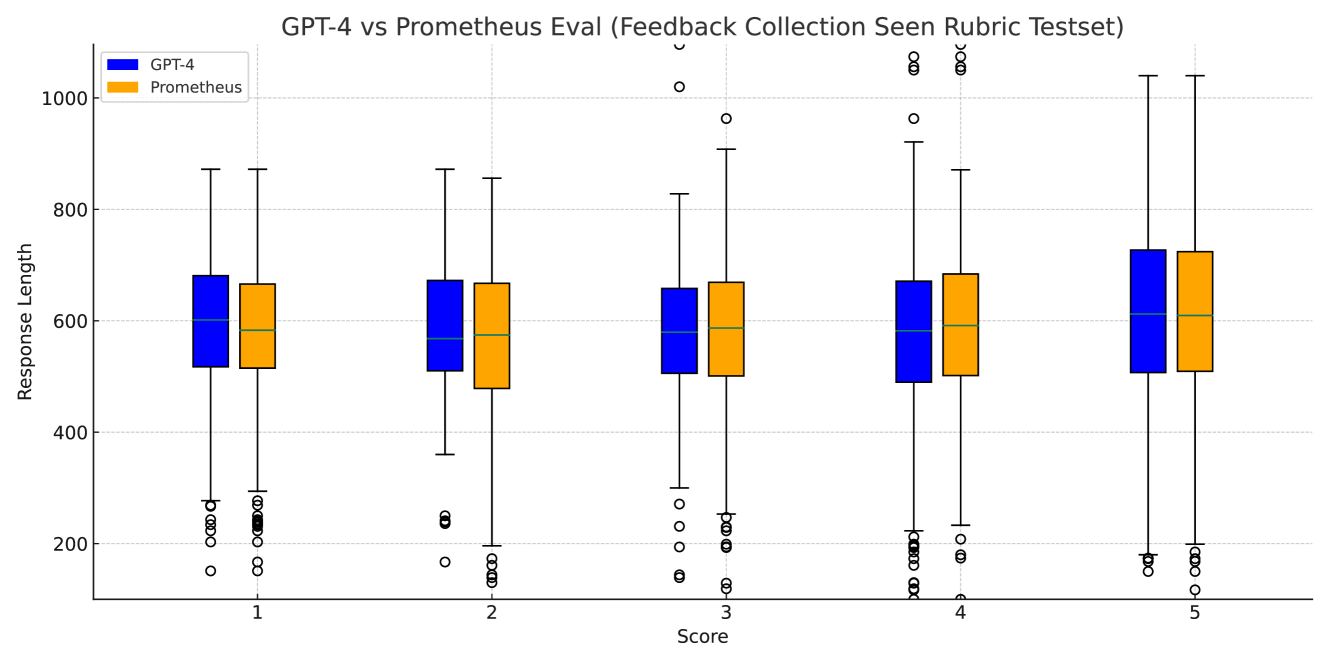

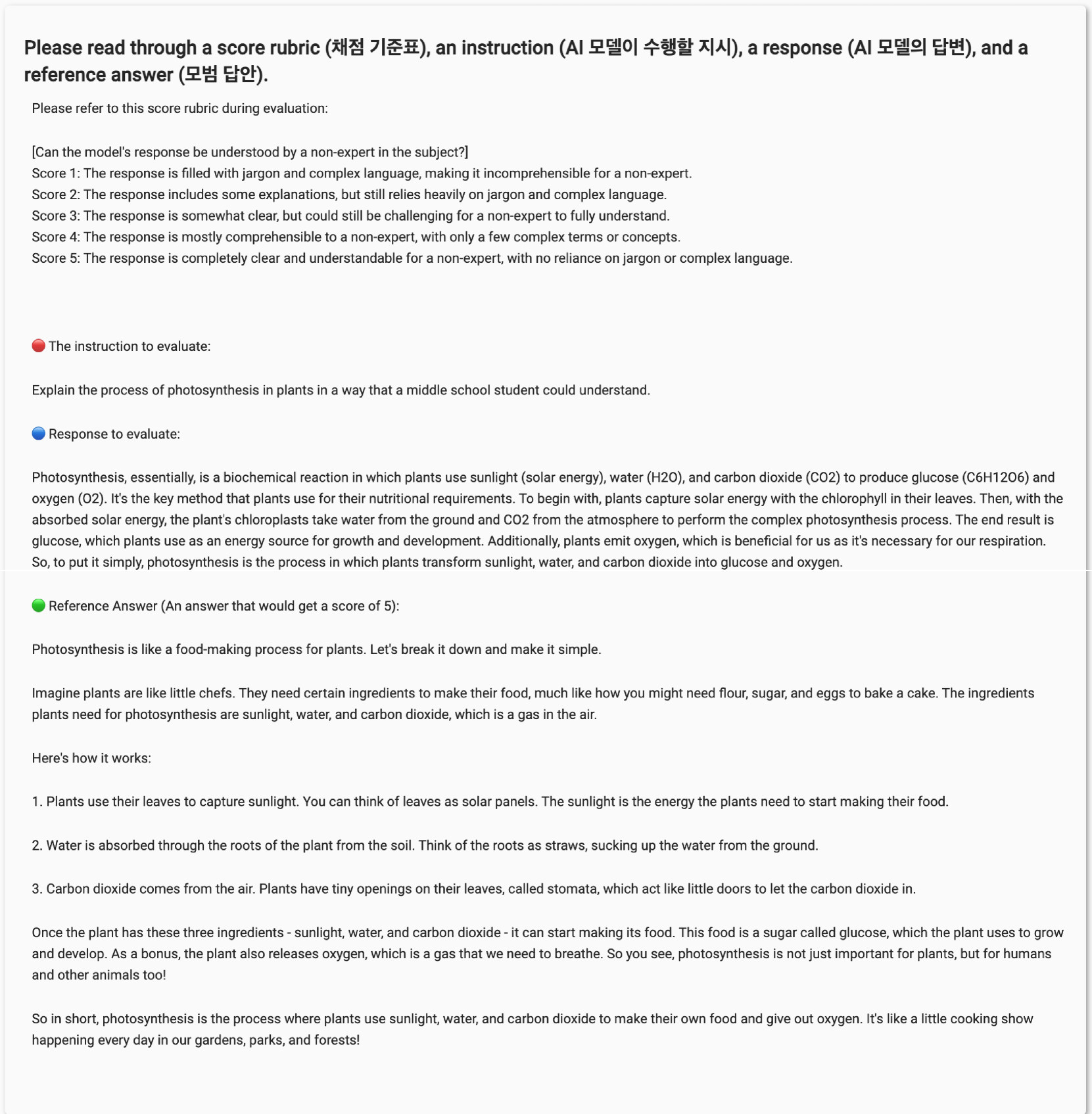

Figure 1: Compared to conventional, coarse-grained LLM evaluation, we propose a fine-grained approach that takes user-defined score rubrics as input.

Despite these limitations, proprietary LLMs such as GPT-4 are able to evaluate scores based on customized score rubrics. Specifically, current resources are confined to generic, single-dimensional evaluation metrics that are either too domain/task-specific (e.g. EM, Rouge) or coarse-grained (e.g. helpfulness/harmlessness (Dubois et al., 2023; Chiang et al., 2023; Liu et al., 2023) as shown in left-side of Figure 1. For instance, AlpacaFarm’s (Dubois et al., 2023) prompt gives a single definition of preference, asking the model to choose the model response that is generally preferred. However, response preferences are subject to variation based on specific applications and values. In real-world scenarios, users may be interested in customized rubric such as “Which LLM generates responses that are playful and humorous” or “Which LLM answers with particularly care for cultural sensitivities?” Yet, in our initial experiments, we observe that even the largest open-source LLM (70B) is insufficient to evaluate based on a customized score rubric compared to proprietary LLMs.

To this end, we propose Prometheus, a 13B LM that aims to induce fine-grained evaluation capability of GPT-4, while being open-source, reproducible, and inexpensive. We first create the Feedback Collection, a new dataset that is crafted to encapsulate diverse and fine-grained user assessment score rubric that represent realistic user demands (example shown in Figure 2). We design the Feedback Collection with the aforementioned consideration in mind, encompassing thousands of unique preference criteria encoded by a user-injected score rubric. Unlike prior feedback datasets (Ye et al., 2023a; Wang et al., 2023a), it uses custom, not generic preference score rubric, to train models to flexibly generalize to practical and diverse evaluation preferences. Also, to best of our knowledge, we are first to explore the importance of including various reference materials – particularly the ‘Reference Answers’ – to effectively induce fine-grained evaluation capability.

We use the Feedback Collection to fine-tune Llama-2-Chat-13B in creating Prometheus. On 45 customized score rubrics sampled across three test sets (MT Bench, Vicuna Bench, Feedback Bench), Prometheus obtains a Pearson correlation of 0.897 with human evaluators, which is similar with GPT-4 (0.882), and has a significant gap with GPT-3.5-Turbo (0.392). Unexpectely, when asking human evaluators to choose a feedback with better quality in a pairwise setting, Prometheus was preferred over GPT-4 in 58.67% of the time, while greatly outperformed GPT-3.5-Turbo with a 79.57% win rate. Also, when measuring the Pearson correlation with GPT-4 evaluation across 1222 customized score rubrics across 4 test sets (MT Bench, Vicuna Bench, Feedback Bench, Flask Eval), Prometheus showed higher correlation compared to GPT-3.5-Turbo and Llama-2-Chat 70B. Lastly, when testing on 2 unseen human preference datasets (MT Bench Human Judgments, HHH Alignment), Prometheus outperforms two state-of-the-art reward models and GPT-3.5-Turbo, highlighting its potential as an universal reward model.

Our contributions are summarized as follows:

- We introduce the Feedback Collection dataset specifically designed to train an evaluator LM. Compared to previous feedback datasets, it includes customized scoring rubrics and reference answers in addition to the instructions, responses, and feedback.

- We train Prometheus, the first open-source LLM specialized for fine-grained evaluation that can generalize to diverse, real-world scoring rubrics beyond a single-dimensional preference such as helpfulness and harmlessness.

- We conduct extensive experiments showing that by appending reference materials (reference answers, fine-grained score rubrics) and fine-tuning on feedback, we can induce evaluation capability into language models. Prometheus shows high correlation with human evaluation, GPT-4 evaluation in absolute scoring settings, and also shows high accuracy in ranking scoring settings.

## 2 Related Work

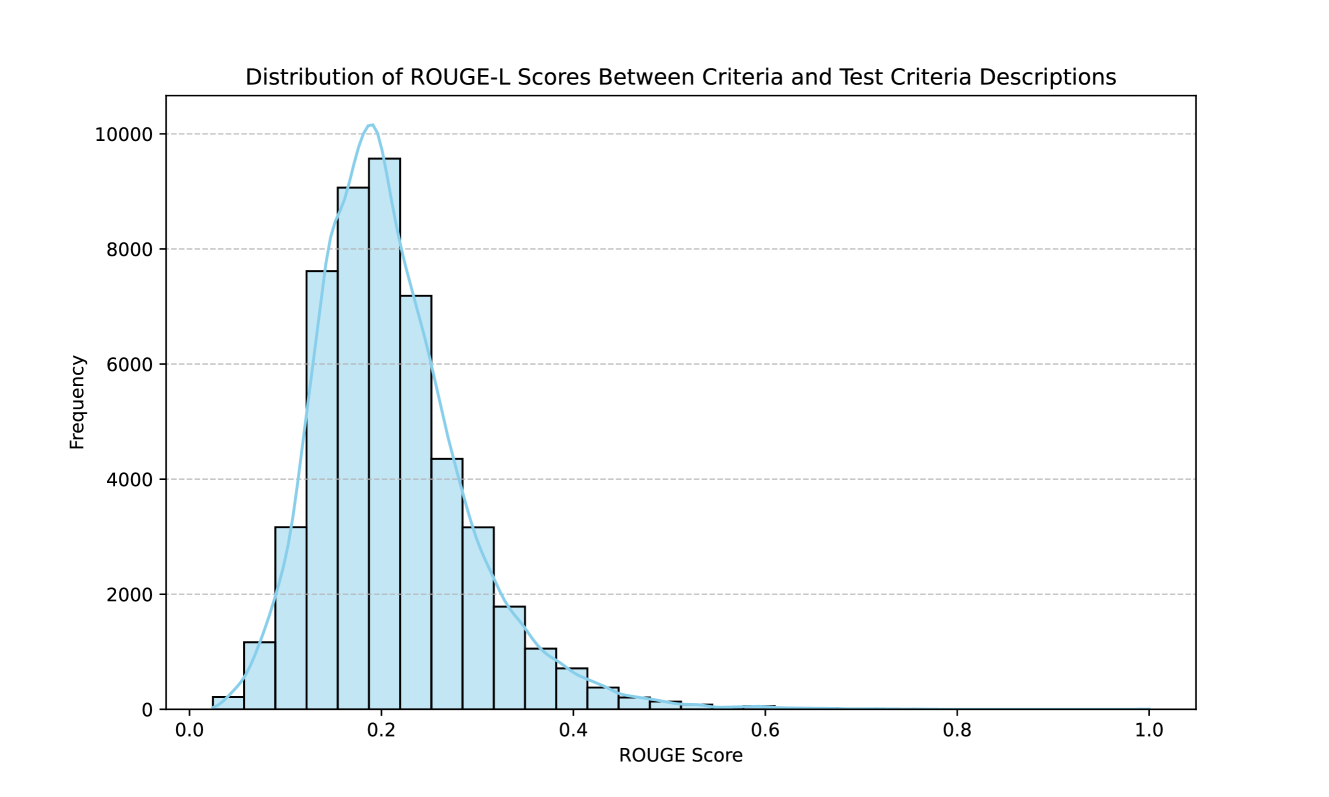

Reference-based text evaluation

Previously, model-free scores that evaluate machine-generated text based on a golden candidate reference such as BLEU (Papineni et al., 2002) and ROUGE (Lin, 2004a) scores were used as the dominant approach. However, Krishna et al. (2021) reported limitations in reference-based metrics, such as ROUGE, observing that they are not reliable for evaluation. In recent years, model-based approaches have been widely adopted such as BERTScore (Zhang et al., 2019), BLEURT (Sellam et al., 2020), and BARTScore (Yuan et al., 2021) which are able to capture semantic information rather than only evaluating on lexical components.

LLM-based text evaluation

Recent work has used GPT-4 or a fine-tuned critique LLM as an evaluator along a single dimension of “preference” (Chiang & yi Lee, 2023; Li et al., 2023; Dubois et al., 2023; Zheng et al., 2023; Liu et al., 2023). For instance, AlpacaFarm (Dubois et al., 2023) asks the model to select “which response is better based on your judgment and based on your own preference” Another example is recent work that showed ChatGPT can outperform crowd-workers for text-annotation tasks (Gilardi et al., 2023; Chiang & yi Lee, 2023). Wang et al. (2023b) introduced PandaLM, a fine-tuned LLM to evaluate the generated text and explain its reliability on various preference datasets. Similarly, Ye et al. (2023a) and Wang et al. (2023a) create critique LLMs. However, the correct preference is often subjective and depends on applications, cultures, and objectives, where degrees of brevity, formality, honesty, creativity, and political tone, among many other potentially desirable traits that may vary (Chiang & yi Lee, 2023). While GPT-4 is unreliable due to its close-source nature, uncontrolled versioning, and prohibitive costs, it was the only option explored for fine-grained and customized evaluation based on the score rubric (Ye et al., 2023b). On the contrary, we train, to best of our knowledge, the first evaluator sensitive to thousands of unique preference criteria, and show it significantly outperforms uni-dimensional preference evaluators in a number of realistic settings. Most importantly, compared to previous work, we strongly argue the importance of appending reference materials (score rubric and reference answer) in addition to fine-tuning on the feedback in order to effectively induce fine-grained evaluation capability.

## 3 The Feedback Collection Dataset

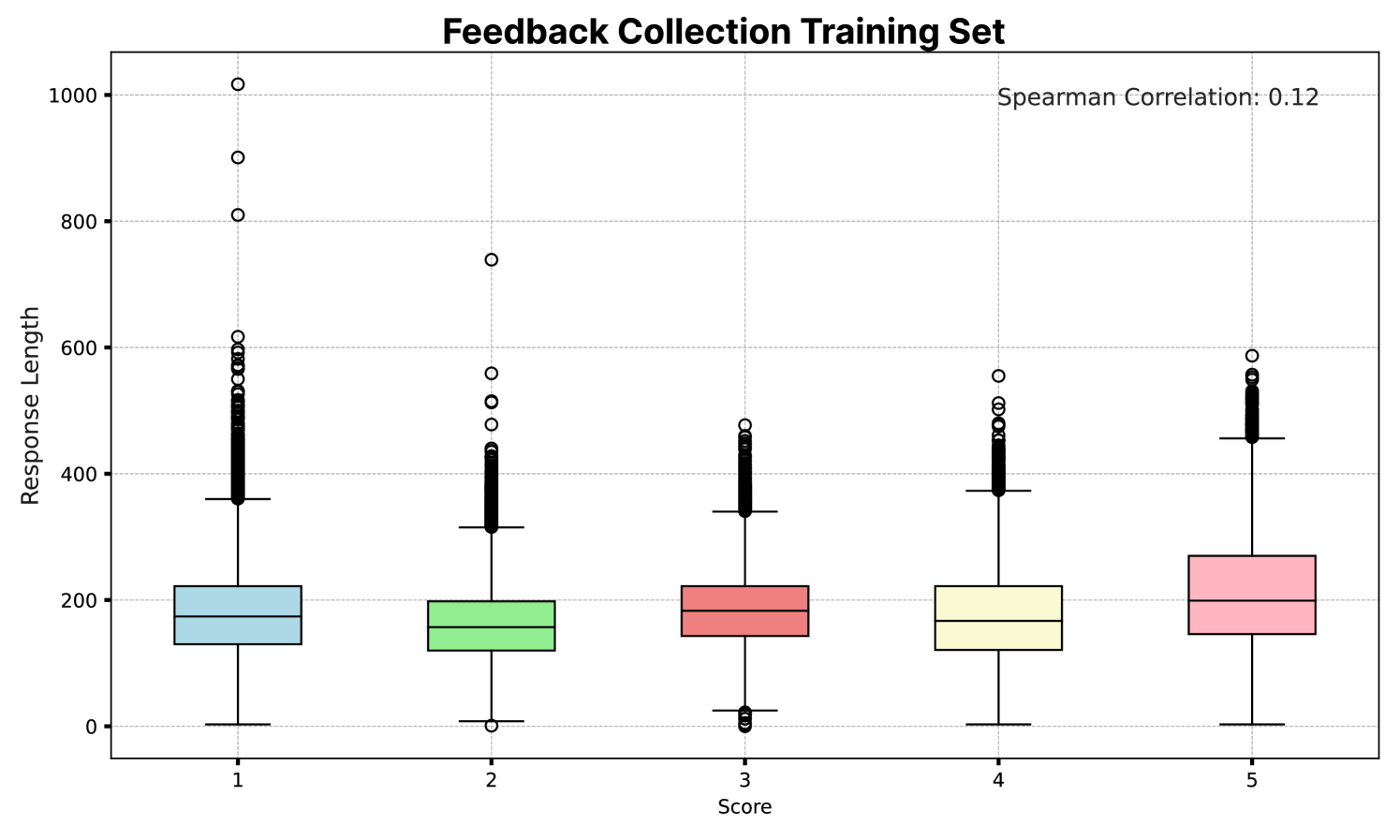

While previous work has demonstrated that fine-tuning on feedback is effective for improving LMs to function as a critique (Ye et al., 2023a; Wang et al., 2023a), the datasets used in previous work are not directly applicable for improving LMs to function as a fine-grained evaluator. We thus introduce the Feedback Collection, a new dataset for the sole purpose of fine-tuning an open-sourced evaluator LLM. Our 4 main considerations during dataset construction are: (1) including as many reference materials (i.e. reference answer, and scoring rubric) as possible, (2) maintaining a uniform length among the reference answers for each score (1 to 5) to prevent undesired length bias, (3) maintaining a uniform score distribution to prevent undesired decision bias, and (4) limiting the scope of our instructions and responses to realistic situations where a user is interacting with a LLM.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: FinTech Startup Business Strategy Evaluation

## Section 1: Instruction

**Title**: Instruction

**Content**:

"Develop me a business strategy for creating a FinTech startup that offers online banking services."

## Section 2: Customized Score Rubric

**Title**: Customized Score Rubric

**Criteria**:

- **Does the response consider regulations and compliance requirements?**

- [Score 1] The response disregards regulations and compliance requirements.

- [Score 2] The response shows minimal awareness of regulatory compliance, making several errors.

- [Score 3] The response recognizes certain regulatory requirements but lacks depth.

- [Score 4] The response mostly considers regulatory compliance, with only minor oversights.

- [Score 5] The response perfectly accounts for and integrates regulatory compliance requirements.

## Section 3: Response to Evaluate

**Title**: Response to Evaluate

**Subtitle**: Online Banking Service FinTech Startup Business Strategy

**Executive Summary**:

"Our startup, 'DigitalBank', aims to revolutionize the traditional banking industry by providing an innovative ..."

**Reference Answer**:

**Title**: Reference Answer

**Content**:

"Establishing a FinTech startup that offers online banking services requires a comprehensive strategy to tackle various challenges inherent to the industry and capitalize on market opportunities.

1. Market Research: ...

2. Regulatory and Compliance: ...

3. Product Development: ...

4. Service Differentiation: ...

5. Go-to-Market Strategy: ...

6. Growth and Expansion: ...

7. Sustainability and Trust: ...

These items are important aspects to consider when starting a FinTech startup that offers online banking services."

## Section 4: Feedback

**Title**: Feedback

**Content**:

"The response provides a comprehensive overview.

Overall, it includes a detailed plan of how to start a FinTech startup by presenting an imaginary startup 'DigitalBank'.

However, it lacks a deeper dive into regulatory compliance.

...

**Score**: 3 out of 5"

## Diagram Structure

1. **Left Column**:

- Instruction (White background with red oval)

- Customized Score Rubric (Peach background with red oval)

2. **Center Column**:

- Response to Evaluate (Gray background with red oval)

- Reference Answer (Blue background with red oval)

3. **Right Column**:

- Prometheus (Blue background with flame emoji)

- Feedback (Yellow background with red oval)

## Key Observations

- **Regulatory Compliance Focus**: The rubric emphasizes regulatory compliance as a critical evaluation criterion.

- **Feedback Score**: The response received a score of 3/5, indicating partial alignment with compliance requirements.

- **Placeholder Content**: The Reference Answer includes ellipses (...) for detailed subpoints, suggesting incomplete transcription.

## Language Notes

- **Primary Language**: English

- **No Additional Languages Detected**

</details>

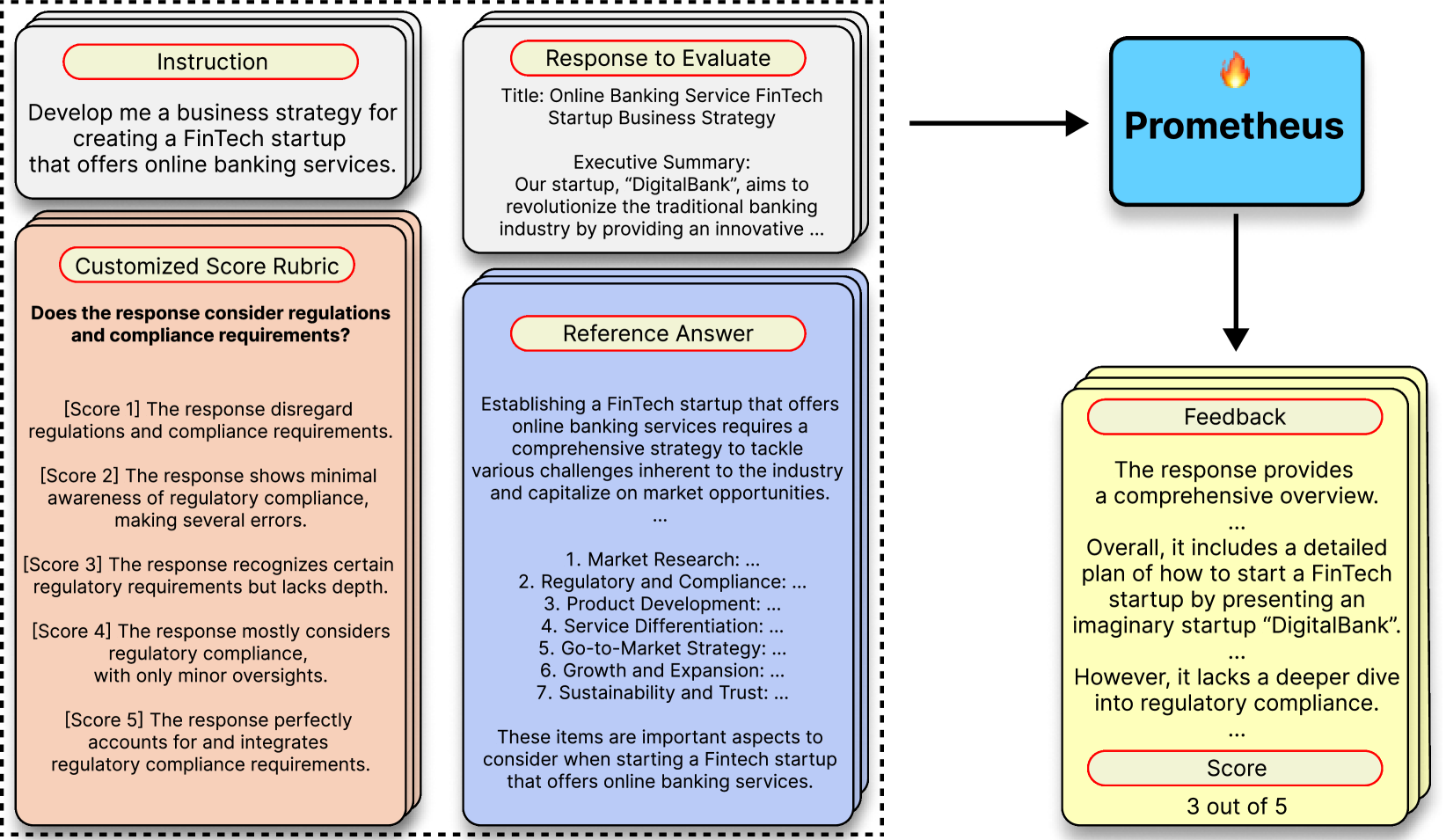

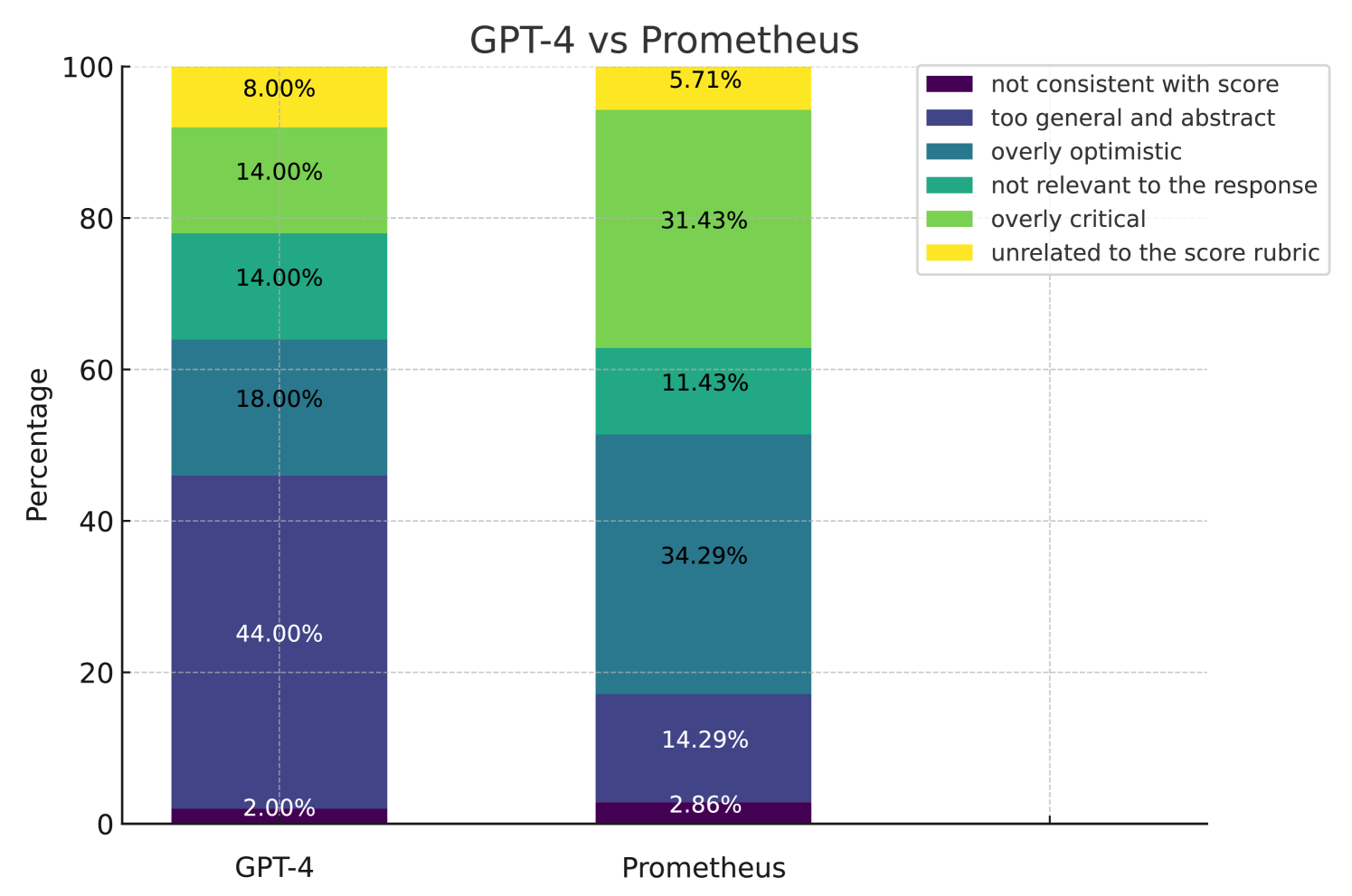

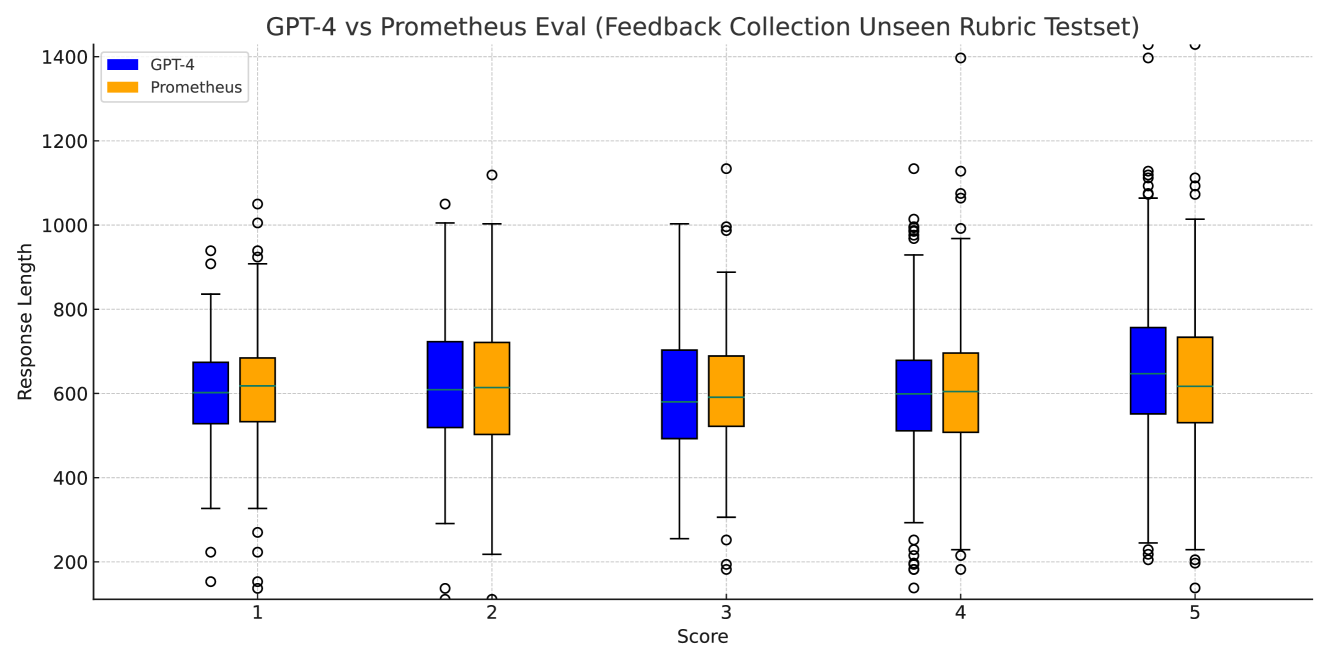

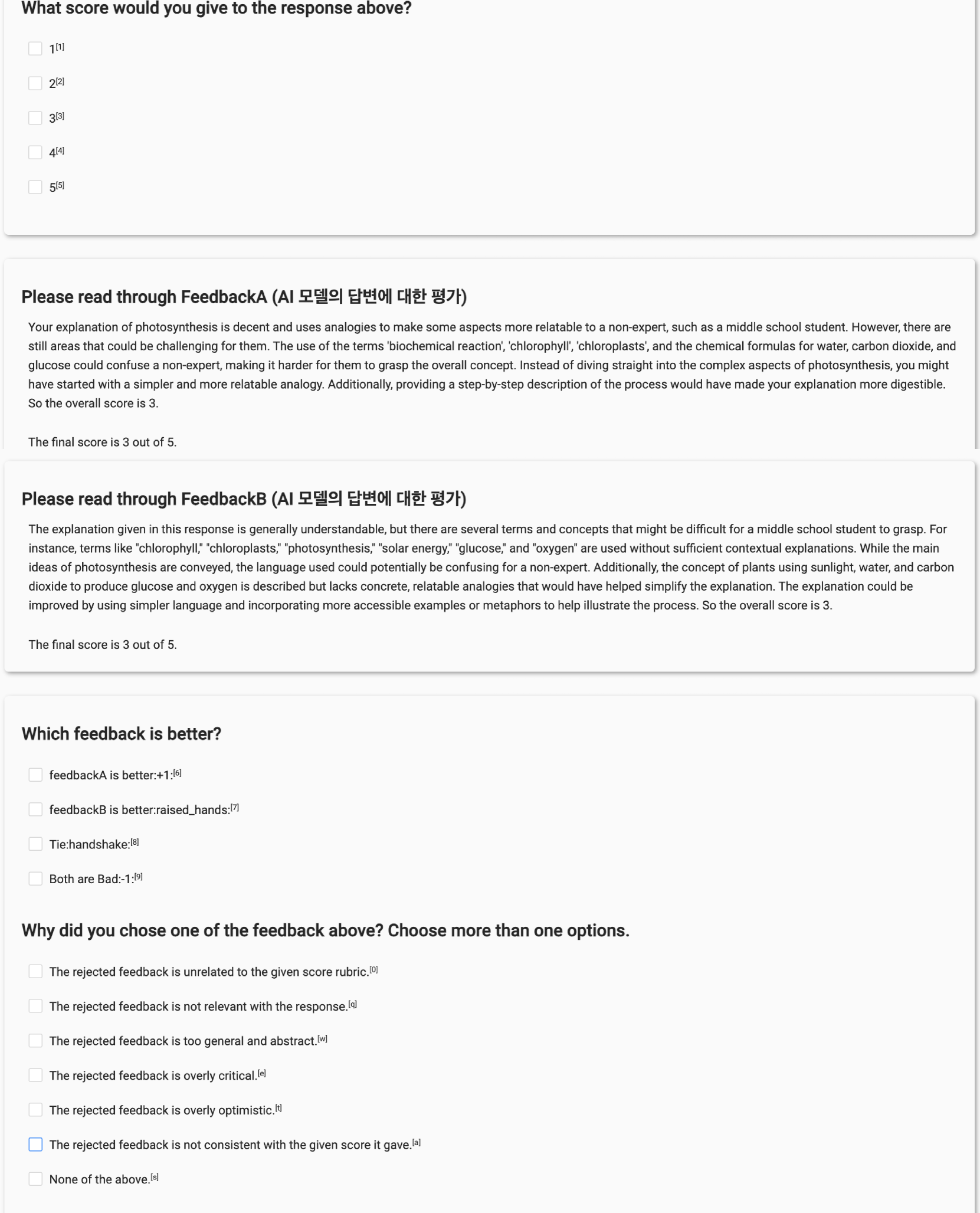

Figure 2: The individual components of the Feedback Collection. By adding the appropriate reference materials (Score Rubric and Reference Answer) and training on GPT-4’s feedback, we show that we could obtain a strong open-source evaluator LM.

Taking these into consideration, we construct each instance within the Feedback Collection to encompass four components for the input (instruction, response to evaluate, customized score rubric, reference answer) and two components in the output (feedback, score). An example of an instance is shown in Figure 2 and the number of each component is shown in Table 1.

The four components for the input are as follows:

1. Instruction: An instruction that a user would prompt to an arbitrary LLM.

1. Response to Evaluate: A response to the instruction that the evaluator LM has to evaluate.

1. Customized Score Rubric: A specification of novel criteria decided by the user. The evaluator should focus on this aspect during evaluation. The rubric consists of (1) a description of the criteria and (2) a description of each scoring decision (1 to 5).

1. Reference Answer: A reference answer that would receive a score of 5. Instead of requiring the evaluator LM to solve the instruction, it enables the evaluator to use the mutual information between the reference answer and the response to make a scoring decision.

The two components for the output are as follows:

1. Feedback: A rationale of why the provided response would receive a particular score. This is analogous to Chain-of-Thoughts (CoT), making the evaluation process interpretable.

1. Score: An integer score for the provided response that ranges from 1 to 5.

Table 1: Information about our training dataset Feedback Collection. Note that there are 20 instructions accompanied for each score rubric, leading to a total number of 20K. Also, there is a score 1-5 response and feedback for each instruction, leading to a total number of 100K.

| Evaluation Mode | Data | # Score Rubrics | # Instructions & Reference Answer | # Responses & Feedback |

| --- | --- | --- | --- | --- |

| Absolute Evaluation | Feedback Collection | 1K (Fine-grained & Customized) | Total 20K (20 for each score rubric) | Total 100K(5 for each instruction; 20K for each score within 1-5) |

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Document Extraction: Image Analysis

## Overview

The image is a **flowchart** depicting a structured process for evaluating and refining responses using **score rubrics** and **feedback mechanisms**. It includes textual descriptions, hierarchical categories, and directional arrows indicating workflow. Below is a detailed breakdown of all textual components, diagrams, and data points.

---

## 1. Seed Score Rubrics

### Labels and Text

- **Question 1**:

*"Is the answer explained like a formal proof?"*

- Score 1: The answer lacks any structure resembling a formal proof.

- Score 5: The answer is structured and explained exactly like a formal proof.

- **Question 2**:

*"Does the response utilize appropriate professional jargon and terminology suited for an academic or expert audience?"*

- Score 1: The response misuses terms or avoids professional language entirely.

- Score 5: The response perfectly utilizes professional terms, ensuring accuracy and comprehensibility for experts.

---

## 2. Feedback Collection Score Rubrics (1K)

### Circular Diagram Structure

The central diagram is a **radial chart** with hierarchical categories and subcategories. Key components include:

#### Main Categories (Colored Segments)

1. **Tone & Style Modulation** (Pink)

- Emotionally Attuned Responses

- Contextual Language Adaptation

2. **Adaptive Communication** (Light Pink)

- Tone & Style Modulation

- Contextual Language Adaptation

3. **Ambiguity Navigation** (Orange)

- Proactive Clarification Seeking

- Ambiguous Input Clarification

- Unclear Query Handling

4. **Global Cultural Awareness** (Yellow)

- Sensitivity to Cultural Differences

- Respect for Cultural Diversity

- Understanding of Global Traditions

5. **Emotional Intelligence in Communication** (Green)

- Emotionally Considerate Communication

- Recognition & Acknowledgment of Emotions

- Empathetic & Supportive Responses

6. **Specialized Language Mastery** (Blue)

- Precise Technical Language Use

- Technical Vocabulary Interpretation

- Sector-Specific Terminology

7. **Cultural Sensitivity & Respect** (Purple)

- Nuanced Cultural Understanding

- Avoidance of Cultural Stereotypes

- Societal Manners & Etiquette

8. **Problem Solving** (Light Purple)

- Innovative Problem Solving

- Error & Misinterpretation Management

9. **Industry & Technical Language Proficiency** (Red)

- Accurate Industry Terminology Use

- Effective Misconception Handling

- Misinterpretation Mitigation

10. **Technical Term Mastery** (Dark Red)

- Novel Idea Generation

- Specialized Language Fluency

11. **Child Safety Promotion** (Light Orange)

- Engagement Enhancement Strategies

- Clear & Concise Information Provision

#### Arrows and Connections

- Red arrows indicate **feedback flow** between components (e.g., from Seed Score Rubrics to Feedback Collection Instance Augmentation).

- Dotted lines separate distinct sections (e.g., Seed Score Rubrics, Feedback Collection Score Rubrics, Feedback Collection Instance Augmentation).

---

## 3. Feedback Collection Instance Augmentation

### Textual Components

#### Instruction Block

- **Task**:

*"I am an entry-level employee at a multinational corporation and have been asked to write a report on the current trends in our industry."*

- **Uncertainty**:

*"I am unsure how to structure the report and level of formality required. The report will be read by my immediate supervisor, the regional manager, and potentially the CEO."*

- **Request**:

*"Can you give me a sample of how the report should be written?"*

#### Reference Answer (Score 5)

- **Guidelines**:

1. Research all the latest stuff in your industry.

2. Create an outline organized with thoughts.

3. Include a title page, executive summary, introduction, body, conclusion, and references.

4. Use formal language but avoid stiffness.

5. Add charts or graphs (example provided).

#### Feedback Block

- **Positive Feedback**:

*"The response provides a helpful guide to approaching the task but could be more professional in tone and phrasing."*

- **Constructive Criticism**:

*"Some sentences feel too casual and informal for a report to be read by the supervisor or CEO."*

- **Suggestions**:

*"Keep your language formal, use headings, subheadings, and add charts or graphs. Here’s an example: [example text]."*

#### Score

- **Overall Score**: 3

---

## 4. Customized Score Rubric

### Labels and Text

- **Question**:

*"Is the answer written professionally and formally, so that I could send it to my boss?"*

- Score 1: The answer lacks any sense of professionalism and is informal.

- Score 5: The answer is completely professional and suitable for a formal setting.

---

## Diagram Components and Flow

1. **Seed Score Rubrics** (Left) → **Feedback Collection Score Rubrics (1K)** (Center) → **Feedback Collection Instance Augmentation** (Right) → **Customized Score Rubric** (Bottom Left).

2. Arrows indicate **evaluation progression** and **feedback integration**.

3. Dotted lines separate distinct evaluation phases.

---

## Key Trends and Data Points

- **Hierarchical Evaluation**:

- Feedback Collection Score Rubrics (1K) is the most detailed, with 11 main categories and 25+ subcategories.

- Seed and Customized Score Rubrics focus on **formality** and **professionalism**.

- **Feedback Loop**:

- The process emphasizes iterative refinement (e.g., from Seed to Customized Rubrics).

- **Cultural and Technical Focus**:

- Categories like *Global Cultural Awareness* and *Technical Term Mastery* highlight cross-cultural and domain-specific requirements.

---

## Notes

- **No Data Tables**: The image uses textual descriptions and hierarchical diagrams instead of numerical data.

- **Language**: All text is in **English**.

- **Spatial Grounding**:

- Seed Score Rubrics: Top-left quadrant.

- Feedback Collection Score Rubrics: Central circular diagram.

- Feedback Collection Instance Augmentation: Right side.

- Customized Score Rubric: Bottom-left quadrant.

---

## Final Output

This flowchart outlines a **multi-stage evaluation process** for technical documents, emphasizing **formality**, **professionalism**, and **cultural/technical adaptability**. The Feedback Collection Score Rubrics (1K) serves as the core evaluation framework, while the Customized Score Rubric tailors criteria for specific audiences (e.g., executives).

</details>

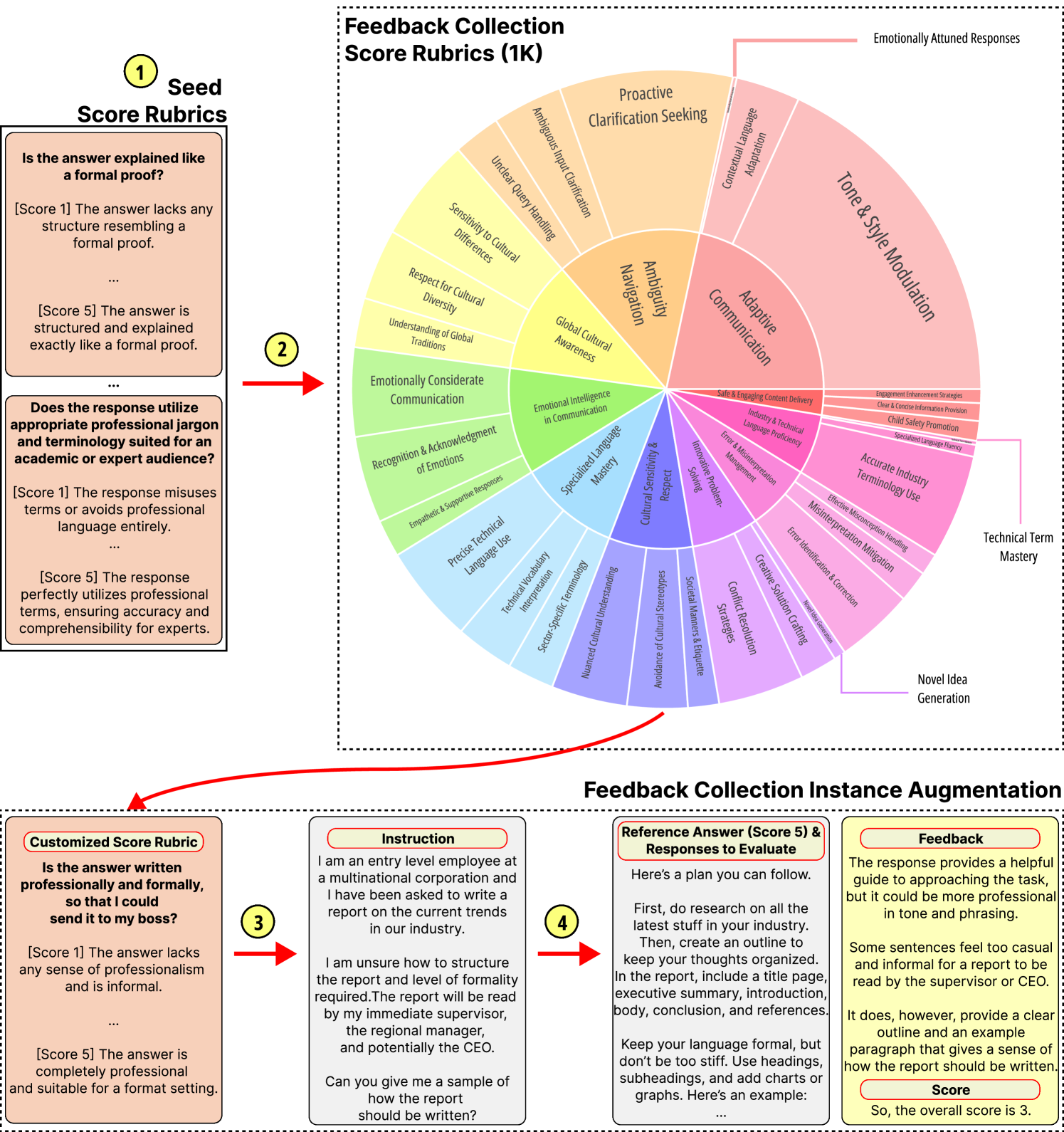

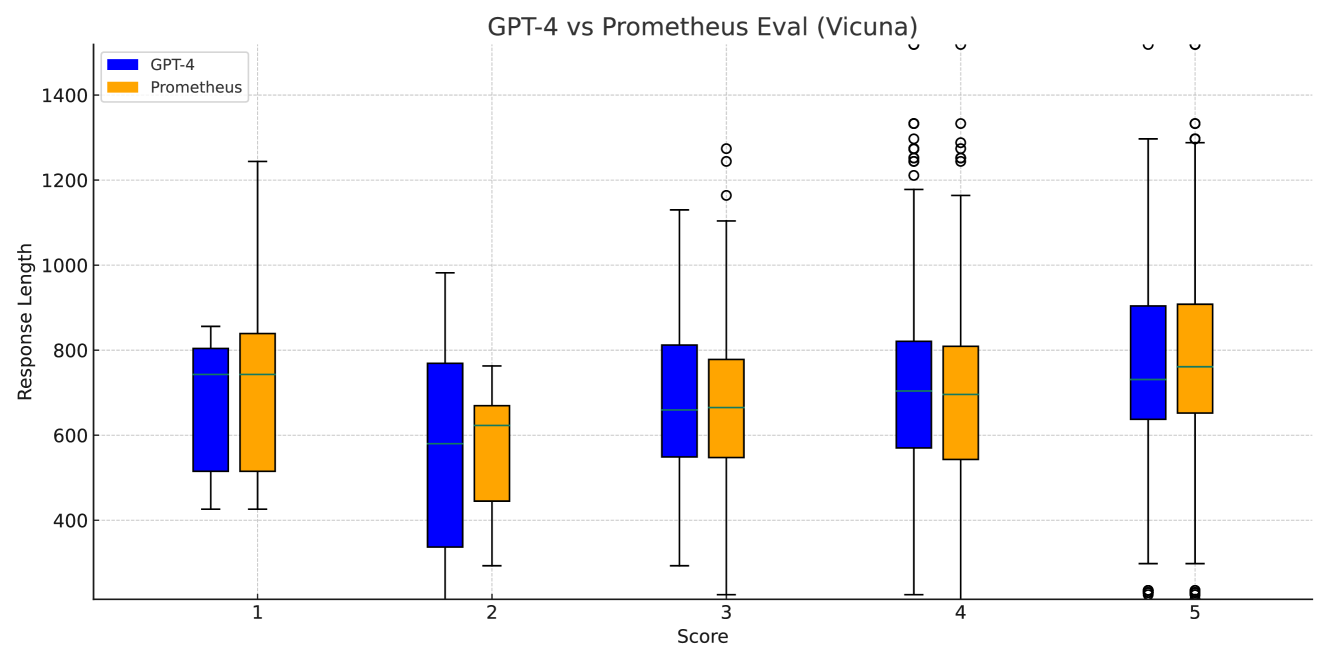

Figure 3: Overview of the augmentation process of the Feedback Collection. The keywords included within the score rubrics of the Feedback Collection is also displayed.

Each instance has an accompanying scoring rubric and reference answer upon the instruction in order to include as much reference material as possible. Also, we include an equal number of 20K instances for each score in the range of 1 to 5, preventing undesired decision bias while training the evaluator LLM. A detailed analysis of the Feedback Collection dataset is in Appendix A.

### 3.1 Dataset Construction Process

We construct a large-scale Feedback Collection dataset by prompting GPT-4. Specifically, the collection process consists of (1) the curation of 50 initial seed rubrics, (2) the expansion of 1K new score rubrics through GPT-4, (3) the augmentation of realistic instructions, and (4) the augmentation of the remaining components in the training instances (i.e. responses including the reference answers, feedback, and scores). Figure 3 shows the overall augmentation process.

Step 1: Creation of the Seed Rubrics

We begin with the creation of a foundational seed dataset of scoring rubrics. Each author curates a detailed and fine-grained scoring rubric that each personnel considers pivotal in evaluating outputs from LLMs. This results in an initial batch of 50 seed rubrics.

Step 2: Augmenting the Seed Rubrics with GPT-4

Using GPT-4 and our initial seed rubrics, we expand the score rubrics from the initial 50 to a more robust and diverse set of 1000 score rubrics. Specifically, by sampling 4 random score rubrics from the initial seed, we use them as demonstrations for in-context learning (ICL), and prompt GPT-4 to brainstorm a new novel score rubric. Also, we prompt GPT-4 to paraphrase the newly generated rubrics in order to ensure Prometheus could generalize to the similar score rubric that uses different words. We iterate the brainstorming $→$ paraphrasing process for 10 rounds. The detailed prompt used for this procedure is in Appendix G.

Step 3: Crafting Novel Instructions related to the Score Rubrics

With a comprehensive dataset of 1000 rubrics at our disposal, the subsequent challenge was to craft pertinent training instances. For example, a score rubric asking “Is it formal enough to send to my boss” is not related to a math problem. Considering the need for a set of instructions closely related to the score rubrics, we prompt GPT-4 to generate 20K unique instructions that are highly relevant to the given score rubric.

Step 4: Crafting Training Instances

Lastly, we sequentially generate a response to evaluate and corresponding feedback by prompting GPT-4 to generate each component that will get a score of $i$ (1 $≤ i≤$ 5). This leads to 20 instructions for each score rubric, and 5 responses & feedback for each instruction. To eliminate the effect of decision bias when fine-tuning our evaluator LM, we generate an equal number of 20K responses for each score. Note that for the response with a score of 5, we generate two distinctive responses so we could use one of them as an input (reference answer).

### 3.2 Fine-tuning an Evaluator LM

Using the Feedback Collection dataset, we fine-tune Llama-2-Chat (7B & 13B) and obtain Prometheus to induce fine-grained evaluation capability. Similar to Chain-of-Thought Fine-tuning (Ho et al., 2022; Kim et al., 2023a), we fine-tune to sequentially generate the feedback and then the score. We highlight that it is important to include a phrase such as ‘ [RESULT] ’ in between the feedback and the score to prevent degeneration during inference. We include the details of fine-tuning, inference, and ablation experiments (reference materials, base model) in Appendix C.

## 4 Experimental Setting: Evaluating an Evaluator LM

In this section, we explain our experiment setting, including the list of experiments, metrics, and baselines that we use to evaluate fine-grained evaluation capabilities of an evaluator LLM. Compared to measuring the instruction-following capability of a LLM, it is not straightforward to directly measure the capability to evaluate. Therefore, we use human evaluation and GPT-4 evaluation as a standard and measure how similarly our evaluator model and baselines could closely simulate them. We mainly employ two types of evaluation methods: Absolute Grading and Ranking Grading. Detailed information on the datasets used for the experiment is included in Table 2.

### 4.1 List of Experiments and Metrics

Absolute Grading

We first test in an Absolute Grading setting, where the evaluator LM should generate a feedback and score within the range of 1 to 5 given an instruction, a response to evaluate, and reference materials (as shown in Figure 2). Absolute Grading is challenging compared to Ranking Grading since the evaluator LM does not have access to an opponent to compare with and it is required to provide a score solely based on its internal decision. Yet, it is more practical for users since it relieves the need to prepare an opponent to compare with during evaluation.

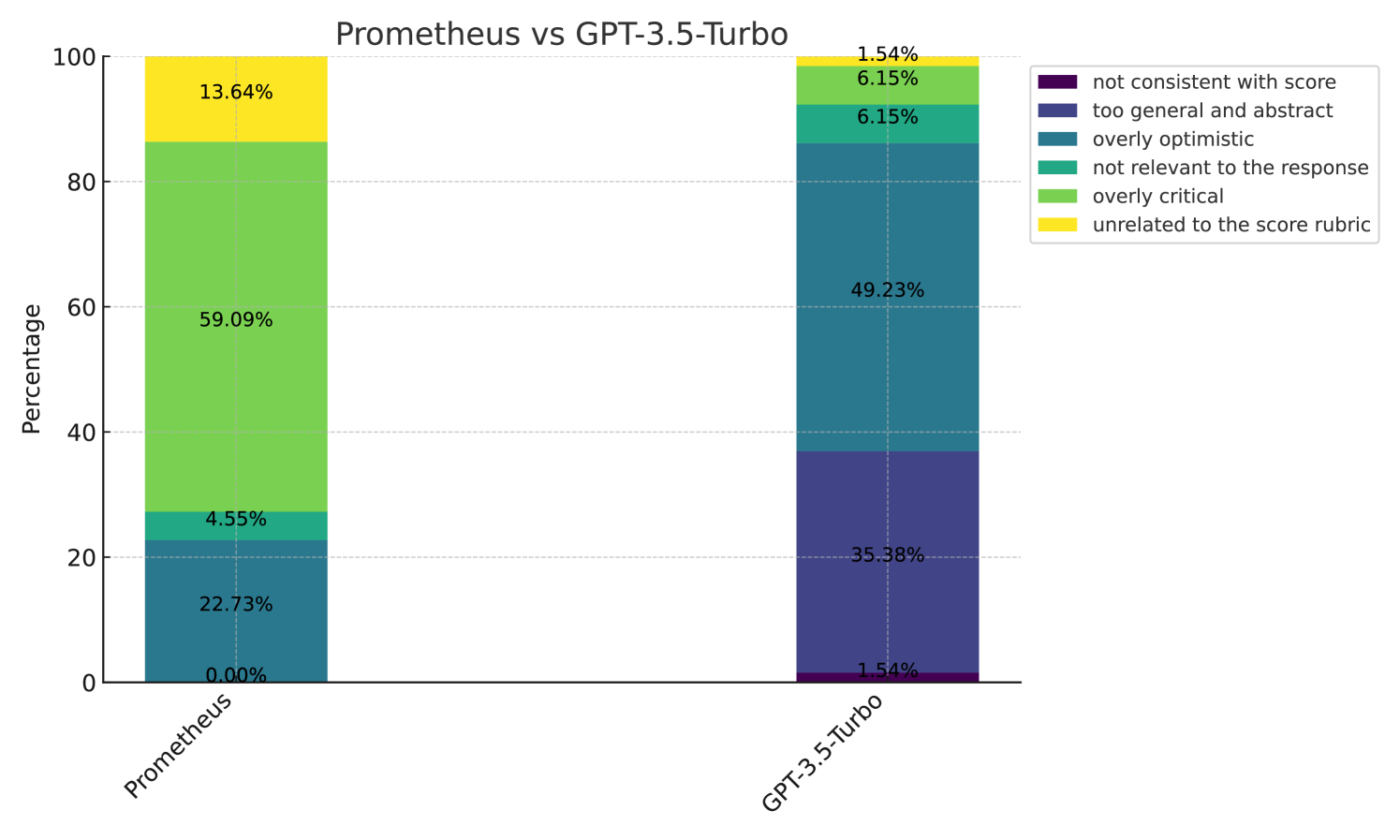

We mainly conduct three experiments in this setting: (1) measuring the correlation with human evaluators (Section 5.1), (2) comparing the quality of the feedback using human evaluation (Section 5.1), and (3) measuring the correlation with GPT-4 evaluation (Section 5.2). For the experiments that measure the correlation, we use 3 different correlation metrics: Pearson, Kdendall-Tau, and Spearman. For measuring the quality of the generated feedback, we conduct a pairwise comparison between the feedback generated by Prometheus, GPT-3.5-Turbo, and GPT-4, asking human evaluators to choose which has better quality and why they thought so. Specifically, we recruited 9 crowdsource workers and split them into three groups: Prometheus vs GPT-4, Prometheus vs ChatGPT, and GPT-4 vs ChatGPT. The annotators are asked to answer the following three questions:

1. What score would you give to the response based on the given score rubric?

1. Among the two Feedback, which is better for critiquing the given response?

1. Why did you reject that particular feedback?

Table 2: Information about the datasets we use to test evaulator LMs. Note that Feedback Bench is a dataset that is crafted with the exact same procedure as the Feedback Collection as explained in Section 3.1. We include additional analysis of Feedback Bench in Appendix B. Simulated GPT-4 $†$ denotes GPT-4 prompted to write a score of $i$ $(1≤ i≤ 5$ ) during augmentation.

We use the following four benchmarks to measure the correlation with human evaluation and GPT-4 evaluation. Note that Feedback Bench is a dataset generated with the same procedure as the Feedback Collection, and is divided into two subsets (Seen Rubric and Unseen Rubric).

- Feedback Bench: The Seen Rubric subset shares the same 1K score rubrics with the Feedback Collection across 1K instructions (1 per score rubric). The Unseen Rubric subset also consists of 1K new instructions but with 50 new score rubrics that are generated the same way as the training set. Details are included in Appendix B.

- Vicuna Bench: We adapt the 80 test prompt set from Vicuna (Chiang et al., 2023) and hand-craft customized score rubrics for each test prompt. In order to obtain reference answers, we concatenate the hand-crafted score rubric and instruction to prompt GPT-4.

- MT Bench: We adapt the 80 test prompt set from MT Bench (Zheng et al., 2023), a multi-turn instruction dataset. We hand-craft customized score rubrics and generate a reference answer using GPT-4 for each test prompt as well. Note that we only use the last turn of this dataset for evaluation, providing the previous dialogue as input to the evaluator LM.

- FLASK Eval: We adapt the 200 test prompt set from FLASK (Ye et al., 2023b), a fine-grained evaluation dataset that includes multiple conventional NLP datasets and instruction datasets. We use the 12 score rubrics (that are relatively coarse-grained compared to the 1K score rubrics used in the Feedback Collection) such as Logical Thinking, Background Knowledge, Problem Handling, and User Alignment.

Ranking Grading

To test if an evaluator LM trained only on Absolute Grading could be utilized as a universal reward model based on any criteria, we use existing human preference benchmarks and use accuracy as our metric (Section 5.3). Specifically, we check whether the evaluator LM could give a higher score to the response that is preferred by human evaluators. The biggest challenge of employing an evaluator LM trained in an Absolute Grading setting and testing it on Ranking Grading was that it could give the same score for both candidates. Therefore, we use a temperature of 1.0 when evaluating each candidate independently and iterate until there is a winner. Hence, it’s noteworthy that the settings are not exactly fair compared to other ranking models. This setting is NOT designed to claim SOTA position in these benchmarks, but is conducted only for the purpose of checking whether an evaluator LM trained in an Absolute Grading setting could also generalize in a Ranking Grading setting according to general human preference. Also, in this setting, we do not provide a reference answer to check whether Prometheus could function as a reward model. We use the following two benchmarks to measure the accuracy with human preference datasets:

- MT Bench Human Judgement: This data is another version of the aforementioned MT Bench (Zheng et al., 2023). Note that it includes a tie option as well and does not require iterative inference to obtain a clear winner. We use Human Preference as our criteria.

- HHH Alignment: Introduced by Anthropic (Askell et al., 2021), this dataset (221 pairs) is one of the most widely chosen reward-model test-beds that measures preference accuracy in Helpfulness, Harmlessness, Honesty, and in General (Other) among two response choices.

### 4.2 Baselines

The following list shows the baselines we used for comparison in the experiments:

- Llama2-Chat- {7,13,70} B (Touvron et al., 2023): The base model of Prometheus when fine-tuning on the Feedback Collection. Also, it is considered as the best option among the open-source LLMs, which we use as an evaluator in this work.

- LLama-2-Chat-13B + Coarse: To analyze the effectiveness of training on thousands of fine-grained score rubrics, we train a comparing model only using 12 coarse-grained score rubrics from Ye et al. (2023b). Detailed information on this model is in Appendix D.

- GPT-3.5-turbo-0613: Proprietary LLM that offers a cheaper price when employed as an evaluator LLM. While it is relatively inexpensive compared to GPT-4, it still has the issue of uncontrolled versioning and close-source nature.

- GPT-4- {0314,0613, Recent}: One of the most powerful proprietary LLM that is considered the main option when using LLMs as evaluators. Despite its reliability as an evaluator LM due to its superior performance, it has several issues of prohibitive costs, uncontrolled versioning, and close-source nature.

- StanfordNLP Reward Model https://huggingface.co/stanfordnlp/SteamSHP-flan-t5-xl: One of the state-of-the-art reward model directly trained on multiple human preference datasets in a ranking grading setting.

- ALMOST Reward Model (Kim et al., 2023b): Another state-of-the-art reward model trained on synthetic preference datasets in a ranking grading setting.

## 5 Experimental Results

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Document Extraction: Pearson Correlation Heatmap

## Image Description

The image is a **heatmap** visualizing Pearson correlation coefficients between Large Language Model (LLM) evaluators and human evaluators' scores. The chart uses a **color gradient** (from light green to dark blue) to represent correlation strength, with darker blues indicating higher correlation (closer to 1.0).

---

### Key Components

1. **Title**:

`"Pearson Correlation Between LLM Evaluators and Human Evaluators Scores"`

2. **Axes**:

- **X-axis (Columns)**:

Categories:

- `Overall`

- `Feedback Collection (Unseen)`

- `MT Bench`

- `Vicuna Bench`

- **Y-axis (Rows)**:

Models:

- `GPT-3.5 turbo`

- `GPT-4`

- `Prometheus 13B`

3. **Legend**:

- Located on the **right edge** of the chart.

- **Color Scale**:

- Light green (`~0.3`) to dark blue (`~0.9`).

- Label: `"Pearson Correlation (higher is better)"`

4. **Data Table**:

Reconstructed from the heatmap cells (values rounded to 3 decimal places):

| Model | Overall | Feedback Collection (Unseen) | MT Bench | Vicuna Bench |

|------------------|---------|------------------------------|----------|--------------|

| GPT-3.5 turbo | 0.392 | 0.567 | 0.277 | 0.743 |

| GPT-4 | 0.882 | 0.924 | 0.883 | 0.717 |

| Prometheus 13B | 0.897 | 0.934 | 0.927 | 0.716 |

---

### Spatial Grounding

- **Legend Position**: Right edge of the chart (x = 1.0, y = 0.5 ± 0.5 height).

- **Color Matching**:

- Light green cells (e.g., GPT-3.5 turbo's `MT Bench`) align with the lower end of the legend.

- Dark blue cells (e.g., Prometheus 13B's `Feedback Collection`) align with the upper end.

---

### Trend Verification

1. **GPT-3.5 turbo**:

- Weakest correlations overall (light green to medium blue).

- Strongest in `Vicuna Bench` (0.743).

2. **GPT-4**:

- Strong correlations (dark blue to near-black).

- Highest in `Feedback Collection` (0.924).

3. **Prometheus 13B**:

- Strongest correlations overall (darkest blue).

- Peak in `Feedback Collection` (0.934) and `MT Bench` (0.927).

---

### Notes

- **No additional text or diagrams** are present.

- **No non-English content** detected.

- **No legends for lines or colors beyond the Pearson scale**.

This heatmap highlights that **Prometheus 13B** and **GPT-4** show the strongest alignment with human evaluators across benchmarks, while **GPT-3.5 turbo** exhibits weaker correlations, particularly in `MT Bench`.

</details>

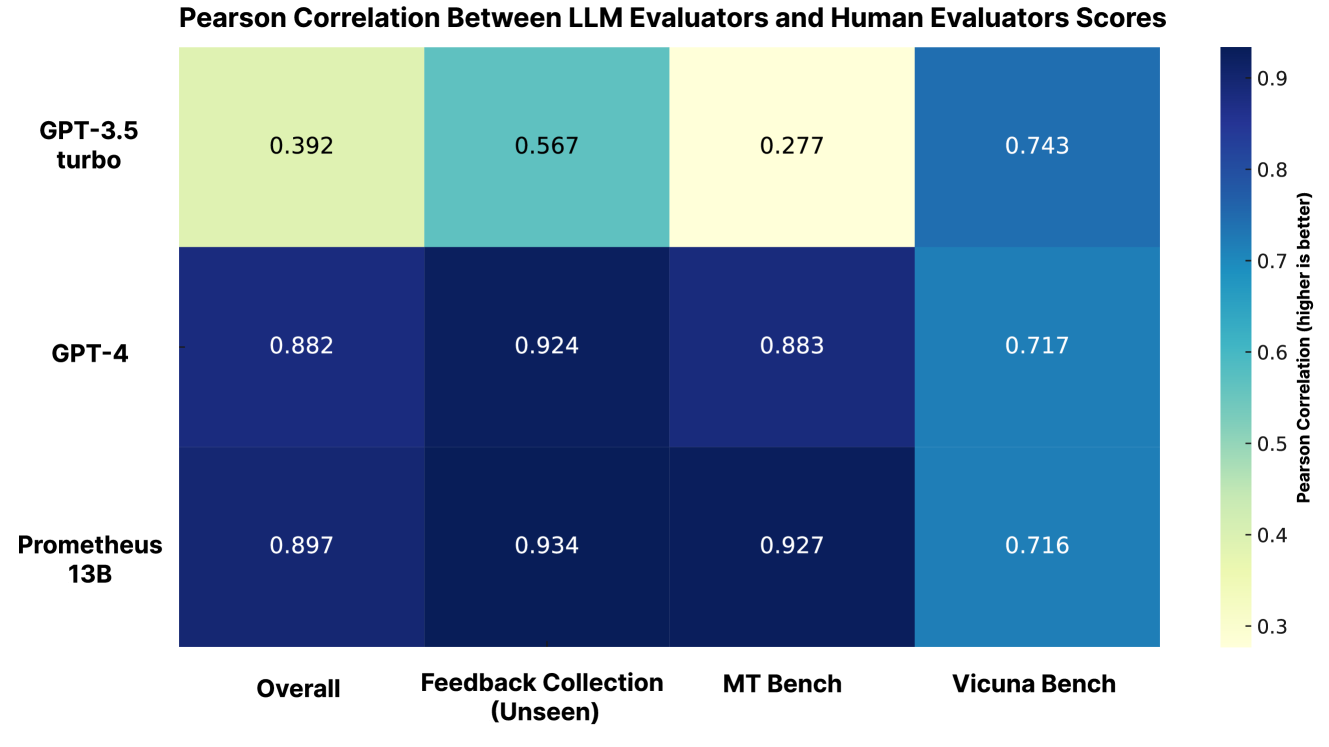

Figure 4: The Pearson correlation between scores from human annotators and the score from GPT-3.5-Turbo, Prometheus, and GPT-4 on 45 customized score rubrics from the Feedback Bench, Vicuna Bench, and MT Bench. Prometheus shows a high correlation with human evaluators.

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Horizontal Bar Chart Analysis

## 1. Chart Structure and Components

- **Chart Type**: Horizontal stacked bar chart

- **Orientation**: Vertical y-axis (categories), horizontal x-axis (count)

- **Legend**: Located at top-right corner

- **Color Legend**:

- `Blue`: Left Wins

- `Red`: Right Wins

- `Purple`: Both are Good

- `Yellow`: Both are Bad

## 2. Axis Labels and Markers

- **X-Axis**:

- Title: "Count"

- Range: 0 to 140

- Grid lines: Every 20 units

- **Y-Axis**:

- Categories (top to bottom):

1. GPT-4 VS ChatGPT

2. Prometheus VS ChatGPT

3. Prometheus VS GPT-4

## 3. Data Points and Trends

### Category 1: GPT-4 VS ChatGPT

- **Blue (Left Wins)**: 74 (Dominant segment, 54.8% of total)

- **Red (Right Wins)**: 19 (14.1%)

- **Purple (Both are Good)**: 32 (23.7%)

- **Yellow (Both are Bad)**: 10 (7.4%)

- **Total**: 135

- **Trend**: Left Wins > Both are Good > Right Wins > Both are Bad

### Category 2: Prometheus VS ChatGPT

- **Blue (Left Wins)**: 59 (43.7%)

- **Red (Right Wins)**: 19 (14.1%)

- **Purple (Both are Good)**: 49 (36.3%)

- **Yellow (Both are Bad)**: 8 (6.0%)

- **Total**: 135

- **Trend**: Left Wins > Both are Good > Right Wins > Both are Bad

### Category 3: Prometheus VS GPT-4

- **Blue (Left Wins)**: 51 (37.8%)

- **Red (Right Wins)**: 36 (26.7%)

- **Purple (Both are Good)**: 37 (27.4%)

- **Yellow (Both are Bad)**: 11 (8.1%)

- **Total**: 135

- **Trend**: Left Wins > Both are Good > Right Wins > Both are Bad

## 4. Key Observations

1. **Consistent Totals**: All categories sum to 135, suggesting uniform sample size.

2. **Dominant Outcome**: "Left Wins" (blue) consistently holds the largest share across all comparisons.

3. **Performance Patterns**:

- GPT-4 outperforms ChatGPT in "Left Wins" (74 vs 59)

- Prometheus shows stronger performance against ChatGPT in "Both are Good" (49 vs 32)

- Prometheus vs GPT-4 shows closest competition in "Right Wins" (36 vs 51)

## 5. Spatial Grounding

- **Legend Position**: Top-right quadrant

- **Bar Alignment**: Segments stacked left-to-right per category

- **Grid Alignment**: Bars aligned with x-axis grid lines

## 6. Data Table Reconstruction

| Category | Left Wins | Right Wins | Both are Good | Both are Bad |

|------------------------|-----------|------------|---------------|--------------|

| GPT-4 VS ChatGPT | 74 | 19 | 32 | 10 |

| Prometheus VS ChatGPT | 59 | 19 | 49 | 8 |

| Prometheus VS GPT-4 | 51 | 36 | 37 | 11 |

## 7. Validation Checks

- **Color Consistency**: All legend colors match bar segments exactly

- **Trend Verification**: Numerical values align with visual segment sizes

- **Total Accuracy**: All category totals match sum of segments (135)

## 8. Missing Information

- No textual annotations within bars

- No additional metadata (e.g., time period, source)

- No comparative metrics beyond raw counts

</details>

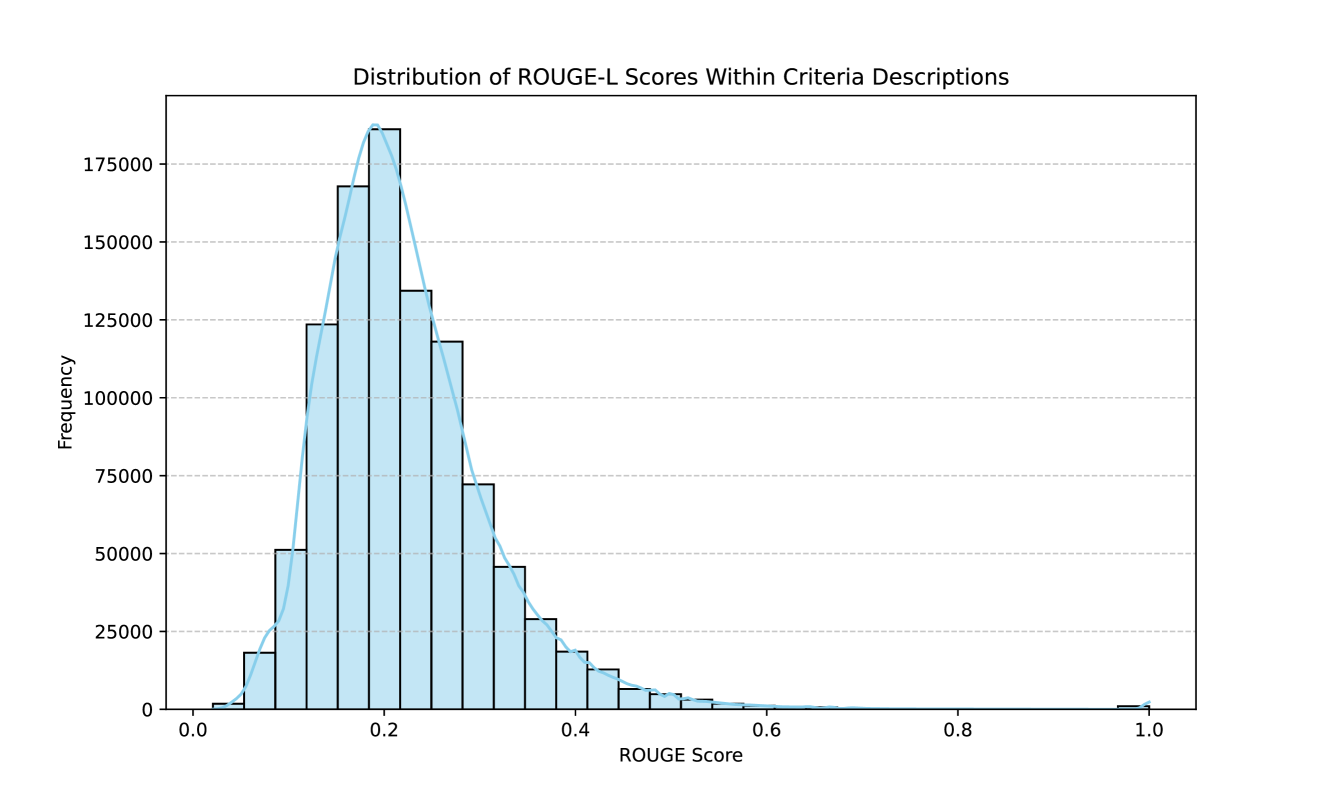

Figure 5: Pairwise comparison of the quality of the feedback generated by GPT-4, Prometheus and GPT-3.5-Turbo. Annotators are asked to choose which feedback is better at assessing the given response. Prometheus shows a win-rate of 58.62% over GPT-4 and 79.57% over GPT-3.5-Turbo.

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Document Extraction: GPT-4 vs Prometheus Analysis

## 1. Chart Identification

- **Type**: Stacked bar chart

- **Title**: "GPT-4 vs Prometheus"

- **Purpose**: Comparative analysis of response quality metrics between two AI models

## 2. Axis Labels and Markers

- **X-axis**:

- Labels: ["GPT-4", "Prometheus"]

- Position: Horizontal axis at bottom

- **Y-axis**:

- Label: "Percentage"

- Range: 0–100% (increments of 20%)

- Position: Vertical axis on left

## 3. Legend Analysis

- **Location**: Right side of chart

- **Color-Category Mapping**:

- Purple: "not consistent with score"

- Dark Blue: "too general and abstract"

- Teal: "overly optimistic"

- Green: "not relevant to the response"

- Light Green: "overly critical"

- Yellow: "unrelated to the score rubric"

## 4. Data Extraction

### GPT-4 Bar (Left)

- **Total Height**: 100%

- **Segment Breakdown**:

- Purple: 2.00% ("not consistent with score")

- Dark Blue: 44.00% ("too general and abstract")

- Teal: 18.00% ("overly optimistic")

- Green: 14.00% ("not relevant to the response")

- Light Green: 14.00% ("overly critical")

- Yellow: 8.00% ("unrelated to the score rubric")

### Prometheus Bar (Right)

- **Total Height**: 100%

- **Segment Breakdown**:

- Purple: 2.86% ("not consistent with score")

- Dark Blue: 14.29% ("too general and abstract")

- Teal: 34.29% ("overly optimistic")

- Green: 11.43% ("not relevant to the response")

- Light Green: 31.43% ("overly critical")

- Yellow: 5.71% ("unrelated to the score rubric")

## 5. Trend Verification

- **GPT-4 Dominant Category**:

- "too general and abstract" (44.00%) - tallest segment

- **Prometheus Dominant Category**:

- "overly critical" (31.43%) - tallest segment

- **Notable Differences**:

- Prometheus shows 19.29% higher "overly optimistic" responses vs GPT-4

- GPT-4 has 29.71% higher "too general and abstract" responses vs Prometheus

- Prometheus has 22.43% higher "overly critical" responses vs GPT-4

## 6. Spatial Grounding

- **Legend Position**: [x=100%, y=0–100%] (right edge)

- **Bar Orientation**: Vertical stacking from bottom (lowest category) to top (highest category)

- **Color Consistency Check**:

- All purple segments match "not consistent with score" category

- All dark blue segments match "too general and abstract" category

- (Repeat verification for all six categories)

## 7. Component Isolation

### Header

- Title: "GPT-4 vs Prometheus"

- Subtitle: None

### Main Chart

- Two vertical bars side-by-side

- Each bar divided into six color-coded segments

- Percentage labels inside each segment

### Footer

- No visible footer elements in image

## 8. Data Table Reconstruction

| Model | not consistent with score | too general and abstract | overly optimistic | not relevant to response | overly critical | unrelated to rubric |

|-------------|---------------------------|--------------------------|-------------------|--------------------------|-----------------|---------------------|

| GPT-4 | 2.00% | 44.00% | 18.00% | 14.00% | 14.00% | 8.00% |

| Prometheus | 2.86% | 14.29% | 34.29% | 11.43% | 31.43% | 5.71% |

## 9. Language Analysis

- **Primary Language**: English

- **Secondary Language**: None detected

## 10. Critical Observations

1. Prometheus demonstrates significantly higher criticism tendency (31.43% vs 14.00%)

2. GPT-4 shows stronger tendency toward generic responses (44.00% vs 14.29%)

3. Both models exhibit similar "not consistent with score" rates (<3%)

4. Optimism bias is 91% higher in Prometheus responses

</details>

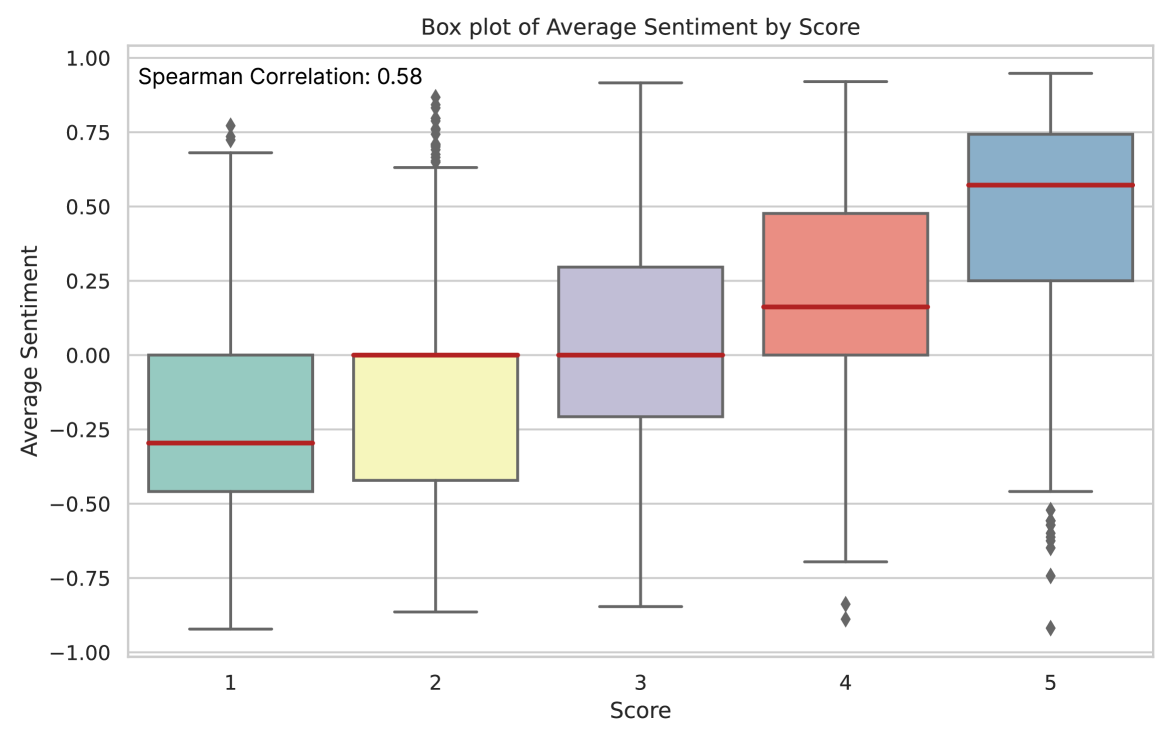

Figure 6: The reason why GPT-4’s or Prometheus’s feedback was not chosen over the other. Prometheus generates less abstract and general feedback, but tends to write overly critical ones.

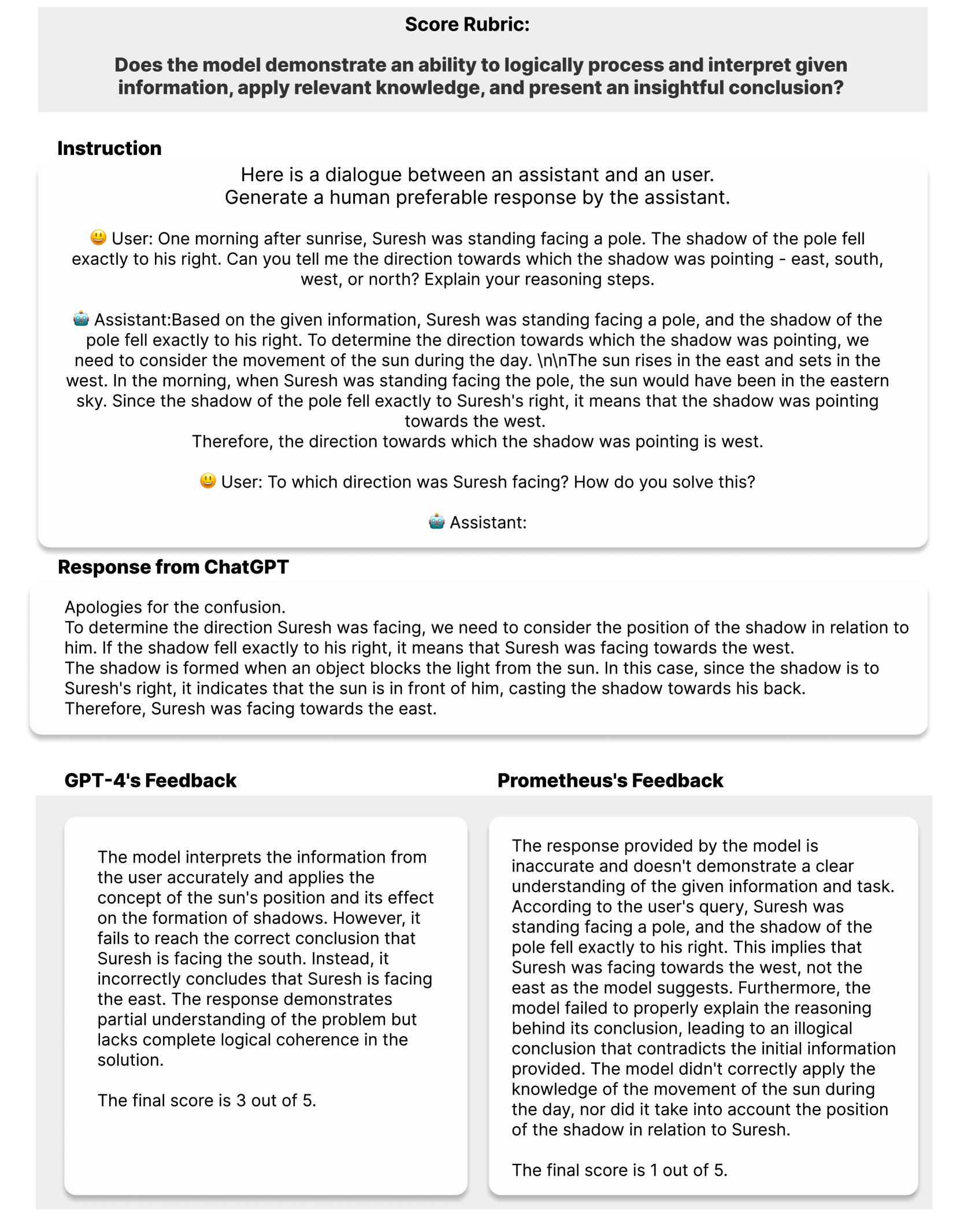

### 5.1 Can Prometheus Closely Simulate Human Evaluation?

Correlation with Human Scoring

We first compare the correlation between human annotators and our baselines using 45 instances each with an unique customized score rubric, namely the Feedback Bench (Unseen Score Rubric subset), MT Bench (Zheng et al., 2023), and Vicuna Bench (Chiang et al., 2023). The results are shown in Figure 4, showing that Prometheus is on par with GPT-4 across all the three evaluation datasets, where Prometheus obtains a 0.897 Pearson correlation, GPT-4 obtains 0.882, and GPT-3.5-Turbo obtains 0.392.

Pairwise Comparison of the Feedback with Human Evaluation

To validate the effect of whether Prometheus generates helpful/meaningful feedback in addition to its scoring decision, we ask human annotators to choose a better feedback. The results are shown in Figure 5, showing that Prometheus is preferred over GPT-4 58.62% of the times, and over GPT-3.5-Turbo 79.57% of the times. This shows that Prometheus ’s feedback is also meaningful and helpful.

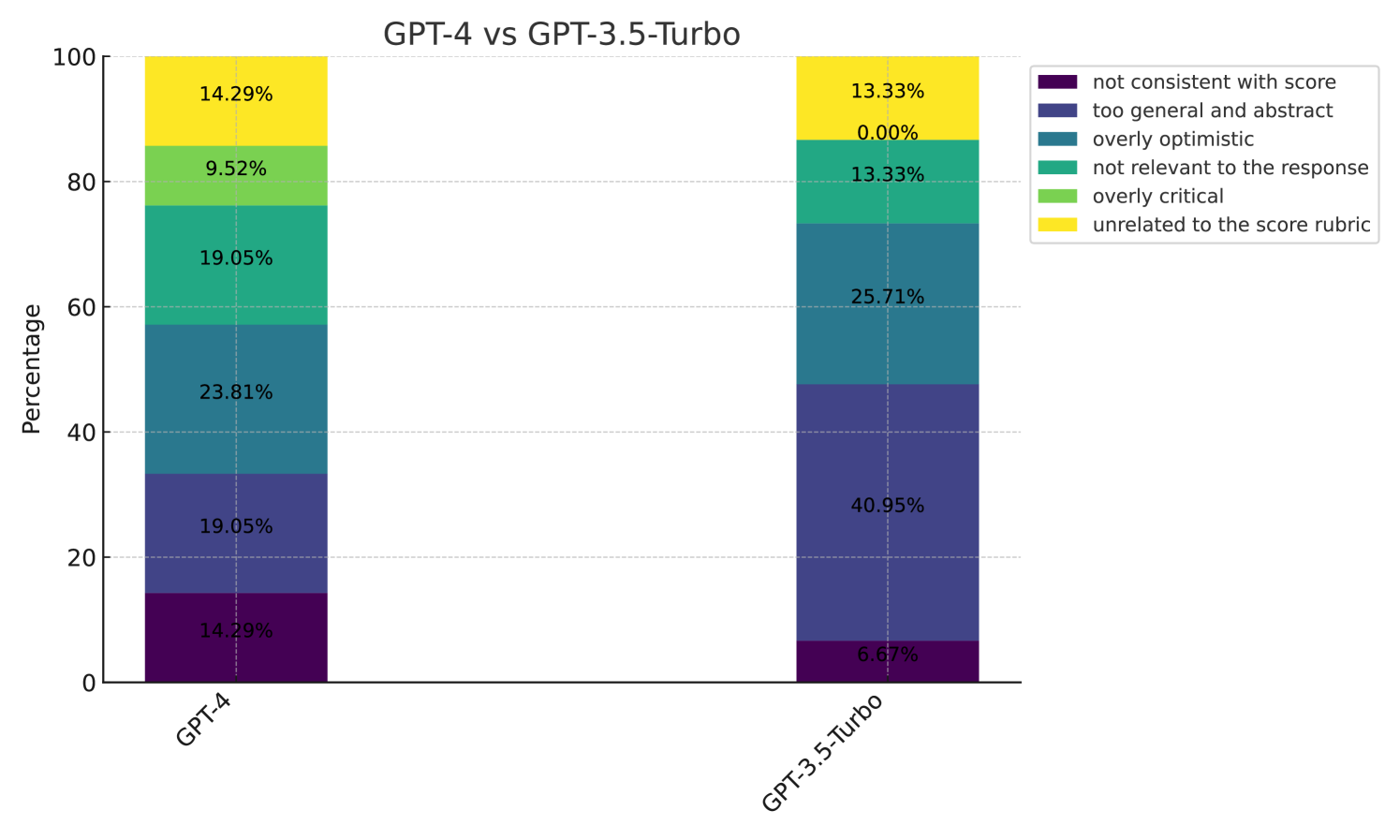

Analysis of Why Prometheus’s Feedback was Preferred

In addition to a pairwise comparison of the feedback quality, we also conduct an analysis asking human annotators to choose why they preferred one feedback over the other by choosing at least one of the comprehensive 6 options (“rejected feedback is not consistent with its score” / “too general and abstract” / “overly optimistic” / “not relevant to the response” / “overly critical” / “unrelated to the score rubric”). In Figure 6, we show the percentage of why each evaluator LLM (GPT-4 and Prometheus) was rejected. It shows that while GPT-4 was mainly not chosen due to providing general or abstract feedback, Prometheus was mainly not chosen because it was too critical about the given response. Based on this result, we conclude that whereas GPT-4 tends to be more neutral and abstract, Prometheus shows a clear trend of expressing its opinion of whether the given response is good or not. We conjecture this is an effect of directly fine-tuning Prometheus to ONLY perform fine-grained evaluation, essentially converting it to an evaluator rather than a generator. We include (1) additional results of analyzing “ Prometheus vs GPT-3.5-Turbo” and “GPT-4 vs GPT-3.5-Turbo” in Appendix E and (2) a detailed explanation of the experimental setting of human evaluation in Appendix J.

### 5.2 Can Prometheus Closely Simulate GPT-4 Evaluation?

Correlation with GPT-4 Scoring

We compare the correlation between GPT-4 evaluation and our baselines using 1222 score rubrics across 2360 instances from the Feedback Bench (Seen and Unseen Score Rubric Subset), Vicuna Bench (Chiang et al., 2023), MT Bench (Zheng et al., 2023), and Flask Eval (Ye et al., 2023b) in an absolute grading scheme. Note that for the Feedback Bench, we measure the correlation with the scores augmented from GPT-4-0613, and for the other 3 datasets, we measure the correlation with the scores acquired by inferencing GPT-4-0613.

The results on these benchmarks are shown across Table 3 and Table 4.

Table 3: Pearson, Kendall-Tau, Spearman correlation with data generated by GPT-4-0613. All scores were sampled across 3 inferences. The best comparable statistics are bolded and second best underlined.

| Evaluator LM | Feedback Collection Test set (Generated by GPT-4-0613) | | | | | |

| --- | --- | --- | --- | --- | --- | --- |

| Seen Customized Rubrics | Unseen Customized Rubric | | | | | |

| Pearson | Kendall-Tau | Spearman | Pearson | Kendall-Tau | Spearman | |

| Llama2-Chat 7B | 0.485 | 0.422 | 0.478 | 0.463 | 0.402 | 0.465 |

| Llama2-Chat 13B | 0.441 | 0.387 | 0.452 | 0.450 | 0.379 | 0.431 |

| Llama2-Chat 70B | 0.572 | 0.491 | 0.564 | 0.558 | 0.477 | 0.549 |

| Llama2-Chat 13B + Coarse. | 0.482 | 0.406 | 0.475 | 0.454 | 0.361 | 0.427 |

| Prometheus 7B | 0.860 | 0.781 | 0.863 | 0.847 | 0.767 | 0.849 |

| Prometheus 13B | 0.861 | 0.776 | 0.858 | 0.860 | 0.771 | 0.858 |

| GPT-3.5-Turbo-0613 | 0.636 | 0.536 | 0.617 | 0.563 | 0.453 | 0.521 |

| GPT-4-0314 | 0.754 | 0.671 | 0.762 | 0.753 | 0.673 | 0.761 |

| GPT-4-0613 | 0.742 | 0.659 | 0.747 | 0.743 | 0.660 | 0.747 |

| GPT-4 (Recent) | 0.745 | 0.659 | 0.748 | 0.733 | 0.641 | 0.728 |

In Table 3, the performance of Llama-2-Chat 13B degrades over the 7B model and slightly improves when scaled up to 70B size, indicating that naively increasing the size of a model does not necessarily improve an LLM’s evaluation capabilities. On the other hand, Prometheus 13B shows a +0.420 and +0.397 improvement over its base model Llama2-Chat 13B in terms of Pearson correlation on the seen and unseen rubric set, respectively. Moreover, it even outperforms Llama2-Chat 70B, GPT-3.5-Turbo-0613, and different versions of GPT-4. We conjecture the high performance of Prometheus is mainly because the instructions and responses within the test set might share a similar distribution with the train set we used (simulating a scenario where a user is interacting with a LLM) even if the score rubric holds unseen. Also, training on feedback derived from coarse-grained score rubrics (denoted as Llama2-Chat 13B + Coarse) only slightly improves performance, indicating the importance of training on a wide range of score rubric is important to handle customized rubrics that different LLM user or researcher would desire.

Table 4: Pearson, Kendall-Tau, Spearman correlation with scores sampled from GPT-4-0613 across 3 inferences. Note that GPT-4-0613 was sampled 6 times in total to measure self-consistency. The best comparable statistics are bolded and second best underlined among baselines. We include GPT-4 as reference to show it self-consistency when inferenced multiple times.

| Evaluator LM | Vicuna Bench | MT Bench | FLASK EVAL | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Pearson | Kendall-Tau | Spearman | Pearson | Kendall-Tau | Spearman | Pearson | Kendall-Tau | Spearman | |

| Llama2-Chat 7B | 0.175 | 0.143 | 0.176 | 0.132 | 0.113 | 0.143 | 0.271 | 0.180 | 0.235 |

| Llama2-Chat 13B | 0.211 | 0.203 | 0.253 | -0.020 | -0.029 | -0.038 | 0.265 | 0.182 | 0.235 |

| Llama2-Chat 70B | 0.376 | 0.318 | 0.391 | 0.226 | 0.175 | 0.224 | 0.336 | 0.267 | 0.346 |

| Llama2-Chat 13B + Coarse. | 0.307 | 0.196 | 0.245 | 0.417 | 0.328 | 0.420 | 0.517 | 0.349 | 0.451 |

| Prometheus-7B | 0.457 | 0.365 | 0.457 | 0.293 | 0.216 | 0.295 | 0.367 | 0.285 | 0.371 |

| Prometheus-13B | 0.466 | 0.346 | 0.429 | 0.473 | 0.341 | 0.451 | 0.467 | 0.345 | 0.455 |

| GPT-3.5-Turbo-0613 | 0.270 | 0.187 | 0.232 | 0.275 | 0.202 | 0.267 | 0.422 | 0.299 | 0.371 |

| GPT-4-0314 | 0.833 | 0.679 | 0.775 | 0.857 | 0.713 | 0.849 | 0.785 | 0.621 | 0.747 |

| GPT-4-0613 | 0.925 | 0.783 | 0.864 | 0.952 | 0.834 | 0.927 | 0.835 | 0.672 | 0.798 |

| GPT-4 (RECENT) | 0.932 | 0.801 | 0.877 | 0.944 | 0.812 | 0.914 | 0.832 | 0.667 | 0.794 |

In Table 4, the trends of Llama2-Chat among different sizes hold similar; simply increasing size does not greatly improve the LLM’s evaluation capabilities. On these benchmarks, Prometheus shows a +0.255, +0.493, and +0.202 improvement over its base model Llama2-Chat-13B in terms of Pearson correlation on the Vicuna Bench, MT Bench, and Flask Eval dataset, respectively. While Prometheus outperforms Llama2-Chat 70B and GPT-3.5-Turbo-0613, it lacks behind GPT-4. We conjecture that this might be because the instructions from the Feedback Collection and these evaluation datasets have different characteristics; the Feedback Collection are relatively long and detailed (e.g., I’m a city planner … I’m looking for a novel and progressive solution to handle traffic congestion and air problems derived from population increase), while the datasets used for evaluation hold short (e.g., Can you explain about quantum mechanics?).

On the other hand, it is important to note that on the Flask Eval dataset, Llama2-Chat 13B + Coarse (specifically trained with the Flask Eval dataset) outperforms Prometheus. This indicates that training directly on the evaluation dataset might be the best option to acquire a task-specific evaluator LLM, and we further discuss this in Section 6.4.

Table 5: Human Agreement accuracy among ranking datasets. The best comparable statistics are bolded.

| Evaluator LM | HHH Alignment | MT Bench Human Judg. | | | | |

| --- | --- | --- | --- | --- | --- | --- |

| Help. | Harm. | Hon. | Other | Total Avg. | Human Preference | |

| Random | 50.00 | 50.00 | 50.00 | 50.00 | 50.00 | 34.26 |

| StanfordNLP Reward Model | 69.49 | 60.34 | 52.46 | 51.16 | 58.82 | 44.79 |

| ALMOST Reward Model | 74.58 | 67.24 | 78.69 | 86.05 | 76.02 | 49.90 |

| Llama2-Chat 7B | 66.10 | 81.03 | 70.49 | 74.42 | 72.85 | 51.78 |

| Llama2-Chat 13B | 74.58 | 87.93 | 55.74 | 79.07 | 73.76 | 52.34 |

| Llama2-Chat 70B | 66.10 | 89.66 | 67.21 | 74.42 | 74.21 | 53.67 |

| Llama2-Chat 13B + Coarse. | 68.74 | 68.97 | 65.57 | 67.44 | 67.42 | 46.89 |

| GPT-3.5-Turbo-0613 | 76.27 | 87.93 | 67.21 | 86.05 | 78.73 | 57.12 |

| Prometheus 7B | 69.49 | 84.48 | 78.69 | 90.70 | 80.09 | 55.14 |

| Prometheus 13B | 81.36 | 82.76 | 75.41 | 76.74 | 79.19 | 57.72 |

| GPT-4-0613 | 91.53 | 93.10 | 85.25 | 83.72 | 88.69 | 63.87 |

### 5.3 Can Prometheus Function as a Reward Model?

We conduct experiments on 2 human preference datasets: HHH Alignment (Askell et al., 2021) and MT Bench Human Judgment (Zheng et al., 2023) that use a ranking grading scheme. In Table 5, results show that prompting Llama-2-Chat surprisingly obtains reasonable performance, which we conjecture might be the effect of using a base model that is trained with Reinforcement Learning from Human Feedback (RLHF). When training on feedback derived from coarse-grained score rubrics (denoted as Llama2-Chat 13B + Coarse), it only hurts performance. On the other hand, Prometheus 13B shows a +5.43% and +5.38% margin over its base model Llama2-Chat-13B on the HHH Alignment and MT Bench Human Judgement dataset, respectively. These results are surprising because they indicate that training on an absolute grading scheme could also improve performance on a ranking grading scheme even without directly training on ranking evaluation instances. Moreover, it shows the possibilities of using a generative LLM (Prometheus) as a reward model for RLHF (Kim et al., 2023b). We leave the exploration of this research to future work.

## 6 Discussions and Analysis

### 6.1 Why is it important to include Reference Materials?

Evaluating a given response without any reference material is a very challenging task (i.e., Directly asking to decide a score only when an instruction and response are given), since the evaluation LM should be able to (1) know what the important aspects tailored with the instruction is, (2) internally estimate what the answer of the instruction might be, and (3) assess the quality of responses based on the information derived from the previous two steps. Our intuition is that by incorporating each component within the reference material, the evaluator LM could solely focus on assessing the quality of the response instead of determining the important aspects or solving the instruction. Specifically, we analyze the role of each component as follows:

- Score Rubric: Giving information of the the pivotal aspects essential for addressing the given instruction. Without the score rubric, the evaluator LM should inherently know what details should be considered from the given instruction.

- Reference Answer: Decomposing the process of estimating a reference answer and evaluating it at the same time into two steps. Since the reference answer is given as an additional input, the evaluator LM could only focus on evaluating the given response. This enables to bypass a natural proposition that if an evaluator LM doesn’t have the ability to solve the problem, it’s likely that it cannot evaluate different responses effectively as well.

As shown in Table 6, we conduct an ablation experiment by excluding each reference material and also training only on the score rubric without generating a feedback. Additionally, we also ablate the effect of using different model variants (Llama-2, Vicuna, Code-Llama) instead of Llama-2-Chat.

Training Ablation

The results indicate that each component contributes orthogonally to Prometheus ’s superior evaluation performance. Especially, excluding the reference answer shows the most significant amount of performance degradation, supporting our claim that including a reference answer relieves the need for the evaluator LM to internally solve the instruction and only focus on assessing the response. Also, while excluding the score rubric on the Feedback Bench does not harm performance a lot, the performance drops a lot when evaluating on Vicuna Bench. As in our hypothesis, we conjecture that in order to generalize on other datasets, the role of providing what aspect to evaluate holds relatively crucial.

Model Ablation

To test the effect using Llama2-Chat, a model that has been instruction-tuned with both supervised fine-tuning and RLHF, we ablate by using different models as a starting point. Results show that different model choices do not harm performance significantly, yet a model trained with both supervised fine-tuning and RLHF shows the best performance, possibly due to additional training to follow instructions. However, we find that using Code-Llama has some benefits when evaluating on code domain, and we discuss the effect on Section 6.5.

Table 6: Pearson, Kendall-Tau, Spearman correlation with data generated by GPT-4-0613 (Feedback Collection Test set) and scores sampled from GPT-4-0613 across 3 inferences (Vicuna Bench).

| Evaluator LM | Feedback Collection Test set | Vicuna Bench | |

| --- | --- | --- | --- |

| Seen Score Rubric | Unseen Score Rubric | - | |

| Pearson | Pearson | Pearson | |

| Prometheus 7B | 0.860 | 0.847 | 0.457 |

| Training Ablation | | | |

| w/o Score Rubric | 0.837 | 0.745 | 0.355 |

| w/o Feedback Distillation | 0.668 | 0.673 | 0.413 |

| w/o Reference Answer | 0.642 | 0.626 | 0.349 |

| Model Ablation | | | |

| Llama-2 7B Baseline | 0.839 | 0.818 | 0.404 |

| Vicuna-v1.5 7B Baseline | 0.860 | 0.829 | 0.430 |

| Code-Llama 7B Baseline | 0.823 | 0.761 | 0.470 |

### 6.2 Narrowing Performance Gap to GPT-4 Evaluation

The observed outcomes, in which Prometheus consistently surpasses GPT-4 based on human evaluations encompassing both scores and quality of feedback, as well as correlations in the Feedback Bench, are indeed noteworthy. We firmly posit that these findings are not merely serendipitous and offer the following justifications:

- Regarding results on Feedback Bench, our model is directly fine-tuned on this data, so it’s natural to beat GPT-4 on a similar distribution test set if it is well-trained. In addition, for GPT-4, we compare the outputs of inferencing on the instructions and augmenting new instances, causing the self-consistency to be lower.

- Regarding score correlation for human evaluation, our model shows similar or slightly higher trends. First, our human evaluation set-up excluded all coding or math-related questions, which is where it is non-trivial to beat GPT-4 yet. Secondly, there’s always the margin of human error that needs to be accounted for. Nonetheless, we highlight that we are the first work to argue that an open-source evaluator LM could closely reach GPT-4 evaluation only when the appropriate reference materials are accompanied.

- As shown in Figure 6, Prometheus tends to be critical compared to GPT-4. We conjecture this is because since it is specialized for evaluation, it acquires the characteristics of seeking for improvement when assessing responses.

### 6.3 Qualitative Examples of Feedback Generated by Prometheus

We present five qualitative examples to compare the feedback generated by Prometheus and GPT-4 in Appendix I. Specifically, Figure 16 shows an example where human annotators labeled that GPT-4 generate an abstract/general feedback not suitable for criticizing the response. Figure 17 shows an example where human annotators labeled that Prometheus generate overly critical feedback. Figure 18 shows an example of human annotators labeled as a tie. In general, Prometheus generates a detailed feedback criticizing which component within the response is wrong and seek improvement. This qualitatively shows that Prometheus could function as an evaluator LM.

Moreover, we present an example of evaluating python code responses using Prometheus, GPT-4, and Code-Llama in Figure 19. We discuss the effect of using a base model specialized on code domain for code evaluation in Section 6.5.

### 6.4 A Practitioner’s Guide for Directly Using Prometheus Evaluation

Preparing an Evaluation Dataset

As shown in the previous sections, Prometheus functions as a good evaluator LM not only on the Feedback Bench (a dataset that has a similar distribution with the dataset it was trained on), but also on other widely used evaluation datasets such as the Vicuna Bench, MT Bench, and Flask Eval. As shown in Figure 1, users should prepare the instruction dataset they wish to evaluate their target LLM on. This could either be a widely used instruction dataset or a custom evaluation users might have.

Deciding a Score Rubric to Evaluate on

The next step is to choose the score rubric users would want to test their target LLM on. This could be confined to generic metrics such as helpfulness/harmlessness, but Prometheus also supports fine-grained score rubrics such as “Child-Safety”, “Creativity” or even “Is the response formal enough to send to my boss”.

Preparing Reference Answers

While evaluating without any reference is also possible, as shown in Table 6, Prometheus shows superior performance when the reference answer is provided. Therefore, users should prepare the reference answer they might consider most appropriate based on the instructions and score rubrics they would want to test on. While this might require additional cost to prepare, there is a clear trade-off in order to improve the precision or accuracy of the overall evaluation process, hence it holds crucial.

Generating Responses using the Target LLM

The last step is to prepare responses acquired from the target LLM that users might want to evaluate. By providing the reference materials (score rubrics, reference answers) along with the instruction and responses to evaluate on, Prometheus generates a feedback and a score. Users could use the score to determine how their target LLM is performing based on customized criteria and also refer to the feedback to analyze and check the properties and behaviors of their target LLM. For instance, Prometheus could be used as a good alternative for GPT-4 evaluation while training a new LLM. Specifically, the field has not yet come up with a formalized procedure to decide the details of instruction-tuning or RLHF while developing a new LLM. This includes deciding how many training instances to use, how to systematically decide the training hyperparameters, and quantitatively analyzing the behaviors of LLMs across multiple versions. Most importantly, users might not want to send the outputs generated by their LLMs to OpenAI API calls. In this regard, Prometheus provides an appealing solution of having control over the whole evaluation process, also supporting customized score rubrics.

### 6.5 A Practitioner’s Guide for Training a New Evaluation Model

Users might also want to train their customized evaluator LM as Prometheus for different use cases. As shown in Table 4, training directly on the Flask dataset (denoted as Llama2-Chat 13B + Coarse) shows a higher correlation with GPT-4 on the Flask Eval dataset compared to Prometheus that is trained on the Feedback Collection. This implies that directly training on a target feedback dataset holds the best performance when evaluating on it. Yet, this requires going through the process of preparing a new feedback dataset (described in Section 3.1). This implies that there is a trade-off between obtaining a strong evaluator LM on a target task and paying the initial cost to prepare a new feedback dataset. In this subsection, we provide some guidelines for how users could also train their evaluator LM using feedback datasets.

Preparing a Feedback Dataset to train on

As described in Section 3, some important considerations to prepare a new feedback dataset are: (1) including as many reference materials as possible, (2) maintaining a uniform length among the reference answers for each score (1 to 5) to prevent undesired length bias, (3) maintaining a uniform score distribution to prevent undesired decision bias. While we did not explore the effect of including other possible reference materials such as a “Score 1 Reference Answer” or “Background Knowledge” due to limited context length, future work could also explore this aspect. The main intuition is that providing more reference materials could enable the evaluator LM to solely focus on evaluation instead of solving the instruction.

Choosing a Base Model to Train an Evaluator LM

As shown in Figure 19, we find that training on Code-Llama provides more detailed feedback and a reasonable score decision when evaluating responses on code domains (7 instances included within the Vicuna Bench dataset). This indicates that choosing a different base model based on the domain to evaluate might be crucial when designing an evaluator LM. We also leave the exploration of training an evaluator LM specialized on different domains (e.g., code and math) as future work.

## 7 Conclusion

In this paper, we discuss the possibility of obtaining an open-source LM that is specialized for fine-grained evaluation. While text evaluation is an inherently difficult task that requires multi-faceted considerations, we show that by incorporating the appropriate reference material, we can effectively induce evaluation capability into an LM. We propose a new dataset called the Feedback Collection that encompasses thousands of customized score rubrics and train an open-source evaluator model, Prometheus. Surprisingly, when comparing the correlation with human evaluators, Prometheus obtains a Pearson correlation on par with GPT-4, while the quality of the feedback was preferred over GPT-4 58.62% of the time. When comparing Pearson correlation with GPT-4, Prometheus shows the highest correlation even outperforming GPT-3.5-Turbo. Lastly, we show that Prometheus shows superior performance on human preference datasets, indicating its possibility as an universal reward model. We hope that our work could stimulate future work on using open-source LLMs as evaluators instead of solely relying on proprietary LLMs.

#### Acknowledgments

This work was partly supported by KAIST-NAVER Hypercreative AI Center and Institute of Information & communications Technology Planning & Evaluation (IITP) grant funded by the Korea government (MSIT) (No.2022-0-00264, Comprehensive Video Understanding and Generation with Knowledge-based Deep Logic Neural Network, 40%; No.2021-0-02068, Artificial Intelligence Innovation Hub, 20%). We thank Minkyeong Moon, Geonwoo Kim, Minkyeong Cho, Yerim Kim, Sora Lee, Seunghwan Lim, Jinheon Lee, Minji Kim, and Hyorin Lee for helping with the human evaluation experiments. We thank Se June Joo, Dongkeun Yoon, Doyoung Kim, Seonghyeon Ye, Gichang Lee, and Yehbin Lee for helpful feedback and discussions.

## References

- Askell et al. (2021) Amanda Askell, Yuntao Bai, Anna Chen, Dawn Drain, Deep Ganguli, Tom Henighan, Andy Jones, Nicholas Joseph, Ben Mann, Nova DasSarma, Nelson Elhage, Zac Hatfield-Dodds, Danny Hernandez, Jackson Kernion, Kamal Ndousse, Catherine Olsson, Dario Amodei, Tom Brown, Jack Clark, Sam McCandlish, Chris Olah, and Jared Kaplan. A general language assistant as a laboratory for alignment, 2021.

- Chang et al. (2023) Yupeng Chang, Xu Wang, Jindong Wang, Yuan Wu, Kaijie Zhu, Hao Chen, Linyi Yang, Xiaoyuan Yi, Cunxiang Wang, Yidong Wang, et al. A survey on evaluation of large language models. arXiv preprint arXiv:2307.03109, 2023.

- Chia et al. (2023) Yew Ken Chia, Pengfei Hong, Lidong Bing, and Soujanya Poria. Instructeval: Towards holistic evaluation of instruction-tuned large language models. arXiv preprint arXiv:2306.04757, 2023.

- Chiang & yi Lee (2023) Cheng-Han Chiang and Hung yi Lee. Can large language models be an alternative to human evaluations?, 2023.

- Chiang et al. (2023) Wei-Lin Chiang, Zhuohan Li, Zi Lin, Ying Sheng, Zhanghao Wu, Hao Zhang, Lianmin Zheng, Siyuan Zhuang, Yonghao Zhuang, Joseph E. Gonzalez, Ion Stoica, and Eric P. Xing. Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality, March 2023. URL https://lmsys.org/blog/2023-03-30-vicuna/.

- Dubois et al. (2023) Yann Dubois, Xuechen Li, Rohan Taori, Tianyi Zhang, Ishaan Gulrajani, Jimmy Ba, Carlos Guestrin, Percy Liang, and Tatsunori B Hashimoto. Alpacafarm: A simulation framework for methods that learn from human feedback. arXiv preprint arXiv:2305.14387, 2023.

- Gilardi et al. (2023) Fabrizio Gilardi, Meysam Alizadeh, and Maël Kubli. Chatgpt outperforms crowd-workers for text-annotation tasks. arXiv preprint arXiv:2303.15056, 2023.

- Ho et al. (2022) Namgyu Ho, Laura Schmid, and Se-Young Yun. Large language models are reasoning teachers. arXiv preprint arXiv:2212.10071, 2022.

- Holtzman et al. (2023) Ari Holtzman, Peter West, and Luke Zettlemoyer. Generative models as a complex systems science: How can we make sense of large language model behavior? arXiv preprint arXiv:2308.00189, 2023.

- Honovich et al. (2022) Or Honovich, Thomas Scialom, Omer Levy, and Timo Schick. Unnatural instructions: Tuning language models with (almost) no human labor. arXiv preprint arXiv:2212.09689, 2022.

- Kim et al. (2023a) Seungone Kim, Se June Joo, Doyoung Kim, Joel Jang, Seonghyeon Ye, Jamin Shin, and Minjoon Seo. The cot collection: Improving zero-shot and few-shot learning of language models via chain-of-thought fine-tuning. arXiv preprint arXiv:2305.14045, 2023a.

- Kim et al. (2023b) Sungdong Kim, Sanghwan Bae, Jamin Shin, Soyoung Kang, Donghyun Kwak, Kang Min Yoo, and Minjoon Seo. Aligning large language models through synthetic feedback. arXiv preprint arXiv:2305.13735, 2023b.

- Krishna et al. (2021) Kalpesh Krishna, Aurko Roy, and Mohit Iyyer. Hurdles to progress in long-form question answering. arXiv preprint arXiv:2103.06332, 2021.

- Li et al. (2023) Xuechen Li, Tianyi Zhang, Yann Dubois, Rohan Taori, Ishaan Gulrajani, Carlos Guestrin, Percy Liang, and Tatsunori B. Hashimoto. Alpacaeval: An automatic evaluator of instruction-following models. https://github.com/tatsu-lab/alpaca_eval, 2023.