# CausalCite: A Causal Formulation of Paper Citations

> Equal contribution.

## Abstract

Citation count of a paper is a commonly used proxy for evaluating the significance of a paper in the scientific community. Yet citation measures are widely criticized for failing to accurately reflect the true impact of a paper. Thus, we propose CausalCite, a new way to measure the significance of a paper by assessing the causal impact of the paper on its follow-up papers. CausalCite is based on a novel causal inference method, TextMatch, which adapts the traditional matching framework to high-dimensional text embeddings. TextMatch encodes each paper using text embeddings from large language models (LLMs), extracts similar samples by cosine similarity, and synthesizes a counterfactual sample as the weighted average of similar papers according to their similarity values. We demonstrate the effectiveness of CausalCite on various criteria, such as high correlation with paper impact as reported by scientific experts on a previous dataset of 1K papers, (test-of-time) awards for past papers, and its stability across various subfields of AI. We also provide a set of findings that can serve as suggested ways for future researchers to use our metric for a better understanding of the quality of a paper. Our code is available at https://github.com/causalNLP/causal-cite.

## 1 Introduction

Recent years have seen explosive growth in the number of scientific publications, making it increasingly challenging for scientists to navigate the vast landscape of scientific literature. Therefore, identifying a good paper has become a crucial challenge for the scientific community, not only for technical research purposes, but also for making decisions, such as funding allocation (Carlsson, 2009), research evaluation (Moed, 2006), recruitment (Gary Holden and Barker, 2005), and university ranking and evaluation (Piro and Sivertsen, 2016).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Flowchart: Impact of Paper a on Follow-up Study Paper b

### Overview

The diagram illustrates a causal relationship between two academic papers (Paper a and Paper b) and explores a counterfactual scenario where Paper a does not exist. It compares the success metrics of Paper b in both scenarios.

### Components/Axes

- **Ovals**:

- Red oval labeled "Paper a" (left side)

- Blue oval labeled "Paper b" (right side)

- **Arrows**:

- Black arrow labeled "Causal Effect" from Paper a to Paper b

- Blue arrow labeled "We make a counterfactual situation" pointing downward

- **Text Labels**:

- Top: "What is the impact of Paper a on its followup study b?"

- Middle: "Had Paper a not existed... Yet Paper b still has the same topic, year, etc."

- Bottom: "What would the counterfactual success metric y’ be?"

- **Attributes**:

- For Paper b: "Paper topic," "Publication year," "...", "Success metric: y"

- For counterfactual Paper b: "Success metric: y’"

### Detailed Analysis

- **Causal Scenario**:

- Paper a (red) directly influences Paper b (blue) via a labeled "Causal Effect" arrow.

- Paper b's success metric is denoted as **y**.

- **Counterfactual Scenario**:

- Paper a is crossed out (red oval with red X).

- Paper b retains the same attributes (topic, year) but has a modified success metric **y’**.

### Key Observations

1. The diagram explicitly contrasts two scenarios:

- Actual: Paper a exists and affects Paper b.

- Counterfactual: Paper a is absent, but Paper b retains identical attributes.

2. Success metrics are differentiated as **y** (actual) and **y’** (counterfactual).

3. No numerical values or quantitative data are provided; the focus is on conceptual relationships.

### Interpretation

This diagram frames a **causal inference** problem, asking:

- How much of Paper b's success (y) can be attributed to Paper a?

- What would Paper b's success metric (y’) look like without Paper a's influence?

The counterfactual approach suggests a **thought experiment** to isolate Paper a's impact by holding all other variables constant (topic, year). The absence of numerical data implies this is a conceptual framework for designing an impact assessment study rather than presenting empirical results.

</details>

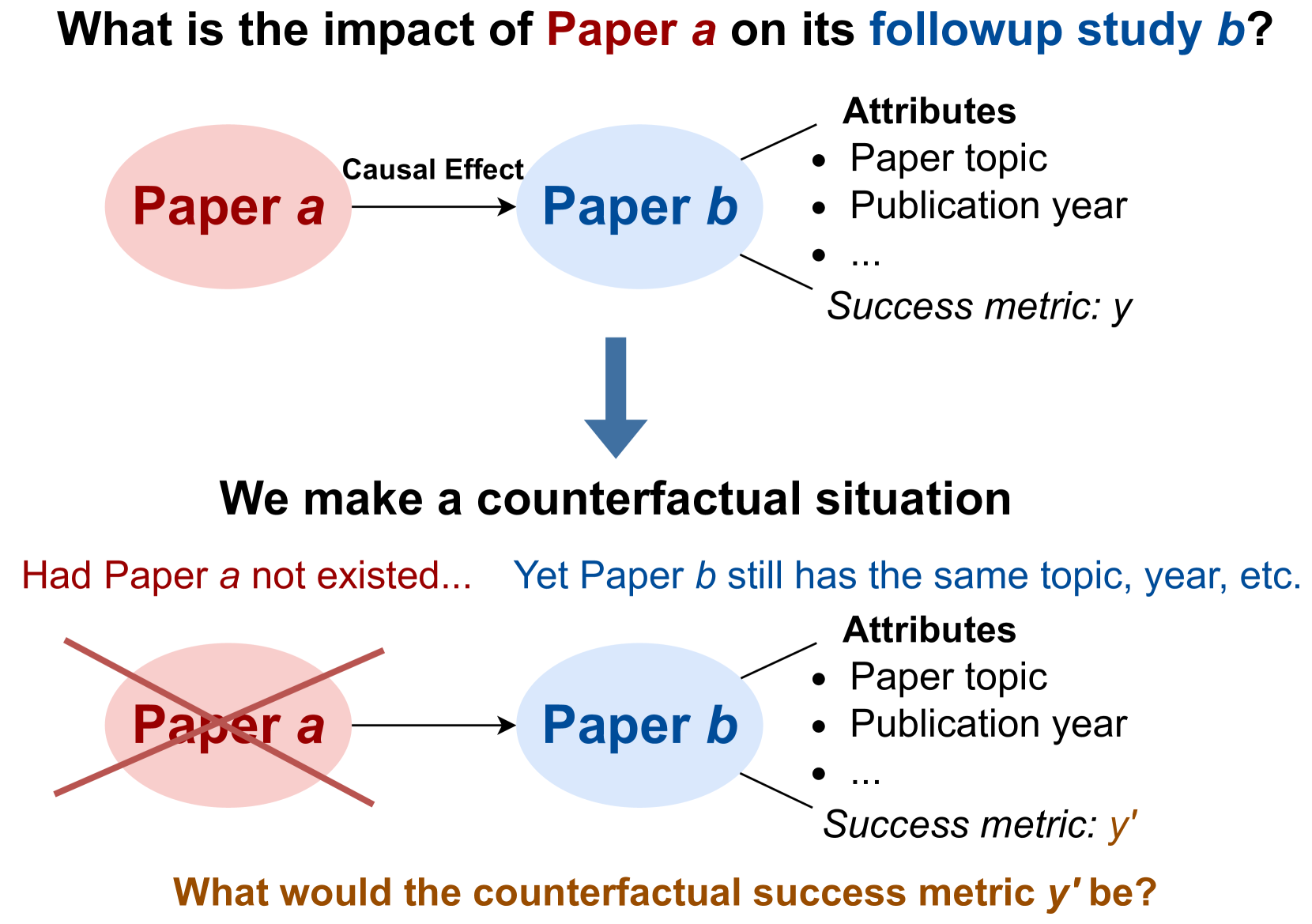

Figure 1: An overview of our research question.

A traditional approach to recognize paper quality is peer review, a mechanism that requires large efforts, and yet has inherent randomness and flaws (Cortes and Lawrence, 2021; Rogers et al., 2023; Shah, 2022; Prechelt et al., 2018; Resnik et al., 2008). Moreover, the number of papers after peer review is still overwhelmingly large for researchers to read, leaving the challenge of identifying truly impactful research unaddressed. Another commonly used metric is citations. However, this metric faces criticism for biases, such as a preference for survey, toolkit, and dataset papers (Zhu et al., 2015; Valenzuela-Escarcega et al., 2015). Together with altmetrics (Wilsdon et al., 2015), which incorporates social media attention to a paper, both metrics also bias towards papers from major publishing countries (Rungta et al., 2022; Gomez et al., 2022), with extensive publicity and promotion, and authored by established figures.

To provide a more equitable assessment of paper quality, we employ the causal inference framework (Hernán and Robins, 2010) to quantify a paper’s impact by how much of the academic success in the follow-up papers should be causally attributed to this paper. We introduce CausalCite, an enhanced citation based metric that poses the following counterfactual question (also shown in Figure 1): “ had this paper never been published, what would have happened to its follow-up studies? ” To compute the causal attribution of each follow-up paper, we contrast its citations (the treatment group) with citations of papers that address a similar topic, but are not built on the paper of interest (the control group).

Traditionally, this problem is solved by using the matching method Rosenbaum and Rubin (1983) in causal inference, which discretizes the value of the confounder variable, and compares the treatment and control groups with regard to each discretized value of the confounder variable. However, this approach does not apply when the confounder variable is high-dimensional, e.g., text data, such as the content of the paper. Thus, we improve the matching method to adapt for textual confounders, by marrying recent advancement of large language models (LLMs) with traditional causal inference. Specifically, we propose TextMatch, which uses LLMs to encode an academic paper as a high-dimensional text embedding to represent the confounders, and then, instead of iterating over discretized values of the confounder, we match each paper in the treatment group with papers from the control group with high cosine similarity by the text embeddings.

TextMatch makes contributions in three different aspects: (1) it relaxes the previous constraint that the confounder variable should be binned into a limited set of intervals, and makes the matching method applicable for high-dimensional continuous variable type for the confounder; (2) since there are millions of papers, we enable efficient matching via a matching-and-reranking approach, first using information retrieval (IR) (Manning et al., 2008) to extract a small set of candidates, and then applying semantic textual similarity (STS) (Majumder et al., 2016; Chandrasekaran and Mago, 2022) for fine-grained reranking; and (3) we enable a more stable causal effect estimation by leveraging all the close matches to synthesize the counterfactual citation score by a weighted average according to the similarity scores of the matched papers.

CausalCite quantifies scientific impact via a causal lens, offering an alternative understanding of a paper’s impact within the academic community. To test its effectiveness, we conduct extensive experiments using the Semantic Scholar corpus (Lo et al., 2020; Kinney et al., 2023), comprising of $206$ M papers and $2.4$ B citation links. We empirically validate CausalCite by showing higher predictive accuracy of paper impact (as judged by scientific experts on a past dataset of 1K papers (Zhu et al., 2015)) compared to citations and other previous impact assessment metrics. We further show a stronger correlation of the metric with the test-of-time (ToT) paper awards. We find that, unlike citation counts, our metric exhibits a greater balance across various research domains in AI, e.g., general AI, NLP, and computer vision (CV). While citation numbers for papers in these domains vary significantly – for example, while an average CV paper has many more citations than an average NLP paper, CausalCite scores papers across AI sub-fields more similarly.

After demonstrating the desirable properties of our metric, we also present several case studies of its applications. Our findings reveal that the quality of conference best papers is noisier on average than that of ToT papers (Section 5.1). We then showcase and present CausalCite for several well-known papers (Section 5.3) and utilize CausalCite to identify high-quality papers that are less recognized by citation counts (Section 5.4).

In conclusion, our contributions are as follows:

1. We introduce CausalCite, a counterfactual causal effect-based formulation for paper citations.

1. We develop TextMatch, a new method that leverages LLMs and causal inference to estimate the counterfactual causal effect of a paper.

1. We conduct comprehensive analyses, including various performance evaluations and present new findings using our metric.

## 2 Problem Formulation

Our problem formulation involves a citation graph and a causal graph. We use lowercase letters for specific papers and uppercase for an arbitrary paper treated as a random variable.

2.0.0.0.1 Citation Graph

In the citation graph $\mathbb{G}\coloneqq(\mathbb{P},\mathbb{L})$ , $\mathbb{P}$ is a set of papers, and each edge $\ell_{i,j}\in\mathbb{L}$ indicates that an earlier paper ${p}_{i}$ influences (i.e., is cited by) a follow-up paper ${p}_{j}$ . To obtain the citation graph, we use the Semantic Scholar Academic Graph dataset (Kinney et al., 2023) with 206M papers and 2.4B citation edges.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Causal Diagram: Effect of Building Paper b on Paper a's Success

### Overview

The diagram illustrates a causal framework to analyze the relationship between "Building Paper b on Paper a" (Treatment T) and "Success of Paper b" (Effect Y). It identifies variables to control for in causal inference, distinguishing confounders, mediators, and colliders.

### Components/Axes

1. **Main Elements**:

- **Treatment T**: "Building Paper b on Paper a" (light blue oval).

- **Effect Y**: "Success of Paper b" (peach oval).

- **Confounders X**: "Title+Abstract" (incl. topic, research question) and "Year" (green oval with checkmark).

- **Mediators**: "Performance" (e.g., "90%") and "Venue" (e.g., "ACL") (pink ovals with X marks).

- **Colliders**: "Post-Hoc Award" (e.g., "Test of Time") (pink oval with X mark).

2. **Arrows**:

- **Direct Influence**: Treatment T → Effect Y (dashed arrow).

- **Mediation Path**: Treatment T → Mediators → Effect Y (solid arrows).

- **Confounder Influence**: Confounders X → Effect Y (dashed arrows).

- **Ancestral Links**:

- T’s Ancestors (e.g., "Paper a’s venue, publicity") → Treatment T (gray dashed arrows).

- Y’s Ancestors (e.g., "Paper b’s efforts into PR") → Effect Y (gray dashed arrows).

3. **Annotations**:

- **Checkmark**: Confounders X should be controlled for.

- **X Marks**: Mediators and Colliders should not be controlled for.

### Detailed Analysis

- **Confounders X** (green oval):

- Includes "Title+Abstract" (topic, research question) and "Year."

- Explicitly marked with a checkmark, indicating these variables must be controlled to isolate the causal effect of Treatment T on Effect Y.

- **Mediators** (pink ovals):

- "Performance" (e.g., "90%") and "Venue" (e.g., "ACL").

- Arrows show they mediate the relationship between Treatment T and Effect Y.

- X marks indicate they should **not** be controlled for, as doing so would block the causal pathway.

- **Colliders** (pink oval):

- "Post-Hoc Award" (e.g., "Test of Time").

- Colliders are variables influenced by both Treatment T and Effect Y. Controlling for them would introduce bias, hence the X mark.

- **Ancestral Links**:

- T’s Ancestors (e.g., "Paper a’s venue, publicity") and Y’s Ancestors (e.g., "Paper b’s efforts into PR") are excluded from direct control, as they are upstream/downstream factors not directly tied to the causal mechanism.

### Key Observations

1. **Control Variables**: Only Confounders X (Title+Abstract, Year) should be controlled for to avoid confounding bias.

2. **Mediators**: Performance and Venue are critical mediators; controlling them would obscure the true causal effect.

3. **Colliders**: Post-Hoc Awards are colliders; controlling them would distort the causal relationship.

4. **Ancestral Factors**: Excluded from control to focus on direct and mediated effects.

### Interpretation

This diagram emphasizes the importance of distinguishing between confounders, mediators, and colliders in causal analysis. By controlling only for Confounders X (Title+Abstract, Year), researchers can isolate the direct and indirect effects of building Paper b on Paper a’s success. Mediators (Performance, Venue) and Colliders (Post-Hoc Awards) are excluded from control to preserve the integrity of the causal pathway. The diagram aligns with principles of causal inference, highlighting how improper variable selection can lead to biased conclusions. For example, controlling for mediators would underestimate the true effect of Treatment T, while controlling for colliders would introduce spurious associations.

</details>

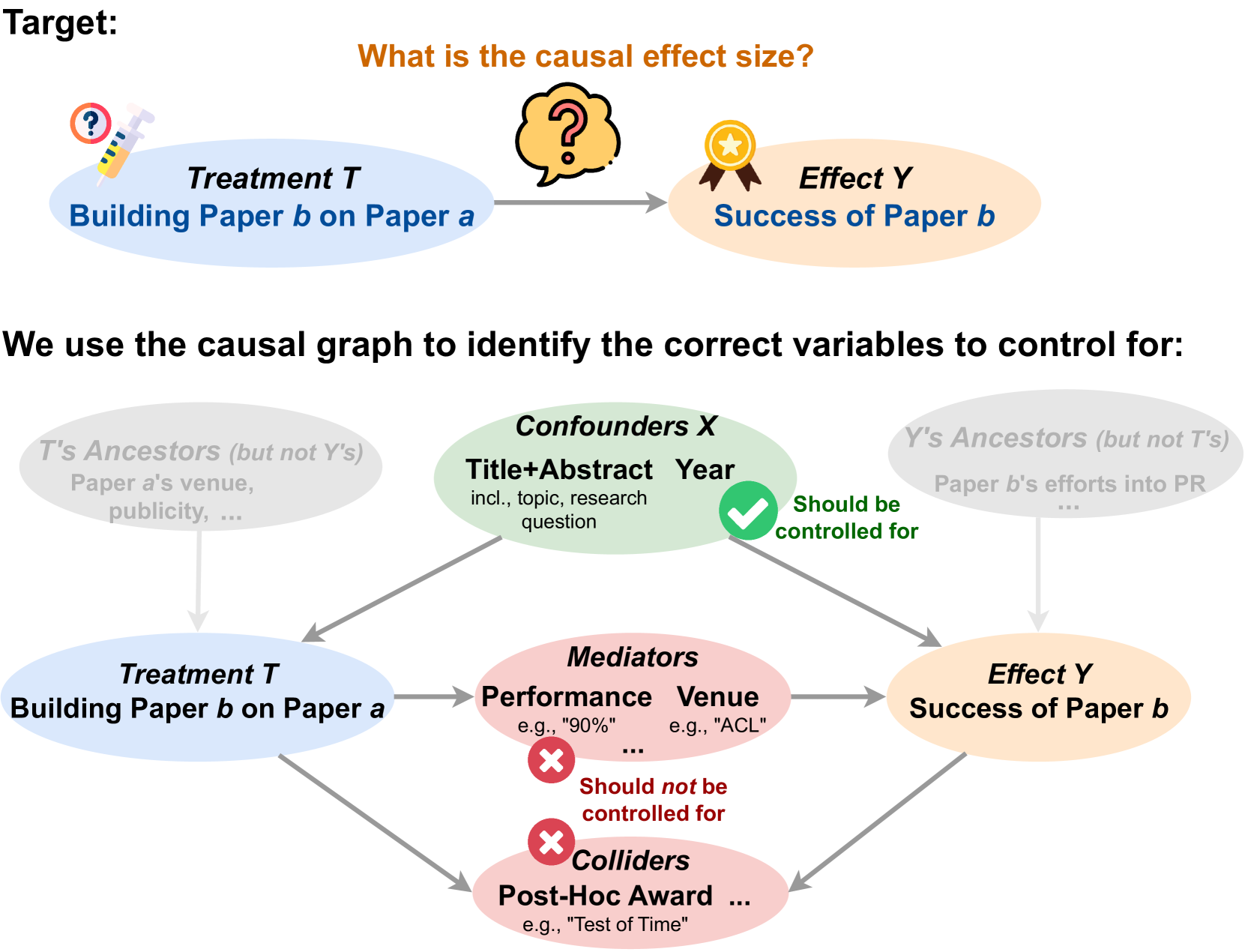

Figure 2: The causal graph of our study.

2.0.0.0.2 Causal Graph.

The causal graph, shown in Figure 2, highlights the contribution of a paper $a$ to a follow-up paper $b$ . We use a binary variable $T$ to indicate if $a$ influences $b$ and an effect variable $Y$ to represent the success of $b$ . We use $\log_{10}$ of citation counts to quantify $Y$ , although other transformations can also be used. We introduce two sets of variables in this causal graph: (i) The set of confounders, which are the common causes of $T$ and $Y$ . For instance, the research area of $b$ impacts both the likelihood of a paper citing $a$ and its own citation count. (ii) Descendants of the treatment, comprising mediators (e.g., paper $a$ influencing the quality of paper $b$ and subsequently influencing its citations) and colliders (e.g., both the influence from $a$ and the citations of $b$ influencing later awards received by $b$ ).

### 2.1 CausalCite Indices

In this section, we introduce various indices that measure the causal impact of a paper.

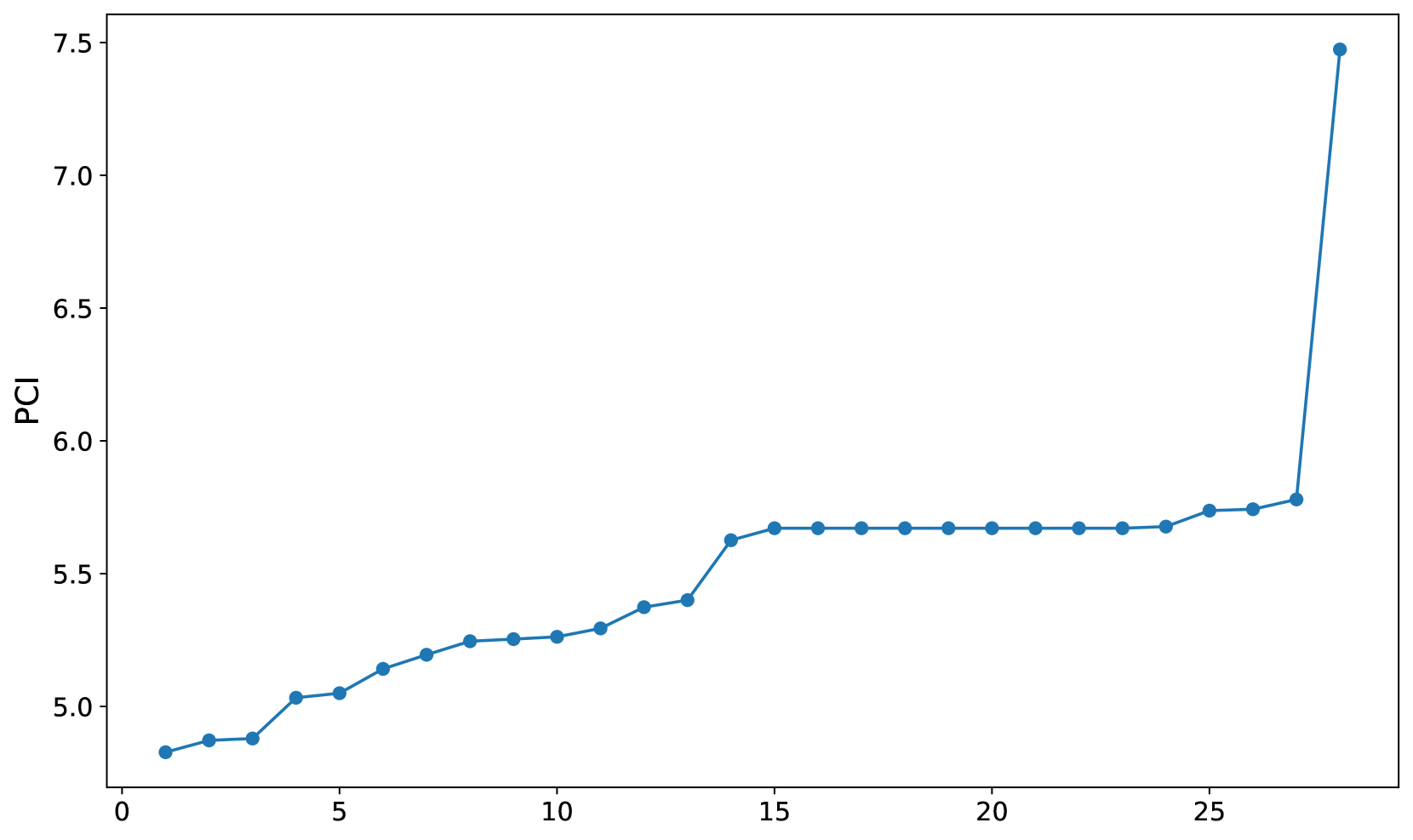

2.1.0.0.1 Two-Paper Interaction: Pairwise Causal Impact (PCI).

To examine the causal impact of a paper $a$ on a follow-up paper $b$ , we define the pairwise causal impact $\mathrm{PCI}(a,b)$ by unit-level causal effect:

$$

\displaystyle\mathrm{PCI}(a,b)\coloneqq y^{t=1}-y^{t=0}~{}, \tag{1}

$$

where we compare the outcomes $Y$ of the paper $b$ had it been influenced by paper $a$ or not, denoted as the actual $y^{t=1}$ and the counterfactual $y^{t=0}$ , respectively. Note that the counterfactual $y^{t=0}$ can never be observed, but only estimated by statistical methods, as we will discuss in Section 3.2.

2.1.0.0.2 Single-Paper Quality Metrics: Total Causal Impact (TCI) and Average Causal Impact (ACI).

Let $\bm{S}$ denote the set of all follow-up studies of paper $a$ . We define total causal impact $\mathrm{TCI}(a)$ as the sum of the pairwise causal impact index $\mathrm{PCI}(a,b)$ across all $b\in\bm{S}$ . That is,

$$

\mathrm{TCI}(a)\coloneqq\sum_{b\in\bm{S}}\mathrm{PCI}(a,b)~{}. \tag{2}

$$

This definition provides an aggregated measure of a paper’s influence across all its follow-up papers.

As the causal inference literature is usually interested in the average treatment effect, we further define the average causal impact (ACI) index as the average per paper PCI:

$$

\mathrm{ACI}(a)\coloneqq\frac{\mathrm{TCI}(a)}{|\bm{S}|}=\frac{1}{|\bm{S}|}

\sum_{b\in\bm{S}}\left(y^{t=1}-y^{t=0}\right)~{}. \tag{3}

$$

We note that $\mathrm{ACI}(a)$ is equal to the a verage t reatment effect on the t reated (ATT) of paper $a$ (Pearl, 2009).

## 3 The TextMatch Method

As illustrated in Figure 1, the objective of our study is to quantify the causal effect of the treatment $T$ (i.e., whether paper $b$ is built on paper $a$ ) on the effect $Y$ (i.e., the outcome of paper $b$ ). To approach this, we envision a counterfactual scenario: what if paper $a$ had never been published, yet certain key characteristics of paper $b$ remain unchanged? The critical question then becomes: which key characteristics of paper $b$ should be controlled for in this hypothetical situation?

### 3.1 What Does Causal Inference Tell Us about What Variables to Control for, and What Not?

In causal inference, selecting the appropriate variables for control is a delicate and crucial process that affects the accuracy of the analysis. Pearl’s seminal work on causality guides us in differentiating between various types of variables Pearl (2009).

Firstly, we must control for confounders – variables that influence both the treatment and the outcome. Confounders can create spurious correlations; if not controlled, they can lead us to mistakenly attribute the effect of these external factors to the treatment itself. For example, in assessing the impact of one paper on another, if both papers are in a trending research area, the apparent influence might be due to the popularity of the topic rather than the papers’ content.

However, not all variables warrant control. Mediators and colliders should be explicitly avoided in control. Mediators are part of the causal pathway between the treatment and outcome. By controlling them, we would block the very effect we are trying to measure. Colliders, affected by both the treatment and the outcome, can introduce bias when controlled. Controlling a collider can inadvertently create associations that do not naturally exist. In general, this also includes not controlling for the descendants of the treatment, as it could obscure the direct impact we intend to study.

Lastly, variables that do not share a causal path with both the treatment and outcome, known as unshared ancestors, are less critical in our analysis. They do not contribute to or confound the causal relationship we are exploring, and thus, controlling for them does not add value to our causal understanding.

### 3.2 Can Existing Causal Inference Methods Handle This Control?

Several causal inference methods have been proposed to address the problem of estimating treatment effects while controlling for confounders. Next, we will discuss the workings and limitations of three classical methods.

3.2.0.0.1 Randomized Control Trials (RCTs) Assumes Intervenability.

The ideal way to obtain causal effects is through randomized control trials (RCTs). For example, when testing a drug, we randomly split all patients into two groups, the control group and the treatment group, where the random splitting ensures the same distribution of the confounders across the two groups such as gender and age. However, RCTs are usually not easily achievable, in some cases too expensive (e.g., tracking hundreds of people’s daily lives for 50 years), and in other cases unethical (e.g., forcing a random person to smoke), or infeasible (e.g., getting a time machine to change a past event in history).

For our research question on a paper’s impact, utilizing RCTs is impractical as it is infeasible to randomly divide researchers into two groups, instructing one group to base their research on a specific paper $a$ while the other group does not, and then observe the citation count of their papers years later.

3.2.0.0.2 Ratio Matching Iterates over Discretized Confounder Values.

In the absence of RCTs, matching is as an alternate method for determining causal effects from observational data. In this case, we can let the treatment assignment happen naturally, such as taking the naturally existing set of papers and running causal inference by adjusting for the variables that block all paths. Given a set of naturally observed papers, one of the most commonly used causal inference methods is ratio matching (Rosenbaum and Rubin, 1983), whose basic idea is to iterate over all possible values $\bm{x}$ of the adjustment variables $\bm{X}$ and obtain the difference between the treatment group $\mathcal{T}$ and control group $\mathcal{C}$ :

$$

\widehat{\mathrm{ACI}}(a)=\sum_{\bm{x}}P(\bm{x})\left(\frac{1}{|\mathcal{T}_{

\bm{x}}|}\sum_{i\in\mathcal{T}_{\bm{x}}}y_{i}-\frac{1}{|\mathcal{C}_{\bm{x}}|}

\sum_{j\in\mathcal{C}_{\bm{x}}}y_{j}\right)~{}, \tag{4}

$$

where for each value $\bm{x}$ , we extract all the units corresponding to this value in the treatment and control sets, compute the average of the effect variable $Y$ for each set, and obtain the difference.

While ratio matching is practical when there is a small set of values for the adjustment variables to sum over, its applicability dwindles with high-dimensional variables like text embeddings in our context. This scenario may generate numerous intervals to sum over, presenting numerical challenges and potential breaches of the positivity assumption.

3.2.0.0.3 One-to-One Matching Is Susceptible to Variance.

To handle high-dimensional adjustment variables, one possible way is to avoid pre-defining all their possible intervals, but, instead, iterating over each unit in the treatment group to match for its closest control unit (e.g., McGue et al., 2010; Sato et al., 2022). Consider a given follow-up paper $b$ , and a set of candidate control papers $\bm{C}$ , where each paper $c_{i}$ has a citation count $y_{i}$ , and vector representation $\bm{t}_{i}$ of the confounders (e.g., research topic). One-to-one matching estimates PCI as

$$

\begin{split}\widehat{\mathrm{PCI}}(a,b)&=y_{b}-y_{\operatorname*{argmax}_{c_{

i}\in\bm{C}}m_{i}}\\

&=y_{b}-y_{\operatorname*{argmax}_{c_{i}\in\bm{C}}\mathrm{sim}(\bm{t}_{b},\bm{

t}_{i})}~{},\end{split} \tag{5}

$$

where we approximate the counterfactual sample by the paper $c_{i}\in\bm{C}$ which is the most similar to paper $b$ by the matching score $m_{i}$ , which is obtained by the cosine similarity $\mathrm{sim}$ of the confounder vectors. A limitation of the one-to-one matching method is that it might induce large instability in the result, as only taking one paper with similar contents may have a large variance in citations when the matched paper slightly differs.

### 3.3 How Do We Extending Causal Inference to Text Variables?

#### 3.3.1 Theoretical Formulation of TextMatch : Stabilizing Text Matching by Synthesis

To fill in the aforementioned gap in the existing matching methods, we propose TextMatch, which mitigates the instability issue of one-to-one matching by replacing it with a convex combination of a set of matched samples to form a synthetic counterfactual sample. Specifically, we identify a set of papers $c_{i}\in\bm{C}$ with high matching scores $m_{i}$ to the paper $b$ , and synthesize the counterfactual sample by an interpolation of them:

$$

\displaystyle\widehat{\mathrm{PCI}}(a,b)=y_{b}-\sum_{c_{i}\in\bm{C}}w_{i}y_{i}

=y_{b}-\sum_{c_{i}\in\bm{C}}\frac{m_{i}}{\sum_{c_{i}\in\bm{C}}m_{i}}y_{i}~{}, \tag{6}

$$

where the weight $w_{i}$ of each paper $c_{i}$ is proportional to the matching score $m_{i}$ and normalized.

The contributions of our method are as follows: (1) we adapt the traditional matching methods from low-dimensional covariates to any high-dimensional variables such as text embeddings; (2) different from the ratio matching, we do not stratify the covariates, but synthesize a counterfactual sample for each observed treated units; (3) due to this iteration over each treated unit instead of taking the population-level statistics, we closely control for exogenous variables for the ATT estimation, which circumvents that need for the structural causal models; (4) we further stabilize the estimand by a convex combination of a set of similar papers. Note that the contribution of Eq. 6 might seem to bear similarity with synthetic control Abadie and Gardeazabal (2003); Abadie et al. (2010), but they are fundamentally different, in that synthetic control runs on time series, and fit for the weights $w_{i}$ by linear regression between the time series of the treated unit and a set of time series from the control units, using each time step’s values in the regression loss function.

#### 3.3.2 Overall Algorithm

To operationalize our theoretical formulation above, we introduce our overall algorithm in Algorithm 1. We briefly give an overview of the the algorithm with more details to be elaborated in later sections. We use the weighted average of the matched samples following our TextMatch method in Eq. 6 through 27, 28, 29, 30, 31, 32, 33, 34, 35 and 36. In our experiments, we use the interpolation of up to top 10 matched papers. We encourage future work to explore other hyperparameter settings too. Given the PCI estimation, the main spirit of the $\textsc{GetACIandTCI}(a)$ function is to average or sum over all the follow-up studies of paper $a$ , following the theoretical formulation in Eqs. 2 and 3 and implemented in our algorithm through 7, 8, 9, 10, 11 and 12.

Algorithm 1 Get causal impact indices $\mathrm{ACI}$ and $\mathrm{TCI}$

1: Input: Paper $a$ .

2: procedure GetACIandTCI ( $a$ )

3: $\bm{D}\leftarrow\mathrm{GetDesc}(a)$ $\triangleright$ Get descendants by DFS

4: $\bm{B}\leftarrow\mathrm{GetChildren}(a)$

5: $\bm{B}^{\prime}\leftarrow\mathrm{SampleSubset}(\bm{B})$ $\triangleright$ See Section 3.3.3

6: $\bm{C}\leftarrow\mathrm{EntireSet}\backslash\{\bm{D}\cup\{a\}\}$ $\triangleright$ Get non-descendants

7: $\mathrm{ACI}\leftarrow 0$

8: for each $b_{i}$ in $\bm{B}^{\prime}$ do

9: $I_{i}\leftarrow\textsc{GetPCI}(a,b_{i},\bm{C})$

10: $\mathrm{ACI}\leftarrow\mathrm{ACI}+\frac{1}{|\bm{B}^{\prime}|}\cdot I_{i}$

11: end for

12: $\mathrm{TCI}\leftarrow\mathrm{ACI}\cdot|\bm{B}|$

13: return $\mathrm{ACI}$ and $\mathrm{TCI}$

14: end procedure

15:

16: procedure GetPCI ( $a,b,\bm{C}$ )

17: $\bm{C}_{\mathrm{sameYear}}\leftarrow\mathrm{FilterByYear}(\bm{C},b_{\mathrm{ year}})$

18: for each $p_{i}$ in $\bm{C}_{\mathrm{sameYear}}\cup\{b\}$ do

19: $\bm{t}_{i}\leftarrow\mathrm{RemoveMediator}(\mathrm{TitleAbstract}_{i})$

20: end for

21: $\bm{C}_{\mathrm{coarse}}\leftarrow\mathrm{BM25}(b,\bm{C}_{\mathrm{sameYear}}, \text{topk}=100)$

22: for each $c_{i}$ in $\bm{C}_{\mathrm{coarse}}$ do

23: $m_{i}\leftarrow\mathrm{Sim}(\bm{t}_{b},\bm{t}_{i})$

24: end for

25: $\bm{C}_{\mathrm{top10}}\leftarrow\mathrm{argmax10}_{m}(\bm{C}_{\mathrm{coarse}})$

26:

27: $M\leftarrow 0$

28: for each $c_{i}$ in $\bm{C}_{\mathrm{top10}}$ do $\triangleright$ For the normalization later

29: $M\leftarrow M+m_{i}$

30: end for

31: $\hat{y}^{t=0}\leftarrow 0$

32: for each $c_{i}$ in $\bm{C}_{\mathrm{top10}}$ do

33: $w_{i}\leftarrow\frac{m_{i}}{M}$

34: $\hat{y}^{t=0}\leftarrow\hat{y}^{t=0}+w_{i}\cdot y_{i}$ $\triangleright$ Apply Eq. 6

35: end for

36: return $y_{b}-\hat{y}^{t=0}$

37: end procedure

#### 3.3.3 Key Challenges and Mitigation Methods

We address several technical challenges below.

Confounders of Various Types First, as we mentioned in the causal graph in Figure 2, the confounder set consists of a text variable (title and abstract concatenated together) and an ordinal variable (publication year). Therefore, the similarity operation $\mathrm{Sim}$ between two papers should be customized. For our specific use case, we first filter by the publication year in 17, as it is not fair to compare the citations of papers published in different years. Then, we apply the cosine similarity method paper embeddings as in 23. As a general solution, we recommend to separate hard logical constraints, and soft matching preferences, where the hard constraints should be imposed to filter the data first, and then all the rest of the variables can be concatenated to apply the similarity metric on.

Excluding the Mediators from Confounders Another key challenge to highlight is that the text variable we use for the confounder might accidentally include some mediator information. For example, the quality or performance of a paper could be expressed in the abstract, such as “we achieved 90% accuracy.” Therefore, we conduct a specific preprocessing procedure before feeding the text variable to the similarity function. For the $\mathrm{RemoveMediator}$ function in 19, we exclude all numerical expressions such as percentage numbers, as well as descriptions such as “state-of-the-art.” For generalizability, the essence of this step is a entanglement action to separate the confounder variable (in this case, the research content) and all the descendants of the treatment variable (in this case, mentions of the performance). For more complicated cases in future work, we recommend a separate disentanglement model to be applied here.

Efficient Matching-and-Reranking Method Since we use one of the largest available paper databases, the Semantic Scholar dataset (Kinney et al., 2023) containing 206M papers, we need to optimize our algorithm for large-scale paper matching. For example, after we filter by the publication year, the number of candidate papers $\bm{C}_{\mathrm{sameYear}}$ could be up to 8.8M. In order to conduct text matching across millions of papers, we use a matching-and-reranking approach, by combining two NLP tasks, information retrieval (IR) (Manning et al., 2008) and semantic textual similarity (STS) (Majumder et al., 2016; Chandrasekaran and Mago, 2022).

Specifically, we first run large-scale matching to obtain 100 candidates papers (21) using the common IR method, BM25 (Robertson and Zaragoza, 2009). Briefly, BM25 is a bag-of-words retrieval function that uses term frequencies and document lengths to estimate relevancy between two text documents. Deploying this method, we can find a set of candidate papers for, for example, two million papers, at a speed 250x faster than the text embedding cosine similarity matching. Then, we conduct a fine-grained reranking using cosine similarity (22, 23 and 24). In the cosine similarity matching process, we use the MPNet model Song et al. (2020) to encode the text of each paper $c_{i}$ into an embedding $\bm{t}_{i}$ , with which we get the matching score $m_{i}$ according to Eq. 5 in 23, and the normalized weight $w_{i}$ by Eq. 6 in 33.

Numerical Estimation Given the large number of papers, it is numerically challenging to aggregate the TCI from individual PCIs, because the number of follow-up papers for a study can be up to tens of thousands, such as the 57,200 citations by 2023 for the ImageNet paper (Deng et al., 2009). To avoid extensively running PCI for all follow-up papers, we propose a new numerical estimation method using a carefully designed random paper subset.

A naive way to achieve this aggregation is Monte Carlo (MC) sampling. However, unfortunately, MC sampling requires very large sample sizes when it comes to estimating long-tailed distributions, which is the usual case of citations. Since citations are more likely to be concentrated in the head part of the distribution, we cannot afford the computational budget for huge sample sizes that cover the tails of the distribution. Instead, we propose a novel numerical estimation method for sampling the follow-up papers, inspired by importance sampling (Singh, 2014; Kloek and van Dijk, 1976).

Our numerical estimation method works as follows: First, we propose the formulation that the relation between ACI and TCI is an integral over all possible paper $b$ ’s. Then, we formulated the above sampling problem as integral estimation or area-under-the-curve estimation. We draw inspiration from Simpson’s method, which estimates integrals by binning the input variable into small intervals. Analogously, although we cannot run through all PCIs, we use citations as a proxy, bin the large set of follow-up papers according to their citations into $n$ equally-sized intervals, and perform random sampling over each bin, which we then sum over. In this way, we make sure that our samples come from all parts of the long-tailed distribution and are a more accurate numerical estimate for the actual TCI.

## 4 Performance Evaluation

The contribution of a paper is inherently multi-dimensional, making it infeasible to encapsulate its richness fully through a scalar. Yet the demand for a single, comprehensible metric for research impact persists, fueling the continued use of traditional citations despite their known limitations. In this section, we show how our new metrics significantly improve upon traditional citations by providing quantitative evaluations comparing the effectiveness of citations, Semantic Scholar’s highly influential (SSHI) citations (Valenzuela-Escarcega et al., 2015), and our CausalCite metric.

### 4.1 Experimental Setup

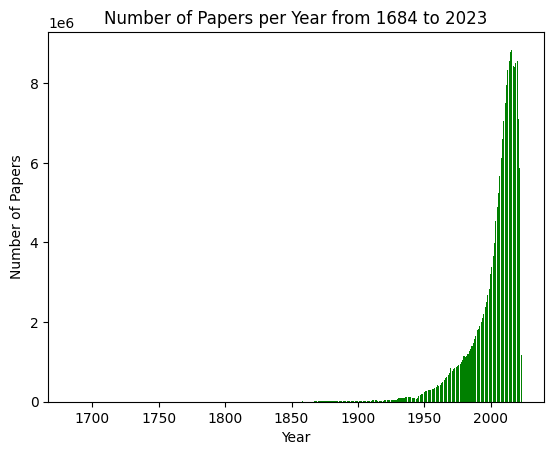

Dataset We use the Semantic Scholar dataset (Lo et al., 2020; Kinney et al., 2023) https://api.semanticscholar.org/api-docs/datasets which includes a corpus of 206M scientific papers, and a citation graph of 2.4B+ citation edges. For each paper, we obtain the title and abstract for the matching process. We list some more details of the dataset in Appendix B, such as the number of papers reaching 8M per year after 2012.

4.1.0.0.1 Selecting the Text Encoder

When projecting the text into the vector space, we need a text encoder with a strong representation power for scientific publications, and is sensitive towards two-paper similarity comparisons regarding their abstracts containing key information such as the research topics. For the representation power for scientific publications, instead of general-domain models such as BERT (Devlin et al., 2019) and RoBERTa (Liu et al., 2019), we consider LLM variants Note that we follow the standard notion by Yang et al. (2023) to refer to BERT and its variants as LLMs. pretrained on large-scale scientific text, such as SciBERT (Beltagy et al., 2019), SPECTER (Cohan et al., 2020), and MPNet (Song et al., 2020).

To check the quality of two-paper similarity measures, we conduct a small-scale empirical study comparing human-ranked paper similarity and model-identified semantic similarity in Section A.3, according to which MPNet outperforms the other two models.

Implementation Details We deploy the all-mpnet-base-v2 checkpoint of the MPNet using the transformers Python package (Wolf et al., 2020), and set the batch size to be 32. For the set of matched papers, we consider papers with cosine similarity scores higher than 0.81, which we optimize empirically on 100 random paper pairs. We the top ten most similar papers above the threshold. In special cases where there is no matched paper above the threshold, it means that no other paper works on the same idea as Paper $b$ , and we make the counterfactual citation number to be zero, which also reflects the quality of Paper $b$ as its novelty is high.

To enable efficient operations on the large-scale citation graph, we use the Dask framework, https://dask.org/ which optimizes for data processing and distributed computing. We optimize our program to take around 100GB RAM, and on average 25 minutes for each $\mathrm{PCI}(a,b)$ after matching against up to millions of candidates. More implementation details are in Section A.1. For the estimation of TCI, we empirically select the sample size to be 40, which is a balance between the computational time and performance, as found in Section A.2.

### 4.2 Author-Identified Paper Impact

In this experiment, we follow the evaluation setup in Valenzuela-Escarcega et al. (2015) to use an annotated dataset (Zhu et al., 2015) comprised of 1,037 papers, annotated according to whether they serve as significant prior work for a given follow-up study. Although paper quality evaluation can be tricky, this dataset was cleverly annotated by first collecting a set of follow-up studies and letting one of the authors of each paper go through the references they cite and select the ones that significantly impact their work. In other words, for a given paper $b$ , each reference $a$ is annotated as whether $a$ has significantly impacted $b$ or not.

Table 1 reports the accuracy of our CausalCite metric, together with two existing citation metrics: citations, and SSHI citations (Valenzuela-Escarcega et al., 2015). See the detailed derivation of the accuracy scores in Section C.2. From this table, we can see that our CausalCite metric achieves the highest accuracy, 80.29%, which is 5 points higher than SSHI, and 9 points higher than the traditional citations.

### 4.3 Test-of-Time Paper Analysis

| Metric | Accuracy |

| --- | --- |

| Citations | 71.33 |

| SSHI Citations | 75.25 |

| CausalCite | 80.29 |

Table 1: Accuracy of all three citation metrics.

| Metric | Corr. Coef. |

| --- | --- |

| Citations | 0.491 |

| SSHI Citations | 0.317 |

| TCI | 0.640 |

Table 2: Correlation coefficients of each metric and ToT paper award by Point Biserial Correlation (Tate, 1954).

<details>

<summary>x3.png Details</summary>

### Visual Description

## Violin Plot: CausalCite Distribution by Paper Type

### Overview

The image is a violin plot comparing the distribution of "CausalCite" scores for two categories: "Non-ToT Papers" (pink) and "ToT Papers" (purple). The y-axis represents "CausalCite" values ranging from 0 to 7000, while the x-axis categorizes the data into the two paper types. The plot uses violin shapes to visualize the density and spread of data points, with black lines indicating medians and whiskers showing the range.

### Components/Axes

- **X-axis**: Labeled "Non-ToT Papers" (left) and "ToT Papers" (right).

- **Y-axis**: Labeled "CausalCite" with a scale from 0 to 7000, marked at intervals of 1000.

- **Legend**: Located on the right side of the plot, associating pink with "Non-ToT Papers" and purple with "ToT Papers."

- **Violin Shapes**:

- **Non-ToT Papers**: A narrow, low-density violin with a peak near 1000 and a range from 0 to ~4500.

- **ToT Papers**: A wider, higher-density violin with a peak near 1500 and a range from 0 to ~7000.

- **Median Lines**: Horizontal black lines within each violin, at ~1000 (Non-ToT) and ~1500 (ToT).

- **Whiskers**: Vertical lines extending from the median to the minimum and maximum values.

### Detailed Analysis

- **Non-ToT Papers**:

- **Peak Density**: ~1000 (median).

- **Range**: 0 to ~4500 (whiskers).

- **Distribution**: Narrow and skewed, with most data concentrated near the median.

- **ToT Papers**:

- **Peak Density**: ~1500 (median).

- **Range**: 0 to ~7000 (whiskers).

- **Distribution**: Broader and more variable, with a higher density around the median but extending to the maximum y-axis value.

### Key Observations

1. **Higher CausalCite for ToT Papers**: The median for ToT Papers (~1500) is significantly higher than for Non-ToT Papers (~1000).

2. **Wider Distribution for ToT Papers**: The violin for ToT Papers spans the full y-axis range (0–7000), indicating greater variability in CausalCite scores.

3. **Lower Variability for Non-ToT Papers**: The Non-ToT violin is tightly clustered, suggesting more consistent CausalCite values.

4. **Outlier Potential**: The ToT Papers’ distribution extends to the maximum y-axis value (7000), which may represent extreme cases or outliers.

### Interpretation

The data suggests that "ToT Papers" are associated with higher CausalCite scores on average, potentially indicating greater impact, relevance, or methodological rigor compared to "Non-ToT Papers." The broader distribution of ToT Papers implies variability in their CausalCite values, which could stem from differences in research contexts, methodologies, or citation practices. In contrast, Non-ToT Papers exhibit more uniform CausalCite scores, possibly reflecting a more standardized or less impactful category. The presence of ToT Papers reaching the maximum y-axis value (7000) highlights the existence of high-impact outliers within this group. This visualization underscores the importance of paper type in determining CausalCite, with ToT Papers demonstrating both higher central tendency and greater diversity in their scores.

</details>

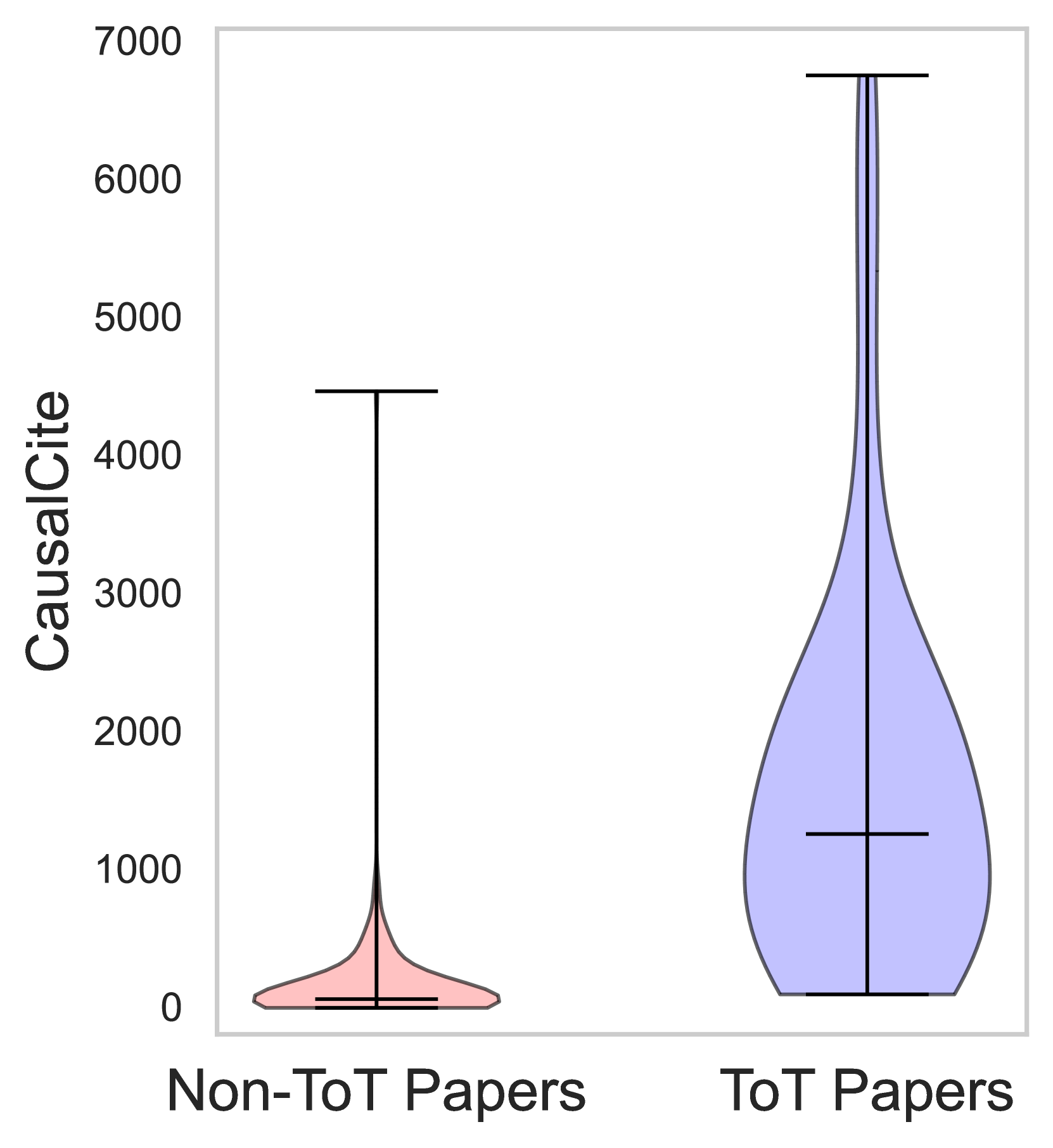

Figure 3: Distributions of ToT (mean: 142) and non-ToT papers (mean: 1,623).

<details>

<summary>x4.png Details</summary>

### Visual Description

## Scatter Plot: CausalCite Values Across Research Categories

### Overview

The image is a scatter plot comparing "CausalCite" values (logarithmic scale) across three research categories:

1. **Random Features for Large-Scale Kernel Machines** (NeurIPS 2017)

2. **BLEU Metric** (NAACL 2018)

3. **ImageNet Dataset** (CVPR 2019)

Data points are color-coded: **Non-ToT** (pink) and **ToT** (blue), with the legend positioned in the top-right corner.

---

### Components/Axes

- **X-Axis**:

- Categories:

1. Random Features for Large-Scale Kernel Machines (NeurIPS 2017)

2. BLEU Metric (NAACL 2018)

3. ImageNet Dataset (CVPR 2019)

- Positioning: Centered labels with parentheses indicating conferences/years.

- **Y-Axis**:

- Label: "CausalCite"

- Scale: Logarithmic (10¹ to 10⁴).

- **Legend**:

- Top-right corner.

- Pink = Non-ToT, Blue = ToT.

---

### Detailed Analysis

#### Non-ToT (Pink) Data Points:

- **Random Features (NeurIPS 2017)**:

- Values: ~10¹.⁵ to ~10².

- Distribution: Clustered tightly between 10¹ and 10².

- **BLEU Metric (NAACL 2018)**:

- Values: ~10¹ to ~10².⁵.

- Distribution: Spread across the lower range, with one outlier at ~10¹.

- **ImageNet Dataset (CVPR 2019)**:

- Values: ~10¹ to ~10³.

- Distribution: Broader spread, peaking near 10³.

#### ToT (Blue) Data Points:

- **Random Features (NeurIPS 2017)**:

- Values: ~10³.⁵.

- Position: Single point near the top of the y-axis.

- **BLEU Metric (NAACL 2018)**:

- Values: ~10³.⁵ to ~10⁴.

- Position: Clustered near the top of the y-axis.

- **ImageNet Dataset (CVPR 2019)**:

- Values: ~10³ to ~10⁴.

- Position: Highest values in the dataset, with one point at ~10⁴.

---

### Key Observations

1. **ToT Dominates CausalCite**:

- ToT values consistently exceed Non-ToT across all categories.

- Largest gap in **ImageNet Dataset** (ToT: ~10⁴ vs. Non-ToT: ~10³).

2. **Category-Specific Trends**:

- **BLEU Metric** (NAACL 2018): Highest ToT value (~10⁴).

- **Random Features** (NeurIPS 2017): Lowest ToT value (~10³.⁵).

3. **Outliers**:

- Non-ToT in BLEU Metric has one outlier at ~10¹.

- ToT in Random Features has a single high-value point (~10³.⁵).

---

### Interpretation

The data suggests that **ToT methods significantly increase citation impact** compared to Non-ToT approaches. This trend is most pronounced in the **ImageNet Dataset** (CVPR 2019), where ToT achieves ~10× higher CausalCite than Non-ToT. The BLEU Metric (NAACL 2018) shows the highest ToT value, potentially due to its application in NLP tasks with high practical relevance. Conversely, the Random Features category (NeurIPS 2017) exhibits lower citation impact, possibly reflecting its theoretical nature. The logarithmic y-axis emphasizes exponential differences, highlighting ToT's scalability in generating impactful research.

</details>

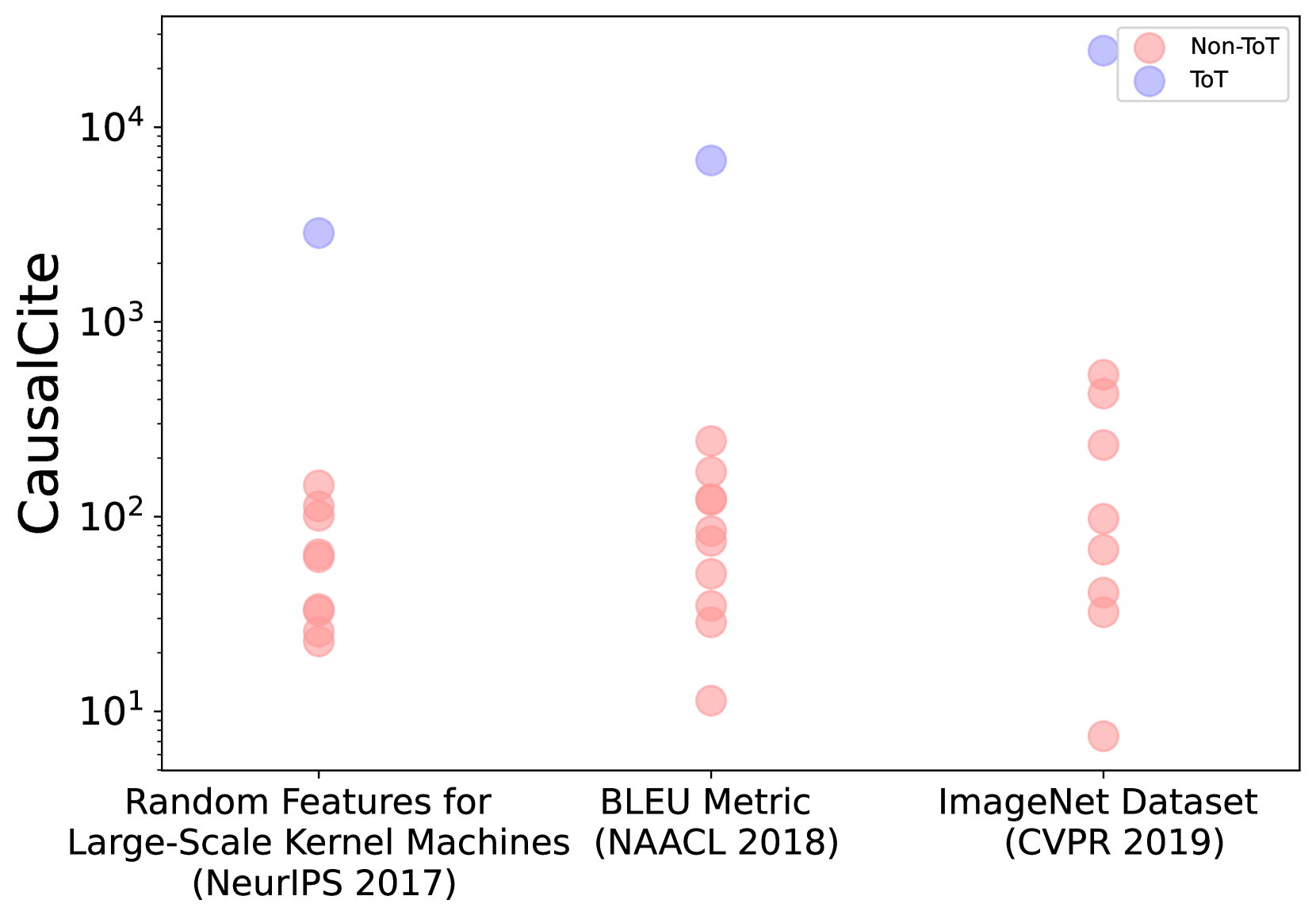

Figure 4: The CausalCite values of three example ToT papers from general AI, NLP, and CV.

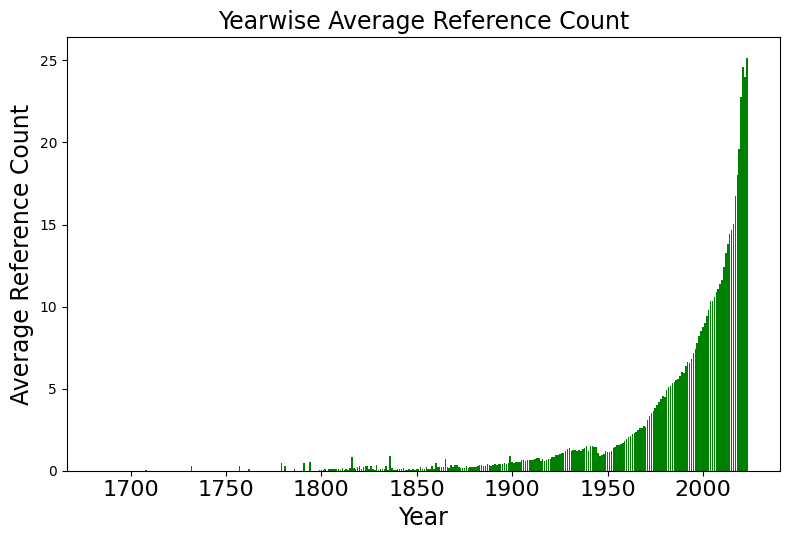

The test-of-time (ToT) paper award is a prestigious honor bestowed upon papers that have made substantial and enduring impacts in their field. In this section, we collect a dataset of $792$ papers, including $72$ ToT papers, and a control group of $10$ randomly selected non-ToT papers from the same conference and year as each ToT paper. To collect this ToT paper dataset, we look into ten leading AI conferences spanning general AI (NeurIPS, ICLR, ICML, and AAAI), NLP (ACL, EMNLP, and NAACL), and CV (CVPR, ECCV, and ICCV), for which we go through each of their websites to identify all available ToT papers. We get this list by selecting the top conferences on Google Scholar using the h5-Index ranking in each of the above domains: general AI (link), CV (link), and NLP (link).

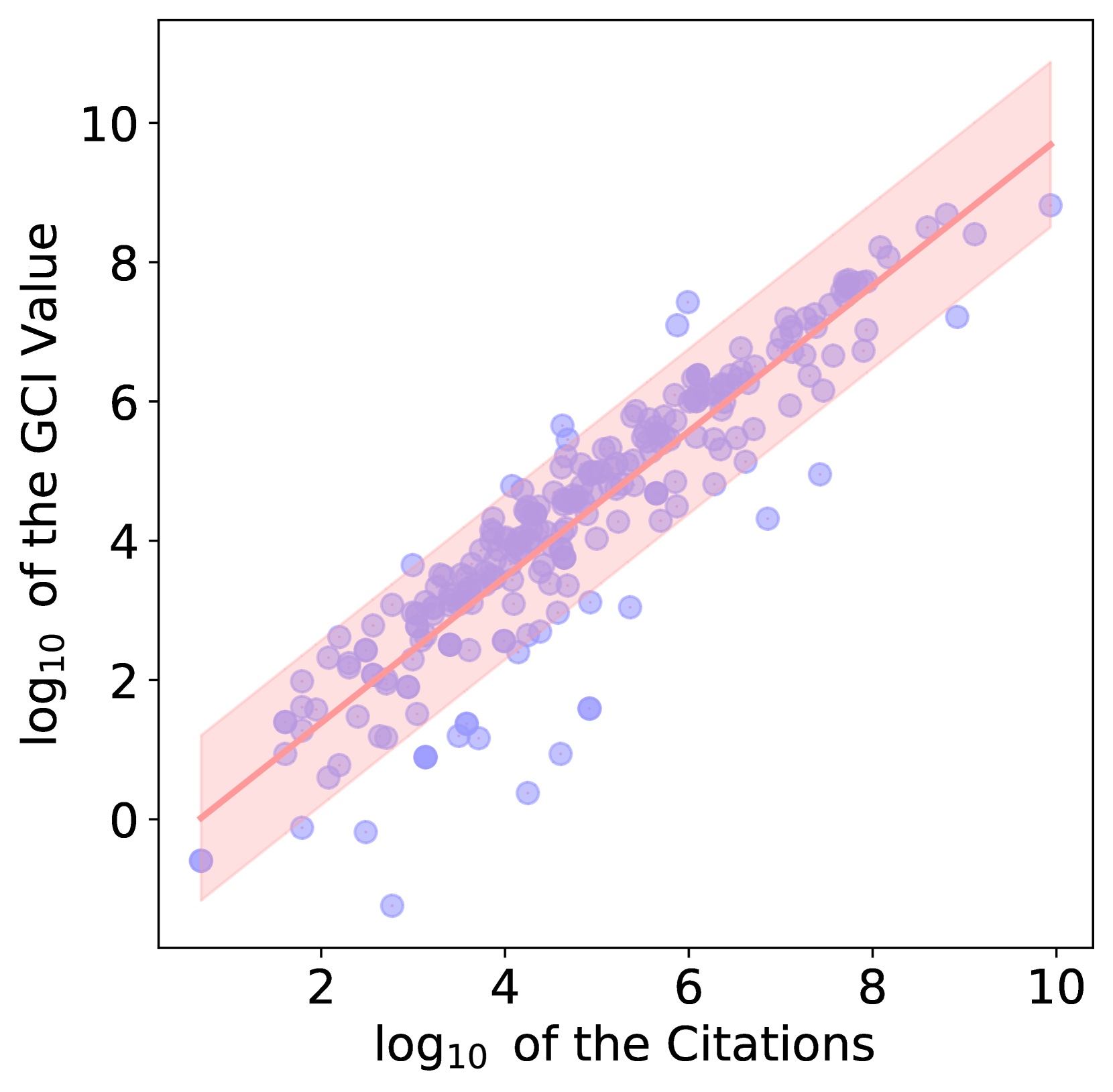

In Table 2, we show the correlations of various metrics with the ToT awards. In this table, CausalCite achieves the highest correlation of 0.639, which is +30.14% better than that of citations. Furthermore, we visualize the correspondence of our metric and ToT, and observe a substantial difference between the CausalCite distributions of ToT vs. non-ToT papers in Figure 4. We also show three examples of ToT papers in Figure 4, where the ToT papers differ from the non-ToT papers by one or two orders of magnitude.

### 4.4 Topic Invariance of CausalCite

| Research Area | ACI | Citations | SSHI |

| --- | --- | --- | --- |

| General AI (n=16) | 0.748 | 2,024 | 267 |

| CV (n=36) | 0.734 | 7,238 | 1,088 |

| NLP (n=20) | 0.763 | 1,785 | 461 |

Table 3: The average of each metric by research area on our collected set of 72 ToT papers.

A well-known issue with citations is their inconsistency across different fields. What might be considered a large number of citations in one field might be seen as average in another. In contrast, we show that our ACI index does not suffer from this issue. We show this using our ToT dataset, where we control for the quality of the papers to be ToT but vary the domain by the three fields: general AI, CV, and NLP. We observe in Table 3 that even though some domains have significantly more citations (for instance, CV ToT papers have, on average, $4.05$ times more citations than NLP), the ACI remains consistent across various fields.

## 5 Findings

Having demonstrated the effectiveness of our metrics, we now explore some open-ended questions: (1) Do best papers have high causal impact? (Section 5.1) (2) How does the CausalCite value distribute across papers? (Section 5.2) (3) What is the impact of some famous papers evaluated by CausalCite? (Section 5.3) (4) Can we use this metric to correct for citations? (Section 5.4).

### 5.1 Do Best Papers Have High Causal Impact?

Selecting best paper awards is an arguably much harder task than ToT papers, as it is difficult to predict of the impact of a paper when it is just newly published. Therefore, we are interested in the actual causal impact of best papers. Similar to our study on ToT papers, we collect a dataset of $444$ papers including $74$ best papers and a control set of random $5$ non-best papers from the same conference in the same year, using the same set of the top ten leading AI conferences. We find that the correlation of the CausalCite metric with best papers is $0.348$ , which is very low compared to the $0.639$ correlation with the ToT papers. This shows that the best papers do not necessarily have a high causal impact. One interpretation can be that the best paper evaluation is a forecasting task, which is much more challenging than the retrospective task of ToT paper selection.

### 5.2 What Is the Nature of the CausalCite Distribution?

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: TCI Distribution Across Percentiles

### Overview

The chart displays a distribution of TCI (Total Composite Index) values across percentiles from 0 to 100. The y-axis represents TCI values (0–700), while the x-axis represents percentiles. A single data series is visualized using blue bars, with a dashed line tracing the trend of the bars.

### Components/Axes

- **X-axis (Percentile)**: Labeled "Percentile," ranging from 0 to 100 in increments of 25. No gridlines or tick marks are visible.

- **Y-axis (TCI)**: Labeled "TCI," scaled from 0 to 700 in increments of 100. No gridlines or tick marks are visible.

- **Data Series**: A single blue bar series representing TCI values. A dashed line overlays the chart, starting at the highest bar and tapering off toward the right.

### Detailed Analysis

- **0 Percentile**: The tallest bar reaches approximately **700 TCI**, with a slight drop to **690 TCI** in the adjacent bar.

- **1–25 Percentiles**: Bars decrease sharply from ~100 TCI at 1 percentile to ~50 TCI at 25 percentile. Values are irregular but follow a general downward trend.

- **25–100 Percentiles**: Bars decline gradually, with values dropping to near-zero by 100 percentile. The dashed line mirrors this trend, starting at ~700 TCI and ending near 0.

### Key Observations

1. **Steep Initial Drop**: TCI decreases dramatically from 700 to ~100 between 0 and 1 percentile.

2. **Gradual Decline**: After 1 percentile, TCI declines steadily but less sharply, reaching ~50 at 25 percentile and near 0 by 100 percentile.

3. **Dashed Line**: The dashed line aligns with the bar trend, suggesting it represents a smoothed or fitted trendline.

### Interpretation

The data indicates that TCI is highest at the lowest percentile (0) and decreases monotonically as percentile increases. The steep drop at the beginning suggests a concentration of high TCI values in the lowest percentile range, followed by a more uniform distribution of lower values. The dashed line reinforces the overall downward trend but does not indicate any anomalies or inflection points. This pattern could imply that TCI is inversely correlated with percentile ranking, possibly reflecting a competitive or hierarchical system where higher percentiles correspond to lower TCI scores.

</details>

Figure 5: The distribution of TCI values by percentile of 100 random papers, which shows a long tail indicating that high impact is concentrated in a relatively small portion of papers.

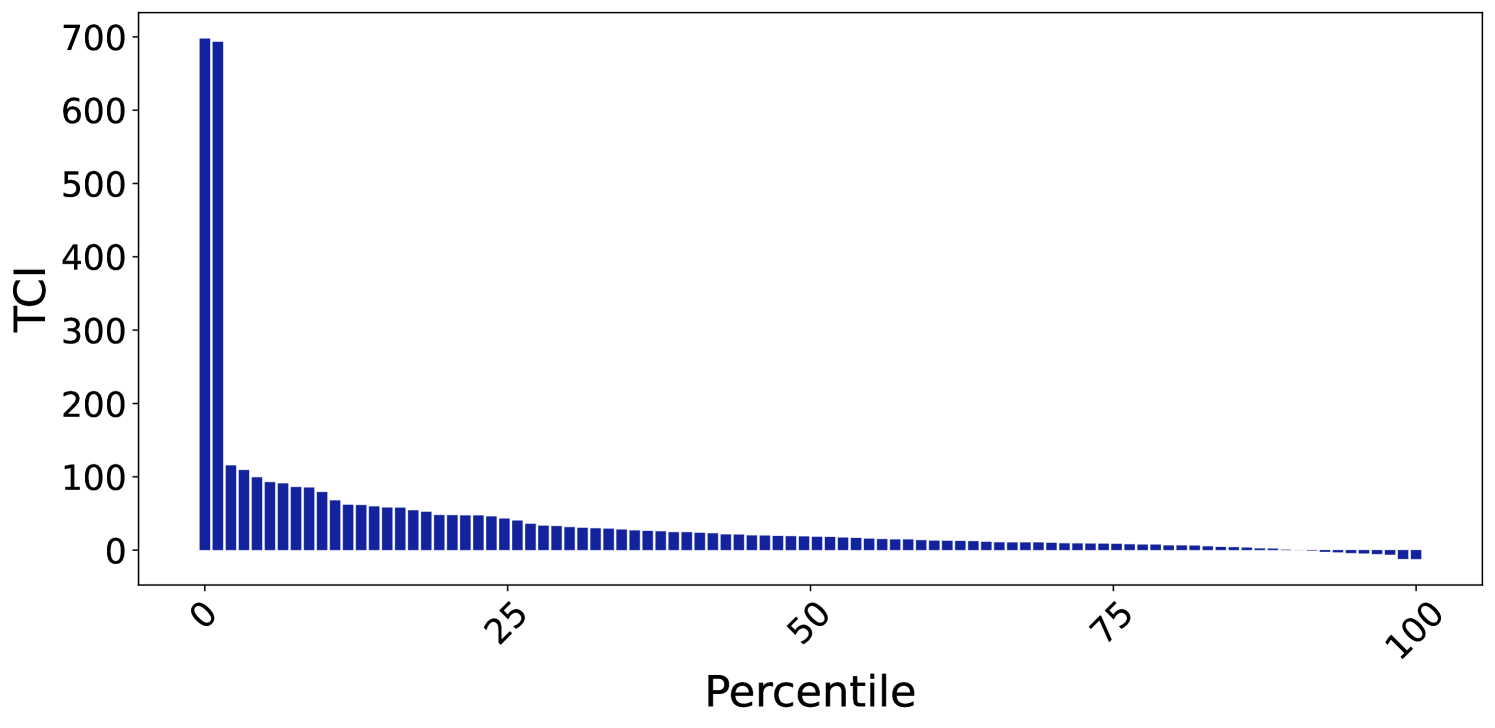

We explore how the CausalCite scores are distributed across papers in general. We plot Figure 5 using a random set of 100 papers from the Semantic Scholar dataset, which is a reasonably large size given the computation budget mentioned in 4.1.0.0.1. From this plot, we can see a power law distribution with a long tail, echoing with the common belief that the paper impact follows the power law, with high impact concentrated in a relatively small portion of papers.

### 5.3 Selected Paper Case Study

| Paper Name | TCI | Citations | ACI |

| --- | --- | --- | --- |

| Transformers | 52,507 | 68,064 | 0.771 |

| BERT | 40,675 | 59,486 | 0.683 |

| RoBERTa | 6,932 | 14,434 | 0.480 |

Table 4: Case study of some selected NLP papers.

In addition to the shape of the overall distribution, we also look at our metric’s correspondence to some selected papers shown in Table 4. For example, we know that the Transformer paper (Vaswani et al., 2017) is a more foundational work than its follow-up work BERT (Devlin et al., 2019), and BERT is more foundational than its later variant, RoBERTa (Liu et al., 2019). This monotonic trend is confirmed in their TCI and ACI values too. Again, this is a preliminary case study, and we welcome future work to cover more papers.

### 5.4 Discovering Quality Papers beyond Citations

Another important contribution of our metric is that it can help discover papers that are traditionally overlooked by citations. To achieve the discovery, we formulate the problem as outlier detection, where we first use a linear projection to handle the trivial alignment of citations and CausalCite, and then analyze the outliers using the interquartile range (IQR) method (Smiti, 2020). See the exact calculation in Section C.1. We show the three subsets of papers in Table 5, where the two outlier categories, the overcited and undercited papers, correspond to the false positive and false negative oversight by citations, respectively. An additional note is that, when we look into some characteristics of the three categories, we find that the citation frequency in result section, i.e., the percentage of times they are cited in results section compared to all the citations, correlates with these categories. Specifically, we find that the undercited papers tend to have more of their citations concentrated in the results section, which usually indicates that this paper constitutes an important baseline for a follow-up study, while the overcited papers tend to be cited out of the results section, which tends to imply a less significant citation.

| Paper Category | Result Citations | Residual |

| --- | --- | --- |

| Overcited Papers (7.04%) | 1.26 | -1.792 |

| Aligned Papers (91.20%) | 1.51 | 0.118 |

| Undercited Papers (1.76%) | 1.90 | 1.047 |

Table 5: We use our CausalCite metric to discover outlier papers that are overlooked by citations. For each paper category, we include their portion relative to the entire population, the percentage of citations occurred in the result section (Result Citations), and average residual value by linear regression.

## 6 Related Work

The quantification of scientific impact has a rich history and continuously evolves with technology. Bibliometric analysis has been largely influenced by early methods that relied on citation counts (Garfield et al., 1964; Garfield, 1972, 1964). Hou (2017) investigate the evolution of citation analysis, employing reference publication year spectroscopy (RPYS) to trace its historical development in scientometrics. Donthu et al. (2021) provide practical guidelines for conducting bibliometric analysis, focusing on robust methodologies to analyze scientific data and identify emerging research trends.

Indices such as the h-index, introduced by Hirsch (2005), are established tools for measuring research impact. The more recent Relative Citation Ratio (RCR), developed by Hutchins et al. (2016), provides a field-normalized alternative to traditional metrics. Valenzuela-Escarcega et al. (2015) introduced SSHI, an approach to identify meaningful citations in scholarly literature. However, these metrics are not without limitations. As Wróblewska (2021) discussed, conventional citation-based metrics often fail to capture the multidimensional nature of research impact. In this context, Elmore (2018) discussed the Altmetric Attention Score, which evaluates the broader societal and online impact of research.

With the increasing availability of large datasets and the advent of digital technologies, new opportunities for bibliometric analysis have emerged. Iqbal et al. (2021) highlighted the role of NLP and machine learning in enhancing in-text citation analysis. Similarly, Umer et al. (2021) explored the use of textual features and SMOTE resampling techniques in scientific paper citation analysis. Jebari et al. (2021) analyzed citation context to detect research topic evolution, showcasing data analysis for scientific discourse. Chang et al. (2023) explored augmenting citations in scientific papers with historical context, offering a novel perspective on citation analysis. Manghi et al. (2021) introduced scientific knowledge graphs, an innovative method for evaluating research impact. Bittmann et al. (2021) explored statistical matching in bibliometrics, discussing its utility and challenges in post-matching analysis. The use of AI in bibliometric analysis is highlighted in research by Chubb et al. (2022) and the systematic review of AI in information systems by Collins et al. (2021). Network analysis approaches, as discussed by Chakraborty et al. (2020) in the context of patent citations and by Dawson et al. (2014) in learning analytics, further illustrate the diverse applications of advanced methodologies in understanding citation patterns.

## 7 Conclusion

In this study, we propose CausalCite, a novel causal formulation for paper citations. Our method combines traditional causal inference methods with the recent advancement of NLP in LLMs to provide a new causal outlook on paper impact by answering the causal question: ”Had this paper never been published, what would be the impact on this paper’s current follow-up studies?”. With extensive experiments and analyses using expert ratings and test-of-time papers as criteria for impact, our new CausalCite metric demonstrates clear improvements over the traditional citation metrics. Finally, we use this metric to investigate several open-ended questions like “Do best papers have high causal impact?”, conduct a case study of famous papers, and suggest future usage of our metric for discovering good papers less recognized by citations for the scientific community.

## Limitations and Future Work

There are several limitations for our work. For example, as mentioned previously, our metric has a high computational budget. Future work can explore more efficient optimization methods. Also, we model the content of the paper by its title and abstract, it could also be possible for future work to benefit from modeling the full text, given appropriate license permissions.

As for another limitation, our study is based on data provided by the Semantic Scholar corpus. This corpora has certain properties such as being more comprehensive with computer science papers, but less so in other disciplines. Its citation data also has a delay compared to Google Scholar, so for the newest papers, the citation score may not be accurate, making it more difficult to calculate our metric.

Additionally, our study provides a general framework for causal inference given a causal graph that involves text. It is totally possible that for a more fine-grained problem, the causal graph will change, in which case, we undersuggest future researchers to derive the new backdoor adjustment set, and then adjust the algorithm accordingly. An example of such a variable could be the author information, which might also be a confounder.

Finally, since quality evaluation of a paper is a multi-faceted task, theoretically, a single number can never give more than a rough approximation, because it collapses multiple dimensions into one and loses information. Our argument in this paper is just to show that our formulation is theoretically more accurate than the citation formulation. We take one step further, instead of solving the quality evaluation problem which is much more nuanced. Some intrinsic problems in citations that we can also not solve (because our metrics still rely on using citations, just contrasting them in the right away) include (1) if a paper is newly published, with zero citations, there is no way to obtain a positive causal index, and (2) we do not solve the fair attribution problem when multiple authors share credit of a paper, as our metric is not sensitive towards authors.

## Ethical Considerations

Data Collection and Privacy The data used in this work are all from Open Source Semantic Scholar data, with no user privacy concerns. The potential use of this work is for finding papers that are unique and innovative but do not get enough citations due to loack of popularity or awareness of the field. This metric can act as an aid when deciding impact of papers, but we do not suggest its usage without expert involvement. Through this work, we are not trying to demean or criticize anyone’s work we only intend to find more papers that have made a valuable contribution to the field.

CS-Centric Perspective The authors of this paper work in Computer Science (mostly Machine Learning) hence a lot of analysis done on the quality of papers that required sanity checks are done on ML papers. The conferences selected for doing the ToT evaluation were also CS Top conferences, hence they might have induced some biases. The metric in general has been created generically and should be applicable to other domains as well, the Author Identified Most Influential Papers study is also done on a generalized dataset, but we encourage readers in other disciplines to try out the metric on papers from their field.

## Author Contributions

This project originates as part of the AI Scholar series of projects that Zhijing Jin started since 2021, as she identified that causal inference over papers is a valuable research setting with sufficient data and rich causal phenomena. Bernhard Schölkopf came up with the formulation that the action of citation itself has a causal nature, and can thus be formulated as a causal inference question. Zhijing, Bernhard, and Siyuan Guo settled down the overall project design.

After the initial idea formulation, Ishan Kumar and Zhijing Jin operationalized the entire project, with vast efforts in identifying the data source; improving the theoretical formulation (together with Ehsan Mokhtarian, and Bernhard); speeding up the code efficiency; designing the evaluation and analysis protocols (with the insightful supervision from Mrinmaya Sachan and Bernhard, and suggestions from Siyuan); and implementing all the evaluations (with the help of Yuen Chen). In the writing stage, Mrinmaya gave substantial guidance to structure the storyline of the paper, and Zhijing, Ehsan, Ishan, and Mrinmaya contributed significantly to the writing, with various help and suggestions from all the other authors.

## Acknowledgment

During the idea formulation stage, we are grateful for the research discussions with Kun Zhang on the vision of the AI Scholar series of projects. During the implementation of our paper, we thank Zhiheng Lyu for his suggestions on efficient computer algorithm over massive graphs and large data. We also thank labmates from Max Planck Institute for constructive feedback and help on data annotation. We thank Vincent Berenz, Felix Leeb, and Luigi Gresele for their generous support with computation resources.

This material is based in part upon works supported by the German Federal Ministry of Education and Research (BMBF): Tübingen AI Center, FKZ: 01IS18039B; by the Machine Learning Cluster of Excellence, EXC number 2064/1 – Project number 390727645; by the John Templeton Foundation (grant #61156); by a Responsible AI grant by the Haslerstiftung; and an ETH Grant (ETH-19 21-1). Zhijing Jin is supported by PhD fellowships from the Future of Life Institute and Open Philanthropy, as well as the travel support from ELISE (GA no 951847) for the ELLIS program.

## References

- Abadie et al. (2010) Alberto Abadie, Alexis Diamond, and Jens Hainmueller. 2010. Synthetic control methods for comparative case studies: Estimating the effect of california’s tobacco control program. Journal of the American statistical Association, 105(490):493–505.

- Abadie and Gardeazabal (2003) Alberto Abadie and Javier Gardeazabal. 2003. The economic costs of conflict: A case study of the basque country. American economic review, 93(1):113–132.

- Beltagy et al. (2019) Iz Beltagy, Kyle Lo, and Arman Cohan. 2019. SciBERT: A pretrained language model for scientific text. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pages 3615–3620, Hong Kong, China. Association for Computational Linguistics.

- Bittmann et al. (2021) Felix Bittmann, Alexander Tekles, and Lutz Bornmann. 2021. Applied usage and performance of statistical matching in bibliometrics: The comparison of milestone and regular papers with multiple measurements of disruptiveness as an empirical example. Quantitative Science Studies, 2(4):1246–1270.

- Brown et al. (2020) Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, T. J. Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeff Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. 2020. Language models are few-shot learners. ArXiv, abs/2005.14165.

- Carlsson (2009) Håkan Carlsson. 2009. Allocation of research funds using bibliometric indicators–asset and challenge to swedish higher education sector.

- Chakraborty et al. (2020) Manajit Chakraborty, Maksym Byshkin, and Fabio Crestani. 2020. Patent citation network analysis: A perspective from descriptive statistics and ergms. Plos one, 15(12):e0241797.

- Chandrasekaran and Mago (2022) Dhivya Chandrasekaran and Vijay Mago. 2022. Evolution of semantic similarity - A survey. ACM Comput. Surv., 54(2):41:1–41:37.

- Chang et al. (2023) Joseph Chee Chang, Amy X Zhang, Jonathan Bragg, Andrew Head, Kyle Lo, Doug Downey, and Daniel S Weld. 2023. Citesee: Augmenting citations in scientific papers with persistent and personalized historical context. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, pages 1–15.

- Chowdhery et al. (2022) Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, Charles Sutton, Sebastian Gehrmann, Parker Schuh, Kensen Shi, Sasha Tsvyashchenko, Joshua Maynez, Abhishek Rao, Parker Barnes, Yi Tay, Noam M. Shazeer, Vinodkumar Prabhakaran, Emily Reif, Nan Du, Benton C. Hutchinson, Reiner Pope, James Bradbury, Jacob Austin, Michael Isard, Guy Gur-Ari, Pengcheng Yin, Toju Duke, Anselm Levskaya, Sanjay Ghemawat, Sunipa Dev, Henryk Michalewski, Xavier García, Vedant Misra, Kevin Robinson, Liam Fedus, Denny Zhou, Daphne Ippolito, David Luan, Hyeontaek Lim, Barret Zoph, Alexander Spiridonov, Ryan Sepassi, David Dohan, Shivani Agrawal, Mark Omernick, Andrew M. Dai, Thanumalayan Sankaranarayana Pillai, Marie Pellat, Aitor Lewkowycz, Erica Moreira, Rewon Child, Oleksandr Polozov, Katherine Lee, Zongwei Zhou, Xuezhi Wang, Brennan Saeta, Mark Díaz, Orhan Firat, Michele Catasta, Jason Wei, Kathleen S. Meier-Hellstern, Douglas Eck, Jeff Dean, Slav Petrov, and Noah Fiedel. 2022. Palm: Scaling language modeling with pathways. J. Mach. Learn. Res., 24:240:1–240:113.

- Chubb et al. (2022) Jennifer Chubb, Peter Cowling, and Darren Reed. 2022. Speeding up to keep up: exploring the use of ai in the research process. AI & society, 37(4):1439–1457.

- Cohan et al. (2020) Arman Cohan, Sergey Feldman, Iz Beltagy, Doug Downey, and Daniel S. Weld. 2020. SPECTER: Document-level Representation Learning using Citation-informed Transformers. In ACL.

- Collins et al. (2021) Christopher Collins, Denis Dennehy, Kieran Conboy, and Patrick Mikalef. 2021. Artificial intelligence in information systems research: A systematic literature review and research agenda. International Journal of Information Management, 60:102383.

- Cortes and Lawrence (2021) Corinna Cortes and Neil D. Lawrence. 2021. Inconsistency in conference peer review: Revisiting the 2014 neurips experiment. CoRR, abs/2109.09774.

- Courant et al. (1952) Ernest D. Courant, Milton Stanley Livingston, and Hartland S. Snyder. 1952. The strong-focusing synchrotron-a new high energy accelerator. Physical Review, 88:1190–1196.

- Dawson et al. (2014) Shane Dawson, Dragan Gašević, George Siemens, and Srecko Joksimovic. 2014. Current state and future trends: A citation network analysis of the learning analytics field. In Proceedings of the fourth international conference on learning analytics and knowledge, pages 231–240.

- Deng et al. (2009) Jia Deng, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. 2009. ImageNet: A large-scale hierarchical image database. In Computer Vision and Pattern Recognition (CVPR), pages 248–255.

- Devlin et al. (2019) Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. 2019. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 4171–4186, Minneapolis, Minnesota. Association for Computational Linguistics.

- Donthu et al. (2021) Naveen Donthu, Satish Kumar, Debmalya Mukherjee, Nitesh Pandey, and Weng Marc Lim. 2021. How to conduct a bibliometric analysis: An overview and guidelines. Journal of business research, 133:285–296.

- Elmore (2018) Susan A Elmore. 2018. The altmetric attention score: what does it mean and why should i care?

- Fang and Zhan (2015) Xing Fang and Justin Zhijun Zhan. 2015. Sentiment analysis using product review data. Journal of Big Data, 2:1–14.

- Garfield (1964) Eugene Garfield. 1964. " science citation index"—a new dimension in indexing: This unique approach underlies versatile bibliographic systems for communicating and evaluating information. Science, 144(3619):649–654.

- Garfield (1972) Eugene Garfield. 1972. Citation analysis as a tool in journal evaluation: Journals can be ranked by frequency and impact of citations for science policy studies. Science, 178(4060):471–479.

- Garfield et al. (1964) Eugene Garfield, Irving H Sher, Richard J Torpie, et al. 1964. The use of citation data in writing the history of science.

- Gary Holden and Barker (2005) Gary Rosenberg Gary Holden and Kathleen Barker. 2005. Bibliometrics. Social Work in Health Care, 41(3-4):67–92.

- Gomez et al. (2022) Charles J Gomez, Andrew C Herman, and Paolo Parigi. 2022. Leading countries in global science increasingly receive more citations than other countries doing similar research. Nature Human Behaviour, 6(7):919–929.

- Hernán and Robins (2010) Miguel A Hernán and James M Robins. 2010. Causal inference.

- Hirsch (2005) Jorge E Hirsch. 2005. An index to quantify an individual’s scientific research output. Proceedings of the National academy of Sciences, 102(46):16569–16572.

- Hou (2017) Jianhua Hou. 2017. Exploration into the evolution and historical roots of citation analysis by referenced publication year spectroscopy. Scientometrics, 110:1437–1452.

- Hutchins et al. (2016) B Ian Hutchins, Xin Yuan, James M Anderson, and George M Santangelo. 2016. Relative citation ratio (rcr): a new metric that uses citation rates to measure influence at the article level. PLoS biology, 14(9):e1002541.

- Iqbal et al. (2021) Sehrish Iqbal, Saeed-Ul Hassan, Naif Radi Aljohani, Salem Alelyani, Raheel Nawaz, and Lutz Bornmann. 2021. A decade of in-text citation analysis based on natural language processing and machine learning techniques: An overview of empirical studies. Scientometrics, 126(8):6551–6599.

- Jebari et al. (2021) Chaker Jebari, Enrique Herrera-Viedma, and Manuel Jesus Cobo. 2021. The use of citation context to detect the evolution of research topics: a large-scale analysis. Scientometrics, 126(4):2971–2989.

- Kinney et al. (2023) Rodney Kinney, Chloe Anastasiades, Russell Authur, Iz Beltagy, Jonathan Bragg, Alexandra Buraczynski, Isabel Cachola, Stefan Candra, Yoganand Chandrasekhar, Arman Cohan, Miles Crawford, Doug Downey, Jason Dunkelberger, Oren Etzioni, Rob Evans, Sergey Feldman, Joseph Gorney, David Graham, Fangzhou Hu, Regan Huff, Daniel King, Sebastian Kohlmeier, Bailey Kuehl, Michael Langan, Daniel Lin, Haokun Liu, Kyle Lo, Jaron Lochner, Kelsey MacMillan, Tyler Murray, Chris Newell, Smita Rao, Shaurya Rohatgi, Paul Sayre, Zejiang Shen, Amanpreet Singh, Luca Soldaini, Shivashankar Subramanian, Amber Tanaka, Alex D. Wade, Linda Wagner, Lucy Lu Wang, Chris Wilhelm, Caroline Wu, Jiangjiang Yang, Angele Zamarron, Madeleine van Zuylen, and Daniel S. Weld. 2023. The semantic scholar open data platform. CoRR, abs/2301.10140.

- Kloek and van Dijk (1976) Teun Kloek and Herman K. van Dijk. 1976. Bayesian estimates of equation system parameters, an application of integration by monte carlo. Econometrica, 46:1–19.

- Liu et al. (2019) Yinhan Liu, Myle Ott, Naman Goyal, Jingfei Du, Mandar Joshi, Danqi Chen, Omer Levy, Mike Lewis, Luke Zettlemoyer, and Veselin Stoyanov. 2019. RoBERTa: A robustly optimized BERT pretraining approach. arXiv preprint arXiv:1907.11692.

- Lo et al. (2020) Kyle Lo, Lucy Lu Wang, Mark Neumann, Rodney Kinney, and Daniel Weld. 2020. S2ORC: The semantic scholar open research corpus. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 4969–4983, Online. Association for Computational Linguistics.

- Majumder et al. (2016) Goutam Majumder, Partha Pakray, Alexander Gelbukh, and David Pinto. 2016. Semantic textual similarity methods, tools, and applications: A survey. Computación y Sistemas, 20.

- Manghi et al. (2021) Paolo Manghi, Andrea Mannocci, Francesco Osborne, Dimitris Sacharidis, Angelo Salatino, and Thanasis Vergoulis. 2021. New trends in scientific knowledge graphs and research impact assessment.

- Manning et al. (2008) Christopher D. Manning, Prabhakar Raghavan, and Hinrich Schütze. 2008. Introduction to information retrieval. In J. Assoc. Inf. Sci. Technol.

- McGue et al. (2010) Matt McGue, Merete Osler, and Kaare Christensen. 2010. Causal inference and observational research: The utility of twins. Perspectives on psychological science, 5(5):546–556.

- Moed (2006) Henk F Moed. 2006. Citation analysis in research evaluation, volume 9. Springer Science & Business Media.

- Pearl (2009) Judea Pearl. 2009. Causality. Cambridge University Press.

- Piro and Sivertsen (2016) Fredrik Niclas Piro and Gunnar Sivertsen. 2016. How can differences in international university rankings be explained? Scientometrics, 109(3):2263–2278.

- Prechelt et al. (2018) Lutz Prechelt, Daniel Graziotin, and Daniel Méndez Fernández. 2018. A community’s perspective on the status and future of peer review in software engineering. Information and Software Technology, 95:75–85.

- Radford and Narasimhan (2018) Alec Radford and Karthik Narasimhan. 2018. Improving language understanding by generative pre-training.

- Radford et al. (2019) Alec Radford, Jeff Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever. 2019. Language models are unsupervised multitask learners.

- Reimers and Gurevych (2019) Nils Reimers and Iryna Gurevych. 2019. Sentence-bert: Sentence embeddings using siamese bert-networks. In Conference on Empirical Methods in Natural Language Processing.

- Resnik et al. (2008) David B Resnik, Christina Gutierrez-Ford, and Shyamal Peddada. 2008. Perceptions of ethical problems with scientific journal peer review: an exploratory study. Science and engineering ethics, 14(3):305–310.

- Robertson and Zaragoza (2009) Stephen E. Robertson and Hugo Zaragoza. 2009. The probabilistic relevance framework: Bm25 and beyond. Found. Trends Inf. Retr., 3:333–389.

- Rogers et al. (2023) Anna Rogers, Marzena Karpinska, Jordan Boyd-Graber, and Naoaki Okazaki. 2023. Program chairs’ report on peer review at acl 2023. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages xl–lxxv, Toronto, Canada. Association for Computational Linguistics.

- Rombach et al. (2021) Robin Rombach, A. Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. 2021. High-resolution image synthesis with latent diffusion models. 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 10674–10685.

- Rosenbaum and Rubin (1983) Paul R Rosenbaum and Donald B Rubin. 1983. The central role of the propensity score in observational studies for causal effects. Biometrika, 70(1):41–55.

- Rungta et al. (2022) Mukund Rungta, Janvijay Singh, Saif M. Mohammad, and Diyi Yang. 2022. Geographic citation gaps in NLP research. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, pages 1371–1383, Abu Dhabi, United Arab Emirates. Association for Computational Linguistics.

- Sato et al. (2022) Ryoma Sato, Makoto Yamada, and Hisashi Kashima. 2022. Twin papers: A simple framework of causal inference for citations via coupling. In Proceedings of the 31st ACM International Conference on Information & Knowledge Management, Atlanta, GA, USA, October 17-21, 2022, pages 4444–4448. ACM.

- Shah (2022) Nihar B Shah. 2022. An overview of challenges, experiments, and computational solutions in peer review. Communications of the ACM, 65(6):76–87.

- Singh (2014) Surya Nath Singh. 2014. Sampling techniques & determination of sample size in applied statistics research : an overview.

- Smiti (2020) Abir Smiti. 2020. A critical overview of outlier detection methods. Computer Science Review, 38:100306.

- Song et al. (2020) Kaitao Song, Xu Tan, Tao Qin, Jianfeng Lu, and Tie-Yan Liu. 2020. Mpnet: Masked and permuted pre-training for language understanding. arXiv preprint arXiv:2004.09297.

- Tate (1954) Robert F. Tate. 1954. Correlation between a discrete and a continuous variable. point-biserial correlation. Annals of Mathematical Statistics, 25:603–607.

- Umer et al. (2021) Muhammad Umer, Saima Sadiq, Malik Muhammad Saad Missen, Zahid Hameed, Zahid Aslam, Muhammad Abubakar Siddique, and Michele Nappi. 2021. Scientific papers citation analysis using textual features and smote resampling techniques. Pattern Recognition Letters, 150:250–257.

- Valenzuela et al. (2015) Marco Valenzuela, Vu Ha, and Oren Etzioni. 2015. Identifying meaningful citations. In Scholarly Big Data: AI Perspectives, Challenges, and Ideas, Papers from the 2015 AAAI Workshop, Austin, Texas, USA, January, 2015, volume WS-15-13 of AAAI Technical Report. AAAI Press.

- Valenzuela-Escarcega et al. (2015) Marco Antonio Valenzuela-Escarcega, Vu A. Ha, and Oren Etzioni. 2015. Identifying meaningful citations. In AAAI Workshop: Scholarly Big Data.