# Active Inference and Intentional Behaviour

**Authors**: Karl J. Friston, Tommaso Salvatori, Takuya Isomura, Alexander Tschantz, Alex Kiefer, Tim Verbelen, Magnus Koudahl, Aswin Paul, Thomas Parr, Adeel Razi, Brett Kagan, Christopher L. Buckley, Maxwell J. D. Ramstead

> Wellcome Trust Centre for Neuroimaging, Institute of Neurology, University College London, UK.VERSES AI Research Lab, Los Angeles, California, 90016, USA

> VERSES AI Research Lab, Los Angeles, California, 90016, USA

> Brain Intelligence Theory Unit, RIKEN Center for Brain Science, Wako, Saitama, Japan

> VERSES AI Research Lab, Los Angeles, California, 90016, USANuffield Department of Clinical Neurosciences, University of Oxford, UK

> VERSES AI Research Lab, Los Angeles, California, 90016, USATurner Institute for Brain and Mental Health, School of Psychological Sciences, Monash University, Clayton, AustraliaIITB-Monash Research Academy, Mumbai-76, India

> Nuffield Department of Clinical Neurosciences, University of Oxford, UK

> Turner Institute for Brain and Mental Health, School of Psychological Sciences, Monash University, Clayton, AustraliaMonash Biomedical Imaging, Monash University, Clayton, AustraliaCIFAR Azrieli Global Scholars Program, Toronto, Canada

> Cortical Labs, Melbourne, Australia

Abstract

Recent advances in theoretical biology suggest that basal cognition and sentient behaviour are emergent properties of in vitro cell cultures and neuronal networks, respectively. Such neuronal networks spontaneously learn structured behaviours in the absence of reward or reinforcement. In this paper, we characterise this kind of self-organisation through the lens of the free energy principle, i.e., as self-evidencing. We do this by first discussing the definitions of reactive and sentient behaviour in the setting of active inference, which describes the behaviour of agents that model the consequences of their actions. We then introduce a formal account of intentional behaviour, that describes agents as driven by a preferred endpoint or goal in latent state-spaces. We then investigate these forms of (reactive, sentient, and intentional) behaviour using simulations. First, we simulate the aforementioned in vitro experiments, in which neuronal cultures spontaneously learn to play Pong, by implementing nested, free energy minimising processes. The simulations are then used to deconstruct the ensuing predictive behaviour—leading to the distinction between merely reactive, sentient, and intentional behaviour, with the latter formalised in terms of inductive planning. This distinction is further studied using simple machine learning benchmarks (navigation in a grid world and the Tower of Hanoi problem), that show how quickly and efficiently adaptive behaviour emerges under an inductive form of active inference.

Keywords: active inference; active learning; backwards induction;planning as inference; free energy principle.

1 Introduction

In 2022, a paper was published that claimed to demonstrate sentient behaviour in a neuronal culture grown in a dish (an in vitro neuronal network) [1]. The behaviour in question was the spontaneous emergence of controlled movements of a paddle to hit a ball—and thereby play Pong. This study has several sources of inspiration that speak to the notion of basal cognition; see, e.g., [2, 3, 4] (and related work, e.g. [5]). In particular, the hypothesis that adaptive and predictive behaviour would emerge spontaneously was based on earlier work showing that in vitro neuronal cultures could be described as minimising variational free energy [6] and thereby evince active inference and learning. This application of the free energy principle (FEP) to neuronal cultures was subsequently validated empirically [7]: in the sense that changes in neuronal activity and synaptic efficacy—that underwrite learning—could be predicted quantitatively, as a variational free energy minimising process. So, are these findings remarkable, or were they predictable?

In one sense, these results were entirely predictable. Indeed, they were predictable from the FEP, which states that any two networks—that are coupled in a certain sparse fashion—will come to manifest a generalised synchrony [8, 9]. More formally, the FEP states that if the probability density that underwrites the dynamics of coupled random dynamical systems contains a Markov blanket—which shields internal states from external states, given blanket (sensory and active) states—then internal states will look as if they track the statistics of external states—or more precisely, as if they encode the parameters of a variational density (or best guess about) external states beyond the blanket. Empirically, this synchronisation was observed when the neuronal cultures learned to play Pong. However, the FEP goes further and says that the internal and active states (together, autonomous states) of either network can be described as minimising a variational free energy functional. This functional is exactly the same used to optimise generative models in statistics and machine learning [10]. On this reading, one can interpret the autonomous states—of a network, particle or person—as minimising variational free energy or surprise (a.k.a., self-information) or, equivalently, maximising Bayesian model evidence (a.k.a., the marginal likelihood of sensory states). This leads to an implicit teleology, in the sense that one can describe self-organisation in terms of self-evidencing [11] that entails active inference and learning, planning, purpose, intentions and, perhaps, sentience. The underlying free energy minimising processes—and their teleological interpretation—are the focus of this paper.

The results reported in [1] were considered by some to be unremarkable for a different reason: learning to play (Atari) games like Pong was something that had been accomplished with machine learning systems years earlier using neural networks and (deep) reinforcement learning [12, 13]. So, what is remarkable about a neuronal network reproducing the same kind of behaviour? It is remarkable because one cannot use the reinforcement learning (RL) paradigm to explain the emergence of self-evidencing behaviour seen in vitro. This follows from the fact that one cannot reward a neuronal network—because no one knows what any given in vitro neuronal network finds rewarding. However, the FEP theorist knows exactly what a self-evidencing network finds aversive; namely, surprise and unpredictability. This was a rationale for delivering unpredictable noise to the sensory electrodes of the cell culture (or restarting the game in an unpredictable way), whenever the neuronal network failed to hit the ball [1].

Some found the results reported in [1] remarkable, but not in a good way: they disagreed with the claim that the behaviour could be described as ‘sentient’ [14]. Here, we hope to make sense of the notion of sentient behaviour in terms of Bayesian belief updating; where ‘sentient behaviour’ denotes the capacity to generate appropriate responses to sensory perturbations (as opposed to merely reactive behaviour). We pursue the narrative established by the cell culture experiments above to illustrate why Pong-playing behaviour was considered sentient, as opposed to reactive. In brief, we consider a bright line between actions based upon the predictions of a generative model that does, and does not, entail the consequences of action.

Specifically, this paper differentiates between three kinds of behaviour: reactive, sentient, and intentional. The first two have formulations that have been extensively studied in the literature, under the frameworks of model-free reinforcement learning (RL) and active inference, respectively. In model-free RL, the system selects actions using either a lookup table (Q-learning), or a neural network (deep Q-learning). In standard active inference, the action selection depends on the expected free energy of policies (Equation 2), where the expectation is over observations in the future that become random variables. This means that preferred outcomes—that subtend expected cost and risk—are prior beliefs that constrain the implicit planning as inference [15, 16, 17]. Things that evince this kind of behaviour can hence be described as planning their actions, based upon a generative model of the consequences of those actions [15, 16, 18]. It was this sense in which the behaviour of the cell cultures was considered sentient.

This form of sentient behaviour —described in terms of Bayesian mechanics [19, 20, 21] —can be augmented with intended endpoints or goals. This leads to a novel kind of sentient behaviour that not only predicts the consequences of its actions, but is also able to select them to reach a goal state that may be many steps in the future. This kind of behaviour, that we call intentional behaviour, generally requires some form of backwards induction [22, 23] of the kind found in dynamic programming [24, 25, 26, 27]: this is, starting from the intended goal state, and working backwards, inductively, to the current state of affairs, in order to plan moves to that goal state. Backwards induction was applied to the partially observable setting and explored in the context of active inference in [27]. In that work, dynamic programming was shown to be more efficient than traditional planning methods in active inference.

The focus of this work is to formally define a framework for intentional behaviour, where the agent minimises a constrained form of expected free energy—and to demonstrate this framework in silico. These constraints are defined on a subset of latent states that represent the intended goals of the agent, and propagated to the agent via a form of backward induction. As a result, states that do not allow the agent to make any ‘progress’ towards one of the intended goals are penalised, and so are actions that lead to such disfavoured states. This leads to a distinction between sentient and intentional behaviour, were intentional behaviour is equipped with inductive constraints.

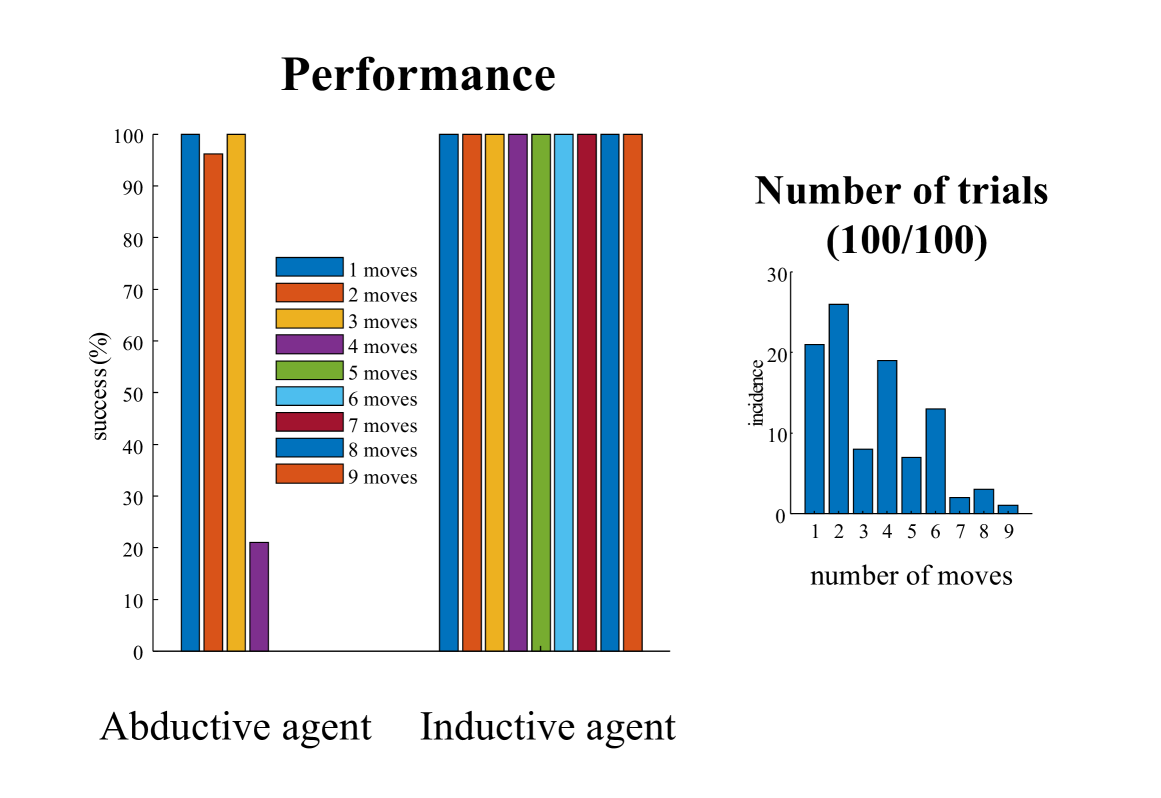

In this treatment, the word inductive is used in several senses. First, to distinguish inductive planning from the abductive kind of inference that usually figures in applications of Bayesian mechanics; i.e., to distinguish between mere inference to the best explanation (abductive inference) and genuinely goal-directed inference (inductive planning) [28, 29]. Second, it is used with a nod to backwards induction in dynamic programming, where one starts from an intended endpoint and works backwards in time to the present, to decide what to do next [24, 25, 30, 27]. Under this naturalisation of behaviours, a thermostat would not exhibit sentient behaviour, but insects might (i.e., thermostats exhibit merely reactive behaviour). Similarly, insects would not exhibit intentional behaviour, but mammals might (i.e., insects exhibit merely sentient behaviour). The numerical analyses presented below suggest that in vitro neuronal cultures may exhibit sentient behaviour, but not intentional behaviour. Crucially, we show that neither sentient nor intentional behaviour can be explained by reinforcement learning. In the experimental sections of this work, we study and compare the performance of active inference agents with and without intended goal states. For ease of reference, we will call active inference agents without goal states abductive agents, and agents with intended goals inductive agents.

This paper comprises four sections. The first briefly rehearses active inference and learning—as a set of nested free energy minimising processes—applied to a generic generative model of exchange with some world or environment. This model is a partially observed Markov decision process that is conciliatory with canonical neural networks in machine learning and apt to describe the self-evidencing of in vitro neuronal networks [6, 7]. This section has a special focus on inductive planning and its relationship to expected free energy. The subsequent sections use numerical studies to make a series of key points. The second section reproduces the empirical behaviour of in vitro neuronal networks playing Pong. Crucially, this behaviour emerges purely in terms of free energy minimising processes, starting with a naïve neuronal network. This section illustrates the failure of a (simulated) abductive agent when the game is made more difficult. This failure is used to illustrate the role of inductive planning, which restores performance and underwrites a fluent engagement with the sensorium. The final two sections illustrate inductive planning using navigation in a maze and the Tower of Hanoi problem, respectively. These numerical studies illustrate how the simple application of inductive constraints to active inference allows tasks—that would be otherwise intractable in discrete state spaces—to be solved efficiently. This efficiency rests on the fact that distal goals can be reached by only planning a few steps in the future, thanks to constraints furnished by inductive planning. Effectively, inductive planning takes the pressure off deep tree searches by identifying ’blind alleys’ or ’dead ends’.

1.1 Glossary of definitions

Before introducing the inductive planning algorithm, we frame our treatment by clarifying our use of some key terms. This framing is important, given that the goal of the present work is not simply to describe a useful heuristic for efficient inference (i.e., inductive planning), but to provide an account of how a new form of decision-making, characteristic of more complex forms of agency, may be combined with, and folded into, a generic Bayesian (active) inference scheme.

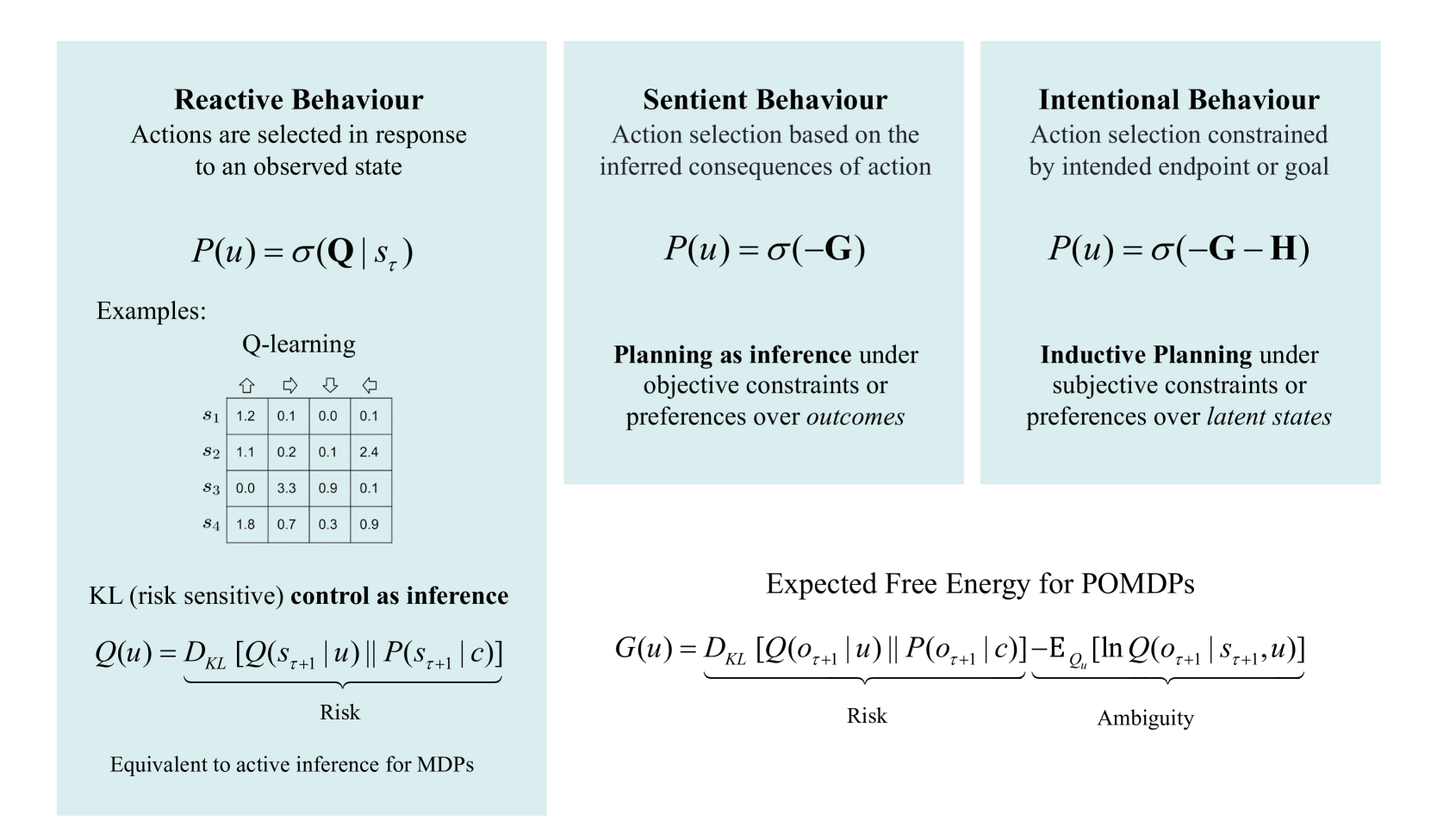

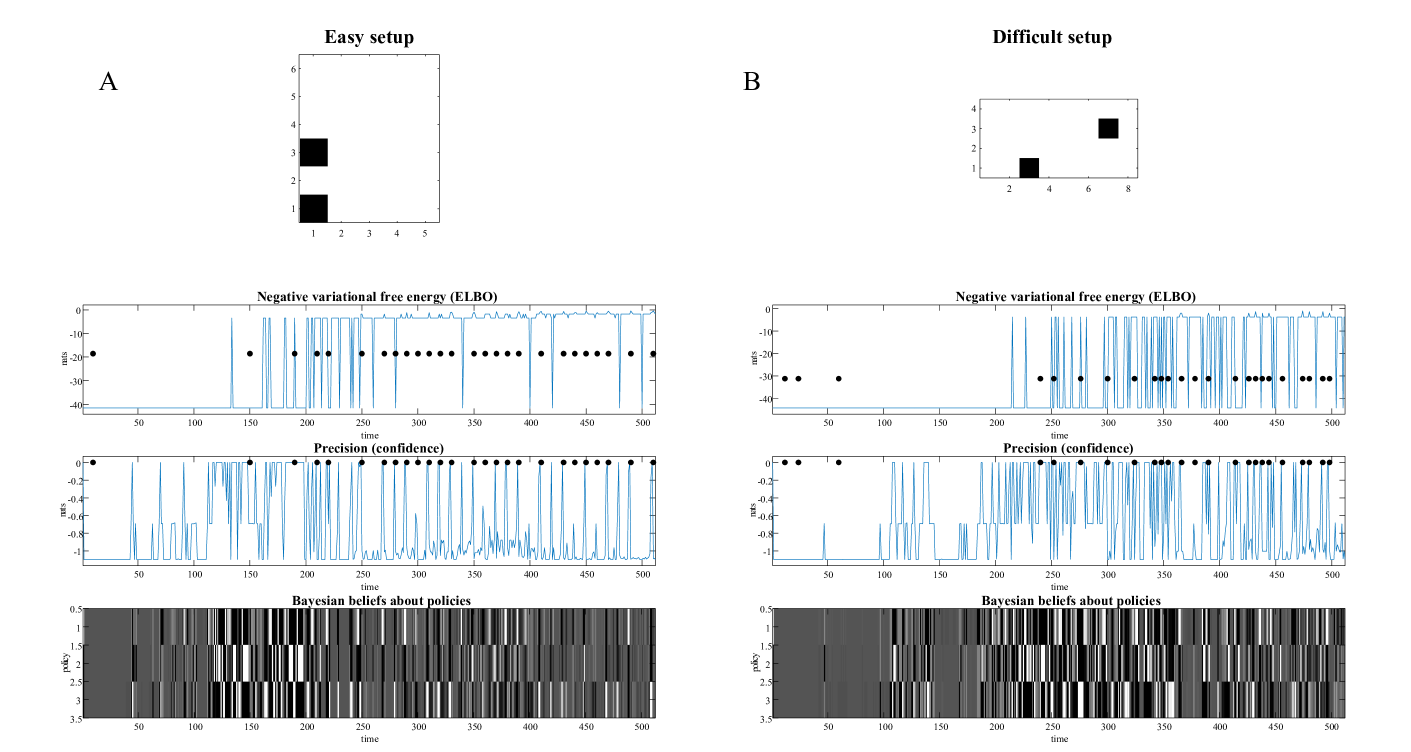

Figure 1 describes increasingly complex forms of behaviour—from reactive (merely responding to stimuli), to sentient (planning based on the sensory consequences of actions), to intentional (planning in order to bring about intended states)—and corresponding forms of decision-making that may underwrite such behaviour.

Reactive behaviour characterises simple sensorimotor reflex arcs and the mere realisation of set points or trajectories (e.g., simple cases of homeostasis and homeorhesis). This form of behaviour can be accounted for acting in a way that realises predicted sensations, with no anticipation of the future sensory consequences of action.

Sentient behaviour characterises the paradigmatic case of active inference, in which the influence of perception on action is mediated by the results of planning, with a distribution over policies derived from a model endowed with counterfactual depth (i.e., beliefs about the future sensory consequences of action pursuant to a policy). In this case, we may characterise the form of inference over actions or policies as abductive —i.e., as an inference to the policy that best explains current and future observations under a generative model (see below).

Intentional behaviour is driven not simply by the generic imperative to minimise sensory prediction error, present and future, but toward the attainment of a particular future endpoint or goal state. This form of behaviour can be subserved by backward induction or inductive planning, as defined below, which supplies a specific form of constraint on the Bayesian (abductive) inference characteristic of (mere) sentient behaviour. In particular, it implies not merely beliefs about sensory consequences of actions but rather beliefs about the inferred or latent causes of sensory input.

Note that words like ‘sentient behaviour’ and ‘intentional behaviour’ are deliberately defined here such that they can be operationalized within the framework of generative modelling, in which terms like ‘state’, ‘belief’, and ‘confidence’ have precise, if narrow, interpretations in terms of belief structures of a mathematical sort [31]. Whether the phenomenology of (propositional or subjective) beliefs—or sentience—could yield to the same naturalisation remains to be seen: see [32, 33, 34] for treatments in this direction. Note further that a key distinction between sentient and intentional behaviour rests upon the consequences of behaviour in (observable) outcome and (unobservable) latent spaces, respectively.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Behavioural Models and Equations

### Overview

The image presents three different behavioral models: Reactive, Sentient, and Intentional. Each model is described with a brief explanation, a mathematical equation, and a further description. The image also includes equations for KL control as inference and expected free energy for POMDPs.

### Components/Axes

* **Reactive Behaviour:**

* Description: Actions are selected in response to an observed state.

* Equation: P(u) = σ(Q | s\_τ)

* Example: Q-learning table with states (s1, s2, s3, s4) and actions (up, right, down, left).

* **Sentient Behaviour:**

* Description: Action selection based on the inferred consequences of action.

* Equation: P(u) = σ(-G)

* Further Description: Planning as inference under objective constraints or preferences over outcomes.

* **Intentional Behaviour:**

* Description: Action selection constrained by intended endpoint or goal.

* Equation: P(u) = σ(-G - H)

* Further Description: Inductive Planning under subjective constraints or preferences over latent states.

* **KL (risk sensitive) control as inference:**

* Equation: Q(u) = D\_KL [Q(s\_{τ+1} | u) || P(s\_{τ+1} | c)]

* Label: Risk

* Description: Equivalent to active inference for MDPs

* **Expected Free Energy for POMDPs:**

* Equation: G(u) = D\_KL [Q(o\_{τ+1} | u) || P(o\_{τ+1} | c)] - E\_{Q\_u} [ln Q(o\_{τ+1} | s\_{τ+1}, u)]

* Label: Risk

* Label: Ambiguity

### Detailed Analysis or ### Content Details

* **Reactive Behaviour - Q-learning Example:**

* The Q-learning example is presented as a table. The rows represent states (s1, s2, s3, s4), and the columns represent actions (up, right, down, left). The values in the table represent Q-values.

* s1: Up (1.2), Right (0.1), Down (0.0), Left (0.1)

* s2: Up (1.1), Right (0.2), Down (0.1), Left (2.4)

* s3: Up (0.0), Right (3.3), Down (0.9), Left (0.1)

* s4: Up (1.8), Right (0.7), Down (0.3), Left (0.9)

### Key Observations

* The image presents a hierarchy of behavioral models, starting from simple reactive behavior to more complex intentional behavior.

* Each model is associated with a mathematical equation that captures the underlying principle.

* The Q-learning example provides a concrete illustration of reactive behavior.

* The equations for KL control and expected free energy provide a more detailed mathematical formulation of the models.

### Interpretation

The image provides a conceptual framework for understanding different types of behavior. The models are presented in increasing order of complexity, reflecting the increasing role of internal representations and goals in shaping behavior. The mathematical equations provide a formal language for describing these models, while the Q-learning example provides a concrete illustration of how reactive behavior can be implemented. The KL control and expected free energy equations suggest a deeper connection between these behavioral models and concepts from information theory and decision theory. The image suggests that behavior can be understood as a process of inference and decision-making, where agents use their internal models of the world to select actions that maximize their expected reward or minimize their expected cost.

</details>

Figure 1: Glossary. In this figure, we provide illustrative definitions of the three kinds of behaviour considered in this work, In terms of examples, and mathematical differences. Examples of agents with reactive behaviours are (1) Model-free reinforcement learning schemes, such as Q-learning, where the agent makes use of a lookup table to select actions (more generally, a state-action policy). In this table, rows correspond to states, actions to columns, and every entry encodes the value of taking a specific action (in this case: go up, right, down, left) when in state $s_{\tau}$ . There is no inference over policies, as for every state the agent automatically selects the action with the highest value; and (2) KL control (a.k.a., risk-sensitive control) methods, that automatically select actions that minimise a KL divergence between anticipated and preferred states (where there is no uncertainty about the current state). Sentient agents, on the other hand, plan by taking into account future outcomes and their uncertainty, as they act by minimising an expected free energy $\mathbf{G}$ , that includes risk and ambiguity terms. More details on this can be found in Equation 5. Finally, inductive agents add constraints ( $\mathbf{H}$ in the figure) in the action selection, by penalising actions that preclude an intended goal. For a formal derivation of $\mathbf{H}$ , we refer to Section 3.

2 Active inference

Here, we introduce the generative model used in the following sections, which can be seen as a generalisation of a partially observed Markov decision process (POMDP). The generalisation in question covers trajectories, narratives or syntax—which may or may not be controllable—by equipping a POMDP with random variables called paths. Paths effectively pick out transitions among latent states. These models are designed to be composed hierarchically, in a way that speaks to a separation of temporal scales in deep generative models. In other words, the number of transitions among latent states at any given level is greater than the number of transitions at the level above. This furnishes a unique specification of a hierarchy, in which the parents of any latent factor (associated with unique states and paths) contextualise the dynamics of their children.

The variational inference scheme [35] used to invert these models inherits from their application to online decision-making tasks. This means that action selection rests primarily on current beliefs about latent states and structures, and expectations about future observations. In that sense, the beliefs are updated sequentially—and in an online fashion—with each new action-outcome pair. This calls for Bayesian filtering (i.e., forward message passing) during the active sampling of observations, followed by Bayesian smoothing (i.e., forward and backward message passing) to revise posterior beliefs about past states at the end of an epoch. The implicit Bayesian smoothing ensures that the beliefs about latent states at any moment in the past are informed by all available observations when updating model parameters (and latent states of parents in deep models).

In neurobiology, this combination of Bayesian filtering and smoothing would correspond to evidence accumulation during active engagement with the environment, followed by a ‘replay’ before the next epoch [36, 37, 38, 39]. From a machine learning perspective, this can be regarded as a forward pass (c.f., belief propagation) for online active inference, followed by a backwards pass (implemented with variational message passing) for active learning. The implicit belief updates, pertaining to states, parameters and structure, foreground the conditional dependencies between active inference, learning, and selection, respectively.

Generative modelling

Active inference rests upon a generative model of observable outcomes (observations). This model is used to infer the most likely causes of outcomes in terms of expected states of the world. These states (and paths) are latent or hidden because they can only be inferred through observations. Some paths are controllable in the sense they can be realised through action. Therefore, certain observations depend upon action (e.g., where one is looking), which requires the generative model to entertain expectations about outcomes under different combinations of actions (i.e., policies) Note that in this setting, a policy is not a sequence of actions, but simply a combination of paths, where each hidden factor has an associated state and path. This means there are, potentially, as many policies as there are combinations of paths..

These expectations are optimised by minimising the variational free energy, defined in Equation (1). Variational free energy scores the discrepancy between the data expected under the generative model and the actual data. Crucially, the prior probability of a policy depends upon its expected free energy. Expected free energy, described in more detail in Equation (2), is a universal objective function that can be read as augmenting mutual information with a expected costs or constraints that need to be satisfied. Heuristically, it scores the free energy expected under each course of action. Having evaluated the expected free energy of each policy, the most likely action can be selected and the perception-action cycle continues [40].

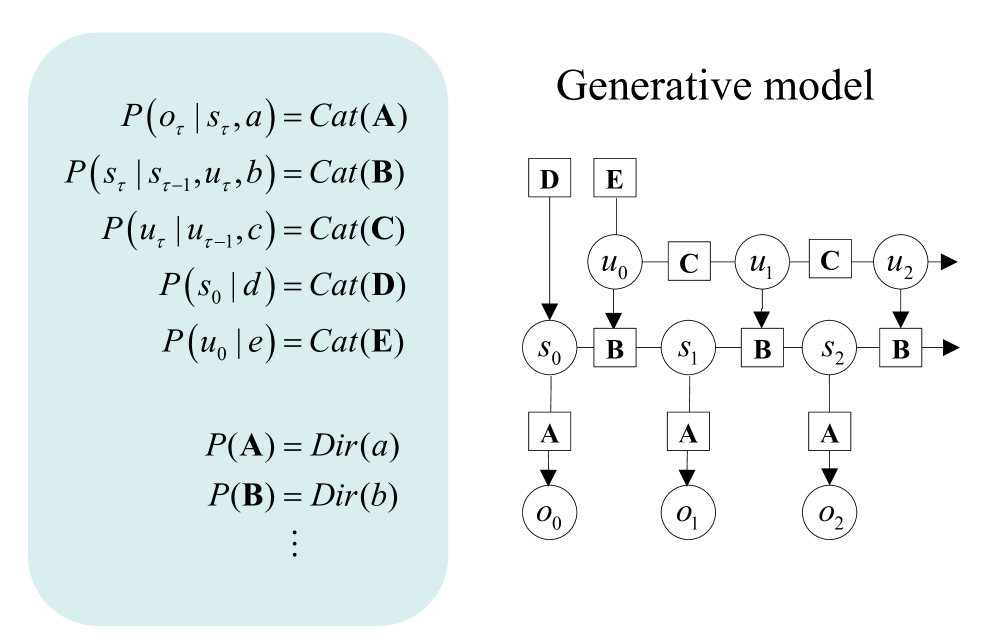

The generative model

Figure 2 provides a schematic overview of the generative model used for the simulations considered in this paper. Outcomes at any particular time depend upon hidden states, while transitions among hidden states depend upon paths. Note that paths are random variables, in the sense that a particle can have both a position (i.e., a state) and momentum (i.e., a path). Paths may or may not depend upon action. The resulting POMDP is specified by a set of tensors. The first set of parameters, denoted $\mathbf{A}$ , maps from hidden states to outcome modalities; for example, exteroceptive (e.g., visual) or proprioceptive (e.g., eye position) modalities. These parameters encode the likelihood of an outcome given their hidden causes. The second set $\mathbf{B}$ prescribes transitions among the hidden states of a factor, under a particular path. Factors correspond to different kinds of causes; e.g., the location versus the class of an object. The remaining tensors encode prior beliefs about paths $\mathbf{C}$ , and initial states $\mathbf{D}$ . The tensors—encoding probabilistic mappings or contingencies—are generally parameterised as Dirichlet distributions, whose sufficient statistics are concentration parameters or Dirichlet counts. These count the number of times a particular combination of states or outcomes has been inferred. We will focus on learning the likelihood model, encoded by Dirichlet counts, $\boldsymbol{a}$ .

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Generative Model

### Overview

The image presents a graphical representation of a generative model, likely used in a probabilistic or statistical context. It consists of two main parts: a set of probability equations on the left, enclosed in a rounded rectangle, and a directed acyclic graph (DAG) on the right, illustrating the relationships between different variables.

### Components/Axes

* **Title:** "Generative model" located at the top-right of the image.

* **Probability Equations (Left):**

* `P(o_τ | s_τ, a) = Cat(A)`

* `P(s_τ | s_{τ-1}, u_τ, b) = Cat(B)`

* `P(u_τ | u_{τ-1}, c) = Cat(C)`

* `P(s_0 | d) = Cat(D)`

* `P(u_0 | e) = Cat(E)`

* `P(A) = Dir(a)`

* `P(B) = Dir(b)`

* `...` (Indicates continuation of similar equations)

* **Directed Acyclic Graph (DAG) (Right):**

* Nodes: Represented by circles and squares, labeled with variables.

* Edges: Represented by arrows, indicating dependencies between variables.

* Variables: `D`, `E`, `u_0`, `u_1`, `u_2`, `s_0`, `s_1`, `s_2`, `o_0`, `o_1`, `o_2`.

* Factors: `A`, `B`, `C`

### Detailed Analysis

**Probability Equations:**

* The equations define conditional probabilities, where the probability of a variable depends on the values of other variables.

* `Cat(X)` likely represents a categorical distribution parameterized by `X`.

* `Dir(x)` likely represents a Dirichlet distribution parameterized by `x`.

* The subscript `τ` denotes a time step or index.

**Directed Acyclic Graph (DAG):**

* **Top Row:** `D` and `E` are at the top, with arrows pointing to `u_0` and `s_0` respectively.

* **Second Row:** `u_0`, `u_1`, and `u_2` are connected sequentially with edges labeled `C`.

* **Third Row:** `s_0`, `s_1`, and `s_2` are connected sequentially with edges labeled `B`. Each `u_i` also has a directed edge to the subsequent `s_i`.

* **Bottom Row:** `o_0`, `o_1`, and `o_2` are at the bottom.

* **Connections:** Each `s_i` has a directed edge to the corresponding `o_i`, labeled `A`.

* **Flow:** The graph suggests a temporal or sequential process, where the state `s` and observation `o` evolve over time, influenced by variables `u`, `D`, and `E`.

**Node and Factor Placement:**

* `D` is positioned above `s_0`, and has a directed edge to `s_0`.

* `E` is positioned above `u_0`, and has a directed edge to `u_0`.

* `C` is positioned between each `u_i` node.

* `B` is positioned between each `s_i` node.

* `A` is positioned between each `s_i` and `o_i` node.

### Key Observations

* The model appears to be a Hidden Markov Model (HMM) or a variant thereof, with hidden states `s` and observations `o`.

* The variables `u`, `D`, and `E` seem to influence the state transitions and initial states.

* The factors `A`, `B`, and `C` parameterize the conditional probabilities between the variables.

### Interpretation

The diagram represents a generative model that describes how a sequence of observations `o` is generated from a sequence of hidden states `s`. The model incorporates external influences `u`, `D`, and `E` that affect the state transitions and initial states. The probability equations on the left provide the mathematical formulation of the model, specifying the conditional probabilities between the variables. The DAG visually represents the dependencies between the variables, illustrating the flow of information and the generative process. This type of model is commonly used in various applications, such as speech recognition, natural language processing, and time series analysis.

</details>

Figure 2: Generative models as agents. A generative model specifies the joint probability of observable consequences and their hidden causes. Usually, the model is expressed in terms of a likelihood (the probability of consequences given their causes) and priors (over causes). When a prior depends upon a random variable it is called an empirical prior. Here, the likelihood is specified by a tensor $\mathbf{A}$ , encoding the probability of an outcome under every combination of states ( $s$ ). The empirical priors pertain to transitions among hidden states, $\mathbf{B}$ , that depend upon paths ( $u$ ), whose transition probabilities are encoded in $\mathbf{C}$ . $\mathbf{E}$ specifies the empirical prior probability of each path. The subscripts in this graphic pertain to time.

The generative model in Figure 2 means that outcomes are generated as follows: first, a policy is selected using a softmax function of expected free energy. Sequences of hidden states are generated using the probability transitions specified by the selected combination of paths (i.e., policy). Finally, these hidden states generate outcomes in one or more modalities. Perception or inference about hidden states (i.e., state estimation) corresponds to inverting a generative model, given a sequence of outcomes, while learning corresponds to updating model parameters. Perception therefore corresponds to updating beliefs about hidden states and paths, while learning corresponds to accumulating knowledge in the form of Dirichlet counts. The requisite expectations constitute the sufficient statistics $(\mathbf{s},\mathbf{u},\mathbf{a})$ of posterior beliefs $Q(s,u,a)=Q_{\mathbf{s}}(s)Q_{\mathbf{u}}(u)Q_{\mathbf{a}}(a)$ . The implicit factorisation of this approximate posterior effectively partitions model inversion into inference, planning, and learning.

Variational free energy and inference

In variational Bayesian inference (a form of approximate Bayesian inference), model inversion entails the minimisation of variational free energy with respect to the sufficient statistics of approximate posterior beliefs. This can be expressed as follows, where, for clarity, we will deal with a single factor, such that the policy (i.e., combination of paths) becomes the path, $\pi=u$ . Omitting dependencies on previous states, we have for model $m$ :

$$

\displaystyle Q\left(s_{\tau},u_{\tau},a\right) \displaystyle=\arg\min_{Q}F \displaystyle F \displaystyle=\mathbb{E}_{Q}[\ln\underbrace{Q\left(s_{\tau},u_{\tau},a\right)}%

_{\text{posterior }}-\ln\underbrace{P\left(o_{\tau}\mid s_{\tau},u_{\tau},a%

\right)}_{\text{likelihood }}-\ln\underbrace{P\left(s_{\tau},u_{\tau},a\right)%

}_{\text{prior }}] \displaystyle=\underbrace{D_{KL}\left[Q\left(s_{\tau},u_{\tau},a\right)\|P%

\left(s_{\tau},u_{\tau},a\mid o_{\tau}\right)\right]}_{\text{divergence }}-%

\underbrace{\ln P\left(o_{\tau}\mid m\right)}_{\text{log evidence }} \displaystyle=\underbrace{D_{KL}\left[Q\left(s_{\tau},u_{\tau},a\right)\|P%

\left(s_{\tau},u_{\tau},a\right)\right]}_{\text{complexity }}-\underbrace{%

\mathbb{E}_{Q}\left[\ln P\left(o_{\tau}\mid s_{\tau},u_{\tau},a\right)\right]}%

_{\text{accuracy }} \tag{1}

$$

Because the (KL) divergences cannot be less than zero, the penultimate equality means that free energy is minimised when the (approximate) posterior is equal to the true posterior. At this point, the free energy is equal to the negative log evidence for the generative model [35]. This means minimising free energy is mathematically equivalent to maximising model evidence, which is, in turn, equivalent to minimising the complexity of accurate explanations for observed outcomes.

Planning emerges under active inference by placing priors over (controllable) paths to minimise expected free energy [41]:

$$

\displaystyle G(u) \displaystyle=\mathbb{E}_{Q_{u}}\left[\ln Q\left(s_{\tau+1},a\mid u\right)-\ln

Q%

\left(s_{\tau+1},a\mid o_{\tau+1},u\right)-\ln P\left(o_{\tau+1}\mid c\right)\right] \displaystyle=-\underbrace{\mathbb{E}_{Q_{u}}\left[\ln Q\left(a\mid s_{\tau+1}%

,o_{\tau+1},u\right)-\ln Q(a\mid s_{\tau+1},u)\right]}_{\text{expected %

information gain (learning) }}- \displaystyle\ \ \ \ \ \underbrace{\mathbb{E}_{Q_{u}}\left[\ln Q\left(s_{\tau+%

1}\mid o_{\tau+1},u\right)-\ln Q\left(s_{\tau+1}\mid u\right)\right]}_{\text{%

expected information gain (inference) }}\underbrace{-\mathbb{E}_{Q_{u}}\left[%

\ln P\left(o_{\tau+1}\mid c\right)\right]}_{\text{expected cost }} \displaystyle=-\underbrace{\mathbb{E}_{Q_{u}}\left[D_{KL}\left[Q\left(a\mid s_%

{\tau+1},o_{\tau+1},u\right)\|Q(a\mid s_{\tau+1},u)\right]\right]}_{\text{%

novelty }}+ \displaystyle\ \ \ \ \ \underbrace{D_{KL}\left[Q\left(o_{\tau+1}\mid u\right)%

\|P\left(o_{\tau+1}\mid c\right)\right]}_{\text{risk }}\underbrace{-\mathbb{E}%

_{Q_{u}}\left[\ln Q\left(o_{\tau+1}\mid s_{\tau+1},u\right)\right]}_{\text{%

ambiguity }} \tag{3}

$$

Here, the posterior predictive distribution over parameters, hidden states and outcomes at the next time step, under a particular path, is defined as follows:

| | $\displaystyle Q_{u}$ | $\displaystyle=Q\left(o_{\tau+1},s_{\tau+1},a\mid u\right)$ | |

| --- | --- | --- | --- |

One can also express the prior over the parameters in terms of an expected free energy, where, marginalising over paths:

$$

\displaystyle P(a) \displaystyle=\sigma(-G) \displaystyle G(a) \displaystyle=\mathbb{E}_{Q_{a}}[\ln P(s\mid a)-\ln P(s\mid o,a)-\ln P(o\mid c)] \displaystyle=-\underbrace{\mathbb{E}_{Q_{a}}[\ln P(s\mid o,a)-\ln P(s\mid a)]%

}_{\text{expected information gain }}\underbrace{-\mathbb{E}_{Q_{a}}[\ln P(o%

\mid c)]}_{\text{expected cost }} \displaystyle=-\underbrace{\mathbb{E}_{Q_{a}}\left[D_{KL}[P(o,s\mid a)\|P(o%

\mid a)P(s\mid a)]\right.}_{\text{mutual information }}\underbrace{-\mathbb{E}%

_{Q_{a}}[\ln P(o\mid c)]}_{\text{expected cost }} \tag{5}

$$

where $Q_{a}=P(o|s,a)P(s|a)=P(o,s|a)$ is the joint distribution over outcomes and hidden states, encoded by the Dirichlet parameters, $a$ , and $\sigma(·)$ is the softmax function. Note that the Dirichlet parameters encode the mutual information, in the sense that they implicitly encode the joint distribution over outcomes and their hidden causes. When normalising each column of the $a$ tensor, we recover the likelihood distribution (as in Figure 2); however, we could normalise over every element, to recover a joint distribution.

As discussed above, expected free energy can be regarded as a universal objective function that augments mutual information with expected costs or constraints. Constraints — parameterised by $c$ — reflect the fact that we are dealing with open systems with characteristic outcomes. This allows an optimal trade-off between exploration and exploitation, that can be read as an expression of the constrained maximum entropy principle that is dual to the free energy principle [19]. Alternatively, it can be read as a constrained principle of maximum mutual information or minimum redundancy [42, 43, 44, 45]. In machine learning, this kind of objective function underwrites disentanglement [46, 47], and generally leads to sparse representations [48, 45, 49, 50].

When comparing the expressions for expected free energy in Equation 2 with variational free energy in Equation 1, the expected divergence becomes expected information gain. Expected information gain about the parameters and states are sometimes associated with distinct epistemic affordances; namely, novelty and salience, respectively [51]. Similarly, expected log evidence becomes expected value, where value is the logarithm of prior preferences. The last equality in Equation 2 provides a complementary interpretation; in which the expected complexity becomes risk, while expected inaccuracy becomes ambiguity.

There are many special cases of minimising expected free energy. For example, maximising expected information gain maximises (expected) Bayesian surprise [52], in accord with the principles of optimal (Bayesian) experimental design [53]. This resolution of uncertainty is related to artificial curiosity [54, 55] and speaks to the value of information [56].

Expected complexity or risk is the same quantity minimised in risk sensitive or KL control [57, 58], and underpins (free energy) formulations of bounded rationality based on complexity costs [59, 60] and related schemes in machine learning; e.g., Bayesian reinforcement learning [61]. More generally, minimising expected cost subsumes Bayesian decision theory [62].

<details>

<summary>x3.png Details</summary>

### Visual Description

## Inductive Planning Diagram

### Overview

The image presents a diagram illustrating the concept of inductive planning. It includes mathematical formulas, two plots representing state transitions, and a heatmap-like representation of a matrix. The diagram aims to visually explain the process of inductive planning using mathematical notation and graphical representations.

### Components/Axes

* **Title:** Inductive planning (top-left)

* **Mathematical Formulas:**

* `I₀ = h`

* `Iₙ₊₁ = B̃ᵀ ⊙ Iₙ`

* `B̃ = B > ε : ∃u`

* `pₙ = Iₙ ⊙ sτ`

* `m = arg maxₙ pₙ < sup p`

* `H = ln ε ⋅ Iₘ ⊙ sτ₊₁`

* `P(u) = σ(-G - H)`

* **Left Plot:**

* Y-axis: Labeled `sτ`, with tick marks from 10 to 100 in increments of 10.

* The plot shows a black bar with a small white segment around the 20 mark.

* A red arrow points from the white segment to the right.

* A red arrow points to the black bar at the 60 mark, labeled `h`.

* **Middle Plot:**

* X-axis: Labeled `n`, with tick marks from 5 to 30 in increments of 5. An arrow below the axis indicates "future" to the left and "past" to the right.

* Y-axis: Tick marks from 10 to 100 in increments of 10.

* The plot shows a series of black and white horizontal bars, with the white portions forming a jagged pattern.

* A red dotted vertical line is present at approximately the 11 mark on the x-axis, labeled `m`.

* The expression `pₙ = Iₙ ⊙ sτ` is above the plot.

* The expression `I = [I₀, I₁, ..., Iₙ]` is to the right of the plot.

* The label `Iₘ` is to the left of the red dotted line.

* **Right Plot:**

* X and Y axes: Tick marks from 20 to 100 in increments of 20.

* The plot shows a black square with three diagonal white lines.

* The expression `B̃ = B > ε : ∃u` is above the plot.

### Detailed Analysis or Content Details

* **Mathematical Formulas:** The formulas describe the inductive planning process, involving state transitions, reward functions, and policy updates.

* **Left Plot (sτ):** The plot represents the state `sτ` at different time steps. The white segment at approximately 20 indicates a specific state value.

* **Middle Plot (n):** The plot represents the evolution of the state over time (`n`). The jagged pattern indicates changes in the state. The red dotted line `m` marks a specific time step.

* **Right Plot (B̃):** The plot represents the matrix `B̃`, which likely encodes transition probabilities or relationships between states. The diagonal lines suggest a strong correlation between neighboring states.

### Key Observations

* The diagram combines mathematical notation with visual representations to explain inductive planning.

* The plots provide a visual understanding of state transitions and relationships.

* The mathematical formulas formalize the planning process.

### Interpretation

The diagram illustrates the core concepts of inductive planning. The formulas define the mathematical framework, while the plots provide a visual representation of the planning process. The left plot `sτ` shows the state at different time steps, the middle plot `n` shows the evolution of the state over time, and the right plot `B̃` represents the transition matrix. The red arrows and dotted line highlight specific aspects of the planning process. The diagram suggests that inductive planning involves iteratively updating the state based on the transition matrix and reward function. The goal is to find an optimal policy that maximizes the cumulative reward.

</details>

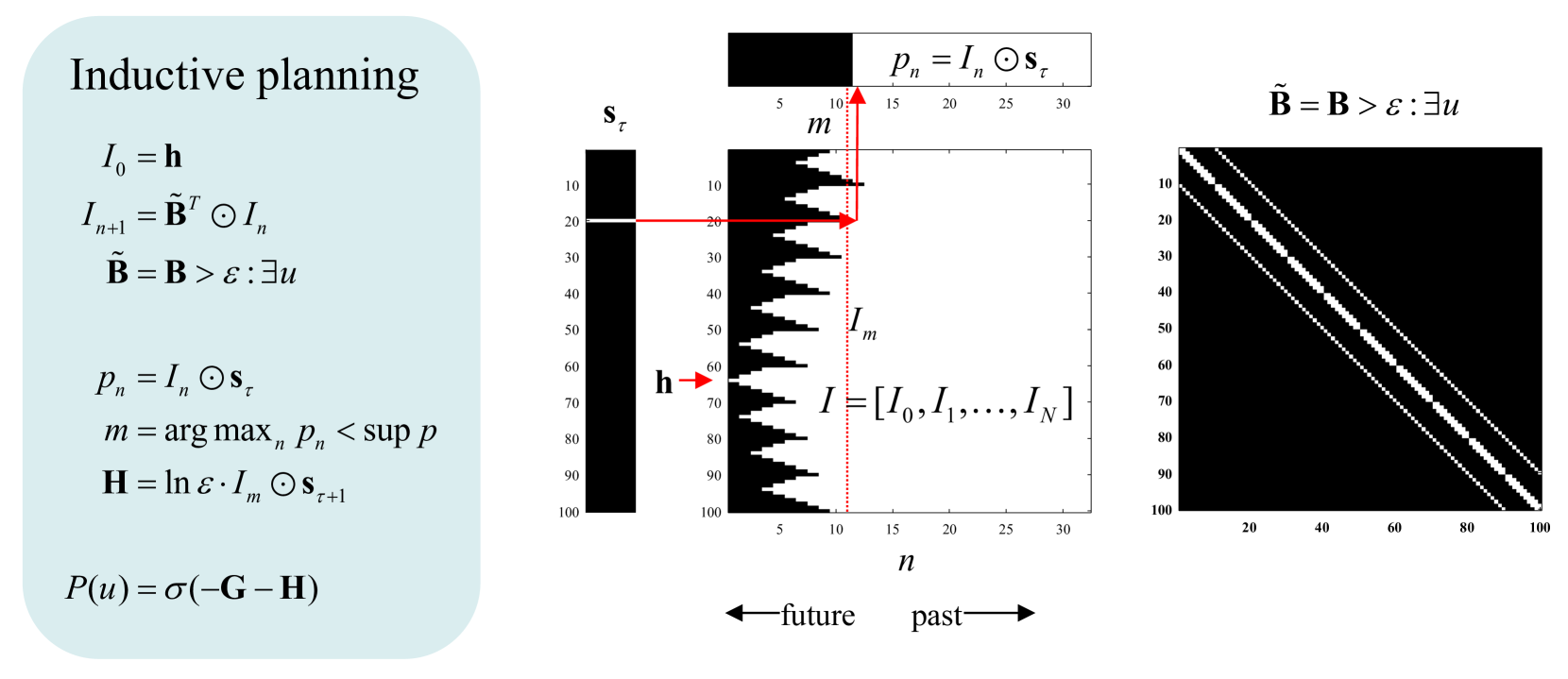

Figure 3: Inductive Planning. This figure provides an overview of inductive planning used in this paper. The left panel provides the expressions used to induce which subsequent states do and do not contain paths to some intended end state, encoded by a one hot vector $\mathbf{h}$ . The central panel illustrates this induction graphically, where vectors and matrices are shown in image format (black equals zero or false and white equals one or true). Working down the equalities in the left panel, we first initialise a logical vector of states, $I$ , to the intended state $\mathbf{h}$ . Recursively, we evaluate all the states from which the previous state can be accessed (a state can be accessed if the probability of transitioning from an adjacent state is larger than $\varepsilon$ ). Because this recursive induction works backwards in time, the allowable transition matrix is transposed. Having induced the reverse history of states—that contain paths to the intended state—one can then evaluate the length of the shortest path to the intended state. This depends upon posterior beliefs about the current state. In the example shown on the left, we are currently in state $20$ , which means that the shortest path to the intended state (state $64$ ) is $12$ time steps. This tells us that if we are pursuing the shortest path then there are certain states we need to avoid—from which the intended state cannot be reached. These states are encoded by the logical vector I at the next time step; namely, the last time before the probability $p$ of being on a path to the intended state reaches its supremum. Because the eligible states can only increase—as we move backwards in time—this probability can only increase, until all states are eligible (or there are no further eligible states). The first time that the probability reaches its supremum tells us where we are on the path to our intended state and, crucially, the ineligible states at the next time step. We now know the states to avoid at the next time step. If ineligible states are precluded, the next state must be on the path to the intended state. Ineligible states can be assigned a high cost (here, the log of a small value) to evaluate the expected cost incurred by each policy, using its predictive posterior over states (see Figure 2). Finally, we can supplement the expected free energy, $\mathbf{G}$ of each policy with the ensuing inductive cost, $\mathbf{H}$ . In principle, this guarantees the selection of paths or policies that lead to the intended state, provided that state can be reached. The example shown on the right is taken from the maze navigation task described later. For clarity, this example only considers a single factor. The mathematical expressions use the notation of Figure 2: The dotted red line indicates the logical vector encoding which of the $100$ states will lead to the intended state at the next time point; here, $11$ time steps from the intended state (indicated with a small red arrow).

3 Inductive Planning

What we call inductive planning—in this setting—recalls the notion of backwards induction in dynamic programming and related schemes [63, 25, 30, 23, 26, 64, 27]. In this form of inference, precise beliefs about state transitions are leveraged to rule out actions that are inconsistent with the attainment of future goals, defined in belief or state space as a final (or intended) state. This is a limiting case of inductive (Bayesian) inference [65, 66, 67] in which the very high precision of beliefs about final or intended states allows one to use logical operators in place of tensor operations; thereby vastly simplifying computations. In brief, we will use this simplification to furnish constraints on action selection that inherit from priors over intended states in the future.

Active inference rests on priors that place constraints on paths or trajectories through state space. For example, a sparse prior preference with knowledge only about the final state warrants deep planning to demonstrate intentional behaviour [27]. One can either specify these constraints in terms of states that are unlikely to traversed, or in terms of the final state. In other words, the agent may, a priori, believe it will navigate state space in a way that avoids unlikely or surprising outcomes, or that it will reach some final destination (in state space, not outcome space), irrespective of the path taken. These are distinct kinds of constraints. The first is implemented by $\mathbf{c}$ , in terms of the cost or constraints that apply during the entire path. We now introduce another prior or constraint $\mathbf{h}$ , over the final state. The priors, $\mathbf{d}$ and $\mathbf{h}$ play reciprocal roles; in the sense they specify prior beliefs about the initial and final states, respectively. Backwards induction now follows simply from this prior; provided it is specified sufficiently precisely. We will refer to these final states as intended states While $\mathbf{c}$ , $\mathbf{d}$ , and $\mathbf{h}$ are usually hard coded, they can be learnt very efficiently, for example using Z-learning for certain classes of MDPs [68, 27].

The basic idea is that although we may be uncertain about the next latent state, we can be certain about which states cannot be accessed from the current state. This means we can use induction to identify subsequent states that cannot be on a path to an intended state; thereby rendering actions—(i.e., state transitions) to those ineligible, ’dead-end’ states—highly unlikely (assuming that we are on a path to an intended state). The requisite induction goes as follows:

Imagine that we know our current state and that we will be in a certain (intended) state in the future. Imagine further that we know all possible transitions, afforded by action, among states. This means we can identify all the states from which the intended state is accessible. We can now repeat this and identify all the states from which the eligible states at the penultimate time point can be accessed, and so on. We now repeat this recursively—moving backwards in time—until our current state becomes eligible. At this point, we select an action that precludes ineligible states at the preceding point in backwards time (or next point in forwards time), bringing us one step closer to the intended state. We now repeat the backwards induction, until we arrive at the intended state, via the shortest path. This backwards induction is computationally cheap because it entails logical operations on a sparse logical tensor, encoding allowable state transitions.

Figure 3 provides a pseudocode and graphical abstraction based upon the MATLAB scripts implementing this inductive logic. For clarity, we have assumed a single factor and that there are no constraints on the paths, other than those specified by a one hot vector $\mathbf{h}$ , specifying the agent’s intended states In our MATLAB implementation of inductive planning, constraints due to prior preferences in outcome space are accommodated by precluding transitions to costly states during construction of the logical matrix encoding possible or true transitions. Furthermore, the implementation deals with multiple factors using appropriate tensor products. Finally, when multiple intended states are supplied, the nearest state is chosen for induction; where nearest is defined in terms of the number of timesteps required to access an intended state..

Note that this is not vanilla backwards induction. It is simply a way of placing precise priors on paths that render certain paths—that cannot access an intended state—highly unlikely. The requisite priors complement expected free energy in the following sense (see Figure 3): inductive priors over policies, $\mathbf{H}$ are derived from priors over intended states $\mathbf{h}$ , while the priors over policies scored by expected free energy, $\mathbf{G}$ inherit from priors over preferred outcomes $\mathbf{c}$ . This distinction is important because it means that this kind of reasoning—and intentional behaviour—can only manifest under precise beliefs about latent states. For example, a baby (or unexplainable neural network) could not, by definition, act intentionally because it does not have a precise generative model of latent states (or any mechanism to specify intended states). We will return to prerequisites for inductive planning in the discussion.

In summary, inductive planning propagates constraints backwards in time to provide empirical priors for planning as inference in the usual way. This means that—within the constraints afforded by such planning—actions will still be chosen that maximise expected information gain and any constraints encoded by $\mathbf{c}$ . In this sense, the inductive part of this inference scheme can be regarded as providing a constrained expected free energy, which winnows trajectories through state space to paths of least action. An equivalent and alternative perspective is that inductive planning furnishes an empirical prior over policies.

When intended states are conditioned on some context—inferred by a supraordinate (hierarchical) level—one has the opportunity to learn intended states and, effectively, make planning habitual. In this setting, the implicit Dirichlet counts in $\mathbf{h}$ , could be regarded as accumulating habitual courses of action that are learned as empirical priors in hierarchical models. We will pursue this elsewhere. In what follows, we focus on the distinction between sentient behaviour—based upon expected free energy—and intentional behaviour—based upon inductive priors.

4 Pong Revisited

In this section, we first simulate ’mere’ sentient Behaviour and then examine the qualitative differences in behaviour when adding inductive constraints. Specifically, we simulate the in vitro experiments reported in [1], using both an abductive and an inductive agent. The first has no intended goals, and stands in for a naïve neuronal culture; the second has as set of intended states: the ones where the paddle hits upcoming balls. As environments, we use Pong of two different sizes, that reflect two different difficulties: $5× 6$ (easy), and $8× 4$ (hard). The results show that while the simulated in vitro agent is able to fluently play in the easy environment, it struggles in the harder one. The inductive agent, on the other hand, can master the harder environment in less than three minutes of (simulated) game time.

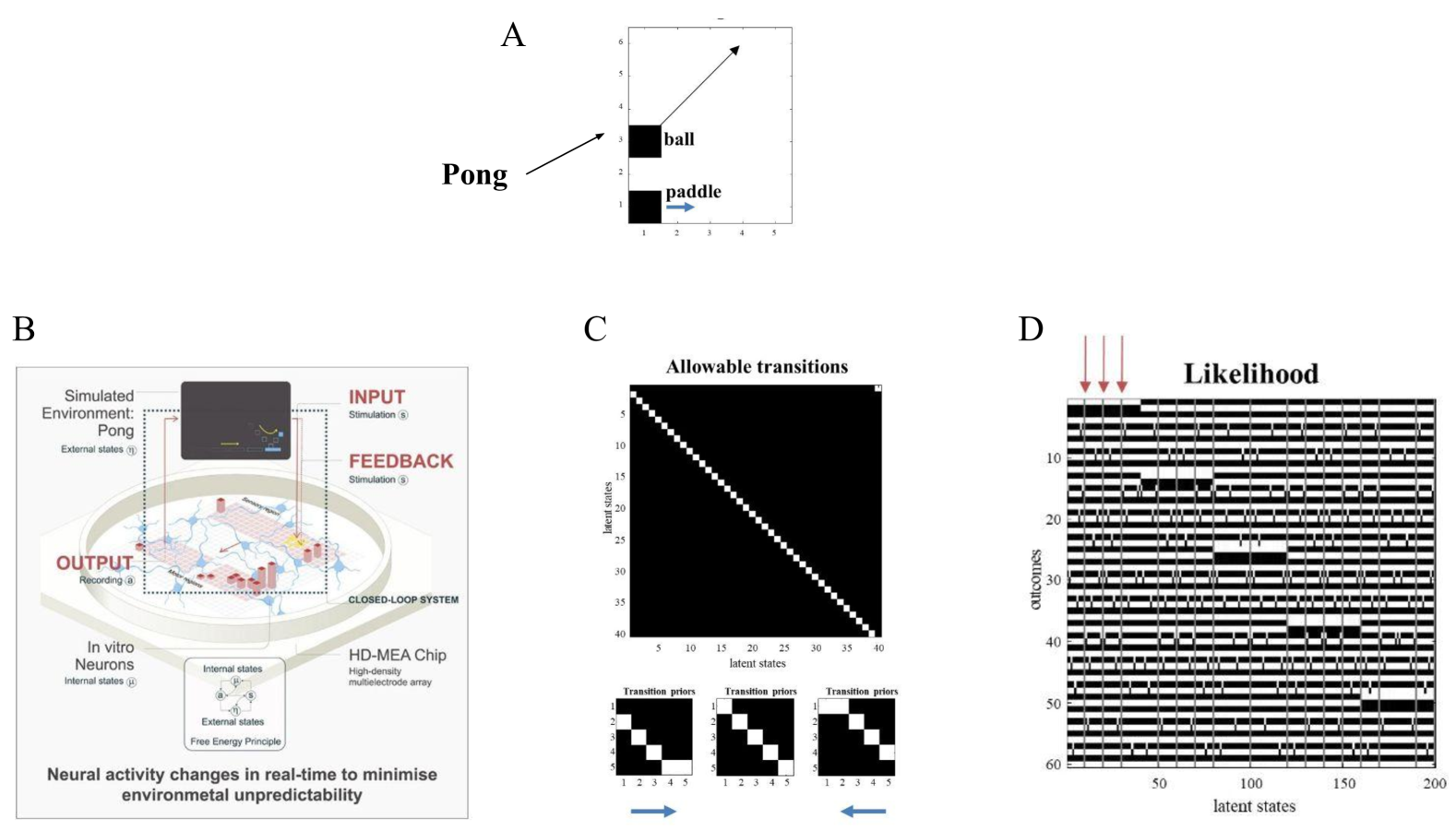

In the in vitro experiments, certain cells were stimulated depending upon the configuration of a virtual game of Pong, constituted by the position of a paddle and a ball bouncing around a bounded box. Other recording electrodes were used to drive the paddle, thereby closing the sparse coupling between the neuronal network and the computer network simulating the game of Pong (see Figure 4). Typically, in these experiments, after a few minutes of exposure to the game, short rallies of ball returns emerge. To emulate this setup, we created a generative process (i.e., a hard-coded representation of the dynamics of external states) in which a ball bounced around a box at $45$ degrees. The lower boundary contained a paddle that could be moved to the right or left. The size of the box was $5× 6$ units, where the ball moved one unit up or down (and right or left) at every time point. The (one unit wide) paddle could be moved left or right by one unit at every time point. In the in vitro experiments, whenever the agent missed the ball, either white noise or no stimulation was applied to the sensory electrodes; otherwise, the game remained in the play. We simulated this by supplying random input to all sensory channels whenever the ball failed to contact the paddle on the lower boundary.

The (sensory) outcomes of the POMDP comprised $30$ sensory channels that could be on or off. These can be thought of as pixels in a simple Atari-like game. The latent states were modelled as one long orbit, by equipping the generative model with a transition matrix that moved from one state to the next (with circular boundary conditions) for a suitably long sequence of state transitions (here, $40$ ). The generative model was equipped with a second factor with three controllable paths. This factor moved the paddle one unit to the right or left (or no movement). However, the (implicit) agent knew nothing more about its world and, in particular, had no notion that the second factor endowed it with control over the paddle. This was because the likelihood tensors mapping from the two latent factors to the outcomes were populated with small and uniform Dirichlet counts (i.e., concentration parameters of $1/32$ ). In other words, our naïve generative model could, in principle, model any given world (providing this world has a limited number of states that are revisited systematically). Figure 4 shows the setup of this paradigm and the parameters of the generative model learned after $512$ time steps.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Composite Image Analysis: Pong Simulation and Neural Activity

### Overview

The image is a composite figure containing four sub-figures (A, B, C, and D) related to a Pong simulation and its connection to neural activity. Sub-figure A shows a simplified Pong game representation. Sub-figure B illustrates a closed-loop system involving a simulated Pong environment interacting with in-vitro neurons via an HD-MEA chip. Sub-figure C displays allowable state transitions. Sub-figure D shows a likelihood matrix.

### Components/Axes

**Sub-figure A: Pong Game Representation**

* **Labels:** "Pong", "ball", "paddle"

* **Axes:** X-axis ranges from 1 to 5, Y-axis ranges from 1 to 6.

* The "ball" is located at approximately (1, 4) and is moving towards the top-right.

* The "paddle" is located at approximately (1, 1) and is moving to the right.

**Sub-figure B: Closed-Loop System Diagram**

* **Components:**

* "Simulated Environment: Pong" with "External states"

* "INPUT" with "Stimulation (s)"

* "FEEDBACK" with "Stimulation (s)"

* "OUTPUT" with "Recording (a)"

* "In vitro Neurons" with "Internal states"

* "HD-MEA Chip" (High-density multielectrode array)

* "Internal states" and "External states" connected by "Free Energy Principle"

* "CLOSED-LOOP SYSTEM" label encompassing the entire diagram.

* **Text:** "Neural activity changes in real-time to minimise environmetal unpredictability"

**Sub-figure C: Allowable Transitions**

* **Title:** "Allowable transitions"

* **Axes:** Both X and Y axes are labeled "latent states" and range from 5 to 40 in increments of 5.

* The main plot shows a diagonal line of black squares on a white background, indicating allowed transitions between adjacent states.

* Three smaller plots labeled "Transition priors" show 5x5 matrices with a diagonal of black squares. Arrows below the plots indicate a direction.

**Sub-figure D: Likelihood Matrix**

* **Title:** "Likelihood"

* **Axes:**

* X-axis: "latent states" ranging from 50 to 200 in increments of 50.

* Y-axis: "outcomes" ranging from 10 to 60 in increments of 10.

* The plot displays a black and white matrix, where black represents high likelihood and white represents low likelihood. Three red arrows point to the top of the matrix.

### Detailed Analysis or Content Details

**Sub-figure A:**

* The Pong game is highly simplified, showing only the ball and paddle positions.

* The ball's trajectory suggests an upward and rightward movement.

* The paddle's movement is indicated by an arrow pointing to the right.

**Sub-figure B:**

* The diagram illustrates a closed-loop system where the simulated Pong environment provides input to in-vitro neurons via stimulation.

* The neural activity is recorded and used as feedback to influence the Pong simulation.

* The HD-MEA chip serves as the interface between the simulated environment and the biological neurons.

* The "Free Energy Principle" connects the internal and external states.

**Sub-figure C:**

* The diagonal line in the main plot indicates that transitions are only allowed between adjacent latent states.

* The "Transition priors" plots show the prior probabilities of transitioning between states.

**Sub-figure D:**

* The likelihood matrix shows the probability of observing different outcomes given different latent states.

* The pattern suggests that certain latent states are more likely to produce specific outcomes.

* The red arrows at the top indicate specific latent states.

### Key Observations

* The image presents a system that integrates a simulated environment (Pong) with biological neurons.

* The closed-loop architecture allows for real-time interaction between the simulation and the neural activity.

* The allowable transitions and likelihood matrix provide insights into the dynamics of the system.

### Interpretation

The image illustrates an attempt to model and understand neural activity by connecting it to a simple, controllable environment (Pong). The closed-loop system allows researchers to observe how neural activity changes in response to environmental stimuli and how these changes, in turn, affect the environment. The "Free Energy Principle" suggests a theoretical framework for understanding how the system minimizes environmental unpredictability. The allowable transitions and likelihood matrix provide a quantitative representation of the system's dynamics, allowing for further analysis and modeling. The overall goal appears to be to gain a deeper understanding of how neural systems adapt and learn in response to external stimuli.

</details>

Figure 4: Learning the world of Pong. Panel $A$ : Setup used in the simulations. In brief, the generative process modelled a ball bouncing around inside a bounding box, with a movable paddle on the lower boundary. The $(5× 6=)30$ locations or pixels provided outputs with two states (black or white) that were subsequently learned via a likelihood mapping to $40$ latent states. The agent was equipped with a precise transition prior where $40$ latent states followed each other, with circular boundary conditions. In addition, the agent was equipped with a second factor that controlled the panel, moving it to the right, staying still and moving it to the left. Panel $B$ : graphical abstract (reproduced with permission from the authors) describing the in vitro empirical study in which a closed loop system was used to record from—and stimulate—a network of cultured neurons. The set up enabled the neurons to control a virtual paddle in a simulated game of Pong. Sensory feedback reported the location of the ball and paddle; enabling the neuronal preparation to learn how to play a rudimentary form of ping-pong. Panel $C$ shows the transitions of the generative model, while Panel $D$ shows the results of active learning—i.e., accumulation of Dirichlet counts in the likelihood tensor—after $512$ time steps. Note that this is a precise likelihood mapping due to the fact that the synthetic agent has precise, if generic, transition priors. The likelihood mapping in panel D is shown in image format, with each of the $30$ likelihood tensors stacked on top of each other. Of note here are certain latent states that produce ambiguous (i.e., unpredictable) outcomes. The first three are labelled with small arrows over the likelihood matrix. These ambiguous likelihood mappings appear as grey columns. This reflects the fact that the agent has learned that states corresponding to ‘missing the ball’ lead to unpredictable and ambiguous stimulation. The implicit surprise and ambiguity means that the agent plans to avoid these states and look as if it is playing Pong—by choosing paths or policies that are more likely to hit the ball. The emergence of this behaviour is described in the next figure.

To simulate the in vitro study, we exposed the synthetic neural network to $512$ observations—about two minutes of simulated time (i.e., a few seconds of computer time). Figure 5 shows the results of this simulation. The ensuing behaviour reproduced that observed empirically; namely, the emergence of short rallies after a minute or so of exposure. The question is now: can we understand this in terms of free energy minimising processes and their teleological concomitants?

As time progresses, Dirichlet counts are accumulated in the likelihood tensor to establish a precise mapping between each successive hidden state and the outcomes observed in each modality. This accumulation is precise because the agent has precise beliefs about state transitions. As the likelihood mapping is learned, it becomes apparent to the agent that certain states produce ambiguous outputs. These are the states in which it fails to hit the ball with the paddle. Because these ambiguous states have a high expected free energy—see Equation 2 —the agent considers that actions that bring about these states are unlikely and therefore tries to avoid missing the ball. This is sufficient to support rallies of up to $7$ returns: see Figure 5.

However, because this agent does not look deep into the future, it can only elude ambiguous states when they are imminent. In other words, although this kind of behaviour can be regarded as sentient—in the sense that it rests upon an acquired model of the consequences of its own action—it is not equipped with intended states.

Note what has been simulated here does not rely on any notion of reinforcement learning: at no point was the agent rewarded for any behaviour or outcome. This kind of self-organisation—to a synchronous exchange with the world—is an emergent property of the system that simply rests on avoiding ambiguity or uncertainty of a particular kind. The subtle distinction between a behaviourist (reinforcement learning) account and this kind of self-evidencing rests upon the imperatives for self-organised behaviour. In this in silico reproduction of in vitro experiments, behaviour is a consequence of (planning as) inference, where inference is based upon what has been learned. What has been learned are just statistical regularities (or unpredictable irregularities) in the environment: in this case, there are certain states that lead to unpredictable outcomes. This gives the agent a precise grip on the world and enables it to infer its most likely actions. Its most likely actions are those that are characteristic of the thing it is; namely, something that minimises surprise, ambiguity, and free energy. This is distinct from learning a behaviour in the sense of reinforcement learning (e.g., a state-action mapping). The difference lies in the fact that behaviour—of the sort demonstrated above—rests on inference, under a learned model.

In the next section, we turn to a different kind of behaviour that rests upon inductive planning, equipping the agent with foresight and eliciting anticipatory behaviour.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Chart/Diagram Type: Comparative Analysis of Agent Behavior in Easy vs. Difficult Setups

### Overview

The image presents a comparative analysis of an agent's behavior in two different setups: an "Easy setup" (left column, labeled A) and a "Difficult setup" (right column, labeled B). For each setup, the image shows a schematic of the environment, a plot of the negative variational free energy (ELBO) over time, a plot of precision (confidence) over time, and a heatmap representing Bayesian beliefs about policies over time. The x-axis for all plots is "time".

### Components/Axes

**Top Row: Environment Schematics**

* **Easy setup (A):** A 5x6 grid with two black squares. One square is at approximately (1,1) and the other at (1,3).

* **Difficult setup (B):** An 8x4 grid with two black squares. One square is at approximately (2,1) and the other at (7,3).

**Middle Row: Negative Variational Free Energy (ELBO)**

* **Y-axis:** Labeled "nits", ranging from approximately -40 to 0.

* **X-axis:** Labeled "time", ranging from 0 to 500.

* **Easy setup (A):** The ELBO plot shows a fluctuating line, mostly near 0, with occasional drops to around -20 to -40. Black dots are overlaid on the line at various time points.

* **Difficult setup (B):** The ELBO plot shows a fluctuating line, mostly near 0, with frequent drops to around -20 to -40. Black dots are overlaid on the line at various time points.

**Third Row: Precision (confidence)**

* **Y-axis:** Labeled "nits", ranging from approximately -1 to 0.

* **X-axis:** Labeled "time", ranging from 0 to 500.

* **Easy setup (A):** The precision plot shows a fluctuating line, mostly near 0, with occasional drops to around -0.8 to -1. Black dots are overlaid on the line at various time points.

* **Difficult setup (B):** The precision plot shows a fluctuating line, mostly near 0, with frequent drops to around -0.8 to -1. Black dots are overlaid on the line at various time points.

**Bottom Row: Bayesian Beliefs About Policies**

* **Y-axis:** Labeled "policy", ranging from 0.5 to 3.5.

* **X-axis:** Labeled "time", ranging from 0 to 500.

* **Easy setup (A):** The heatmap shows vertical stripes of varying shades of gray, representing the agent's belief in different policies over time.

* **Difficult setup (B):** The heatmap shows vertical stripes of varying shades of gray, representing the agent's belief in different policies over time. The stripes appear more fragmented and less consistent than in the "Easy setup".

### Detailed Analysis

**Negative Variational Free Energy (ELBO) - Easy Setup (A):**

* The ELBO line starts at approximately -20 nits at time 0.

* The line fluctuates, mostly staying near 0 nits, with drops to -20 to -40 nits at times around 150, 200, 250, 300, 350, 400, 450, and 500.

**Negative Variational Free Energy (ELBO) - Difficult Setup (B):**

* The ELBO line starts at approximately -30 nits at time 0.

* The line fluctuates more frequently than in the "Easy setup", with drops to -20 to -40 nits at times around 50, 250, 300, 350, 400, 450, and 500.

**Precision (confidence) - Easy Setup (A):**

* The precision line starts at approximately -0.2 nits at time 0.

* The line fluctuates, mostly staying near 0 nits, with drops to -0.8 to -1 nits at times around 50, 150, 200, 250, 300, 350, 400, 450, and 500.

**Precision (confidence) - Difficult Setup (B):**

* The precision line starts at approximately -0.1 nits at time 0.

* The line fluctuates more frequently than in the "Easy setup", with drops to -0.8 to -1 nits at times around 50, 100, 150, 200, 250, 300, 350, 400, 450, and 500.

**Bayesian Beliefs About Policies - Easy Setup (A):**

* The heatmap shows relatively consistent vertical stripes, indicating a more stable belief in certain policies over time.

**Bayesian Beliefs About Policies - Difficult Setup (B):**

* The heatmap shows more fragmented and less consistent vertical stripes, indicating a less stable belief in certain policies over time.

### Key Observations

* The "Difficult setup" exhibits more frequent fluctuations in both ELBO and precision compared to the "Easy setup".

* The Bayesian beliefs about policies are more stable in the "Easy setup" than in the "Difficult setup".

* The black dots overlaid on the ELBO and precision plots appear to correspond to the times when the values drop significantly.

### Interpretation

The data suggests that the agent experiences more uncertainty and difficulty in the "Difficult setup" compared to the "Easy setup". This is reflected in the more frequent fluctuations in ELBO and precision, as well as the less stable Bayesian beliefs about policies. The black dots likely represent key decision points or events where the agent's confidence decreases, leading to a drop in ELBO and precision. The "Easy setup" allows the agent to maintain a more consistent belief in its policies, leading to more stable performance. The "Difficult setup" forces the agent to constantly re-evaluate its policies, resulting in more volatile behavior.

</details>

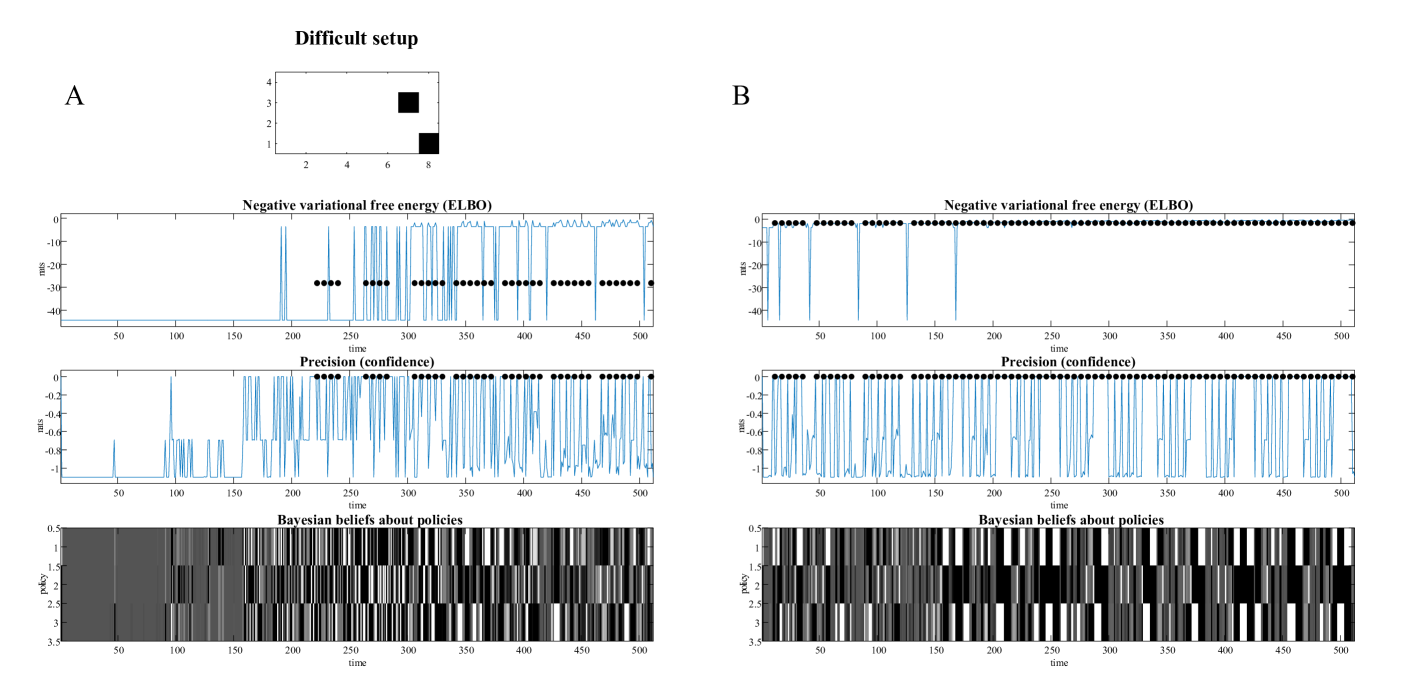

Figure 5: The emergence of play. Panels $A$ and $B$ show the results of two simulations of $512$ time steps (i.e., about two minutes of simulated time) under two configurations of the Pong set up: an easy setup in panel A and a slightly more difficult setup in panel B, in which the width of the bounding box was increased, and its height decreased (from $5× 6$ to $8× 4$ ). In both panels, the configuration of the game is shown above three plots reporting fluctuations in various measures of belief updating, and accompanying behaviour. The first graph plots the (negative) variational free energy as a function of time (where each time step corresponds roughly to $250$ ms). The black dots mark time points when the ball was hit. It can be seen that during accumulation of the likelihood Dirichlet counts, the ball was missed until time step $150$ . After about a minute, the synthetic agent then starts to emit short rallies of between one and seven consecutive hits. The emergence of game play is accompanied by saltatory increases in negative variational free energy (or evidence lower bound). These increases disappear whenever the agent misses the ball, terminating little rallies. The second graph plots the average of the expected free energy under posterior beliefs about policies. This can be read as the precision of policy beliefs or, more colloquially, the confidence placed in policy selection. This illustrates that confident behaviour emerges during the first minute and is subsequently restricted to moments prior to hitting the ball. Heuristically, this can be read as the agent realising that it can avoid ambiguity by move moving in such a way as to catch the ball. The accompanying posterior (Bayesian) beliefs about policies are shown in image format in the lower plot. This illustrates that precise or confident behaviour entails precise beliefs about what to do next. Panel $B$ shows exactly the same results but for a slightly more difficult game. Here, the ball has more latitude to move horizontally and is returned more quickly, due to the reduced height of the bounding box. In consequence, learning a precise likelihood mapping takes about twice the amount of time. And, even when learned, the rallies are shorter, ranging from one to four, at most. We will use this more difficult set up to look at the effect of inductive planning in the next figure.

4.1 Inductive Planning

In this section, we repeat the simulations above, but making the game more difficult by increasing the width of the box. This means that to catch the ball, the agent has to anticipate outcomes in the distal future in order to respond with pre-emptive movement of the paddle. Note that this kind of behaviour goes beyond the sort of behaviour predicted under perceptual control theory and related accounts of ball catching [69, 70]. For example, one way to model behaviour in this paradigm would be to move the paddle so that it was always underneath the ball. However, this is not the behaviour that emerges under self-evidencing. In what follows, we will see that avoiding ambiguity is not sufficient for skilled performance of a more difficult game of Pong. However, if we equip the agent with intentions to hit the ball (i.e., as an intended state), it can use inductive planning to pursue a never ending rally, and play the game skilfully.

Figure 5 (B) reports performance over about two minutes of simulated time of an abductive agent when increasing the width of the Pong box to $8$ units (and decreasing its height to $4$ units). This simple change precludes sustained rallies; largely because the depth of planning is not sufficient to support pre-emptive moves of the paddle.

The equivalent results under inductive planning are shown in Figure 6. Here, active inference under inductive constraints produces intermittent rallies within about a minute of simulated time—and skilled, and fluent play after three minutes.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Comparative Analysis of Agent Performance in a Difficult Setup

### Overview

The image presents a comparative analysis of an agent's performance in a "Difficult setup" under two conditions, labeled A and B. Each condition is represented by three plots: Negative variational free energy (ELBO), Precision (confidence), and Bayesian beliefs about policies, all plotted against time. The setup is visualized as a small grid with two black squares representing obstacles.

### Components/Axes

**Top Row: Setup Visualization**

* **Title:** Difficult setup

* **Grid:** A 4x8 grid.

* **Obstacles:** Two black squares, one at approximately (6,4) and the other at approximately (8,1).

* **Labels:** A and B, indicating two different conditions or agents.

**Middle Rows: Condition A (Left) and Condition B (Right)**

* **Plot 1: Negative variational free energy (ELBO)**

* **Y-axis:** "nts", ranging from -40 to 0, with markers at -10, -20, -30, and -40.

* **X-axis:** "time", ranging from 0 to 500, with markers every 50 units.

* **Data:** A blue line representing the ELBO over time. Black dots are overlaid on the line at certain points.

* **Plot 2: Precision (confidence)**

* **Y-axis:** "nts", ranging from -1 to 0, with markers at -0.2, -0.4, -0.6, -0.8, and -1.

* **X-axis:** "time", ranging from 0 to 500, with markers every 50 units.

* **Data:** A blue line representing the precision/confidence over time. Black dots are overlaid on the line at certain points.

* **Plot 3: Bayesian beliefs about policies**

* **Y-axis:** "policy", ranging from 0.5 to 3.5, with markers at 1, 2, 2.5, and 3.

* **X-axis:** "time", ranging from 0 to 500, with markers every 50 units.

* **Data:** A heatmap-like representation of policy beliefs, with varying shades of gray indicating different belief levels.

### Detailed Analysis

**Condition A**

* **Negative variational free energy (ELBO):**

* The blue line starts at approximately -40 nts and remains there until around time = 200.

* Between time = 200 and 300, the line fluctuates between -40 and 0 nts.

* From time = 300 to 500, the line fluctuates more frequently between -40 and 0 nts.

* Black dots are present on the line between time = 225 and 325, indicating a value of approximately -27 nts.

* **Precision (confidence):**

* The blue line starts at approximately -1 nts and fluctuates until around time = 200.

* Between time = 200 and 500, the line fluctuates more frequently between -1 and 0 nts.

* Black dots are present on the line between time = 225 and 500, indicating a value of approximately 0 nts.

* **Bayesian beliefs about policies:**

* The heatmap shows a mix of gray shades, indicating varying beliefs about different policies.

* Before time = 150, the policies are mostly uniform.

* After time = 150, there are clear vertical stripes, indicating distinct policy preferences at different times.

**Condition B**

* **Negative variational free energy (ELBO):**

* The blue line fluctuates between -40 and 0 nts throughout the entire time range.

* Black dots are present on the line between time = 0 and 500, indicating a value of approximately 0 nts.

* **Precision (confidence):**

* The blue line fluctuates between -1 and 0 nts throughout the entire time range.

* Black dots are present on the line between time = 0 and 500, indicating a value of approximately 0 nts.

* **Bayesian beliefs about policies:**

* The heatmap shows a mix of gray shades, indicating varying beliefs about different policies.

* There are clear vertical stripes, indicating distinct policy preferences at different times.

### Key Observations

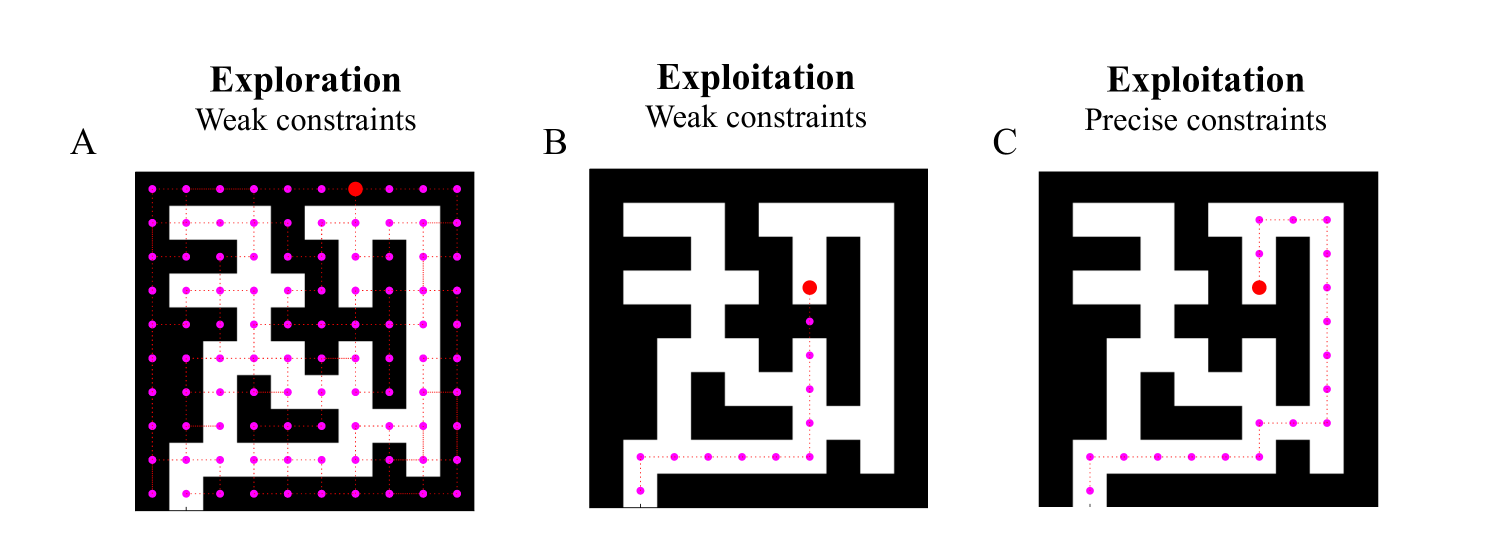

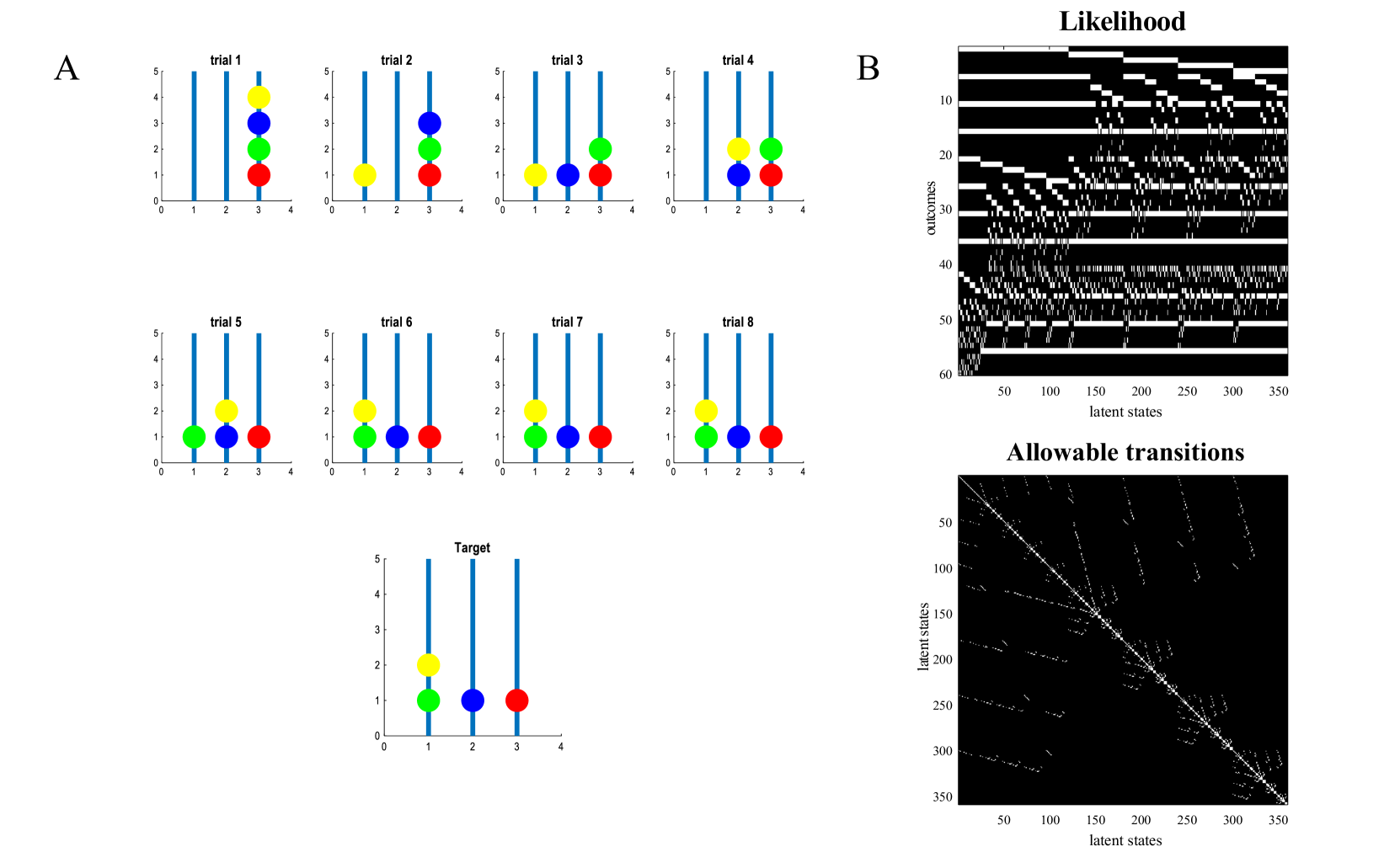

* **ELBO:** In Condition A, the ELBO initially remains low and then starts fluctuating, while in Condition B, it fluctuates throughout the entire time range.