# MATH-SHEPHERD: VERIFY AND REINFORCE LLMS STEP-BY-STEP WITHOUT HUMAN ANNOTATIONS

## MATH-SHEPHERD: VERIFY AND REINFORCE LLMS STEP-BY-STEP WITHOUT HUMAN ANNOTATIONS

Peiyi Wang 1 † Lei Li 3 Zhihong Shao 4 R.X. Xu 2 Damai Dai 1 Yifei Li 5 2 2 1

Deli Chen Y. Wu Zhifang Sui

1 National Key Laboratory for Multimedia Information Processing, Peking University

2 DeepSeek-AI 3 The University of Hong Kong

4 Tsinghua University 5 The Ohio State University

{ wangpeiyi9979, nlp.lilei } @gmail.com li.14042@osu.edu

szf@pku.edu.cn

<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (46x43)

</details>

Project Page:

MATH-SHEPHERD

## ABSTRACT

In this paper, we present an innovative process-oriented math process reward model called MATH-SHEPHERD , which assigns a reward score to each step of math problem solutions. The training of MATH-SHEPHERD is achieved using automatically constructed process-wise supervision data, breaking the bottleneck of heavy reliance on manual annotation in existing work. We explore the effectiveness of MATH-SHEPHERD in two scenarios: 1) Verification : MATH-SHEPHERD is utilized for reranking multiple outputs generated by Large Language Models (LLMs); 2) Reinforcement Learning : MATH-SHEPHERD is employed to reinforce LLMs with step-by-step Proximal Policy Optimization (PPO). With MATH-SHEPHERD, a series of open-source LLMs demonstrates exceptional performance. For instance, the step-by-step PPO with MATH-SHEPHERD significantly improves the accuracy of Mistral-7B (77.9% → 84.1% on GSM8K and 28.6% → 33.0% on MATH). The accuracy can be further enhanced to 89.1% and 43.5% on GSM8K and MATH with the verification of MATH-SHEPHERD, respectively. We believe that automatic process supervision holds significant potential for the future evolution of LLMs.

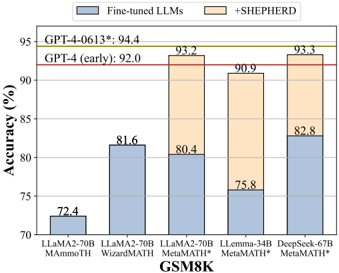

Figure 1: We evaluate the performance of various LLMs with MATH-SHEPHERD on the GSM8K and MATH datasets. All base models are finetuned with the MetaMath dataset (Yu et al., 2023b). The +SHEPHERD results are obtained by selecting the best one from 256 candidates using MATHSHEPHERD. We observe that MATH-SHEPHERD is compatible with different LLMs. The results of GPT-4 (early) are from Bubeck et al. (2023).

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Bar Chart: LLM Accuracy on GSM8K Dataset

### Overview

This bar chart compares the accuracy of several Large Language Models (LLMs) on the GSM8K dataset, a benchmark for mathematical problem-solving. The chart shows the accuracy of the models in their fine-tuned state and when augmented with the "+SHEPHERD" method. Two horizontal lines indicate the accuracy of GPT-4 models (early and a later version).

### Components/Axes

* **X-axis:** LLM Models - LLaMA2-70B (AMMO TH), LLaMA2-70B (WizardMATH), LLaMA2-70B (MetaMATH), LLemma-34B (MetaMATH), DeepSeek-67B (MetaMATH).

* **Y-axis:** Accuracy (%) - Scale ranges from 70% to 95%. Gridlines are present at 5% intervals.

* **Legend:**

* Gray: Fine-tuned LLMs

* Orange: +SHEPHERD

* **Horizontal Lines:**

* GPT-4-0613*: 94.4%

* GPT-4 (early): 92.0%

* **Title:** GSM8K (located along the x-axis)

### Detailed Analysis

The chart consists of five sets of paired bars, each representing a different LLM. The left bar in each pair represents the accuracy of the fine-tuned LLM, and the right bar represents the accuracy when using the "+SHEPHERD" method.

* **LLaMA2-70B (AMMO TH):**

* Fine-tuned: Approximately 72.4%

* +SHEPHERD: Not applicable (only one bar present)

* **LLaMA2-70B (WizardMATH):**

* Fine-tuned: Approximately 81.6%

* +SHEPHERD: Not applicable (only one bar present)

* **LLaMA2-70B (MetaMATH):**

* Fine-tuned: Approximately 80.4%

* +SHEPHERD: Approximately 93.2%

* **LLemma-34B (MetaMATH):**

* Fine-tuned: Approximately 75.8%

* +SHEPHERD: Approximately 90.9%

* **DeepSeek-67B (MetaMATH):**

* Fine-tuned: Approximately 82.8%

* +SHEPHERD: Approximately 93.3%

The horizontal lines representing GPT-4's accuracy are positioned at 92.0% (GPT-4 early) and 94.4% (GPT-4-0613*).

### Key Observations

* The "+SHEPHERD" method consistently improves the accuracy of the LLMs.

* LLaMA2-70B (MetaMATH), LLemma-34B (MetaMATH), and DeepSeek-67B (MetaMATH) with "+SHEPHERD" achieve accuracy levels comparable to or exceeding the earlier version of GPT-4.

* LLaMA2-70B (AMMO TH) and LLaMA2-70B (WizardMATH) have significantly lower accuracy compared to the other models, even with fine-tuning.

* The largest performance gain from "+SHEPHERD" is observed with LLaMA2-70B (MetaMATH), increasing accuracy by approximately 12.8 percentage points.

### Interpretation

The data suggests that the "+SHEPHERD" method is a highly effective technique for improving the mathematical problem-solving capabilities of LLMs. The substantial accuracy gains observed across multiple models indicate that "+SHEPHERD" provides a significant boost to performance on the GSM8K dataset. The fact that some models, when combined with "+SHEPHERD", reach or surpass the accuracy of GPT-4 (early) is particularly noteworthy, suggesting that these models can be competitive with state-of-the-art performance with the right augmentation. The varying levels of improvement across different models suggest that the effectiveness of "+SHEPHERD" may depend on the underlying architecture and training data of the LLM. The lower performance of LLaMA2-70B (AMMO TH) and LLaMA2-70B (WizardMATH) may indicate that these models are less well-suited for mathematical reasoning tasks, or that their fine-tuning process was less effective. The consistent improvement across the other models suggests a generalizable benefit from the "+SHEPHERD" approach.

</details>

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Bar Chart: Math Problem Solving Accuracy

### Overview

This bar chart compares the accuracy of several Large Language Models (LLMs) on math problems. The chart shows the accuracy of "Fine-tuned LLMs" (blue bars) and the accuracy of the same LLMs when used with "+SHEPHERD" (beige bars). Horizontal red lines indicate the accuracy of GPT-4 (early) and GPT-4-0613. The x-axis represents different LLM models, and the y-axis represents accuracy in percentage.

### Components/Axes

* **X-axis:** LLM Models: LLaMA2-70B MATH, LLaMA2-70B WizardMATH, LLaMA2-70B MetaMATH*, LLemma-34B MetaMATH*, DeepSeek-67B MetaMATH*.

* **Y-axis:** Accuracy (%) - Scale ranges from 10 to 60, with increments of 10.

* **Legend:**

* Blue: Fine-tuned LLMs

* Beige: +SHEPHERD

* **Horizontal Lines:**

* GPT-4 (early): 42.5% (Red line)

* GPT-4-0613: 56.2% (Red line)

* **Title:** MATH (centered at the bottom of the chart)

### Detailed Analysis

The chart consists of five sets of stacked bars, each representing a different LLM.

1. **LLaMA2-70B MATH:**

* Fine-tuned LLMs (Blue): Approximately 21.1%

* +SHEPHERD (Beige): Not present.

2. **LLaMA2-70B WizardMATH:**

* Fine-tuned LLMs (Blue): Approximately 22.7%

* +SHEPHERD (Beige): Not present.

3. **LLaMA2-70B MetaMATH*:**

* Fine-tuned LLMs (Blue): Approximately 29.8%

* +SHEPHERD (Beige): Approximately 15.4% (45.2% total)

4. **LLemma-34B MetaMATH*:**

* Fine-tuned LLMs (Blue): Approximately 34.8%

* +SHEPHERD (Beige): Approximately 12.5% (47.3% total)

5. **DeepSeek-67B MetaMATH*:**

* Fine-tuned LLMs (Blue): Approximately 36.8%

* +SHEPHERD (Beige): Approximately 11.3% (48.1% total)

The red horizontal line for GPT-4 (early) is positioned at approximately 42.5% on the y-axis. The red horizontal line for GPT-4-0613 is positioned at approximately 56.2% on the y-axis.

### Key Observations

* The addition of "+SHEPHERD" consistently improves the accuracy of the LLMs.

* DeepSeek-67B MetaMATH* achieves the highest overall accuracy (48.1%) when combined with +SHEPHERD.

* LLaMA2-70B MATH and LLaMA2-70B WizardMATH have the lowest accuracy, even with +SHEPHERD.

* GPT-4-0613 outperforms all LLM/SHEPHERD combinations.

* GPT-4 (early) is outperformed by LLemma-34B MetaMATH* and DeepSeek-67B MetaMATH* with +SHEPHERD.

### Interpretation

The data suggests that "+SHEPHERD" is a valuable tool for enhancing the math problem-solving capabilities of LLMs. The consistent improvement across all models indicates that it provides a general benefit, likely by improving the reasoning or calculation steps. The performance of DeepSeek-67B MetaMATH* with +SHEPHERD is approaching that of GPT-4 (early), suggesting that fine-tuning and the use of tools like +SHEPHERD can significantly close the gap between open-source LLMs and state-of-the-art proprietary models. The relatively low performance of LLaMA2-70B MATH and WizardMATH suggests that these models may require more extensive fine-tuning or different architectural approaches to achieve comparable accuracy. The difference between the two GPT-4 versions (early vs. 0613) highlights the rapid progress in LLM development. The asterisk (*) after MetaMATH suggests a possible version or variant of the model.

</details>

† Contribution during internship at DeepSeek-AI.

## 1 INTRODUCTION

Large language models (LLMs) have demonstrated remarkable capabilities across various tasks (Park et al., 2023; Kaddour et al., 2023; Song et al.; Li et al., 2023a; Wang et al., 2023a; Chen et al., 2023; Zheng et al., 2023; Wang et al., 2023c), However, even the most advanced LLMs face challenges in complex multi-step mathematical reasoning problems (Lightman et al., 2023; Huang et al., 2023). To address this issue, prior research has explored different methodologies, such as pretraining (Azerbayev et al., 2023), fine-tuning (Luo et al., 2023; Yu et al., 2023b; Wang et al., 2023b), prompting (Wei et al., 2022; Fu et al., 2022), and verification (Wang et al., 2023d; Li et al., 2023b; Zhu et al., 2023; Leviathan et al., 2023). Among these techniques, verification has recently emerged as a favored method. The motivation behind verification is that relying solely on the top-1 result may not always produce reliable outcomes. A verification model can rerank candidate responses, ensuring higher accuracy and consistency in the outputs of LLMs. In addition, a good verification model can also offer invaluable feedback for further improvement of LLMs (Uesato et al., 2022; Wang et al., 2023b; Pan et al., 2023).

The verification models generally fall into the outcome reward model (ORM) (Cobbe et al., 2021; Yu et al., 2023a) and process reward model (PRM) (Li et al., 2023b; Uesato et al., 2022; Lightman et al., 2023; Ma et al., 2023). The ORM assigns a confidence score based on the entire generation sequence, whereas the PRM evaluates the reasoning path step-by-step. PRM is advantageous due to several compelling reasons. One major benefit is its ability to offer precise feedback by identifying the specific location of any errors that may arise, which is a valuable signal in reinforcement learning and automatic correction. Besides, The PRM exhibits similarities to human behavior when assessing a reasoning problem. If any steps contain an error, the final result is more likely to be incorrect, mirroring the way human judgment works. However, gathering data to train a PRM can be an arduous process. Uesato et al. (2022) and Lightman et al. (2023) utilize human annotators to provide process supervision annotations, enhancing the performance of PRM. Nevertheless, annotation by humans, particularly for intricate multi-step reasoning tasks that require advanced annotator skills, can be quite costly, which hinders the advancement and practical application of PRM.

To tackle the problem, in this paper, we propose an automatic process annotation framework. Inspired by Monte Carlo Tree Search (Kocsis & Szepesv´ ari, 2006; Coulom, 2006; Silver et al., 2016; ´ Swiechowski et al., 2023), we define the quality of an intermediate step as its potential to deduce the correct final answer. By leveraging the correctness of the answer, we can automatically gather step-wise supervision. Specifically, given a math problem with a golden answer and a step-by-step solution, to achieve the label of a specific step, we utilize a fine-tuned LLM to decode multiple subsequent reasoning paths from this step. We further validate whether the decoded final answer matches with the golden answer. If a reasoning step can deduce more correct answers than another, it would be assigned a higher correctness score.

We use this automatic way to construct the training data for MATH-SHEPHERD, and verify our ideas on two widely used mathematical benchmarks, GSM8K (Cobbe et al., 2021) and MATH (Hendrycks et al., 2021). We explore the effectiveness of MATH-SHEPHERD in two scenarios: 1) verification: MATH-SHEPHERD is utilized for reranking multiple outputs generated by LLMs; 2) reinforcement learning: MATH-SHEPHERD is employed to reinforce LLMs with step-by-step Proximal Policy Optimization (PPO). With the verification of MATH-SHEPHERD, a series of open-source LLMs from 7B to 70B demonstrates exceptional performance. For instance, the step-by-step PPO with MATHSHEPHERD significantly improves the accuracy of Mistral-7B (77.9% → 84.1% on GSM8K and 28.6% → 33.0% on MATH). The accuracy can be further enhanced to 89.1% and 43.5% on GSM8K and MATH with verification. DeepSeek 67B (DeepSeek, 2023) achieves accuracy rates of 93.3% on the GSM8K dataset and 48.1% on the MATH dataset with verification of MATH-SHEPHERD. To the best of our knowledge, these results are unprecedented for open-source models that do not rely on additional tools.

Our main contributions are as follows:

1) We propose a framework to automatically construct process supervision datasets without human annotations for math reasoning tasks.

- 2) We evaluate our method on both step-by-step verification and reinforcement learning scenarios. Extensive experiments on two widely used mathematical benchmarks - GSM8K and MATH, in addition to a series of LLMs ranging from 7B to 70B, demonstrate the effectiveness of our method.

- 3) We empirically analyze the key factors for training high-performing process reward models, shedding light on future directions toward improving reasoning capability with automatic step-bystep verification and supervision.

## 2 RELATED WORKS

Improving and eliciting mathematical reasoning abilities of LLMs. Mathematical reasoning tasks are one of the most challenging tasks for LLMs. Researchers have proposed various methods to improve or elicit the mathematical reasoning ability of LLMs, which can be broadly divided into three groups: 1) pre-training : The pre-training methods (OpenAI, 2023; Anil et al., 2023; Touvron et al., 2023; Azerbayev et al., 2023) pre-train LLMs on a vast of datasets that are related to math problems, such as the Proof-Pile and ArXiv (Azerbayev et al., 2023) with a simple next token prediction objective. 2) fine-tuning : The fine-tuning methods (Yu et al., 2023b; Luo et al., 2023; Yue et al., 2023; Wang et al., 2023b; Gou et al., 2023) can also enhance the mathematical reasoning ability of LLMs. The core of fine-tuning usually lies in constructing high-quality question-response pair datasets with a chain-of-thought reasoning process. and 3) prompting : The prompting methods (Wei et al., 2022; Zhang et al., 2023; Fu et al., 2022; Bi et al., 2023) aim to elicit the mathematical reasoning ability of LLMs by designing prompting strategy without updating the model parameters, which is very convenient and practical.

Mathematical reasoning verification for LLMs. Except for directly improving and eliciting the mathematical reasoning potential of LLMs, the reasoning results can be boosted via an extra verifier for selecting the best answer from multiple decoded candidates. There are two primary types of verifiers: the Outcome Reward Model (ORM) and the Process Reward Model (PRM). The ORM allocates a score to the entire solution while the PRM assigns a score to each individual step in the reasoning process. Recent findings by (Lightman et al., 2023) suggest that PRM outperforms ORM. In addition to verification, reward models can offer invaluable feedback for further training of generators (Uesato et al., 2022; Pan et al., 2023). Compared to ORM, PRM provides more detailed feedback, demonstrating greater potential to enhance generator (Wu et al., 2023). However, training a PRM requires access to expensive human-annotated datasets (Uesato et al., 2022; Lightman et al., 2023), which hinders the advancement and practical application of PRM. Therefore, in this paper, we aim to build a PRM for mathematical reasoning without human annotation, and we explore the effectiveness of the automatic PRM with both verification and reinforcement learning scenarios.

## 3 METHODOLOGY

In this section, we first present our task formulation to evaluate the performance of reward models (§3.1). Subsequently, we outline two typical categories of reward models, ORM and PRM(§3.2). Then, we introduce our methodology to automatically build the training dataset for PRM(§3.3), breaking the bottleneck of heavy reliance on manual annotation in existing work (Uesato et al., 2022; Lightman et al., 2023).

## 3.1 TASK FORMULATION

We evaluate the performance of the reward model in two scenarios:

Verification Following (Lightman et al., 2023), we consider a best-of-N selection evaluation paradigm. Specifically, given a problem p in the testing set, we sample N candidate solutions from a generator. These candidates are then scored using a reward model, and the highest-scoring solution is selected as the final answer. An enhanced reward model elevates the likelihood of selecting the solution containing the correct answer, consequently raising the success rate in solving mathematical problems for LLMs.

Reinforcement learning We also use the automatically constructed PRM to supervise LLMs with step-by-step PPO. In this scenario, we evaluate the accuracy of the LLMs' greedy decoding output. An enhanced reward model is instrumental in training higher-performing LLMs.

## 3.2 REWARD MODELS FOR MATHEMATICAL PROBLEM

ORM Given a mathematical problem p and its solution s , ORM ( P × S → R ) assigns a single real-value to s to indicate whether s is correct. ORM is usually trained with a cross-entropy loss (Cobbe et al., 2021; Li et al., 2023b):

$$\displaystyle \sum _ { i = 1 } ^ { n } L _ { i s } ( 1 )$$

where y s is the golden answer of the solution s , y s = 1 if s is correct, otherwise y s = 0 . r s is the sigmoid score of s assigned by ORM. The success of the reward model hinges on the effective construction of the high-quality training dataset. As the math problem usually has a certain answer, we can automatically construct the training set of ORM by two steps: 1) sampling some candidate solutions for a problem from a generator; 2) assigning the label to each sampling solution by checking whether its answer is correct. Although false positives solutions that reach the correct answer with incorrect reasoning will be misgraded, previous studies have proven that it is still effective for training a good ORM (Lightman et al., 2023; Yu et al., 2023a).

PRM Take a step further, PRM ( P × S → R + ) assigns a score to each reasoning step of s , which is usually trained with:

$$\sum _ { i = 1 } ^ { K } y _ { s _ { i } } \log r _ { s _ { i } } + ( 1 - y _ { s _ { i } } )$$

where y s i is the golden answer of s i (the i -th step of s ), r s i is the sigmoid score of s i assigned by PRM and K is the number of reasoning steps for s . (Lightman et al., 2023) also conceptualizes the PRM training as a three-class classification problem, in which each step is classified as either 'good', 'neutral', or 'bad'. In this paper, we found that there is not much difference between the binary and the three classifications, and we regard PRM training as the binary classification. Compared to ORM, PRM can provide more detailed and reliable feedback (Lightman et al., 2023). However, there are currently no automated methods available for constructing high-quality PRM training datasets. Previous works (Uesato et al., 2022; Lightman et al., 2023) typically resort to costly human annotations. While PRM manages to outperform ORM (Lightman et al., 2023), the annotation cost invariably impedes both the development and application of PRM.

## 3.3 AUTOMATIC PROCESS ANNOTATION

In this section, we propose an automatic process annotation framework to mitigate the annotation cost issues associated with PRM. We first define the quality of a reasoning step, followed by the introduction of our solution that obviates the necessity for human annotation.

## 3.3.1 DEFINITION

Inspired by Monto Carlo Tree Search (Kocsis & Szepesv´ ari, 2006; Coulom, 2006; Silver et al., 2016; ´ Swiechowski et al., 2023), we define the quality of a reasoning step as its potential to deduce the correct answer. This criterion stems from the primary objective of the reasoning process, which essentially is a cognitive procedure aiding humans or intelligent agents in reaching a well-founded outcome (Huang & Chang, 2023). Therefore, a step that has the potential to deduce a well-founded result can be considered a good reasoning step. Analogous to ORM, this definition also introduces some degree of noise. Nevertheless, we find that it is beneficial for effectively training a good PRM.

## 3.3.2 SOLUTION

Completion To quantify and estimate the potential for a give reasoning step s i , as shown in Figure 2, we use a 'completer' to finalize N subsequent reasoning processes from this step: { ( s i +1 ,j , · · · , s K j ,j , a j ) } N j =1 , where a j and K j are the decoded answer and the total number of steps for the j -th finalized solution, respectively. Then, we estimate the potential of this step based on the correctness of all decoded answers A = { a j } N j =1 .

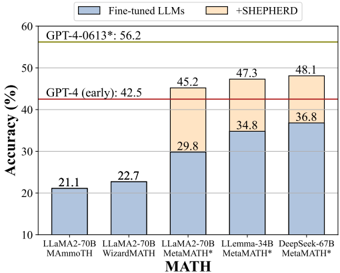

Figure 2: Comparison for previous automatic outcome annotation and our automatic process annotation. (a): automatic outcome annotation assigns a label to the entire solution S , dependent on the correctness of the answer; (b) automatic process annotation employs a 'completer' to finalize N reasoning processes (N=3 in this figure) for an intermediate step ( s 1 in this figure), subsequently use hard estimation (HE) and soft estimation (SE) to annotate this step based on all decoded answers.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Diagram: Solution Process Annotation for Polynomial Problem

### Overview

The image presents a diagram illustrating a solution process for a polynomial problem, along with annotations regarding the outcome and process steps. It visually represents a series of solution attempts (S1, S2, S3… Sk) and their corresponding answers, indicating whether each attempt is correct or incorrect. The diagram is divided into two main sections: Outcome Annotation and Process Annotation.

### Components/Axes

The diagram consists of the following components:

* **Problem Statement:** "Problem: Let p(x) be a monic polynomial of degree 4. Three of the roots of p(x) are 1, 2, and 3. Find p(0) + p(4)."

* **Golden Answer:** "Golden Answer: 24" (located in the top-right corner)

* **Solution Representation:** "Solution: S = S1, S2, S3, …, Sk"

* **Outcome Annotation (a):** "γS = 0"

* **Process Annotation (b):** "γS1 = 2/3, γHE = 1"

* **Solution Steps (S1, S2, S3):** Represented as rectangular boxes, each containing a step in the solution process.

* **Finalized Solutions (Sk,1, Sk,2, Sk,3):** Represented as rectangular boxes, each containing a finalized solution attempt.

* **Arrows:** Indicate the flow of the solution process from steps to finalized solutions.

* **Answer Boxes:** Boxes at the end of each finalized solution path, displaying the answer and a checkmark (correct) or an 'X' (incorrect).

* **Definitions:** "Sᵢ: the i-th step of the solution S." and "Sᵢ,ⱼ: the i-th step of the j-th finalized solution."

### Detailed Analysis or Content Details

The diagram illustrates three initial solution steps (S1, S2, S3) branching out into three finalized solutions (Sk,1, Sk,2, Sk,3).

* **S1:** "Since three of the roots of p(x) are 1, 2, and 3, we can write: p(x) = (x-1)(x-2)(x-3)(x-r)."

* **Sk,1:** Answer: 24 (checkmark - correct)

* **Sk,2:** Answer: 24 (checkmark - correct)

* **Sk,3:** Answer: 20 (X - incorrect)

* **Outcome Annotation:** The outcome annotation states γS = 0, indicating a measure of the overall solution quality.

* **Process Annotation:** The process annotation provides values for γS1 = 2/3 and γHE = 1, likely representing metrics related to the first solution step and a heuristic evaluation, respectively.

### Key Observations

* Two out of the three finalized solutions (Sk,1 and Sk,2) yield the correct answer (24), while one (Sk,3) is incorrect (20).

* The process annotation suggests a scoring or evaluation system where γS1 = 2/3 indicates partial correctness or progress in the first step, and γHE = 1 suggests a high heuristic evaluation.

* The outcome annotation γS = 0 suggests that the overall solution process, despite having some correct answers, is not fully satisfactory.

### Interpretation

The diagram demonstrates a solution process with multiple attempts and evaluations. The annotations (γS, γS1, γHE) suggest a system for assessing the quality of both individual steps and the overall solution. The fact that two out of three finalized solutions are correct indicates a reasonable level of success, but the incorrect answer and the overall outcome annotation (γS = 0) suggest there is room for improvement in the solution strategy or the evaluation metrics. The diagram likely represents a component of a larger system for automated problem-solving or educational assessment, where the annotations are used to provide feedback and guide the learning process. The branching structure highlights the exploration of different solution paths, and the annotations provide insights into the effectiveness of each path. The diagram is not presenting data in a traditional chart or graph format, but rather a visual representation of a process and its evaluation. It is a schematic diagram illustrating a methodology for evaluating solution steps.

</details>

Estimation In this paper, we use two methods to estimate the quality y s i for the step s i , hard estimation (HE) and soft estimation (SE). HE supposes that a reasoning step is good as long as it can reach the correct answer a ∗ :

$$y _ { s _ { 1 } } = \int _ { 0 } ^ { 1 } z _ { a _ { j } } \in A , y _ { j } = a *$$

SE assumes the quality of a step as the frequency with which it reaches the correct answer:

$$y _ { s } = \frac { \sum _ { j = 1 } ^ { N } y _ { j } = 1 ( a _ { j } = a * ) } { N } .$$

Once we gather the label of each step, we can train PRM with the cross-entropy loss. In conclusion, our automatic process annotation framework defines the quality of a step as its potential to deduce the correct answer and achieve the label of each step by completion and estimation.

## 3.4 RANKING FOR VERIFICATION

Following (Lightman et al., 2023), we use the minimum score across all steps to represent the final score of a solution assigned by PRM. We also explore the combination of self-consistency and reward models following (Li et al., 2023b). In this context, we initially classify solutions into distinct groups according to their final answers. Following that, we compute the aggregate score for each group. Formally, the final prediction answer based on N candidate solutions is:

$$a _ { sc + r m } = \arg _ { a } \max _ { i = 1 } ^ { N } \sum _ { i = 1 } ^ { N } I ( a _ { i } = a ) .$$

Where RM ( p, S i ) is the score of the i -th solution assigned by ORM or PRM for problem p .

## 3.5 REINFORCE LEARNING WITH PROCESS SUPERVISION

Upon achieving PRM, we employ reinforcement learning to train LLMs. We implement Proximal Policy Optimization (PPO) in a step-by-step manner. This method differs from the conventional strategy that utilizes PPO with ORM, which only offers a reward at the end of the response. Conversely, our step-by-step PPO offers rewards at the end of each reasoning step.

Table 1: Performances of different LLMs on GSM8K and MATH with different verification strategies. The reward models are trained based on LLama2-70B and LLemma-34B on GSM8K and MATH, respectively. The verification is based on 256 outputs.

| Models | Verifiers | GSM8K | MATH500 |

|------------------------|-----------------------------------------|---------|-----------|

| LLaMA2-70B: MetaMATH | Self-Consistency | 88 | 39.4 |

| LLaMA2-70B: MetaMATH | ORM | 91.8 | 40.4 |

| LLaMA2-70B: MetaMATH | Self-Consistency+ORM | 92 | 42 |

| LLaMA2-70B: MetaMATH | MATH-SHEPHERD (Ours) | 93.2 | 44.5 |

| LLaMA2-70B: MetaMATH | Self-Consistency + MATH-SHEPHERD (Ours) | 92.4 | 45.2 |

| LLemma-34B: MetaMATH | Self-Consistency | 82.6 | 44.2 |

| LLemma-34B: MetaMATH | ORM | 90 | 43.7 |

| LLemma-34B: MetaMATH | Self-Consistency+ORM | 89.6 | 45.4 |

| LLemma-34B: MetaMATH | MATH-SHEPHERD (Ours) | 90.9 | 46 |

| LLemma-34B: MetaMATH | Self-Consistency + MATH-SHEPHERD (Ours) | 89.7 | 47.3 |

| DeepSeek-67B: MetaMATH | Self-Consistency | 88.2 | 45.4 |

| DeepSeek-67B: MetaMATH | ORM | 92.6 | 45.3 |

| DeepSeek-67B: MetaMATH | Self-Consistency+ORM | 92.4 | 47 |

| DeepSeek-67B: MetaMATH | MATH-SHEPHERD (Ours) | 93.3 | 47 |

| DeepSeek-67B: MetaMATH | Self-Consistency + MATH-SHEPHERD (Ours) | 92.5 | 48.1 |

## 4 EXPERIMENTS

Datasets We conduct our experiments using two widely used math reasoning datasets, GSM8K (Cobbe et al., 2021) and MATH (Hendrycks et al., 2021). For the GSM8K dataset, we leverage the whole test set in both verification and reinforcement learning scenarios. For the MATH dataset, in the verification scenario, due to the computation cost, we employ a subset MATH500 that is identical to the test set of Lightman et al. (2023). The subset consists of 500 representative problems, and we find that the subset evaluation produces similar results to the full-set evaluation. To assess different verification methods, we generate 256 candidate solutions for each test problem. We report the mean accuracy of 3 groups of sampling results. In the reinforcement learning scenario, we use the whole test set to evaluate the model performance. We train LLMs with MetaMATH (Yu et al., 2023b).

Parameter Setting Our experiments are based on a series of large language models, LLaMA27B/13B/70B (Touvron et al., 2023), LLemma-7B/34B (Azerbayev et al., 2023), Mistral-7B (Jiang et al., 2023) and DeepSeek-67B (DeepSeek, 2023). We train the generator and completer for 3 epochs on MetaMATH. We train the Mistral-7B with a learning rate of 5e-6. For other models, The learning rates are set to 2e-5, 1e-5, and 6e-6 for the 7B/13B, 34B, and 67B/70B LLMs, respectively. To construct the training dataset of ORM and PRM, we train 7B and 13B models for a single epoch on the GSM8K and MATH training sets. Subsequently, we sample 15 solutions per problem from each model for the training set. Following this, we eliminate duplicate solutions and annotate the solutions at each step. We use LLemma-7B as the completer with the decoded number N=8. Consequently, we obtain around 170k solutions for GSM8K and 270k solutions for MATH. For verification, we choose LLaMA2-70B and LLemma-34B as the base models to train reward models for GSM8K and MATH, respectively. For reinforcement learning, we choose Mistral-7B as the base model to train reward models and use it to supervise LLama2-7B and Mistral-7B generators. The reward model is trained in 1 epoch with a learning rate 1e-6. For the sake of convenience, we train the PRM using the hard estimation version because it allows us to utilize a standard language modeling pipeline by selecting two special tokens to represent 'has potential' and 'no potential' labels, thereby eliminating the need for any specific model adjustments. In reinforcement learning, the learning rate is 4e-7 and 1e-7 for LLaMA2-7B and Mistral-7B, respectively. The Kullback-Leibler coefficient is set to 0.04. We implement a cosine learning rate scheduler, employing a minimal learning rate set to 1e-8. We use 3D parallelism provided by hfai 1 to train all models with the max sequence length of 512.

1 https://doc.hfai.high-flyer.cn/index.html

Table 2: Performances of different 7B models on GSM8K and MATH with greedy decoding. We use the questions in MetaMATH for RFT and PPO training. Both LLaMA2-7B and Mistral-7B are supervised by Mistral-7B-ORM and -MATH-SHEPHERD.

| Models | GSM8K | MATH |

|-----------------------------------------|---------|--------|

| LLaMA2-7B: MetaMATH | 66.6 | 19.2 |

| + RFT | 68.5 | 19.9 |

| + ORM-PPO | 70.8 | 20.8 |

| + MATH-SHEPHERD-step-by-step-PPO (Ours) | 73.2 | 21.6 |

| Mistral-7B: MetaMATH | 77.9 | 28.6 |

| + RFT | 79 | 29.9 |

| + ORM-PPO | 81.8 | 31.3 |

| + MATH-SHEPHERD-step-by-step-PPO (Ours) | 84.1 | 33 |

Baselines and Metrics In the verification scenario, following (Lightman et al., 2023), we evaluate the performance of our reward model by comparing it against the Self-consistency (majority voting) and outcome reward model. The accuracy of the best-of-N solution is utilized as the evaluation metric. For PRM, the minimum score across all steps is adopted to represent the final score of a solution. In the reinforcement scenario, we compare our step-by-step supervision with the outcome supervision provided by ORM, and Rejective Sampling Fine-tuning (RFT) (Yuan et al., 2023), we sample 8 responses for each question in MetaMATH for RFT. We use the accuracy of LLMs' greedy decoding output to assess the performance.

## 4.1 MAIN RESULTS

MATH-SHEPHERD as verifier Table 1 presents the performance comparison of various methods on GSM8K and MATH. We find that: 1) As the verifier, MATH-SHEPHERD consistently outperforms self-consistency and ORM on two datasets with all generators. Specifically, enhanced by MATHSHEPHERD, DeepSeek-67B achieves 93.3% and 48.1% accuracy on GSM8K and MATH; 2) In comparison to GSM8K, PRM achieves a greater advantage over ORM on the more challenging MATH dataset; This outcome aligns with the findings in Uesato et al. (2022) and Lightman et al. (2023). The former discovers that PRM and ORM yield similar results on GSM8K, whereas the latter shows that PRM significantly outperforms ORM on the MATH dataset. This could be attributed to the relative simplicity of the GSM8K dataset compared to MATH, i.e., the GSM8K dataset necessitates fewer steps for problem-solving. As a result, ORM operates efficiently when handling this particular dataset; 3) In GSM8K, when combined with self-consistency, there's a drop in performance, whereas in MATH, performance improves. These results indicate that if the reward model is sufficiently powerful for a task, combining it with self-consistency may harm the verification performance.

MATH-SHEPHERD as reward model on reinforcement learning Table 2 presents the performance of different LLMs with greedy decoding outputs. As is shown: 1) step-by-step PPO significantly improves the performance of two supervised fine-tuned models. For example, Mistral-7B with step-by-step PPO achieves 84.1% and 33.0% on the GSM8K and MATH datasets, respectively; 2) RFT only slightly improves the model performance, we believe this is because MetaMATH already has conducted some data augmentation strategies like RFT; 3) the vanilla PPO with ORM can also enhance the model performance. However, it does not perform as well as the step-by-step PPO supervised by MATH-SHEPHERD, demonstrating the potential of step-by-step supervision.

MATH-SHEPHERD as both reward models and verifiers We also combine the reinforcement learning and the verification. As shown in Table 3: 1) reinforcement learning and verification are complementary. For example, in MATH, step-by-step PPO Mistral-7B outperforms supervised fine-tuning Mistral-7B 7.2% accuracy with self-consistency as the verifier; The performance gap is even larger than that of greedy decoding results, i.e., 4.4%; 2) after reinforcement learning, the vanilla verification methods with only reward models is inferior to self-consistency, we think the

Table 3: Results of reinforcement learning and verification combination. The reward models are trained based on Mistral-7B. The verification is based on 256 outputs.

| Models | Verifiers | GSM8K | MATH500 |

|--------------------------|-----------------------------------------|---------|-----------|

| Mistral-7B: MetaMATH | Self-Consistency | 83.9 | 35.1 |

| Mistral-7B: MetaMATH | ORM | 86.2 | 36.4 |

| Mistral-7B: MetaMATH | Self-Consistency+ORM | 86.6 | 38 |

| Mistral-7B: MetaMATH | MATH-SHEPHERD (Ours) | 87.1 | 37.3 |

| Mistral-7B: MetaMATH | Self-Consistency + MATH-SHEPHERD (Ours) | 86.3 | 38.3 |

| Mistral-7B: MetaMATH | Self-Consistency | 87.4 | 42.3 |

| Mistral-7B: MetaMATH | ORM | 87.6 | 41.3 |

| +step-by-step PPO (Ours) | Self-Consistency+ORM | 89 | 43.1 |

| +step-by-step PPO (Ours) | MATH-SHEPHERD (Ours) | 88.4 | 41.1 |

| +step-by-step PPO (Ours) | Self-Consistency + MATH-SHEPHERD (Ours) | 89.1 | 43.5 |

reason is that the initial reward model is not sufficient to supervise the more powerful model after PPO. These results can also show the potential of iterative reinforcement learning, which we leave for future work.

## 5 ANALYSIS

## 5.1 PERFORMANCE WITH DIFFERENT NUMBER OF CANDIDATE SOLUTIONS

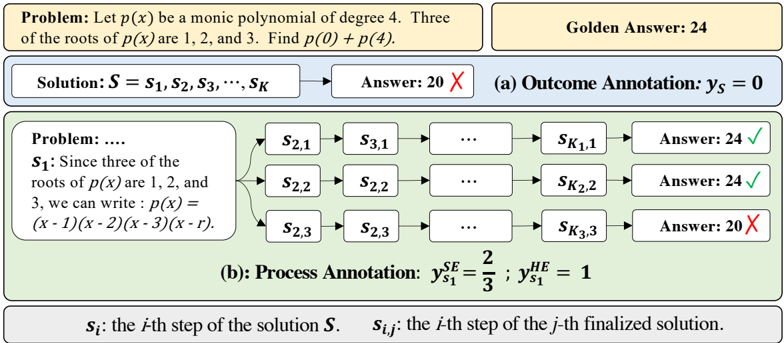

Figure 3 illustrates the performance comparison of various strategies when applied to different numbers of candidates ranging from 1 to 256 on two benchmarks. The key observations are as follows: 1) PRM exhibits consistent superior performance when compared to both ORM and majority voting, with the degree of this superiority becoming more pronounced as N escalates. 2) In MATH, our automatically annotated datasets outperform the human-annotated PRM800K (Lightman et al., 2023). We ascribe this superiority to the distribution gap and the data quantity. Specifically, PRM800K is annotated based on the output from GPT-4, and consequently, a discrepancy arises for the output of open-source LLaMA models fine-tuned on MetaMATH. Furthermore, when considering the quantity of data, our automated reward model data exhibits both high scalability and a reduced labeling cost. Consequently, our dataset is four times larger than that provided in PRM800K. Overall, these results further underscore the effectiveness and potential of our method.

## 5.2 QUALITY OF THE AUTOMATIC PROCESS ANNOTATIONS

In this section, we explore the quality of our automatic PRM dataset. To achieve this, we manually annotate 160 steps sampled from the training set of GSM8K and use different completers to infer from each step to achieve their label. We find that:

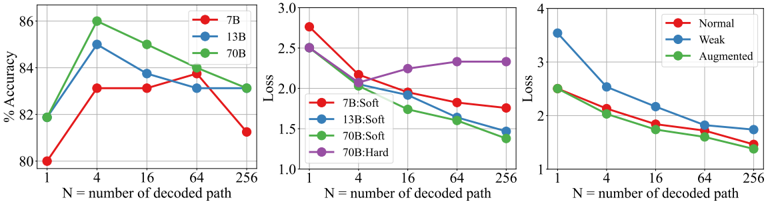

Automatic process annotation exhibits satisfactory quality. Figure 4(a) demonstrates that utilizing LLaMA2-70B trained on MetaMATH as the completer, the accuracy of the hard estimation (HE) reaches 86% when N equals 4. This suggests that our automatically constructed dataset is of high quality. However, we observed a decline in the accuracy of the constructed dataset with further increases in N. Our analysis indicates that larger values for N may lead to false positives.

Figure 4(b) shows the cross-entropy loss between SE and HE labels compared to the human-annotated distribution: as N increases, SE progressively aligns closer to the standard distribution, in contrast to HE which does not exhibit similar behavior. It is essential to note that at N=4, HE achieves an accuracy of 86%. We can theoretically attain higher quality data exceeding 86% accuracy by utilizing SE. However, we discovered that the performance of the verifier exhibits no substantial divergence whether trained with either SE or HE. This may be attributable to the already high-quality annotations provided by HE.

Furthermore, we also delve into other automatic process annotation methodologies. For instance, (Li et al., 2023b) employs a natural language inference (NLI) model and a string match rule to annotate a

Figure 3: Performance of LLaMA2-70B using different verification strategies across different numbers of solution candidates on GSM8K and MATH.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Chart: Problem Solving Performance Comparison

### Overview

The image presents two line charts comparing the performance of different problem-solving methods (SC, ORM, PRM800K, and SHEPHERD) on two datasets: GSM8K and MATH. The charts display the percentage of problems solved (Best-of-N) as a function of the number of solutions generated per problem (N), ranging from 1 to 256.

### Components/Axes

* **X-axis (both charts):** "N = number of solutions per problem". Markers are at 1, 4, 16, 64, and 256.

* **Y-axis (left chart - GSM8K):** "% Problems Solved (Best-of-N)". Scale ranges from 80% to 92%, with increments of 2.5%.

* **Y-axis (right chart - MATH):** "% Problems Solved (Best-of-N)". Scale ranges from 25% to 45%, with increments of 5%.

* **Legend (bottom-left of each chart):**

* SC (Red)

* ORM (Blue)

* PRM800K (Purple)

* SHEPHERD (Green)

* **Titles:**

* Left Chart: "GSM8K" (top-center)

* Right Chart: "MATH" (top-center)

### Detailed Analysis or Content Details

**GSM8K Chart:**

* **SC (Red):** The line starts at approximately 80% at N=1, increases to around 87% at N=4, plateaus around 87.5% at N=16, and reaches approximately 88% at N=256. The trend is initially steep, then flattens.

* **ORM (Blue):** The line begins at approximately 87% at N=1, rises to around 91% at N=4, reaches a peak of approximately 92% at N=16, and remains relatively stable at around 91.5% at N=256. The trend is upward, with a plateau.

* **PRM800K (Purple):** The line starts at approximately 87% at N=1, increases to around 90% at N=4, reaches approximately 91.5% at N=16, and continues to rise to approximately 91.8% at N=256. The trend is consistently upward, but less steep than SC or ORM.

* **SHEPHERD (Green):** The line starts at approximately 88% at N=1, increases rapidly to around 92% at N=4, reaches approximately 92.5% at N=16, and continues to rise slightly to approximately 92.8% at N=256. This line consistently outperforms the others.

**MATH Chart:**

* **SC (Red):** The line starts at approximately 28% at N=1, increases to around 35% at N=4, rises to approximately 40% at N=16, reaches around 42% at N=64, and plateaus at approximately 42.5% at N=256.

* **ORM (Blue):** The line begins at approximately 30% at N=1, increases to around 37% at N=4, rises to approximately 40% at N=16, and remains relatively stable at around 40.5% at N=256.

* **PRM800K (Purple):** The line starts at approximately 30% at N=1, increases to around 36% at N=4, rises to approximately 40% at N=16, and continues to rise to approximately 41% at N=256.

* **SHEPHERD (Green):** The line starts at approximately 32% at N=1, increases rapidly to around 42% at N=4, rises to approximately 44% at N=16, reaches approximately 44.5% at N=64, and plateaus at approximately 44.8% at N=256. This line consistently outperforms the others.

### Key Observations

* **SHEPHERD consistently outperforms all other methods** on both datasets, especially at lower values of N.

* **The performance of all methods generally increases with N**, but the rate of increase diminishes as N grows larger.

* **The GSM8K dataset shows higher overall performance** compared to the MATH dataset, with all methods achieving higher percentages of problems solved.

* **SC shows the lowest performance** on both datasets.

* **The gap between SHEPHERD and other methods narrows** as N increases, suggesting diminishing returns.

### Interpretation

The data suggests that the SHEPHERD method is the most effective approach for solving problems in both the GSM8K and MATH datasets. Increasing the number of solutions generated (N) generally improves performance, but the benefits of generating more solutions decrease as N becomes larger. The difference in performance between the datasets indicates that the GSM8K problems are inherently easier to solve than the MATH problems. The consistent underperformance of SC suggests it is a less effective method compared to ORM, PRM800K, and especially SHEPHERD.

The charts demonstrate a clear trade-off between computational cost (generating more solutions) and problem-solving accuracy. While increasing N improves performance, the marginal gains diminish, suggesting an optimal point beyond which further computation yields limited benefits. The consistent superiority of SHEPHERD implies that its underlying approach is more robust and efficient at exploring the solution space, even with a limited number of attempts.

</details>

Figure 4: Quality of process annotation on GSM8K. (a): Accuracy of the process annotation using different completer; (b): Loss of the process annotation using different completer; (c): Loss of the process annotation using the same completer with different training data.

<details>

<summary>Image 6 Details</summary>

### Visual Description

\n

## Charts: Model Performance vs. Decoded Path Number

### Overview

The image presents three charts comparing the performance of different model sizes (7B, 13B, 70B) and data augmentation techniques (Normal, Weak, Augmented) as the number of decoded paths (N) increases. The first chart shows accuracy, the second loss for soft decoding, and the third loss for hard decoding.

### Components/Axes

All three charts share the same x-axis: "N = number of decoded path", ranging from 1 to 256. The y-axis scales differ for each chart.

* **Chart 1 (Accuracy):** Y-axis is "% Accuracy", ranging from 80% to 86%.

* Legend:

* Red: 7B

* Blue: 13B

* Green: 70B

* **Chart 2 (Loss - Soft Decoding):** Y-axis is "Loss", ranging from 1.0 to 3.0.

* Legend:

* Red: 7B:Soft

* Blue: 13B:Soft

* Green: 70B:Soft

* Purple: 70B:Hard

* **Chart 3 (Loss - Hard Decoding):** Y-axis is "Loss", ranging from 1 to 4.

* Legend:

* Red: Normal

* Blue: Weak

* Green: Augmented

### Detailed Analysis or Content Details

**Chart 1 (Accuracy):**

* **7B (Red):** Starts at approximately 81% accuracy at N=1, increases sharply to around 84% at N=4, then declines steadily to approximately 82% at N=256.

* **13B (Blue):** Starts at approximately 82% accuracy at N=1, increases to a peak of around 85% at N=4, then decreases to approximately 83% at N=256.

* **70B (Green):** Starts at approximately 83% accuracy at N=1, increases to a peak of around 86% at N=4, then declines to approximately 83% at N=256.

**Chart 2 (Loss - Soft Decoding):**

* **7B:Soft (Red):** Starts at approximately 2.8 loss at N=1, decreases to around 1.8 loss at N=4, then fluctuates between 1.8 and 2.1 loss, ending at approximately 1.8 loss at N=256.

* **13B:Soft (Blue):** Starts at approximately 2.6 loss at N=1, decreases to around 1.7 loss at N=4, then fluctuates between 1.7 and 2.0 loss, ending at approximately 1.9 loss at N=256.

* **70B:Soft (Green):** Starts at approximately 2.5 loss at N=1, decreases to around 1.5 loss at N=4, then fluctuates between 1.5 and 1.8 loss, ending at approximately 1.6 loss at N=256.

* **70B:Hard (Purple):** Starts at approximately 2.7 loss at N=1, decreases to around 2.2 loss at N=4, then increases to approximately 2.4 loss at N=16, and fluctuates between 2.3 and 2.5 loss, ending at approximately 2.4 loss at N=256.

**Chart 3 (Loss - Hard Decoding):**

* **Normal (Red):** Starts at approximately 3.5 loss at N=1, decreases to around 2.2 loss at N=4, then decreases to approximately 1.8 loss at N=256.

* **Weak (Blue):** Starts at approximately 3.8 loss at N=1, decreases to around 2.5 loss at N=4, then decreases to approximately 2.0 loss at N=256.

* **Augmented (Green):** Starts at approximately 4.0 loss at N=1, decreases sharply to around 2.0 loss at N=4, then decreases to approximately 1.6 loss at N=256.

### Key Observations

* All models show an initial increase in accuracy (Chart 1) and a decrease in loss (Charts 2 & 3) as the number of decoded paths increases from 1 to 4.

* Beyond N=4, accuracy plateaus or declines slightly for all models (Chart 1).

* The 70B model consistently achieves the highest accuracy (Chart 1) and lowest loss (Charts 2 & 3) across most values of N.

* In Chart 3, the Augmented data consistently results in the lowest loss values, indicating the most effective performance.

* The 70B:Hard decoding method (Chart 2) shows a slight increase in loss after N=4, while the soft decoding methods continue to decrease.

### Interpretation

These charts demonstrate the impact of model size and data augmentation on the performance of a decoding process. Increasing the number of decoded paths initially improves performance (up to N=4), likely by allowing the model to explore more potential solutions. However, beyond this point, the benefits diminish, and performance may even degrade.

The superior performance of the 70B model suggests that larger models have a greater capacity to learn and generalize from the data. The effectiveness of data augmentation (Chart 3) indicates that increasing the diversity of the training data can improve the model's robustness and accuracy. The difference between soft and hard decoding (Chart 2) suggests that soft decoding provides a more stable and consistent performance, while hard decoding can be more sensitive to the number of decoded paths. The slight increase in loss for 70B:Hard after N=4 could indicate overfitting or instability in the decoding process for larger models when using hard decoding. The charts collectively suggest a trade-off between model size, decoding strategy, and data augmentation in optimizing performance.

</details>

given step. The NLI-based method annotates a step as correct if it is entailment with any step in the reference solutions. The Rule-based method annotates a step as correct if its support number precisely matches that of any steps in the reference solutions. As demonstrated in Table 4, our annotation strategy exhibits substantial superiority over the two approaches.

The ability of the LLM completer plays an important role in the data quality. We employ a completer to finalize multiple subsequent reasoning processes for a given step. Therefore, we investigate the impact of the LLM completer.

Figure 4(b) presents the cross-entropy loss across diverse completers trained on MetaMath. The results indicate that a larger completer is adept at generating superior-quality datasets. Figure 4(c) depicts the cross-entropy loss of LLaMA2-70B trained with different datasets. 'Normal' denotes the original GSM8K training dataset; 'Weak' refers to the Normal set excluding examples whose questions are in our 160 evaluation set; while 'Augmented' symbolizes MetaMath, an augmented version of the Normal set.

The findings suggest that high-quality training sets allow the model to operate more proficiently as a completer. Importantly, the 'Weak' set exhibits a markedly larger loss than other datasets. This insight drives us to infer that LLMs should acquire the questions in advance to enhance their performance as completers. We can also conjecture that a stronger foundational model, coupled with superior training data, could further enhance the quality of automatic annotation.

## 5.3 INFLUENCE OF THE PRE-TRAINED BASE MODELS

To conduct an exhaustive evaluation of MATH-SHEPHERD's effectiveness, we performed a diverse range of experiments using model sizes 7B, 13B, and 70B.

Table 4: The comparison between NLI/Rule-based automatic process annotation methods from Li et al. (2023b) and our method.

| Methods | Models | Accuracy (%) | Loss |

|---------------------------------|---------------------------|----------------|--------|

| DIVERSE-NLI (Li et al., 2023b) | DeBERTa (He et al., 2020) | 61.3 | 5.43 |

| DIVERSE-NLI (Li et al., 2023b) | LLaMA2-13B | 75.6 | 3.27 |

| DIVERSE-Rule (Li et al., 2023b) | - | 75 | 3.43 |

| MATH-SHEPHERD | LLaMA2-13B (N = 4) | 85 | 2.05 |

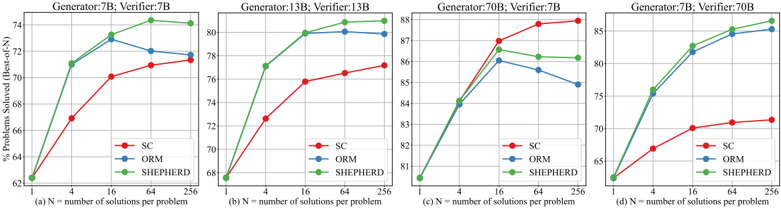

Figure 5: Performance of different verification strategies on different sizes of generators and verifiers.

<details>

<summary>Image 7 Details</summary>

### Visual Description

\n

## Line Charts: Problem Solving Performance with Different Generators and Verifiers

### Overview

The image presents four separate line charts, each comparing the performance of three different problem-solving methods ("SC", "ORM", and "SHEPHERD") as the number of solutions considered increases. Each chart corresponds to a specific combination of "Generator" and "Verifier" sizes (7B, 13B, 70B). The y-axis represents the percentage of problems solved (Best-of-1), and the x-axis represents the number of solutions per problem.

### Components/Axes

Each chart shares the following components:

* **X-axis Label:** "(N) = number of solutions per problem" with markers at 1, 4, 16, 64, and 256.

* **Y-axis Label:** "% Problems Solved (Best-of-1)" with a scale ranging from approximately 62% to 88%.

* **Legend:** Located in the bottom-left corner of each chart, listing the three methods:

* "SC" (Red)

* "ORM" (Blue)

* "SHEPHERD" (Green)

* **Title:** Each chart has a title indicating the Generator and Verifier sizes.

* (a) Generator: 7B; Verifier: 7B

* (b) Generator: 13B; Verifier: 13B

* (c) Generator: 70B; Verifier: 7B

* (d) Generator: 7B; Verifier: 70B

### Detailed Analysis or Content Details

**Chart (a): Generator: 7B; Verifier: 7B**

* **SC (Red):** Starts at approximately 62% at N=1, increases steadily to around 76% at N=64, and plateaus at approximately 76% at N=256.

* **ORM (Blue):** Starts at approximately 70% at N=1, increases to around 78% at N=4, continues to increase to approximately 81% at N=16, then plateaus around 81% at N=64 and N=256.

* **SHEPHERD (Green):** Starts at approximately 68% at N=1, increases rapidly to around 78% at N=4, continues to increase to approximately 80% at N=16, and plateaus around 80% at N=64 and N=256.

**Chart (b): Generator: 13B; Verifier: 13B**

* **SC (Red):** Starts at approximately 68% at N=1, increases to around 74% at N=64, and plateaus at approximately 74% at N=256.

* **ORM (Blue):** Starts at approximately 72% at N=1, increases to around 78% at N=4, continues to increase to approximately 80% at N=16, and plateaus around 80% at N=64 and N=256.

* **SHEPHERD (Green):** Starts at approximately 70% at N=1, increases to around 77% at N=4, continues to increase to approximately 80% at N=16, and plateaus around 80% at N=64 and N=256.

**Chart (c): Generator: 70B; Verifier: 7B**

* **SC (Red):** Starts at approximately 82% at N=1, increases to around 86% at N=4, continues to increase to approximately 88% at N=16, and plateaus around 88% at N=64 and N=256.

* **ORM (Blue):** Starts at approximately 84% at N=1, increases to around 86% at N=4, continues to increase to approximately 87% at N=16, and plateaus around 86% at N=64 and N=256.

* **SHEPHERD (Green):** Starts at approximately 83% at N=1, increases to around 87% at N=4, continues to increase to approximately 88% at N=16, and plateaus around 88% at N=64 and N=256.

**Chart (d): Generator: 7B; Verifier: 70B**

* **SC (Red):** Starts at approximately 65% at N=1, increases to around 70% at N=4, continues to increase to approximately 72% at N=16, and plateaus around 72% at N=64 and N=256.

* **ORM (Blue):** Starts at approximately 75% at N=1, increases to around 80% at N=4, continues to increase to approximately 82% at N=16, and plateaus around 82% at N=64 and N=256.

* **SHEPHERD (Green):** Starts at approximately 70% at N=1, increases to around 78% at N=4, continues to increase to approximately 82% at N=16, and plateaus around 82% at N=64 and N=256.

### Key Observations

* Increasing the number of solutions considered (N) generally improves performance for all methods, but the gains diminish beyond N=16.

* The combination of a 70B Generator and a 7B Verifier (Chart c) consistently yields the highest performance across all methods.

* The 7B Generator and 70B Verifier (Chart d) shows the lowest overall performance.

* SHEPHERD generally outperforms SC, while ORM often performs similarly to or slightly better than SHEPHERD.

### Interpretation

The data suggests that the size of the generator significantly impacts problem-solving performance. A larger generator (70B) leads to higher success rates, even when paired with a smaller verifier (7B). The verifier size also plays a role, but its impact is less pronounced than the generator size. The diminishing returns observed beyond N=16 indicate that there's a point where considering more solutions doesn't significantly improve the outcome.

The relative performance of the methods (SC, ORM, SHEPHERD) appears to be consistent across different generator/verifier configurations, with SHEPHERD generally being superior to SC and ORM often performing comparably to SHEPHERD. This suggests that the underlying strengths and weaknesses of each method are relatively independent of the model sizes used.

The outlier is the 7B Generator and 70B Verifier combination (Chart d), which shows the lowest overall performance. This could indicate a bottleneck in the generator's ability to produce high-quality solutions that the larger verifier can effectively evaluate. It could also suggest that the verifier's capacity is not fully utilized when paired with a smaller generator.

</details>

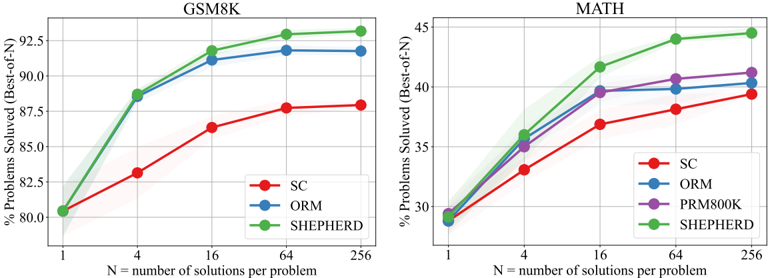

Figures 5(a), 5(b), and 3(a) display the results from the 7B, 13B, and 70B generators paired with equal-sized reward models, respectively. It becomes evident that PRM exhibits superiority over self-consistency and ORM across all sizes of base models. Moreover, bigger reward models prove to be more robust; for instance, the accuracy of the 70B reward models escalates as the number of candidate solutions rises, while the 7B reward models show a decreasing trend.

Figure 5(c) and 5(d) presents the performance of 7B and 70B generators interfaced with differentsized reward models. The findings illustrate that utilizing a larger reward model to validate the output of a smaller generator significantly enhances performance. Conversely, when a smaller reward model is employed to validate the output of a larger generator, the verification process adversely impacts the model's performance compared to SC. These results substantiate that we should utilize a more potent reward model for validating or supervising the generator.

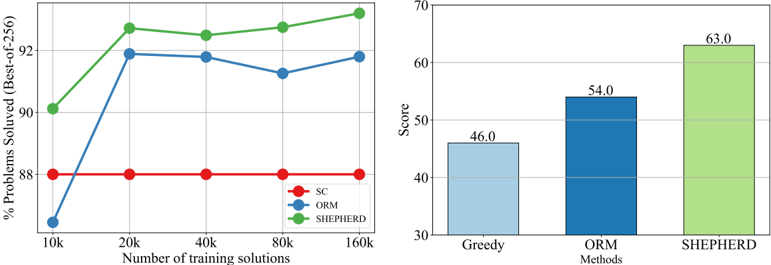

## 5.4 INFLUENCE OF THE NUMBER OF DATA

We delve deeper into the analysis of PRM and ORM by utilizing varying quantities of training data. As depicted in Figure 6(a), it is clear that PRM exhibits superior data efficiency. Specifically, it outperforms ORM by approximately 4% accuracy when applying a modestly sized training dataset (i.e., 10k instances). Furthermore, PRM seems to have a higher potential ceiling than ORM. These observations highlight the efficacy of PRM for verification purposes.

## 5.5 OUT-OF-DISTRIBUTION PERFORMANCE

To further demonstrate the effectiveness of our method, we conduct an out-of-distribution evaluation on the Hungarian national final exam 2 , which consists of 33 questions. The total score of these questions is 100. We use the LLemma-34B trained on MetaMATH to serve as the generator and generate 256 candidate solutions for each question. We use LLemma-34B-ORM and LLemma34B-PRM to select the solution for each question. As shown in Figure 6(b): 1) both LLemma34B-ORM and LLemma-34B-PRM outperform the origin LLemma-34B, showing the reward model can generalize to other domains; 2) PRM outperforms ORM 9 scores, further demonstrating the superiority of PRM.

2 https://huggingface.co/datasets/keirp/hungarian\_national\_hs\_finals\_ exam

Figure 6: (a): Performance of different reward models using different numbers of training data; (b) performance of different verification strategies on the out-of-distribution Hungarian national exam.

<details>

<summary>Image 8 Details</summary>

### Visual Description

\n

## Chart: Performance Comparison of Optimization Methods

### Overview

The image presents two charts comparing the performance of three optimization methods: SC (likely Stochastic Configuration), ORM (likely Optimization-based Refinement Method), and SHEPHERD. The left chart shows the percentage of problems solved (using a Best-of-256 metric) as a function of the number of training solutions, ranging from 10k to 160k. The right chart displays the overall score for each method.

### Components/Axes

**Left Chart:**

* **X-axis:** Number of training solutions (10k, 20k, 40k, 80k, 160k)

* **Y-axis:** % Problems Solved (Best-of-256), ranging from 0 to 70.

* **Legend:**

* SC (Red, diamond-shaped markers)

* ORM (Blue, circular markers)

* SHEPHERD (Green, triangle-shaped markers)

**Right Chart:**

* **X-axis:** Methods (Greedy, ORM, SHEPHERD)

* **Y-axis:** Score, ranging from 30 to 70.

### Detailed Analysis or Content Details

**Left Chart:**

* **SC (Red):** The line is relatively flat, hovering around 38% solved problems across all training solution counts. Approximately 38% ± 2%.

* **ORM (Blue):** The line shows a strong upward trend from 10k to 20k training solutions, increasing from approximately 0% to 65%. It then plateaus and slightly decreases to approximately 62% at 160k training solutions.

* 10k: ~0%

* 20k: ~65%

* 40k: ~64%

* 80k: ~61%

* 160k: ~62%

* **SHEPHERD (Green):** The line exhibits a consistent upward trend, starting at approximately 50% at 10k training solutions and reaching approximately 68% at 160k training solutions.

* 10k: ~50%

* 20k: ~65%

* 40k: ~66%

* 80k: ~65%

* 160k: ~68%

**Right Chart:**

* **Greedy:** Score of approximately 46.0.

* **ORM:** Score of approximately 54.0.

* **SHEPHERD:** Score of approximately 63.0.

### Key Observations

* SC consistently performs the worst, with a very low percentage of problems solved regardless of the number of training solutions.

* ORM shows significant improvement with increasing training solutions, but its performance plateaus after 20k solutions.

* SHEPHERD demonstrates a steady improvement in performance with more training solutions and achieves the highest overall score.

* The right chart confirms SHEPHERD's superior performance, with a significantly higher score than ORM and Greedy.

### Interpretation

The data suggests that SHEPHERD is the most effective optimization method among the three tested, particularly when a larger number of training solutions are available. ORM benefits from increased training data up to a point, after which its performance stabilizes. SC appears to be the least effective method, showing minimal improvement with more training data.

The consistent upward trend of SHEPHERD indicates that its performance continues to improve with more data, suggesting it can leverage larger datasets effectively. The plateauing of ORM suggests that it may reach a limit in its ability to refine solutions beyond a certain point. The consistently low performance of SC suggests it may be fundamentally limited in its ability to solve these types of problems.

The difference in scores on the right chart reinforces the findings from the left chart, highlighting SHEPHERD's overall superiority. The data implies that SHEPHERD is a robust and scalable optimization method, while ORM is effective up to a certain point, and SC is not a viable option for these problems.

</details>

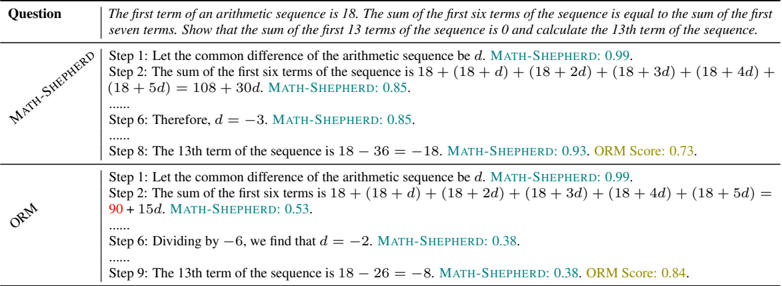

Table 5: A case study from the Hungarian national exam. Red text denotes the mistake that ORM fails to detect.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Textual Comparison: Math Problem Solutions

### Overview

The image presents a comparison of two solutions to the same arithmetic sequence problem, generated by "MATH-SHEPHERD" and "ORM". The problem statement is at the top, followed by step-by-step solutions from each system, along with associated confidence scores.

### Components/Axes

The image is structured into columns:

* **Question:** Contains the problem statement.

* **MATH-SHEPHERD:** Displays the solution steps generated by the MATH-SHEPHERD system, along with a confidence score labeled "MATH-SHEPHERD".

* **ORM:** Displays the solution steps generated by the ORM system, along with a confidence score labeled "ORM Score".

The problem statement is: "The first term of an arithmetic sequence is 18. The sum of the first six terms of the sequence is equal to the sum of the first seven terms. Show that the sum of the first 13 terms of the sequence is 0 and calculate the 13th term of the sequence."

### Content Details

**Question:**

"The first term of an arithmetic sequence is 18. The sum of the first six terms of the sequence is equal to the sum of the first seven terms. Show that the sum of the first 13 terms of the sequence is 0 and calculate the 13th term of the sequence."

**MATH-SHEPHERD Solution:**

* **Step 1:** Let the common difference of the arithmetic sequence be d. MATH-SHEPHERD: 0.99

* **Step 2:** The sum of the first six terms of the sequence is 18 + (18 + d) + (18 + 2d) + (18 + 3d) + (18 + 4d) + (18 + 5d) = 108 + 30d. MATH-SHEPHERD: 0.85

* **Step 6:** Therefore, d = -3. MATH-SHEPHERD: 0.85

* **Step 8:** The 13th term of the sequence is 18 – 36 = –18. MATH-SHEPHERD: 0.93. ORM Score: 0.73

**ORM Solution:**

* **Step 1:** Let the common difference of the arithmetic sequence be d. MATH-SHEPHERD: 0.99

* **Step 2:** The sum of the first six terms is 18 + (18 + d) + (18 + 2d) + (18 + 3d) + (18 + 4d) + (18 + 5d) = 90 + 15d. MATH-SHEPHERD: 0.53

* **Step 6:** Dividing by –6, we find that d = -2. MATH-SHEPHERD: 0.38

* **Step 9:** The 13th term of the sequence is 18 – 26 = –8. MATH-SHEPHERD: 0.38. ORM Score: 0.84

### Key Observations

* Both systems start with the same initial step.

* There is a discrepancy in Step 2: MATH-SHEPHERD calculates the sum as 108 + 30d, while ORM calculates it as 90 + 15d. This is a significant error in the ORM solution.

* Consequently, the calculated common difference 'd' differs between the two systems (d = -3 for MATH-SHEPHERD and d = -2 for ORM).

* The final 13th term also differs (-18 for MATH-SHEPHERD and -8 for ORM).

* MATH-SHEPHERD consistently has higher confidence scores for its steps compared to ORM.

* The "MATH-SHEPHERD" label appears in the ORM solution section, likely indicating a cross-evaluation or comparison metric.

### Interpretation

The image demonstrates a comparison of two automated problem-solving systems. MATH-SHEPHERD appears to provide a more accurate solution to the arithmetic sequence problem, as evidenced by the correct calculation of the common difference and the 13th term. The ORM system makes an error in calculating the sum of the first six terms, leading to an incorrect solution. The confidence scores suggest that MATH-SHEPHERD is more certain about its steps, which aligns with its accuracy. The presence of "MATH-SHEPHERD" scores within the ORM section suggests a potential evaluation framework where MATH-SHEPHERD's output is used as a benchmark for assessing ORM's performance. This comparison highlights the challenges in automated mathematical reasoning and the importance of verifying the correctness of solutions generated by such systems.

</details>

| Question | The first term of an arithmetic sequence is 18. The sum of the first six terms of the sequence is equal to the sum of the first seven terms. Show that the sum of the first 13 terms of the sequence is 0 and calculate the 13th term of the sequence. |

|---------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| MATH-SHEPHERD | Step 1: Let the common difference of the arithmetic sequence be d . MATH-SHEPHERD: 0.99. Step 2: The sum of the first six terms of the sequence is 18 +(18+ d ) +(18+2 d ) +(18+3 d ) +(18+4 d )+ (18+5 d ) = 108 +30 d . MATH-SHEPHERD: 0.85. ...... Step 6: Therefore, d = - 3 . MATH-SHEPHERD: 0.85. ...... Step 8: The 13th term of the sequence is 18 - 36 = - 18 . MATH-SHEPHERD: 0.93. ORMScore: 0.73. |

| ORM | Step 1: Let the common difference of the arithmetic sequence be d . MATH-SHEPHERD: 0.99. Step 2: The sum of the first six terms is 18 +(18+ d ) +(18+2 d ) +(18+3 d ) +(18+4 d ) +(18+5 d ) = 90 + 15 d . MATH-SHEPHERD: 0.53. ...... Step 6: Dividing by - 6 , we find that d = - 2 . MATH-SHEPHERD: 0.38. ...... Step 9: The 13th term of the sequence is 18 - 26 = - 8 . MATH-SHEPHERD: 0.38. ORMScore: 0.84. |

We also conduct a case study to intuitively demonstrate the effectiveness of MATH-SHEPHERD. As outlined in Table 5, when presented with a question from the Hungarian national final exam, our MATH-SHEPHERD accurately selected the correct solution from a pool of 256 potential solutions, which ORM failed. Moreover, MATH-SHEPHERD displayed superior discernment by precisely identifying incorrect steps within the solutions selected by ORM. Notably, it recognized errors in Step 2, Step 6, and Step 9 and so on, and subsequently assigned them lower scores relative to those for steps present in the correct solutions.

## 6 LIMITATIONS

Our paper has some limitations, which we leave for future work:

The computational cost of the completion process. To determine the label of each reasoning step, we utilize a 'completer' to decode N subsequent reasoning processes. We observe that as N increases, so does the quality of automatic annotations. However, this completion process demands a lot of computing resources, potentially imposing a limitation on the usage of our method. Despite this limitation, the cost remains significantly lower than human annotation. Furthermore, we are optimistic that advancements in efficient inference techniques such as speculative decoding (Xia et al., 2022; Leviathan et al., 2023) and vLLM (Kwon et al., 2023) could mitigate this limitation.

The automatic process annotation consists of noise. Similar to the automatic outcome annotation, our automatic process annotation also has noise. Despite this, our experiments verify the efficacy of our method for training a PRM. In particular, the PRM trained on our dataset outperforms the human-annotated PRM800K dataset. However, a noticeable gap remains between PRM800K and the candidate responses generated by the open-source models utilized in this study, which may result in the invalidation of PRM800K. As a result, the impact of this potential noise on PRM performance is still undetermined. A comprehensive comparison between human and automated annotations is envisaged for future studies. Furthermore, we assert that integrating human and automated process annotations could play a vital role in constructing robust and efficient process supervision.

## 7 CONCLUSION

In this paper, we introduce a process-oriented math verifier called MATH-SHEPHERD, which assigns a reward score to each step of the LLM's outputs on math problems. The training of MATH-SHEPHERD is achieved using automatically constructed process-wise supervision data, thereby eradicating the necessity for labor-intensive human annotation. Remarkably, this automatic methodology correlates strongly with human annotations. Extensive experiments in both verification and reinforcement learning scenarios demonstrate the effectiveness of our method.

## REFERENCES

- Rohan Anil, Andrew M Dai, Orhan Firat, Melvin Johnson, Dmitry Lepikhin, Alexandre Passos, Siamak Shakeri, Emanuel Taropa, Paige Bailey, Zhifeng Chen, et al. Palm 2 technical report. arXiv preprint arXiv:2305.10403 , 2023.

- Zhangir Azerbayev, Hailey Schoelkopf, Keiran Paster, Marco Dos Santos, Stephen McAleer, Albert Q Jiang, Jia Deng, Stella Biderman, and Sean Welleck. Llemma: An open language model for mathematics. arXiv preprint arXiv:2310.10631 , 2023.

- Zhen Bi, Ningyu Zhang, Yinuo Jiang, Shumin Deng, Guozhou Zheng, and Huajun Chen. When do program-of-thoughts work for reasoning? arXiv preprint arXiv:2308.15452 , 2023.

- S´ ebastien Bubeck, Varun Chandrasekaran, Ronen Eldan, Johannes Gehrke, Eric Horvitz, Ece Kamar, Peter Lee, Yin Tat Lee, Yuanzhi Li, Scott Lundberg, et al. Sparks of artificial general intelligence: Early experiments with gpt-4. arXiv preprint arXiv:2303.12712 , 2023.

- Liang Chen, Yichi Zhang, Shuhuai Ren, Haozhe Zhao, Zefan Cai, Yuchi Wang, Peiyi Wang, Tianyu Liu, and Baobao Chang. Towards end-to-end embodied decision making via multi-modal large language model: Explorations with gpt4-vision and beyond. arXiv preprint arXiv:2310.02071 , 2023.

- Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168 , 2021.

- R´ emi Coulom. Efficient selectivity and backup operators in monte-carlo tree search. In International conference on computers and games , pp. 72-83. Springer, 2006.

- DeepSeek. Deepseek llm: Let there be answers. https://github.com/deepseek-ai/ DeepSeek-LLM , 2023.

- Yao Fu, Hao Peng, Ashish Sabharwal, Peter Clark, and Tushar Khot. Complexity-based prompting for multi-step reasoning. arXiv preprint arXiv:2210.00720 , 2022.

- Zhibin Gou, Zhihong Shao, Yeyun Gong, Yujiu Yang, Minlie Huang, Nan Duan, Weizhu Chen, et al. Tora: A tool-integrated reasoning agent for mathematical problem solving. arXiv preprint arXiv:2309.17452 , 2023.

- Pengcheng He, Xiaodong Liu, Jianfeng Gao, and Weizhu Chen. Deberta: Decoding-enhanced bert with disentangled attention. arXiv preprint arXiv:2006.03654 , 2020.

- Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874 , 2021.

- Jie Huang and Kevin Chen-Chuan Chang. Towards reasoning in large language models: A survey. In Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki (eds.), Findings of the Association for Computational Linguistics: ACL 2023 , pp. 1049-1065, Toronto, Canada, July 2023. Association for Computational Linguistics. doi: 10.18653/v1/2023.findings-acl.67. URL https://aclanthology.org/2023.findings-acl.67 .

- Jie Huang, Xinyun Chen, Swaroop Mishra, Huaixiu Steven Zheng, Adams Wei Yu, Xinying Song, and Denny Zhou. Large language models cannot self-correct reasoning yet. arXiv preprint arXiv:2310.01798 , 2023.

- Albert Q Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lucile Saulnier, et al. Mistral 7b. arXiv preprint arXiv:2310.06825 , 2023.

- Jean Kaddour, Joshua Harris, Maximilian Mozes, Herbie Bradley, Roberta Raileanu, and Robert McHardy. Challenges and applications of large language models. arXiv preprint arXiv:2307.10169 , 2023.

- Levente Kocsis and Csaba Szepesv´ ari. Bandit based monte-carlo planning. In European conference on machine learning , pp. 282-293. Springer, 2006.

- Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph Gonzalez, Hao Zhang, and Ion Stoica. Efficient memory management for large language model serving with pagedattention. In Proceedings of the 29th Symposium on Operating Systems Principles , pp. 611-626, 2023.

- Yaniv Leviathan, Matan Kalman, and Yossi Matias. Fast inference from transformers via speculative decoding. In International Conference on Machine Learning , pp. 19274-19286. PMLR, 2023.

- Lei Li, Yuwei Yin, Shicheng Li, Liang Chen, Peiyi Wang, Shuhuai Ren, Mukai Li, Yazheng Yang, Jingjing Xu, Xu Sun, et al. M3it: A large-scale dataset towards multi-modal multilingual instruction tuning. arXiv preprint arXiv:2306.04387 , 2023a.

- Yifei Li, Zeqi Lin, Shizhuo Zhang, Qiang Fu, Bei Chen, Jian-Guang Lou, and Weizhu Chen. Making language models better reasoners with step-aware verifier. In Anna Rogers, Jordan BoydGraber, and Naoaki Okazaki (eds.), Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) , pp. 5315-5333, Toronto, Canada, July 2023b. Association for Computational Linguistics. doi: 10.18653/v1/2023.acl-long.291. URL https://aclanthology.org/2023.acl-long.291 .

- Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let's verify step by step. arXiv preprint arXiv:2305.20050 , 2023.

- Haipeng Luo, Qingfeng Sun, Can Xu, Pu Zhao, Jianguang Lou, Chongyang Tao, Xiubo Geng, Qingwei Lin, Shifeng Chen, and Dongmei Zhang. Wizardmath: Empowering mathematical reasoning for large language models via reinforced evol-instruct. arXiv preprint arXiv:2308.09583 , 2023.

- Qianli Ma, Haotian Zhou, Tingkai Liu, Jianbo Yuan, Pengfei Liu, Yang You, and Hongxia Yang. Let's reward step by step: Step-level reward model as the navigators for reasoning. arXiv preprint arXiv:2310.10080 , 2023.

- OpenAI. GPT-4 technical report. CoRR , abs/2303.08774, 2023. doi: 10.48550/arXiv.2303.08774. URL https://doi.org/10.48550/arXiv.2303.08774 .