## Small LLMs Are Weak Tool Learners: A Multi-LLM Agent

Weizhou Shen 1 , Chenliang Li 2 , Hongzhan Chen 1 , Ming Yan 2 * , Xiaojun Quan 1 ∗ , Hehong Chen 2 , Ji Zhang 2 , Fei Huang 2

1 School of Computer Science and Engineering, Sun Yat-sen University, China 2 Alibaba Group shenwzh3@mail2.sysu.edu.cn, quanxj3@mail.sysu.edu.cn,

ym119608@alibaba-inc.com

https://github.com/X-PLUG/Multi-LLM-Agent

## Abstract

Large Language Model (LLM) agents significantly extend the capabilities of standalone LLMs, empowering them to interact with external tools (e.g., APIs, functions) and complete various tasks in a self-directed fashion. The challenge of tool use demands that LLMs not only understand user queries and generate answers accurately but also excel in task planning, tool invocation, and result summarization. While traditional works focus on training a single LLM with all these capabilities, performance limitations become apparent, particularly with smaller models. To overcome these challenges, we propose a novel approach that decomposes the aforementioned capabilities into a planner, caller, and summarizer. Each component is implemented by a single LLM that focuses on a specific capability and collaborates with others to accomplish the task. This modular framework facilitates individual updates and the potential use of smaller LLMs for building each capability. To effectively train this framework, we introduce a two-stage training paradigm. First, we fine-tune a backbone LLM on the entire dataset without discriminating sub-tasks, providing the model with a comprehensive understanding of the task. Second, the fine-tuned LLM is used to instantiate the planner, caller, and summarizer respectively, which are continually fine-tuned on respective sub-tasks. Evaluation across various tool-use benchmarks illustrates that our proposed multi-LLM framework surpasses the traditional single-LLM approach, highlighting its efficacy and advantages in tool learning.

## 1 Introduction

Large Language Models (LLMs) have revolutionized natural language processing with remarkable proficiency in understanding and generating text. Despite their impressive capabilities, LLMs are

* Corresponding authors.

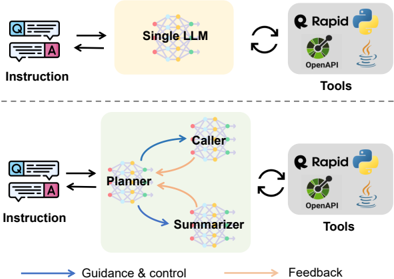

Figure 1: A conceptual comparison of the traditional single-LLM agent framework (top) and the proposed multi-LLM agent framework, α -UMi (bottom).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Comparison of Single LLM vs. Multi-Agent System Architecture

### Overview

The diagram contrasts two approaches to handling instructions with tools:

1. **Top Section**: A single Large Language Model (LLM) directly interacting with tools.

2. **Bottom Section**: A multi-agent system comprising a Planner, Caller, and Summarizer, also interacting with tools.

Arrows indicate guidance/control (blue) and feedback (orange) flows.

---

### Components/Axes

- **Labels**:

- **Top Section**:

- "Single LLM" (central node with interconnected edges).

- "Instruction" (input text bubble with "Q" icon).

- "Tools" (box with icons: Rapid, Python, OpenAPI, Java).

- **Bottom Section**:

- "Planner," "Caller," "Summarizer" (three interconnected nodes).

- "Instruction" (input text bubble with "Q" icon).

- "Tools" (same box as top section).

- **Arrows**:

- **Blue**: Guidance & control (e.g., Planner → Caller, Planner → Summarizer).

- **Orange**: Feedback (e.g., Caller → Planner, Summarizer → Planner).

- **Spatial Grounding**:

- Top section: Single LLM centered, tools on the right.

- Bottom section: Planner, Caller, and Summarizer arranged in a triangular flow, tools on the right.

---

### Detailed Analysis

- **Single LLM Approach**:

- Direct bidirectional interaction between the LLM and tools.

- No intermediate components; instruction → LLM → tools.

- **Multi-Agent System**:

- **Planner**: Coordinates tasks, sends guidance to Caller and Summarizer.

- **Caller**: Executes tool calls based on Planner’s instructions.

- **Summarizer**: Processes outputs and provides feedback to the Planner.

- Feedback loops enable iterative refinement.

---

### Key Observations

1. **Complexity**: The multi-agent system introduces additional components (Planner, Caller, Summarizer) compared to the single LLM.

2. **Feedback Mechanisms**: Orange arrows highlight iterative refinement in the multi-agent system, absent in the single LLM.

3. **Tool Integration**: Both approaches share the same tools (Rapid, Python, OpenAPI, Java), suggesting modularity.

4. **Control Flow**: Blue arrows in the multi-agent system emphasize structured task delegation.

---

### Interpretation

- **Purpose**: The diagram illustrates a trade-off between simplicity (single LLM) and adaptability (multi-agent system).

- **Implications**:

- The single LLM may struggle with complex, multi-step tasks requiring coordination.

- The multi-agent system’s feedback loops suggest improved error handling and dynamic adjustment.

- **Anomalies**: No explicit data points or numerical values are provided, limiting quantitative analysis.

- **Design Philosophy**: The multi-agent system aligns with modular AI architectures, emphasizing specialization and collaboration.

This diagram underscores the potential benefits of distributed intelligence in handling structured workflows, though it lacks empirical validation (e.g., performance metrics).

</details>

not without limitations. Notably, they lack domain specificity, real-time information, and face challenges in solving specialized problems such as mathematics (Gou et al., 2023) and program compilation (OpenAI, 2023a). Hence, integrating LLMs with external tools, such as API calls and Python functions, becomes imperative to extend their capabilities and enhance the overall performance. Consequently, LLM agents have become a prominent area for both academia and industry, employing large language models to determine when and how to utilize external tools to tackle various tasks.

In addition to exploring proprietary LLMs like GPT-4, researchers have also actively engaged in developing customizable agent systems by finetuning open-source LLMs on diverse tool-use datasets (Patil et al., 2023; Tang et al., 2023; Qin et al., 2023b; Gou et al., 2023). The challenge of tool learning demands sufficiently large and complex LLMs. These models must not only comprehend user queries but also excel in task planning, tool selection and invocation, and result summarization (Yujia et al., 2023). These capabilities draw upon different facets of the LLMs; for instance, planning relies more on reasoning ability, while tool selection and invocation demand legal and ac- curate request writing, and result summarization requires adept conclusion-drawing skills. While conventional approaches (Qin et al., 2023b; Gou et al., 2023; Zeng et al., 2023) focus on training a single open-source LLM with all these capabilities, notable performance limitations have been observed, especially with smaller open-source LLMs (Touvron et al., 2023a,b). Moreover, the tools could be updated frequently in practical scenarios, when the entire LLM requires potential retraining.

To address these challenges, we propose a multiLLM agent framework for tool learning, α -UMi 1 . As illustrated in Figure 1, α -UMi decomposes the capabilities of a single LLM into three components, namely planner, caller, and summarizer. Each of these components is implemented by a single LLM and trained to focus on a specific capability. The planner is designed to generate the rationale based on the current state of the system and weighs between selecting the caller or summarizer to generate downstream output, or even deciding to terminate the execution. The caller is directed by the rationale and responsible for invocating specific tools. The summarizer is guided by the planner to craft the ultimate user answer based on the execution trajectory. These components collaborate seamlessly to accomplish various tasks. Compared to previous approaches, our modular framework has three distinct advantages. First, each component undergoes training for a designated role, ensuring enhanced performance for each capability. Second, the modular structure allows for individual updates to each component as required, ensuring adaptability and streamlined maintenance. Third, since each component focuses solely on a specific capability, potentially smaller LLMs can be employed.

To effectively train this multi-LLM framework, we introduce a novel global-to-local progressive fine-tuning strategy (GLPFT). First, an LLM backbone is trained on the original training dataset without discriminating between sub-tasks, enhancing the comprehensive understanding of the toollearning task. Three copies of this LLM backbone are created to instantiate the planner, caller, and summarizer, respectively. In the second stage, the training dataset is reorganized into new datasets tailored to each LLM's role in tool use, and continual

1 In astronomy, the name ' α -UMi' is an alias of the Polaris Star ( https://en.wikipedia.org/wiki/Polaris ), which is actually a triple star system consisting of a brighter star (corresponding to the planner) and two fainter stars (corresponding to the caller and the summarizer).

fine-tuning of the planner, caller, and summarizer is performed on their respective datasets.

We employ LLaMA-2 (Touvron et al., 2023b) series to implement the LLM backbone and evaluate our α -UMi agent on several tool learning benchmarks (Qin et al., 2023b; Tang et al., 2023). Experimental results demonstrate that our proposed framework outperforms the single-LLM approach across various model and data sizes. Moreover, we show the necessity of the GLPFT strategy for the success of our framework and delve into the reasons behind the improved performance. Finally, the results confirm our assumption that smaller LLMs can be used in our multi-LLM framework to cultivate individual tool learning capabilities and attain a competitive overall performance.

To sum up, this work makes three critical contributions. First, we demonstrate that small LLMs are weak tool learners and introduce α -UMi, a multiLLM framework for building LLM agents, that outperforms the existing single-LLM approach in tool use. Second, we propose a GLPFT fine-tuning strategy, which has proven to be essential for the success of our framework. Third, we perform a thorough analysis, delving into data scaling laws and investigating the underlying reasons behind the superior performance of our framework.

## 2 Related Works

## 2.1 Tool Learning

The ability of LLMs to use external tools has become a pivotal component in the development of AI agents, attracting rapidly growing attention (Qin et al., 2023b; Schick et al., 2023; Yang et al., 2023b; Shen et al., 2023; Patil et al., 2023; Qin et al., 2023a). Toolformer (Schick et al., 2023) was one of the pioneering work in tool learning, employing tools in a self-supervised manner. Subsequently, a diverse array of external tools has been employed to enhance LLMs in various ways, including the improvement of real-time factual knowledge (Yang et al., 2023a; Nakano et al., 2021), multimodal comprehension and generation (Yang et al., 2023b; Wu et al., 2023a; Yang et al., 2023c), code and math reasoning (Gou et al., 2023; OpenAI, 2023a), and domain knowledge of specific AI models and APIs (Shen et al., 2023; Li et al., 2023; Qin et al., 2023b). Different from previous approaches relying on a single LLM for tool learning, we introduce a novel multi-LLM collaborated tool learning framework designed for smaller open-source

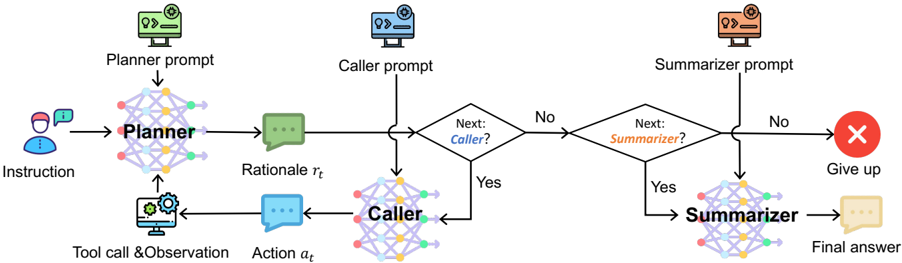

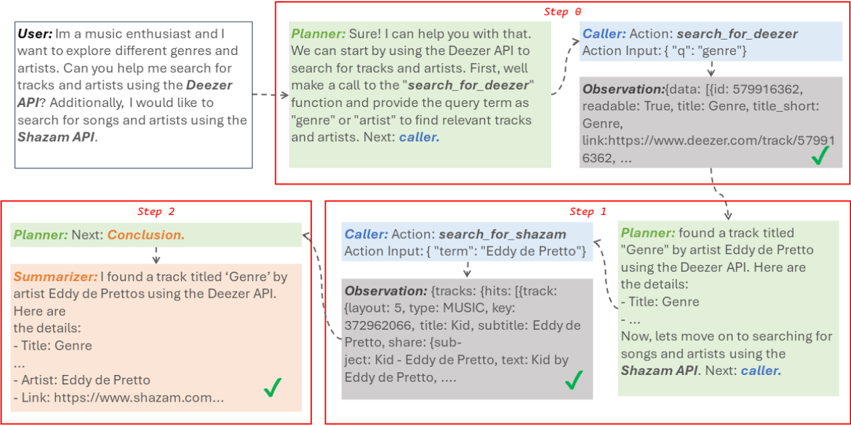

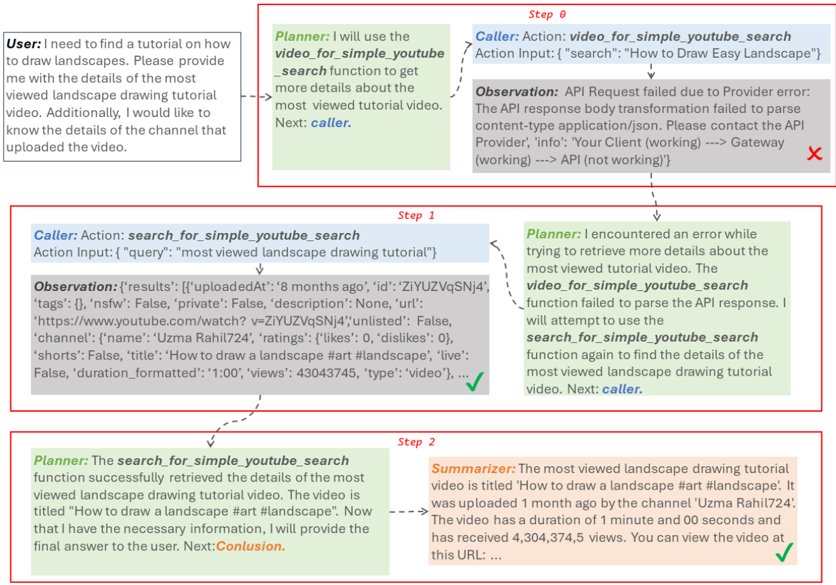

Figure 2: An illustration of how α -UMi works to complete a task.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Flowchart: Multi-Agent Workflow with Conditional Routing

### Overview

The image depicts a multi-stage workflow involving three specialized agents (Planner, Caller, Summarizer) with conditional routing based on decision points. The process begins with a user instruction and progresses through tool calls, actions, and summarization, with potential termination points.

### Components/Axes

1. **Nodes**:

- **Planner**: Green node with "Planner prompt" input and "Rationale r_t" output

- **Caller**: Blue node with "Caller prompt" input and "Action a_t" output

- **Summarizer**: Orange node with "Summarizer prompt" input and "Final answer" output

- **Decision Points**:

- "Next: Caller?" (Yes/No)

- "Next: Summarizer?" (Yes/No)

- **Termination States**:

- "Give up" (red X)

- "Final answer" (beige speech bubble)

2. **Flow Arrows**:

- Solid arrows indicate mandatory progression

- Dashed arrows represent conditional routing

- Color-coded arrows match node colors (green→blue→orange)

3. **Input/Output**:

- **Input**: User instruction (leftmost node)

- **Outputs**:

- Tool call & observation (Planner→Caller)

- Action (Caller→Decision)

- Final answer (Summarizer)

### Detailed Analysis

1. **Planner Stage**:

- Receives user instruction

- Generates rationale (r_t) and tool call/observation

- Outputs to Caller via green arrow

2. **Caller Stage**:

- Processes planner's output

- Executes action (a_t)

- Routes to decision point "Next: Caller?"

3. **Decision Logic**:

- **Yes Path**: Returns to Caller for additional actions

- **No Path**: Proceeds to Summarizer

4. **Summarizer Stage**:

- Processes caller's final output

- Generates final answer

- Routes to decision point "Next: Summarizer?"

5. **Termination Conditions**:

- **No Path from Summarizer**: Process terminates with "Give up"

- **Yes Path from Summarizer**: Completes with "Final answer"

### Key Observations

1. **Sequential Dependency**: Each stage depends on the previous agent's output

2. **Conditional Loops**:

- Caller can loop back for additional actions

- Summarizer can loop back for refinement

3. **Color-Coded Workflow**:

- Green (Planner) → Blue (Caller) → Orange (Summarizer)

4. **Termination States**: Two possible endpoints based on decision outcomes

### Interpretation

This flowchart represents a structured problem-solving framework where:

1. The **Planner** decomposes tasks into rational steps and tool requirements

2. The **Caller** executes actions and determines if further execution is needed

3. The **Summarizer** synthesizes results into final outputs

4. Conditional routing allows iterative refinement at both Caller and Summarizer stages

5. The red "Give up" state acts as a fail-safe for unresolvable paths

The workflow emphasizes iterative processing with clear decision boundaries, suggesting an AI system designed for complex task decomposition with built-in quality control through conditional summarization checks.

</details>

LLMs. This framework decomposes the comprehensive abilities of LLMs into distinct roles, namely a planner, caller, and summarizer.

## 2.2 LLM-powered Agents

Leveraging the capabilities of LLMs such as ChatGPT (OpenAI, 2022) and GPT-4 (OpenAI, 2023b), AI agent systems have found application in diverse scenarios. For instance, solutions like BabyAGI (Nakajima, 2023) and AutoGPT (Gravitas, 2023) have been developed to address daily problems, while Voyager (Wang et al., 2023) and Ghost (Zhu et al., 2023) engage in free exploration within Minecraft games. Additionally, MetaGPT (Hong et al., 2023), ChatDev (Qian et al., 2023), and AutoGen (Wu et al., 2023b) contribute to the development of multi-agent frameworks tailored for software development and problem-solving.

Various techniques have been proposed to augment agent capabilities from different perspectives. The chain-of-thought series (Wei et al., 2022; Wang et al., 2022; Yao et al., 2022, 2023) and Reflextion (Shinn et al., 2023) contribute to enhancing agents' reasoning abilities, while MemoryBank (Zhong et al., 2023) enriches agents with long-term memory. Recent efforts have also emerged in fine-tuning open-source LLMs as agents, exemplified by works like FiREACT (Chen et al., 2023) and AgentTuning (Zeng et al., 2023). However, these endeavors primarily focus on finetuning a single LLM, unlike our approach, which explores an effective method for fine-tuning a multiLLM agent specifically for tool learning.

## 3 Methodology

## 3.1 Preliminary

Agents for tool learning are systems designed to assist users in completing tasks through a series of decision-making processes and tool use (Yujia et al., 2023). In recent years, these agents commonly adhere to the ReACT framework (Yao et al., 2022). The backbone of the agent is an LLM denoted as M . Given the user instruction q and the system prompt P , the agent solves the instruction step by step. In the t th step, the LLM M generates a rationale r t and an action a t based on the instruction and the current state of the system:

$$r _ { t } , a _ { t } = \mathcal { M } ( \mathcal { P } , \tau _ { t - 1 } , q ) , \quad ( 1 )$$

where τ t -1 = { r 1 , a 1 , o 1 , ..., r t -1 , a t -1 , o t -1 } denotes the previous execution trajectory. Here, o t denotes the observation returned by tools when the action a t is supplied. In the final step of the interaction, the agent generates rationale r n indicating that the instruction q is solved along with the final answer a n or that it will abandon this execution run. Therefore, no observation is included in this step.

## 3.2 The α -UMi Framework

As previously mentioned, the task of tool learning imposes a significant demand on the capabilities of LLMs, including task planning, tool invocation, and result summarization. Coping with all these capabilities using a single open-source LLM, especially when opting for a smaller LLM, appears to be challenging. To address this challenge, we introduce the α -UMi framework, which breaks down the tool learning task into three sub-tasks and assigns each sub-task to a dedicated LLM. Figure 1 presents an illustration of our framework, which incorporates three distinct LLM components: planner M plan, caller M call, and summarizer M sum. These components are differentiated by their roles in tool use, and each component model has a unique task definition, system prompt 2 , and model input.

2 The prompts for each LLM are provided in Appendix A.

The workflow of α -UMi is shown in Figure 2. Upon receiving the user instruction q , the planner generates a rationale comprising hints for the this step. This may trigger the caller to engage with the tools and subsequently receive observations from the tools. This iterative planner-caller-tool loop continues until the planner determines that it has gathered sufficient information to resolve the instruction. At this point, the planner transitions to the summarizer to generate the final answer. Alternatively, if the planner deems the instruction unsolvable, it may abandon the execution.

Planner : The planner assumes responsibility for planning and decision-making, serving as the 'brain' of our agent framework. Specifically, the model input for the planner comprises the system prompt P plan, the user instruction q , and the previous execution trajectory τ t -1 . Using this input, the planner generates the rationale r t :

$$r _ { t } = \mathcal { M } _ { p l a n } ( \mathcal { P } _ { p l a n } , \tau _ { t - 1 } , q ) . \quad ( 2 ) \quad o f \, c o r d$$

Following the rationale, the planner generates the decision for the next step: (1) If the decision is 'Next: Caller', the caller will be activated and an action will be generated for calling tools. (2) If the decision is 'Next: Summarizer', the summarizer will be activated to generate the final answer for the user, and the agent execution will finish. (3) If the decision is 'Next: Give up', it means that the user's instruction cannot be solved in the current situation, and the system will be terminated.

Caller: Interacting with the tools requires the LLM to generate legal and useful requests, which may conflict with other abilities such as reasoning and general response generation during fine-tuning. Therefore, we train a specialized caller to generate the action for using tools. The caller takes the user instruction q and the previous execution trajectory τ t -1 as input. To make the caller focus on the planner's rationale r t in the current step, we also design a prompt P call to explicitly remind the caller:

$$a _ { t } = \mathcal { M } _ { c a l l } ( \mathcal { P } _ { c a l l } , \tau _ { t - 1 } , q , r _ { t } ) . \quad ( 3 ) \quad m a n$$

Summarizer: The agent's final response, which aims to offer informative and helpful information to the user, is distinct from the rationales that primarily focus on planning and reasoning. Therefore, we employ a dedicated summarizer tasked with generating the final answer a n . This model utilizes a concise prompt P sum designed to guide the model

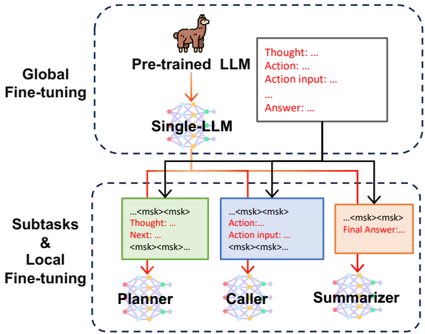

Figure 3: Global-to-local progressive fine-tuning.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: System Architecture for LLM Fine-tuning and Subtask Processing

### Overview

The diagram illustrates a multi-stage system architecture for fine-tuning large language models (LLMs) and processing subtasks. It is divided into two primary sections: **Global Fine-tuning** (top) and **Subtasks & Local Fine-tuning** (bottom). Arrows indicate the flow of information and control between components, with color-coded text boxes representing specific stages of processing.

---

### Components/Axes

1. **Global Fine-tuning Section**:

- **Pre-trained LLM**: A llama icon representing the initial pre-trained model.

- **Single-LLM**: A network diagram symbolizing a single LLM instance.

- Arrows connect "Pre-trained LLM" to "Single-LLM," indicating a transition or refinement process.

2. **Subtasks & Local Fine-tuning Section**:

- **Planner**: A green text box with placeholders like `<msk>` and labels "Thought: ...", "Next: ...".

- **Caller**: A blue text box with "Action: ...", "Action input: ...", and `<msk>` placeholders.

- **Summarizer**: An orange text box with "Final Answer: ..." and `<msk>` placeholders.

- Arrows connect these components in a sequential flow: Planner → Caller → Summarizer.

3. **Control Flow**:

- Red arrows link the Global Fine-tuning section to the Subtasks section, labeled with "Thought: ...", "Action: ...", and "Answer: ...".

- The final output flows from the Summarizer to the Global Fine-tuning section.

---

### Detailed Analysis

- **Textual Elements**:

- **Thought**: Placeholder for reasoning steps (e.g., "Thought: ...").

- **Action**: Placeholder for model actions (e.g., "Action: ...").

- **Action input**: Placeholder for input data (e.g., "Action input: ...").

- **Answer**: Placeholder for intermediate outputs (e.g., "Answer: ...").

- **Final Answer**: Placeholder for the system's final output (e.g., "Final Answer: ...").

- **Color Coding**:

- **Green**: Planner (subtask planning).

- **Blue**: Caller (action execution).

- **Orange**: Summarizer (output synthesis).

- **Red**: Arrows indicating control flow (Thought → Action → Answer → Final Answer).

- **Spatial Grounding**:

- **Top Section**: "Global Fine-tuning" is centered at the top, with "Pre-trained LLM" and "Single-LLM" aligned vertically.

- **Bottom Section**: "Subtasks & Local Fine-tuning" is centered at the bottom, with Planner, Caller, and Summarizer arranged horizontally.

- Arrows originate from the Global Fine-tuning section and terminate in the Subtasks section, with text boxes positioned near the arrows.

---

### Key Observations

1. **Sequential Workflow**: The system processes information in a pipeline: Global Fine-tuning → Subtasks (Planner → Caller → Summarizer) → Global Fine-tuning.

2. **Placeholder Usage**: `<msk>` placeholders suggest masked token inputs for model training or inference.

3. **Color-Coded Roles**: Distinct colors differentiate subtask components, aiding in visual separation of responsibilities.

4. **Cyclical Feedback**: The final output from the Summarizer loops back to the Global Fine-tuning section, implying iterative refinement.

---

### Interpretation

This diagram represents a hybrid system for LLM optimization and task execution. The **Global Fine-tuning** stage likely involves adapting a pre-trained model to a specific domain, while the **Subtasks & Local Fine-tuning** section handles task-specific processing through modular components:

- **Planner**: Generates reasoning steps or task plans.

- **Caller**: Executes actions based on the plan.

- **Summarizer**: Synthesizes results into a final answer.

The cyclical feedback loop suggests the system iteratively refines outputs, potentially improving performance over time. The use of `<msk>` placeholders indicates a focus on masked language modeling techniques, common in transformer-based architectures. The diagram emphasizes modularity, with clear separation between global model adaptation and localized task execution.

</details>

in concentrating on summarizing the execution trajectory and presenting the answer to the user:

$$a _ { n } = \mathcal { M } _ { s u m } ( \mathcal { P } _ { s u m } , \tau _ { n - 1 } , q , r _ { n } ) . \quad ( 4 )$$

In Figure 6 and Figure 7, we show several cases of our α -UMi on downstream tasks.

## 3.3 Global-to-Local Progressive Fine-Tuning

To effectively fine-tune the above multi-LLM system is a complex endeavor: On one hand, generating the rationale, action, and final answer can facilitate each other during the training process, and enhance the model's comprehension of the entire agent task (Chen et al., 2023). On the other hand, the constraints on model capacity make it challenging to fine-tune a small LLM to achieve peak performance in generating rationales, actions, and final answers simultaneously (Dong et al., 2023). Taking into account these two points, we propose a global-to-local progressive fine-tuning (GLPFT) strategy for α -UMi. The motivation behind this strategy is to first exploit the mechanism by which the generation of rationale, action, and final answer can mutually enhance each other. Then, once the single LLM reaches its performance ceiling, it is subsequently split into planner, caller and summarizer for further fine-tuning, in order to enhance its capabilities in the subtasks and mitigate the performance constraints due to limited model capacity.

As depicted in Figure 3, this GLPFT strategy comprises two distinct stages. The first stage involves global fine-tuning, where we fine-tune a backbone LLM on the original training dataset without distinguishing between sub-tasks. After this stage, the backbone LLM is trained to sequentially output the rationale, action, and answer as introduced in Section 3.1. Then, we create three copies of the backbone LLM, designated as the planner, caller, and summarizer, respectively.

The second stage is local fine-tuning, where we reorganize the training dataset tailored to each LLM's role, as introduced in Section 3.2. We then proceed to fine-tune the planner, caller, and summarizer on their respective datasets, thereby further enhancing their specific abilities in each sub-task. During this local fine-tuning stage, we opt to reuse the set of user instructions curated in the global fine-tuning stage. The only adjustment made to the training set is the change in the format of the training data. As illustrated in Figure 3, the finetuning objective during the second stage for the planner, caller, and summarizer is oriented towards generating the rationale, action, and final answer, respectively. While the gradients from other text spans are stopped. Simultaneously, we refine the system prompts for the training data of the planner, caller, and summarizer, as detailed in Appendix A.

## 3.4 Discussions

The proposed α -UMi framework and GLPFT strategy are founded on three main principles. Firstly, the limited ability and capacity of small LLMs, such as LLaMA-7B, pose challenges during finetuning in tool learning tasks. In contrast, α -UMi decomposes complex tasks into simpler ones, reducing the workload on LLMs. Secondly, α -UMi offers increased flexibility in prompt design, allowing us to create specific prompts and model inputs for each LLM to fully leverage its capabilities in sub-tasks. Thirdly, the GLPFT strategy bridges the gap between fine-tuning on the whole tool learning task and on each sub-task, leading to a more successful fine-tuning process of the multi-LLM system. In the following experimental sections, we will focus on demonstrating these principles.

Recent studies have explored multi-agent systems based on LLMs across various domains, such as social communication (Park et al., 2023; Wei et al., 2023), software development (Qian et al., 2023; Hong et al., 2023), and problem solving (Wu et al., 2023b). These frameworks typically rely on powerful closed-source LLMs, demanding advanced capabilities such as automatic cooperation and feedback-abilities that extend beyond those of open-source small LLMs. In contrast, our α -UMi aims to ease the LLM's workload in tool-use tasks by integrating multiple LLMs to form an agent, particularly suitable for open-source, small LLMs. Additionally, we introduce the novel GLPFT method for fine-tuning the multi-LLM system, a contribution not found in existing multi-agent works.

## 4 Experimental Settings

## 4.1 Benchmarks

We evaluate the effectiveness of our α -UMi on two tool learning benchmarks: ToolBench (Qin et al., 2023b) and ToolAlpaca (Tang et al., 2023). These tasks involve integrating API calls to accomplish tasks, where the agent must accurately select the appropriate API and compose necessary API requests. Moreover, we partition the test set of ToolBench into in-domain and out-of-domain based on whether the tools used in the test instances have been seen during training. This division allows us to evaluate performance in both in-distribution and out-of-distribution scenarios. For additional details and statistics regarding these datasets, please refer to Appendix B. We also evaluate α -UMi on other benchmarks such as program-aided agent for mathematical reasoning (Hendrycks et al., 2021; Cobbe et al., 2021). The results are shown in Appendix D.

## 4.2 Metrics

The tasks in ToolBench involve calling APIs through RapidAPI 3 . This process frequently encounters problems such as API breakdowns, which impacts the fairness of the comparison. To address this problem, we introduce two types of evaluations for ToolBench. In Section 5.1, we first compare the output of agent with the annotated reference at each step 4 , which avoids real-time API callings. The metrics for this evaluation include Action EM (Act. EM), Argument F1 (Arg. F1), and Rouge-L (R-L) as proposed by Li et al. (2023). Moreover, we examine the frequency of API name hallucinations (Hallu.) and the accuracy (Plan ACC) of the agent's planning decisions at each step for using tools invocation, generating answer, or giving up. The reference annotations are based on verified ChatGPT execution results provided in ToolBench. We also provide the results based on real-time RapidAPI calling in Section 5.2, which is the original evaluation method used by the ToolBench team.

For ToolAlpaca, we assess the process correctness rate (Proc.) and the final answer correctness rate (Ans.) (Tang et al., 2023), both by GPT-4.

3 https://rapidapi.com/hub.

4 Refer to Appendix C for more details of the evaluation.

Table 1: Overall evaluation results on ToolBench and ToolAlpaca. 'ToolLLaMA (len = 4096)' and 'ToolLLaMA (len = 8192)' mean setting the max input length of ToolLLaMA to 4096 and 8192, respectively.

| Model | | ToolBench (in-domain) | ToolBench (in-domain) | ToolBench (in-domain) | ToolBench (in-domain) | ToolBench (out-of-domain) | ToolBench (out-of-domain) | ToolBench (out-of-domain) | ToolBench (out-of-domain) | | ToolAlpaca | ToolAlpaca |

|---------------------------------|------------------|-------------------------|-------------------------|-------------------------|-------------------------|-----------------------------|-----------------------------|-----------------------------|-----------------------------|------------------|------------------|------------------|

| | Plan ACC | Act. EM | Hallu. | Arg. F1 | R-L | Plan ACC | Act. EM | Hallu. | Arg. F1 | R-L | Proc. | Ans. |

| Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM | Close-SourceLLM |

| ChatGPT (0-shot) GPT-4 (0-shot) | 83.33 80.28 | 58.67 55.52 | 7.40 5.98 | 45.61 48.74 | 23.08 28.69 | 81.62 77.80 | 54.67 55.26 | 8.19 5.12 | 40.08 47.45 | 22.85 30.61 | 33 41 | 37 44 |

| Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B |

| ToolLLaMA (len = 4096) | 66.42 | 19.47 | 33.94 | 15.98 | 2.06 | 68.21 | 30.75 | 25.35 | 25.07 | 5.78 | - | - |

| ToolLLaMA (len = 8192) | 77.02 | 47.56 | 4.03 | 42.00 | 15.26 | 77.76 | 45.07 | 3.45 | 40.41 | 18.10 | - | - |

| Single-LLM | 81.92 | 53.26 | 2.32 | 45.57 | 42.66 | 84.61 | 56.54 | 2.26 | 50.09 | 47.99 | 11 | 11 |

| Multi-LLM one-stage | 87.52 | 45.11 | 7.71 | 38.02 | 41.01 | 88.42 | 53.40 | 2.52 | 45.79 | 46.39 | 2 | 9 |

| Single-LLM multi-task | 85.06 | 51.83 | 2.96 | 44.25 | 27.40 | 86.55 | 56.89 | 2.77 | 49.50 | 32.58 | 28 | 18 |

| α -UMi w/o reuse | 88.24 | 55.50 | 0.53 | 48.97 | 39.98 | 87.91 | 58.02 | 2.32 | 50.55 | 42.59 | - | - |

| α -UMi w/ reuse | 88.92 | 58.94 | 0.57 | 52.24 | 43.17 | 89.72 | 60.47 | 0.45 | 53.60 | 46.26 | 41 | 35 |

| Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B |

| Single-LLM | 81.01 | 59.67 | 1.53 | 52.35 | 42.16 | 86.74 | 60.04 | 2.03 | 52.94 | 48.46 | 33 | 29 |

| Multi-LLM one-stage | 86.49 | 50.54 | 5.11 | 41.96 | 36.21 | 87.45 | 56.71 | 3.23 | 47.49 | 41.62 | 22 | 19 |

| Single-LLM multi-task | 86.36 | 58.96 | 2.00 | 49.28 | 28.41 | 86.64 | 62.78 | 3.42 | 53.29 | 35.46 | 28 | 16 |

| α -UMi w/o reuse | 86.33 | 60.07 | 0.39 | 53.11 | 35.09 | 87.75 | 61.63 | 2.95 | 52.54 | 37.70 | - | - |

| α -UMi w/ reuse | 87.87 | 63.03 | 0.37 | 57.65 | 43.46 | 88.73 | 64.21 | 0.24 | 57.38 | 42.50 | 41 | 35 |

## 4.3 Implementation Details

We opt for LLaMA-2-chat-7B/13B (Touvron et al., 2023b) as the backbone to implement our framework. In the first stage of our GLPFT, we conduct fine-tuning for the backbone LLM with a learning rate of 5e-5 for 2 epochs. Then, we create three copies of this fine-tuned backbone to instantiate the planner, caller, and summarizer, respectively. In the second stage, we fine-tune the three LLMs with a reduced learning rate of 1e-5. The planner and caller undergo fine-tuning for 1 epoch, while the summarizer undergoes fine-tuning for 2 epochs. Weset the global batch size to 48 and employ DeepSpeed ZeRO Stage3 (Rajbhandari et al., 2021) to speed up the fine-tuning process. All experimental results are obtained using greedy decoding, with the maximum sequence length set at 4096.

## 4.4 Baselines

We compare our method with three baseline methods, namely Single-LLM, Multi-LLMone-stage and Single-LLM multi-task . Single-LLM refers to the traditional single-LLM tool learning approach. Multi-LLMone-stage involves directly fine-tuning the planner, caller, and summarizer on their own sub-task datasets, without employing our two-stage fine-tuning strategy. Single-LLM multi-task refers to using the same LLM to fulfill the roles of planner, caller, and summarizer. This particular LLM is fine-tuned on a combined dataset comprising the three sub-task datasets and functions similarly to our multi-LLM framework. We also evaluate the performance of ChatGPT and GPT-4 with 0-shot setting, and ToolLLaMA (Qin et al., 2023b), which is a 7B LLaMA model fine-tuned on ToolBench.

## 5 Results and Analysis

## 5.1 Overall Results

The main results are presented in Table 1. We elaborate on our observations from five perspectives:

First, when compared to ChatGPT and ToolLLama, our α -UMi outperforms them on all metrics except for the answer correctness on ToolAlpaca. α -UMi exceeds these two baselines in terms of Plan ACCand R-L considerably, demonstrating its alignment with annotated reference in terms of planning execution steps and generating final answers. It is worth mentioning that ToolLLaMA only exhibits acceptable performance when the input length is 8192. At an input length of 4096, ToolLLaMA shows deterioration across various metrics, particularly exhibiting a very high hallucination rate. In contrast, α -UMi only requires the input length of 4096 to achieve a satisfying performance. We attribute this to our multi-LLM system design, which allows each small LLM to focus on its sub-task, thereby reducing the requirement for input length.

Second, α -UMi outperforms the Single-LLM agent. On ToolBench, we unveil substantial improvements with α -UMi, particularly in Plan ACC, Act. EM, Hallu., and Arg. F1. On ToolAlpaca, α -UMi also surpasses Single-LLM with both 7B and 13B backbones. These findings not only confirm the effectiveness of α -UMi in enhancing the agent's planning and API calling capabilities but also suggest a notable decrease in hallucinations, which can significantly elevate user satisfaction.

Third, when comparing the results of methods with different model sizes, we note that agents with a 13B backbone exhibit superior performance com-

Table 2: Results of real-time evaluation on ToolBench. 'ReACT' and 'DFSDT' denote reasoning strategies used to construct agents, as detailed in Section 5.2. 'Win' measures the relative win rate of each agent compared to ChatGPT-ReACT ('Method'=ReACT, 'Model'=ChatGPT), which does not have an associated win rate.

| Method | Model | I1-Inst. | I1-Inst. | I1-Tool | I1-Tool | I1-Cat. | I1-Cat. | I2-Inst. | I2-Inst. | I2-Cat. | I2-Cat. | I3-Inst. | I3-Inst. | Average | Average |

|----------|--------------|------------|------------|-----------|-----------|-----------|-----------|------------|------------|-----------|-----------|------------|------------|-----------|-----------|

| Method | Model | Pass | Win | Pass | Win | Pass | Win | Pass | Win | Pass | Win | Pass | Win | Pass | Win |

| | Claude-2 | 5.5 | 31.0 | 3.5 | 27.8 | 5.5 | 33.8 | 6.0 | 35.0 | 6.0 | 31.5 | 14.0 | 47.5 | 6.8 | 34.4 |

| | ChatGPT | 41.5 | - | 44.0 | - | 44.5 | - | 42.5 | - | 46.5 | - | 22.0 | - | 40.2 | - |

| | ToolLLaMA | 25.0 | 45.0 | 29.0 | 42.0 | 33.0 | 47.5 | 30.5 | 50.8 | 31.5 | 41.8 | 25.0 | 55.0 | 29.0 | 47.0 |

| | GPT-4 | 53.5 | 60.0 | 50.0 | 58.8 | 53.5 | 63.5 | 67.0 | 65.8 | 72.0 | 60.3 | 47.0 | 78.0 | 57.2 | 64.4 |

| | Claude-2 | 20.5 | 38.0 | 31.0 | 44.3 | 18.5 | 43.3 | 17.0 | 36.8 | 20.5 | 33.5 | 28.0 | 65.0 | 43.1 | 43.5 |

| | ChatGPT | 54.5 | 60.5 | 65.0 | 62.0 | 60.5 | 57.3 | 75.0 | 72.0 | 71.5 | 64.8 | 62.0 | 69.0 | 64.8 | 64.3 |

| | ToolLLaMA | 57.0 | 55.0 | 61.0 | 55.3 | 62.0 | 54.5 | 77.0 | 68.5 | 77.0 | 58.0 | 66.0 | 69.0 | 60.7 | 60.0 |

| | GPT-4 | 60.0 | 67.5 | 71.5 | 67.8 | 67.0 | 66.5 | 79.5 | 73.3 | 77.5 | 63.3 | 71.0 | 84.0 | 71.1 | 70.4 |

| | α -UMi (7B) | 65.0 | 59.5 | 68.0 | 66.0 | 64.0 | 57.0 | 81.5 | 76.5 | 76.5 | 72.0 | 70.0 | 63.0 | 70.9 | 65.9 |

| | α -UMi (13B) | 65.5 | 61.5 | 69.0 | 66.0 | 65.0 | 62.5 | 84.5 | 75.0 | 81.0 | 74.5 | 71.0 | 66.0 | 72.2 | 67.7 |

pared to their 7B counterparts. This observation implies that the shift from a 7B to a 13B model results in a noteworthy improvement in tool utilization capabilities. Significantly, α -UMi with a 7B backbone even outperforms the Single-LLM baseline with a 13B LLM, confirming our earlier assumption that smaller LLMs can be utilized in our multi-LLM framework to develop each capability and achieve competitive overall performance.

Fourth, α -UMi outperforms Multi-LLMone-stage and Single-LLM multi-task . Multi-LLMone-stage even exhibits suboptimal performance compared to the Single-LLM baseline in metrics assessing API calling abilities, such as Act. EM, Hallu., and Arg. F1. This finding highlights the limitations of Multi-LLMone-stage when training each LLM on individual sub-tasks, compromising the comprehensive understanding of the tool-use task. Moreover, the subpar performance of Single-LLM multi-task reveals a limitation associated with the capacity of 7B and 13B models. The limited model capacity hinders the agent from effectively fulfilling the roles of planner, caller, and summarizer simultaneously. In contrast, through the application of the GLPFT strategy, α -UMi successfully mitigates this limitation, showcasing its effectiveness in achieving comprehensive tool learning capabilities.

Finally, α -UMi w/o reuse represents that instead of reusing the user instructions in the first fine-tuning stage of GLPFT, a new set of user instructions are employed for the second stage of GLPFT. This setup is inspired by Chung et al. (2022), which has demonstrated that increasing the diversity of user instructions during fine-tuning can improve the performance and generalizability of LLMs. However, as presented in Table 1 and visualized in Figure 4, despite the increased diversity of instructions compared to α -UMiw/ reuse, α -UMiw/o reuse does not outperform α -UMiw/ reuse. We attribute this unexpected result to the following explanation: Since the objectives of the two training stages are different, using distinct sets of user instructions, each with its unique distribution, may introduces a harmful inductive bias that solving one group of the instructions in single-LLM format while the other group in multi-LLM format. In contrast, through the reuse of user instructions, the impact of varying distributions from different sets is mitigated.

## 5.2 Real-Time Test on ToolBench

To assess the performance of LLMs for solving real tasks via RapidAPI, we follow the ToolEval method (Qin et al., 2023b) proposed by the ToolBench team to conduct a real-time evaluation on the test set of ToolBench. The LLMs under consideration include Claude-2 (Anthropic, 2023), ChatGPT, GPT-4, and ToolLLaMA. We apply two reasoning strategies for these LLMs to construct tool learning agents: the ReACT method, as introduced in Section 3.1, and the Depth First Search-based Decision Tree (DFSDT) (Qin et al., 2023b), which empowers the agent to evaluate and select between different execution paths. Two metrics are included to measure these LLMs' performance: pass rate , which calculates the percentage of tasks successfully completed, and win rate , which compares the agent's solution path with that of the standard baseline, ChatGPT-ReACT. The above two metrics are assessed by a ChatGPT evaluator with carefully crafted criteria. The empirical results presented in Table 2 demonstrate that our α -UMi (7B) surpasses both ChatGPT and ToolLLaMA by significant margins in terms of pass rate (+6.1 and +10.2, respectively) and win rate (+1.6 and +5.9, respectively).

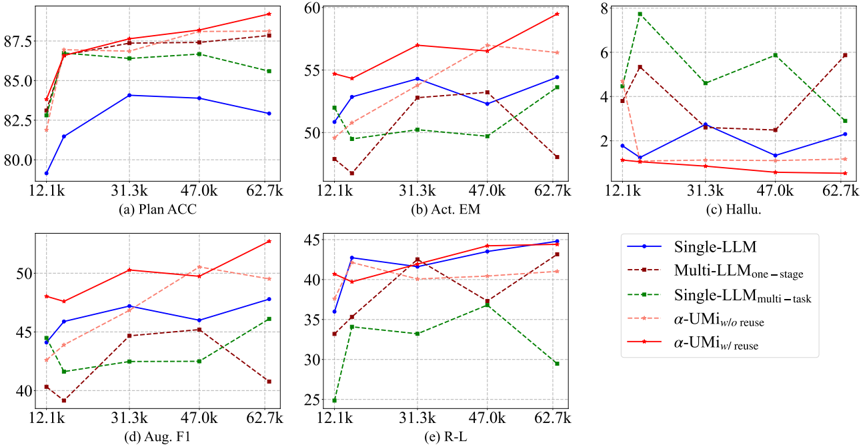

Figure 4: Results of data scaling law study on ToolBench with different evaluation metrics: (a) Plan ACC, (b) Act. EM, (c) Hallu, (d) Arg. F1, and (e) R-L. We randomly sampled five training sets with the scales of 12.1k, 15.7k, 31.3k, 47.0k, and 62.7k instances, accounting for 19.2%, 25%, 50%, 75%, and 100% of the training set, respectively.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Graphs: Model Performance Across Metrics

### Overview

The image contains six line graphs (labeled a-f) comparing the performance of different language model configurations across five metrics: Plan ACC, Act EM, Hallu., Aug. F1, and R-L. Each graph plots performance against dataset size (x-axis: 12.1k–62.7k) with distinct colored lines representing model variants. The legend on the right maps colors to model types.

---

### Components/Axes

- **X-Axes**: Dataset size (12.1k, 31.3k, 47.0k, 62.7k) across all graphs.

- **Y-Axes**:

- (a) Plan ACC: 80–87.5

- (b) Act EM: 2–60

- (c) Hallu.: 2–8

- (d) Aug. F1: 25–50

- (e) R-L: 25–45

- **Legend** (right):

- Blue: Single-LLM

- Dark red: Multi-LLMone-stage

- Green: Single-LLMmulti-task

- Light pink: α-UMi w/o reuse

- Red: α-UMi w/ reuse

---

### Detailed Analysis

#### (a) Plan ACC

- **Trends**:

- Red (α-UMi w/ reuse) starts at ~85, peaks at 87.5 (47.0k), then drops to ~86.

- Blue (Single-LLM) starts at 80, rises to 82.5 (31.3k), then declines to 82.5.

- Green (Single-LLMmulti-task) fluctuates between 82.5–85.

- **Values**:

- At 12.1k: Red ~85, Blue ~80, Green ~82.5.

- At 62.7k: Red ~86, Blue ~82.5, Green ~85.

#### (b) Act EM

- **Trends**:

- Red (α-UMi w/ reuse) peaks at 57.5 (31.3k), then drops to ~55.

- Blue (Single-LLM) rises to 55 (31.3k), then declines to ~50.

- Dark red (Multi-LLMone-stage) fluctuates between 45–55.

- **Values**:

- At 12.1k: Red ~55, Blue ~50, Dark red ~45.

- At 62.7k: Red ~55, Blue ~50, Dark red ~40.

#### (c) Hallu.

- **Trends**:

- Green (Single-LLMmulti-task) starts at 6, drops to 4 (47.0k), then rises to 5.

- Red (α-UMi w/ reuse) peaks at 3 (31.3k), then drops to 2.

- Light pink (α-UMi w/o reuse) fluctuates between 2–4.

- **Values**:

- At 12.1k: Green ~6, Red ~2, Light pink ~3.

- At 62.7k: Green ~5, Red ~2, Light pink ~3.

#### (d) Aug. F1

- **Trends**:

- Red (α-UMi w/ reuse) peaks at 50 (31.3k), then drops to ~45.

- Blue (Single-LLM) rises to 45 (31.3k), then declines to ~42.5.

- Dark red (Multi-LLMone-stage) fluctuates between 35–45.

- **Values**:

- At 12.1k: Red ~45, Blue ~40, Dark red ~35.

- At 62.7k: Red ~45, Blue ~42.5, Dark red ~35.

#### (e) R-L

- **Trends**:

- Red (α-UMi w/ reuse) peaks at 45 (31.3k), then drops to ~40.

- Blue (Single-LLM) rises to 40 (31.3k), then declines to ~35.

- Green (Single-LLMmulti-task) fluctuates between 30–35.

- **Values**:

- At 12.1k: Red ~40, Blue ~35, Green ~30.

- At 62.7k: Red ~40, Blue ~35, Green ~25.

---

### Key Observations

1. **α-UMi w/ reuse** (red) consistently outperforms other models in Plan ACC, Aug. F1, and R-L.

2. **Single-LLMmulti-task** (green) shows the worst performance in Hallu. and R-L, with a sharp drop at 62.7k.

3. **Multi-LLMone-stage** (dark red) exhibits instability, particularly in Act EM (40 at 62.7k vs. 55 at 31.3k).

4. **α-UMi w/o reuse** (light pink) underperforms its "w/ reuse" counterpart across all metrics.

---

### Interpretation

- **Model Efficiency**: α-UMi w/ reuse demonstrates superior performance, suggesting reuse mechanisms enhance accuracy. Single-LLMmulti-task struggles with hallucination (Hallu.) and reasoning (R-L), indicating potential overfitting or task-specific limitations.

- **Dataset Size Impact**: Performance generally improves with larger datasets (e.g., Plan ACC peaks at 47.0k), but plateaus or declines at 62.7k, hinting at diminishing returns or data quality issues.

- **Outliers**: The green line in (c) Hallu. peaks at 6 (12.1k), suggesting initial overconfidence in smaller datasets. The dark red line in (b) Act EM drops sharply at 62.7k, possibly due to model instability at scale.

This analysis highlights trade-offs between model complexity, reuse strategies, and dataset size in language model performance.

</details>

While α -UMi underperforms GPT-4 in win rate , it exhibits pass rates on par with GPT-4 or even exceeds it in certain test groups such as I1-Inst. and I2-Inst. . Combining the findings from Section 5.1 and this section, we note that our multi-LLM agent outperforms several established baselines across diverse metrics on ToolBench, validating its efficacy.

## 5.3 Data Scaling Law

To assess the impact of the amount of training data on performance, we conduct a data scaling law analysis with the 7B backbone on ToolBench, varying the number of annotated training instances from 12.1k to 62.7k. The results in different metrics are depicted in Figure 4. Several observations can be drawn from the results. Firstly, when comparing α -UMi (solid red curves) to Single-LLM (solid blue curves), there are significant and consistent enhancements in metrics such as Plan ACC, Act. EM, Hallu., and Arg. F1 across various scales of training data. While only minor improvements are observed in the R-L metric, which directly reflects the performance of the summarizer, this suggests that the performance enhancement of our framework is mainly attributed to the separation of the planner and the caller. Secondly, the performances of Multi-LLMone-stage and Single-LLM multi-task exhibit severe fluctuations in all metrics except for Plan ACC, indicating instability in training the framework through direct fine-tuning or multi-task fine-tuning. Thirdly, Single-LLM achieves opti-

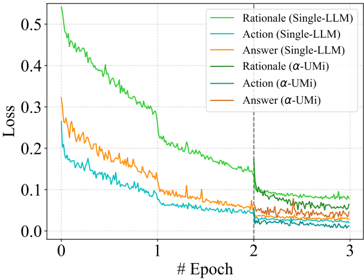

Figure 5: Curves of training loss.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Graph: Model Loss Over Training Epochs

### Overview

The image is a line graph comparing the training loss of different models across 3 epochs. Five lines represent distinct model configurations, with loss values decreasing over time. The graph includes a legend, axis labels, and a vertical dashed line at epoch 2.

### Components/Axes

- **X-axis**: Labeled "# Epoch" with ticks at 0, 1, 2, 3.

- **Y-axis**: Labeled "Loss" with values ranging from 0.0 to 0.5 in increments of 0.1.

- **Legend**: Located in the top-right corner, listing six model configurations:

1. **Rational (Single-LLM)** – Green line

2. **Action (Single-LLM)** – Blue line

3. **Answer (Single-LLM)** – Orange line

4. **Rational (α-UMi)** – Dark green line

5. **Action (α-UMi)** – Dark blue line

6. **Answer (α-UMi)** – Dark orange line

- **Dashed Line**: Vertical line at epoch 2 (x=2).

### Detailed Analysis

1. **Rational (Single-LLM)** (Green):

- Starts at ~0.5 loss at epoch 0.

- Decreases sharply to ~0.05 by epoch 3.

- Shows minor fluctuations but a consistent downward trend.

2. **Action (Single-LLM)** (Blue):

- Begins at ~0.3 loss at epoch 0.

- Drops to ~0.02 by epoch 3.

- Slightly more volatile than the Rational line.

3. **Answer (Single-LLM)** (Orange):

- Starts at ~0.35 loss at epoch 0.

- Declines to ~0.03 by epoch 3.

- Exhibits moderate fluctuations.

4. **Rational (α-UMi)** (Dark Green):

- Initial loss ~0.15 at epoch 0.

- Reduces to ~0.01 by epoch 3.

- Smoother decline compared to Single-LLM variants.

5. **Answer (α-UMi)** (Dark Orange):

- Begins at ~0.1 loss at epoch 0.

- Drops to ~0.005 by epoch 3.

- Most stable and lowest final loss among all lines.

**Note**: The legend lists six models, but only five lines are visible in the graph. The "Action (α-UMi)" (dark blue) line is missing, suggesting a potential error in the image or legend.

### Key Observations

- All models show decreasing loss over epochs, indicating improved performance.

- **α-UMi models** (dark green/orange) consistently outperform Single-LLM models (green/blue/orange) in final loss values.

- The **Rational (Single-LLM)** model has the highest initial loss but the steepest decline.

- The **Answer (α-UMi)** model achieves the lowest final loss (~0.005), suggesting superior efficiency.

### Interpretation

The graph demonstrates that models using the **α-UMi framework** (likely a more advanced or optimized architecture) achieve significantly lower training losses compared to Single-LLM baselines. This implies that α-UMi models may be more effective at minimizing error during training. The Rational (Single-LLM) model, despite starting with the highest loss, shows the most aggressive improvement, possibly due to its design prioritizing rapid convergence. The missing "Action (α-UMi)" line introduces ambiguity, but the visible trends suggest α-UMi models generally outperform their Single-LLM counterparts. The vertical dashed line at epoch 2 may indicate a phase shift in training dynamics, though its significance is unclear without additional context.

</details>

mal results in different metrics at different data scales. For example, it attains peak performance in Plan ACC with 31.3k instances and the best Arg. F1 and R-L with 62.7k instances. This suggests the challenge of obtaining a single LLM that uniformly performs well across all metrics. In contrast, the performance of our framework consistently improves with increased data scale across all metrics.

## 5.4 Why α -UMi Works?

We track the training process of our α -UMi approach to examine what makes it different from the Single-LLM baseline. To further investigate how each capability of the model evolves during training, we track the training loss on the rationale, action, and answer components of target responses. The results are depicted in Figure 5. As introduced in Section 4.3, α -UMi employs GLPFT and deviates from Single-LLM after two training epochs.

Table 3: The cost of training and inference.

| Model | Storage | Flops | Train Time | GPU Mem. | Infer. Time (Per Inst.) |

|-------------------|------------------|-----------------------------|------------------|------------------|---------------------------|

| Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B | Model Size = 7B |

| Single-LLM α -UMi | 7B 7B*3 | 4 . 8 ∗ 10 15 6 . 2 ∗ 10 15 | 41.54h 63.34h | 206G 206G | 6.41s 6.27s |

| Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B | Model Size = 13B |

| Single-LLM α -UMi | 13B 13B*3 | 7 . 2 ∗ 10 15 9 . 7 ∗ 10 15 | 89.56h 129.96h | 308G 308G | 11.91s 11.09s |

Therefore, our discussion focuses on the training curves of α -UMi from the third epoch.

The plotted curves reveal a consistent decrease in the training loss for rationale, action, and answer components during the initial two epochs. However, in the third epoch, the losses of Single-LLM exhibit a nearly stagnant trend. In contrast, α -UMi experiences continued reductions in the losses associated with rationale and action, indicating further optimization within our α -UMi framework.

These observations suggest that the key factor contributing to the success of α -UMi lies in its ability to surpass the performance upper-bound of Single-LLM. This is achieved by leveraging GLPFT and decomposing the agent into a multiLLM system, even after Single-LLM has attained its upper-bound abilities via sufficient fine-tuning.

## 5.5 Cost of α -UMi

Given that α -UMi operates as a multi-LLM framework, it introduces potential additional costs in terms of training, storage, and deployment. Table 3 provides a summary of the costs associated with Single-LLM and α -UMi, based on execution logs on 8 Nvidia A100 GPUs with a 40G capacity. Our observations are threefold. Firstly, owing to its composition of a planner, a caller, and a summarizer, α -UMi demands three times the storage capacity compared to the Single-LLM framework, assuming they employ backbones of the same size. Secondly, the training of α -UMi requires 1.3 times the computational resources and 1.5 times the training duration compared to Single-LLM, while the GPU memory cost for training remains consistent between the two methods. Thirdly, during inference, the time required for both Single-LLM and α -UMi is similar, as we only distribute sub-tasks (rationale, action, and answer) to the three LLMs, without forcing them to generate extra contents, thus bringing nearly no extra cost when inference.

Note that based on the findings presented in Table 1, α -UMi with a 7B backbone can outperform Single-LLM with a 13B backbone. Furthermore, the cost associated with α -UMi featuring a 7B model is lower than that of Single-LLM featuring a 13B model, both in terms of training and inference. This underscores the cost-effectiveness of α -UMi as a means to achieve, and even surpass, the performance of a larger LLM.

## 5.6 Case Study

Figure 6 and Figure 7 show two cases of our α -UMi executing real tasks in ToolBench. In the case of Figure 6, the user specifies the available tools in the instructions, making the tool invocation process simpler. The α -UMi framework completes the task within two steps through the collaboration of the planner, caller, and summarizer. In the case of Figure 7, α -UMi initially attempts to use the 'video\_for\_simple\_youtube\_search' to obtain detailed video information at step 0. However, it realizes that this API has broken and cannot be invoked. Therefore, the planner informs the caller to try an alternative API and obtain accurate information. Ultimately, the user's task is successfully resolved.

To further analyze the specific advantages of our α -UMi and Single-LLM frameworks in task execution, we have presented some comparative examples of the two frameworks in Tables 5, 6, 7, and 8. Tables 5 and 6 illustrate simple tasks that require only a single step tool invocation to be completed, in which case both α -UMi and Single-LLM can successfully accomplish the tasks. However, in the complex tasks presented in Tables 7 and 8, where the tasks require the models to accomplish some composite objectives, α -UMi's planner can quickly understand the user's intentions and plan out steps based on the prompts provided by the caller and summarizer. On the other hand, Single-LLM exhibited some behaviors that did not align with the user's intentions during planning, such as invoking APIs that did not match the intent and entering loops in these misaligned APIs, ultimately failing to provide sufficient information to complete the user's instructions. This result indicates that α -UMi's decomposing Single-LLM into a planner, caller, and summarizer reduces the burden on the model during reasoning, allowing the planner model to focus solely on understanding the user's intentions and making effective plans, thereby better accomplishing the tasks.

## 6 Conclusion

The objective of this paper is to address the challenge of designing and fine-tuning a single small

LLM to acquire the extensive abilities required for a tool learning agent. To this end, we introduce α -UMi, a multi-LLM tool learning agent framework that breaks down the tool learning task into three distinct sub-tasks delegated to three small LLMs: planner, caller, and summarizer. Moreover, we propose a global-to-local progressive fine-tuning strategy and demonstrate its effectiveness in training the multi-LLM framework. We evaluate our approach against single-LLM baselines on four tool learning benchmarks, supplemented by various indepth analyses, including a data scaling law experiment. Our findings highlight the significance of our proposed method, validating that the system's design for decomposing tool learning tasks and the progressive fine-tuning strategy contribute to enhancing the upper-bound ability of a single LLM. Besides, we acknowledge the potential to utilize small LLMs to surpass the capabilities of an agent framework that relies on a single, larger LLM.

## 7 Limitations

While our framework has been demonstrated to outperform the single-LLM framework in tool learning tasks, there are still some limitations to this work. Firstly, there are additional avenues for exploration, such as integrating small LLMs with a powerful closed-source LLM like GPT-4 to create a 'large + small' collaborative multi-LLM tool learning agent. Secondly, our framework could be further optimized to enhance its flexibility and applicability to a wider range of agent tasks.

## 8 Ethical Statement

The α -UMi framework is trained on the public ToolBench and ToolAlpaca benchmarks, with their original purpose being to enhance the tool invocation capabilities of LLMs and improve their performance in assisting users to complete tasks. This framework has not been trained on any data that poses ethical risks. The backbone model it uses, LLaMA-2-chat, has undergone safety alignment.

## References

Anthropic. 2023. Claude-2. Website. https://www. anthropic.com/news/claude-2 .

Baian Chen, Chang Shu, Ehsan Shareghi, Nigel Collier, Karthik Narasimhan, and Shunyu Yao. 2023. Fireact: Toward language agent fine-tuning. arXiv preprint arXiv:2310.05915 .

Hyung Won Chung, Le Hou, Shayne Longpre, Barret Zoph, Yi Tay, William Fedus, Yunxuan Li, Xuezhi Wang, Mostafa Dehghani, Siddhartha Brahma, et al. 2022. Scaling instruction-finetuned language models. arXiv preprint arXiv:2210.11416 .

Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. 2021. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168 .

Guanting Dong, Hongyi Yuan, Keming Lu, Chengpeng Li, Mingfeng Xue, Dayiheng Liu, Wei Wang, Zheng Yuan, Chang Zhou, and Jingren Zhou. 2023. How abilities in large language models are affected by supervised fine-tuning data composition. arXiv preprint arXiv:2310.05492 .

Zhibin Gou, Zhihong Shao, Yeyun Gong, Yujiu Yang, Minlie Huang, Nan Duan, Weizhu Chen, et al. 2023. Tora: A tool-integrated reasoning agent for mathematical problem solving. arXiv preprint arXiv:2309.17452 .

Significant Gravitas. 2023. Autogpt: the heart of the open-source agent ecosystem. Github repository. https://github.com/Significant-Gravitas/ Auto-GPT .

Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. 2021. Measuring mathematical problem solving with the math dataset. In Thirtyfifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2) .

Sirui Hong, Xiawu Zheng, Jonathan Chen, Yuheng Cheng, Ceyao Zhang, Zili Wang, Steven Ka Shing Yau, Zijuan Lin, Liyang Zhou, Chenyu Ran, et al. 2023. Metagpt: Meta programming for multiagent collaborative framework. arXiv preprint arXiv:2308.00352 .

Chenliang Li, Hehong Chen, Ming Yan, Weizhou Shen, Haiyang Xu, Zhikai Wu, Zhicheng Zhang, Wenmeng Zhou, Yingda Chen, Chen Cheng, Hongzhu Shi, Ji Zhang, Fei Huang, and Jingren Zhou. 2023. Modelscope-agent: Building your customizable agent system with open-source large language models.

Yohei Nakajima. 2023. Babyagi. Github repository. https://github.com/yoheinakajima/babyagi .

Reiichiro Nakano, Jacob Hilton, Suchir Balaji, Jeff Wu, Long Ouyang, Christina Kim, Christopher Hesse, Shantanu Jain, Vineet Kosaraju, William Saunders, et al. 2021. Webgpt: Browser-assisted questionanswering with human feedback. arXiv preprint arXiv:2112.09332 .

OpenAI. 2022. Chatgpt: Conversational ai language model. Website. https://openai.com/chatgpt .

- OpenAI. 2023a. Gpt-4 code interpreter. Website. https://chat.openai.com/?model= gpt-4-code-interpreter .

OpenAI. 2023b. Gpt-4 technical report.

- Joon Sung Park, Joseph O'Brien, Carrie Jun Cai, Meredith Ringel Morris, Percy Liang, and Michael S Bernstein. 2023. Generative agents: Interactive simulacra of human behavior. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology , pages 1-22.

- Shishir G. Patil, Tianjun Zhang, Xin Wang, and Joseph E. Gonzalez. 2023. Gorilla: Large language model connected with massive apis. arXiv preprint arXiv:2305.15334 .

- Chen Qian, Xin Cong, Cheng Yang, Weize Chen, Yusheng Su, Juyuan Xu, Zhiyuan Liu, and Maosong Sun. 2023. Communicative agents for software development. arXiv preprint arXiv:2307.07924 .

- Yujia Qin, Shengding Hu, Yankai Lin, Weize Chen, Ning Ding, Ganqu Cui, Zheni Zeng, Yufei Huang, Chaojun Xiao, Chi Han, et al. 2023a. Tool learning with foundation models. arXiv preprint arXiv:2304.08354 .

- Yujia Qin, Shihao Liang, Yining Ye, Kunlun Zhu, Lan Yan, Yaxi Lu, Yankai Lin, Xin Cong, Xiangru Tang, Bill Qian, Sihan Zhao, Runchu Tian, Ruobing Xie, Jie Zhou, Mark Gerstein, Dahai Li, Zhiyuan Liu, and Maosong Sun. 2023b. Toolllm: Facilitating large language models to master 16000+ real-world apis.

- Samyam Rajbhandari, Olatunji Ruwase, Jeff Rasley, Shaden Smith, and Yuxiong He. 2021. Zero-infinity: Breaking the gpu memory wall for extreme scale deep learning. In SC21: International Conference for High Performance Computing, Networking, Storage and Analysis , pages 1-15. IEEE Computer Society.

- Timo Schick, Jane Dwivedi-Yu, Roberto Dessì, Roberta Raileanu, Maria Lomeli, Luke Zettlemoyer, Nicola Cancedda, and Thomas Scialom. 2023. Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761 .

- Yongliang Shen, Kaitao Song, Xu Tan, Dongsheng Li, Weiming Lu, and Yueting Zhuang. 2023. Hugginggpt: Solving ai tasks with chatgpt and its friends in hugging face. arXiv preprint arXiv:2303.17580 .

- Noah Shinn, Federico Cassano, Ashwin Gopinath, Karthik R Narasimhan, and Shunyu Yao. 2023. Reflexion: Language agents with verbal reinforcement learning. In Thirty-seventh Conference on Neural Information Processing Systems .

- Qiaoyu Tang, Ziliang Deng, Hongyu Lin, Xianpei Han, Qiao Liang, and Le Sun. 2023. Toolalpaca: Generalized tool learning for language models with 3000 simulated cases. arXiv preprint arXiv:2306.05301 .

- Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, Aurelien Rodriguez, Armand Joulin, Edouard Grave, and Guillaume Lample. 2023a. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971 .

- Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al. 2023b. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288 .

- Guanzhi Wang, Yuqi Xie, Yunfan Jiang, Ajay Mandlekar, Chaowei Xiao, Yuke Zhu, Linxi Fan, and Anima Anandkumar. 2023. Voyager: An open-ended embodied agent with large language models. arXiv preprint arXiv:2305.16291 .

- Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc V Le, Ed H Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. 2022. Self-consistency improves chain of thought reasoning in language models. In The Eleventh International Conference on Learning Representations .

- Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. 2022. Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems , 35:24824-24837.

- Jimmy Wei, Kurt Shuster, Arthur Szlam, Jason Weston, Jack Urbanek, and Mojtaba Komeili. 2023. Multi-party chat: Conversational agents in group settings with humans and models. arXiv preprint arXiv:2304.13835 .

- Chenfei Wu, Shengming Yin, Weizhen Qi, Xiaodong Wang, Zecheng Tang, and Nan Duan. 2023a. Visual chatgpt: Talking, drawing and editing with visual foundation models. arXiv preprint arXiv:2303.04671 .

- Qingyun Wu, Gagan Bansal, Jieyu Zhang, Yiran Wu, Shaokun Zhang, Erkang Zhu, Beibin Li, Li Jiang, Xiaoyun Zhang, and Chi Wang. 2023b. Autogen: Enabling next-gen llm applications via multiagent conversation framework. arXiv preprint arXiv:2308.08155 .

- Linyao Yang, Hongyang Chen, Zhao Li, Xiao Ding, and Xindong Wu. 2023a. Chatgpt is not enough: Enhancing large language models with knowledge graphs for fact-aware language modeling. arXiv preprint arXiv:2306.11489 .

- Rui Yang, Lin Song, Yanwei Li, Sijie Zhao, Yixiao Ge, Xiu Li, and Ying Shan. 2023b. Gpt4tools: Teaching large language model to use tools via self-instruction. arXiv preprint arXiv:2305.18752 .

- Zhengyuan Yang, Linjie Li, Jianfeng Wang, Kevin Lin, Ehsan Azarnasab, Faisal Ahmed, Zicheng Liu,

Ce Liu, Michael Zeng, and Lijuan Wang. 2023c. Mmreact: Prompting chatgpt for multimodal reasoning and action. arXiv preprint arXiv:2303.11381 .

- Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Thomas L Griffiths, Yuan Cao, and Karthik Narasimhan. 2023. Tree of thoughts: Deliberate problem solving with large language models. arXiv preprint arXiv:2305.10601 .

- Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik R Narasimhan, and Yuan Cao. 2022. React: Synergizing reasoning and acting in language models. In The Eleventh International Conference on Learning Representations .

- Qin Yujia, Yankai Lin Shengding Hu, Weize Chen, Ning Ding, Ganqu Cui, Zheni Zeng, et al. 2023. Tool learning with foundation models. arXiv preprint arXiv:2304.08354 .

- Aohan Zeng, Mingdao Liu, Rui Lu, Bowen Wang, Xiao Liu, Yuxiao Dong, and Jie Tang. 2023. Agenttuning: Enabling generalized agent abilities for llms. arXiv preprint arXiv:2310.12823 .

- Wanjun Zhong, Lianghong Guo, Qiqi Gao, and Yanlin Wang. 2023. Memorybank: Enhancing large language models with long-term memory. arXiv preprint arXiv:2305.10250 .

Xizhou Zhu, Yuntao Chen, Hao Tian, Chenxin Tao, Weijie Su, Chenyu Yang, Gao Huang, Bin Li, Lewei Lu, Xiaogang Wang, et al. 2023. Ghost in the minecraft: Generally capable agents for open-world enviroments via large language models with text-based knowledge and memory. arXiv preprint arXiv:2305.17144 .

## A System prompts

## A.1 P plan for ToolBench and ToolAlpaca

You have assess to the following apis: {doc}

The conversation history is:

{history}

You are the assistant to plan what to do next and whether is caller's or conclusion's turn to answer.

Answer with a following format:

The thought of the next step, followed by Next: caller or conclusion or give up.

## A.2 P call for ToolBench and ToolAlpaca

You have assess to the following apis: {doc}

The conversation history is:

{history}

The thought of this step is:

{thought}

Base on the thought make an api call in the following format:

Action: the name of api that should be called in this step, should be exactly in {tool\_names},

Action Input: the api call request.

## A.3 P sum for ToolBench and ToolAlpaca

Make a conclusion based on the conversation history: {history}

## A.4 P plan for MATH and GSM8K

Solve the math problem step by step by integrating step-by-step reasoning and Python code,

The problem is: {instruction}

The historical execution logs are:

{history} You are the assistant to plan what to do next, and shooce caller to generate code or conclusion to answer the problem.

Answer with a following format:

The thought of the next step, followed by Next: caller or conclusion.

## A.5 P call for MATH and GSM8K

The problem is: {instruction} The historical execution logs are: {history}

The thought of this step is:

{thought} generate the code for this step

## A.6 P sum for MATH and GSM8K

The problem is: {instruction} The historical execution logs are: {history} Make a conclusion based on the conversation history

## B Details of Benchmarks

## B.1 ToolBench

ToolBench (Qin et al., 2023b) is a benchmark for evaluating an agent's ability to call APIs. The ToolBench team collects 16,464 real-world APIs from RapidAPI and a total of 125,387 execution trajectories as the training corpus. We randomly sample 62,694 execution trajectories as the training set, and the average number of execution steps is 4.1.

The test set of ToolBench is divided into 6 groups, namely I1-instruction, I1-tool, I1-category, I2-instruction, I2-category, and I3-instruction. The groups whose name ends with 'instruction' means the test instructions in these groups use the tools in the training set, which is the in-domain test data. Otherwise, the groups whose name ends with 'tool' or 'category' means the test instructions do not use the tools in the training set, which is the outof-domain test data. Each group contains 100 user instructions, therefore the total in-domain test set contains 400 instructions, while the out-of-domain test set contains 200 instructions.

The original evaluation metrics in ToolBench are the pass rate and win rate judged by ChatGPT. However, as introduced in Section 4.2, the APIs in RapidAPI update every day, which can cause network block, API breakdown, and exhausted quota. Therefore, to make a relatively fair comparison, we adopt the idea of Modelscope-Agent (Li et al., 2023) to compare the predictions of our model with the annotated GPT-4 outputs on the step level. Specifically, for the t th step, we input the model with the previous trajectory of GPT-4, ask our framework to generate the rationale and action of this step, and then compare the generated rationale and action of this step with the output of GPT-4.

## B.2 ToolAlpaca

ToolAlpaca is another benchmark for evaluating API calling. Unlike ToolBench, the APIs and API calling results in ToolAlpaca are mocked from ChatGPT by imitating how the real APIs work. The

Table 4: Overall results on MATH and GSM8K.

| Model | MATH GSM8K ACC | MATH GSM8K ACC |

|-----------------------|------------------|------------------|

| Model Size = 7B | Model Size = 7B | Model Size = 7B |

| Single-LLM | 17.38 | 37.90 |

| Multi-LLM one-stage | 15.46 | 38.96 |

| Single-LLM multi-task | 14.18 | 27.97 |

| α -UMi | 25.60 | 49.73 |

| Model Size = 13B | Model Size = 13B | Model Size = 13B |

| Single-LLM | 20.26 | 44.88 |

| Multi-LLM one-stage | 20.32 | 44.57 |

| Single-LLM multi-task | 15.34 | 34.79 |

| α -UMi | 28.54 | 54.20 |

total number of training instances in ToolAlpaca is 4098, with an average of 2.66 execution steps per instance. The test set of ToolAlpaca contains 100 user instructions. The evaluation of ToolAlpaca is carried out by a simulator where the agent solves the instruction with the tools mocked by ChatGPT. Finally, GPT-4 judges if the execution process of the agent is consistent with the reference process pre-generated by ChatGPT (Proc. correctness) and whether the final answer generated by the agent can solve the user instruction (Ans. correctness).

## C Static Evaluation on ToolBench

The evaluation method for ToolBench introduced in Section 4.2 is a static approach that assesses the output of the agent at each step individually. Specifically, for each step t , given the ground-truth annotation of the previous execution trajectory τ ∗ <t , the agent generates the rationale ˆ r t and action ˆ a t for this step:

$$\hat { r } _ { t } , \hat { a } _ { t } = A g e n t ( \tau _ { < t } ^ { * } ) . \quad ( 5 )$$

Then, metrics are computed by comparing the generated ˆ r t and ˆ a t with the annotated ground-truth rationale r ∗ t and action a ∗ t for this step:

$$M e t r i c = E v a l u a t e ( \hat { r } _ { t } , \hat { a } _ { t } , r _ { t } ^ { * } , a _ { t } ^ { * } ) . \quad ( 6 )$$

The advantage of this evaluation method is as follows. At each step, the agent only needs to take the previous ground-truth trajectory as input and outputs the current step's rationale and action. This prevents error propagation due to factors such as network blocks, API breakdowns, and exhausted quotas in any particular step, which could affect the fairness of comparison. This evaluation method is an effective complement to real-time evaluation.

## D α -UMi on Other Benchmarks

## D.1 MATH and GSM8K

The MATH (Hendrycks et al., 2021) and GSM8K (Cobbe et al., 2021) benchmarks are originally designed to test the mathematical reasoning ability of LLMs. Following ToRA (Gou et al., 2023), we employ a program-aided agent to solve the mathematical problems presented in these datasets. In our scenario, the planner will generate certain rationales and comments to guide the generation of program, the caller will generate program to conduct mathematical calculation, and finally the summarizer will conclude the final answer. Since ToRA has not released its training data, to facilitate the training of our framework, we utilize gpt-3.5-turbo-1106 (OpenAI, 2022) and gpt-4 (OpenAI, 2023b) to collect execution trajectories in the training set of MATH and GSM8K and filter out the trajectories that do not lead to the correct final answer. Finally, we collect 5536 trajectories from ChatGPT, 573 trajectories from GPT-4 on MATH, and 6213 from ChatGPT on GSM8K.

The test set sizes of MATH and GSM8K are 5000 and 1319, respectively. During testing, we feed our agent with each of the test instructions and execute the agent with a Python code interpreter. We follow the original evaluation methods of MATH and GSM8K to evaluate the performance of the agent with the accuracy of the final answer. As the evaluation results shown in Table 4, our α -UMi can still outperform the baselines on MATH and GSM8K, verifying its effectiveness.

Figure 6: A case study of α -UMi. In this case, the user specifies the available tools in the instructions, making the tool invocation process simpler. The α -UMi framework completes the task within two steps through the collaboration of the planner, caller, and summarizer.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Flowchart: Music Search System Workflow

### Overview

The diagram illustrates a conversational workflow between a user and a planner system, demonstrating how to search for music tracks and artists using the Deezer and Shazam APIs. The process involves iterative steps of querying APIs, processing responses, and transitioning between components (Planner, Caller, Summarizer).

### Components/Axes

- **Steps**: Labeled as Step 0, Step 1, Step 2, and Step 8.

- **Actions**: