# Medusa: Simple LLM Inference Acceleration Framework with Multiple Decoding Heads

**Authors**: Tianle Cai, Yuhong Li, Zhengyang Geng, Hongwu Peng, Jason D. Lee, Deming Chen, Tri Dao

Abstract

Large Language Models (LLMs) employ auto-regressive decoding that requires sequential computation, with each step reliant on the previous one’s output. This creates a bottleneck as each step necessitates moving the full model parameters from High-Bandwidth Memory (HBM) to the accelerator’s cache. While methods such as speculative decoding have been suggested to address this issue, their implementation is impeded by the challenges associated with acquiring and maintaining a separate draft model. In this paper, we present Medusa, an efficient method that augments LLM inference by adding extra decoding heads to predict multiple subsequent tokens in parallel. Using a tree-based attention mechanism, Medusa constructs multiple candidate continuations and verifies them simultaneously in each decoding step. By leveraging parallel processing, Medusa substantially reduces the number of decoding steps required. We present two levels of fine-tuning procedures for Medusa to meet the needs of different use cases: Medusa -1: Medusa is directly fine-tuned on top of a frozen backbone LLM, enabling lossless inference acceleration. Medusa -2: Medusa is fine-tuned together with the backbone LLM, enabling better prediction accuracy of Medusa heads and higher speedup but needing a special training recipe that preserves the model’s capabilities. Moreover, we propose several extensions that improve or expand the utility of Medusa, including a self-distillation to handle situations where no training data is available and a typical acceptance scheme to boost the acceptance rate while maintaining generation quality. We evaluate Medusa on models of various sizes and training procedures. Our experiments demonstrate that Medusa -1 can achieve over 2.2 $×$ speedup without compromising generation quality, while Medusa -2 further improves the speedup to 2.3-2.8 $×$ .

Machine Learning, ICML

1 Introduction

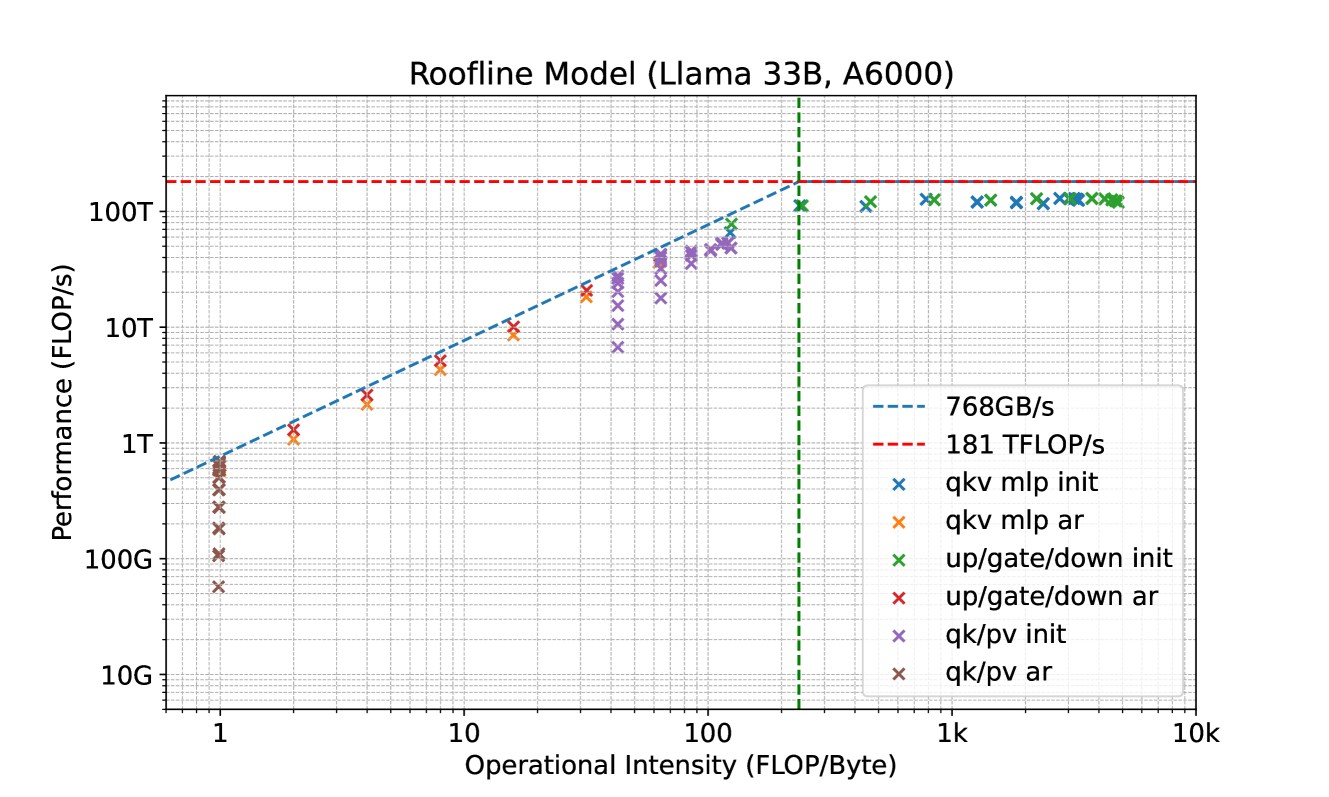

The recent advancements in Large Language Models (LLMs) have demonstrated that the quality of language generation significantly improves with an increase in model size, reaching billions of parameters (Brown et al., 2020; Chowdhery et al., 2022; Zhang et al., 2022; Hoffmann et al., 2022; OpenAI, 2023; Google, 2023; Touvron et al., 2023). However, this growth has led to an increase in inference latency, which poses a significant challenge in practical applications. From a system perspective, LLM inference is predominantly memory-bandwidth-bound (Shazeer, 2019; Kim et al., 2023), with the main latency bottleneck stemming from accelerators’ memory bandwidth rather than arithmetic computations. This bottleneck is inherent to the sequential nature of auto-regressive decoding, where each forward pass requires transferring the complete model parameters from High-Bandwidth Memory (HBM) to the accelerator’s cache. This process, which generates only a single token, underutilizes the arithmetic computation potential of modern accelerators, leading to inefficiency.

To address this, one approach to speed up LLM inference involves increasing the arithmetic intensity (the ratio of total floating-point operations (FLOPs) to total data movement) of the decoding process and reducing the number of decoding steps. In line with this idea, speculative decoding has been proposed (Leviathan et al., 2022; Chen et al., 2023; Xia et al., 2023; Miao et al., 2023). This method uses a smaller draft model to generate a token sequence, which is then refined by the original, larger model for acceptable continuation. However, obtaining an appropriate draft model remains challenging, and it’s even harder to integrate the draft model into a distributed system (Chen et al., 2023).

Instead of using a separate draft model to sequentially generate candidate outputs, in this paper, we revisit and refine the concept of using multiple decoding heads on top of the backbone model to expedite inference (Stern et al., 2018). We find that when applied effectively, this technique can overcome the challenges of speculative decoding, allowing for seamless integration into existing LLM systems. Specifically, we introduce Medusa, a method that enhances LLM inference by integrating additional decoding heads to concurrently predict multiple tokens. These heads are fine-tuned in a parameter-efficient manner and can be added to any existing model. With no requirement for a draft model, Medusa offers easy integration into current LLM systems, including those in distributed environments, ensuring a user-friendly experience.

We further enhance Medusa with two key insights. Firstly, the current approach of generating a single candidate continuation at each decoding step leads to inefficient use of computational resources. To address this, we propose generating multiple candidate continuations using the Medusa heads and verifying them concurrently through a simple adjustment to the attention mask. Secondly, we can reuse the rejection sampling scheme as used in speculative decoding (Leviathan et al., 2022; Chen et al., 2023) to generate consistent responses with the same distribution as the original model. However, it cannot further enhance the acceleration rate. Alternatively, we introduce a typical acceptance scheme that selects reasonable candidates from the Medusa head outputs. We use temperature as a threshold to manage deviation from the original model’s predictions, providing an efficient alternative to the rejection sampling method. Our results suggest that the proposed typical acceptance scheme can accelerate the decoding speed further while maintaining a similar generation quality.

To equip LLMs with predictive Medusa heads, we propose two distinct fine-tuning procedures tailored to various scenarios. For situations with limited computational resources or when the objective is to incorporate Medusa into an existing model without affecting its performance, we recommend Medusa -1. This method requires minimal memory and can be further optimized with quantization techniques akin to those in QLoRA (Dettmers et al., 2023), without compromising the generation quality due to the fixed backbone model. However, in Medusa -1, the full potential of the backbone model is not utilized. We can further fine-tune it to enhance the prediction accuracy of Medusa heads, which can directly lead to a greater speedup. Therefore, we introduce Medusa -2, which is suitable for scenarios with ample computational resources or for direct Supervised Fine-Tuning (SFT) from a base model. The key to Medusa -2 is a training protocol that enables joint training of the Medusa heads and the backbone model without compromising the model’s next-token prediction capability and output quality. We propose different strategies for obtaining the training datasets depending on the model’s training recipe and dataset availability. When the model is fine-tuned on a public dataset, it can be directly used for Medusa. If the dataset is unavailable or the model underwent a Reinforcement Learning with Human Feedback (RLHF) (Ouyang et al., 2022) process, we suggest a self-distillation approach to generate a training dataset for the Medusa heads.

Our experiments primarily focus on scenarios with a batch size of one, which is representative of the use case where LLMs are locally hosted for personal use. We test Medusa on models of varying sizes and training settings, including Vicuna-7B, 13B (trained with a public dataset), Vicuna-33B (Chiang et al., 2023) (trained with a private dataset Upon contacting the authors, this version is experimental and used some different data than Vicuna 7B and 13B.), and Zephyr-7B (trained with both supervised fine-tuning and alignment). Medusa can achieve a speedup of 2.3 to 2.8 times across different prompt types without compromising on the quality of generation.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Diagram Analysis: Medusa Architecture for LLM Acceleration

This document provides a detailed technical extraction of the provided architectural diagram, which illustrates the "Medusa" method for accelerating Large Language Model (LLM) inference.

## 1. Component Isolation

The diagram is organized into five primary functional regions:

1. **Header:** Branding/Logo.

2. **Original Model (Blue Block):** The base transformer architecture.

3. **Medusa Heads (Red Block):** The parallel prediction heads.

4. **Top-k Predictions (Purple Block):** The output tokens from each head.

5. **Footer/Processing Logic:** Input, candidate verification, and final prediction.

---

## 2. Detailed Component Extraction

### Header

* **Logo:** A circular emblem featuring a stylized llama with a star above its head, framed by wavy hair reminiscent of the Starbucks logo (a play on "Medusa" and "Llama").

### Original Model (Blue Region - Left)

This region represents the standard frozen or base model.

* **Labels:** ❄️ / 🔥 Original Model

* **Internal Flow:**

* **Embedding:** The entry point for input data.

* **Transformer Layers:** The core processing block.

* **LM Head:** The standard Language Modeling head that predicts the next token.

* **Data Path:** An arrow labeled **"Last Hidden"** originates from the output of the Transformer Layers and branches to both the LM Head and the Medusa Heads.

### Medusa Heads (Red Region - Center)

This region represents the additional heads added to the model.

* **Label:** 🔥 Medusa Heads

* **Components:**

* **Medusa Head 1**

* **Medusa Head 2**

* **Medusa Head 3**

* **Input:** All three heads receive the "Last Hidden" state from the Transformer Layers in parallel.

### Top-k Predictions (Purple Region - Right)

This region displays the candidate tokens generated by each head.

* **Label:** 🔝 Top-$k$ Predictions

* **Data Mapping:**

| Source | Predictions |

| :--- | :--- |

| **LM Head** | "It, I, As" |

| **Medusa Head 1** | "is, ', the" |

| **Medusa Head 2** | "difficult, is, '" |

| **Medusa Head 3** | "not, difficult, a" |

### Footer / Logic Flow (Bottom)

* **Input Block:**

* **Label:** 📝 Input

* **Text:** "What will happen if Medusa meets a Llama?"

* **Candidates Block:**

* **Label:** 📜 Candidates

* **Content:**

* **It is difficult** not ✅ (Indicated as the correct/accepted sequence)

* **It'** difficult a ❌

* **It is'** not ❌

* ... (Ellipsis indicating further candidates)

* **Single Step Prediction Block:**

* **Label:** ✍️ Single step prediction

* **Text:** *It is difficult*

---

## 3. Process Flow and Logic

1. **Input Processing:** The text "What will happen if Medusa meets a Llama?" is fed into the **Embedding** layer and processed through **Transformer Layers**.

2. **Parallel Generation:** Instead of generating one token, the **Last Hidden** state is sent to the **LM Head** and three **Medusa Heads** simultaneously.

3. **Token Proposal:**

* The LM Head predicts the immediate next token (e.g., "It").

* Medusa Head 1 predicts the token after that (e.g., "is").

* Medusa Head 2 predicts the third token (e.g., "difficult").

* Medusa Head 3 predicts the fourth token (e.g., "not").

4. **Candidate Assembly:** The system combines the top-$k$ results from all heads to create multiple potential sentence continuations (Candidates).

5. **Verification:** The candidates are checked for linguistic validity.

* The sequence "**It is difficult**" is validated.

* Incorrect combinations like "**It' difficult**" are rejected.

6. **Output:** In a **Single step prediction**, the model successfully outputs multiple tokens (*It is difficult*) at once, rather than generating them one by one, thereby increasing inference speed.

## 4. Symbol Legend

* ❄️: Likely represents "Frozen" parameters (Original Model).

* 🔥: Likely represents "Trainable" parameters (Medusa Heads).

* ✅: Validated/Accepted candidate.

* ❌: Rejected candidate.

</details>

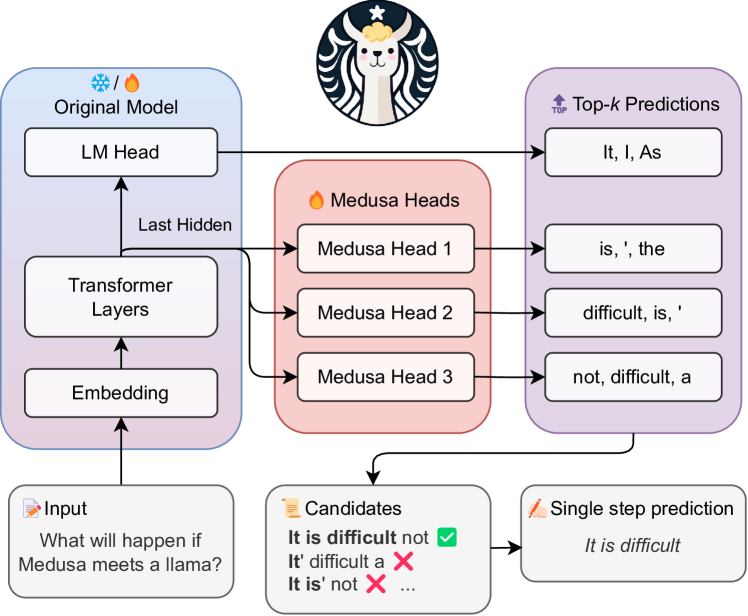

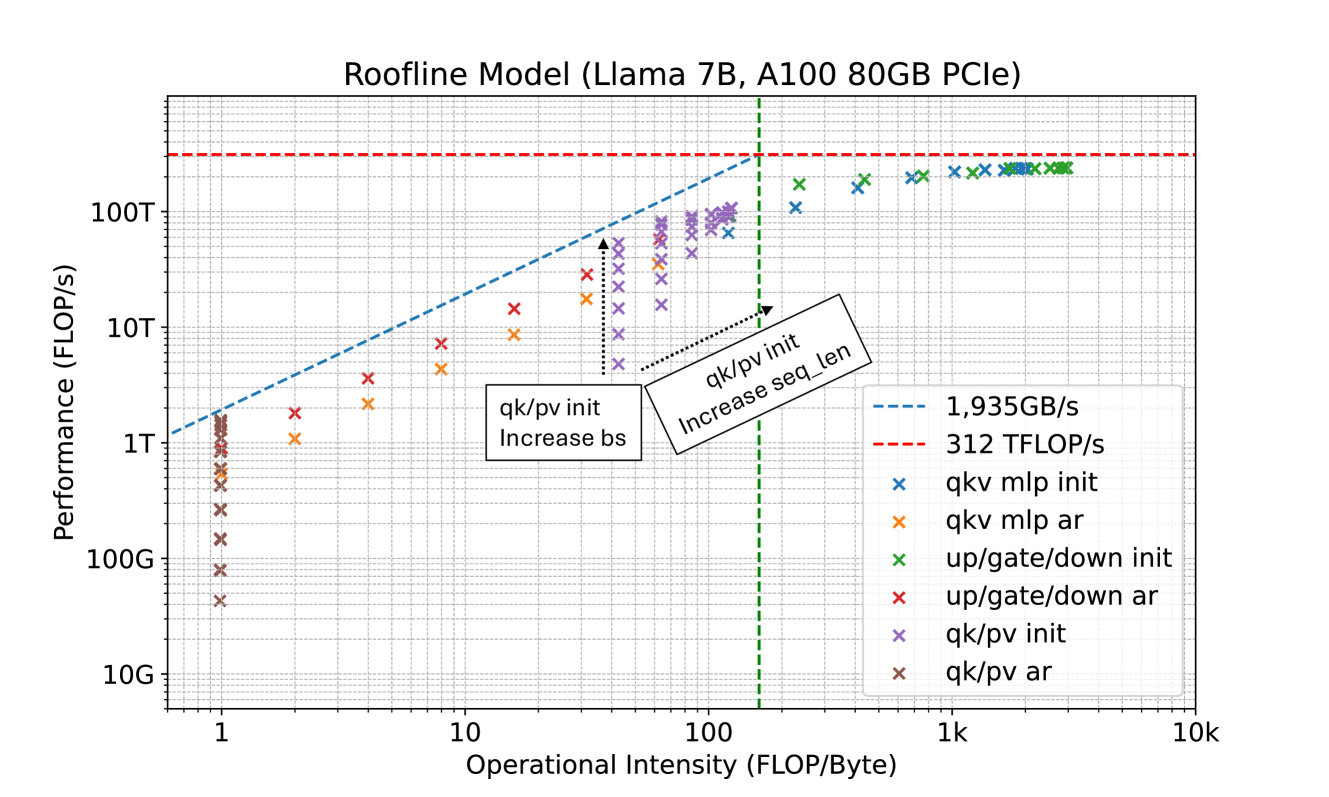

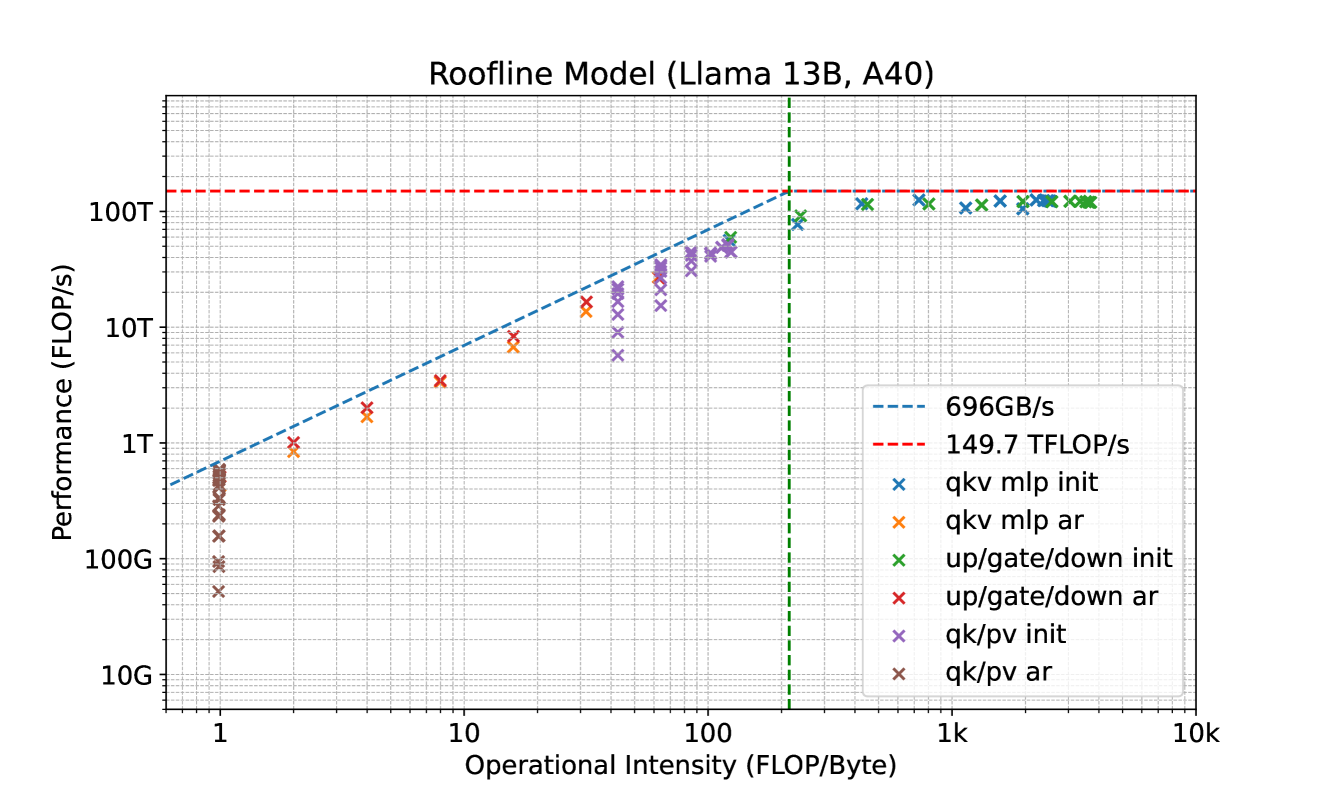

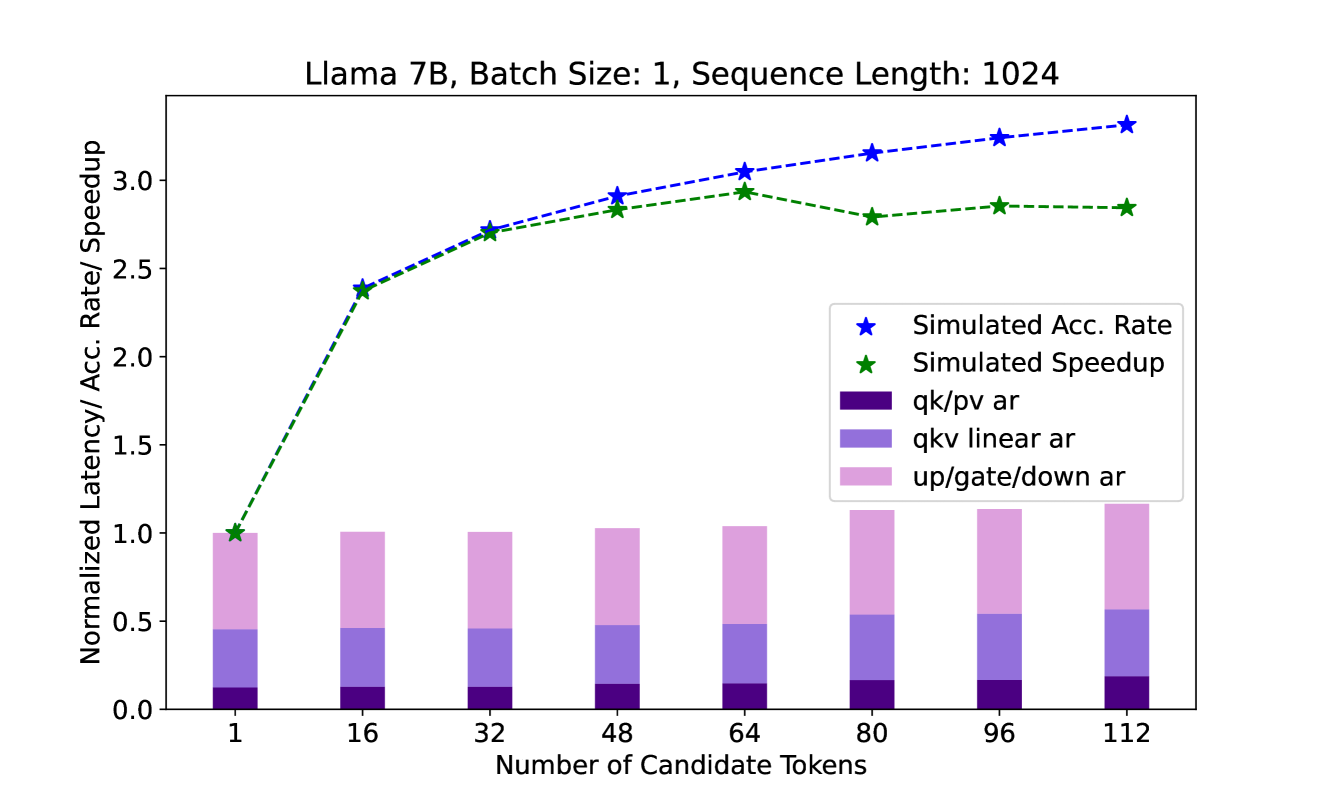

Figure 1: Medusa introduces multiple heads on top of the last hidden states of the LLM, enabling the prediction of several subsequent tokens in parallel (Section 2.1.1). During inference, each head generates multiple top predictions for its designated position. These predictions are assembled into candidates, which are processed in parallel using a tree-based attention mechanism (Section 2.1.2). The final step is to verify the candidates and accept a continuation. Besides the standard rejection sampling scheme, a typical acceptance scheme (Section 2.3.1) can also be used here to select reasonable continuations, and the longest accepted candidate prefix will be used for the next decoding phase.

2 Methodology

Medusa follows the same framework as speculative decoding, where each decoding step primarily consists of three substeps: (1) generating candidates, (2) processing candidates, and (3) accepting candidates. For Medusa, (1) is achieved by Medusa heads, (2) is realized by tree attention, and since Medusa heads are on top of the original model, the logits calculated in (2) can be used for substep (1) for the next decoding step. The final step (3) can be realized by either rejection sampling (Leviathan et al., 2022; Chen et al., 2023) or typical acceptance (Section 2.3.1). The overall pipeline is illustrated in Figure 1.

In this section, we first introduce the key components of Medusa, including Medusa heads, and tree attention. Then, we present two levels of fine-tuning procedures for Medusa to meet the needs of different use cases. Finally, we propose two extensions to Medusa, including self-distillation and typical acceptance, to handle situations where no training data is available for Medusa and to improve the efficiency of the decoding process, respectively.

2.1 Key Components

2.1.1 Medusa Heads

In speculative decoding, subsequent tokens are predicted by an auxiliary draft model. This draft model must be small yet effective enough to generate continuations that the original model will accept. Fulfilling these requirements is a challenging task, and existing approaches (Spector & Re, 2023; Miao et al., 2023) often resort to separately pre-training a smaller model. This pre-training process demands substantial additional computational resources. For example, in (Miao et al., 2023), a reported 275 NVIDIA A100 GPU hours were used. Additionally, separate pre-training can potentially create a distribution shift between the draft model and the original model, leading to continuations that the original model may not favor. Chen et al. (2023) have also highlighted the complexities of serving multiple models in a distributed environment.

To streamline and democratize the acceleration of LLM inference, we take inspiration from Stern et al. (2018), which utilizes parallel decoding for tasks such as machine translation and image super-resolution. Medusa heads are additional decoding heads appended to the last hidden states of the original model. Specifically, given the original model’s last hidden states $h_{t}$ at position $t$ , we add $K$ decoding heads to $h_{t}$ . The $k$ -th head is used to predict the token in the $(t+k+1)$ -th position of the next tokens (the original language model head is used to predict the $(t+1)$ -th position). The prediction of the $k$ -th head is denoted as $p_{t}^{(k)}$ , representing a distribution over the vocabulary, while the prediction of the original model is denoted as $p_{t}^{(0)}$ . Following the approach of Stern et al. (2018), we utilize a single layer of feed-forward network with a residual connection for each head. We find that this simple design is sufficient to achieve satisfactory performance. The definition of the $k$ -th head is outlined as:

| | $\displaystyle p_{t}^{(k)}=\text{softmax}\left(W_{2}^{(k)}·\left(\text{SiLU%

}(W_{1}^{(k)}· h_{t})+h_{t}\right)\right),$ | |

| --- | --- | --- |

$d$ is the output dimension of the LLM’s last hidden layer and $V$ is the vocabulary size. We initialize $W_{2}^{(k)}$ identically to the original language model head, and $W_{1}^{(k)}$ to zero. This aligns the initial prediction of Medusa heads with that of the original model. The SiLU activation function (Elfwing et al., 2017) is employed following the Llama models (Touvron et al., 2023).

Unlike a draft model, Medusa heads are trained in conjunction with the original backbone model, which can remain frozen during training (Medusa -1) or be trained together (Medusa -2). This method allows for fine-tuning large models even on a single GPU, taking advantage of the powerful base model’s learned representations. Furthermore, it ensures that the distribution of the Medusa heads aligns with that of the original model, thereby mitigating the distribution shift problem. Additionally, since the new heads consist of just a single layer akin to the original language model head, Medusa does not add complexity to the serving system design and is friendly to distributed settings. We will discuss the training recipe for Medusa heads in Section 2.2.

2.1.2 Tree Attention

Through Medusa heads, we obtain probability predictions for the subsequent $K+1$ tokens. These predictions enable us to create length- $K+1$ continuations as candidates. While the speculative decoding studies (Leviathan et al., 2022; Chen et al., 2023) suggest sampling a single continuation as the candidate, leveraging multiple candidates during decoding can enhance the expected acceptance length within a decoding step. Nevertheless, more candidates can also raise computational demands. To strike a balance, we employ a tree-structured attention mechanism to process multiple candidates concurrently.

<details>

<summary>extracted/5668658/tree_attention.png Details</summary>

### Visual Description

# Technical Document Extraction: Tree Mask Attention Mechanism

This document describes a technical diagram illustrating a "Tree Mask" mechanism, likely used in Transformer-based architectures for processing hierarchical or branching data structures.

## 1. Component Isolation

The image is divided into three primary functional regions:

* **Left (Tree Structure):** A hierarchical representation of tokens starting from a "Root" node.

* **Top (Key Sequence):** A horizontal sequence of tokens acting as the "Key" in an attention mechanism.

* **Center-Right (Attention Matrix):** An $8 \times 8$ grid representing the mask, where checkmarks indicate permitted attention connections between "Query" tokens (rows) and "Key" tokens (columns).

---

## 2. Tree Structure and Query Mapping (Left Region)

The diagram shows how tokens are branched from a central root, organized into "Heads" (likely representing different branches or paths).

### Hierarchy Flow:

1. **Root (Grey Node):** The origin point.

2. **Head 1 (Red Background):** Contains two tokens:

* **It**

* **I**

3. **Head 2 (Orange Background):** This head branches further into two sub-groups based on the parent token from Head 1.

* **Sub-group 1 (Yellow Background):** Derived from the token "It". Contains: **is**, **'**, **the**.

* **Sub-group 2 (Green Background):** Derived from the token "I". Contains: **is**, **'**, **the**.

### Query Sequence (Vertical Axis):

The tokens from the tree are flattened into a vertical sequence of 8 rows for the attention matrix:

1. **It** (from Head 1)

2. **I** (from Head 1)

3. **is** (from Head 2, yellow)

4. **'** (from Head 2, yellow)

5. **the** (from Head 2, yellow)

6. **is** (from Head 2, green)

7. **'** (from Head 2, green)

8. **the** (from Head 2, green)

---

## 3. Key Sequence (Top Region)

The horizontal axis represents the **Key** tokens. They are grouped by color to match the tree structure:

* **Red Group:** [It], [I]

* **Yellow Group:** [is], ['], [the]

* **Green Group:** [is], ['], [the]

---

## 4. Attention Matrix (Tree Mask Data)

The matrix defines which Query (row) can attend to which Key (column). A purple checkmark ($\checkmark$) indicates an active connection.

### Data Table Reconstruction

| Query \ Key | It (Red) | I (Red) | is (Yel) | ' (Yel) | the (Yel) | is (Grn) | ' (Grn) | the (Grn) |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: |

| **It** | $\checkmark$ | | | | | | | |

| **I** | | $\checkmark$ | | | | | | |

| **is (Yel)** | $\checkmark$ | | $\checkmark$ | | | | | |

| **' (Yel)** | $\checkmark$ | | | $\checkmark$ | | | | |

| **the (Yel)** | $\checkmark$ | | | | $\checkmark$ | | | |

| **is (Grn)** | | $\checkmark$ | | | | $\checkmark$ | | |

| **' (Grn)** | | $\checkmark$ | | | | | $\checkmark$ | |

| **the (Grn)** | | $\checkmark$ | | | | | | $\checkmark$ |

---

## 5. Trend and Logic Verification

* **Identity Attention:** Every token attends to itself, forming a sparse diagonal pattern (visible in the checkmarks at [1,1], [2,2], [3,3], etc.).

* **Hierarchical Dependency:**

* The **Yellow Group** (is, ', the) only attends to itself and its parent token **"It"** (Red). It cannot see the "I" (Red) branch or the Green branch.

* The **Green Group** (is, ', the) only attends to itself and its parent token **"I"** (Red). It cannot see the "It" (Red) branch or the Yellow branch.

* **Isolation:** There is no cross-attention between the Yellow and Green branches, despite them containing the same string literals ("is", "'", "the"). This confirms the mask enforces the tree structure where branches are independent.

## 6. Textual Labels Summary

* **Title:** Tree Mask (accompanied by a small evergreen tree icon 🌲).

* **Labels:** Root, Head 1, Head 2, Query, Key.

* **Tokens:** It, I, is, ', the.

</details>

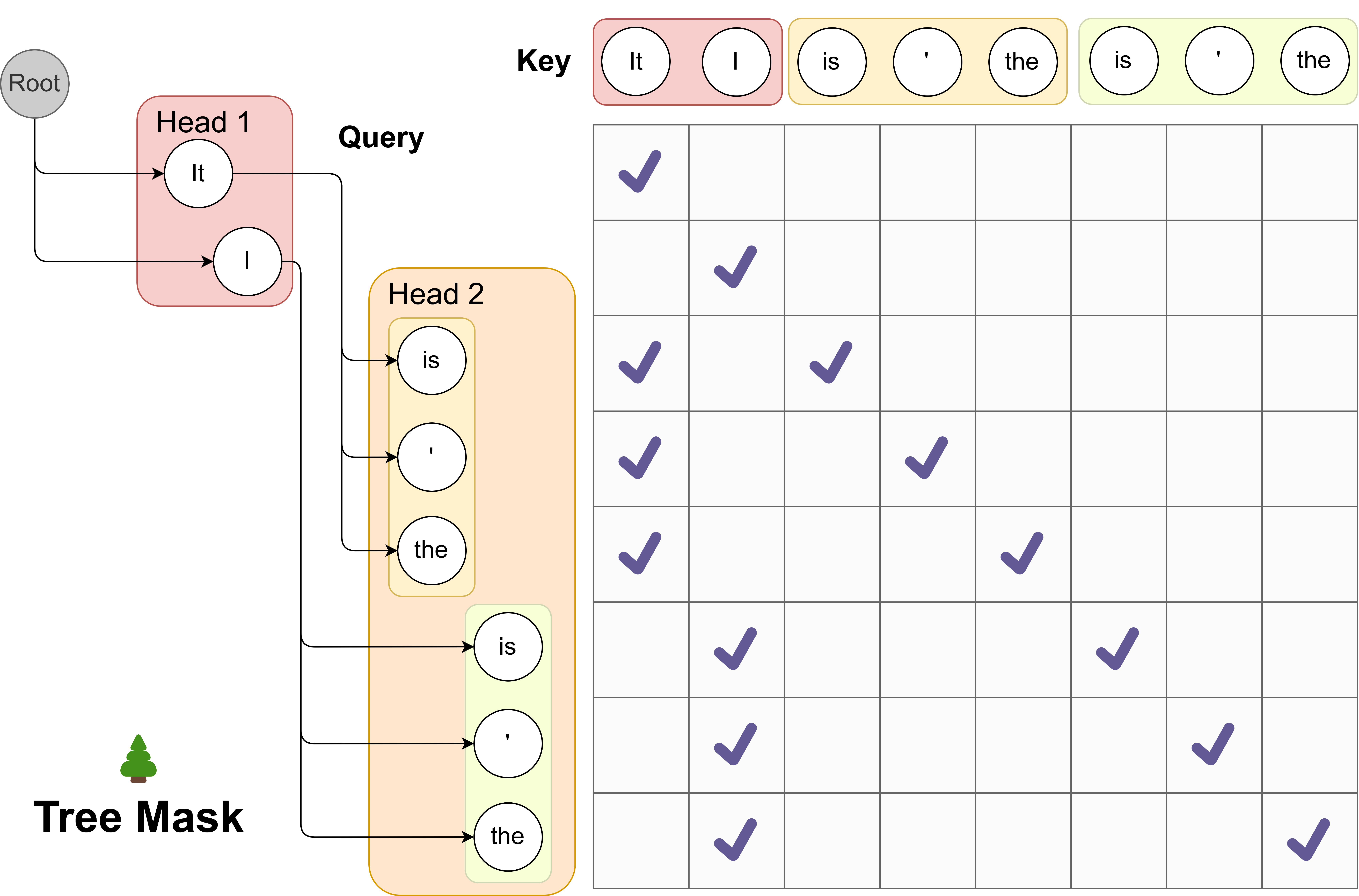

Figure 2: We demonstrates the use of tree attention to process multiple candidates concurrently. As exemplified, the top-2 predictions from the first Medusa head and the top-3 from the second result in a total of $2× 3=6$ candidates. Each of these candidates corresponds to a distinct branch within the tree structure. To guarantee that each token only accesses its predecessors, we devise an attention mask that exclusively permits attention flow from the current token back to its antecedent tokens. The positional indices for positional encoding are adjusted in line with this structure.

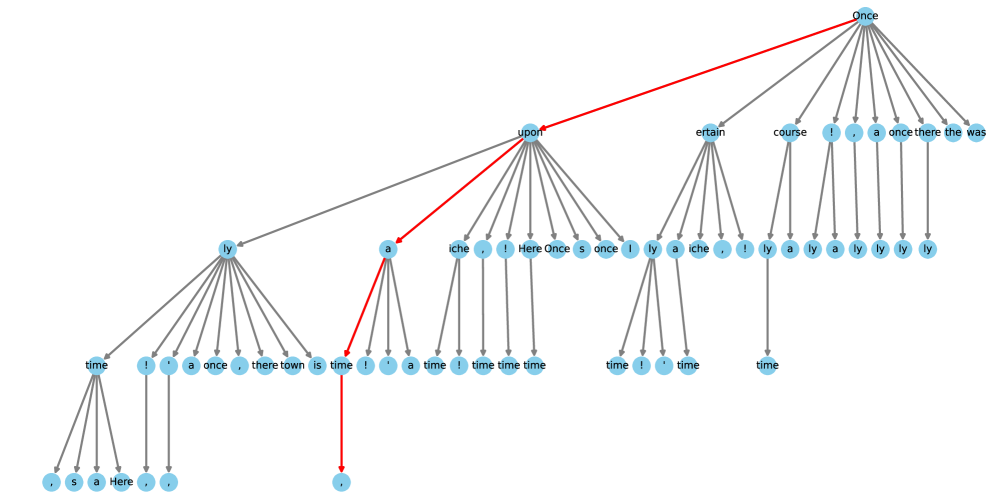

This attention mechanism diverges from the traditional causal attention paradigm. Within this framework, only tokens from the same continuation are regarded as historical data. Drawing inspiration from the concept of embedding graph structures into attention as proposed in the graph neural network domain (Ying et al., 2021), we incorporate the tree structure into our attention mask, visualized in Figure 2. Remarkably, similar ideas have also been explored in independent works like Miao et al. (2023); Spector & Re (2023), where they follow a bottom-up approach and construct the tree by merging multiple candidates generated by a draft model. In our method, we instead take a top-down approach to build the tree thanks to the structure of candidates generated by Medusa heads. For a given $k$ -th head, its top- $s_{k}$ predictions serve as the basis for candidate formation, where $s_{k}$ is a designated hyperparameter. These candidates are established by determining the Cartesian product of the top- $s_{k}$ predictions from each head. For instance, in Figure 2, with $s_{1}=2$ and $s_{2}=3$ , each first head prediction can be succeeded by any prediction from the second head. This leads to a tree structure where $s_{k}$ branches exist at the $k$ -th level (considering a virtual root as the $0 0$ -level, in practice, this $0 0$ -level is for the prediction of the language model head of the original model, which can be sampled independently). Within this tree, only a token’s predecessors are seen as historical context, and our attention mask ensures that the attention is only applied on a token’s predecessors. By employing this mask and properly setting the positional indices for positional encoding, we can process numerous candidates simultaneously without the need to expand the batch size. The cumulative number of new tokens is calculated as $\sum_{k=1}^{K}\prod_{i=1}^{k}s_{i}$ .

In this section, we demonstrate the most simple and regular way to construct the tree structure by taking the Cartesian product. However, it is possible to construct the tree structure in a more sophisticated way and exploit the unbalanced accuracy of different top predictions of different heads. We will discuss this in Section 2.3.3.

2.2 Training Strategies

At the most basic level, we can train Medusa heads by freezing the backbone model and fine-tuning Medusa heads. However, training the backbone in conjunction with the Medusa heads can significantly enhance the accuracy of the Medusa heads. Depending on the computational resources and the specific reqirements of the use case, we propose two levels of training strategies for Medusa heads.

In this section, we assume the availability of a training dataset that aligns with the target model’s output distribution. This could be the dataset used for Supervised Fine-Tuning (SFT) of the target model. We will discuss eliminating the need for such a dataset using a self-distillation approach in Section 2.3.2.

2.2.1 Medusa -1: Frozen Backbone

To train Medusa heads with a frozen backbone model, we can use the cross-entropy loss between the prediction of Medusa heads and the ground truth. Specifically, given the ground truth token $y_{t+k+1}$ at position $t+k+1$ , the loss for the $k$ -th head is $\mathcal{L}_{k}=-\log p_{t}^{(k)}(y_{t+k+1})$ where $p_{t}^{(k)}(y)$ denotes the probability of token $y$ predicted by the $k$ -th head. We also observe that $\mathcal{L}_{k}$ is larger when $k$ is larger, which is reasonable since the prediction of the $k$ -th head is more uncertain when $k$ is larger. Therefore, we can add a weight $\lambda_{k}$ to $\mathcal{L}_{k}$ to balance the loss of different heads. And the total Medusa loss is:

$$

\displaystyle\mathcal{L}_{\text{{Medusa}-1}}=\sum_{k=1}^{K}-\lambda_{k}\log p_%

{t}^{(k)}(y_{t+k+1}). \tag{1}

$$

In practice, we set $\lambda_{k}$ as the $k$ -th power of a constant like $0.8$ . Since we only use the backbone model for providing the hidden states, we can use a quantized version of the backbone model to reduce the memory consumption. This introduces a more democratized way to accelerate LLM inference, as with the quantization, Medusa can be trained for a large model on a single consumer GPU similar to QLoRA (Dettmers et al., 2023). The training only takes a few hours (e.g., 5 hours for Medusa -1 on Vicuna 7B model with a single NVIDIA A100 PCIE GPU to train on 60k ShareGPT samples).

2.2.2 Medusa -2: Joint Training

To further improve the accuracy of Medusa heads, we can train Medusa heads together with the backbone model. However, this requires a special training recipe to preserve the backbone model’s next-token prediction capability and output quality. To achieve this, we propose three strategies:

- Combined loss: To keep the backbone model’s next-token prediction capability, we need to add the cross-entropy loss of the backbone model $\mathcal{L}_{\text{LM}}=-\log p_{t}^{(0)}(y_{t+1})$ to the Medusa loss. We also add a weight $\lambda_{0}$ to balance the loss of the backbone model and the Medusa heads. Therefore, the total loss is:

$$

\displaystyle\mathcal{L}_{\text{{Medusa}-2}}=\mathcal{L}_{\text{LM}}+\lambda_{%

0}\mathcal{L}_{\text{{Medusa}-1}}. \tag{2}

$$

- Differential learning rates: Since the backbone model is already well-trained and the Medusa heads need more training, we can use separate learning rates for them to enable faster convergence of Medusa heads while preserving the backbone model’s capability.

- Heads warmup: Noticing that at the beginning of training, the Medusa heads have a large loss, which leads to a large gradient and may distort the backbone model’s parameters. Following the idea from Kumar et al. (2022), we can employ a two-stage training process. In the first stage, we only train the Medusa heads as Medusa -1. In the second stage, we train the backbone model and Medusa heads together with a warmup strategy. Specifically, we first train the backbone model for a few epochs, then train the Medusa heads together with the backbone model. Besides this simple strategy, we can also use a more sophisticated warmup strategy by gradually increasing the weight $\lambda_{0}$ of the backbone model’s loss. We find both strategies work well in practice.

Putting these strategies together, we can train Medusa heads together with the backbone model without hurting the backbone model’s capability. Moreover, this recipe can be applied together with Supervised Fine-Tuning (SFT), enabling us to get a model with native Medusa support.

2.2.3 How to Select the Number of Heads

Empirically, we found that five heads are sufficient at most. Therefore, we recommend training with five heads and referring to the strategy described in Section 2.3.3 to determine the optimal configuration of the tree attention. With optimized tree attention, sometimes three or four heads may be enough for inference. In this case, we can ignore the redundant heads without overhead.

2.3 Extensions

2.3.1 Typical Acceptance

In speculative decoding papers (Leviathan et al., 2022; Chen et al., 2023), authors employ rejection sampling to yield diverse outputs that align with the distribution of the original model. However, subsequent implementations (Joao Gante, 2023; Spector & Re, 2023) reveal that this sampling strategy results in diminished efficiency as the sampling temperature increases. Intuitively, this can be comprehended in the extreme instance where the draft model is the same as the original one: Using greedy decoding, all output of the draft model will be accepted, therefore maximizing the efficiency. Conversely, rejection sampling introduces extra overhead, as the draft model and the original model are sampled independently. Even if their distributions align perfectly, the output of the draft model may still be rejected.

However, in real-world scenarios, sampling from language models is often employed to generate diverse responses, and the temperature parameter is used merely to modulate the “creativity” of the response. Therefore, higher temperatures should result in more opportunities for the original model to accept the draft model’s output. We ascertain that it is typically unnecessary to match the distribution of the original model. Thus, we propose employing a typical acceptance scheme to select plausible candidates rather than using rejection sampling. This approach draws inspiration from truncation sampling studies (Hewitt et al., 2022) (refer to Appendix A for an in-depth explanation). Our objective is to choose candidates that are typical, meaning they are not exceedingly improbable to be produced by the original model. We use the prediction probability from the original model as a natural gauge for this and establish a threshold based on the prediction distribution to determine acceptance. Specifically, given $x_{1},x_{2},·s,x_{n}$ as context, when evaluating the candidate sequence $(x_{n+1},x_{n+2},·s,x_{n+K+1})$ (composed by top predictions of the original language model head and Medusa heads), we consider the condition

| | $\displaystyle p_{\text{original}}(x_{n+k}|x_{1},x_{2},·s,x_{n+k-1})>$ | |

| --- | --- | --- |

where $H(·)$ denotes the entropy function, and $\epsilon,\delta$ are the hard threshold and the entropy-dependent threshold respectively. This criterion is adapted from Hewitt et al. (2022) and rests on two observations: (1) tokens with relatively high probability are meaningful, and (2) when the distribution’s entropy is high, various continuations may be deemed reasonable. During decoding, every candidate is evaluated using this criterion, and a prefix of the candidate is accepted if it satisfies the condition. To guarantee the generation of at least one token at each step, we apply greedy decoding for the first token and unconditionally accept it while employing typical acceptance for subsequent tokens. The final prediction for the current step is determined by the longest accepted prefix among all candidates.

Examining this scheme leads to several insights. Firstly, when the temperature is set to $0 0$ , it reverts to greedy decoding, as only the most probable token possesses non-zero probability. As the temperature surpasses $0 0$ , the outcome of greedy decoding will consistently be accepted with appropriate $\epsilon,\delta$ , since those tokens have the maximum probability, yielding maximal speedup. Likewise, in general scenarios, an increased temperature will correspondingly result in longer accepted sequences, as corroborated by our experimental findings.

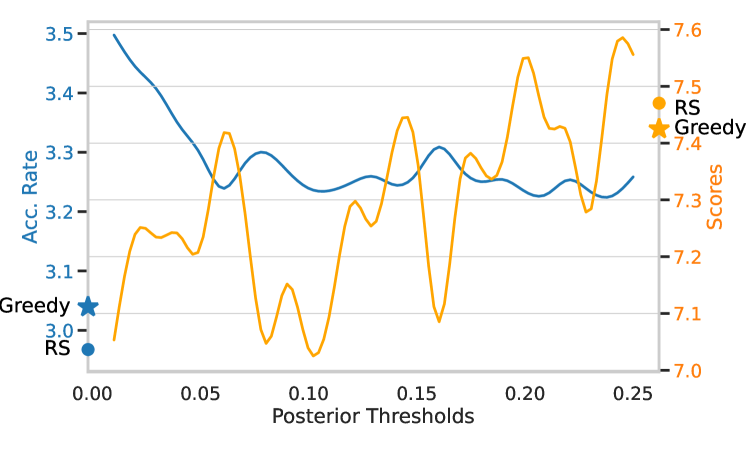

Empirically, we verify that typical acceptance can achieve a better speedup while maintaining a similar generation quality as shown in Figure 5.

2.3.2 Self-Distillation

In Section 2.2, we assume the existence of a training dataset that matches the target model’s output distribution. However, this is not always the case. For example, the model owners may only release the model without the training data, or the model may have gone through a Reinforcement Learning with Human Feedback (RLHF) procedure, which makes the output distribution of the model different from the training dataset. To tackle this issue, we propose an automated self-distillation pipeline to use the model itself to generate the training dataset for Medusa heads, which matches the output distribution of the model.

The dataset generation process is straightforward. We first take a public seed dataset from a domain similar to the target model; for example, using the ShareGPT (ShareGPT, 2023) dataset for chat models. Then, we simply take the prompts from the dataset and ask the model to reply to the prompts. In order to obtain multi-turn conversation samples, we can sequentially feed the prompts from the seed dataset to the model. Or, for models like Zephyr 7B (Tunstall et al., 2023), which are trained on both roles of the conversation, they have the ability to self-talk, and we can simply feed the first prompt and let the model generate multiple rounds of conversation.

For Medusa -1, this dataset is sufficient for training Medusa heads. However, for Medusa -2, we observe that solely using this dataset for training the backbone and Medusa heads usually leads to a lower generation quality. In fact, even without training Medusa heads, training the backbone model with this dataset will lead to performance degradation. This suggests that we also need to use the original model’s probability prediction instead of using the ground truth token as the label for the backbone model, similar to classic knowledge distillation works (Kim & Rush, 2016). Concretely, the loss for the backbone model is:

$$

\displaystyle\mathcal{L}_{\text{LM-distill}}=KL(p_{\text{original},t}^{(0)}||p%

_{t}^{(0)}), \tag{0}

$$

where $p_{\text{original},t}^{(0)}$ denotes the probability distribution of the original model’s prediction at position $t$ .

However, naively, to obtain the original model’s probability prediction, we need to maintain two models during training, increasing the memory requirements. To further alleviate this issue, we propose a simple yet effective way to exploit the self-distillation setup. We can use a parameter-efficient adapter like LoRA (Hu et al., 2021) for fine-tuning the backbone model. In this way, the original model is simply the model with the adapter turned off. Therefore, the distillation does not require additional memory consumption. Together, this self-distillation pipeline can be used to train Medusa -2 without hurting the backbone model’s capability and introduce almost no additional memory consumption. Lastly, one tip about using self-distillation is that it is preferable to use LoRA without quantization in this case, otherwise, the teacher model will be the quantized model, which may lead to a lower generation quality.

2.3.3 Searching for the Optimized Tree Construction

In Section 2.1.2, we present the simplest way to construct the tree structure by taking the Cartesian product. However, with a fixed budget for the number of total nodes in the tree, a regular tree structure may not be the best choice. Intuitively, those candidates composed of the top predictions of different heads may have different accuracies. Therefore, we can leverage an estimation of the accuracy to construct the tree structure.

Specifically, we can use a calibration dataset and calculate the accuracies of the top predictions of different heads. Let $a_{k}^{(i)}$ denote the accuracy of the $i$ -th top prediction of the $k$ -th head Here, the accuracy is defined for the single top $i$ -th token, i.e., this accuracy is equal to top- $i$ accuracy minus top- $(i-1)$ accuracy.. Assuming the accuracies are independent, we can estimate the accuracy of a candidate sequence composed by the top $\left[i_{1},i_{2},·s,i_{k}\right]$ predictions of different heads as $\prod_{j=1}^{k}a_{j}^{(i_{j})}$ . Let $I$ denote the set of all possible combinations of $\left[i_{1},i_{2},·s,i_{k}\right]$ and each element of $I$ can be mapped to a node of the tree (not only leaf nodes but all nodes are included). Then, the expectation of the acceptance length of a candidate sequence is:

| | $\displaystyle\sum_{\left[i_{1},i_{2},·s,i_{k}\right]∈ I}\prod_{j=1}^{k}a%

_{j}^{(i_{j})}.$ | |

| --- | --- | --- |

Thinking about building a tree by adding nodes one by one, the contribution of a new node to the expectation is exactly the accuracy associated with the node. Therefore, we can greedily add nodes to the tree by choosing the node that is connected to the current tree and has the highest accuracy. This process can be repeated until the total number of nodes reaches the desired number. In this way, we can construct a tree that maximizes the expectation of the acceptance length. Further details can be found in Appendix C.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: Speedup on Different Model Sizes

## 1. Document Overview

This image is a grouped bar chart illustrating the performance improvements (speedup) achieved by different versions of the "Medusa" system across two Large Language Model (LLM) sizes. The performance is measured in throughput (Tokens per Second).

## 2. Component Isolation

### Header

* **Title:** Speedup on different model sizes

### Main Chart Area

* **Y-Axis Label:** Tokens per Second

* **Y-Axis Markers:** 0, 20, 40, 60, 80, 100, 120

* **X-Axis Label:** Model Size

* **X-Axis Categories:** 7B, 13B

* **Grid:** Horizontal grid lines corresponding to the Y-axis markers.

### Legend

* **Blue Square:** w/o Medusa (Baseline)

* **Orange Square:** Medusa-1

* **Green Square:** Medusa-2

## 3. Data Extraction and Trend Analysis

### Trend Verification

1. **Baseline (w/o Medusa):** As model size increases from 7B to 13B, the throughput decreases (the blue bar is shorter for 13B).

2. **Medusa-1:** Shows a significant upward shift from the baseline in both categories.

3. **Medusa-2:** Shows the highest throughput in both categories, consistently outperforming Medusa-1 and the baseline.

4. **Relative Speedup:** The speedup factor (annotated above the bars) increases as the model size increases for Medusa-1 (2.18x to 2.33x), while it remains constant for Medusa-2 (2.83x).

### Data Table (Reconstructed)

| Model Size | Configuration | Tokens per Second (Approx.) | Speedup Factor (Annotated) |

| :--- | :--- | :--- | :--- |

| **7B** | w/o Medusa (Blue) | ~45 | - |

| **7B** | Medusa-1 (Orange) | ~98 | 2.18x |

| **7B** | Medusa-2 (Green) | ~128 | 2.83x |

| **13B** | w/o Medusa (Blue) | ~35 | - |

| **13B** | Medusa-1 (Orange) | ~80 | 2.33x |

| **13B** | Medusa-2 (Green) | ~98 | 2.83x |

## 4. Detailed Observations

* **Performance Scaling:** While absolute throughput (Tokens per Second) drops for all configurations when moving from a 7B to a 13B model, the efficiency gains provided by Medusa become more pronounced or stay stable.

* **Medusa-2 Efficiency:** Medusa-2 on a 13B model (~98 tokens/sec) achieves roughly the same performance as Medusa-1 on a 7B model (~98 tokens/sec), effectively allowing a larger model to run at the speed of a smaller optimized model.

* **Maximum Throughput:** The peak performance recorded is for the 7B model using Medusa-2, reaching approximately 128 tokens per second.

</details>

(a)

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Document Extraction: Speedup Analysis for Vicuna-7B

## 1. Document Overview

This image is a vertical bar chart illustrating the performance "Speedup" achieved across eight different task categories for the large language model **Vicuna-7B**. The chart uses a multi-colored categorical scheme to differentiate between the task types.

## 2. Component Isolation

### Header

- **Title:** Speedup on different categories for Vicuna-7B

### Main Chart Area

- **Y-Axis Label:** Speedup

- **Y-Axis Scale:** Numerical range from 1.0 to 3.5 (with the highest data point extending to ~3.62). Major gridlines are present at intervals of 0.5 (1.0, 1.5, 2.0, 2.5, 3.0, 3.5).

- **X-Axis Labels:** Eight categorical task types, rotated approximately 45 degrees for readability.

- **Data Visualization:** Eight vertical bars, each topped with a precise numerical value label.

## 3. Data Table Extraction

The following table reconstructs the visual data presented in the bar chart.

| Category | Speedup Value | Bar Color |

| :--- | :--- | :--- |

| Humanities | 2.58x | Blue |

| Reasoning | 2.58x | Orange |

| Roleplay | 2.7x | Green |

| Writing | 2.72x | Red |

| Stem | 2.77x | Purple |

| Math | 3.01x | Brown |

| Coding | 3.29x | Pink |

| Extraction | 3.62x | Grey |

## 4. Trend Verification and Analysis

The chart is organized in ascending order of speedup from left to right.

* **Initial Plateau:** The first two categories, **Humanities** and **Reasoning**, show identical performance gains at **2.58x**.

* **Steady Incremental Growth:** From **Roleplay (2.7x)** through **Stem (2.77x)**, there is a gradual upward slope in performance.

* **Significant Acceleration:** There is a notable jump in speedup when moving into technical and structured data tasks. **Math** breaks the 3.0x threshold (**3.01x**), followed by a sharp increase in **Coding (3.29x)**.

* **Peak Performance:** The **Extraction** category exhibits the highest speedup at **3.62x**, which is approximately 40% higher than the baseline speedup seen in the Humanities category.

## 5. Spatial Grounding and Labels

* **X-Axis [Bottom]:** Labels are centered under each bar.

* **Y-Axis [Left]:** "Speedup" text is oriented vertically.

* **Data Labels [Top of Bars]:** Each bar has its specific "Nx" value printed directly above the colored region to ensure precision beyond the gridlines.

* **Language:** The entire chart is in **English**. No other languages are present.

</details>

(b)

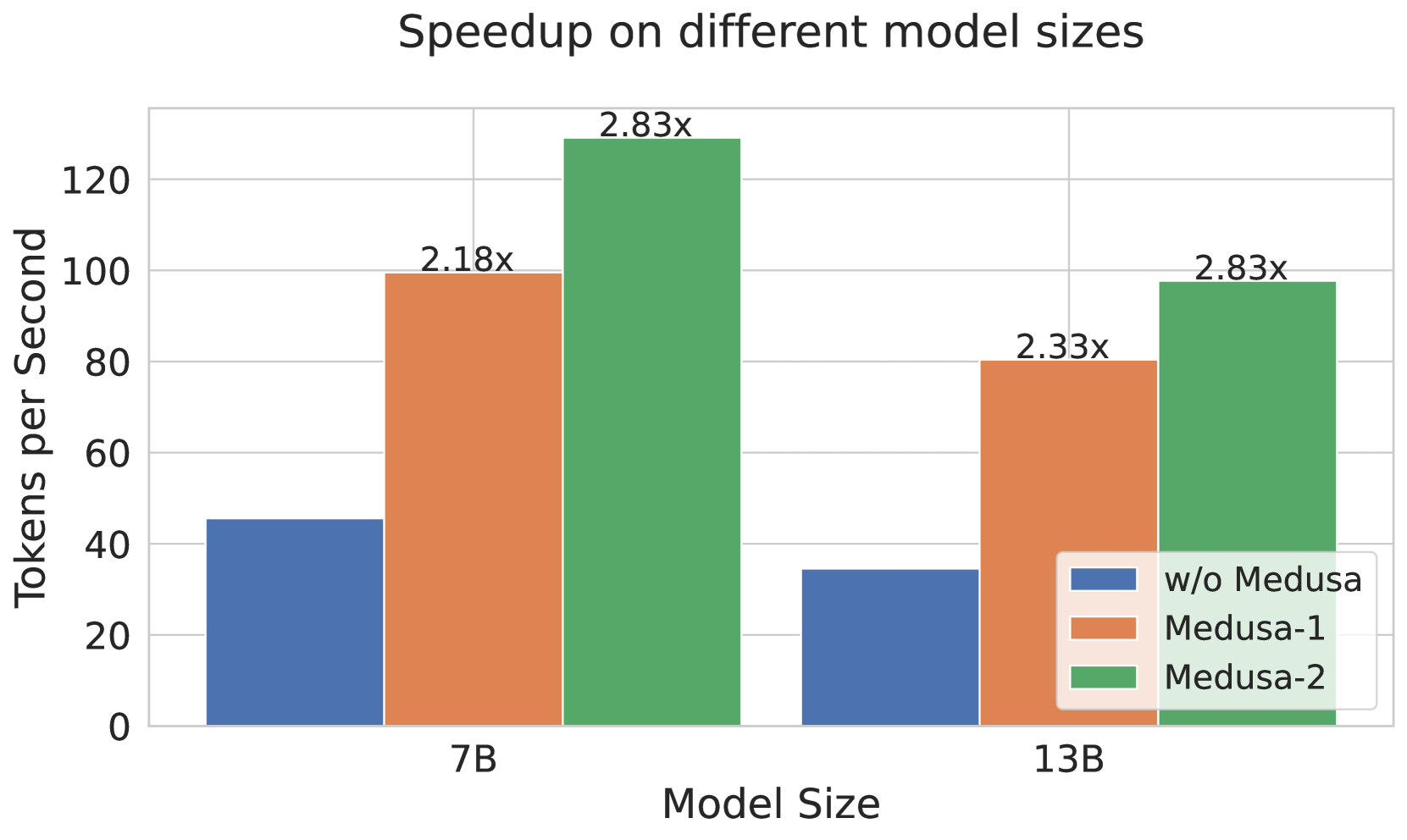

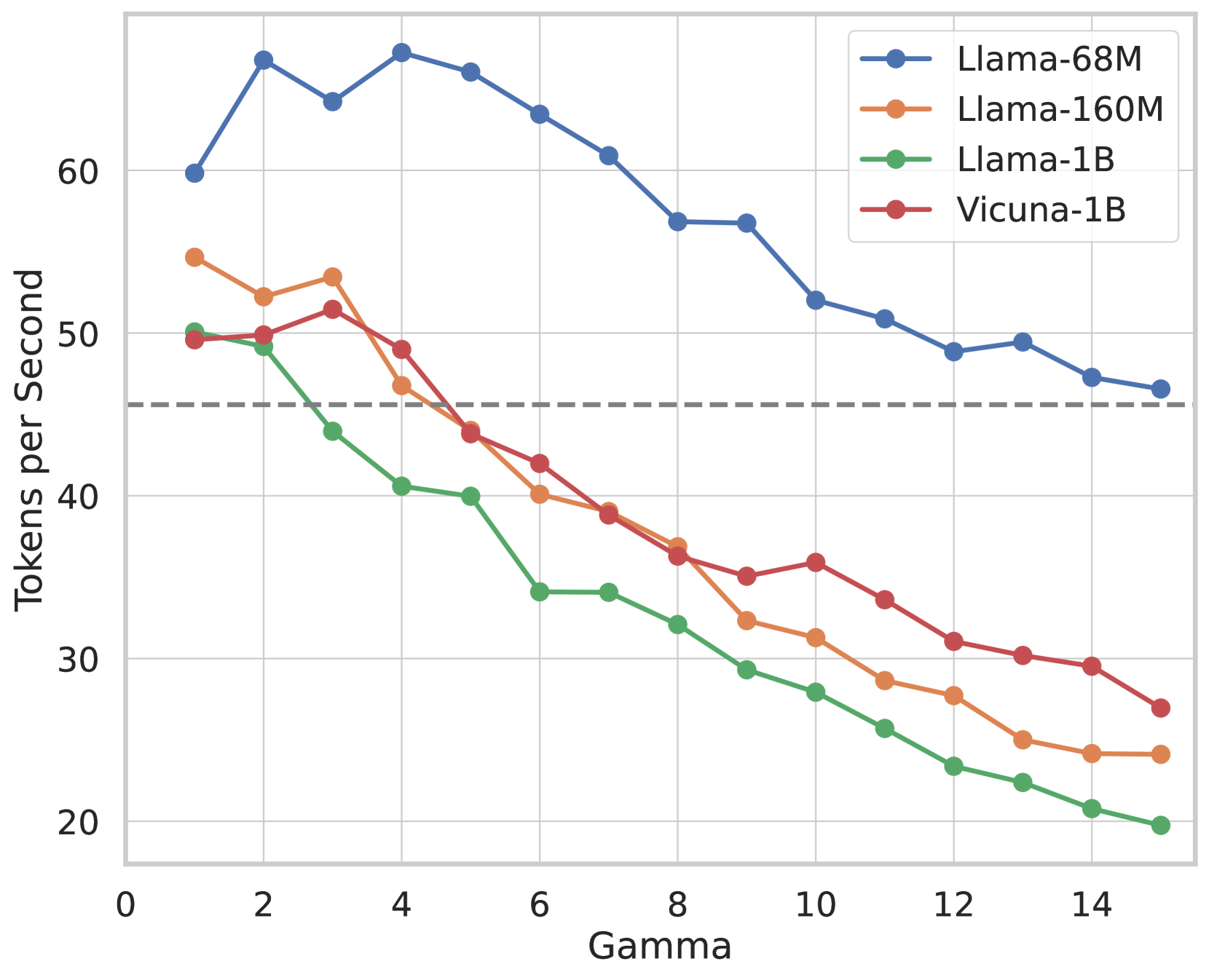

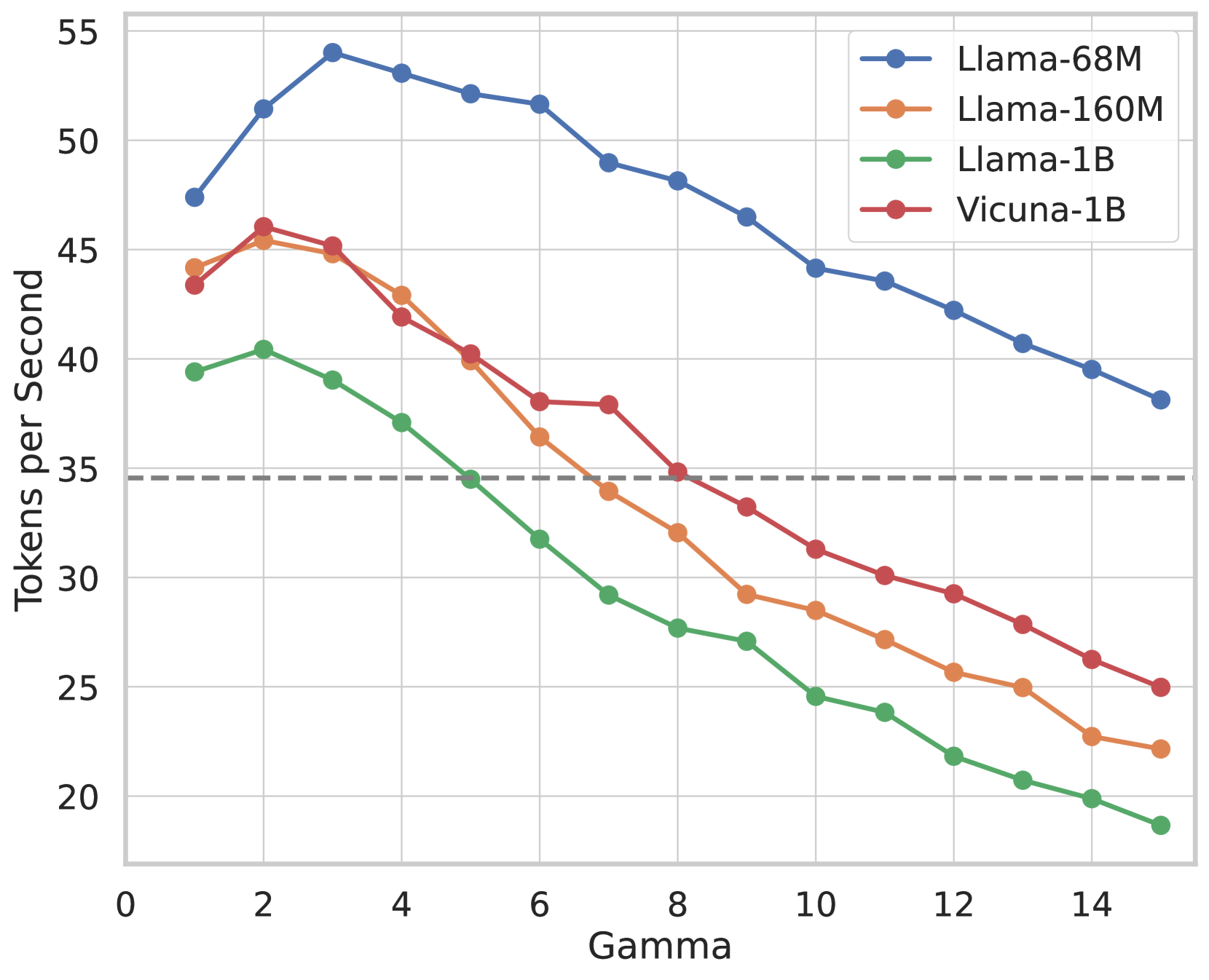

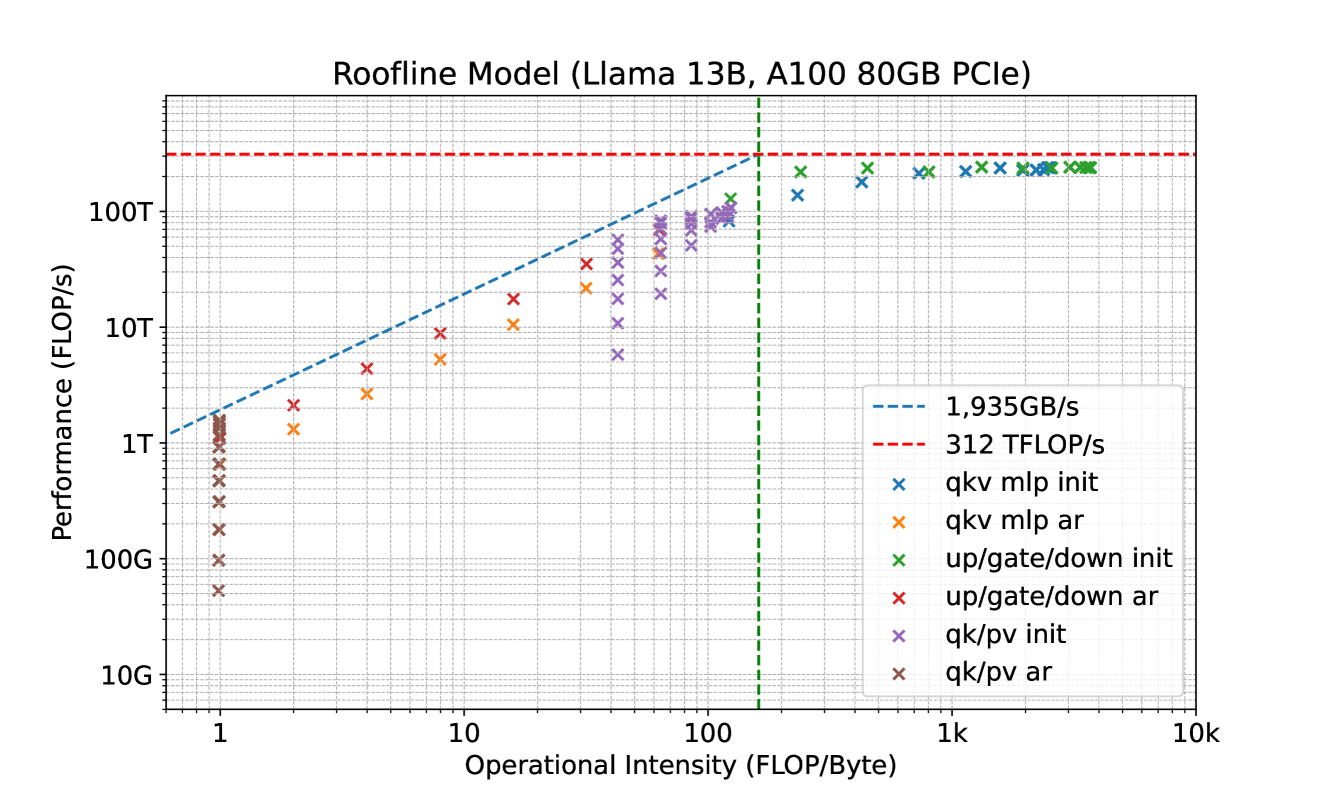

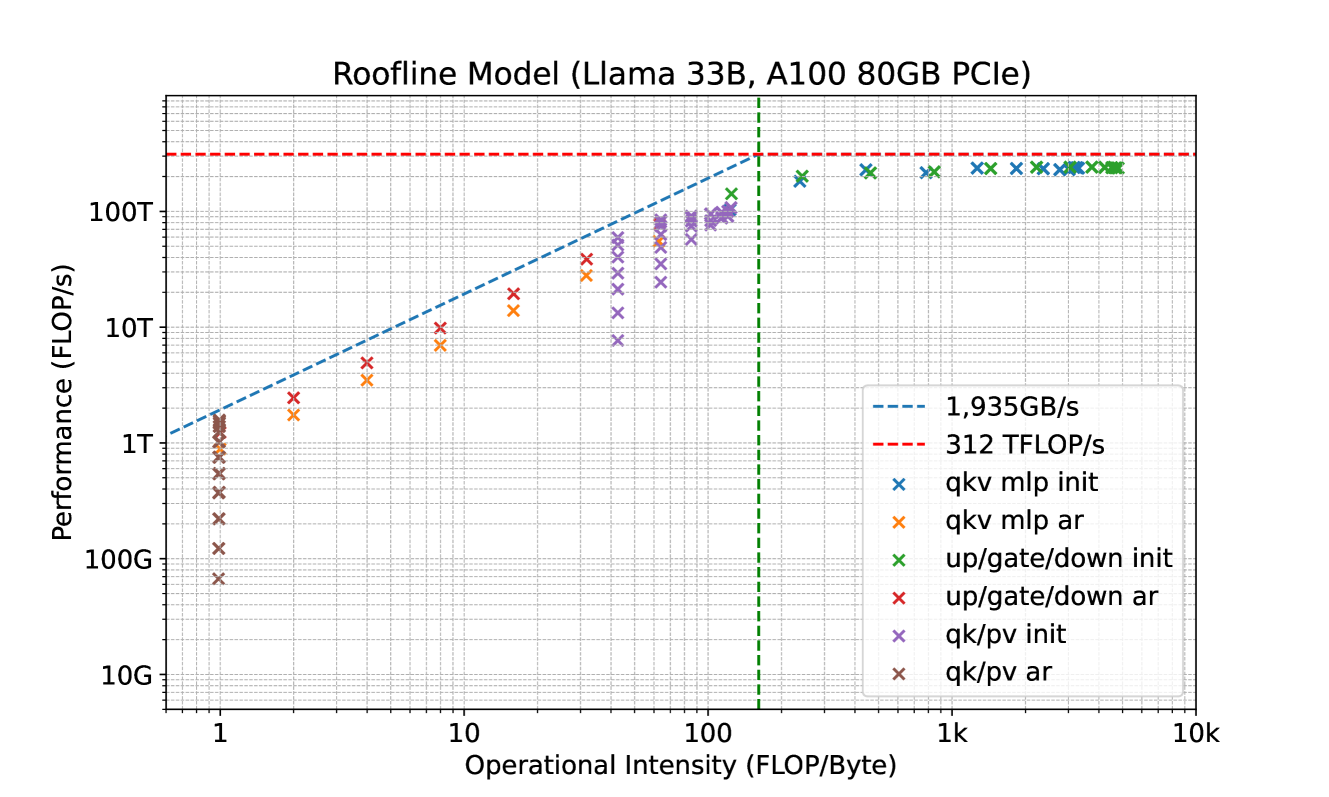

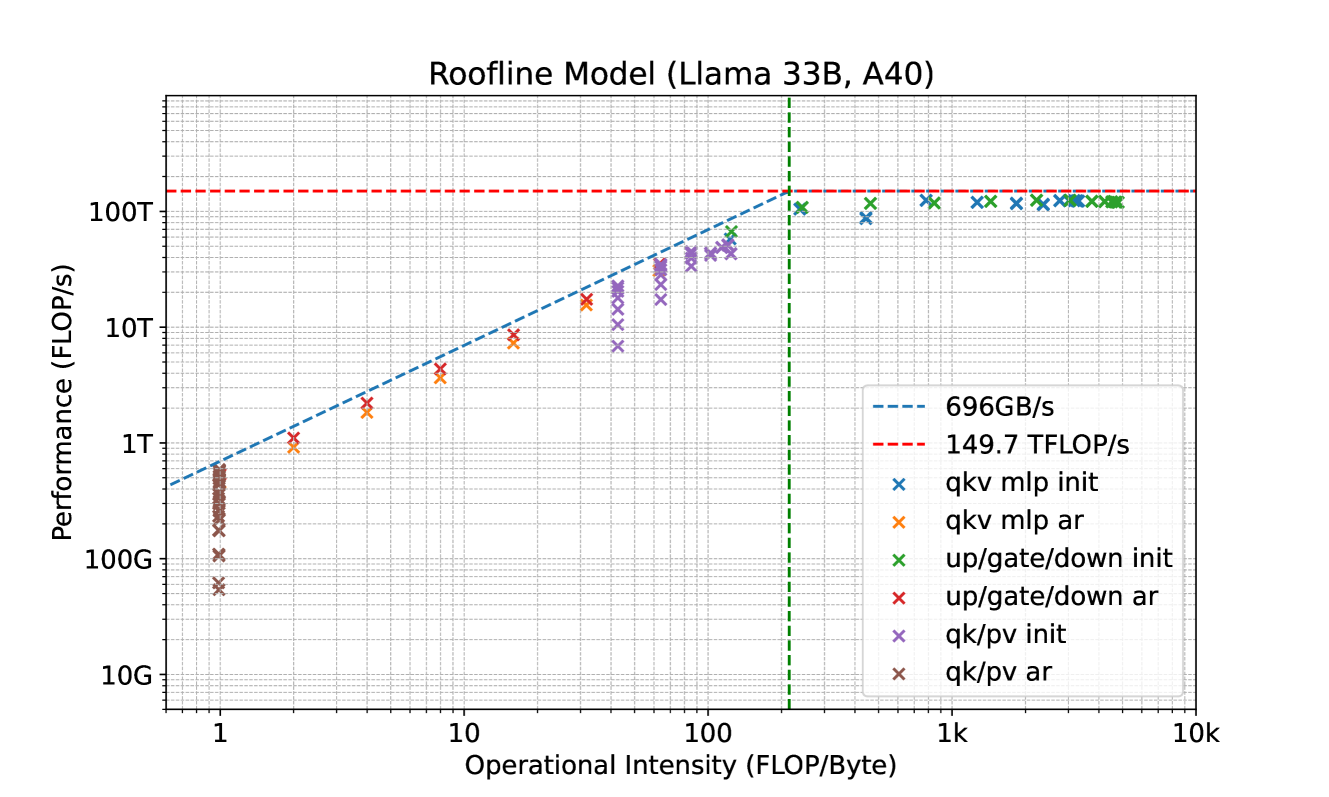

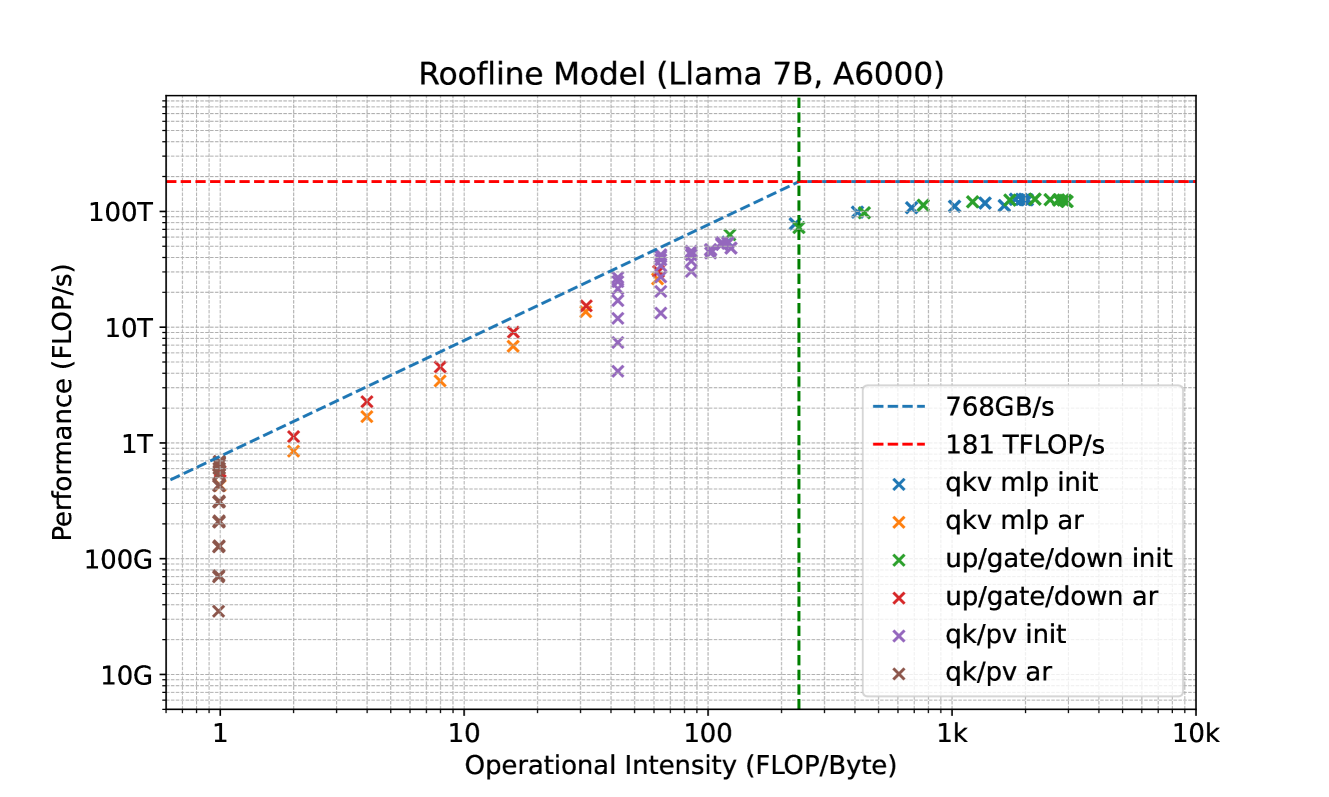

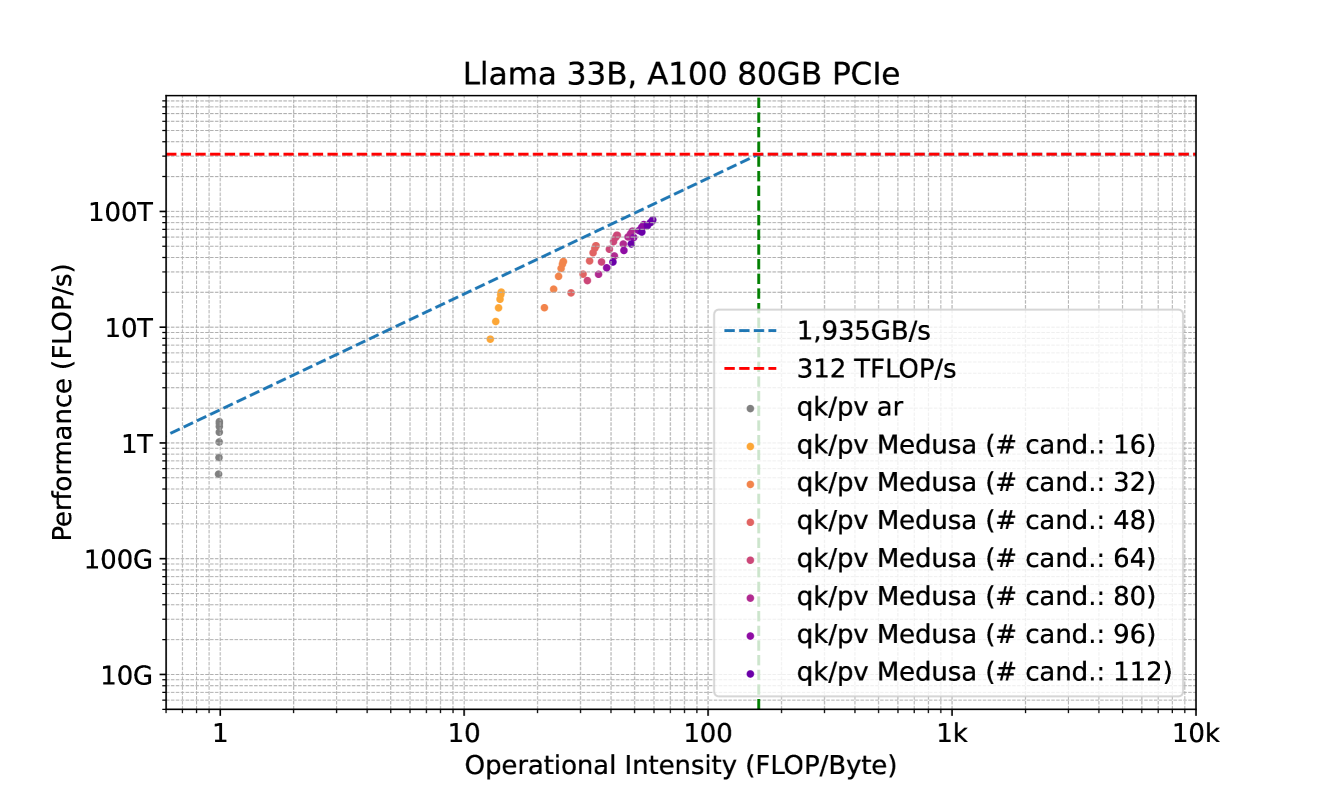

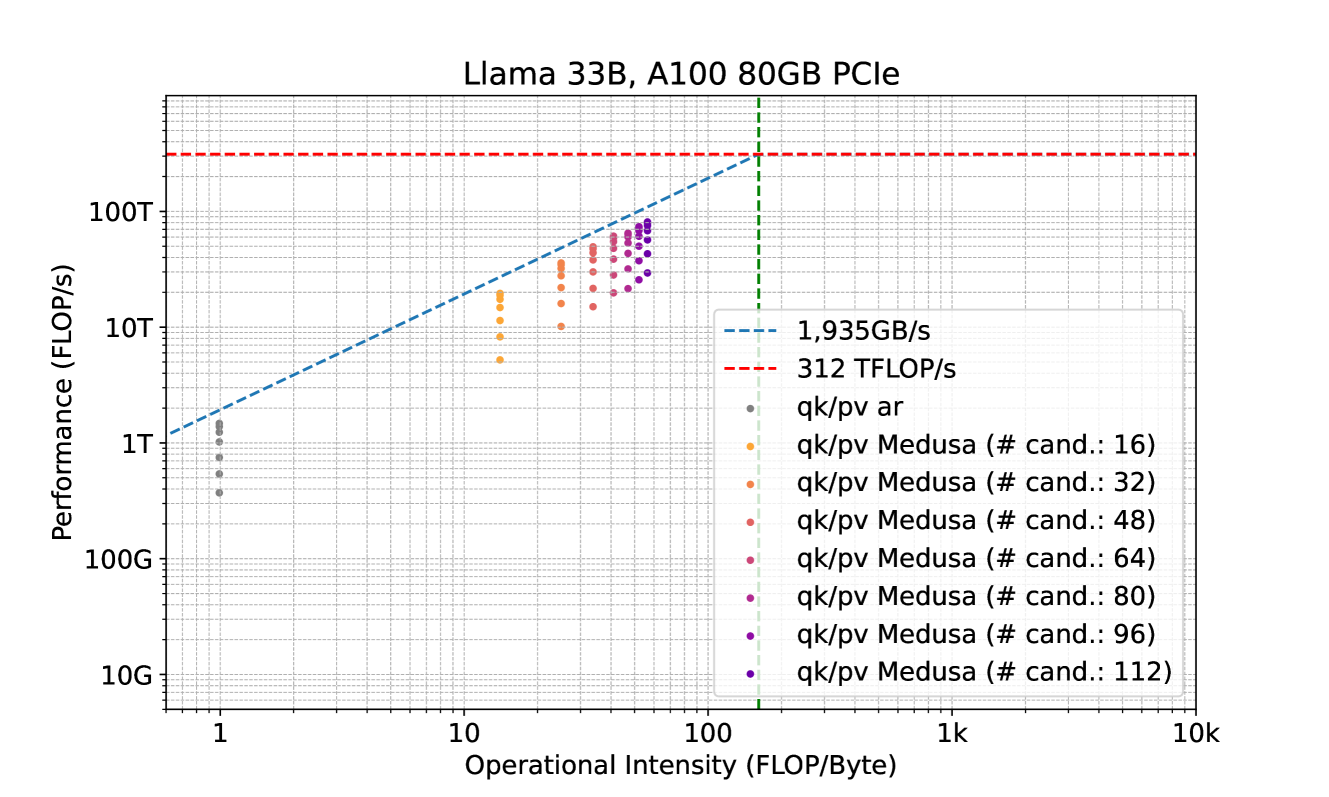

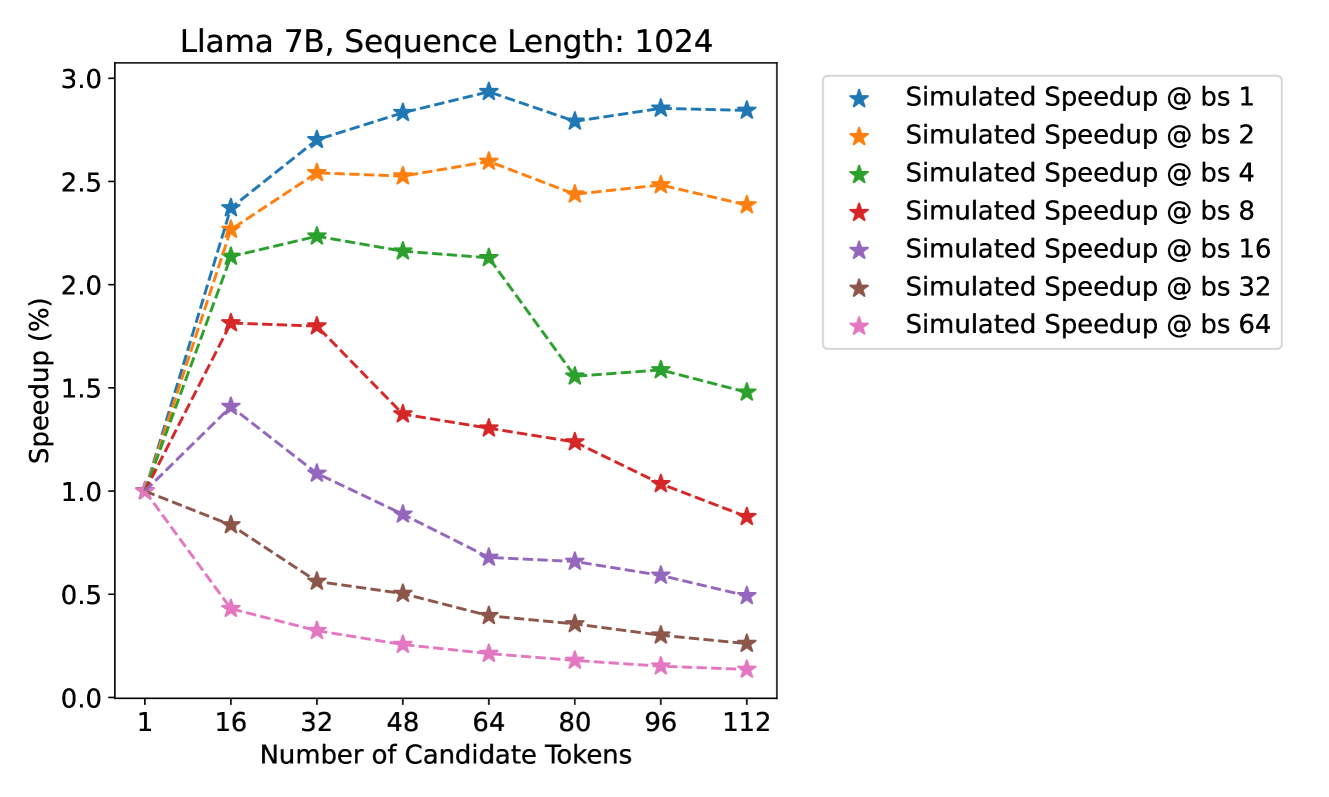

Figure 3: Left: Speed comparison of baseline, Medusa -1 and Medusa -2 on Vicuna-7B/13B. Medusa -1 achieves more than 2 $×$ wall-time speedup compared to the baseline implementation while Medusa -2 further improves the speedup by a significant margin. Right: Detailed speedup performance of Vicuna-7B with Medusa -2 on 8 categories from MT-Bench.

3 Experiments

In this section, we present experiments to demonstrate the effectiveness of Medusa under different settings. First, we evaluate Medusa on the Vicuna-7B and 13B models (Chiang et al., 2023) to show the performance of Medusa -1 and Medusa -2. Then, we assess our method using the Vicuna-33B and Zephyr-7B models to demonstrate self-distillation’s viability in scenarios where direct access to the fine-tuning recipe is unavailable, as with Vicuna-33B, and in models like Zephyr-7B that employ Reinforcement Learning from Human Feedback (RLHF). The evaluation is conducted on MT-Bench (Zheng et al., 2023), a multi-turn, conversational-format benchmark. Detailed settings can be found in Appendix B.

3.1 Case Study: Medusa -1 v.s. Medusa -2 on Vicuna 7B and 13B

Experimental Setup. We use the Vicuna model class (Chiang et al., 2023), which encompasses chat models of varying sizes (7B, 13B, 33B) that are fine-tuned from the Llama model (Touvron et al., 2023). Among them, the 7B and 13B models are trained on the ShareGPT (ShareGPT, 2023) dataset, while the 33B model is an experimental model and is trained on a private dataset. In this section, we use the ShareGPT dataset to train the Medusa heads on the 7B and 13B models for $2$ epochs. We use the v1.5 version of Vicuna models, which are fine-tuned from Llama-2 models with sequence length 4096.

Results. We collect the results and show them in Fig. 3. The baseline is the default Huggingface implementation. In Fig. 3(a), we can see that for the 7B models, Medusa -1 and Medusa -2 configurations lead to a significant increase in speed, measuring in tokens processed per second. Medusa -1 shows a 2.18 $×$ speedup, while Medusa -2 further improves this to a 2.83 $×$ . When applied to the larger 13B model, Medusa -1 results in a 2.33 $×$ speed increase, while Medusa -2 maintains a similar performance gain of 2.83 $×$ over the baseline. We also plot the speedup per category for Medusa -2 Vicuna-7B model. We observe that the coding category benefits from a 3.29 $×$ speedup, suggesting that Medusa is particularly effective for tasks in this domain. This points to a significant potential for optimizing coding LLMs, which are widely used in software development and other programming-related tasks. The “Extraction” category shows the highest speedup at 3.62 $×$ , indicating that this task is highly optimized by the Medusa. Overall, the results suggest that the Medusa significantly enhances inference speed across different model sizes and tasks.

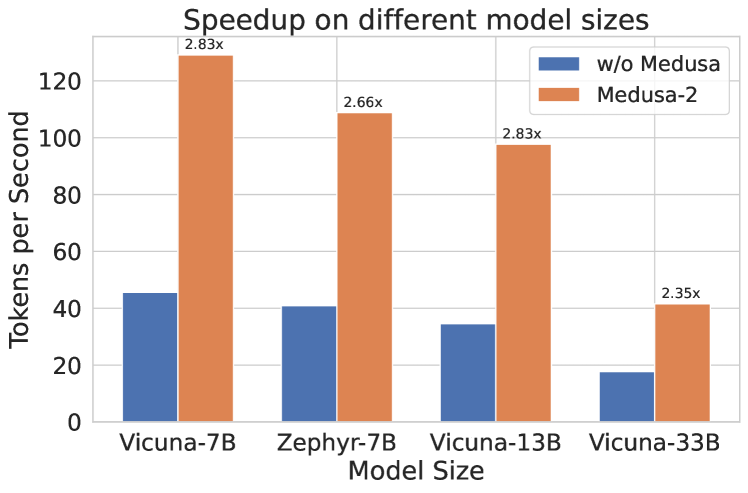

3.2 Case Study: Training with Self-Distillation on Vicuna-33B and Zephyr-7B

Experimental Setup. In this case study, we focus on the cases where self-distillation is needed. We use the Vicuna-33B model (Chiang et al., 2023) and the Zephyr-7B model (Tunstall et al., 2023) as examples. Following the procedure described in Section 2.3.2, we first generate the datasets with some seed prompts. We use ShareGPT (ShareGPT, 2023) and UltraChat (Ding et al., 2023) as the seed datasets and collect a dataset at about $100k$ samples for both cases. Interestingly, we find that the Zephyr model can continue to generate multiple rounds of conversation with a single prompt, which makes it easy to collect a large dataset. For Vicuna-33B, we generate the multi-turn conversations by iteratively feeding the prompts from each multi-turn seed conversation using random sampling with temperature 0.3. Both models are trained with sequence length $2048$ and batch size $128$ .

| Model Name Acc. rate Overhead | Vicuna-7B 3.47 1.22 | Zephyr-7B 3.14 1.18 | Vicuna-13B 3.51 1.23 | Vicuna-33B 3.01 1.27 |

| --- | --- | --- | --- | --- |

| Quality | 6.18 (+0.01) | 7.25 (-0.07) | 6.43 (-0.14) | 7.18 (+0.05) |

| $S_{\textnormal{SpecDecoding}}$ | 1.47 | - | 1.56 | 1.60 |

| $S_{\textsc{Medusa}}$ | 2.83 | 2.66 | 2.83 | 2.35 |

Table 1: Comparison of various Medusa -2 models. The first section reports the details of Medusa -2, including accelerate rate, overhead, and quality that denoted the average scores on the MT-Bench compared to the original models. The second section lists the speedup ( $S$ ) of SpecDecoding and Medusa, respectively.

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Document Extraction: Performance Analysis Chart

## 1. Component Isolation

* **Header/Legend:** Located in the top-left quadrant. Contains the label for the primary data series.

* **Main Chart Area:** A scatter plot with an overlaid line graph, featuring a grid background.

* **Axes:** Y-axis (left) representing "Acc. Rate" and X-axis (bottom) representing "Number of Candidate Tokens".

* **Annotations:** Text labels and arrows pointing to specific data points within the plot area.

## 2. Axis and Legend Extraction

### Axis Labels

| Axis | Label | Markers |

| :--- | :--- | :--- |

| **Y-Axis (Vertical)** | `Acc. Rate` | 1.0, 1.5, 2.0, 2.5, 3.0, 3.5 |

| **X-Axis (Horizontal)** | `Number of Candidate Tokens` | 0, 50, 100, 150, 200, 250 |

### Legend

* **Location:** Top-left corner of the plot area.

* **Label:** `Sparse Tree Attention`

* **Visual Style:** Red dashed line with red star markers.

## 3. Data Series Analysis

### Series 1: Sparse Tree Attention (Primary Trend)

* **Visual Trend:** The line slopes upward from left to right, showing a positive correlation between the number of candidate tokens and the acceleration rate. The rate of increase slows down as the x-value increases (logarithmic-like growth).

* **Data Points (Approximate):**

* (64, ~3.15)

* (128, ~3.32)

* (256, ~3.48)

### Series 2: Baseline/Comparison Scatter Plot

* **Visual Trend:** A dense cluster of blue semi-transparent dots. The trend follows a logarithmic curve, starting sharply from x=0 and flattening out as it approaches x=250. The values are consistently lower than the "Sparse Tree Attention" series.

* **Data Range:**

* **X-axis:** Starts near 5 and extends to 256.

* **Y-axis:** Starts near 2.2 and reaches a maximum density around 2.8 - 3.1.

### Series 3: Baseline Marker (w/o Medusa)

* **Visual Trend:** A single isolated data point.

* **Data Point:** (1, 1.0)

* **Annotation:** A grey arrow points from the text "w/o Medusa" to a solid blue circle at the coordinate (1, 1.0).

## 4. Summary of Findings

The chart illustrates the performance improvement (Acceleration Rate) of different configurations based on the number of candidate tokens used.

1. **Baseline (w/o Medusa):** Represents the starting point with an Acc. Rate of 1.0 at 1 candidate token.

2. **Standard Medusa (Scatter Plot):** Shows significant improvement over the baseline, reaching Acc. Rates between 2.5 and 3.0 as candidate tokens increase.

3. **Sparse Tree Attention (Red Dashed Line):** Represents the highest performing method shown. It consistently outperforms the standard scatter plot data points. At 256 candidate tokens, it achieves an acceleration rate of approximately 3.5x, compared to the ~3.0x seen in the dense scatter plot.

</details>

(a)

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Performance Analysis of Sparse Tree Attention

## 1. Image Overview

This image is a scatter plot with an overlaid line graph comparing the inference speed (throughput) of different configurations for a Large Language Model (LLM) acceleration technique, likely related to the "Medusa" speculative decoding framework.

## 2. Component Isolation

### Header / Metadata

* **Language:** English

* **Primary Subject:** Speed (token/s) vs. Number of Candidate Tokens.

### Axis Definitions

* **Y-Axis (Vertical):**

* **Label:** Speed (token/s)

* **Scale:** Linear, ranging from 60 to 120 with major tick marks every 20 units (60, 80, 100, 120).

* **X-Axis (Horizontal):**

* **Label:** Number of Candidate Tokens

* **Scale:** Linear, ranging from 0 to 250 with major tick marks every 50 units (0, 50, 100, 150, 200, 250).

### Legend [Spatial Placement: Top Right]

| Symbol | Label |

| :--- | :--- |

| Red dashed line with star markers (`--*--`) | Sparse Tree Attention |

## 3. Data Series Analysis

### Series 1: Baseline (w/o Medusa)

* **Visual Description:** A single blue circular data point located at the bottom left of the chart.

* **Annotation:** An arrow points to this dot with the text "w/o Medusa".

* **Coordinates:** Approximately [x: 1, y: 45].

* **Trend:** This represents the base performance of the model without speculative decoding enhancements.

### Series 2: Standard Medusa / Dense Attention (Scatter Plot)

* **Visual Description:** A dense collection of small blue semi-transparent dots.

* **Trend Verification:**

* **0 to 60 tokens:** The speed increases sharply as the number of candidate tokens increases, peaking around 110 token/s.

* **60 to 250 tokens:** The speed follows a "stair-step" downward trend. There are distinct clusters where performance drops significantly at specific thresholds (roughly at 128 and 192 tokens).

* **Key Data Clusters:**

* **Peak:** ~110 token/s at ~60 candidate tokens.

* **Mid-range:** ~100 token/s between 70 and 125 candidate tokens.

* **Lower-range:** ~85 token/s between 130 and 185 candidate tokens.

* **Bottom-range:** ~75 token/s between 190 and 250 candidate tokens.

### Series 3: Sparse Tree Attention (Line Graph)

* **Visual Description:** A red dashed line connecting three large red star markers.

* **Trend Verification:** The line slopes downward as the number of candidate tokens increases, but it consistently maintains a higher speed than the blue scatter points (Standard Medusa).

* **Extracted Data Points:**

1. **Point 1:** [x: ~64, y: ~118] - Highest recorded speed.

2. **Point 2:** [x: ~128, y: ~112] - Maintains high speed even as candidate tokens double.

3. **Point 3:** [x: ~256, y: ~82] - Speed drops as candidate tokens reach the maximum shown, but remains above the dense scatter plot baseline for that x-value.

## 4. Technical Summary and Findings

* **Performance Gain:** Implementing Medusa (even without Sparse Tree Attention) provides a massive speedup from ~45 token/s to over 100 token/s.

* **Efficiency Optimization:** "Sparse Tree Attention" (Red Line) acts as a Pareto frontier, representing the optimal speed for a given number of candidate tokens. It effectively mitigates the performance degradation seen in the standard implementation (Blue Dots) as the complexity (number of tokens) increases.

* **Scaling Behavior:** Standard attention shows significant performance "cliffs" or drops at specific token counts, likely due to hardware memory limits or kernel inefficiencies. Sparse Tree Attention smooths this degradation and keeps the throughput significantly higher at high candidate counts (e.g., at 250 tokens, Sparse Tree is ~82 token/s vs ~75 token/s for standard).

</details>

(b)

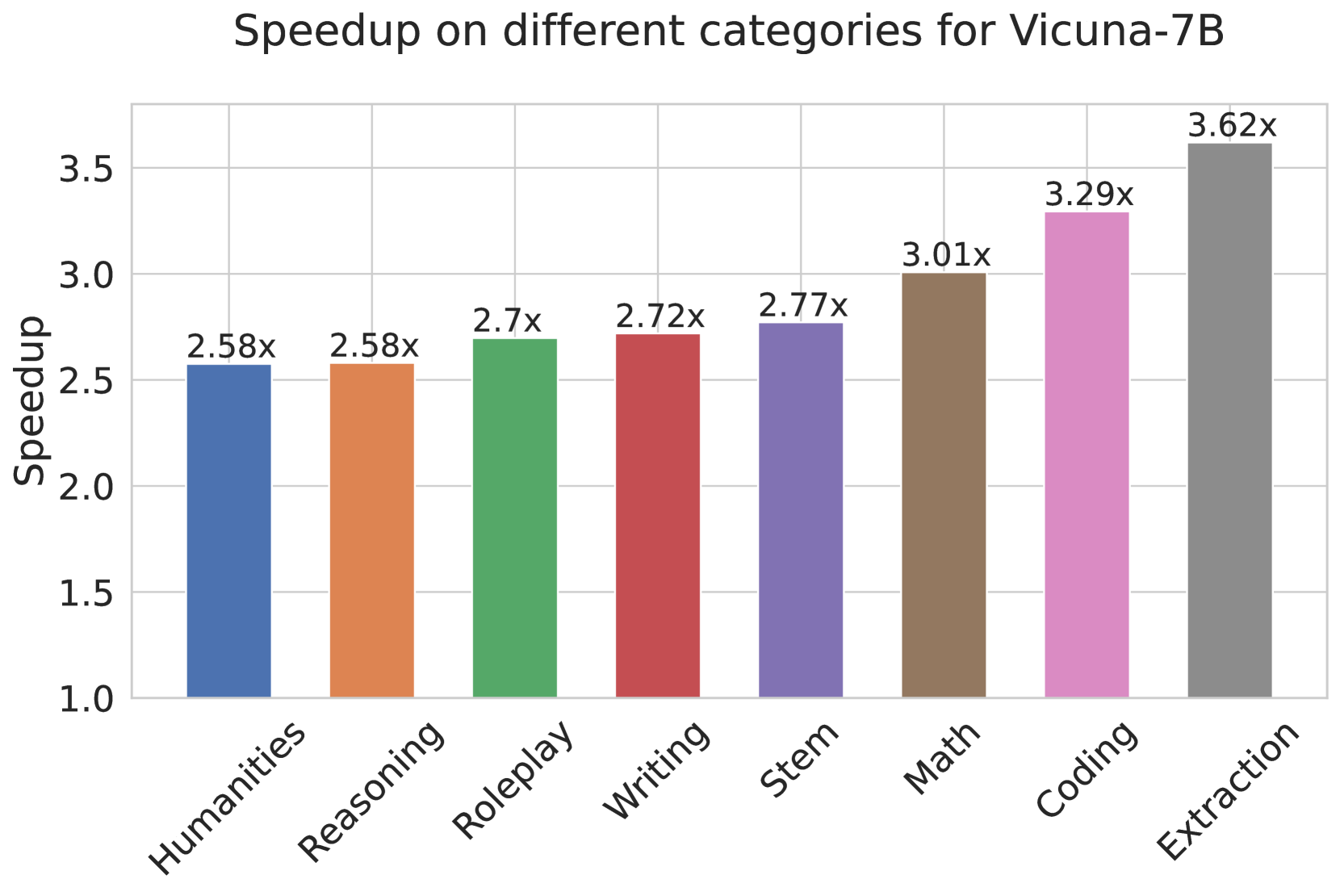

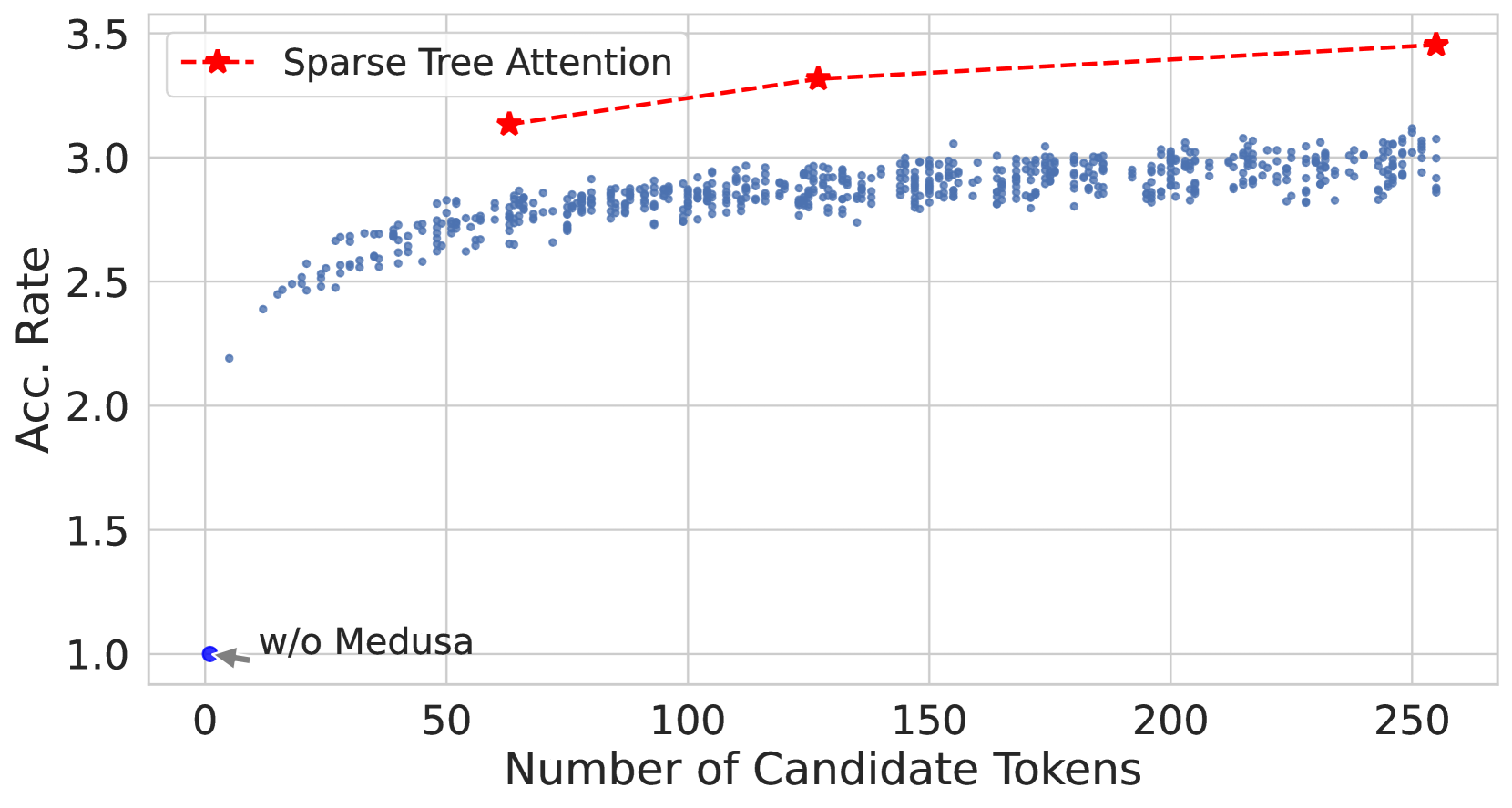

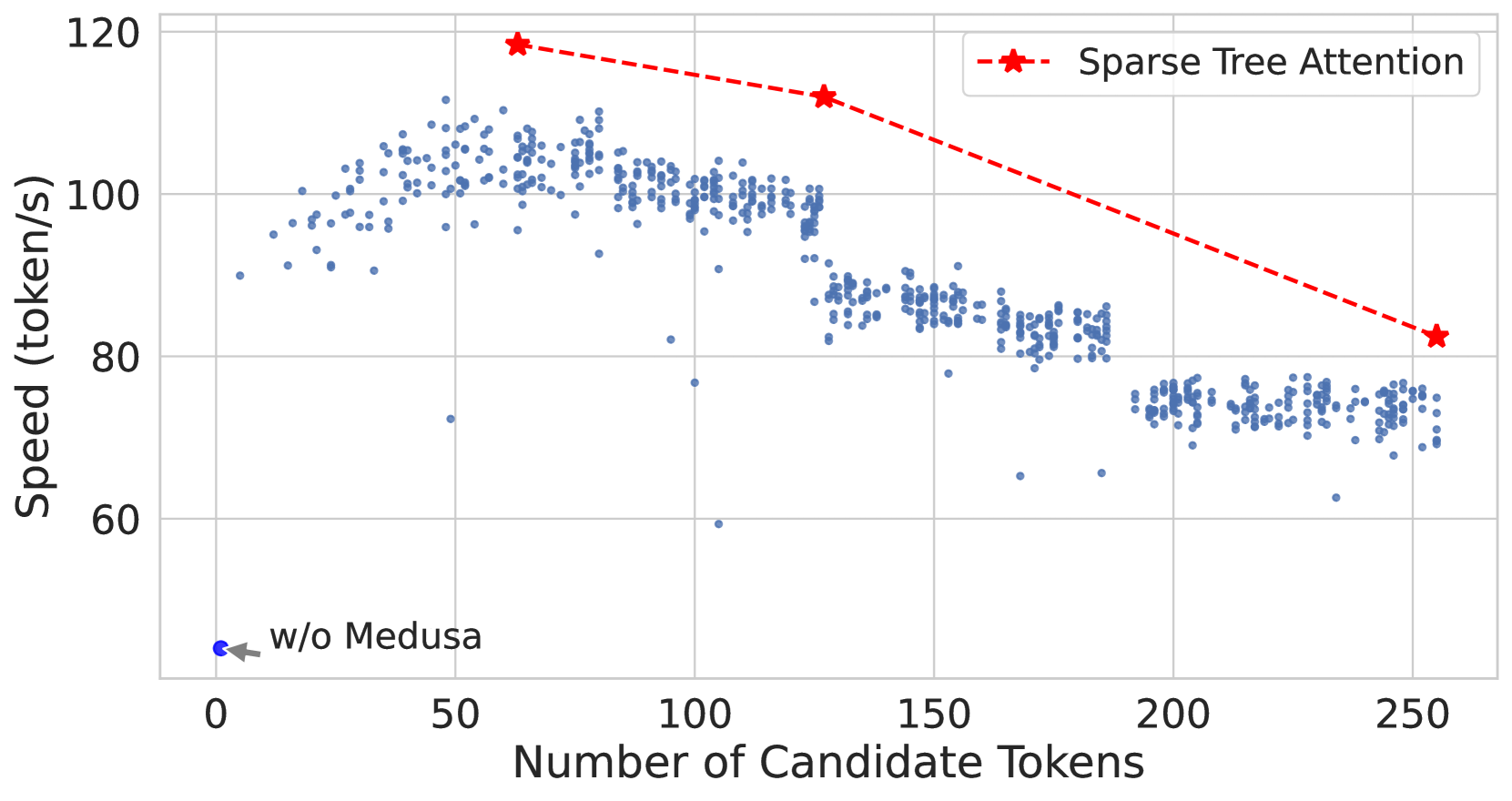

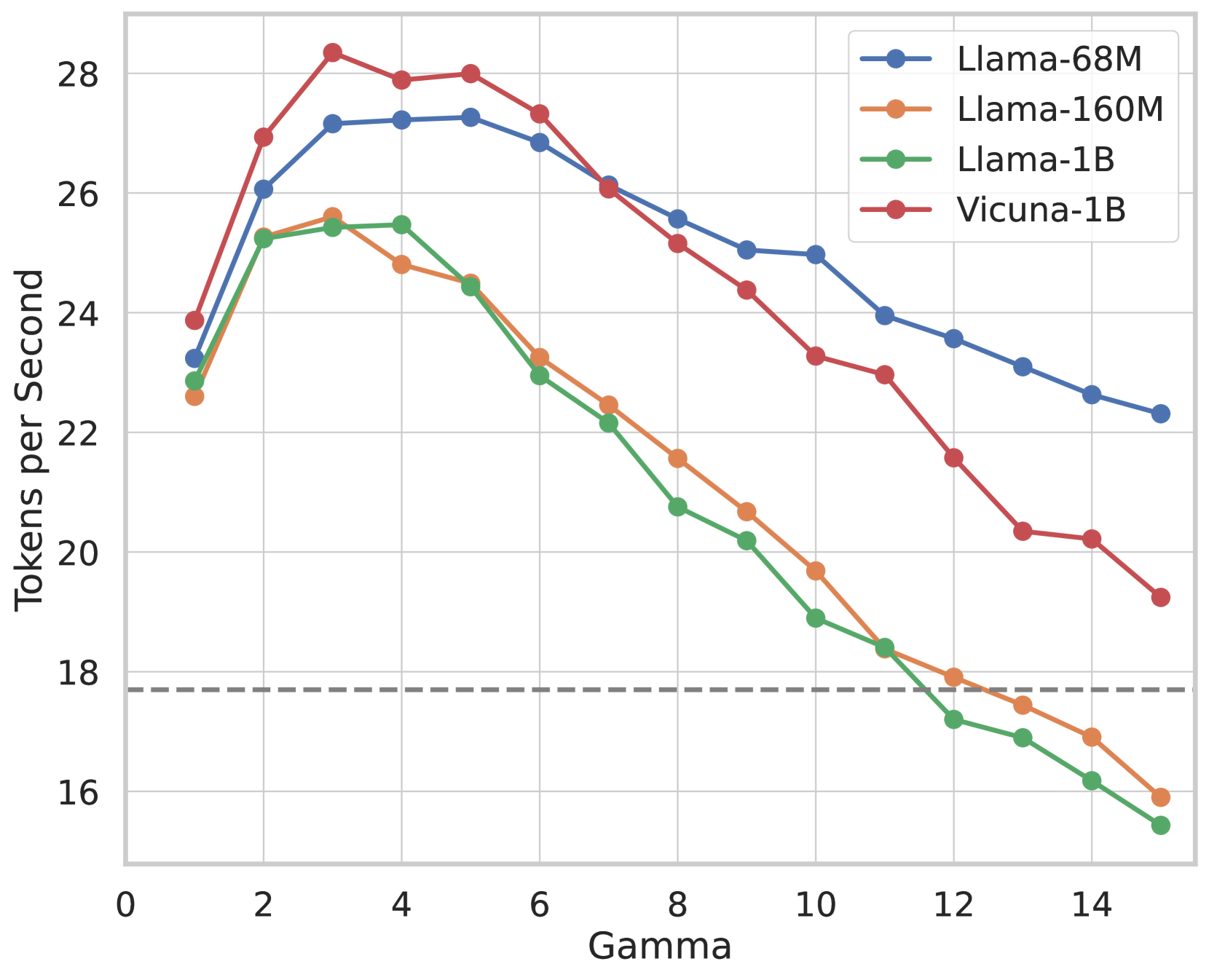

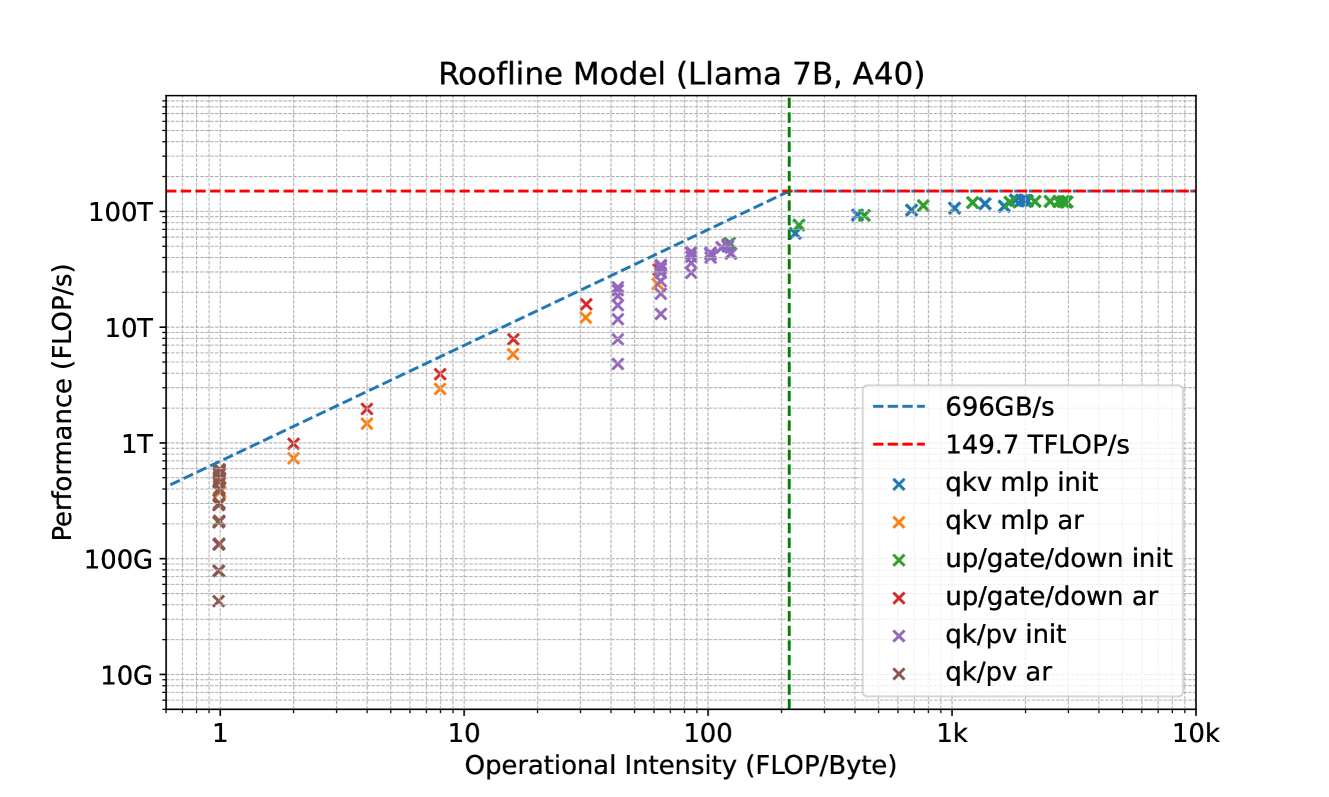

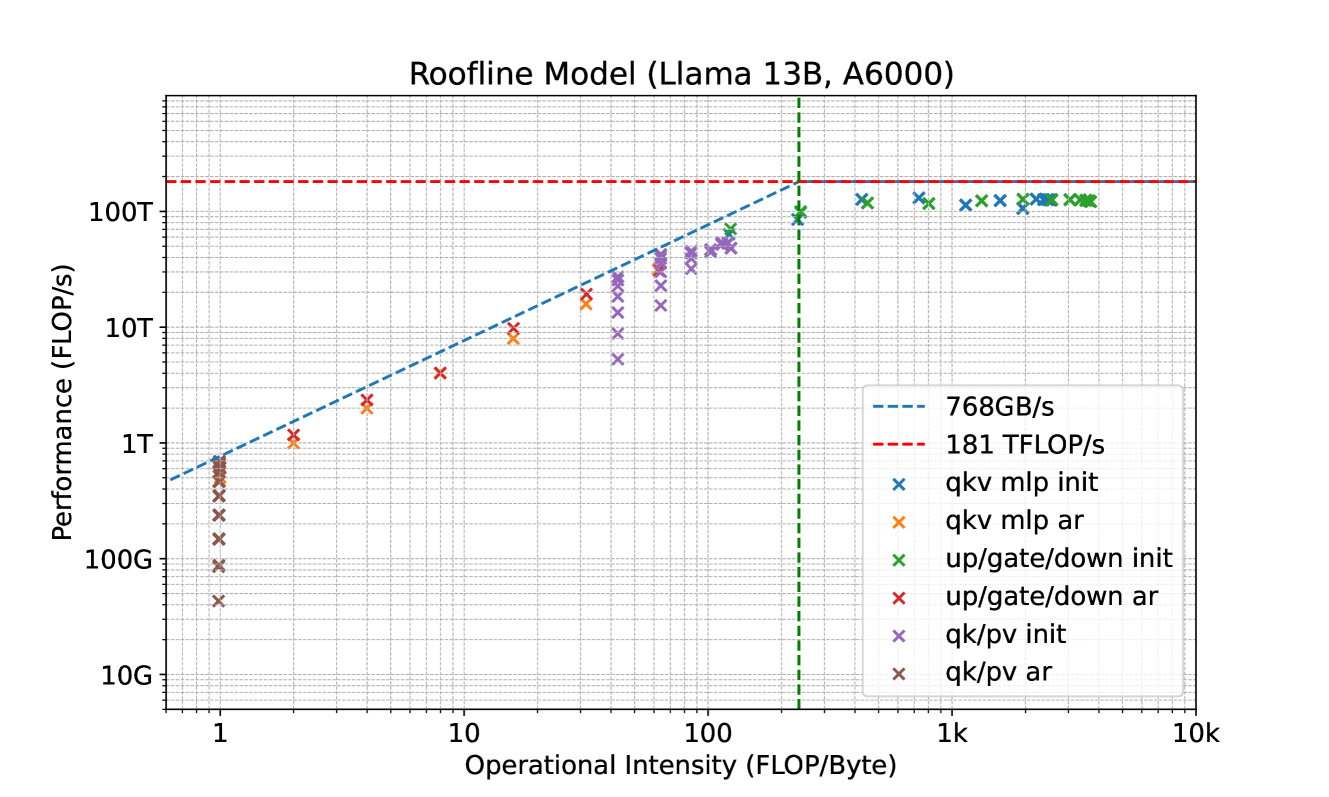

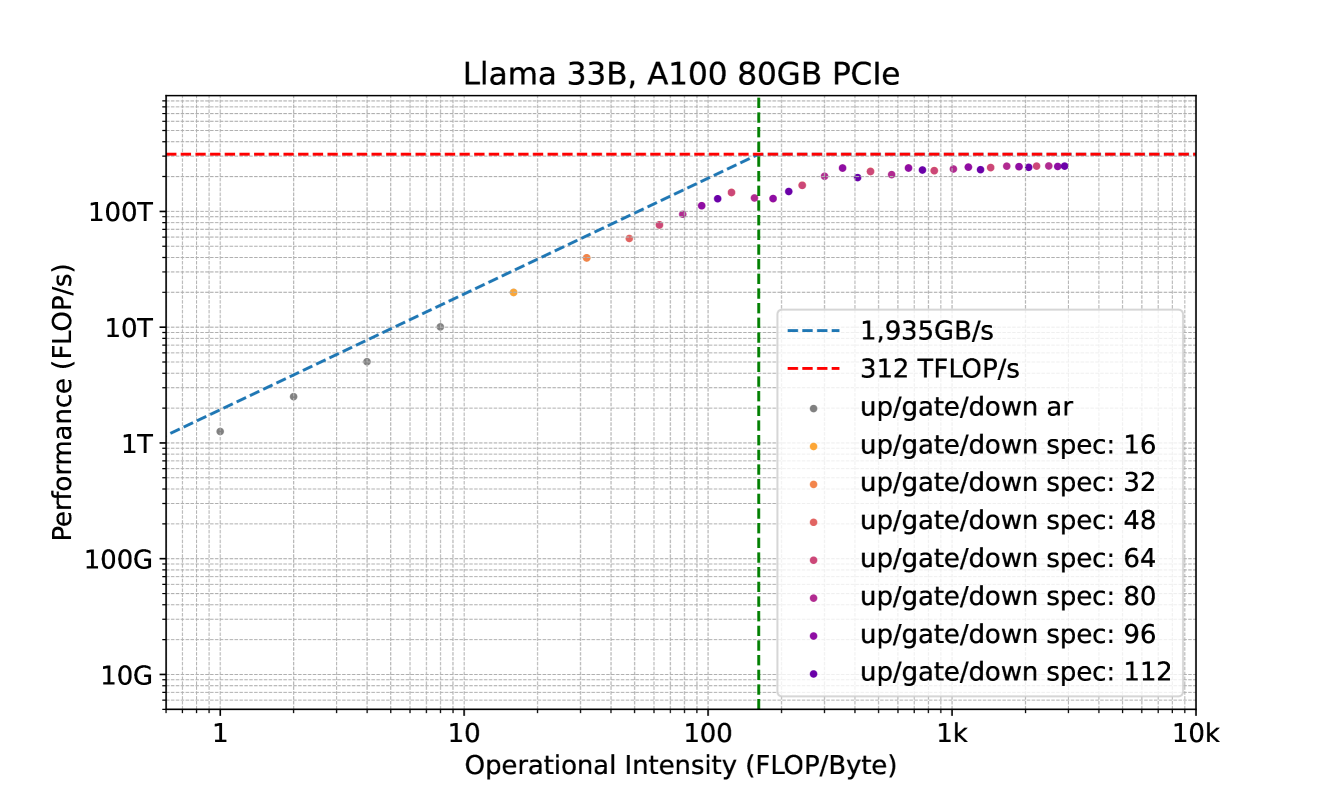

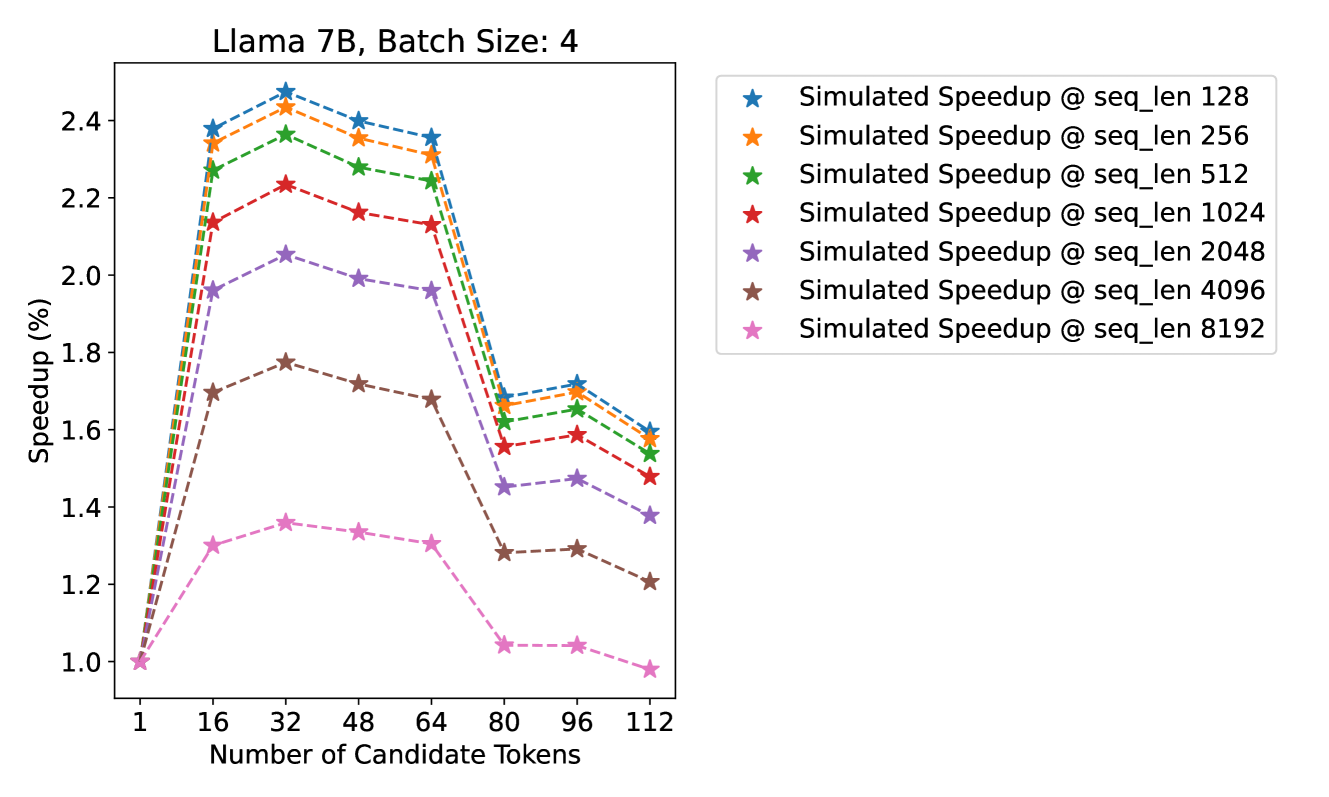

Figure 4: Effectiveness of numbers of candidate tokens for decoding introduced by trees (default number of candidate token for decoding is 1 when using KV cache). Left: The acceleration rate for randomly sampled dense tree settings (blue dots) and optimized sparse tree settings (red stars). Right: The speed (tokens/s) for both settings. The trend lines indicate that while the acceleration rate remains relatively stable for sparse trees, there is a notable decrease in speed as the candidate tokens increases.

Results. Table 1 complements these findings by comparing various Medusa -2 models in terms of their acceleration rate, overhead, and quality on MT-Bench with GPT-4 acting as the evaluator to assign performance scores ranging from 0 to 10. We report the quality differences of Medusa compared to the original model. Notably, while the Medusa -2 Vicuna-33B model shows a lower acceleration rate, it maintains a comparable quality. We hypothesize that this is due to a mismatch between the hidden training dataset and the dataset we used for self-distillation. Hence, the model’s generation quality can be well aligned by self-distillation while Medusa heads learn distribution from the self-distillation that potentially shifts from the training set. In our study, we also applied speculative decoding (Chen et al., 2023; Leviathan et al., 2022) to the Vicuna lineup using open-source draft models (details can be found in Appendix D).

These results underscore the complex interplay between speed and performance when scaling up model sizes and applying self-distillation techniques. The findings also highlight the potential of the Medusa -2 configuration to boost efficiency in processing while carefully preserving the quality of the model’s outputs, suggesting a promising direction for co-optimizing LLMs with Medusa heads.

3.3 Ablation Study

3.3.1 Configuration of Tree Attention

The study of tree attention is conducted on the writing and roleplay categories from the MT-Bench dataset using Medusa -2 Vicuna-7B. We target to depict tree attention’s motivation and its performance.

Fig. 4(a) compares the acceleration rate of randomly sampled dense tree configurations (Section. 2.1.2, depicted by blue dots) against optimized sparse tree settings (Section. 2.3.3, shown with red stars). The sparse tree configuration with 64 nodes shows a better acceleration rate than the dense tree settings with 256 nodes. The decline in speed in Fig. 4(b) is attributed to the increased overhead introduced by the compute-bound. While a more complex tree can improve acceleration, it does so at the cost of speed due to intensive matrix multiplications for linear layers and self-attention. The acceleration rate increase follows a logarithmic trend and slows down when the tree size grows as shown in Fig. 4(a). However, the initial gains are substantial, allowing Medusa to achieve significant speedups. If the acceleration increase is less than the overhead, it will slow down overall performance. For detailed study, please refer to Appendix G.

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Document Extraction: Performance Metrics vs. Posterior Thresholds

## 1. Image Overview

This image is a dual-axis line chart comparing two performance metrics—**Acc. Rate** (Acceptance Rate) and **Scores**—across a range of **Posterior Thresholds**. The chart also includes baseline markers for two methodologies: **RS** (Random Search) and **Greedy**.

## 2. Component Isolation

### A. Header / Metadata

* **Language:** English.

* **Content:** No explicit title text is present above the chart area.

### B. Main Chart Area (Axes and Labels)

* **X-Axis (Bottom):**

* **Label:** `Posterior Thresholds`

* **Scale:** Linear, ranging from `0.00` to `0.25`.

* **Markers:** `0.00`, `0.05`, `0.10`, `0.15`, `0.20`, `0.25`.

* **Primary Y-Axis (Left - Blue):**

* **Label:** `Acc. Rate`

* **Scale:** Linear, ranging from `3.0` to `3.5`.

* **Markers:** `3.0`, `3.1`, `3.2`, `3.3`, `3.4`, `3.5`.

* **Secondary Y-Axis (Right - Orange/Gold):**

* **Label:** `Scores`

* **Scale:** Linear, ranging from `7.0` to `7.6`.

* **Markers:** `7.0`, `7.1`, `7.2`, `7.3`, `7.4`, `7.5`, `7.6`.

### C. Legend and Baselines

The chart utilizes a non-standard legend format where baseline values are plotted as individual points on the Y-axes.

* **Left Side Baselines (Acc. Rate):**

* **Greedy (Blue Star):** Positioned at approximately `Acc. Rate = 3.05` at `Threshold = 0.00`.

* **RS (Blue Circle):** Positioned at approximately `Acc. Rate = 2.98` (just below the 3.0 line) at `Threshold = 0.00`.

* **Right Side Baselines (Scores):**

* **RS (Orange Circle):** Positioned at approximately `Score = 7.45`.

* **Greedy (Orange Star):** Positioned at approximately `Score = 7.41`.

---

## 3. Data Series Analysis

### Series 1: Acc. Rate (Solid Blue Line)

* **Trend Verification:** The line starts at its maximum value and exhibits a sharp downward slope initially. After reaching a local minimum around threshold 0.06, it enters a stabilized oscillatory pattern (a "wavy" horizontal trend) for the remainder of the x-axis.

* **Key Data Points:**

* **Start (0.01):** ~3.50 (Peak)

* **Initial Drop (0.05):** ~3.30

* **Local Min (0.06):** ~3.24

* **Stabilization Range:** Fluctuates between ~3.22 and ~3.30 for thresholds 0.07 to 0.25.

* **End (0.25):** ~3.26

### Series 2: Scores (Solid Orange/Gold Line)

* **Trend Verification:** This series is highly volatile. It shows no singular upward or downward trend but rather a series of sharp peaks and valleys across the entire threshold range.

* **Key Data Points:**

* **Start (0.01):** ~7.05

* **First Peak (0.06):** ~7.38

* **Deep Valley (0.10):** ~7.02

* **Mid Peak (0.14):** ~7.42

* **Deep Valley (0.16):** ~7.08

* **High Peak (0.20):** ~7.55

* **Final Peak (0.24):** ~7.58 (Global Maximum)

* **End (0.25):** ~7.52

---

## 4. Summary of Findings

1. **Inverse Relationship (Initial):** At very low posterior thresholds (< 0.05), the Acceptance Rate is at its highest while Scores are relatively low.

2. **Stability vs. Volatility:** The `Acc. Rate` (Blue) becomes relatively stable after the initial threshold increase, whereas the `Scores` (Orange) remain highly sensitive to specific threshold values, showing significant variance.

3. **Baseline Comparison:**

* The `Acc. Rate` for all thresholds shown (0.01 - 0.25) remains significantly higher than the **Greedy** (~3.05) and **RS** (~2.98) baselines.

* The `Scores` fluctuate around the **RS** (~7.45) and **Greedy** (~7.41) baselines, only consistently exceeding them at specific threshold intervals (e.g., near 0.20 and 0.24).

</details>

Figure 5: Performance comparison of Medusa using proposed typical sampling. The model is fully fine-tuned from Vicuna-7B. The plot illustrates the acceleration rate and average scores on the writing and roleplay (MT-Bench) with a fixed temperature of 0.7 for 3 different settings: greedy sampling and random sampling (RS) plotted as the star and the dot, and typical sampling curves under different thresholds.

3.3.2 Thresholds of Typical Acceptance

The thresholds of typical acceptance are studied on the writing and roleplay categories from the MT-Bench dataset (Zheng et al., 2023) using Medusa -2 Vicuna 7B. Utilizing the Vicuna 7B model, we aligned our methodology with the approach delineated by (Hewitt et al., 2022) setting the $\alpha=\sqrt{\epsilon}$ . Fig. 5 presents a comparative analysis of our model’s performance across various sampling settings. These settings range from a threshold $\epsilon$ starting at 0.01 and incrementally increasing to 0.25 in steps of 0.01. Our observations indicate a discernible trade-off: as $\epsilon$ increases, there is an elevation in quality at the expense of a reduced acceleration rate. Furthermore, for tasks demanding creativity, it is noted that the default random sampling surpasses greedy sampling in performance, and the proposed typical sampling is comparable with random sampling when $\epsilon$ increases.

| | Baseline | Direct Fine-tuning | Medusa -1 | Medusa -2 |

| --- | --- | --- | --- | --- |

| Quality | 6.17 | 5.925 | 6.23 | 6.18 |

| Speedup | N/A | N/A | 2.18 | 2.83 |

Table 2: Comparison of Different Settings of Vicuna-7B. Quality is obtained by evaluating models on MT-Bench using GPT-4 as the judge (higher the better).

3.3.3 Effectiveness of Two-stage Fine-tuning

Table 2 shows the performance differences between various fine-tuning strategies for the Vicuna-7B model. Medusa -1, which fine-tunes only the Medusa heads, achieves a 2.18x speedup without compromising generation quality. Medusa -2, which employs two-stage fine-tuning (Section 2.2.2), maintains generation quality and provides greater speedup (2.83x) compared to Medusa -1. In contrast, direct fine-tuning the model with the Medusa heads results in degraded generation quality. The findings indicate that implementing our Medusa -2 for fine-tuning maintains the model’s quality and concurrently improves the speedup versus Medusa -1.

Table 3: Impact of Techniques on Speedup

| Medusa-1 heads without tree attention | $\sim$ 1.5x |

| --- | --- |

| Adding tree attention | $\sim$ 1.9x |

| Using optimized tree configuration | $\sim$ 2.2x |

| Training heads with Medusa-2 | $\sim$ 2.8x |

4 Discussion

In conclusion, Medusa enhances LLM inference speed by 2.3-2.8 times by equipping models with additional predictive decoding heads, allowing for generating multiple tokens simultaneously and bypassing the sequential decoding limitation. Key advantages of Medusa include its simplicity, parameter efficiency, and ease of integration into existing systems. Medusa avoids the need for specialized draft models. The typical acceptance scheme removes complications from rejection sampling while providing reasonable outputs. Our approach including two efficient training procedures, ensures high-quality output across various models and prompt types. We summarize the development of each technique and their impact on the speedup in Table 3.

In the paper, we focus on the setting with batch size 1 for simplicity. Yet, we want to emphasize that the ideas presented in our paper can be generalized to larger batch-size settings, which are now supported by libraries like TensorRT and Huggingface TGI following our paper.

Acknowledgements

We extend our heartfelt gratitude to several individuals whose contributions were invaluable to this project:

- Zhuohan Li, for his invaluable insights on LLM serving. If you haven’t already, do check out Zhuohan’s vLLM project—it’s nothing short of impressive.

- Shaojie Bai, for engaging in crucial discussions that helped shape the early phases of this work.

- Denny Zhou, for introducing the truncation sampling scheme to Tianle and encouraging Tianle to explore the area of LLM serving.

- Yanping Huang, for pointing out the memory-bandwidth-bound challenges associated with LLM serving to Tianle.

- Lianmin Zheng, for clarifying the different training recipes used in different sizes of Vicuna models.

Jason D. Lee acknowledges the support of the NSF CCF 2002272, NSF IIS 2107304, and NSF CAREER Award 2144994. Deming Chen acknowledges the support from the AMD Center of Excellence at UIUC.

Impact Statement

The introduction of Medusa, an innovative method to improve the inference speed of Large Language Models (LLMs), presents a range of broader implications for society, technology, and ethics. This section explores these implications in detail.

Societal and Technological Implications

- Accessibility and Democratization of AI: By significantly enhancing the efficiency of LLMs, Medusa makes advanced AI technologies more accessible to a wider range of users and organizations. Democratization can spur innovation across various sectors, including education, healthcare, and entertainment, potentially leading to breakthroughs that benefit society at large.

- Environmental Impact: The acceleration for LLM inference due to Medusa could lead to decreased energy consumption and a smaller carbon footprint. This aligns with the growing need for sustainable AI practices, contributing to environmental conservation efforts.

- Economic Implications: The increased efficiency brought about by Medusa may lower the cost barrier to deploying state-of-the-art AI models, enabling small and medium-sized enterprises to leverage advanced AI capabilities. This could stimulate economic growth, foster competition, and drive technological innovation.

Ethical Considerations

- Bias and Fairness: While Medusa aims to improve LLM efficiency, it inherits the ethical considerations of its backbone models, including issues related to bias and fairness. The method’s ability to maintain generation quality necessitates investigation to ensure that the models do not perpetuate or amplify existing biases.

- Transparency and Accountability: The complexity of Medusa, particularly with its tree-based attention mechanism and multiple decoding heads, may pose challenges in terms of model interpretability. Ensuring transparency in how decisions are made and maintaining accountability for those decisions are crucial for building trust in AI systems.

- Security and Privacy: The accelerated capabilities of LLMs augmented by Medusa could potentially be exploited for malicious purposes, such as generating disinformation at scale or automating cyber-attacks. It is imperative to develop and enforce ethical guidelines and security measures to prevent misuse.

References

- Ainslie et al. (2023) Ainslie, J., Lee-Thorp, J., de Jong, M., Zemlyanskiy, Y., Lebrón, F., and Sanghai, S. Gqa: Training generalized multi-query transformer models from multi-head checkpoints. arXiv preprint arXiv:2305.13245, 2023.

- Axolotl (2023) Axolotl. Axolotl. https://github.com/OpenAccess-AI-Collective/axolotl, 2023.

- Basu et al. (2021) Basu, S., Ramachandran, G. S., Keskar, N. S., and Varshney, L. R. {MIROSTAT}: A {neural} {text} {decoding} {algorithm} {that} {directly} {controls} {perplexity}. In International Conference on Learning Representations, 2021. URL https://openreview.net/forum?id=W1G1JZEIy5_.

- Brown et al. (2020) Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J. D., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Chen et al. (2023) Chen, C., Borgeaud, S., Irving, G., Lespiau, J.-B., Sifre, L., and Jumper, J. Accelerating large language model decoding with speculative sampling. February 2023. doi: 10.48550/ARXIV.2302.01318.

- Chen (2023) Chen, L. Dissecting batching effects in gpt inference. https://le.qun.ch/en/blog/2023/05/13/transformer-batching/, 2023. Blog.

- Chiang et al. (2023) Chiang, W.-L., Li, Z., Lin, Z., Sheng, Y., Wu, Z., Zhang, H., Zheng, L., Zhuang, S., Zhuang, Y., Gonzalez, J. E., Stoica, I., and Xing, E. P. Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality, March 2023. URL https://lmsys.org/blog/2023-03-30-vicuna/.

- Chowdhery et al. (2022) Chowdhery, A., Narang, S., Devlin, J., Bosma, M., Mishra, G., Roberts, A., Barham, P., Chung, H. W., Sutton, C., Gehrmann, S., et al. Palm: Scaling language modeling with pathways. arXiv preprint arXiv:2204.02311, 2022.

- Dettmers et al. (2021) Dettmers, T., Lewis, M., Shleifer, S., and Zettlemoyer, L. 8-bit optimizers via block-wise quantization. International Conference on Learning Representations, 2021.

- Dettmers et al. (2022) Dettmers, T., Lewis, M., Belkada, Y., and Zettlemoyer, L. Llm. int8 (): 8-bit matrix multiplication for transformers at scale. arXiv preprint arXiv:2208.07339, 2022.

- Dettmers et al. (2023) Dettmers, T., Pagnoni, A., Holtzman, A., and Zettlemoyer, L. Qlora: Efficient finetuning of quantized llms. arXiv preprint arXiv:2305.14314, 2023.

- Ding et al. (2023) Ding, N., Chen, Y., Xu, B., Qin, Y., Zheng, Z., Hu, S., Liu, Z., Sun, M., and Zhou, B. Enhancing chat language models by scaling high-quality instructional conversations, 2023.

- Dubois et al. (2023) Dubois, Y., Li, X., Taori, R., Zhang, T., Gulrajani, I., Ba, J., Guestrin, C., Liang, P., and Hashimoto, T. B. Alpacafarm: A simulation framework for methods that learn from human feedback, 2023.

- Elfwing et al. (2017) Elfwing, S., Uchibe, E., and Doya, K. Sigmoid-weighted linear units for neural network function approximation in reinforcement learning. Neural Networks, 2017. doi: 10.1016/j.neunet.2017.12.012.

- Fan et al. (2018) Fan, A., Lewis, M., and Dauphin, Y. Hierarchical neural story generation. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2018. doi: 10.18653/v1/p18-1082.

- Frantar et al. (2022) Frantar, E., Ashkboos, S., Hoefler, T., and Alistarh, D. Gptq: Accurate post-training quantization for generative pre-trained transformers. arXiv preprint arXiv:2210.17323, 2022.

- Google (2023) Google. Palm 2 technical report, 2023. URL https://ai.google/static/documents/palm2techreport.pdf.

- Hewitt et al. (2022) Hewitt, J., Manning, C. D., and Liang, P. Truncation sampling as language model desmoothing. October 2022. doi: 10.48550/ARXIV.2210.15191.

- Hoffmann et al. (2022) Hoffmann, J., Borgeaud, S., Mensch, A., Buchatskaya, E., Cai, T., Rutherford, E., Casas, D. d. L., Hendricks, L. A., Welbl, J., Clark, A., et al. Training compute-optimal large language models. arXiv preprint arXiv:2203.15556, 2022.

- Holtzman et al. (2020) Holtzman, A., Buys, J., Du, L., Forbes, M., and Choi, Y. The curious case of neural text degeneration. In International Conference on Learning Representations, 2020. URL https://openreview.net/forum?id=rygGQyrFvH.

- Hu et al. (2021) Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., and Chen, W. Lora: Low-rank adaptation of large language models. ICLR, 2021.

- Joao Gante (2023) Joao Gante. Assisted generation: a new direction toward low-latency text generation, 2023. URL https://huggingface.co/blog/assisted-generation.

- Kim et al. (2023) Kim, S., Hooper, C., Gholami, A., Dong, Z., Li, X., Shen, S., Mahoney, M. W., and Keutzer, K. Squeezellm: Dense-and-sparse quantization. arXiv preprint arXiv:2306.07629, 2023.

- Kim & Rush (2016) Kim, Y. and Rush, A. M. Sequence-level knowledge distillation. EMNLP, 2016.

- Kumar et al. (2022) Kumar, A., Raghunathan, A., Jones, R., Ma, T., and Liang, P. Fine-tuning can distort pretrained features and underperform out-of-distribution. International Conference on Learning Representations, 2022.

- Kwon et al. (2023) Kwon, W., Li, Z., Zhuang, S., Sheng, Y., Zheng, L., Yu, C. H., Gonzalez, J. E., Zhang, H., and Stoica, I. Efficient memory management for large language model serving with pagedattention. In Proceedings of the ACM SIGOPS 29th Symposium on Operating Systems Principles, 2023.

- Leviathan et al. (2022) Leviathan, Y., Kalman, M., and Matias, Y. Fast inference from transformers via speculative decoding. November 2022. doi: 10.48550/ARXIV.2211.17192.

- Li et al. (2023) Li, X., Zhang, T., Dubois, Y., Taori, R., Gulrajani, I., Guestrin, C., Liang, P., and Hashimoto, T. B. Alpacaeval: An automatic evaluator of instruction-following models. https://github.com/tatsu-lab/alpaca_eval, 2023.

- Lin et al. (2023) Lin, J., Tang, J., Tang, H., Yang, S., Dang, X., and Han, S. Awq: Activation-aware weight quantization for llm compression and acceleration. arXiv preprint arXiv:2306.00978, 2023.

- Meister et al. (2022) Meister, C., Wiher, G., Pimentel, T., and Cotterell, R. On the probability-quality paradox in language generation. March 2022. doi: 10.48550/ARXIV.2203.17217.

- Meister et al. (2023) Meister, C., Pimentel, T., Wiher, G., and Cotterell, R. Locally typical sampling. Transactions of the Association for Computational Linguistics, 11:102–121, 2023.

- Miao et al. (2023) Miao, X., Oliaro, G., Zhang, Z., Cheng, X., Wang, Z., Wong, R. Y. Y., Chen, Z., Arfeen, D., Abhyankar, R., and Jia, Z. Specinfer: Accelerating generative llm serving with speculative inference and token tree verification. arXiv preprint arXiv:2305.09781, 2023.

- (33) NVIDIA. Nvidia a100 tensor core gpu.

- OpenAI (2023) OpenAI. Gpt-4 technical report, 2023.

- Ouyang et al. (2022) Ouyang, L., Wu, J., Jiang, X., Almeida, D., Wainwright, C. L., Mishkin, P., Zhang, C., Agarwal, S., Slama, K., Ray, A., et al. Training language models to follow instructions with human feedback. arXiv preprint arXiv:2203.02155, 2022.

- Pan (2023) Pan, J. Tiny vicuna 1b. https://huggingface.co/Jiayi-Pan/Tiny-Vicuna-1B, 2023.

- Pillutla et al. (2021) Pillutla, K., Swayamdipta, S., Zellers, R., Thickstun, J., Welleck, S., Choi, Y., and Harchaoui, Z. MAUVE: Measuring the gap between neural text and human text using divergence frontiers. In Beygelzimer, A., Dauphin, Y., Liang, P., and Vaughan, J. W. (eds.), Advances in Neural Information Processing Systems, 2021. URL https://openreview.net/forum?id=Tqx7nJp7PR.

- Pope et al. (2022) Pope, R., Douglas, S., Chowdhery, A., Devlin, J., Bradbury, J., Levskaya, A., Heek, J., Xiao, K., Agrawal, S., and Dean, J. Efficiently scaling transformer inference. November 2022. doi: 10.48550/ARXIV.2211.05102.

- ShareGPT (2023) ShareGPT. ShareGPT. https://huggingface.co/datasets/Aeala/ShareGPT_Vicuna_unfiltered, 2023.

- Shazeer (2019) Shazeer, N. Fast transformer decoding: One write-head is all you need. arXiv preprint arXiv:1911.02150, 2019.

- Spector & Re (2023) Spector, B. and Re, C. Accelerating llm inference with staged speculative decoding. arXiv preprint arXiv:2308.04623, 2023.

- Stern et al. (2018) Stern, M., Shazeer, N. M., and Uszkoreit, J. Blockwise parallel decoding for deep autoregressive models. Neural Information Processing Systems, 2018.

- Touvron et al. (2023) Touvron, H., Martin, L., Stone, K., Albert, P., Almahairi, A., Babaei, Y., Bashlykov, N., Batra, S., Bhargava, P., Bhosale, S., et al. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288, 2023.

- Tunstall et al. (2023) Tunstall, L., Beeching, E., Lambert, N., Rajani, N., Rasul, K., Belkada, Y., Huang, S., von Werra, L., Fourrier, C., Habib, N., Sarrazin, N., Sanseviero, O., Rush, A. M., and Wolf, T. Zephyr: Direct distillation of lm alignment, 2023.

- Xia et al. (2023) Xia, H., Ge, T., Chen, S.-Q., Wei, F., and Sui, Z. Speculative decoding: Lossless speedup of autoregressive translation, 2023. URL https://openreview.net/forum?id=H-VlwsYvVi.

- Xiao et al. (2023a) Xiao, G., Lin, J., Seznec, M., Wu, H., Demouth, J., and Han, S. Smoothquant: Accurate and efficient post-training quantization for large language models. In International Conference on Machine Learning, pp. 38087–38099. PMLR, 2023a.