## Scalable Automated Verification for Cyber-Physical Systems in Isabelle/HOL

Jonathan Julián Huerta y Munive

Czech Institute of Informatics, Robotics and Cybernetics Prague, Czechia

Mario Gleirscher University of Bremen Germany

Georg Struth University of Sheffield United Kingdom

Thomas Hickman Genomics PLC United Kingdom

22/January/2024

## Abstract

We formally introduce IsaVODEs (Isabelle verification with Ordinary Differential Equations), a framework for the verification of cyber-physical systems. We describe the semantic foundations of the framework's formalisation in the Isabelle/HOL proof assistant. A user-friendly language specification based on a robust state model makes our framework flexible and adaptable to various engineering workflows. New additions to the framework increase both its expressivity and proof automation. Specifically, formalisations related to forward diamond correctness specifications, certification of unique solutions to ordinary differential equations (ODEs) as flows, and invariant reasoning for systems of ODEs contribute to the framework's scalability and usability. Various examples and an evaluation validate the effectiveness of our framework.

Keywords: cyber-physical systems, hybrid systems, program verification, interactive theorem proving, predicate transformers, lenses

## 1 Introduction

Cyber-physical systems (CPSs) are computerised systems whose software (the 'cyber' part) interacts with their physical environment. The software is modelled as a variable-updating, potentially nondeterministic program, while the environment is modelled by a system of ordinary differential equations (ODEs). When CPSs interact with humans, for example through robotic manipulators, they are invariably safety-critical, which makes their design verification imperative.

However, CPS verification is challenging because of the complex interactions between the software, hardware, and the physical environment. These produce uncountably infinite state spaces, which makes verification generally intractable, and so requires the use of abstractions. A deductive verification approach uses symbolic logics, which support the encoding of solutions and invariants of ODEs. Such an approach has been implemented with the KeYmaera X tool (KYX) [31], which models CPSs via hybrid programs, and verifies their behaviour with differential dynamic logic ( d L ). Numerous case studies and competitions support the applicability of this approach [44, 52, 56, 85].

General-purpose interactive theorem provers (ITPs), like Coq, Lean, and Isabelle, also support CPS verification [40, 25]. Their track record for large-scale deductive verification is welldocumented [5, 14, 45, 47, 49]. A significant reason for these successes is the combination of

Simon Foster University of York United Kingdom

Christian Pardillo Laursen University of York United Kingdom

expressive logical languages, and plugins to enhance reasoning capacity and usability. Different from bespoke axiomatic provers like KYX, these tools are inherently extensible since the mathematics is constructed from first principles, rather than postulated, with accompanying soundness guarantees. For example, the recent addition of transcendental functions to KYX necessitated an extension of the tool, whereas these have been available in HOL, Coq, and Isabelle for many years. Moreover, the foundational mathematical libraries are under constant development by the community 1 , and so ITP-based verification tools benefit from an ever-growing library of definitions and theorems.

Our ITP of choice, Isabelle/HOL, provides a framework supporting assured software engineering [45, 11], through an open extensible architecture; a flexible syntax pipeline [82, 60]; integration of external analysis tools in Sledgehammer, Nitpick, and cousins; and output of software artefacts in a variety of languages [34]. Isabelle's rich library for multi-variate analysis (HOL-Analysis) can be applied to CPS verification [37, 42]. Thanks to its higher-order logic, Isabelle supports several modelling facilities essential to control engineering, such as vectors, matrices, and transcendental functions (e.g. sin , cos , and e x ) [8, 38], and it has extended reasoning capability through its integration with SMT solvers (CVC4, Z3, Vampire) [61]. Isabelle is therefore ideally suited to reasoning about CPSs, but to harness its facilities we need an accessible and powerful CPS modelling language and verification framework.

In this paper we contribute an Isabelle-based verification framework called IsaVODEs (Isabelle Verification with Ordinary Differential Equations) [40, 25]. IsaVODEs provides a textual language for modelling CPSs, which is constructed as a shallow embedding in Isabelle. The language is equipped with nondeterministic state-transformer-based program semantics and a flexible hybrid store model based on lenses [23, 27]. The program model provides several verification calculi, including Hoare logic and dynamic logic, with modalities for specifying both safety and reachability properties. The store model allows software models to benefit from the full generality of the Isabelle type system, including continuous structures like vectors and matrices, and discrete structures like algebraic data types. Our language can therefore scale to systems with both realistic dynamics, and complex control structures. We harness Isabelle's syntax translation mechanisms to provide a user-friendly frontend for the language, including declarative context, program notation, and operators for arithmetic, vectors, and matrices. Our technical solution is extensible and endeavours to resemble languages like Modelica, Mathematica, and MATLAB.

Verification of models in IsaVODEs is supported by a library of deduction rules. For reasoning about ODEs, we support both the use of solutions with flow functions, and differential induction, which avoids the need for solutions. We integrate two Computer Algebra Systems (CASs), namely Mathematica and SageMath, into Isabelle for the supply of symbolic solutions to Lipschitz-continuous ODEs in an analogous way to sledgehammer. We also support both a d L -style differential ghost rule and Darboux rules that enhance IsaVODEs' invariant reasoning capabilities for complex continuous dynamics.

Orthogonal to this, we also contribute novel laws to support local reasoning in the style of separation logic. We can infer the frame of a program (i.e. the mutable variables), and use a frame rule to prove invariants over variables outside the frame. This is accompanied by a novel form of differentiation called 'framed Fréchet derivatives', which allow us to differentiate with respect to a strict subset of the store variables, particularly ignoring any discrete variables lacking a topological structure. When variables are outside of the frame of a system of ODEs, those variables are unchanged during continuous evolution. Local reasoning further facilitates scalability of our tool, by allowing a verification task to be decomposed into modules supported by individual assumptions and guarantees.

We supply several proof methods to support automated proof and verification condition generation (VCG), including the application of differential induction, certification of ODE solutions, and the various Hoare-logic laws. Care is taken to ensure that the resulting proof obligations are presented in a way that preserves abstraction, and elides irrelevant details of the Isabelle mechanisation, to main-

1 See Isabelle's Archive of Formal Proofs ( https://www.isa-afp.org/statistics/ ) and Lean's Mathematical Library ( https://leanprover-community.github.io/mathlib\_stats.html ).

tain the user experience. Harnessing Isabelle's Eisbach tool [53], we also employ various high-level search-based proof methods, which exhaustively apply the proof rules to compute a complete set of VCs. The VCs can finally be tackled in the usual way using tools like auto and sledgehammer.

The framework has been tested successfully on a large set of hybrid verification benchmarks [54], and many larger examples. Our enhancements yield at least the same performance in essential verification tasks as that of domain-specific provers. Initial case studies suggest a simplification of user interaction. Our new components can be found online 2 . Our contributions are highlighted through examples, while additional contributions are noted throughout the text.

This article is a substantial extension of previously published work at the Formal Methods symposium (FM2021) [27]. Our additional contributions include generalisations of the laws for frames (§5.1), differential ghosts, and Darboux rules (§5.4); proof methods for certification of continuity, Lipschitz continuity, and uniqueness of solutions (§6.2); extensions to our previous proof methods (§6); the CAS integrations (§6.5); a more substantial evaluation (§7); and several additional examples (§2, §8). As a result of these enhancements, the usability of the tool is more mature and polished.

We begin our paper by illustrating the framework with a small case study (§2). We then expound our formalisation of dynamical systems (§3.1), the state-transformer hybrid program model (§3.2), the foundations of the store model (§3.3), the specification of continuous evolution (§3.4), and finally VCG though our framework's algebraic foundations (§3.5). This includes both safety, and also reachability and termination (§3.5.3).

Next, in §4, we introduce our framework's hybrid modelling language. We supply commands for generating hybrid stores, with variables, constants, and foundational properties (§4.1), and userfriendly notation for expressions (§4.3), matrices, and vectors (§4.4). The notation is automatically processed via rewriting rules supplied to Isabelle's simplifier, hiding implementation details.

In §5 we present laws for local reasoning about frames (§5.1), the seamless integration of framed ODEs into our hybrid programs (§5.2), and automatic certification of ODE invariants through framed Fréchet derivatives (§5.3). These also serve to supply new d L -style ghost and Darboux rules that enhance IsaVODEs' invariant reasoning (§5.4).

We contribute increased IsaVODEs proof-capabilities for the verification process. We formalise theorems necessary for increased automation, like the fact that differentiable functions are Lipschitzcontinuous (§6.2) or the first and second derivative test laws (§6.7). We provide methods to automate certification of differentiation (§6.1), uniqueness of solutions (§6.3), invariants for ODEs (§6.6), and VCG (§6.4). Additional automation is provided through the integration of Mathematica and SageMath (§6.5). We then bring all of the results together for the evaluation (§7) using benchmarks and examples (§8). In the remaining sections we review related work (§9), provide concluding statements, and discuss future research avenues (§10).

## 2 Motivating Case Study: Flight Dynamics

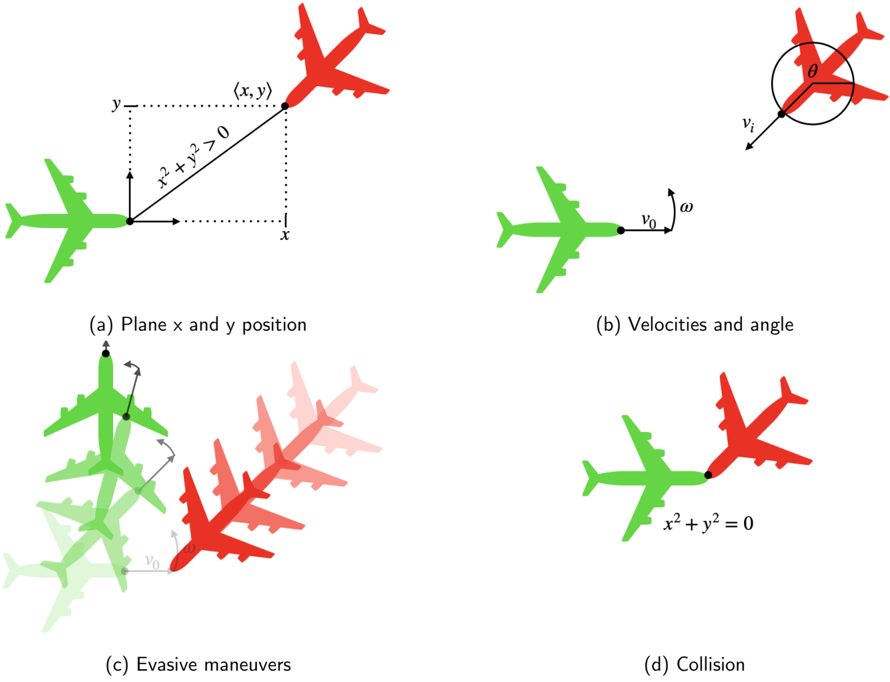

In this section, we motivate and demonstrate our framework's usage with a worked example: an aircraft collision avoidance scenario that was first presented by Mitsch et. al. [55]. It describes an aircraft trying to avoid a collision with a nearby intruding aircraft travelling at the same altitude. We can model this intruding aircraft by considering its coordinates x and y , and angle ϑ , in the reference frame of our own ship. The intruding plane has fixed velocity v i > 0 , and our plane has fixed velocity v o > 0 and variable angular velocity ω . This is illustrated in Figure 1a and 1b.

With our tool, we can model this using a dataspace command, as shown below:

```

dataspace planar_flight =

constants

v_o :: real (* own_velocity *)

```

2 github.com/isabelle-utp/Hybrid-Verification , also by clicking our icons

```

city *)

Verification, also by clicking our icons.

```

<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (23x23)

</details>

<details>

<summary>Image 2 Details</summary>

### Visual Description

Icon/Small Image (23x22)

</details>

Figure 1: Diagrams illustrating the flight dynamics example

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Diagram: Aircraft Collision Avoidance Scenarios

### Overview

The image presents a series of four diagrams illustrating different stages and scenarios related to aircraft proximity and potential collision. Each diagram depicts two airplanes, one green and one red, with associated coordinate systems, velocity vectors, and annotations describing the situation. The diagrams appear to be part of a technical explanation of collision avoidance systems or flight dynamics.

### Components/Axes

The diagrams utilize the following components:

* **Aircraft Representations:** Simplified depictions of airplanes, colored green and red to distinguish them.

* **Coordinate System:** A Cartesian coordinate system (x, y) is present in diagrams (a) and (d).

* **Velocity Vectors:** Arrows representing the velocity of the green aircraft (v₀) and the relative velocity (vᵣ) in diagrams (b) and (c).

* **Angle:** An angle θ is shown in diagram (b).

* **Mathematical Equations:** Equations are included in diagrams (a) and (d): x² + y² > 0 and x² + y² = 0.

* **Labels:** Each diagram is labeled (a) through (d) with descriptive titles.

* **Evasive Maneuvers:** Multiple ghosted images of the green aircraft are shown in diagram (c) to represent possible maneuvers.

### Detailed Analysis or Content Details

**(a) Plane x and y position:**

* Two airplanes are shown, one green and one red.

* The red airplane is positioned at coordinates (x, y).

* The equation x² + y² > 0 is displayed, indicating the distance from the origin is positive.

* The x and y axes are labeled.

**(b) Velocities and angle:**

* A green airplane is shown with a velocity vector labeled v₀.

* A circular diagram shows a relative velocity vector vᵣ at an angle θ to the horizontal.

* The angle θ is indicated within the circular diagram.

**(c) Evasive maneuvers:**

* A green airplane is shown with a velocity vector labeled v₀.

* Multiple, semi-transparent images of the green airplane are shown, illustrating potential evasive maneuvers.

* A red airplane is positioned in the background.

* The relative velocity vector is shown.

**(d) Collision:**

* Two airplanes, one green and one red, are shown directly overlapping.

* The equation x² + y² = 0 is displayed, indicating the distance from the origin is zero.

* The x and y axes are labeled.

### Key Observations

* The diagrams progress from a scenario of separation (a) to potential collision (d).

* Diagram (b) introduces the concept of relative velocity and angle.

* Diagram (c) explores potential evasive maneuvers.

* The mathematical equations in (a) and (d) quantify the distance between the aircraft and the origin.

* The use of color (green and red) consistently represents the two aircraft throughout the diagrams.

### Interpretation

The diagrams illustrate a simplified model of an aircraft collision avoidance scenario. Diagram (a) represents a safe separation where the distance between the aircraft and a reference point is positive. Diagram (b) introduces the concept of relative velocity, which is crucial for predicting potential collisions. Diagram (c) demonstrates how evasive maneuvers can be used to alter the trajectory of an aircraft and avoid a collision. Finally, diagram (d) depicts a collision scenario where the distance between the aircraft and the origin is zero.

The diagrams suggest a system where monitoring relative velocity and distance is critical for collision avoidance. The evasive maneuvers shown in (c) imply a control system capable of altering the aircraft's trajectory based on predicted collision risks. The mathematical equations provide a quantitative basis for assessing the risk of collision. The diagrams are likely part of a larger explanation of collision avoidance systems, flight dynamics, or air traffic control.

</details>

```

```

This command allows us to define our constants, any assumptions about the verification problem, and the system's variables. In this case, we postulate two constants v o and v i , both of type real , for the velocity of the aircraft and intruder, respectively. Here, real is the type of precise mathematical real numbers, as opposed to floating points or rationals.

As per the problem statement, we assume that both of these constants are strictly positive. This is specified by the assumptions called v o \_pos and v i \_pos , respectively, which can be used as hypotheses in proofs. We supply the variables of this system, which give the relative position of the intruder ( x and y ), its orientation θ , and its angular velocity ω .

We next specify the ODEs that model the system as a constant called plant :

$$\begin{array} { r l } { d e f i n i t i o n " p l a n t \equiv \{ x ^ { \prime } = v _ { i } * \cos \theta - v _ { o } + \omega ^ { * } y , } \\ { y ^ { \prime } = v _ { i } * \sin \theta - \omega ^ { * } x , } \\ { \theta ^ { \prime } = - \omega " } \end{array}$$

The ODEs can be specified in a user-friendly manner, as physicists and engineers would informally state them, in terms of the constants and variables of the system x , y , and φ .

Next, we define a simple controller to avoid collisions, as explained below:

```

abbreviation "I" = (v$_{i}$ * sin \* x - (v$_{i}$ * cos \- v$_{o}$) * y

> v$_{o}$ + v$_{i}$)"

abbreviation "J" = (v$_{i}$ * \omega * sin \* x - v$_{i}$ * \omega * cos \* y

+ v$_{o}$ * v$_{i}$ * cos \

> v$_{o}$ * v$_{i}$ + v$_{i}$ * \omega)"

definition "ctrl" = ( \omega ::= 0; \zI?) \p ( \omega ::= 1; \jJ?)"

```

This is based on two invariants, I and J , for two different scenarios. These are properties that remain true after the unfolding of each scenario. The invariant I holds when going straight is safe, while J holds when an evasion manoeuvre is allowed. Our controller selects whether to set our aircraft's angular velocity to 0 or 1 depending on which invariant holds ( I and J respectively). The evasive manoeuvres that occur when the angular velocity is set to 1 are illustrated in figure 1c.

Finally, our model follows the usual structure of an iteration ( ∗ ) of control choices ( ctrl ) followed by a nondeterministic evolution of the system dynamics ( plant ):

```

definition "flight" = (ctrl; plant)*''

```

The behaviour of the system is characterised by all states reachable by executing the controller followed by the plant a finite number of times.

<details>

<summary>Image 4 Details</summary>

### Visual Description

Icon/Small Image (22x23)

</details>

Next, we show how we can formally verify the collision avoidance of this system. We do this by specifying a Hoare triple within an Isabelle lemma:

```

lemma flight_safe: "{x^2 + y^2 > 0} flight {x^2 + y^2 > 0}"

proof -

have ctrl_post: "{x^2 + y^2 > 0} ctrl {(omega = 0 ^ @I) \ (omega = 1 ^ @J)}"

unfolding ctrl_def by wlp_full

have plant_safe_I: "{omega = 0 ^ @I} plant {x^2 + y^2 > 0}"

unfolding plant_def apply (dInv "($omega = 0 ^ @I)e", dWeaken)

using v_pos v_pos sum_squares_gt_zero_iff by fastforce

have plant_safe_J: "{omega = 1 ^ @J} plant {x^2 + y^2 > 0}"

unfolding plant_def apply (dInv "(omega=1 ^ @J)e", dWeaken)

by (smt (z3) cos_le_one mult_if_delta mult_le_cancel_iff2

mult_left_le sum_squares_gt_zero_iff v_pos v_pos)

show ?thesis

unfolding flight_def

apply (intro hoare_kstar_inv hoare_kcomp[OF ctrl_post])

by (rule hoare_disj_split[OF plant_safe_I plant_safe_J])

qed

```

We formulate collision avoidance using x 2 + y 2 > 0 as the invariant in the Hoare triple. A collision occurs when x 2 + y 2 = 0 , as illustrated in figure 1d. We use Isabelle's Isar scripting language to break down the verification into several intermediate properties via the Isar command ' have ', which takes a label followed by a property specification.

We first calculate the postconditions that arise from running the controller ( ctrl ) by using our tactic wlp\_full (see Section 6). This gives us two possible execution branches - one where I ∧ ω = 0 holds, and one where J ∧ ω = 1 holds. We give this first property the name ctrl\_post .

The postconditions provide two possible initial states for the plant, which we consider using the properties plant\_safe\_I and plant\_safe\_J . We show that both preconditions guarantee the problem's postcondition x 2 + y 2 > 0 by applying differential induction, a technique that proves an invariant of a system of ODEs without computing a solution (see Section 5.3). In each case, we use our Eisbach-designed method dInv , which takes as a parameter the invariant we wish to prove. The invariant is simply the precondition of each Hoare triple. However, we then need to prove that this invariant implies the postcondition, which is done using a further method called dWeaken . The remaining proof obligations can be solved using the Sledgehammer tool, which calls external automated theorem provers to find a solution and reconstructs it using the names of previously proven theorems in Isabelle's libraries. In particular, the property plant\_safe\_J is discharged using Z3, which uses trigonometric identities formalised in HOL-Analysis. We display the proof state resulting from applying differential induction below:

$$\begin{array} { r l } { \S w = 1 \wedge } \\ { v o * v i + v i * $ \omega } \\ { < v i * $ \omega * sin ($\vartheta ) * $ x - v i * $ \omega * cos ($\vartheta ) * $ y + } \\ { v o * v i * cos ($\vartheta ) \longrightarrow } \\ { 0 < ($x) ^ { 2 } + ($y)^{2 } } \end{array}$$

In the case that Sledgehammer is unable to find a proof, this proof obligation is quite readable, and so a manual proof or refutation could be given (See Section 8).

Finally, we put everything together to show that the whole system is safe, using the Isar command show , which is used to conclude proofs and restate the overall goal of the lemma ( ?thesis ). The proof uses a couple of high-level Hoare logic laws, and the properties that were proven to complete

the proof. This final step can be completely automated using Isabelle's classical reasoner, but we leave the details for the purpose of demonstration.

This completes our overview of our tool and its capabilities. In the remainder of the paper, we will expound the technical foundations of the tool, and our key results.

## 3 Semantics for hybrid systems verification

We start the section by recapitulating basic concepts from the theory of dynamical systems. We use these notions to describe our approach [40, 27] to hybrid systems verification in general-purpose proof assistants. Specifically, we present hybrid programs and their state transformer semantics. We then introduce our store model and provide intuitions for deriving state transformers for program assignments and ODEs relative to this model. We extend our approach to a predicate transformer semantics to derive laws for verification condition generation (VCG) [40, 9]. That is, we introduce the forward box predicate transformer and use its properties to derive the rules of Hoare logic. Finally, we introduce the forward diamond predicate transformer which serves to prove reachability and progress properties of hybrid systems. Our formalisation of these concepts as a shallow embedding in Isabelle/HOL maximises the availability of the prover's proof automation in VCG.

## 3.1 Dynamical Systems

In this section, we consider two ways to specify continuous dynamical systems [78]: explicitly via flows and implicitly via systems of ordinary differential equations (ODEs). Flows are functions φ : T → C → C , where T is a non-discrete submonoid of the real numbers R representing time. Similarly, C is a set with some topological structure like a vector space or a metric space. We emphasise this intuition using an overhead arrow for elements ⃗ c ∈ C and refer to C as a continuous state space. By definition, flows are continuously differentiable ( C 1 ) functions and monoid actions: they satisfy the laws φ ( t 1 + t 2 ) = φt 1 ◦ φt 2 and φ 0 = id C . Given a state ⃗ c ∈ C , the trajectory φ ⃗ c : T → C , such that φ ⃗ c t = φt⃗ c , is a curve modelling the continuous dynamical system's evolution in time and passing through ⃗ c . The orbit map γ φ : C → P C , such that γ φ ⃗ c = P φ ⃗ c T , gives the graph of this curve for ⃗ c , where P denotes both the powerset operation and the direct image operator.

Systems of ODEs are related to flows through their solutions. Formally, systems of ODEs are specified by vector fields f : T → C → C , functions assigning vectors to points in space-time. A solution X : T → C to the system of ODEs specified by f is then a C 1 -function such that X ′ t = f t ( Xt ) for all t in some interval U ⊆ T . This solution also solves the associated initial value problem (IVP) given by ( t 0 , ⃗ c ) (denoted by X ∈ ivp -sols U f t 0 ⃗ c ) if it satisfies Xt 0 = ⃗ c , with t 0 ∈ U . The existence of solutions to IVPs is guaranteed for continuous vector fields by the Peano theorem, albeit on an interval U⃗ c depending on the initial condition ( t 0 , ⃗ c ) . Similarly, the Picard-Lindelöf theorem states that all solutions to the IVP X ′ t = f t ( Xt ) with Xt 0 = ⃗ c coincide in some interval U⃗ c ⊆ T (around t 0 ) if f is Lipschitz continuous on T . In other words, it states that there is a unique solution to the IVP on U⃗ c . Thanks to this, when f is Lipschitz continuous on T , t 0 = 0 , and U⃗ c = T for all ⃗ c ∈ C , the solutions to the associated IVPs are exactly a flow's trajectories φ ⃗ c , that is, φ ′ ⃗ c t = f t ( φ ⃗ c t ) and φ ⃗ c 0 = ⃗ c . Therefore, the flow φ is the function mapping to each ⃗ c ∈ C the unique φ ⃗ c such that φ ⃗ c ∈ ivp -sols T f 0 ⃗ c .

̸

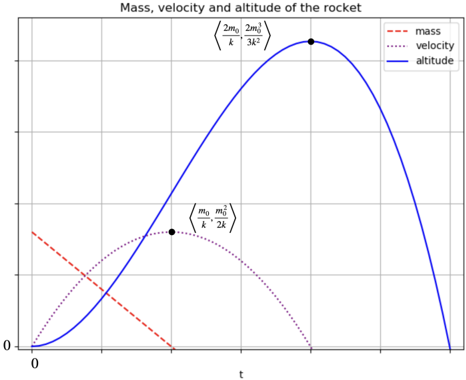

Example 1. We illustrate the above properties about flows and ODEs with the differential equation y ′ t = a · y t + b , where a = 0 and t ∈ R . This is an important ODE modelling for instance idealised bacterial growth [76], radioactive decay [81] or concentration of glucose in the blood (without the intervention of insulin) [2]. First, given that differentiability implies Lipschitz continuity [78], we can verify that this equation has unique solutions (by the Picard-Lindelöf theorem) simply by noticing that f t = a · y t + b is differentiable with derivative f ′ t = a · y ′ t . The solution to the associated

IVP with initial condition y 0 = c is φ c t = b · e a · t + c · e a · t -b .

$$loc. 0 = c is ϕ$_{c}$ t = b a · e ^ { a \cdot t } + c · e ^ { a \cdot t } - b $_{a}$. Indeed,

\varphi _ { c } ^ { \prime } t & = \left ( \frac { b } { a } \cdot e ^ { a \cdot t } + c \cdot e ^ { a \cdot t } - \frac { b } { a } \right ) ^ { \prime } \\ & = \frac { b } { a } \cdot e ^ { a \cdot t } + c \cdot a \cdot e ^ { a \cdot t } \\ & = a \cdot \left ( \frac { b } { a } \cdot e ^ { a \cdot t } + c \cdot a \cdot e ^ { a \cdot t } - \frac { b } { a } \right ) ^ { \prime } \\ & = a \cdot \left ( \frac { b } { a } \cdot e ^ { a \cdot t } + c \cdot e ^ { a \cdot t } - \frac { b } { a } \right ) ^ { \prime } \\$$

$$c t = \frac { b } { a } \cdot e ^ { a \cdot t } + c \cdot e ^ { a \cdot t } - \frac { b } { a } . \text { Indeed,}$$

It is also easy to check that φ c 0 = c . Hence, the mapping φ : R → R → R , such that φtc = φ c t , is the unique flow associated to the ODE y ′ t = a · y t + b . Indeed, for a fixed τ ∈ R , the function g : R → R such that g t = φ ( t + τ ) c = φ c ( t + τ ) satisfies g 0 = φ c τ and also g ′ t = φ ′ c ( t + τ ) = a · φ c ( t + τ ) + b = a · ( g t ) + b . However, by uniqueness, the only function satisfying these two equations is φ φ c τ . Hence g t = φ φ c τ t , thus, the monoid law φ ( t + τ ) c = φt ( φτ c ) holds. Thus, the function φ mapping points c to IVP-solutions φ c of the ODE y ′ t = a · y t + b satisfies the monoid action laws, and is therefore, a flow.

## 3.2 State transformer semantics for hybrid programs

Having introduced some basic concepts from the theory of dynamical systems, we present our hybrid systems model. We represent these via hybrid programs which are traditionally defined syntactically [35, 65]. Yet, our approach is purely semantic and we merely provide the recursive definition below as a guide to what our semantics should model:

$$\alpha \colon = x \colon = e | x ^ { \prime } = f \, \& \, G | i P ? | \alpha \, ; \alpha | \alpha \sqcap \alpha | \alpha ^ { * } .$$

Typically, x denotes variables; e and f are terms, and G and P are assertions. In dynamic logic [35], the statement x := e represents an assignment of variable x to expression e , ¿ P ? models testing whether P holds, while α ; β , α ⊓ β and α ∗ are the sequential composition , nondeterministic choice , and finite iteration of programs α and β . Well-known while-programs emerge via the equations if P then α else β ≡ ( ¿ P ? ; α ) ⊓ ( ¿ ¬ P ? ; β ) and while P do α ≡ ( ¿ P ? ; α ) ∗ ; ¿ ¬ P ? . Beyond these, differential dynamic logic ( d L ) [65] introduces evolution commands x ′ = f & G that represent systems of ODEs with boundary conditions or guards G that delimit the solutions' range to the region described by G .

We use nondeterministic state transformers α : S → P S as our semantic representation for hybrid programs. Thus, our 'hybrid programs' are really arrows in the Kleisli category of the powerset monad. Observe that a subset of these arrows also model 'assertions', namely the subidentities of the monadic unit η S , such that η S s = { s } for all s ∈ S . That is, the functions mapping each state s either to { s } or to ∅ model assertions where P s = { s } represents that P holds for s , and P s = ∅ that P does not hold for s . Henceforth, we abuse notation and identify predicates P : S → B (or P ∈ B S ), sets P S , and subidentities of η S , where B denotes the Booleans. We also treat predicates as logic formulae by writing, for instance, P ∧ Q and P ∨ Q instead of λs. P s ∧ Qs and λs. P s ∨ Qs . Thus, we denote the constantly true and constantly false predicates by ⊤ and ⊥ respectively. They coincide with the skip and abort programs such that skip = η S and abort s = ∅ for all s ∈ S . In this state transformer semantics, sequential compositions correspond to (forward) Kleisli compositions ( α ; β ) s = ⋃ { β s ′ | s ′ ∈ αs } , nondeterministic choices are pointwise unions ( α ⊓ β ) s = αs ∪ β s , and finite iterations are the functions α ∗ s = ⋃ i ∈ N α i s , with α i +1 = α ; α i and α 0 = skip .

## 3.3 Store and expressions model

To introduce our state transformer semantics of assignments and ODEs, we first describe our store model. We use lenses [59, 10, 21] to algebraically characterise the hybrid store. Through an axiomatic approach to accessing and mutating functions, lenses allow us to locally manipulate program

stores [22] and algebraically reason about program variables [23, 28]. Formally, a lens x with source S and view V , denoted x :: V ⇒ S , is a pair of functions ( get x , put x ) with get x : S → V and put x : V → S → S such that

$$g e t _ { x } \left ( p u t _ { x } \, v \, s \right ) = v , \quad p u t _ { x } \, v \circ p u t _ { x } \, v ^ { \prime } = p u t _ { x } \, v , \quad a n d \quad p u t _ { x } \left ( g e t _ { x } s \right ) \, s = s ,$$

for all v, v ′ ∈ V and s ∈ S . Usually, a lens x represents a variable, S is the system's state space and V is the value domain for x . Under this interpretation, get x returns the value of variable x while put x updates it. Yet, we sometimes interpret V as a subregion of S , making get x a projection or restriction and put x an overwriting or substituting function. For other state models using diverse variable lenses, such as arrays, maps and local variables, see [23, 22]. Lenses x, x ′ :: V ⇒ S are independent , x ▷ ◁ x ′ , if put x u ◦ put x ′ v = put x ′ v ◦ put x u , for all u, v ∈ V , that is, these operations commute on all states.

We model expressions and assertions used in program syntax as functions e : S → V , which semantically are queries over the store S returning a value of type V . We can use the get function to perform such queries by 'looking up the value of variables'. For instance, if x, y :: R ⇒S model independent program variables, and c ∈ R is a constant, then the function λs. ( get x s ) 2 +( get y s ) 2 ≤ c 2 represents the 'expression' x 2 + y 2 ≤ c 2 . Then, function evaluation corresponds to computing the value of the expression at state s ∈ S .

With this representation, the state transformer λs. { put x ( e s ) s } models a program assignment, denoted by x := e . More generally, we turn deterministic functions σ : S → S into state transformers via function composition with the Kleisli unit, which we denote by ⟨ σ ⟩ = η S ◦ σ . Then, representing expressions e as functions e : S → V , our model for variable assignments becomes ( x := e ) = ⟨ λs. put x ( e s ) s ⟩ .

## 3.4 Model for evolution commands

To derive in our framework a state transformer semantics S → P S for evolution commands x ′ = f & G , observe that a flow's orbit map γ φ : C → P C with γ φ ⃗ c = { φt⃗ c | t ∈ T } is already a state transformer on C . It sends each ⃗ c in the continuous state space C to the reachable states of the trajectory φ ⃗ c . Based on the relationship between flows and the solutions to IVPs from Subsection 3.1, we can generalise this state transformer to a set of all points Xt of the solutions X of the system of ODEs represented by f , i.e. X ′ t = f t ( Xt ) , with initial condition Xt 0 = ⃗ c over an interval U⃗ c around t 0 ( t 0 ∈ U⃗ c ). Moreover, in line with d L , the solutions should remain within the guard or boundary condition G : ∀ τ ∈ U⃗ c. τ ≤ t ⇒ G ( Xτ ) . Thus, to specify dynamical systems via ODEs f instead of flows φ , we define the generalised guarded orbits map [40]

$$\gamma ^ { f } \, G U t _ { 0 } \, \vec { c } = \{ X \, t | \, t \in U \, \vec { c } \wedge X \in i v p \text {-} s o l s \, U \, f \, t _ { 0 } \, \vec { c } \wedge \mathcal { P } \, X \left ( \downarrow _ { U \, \vec { c } } \right ) \subseteq G \} ,$$

that also introduces guards G , initial conditions t 0 , and intervals U⃗ c . The set t ↓ T is a downward closure t ↓ T = { τ ∈ T | τ ≤ t } which in applications becomes the interval [0 , t ] = { τ | 0 ≤ τ ≤ t } because we usually fix U⃗ c = R ≥ 0 = { τ | τ ≥ 0 } for all ⃗ c ∈ C . This is why we also abuse notation and write constant interval functions λ⃗ c. T simply as T . Notice that when the flow φ for f exists, γ f ⊤ T 0 ⃗ c = γ φ ⃗ c .

Lenses support algebraic reasoning about variable frames: the set of variables that a hybrid program can modify. In particular, they allow us to split the state space into continuous and discrete parts. We explain a lens-based lifting of guarded orbits γ f GU t 0 from the continuous space C → P C to the full state space S in Subsection 5.2. This produces our state transformer for evolution commands ( x ′ = f & G ) t 0 U : S → P S . Intuitively, it maps each state s ∈ S to all X -reachable states within the region G , where X solves the system of ODEs specified by f , and leaves intact the non-continuous part of S . Having the same type as the above-described state transformers enables us to seamlessly use the same operations on ( x ′ = f & G ) t 0 U . This also enables us to do modular verification condition generation (VCG) of hybrid systems as described below.

Example 2. In this example, we use a hybrid program blood\_sugar to model an idealised machine controlling the concentration of glucose in a patient's body. Hybrid programs are often split into discrete control ctrl and continuous dynamics dyn . Their composition is then wrapped in a finite iteration: blood\_sugar = ( ctrl ; dyn ) ∗ . For the control, we use a conditional statement reacting to the patient's blood-glucose. Concretely, the program

$$c t r l = i f \ g \leq g _ { m } \ t h e n \ g \colon = g _ { M } \ e l s e \ s k i p$$

states that if the value of the patient's blood-glucose concentration g is below a certain warning threshold g m ≥ 0 , the maximum healthy dose of insulin, represented as an immediate spike to the patient's glucose g := g M , is injected into the patient's body. Otherwise, the patient is fine and the machine does nothing. The continuous variable g follows the dynamics in Example 1: y ′ t = a · y t + b . We assume a = -1 and b = 0 so that the concentration of glucose decreases over time. This results in the evolution command dyn = ( g ′ = -g & ⊤ ) 0 R ≥ 0 which we abbreviate as dyn = ( g ′ = -g ) . Formally, the assignment g := g M is the state transformer λs. { put g g M s } , the test g ≤ g m is the predicate λs. get g s ≤ g m , and the evolution command g ′ = -g is the orbit map γ φ lifted to the whole space S , where φtc = c · e -t for all t ∈ R .

## 3.5 Predicate transformer semantics

Finally, we extend our state transformer semantics to a predicate transformer ( B S → B S ) semantics for verification condition generation (VCG). Concretely, we define two predicate transformers and use the definition of the first one for deriving partial correctness laws, including the rules of Hoare logic, and the definition of the second one for deriving reachability laws. We also exemplify the application of these to VCG.

## 3.5.1 Forward boxes

We define dynamic logic's forward box or weakest liberal precondition (wlp) |-] -operator | α ] : B S → B S as | α ] Qs ⇔ ( ∀ s ′ . s ′ ∈ αs ⇒ Qs ′ ) , for α : S → P S and Q : S → B . It is true for those initial system's states that lead to a state satisfying Q after executing α , if α terminates. Well-known wlp -laws are derivable and simple consequences from this and our previous definitions [40]. These laws allow us to automate and do VCG much more efficiently than by doing Hoare Logic since all of them, except for the loop rule, are equational simplifications from left to right:

| (wlp-skip) | | skip ] Q | = | Q |

|--------------|-------------------|-------------------------------------------|-----|

| (wlp-abort) | | abort ] Q | = | ⊤ |

| (wlp-test) | | ¿ P ?] Q | = P ⇒ Q | |

| (wlp-assign) | | x := e ] Q | = Q [ e/x ] | |

| (wlp-seq) | | α ; β ] Q | = | α ] | β ] Q | |

| (wlp-choice) | | α ⊓ β ] Q | = | α ] Q ∧ | β ] Q | |

| (wlp-loop) | | loop α ] Q | = ∀ n. | α n ] Q | |

| (wlp-cond) | α else β ] Q | = ( T ⇒| α ] Q ) ∧ ( ¬ T ⇒| β ] | |

Here, n is a natural number (i.e. n ∈ N ), loop α is simply α ∗ , and Q [ e/x ] is our abbreviation for the function λs. Q ( put x ( e s ) s ) that represents the value of Q after variable x has been updated by the value of evaluating e on s ∈ S . We write this semantic operation as a substitution to resemble Hoare logic (see Section 4.3). Similarly, the wlp -law for evolution commands informally corresponds to

$$\begin{array} { r l } { \left ( w p - e v o l \right ) } & \left | \left ( x ^ { \prime } = f \, \& \, G \right ) _ { U } ^ { t _ { 0 } } \right ] Q \, s } & \iff } & { \forall X \in i v p - s o l s \, U \, f \, t _ { 0 } \, s . \, \forall t \in U \, s . } \\ & ( \forall \tau \in t \downarrow _ { U s } . \, G \left ( X \, \tau \right ) ) \Rightarrow Q \left ( X \, t \right ) . } \end{array}$$

That is, a postcondition Q holds after an evolution command starting at ( t 0 , s ) , if and only if, the postcondition holds Q ( Xt ) for all solutions to the IVP X ′ t = f t ( Xt ) , Xt 0 = s , for all times in the interval t ∈ U s whose previous times respect G . Notice that, if there is a flow φ : T →C → C for f and U = T = R ≥ 0 , this simplifies to

$$\begin{array} { r l } { ( w p - f o w ) } & | x ^ { \prime } = f \, \& \, G ] \, Q \, s } & \Leftrightarrow } & ( \forall t \geq 0 . \, ( \forall \tau \in [ 0 , t ] . \, G \left ( \varphi _ { s } \, \tau \right ) ) \Rightarrow Q \left ( \varphi _ { s } \, t \right ) . } \end{array}$$

See Section 5 for the formal version of these laws.

## 3.5.2 Hoare triples

It is well-known that Hoare logic can be derived from the forward box operator of dynamic logic [35]. Thus, we can also write partial correctness specifications as Hoare-triples with our forward box operators via { P } α { Q } ⇔ ( P ⇒ wlp αQ ) . From our wlp -laws and definitions, the Hoare logic rules below hold:

| (h-skip) | | { P | } skip { P } |

|------------|--------------------------------------------------------------|-------|-----------------------------------|

| (h-abort) | | { | P } abort { Q } |

| (h-test) | | | { P } ¿ Q ? { P ∧ Q } |

| (h-assign) | | | { Q [ e/x ] } x := e { Q } |

| (h-seq) | { P } α { R } ∧ { R } β { Q } | ⇒ { | P } α ; β { Q } |

| (h-choice) | { P } α { Q } ∧ { P } β { Q } | ⇒ | { P } α ⊓ β { Q } |

| (h-loop) | { I } α { I } | ⇒ | { I } loop α { I } |

| (h-cons) | ( P 1 ⇒ P 2 ) ∧ ( Q 2 ⇒ Q 1 ) ∧{ P 2 } α { Q 2 } | ⇒ { | P 1 } α { Q 1 } |

| (h-cond) | { T ∧ P } α { Q } ∧{¬ T ∧ P } β { Q } | ⇒ | { P } if T then α else |

| (h-while) | { T ∧ I } α { I } | ⇒ | { I } while T do α {¬ T ∧ |

| (h-whilei) | ( P ⇒ I ) ∧ ( I ∧ ¬ T ⇒ Q ) ∧{ I ∧ T } α { I } | ⇒ { | P } while T do α inv |

| (h-loopi) | ( P ⇒ I ) ∧ ( I ⇒ Q ) ∧{ I } α { I } | ⇒ { | P } loop α inv I { Q } |

| (h-evoli) | ( P ⇒ I ) ∧ ( I ∧ G ⇒ Q ) ∧{ I } ( x ′ = f & G ) t 0 U { I } | ⇒ | { P } ( x ′ = f & G ) t 0 U inv I |

| (h-conji) | { I } α { I } ∧ { J } α { J } | ⇒ | { I ∧ J } α { I ∧ J } |

| (h-disji) | { I } α { I } ∧ { J } α { J } | ⇒ | { I ∨ J } α { I ∨ J } |

where α inv I is simply α with the annotated invariant I and it binds less than any other program operator, e.g. { P } loop α inv I { Q } = { P } ( loop α ) inv I { Q } .

For automating VCG, the wlp -laws are preferable over the Hoare-style rules since the laws can be added to the proof assistant's simplifier which rewrites them automatically. However, when loops and ODEs are involved, we use the rules (h-whilei), (h-loopi) and (h-evoli). In particular, two workflows emerge for discharging ODEs. If Picard-Lindelöf holds, that is, if there is a unique solution to the system of ODEs and it is known, the law (wlp-flow) is the best choice. Otherwise, we employ the rule (h-evoli) if an invariant is known. See Section 6.2 for a procedure guaranteeing the existence of flows or Section 5.3 for a procedure determining invariance for evolution commands.

Example 3. We prove that I s ⇔ get g s ≥ 0 , or simply g ≥ 0 , is an invariant for the program blood\_sugar = loop ( ctrl ; dyn ) from Example 2. That is, we show that { I } blood\_sugar { I } . We start applying (h-loopi) and proceed with wlp-laws:

$$& \{ I \} \ l o o p \ ( c t r { 1 } ; d y n ) \ i n v \ I \ \{ I \} \\ & \Leftrightarrow ( I \Rightarrow I ) \land ( I \Rightarrow | c t r { 1 } ; d y n ] \, I ) \land ( I \Rightarrow I ) \\ & = \left ( \forall s . \ I \ s \Rightarrow | i f \ g \leq g _ { m } \text { then } g \colon = g _ { M } \ e l s e \ s k i p \right ] | g ^ { \prime } = - g ] \, I \ s ) \\ & = \left ( \forall s . \ I \ s \Rightarrow \left ( g \leq g _ { m } \Rightarrow | g \colon = g _ { M } \right ] | g ^ { \prime } = - g ] \, I \ s \right ) \land \left ( g > g _ { m } \Rightarrow | g ^ { \prime } = - g ] \, I \ s \right ) ,$$

where the first equality applies (wlp-seq) and unfolds the definition of ctrl and dyn . The second follows by (wlp-cond). Next, given that φtc = c · e -t is the flow for g ′ = -g :

$$\left | g ^ { \prime } = - g \right ] I s \Leftrightarrow ( \forall t \geq 0 . \, I [ \varphi t c / g ] ) \Leftrightarrow ( \forall t \geq 0 . \, g \cdot e ^ { - t } \geq 0 ) \Leftrightarrow g \geq 0 ,$$

for all s ∈ S by (wlp-flow), the lens laws, and because G = ⊤ and k · e -t ≥ 0 ⇔ k ≥ 0 . Thus, the conjuncts above simplify to

$$\begin{array} { l l } { g \leq g _ { m } \Rightarrow ( | g ^ { \prime } = - g ] \, I ) [ g _ { M } / g ] } & { g > g _ { m } \Rightarrow | g ^ { \prime } = - g ] \, I \, s } \\ { = g \leq g _ { m } \Rightarrow ( g \geq 0 ) [ g _ { M } / g ] } & { = g > g _ { m } \Rightarrow g \geq 0 } \\ { = ( g \leq g _ { m } \Rightarrow g _ { M } \geq 0 ) = \top , } & { = \top , } \end{array}$$

by (wlp-assign), and because g m , g M ≥ 0 . Thus, ( I ⇒| ctrl ; dyn ] I ) = ⊤ .

## 3.5.3 Forward diamonds

Here we add to our VCG approach by including forward diamonds in our verification framework. Our VCG laws from Sections 3.5.1 and 3.5.2 help users prove partial correctness specifications. Yet, our approach is generic and extensible and can cover other types of specifications [33, 40, 77]. For instance, we have integrated the forward diamond |-⟩ -predicate transformer, defined as | α ⟩ Qs ⇔ ( ∃ s ′ . s ′ ∈ αs ∧ Qs ′ ) . It holds if there is a Q -satisfying state of α reachable from s . Due to their semantics, forward diamonds enable us to reason about progress and reachability properties. In applications, this implies that our tool supports proofs describing worst and best case scenarios stating that the modelled system can evolve into an undesired/desired state. See Section 8 for an example showing progress for a dynamical system. We formalise and prove the forward diamonds laws below which are also direct consequences of the duality law | α ⟩ Q = ¬| α ] ¬ Q . The example immediately after them merely illustrates their seamless application.

$$\begin{array} { r l r } { ( \text fdia-skip) } & | skip\rangle Q } & = } & Q } \\ { ( \text fdia-abort) } & | abort\rangle Q } & = } & \perp } \\ { ( \text fdia-test) } & | \dot { \ell } P \rangle Q } & = } & P \wedge Q } \\ { ( \text fdia-assign) } & | x \colon = e\rangle Q } & = } & Q [ e/x ] } \\ { ( \text fdia-seq) } & | \alpha ; \beta \rangle Q } & = } & | \alpha \rangle | \beta \rangle Q } \\ { ( \text fdia-choice) } & | \alpha \sqcap \beta \rangle Q } & = } & | \alpha \rangle Q \vee | \beta \rangle Q } \\ { ( \text fdia-loop) } & | loop \alpha \rangle Q } & = } & \exists n . | \alpha ^ { n } \rangle Q } \\ { ( \text fdia-cond) } & | if T then \alpha else \beta \rangle Q } & = } & ( T \wedge | \alpha \rangle Q ) \vee ( \neg T \wedge | \beta \rangle Q ) . } \end{array}$$

Additionally, the (informal) diamond law for evolution commands is

$$\begin{array} { r l } { ( f d i a - e v o l ) } & \left | ( x ^ { \prime } = f \, \& \, G ) _ { U } ^ { t _ { 0 } } \right \rangle Q \, s } & \exists X \in i v p - s o l s \, U \, f \, t _ { 0 } \, s . \, \exists t \in U \, s . } \\ & \left ( \forall \tau \in t \downarrow _ { U \, s } . \, G \, ( X \, \tau ) \right ) \Rightarrow Q \, ( X \, t ) . } \end{array}$$

and the corresponding law for flows φ : T →C → C of f and U = T = R ≥ 0 is

$$\begin{array} { r l } { ( f d i a - \, f o w ) } & | x ^ { \prime } = f \, \& \, G \rangle \, Q \, s } & \Leftrightarrow } & ( \exists t \geq 0 . \, ( \forall \tau \in [ 0 , t ] . \, G \left ( \varphi _ { s } \, \tau \right ) ) \Rightarrow Q \left ( \varphi _ { s } \, t \right ) ) . } \end{array}$$

Example 4. A similar argument as that in Example 3 allows us to prove the inequality I ⇒ | blood\_sugar ⟩ I where I s ⇔ ( g ≥ 0) and blood\_sugar = loop ( ctrl ; dyn ) . Namely, we observe that by (fdia-evol), the forward diamond of g ′ = -g and I becomes

$$\left | g ^ { \prime } = - g \right \rangle I \, s \Leftrightarrow ( \exists t \geq 0 . \, ( I [ g \cdot e ^ { - t } / g ] ) \Leftrightarrow ( \exists t \geq 0 . \, g \cdot e ^ { - t } \geq 0 ) \Leftrightarrow g \geq 0 ,$$

for all s ∈ S . Hence, the conjuncts below simplify as shown:

$$\begin{array} { l l } { g \leq g _ { m } \wedge ( | g ^ { \prime } = - g \rangle \, I ) [ g _ { M } / g ] } & { g _ { s } > g _ { m } \wedge | g ^ { \prime } = - g \rangle \, I \, s } \\ { = g \leq g _ { m } \wedge ( g \geq 0 ) [ g _ { M } / g ] } & { = g _ { s } > g _ { m } \wedge g _ { s } \geq 0 } \\ { = g \leq g _ { m } \wedge g _ { M } \geq 0 = g \leq g _ { m } , } & { = g _ { s } > g _ { m } . } \end{array}$$

Therefore, by backward reasoning with the diamond laws, we have

$$\begin{array} { r l } & { I \Rightarrow | l o o p ( c t r 1 ; d y n ) \ i n v \ I \rangle I } \\ & { \Leftarrow I \Rightarrow | c t r 1 ; d y n \rangle I } \\ & { = \left ( \forall s . \, I \, s \Rightarrow | i f \ g \leq g _ { m } \ t h e n \ g \colon = g _ { M } \ e l s e \ s k i p \right ) | g ^ { \prime } = - g \rangle I \, s \right ) } \\ & { = \left ( \forall s . \, I \, s \Rightarrow \left ( g \leq g _ { m } \land | g \colon = g _ { M } \rangle | g ^ { \prime } = - g \rangle I \, s \right ) \vee \left ( g > g _ { m } \land | g ^ { \prime } = - g \rangle I \, s \right ) \right ) } \\ & { = \left ( \forall s . \, I \, s \Rightarrow g \leq g _ { m } \lor g > g _ { m } \right ) = \top , } \end{array}$$

where the first implication follows by a rule analogous to (h-loopi) for diamonds.

Thus, we have shown that I ⇒| blood\_sugar ⟩ I .

We have summarised our approach to hybrid systems verification in general purpose proof assistants [27, 40]. This is the basis for describing our contributions for the rest of this article, included among them, the formalisation of forward diamonds into IsaVODEs. Although a similar formalisation has been done before [77], our implementation is more automated due to its use of standard types, e.g. Isabelle predicates ( S → B ), that have had more support over time. Thus, our formalisation increases the proof capabilities of our Isabelle-based framework and its expressivity, since the forward diamonds enable us to assert the progress of hybrid programs. Other extensions to our framework not described here are the addition of d L 's nondeterministic assignments and their corresponding partial correctness and progress laws, as well as the formalisation of variant-based rules on the reachability of finite iterations and while-loops. See previous work [9, 33] for examples with while-loops that our verification framework could tackle.

## 4 Hybrid Modelling Language

Here, we describe our implementation of a hybrid modelling language, which takes advantage of lenses and Isabelle's flexible syntax processing features. Beyond the advantages already mentioned, lenses enhance our hybrid store models in several ways. They allow us to model frames-sets of mutable variables-and thus support local reasoning. They also allow us to project parts of the global store onto vector spaces to describe continuous dynamics. These projections can be constructed using three lens combinators: composition, sum and quotient.

The projections particularly allow us to use hybrid state spaces, consisting of both continuous components with a topological structure (e.g. R n ), and discrete components using Isabelle's flexible type system. This in turn allows our tool to support more general software engineering notations, which typically make use of object-oriented data structures [57]. Moreover, the projections allow us to reason locally about the continuous variables, since discrete variables are outside of the frame during continuous evolution.

<details>

<summary>Image 5 Details</summary>

### Visual Description

Icon/Small Image (22x22)

</details>

<details>

<summary>Image 6 Details</summary>

### Visual Description

Icon/Small Image (22x23)

</details>

## 4.1 Dataspaces

Most modelling and programming languages support modules with local variables, constant declarations, and assumptions. We have implemented an Isabelle command that automates the creation of hybrid stores, which provide a local theory context for hybrid programs. We call these dataspaces , since they are state spaces that can make use of rich data structures in the program variables.

```

dataspace store = [parent_store +]

constants c1::C1 ... c_n::C_n

assumes a1::P1 ... a_n::P_n

variables x1::T1 ... x_n::T_n

```

A dataspace has constants c i : C i , named constraints a i : P i and state variables x i : T i . In its context, we can create local definitions, theorems and proofs, which are hidden, but accessible using its namespace. Internally, the dataspace command creates an Isabelle locale with fixed constants and assumptions, inspired by previous work by Schirmer and Wenzel [74]. Like locales, dataspaces support a form of inheritance, whereby constants, assumptions, and variables can be imported from an existing dataspace (e.g. parent\_store ) and extended with further constants, assumptions, and variables.

̸

Each declared state variable is assigned a lens x i :: T i ⇒S , using the abstract store type S with the lens axioms from Secion 3.3 as locale assumptions. We also generate independence assumptions, e.g. x i ▷ ◁ x j for x i = x j , that distinguish different variables semantically [23]. Formally, x ▷ ◁ y if put x u ◦ put y v = put y v ◦ put x u for all u, v ∈ V . That is, two lenses are independent if their put operations commute on all states.

## 4.2 Lifted Expressions

As discussed in Section 3.3, expressions in our hybrid modelling language are modelled by functions of type S → V . Assertions are therefore state predicates, or 'expressions' where V = B . Discharging VCs requires showing that assertions hold for all states. For example, the law (wlp-test) requires us to prove a VC of the form P ⇒ Q , that is, ∀ s. P s ⇒ Qs . Also, if we have state variables x and y , then proving the assertion x + y ≥ x corresponds to proving the HOL predicate get x s + get y s ≥ get x s for some arbitrary-but-fixed state s , which can readily by discharged using one of Isabelle's proof methods ( simp , auto etc.). This process is automated by methods expr-simp and expr-auto .

Nevertheless, there remains a gap between the syntax used in typical programming languages and its semantic representation. Namely, users would prefer writing x 2 + y 2 ≤ c 2 over λs. ( get x s ) 2 + ( get y s ) 2 ≤ c 2 , and so, the main technical challenge is to seamlessly transform between the two. This can be achieved using Isabelle's syntax pipeline, which significantly improves the usability of our tool.

Isabelle's multi-stage syntax pipeline parses Unicode strings and transforms them into 'preterms' [46]: elements of the ML term type containing syntactic constants. These must be mapped to previously defined semantic constants by syntax translations, before they can be checked and certified in the Isabelle kernel. Printing reverses this pipeline, mapping terms to strings.

We automate the translation between the expression syntax (pre-terms) and semantics using parse and print translations implemented in Isabelle/ML, as part of our Shallow-Expressions component. It lifts pre-terms by replacement of free variables and constants, and insertion of store variables ( s ) and λ -binders. Its implementation uses the syntactic annotation ( t ) e to lift the syntactic term t to a semantic expression in the syntax translation rules. The syntax translation is described by the following equations:

$$( t ) _ { e } \rightleftharpoons [ ( t ) ^ { e } ] _ { e } , \quad ( n ) ^ { e } \rightleftharpoons \begin{cases} \lambda s . g e t _ { n } s & i f $ n $ i s a l e n s , \\ \lambda s . n s & i f $ n $ i s a n e x p r e s s i o n , \\ \lambda s . n & o t h e r w i s e , \end{cases} ( f t ) ^ { e } \rightleftharpoons \lambda s . f \left ( ( t ) ^ { e } s \right ) ,$$

<details>

<summary>Image 7 Details</summary>

### Visual Description

Icon/Small Image (22x23)

</details>

where p ⇌ q means that pre-term p is translated to term q , and q printed as p . Moreover, [ -] e is a constant that marks lifted expressions that are embedded in terms. When the translation encounters a name n (i.e. a free variable or constant), it checks whether n has a definition in the context. If it is a lens (i.e. n :: V ⇒ S ) , then it inserts a get . If it is an expression (i.e. n : S → V ), then it is applied to the state. Otherwise, it leaves the name unchanged, assuming it to be a constant. Function applications are also left unchanged by ⇌ . For instance, (( x + y ) 2 /z ) e ⇌ [ λ s . ( get x s + get y s ) 2 /z ] e for variables (lenses) x and y and constant z . Once an expression has been processed, the resulting λ -term is enclosed in [ -] e . The pretty printer can then recognise a lifted term and print it. This process is fully automated, so that users see only the sugared expression, without the λ -binders, in both the parser and terms' output during the proving process.

## 4.3 Substitutions

In our semantic approach, substitutions correspond to functions σ : S → S on the store S . This interpretation allows us to denote updates as a sequence of variable assignments. That is, instead of directly manipulating the store s : S with the lens functions, we provide more user-friendly program specifications with the notation σ ( x ⇝ e ) = λs. put x ( e s ) ( σ s ) . It allows us to describe assignments as sequences of updates: [ x 1 ⇝ e 1 , x 2 ⇝ e 2 , · · · ] = id ( x 1 ⇝ e 1 )( x 2 ⇝ e 2 ) · · · , for variable lenses x i :: V i ⇒S and 'expressions' e i : S → V i .

<details>

<summary>Image 8 Details</summary>

### Visual Description

Icon/Small Image (22x38)

</details>

Implicitly, any variable y not mentioned in such a substitution is left unchanged: y ⇝ y . We further write e [ v/x ] = e ◦ [ x ⇝ v ] , for x :: V ⇒ S , e : S → V ′ , and v : S → V , for the application of substitutions to expressions. This yields standard notations for program specifications, e.g. ( x := e ) = ⟨ [ x ⇝ e ] ⟩ and wlp ⟨ [ x ⇝ e ] ⟩ Q = Q [ e/x ] . Using an Isabelle simplification procedure (a 'simproc'), the simplifier can reorder assignments alphabetically according to their variable name, and otherwise reduce and evaluate substitutions during VCG [23]. We can extract assignments for x writing ⟨ σ ⟩ s x = get x ◦ σ so that, e.g. ⟨ [ x ⇝ e 1 , y ⇝ e 2 ] ⟩ s x reduces to e 1 when x ▷ ◁ y .

Example 5. We continue our blood glucose running example and formalise Example 2. First, we declare our problem variables and assumptions via our dataspace command. We name this dataspace glucose , and assume that there is a minimal warning threshold g m > 0 and a maximum dosage g M > g m . The patient's glucose is represented via the 'continuous' variable g .

```

dataspace glucose =

constants g_m :: real g_M :: real

assumes ge_0: "g_m > 0" and ge_gm: "g_M > g_m"

variables g :: real

```

Next, inside the glucose context we declare, via Isabelle's abbreviation command, the definition of the controller and the dynamics. Our shallow expressions hide the lens infrastructure and, from the user's perspective, the definitions are Isabelle abbreviations. Notice also, that our recently introduced 'substitution' notation allows us to explicitly specify the flow's behaviour on the continuous variable g . It also occurs implicitly in our declaration of the differential equation g ′ = g (see Section 5.2).

```

context glucose

begin

abbreviation "ctrl = IF g ≤ g$_ THEN g ::= g$_ ELSE skip"

abbreviation "dyn = {g' = -g}"

abbreviation "flow τ ≡ [g ~~ g * exp (- τ)]"

abbreviation "blood_sugar = LOOP (ctrl; dyn) INV (g ≥ 0)"

```

## end

Thus, our lens integrations provide a seamless way to formalise hybrid system verification problems in Isabelle. We explore their verification condition generation in Section 6.2.

## 4.4 Vectors and matrices

Vectors and matrices are ubiquitous in engineering applications and users of our framework would appreciate using familiar concepts and notations to them. This is possible due to our modelling language. In particular, vectors are supported by HOL-Analysis using finite Cartesian product types, (A,n) vec with the notation A^n . Here, A is the element type, and n is a numeral type denoting the dimension. The type of vectors is isomorphic to [ n ] → A where [ n ] = { 1 , . . . , n } . A matrix is simply a vector of vectors, A^m^n , hence a map [ m ] → [ n ] → A . Building on this, we supply notation [[x11,...,x1n],...,[xm1,...,xmn]] for matrices and means for accessing coordinates of vectors via hybrid program variables [24]. This notation supports the inference of vector and matrices' dimensions conveyed by the type variables.

Vectors and matrices are often represented as composite objects consisting of several values, e.g. p = ( p x , p y ) ∈ R 2 . When writing specifications, it is often convenient to refer to and manipulate these components individually. We can denote such variables using component lenses and the lens composition operator. We write x 1 # x 2 :: S 1 ⇒ S 3 , for x 1 :: S 1 ⇒ S 2 , x 2 :: S 2 ⇒ S 3 , for the forward composition and 1 S :: S ⇒ S for the units in the lens category, but do not show formal definitions [23]. Intuitively, the composition selects part of a larger store as illustrated below.

# We model vectors in R n as part of larger hybrid stores, lenses v :: R n ⇒ S , and project onto coordinate v k :: R ⇒S using lens composition and a vector lens Π( i ) :: R ⇒ R n :

$$\Pi ( i ) & = ( g e t _ { \Pi ( i ) } , p u t _ { \Pi ( i ) } ) , \text { where} \\ g e t _ { \Pi ( i ) } & = ( \lambda s . \ v e c { - n } t h \ s \ i ) , \\ p u t _ { \Pi ( i ) } & = ( \lambda v \ s . \ v e c { - u p d } \ s \ i \ v ) ,$$

̸

and i ∈ [ n ] = { 1 , . . . , n } .The lookup function vec-nth : A n → [ n ] → A and update function vec-upd : A n → [ n ] → A → A n come from HOL-Analysis and satisfy the lens axioms (Section 3.3). Then, as an example, p x = Π(1) # p and p y = Π(2) # p for p :: R 2 ⇒ S , using # to first select the variable p and then the vector-part of the hybrid store. Intuitively, two vector elements are independent, Π( i ) ▷ ◁ Π( j ) iff they have different indices, i = j .

Example 6. To illustrate the use of vector variables, we model the dynamics and a controller for an autonomous boat. We refer readers to previous publications for the verification of an invariant for this system [24, 27]. The boat is manoeuvrable in R 2 and has a rotatable thruster generating a positive propulsive force f with maximum f max . The boat's state is determined by its position p = ( p x , p y ) , velocity v = ( v x , v y ) , and acceleration a = ( a x , a y ) . We describe this state with the following dataspace:

## dataspace AMV =

```

constants::R f_max::R assumes fmax:"f_max" ≥ 0"

variables p:"R vec[2]" v:"R vec[2]" a:"R vec[2]" φ::R s::R

wps::"(R vec[2]) list" org::"(R vec[2]) set" rs::R rh::R

```

This store model combines discrete and continuous variables and uses the alternative notation R vec[n] for a real-valued vector of dimension n . The dataspace specifies a variable for linear speed s , and a constant S for the boat's maximum speed. We also provide discrete variable wps for a list of points to pass through in the vehicle's path (way-point path), org for a set of points where

obstacles are located (obstacle register), and the requested speed and heading ( rs and rh ). Our dataspace allows us to declare variables p, v, a : R vec[2] and manipulate them using operations for vectors (see Section 5.2).

## 5 Local Reasoning

In this section, we describe our framework's support for local reasoning, which allows us to consider only parts of the state that are changed by a component in the verification. This improves the scalability of our approach, since we can decompose verification tasks into smaller manageable tasks, in an analogous way to separation logic [70]. We show how lenses can be used to characterise a program's frame: the set of variables which may be modified. We then explain how frames extend to evolution commands, such that variables with no derivative (or derivative 0) are outside of the frame. Next, we develop a framed version of differentiation, called framed Fréchet derivatives , which allows us to perform local differentiation with respect to a strict subset of the store variables. This, in turn, supports a method, framed differential induction, for proving invariants in the continuous part of the state space. Finally, we introduce a corresponding implementation of d L 's differential ghost rule [65] that augments systems of ODEs with fresh equations to aid invariant reasoning. This rule likewise supports frames.

## 5.1 Frames

Lenses support algebraic manipulations of variable frames. A frame is the set of variables that a program is permitted to change. Variables outside of the frame are immutable. We first show how variable sets can be modelled via lens sums. Then we recall a predicate characterising immutable program variables [26]. Most importantly, we derive a frame rule à la separation logic for local reasoning with framed variables.

Variable lenses x 1 :: V 1 ⇒ S and x 2 :: V 2 ⇒ S can be combined into lenses for variable sets with lens sum [23], x 1 ⊕ x 2 :: V 1 ×V 2 ⇒ S if x 1 ▷ ◁ x 2 via get x 1 ⊕ x 2 ( s 1 , s 2 ) = ( get x 1 s 1 , get x 2 s 2 ) and put x 1 ⊕ x 2 ( v 1 , v 2 ) = put x 1 v 1 ◦ put x 2 v 2 This combines two independent lenses into a single lens with a product view. It can be used to model composite variables, for example, ( x ⊕ y ) := ( e, f ) is a simultaneous assignment to x and y . We can decompose such a composite update into two atomic updates, with [( x, y ) ⇝ ( e 1 , e 2 )] = [ x ⇝ e 1 , y ⇝ e 2 ] . We can also use lens sums to model finite sets, for example { x, y, z } is modelled as x ⊕ ( y ⊕ z ) . Each variable in such a sum may have a different type, e.g. { v x , ⃗ p } is a valid and well-typed construction.

<details>

<summary>Image 9 Details</summary>

### Visual Description

Icon/Small Image (22x23)

</details>

Lens sums are only associative and commutative up-to isomorphism of cartesian products. We need heterogeneous orderings and equivalences between lenses to capture this. We define a lens preorder [23], x 1 ⪯ x 2 ⇔∃ x 3 . x 1 = x 3 # x 2 that captures the part-of relation between x 1 :: V 1 ⇒S and x 2 :: V 2 ⇒S , e.g. v x ⪯ ⃗ v and p ⃗ ⪯ p ⃗ ⊕ ⃗ v . Lens equivalence ∼ = = ⪯∩⪰ then identifies lenses with the same shape in the store. Then, for variable set lenses up-to ∼ = , ⊕ models ∪ , ▷ ◁ models / ∈ , and ⪯ models ⊆ or ∈ . Since x 1 ⪯ x 1 ⊕ x 2 and x 1 ⊕ x 2 ∼ = x 2 ⊕ x 1 , with our variable set interpretation, we can show, e.g., that x ∈ { x, y, z } , { x, y } ⊆ { x, y, z } , and { x, y } = { y, x } . Hence we can use these lens combinators to construct and reason about variable frames.

<details>

<summary>Image 10 Details</summary>

### Visual Description

Icon/Small Image (25x25)

</details>

We can use variable set lenses to capture the frame of a program. Let A :: V ⇒ S be a lens modelling a variable set. For s 1 , s 2 ∈ S let s 1 ≈ A s 2 hold if s 1 = s 2 up-to the values of variables in A , that is get A s 1 = get A s 2 . Local reasoning within A uses the lens quotient [22] x A , which localises a lens x :: V ⇒ S to a lens V ⇒ C . Assuming x ⪯ A , it yields x 1 :: V ⇒ C such that x = x 1 A . For example, p x p = Π(1) with C = R n . .

# We can also use lenses to describe when a variable does not occur freely in an expression or predicate with the unrestriction property: A♯ e ⇔ ∀ v. e ◦ ( put A v ) = e [23]. A variable x is unrestricted in e , written x♯ e , provides that e does not semantically depend on x for its evaluation. For example, x♯ ( y +1) , when x ▷ ◁ y , since y +1 does not mention x . We also define ( -A ) ♯ e ⇔

∀ s 1 s 2 v. e ( put A v s 1 ) = e ( put A v s 2 ) as the converse, which requires that e does not depend on variables outside of A .

Next, we capture the non-modification of variables by a program. For α : S → P S and an expression (or predicate) e we define α nmods e ⇔ ( ∀ s 1 ∈ S . e ( s 1 ) = e ( s 2 )) , which describes when e does not depend on the mutable variable of α . The expression e can characterise a set of variables giving the set of immutable variables. For example, we have it that ( x := x +1) nmods ( y, z ) , when x ▷ ◁ y and x ▷ ◁ z , since this assignment changes only x and no other variables.

Intuitively, non-modification α nmods x , where x is a variable lens, is equivalent to the specification for { x = v } α { x = v } for fresh logical variable v . This means that x retains its initial value in any final state of α . We prove the following laws for non-modification:

$$\frac { A \ddot { \mathfrak { h } } \, x } { ( x \colon = e ) \, n m o d s \, A } & \quad \frac { - } { \dot { \mathfrak { h } } \, P ? \, n m o d s \, A } \quad \frac { \alpha \, n m o d s \, A } { ( \alpha \, \ddot { \mathfrak { h } } \, \beta ) \, n m o d s \, A } \\ & \frac { \alpha \, n m o d s \, A \, \beta \, n m o d s \, A } { ( \alpha \, \ddot { \mathfrak { h } } \, \beta ) \, n m o d s \, A } & \quad \frac { \alpha \, n m o d s \, A } { \alpha ^ { * } \, n m o d s \, A } \quad \frac { \alpha \, n m o d s \, B \, \ A \preceq B } { \alpha \, n m o d s \, A }$$

The variables in A are immutable for assignment x := e provided x is not in A . A test ¿ P ? modifies no variables, and therefore any set A is immutable. For the programming operators, non-modification is inherited from the parts. The final law shows that we can always shrink the specified set of immutable variables.

With these concepts in place, we derive two frame rules for local reasoning:

$$\frac { \alpha \, n m o d s \, I } { \{ P \wedge I \} \, \alpha \, \{ Q \wedge I \} } \quad \frac { \alpha \, n m o d s \, A } { \{ P \wedge I \} \, \alpha \, \{ Q \wedge I \} } \quad \frac { \alpha \, n m o d s \, A } { \{ P \wedge I \} \, \alpha \, \{ Q \wedge I \} }$$

If program α does not modify any variables mentioned in I , then I can be added as an invariant of α . In the first law, non-modification is checked directly of the variables used by I . In the second, which is an instance of the first, we instead infer the immutable variables of A and check that I does not depend on variables outside of A . With these laws, we can import invariants for a program fragment that refer to only those variables that are left unchanged. This allows us to perform modular verification, whereby we need only consider invariants of variables used in a component. In the following section, we show how this can be applied to systems of ODEs.

## 5.2 Framed evolution commands

We extend previous components [40] for continuous dynamics with function framing techniques that project onto parts of the store. That is, we formally describe the implementation of the evolution command state transformer using the lens infrastructure described so far [27]. Specifically, we use framing to derive continuous vector fields ( C → C ) and flows from state-wide 'substitutions' ( S → S ). We also add a non-modification rule for evolution commands. This supports local reasoning where evolution commands modify only continuous variables and leave discrete ones-outside a frameunchanged.

Framing uses the second interpretation of lenses where the frame C is a subregion of S that we can access through x :: C ⇒ S . We view the store as divided into its continuous C and discrete parts and localise continuous variables to the former. The continuous part must have sufficient topological structure to support derivatives and is thus restricted to certain type constructions like normed vector spaces or the real numbers. However, the discrete part may use any type defined in HOL. With this view, we can use get x and put x to lift entities defined on C or project those in S . For instance, given any s ∈ S and a predicate G : S → B (like the guards in evolution commands), there is a

corresponding restriction G ↓ s x : C → B such that G ↓ s x ⃗ c ⇔ G [ ⃗ c/x ] ⇔ G ( put x ⃗ c s ) . Conversely, for s ∈ S and X , a set of vectors in C , the set X ↑ s x = P ( λ⃗ c. put x ⃗ c s ) X has values in S .

More importantly, we can specify ODEs and flows via time-dependent deterministic functions (Section 4.3's substitutions). Given a lens x :: C ⇒ S from global store S onto local continuous store C and s ∈ S , we can turn any state-wide function f : T → S → S into a vector field f ↓ s x : T →C → C by framing it via f ↓ s x t⃗ c = get x (( f t ) ( put x ⃗ c s )) .

Example 7. Suppose S = R 2 × R 2 × R 2 × S ′ and p, v, a :: R 2 ⇒ S . The variable set lens A = ( p ⊕ v ⊕ a ) :: R 2 × R 2 × R 2 ⇒ S frames the continuous part of the state space S . The substitution f : T → S → S such that f t = [ p ⇝ v, v ⇝ a, a ⇝ 0] then behaves as the identity function on S ′ and becomes the vector field f ↓ s A : T → R 2 × R 2 × R 2 → R 2 × R 2 × R 2 . Hence, f naturally describes the ODEs p ′ t = v t, v ′ t = at, a ′ t = 0 after framing.

Using the previously described liftings and projections, we formally define the semantics of evolution commands. For this, we only need to lift the definition of generalised guarded orbits maps (Section 3) on the continuous C to the larger space S . Thus, for substitution f : T → S → S , predicate G : S → B , interval function U : C → P R , and t 0 ∈ R the state transformer S → P S modelling evolution commands is ( x ′ = f & G ) t 0 U s = ( γ f ↓ s x ( G ↓ s x ) U t 0 x s ) ↑ s x , or equivalently

$$\left ( x ^ { \prime } = f \, \& \, G \right ) _ { U } ^ { t _ { 0 } } s = \left \{ p u t _ { x } \left ( X \, t \right ) s \left | \begin{array} { l } { t \in U \, x _ { s } \wedge X \in i v p \text {-sols} \, U \left ( f \downarrow _ { x } ^ { s } \right ) t _ { 0 } \, x _ { s } } \\ { \wedge \mathcal { P } \, X \left ( t \downarrow _ { U \, x _ { s } } \right ) \subseteq G \downarrow _ { x } ^ { s } } \end{array} \right \} ,$$

where we abbreviate get x s with x s . That is, evolution commands are state transformers that output those states whose discrete part remains unchanged from s but whose continuous part changes according to the ODEs' solutions within G . With this, the law (wlp-evol) formally becomes

$$\begin{array} { r l r } & { | ( x ^ { \prime } = f \, \& \, G ) _ { U } ^ { t _ { 0 } } ] \, Q \, s } & { \Leftrightarrow } & { \quad \forall X \in i v p \, - s o l s \, U \left ( f \downarrow _ { x } ^ { s } \right ) t _ { 0 } \, x _ { s } . \, \forall t \in U \, x _ { s } . } \\ & { \quad ( \forall \tau \in t \downarrow _ { U \, x _ { s } } . \, G [ X \, \tau / x ] ) \Rightarrow Q [ X \, t / x ] . } \end{array}$$

This says that the postcondition Q holds after an evolution command ( x ′ = f & G ) t 0 U for s ∈ S if every solution X to the IVP corresponding to ( t 0 , x s ) satisfies Q on every time t , provided G holds from the beginning of the interval t 0 ∈ U x s until t . Thus, VCG follows our description in Section 3: users must supply flows and evidence for Lipschitz continuity in order to obtain wlp s. We provide tactics that automate these processes in Section 6.

We use Isabelle's syntax translations to provide a natural syntax for specifying evolution commands. Users can write { x ′ 1 = e 1 , · · · , x ′ n = e n | G on U V @ t 0 } directly into the prover where each x i :: V i ⇒ S is a summand of the frame lens x = { x 1 , · · · , x n } :: C ⇒ S . Users can thus declare the ODEs in evolution commands coordinate-wise with lifted expressions e i : S → V i . They can also omit the parameters G , U , V and t 0 which defaults them to ⊤ , R ≥ 0 , C and 0 , respectively. If desired, they can also use product syntax ( x ′ 1 , · · · , x ′ n ) = ( e 1 , · · · , e n ) or vector syntax x ′ = e , and specify evolution commands using flows instead of ODEs with the notation { EVOL x = e τ | G } .

With these, non-modification of variables naturally extends to ODEs with the law

$$\frac { x \, \nmid A } { \{ x ^ { \prime } = e \, | \, G \} \, n m o d s \, A }$$

Specifically, any set of variables ( A ) without assigned derivatives in a system of ODEs is immutable. Then, by application of the frame rule, we can demonstrate that any assertion I that uses only variables outside of x is an invariant of the system of ODEs.

Example 8. We use the autonomous boat from Example 6 to illustrate the use of non-modification. A system of ODEs for the boat's state p, v, a may be specified as follows:

̸

```

abbreviation "ODE \equiv \{ p ^ { \prime } = v , v ^ { \prime } = a , a ^ { \prime } = 0 , \phi ^ { \prime } = \omega , \\ s ^ { \prime } = i f s \neq 0 $ t h e n ( v \cdot a ) / s $ l e s e \| a \| \\ | s ^ { * } _ { R } \ [ [ \sin ( \phi ) , \cos ( \phi ) ] ] = v \wedge 0 \leq s \wedge s \leq S \, \} "

</doctag>

```

We also write derivatives for ϕ and s . The derivative of the former is the angular velocity ω , which has the value arcos (( v + a ) · v/ ( ∥ v + a ∥ · ∥ v ∥ )) when ∥ v ∥ ̸ = 0 and 0 otherwise [24]. The linear acceleration ( s ′ ) is calculated using the inner product of v and a . If the current speed is 0 , then s ′ is ∥ a ∥ . Immediately after the derivatives, we also specify the guard or boundary condition that serves to constrain the relationship between the velocity vector and the heading ϕ . The guard states that the velocity vector v is equal to s multiplied with the heading unit-vector using scalar multiplication ( * R ) and our vector syntax. We also require that 0 ≤ s ≤ S , i.e. that the linear speed is between 0 and the maximum speed.

All other variables in the store remain outside the evolution frame and do not need to be specified. In particular, notice that the ODE above does not mention the requested speed variable rs. This is a discrete variable that is unchanged during evolution. Therefore, we can show: ODEnmods rs. Moreover, using the frame rule we can also demonstrate that rs > 0 is an invariant, i.e. { rs > 0 } ODE { rs > 0 } [27].

## 5.3 Frames and invariants for ODEs

As discussed in Section 3, an alternative to using flows for verification of evolution commands is finding and certifying invariants for them. Mathematically, evolution commands' invariants coincide with invariant sets for dynamical systems or d L 's differential invariants [40]. We abbreviate the statement ' I is an invariant for the evolution command ( x ′ = f & G ) t 0 U ' with the notation diff -inv xf GU t 0 I . In terms of Hoare logic, invariants for evolution commands satisfy

$$d i f f \text {-} i n v \, x \, f \, G U \, t _ { 0 } \, I \Leftrightarrow \{ I \} \, ( x ^ { \prime } = f \, \& \, G ) _ { U } ^ { t _ { 0 } } \, \{ I \} \, .$$