# Distributionally Robust Receive Combining

**Authors**: Shixiong Wang, Wei Dai, and Geoffrey Ye Li

> S. Wang, W. Dai, and G. Li are with the Department of Electrical and Electronic Engineering, Imperial College London, London SW7 2AZ, United Kingdom (E-mail: s.wang@u.nus.edu; wei.dai1@imperial.ac.uk; geoffrey.li@imperial.ac.uk).

This work is supported by the UK Department for Science, Innovation

and Technology under the Future Open Networks Research Challenge project

TUDOR (Towards Ubiquitous 3D Open Resilient Network).

Abstract

This article investigates signal estimation in wireless transmission (i.e., receive combining) from the perspective of statistical machine learning, where the transmit signals may be from an integrated sensing and communication system; that is, 1) signals may be not only discrete constellation points but also arbitrary complex values; 2) signals may be spatially correlated. Particular attention is paid to handling various uncertainties such as the uncertainty of the transmit signal covariance, the uncertainty of the channel matrix, the uncertainty of the channel noise covariance, the existence of channel impulse noises, the non-ideality of the power amplifiers, and the limited sample size of pilots. To proceed, a distributionally robust receive combining framework that is insensitive to the above uncertainties is proposed, which reveals that channel estimation is not a necessary operation. For optimal linear estimation, the proposed framework includes several existing combiners as special cases such as diagonal loading and eigenvalue thresholding. For optimal nonlinear estimation, estimators are limited in reproducing kernel Hilbert spaces and neural network function spaces, and corresponding uncertainty-aware solutions (e.g., kernelized diagonal loading) are derived. In addition, we prove that the ridge and kernel ridge regression methods in machine learning are distributionally robust against diagonal perturbation in feature covariance.

Index Terms: Wireless Transmission, Smart Antenna, Machine Learning, Robust Estimation, Robust Combining, Distributional Uncertainty, Channel Uncertainty, Limited Pilot.

I Introduction

In wireless transmission, detection and estimation of transmitted signals is of high importance, and combining at array receivers serves as a key signal-processing technique to suppress interference and environmental noises. The earliest beamforming solutions rely on the use of phase shifters (e.g., phased arrays) to steer and shape wave lobes, while advanced combining methods allow the employment of digital signal processing units, which introduce additional structural freedom (e.g., fully digital, hybrid, nonlinear, wideband) in combiner design and significant performance improvement in signal recovery [1, 2, 3].

In traditional communication systems, transmitted signals are discrete points from constellations. Therefore, signal recovery, commonly referred to as signal detection, can be cast into a classification problem from the perspective of statistical machine learning, and the number of candidate classes is determined by the number of points in the employed constellation. Research in this stream includes, e.g., [4, 5, 6, 7, 8, 9] as well as references therein, and the performance measure for signal detection is usually the misclassification rate (i.e., symbol error rate); representative algorithms encompass the maximum likelihood detector, the sphere decoding, etc. In another research stream, the signal recovery performance is evaluated using mean-squared errors (cf., signal-to-interference-plus-noise ratio), and the resultant signal recovery problem is commonly known as signal estimation, which can be considered as a regression problem from the perspective of statistical machine learning. By comparing the estimated symbols with the constellation points afterward, the detection of discrete symbols can be realized. For this case, till now, typical combining solutions include zero-forcing receivers, Wiener receivers (i.e., linear minimum mean-squared error receivers), Capon receivers (i.e., minimum variance distortionless response receivers), and nonlinear receivers such as neural-network receivers [10, 11, 12]. On the basis of these canonical approaches, variants such as robust beamformers working against the limited size of pilot samples and the uncertainty in steering vectors [13, 14, 15, 16, 17, 18] have also been intensively reported; among these robust solutions, the diagonal loading method [19], [14, Eq. (11)] and the eigenvalue thresholding method [20], [14, Eq. (12)] are popular due to their excellent balance between practical performance and technical simplicity.

Different from traditional paradigms, in emerging communication systems, e.g., integrated sensing and communication (ISAC) systems, transmitted signals may be arbitrary complex values and spatially correlated [21, 22, 23]. As a result, mean-squared error is a preferred performance measure to investigate the receive combining and estimation problem of wireless signals, which is, therefore, the focus of this article.

Although a large body of problems have been attacked in the area, the following signal-processing problems of combining and estimation in wireless transmission remain unsolved.

1. What is the relation between the signal-model-based approaches (e.g., Wiener and Capon receivers) and the data-driven approaches (e.g., deep-learning receivers)? In other words, how can we build a mathematically unified modeling framework to interpret all the existing digital receive combiners?

1. In addition to the limited pilot size and the uncertainty in steering vectors, there exist other uncertainties in the signal model: the uncertainty of the transmit signal covariance, the uncertainty of the communication channel matrix, the uncertainty of the channel noise covariance, the presence of channel impulse noises (i.e., outliers), and the non-ideality of the power amplifiers. Therefore, how can we handle all these types of uncertainties in a unified solution framework?

1. Existing literature mainly studied the robustness theory of linear beamformers against limited pilot size and the uncertainty in steering vectors [13, 14, 15, 16, 17, 18]. However, how can we develop the theory of robust nonlinear combiners against all the aforementioned uncertainties?

To this end, this article designs a unified modeling and solution framework for receive combining of wireless signals, in consideration of the scarcity of the pilot data and the different uncertainties in the signal model.

I-A Contributions

The contributions of this article can be summarized from the aspects of machine learning theory and wireless transmission theory.

In terms of machine learning theory, we give a justification of the popular ridge regression and kernel ridge regression (i.e., quadratic loss function plus squared- $F$ -norm regularization) from the perspective of distributional robustness against diagonal perturbation in feature covariance, which enriches the theory of trustworthy machine learning; see Theorems 2 and 3, as well as Corollaries 3 and 5.

In terms of wireless transmission theory, the contributions are outlined below.

1. We build a fundamentally theoretical framework for receive combining from the perspective of statistical machine learning. In addition to the linear estimation methods, nonlinear approaches (i.e., nonlinear combining) are also discussed in reproducing kernel Hilbert spaces and neural network function spaces. In particular, we reveal that channel estimation is not a necessary operation in receive combining. For details, see Subsection III-A.

1. The presented framework is particularly developed from the perspective of distributional robustness which can therefore combat the limited size of pilot data and several types of uncertainties in the wireless signal model such as the uncertainty in the transmit power matrix, the uncertainty in the communication channel matrix, the existence of channel impulse noises (i.e., outliers), the uncertainty in the covariance matrix of channel noises, the non-ideality of the power amplifiers, etc. For details, see Subsection III-B, and the technical developments in Sections IV and V.

1. Existing methods such as diagonal loading and eigenvalue thresholding are proven to be distributionally robust against the limited pilot size and all the aforementioned uncertainties in the wireless signal model. Extensions of diagonal loading and eigenvalue thresholding are proposed as well. Moreover, the kernelized diagonal loading and the kernelized eigenvalue thresholding methods are put forward for nonlinear estimation cases. For details, see Corollary 1, Examples 4 and 5, and Subsections IV-B.

1. The distributionally robust receive combining and signal estimation problems across multiple frames, where channel conditions may change, are also investigated. For details, see Subsections IV-C and V-A 2.

I-B Notations

The $N$ -dimensional real (coordinate) space and complex (coordinate) space are denoted as $\mathbb{R}^{N}$ and $\mathbb{C}^{N}$ , respectively. Lowercase symbols (e.g., $\bm{x}$ ) denote vectors (column by default) and uppercase ones (e.g., $\bm{X}$ ) denote matrices. We use the Roman font for random quantities (e.g., $\mathbf{x},\mathbf{X}$ ) and the italic font for deterministic quantities (e.g., $\bm{x},\bm{X}$ ). Let $\operatorname{Re}\bm{X}$ be the real part of a complex quantity $\bm{X}$ (a vector or matrix) and $\operatorname{Im}\bm{X}$ be the imaginary part of $\bm{X}$ . For a vector $\bm{x}∈\mathbb{C}^{N}$ , let

$$

\underline{\bm{x}}\coloneqq\left[\begin{array}[]{cc}\operatorname{Re}\bm{x}\\

\operatorname{Im}\bm{x}\end{array}\right]\in\mathbb{R}^{2N}

$$

be the real-space representation of $\bm{x}$ ; for a matrix $\bm{H}∈\mathbb{C}^{N× M}$ , let

$$

\underline{\bm{H}}\coloneqq\left[\begin{array}[]{cc}\operatorname{Re}\bm{H}\\

\operatorname{Im}\bm{H}\end{array}\right],~{}~{}~{}~{}~{}\underline{\underline%

{\bm{H}}}\coloneqq\left[\begin{array}[]{cc}\operatorname{Re}\bm{H}&-%

\operatorname{Im}\bm{H}\\

\operatorname{Im}\bm{H}&\operatorname{Re}\bm{H}\end{array}\right]

$$

be the real-space representations of $\bm{H}$ where $\underline{\bm{H}}∈\mathbb{R}^{2N× M}$ and $\underline{\underline{\bm{H}}}∈\mathbb{R}^{2N× 2M}$ . The running index set induced by an integer $N$ is defined as $[N]\coloneqq\{1,2,...,N\}$ . To concatenate matrices and vectors, MATLAB notations are used: i.e., $[\bm{A},~{}\bm{B}]$ for row stacking and $[\bm{A};~{}\bm{B}]$ for column stacking. We let $\bm{\Gamma}_{M}\coloneqq[\bm{I}_{M},~{}\bm{J}_{M}]∈\mathbb{C}^{M× 2M}$ where $\bm{I}_{M}$ denotes the $M$ -dimensional identity matrix, $\bm{J}_{M}\coloneqq j·\bm{I}_{M}$ , and $j$ denotes the imaginary unit. Let $\mathcal{N}(\bm{\mu},\bm{\Sigma})$ denote a real Gaussian distribution with mean $\bm{\mu}$ and covariance $\bm{\Sigma}$ . We use $\mathcal{CN}(\bm{s},\bm{P},\bm{C})$ to denote a complex Gaussian distribution with mean $\bm{s}$ , covariance $\bm{P}$ , and pseudo-covariance $\bm{C}$ ; if $\bm{C}$ is not specified, we imply $\bm{C}=\bm{0}$ .

II Preliminaries

We review two popular structured representation methods of nonlinear functions $\bm{\phi}:\mathbb{R}^{N}→\mathbb{R}^{M}$ . More details can be seen in Appendix A.

II-A Reproducing Kernel Hilbert Spaces

A reproducing kernel Hilbert space (RKHS) $\mathcal{H}$ induced by the kernel function $\ker:\mathbb{R}^{N}×\mathbb{R}^{N}→\mathbb{R}$ and a collection of points $\{\bm{x}_{1},\bm{x}_{2},...,\bm{x}_{L}\}⊂\mathbb{R}^{N}$ is a set of functions from $\mathbb{R}^{N}$ to $\mathbb{R}$ ; $L$ may be infinite. Every function $\phi:\mathbb{R}^{N}→\mathbb{R}$ in the functional space $\mathcal{H}$ can be represented by a linear combination [24, p. 539; Chap. 14]

$$

\phi(\bm{x})=\sum^{L}_{i=1}\omega_{i}\cdot\ker(\bm{x},\bm{x}_{i}),~{}\forall%

\bm{x}\in\mathbb{R}^{N} \tag{1}

$$

where $\{\omega_{i}\}_{i∈[L]}$ are the combination weights; $\omega_{i}∈\mathbb{R}$ for every $i∈[L]$ . The matrix form of (1) for $M$ -multiple functions are

$$

\bm{\phi}(\bm{x})\coloneqq\left[\begin{array}[]{ccccccc}\phi_{1}(\bm{x})\\

\phi_{2}(\bm{x})\\

\vdots\\

\phi_{M}(\bm{x})\end{array}\right]=\bm{W}\cdot\bm{\varphi}(\bm{x})\coloneqq%

\left[\begin{array}[]{c}\bm{\omega}_{1}\\

\bm{\omega}_{2}\\

\vdots\\

\bm{\omega}_{M}\end{array}\right]\cdot\bm{\varphi}(\bm{x}), \tag{2}

$$

where $\bm{\omega}_{1},\bm{\omega}_{2},...,\bm{\omega}_{M}∈\mathbb{R}^{L}$ are weight row-vectors for functions $\phi_{1}(\bm{x}),\phi_{2}(\bm{x}),...,\phi_{M}(\bm{x})$ , respectively, and

$$

\bm{W}\coloneqq\left[\begin{array}[]{c}\bm{\omega}_{1}\\

\bm{\omega}_{2}\\

\vdots\\

\bm{\omega}_{M}\end{array}\right]\in\mathbb{R}^{M\times L},~{}~{}~{}\bm{%

\varphi}(\bm{x})\coloneqq\left[\begin{array}[]{c}\ker(\bm{x},\bm{x}_{1})\\

\ker(\bm{x},\bm{x}_{2})\\

\vdots\\

\ker(\bm{x},\bm{x}_{L})\end{array}\right]. \tag{3}

$$

Since a kernel function is pre-designed (i.e., fixed) for an RKHS $\mathcal{H}$ , (2) suggests a $\bm{W}$ -linear representation of $\bm{x}$ -nonlinear functions $\bm{\phi}(\bm{x})$ in $\mathcal{H}^{M}$ . Note that there exists a one-to-one correspondence between $\bm{\phi}$ and $\bm{W}$ : for every $\bm{\phi}:\mathbb{R}^{N}→\mathbb{R}^{M}$ , there exists a $\bm{W}∈\mathbb{R}^{M× L}$ , and vice versa.

II-B Neural Networks

Neural networks (NN) are another powerful tool to represent (i.e., approximate) nonlinear functions. A neural network function space (NNFS) $\mathcal{K}$ characterizes (or parameterizes) a set of multi-input multi-output functions. Typical choices are multi-layer feed-forward neural networks, recurrent neural networks, etc. For combining and estimation of wireless signals, the multi-layer feed-forward neural networks are standard [10, 11, 12]. Suppose that we have $R-1$ hidden layers (so in total $R+1$ layers including one input layer and one output layer) and each layer $r=0,1,...,R$ contains $T_{r}$ neurons. To represent a function $\bm{\phi}:\mathbb{R}^{N}→\mathbb{R}^{M}$ , for the input layer $r=0$ and output layer $r=R$ , we have $T_{0}=N$ and $T_{R}=M$ , respectively. Let the output of the $r^{\text{th}}$ layer be $\bm{y}_{r}∈\mathbb{R}^{T_{r}}$ . For every layer $r$ , we have $\bm{y}_{r}=\bm{\sigma}_{r}(\bm{W}^{\circ}_{r}·\bm{y}_{r-1}+\bm{b}_{r})$ where $\bm{W}^{\circ}_{r}∈\mathbb{R}^{T_{r}× T_{r-1}}$ is the weight matrix, $\bm{b}_{r}∈\mathbb{R}^{T_{r}}$ is the bias vector, and the multi-output function $\bm{\sigma}_{r}$ is the activation function which is entry-wise identical. Hence, every function $\bm{\phi}:\mathbb{R}^{N}→\mathbb{R}^{M}$ in a NNFS can be recursively expressed as [25, Chap. 5], [26]

$$

\begin{array}[]{cll}\bm{\phi}(\bm{x})&=\bm{\sigma}_{R}(\bm{W}_{R}\cdot[\bm{y}_%

{R-1}(\bm{x});~{}1])\\

\bm{y}_{r}(\bm{x})&=\bm{\sigma}_{r}(\bm{W}_{r}\cdot[\bm{y}_{r-1}(\bm{x});~{}1]%

),&r\in[R-1]\\

\bm{y}_{0}(\bm{x})&=\bm{x},\end{array} \tag{4}

$$

where $\bm{W}_{r}\coloneqq[\bm{W}^{\circ}_{r},~{}\bm{b}_{r}]$ for $r∈[R]$ . Note that the activation functions can vary from one layer to another.

III Problem Formulation

Consider a narrow-band wireless signal transmission model

$$

\mathbf{x}=\bm{H}\mathbf{s}+\mathbf{v} \tag{5}

$$

where $\mathbf{x}∈\mathbb{C}^{N}$ is the received signal, $\mathbf{s}∈\mathbb{C}^{M}$ is the transmitted signal, $\bm{H}∈\mathbb{C}^{N× M}$ is the channel matrix, and $\mathbf{v}∈\mathbb{C}^{N}$ is the zero-mean channel noise. The precoding operation (if exists) is integrated in $\bm{H}$ . The transmitted symbols $\mathbf{s}$ have zero means, which may be not only discrete symbols from constellations such as quadrature amplitude modulation but also arbitrary values such as integrated sensing and communication signals. We consider $L$ pilots $\mathbf{S}\coloneqq(\mathbf{s}_{1},\mathbf{s}_{2},...,\mathbf{s}_{L})$ in each frame, and the corresponding received symbols are $\mathbf{X}\coloneqq(\mathbf{x}_{1},\mathbf{x}_{2},...,\mathbf{x}_{L})$ under the noise $(\mathbf{v}_{1},\mathbf{v}_{2},...,\mathbf{v}_{L})$ . We suppose that $\bm{R}_{s}\coloneqq\mathbb{E}\mathbf{s}\mathbf{s}^{\mathsf{H}}$ and $\bm{R}_{v}\coloneqq\mathbb{E}\mathbf{v}\mathbf{v}^{\mathsf{H}}$ may not be identity or diagonal matrices: i.e., the components of $\mathbf{s}$ can be correlated (e.g., in ISAC), so can be these of $\mathbf{v}$ . Consider the real-space representation of the signal model (5) by stacking the real and imaginary components:

$$

\underline{\mathbf{x}}=\underline{\underline{\bm{H}}}\cdot\underline{\mathbf{s%

}}+\underline{\mathbf{v}}, \tag{6}

$$

where $\underline{\mathbf{x}}∈\mathbb{R}^{2N}$ , $\underline{\underline{\bm{H}}}∈\mathbb{R}^{2N× 2M}$ , $\underline{\mathbf{s}}∈\mathbb{R}^{2M}$ , and $\underline{\mathbf{v}}∈\mathbb{R}^{2N}$ . The expressions of $\bm{R}_{\underline{x}}\coloneqq\mathbb{E}{\underline{\mathbf{x}}\underline{%

\mathbf{x}}^{\mathsf{T}}}$ , $\bm{R}_{\underline{s}}\coloneqq\mathbb{E}{\underline{\mathbf{s}}\underline{%

\mathbf{s}}^{\mathsf{T}}}$ , $\bm{R}_{\underline{x}\underline{s}}\coloneqq\mathbb{E}{\underline{\mathbf{x}}%

\underline{\mathbf{s}}^{\mathsf{T}}}$ , and $\bm{R}_{\underline{v}}\coloneqq\mathbb{E}{\underline{\mathbf{v}}\underline{%

\mathbf{v}}^{\mathsf{T}}}$ can be readily obtained; see Appendix B. In some cases, signal estimation in real spaces can be technically simpler than that in complex spaces.

III-A Optimal Estimation

III-A 1 Optimal Nonlinear Estimation (Receive Combining)

To recover $\mathbf{s}$ using $\mathbf{x}$ , we consider an estimator $\hat{\mathbf{s}}\coloneqq\bm{\phi}(\mathbf{x})$ , called a receive combiner, at the receiver where $\bm{\phi}:\mathbb{C}^{N}→\mathbb{C}^{M}$ is a Borel-measurable function. Note that $\bm{\phi}(\mathbf{x})$ may be nonlinear in general because the joint distribution of $(\mathbf{x},\mathbf{s})$ is not necessarily Gaussian, for example, when the channel noise $\mathbf{v}$ is non-Gaussian or when the power amplifiers work in non-linear regions. The signal estimation problem at the receiver can be written as a statistical machine-learning problem under the joint data distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ of $(\mathbf{x},\mathbf{s})$ , that is,

$$

\min_{\bm{\phi}\in\mathcal{B}_{\mathbb{C}^{N}\to\mathbb{C}^{M}}}\operatorname{%

Tr}\mathbb{E}_{\mathbf{x},\mathbf{s}}[\bm{\phi}(\mathbf{x})-\mathbf{s}][\bm{%

\phi}(\mathbf{x})-\mathbf{s}]^{\mathsf{H}}, \tag{7}

$$

where $\mathcal{B}_{\mathbb{C}^{N}→\mathbb{C}^{M}}$ contains all Borel-measurable estimators from $\mathbb{C}^{N}$ to $\mathbb{C}^{M}$ . In what follows, we omit the notational dependence on $\mathbb{C}^{N}$ and $\mathbb{C}^{M}$ , and use $\mathcal{B}$ as a shorthand. The optimal estimator, in the sense of minimum mean-squared error, is known as the conditional mean of $\mathbf{s}$ given $\mathbf{x}$ , i.e.,

$$

\hat{\mathbf{s}}=\bm{\phi}(\mathbf{x})=\mathbb{E}({\mathbf{s}|\mathbf{x}}). \tag{8}

$$

Usually, it is computationally complicated to find the optimal $\bm{\phi}(·)$ from the whole space $\mathcal{B}$ of Borel-measurable functions, that is, to compute the conditional mean. Therefore, in practice, we may find the optimal approximation of $\bm{\phi}(·)$ in an RKHS $\mathcal{H}$ or a NNFS $\mathcal{K}$ ; note that $\mathcal{H}$ and $\mathcal{K}$ are two subspaces of $\mathcal{B}$ . However, both $\mathcal{H}$ and $\mathcal{K}$ are sufficiently rich because they can be dense in the space of all continuous bounded functions.

III-A 2 Optimal Linear Estimation (Receive Beamforming)

If $\mathbf{x}$ and $\mathbf{s}$ are jointly Gaussian (e.g., when $\mathbf{s}$ and $\mathbf{v}$ are jointly Gaussian), the optimal estimator $\bm{\phi}$ is linear in $\mathbf{x}$ :

$$

\hat{\mathbf{s}}=\bm{W}\mathbf{x}, \tag{9}

$$

where $\bm{W}∈\mathbb{C}^{M× N}$ is called a receive beamformer or a linear receive combiner. In this linear case, (7) reduces to the usual Wiener–Hopf beamforming problem

$$

\min_{\bm{W}}\operatorname{Tr}\mathbb{E}_{\mathbf{x},\mathbf{s}}[\bm{W}\mathbf%

{x}-\mathbf{s}][\bm{W}\mathbf{x}-\mathbf{s}]^{\mathsf{H}}, \tag{10}

$$

that is,

$$

\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}\bm{R}_{x}\bm{W}^{\mathsf{H}}-\bm{W%

}\bm{R}_{xs}-\bm{R}^{\mathsf{H}}_{xs}\bm{W}^{\mathsf{H}}+\bm{R}_{s}\big{]}, \tag{11}

$$

where $\bm{R}_{x}\coloneqq\mathbb{E}{\mathbf{x}\mathbf{x}^{\mathsf{H}}}∈\mathbb{C}^%

{N× N}$ and $\bm{R}_{xs}\coloneqq\mathbb{E}{\mathbf{x}\mathbf{s}^{\mathsf{H}}}∈\mathbb{C}%

^{N× M}$ . Since $\bm{R}_{x}=\bm{H}\bm{R}_{s}\bm{H}^{\mathsf{H}}+\bm{R}_{v}$ and $\bm{R}_{xs}=\bm{H}\bm{R}_{s}+\mathbb{E}{\mathbf{v}\mathbf{s}^{\mathsf{H}}}=\bm%

{H}\bm{R}_{s}$ , the solution of (11), or (10), is

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{Wiener}}&=\bm{R}^{\mathsf{H}}_{xs}\bm%

{R}^{-1}_{x}\\

&=\bm{R}_{s}\bm{H}^{\mathsf{H}}[\bm{H}\bm{R}_{s}\bm{H}^{\mathsf{H}}+\bm{R}_{v}%

]^{-1},\end{array} \tag{12}

$$

which is known as the Wiener beamformer. With an additional constraint $\bm{W}\bm{H}=\bm{I}_{M}$ (i.e., distortionless response), (11) gives the Capon beamformer. Both the Wiener beamformer and the Capon beamformer maximize the output signal–to–interference-plus-noise ratio (SINR); hence, both are optimal in the sense of maximum output SINR.

No matter whether $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is Gaussian or not, (10) or (11) identifies the optimal linear estimator in the sense of minimum mean-squared error among all linear estimators.

III-A 3 Role of Channel Estimation

Eqs. (7) and (10) imply that channel estimation is not a necessary step in receive combining. The only necessary element, from the perspective of statistical machine learning, is the joint distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ of the received signal $\mathbf{x}$ and the transmitted signal $\mathbf{s}$ . Therefore, the following two points can be highlighted.

1. If the joint distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is non-Gaussian, we just need to learn the mapping $\bm{\phi}$ using (7).

1. If the joint distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is (or assumed to be) Gaussian, we just learn covariance matrices $\bm{R}_{xs}$ and $\bm{R}_{x}$ ; cf. (12); Gaussianity assumption of $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is beneficial in reducing computational burdens. If, further, the channel matrix $\bm{H}$ is known, $\bm{R}_{xs}$ and $\bm{R}_{x}$ can be expressed using $\bm{H}$ .

III-B Distributional Uncertainty and Distributional Robustness

For ease of conceptual illustration, we start with the following stationary-channel assumption in this subsection: The channel statistics remain unchanged within the communication frame so that the joint distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is fixed over time. That is, pilot data $\{(\bm{x}_{1},\bm{s}_{1}),(\bm{x}_{2},\bm{s}_{2}),...,(\bm{x}_{L},\bm{s}_{L%

})\}$ and non-pilot communication data are drawn from the same unknown distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ . For the general case where the channel is not statistically stationary within a frame, see Appendix C; the statistical non-stationarity of $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ may be due to the time-selectivity of the transmit power matrix $\bm{R}_{s}$ , of the channel matrix $\bm{H}$ , and/or of the channel noise covariance $\bm{R}_{v}$ .

III-B 1 Issue of Distributional Uncertainty

In practice, the true joint distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is unknown but can be estimated by the pilot data. Hence, the estimation of wireless signals is a data-driven statistical inference (i.e., statistical machine learning) problem. We let

$$

\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}\coloneqq\frac{1}{L}\sum^{L}_{i=1}%

\delta_{(\bm{x}_{i},\bm{s}_{i})} \tag{13}

$$

denote the empirical distribution supported on the $L$ collected data $\{(\bm{x}_{i},\bm{s}_{i})\}_{i∈[L]}$ , where $\delta_{(\bm{x}_{i},\bm{s}_{i})}$ denotes the Dirac distribution (i.e., point-mass distribution) centered on $(\bm{x}_{i},\bm{s}_{i})$ ; note that $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ is a discrete distribution. If we use the estimated joint distribution $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ as a surrogate of the true joint distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ , (7) becomes the conventional empirical risk minimization (ERM)

$$

\min_{\bm{\phi}\in\mathcal{B}}\operatorname{Tr}\mathbb{E}_{(\mathbf{x},\mathbf%

{s})\sim\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}}[\bm{\phi}(\mathbf{x})-%

\mathbf{s}][\bm{\phi}(\mathbf{x})-\mathbf{s}]^{\mathsf{H}}, \tag{14}

$$

i.e.,

$$

\min_{\bm{\phi}\in\mathcal{B}}\operatorname{Tr}\frac{1}{L}\sum^{L}_{i=1}[\bm{%

\phi}(\bm{x}_{i})-\bm{s}_{i}][\bm{\phi}(\bm{x}_{i})-\bm{s}_{i}]^{\mathsf{H}}. \tag{15}

$$

Likewise, (11) become the conventional beamforming problem

$$

\displaystyle\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}\hat{\bm{R}}_{x}\bm{W}%

^{\mathsf{H}}-\bm{W}\hat{\bm{R}}_{xs}-\hat{\bm{R}}^{\mathsf{H}}_{xs}\bm{W}^{%

\mathsf{H}}+\hat{\bm{R}}_{s}\big{]}, \tag{16}

$$

where ${\hat{\bm{R}}}_{x}$ , ${\hat{\bm{R}}}_{xs}$ , and ${\hat{\bm{R}}}_{s}$ are the training-sample-estimated (i.e., nominal) values of $\bm{R}_{x}$ , $\bm{R}_{xs}$ , and $\bm{R}_{s}$ , respectively.

There exists the distributional difference between the sample-defined nominal distribution $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ and true data-generating distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ due to the limited size of the training data set (i.e., limited pilot length) and the time-selectivity of $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ . From the perspective of applied statistics and machine learning, the distributional difference between $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ and $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ (i.e., the distributional uncertainty of $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ compared to $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ ) may cause significant performance degradation of (15) compared to (7), so is the performance deterioration of (16) compared to (11). For extensive reading on this point, see Appendix C. Therefore, to reduce the adverse effect introduced by the distributional uncertainty in $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ , a new surrogate of (7) rather than the sample-averaged approximation in (15) is expected.

III-B 2 Distributionally Robust Estimation

To combat the distributional uncertainty in $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ , we consider the distributionally robust counterpart of (7)

$$

\min_{\bm{\phi}\in\mathcal{B}}\max_{\mathbb{P}_{\mathbf{x},\mathbf{s}}\in%

\mathcal{U}_{\mathbf{x},\mathbf{s}}}\operatorname{Tr}\mathbb{E}_{\mathbf{x},%

\mathbf{s}}[\bm{\phi}(\mathbf{x})-\mathbf{s}][\bm{\phi}(\mathbf{x})-\mathbf{s}%

]^{\mathsf{H}}, \tag{17}

$$

where $\mathcal{U}_{\mathbf{x},\mathbf{s}}$ , called a distributional uncertainty set, contains a collection of distributions that are close to the nominal distribution (i.e., the sample-estimated distribution) $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ ;

$$

\mathcal{U}_{\mathbf{x},\mathbf{s}}\coloneqq\{\mathbb{P}_{\mathbf{x},\mathbf{s%

}}|~{}d(\mathbb{P}_{\mathbf{x},\mathbf{s}},\hat{\mathbb{P}}_{\mathbf{x},%

\mathbf{s}})\leq\epsilon\}, \tag{18}

$$

where $d(·,·)$ denotes a similarity measure (e.g., metric or divergence) between two distributions and $\epsilon≥ 0$ an uncertainty quantification level. Since $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ is discrete and $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is not, the Wasserstein distance [27, Def. 2] and the maximum mean discrepancy (MMD) distance [28, Def. 2.1] are the typical choices of $d(·,·)$ to construct $\mathcal{U}_{\mathbf{x},\mathbf{s}}$ . When $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ and $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ are parametric distributions (e.g., Gaussian, exponential family), divergences such as the Kullback-–Leibler (KL) divergence, or more general $\phi$ -divergence, are also applicable to particularize $d(·,·)$ because parameters can be estimated using samples. When $\epsilon=0$ , (17) reduces to (15).

If $\mathcal{U}_{\mathbf{x},\mathbf{s}}$ contains (or is assumed, for computational simplicity, to contain) only Gaussian distributions, (17) particularizes to

$$

\begin{array}[]{cl}\displaystyle\min_{\bm{W}}\max_{\bm{R}}&\operatorname{Tr}%

\big{[}\bm{W}\bm{R}_{x}\bm{W}^{\mathsf{H}}-\bm{W}\bm{R}_{xs}-\bm{R}^{\mathsf{H%

}}_{xs}\bm{W}^{\mathsf{H}}+\bm{R}_{s}\big{]}\\

\text{s.t.}&d_{0}(\bm{R},~{}\hat{\bm{R}})\leq\epsilon_{0},\\

&\bm{R}\succeq\bm{0},\end{array} \tag{19}

$$

where

$$

\bm{R}\coloneqq\left[\begin{array}[]{cc}\bm{R}_{x}&\bm{R}_{xs}\\

\bm{R}^{\mathsf{H}}_{xs}&\bm{R}_{s}\end{array}\right],~{}~{}~{}\hat{\bm{R}}%

\coloneqq\left[\begin{array}[]{cc}\hat{\bm{R}}_{x}&\hat{\bm{R}}_{xs}\\

\hat{\bm{R}}^{\mathsf{H}}_{xs}&\hat{\bm{R}}_{s}\end{array}\right], \tag{20}

$$

because every zero-mean complex Gaussian distribution is uniquely characterized by its covariance and pseudo-covariance, but in receive beamforming, we do not consider pseudo-covariances; cf. (12); $d_{0}$ denotes the matrix similarity measures (e.g., matrix distances); $\epsilon_{0}≥ 0$ is the uncertainty quantification parameter. When $\epsilon_{0}=0$ , (19) reduces to (16).

For additional discussions on the framework of distributionally robust estimation, see Appendix D.

IV Distributionally Robust Linear Estimation

Due to several practical benefits of linear estimation, for example, the simplicity of hardware structures, the clarity of physical meaning (i.e., constructive and destructive interference through beamforming), and the easiness of computations, investigating distributionally robust linear estimation problems is important. This section particularly studies Problem (19).

IV-A General Framework and Concrete Examples

The following lemma solves Problem (19).

**Lemma 1**

*Suppose that the set $\{\bm{R}|~{}d_{0}(\bm{R},~{}\hat{\bm{R}})≤\epsilon_{0}\}$ is compact convex and $\bm{R}_{x}$ is invertible. Let $\bm{R}^{\star}$ solve the problem below:

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}}&\operatorname{Tr}\big{[}-\bm{R}^%

{\mathsf{H}}_{xs}\bm{R}^{-1}_{x}\bm{R}_{xs}+\bm{R}_{s}\big{]}\\

\text{s.t.}&d_{0}(\bm{R},~{}\hat{\bm{R}})\leq\epsilon_{0},\\

&\bm{R}\succeq\bm{0},~{}~{}~{}\bm{R}_{x}\succ\bm{0}.\end{array} \tag{21}

$$

Construct $\bm{W}^{\star}$ using $\bm{R}^{\star}$ as follows:

$$

\bm{W}^{\star}\coloneqq\bm{R}^{\star\mathsf{H}}_{xs}\bm{R}^{\star-1}_{x}. \tag{22}

$$

Then $(\bm{W}^{\star},\bm{R}^{\star})$ is a solution to Problem (19). On the other hand, if $(\bm{W}^{\star},\bm{R}^{\star})$ solves Problem (19), then $\bm{R}^{\star}$ is a solution to (21) and $(\bm{W}^{\star},\bm{R}^{\star})$ satisfies (22).*

* Proof:*

See Appendix E. $\square$ ∎

Let

$$

f_{1}(\bm{R})\coloneqq\operatorname{Tr}\big{[}-\bm{R}^{\mathsf{H}}_{xs}\bm{R}^%

{-1}_{x}\bm{R}_{xs}+\bm{R}_{s}\big{]} \tag{23}

$$

denote the objective function of (21). When $\bm{R}_{s}$ and $\bm{R}_{xs}$ are fixed, we define

$$

f_{2}(\bm{R}_{x})\coloneqq\operatorname{Tr}\big{[}-\bm{R}^{\mathsf{H}}_{xs}\bm%

{R}^{-1}_{x}\bm{R}_{xs}+\bm{R}_{s}\big{]}. \tag{24}

$$

The theorem below studies the properties of $f_{1}$ and $f_{2}$ .

**Theorem 1**

*Consider the definition of $\bm{R}$ in (20). The functions $f_{1}$ defined in (23) and $f_{2}$ defined in (24) are monotonically increasing in $\bm{R}$ and $\bm{R}_{x}$ , respectively. To be specific, if $\bm{R}_{1}\succeq\bm{R}_{2}\succeq\bm{0}$ , $\bm{R}_{1,x}\succ\bm{0}$ , and $\bm{R}_{2,x}\succ\bm{0}$ , we have $f_{1}(\bm{R}_{1})≥ f_{1}(\bm{R}_{2})$ . In addition, if $\bm{R}_{1,x}\succeq\bm{R}_{2,x}\succ\bm{0}$ , we have $f_{2}(\bm{R}_{1,x})≥ f_{2}(\bm{R}_{2,x})$ .*

* Proof:*

See Appendix F. $\square$ ∎

To concretely solve (21), we need to particularize $d_{0}$ . This article investigates the following uncertainty sets.

**Definition 1 (Additive Moment Uncertainty Set)**

*The additive moment uncertainty set of $\bm{R}$ is constructed as

$$

\{\bm{R}|~{}\hat{\bm{R}}-\epsilon_{0}\bm{E}\preceq\bm{R}\preceq\hat{\bm{R}}+%

\epsilon_{0}\bm{E},~{}\bm{R}\succeq\bm{0}\} \tag{25}

$$

for some $\bm{E}\succeq\bm{0}$ and $\epsilon_{0}≥ 0$ . $\square$*

Definition 1 is motivated by the fact that the difference $\bm{R}-\hat{\bm{R}}$ is bounded by some threshold matrix $\bm{E}$ and error quantification level $\epsilon_{0}$ : specifically, $-\epsilon_{0}\bm{E}\preceq\bm{R}-\hat{\bm{R}}\preceq\epsilon_{0}\bm{E}$ . In practice, we can consider the threshold as an identity matrix because, for every non-identity $\bm{E}\succeq\bm{0}$ , we have $\bm{E}\preceq\lambda_{1}\bm{I}_{N+M}$ where $\lambda_{1}$ is the largest eigenvalue of $\bm{E}$ .

**Definition 2 (Diagonal-Loading Uncertainty Set)**

*The diagonal-loading uncertainty set of $\bm{R}$ is constructed as

$$

\{\bm{R}|~{}\hat{\bm{R}}-\epsilon_{0}\bm{I}_{N+M}\preceq\bm{R}\preceq\hat{\bm{%

R}}+\epsilon_{0}\bm{I}_{N+M},~{}\bm{R}\succeq\bm{0}\} \tag{26}

$$

for some $\epsilon_{0}≥ 0$ . $\square$*

Due to the concentration property of the sample-covariance $\hat{\bm{R}}$ to the true covariance $\bm{R}$ when the true distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ is fixed within a frame, finite values of $\epsilon_{0}$ exist for every sample size $L$ ; NB: $\epsilon_{0}→ 0$ as $L→∞$ . However, given $L$ , the smallest $\epsilon_{0}$ cannot be practically calculated because it depends on the true but unknown $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ . If $\bm{E}$ is block-diagonal, the generalized diagonal-loading uncertainty set can be motivated.

**Definition 3 (Generalized Diagonal-Loading Uncertainty Set)**

*The generalized diagonal-loading uncertainty set of $\bm{R}$ is constructed by the following constraints: $\bm{R}\succeq\bm{0}$ and

$$

\begin{array}[]{l}\left[\begin{array}[]{cc}\hat{\bm{R}}_{x}&\hat{\bm{R}}_{xs}%

\\

\hat{\bm{R}}^{\mathsf{H}}_{xs}&\hat{\bm{R}}_{s}\end{array}\right]-\epsilon_{0}%

\left[\begin{array}[]{cc}\bm{F}&\bm{0}\\

\bm{0}&\bm{G}\end{array}\right]\\

\quad\quad\preceq\left[\begin{array}[]{cc}\bm{R}_{x}&\bm{R}_{xs}\\

\bm{R}^{\mathsf{H}}_{xs}&\bm{R}_{s}\end{array}\right]\\

\quad\quad\quad\quad\preceq\left[\begin{array}[]{cc}\hat{\bm{R}}_{x}&\hat{\bm{%

R}}_{xs}\\

\hat{\bm{R}}^{\mathsf{H}}_{xs}&\hat{\bm{R}}_{s}\end{array}\right]+\epsilon_{0}%

\left[\begin{array}[]{cc}\bm{F}&\bm{0}\\

\bm{0}&\bm{G}\end{array}\right],\end{array} \tag{27}

$$

for some $\bm{F},\bm{G}\succeq\bm{0}$ and $\epsilon_{0}≥ 0$ . $\square$*

Definitions 1, 2, and 3 are introduced for the first time in this article. Another type of moment-based uncertainty set is popular in the literature, which we refer to as the multiplicative moment uncertainty set for differentiation.

**Definition 4 (Multiplicative Moment Uncertainty Set[29])**

*The multiplicative moment uncertainty set of $\bm{R}$ is given as

$$

\{\bm{R}|~{}\theta_{1}\hat{\bm{R}}\preceq\bm{R}\preceq\theta_{2}\hat{\bm{R}}\} \tag{28}

$$

for some $\theta_{2}≥ 1≥\theta_{1}≥ 0$ . $\square$*

The following corollary shows the distributionally robust linear beamformers associated with the various uncertainty sets in Definitions 1, 2, 3, and 4.

**Corollary 1 (of Theorem1)**

*Consider the moment-based uncertainty sets in Definitions 1, 2, 3, and 4. The distributionally robust linear beamforming (21) is analytically solved by the corresponding upper bounds of $\bm{R}$ . To be specific,

1. Under Definition 1, the additive-moment distributionally robust (DR-AM) beamformer is

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{DR-AM}}&=(\hat{\bm{R}}_{xs}+\epsilon_%

{0}\bm{E}_{xs})^{\mathsf{H}}(\hat{\bm{R}}_{x}+\epsilon_{0}\bm{E}_{x})^{-1}\\

&=(\hat{\bm{H}}\hat{\bm{R}}_{s}+\epsilon_{0}\bm{E}_{xs})^{\mathsf{H}}\cdot\\

&\quad\quad\quad[\hat{\bm{H}}\hat{\bm{R}}_{s}\hat{\bm{H}}^{\mathsf{H}}+\hat{%

\bm{R}}_{v}+\epsilon_{0}\bm{E}_{x}]^{-1},\end{array} \tag{29}

$$

where $\hat{\bm{H}}$ , $\hat{\bm{R}}_{s}$ , and $\hat{\bm{R}}_{v}$ denote the estimates of $\bm{H}$ , $\bm{R}_{s}$ , and $\bm{R}_{v}$ , respectively.

1. Under Definition 2, the diagonal-loading distributionally robust (DR-DL) beamformer is

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{DR-DL}}&=\hat{\bm{R}}^{\mathsf{H}}_{%

xs}[{\hat{\bm{R}}}_{x}+\epsilon_{0}\bm{I}_{N}]^{-1}\\

&=\hat{\bm{R}}_{s}\hat{\bm{H}}^{\mathsf{H}}[\hat{\bm{H}}\hat{\bm{R}}_{s}\hat{%

\bm{H}}^{\mathsf{H}}+\hat{\bm{R}}_{v}+\epsilon_{0}\bm{I}_{N}]^{-1},\end{array} \tag{30}

$$

which is also known as the loaded sample matrix inversion method [19], [14, Eq. (11)] and widely-used in the practice of wireless communications.

1. Under Definition 3, the generalized diagonal-loading distributionally robust beamformer (DR-GDL) is

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{DR-GDL}}&=\hat{\bm{R}}^{\mathsf{H}}_{%

xs}[{\hat{\bm{R}}}_{x}+\epsilon_{0}\bm{F}]^{-1}\\

&=\hat{\bm{R}}_{s}\hat{\bm{H}}^{\mathsf{H}}[\hat{\bm{H}}\hat{\bm{R}}_{s}\hat{%

\bm{H}}^{\mathsf{H}}+\hat{\bm{R}}_{v}+\epsilon_{0}\bm{F}]^{-1}.\end{array} \tag{31}

$$

1. Under Definition 4, the multiplicative-moment (MM) distributionally robust beamformer is identical to the Wiener beamformer (12) at nominal values:

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{DR-MM}}&=\hat{\bm{R}}_{xs}^{\mathsf{H%

}}\hat{\bm{R}}_{x}^{-1}\\

&=\hat{\bm{R}}_{s}\hat{\bm{H}}^{\mathsf{H}}[\hat{\bm{H}}\hat{\bm{R}}_{s}\hat{%

\bm{H}}^{\mathsf{H}}+\hat{\bm{R}}_{v}]^{-1}.\end{array} \tag{32}

$$

The corresponding estimation errors are simple to obtain. $\square$*

Corollary 1 implies that, in the sense of the same induced robust beamformers, the diagonal-loading uncertainty set (26) and the generalized diagonal-loading uncertainty set (27) are technically equivalent to the following trimmed versions.

**Definition 5 (Trimmed Diagonal-Loading Uncertainty Sets)**

*By setting $\bm{G}\coloneqq\bm{0}$ in (27), in terms of $\bm{R}_{x}$ , (27) reduces to the trimmed generalized diagonal-loading uncertainty set:

$$

\{\bm{R}_{x}|~{}\hat{\bm{R}}_{x}-\epsilon_{0}\bm{F}\preceq\bm{R}_{x}\preceq%

\hat{\bm{R}}_{x}+\epsilon_{0}\bm{F},~{}\bm{R}_{x}\succeq\bm{0}\}. \tag{33}

$$

The trimmed diagonal-loading uncertainty set

$$

\{\bm{R}_{x}|~{}\hat{\bm{R}}_{x}-\epsilon_{0}\bm{I}_{N}\preceq\bm{R}_{x}%

\preceq\hat{\bm{R}}_{x}+\epsilon_{0}\bm{I}_{N},~{}\bm{R}_{x}\succeq\bm{0}\}, \tag{34}

$$

is obtained by letting $\bm{F}\coloneqq\bm{I}_{N}$ . $\square$*

The robust beamformers corresponding to the trimmed uncertainty sets (33) and (34) remain the same as defined in (31) and (30), respectively; cf. Theorem 1.

As we can see from Corollary 1, the primary benefit of using the moment-based uncertainty sets is the computational simplicity due to the availability of closed-form solutions. If the uncertainty sets are constructed using the Wasserstein distance $\sqrt{\operatorname{Tr}[{\bm{R}+\hat{\bm{R}}-2(\hat{\bm{R}}^{1/2}\bm{R}\hat{%

\bm{R}}^{1/2})^{1/2}}]}≤\epsilon_{0}$ or the KL divergence $\frac{1}{2}[\operatorname{Tr}[{\hat{\bm{R}}^{-1}\bm{R}-\bm{I}_{N+M}}]-\ln\det(%

\hat{\bm{R}}^{-1}\bm{R})]≤\epsilon_{0}$ between $\mathcal{CN}(\bm{0},\bm{R})$ and $\mathcal{CN}(\bm{0},\hat{\bm{R}})$ , the induced distributionally robust linear beamforming problems have no closed-form solutions, and therefore, are computationally prohibitive in practice. In addition, Corollary 1 suggests that the distributionally robust beamformer under the multiplicative moment uncertainty set (28) is the same as the nominal beamformer $\hat{\bm{R}}^{\mathsf{H}}_{xs}\hat{\bm{R}}_{x}^{-1}$ , which essentially does not introduce robustness in wireless signal estimation; this is another motivation why we construct new moment-based uncertainty sets in Definitions 1, 2, and 3. However, we can modify the multiplicative moment uncertainty set in Definition 4 to achieve robustness.

**Definition 6 (Modified Multiplicative Moment Uncertainty Set)**

*The modified multiplicative moment uncertainty set of $\bm{R}$ is defined by the following constraint:

$$

\left[\begin{array}[]{cc}\theta_{1}\hat{\bm{R}}_{x}&\hat{\bm{R}}_{xs}\\

\hat{\bm{R}}^{\mathsf{H}}_{xs}&\theta_{1}\hat{\bm{R}}_{s}\end{array}\right]%

\preceq\left[\begin{array}[]{cc}\bm{R}_{x}&\bm{R}_{xs}\\

\bm{R}^{\mathsf{H}}_{xs}&\bm{R}_{s}\end{array}\right]\preceq\left[\begin{array%

}[]{cc}\theta_{2}\hat{\bm{R}}_{x}&\hat{\bm{R}}_{xs}\\

\hat{\bm{R}}^{\mathsf{H}}_{xs}&\theta_{2}\hat{\bm{R}}_{s}\end{array}\right] \tag{35}

$$

for some $\theta_{2}≥ 1≥\theta_{1}≥ 0$ such that the left-most matrix is positive semi-definite. $\square$*

The robust beamformer under the modified multiplicative moment uncertainty set (35) is

$$

\bm{W}^{\star}_{\text{DR-MMM}}=\hat{\bm{R}}^{\mathsf{H}}_{xs}\cdot[\theta_{2}%

\hat{\bm{R}}_{x}]^{-1}. \tag{36}

$$

In terms of the uncertainties of $\bm{R}_{s}$ and $\bm{R}_{v}$ , Problem (21) can be explicitly written as

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}_{s},\bm{R}_{v}}&\operatorname{Tr}%

\big{[}\bm{R}_{s}-\bm{R}_{s}\bm{H}^{\mathsf{H}}(\bm{H}\bm{R}_{s}\bm{H}^{%

\mathsf{H}}+\bm{R}_{v})^{-1}\bm{H}\bm{R}_{s}\big{]}\\

\text{s.t.}&d_{1}(\bm{R}_{s},\hat{\bm{R}}_{s})\leq\epsilon_{1},\\

&d_{2}(\bm{R}_{v},\hat{\bm{R}}_{v})\leq\epsilon_{2},\\

&\bm{R}_{s}\succeq\bm{0},~{}\bm{R}_{v}\succeq\bm{0},\end{array} \tag{37}

$$

for some similarity measures $d_{1}$ and $d_{2}$ and nonnegative scalars $\epsilon_{1}$ and $\epsilon_{2}$ . For every given $(\bm{R}_{s},\bm{R}_{v})$ , the associated beamformer is given in (12). When the uncertainty in the channel matrix must be investigated, we can consider

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{H}}&\operatorname{Tr}\big{[}\bm{R}_{%

s}-\bm{R}_{s}\bm{H}^{\mathsf{H}}(\bm{H}\bm{R}_{s}\bm{H}^{\mathsf{H}}+\bm{R}_{v%

})^{-1}\bm{H}\bm{R}_{s}\big{]}\\

\text{s.t.}&d_{3}(\bm{H},\hat{\bm{H}})\leq\epsilon_{3},\end{array} \tag{38}

$$

which is not a semi-definite program. In addition, the gradient of the objective function with respect to $\bm{H}$ is complicated to obtain. Hence, practically, we should avoid directly attacking Problem (38); this can be done by directly considering the uncertainties of $\bm{R}_{x}$ and $\bm{R}_{xs}$ (i.e., $\bm{R}$ ) because the uncertainties of $\bm{R}_{s}$ , $\bm{R}_{v}$ , and $\bm{H}$ can be reflected in the uncertainties of $\bm{R}_{x}$ and $\bm{R}_{xs}$ ; cf. $\bm{R}_{x}=\bm{H}\bm{R}_{s}\bm{H}^{\mathsf{H}}+\bm{R}_{v}$ and $\bm{R}_{xs}=\bm{H}\bm{R}_{s}$ .

In addition to Corollary 1, below we provide other concrete examples to further showcase the usefulness and applications of the distributionally robust beamforming formulations (21) and (37), where the trimmed uncertainty sets are employed.

**Example 1 (Distributionally Robust Capon Beamforming)**

*We consider a distributionally robust Capon beamforming problem under the trimmed uncertainty set (34):

$$

\begin{array}[]{cl}\displaystyle\min_{\bm{W}}\max_{\bm{R}_{x}}&\operatorname{%

Tr}\big{[}\bm{W}\bm{R}_{x}\bm{W}^{\mathsf{H}}-2\bm{R}_{s}+\bm{R}_{s}\big{]}\\

\text{s.t.}&\bm{W}\bm{H}=\bm{I}_{M},\\

&{\hat{\bm{R}}}_{x}-\epsilon_{0}\bm{I}_{N}\preceq\bm{R}_{x}\preceq{\hat{\bm{R}%

}}_{x}+\epsilon_{0}\bm{I}_{N},\\

&\bm{R}_{x}\succeq\bm{0},\end{array}

$$

which is equivalent, in the sense of the same solutions, to

$$

\begin{array}[]{cl}\displaystyle\min_{\bm{W}}\max_{\bm{R}_{x}}&\operatorname{%

Tr}\big{[}\bm{W}\bm{R}_{x}\bm{W}^{\mathsf{H}}\big{]}\\

\text{s.t.}&\bm{W}\bm{H}=\bm{I}_{M},\\

&{\hat{\bm{R}}}_{x}-\epsilon_{0}\bm{I}_{N}\preceq\bm{R}_{x}\preceq{\hat{\bm{R}%

}}_{x}+\epsilon_{0}\bm{I}_{N},\\

&\bm{R}_{x}\succeq\bm{0}.\end{array}

$$

According to Theorem 1, the above display is equivalent to

$$

\begin{array}[]{cl}\displaystyle\min_{\bm{W}}&\operatorname{Tr}\big{[}\bm{W}{%

\hat{\bm{R}}}_{x}\bm{W}^{\mathsf{H}}\big{]}+\epsilon_{0}\cdot\operatorname{Tr}%

\big{[}\bm{W}\bm{W}^{\mathsf{H}}\big{]}\\

\text{s.t.}&\bm{W}\bm{H}=\bm{I}_{M}.\end{array}

$$

The above formulation is the squared- $F$ -norm–regularized Capon beamformer [14, Eq. (10)] whose solution is

$$

\begin{array}[]{l}\bm{W}^{\star}_{\text{DR-Capon}}=[\bm{H}^{\mathsf{H}}(\hat{%

\bm{R}}_{x}+\epsilon_{0}\bm{I}_{N})^{-1}\bm{H}]^{-1}\cdot\\

\quad\quad\quad\quad\quad\quad\quad\quad\quad\quad\quad\quad\bm{H}^{\mathsf{H}%

}(\hat{\bm{R}}_{x}+\epsilon_{0}\bm{I}_{N})^{-1},\end{array} \tag{39}

$$

which is the diagonal-loading Capon beamformer. $\square$*

**Example 2 (Eigenvalue Thresholding)**

*Suppose that $\hat{\bm{R}}_{x}$ admits the eigenvalues of $\operatorname{diag}\{\lambda_{1},\lambda_{2},...,\lambda_{N}\}$ in descending order and the eigenvectors in $\bm{Q}$ (columns). Let $0≤\mu≤ 1$ be a shrinking coefficient. If we assume $\bm{R}_{x}\preceq\hat{\bm{R}}_{x,\text{thr}}$ where

$$

\begin{array}[]{l}\hat{\bm{R}}_{x,\text{thr}}\coloneqq\\

\quad\bm{Q}\left[\begin{array}[]{cccc}\lambda_{1}&&&\\

&\max\{\mu\lambda_{1},\lambda_{2}\}&&\\

&&\ddots&\\

&&&\max\{\mu\lambda_{1},\lambda_{N}\}\end{array}\right]\bm{Q}^{-1},\end{array} \tag{40}

$$

we have the distributionally robust beamformer

$$

\bm{W}^{\star}_{\text{DR-ET}}=\bm{R}^{\mathsf{H}}_{xs}\hat{\bm{R}}^{-1}_{x,%

\text{thr}}, \tag{41}

$$

which is known as the eigenvalue thresholding method [20], [14, Eq. (12)]. $\square$*

**Example 3 (Distributionally Robust Beamforming for UncertainRssubscript𝑅𝑠\bm{R}_{s}bold_italic_R start_POSTSUBSCRIPT italic_s end_POSTSUBSCRIPTandRvsubscript𝑅𝑣\bm{R}_{v}bold_italic_R start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT)**

*Consider Problem (37). Since the objective of (37) is increasing in both $\bm{R}_{s}$ and $\bm{R}_{v}$ , This claim can be routinely proven in analogy to Theorem 1 and a real-space case in [30, Thm. 1]. if

$$

\hat{\bm{R}}_{s}-\epsilon_{1}\bm{I}_{M}\preceq\bm{R}_{s}\preceq\hat{\bm{R}}_{s%

}+\epsilon_{1}\bm{I}_{M},

$$

we have a distributionally robust beamformer

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{DR}}&=(\hat{\bm{R}}_{s}+\epsilon_{1}%

\bm{I}_{M})\bm{H}^{\mathsf{H}}[\bm{H}(\hat{\bm{R}}_{s}+\epsilon_{1}\bm{I}_{M})%

\bm{H}^{\mathsf{H}}+\bm{R}_{v}]^{-1}\\

&=(\hat{\bm{R}}_{s}+\epsilon_{1}\bm{I}_{M})\bm{H}^{\mathsf{H}}[\bm{H}\hat{\bm{%

R}}_{s}\bm{H}^{\mathsf{H}}+\bm{R}_{v}+\epsilon_{1}\bm{H}\bm{H}^{\mathsf{H}}]^{%

-1};\end{array} \tag{42}

$$

if instead

$$

\hat{\bm{R}}_{s}-\epsilon_{1}\bm{H}^{\mathsf{H}}(\bm{H}\bm{H}^{\mathsf{H}})^{-%

2}\bm{H}\preceq\bm{R}_{s}\preceq\hat{\bm{R}}_{s}+\epsilon_{1}\bm{H}^{\mathsf{H%

}}(\bm{H}\bm{H}^{\mathsf{H}})^{-2}\bm{H}, \tag{43}

$$

we have

$$

\begin{array}[]{l}\bm{W}^{\star}_{\text{DR}}=[\hat{\bm{R}}_{s}\bm{H}^{\mathsf{%

H}}+\epsilon_{1}\bm{H}^{\mathsf{H}}(\bm{H}\bm{H}^{\mathsf{H}})^{-1}]\cdot\\

\quad\quad\quad\quad\quad[\bm{H}\hat{\bm{R}}_{s}\bm{H}^{\mathsf{H}}+\bm{R}_{v}%

+\epsilon_{1}\bm{I}_{N}]^{-1},\end{array} \tag{44}

$$

which is a modified diagonal-loading beamformer. On the other hand, if

$$

\hat{\bm{R}}_{v}-\epsilon_{2}\bm{I}_{N}\preceq\bm{R}_{v}\preceq\hat{\bm{R}}_{v%

}+\epsilon_{2}\bm{I}_{N},

$$

we have

$$

\bm{W}^{\star}_{\text{DR}}=\bm{R}_{s}\bm{H}^{\mathsf{H}}[\bm{H}\bm{R}_{s}\bm{H%

}^{\mathsf{H}}+\hat{\bm{R}}_{v}+\epsilon_{2}\bm{I}_{N}]^{-1}, \tag{45}

$$

which is also a diagonal-loading beamformer. $\square$*

Motivated by Corollary 1 and Examples 1 $\sim$ 3, as well as the trimmed uncertainty sets in Definition 5, we have the following important theorem, which justifies the popular ridge regression in machine learning.

**Theorem 2 (Ridge Regression and Tikhonov Regularization)**

*Consider a linear regression problem on $(\mathbf{x},\mathbf{s})$ , i.e.,

$$

\mathbf{s}=\bm{W}\mathbf{x}+\mathbf{e},

$$

where $\mathbf{e}$ denotes the error term and the distributionally robust estimator of $\bm{W}$ , i.e.,

$$

\min_{\bm{W}\in\mathbb{C}^{M\times N}}\max_{\mathbb{P}_{\mathbf{x},\mathbf{s}}%

\in\mathcal{U}_{\mathbf{x},\mathbf{s}}}\operatorname{Tr}\mathbb{E}_{\mathbf{x}%

,\mathbf{s}}[\bm{W}\mathbf{x}-\mathbf{s}][\bm{W}\mathbf{x}-\mathbf{s}]^{%

\mathsf{H}},

$$

which can be particularized to (19). Supposing that the second-order moment of $\mathbf{x}$ is uncertain and quantified as

$$

{\hat{\bm{R}}}_{x}-\epsilon_{0}\bm{I}_{N}\preceq\bm{R}_{x}\preceq{\hat{\bm{R}}%

}_{x}+\epsilon_{0}\bm{I}_{N},

$$

then the distributionally robust estimator of $\bm{W}$ becomes a ridge regression (i.e., squared- $F$ -norm regularized) method

$$

\displaystyle\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}\hat{\bm{R}}_{x}\bm{W}%

^{\mathsf{H}}-\bm{W}\hat{\bm{R}}_{xs}-\hat{\bm{R}}^{\mathsf{H}}_{xs}\bm{W}^{%

\mathsf{H}}+\hat{\bm{R}}_{s}\big{]}+\epsilon_{0}\operatorname{Tr}\big{[}\bm{W}%

\bm{W}^{\mathsf{H}}\big{]}.

$$

The regularization term becomes $\operatorname{Tr}\big{[}\bm{W}\bm{F}\bm{W}^{\mathsf{H}}\big{]}$ , which is known as the Tikhonov regularizer, if

$$

{\hat{\bm{R}}}_{x}-\epsilon_{0}\bm{F}\preceq\bm{R}_{x}\preceq{\hat{\bm{R}}}_{x%

}+\epsilon_{0}\bm{F}

$$

for some $\bm{F}\succeq\bm{0}$ .*

* Proof:*

This is due to Lemma 1 and Theorem 1. Just note that $\operatorname{Tr}\big{[}\bm{W}(\hat{\bm{R}}_{x}+\epsilon_{0}\bm{F})\bm{W}^{%

\mathsf{H}}-\bm{W}\hat{\bm{R}}_{xs}-\hat{\bm{R}}^{\mathsf{H}}_{xs}\bm{W}^{%

\mathsf{H}}+\hat{\bm{R}}_{s}\big{]}=\\

\operatorname{Tr}\big{[}\bm{W}\hat{\bm{R}}_{x}\bm{W}^{\mathsf{H}}-\bm{W}\hat{%

\bm{R}}_{xs}-\hat{\bm{R}}^{\mathsf{H}}_{xs}\bm{W}^{\mathsf{H}}+\hat{\bm{R}}_{s%

}\big{]}+\epsilon_{0}\operatorname{Tr}\big{[}\bm{W}\bm{F}\bm{W}^{\mathsf{H}}%

\big{]}$ . This completes the proof. $\square$ ∎

Note that in Theorem 2, the second-order moment of $\mathbf{s}$ is not considered because it does not influence the optimal solution of $\bm{W}$ : i.e., the optimal solution of $\bm{W}$ does not depend on the value of $\bm{R}_{s}$ . Theorem 2 gives a new theoretical interpretation of the popular ridge regression in machine learning from the perspective of distributional robustness against second-moment uncertainties of the feature vector $\mathbf{x}$ ; another interpretation of ridge regression from the perspective of distributional robustness under martingale constraints is identified in [31, Ex. 3.3]. When the uncertainty is quantified by the Wasserstein distance, a similar result can be seen in [32, Prop. 3], [33, Prop. 2], which however is not a ridge regression formulation because in [32, Prop. 3] and [33, Prop. 2], the loss function is square-rooted and the norm regularizer is not squared; cf. also [27, Rem. 18 and 19]. The corollary below justifies the rationale of any norm-regularized method.

**Corollary 2**

*The following squared-norm-regularized beamforming formulation can combat the distributional uncertainty:

$$

\displaystyle\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}\hat{\bm{R}}_{x}\bm{W}%

^{\mathsf{H}}-\bm{W}\hat{\bm{R}}_{xs}-\hat{\bm{R}}^{\mathsf{H}}_{xs}\bm{W}^{%

\mathsf{H}}+\hat{\bm{R}}_{s}\big{]}+\lambda\|\bm{W}\|^{2}, \tag{46}

$$

where $\|·\|$ denotes any matrix norm. This is because all norms on $\mathbb{C}^{M× N}$ are equivalent; hence, there exists some $\lambda≥ 0$ such that $\lambda\|\bm{W}\|^{2}≥\epsilon_{0}\|\bm{W}\|^{2}_{F}=\epsilon_{0}%

\operatorname{Tr}\big{[}\bm{W}\bm{W}^{\mathsf{H}}\big{]}$ . As a result, (46) can upper bound the ridge cost in Theorem 2. $\square$*

Motivated by Theorem 2, the following corollary is immediate, which gives another interpretation of ridge regression and Tikhonov regularization from the perspective of data augmentation through data perturbation (cf. noise injection in image [34] and speech [35] processing).

**Corollary 3 (Data Augmentation for Linear Regression)**

*Consider a linear regression problem on $(\mathbf{x},\mathbf{s})$ with data perturbation vectors $(\mathbf{\Delta}_{x},\mathbf{\Delta}_{s})$

$$

(\mathbf{s}+\mathbf{\Delta}_{s})=\bm{W}(\mathbf{x}+\mathbf{\Delta}_{x})+%

\mathbf{e},

$$

and the distributionally robust estimator of $\bm{W}$

$$

\begin{array}[]{l}\displaystyle\min_{\bm{W}\in\mathbb{C}^{M\times N}}\max_{%

\mathbb{P}_{\mathbf{\Delta}_{x},\mathbf{\Delta}_{s}}\in\mathcal{U}_{{\mathbf{%

\Delta}_{x},\mathbf{\Delta}_{s}}}}\operatorname{Tr}\mathbb{E}_{(\mathbf{x},%

\mathbf{s})\sim\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}}\mathbb{E}_{\mathbf{%

\Delta}_{x},\mathbf{\Delta}_{s}}\Big{\{}\\

\quad[\bm{W}(\mathbf{x}+\mathbf{\Delta}_{x})-(\mathbf{s}+\mathbf{\Delta}_{s})]%

[\bm{W}(\mathbf{x}+\mathbf{\Delta}_{x})-(\mathbf{s}+\mathbf{\Delta}_{s})]^{%

\mathsf{H}}\Big{\}}.\end{array}

$$

Suppose that $\mathbf{\Delta}_{x}$ is uncorrelated with $\mathbf{x}$ , with $\mathbf{s}$ , and with $\mathbf{\Delta}_{s}$ ; in addition, $\mathbf{\Delta}_{s}$ is uncorrelated with $\mathbf{x}$ . If the second-order moment of $\mathbf{\Delta}_{x}$ is upper bounded as $\mathbb{E}\mathbf{\Delta}_{x}\mathbf{\Delta}^{\mathsf{H}}_{x}\preceq\epsilon_{%

0}\bm{I}_{N}$ , then the distributionally robust estimator of $\bm{W}$ becomes a ridge regression (i.e., squared- $F$ -norm regularized) method

$$

\displaystyle\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}\hat{\bm{R}}_{x}\bm{W}%

^{\mathsf{H}}-\bm{W}\hat{\bm{R}}_{xs}-\hat{\bm{R}}^{\mathsf{H}}_{xs}\bm{W}^{%

\mathsf{H}}+\hat{\bm{R}}_{s}\big{]}+\epsilon_{0}\operatorname{Tr}\big{[}\bm{W}%

\bm{W}^{\mathsf{H}}\big{]}.

$$

The regularization term becomes $\operatorname{Tr}\big{[}\bm{W}\bm{F}\bm{W}^{\mathsf{H}}\big{]}$ , which is known as the Tikhonov regularizer, if $\mathbb{E}\mathbf{\Delta}_{x}\mathbf{\Delta}^{\mathsf{H}}_{x}\preceq\epsilon_{%

0}\bm{F}$ , for some $\bm{F}\succeq\bm{0}$ . $\square$*

The second-order moment of $\mathbf{\Delta}_{s}$ is not considered in Corollary 3 as it does not influence the optimal value of $\bm{W}$ .

IV-B Complex Uncertainty Sets

Below we remark on more general construction methods for the uncertainty set of $\bm{R}$ using the Wasserstein distance and the $F$ -norm, beyond the moment-based methods in Definitions 1 $\sim$ 6. However, note that such complicated approaches are computationally prohibitive in practice when $N$ or $M$ is large.

IV-B 1 Wasserstein Distributionally Robust Beamforming

We start with the Wasserstein distance:

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}}&\operatorname{Tr}\big{[}-\bm{R}^%

{\mathsf{H}}_{xs}\bm{R}^{-1}_{x}\bm{R}_{xs}+\bm{R}_{s}\big{]}\\

\text{s.t.}&\operatorname{Tr}\left[{\bm{R}+\hat{\bm{R}}-2(\hat{\bm{R}}^{1/2}%

\bm{R}\hat{\bm{R}}^{1/2})^{1/2}}\right]\leq\epsilon^{2}_{0}\\

&\bm{R}\succeq\bm{0},~{}\bm{R}_{x}\succ\bm{0}.\end{array} \tag{47}

$$

The first constraint in the above display is a particularization of the Wasserstein distance between $\mathcal{CN}(\bm{0},\bm{R})$ and $\mathcal{CN}(\bm{0},\hat{\bm{R}})$ .

Problem (47) is a nonlinear positive semi-definite program (P-SDP). However, we can give it a linear reformulation.

**Proposition 1**

*Problem (47) can be equivalently reformulated into a linear P-SDP

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R},\bm{V},\bm{U}}&\operatorname{Tr}[%

\bm{R}_{s}-\bm{V}]\\

\text{s.t.}&\left[\begin{array}[]{cc}\bm{V}&\bm{R}^{\mathsf{H}}_{xs}\\

\bm{R}_{xs}&\bm{R}_{x}\end{array}\right]\succeq\bm{0}\\

&\operatorname{Tr}\left[{\bm{R}+\hat{\bm{R}}-2\bm{U}}\right]\leq\epsilon^{2}_{%

0}\\

&\left[\begin{array}[]{cc}\hat{\bm{R}}^{1/2}\bm{R}\hat{\bm{R}}^{1/2}&\bm{U}\\

\bm{U}&\bm{I}_{N+M}\end{array}\right]\succeq\bm{0}\\

&\bm{R}\succeq\bm{0},~{}\bm{R}_{x}\succ\bm{0},~{}\bm{V}\succeq\bm{0},~{}\bm{U}%

\succeq\bm{0}.\end{array} \tag{48}

$$*

* Proof:*

This is by applying the Schur complement. $\square$ ∎

Complex-valued linear P-SDP can be solved using, e.g., the YALMIP solver. See https://yalmip.github.io/inside/complexproblems/.

Suppose that $\bm{R}^{\star}$ solves (48). The corresponding Wasserstein distributionally robust beamformer is given as

$$

\bm{W}^{\star}_{\text{DR-Wasserstein}}=\bm{R}^{\star\mathsf{H}}_{xs}\bm{R}^{%

\star-1}_{x}. \tag{49}

$$

Next, we separately investigate the uncertainties in $\hat{\bm{R}}_{s}$ and $\hat{\bm{R}}_{v}$ . From (37), we have

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}_{s},\bm{R}_{v}}&\operatorname{Tr}%

\big{[}\bm{R}_{s}-\bm{R}_{s}\bm{H}^{\mathsf{H}}(\bm{H}\bm{R}_{s}\bm{H}^{%

\mathsf{H}}+\bm{R}_{v})^{-1}\bm{H}\bm{R}_{s}\big{]}\\

\text{s.t.}&\operatorname{Tr}\left[{\bm{R}_{s}+\hat{\bm{R}}_{s}-2(\hat{\bm{R}}%

_{s}^{1/2}\bm{R}_{s}\hat{\bm{R}}_{s}^{1/2})^{1/2}}\right]\leq\epsilon^{2}_{1}%

\\

&\operatorname{Tr}\left[{\bm{R}_{v}+\hat{\bm{R}}_{v}-2(\hat{\bm{R}}_{v}^{1/2}%

\bm{R}_{v}\hat{\bm{R}}_{v}^{1/2})^{1/2}}\right]\leq\epsilon^{2}_{2}\\

&\bm{R}_{s}\succeq\bm{0},~{}\bm{R}_{v}\succeq\bm{0},\end{array} \tag{50}

$$

where we ignore the uncertainty of $\bm{H}$ for technical tractability. Problem (50) can be transformed into a linear P-SDP using a similar technique as in Proposition 1. One can just introduce an inequality $\bm{U}\succeq\bm{R}_{s}\bm{H}^{\mathsf{H}}(\bm{H}\bm{R}_{s}\bm{H}^{\mathsf{H}}%

+\bm{R})^{-1}\bm{H}\bm{R}_{s}$ and the objective function will become $\operatorname{Tr}\left[{\bm{R}_{s}-\bm{U}}\right]$ .

Suppose that $(\bm{R}_{s}^{\star},\bm{R}_{v}^{\star})$ solves (50). The corresponding Wasserstein distributionally robust beamformer is given as

$$

\bm{W}^{\star}_{\text{DR-Wasserstein-Individual}}=\bm{R}_{s}^{\star}\bm{H}^{%

\mathsf{H}}[\bm{H}\bm{R}_{s}^{\star}\bm{H}^{\mathsf{H}}+\bm{R}_{v}^{\star}]^{-%

1}. \tag{51}

$$

IV-B 2 F-Norm Distributionally Robust Beamforming

Under the $F$ -norm, we just need to replace the Wasserstein distance. To be specific, (47) becomes

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}}&\operatorname{Tr}\big{[}-\bm{R}^%

{\mathsf{H}}_{xs}\bm{R}^{-1}_{x}\bm{R}_{xs}+\bm{R}_{s}\big{]}\\

\text{s.t.}&\operatorname{Tr}\left[{(\bm{R}-\hat{\bm{R}})^{\mathsf{H}}(\bm{R}-%

\hat{\bm{R}})}\right]\leq\epsilon^{2}_{0}\\

&\bm{R}\succeq\bm{0},~{}\bm{R}_{x}\succ\bm{0}.\end{array} \tag{52}

$$

The linear reformulation of the above display is given in the proposition below.

**Proposition 2**

*The nonlinear P-SDP (52) can be equivalently reformulated into a linear P-SDP

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R},\bm{V},\bm{U}}&\operatorname{Tr}[%

\bm{R}_{s}-\bm{V}]\\

\text{s.t.}&\left[\begin{array}[]{cc}\bm{V}&\bm{R}^{\mathsf{H}}_{xs}\\

\bm{R}_{xs}&\bm{R}_{x}\end{array}\right]\succeq\bm{0}\\

&\operatorname{Tr}\left[{\bm{U}}\right]\leq\epsilon^{2}_{0},\\

&\left[\begin{array}[]{cc}\bm{U}&(\bm{R}-\hat{\bm{R}})^{\mathsf{H}}\\

(\bm{R}-\hat{\bm{R}})&\bm{I}_{N+M}\end{array}\right]\succeq\bm{0},\\

&\bm{R}\succeq\bm{0},~{}\bm{R}_{x}\succ\bm{0},~{}\bm{V}\succeq\bm{0},~{}\bm{U}%

\succeq\bm{0}.\end{array} \tag{53}

$$*

* Proof:*

This is by applying the Schur complement. $\square$ ∎

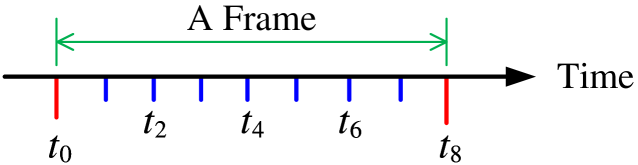

IV-C Multi-Frame Case: Dynamic Channel Evolution

Each frame contains a pilot block used for beamformer design. Although the channel state information (CSI) may change from one frame to another, the CSI between the two consecutive frames is highly correlated. This correlation can benefit beamformer design across multiple frames. Suppose that $\{(\bm{s}_{1},\bm{x}_{1}),(\bm{s}_{2},\bm{x}_{2}),...,(\bm{s}_{L},\bm{x}_{L%

})\}$ is the training data in the current frame and $\{(\bm{s}^{\prime}_{1},\bm{x}^{\prime}_{1}),(\bm{s}^{\prime}_{2},\bm{x}^{%

\prime}_{2}),...,(\bm{s}^{\prime}_{L},\bm{x}^{\prime}_{L})\}$ is the history data in the immediately preceding frame. In such a case, the distributional difference between $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}$ and $\hat{\mathbb{P}}_{\mathbf{x}^{\prime},\mathbf{s}^{\prime}}$ is upper bounded, that is, $d(\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}},~{}\hat{\mathbb{P}}_{\mathbf{x}^{%

\prime},\mathbf{s}^{\prime}})≤\epsilon^{\prime}$ for some proper distance $d$ and a real number $\epsilon^{\prime}≥ 0$ where $\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}}\coloneqq\frac{1}{L}\sum^{L}_{i=1}%

\delta_{(\bm{x}_{i},\bm{s}_{i})}$ and $\hat{\mathbb{P}}_{\mathbf{x}^{\prime},\mathbf{s}^{\prime}}\coloneqq\frac{1}{L}%

\sum^{L}_{i=1}\delta_{(\bm{x}^{\prime}_{i},\bm{s}^{\prime}_{i})}$ .

Since a beamformer $\bm{W}=\mathcal{F}(\mathbb{P}_{\mathbf{x},\mathbf{s}})$ is a continuous functional $\mathcal{F}(·)$ of data distribution $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ , cf. (10), we have $\|\bm{W}-\bm{W}^{\prime}\|_{F}=\|\mathcal{F}(\hat{\mathbb{P}}_{\mathbf{x},%

\mathbf{s}})-\mathcal{F}(\hat{\mathbb{P}}_{\mathbf{x}^{\prime},\mathbf{s}^{%

\prime}})\|_{F}≤ C· d(\hat{\mathbb{P}}_{\mathbf{x},\mathbf{s}},~{}\hat{%

\mathbb{P}}_{\mathbf{x}^{\prime},\mathbf{s}^{\prime}})≤\epsilon$ for some positive constant $C≥ 0$ and upper bound $\epsilon≥ 0$ where $\bm{W}^{\prime}$ is the beamformer associated with $\hat{\mathbb{P}}_{\mathbf{x}^{\prime},\mathbf{s}^{\prime}}$ in the previous frame. Therefore, the beamforming problem (11) becomes a constrained problem

$$

\begin{array}[]{cl}\displaystyle\min_{\bm{W}}&\operatorname{Tr}\big{[}\bm{W}%

\bm{R}_{x}\bm{W}^{\mathsf{H}}-\bm{W}\bm{R}_{xs}-\bm{R}^{\mathsf{H}}_{xs}\bm{W}%

^{\mathsf{H}}+\bm{R}_{s}\big{]}\\

\text{s.t.}&\operatorname{Tr}[\bm{W}-\bm{W}^{\prime}][\bm{W}-\bm{W}^{\prime}]^%

{\mathsf{H}}\leq\epsilon^{2}.\end{array}

$$

By the Lagrange duality theory, it is equivalent to

$$

\begin{array}[]{l}\displaystyle\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}\bm{%

R}_{x}\bm{W}^{\mathsf{H}}-\bm{W}\bm{R}_{xs}-\bm{R}^{\mathsf{H}}_{xs}\bm{W}^{%

\mathsf{H}}+\bm{R}_{s}\big{]}+\\

\quad\quad\quad\quad\quad\quad\lambda\cdot\operatorname{Tr}[\bm{W}-\bm{W}^{%

\prime}][\bm{W}-\bm{W}^{\prime}]^{\mathsf{H}}\\

=\displaystyle\min_{\bm{W}}\operatorname{Tr}\big{[}\bm{W}(\bm{R}_{x}+\lambda%

\bm{I}_{N})\bm{W}^{\mathsf{H}}-\bm{W}(\bm{R}_{xs}+\lambda\bm{W}^{\prime\mathsf%

{H}})-\\

\quad\quad\quad\quad\quad\quad(\bm{R}_{xs}+\lambda\bm{W}^{\prime\mathsf{H}})^{%

\mathsf{H}}\bm{W}^{\mathsf{H}}+(\bm{R}_{s}+\lambda\bm{W}^{\prime}\bm{W}^{%

\prime\mathsf{H}})\big{]},\end{array} \tag{54}

$$

for some $\lambda≥ 0$ . As a result, we have the Wiener beamformer for the multi-frame case, where we can treat $\bm{W}^{\prime}$ as a prior knowledge of $\bm{W}$ .

**Claim 1 (Multi-Frame Beamforming)**

*The Wiener beamformer for the multi-frame case is given by

$$

\begin{array}[]{cl}\bm{W}^{\star}_{\text{Wiener-MF}}&=[\bm{R}_{xs}+\lambda\bm{%

W}^{\prime\mathsf{H}}]^{\mathsf{H}}[\bm{R}_{x}+\lambda\bm{I}_{N}]^{-1}\\

&=[\bm{R}_{s}\bm{H}^{\mathsf{H}}+\lambda\bm{W}^{\prime}][\bm{H}\bm{R}_{s}\bm{H%

}^{\mathsf{H}}+\bm{R}_{v}+\lambda\bm{I}_{N}]^{-1},\end{array} \tag{55}

$$

where $\lambda≥ 0$ is a tuning parameter to control the similarity between $\bm{W}$ and $\bm{W}^{\prime}$ . Specifically, if $\lambda$ is large, $\bm{W}$ must be close to $\bm{W}^{\prime}$ ; if $\lambda$ is small, $\bm{W}$ can be far away from $\bm{W}^{\prime}$ . $\square$*

With the result in Claim 1, (21) becomes

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}}&\operatorname{Tr}\big{[}-(\bm{R}%

_{xs}+\lambda\bm{W}^{\prime\mathsf{H}})^{\mathsf{H}}\cdot(\bm{R}_{x}+\lambda%

\bm{I}_{N})^{-1}\cdot\\

&\quad\quad\quad(\bm{R}_{xs}+\lambda\bm{W}^{\prime\mathsf{H}})+(\bm{R}_{s}+%

\lambda\bm{W}^{\prime}\bm{W}^{\prime\mathsf{H}})\big{]}\\

\text{s.t.}&d_{0}(\bm{R},{\hat{\bm{R}}})\leq\epsilon_{0},\\

&\bm{R}\succeq\bm{0},\end{array} \tag{56}

$$

whose objective function is monotonically increasing in $\bm{R}$ .

The remaining distributional robustness modeling and analyses against the uncertainties in $\bm{R}$ are technically straightforward, and therefore, we omit them here. Upon using the diagonal-loading method on $\bm{R}$ , a distributionally robust beamformer for the multi-frame case is

$$

\begin{array}[]{l}\bm{W}^{\star}_{\text{DR-Wiener-MF}}=[\hat{\bm{R}}_{xs}+%

\lambda\bm{W}^{\prime\mathsf{H}}]^{\mathsf{H}}\cdot[\hat{\bm{R}}_{x}+\lambda%

\bm{I}_{N}+\epsilon_{0}\bm{I}_{N}]^{-1},\end{array}

$$

where $\epsilon_{0}$ is an uncertainty quantification parameter for $\bm{R}$ .

V Distributionally Robust Nonlinear Estimation

For the convenience of the technical treatment, we study the estimation problem in real spaces. Nonlinear estimators, which are suitable for non-Gaussian $\mathbb{P}_{\mathbf{x},\mathbf{s}}$ , are to be limited in reproducing kernel Hilbert spaces and feedforward multi-layer neural network function spaces.

V-A Reproducing Kernel Hilbert Spaces

V-A 1 General Framework and Concrete Examples

As a standard treatment in machine learning, we use the partial pilot data $\{\underline{\bm{x}}_{1},\underline{\bm{x}}_{2},...,\underline{\bm{x}}_{L}\}$ to construct the reproducing kernel Hilbert spaces, and use the whole pilot data $\{(\underline{\bm{x}}_{1},\underline{\bm{s}}_{1}),(\underline{\bm{x}}_{2},%

\underline{\bm{s}}_{2}),...,(\underline{\bm{x}}_{L},\underline{\bm{s}}_{L})\}$ to train the optimal estimator in an RKHS.

With the $\bm{W}$ -linear representation of $\bm{\phi}(·)$ in (2), i.e., $\bm{\phi}(·)=\bm{W}\bm{\varphi}(·)$ , the distributionally robust estimation problem (17) becomes

$$

\min_{\bm{W}\in\mathbb{R}^{2M\times L}}\max_{\mathbb{P}_{\underline{\mathbf{x}%

},\underline{\mathbf{s}}}\in\mathcal{U}_{\underline{\mathbf{x}},\underline{%

\mathbf{s}}}}\operatorname{Tr}\mathbb{E}_{\underline{\mathbf{x}},\underline{%

\mathbf{s}}}[\bm{W}\cdot\bm{\varphi}(\underline{\mathbf{x}})-\underline{%

\mathbf{s}}][\bm{W}\cdot\bm{\varphi}(\underline{\mathbf{x}})-\underline{%

\mathbf{s}}]^{\mathsf{T}}. \tag{57}

$$

The proposition below reformulates and solves (57).

**Proposition 3**

*Let $\bm{K}$ denote the kernel matrix associated with the kernel function $\ker(·,·)$ whose $(i,j)$ -entry is defined as

$$

\bm{K}_{i,j}\coloneqq\ker(\underline{\bm{x}}_{i},\underline{\bm{x}}_{j}),~{}~{%

}~{}\forall i,j\in[L].

$$

Let $\underline{\mathbf{z}}\coloneqq\bm{\varphi}(\underline{\mathbf{x}})$ . Then, the distributionally robust $\underline{\mathbf{x}}$ -nonlinear estimation problem (57) can be rewritten as a distributionally robust $\underline{\mathbf{z}}$ -linear beamforming problem as

$$

\begin{array}[]{cl}\displaystyle\min_{\bm{W}}\max_{\bm{R}_{\underline{z}},\bm{%

R}_{\underline{zs}},\bm{R}_{\underline{s}}}&\operatorname{Tr}\big{[}\bm{W}\bm{%

R}_{\underline{z}}\bm{W}^{\mathsf{T}}-\bm{W}\bm{R}_{\underline{zs}}-\bm{R}^{%

\mathsf{T}}_{\underline{zs}}\bm{W}^{\mathsf{T}}+\bm{R}_{\underline{s}}\big{]}%

\\

\text{s.t.}&d_{0}\left(\left[\begin{array}[]{cc}\bm{R}_{\underline{z}}&\bm{R}_%

{\underline{zs}}\\

\bm{R}^{\mathsf{T}}_{\underline{zs}}&\bm{R}_{\underline{s}}\end{array}\right],%

\left[\begin{array}[]{cc}\hat{\bm{R}}_{\underline{z}}&\hat{\bm{R}}_{\underline%

{zs}}\\

\hat{\bm{R}}^{\mathsf{T}}_{\underline{zs}}&\hat{\bm{R}}_{\underline{s}}\end{%

array}\right]\right)\leq\epsilon_{0},\\

&\left[\begin{array}[]{cc}\bm{R}_{\underline{z}}&\bm{R}_{\underline{zs}}\\

\bm{R}^{\mathsf{T}}_{\underline{zs}}&\bm{R}_{\underline{s}}\end{array}\right]%

\succeq\bm{0},\end{array} \tag{58}

$$

where $\hat{\bm{R}}_{\underline{z}}=\frac{1}{L}\bm{K}^{2}$ , $\hat{\bm{R}}_{\underline{zs}}=\frac{1}{L}\bm{K}\underline{\bm{S}}^{\mathsf{T}}$ , $\hat{\bm{R}}_{\underline{s}}=\frac{1}{L}\underline{\bm{S}}\underline{\bm{S}}^{%

\mathsf{T}}$ , and $\underline{\bm{S}}\coloneqq[\operatorname{Re}\bm{S};~{}\operatorname{Im}\bm{S}%

]=[\underline{\bm{s}}_{1},\underline{\bm{s}}_{2},...,\underline{\bm{s}}_{L}]$ . In addition, the strong min-max property holds for (58): i.e., the order of $\min$ and $\max$ can be exchanged provided that the first constraint is compact convex. As a result, given every pair of $(\bm{R}_{\underline{z}},\bm{R}_{\underline{zs}},\bm{R}_{\underline{s}})$ , the optimal Wiener beamformer is

$$

\bm{W}^{\star}_{\text{RKHS}}=\bm{R}^{\mathsf{T}}_{\underline{zs}}\cdot\bm{R}^{%

-1}_{\underline{z}} \tag{59}

$$

which transforms (58) to

$$

\begin{array}[]{cl}\displaystyle\max_{\bm{R}_{\underline{z}},\bm{R}_{%

\underline{zs}},\bm{R}_{\underline{s}}}&\operatorname{Tr}\big{[}-\bm{R}^{%

\mathsf{T}}_{\underline{zs}}\bm{R}^{-1}_{\underline{z}}\bm{R}_{\underline{zs}}%

+\bm{R}_{\underline{s}}\big{]}\\

\text{s.t.}&d_{0}\left(\left[\begin{array}[]{cc}\bm{R}_{\underline{z}}&\bm{R}_%

{\underline{zs}}\\

\bm{R}^{\mathsf{T}}_{\underline{zs}}&\bm{R}_{\underline{s}}\end{array}\right],%

~{}\left[\begin{array}[]{cc}\hat{\bm{R}}_{\underline{z}}&\hat{\bm{R}}_{%

\underline{zs}}\\

\hat{\bm{R}}^{\mathsf{T}}_{\underline{zs}}&\hat{\bm{R}}_{\underline{s}}\end{%

array}\right]\right)\leq\epsilon_{0},\\

&\left[\begin{array}[]{cc}\bm{R}_{\underline{z}}&\bm{R}_{\underline{zs}}\\

\bm{R}^{\mathsf{T}}_{\underline{zs}}&\bm{R}_{\underline{s}}\end{array}\right]%

\succeq\bm{0},~{}~{}~{}\bm{R}_{\underline{z}}\succ\bm{0}.\end{array} \tag{60}

$$*

* Proof:*

Treating $[\underline{\mathbf{z}};\underline{\mathbf{s}}]$ as, or approximating $[\underline{\mathbf{z}};\underline{\mathbf{s}}]$ using, a joint Gaussian random vector due to the linear estimation relation $\hat{\underline{\mathbf{s}}}=\bm{W}\underline{\mathbf{z}}$ in RKHS [cf. (57)], then the results in Lemma 1 apply. For details, see Appendix G. $\square$ ∎

In (58), $d_{0}$ defines a matrix similarity measure to quantify the uncertainty of the covariance matrix of $[\underline{\mathbf{z}};\underline{\mathbf{s}}]$ , and $\epsilon_{0}≥ 0$ quantifies the uncertainty level. Proposition 3 reveals the benefit of the kernel trick (2), that is, the possibility to represent a nonlinear estimation problem as a linear one.

The claim below summarizes the solution of (17) in the RKHS induced by the kernel function $\ker(·,·)$ .

**Claim 2**

*Suppose that $(\bm{R}^{\star}_{\underline{z}},\bm{R}^{\star}_{\underline{zs}},\bm{R}^{\star}%

_{\underline{s}})$ solves (60). Then the optimal estimator of (17) in the RKHS induced by $\ker(·,·)$ is given by

$$

\bm{\phi}^{\star}(\mathbf{x})=\bm{\Gamma}_{M}\cdot\bm{R}^{\star\mathsf{T}}_{%

\underline{zs}}\cdot\bm{R}^{\star-1}_{\underline{z}}\cdot\bm{\varphi}(%

\underline{\mathbf{x}}), \tag{61}

$$

where $\underline{\mathbf{x}}=[\operatorname{Re}\mathbf{x};~{}\operatorname{Im}%

\mathbf{x}]$ is the real-space representation of $\mathbf{x}$ , $\bm{\Gamma}_{M}\coloneqq[\bm{I}_{M},\bm{J}_{M}]$ is defined in Subsection I-B, and

$$

\bm{\varphi}(\underline{\mathbf{x}})\coloneqq\left[\begin{array}[]{c}\ker(%

\underline{\mathbf{x}},\underline{\bm{x}}_{1})\\

\ker(\underline{\mathbf{x}},\underline{\bm{x}}_{2})\\

\vdots\\

\ker(\underline{\mathbf{x}},\underline{\bm{x}}_{L})\end{array}\right].

$$

In addition, the corresponding worst-case estimation error covariance is

$$

\bm{\Gamma}_{M}\cdot\big{[}-\bm{R}^{\star\mathsf{T}}_{\underline{zs}}\bm{R}^{%

\star-1}_{\underline{z}}\bm{R}^{\star}_{\underline{zs}}+\bm{R}^{\star}_{%

\underline{s}}\big{]}\cdot\bm{\Gamma}_{M}^{\mathsf{H}}, \tag{62}

$$

which upper bounds the true estimation error covariance. $\square$*

Concrete examples of Claim 2 are given as follows.

**Example 4 (Kernelized Diagonal Loading)**

*By using the trimmed diagonal-loading uncertainty set for $\bm{R}_{\underline{z}}$ , i.e.,

$$

\hat{\bm{R}}_{\underline{z}}-\epsilon_{0}\bm{I}_{L}\preceq\bm{R}_{\underline{z%

}}\preceq\hat{\bm{R}}_{\underline{z}}+\epsilon_{0}\bm{I}_{L},

$$

we have the kernelized diagonal loading method

$$

\bm{\phi}^{\star}(\mathbf{x})=\bm{\Gamma}_{M}\cdot\frac{1}{L}\underline{\bm{S}%

}\bm{K}\cdot\left(\frac{1}{L}\bm{K}^{2}+\epsilon_{0}\bm{I}_{L}\right)^{-1}%

\cdot\bm{\varphi}(\underline{\mathbf{x}}), \tag{63}

$$

which is obtained at the upper bound of $\bm{R}_{\underline{z}}$ . Furthermore, in this case, the distributionally robust formulation (57) is equivalent to a squared- $F$ -norm-regularized formulation

$$

\begin{array}[]{l}\displaystyle\min_{\bm{W}}\operatorname{Tr}\mathbb{E}_{(%

\underline{\mathbf{x}},\underline{\mathbf{s}})\sim\hat{\mathbb{P}}_{\underline%

{\mathbf{x}},\underline{\mathbf{s}}}}[\bm{W}\cdot\bm{\varphi}(\underline{%

\mathbf{x}})-\underline{\mathbf{s}}][\bm{W}\cdot\bm{\varphi}(\underline{%

\mathbf{x}})-\underline{\mathbf{s}}]^{\mathsf{T}}+\\

\quad\quad\quad\quad\quad\quad\quad\quad\quad\quad\epsilon_{0}\cdot%

\operatorname{Tr}[\bm{W}\bm{W}^{\mathsf{T}}],\end{array} \tag{64}

$$

which can be proven by replacing $\bm{R}_{\underline{z}}$ in (58) with its upper bound. $\square$*

**Example 5 (Kernelized Eigenvalue Thresholding)**

*The kernelized eigenvalue thresholding method can be designed in analogy to Example 2. The two key steps are to obtain the eigenvalue decomposition of $\hat{\bm{R}}_{\underline{z}}=\bm{K}^{2}/L$ and then lift the eigenvalues; cf. (40). $\square$*

In addition, Example 4 motivates the following important theorem for statistical machine learning.

**Theorem 3 (Kernel Ridge Regression and Kernel Tikhonov Regularization)**

*Consider the nonlinear regression problem

$$

\mathbf{s}=\bm{\phi}(\mathbf{x})+\mathbf{e},

$$

and the distributionally robust estimator of $\bm{\phi}(\underline{\mathbf{x}})=\bm{W}·\bm{\varphi}(\underline{\mathbf{x%

}})$ in the RKHS induced by the kernel function $\ker(·,·)$ , i.e.,

$$

\min_{\bm{W}\in\mathbb{R}^{2M\times L}}\max_{\mathbb{P}_{\underline{\mathbf{x}%

},\underline{\mathbf{s}}}\in\mathcal{U}_{\underline{\mathbf{x}},\underline{%

\mathbf{s}}}}\operatorname{Tr}\mathbb{E}_{\underline{\mathbf{x}},\underline{%

\mathbf{s}}}[\bm{W}\cdot\bm{\varphi}(\underline{\mathbf{x}})-\underline{%

\mathbf{s}}][\bm{W}\cdot\bm{\varphi}(\underline{\mathbf{x}})-\underline{%

\mathbf{s}}]^{\mathsf{T}}.

$$

Supposing that only the second-order moment of $\underline{\mathbf{z}}\coloneqq\bm{\varphi}(\underline{\mathbf{x}})$ is uncertain and quantified as

$$

{\hat{\bm{R}}}_{\underline{z}}-\epsilon_{0}\bm{I}_{L}\preceq{\bm{R}}_{%

\underline{z}}\preceq{\hat{\bm{R}}}_{\underline{z}}+\epsilon_{0}\bm{I}_{L},

$$

then the distributionally robust estimator of $\bm{W}$ becomes a kernel ridge regression method (64). The regularization term in (64) becomes the Tikhonov regularizer $\operatorname{Tr}[\bm{W}\bm{F}\bm{W}^{\mathsf{T}}]$ if

$$

{\hat{\bm{R}}}_{\underline{z}}-\epsilon_{0}\bm{F}\preceq{\bm{R}}_{\underline{z%

}}\preceq{\hat{\bm{R}}}_{\underline{z}}+\epsilon_{0}\bm{F}

$$

for some $\bm{F}\succeq\bm{0}$ .*

* Proof:*

See Example 4; cf. Theorem 2. $\square$ ∎

Theorem 3 gives the kernel ridge regression an interpretation of distributional robustness. The usual choice of $\bm{F}$ in Theorem 3 is the $L$ -divided kernel matrix $\bm{K}/L$ ; see, e.g., [36, Eq. (4)], [24, Eqs. (15.110) and (15.113)]. As a result, from (63), we have

$$

\bm{\phi}^{\star}(\mathbf{x})=\bm{\Gamma}_{M}\cdot\underline{\bm{S}}\cdot\left%

(\bm{K}+\epsilon_{0}\bm{I}_{L}\right)^{-1}\cdot\bm{\varphi}(\underline{\mathbf%

{x}}), \tag{65}

$$

which is another type of kernel ridge regression (i.e., a new kernelized diagonal-loading method).

In analogy to Corollary 2, the following corollary motivated from (64) is immediate.

**Corollary 4**

*The following squared-norm-regularized method in RKHSs can combat the distributional uncertainty:

$$

\begin{array}[]{l}\displaystyle\min_{\bm{W}}\operatorname{Tr}\mathbb{E}_{(%

\underline{\mathbf{x}},\underline{\mathbf{s}})\sim\hat{\mathbb{P}}_{\underline%

{\mathbf{x}},\underline{\mathbf{s}}}}[\bm{W}\cdot\bm{\varphi}(\underline{%

\mathbf{x}})-\underline{\mathbf{s}}][\bm{W}\cdot\bm{\varphi}(\underline{%

\mathbf{x}})-\underline{\mathbf{s}}]^{\mathsf{T}}+\\

\quad\quad\quad\quad\quad\quad\quad\quad\quad\quad\lambda\cdot\|\bm{W}\|^{2},%

\end{array} \tag{66}

$$

for any matrix norm $\|·\|$ ; cf. Corollary 2. $\square$*

Moreover, in analogy to Corollary 3, the following corollary is immediate.

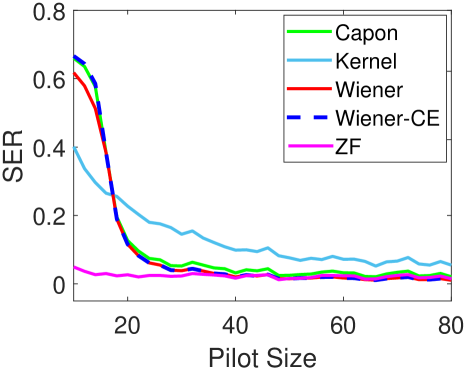

**Corollary 5 (Data Augmentation for Kernel Regression)**