# The Case for Co-Designing Model Architectures with Hardware

**Authors**: Quentin Anthony, Jacob Hatef, Deepak Narayanan, Stella Biderman, Stas BekmanJunqi Yin, Aamir Shafi, Hari Subramoni, Dhabaleswar K. Panda

> EleutherAI Ohio State University

> NVIDIA

> EleutherAI

> Contextual AI Oak Ridge National Lab

> Ohio State University

## Abstract

While GPUs are responsible for training the vast majority of state-of-the-art deep learning models, the implications of their architecture are often overlooked when designing new deep learning (DL) models. As a consequence, modifying a DL model to be more amenable to the target hardware can significantly improve the runtime performance of DL training and inference. In this paper, we provide a set of guidelines for users to maximize the runtime performance of their transformer models. These guidelines have been created by carefully considering the impact of various model hyperparameters controlling model shape on the efficiency of the underlying computation kernels executed on the GPU. We find the throughput of models with “efficient” model shapes is up to 39% higher while preserving accuracy compared to models with a similar number of parameters but with unoptimized shapes.

## I Introduction

Transformer-based [37] language models have become widely popular for language and sequence modeling tasks. Consequently, it is extremely important to train and serve large transformer models such as GPT-3 [6] and Codex as efficiently as possible given their scale and wide use. At the immense scales that are in widespread use today, efficiently using computational resources becomes a complex problem and small drops in hardware utilization can lead to enormous amounts of wasted compute, funding, and time. In this paper, we tackle a frequently ignored aspect of training large transformer models: how the shape of the model can impact runtime performance. We use first principles of GEMM optimization to optimize individual parts of the transformer model (which translates to improved end-to-end runtime performance as well). Throughout the paper, we illustrate our points with extensive computational experiments demonstrating how low-level GPU phenomenon impact throughput throughout the language model architecture.

Many of the phenomena remarked on in this paper have been previously documented, but continue to plague large language model (LLM) designers to this day. We hypothesize that there are three primary causes of this:

1. Few resources trace the performance impacts of a transformer implementation all the way to the underlying computation kernels executed on the GPU.

1. The existing documentation on how transformer hyperparameters map to these kernels is not always in the most accessible formats, including tweets [19, 18], footnotes [33], and in comments in training libraries [3].

1. It is convenient to borrow architectures from other papers and researchers rarely give substantial thought to whether those choices of model shapes are optimal.

This work attempts to simplify performance tuning for transformer models by carefully considering the architecture of modern GPUs. This paper is also a demonstration of our thesis that model dimensions should be chosen with hardware details in mind to an extent far greater than is typical in deep learning research today.

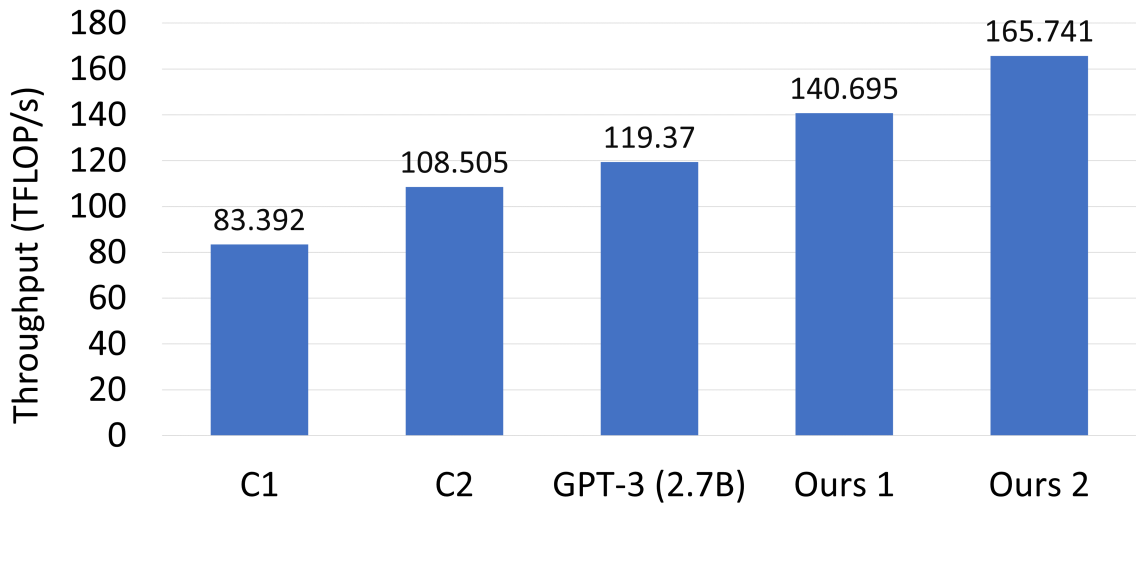

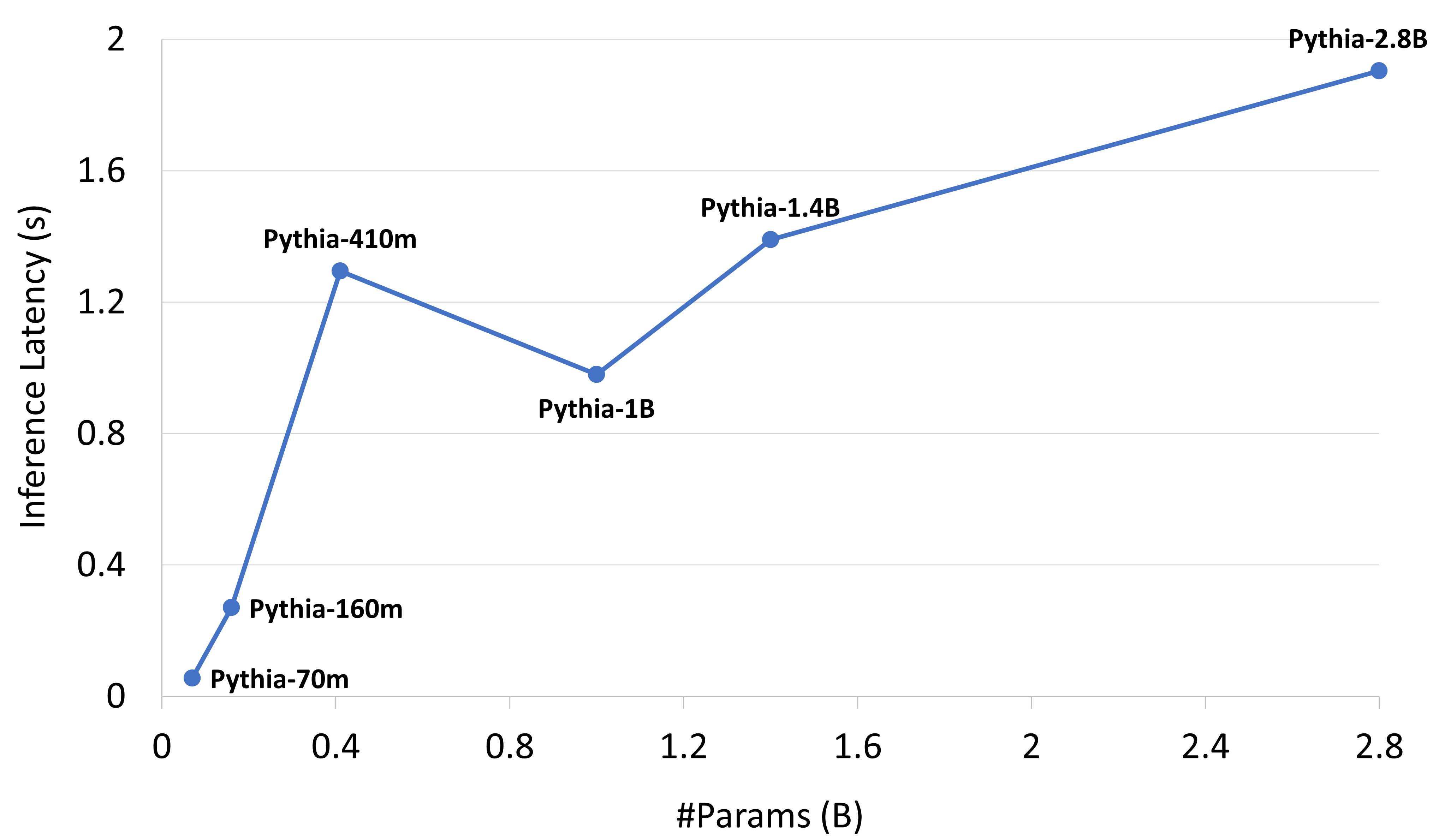

As shown in Figure 1, the runtimes of models with a nearly identical number of parameters but different shapes can vary wildly. In this figure, the “standard architecture” for a 2.7B transformer model defined by GPT-3 [6] has been used by OPT [43], GPT-Neo [5], Cerebras-GPT [13], RedPajama-INCITE [1], and Pythia [4]. Unfortunately the knowledge of how to optimally shape transformer architectures is not widely known, resulting in people often making sub-optimal design decisions. This is exacerbated by the fact that researchers often deliberately copy hyperparameters from other papers for cleaner comparisons, resulting in these sub-optimal choices becoming locked in as the standard. As one example of this, we show that the 2.7 billion parameter model described in [6] can be trained almost 20% faster than the default architecture through minor tweaking of the model shape.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: Throughput Comparison Chart

## Chart Type

Bar chart comparing computational throughput (TFLOPs/s) across different models.

## Axis Labels

- **X-axis**: "Models" (categorical)

- Categories: C1, C2, GPT-3 (2.7B), Ours 1, Ours 2

- **Y-axis**: "Throughput (TFLOPs/s)" (numerical)

- Range: 0 to 180 (linear scale)

## Data Points

| Model | Throughput (TFLOPs/s) |

|----------------|-----------------------|

| C1 | 83.392 |

| C2 | 108.505 |

| GPT-3 (2.7B) | 119.37 |

| Ours 1 | 140.695 |

| Ours 2 | 165.741 |

## Visual Trends

- **Upward progression**: Throughput increases monotonically from left to right.

- **Key jumps**:

- C1 → C2: +25.113 TFLOPs/s

- C2 → GPT-3: +10.865 TFLOPs/s

- GPT-3 → Ours 1: +21.325 TFLOPs/s

- Ours 1 → Ours 2: +25.046 TFLOPs/s

## Color Coding

- All bars rendered in **blue** (no explicit legend visible; uniform color implies single data series).

## Spatial Grounding

- Bars positioned sequentially along X-axis from left (C1) to right (Ours 2).

- Numerical values displayed atop each bar for direct readability.

## Component Isolation

1. **Header**: No explicit title present.

2. **Main Chart**: Bar heights proportional to throughput values.

3. **Footer**: No source/attribution information visible.

## Trend Verification

- Confirmed: Each subsequent model shows higher throughput than the previous, with consistent incremental improvements.

## Data Table Reconstruction

| Model | Throughput (TFLOPs/s) |

|----------------|-----------------------|

| C1 | 83.392 |

| C2 | 108.505 |

| GPT-3 (2.7B) | 119.37 |

| Ours 1 | 140.695 |

| Ours 2 | 165.741 |

## Notes

- No non-English text detected.

- No explicit legend present; color consistency across bars suggests single data series.

- Values formatted to two decimal places for precision.

</details>

Figure 1: Transformer single-layer throughput of various architectures for a 2.7 billion parameter model (C1 and C2 are defined by this paper as C1: $h=2560,a=64$ , C2: $h=2560,a=40$ ).

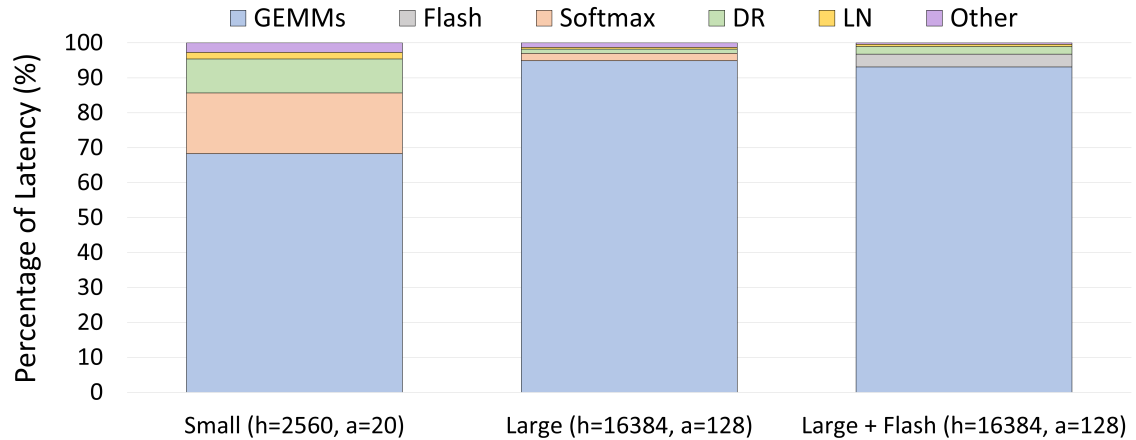

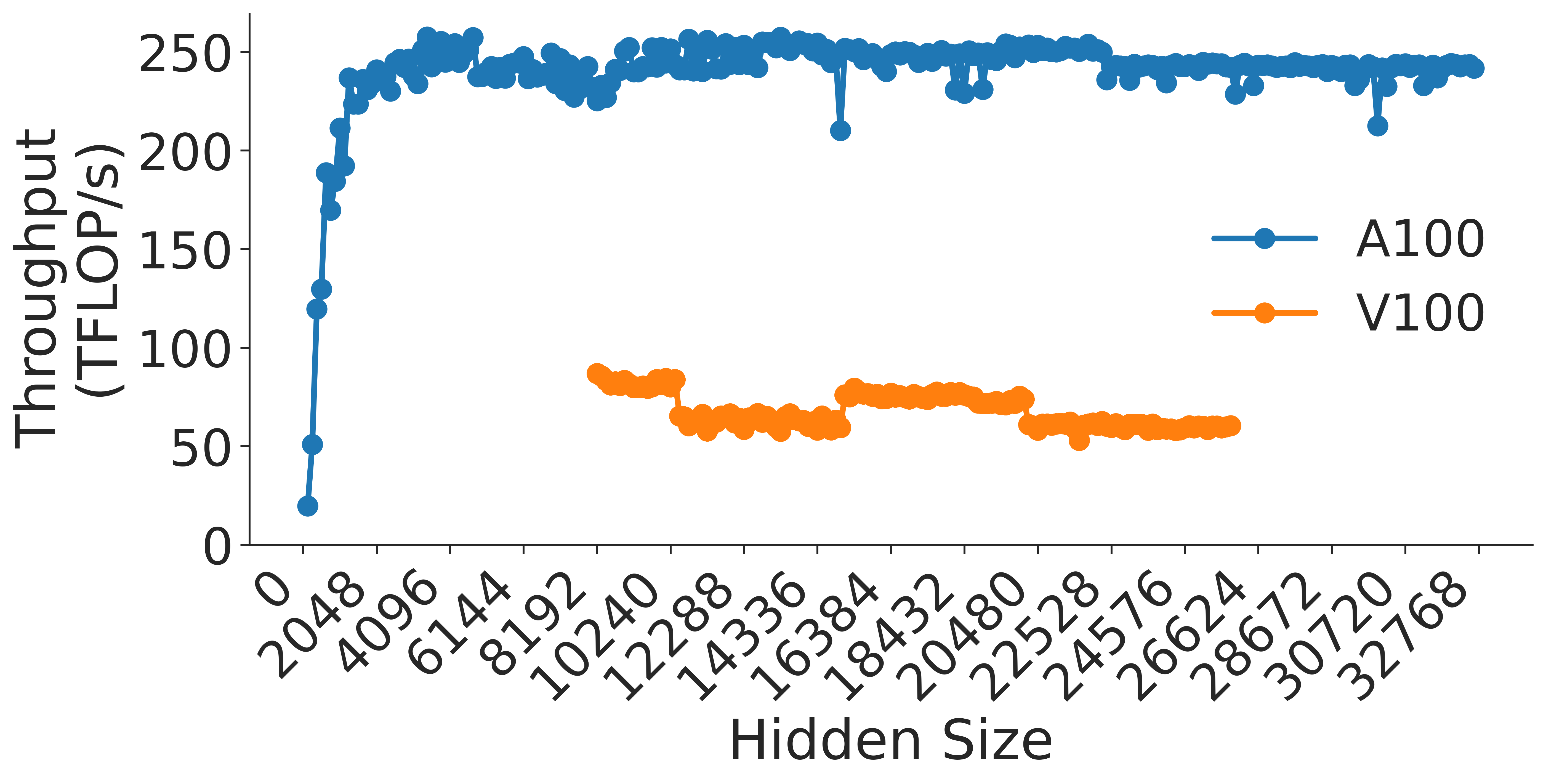

Our analysis makes use of the fact that General Matrix Multiplications (GEMMs) are the lifeblood of modern deep learning. Most widely-used compute-intensive layers in deep learning explicitly use GEMMs (e.g., linear layers or attention layers) or use operators that are eventually lowered into GEMMs (e.g., convolutions). For transformer models, our experiments from Figure 2 show that GEMM kernels regularly account for $68.3\$ and $94.9\$ of the total model latency for medium- and large-sized models, respectively. As a result, understanding the performance of GEMMs is crucial to understanding the runtime performance of end-to-end models; this only becomes more important as model size increases.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: Latency Composition Analysis

## Chart Description

This image presents a stacked bar chart comparing latency composition across three computational configurations. The chart uses color-coded segments to represent different computational components' contribution to total latency.

### Key Components

1. **Legend** (Top of chart):

- GEMMs: Blue

- Flash: Gray

- Softmax: Orange

- DR: Green

- LN: Yellow

- Other: Purple

2. **X-Axis Categories**:

- Small (h=2560, a=20)

- Large (h=16384, a=128)

- Large + Flash (h=16384, a=128)

3. **Y-Axis**:

- Label: "Percentage of Latency (%)"

- Range: 0-100%

## Data Analysis

### Spatial Grounding Verification

- Legend position: Top-center

- Color consistency confirmed:

- Blue = GEMMs (dominant in all categories)

- Gray = Flash (appears only in Large + Flash)

- Orange = Softmax (visible in Small and Large)

- Green = DR (small presence in all)

- Yellow = LN (minimal in all)

- Purple = Other (consistent 1% across all)

### Trend Verification

1. **Small Configuration**:

- GEMMs: 68% (blue)

- Softmax: 12% (orange)

- DR: 6% (green)

- LN: 2% (yellow)

- Flash: 1% (gray)

- Other: 1% (purple)

2. **Large Configuration**:

- GEMMs: 94% (blue)

- Softmax: 3% (orange)

- DR: 1% (green)

- LN: 1% (yellow)

- Flash: 1% (gray)

- Other: 1% (purple)

3. **Large + Flash Configuration**:

- GEMMs: 92% (blue)

- Flash: 3% (gray)

- Softmax: 2% (orange)

- DR: 1% (green)

- LN: 1% (yellow)

- Other: 1% (purple)

## Technical Observations

1. **Dominant Component**: GEMMs consistently represent >90% of latency in Large configurations

2. **Flash Impact**: Addition of Flash in Large + Flash configuration reduces GEMMs' share by 2% while maintaining total latency

3. **Softmax Reduction**: Softmax contribution decreases from 12% (Small) to 2% (Large + Flash)

4. **Stable Components**: DR, LN, and Other maintain <3% contribution across all configurations

## Data Table Reconstruction

| Configuration | GEMMs (%) | Flash (%) | Softmax (%) | DR (%) | LN (%) | Other (%) |

|--------------------|-----------|-----------|-------------|--------|--------|-----------|

| Small (h=2560) | 68 | 1 | 12 | 6 | 2 | 1 |

| Large (h=16384) | 94 | 1 | 3 | 1 | 1 | 1 |

| Large + Flash | 92 | 3 | 2 | 1 | 1 | 1 |

## Language Analysis

- All text appears in English

- No non-English content detected

## Critical Findings

1. **Latency Bottleneck**: GEMMs dominate computational latency in large-scale operations

2. **Hardware Impact**: Flash integration shows minimal latency contribution (3%) but enables GEMM optimization

3. **Algorithmic Efficiency**: Softmax and DR components show significant reduction in larger configurations

</details>

Figure 2: The proportion of latency from each transformer component for one layer of various model sizes

On account of their parallel architecture, GPUs are a natural hardware platform for GEMMs. However, the observed throughput for these GEMMs depends on the matrix dimensions due to how the computation is mapped onto the execution units of the GPU (called streaming multiprocessors or SMs for short). As a result, GPU efficiency is sensitive to the model depth and width, which control the arithmetic efficiency of the computation, SM utilization, kernel choice, and the usage of tensor cores versus slower cuda cores. This work tries to determine how best to size models to ensure good performance on GPUs, taking these factors into account. Optimizing model shapes for efficient GEMMs will increase throughput for the entire lifetime of the model, decreasing training time and inference costs We expect best results when the inference GPU is the same as the training GPU, but the guidelines we present could also be useful when the two are different. for production models.

### I-A Contributions

Our contributions are as follows:

- We map the transformer model to its underlying matrix multiplications / GEMMs, and show how each component of the transformer model can suffer from using sub-optimal transformer dimensions.

- We compile a list of GPU performance factors into one document and explain how to choose optimal GEMM dimensions.

- We define rules to ensure transformer models are composed of efficient GEMMs.

## II Related Work

### II-A GPU Characterization of DNNs

DL model training involves the heavy use of GPU kernels, and the characterization of such kernel behavior constitutes a large body of prior work that this paper builds upon. GPU kernels, especially GEMM kernels, are key to improving DL training and inference performance. Therefore, characterizing [20] and optimizing [42, 2, 16, 15] these kernels have received a lot of attention in recent work [22].

Beyond GPU kernels, new algorithms and DL training techniques have been developed to optimize I/O [10, 9] and leverage hardware features like Tensor Cores [40, 31] as efficiently as possible. In addition to the above studies for DL training, exploiting Tensor Core properties has also shown excellent speedups for scientific applications such as iterative solvers [17] and sparse linear algebra subroutines [36].

### II-B Comparison Across DL Accelerators

In recent years, there has emerged a range of acceleration strategies such as wafer-scale (Cerebras), GPUs (AMD and NVIDIA), and tensor processing units (Google). Given this diverse array of new AI accelerators, many pieces of work perform cross-generation and cross-accelerator comparison that have helped elucidate the strengths and weaknesses of each accelerator. Cross-accelerator studies such as [14, 39, 23] enable HPC and cloud customers to choose an appropriate accelerator for their DL workload. We seek to extend this particular line of work by evaluating across various datacenter-class NVIDIA (V100, A100, and H100) and AMD GPUs (MI250X).

### II-C DL Training Performance Guides

The most similar effort to our work is a GPU kernel characterization study for RNNs and CNNs performed in [41]. Since the transformer architecture differs greatly compared to RNNs and CNNs, we believe that our work provides a timely extension. Further, our focus on creating a practical performance guide is similar in nature to the 3D-parallelism optimization for distributed GPU architectures presented in [25].

From the above discussion, one can posit that while many papers exist to optimize DL performance on GPUs [22], such papers tend to neglect the fundamental effects that GPU properties (e.g. Tensor Cores, tiling, wave quantization, etc.) have on model training. Because of this omission, many disparate DL training groups have rediscovered a similar set of model sizing takeaways [19, 18, 33, 3]. We seek to provide explanations for these takeaways from the perspective of fundamental GPU first-principles, and to aggregate these explanations into a concise set of takeaways for efficient transformer training and inference.

## III Background

We will now discuss some of the necessary prerequisite material to understand the performance characteristics of the GPU kernels underlying transformer models.

### III-A GPU Kernels

General Matrix Multiplications (GEMMs) serve as a crucial component for many functions in neural networks, including fully-connected layers, recurrent layers like RNNs, LSTMs, GRUs, and convolutional layers. If $A$ is an $m× k$ matrix and $B$ is a $k× n$ matrix, then the matrix product $AB$ is a simple GEMM. We can then generalize this to $C=α AB+β C$ (in the previous example, $α$ is 1, and $β$ is 0). In a fully-connected layer’s forward pass, the weight matrix would be argument $A$ and input activations would be argument $B$ ( $α$ and $β$ would typically be 1 and 0 as before; $β$ can be 1 in certain scenarios, such as when adding a skip-connection with a linear operation).

Matrix-matrix multiplication is a fundamental operation in numerous scientific and engineering applications, particularly in the realm of deep learning. It is a computationally intensive task that requires significant computational resources for large-scale problems. To address this, various algorithms and computational techniques have been developed to optimize matrix-matrix multiplication operations.

Matrix multiplication variants like batched matrix-matrix (BMM) multiplication kernels have also been introduced to improve the throughput of certain common DL operators like attention [37]. A general formula for a BMM operation is given by Equation 1 below, where $\{A_i\}$ and $\{B_i\}$ are a batch of matrix inputs, $α$ and $β$ are scalar inputs, and $\{C_i\}$ is a batch of output matrices.

$$

C_i=α A_iB_i+β C_i, i=1,...N \tag{1}

$$

### III-B NVIDIA GEMM Implementation and Performance Factors

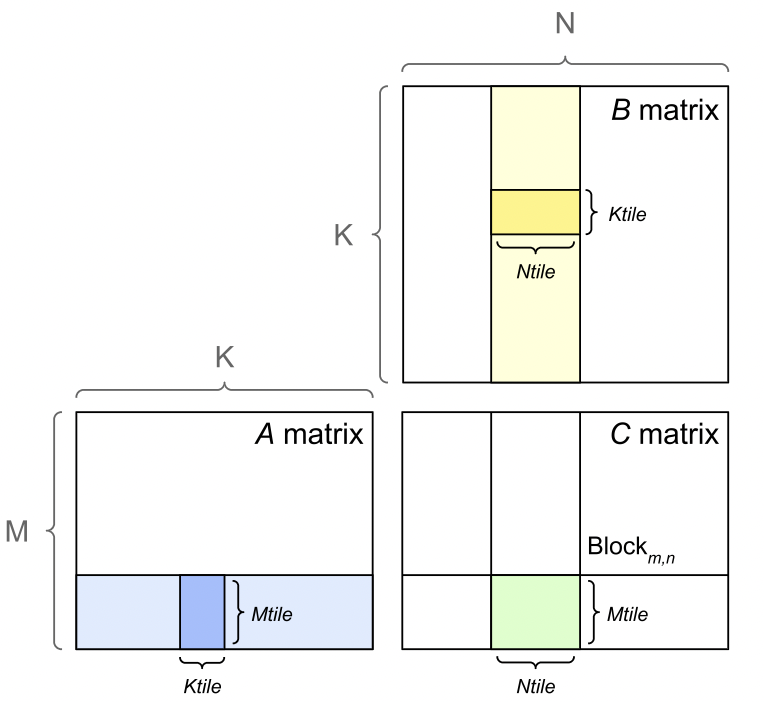

There are a number of performance factors to consider when analyzing GEMMs on NVIDIA GPU architectures. NVIDIA GPUs divide the output matrix into regions or tiles as shown in Figure 3 and schedule them to one of the available streaming multiprocessors (SM) on the GPU (e.g., A100 GPUs have 108 SMs). Each tile or thread block is processed in a Tensor Core, which NVIDIA introduced for fast tensor operations. NVIDIA Tensor Cores are only available for GEMMs with appropriate dimensions. Tensor Cores can be fully utilized when GEMM dimensions $m$ , $k$ , and $n$ are multiples of 16 bytes and 128 bytes for V100 and A100 GPUs, respectively. Since a FP16 element is 2 bytes, this corresponds to dimension sizes that are multiples of 8 and 64 elements, respectively. If these dimension sizes are not possible, Tensor Cores perform better with larger multiples of 2 bytes.

<details>

<summary>extracted/5378885/figures/tiling.png Details</summary>

### Visual Description

# Technical Document Extraction: Matrix Diagram Analysis

## Overview

The image depicts a block matrix decomposition across three matrices (A, B, C) with labeled dimensions and highlighted tiles. All text is in English.

---

### Matrix A

- **Dimensions**: M (rows) × K (columns)

- **Highlighted Tile**:

- **Color**: Blue

- **Label**: `Mtile` (rows) × `Ktile` (columns)

- **Position**: Bottom-right quadrant of Matrix A

---

### Matrix B

- **Dimensions**: K (rows) × N (columns)

- **Highlighted Tile**:

- **Color**: Yellow

- **Label**: `Ktile` (rows) × `Ntile` (columns)

- **Position**: Top-right quadrant of Matrix B

---

### Matrix C

- **Dimensions**: N (rows) × N (columns)

- **Highlighted Tile**:

- **Color**: Green

- **Label**: `Mtile` (rows) × `Ntile` (columns)

- **Position**: Bottom-right quadrant of Matrix C

- **Additional Label**: `Block_m,n` (top-right quadrant)

---

### Legend & Color Mapping

- **Blue**: `Mtile` (Matrix A)

- **Yellow**: `Ktile` (Matrix B)

- **Green**: `Mtile` (Matrix C)

- **Spatial Grounding**:

- Legend colors match tile colors exactly.

- No explicit legend box; colors are inferred from tile highlights.

---

### Key Observations

1. **Tile Consistency**:

- `Mtile` appears in both Matrix A and C, suggesting a shared submatrix dimension.

- `Ktile` and `Ntile` are unique to their respective matrices.

2. **Block Structure**:

- Matrices A and B share the `Ktile` dimension, implying a partitioning of the K-axis.

- Matrix C’s `Block_m,n` suggests a hierarchical decomposition of the N×N matrix.

---

### Data Extraction Summary

| Matrix | Dimensions | Highlighted Tile | Color | Labels |

|--------|------------|------------------|--------|--------------|

| A | M × K | Mtile × Ktile | Blue | Mtile, Ktile |

| B | K × N | Ktile × Ntile | Yellow | Ktile, Ntile |

| C | N × N | Mtile × Ntile | Green | Mtile, Ntile, Block_m,n |

---

### Notes

- No numerical data or trends are present; the image focuses on structural decomposition.

- All axis labels (M, K, N) and tile labels (`Mtile`, `Ktile`, `Ntile`) are explicitly defined.

- No omitted labels; all textual components are transcribed.

</details>

Figure 3: GEMM tiling [26].

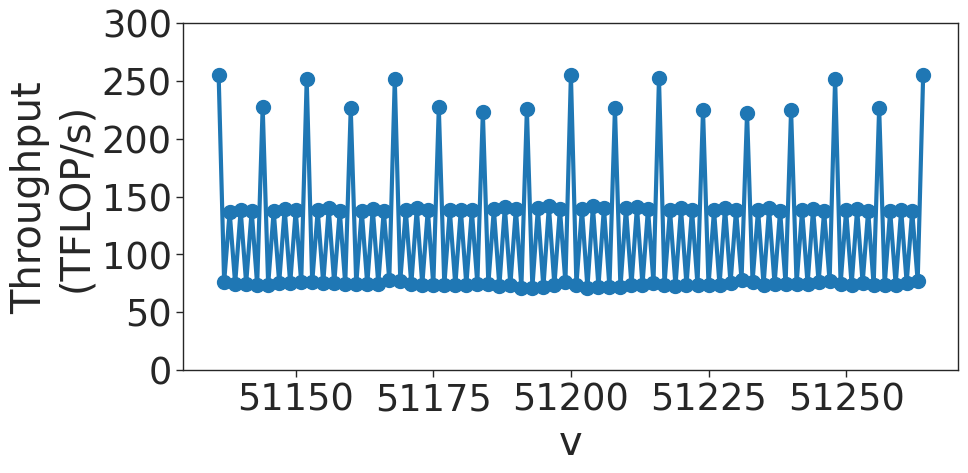

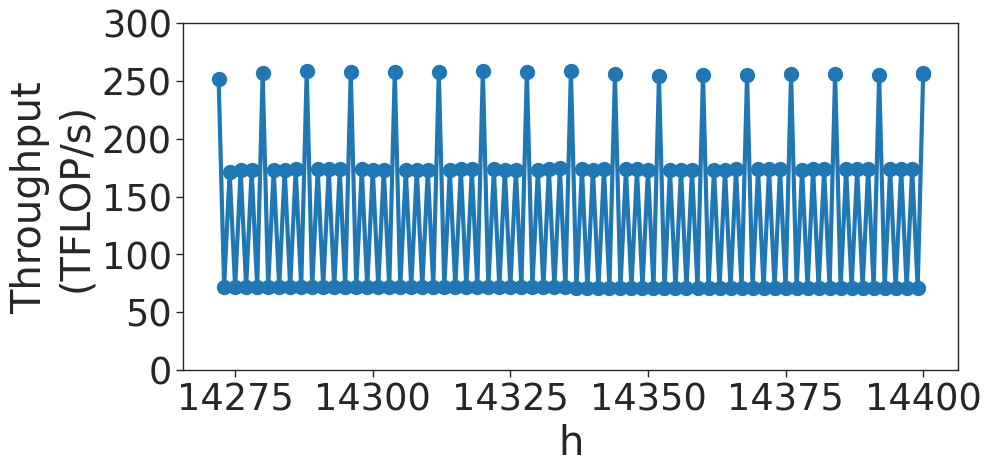

There are multiple tile sizes that the kernel can choose from. If the GEMM size does not divide evenly into the tile size, there will be wasted compute, where the thread block must execute fully on the SM, but only part of the output is necessary. This is called the tile quantization effect, as the output is quantized into discrete tiles.

Another quantization effect is called wave quantization. As the thread blocks are scheduled to SMs, only 108 thread blocks at a time may be scheduled. If, for example, 109 thread blocks must be scheduled, two rounds, or waves, of thread blocks must be scheduled to GPU. The first wave will have 108 thread blocks, and the second wave will have 1. The second wave will have almost the same latency as the first, but with a small fraction of the useful compute. As the matrix size increases, the last or tail wave grows. The throughput will increase, until a new wave is required. Then, the throughput will drop.

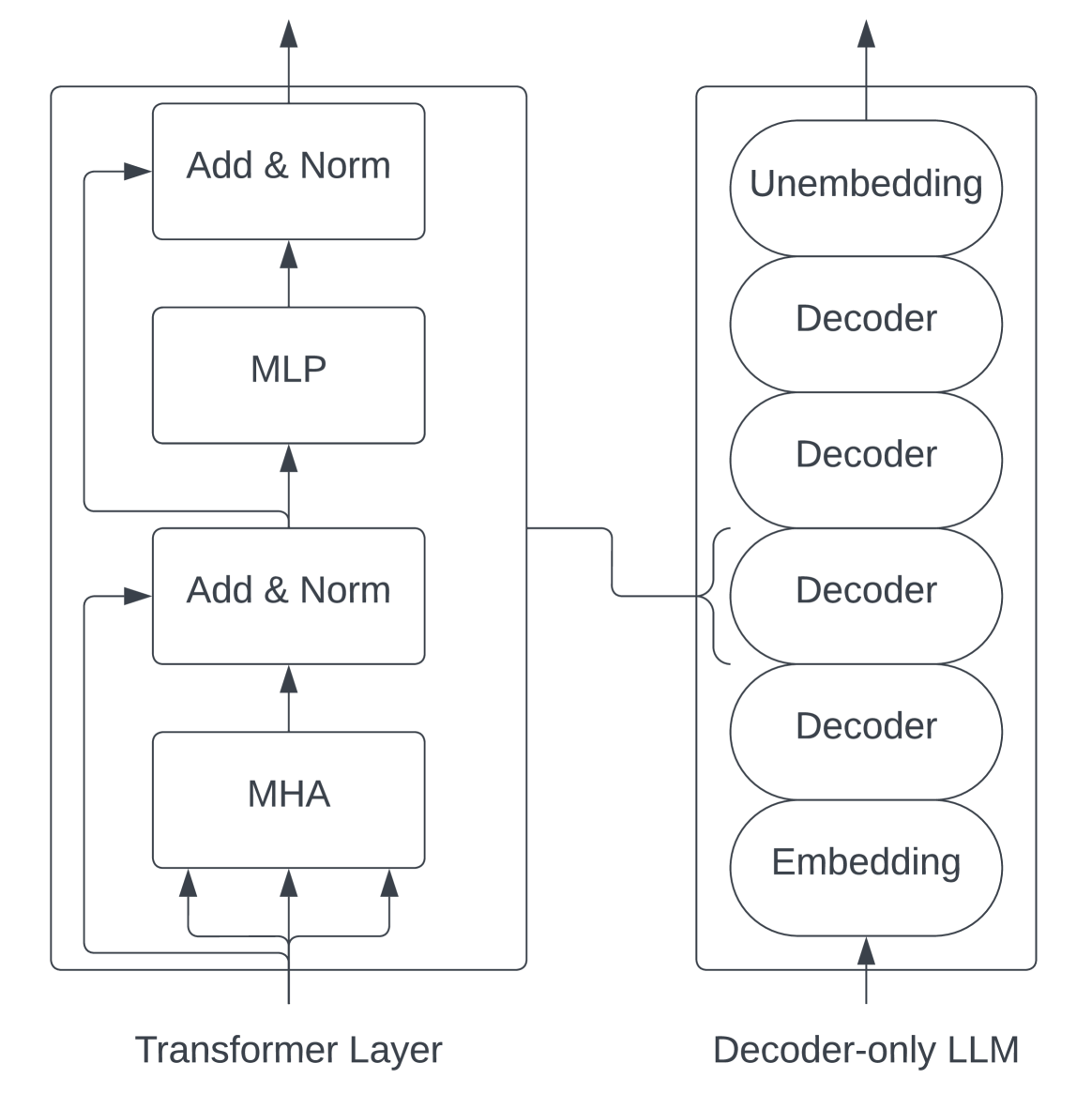

### III-C Transformer Models

In this study, we examine a decoder-only transformer architecture popularized by GPT-2 [29]. We focus on this architecture due to its popularity for training very large models [6, 7, 34] , but most of our conclusions also apply to encoder-only models [12, 21]. Due to the nature of the transition between the encoder and decoder, our analysis will largely not apply to encoder-decoder models [37, 30].

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Diagram Analysis: Transformer Layer vs Decoder-only LLM

## Diagram Overview

The image compares two neural network architectures through labeled components and directional flow arrows. Two primary blocks are depicted:

1. **Transformer Layer** (left)

2. **Decoder-only LLM** (right)

---

## Transformer Layer Components

### Spatial Layout

- **Vertical Stack** of four processing blocks

- **Bidirectional Connections** between components

### Component Breakdown

1. **Multi-Head Attention (MHA)**

- Position: Bottom-most block

- Connections:

- 3 upward arrows to **Add & Norm** (left)

- 1 rightward arrow to **Add & Norm** (center)

2. **MLP (Multi-Layer Perceptron)**

- Position: Middle block

- Connections:

- 1 upward arrow from **Add & Norm** (center)

- 1 downward arrow to **Add & Norm** (left)

3. **Add & Norm Blocks**

- **Left Block**:

- Receives input from MHA (3 arrows)

- Outputs to MHA (1 arrow)

- **Center Block**:

- Receives input from MLP (1 arrow)

- Outputs to MLP (1 arrow)

- **Right Block**:

- Receives input from MHA (1 arrow)

- Outputs to MHA (1 arrow)

4. **Residual Connections**

- All Add & Norm blocks implement residual connections

- Normalization layers follow addition operations

---

## Decoder-only LLM Architecture

### Component Stack

1. **Embedding Layer**

- Position: Bottom-most

- Function: Input token conversion

2. **Decoder Stack**

- **Four Identical Decoder Blocks** (stacked vertically)

- Each block contains:

- Self-attention mechanism

- Cross-attention mechanism

- Feed-forward network

- Residual connections

3. **Unembedding Layer**

- Position: Top-most

- Function: Output token reconstruction

### Data Flow

- **Bottom-to-Top** processing sequence

- Embedding → Decoder 1 → Decoder 2 → Decoder 3 → Decoder 4 → Unembedding

---

## Key Architectural Differences

| Feature | Transformer Layer | Decoder-only LLM |

|------------------------|----------------------------------|---------------------------------|

| **Directionality** | Bidirectional flow | Unidirectional flow |

| **Component Repetition**| 2 Add & Norm blocks | 4 Decoder blocks |

| **Attention Type** | Multi-head attention | Self/cross-attention |

| **Normalization** | Explicit Add & Norm blocks | Implicit in decoder blocks |

---

## Technical Notes

1. **Transformer Layer**:

- Follows standard transformer block architecture

- Contains pre-layer normalization (Add & Norm before MHA/MLP)

2. **Decoder-only LLM**:

- Resembles GPT-style architecture

- Lacks encoder components present in full transformers

- Uses tied embeddings (embedding/unembedding weight sharing)

3. **Arrow Conventions**:

- Black arrows: Data flow direction

- Dashed lines: Residual connections

- Solid lines: Primary data paths

---

## Missing Elements

- No numerical data or performance metrics

- No explicit parameter counts

- No activation function specifications

- No positional encoding details

This diagram focuses on architectural comparison rather than quantitative analysis.

</details>

Figure 4: The transformer architecture [29].

For a mapping from variables to their definitions, see Table I. Initially, the network takes in raw input tokens which are then fed into a word embedding table of size $v× h$ . These token embeddings are then merged with learned positional embeddings of size $s× h$ . The output from the embedding layer, which serves as the input for the transformer block, is a 3-D tensor of size $s× b× h$ . Each layer of the transformer comprises a self-attention block with attention heads, followed by a two-layer multi-layer perceptron (MLP) that expands the hidden size to $4h$ before reducing it back to $h$ . The input and output sizes for each transformer layer remain consistent at $s× b× h$ . The final output from the last transformer layer is projected back into the vocabulary dimension to compute the cross-entropy loss.

| a b h | Number of attention heads Microbatch size Hidden dimension size | s t v | Sequence length Tensor-parallel size Vocabulary size |

| --- | --- | --- | --- |

| L | Number of transformer layers | | |

TABLE I: Variable names.

Each transformer layer consists of the following matrix multiplication operators:

1. Attention key, value, query transformations: These can be expressed as a single matrix multiplication of size: $(b· s,h)×(h,\frac{3h}{t})$ . Output is of size $(b· s,\frac{3h}{t})$ .

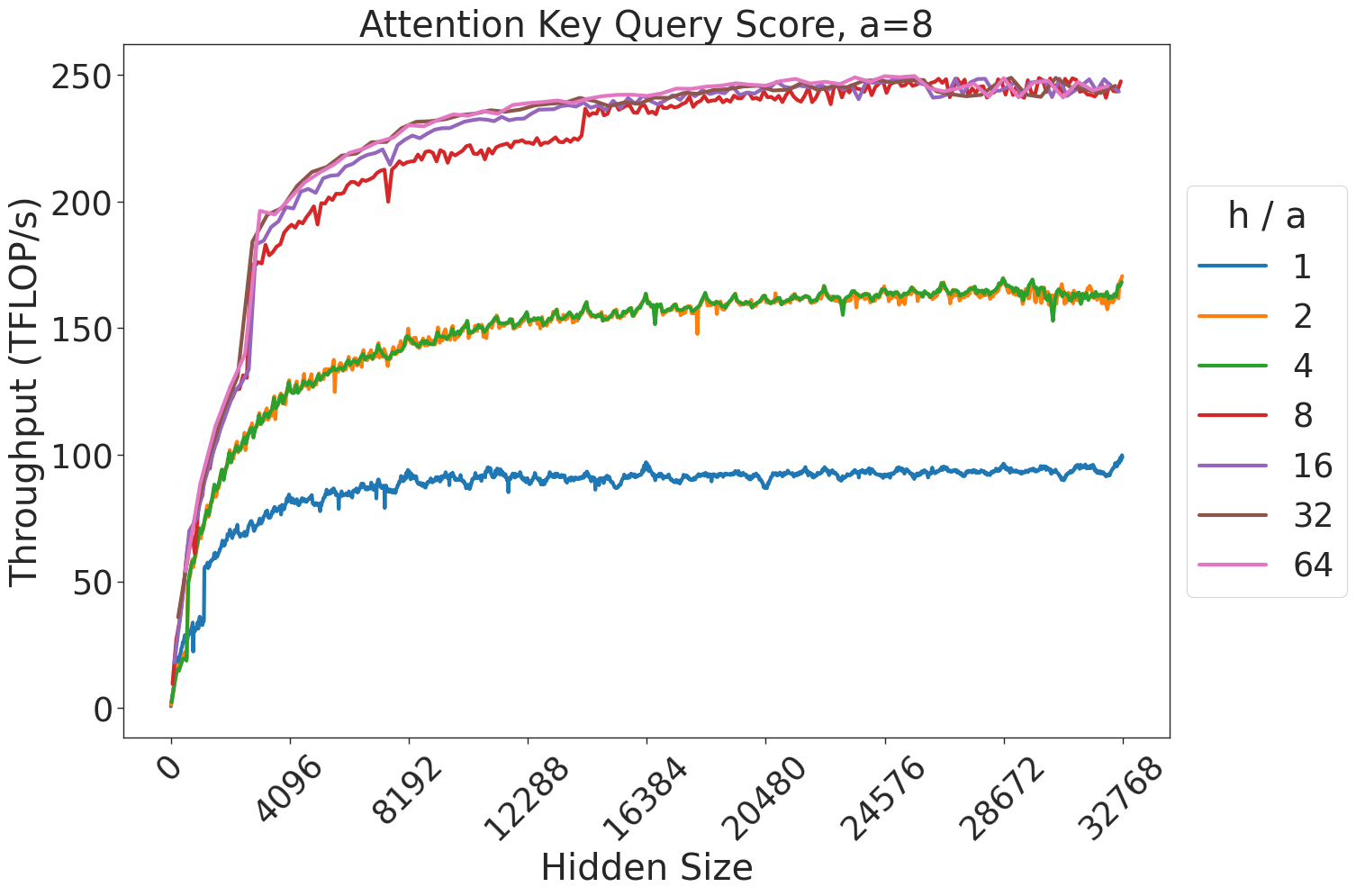

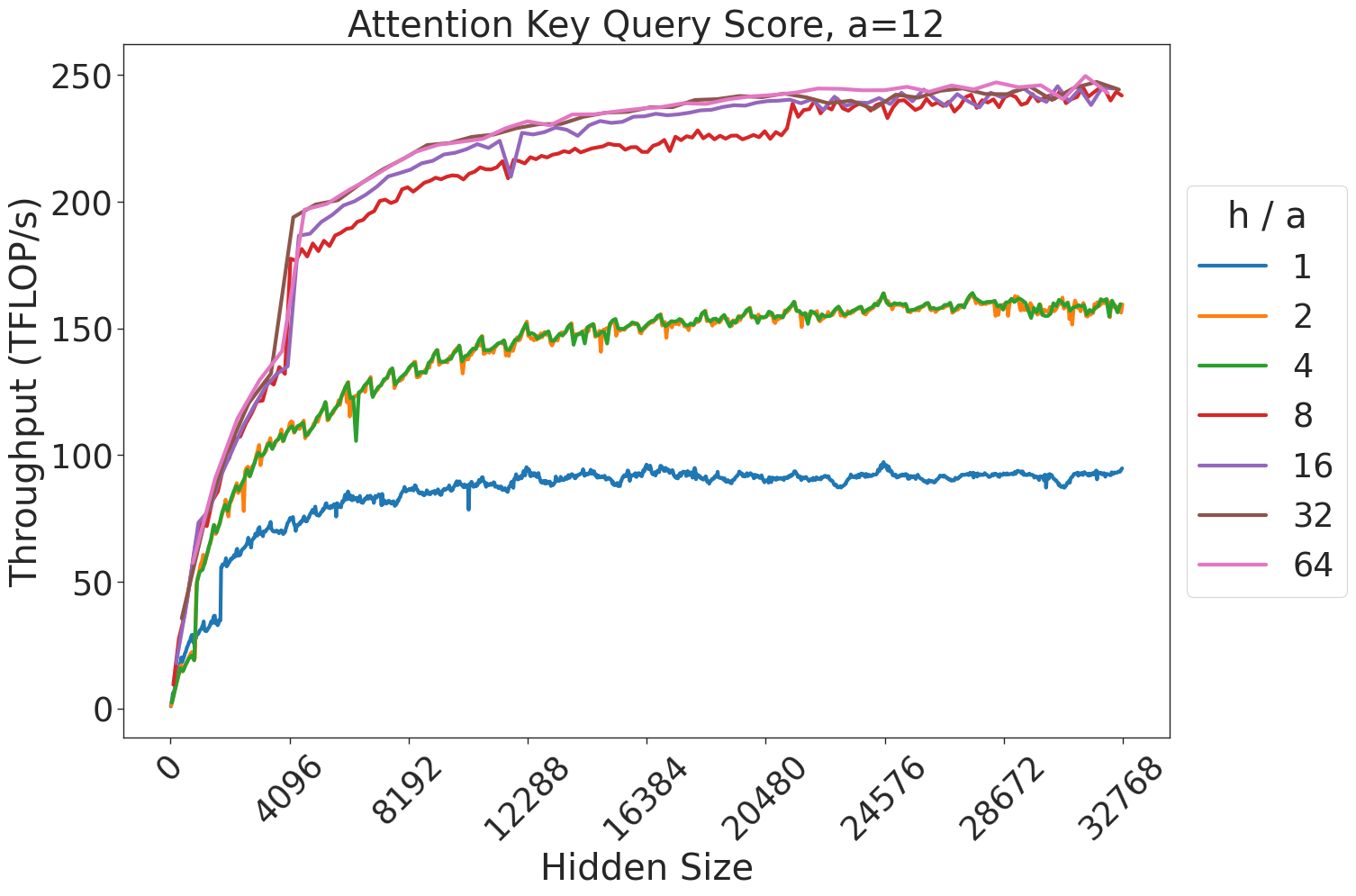

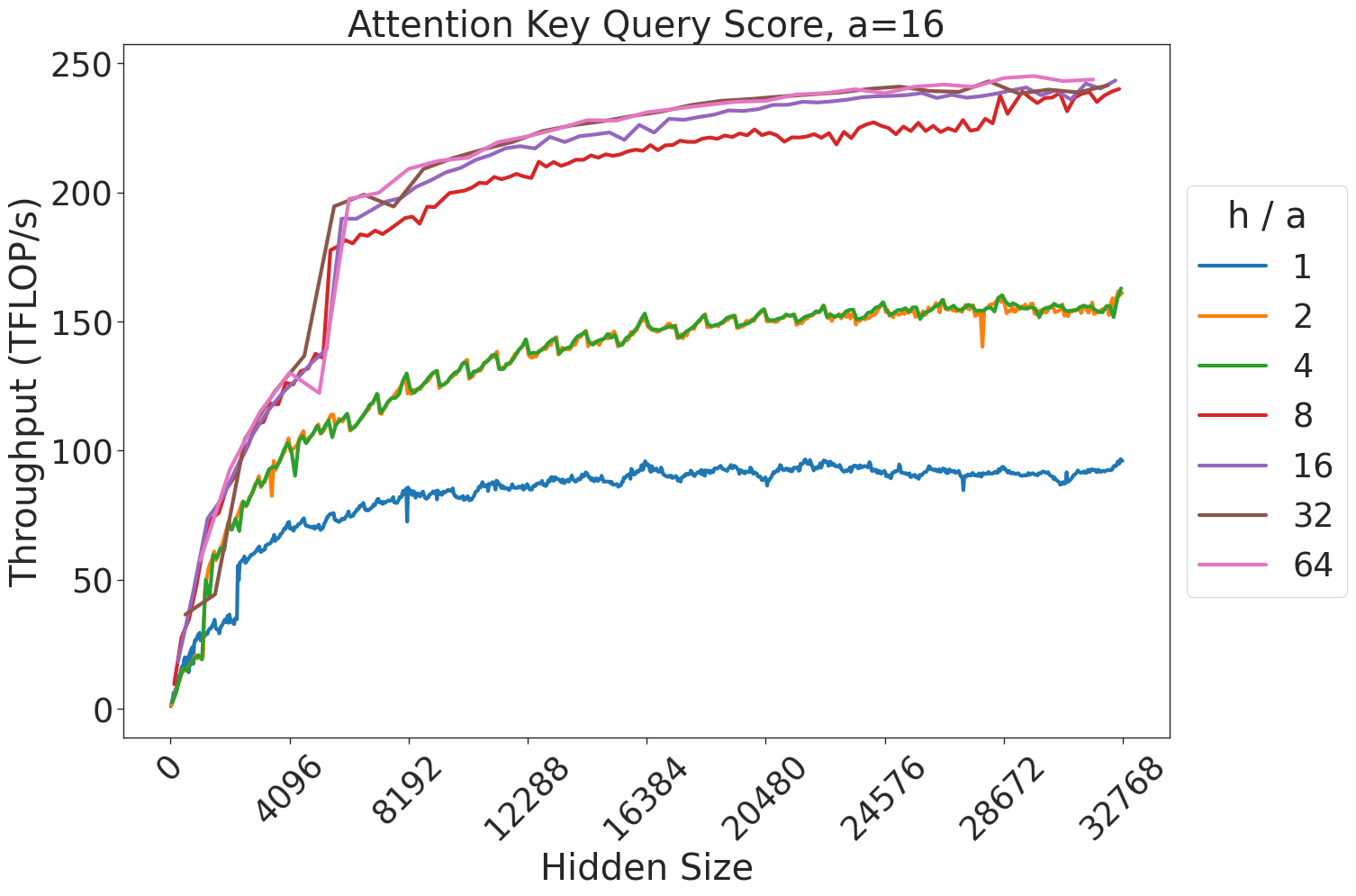

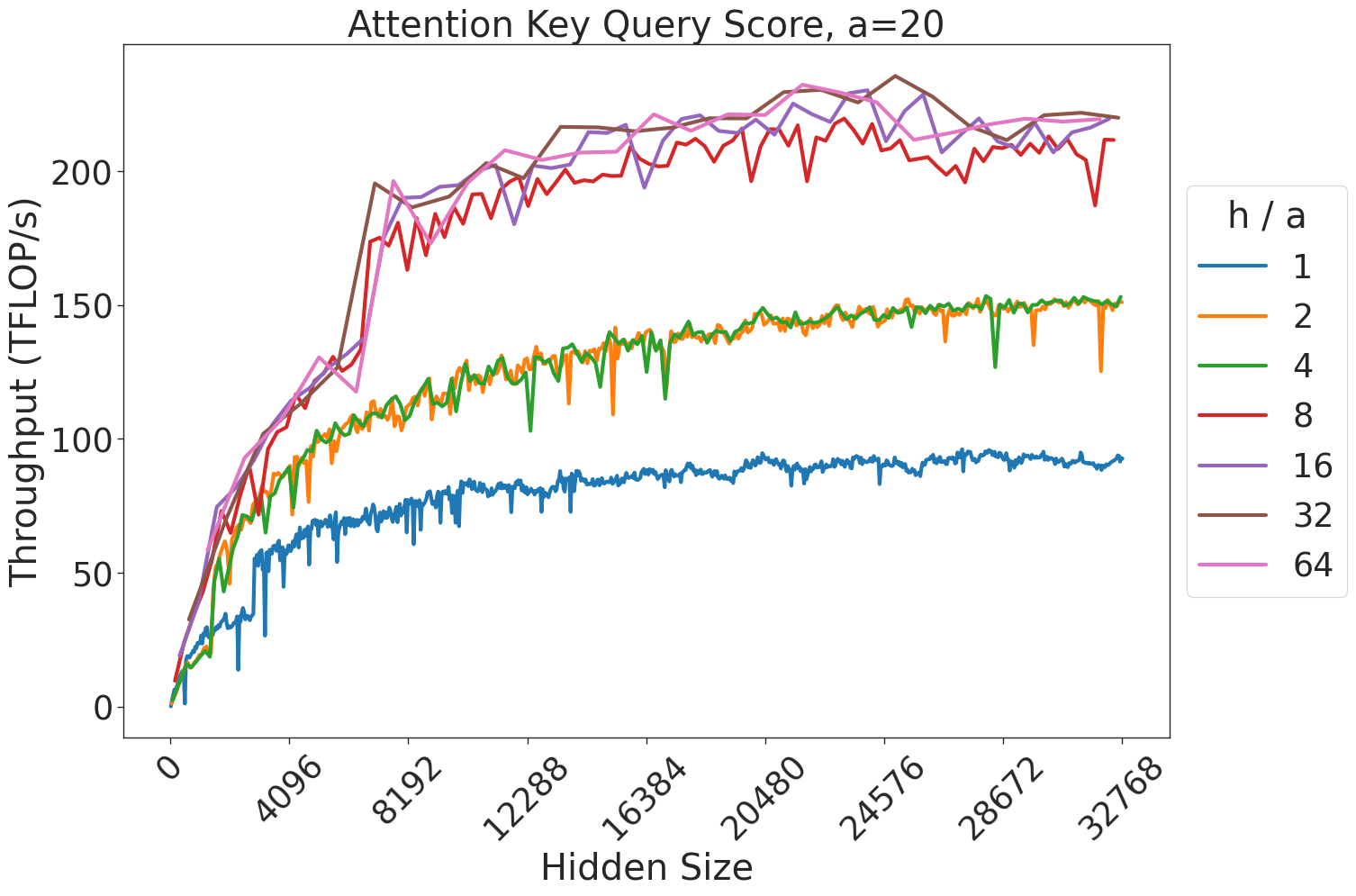

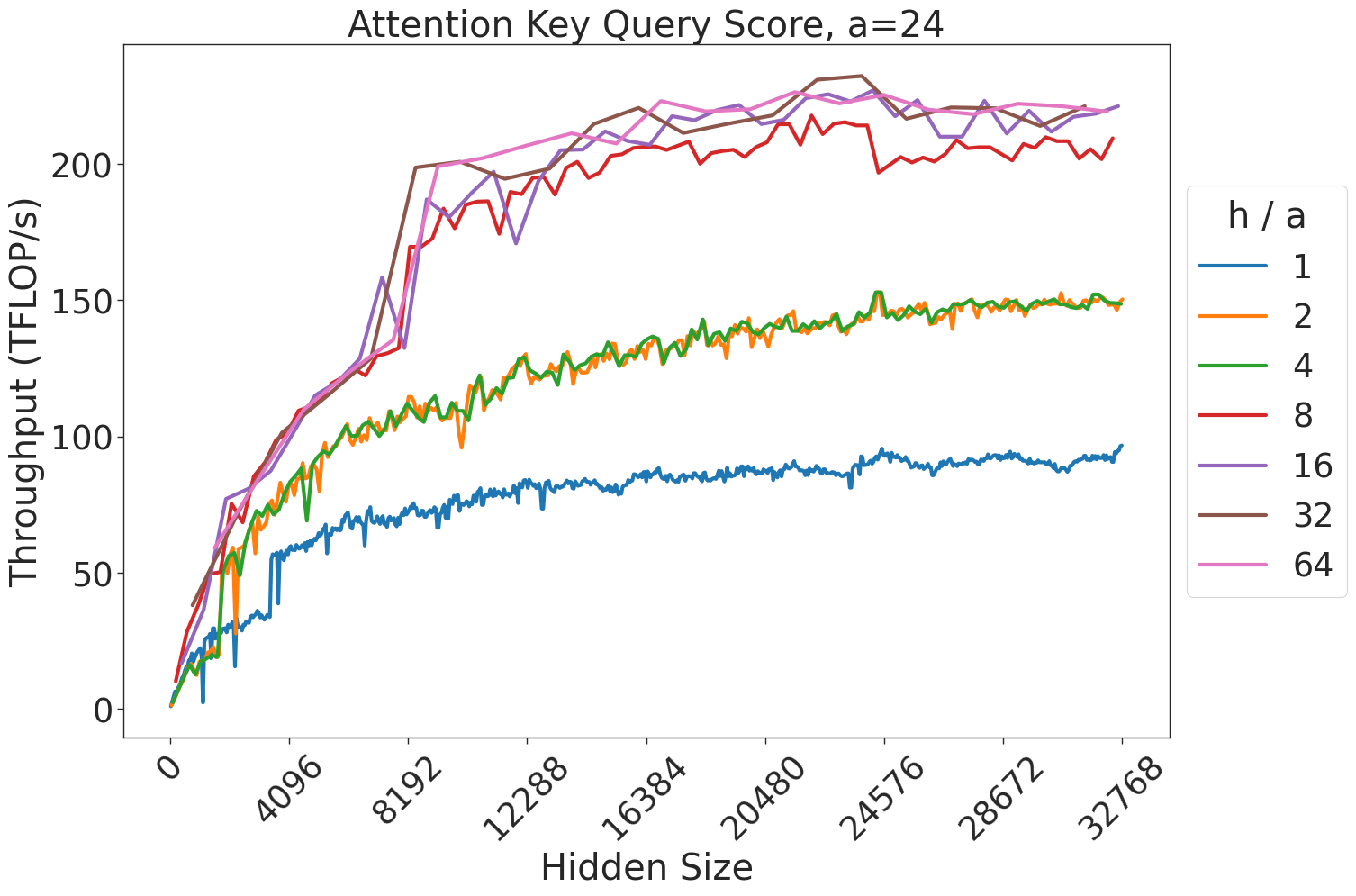

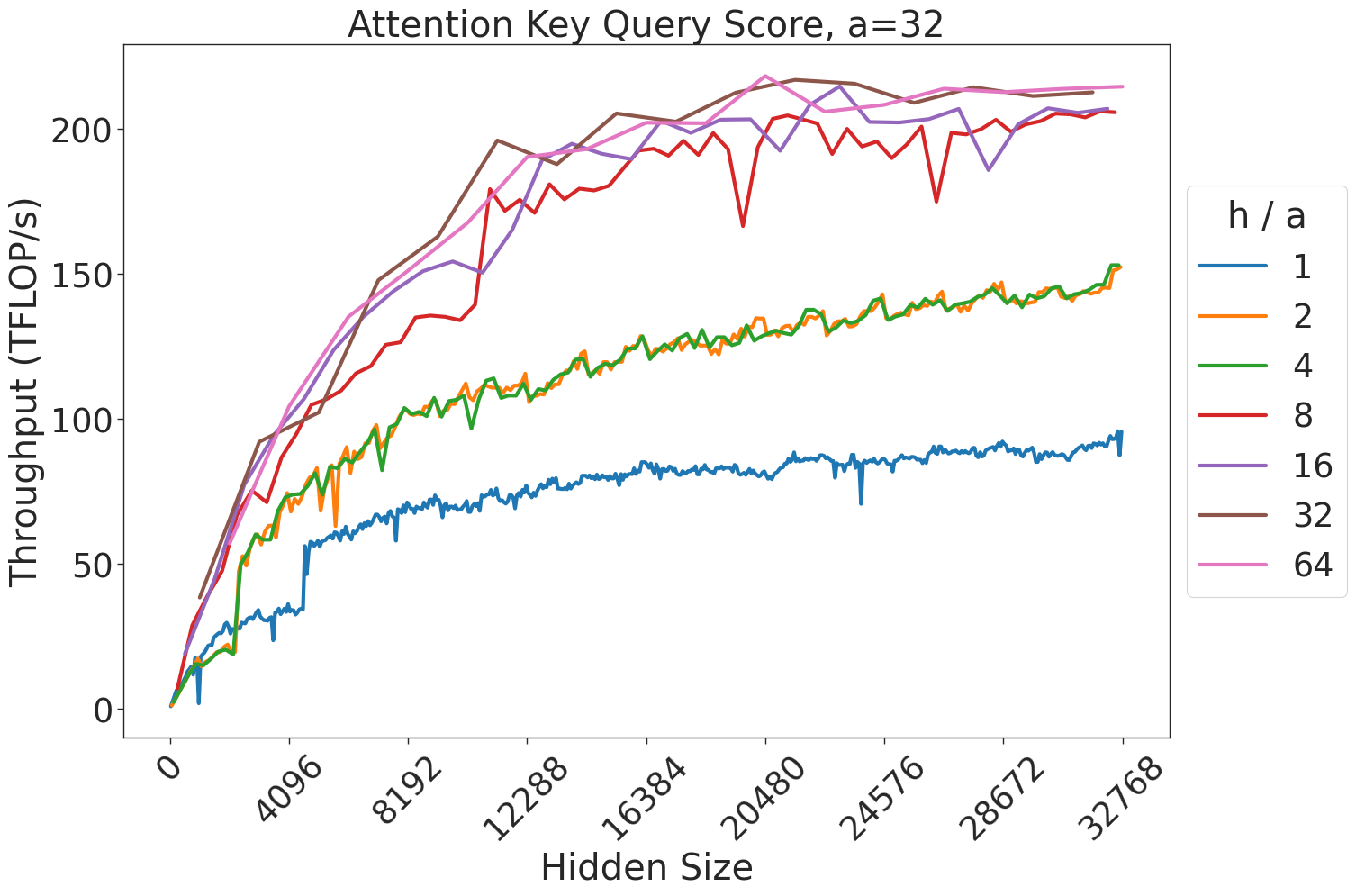

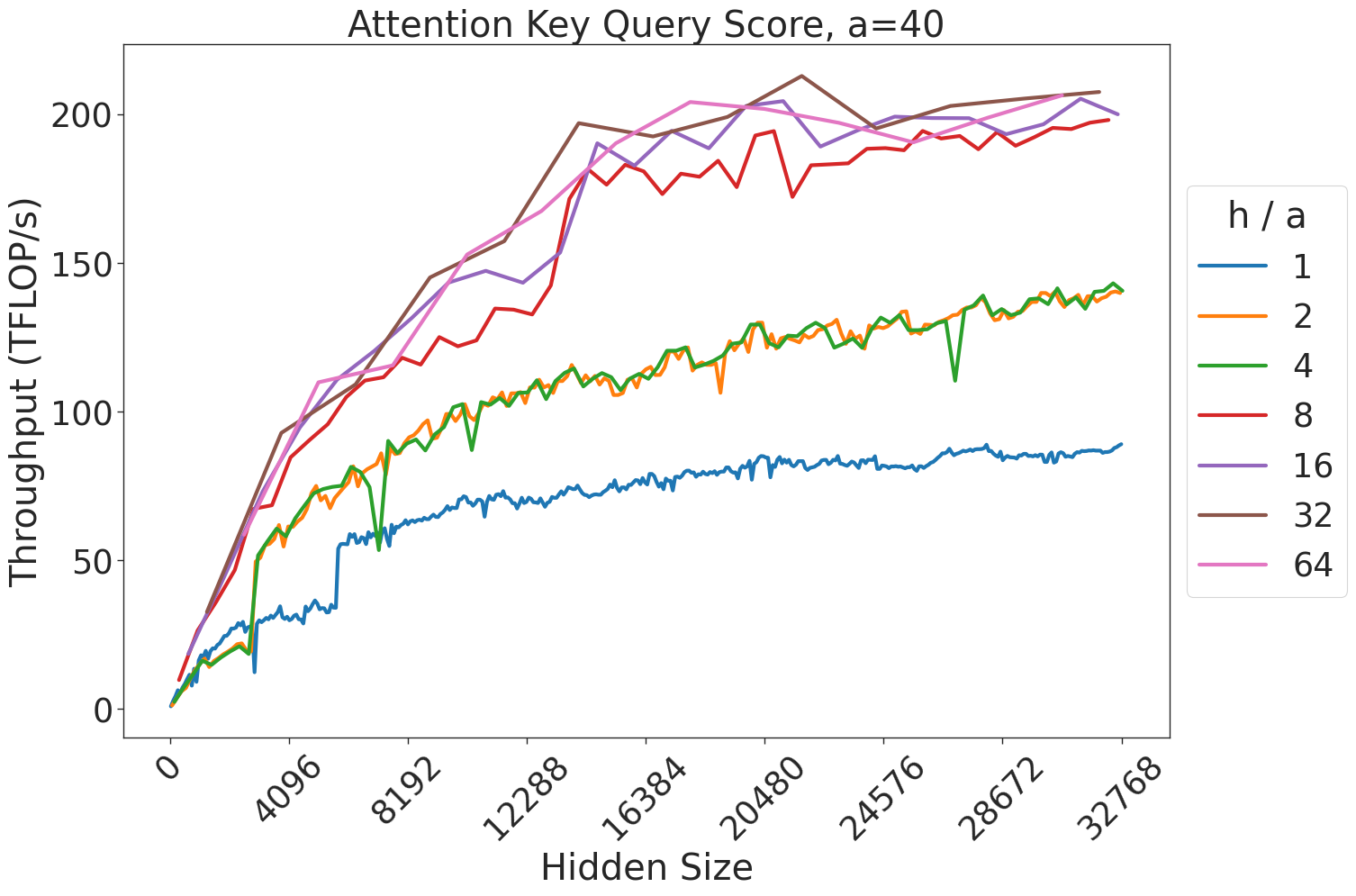

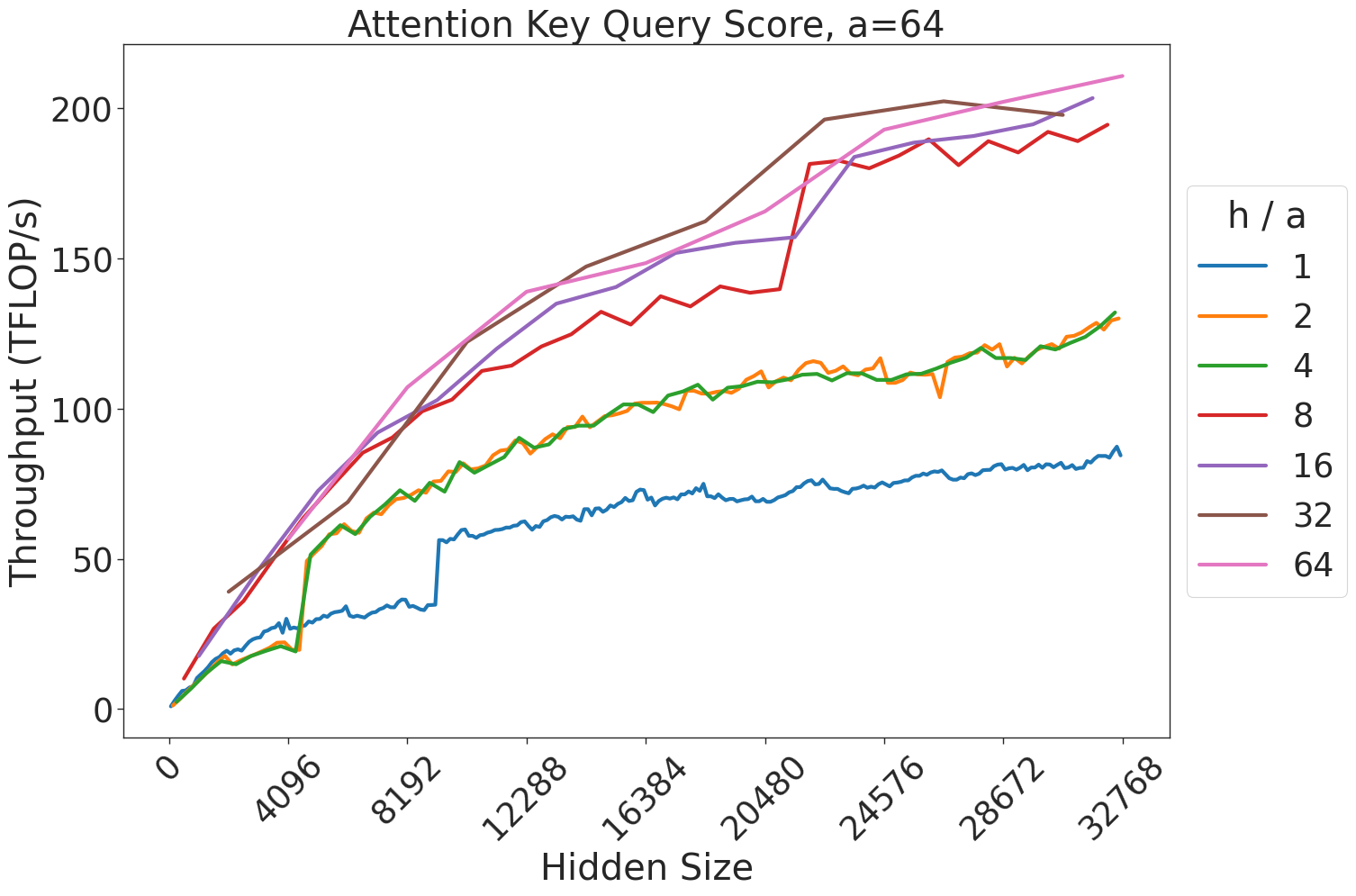

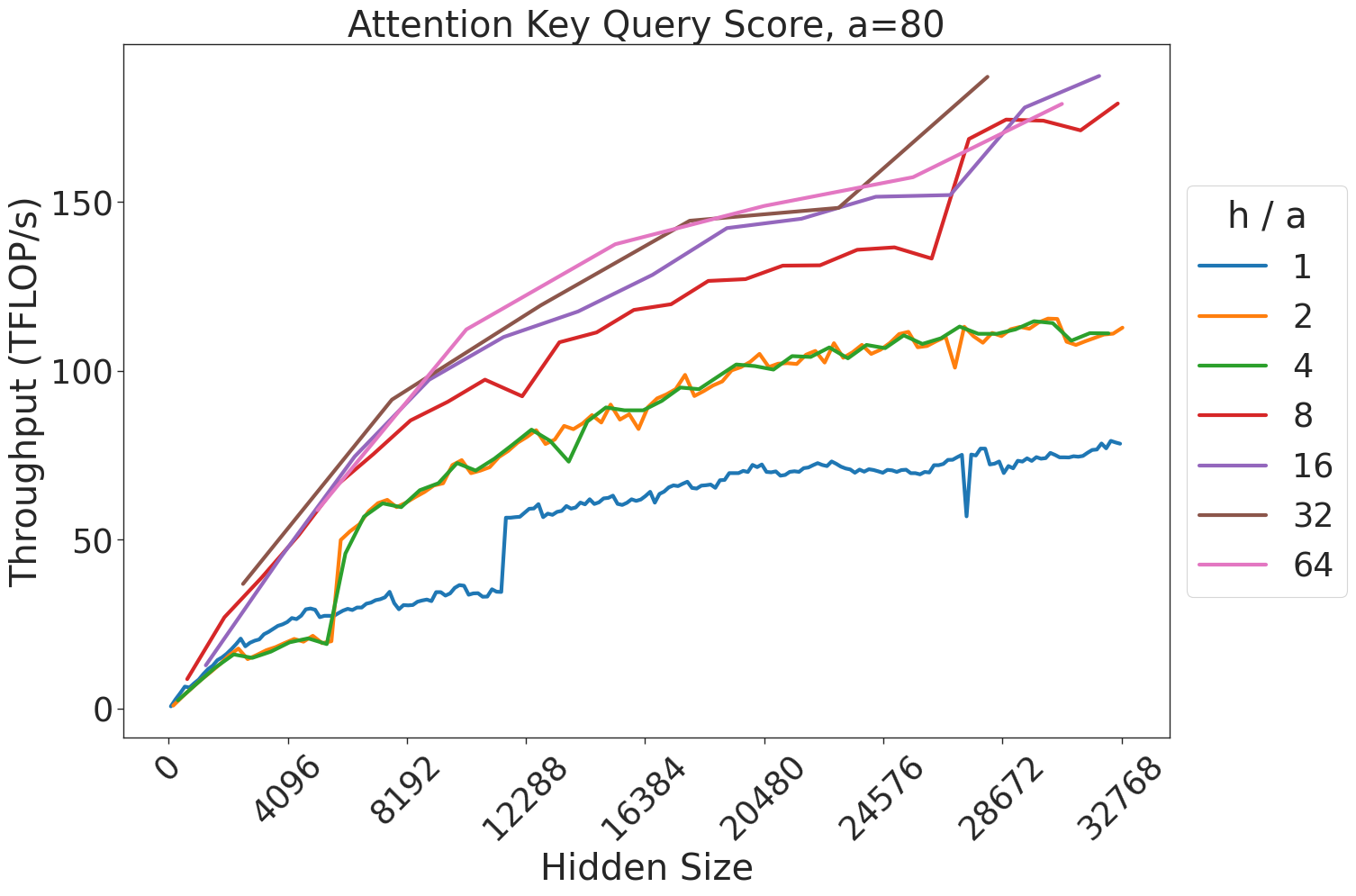

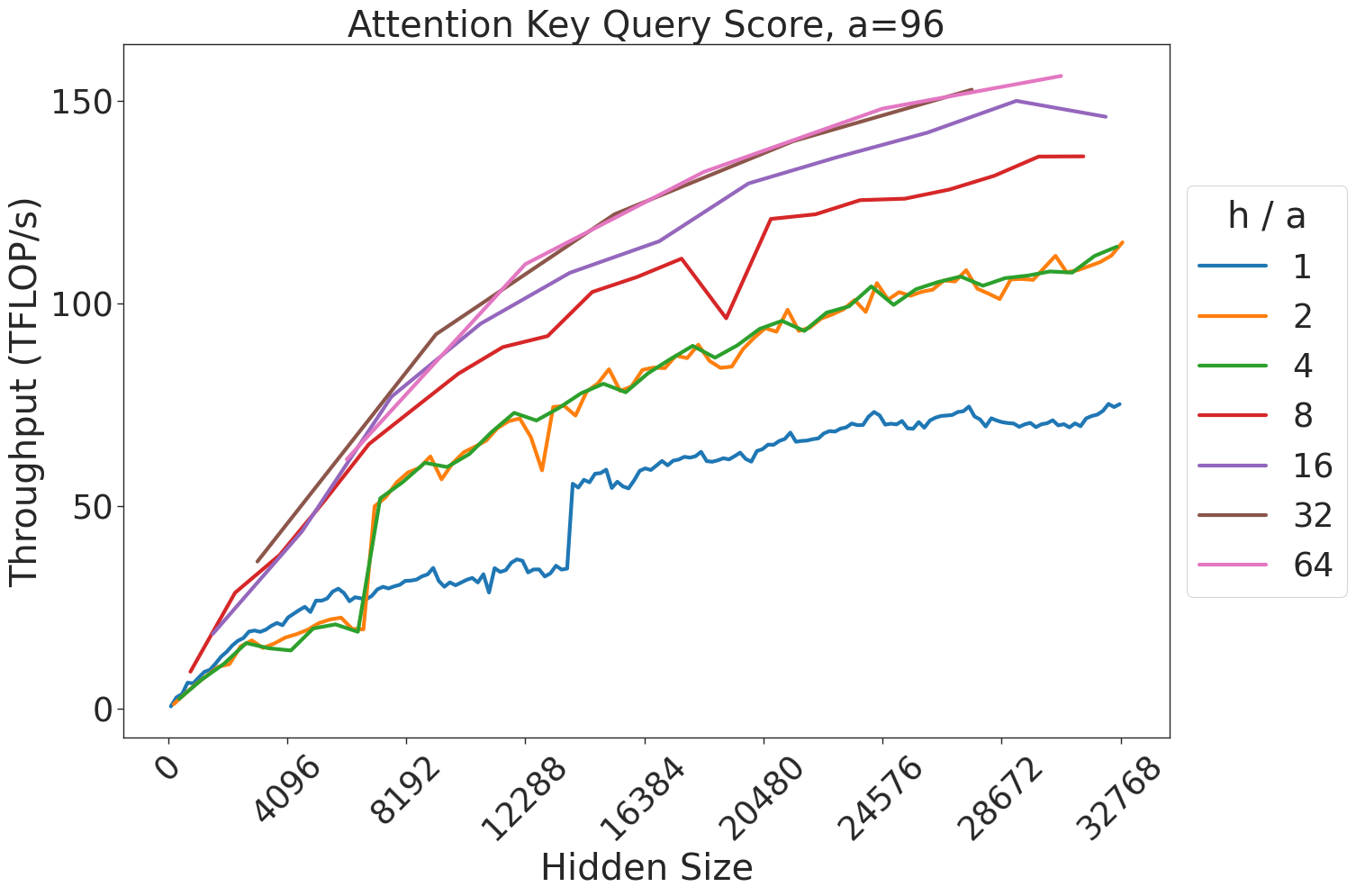

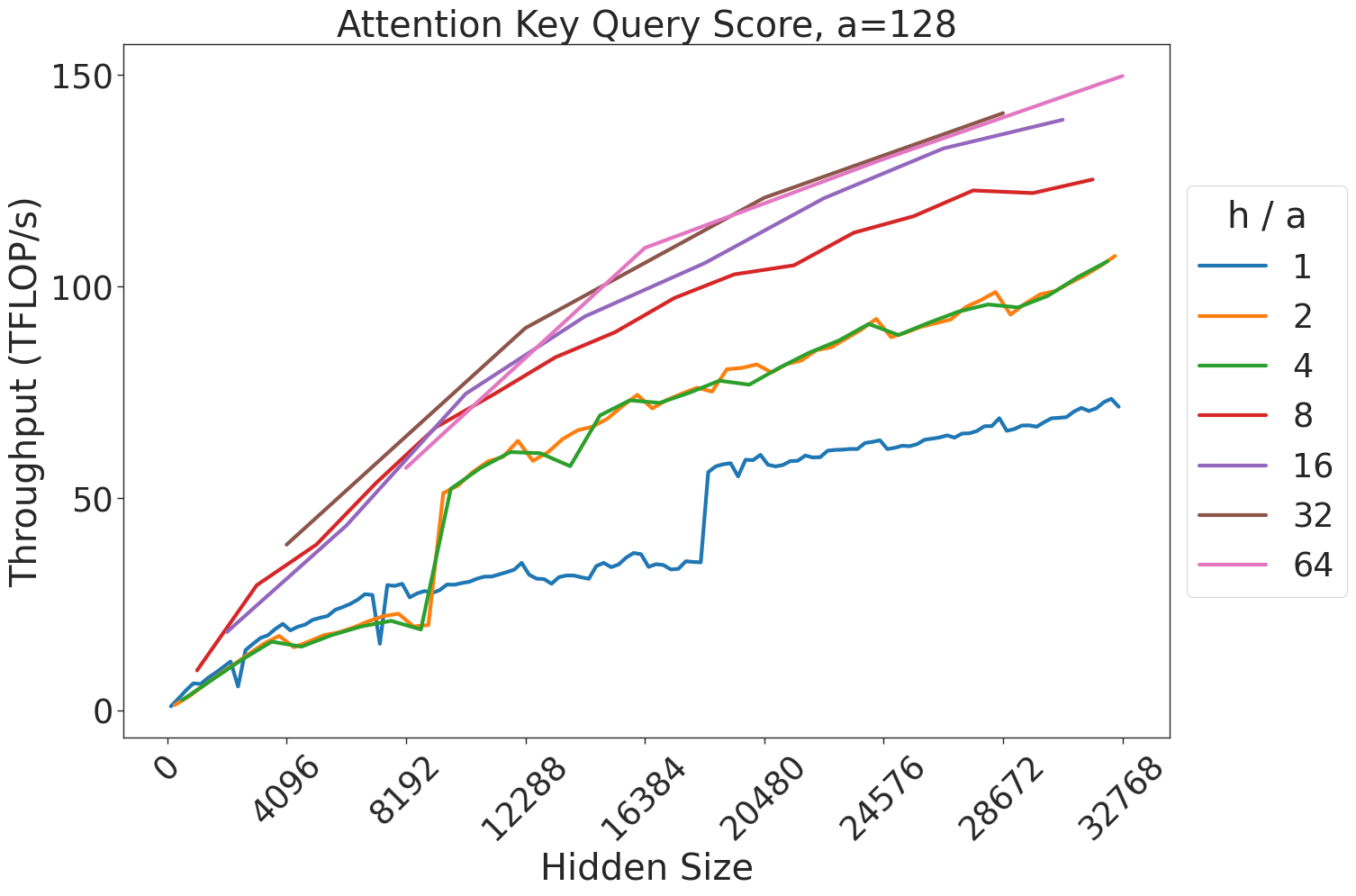

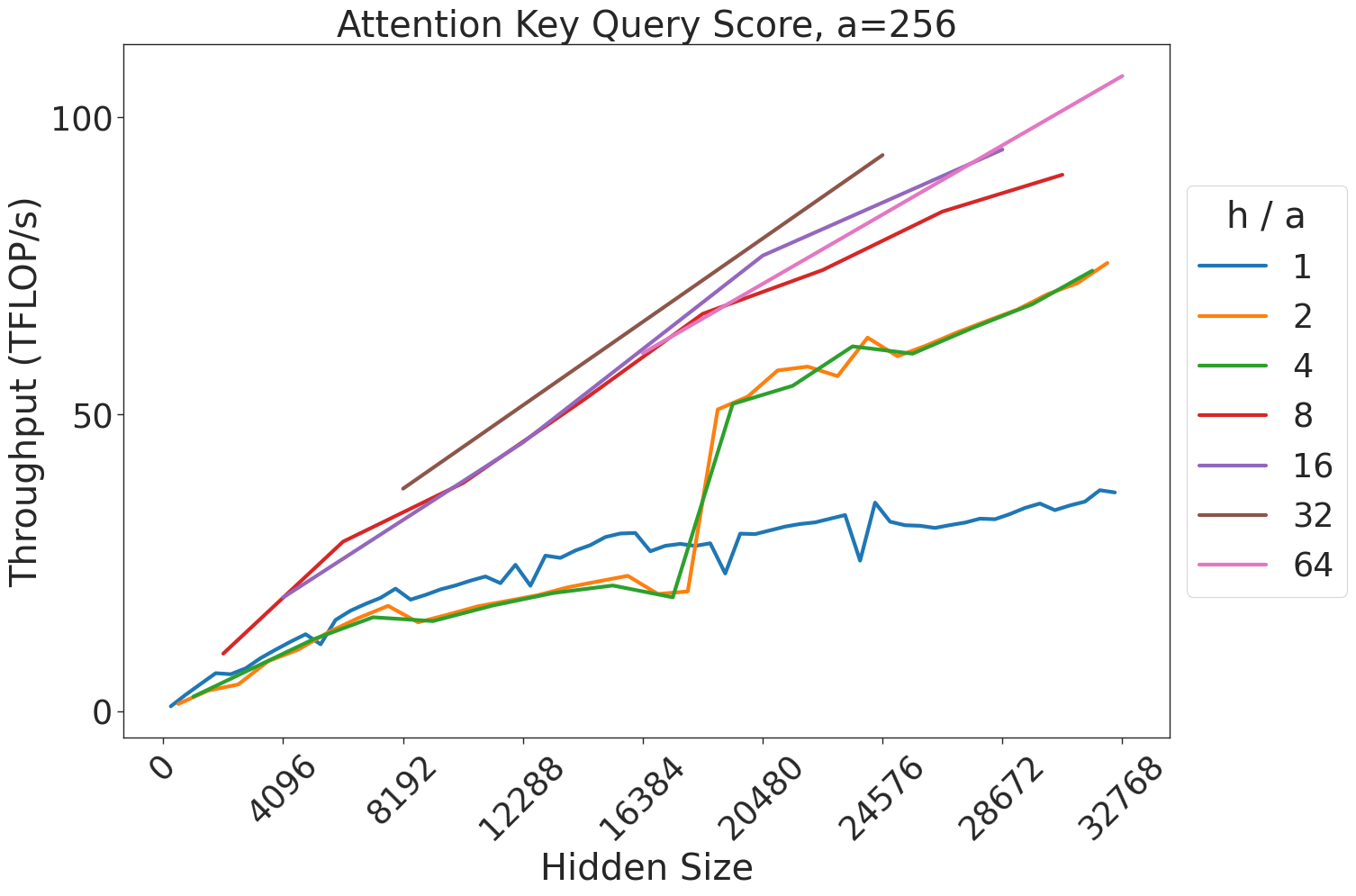

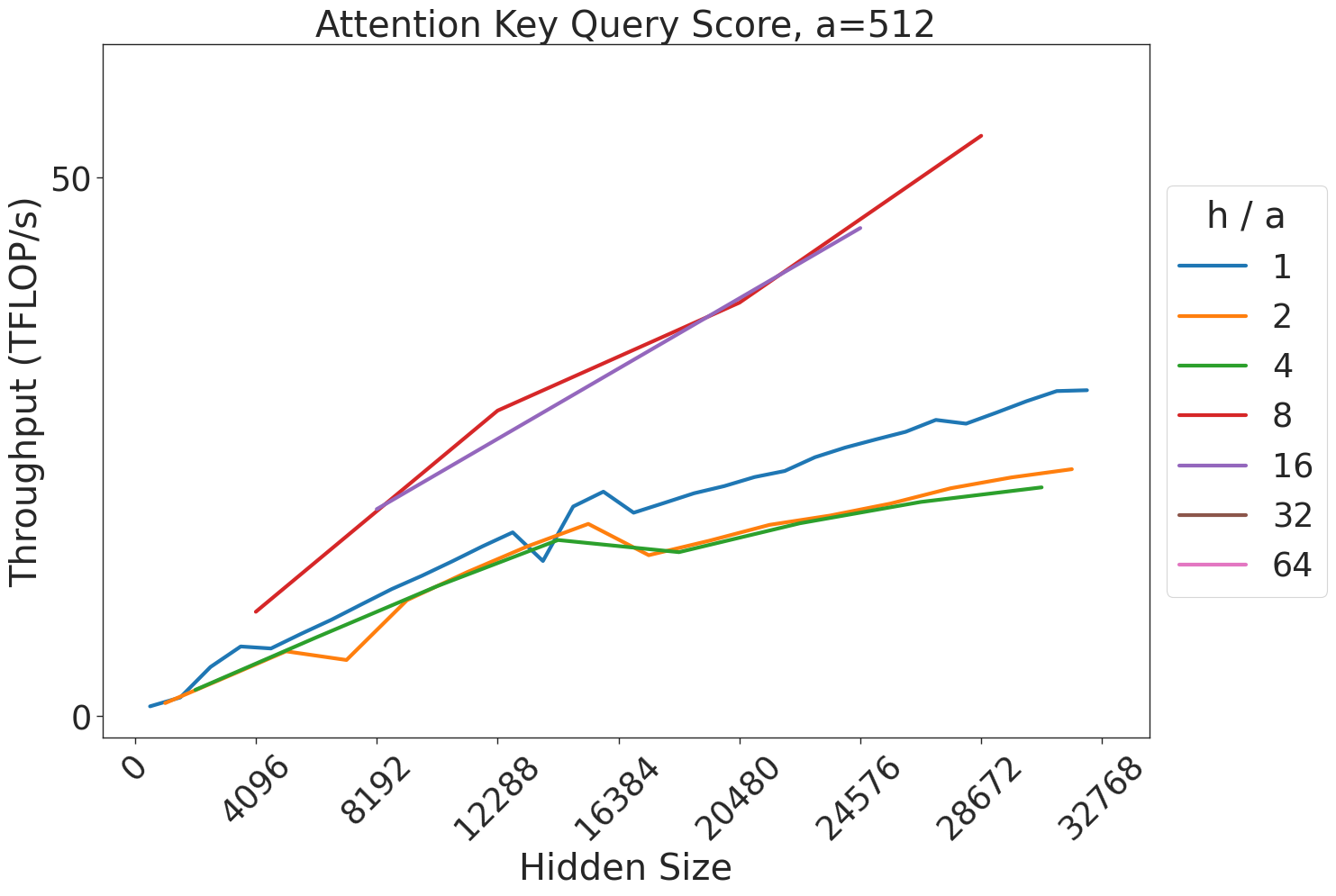

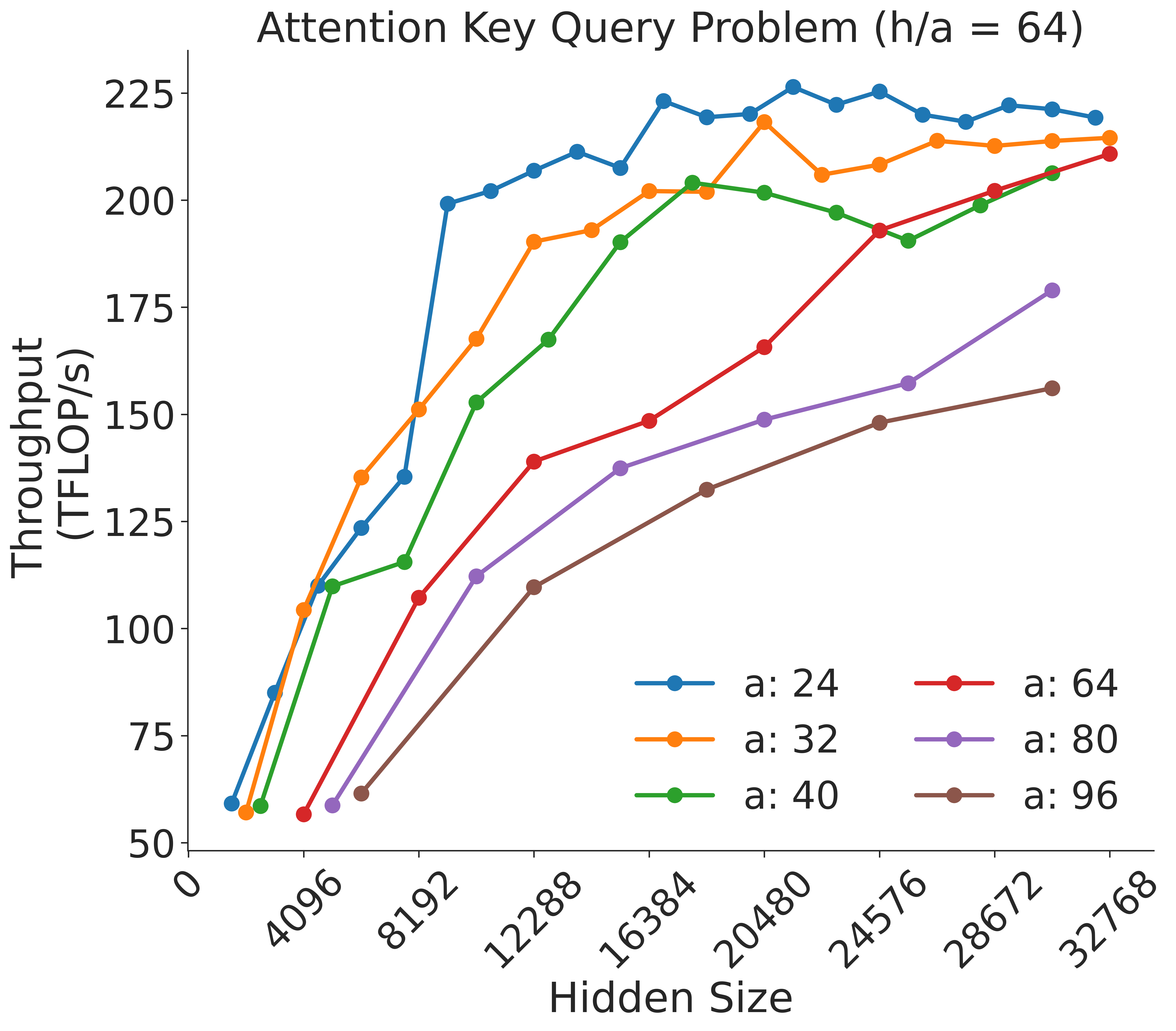

1. Attention score computation: $b· a/t$ batched matrix multiplications (BMMs), each of size $(s,\frac{h}{a})×(\frac{h}{a},s)$ . Output is of size $(\frac{b· a}{t},s,s)$ .

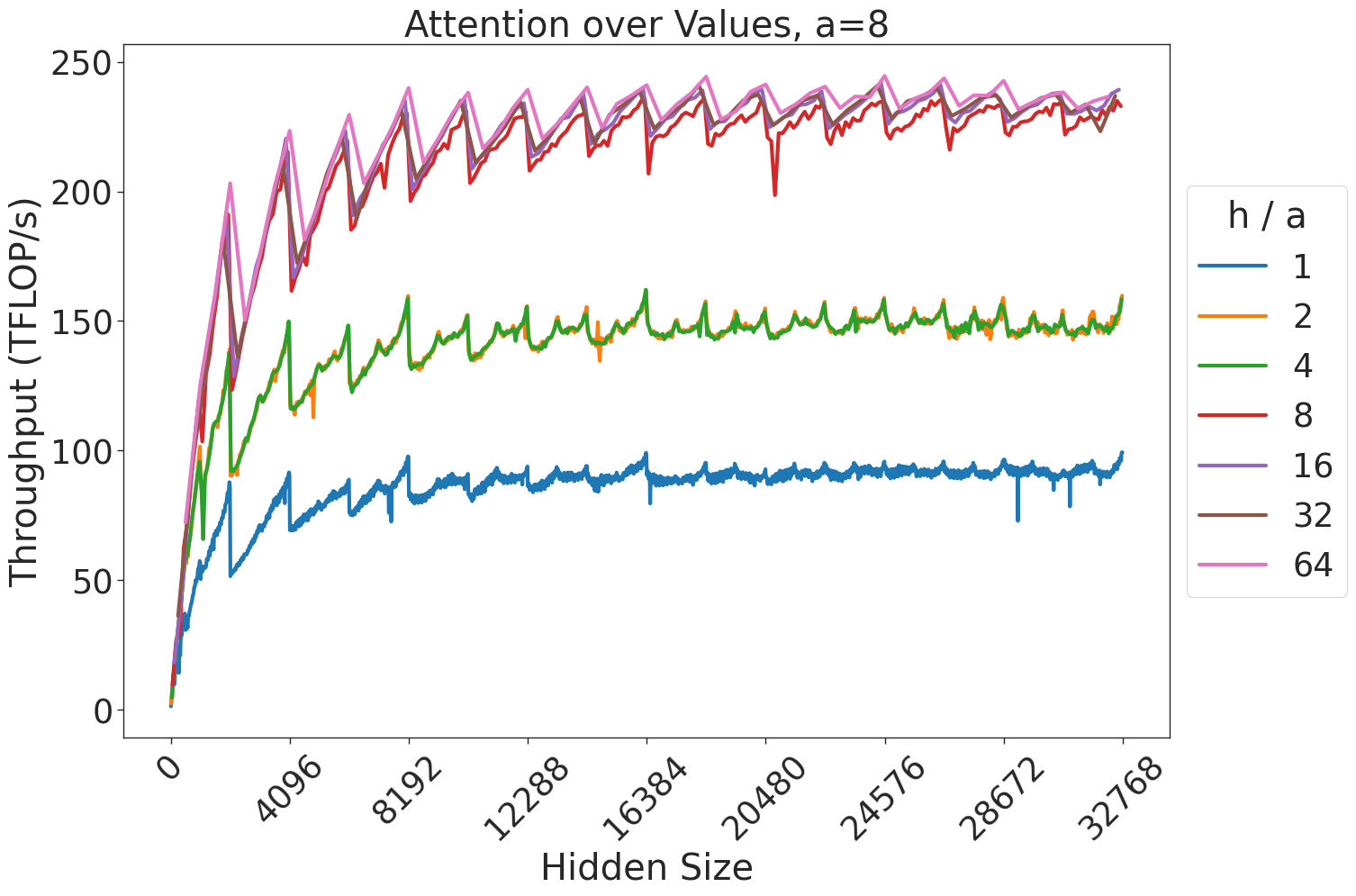

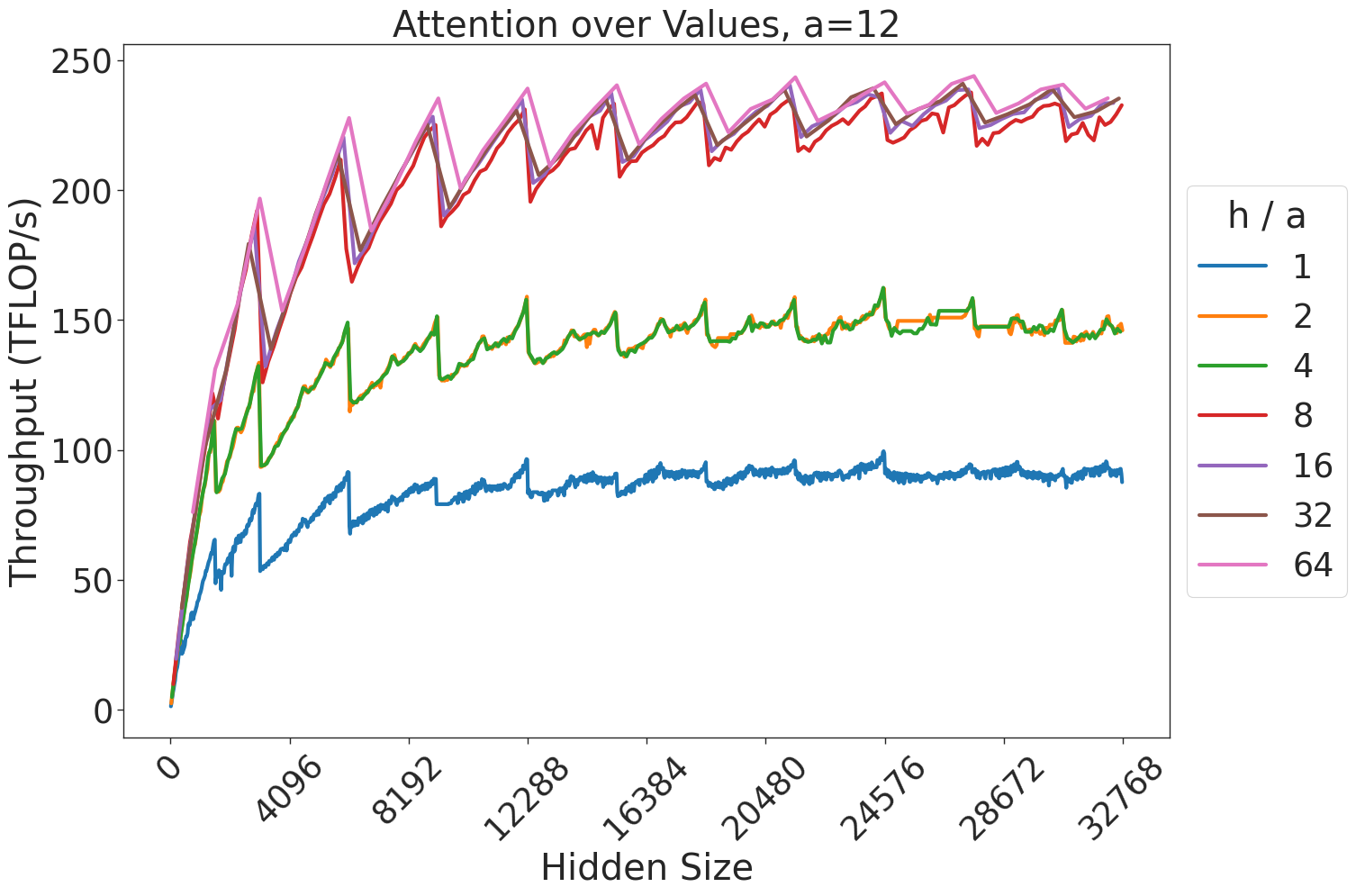

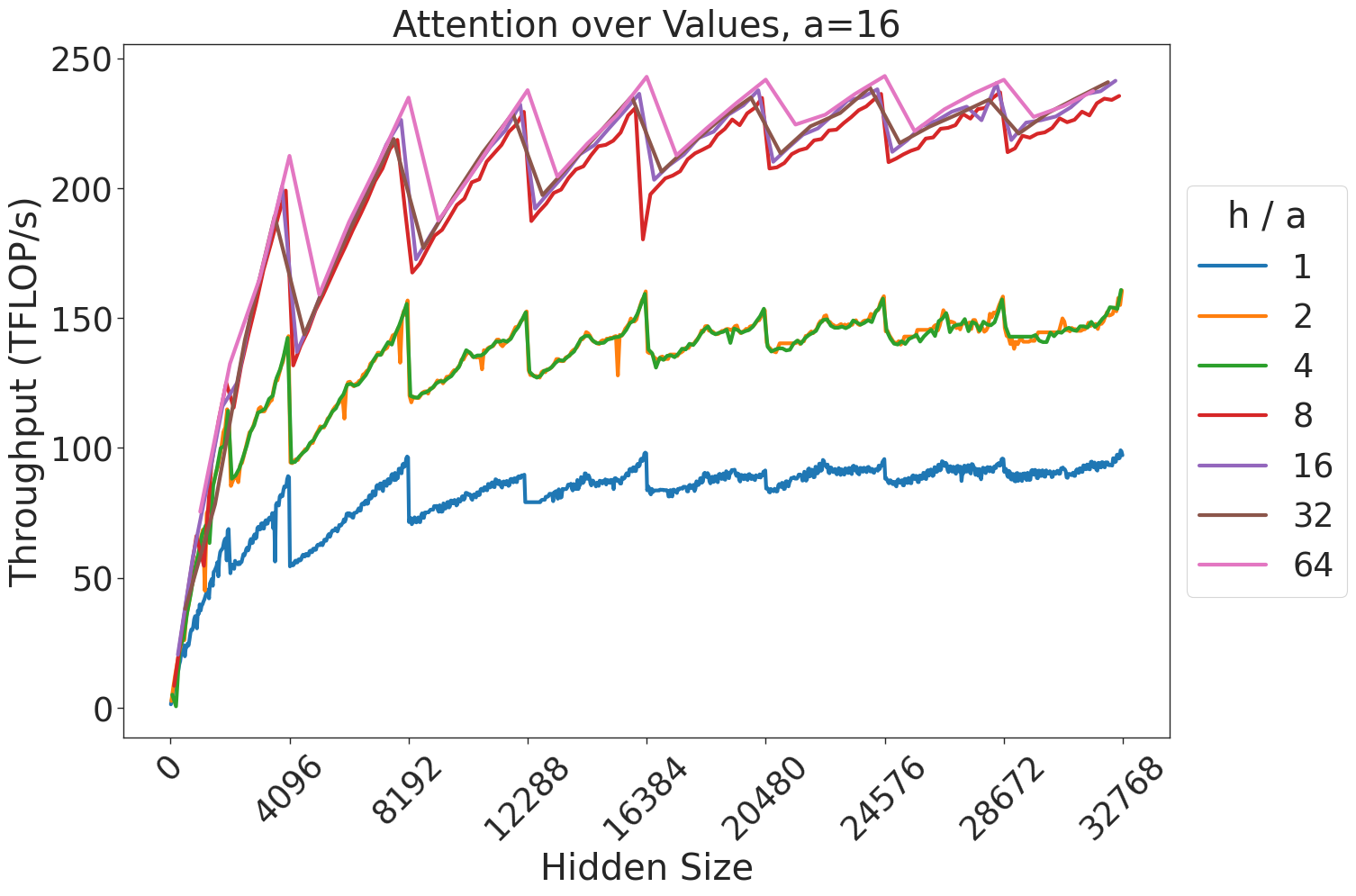

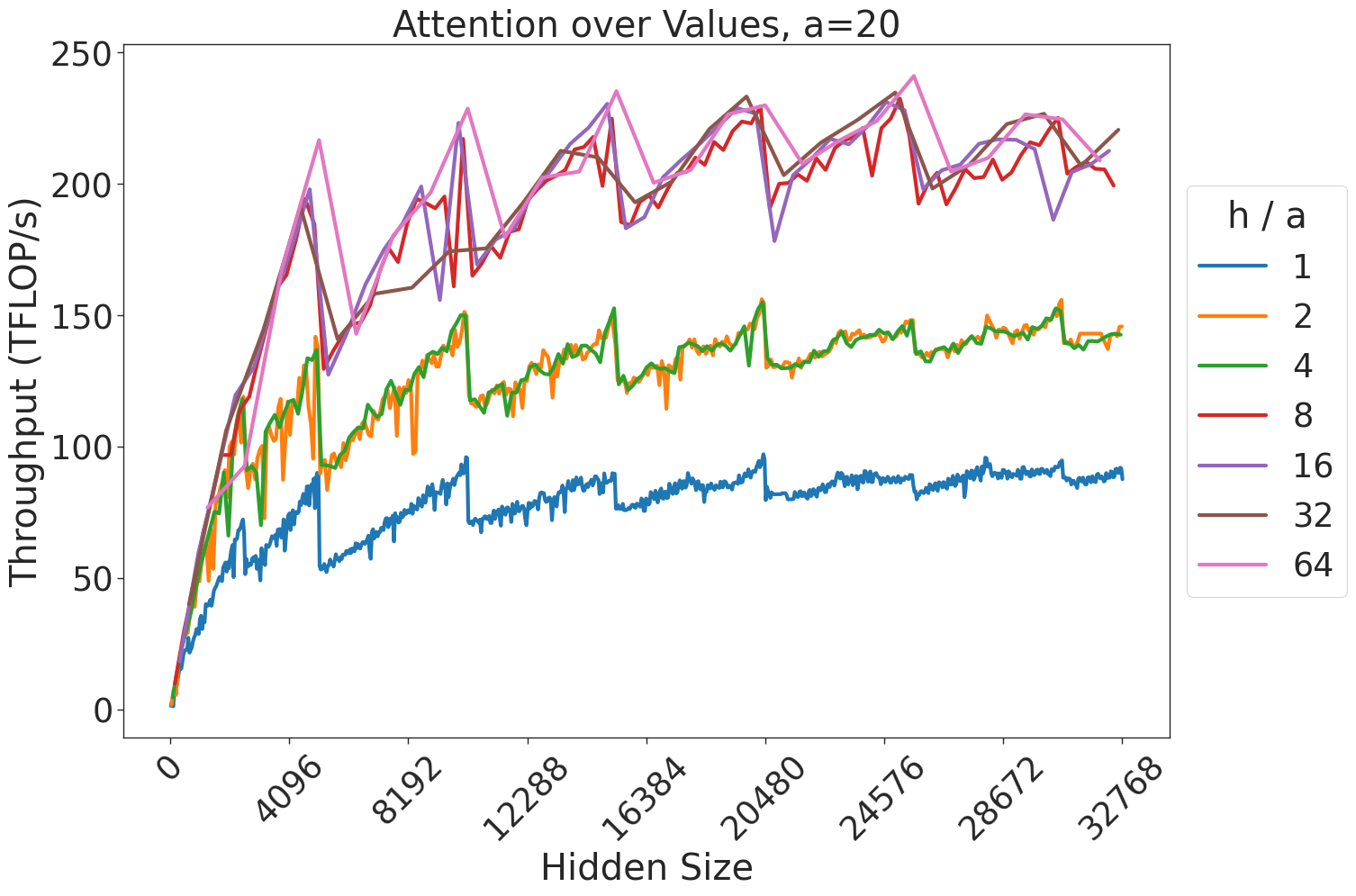

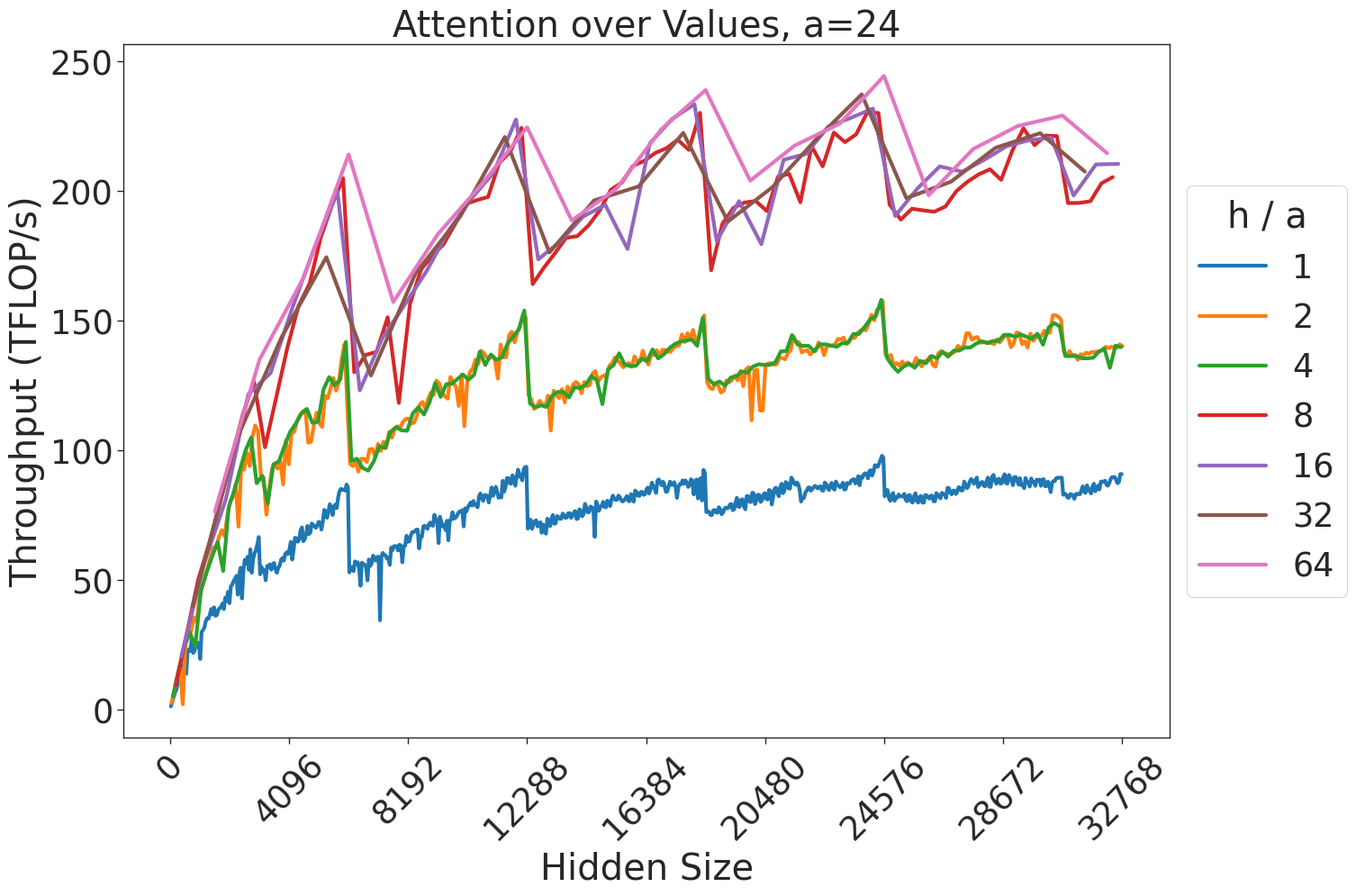

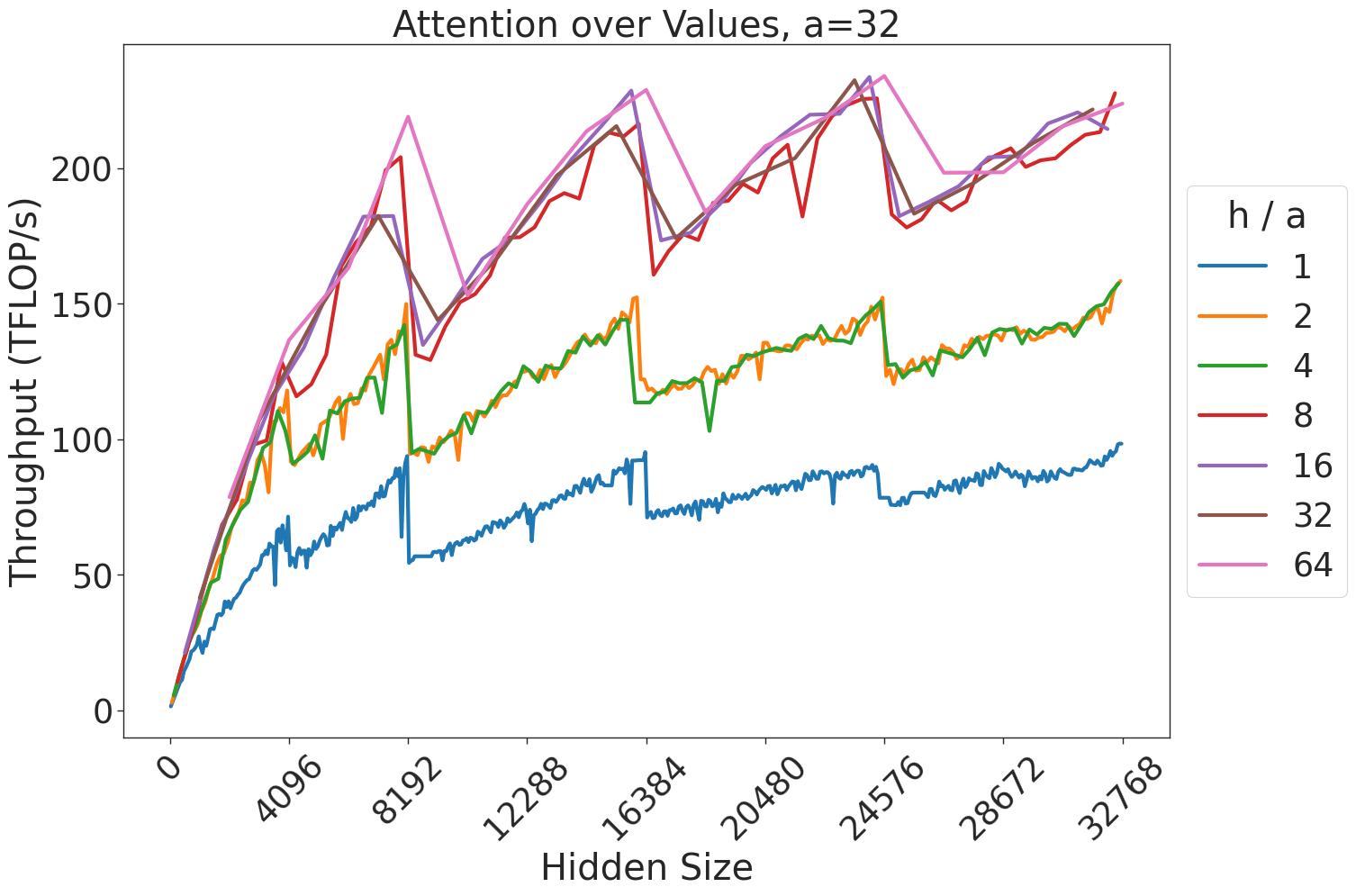

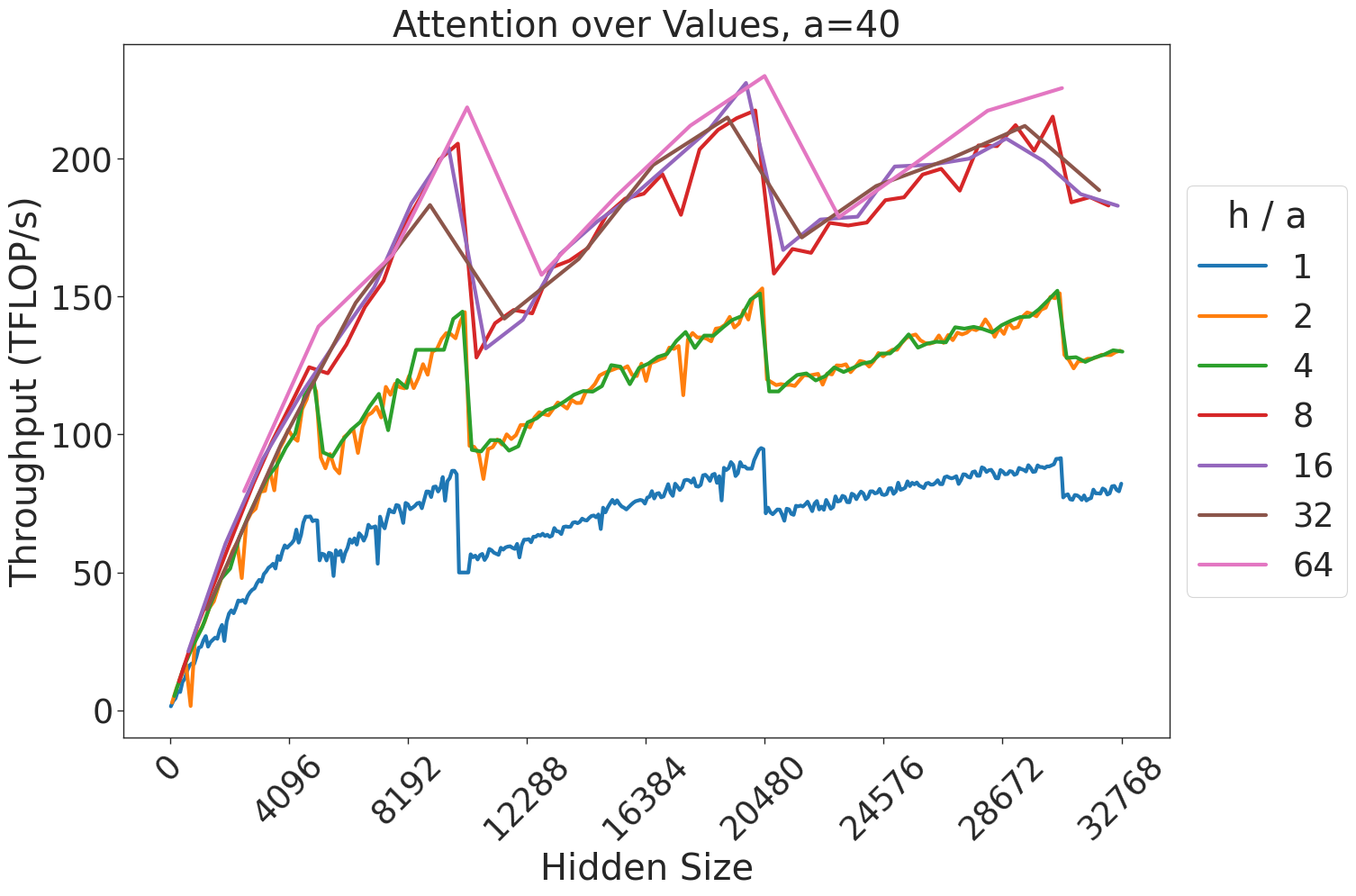

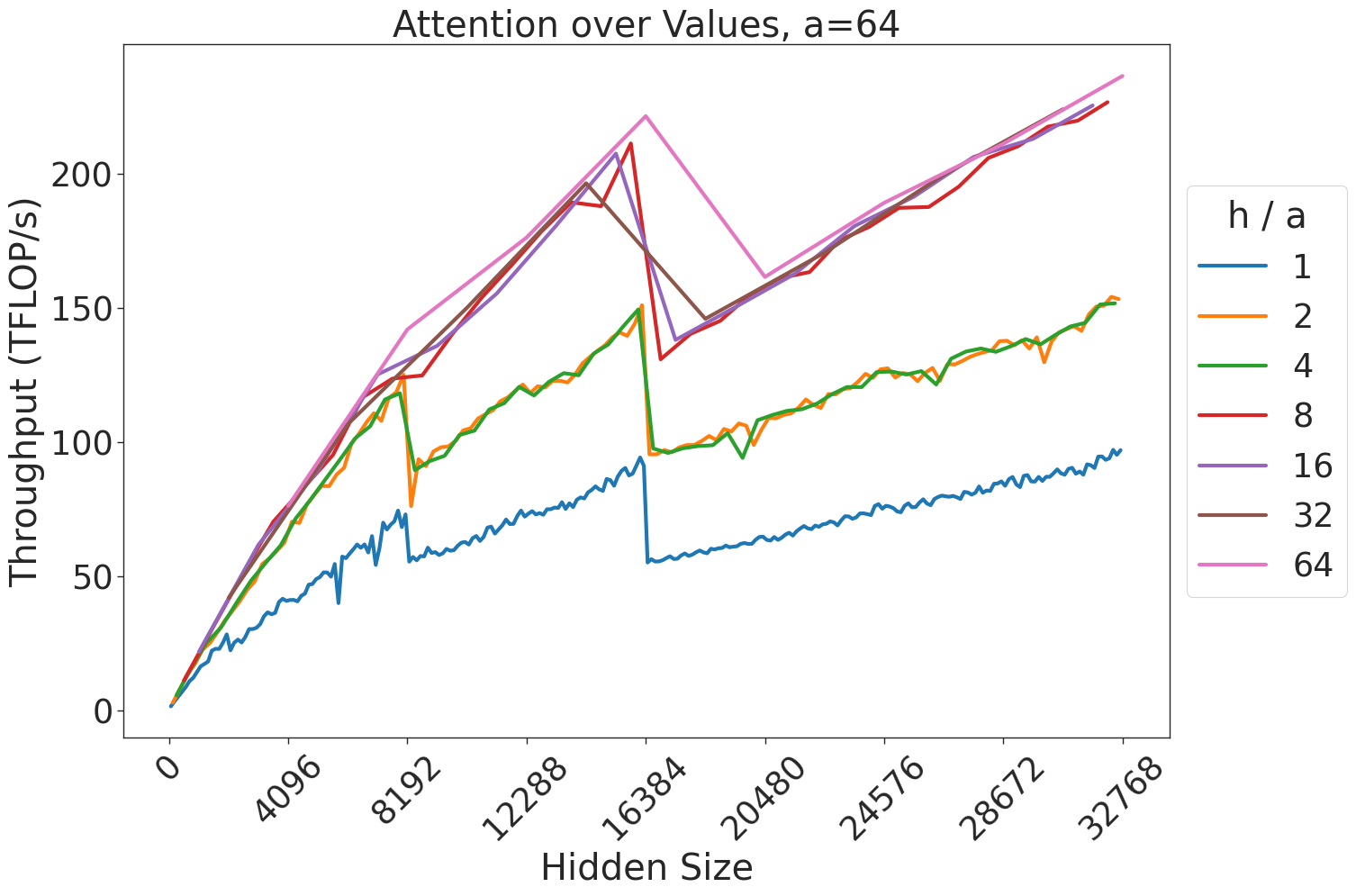

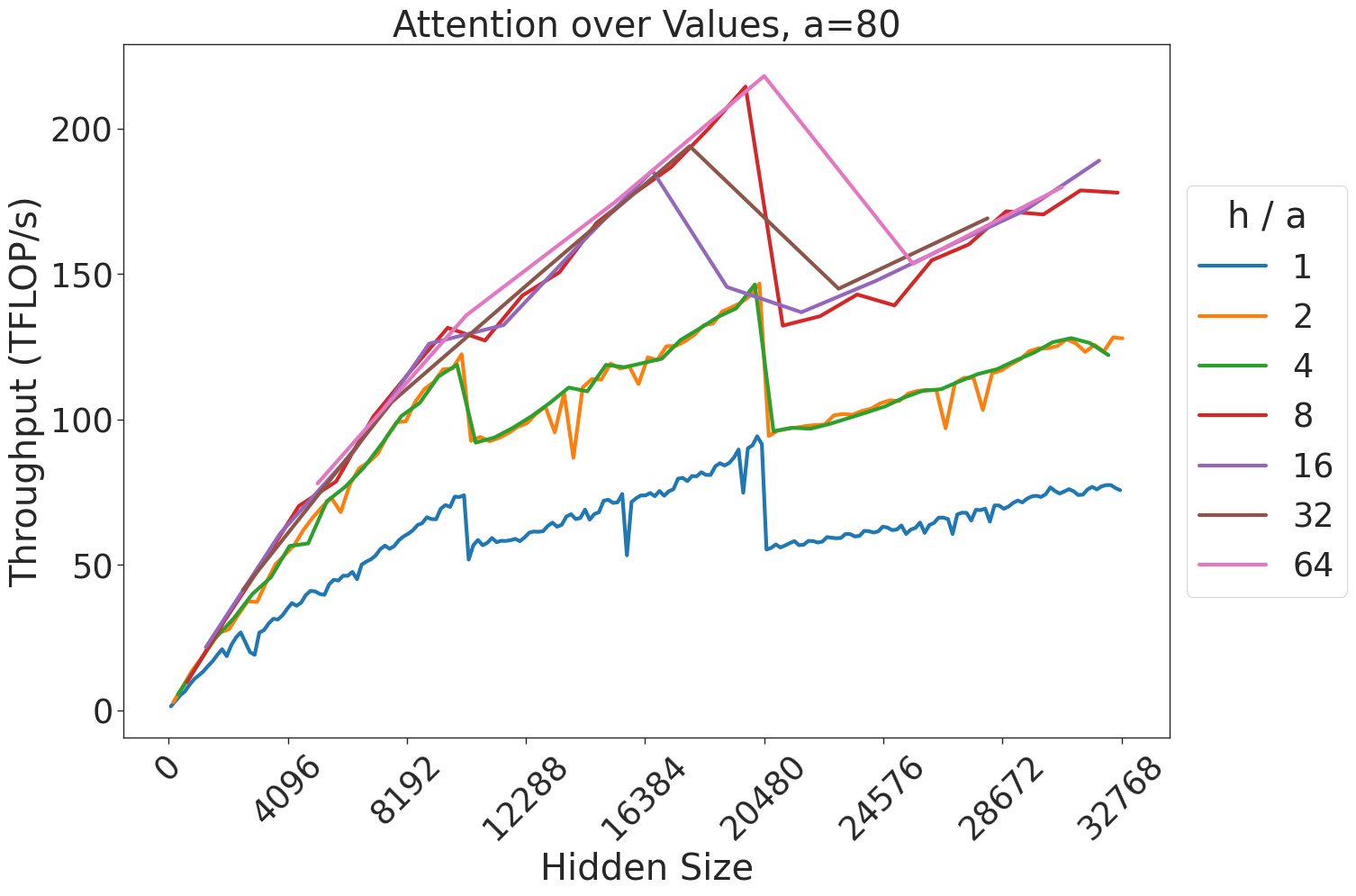

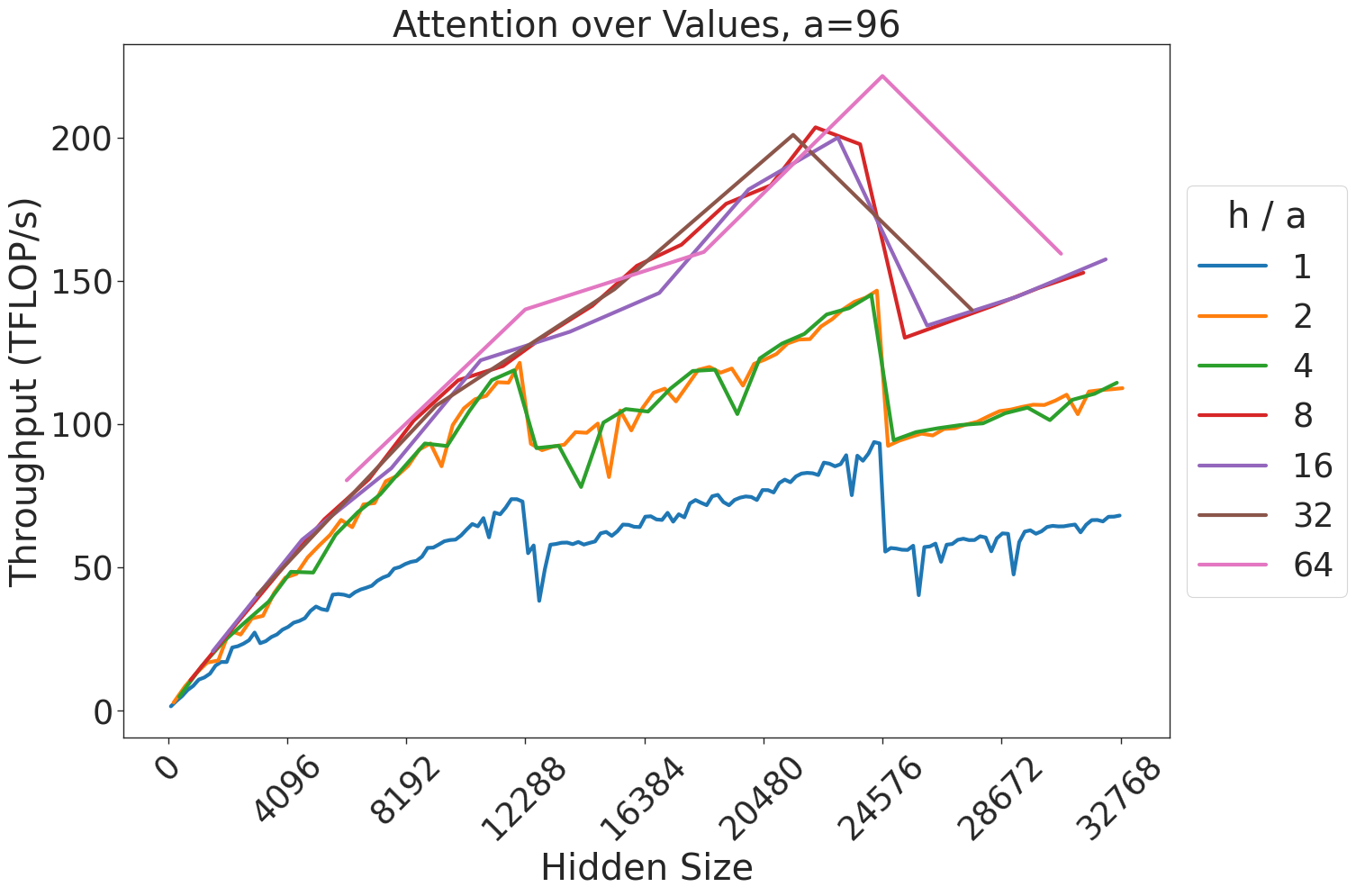

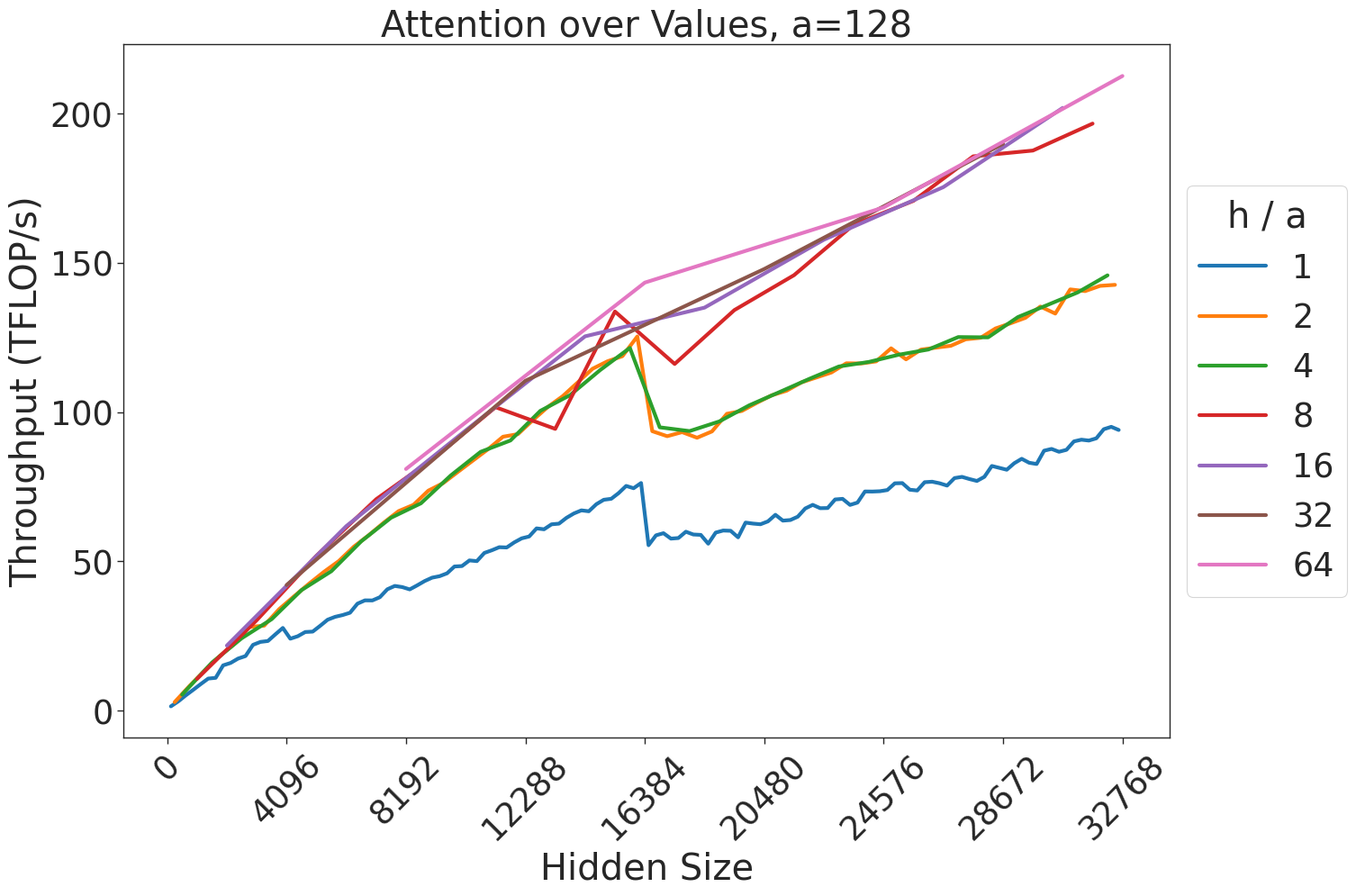

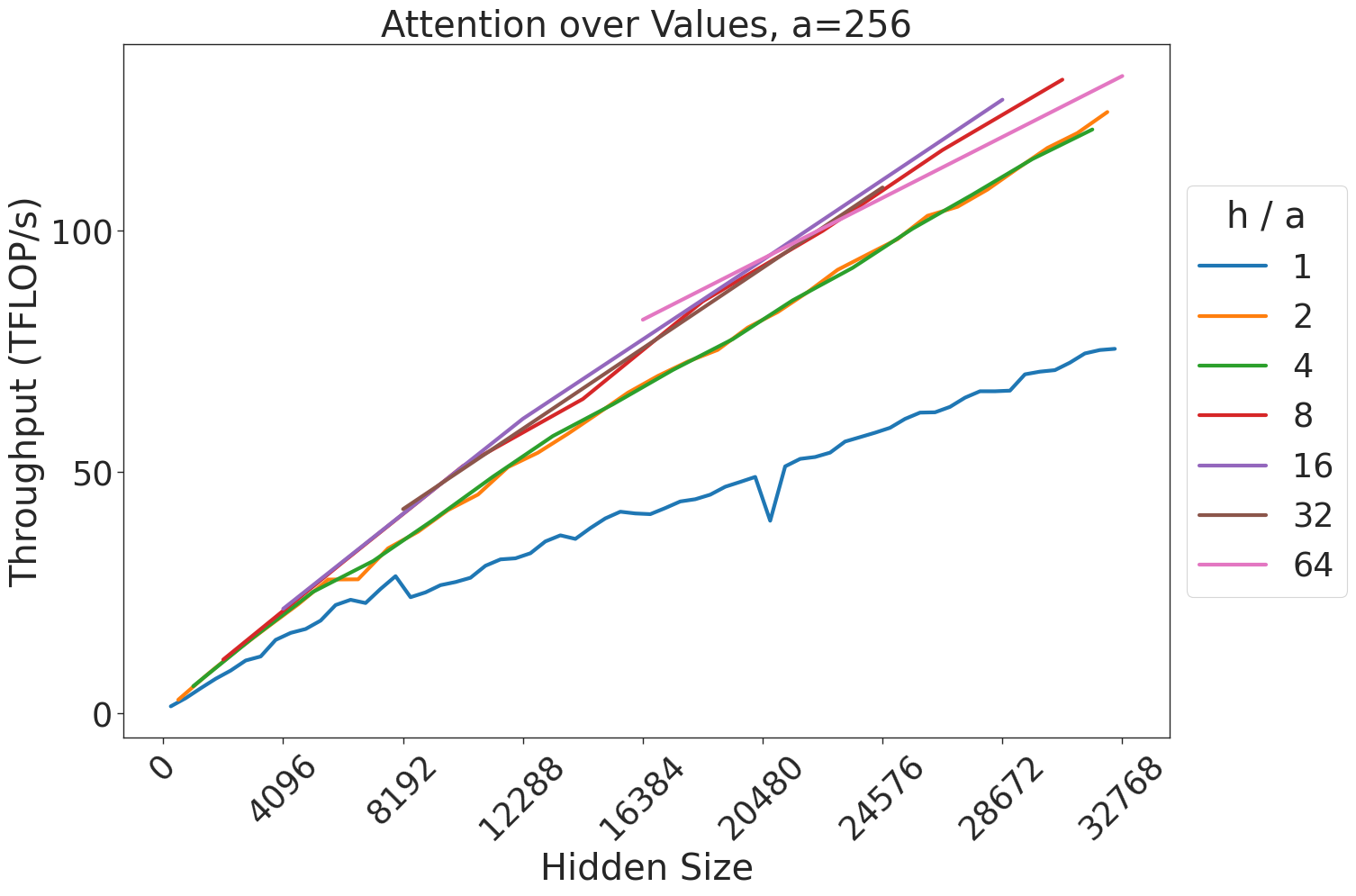

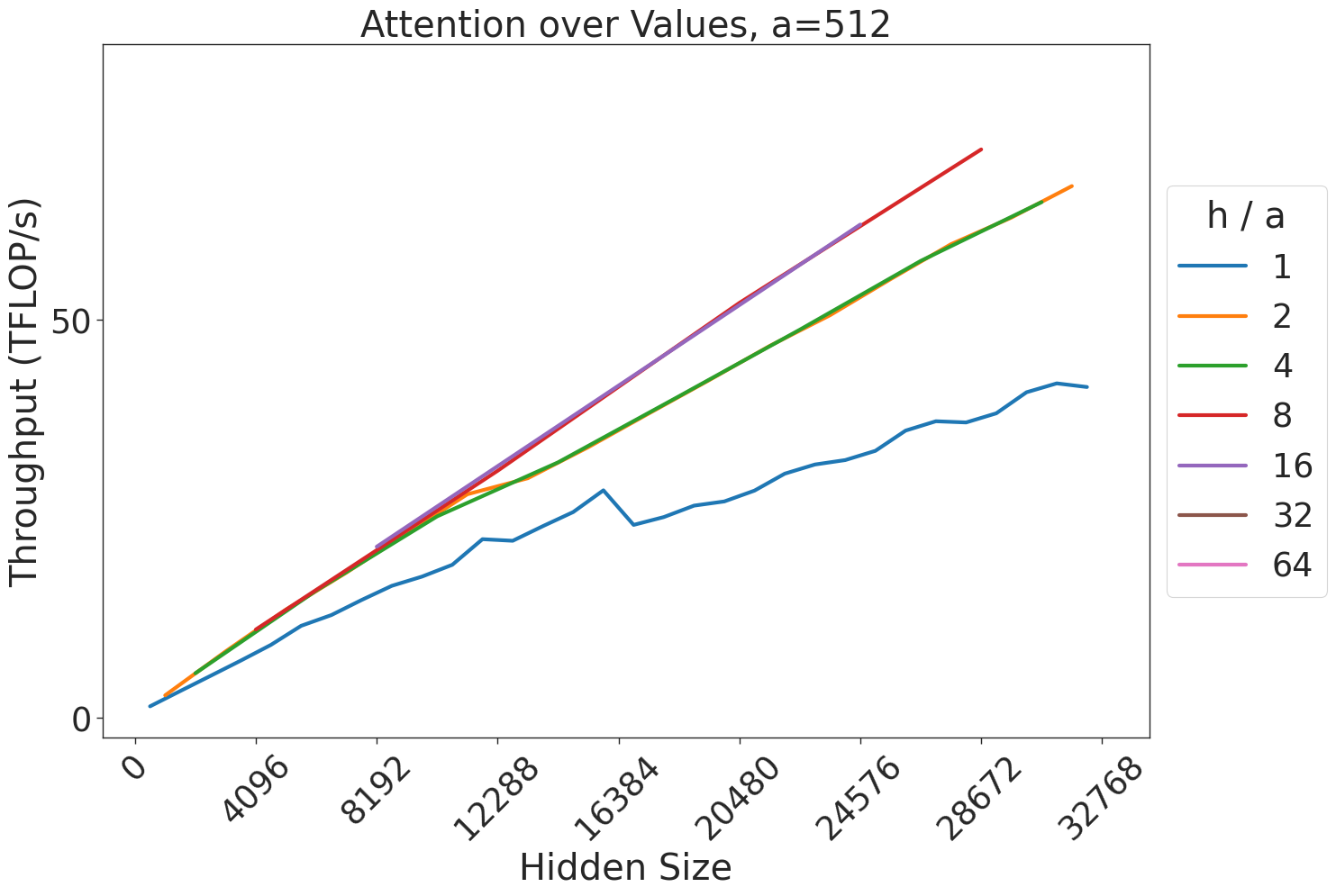

1. Attention over value computation: $\frac{b· a}{t}$ batched matrix multiplications of size $(s,s)×(s,\frac{h}{a})$ . Output is of size $(\frac{b· a}{t},s,\frac{h}{a})$ .

1. Post-attention linear projection: a single matrix multiplication of size $(b· s,\frac{h}{t})×(\frac{h}{t},h)$ . Output is of size $(b· s,h)$ .

1. Matrix multiplications in the MLP block of size $(b· s,h)×(h,\frac{4h}{t})$ and $(b· s,\frac{4h}{t})×(\frac{4h}{t},h)$ . Outputs are of size $(b· s,\frac{4h}{t})$ and $(b· s,h)$ .

The total number of parameters in a transformer can be calculated using the formula $P=12h^2L+13hL+(v+s)h$ . This is commonly approximated as $P=12h^2L$ , omitting the lower-order terms.

| Module Input Embedding Layer Norm 1 | GEMM Size — — | Figure — — |

| --- | --- | --- |

| $QKV$ Transform | $(b· s,h)×(h,\frac{3h}{t})$ | 16 |

| Attention Score | $(\frac{b· a}{t},s,\frac{h}{a})×(\frac{b· a}{t},\frac{h}{a},s)$ | 7a 8 |

| Attn over Value | $(\frac{b· a}{t},s,s)×(\frac{b· a}{t},s,\frac{h}{a})$ | 7b 9 |

| Linear Projection | $(b· s,\frac{h}{t})×(\frac{h}{t},h)$ | 19 |

| Layer Norm 2 | — | — |

| MLP $h$ to $4h$ | $(b· s,h)×(h,\frac{4h}{t})$ | 10a |

| MLP $4h$ to $h$ | $(b· s,\frac{4h}{t})×(\frac{4h}{t},h)$ | 10b |

| Linear Output | $(b· s,v)×(v,h)$ | 20 |

TABLE II: Summary of operators in the transformer layer considered in this paper, along with the size of the GEMMs used to execute these operators.

Here, we make the assumption that the projection weight dimension in the multi-headed attention block is $h/a$ , which is the default in existing implementations like Megatron [33] and GPT-NeoX [3].

The total number of compute operations needed to perform a forward pass for training is then $24bsh^2+4bs^2h=24bsh^2≤ft(1+\frac{s}{6h}\right)$ .

Parallelization Across GPUs. Due to the extreme size of modern transformer models, and the additional buffers and activations needed for training, it is common to split transformers across multiple GPUs using tensor and pipeline parallelism [33, 25]. Since this paper focuses on the computations being done on a single GPU, we will largely ignore parallelism. When we speak of the hidden size of a model, that should be understood to mean the hidden size per GPU. For example, with $t$ -way tensor parallelism, the hidden size per GPU is typically $h/t$ . We leave an analysis of the implications of pipeline and sequence parallelism on optimal model shapes to future work.

| AWS p4d ORNL Summit SDSC Expanse | NVIDIA NVIDIA NVIDIA | 8x(A100 40GB) 6x(V100 16GB) 4x(V100 32GB) | Intel Cascade Lake 8275CL IBM POWER9 AMD EPYC 7742 | Amazon EFA [400 Gbps] InfiniBand EDR [200 Gbps] InfiniBand HDR [200 Gbps] | NVLINK [600 GBps] NVLINK (2x3) [100 GBps] NVLINK [100 GBps] |

| --- | --- | --- | --- | --- | --- |

TABLE III: Hardware systems used in this paper.

## IV Experimental Setup

### IV-A Hardware Setup

All experimental results were measured on one of the systems described in Table III. We used compute from a wide variety of sources such as Oak Ridge National Laboratory (ORNL), the San Diego Supercomputing Center (SDSC), and cloud providers such as AWS and Cirrascale. In order to increase the coverage of our takeaways as much as possible, we have included a diverse range of systems in this study.

### IV-B Software Setup

Each hardware setup has used slightly different software. For the V100 experiments, we used PyTorch 1.12.1 and CUDA 11.3. For the A100 experiments, we used PyTorch 1.13.1, CUDA 11.7. For H100 experiments, we used PyTorch 2.1.0 and CUDA 12.2.2. For MI250X experiments, we used PyTorch 2.1.1 and ROCM 5.6.0. All transformer implementations are ported from GPT-NeoX [3].

## V GEMM Results

<details>

<summary>extracted/5378885/figures/mm/basicGemmMSweep.png Details</summary>

### Visual Description

# Technical Document Extraction: GPU Throughput Analysis

## Chart Description

This image is a **line chart** comparing the throughput performance of two GPU models (A100 and V100) across varying computational loads (denoted as "m"). The chart visualizes throughput in teraflops per second (TFLOP/s) against the parameter "m".

---

### Axis Labels and Markers

- **X-axis (Horizontal):**

- Label: `m`

- Markers: `0`, `2000`, `4000`, `6000`, `8000`

- **Y-axis (Vertical):**

- Label: `Throughput (TFLOP/s)`

- Markers: `0`, `50`, `100`, `150`, `200`

---

### Legend

- **Placement:** Right side of the chart (outside the plot area).

- **Labels and Colors:**

- `A100` (Blue line with circular markers)

- `V100` (Orange line with circular markers)

---

### Data Series and Trends

#### A100 (Blue Line)

- **Trend:** Steep upward curve, indicating exponential growth in throughput as "m" increases.

- **Data Points (x, y):**

- (0, 0)

- (1000, 30)

- (2000, 120)

- (4000, 170)

- (8000, 210)

#### V100 (Orange Line)

- **Trend:** Gradual upward slope, indicating linear growth in throughput as "m" increases.

- **Data Points (x, y):**

- (0, 0)

- (1000, 10)

- (2000, 60)

- (4000, 80)

- (8000, 90)

---

### Key Observations

1. **Performance Gap:**

- A100 consistently outperforms V100 across all values of "m".

- At "m = 8000", A100 achieves **210 TFLOP/s**, while V100 achieves **90 TFLOP/s**.

2. **Scalability:**

- A100 demonstrates superior scalability, with throughput increasing by **~180 TFLOP/s** from "m = 0" to "m = 8000".

- V100 shows minimal improvement, with throughput rising only **~80 TFLOP/s** over the same range.

---

### Spatial Grounding

- **Legend Position:** Right-aligned, outside the plot area.

- **Data Point Verification:**

- Blue markers (A100) align with the blue line.

- Orange markers (V100) align with the orange line.

---

### Notes

- No additional text, tables, or embedded diagrams are present.

- All textual information is in **English**.

- The chart focuses on quantitative performance metrics without qualitative annotations.

</details>

(a) $(m,4096)×(4096,m)$

<details>

<summary>extracted/5378885/figures/mm/basicGemmKSweep.png Details</summary>

### Visual Description

# Technical Document Analysis: GPU Throughput Comparison

## Image Description

The image is a **line chart** comparing the throughput (in TFLOPs per second) of two GPUs, **A100** and **V100**, plotted against a variable **k** (x-axis). The chart includes labeled axes, a legend, and two distinct data series.

---

## Key Components

### Axis Labels

- **Y-axis**: "Throughput (TFLOP/s)"

- Range: 0 to 150 (increments of 50).

- **X-axis**: "k"

- Range: 0 to 500 (increments of 100).

### Legend

- Located on the **right side** of the chart.

- **Blue line**: A100 GPU.

- **Orange line**: V100 GPU.

---

## Data Series Analysis

### A100 (Blue Line)

- **Trend**:

- Starts at (0, 0).

- Sharp upward slope to ~100 TFLOP/s at **k=50**.

- Continues rising with fluctuations, peaking at **~170 TFLOP/s** around **k=250**.

- Dips to **~140 TFLOP/s** at **k=300**, then rises again to **~160 TFLOP/s** at **k=400**, and finally reaches **~180 TFLOP/s** at **k=500**.

- **Key Data Points**:

- (0, 0)

- (50, 100)

- (250, 170)

- (300, 140)

- (400, 160)

- (500, 180)

### V100 (Orange Line)

- **Trend**:

- Starts at (0, 0).

- Gradual upward slope to **~50 TFLOP/s** at **k=200**.

- Dips to **~40 TFLOP/s** at **k=300**, then rises to **~70 TFLOP/s** at **k=400**, and reaches **~80 TFLOP/s** at **k=500**.

- **Key Data Points**:

- (0, 0)

- (200, 50)

- (300, 40)

- (400, 70)

- (500, 80)

---

## Spatial Grounding

- **Legend Position**: Right side of the chart.

- **Color Consistency**:

- Blue data points (A100) match the legend.

- Orange data points (V100) match the legend.

---

## Observations

1. **A100 outperforms V100** across all values of **k**, with a significantly higher throughput.

2. **A100 exhibits volatility** (e.g., dip at **k=300**), while **V100 shows a smoother trend** with a mid-chart dip.

3. **No additional text or data tables** are present in the image.

---

## Conclusion

The chart demonstrates a clear performance gap between the A100 and V100 GPUs, with A100 achieving higher throughput values for all tested **k** values. The trends suggest A100’s throughput is more sensitive to changes in **k**, while V100’s performance remains relatively stable but lower.

</details>

(b) $(27648,4096)×(4096,k)$

<details>

<summary>extracted/5378885/figures/mm/basicGemmLargeKSweep.png Details</summary>

### Visual Description

# Technical Document Analysis: GPU Throughput Comparison

## Chart Type

Line chart comparing throughput performance of two GPU models over a variable parameter `k`.

## Axis Labels

- **X-axis**: `k` (ranges from 0 to 6000, increments of 1000)

- **Y-axis**: `Throughput (TFLOP/s)` (ranges from 0 to 150, increments of 50)

## Legend

- **Location**: Right side of the chart

- **Labels**:

- `A100` (blue line with circular markers)

- `V100` (orange line with circular markers)

## Data Trends

### A100 (Blue Line)

- **Initial Value**: Starts at ~10 TFLOP/s at `k=0`

- **Trend**: Steadily increases with minor fluctuations

- **Final Value**: Reaches ~170 TFLOP/s at `k=6000`

- **Key Observations**:

- Sharp rise from `k=0` to `k=1000` (10 → ~120 TFLOP/s)

- Gradual ascent with periodic dips (e.g., ~150 → ~140 at `k=3000`)

- Consistent upward trajectory after `k=4000`

### V100 (Orange Line)

- **Initial Value**: Starts at 0 TFLOP/s at `k=0`, jumps to ~60 TFLOP/s

- **Trend**: Plateaus at ~80 TFLOP/s with minor oscillations

- **Final Value**: Remains ~80 TFLOP/s at `k=6000`

- **Key Observations**:

- Immediate spike at `k=0` (0 → 60 TFLOP/s)

- Stable performance with slight fluctuations (e.g., 80 → 85 → 78 between `k=2000` and `k=4000`)

- No significant growth beyond `k=1000`

## Spatial Grounding

- **Legend Position**: Right-aligned, outside the plot area

- **Data Point Colors**:

- Blue markers correspond to `A100` (confirmed)

- Orange markers correspond to `V100` (confirmed)

## Component Isolation

1. **Header**: No explicit header text

2. **Main Chart**:

- Dual-line plot with distinct markers

- Y-axis scaled logarithmically? (No, linear scale)

3. **Footer**: No footer text

## Critical Data Points

| `k` Value | A100 Throughput (TFLOP/s) | V100 Throughput (TFLOP/s) |

|-----------|---------------------------|---------------------------|

| 0 | 10 | 60 |

| 1000 | ~120 | ~70 |

| 2000 | ~140 | ~75 |

| 3000 | ~150 | ~80 |

| 4000 | ~160 | ~82 |

| 5000 | ~170 | ~80 |

| 6000 | ~170 | ~80 |

## Notes

- The `A100` demonstrates superior scalability with `k`, achieving ~17x higher throughput than `V100` at `k=6000`.

- `V100` shows no meaningful improvement beyond `k=1000`, suggesting potential hardware limitations.

- Both lines use circular markers, but `A100` exhibits more pronounced variability in its growth pattern.

</details>

(c) $(2304,4096)×(4096,k)$ .

Figure 5: Throughput (in teraFLOP/s) for matrix multiplication computations of various sizes.

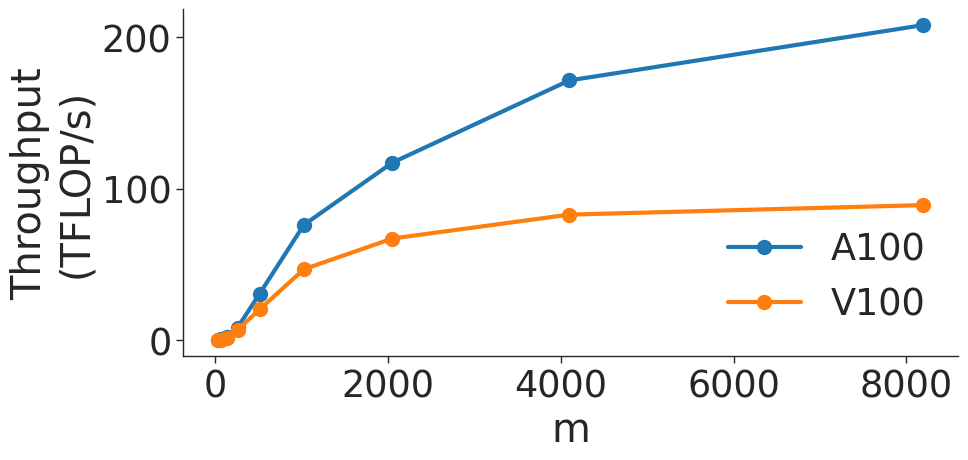

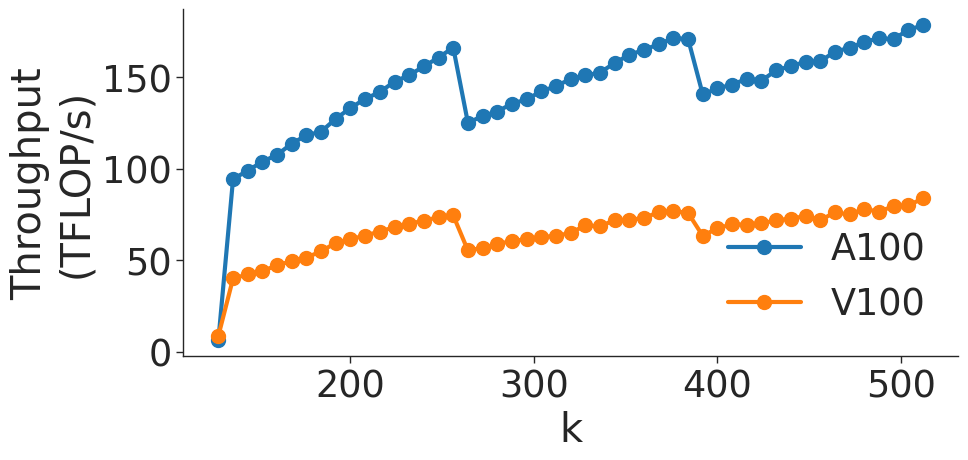

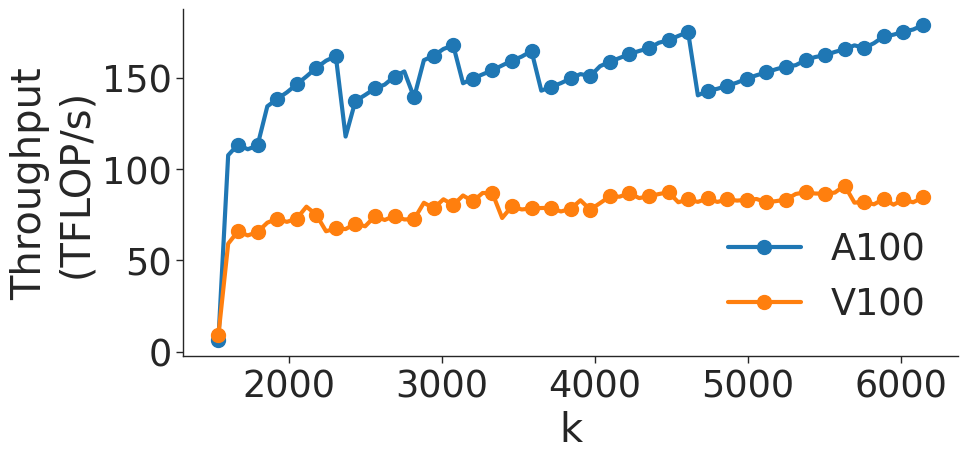

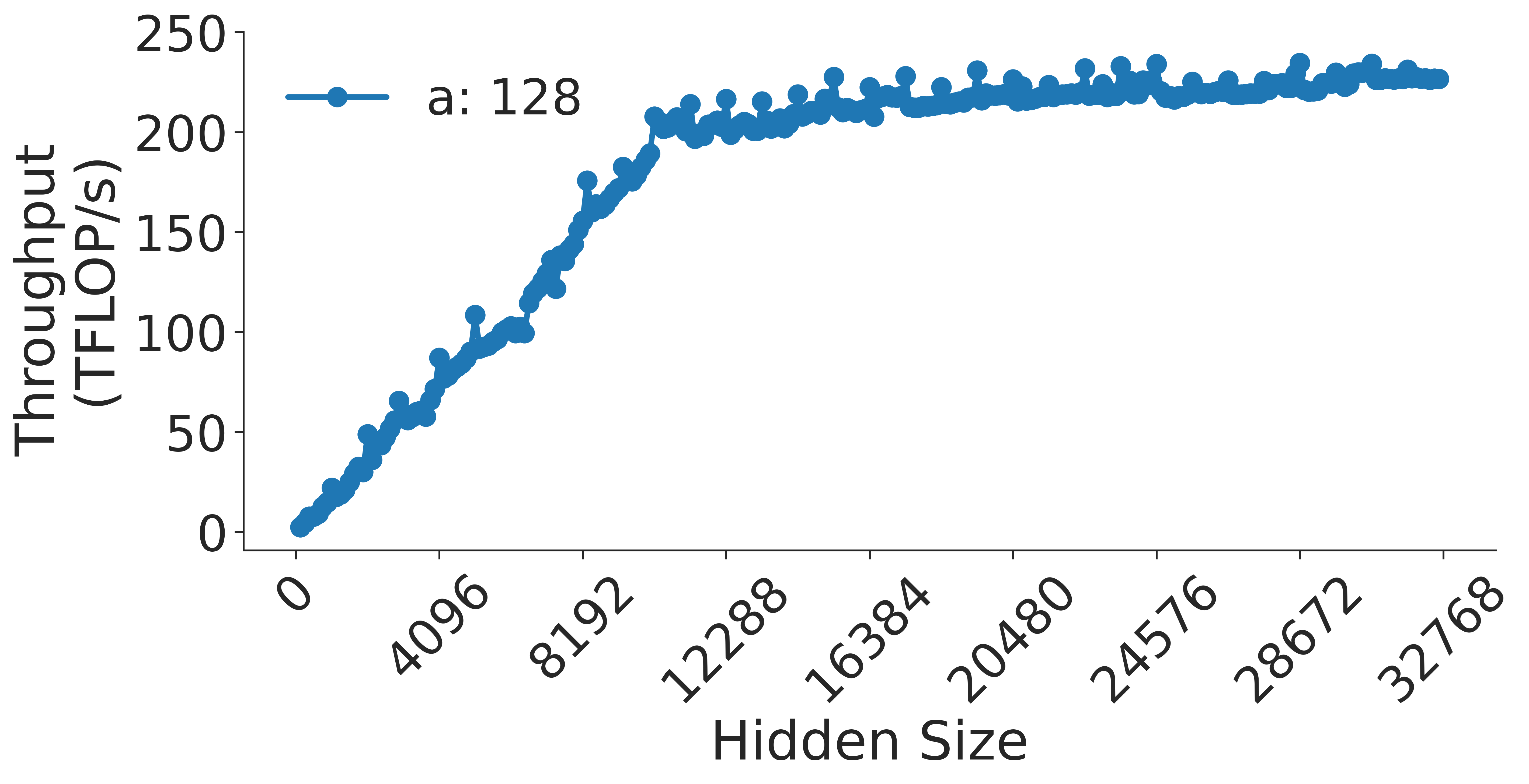

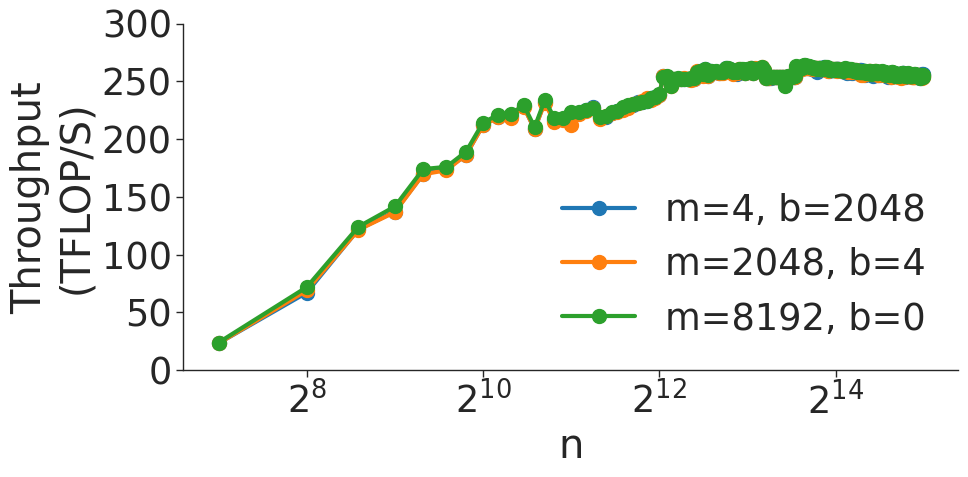

Figure 5 shows the throughput (in teraFLOP/s) of matrix multiplication computations of various sizes on two types of NVIDIA GPUs. As the GEMM size increases, the operation becomes more computationally intensive and uses memory more efficiently (GEMMs are memory-bound for small matrices). As shown in Figure 5 a, throughput of the GEMM kernel increases with matrix size as the kernel becomes compute-bound. However, wave quantization inefficiencies reduce the throughput when the GEMM size crosses certain thresholds. The effects of wave quantization can be seen clearly in Figure 5 b. Additionally, when the size of the GEMM is sufficiently large, PyTorch may automatically choose a tile size that decreases quantization effects. In Figure 5 c, the effects of wave quantization are lessened, as PyTorch is able to better balance the improvements from GEMM parallelization and inefficiencies from wave quantization to improve throughput.

<details>

<summary>extracted/5378885/figures/bmm/v100/b_sweep.png Details</summary>

### Visual Description

# Technical Document Analysis: Throughput vs. b (Log Scale)

## Chart Description

The image is a line graph titled **"Throughput vs. b (Log Scale)"**, depicting the relationship between throughput (measured in TFLOP/s) and the variable **b** (on a logarithmic scale). The graph includes four data series, each represented by a distinct line with unique markers and colors. Below is a detailed breakdown of the components, trends, and data points.

---

### **Axis Labels and Scales**

- **Y-Axis**:

- Label: **"Throughput (TFLOP/s)"**

- Range: **0 to 100** (in increments of 25).

- **X-Axis**:

- Label: **"b"**

- Scale: **Logarithmic** (base 2).

- Markers: **2¹, 2³, 2⁵** (x-values).

---

### **Legend**

The legend is positioned on the **right side** of the graph and maps colors/markers to **m** values:

1. **Blue line with circles**: **m = 1024**

2. **Green line with circles**: **m = 4096**

3. **Orange line with squares**: **m = 2048**

4. **Red line with circles**: **m = 8192**

---

### **Data Series and Trends**

#### 1. **m = 1024 (Blue Line with Circles)**

- **Trend**: Steady upward slope.

- **Data Points**:

- At **2¹**: ~50 TFLOP/s

- At **2³**: ~60 TFLOP/s

- At **2⁵**: ~70 TFLOP/s

#### 2. **m = 4096 (Green Line with Circles)**

- **Trend**: Sharp decline at **2¹**, followed by a slight downward trend.

- **Data Points**:

- At **2¹**: ~90 TFLOP/s

- At **2³**: ~75 TFLOP/s

- At **2⁵**: ~70 TFLOP/s

#### 3. **m = 2048 (Orange Line with Squares)**

- **Trend**: Gradual increase at **2¹**, then plateaus.

- **Data Points**:

- At **2¹**: ~70 TFLOP/s

- At **2³**: ~75 TFLOP/s

- At **2⁵**: ~75 TFLOP/s

#### 4. **m = 8192 (Red Line with Circles)**

- **Trend**: Sharp decline at **2¹**, followed by stabilization.

- **Data Points**:

- At **2¹**: ~100 TFLOP/s

- At **2³**: ~75 TFLOP/s

- At **2⁵**: ~75 TFLOP/s

---

### **Key Observations**

1. **Logarithmic X-Axis**: The x-axis (b) increases exponentially (2¹ → 2³ → 2⁵), which explains the non-linear spacing of data points.

2. **Performance Trends**:

- Higher **m** values (e.g., **m = 8192**) show steeper initial declines in throughput.

- Lower **m** values (e.g., **m = 1024**) exhibit more gradual improvements.

3. **Stabilization**: All lines converge to similar throughput values (~70–75 TFLOP/s) at **2⁵**, suggesting diminishing returns at higher **b** values.

---

### **Footer Notes**

- **Source**: "Example Data Source"

- **Note**: "Logarithmic scale on x-axis (b)"

---

### **Spatial Grounding**

- **Legend Position**: Right side of the graph.

- **Data Point Verification**:

- Colors and markers for each **m** value match the legend exactly.

- Example: At **2¹**, the red circle (m = 8192) aligns with the legend.

---

### **Conclusion**

The graph illustrates how throughput varies with **b** (logarithmically scaled) for different **m** values. Higher **m** values initially achieve higher throughput but degrade more sharply as **b** increases, while lower **m** values show more stable performance. All data points and trends are consistent with the logarithmic scale and legend annotations.

</details>

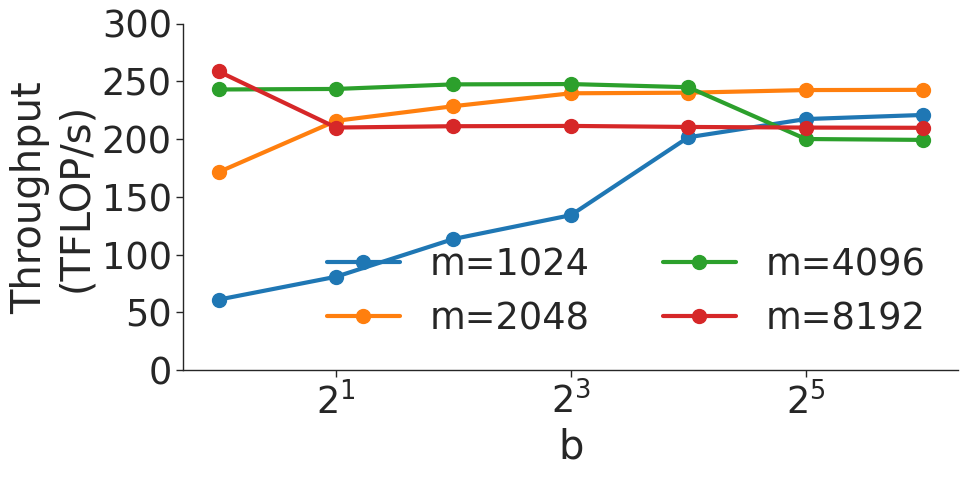

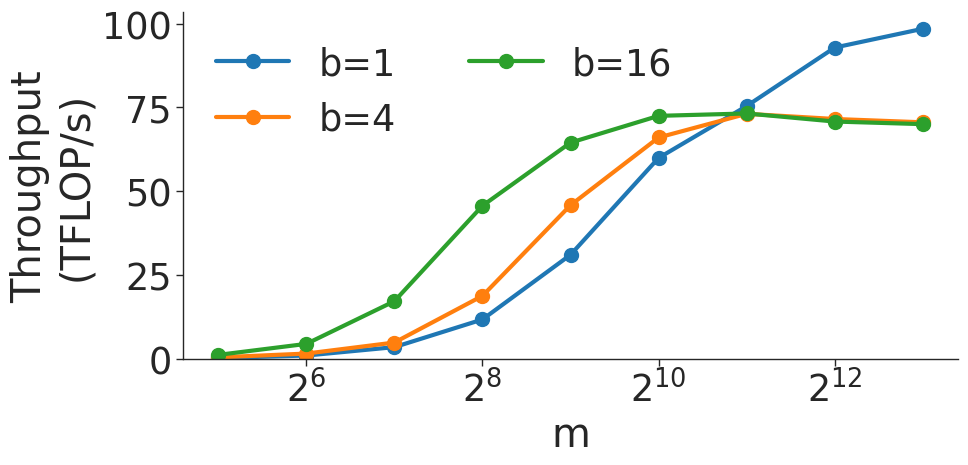

(a) $(b,m,m)×(b,m,m)$ BMM on V100 GPU.

<details>

<summary>extracted/5378885/figures/bmm/a100/b_sweep.png Details</summary>

### Visual Description

# Technical Document Extraction: Throughput vs. Parameter 'b'

## Chart Description

This image is a **line chart** visualizing the relationship between computational throughput (measured in TFLOP/s) and a parameter labeled 'b' (on a logarithmic scale). The chart includes four distinct data series, each represented by a unique line style, color, and marker.

---

### **Axis Labels and Scale**

- **X-axis (Horizontal):**

- Label: `b`

- Values: Logarithmic scale with markers at `2¹`, `2³`, and `2⁵` (i.e., 2, 8, 32).

- **Y-axis (Vertical):**

- Label: `Throughput (TFLOP/s)`

- Range: 0 to 300 (linear scale).

---

### **Legend**

- **Position:** Right side of the chart.

- **Labels and Colors:**

- `m=1024` → **Blue line** with **circle markers** (`●`).

- `m=2048` → **Orange line** with **square markers** (`■`).

- `m=4096` → **Green line** with **triangle markers** (`▲`).

- `m=8192` → **Red line** with **diamond markers** (`◆`).

---

### **Data Series and Trends**

#### 1. **m=1024 (Blue Line)**

- **Trend:** Starts at ~60 TFLOP/s at `b=2¹`, increases steadily to ~140 TFLOP/s at `b=2³`, then rises sharply to ~220 TFLOP/s at `b=2⁵`.

- **Key Points:**

- `b=2¹`: ~60 TFLOP/s

- `b=2³`: ~140 TFLOP/s

- `b=2⁵`: ~220 TFLOP/s

#### 2. **m=2048 (Orange Line)**

- **Trend:** Begins at ~180 TFLOP/s at `b=2¹`, rises slightly to ~230 TFLOP/s at `b=2³`, then plateaus at ~240 TFLOP/s at `b=2⁵`.

- **Key Points:**

- `b=2¹`: ~180 TFLOP/s

- `b=2³`: ~230 TFLOP/s

- `b=2⁵`: ~240 TFLOP/s

#### 3. **m=4096 (Green Line)**

- **Trend:** Starts at ~240 TFLOP/s at `b=2¹`, remains stable at ~250 TFLOP/s at `b=2³`, then drops to ~200 TFLOP/s at `b=2⁵`.

- **Key Points:**

- `b=2¹`: ~240 TFLOP/s

- `b=2³`: ~250 TFLOP/s

- `b=2⁵`: ~200 TFLOP/s

#### 4. **m=8192 (Red Line)**

- **Trend:** Begins at ~260 TFLOP/s at `b=2¹`, dips slightly to ~210 TFLOP/s at `b=2³`, then stabilizes at ~210 TFLOP/s at `b=2⁵`.

- **Key Points:**

- `b=2¹`: ~260 TFLOP/s

- `b=2³`: ~210 TFLOP/s

- `b=2⁵`: ~210 TFLOP/s

---

### **Spatial Grounding and Validation**

- **Legend Accuracy:**

- All line colors and markers match the legend labels (e.g., blue line = `m=1024`).

- **Data Point Consistency:**

- Confirmed that line colors and markers align with their respective `m` values across all `b` values.

---

### **Summary of Observations**

- **Throughput Trends:**

- Lower `m` values (e.g., `m=1024`) show increasing throughput with higher `b`.

- Higher `m` values (e.g., `m=4096`, `m=8192`) exhibit diminishing returns or declines at larger `b`.

- **Critical Insight:**

- The optimal `b` value for maximum throughput varies by `m`. For example, `m=4096` peaks at `b=2³`, while `m=1024` benefits most at `b=2⁵`.

---

### **Additional Notes**

- No textual blocks, tables, or non-English content are present.

- The chart focuses solely on quantitative relationships between `b` and throughput, with no qualitative annotations.

</details>

(b) $(b,m,m)×(b,m,m)$ BMM on A100 GPU.

<details>

<summary>extracted/5378885/figures/bmm/v100/BmmMSweep.png Details</summary>

### Visual Description

# Technical Document Extraction: Line Chart Analysis

## 1. **Axis Labels and Titles**

- **X-axis**: Labeled `m`, with values marked at `2^6`, `2^8`, `2^10`, and `2^12`.

- **Y-axis**: Labeled `Throughput (TFLOP/s)`, with a linear scale from `0` to `100`.

## 2. **Legend and Data Series**

- **Legend Position**: Top-left corner of the chart.

- **Data Series**:

- **Blue Line**: `b=1` (Throughput values).

- **Orange Line**: `b=4` (Throughput values).

- **Green Line**: `b=16` (Throughput values).

## 3. **Key Trends and Data Points**

### a. **Green Line (`b=16`)**

- **Trend**: Steadily increases from near `0` at `m=2^6` to approximately `75 TFLOP/s` at `m=2^12`.

- **Data Points**:

- `m=2^6`: ~`0 TFLOP/s`

- `m=2^8`: ~`45 TFLOP/s`

- `m=2^10`: ~`70 TFLOP/s`

- `m=2^12`: ~`75 TFLOP/s`

### b. **Orange Line (`b=4`)**

- **Trend**: Sharp rise from near `0` at `m=2^6` to ~`75 TFLOP/s` at `m=2^10`, then plateaus.

- **Data Points**:

- `m=2^6`: ~`0 TFLOP/s`

- `m=2^8`: ~`20 TFLOP/s`

- `m=2^10`: ~`70 TFLOP/s`

- `m=2^12`: ~`75 TFLOP/s`

### c. **Blue Line (`b=1`)**

- **Trend**: Gradual increase from near `0` at `m=2^6`, accelerating sharply after `m=2^10` to ~`95 TFLOP/s` at `m=2^12`.

- **Data Points**:

- `m=2^6`: ~`0 TFLOP/s`

- `m=2^8`: ~`10 TFLOP/s`

- `m=2^10`: ~`60 TFLOP/s`

- `m=2^12`: ~`95 TFLOP/s`

## 4. **Spatial Grounding and Color Verification**

- **Legend Colors**:

- Blue (`b=1`) matches the blue line.

- Orange (`b=4`) matches the orange line.

- Green (`b=16`) matches the green line.

- **Data Point Accuracy**: All data points align with their respective legend colors.

## 5. **Component Isolation**

- **Main Chart**: Line plot with logarithmic x-axis (`m`) and linear y-axis (`Throughput`).

- **No Additional Components**: No headers, footers, or embedded text blocks.

## 6. **Summary of Observations**

- **Performance Scaling**:

- `b=16` (green) achieves the highest throughput early and maintains a steady increase.

- `b=4` (orange) shows rapid growth but plateaus near `75 TFLOP/s`.

- `b=1` (blue) lags initially but surpasses other series at `m=2^12`.

- **Implications**: Higher `b` values correlate with better throughput, though diminishing returns are observed for `b=4` and `b=16` at larger `m`.

## 7. **Final Notes**

- The chart uses a **logarithmic scale** for the x-axis (`m`), as indicated by the exponential notation (`2^6`, `2^8`, etc.).

- No textual data or tables are present in the image.

- All trends and data points are visually consistent with the legend and axis labels.

</details>

(c) $(b,m,4096)×(b,4096,m)$ BMM on V100 GPU.

<details>

<summary>extracted/5378885/figures/bmm/a100/BmmMSweep.png Details</summary>

### Visual Description

# Technical Document Extraction: Line Chart Analysis

## Labels and Axis Titles

- **X-axis**: Labeled `m` with values: `2^6`, `2^8`, `2^10`, `2^12`.

- **Y-axis**: Labeled `Throughput (TFLOP/s)` with values: `0`, `50`, `100`, `150`, `200`, `250`, `300`.

## Legend

- **Location**: Right side of the chart.

- **Entries**:

- `b=1` (Blue line with circle markers).

- `b=4` (Orange line with square markers).

- `b=16` (Green line with diamond markers).

## Data Series and Trends

### Series 1: `b=1` (Blue)

- **Trend**: Gradual upward slope with consistent growth.

- **Data Points**:

- `m=2^6`: ~0 TFLOP/s.

- `m=2^8`: ~10 TFLOP/s.

- `m=2^9`: ~50 TFLOP/s.

- `m=2^10`: ~130 TFLOP/s.

- `m=2^11`: ~220 TFLOP/s.

- `m=2^12`: ~250 TFLOP/s.

### Series 2: `b=4` (Orange)

- **Trend**: Accelerated upward slope, surpassing `b=1` after `m=2^10`.

- **Data Points**:

- `m=2^6`: ~0 TFLOP/s.

- `m=2^8`: ~10 TFLOP/s.

- `m=2^9`: ~60 TFLOP/s.

- `m=2^10`: ~180 TFLOP/s.

- `m=2^11`: ~240 TFLOP/s.

- `m=2^12`: ~250 TFLOP/s.

### Series 3: `b=16` (Green)

- **Trend**: Steep initial rise, peaks at `m=2^11`, then declines.

- **Data Points**:

- `m=2^6`: ~0 TFLOP/s.

- `m=2^7`: ~10 TFLOP/s.

- `m=2^8`: ~50 TFLOP/s.

- `m=2^9`: ~170 TFLOP/s.

- `m=2^10`: ~230 TFLOP/s.

- `m=2^11`: ~240 TFLOP/s.

- `m=2^12`: ~210 TFLOP/s.

## Spatial Grounding

- **Legend Position**: Right-aligned, outside the plot area.

- **Color Consistency**:

- Blue (`b=1`) matches all blue data points.

- Orange (`b=4`) matches all orange data points.

- Green (`b=16`) matches all green data points.

## Key Observations

1. **Performance Scaling**: Higher `b` values (e.g., `b=16`) achieve higher throughput earlier but plateau or decline at larger `m`.

2. **Convergence**: All series converge near `m=2^12` (~250 TFLOP/s), suggesting diminishing returns at extreme scales.

3. **Efficiency**: `b=4` and `b=16` outperform `b=1` significantly at mid-to-high `m` values.

## Notes

- No non-English text or additional components (e.g., tables, heatmaps) are present.

- The chart focuses on computational throughput scaling with parameter `b` and input size `m`.

</details>

(d) $(b,m,4096)×(b,4096,m)$ BMM on A100 GPU.

Figure 6: Throughput (in teraFLOP/s) for batched matrix multiplication (BMM) computations with various dimensions.

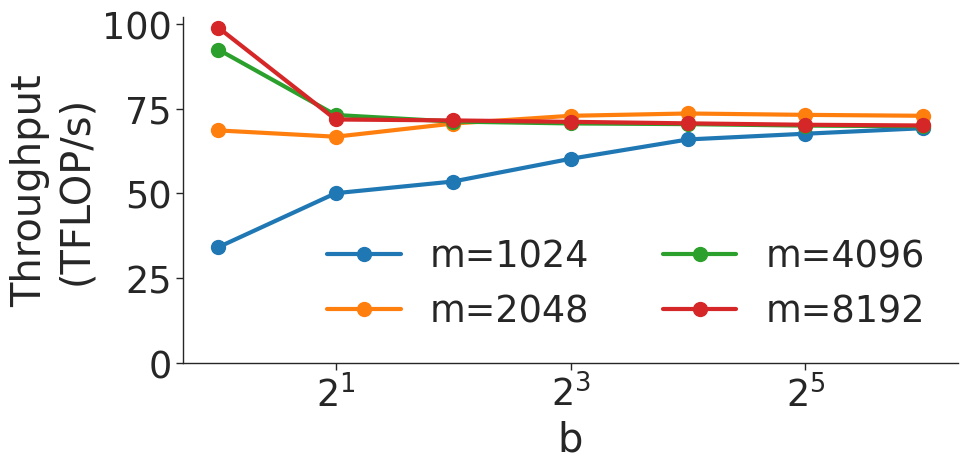

Figure 6 shows the throughput (in teraFLOP/s) of batched matrix multiplication (BMMs) computations of various sizes. Since BMMs are composed of GEMMs, the same wave quantization effects would apply (though they do not for these BMM sizes and on these GPU architectures). BMM throughput also increases as the size of the BMM and arithmetic intensity increases.

## VI Transformer Results

### VI-A Transformer as a Series of GEMMs

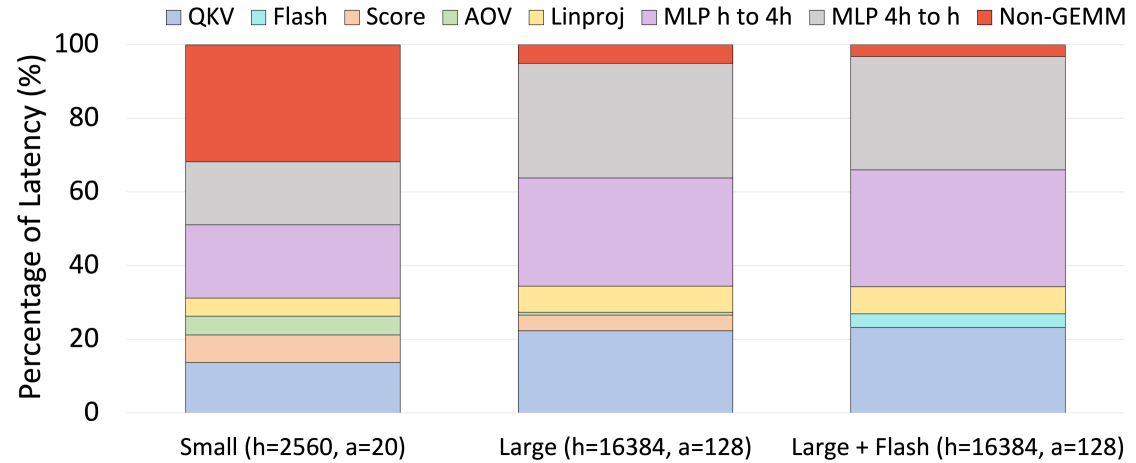

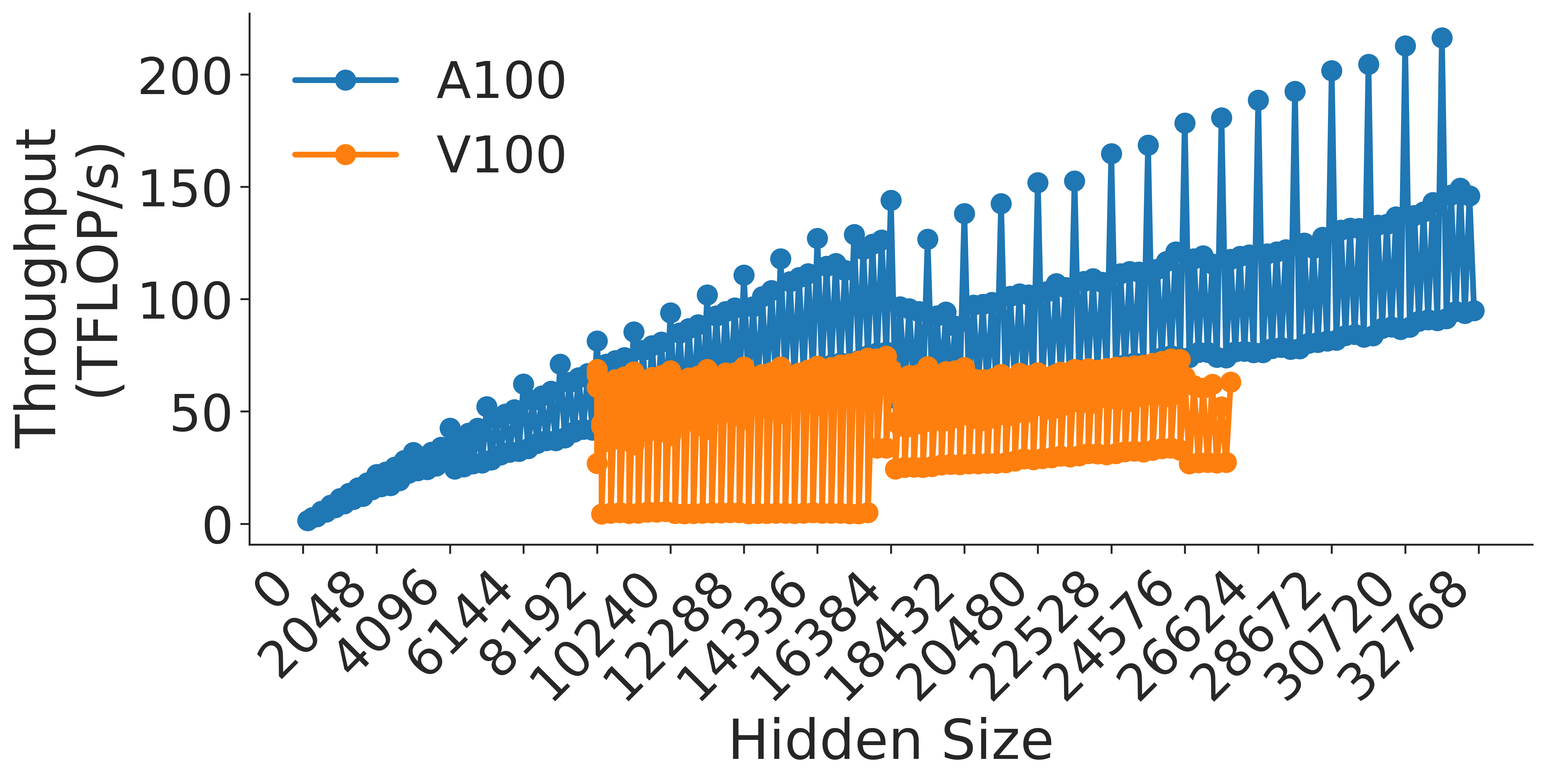

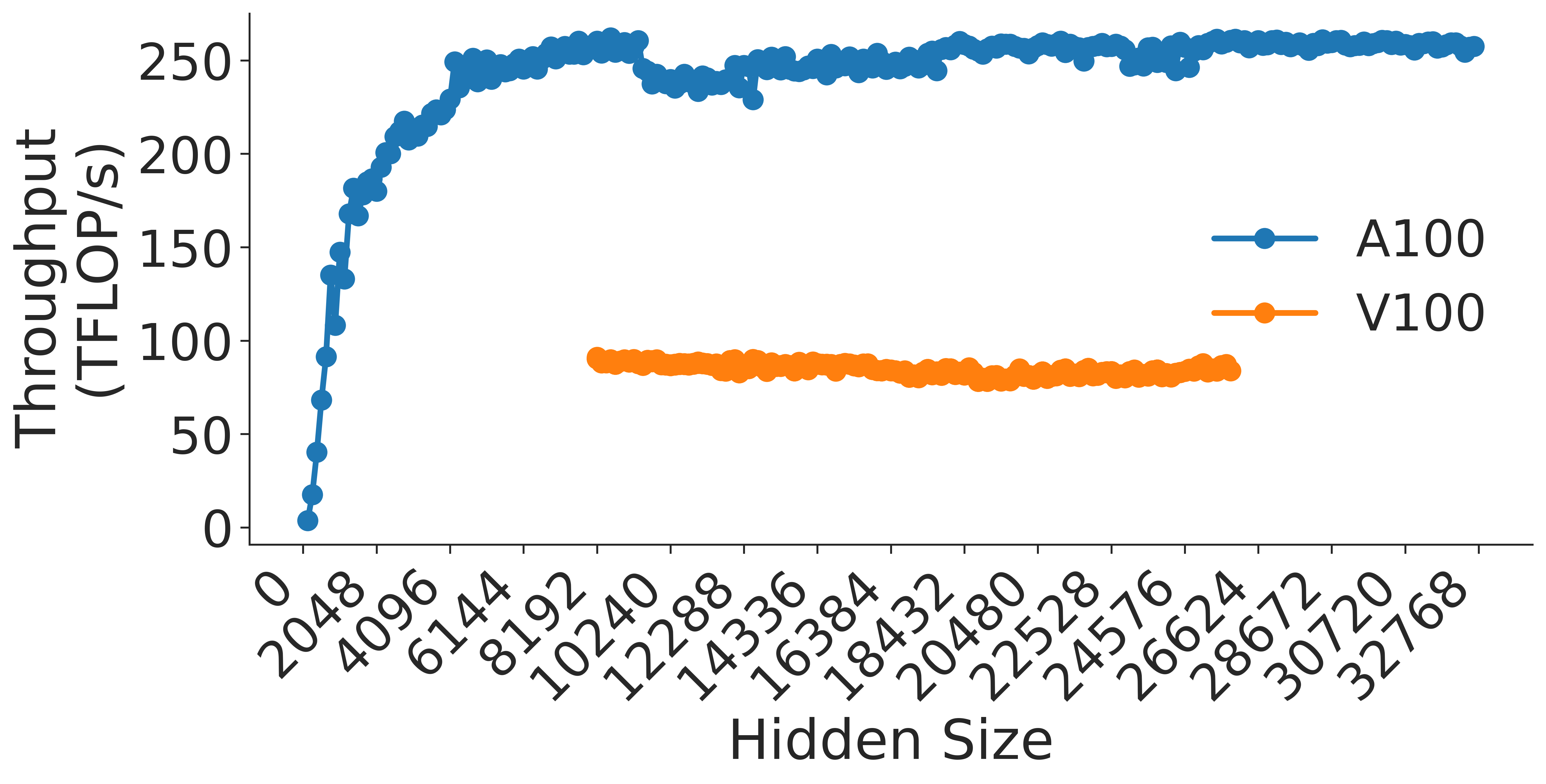

The settings of the various hyperparameters in the transformer layer controlling its shape all have an impact on its observed end-to-end throughput. Some of these hyperparameters can affect performance in subtle ways. The purpose of this section is to map GEMM performance to transformer throughput, use these mappings to explain the performance effects of relevant hyperparameters, and finally to boil down these effects into a series of practical takeaways.

<details>

<summary>extracted/5378885/figures/transformer/spikeless_sweeps/attention_key_query_problem_a32.png Details</summary>

### Visual Description

# Technical Document Extraction: Attention Key Query Score Analysis

## Chart Overview

The image depicts a line chart titled **"Attention Key Query Score, a=32"**, analyzing the relationship between **Hidden Size** (x-axis) and **Throughput (TFLOP/s)** (y-axis). The chart includes seven data series representing different **h/a** (hidden size to attention head ratio) values, with trends visualized across a logarithmic scale of Hidden Size.

---

### Key Components

1. **Title**:

- *"Attention Key Query Score, a=32"*

- Indicates the analysis focuses on attention mechanisms with a fixed parameter `a=32`.

2. **Axes**:

- **X-axis (Hidden Size)**:

- Logarithmic scale ranging from `0` to `32768`.

- Markers at intervals: `4096`, `8192`, `12288`, `16384`, `20480`, `24576`, `28672`, `32768`.

- **Y-axis (Throughput)**:

- Linear scale from `0` to `200` TFLOP/s.

- Markers at intervals: `0`, `50`, `100`, `150`, `200`.

3. **Legend**:

- Located in the **top-right corner**.

- Colors map to **h/a ratios**:

- `1` (blue), `2` (orange), `4` (green), `8` (red), `16` (purple), `32` (brown), `64` (pink).

- Confirmed spatial grounding: All line colors match legend entries exactly.

---

### Data Series Trends

1. **h/a = 1 (Blue Line)**:

- **Trend**: Gradual upward slope with minor fluctuations.

- **Key Points**:

- At Hidden Size `4096`: ~30 TFLOP/s.

- At Hidden Size `32768`: ~90 TFLOP/s.

2. **h/a = 2 (Orange Line)**:

- **Trend**: Steeper initial rise, then plateaus.

- **Key Points**:

- At Hidden Size `4096`: ~50 TFLOP/s.

- At Hidden Size `32768`: ~140 TFLOP/s.

3. **h/a = 4 (Green Line)**:

- **Trend**: Consistent upward trajectory with minor dips.

- **Key Points**:

- At Hidden Size `4096`: ~70 TFLOP/s.

- At Hidden Size `32768`: ~145 TFLOP/s.

4. **h/a = 8 (Red Line)**:

- **Trend**: Sharp rise, followed by stabilization with oscillations.

- **Key Points**:

- At Hidden Size `4096`: ~90 TFLOP/s.

- At Hidden Size `32768`: ~200 TFLOP/s.

5. **h/a = 16 (Purple Line)**:

- **Trend**: Rapid ascent, then sustained high throughput with minor dips.

- **Key Points**:

- At Hidden Size `4096`: ~120 TFLOP/s.

- At Hidden Size `32768`: ~200 TFLOP/s.

6. **h/a = 32 (Brown Line)**:

- **Trend**: Highest throughput, peaking early and maintaining near-maximum.

- **Key Points**:

- At Hidden Size `4096`: ~150 TFLOP/s.

- At Hidden Size `32768`: ~210 TFLOP/s.

7. **h/a = 64 (Pink Line)**:

- **Trend**: Highest throughput overall, with slight fluctuations.

- **Key Points**:

- At Hidden Size `4096`: ~160 TFLOP/s.

- At Hidden Size `32768`: ~210 TFLOP/s.

---

### Observations

- **Scaling Behavior**: Higher **h/a ratios** (e.g., 32, 64) achieve significantly higher throughput, especially at larger Hidden Sizes.

- **Efficiency**: Lower ratios (e.g., 1, 2) show diminishing returns as Hidden Size increases.

- **Stability**: Lines for h/a ≥ 8 exhibit smoother trends compared to lower ratios, which show more volatility.

---

### Notes

- No non-English text detected.

- No embedded data tables or heatmaps present.

- All textual elements (labels, titles, legend) are in English.

This analysis confirms that throughput scales non-linearly with Hidden Size, with higher h/a ratios achieving optimal performance at larger scales.

</details>

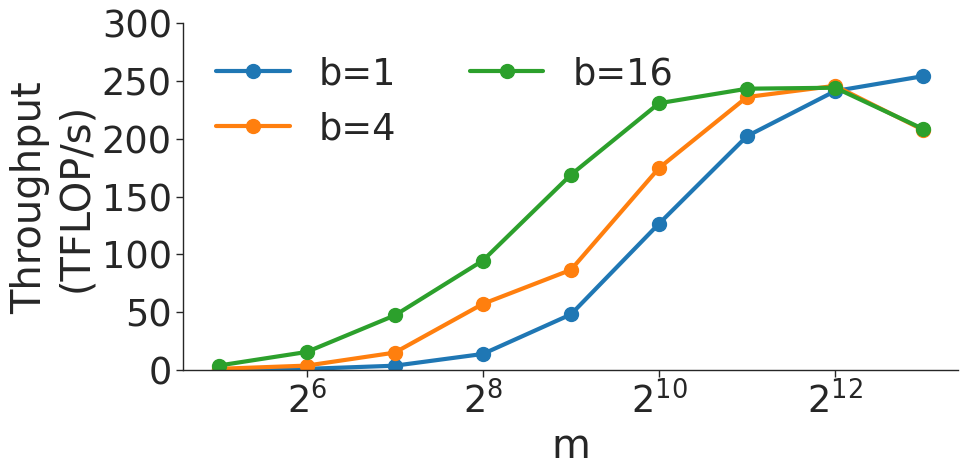

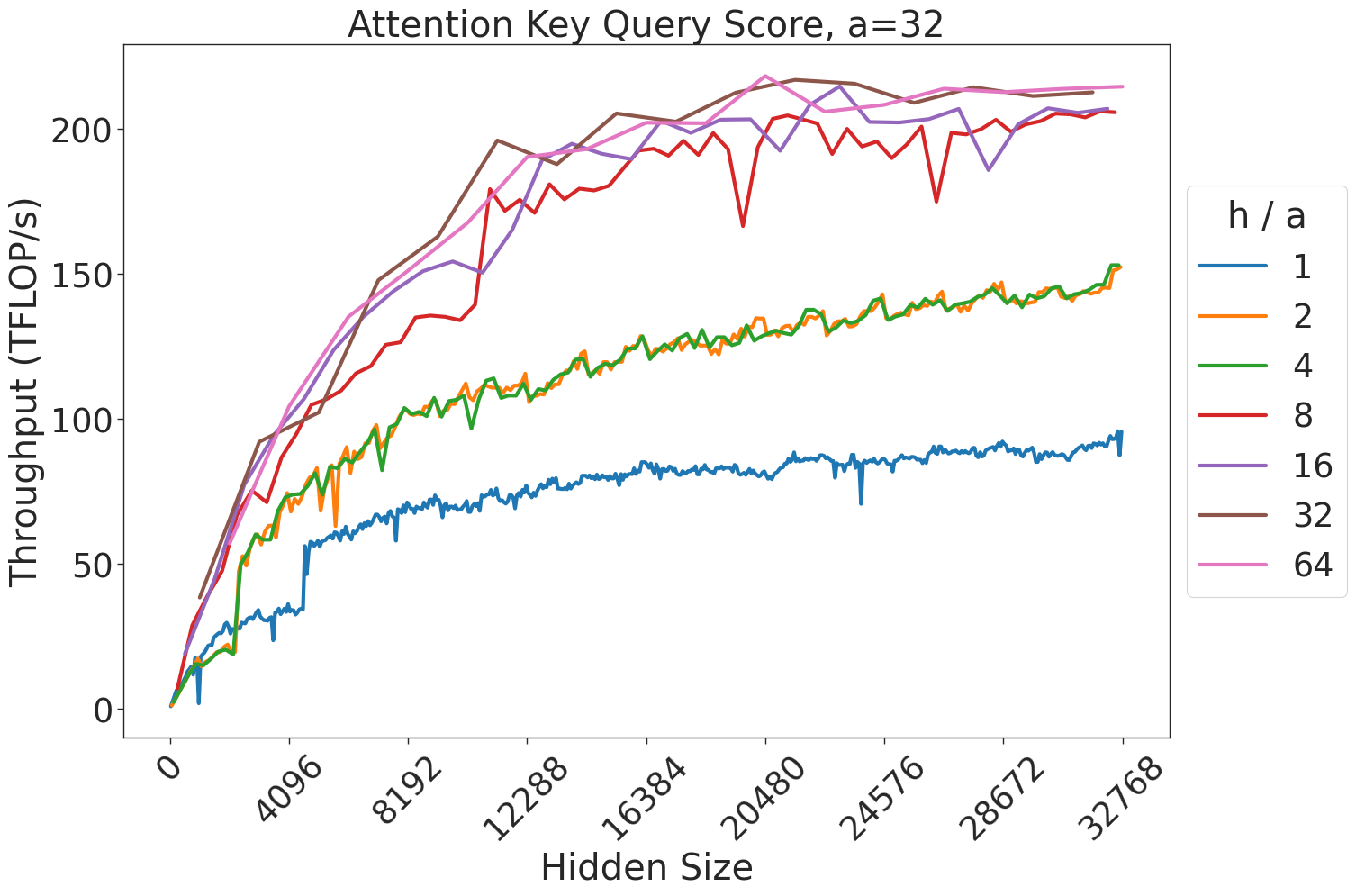

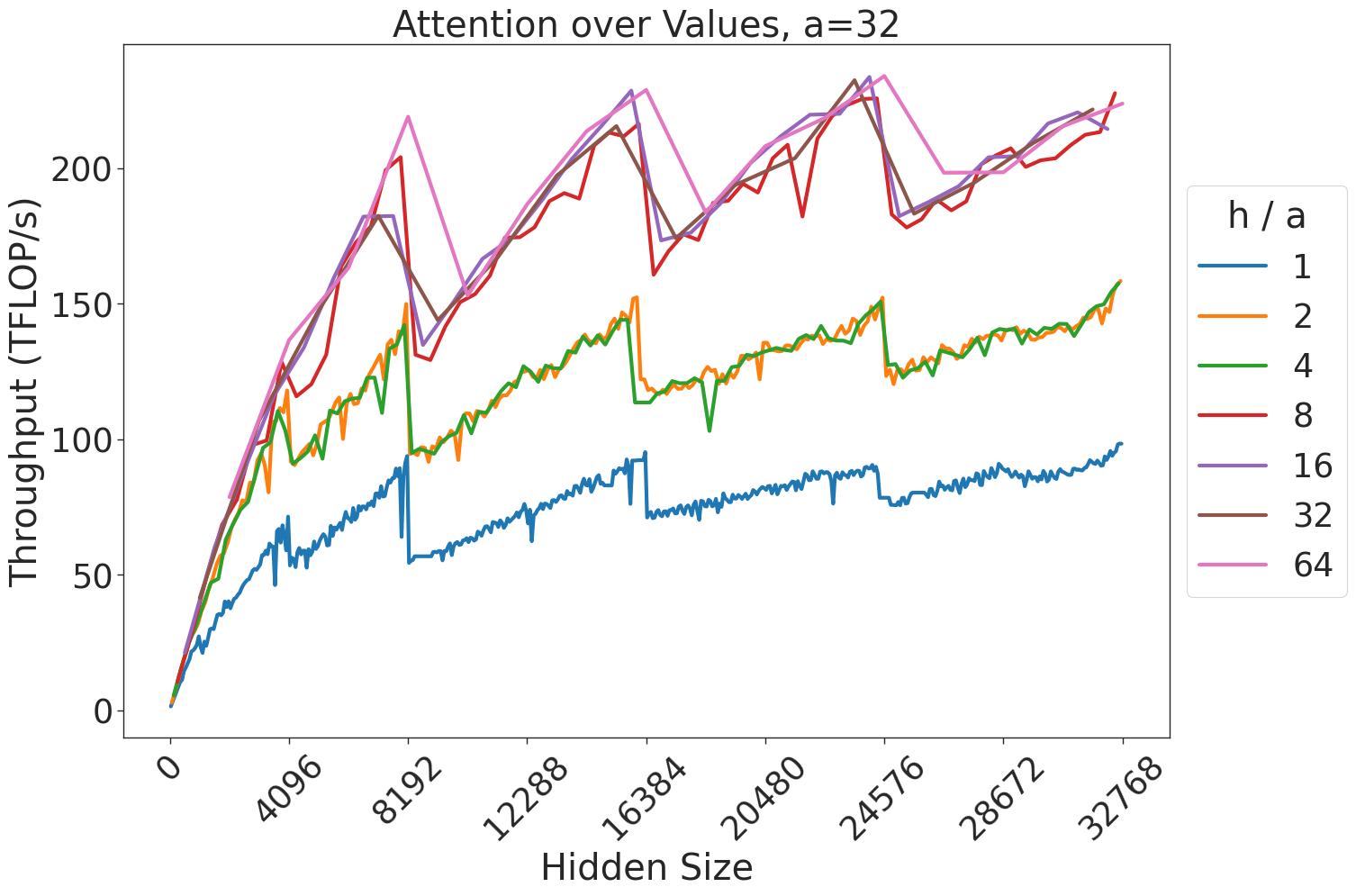

(a) Attention key-query score GEMM throughput for 32 attention heads.

<details>

<summary>extracted/5378885/figures/transformer/spikeless_sweeps/attention_problem_times_values_a32.png Details</summary>

### Visual Description

# Technical Document Extraction: Line Graph Analysis

## Image Description

The image is a line graph titled **"Attention over Values, a=32"**. It visualizes the relationship between **Hidden Size** (x-axis) and **Throughput (TFLOPs/s)** (y-axis). The graph includes six data series, each represented by a distinct colored line, corresponding to different **h/a** ratios. The legend is positioned on the right side of the graph.

---

## Key Components

### 1. **Title**

- **Text**: "Attention over Values, a=32"

- **Purpose**: Indicates the focus of the analysis (attention mechanisms) and a fixed parameter value (a=32).

### 2. **Axes**

- **X-axis (Hidden Size)**:

- **Label**: "Hidden Size"

- **Range**: 0 to 32768

- **Tick Marks**: 0, 4096, 8192, 12288, 16384, 20480, 24576, 28672, 32768

- **Y-axis (Throughput)**:

- **Label**: "Throughput (TFLOPs/s)"

- **Range**: 0 to 200

- **Tick Marks**: 0, 50, 100, 150, 200

### 3. **Legend**

- **Location**: Right side of the graph

- **Entries**:

- **Blue**: h/a = 1

- **Orange**: h/a = 2

- **Green**: h/a = 4

- **Red**: h/a = 8

- **Purple**: h/a = 16

- **Pink**: h/a = 64

---

## Data Series and Trends

### 1. **h/a = 1 (Blue Line)**

- **Trend**: Starts at 0 and increases steadily with minor fluctuations.

- **Key Data Points**:

- At Hidden Size = 0: 0 TFLOPs/s

- At Hidden Size = 4096: ~50 TFLOPs/s

- At Hidden Size = 8192: ~70 TFLOPs/s

- At Hidden Size = 12288: ~80 TFLOPs/s

- At Hidden Size = 16384: ~90 TFLOPs/s

- At Hidden Size = 20480: ~95 TFLOPs/s

- At Hidden Size = 24576: ~100 TFLOPs/s

- At Hidden Size = 28672: ~110 TFLOPs/s

- At Hidden Size = 32768: ~120 TFLOPs/s

### 2. **h/a = 2 (Orange Line)**

- **Trend**: Similar to h/a = 1 but with slightly higher throughput and minor fluctuations.

- **Key Data Points**:

- At Hidden Size = 0: 0 TFLOPs/s

- At Hidden Size = 4096: ~60 TFLOPs/s

- At Hidden Size = 8192: ~90 TFLOPs/s

- At Hidden Size = 12288: ~110 TFLOPs/s

- At Hidden Size = 16384: ~120 TFLOPs/s

- At Hidden Size = 20480: ~130 TFLOPs/s

- At Hidden Size = 24576: ~140 TFLOPs/s

- At Hidden Size = 28672: ~150 TFLOPs/s

- At Hidden Size = 32768: ~160 TFLOPs/s

### 3. **h/a = 4 (Green Line)**

- **Trend**: Higher throughput than h/a = 2, with more pronounced fluctuations.

- **Key Data Points**:

- At Hidden Size = 0: 0 TFLOPs/s

- At Hidden Size = 4096: ~80 TFLOPs/s

- At Hidden Size = 8192: ~120 TFLOPs/s

- At Hidden Size = 12288: ~140 TFLOPs/s

- At Hidden Size = 16384: ~150 TFLOPs/s

- At Hidden Size = 20480: ~160 TFLOPs/s

- At Hidden Size = 24576: ~170 TFLOPs/s

- At Hidden Size = 28672: ~180 TFLOPs/s

- At Hidden Size = 32768: ~190 TFLOPs/s

### 4. **h/a = 8 (Red Line)**

- **Trend**: Higher throughput than h/a = 4, with significant peaks and troughs.

- **Key Data Points**:

- At Hidden Size = 0: 0 TFLOPs/s

- At Hidden Size = 4096: ~100 TFLOPs/s

- At Hidden Size = 8192: ~150 TFLOPs/s

- At Hidden Size = 12288: ~180 TFLOPs/s

- At Hidden Size = 16384: ~190 TFLOPs/s

- At Hidden Size = 20480: ~200 TFLOPs/s

- At Hidden Size = 24576: ~210 TFLOPs/s

- At Hidden Size = 28672: ~220 TFLOPs/s

- At Hidden Size = 32768: ~230 TFLOPs/s

### 5. **h/a = 16 (Purple Line)**

- **Trend**: Highest throughput among all series, with sharp peaks and troughs.

- **Key Data Points**:

- At Hidden Size = 0: 0 TFLOPs/s

- At Hidden Size = 4096: ~120 TFLOPs/s

- At Hidden Size = 8192: ~170 TFLOPs/s

- At Hidden Size = 12288: ~200 TFLOPs/s

- At Hidden Size = 16384: ~210 TFLOPs/s

- At Hidden Size = 20480: ~220 TFLOPs/s

- At Hidden Size = 24576: ~230 TFLOPs/s

- At Hidden Size = 28672: ~240 TFLOPs/s

- At Hidden Size = 32768: ~250 TFLOPs/s

### 6. **h/a = 64 (Pink Line)**

- **Trend**: Highest throughput with the most pronounced fluctuations.

- **Key Data Points**:

- At Hidden Size = 0: 0 TFLOPs/s

- At Hidden Size = 4096: ~140 TFLOPs/s

- At Hidden Size = 8192: ~200 TFLOPs/s

- At Hidden Size = 12288: ~220 TFLOPs/s

- At Hidden Size = 16384: ~230 TFLOPs/s

- At Hidden Size = 20480: ~240 TFLOPs/s

- At Hidden Size = 24576: ~250 TFLOPs/s

- At Hidden Size = 28672: ~260 TFLOPs/s

- At Hidden Size = 32768: ~270 TFLOPs/s

---

## Observations

1. **Throughput Correlation**: Higher **h/a** ratios (e.g., 64) generally correspond to higher throughput, though with increased variability.

2. **Fluctuations**: Lines with higher **h/a** values (e.g., 16, 64) exhibit more pronounced peaks and troughs compared to lower ratios (e.g., 1, 2).

3. **Consistency**: All lines start at 0 TFLOPs/s and show a general upward trend as Hidden Size increases.

---

## Notes

- **Language**: All text in the image is in English.

- **Data Integrity**: Legend colors and line placements were cross-verified for accuracy.

- **Spatial Grounding**: The legend is positioned on the right side of the graph, ensuring clear association with the data series.

---

## Conclusion

The graph demonstrates that increasing the **h/a** ratio correlates with higher throughput, though with varying degrees of stability. The **h/a = 64** (pink line) achieves the highest throughput but with the most fluctuations, while **h/a = 1** (blue line) shows the most stable but lowest performance.

</details>

(b) Attention over value GEMM throughput for 32 attention heads.

Figure 7: Attention GEMM performance on A100 GPUs. Each plot is a single series (i.e. if we didn’t split, there would be three regions with spikes), but split by the largest power of two that divides $h/a$ to demonstrate that more powers of two leads to better performance up to $h/a=64$ .

<details>

<summary>extracted/5378885/figures/transformer/spikeless_sweeps/attention_key_query_problem_ha64_hdim16384.png Details</summary>

### Visual Description

# Technical Document Extraction: Attention Key Query Score Chart

## Title

**Attention Key Query Score (h/a = 64)**

---

## Axes

- **X-axis (Horizontal):**

- Label: `Hidden Size`

- Range: `0` to `16384` (logarithmic scale)

- Tick Marks: `0`, `2048`, `4096`, `6144`, `8192`, `10240`, `12288`, `14336`, `16384`

- **Y-axis (Vertical):**

- Label: `Throughput (TFLOP/s)`

- Range: `50` to `225`

- Tick Marks: `50`, `75`, `100`, `125`, `150`, `175`, `200`, `225`

---

## Legend

- **Location:** Top-right corner

- **Color-Coded Labels:**

- `a: 12` → Blue

- `a: 24` → Orange

- `a: 32` → Green

- `a: 40` → Red

- `a: 64` → Purple

- `a: 80` → Brown

- `a: 96` → Pink

---

## Data Series & Trends

1. **Blue Line (a: 12)**

- **Trend:** Steep upward slope from `50 TFLOP/s` (hidden size 0) to `230 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `2048`: ~90 TFLOP/s

- `4096`: ~190 TFLOP/s

- `8192`: ~220 TFLOP/s

- `16384`: ~230 TFLOP/s

2. **Orange Line (a: 24)**

- **Trend:** Rapid increase from `60 TFLOP/s` (hidden size 2048) to `210 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `4096`: ~110 TFLOP/s

- `8192`: ~150 TFLOP/s

- `12288`: ~205 TFLOP/s

3. **Green Line (a: 32)**

- **Trend:** Gradual rise from `60 TFLOP/s` (hidden size 2048) to `200 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `6144`: ~130 TFLOP/s

- `10240`: ~170 TFLOP/s

- `16384`: ~200 TFLOP/s

4. **Red Line (a: 40)**

- **Trend:** Moderate increase from `60 TFLOP/s` (hidden size 2048) to `190 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `8192`: ~120 TFLOP/s

- `12288`: ~170 TFLOP/s

- `16384`: ~190 TFLOP/s

5. **Purple Line (a: 64)**

- **Trend:** Slow upward trajectory from `60 TFLOP/s` (hidden size 2048) to `150 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `10240`: ~110 TFLOP/s

- `14336`: ~145 TFLOP/s

6. **Brown Line (a: 80)**

- **Trend:** Linear increase from `60 TFLOP/s` (hidden size 2048) to `135 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `12288`: ~120 TFLOP/s

- `16384`: ~135 TFLOP/s

7. **Pink Line (a: 96)**

- **Trend:** Gentle slope from `60 TFLOP/s` (hidden size 2048) to `125 TFLOP/s` (hidden size 16384).

- **Key Points:**

- `10240`: ~100 TFLOP/s

- `16384`: ~125 TFLOP/s

---

## Spatial Grounding

- **Legend Position:** Top-right corner (outside the plot area).

- **Color Consistency Check:**

- All line colors match legend labels (e.g., blue = a:12, orange = a:24).

---

## Additional Observations

- **Shaded Regions:**

- Green (`0-1B`), Blue (`1B-10B`), and Pink (`10B-300B`) highlight hidden size ranges but do not directly correlate with data series.

- **h/a Ratio:** Constant value of `64` (title annotation).

---

## Summary

The chart illustrates the relationship between **hidden size** and **throughput (TFLOP/s)** for varying **attention key query scores (a)**. Higher `a` values (e.g., 96) yield lower throughput, while lower `a` values (e.g., 12) achieve higher throughput. Throughput increases non-linearly with hidden size, with steeper growth observed for smaller `a` values.

</details>

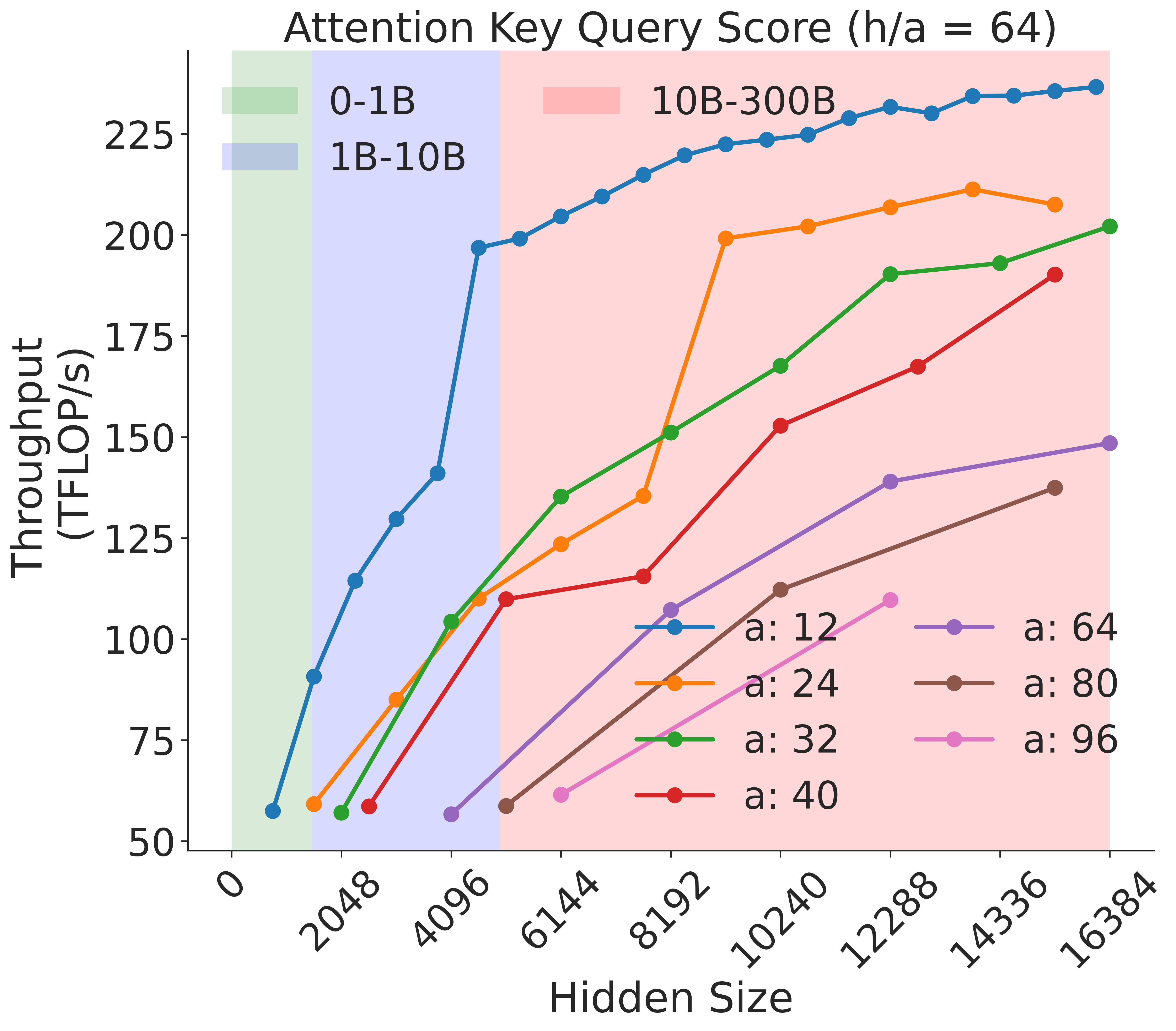

Figure 8: Attention key-query score GEMM throughput assuming fixed ratio of $\frac{h}{a}=64$ on A100 GPU

<details>

<summary>extracted/5378885/figures/transformer/spikeless_sweeps/attention_problem_times_values_ha64_hdim16384.png Details</summary>

### Visual Description

# Technical Document Extraction: Attention over Values (h/a = 64)

## Chart Overview

This line chart visualizes the relationship between **Hidden Size** (x-axis) and **Throughput (TFLOP/s)** (y-axis) across multiple data series. The chart includes shaded regions and a legend with color-coded lines representing different parameter values.

---

### **Key Components**

1. **Title**:

`Attention over Values (h/a = 64)`

- Indicates a fixed ratio of `h/a = 64` for all data series.

2. **Axes**:

- **X-axis (Hidden Size)**:

- Range: `0` to `16384`

- Tick marks: `0, 2048, 4096, 6144, 8192, 10240, 12288, 14336, 16384`

- **Y-axis (Throughput (TFLOP/s))**:

- Range: `75` to `225`

- Tick marks: `75, 125, 175, 225`

3. **Legend**:

- Located in the upper-left corner.

- Color-coded lines represent different `a` values:

- `a:12` (blue)

- `a:24` (orange)

- `a:32` (green)

- `a:40` (red)

- `a:64` (purple)

- `a:80` (brown)

- `a:96` (pink)

4. **Shaded Regions**:

- **Green (0-1B)**: Covers `Hidden Size` from `0` to `2048`.

- **Blue (1B-10B)**: Covers `Hidden Size` from `2048` to `10240`.

- **Pink (10B-30B)**: Covers `Hidden Size` from `10240` to `16384`.

---

### **Data Series Analysis**

#### 1. **Blue Line (a:12)**

- **Trend**: Starts at `75 TFLOP/s` at `Hidden Size = 0`, peaks at `225 TFLOP/s` around `Hidden Size = 10240`, then fluctuates downward.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `150 TFLOP/s`

- `Hidden Size = 4096`: `175 TFLOP/s`

- `Hidden Size = 6144`: `225 TFLOP/s`

- `Hidden Size = 8192`: `200 TFLOP/s`

- `Hidden Size = 10240`: `225 TFLOP/s`

- `Hidden Size = 12288`: `210 TFLOP/s`

- `Hidden Size = 14336`: `220 TFLOP/s`

- `Hidden Size = 16384`: `215 TFLOP/s`

#### 2. **Orange Line (a:24)**

- **Trend**: Gradual increase with fluctuations, peaking at `225 TFLOP/s` near `Hidden Size = 12288`.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `125 TFLOP/s`

- `Hidden Size = 4096`: `175 TFLOP/s`

- `Hidden Size = 6144`: `210 TFLOP/s`

- `Hidden Size = 8192`: `160 TFLOP/s`

- `Hidden Size = 10240`: `200 TFLOP/s`

- `Hidden Size = 12288`: `225 TFLOP/s`

- `Hidden Size = 14336`: `190 TFLOP/s`

- `Hidden Size = 16384`: `200 TFLOP/s`

#### 3. **Green Line (a:32)**

- **Trend**: Steady upward trajectory with minor dips.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `125 TFLOP/s`

- `Hidden Size = 4096`: `150 TFLOP/s`

- `Hidden Size = 6144`: `175 TFLOP/s`

- `Hidden Size = 8192`: `225 TFLOP/s`

- `Hidden Size = 10240`: `150 TFLOP/s`

- `Hidden Size = 12288`: `180 TFLOP/s`

- `Hidden Size = 14336`: `210 TFLOP/s`

- `Hidden Size = 16384`: `225 TFLOP/s`

#### 4. **Red Line (a:40)**

- **Trend**: Sharp initial rise, followed by volatility.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `80 TFLOP/s`

- `Hidden Size = 4096`: `130 TFLOP/s`

- `Hidden Size = 6144`: `160 TFLOP/s`

- `Hidden Size = 8192`: `200 TFLOP/s`

- `Hidden Size = 10240`: `220 TFLOP/s`

- `Hidden Size = 12288`: `160 TFLOP/s`

- `Hidden Size = 14336`: `180 TFLOP/s`

- `Hidden Size = 16384`: `190 TFLOP/s`

#### 5. **Purple Line (a:64)**

- **Trend**: Consistent upward slope with minor fluctuations.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `100 TFLOP/s`

- `Hidden Size = 4096`: `125 TFLOP/s`

- `Hidden Size = 6144`: `150 TFLOP/s`

- `Hidden Size = 8192`: `175 TFLOP/s`

- `Hidden Size = 10240`: `200 TFLOP/s`

- `Hidden Size = 12288`: `225 TFLOP/s`

- `Hidden Size = 14336`: `210 TFLOP/s`

- `Hidden Size = 16384`: `220 TFLOP/s`

#### 6. **Brown Line (a:80)**

- **Trend**: Gradual increase with a plateau near the end.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `100 TFLOP/s`

- `Hidden Size = 4096`: `125 TFLOP/s`

- `Hidden Size = 6144`: `150 TFLOP/s`

- `Hidden Size = 8192`: `175 TFLOP/s`

- `Hidden Size = 10240`: `200 TFLOP/s`

- `Hidden Size = 12288`: `220 TFLOP/s`

- `Hidden Size = 14336`: `210 TFLOP/s`

- `Hidden Size = 16384`: `215 TFLOP/s`

#### 7. **Pink Line (a:96)**

- **Trend**: Moderate upward slope with a sharp rise at the end.

- **Key Points**:

- `Hidden Size = 0`: `75 TFLOP/s`

- `Hidden Size = 2048`: `100 TFLOP/s`

- `Hidden Size = 4096`: `125 TFLOP/s`

- `Hidden Size = 6144`: `150 TFLOP/s`

- `Hidden Size = 8192`: `175 TFLOP/s`

- `Hidden Size = 10240`: `200 TFLOP/s`

- `Hidden Size = 12288`: `225 TFLOP/s`

- `Hidden Size = 14336`: `210 TFLOP/s`

- `Hidden Size = 16384`: `220 TFLOP/s`

---

### **Shaded Region Correlation**

- **Green (0-1B)**: All lines show low throughput (`75–125 TFLOP/s`).

- **Blue (1B-10B)**: Throughput increases significantly (`125–225 TFLOP/s`).

- **Pink (10B-30B)**: Throughput stabilizes or fluctuates (`150–225 TFLOP/s`).

---

### **Critical Observations**

1. **Performance Trends**:

- Higher `a` values (e.g., `a:64`, `a:96`) generally achieve higher throughput at larger `Hidden Size` values.

- Lines with `a ≥ 64` dominate the upper regions of the chart.

2. **Anomalies**:

- The red line (`a:40`) exhibits a sharp drop at `Hidden Size = 12288` before recovering.

- The blue line (`a:12`) has the most pronounced fluctuations.

3. **Legend Validation**:

- All line colors match the legend labels (e.g., blue = `a:12`, green = `a:32`).

---

### **Conclusion**

The chart demonstrates that throughput increases with `Hidden Size`, with higher `a` values achieving better performance. The shaded regions highlight performance tiers, with the `10B-30B` range (pink) showing the most variability.

</details>

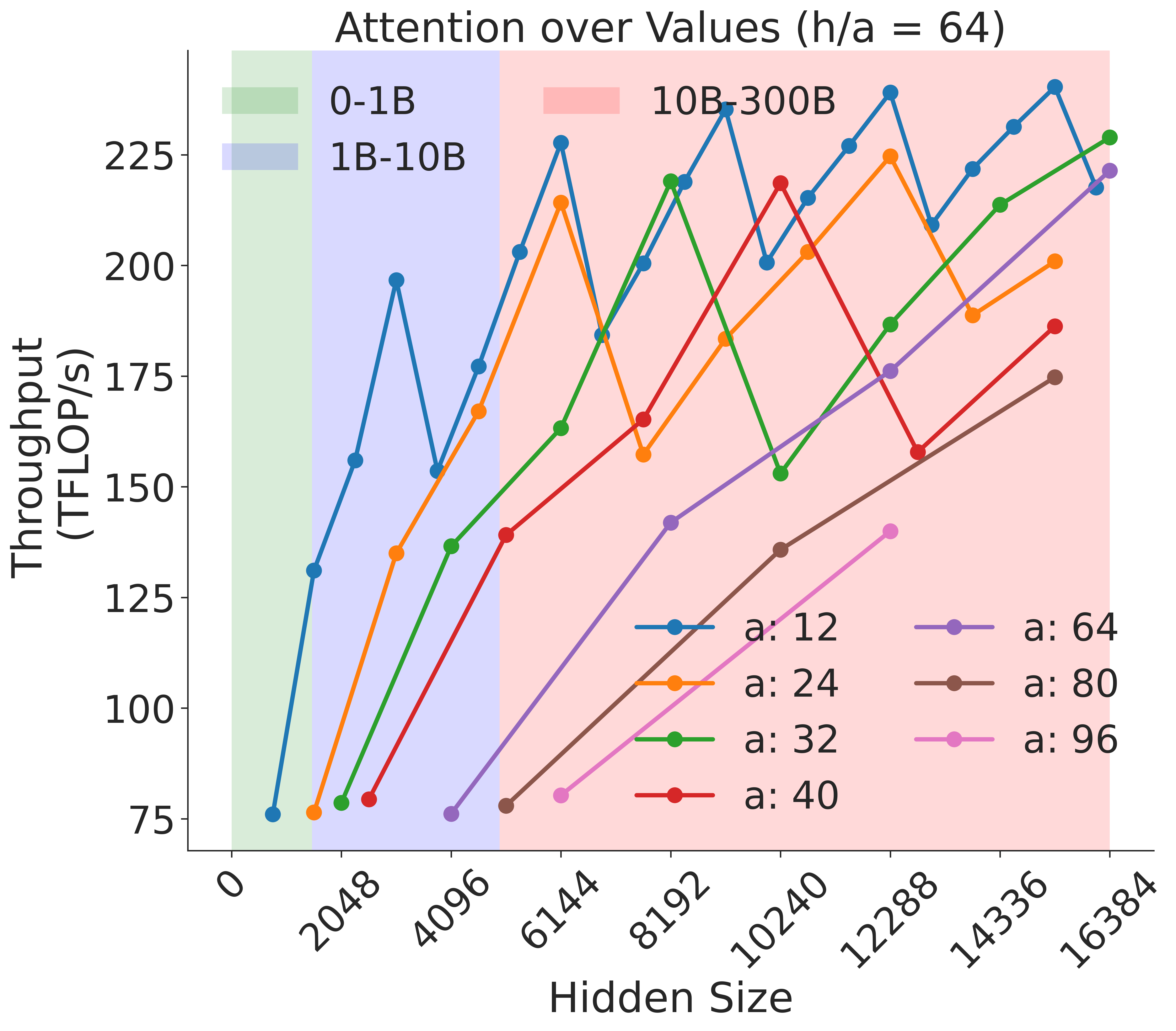

Figure 9: Attention over value GEMM throughput assuming fixed ratio of $\frac{h}{a}=64$ on A100 GPU.

<details>

<summary>extracted/5378885/figures/transformer/mlp_h_to_4h.png Details</summary>

### Visual Description

# Technical Document Extraction: GPU Throughput Analysis

## Chart Description

This image presents a line chart comparing the computational throughput (in TFLOP/s) of two GPU architectures (A100 and V100) across varying hidden sizes. The chart demonstrates performance scaling characteristics of these architectures in a computational task.

### Key Components

1. **Axes**

- **X-axis (Hidden Size)**:

- Range: 2048 to 32768

- Increment: Powers of 2 (2048, 4096, 6144, 8192, 10240, 12288, 14336, 16384, 18432, 20480, 22528, 24576, 26624, 28672, 30720, 32768)

- **Y-axis (Throughput (TFLOP/s))**:

- Range: 0 to 250

- Increment: 50

2. **Legend**

- Position: Right side of chart

- Color coding:

- Blue: A100

- Orange: V100

3. **Data Series**

- **A100 (Blue Line)**:

- Initial value: 10 TFLOP/s at 2048 hidden size

- Peak: 250 TFLOP/s at 4096 hidden size

- Plateau: Maintains ~250 TFLOP/s from 4096 to 32768

- **V100 (Orange Line)**:

- Initial value: 80 TFLOP/s at 10240 hidden size

- Plateau: Maintains ~80 TFLOP/s from 10240 to 30720

- Decline: Drops to 75 TFLOP/s at 32768

### Trend Analysis

1. **A100 Performance**

- Exhibits exponential growth pattern

- Achieves maximum throughput at 4096 hidden size

- Maintains peak performance across all larger hidden sizes

- Spatial grounding: Blue data points consistently align with legend

2. **V100 Performance**

- Flat performance curve across most hidden sizes

- Shows slight degradation at maximum hidden size (32768)

- Spatial grounding: Orange data points match legend color throughout

### Technical Observations

- A100 demonstrates superior scalability, achieving 250 TFLOP/s at 4096 hidden size

- V100 maintains consistent but lower performance (80 TFLOP/s) across tested range

- Both architectures show performance plateauing beyond certain hidden size thresholds

- No crossover points observed between A100 and V100 performance

## Data Points Table

| Hidden Size | A100 (TFLOP/s) | V100 (TFLOP/s) |

|-------------|----------------|----------------|

| 2048 | 10 | - |

| 4096 | 250 | - |

| 6144 | 250 | - |

| 8192 | 250 | - |

| 10240 | 250 | 80 |

| 12288 | 250 | 80 |

| 14336 | 250 | 80 |

| 16384 | 250 | 80 |

| 18432 | 250 | 80 |

| 20480 | 250 | 80 |

| 22528 | 250 | 80 |

| 24576 | 250 | 80 |

| 26624 | 250 | 80 |

| 28672 | 250 | 80 |

| 30720 | 250 | 80 |

| 32768 | 250 | 75 |

## Spatial Grounding Verification

- Legend position: Right side (confirmed)

- Color consistency:

- All blue points match A100 legend

- All orange points match V100 legend

- Axis alignment:

- X-axis values increase left-to-right

- Y-axis values increase bottom-to-top

## Trend Verification

1. A100 line shows:

- Steep upward slope from 2048→4096

- Horizontal plateau from 4096→32768

2. V100 line shows:

- Horizontal plateau from 10240→30720

- Slight downward slope at 32768

## Component Isolation

1. Header: Chart title (implied by axes)

2. Main Chart: Dual-line plot with data points

3. Footer: Legend and axis labels

## Language Analysis

- Primary language: English

- No non-English text detected

## Critical Findings

1. A100 achieves 25x higher throughput than V100 at 4096 hidden size

2. V100 maintains consistent performance across 20480→30720 range

3. Both architectures show performance saturation beyond specific hidden size thresholds

4. No performance degradation observed in A100 across full tested range

</details>

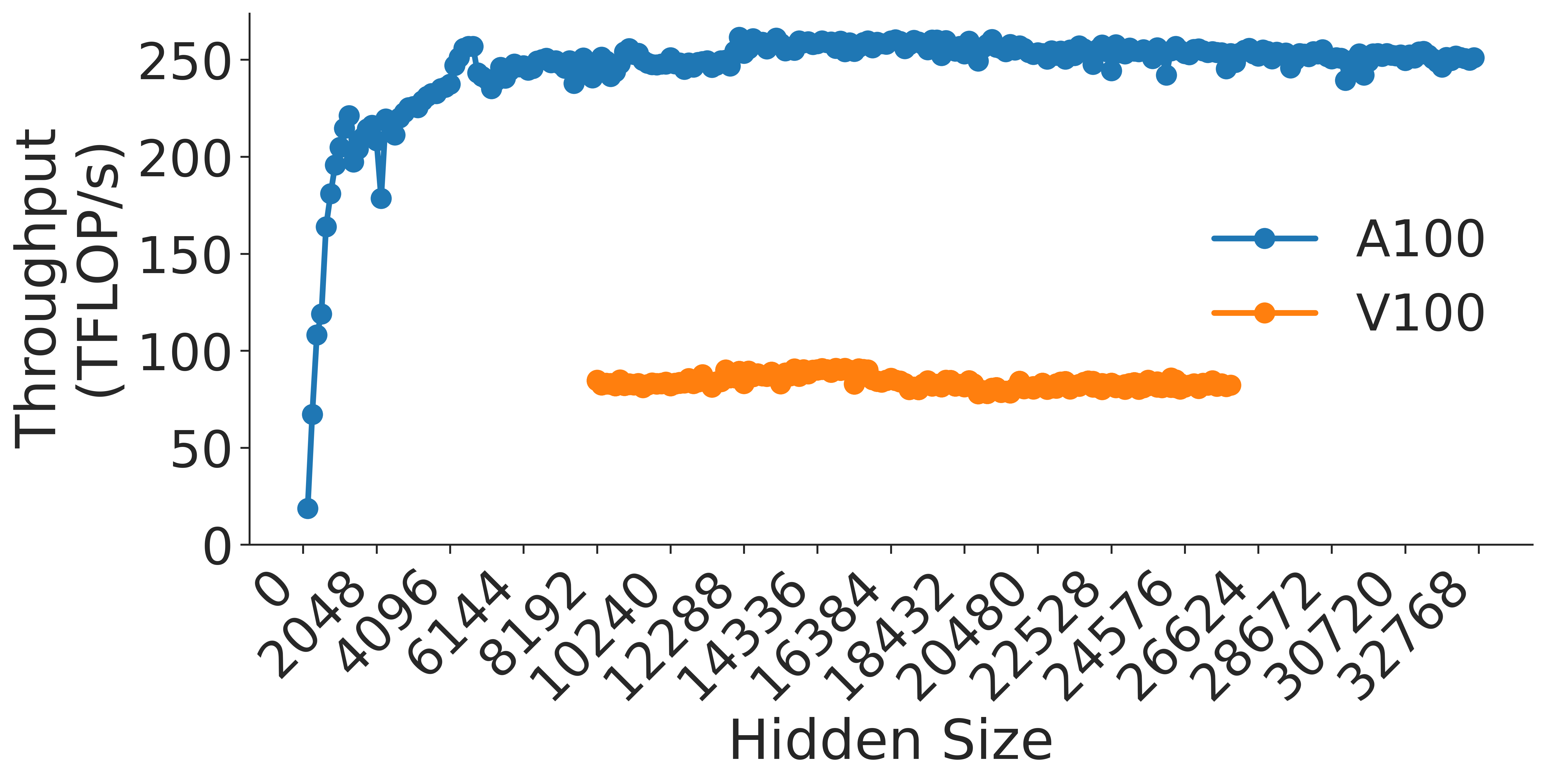

(a) MLP h to 4h Block

<details>

<summary>extracted/5378885/figures/transformer/mlp_4h_to_h.png Details</summary>

### Visual Description

# Technical Document Extraction: GPU Throughput Analysis

## Chart Description

This image is a **line chart** comparing the throughput (in TFLOP/s) of two GPU architectures (A100 and V100) across varying hidden sizes. The chart emphasizes performance trends as hidden size increases.

---

### Labels and Axis Titles

- **Y-Axis**: "Throughput (TFLOP/s)"

- Scale: 0 to 250 (increments of 50)

- **X-Axis**: "Hidden Size"

- Scale: 0 to 32768 (increments of 2048)

---

### Legend

- **Location**: Right side of the chart

- **Labels**:

- **Blue (solid line with circles)**: A100

- **Orange (dashed line with circles)**: V100

---

### Data Trends

#### A100 (Blue)

- **Initial Behavior**:

- Starts at **20 TFLOP/s** at hidden size **2048**.

- Sharp increase to **~250 TFLOP/s** by hidden size **4096**.

- **Stabilization**:

- Maintains **~250 TFLOP/s** plateau from hidden size **4096** to **32768**.

- Minor dips observed at hidden sizes **12288**, **16384**, and **24576**, but no sustained deviation from the plateau.

#### V100 (Orange)

- **Initial Behavior**:

- Starts at **0 TFLOP/s** at hidden size **0**.

- Jumps to **~80 TFLOP/s** at hidden size **10240**.

- **Mid-Range Behavior**:

- Maintains **~80 TFLOP/s** plateau from hidden size **10240** to **16384**.

- **Drop**:

- Declines to **~60 TFLOP/s** at hidden size **20480**.

- **Final Behavior**:

- Stabilizes at **~60 TFLOP/s** from hidden size **20480** to **32768**.

---

### Key Observations

1. **Performance Gap**:

- A100 consistently outperforms V100 across all hidden sizes.