# FCoReBench: Can Large Language Models Solve Challenging First-Order Combinatorial Reasoning Problems?

Abstract

Can the large language models (LLMs) solve challenging first-order combinatorial reasoning problems such as graph coloring, knapsack, and cryptarithmetic? By first-order, we mean these problems can be instantiated with potentially an infinite number of problem instances of varying sizes. They are also challenging being NP-hard and requiring several reasoning steps to reach a solution. While existing work has focused on coming up with datasets with hard benchmarks, there is limited work which exploits the first-order nature of the problem structure. To address this challenge, we present FCoReBench, a dataset of $40$ such challenging problems, along with scripts to generate problem instances of varying sizes and automatically verify and generate their solutions. We first observe that LLMs, even when aided by symbolic solvers, perform rather poorly on our dataset, being unable to leverage the underlying structure of these problems. We specifically observe a drop in performance with increasing problem size. In response, we propose a new approach, SymPro-LM, which combines LLMs with both symbolic solvers and program interpreters, along with feedback from a few solved examples, to achieve huge performance gains. Our proposed approach is robust to changes in the problem size, and has the unique characteristic of not requiring any LLM call during inference time, unlike earlier approaches. As an additional experiment, we also demonstrate SymPro-LM ’s effectiveness on other logical reasoning benchmarks.

1 Introduction

Recent works have shown that large language models (LLMs) can reason like humans (Wei et al., 2022a), and solve diverse natural language reasoning tasks, without the need for any fine-tuning (Wei et al., 2022c; Zhou et al., 2023; Zheng et al., 2023). We note that, while impressive, these tasks are simple reasoning problems, generally requiring only a handful of reasoning steps to reach a solution.

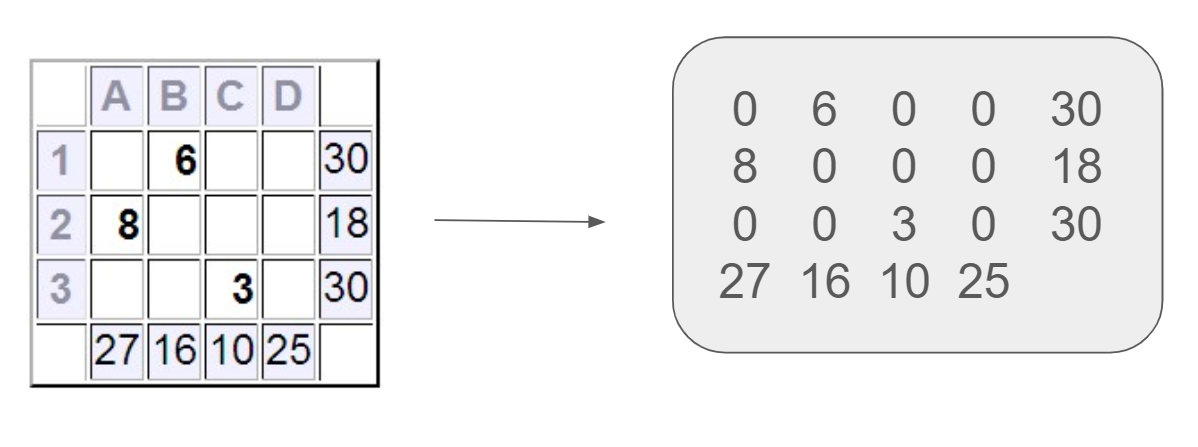

We are motivated by the goal of assessing the reasoning limits of modern-day LLMs. In this paper, we study computationally intensive, first-order combinatorial problems posed in natural language. These problems (e.g., sudoku, knapsack, graph coloring, cryptarithmetic) have long served as important testbeds to assess the intelligence of AI systems (Russell and Norvig, 2010), and strong traditional AI methods have been developed for them. Can LLMs solve these directly? If not, can they solve these with the help of symbolic AI systems like SMT solvers? To answer these questions, we release a dataset named FCoReBench, consisting of $40$ such problems (see Figure 1).

We refer to such problems as fcore (f irst-order co mbinatorial re asoning) problems. Fcore problems can be instantiated with any number of instances of varying sizes, e.g., 9 $×$ 9 and 16 $×$ 16 sudoku. Most of the problems in FCoReBench are NP-hard and solving them will require extensive planning and search over a large number of combinations. We provide scripts to generate instances for each problem and verify/generate their solutions. Across all problems we generate 1354 test instances of varying sizes for evaluation and also provide 596 smaller sized solved instances as a training set. We present a detailed comparison with existing benchmarks in the related work (Section 2).

Not surprisingly, our initial experiments reveal that even the largest LLMs can only solve less than a third of these instances. We then turn to recent approaches that augment LLMs with tools for better reasoning. Program-aided Language models (PAL) (Gao et al., 2023) use LLMs to generate programs, offloading execution to a program interpreter. Logic-LM (Pan et al., 2023) and SAT-LM (Ye et al., 2023) use LLMs to convert questions to symbolic representations, and external symbolic solvers perform the actual reasoning. Our experiments show that, by themselves, their performances are not that strong on FCoReBench. At the same time, both these methods demonstrate complementary strengths – PAL can handle first-order structures well, whereas Logic-LM is better at complex reasoning. In response, we propose a new approach named SymPro-LM, which combines the powers of both PAL and symbolic solvers with LLMs to effectively solve fcore problems. In particular, the LLM generates an instance-agnostic program for an fcore problem that converts any problem instance to a symbolic representation. This program passes this representation to a symbolic solver, which returns a solution back to the program. The program then converts the symbolic solution to the desired output representation, as per the natural language instruction. Interestingly, in contrast to LLMs with symbolic solvers, once this program is generated, inference on new fcore instances (of any size) can be done without any LLM calls.

SymPro-LM outperforms few-shot prompting by $21.61$ , PAL by $3.52$ and Logic-LM by $16.83$ percent points on FCoReBench, with GPT-4-Turbo as the LLM. Given the structured nature of fcore problems, we find that utilizing feedback from small sized solved examples to correct the programs generated for just four rounds yields a further $21.02$ percent points gain for SymPro-LM, compared to $12.5$ points for PAL.

We further evaluate SymPro-LM on three (non-first order) logical reasoning benchmarks from literature (Tafjord et al., 2021; bench authors, 2023; Saparov and He, 2023a). SymPro-LM consistently outperforms existing baselines by large margins on two datasets, and is competitive on the third, underscoring the value of integrating LLMs with symbolic solvers through programs. We perform additional analyses to understand impact of hyperparameters on SymPro-LM and its errors. We release the dataset and code for further research. We summarize our contributions below:

- We formally define the task of natural language first-order combinatorial reasoning and present FCoReBench, a corresponding benchmark.

- We provide a thorough evaluation of LLM prompting techniques for fcore problems, offering new insights into existing techniques.

- We propose a novel approach, SymPro-LM, demonstrating its effectiveness on fcore problems as well as other datasets, along with an in-depth analysis of its performance.

<details>

<summary>extracted/6211530/Images/puzzle-bench.png Details</summary>

### Visual Description

## Composite Image: Mathematical and Algorithmic Problems

### Overview

The image presents a collection of different types of mathematical and algorithmic problems. These include a knapsack problem, graph coloring, a KenKen puzzle, a cryptarithmetic problem, a Shinro puzzle, and a job-shop scheduling diagram.

### Components/Axes

* **Knapsack:** An illustration of a knapsack with a capacity of 15 kg, surrounded by items with different weights and values. The items are:

* A green book labeled "$4, 12kg"

* A blue book labeled "$2, 2kg"

* A yellow book labeled "$10, 4kg"

* A peach book labeled "$1, 1kg"

* A grey book labeled "$2, 1kg"

* A question mark above the knapsack indicates the problem is to determine which items to include to maximize value without exceeding the weight limit.

* **Graph Coloring:** A graph with vertices colored red, green, and blue. The graph consists of a pentagon with an inner star.

* **KenKen:** A 3x3 grid with arithmetic constraints. The constraints are:

* Top-left cell: "3+"

* Middle-left cell: "3"

* Bottom-left cell: "5+"

* Top-middle cell: None

* Middle-middle cell: "4+"

* Bottom-middle cell: None

* Top-right cell: "?"

* Middle-right cell: None

* Bottom-right cell: None

The grid contains the numbers 1, 2, and 3.

* **Cryparithmetic:** The equation SEND + MORE = MONEY, where each letter represents a unique digit.

* **Shinro:** A 6x6 grid with numbers 1 and 2 along the top and left edges. The grid contains circles and arrows.

* **Job-Shop Scheduling:** A Gantt chart showing the scheduling of jobs on three machines (M1, M2, M3). The x-axis is labeled "Time (min)" and ranges from 0 to 30. The y-axis represents the machines. The makespan (Cmax) is 29.

### Detailed Analysis

**Knapsack:**

* The problem is to maximize the value of items placed in the knapsack without exceeding its 15 kg capacity.

**Graph Coloring:**

* The graph is a combination of a pentagon and a star.

* The vertices are colored red, green, and blue.

**KenKen:**

* The grid is 3x3.

* The constraints are addition.

* The goal is to fill the grid with numbers 1, 2, and 3 such that each row and column contains each number exactly once, and the numbers in each heavily outlined set of cells combine (in some order) to produce the target number in the top-left corner of the set using the specified mathematical operation.

**Cryparithmetic:**

* The problem is to assign digits to the letters S, E, N, D, M, O, R, Y such that the equation SEND + MORE = MONEY is true.

**Shinro:**

* The grid is 6x6.

* The grid contains circles and arrows.

* The numbers along the top and left edges are 1 and 2.

**Job-Shop Scheduling:**

* Three machines (M1, M2, M3) are scheduling four jobs (1, 2, 3, 4).

* The x-axis represents time in minutes, ranging from 0 to 30.

* The makespan (Cmax) is 29 minutes.

* Machine M1 processes job 3 from 0 to approximately 5 minutes, job 2 from approximately 5 to 10 minutes, job 4 from approximately 10 to 15 minutes, and job 1 from approximately 15 to 20 minutes.

* Machine M2 processes job 1 from 0 to approximately 2 minutes, job 3 from approximately 2 to 10 minutes, job 4 from approximately 10 to 15 minutes, and job 2 from approximately 15 to 25 minutes.

* Machine M3 processes job 4 from 0 to approximately 5 minutes, job 3 from approximately 5 to 15 minutes, job 2 from approximately 15 to 25 minutes, and job 1 from approximately 25 to 29 minutes.

### Key Observations

* The image presents a variety of mathematical and algorithmic problems.

* Each problem has its own unique characteristics and challenges.

* The job-shop scheduling diagram shows the scheduling of jobs on three machines, with a makespan of 29 minutes.

### Interpretation

The image serves as a visual representation of different problem-solving paradigms within mathematics and computer science. The knapsack problem exemplifies optimization, graph coloring demonstrates constraint satisfaction, KenKen combines logic and arithmetic, cryptarithmetic involves pattern recognition and deduction, Shinro tests spatial reasoning, and job-shop scheduling focuses on resource allocation and efficiency. The collection highlights the diverse range of challenges and techniques used in these fields.

</details>

Figure 1: Illustrative examples of problems in FCoReBench (represented as images for illustration).

2 Related Work

Neuro-Symbolic AI: Our work falls in the broad category of neuro-symbolic AI (Yu et al., 2023) which builds models leveraging the complementary strengths of neural and symbolic methods. Several prior works build neuro-symbolic models for solving combinatorial reasoning problems (Palm et al., 2018; Wang et al., 2019; Paulus et al., 2021; Nandwani et al., 2022a, b). These develop specialized problem-specific modules (that are typically not size-invariant), which are trained over large training datasets. In contrast, SymPro-LM uses LLMs, and bypasses problem-specific architectures, generalizes to problems of varying sizes, and is trained with very few solved instances.

Reasoning with Language Models: The previous paradigm to reasoning was fine-tuning of LLMs (Clark et al., 2021; Tafjord et al., 2021; Yang et al., 2022), but as LLMs scaled, they have been found to reason well, when provided with in-context examples without any fine-tuning (Brown et al., 2020; Wei et al., 2022b). Since then, many prompting approaches have been developed that leverage in-context learning. Prominent ones include Chain of Thought (CoT) prompting (Wei et al., 2022c; Kojima et al., 2022), Least-to-Most prompting (Zhou et al., 2023), Progressive-Hint prompting (Zheng et al., 2023) and Tree-of-Thoughts (ToT) prompting (Yao et al., 2023).

Tool Augmented Language Models: Augmenting LLMs with external tools has emerged as a way to solve complex reasoning problems (Schick et al., 2023; Paranjape et al., 2023). The idea is to offload a part of the task to specialized external tools, thereby reducing error rates. Program-aided Language models (Gao et al., 2023) invoke a Python interpreter over a program generated by an LLM. Logic-LM (Pan et al., 2023) and SAT-LM (Ye et al., 2023) integrate reasoning of symbolic solvers with LLMs, which convert the natural language problem into a symbolic representation. SymPro-LM falls in this category and combines LLMs with both program interpreters and symbolic solvers.

Logical Reasoning Benchmarks: There are several reasoning benchmarks in literature, such as LogiQA (Liu et al., 2020) for mixed reasoning, GSM8K (Cobbe et al., 2021) for arithmetic reasoning, FOLIO (Han et al., 2022) for first-order logic, PrOntoQA (Saparov and He, 2023b) and ProofWriter (Tafjord et al., 2021) for deductive reasoning, AR-LSAT (Zhong et al., 2021) for analytical reasoning. These dataset are not first-order i.e. each problem is accompanied with a single instance (despite the rules potentially being described in first-order logic). We propose FCoReBench, which substantially extends the complexity of these benchmarks by investigating computationally hard, first-order combinatorial reasoning problems. Among recent works, NLGraph (Wang et al., 2023) studies structured reasoning problems but is limited to graph based problems, and has only 8 problems in its dataset. On the other hand, NPHardEval (Fan et al., 2023) studies problems from the lens of computational complexity, but works with a relatively small set of 10 problems. In contrast we study the more broader area of first-order reasoning, we investigate the associated complexities of structured reasoning, and have a much large problem set (sized 40). Specifically, all the NP-Hard problems in these two datasets are also present in our benchmark.

3 Problem Setup: Natural Language First-order Combinatorial Reasoning

<details>

<summary>extracted/6211530/Images/puzzle-bench-example-sudoku.png Details</summary>

### Visual Description

## Diagram: Sudoku Rules, Input/Output Format, and Examples

### Overview

The image presents a description of Sudoku rules, input and output formats, and solved examples in textual representation. It's divided into four sections, each with a title and a list of bullet points explaining the respective topic.

### Components/Axes

* **Header 1 (Top, Orange):** "Natural Language Description of Rules (NL(C))"

* Rules for solving the Sudoku grid.

* **Header 2 (Middle-Top, Blue):** "Natural Language Description of Input Format (NL(X))"

* Description of the input format for the Sudoku grid.

* **Header 3 (Middle-Bottom, Green):** "Natural Language Description of Output Format (NL(Y))"

* Description of the output format for the Sudoku grid.

* **Header 4 (Bottom, Gray):** "Solved Examples in their Textual Representation (Dp)"

* Examples of input and output grids.

### Detailed Analysis

**1. Natural Language Description of Rules (NL(C))**

* Empty cells of the grid must be filled using numbers from 1 to n.

* Each row must have each number from 1 to n exactly once.

* Each column must have each number from 1 to n exactly once.

* Each of the n non-overlapping sub-grids of size √n × √n must have each number from 1 to n exactly once.

* n is a perfect square.

**2. Natural Language Description of Input Format (NL(X))**

* There are n rows and n columns representing the n × n unsolved grid.

* Each row represents the corresponding unsolved row of the grid.

* Each row has n space separated numbers ranging from 0 to n representing the corresponding cells in the grid.

* Empty cells are indicated by 0s.

* The other filled cells will have numbers from 1 to n.

**3. Natural Language Description of Output Format (NL(Y))**

* There are n rows and n columns representing the n × n solved grid.

* Each row represents the corresponding solved row of the grid.

* Each row has n space separated numbers ranging from 1 to n representing the corresponding cells of the solved grid.

**4. Solved Examples in their Textual Representation (Dp)**

The examples show two sets of input and output grids. Each grid is 4x4.

* **Input - 1:**

</details>

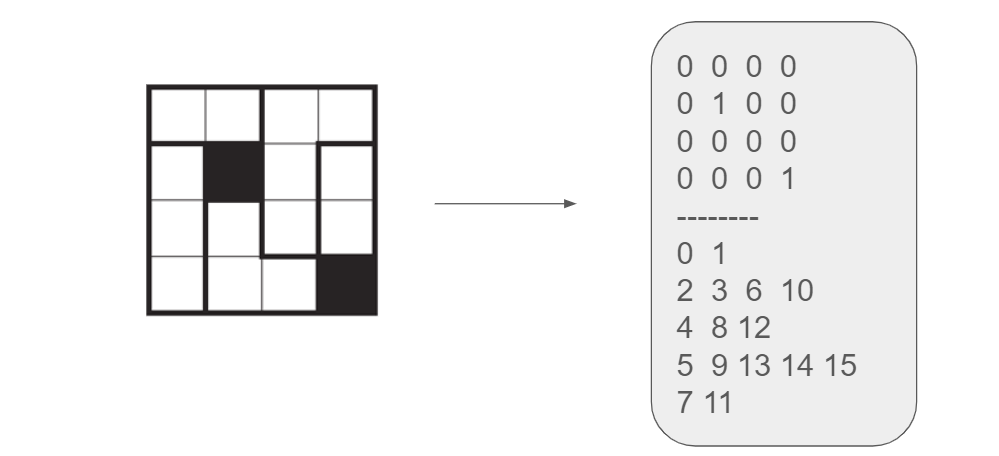

Figure 2: FCoReBench Example: Filling a $n× n$ Sudoku board along with its rules, input-output format, and a couple of sample input-output pairs.

A first-order combinatorial reasoning problem $\mathcal{P}$ has three components: a space of legal input instances ( $\mathcal{X}$ ), a space of legal outputs ( $\mathcal{Y}$ ), and a set of constraints ( $\mathcal{C}$ ) that every input-output pair must satisfy. E.g., for sudoku, $\mathcal{X}$ is the space of partially-filled grids with $n× n$ cells, $\mathcal{Y}$ is the space of fully-filled grids of the same size, and $\mathcal{C}$ comprises row, column, and box alldiff constraints, with input cell persistence. To communicate a structured problem instance (or its output) to an NLP system, it must be serialized in text. We overload $\mathcal{X}$ and $\mathcal{Y}$ to also denote the formats for these serialized input and output instances. Two instances for sudoku are shown in Figure 2 (grey box). We are also provided (serialized) training data of input-output instance pairs, $\mathcal{D}_{\mathcal{P}}$ $=\{(x^{(i)},y^{(i)})\}_{i=1}^{N}$ , where $x^{(i)}∈\mathcal{X},y^{(i)}∈\mathcal{Y}$ , such that $(x^{(i)},y^{(i)})$ honors all constraints in $\mathcal{C}$ .

Further, we verbalize all three components – input-output formats and constraints – in natural language instructions. We denote these instructions by $NL(\mathcal{X})$ , $NL(\mathcal{Y})$ , and $NL(\mathcal{C})$ , respectively. Figure 2 illustrates these for sudoku. With this notation, we summarize our setup as follows. For an fcore problem $\mathcal{P}=\langle\mathcal{X},\mathcal{Y},\mathcal{C}\rangle$ , we are provided $NL(\mathcal{X})$ , $NL(\mathcal{Y})$ , $NL(\mathcal{C})$ and training data $\mathcal{D}_{\mathcal{P}}$ , and our goal is to learn a function $\mathcal{F}$ , which maps any (serialized) $x∈\mathcal{X}$ to its corresponding (serialized) solution $y∈\mathcal{Y}$ such that $(x,y)$ honors all constraints in $\mathcal{C}$ .

4 FCoReBench: Dataset Construction

First, we shortlisted computationally challenging first-order problems from various sources. We manually scanned Wikipedia https://en.wikipedia.org/wiki/List_of_NP-complete_problems for NP-hard algorithmic problems and logical-puzzles. We also took challenging logical-puzzles from other publishing houses (e.g., Nikoli), 2 and real world problems from the operations research community and the industrial track of the annual SAT competition https://www.nikoli.co.jp/en/puzzles/, https://satcompetition.github.io/. From this set, we selected problems (1) that can be described in natural language (we remove problems where some rules are inherently visual), and (2) for whom, the training and test datasets can be created with a reasonable programming effort. This led to $40$ fcore problems (see Table 7 for a complete list), of which 30 are known to be NP-hard and others have unknown complexity. 10 problems are graph-based (e.g., graph coloring), 18 are grid based (e.g., sudoku), 5 are set-based (e.g., knapsack), 5 are real-world settings (e.g. car sequencing) and 2 are miscellaneous (e.g., cryptarithmetic).

Two authors of the paper having formal background in automated reasoning and logic then created the natural language instructions and the input-output format for each problem. First, for each problem one author created the input-output formats and the instructions for them ( $NL(\mathcal{X})$ , $NL(\mathcal{Y})$ ). Second, the same author then created the natural language rules ( $NL(\mathcal{C})$ ) by referring to the respective sources and re-writing the rules. These rules were verified by the other author making sure that they were correct i.e. the meaning of the problem did not change and they were unambiguous. The rules were re-written to ensure that an LLM cannot easily invoke its prior knowledge about the same problem. For the same reason, the name of the problem was hidden.

In the case of errors in the natural language descriptions, feedback was given to the author who wrote the descriptions to correct them. In our case typically there were no corrections required except 3 problems where the descriptions were corrected within a single round of feedback. A third independent annotator was employed who was tasked with reading the natural language descriptions and solving the input instances in the training set. The solutions were then verified to make sure that the rules were written and comprehensible by a human correctly. The annotator was able to solve all instances correctly highlighting that the descriptions were correct. The guidelines utilized to re-write the rules from their respective sources were to use crisp and concise English without utilizing technical jargon and avoiding ambiguities. The rules were intended to be understood by any person with a reasonable comprehension of the language and did not contain any formal specifications or mathematical formulas. Appendices A.2 and A.3 have detailed examples of rules and formats, respectively.

Next, we created train/test data for each problem. These instances are generated programmatically by scripts written by the authors. For each problem, one author also wrote a solver and a verification script, and the other verified that these scripts and suggested corrections if needed. In all but one case the other author found the scripts to be correct. These scripts (after correction) were also verified through manually curated test cases. These scripts were then used to ensure the feasibility of instances.

Since a single problem instance can potentially have multiple correct solutions (Nandwani et al., 2021) – all solutions are provided for each training input. The instances in the test set are typically larger in size than those in training. Because of their size, test instances may have too many solutions, and computing all of them can be expensive. Instead, the verification script can be used, which outputs the correctness of a candidate solution for any test instance. The scripts are a part of the dataset and can be used to generate any number of instances of varying complexity for each problem to easily extend the dataset. Keeping the prohibitive experimentation costs with LLMs in mind, we generate around 15 training instances and around 34 test instances on average per problem. In total FCoReBench has 596 training instances and 1354 test instances.

5 SymPro-LM

Preliminaries: In the following, we assume that we have access to an LLM $\mathcal{L}$ , which can work with various prompting strategies, a program interpreter $\mathcal{I}$ , which can execute programs written in its language and a symbolic solver $\mathcal{S}$ , which takes as input a pair of the form $(E,V)$ , where $E$ is set of equations (constraints) specified in the language of $\mathcal{S}$ , and $V$ is a set of (free) variables in $E$ , and produces an assignment $\mathcal{A}$ to the variables in $V$ that satisfies the set of equations in $E$ . Given the an fcore problem $\mathcal{P}=\langle\mathcal{X},\mathcal{Y},\mathcal{C}\rangle$ described by $NL(\mathcal{C})$ , $NL(\mathcal{X})$ , $NL(\mathcal{Y})$ and $\mathcal{D_{\mathcal{P}}}$ , we would like to make effective use of $\mathcal{L}$ , $\mathcal{I}$ and $\mathcal{S}$ , to learn the mapping $\mathcal{F}$ , which takes any input $x∈\mathcal{X}$ , and maps it to $y∈\mathcal{Y}$ , such that $(x,y)$ honors the constraints in $\mathcal{C}$ .

Background: We consider the following possible representations for $\mathcal{F}$ which cover existing work.

- Exclusively LLM: Many prompting strategies (Wei et al., 2022c; Zhou et al., 2023) make exclusive use of $\mathcal{L}$ to represent $\mathcal{F}$ . $\mathcal{L}$ is supplied with a prompt consisting of the description of $\mathcal{P}$ via $NL(\mathcal{C})$ , $NL(\mathcal{X})$ , $NL(\mathcal{Y})$ , the input $x$ , along with specific instructions on how to solve the problem and asked to output $y$ directly. This puts the entire burden of discovering $\mathcal{F}$ on the LLM.

- LLM $→$ Program: In strategies such as PAL (Gao et al., 2023), the LLM is prompted to output a program, which then is interpreted by $\mathcal{I}$ on the input $x$ , to produce the output $y$ .

- LLM + Solver: Strategies such as Logic-LM (Pan et al., 2023) and Sat-LM (Ye et al., 2023) make use of both the LLM $\mathcal{L}$ and the symbolic solver $\mathcal{S}$ . The primary goal of $\mathcal{L}$ is to to act as an interface for translating the problem description for $\mathcal{P}$ and the input $x$ , to the language of the solver $\mathcal{S}$ . The primary burden of solving the problem is on $\mathcal{S}$ , whose output is then parsed as $y$ .

5.1 Our Approach

<details>

<summary>extracted/6211530/Images/puzzle-lm.png Details</summary>

### Visual Description

## Diagram: LLM-Based Program Synthesis

### Overview

The image is a block diagram illustrating a system for program synthesis using a Large Language Model (LLM), a Symbolic Solver, a Feedback Agent, and a Python Program. The diagram shows the flow of information and interactions between these components.

### Components/Axes

* **Title:** Natural Language Description of Rules, Input-Output Format of P, NL(C), NL(X), NL(Y)

* **Components (from top to bottom, left to right):**

* LLM (Large Language Model): A yellow-orange rounded rectangle.

* Symbolic Solver: A light purple rounded rectangle with "Z3" above it.

* Feedback Agent: A light green rounded rectangle.

* Python Program: A light blue rounded rectangle with a Python logo above it.

* **Inputs/Outputs:**

* Gold Output: y

* Predicted Output: ŷ

* Solved Input: x

* **Arrows:** Arrows indicate the flow of information between components. Solid arrows indicate direct flow, while dashed arrows indicate feedback or less direct influence.

* **Annotations on Arrows:**

* (Ex, Vx): Annotation on the arrow from Python Program to Symbolic Solver.

* Ax: Annotation on the arrow from Symbolic Solver to Python Program.

* **Icons:**

* Robot icon above LLM.

* Thumbs down icon above Feedback Agent.

* Python logo above Python Program.

### Detailed Analysis

* **LLM (Large Language Model):**

* Receives input from an unspecified source (indicated by an arrow from above with a robot icon).

* Sends output to both the Python Program (solid arrow) and the Feedback Agent (dashed arrow).

* **Symbolic Solver:**

* Receives input (Ex, Vx) from the Python Program (solid arrow).

* Sends output Ax to the Python Program (solid arrow).

* **Feedback Agent:**

* Receives input from the LLM (dashed arrow).

* Receives "Gold Output" (y) from below (solid arrow).

* Receives "Predicted Output" (ŷ) from the Python Program (dashed arrow).

* Sends feedback to the LLM (dashed arrow).

* **Python Program:**

* Receives input from the LLM (solid arrow).

* Receives input Ax from the Symbolic Solver (solid arrow).

* Receives "Solved Input" (x) from below (solid arrow).

* Sends output (Ex, Vx) to the Symbolic Solver (solid arrow).

* Sends "Predicted Output" (ŷ) to the Feedback Agent (dashed arrow).

### Key Observations

* The LLM generates an initial program or solution.

* The Python Program interacts with the Symbolic Solver to refine the solution.

* The Feedback Agent compares the predicted output with the gold output and provides feedback to the LLM.

* The system appears to be an iterative process, with feedback loops between the components.

### Interpretation

The diagram illustrates a program synthesis system that leverages the strengths of both neural (LLM) and symbolic (Symbolic Solver) methods. The LLM provides an initial, potentially approximate, solution, while the Symbolic Solver provides a means to verify and refine the solution. The Feedback Agent acts as a critic, guiding the LLM towards better solutions based on a comparison with known correct outputs. The Python Program likely serves as an environment for executing and testing the generated code, as well as interfacing with the Symbolic Solver. The iterative nature of the system, with feedback loops, suggests a process of continuous improvement and refinement of the synthesized program. The use of natural language descriptions (NL(C), NL(X), NL(Y)) suggests that the system is designed to work with human-readable specifications.

</details>

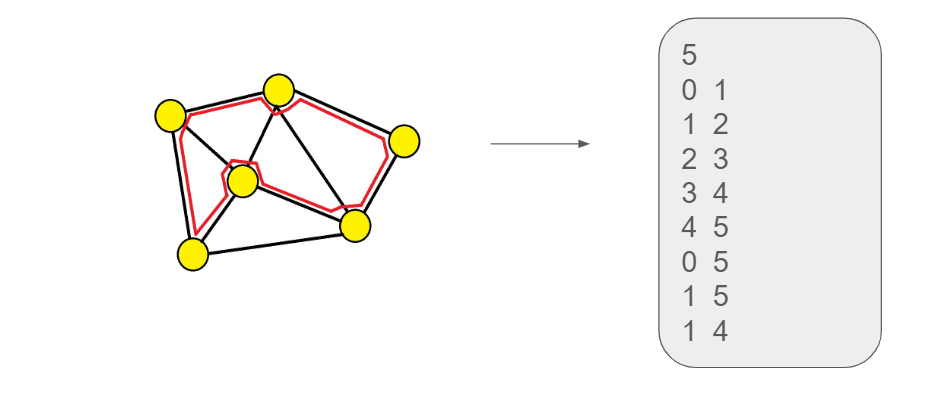

Figure 3: SymPro-LM: Solid lines indicate the main flow and dotted lines indicate feedback pathways.

Our approach can be seen as a combination of LLM $→$ Program and LLM+Solver strategies described above. While the primary role of the LLM is to do the interfacing between the natural language description of the problem $\mathcal{P}$ , the task of solving the actual problem is delegated to the solver $\mathcal{S}$ as in LLM+Solver strategy. But unlike them, where the LLM directly calls the solver, we now prompt it to write a program, $\psi$ , which can work with any given input $x∈\mathcal{X}$ of any size. This allows us to get rid of the LLM calls at inference time, resulting in a "lifted" implementation. The program $\psi$ internally represents the specification of the problem. It takes as argument an input $x$ , and then converts it according to the inferred specification of the problem to a set of equations $(E_{x},V_{x})$ in the language of the solver $\mathcal{S}$ to get the solution to the original problem. The solver $S$ then outputs an assignment $A_{x}$ in its own representation, which is then passed back to the program $\psi$ , which converts it back to the desired output format specified by $\mathcal{Y}$ and produces output $\hat{y}$ . Broadly, our pipeline consists of the 3 components which we describe next in detail.

- Prompting LLMs: The LLM is prompted with $NL(\mathcal{C})$ , $NL(\mathcal{X})$ , $NL(\mathcal{Y})$ (see Figure 2) to generate an input-agnostic program $\psi$ . The LLM is instructed to write $\psi$ to read an input from a file, convert it to a symbolic representation according to the inferred specification of the problem, pass the symbolic representation to the solver and then use the solution from the solver to generate the output in the desired format. The LLM is also prompted with information about the solver and its underlying language. Optionally we can also provide the LLM with a subset of $\mathcal{D}_{\mathcal{P}}$ (see Appendix B.3 for exact prompts).

- Symbolic Solver: $\psi$ can convert any input instance $x$ to $(E_{x},V_{x})$ which it passes to the symbolic solver. The solver is agnostic to how the representation $(E_{x},V_{x})$ was created and tries to find an assignment $A_{x}$ to $V_{x}$ which satisfies $E_{x}$ which is passed back to $\psi$ (see Appendix E.1 for sample programs generated).

- Generating the Final Output: $\psi$ then uses $\mathcal{A}_{x}$ to generate the predicted output $\hat{y}$ . This step is need because the symbolic representation was created by $\psi$ and it must recover the desired output representation from $\mathcal{A}_{x}$ , which might not be straightforward for all problem representations.

Refinement via Solved Examples: We make use of $\mathcal{D}_{\mathcal{P}}$ to verify and (if needed) make corrections to $\psi$ . For each $(x,y)∈\mathcal{D}_{\mathcal{P}}$ (solved input-output pair), we run $\psi$ on $x$ to generate the prediction $\hat{y}$ , during which the following can happen: 1) Errors during execution of $\psi$ ; 2) The solver is unable to find $\mathcal{A}_{x}$ under a certain time limit; 3) $\hat{y}≠ y$ , i.e. the predicted output is incorrect; 4) $\hat{y}=y$ , i.e. the predicted output is correct. If for any training input one of the first three cases occur we provide automated feedback to the LLM through prompts to improve and generate a new program. This process is repeated till all training examples are solved correctly or till a maximum number of feedback rounds is reached. The feedback is simple in nature and includes the nature of the error, the actual error from the interpreter/symbolic solver and the input instance on which the error was generated. For example, in the case where the output doesn’t match the gold output we prompt the LLM with the solved example it got wrong and the expected solution. Appendix B contains details of feedback prompts.

It is possible that a single run of SymPro-LM (along with feedback) is unable to generate the correct solution for all training examples – so, we restart SymPro-LM multiple times for a given problem. Given the probabilistic nature of LLMs a new program is generated at each restart and a new feedback process continues. For the final program, we pick the best program generated during these runs, as judged by the accuracy on the training set. Figure 3 describes our entire approach diagrammatically.

SymPro-LM for Non-First Order Reasoning Datasets: For datasets that are not first-order in nature, a single program does not exist which can solve all problems, hence we prompt the LLM to generate a new program for each test set instance. Thus we cannot use feedback from solved examples and we only use feedback to correct syntactic mistakes (if any). The prompt contains an instruction to write a program which will use a symbolic solver to solve the problem. Additionally, we provide details about the solver to be used. The prompt also contains in-context examples demonstrating sample programs for other logical reasoning questions. The LLM should parse the logical reasoning question and extract the corresponding facts/rules which it needs to pass to the solver (via the program). Once the solver returns with an answer, it is passed back to the program to generate the final output.

6 Experimental Setup

Our experiments answer these research questions. (1) How does SymPro-LM compare with other LLM-based reasoning approaches on fcore problems? (2) How useful is using feedback from solved examples and multiple runs for fcore problems? (3) How does SymPro-LM compare with other methods on other existing (non-first order) logical reasoning benchmarks? (4) What is the nature of errors made by SymPro-LM and other baselines?

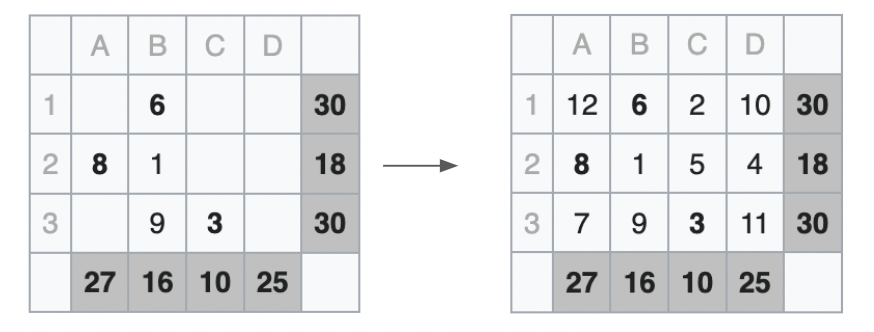

Baselines: On FCoReBench, we compare our method with 4 baselines: 1) Standard LLM prompting, which leverages in-context learning to directly answer the questions; 2) Program-aided Language Models, which use imperative programs for reasoning and offload the solution step to a program interpreter; 3) Logic-LM, which offloads the reasoning to a symbolic solver. 4) Tree-of-Thoughts (ToT) Yao et al. (2023), which is a search based prompting technique. These techniques (Yao et al., 2023; Hao et al., 2023) involve considerable manual effort for writing specialized prompts for each problem and are estimated to be 2-3 orders of magnitude more expensive than other baselines. We thus decide to present a separate comparison with ToT on a subset of FCoReBench (see Appendix C.1.1 for more details regarding ToT experiments). We use Z3 (De Moura and Bjørner, 2008) an efficient SMT solver for experiments with Logic-LM and SymPro-LM. We use the Python interpreter for experiments with PAL and SymPro-LM. We also evaluate refinement for PAL and SymPro-LM by using 5 runs each with 4 rounds of feedback on solved examples for each problem. We evaluate refinement for Logic-LM by providing 4 rounds of feedback to correct syntactic errors in constraints (if any) for each problem instance. We decide not to evaluate SAT-LM given its conceptual similarity to Logic-LM having being proposed concurrently.

Models: We experiment with 3 LLMs: GPT-4-Turbo (gpt-4-0125-preview) (OpenAI, 2023) which is a SOTA LLM by OpenAI, GPT-3.5-Turbo (gpt-3.5-turbo-0125), a relatively smaller LLM by OpenAI and Mixtral 8x7B (open-mixtral-8x7b) (Jiang et al., 2024), an open-source mixture-of-experts model developed by Mistral AI. We set the temperature to $0 0$ for few-shot prompting and Logic-LM for reproducibility and to $0.7$ to sample several runs for PAL and SymPro-LM.

Prompting LLMs: Each method’s prompt includes the natural language description of the problem’s rules and the input-output format, along with two solved examples. No additional intermediate supervision (e.g., SMT or Python program) is given in the prompt. For few-shot prompting we directly prompt the LLM to solve each test set instance separately. For PAL we prompt the LLM to write an input-agnostic Python program which reads the input from a file, reasons to solve the input and then writes the solution to another file, the program generated is run on each testing set instance. For Logic-LM for each test set instance we prompt the LLM to convert it into its symbolic representation which is then fed to a symbolic solver, the prompt additionally contains the description of the language of the solver. We then prompt the LLM with the solution from the solver and ask it to generate the output in the desired format (see Section 5). Prompt templates are detailed in Appendix B and other experimental details can be found in Appendix C.

Metrics: For each problem, we use the associated verification script to check the correctness of the candidate solution for each test instance. This script computes the accuracy as the fraction of test instances solved correctly, using binary marking assigning 1 to correct solutions and 0 for incorrect ones. We report the macro-average of test set accuracies across all problems in FCoReBench.

Additional Datasets: Apart from FCoReBench, we also evaluate SymPro-LM on 3 additional logical reasoning datasets: (1) LogicalDeduction from the BigBench (bench authors, 2023) benchmark, (2) ProofWriter (Tafjord et al., 2021) and (3) PrOntoQA (Saparov and He, 2023a). In addition to other baselines, we also compare with Chain-of-Thought (CoT) prompting (Wei et al., 2022c), as it performs significantly better than standard prompting for such datasets. Recall that these benchmarks are not first-order in nature i.e. each problem is accompanied with a single instance (despite the rules potentially being first-order) and hence we have to run SymPro-LM (and other methods) separately for each test instance (see Appendix C.2 for more details).

7 Results

Table 1 describes the main results for FCoReBench. Unsurprisingly, GPT-4-Turbo is hugely better than other LLMs. Mixtral 8x7B struggles on our benchmark indicating that smaller LLMs (even with mixture of experts) are not as effective at complex reasoning. Mixtral in general does badly, often doing worse than random (especially when used without refinement). PAL and SymPro-LM tend to perform better than other baselines benefiting from the vast pre-training of LLMs on code (Chen et al., 2021). Logic-LM performs rather poorly with smaller LLMs indicating that they struggle to invoke symbolic solvers directly.

Table 1: Results for FCoReBench. - / + indicate before / after refinement. Performance for random guessing is 20.13%.

| Mixtral 8x7B GPT-3.5-Turbo GPT-4-Turbo | 25.06% 27.02% 29.33% | 14.98% 32.66% 47.42% | 36.09% 49.19% 66.40% | 0.21% 6.04% 34.11% | 2.04% 6.58% 38.51% | 8.08% 17.08% 50.94% | 30.09% 50.35% 83.37% |

| --- | --- | --- | --- | --- | --- | --- | --- |

Hereafter, we focus primarily on GPT-4-Turbo’s performance, since it is far superior to other models. SymPro-LM outperforms few-shot prompting and Logic-LM across all problems in FCoReBench. On average the improvements are by an impressive $54.04\%$ against few-shot prompting and by $44.86\%$ against Logic-LM (with refinement). Few-shot prompting solve less than a third of the problems with GPT-4-Turbo, suggesting that even the largest LLMs cannot directly perform complex reasoning. While Logic-LM performs better, it still isn’t that good either, indicating that combining LLMs with symbolic solvers is not enough for such reasoning problems.

Table 2: Logic-LM’s performance on FCoReBench evaluated with refinement.

| Correct Output Incorrect Output Timeout Error | 6.58% 62.11% 2.375% | 38.51% 52.06% 2.49% |

| --- | --- | --- |

| Syntactic Error | 29.04% | 6.91% |

Table 3: Error analysis at a program level for GPT-4-Turbo before and after refinement for PAL and SymPro-LM. Results are averaged over all runs for a problem and further over all problems in FCoReBench.

| Incorrect Program Semantically Incorrect Program Python Runtime Error | 70% / 57% 62% / 49.5% 7% / 4.5% | 58% / 38% 29% / 20.5% 13.5% / 5.5% |

| --- | --- | --- |

| Timeout | 1% / 3% | 15.5% / 12% |

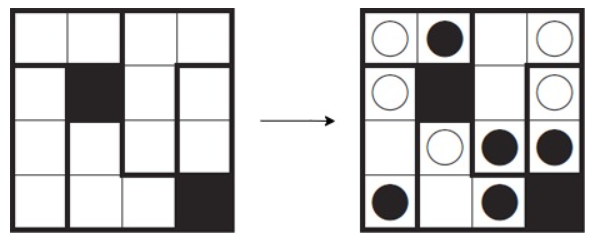

Further qualitative analysis suggests that Logic-LM gets confused in handling the structure of fcore problems. As problem instance size grows, it tends to make syntactic mistakes with smaller LLMs (Table 3). With larger LLMs, syntactic mistakes reduce, but constraints still remain semantically incorrect and do not get corrected through feedback.

Often this is because LLMs are error-prone when enumerating combinatorial constraints, i.e., they struggle with executing implicit for-loops and conditionals (see Appendix F). In contrast, SymPro-LM and PAL manage first order structures well, since writing code for a loop/conditional is not that hard, and the correct loop-execution is done by a program interpreter. These (size-invariant) programs then get used independently without any LLM call at inference time to solve any input instance – easily generalizing to larger instances – highlighting the benefit of using a program interpreter for such combinatorial problems.

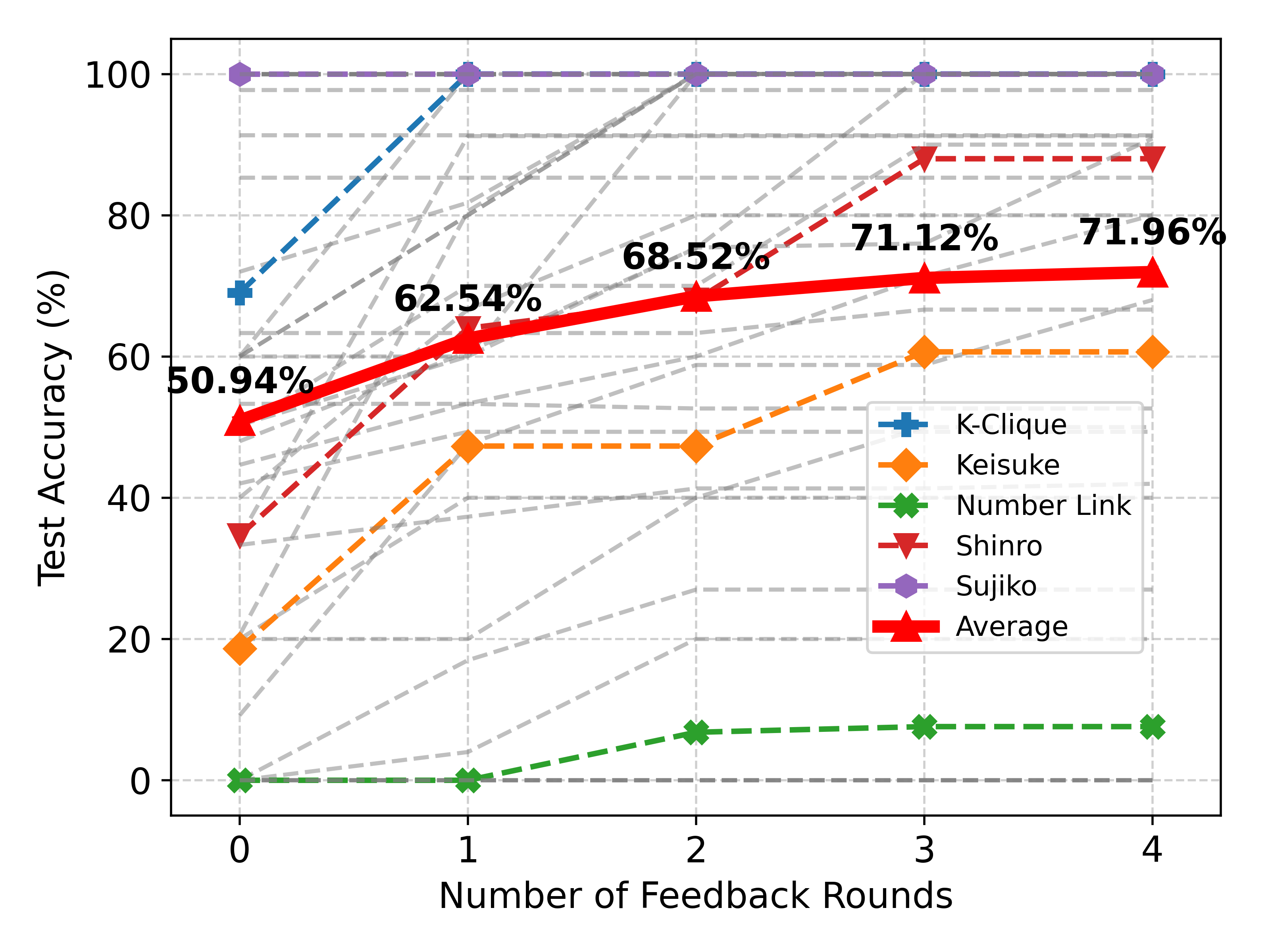

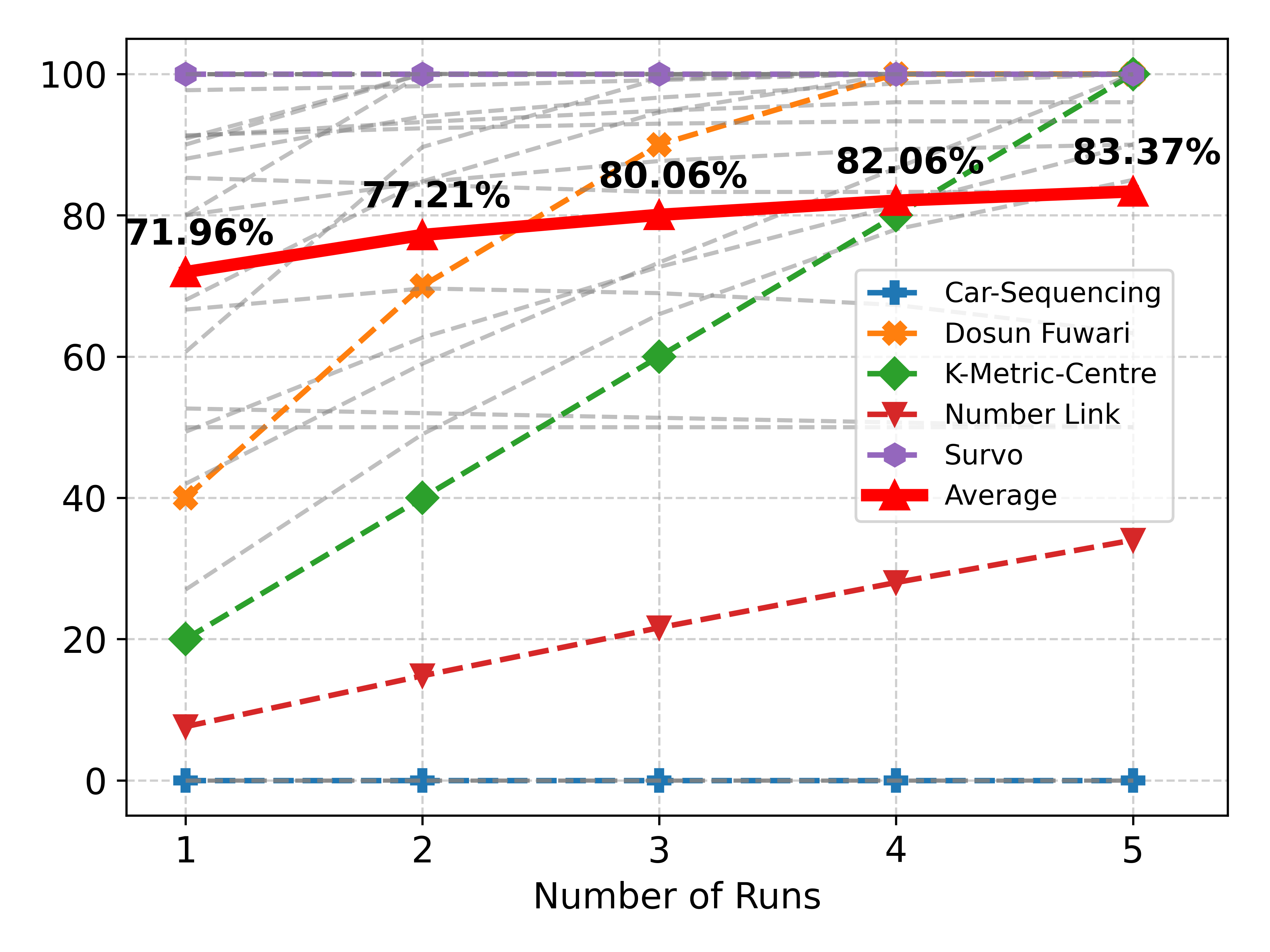

At the same time, PAL is also not as effective on FCoReBench. Table 4 compares the effect of feedback and multiple runs on PAL and SymPro-LM. SymPro-LM outperforms PAL by $16.97\%$ on FCoReBench (with refinement). When LLMs are forced to write programs for performing complicated reasoning, they tend to produce brute-force solutions that often are either incorrect or slow (see Table- 8 in the appendix). This highlights the value of offloading reasoning to a symbolic solver. Interestingly, feedback from solved examples and re-runs is more effective (Table 3) for SymPro-LM, as also shown by larger gains with increasing number of feedback rounds and runs (Table 4). We hypothesize that this is because declarative programs (generated by SymPro-LM) are easier to correct, than imperative programs (produced by PAL).

Table 4: Comparative analysis between PAL and SymPro-LM on FCoReBench for GPT-4-Turbo.

| PAL SymPro-LM | 47.42% 50.94% $\uparrow$ 3.52% | 54.00% 62.54% $\uparrow$ 8.54% | 57.09% 68.52% $\uparrow$ 11.43% | 58.82% 71.12% $\uparrow$ 12.3% | 59.92% 71.96% $\uparrow$ 12.04% |

| --- | --- | --- | --- | --- | --- |

(a) Effect of feedback rounds for a single run

| PAL SymPro-LM | 59.92% 71.96% $\uparrow$ 12.04% | 62.54% 77.21% $\uparrow$ 14.67% | 63.95% 80.06% $\uparrow$ 16.11% | 65.19% 82.06% $\uparrow$ 16.87% | 66.40% 83.37% $\uparrow$ 16.97% |

| --- | --- | --- | --- | --- | --- |

(b) Effect of multiple runs each with 4 feedback rounds

Table 5: Accuracy and cost comparison between ToT prompting and SymPro-LM with GPT-4-Turbo for 3 problems in FCoReBench. Costs are per test instance for ToT and one time costs per problem for SymPro-LM.

| Latin Squares 4x4 Magic Square | 3x3 32.5% 3x3 | 46.33% $0.5135 26.25% | $0.1235 100% $0.4325 | 100% $0.02 100% | $0.02 $0.02 |

| --- | --- | --- | --- | --- | --- |

| 4x4 | 8% | $0.881 | 100% | $0.02 | |

| Sujiko | 3x3 | 7.5% | $0.572 | 100% | $0.02 |

| 4x4 | 0% | $1.676 | 100% | $0.02 | |

Comparison with ToT Prompting: Table 5 compares SymPro-LM with ToT prompting on 3 problems. SymPro-LM is far superior in terms of cost and accuracy, indicating that even the largest LLMs cannot do complex reasoning on problems with large search depths and branching factors, despite being called multiple times with search-based prompting. Due to its programmatic nature, SymPro-LM generalizes even better to larger instances and is also hugely cost effective, as there is no need to call an LLM for each instance separately. We do not perform further experiments with ToT prompting, due to cost considerations.

<details>

<summary>extracted/6211530/Images/size-vs-algo.png Details</summary>

### Visual Description

## Line Charts: Accuracy of Different Models on Sudoku, Sujiko, and Magic-Square

### Overview

The image contains three line charts comparing the accuracy of four different models (Few-Shot, Logic-LM, PAL, and SymPro-LM) on three different puzzle types: Sudoku, Sujiko, and Magic-Square. The x-axis represents the board size, and the y-axis represents the accuracy in percentage.

### Components/Axes

* **Titles:**

* Chart 1: Sudoku

* Chart 2: Sujiko

* Chart 3: Magic-Square

* **X-Axis:** Board Size

* Sudoku: 4x4, 9x9, 16x16, 25x25

* Sujiko: 3x3, 4x4, 5x5

* Magic-Square: 3x3, 4x4, 5x5

* **Y-Axis:** Accuracy (%)

* Scale: 0 to 100, with gridlines at intervals of 20.

* **Legend (Top-Right):**

* Orange line with circle markers: Few-Shot

* Purple dashed line with square markers: Logic-LM

* Blue dash-dotted line with triangle markers: PAL

* Green dotted line with diamond markers: SymPro-LM

### Detailed Analysis

#### Sudoku Chart

* **Few-Shot (Orange):** Starts at approximately 60% accuracy for 4x4, drops to approximately 5% for 9x9, and remains at 0% for 16x16 and 25x25.

* **Logic-LM (Purple):** Starts at approximately 60% accuracy for 4x4, drops to approximately 5% for 9x9, and remains at 0% for 16x16 and 25x25.

* **PAL (Blue):** Starts at approximately 100% accuracy for 4x4, drops to approximately 60% for 25x25.

* **SymPro-LM (Green):** Maintains 100% accuracy across all board sizes (4x4, 9x9, 16x16, 25x25).

#### Sujiko Chart

* **Few-Shot (Orange):** Starts at approximately 47% accuracy for 3x3, drops to approximately 20% for 4x4, and further to approximately 2% for 5x5.

* **Logic-LM (Purple):** Starts at approximately 68% accuracy for 3x3, drops to approximately 53% for 4x4, and further to approximately 13% for 5x5.

* **PAL (Blue):** Starts at approximately 100% accuracy for 3x3, drops to approximately 80% for 5x5.

* **SymPro-LM (Green):** Maintains 100% accuracy across all board sizes (3x3, 4x4, 5x5).

#### Magic-Square Chart

* **Few-Shot (Orange):** Starts at approximately 20% accuracy for 3x3, drops to 0% for 4x4 and remains at 0% for 5x5.

* **Logic-LM (Purple):** Starts at approximately 67% accuracy for 3x3, drops to approximately 25% for 4x4, and further to approximately 7% for 5x5.

* **PAL (Blue):** Starts at approximately 100% accuracy for 3x3, drops to approximately 0% for 4x4 and remains at 0% for 5x5.

* **SymPro-LM (Green):** Maintains 100% accuracy across all board sizes (3x3, 4x4, 5x5).

### Key Observations

* SymPro-LM consistently achieves 100% accuracy across all puzzle types and board sizes.

* Few-Shot and Logic-LM generally perform worse than PAL and SymPro-LM.

* The accuracy of Few-Shot and Logic-LM decreases significantly as the board size increases for Sudoku.

* PAL's performance varies significantly across puzzle types, performing well on Sudoku but poorly on Magic-Square for larger board sizes.

### Interpretation

The data suggests that SymPro-LM is the most robust model for solving these puzzles, as its accuracy remains consistently high regardless of puzzle type or board size. PAL shows promise but is less consistent. Few-Shot and Logic-LM appear to struggle with larger board sizes, particularly in Sudoku. The relationship between board size and accuracy highlights the challenges these models face in scaling to more complex puzzles. The performance differences across puzzle types suggest that the models may be better suited for certain types of logical reasoning problems than others.

</details>

Figure 4: Effect of increasing problem instance size on baselines and SymPro-LM for GPT-4-Turbo.

Effect of Problem Instance Size: We now report performance of SymPro-LM and other baselines against varying problem instance sizes (see Figure 4) for 3 problems in FCoReBench (sudoku, sujiko and magic-square). Increasing the problem instance size increases the number of variables, accompanying constraints and reasoning steps required to reach the solution. We observe that being programmatic SymPro-LM and PAL, are relatively robust against increase in size of input instances. In comparison, performance of Logic-LM and few-shot prompting declines sharply. PAL programs are often inefficient and may see performance drop when they fail to find a solution within the time limit.

<details>

<summary>extracted/6211530/Images/feedback-effect.png Details</summary>

### Visual Description

## Line Chart: Test Accuracy vs. Number of Feedback Rounds

### Overview

The image is a line chart comparing the test accuracy (%) of different methods (K-Clique, Keisuke, Number Link, Shinro, and Sujiko) against the number of feedback rounds (0 to 4). It also includes an average accuracy line. The chart uses dashed lines for each method and a solid, thicker line for the average.

### Components/Axes

* **X-axis:** Number of Feedback Rounds, with values 0, 1, 2, 3, and 4.

* **Y-axis:** Test Accuracy (%), with values ranging from 0 to 100. Gridlines are present at intervals of 20.

* **Legend:** Located in the bottom-right corner, it identifies each method by color and name:

* Blue: K-Clique

* Orange: Keisuke

* Green: Number Link

* Red: Shinro

* Purple: Sujiko

* Thick Red: Average

* **Data Series:** Each method's performance is represented by a dashed line with a specific color and marker. The average performance is represented by a thick solid red line with triangle markers.

### Detailed Analysis

**K-Clique (Blue, dashed line with plus markers):**

* Trend: Slopes upward from round 0 to round 1, then remains relatively constant.

* Round 0: Approximately 70%

* Round 1: Approximately 98%

* Round 2: Approximately 100%

* Round 3: Approximately 100%

* Round 4: Approximately 100%

**Keisuke (Orange, dashed line with diamond markers):**

* Trend: Slopes upward from round 0 to round 3, then remains relatively constant.

* Round 0: Approximately 18%

* Round 1: Approximately 48%

* Round 2: Approximately 48%

* Round 3: Approximately 60%

* Round 4: Approximately 60%

**Number Link (Green, dashed line with x markers):**

* Trend: Remains relatively constant at a low accuracy.

* Round 0: Approximately 0%

* Round 1: Approximately 0%

* Round 2: Approximately 7%

* Round 3: Approximately 7%

* Round 4: Approximately 7%

**Shinro (Red, dashed line with triangle markers):**

* Trend: Slopes upward from round 0 to round 3, then remains relatively constant.

* Round 0: Approximately 35%

* Round 1: Approximately 60%

* Round 2: Approximately 68%

* Round 3: Approximately 88%

* Round 4: Approximately 88%

**Sujiko (Purple, dashed line with pentagon markers):**

* Trend: Remains relatively constant at a high accuracy.

* Round 0: Approximately 100%

* Round 1: Approximately 100%

* Round 2: Approximately 100%

* Round 3: Approximately 100%

* Round 4: Approximately 100%

**Average (Thick Red, solid line with triangle markers):**

* Trend: Slopes upward from round 0 to round 4.

* Round 0: 50.94%

* Round 1: 62.54%

* Round 2: 68.52%

* Round 3: 71.12%

* Round 4: 71.96%

There are also several grey dashed lines that appear to connect the data points of the other lines.

### Key Observations

* Sujiko consistently achieves the highest test accuracy, remaining at 100% across all feedback rounds.

* Number Link consistently performs the worst, with accuracy remaining near 0%.

* K-Clique shows a significant improvement from round 0 to round 1, then plateaus.

* The average accuracy increases with the number of feedback rounds, but the rate of increase diminishes.

### Interpretation

The chart illustrates the impact of feedback rounds on the test accuracy of different methods. Sujiko's consistently high performance suggests it is the most robust method, while Number Link's consistently low performance indicates it may be ineffective. The increasing average accuracy suggests that, overall, feedback rounds improve performance, but the diminishing rate of increase implies that there may be a point of diminishing returns. The grey dashed lines are likely showing the individual data points that are being averaged.

</details>

(a) Effect of feedback

<details>

<summary>extracted/6211530/Images/effect-runs.png Details</summary>

### Visual Description

## Line Chart: Performance Comparison Across Runs

### Overview

The image is a line chart comparing the performance of five different algorithms (Car-Sequencing, Dosun Fuwari, K-Metric-Centre, Number Link, and Survo) across five runs. It also includes an average performance line. The y-axis represents performance, and the x-axis represents the number of runs.

### Components/Axes

* **X-axis:** Number of Runs (labeled 1 to 5)

* **Y-axis:** Performance (labeled 0 to 100)

* **Legend:** Located on the right side of the chart, associating colors and markers with algorithm names:

* Blue (+): Car-Sequencing

* Orange (x): Dosun Fuwari

* Green (diamond): K-Metric-Centre

* Red (upside-down triangle): Number Link

* Purple (hexagon): Survo

* Red (triangle): Average

* **Grid:** Light gray dashed lines provide a visual grid.

### Detailed Analysis or Content Details

**1. Car-Sequencing (Blue, +):**

* Trend: The line is essentially flat at 0 across all runs.

* Values: Performance remains at approximately 0 for all runs (1 to 5).

**2. Dosun Fuwari (Orange, x):**

* Trend: The line slopes upward.

* Values:

* Run 1: ~40

* Run 2: ~70

* Run 3: ~85

* Run 4: ~95

* Run 5: ~100

**3. K-Metric-Centre (Green, diamond):**

* Trend: The line slopes upward.

* Values:

* Run 1: ~20

* Run 2: ~40

* Run 3: ~60

* Run 4: ~80

* Run 5: ~100

**4. Number Link (Red, upside-down triangle):**

* Trend: The line slopes upward.

* Values:

* Run 1: ~8

* Run 2: ~15

* Run 3: ~23

* Run 4: ~29

* Run 5: ~35

**5. Survo (Purple, hexagon):**

* Trend: The line is essentially flat at 100 across all runs.

* Values: Performance remains at approximately 100 for all runs (1 to 5).

**6. Average (Red, triangle):**

* Trend: The line slopes upward.

* Values:

* Run 1: 71.96%

* Run 2: 77.21%

* Run 3: 80.06%

* Run 4: 82.06%

* Run 5: 83.37%

**Other Lines (Gray, dashed):**

* There are multiple gray dashed lines, but they are not labeled in the legend.

### Key Observations

* Car-Sequencing consistently performs poorly, with a performance of 0 across all runs.

* Survo consistently performs at the maximum, with a performance of 100 across all runs.

* Dosun Fuwari and K-Metric-Centre show significant performance improvements as the number of runs increases.

* Number Link shows a gradual performance improvement as the number of runs increases, but its overall performance is significantly lower than Survo, Dosun Fuwari, and K-Metric-Centre.

* The average performance increases with the number of runs, reflecting the general upward trend of most algorithms.

### Interpretation

The chart illustrates the performance of different algorithms over multiple runs. Survo is the clear winner, achieving perfect performance consistently. Car-Sequencing appears to be ineffective in this context. Dosun Fuwari and K-Metric-Centre show promise with increasing runs. The average performance provides a benchmark, showing the overall trend of improvement as the number of runs increases. The unlabeled gray lines likely represent individual trials or variations within the algorithms, adding to the complexity of the performance landscape.

</details>

(b) Effect of multiple runs

<details>

<summary>extracted/6211530/Images/solved-examples-count.png Details</summary>

### Visual Description

## Line Chart: Number of Solved Examples vs. Number of Feedback Rounds

### Overview

The image is a line chart showing the relationship between the number of solved examples and the number of feedback rounds. The chart displays five different lines, each representing a different number of solved examples (0, 1, 4, 7, and 10). The x-axis represents the number of feedback rounds (0 to 4), and the y-axis represents the percentage score.

### Components/Axes

* **Title:** There is no explicit title for the chart.

* **X-axis:**

* Label: "Number of Feedback Rounds"

* Scale: 0, 1, 2, 3, 4

* **Y-axis:**

* Label: No explicit label, but the values represent a percentage score.

* Scale: 50 to 78, with increments of 2.

* **Legend:** Located in the top-left corner.

* "Number of Solved Examples"

* Blue: 0

* Orange: 1

* Green: 4

* Red: 7

* Purple: 10

### Detailed Analysis

* **Line 0 (Blue):** This line represents 0 solved examples. It remains constant at approximately 50.94% across all feedback rounds.

* Round 0: 50.94%

* Round 1: 50.94%

* Round 2: 50.94%

* Round 3: 50.94%

* Round 4: 50.94%

* **Line 1 (Orange):** This line represents 1 solved example. It increases from round 0 to round 2, then plateaus.

* Round 0: 60.35%

* Round 1: 62.11%

* Round 2: 64.48%

* Round 3: 66.10%

* Round 4: 66.11%

* **Line 4 (Green):** This line represents 4 solved examples. It increases from round 0 to round 3, then plateaus slightly.

* Round 0: 62.11%

* Round 1: 62.54%

* Round 2: 67.90%

* Round 3: 70.22%

* Round 4: 70.62%

* **Line 7 (Red):** This line represents 7 solved examples. It increases from round 0 to round 4.

* Round 0: 62.31%

* Round 1: 68.28%

* Round 2: 70.89%

* Round 3: 71.73%

* **Line 10 (Purple):** This line represents 10 solved examples. It increases from round 0 to round 4.

* Round 0: 50.94%

* Round 1: 68.52%

* Round 2: 71.12%

* Round 3: 71.96%

### Key Observations

* The performance with 0 solved examples remains constant regardless of the number of feedback rounds.

* The performance generally increases with more solved examples.

* The lines representing 4, 7, and 10 solved examples show a similar trend of increasing performance with more feedback rounds, but the rate of increase diminishes as the number of feedback rounds increases.

* The line representing 1 solved example plateaus after 2 feedback rounds.

### Interpretation

The chart suggests that providing solved examples significantly improves performance. The more solved examples provided, the better the initial performance and the greater the improvement with feedback rounds. However, the benefit of additional feedback rounds diminishes as the number of rounds increases, especially when only one solved example is provided. The flat line for 0 solved examples indicates that feedback alone is not sufficient to improve performance without any initial examples. The data demonstrates the importance of providing a sufficient number of solved examples to facilitate learning and improvement through feedback. The outlier is the flat line for 0 solved examples, which highlights the necessity of having some initial examples for feedback to be effective.

</details>

(c) Effect of # of solved examples

Figure 5: Effect of feedback and multiple runs with GPT-4-Turbo. (a) and (b) show results with 10 solved examples for feedback where dashed lines show results for individual problems in FCoReBench, with coloured lines highlighting specific problems and the red bold line represents the average effect across all problems. (c) shows the effect of number of solved examples used for feedback in a single run.

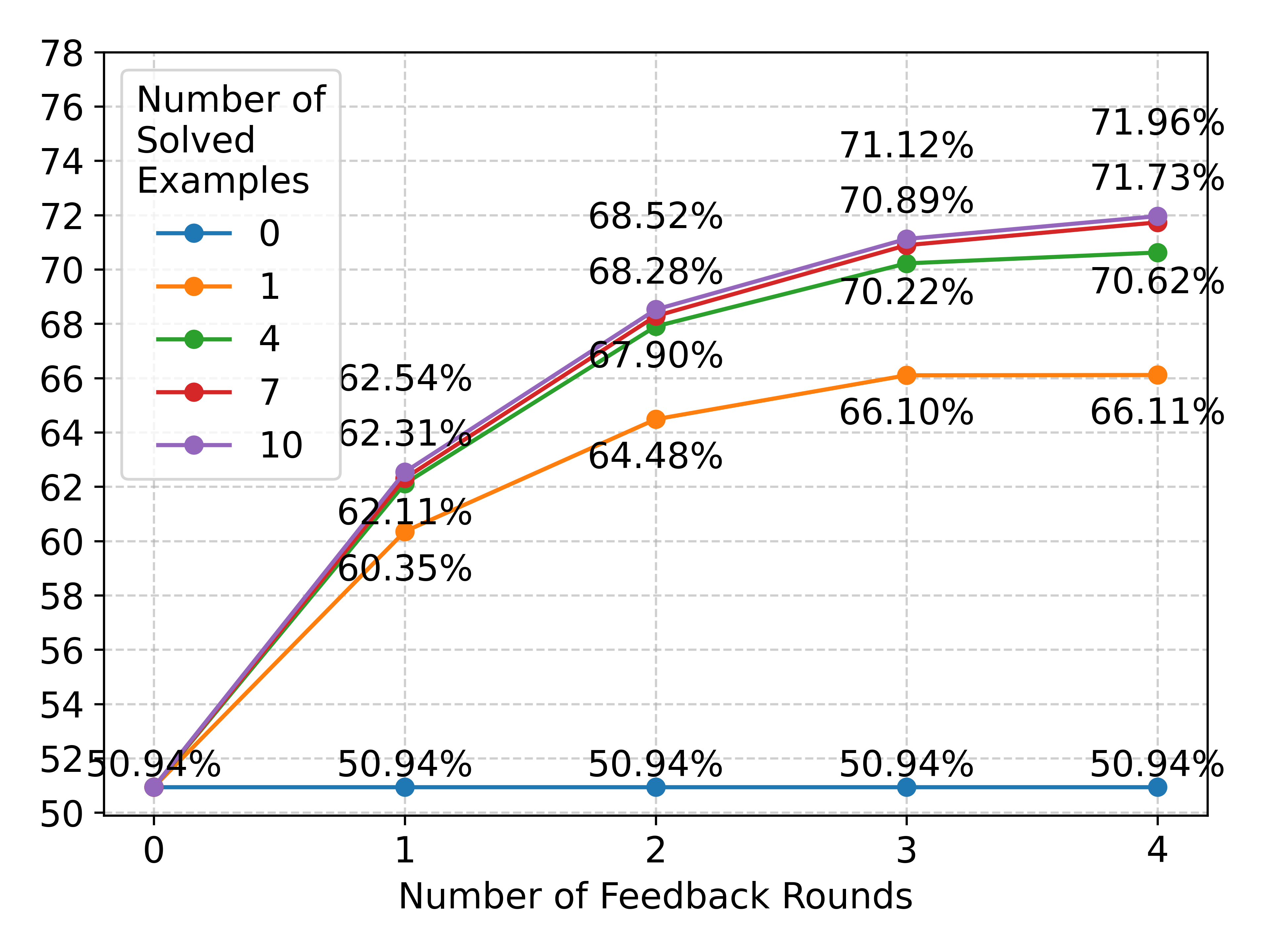

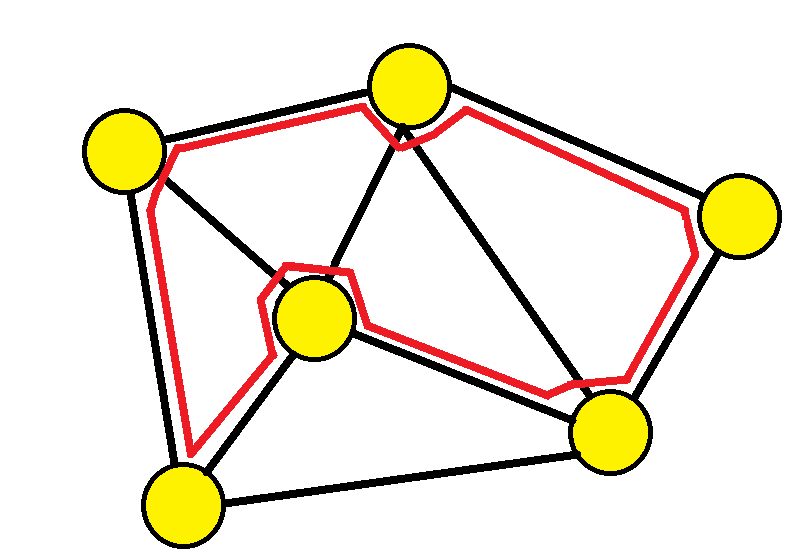

Effect of Feedback on Solved Examples: Figure 5(a) describes the effect of multiple rounds of feedback for SymPro-LM. Feedback helps performance significantly; utilizing 4 feedback rounds improves performance by $21.02\%$ . Even the largest LLMs commit errors, making it important to verify and correct their work. But feedback on its own is not enough, a single run might end-up in a wrong reasoning path, which is not corrected by feedback making it important to utilize multiple runs for effective reasoning. Utilizing 5 runs improves the performance by additional $11.41\%$ (Figure 5(b)) after which the gains tend to saturate. Performance also increases with an increase in the number of solved examples (Figure 5(c)). Each solved example helps in detecting and correcting different errors. However, performance tends to saturate at 7 solved examples and no new errors are discovered/corrected, even with additional training data.

7.1 Results on Other Datasets

Table 6: Results for baselines & SymPro-LM on other benchmarks. Best results with each LLM are highlighted.

| Logical Deduction ProofWriter PrOntoQA | 39.66 % 40.50 % 49.60 % | 50.66 % 57.16 % 83.20 % | 66.33 % 50.5 % 98.40 % | 71.00 % 70.16 % 72.20 % | 78.00 % 74.167 % 97.40 % | 65.33 % 46.5 % 83.00 % | 76.00 % 61.66 % 98.80 % | 81.66 % 76.29 % 99.80 % | 82.67 % 74.83 % 91.20 % | 94.00 % 89.83 % 97.80 % |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

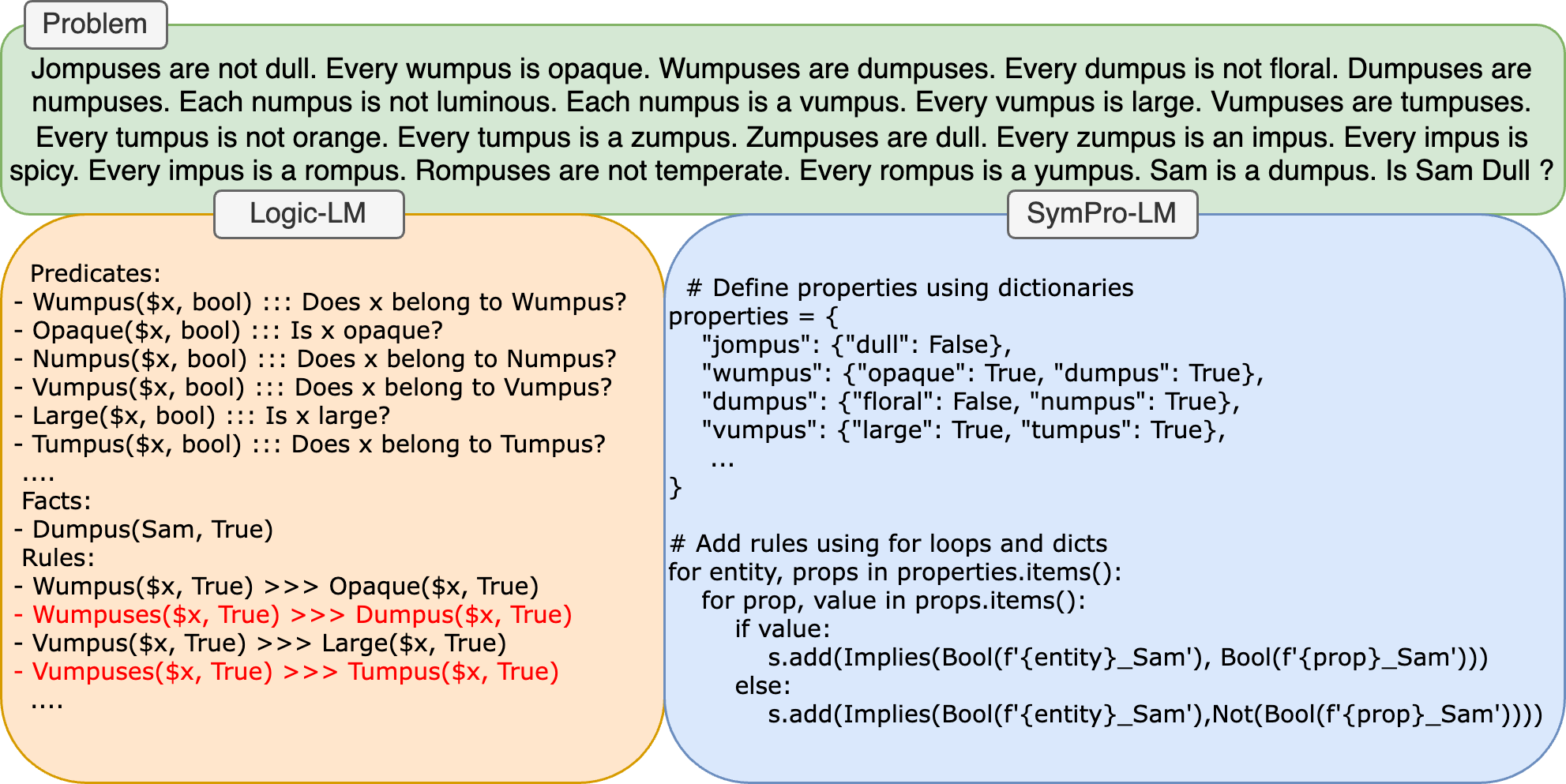

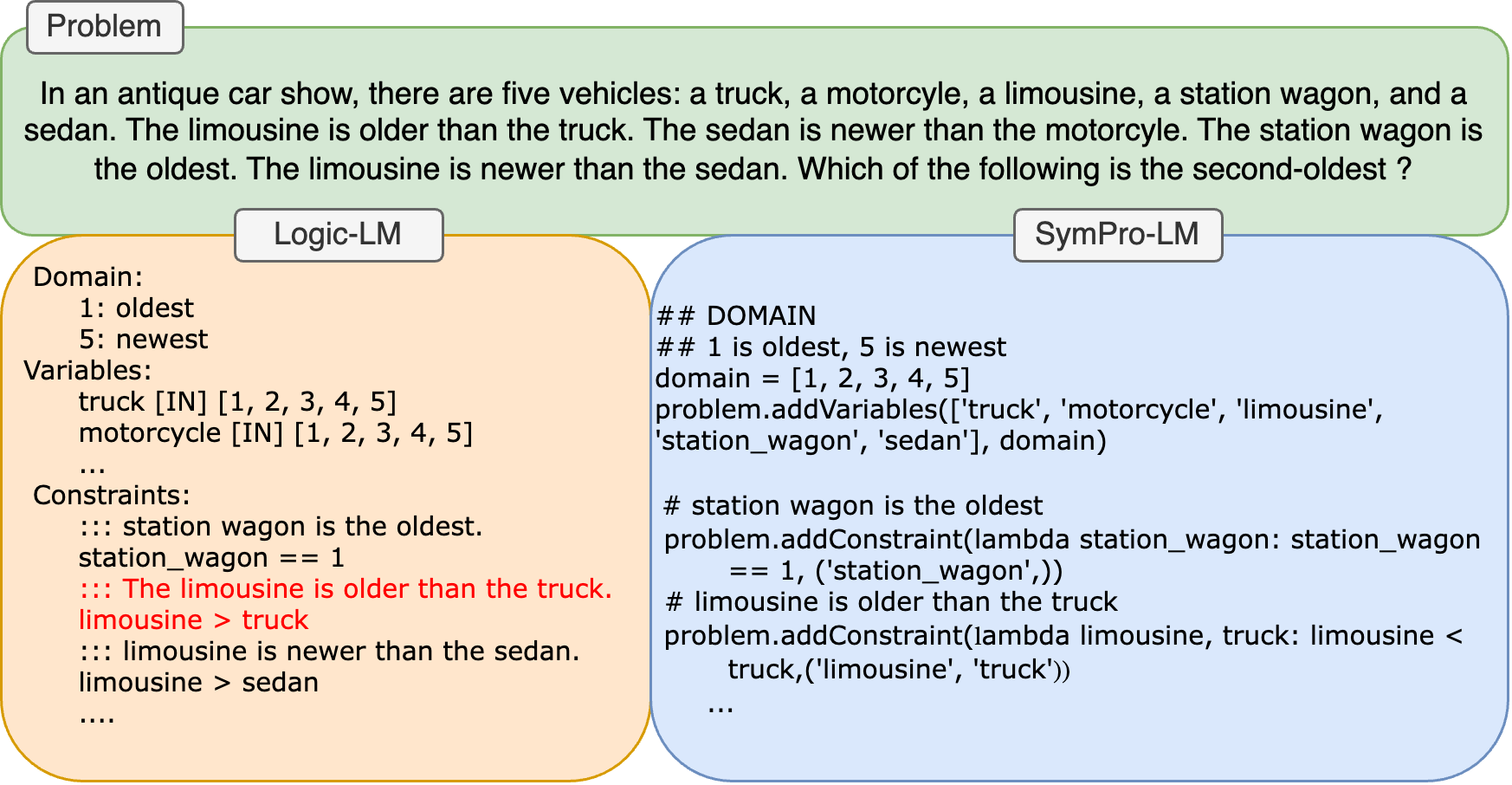

Table 6 reports the performance on non-first order datasets. SymPro-LM outperforms all other baselines on ProofWriter and LogicalDeduction, particularly Logic-LM. This showcases the value of integrating LLMs with symbolic solvers through programs, even for standard reasoning tasks. These experiments suggest that LLMs translate natural language questions into programs using solvers much more effectively than into symbolic formulations directly. We attribute this to the vast pre-training of LLMs on code (Brown et al., 2020; Chen et al., 2021). For instance, on the LogicalDeduction benchmark, while Logic-LM does not make syntactic errors during translation it often makes logical errors. These errors significantly decrease when LLMs are prompted to produce programs instead (Figure 6(b)). Error analysis on ProofWriter and PrOntoQA reveals that for more complex natural language questions, LLMs also start making syntactic errors during translation as the number of rules/facts start increasing. With SymPro-LM these errors are vastly reduced because, apart from the benefit from pre-training, LLMs also start utilizing programming constructs like dictionaries and loops to make most out of the structure in these problems (Figure 6(a)). PAL and CoT perform marginally better on PrOntoQA because the reasoning style for problems in this dataset involves forward-chain reasoning which aligns with PAL’s and CoT’s style of reasoning. Integrating symbolic solvers is not as useful for this dataset, but still achieves competitive performance.

<details>

<summary>extracted/6211530/Images/prontoQA-example.png Details</summary>

### Visual Description

## Logical Reasoning Problem and Solutions

### Overview

The image presents a logical reasoning problem and two different approaches to solving it: Logic-LM and SymPro-LM. The problem is stated at the top, followed by the Logic-LM approach on the left and the SymPro-LM approach on the right.

### Components/Axes

* **Problem Statement:** A series of statements defining relationships between different entities (Jompuses, Wumpuses, Dumpuses, etc.) and their properties (dull, opaque, floral, etc.). The final question is "Is Sam Dull?".

* **Logic-LM (Left Side):**

* **Predicates:** Defines the meaning of terms like "Wumpus(x, bool)" as "Does x belong to Wumpus?".

* **Facts:** States "Dumpus(Sam, True)", meaning Sam is a Dumpus.

* **Rules:** Defines logical implications, such as "Wumpus(x, True) >>> Opaque(x, True)", meaning if something is a Wumpus, it is opaque.

* **SymPro-LM (Right Side):**

* **Properties Dictionary:** Defines the properties of each entity using a Python dictionary. For example, "jompus": {"dull": False} means a Jompus is not dull.

* **Rule Implementation:** Python code that adds rules based on the properties dictionary using loops and conditional statements. It uses a solver `s` and adds implications using `s.add(Implies(...))`.

### Detailed Analysis or ### Content Details

**Problem Statement:**

* Jompuses are not dull.

* Every wumpus is opaque.

* Wumpuses are dumpuses.

* Every dumpus is not floral.

* Dumpuses are numpuses.

* Each numpus is not luminous.

* Each numpus is a vumpus.

* Every vumpus is large.

* Vumpuses are tumpuses.

* Every tumpus is not orange.

* Every tumpus is a zumpus.

* Zumpuses are dull.

* Every zumpus is an impus.

* Every impus is spicy.

* Every impus is a rompus.

* Rompuses are not temperate.

* Every rompus is a yumpus.

* Sam is a dumpus.

* Is Sam Dull?

**Logic-LM:**

* **Predicates:**

* Wumpus($x, bool) ::: Does x belong to Wumpus?

* Opaque($x, bool) ::: Is x opaque?

* Numpus($x, bool) ::: Does x belong to Numpus?

* Vumpus($x, bool) ::: Does x belong to Vumpus?

* Large($x, bool) ::: Is x large?

* Tumpus($x, bool) ::: Does x belong to Tumpus?

* **Facts:**

* Dumpus(Sam, True)

* **Rules:**

* Wumpus($x, True) >>> Opaque($x, True)

* Wumpuses($x, True) >>> Dumpus($x, True)

* Vumpus($x, True) >>> Large($x, True)

* Vumpuses($x, True) >>> Tumpus($x, True)

**SymPro-LM:**

* **Properties Dictionary:**

</details>

(a) PrOntoQA

<details>

<summary>extracted/6211530/Images/logicaldeduction-example.png Details</summary>

### Visual Description

## Problem Solving Approach Comparison: Logic-LM vs. SymPro-LM

### Overview

The image presents a problem involving the relative ages of five vehicles (truck, motorcycle, limousine, station wagon, and sedan) and compares two different approaches (Logic-LM and SymPro-LM) to represent and solve the problem. The problem is stated at the top, followed by the Logic-LM approach on the left and the SymPro-LM approach on the right.

### Components/Axes

* **Problem Statement:** A textual description of the problem.

* **Logic-LM:** An approach to represent the problem using a domain, variables, and constraints.

* **Domain:** Defines the range of possible values (1 to 5), where 1 represents the oldest and 5 represents the newest.

* **Variables:** Declares the vehicles as variables, each with a possible value from the domain (1 to 5).

* **Constraints:** Defines the relationships between the vehicles' ages based on the problem statement.

* **SymPro-LM:** An approach to represent the problem using code.

* **Domain:** Defines the range of possible values (1 to 5), where 1 represents the oldest and 5 represents the newest.

* **Variables:** Declares the vehicles as variables, each with a possible value from the domain (1 to 5).

* **Constraints:** Defines the relationships between the vehicles' ages based on the problem statement using code.

### Detailed Analysis or ### Content Details

**Problem Statement:**

"In an antique car show, there are five vehicles: a truck, a motorcyle, a limousine, a station wagon, and a sedan. The limousine is older than the truck. The sedan is newer than the motorcyle. The station wagon is the oldest. The limousine is newer than the sedan. Which of the following is the second-oldest?"

**Logic-LM:**

* **Domain:**

* 1: oldest

* 5: newest

* **Variables:**

* truck [IN] \[1, 2, 3, 4, 5]

* motorcycle [IN] \[1, 2, 3, 4, 5]

* ... (The other vehicles are implied to be defined similarly)

* **Constraints:**

* ::: station wagon is the oldest.

* station\_wagon == 1

* ::: The limousine is older than the truck.

* limousine > truck

* ::: limousine is newer than the sedan.

* limousine > sedan

* ....

**SymPro-LM:**

* `## DOMAIN`

* `## 1 is oldest, 5 is newest`

* `domain = [1, 2, 3, 4, 5]`

* `problem.addVariables(['truck', 'motorcycle', 'limousine', 'station_wagon', 'sedan'], domain)`

* `# station wagon is the oldest`

* `problem.addConstraint(lambda station_wagon: station_wagon == 1, ('station_wagon',))`

* `# limousine is older than the truck`

* `problem.addConstraint(lambda limousine, truck: limousine < truck, ('limousine', 'truck'))`

### Key Observations

* Both Logic-LM and SymPro-LM aim to represent the same problem and constraints.

* Logic-LM uses a more declarative approach, defining the domain, variables, and constraints in a structured format.

* SymPro-LM uses a more programmatic approach, defining the domain, variables, and constraints using code.

* The constraints in Logic-LM are represented using symbolic notation (e.g., `limousine > truck`), while in SymPro-LM, they are represented using code (e.g., `lambda limousine, truck: limousine < truck`).

### Interpretation

The image illustrates two different methods for representing and solving a constraint satisfaction problem. Logic-LM provides a more abstract and human-readable representation, while SymPro-LM offers a more concrete and executable representation. The choice between these approaches depends on the specific application and the desired level of abstraction. The problem is to determine the second-oldest vehicle given the constraints. The Logic-LM and SymPro-LM sections show how the problem and constraints can be formalized for automated reasoning or solving.

</details>

(b) LogicalDeduction

Figure 6: Examples highlighting benefits of integrating LLMs with symbolic solver through programs.

8 Discussion

We analyze FCoReBench to identify where LLMs excel and where the largest models still struggle. Based on SymPro-LM ’s performance, we categorize FCoReBench problems into three broad groups.

1) Problems that SymPro-LM solved with 100% accuracy without any feedback. 8 such problems exist out of the $40$ , including vertex-cover and latin-square. These problems have a one-to-one correspondence between the natural language description of the rules and the program for generating the constraints and the LLM essentially has to perform a pure translation task which they excel at.

2) Problems that SymPro-LM solved with 100% accuracy but after feedback from solved examples. There are 20 such problems. They typically do not have a one-to-one correspondence between rule descriptions and code, thus requiring some reasoning to encode the problem in the solver’s language. For eg. one must define auxiliary variables and/or compose several primitives to encode a single natural language rule. GPT-4-Turbo initially misses constraints or encodes the problem incorrectly, but with feedback, it can spot its mistakes and corrects its programs. Examples include k-clique and binairo. In binairo, for example, GPT-4-Turbo incorrectly encodes the constraints for ensuring all columns and rows to be distinct but fixes this mistake after feedback (see Figure 17 in the appendix). LLMs can leverage their vast pre-training to discover non-trivial encodings for several interesting problems and solved examples can help guide LLMs to correct solutions in case of mistakes.

3) Problems with performance below 100% that are not corrected through feedback or utilizing multiple runs. For these 12 problems, LLM finds it difficult to encode some natural language constraint into SMT. Examples include number-link and hamiltonian path, where GPT-4-Turbo is not able to figure out how to encode existence of paths as SMT constraints. In our opinion, these conversions are peculiar, and may be hard even for average CS students. We hope that further analysis of these 12 domains opens up research directions for neuro-symbolic reasoning with LLMs.

9 Conclusion and Limitations

We investigate the reasoning abilities of LLMs on structured first-order combinatorial reasoning problems. We formally define the task, and we present FCoReBench, a novel benchmark of $40$ such problems and find that existing tool-augmented techniques, such as Logic-LM and PAL fare poorly. In response, we propose SymPro-LM – a new technique to aid LLMs with both program interpreters and symbolic solvers. It uses LLMs to convert text into executable code, which is then processed by interpreters to define constraints, allowing symbolic solvers to efficiently tackle the reasoning tasks. Our extensive experiments show that SymPro-LM ’s integrated approach leads to superior performance on our dataset as well as existing benchmarks. Error analysis reveals that SymPro-LM struggles for a certain class of problems where conversion to symbolic representation is not straightforward. In such cases simple feedback strategies do not improve reasoning; exploring methods to alleviate such problems is a promising direction for future work. Another future work direction is to extend this dataset to include images of inputs and outputs, instead of serialized text representations, and assess the reasoning abilities of vision-language models, like GPT4-V.

Limitations: While we study a wide variety of fcore problems, more such problems always exist and adding these to FCoReBench remains a direction of future work. Additionally we assume that input instances and their outputs have a fixed pre-defined (serialized) representation, which may not always be easy to find. Another limitation is that encoding of many problems in the solver’s language can potentially be complicated. Our method relies on the pre-training of LLMs to achieve this without any training/fine-tuning, and addressing this is a direction for future work.

References

- bench authors [2023] BIG bench authors. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. Transactions on Machine Learning Research, 2023. ISSN 2835-8856.

- Brown et al. [2020] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Chen et al. [2021] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Pondé de Oliveira Pinto, Jared Kaplan, Harrison Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, Alex Ray, Raul Puri, Gretchen Krueger, Michael Petrov, Heidy Khlaaf, Girish Sastry, Pamela Mishkin, Brooke Chan, Scott Gray, Nick Ryder, Mikhail Pavlov, Alethea Power, Lukasz Kaiser, Mohammad Bavarian, Clemens Winter, Philippe Tillet, Felipe Petroski Such, Dave Cummings, Matthias Plappert, Fotios Chantzis, Elizabeth Barnes, Ariel Herbert-Voss, William Hebgen Guss, Alex Nichol, Alex Paino, Nikolas Tezak, Jie Tang, Igor Babuschkin, Suchir Balaji, Shantanu Jain, William Saunders, Christopher Hesse, Andrew N. Carr, Jan Leike, Joshua Achiam, Vedant Misra, Evan Morikawa, Alec Radford, Matthew Knight, Miles Brundage, Mira Murati, Katie Mayer, Peter Welinder, Bob McGrew, Dario Amodei, Sam McCandlish, Ilya Sutskever, and Wojciech Zaremba. Evaluating large language models trained on code. CoRR, abs/2107.03374, 2021.

- Clark et al. [2021] Peter Clark, Oyvind Tafjord, and Kyle Richardson. Transformers as soft reasoners over language. In Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence, IJCAI’20, 2021. ISBN 9780999241165.

- Cobbe et al. [2021] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems. CoRR, abs/2110.14168, 2021.

- Colbourn [1984] Charles J. Colbourn. The complexity of completing partial latin squares. Discrete Applied Mathematics, 8(1):25–30, 1984. ISSN 0166-218X. doi: https://doi.org/10.1016/0166-218X(84)90075-1.

- De Biasi [2013] Marzio De Biasi. Binary puzzle is np–complete, 07 2013.

- De Moura and Bjørner [2008] Leonardo De Moura and Nikolaj Bjørner. Z3: An efficient smt solver. In International conference on Tools and Algorithms for the Construction and Analysis of Systems, pages 337–340. Springer, 2008.

- Demaine and Rudoy [2018] Erik D. Demaine and Mikhail Rudoy. Theoretical Computer Science, 732:80–84, 2018. ISSN 0304-3975. doi: https://doi.org/10.1016/j.tcs.2018.04.031.

- Epstein [1987] D. Epstein. On the np-completeness of cryptarithms. ACM SIGACT News, 18(3):38–40, 1987. doi: 10.1145/24658.24662.

- Fan et al. [2023] Lizhou Fan, Wenyue Hua, Lingyao Li, Haoyang Ling, and Yongfeng Zhang. Nphardeval: Dynamic benchmark on reasoning ability of large language models via complexity classes. arXiv preprint arXiv:2312.14890, 2023.

- Gao et al. [2023] Luyu Gao, Aman Madaan, Shuyan Zhou, Uri Alon, Pengfei Liu, Yiming Yang, Jamie Callan, and Graham Neubig. Pal: Program-aided language models. In International Conference on Machine Learning, pages 10764–10799. PMLR, 2023.

- Garey et al. [1976a] M. R. Garey, D. S. Johnson, and Ravi Sethi. The complexity of flowshop and jobshop scheduling. Mathematics of Operations Research, 1(2):117–129, 1976a. doi: 10.1287/moor.1.2.117.

- Garey et al. [1976b] M. R. Garey, D. S. Johnson, and Ravi Sethi. The complexity of flowshop and jobshop scheduling. Mathematics of Operations Research, 1(2):117–129, 1976b. ISSN 0364765X, 15265471.