# Shortened LLaMA: Depth Pruning for Large Language Models with Comparison of Retraining Methods

> Equal contribution.Corresponding author.

Abstract

Structured pruning of modern large language models (LLMs) has emerged as a way of decreasing their high computational needs. Width pruning reduces the size of projection weight matrices (e.g., by removing attention heads) while maintaining the number of layers. Depth pruning, in contrast, removes entire layers or blocks, while keeping the size of the remaining weights unchanged. Most current research focuses on either width-only or a blend of width and depth pruning, with little comparative analysis between the two units (width vs. depth) concerning their impact on LLM inference efficiency. In this work, we show that simple depth pruning can effectively compress LLMs while achieving comparable or superior performance to recent width pruning studies. Our pruning method boosts inference speeds, especially under memory-constrained conditions that require limited batch sizes for running LLMs, where width pruning is ineffective. In retraining pruned models for quality recovery, continued pretraining on a large corpus markedly outperforms LoRA-based tuning, particularly at severe pruning ratios. We hope this work can help build compact yet capable LLMs.

Shortened LLaMA: Depth Pruning for Large Language Models with Comparison of Retraining Methods

Bo-Kyeong Kim 1 thanks: Equal contribution. Geonmin Kim 1 footnotemark: Tae-Ho Kim 1 thanks: Corresponding author. Thibault Castells 1 Shinkook Choi 1 Junho Shin 1 Hyoung-Kyu Song 2 1 Nota Inc. 2 Captions {bokyeong.kim, geonmin.kim, thkim, thibault, shinkook.choi, junho.shin}@nota.ai, kyu@captions.ai

1 Introduction

The advancement of large language models (LLMs) Touvron et al. (2023); OpenAI (2023); Chowdhery et al. (2022); Zhang et al. (2022); Scao et al. (2022) has brought significant improvements in language-based tasks, enabling versatile applications such as powerful chatbots Google (2023); OpenAI (2022). However, the deployment of LLMs is constrained by their intensive computational demands. To make LLMs more accessible and efficient for practical use, various optimization strategies have been actively studied over recent years (see Zhu et al. (2023); Wan et al. (2023) for survey). This work focuses on structured pruning Fang et al. (2023); Li et al. (2017a), which removes groups of unnecessary weights and can facilitate hardware-agnostic acceleration.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Throughput vs. Latency for Different Models

### Overview

The left chart compares throughput (tokens/s) against latency (s) for four models: **Original**, **FLAP**, **LLM-Prn.**, and **Ours**. Throughput increases with latency, but the models exhibit distinct performance curves.

### Components/Axes

- **X-axis (Latency)**: Ranges from 1.6s to 6.4s, with gridlines at 1.6, 2.8, 4.0, 5.2, and 6.4s.

- **Y-axis (Throughput)**: Ranges from 32 to 8192 tokens/s, with gridlines at 32, 64, 128, 256, 512, 1024, 2048, 4096, and 8192.

- **Legend**:

- **Original**: Gray crosses (×)

- **FLAP**: Green circles (○)

- **LLM-Prn.**: Green triangles (△)

- **Ours**: Blue squares (■)

### Detailed Analysis

- **Original (Gray ×)**:

- Starts at ~64 tokens/s at 1.6s latency, rising sharply to ~8192 tokens/s at 6.4s.

- Slope is steep, indicating high throughput at high latency.

- **FLAP (Green ○)**:

- Begins at ~32 tokens/s at 1.6s, peaking at ~4096 tokens/s at 5.2s.

- Slope is less steep than Original, with a plateau at higher latencies.

- **LLM-Prn. (Green △)**:

- Starts at ~32 tokens/s at 1.6s, reaching ~4096 tokens/s at 5.2s.

- Similar to FLAP but with slightly lower throughput at 6.4s.

- **Ours (Blue ■)**:

- Starts at ~32 tokens/s at 1.6s, peaking at ~8192 tokens/s at 6.4s.

- Outperforms all models at higher latencies.

### Key Observations

- **Ours** achieves the highest throughput across all latencies, especially at 6.4s.

- **Original** has the steepest slope, suggesting it prioritizes throughput over latency efficiency.

- **FLAP** and **LLM-Prn.** show similar performance but lag behind **Ours** at higher latencies.

### Interpretation

The data suggests **Ours** is the most efficient model, balancing throughput and latency. **Original** sacrifices latency for maximum throughput, while **FLAP** and **LLM-Prn.** offer moderate performance. The use of distinct markers (×, ○, △, ■) and colors (gray, green, blue) in the legend ensures clear differentiation.

---

## Bar Charts: PPL and Accuracy Across Parameter Sizes

### Overview

The right side contains two bar charts:

1. **PPL (Perplexity)**: Lower values indicate better performance.

2. **Accuracy (%)**: Higher values indicate better performance.

Both charts compare models (**FLAP**, **SLEB**, **LLM-Prn.**, **Ours-CPT**) across parameter sizes (5.5B, 3.7B, 2.7B).

### Components/Axes

- **X-axis (Parameter Sizes)**: 5.5B, 3.7B, 2.7B.

- **Y-axis (PPL)**: Ranges from 0 to 40, with a dashed line at 20.

- **Y-axis (Accuracy)**: Ranges from 0% to 60%, with a dashed line at 50%.

- **Legend**:

- **FLAP (W✂️)**: Green bars

- **SLEB (D✂️)**: Blue bars

- **LLM-Prn. (W✂️)**: Green bars

- **Ours-CPT (D✂️)**: Blue bars

### Detailed Analysis

#### PPL Chart

- **5.5B Parameters**:

- **FLAP**: ~25 PPL

- **SLEB**: ~30 PPL

- **LLM-Prn.**: ~20 PPL

- **Ours-CPT**: ~10 PPL (lowest, best performance)

- **3.7B Parameters**:

- **FLAP**: ~35 PPL

- **SLEB**: ~40 PPL

- **LLM-Prn.**: ~30 PPL

- **Ours-CPT**: ~15 PPL

- **2.7B Parameters**:

- **FLAP**: ~45 PPL

- **SLEB**: ~50 PPL

- **LLM-Prn.**: ~40 PPL

- **Ours-CPT**: ~20 PPL

#### Accuracy Chart

- **5.5B Parameters**:

- **FLAP**: ~60%

- **SLEB**: ~55%

- **LLM-Prn.**: ~50%

- **Ours-CPT**: ~65% (highest, best performance)

- **3.7B Parameters**:

- **FLAP**: ~50%

- **SLEB**: ~45%

- **LLM-Prn.**: ~40%

- **Ours-CPT**: ~55%

- **2.7B Parameters**:

- **FLAP**: ~40%

- **SLEB**: ~35%

- **LLM-Prn.**: ~30%

- **Ours-CPT**: ~45%

### Key Observations

- **Ours-CPT** consistently outperforms other models in both PPL and accuracy across all parameter sizes.

- **FLAP** and **LLM-Prn.** show similar trends but with higher PPL and lower accuracy.

- **SLEB** performs poorly in PPL but slightly better in accuracy for 5.5B parameters.

### Interpretation

The charts highlight **Ours-CPT** as the most effective model, achieving the lowest PPL and highest accuracy. **FLAP** and **LLM-Prn.** are comparable but less efficient. The parameter size inversely correlates with performance: larger models (5.5B) outperform smaller ones (2.7B) in both metrics. The use of green (W✂️) and blue (D✂️) bars in the legend aligns with the model categories, ensuring clarity.

---

## Interpretation

The data demonstrates that **Ours** and **Ours-CPT** models excel in throughput, PPL, and accuracy, suggesting superior optimization. **Original** prioritizes throughput at the cost of latency, while **FLAP** and **LLM-Prn.** offer moderate performance. The parameter size directly impacts performance, with larger models (5.5B) outperforming smaller ones (2.7B). The visual design (colors, markers) effectively distinguishes models, aiding in quick comparisons.

</details>

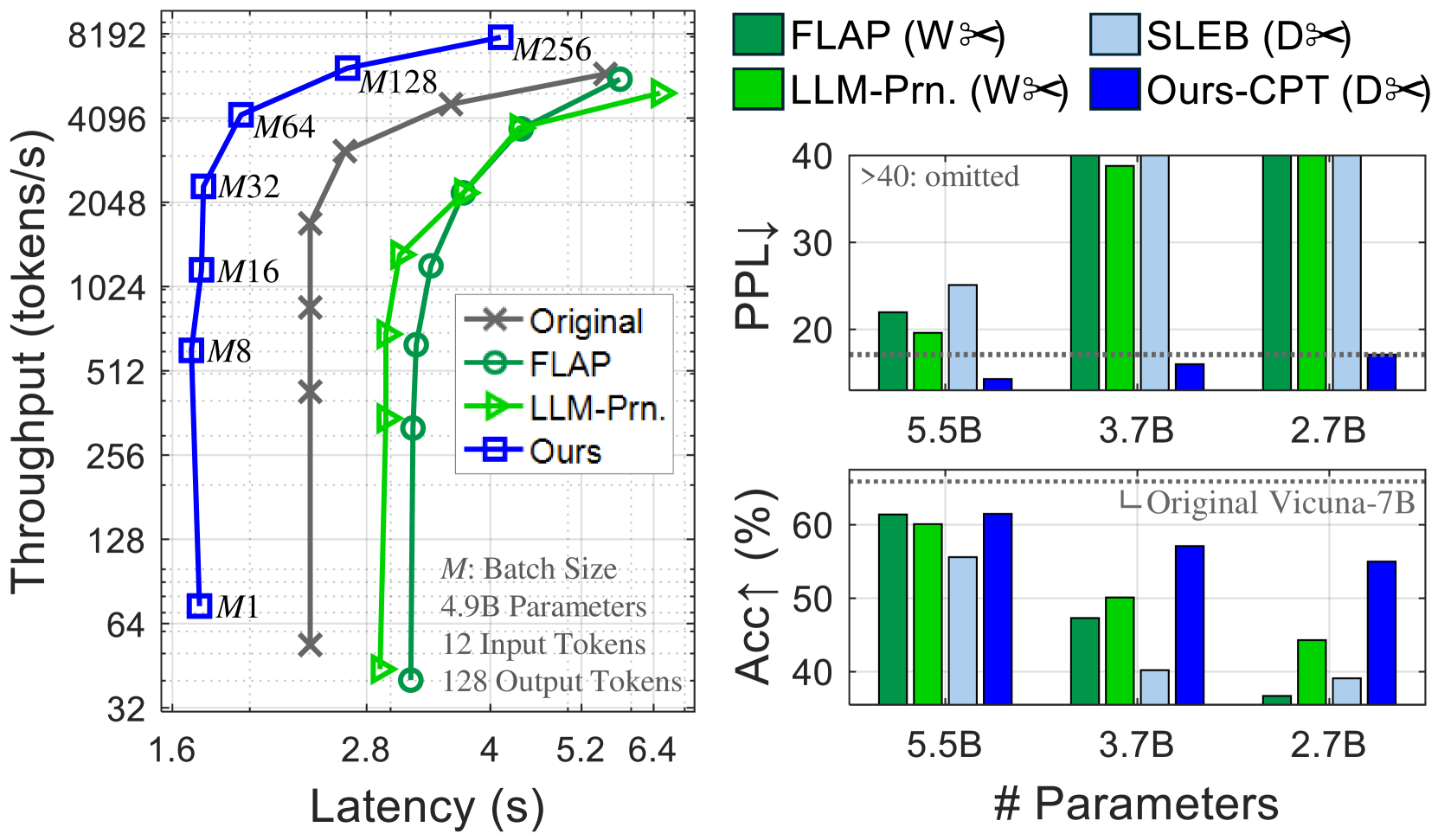

Figure 1: Inference of pruned Vicuna-7B models on an NVIDIA H100 GPU. Left: Compared to width pruning (W✂) of FLAP An et al. (2024) and LLM-Pruner Ma et al. (2023), our depth pruning (D✂) achieves faster inference. Right: Continued pretraining is crucial for restoring the quality of heavily pruned models with fewer than 3.7B parameters, enabling our method to surpass the baselines, including SLEB Song et al. (2024). See Table 3 for details.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Heatmaps and Line Graphs: GPU Utilization and Latency Analysis

### Overview

The image contains four heatmaps (a-d) and corresponding line graphs, analyzing GPU utilization and latency for different model configurations (7B and 13B parameter sizes) across sequence lengths and batch sizes. Heatmaps show utilization percentages, while line graphs compare latency for "Original," "Width," and "Depth" configurations.

---

### Components/Axes

#### Heatmaps (a-d)

- **X-axis**: Batch Size (1, 2, 4, 8, 16, 32, 64, 128, 256, 384, 512)

- **Y-axis**: Sequence Length (16, 32, 64, 128, 256, 512, 1024)

- **Color Scale**: Utilization (%) from 50% (yellow) to 100% (red)

- **Labels**:

- (a) 7B’s RTX3090 Utilization [%]

- (b) 7B’s A100 Utilization [%]

- (c) 7B’s H100 Utilization [%]

- (d) 13B’s H100 Utilization [%]

#### Line Graphs (a-d)

- **X-axis**: Batch Size (1, 10, 100, 384)

- **Y-axis**: Latency (s)

- **Legends**:

- Gray: Original

- Green: Width

- Blue: Depth

- **Annotations**:

- (a) L128, L512

- (b) L128, L512

- (c) L128, L512

- (d) L128, L512

---

### Detailed Analysis

#### Heatmaps

1. **7B’s RTX3090 (a)**:

- Utilization peaks at 100% for sequence length 1024 and batch size 512.

- Lower utilization (50-70%) for smaller batch sizes (1-16) and sequence lengths (16-64).

- High utilization (90-100%) dominates larger batch sizes (32-512) and sequence lengths (128-1024).

2. **7B’s A100 (b)**:

- Similar trend to RTX3090 but with slightly lower utilization (90-100%) at sequence length 1024 and batch size 512.

- Higher utilization (95-100%) for sequence length 512 and batch sizes ≥32.

3. **7B’s H100 (c)**:

- Near-100% utilization across all sequence lengths ≥128 and batch sizes ≥32.

- Lower utilization (60-80%) for smaller batch sizes (1-16) and sequence lengths (16-64).

4. **13B’s H100 (d)**:

- Consistently high utilization (80-100%) for sequence lengths ≥32 and batch sizes ≥8.

- Max utilization (100%) at sequence length 1024 and batch size 512.

#### Line Graphs

1. **7B’s RTX3090 (a)**:

- **L128**: Original (6s), Width (4.5s), Depth (2.5s) at batch size 32.

- **L512**: Original (22s), Width (18s), Depth (12s) at batch size 384.

2. **7B’s A100 (b)**:

- **L128**: Original (7s), Width (5s), Depth (3s) at batch size 32.

- **L512**: Original (21s), Width (16s), Depth (10s) at batch size 384.

3. **7B’s H100 (c)**:

- **L128**: Original (6s), Width (4s), Depth (2s) at batch size 32.

- **L512**: Original (13s), Width (9s), Depth (5s) at batch size 384.

4. **13B’s H100 (d)**:

- **L128**: Original (14s), Width (10s), Depth (6s) at batch size 32.

- **L512**: Original (20s), Width (14s), Depth (8s) at batch size 384.

---

### Key Observations

1. **Utilization Trends**:

- Larger models (13B) achieve higher utilization than smaller models (7B) across most configurations.

- H100 GPUs consistently outperform RTX3090 and A100 in utilization, especially for large sequence lengths and batch sizes.

2. **Latency Trends**:

- "Depth" configuration reduces latency by ~30-50% compared to "Original" across all models.

- "Width" configuration shows intermediate latency reduction (~20-40%).

- Latency increases with batch size, but optimized configurations (Width/Depth) scale more efficiently.

3. **Anomalies**:

- 13B’s H100 (d) shows near-100% utilization even at sequence length 32 and batch size 8, suggesting superior hardware efficiency.

- RTX3090 (a) has the lowest utilization (50-70%) for small batch sizes, indicating underutilization.

---

### Interpretation

1. **Hardware Efficiency**:

- H100 GPUs demonstrate significantly higher utilization than RTX3090 and A100, particularly for large models (13B). This suggests H100 is optimized for high-throughput workloads.

2. **Model Optimization**:

- "Depth" configuration reduces latency more effectively than "Width," likely due to architectural improvements in parallelism or memory access.

- Optimized configurations (Width/Depth) maintain high utilization while reducing latency, critical for real-time applications.

3. **Scalability**:

- Larger batch sizes and sequence lengths improve utilization but increase latency. However, optimized models mitigate this trade-off, enabling efficient scaling.

4. **Practical Implications**:

- For 7B models, H100 GPUs are ideal for high-throughput tasks, while RTX3090 may struggle with underutilization at small batch sizes.

- 13B models on H100 achieve near-maximal utilization, making them suitable for large-scale inference or training.

---

### Spatial Grounding and Color Matching

- **Legends**: Positioned below line graphs, with colors matching data points (gray=Original, green=Width, blue=Depth).

- **Heatmap Colors**: Red shades indicate high utilization (90-100%), yellow shades low utilization (50-70%).

- **Annotations**: Purple boxes highlight specific sequence lengths (L128, L512) in heatmaps.

---

### Conclusion

The data demonstrates that H100 GPUs outperform other hardware in utilization, especially for larger models. Optimized configurations ("Depth") reduce latency without sacrificing utilization, highlighting the importance of architectural improvements in model efficiency.

</details>

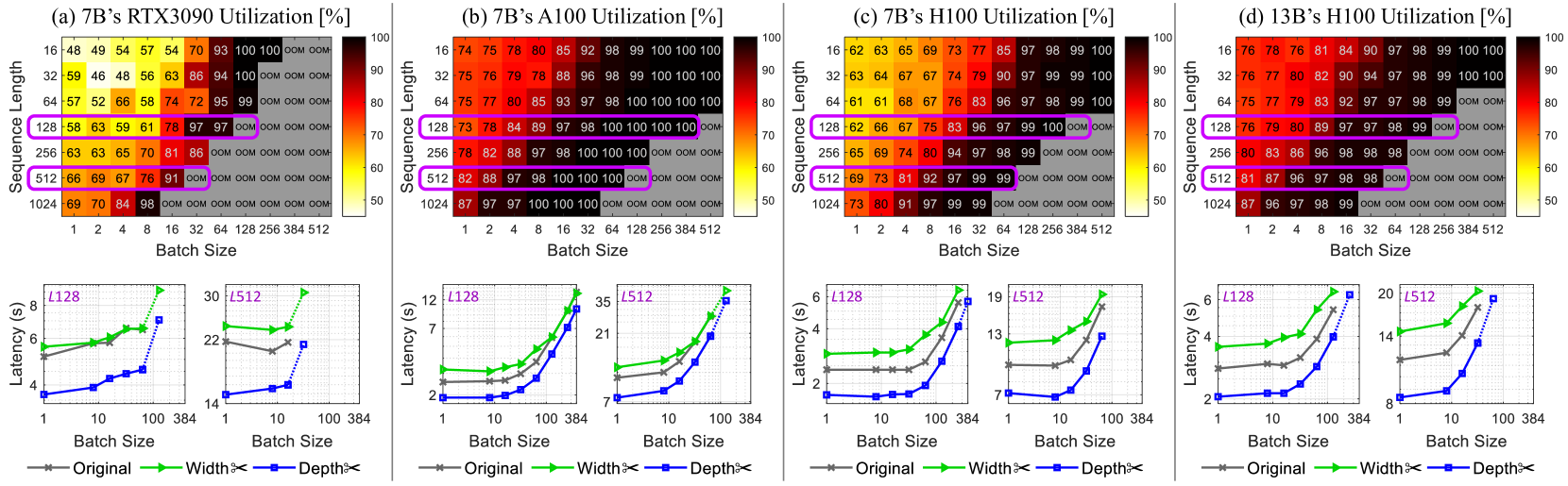

Figure 2: Top: GPU compute utilization of (a)–(c) running LLaMA-7B on different NVIDIA GPUs and that of (d) Vicuna-13B. Increasing batch sizes can enhance GPU utilization and throughput, but pushing this too far triggers OOM issues. Bottom: Latency results ( $L$ : target output length). Our depth pruning (blue lines) improves generation speeds over the original models (gray), while width pruning Ma et al. (2023) is ineffective (green). The dotted lines show that pruned models can operate with larger batch sizes that cause OOM errors for the original model. The results are obtained with pruning ratios of 27% for the 7B model and 29% for the 13B model.

In the context of compressing recent LLMs, LLM-Pruner Ma et al. (2023) and FLAP An et al. (2024) narrow the network width by pruning coupled structures (e.g., attention heads and their associated weight connections) while maintaining the number of layers. Sheared-LLaMA Xia et al. (2024) reduces not only the network width but also its depth by entirely removing some layers. Despite the existence of pruning methods Xia et al. (2022); Kurtic et al. (2023); Xia et al. (2024) that incorporate both width and depth aspects, there remains a gap in detailed analysis comparing these two factors (width vs. depth), specifically in relation to their impact on LLM inference efficiency.

In addition to substantial model sizes, LLM inference is distinguished by an autoregressive decoding mechanism, which predicts tokens one by one based on the input and the previously generated tokens. This sequential generation process often exhibits a memory-bound nature, leading to considerable underutilization of GPU compute abilities Kwon et al. (2023); Jin et al. (2023). While expanding batch sizes is a standard way to enhance GPU utilization and throughput, this approach is unfeasible for low-specification GPUs with memory constraints. We aim to improve inference speeds of LLMs, especially under hardware limitations that demand small batch sizes, where we observe that width-only pruning is inadequate.

Depth pruning is often regarded as being less effective in generation performance compared to width pruning, due to the elimination of bigger and coarse units. Contrary to the prevailing view, this study reveals that depth pruning is a compelling option for compressing LLMs, and it can achieve comparable or superior performance to prior studies depending on the retraining setups. Our contributions are summarized as follows:

1. In scenarios with limited batch sizes, our work demonstrates that width pruning is difficult to attain actual speedups in LLM’s autoregressive generation. This aspect has been underexplored in previous works.

1. We introduce a simple yet effective method for depth pruning of LLMs by exploring various design factors. Our compact LLMs, obtained by excluding several Transformer blocks, achieve actual speedups.

1. We show that under moderate pruning ratios, our depth pruning method with LoRA retraining can rival recent width pruning studies for LLMs in zero-shot capabilities. For more aggressive pruning (over 40% removal), intensive retraining with a full-parameter update is crucial for recovering performance.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Transformer Model Architecture Diagram

### Overview

The diagram illustrates the architecture of a transformer-based language model, including its hierarchical structure, key components (e.g., Transformer Blocks, MHA, FFN), and pruning techniques (depth and width pruning). The left side shows the overall model flow, while the right side zooms into the internal mechanics of a single Transformer Block.

### Components/Axes

#### Left Side (Model Flow):

- **Input Embedding**: Starting point for data processing.

- **Transformer Blocks**: Labeled sequentially as `Transformer Block_1` to `Transformer Block_N`, with `Transformer Block_n` highlighted (dashed blue border).

- **LM Head**: Final output layer for logit generation.

- **Pruning Indicators**:

- **Depth Pruning** (blue scissors): Applied to remove entire Transformer Blocks (e.g., `Block_n`).

- **Width Pruning** (green scissors): Applied to internal layers (e.g., MHA, FFN).

#### Right Side (Transformer Block Details):

- **MHA (Multi-Head Attention)**:

- Contains `QKV_1` to `QKV_H` (H heads).

- Normalization layer (`Norm`) after MHA.

- **FFN (Feed-Forward Network)**:

- Includes `Down` (downsampling), `Up & Gate` (upsampling with gating), and `Norm`.

- **Output**: Final output from the Transformer Block.

### Detailed Analysis

- **Transformer Block Structure**:

- Each block processes input through MHA and FFN, with residual connections (implied by arrows).

- Normalization layers (`Norm`) stabilize training by standardizing inputs.

- **Pruning Techniques**:

- **Depth Pruning**: Removes entire Transformer Blocks (e.g., `Block_n`), reducing model depth.

- **Width Pruning**: Truncates layers within blocks (e.g., `QKV_H` in MHA, `Up & Gate` in FFN), reducing width.

- **Flow Direction**:

- Input flows left-to-right through blocks, with outputs aggregated at the LM Head.

- Internal block flow: Input → MHA → FFN → Output.

### Key Observations

1. **Hierarchical Design**: The model scales with `N` Transformer Blocks, allowing flexibility in depth.

2. **Pruning Targets**:

- Depth pruning targets entire blocks (e.g., `Block_n`), while width pruning targets specific layers (e.g., `QKV_H`).

3. **Normalization**: Appears after both MHA and FFN, ensuring stable gradient flow.

4. **Gating Mechanism**: The `Up & Gate` layer in FFN introduces non-linearity and controls information flow.

### Interpretation

- **Model Efficiency**: Pruning techniques (depth/width) enable model compression without significant performance loss, critical for deployment on resource-constrained devices.

- **Attention Mechanism**: MHA with `H` heads allows parallel processing of contextual relationships, a core strength of transformers.

- **Non-Linearity**: The `Up & Gate` layer in FFN adds complexity, enabling the model to learn intricate patterns.

- **Trade-offs**: Depth pruning reduces computational cost but may limit representational capacity, while width pruning preserves depth but reduces feature diversity.

This diagram highlights the balance between model complexity and efficiency, emphasizing modular design and strategic pruning for optimization.

</details>

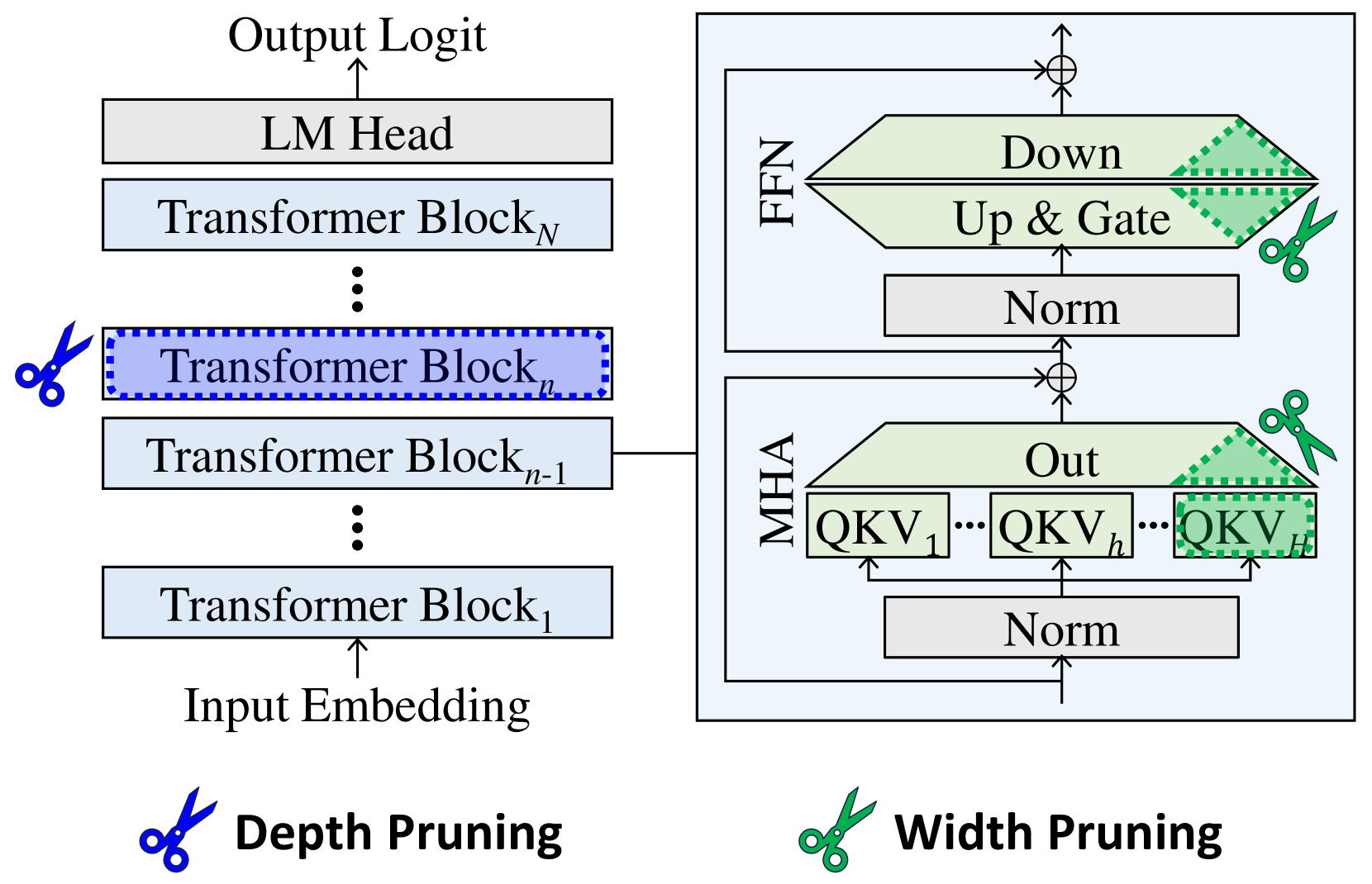

Figure 3: Comparison of pruning units. Width pruning reduces the size of projection weight matrices. Depth pruning removes Transformer blocks, or individual MHA and FFN modules.

2 Problem: Small-batch LLM Inference

Most LLMs are autoregressive models that sequentially produce tokens, based on the initial prompt and the sequence of tokens previously generated. The token-by-token generation process often involves multiplying large matrices (weights) with smaller matrices or vectors (activations). The primary bottleneck for inference efficiency is memory access operations rather than the speed of mathematical computations (referred to as ‘memory-bound’), leading to suboptimal use of GPU computing power Kwon et al. (2023). Though increasing batch sizes is a standard way to enhance GPU computation and throughput, it poses a risk of out-of-memory (OOM) errors (see Figure 2) Using the HF-Transformers library Wolf et al. (2020), we ran the LLMs with 12 input tokens for 20 batched runs after 10 warm-ups. Top: Peak GPU compute utilization NVIDIA (2018). Bottom: Mean latency over 20 runs. unless advanced system-level optimizations Kwon et al. (2023); Sheng et al. (2023) are applied.

In this study, our focus is on accelerating the inference of LLMs under small-batch conditions caused by hardware restrictions. Such situations are relevant for deploying LLMs on memory-constrained local devices, which can enhance user experience and data privacy protection. We show that (i) reducing weight shapes via width pruning does not improve generation speeds and can even degrade it when the resulting weight dimensions are unsuitable for GPU capabilities, and (ii) notable speed gains are only achievable through depth pruning that excludes a number of modules entirely.

3 Method: Block Pruning

An LLM is a stack of multiple Transformer blocks Vaswani et al. (2017), each of which contains a pair of multi-head attention (MHA) and feed-forward network (FFN) modules (see Figure 3). We choose this Transformer block as the prunable unit to prioritize reducing inference latency. Our approach is simple: after identifying unimportant blocks with straightforward metrics, we perform simple one-shot pruning.

3.1 Evaluation of Block-level Importance

We consider the following criteria to evaluate the significance of each block, ultimately selecting the Taylor+ and PPL metrics (see Table 6). Specifically, the linear weight matrix is denoted as $\mathbf{W}^{k,n}=\left[W_{i,j}^{k,n}\right]$ with a size of $(d_{\mathrm{out}},d_{\mathrm{in}})$ , where $k$ represents the type of operation (e.g., a query projection in MHA or an up projection in FFN) within the $n$ -th Transformer block. The weight importance scores are calculated at the output neuron level Sun et al. (2024), followed by summing In our exploration of various aggregation strategies (i.e., sum, mean, product, and max operations), summing the scores was effective at different pruning ratios. these scores to assess the block-level importance.

Magnitude (Mag).

This metric Li et al. (2017b) is a fundamental baseline in the pruning literature, assuming that weights with smaller norms are less informative. For the block-level analysis, we compute $I_{\mathrm{Magnitude}}^{n}=\sum_{k}\sum_{i}\sum_{j}\left|W_{i,j}^{k,n}\right|$ .

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Perplexity (PPL) Analysis Across Removed Block Indices

### Overview

The image contains two vertically stacked line charts comparing the perplexity (PPL) of two language models (LaMA-7B and Vicuna-7B) when individual blocks are removed. The top subplot uses a logarithmic scale for PPL (10² to 10⁴), while the bottom subplot uses a linear scale (20 to 35). Both charts track PPL changes as blocks 1–31 are removed sequentially.

---

### Components/Axes

- **X-axis (Both Subplots):**

- Label: "Removed Block Index"

- Ticks: 1, 4, 7, 10, 13, 16, 19, 22, 25, 28, 31

- Scale: Linear (1–31)

- **Y-axis (Top Subplot):**

- Label: "PPL"

- Scale: Logarithmic (10² to 10⁴)

- **Y-axis (Bottom Subplot):**

- Label: "PPL"

- Scale: Linear (20 to 35)

- **Legends:**

- **Top Subplot (Top-Right Corner):**

- LaMA-7B: Single Block (red crosses)

- LaMA-7B: Original (dashed red line)

- Vicuna-7B: Single Block (blue diamonds)

- Vicuna-7B: Original (dashed blue line)

- **Bottom Subplot (Bottom-Right Corner):**

- Identical labels to the top subplot, with the same color/marker mappings.

---

### Detailed Analysis

#### Top Subplot (Logarithmic Scale)

- **LaMA-7B: Single Block**

- Sharp spike at block 1 (≈10⁴ PPL).

- Secondary spike at block 4 (≈10³ PPL).

- Drops to baseline (≈10² PPL) after block 4, remaining flat until block 31.

- **LaMA-7B: Original**

- Dashed red line overlaps with "Single Block" at blocks 1 and 4.

- Remains flat (≈10² PPL) after block 4.

- **Vicuna-7B: Single Block**

- Spike at block 1 (≈10³ PPL).

- Secondary spike at block 4 (≈10² PPL).

- Drops to baseline (≈10² PPL) after block 4, remaining flat until block 31.

- **Vicuna-7B: Original**

- Dashed blue line overlaps with "Single Block" at blocks 1 and 4.

- Remains flat (≈10² PPL) after block 4.

#### Bottom Subplot (Linear Scale)

- **LaMA-7B: Single Block**

- Spike at block 1 (≈30 PPL).

- Secondary spike at block 4 (≈24 PPL).

- Drops to baseline (≈22 PPL) after block 4, remaining flat until block 31.

- Final spike at block 31 (≈35 PPL).

- **LaMA-7B: Original**

- Dashed red line overlaps with "Single Block" at blocks 1 and 4.

- Remains flat (≈22 PPL) after block 4.

- **Vicuna-7B: Single Block**

- Spike at block 1 (≈28 PPL).

- Secondary spike at block 4 (≈26 PPL).

- Drops to baseline (≈25 PPL) after block 4, remaining flat until block 31.

- **Vicuna-7B: Original**

- Dashed blue line overlaps with "Single Block" at blocks 1 and 4.

- Remains flat (≈25 PPL) after block 4.

---

### Key Observations

1. **Initial Spikes:** Both models exhibit significant PPL increases when blocks 1 and 4 are removed, with LaMA-7B showing larger spikes (especially in the logarithmic scale).

2. **Stability Post-Block 4:** After block 4, PPL stabilizes for both models until block 31.

3. **Final Spike (Block 31):** LaMA-7B shows a sharp PPL increase at block 31 (≈35 PPL in linear scale), while Vicuna-7B remains stable.

4. **Scale Differences:** The logarithmic scale emphasizes the magnitude of early spikes, while the linear scale highlights absolute differences in later trends.

---

### Interpretation

- **Model Sensitivity:** LaMA-7B is more sensitive to block removal, as evidenced by higher PPL spikes (e.g., 10⁴ vs. 10³ in the top subplot). This suggests critical dependencies on early blocks.

- **Robustness:** Vicuna-7B demonstrates greater stability, with smaller spikes and consistent PPL after block 4.

- **Block 31 Anomaly:** The final spike in LaMA-7B at block 31 may indicate a structurally critical block whose removal disrupts model performance.

- **Original vs. Single Block:** The overlapping "Original" and "Single Block" lines at blocks 1 and 4 imply that removing these blocks has a comparable impact to the original configuration.

This analysis highlights the importance of early blocks in maintaining model coherence, with LaMA-7B being more vulnerable to structural changes than Vicuna-7B.

</details>

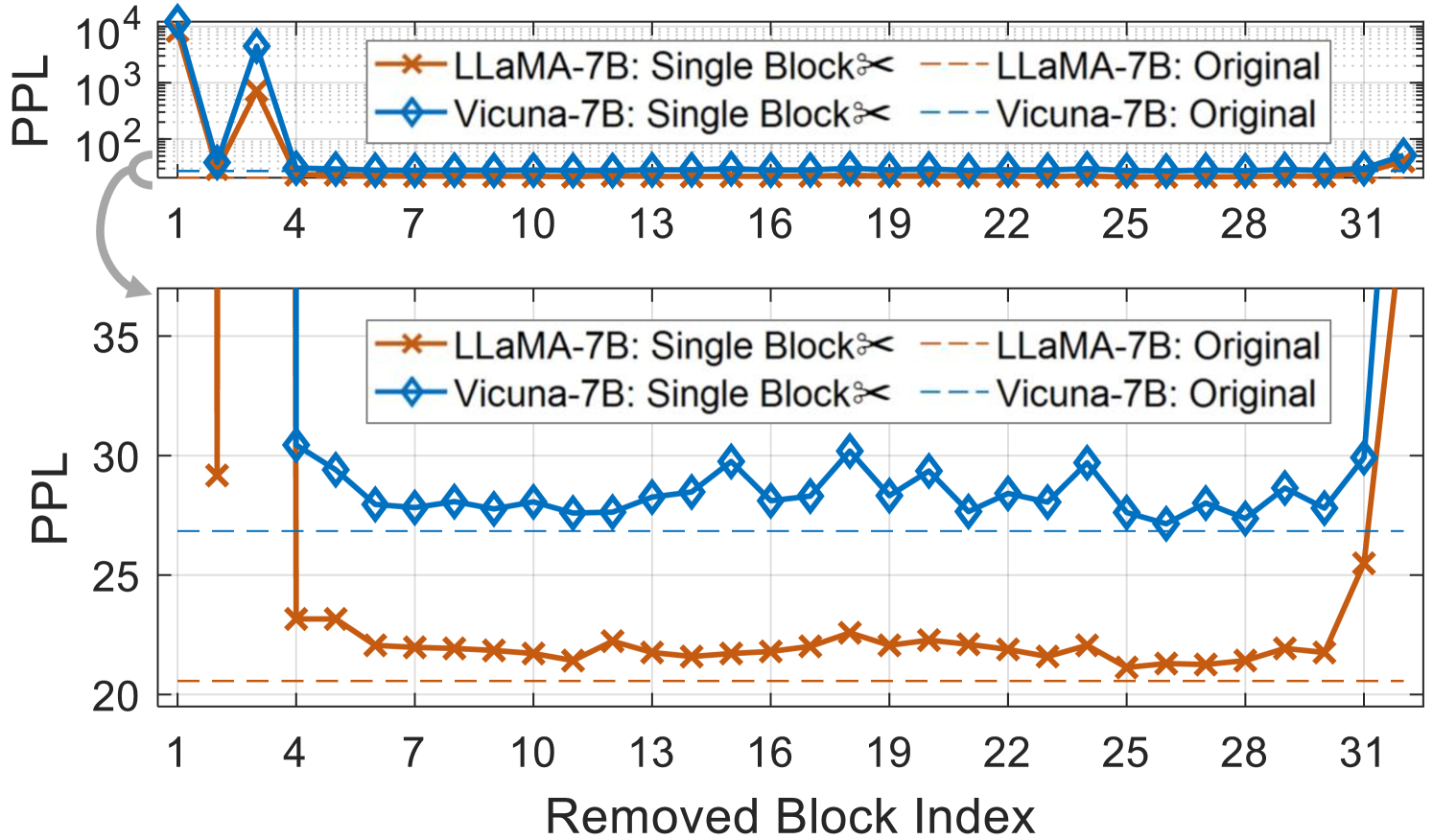

Figure 4: Estimated importance of each Transformer block on the calibration set. We prune blocks that have lower (better) PPL scores, as their removal causes less disruption to the output.

Taylor.

Assessing the error caused by the removal of a weight parameter helps in identifying its significance. For a given calibration dataset $D$ , this can be expressed as the alteration in the training loss $\mathcal{L}$ LeCun et al. (1989); Molchanov et al. (2019): $\left|\mathcal{L}(W_{i,j}^{k,n};D)-\mathcal{L}(W_{i,j}^{k,n}=0;D)\right|%

≈\left|\frac{∂\mathcal{L}(D)}{∂ W_{i,j}^{k,n}}W_{i,j}^{k,n%

}\right|$ , where we omit the second-order derivatives by following Ma et al. (2023). We define the block score as $I_{\mathrm{Taylor}}^{n}=\sum_{k}\sum_{i}\sum_{j}\left|\frac{∂\mathcal{L%

}(D)}{∂ W_{i,j}^{k,n}}W_{i,j}^{k,n}\right|$ .

Mag+ and Taylor+.

Upon using the aforementioned metrics, the early blocks are labeled as unimportant, but their removal leads to severe performance drops. Similar to a popular heuristic Gale et al. (2019); Lee et al. (2021), we preserve the first four and the last two blocks Ma et al. (2023) by excluding them from the pruning candidates.

Perplexity (PPL).

Redundant blocks contribute less to the model’s outputs, and their removal leads to smaller degradation in PPL, a commonly used metric for language modeling tasks. In this context, we eliminate each block from the source model and monitor its influence on PPL using the calibration set $D$ : $I_{\mathrm{PPL}}^{n}=\exp\left\{-\frac{1}{SL}\sum_{s}\sum_{l}\log p_{\theta^{n%

}}(x_{l}^{(s)}|x_{<l}^{(s)})\right\}$ , where $\theta^{n}$ denotes the model without its $n$ -th block, and $s=1,...,S$ and $l=1,...,L$ are the indices for sequences and tokens in $D$ . The PPL can be derived from the next-token prediction loss and requires only forward-pass computation. As shown in Figure 4, several blocks are removable with only a slight effect on the PPL metric. Pruning initial and final blocks significantly degrades the performance, which necessitates keeping them unpruned.

3.2 One-shot Pruning

After sorting the block-level importance scores, we prune the less crucial blocks in a single step. Since every block has an identical configuration and it is easy to calculate the number of parameters for one block, we readily decide how many blocks should be removed to meet the target model size.

Iterative pruning with intermediate updates of block importance can be applied as in SLEB Song et al. (2024). However, it requires much longer computing time than one-shot pruning as the number of blocks increases. Furthermore, we empirically observed that retraining strategies matter more than whether the pruning scheme is iterative or one-shot, especially under severe pruning ratios.

3.3 Retraining for Performance Restoration

Some recent studies suggest that structured pruning of LLMs can be retraining-free Song et al. (2024); An et al. (2024) or feasible with low retraining budgets Ma et al. (2023). However, the types of retraining over different pruning rates have been underexplored. Here, we compare several retraining strategies and their implications for regaining the quality of pruned models.

Low-Rank Adaptation (LoRA).

LoRA Hu et al. (2022) enables the efficient refinement of LLMs with less computation. Ma et al. (2023) has applied LoRA to enhance moderately width-pruned models (e.g., with 20% of units removed) on an instruction tuning dataset. In this work, we show that LoRA can also recover the ability of depth-pruned models; however, it does not perform well for extensive compression rates (e.g., with over 50% removal) in either width or depth pruning.

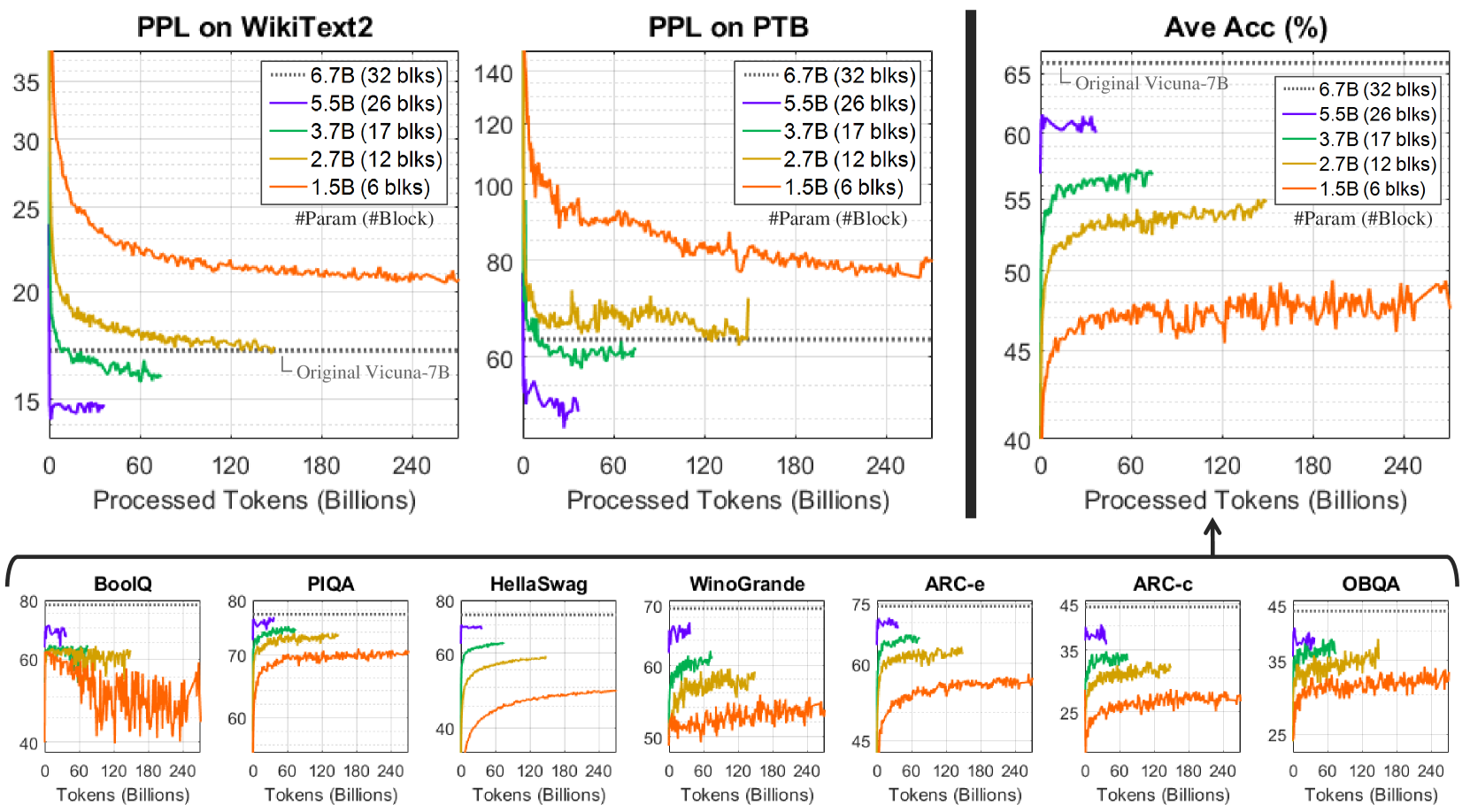

Continued Pretraining (CPT).

We leverage CPT, which involves updating all parameters, on a large-scale pretraining corpus. This powerful retraining is critical for severely depth-pruned models, extending its proven effectiveness for width- or hybrid-pruned models Xia et al. (2024). Though requiring greater resources than LoRA, CPT on pruned networks significantly accelerates learning and yields superior results compared to training the same architectures from random initialization.

CPT $\Rightarrow$ LoRA

Once CPT on the pretraining data is completed, LoRA with the instruction set is applied to observe whether further performance improvement can be achieved.

Model #Param #Block $\ddagger$ #Head $\ddagger$ FFN-D $\ddagger$ Original 7B 6.7B 32 32 11008 35% $\dagger$ Wanda-sp 4.5B 32 21 7156 FLAP 4.5B 32 23.0±8.8 6781.1±2440.6 LLM-Pruner 4.4B 32 18 6054 Ours 4.5B 21 32 11008 Original 13B 13.0B 40 40 13824 37% $\dagger$ Wanda-sp 8.4B 40 26 8710 FLAP 8.3B 40 27.5±11.3 8326.6±2874.9 LLM-Pruner 8.2B 40 22 7603 Ours 8.3B 25 40 13824

$\dagger$ Reduction ratio for the number of parameters. $\ddagger$ #Block: #Transformer blocks; #Head: #attention heads of MHA; FFN-D: intermediate size of FFN.

Table 1: Examples of pruned architectures on 7B-parameter (top) and 13B-parameter (bottom) models. While Wanda-sp Sun et al. (2024); An et al. (2024), FLAP An et al. (2024), and LLM-Pruner Ma et al. (2023) reduce the network width, our method reduces the network depth. See Table 14 for the details.

Zero-shot Performance H100 80GB $\ddagger$ RTX3090 24GB $\ddagger$ PPL↓ #Param & Method WikiText2 PTB Ave Acc↑ (%) $\dagger$ Latency↓ (s) Throughput↑ (tokens/s) Latency↓ (s) Throughput↑ (tokens/s) LLaMA-7B: 6.7B (Original) 12.6 22.1 66.3 2.4 53.7 5.1 25.0 Wanda-sp 21.4 47.2 51.8 3.1 41.7 7.6 16.7 FLAP 17.0 30.1 59.5 3.2 40.5 7.7 16.5 W✂ LLM-Pruner 17.6 30.4 61.8 3.0 43.2 6.0 21.4 SLEB 18.5 31.6 57.6 1.9 66.0 4.5 28.4 Ours: Taylor+ 20.2 32.3 63.5 1.9 66.0 4.5 28.4 5.5B (20% Pruned) D✂ Ours: PPL 17.7 30.7 61.9 1.9 66.0 4.5 28.4 Wanda-sp 133.6 210.1 36.9 3.1 41.6 8.0 16.1 FLAP 25.6 44.4 52.7 3.2 40.5 8.1 15.8 W✂ LLM-Pruner 24.2 40.7 55.5 2.9 44.4 6.1 21.1 SLEB 34.2 49.8 50.1 1.6 80.1 3.4 37.8 Ours: Taylor+ 33.2 58.5 55.4 1.6 80.1 3.4 37.8 4.5B (35% Pruned) D✂ Ours: PPL 23.1 38.8 55.2 1.6 80.1 3.4 37.8 Zero-shot Performance H100 80GB RTX3090 24GB PPL↓ #Param & Method WikiText2 PTB Ave Acc↑ (%) $\dagger$ Latency↓ (s) Throughput↑ (tokens/s) Latency↓ (s) Throughput↑ (tokens/s) Vicuna-13B: 13.0B (Original) 14.7 51.6 68.3 2.8 45.5 OOM OOM Wanda-sp 19.0 71.8 63.6 3.8 34.1 9.8 12.9 FLAP 18.8 65.3 63.3 3.9 32.6 10.2 12.6 W✂ LLM-Pruner 16.0 57.0 65.3 3.8 34.0 7.5 17.3 SLEB 20.5 68.7 60.4 2.3 55.7 5.4 23.9 Ours: Taylor+ 18.1 61.6 66.7 2.3 55.7 5.4 23.9 10.5B (21% Pruned) D✂ Ours: PPL 16.1 56.5 64.9 2.3 55.7 5.4 23.9 Wanda-sp 36.6 123.5 52.7 3.8 33.8 10.5 12.6 FLAP 28.7 96.2 58.3 3.9 32.9 9.7 13.2 W✂ LLM-Pruner 22.2 74.0 59.7 3.6 35.6 7.1 18.0 SLEB 41.6 116.5 49.4 1.8 69.7 4.0 31.7 Ours: Taylor+ 34.2 90.4 61.4 1.8 69.7 4.0 31.7 8.3B (37% Pruned) D✂ Ours: PPL 22.1 73.6 59.1 1.8 69.7 4.0 31.7

$\dagger$ Average accuracy on seven commonsense reasoning tasks. $\ddagger$ Measured with 12 input tokens, 128 output tokens, and a batch size of 1 on a single GPU.

Table 2: Results with moderate-level pruning on LLaMA-7B (top) and Vicuna-13B-v1.3 (bottom). Our depth pruning (D✂) with LoRA retraining achieves similar performance to width pruning (W✂) methods Sun et al. (2024); An et al. (2024); Ma et al. (2023) and outperforms the recent SLEB Song et al. (2024), while effectively accelerating LLM inference. See Table 9 for detailed results.

Metric PPL↓ on WikiText2 Ave Acc↑ (%) $\dagger$ Throughput↑ (tokens/s) $\ddagger$ #Param after Pruning $\star$ 5.5B 3.7B 2.7B 1.5B 5.5B 3.7B 2.7B 1.5B 5.5B 3.7B 2.7B 1.5B Wanda-sp 24.4 364.5 1370.1 8969.3 58.5 36.7 37.0 35.6 41.7 40.5 40.7 43.5 FLAP 22.0 63.1 589.3 28727.9 61.4 47.3 36.7 34.5 40.5 41.2 41.2 42.3 W✂ LLM-Pruner 19.6 38.8 66.4 202.9 60.1 50.1 44.3 38.4 43.2 43.4 43.9 44.8 SLEB 25.1 110.4 731.5 18730.8 55.6 40.2 39.1 37.4 66.0 84.0 107.4 182.5 Ours, LoRA 18.8 37.0 68.9 1002.2 60.7 47.0 40.1 37.1 Ours, CPT 14.3 16.0 17.1 20.5 61.5 57.1 55.0 49.2 D✂ Ours, CPT $\Rightarrow$ LoRA 14.8 16.5 17.8 21.1 63.1 57.4 55.0 49.0 66.0 (1.2×) 84.0 (1.6×) 107.4 (2.0×) 182.5 (3.4×) Vicuna-7B: 6.7B (Original) 17.1 65.9 53.7

$\star$ The pruning ratios of 20%, 45%, 60%, and 80% lead to 5.5B, 3.7B, 2.7B, and 1.5B parameters, respectively. The PPL criterion is used to obtain our models. $\dagger$ Average accuracy on seven commonsense reasoning tasks. $\ddagger$ Measured with 12 input tokens, 128 output tokens, and a batch size of 1 on an NVIDIA H100 GPU.

Table 3: Effectiveness of CPT under high compression rates on Vicuna-7B-v1.3. CPT is essential to regain the performance of extensively pruned models (e.g., fewer than 3.7B parameters), whereas retraining-free An et al. (2024); Song et al. (2024) and LoRA-based Ma et al. (2023) approaches yield unsatisfactory results.

Model Generated Text Vicuna-7B AI can create a logo in seconds! You choose the design elements you like best from pre-made templates, and then the AI generates a unique logo design just for you. This is a great way to get a professional-looking logo without hiring … Wanda-sp AI can create a logo in seconds now. Inso Ison Im Ison Im I I was able can do do do now do now do now Innowhere you you you you you you you you you you you you you you you you you you you you you you you you you you you … FLAP AI can create a logo in seconds The V Just ing A A A A A A A A A, A A A A A S Just a. : S . No An , A A the A The The… The The… A, A A A A. A A A This A The Just A A A A The A A The A A A. and The A All T A A.. A the … LLM-Pruner AI can create a logo in seconds: 10 seconds! I’ve created a logo in minutes using an AI. It has only one image in front for 8 minutes. 4 hours = 65.000 + 12 hours Words: English Speech: 100 words in 5 minutes 1. 60 hours 2. 492 … SLEB AI can create a logo in seconds while. :). I have put. I believe it . →.]. Here least →… </<erus known). See →www.giftoings . </<.next]; info. I’ve don-> .…erutex“ Here for. to “Itin.g for the next….. .0.>1260070uro.‘s- … Ours, CPT AI can create a logo in seconds. Even if you don’t have a designer who knows the best layouts to use or what colors work best together, AI is already hard at work creating the perfect combination to your artwork. AI is also capable of …

Table 4: Generation examples from the original Vicuna-7B and the 60%-pruned models with 2.7B parameters.

4 Experimental Setup

Source Model.

Our testbed includes LLaMA-7B Touvron et al. (2023) and Vicuna-{7B, 13B}-v1.3 Chiang et al. (2023), which are famous LLMs.

Baseline.

LLM-Pruner Ma et al. (2023), FLAP An et al. (2024), and Wanda-sp (i.e., a structured variant An et al. (2024) of Wanda Sun et al. (2024)) serve as the baselines for width pruning. Table 1 shows the pruned architectures under similar numbers of parameters. We also examine SLEB Song et al. (2024), a retraining-free block pruning method for LLMs, which has been concurrently introduced with our study. Section E.1 describes the baselines in detail.

Data.

Following Ma et al. (2023), we randomly select 10 samples from BookCorpus Zhu et al. (2015) to compute block-level significance during the pruning stage. We also use this calibration dataset for the baseline methods to ensure a fair comparison. In LoRA retraining, 50K samples of the refined Alpaca Taori et al. (2023) are used for instruction tuning. In CPT retraining, we leverage SlimPajama Soboleva et al. (2023), which consists of 627B tokens for LLM pretraining.

Evaluation.

Following Touvron et al. (2023), we measure zero-shot accuracy on commonsense reasoning datasets (i.e., BoolQ Clark et al. (2019), PIQA Bisk et al. (2020), HellaSwag Zellers et al. (2019), WinoGrande Sakaguchi et al. (2019), ARC-easy Clark et al. (2018), ARC-challenge Clark et al. (2018), and OpenbookQA Mihaylov et al. (2018)) using the lm-evaluation-harness package EleutherAI (2023). We also report zero-shot PPL on WikiText2 Merity et al. (2017) and PTB Marcus et al. (1993).

Latency and Throughput.

We follow Sheng et al. (2023) to measure the metrics. Given a batch size $M$ and an output sequence length $L$ (excluding the input length), the latency $T$ represents the time required to handle the given prompts and produce $ML$ output tokens. The throughput is computed as $ML/T$ . We report the average results from 20 runs after the initial 10 warm-up batches.

Implementation.

We use the Hugging Face’s Transformers library Wolf et al. (2020). For pruning and LoRA retraining, an NVIDIA A100 GPU is employed. For CPT retraining, eight NVIDIA H100 GPUs are utilized, with a training duration of less than two weeks for each model size. For inference, we opt for the default setup of the Transformers library. See Section E.2 for the details.

5 Results

5.1 Moderate Pruning and LoRA Retraining

Tables 2 and 9 show the zero-shot performance and inference efficiency of differently pruned models. Here, our models are obtained using a light LoRA retraining setup. The width pruning methods Ma et al. (2023); An et al. (2024); Sun et al. (2024) do not improve LLM inference efficiency. Under limited input (batch) scales, the processing speed largely hinges on the frequency of memory access operations. Addressing this issue by merely reducing matrix sizes is challenging, unless they are completely removed. The speed even worsens compared to the original model due to GPU-unfriendly operation dimensions (e.g., the hidden sizes of FFN are often not divisible by 8 (Table 14), which hinders the effective utilization of GPU Tensor Cores Andersch et al. (2019)).

On the contrary, our depth pruning exhibits speedups through the complete removal of several Transformer blocks, resulting in fewer memory access and matrix-level operations between activations and weights. Moreover, under the same LoRA retraining protocol as Ma et al. (2023), our models achieve zero-shot scores on par with finely width-pruned models. Although SLEB Song et al. (2024) enhances inference efficiency similar to our method, its approach without retraining falls short in developing proficient small LLMs. See Section B for detailed results.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Graphs: Performance Comparison of Initialization Methods

### Overview

The image contains two side-by-side line graphs comparing the performance of two initialization methods ("Random Init." and "Pruned Init.") across update steps. The left graph tracks **PPL** (Perplexity), while the right graph tracks **Ave Acc** (Average Accuracy in percentage). Both graphs show trends over 120K update steps.

---

### Components/Axes

#### Left Graph (PPL)

- **Y-axis**: PPL (Perplexity), scaled from 17 to 41.

- **X-axis**: Update Steps, scaled from 0 to 120K (increments of 40K).

- **Legend**:

- Purple line: "Random Init."

- Blue line: "Pruned Init."

- **Legend Position**: Top-left corner of the graph.

#### Right Graph (Ave Acc %)

- **Y-axis**: Ave Acc (%), scaled from 35 to 56.

- **X-axis**: Update Steps, identical to the left graph (0 to 120K).

- **Legend**:

- Purple line: "Random Init."

- Blue line: "Pruned Init."

- **Legend Position**: Top-left corner of the graph.

---

### Detailed Analysis

#### Left Graph (PPL)

- **Random Init. (Purple)**:

- Starts at ~41 PPL at 0 steps.

- Gradually decreases to ~17 PPL by 120K steps.

- Slope: Steady decline with minor fluctuations.

- **Pruned Init. (Blue)**:

- Starts at ~41 PPL at 0 steps.

- Sharp decline to ~17 PPL by ~40K steps.

- Stabilizes near 17 PPL with minor oscillations.

#### Right Graph (Ave Acc %)

- **Random Init. (Purple)**:

- Starts at ~35% at 0 steps.

- Gradual increase to ~49% by 120K steps.

- Slope: Slow, steady rise with minor fluctuations.

- **Pruned Init. (Blue)**:

- Starts at ~35% at 0 steps.

- Rapid rise to ~56% by ~40K steps.

- Stabilizes near 56% with minor oscillations.

---

### Key Observations

1. **Pruned Init. outperforms Random Init.** in both metrics:

- Achieves lower PPL faster (by ~40K steps).

- Reaches higher accuracy earlier (by ~40K steps).

2. **Convergence**:

- Both methods stabilize after ~80K steps, but Pruned Init. maintains superior performance.

3. **Trend Consistency**:

- PPL and Ave Acc trends are inversely related (lower PPL correlates with higher accuracy).

---

### Interpretation

The data demonstrates that **Pruned Initialization** significantly accelerates model convergence compared to **Random Initialization**. This suggests that pruning techniques (e.g., weight pruning, parameter selection) improve training efficiency by reducing the number of updates required to achieve optimal performance. The inverse relationship between PPL and accuracy highlights the trade-off between model uncertainty and predictive power. These findings are critical for optimizing training pipelines in machine learning, where computational resources are often constrained.

</details>

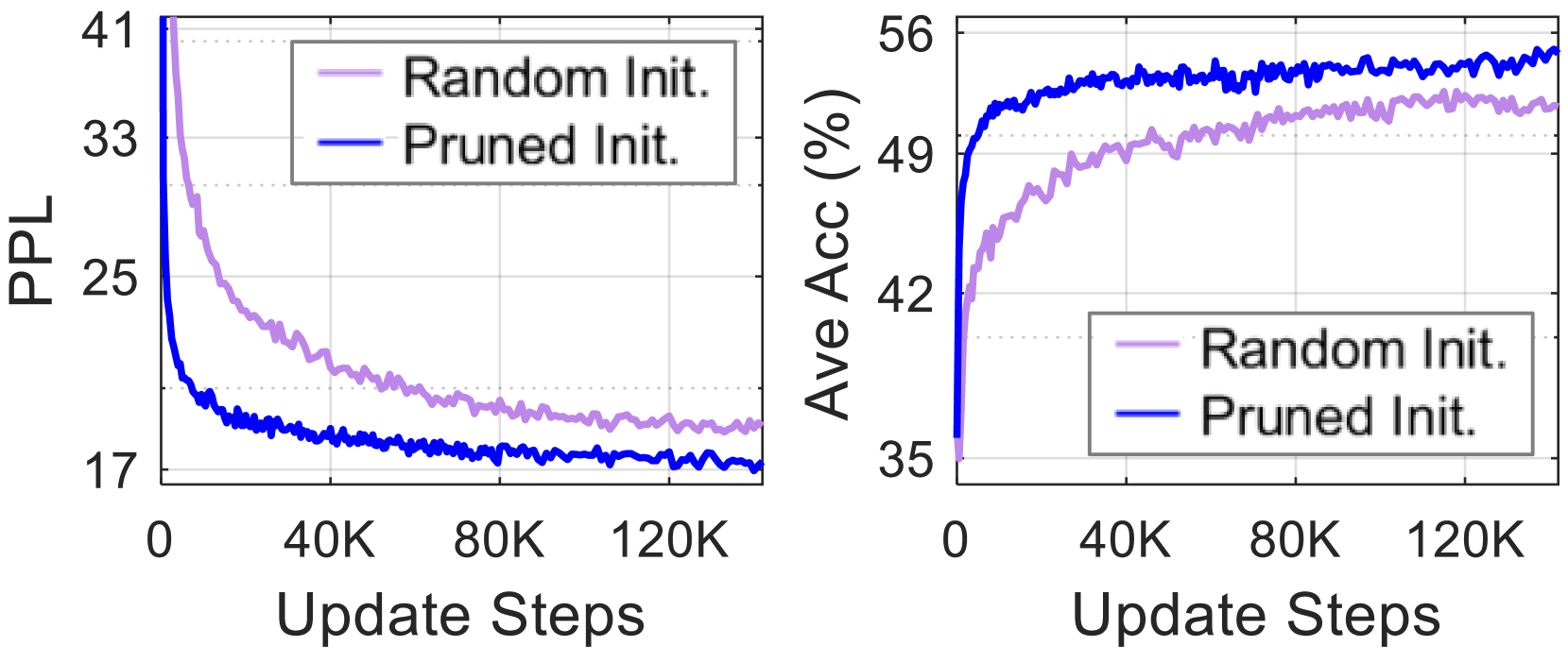

Figure 5: Zero-shot scores during the training progress of the 2.7B-parameter model from Vicuna-7B. Using the pruned network as initialization (blue lines) for CPT accelerates the learning process and yields better results than starting from scratch (purple).

5.2 Aggressive Pruning and CPT Retraining

Table 3 compares different retraining methods. Our models are obtained using the PPL criterion. Under high pruning ratios (e.g., yielding fewer than 3.7B parameters), LoRA-based tuning (LLM-Pruner Ma et al. (2023); Ours, LoRA) and retraining-free approaches (Wanda-sp Sun et al. (2024); An et al. (2024), FLAP An et al. (2024), SLEB Song et al. (2024)) fail to recover model performance. In contrast, CPT proves effective in regaining the quality of heavily pruned models. CPT $\Rightarrow$ LoRA slightly improves zero-shot accuracy for some pruning ratios, but with a minor drop in PPL. Table 4 presents samples produced by 2.7B-parameter models (60% pruned). In contrast to the baselines, our model can generate text that is fluent and appropriately aligned with the context.

Compared to LoRA retraining, the computational costs for CPT are considerably higher: LoRA can be completed within a day using just one GPU, while CPT requires about two weeks with eight GPUs in our experiments, with the option to use more if needed. However, utilizing a pruned network for initialization in CPT leads to faster learning and better results than building the same-sized models from scratch (see Figure 5), highlighting its efficacy for smaller LLMs. Section C presents the learning progress in detail.

<details>

<summary>extracted/5685909/fig/gptq_results.png Details</summary>

### Visual Description

## Bar Charts: VRAM Usage and Accuracy Comparison Across Model Sizes

### Overview

The image contains two side-by-side bar charts comparing model performance metrics (VRAM usage and accuracy) across four parameter sizes (2.7B, 3.7B, 5.5B, 6.7B). Each chart uses four color-coded categories: "Ours" (blue), "Ours + GPTQ" (teal), "Original" (black), and "Original + GPTQ" (gray). The charts demonstrate trade-offs between resource efficiency and performance.

### Components/Axes

**Left Chart (VRAM Usage in GB):**

- **X-axis**: Model parameter sizes (2.7B, 3.7B, 5.5B, 6.7B)

- **Y-axis**: VRAM usage (GB), ranging from 2 to 12

- **Legend**:

- Blue = "Ours"

- Teal = "Ours + GPTQ"

- Black = "Original"

- Gray = "Original + GPTQ"

- **Legend Position**: Top of chart

**Right Chart (Average Accuracy %):**

- **X-axis**: Same parameter sizes as left chart

- **Y-axis**: Accuracy (%), ranging from 52.5 to 65

- **Legend**: Same color coding as left chart

- **Legend Position**: Top of chart

### Detailed Analysis

**VRAM Usage (Left Chart):**

- **2.7B Parameters**:

- Blue ("Ours"): ~5.2GB

- Teal ("Ours + GPTQ"): ~2.5GB

- Black ("Original"): ~10.5GB

- Gray ("Original + GPTQ"): ~4.7GB

- **3.7B Parameters**:

- Blue: ~7.2GB

- Teal: ~3.1GB

- Black: ~11.8GB

- Gray: ~4.9GB

- **5.5B Parameters**:

- Blue: ~10.6GB

- Teal: ~4.1GB

- Black: ~12.4GB

- Gray: ~5.1GB

- **6.7B Parameters**:

- Blue: ~12.4GB

- Teal: ~4.8GB

- Black: ~12.4GB

- Gray: ~5.1GB

**Accuracy (Right Chart):**

- **2.7B Parameters**:

- Blue: ~55.1%

- Teal: ~54.7%

- Black: ~57.8%

- Gray: ~56.9%

- **3.7B Parameters**:

- Blue: ~57.3%

- Teal: ~57.2%

- Black: ~59.5%

- Gray: ~57.4%

- **5.5B Parameters**:

- Blue: ~63.2%

- Teal: ~61.3%

- Black: ~66.1%

- Gray: ~63.5%

- **6.7B Parameters**:

- Blue: ~63.2%

- Teal: ~61.3%

- Black: ~66.1%

- Gray: ~63.5%

### Key Observations

1. **VRAM Trends**:

- "Ours" (blue) shows linear VRAM growth with parameter size (5.2GB → 12.4GB).

- "Original" (black) maintains high VRAM (~10.5GB–12.4GB) across all sizes.

- "Ours + GPTQ" (teal) consistently uses the least VRAM (2.5GB–4.8GB).

- "Original + GPTQ" (gray) reduces VRAM by ~50% compared to "Original" but remains higher than "Ours + GPTQ".

2. **Accuracy Trends**:

- "Ours" (blue) improves accuracy from 55.1% to 63.2% as parameters increase.

- "Original" (black) achieves the highest accuracy (66.1% at 5.5B/6.7B).

- "Ours + GPTQ" (teal) shows minimal accuracy gains (54.7% → 61.3%).

- "Original + GPTQ" (gray) maintains ~63.5% accuracy at larger sizes.

### Interpretation

The data reveals a trade-off between resource efficiency and performance:

- **"Ours" models** prioritize accuracy growth with parameter size but require significant VRAM.

- **GPTQ quantization** reduces VRAM usage by ~50% for both "Ours" and "Original" models but sacrifices ~2–3% accuracy.

- The "Original" model achieves the highest accuracy but at the cost of high VRAM consumption.

- At 6.7B parameters, "Ours" matches "Original" in VRAM (12.4GB) but lags in accuracy (63.2% vs. 66.1%).

This suggests that "Ours" offers a scalable architecture for accuracy-focused applications, while GPTQ provides a lightweight alternative for resource-constrained environments. The "Original" model remains optimal for accuracy-critical tasks despite its resource demands.

</details>

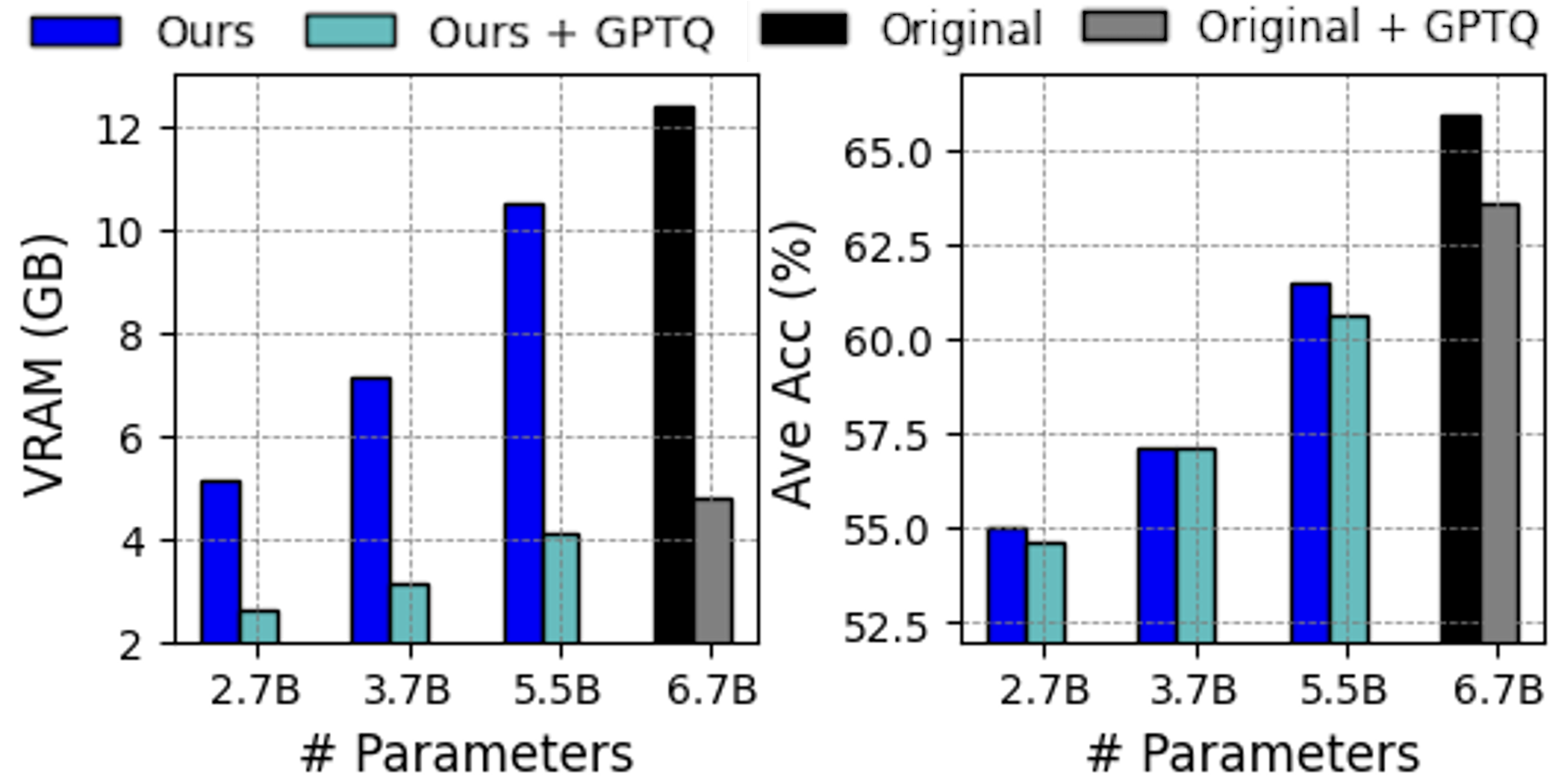

Figure 6: Further compression with GPTQ. Our pruned models following 4-bit weight quantization exhibit reduced VRAM usage without significant performance decline. The results for the original Vicuna-7B are presented for reference. See Section D for the details.

5.3 Applicability with Quantization

Leveraging post-training quantization (PTQ) effectively lowers the memory consumption for inference of LLMs. Figure 6 shows the results of applying GPTQ Frantar et al. (2023), a well-known PTQ method, to our depth-pruned models after CPT. The 4-bit weight quantization significantly reduces the VRAM demands across various model sizes without noticeable degradation in zero-shot accuracy. See Section D for further results.

5.4 Ablation Study

We explore various design factors, including the criteria for importance evaluation, the choice of units for depth pruning, and the impact of calibration data volume. The results presented in this section were obtained through LoRA retraining.

5.4.1 Importance Criteria for Block Pruning

Table 6 presents the results of block pruning using various significance criteria. The basic methods without the ‘+’ label fail to maintain essential initial blocks, causing a decline in performance. The Mag+ method, which preserves these critical blocks, partially improves the scores; however, its effectiveness is still inferior compared to the other methods, indicating that relying solely on weight magnitude could be improper for pruning decisions. The Taylor+ criterion enhances accuracy in commonsense reasoning tasks, while the PPL method leads to better generation quality without relying on heuristic selection of pruning candidates.

5.4.2 Structural Unit for Depth Pruning

Pruning individual MHA and FFN modules, which are more fine-grained units than Transformer blocks, is also possible. To examine its effect, we measure the impact of removing each module on the PPL of the calibration set and selectively eliminate the unnecessary modules. The same LoRA retraining procedure is conducted.

Block Pruning Criterion PPL↓ Ave Acc↑ (%) $\dagger$ WikiText2 PTB 5.5B (20% Pruned) Mag 7720.7 10618.7 34.4 Mag+ 19.4 36.3 56.1 Taylor 3631.7 4327.9 35.5 Taylor+ 20.2 32.3 63.5 PPL 17.7 30.7 61.9 4.5B (35% Pruned) Mag 8490.1 14472.1 34.9 Mag+ 36.9 61.1 49.3 Taylor 7666.8 10913.1 35.3 Taylor+ 33.2 58.5 55.4 PPL 23.1 38.8 55.2

$\dagger$ Average accuracy on seven commonsense reasoning tasks.

Table 5: Comparison of pruning criteria on LLaMA-7B. The Taylor+ method excels in commonsense reasoning accuracy, while the PPL criterion leads to better generation performance.

Depth Pruning Unit #Param PPL↓ Ave Acc↑ (%) $\dagger$ WikiText2 PTB Individual MHA & FFN 5.7B 20.8 34.8 63.1 Transformer Block 5.7B 16.9 29.3 62.8 Individual MHA & FFN 5.3B 25.2 41.3 61.1 Transformer Block 5.3B 18.6 33.1 60.6 Individual MHA & FFN 4.6B 38.9 58.7 52.5 Transformer Block 4.5B 23.1 38.8 55.2 Individual MHA & FFN 4.0B 63.2 88.9 48.3 Transformer Block 3.9B 31.1 47.3 50.6

$\dagger$ Average accuracy on seven commonsense reasoning tasks.

Table 6: Comparison of depth pruning granularities on LLaMA-7B. Removing entire Transformer blocks instead of individual MHA and FFN modules generally yields better results.

Table 6 shows the results of depth pruning at different granularities. For the models with more than 5B parameters, removing individual MHA and FFN modules results in better downstream task accuracy but worse PPL compared to removing entire Transformer blocks. For smaller models than 5B, block-level pruning achieves superior results in terms of all the examined metrics. This differs from the common belief that removing finer units yields better performance.

Given the collaborative roles of the modules (i.e., MHA captures dependency relations Vaswani et al. (2017), while skip connections and FFN prevent the rank collapse in purely attention-driven networks Dong et al. (2021)), it may be suboptimal to treat them in isolation. Taking the 5.3B model in Table 6 as an example, module-level pruning results in consecutive FFNs in some positions, potentially impairing the model’s ability to handle word interactions. In contrast, with block-level removal, the loss of information could be compensated by neighboring blocks that serve similar functions.

5.4.3 Calibration Data Volume

The calibration set is employed to assess the weight significance of width pruning baselines and the block-level importance of our method during the pruning phase. Table 7 presents the results obtained by varying the number of calibration samples in the BookCorpus dataset. The scores remain relatively stable for the examined methods, suggesting that 10 samples could be sufficient. However, our Taylor+ method encounters a drop in downstream task accuracy when 1K samples are used, leaving the exploration of calibration data characteristics for future research.

6 Related Work

Numerous techniques have been developed towards efficient LLMs, including knowledge distillation Fu et al. (2023); Hsieh et al. (2023), quantization Frantar et al. (2023); Dettmers et al. (2022), and system-level inference acceleration Dao (2023); Kwon et al. (2023). In this study, we focus on network pruning LeCun et al. (1989), which has a long-standing reputation in the model compression field. Beyond its use in relatively small-scale convolutional networks Li et al. (2017b); He et al. (2019) and Transformer models Yu et al. (2022); Xia et al. (2022); Kurtic et al. (2023), pruning has recently begun to be applied to contemporary LLMs. Several studies Frantar and Alistarh (2023); Sun et al. (2024) employ unstructured and semi-structured Aojun Zhou (2021) pruning by zeroing individual neurons. SparseGPT Frantar and Alistarh (2023) addresses the layer-wise reconstruction problem for pruning by computing Hessian inverses. Wanda Sun et al. (2024) introduces a pruning criterion that involves multiplying weight magnitudes by input feature norms. Despite the plausible performance of pruned models using zero masks, they necessitate specialized support for sparse matrix operations to ensure actual speedups.

In contrast, structured pruning removes organized patterns, such as layers Fan et al. (2020); Jha et al. (2023), MHA’s attention heads Voita et al. (2019); Michel et al. (2022), FFN’s hidden sizes Nova et al. (2023); Santacroce et al. (2023), and some hybrid forms Lagunas et al. (2021); Xia et al. (2022); Kwon et al. (2022); Kurtic et al. (2023), thereby improving inference efficiency in a hardware-agnostic way. To compress LLMs, FLAP An et al. (2024) and LLM-Pruner Ma et al. (2023) eliminate coupled structures in the aspect of network width while retaining the number of layers. Sheared-LLaMA Xia et al. (2024) introduces a mask learning phase aimed at identifying prunable components in both the network’s width and depth. Our study explores the relatively untapped area of depth-only pruning for multi-billion parameter LLMs, which can markedly accelerate latency while attaining competitive performance.

Strategies for skipping layers Schuster et al. (2022); Corro et al. (2023); Raposo et al. (2024) effectively serve to decrease computational burdens. Moreover, depth pruning approaches Song et al. (2024); Men et al. (2024); Tang et al. (2024) for LLMs have been proposed concurrently with our work, based on the architectural redundancy in LLMs.

Evaluation Metric Method # Calibration Samples 10 50 100 1000 PPL↓ on WikiText2 Wanda-sp 21.4 21.4 21.7 20.8 FLAP 17.0 17.5 17.5 17.3 LLM-Pruner 17.6 17.2 17.0 OOM $\ddagger$ Ours: Taylor+ 20.2 20.2 19.0 19.6 Ours: PPL 17.7 17.2 17.4 17.4 Ave Acc↑ (%) $\dagger$ Wanda-sp 51.8 52.9 52.0 53.0 FLAP 59.5 59.7 59.9 60.8 LLM-Pruner 61.8 61.6 61.7 OOM $\ddagger$ Ours: Taylor+ 63.5 63.5 63.9 61.7 Ours: PPL 61.9 61.5 61.7 61.7

$\dagger$ Average accuracy on seven commonsense reasoning tasks. $\ddagger$ Out-of-memory error on an A100 (80GB) using the official code.

Table 7: Impact of calibration data volume. The results of 20%-pruned LLaMA-7B are reported.

7 Conclusion

By introducing a block pruning method, we conduct an in-depth comparative analysis on the impact of network width and depth on LLM compression. Our work involves the one-shot removal of Transformer blocks. Despite its simplicity, our method with light LoRA retraining matches the zero-shot capabilities of recent width pruning techniques under moderate pruning levels. Moreover, it offers significant inference speedups in resource-constrained scenarios that require running LLMs with limited batch sizes, where width pruning falls short. When comparing retraining strategies, continued pretraining on a large-scale dataset significantly surpasses LoRA-based tuning, particularly in cases of severe pruning. We hope this study will support the development of potent small LLMs.

Limitations

Due to constraints in computational resources, we could not test our method on LLMs exceeding 13B parameters. We plan to explore larger models in future research, given that our method can be applied to any model size. Secondly, we found that continued pretraining was essential for performance recovery after extensive pruning. Further exploration of different training corpora and hyperparameters could lead to additional performance improvements. Lastly, commercially available LLMs are optimized for human preferences, such as safety and helpfulness, through alignment tuning. We have yet to assess human preferences throughout the entire process of pruning, retraining, and quantization. We hope future research will address this aspect.

Acknowledgments

We thank the Microsoft Startups Founders Hub program and the AI Industrial Convergence Cluster Development project funded by the Ministry of Science and ICT (MSIT, Korea) and Gwangju Metropolitan City for their generous support of GPU resources.

References

- An et al. (2024) Yongqi An, Xu Zhao, Tao Yu, Ming Tang, and Jinqiao Wang. 2024. Fluctuation-based adaptive structured pruning for large language models. In AAAI.

- Andersch et al. (2019) Michael Andersch, Valerie Sarge, and Paulius Micikevicius. 2019. Tensor core dl performance guide. In NVIDIA GTC.

- Aojun Zhou (2021) Junnan Zhu Jianbo Liu Zhijie Zhang Kun Yuan Wenxiu Sun Hongsheng Li Aojun Zhou, Yukun Ma. 2021. Learning n:m fine-grained structured sparse neural networks from scratch. In ICLR.

- Bisk et al. (2020) Yonatan Bisk, Rowan Zellers, Ronan Le Bras, Jianfeng Gao, and Yejin Choi. 2020. Piqa: Reasoning about physical commonsense in natural language. In AAAI.

- Chiang et al. (2023) Wei-Lin Chiang, Zhuohan Li, Zi Lin, Ying Sheng, Zhanghao Wu, Hao Zhang, Lianmin Zheng, et al. 2023. Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality.

- Chowdhery et al. (2022) Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, et al. 2022. Palm: Scaling language modeling with pathways. arXiv preprint arXiv:2204.02311.

- Clark et al. (2019) Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. 2019. BoolQ: Exploring the surprising difficulty of natural yes/no questions. In NAACL.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. 2018. Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv preprint arXiv:1803.05457.

- Corro et al. (2023) Luciano Del Corro, Allie Del Giorno, Sahaj Agarwal, Bin Yu, Ahmed Awadallah, and Subhabrata Mukherjee. 2023. Skipdecode: Autoregressive skip decoding with batching and caching for efficient llm inference. arXiv preprint arXiv:2307.02628.

- Dao (2023) Tri Dao. 2023. Flashattention-2: Faster attention with better parallelism and work partitioning. arXiv preprint arXiv:2307.08691.

- Dettmers et al. (2022) Tim Dettmers, Mike Lewis, Younes Belkada, and Luke Zettlemoyer. 2022. Llm.int8(): 8-bit matrix multiplication for transformers at scale. In NeurIPS.

- Dong et al. (2021) Yihe Dong, Jean-Baptiste Cordonnier, and Andreas Loukas. 2021. Attention is not all you need: Pure attention loses rank doubly exponentially with depth. In ICML.

- EleutherAI (2023) EleutherAI. 2023. Language model evaluation harness (package version 3326c54). https://github.com/EleutherAI/lm-evaluation-harness.

- Fan et al. (2020) Angela Fan, Edouard Grave, and Armand Joulin. 2020. Reducing transformer depth on demand with structured dropout. In ICLR.

- Fang et al. (2023) Gongfan Fang, Xinyin Ma, Mingli Song, Michael Bi Mi, and Xinchao Wang. 2023. Depgraph: Towards any structural pruning. In CVPR.

- Frantar and Alistarh (2023) Elias Frantar and Dan Alistarh. 2023. SparseGPT: Massive language models can be accurately pruned in one-shot. In ICML.

- Frantar et al. (2023) Elias Frantar, Saleh Ashkboos, Torsten Hoefler, and Dan Alistarh. 2023. OPTQ: Accurate quantization for generative pre-trained transformers. In ICLR.

- Fu et al. (2023) Yao Fu, Hao Peng, Litu Ou, Ashish Sabharwal, and Tushar Khot. 2023. Specializing smaller language models towards multi-step reasoning. In ICML.

- Gale et al. (2019) Trevor Gale, Erich Elsen, and Sara Hooker. 2019. The state of sparsity in deep neural networks. In ICML Workshop.

- Google (2023) Google. 2023. An important next step on our ai journey. https://blog.google/technology/ai/bard-google-ai-search-updates/.

- He et al. (2019) Yang He, Ping Liu, Ziwei Wang, Zhilan Hu, and Yi Yang. 2019. Filter pruning via geometric median for deep convolutional neural networks acceleration. In CVPR.

- Hsieh et al. (2023) Cheng-Yu Hsieh, Chun-Liang Li, Chih-Kuan Yeh, Hootan Nakhost, et al. 2023. Distilling step-by-step! outperforming larger language models with less training data and smaller model sizes. In Findings of ACL.

- Hu et al. (2022) Edward J. Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. 2022. Lora: Low-rank adaptation of large language models. In ICLR.

- Jha et al. (2023) Ananya Harsh Jha, Tom Sherborne, Evan Pete Walsh, Dirk Groeneveld, Emma Strubell, and Iz Beltagy. 2023. How to train your (compressed) large language model. arXiv preprint arXiv:2305.14864.

- Jin et al. (2023) Yunho Jin, Chun-Feng Wu, David Brooks, and Gu-Yeon Wei. 2023. S3: Increasing gpu utilization during generative inference for higher throughput. In NeurIPS.

- Kurtic et al. (2023) Eldar Kurtic, Elias Frantar, and Dan Alistarh. 2023. Ziplm: Inference-aware structured pruning of language models. In NeurIPS.

- Kwon et al. (2022) Woosuk Kwon, Sehoon Kim, Michael W. Mahoney, Joseph Hassoun, Kurt Keutzer, and Amir Gholami. 2022. A fast post-training pruning framework for transformers. In NeurIPS.

- Kwon et al. (2023) Woosuk Kwon, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph E. Gonzalez, Hao Zhang, and Ion Stoica. 2023. Efficient memory management for large language model serving with pagedattention. In SOSP.

- Lagunas et al. (2021) François Lagunas, Ella Charlaix, Victor Sanh, and Alexander M. Rush. 2021. Block pruning for faster transformers. In EMNLP.

- LeCun et al. (1989) Yann LeCun, John Denker, and Sara Solla. 1989. Optimal brain damage. In NeurIPS.

- Lee et al. (2021) Jaeho Lee, Sejun Park, Sangwoo Mo, Sungsoo Ahn, and Jinwoo Shin. 2021. Layer-adaptive sparsity for the magnitude-based pruning. In ICLR.

- Li et al. (2017a) Hao Li, Asim Kadav, Igor Durdanovic, Hanan Samet, and Hans Peter Graf. 2017a. Pruning filters for efficient convnets. In ICLR.

- Li et al. (2017b) Hao Li, Asim Kadav, Igor Durdanovic, Hanan Samet, and Hans Peter Graf. 2017b. Pruning filters for efficient convnets. In ICLR.

- Ma et al. (2023) Xinyin Ma, Gongfan Fang, and Xinchao Wang. 2023. Llm-pruner: On the structural pruning of large language models. In NeurIPS.

- Marcus et al. (1993) Mitchell P. Marcus, Beatrice Santorini, and Mary Ann Marcinkiewicz. 1993. Building a large annotated corpus of English: The Penn Treebank. Computational Linguistics, 19(2):313–330.

- Men et al. (2024) Xin Men, Mingyu Xu, Qingyu Zhang, Bingning Wang, Hongyu Lin, Yaojie Lu, Xianpei Han, and Weipeng Chen. 2024. Shortgpt: Layers in large language models are more redundant than you expect. arXiv preprint arXiv:2403.03853.

- Merity et al. (2017) Stephen Merity, Caiming Xiong, James Bradbury, and Richard Socher. 2017. Pointer sentinel mixture models. In ICLR.

- Michel et al. (2022) Paul Michel, Omer Levy, and Graham Neubig. 2022. Are sixteen heads really better than one? In NeurIPS.

- Mihaylov et al. (2018) Todor Mihaylov, Peter Clark, Tushar Khot, and Ashish Sabharwal. 2018. Can a suit of armor conduct electricity? a new dataset for open book question answering. In EMNLP.

- Molchanov et al. (2019) Pavlo Molchanov, Arun Mallya, Stephen Tyree, Iuri Frosio, and Jan Kautz. 2019. Importance estimation for neural network pruning. In CVPR.

- Nova et al. (2023) Azade Nova, Hanjun Dai, and Dale Schuurmans. 2023. Gradient-free structured pruning with unlabeled data. In ICML.

- NVIDIA (2018) NVIDIA. 2018. Useful nvidia-smi queries. https://enterprise-support.nvidia.com/s/article/Useful-nvidia-smi-Queries-2.

- OpenAI (2022) OpenAI. 2022. Introducing chatgpt. https://openai.com/blog/chatgpt.

- OpenAI (2023) OpenAI. 2023. Gpt-4 technical report. arXiv preprint arXiv:2303.08774.

- Raffel et al. (2020) Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Yanqi Zhou, Wei Li, and Peter J Liu. 2020. Exploring the limits of transfer learning with a unified text-to-text transformer. JMLR, 21(140):1–67.

- Raposo et al. (2024) David Raposo, Sam Ritter, Blake Richards, Timothy Lillicrap, Peter Conway Humphreys, and Adam Santoro. 2024. Mixture-of-depths: Dynamically allocating compute in transformer-based language models. arXiv preprint arXiv:2404.02258.

- Sakaguchi et al. (2019) Keisuke Sakaguchi, Ronan Le Bras, Chandra Bhagavatula, and Yejin Choi. 2019. Winogrande: An adversarial winograd schema challenge at scale. arXiv preprint arXiv:1907.10641.

- Santacroce et al. (2023) Michael Santacroce, Zixin Wen, Yelong Shen, and Yuanzhi Li. 2023. What matters in the structured pruning of generative language models? arXiv preprint arXiv:2302.03773.

- Scao et al. (2022) Teven Le Scao, Angela Fan, Christopher Akiki, Ellie Pavlick, Suzana Ilić, Daniel Hesslow, et al. 2022. Bloom: A 176b-parameter open-access multilingual language model. arXiv preprint arXiv:2211.05100.

- Schuster et al. (2022) Tal Schuster, Adam Fisch, Jai Gupta, Mostafa Dehghani, Dara Bahri, Vinh Tran, Yi Tay, and Donald Metzler. 2022. Confident adaptive language modeling. In NeurIPS.

- Sheng et al. (2023) Ying Sheng, Lianmin Zheng, Binhang Yuan, Zhuohan Li, Max Ryabinin, et al. 2023. Flexgen: High-throughput generative inference of large language models with a single gpu. In ICML.

- Soboleva et al. (2023) Daria Soboleva, Faisal Al-Khateeb, Robert Myers, Jacob R Steeves, Joel Hestness, and Nolan Dey. 2023. SlimPajama: A 627B token cleaned and deduplicated version of RedPajama. https://huggingface.co/datasets/cerebras/SlimPajama-627B.

- Song et al. (2024) Jiwon Song, Kyungseok Oh, Taesu Kim, Hyungjun Kim, Yulhwa Kim, and Jae-Joon Kim. 2024. Sleb: Streamlining llms through redundancy verification and elimination of transformer blocks. In ICML.

- Sun et al. (2024) Mingjie Sun, Zhuang Liu, Anna Bair, and J. Zico Kolter. 2024. A simple and effective pruning approach for large language models. In ICLR.

- Tang et al. (2024) Yehui Tang, Fangcheng Liu, Yunsheng Ni, Yuchuan Tian, Zheyuan Bai, Yi-Qi Hu, Sichao Liu, Shangling Jui, Kai Han, and Yunhe Wang. 2024. Rethinking optimization and architecture for tiny language models. arXiv preprint arXiv:2402.02791.

- Taori et al. (2023) Rohan Taori, Ishaan Gulrajani, Tianyi Zhang, Yann Dubois, Xuechen Li, Carlos Guestrin, et al. 2023. Stanford Alpaca: An Instruction-following LLaMA model. https://github.com/tatsu-lab/stanford_alpaca.

- Touvron et al. (2023) Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, et al. 2023. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971.

- Vaswani et al. (2017) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Ł ukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In NeurIPS.

- Voita et al. (2019) Elena Voita, David Talbot, Fedor Moiseev, Rico Sennrich, and Ivan Titov. 2019. Analyzing multi-head self-attention: Specialized heads do the heavy lifting, the rest can be pruned. In ACL.

- Wan et al. (2023) Zhongwei Wan, Xin Wang, Che Liu, Samiul Alam, Yu Zheng, Zhongnan Qu, Shen Yan, Yi Zhu, Quanlu Zhang, Mosharaf Chowdhury, and Mi Zhang. 2023. Efficient large language models: A survey. arXiv preprint arXiv:2312.03863.

- Wolf et al. (2020) Thomas Wolf, Lysandre Debut, Victor Sanh, Julien Chaumond, Clement Delangue, et al. 2020. Transformers: State-of-the-art natural language processing. In EMNLP: System Demonstrations.

- Xia et al. (2024) Mengzhou Xia, Tianyu Gao, Zhiyuan Zeng, and Danqi Chen. 2024. Sheared llama: Accelerating language model pre-training via structured pruning. In ICLR.

- Xia et al. (2022) Mengzhou Xia, Zexuan Zhong, and Danqi Chen. 2022. Structured pruning learns compact and accurate models. In ACL.

- Yu et al. (2022) Shixing Yu, Tianlong Chen, Jiayi Shen, Huan Yuan, Jianchao Tan, Sen Yang, Ji Liu, and Zhangyang Wang. 2022. Unified visual transformer compression. In ICLR.

- Zellers et al. (2019) Rowan Zellers, Ari Holtzman, Yonatan Bisk, Ali Farhadi, and Yejin Choi. 2019. Hellaswag: Can a machine really finish your sentence? In ACL.

- Zhang et al. (2022) Susan Zhang, Stephen Roller, Naman Goyal, Mikel Artetxe, Moya Chen, et al. 2022. Opt: Open pre-trained transformer language models. arXiv preprint arXiv:2205.01068.

- Zhu et al. (2023) Xunyu Zhu, Jian Li, Yong Liu, Can Ma, and Weiping Wang. 2023. A survey on model compression for large language models. arXiv preprint arXiv:2308.07633.

- Zhu et al. (2015) Yukun Zhu, Ryan Kiros, Rich Zemel, Ruslan Salakhutdinov, et al. 2015. Aligning books and movies: Towards story-like visual explanations by watching movies and reading books. In ICCV.

Appendix — Shortened LLaMA: Depth Pruning for LLMs

Appendix A Additional Results of Inference Efficiency

A.1 Latency-Throughput Trade-Off

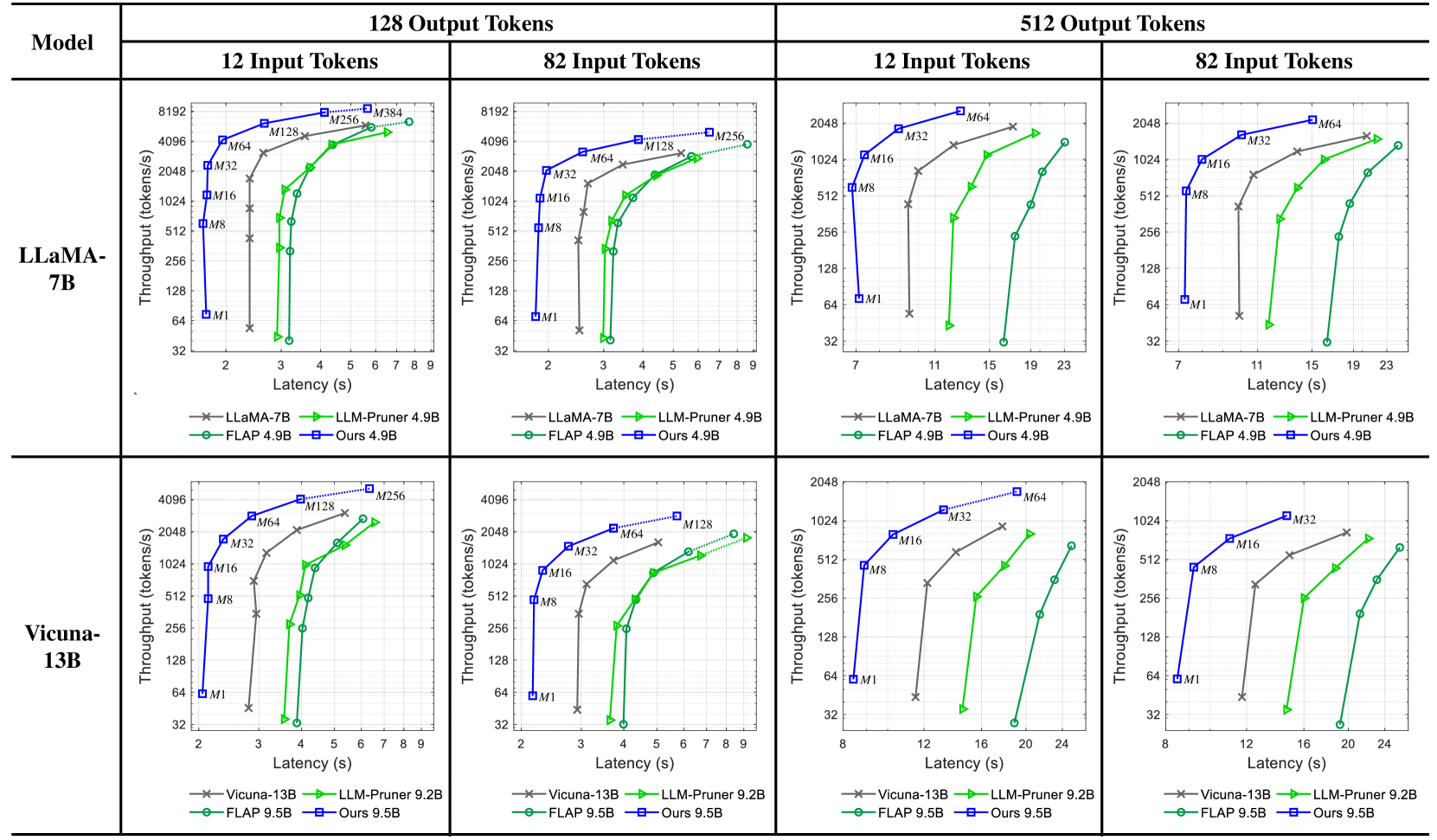

As shown in Figure 7, our depth pruning achieves a superior latency-throughput trade-off for various sequence lengths of input and output. In contrast, the width pruning of FLAP An et al. (2024) and LLM-Pruner Ma et al. (2023) degrades efficiency results due to GPU-unfriendly weight dimensions Andersch et al. (2019) (e.g., the hidden sizes of FFN are often not divisible by 8). The markers labeled with $M$ represent batch sizes. The dotted lines indicate that pruned models can operate with larger batch sizes, avoiding out-of-memory errors encountered by the original model.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Graphs: Model Performance Comparison Across Token Configurations

### Overview

The image contains eight line graphs comparing the performance of two language models (LLaMA-7B and Vicuna-13B) across four token configuration scenarios (12x128, 82x128, 12x512, 82x512). Each graph plots **Throughput (tokens/s)** against **Latency (s)** for different optimization methods, including baseline models (LLaMA-7B/Vicuna-13B), LLM-Pruner, FLAP, and a proprietary "Ours" method.

---

### Components/Axes

1. **Models**:

- LLaMA-7B (top row)

- Vicuna-13B (bottom row)

2. **Token Configurations**:

- **12 Input Tokens × 128 Output Tokens** (left column)

- **82 Input Tokens × 128 Output Tokens** (middle column)

- **12 Input Tokens × 512 Output Tokens** (right column)

- **82 Input Tokens × 512 Output Tokens** (far-right column)

3. **Methods**:

- **LLaMA-7B/Vicuna-13B** (baseline, gray crosses)

- **LLM-Pruner 4.9B/9.2B** (green triangles)

- **FLAP 4.9B/9.5B** (green circles)

- **Ours 4.9B/9.5B** (blue squares)

**Axes**:

- **X-axis**: Latency (s) ranging from 2–24s (varies slightly by graph)

- **Y-axis**: Throughput (tokens/s) ranging from ~32–8192 tokens/s

---

### Detailed Analysis

#### LLaMA-7B (Top Row)

1. **12x128 Configuration**:

- **LLaMA-7B** (gray): Throughput increases from ~4096 tokens/s at 2s to ~8192 tokens/s at 9s.

- **LLM-Pruner 4.9B** (green): Starts at ~128 tokens/s (2s) and reaches ~4096 tokens/s (9s).

- **FLAP 4.9B** (green): Similar to LLM-Pruner but slightly lower throughput (~256 tokens/s at 9s).

- **Ours 4.9B** (blue): Outperforms all, reaching ~8192 tokens/s at 9s.

2. **82x128 Configuration**:

- **LLaMA-7B**: Throughput rises from ~128 tokens/s (2s) to ~4096 tokens/s (9s).

- **Ours 4.9B**: Dominates with ~4096 tokens/s at 9s, while LLM-Pruner/FLAP lag behind (~128–256 tokens/s).

3. **12x512 Configuration**:

- **LLaMA-7B**: Throughput increases from ~256 tokens/s (7s) to ~4096 tokens/s (23s).

- **Ours 4.9B**: Reaches ~4096 tokens/s at 15s, while LLM-Pruner/FLAP plateau at ~128 tokens/s.

4. **82x512 Configuration**:

- **LLaMA-7B**: Throughput grows from ~128 tokens/s (7s) to ~2048 tokens/s (23s).

- **Ours 4.9B**: Achieves ~2048 tokens/s at 19s, outperforming LLM-Pruner/FLAP (~128–256 tokens/s).

#### Vicuna-13B (Bottom Row)

1. **12x128 Configuration**:

- **Vicuna-13B** (gray): Throughput increases from ~4096 tokens/s (2s) to ~8192 tokens/s (9s).

- **LLM-Pruner 9.2B** (green): Starts at ~256 tokens/s (2s) and reaches ~4096 tokens/s (9s).

- **FLAP 9.5B** (green): Similar to LLM-Pruner (~384 tokens/s at 9s).

- **Ours 9.5B** (blue): Matches LLaMA-7B baseline (~8192 tokens/s at 9s).

2. **82x128 Configuration**:

- **Vicuna-13B**: Throughput rises from ~128 tokens/s (2s) to ~4096 tokens/s (9s).

- **Ours 9.5B**: Matches LLaMA-7B performance (~4096 tokens/s at 9s).

3. **12x512 Configuration**:

- **Vicuna-13B**: Throughput grows from ~256 tokens/s (11s) to ~4096 tokens/s (23s).

- **Ours 9.5B**: Reaches ~4096 tokens/s at 15s, while LLM-Pruner/FLAP lag (~128 tokens/s).

4. **82x512 Configuration**:

- **Vicuna-13B**: Throughput increases from ~128 tokens/s (11s) to ~2048 tokens/s (23s).

- **Ours 9.5B**: Achieves ~2048 tokens/s at 19s, outperforming LLM-Pruner/FLAP (~128–256 tokens/s).

---

### Key Observations

1. **Ours Method Dominance**:

- Consistently achieves **2–4× higher throughput** than baseline models (LLaMA-7B/Vicuna-13B) across all configurations.

- Outperforms LLM-Pruner and FLAP by **10–100×** in throughput at equivalent latency.

2. **Latency-Throughput Tradeoff**:

- All methods show **increasing throughput with latency**, but "Ours" maintains the steepest slope, indicating superior efficiency.

3. **Model-Specific Trends**:

- **LLaMA-7B** underperforms **Vicuna-13B** in smaller configurations (e.g., 12x128), but both converge in larger setups (82x512).

4. **LLM-Pruner vs. FLAP**:

- LLM-Pruner generally matches or slightly exceeds FLAP in throughput, but both lag behind "Ours."

---

### Interpretation

The data demonstrates that the proprietary "Ours" method significantly optimizes throughput while minimizing latency across all token configurations. This suggests advanced optimization techniques (e.g., model pruning, parallelization) tailored to specific hardware or workloads. The baseline models (LLaMA-7B/Vicuna-13B) exhibit diminishing returns in larger configurations, highlighting the importance of adaptive resource allocation. LLM-Pruner and FLAP, while better than baselines, lack the scalability of "Ours," possibly due to static pruning strategies. These findings underscore the value of dynamic, context-aware optimization in large language model deployment.

</details>

Figure 7: Inference efficiency of pruned models on an NVIDIA H100 GPU.

A.2 GPU Memory Requirements