# A Survey on Transformer Compression

**Authors**: Yehui Tang, Yunhe Wang, Jianyuan Guo, Zhijun Tu, Kai Han, Hailin Hu, and Dacheng Tao

> Yehui Tang, Jianyuan Guo, Zhijun Tu, Kai Han, Hailin Hu, and Yunhe Wang are with Huawei Noah’s Ark Lab. E-mail: Dacheng Tao is with the School of Computer Science, in the Faculty of Engineering, at The University of Sydney, 6 Cleveland St, Darlington, NSW 2008, Australia. E-mail: Corresponding to Yunhe Wang and Dacheng Tao.

## Abstract

Transformer plays a vital role in the realms of natural language processing (NLP) and computer vision (CV), specially for constructing large language models (LLM) and large vision models (LVM). Model compression methods reduce the memory and computational cost of Transformer, which is a necessary step to implement large language/vision models on practical devices. Given the unique architecture of Transformer, featuring alternative attention and feedforward neural network (FFN) modules, specific compression techniques are usually required. The efficiency of these compression methods is also paramount, as retraining large models on the entire training dataset is usually impractical. This survey provides a comprehensive review of recent compression methods, with a specific focus on their application to Transformer-based models. The compression methods are primarily categorized into pruning, quantization, knowledge distillation, and efficient architecture design (Mamba, RetNet, RWKV, etc). In each category, we discuss compression methods for both language and vision tasks, highlighting common underlying principles. Finally, we delve into the relation between various compression methods, and discuss further directions in this domain.

Index Terms: Model Compression, Transformer, Large Language Model, Large Vision Model, LLM

## 1 Introduction

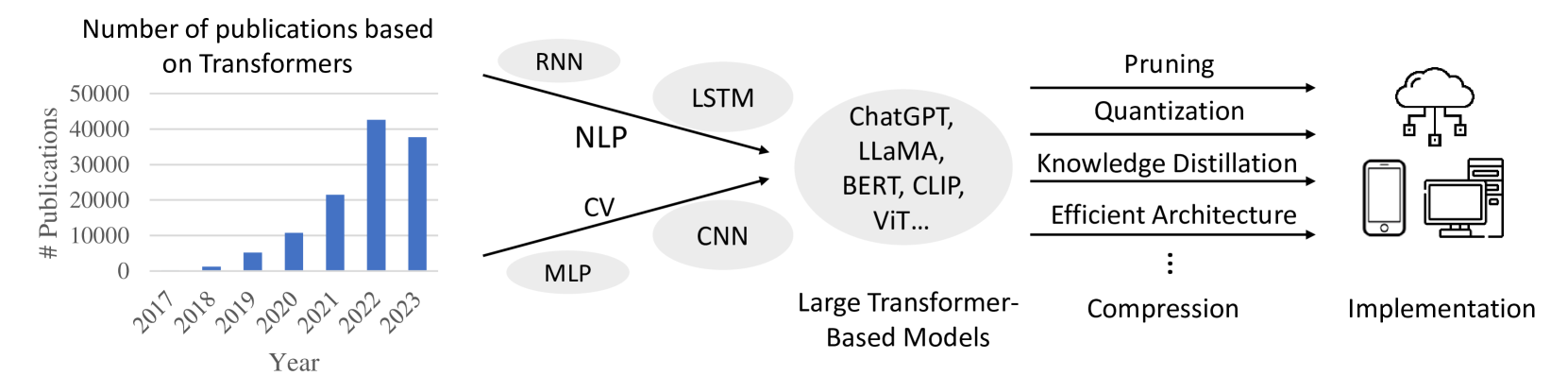

Deep neural networks have become indispensable in numerous artificial intelligence applications, with architectures encompassing diverse formulations, such as multilayer perceptron (MLP), convolutional neural network (CNN), recurrent neural network (RNN), long short-term memory (LSTM), Transformers, etc. In recent times, Transformer-based models have emerged as the prevailing choice across various domains, including both natural language processing (NLP) and computer vision (CV) domains. Considering their strong scalability, most of the large models with over billions of parameters are based on the Transformer architecture, which are considered as foundational elements for artificial general intelligence (AGI) [1, 2, 3, 4, 5, 6].

While large models have demonstrated significant capabilities, their exceptionally vast sizes pose challenges for practical development. For instance, the GPT-3 model has 175 billion parameters and demands about 350GB memory model storage (float16). The sheer volume of parameters and the associated computational expenses necessitate devices with exceedingly high memory and computational capabilities. Directly deploying such models will incur substantial resource costs and contributes significantly to carbon dioxide emissions. Moreover, on edge devices like mobile phones, the development of these models becomes impractical due to the limited storage and computing resources of such devices.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart and Flow Diagram: Publications on Transformers and Their Development

### Overview

This image presents two distinct but related sections. The left section is a bar chart showing the number of publications based on Transformers over the years from 2017 to 2023. The right section is a flow diagram illustrating the progression from various model types and applications to large Transformer-based models, followed by compression techniques, and finally, implementation on different devices.

### Components/Axes

**Bar Chart:**

* **Title:** "Number of publications based on Transformers"

* **Y-axis Title:** "# Publications"

* **Y-axis Scale:** Ranges from 0 to 50,000, with major tick marks at 0, 10,000, 20,000, 30,000, 40,000, and 50,000.

* **X-axis Title:** "Year"

* **X-axis Markers:** 2017, 2018, 2019, 2020, 2021, 2022, 2023.

**Flow Diagram:**

* **Input Categories (Left):**

* Ovals containing: "RNN", "NLP", "CV", "CNN", "MLP". Arrows point from these towards "Large Transformer-Based Models".

* **Intermediate Stage:**

* A large oval labeled "Large Transformer-Based Models" containing text: "ChatGPT, LLaMA, BERT, CLIP, ViT...".

* **Compression Techniques (Middle):**

* A list of techniques with arrows pointing from "Large Transformer-Based Models" to them, and then to "Implementation":

* "Pruning"

* "Quantization"

* "Knowledge Distillation"

* "Efficient Architecture"

* "..." (ellipsis indicating more techniques)

* "Compression" (positioned below the ellipsis, suggesting it's a broader category or a related concept).

* **Output Stage (Right):**

* Icons representing implementation:

* A cloud icon with connected squares, representing cloud-based implementation.

* A smartphone icon.

* A desktop computer icon.

* A label below these icons: "Implementation".

### Detailed Analysis or Content Details

**Bar Chart Data:**

The bar chart displays the following approximate publication counts for each year:

* **2017:** Approximately 1,000 publications.

* **2018:** Approximately 2,000 publications.

* **2019:** Approximately 8,000 publications.

* **2020:** Approximately 22,000 publications.

* **2021:** Approximately 42,000 publications.

* **2022:** Approximately 42,000 publications.

* **2023:** Approximately 38,000 publications.

**Flow Diagram Progression:**

The flow diagram illustrates a conceptual pathway:

1. **Foundation Models/Applications:** Traditional model types and application areas like RNN, NLP, CV, CNN, and MLP are shown as inputs that contribute to or are related to the development of Transformer models.

2. **Emergence of Large Transformer Models:** These foundational elements lead to the development of significant Transformer-based models, exemplified by names like ChatGPT, LLaMA, BERT, CLIP, and ViT.

3. **Optimization and Efficiency:** To make these large models practical, various compression and efficiency techniques are applied, including Pruning, Quantization, Knowledge Distillation, and the development of Efficient Architectures. The ellipsis and "Compression" label suggest this is a multifaceted area.

4. **Deployment:** The final stage is "Implementation," depicted by icons for cloud, mobile, and desktop/server environments, indicating the diverse platforms where these optimized Transformer models can be deployed.

### Key Observations

* **Exponential Growth in Publications:** The bar chart clearly shows a dramatic increase in the number of publications related to Transformers, particularly from 2019 to 2021, indicating a surge in research and development in this area.

* **Peak and Slight Decline:** While publications peaked around 2021-2022, there's a slight decrease in 2023, which could be a temporary fluctuation or an indication of a maturing research field or a shift in focus.

* **Interconnectedness of AI Fields:** The flow diagram highlights how older AI paradigms (RNN, CNN, MLP) and application domains (NLP, CV) paved the way for or are integrated with the rise of Transformer models.

* **Focus on Practicality:** The inclusion of compression techniques underscores the ongoing effort to make powerful Transformer models more efficient and deployable across various hardware and software environments.

### Interpretation

The image effectively communicates the trajectory of Transformer-based models in artificial intelligence. The bar chart quantifies the explosive growth in research interest and output concerning Transformers, demonstrating their significant impact on the AI landscape. The rapid ascent from 2019 to 2021 suggests a paradigm shift driven by the capabilities of these models.

The flow diagram provides a conceptual framework for understanding the evolution and application of Transformers. It suggests that while foundational AI concepts and models were crucial, the advent of large Transformer architectures like BERT and GPT has revolutionized the field. The subsequent emphasis on compression and efficient implementation highlights the practical challenges and ongoing innovations required to translate these powerful models into real-world applications across diverse platforms, from cloud services to personal devices. The diagram implies a progression from theoretical development and model creation to optimization and widespread deployment, reflecting the maturity and impact of Transformer technology. The slight dip in publications in 2023, while needing further context, could indicate a stabilization after rapid growth or a transition to more applied research and deployment rather than foundational exploration.

</details>

Figure 1: Transformer-based models have emerged as the predominant architectures in both natural language processing (NLP) and computer vision (CV) domains, resulting in a surge in publications. As these models tend to possess substantial dimensions, it becomes imperative to compress their parameters and streamline computational redundancies. This compression is essential for facilitating efficient implementation on practical platforms, ensuring the feasibility of deploying Transformer models in real-world applications.

Model compression is an effective strategy for mitigating the development costs associated with Transformer models. This approach, grounded in the principle of reducing redundancy, encompasses various categories, including pruning, quantization, knowledge distillation, efficient architecture design, etc. Network pruning directly removes redundant components, such as blocks, attention heads, FFN layers, and individual parameters. Diverse sub-models can be derived by employing different pruning granularity and pruning criteria. Quantization reduces the development cost by representing model weights and intermediate features with lower bits. For example, when quantizing a full-precision model (float32) into 8-bit integers, the memory cost can be reduced by a factor of four. According the computational process, it can be divided into post-training quantization(PTQ) or quantization-aware training (QAT), in which the former incurs only limited training costs and is more efficient for large models. Knowledge distillation serves as a training strategy, and transfers knowledge from a large model (teacher) to a smaller model (student). The student mimics the behavior of the teacher by emulating the model’s output and intermediate features. Notably, for advanced models like GPT-4, accessible only through APIs, their generated instructions and explanations can also guide the learning of the student model [7, 8].In addition to obtaining models from predefined large models, some methods yield efficient architectures by directly reducing the computational complexity of attention modules or FFN modules. Combining different methods enables extreme compression. For instance, Han et al. [9] combined network pruning, quantization, and Huffman coding to achieve an impressive 49 $\times$ compression rate on a conventional VGGNet [10].

Regarding Transformer models, their compression strategies exhibit distinct characteristics. Unlike other architectures such as CNN or RNN, the Transformer features a unique design with alternative attention and FFN modules. The former captures the global information by calculating the attention map over different tokens while the latter extracts information from each token respectively. This specific architecture can enable a tailored compression strategy for optimal compression rates. What’s more, the efficiency of compression method becomes especially important for such large models. Due to the high computational cost of large model, it is usually unaffordable to retrain the whole model on the original training set. Some training-efficient methods like post-training compression are preferable.

In this survey, we aim to comprehensively investigate how to compress these Transformer models (Figure 1), and categorize the methods by quantization, knowledge distillation, pruning, efficient architecture design, etc. In each category, we investigate the compression methods for NLP and CV domains, respectively. Table I summarizes the main compression categories and lists representative method suitable for large Transformer models. Though NLP and CV are usually treated as very different domains, we observe that their models compression methods actually share the similar principles. Finally, we discuss the relationship between different compression methods and outline some future research directions.

The rest of the paper is organized as follows. Section 2 introduces the fundamental concept of Transformers. Following this, Section 3 provides an in-depth discussion on compression methods that preserve the architecture, encompassing quantization and knowledge distillation—techniques that maintain the model’s architecture. Section 4 delves further into architecture-preserving compression, including pruning and efficient architecture design. Additional Transformer compression methods are explored in Section 5. Finally, Section 6 draws conclusions on the compression methods and discusses future research directions.

## 2 Concept of Transformer

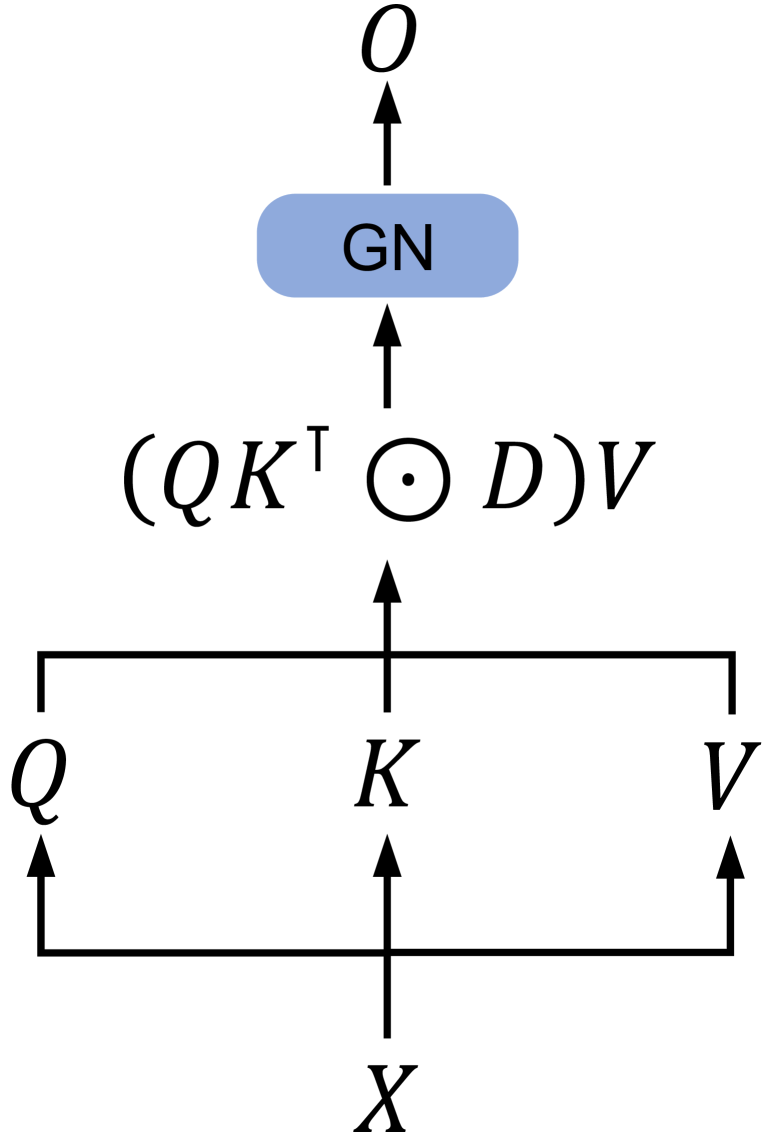

The Transformer architecture is firstly proposed to tackle tasks like machine translation [11]. A standard Transformer architecture contains main blocks, multi-head attention (MHA) and feed-forward networks (FFN). The attention is formulated as

$$

\mathrm{Attention}(Q,K,V)=\mathrm{softmax}(\frac{QK^{T}}{\sqrt{d}})V, \tag{1}

$$

where $Q$ , $K$ , $V$ are query, key and value matrix, respectively. $d$ is the feature’s dimension. The multi-head attention jointly extracts information from diverse subspaces, which is the concatenation of different heads,

$$

\displaystyle\mathrm{MultiHead}(Q,K,V)=\mathrm{Concat}(\mathrm{head_{1}},...,

\mathrm{head_{h}})W^{O}, \displaystyle\text{where}~{}\mathrm{head_{i}}=\mathrm{Attention}(QW^{Q}_{i},KW

^{K}_{i},VW^{V}_{i}). \tag{2}

$$

$W^{Q}$ , $W^{K}$ , $W^{V}$ , $W^{O}$ are the corresponding parameter matrices. The FFN module transforms features from each token independently. It is usually constructed by stacking two FC layers with activation functions,

$$

\mathrm{FFN}(x)=\phi(xW_{1}+b_{1})W_{2}+b_{2}, \tag{3}

$$

where $x$ is input feature, and $\phi$ is activation function (e.g., GELU). $W_{1}$ , $W_{2}$ , $b_{1}$ , $b_{2}$ are the weight and bias parameters in FC layers. The MHA and FFN module are stacked alternatively to construct the whole model.

The Transformer architecture has strong scalability and so can be used to construct extremely large models with several billion or trillion parameters. It supports most of the predominant large models in NLP, CV and multiple modality domains. For example, the well-known large language models (e.g., GPT-series [4, 2], LLaMA [1],Pangu [5, 6]) are its decoder-only variants. By simply splitting an image into multiple patches, it can be used to tackle vision tasks [12, 13, 14]. The multiple model like CLIP [15], BLIP [16], LLaVA [17] also use Transformer as the backbones.

TABLE I: Representative compression method for Transformer models.

| Category | Sub-category | Method | Highlights | Publication |

| --- | --- | --- | --- | --- |

| Quantization | NLP | SmoothQuant [18] | Training-free, smooth outliers, equivalent transformation | ICML 2023 |

| OmniQuant [19] | Weight clipping, learnable transformation, block-wise | Arxiv 2023 | | |

| QLoRA [20] | Parameter-efficient fine-tuning, memory management | Arxiv 2023 | | |

| CV | PTQ-ViT [21] | Self-attention preservation, mixed-precision | NeurIPS 2021 | |

| FQ-ViT [22] | Fully-quantized, log2 quantization, power-of-two factor | IJCAI 2022 | | |

| OFQ [23] | Confidence-guided annealing, query-key reparameterization | ICML 2023 | | |

| Knowledge Distillation | NLP | DistilBERT [24] | Small version of BERT, trained with logits of the teacher | NeurIPS 2019 |

| MiniLM [25] | Mimicking attention distribution and value-relation of teacher | NeurIPS 2020 | | |

| Lion [7] | Adversarial distillation: imitation, discrimination, generation | EMNLP 2023 | | |

| CV | DeiT [26] | Hard labels, novel distillation token in ViTs | ICML 2021 | |

| TinyViT [27] | Large-scale pretraining data, encoded data augmentation | ECCV 2022 | | |

| ManifoldKD [28] | Patch-level, batch-level manifold information | NeurIPS 2022 | | |

| Pruning | NLP | LLM Pruner [29] | Structured, coupled-module identification | NeurIPS 2023 |

| Sheared LLaMA [30] | Structured, pre-defined target, dynamic data loading | NeurIPS 2023 | | |

| Dynamic Context Pruning [31] | Sigmoid-based context selection, KV-cache aware | NeurIPS 2023 | | |

| CV | ViT-Slim [32] | Structured, single-shot architecture search | CVPR 2022 | |

| Patch Sliming [33] | Top-down unimportant patch removing | CVPR 2022 | | |

| X-pruner [34] | Structured, class-aware, layer-wise fully differentiable pruning | CVPR 2023 | | |

| Efficient Architecture | NLP | PaLM [35] | SwiGLU activation in FFN, densely activated | JMLR 2023 |

| RetNet [36] | Training parallelism, low-cost inference, parallel, recurrent | Arxiv 2023 | | |

| Reformer [37] | Efficient attention, locality-sensitive hashing | ICLR 2020 | | |

| CV | Swin [38] | Hierarchical structures, shifted local window attention | ICCV 2021 | |

| MetaFormer [39] | Non-parametric pooling as basic token mixing | CVPR 2022 | | |

| MLP-Mixer [40] | Architecture based exclusively on multi-layer perceptrons | NeurIPS 2021 | | |

## 3 Architecture Preserved Compression

### 3.1 Quantization

#### 3.1.1 Overview of Quantization

Quantization is a crucial step for deploying Transformers on various devices, especially GPUs and NPUs, that have specialized circuits for low-precision arithmetic. During the quantization process as shown in Equation 4, a floating-point tensor $x$ is converted to the integer one $x_{int}$ with corresponding quantization parameters (scale factor $s$ and zero point $z$ ), then the integer tensor $x_{int}$ could be quantized back to floating-point $x_{quant}$ but causes some precision error compared with the original $x$ ,

$$

\displaystyle x_{int} \displaystyle=\textrm{Clamp}(\lfloor x/s\rceil+z,0,2^{b}-1), \displaystyle x_{quant} \displaystyle=s(x_{int}-z), \tag{4}

$$

where $b$ denotes the bit-width, $\lfloor\cdot\rceil$ represents the rounding function and ‘Clamp’ clips the values that exceed the given range. For the matrix multiplication, the weight w adopts symmetrical quantization with zero point $z_{w}=0$ , and the input embedding tensor $e$ is quantized with unsymmetrical quantization, as shown in Equation 5:

$$

\displaystyle y \displaystyle=\textrm{MatMul}(e,\textrm{w})\approx\textrm{MatMul}(e_{quant},

\textrm{w}_{quant}) \displaystyle=\textrm{MatMul}(s_{e}(e_{int}-z_{e}),s_{w}\textrm{w}_{int}) \displaystyle=s_{e}s_{w}\textrm{MatMul}(e_{int},\textrm{w}_{int})+C, \tag{5}

$$

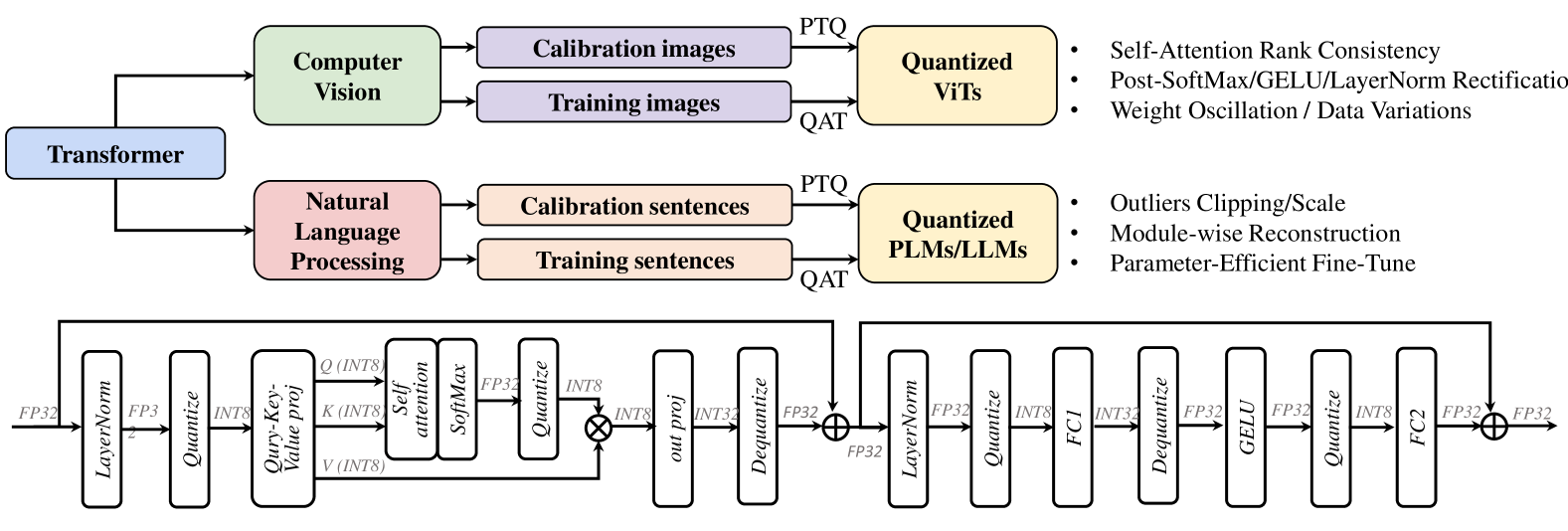

where $s_{w},s_{e},z_{e}$ are quantization parameters of weights and input embedding, and $e_{int}$ and $\textrm{w}_{int}$ are the integer input and weights, which are calculated by Equation 4. $C$ could be pre-computed with $s_{e},z_{e},s_{e}$ and $\textrm{w}_{int}$ . Thus the floating-point multiplication could be accelerated with efficient integer multiplication in the inference. To minimize the performance degradation of quantized models, different optimization methods have been proposed and they can be divided into two categories: (1) Post-training quantization (PTQ) [21, 41, 22, 42, 43, 44, 45] , mainly focuses on optimizing the quantization parameters of weights and activations with a few unlabeled calibration data, and some of the latest methods also explore adaptive rounding for weight quantization. (2) Quantization-aware training (QAT) [46, 47, 48, 49, 50, 51, 23, 52, 53, 54, 55, 56], inserts the quantization nodes into networks and conducts training with complete training data, where all the weights and quantization parameters are optimized together. In this section, we systematically introduce the research of model quantization on Transformer-based vision models and large language models, as shown in Figure 2.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Transformer Quantization Workflow for Computer Vision and NLP

### Overview

This diagram illustrates a generalized workflow for applying quantization techniques to Transformer models in both Computer Vision (CV) and Natural Language Processing (NLP) domains. It shows how different types of input data (images and sentences) are processed through a Transformer architecture, leading to quantized models. The diagram also depicts two distinct, detailed sub-workflows representing the internal processing of a quantized Transformer block, likely for either CV or NLP tasks, showing the flow of data through various operations like normalization, quantization, attention mechanisms, and feed-forward layers.

### Components/Axes

The diagram is structured into three main sections:

**Top Section: High-Level Workflow**

* **Input Branches:**

* **Transformer (Blue Box):** A central component from which two main processing branches originate.

* **Computer Vision (Green Box):** Represents the application domain for image data.

* **Natural Language Processing (Pink Box):** Represents the application domain for text data.

* **Data Types:**

* **Calibration images (Purple Box):** Input data for CV calibration.

* **Training images (Purple Box):** Input data for CV training.

* **Calibration sentences (Orange Box):** Input data for NLP calibration.

* **Training sentences (Orange Box):** Input data for NLP training.

* **Quantization Methods:**

* **PTQ (Post-Training Quantization):** A label indicating a quantization method applied to calibration data.

* **QAT (Quantization-Aware Training):** A label indicating a quantization method applied to training data.

* **Output Models:**

* **Quantized ViTs (Yellow Box):** Represents quantized Vision Transformers.

* **Quantized PLMs/LLMs (Yellow Box):** Represents quantized Pre-trained Language Models / Large Language Models.

* **Associated Techniques/Challenges:**

* **For Quantized ViTs:**

* Self-Attention Rank Consistency

* Post-SoftMax/GELU/LayerNorm Rectification

* Weight Oscillation / Data Variations

* **For Quantized PLMs/LLMs:**

* Outliers Clipping/Scale

* Module-wise Reconstruction

* Parameter-Efficient Fine-Tune

**Bottom Section: Detailed Quantized Transformer Block (Left)**

This section depicts a detailed view of a Transformer block, likely for processing input features.

* **Input Data Type:**

* **FP32:** Indicates input data is in 32-bit floating-point format.

* **Sequential Operations (Rectangular Boxes):**

* **LayerNorm:** Layer Normalization.

* **Quantize:** Quantization operation.

* **Q (INT8), K (INT8), V (INT8):** Query, Key, and Value projections, with data quantized to 8-bit integers.

* **Self attention:** Self-attention mechanism.

* **SoftMax:** Softmax activation function.

* **FP32:** Intermediate output in 32-bit floating-point format.

* **Quantize:** Another quantization operation.

* **out proj:** Output projection.

* **INT8:** Output data quantized to 8-bit integers.

* **INT32:** Intermediate data in 32-bit integers.

* **Dequantize:** Dequantization operation.

* **FP32:** Output data in 32-bit floating-point format.

* **Operations (Symbols):**

* **⊕ (Circle with Plus):** Element-wise addition (residual connection).

**Bottom Section: Detailed Quantized Transformer Block (Right)**

This section depicts another detailed view of a Transformer block, likely a subsequent layer or a different type of block.

* **Input Data Type:**

* **FP32:** Indicates input data is in 32-bit floating-point format.

* **Sequential Operations (Rectangular Boxes):**

* **LayerNorm:** Layer Normalization.

* **Quantize:** Quantization operation.

* **INT8:** Output data quantized to 8-bit integers.

* **FC1:** First Feed-Forward layer (likely a linear transformation).

* **INT32:** Intermediate data in 32-bit integers.

* **Dequantize:** Dequantization operation.

* **FP32:** Output data in 32-bit floating-point format.

* **GELU:** Gaussian Error Linear Unit activation function.

* **Quantize:** Another quantization operation.

* **INT8:** Output data quantized to 8-bit integers.

* **FC2:** Second Feed-Forward layer (likely a linear transformation).

* **FP32:** Output data in 32-bit floating-point format.

* **Operations (Symbols):**

* **⊕ (Circle with Plus):** Element-wise addition (residual connection).

* **Loop Arrow:** Indicates a residual connection from a previous layer (or the input) to the output of the FC2 layer before the final addition.

### Detailed Analysis or Content Details

**Top Section:**

* The diagram shows that a "Transformer" architecture can be applied to both "Computer Vision" and "Natural Language Processing".

* For CV, "Calibration images" are processed via "PTQ" to yield "Quantized ViTs". "Training images" are processed via "QAT" to yield "Quantized ViTs".

* For NLP, "Calibration sentences" are processed via "PTQ" to yield "Quantized PLMs/LLMs". "Training sentences" are processed via "QAT" to yield "Quantized PLMs/LLMs".

* The associated bullet points for "Quantized ViTs" highlight potential issues like "Self-Attention Rank Consistency", "Post-SoftMax/GELU/LayerNorm Rectification", and "Weight Oscillation / Data Variations".

* The associated bullet points for "Quantized PLMs/LLMs" highlight potential issues like "Outliers Clipping/Scale", "Module-wise Reconstruction", and "Parameter-Efficient Fine-Tune".

**Bottom Section (Left Block):**

* The flow starts with **FP32** data.

* It passes through **LayerNorm**, then is **Quantized**. The diagram indicates a transition to INT8 for Q, K, V.

* This is followed by a projection into **Query (INT8)**, **Key (INT8)**, and **Value (INT8)**.

* These are fed into a **Self attention** mechanism, followed by **SoftMax**.

* The output of SoftMax is **FP32**.

* This FP32 output is then **Quantized** to **INT8**.

* This INT8 output is then processed by an **out proj** layer, resulting in **INT8** data.

* This INT8 data is then converted to **INT32** and subsequently **Dequantized** back to **FP32**.

* Finally, this FP32 output is added element-wise (residual connection) with another FP32 input (indicated by the ⊕ symbol).

**Bottom Section (Right Block):**

* The flow starts with **FP32** data.

* It passes through **LayerNorm**, then is **Quantized** to **INT8**.

* This INT8 data is processed by **FC1**, resulting in **INT32** data.

* This INT32 data is then **Dequantized** to **FP32**.

* This FP32 data passes through a **GELU** activation.

* The output of GELU is then **Quantized** to **INT8**.

* This INT8 data is processed by **FC2**, resulting in **FP32** data.

* Finally, this FP32 output is added element-wise (residual connection) with another FP32 input (indicated by the ⊕ symbol). A loop arrow indicates this residual connection originates from a point earlier in the network, likely before the LayerNorm in this block.

### Key Observations

* The diagram clearly distinguishes between Post-Training Quantization (PTQ) and Quantization-Aware Training (QAT), with PTQ using calibration data and QAT using training data.

* Both CV (ViTs) and NLP (PLMs/LLMs) models are shown to undergo similar quantization workflows.

* The detailed sub-diagrams illustrate a common pattern in Transformer blocks: normalization, quantization, attention/projection, activation, and residual connections.

* There's a significant amount of quantization and dequantization happening within the detailed blocks, suggesting a focus on mixed-precision or aggressive quantization.

* The presence of INT8 and INT32 data types indicates the use of low-precision integer representations for computational efficiency.

* The residual connections (⊕) are crucial for maintaining model performance during quantization, a standard practice in deep learning architectures.

* The specific challenges listed for ViTs and PLMs/LLMs suggest areas of active research and potential pitfalls in quantizing these models.

### Interpretation

This diagram outlines a generalized methodology for quantizing Transformer models for both computer vision and natural language processing tasks. It highlights that the choice of quantization method (PTQ vs. QAT) depends on the availability of calibration or training data, respectively. The subsequent detailed sub-diagrams provide a glimpse into the internal mechanics of how these quantized models operate, showcasing the interplay between various operations like normalization, attention, feed-forward layers, and crucially, the repeated quantization and dequantization steps.

The diagram suggests that achieving effective quantization for advanced models like Vision Transformers (ViTs) and Large Language Models (LLMs) involves addressing specific challenges. For ViTs, maintaining the integrity of the self-attention mechanism and mitigating issues like weight oscillation are key. For NLP models, handling outliers and employing module-wise reconstruction are important considerations. The emphasis on mixed precision (e.g., INT8, INT32, FP32) within the detailed blocks implies a strategy to balance computational efficiency with model accuracy. The residual connections are a fundamental architectural element that helps preserve information flow and gradients, which is particularly vital when reducing precision. Overall, the diagram serves as a conceptual blueprint for understanding the process and complexities of deploying quantized Transformer models in real-world applications.

</details>

Figure 2: The overview of quantization for Transformers. The top demonstrates the different problems that are addressed in existing works for computer vision and natural language processing, and the bottom shows a normal INT8 inference process of a standard Transformer block.

TABLE II: Comparison of different PTQ and QAT methods for transformer-based vision models. W/A denotes the bit-width of weight and activation and the results show the top-1 accuracy on ImageNet-1k validation set. * represents for mixed-precision.

| Method of PTQ | W/A (bit) | ViT-B | DeiT-S | DeiT-B | Swin-S | Swin-B |

| --- | --- | --- | --- | --- | --- | --- |

| Full Precision | 32/32 | 84.54 | 79.85 | 81.80 | 83.23 | 85.27 |

| PTQ-ViT [21] | 8*/8* | 76.98 | 78.09 | 81.29 | - | - |

| PTQ4ViT [41] | 8/8 | 84.54 | 79.47 | 81.48 | 83.10 | 85.14 |

| FQ-ViT [22] | 8/8 | 83.31 | 79.17 | 81.20 | 82.71 | 82.97 |

| APQ-ViT [42] | 8/8 | 84.26 | 79.78 | 81.72 | 83.16 | 85.16 |

| NoiseQuant [44] | 8/8 | 84.22 | 79.51 | 81.45 | 83.13 | 85.20 |

| PTQ-ViT [21] | 6*/6* | 75.26 | 75.10 | 77.47 | - | - |

| PTQ4ViT [41] | 6/6 | 81.65 | 76.28 | 80.25 | 82.38 | 84.01 |

| APQ-ViT [42] | 6/6 | 82.21 | 77.76 | 80.42 | 84.18 | 85.60 |

| NoiseQuant [44] | 6/6 | 82.32 | 77.43 | 80.70 | 82.86 | 84.68 |

| RepQ-ViT [45] | 6/6 | 83.62 | 78.90 | 81.27 | 82.79 | 84.57 |

| APQ-ViT [42] | 4/8 | 72.63 | 77.14 | 79.55 | 80.56 | 81.94 |

| PTQ-ViT [21] | 4*/4* | - | - | 75.94 | - | - |

| APQ-ViT [42] | 4/4 | 41.41 | 43.55 | 67.48 | 83.16 | 85.16 |

| RepQ-ViT [45] | 4/4 | 68.48 | 69.03 | 75.61 | 79.45 | 78.32 |

| Method of QAT | W/A (bit) | DeiT-T | DeiT-S | DeiT-B | Swin-T | Swin-S |

| Full Precision | 32/32 | 72.20 | 79.85 | 81.80 | 81.20 | 83.23 |

| I-ViT [50] | 8/8 | 72.24 | 80.12 | 81.74 | 81.50 | 83.01 |

| Q-ViT [48] | 4/4 | 72.79 | 80.11 | - | 80.59 | - |

| AFQ-ViT [48] | 4/4 | - | 80.90 | 83.00 | 82.50 | 84.40 |

| Quantformer [46] | 4/4 | 69.90 | 78.20 | 79.70 | 78.30 | 81.00 |

| OFQ [23] | 4/4 | 75.46 | 81.10 | - | 81.88 | - |

| VVTQ [56] | 4/4 | 74.71 | - | - | 82.42 | - |

| Q-ViT [48] | 3/3 | 69.62 | 78.08 | - | 79.45 | - |

| AFQ-ViT [48] | 3/3 | - | 79.00 | 81.00 | 80.90 | 82.70 |

| Quantformer [46] | 3/3 | 65.20 | 75.40 | 78.30 | 77.40 | 79.20 |

| OFQ [23] | 3/3 | 72.72 | 79.57 | - | 81.09 | - |

| AFQ-ViT [48] | 2/2 | - | 72.10 | 74.20 | 74.70 | 76.90 |

| Quantformer [46] | 2/2 | 60.70 | 65.20 | 73.80 | 74.20 | 76.60 |

| OFQ [23] | 2/2 | 64.33 | 75.72 | - | 78.52 | - |

(a) ViT-B_16

(b) ViT-B_16-224

(c) ViT-L_16

(d) ViT-L_16-224

(e) OPT-13B

(f) OPT-30B

(g) OPT-66B

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and INT8

### Overview

This bar chart displays the latency in milliseconds (ms) for two different data types, FP16 and INT8, across various batch sizes. The x-axis represents the batch size, and the y-axis represents the latency. For each batch size, there are two bars: one for FP16 (light gray) and one for INT8 (dark red).

### Components/Axes

* **Y-axis Title**: "Latency(ms)"

* **Scale**: Linear, ranging from 0.0 to 50.0.

* **Tick Marks**: 0.0, 12.5, 25.0, 37.5, 50.0.

* **X-axis Title**: "Batch Size"

* **Categories**: 1, 8, 16, 32.

* **Legend**: Located in the top-left quadrant of the chart.

* **FP16**: Represented by a light gray rectangle.

* **INT8**: Represented by a dark red rectangle.

### Detailed Analysis

The chart presents latency values for batch sizes of 1, 8, 16, and 32.

**Batch Size 1:**

* **FP16**: The light gray bar reaches a height of approximately 2.24 ms.

* **INT8**: The dark red bar reaches a height of approximately 2.26 ms.

**Batch Size 8:**

* **FP16**: The light gray bar reaches a height of approximately 11.14 ms.

* **INT8**: The dark red bar reaches a height of approximately 7.93 ms.

**Batch Size 16:**

* **FP16**: The light gray bar reaches a height of approximately 21.5 ms.

* **INT8**: The dark red bar reaches a height of approximately 14.66 ms.

**Batch Size 32:**

* **FP16**: The light gray bar reaches a height of approximately 43.81 ms.

* **INT8**: The dark red bar reaches a height of approximately 29.07 ms.

### Key Observations

* **General Trend**: For both FP16 and INT8, latency generally increases as the batch size increases.

* **FP16 Trend**: The latency for FP16 shows a significant upward trend, with a substantial jump from batch size 16 to 32.

* **INT8 Trend**: The latency for INT8 also increases with batch size, but at a less dramatic rate compared to FP16, especially at larger batch sizes.

* **Comparison**: At batch size 1, the latencies are very similar. However, as batch size increases, FP16 consistently shows higher latency than INT8, with the difference becoming more pronounced at batch sizes 16 and 32.

### Interpretation

This chart demonstrates the impact of batch size on latency for different data precisions (FP16 and INT8). The data suggests that while increasing batch size generally leads to higher latency for both precisions, INT8 exhibits better scalability and lower latency at larger batch sizes compared to FP16. This implies that for applications where latency is a critical factor and large batch sizes are utilized, INT8 might be a more performant choice. The significant increase in latency for FP16 at batch size 32 could indicate a bottleneck or a point where the computational overhead of FP16 becomes more dominant.

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and INT8

### Overview

This image is a bar chart that visualizes the latency (in milliseconds) for two different data types, FP16 and INT8, across varying batch sizes. The chart shows how latency changes as the batch size increases from 1 to 32.

### Components/Axes

* **Y-axis Title**: "Latency(ms)"

* **Scale**: Linear, ranging from 0.0 to 10.0, with major tick marks at 0.0, 2.5, 5.0, 7.5, and 10.0.

* **X-axis Title**: "Batch Size"

* **Categories**: The x-axis displays discrete batch sizes: 1, 8, 16, and 32.

* **Legend**: Located in the top-left quadrant of the chart.

* **FP16**: Represented by a light gray rectangle.

* **INT8**: Represented by a dark red rectangle.

### Detailed Analysis

The chart displays paired bars for each batch size, with the light gray bar representing FP16 latency and the dark red bar representing INT8 latency.

**Batch Size 1:**

* FP16 (light gray bar): 1.53 ms

* INT8 (dark red bar): 1.52 ms

**Batch Size 8:**

* FP16 (light gray bar): 3.03 ms

* INT8 (dark red bar): 2.38 ms

**Batch Size 16:**

* FP16 (light gray bar): 5.3 ms

* INT8 (dark red bar): 3.74 ms

**Batch Size 32:**

* FP16 (light gray bar): 10.04 ms

* INT8 (dark red bar): 6.43 ms

### Key Observations

* **General Trend**: For both FP16 and INT8, latency generally increases as the batch size increases.

* **FP16 Trend**: The latency for FP16 shows a significant upward trend, particularly between batch sizes 16 and 32.

* **INT8 Trend**: The latency for INT8 also increases with batch size, but at a slower rate compared to FP16, especially at larger batch sizes.

* **Comparison**: At batch size 1, the latencies for FP16 and INT8 are very close. However, as the batch size increases, INT8 consistently shows lower latency than FP16. The difference in latency becomes more pronounced at larger batch sizes (16 and 32).

### Interpretation

This bar chart demonstrates the performance characteristics of FP16 and INT8 data types in terms of latency under varying computational loads (batch sizes).

* **Data Suggests**: The data suggests that INT8 is generally more efficient in terms of latency than FP16, especially as the batch size grows. This is likely due to the reduced precision of INT8 requiring less computational resources and memory bandwidth.

* **Relationship**: The x-axis (Batch Size) is the independent variable, and the y-axis (Latency) is the dependent variable. The legend differentiates the two data types (FP16 and INT8) whose latencies are being measured.

* **Notable Trends/Anomalies**:

* The most striking trend is the superior performance of INT8 at larger batch sizes. While FP16 latency nearly doubles from batch size 16 to 32 (from 5.3 ms to 10.04 ms), INT8 latency increases by a smaller margin (from 3.74 ms to 6.43 ms).

* At batch size 1, the latencies are almost identical, indicating that for very small workloads, the overhead of data type conversion or other factors might dominate, making the precision difference less impactful.

* The steep increase in FP16 latency at larger batch sizes could indicate memory bandwidth limitations or increased computational complexity that scales poorly with batch size for higher precision data. Conversely, INT8 appears to scale more favorably.

In essence, the chart highlights a common trade-off in deep learning and other computational tasks: using lower precision data types like INT8 can lead to significant performance gains (lower latency) with a potentially acceptable loss in accuracy, especially for inference tasks. FP16, while offering higher precision, incurs a higher latency cost as the workload increases.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and INT8

### Overview

This image displays a bar chart comparing the latency (in milliseconds) for two different data types, FP16 and INT8, across varying batch sizes. The batch sizes tested are 1, 8, 16, and 32.

### Components/Axes

* **Y-axis Title**: "Latency(ms)"

* **Scale**: Linear, ranging from 0.0 to 130.0. Major tick marks are at 0.0, 32.5, 65.0, 97.5, and 130.0.

* **X-axis Title**: "Batch Size"

* **Categories**: 1, 8, 16, 32.

* **Legend**: Located in the top-left quadrant of the chart.

* **FP16**: Represented by a light gray rectangle.

* **INT8**: Represented by a dark red rectangle.

### Detailed Analysis or Content Details

The chart presents data for four different batch sizes, with two bars for each batch size representing FP16 and INT8.

**Batch Size 1:**

* **FP16**: The light gray bar reaches a height of approximately 5.36 ms.

* **INT8**: The dark red bar reaches a height of approximately 4.77 ms.

**Batch Size 8:**

* **FP16**: The light gray bar reaches a height of approximately 30.95 ms.

* **INT8**: The dark red bar reaches a height of approximately 20.25 ms.

**Batch Size 16:**

* **FP16**: The light gray bar reaches a height of approximately 60.99 ms.

* **INT8**: The dark red bar reaches a height of approximately 39.43 ms.

**Batch Size 32:**

* **FP16**: The light gray bar reaches a height of approximately 124.85 ms.

* **INT8**: The dark red bar reaches a height of approximately 80.05 ms.

### Key Observations

* **Trend**: For both FP16 and INT8, latency generally increases as the batch size increases.

* **Comparison**: Across all batch sizes, FP16 consistently exhibits higher latency than INT8.

* **Rate of Increase**: The latency increase appears to be more pronounced for FP16, especially between batch sizes 16 and 32.

### Interpretation

This bar chart demonstrates the performance characteristics of FP16 and INT8 data types in terms of latency as batch size varies. The data suggests that INT8 is a more efficient data type, resulting in lower latency compared to FP16, particularly at larger batch sizes. This is likely due to the reduced precision of INT8 requiring less computational resources and memory bandwidth. The increasing latency with batch size is a common phenomenon, indicating that processing larger batches takes more time. The significant jump in latency for FP16 at batch size 32 might suggest a performance bottleneck or saturation point for this data type under high load. This information is crucial for optimizing deep learning model inference or training, where choosing the appropriate data type and batch size can significantly impact performance and throughput.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and INT8

### Overview

This image is a bar chart comparing the latency (in milliseconds) for two different data types, FP16 and INT8, across various batch sizes. The chart displays four sets of paired bars, each representing a specific batch size: 1, 8, 16, and 32.

### Components/Axes

* **Y-axis Title**: "Latency(ms)"

* **Scale**: Linear, ranging from 0.0 to 30.0, with major tick marks at 0.0, 7.5, 15.0, 22.5, and 30.0.

* **X-axis Title**: "Batch Size"

* **Categories**: 1, 8, 16, 32.

* **Legend**: Located in the top-left quadrant of the chart.

* **FP16**: Represented by a light gray rectangle.

* **INT8**: Represented by a dark maroon rectangle.

### Detailed Analysis

The chart presents latency values for FP16 and INT8 at batch sizes of 1, 8, 16, and 32.

**Batch Size 1:**

* **FP16**: The light gray bar reaches a height of approximately 2.97 ms.

* **INT8**: The dark maroon bar reaches a height of approximately 2.91 ms.

**Batch Size 8:**

* **FP16**: The light gray bar reaches a height of approximately 8.09 ms.

* **INT8**: The dark maroon bar reaches a height of approximately 5.44 ms.

**Batch Size 16:**

* **FP16**: The light gray bar reaches a height of approximately 15.03 ms.

* **INT8**: The dark maroon bar reaches a height of approximately 9.23 ms.

**Batch Size 32:**

* **FP16**: The light gray bar reaches a height of approximately 29.66 ms.

* **INT8**: The dark maroon bar reaches a height of approximately 17.28 ms.

### Key Observations

* **General Trend**: For both FP16 and INT8, latency generally increases as the batch size increases.

* **FP16 Trend**: The latency for FP16 shows a significant upward trend, accelerating with larger batch sizes.

* **INT8 Trend**: The latency for INT8 also increases with batch size, but at a slower rate compared to FP16, especially at larger batch sizes.

* **Comparison**: At batch size 1, FP16 and INT8 have very similar latencies. However, as batch size increases, FP16 consistently exhibits higher latency than INT8. The difference in latency between FP16 and INT8 becomes more pronounced at batch sizes 16 and 32.

### Interpretation

This chart demonstrates the performance characteristics of FP16 and INT8 data types in terms of latency as batch size varies. The data suggests that while both data types experience increased latency with larger batch sizes, INT8 offers a more favorable latency profile, particularly for larger batch sizes. This implies that INT8 might be a more efficient choice for applications requiring high throughput and low latency when dealing with substantial amounts of data. The increasing divergence in latency between FP16 and INT8 as batch size grows could be attributed to factors like memory bandwidth, computational efficiency, or specific hardware optimizations for integer operations. The initial similarity at batch size 1 might indicate that overheads dominate at very small batch sizes, masking the underlying performance differences.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and w8a8

### Overview

This image is a bar chart that compares the latency (in milliseconds) for two different configurations, FP16 and w8a8, across varying batch sizes. The batch sizes tested are 128, 256, 512, and 1024.

### Components/Axes

* **Y-axis Title**: "Latency(ms)"

* **Scale**: Ranges from 0 to 200, with major tick marks at 0, 50, 100, 150, and 200.

* **X-axis Title**: "Batch Size"

* **Categories**: 128, 256, 512, 1024.

* **Legend**: Located in the top-left quadrant of the chart.

* **FP16**: Represented by a light gray rectangle.

* **w8a8**: Represented by a dark red rectangle.

### Detailed Analysis

The chart displays paired bars for each batch size, with the left bar representing FP16 and the right bar representing w8a8.

* **Batch Size 128**:

* FP16 (light gray bar): 28 ms

* w8a8 (dark red bar): 22 ms

* **Trend**: For this batch size, w8a8 has lower latency than FP16.

* **Batch Size 256**:

* FP16 (light gray bar): 44 ms

* w8a8 (dark red bar): 33 ms

* **Trend**: For this batch size, w8a8 has lower latency than FP16.

* **Batch Size 512**:

* FP16 (light gray bar): 87 ms

* w8a8 (dark red bar): 63 ms

* **Trend**: For this batch size, w8a8 has lower latency than FP16.

* **Batch Size 1024**:

* FP16 (light gray bar): 181 ms

* w8a8 (dark red bar): 125 ms

* **Trend**: For this batch size, w8a8 has lower latency than FP16.

**Overall Trend for both FP16 and w8a8**: As the batch size increases, the latency for both configurations increases significantly. The FP16 configuration consistently shows higher latency than the w8a8 configuration across all tested batch sizes.

### Key Observations

* The latency for both FP16 and w8a8 increases with increasing batch size.

* The w8a8 configuration consistently exhibits lower latency compared to the FP16 configuration for all batch sizes.

* The difference in latency between FP16 and w8a8 appears to widen as the batch size increases.

### Interpretation

This bar chart demonstrates the performance characteristics of two different configurations (FP16 and w8a8) in terms of latency as a function of batch size. The data suggests that the w8a8 configuration is more efficient, offering lower latency across all tested batch sizes. This could be due to optimizations or a more suitable data representation for the underlying hardware or software being used. The increasing latency with larger batch sizes is a common observation in many computational systems, often related to memory constraints, processing overhead, or communication bottlenecks. The widening gap in latency at larger batch sizes might indicate that FP16 scales less favorably than w8a8 under higher load. This information is crucial for system designers and engineers when choosing configurations for optimal performance, especially in scenarios where low latency is a critical requirement.

</details>

<details>

<summary>x8.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and w8a8

### Overview

This bar chart displays the latency in milliseconds (ms) for two different configurations, FP16 and w8a8, across varying batch sizes. The batch sizes tested are 128, 256, 512, and 1024. The chart visually represents how latency changes with increasing batch sizes for each configuration.

### Components/Axes

* **Y-axis Title:** "Latency(ms)"

* **Scale:** Linear, ranging from 0 to 400, with major tick marks at 0, 100, 200, 300, and 400.

* **X-axis Title:** "Batch Size"

* **Categories:** 128, 256, 512, 1024.

* **Legend:** Located in the top-left quadrant of the chart.

* **FP16:** Represented by a light gray rectangle.

* **w8a8:** Represented by a dark red rectangle.

### Detailed Analysis

The chart presents paired bars for each batch size, with the left bar representing FP16 and the right bar representing w8a8.

* **Batch Size 128:**

* FP16 (light gray bar): 59 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 43 ms. This bar is positioned to the right of the FP16 bar.

* **Batch Size 256:**

* FP16 (light gray bar): 98 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 65 ms. This bar is positioned to the right of the FP16 bar.

* **Batch Size 512:**

* FP16 (light gray bar): 186 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 119 ms. This bar is positioned to the right of the FP16 bar.

* **Batch Size 1024:**

* FP16 (light gray bar): 380 ms. This bar is positioned to the left of the w8a8 bar.

* w8a8 (dark red bar): 249 ms. This bar is positioned to the right of the FP16 bar.

### Key Observations

* **Trend:** For both FP16 and w8a8, latency generally increases as the batch size increases.

* **Comparison:** The w8a8 configuration consistently shows lower latency than the FP16 configuration across all tested batch sizes.

* **Magnitude of Difference:** The difference in latency between FP16 and w8a8 appears to grow with increasing batch size. For batch size 128, the difference is approximately 16 ms (59 - 43). For batch size 1024, the difference is approximately 131 ms (380 - 249).

* **Steepest Increase:** The most significant jump in latency for FP16 occurs between batch sizes 512 (186 ms) and 1024 (380 ms), an increase of 194 ms. For w8a8, the largest increase is between batch sizes 512 (119 ms) and 1024 (249 ms), an increase of 130 ms.

### Interpretation

This chart demonstrates the performance characteristics of two different data precision/quantization schemes (FP16 and w8a8) in terms of latency as a function of batch size.

The data suggests that the w8a8 configuration is more efficient, exhibiting lower latency across all batch sizes. This is likely due to its reduced precision (8-bit weights and 8-bit activations) compared to FP16 (16-bit floating-point), which can lead to faster computations and reduced memory bandwidth requirements.

The increasing latency with larger batch sizes is a common phenomenon in many computational systems, often attributed to factors like increased memory usage, cache contention, and parallel processing overhead. The fact that the latency difference between FP16 and w8a8 widens with larger batch sizes indicates that the benefits of w8a8 become more pronounced as the workload scales up. This implies that for applications requiring high throughput and processing large amounts of data (larger batch sizes), the w8a8 configuration offers a significant performance advantage. The steep increase in latency for FP16 at batch size 1024 might indicate a saturation point or a more significant bottleneck compared to w8a8 at that scale.

</details>

<details>

<summary>x9.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and w8a8

### Overview

This image is a bar chart that compares the latency (in milliseconds) for two different data types, FP16 and w8a8, across various batch sizes. The chart displays four sets of paired bars, each representing a specific batch size: 128, 256, 512, and 1024. For each batch size, there are two bars: a light gray bar representing FP16 and a dark red bar representing w8a8. The height of each bar corresponds to the measured latency.

### Components/Axes

* **Y-axis Title**: "Latency(ms)"

* **Scale**: The y-axis ranges from 0 to 500, with major tick marks at 0, 125, 250, 375, and 500.

* **X-axis Title**: "Batch Size"

* **Categories**: The x-axis displays four batch sizes: 128, 256, 512, and 1024.

* **Legend**: Located in the top-left quadrant of the chart.

* **FP16**: Represented by a light gray rectangle.

* **w8a8**: Represented by a dark red rectangle.

### Detailed Analysis

The chart presents the following data points:

* **Batch Size 128**:

* FP16 (light gray bar): 79 ms

* w8a8 (dark red bar): 75 ms

* *Trend*: For batch size 128, w8a8 has a slightly lower latency than FP16.

* **Batch Size 256**:

* FP16 (light gray bar): 122 ms

* w8a8 (dark red bar): 131 ms

* *Trend*: For batch size 256, FP16 has a lower latency than w8a8.

* **Batch Size 512**:

* FP16 (light gray bar): 236 ms

* w8a8 (dark red bar): 229 ms

* *Trend*: For batch size 512, w8a8 has a slightly lower latency than FP16.

* **Batch Size 1024**:

* FP16 (light gray bar): 489 ms

* w8a8 (dark red bar): 490 ms

* *Trend*: For batch size 1024, FP16 has a slightly lower latency than w8a8.

### Key Observations

* **Overall Trend**: Latency increases significantly with increasing batch size for both FP16 and w8a8.

* **Comparison**:

* At batch sizes 128 and 512, w8a8 shows a slightly lower latency compared to FP16.

* At batch sizes 256 and 1024, FP16 shows a slightly lower latency compared to w8a8.

* **Magnitude of Difference**: The latency difference between FP16 and w8a8 is relatively small across all batch sizes, generally within a range of approximately 1-5 ms, except for batch size 256 where the difference is 9 ms.

* **Peak Latency**: The highest latencies are observed at the largest batch size (1024), with values close to 500 ms for both data types.

### Interpretation

This bar chart demonstrates the performance characteristics of FP16 and w8a8 data types in terms of latency as batch size varies. The data suggests that:

1. **Scalability**: Both FP16 and w8a8 exhibit a clear trend of increasing latency as the batch size grows. This is a common behavior in computational tasks, as larger batches require more processing power and memory, leading to longer execution times.

2. **Data Type Performance**: The comparison between FP16 and w8a8 reveals that their performance is quite similar across different batch sizes. There isn't a consistent winner; sometimes w8a8 is slightly faster, and at other times FP16 is. This suggests that for this particular task or hardware configuration, the choice between FP16 and w8a8 might not lead to a dramatic difference in latency, especially at smaller batch sizes. The slight variations could be attributed to factors like hardware optimization, memory access patterns, or specific algorithmic implementations.

3. **Batch Size Impact**: The most significant factor influencing latency is the batch size. The latency more than doubles when moving from batch size 128 to 256, and continues to increase substantially for larger batch sizes. This highlights the importance of selecting an appropriate batch size for optimal performance, balancing throughput with latency requirements.

4. **Near Equivalence at High Load**: At the highest batch size (1024), the latencies for FP16 and w8a8 are almost identical (489 ms vs. 490 ms). This could indicate that at such high loads, the system's resources are saturated, and both data types are experiencing similar bottlenecks, making their relative efficiency less distinguishable.

In essence, the chart provides empirical evidence for the latency behavior of these two data types under varying computational loads, indicating that while batch size is a dominant factor, the performance difference between FP16 and w8a8 is marginal and context-dependent.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

## Bar Chart: Latency vs. Batch Size for FP16 and w8a8

### Overview

This bar chart displays the latency in milliseconds (ms) for two different configurations, FP16 and w8a8, across varying batch sizes. The x-axis represents the batch size, and the y-axis represents the latency. For each batch size, there are two bars: a light gray bar representing FP16 and a dark red bar representing w8a8.

### Components/Axes

* **Title:** Implicitly, the chart compares latency performance.

* **X-axis Title:** "Batch Size"

* **X-axis Markers:** 128, 256, 512, 1024

* **Y-axis Title:** "Latency(ms)"

* **Y-axis Markers:** 0, 225, 450, 675, 900

* **Legend:** Located in the top-left quadrant of the chart.

* **FP16:** Represented by a light gray rectangle.

* **w8a8:** Represented by a dark red rectangle.

### Detailed Analysis or Content Details

The chart presents data for four distinct batch sizes:

* **Batch Size 128:**

* FP16 (light gray bar): 139 ms

* w8a8 (dark red bar): 122 ms

* **Batch Size 256:**

* FP16 (light gray bar): 228 ms

* w8a8 (dark red bar): 194 ms

* **Batch Size 512:**

* FP16 (light gray bar): 432 ms

* w8a8 (dark red bar): 366 ms

* **Batch Size 1024:**

* FP16 (light gray bar): 848 ms

* w8a8 (dark red bar): 720 ms

### Key Observations

* **Trend:** For both FP16 and w8a8, latency generally increases as the batch size increases. This is visually evident as the bars grow taller with larger batch sizes.

* **Comparison:** In all tested batch sizes, the w8a8 configuration consistently exhibits lower latency compared to the FP16 configuration.

* **Magnitude of Difference:** The difference in latency between FP16 and w8a8 appears to grow with increasing batch size.

* At batch size 128, the difference is approximately 17 ms (139 - 122).

* At batch size 256, the difference is approximately 34 ms (228 - 194).

* At batch size 512, the difference is approximately 66 ms (432 - 366).

* At batch size 1024, the difference is approximately 128 ms (848 - 720).

### Interpretation

This bar chart demonstrates the performance trade-offs between two configurations, FP16 and w8a8, in terms of latency as a function of batch size. The data strongly suggests that the w8a8 configuration is more efficient, offering lower latency across all tested batch sizes. Furthermore, the performance advantage of w8a8 over FP16 becomes more pronounced at larger batch sizes. This implies that for applications requiring high throughput (larger batch sizes) and low latency, the w8a8 configuration would be the preferred choice. The increasing latency with batch size is a common characteristic in many computational tasks, as larger workloads can lead to increased processing time and resource contention. The consistent superiority of w8a8 indicates a potential optimization in its architecture or implementation that allows it to handle larger batches with less overhead.

</details>

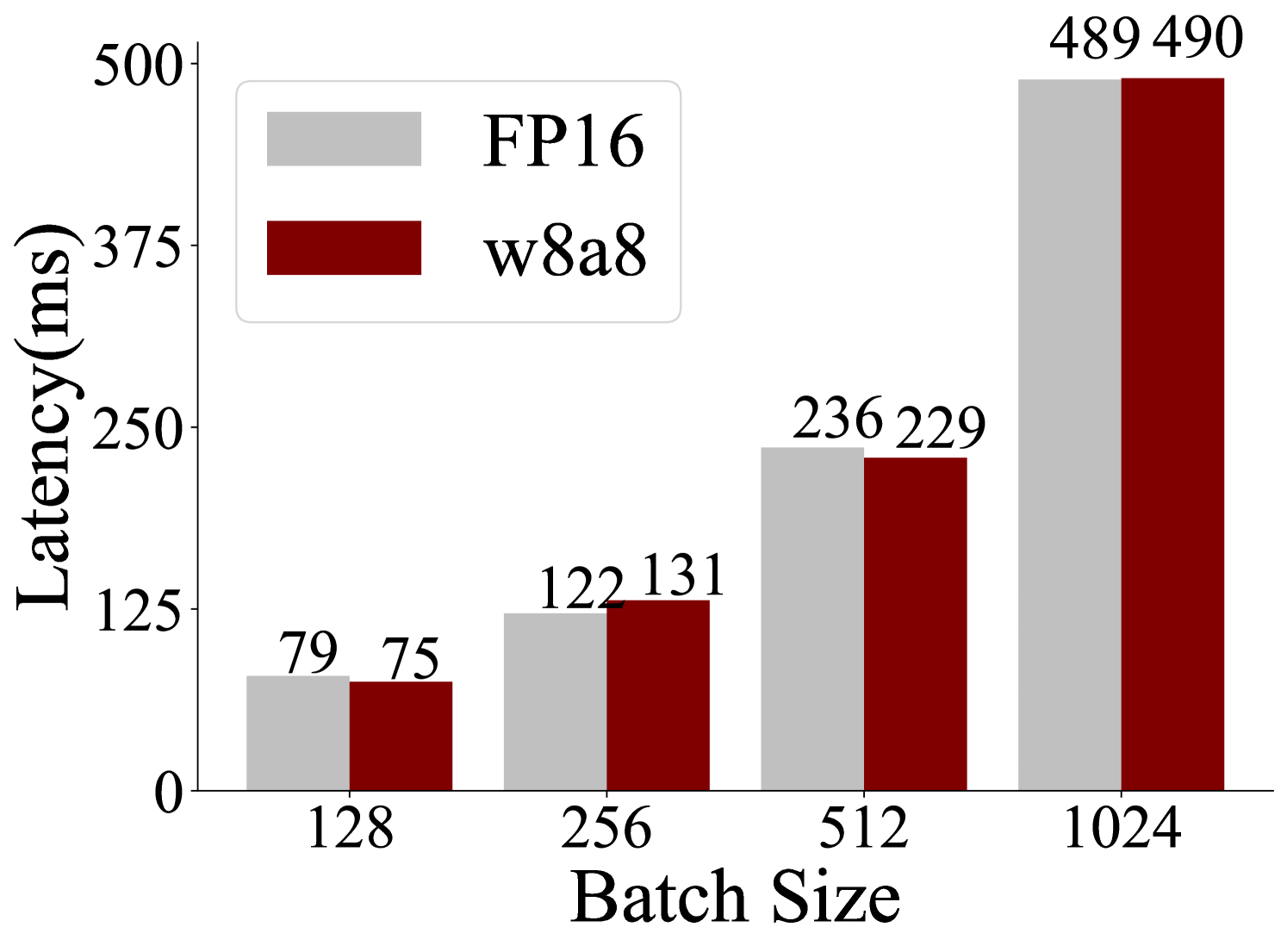

(a) ViT-B_16

(b) ViT-B_16-224

(c) ViT-L_16

(d) ViT-L_16-224

(e) OPT-13B

(f) OPT-30B

(g) OPT-66B

(h) OPT-175B

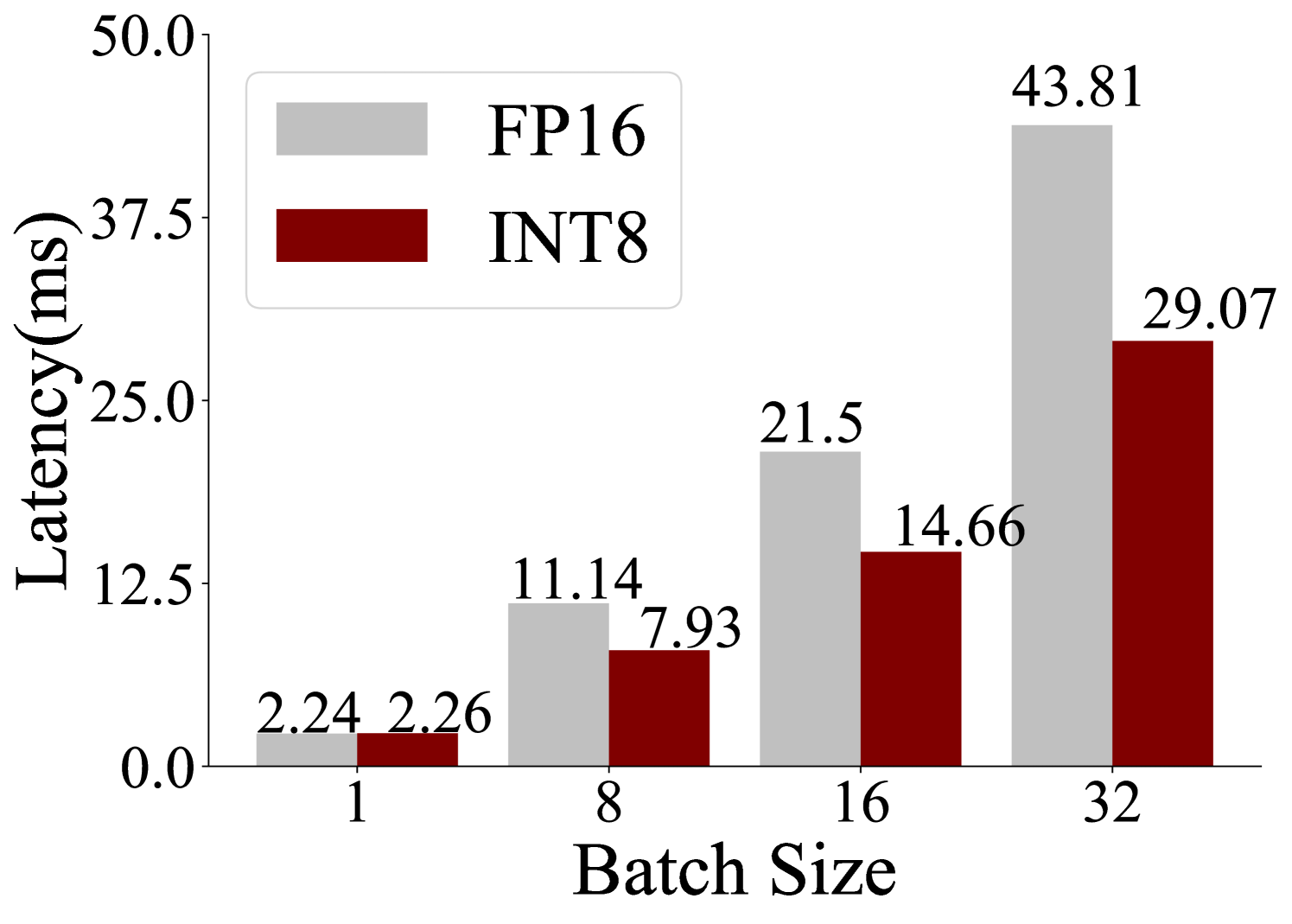

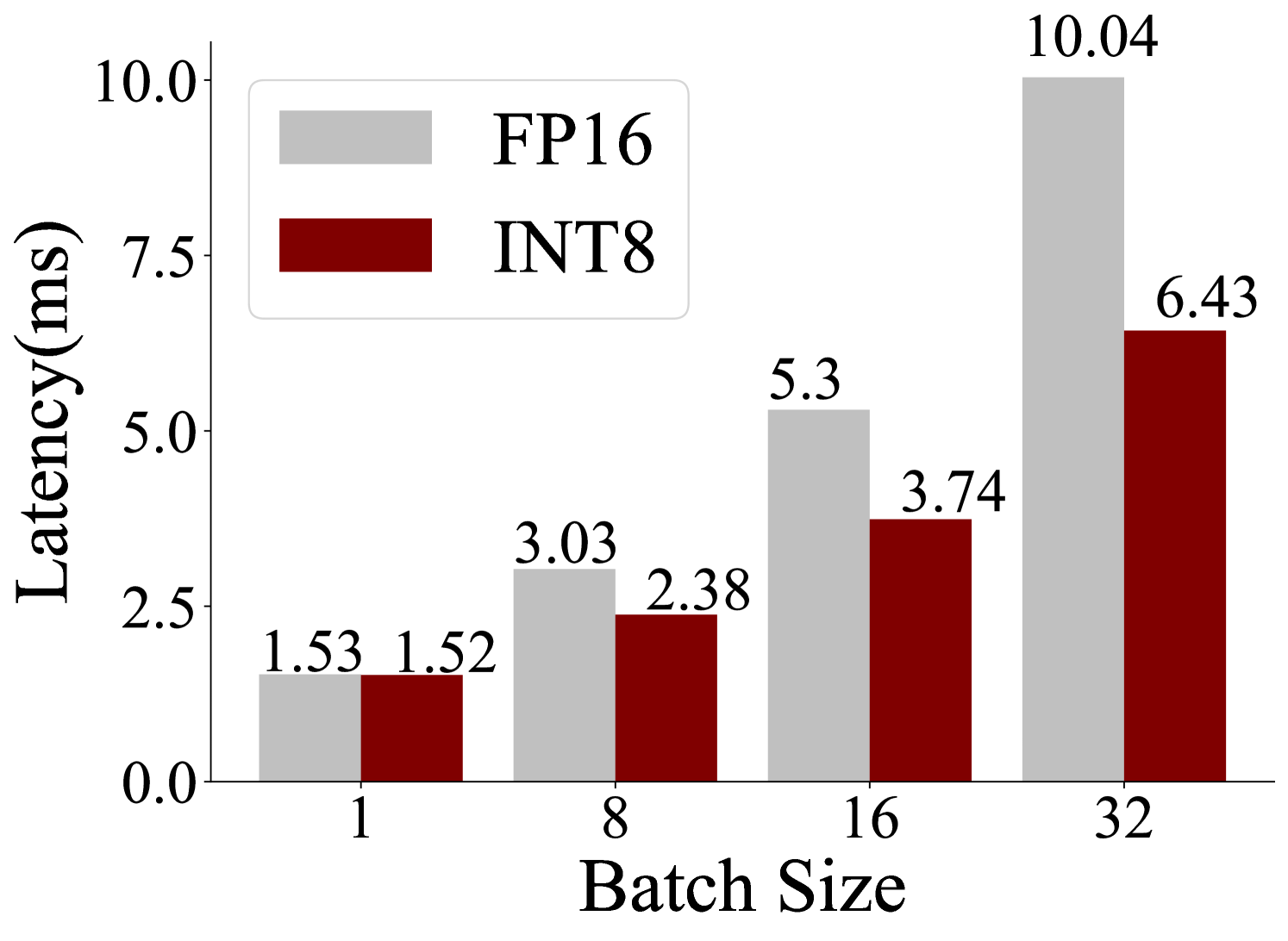

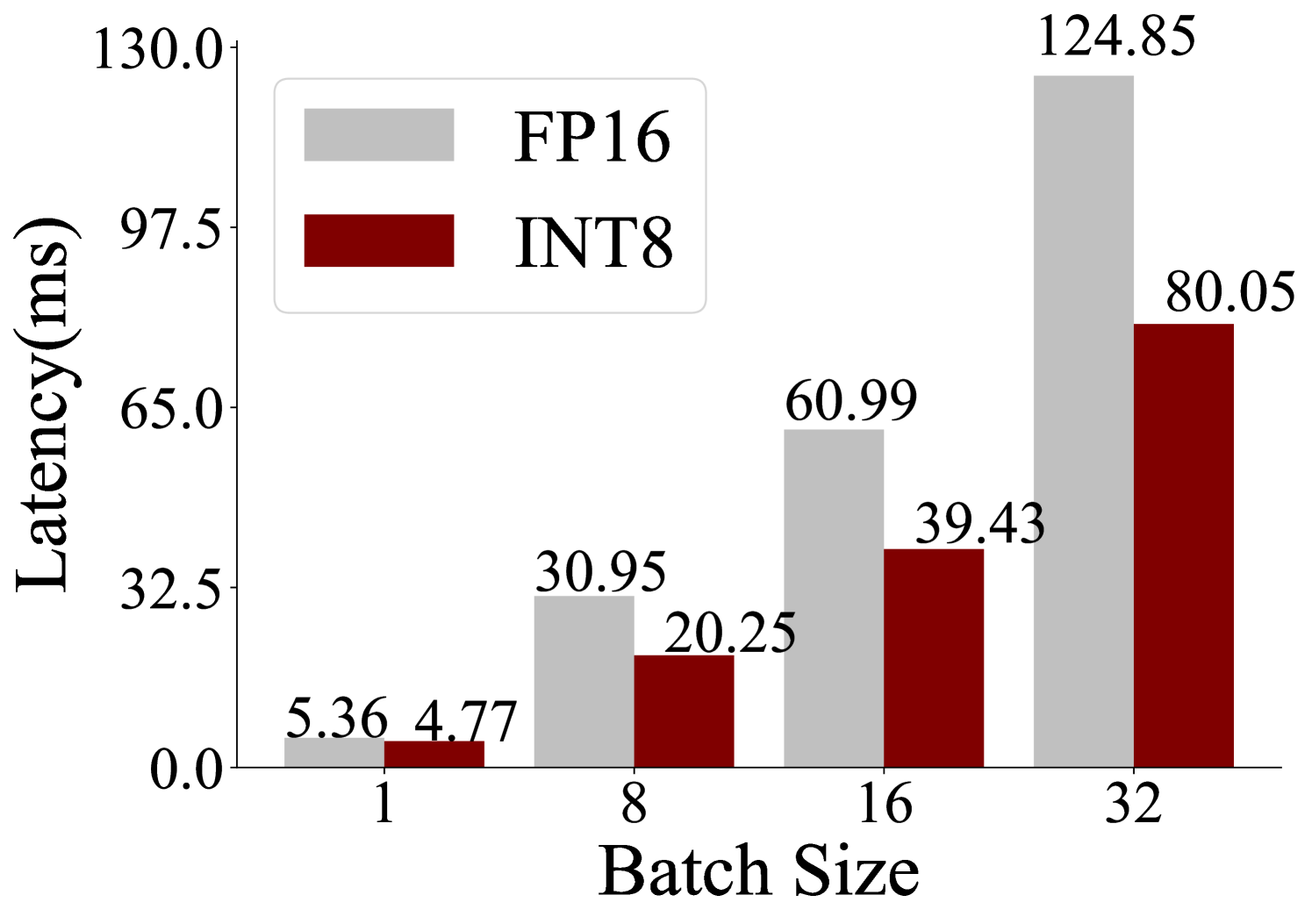

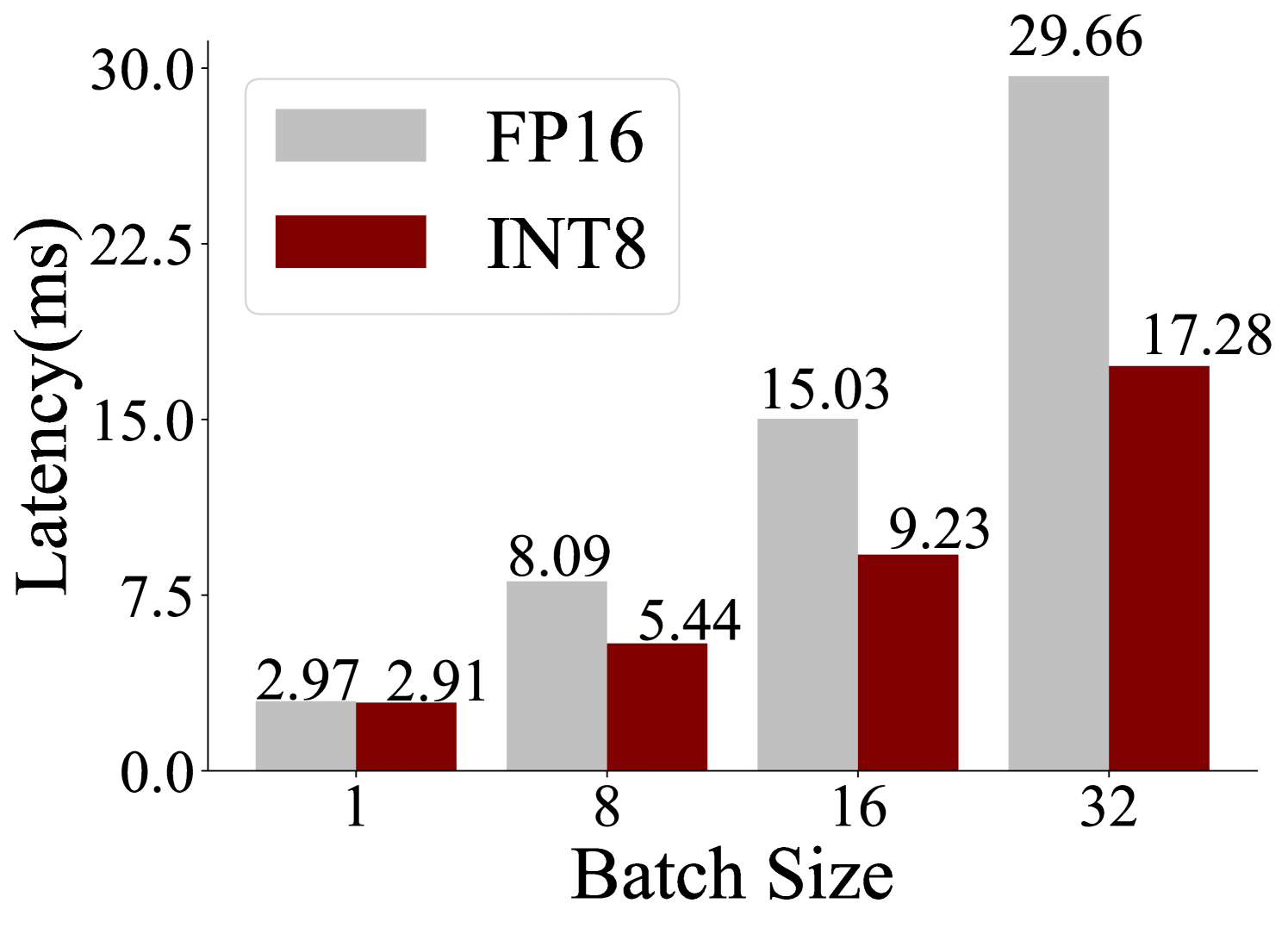

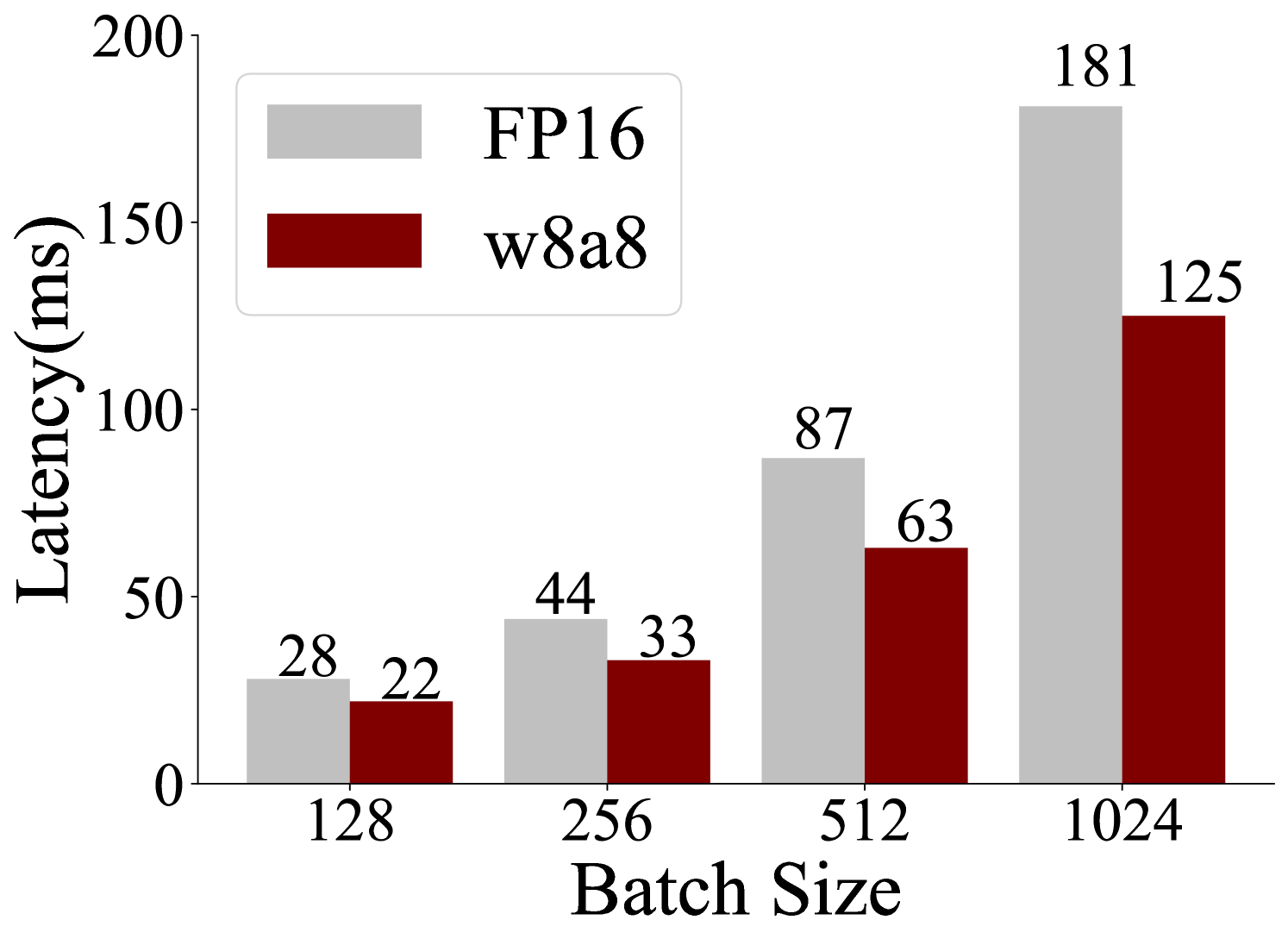

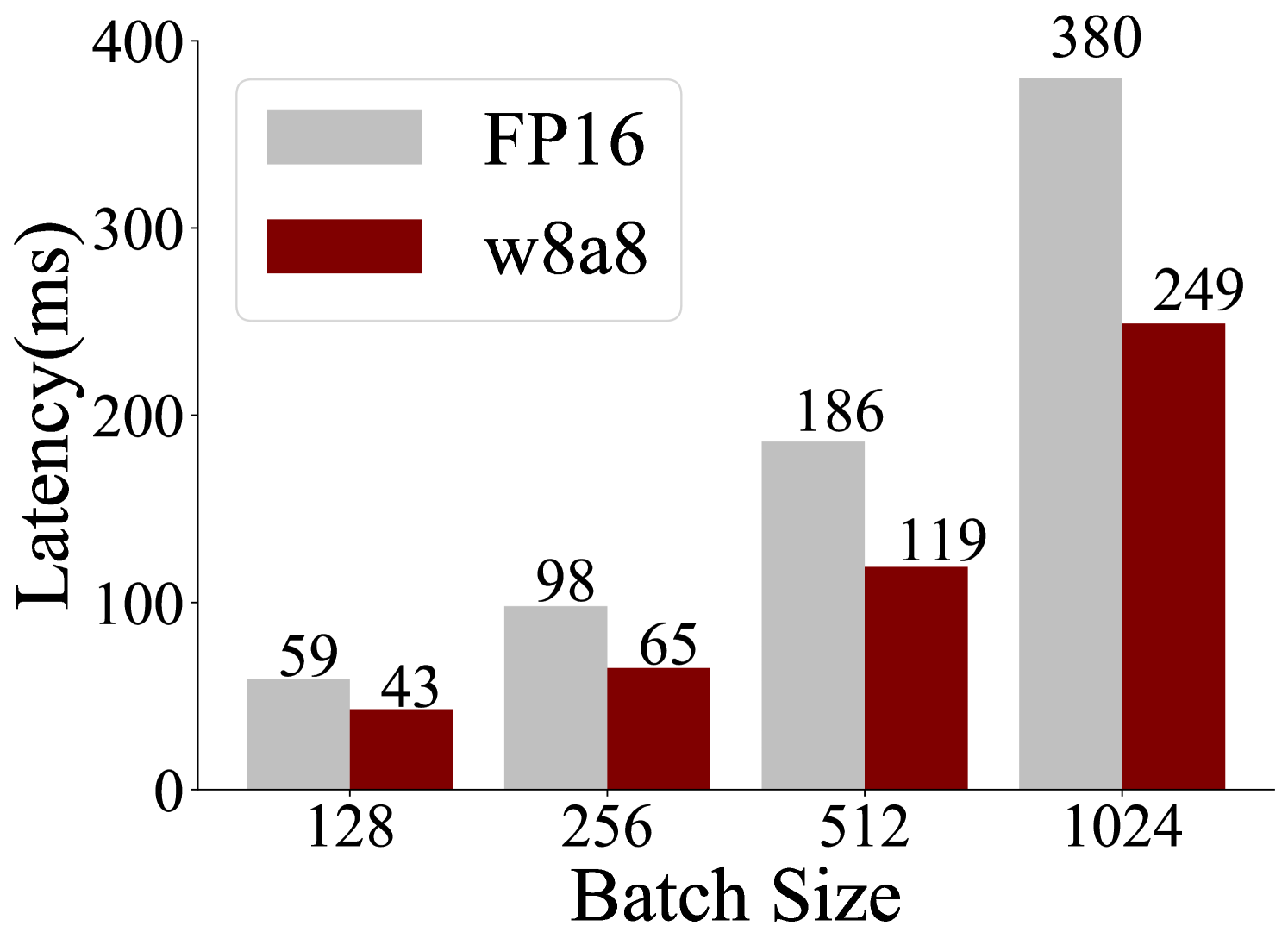

Figure 3: Inference latency of the ViT and OPT using FasterTransformer on NVIDIA A100-80GB GPUs. The data of OPT is from [18].

#### 3.1.2 Quantization for Transformer-Based Large Language Models

Before 2023, the study of quantization [57, 58, 59, 60, 61, 62, 63, 64, 65, 66] on Transformer-based NLP focused almost entirely on the BERT architecture. With the popularity of pretrained large language models [67, 1, 2], researchers [68, 69, 70] stated to explore how to quantize the Transformer with billions of parameters and explores more efficient quantization schemes with limited data and computational overhead.

Post-training quantization. Based on the analysis of outliers for quantized Transformers, Outlier Suppression [71] proposes to migrate the gamma of LayerNorm to the next module for that the gamma amplifies the outliers in the output and causes large quantization error, and then clips the tokens in a coarse-to-fine procedure. MREM [59] focuses on reducing the computational cost of quantization, and minimizes the output quantization error for all modules in parallel manner. Zeroquant [70] finds that the performance degradation is due to the different dynamic range of tokens and weights, proposes to adopt group-wise quantization for weights and token-wise quantization for activations, and utilizes knowledge distillation layer by layer. For large Transformers with billions of parameters, the outlier is still the main reason for large accuracy degradation for quantized models. To address this, LLM.int8() [68] represents the activations and outliers of weights with 16-bit and performs 8-bit vector-wise quantization for weight tensor, but the acceleration is limited and even worsened due to the irregular quantization granularity [18]. GPTQ [72] also only quantizes weight parameters as LLM.int8(), but adopts unified quantization strategy. GPTQ quantizes the weights based on approximate second-order information, thereby getting much more accurate quantized weight in a few hours. AWQ [73] proposes to search for the optimal per-channel scale factors by observing the distribution of activations instead of weights, allowing the LLMs retain the capabilities for different domains and modalities. Outlier Suppression+ [74] explores more accurate outliers suppression schemes with channel-wise shifting and scale, thereby helping align the range of different activation channels and scale down the outliers. The shifting and scale factors could also be merged with other weight parameters. Similarly, SmoothQuant [18] and QLLM [75] propose the mathematically equivalent per-channel scaling transformation that migrates the quantization difficulty from activations to weights. To further improve the performance of quantized LLMs, QLLM also learns low-rank parameters by minimizing the reconstruction error of the outputs between floating-point and quantized LLMs with limited calibration data. Similarly, RPTQ [76] utilizes a reorder-based scheme that rearranges the channels of activations with a similar range and quantizes them in cluster, and then migrates the scale into LayerNorm and weights of linear layers without extra computational overhead in the inference.Based on scale migration, OmniQuant [19] further proposes a learnable PTQ method module by module, where the weight clipping parameters and transformation scale of activations are optimized with gradient descent. To minimize error accumulation in adjacent blocks, CBQ [77] presents a cross-block reconstruction framework, simultaneously learn the rounding matrices of weight and step sizes of weights and activations. The rounding matrices are learned with LoRA technique, which does not bring much extra cost for PTQ. Like GPTQ, SqueezeLLM [78] also focuses on quantizing weights for the memory bandwidth and proposes to search for the optimal bit based on second-order information. Also, SqueezeLLM does not suppress the outliers and sensitive weight values but stores them in an efficient sparse format for more accurate quantized LLMs. Unlike the previous methods, SignRound [79] proposes to optimize quantized LLMs from the perspective of adaptive rounding. SignRound designs block-wise tuning using signed gradient descent to learn the weight rounding, thereby greatly helping the output reconstruction of each block.

TABLE III: Perplexity (PPL) comparison of different quantization methods on WikiText2. W/E denotes weights and embedding, respectively. * represents adopting different methods to get the perplexity, please refer to the corresponding papers.

| Method | PTQ | Bits | LLaMA | | | |

| --- | --- | --- | --- | --- | --- | --- |

| (W-E) | 7B | 13B | 30B | 65B | | |

| FP16 | $\times$ | 16/16 | 5.68 | 5.09 | 4.10 | 3.56 |

| SmoothQuant* [18] | ✓ | 8/8 | 11.56 | 10.08 | 7.56 | 6.20 |

| LLM-QAT* [80] | $\times$ | 8/8 | 10.30 | 9.50 | 7.10 | - |

| OS+ [74] | ✓ | 6/6 | 5.76 | 5.22 | 4.30 | 3.65 |

| OmniQuant [19] | ✓ | 6/6 | 5.96 | 5.28 | 4.38 | 3.75 |

| QLLM [75] | ✓ | 6/6 | 5.89 | 5.28 | 4.30 | 3.73 |

| SqueezeLLM [78] | ✓ | 4/16 | 5.79 | 5.18 | 4.22 | 3.76 |

| SignRound [79] | ✓ | 4/16 | 6.12 | 5.32 | 4.52 | 3.90 |

| OmniQuant [19] | ✓ | 4/16 | 5.86 | 5.21 | 4.25 | 3.71 |

| LLM-QAT* [80] | $\times$ | 4/8 | 10.90 | 10.00 | 7.50 | - |

| OS+ [74] | ✓ | 4/4- | 14.17 | 18.95 | 22.61 | 9.33 |

| OmniQuant [19] | ✓ | 4/4 | 11.26 | 10.87 | 10.33 | 9.17 |

| QLLM [75] | ✓ | 4/4 | 9.65 | 8.41 | 8.37 | 6.87 |

| PEQA [81] | $\times$ | 4/4 | 5.84 | 5.30 | 4.36 | 4.02 |

| SqueezeLLM [78] | ✓ | 3/16 | 6.32 | 5.60 | 4.66 | 4.05 |

| OmniQuant [19] | ✓ | 3/16 | 6.49 | 5.68 | 4.74 | 4.04 |

| PEQA [81] | $\times$ | 3/3 | 6.19 | 5.54 | 4.58 | 4.27 |

| OmniQuant [19] | ✓ | 2/16 | 15.47 | 13.21 | 8.71 | 7.58 |

Quantization-aware training. Q-BERT [57] is the early work that conducts quantization-aware training on Transformer-based architecture for natural language processing. Inspired by HAWQ [82], Q-BERT searches mixed-quantization settings based on the second order Hessian information and adopts group-wise quantization to partition parameter matrix into multiple groups to reduce accuracy degradation. I-BERT [62] not only quantizes the linear and self-attention layer, but also designs an integer-only inference scheme for the nonlinear operations (GELU, SoftMax and LayerNorm) in Transformers, which also inspires the FQ-ViT and I-ViT for the quantization of vision Transformer. Specifically, I-BERT proposes to use the second-order polynomial approximate for GELU and exponential function of SoftMax, and calculate the standard deviation of LayerNorm based on Newton’s Method. In this way, I-BERT can achieve faster inference compared to the baseline and normal quantization methods. To compensate for the disadvantages of the hand-crafted heuristics in Q-BERT, AQ-BERT [66] proposes an automatic mixed-precision quantization scheme to learn the bit-width and parameters of each layer simultaneously, which is inspired from differentiable network architecture search. AQ-BERT achieves better results than Q-BERT and more suitable for resource-limited devices. Besides the QAT schemes for BERT, there are only a few papers that conducts quantization-aware training on large language models for the huge training overhead. PEQA [81] and QLoRA [20] explore parameter-efficient fine-tuning techniques to train the quantized LLMs, with the former only optimizing the scale factors while freezing the quantized weights, and the latter adopting 4-bit NormalFloat, double quantization, and paged optimizers for normally distributed weights and reduce memory footprint during training. LLM-QAT [80] proposes a data-free quantization-aware training method, where the training data is generated from the original pretrained large language model. LLM-QAT not only quantizes all the linear layers and self-attention, but also quantizes the KV cache with cross-entropy based logits distillation.

#### 3.1.3 Quantization for Transformer-Based Vision Models

Post-training quantization. PTQ-ViT [21] firstly explores post-training quantization on vision Transformers, using the nuclear norm of the attention map and output feature in the Transformer layer to determine the bit-width of each layer. To get more accurate quantized Transformers, PTQ-ViT further proposes a rank loss to keep the relative order of the self-attention of quantized Transformers. PTQ4ViT [41] observers that the distributions of post-softmax and post-GELU activations are hard to quantize with conventional methods, and so introduces a twin uniform quantizer and use a Hessian guild metric to find the optimal scale parameters. To construct a full-quantized vision Transformer, FQ-ViT [22] not only quantizes all the linear and self-attention layers, but also quantizes LayerNorm and SoftMax with the power-of-two factor and Log2 quantizer. APQ-ViT [42] explores block-wise optimization scheme to determine the optimal quantizer for extremely low-bit PTQ, and utilizes an asymmetric linear quantization to quantize the attention map for maintaining the Matthew-effect of Softmax. Based on the observation that the normal uniform quantizer can not effectively handle the heavy-tailed distribution of vision Transformer activations, NoisyQuant [44] proposes to enhance the quantizer by adding a fixed uniform noise following the theoretical results, refining the quantized distribution to reduce quantization error with minimal computation cost. For the post-LayerNorm and post-SoftMax activations that have extreme distributions, RepQ-ViT [45] applies channel-wise quantization to deal with the severe inter-channel variation of the former and utilize log $\sqrt{2}$ quantizer to compress the power-law features of the later one. Before the inference, RepQ-ViT reparameterizes the scale factors to the layer-wise and log2 quantizer with mere computations.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Diagram: Knowledge Distillation for Large Transformer-Based Models

### Overview

This diagram outlines the landscape of knowledge distillation techniques applied to large transformer-based models. It categorizes these techniques based on the domain (Computer Vision and Natural Language Processing) and then further subdivides them by the type of information being distilled.

### Components/Axes

The diagram is structured hierarchically:

1. **Top Level (Blue Rounded Rectangle):** "Knowledge Distillation for Large Transformer-Based Models" - This is the overarching topic.

2. **Second Level (Green and Pink Rectangles):**

* "Computer Vision" (Green)

* "Natural Language Processing" (Pink)

These represent the two primary application domains. Arrows point from the top level to these domains, indicating they are sub-categories.

3. **Third Level (Colored Rectangles with Bold Text):** These represent different distillation approaches within each domain.

* **Under Computer Vision:**

* "Logits-based" (Purple)

* "Hint-based" (Yellow)

* "Others" (Gray)

* **Under Natural Language Processing:**

* "Logits-based" (Purple)

* "Hint-based" (Yellow)

* "API-based" (Orange)

* "Others" (Gray)

Arrows point from the domain rectangles to these sub-categories.

4. **Fourth Level (Bullet Points):** These list specific examples of models or methods within each distillation approach. Each approach has a brief description of what is being distilled.

### Detailed Analysis

**Computer Vision Domain:**

* **Logits-based (Purple):**

* Description: Output logits

* Examples:

* DeiT

* TinyViT

* **Hint-based (Yellow):**

* Description: Intermediate features

* Examples:

* ViTKD

* ManifoldKD

* **Others (Gray):**

* Description: Other contexts

* Examples:

* GPT4Image

* BLIP

**Natural Language Processing Domain:**

* **Logits-based (Purple):**

* Description: Output logits

* Examples:

* DistilBERT

* MINILLM

* **Hint-based (Yellow):**

* Description: Intermediate features

* Examples:

* MobileBERT

* TinyBERT

* **API-based (Orange):**

* Description: Generated contexts

* Examples:

* PaD

* Lion

* **Others (Gray):**

* Description: Parameters

* Examples:

* Bert-of-theseus

* ProKT

### Key Observations

* The diagram clearly distinguishes between knowledge distillation for Computer Vision and Natural Language Processing.

* Both domains share "Logits-based" and "Hint-based" distillation approaches.

* Natural Language Processing has an additional category, "API-based," which is not present in Computer Vision.

* The "Others" category exists in both domains, suggesting a catch-all for methods that don't fit the primary classifications.

* Specific model names are provided as concrete examples for each category, offering a practical view of these distillation techniques.

### Interpretation

This diagram provides a structured taxonomy of knowledge distillation strategies for large transformer models. It illustrates that the core principles of distillation, such as leveraging output logits or intermediate features, are applicable across different AI domains. The divergence in NLP with the "API-based" category suggests that the nature of interaction with large language models (e.g., through APIs) can lead to unique distillation paradigms. The presence of an "Others" category in both domains highlights the evolving nature of research and the potential for novel distillation methods that may not fit neatly into existing frameworks. Overall, the diagram serves as a useful map for understanding the current approaches and potential research directions in making large transformer models more efficient through knowledge distillation.

</details>

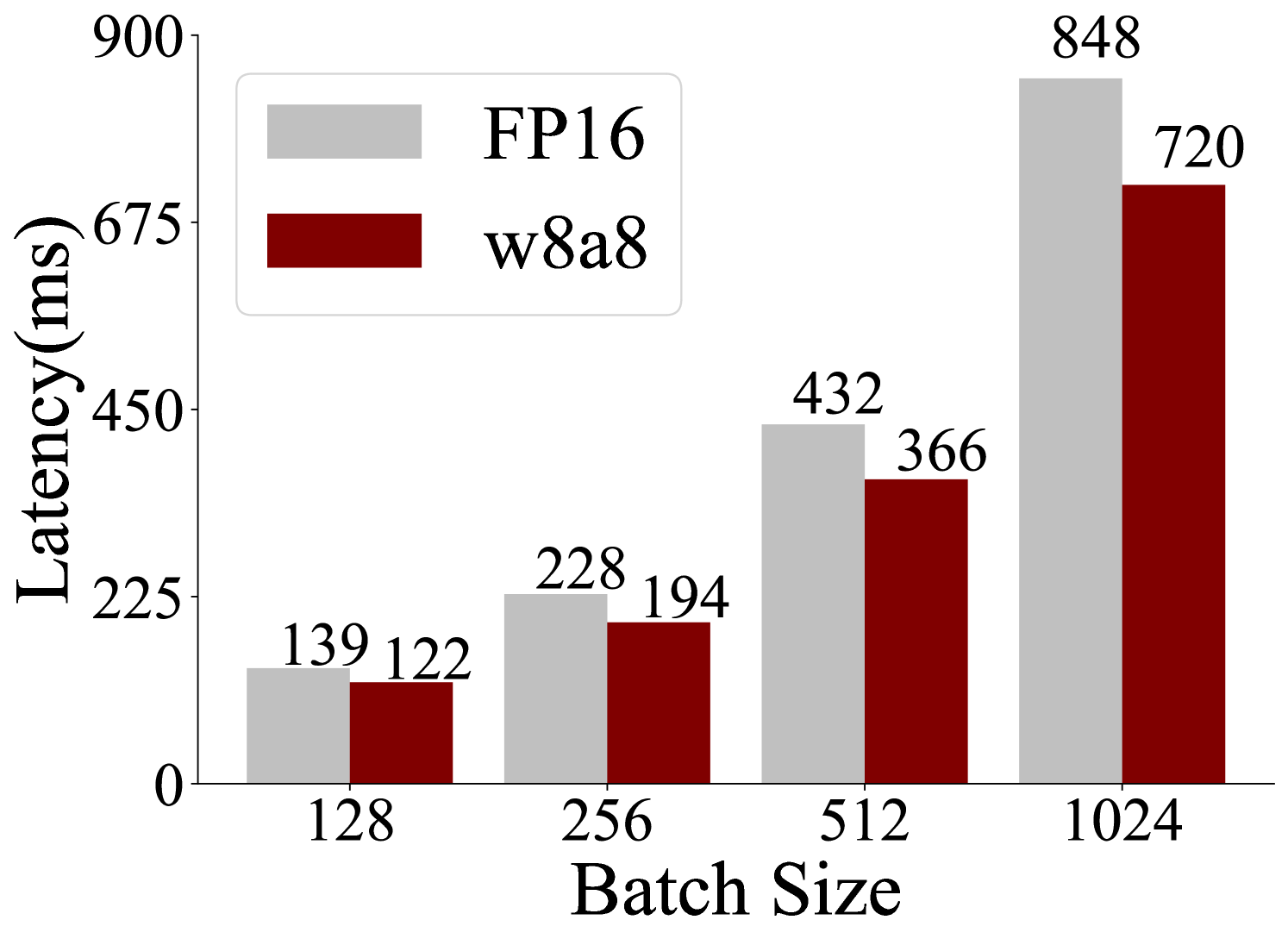

Figure 4: The taxonomy of knowledge distillation used for large Transformer-based models.

Quantization-aware training. When compressing vision Transformers to extremely low-bit precision, PTQ can not optimize the large quantization error with limited calibration images and suffers from significant performance reduction. As such, QAT is urgently required for more accurate low-bit vision Transformers. Q-ViT [48] finds that MSA and GELU are highly sensitive to quantization, and so proposes a fully differentiable quantization method that adopts head-wise bit-width and switch scale during the quantization searching process. Quantformer [46] takes the self-attention rank into consideration, and proposes to maintain the consistency between quantized and full-precision vision Transformers. In addition, Quantformer presents the group-wise strategy to quantize feature of patches in different dimensions, where each group adopts different quantization parameters and the extra computation cost is negligible. Based on the observation that sever performance degradation suffers from the quantized attention map, AFQ-ViT [47] designs an information rectification module and a distribution guided distillation during quantization training. The former helps recover the distribution of attention maps with information entropy maximization in the inference, and the latter reduces the distribution variation with attention similarity loss in the backward. Similar with FQ-ViT [22], I-ViT [50] also explores the integer-only quantization scheme for ViTs. I-ViT designs Shiftmax, ShiftGELU and I-LayerNorm to replace the vanilla modules with bit-wise shift and integer matrix operations in the inference, achieving $3.72-4.11\times$ acceleration compared to the floating-point model. OFQ [23] finds that weight oscillation causes unstable quantization-aware training and leads to sub-optimal results, and the oscillation comes from the learnable scale factor and quantized query and key in self-attention. To address that, OFQ proposes statistical weight quantization to improve quantization robustness, freezing the weights with high confidence and calming the oscillating weights with confidence-guided annealing. For the query and key in the self-attention, OFQ presents query-key reparameterization to decouple the negative mutual-influence between quantized query and key weights oscillation. Similarly, VVTQ [56] analyzes ViT quantization from the perspective of variation, which indicates that the data variance within mini-batch is much harmful to quantization and slow down the training convergence. To reduce the impact of variations in the quantization-aware training, VVTQ proposes a multi-crop knowledge distillation-based quantization methodology, and introduces module-dependent quantization and oscillation-aware regularization to enhance the optimization process.

We summarize the results of these PTQ and QAT methods in Table II. Most schemes conduct PTQ on 8-bit and 6-bit, and there is server degradation when quantized to 4-bit. With the complete training data, QAT could push the ViT quantization to 4-bit and less. Some methods even show better results than floating point models, which prove that quantization-aware training could tap into the true accuracy of quantized models efficiently. Figure 3 shows the latency results of ViT models using FasterTransformer https://github.com/NVIDIA/FasterTransformer.

#### 3.1.4 Discussion