# Self-Alignment for Factuality: Mitigating Hallucinations in LLMs via Self-Evaluation

**Authors**: Tencent AI Lab, Bellevue, {zhangxy, jyzhou, hmmeng}@se.cuhk.edu.hk

Abstract

Despite showing impressive abilities, large language models (LLMs) often struggle with factual inaccuracies, i.e., “hallucinations”, even when they hold relevant knowledge. To mitigate these hallucinations, current approaches typically necessitate high-quality human factuality annotations. In this work, we explore Self-Alignment for Factuality, where we leverage the self-evaluation capability of an LLM to provide training signals that steer the model towards factuality. Specifically, we incorporate Self-Eval, a self-evaluation component, to prompt an LLM to validate the factuality of its own generated responses solely based on its internal knowledge. Additionally, we design S̱elf-Ḵnowledge Tuning (SK-Tuning) to augment the LLM’s self-evaluation ability by improving the model’s confidence estimation and calibration. We then utilize these self-annotated responses to fine-tune the model via Direct Preference Optimization algorithm. We show that the proposed self-alignment approach substantially enhances factual accuracy over Llama family models across three key knowledge-intensive tasks on TruthfulQA and BioGEN. ∗ Work done during the internship at Tencent AI Lab. Our code is publicly available at https://github.com/zhangxy-2019/Self-Alignment-for-Factuality.

Self-Alignment for Factuality: Mitigating Hallucinations in LLMs via Self-Evaluation

Xiaoying Zhang 1∗, Baolin Peng 2, Ye Tian 2, Jingyan Zhou 1, Lifeng Jin 2, Linfeng Song 2, Haitao Mi 2, Helen Meng 1 1 The Chinese University of Hong Kong, Hong Kong 2 Tencent AI Lab, Bellevue {zhangxy, jyzhou, hmmeng}@se.cuhk.edu.hk {baolinpeng, yaptian, lifengjin, lfsong, haitaomi}@global.tencent.com

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: LLM Factuality Alignment Process

This document describes a technical diagram illustrating the workflow for improving the factuality of a Large Language Model (LLM) through a self-evaluation and alignment process.

## 1. Header Section

* **Prompt:** "Write a biography of Jesse Foppert."

---

## 2. Main Process Flow (Pre-Alignment)

This section is divided into two vertical stages connected by a downward-pointing gradient arrow (blue to green to red).

### Stage 1: Before Alignment

* **Actor:** LLM (represented by a blue line-art dog icon).

* **Action:** **Generation**

* **Output Text:** "Jesse Foppert is a former Major League Baseball pitcher who was born on July 20, 1980, in Los Angeles, Pennsylvania, USA ..."

* **Annotated Claims:** Specific segments of the text are highlighted and numbered:

1. **[1]** "a former Major League Baseball pitcher" (Highlighted in blue)

2. **[2]** "July 20" (Highlighted in white/grey)

3. **[3]** "Los Angeles" (Highlighted in blue)

4. **[4]** "Pennsylvania" (Highlighted in red/pink)

### Stage 2: Self-Evaluation on Factuality

* **Actor:** LLM (same icon).

* **Internal Thought (Self-Ask):** "Are the generated facts correct?"

* **Component: Confidence Scores** (Located at the right):

* A panel displays confidence values for the four numbered claims from Stage 1:

* **1: 0.87** (Accompanied by a green checkmark icon)

* **2: 0.10** (Accompanied by a red 'X' icon)

* **3: 0.08** (Accompanied by a red 'X' icon)

* **4: 0.95** (Accompanied by a green checkmark icon)

---

## 3. Post-Alignment Section

Separated by a dashed horizontal line, this section shows the result after the alignment process.

### Stage 3: After Alignment

* **Actor:** Aligned LLM (represented by a detailed, colored white dog/wolf icon).

* **Action:** **Generation**

* **Output Text:** "Jesse Foppert is a former Major League Baseball pitcher who was born on **July 10**, 1980, in **Reading**, Pennsylvania, USA ..."

* **Key Changes and Trends:**

* The model has corrected the low-confidence facts identified in Stage 2.

* **Correction 1 (Date):** "July 20" (Claim 2, low confidence) has been changed to **July 10** (Text is bolded and green).

* **Correction 2 (City):** "Los Angeles" (Claim 3, low confidence) has been changed to **Reading** (Text is bolded and green).

* **Consistency:** "Pennsylvania" (Claim 4, high confidence) remains in the text, though the background highlight is now a light red/pink. "a former Major League Baseball pitcher" (Claim 1, high confidence) remains highlighted in blue.

---

## 4. Summary of Data Transformations

| Claim ID | Original Value (Before) | Confidence | Final Value (After) | Status |

| :--- | :--- | :--- | :--- | :--- |

| 1 | a former MLB pitcher | 0.87 | a former MLB pitcher | Retained |

| 2 | July 20 | 0.10 | **July 10** | **Corrected** |

| 3 | Los Angeles | 0.08 | **Reading** | **Corrected** |

| 4 | Pennsylvania | 0.95 | Pennsylvania | Retained |

**Technical Conclusion:** The diagram demonstrates a "Self-Ask" mechanism where an LLM evaluates its own generated claims. By identifying claims with low confidence scores (0.10 and 0.08), the "Aligned LLM" is able to regenerate the text, replacing the erroneous facts with corrected data while maintaining the high-confidence factual structure.

</details>

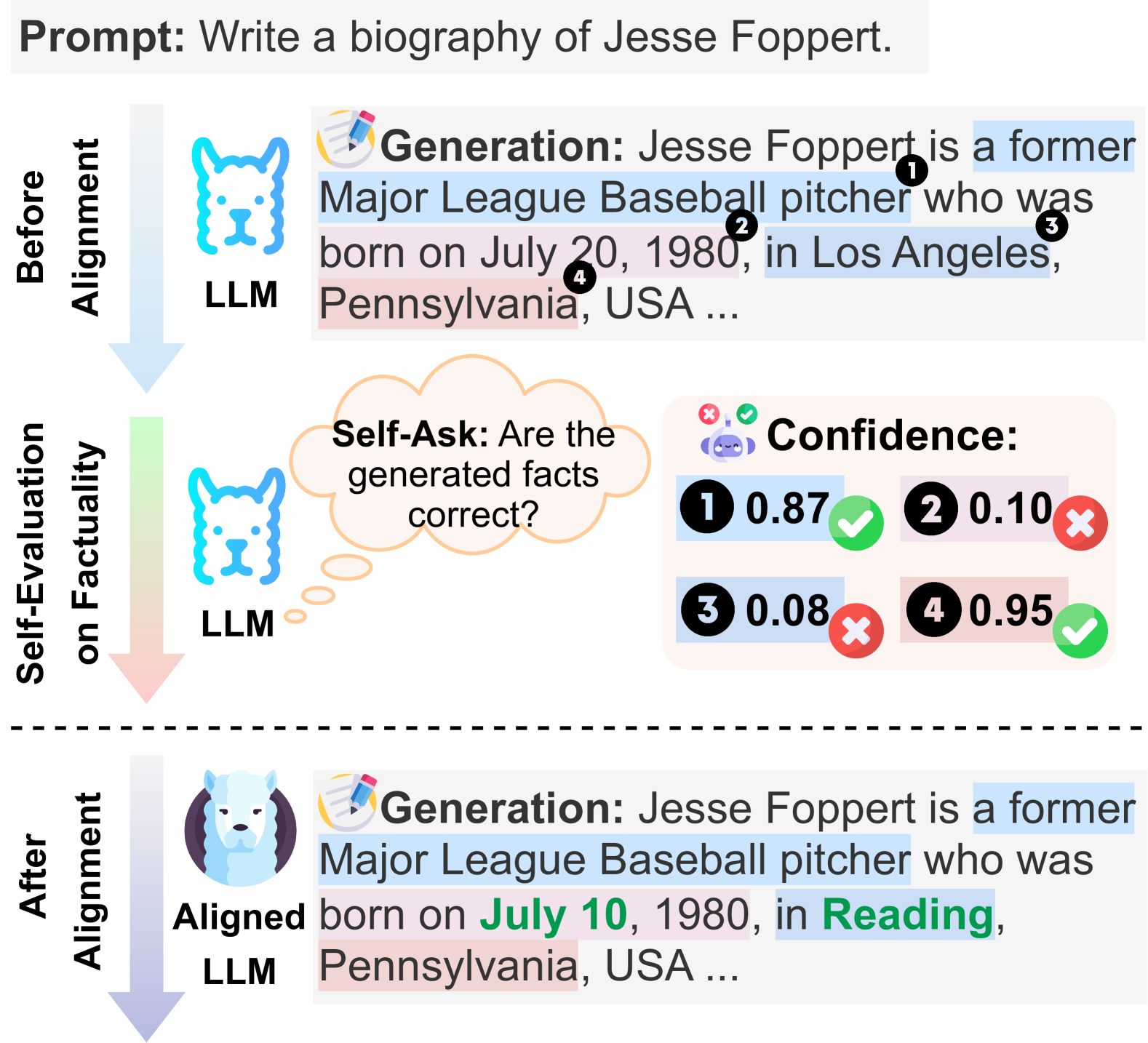

Figure 1: Illustration of Self-Alignment for Factuality. Given a prompt to write a biography, before factuality alignment, the LLM generates some facts that are not accurate. Through self-evaluation, the LLM is capable of identifying these inaccurate facts. The feedback from the self-evaluation is used as a reward signal to align the LLM towards factuality. Each fact is highlighted in distinct colors, and the corrected facts are marked with green letters.

Despite exhibiting remarkable proficiency in a diverse range of NLP tasks Wei et al. (2022); Liu et al. (2023c); Chang et al. (2023); Zhang et al. (2023a), LLMs OpenAI (2022, 2023); Touvron et al. (2023b) occasionally generate seemingly plausible yet factually incorrect statements, i.e., “hallucinations” Huang et al. (2023); Ji et al. (2023); Zhang et al. (2023c); Tonmoy et al. (2024). Such hallucinations can undermine the trustworthiness and practical applicability of LLMs in real-world scenarios, particularly when employed in high-stakes tasks Liu et al. (2023b).

In this paper, we focus on mitigating a noteworthy type of hallucination, where an LLM holds relevant knowledge in response to a query (i.e., “knows”), yet occasionally falters in conveying accurate information (i.e., “tells”) Li et al. (2023b, 2024). For instance, an LLM might generate an inaccurate response during one inference time but can provide a correct response at another time Wang et al. (2023a); Manakul et al. (2023); Dhuliawala et al. (2023). This gap between “knowing” and “telling” Saunders et al. (2022); Kadavath et al. (2022); Chen et al. (2023a) significantly undermines the potential of LLMs to accurately convey the knowledge acquired during the pre-training phase.

A few studies Li et al. (2023b); Chuang et al. (2023); Zhang et al. (2023b) edit the model’s internal representations towards “factuality” directions, using domain-specific annotated data. Meanwhile, acknowledging the inadequacy of the training objective—maximum likelihood estimation (MLE)—in accurately capturing factuality Ouyang et al. (2022); Allen-Zhu and Li (2023); Azaria and Mitchell (2023); Tian et al. (2023a), a recent study Tian et al. (2023a) introduces the LLM’s internal factuality signals as training rewards to guide the models towards factuality. Given that the origin of a LLM’s hallucinations is intrinsically linked to its confidence A lower confidence score corresponds to a greater likelihood of hallucinated facts. Huang et al. (2023), Tian et al. (2023a) employs consistency-based confidence regarding the factual correctness over the generate responses Kuhn et al. (2023); Manakul et al. (2023) as the factuality signals. Nevertheless, such consistency-based confidence remains rely on the model’s generation ability, which might be non-reflective on model’s internal knowledge. Despite the challenges faced by an LLM in directly “telling” the correct response, it has showed potential in “evaluating” its generated responses Kadavath et al. (2022); Saunders et al. (2022). As depicted in Figure 1, the LLM is capable of identifying factual inaccuracies within the responses it generates, with a reasonable prediction confidence. Such self-evaluation, i.e., directly prompting the model itself about internal knowledge awareness, might be a more effective approach to factuality estimation.

In this paper, we introduce a self-alignment framework, Self-Alignment for Factuality, which harnesses an LLM’s self-evaluation capability to mitigate hallucinations. Our approach encourages an LLM to generate prediction confidence scores pertaining to the factuality of its own generated responses through self-asking. Subsequently, these scores are utilized as reward signals to fine-tune the model using the Direct Preference Optimization (DPO) algorithm Rafailov et al. (2023). Specifically, we incorporate a factuality self-evaluation component, Self-Eval, which prompts the LLM to directly validate its responses based on its internal knowledge. To bolster the LLM’s universal self-evaluation ability, we introduce SK-Tuning to enhance the LLM’s internal knowledge awareness, i.e., prediction confidence estimation and calibration The confidence in a prediction is expected to accurately reflect the probability that the prediction is correct. Guo et al. (2017); Tian et al. (2023b), through sufficient tuning across heterogeneous knowledge-oriented tasks.

We assess the effectiveness of the proposed Self-Alignment for Factuality framework on three crucial knowledge-extensive tasks for LLMs, namely Multi-Choice Question-Answering (MCQA), short-form open-ended generation, and long-form open-ended generation, using two benchmark datasets: TruthfulQA Lin et al. (2022) and BioGEN Min et al. (2023a). The results show that, solely relying on the model’s internal knowledge, Self-Alignment for Factuality significantly enhances the factual accuracy of Llama family models Touvron et al. (2023a, b) across all three tasks, notably surpassing the representation-editing methods Chuang et al. (2023); Li et al. (2023c) and the recent work with consistency-based confidence Tian et al. (2023a).

In summary, our contributions are three-fold:

- We propose Self-Alignment for Factuality, a self-alignment strategy that leverages an LLM’s self-evaluation capability to mitigate the model’s hallucinations.

- We introduce SK-Tuning to improve an LLM’s confidence estimation and calibration, thereby enhancing its self-evaluation ability.

- We show the efficacy of Self-Alignment for Factuality on three crucial tasks using TruthfulQA and BioGEN, significantly improving factual precision over all compared methods.

2 Related work

Hallucinations in LLMs.

Hallucinations in LLMs occur when generated content, is seemingly plausible, however deviates from actual world knowledge Chen et al. (2023b); Li et al. (2023a); Zhang et al. (2023c); Tonmoy et al. (2024). In this study, we align with the perspective that an LLM’s acquired knowledge should mirror established facts Yang et al. (2023). We focus on a specific type of “unfaithful hallucination” where LLMs produce factually incorrect statements, even when possessing relevant knowledge Evans et al. (2021); Park et al. (2023); Li et al. (2023b). Rather than broadly targeting the enhancement of LLMs’ factuality Sun et al. (2023); Zhou et al. (2023a); Lightman et al. (2023); Peng et al. (2023); Li et al. (2023d); Mallen et al. (2023); Varshney et al. (2023), our goal is to align LLMs to reliably convey accurate information when they have sufficient knowledge.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document: LLM Factuality Self-Evaluation and Alignment Workflow

This document describes a three-step technical process for improving the factuality of Large Language Models (LLMs) through response sampling, self-evaluation, and preference-based fine-tuning.

---

## 1. Component Isolation

The image is organized into three primary regions representing a sequential pipeline:

* **Top-Left (Step 1):** Response Sampling.

* **Bottom (Step 2):** Self-Evaluation for Factuality (The core processing stage).

* **Top-Right (Step 3):** Pairwise Preference Data Creation and Fine-tuning.

---

## 2. Detailed Process Flow

### Step 1: Response Sampling

The process begins with a user prompt provided to an LLM.

* **Prompt:** "Write a biography of Jesse Foppert."

* **Outputs:** The LLM generates multiple candidate responses:

* **Response A:** "Jesse Foppert is a former Major League Baseball pitcher who was born on July 20, 1980, in Los Angeles, Pennsylvania, USA ...."

* **Response B:** "Jesse Foppert is an American singer who ..."

### Step 2: Self-Evaluation for Factuality

This stage breaks down the responses into verifiable units to estimate their accuracy.

#### A. Claim Extraction & Question Generation

Response A is processed into "Atomic Claims" and corresponding "Atomic Questions":

| ID | Atomic Claim (C) | Atomic Question (Q) |

| :--- | :--- | :--- |

| 1 | Jesse Foppert is a former Major League Baseball pitcher. | What is Jesse Foppert's profession? |

| 2 | Jesse Foppert was born on July 20, 1980. | On what date was Jesse Foppert born? |

| 3 | Jesse Foppert was born in Los Angeles. | In what city was Jesse Foppert born? |

| 4 | Jesse Foppert was born in Pennsylvania. | In what state was Jesse Foppert born? |

#### B. Factuality Estimation

The system pairs each question with its claim (**Q&C Pairs**) and feeds them back into an LLM for verification. The LLM outputs a probability score **P(True)**:

* **Q1 + C1:** 0.87 (Marked with a Green Checkmark - **True**)

* **Q2 + C2:** 0.10 (Marked with a Red X - **False**)

* **Q3 + C3:** 0.08 (Marked with a Red X - **False**)

* **Q4 + C4:** 0.95 (Marked with a Green Checkmark - **True**)

#### C. Overall Evaluation

The individual scores are aggregated into an average probability (**Ave-P**) for each response:

* **Response A:** Ave-P = 0.82

* **Response B:** Ave-P = 0.21

### Step 3: Pairwise Preference Data Creation and Fine-tuning

The final stage uses the evaluation scores to align the model.

* **Ranking:** The system compares the responses based on their factuality scores. Since 0.82 > 0.21, **Response A** is ranked higher than **Response B** (indicated by the `>` symbol).

* Response A (Green background) is the "Winner" (Gold Trophy icon).

* Response B (Red background) is the "Loser".

* **Alignment:** This preference data is used to "Fine-tune via DPO/RL" (Direct Preference Optimization or Reinforcement Learning).

* **Outcome:** The process results in an **Aligned LLM** (represented by a white wolf/husky icon).

---

## 3. Visual and Symbolic Annotations

* **Color Coding:**

* **Blue highlights:** Used for correct or professional information (e.g., "former Major League Baseball pitcher", "Los Angeles").

* **Red/Pink highlights:** Used for incorrect or conflicting information (e.g., "Pennsylvania" in the context of Los Angeles, or low-probability claims).

* **Icons:**

* **OpenAI Logo:** Indicates the use of a model (likely GPT-based) for claim extraction and question generation.

* **Wolf/Husky Icon:** Represents the internal LLM being evaluated and aligned.

* **Check/X Marks:** Indicate the binary result of the factuality estimation threshold.

* **Directional Flow:** Blue arrows indicate the primary data flow from sampling to evaluation, then to ranking, and finally to the alignment of the model.

</details>

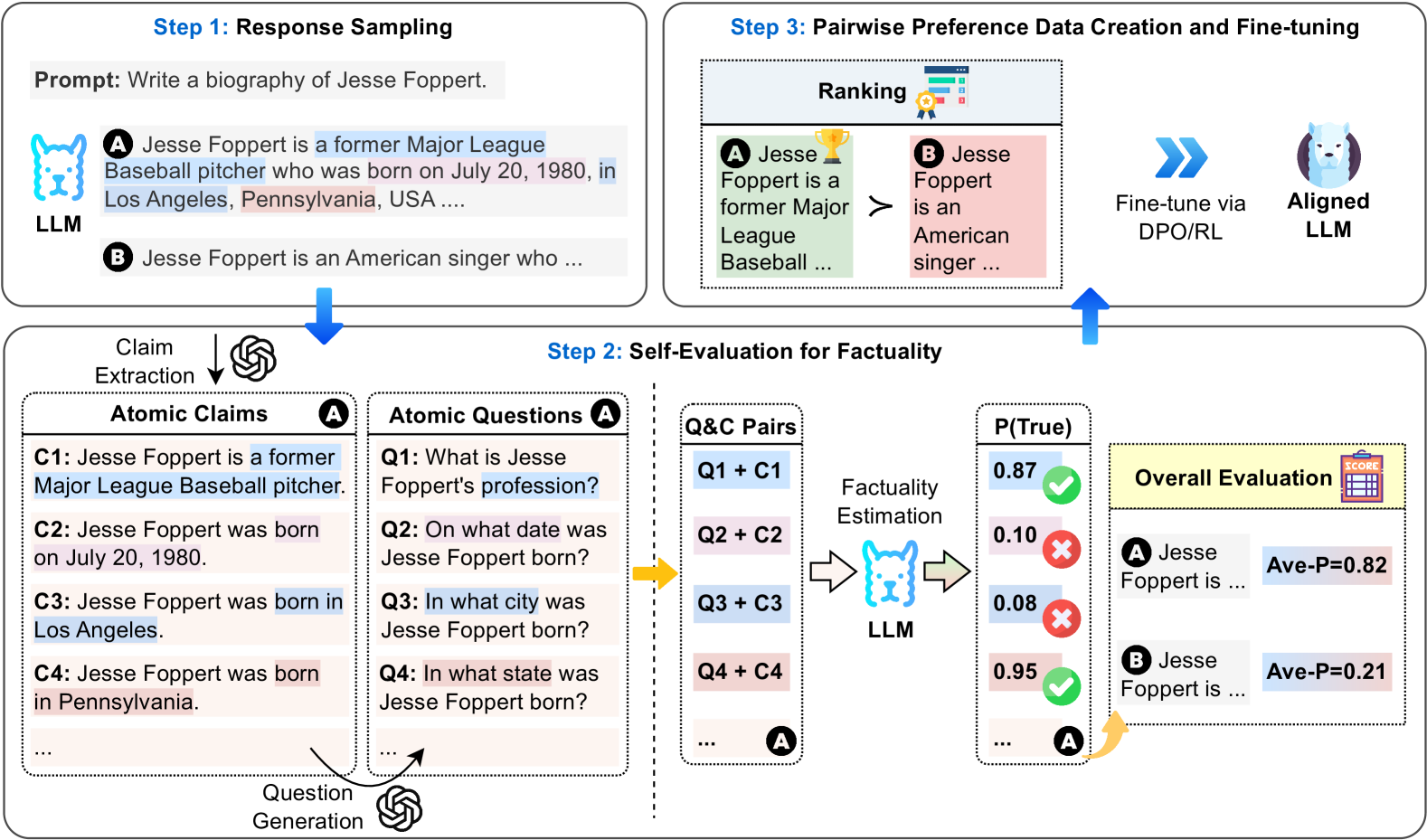

Figure 2: A diagram illustrating the three steps of our Self-Alignment for Factuality (in long-form text generation task): $(\textup{\it i})$ Step 1: Generate initial responses for preference data collection. $(\textup{\it ii})$ Step 2: Estimate the factuality of the responses through self-evaluation for preference labeling. $(\textup{\it iii})$ Step 3: Create pairwise preference data and fine-tune the LLM using DPO.

Hallucination Mitigation.

Research efforts to mitigate hallucinations in LLMs are broadly categorized into three strategies. $(\textup{\it i})$ In post-hoc correction, recent works have explored self-consistency techniques for model refinement Kadavath et al. (2022); Ren et al. (2023); Tian et al. (2023b); Madaan et al. (2023); Dhuliawala et al. (2023); Wang et al. (2023a). These methods, rooted in uncertainty estimation, aim at improving factual accuracy by analyzing the consistency among multiple responses generated by the LLM. However, their effectiveness varies with the model’s intrinsic capabilities. $(\textup{\it ii})$ Inference-time intervention approaches focus on manipulating LLMs’ internal representations to guide them towards factuality Li et al. (2023b); Chuang et al. (2023); Li et al. (2023c); Zhang et al. (2023b). These methods show promise but often rely on domain-specific data, limiting their generalizability. $(\textup{\it iii})$ Alignment training, as a third strategy, directly optimizes LLMs to produce factual statements. This involves either supervised fine-tuning with high-quality datasets Wang et al. (2023b) or reinforcement learning from human feedback (RLHF) Ouyang et al. (2022); Zhang et al. (2022b). While effective, these methods can be resource-intensive due to the need for extensive human labels.

Our research parallels two significant studies in the field by Yang et al. (2023) and Tian et al. (2023a). While Yang et al. (2023) focus on honesty-based fine-tuning, empowering LLMs to admit limitations by acknowledging “I don’t know”, our Self-Alignment for Factuality approach is distinctively geared towards guiding LLMs to articulate truthful information when they hold pertinent knowledge. In contrast to Tian et al. (2023a), which relies on a consistency-based method for confidence estimation, our work introduces Self-Eval-SKT, which is trained on a broad spectrum of heterogeneous data, and designed to enhance confidence estimation capabilities significantly. Experimental results from our study demonstrate a notable improvement in the accuracy and reliability of factual information presented by LLMs. We provide a brief summary in Appendix A.

3 Self-Alignment for Factuality

In this section, we introduce the proposed framework. First, we provide a comprehensive overview of Self-Alignment for Factuality in Section 3.1. Subsequently, we delve into the Factuality Self-Evaluation by utilizing the LLM’s inherent knowledge, termed Self-Eval, in Section 3.2. Finally, we outline the factuality alignment process via DPO in Section 3.3.

3.1 Overview

Self-Alignment for Factuality generally operates in the following three steps, as depicted in Figure 2:

Step 1: Generating Initial Responses for Preference Data Collection.

For a given prompt $x$ , we generate multiple candidate responses $\left\{y_{m}\right\}_{m=1}^{M}$ , where $M$ represents the sample size. These are produced from a base LLM guided by a policy $\pi_{\mathrm{ref}}\left(y\mid x\right)$ . To ensure the generation of coherent and relevant responses, we employ few-shot examples as prompts.

Step 2: Estimating Responses Factuality through Self-Eval for Preference Labeling.

In this step, we evaluate the factuality of generated candidate responses $\left\{y_{m}\right\}_{m=1}^{M}$ for a given prompt $x$ by leveraging the intrinsic knowledge of LLMs. In long-form response generation tasks, e.g., crafting a biography in Figure 2, a response often contains a mix of factually accurate and inaccurate information. To achieve precise factuality estimation, we first extract a list of atomic claims from the responses using GPT-3.5-turbo OpenAI (2022); Min et al. (2023a), with each claim representing a distinct piece of information Liu et al. (2023d). Subsequently, we employ GPT-3.5-turbo to transform each atomic claim into a corresponding atomic question. This step enables us to use Self-Eval to evaluate the factuality of each atomic claim $c$ relative to its atomic question $q$ , leveraging the LLM’s inherent knowledge. This process is denoted as $p(\text{True}|q,c)$ . Finally, we calculate the average of the obtained factuality scores for individual claims, resulting in a final factuality score, Avg- $p$ (True), for the candidate response.

Step 3: Creating Preference Data and Aligning LLM with DPO.

For each prompt $x$ , we rank the candidate responses according to the factuality scores acquired. Then, we select the top $\alpha$ responses as the preferred responses $y_{w}$ and the remaining responses as the dis-preferred ones $y_{l}$ , resulting in a set of preference pairs $\mathcal{D}=\left\{(x,y_{w},y_{l})\right\}$ . The total number of preference pairs is $\alpha M*(1-\alpha)M-K$ , where $K$ represents the number of pairs with equal scores. Finally, we align the LLM with these preference data via DPO.

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Document Extraction: SK-Tuning Training Pipeline

This document describes a two-step technical process for sampling answers, verifying factuality, and creating training samples for "SK-Tuning." The image is a flow diagram divided into two primary modules.

---

## Step 1: Sampling Answers and Verifying Factuality

This module describes the initial data generation and labeling phase.

### 1.1 Input and Generation

* **Question:** "What is Westlife's first album?"

* **Process:** The question is fed into an **LLM** (represented by a blue llama icon).

* **Action:** **Multiple Sampling** is performed.

* **Output:** A stack of answer cards labeled **"x 30"**, indicating 30 samples were generated.

* **Sample Text:** "Answer: Westlife is the debut studio album by Irish boy band Westlife."

### 1.2 Factuality Verification

The generated answers undergo a **Factuality Verification** process (represented by a signpost icon with a green check and red 'x'). This results in three categorized **Answer Samples**:

| ID | Answer Text | Factuality Status | Quantity |

| :--- | :--- | :--- | :--- |

| **1** | "Westlife is the debut studio album by Irish boy band Westlife." | **True** (Green checkmark/Blue background) | x 20 |

| **2** | "Coast to Coast." | **False** (Red 'x'/Pink background) | x 4 |

| **3** | "World of Our Own is their first studio album." | **False** (Red 'x'/Pink background) | x 6 |

---

## Step 2: Creating True/False Training Samples for SK-Tuning

This module describes how the verified samples from Step 1 are converted into preference-based training examples.

### 2.1 Component Definitions

* **Q&A Prompts:** Combinations of the original question and the sampled answers.

* **R+ (Positive Predictions):** Represented by a teal box labeled **A**.

* **R- (Negative Predictions):** Represented by a pink box labeled **B**.

* **Preference Operator:** The symbol **$\succ$** (greater than/preferred to) is used to show the relationship between R+ and R-.

* **Legend:**

* **Label:** A: True / B: False

* **R+:** Positive Predictions

* **R-:** Negative Predictions

### 2.2 Training Example Construction

The system creates pairs where a "True" label is preferred over a "False" label.

| Prompt Composition | Preference Logic | Quantity |

| :--- | :--- | :--- |

| **Question** + **Answer 1** (True) | **A** (True) $\succ$ **B** (False) | x 20 |

| **Question** + **Answer 2** (False) | **B** (False) $\succ$ **A** (True) | x 4 |

| **Question** + **Answer 3** (False) | **B** (False) $\succ$ **A** (True) | x 6 |

**Note on Logic:**

* For the correct answer (1), the model is trained to predict "True" (A) over "False" (B).

* For the incorrect answers (2 and 3), the model is trained to predict "False" (B) over "True" (A).

---

## Summary of Flow

1. **Generation:** An LLM generates 30 responses to a specific factual question.

2. **Labeling:** Responses are manually or automatically verified. In this case, 20 are correct and 10 are incorrect (split 4/6).

3. **Formatting:** These are formatted into "Q&A Prompts."

4. **Optimization:** The prompts are used to create 30 training examples for SK-Tuning, where the objective is to rank the correct factuality label (True for correct statements, False for incorrect statements) higher than the incorrect label.

</details>

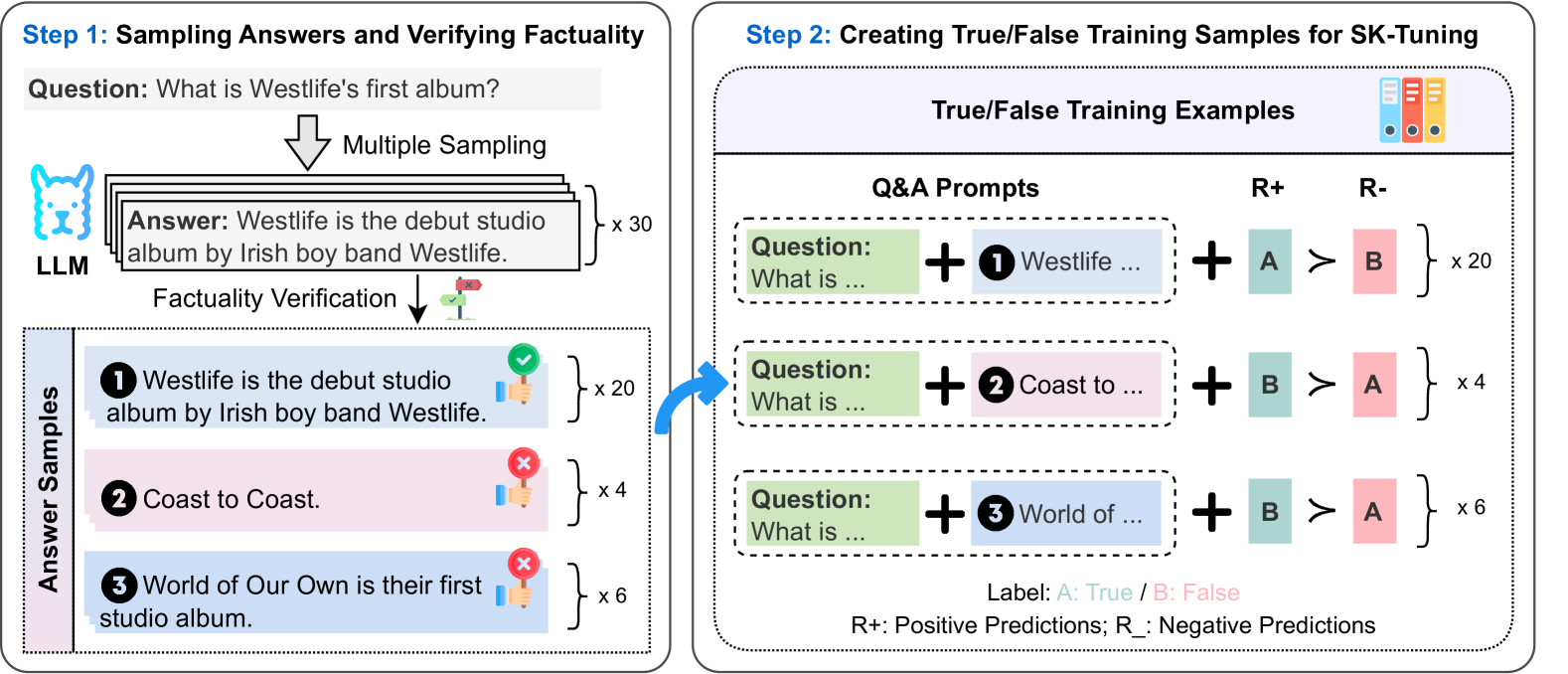

Figure 3: The process of constructing training data for SK-Tuning.

3.2 Factuality Self-Evaluation

At the core of Self-Alignment for Factuality is the design of a factuality self-evaluation component, denoted as Self-Eval. Given a prompt $q$ and a LLM $\mathcal{M}$ , Self-Eval, built on $\mathcal{M}$ , is tasked with assessing the validity of $\mathcal{M}$ ’s response $a$ , leveraging exclusively its own internal knowledge. This process is quantified as the probability $p(\text{True}|q,a)$ , which is formulated as follows:

$$

\displaystyle p(\text{True}|q,a)=f_{\mathcal{M}}(q,a) \tag{1}

$$

There exist various methodologies to parameterize $f_{\mathcal{M}}(q,a)$ . For instance, LLMs have demonstrated capabilities in discerning the extent of their knowledge Kadavath et al. (2022). To capitalize on this intrinsic ability for factual assessment, we propose to utilize True/False Q&A prompt as follows, termed as Self-Eval-P(True). This prompt facilitates the LLM’s self-evaluation of factuality based on its inherent knowledge

{mdframed}

[backgroundcolor=gray!10, leftmargin=0pt, innerleftmargin=5pt, innerrightmargin=5pt, linecolor=white] Instruction: Please evaluate the truthfulness of the proposed answer based on the given question and internal knowledge. <Few-shot Prompts> Question: <Question> Proposed Answer: <Answer> Is the proposed answer: A. True B. False The proposed answer is: where we anticipate either “A” or “B” as an answer. The probability $p$ (True) signifies the extent to which an LLM deems a generated answer (claim) valid. In line with Kadavath et al. (2022), we prepend few-shot prompts to encourage well-structured answers.

Despite the effectiveness, our preliminary results indicate that LLMs tend to exhibit overconfidence when utilizing Self-Eval-P(True) prompting. This observation is in line with the findings presented by Tian et al. (2023b). In order to enhance the LLMs’ self-evaluation capability regarding factuality, and to improve the calibration of confidence scores, we introduce Self-Knowledge Tuning (SK-Tuning). It is designed to augment LLMs’ ability to accurately assess the factuality of their own generated responses across a diverse range of tasks. Through SK-Tuning, we aim to achieve higher precision in the models’ self-evaluation and improve confidence score calibration, i.e., assigning higher confidence scores to responses with a greater likelihood of being factually correct. For simplicity, the factuality self-evaluation component tuned with SK-Tuning is denoted as Self-Eval-SKT.

SK-Tuning

The challenge of SK-Tuning with LLMs lies in creating training examples that can accurately reflect the identification of specific knowledge pieces. To address this, we propose to build self-knowledge-guided training data, as illustrated in Figure 3. Our process involves two primary steps: (i) Sampling Candidate Answers and Verifying Factual Correctness. For each question $q$ , we generate a set of candidate answers $\left\{a_{k}\right\}_{k=1}^{K}$ using few-shot prompting. We then assess the factual correctness of each answer by comparing it to the golden answer, employing the bidirectional entailment approach with the Deberta-Large-MNLI model He et al. (2021). Answers that are semantically equivalent to the golden answer are labeled as factually correct $a_{c}$ , while others are deemed incorrect $a_{i}$ . (ii) Creating True/False Training Examples. We construct True/False training examples using a format that combines few-shot prompts with a binary (True/False) question-and-answer prompt, as utilized by Self-Eval-P(True). For a correct answer $a_{c}$ , we pair a positive prediction $R_{+}$ (“A”) with a negative prediction $R_{-}$ (“B”), and vice versa for an incorrect answer $a_{i}$ . This approach results in a dataset $\mathcal{D}_{\psi}$ comprising prediction pairs, with duplicates maintained to approximate the model’s knowledge over the question, which helps improving the confidence calibration (Appendix LABEL:sec:no_dup_calib).

Following the assembly of $\mathcal{D}_{\psi}$ , we proceed to fine-tune the LLM on this pairwise prediction data. The fine-tuning aims to minimize a loss function specifically designed to enhance the model’s ability to leverage its inherent knowledge for accurate self-knowledge evaluation, as follows:

$$

\displaystyle\mathcal{L}_{\mathrm{\phi}}= \displaystyle-\mathbb{E}_{\left(q,a,r_{+},r_{-}\right)\sim\mathcal{D}_{\psi}}%

\left[\log\sigma\left(\log\pi_{\phi}\left(r_{+}\mid q,a\right)\right.\right. \displaystyle\left.\left.-\log\pi_{\phi}\left(r_{-}\mid q,a\right)\right)%

\right], \tag{2}

$$

where $\pi_{\phi}$ is the LLM trained for factuality estimation and $\sigma$ denotes the logistic function.

3.3 Alignment Tuning with DPO

After obtaining the preference data over candidate responses $\mathcal{D}=\left\{(x,y_{w},y_{l})\right\}$ , where each tuple represents a choice preference between winning and losing responses to few-shot prompts, we proceed to the stage of alignment tuning for improving factuality. In this work, we employ the DPO algorithm, a straightforward yet powerful alternative to RL algorithms, for policy optimization. Specifically, DPO employs a standard cross-entropy objective for direct policy optimization, as follows:

$$

\displaystyle\mathcal{L}_{\theta}= \displaystyle-\mathbb{E}_{\left(x,y_{w},y_{l}\right)\sim\mathcal{D}}\left[\log%

\sigma\left(\beta\log\frac{\pi_{\theta}\left(y_{w}\mid x\right)}{\pi_{\mathrm{%

ref}}\left(y_{w}\mid x\right)}\right.\right. \displaystyle\left.\left.-\beta\log\frac{\pi_{\theta}\left(y_{l}\mid x\right)}%

{\pi_{\mathrm{ref}}\left(y_{l}\mid x\right)}\right)\right], \tag{3}

$$

where the model policy $\pi_{\theta}$ is initialized from the base reference policy $\pi_{\mathrm{ref}}$ , $\beta$ is a parameter controlling the deviation from $\pi_{\mathrm{ref}}$ , and $\sigma$ denotes the logistic function.

4 Experiments

In this section, we evaluate the efficacy of our proposed framework across three distinct tasks: MCQA, short-form open-ended generation, and long-form open-ended generation. Following Touvron et al. (2023b); Li et al. (2023b); Chuang et al. (2023), the chosen tasks narrowed to knowledge-intensive tasks that necessitate the extraction of factual knowledge from an LLM to successfully complete these tasks.

4.1 Setup

Datasets and Evaluation Metrics. For the MCQA task, we utilize the TruthfulQA dataset Lin et al. (2022). For short-form open-ended generation tasks, we use generation formulation of TruthfulQA and BioGEN for the long-form one Min et al. (2023b). In evaluating performance on TruthfulQA, we report Accuracy for the MCQA task, alongside metrics of truthfulness (True), informativeness (Info), and a composite True ∗ Info score, all evaluated using a fine-tuned GPT-3 model Lin et al. (2022). For assessments on BioGEN, we present the FActScore percentage and the Respond ratio. Moreover, we quantify the correctness of generated content by reporting the number of accurate (cor) and inaccurate facts (incor) per response, following the methodology outlined by Tian et al. (2023a). Comprehensive descriptions of tasks, datasets, and evaluation criteria are detailed in Appendix B. Additionally, it is crucial to mention that for open-ended text generation tasks, self-alignment approaches only use the prompts provided in the datasets.

Baselines. We compare our methods with the following representative approaches We report the mean results of three different runs.:

- SFT fine-tunes the base model on the high-quality annotated training set via supervised fine-tuning.

- ITI Li et al. (2023b) edits internal representations by shifting model activations along learned factuality-related directions.

- DoLa Chuang et al. (2023) edits internal representations by contrasting output distributions from different layers within the model.

- FactTune-MC Tian et al. (2023a) optimizes the base model using DPO on the preference data labeled with consistency-based confidence scores.

Implementation Details. $(\textup{\it i})$ Implementation of the Self-Alignment for Factuality framework: We employ Llama-7B Touvron et al. (2023a) and Llama2-7B Touvron et al. (2023b) as the base LLMs and fine-tune these models on the constructed preference data for five epochs. More implementation details are shown in Appendix C. $(\textup{\it ii})$ Implementation of SK-Tuning: We utilize Wikipedia, which is a frequently employed pre-training data source for LLMs Zhang et al. (2022a); Touvron et al. (2023b); Shi et al. (2023), and the BIG-bench dataset Srivastava et al. (2023) in our study. Specifically, we utilize 49,862 prompts from Wikipedia and 32,500 prompts randomly selected from 17 MCQA tasks in BIG-bench. More fine-tuning details are provided in Appendix C.

4.2 Main Results

$$

\mathtt{Acc.} \mathtt{True} \mathtt{Info} \mathtt{True^{*}Info} \mathtt{Cor.} \mathtt{Incor.} \mathtt{Res.} \mathtt{FActScore} \tag{2023}

$$

Table 1: Few-shot evaluation results on three distinct tasks: 6-shot prompting results of the MCQA and short-form generation tasks on TruthfulQA, and 5-shot prompting results of the long-form generation task on BioGEN. We use the default QA prompt as in Lin et al. (2022); Li et al. (2023b); Chuang et al. (2023) on TruthfulQA and the prompt generated by GPT-4 OpenAI (2023) on BioGEN (Table 10 in Appendix C). Results on TruthfulQA marked with an asterisk are cited from Li et al. (2023b) and Chuang et al. (2023). The remaining results of DoLa and FactTune-MC are reproduced following Chuang et al. (2023) and Tian et al. (2023a).

Table 6 presents the main evaluation results across three distinct tasks. We have the following observations:

Self-alignment for factuality is effective on mitigating hallucinations. Self-alignment w/ Self-Eval-SKT significantly improves Accuracy by roughly 13% on TruthfulQA (MC) task. Moreover, self-alignment w/ Self-Eval-SKT attains the highest True ∗ Info (45.75% for Llama-7B and 53.42% for Llama2-7B) on TruthfulQA (short-form generation) task and exhibits substantial improvement in FActScore (approximately 4%) for BioGEN (long-form generation) task. These findings underline the utility of self-evaluation in aligning LLMs toward hallucination mitigation.

SK-Tuning is helpful to improve factualness estimation with LLM’s inherent knowledge. Enhancing self-evaluation capabilities through SK-Tuning enables self-alignment with Self-Eval-SKT to achieve higher factual accuracy compared to Self-Eval-P(True). In addition, Self-alignment w/ Self-Eval-SKT considerably outperforms w/ Self-Eval-P(True) regarding True ∗ Info (surpassing by $12\%$ ) and FActScore (exceeding by $4\%$ ). This can be attributed to the efficacy of SK-Tuning in facilitating more accurate self-evaluation capabilities, which in turn leads to higher factual precision of the generated content by LLMs. We provide an in-depth analysis in Section D. Moreover, self-alignment w/ Self-Eval-SKT evidently surpasses FactTune-MC It is worth noting that the discrepancy between the reported results of FactTune-MC and the results presented in Tian et al. (2023a) may be attributed to the considerably small number of training prompts in this study., emphasizing the advantages of our proposed Self-Eval-SKT for confidence estimation over the sampling-based approach. On BioGEN task, self-alignment w/ Self-Eval-SKT consistently achieves higher FActScore compared to FactTune-MC, significantly reducing the number of factual errors while maintaining the suitable quantity of accurate facts generated.

In addition, without requiring any labeled domain-specific (a.k.a. in-domain) data, self-alignment w/ Self-Eval-SKT considerably surpasses the internal representation editing methods – ITI and DoLa, by obtaining the highest True ∗ Info while exhibiting remarkable True and Info scores on TruthfulQA. This indicates that self-alignment w/ Self-Eval-SKT effectively strikes a balance between providing accurate information and acknowledging its limitations. Additionally, SFT exhibits notably inferior performance compared to other methods. This observation aligns with the findings in Li et al. (2023b); Tian et al. (2023a). A possible explanation Schulman (2023), is that directly supervised fine-tuning LLMs on high-quality data may inadvertently induce hallucinations by forcing LLMs to answer questions that exceed their knowledge limits.

4.3 Pairwise Evaluation

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Data Extraction: Pairwise Comparisons Win Rate Chart

## 1. Document Overview

This image is a grouped bar chart illustrating the performance of a model across four distinct metrics during pairwise comparisons against two different baselines. The chart uses a color-coded system to differentiate between evaluation criteria.

## 2. Component Isolation

### A. Header/Legend

* **Location:** Top-right quadrant of the chart area.

* **Legend Items (Color-to-Label Mapping):**

* **Slate Blue:** Factuality

* **Light Blue:** Helpfulness

* **Pink:** Relevance

* **Yellow/Orange:** Naturalness

### B. Main Chart Area (Axes)

* **Y-Axis (Vertical):**

* **Title:** Win Rate (%)

* **Scale:** 40 to 100

* **Major Tick Marks:** 40, 50, 60, 70, 80, 90, 100

* **Gridlines:** Horizontal dashed lines at every 10-unit interval.

* **X-Axis (Horizontal):**

* **Title:** Pairwise Comparisons

* **Categories:**

1. vs. FactTune-MC

2. vs. w/ Self-Eval-P(True)

### C. Data Points (Bar Values)

Each bar is labeled with its specific numerical value at the top.

#### Category 1: vs. FactTune-MC

* **Factuality (Slate Blue):** 72

* **Helpfulness (Light Blue):** 66

* **Relevance (Pink):** 68

* **Naturalness (Yellow):** 67

#### Category 2: vs. w/ Self-Eval-P(True)

* **Factuality (Slate Blue):** 65

* **Helpfulness (Light Blue):** 68

* **Relevance (Pink):** 62

* **Naturalness (Yellow):** 51

---

## 3. Data Table Reconstruction

| Metric | vs. FactTune-MC (Win Rate %) | vs. w/ Self-Eval-P(True) (Win Rate %) |

| :--- | :---: | :---: |

| **Factuality** | 72 | 65 |

| **Helpfulness** | 66 | 68 |

| **Relevance** | 68 | 62 |

| **Naturalness** | 67 | 51 |

---

## 4. Trend Analysis and Observations

### Trend Verification

* **Factuality:** Shows a downward trend between the two comparisons, dropping from the highest overall value (72) to 65.

* **Helpfulness:** Shows a slight upward trend, increasing from 66 to 68. This is the only metric that improves in the second comparison.

* **Relevance:** Shows a downward trend, decreasing from 68 to 62.

* **Naturalness:** Shows a significant downward trend, dropping sharply from 67 to 51.

### Key Findings

1. **Dominant Metric:** "Factuality" is the strongest performing metric when compared against "FactTune-MC" (72%).

2. **Weakest Metric:** "Naturalness" is the lowest performing metric overall, specifically in the "vs. w/ Self-Eval-P(True)" comparison, where it barely maintains a majority win rate at 51%.

3. **Comparative Difficulty:** The baseline "w/ Self-Eval-P(True)" appears to be a more challenging opponent for the model in terms of Factuality, Relevance, and Naturalness, as the win rates are lower across those three categories compared to the "FactTune-MC" baseline.

4. **Consistency:** All win rates across all categories remain above 50%, indicating the primary model won more often than both baselines in every measured metric.

</details>

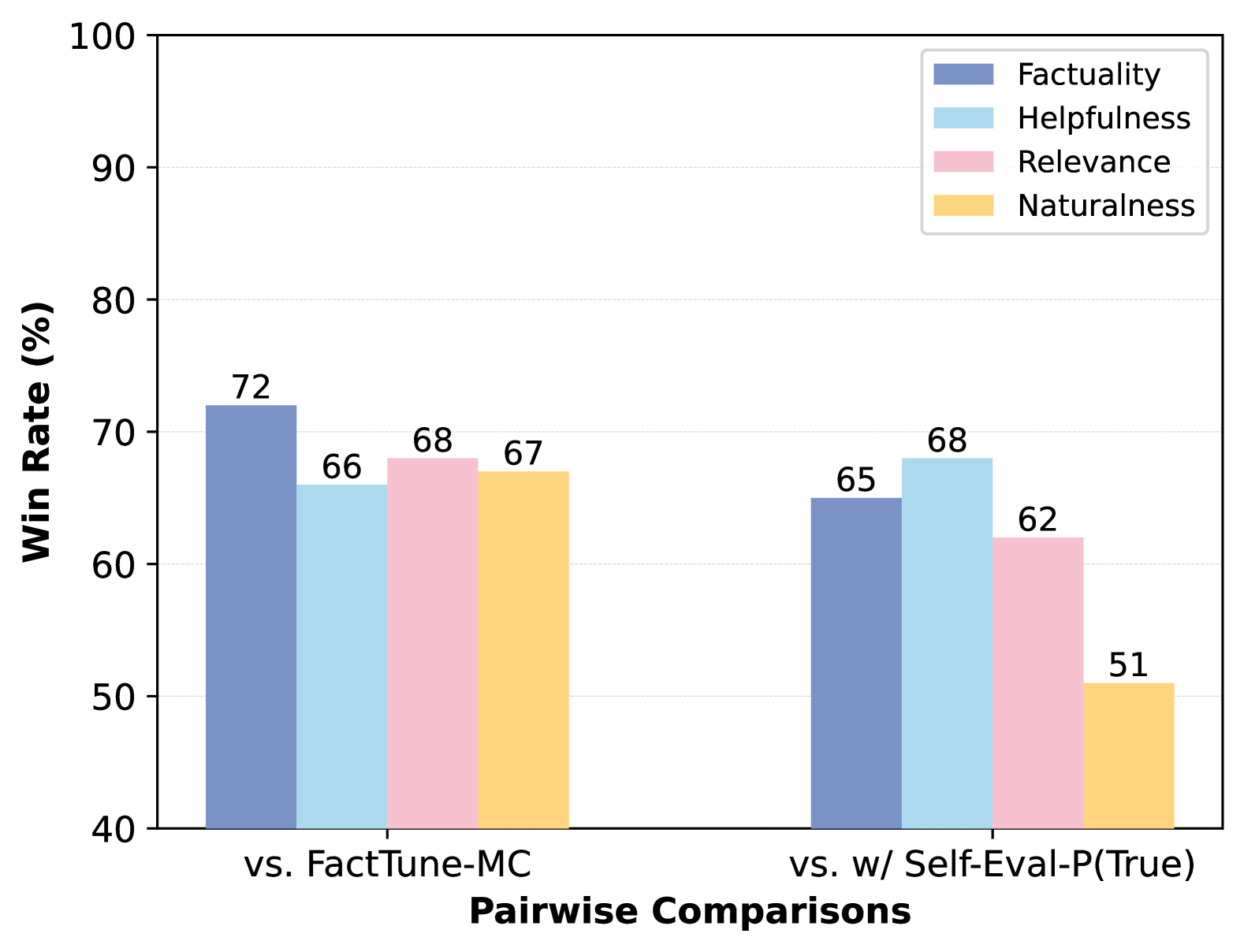

Figure 4: Results of pairwise comparisons on BioGEN across four dimensions: factuality, helpfulness, relevance and naturalness, as evaluated by GPT-4. The left and right sections present the win rates of Self-Alignment for Factuality w/ Self-Eval-SKT against FactTune-MC and Self-Alignment for Factuality w/ Self-Eval-P(True), respectively.

We conduct pairwise comparisons on the generated biographies in Section 4.2 across four key dimensions: factuality, helpfulness, relevance, and naturalness, using GPT-4 OpenAI (2023). The prompt employed can be found in Appendix E. As illustrated in Figure 4, we observe that self-alignment w/ Self-Eval-SKT significantly outperforms FactTune-MC and self-alignment w/ Self-Eval-P(True) (with Llama2-7B as the base model) with considerable winning rates across all dimensions. Examples of qualitative studies are shown in Appendix F.

4.4 Self-Alignment with Varying Factuality Estimation Methods

| Model | TruthfulQA | | | |

| --- | --- | --- | --- | --- |

| % $\mathtt{MC}$ $\mathtt{acc.}$ | % $\mathtt{True}$ | % $\mathtt{Info}$ | % $\mathtt{True^{*}Info}$ | |

| Llama-7B | 25.60 | 30.40 | 96.30 | 26.90 |

| w/ SE | 37.26 | 33.29 | 98.22 | 31.78 |

| w/ USC | 38.63 | 41.92 | 96.16 | 38.77 |

| w/ Self-Eval-SKT | 45.48 | 47.40 | 97.26 | 45.75 |

| Llama2-7B | 28.90 | 50.41 | 88.22 | 39.04 |

| w/ SE | 42.47 | 44.38 | 97.81 | 42.33 |

| w/ USC | 40.55 | 44.66 | 98.77 | 43.84 |

| w/ Self-Eval-SKT | 44.10 | 55.07 | 98.08 | 53.42 |

Table 2: Evaluation results of Self-Alignment for Factuality that employ various approaches for confidence estimation.

Setup. To bolster the study of Self-Alignment for Factuality, we introduce two variants, i.e., self-alignment w/ SE and w/ USC, which adopt Semantic Equivalence Kuhn et al. (2023) and Universal Self-Consistency Chen et al. (2023c) for confidence estimation, respectively. In particular, $(\textup{\it i})$ self-alignment w/ SE clusters the initial responses based on semantic equivalence and then uses the largest cluster of semantically equivalent responses as the preferred responses, while treating the remaining responses as dis-preferred ones. $(\textup{\it ii})$ self-alignment w/ USC adopts the response cluster containing the most consistent response among the candidate responses, as identified using GPT-3.5-turbo, as the preferred responses.

Results. Despite exhibiting lower performance than self-alignment with Self-Eval-SKT, both variants consistently improve factuality over the base models in the MCQA task and open-ended generation tasks, which further reveals the effectiveness of SK-Tuning on improving factuality estimation. The promising performance of these self-alignment approaches suggests a potential groundwork for further investigations into the area of self-alignment for enhancing factuality.

5 In-dpeth Analysis of Self-Eval

| Task | Model | Multi-choice QA Datasets | | | | |

| --- | --- | --- | --- | --- | --- | --- |

| TruthfulQA (Full) | CommonSenseQA | OpenBookQA (Closed) | MedQA | MMLU | | |

| Selection (Metric: $\mathtt{Acc.}$ ) | Llama2-7B | 25.49 | 54.30 | 55.00 | 30.71 | 44.76 |

| Self-Eval-P(True) | 32.64 | 64.95 | 65.40 | 29.69 | 43.29 | |

| Self-Eval-SKT | 43.97 | 70.43 | 67.40 | 36.37 | 49.88 | |

| Discrimination (Metric: $\mathtt{AUROC}$ ) | Self-Eval-P(True) | 51.33 | 79.76 | 71.66 | 52.75 | 59.52 |

| Self-Eval-SKT | 59.02 | 84.65 | 75.72 | 60.40 | 67.07 | |

Table 3: Following Taylor et al. (2022); Singhal et al. (2023), we report the 5-shot results on MCQA tasks. Note that the results of Llama2-7B are reported using the lettered choices format (examples are provided in Appendix D Table 6), as Kadavath et al. (2022); Rae et al. (2022) suggest that models are well-calibrated in this format The results on CommonSenseQA (7-shot), OpenBookQA (0-shot), and MMLU (5-shot) are reported as 57.8%, 58.6%, and 45.3%, respectively, in Touvron et al. (2023b)..

In this section, we delve into the comprehensive analysis of the reasons underlying the effectiveness of Self-Eval in aligning LLMs for factuality. Specifically, following Kadavath et al. (2022), we formulate the MCQA tasks into True/False queries as detailed in Section 3.2. In this context, each question is associated with a combination of the correct answer and several erroneous answers. Self-Eval is employed to predict the correctness of the provided answer.

5.1 Setup

Datasets. We employ five well-studied MCQA datasets: TruthfulQA, CommonSenseQA Talmor et al. (2019), OpenBookQA (Closed-Form) Mihaylov et al. (2018), MedQA (USMLE) Pal et al. (2022), and Massive Multitask Language Understanding (MMLU) Hendrycks et al. (2021).

Evaluation Metrics. We assess the capability on factuality estimation in $(\textup{\it i})$ selecting the correct answer among the answer options using Accuracy Kadavath et al. (2022), i.e., the probability that the correct answer has the highest confidence score among all answer options; $(\textup{\it ii})$ distinguishing the correct answer and a randomly sampled incorrect answer using Area Under the Receiver Operating Characteristic curve (AUROC) Kuhn et al. (2023), i.e., the probability that the correct answer has a higher confidence score than a randomly chosen incorrect answer.

5.2 Results

SK-Tuning shows strong efficacy in improving the model’s confidence estimation. We present the evaluation results in Table 9. Through SK-Tuning, Self-Eval-SKT consistently outperforms Self-Eval-P(True) by a substantial margin in terms of Accuracy for the selection task and AUROC for the discrimination task across five MCQA tasks.

Factuality evaluation is easier than factual generation. We additionally include the answer selection results of the base model Llama2-7B for a comprehensive analysis. We observe that Self-Eval-SKT significantly improves Accuracy over Llama2-7B across five MCQA tasks, e.g., by over 16% on CommonSenseQA and 12% on OpenBookQA (Closed-Form). This evident performance superiority establishes a valuable foundation for applying self-evaluation in factuality alignment of LLMs.

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Reliability Diagram (Calibration Curve)

## 1. Component Isolation

* **Header/Legend:** Located in the top-left quadrant. Contains two labeled data series with corresponding color-coded markers.

* **Main Chart Area:** A 2D plot featuring a dashed diagonal line, two colored line plots with circular markers, and two sets of semi-transparent histograms (bars).

* **Axes:**

* **X-axis (Horizontal):** Labeled "Confidence" ranging from 0.0 to 1.0.

* **Y-axis (Vertical):** Labeled "Frequency" ranging from 0.0 to 1.0.

---

## 2. Metadata and Labels

* **X-axis Title:** Confidence

* **Y-axis Title:** Frequency

* **X-axis Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

* **Y-axis Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

* **Legend [Top-Left]:**

* **Red Line/Circle:** `Self-P(True)`

* **Blue Line/Circle:** `Self-SKT`

* **Reference Line:** A black dashed diagonal line representing perfect calibration (where Confidence = Frequency).

---

## 3. Data Series Analysis

### Series A: Self-P(True) (Red Line & Pink Bars)

* **Trend Verification:** This series starts at a mid-range confidence (~0.55). It shows a sharp upward slope, crossing the perfect calibration line around Confidence 0.7, and ending near the top-right. It represents a model that is "under-confident" at lower values and becomes more calibrated at higher confidence levels.

* **Data Points (Approximate):**

| Confidence | Frequency |

| :--- | :--- |

| 0.55 | 0.15 |

| 0.65 | 0.48 |

| 0.75 | 0.80 |

| 0.85 | 0.89 |

* **Histogram (Pink):** Concentrated in the high confidence range (0.5 to 0.9).

### Series B: Self-SKT (Blue Line & Light Blue Bars)

* **Trend Verification:** This series spans the entire x-axis. It starts above the diagonal (over-confident/high frequency for low confidence), flattens out significantly between 0.2 and 0.6 confidence, and then slopes upward, ending below the diagonal (under-confident).

* **Data Points (Approximate):**

| Confidence | Frequency |

| :--- | :--- |

| 0.05 | 0.20 |

| 0.15 | 0.45 |

| 0.25 | 0.51 |

| 0.35 | 0.53 |

| 0.45 | 0.50 |

| 0.55 | 0.55 |

| 0.65 | 0.47 |

| 0.75 | 0.54 |

| 0.85 | 0.67 |

| 0.95 | 0.80 |

* **Histogram (Light Blue):** Distributed across the entire range from 0.0 to 1.0, with a notable peak/plateau between 0.1 and 0.6.

---

## 4. Comparative Summary

* **Calibration:** The `Self-P(True)` (Red) model is more closely aligned with the perfect calibration line at high confidence levels (0.7-0.9) compared to `Self-SKT`.

* **Confidence Distribution:** `Self-SKT` (Blue) provides predictions across the full spectrum of confidence, whereas `Self-P(True)` (Red) appears to only produce predictions with confidence scores greater than 0.5.

* **Reliability:** `Self-SKT` exhibits a "plateau" effect where increasing confidence from 0.2 to 0.6 does not result in a significant increase in actual frequency (accuracy), indicating poor calibration in that specific range.

</details>

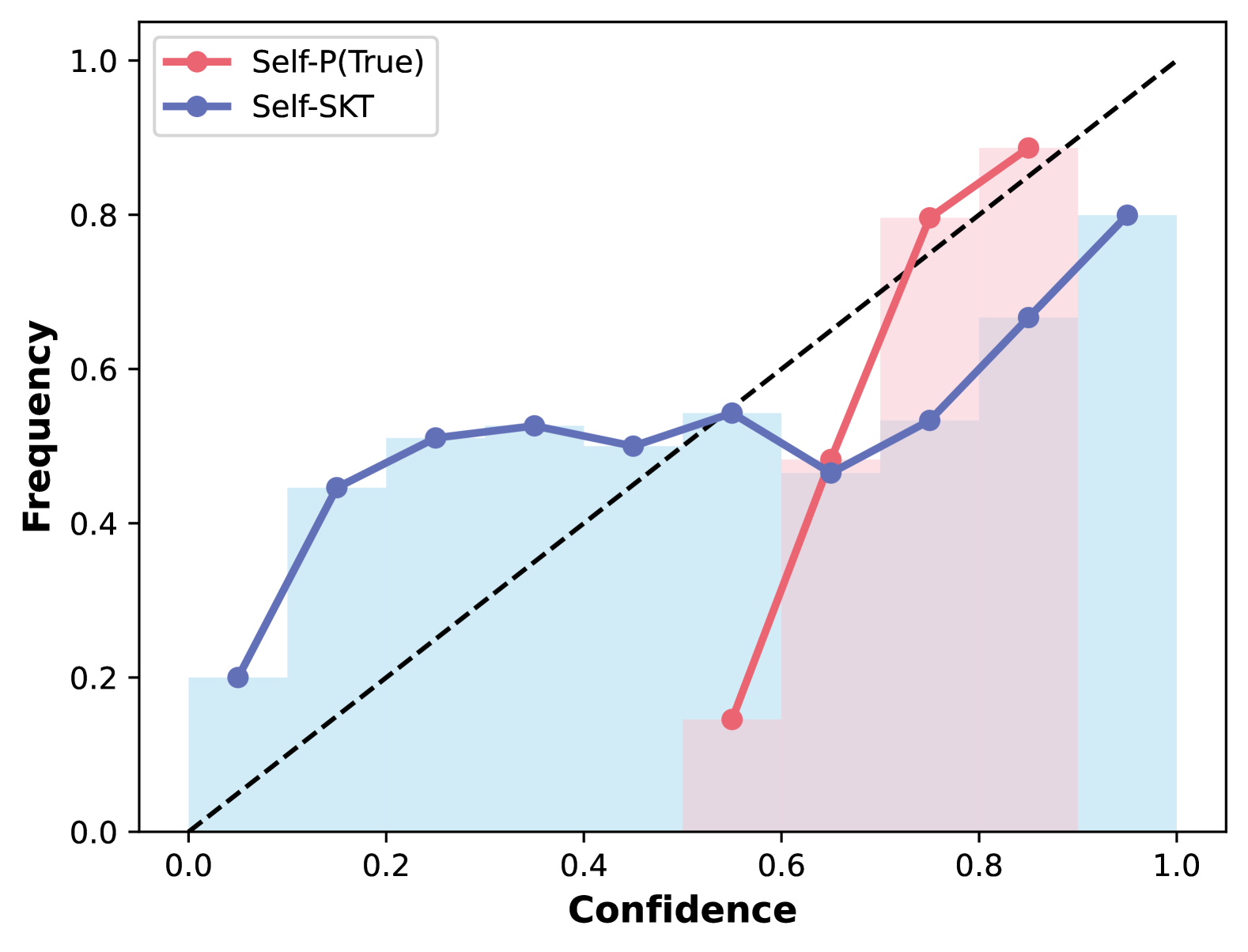

Figure 5: Calibration curves of utilizing Self-Eval-P(True) and Self-Eval-SKT on Llama2-7B in the CommonsenseQA task. Following Kadavath et al. (2022), we plot confidence vs. frequency that a prediction is correct. The dashed line indicates perfect calibration.

SK-Tuning improves the model’s confidence calibration. Following Kadavath et al. (2022); Tian et al. (2023b), we further explore the confidence calibration – a problem that investigates whether the confidence expressed in a prediction accurately reflects the frequency (or likelihood) of that prediction being correct Guo et al. (2017). In Figure 5, we present the calibration curves for utilizing Self-Eval-P(True) and Self-Eval-SKT on Llama2-7B in the CommonSenseQA task. With SK-Tuning, Self-Eval-SKT (represented by the blue line) attains superior calibration of the LLM compared to Self-Eval-P(True) (depicted by the pink line), which demonstrates substantial overconfidence. The frequency within each bin tends to fall below its corresponding confidence level.

6 Conclusion

In this paper, we introduce Self-Alignment for Factuality, a framework that capitalizes on an LLM’s self-evaluation ability to mitigate hallucinations, without the need for external knowledge or human intervention. Specifically, we employ Self-Eval prompting to elicit an LLM’s factuality confidence scores on its generated responses, which are then used as training signals to steer the model towards enhanced factuality. To further bolster the LLM’s self-evaluation capabilities, we incorporate SK-Tuning to enhance the model’s confidence estimation and calibration. Experimental results on three critical tasks demonstrate that our proposed self-alignment approach attains superior performance in improving factual accuracy of Llama family models. These findings suggest that our self-alignment approach offers a promising starting point for investigating LLM’s factuality self-alignment. Moreover, we verify the effectiveness of SK-Tuning in augmenting an LLM’s factuality estimation across five knowledge-intensive MCQA tasks. This finding suggests the potential for wider applications of the proposed framework in various domains, including legal, medical, and educational fields.

Limitations

Although we have achieved promising experimental results, we regard these findings as preliminary, given that numerous avenues remain to be explored in this area.

Combining with decoding-based strategies.

Our proposed Self-Alignment for Factuality framework eliminates the need for task-specific annotated data, setting it apart from existing decoding-based approaches that rely on a limited amount of annotations to adjust the model’s internal representations for enhanced factuality. As suggested by the results in contemporary work Tian et al. (2023a), combining our framework with high-performing approaches, such as DoLa, has the potential to yield even more accurate and factual improvements in LLMs.

Experimenting on different LLMs.

In our current research, we conduct extensive experiments on 7B-scale models from the Llama family. As the promising findings in Kadavath et al. (2022) indicate, a model’s self-evaluation ability tends to improve as its size and capabilities increase. Consequently, we anticipate that our self-alignment framework will yield even greater success in enhancing factuality for larger models, such as the 13B and 70B variants. Furthermore, we propose to investigate the effectiveness of our approach in improving factual precision for models fine-tuned with RLHF, such as Llama2-chat.

Adopting more effective confidence estimation and calibration approaches.

The comprehensive experimental results detailed in Section 4.2 and Section 4.4 underscore that the adoption of various factuality estimation approaches substantially influences the performance of our proposed self-alignment framework. Moreover, the analysis of our proposed Self-Eval-SKT in Section 3 accentuates the importance of enhancing an LLM’s confidence estimation and calibration for factuality improvement within our self-alignment framework. While our proposed SK-Tuning has proven highly effective in refining the model’s confidence estimation and calibration, future research may benefit from exploring more efficient confidence estimation and calibration methods Guo et al. (2017); Tian et al. (2023b); Zhu et al. (2023); Chen et al. (2023a); Shrivastava et al. (2023); Liu et al. (2023a).

Ethics Statement

The motivation of this research is aligned with the ethical principles, to enhance the trustworthiness and avoid LLMs from generating misleading information. Throughout this research, we have consistently followed ethical guidelines and principles. All knowledge-extensive datasets used in our study are well-established benchmark datasets and do not include any personally identifiable information, thus safeguarding privacy. In addition, the prompts employed by GPT-4 for the data collection on BioGEN tasks and model evaluation are meticulously crafted to exclude any language that discriminates against specific individuals or groups Gallegos et al. (2023); Zhou et al. (2023b). Examples of these carefully designed prompts can be found in Appendix E, H. Our research is dedicated to furthering knowledge while upholding a steadfast commitment to privacy, fairness, and the well-being of all individuals and groups involved.

References

- Allen-Zhu and Li (2023) Zeyuan Allen-Zhu and Yuanzhi Li. 2023. Physics of language models: Part 3.2, knowledge manipulation.

- Azaria and Mitchell (2023) Amos Azaria and Tom Mitchell. 2023. The internal state of an LLM knows when it’s lying. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 967–976, Singapore. Association for Computational Linguistics.

- Chang et al. (2023) Yupeng Chang, Xu Wang, Jindong Wang, Yuan Wu, Linyi Yang, Kaijie Zhu, Hao Chen, Xiaoyuan Yi, Cunxiang Wang, Yidong Wang, Wei Ye, Yue Zhang, Yi Chang, Philip S. Yu, Qiang Yang, and Xing Xie. 2023. A survey on evaluation of large language models.

- Chen et al. (2023a) Jiefeng Chen, Jinsung Yoon, Sayna Ebrahimi, Sercan O Arik, Tomas Pfister, and Somesh Jha. 2023a. Adaptation with self-evaluation to improve selective prediction in llms.

- Chen et al. (2023b) Xiang Chen, Duanzheng Song, Honghao Gui, Chengxi Wang, Ningyu Zhang, Fei Huang, Chengfei Lv, Dan Zhang, and Huajun Chen. 2023b. Unveiling the siren’s song: Towards reliable fact-conflicting hallucination detection. CoRR, abs/2310.12086.

- Chen et al. (2023c) Xinyun Chen, Renat Aksitov, Uri Alon, Jie Ren, Kefan Xiao, Pengcheng Yin, Sushant Prakash, Charles Sutton, Xuezhi Wang, and Denny Zhou. 2023c. Universal self-consistency for large language model generation.

- Chuang et al. (2023) Yung-Sung Chuang, Yujia Xie, Hongyin Luo, Yoon Kim, James Glass, and Pengcheng He. 2023. Dola: Decoding by contrasting layers improves factuality in large language models. arXiv preprint arXiv:2309.03883.

- Dhuliawala et al. (2023) Shehzaad Dhuliawala, Mojtaba Komeili, Jing Xu, Roberta Raileanu, Xian Li, Asli Celikyilmaz, and Jason Weston. 2023. Chain-of-verification reduces hallucination in large language models.

- Evans et al. (2021) Owain Evans, Owen Cotton-Barratt, Lukas Finnveden, Adam Bales, Avital Balwit, Peter Wills, Luca Righetti, and William Saunders. 2021. Truthful ai: Developing and governing ai that does not lie.

- Gallegos et al. (2023) Isabel O Gallegos, Ryan A Rossi, Joe Barrow, Md Mehrab Tanjim, Sungchul Kim, Franck Dernoncourt, Tong Yu, Ruiyi Zhang, and Nesreen K Ahmed. 2023. Bias and fairness in large language models: A survey. arXiv preprint arXiv:2309.00770.

- Guo et al. (2017) Chuan Guo, Geoff Pleiss, Yu Sun, and Kilian Q. Weinberger. 2017. On calibration of modern neural networks.

- He et al. (2021) Pengcheng He, Xiaodong Liu, Jianfeng Gao, and Weizhu Chen. 2021. Deberta: Decoding-enhanced bert with disentangled attention. In International Conference on Learning Representations.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. 2021. Measuring massive multitask language understanding. Proceedings of the International Conference on Learning Representations (ICLR).

- Hernandez et al. (2023) Evan Hernandez, Belinda Z. Li, and Jacob Andreas. 2023. Inspecting and editing knowledge representations in language models.

- Huang et al. (2023) Lei Huang, Weijiang Yu, Weitao Ma, Weihong Zhong, Zhangyin Feng, Haotian Wang, Qianglong Chen, Weihua Peng, Xiaocheng Feng, Bing Qin, and Ting Liu. 2023. A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions.

- Ji et al. (2023) Ziwei Ji, Nayeon Lee, Rita Frieske, Tiezheng Yu, Dan Su, Yan Xu, Etsuko Ishii, Yejin Bang, Andrea Madotto, and Pascale Fung. 2023. Survey of hallucination in natural language generation. ACM Comput. Surv., 55(12):248:1–248:38.

- Kadavath et al. (2022) Saurav Kadavath, Tom Conerly, Amanda Askell, Tom Henighan, Dawn Drain, Ethan Perez, Nicholas Schiefer, Zac Hatfield-Dodds, Nova DasSarma, Eli Tran-Johnson, Scott Johnston, Sheer El-Showk, Andy Jones, Nelson Elhage, Tristan Hume, Anna Chen, Yuntao Bai, Sam Bowman, Stanislav Fort, Deep Ganguli, Danny Hernandez, Josh Jacobson, Jackson Kernion, Shauna Kravec, Liane Lovitt, Kamal Ndousse, Catherine Olsson, Sam Ringer, Dario Amodei, Tom Brown, Jack Clark, Nicholas Joseph, Ben Mann, Sam McCandlish, Chris Olah, and Jared Kaplan. 2022. Language models (mostly) know what they know.

- Kuhn et al. (2023) Lorenz Kuhn, Yarin Gal, and Sebastian Farquhar. 2023. Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net.

- Li et al. (2023a) Junlong Li, Shichao Sun, Weizhe Yuan, Run-Ze Fan, Hai Zhao, and Pengfei Liu. 2023a. Generative judge for evaluating alignment.

- Li et al. (2024) Junyi Li, Jie Chen, Ruiyang Ren, Xiaoxue Cheng, Wayne Xin Zhao, Jian-Yun Nie, and Ji-Rong Wen. 2024. The dawn after the dark: An empirical study on factuality hallucination in large language models. arXiv preprint arXiv:2401.03205.

- Li et al. (2023b) Kenneth Li, Oam Patel, Fernanda Viégas, Hanspeter Pfister, and Martin Wattenberg. 2023b. Inference-time intervention: Eliciting truthful answers from a language model.

- Li et al. (2023c) Xiang Lisa Li, Ari Holtzman, Daniel Fried, Percy Liang, Jason Eisner, Tatsunori Hashimoto, Luke Zettlemoyer, and Mike Lewis. 2023c. Contrastive decoding: Open-ended text generation as optimization. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 12286–12312, Toronto, Canada. Association for Computational Linguistics.

- Li et al. (2023d) Xingxuan Li, Ruochen Zhao, Yew Ken Chia, Bosheng Ding, Shafiq Joty, Soujanya Poria, and Lidong Bing. 2023d. Chain-of-knowledge: Grounding large language models via dynamic knowledge adapting over heterogeneous sources.

- Lightman et al. (2023) Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. 2023. Let’s verify step by step.

- Lin et al. (2022) Stephanie Lin, Jacob Hilton, and Owain Evans. 2022. TruthfulQA: Measuring how models mimic human falsehoods. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 3214–3252, Dublin, Ireland. Association for Computational Linguistics.

- Liu et al. (2023a) Xin Liu, Muhammad Khalifa, and Lu Wang. 2023a. Litcab: Lightweight calibration of language models on outputs of varied lengths.

- Liu et al. (2023b) Yang Liu, Yuanshun Yao, Jean-Francois Ton, Xiaoying Zhang, Ruocheng Guo, Hao Cheng, Yegor Klochkov, Muhammad Faaiz Taufiq, and Hang Li. 2023b. Trustworthy llms: a survey and guideline for evaluating large language models’ alignment.

- Liu et al. (2023c) Yiheng Liu, Tianle Han, Siyuan Ma, Jiayue Zhang, Yuanyuan Yang, Jiaming Tian, Hao He, Antong Li, Mengshen He, Zhengliang Liu, et al. 2023c. Summary of chatgpt-related research and perspective towards the future of large language models. Meta-Radiology, page 100017.

- Liu et al. (2023d) Yixin Liu, Alex Fabbri, Pengfei Liu, Yilun Zhao, Linyong Nan, Ruilin Han, Simeng Han, Shafiq Joty, Chien-Sheng Wu, Caiming Xiong, and Dragomir Radev. 2023d. Revisiting the gold standard: Grounding summarization evaluation with robust human evaluation. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 4140–4170, Toronto, Canada. Association for Computational Linguistics.

- Madaan et al. (2023) Aman Madaan, Niket Tandon, Prakhar Gupta, Skyler Hallinan, Luyu Gao, Sarah Wiegreffe, Uri Alon, Nouha Dziri, Shrimai Prabhumoye, Yiming Yang, Shashank Gupta, Bodhisattwa Prasad Majumder, Katherine Hermann, Sean Welleck, Amir Yazdanbakhsh, and Peter Clark. 2023. Self-refine: Iterative refinement with self-feedback.

- Mallen et al. (2023) Alex Mallen, Akari Asai, Victor Zhong, Rajarshi Das, Daniel Khashabi, and Hannaneh Hajishirzi. 2023. When not to trust language models: Investigating effectiveness of parametric and non-parametric memories. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 9802–9822, Toronto, Canada. Association for Computational Linguistics.

- Manakul et al. (2023) Potsawee Manakul, Adian Liusie, and Mark J. F. Gales. 2023. Selfcheckgpt: Zero-resource black-box hallucination detection for generative large language models.

- Mihaylov et al. (2018) Todor Mihaylov, Peter Clark, Tushar Khot, and Ashish Sabharwal. 2018. Can a suit of armor conduct electricity? a new dataset for open book question answering. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pages 2381–2391, Brussels, Belgium. Association for Computational Linguistics.

- Min et al. (2023a) Sewon Min, Kalpesh Krishna, Xinxi Lyu, Mike Lewis, Wen tau Yih, Pang Wei Koh, Mohit Iyyer, Luke Zettlemoyer, and Hannaneh Hajishirzi. 2023a. Factscore: Fine-grained atomic evaluation of factual precision in long form text generation.

- Min et al. (2023b) Sewon Min, Kalpesh Krishna, Xinxi Lyu, Mike Lewis, Wen-tau Yih, Pang Koh, Mohit Iyyer, Luke Zettlemoyer, and Hannaneh Hajishirzi. 2023b. FActScore: Fine-grained atomic evaluation of factual precision in long form text generation. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 12076–12100, Singapore. Association for Computational Linguistics.

- OpenAI (2022) OpenAI. 2022. large-scale generative pre-training model for conversation. OpenAI blog.

- OpenAI (2023) OpenAI. 2023. Gpt-4 technical report.

- Ouyang et al. (2022) Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll L. Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, John Schulman, Jacob Hilton, Fraser Kelton, Luke Miller, Maddie Simens, Amanda Askell, Peter Welinder, Paul F. Christiano, Jan Leike, and Ryan Lowe. 2022. Training language models to follow instructions with human feedback. In NeurIPS.

- Pal et al. (2022) Ankit Pal, Logesh Kumar Umapathi, and Malaikannan Sankarasubbu. 2022. Medmcqa: A large-scale multi-subject multi-choice dataset for medical domain question answering. In Proceedings of the Conference on Health, Inference, and Learning, volume 174 of Proceedings of Machine Learning Research, pages 248–260. PMLR.

- Park et al. (2023) Peter S. Park, Simon Goldstein, Aidan O’Gara, Michael Chen, and Dan Hendrycks. 2023. Ai deception: A survey of examples, risks, and potential solutions.

- Peng et al. (2023) Baolin Peng, Michel Galley, Pengcheng He, Hao Cheng, Yujia Xie, Yu Hu, Qiuyuan Huang, Lars Liden, Zhou Yu, Weizhu Chen, and Jianfeng Gao. 2023. Check your facts and try again: Improving large language models with external knowledge and automated feedback.

- Rae et al. (2022) Jack W. Rae, Sebastian Borgeaud, Trevor Cai, Katie Millican, Jordan Hoffmann, Francis Song, John Aslanides, Sarah Henderson, Roman Ring, Susannah Young, Eliza Rutherford, Tom Hennigan, Jacob Menick, Albin Cassirer, Richard Powell, George van den Driessche, Lisa Anne Hendricks, Maribeth Rauh, Po-Sen Huang, Amelia Glaese, Johannes Welbl, Sumanth Dathathri, Saffron Huang, Jonathan Uesato, John Mellor, Irina Higgins, Antonia Creswell, Nat McAleese, Amy Wu, Erich Elsen, Siddhant Jayakumar, Elena Buchatskaya, David Budden, Esme Sutherland, Karen Simonyan, Michela Paganini, Laurent Sifre, Lena Martens, Xiang Lorraine Li, Adhiguna Kuncoro, Aida Nematzadeh, Elena Gribovskaya, Domenic Donato, Angeliki Lazaridou, Arthur Mensch, Jean-Baptiste Lespiau, Maria Tsimpoukelli, Nikolai Grigorev, Doug Fritz, Thibault Sottiaux, Mantas Pajarskas, Toby Pohlen, Zhitao Gong, Daniel Toyama, Cyprien de Masson d’Autume, Yujia Li, Tayfun Terzi, Vladimir Mikulik, Igor Babuschkin, Aidan Clark, Diego de Las Casas, Aurelia Guy, Chris Jones, James Bradbury, Matthew Johnson, Blake Hechtman, Laura Weidinger, Iason Gabriel, William Isaac, Ed Lockhart, Simon Osindero, Laura Rimell, Chris Dyer, Oriol Vinyals, Kareem Ayoub, Jeff Stanway, Lorrayne Bennett, Demis Hassabis, Koray Kavukcuoglu, and Geoffrey Irving. 2022. Scaling language models: Methods, analysis & insights from training gopher.

- Rafailov et al. (2023) Rafael Rafailov, Archit Sharma, Eric Mitchell, Stefano Ermon, Christopher D. Manning, and Chelsea Finn. 2023. Direct preference optimization: Your language model is secretly a reward model.

- Ren et al. (2023) Jie Ren, Yao Zhao, Tu Vu, Peter J. Liu, and Balaji Lakshminarayanan. 2023. Self-evaluation improves selective generation in large language models.

- Saunders et al. (2022) William Saunders, Catherine Yeh, Jeff Wu, Steven Bills, Long Ouyang, Jonathan Ward, and Jan Leike. 2022. Self-critiquing models for assisting human evaluators.

- Schulman (2023) John Schulman. 2023. Reinforcement learning from human feedback: Progress and challenges. Berkeley EECS.

- Shi et al. (2023) Weijia Shi, Anirudh Ajith, Mengzhou Xia, Yangsibo Huang, Daogao Liu, Terra Blevins, Danqi Chen, and Luke Zettlemoyer. 2023. Detecting pretraining data from large language models.

- Shridhar et al. (2023) Kumar Shridhar, Koustuv Sinha, Andrew Cohen, Tianlu Wang, Ping Yu, Ram Pasunuru, Mrinmaya Sachan, Jason Weston, and Asli Celikyilmaz. 2023. The art of llm refinement: Ask, refine, and trust.

- Shrivastava et al. (2023) Vaishnavi Shrivastava, Percy Liang, and Ananya Kumar. 2023. Llamas know what gpts don’t show: Surrogate models for confidence estimation.

- Singhal et al. (2023) Karan Singhal, Tao Tu, Juraj Gottweis, Rory Sayres, Ellery Wulczyn, Le Hou, Kevin Clark, Stephen Pfohl, Heather Cole-Lewis, Darlene Neal, Mike Schaekermann, Amy Wang, Mohamed Amin, Sami Lachgar, Philip Mansfield, Sushant Prakash, Bradley Green, Ewa Dominowska, Blaise Aguera y Arcas, Nenad Tomasev, Yun Liu, Renee Wong, Christopher Semturs, S. Sara Mahdavi, Joelle Barral, Dale Webster, Greg S. Corrado, Yossi Matias, Shekoofeh Azizi, Alan Karthikesalingam, and Vivek Natarajan. 2023. Towards expert-level medical question answering with large language models.

- Speer and Lowry-Duda (2017) Robyn Speer and Joanna Lowry-Duda. 2017. ConceptNet at SemEval-2017 task 2: Extending word embeddings with multilingual relational knowledge. In Proceedings of the 11th International Workshop on Semantic Evaluation (SemEval-2017), pages 85–89, Vancouver, Canada. Association for Computational Linguistics.

- Srivastava et al. (2023) Aarohi Srivastava, Abhinav Rastogi, Abhishek Rao, Abu Awal Md Shoeb, Abubakar Abid, Adam Fisch, Adam R. Brown, Adam Santoro, Aditya Gupta, and Adrià Garriga-Alonso et al. 2023. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models.

- Sun et al. (2023) Zhiqing Sun, Sheng Shen, Shengcao Cao, Haotian Liu, Chunyuan Li, Yikang Shen, Chuang Gan, Liang-Yan Gui, Yu-Xiong Wang, Yiming Yang, Kurt Keutzer, and Trevor Darrell. 2023. Aligning large multimodal models with factually augmented rlhf.

- Talmor et al. (2019) Alon Talmor, Jonathan Herzig, Nicholas Lourie, and Jonathan Berant. 2019. CommonsenseQA: A question answering challenge targeting commonsense knowledge. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 4149–4158, Minneapolis, Minnesota. Association for Computational Linguistics.

- Taylor et al. (2022) Ross Taylor, Marcin Kardas, Guillem Cucurull, Thomas Scialom, Anthony Hartshorn, Elvis Saravia, Andrew Poulton, Viktor Kerkez, and Robert Stojnic. 2022. Galactica: A large language model for science.

- Tian et al. (2023a) Katherine Tian, Eric Mitchell, Huaxiu Yao, Christopher D. Manning, and Chelsea Finn. 2023a. Fine-tuning language models for factuality.

- Tian et al. (2023b) Katherine Tian, Eric Mitchell, Allan Zhou, Archit Sharma, Rafael Rafailov, Huaxiu Yao, Chelsea Finn, and Christopher Manning. 2023b. Just ask for calibration: Strategies for eliciting calibrated confidence scores from language models fine-tuned with human feedback. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 5433–5442, Singapore. Association for Computational Linguistics.

- Tonmoy et al. (2024) S. M Towhidul Islam Tonmoy, S M Mehedi Zaman, Vinija Jain, Anku Rani, Vipula Rawte, Aman Chadha, and Amitava Das. 2024. A comprehensive survey of hallucination mitigation techniques in large language models.

- Touvron et al. (2023a) Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, Aurélien Rodriguez, Armand Joulin, Edouard Grave, and Guillaume Lample. 2023a. Llama: Open and efficient foundation language models. CoRR, abs/2302.13971.

- Touvron et al. (2023b) Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, Dan Bikel, Lukas Blecher, Cristian Canton Ferrer, Moya Chen, Guillem Cucurull, David Esiobu, Jude Fernandes, Jeremy Fu, Wenyin Fu, Brian Fuller, Cynthia Gao, Vedanuj Goswami, Naman Goyal, Anthony Hartshorn, Saghar Hosseini, Rui Hou, Hakan Inan, Marcin Kardas, Viktor Kerkez, Madian Khabsa, Isabel Kloumann, Artem Korenev, Punit Singh Koura, Marie-Anne Lachaux, Thibaut Lavril, Jenya Lee, Diana Liskovich, Yinghai Lu, Yuning Mao, Xavier Martinet, Todor Mihaylov, Pushkar Mishra, Igor Molybog, Yixin Nie, Andrew Poulton, Jeremy Reizenstein, Rashi Rungta, Kalyan Saladi, Alan Schelten, Ruan Silva, Eric Michael Smith, Ranjan Subramanian, Xiaoqing Ellen Tan, Binh Tang, Ross Taylor, Adina Williams, Jian Xiang Kuan, Puxin Xu, Zheng Yan, Iliyan Zarov, Yuchen Zhang, Angela Fan, Melanie Kambadur, Sharan Narang, Aurelien Rodriguez, Robert Stojnic, Sergey Edunov, and Thomas Scialom. 2023b. Llama 2: Open foundation and fine-tuned chat models.

- Varshney et al. (2023) Neeraj Varshney, Wenlin Yao, Hongming Zhang, Jianshu Chen, and Dong Yu. 2023. A stitch in time saves nine: Detecting and mitigating hallucinations of llms by validating low-confidence generation.

- Wang et al. (2023a) Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. 2023a. Self-consistency improves chain of thought reasoning in language models.

- Wang et al. (2023b) Yizhong Wang, Yeganeh Kordi, Swaroop Mishra, Alisa Liu, Noah A. Smith, Daniel Khashabi, and Hannaneh Hajishirzi. 2023b. Self-instruct: Aligning language models with self-generated instructions. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 13484–13508, Toronto, Canada. Association for Computational Linguistics.

- Wei et al. (2022) Jason Wei, Yi Tay, Rishi Bommasani, Colin Raffel, Barret Zoph, Sebastian Borgeaud, Dani Yogatama, Maarten Bosma, Denny Zhou, Donald Metzler, Ed H. Chi, Tatsunori Hashimoto, Oriol Vinyals, Percy Liang, Jeff Dean, and William Fedus. 2022. Emergent abilities of large language models.

- Yang et al. (2023) Yuqing Yang, Ethan Chern, Xipeng Qiu, Graham Neubig, and Pengfei Liu. 2023. Alignment for honesty.

- Zhang et al. (2022a) Susan Zhang, Stephen Roller, Naman Goyal, Mikel Artetxe, Moya Chen, Shuohui Chen, Christopher Dewan, Mona Diab, Xian Li, Xi Victoria Lin, Todor Mihaylov, Myle Ott, Sam Shleifer, Kurt Shuster, Daniel Simig, Punit Singh Koura, Anjali Sridhar, Tianlu Wang, and Luke Zettlemoyer. 2022a. Opt: Open pre-trained transformer language models.

- Zhang et al. (2022b) Xiaoying Zhang, Baolin Peng, Jianfeng Gao, and Helen Meng. 2022b. Toward self-learning end-to-end task-oriented dialog systems. In Proceedings of the 23rd Annual Meeting of the Special Interest Group on Discourse and Dialogue, pages 516–530, Edinburgh, UK. Association for Computational Linguistics.

- Zhang et al. (2023a) Xiaoying Zhang, Baolin Peng, Kun Li, Jingyan Zhou, and Helen Meng. 2023a. SGP-TOD: Building task bots effortlessly via schema-guided LLM prompting. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 13348–13369, Singapore. Association for Computational Linguistics.

- Zhang et al. (2023b) Yue Zhang, Leyang Cui, Wei Bi, and Shuming Shi. 2023b. Alleviating hallucinations of large language models through induced hallucinations.

- Zhang et al. (2023c) Yue Zhang, Yafu Li, Leyang Cui, Deng Cai, Lemao Liu, Tingchen Fu, Xinting Huang, Enbo Zhao, Yu Zhang, Yulong Chen, Longyue Wang, Anh Tuan Luu, Wei Bi, Freda Shi, and Shuming Shi. 2023c. Siren’s song in the ai ocean: A survey on hallucination in large language models.

- Zhou et al. (2023a) Chunting Zhou, Pengfei Liu, Puxin Xu, Srini Iyer, Jiao Sun, Yuning Mao, Xuezhe Ma, Avia Efrat, Ping Yu, Lili Yu, Susan Zhang, Gargi Ghosh, Mike Lewis, Luke Zettlemoyer, and Omer Levy. 2023a. Lima: Less is more for alignment.

- Zhou et al. (2023b) Jingyan Zhou, Minda Hu, Junan Li, Xiaoying Zhang, Xixin Wu, Irwin King, and Helen Meng. 2023b. Rethinking machine ethics – can llms perform moral reasoning through the lens of moral theories?

- Zhu et al. (2023) Fei Zhu, Zhen Cheng, Xu-Yao Zhang, and Cheng-Lin Liu. 2023. Rethinking confidence calibration for failure prediction.

Appendix A A brief summary of recent hallucination mitigation approaches.

In Table 4, we provide a brief summary of recent hallucination mitigation approaches that are mostly related to ours.

Table 4: A brief summary of recent hallucination mitigation approaches that are closely related to our work. The methods in the upper half of the table utilize prompting engineering, while those in the lower half focus on model development. (MCQA: multiple-choice question answering, Gen.: open-end text generation, Honesty-Tune: honesty-oriented fine-tuning.)

Appendix B Data statistics and task descriptions for main experiments.

Table 5: Task descriptions and dataset information for main experiments. Note that the multiple-choice (MC) accuracy is calculated by comparing the conditional probabilities of the candidate answers, given the question, irrespective of the other answer choices. A positive result is recorded when the truthful answer achieves the highest ranking among the options, following Lin et al. (2022); Li et al. (2023b); Chuang et al. (2023); Touvron et al. (2023b).

Specifically, we construct the BioGEN dataset with the prompts in the format: ‘‘Question: Write a biography of <Entity>.’’ where the entities are sampled from Min et al. (2023b). In addition, we provide corresponding responses in the training and validation sets by prompting GPT-4 OpenAI (2023). We provide task descriptions and detailed information about the datasets in Table 5.

Appendix C Implementation details.

1. Implementing the Self-Alignment for Factuality framework.

Taking into account the minor differences when applying Self-Alignment for Factuality to the three tasks, namely, MCQA, short-form text generation, and long-form text generation, we discuss them individually for each stage:

Step 1: Generating Initial Responses for Preference Data Collection.