# Inference to the Best Explanation in Large Language Models

**Authors**: Email: d.dalal1@universityofgalway.ie

## Abstract

While Large Language Models (LLMs) have found success in real-world applications, their underlying explanatory process is still poorly understood. This paper proposes IBE-Eval, a framework inspired by philosophical accounts on Inference to the Best Explanation (IBE) to advance the interpretation and evaluation of LLM explanations. IBE-Eval estimates the plausibility of natural language explanations through a combination of explicit logical and linguistic features including: consistency, parsimony, coherence, and uncertainty. Extensive experiments are conducted on Causal Question Answering (CQA), where IBE-Eval is tasked to select the most plausible causal explanation amongst competing ones generated by the LLM (e.g. GPT 3.5 or LLaMA 2). The experiments reveal that IBE-Eval can successfully identify the best explanation with up to 77% accuracy ( $\approx 27\$ above random), improving upon a GPT 3.5-as-a-judge baseline ( $\approx+17\$ ) while being intrinsically more efficient and interpretable. Additional analysis suggests that, despite LLM-specific variances, generated explanations tend to conform to IBE criteria and that IBE-Eval is significantly correlated with human judgment, opening up opportunities for future development of automated explanation verification tools.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Causal Reasoning and Inference to the Best Explanation (IBE) Flowchart

### Overview

This image is a technical flowchart illustrating a process for causal reasoning using a Large Language Model (LLM) and the framework of Inference to the Best Explanation (IBE). The diagram is divided into three main vertical sections (left, middle, right) connected by arrows, showing the flow from a causal question to the selection of the most plausible explanation based on defined criteria.

### Components/Axes

The diagram is structured into three primary regions:

1. **Left Section (Input & Hypotheses):**

* **Top Box:** "Causal Question" containing the text: "The balloon expanded. What was the cause?" followed by two options: "A) I blew into it. B) I pricked it."

* **Middle Box:** "Competing Hypotheses" containing two premises and conclusions:

* "Premise 1: I blew into the balloon. Conclusion: The balloon expanded." (Text in blue and purple).

* "Premise 2: I pricked the balloon. Conclusion: The balloon expanded." (Text in red and purple).

* **Bottom Box:** "Explanation Prompt" containing a detailed instruction: "For the provided scenario, identify which option is the most plausible cause of the context. Let's think step-by-step and generate an explanation for each option. Treat each option as the premise and the provided context as the conclusion. Generate a short step-by-step logical proof that explains how the premise can result in the conclusion. For each step provide an IF-THEN rule and the underlying causal or commonsense assumption."

2. **Middle Section (Processing & Explanations):**

* A central box labeled "LLM" receives inputs from the left section via arrows.

* The LLM outputs two detailed explanations, each in a separate box:

* **Explanation 1 (E1):** A green-bordered box outlining a three-step causal chain for blowing into a balloon, concluding with "Therefore, since I blew into the balloon, it caused the balloon to inflate, which resulted in its expansion."

* **Explanation 2 (E2):** A red-bordered box outlining a three-step causal chain for pricking a balloon, concluding with "Therefore, since the balloon was pricked, it may have deflated, resulting in a decrease in air pressure inside the balloon, causing the external air pressure to make the balloon expand."

3. **Right Section (Inference & Selection):**

* **Top Header:** "Inference to the Best Explanation (IBE)".

* **IBE Process:** A central box labeled "IBE" receives inputs from both E1 and E2.

* **Selection Criteria:** Two identical tables labeled "Selection Criteria" are positioned above and below the IBE box, linked to E1 and E2 respectively. Each table lists four criteria with numerical scores:

**Selection Criteria for E1:**

| Criterion | Score |

|--------------|-------|

| Consistency | 1.0 |

| Parsimony | -2.0 |

| Coherence | 0.51 |

| Uncertainty | 2.0 |

**Selection Criteria for E2:**

| Criterion | Score |

|--------------|-------|

| Consistency | 1.0 |

| Parsimony | -3.0 |

| Coherence | 0.28 |

| Uncertainty | 3.0 |

* **Output:** The IBE box has two output arrows. One points to "E1" with a green checkmark (✓). The other points to "E2" with a red cross (✗), indicating E1 is selected as the best explanation.

### Detailed Analysis

* **Flow of Logic:** The diagram maps a complete reasoning pipeline:

1. A causal question is posed.

2. Competing hypotheses are formulated as logical premises.

3. An LLM is prompted to generate step-by-step causal explanations for each hypothesis.

4. Each explanation is evaluated against a set of four selection criteria (Consistency, Parsimony, Coherence, Uncertainty), resulting in numerical scores.

5. An Inference to the Best Explanation (IBE) mechanism compares the scored explanations and selects the one with the superior profile (E1 in this case).

* **Explanation Content:**

* **E1 (Blowing):** Follows a direct, additive causal path: Blow -> Inflate -> Expand. Assumptions are straightforward commonsense physics.

* **E2 (Pricking):** Follows a more complex, counter-intuitive path: Prick -> Deflate -> Decrease Internal Pressure -> External Pressure Causes Expansion. This chain relies on the less obvious principle that a decrease in internal pressure can lead to external expansion.

* **Scoring:** The numerical scores quantify the evaluation. E1 scores better (higher) on Parsimony (-2.0 vs -3.0) and Coherence (0.51 vs 0.28), and has lower Uncertainty (2.0 vs 3.0). Both have identical Consistency (1.0).

### Key Observations

1. **Visual Coding:** The diagram uses color consistently: green for the selected hypothesis/explanation (E1) and red for the rejected one (E2). Blue and purple text highlight key logical statements in the hypotheses.

2. **Structural Separation:** The dashed vertical lines clearly demarcate the three phases of the process: Problem Formulation, Explanation Generation, and Explanation Selection.

3. **Complexity Contrast:** The explanation for the less intuitive cause (pricking leading to expansion via deflation) is notably more complex (3 steps with a counter-intuitive final step) than the explanation for the intuitive cause (blowing).

4. **Quantified Evaluation:** The application of specific numerical scores to abstract criteria like "Parsimony" and "Coherence" is a key feature, suggesting a formalized or computational approach to evaluating explanations.

### Interpretation

This diagram serves as a conceptual model for how an AI system, specifically an LLM, can be structured to perform causal reasoning in a transparent and evaluative manner. It moves beyond simple answer generation to a process that:

* **Generates Multiple Hypotheses:** Explicitly considers competing causes.

* **Articulates Causal Mechanisms:** Requires step-by-step logical proofs with underlying assumptions, making the reasoning traceable.

* **Applies Formal Evaluation:** Uses a defined set of epistemic criteria (Consistency, Parsimony, Coherence, Uncertainty) to judge explanations, introducing objectivity.

* **Makes a Justified Selection:** The IBE step synthesizes the evaluations to choose the "best" explanation, which in this case is the simpler, more coherent one (blowing).

The underlying message is that robust causal reasoning involves not just finding *an* explanation, but systematically generating, elaborating, and comparing multiple explanations against rational criteria to identify the most plausible one. The diagram illustrates a pipeline to achieve this, potentially for applications in AI explainability, scientific reasoning, or decision-support systems. The specific example of the balloon is a simple test case to demonstrate the framework's logic.

</details>

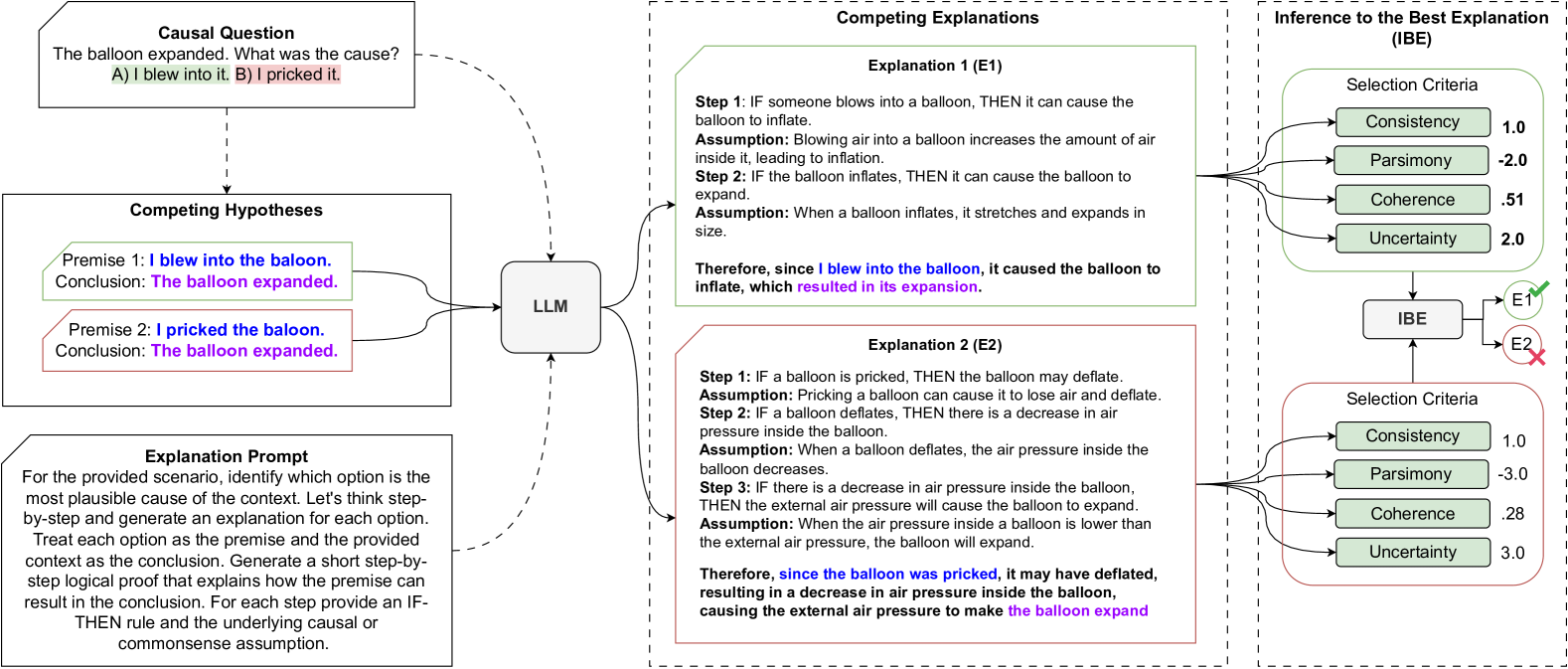

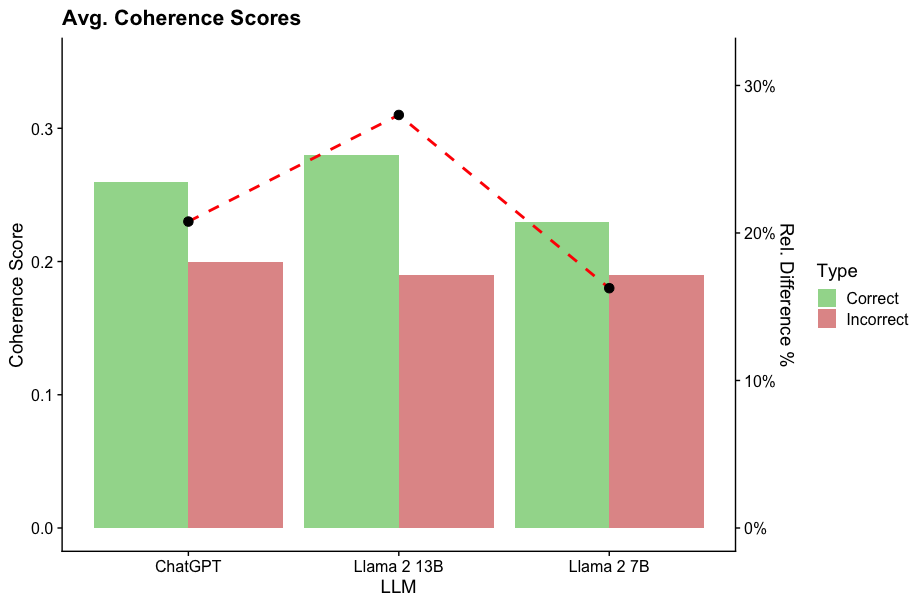

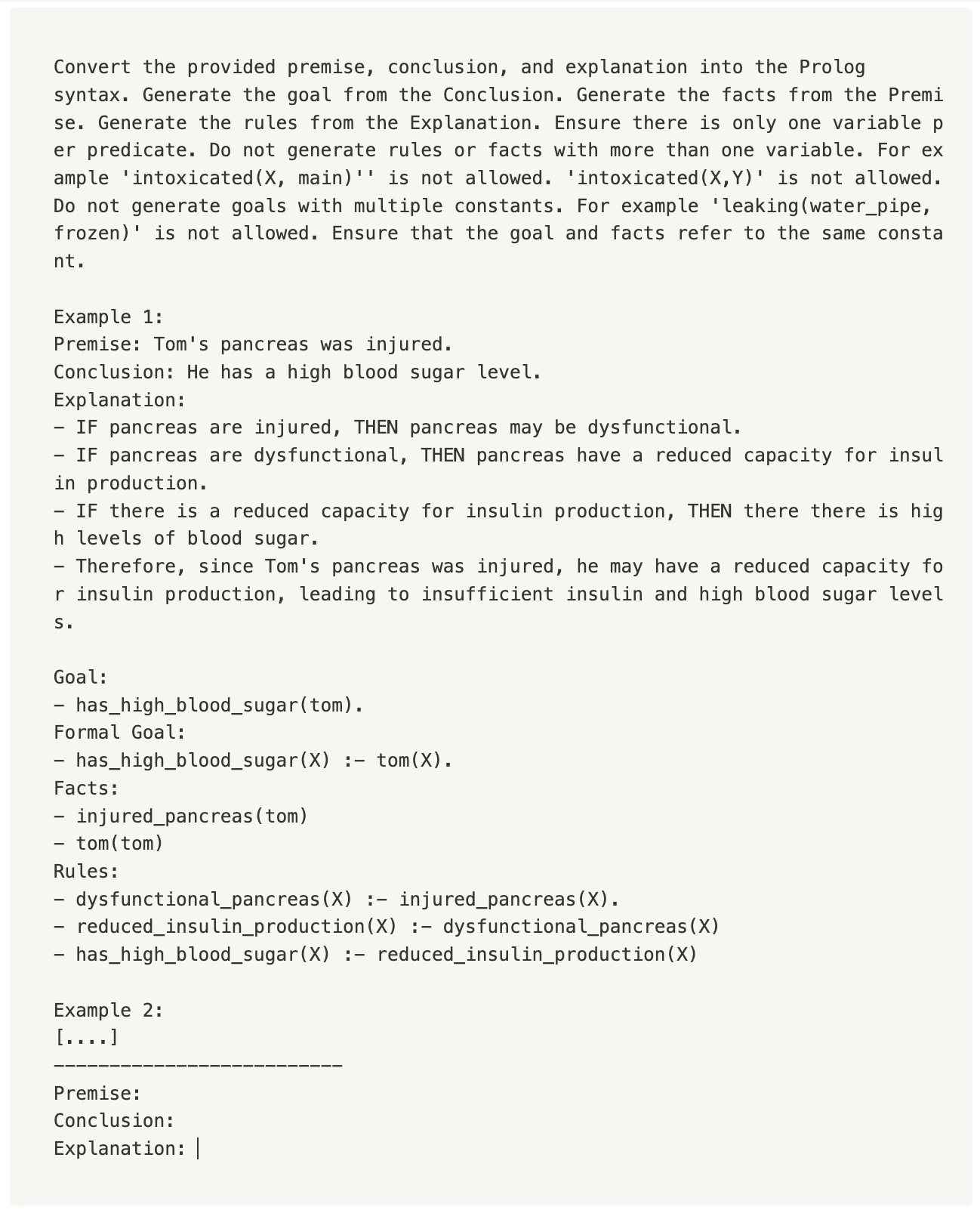

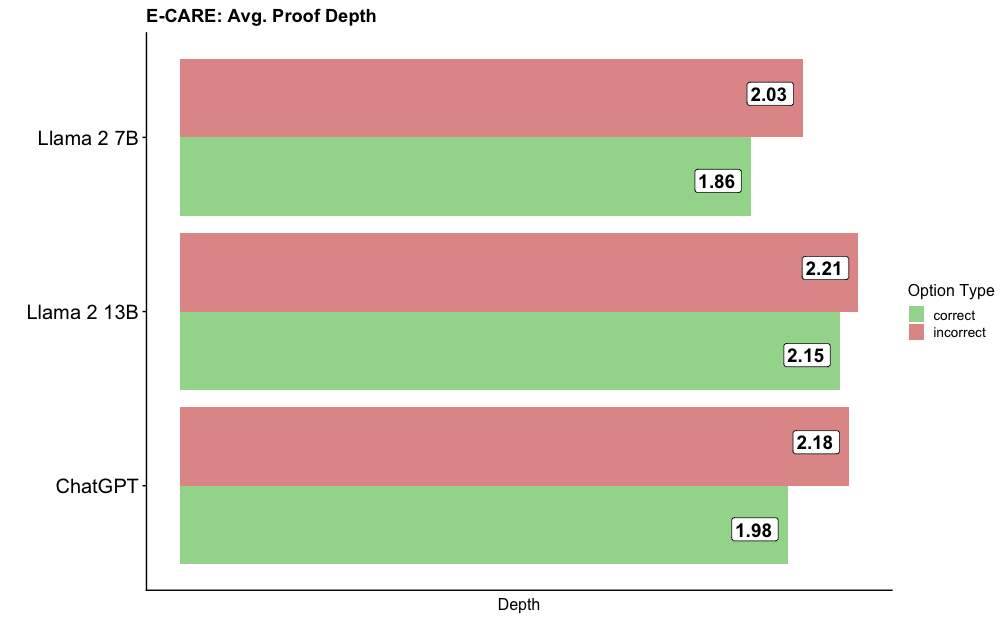

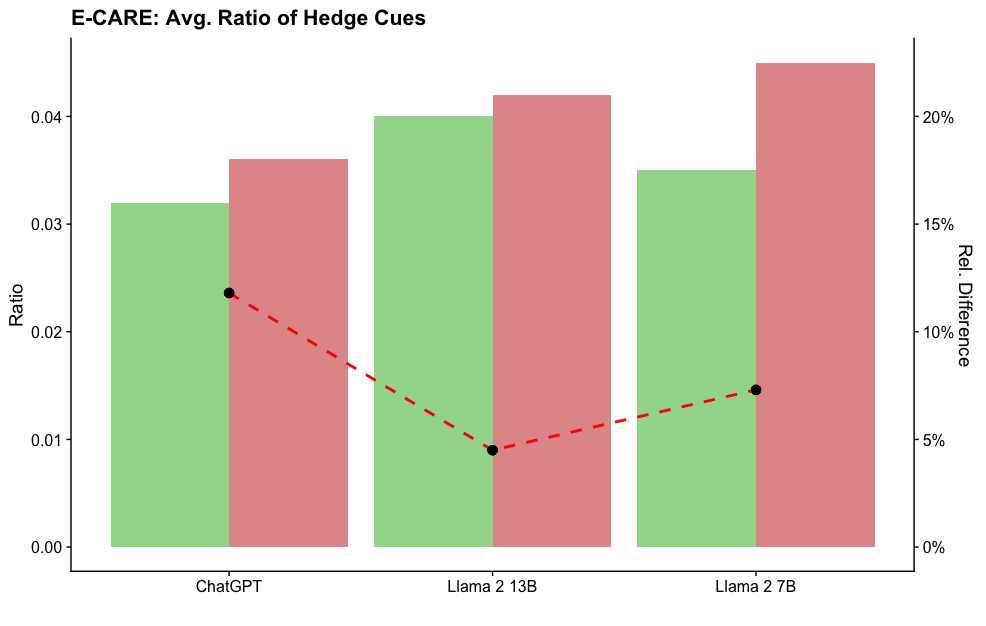

Figure 1: IBE-Eval qualifies LLM-generated explanations with a set of logical and linguistic selection criteria to identify the most plausible hypothesis. The corresponding explanation for each hypothesis is evaluated across the IBE criteria of logical consistency, parsimony, internal coherence, and linguistic uncertainty. A final plausibility score is computed across those features and the hypothesis with highest score is identified as the best explanation.

## 1 Introduction

Large Language Models (LLMs) such as OpenAI’s GPT Brown et al. (2020) and LLaMA Touvron et al. (2023) have been highly effective across a diverse range of language understanding and reasoning tasks Liang et al. (2023). While LLM performances have been thoroughly investigated across various benchmarks Wang et al. (2019); Srivastava et al. (2023); Gao et al. (2023); Touvron et al. (2023), the principles and properties behind their step-wise reasoning process are still poorly understood Valentino et al. (2021). LLMs are notoriously black-box and can be difficult to interpret Chakraborty et al. (2017); Danilevsky et al. (2020). Moreover, the commercialization of LLMs has led to strategic secrecy around model architectures and training details Xiang (2023); Knight (2023). Finally, LLMs are susceptible to hallucinations and adversarial perturbations Geirhos et al. (2020); Camburu et al. (2020), often producing plausible but factually incorrect answers Ji et al. (2023); Huang et al. (2023). As the size and complexity of LLM architectures increase, the systematic study of generated explanations becomes crucial to better interpret and validate the LLM’s internal inference and reasoning processes Wei et al. (2022b); Lampinen et al. (2022); Huang and Chang (2022).

The automatic evaluation of natural language explanations presents several challenges Atanasova et al. (2023); Camburu et al. (2020). Without resource-intensive annotation Wiegreffe and Marasovic (2021); Thayaparan et al. (2020); Dalvi et al. (2021); Camburu et al. (2018), explanation quality methods tend to rely on either weak supervision, where the identification of the correct answer is taken as evidence of explanation quality, or require the injection of domain-specific knowledge Quan et al. (2024). In this paper, we seek to better understand the LLM explanatory process through the investigation of explicit linguistic and logical properties. While explanations are hard to formalize due to their open-ended nature, we hypothesize that they can be analyzed as linguistic objects, with measurable features that can serve to define criteria for assessing their quality.

Specifically, this paper investigates the following overarching research question: “Can the linguistic and logical properties associated with LLM-generated explanations be used to qualify the models’ reasoning process?”. To this end, we propose an interpretable framework inspired by philosophical accounts of abductive inference, also known as Inference to the Best Explanation (IBE) - i.e. the process of selecting among competing explanatory theories Lipton (2017). In particular, we aim to measure the extent to which LLM-generated explanations conform to IBE expectations when attempting to identify the most plausible explanation. To this end, we present IBE-Eval, a framework designed to estimate the plausibility of natural language explanations through a set of explicit logical and linguistic features, namely: logical consistency, parsimony, coherence, and linguistic uncertainty.

To evaluate the efficacy of IBE-Eval, we conduct extensive experiments in the multiple-choice Causal Question Answering (CQA) setting. The overall results and contributions of the paper can be summarized as follows:

1. To the best of our knowledge, we are the first to propose an interpretable framework inspired by philosophical accounts on Inference to the Best Explanation (IBE) to automatically assess the quality of natural language explanations.

1. We propose IBE-Eval, a framework that can be instantiated with external tools for the automatic evaluation of LLM-generated explanations and the identification of the best explanation in a multiple-choice CQA setting.

1. We provide empirical evidence that LLM-generated explanations tend to conform to IBE expectations with varying levels of statistical significance correlated to the LLM’s size.

1. We additionally find that uncertainty, parsimony, and coherence are the best predictors of plausibility and explanation quality across all LLMs. However, we also find that the LLMs tend to be strong rationalizers and can produce logically consistent explanations even for less plausible candidates, making the consistency metric less effective in practice.

1. IBE-Eval can successfully identify the best explanation supporting the correct answers with up to 77% accuracy (+ $\approx 27\$ above random and + $\approx 17\$ over GPT 3.5-as-a-Judge baselines)

1. IBE-Eval is significantly correlated with human judgment, outperforming a GPT3.5-as-a-Judge baseline in terms of alignment with human preferences.

For reproducibility, our code is made available on Github https://github.com/dhairyadalal/IBE-eval to encourage future research in the field.

## 2 Inference to the Best Explanation (IBE)

Explanatory reasoning is a distinctive feature of human rationality underpinning problem-solving and knowledge creation in both science and everyday scenarios Lombrozo (2012); Deutsch (2011). Accepted epistemological accounts characterize the creation of an explanation as composed of two distinct phases: conjecturing and criticism Popper (2014). The explanatory process always involves a conflict between plausible explanations, which is typically resolved through the criticism phase via a selection process, where competing explanations are assessed according to a set of criteria such as parsimony, coherence, unification power, and hardness to variation Lipton (2017); Harman (1965); Mackonis (2013); Thagard (1978, 1989); Kitcher (1989); Valentino and Freitas (2022).

As LLMs become interfaces for natural language explanations, epistemological frameworks offer an opportunity for developing criticism mechanisms to understand the explanatory process underlying state-of-the-art models. To this end, this paper considers an LLM as a conjecture device producing linguistic objects that can be subject to criticism. In particular, we focus on a subset of criteria that can be computed on explicit linguistic and logical features, namely: consistency, parsimony, coherence, and uncertainty.

To assess the LLM’s alignment to such criteria, we focus on the task of selecting among competing explanations in a multiple-choice CQA setting (Figure 1). Specifically, given a set of competing hypotheses (i.e. the multiple-choice options), $H=\{h_{1},h_{2},\ldots,h_{n}\}$ , we prompt the LLM to generate plausible explanations supporting each hypothesis (Section 3). Subsequently, we adopt the proposed IBE selection criteria to assess the quality of the generated explanations (Section 4). IBE-Eval computes an explanation plausibility score derived from the linear combination of the computed selection criteria. The explanation with the highest score is selected as the predicted answer and additionally assessed as to the extent to which the observable IBE features are correlated with QA accuracy. We hypothesize that IBE-Eval will produce higher scores for the explanation associated with the correct answer and that the IBE criteria should meaningfully differentiate between competing explanations.

## 3 Explanation Generation

For the first stage, the LLM is prompted to generate competing explanations for the hypotheses using a modified Chain-of-Thought (CoT) prompt Wei et al. (2022a). Specifically, the COT prompt is modified to instruct the LLM to produce an explanation for each competing hypothesis (see Figure 1). We adopt a methodology similar to Valentino et al. (2021), where the generated explanation is constrained into an entailment form for the downstream IBE evaluation. In particular, we posit that a valid explanation should demonstrate an entailment relationship between the premise and conclusion which are derived from the question-answer pair.

To elicit logical connections between explanation steps and facilitate subsequent analysis, the LLM is constrained to use weak syllogisms expressed as If-Then statements. Additionally, the LLM is instructed to produce the associated causal or commonsense assumption underlying each explanation step. This output is then post-processed to extract the explanation steps and supporting knowledge for evaluation via the IBE selection criteria. Additional details and examples of prompts are reported in Appendix A.2.

## 4 Linguistic & Inference Criteria

To perform IBE, we investigate a set of criteria that can be automatically computed on explicit logical and linguistic features, namely: consistency, parsimony, coherence, and uncertainty.

Consistency.

Consistency aims to verify whether the explanation is logically valid. Given a hypothesis, comprised of a premise $p_{i}$ , a conclusion $c_{i}$ , and an explanation consisting of a set of If-Then statements $E={s_{1},...,s_{i}}$ , we define $E$ to be logically consistent if $p_{i}\cup E\vDash c_{i}$ . Specifically, an explanation is logically consistent if it is possible to build a deductive proof linking premise and conclusion.

To evaluate logical consistency, we leverage external symbolic solvers along with autoformalization - i.e., the translation of natural language into a formal language Wu et al. (2022). Specifically, the hypotheses and explanations are formalized into a Prolog program which will attempt to generate a deductive proof via backward chaining Weber et al. (2019).

To perform autoformalization, we leverage the translation capabilities of GPT 3.5. Specifically, we instruct GPT 3.5 to convert each IF-Then explanation step from the generated explanation into an implication rule and the premise statement into grounding atoms. On the other end, the entailment condition and the conclusion are used to create a Prolog query. The query instructs the Prolog solver to attempt to find a path through the implications rules such that the conclusion be directly connected to the premise. Further details about the autoformalization process can be found in Appendix A.3.

After autoformalization, following recent work on neuro-symbolic integration for LLM explanations Quan et al. (2024), we adopt an external Prolog solver for entailment verification https://github.com/neuro-symbolic-ai/explanation_based_ethical_reasoning. The explanation is considered consistent if the Prolog solver can satisfy the query and successfully build a deductive proof. Technical details can be found in Appendix A.5.

Parsimony.

The parsimony principle, also known as Ockham’s razor, favors the selection of the simplest explanation consisting of the fewest elements and assumptions Sober (1981). Epistemological accounts posit that an explanation with fewer assumptions tends to leave fewer statements unexplained, improving specificity and alleviating the infinite regress Thagard (1978). Further, parsimony is an essential feature of causal interpretability, as only parsimonious solutions are guaranteed to reflect causation in comparative analysis Baumgartner (2015). In this paper, we adopt two metrics as a proxy of parsimony, namely proof depth, and concept drift. Proof depth, denoted as $Depth$ , is defined as the cardinality of the set of rules, $R$ , required by the Prolog solver to connect the conclusion to the premise via backward chaining. Let $h$ be a hypothesis candidate composed of a premise $p$ and a conclusion $c$ , and let $E$ be a formalized explanation represented as a set of rules $R^{\prime}$ . The proof depth is the number of rules $|R|$ , with $R\subseteq R^{\prime}$ , traversed during backward chaining to connect the conclusion $c$ to the premise $p$ :

$$

Depth(h)=|R|

$$

Concept drift, denoted as $Drift$ , is defined as the number of additional concepts and entities, outside the ones appearing in the hypothesis (i.e., premise and conclusion), that are introduced by the LLM to support the entailment. For simplicity, we consider nouns as concepts. Let $N=\{Noun_{p},Noun_{c},Noun_{E}\}$ be the unique nouns found in the premise, conclusion, and explanation steps. Concept drift is the cardinality of the set difference between the nouns found in the explanation and the nouns in the hypothesis:

$$

Drift(h)=|Noun_{E}-(Noun_{p}\cup Noun_{c})|

$$

Intuitively, the parsimony principle would predict the most plausible hypothesis as the one supported by an explanation with the smallest observed proof depth and concept drift. Implementation details can be found in Appendix A.6.

Coherence.

Coherence attempts to measure the logical validity at the level of the specific explanation steps. An explanation can be formally consistent on the surface while still including implausible or ungrounded intermediate assumptions. Coherence evaluates the quality of each intermediate If-Then implication by measuring the entailment strength between the If and Then clauses. To this end, we employ a fine-tuned natural language inference (NLI) model. Formally, let $S$ be a set of explanation steps, where each step $s$ consists of an If-Then statement, $s=(If_{s},Then_{s})$ . For a given step $s_{i}$ , let $ES(s_{i})$ denote the entailment score obtained via the NLI model between $If_{s}$ and $Then_{s}$ clauses. The step-wise entailment score $SWE(S)$ is then calculated as the averaged sum of the entailment scores across all explanation steps $|S|$ :

$$

\text{SWE}(S)=\frac{1}{|S|}\sum_{i=1}^{|S|}\text{ES}(s_{i})

$$

We hypothesize that the LLM should generate a higher coherence score for more plausible hypotheses, as such explanations should exhibit stronger step-wise entailment. Additional details can be found in Appendix A.7.

Uncertainty.

Finally, we consider the linguistic certainty expressed in the generated explanation as a proxy for plausibility. Hedging words such as probably, might be, could be, etc typically signal ambiguity and are often used when the truth condition of a statement is unknown or improbable. Pei and Jurgens (2021) found that the strength of scientific claims in research papers is strongly correlated with the use of direct language. In contrast, they found that the use of hedging language suggested that the veracity of the claim was weaker or highly contextualized.

To measure the linguistic uncertainty ( $UC$ ) of an explanation, we consider the explanation’s underlying assumptions ( $A_{i}$ ) and the overall explanation summary ( $S$ ). The linguistic uncertainty score is extracted using the fine-tuned sentence-level RoBERTa model from Pei and Jurgens (2021). The overall linguistic uncertainty score ( $UC_{\text{overall}}$ ) is the sum of the assumption and explanation summary scores:

$$

UC_{\text{overall}}=UC(A)+UC(S)

$$

Where $UC(A)$ is the sum of the linguistic uncertainty scores ( $UC(A)$ ) across all the assumptions $|A|$ associated with each explanation step $i$ :

$$

UC(A)=\sum_{i=1}^{|A|}UC(a_{i})

$$

and linguistic uncertainty of the explanation summary $UC(S)$ . We hypothesize that the LLM will use more hedging language when explaining the weaker hypothesis resulting in a higher uncertainty score. Further details can be found in Appendix A.8.

### 4.1 Inference to Best Explanation

After the IBE criteria are computed for each competing hypothesis, they are used to generate the final explanation plausibility score. We define a simple linear regression model $\theta(\cdot)$ , which was fitted on a small set of training examples consisting of extracted IBE features to predict the probability that an explanation $E_{i}$ corresponds to the correct answer. Specifically, we employ IBE-Eval to score each generated explanation independently and then select the final answer $a$ via argmax:

$$

a=\operatorname*{argmax}_{i}[\theta(E_{1}),\ldots,\theta(E_{n})]

$$

Additional details can be found in Appendix A.9.

<details>

<summary>extracted/6246183/correlation.png Details</summary>

### Visual Description

## Heatmap Comparison: COPA vs. E-CARE Benchmark Correlations

### Overview

The image displays two side-by-side heatmaps comparing the correlation of various Large Language Model (LLM) performance metrics across two different evaluation benchmarks: **COPA** (left) and **E-CARE** (right). The heatmaps visualize the strength and direction of correlation (positive or negative) between specific LLM characteristics (Consistency, Depth, Coherence, Uncertainty, Drift) and model performance on these benchmarks. The color intensity represents the correlation value, with a shared legend indicating the scale.

### Components/Axes

* **Chart Type:** Two separate correlation heatmaps.

* **Y-Axis (Both Charts):** Labeled "LLM". Lists three models:

* LLaMA 2 7B

* LLaMA 2 13B

* GPT 3.5

* **X-Axis (Both Charts):** Lists five performance metrics:

* Consistency

* Depth

* Coherence

* Uncertainty

* Drift

* **Legend:** Positioned centrally between the two heatmaps. Titled "Corr." (Correlation). It is a vertical color bar with the following scale:

* **Top (Green):** 2.5

* **Middle (Light Yellow/White):** 0.0

* **Bottom (Red):** -2.5

* This indicates that green shades represent positive correlations, red shades represent negative correlations, and the intensity corresponds to the magnitude.

* **Data Labels:** Each cell in the heatmaps contains a numerical correlation value. Many values are followed by asterisks indicating statistical significance (e.g., `*`, `**`, `***`).

### Detailed Analysis

#### **COPA Heatmap (Left)**

* **LLaMA 2 7B:**

* Consistency: 1.37 (light green, positive)

* Depth: -2.95 ** (medium red, strong negative)

* Coherence: 1.22 (light green, positive)

* Uncertainty: -3.10 ** (medium red, strong negative)

* Drift: -0.27 (very light pink, weak negative)

* **LLaMA 2 13B:**

* Consistency: 1.36 (light green, positive)

* Depth: -1.28 (light red, negative)

* Coherence: 3.87 *** (dark green, very strong positive)

* Uncertainty: -2.17 * (medium red, negative)

* Drift: -3.33 *** (dark red, very strong negative)

* **GPT 3.5:**

* Consistency: 4.67 *** (dark green, very strong positive)

* Depth: -4.893 *** (dark red, very strong negative)

* Coherence: 3.60 *** (dark green, very strong positive)

* Uncertainty: -4.34 *** (dark red, very strong negative)

* Drift: -3.22 ** (dark red, strong negative)

#### **E-CARE Heatmap (Right)**

* **LLaMA 2 7B:**

* Consistency: 0.20 (very light green, very weak positive)

* Depth: -0.53 (very light pink, weak negative)

* Coherence: 2.18 * (light green, positive)

* Uncertainty: -2.11 ** (medium red, negative)

* Drift: -0.78 * (light pink, weak negative)

* **LLaMA 2 13B:**

* Consistency: 1.167 (light green, positive)

* Depth: -1.18 (light red, negative)

* Coherence: 1.67 * (light green, positive)

* Uncertainty: -1.52 * (light red, negative)

* Drift: -1.91 * (medium red, negative)

* **GPT 3.5:**

* Consistency: 3.10 ** (green, strong positive)

* Depth: -2.91 ** (red, strong negative)

* Coherence: 0.98 (very light green, weak positive)

* Uncertainty: -2.61 ** (red, strong negative)

* Drift: -5.14 *** (dark red, very strong negative)

### Key Observations

1. **Consistent Negative Correlation with Depth and Uncertainty:** Across both benchmarks and all three models, the "Depth" and "Uncertainty" metrics show a consistent pattern of negative correlation (red cells). This suggests that higher scores on these metrics are associated with lower performance on the COPA and E-CARE tasks.

2. **Consistency and Coherence Show Positive Correlation:** The "Consistency" and "Coherence" metrics generally show positive correlation (green cells), particularly for the larger GPT 3.5 model. This indicates these traits are beneficial for these benchmarks.

3. **Model Scaling Effect:** GPT 3.5 exhibits the most extreme correlation values (both positive and negative) in the COPA benchmark, suggesting its performance is more strongly tied to these measured characteristics compared to the LLaMA 2 models.

4. **Benchmark Differences:** The correlation patterns are broadly similar but not identical between COPA and E-CARE. For instance, the "Coherence" correlation for GPT 3.5 is very strong in COPA (3.60***) but weak in E-CARE (0.98). The "Drift" metric shows a particularly strong negative correlation for GPT 3.5 in E-CARE (-5.14***).

5. **Statistical Significance:** Most of the stronger correlations (magnitude > ~1.5) are marked with asterisks, indicating they are statistically significant. The weakest correlations (e.g., LLaMA 2 7B on COPA Drift: -0.27) lack significance markers.

### Interpretation

This visualization provides a diagnostic look at what internal model characteristics (as measured by Consistency, Depth, Coherence, Uncertainty, Drift) align with success on specific reasoning benchmarks (COPA and E-CARE).

* **What the data suggests:** The strong negative correlations for "Depth" and "Uncertainty" are the most striking finding. This could imply that for these particular tasks, models that exhibit more "depth" (perhaps in terms of reasoning steps or complexity) or higher calibrated "uncertainty" perform worse. Conversely, models that are more "consistent" and "coherent" in their outputs tend to perform better. This might indicate that COPA and E-CARE reward reliable, straightforward reasoning over more complex or hesitant deliberation.

* **Relationship between elements:** The heatmaps directly link abstract model properties (columns) to concrete benchmark performance (implied by the correlation value). The side-by-side comparison allows us to see if these relationships are benchmark-specific or general. The shared color scale enables direct visual comparison of correlation strength across both charts.

* **Notable anomalies:** The drastic difference in the "Coherence" correlation for GPT 3.5 between the two benchmarks is a key anomaly. It suggests that while coherent output is highly predictive of success on COPA, it is much less so for E-CARE. This could point to a fundamental difference in what the two benchmarks measure. Furthermore, the extremely strong negative correlation for "Drift" in GPT 3.5 on E-CARE (-5.14***) is an outlier in magnitude, highlighting "Drift" as a particularly detrimental factor for that model on that specific task.

**In summary, the image presents evidence that for the COPA and E-CARE benchmarks, model performance is positively associated with consistency and coherence, and negatively associated with depth, uncertainty, and drift. The strength of these associations varies by model and benchmark, with GPT 3.5 showing the most pronounced relationships.**

</details>

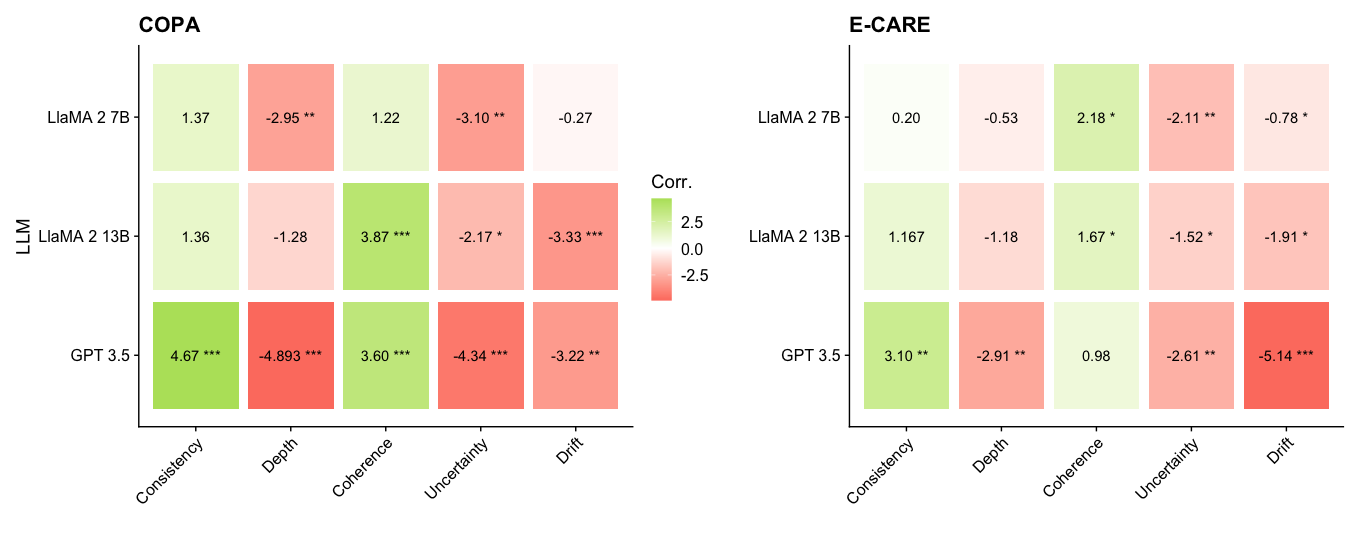

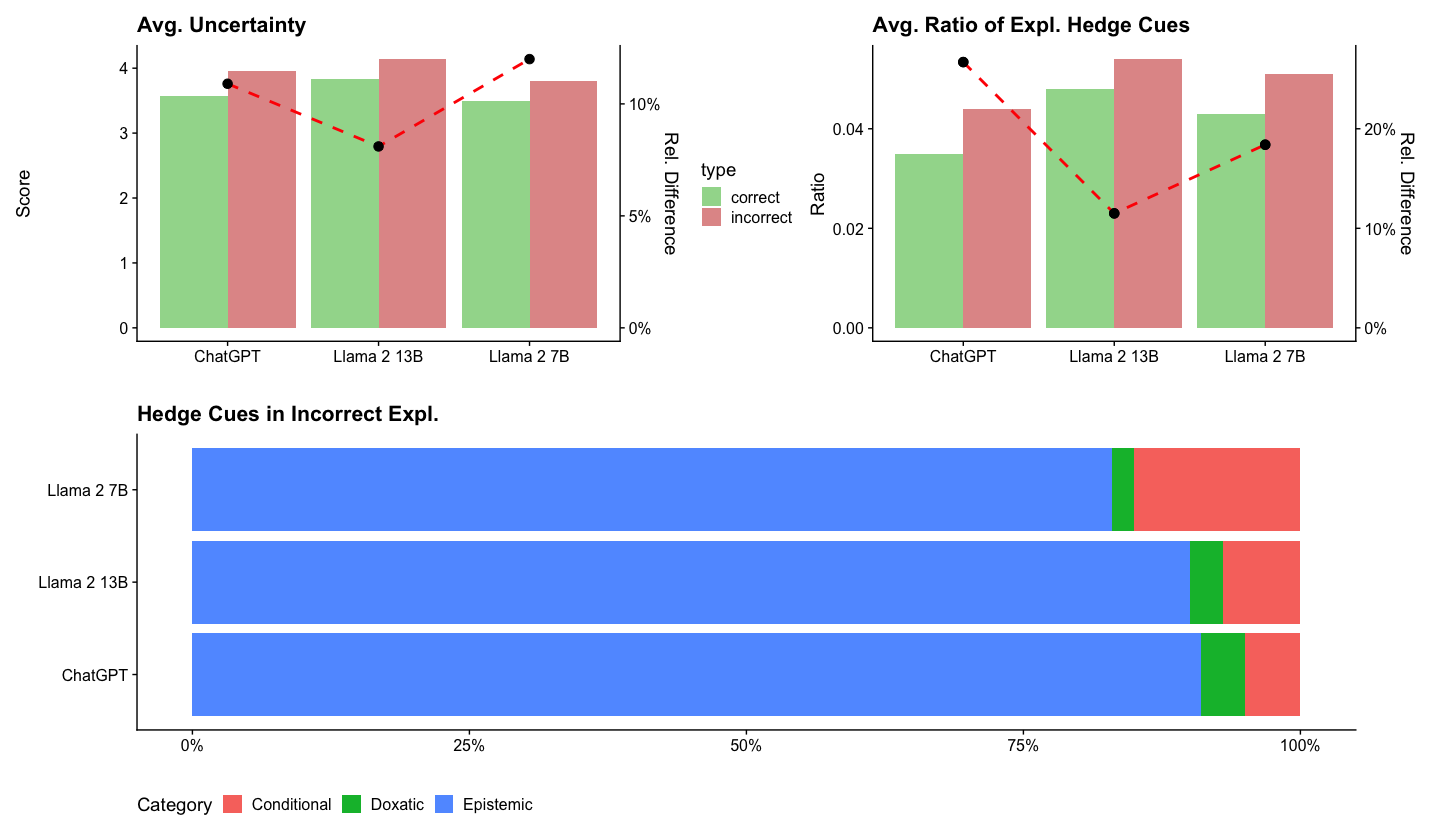

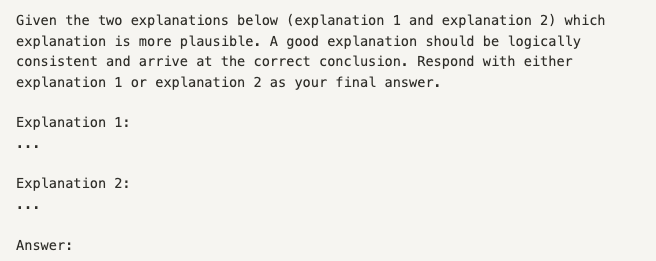

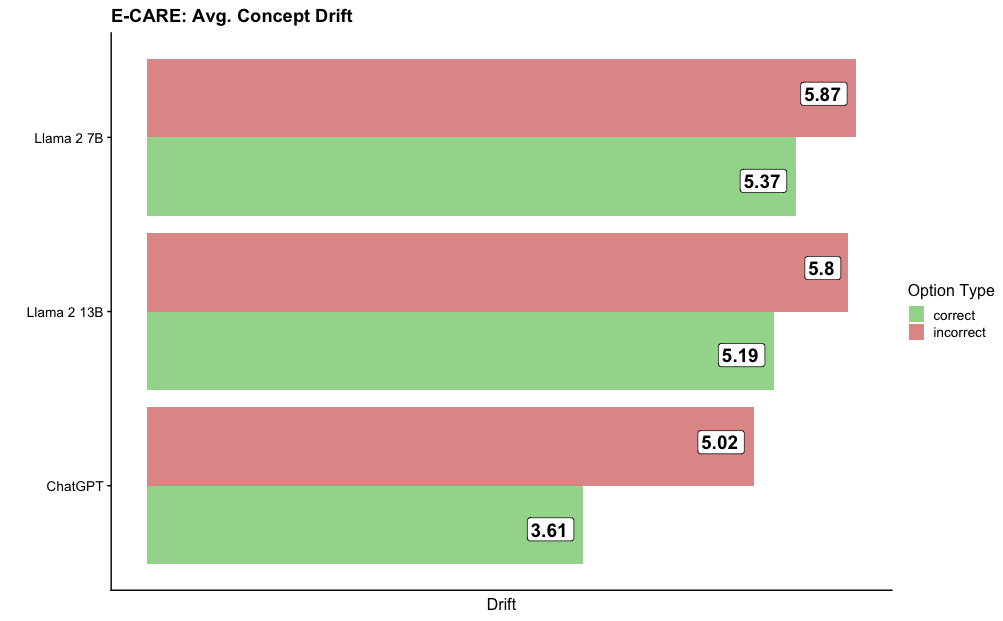

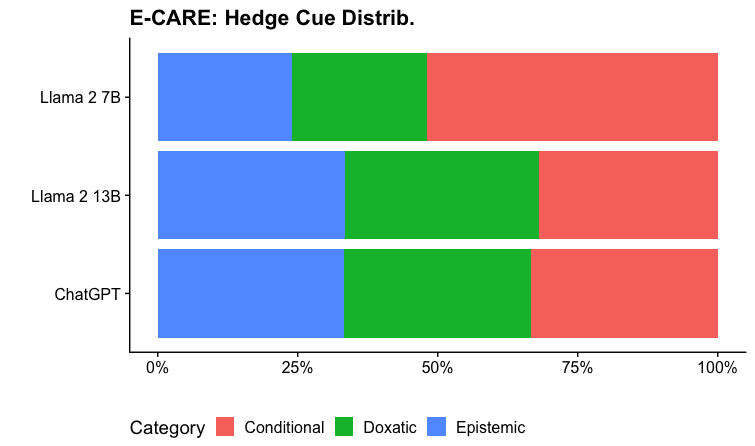

Figure 2: A regression analysis measuring the correlation between IBE criteria and question accuracy. All the LLMs tend to conform to IBE expectations with GPT 3.5 exhibiting the most consistent and significant alignment. Linguistic uncertainty is the strongest IBE predictor for explanation quality, where higher uncertainty is negatively correlated with question accuracy. Statistical significance is noted as: ‘***’ p < 0.001, ‘**’ p < 0.01 ‘*’ p < 0.05.

| Baselines | COPA GPT 3.5 | E-CARE LlaMA 2 13B | LlaMA 2 7B | GPT 3.5 | LlaMA 2 13B | LlaMA 2 7B |

| --- | --- | --- | --- | --- | --- | --- |

| GPT3.5 Judge | .59 | .47 | .63 | .43 | .61 | .52 |

| Human | .95 | 1.0 | .91 | .90 | .91 | .92 |

| IBE Features | | | | | | |

| Consistency | .51 | .52 | .55 | .54 | .54 | .54 |

| Depth (Parsimony) | .67 | .53 | .63 | .66 | .56 | .54 |

| Drift (Parsimony) | .67 | .63 | .58 | .66 | .57 | .57 |

| Coherence | .66 | .66 | .56 | .56 | .57 | .59 |

| Linguistic Uncertainty | .70 | .65 | .61 | .59 | .56 | .60 |

| Composed Model | | | | | | |

| Random | .50 | .50 | .50 | .50 | .50 | .50 |

| + Consistency | .51 | .52 | .55 | .54 | .54 | .54 |

| + Depth | .67 | .53 | .63 | .66 | .56 | .56 |

| + Drift | .70 | .65 | .65 | .72 | .66 | .65 |

| + Coherence | .73 | .71 | .69 | .73 | .68 | .69 |

| + Linguistic Uncertainty | .77 | .74 | .70 | .74 | .70 | .73 |

Table 1: An ablation study and evaluation of the IBE criteria and the composed IBE-Eval model. IBE-Eval outperforms the GPT 3.5 Judge baseline by an average of +17.5% across all all models and tasks.

## 5 Experimental Setting

Causal Question-Answering (CQA) requires reasoning about the causes and effects given an event description. We specifically consider the task of cause and effect prediction in a multiple-choice setting, where given a question and two candidate answers, the LLM must decide which is the most plausible cause or effect. Causal reasoning is a challenging task as the model must both possess commonsense knowledge about causal relationships and consider the event context which would make one option more plausible than the other. For our experiments, we use the Choice of Plausible Alternatives (COPA) Gordon et al. (2012) and E-CARE Du et al. (2022) datasets.

COPA.

COPA is a multiple-choice commonsense causal QA dataset consisting of 500 train and test examples that were manually generated. Each multiple-choice example consists of a question premise and a set of answer candidates which are potential causes or effects of the premise. COPA is a well-established causal reasoning benchmark that is both a part of SuperGlue Wang et al. (2019) and the CALM-Bench Dalal et al. (2023).

E-CARE.

E-CARE is a large-scale multiple-choice causal crowd-sourced QA dataset consisting of 15K train and 2k test examples. Similar to COPA, the task requires the selection of the most likely cause or effect provided an event description. We randomly sample 500 examples from the E-CARE test set for our experiments.

LLMs.

We consider GPT-Turbo-3.5, LLaMA 2 13B, and LLaMA 2 7B for all experiments. GPT 3.5 is a proprietary model Brown et al. (2020) and is highly effective across a wide range of natural language reasoning tasks Laskar et al. (2023). We additionally evaluate the open-source LLaMA 2 model Touvron et al. (2023). We consider both the 13B and 7B variants of Llama 2 as both are seen as viable commodity GPT alternatives and have been widely adopted by the research community for LLM benchmarking and evaluation.

Baselines.

We employ LLM-as-a-Judge Zheng et al. (2023) and human evaluators as baseline methods for the selection of the best explanation in the CQA setting. (Zheng et al., 2023) found LLMs can align with human judgment and be utilized for automated evaluation and judgment. We specifically uses GPT 3.5 as the LLM judge. For each CQA example, we present the judges with two competing explanations generated by the target LLM. The judge is asked to identify the best and most plausible explanation. Additional details about the baselines can be found in Appendix A.4.

## 6 Preliminary Analysis

We conduct a preliminary analysis as a sanity check to measure the extent to which LLMs generate self-evident or tautological explanations - i.e., explanations that simply restate the premises and conclusions. Tautological explanations present a risk for IBE-Eval as the metrics would be theoretically uninformative if the LLM adopts the tested causal relation as the explanation itself (e.g. A → B) without providing additional supporting statements.

We consider the parsimony metric to compute the percentage of explanations with proof depth equal to 1 (i.e, explanations containing only one inference step) and concept drift equal to 0 (i.e. no additional concepts other than the ones stated in premises and conclusions appear in the explanation). In such cases, the LLM is effectively generating a self-evident or tautological explanation.

We found that about 2% of the cases consist of self-evident explanations. For GPT 3.5, LLaMA 2 13B, and LLaMA 2 7B, 2% of the generated explanations exhibit a concept drift of 0, and on average 1.5% of the explanations have a proof depth of 1. We then conducted an error analysis to evaluate the cases where IBE-Eval selected a self-evident explanation as the best one. Across all LLMs, less than 0.1% of the errors were caused by the selection of such explanations. Our analysis suggests that the impact of self-evident explanations is not significant and that the IBE framework can be robustly applied to identify such cases.

## 7 Results

To assess the LLM’s alignment with the proposed IBE framework and evaluate the efficacy of IBE-Eval, we run a regression analysis and conduct a set of ablation studies to evaluate the relationship between IBE and question accuracy. The main results are presented in Figure 2 and Table 1.

Our regression analysis finds that the IBE criteria are generally consistent across the LLMs as demonstrated by similar correlation patterns found on both the COPA and E-CARE tasks (Figure 2). GPT 3.5 exhibits the strongest alignment with IBE expectations as we observe nearly all the IBE criteria have statistically significant and directionally aligned correlations across both tasks. Thus our proposed IBE criteria can serve as promising build blocks for future work on automated explanation evaluation.

In Table 1 we evaluate the accuracy of the IBE criteria and IBE-Eval in selecting the most plausible explanation in the CQA setting. We find that though independently the IBE criteria are generally limited in their ability to identify the more plausible explanation - they still outperform the GPT-3.5-as-a-judge baseline. IBE-Eval, which considers all IBE criteria, improves the ability to select the best explanation by 17% over both the GPT 3.5-as-a-judge and random baselines. We can achieve up to 77% accuracy utilizing just the extracted IBE criteria demonstrating IBE’s potential value for automatic explanation evaluation.

Next, we explore each explanation feature in further detail to better understand the variances across the IBE criteria and LLMs.

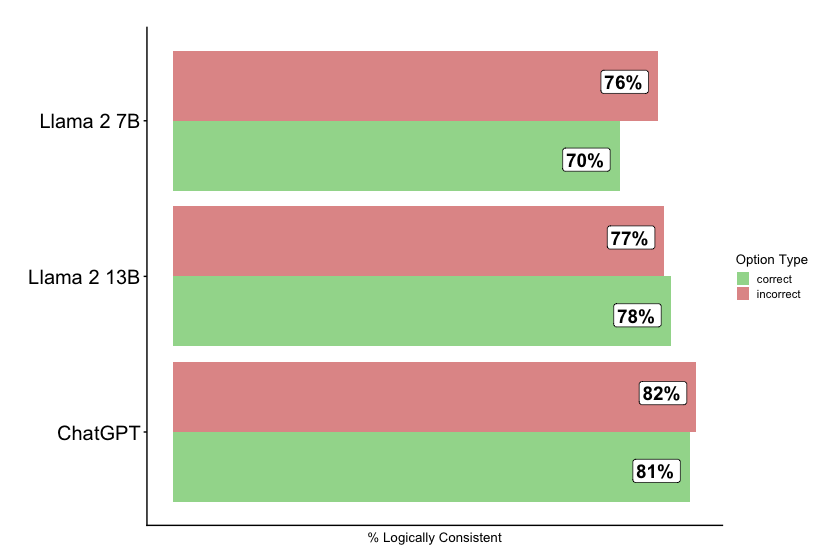

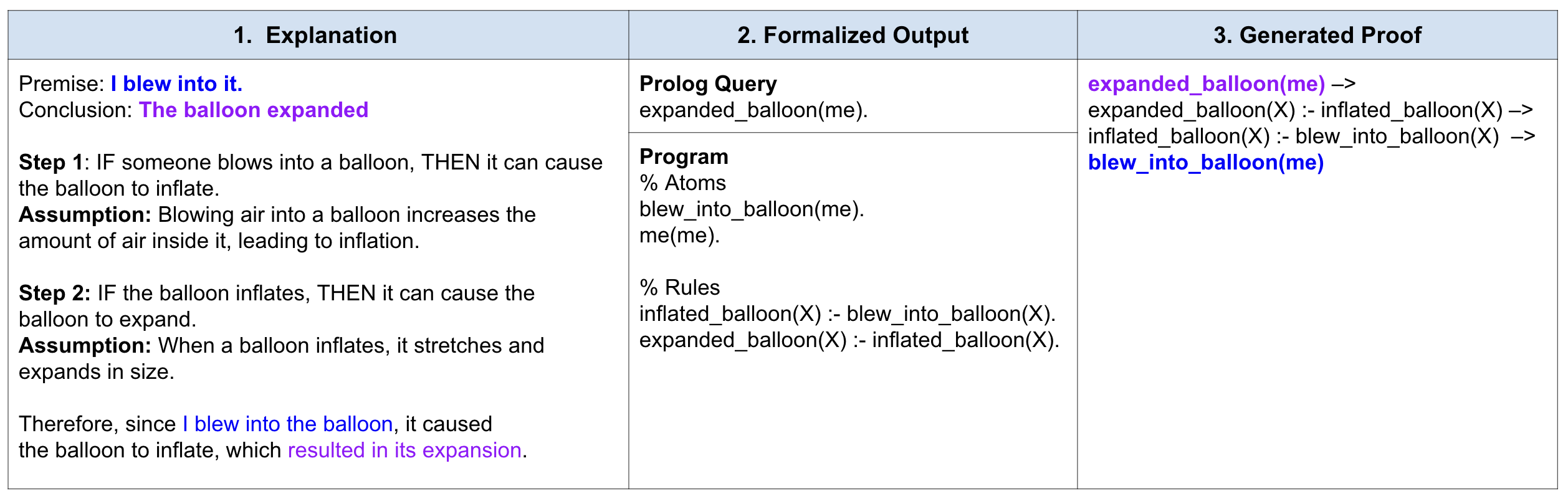

Consistency.

We find that the LLMs are surprisingly strong conjecture models. The LLMs can generate logically consistent explanations for any hypothesis as observed by similar consistency scores for correct and incorrect (Figure 3) explanations. Moreover, we observe that consistency tends to be a statistically insignificant predictor for the LLaMA models. Therefore, we conclude that evidence of logical consistency provides a limited signal for plausibility and is better understood in the context of other IBE criteria. For the incorrect candidate explanations, we find that LLMs over-rationalize and introduce additional premises to demonstrate entailment in their explanations.

<details>

<summary>extracted/6246183/consistency.png Details</summary>

### Visual Description

## Horizontal Bar Chart: Logical Consistency of AI Models

### Overview

The image is a horizontal bar chart comparing the percentage of logical consistency for three large language models: Llama 2 7B, Llama 2 13B, and ChatGPT. The chart breaks down the consistency score into two categories: "correct" and "incorrect" responses.

### Components/Axes

* **Chart Type:** Horizontal grouped bar chart.

* **Y-Axis (Vertical):** Lists the three AI models being compared. From top to bottom: "Llama 2 7B", "Llama 2 13B", "ChatGPT".

* **X-Axis (Horizontal):** Labeled "% Logically Consistent". It represents a percentage scale, though specific numerical markers on the axis are not visible. The bars extend from left to right.

* **Legend:** Positioned on the right side of the chart, titled "Option Type". It defines the two data series:

* A green square labeled "correct".

* A red/salmon square labeled "incorrect".

* **Data Labels:** Each bar segment has a white box with black text displaying its exact percentage value.

### Detailed Analysis

The chart presents two data points for each model, representing the percentage of responses deemed logically consistent within the "correct" and "incorrect" categories.

**1. Llama 2 7B (Top Group)**

* **Incorrect (Red Bar):** The top bar in this group. It extends further to the right and is labeled **76%**.

* **Correct (Green Bar):** The bottom bar in this group. It is shorter than the red bar and is labeled **70%**.

* **Trend:** The "incorrect" category has a higher logical consistency score than the "correct" category for this model.

**2. Llama 2 13B (Middle Group)**

* **Incorrect (Red Bar):** The top bar. It is labeled **77%**.

* **Correct (Green Bar):** The bottom bar. It is slightly longer than the red bar and is labeled **78%**.

* **Trend:** The scores are very close, with the "correct" category having a marginally higher logical consistency score.

**3. ChatGPT (Bottom Group)**

* **Incorrect (Red Bar):** The top bar. It is the longest red bar in the chart and is labeled **82%**.

* **Correct (Green Bar):** The bottom bar. It is slightly shorter than the red bar and is labeled **81%**.

* **Trend:** Both scores are the highest among the three models, with the "incorrect" category scoring slightly higher.

### Key Observations

1. **Performance Hierarchy:** ChatGPT demonstrates the highest logical consistency percentages in both categories (81-82%), followed by Llama 2 13B (77-78%), and then Llama 2 7B (70-76%).

2. **Category Comparison:** For the two Llama models, the relationship between "correct" and "incorrect" scores flips. Llama 2 7B's "incorrect" score is higher, while Llama 2 13B's "correct" score is higher. ChatGPT's scores are nearly equal.

3. **Narrowing Gap:** The difference between the "correct" and "incorrect" percentages narrows as model capability increases (from a 6-point gap for Llama 2 7B, to a 1-point gap for Llama 2 13B, to a 1-point gap for ChatGPT).

4. **High Baseline:** All logical consistency scores are relatively high, ranging from 70% to 82%, suggesting the evaluation metric or task may yield consistently high scores across these models.

### Interpretation

This chart likely visualizes results from a benchmark testing the logical reasoning or consistency of AI model outputs. The "correct" and "incorrect" labels probably refer to the model's final answer being right or wrong, while the "% Logically Consistent" metric evaluates the soundness of the reasoning steps provided, regardless of the final answer's correctness.

The data suggests a few key insights:

* **Model Scaling Improves Consistency:** Moving from Llama 2 7B to the larger 13B version improves logical consistency scores for both correct and incorrect answers, indicating that model scale contributes to more coherent reasoning.

* **ChatGPT Leads in Reasoning Coherence:** ChatGPT exhibits the highest level of logical consistency in its reasoning processes, whether its final answer is correct or not.

* **The "Incorrect" Paradox:** The fact that "incorrect" answers can have high logical consistency (e.g., 82% for ChatGPT) is significant. It implies that models can construct logically sound arguments that lead to wrong conclusions. This highlights a critical challenge in AI evaluation: a model can be persuasive and logically structured yet factually wrong.

* **Benchmark Design:** The high scores across the board (all >70%) may indicate that the specific benchmark used is not highly discriminative for these top-tier models, or that logical consistency is a relative strength of current LLMs. The narrowing gap between correct and incorrect consistency in more advanced models might suggest their errors become more subtle and logically defended.

</details>

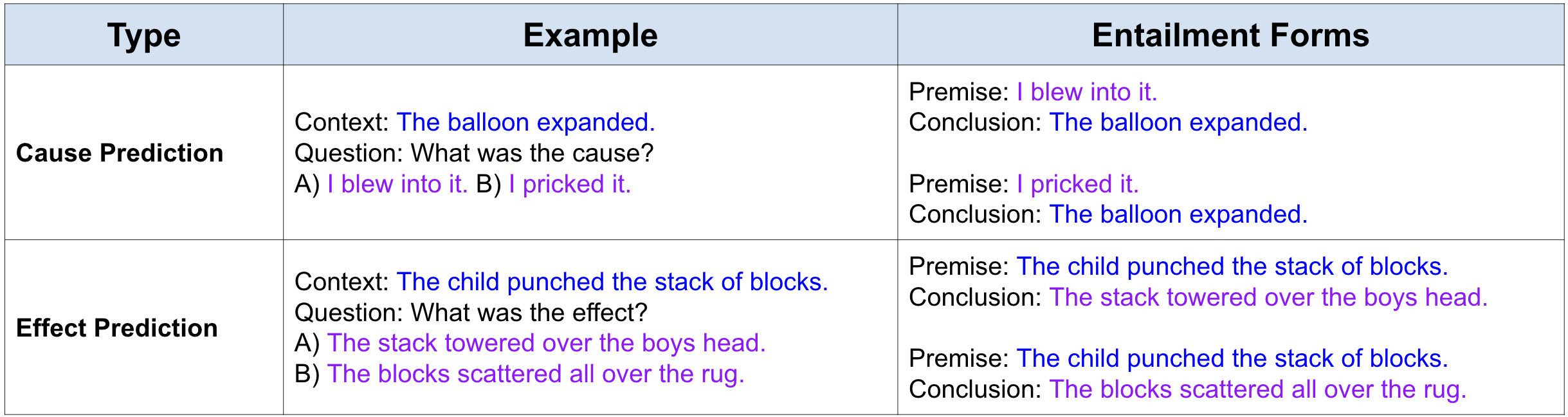

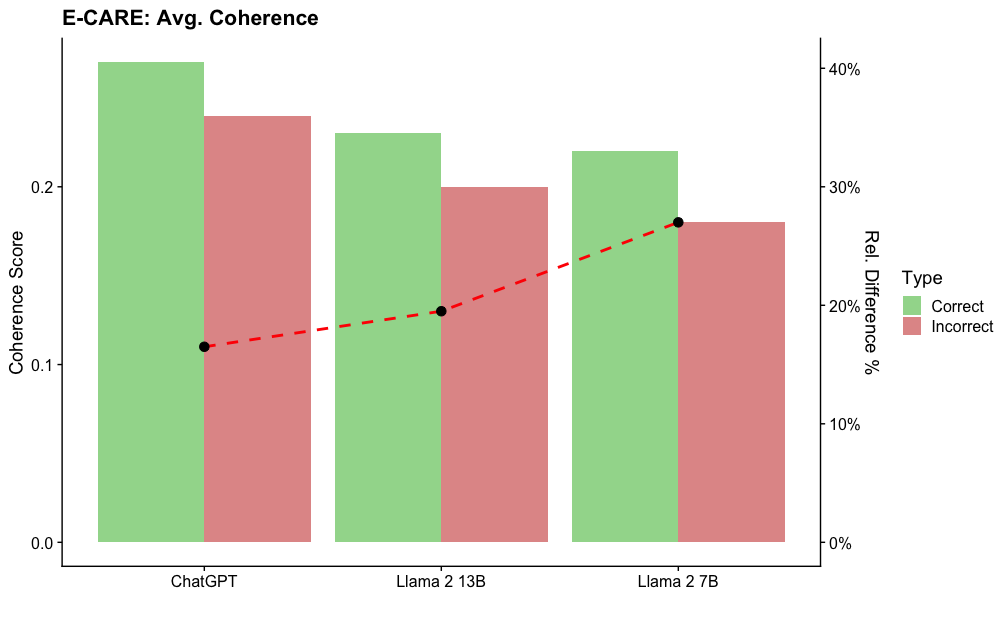

Figure 3: An evaluation of explanation consistency. LLMs are strong rationalizers and can generate logically consistent explanations at equal rates for explanations associated with both correct and incorrect answers options.

Parsimony.

<details>

<summary>extracted/6246183/parsimony.png Details</summary>

### Visual Description

## Horizontal Grouped Bar Chart: Model Performance Metrics

### Overview

The image displays two horizontal grouped bar charts comparing the performance of three large language models (LLMs) on two distinct metrics: "Avg. Proof Depth" and "Expl. Concept Drift." Each chart compares the models' performance on "correct" versus "incorrect" options or outputs. The overall design is clean, with a white background and clear numerical labels on each bar.

### Components/Axes

* **Chart 1 (Top):** Titled "Avg. Proof Depth".

* **Y-axis (Categories):** Lists three models: "Llama 2 7B", "Llama 2 13B", and "ChatGPT".

* **X-axis (Measure):** Labeled "Depth". The axis line is present, but no numerical tick marks are shown; values are provided directly on the bars.

* **Chart 2 (Bottom):** Titled "Expl. Concept Drift".

* **Y-axis (Categories):** Same three models as above.

* **X-axis (Measure):** Labeled "Drift". Similar to the first chart, no numerical ticks are present.

* **Legend:** Positioned at the bottom center of the entire image. It defines the color coding for the bars:

* **Green Square:** "correct"

* **Red Square:** "incorrect"

* **Data Labels:** Each bar has a white box at its end containing the precise numerical value for that data point.

### Detailed Analysis

#### Chart 1: Avg. Proof Depth

This chart measures the average depth of proofs or reasoning chains. For each model, the red bar (incorrect) is longer than the green bar (correct).

* **Llama 2 7B:**

* Incorrect (Red): 2.94

* Correct (Green): 2.68

* **Llama 2 13B:**

* Incorrect (Red): 3.27

* Correct (Green): 3.07

* **ChatGPT:**

* Incorrect (Red): 3.17

* Correct (Green): 2.63

**Trend Verification:** Across all three models, the "incorrect" outputs have a higher average proof depth than the "correct" outputs. The Llama 2 13B model shows the highest depth values for both categories.

#### Chart 2: Expl. Concept Drift

This chart measures the extent of "explained concept drift," likely indicating how much the model's explanation deviates from a core concept. The pattern mirrors the first chart: red bars (incorrect) are consistently longer than green bars (correct).

* **Llama 2 7B:**

* Incorrect (Red): 4.33

* Correct (Green): 3.96

* **Llama 2 13B:**

* Incorrect (Red): 4.55

* Correct (Green): 3.67

* **ChatGPT:**

* Incorrect (Red): 4.18

* Correct (Green): 2.95

**Trend Verification:** The "incorrect" outputs exhibit greater concept drift than the "correct" ones for every model. The Llama 2 13B model again shows the highest value for the "incorrect" category. ChatGPT shows the largest disparity between its correct and incorrect drift scores.

### Key Observations

1. **Consistent Pattern:** In both metrics, incorrect model outputs are associated with higher numerical values (deeper proofs, greater drift) than correct outputs.

2. **Model Comparison:** Llama 2 13B tends to have the highest values in the "incorrect" category for both metrics (3.27 depth, 4.55 drift).

3. **Largest Discrepancy:** The most significant gap between correct and incorrect performance is seen in ChatGPT's "Expl. Concept Drift" score (4.18 vs. 2.95, a difference of 1.23).

4. **Smallest Discrepancy:** The smallest gap is in Llama 2 13B's "Avg. Proof Depth" (3.27 vs. 3.07, a difference of 0.20).

### Interpretation

The data suggests a counterintuitive but potentially significant relationship: **incorrect or flawed model outputs are characterized by longer, more complex reasoning chains (higher proof depth) and explanations that deviate more from the central concept (higher concept drift).**

This could imply that when these models err, they don't simply give short, wrong answers. Instead, they may engage in more elaborate but misguided reasoning, constructing longer justifications that ultimately stray from the correct conceptual path. The higher "drift" score for incorrect answers supports this, indicating a loss of focus on the core idea.

The consistent pattern across three different models (two sizes of Llama 2 and ChatGPT) suggests this might be a general characteristic of current LLM failure modes. From a diagnostic perspective, monitoring for unusually long proof chains or high concept drift in a model's output could serve as a potential red flag for low confidence or likely incorrectness. The outlier is ChatGPT's correct concept drift (2.95), which is notably lower than its peers, possibly indicating a different internal mechanism for maintaining conceptual focus when it is correct.

</details>

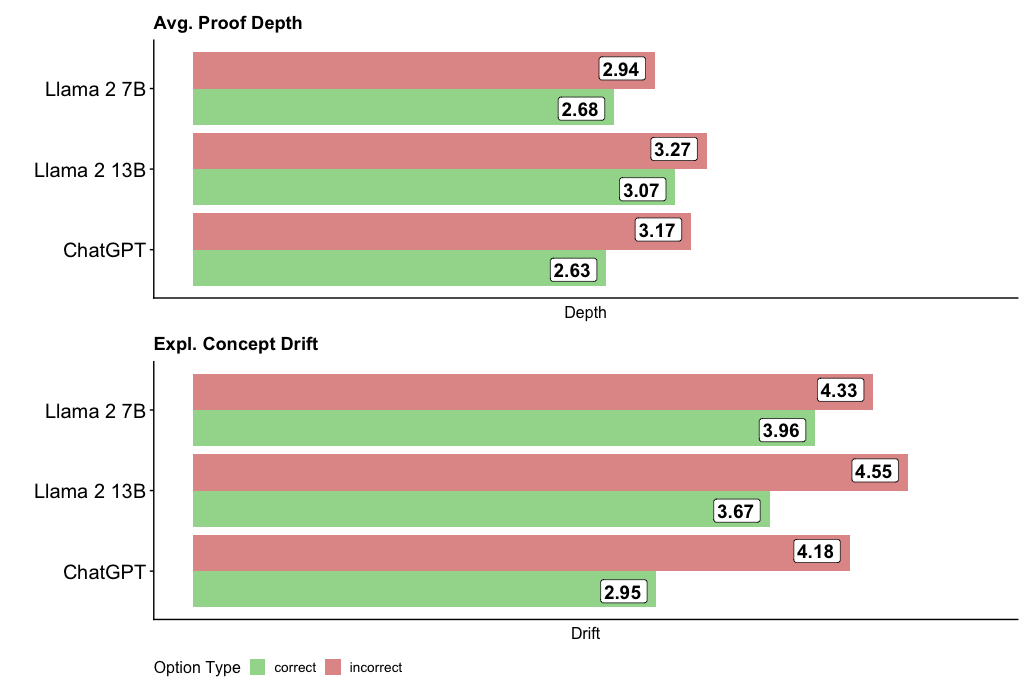

Figure 4: Explanation parsimony is evaluated using proof depth and concept drift. Both metrics are consistently lower for explanations supporting the correct answers suggesting that LLMs are able to generate efficient explanations for the more plausible hypothesis.

The results suggest that parsimony has a more consistent effect and is a better predictor of explanation quality. We observe negative correlations between proof depth, concept drift, and question-answering accuracy, suggesting that LLMs tend to introduce more concepts and explanation steps when explaining less plausible hypotheses. On average, we found the depth and drift to be 6% and 10% greater for the incorrect option across all LLMs (Figure 4). Moreover, the results suggest that as the LLM parameter size increases, the tendency to over-rationalize increases as well. This is attested by the fact that the average difference in depth and drift is the greatest in GPT 3.5, suggesting that the model tends to find the most efficient explanations for stronger hypotheses and articulates explanations for weaker candidates. Finally, we found that the LLaMA models tend to generate more complex explanations overall, with LLaMA 2 13B exhibiting the largest concept drift for less plausible hypotheses. The parsimony criterion supports the IBE predictive power with an average of 14% improvement over consistency.

Coherence.

<details>

<summary>extracted/6246183/coherence.png Details</summary>

### Visual Description

\n

## Bar Chart with Line Overlay: Average Coherence Scores by LLM and Response Type

### Overview

The image is a dual-axis chart comparing the average coherence scores of three Large Language Models (LLMs) for "Correct" versus "Incorrect" responses. It also plots the relative percentage difference between these two scores for each model. The chart uses grouped bars for the scores and a dashed line for the percentage difference.

### Components/Axes

* **Title:** "Avg. Coherence Scores" (top-left).

* **Primary Y-Axis (Left):** Labeled "Coherence Score". Scale ranges from 0.0 to approximately 0.35, with major ticks at 0.0, 0.1, 0.2, and 0.3.

* **Secondary Y-Axis (Right):** Labeled "Rel. Difference %". Scale ranges from 0% to approximately 35%, with major ticks at 0%, 10%, 20%, and 30%.

* **X-Axis:** Labeled "LLM". Three categories are listed: "ChatGPT", "Llama 2 13B", and "Llama 2 7B".

* **Legend:** Positioned on the right side of the chart, titled "Type". It defines two categories:

* **Correct:** Represented by a light green color.

* **Incorrect:** Represented by a light red/salmon color.

* **Data Series:**

1. **Grouped Bars:** For each LLM, two bars are shown side-by-side. The left (green) bar represents the average coherence score for "Correct" responses. The right (red) bar represents the average coherence score for "Incorrect" responses.

2. **Line Overlay:** A dashed red line with black circular markers connects data points representing the "Rel. Difference %" for each LLM. This line is plotted against the right-hand Y-axis.

### Detailed Analysis

**1. ChatGPT:**

* **Correct (Green Bar):** Coherence Score ≈ 0.26.

* **Incorrect (Red Bar):** Coherence Score ≈ 0.20.

* **Rel. Difference % (Line Marker):** ≈ 20%. The black dot is positioned at the 20% tick on the right axis.

**2. Llama 2 13B:**

* **Correct (Green Bar):** Coherence Score ≈ 0.28 (the highest "Correct" score on the chart).

* **Incorrect (Red Bar):** Coherence Score ≈ 0.19.

* **Rel. Difference % (Line Marker):** ≈ 30%. The black dot is positioned at the 30% tick on the right axis, representing the peak of the dashed line.

**3. Llama 2 7B:**

* **Correct (Green Bar):** Coherence Score ≈ 0.23.

* **Incorrect (Red Bar):** Coherence Score ≈ 0.19.

* **Rel. Difference % (Line Marker):** ≈ 18%. The black dot is positioned slightly below the 20% tick on the right axis.

**Trend Verification:**

* For all three LLMs, the "Correct" (green) bar is taller than the "Incorrect" (red) bar, indicating a consistent trend of higher coherence scores for correct responses.

* The dashed red line (Rel. Difference %) slopes upward from ChatGPT to Llama 2 13B, then slopes downward to Llama 2 7B, forming a peak at the 13B model.

### Key Observations

1. **Consistent Performance Gap:** Every model shows a higher average coherence score for correct responses compared to incorrect ones.

2. **Peak Difference at Llama 2 13B:** The relative difference between correct and incorrect coherence scores is largest for Llama 2 13B (~30%), significantly higher than for ChatGPT (~20%) or Llama 2 7B (~18%).

3. **Highest Absolute Score:** Llama 2 13B also achieves the highest absolute coherence score for correct responses (~0.28).

4. **Similar "Incorrect" Scores:** The coherence scores for incorrect responses are relatively similar across all three models, clustering around 0.19-0.20.

### Interpretation

This chart suggests that while all evaluated LLMs produce more coherent text when their responses are correct, the magnitude of this coherence gap varies by model. The data indicates that **Llama 2 13B exhibits the most pronounced distinction in text quality between its correct and incorrect outputs.** This could imply that this model's architecture or training leads to a sharper degradation in linguistic coherence when it fails, compared to ChatGPT or the smaller Llama 2 7B model.

The fact that the "Incorrect" coherence scores are similar across models, while the "Correct" scores vary more, suggests that the models' baseline ability to generate fluent but wrong text is comparable, but their peak performance on correct answers differs. The Llama 2 13B model appears to have a higher ceiling for coherence when it is accurate. This information is valuable for understanding model behavior beyond simple accuracy metrics, highlighting how reliability and output quality are intertwined.

</details>

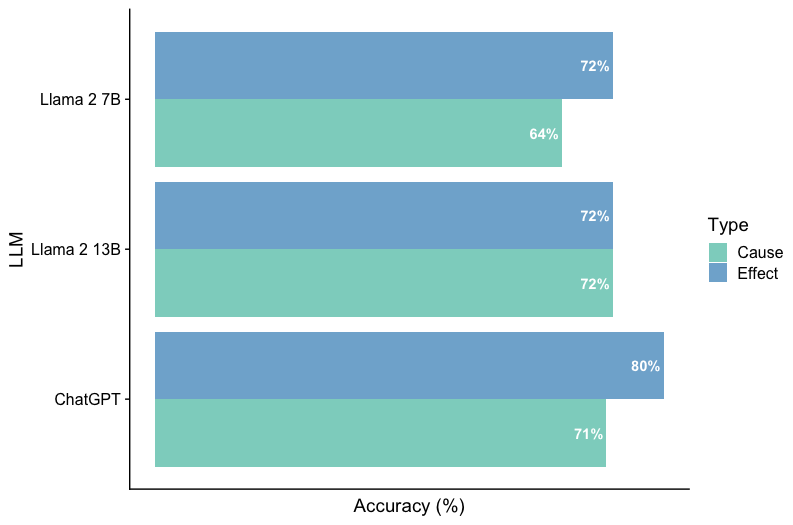

Figure 5: An evaluation of the explanation coherence and question accuracy.The average coherence score is consistently higher for explanations corresponding to the correct hypotheses across the LLMs.

Similarly to parsimony, we found coherence to be a better indicator of explanation quality being statistically significant for both GPT 3.5 and Llama 2 13B on COPA and both Llama 2 models on E-Care. We found that the average coherence score is consistently greater for the stronger hypothesis across all LLMs and datasets (see Figure 5). Both GPT and Llama 2 13B exhibit a higher relative difference between the correct and incorrect hypotheses in contrast to Llama 2 7B.

Uncertainty.

<details>

<summary>extracted/6246183/uncertainty.png Details</summary>

### Visual Description

## [Chart Set]: Analysis of Uncertainty and Hedge Cues in Language Model Explanations

### Overview

The image contains three distinct charts analyzing the behavior of three language models (ChatGPT, Llama 2 13B, Llama 2 7B) regarding uncertainty and the use of "hedge cues" in their explanations. The top section contains two dual-axis bar charts comparing "correct" vs. "incorrect" explanations. The bottom section contains a horizontal stacked bar chart detailing the types of hedge cues found specifically in incorrect explanations.

### Components/Axes

**Top-Left Chart: "Avg. Uncertainty"**

* **Primary Y-Axis (Left):** Label: "Score". Scale: 0 to 4, with increments of 1.

* **Secondary Y-Axis (Right):** Label: "Rel. Difference". Scale: 0% to 10%, with increments of 5%.

* **X-Axis:** Categories: "ChatGPT", "Llama 2 13B", "Llama 2 7B".

* **Legend:** Located between the two top charts. Title: "type". Categories: "correct" (green bar), "incorrect" (red bar).

* **Data Series:**

1. **Bar Series:** Paired green ("correct") and red ("incorrect") bars for each model.

2. **Line Series:** A red dashed line with black circular markers, plotted against the right "Rel. Difference" axis.

**Top-Right Chart: "Avg. Ratio of Expl. Hedge Cues"**

* **Primary Y-Axis (Left):** Label: "Ratio". Scale: 0.00 to 0.04, with increments of 0.02.

* **Secondary Y-Axis (Right):** Label: "Rel. Difference". Scale: 0% to 20%, with increments of 10%.

* **X-Axis:** Categories: "ChatGPT", "Llama 2 13B", "Llama 2 7B".

* **Legend:** Shared with the top-left chart (green="correct", red="incorrect").

* **Data Series:**

1. **Bar Series:** Paired green ("correct") and red ("incorrect") bars for each model.

2. **Line Series:** A red dashed line with black circular markers, plotted against the right "Rel. Difference" axis.

**Bottom Chart: "Hedge Cues in Incorrect Expl."**

* **Y-Axis:** Categories: "Llama 2 7B", "Llama 2 13B", "ChatGPT" (listed from top to bottom).

* **X-Axis:** Label: Percentage scale from 0% to 100%, with markers at 0%, 25%, 50%, 75%, 100%.

* **Legend:** Located at the bottom. Title: "Category". Categories: "Conditional" (red), "Doxastic" (green), "Epistemic" (blue).

* **Data Series:** A single horizontal stacked bar for each model, segmented by color according to the legend.

### Detailed Analysis

**1. Avg. Uncertainty Chart (Top-Left)**

* **Trend Verification:** For all three models, the red "incorrect" bar is taller than the green "correct" bar, indicating higher average uncertainty scores for incorrect explanations. The red dashed line (Rel. Difference) shows a "V" shape, dipping at Llama 2 13B.

* **Data Points (Approximate):**

* **ChatGPT:** Correct Score ≈ 3.6, Incorrect Score ≈ 3.9. Rel. Difference ≈ 8%.

* **Llama 2 13B:** Correct Score ≈ 3.8, Incorrect Score ≈ 4.1. Rel. Difference ≈ 7%.

* **Llama 2 7B:** Correct Score ≈ 3.5, Incorrect Score ≈ 3.8. Rel. Difference ≈ 11% (highest relative difference).

**2. Avg. Ratio of Expl. Hedge Cues Chart (Top-Right)**

* **Trend Verification:** For all three models, the red "incorrect" bar is taller than the green "correct" bar, indicating a higher ratio of hedge cues in incorrect explanations. The red dashed line (Rel. Difference) shows a "check mark" shape, dipping at Llama 2 13B.

* **Data Points (Approximate):**

* **ChatGPT:** Correct Ratio ≈ 0.035, Incorrect Ratio ≈ 0.043. Rel. Difference ≈ 23% (highest relative difference).

* **Llama 2 13B:** Correct Ratio ≈ 0.048, Incorrect Ratio ≈ 0.055. Rel. Difference ≈ 11%.

* **Llama 2 7B:** Correct Ratio ≈ 0.043, Incorrect Ratio ≈ 0.051. Rel. Difference ≈ 19%.

**3. Hedge Cues in Incorrect Expl. Chart (Bottom)**

* **Component Isolation:** Each horizontal bar represents 100% of the hedge cues used in that model's incorrect explanations.

* **Data Distribution (Approximate percentages):**

* **Llama 2 7B:** Epistemic (Blue) ≈ 82%, Doxastic (Green) ≈ 3%, Conditional (Red) ≈ 15%.

* **Llama 2 13B:** Epistemic (Blue) ≈ 88%, Doxastic (Green) ≈ 4%, Conditional (Red) ≈ 8%.

* **ChatGPT:** Epistemic (Blue) ≈ 90%, Doxastic (Green) ≈ 5%, Conditional (Red) ≈ 5%.

### Key Observations

1. **Consistent Pattern:** Across all models, incorrect explanations are associated with both higher average uncertainty scores and a higher ratio of hedge cues compared to correct explanations.

2. **Model Comparison:** Llama 2 13B shows the highest absolute scores and ratios for both correct and incorrect explanations. However, ChatGPT and Llama 2 7B often show a larger *relative* increase (Rel. Difference) in these metrics when moving from correct to incorrect explanations.

3. **Hedge Cue Composition:** In incorrect explanations, the vast majority of hedge cues are "Epistemic" (related to knowledge or belief) across all models. "Conditional" cues are the second most common, while "Doxastic" cues (related to opinion) are rare.

4. **Inverse Relationship in Hedge Cue Ratio:** While Llama 2 13B has the highest absolute ratio of hedge cues, it has the smallest relative difference between correct and incorrect explanations. ChatGPT has the largest relative difference.

### Interpretation

The data suggests a strong correlation between a language model's expression of uncertainty (both in self-reported scores and in linguistic hedging) and the factual correctness of its explanations. Incorrect answers are not just wrong; they are delivered with measurably more cautious, qualified, or uncertain language.

The dominance of "Epistemic" hedge cues (e.g., "I think," "It might be") in incorrect explanations indicates that models may be using language that frames knowledge as tentative or belief-based when they are less confident or incorrect. The lower use of "Doxastic" cues suggests models rarely frame incorrect answers as mere opinion.

The variation between models is notable. Llama 2 13B appears to use more hedging language overall, but the *change* in its behavior between correct and incorrect states is less pronounced than for ChatGPT or Llama 2 7B. This could imply different internal confidence calibration or different linguistic strategies for expressing uncertainty. The charts collectively provide a multi-faceted view of how model "uncertainty" manifests both as an internal score and as a communicative style, linking it directly to output quality.

</details>

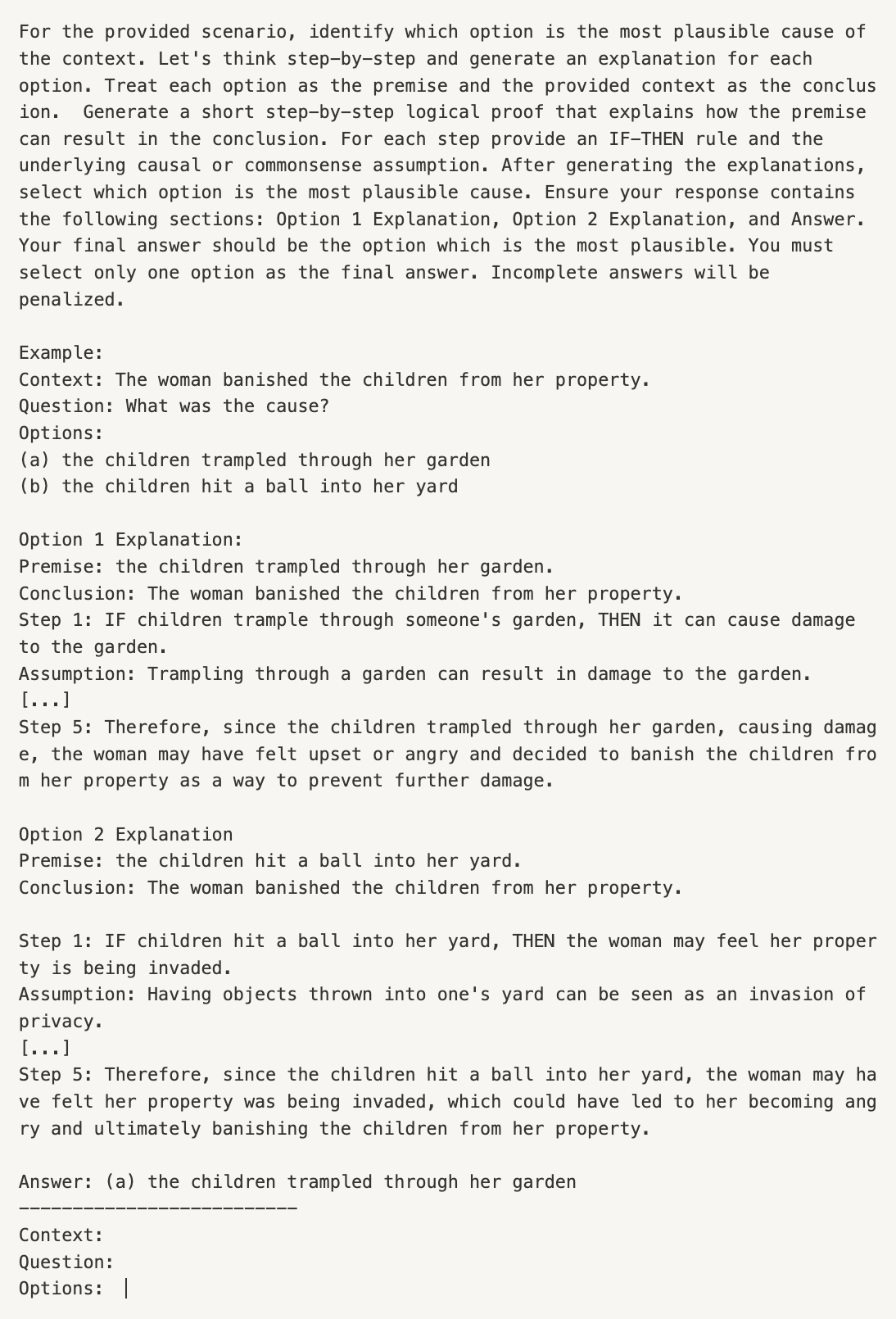

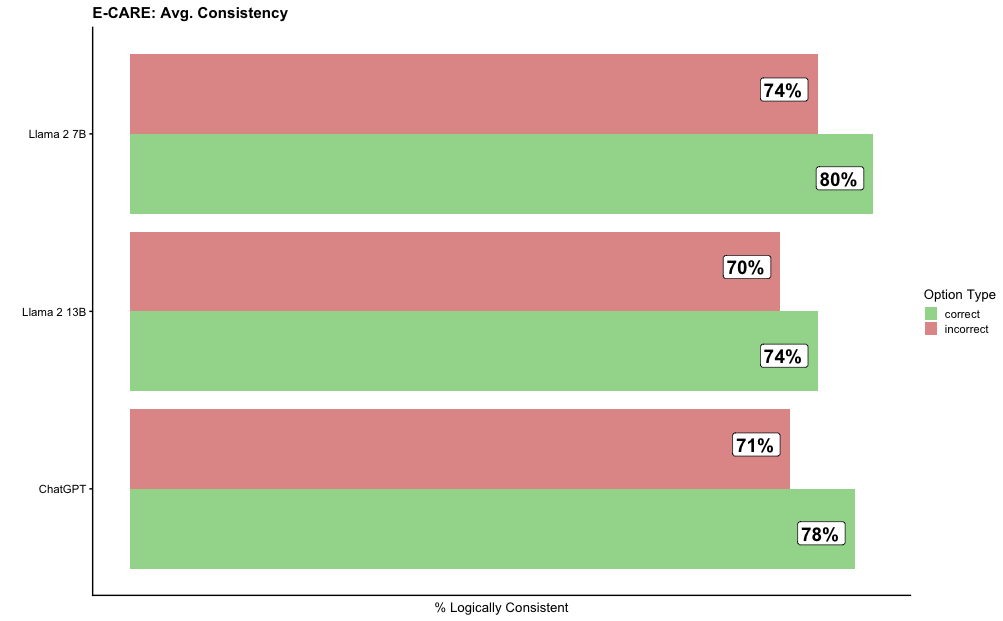

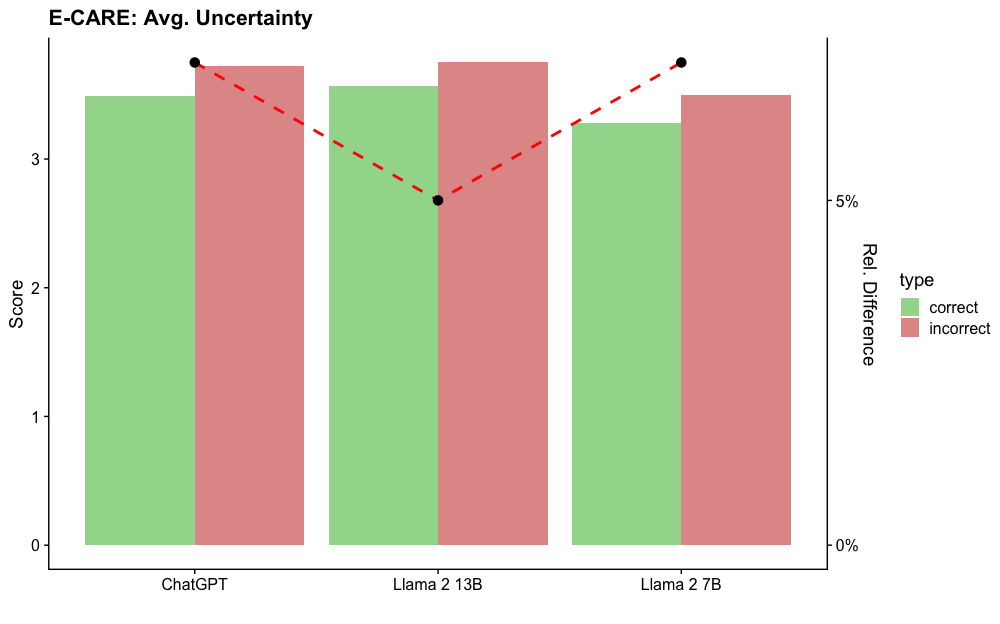

Figure 6: Evaluation of linguistic uncertainty in LLM-generated explanations. LLMs tend to use more hedging language in explanations supporting less plausible hypotheses. Across the LLMs, the hedging language is found to be predominantly epistemic A.8.

The results reveal that linguistic uncertainty is the strongest predictor of explanation quality and is a statistically significant feature for all LLMs. This suggests that LLMs use more qualifying language when explaining weaker hypotheses (see Figure 6). We found that uncertainty can improve accuracy by 13pp on COPA and 4pp on E-CARE. We also examine the uncertainty cues expressed by LLMs by analyzing both the frequency of hedge words and the types of hedge cues employed in incorrect explanations. We find the distribution of hedge cues across LLMs tends to be similar, with only minor differences between LLMs (Figure 6). Epistemic cues were most frequently used by all three models, with LLaMA 2 7B being more likely to use conditional cues. See Appendix A.8 for further details..

### 7.1 Correlation with Human Judgement.

We first sample 100 generated explanation pairs across both the COPA and E-CARE tasks and evaluated LLMs. Two human evaluators are instructed to evaluate the pair of explanations and to select which explanation is most plausible. No additional information about the original question nor the correct answer is provided to prevent biasing the judge.

The human evaluators on average were able to identify the explanation associated with the correct answer 96% (COPA) and 91% (E-Care) of time. We compute the inter-evaluator agreement score between two human evaluators and find that there is Cohen’s Kappa score of .68 suggesting there is a strong agreement between the two evaluators.

To evaluate if IBE-Eval is correlated with human judgment, we compute the Spearman’s rank correlation between GPT-3.5-as-a-judge, IBE-Eval and human judgment. We find that GPT-3.5-as-a-judge exhibits a weak and statistically insignificant correlation with human judgment (0.31). In contrast, we find that the IBE-Eval is significantly aligned with human preferences (with a Spearman’s correlation of 0.64 and p < 0.01) further suggesting the IBE’s potential for automatic explanation evaluation.

## 8 Related Work

Explorations of LLM reasoning capabilities across various domains (e.g. arithmetic, commonsense, planning, symbolic, etc) are an emerging area of interest Xu et al. (2023); Huang and Chang (2023). Prompt-based methods Wei et al. (2022b); Zhou et al. (2023); Wang et al. (2023), such as CoT, investigate strategies to elicit specific types of reasoning behavior through direct LLM interaction. Olausson et al. (2023) investigate automatic proof generation and propose a neurosymbolic framework with an LLM semantic parser and external solver. Creswell et al. (2022) propose an inference framework where the LLM acts as both a selection and inference module to produce explanations consisting of causal reasoning steps in entailment tasks. Research on LLM faithfulness Atanasova et al. (2023) investigates if LLM explanations are robust to spurious input alterations. Parcalabescu and Frank (2024) propose a self-consistency measure CC-SHAP which measures how specific alterations to a model’s input contribute to the generated explanation. This paper primarily draws inspiration from recent work on the evaluation of natural language explanations Quan et al. (2024); Valentino et al. (2021); Wiegreffe and Marasovic (2021); Thayaparan et al. (2020); Dalvi et al. (2021); Camburu et al. (2018). However, differently from previous methods that require extensive human annotations or specific domain knowledge, we are the first to propose a set of criteria that can be automatically computed on explicit linguistic and logical features.

## 9 Conclusion

This paper proposed IBE-Eval, an interpretable framework for LLM explanation evaluation inspired by philosophical accounts of Inference to the Best Explanation (IBE). IBE-Eval can identify the best explanation supporting the correct answer with up to 77% accuracy in CQA scenarios, improving upon a GPT 3.5 Judge baselines by +17%. Our regression study suggests that LLM explanations tend to conform to IBE expectations and that IBE-Eval is strongly correlated with human judgment. Linguistic uncertainty is the stronger IBE predictor for explanation quality closely followed by parsimony and coherence. However, we also found that LLMs tend to be strong conjecture models able to generate logically consistent explanations for less plausible hypotheses, suggesting limited applicability for the logical consistency criterion in isolation. We believe our findings can open new lines of research on external evaluation methods for LLMs as well as interpretability tools for understanding the LLM’s underlying explanatory process.

## 10 Limitations

IBE-Eval offers an interpretable explanation evaluation framework utilizing logical and linguistic features. Our current instantiation of the framework is primarily limited in that it does not consider grounded knowledge for factuality. We observe that LLMs can generate factually incorrect but logically consistent explanations. In some cases, the coherence metric can identify those factual errors when the step-wise entailment score is comparatively lower. However, our reliance on aggregated metrics can hide weaker internal entailment especially when the explanation is longer or the entailment strength of the surrounding explanation steps is stronger. Future work can introduce metrics to evaluate grounded knowledge or perform more granular evaluations of explanations to better weight factual inaccuracies.

Additionally, IBE-Eval currently does not support single natural language explanations and was evaluated in the limited domain of causal commonsense reasoning. Future work will explore globally calibrating IBE-Eval plausibility scores to extend evaluation to more diverse explanation generation and QA settings. Calibration efforts would allow for IBE-Eval to generate comparable scores across unrelated explanations and could be used to produce global thresholds explanation classification.

Finally, the list of criteria considered in this work is not exhaustive and can be extended in future work. However, additional criteria for IBE might not be straightforward to implement (e.g., unification power, hardness to variation) and would probably require further progress in both epistemological accounts and existing NLP technology.

## 11 Ethics Statement

The human annotators for computing the human judgment baseline are all authors of the papers and as such were not further compensated for the annotation task.

## Acknowledgements

This work was partially funded by the Swiss National Science Foundation (SNSF) project NeuMath (200021_204617), by the EPSRC grant EP/T026995/1 entitled “EnnCore: End-to-End Conceptual Guarding of Neural Architectures” under Security for all in an AI-enabled society, by the CRUK National Biomarker Centre, and supported by the Manchester Experimental Cancer Medicine Centre, the Science Foundation Ireland under grants SFI/18/CRT/6223 (Centre for Research Training in Artificial Intelligence), SFI/12/RC/2289_P2 (Insight), co-funded by the European Regional Development Fund, and the NIHR Manchester Biomedical Research Centre.

## References

- Atanasova et al. (2023) Pepa Atanasova, Oana-Maria Camburu, Christina Lioma, Thomas Lukasiewicz, Jakob Grue Simonsen, and Isabelle Augenstein. 2023. Faithfulness tests for natural language explanations. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pages 283–294, Toronto, Canada. Association for Computational Linguistics.

- Baumgartner (2015) Michael Baumgartner. 2015. Parsimony and causality. Quality & Quantity, 49:839–856.

- Bowman et al. (2015) Samuel R. Bowman, Gabor Angeli, Christopher Potts, and Christopher D. Manning. 2015. A large annotated corpus for learning natural language inference. In Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, pages 632–642, Lisbon, Portugal. Association for Computational Linguistics.

- Brown et al. (2020) Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. 2020. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901.

- Buitinck et al. (2013) Lars Buitinck, Gilles Louppe, Mathieu Blondel, Fabian Pedregosa, Andreas Mueller, Olivier Grisel, Vlad Niculae, Peter Prettenhofer, Alexandre Gramfort, Jaques Grobler, Robert Layton, Jake VanderPlas, Arnaud Joly, Brian Holt, and Gaël Varoquaux. 2013. API design for machine learning software: experiences from the scikit-learn project. In ECML PKDD Workshop: Languages for Data Mining and Machine Learning, pages 108–122.

- Camburu et al. (2018) Oana-Maria Camburu, Tim Rocktäschel, Thomas Lukasiewicz, and Phil Blunsom. 2018. e-snli: Natural language inference with natural language explanations. Advances in Neural Information Processing Systems, 31.

- Camburu et al. (2020) OM Camburu, B Shillingford, P Minervini, T Lukasiewicz, and P Blunsom. 2020. Make up your mind! adversarial generation of inconsistent natural language explanations. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020. ACL Anthology.

- Chakraborty et al. (2017) Supriyo Chakraborty, Richard Tomsett, Ramya Raghavendra, Daniel Harborne, Moustafa Alzantot, Federico Cerutti, Mani Srivastava, Alun Preece, Simon Julier, Raghuveer M. Rao, Troy D. Kelley, Dave Braines, Murat Sensoy, Christopher J. Willis, and Prudhvi Gurram. 2017. Interpretability of deep learning models: A survey of results. In 2017 IEEE SmartWorld, Ubiquitous Intelligence & Computing, Advanced & Trusted Computed, Scalable Computing & Communications, Cloud & Big Data Computing, Internet of People and Smart City Innovation (SmartWorld/SCALCOM/UIC/ATC/CBDCom/IOP/SCI), pages 1–6.

- Creswell et al. (2022) Antonia Creswell, Murray Shanahan, and Irina Higgins. 2022. Selection-inference: Exploiting large language models for interpretable logical reasoning.

- Dalal et al. (2023) Dhairya Dalal, Paul Buitelaar, and Mihael Arcan. 2023. CALM-bench: A multi-task benchmark for evaluating causality-aware language models. In Findings of the Association for Computational Linguistics: EACL 2023, pages 296–311, Dubrovnik, Croatia. Association for Computational Linguistics.

- Dalvi et al. (2021) Bhavana Dalvi, Peter Jansen, Oyvind Tafjord, Zhengnan Xie, Hannah Smith, Leighanna Pipatanangkura, and Peter Clark. 2021. Explaining answers with entailment trees. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 7358–7370.

- Danilevsky et al. (2020) Marina Danilevsky, Kun Qian, Ranit Aharonov, Yannis Katsis, Ban Kawas, and Prithviraj Sen. 2020. A survey of the state of explainable AI for natural language processing. In Proceedings of the 1st Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 10th International Joint Conference on Natural Language Processing, pages 447–459, Suzhou, China. Association for Computational Linguistics.

- Deutsch (2011) David Deutsch. 2011. The beginning of infinity: Explanations that transform the world. penguin uK.

- Du et al. (2022) Li Du, Xiao Ding, Kai Xiong, Ting Liu, and Bing Qin. 2022. e-CARE: a new dataset for exploring explainable causal reasoning. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 432–446, Dublin, Ireland. Association for Computational Linguistics.

- Farkas et al. (2010) Richárd Farkas, Veronika Vincze, György Móra, János Csirik, and György Szarvas. 2010. The CoNLL-2010 shared task: Learning to detect hedges and their scope in natural language text. In Proceedings of the Fourteenth Conference on Computational Natural Language Learning – Shared Task, pages 1–12, Uppsala, Sweden. Association for Computational Linguistics.

- Gao et al. (2023) Leo Gao, Jonathan Tow, Baber Abbasi, Stella Biderman, Sid Black, Anthony DiPofi, Charles Foster, Laurence Golding, Jeffrey Hsu, Alain Le Noac’h, Haonan Li, Kyle McDonell, Niklas Muennighoff, Chris Ociepa, Jason Phang, Laria Reynolds, Hailey Schoelkopf, Aviya Skowron, Lintang Sutawika, Eric Tang, Anish Thite, Ben Wang, Kevin Wang, and Andy Zou. 2023. A framework for few-shot language model evaluation.

- Geirhos et al. (2020) Robert Geirhos, Jörn-Henrik Jacobsen, Claudio Michaelis, Richard Zemel, Wieland Brendel, Matthias Bethge, and Felix A Wichmann. 2020. Shortcut learning in deep neural networks. Nature Machine Intelligence, 2(11):665–673.

- Gordon et al. (2012) Andrew Gordon, Zornitsa Kozareva, and Melissa Roemmele. 2012. SemEval-2012 task 7: Choice of plausible alternatives: An evaluation of commonsense causal reasoning. In *SEM 2012: The First Joint Conference on Lexical and Computational Semantics – Volume 1: Proceedings of the main conference and the shared task, and Volume 2: Proceedings of the Sixth International Workshop on Semantic Evaluation (SemEval 2012), pages 394–398, Montréal, Canada. Association for Computational Linguistics.

- Harman (1965) Gilbert H Harman. 1965. The inference to the best explanation. The philosophical review, 74(1):88–95.

- Honnibal and Montani (2017) Matthew Honnibal and Ines Montani. 2017. spaCy 2: Natural language understanding with Bloom embeddings, convolutional neural networks and incremental parsing. To appear.

- Huang and Chang (2022) Jie Huang and Kevin Chen-Chuan Chang. 2022. Towards reasoning in large language models: A survey. arXiv preprint arXiv:2212.10403.

- Huang and Chang (2023) Jie Huang and Kevin Chen-Chuan Chang. 2023. Towards reasoning in large language models: A survey.

- Huang et al. (2023) Lei Huang, Weijiang Yu, Weitao Ma, Weihong Zhong, Zhangyin Feng, Haotian Wang, Qianglong Chen, Weihua Peng, Xiaocheng Feng, Bing Qin, and Ting Liu. 2023. A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions.

- Ji et al. (2023) Ziwei Ji, Nayeon Lee, Rita Frieske, Tiezheng Yu, Dan Su, Yan Xu, Etsuko Ishii, Ye Jin Bang, Andrea Madotto, and Pascale Fung. 2023. Survey of hallucination in natural language generation. ACM Comput. Surv., 55(12).

- Kitcher (1989) Philip Kitcher. 1989. Explanatory unification and the causal structure of the world.

- Knight (2023) Will Knight. 2023. Ai is becoming more powerful-but also more secretive.

- Lampinen et al. (2022) Andrew Lampinen, Ishita Dasgupta, Stephanie Chan, Kory Mathewson, Mh Tessler, Antonia Creswell, James McClelland, Jane Wang, and Felix Hill. 2022. Can language models learn from explanations in context? In Findings of the Association for Computational Linguistics: EMNLP 2022, pages 537–563.

- Laskar et al. (2023) Md Tahmid Rahman Laskar, M Saiful Bari, Mizanur Rahman, Md Amran Hossen Bhuiyan, Shafiq Joty, and Jimmy Xiangji Huang. 2023. A systematic study and comprehensive evaluation of chatgpt on benchmark datasets.