2402.14160

Model: gemini-2.0-flash

## Recursive Speculative Decoding: Accelerating LLM Inference via Sampling Without Replacement

Wonseok Jeon 1 Mukul Gagrani 1 Raghavv Goel 1 Junyoung Park 1 Mingu Lee * 1 Christopher Lott * 1

## Abstract

Speculative decoding is an inference-acceleration method for large language models (LLMs) where a small language model generates a draft-token sequence which is further verified by the target LLMinparallel. Recent works have advanced this method by establishing a draft-token tree, achieving superior performance over a single-sequence speculative decoding. However, those works independently generate tokens at each level of the tree, not leveraging the tree's entire diversifiability. Besides, their empirical superiority has been shown for fixed length of sequences, implicitly granting more computational resource to LLM for the tree-based methods. None of the existing works has conducted empirical studies with fixed target computational budgets despite its importance to resource-bounded devices. We present Recursive Speculative Decoding (RSD), a novel tree-based method that samples draft tokens without replacement and maximizes the diversity of the tree. During RSD's drafting, the tree is built by either Gumbel-Topk trick that draws tokens without replacement in parallel or Stochastic Beam Search that samples sequences without replacement while early-truncating unlikely draft sequences and reducing the computational cost of LLM. We empirically evaluate RSD with Llama 2 and OPT models, showing that RSD outperforms the baseline methods, consistently for fixed draft sequence length and in most cases for fixed computational budgets at LLM.

* Equal advising 1 Qualcomm AI Research. Correspondence to: Wonseok Jeon < wjeon@qti.qualcomm.com > , Mingu Lee < mingul@qti.qualcomm.com > , Christopher Lott < clott@qti.qualcomm.com > .

Qualcomm AI Research is an initiative of Qualcomm Technologies, Inc.

## 1. Introduction

Large language models (LLMs) (Touvron et al., 2023; Zhang et al., 2022; Brown et al., 2020; Achiam et al., 2023; Jiang et al., 2023) have gained popularity due to their outstanding achievements with high-quality text generation, which has drastically increased demands for faster text generation. However, auto-regressive nature of LLMs limits text generation to produce a single token at a time and often suffers from memory-bandwidth bottleneck, which leads to slower inference (Shazeer, 2019). translation (Xiao et al., 2023).

Speculative decoding (Chen et al., 2023; Leviathan et al., 2023) has emerged as a solution for LLM inference acceleration by leveraging the innate parallelizability of the transformer network (Vaswani et al., 2017). This decoding method utilizes a draft model, i.e., a smaller language model, to auto-regressively generate a sequence of draft tokens with a significantly lower cost and latency, followed by the target LLM producing the token-wise probability distributions in parallel. Rejection sampling then verifies those draft tokens, recovering the sequence distribution by auto-regressive decoding with the target model. As speculative decoding uses a single sequence of draft tokens, one needs to increase the draft-sequence length to better exploit LLM's parallelizability. However, the longer draft sequence may slow down the overall inference in practice due to the computational overhead caused by additional auto-regressive decoding steps from the draft model, possibly decelerating the target model process due to the increased number of draft tokens.

Recent works on tree-based speculative decoding (Sun et al., 2023; Miao et al., 2023) have achieved better diversity and higher acceptance rate via multiple draft-token sequences. Despite promising results, their decoding methods independently sample the draft tokens, often harming the diversity of the tree when samples overlap. Also, their experiments have been conducted for the fixed length of draft-token sequences across decoding methods, implicitly requiring more computational resource to the target model when using tree-based methods. To the best of our knowledge, no prior work has thoroughly investigated the performance of single-sequence and tree-based speculative decoding methods with fixed target computational budget, which has practical importance

for resource-bounded devices.

We propose R ecursive S peculative D ecoding (RSD), a novel tree-based speculative decoding algorithm that fully exploits the diversity of the draft-token tree by using sampling without replacement. We summarize our contributions as below:

Theoretical contribution. We propose recursive rejection sampling capable of recovering the target model's distribution with the sampling-without-replacement distribution defined by the draft model.

Algorithmic contribution. We present RSD which builds draft-token tree composed of the tokens sampled without replacement . Two tree construction methods, RSD with C onstant branching factors (RSD-C) and RSD with S tochastic Beam Search (RSD-S) (Kool et al., 2019), are proposed.

Empirical contribution. Two perspectives are considered in our experiments: ( Exp1 ) performance for fixed length of draft sequence , which is also widely considered in previous works (Sun et al., 2023; Miao et al., 2023), and ( Exp2 ) performance for fixed target computational budget , where we compared methods with given size of the draft-token tree. RSD is shown to outperform the baselines consistently in ( Exp1 ) and for the majority of experiments in ( Exp2 ) .

## 2. Background

Let us consider a sequence generation problem with a set X of tokens. We also assume that there is a target model characterized by its conditional probability q ( x i +1 | x 1: i ) := Pr { X i +1 = x i +1 | X 1: i = x 1: i } , i ∈ N for x 1: i := ( x 1 , ..., x i ) , where X 1 , ..., X i +1 ∈ X and x 1 , ..., x i +1 ∈ X are random tokens and their realizations, respectively. Given an input sequence X 1: t = x 1: t , we can auto-regressively and randomly sample an output sequence X t +1: t + i for i ∈ N , i.e., X t + i +1 ∼ q ( ·| X 1: t + i ) .

Speculative decoding. Auto-regressive sampling with modern neural network accelerators (e.g., GPU/TPU) is known to suffer from the memory-bandwidth bottleneck (Shazeer, 2019), which prevents us from utilizing the entire computing power of those accelerators. Speculative decoding (Leviathan et al., 2023; Chen et al., 2023) addresses such issue by using the target model's parallelizability. It introduces a (small) draft model which outputs p ( ˆ X i +1 | ˆ X 1: i ) := Pr { ˆ X i +1 = ˆ x i +1 | ˆ X 1: i = ˆ x 1: i } , i ∈ N . Speculative decoding accelerates the inference speed by iteratively conducting the following steps:

1) Draft token generation: For an input sequence X 1: m = x 1: m and the draft sequence length L , sample draft tokens ˆ X n +1 ∼ p ( ·| X 1: m , ˆ X m +1: n ) auto-regressively for n = m,..., m + L -1 (where ˆ X m +1: m = ∅ ).

2) Evaluation with target model: Use the target model to compute q ( ·| X 1: m , ˆ X m +1: n ) , n = m,..., m + L in parallel.

3) Verification via rejection sampling: Starting from n = m + 1 to m + L , sequentially accept the draft token ˆ X n (i.e., X n = ˆ X n ) with the probability min { 1 , q ( ˆ X n | X 1: n -1 ) p ( ˆ X n | X 1: n -1 ) } . If one of the draft tokens ˆ X n is rejected, we sample X n ∼ q res ( ·| X 1: n -1 ) , where the residual distribution is defined by

$$q _ { r e s } ( \cdot | \tau ) \colon = N o r m [ [ q ( \cdot | \tau ) - p ( \cdot | \tau ) ] ^ { + } ] ,$$

for [ f ] + := max { 0 , f ( · ) } and Norm[ f ] := f ∑ x ′ ∈X f ( x ′ ) . If all draft tokens are accepted ( X n = ˆ X n for n = m + 1 , ..., m + L ), sample an extra token X m + L +1 ∼ q ( ·| X 1: m + L ) .

Chen et al. (2023) and Leviathan et al. (2023) have shown that the target distribution can be recovered when rejection sampling is applied.

Tree-based speculative decoding. One can further improve the sequence generation speed by using multiple draft-token sequences, or equivalently, a tree of draft tokens.

SpecTr (Sun et al., 2023) is a tree-based speculative decoding algorithm motivated by the Optimal Transport (OT) (Villani et al., 2009). It generalizes speculative decoding with K i.i.d. draft tokens ˆ X ( k ) ∼ p, k = 1 , ..., K, while recovering the target distribution q . To this end, a K -sequential draft selection algorithm ( K -SEQ) was proposed, where the algorithm decides whether to accept K draft tokens ˆ X ( k ) , k = 1 , ..., K, or not with the probability min { 1 , q ( ˆ X ( k ) ) γ · p ( ˆ X ( k ) ) } , γ ∈ [1 , K ] . If all draft tokens are rejected, we use a token drawn from the residual distribution

$$\begin{array} { r l } & { \in \mathcal { X } } \\ & { \quad N o r m \left [ q - \min \left \{ p , \frac { q } { \gamma } \right \} \frac { 1 - ( 1 - \beta _ { p , q } ( \gamma ) ) ^ { K } } { \beta _ { p , q } ( \gamma ) } \right ] } \\ & { y a n d \in \mathbb { N } , \quad f o r \, \beta _ { p , q } ( \gamma ) \colon = \sum _ { x \in \mathcal { X } } \min \{ p ( x ) , q ( x ) / \gamma \} . } \end{array}$$

SpecInfer also used the draft-token tree to speed up the inference with multiple draft models p ( k ) , k = 1 , ..., K (Miao et al., 2023). During the inference of SpecInfer, all draft models generate their own draft tokwns independently and create a draft-token tree collectively through repetetion. For draft verification, multi-round rejection sampling is used to recover the target distribution, where we determine whether to accept one of the draft tokens or not with probability min { 1 , q ( k ) ( ˆ X ( k ) ) p ( k ) ( ˆ X ( k ) ) } with distributions q (1) := q and q ( k ) := Norm [ [ q ( k -1) -p ( k -1) ] + ] , k = 2 , ..., K + 1 . If all draft tokens are rejected, we sample a token Y ∼ q ( K +1) from the last residual distribution.

## 3. Recursive Speculative Decoding

In this section, we present R ecursive S peculative D ecoding (RSD), a tree-based speculative decoding method that constructs draft-token trees via sampling without replacement.

## Algorithm 1 Recursive Rejection Sampling

- 1: Input: Draft dist. p ( k ) , k = 1 , ..., K, target dist. q .

- 2: Sample ˆ X ( k ) by (1).

- 3: Compute q ( k ) ( ·| ˆ X (1: k -2) ) and Θ ( k ) by (2) and (3).

- 4: for k in { 1 , ..., K } do

- 5: Sample A ( k ) ∈ { acc , rej } with probability Θ ( k ) .

- 6: if A ( k ) = acc then return Z ← ˆ X ( k ) ; end if

- 7: end for

- 8: return Z ∼ q ( K +1) ( ·| ˆ X (1: K -1) )

We first propose recursive rejection sampling, a generalization of multi-round speculative decoding (Miao et al., 2023) that is applicable to draft distributions with dependencies, where sampling-without-replacement distribution is one instance of such distributions. Then, we use recursive rejection sampling to validate each level of the draft-token tree which can be efficiently constructed via either Gumbel-Topk trick (Vieira, 2014) and Stochastic Beam Search (Kool et al., 2019),

## 3.1. Recursive Rejection Sampling: Generalized Multi-Round Rejection Sampling

Suppose we have target distribution q ( x ) , x ∈ X . In recursive rejection sampling, we introduce random variables ˆ X (1) , ..., ˆ X ( K ) ∈ X that represent K draft tokens; these tokens will locate at the same level of the draft-token tree in Section 3.2. We aim to recover target distribution q , where

<!-- formula-not-decoded -->

for some distributions p ( k ) , k = 1 , ..., K and a sequence ˆ X (1: k -1) := ( ˆ X (1) , ..., ˆ X ( k -1) ) . Note that we assume distributions with dependencies unlike prior works such as SpecTr (Sun et al., 2023) consider independent distributions. By using p (1) , ..., p ( K ) and q , we define q (1) := q and residual distributions

$$& q ^ { ( k + 1 ) } ( \cdot | x ^ { ( 1 \colon k - 1 ) } ) \\ & \quad \vdots = N o r m \left [ [ q ^ { ( k ) } ( \cdot | x ^ { ( 1 \colon k - 2 ) } ) - p ^ { ( k ) } ( \cdot | x ^ { ( 1 \colon k - 1 ) } ) ] + \right ] \quad ( 2 )$$

for k = 1 , ..., K and x (1) , ..., x ( K +1) ∈ X , where x (1: k ′ ) = ∅ (empty sequence, i.e., no conditioning) if k ′ < 1 , or ( x (1) , ..., x ( k ′ ) ) , otherwise. Together with draft, target, and residual distributions, recursive rejection sampling introduces threshold random variables Θ (1) , ..., Θ ( K ) ∈ [0 , 1] which determines rejection criteria for each draft token ˆ X ( k ) , k = 1 , ..., K :

$$\Theta ^ { ( k ) } \colon = \min \left \{ 1 , \frac { q ^ { ( k ) } ( \hat { X } ^ { ( k ) } | \hat { X } ^ { ( 1 \colon k - 2 ) } ) } { p ^ { ( k ) } ( \hat { X } ^ { ( k ) } | \hat { X } ^ { ( 1 \colon k - 1 ) } ) } \right \} . \quad ( 3 )$$

Specifically, each Θ ( k ) can be used to define random variables A ( k ) ∈ { acc , rej } (where acc and rej indicate ac-

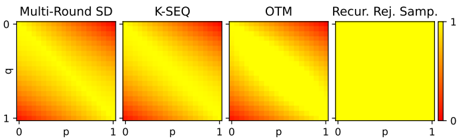

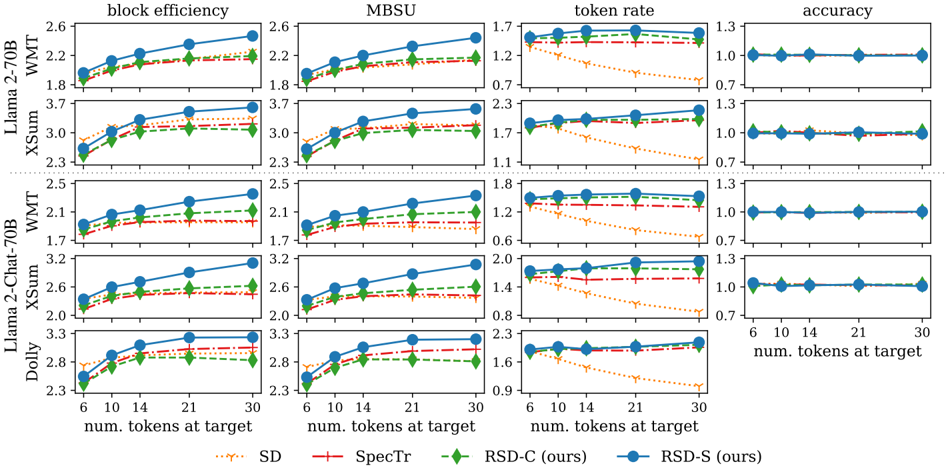

Figure 1. Acceptance rates for multi-round speculative decoding, K-SEQ, OTM and recursive rejection sampling are given when Ber( p ) and Ber( q ) are draft and target distributions, respectively, and two tokens are proposed by the draft model ( K = 2 ).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Heatmaps: Comparison of Sampling Methods

### Overview

The image presents four heatmaps, each representing a different sampling method: Multi-Round SD, K-SEQ, OTM, and Recur. Rej. Samp. The heatmaps visualize a relationship between two variables, 'p' and 'q', both ranging from 0 to 1. The color intensity within each heatmap indicates the value at each (p, q) coordinate, with red representing lower values and yellow representing higher values.

### Components/Axes

* **Titles (Top):**

* Multi-Round SD

* K-SEQ

* OTM

* Recur. Rej. Samp.

* **X-axis:** Labeled 'p', ranging from 0 to 1.

* **Y-axis:** Labeled 'q', ranging from 0 to 1.

* **Color Scale (Right):** Ranges from 0 (red) to 1 (yellow).

### Detailed Analysis

Each heatmap is a square grid, with 'p' on the horizontal axis and 'q' on the vertical axis.

* **Multi-Round SD:** The heatmap shows a gradient. The bottom-left corner (p=0, q=1) is red, indicating a low value. The top-right corner (p=1, q=0) is yellow, indicating a high value. The color transitions smoothly between these extremes.

* **K-SEQ:** Similar to Multi-Round SD, this heatmap also shows a gradient from red (bottom-left) to yellow (top-right). The transition appears slightly less smooth than in Multi-Round SD.

* **OTM:** This heatmap also shows a gradient from red (bottom-left) to yellow (top-right). The transition appears similar to K-SEQ.

* **Recur. Rej. Samp.:** This heatmap is predominantly yellow, indicating high values across most of the (p, q) space. There is a very faint red hue in the bottom-left corner, but the overall color is significantly different from the other three heatmaps.

### Key Observations

* The Multi-Round SD, K-SEQ, and OTM methods exhibit similar patterns, with values increasing as 'p' increases and 'q' decreases.

* The Recur. Rej. Samp. method shows a distinctly different pattern, with consistently high values across the majority of the space.

### Interpretation

The heatmaps visually compare the output of four different sampling methods across a range of 'p' and 'q' values. The similar gradients in Multi-Round SD, K-SEQ, and OTM suggest that these methods produce comparable results under the given conditions. The Recur. Rej. Samp. method, however, appears to behave differently, yielding high values regardless of the 'p' and 'q' inputs. This could indicate a bias or a different sampling strategy compared to the other methods. The data suggests that Recur. Rej. Samp. might be less sensitive to the 'p' and 'q' parameters within the tested range.

</details>

ceptance and rejection of draft tokens, respectively) such that Pr { A ( k ) = acc | Θ ( k ) = θ } = θ for θ ∈ [0 , 1] .

Finally, recursive rejection sampling can be characterized by defining a random variable Z ∈ X such that

<!-- formula-not-decoded -->

where A (1: k -1) := ( A (1) , ..., A ( k -1) ) and rej k is a lengthk sequence with all of its elements equal to rej . Intuitively, we select ˆ X (1) if it is accepted ( A (1) = acc ); we select ˆ X ( k ) when all previous draft tokens ˆ X (1) , ..., ˆ X ( k -1) are rejected and ˆ X ( k ) is accepted ( A (1: k -1) = rej k -1 , A ( k ) = acc ) for each k ; we sample Y ∼ q ( K +1) ( ·| ˆ X (1: K -1) ) and select Y if all draft tokens are rejected ( A (1: K ) = rej K ). We summarize the entire process of recursive rejection sampling in Algorithm 1 . Note that the original rejection sampling (Leviathan et al., 2023; Chen et al., 2023) is a special case of our recursive rejection sampling with K = 1 . Also, it can be shown that recursive rejection sampling (4) always recovers the target distribution q :

Theorem 3.1 (Recursive rejection sampling recovers target distribution) . For the random variable Z ∈ X in (4) ,

$$\Pr \{ Z = z \} = q ( z ) , z \in \mathcal { X } .$$

$$P r o f . \, S e e \, A p p e n d i x \, A . 1 .$$

Although the proposed recursive rejection sampling is applicable to arbitrary distributions with dependencies following (1), we assume a single draft model (as in SpecTr (Sun et al., 2023) and focus on the cases where the draft model samples predictive tokens without replacement, which is an instance of (1).

Toy example. We present a didactic example with Bernoulli distributions (given by Sun et al. (2023)) to showcase the benefit of recursive rejection sampling. Suppose that Bernoulli distributions are used for both draft and target models and only K = 2 tokens are allowed for draft proposals. The acceptance rates for different methods are depicted

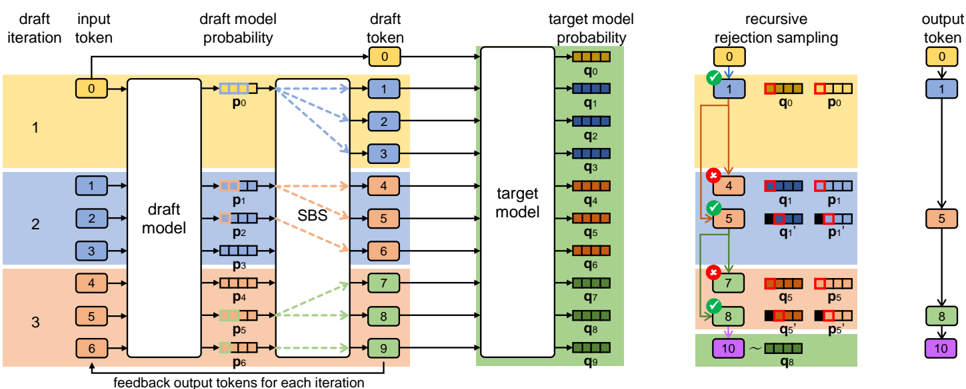

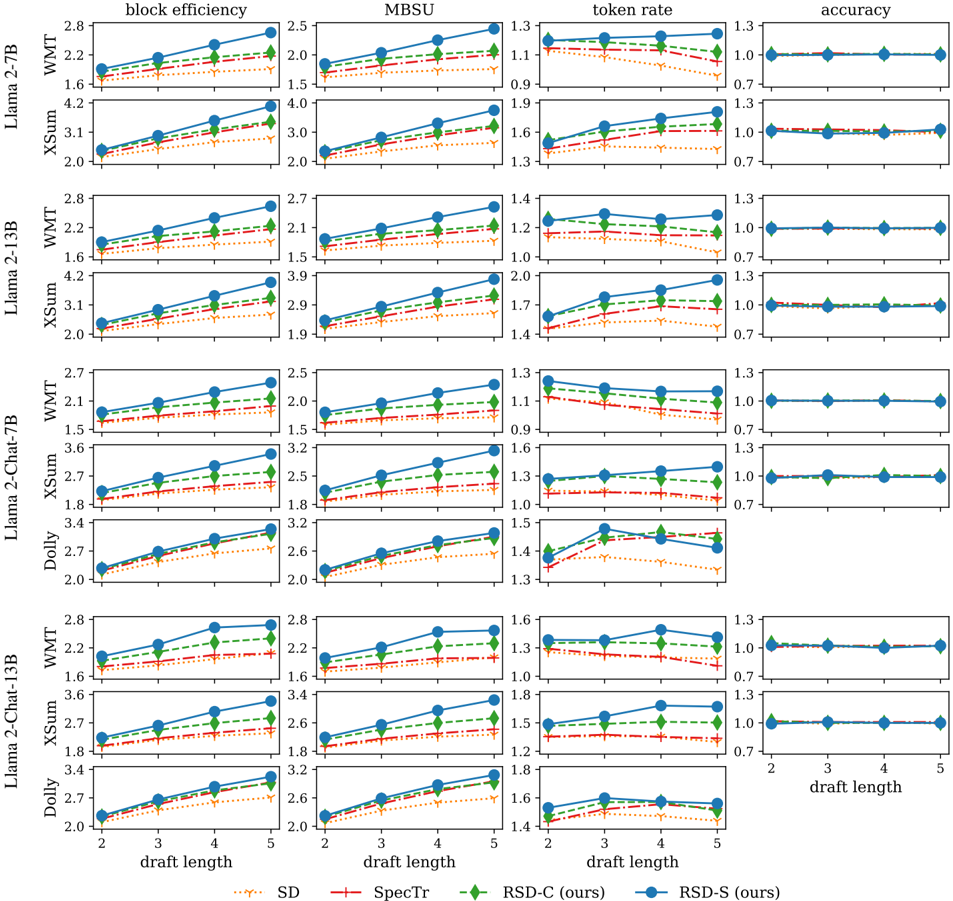

Figure 2. We describe the entire process of RSD with Stochastic Beam Search (RSD-S); the difference between RSD-S and RSD with Constant branching factors (RSD-C) lies at the method of constructing the draft-token tree. Draft tokens the tree are sampled in parallel at each level and auto-regressively across levels, while Stochastic Beam Search samples sequences without replacement at each tree level. The established draft-token tree is then processed by the target model in parallel, which lets us acquire the token-wise target model probabilities. Finally, recursive rejection sampling (for sampling-without-replacement distribution) is applied to each level of the tree, recovering the sequence generation distribution of the target model.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Draft Model Iteration and Rejection Sampling

### Overview

The image is a diagram illustrating a process involving a draft model, a target model, and recursive rejection sampling to generate an output token. The process is divided into iterations, with feedback loops and probability assessments at each stage.

### Components/Axes

* **Columns (Left to Right):**

* Draft Iteration & Input Token

* Draft Model & Probability

* Draft Token

* Target Model & Probability

* Recursive Rejection Sampling

* Output Token

* **Rows:** Represent iterations, visually separated by colored backgrounds (yellow, blue, orange, green).

* **Labels:**

* "draft iteration"

* "input token"

* "draft model probability"

* "draft token"

* "target model probability"

* "recursive rejection sampling"

* "output token"

* "draft model"

* "target model"

* "SBS" (located between the draft model probability and draft token columns)

* "feedback output tokens for each iteration" (located at the bottom of the draft model column)

* **Tokens:** Represented by numbered boxes (0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10).

* **Probabilities:** Represented by "p" and "q" values with subscripts (p0, p1, p2, p3, p4, p5, p6, q0, q1, q2, q3, q4, q5, q6, q7, q8, q9).

* **Acceptance/Rejection Indicators:** Green checkmarks indicate acceptance, red "X" marks indicate rejection.

### Detailed Analysis

**Column 1: Draft Iteration & Input Token**

* Iteration 1 (Yellow): Input token 0.

* Iteration 2 (Blue): Input tokens 1, 2, 3.

* Iteration 3 (Orange): Input tokens 4, 5, 6.

**Column 2: Draft Model & Probability**

* The "draft model" block processes the input tokens.

* Each input token generates a draft model probability (p0, p1, p2, p3, p4, p5, p6).

**Column 3: Draft Token**

* The "SBS" (likely standing for "Sample-Based Search") block connects the draft model probabilities to draft tokens.

* Iteration 1 (Yellow): Draft token 1.

* Iteration 2 (Blue): Draft tokens 4, 5, 6.

* Iteration 3 (Orange): Draft tokens 7, 8, 9.

**Column 4: Target Model & Probability**

* The "target model" block processes the draft tokens.

* Each draft token generates a target model probability (q0, q1, q2, q3, q4, q5, q6, q7, q8, q9).

**Column 5: Recursive Rejection Sampling**

* Iteration 1 (Yellow):

* Token 0 is accepted (green checkmark) and leads to token 1.

* Probabilities q0 and p0 are compared.

* Iteration 2 (Blue):

* Token 4 is rejected (red "X").

* Token 5 is accepted (green checkmark).

* Probabilities q1, p1, q1', and p1' are compared.

* Iteration 3 (Orange):

* Token 7 is rejected (red "X").

* Token 8 is accepted (green checkmark).

* Probabilities q5, p5, q5', and p5' are compared.

* Token 10 is sampled based on q8.

**Column 6: Output Token**

* The final output tokens are 1, 5, 8, and 10.

### Key Observations

* The diagram illustrates an iterative process where a draft model generates candidate tokens, which are then evaluated by a target model.

* Recursive rejection sampling is used to refine the token selection based on the probabilities generated by both models.

* The "SBS" block appears to be a crucial component in connecting the draft model probabilities to the draft tokens.

* The feedback loop, indicated by "feedback output tokens for each iteration," suggests that the output tokens influence subsequent iterations.

### Interpretation

The diagram depicts a sophisticated approach to token generation, likely within a natural language processing or machine learning context. The use of a draft model allows for efficient generation of candidate tokens, while the target model provides a more refined evaluation. Recursive rejection sampling ensures that the selected tokens align with the desired probability distribution. The feedback loop enables the model to learn and adapt over time, improving the quality of the generated tokens. The process aims to generate a sequence of tokens that are both plausible (according to the draft model) and desirable (according to the target model). The SBS component likely plays a role in selecting the most promising candidate tokens from the draft model's output.

</details>

in Figure 1; multi-round speculative decoding (from SpecInfer (Miao et al., 2023)), K-SEQ and Optimal Transport with Membership costs (OTM) (Sun et al., 2023), use sampling with replacement, whereas recursive rejection sampling uses sampling without replacement; note that both K-SEQ and OTM were presented in SpecTr paper (Sun et al., 2023) where OTM shows theoretically optimal acceptance rate. For all the baselines, acceptance rates decrease as the discrepancy between draft and target distribution increases, since tokens sampled from draft models become more unlikely from target models. On the other hand, recursive rejection sampling achieves 100% acceptance rate even with high draft-target-model discrepancy; once the first draft token is rejected, the second draft token is always aligned with the residual distribution. This example shows that draft distributions with dependencies, e.g., sampling-withoutreplacement distribution, leads to higher acceptance rate and becomes crucial, especially for the cases with higher distributional discrepancy between draft and target.

## 3.2. Tree-Based Speculative Decoding with Recursive Rejection Sampling

Recursive rejection sampling is applicable to tree-based speculative decoding algorithms if sampling without replacement is used to construct a draft-token tree . Two Recursive Speculative Decoding (RSD) algorithms using recursive rejection sampling are presented in this section, while they share the same pipeline for parallel target evaluation and draft tree verification after building the draft-token tree (See

Figure 2. ). We describe details about how RSD works in the following sections.

## 3.2.1. DRAFT-TOKEN TREE GENERATION

We consider two RSD algorithms: RSD with C onstant branching factors (RSD-C) and RSD with S tochastic Beam Search (RSD-S). RSD-C builds the draft-token tree having constant branching factors, which makes sequences from the tree to have the same length. RSD-S, on the other hand, builds the tree via Stochastic Beam Search (Kool et al., 2019) that samples draft sequences without replacement, while truncating sequences that are unlikely to be generated from the draft model and efficiently handling the computational cost.

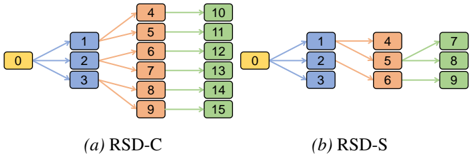

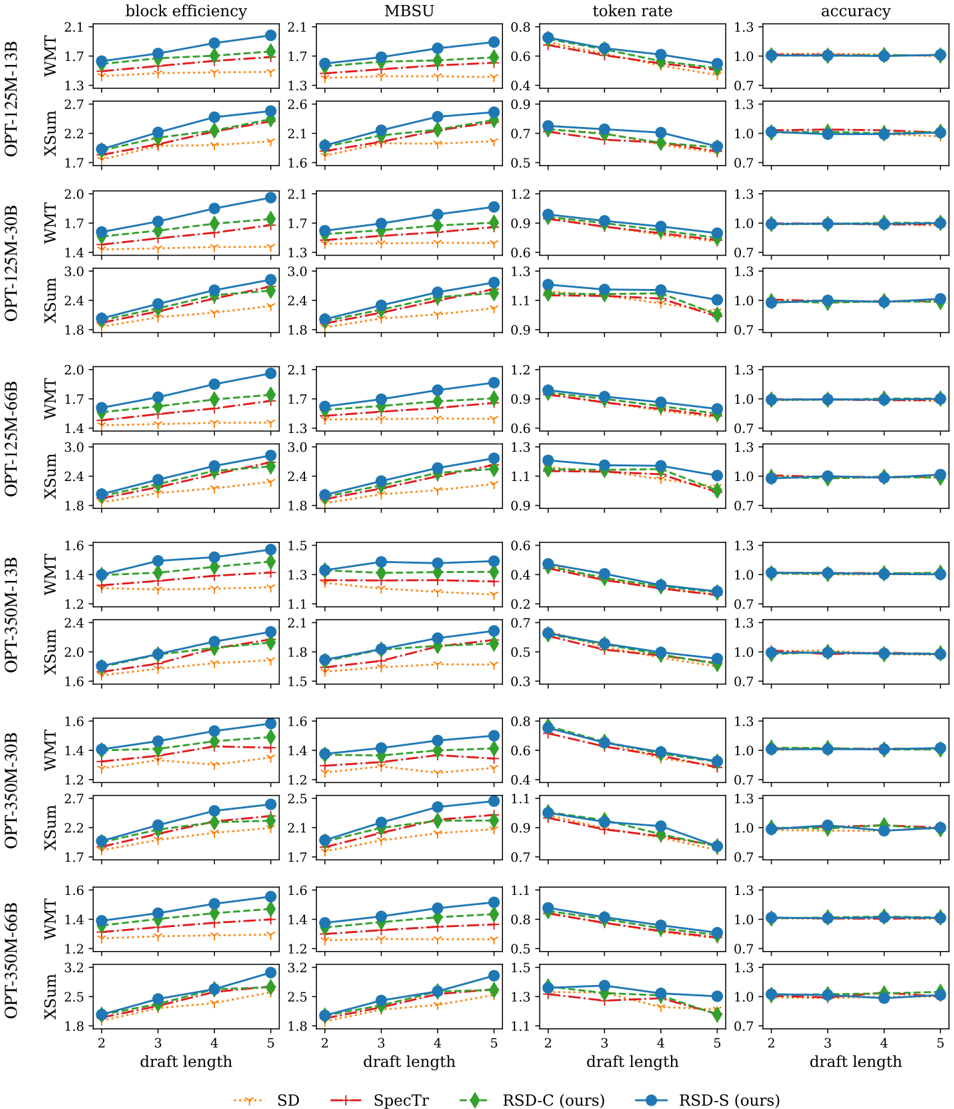

RSD with Constant branching factors (RSD-C). Let L denote the fixed length for all draft sequences, which is equivalent to the depth of the draft-token tree, and τ (1) 0 denote the input sequence of tokens. Let us assume that the tree level increases from root ( l = 0 ) to leaf ( l = L ) nodes, where each node is characterized by the (partial) sequence. We also define b := ( b 0 , ..., b L -1 ) where b l is the branching factor at the level l (See Figure 3(a) for the example with b = (3 , 2 , 1) .).

At each level l ∈ { 0 , ..., L -1 } of the draft tree, we begin with N l sequences τ ( k ) l , k = 1 , ..., N l generated from the previous level, where N 0 := 1 and N l := ∏ l -1 l ′ =0 b l ′ for l ≥ 1 . Then, we evaluate log probabilities ϕ l ( τ ( k ) l , · ) and perturbed log probabilities ˜ ϕ l ( τ ( k ) l , · ) for each k , i.e., for

Figure 3. We describe examples of constructing draft-token trees with the (maximum) draft length equal to 3; (a) The tree constructed by RSD-C with branching factors b = (3 , 2 , 1) is given; (b) we depict the tree constructed by RSD-S with beamwidth W = 3 , where edges are determined via Stochastic Beam Search.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: RSD-C and RSD-S

### Overview

The image presents two diagrams, labeled (a) RSD-C and (b) RSD-S, illustrating different data flow processes. Both diagrams use colored rectangles to represent data points and arrows to indicate the flow of data between them.

### Components/Axes

* **Nodes:** The diagrams consist of rectangular nodes, each containing a numerical value. The nodes are colored yellow, blue, orange, and green.

* **Arrows:** Arrows indicate the direction of data flow between the nodes. The arrows are colored blue, orange, and green, matching the colors of the nodes they connect.

* **Labels:** The diagrams are labeled as "(a) RSD-C" and "(b) RSD-S" at the bottom.

### Detailed Analysis

**(a) RSD-C**

* **Initial Node:** A yellow node labeled "0" is the starting point.

* **First Layer:** The "0" node connects to three blue nodes labeled "1", "2", and "3".

* 0 connects to 1 with a blue arrow.

* 0 connects to 2 with a blue arrow.

* 0 connects to 3 with a blue arrow.

* **Second Layer:** The blue nodes connect to six orange nodes labeled "4", "5", "6", "7", "8", and "9".

* 1 connects to 4 with an orange arrow.

* 1 connects to 5 with an orange arrow.

* 2 connects to 6 with an orange arrow.

* 2 connects to 7 with an orange arrow.

* 3 connects to 8 with an orange arrow.

* 3 connects to 9 with an orange arrow.

* **Third Layer:** The orange nodes connect to six green nodes labeled "10", "11", "12", "13", "14", and "15".

* 4 connects to 10 with a green arrow.

* 5 connects to 11 with a green arrow.

* 6 connects to 12 with a green arrow.

* 7 connects to 13 with a green arrow.

* 8 connects to 14 with a green arrow.

* 9 connects to 15 with a green arrow.

**(b) RSD-S**

* **Initial Node:** A yellow node labeled "0" is the starting point.

* **First Layer:** The "0" node connects to three blue nodes labeled "1", "2", and "3".

* 0 connects to 1 with a blue arrow.

* 0 connects to 2 with a blue arrow.

* 0 connects to 3 with a blue arrow.

* **Second Layer:** The blue nodes connect to three orange nodes labeled "4", "5", and "6".

* 1 connects to 4 with an orange arrow.

* 2 connects to 5 with an orange arrow.

* 3 connects to 6 with an orange arrow.

* **Third Layer:** The orange nodes connect to three green nodes labeled "7", "8", and "9".

* 4 connects to 7 with a green arrow.

* 5 connects to 8 with a green arrow.

* 6 connects to 9 with a green arrow.

### Key Observations

* Both diagrams start with a single yellow node labeled "0".

* Both diagrams have three layers of nodes.

* RSD-C has a branching structure, with the number of nodes increasing in each layer before the final layer.

* RSD-S has a linear structure, with the number of nodes remaining constant after the first layer.

### Interpretation

The diagrams likely represent different data processing or distribution methods. RSD-C appears to be a more complex process, potentially involving parallel processing or data replication. RSD-S seems to be a simpler, more sequential process. The colors may represent different stages or types of data. The diagrams illustrate how an initial data point "0" is transformed or distributed through different stages, resulting in a final set of data points.

</details>

i.i.d. Gumbel samples G ( k ) l ( x ) , x ∈ X ,

$$\phi _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) \colon = \log p ( \cdot | \tau _ { l } ^ { ( k ) } ) , \quad ( 5 ) \quad \begin{array} { r l r } & { ^ { l } } \\ & { ( b ) \, i d o t a n g e \, . } \end{array}$$

$$\tilde { \phi } _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) \colon = \phi _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) + G _ { l } ^ { ( k ) } , \quad ( 6 ) \quad t h e$$

where both log probabilities and Gumbel samples can be computed in parallel; proper positional encodings and attention masking (Cai et al., 2023; Miao et al., 2023) are required for the parallel log-probability computation when transformer architecture is used (Vaswani et al., 2017). By using Gumbel-Topk trick (Vieira, 2014; Kool et al., 2019) with perturbed log probabilities (6), one can sample topb l tokens without replacement for each sequence τ ( k ) l :

$$\hat { X } _ { l + 1 } ^ { ( ( k - 1 ) b _ { l } + 1 ) } , \dots , \hat { X } _ { l + 1 } ^ { ( ( k - 1 ) b _ { l } + b _ { l } ) } = \underset { x \in \mathcal { X } } { \arg t o p \text {-} b _ { l } } \left ( \tilde { \phi } _ { l } ( \tau _ { l } ^ { ( k ) } , x ) \right ) . \quad \text {where} \, t h i n d e r s$$

Note that the outputs ˆ X (( k -1) b l + k ′ ) l +1 , k ′ = 1 , ..., b l , in (7) are assumed to be in the decreasing order of values ˜ ϕ l ( τ ( k ) l , ˆ X (( k -1) b l + k ′ ) l +1 ) , for each k . Finally, we define

$$O _ { l + 1 } ^ { ( ( k - 1 ) b _ { l } + k ^ { \prime } ) } \colon = ( \hat { X } _ { l + 1 } ^ { ( ( k - 1 ) b _ { l } + k ^ { \prime } ) } , k ) , \quad ( 8 ) \quad \psi$$

$$\tau _ { l + 1 } ^ { ( ( k - 1 ) b _ { l } + 1 ) } \colon = ( \tau _ { l } ^ { ( k ) } , \hat { X } _ { l + 1 } ^ { ( ( k - 1 ) b _ { l } + 1 ) } ) \quad ( 9 )$$

for k ∈ 1 , ..., N l and k ′ ∈ { 1 , ..., b l } , where O (( k -1) b l + k ′ ) l +1 is a pair of draft token and parent sequence index. Those pairs in (8) are stored for all levels l = 0 , ..., L -1 and used for draft tree verification, which exploits the fact that the tokens ˆ X (( k -1) b l +1) l +1 , ..., ˆ X (( k -1) b l + b l ) l +1 follow sampling without replacement from p ( ·| τ ( k ) l ) for any given parent sequence index k .

RSD with Stochastic Beam Search (RSD-S). One caveat of RSD-C is that its constant branching factors b should be carefully determined to handle tree complexity, when the computation budget is limited; for example, if b = ( n, ..., n ) with its length L , the number of nodes in the draft tree will be ∑ L -1 l =0 n l = O ( n L -1 ) , which is computationally prohibitive for large n and L . Also, RSD-C constructs sequences at each level l by using the myopic token-wise log probabilities ϕ l in (6). RSD-S addresses both issues by using Stochastic Beam Search (Kool et al., 2019) that early-truncates unlikely sequences and utilizes far-sighted sequence log probabilities.

Let us define the maximum draft sequence length L and the beamwidth W . We also define τ (1) 0 as the input sequence similar to RSD-C. At each level l ∈ { 0 , ..., L -1 } , SBS uses beam

$$\mathcal { B } _ { l } & \colon = ( t _ { l } ^ { ( 1 ) } , \dots , t _ { l } ^ { ( W ) } ) , \\ t _ { l } ^ { ( k ) } & \colon = ( \tau _ { l } ^ { ( k ) } , \phi _ { l - 1 } ( \tau _ { l } ^ { ( k ) } ) , \psi _ { l - 1 } ( \tau _ { l } ^ { ( k ) } ) )$$

generated from the previous level l -1 1 . Here, each tuple t ( k ) l for k ∈ { 1 , ..., W } consists of (a) a sequence τ ( k ) l , (b) its sequence log probability ϕ l -1 ( τ ( k ) l ) of τ ( k ) l , and (c) the transformed (perturbed and truncated) sequence log probability ψ l -1 ( τ ( k ) l ) , respectively.

For each tuple t ( k ) l in the beam B l , we evaluate the (nextlevel) sequence log probabilities ϕ l ( τ ( k ) l , · ) and the perturbed sequence log probabilities ˜ ϕ l ( τ ( k ) l , · ) . Specifically for i.i.d. Gumbel samples G ( k ) l ( x ) , x ∈ X , we compute

$$\begin{array} { r l } & { \phi _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) \colon = \phi _ { l - 1 } ( \tau _ { l } ^ { ( k ) } ) + \log p ( \cdot | \tau _ { l } ^ { ( k ) } ) , } \\ & { \tilde { \phi } _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) \colon = \phi _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) + G _ { l } ^ { ( k ) } , } \end{array}$$

where the terms τ ( k ) l and ϕ l -1 ( τ ( k ) l ) within the tuple t ( k ) l of within the beam B l are reused. Similar to RSD-C, both log probabilities and Gumbel samples can be parallelly computed with positional encodings and attention masking (Cai et al., 2023; Miao et al., 2023). In addition to the perturbed log probabilities, SBS in RSD-S transforms ˜ ϕ l ( τ ( k ) l , · ) into the truncated function

$$\begin{array} { r l r } { 8 ) } & \psi _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) \colon = T ( \psi _ { l - 1 } ( \tau _ { l } ^ { ( k ) } ) , \tilde { \phi } _ { l } ( \tau _ { l } ^ { ( k ) } , \cdot ) ) , } & { ( 1 0 ) } \end{array}$$

$$T ( u , \phi ) \colon = - \log \left ( e ^ { - u } - e ^ { - \max \phi } + e ^ { - \phi ( \cdot ) } \right ) \quad ( 1 1 )$$

for max ϕ := max x ∈X ϕ ( x ) by reusing ψ l -1 ( τ ( k ) l ) in t ( k ) l . Note that T ( u, ϕ ) in (11) is monotonically increasing w.r.t. ϕ and transforms ϕ to the function with the upper bound u (Kool et al., 2019) 2

After evaluating ψ l ( τ ( k ) l , · ) for all parent sequences τ ( k ) l s, SBS selects topW pairs ( ˆ X l +1 , p l +1 ) of draft token and parent sequence index across the beam B l , i.e.,

$$\begin{array} { r l } { \quad O _ { l + 1 } ^ { ( 1 ) } , \cdots , O _ { l + 1 } ^ { ( W ) } \colon = \underset { ( x , k ) \in \mathcal { X } \times \mathcal { K } } { \arg t o p - W } \left ( \psi _ { l } ( \tau _ { l } ^ { ( k ) } , x ) \right ) \quad ( 1 2 ) } \end{array}$$

$$\frac { ^ { 1 } \text {For} \, l = 0 , \phi _ { - 1 } ( \tau _ { 0 } ^ { ( 1 ) } ) = \phi _ { - 1 } ( \tau _ { 0 } ^ { ( 1 ) } ) = 0 \, \text {is used with} \, \mathcal { B } _ { 0 } \colon = } { ( t _ { 0 } ^ { ( 1 ) } ) \left ( K o l e t a n c h , 2 0 9 \right ) .$$

2 In Appendix B.3 of Kool et al. (2019), a numerical stable way of evaluating the function T in (11) is provided.

for O ( k ) l +1 := ( ˆ X ( k ) l +1 , p ( k ) l +1 ) and K := { 1 , ..., W } . The output pairs O (1) l +1 , ..., O ( W ) l +1 are given by corresponding values ψ l ( τ ( k ) l , ˆ X ( k ) l +1 ) in the decreasing order . Finally, we construct the next beam

$$\mathcal { B } _ { l + 1 } & \colon = ( t ^ { ( 1 ) } _ { l + 1 } , \dots , t ^ { ( W ) } _ { l + 1 } ) , \\ t ^ { ( k ) } _ { l + 1 } & \colon = ( ( \hat { \boldsymbol \tau } ^ { ( k ) } _ { l + 1 } , \hat { \boldsymbol X } ^ { ( k ) } _ { l + 1 } ) , \phi _ { l } ( \hat { \boldsymbol \tau } ^ { ( k ) } _ { l + 1 } , \hat { \boldsymbol X } ^ { ( k ) } _ { l + 1 } ) ) & \quad \text {quen} \\$$

for k = 1 , ..., W , where ˆ τ ( k ) l +1 := τ ( p ( k ) l +1 ) l is the selected parent sequence. Intuitively, SBS at the level l evaluates scores ψ ( k ) l ( τ ( k ) l , x ) , x ∈ X , k ∈ K , by considering all child nodes from the beam B l . SBS selects W nodes among all child nodes having topW scores. Note that the above process is theoretically equivalent to sample topW length-( l +1) sequences without replacement (Kool et al., 2019) and efficiently truncates sequences that are unlikely to be generated. (See Figure 3(b) .)

We store the ordered sequence of pairs O (1) l +1 , ..., O ( W ) l +1 for all levels l = 0 , ..., L -1 , which is used for draft-tree verification. As in RSD-C, we show the following property:

Theorem 3.2 (Tokens from the same sequence follow sampling without replacement in RSD-S) . In RSD-S, any nonempty subsequence of the sequence ˆ X (1) l +1 , ..., ˆ X ( W ) l +1 of draft tokens (from O (1) l +1 , ..., O ( W ) l +1 in (12) ) such that each element of the subsequence has the same parent τ ( k ) l follows sampling without replacement from p ( ·| τ ( k ) l ) 3 .

Proof.

$$\text {of. See Appendix A.2} .$$

## 3.2.2. DRAFT-TREE EVALUATION AND VERIFICATION

Tree evaluation with target model. After the draft-tree construction, we have sequences of pairs

$$( O _ { l } ^ { ( 1 ) } , \dots , O _ { l } ^ { ( N _ { l } ) } ) , l = 1 , \dots , L ,$$

where N l = ∏ l l ′ =0 b l ′ for RSD-C and N l = W for RSD-S, respectively ( N 0 := 1 for both). Those pairs include the node-connection information of the draft tree and can be used to parallelly evaluate the draft tree via the target model by utilizing appropriate attention masking and positional encodings. From the evaluation process, we acquire the target log probabilities for all sequences τ ( k l ) l in the draft tree, i.e.,

$$q ( \cdot | \tau _ { l } ^ { ( k _ { l } ) } ) , l = 0 , \dots , L , k _ { l } = 1 , \dots , N _ { l } .$$

Verification via recursive rejection sampling. Earlier, we show that tokens in the tree having the same parent sequence

3 We define a subsequence of a sequence as any sequence acquired by removing its elements while maintaining the order in the original sequence .

τ ( k l ) l follows the sampling-without-replacement distribution from p ( ·| τ ( k l ) l ) for both RSD-C and RSD-S. Thus, one can apply recursive rejection sampling iteratively at each tree level.

Specifically, at the level l ∈ { 0 , 1 , ..., L } , we begin with a sequence τ ( k ′ l ) l where k ′ l is the index of the parent sequence accepted in the previous level ( k ′ 0 = 1 at the level l = 0 ). Within the ordered sequence ( O (1) l +1 , ..., O ( N l +1 ) l +1 ) of pairs, we find the subsequence o ( k ′ l ) l +1 having τ ( k ′ l ) l as parent, which can be validated by checking the second element of each pair O ( k ) l +1 , and the token sequence x ( k ′ l ) l +1 in o ( k ′ l ) l +1 . Earlier, we show that tokens x ( k ′ l ) l +1 follows sampling-withoutreplacement distribution in its order, so we can apply recursive rejection sampling to those tokens with draft and target distributions, p ( ·| τ ( k ′ l ) l ) and q ( ·| τ ( k ′ l ) l ) , respectively. If any token x in x ( k ′ l ) l +1 is accepted, we set k ′ l +1 that corresponds to τ ( k ′ l +1 ) l := ( τ ( k ′ l ) l , x ) , and we continue to the next-level verification if child nodes exist. If all tokens are rejected, we sample from the last residual distribution of recursiver rejection sampling. If there is no child node, we sample from the target q ( ·| τ ( k ′ l ) l ) similar to the single-sequence speculative decoding (Chen et al., 2023; Leviathan et al., 2023). We provide detailed descriptions for RSD-C ( Algorithm 2 ) and for RSD-S ( Algorithm 7 ) in Appendix B.

## 4. Related Works

Many recent works have aimed to address the inference bottleneck of LLMs caused by auto-regressive decoding. Speculative decoding methods (Leviathan et al., 2023; Chen et al., 2023; Sun et al., 2023; Miao et al., 2023) use the target model (LLM) with a draft model (a small language model), while recovering target distribution via rejection sampling. See the recent survey on speculative decoding (Xia et al., 2024) for more comprehensive understanding.

Other than speculative decoding methods, BiLD (Kim et al., 2023) is another method to accelerate inference, where it uses a fallback policy which determines when to invoke the target model and a rollback policy to review and correct draft tokens. Medusa (Cai et al., 2024) uses multiple decoding heads to predict future tokens in parallel, constructs the draft-token tree and uses a typical acceptance criteria. Lookahead decoding (Fu et al., 2023) caches the historical n -grams generated on-the-fly instead of having a draft model and performs parallel decoding using Jacobi iteration and verifies n -grams from the cache. While showing promising results with greedy sampling, these works do not guarantee target distribution recovery in contrast to speculative decoding methods.

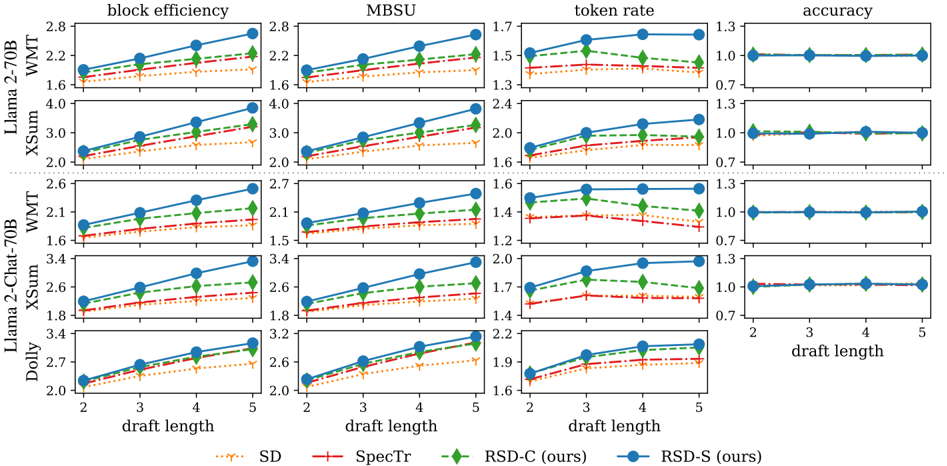

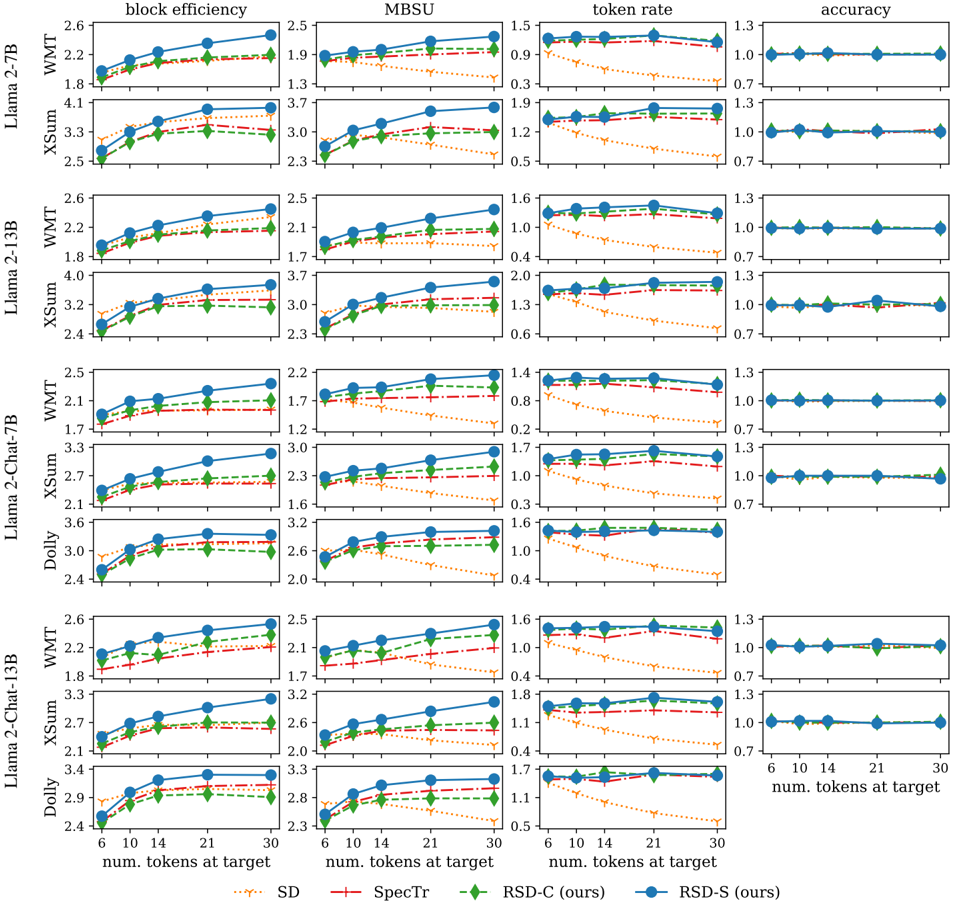

Figure 4. Block efficiency, MBSU, token rate and accuracy for various lengths ( 2 , 3 , 4 , 5 ) of draft sequences are given. We consider two target models, Llama 2-70B and Llama 2-Chat-70B, each of which has a corresponding smaller draft model for speculative decoding. All results are normalized by the corresponding numbers from auto-regressive decoding. RSD-S always outperforms SD, SpecTr and RSD-C. All methods including auto-regressive decoding show similar accuracy for WMT and XSum.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Charts: Performance Metrics vs. Draft Length

### Overview

The image presents a series of line charts comparing the performance of different models (Llama 2-70B, Llama 2-Chat-70B, Dolly) on various tasks (WMT, XSum) across different metrics (block efficiency, MBSU, token rate, accuracy) as a function of draft length. Four different methods (SD, SpecTr, RSD-C (ours), RSD-S (ours)) are compared.

### Components/Axes

* **X-axis:** Draft Length, with values 2, 3, 4, and 5.

* **Y-axes:**

* Block Efficiency: Ranges from approximately 1.6 to 4.0.

* MBSU: Ranges from approximately 1.2 to 3.4.

* Token Rate: Ranges from approximately 1.2 to 2.4.

* Accuracy: Ranges from approximately 0.7 to 1.3.

* **Models (Rows):**

* Llama 2-70B, WMT

* Llama 2-70B, XSum

* Llama 2-Chat-70B, WMT

* Llama 2-Chat-70B, XSum

* Dolly

* **Metrics (Columns):** Block efficiency, MBSU, token rate, accuracy.

* **Legend (Bottom):**

* Yellow dotted line: SD

* Red dashed line with plus markers: SpecTr

* Green dashed line with diamond markers: RSD-C (ours)

* Blue solid line with circle markers: RSD-S (ours)

### Detailed Analysis

**Llama 2-70B, WMT**

* **Block Efficiency:**

* SD (Yellow): Increases from ~1.6 to ~1.8.

* SpecTr (Red): Increases from ~1.7 to ~1.9.

* RSD-C (Green): Increases from ~1.8 to ~2.1.

* RSD-S (Blue): Increases from ~1.9 to ~2.3.

* **MBSU:**

* SD (Yellow): Increases from ~1.6 to ~1.8.

* SpecTr (Red): Increases from ~1.7 to ~1.9.

* RSD-C (Green): Increases from ~1.8 to ~2.2.

* RSD-S (Blue): Increases from ~1.9 to ~2.4.

* **Token Rate:**

* SD (Yellow): Increases from ~1.3 to ~1.4.

* SpecTr (Red): Increases from ~1.4 to ~1.45.

* RSD-C (Green): Increases from ~1.5 to ~1.6.

* RSD-S (Blue): Increases from ~1.5 to ~1.7.

* **Accuracy:**

* All methods maintain a constant accuracy of approximately 1.0.

**Llama 2-70B, XSum**

* **Block Efficiency:**

* SD (Yellow): Increases from ~2.2 to ~2.8.

* SpecTr (Red): Increases from ~2.3 to ~2.9.

* RSD-C (Green): Increases from ~2.4 to ~3.3.

* RSD-S (Blue): Increases from ~2.5 to ~3.9.

* **MBSU:**

* SD (Yellow): Increases from ~2.2 to ~2.4.

* SpecTr (Red): Increases from ~2.3 to ~2.6.

* RSD-C (Green): Increases from ~2.5 to ~3.2.

* RSD-S (Blue): Increases from ~2.6 to ~3.8.

* **Token Rate:**

* SD (Yellow): Increases from ~1.6 to ~1.7.

* SpecTr (Red): Increases from ~1.7 to ~1.9.

* RSD-C (Green): Increases from ~1.9 to ~2.2.

* RSD-S (Blue): Increases from ~2.0 to ~2.3.

* **Accuracy:**

* All methods maintain a constant accuracy of approximately 1.0.

**Llama 2-Chat-70B, WMT**

* **Block Efficiency:**

* SD (Yellow): Increases from ~1.6 to ~1.7.

* SpecTr (Red): Increases from ~1.7 to ~1.9.

* RSD-C (Green): Increases from ~1.8 to ~2.1.

* RSD-S (Blue): Increases from ~1.9 to ~2.5.

* **MBSU:**

* SD (Yellow): Increases from ~1.5 to ~1.6.

* SpecTr (Red): Increases from ~1.4 to ~1.5.

* RSD-C (Green): Increases from ~1.4 to ~1.6.

* RSD-S (Blue): Increases from ~1.7 to ~2.2.

* **Token Rate:**

* SD (Yellow): Decreases from ~1.4 to ~1.3.

* SpecTr (Red): Decreases from ~1.4 to ~1.3.

* RSD-C (Green): Decreases from ~1.5 to ~1.4.

* RSD-S (Blue): Increases from ~1.5 to ~1.6.

* **Accuracy:**

* All methods maintain a constant accuracy of approximately 1.0.

**Llama 2-Chat-70B, XSum**

* **Block Efficiency:**

* SD (Yellow): Increases from ~1.8 to ~2.0.

* SpecTr (Red): Increases from ~1.9 to ~2.1.

* RSD-C (Green): Increases from ~2.0 to ~2.4.

* RSD-S (Blue): Increases from ~2.1 to ~2.6.

* **MBSU:**

* SD (Yellow): Increases from ~1.7 to ~1.8.

* SpecTr (Red): Increases from ~1.7 to ~1.8.

* RSD-C (Green): Increases from ~1.8 to ~2.0.

* RSD-S (Blue): Increases from ~1.9 to ~2.4.

* **Token Rate:**

* SD (Yellow): Increases from ~1.4 to ~1.5.

* SpecTr (Red): Increases from ~1.4 to ~1.5.

* RSD-C (Green): Increases from ~1.5 to ~1.7.

* RSD-S (Blue): Increases from ~1.7 to ~2.0.

* **Accuracy:**

* All methods maintain a constant accuracy of approximately 1.0.

**Dolly**

* **Block Efficiency:**

* SD (Yellow): Increases from ~2.0 to ~2.2.

* SpecTr (Red): Increases from ~2.1 to ~2.3.

* RSD-C (Green): Increases from ~2.2 to ~2.5.

* RSD-S (Blue): Increases from ~2.3 to ~2.7.

* **MBSU:**

* SD (Yellow): Increases from ~1.6 to ~1.8.

* SpecTr (Red): Increases from ~1.7 to ~1.8.

* RSD-C (Green): Increases from ~1.8 to ~2.0.

* RSD-S (Blue): Increases from ~1.9 to ~2.2.

* **Token Rate:**

* SD (Yellow): Increases from ~1.4 to ~1.5.

* SpecTr (Red): Increases from ~1.5 to ~1.6.

* RSD-C (Green): Increases from ~1.6 to ~1.8.

* RSD-S (Blue): Increases from ~1.7 to ~2.0.

* **Accuracy:**

* All methods maintain a constant accuracy of approximately 1.0.

### Key Observations

* RSD-S (ours) generally outperforms the other methods (SD, SpecTr, RSD-C) in terms of block efficiency, MBSU, and token rate across all models and tasks.

* Accuracy remains relatively constant across all methods and draft lengths.

* The performance gains from increasing draft length tend to diminish as the draft length increases from 4 to 5.

* The Llama 2-70B model generally achieves higher block efficiency and MBSU scores compared to the Llama 2-Chat-70B model.

### Interpretation

The data suggests that the RSD-S method is more effective at improving block efficiency, MBSU, and token rate compared to the other methods tested. The consistent accuracy across all methods indicates that these improvements are not achieved at the expense of accuracy. The diminishing returns from increasing draft length suggest that there is an optimal draft length beyond which further increases provide minimal benefit. The differences in performance between the Llama 2-70B and Llama 2-Chat-70B models may be attributed to differences in their architectures or training data.

</details>

## 5. Experiments

We evaluate RSD-C and RSD-S together with our baselines including speculative decoding (SD) (Chen et al., 2023; Leviathan et al., 2023) and SpecTr (Sun et al., 2023), where a single draft model is assumed 4 . We consider the following perspectives during our experiments: ( Exp1 ) How will the performance be affected by the length of draft sequences ? ( Exp2 ) How will the performance be affected by the target computational budget , i.e., the number of tokens processed at the target model? While ( Exp1 ) has been frequently investigated by existing tree-based speculative decoding methods (Sun et al., 2023; Miao et al., 2023), ( Exp2 ) has not been considered in prior works but has practical importance when running the target model on resource-bounded devices.

Models. We consider the following target models; Llama 2 and Llama 2-Chat (Touvron et al., 2023) with 7B , 13B and 70B parameters; OPT (Zhang et al., 2022) with 13B , 30B and 66B parameters. Each class of target models adopts corresponding draft model; see Appendix C.1. In this section, we only present Llama 2-70B and Llama 2-Chat-70B results, and other results (Llama 2 with other sizes and OPT) can be found in Appendix C.4.

Tasks. Our methods and baselines are evaluated for WMT 18-DeEn (Bojar et al., 2018, translation) and XSum (Narayan et al., 2018, summarization) for each target

4 We exclude SpecInfer (Miao et al., 2023) from our baselines since it uses multiple draft models.

model, while we report accuracy scores (BLEU for WMT and ROUGE-2 for XSum) to confirm if the target model's distribution is recovered; DatabricksDolly -15k (Conover et al., 2023, question and answering) is used only for Llama 2-Chat without accuracy evaluation. We use temperature 0.3 for both XSum and WMT and 1.0 for Dolly, where we further apply nucleus (topp ) sampling (Holtzman et al., 2019) with p = 0 . 95 for Dolly.

Performance metrics. We evaluate block efficiency (Leviathan et al., 2023), Memory-Bound Speed-Up ( MBSU ) (Zhou et al., 2023) and token rate (tokens/sec) on A100 GPUs; see Appendix C.2 for details.

## 5.1. (Exp 1) Fixed draft sequence length

We fix (maximum) draft sequence length as the value in { 2 , 3 , 4 , 5 } and evaluate our methods and baselines, which is summarized in Figure 4 . Regarding the tree structures of each decoding methods, we let both SpecTr and RSD-S always use draft-token trees, the size of which is smaller than or equal to that of RSD-C's tree; see Appendix C.3.1 for details. Our results show that tree-based methods (SpecTr, RSD-C and RSD-S) always outperform SD in terms of block efficiency and MBSU, whereas token rates for SpecTr and RSD-C can be lower than that for SD; this is since block efficiencies for both SpecTr and RSD-C are relatively low and there is additional computational overhead to process the tree. On the other hand, RSD-S strictly outperforms both SD and SpecTr for all performance metrics , showing

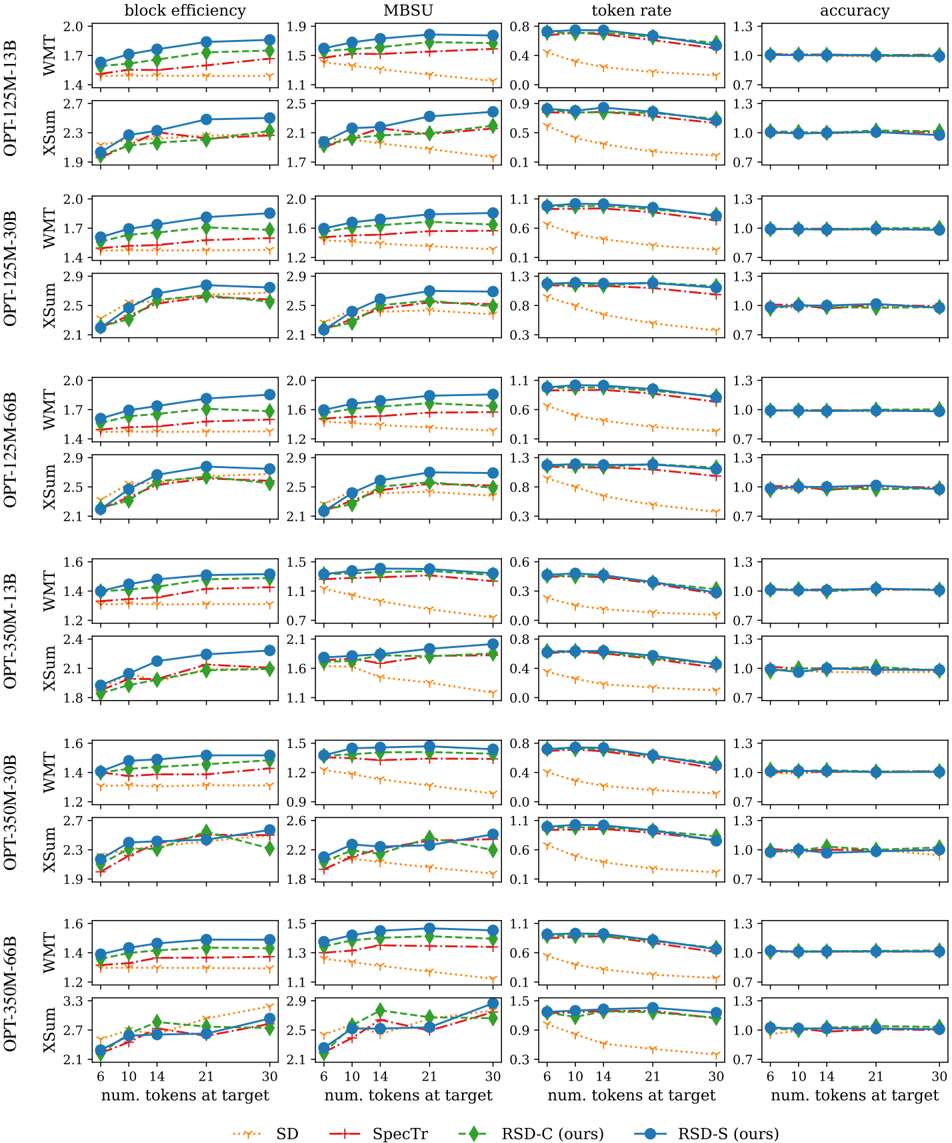

Figure 5. Block efficiency, MBSU, token rate and accuracy for various target computational budgets (the numbers 6 , 10 , 14 , 21 , 30 of draft tokens processed at the target model) are given. We consider two target models, Llama 2-70B and Llama 2-Chat-70B, each of which has a corresponding smaller draft model for speculative decoding. All results are normalized by the corresponding numbers from auto-regressive decoding. RSD-S outperforms SD, SpecTr and RSD-C in the majority of cases. All methods including auto-regressive decoding show similar accuracy for both WMT and XSum.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Chart: Model Performance Comparison

### Overview

The image presents a series of line graphs comparing the performance of different models (Llama 2-70B, Llama 2-Chat-70B, Dolly) on various tasks (WMT, XSum) across four metrics: block efficiency, MBSU, token rate, and accuracy. The x-axis represents the number of tokens at the target (6, 10, 14, 21, 30). Four different methods (SD, SpecTr, RSD-C, RSD-S) are compared within each task and metric combination.

### Components/Axes

* **Title:** Model Performance Comparison (derived from the content)

* **X-axis:** "num. tokens at target" with values 6, 10, 14, 21, 30.

* **Y-axes:**

* **Block efficiency:** Ranges from approximately 1.7 to 3.7.

* **MBSU:** Ranges from approximately 0.6 to 3.3.

* **Token rate:** Ranges from approximately 0.6 to 2.3.

* **Accuracy:** Ranges from approximately 0.7 to 1.3.

* **Models (Rows):**

* Llama 2-70B (WMT, XSum)

* Llama 2-Chat-70B (WMT, XSum)

* Dolly

* **Metrics (Columns):**

* block efficiency

* MBSU

* token rate

* accuracy

* **Legend (Bottom):**

* SD (orange dotted line with downward-pointing triangle markers)

* SpecTr (red dashed-dotted line with plus markers)

* RSD-C (ours) (green dashed line with diamond markers)

* RSD-S (ours) (blue solid line with circle markers)

### Detailed Analysis

#### Llama 2-70B - WMT

* **Block efficiency:**

* SD (orange): Increases from ~1.8 to ~2.1.

* SpecTr (red): Increases from ~1.9 to ~2.2.

* RSD-C (green): Increases from ~1.9 to ~2.3.

* RSD-S (blue): Increases from ~2.0 to ~2.5.

* **MBSU:**

* SD (orange): Increases from ~1.8 to ~2.0.

* SpecTr (red): Increases from ~1.8 to ~2.1.

* RSD-C (green): Increases from ~1.9 to ~2.2.

* RSD-S (blue): Increases from ~2.0 to ~2.4.

* **Token rate:**

* SD (orange): Decreases from ~1.7 to ~1.0.

* SpecTr (red): Remains relatively constant at ~1.7.

* RSD-C (green): Remains relatively constant at ~1.7.

* RSD-S (blue): Remains relatively constant at ~1.7.

* **Accuracy:** All methods remain constant at ~1.0.

#### Llama 2-70B - XSum

* **Block efficiency:**

* SD (orange): Increases from ~2.7 to ~3.3.

* SpecTr (red): Increases from ~2.8 to ~3.2.

* RSD-C (green): Increases from ~2.9 to ~3.3.

* RSD-S (blue): Increases from ~2.9 to ~3.6.

* **MBSU:**

* SD (orange): Increases from ~2.7 to ~3.1.

* SpecTr (red): Increases from ~2.7 to ~3.0.

* RSD-C (green): Increases from ~2.8 to ~3.1.

* RSD-S (blue): Increases from ~2.8 to ~3.6.

* **Token rate:**

* SD (orange): Decreases from ~2.3 to ~1.2.

* SpecTr (red): Remains relatively constant at ~2.1.

* RSD-C (green): Remains relatively constant at ~2.1.

* RSD-S (blue): Remains relatively constant at ~2.1.

* **Accuracy:** All methods remain constant at ~1.0.

#### Llama 2-Chat-70B - WMT

* **Block efficiency:**

* SD (orange): Increases from ~1.7 to ~1.9.

* SpecTr (red): Increases from ~1.8 to ~2.1.

* RSD-C (green): Increases from ~1.8 to ~2.2.

* RSD-S (blue): Increases from ~1.9 to ~2.4.

* **MBSU:**

* SD (orange): Increases from ~1.7 to ~1.9.

* SpecTr (red): Increases from ~1.8 to ~2.0.

* RSD-C (green): Increases from ~1.8 to ~2.2.

* RSD-S (blue): Increases from ~1.9 to ~2.4.

* **Token rate:**

* SD (orange): Decreases from ~1.7 to ~0.8.

* SpecTr (red): Remains relatively constant at ~1.6.

* RSD-C (green): Remains relatively constant at ~1.6.

* RSD-S (blue): Remains relatively constant at ~1.7.

* **Accuracy:** All methods remain constant at ~1.0.

#### Llama 2-Chat-70B - XSum

* **Block efficiency:**

* SD (orange): Increases from ~2.3 to ~2.6.

* SpecTr (red): Increases from ~2.4 to ~2.7.

* RSD-C (green): Increases from ~2.4 to ~2.8.

* RSD-S (blue): Increases from ~2.5 to ~3.1.

* **MBSU:**

* SD (orange): Increases from ~2.3 to ~2.5.

* SpecTr (red): Increases from ~2.4 to ~2.6.

* RSD-C (green): Increases from ~2.4 to ~2.7.

* RSD-S (blue): Increases from ~2.5 to ~3.2.

* **Token rate:**

* SD (orange): Decreases from ~1.9 to ~1.0.

* SpecTr (red): Remains relatively constant at ~1.8.

* RSD-C (green): Remains relatively constant at ~1.8.

* RSD-S (blue): Remains relatively constant at ~1.9.

* **Accuracy:** All methods remain constant at ~1.0.

#### Dolly

* **Block efficiency:**

* SD (orange): Increases from ~2.8 to ~3.0.

* SpecTr (red): Increases from ~2.9 to ~3.2.

* RSD-C (green): Increases from ~2.9 to ~3.2.

* RSD-S (blue): Increases from ~2.9 to ~3.3.

* **MBSU:**

* SD (orange): Increases from ~2.8 to ~3.1.

* SpecTr (red): Increases from ~2.8 to ~3.1.

* RSD-C (green): Increases from ~2.9 to ~3.1.

* RSD-S (blue): Increases from ~2.9 to ~3.3.

* **Token rate:**

* SD (orange): Decreases from ~2.3 to ~1.1.

* SpecTr (red): Remains relatively constant at ~2.2.

* RSD-C (green): Remains relatively constant at ~2.2.

* RSD-S (blue): Remains relatively constant at ~2.3.

* **Accuracy:** All methods remain constant at ~1.0.

### Key Observations

* **Block Efficiency and MBSU:** RSD-S (ours) generally achieves the highest block efficiency and MBSU across all models and tasks. All methods show an increase in block efficiency and MBSU as the number of tokens at the target increases.

* **Token Rate:** SD consistently shows a decreasing token rate as the number of tokens at the target increases. SpecTr, RSD-C, and RSD-S maintain a relatively stable token rate.

* **Accuracy:** Accuracy remains constant across all methods and tasks, suggesting it is not significantly affected by the number of tokens at the target or the different methods being compared.

* **Model Variation:** The performance varies across different models (Llama 2-70B, Llama 2-Chat-70B, Dolly) and tasks (WMT, XSum), indicating that the effectiveness of each method is dependent on the specific model and task.

### Interpretation

The data suggests that RSD-S (ours) generally outperforms the other methods (SD, SpecTr, RSD-C) in terms of block efficiency and MBSU. However, SD exhibits a decreasing token rate, which might be a trade-off for its performance in other metrics. The consistent accuracy across all methods indicates that the primary differences lie in efficiency and resource utilization. The choice of the best method would depend on the specific priorities and constraints of the application, considering the trade-offs between block efficiency, MBSU, and token rate. The "ours" denotation suggests that RSD-C and RSD-S are the focus of the study, and the results indicate their superiority in most metrics.

</details>

the superiority of RSD-S over our baselines and the importance of early-truncating unlikely draft sequences. We also observe that there is no strong correlation between MBSU and token rate; this is since A100 GPUs used to measure token rates are not memory-bound. Furthermore, token rates in many cases are shown to decrease as the length of draft-token sequence becomes higher, which is due to the increased computation overhead to execute draft models with the longer draft sequence; however, one needs to be cautious since this result may not generally hold since token rate is hugely affected by the efficiency of software implementation and the devices which we execute the methods on. Finally, in WMT and XSum, BLEU and ROUGE-2 scores are similar across different methods, respectively, which implies that all methods recover the distributions of target LLMs.

## 5.2. ( Exp2 ) Fixed target computational budget

We select target computational budget, i.e., the number of draft tokens processed at the target model in parallel for each speculative decoding iteration, among values in { 6 , 10 , 14 , 21 , 30 } and evaluate our proposed methods and baselines; we summarize the results in Figure 5 and describe tree structures in Appendix C.3.2. While RSD-S achieves higher block efficiency and MBSU than SD and SpecTr in most cases, SD beats RSD-C in the relatively low budget regime, e.g., { 6 , 10 } with Llama 2-70B and XSum, and { 6 }

with Llama 2-Chat-70B and Dolly. We believe that our draft models are well-aligned with corresponding target models for those cases (from the observation that block efficiencies of SD close to 3.0, which are significantly higher than the numbers in other cases, are achieved), and increasing the depth rather than the width of the tree could quickly increase the acceptance rate in such cases. In the high budget regime, on the other hand, RSD-S beats SD for both block efficiency and MBSU. In terms of token rate, RSD-S strictly outperforms our baselines, whereas SD's token rate severely decreases for higher target computation budgets due to the computational overhead caused by the draft model's autoregressive decoding with the longer draft sequence.

## 6. Conclusion

We present RSD algorithms, a novel tree-based speculative decoding method leveraging the full diversifiability of the draft-token tree; RSD-C efficiently samples draft tokens without replacement via Gumbel-Topk trick, while RSD-S uses Stochastic Beam Search and samples drafttoken sequences without replacement. We also propose recursive rejection sampling that can verify the tree built by the sampling-without-replacement process and recovers the exact target model distribution. We show that RSD outperforms the baselines in most cases, supporting the importance of diverse drafting when accelerating LLM inference.

## References

- Achiam, J., Adler, S., Agarwal, S., Ahmad, L., Akkaya, I., Aleman, F. L., Almeida, D., Altenschmidt, J., Altman, S., Anadkat, S., et al. GPT-4 technical report. arXiv preprint arXiv:2303.08774 , 2023.

- Bojar, O. r., Federmann, C., Fishel, M., Graham, Y., Haddow, B., Huck, M., Koehn, P., and Monz, C. Findings of the 2018 conference on machine translation (wmt18). In Proceedings of the Third Conference on Machine Translation, Volume 2: Shared Task Papers , pp. 272-307, Belgium, Brussels, October 2018. Association for Computational Linguistics. URL http://www . aclweb . org/ anthology/W18-6401 .

- Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J. D., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., et al. Language models are few-shot learners. Advances in Neural Information Processing Systems (NeurIPS) , 33:1877-1901, 2020.

- Cai, T., Li, Y., Geng, Z., Peng, H., and Dao, T. Medusa: Simple framework for accelerating llm generation with multiple decoding heads. https://github . com/ FasterDecoding/Medusa , 2023.

- Cai, T., Li, Y., Geng, Z., Peng, H., Lee, J. D., Chen, D., and Dao, T. Medusa: Simple llm inference acceleration framework with multiple decoding heads. arXiv preprint arXiv:2401.10774 , 2024.

- Chen, C., Borgeaud, S., Irving, G., Lespiau, J.-B., Sifre, L., and Jumper, J. Accelerating large language model decoding with speculative sampling. arXiv preprint arXiv:2302.01318 , 2023.

- Conover, M., Hayes, M., Mathur, A., Xie, J., Wan, J., Shah, S., Ghodsi, A., Wendell, P., Zaharia, M., and Xin, R. Free dolly: Introducing the world's first truly open instruction-tuned llm, 2023. URL https://www . databricks . com/blog/2023/ 04/12/dolly-first-open-commerciallyviable-instruction-tuned-llm .

- Fu, Y., Bailis, P., Stoica, I., and Zhang, H. Breaking the sequential dependency of LLM inference using lookahead decoding, November 2023. URL https://lmsys . org/blog/2023-11-21lookahead-decoding/ .

- Holtzman, A., Buys, J., Du, L., Forbes, M., and Choi, Y. The curious case of neural text degeneration. arXiv preprint arXiv:1904.09751 , 2019.

- Jiang, A. Q., Sablayrolles, A., Mensch, A., Bamford, C., Chaplot, D. S., Casas, D. d. l., Bressand, F., Lengyel, G., Lample, G., Saulnier, L., et al. Mistral 7B. arXiv preprint arXiv:2310.06825 , 2023.

- Kim, S., Mangalam, K., Malik, J., Mahoney, M. W., Gholami, A., and Keutzer, K. Big little transformer decoder. arXiv preprint arXiv:2302.07863 , 2023.

- Kool, W., Van Hoof, H., and Welling, M. Stochastic beams and where to find them: The Gumbel-Topk trick for sampling sequences without replacement. In Proceedings of the 36th International Conference on Machine Learning (ICML) , pp. 3499-3508. PMLR, 2019.

- Leviathan, Y., Kalman, M., and Matias, Y. Fast inference from transformers via speculative decoding. In Proceedings of the 40th International Conference on Machine Learning (ICML) , 2023.

- Miao, X., Oliaro, G., Zhang, Z., Cheng, X., Wang, Z., Wong, R. Y. Y., Chen, Z., Arfeen, D., Abhyankar, R., and Jia, Z. SpecInfer: Accelerating generative LLM serving with speculative inference and token tree verification. arXiv preprint arXiv:2305.09781 , 2023.

- Narayan, S., Cohen, S. B., and Lapata, M. Don't give me the details, just the summary! Topic-aware convolutional neural networks for extreme summarization. arXiv preprint arXiv:1808.08745 , 2018.

- Shazeer, N. Fast transformer decoding: One write-head is all you need. arXiv preprint arXiv:1911.02150 , 2019.

- Sun, Z., Suresh, A. T., Ro, J. H., Beirami, A., Jain, H., and Yu, F. SpecTr: Fast speculative decoding via optimal transport. In Advances in Neural Information Processing Systems (NeurIPS) , 2023.

- Touvron, H., Martin, L., Stone, K., Albert, P., Almahairi, A., Babaei, Y., Bashlykov, N., Batra, S., Bhargava, P., Bhosale, S., et al. Llama 2: Open foundation and finetuned chat models. arXiv preprint arXiv:2307.09288 , 2023.

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., and Polosukhin, I. Attention is all you need. Advances in Neural Information Processing Systems (NeurIPS) , 30, 2017.

- Vieira, T. Gumbel-max trick and weighted reservoir sampling. 2014. URL https: //timvieira . github . io/blog/post/2014/ 08/01/gumbel-max-trick-andweightedreservoir-sampling/ .

- Villani, C. et al. Optimal transport: old and new , volume 338. Springer, 2009.

- Xia, H., Yang, Z., Dong, Q., Wang, P., Li, Y., Ge, T., Liu, T., Li, W., and Sui, Z. Unlocking efficiency in large language model inference: A comprehensive survey of speculative decoding. arXiv preprint arXiv:2401.07851 , 2024.

- Xiao, Y., Wu, L., Guo, J., Li, J., Zhang, M., Qin, T., and Liu, T.-y. A survey on non-autoregressive generation for neural machine translation and beyond. IEEE Transactions on Pattern Analysis and Machine Intelligence , 2023.

- Zhang, S., Roller, S., Goyal, N., Artetxe, M., Chen, M., Chen, S., Dewan, C., Diab, M., Li, X., Lin, X. V., et al. OPT: Open pre-trained transformer language models. arXiv preprint arXiv:2205.01068 , 2022.

- Zhou, Y., Lyu, K., Rawat, A. S., Menon, A. K., Rostamizadeh, A., Kumar, S., Kagy, J.-F., and Agarwal, R. Distillspec: Improving speculative decoding via knowledge distillation. arXiv preprint arXiv:2310.08461 , 2023.

## A. Theorems and proofs

## A.1. Proof of Theorem 3.1

Theorem 3.1 (Recursive rejection sampling recovers target distribution) . The random variable Z ∈ X defining recursive rejection sampling rule (4) follows the target distribution q , i.e.,

$$\Pr \left \{ Z = z \right \} = q ( z ) , z \in \mathcal { X } .$$

Proof. We remain a sketch of the proof here and the formal proof is given in the next paragraph. We first consider the case where ˆ X (1) , ..., ˆ X ( K -1) are rejected and see whether we accept ˆ X ( K ) or not; we either accept ˆ X ( K ) with probability Θ ( K ) in (3) or sample a new token Y ∼ q ( K +1) ( ·| ˆ X (1: K -1) ) when all draft tokens are rejected. Since q ( K +1) is the residual distribution from q ( K ) , one can regard it as the simple sampling by Chen et al. (2023) and Leviathan et al. (2023), which recovers q ( K ) . The same idea is applied to ˆ X ( K -1) , ..., ˆ X (1) in the reversed order until we recover q = q (1) at the end.

Let us desribe the formal proof. From the definition of recursive rejection sampling (4), we have

<!-- formula-not-decoded -->

It can be shown that the following equality holds for each k :

$$\Sigma _ { 2 , k - 1 } = \Sigma _ { 1 , k } + \Sigma _ { 2 , k } .$$

Let us first consider k = K , then,

$$& \text {$\Sigma_{1,K}+ \Sigma_{2,K}$} \\ & = \sum _ { x ^ { ( 1 ) } , \dots , x ^ { ( K - 1 ) } } \Pr \left \{ \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \times \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {rej} ^ { K - 1 } , \hat { X } ^ { ( K ) } = z , A ^ { ( K ) } = \text {acc} \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \\ & + \sum _ { x ^ { ( 1 ) } , \dots , x ^ { ( K ) } } \Pr \left \{ \hat { X } ^ { ( 1 \colon K ) } = x ^ { ( 1 \colon K ) } \right \} \times \Pr \left \{ A ^ { ( 1 \colon K ) } = \text {rej} ^ { K } , \hat { X } ^ { ( K + 1 ) } = z \Big | \hat { X } ^ { ( 1 \colon K ) } = x ^ { ( 1 \colon K ) } \right \} \\ & = \sum _ { x ^ { ( 1 ) } , \dots , x ^ { ( K - 1 ) } } \Pr \left \{ \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \Big ( \underbrace { \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {rej} ^ { K - 1 } , \hat { X } ^ { ( K ) } = z , A ^ { ( K ) } = \text {acc} \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} } _ { = \colon T _ { 2 } ( K ) } \\ & \quad + \underbrace { \sum _ { x ^ { ( K ) } } \Pr \left \{ \hat { X } ^ { ( K ) } = x ^ { ( K ) } \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \times \Pr \left \{ A ^ { ( 1 \colon K ) } = \text {rej} ^ { K } , \hat { X } ^ { ( K + 1 ) } = z \Big | \hat { X } ^ { ( 1 \colon K ) } = x ^ { ( 1 \colon K ) } \right \} } _ { = \colon T _ { 2 } ( K ) } \right ) .

<text><loc_66><loc_464><loc_196><loc_484>One can represent $^{T$_{1,K}$}$and$^{T$_{2,K}$}$as follows:</text>

</doctag>$$

One can represent T 1 ,K and T 2 ,K as follows:

$$T _ { 1 , K } & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {re} j ^ { K - 1 } \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \\ & \quad \times \Pr \left \{ \hat { X } ^ { ( K ) } = z \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \times \text {Pr} \left \{ A ^ { ( K ) } = \text {acc} \Big | \hat { X } ^ { ( 1 \colon K ) } = ( x ^ { ( 1 \colon K - 1 ) } , z ) \right \} \\ & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {re} j ^ { K - 1 } \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} p ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 1 ) } ) \min \left \{ 1 , \frac { q ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 2 ) } ) } { p ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 1 ) } ) } \right \} \\ & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {re} j ^ { K - 1 } \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \min \left \{ p ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 1 ) } ) , q ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 2 ) } ) \right \} ,$$

<!-- formula-not-decoded -->

Therefore, we have

$$& T _ { 1 , K } + T _ { 2 , K } \\ & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {re} j ^ { K - 1 } \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \\ & \quad \times \left ( \min \left \{ p ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 1 ) } ) , q ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 2 ) } ) \right \} + \max \left \{ 0 , q ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 2 ) } ) - p ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 1 ) } ) \right \} \right ) \\ & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {re} j ^ { K - 1 } \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} q ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 2 ) } ) \\ & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {re} j ^ { K - 1 } , \tilde { X } ^ { ( K ) } = z \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} ,$$

where we define a random variable ˜ X ( K ) such that

$$P r \left \{ \tilde { X } ^ { ( K ) } = z \right | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \colon = q ^ { ( K ) } ( z | x ^ { ( 1 \colon K - 1 ) } ) ,$$

which leads to

$$& \Sigma _ { 1 , K } + \Sigma _ { 2 , K } \\ & = \sum _ { x ^ { ( 1 ) } , \dots , x ^ { ( K - 1 ) } } \Pr \left \{ \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} ( T _ { 1 , K } + T _ { 2 , K } ) \\ & = \sum _ { x ^ { ( 1 ) } , \dots , x ^ { ( K - 1 ) } } \Pr \left \{ \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \times \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {rej} ^ { K - 1 } , \tilde { X } ^ { ( K ) } = z \Big | \hat { X } ^ { ( 1 \colon K - 1 ) } = x ^ { ( 1 \colon K - 1 ) } \right \} \\ & = \Pr \left \{ A ^ { ( 1 \colon K - 1 ) } = \text {rej} ^ { K - 1 } , \tilde { X } ^ { ( K ) } = z \right \} \\ & = \Sigma _ { 2 , K - 1 } .$$

Since the same derivation can be done for k = 2 , ..., K -1 , we have

$${ P r } \left \{ Z = z \right \} = \sum _ { k = 1 } ^ { K } \Sigma _ { 1 , k } + \Sigma _ { 2 , K } = \sum _ { k = 1 } ^ { K - 1 } \Sigma _ { 1 , k } + \Sigma _ { 2 , K - 1 } = \cdots = \Sigma _ { 1 , 1 } + \Sigma _ { 2 , 1 } = q ( z ) ,$$

where the last equality holds from the derivation of original speculative decoding by (Chen et al., 2023; Leviathan et al., 2023).

## A.2. Proof of Theorem 3.2

Theorem 3.2 (Tokens from the same sequence follow sampling without replacement in RSD-S) . In RSD-S, any non-empty subsequence of the sequence ˆ X (1) l +1 , ..., ˆ X ( W ) l +1 of draft tokens (from O (1) l +1 , ..., O ( W ) l +1 in (12) ) such that each element of the subsequence has the same parent τ ( k ) l follows sampling without replacement from p ( ·| τ ( k ) l ) .

Proof. For fixed τ ( k ) l , consider a sequence of tokens

$$\bar { X } _ { l + 1 } ^ { ( k ) } \colon = \underset { x \in \mathcal { X } } { \arg s o r t } \psi _ { l } ( \tau _ { l } ^ { ( k ) } , x ) = \underset { x \in \mathcal { X } } { \arg s o r t } \tilde { \phi } _ { l } ( \tau _ { l } ^ { ( k ) } , x ) ,$$

where the last equality holds since T in (10) is monotonically increasing w.r.t. ˜ ϕ l ( τ ( k ) l , · ) for fixed τ ( k ) l . Thus, ¯ X ( k ) l +1 can be seen as samples from p ( ·| τ ( k ) l ) without replacement.

For a lengthl k subsequence o ( k ) l +1 of ( O (1) l +1 , ..., O ( W ) l +1 ) in (12), where each element of the subsequence have τ ( k ) l as its parent, the token sequence in o ( k ) l +1 is a subsequence of ¯ X ( k ) l +1 , i.e., those tokens are topl k samples without replacement from p ( ·| τ ( k ) l ) .

## B. Algorithm

## B.1. Recursive Speculative Decoding with Constant Branching Factors (RSD-C)

## Algorithm 2 Recursive Speculative Decoding with Constant Branching Factors (RSD-C)

- 10:

- 11:

- 12:

- 13:

```

B.1. Recursive Speculative Decoding with Constant Branching Factors (RSD-C)

Algorithm 2 Recursive Speculative Decoding with Constant Branching Factors (RSD-C)

1: Input: The length L$_draft of draft sequences (depth of the draft tree), a sequence x$_input of input tokens, a list

b := [b$_0,...,b$_draft$_-1] of constant branching factors in the draft tree, the maximum length L$_output of the output

sequence.

2: // Get the length of the input sequence.

L$_input ← GetLength(x$_input).

3: // Initialize empty KV caches for draft and target models.

C$_draft ← , C$_target ← .

4: while L$_input < L$_output do

5: // (STEP 1) Create a draft tree by using the draft model.

T, x$_input, C$_draft, M, id$_position, L$_num_nodes ← CreateDraftTreeConst(x$_input, C$_draft, b, L$_draft).

6: // (STEP 2) Evaluate draft tokens by using the target model.

// - Apply M to the right below corner of attention weights.

// - The target log probability Φ$_target is a GetLength(x$_input) × N$_vocab tensor.

// - N$_vocab is the vocabulary size.

7: // Convert the log probability tensor into the list of log probabilities

for each level of the tree.

L$_log_probs_target ← SplitTensor(Φ$_target[-Sum(L$_num_nodes) :,:],L$_num_nodes, dim = 0)

8: // (STEP 3) Run Recursive Rejection Sampling for each level of the tree.

x$_accepted, x$_last, id$_accepted flat node ← RecursiveRejectionSampling(T, L$_log_probs_target)

9: // (STEP 4) Use KV caches that are accepted, and prepare for the next round.

C$_draft, C$_target ← FilterKVCache(C$_draft, C$_target, L$_input, id$_accepted flat node)

10: x$_input ← Concat([x$_input[:L$_input],x$_accepted,x$_last])

11: L$_input ← GetLength(x$_input)

12: end while

```

## Algorithm 3 CreateDraftTreeConst ( x input , C draft , b , L draft )

```

Recursive Speculative Decoding: Accelerating LLM Inference via Sampling Without Replacement

-----------------------------------------------------------------------------

Algorithm 3 CreateDraftTreeConst(xinput, Cdraft, b, Ldraft)

----------------------

1: Input: An input sequence xinput, the draft KV cache Cdraft, the branching factor b := [b0,...,bLdraft-1], the draft

length Ldraft

2: // Get the length of the input sequence.

Linput <-GetLength(xinput).

3: // Initialize lists for 1) draft log probabilities, 2) flattened node IDs, 3)

parent node ids (within each level of the draft tree), 4) draft tokens, 5)

numbers of nodes (for all levels of the tree), respectively.

Llog-probs-draft <- [], Lflat-node-ids <- [], Lparent-ids <- [], Ldraft-tokens <- [], Lnum-nodes <- [].

4: // Initialize a draft tree.

T <- (Llog-probs-draft, Lflat-node-ids, Lparent-ids, Ldraft-tokens).

5: // Set an empty attention mask, and position ids; inclusive for start and

exclusive for end.

M <- 0, id_position <- Arange(start = 0, end = Linput).

6: // Set the counter to check the number of nodes in the tree.

Ntree-prev <- 0, Ntree-curr <- 0.

7: // Set the number of nodes at the current level of the tree.

Nnodes <- 1, Lnum-nodes.append(Nnodes).

8: for ldraft = 0 to Ldraft - 1 do

9: // Apply M to the right below corner of attention weights.

10: // The draft log probability Pdraft is a GetLength(xinput) × Nvocab tensor.