# ConceptMath: A Bilingual Concept-wise Benchmark for Measuring Mathematical Reasoning of Large Language Models

Abstract

This paper introduces ConceptMath, a bilingual (English and Chinese), fine-grained benchmark that evaluates concept-wise mathematical reasoning of Large Language Models (LLMs). Unlike traditional benchmarks that evaluate general mathematical reasoning with an average accuracy, ConceptMath systematically organizes math problems under a hierarchy of math concepts, so that mathematical reasoning can be evaluated at different granularity with concept-wise accuracies. Based on our ConcepthMath, we evaluate a broad range of LLMs, and we observe existing LLMs, though achieving high average accuracies on traditional benchmarks, exhibit significant performance variations across different math concepts and may even fail catastrophically on the most basic ones. Besides, we also introduce an efficient fine-tuning strategy to enhance the weaknesses of existing LLMs. Finally, we hope ConceptMath could guide the developers to understand the fine-grained mathematical abilities of their models and facilitate the growth of foundation models The data and code are available at https://github.com/conceptmath/conceptmath.. footnotetext: * First three authors contributed equally. footnotetext: ${}^{\dagger}$ Corresponding Author: Jiaheng Liu.

1 Introduction

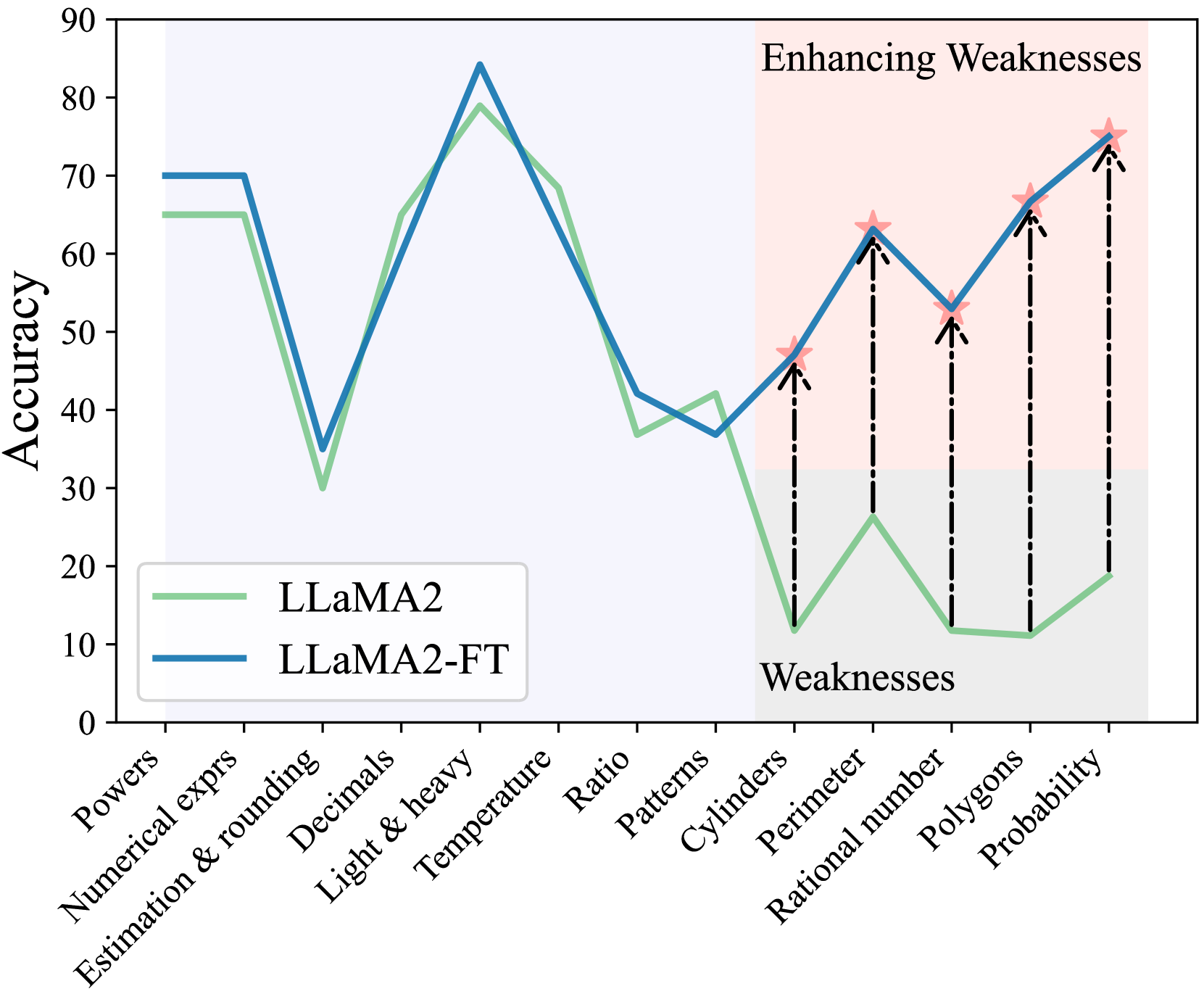

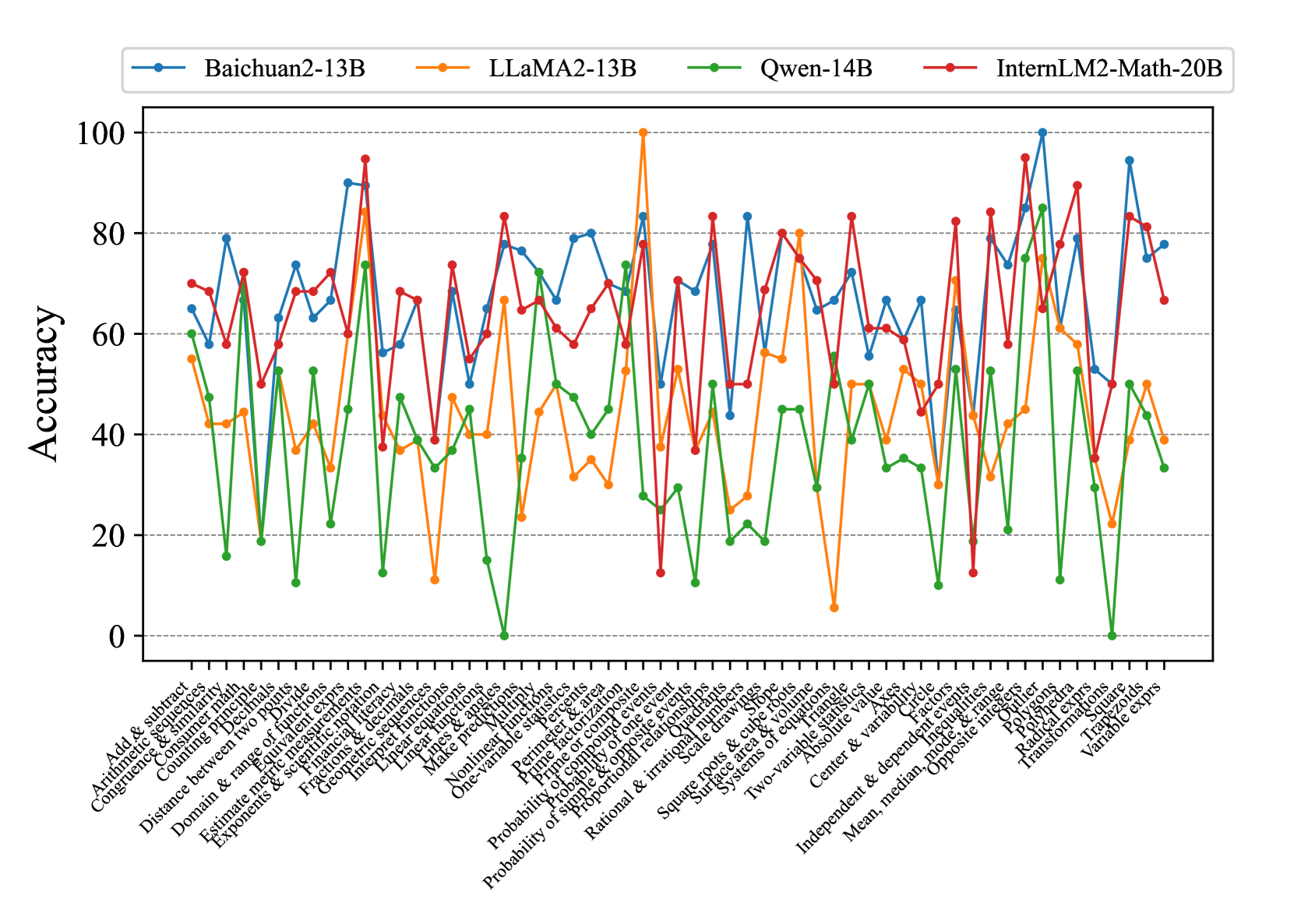

Mathematical reasoning is a crucial capability for Large Language Models (LLMs). Recent advancements in LLMs, including Anthropic Anthropic (2023), GPT-4 (OpenAI, 2023), and LLaMA (Touvron et al., 2023a), have demonstrated impressive mathematical reasoning on existing benchmarks with high average accuracies on datasets like GSM8K (Cobbe et al., 2021). Although these benchmarks are able to measure the overall mathematical reasoning capabilities of LLMs on average, they fail to probe the fine-grained failure modes of mathematical reasoning on specific mathematical concepts. For example, Fig. 1 shows that the performance of LLaMA2-13B varies significantly across different concepts and fails on simple concepts like Rational number and Cylinders. It is crucial to know these specific failure modes of the language model, especially in some practical applications where we need to focus on specific mathematical abilities. For example, for financial analysts, calculation and statistics are the concepts of most interest while others like geometry are not as important.

Moreover, the mathematics system, by its nature, is more fine-grained than holistic. It is typically organized into distinct math concepts https://en.wikipedia.org/wiki/Lists_of_mathematics_topics, and humans develop comprehensive mathematical capabilities through a concept-by-concept, curriculum-based learning process (Simon, 2011; Fritz et al., 2013). These issues underscore the core motivation of this paper: the need for a fine-grained benchmark that evaluates concept-wise mathematical reasoning capabilities of LLMs.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: LLaMA2 vs. LLaMA2-FT Accuracy on Various Tasks

### Overview

The image is a line chart comparing the accuracy of two models, LLaMA2 and LLaMA2-FT, across a range of tasks. The x-axis represents different task categories, while the y-axis represents accuracy, ranging from 0 to 90. The chart highlights a region where LLaMA2 performs poorly ("Weaknesses") and shows how LLaMA2-FT enhances performance in those areas ("Enhancing Weaknesses").

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Task categories: Powers, Numerical exprs, Estimation & rounding, Decimals, Light & heavy, Temperature, Ratio, Patterns, Cylinders, Perimeter, Rational number, Polygons, Probability.

* **Y-axis:** Accuracy, ranging from 0 to 90 in increments of 10.

* **Legend:** Located in the bottom-left corner:

* LLaMA2 (light green line)

* LLaMA2-FT (blue line)

* **Regions:**

* A light blue shaded region spans from "Powers" to "Patterns".

* A light red shaded region spans from "Cylinders" to "Probability". This region is labeled "Enhancing Weaknesses" at the top and "Weaknesses" at the bottom.

### Detailed Analysis

* **LLaMA2 (light green line):**

* Powers: ~65

* Numerical exprs: ~65

* Estimation & rounding: ~30

* Decimals: ~65

* Light & heavy: ~80

* Temperature: ~70

* Ratio: ~40

* Patterns: ~35

* Cylinders: ~12

* Perimeter: ~25

* Rational number: ~11

* Polygons: ~11

* Probability: ~20

* **LLaMA2-FT (blue line):**

* Powers: ~70

* Numerical exprs: ~70

* Estimation & rounding: ~35

* Decimals: ~85

* Light & heavy: ~80

* Temperature: ~70

* Ratio: ~42

* Patterns: ~37

* Cylinders: ~45

* Perimeter: ~50

* Rational number: ~62

* Polygons: ~67

* Probability: ~75

* **Trends:**

* LLaMA2: Starts high, drops significantly at "Estimation & rounding", rises sharply to "Light & heavy", then declines gradually with a sharp drop at "Cylinders", then slowly rises again.

* LLaMA2-FT: Similar to LLaMA2 but generally higher, especially in the "Enhancing Weaknesses" region.

* **Enhancing Weaknesses Region:**

* Vertical dashed lines connect the LLaMA2 data points to the corresponding LLaMA2-FT data points in the "Enhancing Weaknesses" region, visually indicating the improvement in accuracy.

* Red star markers are placed on the LLaMA2-FT line at each data point within the "Enhancing Weaknesses" region.

### Key Observations

* LLaMA2-FT consistently outperforms LLaMA2 across all tasks.

* The "Enhancing Weaknesses" region clearly demonstrates the improvement achieved by LLaMA2-FT in tasks where LLaMA2 performs poorly.

* The largest performance gains are observed in "Cylinders", "Perimeter", "Rational number", "Polygons", and "Probability".

### Interpretation

The chart illustrates the effectiveness of fine-tuning (FT) LLaMA2 to improve its accuracy on specific tasks. The "Enhancing Weaknesses" region highlights the targeted improvement achieved by LLaMA2-FT in areas where the base LLaMA2 model struggles. This suggests that fine-tuning is a valuable technique for enhancing the performance of language models on specific domains or tasks. The consistent outperformance of LLaMA2-FT indicates that the fine-tuning process was successful in transferring knowledge and improving the model's ability to handle these tasks. The red stars and dashed lines emphasize the magnitude of the improvement in the "Weaknesses" area.

</details>

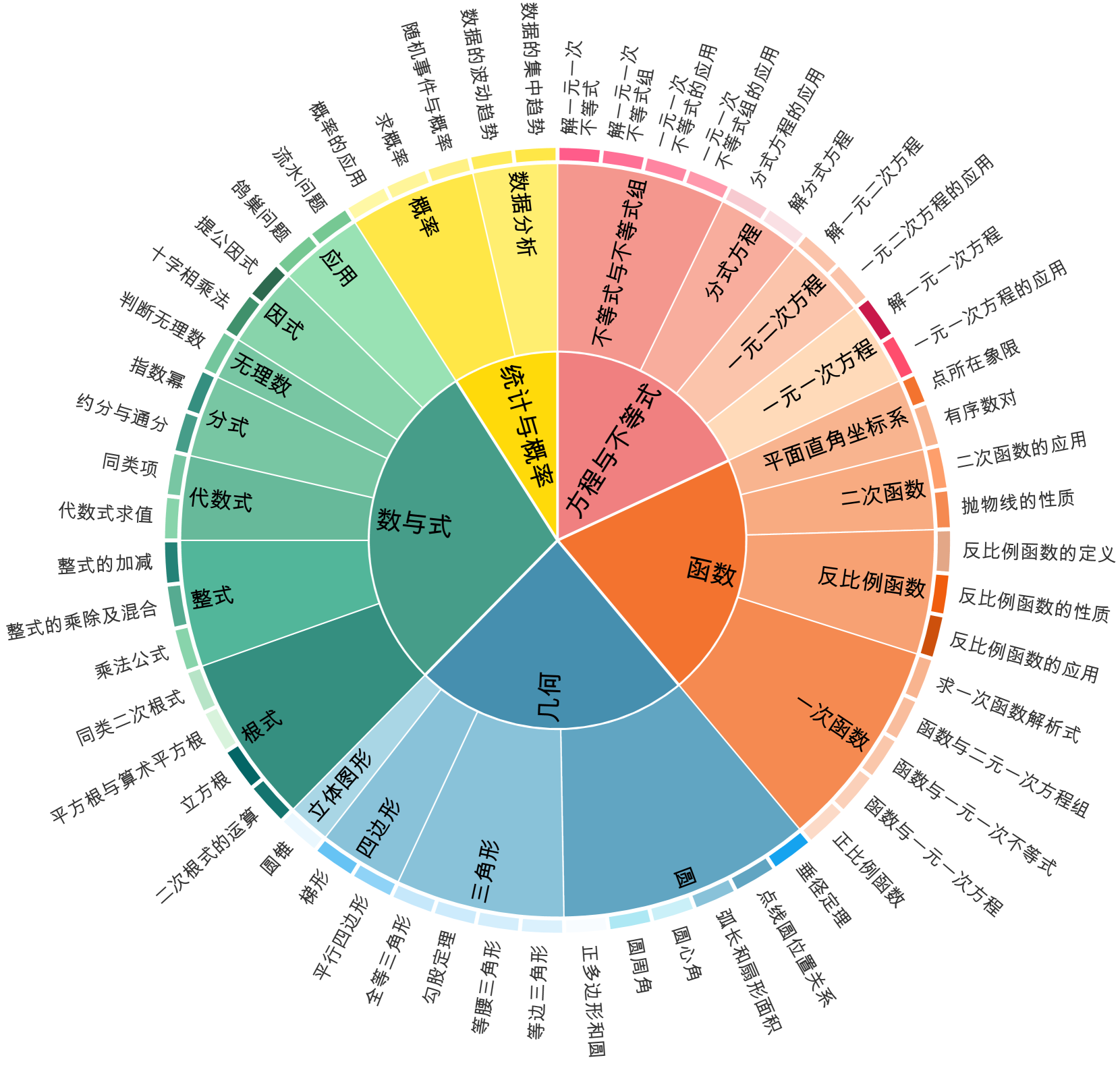

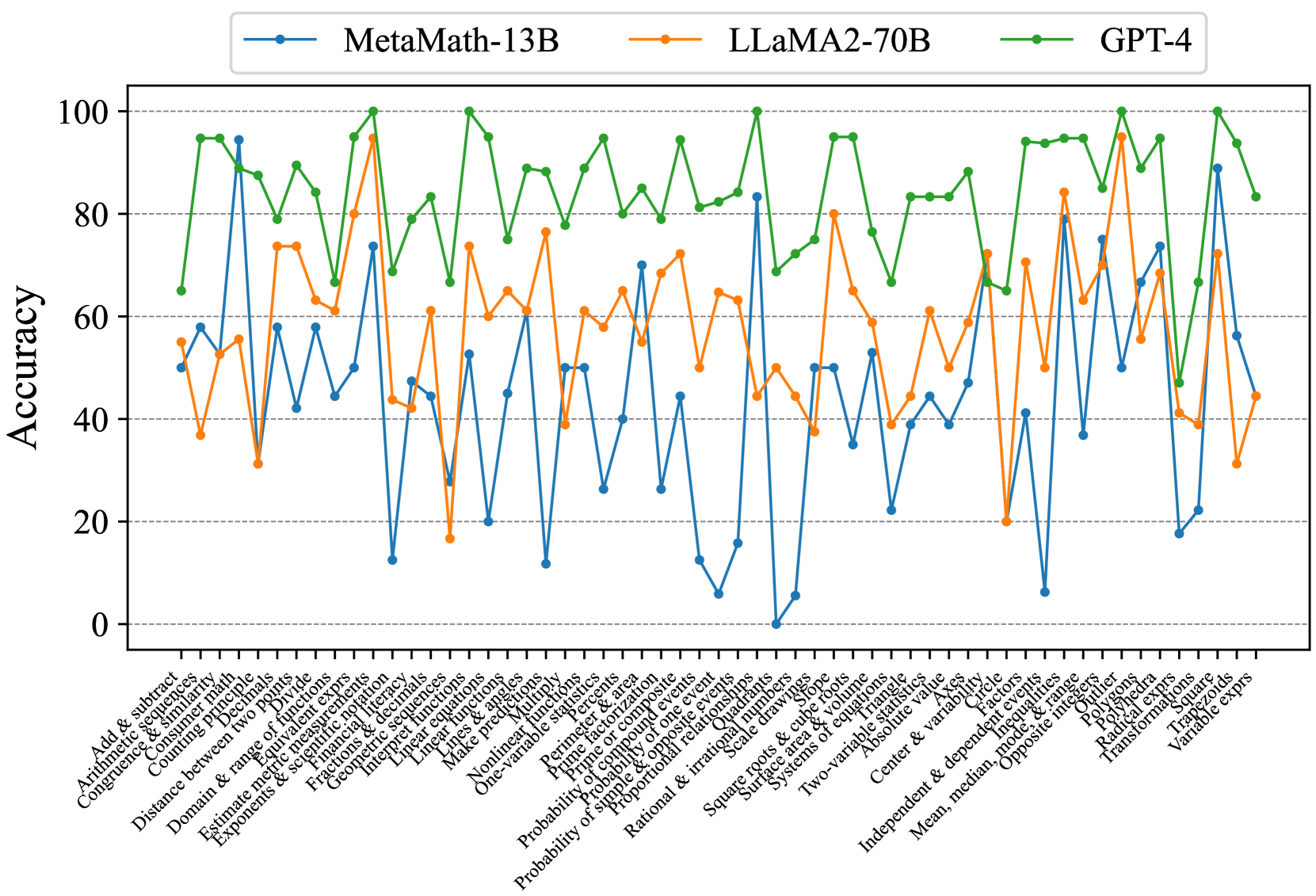

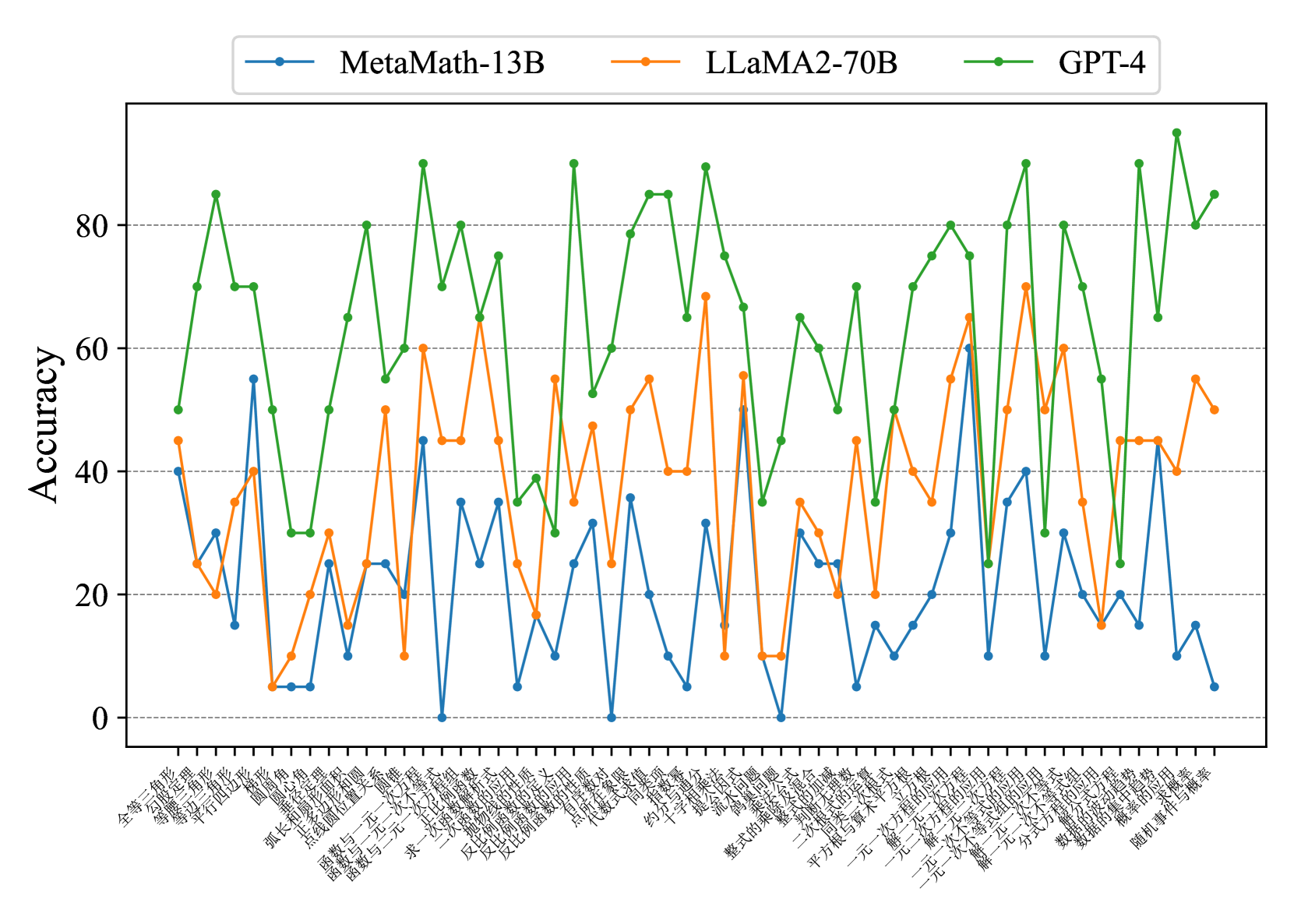

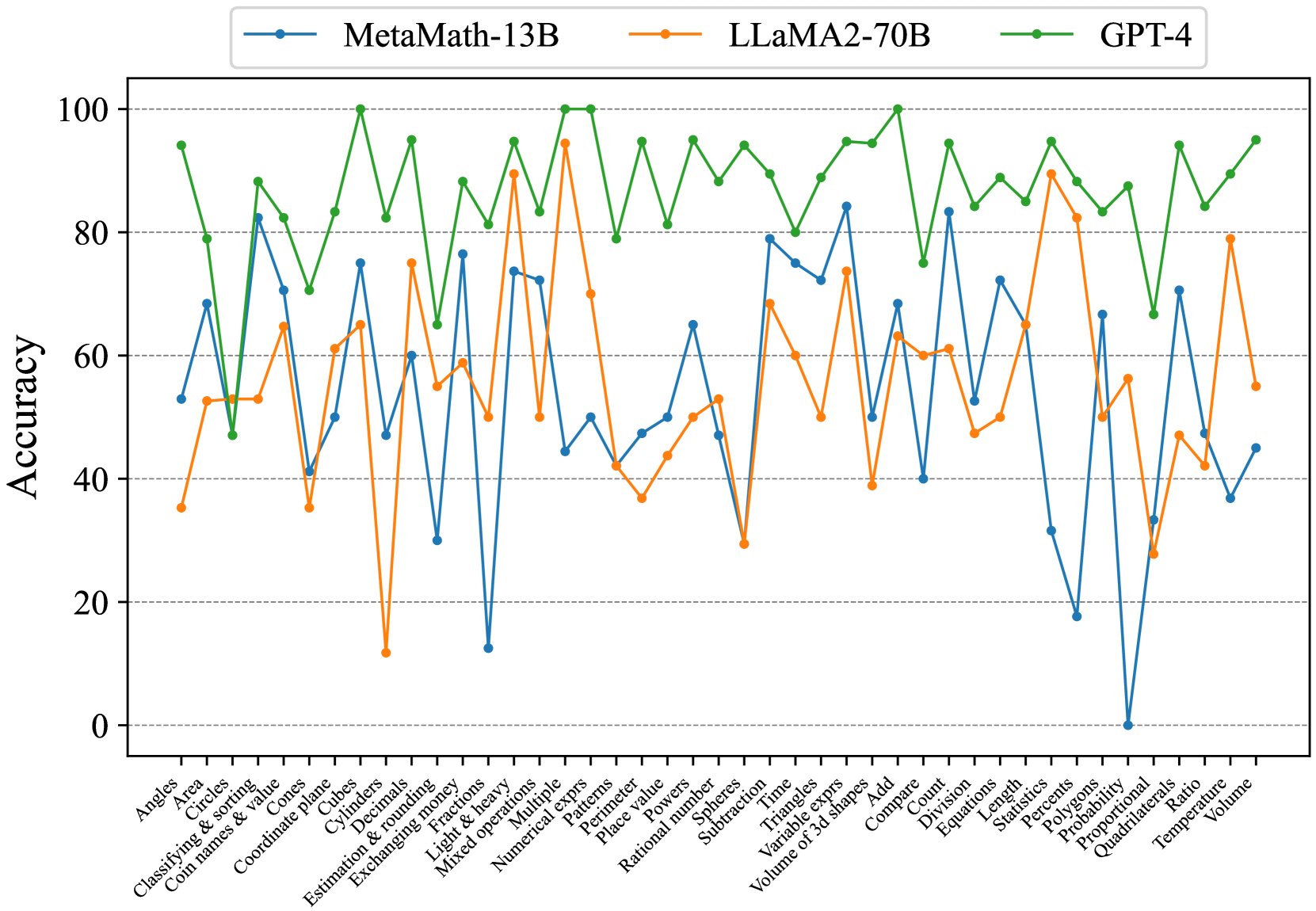

Figure 1: The concept-wise accuracies of LLaMA2-13B and the fine-tuned version based on our efficient fine-tuning method (i.e., LLaMA2-FT).

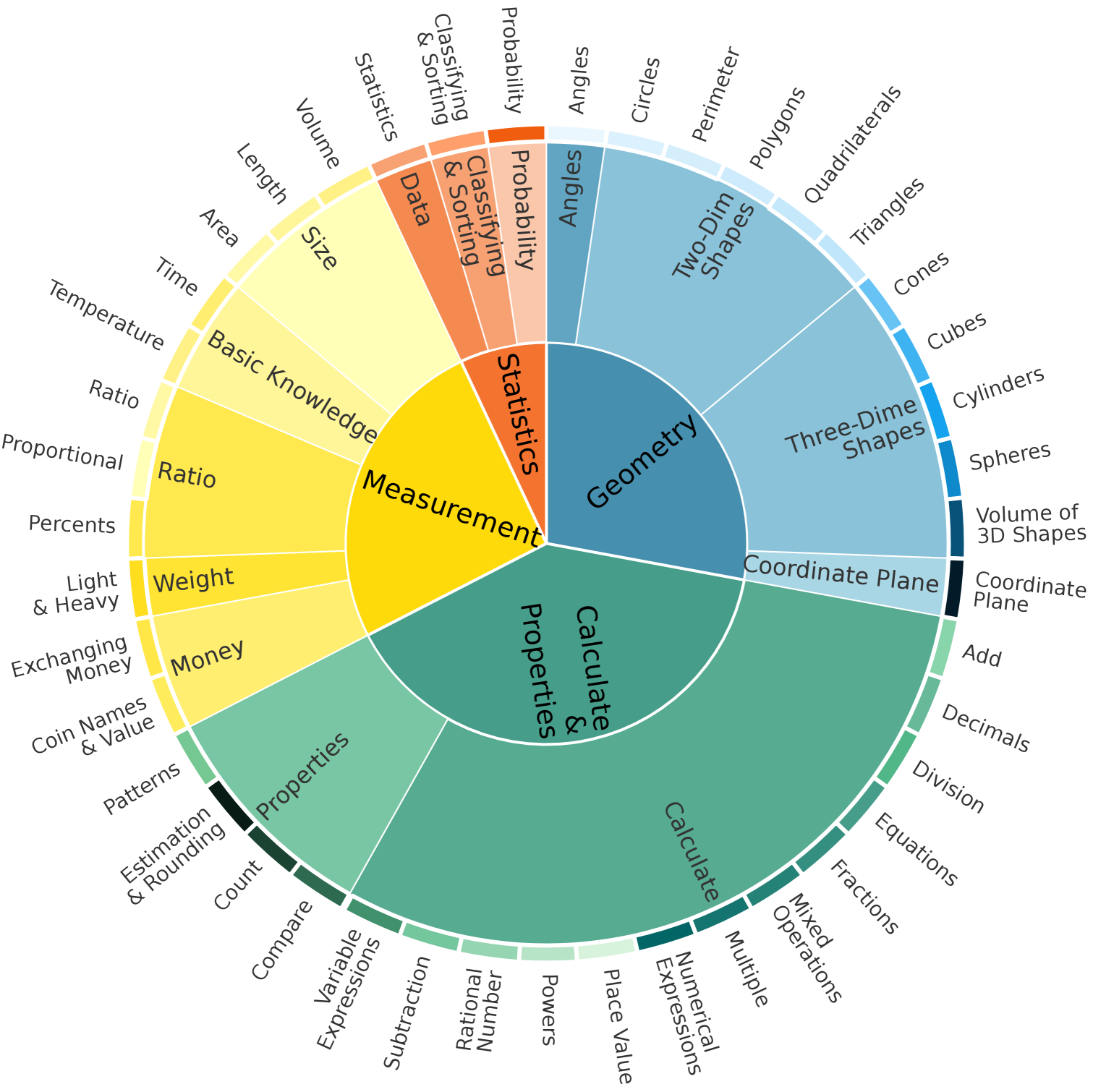

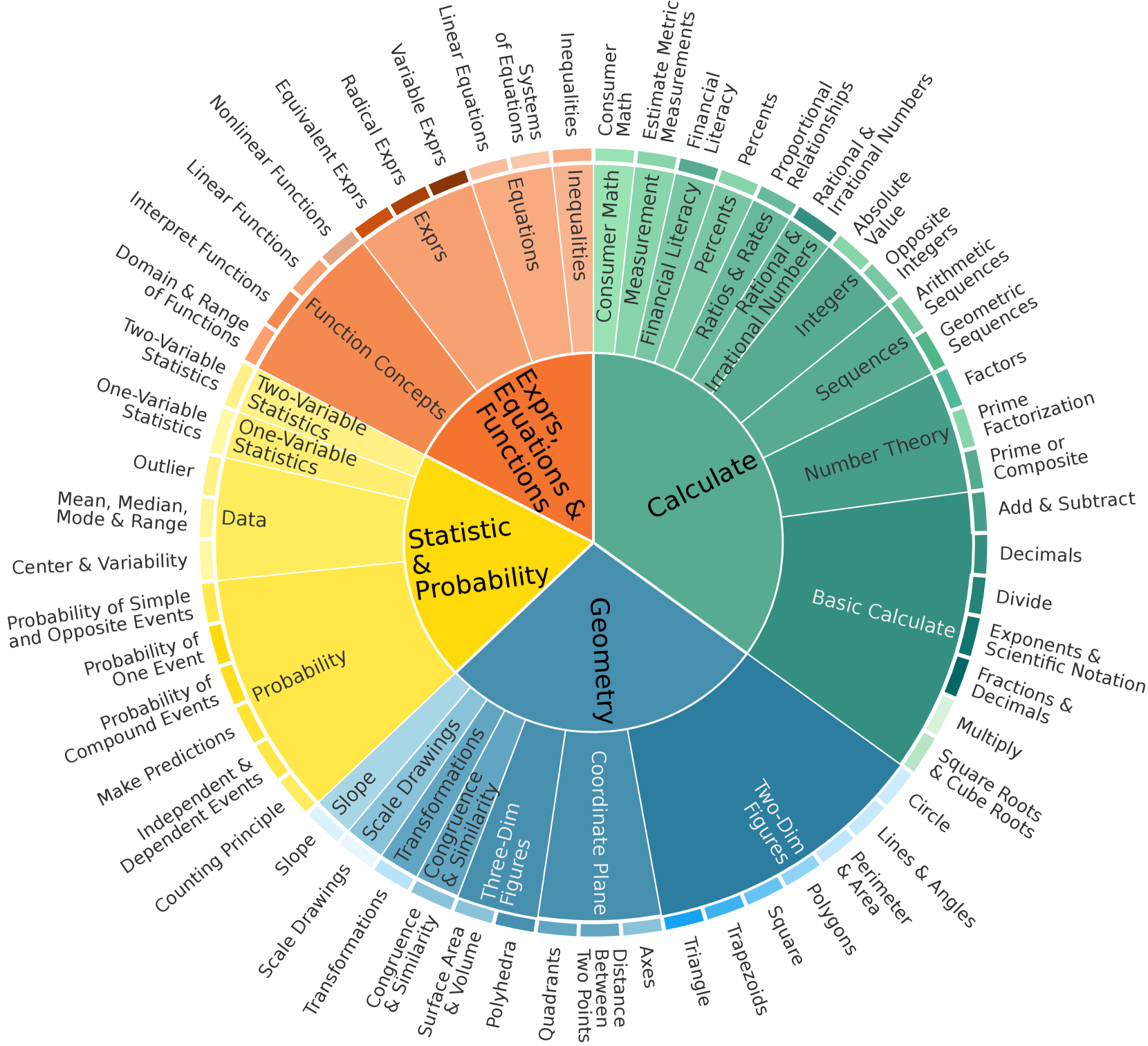

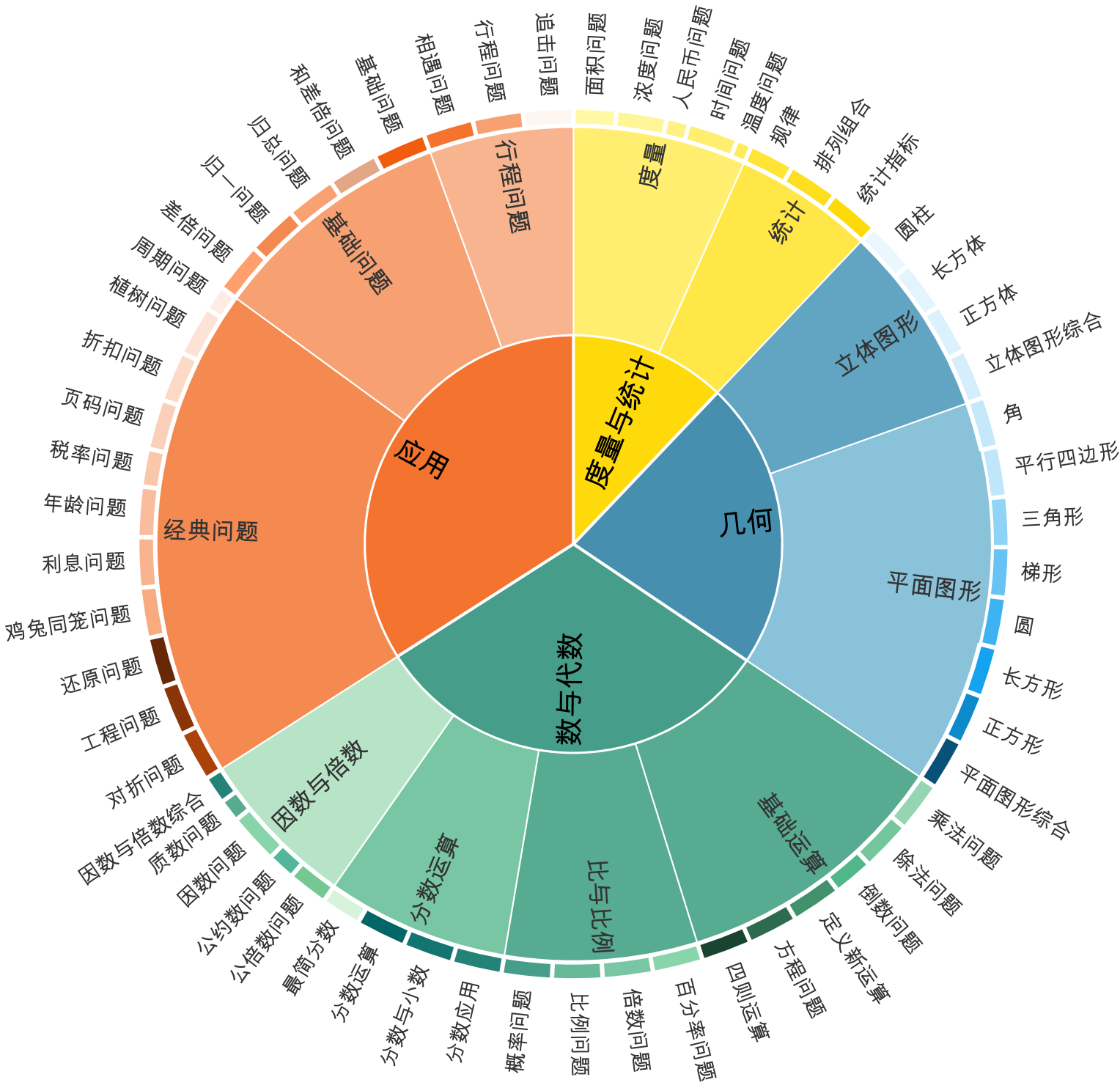

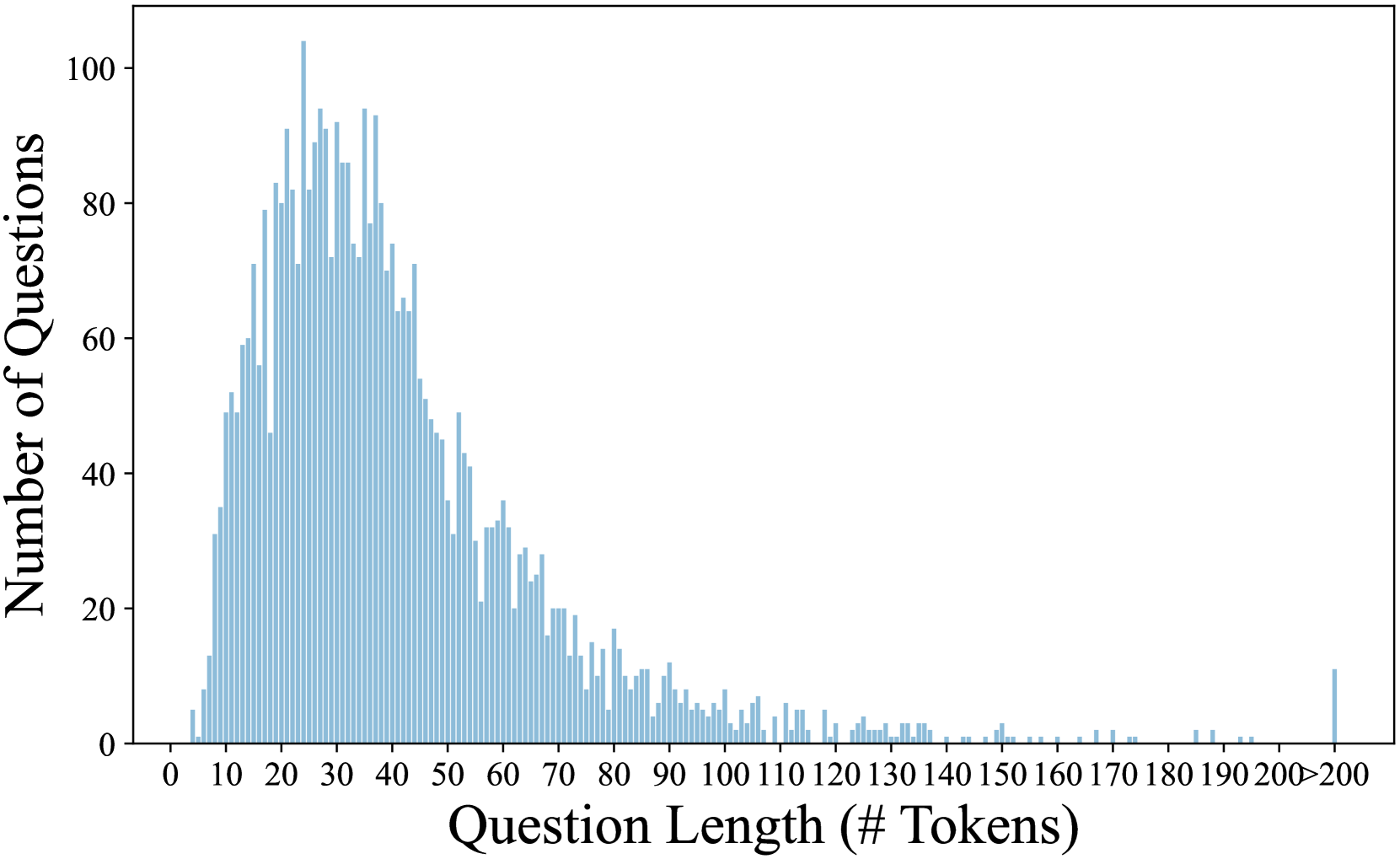

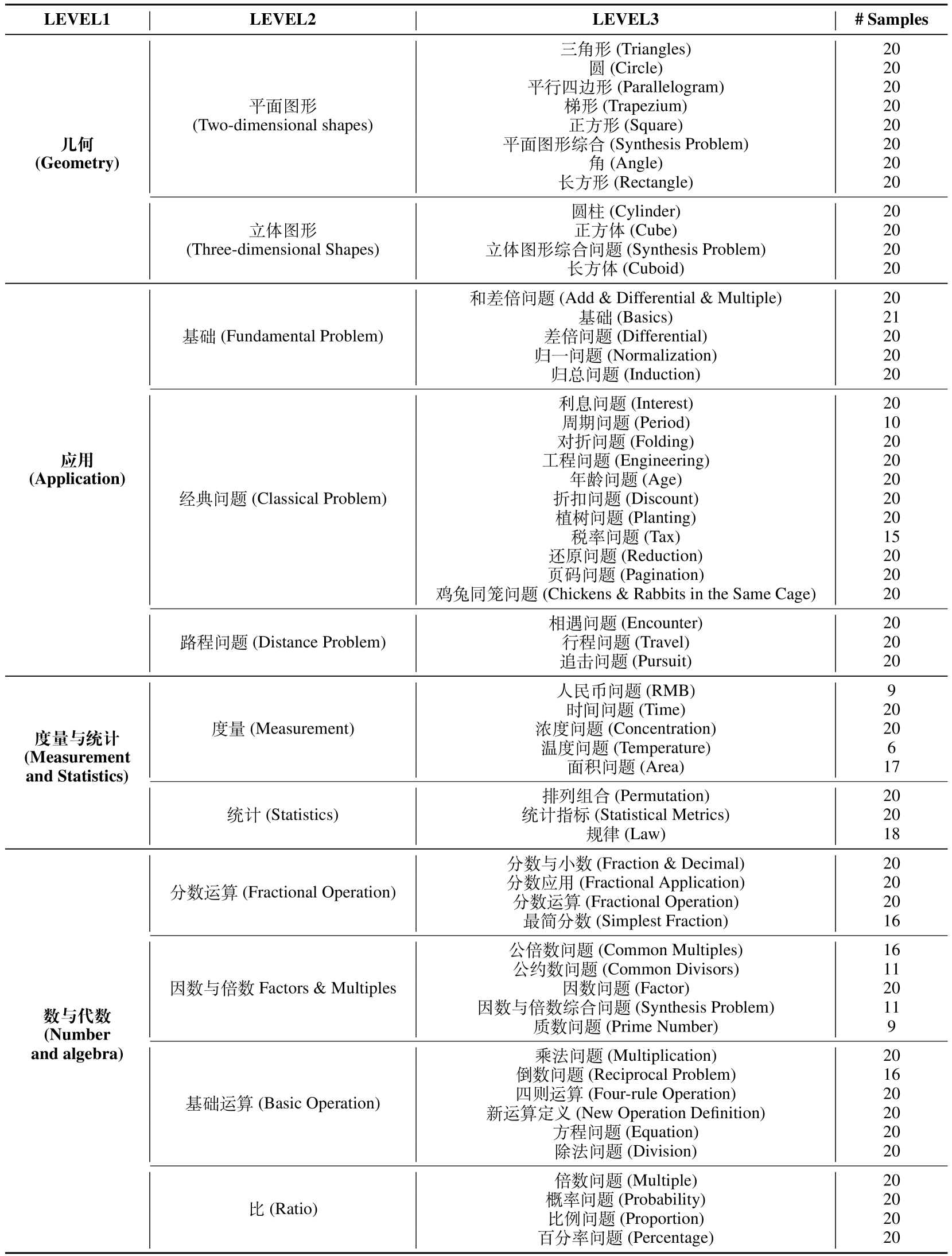

Therefore, first, we introduce ConceptMath, the first bilingual (English and Chinese), concept-wise benchmark for measuring mathematical reasoning. ConceptMath gathers math concepts from four educational systems, resulting in four distinct mathematical concept systems: English Elementary, English Middle, Chinese Elementary, and Chinese Middle The four concept systems are abbreviated as Elementary-EN, Middle-EN, Elementary-ZH, and Middle-ZH.. Each of these concept systems organizes around 50 atomic math concepts under a three-level hierarchy and each concept includes approximately 20 mathematical problems. Overall, ConceptMath comprises a total of 4011 math word problems across 214 math concepts, and Fig. 2 shows the diagram overview of ConceptMath.

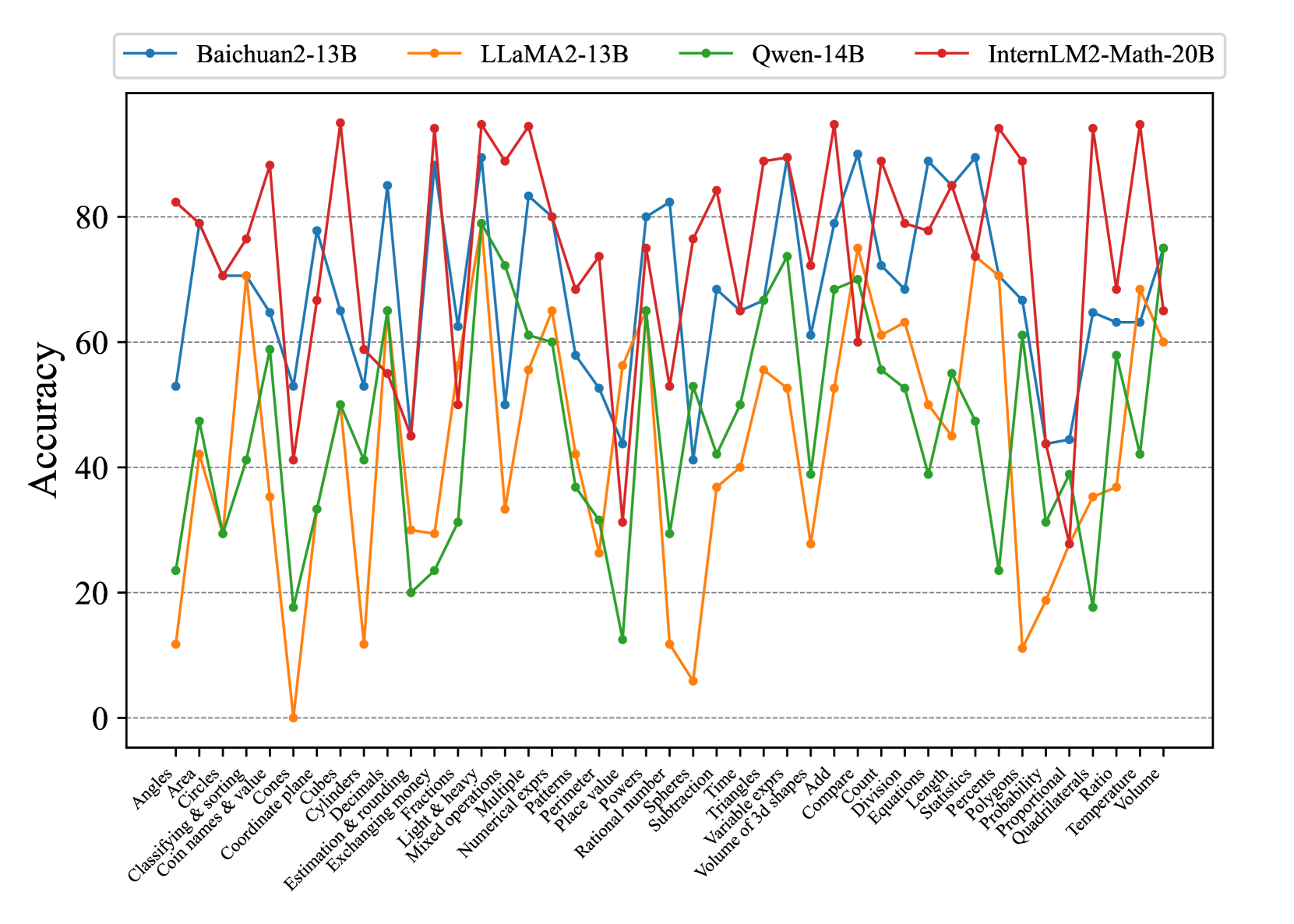

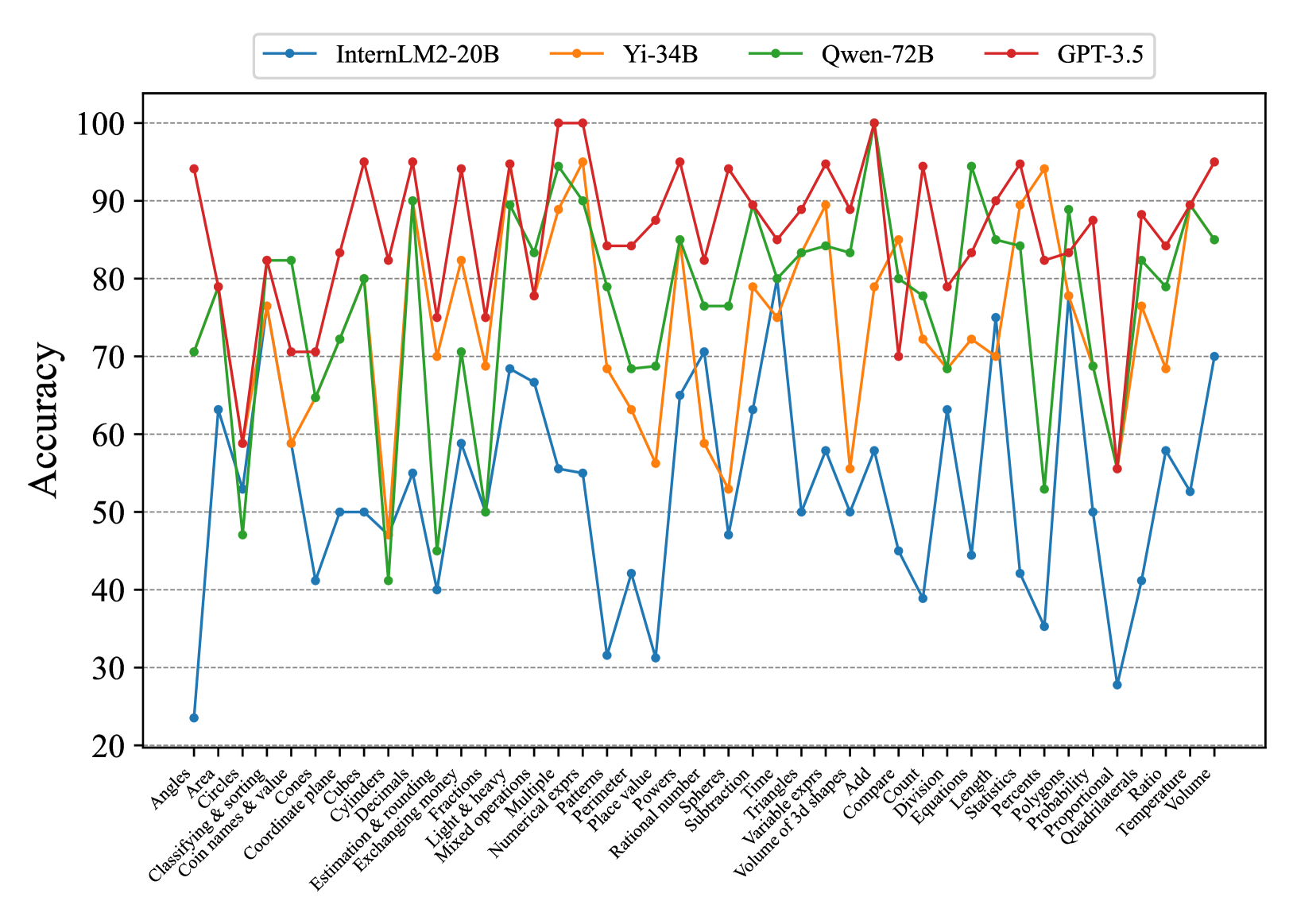

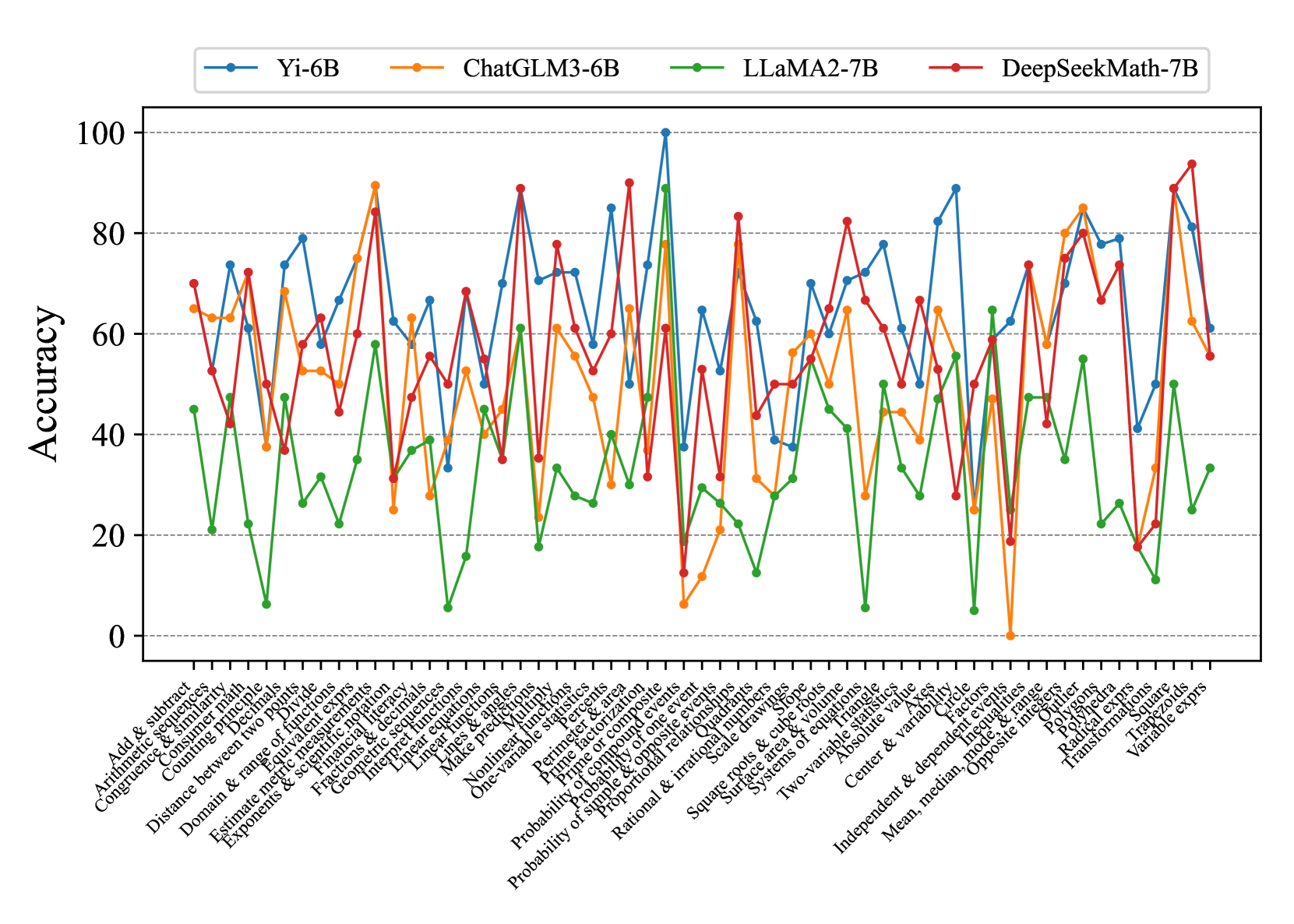

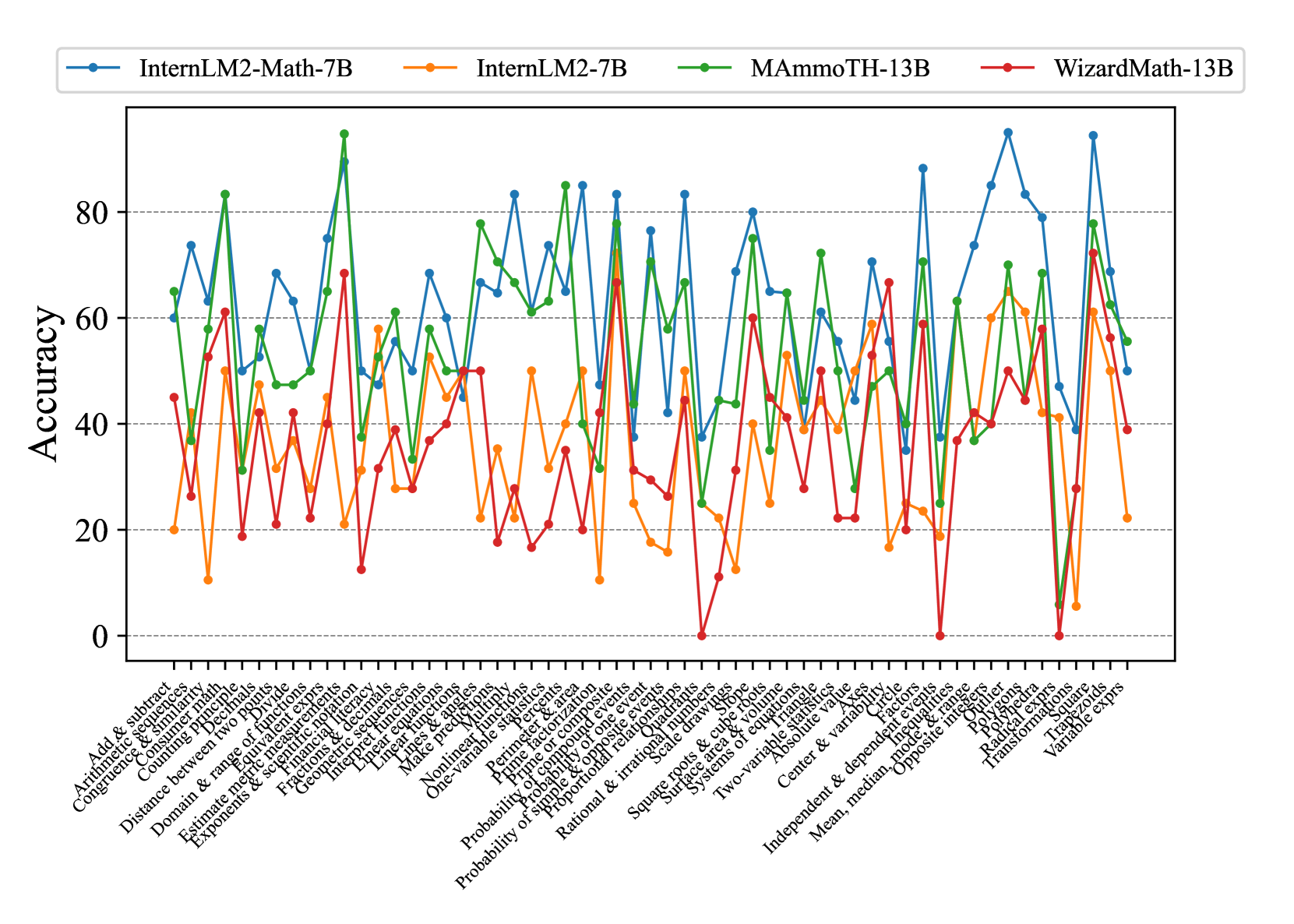

Second, based on our ConceptMath, we perform extensive experiments to assess the mathematical reasoning of existing LLMs, including 2 close-sourced LLMs and 17 open-sourced LLMs. These evaluations were performed in zero-shot, chain-of-thought (CoT), and few-shot settings. To our surprise, even though most of the evaluated LLMs claim to achieve high average accuracies on traditional mathematical benchmarks (e.g., GSM8K), they fail catastrophically across a wide spectrum of mathematical concepts.

Third, to make targeted improvements on underperformed math concepts, we propose an efficient fine-tuning strategy by first training a concept classifier and then crawling a set of samples from a large open-sourced math dataset Paster et al. (2023); Wang et al. (2023b) for further LLMs fine-tuning. In Fig. 1, for LLaMA2-FT, we observe that the results of these weaknesses improved a lot after using the efficient fine-tuning method.

In summary, our contributions are as follows:

- We introduce ConceptMath, the first bilingual, concept-wise benchmark for measuring mathematical reasoning. ConceptMath encompasses 4 systems, approximately 214 math concepts, and 4011 math word problems, which can guide further improvements on the mathematical reasoning of existing models.

- Based on ConceptMath, we evaluate many LLMs and perform a comprehensive analysis of their results. For example, we observe that most of these LLMs (including open-sourced, closed-sourced, general-purpose, or math-specialized models) show significant variations in their performance results across math concepts.

- We also evaluate the contamination rate of our ConceptMath and introduce a simple and efficient fine-tuning method to improve the weaknesses of existing LLMs.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Circular Diagram: Math Concepts

### Overview

The image is a circular diagram illustrating the relationships between different mathematical concepts. It is structured in concentric rings, with broader categories in the inner rings and more specific subcategories in the outer rings. The diagram uses color-coding to visually group related concepts.

### Components/Axes

* **Center:** Geometry (Dark Teal)

* **Ring 2:**

* Statistics (Orange)

* Measurement (Yellow)

* Calculate & Properties (Green)

* Two-Dim Shapes (Light Blue)

* Three-Dime Shapes (Blue)

* Coordinate Plane (Dark Blue)

* **Outer Ring:**

* **Statistics:** Probability, Classifying & Sorting, Data

* **Measurement:** Volume, Length, Area, Size, Time, Temperature, Ratio, Proportional, Percents, Light & Heavy, Exchanging Money, Coin Names & Value

* **Calculate & Properties:** Patterns, Estimation & Rounding, Count, Compare, Variable Expressions, Subtraction, Rational Number, Powers, Place Value, Numerical Expressions, Multiple Operations

* **Two-Dim Shapes:** Angles, Circles, Perimeter, Polygons, Quadrilaterals, Triangles

* **Three-Dime Shapes:** Cones, Cubes, Cylinders, Spheres, Volume of 3D Shapes

* **Calculate:** Add, Decimals, Division, Equations, Fractions, Mixed Operations

* **Color Coding:**

* Dark Teal: Geometry

* Orange: Statistics

* Yellow: Measurement

* Green: Calculate & Properties

* Light Blue: Two-Dim Shapes

* Blue: Three-Dime Shapes

* Dark Blue: Coordinate Plane

### Detailed Analysis or ### Content Details

* **Geometry:** The central concept, branching out into shapes, statistics, measurement, and calculation.

* **Statistics:** Includes Probability, Classifying & Sorting, and Data.

* **Measurement:** Covers a wide range of concepts, from Volume and Length to Time, Temperature, Ratio, Proportional, Percents, Weight (Light & Heavy), and Money (Exchanging Money, Coin Names & Value).

* **Calculate & Properties:** Encompasses Patterns, Estimation & Rounding, Count, Compare, Variable Expressions, Subtraction, Rational Number, Powers, Place Value, Numerical Expressions, and Multiple Operations.

* **Two-Dim Shapes:** Includes Angles, Circles, Perimeter, Polygons, Quadrilaterals, and Triangles.

* **Three-Dime Shapes:** Includes Cones, Cubes, Cylinders, Spheres, and Volume of 3D Shapes.

* **Coordinate Plane:** Includes Coordinate Plane.

* **Calculate:** Includes Add, Decimals, Division, Equations, Fractions, and Mixed Operations.

### Key Observations

* The diagram provides a hierarchical view of mathematical concepts, starting from the broad category of Geometry and branching out into more specific subcategories.

* The color-coding helps to visually group related concepts, making it easier to understand the relationships between them.

* The diagram covers a wide range of mathematical topics, from basic measurement and calculation to more advanced concepts like statistics and geometry.

### Interpretation

The circular diagram serves as a visual representation of how different mathematical concepts are interconnected. It highlights the central role of Geometry as a foundation for other areas of mathematics. The diagram is useful for understanding the relationships between different topics and for providing a high-level overview of the field of mathematics. The structure suggests a curriculum or a learning path, starting with basic concepts and progressing to more advanced topics. The diagram could be used as a reference tool for students or teachers to understand the scope and structure of mathematics.

</details>

((a)) English Elementary (Elementary-EN)

<details>

<summary>x3.png Details</summary>

### Visual Description

## Circular Diagram: Math Concepts

### Overview

The image is a circular diagram illustrating various mathematical concepts, categorized into broader areas like "Exprs, Equations & Functions," "Statistic & Probability," "Geometry," and "Calculate." The diagram uses color-coding to visually group related concepts.

### Components/Axes

The diagram is structured as a series of concentric rings, with the central area containing the most general categories and the outer rings containing more specific sub-categories.

* **Central Categories:**

* Exprs, Equations & Functions (Orange)

* Statistic & Probability (Yellow)

* Geometry (Blue)

* Calculate (Green)

* **Outer Rings:** Contain specific mathematical concepts related to the central categories.

### Detailed Analysis or Content Details

**1. Exprs, Equations & Functions (Orange):**

* **Outermost Ring (Darkest Orange to Lightest Orange):**

* Linear Equations

* Variable Exprs

* Radical Exprs

* Equivalent Exprs

* Systems of Equations

* Equations

* Inequalities

* **Middle Ring:**

* Nonlinear Functions

* Linear Functions

* Interpret Functions

* Domain & Range of Functions

* Function Concepts

* **Innermost Ring:**

* Two-Variable Statistics

* One-Variable Statistics

**2. Statistic & Probability (Yellow):**

* **Outermost Ring:**

* Outlier

* Mean, Median, Mode & Range

* Center & Variability

* Probability of Simple and Opposite Events

* Probability of One Event

* Probability of Compound Events

* Make Predictions

* Independent & Dependent Events

* Counting Principle

* **Middle Ring:**

* Data

* Probability

* **Innermost Ring:**

* Two-Variable Statistics

* One-Variable Statistics

**3. Geometry (Blue):**

* **Outermost Ring (Darkest Blue to Lightest Blue):**

* Slope

* Scale Drawings

* Transformations

* Congruence & Similarity

* Three-Dim Figures

* Surface Area & Volume

* Polyhedra

* Quadrants

* Distance Between Two Points

* Axes

* Triangle

* Trapezoids

* Square

* Polygons

* Perimeter & Area

* Lines & Angles

* Circle

* Two-Dim Figures

* **Middle Ring:**

* Coordinate Plane

**4. Calculate (Green):**

* **Outermost Ring (Darkest Green to Lightest Green):**

* Consumer Math

* Measurement

* Estimate Metric Measurements

* Financial Literacy

* Percents

* Ratios & Rates

* Proportional Relationships

* Rational & Irrational Numbers

* Integers

* Absolute Value

* Opposite Integers

* Arithmetic Sequences

* Geometric Sequences

* Factors

* Prime Factorization

* Prime or Composite

* Add & Subtract

* Decimals

* Divide

* Exponents & Scientific Notation

* Fractions & Decimals

* Multiply

* Square Roots & Cube Roots

* **Middle Ring:**

* Irrational Numbers

* Sequences

* Number Theory

* Basic Calculate

### Key Observations

* The diagram provides a hierarchical organization of mathematical concepts.

* The color-coding helps to visually group related concepts.

* The level of detail increases from the center to the outer rings.

### Interpretation

The circular diagram serves as a visual aid for understanding the relationships between different mathematical concepts. It demonstrates how specific topics like "Linear Equations" and "Slope" fit into broader categories like "Exprs, Equations & Functions" and "Geometry," respectively. The diagram is useful for students or anyone seeking a high-level overview of mathematical topics and their interconnections. The arrangement suggests a progression of learning, starting with fundamental concepts and moving towards more specialized areas.

</details>

((b)) English Middle (Middle-EN)

<details>

<summary>x4.png Details</summary>

### Visual Description

## Radial Chart: Math Problem Categories

### Overview

The image is a radial chart illustrating the categorization of math problems. The chart is divided into several layers, each representing a broader category that branches into more specific subcategories. The chart is written in Chinese.

### Components/Axes

* **Center:** The innermost layer is labeled "度量与统计" (Dùliàng yǔ tǒngjì), which translates to "Measurement and Statistics."

* **Second Layer:** This layer is divided into four main categories:

* "应用" (Yìngyòng) - Application (Orange)

* "几何" (Jǐhé) - Geometry (Blue)

* "数与代数" (Shù yǔ dàishù) - Numbers and Algebra (Green)

* "度量" (Dùliàng) - Measurement (Yellow)

* "统计" (Tǒngjì) - Statistics (Yellow)

* **Outer Layers:** These layers contain subcategories of math problems, branching out from the main categories.

### Detailed Analysis or ### Content Details

**1. 应用 (Yìngyòng) - Application (Orange):**

* "行程问题" (Xíngchéng wèntí) - Travel Problems

* "追击问题" (Zhuījī wèntí) - Pursuit Problems

* "相遇问题" (Xiāngyù wèntí) - Meeting Problems

* "基础问题" (Jīchǔ wèntí) - Basic Problems

* "和差倍问题" (Hé chā bèi wèntí) - Sum, Difference, and Multiple Problems

* "归总问题" (Guīzǒng wèntí) - Total Return Problems

* "归一问题" (Guī yī wèntí) - Unitary Method Problems

* "差倍问题" (Chā bèi wèntí) - Difference and Multiple Problems

* "周期问题" (Zhōuqí wèntí) - Period Problems

* "植树问题" (Zhí shù wèntí) - Tree Planting Problems

* "折扣问题" (Zhékòu wèntí) - Discount Problems

* "页码问题" (Yèmǎ wèntí) - Page Number Problems

* "税率问题" (Shuìlǜ wèntí) - Tax Rate Problems

* "年龄问题" (Niánlíng wèntí) - Age Problems

* "利息问题" (Lìxī wèntí) - Interest Problems

* "鸡兔同笼问题" (Jī tù tóng lóng wèntí) - Chicken and Rabbit in the Same Cage Problems

* "还原问题" (Huányuán wèntí) - Restoration Problems

* "工程问题" (Gōngchéng wèntí) - Work Problems

* "经典问题" (Jīngdiǎn wèntí) - Classic Problems

**2. 几何 (Jǐhé) - Geometry (Blue):**

* "立体图形综合" (Lìtǐ túxíng zònghé) - Comprehensive Solid Geometry

* "正方体" (Zhèngfāngtǐ) - Cube

* "长方体" (Chángfāngtǐ) - Cuboid

* "圆柱" (Yuánzhù) - Cylinder

* "立体图形" (Lìtǐ túxíng) - Solid Geometry

* "统计指标" (Tǒngjì zhǐbiāo) - Statistical Indicators

* "排列组合" (Páilie zǔhé) - Permutations and Combinations

* "角" (Jiǎo) - Angle

* "平行四边形" (Píngxíng sìbiānxíng) - Parallelogram

* "三角形" (Sānjiǎoxíng) - Triangle

* "梯形" (Tīxíng) - Trapezoid

* "圆" (Yuán) - Circle

* "长方形" (Chángfāngxíng) - Rectangle

* "正方形" (Zhèngfāngxíng) - Square

* "平面图形综合" (Píngmiàn túxíng zònghé) - Comprehensive Plane Geometry

* "平面图形" (Píngmiàn túxíng) - Plane Geometry

**3. 数与代数 (Shù yǔ dàishù) - Numbers and Algebra (Green):**

* "比例问题" (Bǐlì wèntí) - Proportion Problems

* "倍数问题" (Bèishù wèntí) - Multiple Problems

* "百分率问题" (Bǎifēn lǜ wèntí) - Percentage Problems

* "四则运算" (Sìzéi yùsuàn) - Four Arithmetic Operations

* "方程问题" (Fāngchéng wèntí) - Equation Problems

* "定义新运算" (Dìngyì xīn yùsuàn) - Defined New Operations

* "倒数问题" (Dàoshù wèntí) - Reciprocal Problems

* "除法问题" (Chúfǎ wèntí) - Division Problems

* "乘法问题" (Chéngfǎ wèntí) - Multiplication Problems

* "基础运算" (Jīchǔ yùsuàn) - Basic Operations

* "比与比例" (Bǐ yǔ bǐlì) - Ratio and Proportion

* "概率问题" (Gàilǜ wèntí) - Probability Problems

* "分数应用" (Fēnshù yìngyòng) - Fraction Applications

* "分数与小数" (Fēnshù yǔ xiǎoshù) - Fractions and Decimals

* "分数运算" (Fēnshù yùsuàn) - Fraction Operations

* "最简分数" (Zuì jiǎn fēnshù) - Simplest Fraction

* "公倍数问题" (Gōngbèishù wèntí) - Common Multiple Problems

* "公约数问题" (Gōngyuēshù wèntí) - Common Factor Problems

* "因数与倍数综合" (Yīnsù yǔ bèishù zònghé) - Comprehensive Factors and Multiples

* "质数问题" (Zhìshù wèntí) - Prime Number Problems

* "因数问题" (Yīnsù wèntí) - Factor Problems

* "因数与倍数" (Yīnsù yǔ bèishù) - Factors and Multiples

**4. 度量 (Dùliàng) - Measurement (Yellow):**

* "规律" (Guīlǜ) - Pattern

* "温度问题" (Wēndù wèntí) - Temperature Problems

* "时间问题" (Shíjiān wèntí) - Time Problems

* "人民币问题" (Rénmínbì wèntí) - RMB Problems

* "浓度问题" (Nóngdù wèntí) - Concentration Problems

* "面积问题" (Miànjī wèntí) - Area Problems

### Key Observations

* The chart provides a hierarchical breakdown of math problems, starting from broad categories and drilling down to specific types.

* The "Application" category has the most subcategories, indicating a wide range of real-world problem-solving scenarios.

* The "Geometry" category covers both solid and plane geometry concepts.

* The "Numbers and Algebra" category includes various arithmetic operations, fractions, ratios, and probability.

* The "Measurement" category focuses on practical measurement-related problems.

### Interpretation

The radial chart serves as a visual guide for categorizing and understanding different types of math problems. It highlights the relationships between broad mathematical concepts and specific problem-solving techniques. This type of chart is useful for students, teachers, and anyone interested in organizing and navigating the landscape of mathematical problems. The chart suggests that problem-solving in mathematics is highly interconnected, with various concepts and techniques building upon each other.

</details>

((c)) Chinese Elementary (Elementary-ZH)

<details>

<summary>x5.png Details</summary>

### Visual Description

## Circular Chart: Mathematics Topics

### Overview

The image is a circular chart, resembling a pie chart, that visually organizes various mathematical topics. The chart is divided into several main categories, each represented by a different color and further subdivided into more specific sub-topics. All text is in Chinese, with English translations provided.

### Components/Axes

The chart is structured in concentric rings, with the main categories in the inner ring and sub-categories in the outer rings. The main categories are:

* **数与式 (Shù yǔ shì)** - Numbers and Expressions (Green)

* **统计与概率 (Tǒngjì yǔ gàilǜ)** - Statistics and Probability (Yellow)

* **方程与不等式 (Fāngchéng yǔ bù děngshì)** - Equations and Inequalities (Pink)

* **函数 (Hánshù)** - Functions (Orange)

* **几何 (Jǐhé)** - Geometry (Blue)

### Detailed Analysis or ### Content Details

Here's a breakdown of the sub-categories within each main category:

**1. 数与式 (Shù yǔ shì) - Numbers and Expressions (Green)**

* **代数式 (Dàishùshì)** - Algebraic Expressions

* 代数式求值 (Dàishùshì qiúzhí) - Evaluating Algebraic Expressions

* 同类项 (Tónglèi xiàng) - Like Terms

* **分式 (Fēnshì)** - Fractions

* 约分与通分 (Yuē fēn yǔ tōng fēn) - Reducing and Finding Common Denominators

* **无理数 (Wúlǐshù)** - Irrational Numbers

* 指数幂 (Zhǐshù mì) - Exponential Powers

* 判断无理数 (Pànduàn wúlǐshù) - Judging Irrational Numbers

* **因式 (Yīnsì)** - Factors

* 十字相乘法 (Shízì xiāng chéng fǎ) - Cross Multiplication Method

* **整式 (Zhěngshì)** - Polynomials

* 整式的加减 (Zhěngshì de jiājiǎn) - Addition and Subtraction of Polynomials

* 整式的乘除及混合 (Zhěngshì de chéng chú jí hùnhé) - Multiplication, Division, and Mixture of Polynomials

* **根式 (Gēnshì)** - Radicals

* 乘法公式 (Chéngfǎ gōngshì) - Multiplication Formulas

* 同类二次根式 (Tónglèi èrcì gēnshì) - Like Quadratic Radicals

* 平方根与算术平方根 (Píngfāng gēn yǔ suànshù píngfāng gēn) - Square Roots and Arithmetic Square Roots

* 二次根式的运算 (Èrcì gēnshì de yùsuàn) - Operations with Quadratic Radicals

* **立方根 (Lìfāng gēn)** - Cube Roots

**2. 统计与概率 (Tǒngjì yǔ gàilǜ) - Statistics and Probability (Yellow)**

* **概率 (Gàilǜ)** - Probability

* 求概率 (Qiú gàilǜ) - Finding Probability

* 概率的应用 (Gàilǜ de yìngyòng) - Application of Probability

* 随机事件与概率 (Suíjī shìjiàn yǔ gàilǜ) - Random Events and Probability

* 数据的波动趋势 (Shùjù de bōdòng qūshì) - Trend of Data Fluctuation

* 数据的集中趋势 (Shùjù de jízhōng qūshì) - Trend of Data Concentration

* **应用 (Yìngyòng)** - Application

* 流水问题 (Liúshuǐ wèntí) - Current Problems

* 鸽巢问题 (Gē cháo wèntí) - Pigeonhole Principle

* **数据分析 (Shùjù fēnxī)** - Data Analysis

* 提公因式 (Tí gōng yīnsì) - Factoring out the Greatest Common Factor

**3. 方程与不等式 (Fāngchéng yǔ bù děngshì) - Equations and Inequalities (Pink)**

* **不等式与不等式组 (Bù děngshì yǔ bù děngshì zǔ)** - Inequalities and Systems of Inequalities

* 解一元一次不等式 (Jiě yīyuán yīcì bù děngshì) - Solving Linear Inequalities in One Variable

* 一元一次不等式组 (Yīyuán yīcì bù děngshì zǔ) - Systems of Linear Inequalities in One Variable

* 一元一次不等式的应用 (Yīyuán yīcì bù děngshì de yìngyòng) - Applications of Linear Inequalities in One Variable

* **分式方程 (Fēnshì fāngchéng)** - Fractional Equations

* 分式方程的应用 (Fēnshì fāngchéng de yìngyòng) - Applications of Fractional Equations

* 解分式方程 (Jiě fēnshì fāngchéng) - Solving Fractional Equations

* **一元二次方程 (Yīyuán èrcì fāngchéng)** - Quadratic Equations in One Variable

* 一元二次方程的应用 (Yīyuán èrcì fāngchéng de yìngyòng) - Applications of Quadratic Equations in One Variable

* 解一元二次方程 (Jiě yīyuán èrcì fāngchéng) - Solving Quadratic Equations in One Variable

**4. 函数 (Hánshù) - Functions (Orange)**

* **一次函数 (Yīcì hánshù)** - Linear Functions

* 函数与一元一次不等式 (Hánshù yǔ yīyuán yīcì bù děngshì) - Functions and Linear Inequalities in One Variable

* 函数与一元一次方程 (Hánshù yǔ yīyuán yīcì fāngchéng) - Functions and Linear Equations in One Variable

* 函数与二元一次方程组 (Hánshù yǔ èryuán yīcì fāngchéng zǔ) - Functions and Systems of Linear Equations in Two Variables

* 求一次函数解析式 (Qiú yīcì hánshù jiěxīshì) - Finding the Analytical Expression of a Linear Function

* **反比例函数 (Fǎn bǐlì hánshù)** - Inverse Proportionality Functions

* 反比例函数的应用 (Fǎn bǐlì hánshù de yìngyòng) - Applications of Inverse Proportionality Functions

* 反比例函数的性质 (Fǎn bǐlì hánshù de xìngzhì) - Properties of Inverse Proportionality Functions

* 反比例函数的定义 (Fǎn bǐlì hánshù de dìngyì) - Definition of Inverse Proportionality Functions

* **二次函数 (Èrcì hánshù)** - Quadratic Functions

* 抛物线的性质 (Pāowùxiàn de xìngzhì) - Properties of Parabolas

* 二次函数的应用 (Èrcì hánshù de yìngyòng) - Applications of Quadratic Functions

* **平面直角坐标系 (Píngmiàn zhíjiǎo zuòbiāo xì)** - Cartesian Coordinate System

* 有序数对 (Yǒuxù shù duì) - Ordered Pairs

* 点所在象限 (Diǎn suǒzài xiàngxiàn) - Quadrant Where a Point is Located

**5. 几何 (Jǐhé) - Geometry (Blue)**

* **圆 (Yuán)** - Circle

* 圆心角 (Yuánxīn jiǎo) - Central Angle

* 圆周角 (Yuánzhōu jiǎo) - Inscribed Angle

* 正多边形和圆 (Zhèng duōbiānxíng hé yuán) - Regular Polygons and Circles

* 弧长和扇形面积 (Hú cháng hé shànxíng miànjī) - Arc Length and Sector Area

* 点线圆位置关系 (Diǎn xiàn yuán wèizhì guānxì) - Positional Relationship between Points, Lines, and Circles

* 垂径定理 (Chuí jìng dìnglǐ) - Perpendicular Bisector Theorem

* **三角形 (Sānjiǎoxíng)** - Triangle

* 等边三角形 (Děngbiān sānjiǎoxíng) - Equilateral Triangle

* 等腰三角形 (Děngyāo sānjiǎoxíng) - Isosceles Triangle

* 勾股定理 (Gōugǔ dìnglǐ) - Pythagorean Theorem

* 全等三角形 (Quán děng sānjiǎoxíng) - Congruent Triangles

* **四边形 (Sìbiānxíng)** - Quadrilateral

* 平行四边形 (Píngxíng sìbiānxíng) - Parallelogram

* 梯形 (Tīxíng) - Trapezoid

* **立体图形 (Lìtǐ túxíng)** - Solid Figures

* 圆锥 (Yuánzhuī) - Cone

### Key Observations

* The chart provides a comprehensive overview of mathematical topics, categorized into five main areas.

* Each main category is further divided into sub-topics, providing a hierarchical structure.

* The visual representation allows for easy identification of related concepts.

### Interpretation

The circular chart serves as a visual aid for organizing and understanding the relationships between different mathematical concepts. It is designed to provide a high-level overview of the subject matter, making it easier to navigate and comprehend the connections between various topics. The chart could be used for studying, curriculum planning, or as a reference tool for students and educators. The hierarchical structure allows users to drill down from broad categories to specific sub-topics, facilitating a deeper understanding of the subject matter.

</details>

((d)) Chinese Middle (Middle-ZH)

Figure 2: Diagram overview of four concept systems in ConceptMath. We have provided translated Chinese concept names in English (See Appendix A).

2 ConceptMath

ConceptMath is the first bilingual, concept-wise benchmark for measuring mathematical reasoning. In this section, we describe the design principle, dataset collection process, dataset statistics and an efficient fine-tuning strategy to enhance the weaknesses identified by our ConceptMath.

2.1 Design Principle

We created ConceptMath based on the following two high-level design principles:

Concept-wised Hierarchical System.

The primary goal of ConceptMath is to evaluate the mathematical reasoning capacities of language models at different granularity. Therefore, ConceptMath organizes math problems within a three-level hierarchy of mathematical concepts in Fig. 2. This approach provides concept-wise evaluation for mathematical reasoning of language models and makes targeted and effective improvements possible.

Bilingualism.

Most of the current mathematical benchmark focuses solely on English, leaving multi-lingual mathematical reasoning unexplored. As an early effort to explore multi-lingual mathematical reasoning, we evaluate mathematical reasoning in two languages: English and Chinese. Besides, since cultures and educational systems vary across different languages, common math concepts can differ a lot. Therefore, we carefully collect concepts in both languages, instead of merely translating from one language to another. For example, measurement metrics (e.g., money, size) are different for English and Chinese.

2.2 Data Collection

Subsequently, for data collection, we take a two-step approach to operationalize the aforementioned design principles: First, we recruit experts to delineate a hierarchy of math concepts based on different education systems. Secondly, we collect problems for each concept from various sources or design problems manually, which is succeeded by quality assessment and data cleaning.

Math Concept System Construction.

Since the education systems provide a natural hierarchy of math concepts, we recruited four teachers from elementary and middle schools, specializing in either English or Chinese, to organize a hierarchy of math concepts for different education systems. This leads to four concept systems: Elementary-EN, Middle-EN, Elementary-ZH, and Middle-ZH, with each system consisting of a three-level hierarchy of around 50 atomic math concepts (Fig. 2).

Math Problem Construction.

Then we conducted a thorough data acquisition from various sources (including educational websites, textbooks, and search engines with specific concepts) to collect math word problems (including both questions and answers) for each math concept. To guarantee a balance across all concepts, approximately 20 problems were gathered for each math concept. Following this, both GPT-4 OpenAI (2023) and human experts were employed to verify and rectify the categorization and the solution of each problem. However, we observed that for some concepts, the problem count was significantly below 20. To address this issue, manual efforts were undertaken to augment these categories, ensuring a consistent collection of 20 problems for each concept. Furthermore, to broaden the diversity of the dataset and minimize the risk of data contamination, all gathered problems were paraphrased using GPT-4. It is important to note that the collection and annotation processes were carried out by a team of six members, each possessing a university degree in an engineering discipline, to maintain a high level of technical expertise in executing these tasks.

2.3 Dataset Statistics

Comparison to existing datasets. As shown in Table 1, our ConceptMath differs from related datasets in various aspects: (1) ConceptMath is the first dataset to study fine-grained mathematical concepts and encompasses 4 systems, 214 math concepts, and 4011 math word problems. (2) Problems in ConcepthMath are carefully annotated based on the mainstream education systems for English (EN) and Chinese (ZH).

Details on the hierarchical system. Apart from Fig. 2, we also provide the details on the hierarchical system more clearly in Appendix A.

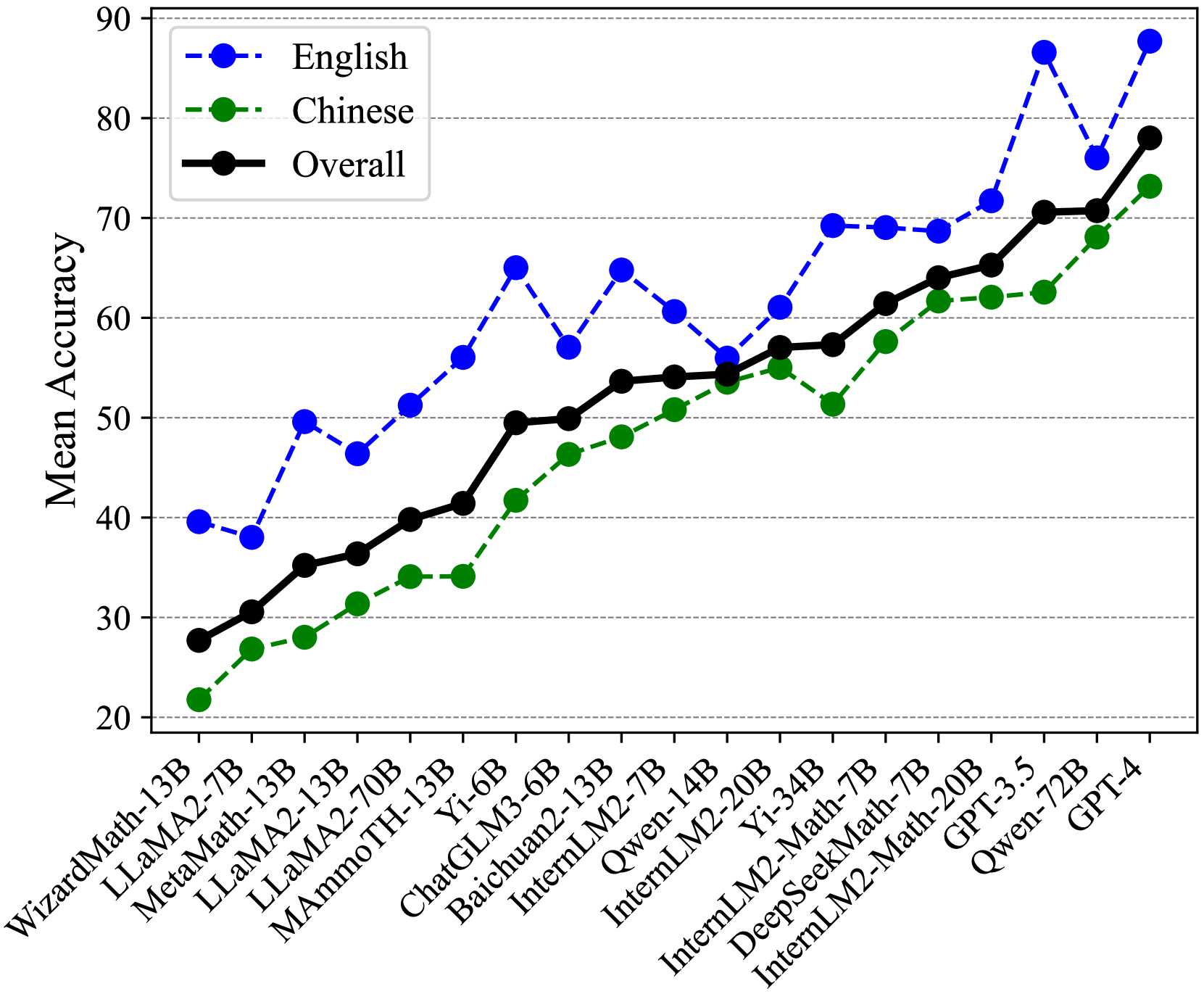

Length distribution. Fig. 3 shows the length distribution of our ConcepthMath, where number of tokens is reported We use the “cl100k_base” tokenizer from https://github.com/openai/tiktoken. The minimum, average and maximum of the tokens for these questions are 4, 41 and 309, respectively, which shows that they have lexical richness.

| Benchmark | Language | Fine-grained | Size |

| --- | --- | --- | --- |

| GSM8K | EN | ✗ | 1319 |

| MATH | EN | ✗ | 5000 |

| TabMWP | EN | ✗ | 7686 |

| Dolphin18K | EN | ✗ | 1504 |

| Math23K | ZH | ✗ | 1000 |

| ASDiv | EN | ✗ | 2305 |

| SVAMP | EN | ✗ | 300 |

| SingleOp | EN | ✗ | 159 |

| MMLU-Math | EN | ✗ | 906 |

| ConceptMath | EN&ZH | ✓ | 4011 |

Table 1: A comparison of our ConceptMath with some notable mathematical datasets. Note that the size is the number of samples of the test split.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Question Length Distribution

### Overview

The image is a bar chart showing the distribution of question lengths, measured in the number of tokens. The x-axis represents the question length, and the y-axis represents the number of questions. The bars are light blue.

### Components/Axes

* **X-axis:** Question Length (# Tokens). The axis is labeled from 0 to 200 in increments of 10, with an additional label ">200" at the end.

* **Y-axis:** Number of Questions. The axis is labeled from 0 to 100 in increments of 20.

* **Bars:** Light blue bars represent the number of questions for each question length.

### Detailed Analysis

The distribution is skewed to the right.

* The number of questions increases rapidly from 0 tokens to a peak around 30-40 tokens.

* The number of questions then decreases gradually as the question length increases from 40 to 200 tokens.

* There is a small spike at the ">200" token mark.

Specific data points (approximate due to bar chart resolution):

* At 10 tokens, the number of questions is approximately 50.

* At 20 tokens, the number of questions is approximately 75.

* At 30 tokens, the number of questions is approximately 90.

* At 40 tokens, the number of questions is approximately 80.

* At 50 tokens, the number of questions is approximately 50.

* At 60 tokens, the number of questions is approximately 40.

* At 70 tokens, the number of questions is approximately 30.

* At 80 tokens, the number of questions is approximately 20.

* At 90 tokens, the number of questions is approximately 15.

* At 100 tokens, the number of questions is approximately 5.

* At 150 tokens, the number of questions is approximately 2.

* At 200 tokens, the number of questions is approximately 1.

* At >200 tokens, the number of questions is approximately 12.

### Key Observations

* Most questions are between 10 and 60 tokens long.

* The number of questions decreases as the question length increases beyond 40 tokens.

* There are relatively few questions longer than 100 tokens.

* There is a small number of questions with more than 200 tokens.

### Interpretation

The data suggests that the majority of questions in the dataset are relatively short, with a peak around 30-40 tokens. The distribution indicates that longer questions are less common. The small spike at ">200" suggests that there is a small subset of questions that are significantly longer than the average. This distribution could be influenced by factors such as the nature of the questions being asked, the writing style of the question askers, or any length constraints imposed on the questions.

</details>

Figure 3: Length distributions of our ConceptMath.

2.4 Efficient Fine-Tuning

Based on our ConceptMath, we are able to identify the weaknesses in the mathematical reasoning capability of LLMs through concept-wise evaluation. In this section, we explore a straightforward approach to enhance mathematical abilities towards specific concepts by first training a concept classifier and then curating a set of samples from a large open-sourced math dataset. Specifically, first, by additionally collecting extra 10 problems per concept, we construct a classifier capable of identifying the concept class of a given question. The backbone of this classifier is a pretrained bilingual LLM, where the classification head is operated on its last hidden output feature. Then, we proceed to fine-tune LLMs using this specific dataset combined with the existing general math dataset, which aims to avoid overfitting on a relatively small dataset. More details have been provided in the Appendix B.

3 Experiments

In this section, we perform extensive experiments to demonstrate the effect of our ConceptMath.

3.1 Experimental Setup

Evaluated Models.

We assess the mathematical reasoning of existing advanced LLMs on ConceptMath, including 2 close-sourced LLMs (i.e., GPT-3.5/GPT-4 (OpenAI, 2023)) and 17 open-sourced LLMs (i.e., WizardMath-13B Luo et al. (2023), MetaMath-13B Yu et al. (2023), MAmmoTH-13B Yue et al. (2023), Qwen-14B/72B Bai et al. (2023b), Baichuan2-13B Baichuan (2023), ChatGLM3-6B Du et al. (2022), InternLM2-7B/20B Team (2023a), InternLM2-Math-7B/20B Ying et al. (2024), LLaMA2-7B/13B/70B Touvron et al. (2023b), Yi-6B/34B Team (2023b) and DeepSeekMath-7B Shao et al. (2024)). Note that WizardMath-13B, MetaMath-13B, and MAmmoTH-13B are specialized math language models fine-tuned from LLaMA2. InternLM2-Math and DeepSeekMath-7B are specialized math language models fine-tuned from corresponding language models. More details of these evaluated models can be seen in Appendix C.

| Model | Elementary-EN | Middle-EN | Elementary-ZH | Middle-ZH | Avg. | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| ZS | ZS-COT | FS | ZS | ZS-COT | FS | ZS | ZS-COT | FS | ZS | ZS-COT | FS | | |

| Yi-6B | 67.94 | 67.56 | 59.03 | 65.55 | 64.59 | 56.05 | 34.33 | 31.91 | 37.86 | 36.46 | 36.19 | 36.46 | 49.49 |

| ChatGLM3-6B | 60.69 | 63.10 | 53.18 | 51.25 | 60.17 | 51.34 | 46.23 | 43.63 | 40.74 | 44.77 | 43.32 | 40.43 | 49.90 |

| DeepSeekMath-7B | 66.92 | 77.35 | 73.92 | 56.53 | 69.87 | 66.31 | 60.47 | 62.33 | 64.19 | 56.50 | 56.95 | 56.86 | 64.02 |

| InternLM2-Math-7B | 71.12 | 72.01 | 69.59 | 63.44 | 62.96 | 63.05 | 57.30 | 58.23 | 58.60 | 53.79 | 53.16 | 53.88 | 61.43 |

| InternLM2-7B | 68.83 | 69.97 | 66.67 | 37.04 | 65.83 | 55.47 | 47.63 | 49.02 | 53.02 | 45.22 | 45.40 | 44.86 | 54.08 |

| LLaMA2-7B | 36.51 | 42.62 | 38.68 | 34.26 | 39.16 | 33.69 | 15.72 | 17.67 | 17.58 | 30.87 | 32.22 | 27.80 | 30.57 |

| MAmmoTH-13B | 61.32 | 52.42 | 56.49 | 53.93 | 45.20 | 48.08 | 22.33 | 33.30 | 23.81 | 27.98 | 43.05 | 29.15 | 41.42 |

| WizardMath-13B | 41.73 | 44.78 | 34.99 | 36.85 | 37.72 | 45.11 | 10.51 | 11.26 | 18.70 | 12.36 | 15.52 | 22.92 | 27.70 |

| MetaMath-13B | 54.45 | 51.78 | 47.96 | 44.24 | 43.47 | 47.50 | 11.44 | 17.30 | 27.53 | 21.21 | 26.08 | 29.60 | 35.21 |

| Baichuan2-13B | 68.83 | 68.58 | 54.07 | 67.66 | 69.67 | 40.40 | 57.02 | 58.23 | 22.05 | 55.05 | 55.32 | 26.90 | 53.65 |

| LLaMA2-13B | 44.02 | 49.75 | 47.07 | 44.72 | 46.45 | 43.09 | 20.19 | 24.19 | 22.14 | 33.30 | 35.38 | 26.17 | 36.37 |

| Qwen-14B | 46.95 | 65.78 | 72.65 | 38.48 | 59.60 | 67.85 | 28.09 | 65.12 | 64.47 | 22.92 | 58.30 | 62.09 | 54.36 |

| InternLM2-Math-20B | 74.05 | 75.32 | 73.41 | 64.11 | 71.21 | 70.83 | 62.98 | 61.95 | 61.77 | 55.14 | 55.78 | 56.86 | 65.28 |

| InternLM2-20B | 53.31 | 72.52 | 73.28 | 45.11 | 67.47 | 56.72 | 48.19 | 55.53 | 59.81 | 45.13 | 50.63 | 56.68 | 57.03 |

| Yi-34B | 74.68 | 73.66 | 56.36 | 72.26 | 74.66 | 65.83 | 50.05 | 51.16 | 38.79 | 45.40 | 43.95 | 40.97 | 57.31 |

| LLaMA2-70B | 56.11 | 60.31 | 30.53 | 58.06 | 60.94 | 31.67 | 28.65 | 26.70 | 24.37 | 37.64 | 34.30 | 28.43 | 39.81 |

| Qwen-72B | 77.10 | 75.06 | 77.23 | 74.66 | 69.87 | 73.99 | 71.16 | 68.65 | 61.86 | 71.30 | 65.43 | 62.45 | 70.73 |

| GPT-3.5 | 85.75 | 92.37 | 84.35 | 83.88 | 90.12 | 82.73 | 56.47 | 53.21 | 56.93 | 51.90 | 53.52 | 55.69 | 70.58 |

| GPT-4 | 86.77 | 90.20 | 89.57 | 84.26 | 89.83 | 88.68 | 67.91 | 72.28 | 72.00 | 63.81 | 64.26 | 66.61 | 78.02 |

| Avg. | 63.00 | 66.59 | 61.00 | 56.65 | 62.57 | 57.28 | 41.93 | 45.35 | 43.49 | 42.67 | 45.72 | 43.41 | 52.47 |

Table 2: Results of different models on our constructed ConceptMath benchmark dataset. Note that “ZS”, “ZS-COT”, “FS” represents “zero-shot”, “zero-shot w/ chain-of-thought” and “few-shot”, repsectively. Models are grouped roughly according to their model sizes.

Evaluation Settings.

We employ three distinct evaluation settings: zero-shot, zero-shot with chain-of-thought (CoT), and few-shot promptings. The zero-shot prompting assesses the models’ intrinsic problem-solving abilities without any prior examples. The zero-shot with CoT prompting evaluates the models’ ability to employ a logical chain of thought. In the few-shot prompting setting, the model is provided with fixed 5-shot prompts for different systems (See Appendix E), which includes five newly created examples with concise ground truth targets. This approach is designed to measure the in-context learning abilities. Besides, following MATH (Hendrycks et al., 2021b), all questions and answers in ConceptMath have been carefully curated, and each problem is evaluated based on exact matches. Moreover, greedy decoding with a temperature of 0 is used.

3.2 Results

Overall Accuracy

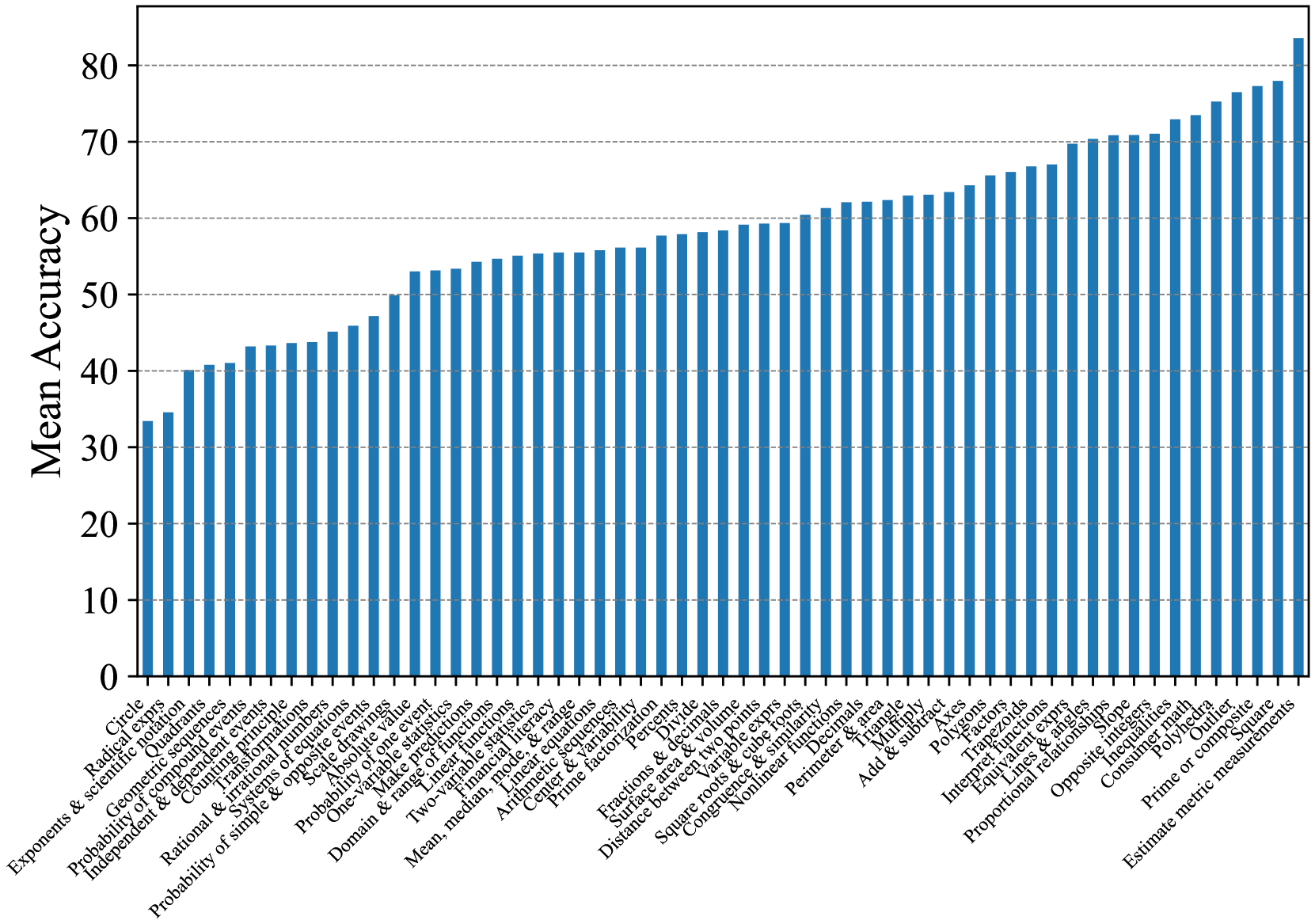

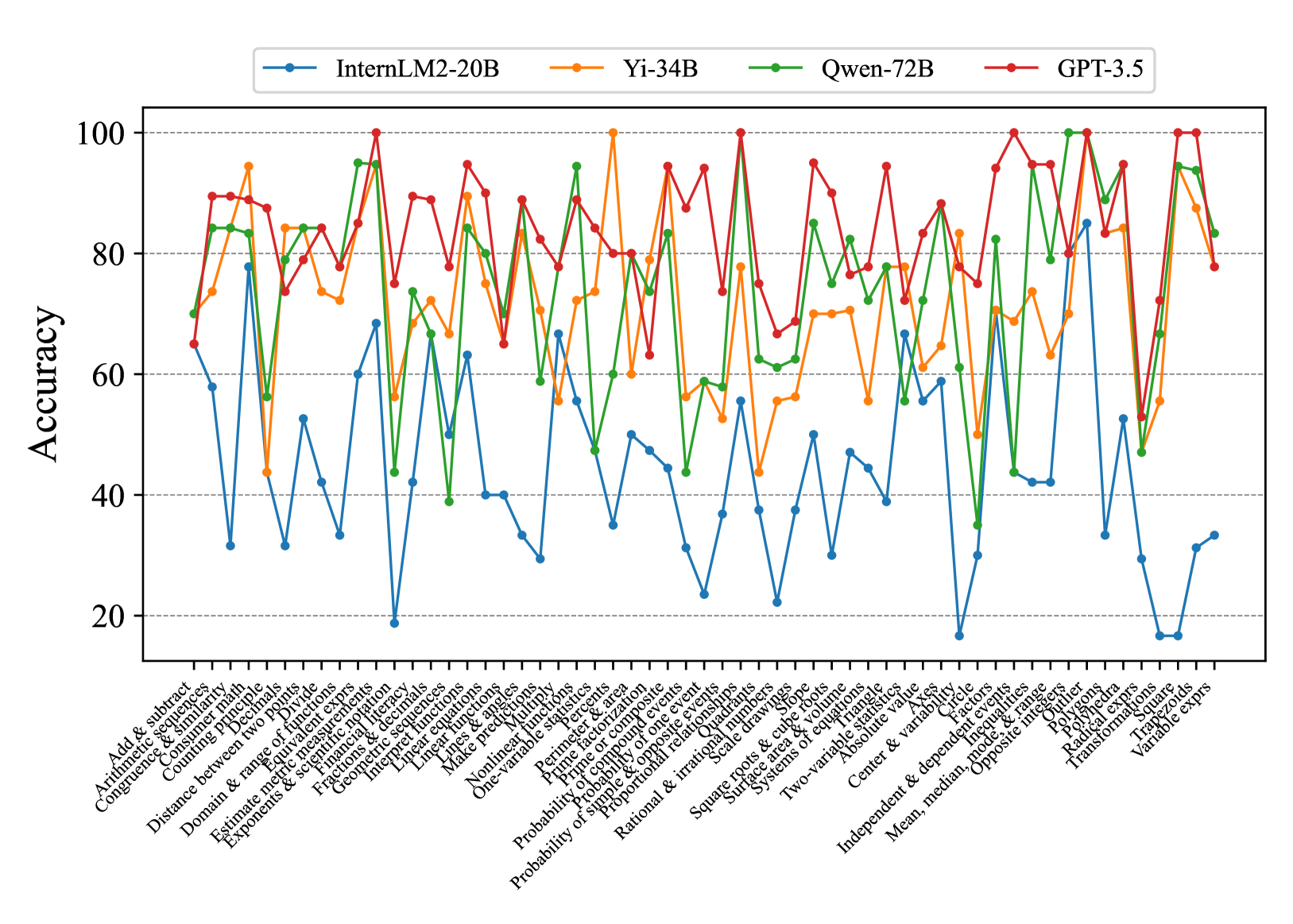

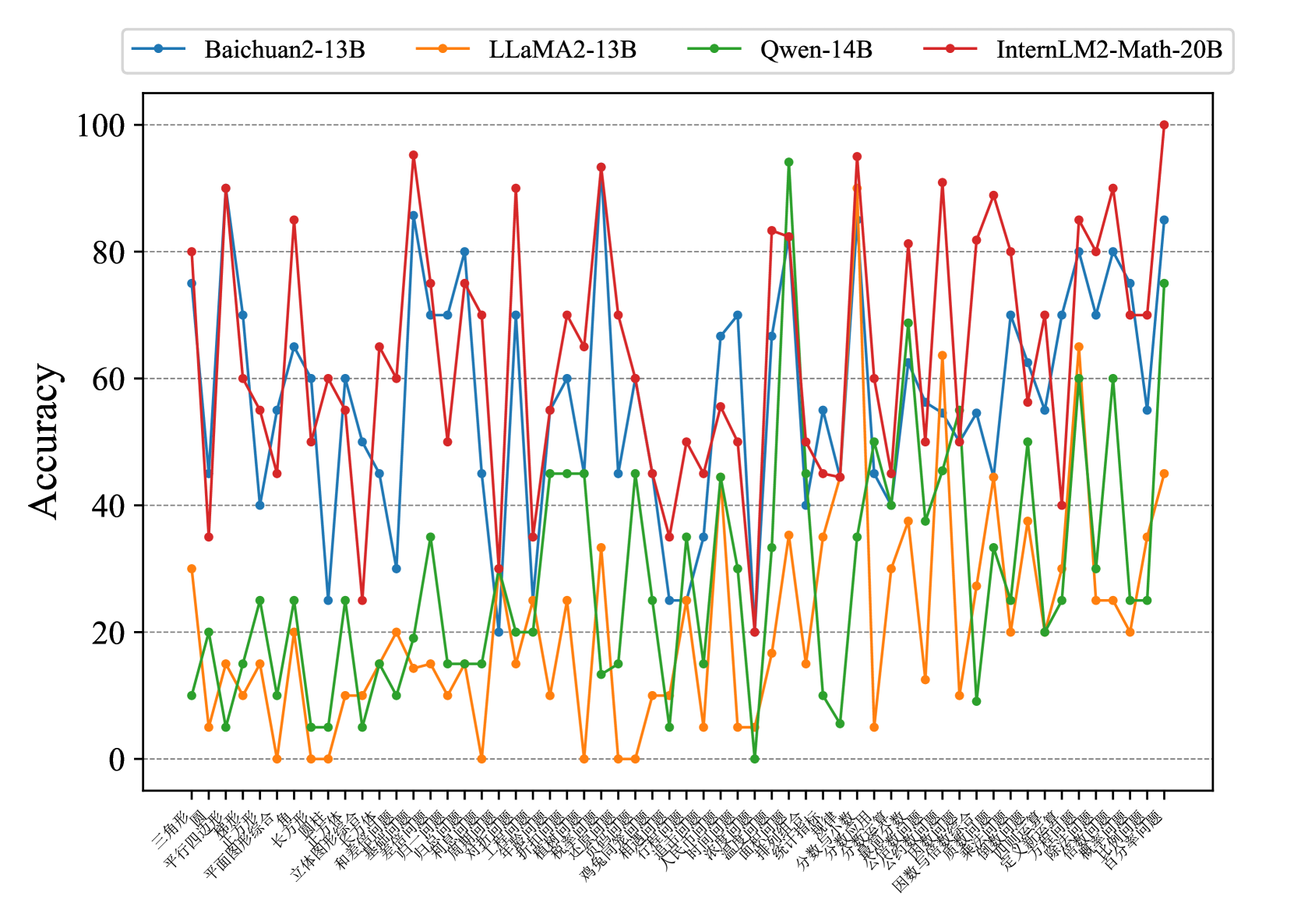

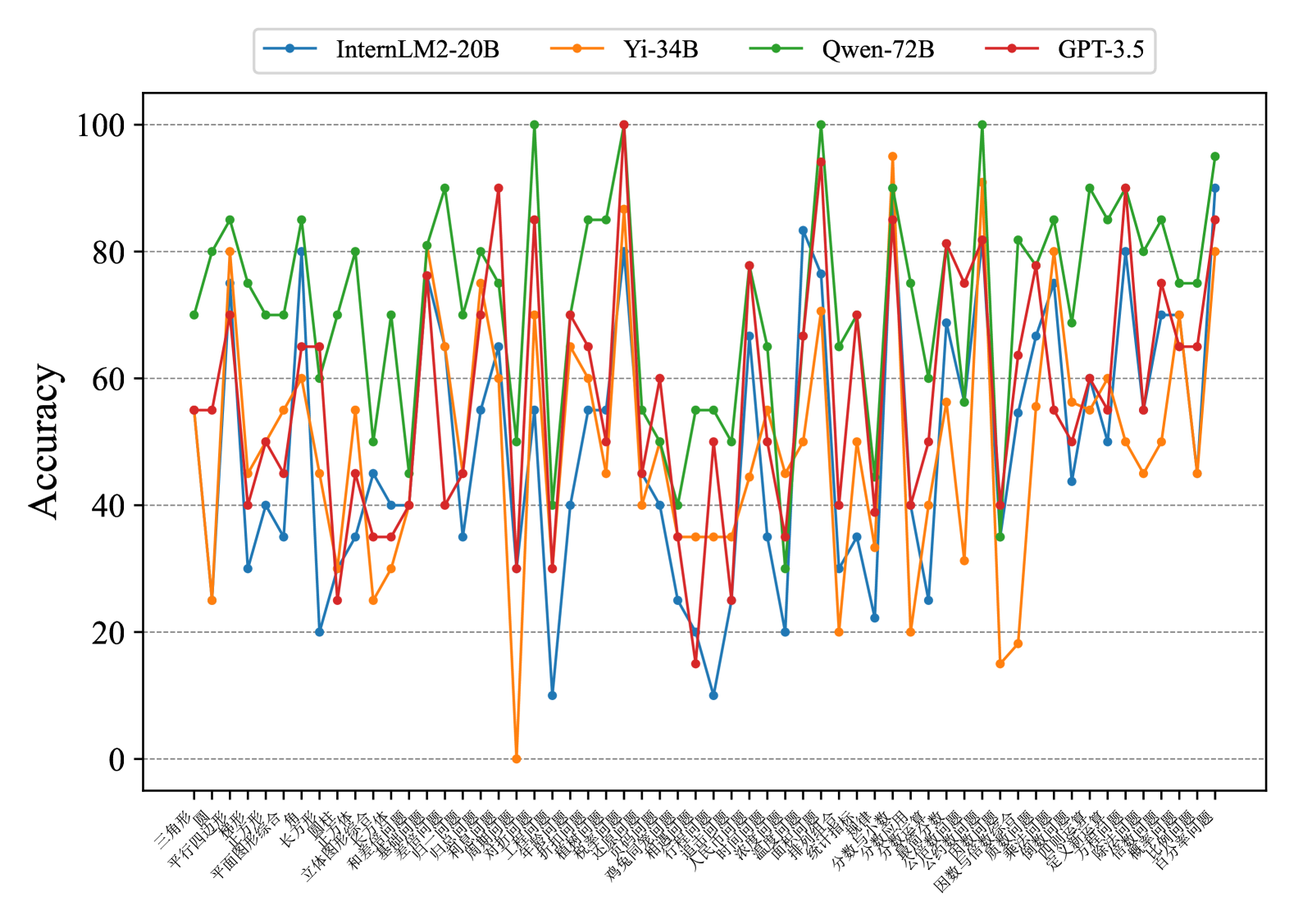

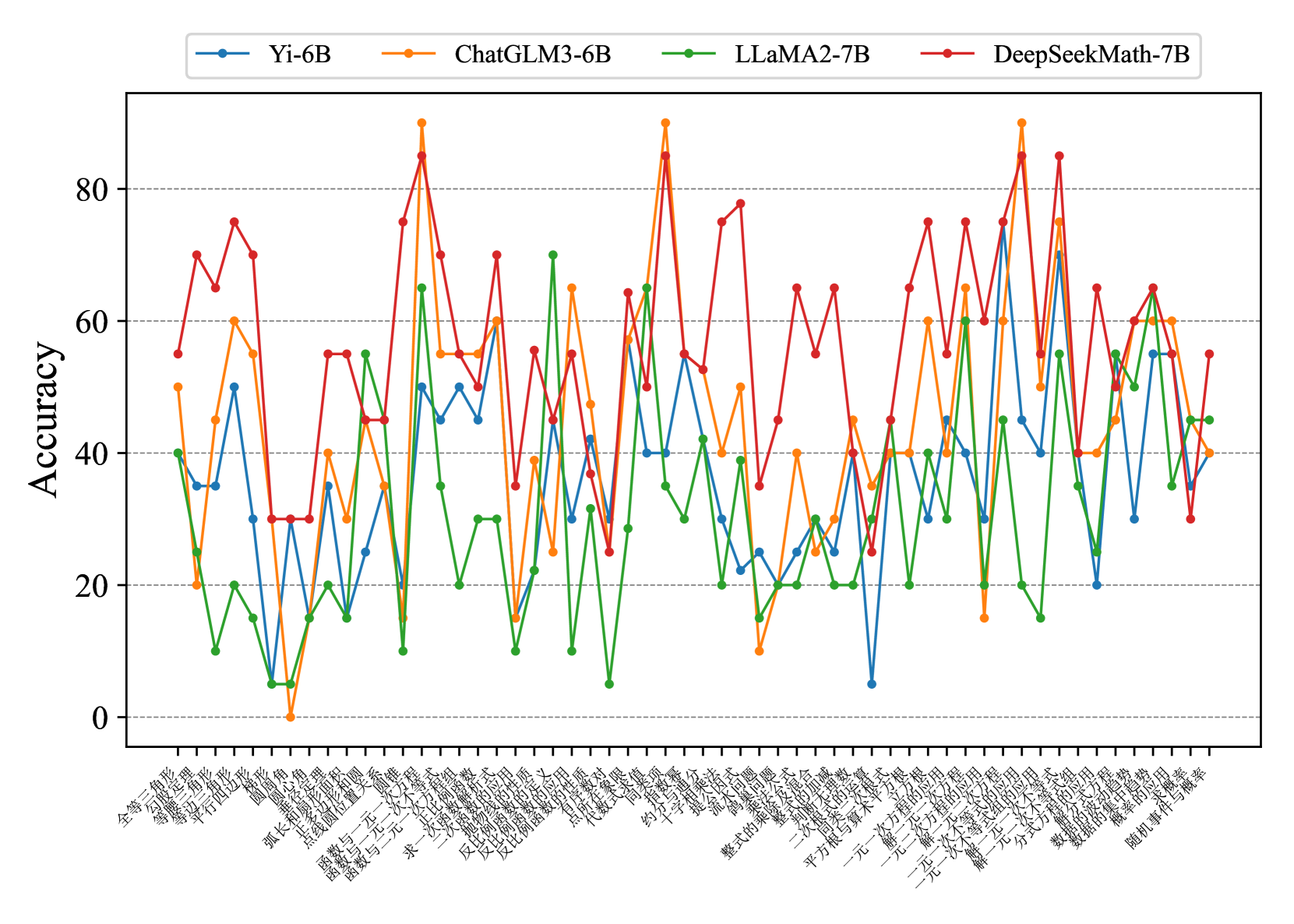

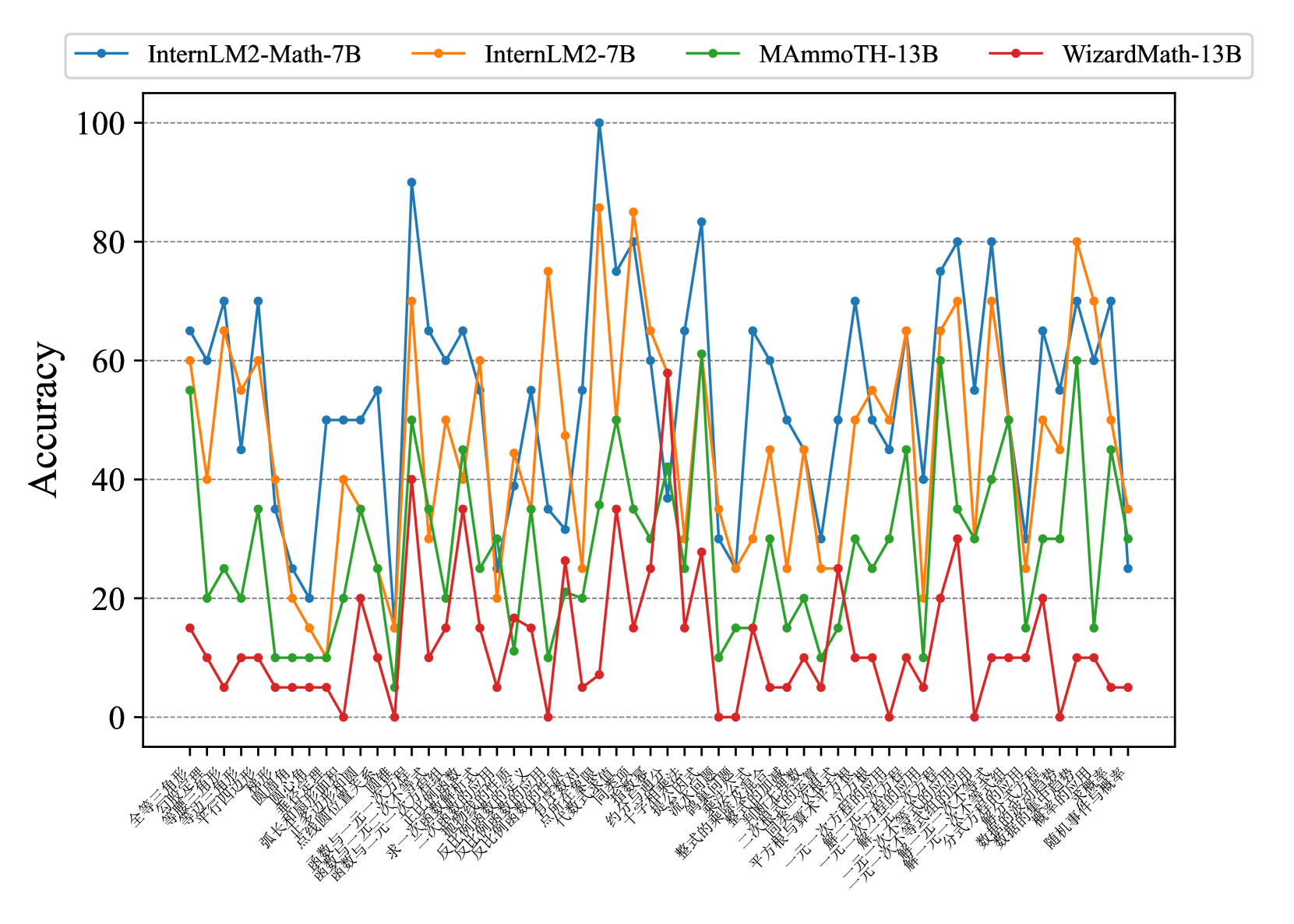

We present the overall accuracies of different LLMs on our ConceptMath benchmark under various prompt settings in Table 2. Subsequently, we analyzed the mathematical abilities of these LLMs in both English and Chinese in Fig. 4. Our analysis led to the following key findings: (1) GPT-3.5/4 showcases the most advanced mathematical reasoning abilities among LLMs in both English and Chinese systems, and the leading open-source Qwen-72B model archives comparable performance compared with GPT-3.5. (2) The scores on Chinese systems of most existing LLMs are lower than English systems a lot. For example, accuracies on Middle-ZH and Middle-EN for GPT-4 are 63.81 and 84.26. (3) Several models (e.g., WizardMath-13B or MetaMath-13B) fine-tuned from LLaMA2-13B achieve slight improvements on English systems, but the performance results are lower than LLaMA2-13B on Chinese systems a lot, which indicates that domain-specific fine-tuning may degrade the generalization abilities of LLMs. (4). The mathematical models (i.e., InternLM2-Math-7B/20B and DeepSeekMath-7B) by continuing pretraining on the large-scale math-related dataset (¿=100B tokens) show sufficient improvements when compared to models with similar size, which indicates that large-scale pertaining is effective to improve the mathematical reasoning abilities.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy Comparison

### Overview

The image is a line chart comparing the mean accuracy of various language models on English and Chinese datasets, as well as their overall performance. The x-axis represents different language models, and the y-axis represents the mean accuracy, ranging from 20 to 90. The chart includes three data series: English (blue dashed line), Chinese (green dashed line), and Overall (black solid line).

### Components/Axes

* **Title:** None

* **X-axis:** Language Models (WizardMath-13B, LLaMA2-7B, MetaMath-13B, LLaMA2-13B, LLaMA2-70B, MAmmoTH-13B, Yi-6B, ChatGLM3-6B, Baichuan2-13B, InternLM2-7B, Qwen-14B, InternLM2-20B, Yi-34B, InternLM2-Math-7B, DeepSeekMath-7B, InternLM2-Math-20B, GPT-3.5, Qwen-72B, GPT-4)

* **Y-axis:** Mean Accuracy, ranging from 20 to 90 in increments of 10.

* **Legend:** Located in the top-left corner.

* English: Blue dashed line with circular markers

* Chinese: Green dashed line with circular markers

* Overall: Black solid line with circular markers

### Detailed Analysis

**English (Blue Dashed Line):**

The English accuracy fluctuates more than the other two lines.

* WizardMath-13B: ~40

* LLaMA2-7B: ~38

* MetaMath-13B: ~50

* LLaMA2-13B: ~47

* LLaMA2-70B: ~65

* MAmmoTH-13B: ~57

* Yi-6B: ~65

* ChatGLM3-6B: Not Available

* Baichuan2-13B: Not Available

* InternLM2-7B: ~62

* Qwen-14B: ~69

* InternLM2-20B: ~69

* Yi-34B: ~70

* InternLM2-Math-7B: Not Available

* DeepSeekMath-7B: Not Available

* InternLM2-Math-20B: ~88

* GPT-3.5: ~75

* Qwen-72B: ~89

* GPT-4: Not Available

**Chinese (Green Dashed Line):**

The Chinese accuracy generally increases across the models.

* WizardMath-13B: ~22

* LLaMA2-7B: ~27

* MetaMath-13B: ~32

* LLaMA2-13B: ~34

* LLaMA2-70B: ~42

* MAmmoTH-13B: ~48

* Yi-6B: ~48

* ChatGLM3-6B: Not Available

* Baichuan2-13B: Not Available

* InternLM2-7B: ~54

* Qwen-14B: ~52

* InternLM2-20B: ~52

* Yi-34B: ~58

* InternLM2-Math-7B: Not Available

* DeepSeekMath-7B: Not Available

* InternLM2-Math-20B: ~62

* GPT-3.5: ~67

* Qwen-72B: ~70

* GPT-4: ~73

**Overall (Black Solid Line):**

The overall accuracy shows a general upward trend.

* WizardMath-13B: ~28

* LLaMA2-7B: ~31

* MetaMath-13B: ~36

* LLaMA2-13B: ~40

* LLaMA2-70B: ~50

* MAmmoTH-13B: ~50

* Yi-6B: ~54

* ChatGLM3-6B: Not Available

* Baichuan2-13B: Not Available

* InternLM2-7B: ~54

* Qwen-14B: ~57

* InternLM2-20B: ~64

* Yi-34B: ~60

* InternLM2-Math-7B: Not Available

* DeepSeekMath-7B: Not Available

* InternLM2-Math-20B: ~71

* GPT-3.5: ~71

* Qwen-72B: ~71

* GPT-4: ~78

### Key Observations

* The English accuracy fluctuates more significantly than the Chinese and Overall accuracies.

* The Overall accuracy generally increases as the models progress along the x-axis.

* The Chinese accuracy consistently lags behind the English accuracy for most models.

* The models on the right side of the chart (GPT-3.5, Qwen-72B, GPT-4) generally exhibit higher accuracy across all three metrics.

### Interpretation

The chart illustrates the performance of various language models on English and Chinese datasets. The fluctuating English accuracy suggests that some models are better suited for English tasks than others, while the consistently lower Chinese accuracy indicates a potential gap in model performance across different languages. The upward trend in overall accuracy suggests that newer models generally perform better than older models. The models GPT-3.5, Qwen-72B, and GPT-4 show the highest overall performance, indicating their superior capabilities in both English and Chinese language tasks. The data suggests that model architecture and training data play a significant role in determining language-specific performance.

</details>

Figure 4: Mean accuracies for English, Chinese, and overall educational systems.

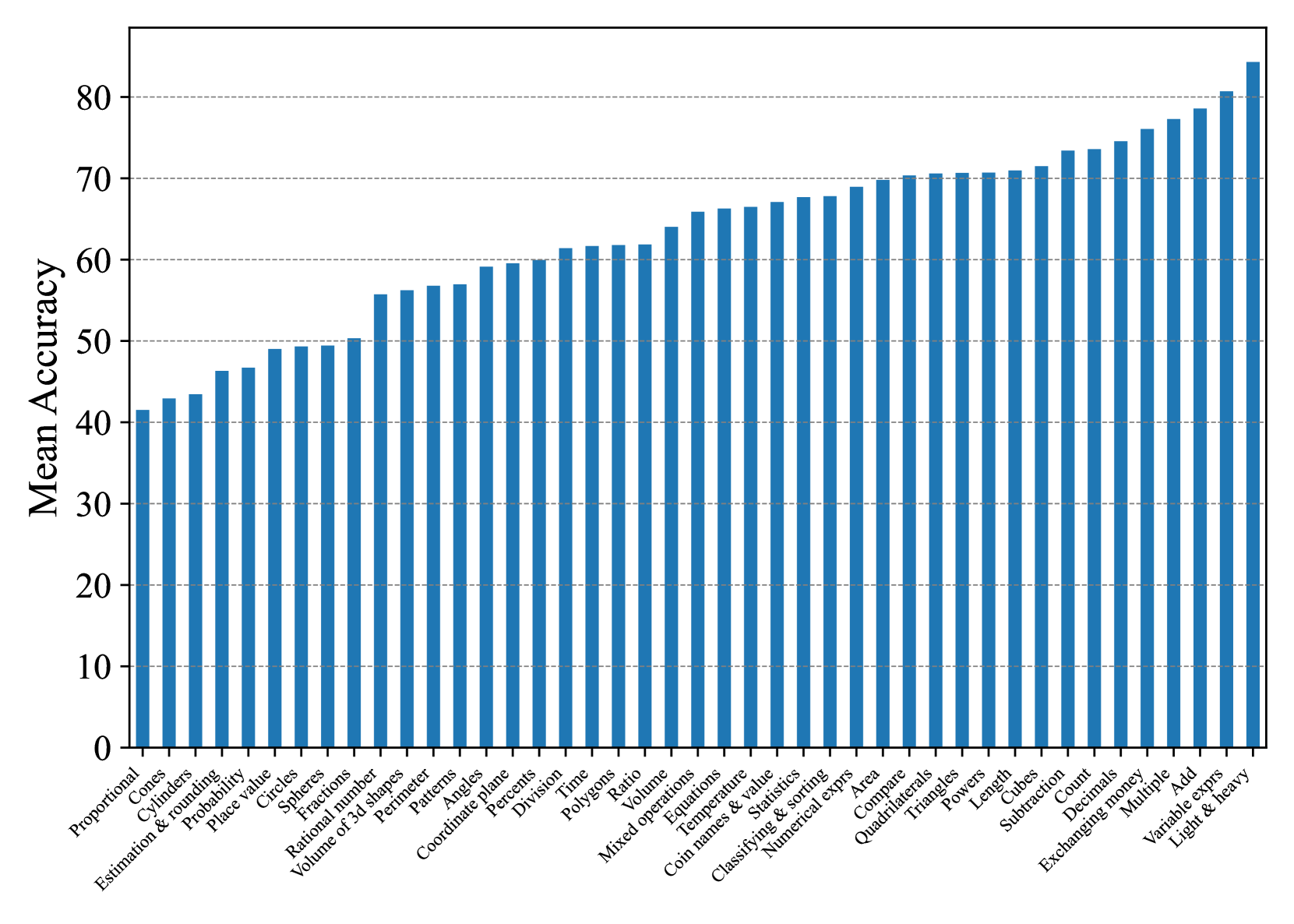

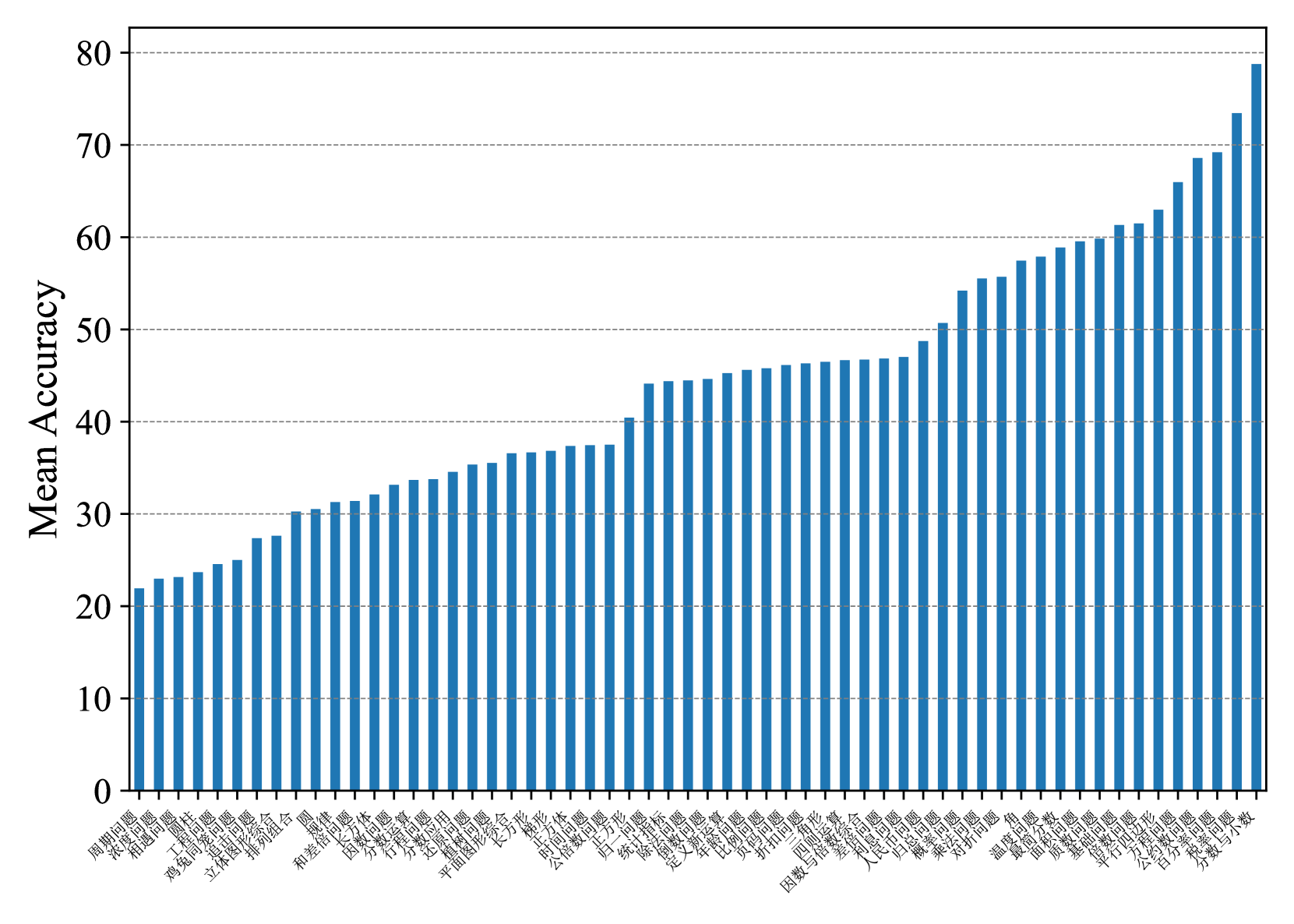

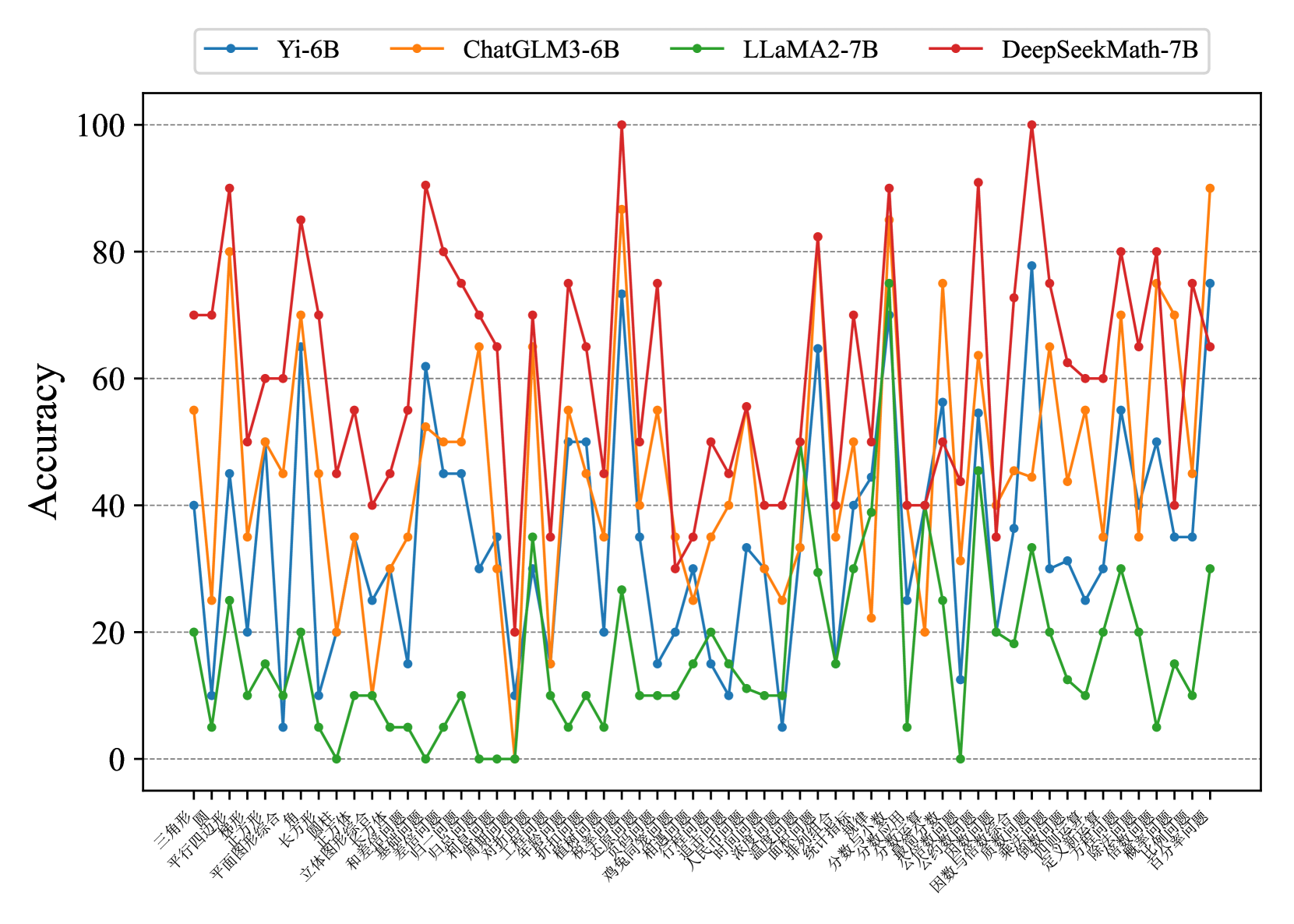

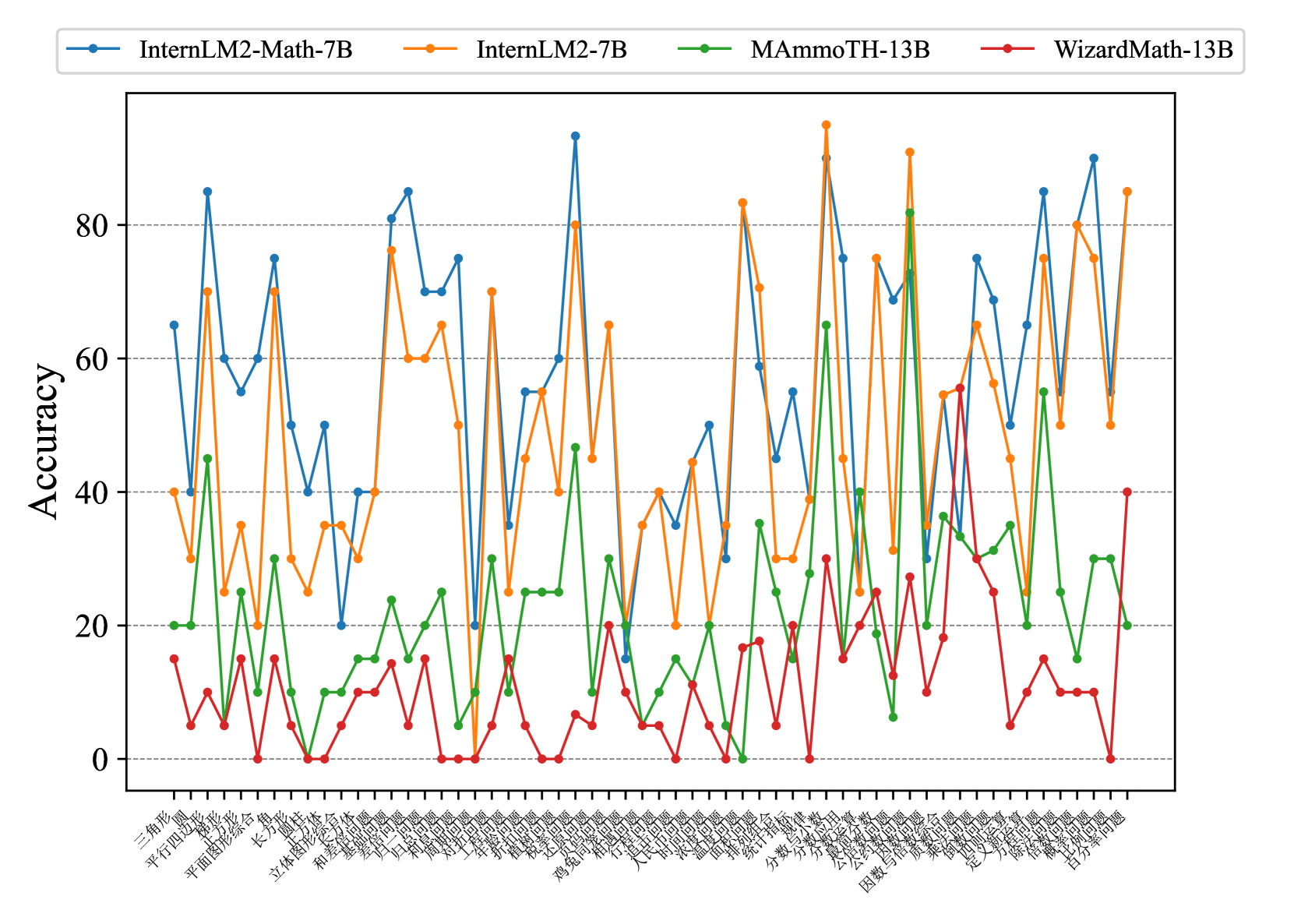

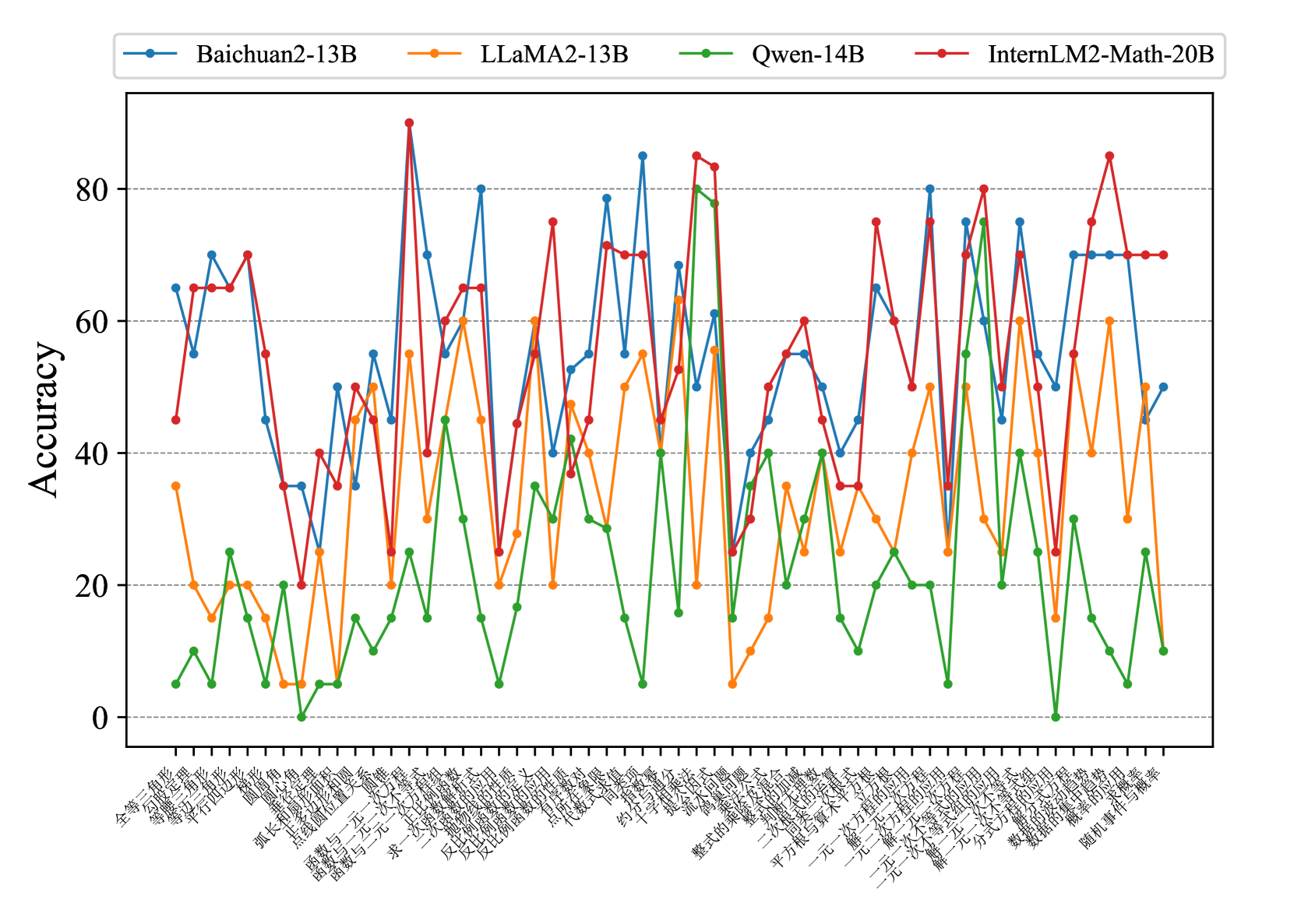

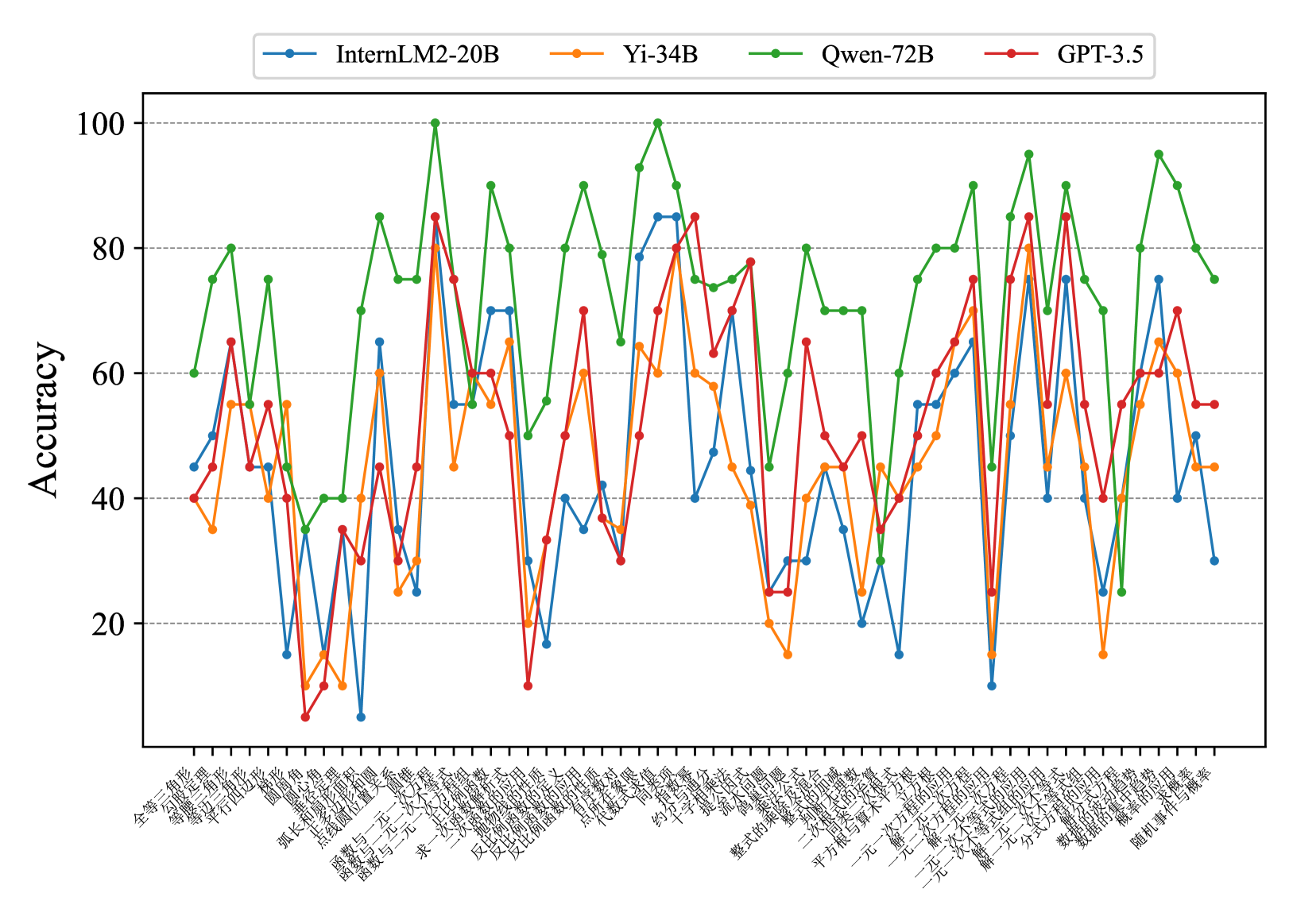

Average Concept-wised Accuracy.

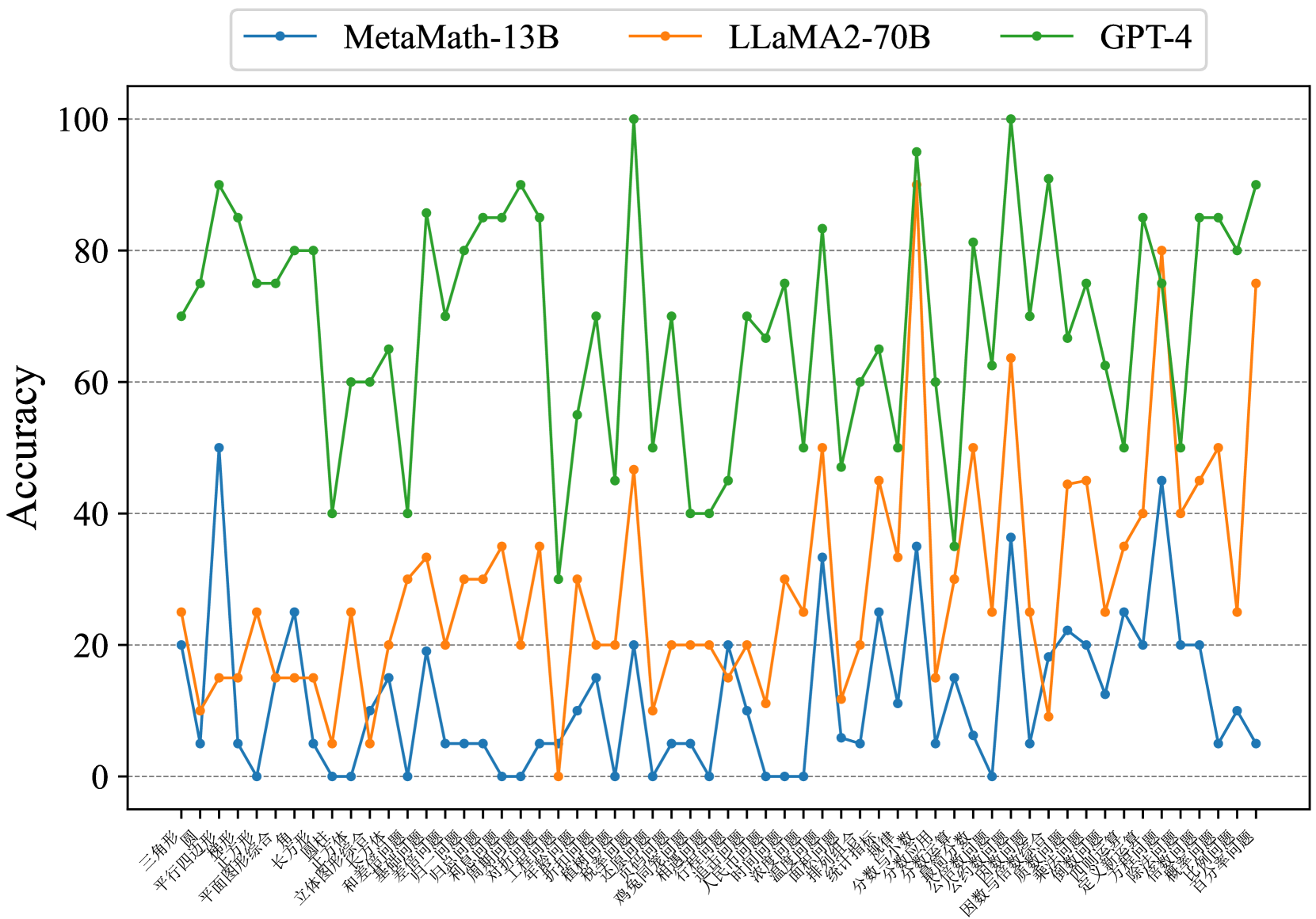

In Fig. 5 and Fig. 6, to better analyze the effectiveness of our ConceptMath, we further provide the concept-wised accuracies average on evaluated models for different mathematical concepts by zero-shot prompting on Middle-EN and Middle-ZH. (See Appendix D for more results on Elementary-EN and Elementary-ZH). In Fig. 5 and Fig. 6, we observe that the accuracies across concepts vary a lot for existing LLMs. For example, for Middle-ZH in Fig. 6, around 18% of concepts exhibit an accuracy lower than 30%. Thus, to improve the mathematical abilities of LLMs, these concepts with large room for improvement should be given the highest priority, which further shows the advantage of ConceptMath.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Bar Chart: Mean Accuracy by Mathematical Concept

### Overview

The image is a bar chart displaying the mean accuracy achieved on various mathematical concepts. The x-axis represents different mathematical topics, while the y-axis represents the mean accuracy, ranging from 0 to 80. The chart uses blue bars to represent the accuracy for each concept, sorted in ascending order.

### Components/Axes

* **Y-axis:** "Mean Accuracy", ranging from 0 to 80, with tick marks at intervals of 10.

* **X-axis:** Mathematical concepts, listed horizontally. The labels are somewhat overlapping due to space constraints.

* **Bars:** Blue bars representing the mean accuracy for each mathematical concept.

### Detailed Analysis

The bar chart presents the mean accuracy for a range of mathematical concepts. The concepts are listed along the x-axis, and the corresponding mean accuracy is indicated by the height of the blue bars. The bars are arranged in ascending order of accuracy.

Here's a breakdown of the approximate accuracy for some of the concepts:

* **Circle:** Approximately 34

* **Radical exprs:** Approximately 35

* **Exponents & scientific notation:** Approximately 39

* **Quadrants:** Approximately 40

* **Geometric sequences:** Approximately 41

* **Probability of compound events:** Approximately 42

* **Independent & dependent events:** Approximately 43

* **Rational & irrational numbers:** Approximately 43

* **Probability of simple & opposite events:** Approximately 44

* **Systems of equations:** Approximately 45

* **Scale drawings:** Approximately 46

* **Absolute value:** Approximately 47

* **Make predictions:** Approximately 48

* **One-variable statistics:** Approximately 49

* **Domain & range of functions:** Approximately 50

* **Two-variable functions:** Approximately 51

* **Linear functions:** Approximately 52

* **Arithmetic sequences:** Approximately 53

* **Mean, median, mode, & range:** Approximately 53

* **Financial literacy:** Approximately 54

* **Center & variability:** Approximately 55

* **Prime factorization:** Approximately 56

* **Percents:** Approximately 57

* **Divide:** Approximately 57

* **Fractions & decimals:** Approximately 58

* **Surface area & volume:** Approximately 59

* **Distance between two points:** Approximately 60

* **Square roots & cube roots:** Approximately 61

* **Congruence & similarity:** Approximately 62

* **Nonlinear functions:** Approximately 62

* **Variable exprs:** Approximately 63

* **Perimeter & area:** Approximately 64

* **Triangle:** Approximately 65

* **Add & subtract:** Approximately 66

* **Multiply:** Approximately 66

* **Decimals:** Approximately 67

* **Axes:** Approximately 68

* **Polygons:** Approximately 69

* **Factors:** Approximately 70

* **Trapezoids:** Approximately 71

* **Interpret functions:** Approximately 72

* **Lines & angles:** Approximately 73

* **Proportional relationships:** Approximately 74

* **Slope:** Approximately 75

* **Opposite integers:** Approximately 76

* **Inequalities:** Approximately 77

* **Consumer math:** Approximately 78

* **Polyhedra:** Approximately 79

* **Prime or composite:** Approximately 80

* **Square:** Approximately 81

* **Estimate metric measurements:** Approximately 82

The general trend is an upward slope, indicating increasing mean accuracy as we move from left to right along the x-axis.

### Key Observations

* The mean accuracy varies significantly across different mathematical concepts.

* "Estimate metric measurements" has the highest mean accuracy, while "Circle" has the lowest.

* The concepts are sorted by mean accuracy, making it easy to identify the easiest and most challenging topics.

### Interpretation

The bar chart provides insights into the relative difficulty of different mathematical concepts. The data suggests that concepts like "Estimate metric measurements" are well-understood, while concepts like "Circle" pose a greater challenge. This information can be valuable for educators to tailor their teaching strategies and focus on areas where students struggle the most. The arrangement of the bars allows for a quick visual assessment of the relative difficulty of each concept. The wide range of accuracy scores suggests that some concepts build upon others, and a strong foundation in the basics is crucial for mastering more advanced topics.

</details>

Figure 5: Mean concept accuracies on Middle-EN.

Figure 6: Mean concept accuracies on Middle-ZH.

Concept-wised Accuracy.

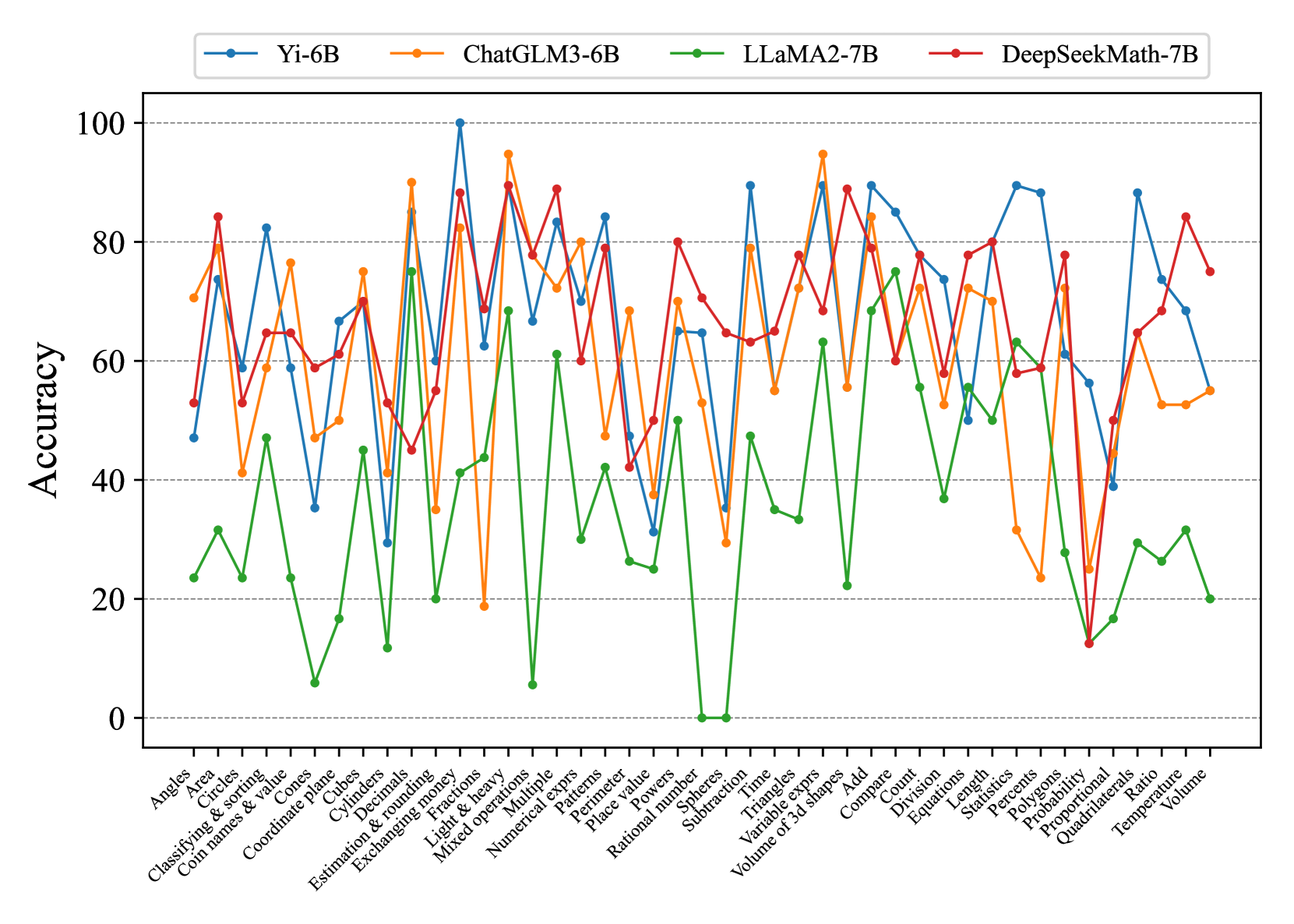

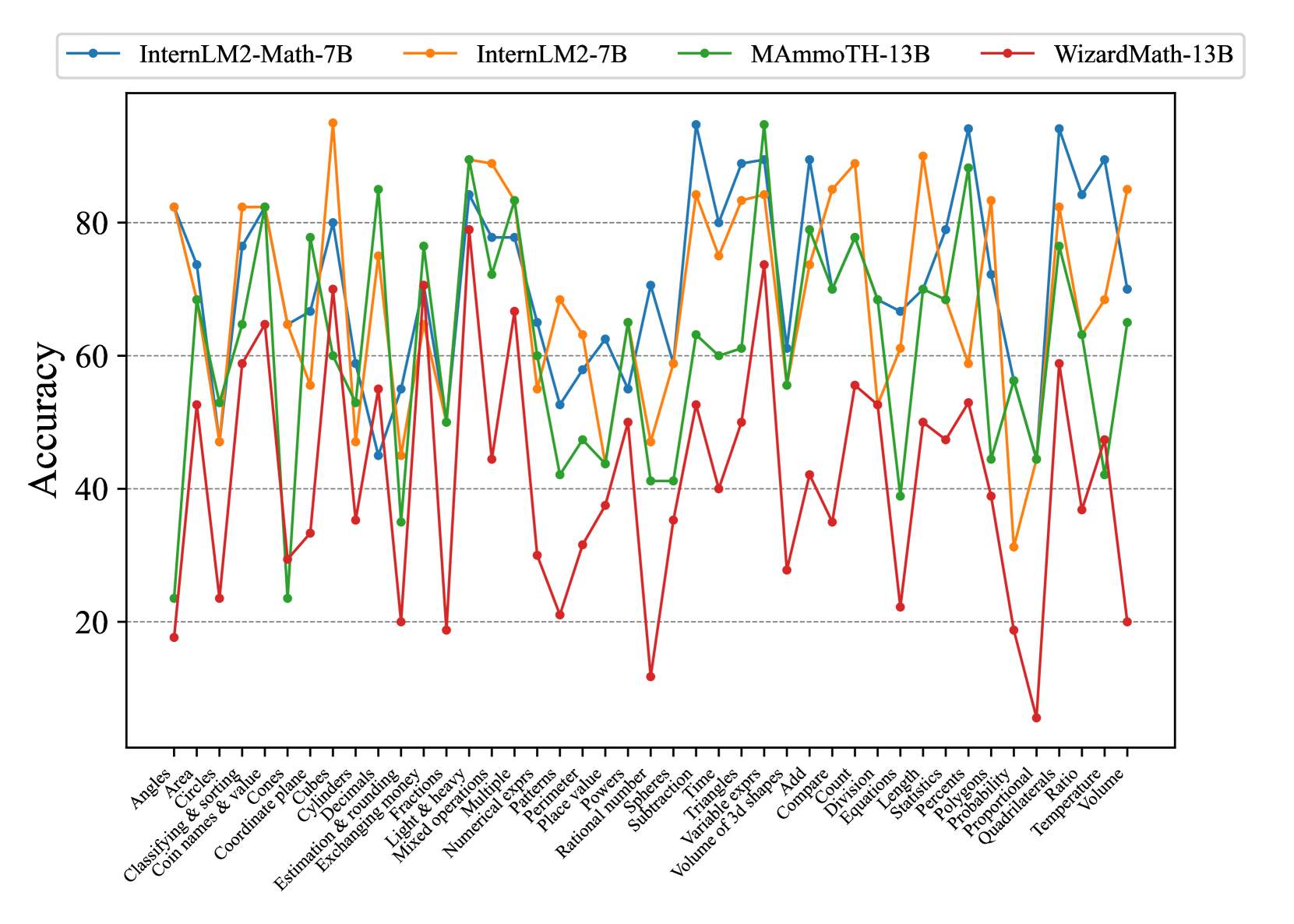

Fig. 7 and Fig. 8 show that most existing LLMs, whether open-sourced, closed-sourced, general-purpose, or math-specialized, exhibit notable differences in their concept accuracies in the zero-shot prompt setting. These disparities may stem from variations in training datasets, strategies, and model sizes, which suggests that apart from common weaknesses, each model possesses its unique areas of deficiency or shortcomings. For the sake of brevity in the presentation, we only show a subset of models on Middle-EN and Middle-ZH. The concept accuracies of Elementary-EN and Elementary-ZH systems and all results of all models can be found in Appendix D.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Chart: Accuracy Comparison of Language Models on Math Problems

### Overview

The image is a line chart comparing the accuracy of three language models (MetaMath-13B, LLaMA2-70B, and GPT-4) across a range of mathematical problem types. The x-axis represents different math topics, and the y-axis represents accuracy, ranging from 0 to 100.

### Components/Axes

* **Title:** (Implicit) Accuracy Comparison of Language Models on Math Problems

* **X-axis:** Math problem types (listed below)

* **Y-axis:** Accuracy (ranging from 0 to 100) with gridlines at intervals of 20.

* **Legend:** Located at the top of the chart.

* Blue line: MetaMath-13B

* Orange line: LLaMA2-70B

* Green line: GPT-4

**X-axis Labels (Math Problem Types):**

1. Add & subtract

2. Arithmetic sequences

3. Congruence & similarity

4. Consumer math

5. Counting principles

6. Distance between two points

7. Divide

8. Domain & range of functions

9. Estimate metric measurements

10. Equivalent exprs

11. Exponents & scientific notation

12. Financial literacy

13. Fractions & decimals

14. Geometric sequences

15. Interpret functions

16. Linear equations

17. Linear functions

18. Lines & angles

19. Make predictions

20. Multiply

21. Non-linear functions

22. One-variable statistics

23. Percents

24. Perimeter & area

25. Prime factorization

26. Prime & composite

27. Probability of compound event

28. Probability of one event

29. Probability of simple & composite events

30. Probability of simple & opposite events

31. Proportional relationships

32. Quadrants

33. Rational & irrational numbers

34. Scale drawings

35. Slope

36. Square roots & cube roots

37. Square, area & volume

38. Surface area & volume

39. Systems of equations

40. Triangle

41. Two-variable statistics

42. Absolute value

43. Center & variability

44. Circle

45. Factors

46. Independent & dependent events

47. Inequalities

48. Mean, median, mode, & range

49. Opposite integers

50. Outlier

51. Polygons

52. Polyhedra

53. Radical exprs

54. Square

55. Transformations

56. Trapezoids

57. Variable exprs

### Detailed Analysis

* **MetaMath-13B (Blue):** The accuracy fluctuates significantly across different problem types. It shows very low accuracy (near 0) on "Probability of one event" and "Scale drawings". It peaks around 60-70 on "Distance between two points" and "Equivalent exprs". Overall, it has the lowest average accuracy.

* **LLaMA2-70B (Orange):** The accuracy is generally higher than MetaMath-13B but lower than GPT-4. It shows a more consistent performance across different problem types, with fewer extreme dips. It peaks around 75 on "Equivalent exprs".

* **GPT-4 (Green):** This model consistently demonstrates the highest accuracy across almost all problem types. The accuracy generally stays above 60, often reaching 80-100.

**Specific Data Points (Approximate):**

| Problem Type | MetaMath-13B | LLaMA2-70B | GPT-4 |

| :---------------------------- | :----------- | :----------- | :---- |

| Add & subtract | ~50 | ~55 | ~65 |

| Distance between two points | ~60 | ~55 | ~95 |

| Equivalent exprs | ~70 | ~75 | ~85 |

| Probability of one event | ~0 | ~20 | ~75 |

| Scale drawings | ~5 | ~45 | ~65 |

| Absolute value | ~50 | ~60 | ~90 |

### Key Observations

* GPT-4 significantly outperforms MetaMath-13B and LLaMA2-70B across most math problem types.

* MetaMath-13B has very poor performance on specific problem types like "Probability of one event" and "Scale drawings".

* LLaMA2-70B provides a more stable, though generally lower, accuracy compared to MetaMath-13B.

* All models show variability in accuracy depending on the specific math topic.

### Interpretation

The chart demonstrates the varying capabilities of different language models in solving mathematical problems. GPT-4's superior performance suggests a more robust understanding of mathematical concepts and problem-solving strategies. The weaknesses of MetaMath-13B in specific areas highlight potential gaps in its training data or architecture. LLaMA2-70B's more consistent performance indicates a more balanced but less specialized skill set. The data suggests that the choice of language model for math-related tasks should be carefully considered based on the specific types of problems being addressed. The large variance in accuracy across problem types for all models suggests that even the best models have areas for improvement.

</details>

Figure 7: Concept accuracies on Middle-EN.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy Comparison

### Overview

The image is a line chart comparing the accuracy of three language models: MetaMath-13B, LLaMA2-70B, and GPT-4, across a series of mathematical problems. The x-axis represents different problem types (labeled in Chinese), and the y-axis represents accuracy, ranging from 0 to 80.

### Components/Axes

* **Title:** None visible.

* **X-axis:** Represents different mathematical problem types, labeled in Chinese. The labels are closely spaced and difficult to read individually.

* **Y-axis:** "Accuracy", ranging from 0 to 80 in increments of 20. Horizontal gridlines are present at each increment.

* **Legend:** Located at the top of the chart.

* Blue line: MetaMath-13B

* Orange line: LLaMA2-70B

* Green line: GPT-4

### Detailed Analysis

The chart displays the accuracy of each model across a range of mathematical problems. The x-axis labels are in Chinese, representing different problem types.

Here's a breakdown of each model's performance:

* **MetaMath-13B (Blue):** Generally shows the lowest accuracy among the three models. The accuracy fluctuates significantly across different problem types, ranging from approximately 0 to 60.

* **LLaMA2-70B (Orange):** Shows a moderate level of accuracy, generally higher than MetaMath-13B but lower than GPT-4. The accuracy also fluctuates, ranging from approximately 5 to 65.

* **GPT-4 (Green):** Consistently demonstrates the highest accuracy across most problem types. The accuracy fluctuates, ranging from approximately 30 to 90.

Here are some approximate data points for each model at the beginning and end of the chart:

* **MetaMath-13B:**

* First data point: Accuracy ~40

* Last data point: Accuracy ~10

* **LLaMA2-70B:**

* First data point: Accuracy ~45

* Last data point: Accuracy ~55

* **GPT-4:**

* First data point: Accuracy ~50

* Last data point: Accuracy ~90

Here is a transcription of the x-axis labels, along with their English translations:

| Chinese Label | Approximate English Translation |

|---|---|

| 全等 | Congruence |

| 等腰三角形 | Isosceles triangle |

| 等边三角形 | Equilateral triangle |

| 平行四边形 | Parallelogram |

| 弧长 | Arc length |

| 圆锥 | Cone |

| 函数与一次函数 | Function and linear function |

| 函数与二次函数 | Function and quadratic function |

| 反比例函数 | Inverse proportional function |

| 整式的加减 | Addition and subtraction of polynomials |

| 一元一次方程 | Linear equation in one variable |

| 平方根 | Square root |

| 用平方根解一元二次方程 | Solving quadratic equations in one variable using square roots |

| 用公式法解一元二次方程 | Solving quadratic equations in one variable using the quadratic formula |

| 用因式分解法解一元二次方程 | Solving quadratic equations in one variable by factoring |

| 一元二次方程的应用 | Application of quadratic equations in one variable |

| 一元一次不等式 | Linear inequality in one variable |

| 一元一次不等式的应用 | Application of linear inequalities in one variable |

| 随机事件与概率 | Random events and probability |

### Key Observations

* GPT-4 consistently outperforms MetaMath-13B and LLaMA2-70B across the majority of problem types.

* MetaMath-13B generally has the lowest accuracy.

* All three models exhibit significant fluctuations in accuracy depending on the problem type.

* The performance gap between GPT-4 and the other two models is substantial.

### Interpretation

The data suggests that GPT-4 is significantly more proficient at solving the mathematical problems represented on the x-axis compared to MetaMath-13B and LLaMA2-70B. The fluctuations in accuracy across different problem types indicate that each model has strengths and weaknesses in specific areas of mathematics. The consistent underperformance of MetaMath-13B suggests it may require further training or optimization to achieve comparable accuracy to the other models. The chart highlights the varying capabilities of different language models in tackling mathematical reasoning tasks.

</details>

Figure 8: Concept accuracies on Middle-ZH.

| Model | Elementary-EN | Middle-EN | Elementary-ZH | Middle-ZH | Avg. $\downarrow$ |

| --- | --- | --- | --- | --- | --- |

| Yi-6B | 5.30 / 1.73 | 5.21 / 1.37 | 0.04 / 0.20 | 0.36 / 0.35 | 2.73 / 0.91 |

| ChatGLM3-6B | 7.42 / 0.22 | 7.55 / 0.23 | 0.11 / 0.02 | 0.35 / 0.05 | 3.86 / 0.13 |

| InternLM2-Math-7B | 7.42 / 0.22 | 7.55 / 0.23 | 0.11 / 0.02 | 0.35 / 0.05 | 3.86 / 0.13 |

| InternLM2-7B | 5.36 / 1.03 | 5.27 / 0.84 | 0.01 / 0.37 | 0.33 / 0.49 | 2.74 / 0.68 |

| MAmmoTH-13B | 7.67 / 0.47 | 7.97 / 0.46 | 0.00 / 0.03 | 0.35 / 0.03 | 4.00 / 0.25 |

| WizardMath-13B | 8.41 / 0.35 | 8.23 / 0.34 | 0.00 / 0.02 | 0.55 / 0.02 | 4.30 / 0.18 |

| MetaMath-13B | 7.67 / 0.47 | 7.97 / 0.46 | 0.00 / 0.03 | 0.35 / 0.03 | 4.00 / 0.25 |

| Baichuan2-13B | 7.20 / 1.43 | 6.58 / 1.18 | 0.05 / 0.54 | 0.41 / 0.65 | 3.56 / 0.95 |

| LLaMA2-13B | 6.80 / 0.73 | 6.36 / 0.64 | 0.01 / 0.15 | 0.56 / 0.16 | 3.43 / 0.42 |

| Qwen-14B | 11.04 / 1.58 | 9.73 / 1.08 | 1.43 / 1.27 | 0.70 / 0.93 | 5.73 / 1.22 |

| InternLM2-Math-20B | 5.58 / 1.30 | 5.51 / 0.99 | 0.03 / 0.47 | 0.34 / 0.47 | 2.86 / 0.81 |

| InternLM2-20B | 7.20 / 1.43 | 6.58 / 1.18 | 0.05 / 0.54 | 0.41 / 0.65 | 3.56 / 0.95 |

| GPT-3.5 | 9.48 / - | 9.21 / - | 0.00 / - | 0.31 / - | 4.75 / - |

| GPT-4 | 8.68 / - | 8.24 / - | 0.15 / - | 0.68 / - | 4.44 / - |

Table 3: Data contamination rate of LLMs. We provide two different contamination detection methods. The values in the table represent “Rouge / Prob”. Note that the second method based on output probability distributions can only be applied to the open-source models.

3.3 Analysis

Contamination.

To determine whether a text is in the pretraining data of a LLM, we provide two different contamination detection methods (i.e., Rouge-based and Prob-based methods) to analyze our ConceptMath in Table 3. Specifically, for the Rouge-based method, we just input the first 50% of the question as the input and compute the Rouge-L score between the generation results and the ground-truth label of the last 50% of the text, where a lower Rouge-L score means a lower contamination rate. For the Prob-based method, we follow Shi et al. (2023) to use the MIN-K% probability metric, which first gets the probability for each token in the test, and selects the K% tokens with minimum probabilities and calculates their average log-likelihood. If the average log-likelihood is high, the text is likely in the pretraining data. Note that we choose $K$ as 10 in our setting. In Table 3, we observe that the contaminate rates on our ConceptMath are very low, which means that our ConceptMath can provide a reasonable evaluation for existing LLMs.

| Concept | Concept Acc | Error Type | Example |

| --- | --- | --- | --- |

| Perimeter | 26.32 | Calculation Error | Q: Find the perimeter of a 16 by 18 feet rectangle. A: The perimeter is 16 + 18 + 16 + 18 = 72 feet. |

| Knowledge Error | Q: The perimeter of a rectangular sticker is 14 centimeters. The sticker is 2 centimeters wide. How tall is it? A: The height equals the perimeter subtracts the width. The height of the rectangle is $14-12=12$ centimeters. | | |

| Polygons | 11.11 | Knowledge Error | Q: A regular polygon has an interior angle that measures 120 degrees. How many sides does this polygon have? A: Let $n$ be the number of sides of the polygon. We have that $n× 120=360$ . Hence: $n=3$ . |

| Cylinders | 11.76 | Calculation Error | Q: How much soup can a cylinder-shaped can store, if the radius of the can is 3 inches and the height is 5 inches? A: … The volume of the can is V = $\pi× 3^{2}× 5=30\pi$ . |

Table 4: Error reasons of several unmastered concepts of LLaMA2-13B.

Unmastered Concepts.

We also highlight the several unmastered concepts of the LLaMA2-13B in Table 4, which shows ConceptMath is effective in guiding further refinement of existing LLMs.

| Models | LLaMA2 | LLaMA2 | LLaMA2 |

| --- | --- | --- | --- |

| (w/ MMQA) | (w/ MMQA | | |

| &CS) | | | |

| Cones | 0.00 | 17.65 | 23.53 |

| Spheres | 5.88 | 29.41 | 35.29 |

| Polygons | 11.11 | 61.11 | 66.67 |

| Rational Number | 11.76 | 23.53 | 52.94 |

| Cylinders | 11.76 | 35.29 | 47.06 |

| Angles | 11.76 | 47.06 | 58.82 |

| Probability | 18.75 | 25.00 | 75.00 |

| Perimeter | 26.32 | 42.11 | 63.16 |

| Volume | 27.78 | 38.89 | 66.67 |

| Proportional | 27.78 | 33.33 | 44.44 |

| Avg Acc. | 15.29 | 36.88 | 53.36 |

| (over 10 concepts) | | | |

| Avg Acc. | 51.94 | 58.14 | 60.67 |

| (over 33 concepts) | | | |

| Overall Acc. | 44.02 | 53.94 | 59.29 |

Table 5: Results of fine-tuning models. “MMQA” and “CS” denote MetaMathQA and our constructed Concept-Specific training datasets, respectively. Introducing CS data specifically for the bottom 10 concepts significantly enhances these concepts’ performance, while slightly improving the performance across the remaining 33 concepts.

Evaluation Prompting.

Different from the few-shot or cot prompting evaluation that can boost closed-source models, we find that zero-shot prompting is more effective for certain open-source LLMs in Table 2. This disparity may arise either because the models are not sufficiently powerful to own mathematical CoT capabilities Yu et al. (2023); Wei et al. (2022) or because these models have already incorporated CoT data during training Longpre et al. (2023). Consequently, to ensure a comprehensive analysis, we have employed all three prompting methods for evaluation.

Efficient Fine-tuning.

To show the effect of efficient fine-tuning, we take the LLaMA2-13B as an example in Table 5. Specifically, for LLaMA2-13B, we first select 10 concepts with the lowest accuracies in Elementary-EN. Then, we crawl 495 samples (about 50 samples per concept) using the trained classifier as the Concept-Specific (CS) training data (See Appendix B for more details). Meanwhile, to avoid overfitting, we introduce the MetaMathQA (MMQA Yu et al. (2023) ) data to preserve general mathematical abilities. After that, we can fine-tune LLaMA2-13B by only using MMQA (i.e., LLaMA2 (w/ MMQA)), or using both MMQA and CS data (i.e., LLaMA2 (w/ MMQA & CS)). In Table 5, we observe that LLaMA2 (w/ MMQA & CS) archives significant improvements on the lowest 10 concepts and preserves well on the other 33 concepts, which shows the effect of efficient fine-tuning and the advantages of our ConceptMath.

4 Related Work

Large Language Models for Mathematics.

Large Language Models (LLMs) such as GPT-3.5 and GPT-4 have exhibited promising capabilities in complex mathematical tasks. However, the proficiency of open-source alternatives like LLaMA (Touvron et al., 2023a) and LLaMA2 (Touvron et al., 2023b) remains notably inferior on these datasets, particularly in handling non-English problems. In contrast, models like Baichuan2 (Baichuan, 2023) and Qwen (Bai et al., 2023b) pretrained on multilingual datasets (i.e., Chinese and English) have achieved remarkable performance. Recently, many domain-specialized math language models have been proposed. For example, MetaMath (Yu et al., 2023) leverages the LLaMA2 models and finetunes on the constructed MetaMathQA dataset. MAmmoTH (Yue et al., 2023) synergizes Chain-of-Thought (CoT) and Program-of-Thought (PoT) rationales.

Mathmatical Reasoning Benchmarks.

Recently, many mathematical datasets Roy and Roth (2015); Koncel-Kedziorski et al. (2015); Lu et al. (2023); Huang et al. (2016); Miao et al. (2020); Patel et al. (2021) have been proposed. For example, SingleOp (Roy et al., 2015), expands the scope to include more complex operations like multiplication and division. Math23k (Wang et al., 2017) gathers 23,161 problems labeled with structured equations and corresponding answers. GSM8K (Cobbe et al., 2021) is a widely used dataset, which requires a sequence of elementary calculations with basic arithmetic operations.

Fine-Grained Benchmarks.

Traditional benchmarks focus on assessing certain abilities of models on one task Guo et al. (2023b); Wang et al. (2023a); Liu et al. (2020); Guo et al. (2022); Chai et al. (2024); Liu et al. (2024); Guo et al. (2024, 2023c); Bai et al. (2023a); Liu et al. (2022); Guo et al. (2023a); Bai et al. (2024); Liu et al. (2021) (e.g., reading comprehension (Rajpurkar et al., 2018), machine translation (Bojar et al., 2014), and summarization (Narayan et al., 2018)). For example, the GLUE benchmark (Wang et al., 2019) combines a collection of tasks, and has witnessed superhuman model performance for pretraining models (Kenton and Toutanova, 2019; Radford et al., 2019) (Hendrycks et al., 2021a) introduced MMLU, a benchmark with multiple-choice questions across 57 subjects including STEM, humanities, and social sciences, for assessing performance and identifying weaknesses. (et al., 2022) proposed BIG-bench with over 200 tasks. To enhance the mathematical capabilities of LLMs, we introduce a comprehensive mathematical reasoning ConceptMath dataset designed to assess model performance across over 200 diverse mathematical concepts in both Chinese and English.

5 Conclusion

We introduce a new bilingual concept-wise math reasoning dataset called ConceptMath to assess models across a diverse set of concepts. First, ConceptMath covers more than 200 concepts across elementary and middle schools for mainstream English and Chinese systems. Second, we extensively evaluate existing LLMs by three prompting methods, which can guide further improvements for these LLMs on mathematical abilities. Third, we analyze the contamination rates, error cases and provide a simple and efficient fine-tuning strategy to enhance the weaknesses.

Limitations.

Human efforts are required to carefully design the hierarchical systems of mathematical concepts. In the future, we have three plans as follows: (1) Extend the input modality to multi-modalities. (2) Extend the education systems to high school and college levels. (3) Extend the reasoning abilities to more STEM fields.

References

- Anthropic (2023) Anthropic. 2023. Model card and evaluations for claude models.

- Bai et al. (2024) Ge Bai, Jie Liu, Xingyuan Bu, Yancheng He, Jiaheng Liu, Zhanhui Zhou, Zhuoran Lin, Wenbo Su, Tiezheng Ge, Bo Zheng, and Wanli Ouyang. 2024. Mt-bench-101: A fine-grained benchmark for evaluating large language models in multi-turn dialogues. arXiv.

- Bai et al. (2023a) Jiaqi Bai, Hongcheng Guo, Jiaheng Liu, Jian Yang, Xinnian Liang, Zhao Yan, and Zhoujun Li. 2023a. Griprank: Bridging the gap between retrieval and generation via the generative knowledge improved passage ranking. CIKM.

- Bai et al. (2023b) Jinze Bai, Shuai Bai, Yunfei Chu, Zeyu Cui, Kai Dang, Xiaodong Deng, Yang Fan, Wenbin Ge, Yu Han, Fei Huang, Binyuan Hui, Luo Ji, Mei Li, Junyang Lin, Runji Lin, Dayiheng Liu, Gao Liu, Chengqiang Lu, Keming Lu, Jianxin Ma, Rui Men, Xingzhang Ren, Xuancheng Ren, Chuanqi Tan, Sinan Tan, Jianhong Tu, Peng Wang, Shijie Wang, Wei Wang, Shengguang Wu, Benfeng Xu, Jin Xu, An Yang, Hao Yang, Jian Yang, Shusheng Yang, Yang Yao, Bowen Yu, Hongyi Yuan, Zheng Yuan, Jianwei Zhang, Xingxuan Zhang, Yichang Zhang, Zhenru Zhang, Chang Zhou, Jingren Zhou, Xiaohuan Zhou, and Tianhang Zhu. 2023b. Qwen technical report. arXiv preprint arXiv:2309.16609.

- Baichuan (2023) Baichuan. 2023. Baichuan 2: Open large-scale language models. arXiv preprint arXiv:2309.10305.

- Bojar et al. (2014) Ondřej Bojar, Christian Buck, Christian Federmann, Barry Haddow, Philipp Koehn, Johannes Leveling, Christof Monz, Pavel Pecina, Matt Post, Herve Saint-Amand, Radu Soricut, Lucia Specia, and Aleš Tamchyna. 2014. Findings of the 2014 workshop on statistical machine translation. In Proceedings of the Ninth Workshop on Statistical Machine Translation, pages 12–58, Baltimore, Maryland, USA. Association for Computational Linguistics.

- Chai et al. (2024) Linzheng Chai, Jian Yang, Tao Sun, Hongcheng Guo, Jiaheng Liu, Bing Wang, Xiannian Liang, Jiaqi Bai, Tongliang Li, Qiyao Peng, et al. 2024. xcot: Cross-lingual instruction tuning for cross-lingual chain-of-thought reasoning. arXiv preprint arXiv:2401.07037.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. 2021. Training verifiers to solve math word problems.

- Du et al. (2022) Zhengxiao Du, Yujie Qian, Xiao Liu, Ming Ding, Jiezhong Qiu, Zhilin Yang, and Jie Tang. 2022. Glm: General language model pretraining with autoregressive blank infilling. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 320–335.

- et al. (2022) Aarohi Srivastava et al. 2022. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. arXiv preprint arXiv: Arxiv-2206.04615.

- Fritz et al. (2013) Annemarie Fritz, Antje Ehlert, and Lars Balzer. 2013. Development of mathematical concepts as basis for an elaborated mathematical understanding. South African Journal of Childhood Education, 3(1):38–67.

- Guo et al. (2022) Hongcheng Guo, Jiaheng Liu, Haoyang Huang, Jian Yang, Zhoujun Li, Dongdong Zhang, Zheng Cui, and Furu Wei. 2022. Lvp-m3: language-aware visual prompt for multilingual multimodal machine translation. EMNLP.

- Guo et al. (2023a) Hongcheng Guo, Boyang Wang, Jiaqi Bai, Jiaheng Liu, Jian Yang, and Zhoujun Li. 2023a. M2c: Towards automatic multimodal manga complement. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 9876–9882.

- Guo et al. (2024) Hongcheng Guo, Jian Yang, Jiaheng Liu, Jiaqi Bai, Boyang Wang, Zhoujun Li, Tieqiao Zheng, Bo Zhang, Qi Tian, et al. 2024. Logformer: A pre-train and tuning pipeline for log anomaly detection. AAAI.