# Tokenization Is More Than Compression

## Abstract

Tokenization is a foundational step in natural language processing (NLP) tasks, bridging raw text and language models. Existing tokenization approaches like Byte-Pair Encoding (BPE) originate from the field of data compression, and it has been suggested that the effectiveness of BPE stems from its ability to condense text into a relatively small number of tokens. We test the hypothesis that fewer tokens lead to better downstream performance by introducing PathPiece, a new tokenizer that segments a document’s text into the minimum number of tokens for a given vocabulary. Through extensive experimentation we find this hypothesis not to be the case, casting doubt on the understanding of the reasons for effective tokenization. To examine which other factors play a role, we evaluate design decisions across all three phases of tokenization: pre-tokenization, vocabulary construction, and segmentation, offering new insights into the design of effective tokenizers. Specifically, we illustrate the importance of pre-tokenization and the benefits of using BPE to initialize vocabulary construction. We train 64 language models with varying tokenization, ranging in size from 350M to 2.4B parameters, all of which are made publicly available.

Tokenization Is More Than Compression

Craig W. Schmidt † Varshini Reddy † Haoran Zhang †,‡ Alec Alameddine † Omri Uzan § Yuval Pinter § Chris Tanner †,¶ † Kensho Technologies ‡ Harvard Univ § Ben-Gurion University ¶ MIT Cambridge, MA Cambridge, MA Beer Sheva, Israel Cambridge, MA {craig.schmidt,varshini.reddy,alec.alameddine,chris.tanner}@kensho.com haoran_zhang@g.harvard.edu {omriuz@post,uvp@cs}.bgu.ac.il

## 1 Introduction

Tokenization is an essential step in NLP that translates human-readable text into a sequence of distinct tokens that can be subsequently used by statistical models Grefenstette (1999). Recently, a growing number of studies have researched the effects of tokenization, both in an intrinsic manner and as it affects downstream model performance Singh et al. (2019); Bostrom and Durrett (2020); Hofmann et al. (2021, 2022); Limisiewicz et al. (2023); Zouhar et al. (2023b). To rigorously inspect the impact of tokenization, we consider tokenization as three distinct, sequential stages:

1. Pre-tokenization: an optional set of initial rules that restricts or enforces the creation of certain tokens (e.g., splitting a corpus on whitespace, thus preventing any tokens from containing whitespace).

1. Vocabulary Construction: the core algorithm that, given a text corpus $\mathcal{C}$ and desired vocabulary size $m$ , constructs a vocabulary of tokens $t_{k}\in\mathcal{V}$ , such that $|\mathcal{V}|=m$ , while adhering to the pre-tokenization rules.

1. Segmentation: given a vocabulary $\mathcal{V}$ and a document $d$ , segmentation determines how to split $d$ into a series of $K_{d}$ tokens $t_{1},\dots,t_{k},\dots,t_{K_{d}}$ , with all $t_{k}\in\mathcal{V}$ , such that the concatenation of the tokens strictly equals $d$ . Given a corpus of documents $\mathcal{C}$ , we will define the corpus token count (CTC) as the total number of tokens used in each segmentation, $\operatorname{CTC}(\mathcal{C})=\sum_{d\in\mathcal{C}}K_{d}$ .

As an example, segmentation might decide to split the text intractable into “ int ract able ”, “ in trac table ”, “ in tractable ”, or “ int r act able ”.

We will refer to this step as segmentation, although in other works it is also called inference or even tokenization.

The widely used Byte-Pair Encoding (BPE) tokenizer Sennrich et al. (2016) originated in the field of data compression Gage (1994). Gallé (2019) argues that it is effective because it compresses text to a short sequence of tokens. Goldman et al. (2024) varied the number of documents in the tokenizer training data for BPE, and found a correlation between CTC and downstream performance. To investigate the hypothesis that having fewer tokens necessarily leads to better downstream performance, we design a novel tokenizer, PathPiece, that, for a given document $d$ and vocabulary $\mathcal{V}$ , finds a segmentation with the minimum possible $K_{d}$ . The PathPiece vocabulary construction routine is a top-down procedure that heuristically minimizes CTC on a training corpus. PathPiece is ideal for studying the effect of CTC on downstream performance, as we can vary decisions at each tokenization stage.

We extend these experiments to the most commonly used tokenizers, focusing on how downstream task performance is impacted by the major stages of tokenization and vocabulary sizes. Toward this aim, we conducted experiments by training 64 language models (LMs): 54 LMs with 350M parameters; 6 LMs with 1.3B parameters; and 4 LMs with 2.4B parameters. We provide open-source, public access to PathPiece, https://github.com/kensho-technologies/pathpiece and our trained vocabularies and LMs. https://github.com/kensho-technologies/timtc_vocabs_models

## 2 Preliminaries

Ali et al. (2024) and Goldman et al. (2024) examined the effect of tokenization on downstream performance of LLM tasks, reaching opposite conclusions on the importance of CTC. Zouhar et al. (2023a) also find that low token count alone does not necessarily improve performance. Mielke et al. (2021) give a survey of subword tokenization.

### 2.1 Pre-tokenization Methods

Pre-tokenization is a process of breaking text into chunks, which are then tokenized independently. A token is not allowed to cross these pre-tokenization boundaries. BPE, WordPiece, and Unigram all require new chunks to begin whenever a space is encountered. If a space appears in a chunk, it must be the first character; hence, we will call this “FirstSpace”. Thus “ ␣New ” is allowed but “ New␣York ” is not. Gow-Smith et al. (2022) examine treating spaces as individual tokens, which we will call “Space” pre-tokenization, while Jacobs and Pinter (2022) suggest marking spaces at the end of tokens, and Gow-Smith et al. (2024) propose dispensing them altogether in some settings. Llama Touvron et al. (2023) popularized the idea of having each digit always be an individual token, which we call “Digit” pre-tokenization.

### 2.2 Vocabulary Construction

We focus on byte-level, lossless subword tokenization. Subword tokenization algorithms split text into word and subword units based on their frequency and co-occurrence patterns from their “training” data, effectively capturing morphological and semantic nuances in the tokenization process Mikolov et al. (2011).

We analyze BPE, WordPiece, and Unigram as baseline subword tokenizers, using the implementations from HuggingFace https://github.com/huggingface/tokenizers with ByteLevel pre-tokenization enabled. We additionally study SaGe, a context-sensitive subword tokenizer, using version 2.0. https://github.com/MeLeLBGU/SaGe

Byte-Pair Encoding

Sennrich et al. (2016) introduced Byte-Pair Encoding (BPE), a bottom-up method where the vocabulary construction starts with single bytes as tokens. It then merges the most commonly occurring pair of adjacent tokens in a training corpus into a single new token in the vocabulary. This process repeats until the desired vocabulary size is reached. Issues with BPE and analyses of its properties are discussed in Bostrom and Durrett (2020); Klein and Tsarfaty (2020); Gutierrez-Vasques et al. (2021); Yehezkel and Pinter (2023); Saleva and Lignos (2023); Liang et al. (2023); Lian et al. (2024); Chizhov et al. (2024); Bauwens and Delobelle (2024). Zouhar et al. (2023b) build an exact algorithm which optimizes compression for BPE-constructed vocabularies.

WordPiece

WordPiece is similar to BPE, except that it uses Pointwise Mutual Information (PMI) Bouma (2009) as the criteria to identify candidates to merge, rather than a count Wu et al. (2016); Schuster and Nakajima (2012). PMI prioritizes merging pairs that occur together more frequently than expected, relative to the individual token frequencies.

Unigram Language Model

Unigram works in a top-down manner, starting from a large initial vocabulary and progressively pruning groups of tokens that induce the minimum likelihood decrease of the corpus Kudo (2018). This selects tokens to maximize the likelihood of the corpus, according to a simple unigram language model.

SaGe

Yehezkel and Pinter (2023) proposed SaGe, a subword tokenization algorithm incorporating contextual information into an ablation loss via a skipgram objective. SaGe also operates top-down, pruning from an initial vocabulary to a desired size.

### 2.3 Segmentation Methods

Given a tokenizer and a vocabulary of tokens, segmentation converts text into a series of tokens. We included all 256 single-byte tokens in the vocabulary of all our experiments, ensuring any text can be segmented without out-of-vocabulary issues.

Certain segmentation methods are tightly coupled to the vocabulary construction step, such as merge rules for BPE or the maximum likelihood approach for Unigram. Others, such as the WordPiece approach of greedily taking the longest prefix token in the vocabulary at each point, can be applied to any vocabulary; indeed, there is no guarantee that a vocabulary will perform best downstream with the segmentation method used to train it Uzan et al. (2024). Additional segmentation schemes include Dynamic Programming BPE He et al. (2020), BPE-Dropout Provilkov et al. (2020), and FLOTA Hofmann et al. (2022).

## 3 PathPiece

Several efforts over the last few years (Gallé, 2019; Zouhar et al., 2023a, inter alia) have suggested that the empirical advantage of BPE as a tokenizer in many NLP applications, despite its unawareness of language structure, can be traced to its superior compression abilities, providing models with overall shorter sequences during learning and inference. Inspired by this claim we introduce PathPiece, a lossless subword tokenizer that, given a vocabulary $\mathcal{V}$ and document $d$ , produces a segmentation minimizing the total number of tokens needed to split $d$ . We additionally provide a vocabulary construction procedure that, using this segmentation, attempts to find a $\mathcal{V}$ minimizing the corpus token count (CTC). An extended description is given in Appendix A. PathPiece provides an ideal testing laboratory for the compression hypothesis by virtue of its maximally efficient segmentation.

### 3.1 Segmentation

PathPiece requires that all single-byte tokens are included in vocabulary $\mathcal{V}$ to run correctly. PathPiece works by finding a shortest path through a directed acyclic graph (DAG), where each byte $i$ of training data forms a node in the graph, and two nodes $j$ and $i$ contain a directed edge if the byte segment $[j,i]$ is a token in $\mathcal{V}$ . We describe PathPiece segmentation in Algorithm 1, where $L$ is a limit on the maximum width of a token in bytes, which we set to 16. It has a complexity of $O(nL)$ , which follows directly from the two nested for -loops. For each byte $i$ in $d$ , it computes the shortest path length $pl[i]$ in tokens up to and including byte $i$ , and the width $wid[i]$ of a token with that shortest path length. In choosing $wid[i]$ , ties between multiple tokens with the same shortest path length $pl[i]$ can be broken randomly, or the one with the longest $wid[i]$ can be chosen, as shown here. Random tie-breaking, which can be viewed as a form of subword regularization, is presented in Appendix A. Some motivation for selecting the longest token is due to the success of FLOTA Hofmann et al. (2022). Then, a backward pass constructs the shortest possible segmentation from the $wid[i]$ values computed in the forward pass.

1: procedure PathPiece ( $d,\mathcal{V},L$ )

2: $n\leftarrow\operatorname{len}(d)$ $\triangleright$ document length

3: $pl[1:n]\leftarrow\infty$ $\triangleright$ shortest path length

4: $wid[1:n]\leftarrow 0$ $\triangleright$ shortest path tok width

5: for $e\leftarrow 1,n$ do $\triangleright$ token end

6: for $w\leftarrow 1,L$ do $\triangleright$ token width

7: $s\leftarrow e-w+1$ $\triangleright$ token start

8: if $s\geq 1$ then $\triangleright$ $s$ in range

9: if $d[s:e]\in\mathcal{V}$ then

10: if $s=1$ then $\triangleright$ 1 tok path

11: $pl[e]\leftarrow 1$

12: $wid[e]\leftarrow w$

13: else

14: $nl\leftarrow pl[s-1]+1$

15: if $nl\leq pl[e]$ then

16: $pl[e]\leftarrow nl$

17: $wid[e]\leftarrow w$

18: $T\leftarrow[\,]$ $\triangleright$ output token list

19: $e\leftarrow n$ $\triangleright$ start at end of $d$

20: while $e\geq 1$ do

21: $s\leftarrow e-wid[e]+1$ $\triangleright$ token start

22: $T.\operatorname{append}(d[s:e])$ $\triangleright$ append token

23: $e\leftarrow e-wid[e]$ $\triangleright$ back up a token

24: return $\operatorname{reversed}(T)$ $\triangleright$ reverse order

Algorithm 1 PathPiece segmentation.

### 3.2 Vocabulary Construction

PathPiece ’s vocabulary is built in a top-down manner, attempting to minimize the corpus token count (CTC), by starting from a large initial vocabulary $\mathcal{V}_{0}$ and iteratively omitting batches of tokens. The $\mathcal{V}_{0}$ may be initialized from the most frequently occurring byte $n$ -grams in the corpus, or from a large vocabulary trained by BPE or Unigram. We enforce that all single-byte tokens remain in the vocabulary and that all tokens are $L$ bytes or shorter.

For a PathPiece segmentation $t_{1},\dots,t_{K_{d}}$ of a document $d$ in the training corpus $\mathcal{C}$ , we would like to know the increase in the overall length of the segmentation $K_{d}$ after omitting each token $t$ from our vocabulary and then recomputing the segmentation. Tokens with a low overall increase are good candidates to remove from the vocabulary.

To avoid the very expensive $O(nL|\mathcal{V}|)$ computation of each segmentation from scratch, we make a simplifying assumption that allows us to compute these increases more efficiently: we omit a specific token $t_{k}$ , for $k\in[1,\dots,K_{d}]$ in the segmentation of a particular document $d$ , and compute the minimum increase $MI_{kd}\geq 0$ in the total tokens $K_{d}$ from not having that token $t_{k}$ in the segmentation of $d$ . We then aggregate these token count increases $MI_{kd}$ for each token $t\in\mathcal{V}$ . We can compute the $MI_{kd}$ without actually re-segmenting any documents, by reusing the shortest path information computed by Algorithm 1 during segmentation.

Any segmentation not containing $t_{k}$ must either contain a token boundary somewhere inside of $t_{k}$ breaking it in two, or it must contain a token that entirely contains $t_{k}$ as a superset. We enumerate all occurrences for these two cases, and we find the minimum increase $MI_{kd}$ among them. Let $t_{k}$ start at index $s$ and end at index $e$ , inclusive. Path length $pl[j]$ represents the number of tokens required for the shortest path up to and including byte $j$ . We also run Algorithm 1 backwards on $d$ , computing a similar vector of backwards path lengths $bpl[j]$ , representing the number of tokens on a path from the end of the data up to and including byte $j$ . The minimum length of a segmentation with a token boundary after byte $j$ is thus:

$$

K_{j}^{b}=pl[j]+bpl[j+1]. \tag{1}

$$

We have added an extra constraint on the shortest path, that there is a break at $j$ , so clearly $K_{j}^{b}\geq K_{d}$ . The minimum increase for the case of having a token boundary within $t_{k}$ is thus:

$$

MI_{kd}^{b}=\min_{j=s,\dots,e-1}{K_{j}^{b}-K_{d}}. \tag{2}

$$

The minimum increase from omitting $t_{k}$ could also be from a segmentation containing a strict superset of $t_{k}$ . Let this superset token be $t_{k}^{\prime}$ , with start $s^{\prime}$ and end $e^{\prime}$ inclusive. To be a strict superset entirely containing $t_{k}$ , then either $s^{\prime}<s$ and $e^{\prime}\geq e$ , or $s^{\prime}\leq s$ and $e^{\prime}>e$ , subject to the constraint that the width $w^{\prime}=e^{\prime}-s^{\prime}+1\leq L$ . In this case, the minimum length when using the superset token $t_{k}^{\prime}$ would be:

$$

K_{t_{k}^{\prime}}^{s}=pl[s^{\prime}-1]+bpl[e^{\prime}+1]+1, \tag{3}

$$

which is the path length to get to the byte before $t_{k}^{\prime}$ , plus the path length from the end of the data backwards to the byte after $t_{k}^{\prime}$ , plus 1 for the token $t_{k}^{\prime}$ itself.

We retain a list of the widths of the tokens ending at each byte. See the expanded explanation in Appendix A for details. The set of superset tokens $S$ can be found by examining the potential $e^{\prime}$ , and then seeing if the tokens ending at $e^{\prime}$ form a strict superset. Similar to the previous case, we can compute the minimum increase from replacing $t_{k}$ with a superset token by taking the minimum increase over the superset tokens $S$ :

$$

MI_{kd}^{s}=\min_{t_{k}^{\prime}\in S}{K_{t_{k}^{\prime}}^{s}-K_{d}}. \tag{4}

$$

We then aggregate over the documents to get the overall increase for each $t\in\mathcal{V}$ :

$$

MI_{t}=\sum_{d\in\mathcal{C}}\sum_{k=1|t_{k}=t}^{K_{d}}\min(MI_{kd}^{b},MI_{kd

}^{s}). \tag{5}

$$

One iteration of this vocabulary construction procedure will have complexity $O(nL^{2})$ . footnotemark:

### 3.3 Connecting PathPiece and Unigram

We note a connection between PathPiece and Unigram. In Unigram, the probability of a segmentation $t_{1},\dots,t_{K_{d}}$ is the product of the unigram token probabilities $p(t_{k})$ :

$$

P(t_{1},\dots,t_{K_{d}})=\prod_{k=1}^{K_{d}}p(t_{k}). \tag{6}

$$

Taking the negative $\log$ of this product converts the objective from maximizing the likelihood to minimizing the sum of $-\log(p(t_{k}))$ terms. While Unigram is solved by the Viterbi (1967) algorithm, it can also be solved by a weighted version of PathPiece with weights of $-\log(p(t_{k}))$ . Conversely, a solution minimizing the number of tokens can be found in Unigram by taking all $p(t_{k}):=1/|\mathcal{V}|$ .

## 4 Experiments

We used the Pile corpus Gao et al. (2020); Biderman et al. (2022) for language model pre-training, which contains 825GB of English text data from 22 high-quality datasets. We constructed the tokenizer vocabularies over the MiniPile dataset Kaddour (2023), a 6GB subset of the Pile. We use the MosaicML Pretrained Transformers (MPT) decoder-only language model architecture. https://github.com/mosaicml/llm-foundry Appendix B gives the full set of model parameters, and Appendix D discusses model convergence.

### 4.1 Downstream Evaluation Tasks

To evaluate and analyze the performance of our tokenization process, we select 10 benchmarks from lm-evaluation-harness Gao et al. (2023). https://github.com/EleutherAI/lm-evaluation-harness These are all multiple-choice tasks with 2, 4, or 5 options, and were run with 5-shot prompting. We use arc_easy Clark et al. (2018), copa Brassard et al. (2022), hendrycksTests-marketing Hendrycks et al. (2021), hendrycksTests-sociology Hendrycks et al. (2021), mathqa Amini et al. (2019), piqa Bisk et al. (2019), qa4mre_2013 Peñas et al. (2013), race Lai et al. (2017), sciq Welbl et al. (2017), and wsc273 Levesque et al. (2012). Appendix C gives a full description of these tasks.

### 4.2 Tokenization Stage Variants

We conduct the 18 experimental variants listed in Table 1, each repeated at the vocabulary sizes of 32,768, 40,960, and 49,152. These sizes were selected because vocabularies in the 30k to 50k range are the most common amongst language models within the HuggingFace Transformers library, https://huggingface.co/docs/transformers/. Ali et al. (2024) recently examined the effect of vocabulary sizes and found 33k and 50k sizes performed better on English language tasks than larger sizes. For baseline vocabulary creation methods, we used BPE, Unigram, WordPiece, and SaGe. We also consider two variants of PathPiece where ties in the shortest path are broken either by the longest token (PathPieceL), or randomly (PathPieceR). For the vocabulary initialization required by PathPiece and SaGe, we experimented with the most common $n$ -grams, as well as with a large initial vocabulary trained with BPE or Unigram. We also varied the pre-tokenization schemes for PathPiece and SaGe, using either no pre-tokenization or combinations of FirstSpace, Space, and Digit described in § 2.1. Tokenizers usually use the same segmentation approach used in vocabulary construction. PathPieceL ’s shortest path segmentation can be used with any vocabulary, so we apply it to vocabularies trained by BPE and Unigram. We also apply a Greedy left-to-right longest-token segmentation approach to these vocabularies.

## 5 Results

Table 1 reports the downstream performance across all our experimental settings. The same table sorted by rank is in Table 10 of Appendix G. The comprehensive results for the ten downstream tasks, for each of the 350M parameter models, are given in Appendix G. A random baseline for these 10 tasks yields 32%. The Overall Avg column indicates the average results over the three vocabulary sizes. The Rank column refers to the rank of each variant with respect to the Overall Avg column (Rank 1 is best), which we will sometimes use as a succinct way to refer to a variant.

| Rank | Vocab Constr | Init Voc | Pre-tok | Segment | Overall | 32,768 | 40,960 | 49,152 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| 1 | PathPieceL | BPE | FirstSpace | PathPieceL | 49.4 | 49.3 | 49.4 | 49.4 |

| 9 | Unigram | FirstSpace | 48.0 | 47.0 | 48.5 | 48.4 | | |

| 15 | $n$ -gram | FirstSpDigit | 44.8 | 44.6 | 44.9 | 45.0 | | |

| 16 | $n$ -gram | FirstSpace | 44.7 | 44.8 | 45.5 | 43.9 | | |

| 2 | Unigram | | FirstSpace | Likelihood | 49.0 | 49.2 | 49.1 | 48.8 |

| 7 | | Greedy | 48.3 | 47.9 | 48.5 | 48.6 | | |

| 17 | | PathPieceL | 43.6 | 43.6 | 43.1 | 44.0 | | |

| 3 | BPE | | FirstSpace | Merge | 49.0 | 49.0 | 50.0 | 48.1 |

| 4 | | Greedy | 49.0 | 48.3 | 49.1 | 49.5 | | |

| 13 | | PathPieceL | 46.5 | 45.6 | 46.7 | 47.2 | | |

| 5 | WordPiece | | FirstSpace | Greedy | 48.8 | 48.5 | 49.1 | 48.8 |

| 6 | SaGe | BPE | FirstSpace | Greedy | 48.6 | 48.0 | 49.2 | 48.8 |

| 8 | $n$ -gram | FirstSpace | 48.0 | 47.5 | 48.5 | 48.0 | | |

| 10 | Unigram | FirstSpace | 47.7 | 48.4 | 46.9 | 47.8 | | |

| 11 | $n$ -gram | FirstSpDigit | 47.5 | 48.4 | 46.9 | 47.2 | | |

| 12 | PathPieceR | $n$ -gram | SpaceDigit | PathPieceR | 46.7 | 47.5 | 45.4 | 47.3 |

| 14 | FirstSpDigit | 45.5 | 45.3 | 45.8 | 45.5 | | | |

| 18 | None | 43.2 | 43.5 | 44.0 | 42.2 | | | |

| Random | | | | 32.0 | 32.0 | 32.0 | 32.0 | |

Table 1: Summary of 350M parameter model downstream accuracy (%) across 10 tasks. The Overall column averages across the three vocabulary sizes. The Rank column refers to the Overall column, best to worst.

### 5.1 Vocabulary Size

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Average Accuracy by Rank

### Overview

The chart displays four data series representing average accuracy across 18 ranks. The Overall Avg (blue line) shows a smooth decline, while three additional series (32,768 Avg, 40,960 Avg, 49,152 Avg) exhibit more variability. All series trend downward as rank increases.

### Components/Axes

- **X-axis**: Rank (1–18, integer increments)

- **Y-axis**: Average Accuracy (0.40–0.52, 0.02 increments)

- **Legend**:

- Blue circles: Overall Avg

- Light blue diamonds: 32,768 Avg

- Peach squares: 40,960 Avg

- Red triangles: 49,152 Avg

- **Visual Elements**:

- Dashed vertical lines at each rank

- Solid blue line connecting Overall Avg data points

### Detailed Analysis

1. **Overall Avg (Blue Line)**:

- Starts at ~0.495 (Rank 1)

- Declines steadily to ~0.432 (Rank 18)

- Smooth curve with no abrupt changes

2. **32,768 Avg (Light Blue Diamonds)**:

- Begins at ~0.492 (Rank 1)

- Declines gradually to ~0.435 (Rank 18)

- Maintains highest accuracy among all series

3. **40,960 Avg (Peach Squares)**:

- Starts at ~0.498 (Rank 1)

- Drops to ~0.438 (Rank 18)

- Shows moderate variability (e.g., 0.475 at Rank 10)

4. **49,152 Avg (Red Triangles)**:

- Begins at ~0.495 (Rank 1)

- Declines steeply to ~0.422 (Rank 18)

- Most pronounced downward trend

### Key Observations

- All series show consistent decline in accuracy with increasing rank

- 49,152 Avg (red triangles) exhibits the steepest decline (-0.073 total drop)

- 32,768 Avg (light blue diamonds) maintains highest accuracy throughout

- Overall Avg (blue line) acts as a weighted average of the three series

- Data points for 40,960 Avg (peach squares) show irregular spacing between ranks

### Interpretation

The data suggests a negative correlation between rank and accuracy across all groups. The 49,152 Avg series demonstrates the most significant performance degradation, potentially indicating higher sensitivity to ranking factors. The 32,768 Avg series' resilience implies better stability or inherent advantages in lower-rank positions. The Overall Avg line validates that the decline is systematic rather than random variation. The peach squares' irregular spacing suggests possible missing data or special handling for mid-range ranks. This pattern could reflect diminishing returns in performance metrics as competitive positioning worsens.

</details>

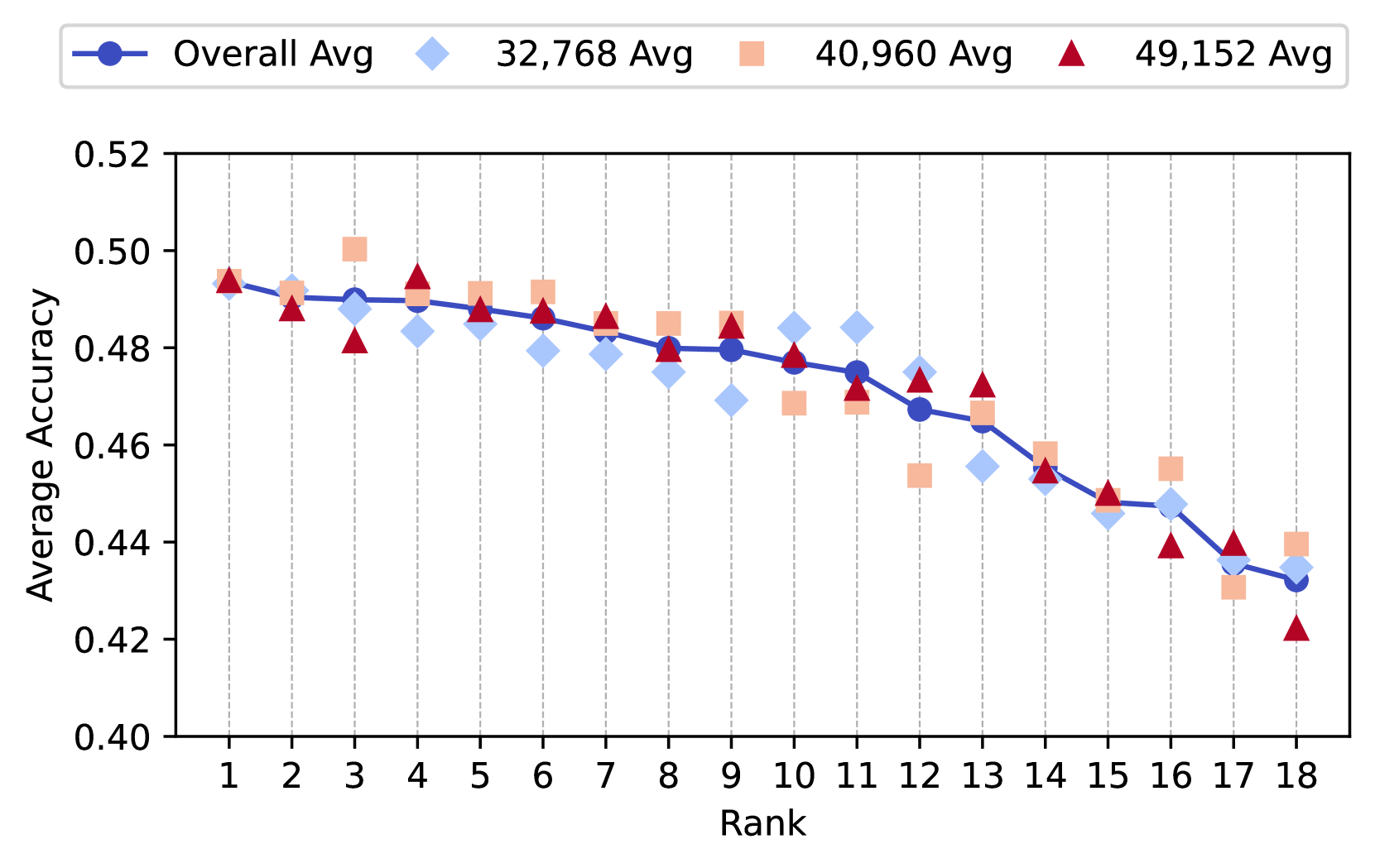

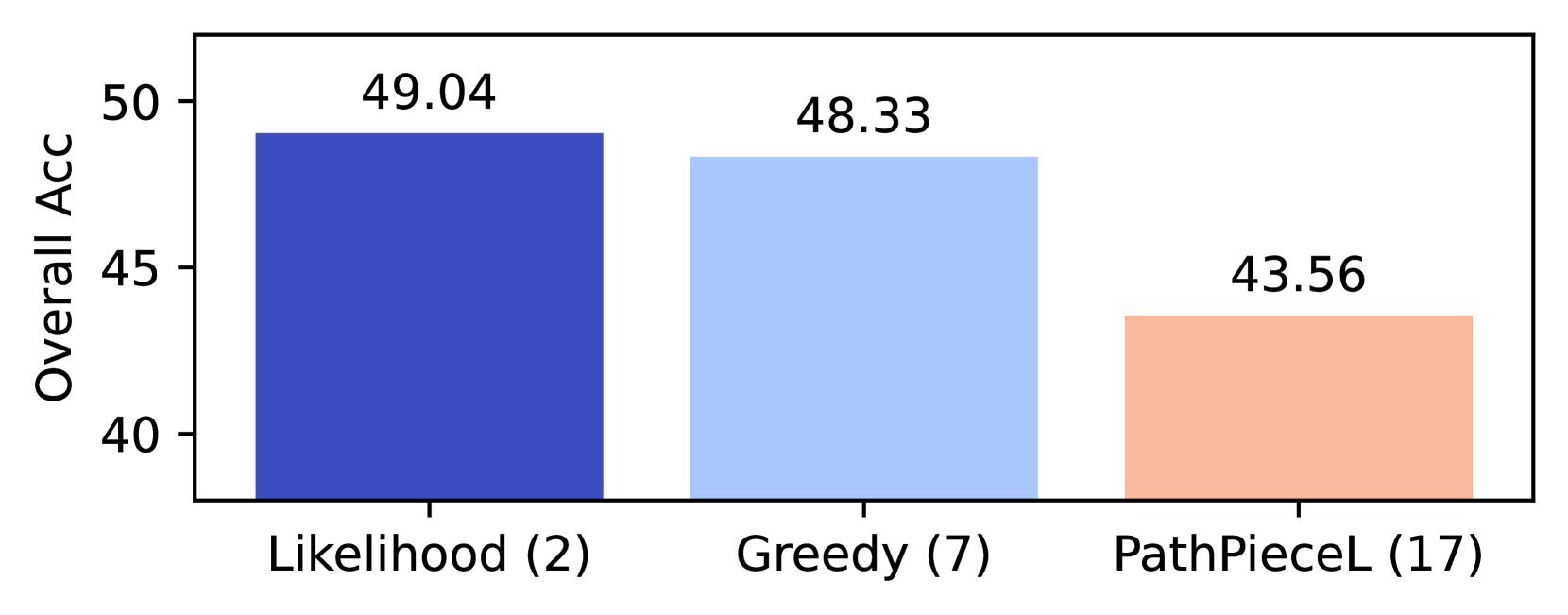

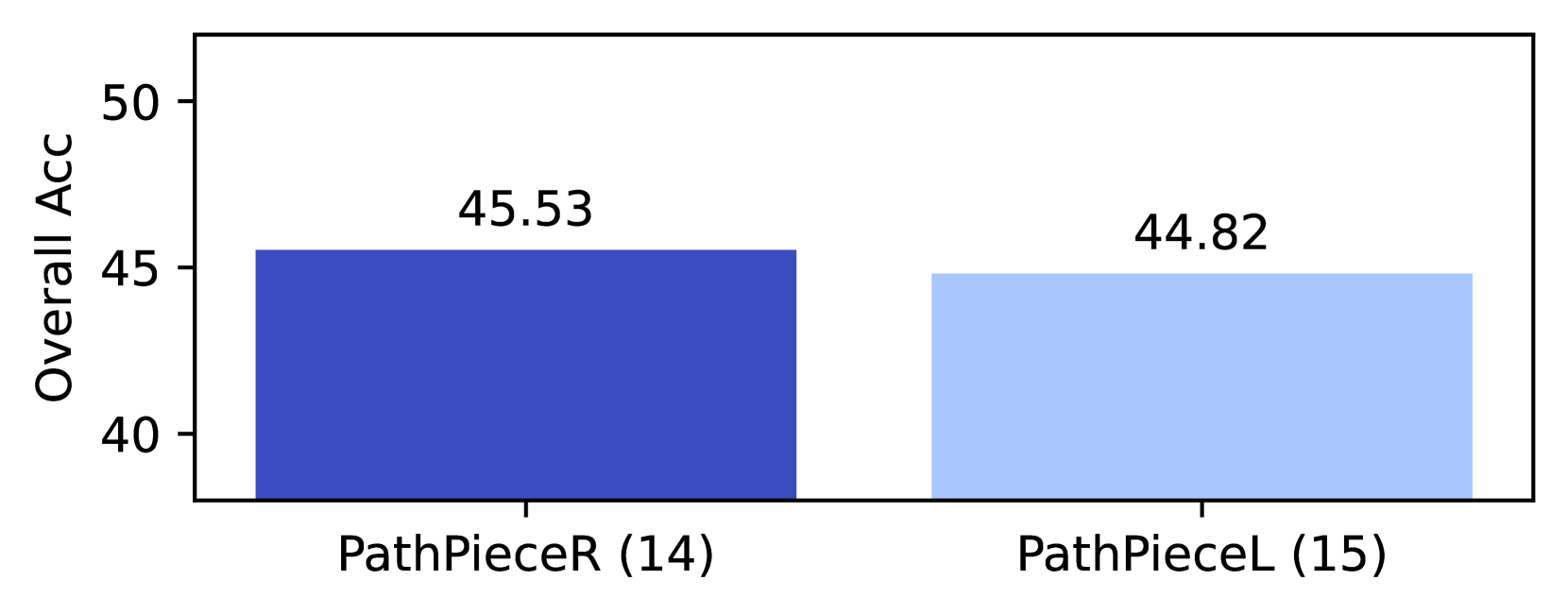

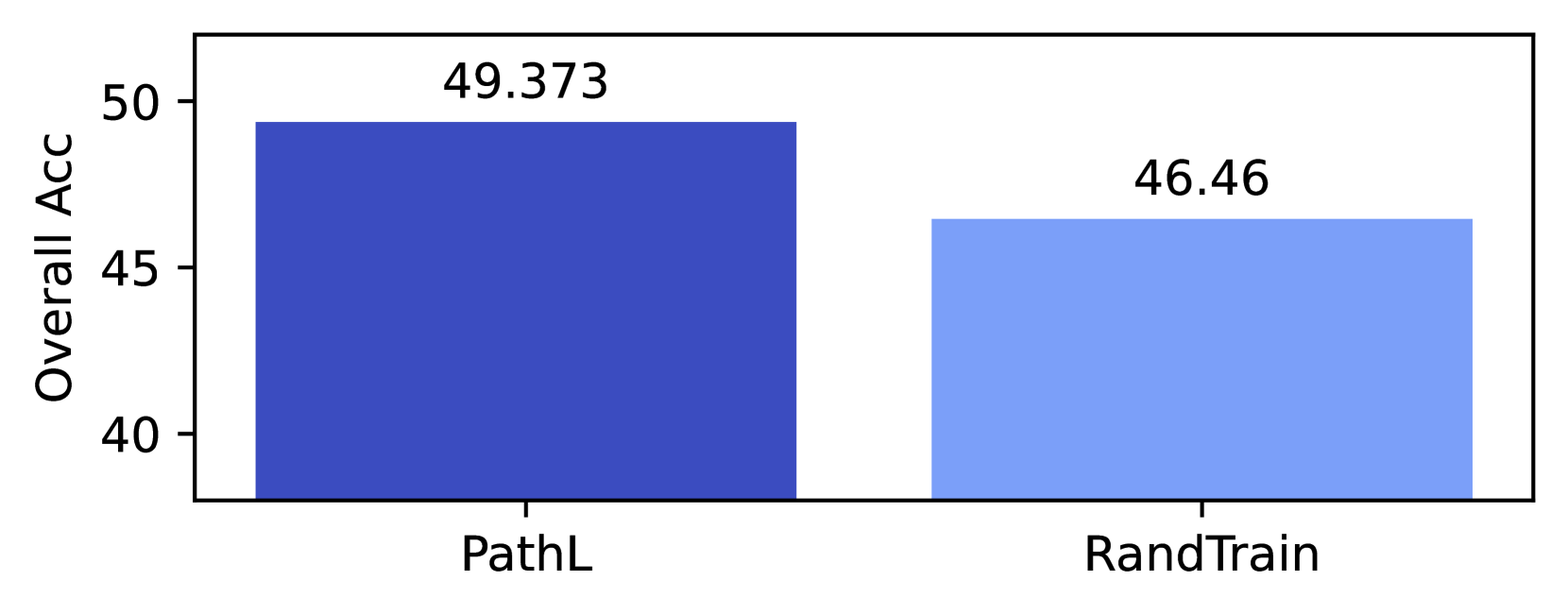

Figure 1: Effect of vocabulary size on downstream performance. For each tokenizer variant, we show the overall average, along with the three averages by vocabulary size, labeled according to the ranks in Table 1.

Figure 1 gives the overall average, along with the individual averages, for each of the three vocabulary sizes for each variant, labeled according to the rank from Table 1. We observe that there is a high correlation between downstream performance at different vocabulary sizes. The pairwise $R^{2}$ values for the accuracy of the 32,768 and 40,960 runs was 0.750; between 40,960 and 49,152 it was 0.801; and between 32,768 and 49,152 it was 0.834. This corroborates the effect shown graphically in Figure 1 that vocabulary size is not a crucial decision over this range of sizes. Given this high degree of correlation, we focus our analysis on the overall average accuracy. This averaging removes some of the variance amongst individual language model runs. Thus, unless specified otherwise, our analyses present performance averaged over vocabulary sizes.

### 5.2 Overall performance

To determine which of the differences in the overall average accuracy in Table 1 are statistically significant, we conduct a one-sided Wilcoxon signed-rank test Wilcoxon (1945) on the paired differences of the 30 accuracy scores (three vocabulary sizes over ten tasks), for each pair of variants. All $p$ -values reported in this paper use this test.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Heatmap: Tokenizer Rank vs. p-value Distribution

### Overview

The image displays a heatmap visualizing the relationship between tokenizer rank and p-values across 18 ranked tokenizers. The color gradient transitions from blue (low p-values) to red (high p-values), with a diagonal pattern of intermediate values separating the two regions.

### Components/Axes

- **X-axis (Horizontal)**: "Tokenizer Rank" (1 to 18)

- **Y-axis (Vertical)**: "p-value vs. Lower Ranked Tokenizers" (1 to 18)

- **Color Bar (Right)**: Labeled "p-value" with a scale from 0.00 (blue) to 1.00 (red)

- **Grid**: Black gridlines separating cells

- **Annotations**: No embedded text in cells

### Detailed Analysis

1. **Top-Left Region (High p-values)**:

- Ranks 1–5 (y-axis) vs. 1–5 (x-axis) show dominant red shades.

- Example: Cell (1,1) = ~0.90, (2,2) = ~0.85, (3,3) = ~0.75.

- Gradual transition to orange in cells like (4,4) (~0.60) and (5,5) (~0.55).

2. **Diagonal Band (Intermediate p-values)**:

- Cells along the diagonal (e.g., 6–12 vs. 6–12) show mixed gray/blue shades.

- Example: (10,10) = ~0.15, (12,12) = ~0.10.

3. **Bottom-Right Region (Low p-values)**:

- Ranks 13–18 (y-axis) vs. 13–18 (x-axis) are predominantly blue.

- Example: (18,18) = ~0.01, (16,16) = ~0.02.

4. **Edge Cases**:

- Cell (17,17) = ~0.03 (light blue).

- Cell (15,15) = ~0.04 (light blue).

### Key Observations

- **Dominant Pattern**: A clear diagonal division separates high p-values (top-left) from low p-values (bottom-right).

- **Statistical Significance**: Higher-ranked tokenizers (1–5) exhibit weaker statistical significance (higher p-values) when compared to lower-ranked ones.

- **Threshold Effect**: The diagonal band suggests a potential cutoff where p-values drop below ~0.10 for ranks ≥10.

### Interpretation

The heatmap implies that tokenizer rankings correlate with statistical significance in their performance. Higher-ranked tokenizers (1–5) show less significant p-values when compared to themselves, while lower-ranked tokenizers (13–18) demonstrate stronger significance. The diagonal band may represent a critical threshold where p-values transition from non-significant (≥0.10) to significant (<0.10). This could reflect diminishing returns in tokenizer utility as rank increases, or a methodological artifact in the ranking process. The absence of extreme outliers suggests a consistent trend across the dataset.

</details>

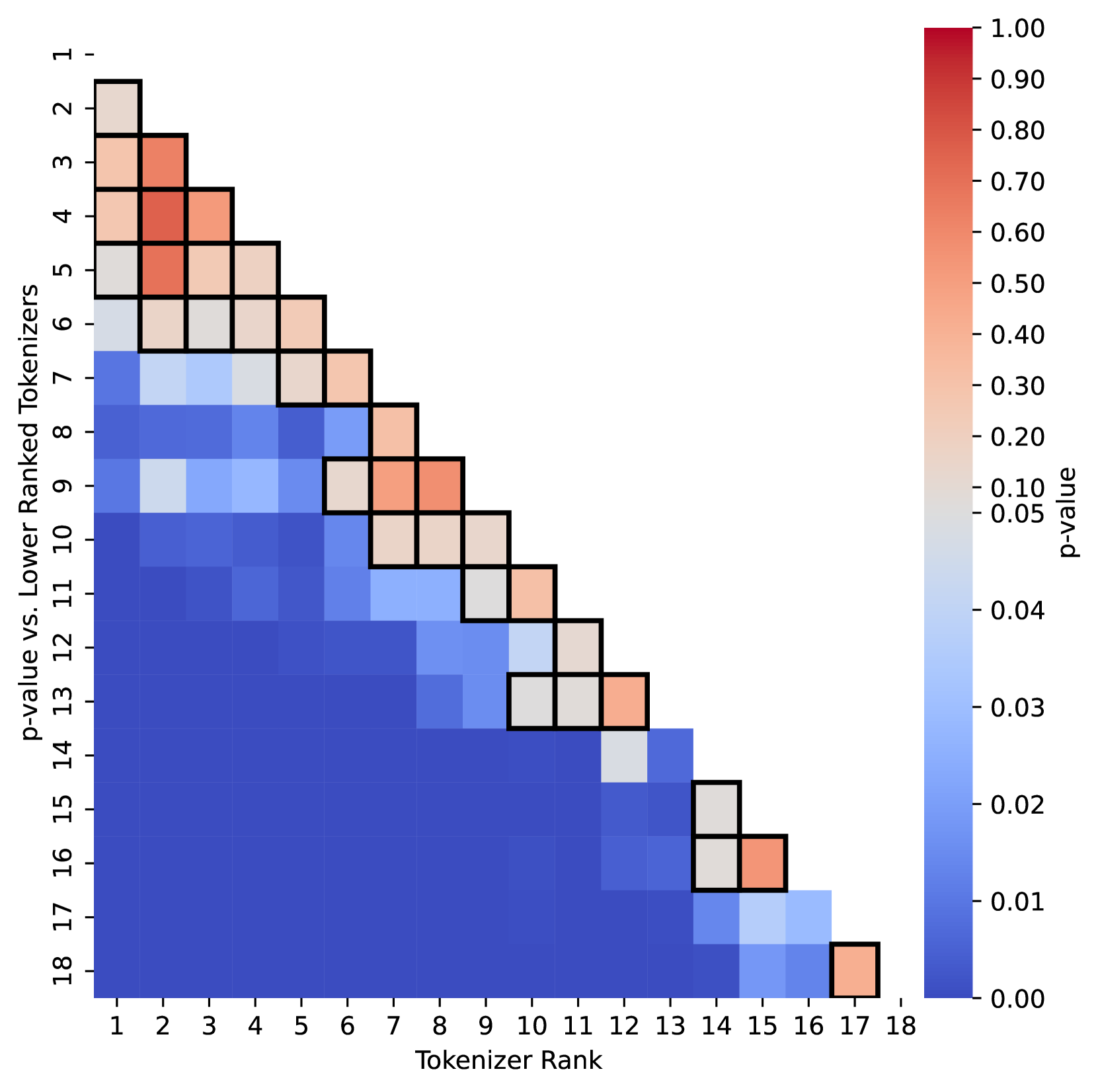

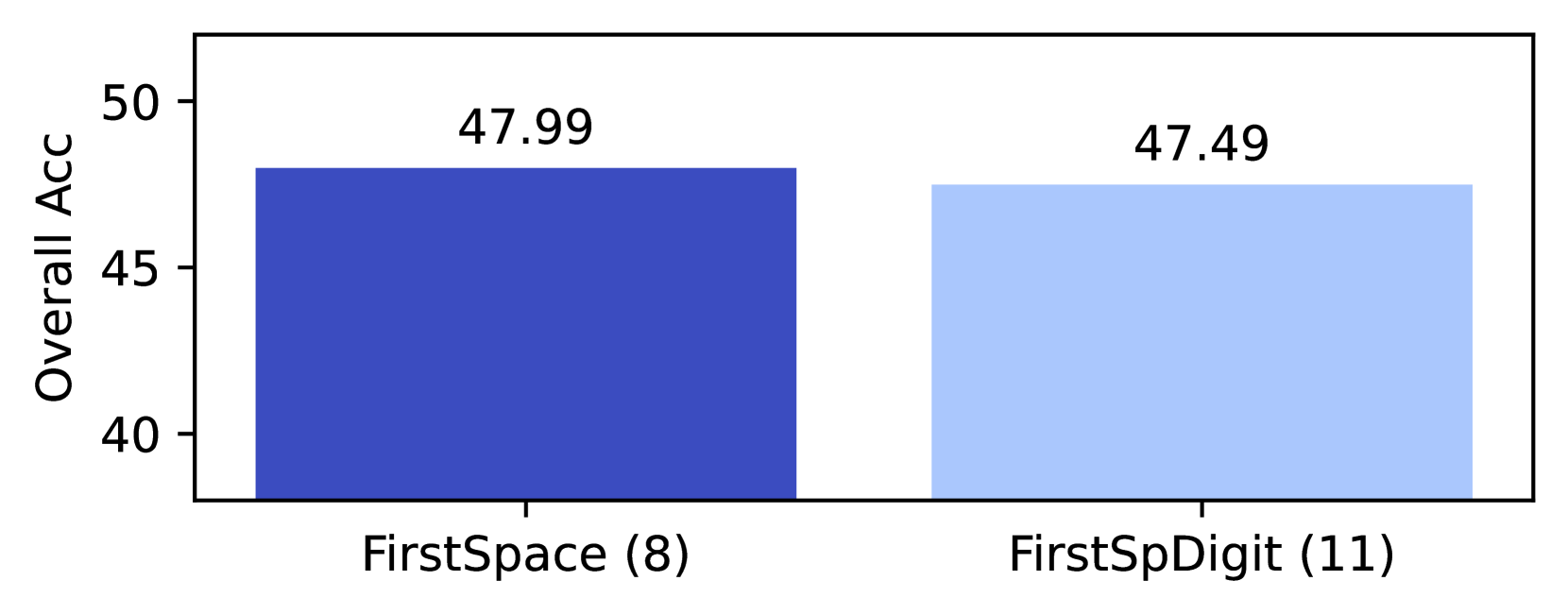

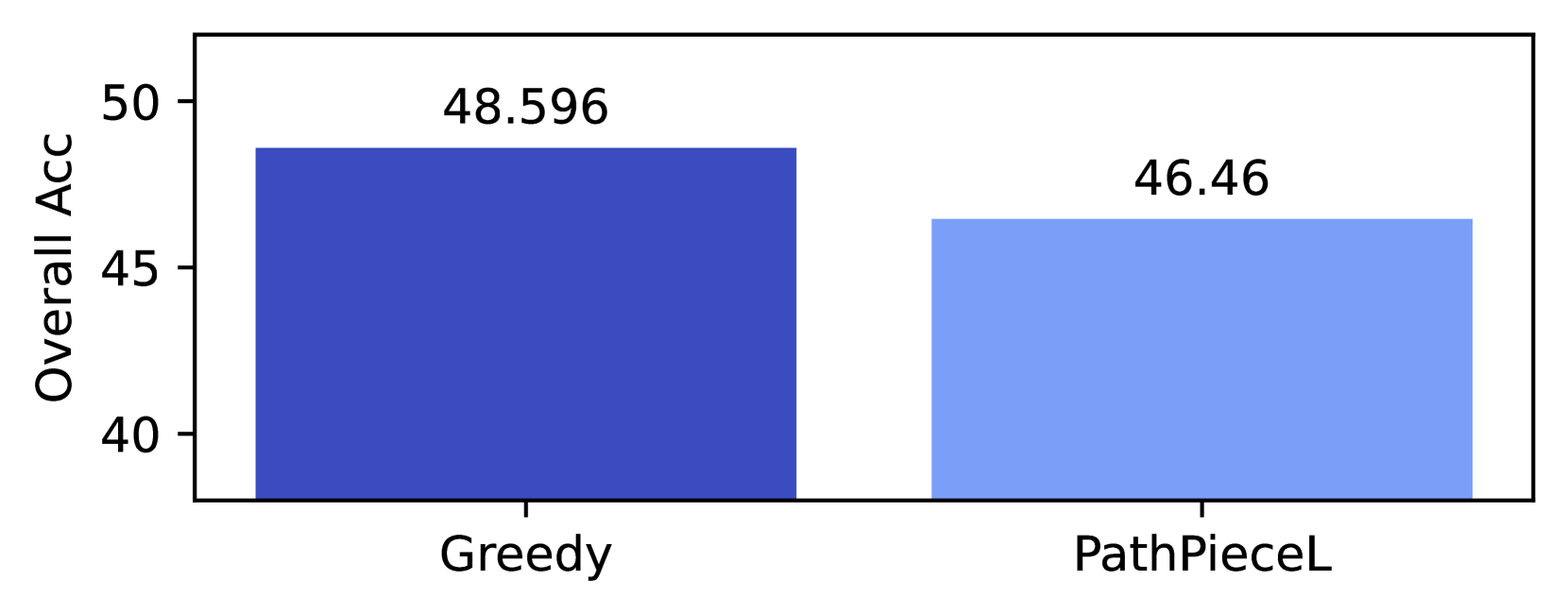

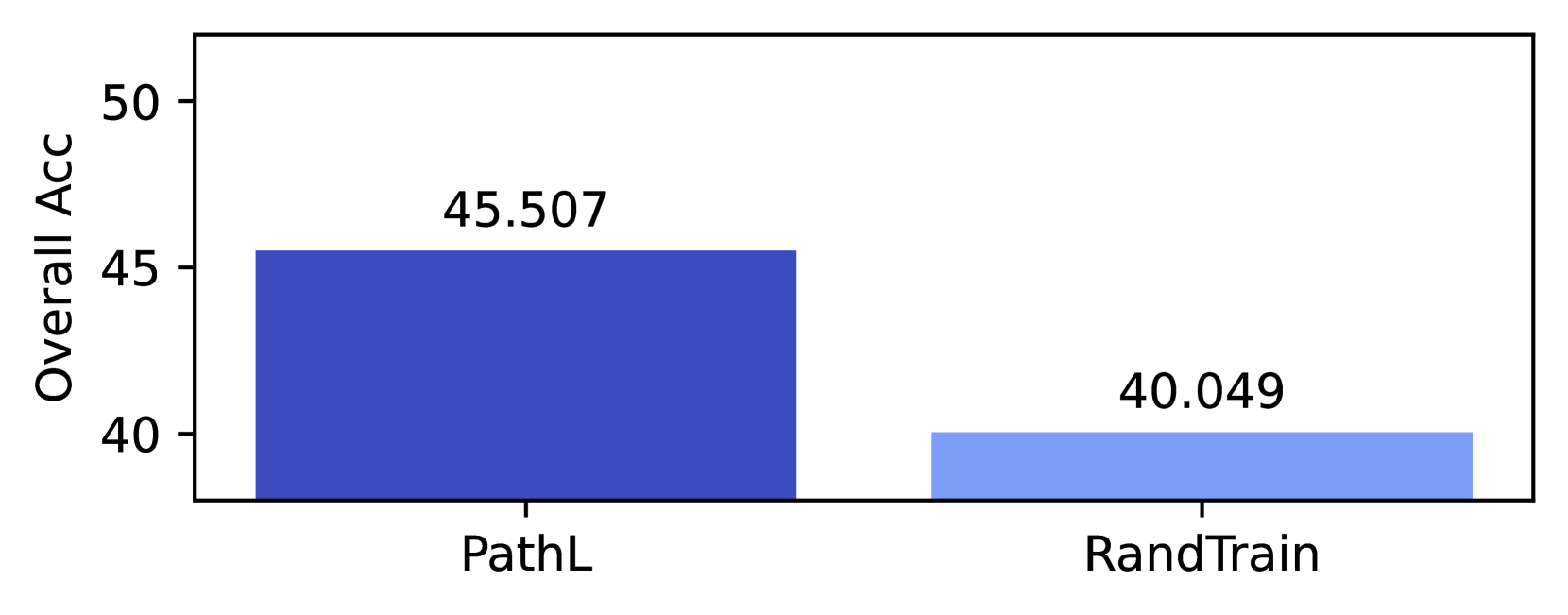

Figure 2: Pairwise $p$ -values for 350M model results. Boxes outlined in black represent $p$ > 0.05. The top 6 tokenizers are all competitive, and there is no statistically significantly best approach.

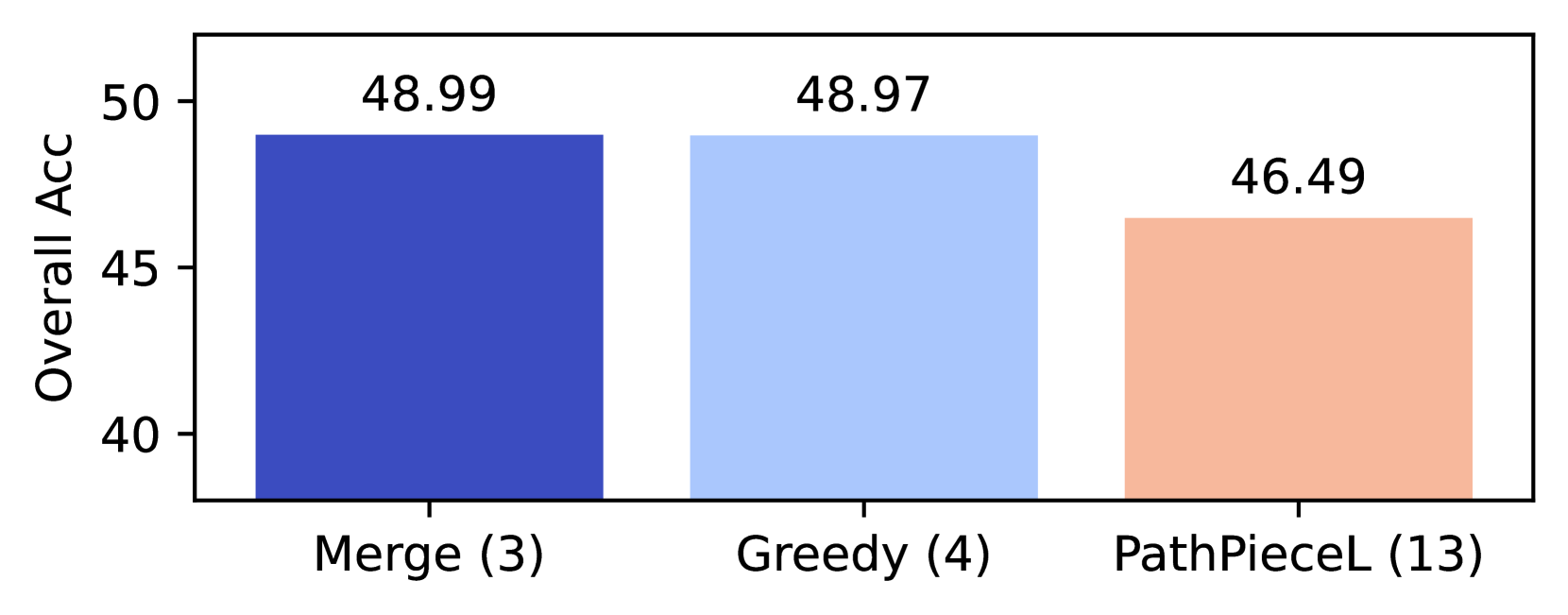

Figure 2 displays all pairwise $p$ -values in a color map. Each column designates a tokenization variant by its rank in Table 1, compared to all the ranks below it. A box is outlined in black if $p>0.05$ , where we cannot reject the null. While PathPieceL -BPE had the highest overall average on these tasks, the top five tokenizers, PathPieceL -BPE, Unigram, BPE, BPE-Greedy, and WordPiece do not have any other row in Figure 2 significantly different from them. Additionally, SaGe-BPE (rank 6) is only barely worse than PathPieceL -BPE ( $p$ = 0.047), and should probably be included in the list of competitive tokenizers. Thus, our first key result is that there is no tokenizer algorithm better than all others to a statistically significant degree.

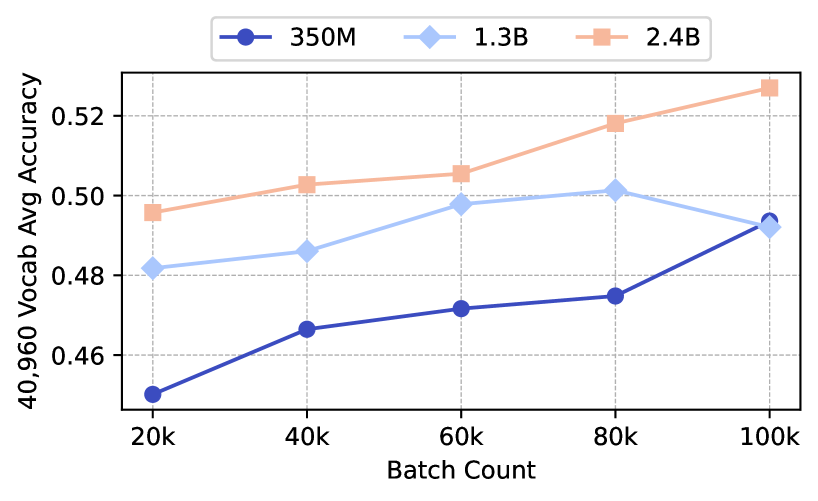

All the results reported thus far are for language models with identical architectures and 350M parameters. To examine the dependency on model size, we trained larger models of 1.3B parameters for six of our experiments, and 2.4B parameters for four of them. In the interest of computational time, these larger models were only trained with a single vocabulary size of 40,960. In Figure 6 in subsection 6.4, we report models’ average performance across 10 tasks. See Figure 7 in Appendix D for an example checkpoint graph at each model size. The main result from these models is that the relative performance of the tokenizers does vary by model size, and that there is a group of high performing tokenizers that yield comparable results. This aligns with our finding that the top six tokenizers are not statistically better than one another at the 350M model size.

### 5.3 Corpus Token Count vs Accuracy

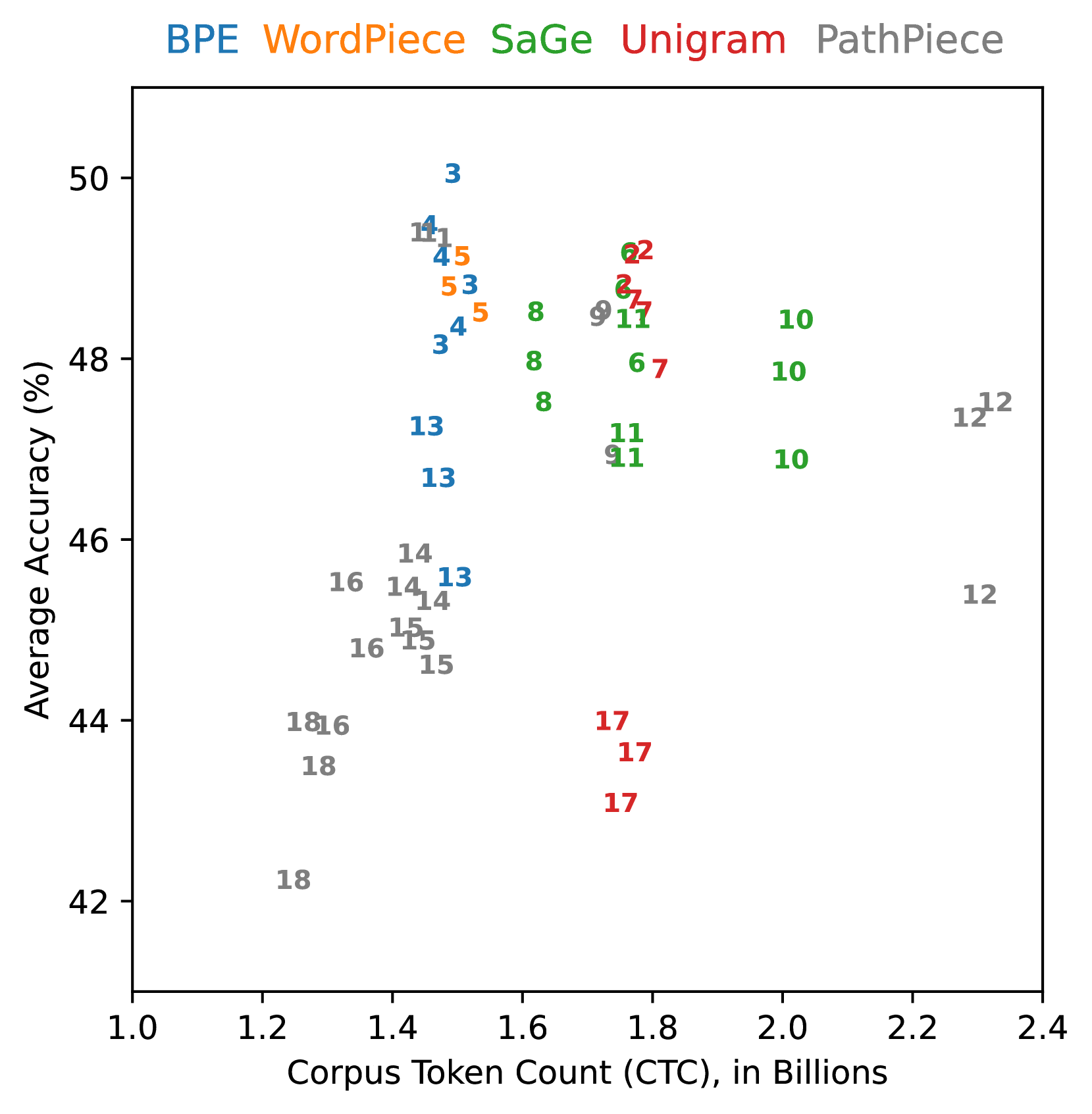

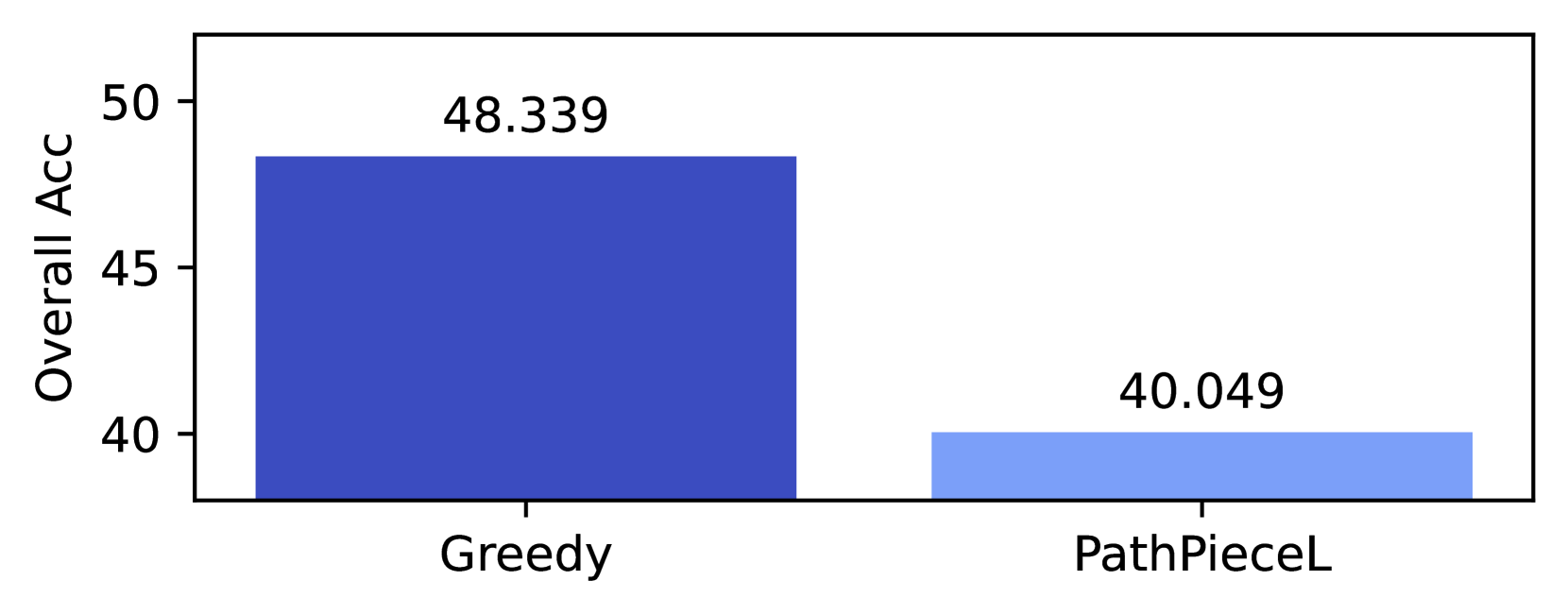

Figure 3 shows the corpus token count (CTC) versus the accuracy of each vocabulary size, given in Table 11. We do not find a straightforward relationship between the two. Ali et al. (2024) recently examined the relationship between CTC and downstream performance for three different tokenizers, and also found it was not correlated on English language tasks.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance vs. Corpus Token Count

### Overview

The image is a scatter plot comparing the **Average Accuracy (%)** of different language models against their **Corpus Token Count (CTC) in Billions**. Five models are represented by distinct colors: BPE (blue), WordPiece (orange), SaGe (green), Unigram (red), and PathPiece (gray). Data points are labeled with numerical identifiers (e.g., "3", "14", "17") and positioned across the plot.

### Components/Axes

- **X-axis**: Corpus Token Count (CTC), in Billions (range: 1.0 to 2.4).

- **Y-axis**: Average Accuracy (%), ranging from 42% to 50%.

- **Legend**: Located at the top, with color-coded labels for each model.

- **Data Points**: Labeled with numbers (e.g., "3", "14", "17") and colored according to the legend.

### Detailed Analysis

#### BPE (Blue)

- Data points: (1.4, 49), (1.5, 48), (1.6, 47), (1.7, 46), (1.8, 45).

- Trend: Accuracy decreases slightly as token count increases.

#### WordPiece (Orange)

- Data points: (1.3, 48), (1.4, 47), (1.5, 46), (1.6, 45), (1.7, 44).

- Trend: Similar to BPE, with a gradual decline in accuracy.

#### SaGe (Green)

- Data points: (1.6, 48), (1.7, 47), (1.8, 46), (1.9, 45), (2.0, 44).

- Trend: Accuracy decreases as token count increases.

#### Unigram (Red)

- Data points: (1.7, 47), (1.8, 46), (1.9, 45), (2.0, 44), (2.1, 43).

- Trend: Accuracy declines with increasing token count.

#### PathPiece (Gray)

- Data points: (1.8, 46), (1.9, 45), (2.0, 44), (2.1, 43), (2.2, 42).

- Trend: Accuracy decreases as token count increases.

### Key Observations

1. **BPE and WordPiece** (blue/orange) achieve the highest accuracy (45–49%) with lower token counts (1.3–1.8 billion).

2. **SaGe and Unigram** (green/red) show moderate accuracy (44–48%) with higher token counts (1.6–2.1 billion).

3. **PathPiece** (gray) has the lowest accuracy (42–46%) and requires the highest token counts (1.8–2.2 billion).

4. **Outliers**: No significant outliers; all data points follow a consistent downward trend for each model.

### Interpretation

The data suggests that **BPE and WordPiece models** are more efficient, achieving higher accuracy with fewer tokens. In contrast, **PathPiece** requires significantly more tokens for lower accuracy, indicating potential inefficiency. The trend across all models shows a trade-off between token count and accuracy, with no model maintaining high accuracy at very high token counts. This could imply that larger token counts do not necessarily correlate with better performance, highlighting the importance of model architecture and training data quality.

</details>

Figure 3: Effect of corpus token count (CTC) vs average accuracy of individual vocabulary sizes.

The two models with the highest CTC are PathPiece with Space pre-tokenization (12), which is to be expected given each space is its own token, and SaGe with an initial Unigram vocabulary (10). The Huggingface Unigram models in Figure 3 had significantly higher CTC than the corresponding BPE models, unlike Bostrom and Durrett (2020) and Gow-Smith et al. (2022), which report a difference of only a few percent with SentencePiece Unigram. Ali et al. (2024) point out that due to differences in pre-processing, the Huggingface Unigram tokenizer behaves quite differently than the SentencePiece Unigram tokenizer, which may explain this discrepancy.

In terms of accuracy, PathPiece with no pre-tokenization (18) and Unigram with PathPiece segmentation (17) both did quite poorly. Notably, the range of CTC is quite narrow within each vocabulary construction method, even while changes in pre-tokenization and segmentation lead to significant accuracy differences. While there are confounding factors present in this chart (e.g., pre-tokenization, vocabulary initialization, and that more tokens allow for additional computations by the downstream model) it is difficult to discern any trend that lower CTC leads to improved performance. If anything, there seems to be an inverted U-shaped curve with respect to the CTC and downstream performance. The Pearson correlation coefficient between CTC and average accuracy was found to be 0.241. Given that a lower CTC value signifies greater compression, this result suggests a weak negative relationship between the amount of compression and average accuracy.

Zouhar et al. (2023a) introduced an information-theoretic measure based on Rényi efficiency that correlates with downstream performance for their application. Except, so far, for a family of adversarially-created tokenizers Cognetta et al. (2024). It has an order parameter $\alpha$ , with a recommended value of 2.5. We present the Rényi efficiencies and CTC for all models in Table 11 in Appendix G, and summarize their Pearson correlation with average accuracy in Table 2. For the data of Figure 3, all the correlations for various $\alpha$ also have a weak negative association. They are slightly less negative than the association for CTC, although it is not nearly as large as the benefit they saw over sequence length in their application. We note the strong relationship between compression and Rényi efficiency, as the Pearson correlation of CTC and Rényi efficiency with $\alpha$ =2.5 is $-$ 0.891.

| CTC and Ave Acc Rényi Eff and Ave Acc ( $\alpha$ =1.5) Rényi Eff and Ave Acc ( $\alpha$ =2.0) | 0.241 $-$ 0.221 $-$ 0.169 |

| --- | --- |

| Rényi Eff and Ave Acc ( $\alpha$ =2.5) | $-$ 0.151 |

| Rényi Eff and Ave Acc ( $\alpha$ =3.0) | $-$ 0.144 |

| Rényi Eff and Ave Acc ( $\alpha$ =3.5) | $-$ 0.141 |

| CTC and Rényi Eff ( $\alpha$ =2.5) | $-$ 0.891 |

Table 2: Pearson Correlation of CTC and Average Accuracy, or Rényi efficiency for various orders $\alpha$ with Average Accuracy, or CTC and Rényi efficiency at $\alpha=2.5$ .

By varying aspects of BPE, Gallé (2019) and Goldman et al. (2024) suggests we should expect downstream performance to decrease with CTC, while in contrast Ali et al. (2024) did not find a strong relation when varying the tokenizer. Our extensive results varying a number of stages of tokenization suggest it is not inherently beneficial to use fewer tokens. Rather, the particular way that the CTC is varied can lead to different conclusions.

## 6 Analysis

We now analyze the results across the various experiments in a more controlled manner. Our experiments allow us to examine changes in each stage of tokenization, holding the rest constant, revealing design decisions making a significant difference. Appendix E contains additional analysis

### 6.1 Pre-tokenization

For PathPieceR with an $n$ -gram initial vocabulary, we can isolate pre-tokenization. PathPiece is efficient enough to process entire documents with no pre-tokenization, giving it full freedom to minimize the corpus token count (CTC).

| 12 14 18 | SpaceDigit FirstSpDigit None | The ␣ valuation ␣ is ␣ estimated ␣ to ␣ be ␣ $ 2 1 3 M The ␣valuation ␣is ␣estimated ␣to ␣be ␣$ 2 1 3 M The ␣valu ation␣is ␣estimated ␣to␣b e␣$ 2 1 3 M |

| --- | --- | --- |

Table 3: Example PathPiece tokenizations of “The valuation is estimated to be $213M”; vocabulary size of 32,768.

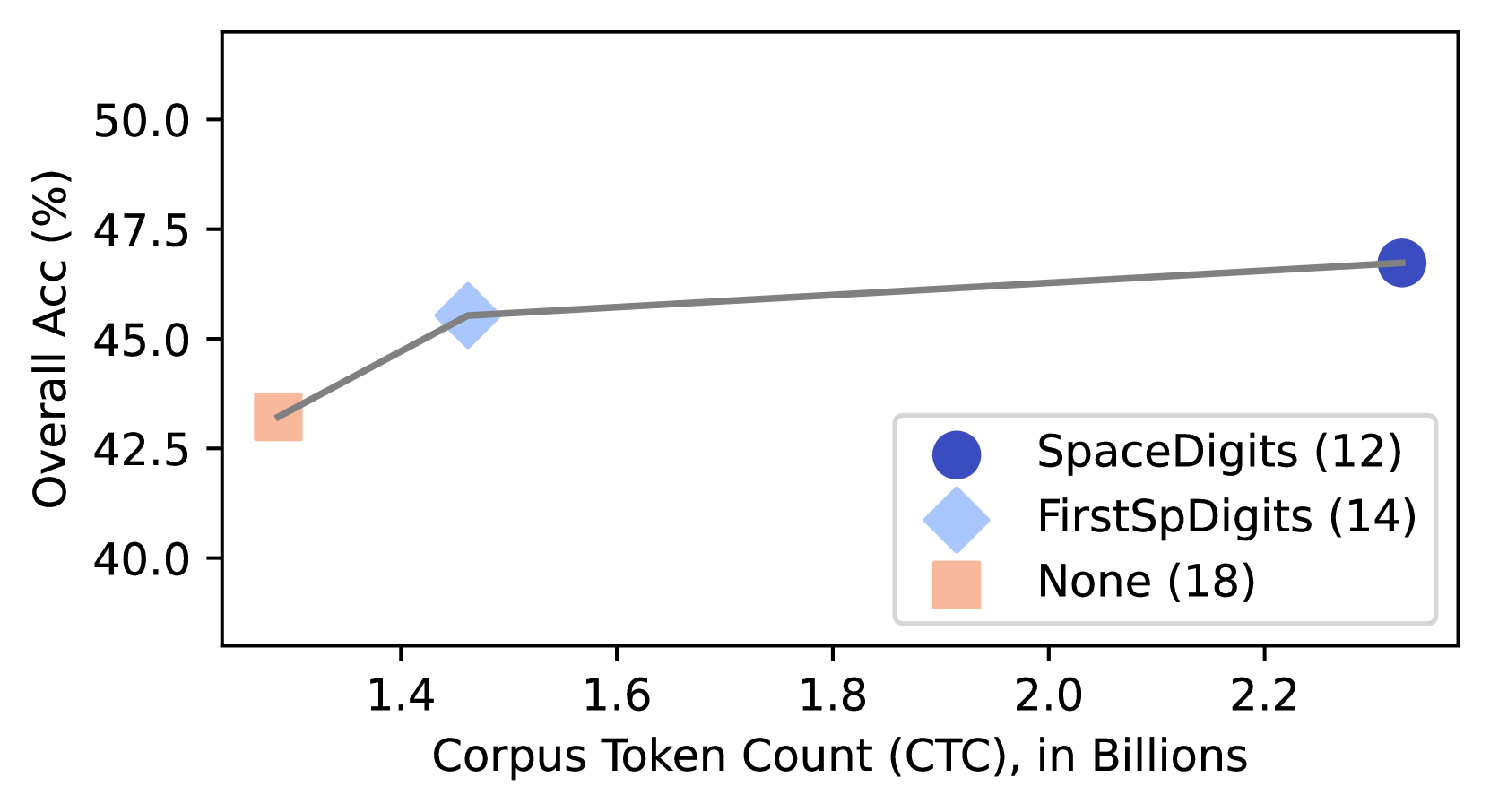

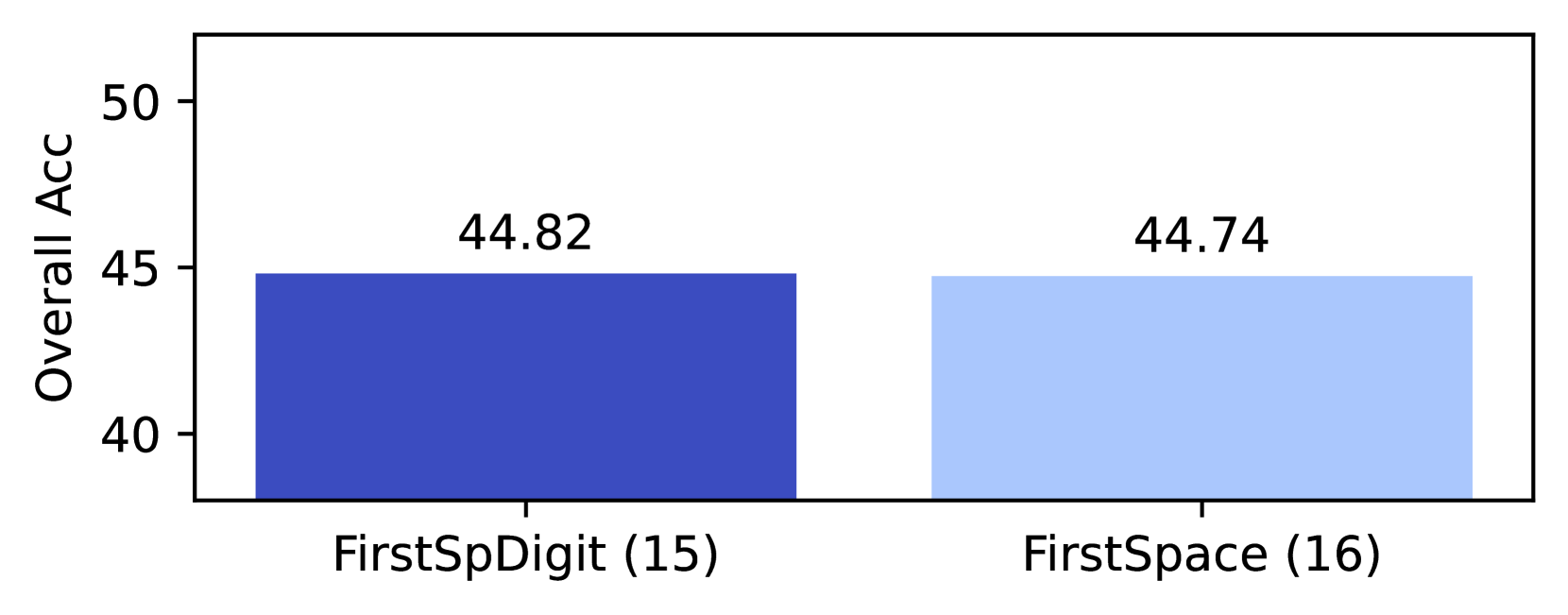

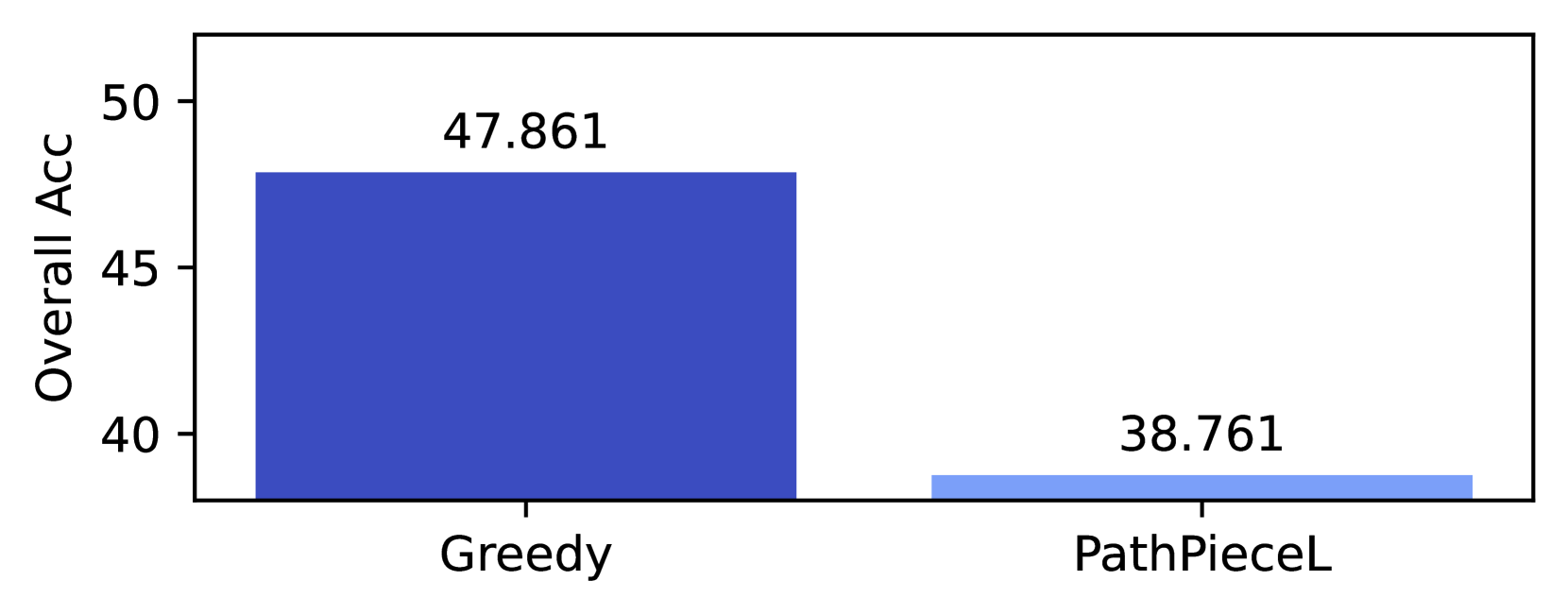

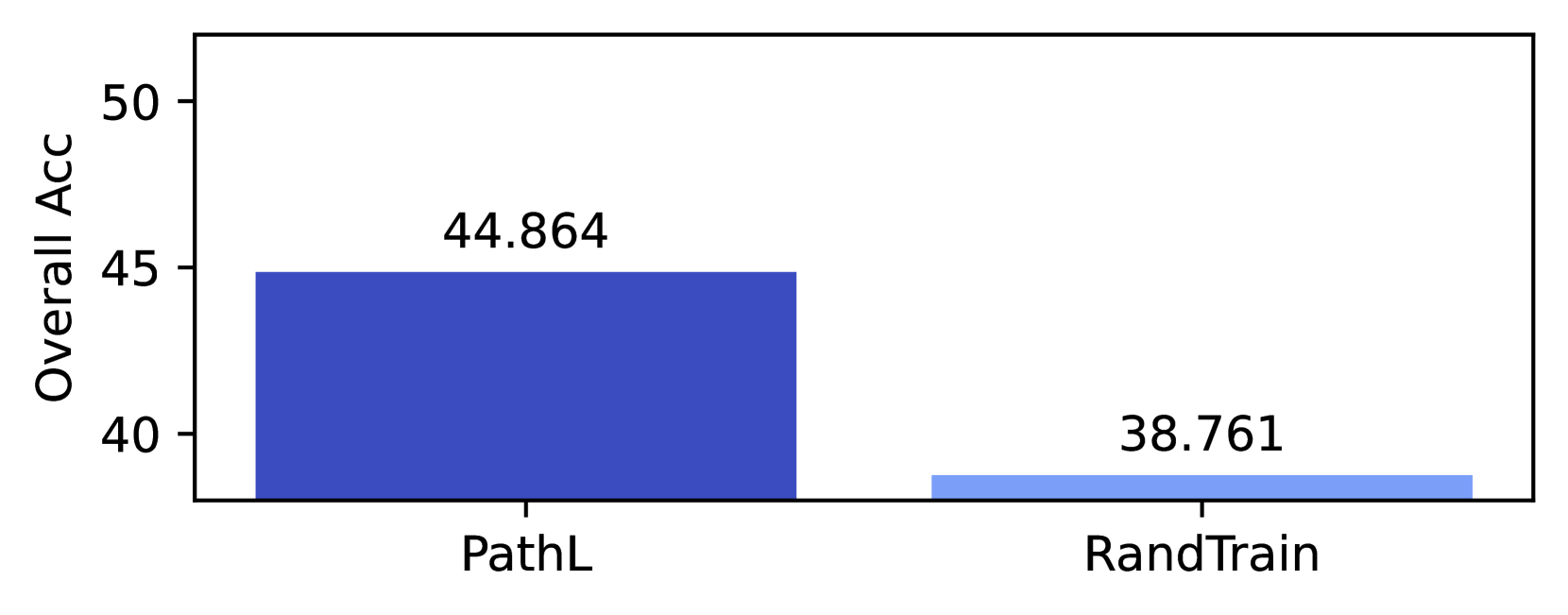

Adding pre-tokenization constrains PathPiece ’s ability to minimize tokens, giving a natural way to vary the number of tokens. Figure 4 shows that PathPiece minimizes the number of tokens used over a corpus when trained with no pre-tokenization (18). The other variants restrict spaces to either be the first character of a token (14), or their own token (12). These two runs also used Digit pre-tokenization where each digit is its own token. Consider the example PathPiece tokenization in Table 3 for the three pre-tokenization methods. The None mode uses the word-boundary-spanning tokens “ ation␣is ”, “ ␣to␣b ”, and “ e␣$ ”. The lack of morphological alignment demonstrated in this example is likely more important to downstream model performance than a simple token count.

In Figure 4 we observe a statistically significant increase in overall accuracy for our downstream tasks, as a function of CTC. Gow-Smith et al. (2022) found that Space pre-tokenization lead to worse performance, while removing the spaces entirely helps Although omitting the spaces entirely does not lead to a reversible tokenization as we have been considering.. Thus, this particular result may be specific to PathPieceR.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Graph: Overall Accuracy vs. Corpus Token Count

### Overview

The image depicts a line graph illustrating the relationship between corpus token count (in billions) and overall accuracy (in percentage). Three data points are connected by a gray line, with markers differentiated by color and shape. The graph suggests a positive correlation between token count and accuracy.

### Components/Axes

- **X-axis**: Corpus Token Count (CTC), in Billions (ranging from 1.4 to 2.3)

- **Y-axis**: Overall Accuracy (%), ranging from 40.0 to 50.0

- **Legend**: Located in the bottom-right corner, with three entries:

- **Blue Circle**: SpaceDigits (12)

- **Light Blue Diamond**: FirstSpDigits (14)

- **Peach Square**: None (18)

### Detailed Analysis

1. **Data Points**:

- **Peach Square (None, 18)**: Positioned at (1.4, 43.0)

- **Light Blue Diamond (FirstSpDigits, 14)**: Positioned at (1.5, 45.0)

- **Blue Circle (SpaceDigits, 12)**: Positioned at (2.3, 47.0)

2. **Line Trend**: A straight gray line connects the points, showing a consistent upward slope from left to right.

3. **Spatial Grounding**:

- Legend: Bottom-right corner

- Data Points: Aligned with their respective x-axis values (1.4, 1.5, 2.3)

- Line: Connects all points in order of increasing x-values

### Key Observations

- Accuracy increases linearly with token count: 43% → 45% → 47%.

- The largest token count (2.3B) corresponds to the highest accuracy (47%).

- The "None" condition (18) has the lowest token count and accuracy.

### Interpretation

The graph demonstrates that increasing corpus token count improves model performance, with the "SpaceDigits" condition achieving the best results. The linear trend implies a predictable relationship between token quantity and accuracy. The legend’s color coding confirms the association between marker types and conditions. No anomalies are observed, as all data points follow the expected upward trajectory.

</details>

Figure 4: The impact of pre-tokenization on Corpus Token Count (CTC) and Overall Accuracy. Ranks in parentheses refer to performance in Table 1.

### 6.2 Vocabulary Construction

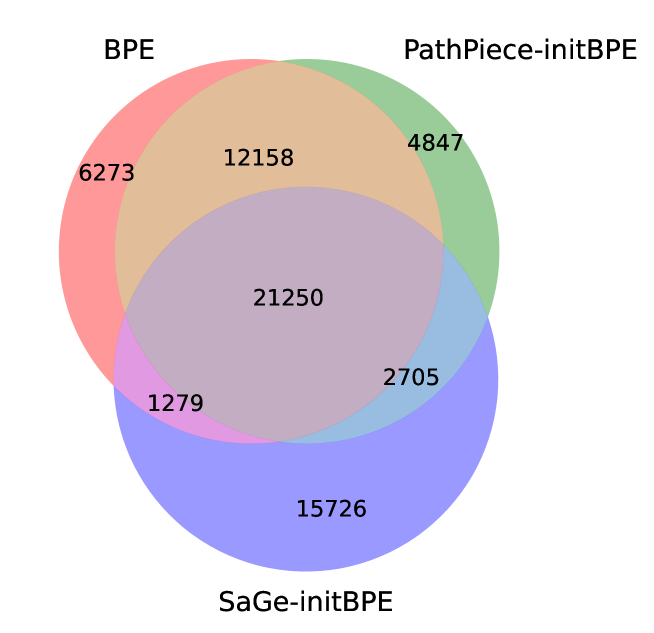

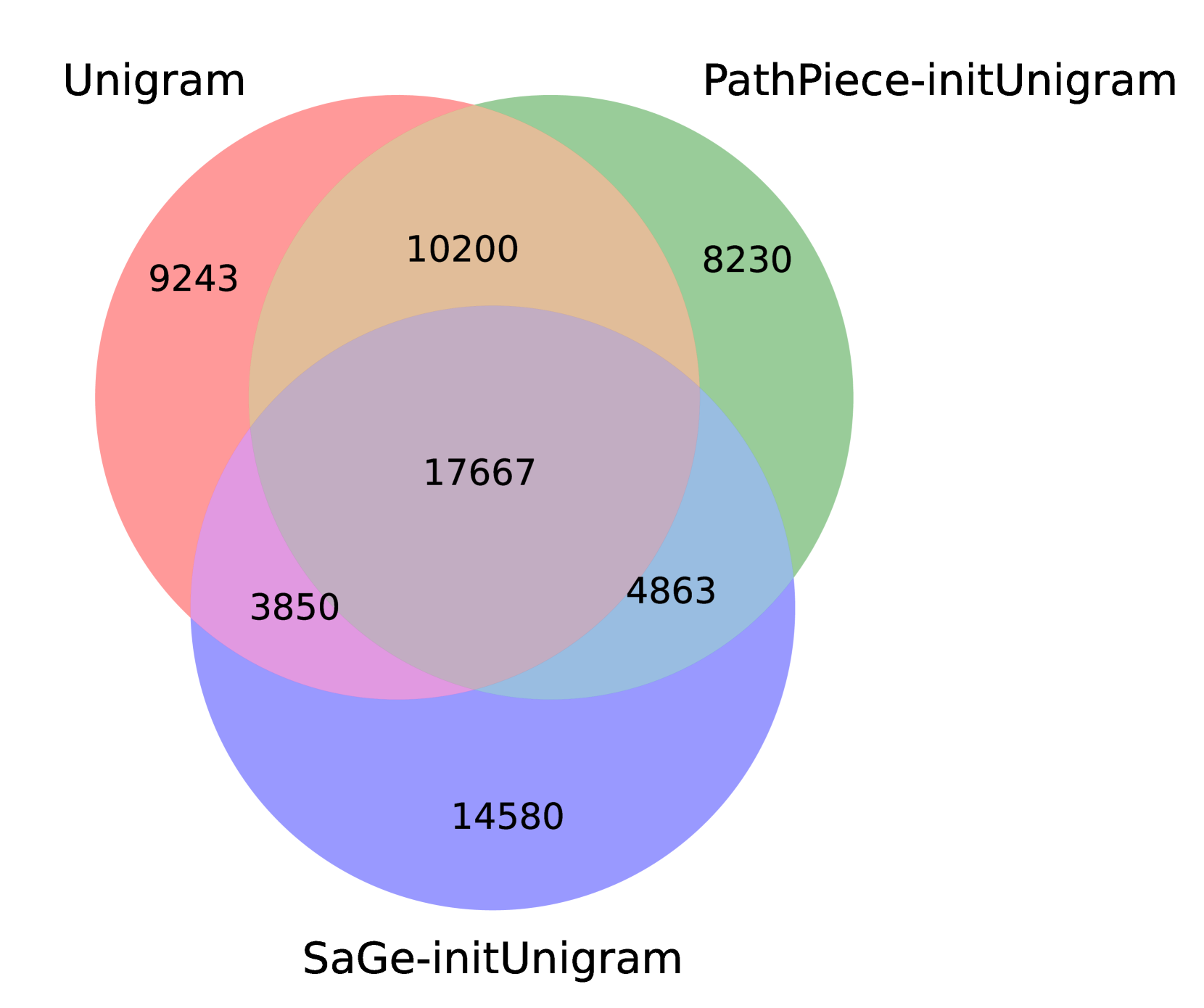

One way to examine the effects of vocabulary construction is to compare the resulting vocabularies of top-down methods trained using an initial vocabulary to the method itself. Figure 5 presents an area-proportional Venn diagram of the overlap in 40,960-sized vocabularies between BPE (6) and variants of PathPieceL (1) and SaGe (6) that were trained using an initial BPE vocabulary of size $2^{18}=262,144$ . See Figure 12 in Appendix E.3 for analogous results for Unigram, which behaves similarly. While BPE and PathPieceL overlap considerably, SaGe produces a more distinct set of tokens.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Venn Diagram: Overlap Analysis of BPE, PathPiece-initBPE, and SaGe-initBPE

### Overview

The image is a three-circle Venn diagram comparing three datasets: **BPE** (red), **PathPiece-initBPE** (green), and **SaGe-initBPE** (blue). Numerical values are embedded in each region to quantify overlaps and unique elements. The diagram uses color-coded regions to represent intersections and exclusions.

### Components/Axes

- **Circles**:

- **BPE** (Red): Leftmost circle.

- **PathPiece-initBPE** (Green): Top-right circle.

- **SaGe-initBPE** (Blue): Bottom-right circle.

- **Overlap Regions**:

- **BPE ∩ PathPiece-initBPE**: 12,158 (orange).

- **PathPiece-initBPE ∩ SaGe-initBPE**: 2,705 (light blue).

- **BPE ∩ SaGe-initBPE**: 1,279 (purple).

- **BPE ∩ PathPiece-initBPE ∩ SaGe-initBPE**: 21,250 (dark purple).

- **Unique Regions**:

- **BPE-only**: 6,273 (red).

- **PathPiece-initBPE-only**: 4,847 (green).

- **SaGe-initBPE-only**: 15,726 (blue).

### Detailed Analysis

- **BPE (Red)**:

- Total: 6,273 (unique) + 12,158 (BPE ∩ PathPiece) + 1,279 (BPE ∩ SaGe) + 21,250 (triple overlap) = **40,960**.

- **PathPiece-initBPE (Green)**:

- Total: 4,847 (unique) + 12,158 (BPE ∩ PathPiece) + 2,705 (PathPiece ∩ SaGe) + 21,250 (triple overlap) = **40,960**.

- **SaGe-initBPE (Blue)**:

- Total: 15,726 (unique) + 2,705 (PathPiece ∩ SaGe) + 1,279 (BPE ∩ SaGe) + 21,250 (triple overlap) = **40,960**.

- **Triple Overlap (Dark Purple)**: 21,250 (largest shared region).

- **Smallest Overlap**: BPE ∩ SaGe-initBPE (1,279).

### Key Observations

1. **Dominant Overlap**: The triple intersection (21,250) is the largest shared region, indicating significant commonality across all three datasets.

2. **SaGe-initBPE Dominance**: SaGe-initBPE has the largest unique region (15,726), suggesting it contains the most distinct elements.

3. **Minimal BPE-SaGe Overlap**: Only 1,279 elements are shared exclusively between BPE and SaGe-initBPE, highlighting limited direct interaction between these two.

4. **Symmetry**: All three datasets have identical total sizes (40,960), implying balanced representation despite differing overlaps.

### Interpretation

The diagram reveals that **SaGe-initBPE** contributes the most unique data, while the **triple overlap** (21,250) represents the core shared functionality or features among all three. The minimal BPE-SaGe overlap (1,279) suggests potential gaps in integration or compatibility between these two. The symmetry in total sizes implies the datasets were designed to be comparable, but their distinct overlaps highlight nuanced differences in scope or methodology. This could reflect trade-offs in efficiency, accuracy, or resource usage between the approaches.

</details>

Figure 5: Venn diagram comparing 40,960 token vocabularies of BPE, PathPieceL and SaGe – the latter two were both initialized from a BPE vocabulary of 262,144.

### 6.3 Initial Vocabulary

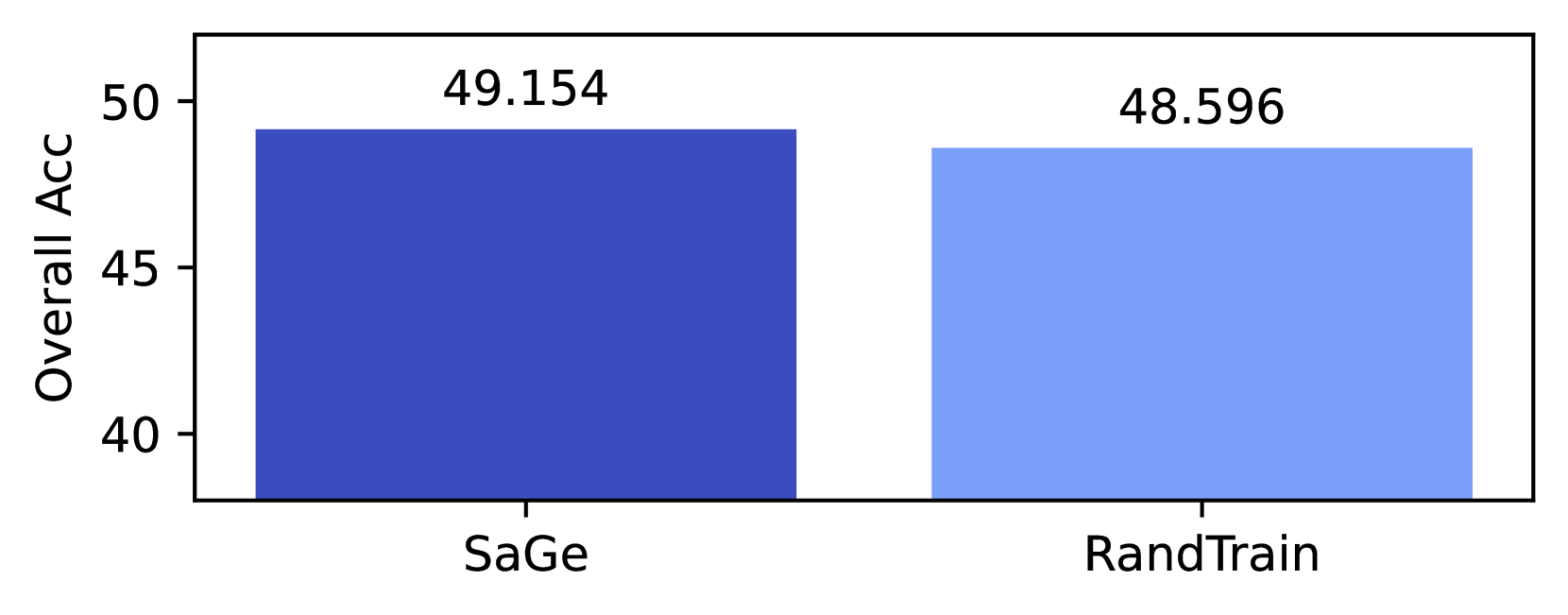

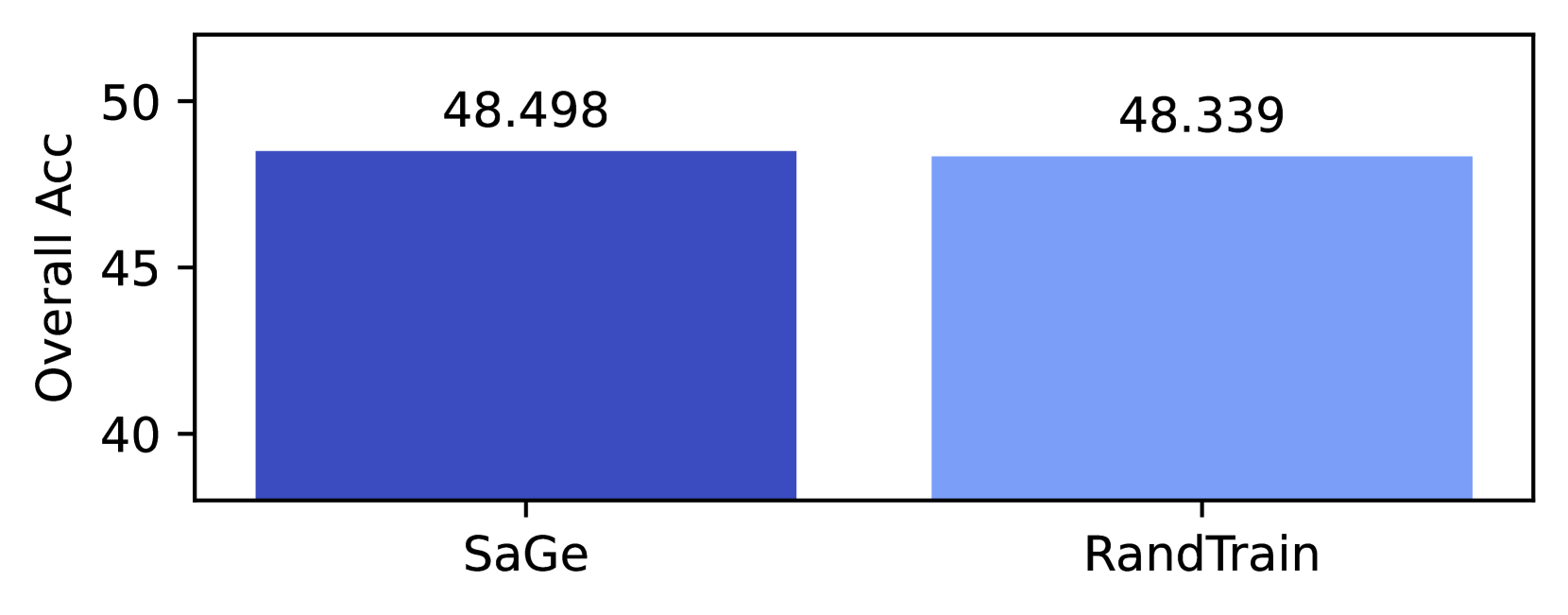

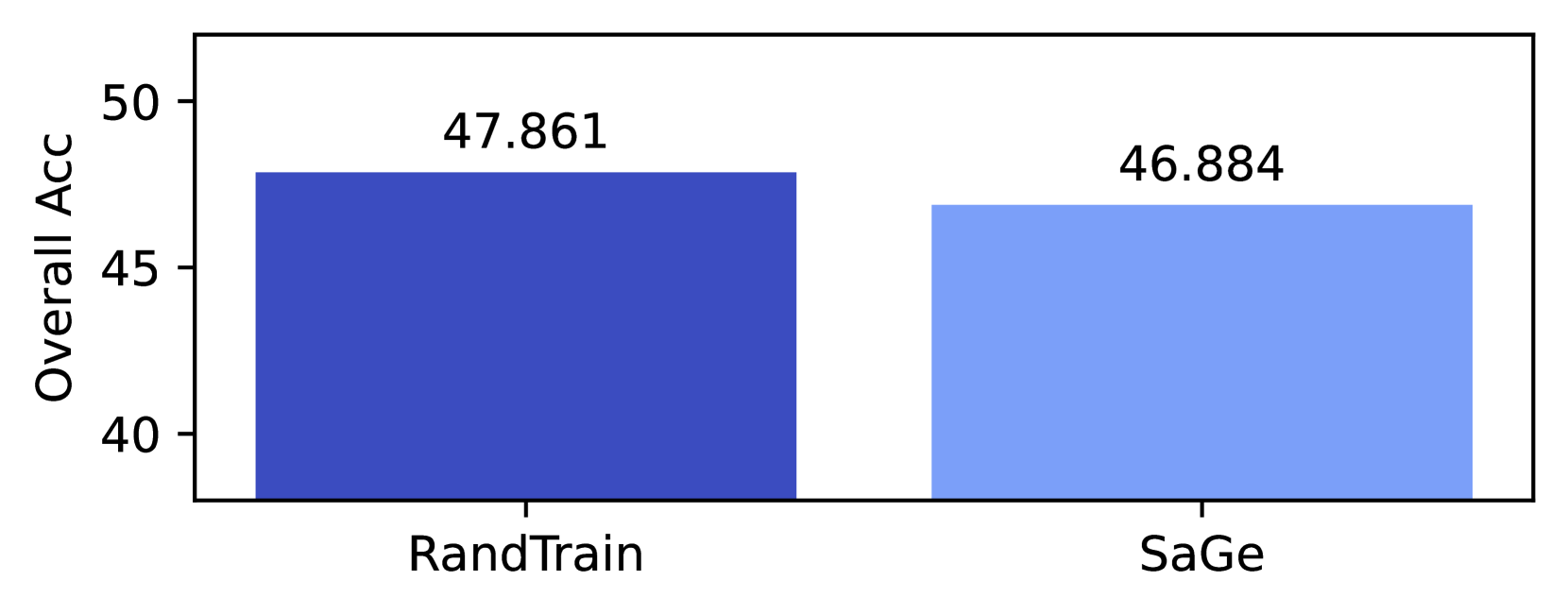

PathPiece, SaGe, and Unigram all require an initial vocabulary. The HuggingFace Unigram implementation starts with the one millionp $n$ -grams, but sorted according to the count times the length of the token, introducing a bias toward longer tokens. For PathPiece and SaGe, we experimented with initial vocabularies of size 262,144 constructed from either the most frequent $n$ -grams, or trained using either BPE or Unigram. For PathPieceL, using a BPE initial vocabulary (1) is statistically better than both Unigram (9) and $n$ -grams (16), with $p\leq 0.01$ . Using an $n$ -gram initial vocabulary leads to the lowest performance, with statistical significance. Comparing ranks 6, 8, and 10 reveals the same pattern for SaGe, although the difference between 8 and 10 is not significant.

### 6.4 Effect of Model Size

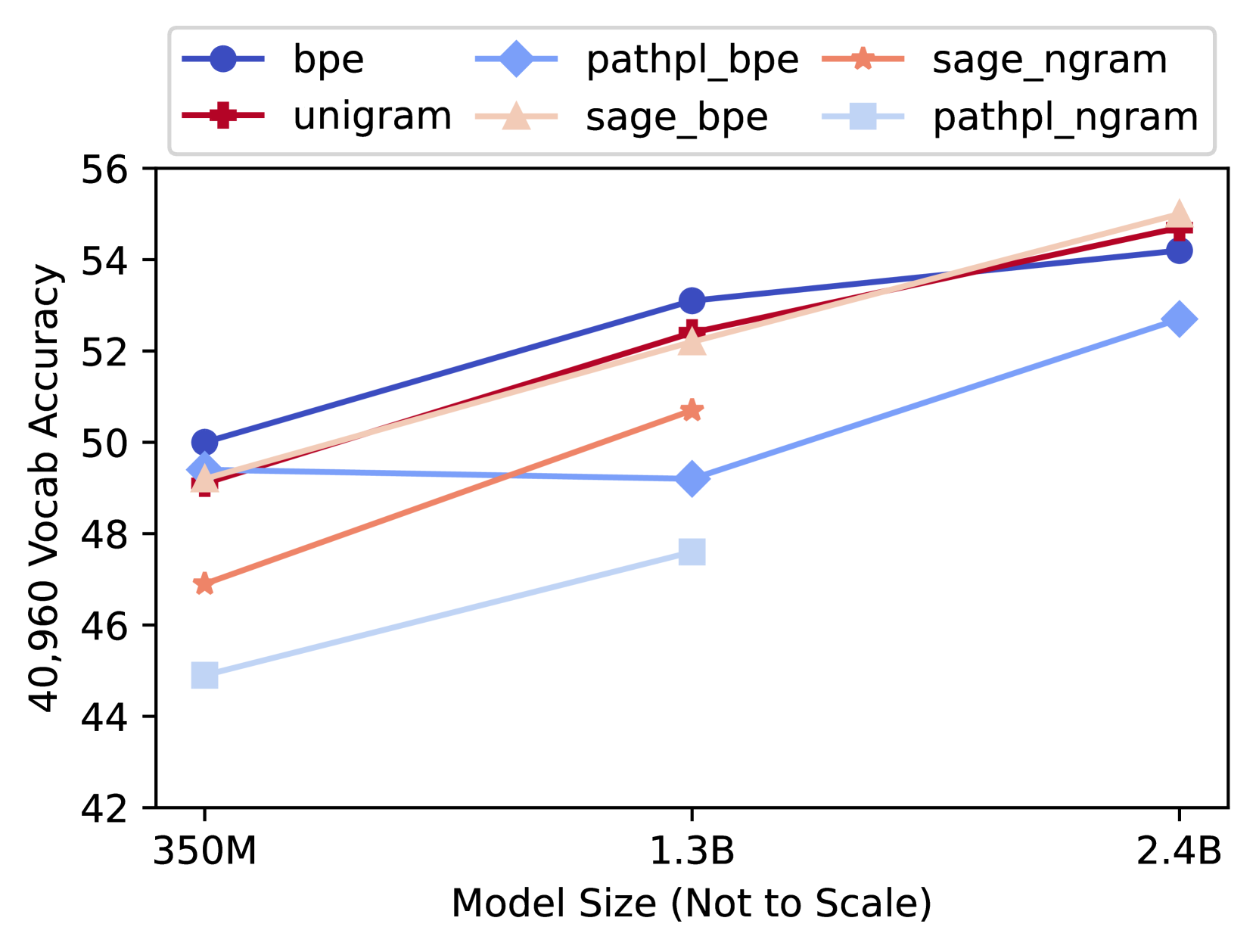

To examine the dependency on model size, we build larger models of 1.3B parameters for 6 of our experiments, and 2.4B parameters for 4 of them. These models were trained over the same 200 billion tokens. In the interest of computational time, these larger models were only run at a single vocabulary size of 40,960. The average results over the 10 task accuracies for these models is given in Figure 6. See Table 14 in Appendix G for the numerical values.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: 40,960 Vocab Accuracy vs Model Size

### Overview

The chart compares the performance of six different model configurations (bpe, pathpl_bpe, sage_ngram, unigram, sage_bpe, pathpl_ngram) across three model sizes (350M, 1.3B, 2.4B) in terms of 40,960 Vocab Accuracy. Accuracy is measured on a scale from 42 to 56, with model sizes represented on a logarithmic scale.

### Components/Axes

- **X-axis**: Model Size (Not to Scale)

- Markers: 350M (left), 1.3B (center), 2.4B (right)

- **Y-axis**: 40,960 Vocab Accuracy (42–56)

- **Legend**: Top-left corner with six entries:

- Blue circle: bpe

- Light blue diamond: pathpl_bpe

- Orange star: sage_ngram

- Red cross: unigram

- Pink triangle: sage_bpe

- Light pink square: pathpl_ngram

### Detailed Analysis

1. **bpe (Blue Circle)**:

- 350M: ~50.0

- 1.3B: ~53.0

- 2.4B: ~54.2

- *Trend*: Steady upward slope.

2. **pathpl_bpe (Light Blue Diamond)**:

- 350M: ~49.5

- 1.3B: ~49.2

- 2.4B: ~52.8

- *Trend*: Flat initially, then sharp increase.

3. **sage_ngram (Orange Star)**:

- 350M: ~47.0

- 1.3B: ~50.5

- 2.4B: ~54.0

- *Trend*: Steep upward slope.

4. **unigram (Red Cross)**:

- 350M: ~49.0

- 1.3B: ~52.5

- 2.4B: ~54.5

- *Trend*: Sharp upward slope.

5. **sage_bpe (Pink Triangle)**:

- 350M: ~49.2

- 1.3B: ~52.0

- 2.4B: ~55.0

- *Trend*: Consistent upward slope.

6. **pathpl_ngram (Light Pink Square)**:

- 350M: ~45.0

- 1.3B: ~47.5

- 2.4B: ~52.5

- *Trend*: Gradual upward slope.

### Key Observations

- **Highest Performance**:

- At 2.4B, **sage_bpe** (55.0) and **unigram** (54.5) achieve the highest accuracy.

- **Lowest Performance**:

- **pathpl_ngram** (light pink square) consistently lags, with ~45.0 at 350M and ~52.5 at 2.4B.

- **Model Size Impact**:

- Larger models (2.4B) outperform smaller ones across all configurations.

- **sage_ngram** and **unigram** show the steepest improvement with model size.

- **Flat Lines**:

- **bpe** and **pathpl_bpe** exhibit relatively flat trends compared to others.

### Interpretation

The data suggests that model size significantly impacts performance, with larger models (2.4B) achieving higher accuracy. The **unigram** and **sage_ngram** configurations benefit most from increased model size, showing steep upward trends. In contrast, **pathpl_ngram** underperforms across all sizes, indicating potential inefficiencies in its design. The flat lines for **bpe** and **pathpl_bpe** imply that their performance is less sensitive to model size changes. This highlights the importance of architectural choices (e.g., n-gram vs. path-based models) in determining scalability and effectiveness.

</details>

Figure 6: 40,960 vocab average accuracy at various models sizes

It is noteworthy from the prevalence of crossing lines in Figure 6 that the relative performance of the tokenizers do vary by model size, and that there is a group of tokenizers that are trading places being at the top for various model sizes. This aligns with our observation that the top 6 tokenizers were within the noise, and not significantly better than each other in the 350M models.

## 7 Conclusion

We investigate the hypothesis that reducing the corpus token count (CTC) would improve downstream performance, as suggested by Gallé (2019) and Goldman et al. (2024) when they varied aspects of BPE. When comparing CTC and downstream accuracy across all our experimental settings in Figure 3, we do not find a clear relationship between the two. We expand on the findings of Ali et al. (2024) who did not find a strong relation when comparing 3 tokenizers, as we run 18 experiments varying the tokenizer, initial vocabulary, pre-tokenizer, and inference method. Our results suggest compression is not a straightforward explanation of what makes a tokenizer effective.

Finally, this work makes several practical contributions: (1) vocabulary size has little impact on downstream performance over the range of sizes we examined (§ 5.1); (2) five different tokenizers all perform comparably, with none outperforming at statistical significance (§ 5.2); (3) BPE initial vocabularies work best for top-down vocabulary construction (§ 6.3). To further encourage research in this direction, we make all of our trained vocabularies publicly available, along with the model weights from our 64 language models.

## Limitations

The objective of this work is to offer a comprehensive analysis of the tokenization process. However, our findings were constrained to particular tasks and models. Given the degrees of freedom, such as choice of downstream tasks, model, vocabulary size, etc., there is a potential risk of inadvertently considering our results as universally applicable to all NLP tasks; results may not generalize to other domains of tasks.

Additionally, our experiments were exclusively with English language text, and it is not clear how these results will extend to other languages. In particular, our finding that pre-tokenization is crucial for effective downstream accuracy is not applicable to languages without space-delimited words.

We conducted experiments for three district vocabulary sizes, and we reported averaged results across these experiments. With additional compute resources and time, it could be beneficial to conduct further experiments to gain a better estimate of any potential noise. For example, in Figure 7 of Appendix D, the 100k checkpoint at the 1.3B model size is worse than expected, indicating that noise could be an issue.

Finally, the selection of downstream tasks can have a strong impact on results. To allow for meaningful results, we attempted to select tasks that were neither too difficult nor too easy for the 350M parameter models, but other choices could lead to different outcomes. There does not seem to be a good, objective criteria for selecting a finite set of task to well-represent global performance.

## Ethics Statement

We have used the commonly used public dataset The Pile, which has not undergone a formal ethics review Biderman et al. (2022). Our models may include biases from the training data.

Our experimentation has used considerable energy. Each 350M parameter run took approximately 48 hours on (4) p4de nodes, each containing 8 NVIDIA A100 GPUs. We trained 62 models, including the 8 RandTrain runs in Appendix F. The (6) 1.3B parameters models took approximately 69 hours to train on (4) p4de nodes, while the (4) 2.4B models took approximately 117 hours to train on (8) p4de nodes. In total, training required 17,304 hours of p4de usage (138,432 GPU hours).

## Acknowledgments

Thanks to Charles Lovering at Kensho for his insightful suggestions, and to Michael Krumdick, Mike Arov, and Brian Chen at Kensho for their help with the language model development process. This research was supported in part by the Israel Science Foundation (grant No. 1166/23). Thanks to an anonymous reviewer who pointed out the large change in CTC when comparing Huggingface BPE and Unigram, in contrast to the previous literature using the SentencePiece implementations Kudo and Richardson (2018).

## References

- Ali et al. (2024) Mehdi Ali, Michael Fromm, Klaudia Thellmann, Richard Rutmann, Max Lübbering, Johannes Leveling, Katrin Klug, Jan Ebert, Niclas Doll, Jasper Schulze Buschhoff, Charvi Jain, Alexander Arno Weber, Lena Jurkschat, Hammam Abdelwahab, Chelsea John, Pedro Ortiz Suarez, Malte Ostendorff, Samuel Weinbach, Rafet Sifa, Stefan Kesselheim, and Nicolas Flores-Herr. 2024. Tokenizer choice for llm training: Negligible or crucial?

- Amini et al. (2019) Aida Amini, Saadia Gabriel, Peter Lin, Rik Koncel-Kedziorski, Yejin Choi, and Hannaneh Hajishirzi. 2019. Mathqa: Towards interpretable math word problem solving with operation-based formalisms.

- Bauwens and Delobelle (2024) Thomas Bauwens and Pieter Delobelle. 2024. BPE-knockout: Pruning pre-existing BPE tokenisers with backwards-compatible morphological semi-supervision. In Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers), pages 5810–5832, Mexico City, Mexico. Association for Computational Linguistics.

- Biderman et al. (2022) Stella Biderman, Kieran Bicheno, and Leo Gao. 2022. Datasheet for the pile. CoRR, abs/2201.07311.

- Bisk et al. (2019) Yonatan Bisk, Rowan Zellers, Ronan Le Bras, Jianfeng Gao, and Yejin Choi. 2019. Piqa: Reasoning about physical commonsense in natural language.

- Bostrom and Durrett (2020) Kaj Bostrom and Greg Durrett. 2020. Byte pair encoding is suboptimal for language model pretraining. In Findings of the Association for Computational Linguistics: EMNLP 2020, pages 4617–4624, Online. Association for Computational Linguistics.

- Bouma (2009) Gerlof Bouma. 2009. Normalized (pointwise) mutual information in collocation extraction. Proceedings of GSCL, 30:31–40.

- Brassard et al. (2022) Ana Brassard, Benjamin Heinzerling, Pride Kavumba, and Kentaro Inui. 2022. Copa-sse: Semi-structured explanations for commonsense reasoning.

- Chizhov et al. (2024) Pavel Chizhov, Catherine Arnett, Elizaveta Korotkova, and Ivan P. Yamshchikov. 2024. Bpe gets picky: Efficient vocabulary refinement during tokenizer training.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. 2018. Think you have solved question answering? try arc, the ai2 reasoning challenge. ArXiv, abs/1803.05457.

- Cognetta et al. (2024) Marco Cognetta, Vilém Zouhar, Sangwhan Moon, and Naoaki Okazaki. 2024. Two counterexamples to tokenization and the noiseless channel. In Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024), pages 16897–16906, Torino, Italia. ELRA and ICCL.

- Efraimidis (2010) Pavlos S. Efraimidis. 2010. Weighted random sampling over data streams. CoRR, abs/1012.0256.

- Gage (1994) Philip Gage. 1994. A new algorithm for data compression. C Users J., 12(2):23–38.

- Gallé (2019) Matthias Gallé. 2019. Investigating the effectiveness of BPE: The power of shorter sequences. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pages 1375–1381, Hong Kong, China. Association for Computational Linguistics.

- Gao et al. (2020) Leo Gao, Stella Biderman, Sid Black, Laurence Golding, Travis Hoppe, Charles Foster, Jason Phang, Horace He, Anish Thite, Noa Nabeshima, Shawn Presser, and Connor Leahy. 2020. The pile: An 800gb dataset of diverse text for language modeling.

- Gao et al. (2023) Leo Gao, Jonathan Tow, Baber Abbasi, Stella Biderman, Sid Black, Anthony DiPofi, Charles Foster, Laurence Golding, Jeffrey Hsu, Alain Le Noac’h, Haonan Li, Kyle McDonell, Niklas Muennighoff, Chris Ociepa, Jason Phang, Laria Reynolds, Hailey Schoelkopf, Aviya Skowron, Lintang Sutawika, Eric Tang, Anish Thite, Ben Wang, Kevin Wang, and Andy Zou. 2023. A framework for few-shot language model evaluation.

- Goldman et al. (2024) Omer Goldman, Avi Caciularu, Matan Eyal, Kris Cao, Idan Szpektor, and Reut Tsarfaty. 2024. Unpacking tokenization: Evaluating text compression and its correlation with model performance.

- Gow-Smith et al. (2024) Edward Gow-Smith, Dylan Phelps, Harish Tayyar Madabushi, Carolina Scarton, and Aline Villavicencio. 2024. Word boundary information isn’t useful for encoder language models. In Proceedings of the 9th Workshop on Representation Learning for NLP (RepL4NLP-2024), pages 118–135, Bangkok, Thailand. Association for Computational Linguistics.

- Gow-Smith et al. (2022) Edward Gow-Smith, Harish Tayyar Madabushi, Carolina Scarton, and Aline Villavicencio. 2022. Improving tokenisation by alternative treatment of spaces. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, pages 11430–11443, Abu Dhabi, United Arab Emirates. Association for Computational Linguistics.

- Grefenstette (1999) Gregory Grefenstette. 1999. Tokenization, pages 117–133. Springer Netherlands, Dordrecht.

- Gutierrez-Vasques et al. (2021) Ximena Gutierrez-Vasques, Christian Bentz, Olga Sozinova, and Tanja Samardzic. 2021. From characters to words: the turning point of BPE merges. In Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, pages 3454–3468, Online. Association for Computational Linguistics.

- He et al. (2020) Xuanli He, Gholamreza Haffari, and Mohammad Norouzi. 2020. Dynamic programming encoding for subword segmentation in neural machine translation. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 3042–3051, Online. Association for Computational Linguistics.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. 2021. Measuring massive multitask language understanding.

- Hofmann et al. (2021) Valentin Hofmann, Janet Pierrehumbert, and Hinrich Schütze. 2021. Superbizarre is not superb: Derivational morphology improves BERT’s interpretation of complex words. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 3594–3608, Online. Association for Computational Linguistics.

- Hofmann et al. (2022) Valentin Hofmann, Hinrich Schuetze, and Janet Pierrehumbert. 2022. An embarrassingly simple method to mitigate undesirable properties of pretrained language model tokenizers. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pages 385–393, Dublin, Ireland. Association for Computational Linguistics.

- Jacobs and Pinter (2022) Cassandra L Jacobs and Yuval Pinter. 2022. Lost in space marking. arXiv preprint arXiv:2208.01561.

- Kaddour (2023) Jean Kaddour. 2023. The minipile challenge for data-efficient language models.

- Klein and Tsarfaty (2020) Stav Klein and Reut Tsarfaty. 2020. Getting the ##life out of living: How adequate are word-pieces for modelling complex morphology? In Proceedings of the 17th SIGMORPHON Workshop on Computational Research in Phonetics, Phonology, and Morphology, pages 204–209, Online. Association for Computational Linguistics.

- Kudo (2018) Taku Kudo. 2018. Subword regularization: Improving neural network translation models with multiple subword candidates. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 66–75, Melbourne, Australia. Association for Computational Linguistics.

- Kudo and Richardson (2018) Taku Kudo and John Richardson. 2018. SentencePiece: A simple and language independent subword tokenizer and detokenizer for neural text processing. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, pages 66–71, Brussels, Belgium. Association for Computational Linguistics.

- Lai et al. (2017) Guokun Lai, Qizhe Xie, Hanxiao Liu, Yiming Yang, and Eduard Hovy. 2017. Race: Large-scale reading comprehension dataset from examinations.

- Levesque et al. (2012) Hector J. Levesque, Ernest Davis, and Leora Morgenstern. 2012. The winograd schema challenge. In 13th International Conference on the Principles of Knowledge Representation and Reasoning, KR 2012, Proceedings of the International Conference on Knowledge Representation and Reasoning, pages 552–561. Institute of Electrical and Electronics Engineers Inc. 13th International Conference on the Principles of Knowledge Representation and Reasoning, KR 2012 ; Conference date: 10-06-2012 Through 14-06-2012.

- Lian et al. (2024) Haoran Lian, Yizhe Xiong, Jianwei Niu, Shasha Mo, Zhenpeng Su, Zijia Lin, Peng Liu, Hui Chen, and Guiguang Ding. 2024. Scaffold-bpe: Enhancing byte pair encoding with simple and effective scaffold token removal.

- Liang et al. (2023) Davis Liang, Hila Gonen, Yuning Mao, Rui Hou, Naman Goyal, Marjan Ghazvininejad, Luke Zettlemoyer, and Madian Khabsa. 2023. XLM-V: Overcoming the vocabulary bottleneck in multilingual masked language models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 13142–13152, Singapore. Association for Computational Linguistics.

- Limisiewicz et al. (2023) Tomasz Limisiewicz, Jiří Balhar, and David Mareček. 2023. Tokenization impacts multilingual language modeling: Assessing vocabulary allocation and overlap across languages. In Findings of the Association for Computational Linguistics: ACL 2023, pages 5661–5681, Toronto, Canada. Association for Computational Linguistics.

- Mielke et al. (2021) Sabrina J. Mielke, Zaid Alyafeai, Elizabeth Salesky, Colin Raffel, Manan Dey, Matthias Gallé, Arun Raja, Chenglei Si, Wilson Y. Lee, Benoît Sagot, and Samson Tan. 2021. Between words and characters: A brief history of open-vocabulary modeling and tokenization in nlp.

- Mikolov et al. (2011) Tomas Mikolov, Ilya Sutskever, Anoop Deoras, Hai Son Le, Stefan Kombrink, and Jan Honza Černocký. 2011. Subword language modeling with neural networks. Preprint available at: https://api.semanticscholar.org/CorpusID:46542477.

- Peñas et al. (2013) Anselmo Peñas, Eduard Hovy, Pamela Forner, Álvaro Rodrigo, Richard Sutcliffe, and Roser Morante. 2013. Qa4mre 2011-2013: Overview of question answering for machine reading evaluation. In CLEF 2013, LNCS 8138, pages 303–320.

- Provilkov et al. (2020) Ivan Provilkov, Dmitrii Emelianenko, and Elena Voita. 2020. BPE-dropout: Simple and effective subword regularization. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 1882–1892, Online. Association for Computational Linguistics.

- Saleva and Lignos (2023) Jonne Saleva and Constantine Lignos. 2023. What changes when you randomly choose BPE merge operations? not much. In Proceedings of the Fourth Workshop on Insights from Negative Results in NLP, pages 59–66, Dubrovnik, Croatia. Association for Computational Linguistics.

- Schuster and Nakajima (2012) Mike Schuster and Kaisuke Nakajima. 2012. Japanese and korean voice search. In 2012 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 5149–5152.

- Sennrich et al. (2016) Rico Sennrich, Barry Haddow, and Alexandra Birch. 2016. Neural machine translation of rare words with subword units. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 1715–1725, Berlin, Germany. Association for Computational Linguistics.

- Singh et al. (2019) Jasdeep Singh, Bryan McCann, Richard Socher, and Caiming Xiong. 2019. BERT is not an interlingua and the bias of tokenization. In Proceedings of the 2nd Workshop on Deep Learning Approaches for Low-Resource NLP (DeepLo 2019), pages 47–55, Hong Kong, China. Association for Computational Linguistics.

- Touvron et al. (2023) Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, Aurelien Rodriguez, Armand Joulin, Edouard Grave, and Guillaume Lample. 2023. Llama: Open and efficient foundation language models.

- Uzan et al. (2024) Omri Uzan, Craig W. Schmidt, Chris Tanner, and Yuval Pinter. 2024. Greed is all you need: An evaluation of tokenizer inference methods. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pages 813–822, Bangkok, Thailand. Association for Computational Linguistics.

- Viterbi (1967) A. Viterbi. 1967. Error bounds for convolutional codes and an asymptotically optimum decoding algorithm. IEEE Transactions on Information Theory, 13(2):260–269.

- Vitter (1985) Jeffrey S. Vitter. 1985. Random sampling with a reservoir. ACM Transactions on Mathematical Software, 11(1):37–57.

- Welbl et al. (2017) Johannes Welbl, Nelson F. Liu, and Matt Gardner. 2017. Crowdsourcing multiple choice science questions. ArXiv, abs/1707.06209.

- Wilcoxon (1945) F Wilcoxon. 1945. Individual comparisons by ranking methods. biom. bull., 1, 80–83.

- Wu et al. (2016) Yonghui Wu, Mike Schuster, Zhifeng Chen, Quoc V. Le, Mohammad Norouzi, Wolfgang Macherey, Maxim Krikun, Yuan Cao, Qin Gao, Klaus Macherey, Jeff Klingner, Apurva Shah, Melvin Johnson, Xiaobing Liu, Łukasz Kaiser, Stephan Gouws, Yoshikiyo Kato, Taku Kudo, Hideto Kazawa, Keith Stevens, George Kurian, Nishant Patil, Wei Wang, Cliff Young, Jason Smith, Jason Riesa, Alex Rudnick, Oriol Vinyals, Greg Corrado, Macduff Hughes, and Jeffrey Dean. 2016. Google’s neural machine translation system: Bridging the gap between human and machine translation.

- Yehezkel and Pinter (2023) Shaked Yehezkel and Yuval Pinter. 2023. Incorporating context into subword vocabularies. In Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics, pages 623–635, Dubrovnik, Croatia. Association for Computational Linguistics.

- Zouhar et al. (2023a) Vilém Zouhar, Clara Meister, Juan Gastaldi, Li Du, Mrinmaya Sachan, and Ryan Cotterell. 2023a. Tokenization and the noiseless channel. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 5184–5207, Toronto, Canada. Association for Computational Linguistics.

- Zouhar et al. (2023b) Vilém Zouhar, Clara Meister, Juan Gastaldi, Li Du, Tim Vieira, Mrinmaya Sachan, and Ryan Cotterell. 2023b. A formal perspective on byte-pair encoding. In Findings of the Association for Computational Linguistics: ACL 2023, pages 598–614, Toronto, Canada. Association for Computational Linguistics.

## Appendix A Expanded description of PathPiece

This section provides a self-contained explanation of PathPiece, expanding on the one in § 3, with additional details on the vocabulary construction and complexity.

In order to design an optimal vocabulary $\mathcal{V}$ , it is first necessary to know how the vocabulary will be used to tokenize. There can be no best vocabulary in the abstract. Thus, we first present a new lossless subword tokenizer PathPiece. This tokenization over our training corpus will provide the context to design a coherent vocabulary.

### A.1 Tokenization for a given vocabulary

We work at the byte level, and require that all 256 single byte tokens are included in any given vocabulary $\mathcal{V}$ . This avoids any out-of-vocabulary tokens by falling back to single bytes in the worst case.

Tokenization can be viewed as a compression problem, where we would like to tokenize text in a few tokens as possible. This has direct benefits, as it allows more text to fit in a given context window. A Minimum Description Length (MDL) argument can also be made that the tokenization using the fewest tokens best describes the data, although we saw in Subsection 6.1 this may not always hold in practice.

Tokenizers such as BPE and WordPiece make greedy decisions, such as choosing which pair of current tokens to merge to create a new one, which results in tokenizations that may use more tokens than necessary. In contrast, PathPiece will find an optimal tokenization by finding a shortest path through a Directed Acyclic Graph (DAG). Informally, each byte $i$ of training data forms a node in the graph, and there is an edge if the $w$ byte sequence ending at $i$ is a token in $\mathcal{V}$ .

An implementation of PathPiece is given in Algorithm 2, where input $d$ is a text document of $n$ bytes, $\mathcal{V}$ is a given vocabulary, and $L$ is a limit on the maximum width of a token in bytes. It has complexity $O(nL)$ , following directly from the two nested for -loops. It iterates over the bytes $i$ in $d$ , computing 4 values for each. It computes the shortest path length $pl[i]$ in tokens up to and including byte $i$ , the width $wid[i]$ of a token with that shortest path length, and the solution count $sc[i]$ of optimal solutions found thus far with that shortest length. We also remember the valid tokens of width 2 or more ending at each location $i$ in $vt[i]$ , which will be used in the next section.

There will be multiple tokenizations with the same optimal length, so some sort of tiebreaker is needed. The longest token or a randomly selected token are obvious choices. We have presented the random tiebreaker method here, where a random solution is selected in a single pass in lines 29-32 of the listing using an idea from reservoir sampling Vitter (1985).

A backward pass through $d$ constructs the optimal tokenization from the $wid[e]$ values from the forward pass.

Algorithm 2 PathPiece segmentation.

1: procedure PathPiece ( $d,\mathcal{V},L$ )

2: $n\leftarrow\operatorname{len}(d)$ $\triangleright$ document length

3: for $i\leftarrow 1,n$ do

4: $wid[i]\leftarrow 0$ $\triangleright$ shortest path token

5: $pl[i]\leftarrow\infty$ $\triangleright$ shortest path len

6: $sc[i]\leftarrow 0$ $\triangleright$ solution count

7: $vt[i]\leftarrow[\,]$ $\triangleright$ valid token list

8: for $e\leftarrow 1,n$ do $\triangleright$ token end

9: for $w\leftarrow 1,L$ do $\triangleright$ token width

10: $s\leftarrow e-w+1$ $\triangleright$ token start

11: if $s\geq 1$ then $\triangleright$ $s$ in range

12: $t\leftarrow d[s:e]$ $\triangleright$ token

13: if $t\in\mathcal{V}$ then

14: if $s=1$ then $\triangleright$ 1 tok path

15: $wid[e]\leftarrow w$

16: $pl[e]\leftarrow 1$

17: $sc[e]\leftarrow 1$

18: else

19: if $w\geq 2$ then

20: $vt[e].\operatorname{append}(w)$

21: $nl\leftarrow pl[s-1]+1$

22: if $nl<pl[e]$ then

23: $pl[e]\leftarrow nl$

24: $wid[e]\leftarrow w$

25: $sc[e]\leftarrow 1$

26: else if $nl=pl[e]$ then

27: $sc[e]\leftarrow sc[e]+1$

28: $r\leftarrow\operatorname{rand}()$

29: if $r\leq 1/sc[e]$ then

30: $wid[e]\leftarrow w$

31: $T\leftarrow[\,]$ $\triangleright$ output token list

32: $e\leftarrow n$ $\triangleright$ start at end of $d$

33: while $e\geq 1$ do

34: $w\leftarrow wid[e]$ $\triangleright$ width of short path tok

35: $s\leftarrow e-w+1$ $\triangleright$ token start

36: $t\leftarrow d[s:e]$ $\triangleright$ token

37: $T.\operatorname{append}(t)$

38: $e\leftarrow e-w$ $\triangleright$ back up a token

39: return $\operatorname{reversed}(T)$ $\triangleright$ reverse order

### A.2 Optimal Vocabulary Construction

#### A.2.1 Vocabulary Initialization

We will build an optimal vocabulary by starting from a large initial one, and sequentially omitting batches of tokens. We start with the most frequently occurring byte $n$ -grams in a training corpus, of width 1 to $L$ , or a large vocabulary trained by BPE or Unigram. We then add any single byte tokens that were not already included, making room by dropping the tokens with the lowest counts. In our experiments we used an initial vocabulary size of $|\mathcal{V}|=2^{18}=262,144$ .

#### A.2.2 Increase from omitting a token

Given a PathPiece tokenization $t_{1},\dots,t_{K_{d}}$ , $\forall{d\in\mathcal{C}}$ for training corpus $\mathcal{C}$ , we would like to know the increase in the overall length of a tokenization $K=\sum_{d}{K_{d}}$ from omitting a given token $t$ from our vocabulary, $\mathcal{V}\setminus\{t\}$ and recomputing the tokenization. Tokens with a low increase are good candidates to remove from the vocabulary Kudo (2018). However, doing this from scratch for each $t$ would be a very expensive $O(nL|\mathcal{V}|)$ operation.

We make a simplifying assumption that allows us to compute these increases more efficiently. We omit a specific token $t_{k}$ in the tokenization of document $d$ , and compute the minimum increase $MI_{kd}$ in $K_{d}$ from not having that token $t_{k}$ in the tokenization of $d$ . We then aggregate over the documents to get the overall increase for $t$ :

$$

MI_{t}=\sum_{d\in\mathcal{C}}\sum_{k=1|t_{k}=t}^{K_{d}}MI_{kd}. \tag{7}

$$

This is similar to computing the increase from $\mathcal{V}\setminus\{t\}$ , but ignores interaction effects from having several occurrences of the same token $t$ close to each other in a given document.

With PathPiece, it turns out we can compute the minimum increase in tokenization length without actually recomputing the tokenization. Any tokenization not containing $t_{k}$ must either contain a token boundary somewhere inside of $t_{k}$ breaking it in two, or it must contain a token that entirely contains $t_{k}$ as a superset. Our approach will be to enumerate all the occurrences for these two cases, and to find the minimum increase $MI_{kd}$ overall.

Before considering these two cases, there is a shortcut that often tells us that there would be no increase due to omitting $t_{k}$ ending at index $e$ . We computed the solution count vector $sc[e]$ when running Algorithm 2. If $sc[e]>1$ for a token ending at $e$ , then the backward pass could simply select one of the alternate optimal tokens, and find an overall tokenization of the same length.

Let $t_{k}$ start at index $s$ and end at index $e$ , inclusive. Remember that path length $pl[i]$ represents the number of tokens required for shortest path up to and including byte $i$ . We can also run Algorithm 2 backwards on $d$ , computing a similar vector of backwards path lengths $bpl[i]$ , representing the number of tokens on a path from the end of the data up to and including byte $i$ . The overall minimum length of a tokenization with a token boundary after byte $j$ is thus:

$$

K_{j}^{b}=pl[j]+bpl[j+1]. \tag{8}

$$

We have added an extra constraint on the shortest path, that there is a break at $j$ , so clearly $K_{j}^{br}\geq pl[n]$ . The minimum increase for the case of having a token boundary within $t_{k}$ is thus:

$$

MI_{kd}^{b}=\min_{j=s,\dots,e-1}{K_{j}^{b}-pl[n]}. \tag{9}

$$

Each token $t_{k}$ will have no more than $L-1$ potential internal breaks, so the complexity of computing $MI_{kd}^{b}$ is $O(L)$ .