# Reason from Fallacy: Enhancing Large Language Models’ Logical Reasoning through Logical Fallacy Understanding

## Abstract

Large Language Models (LLMs) have demonstrated good performance in many reasoning tasks, but they still struggle with some complicated reasoning tasks including logical reasoning. One non-negligible reason for LLMs’ suboptimal performance on logical reasoning is their overlooking of understanding logical fallacies correctly. To evaluate LLMs’ capability of logical fallacy understanding (LFU), we propose five concrete tasks from three cognitive dimensions of WHAT, WHY, and HOW in this paper. Towards these LFU tasks, we have successfully constructed a new dataset LFUD based on GPT-4 accompanied by a little human effort. Our extensive experiments justify that our LFUD can be used not only to evaluate LLMs’ LFU capability, but also to fine-tune LLMs to obtain significantly enhanced performance on logical reasoning.

Reason from Fallacy: Enhancing Large Language Models’ Logical Reasoning through Logical Fallacy Understanding

Yanda Li ${}^†$ , Dixuan Wang ${}^†$ , Jiaqing Liang ${}^{†{‡}\textrm{\Letter}}$ , Guochao Jiang ${}^†$ , Qianyu He ${}^†$ , Yanghua Xiao ${}^{†{‡}}$ , Deqing Yang ${}^{†{‡}\textrm{\Letter}}$ ${}^†$ School of Data Science, Fudan University, Shanghai, China ${}^‡$ Shanghai Key Laboratory of Data Science, Shanghai, China ${}^†$ {ydli22, dxwang23, gcjiang22, qyhe21}@m.fudan.edu.cn ${}^‡$ {liangjiaqing, shawyh, yangdeqing}@fudan.edu.cn

## 1 Introduction

As a cognitive process, logical reasoning plays an important role in many intellectual activities, such as problem solving, decision making and planning Huang and Chang (2022). Up to now, a lot of efforts have been dedicated to logical reasoning based on language models Cresswell (1973); Kowalski (1974); Iwańska (1993); Liu et al. (2020). More recently, the popularity of large language models (LLMs) such as ChatGPT Ouyang et al. (2022) and GPT-4 OpenAI (2023) stimulates the growth of research on LLM-based logical reasoning. Compared to traditional small language models, LLMs have demonstrated better performance in many reasoning tasks.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: LLM Reasoning Improvement via Fallacy Knowledge

### Overview

The image is a conceptual diagram illustrating how a Large Language Model's (LLM) reasoning can be improved by incorporating knowledge of logical fallacies. It contrasts two states: a "Before" state where the LLM makes an incorrect logical judgment, and an "After" state where, after being equipped with "Fallacy Knowledge," it correctly identifies the flawed reasoning. The diagram uses a specific example about French people and romanticism to demonstrate the concept.

### Components/Axes

The diagram is structured into three main visual regions within a light grey rounded rectangle container:

1. **Top Region (Problem Statement):**

* **Left Box (Premise):** A light blue rectangle containing the text: "Premise: My French colleague is very romantic."

* **Right Box (Hypothesis):** A light blue rectangle containing the text: "Hypothesis: I think all French people are romantic."

* **Connection:** A blue arrow points from the Premise to the Hypothesis. Above this arrow is a large red question mark ("?"), indicating the logical relationship between them is in question. Two grey dashed arrows point from the "Before" and "After" sections below up to this central question mark, showing that the LLM's assessment of this relationship is the subject of the diagram.

2. **Left Region ("Before" State):**

* **Label:** The word "**Before**" in large, bold, black italic font.

* **Icon:** A grey robot icon labeled "**LLM**" below it.

* **Output Box:** A light red rectangle with the text "**Entailment.**" in bold red font.

* **Indicator:** A red circle with a white "X" (✗) is positioned to the right of the output box, signifying an incorrect judgment.

* **Container:** This entire section is enclosed by a red dashed-line border.

3. **Central Region ("Fallacy Knowledge"):**

* **Title:** The text "**Fallacy Knowledge**" in brown font, accompanied by an icon of an open book with a lightbulb.

* **Content:** A list of abstract logical patterns written in brown text:

* "A has B → C has B"

* "A in C"

* "D in E ↔ F in D"

* "E in F"

* "......" (ellipsis indicating more patterns)

* **Connector:** A red arrow with a lightbulb icon points from this knowledge box to the "After" section's LLM, indicating the knowledge is being provided to the model.

4. **Right Region ("After" State):**

* **Label:** The word "**After**" in large, bold, black italic font.

* **Icon:** A grey robot icon labeled "**LLM**" below it.

* **Output Box:** A light green rectangle containing the text:

* "**Contradiction !**" (in bold green)

* "This is **Faulty Generalization.**" (with "Faulty Generalization" in bold green).

* **Indicator:** A green circle with a white checkmark (✓) is positioned to the right of the output box, signifying a correct judgment.

* **Container:** This entire section is enclosed by a green dashed-line border.

### Detailed Analysis

The diagram presents a before-and-after workflow for an LLM's reasoning task.

* **Task:** Evaluate the logical relationship between a specific premise ("My French colleague is very romantic") and a general hypothesis ("I think all French people are romantic").

* **"Before" Process:** The LLM, without specialized knowledge, incorrectly assesses this as **"Entailment."** This means it believes the premise logically guarantees the truth of the hypothesis. The red "X" marks this as an error.

* **Intervention:** The LLM is provided with **"Fallacy Knowledge."** This is represented as a set of abstract logical rules or patterns (e.g., "A has B → C has B") that describe common reasoning errors. The specific pattern relevant to this example is the fallacy of **hasty or faulty generalization**—drawing a broad conclusion from a single or limited instance.

* **"After" Process:** The same LLM, now equipped with this fallacy knowledge, correctly identifies the relationship as a **"Contradiction."** More precisely, it labels the reasoning from premise to hypothesis as a **"Faulty Generalization."** The green checkmark confirms this as the correct logical assessment. The diagram implies that the hypothesis does not logically follow from the premise and, in fact, represents a flawed inference.

### Key Observations

1. **Visual Coding:** The diagram uses a consistent color scheme to convey correctness: red for error ("Before" state, "Entailment," X mark) and green for correctness ("After" state, "Contradiction/Faulty Generalization," ✓ mark).

2. **Spatial Flow:** The central "Fallacy Knowledge" box acts as a bridge or catalyst between the two states. The dashed arrows from the top question mark to both states emphasize that the same logical problem is being evaluated under different conditions.

3. **Abstraction vs. Specificity:** The "Fallacy Knowledge" is presented as abstract symbolic patterns (A, B, C, D, E, F), while the applied example is concrete (French colleague, romanticism). This highlights the transfer of general logical principles to a specific case.

4. **LLM Representation:** The LLM is depicted as a simple robot icon, personifying the model as an agent that receives input (knowledge) and produces output (judgment).

### Interpretation

This diagram is a pedagogical or conceptual illustration of a key challenge and solution in AI reasoning. It argues that:

* **The Problem:** Standard LLMs may perform surface-level pattern matching or rely on biased training data, leading them to commit logical fallacies like faulty generalization. They might incorrectly "entail" a general statement from a specific one because such patterns appear frequently in text, not because the logic is sound.

* **The Solution:** Explicitly training or augmenting LLMs with knowledge of formal logical fallacies and reasoning patterns can improve their robustness. By learning the abstract structure of fallacies (e.g., "A has property P, therefore all members of category C have property P"), the model can better identify and flag flawed reasoning in novel contexts.

* **The Outcome:** An LLM enhanced with "Fallacy Knowledge" transitions from being a passive text predictor to a more active, critical reasoner. It can move beyond simple entailment judgments to provide more nuanced and accurate analyses, such as identifying contradictions and naming the specific fallacy committed. This has significant implications for developing more reliable, trustworthy, and logically consistent AI systems for tasks like argument analysis, fact-checking, and educational tutoring.

The diagram ultimately advocates for the integration of symbolic logic and critical thinking frameworks into the training paradigms of large language models.

</details>

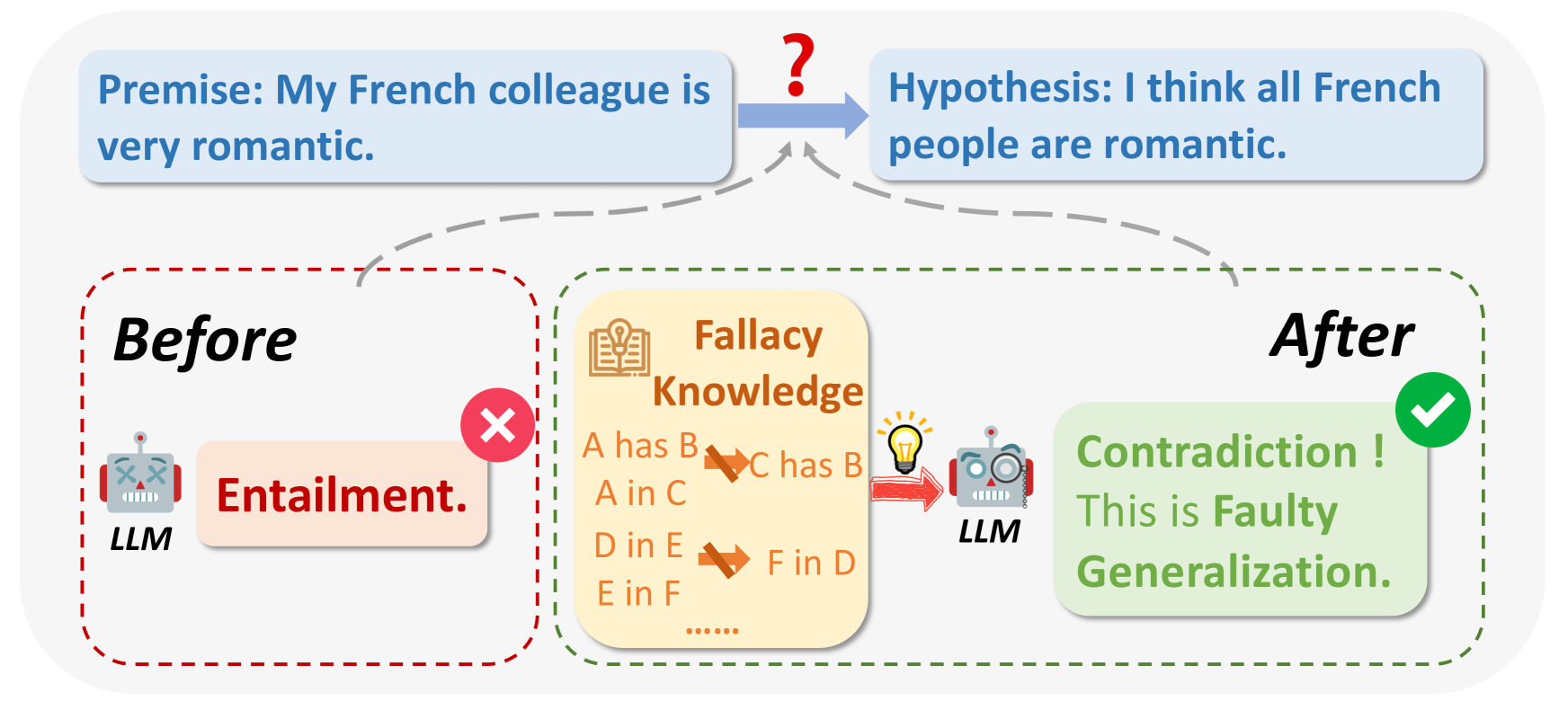

Figure 1: LLMs have deficiencies in logical reasoning. Once they understand logical fallacies, they know how to avoid logical fallacies, and thus improve their performance in various logical reasoning tasks.

However, LLMs still struggle with some more complex reasoning tasks including logical reasoning. One non-negligible reason for LLMs’ suboptimal performance on logical reasoning is their overlooking of understanding logical fallacies correctly. As early as 350 BC, Aristotle first proposed the concept of logical fallacy in his work Sophistical Refutations Aristotle (2006). Since then, logical fallacies have gradually become an important issue that should be noticed in our lives. “ Thou shalt not commit logical fallacies! ” has even become a worldwide popular idiom to remind us not to commit logical fallacies. By definition, logical fallacies refer to the errors in reasoning Tindale (2007), and they usually happen when the premises are not relevant or sufficient to draw the conclusions. Many previous works Liu et al. (2020); Yu et al. (2020); Joshi et al. (2020); Han et al. (2022) have focused on evaluating LLM logical reasoning capabilities from the perspective of deductive reasoning, natural language inference, reading comprehension, etc. However, few works focus on logical fallacies, which is in fact the major reason causing logical inconsistency in the sentences.

Chen et al. have observed that, LLMs often commit logical fallacies in logical reasoning, such as "Either protect the environment or develop the economy." (false dilemma) and "Some roses are not red because not all roses are red." (circular reasoning). It has been found that language models could avoid mistakes only when they understand what mistakes are Chen et al. (2023a); An et al. (2023), which justifies the ancient Greek philosopher Epicurus’s saying “ The mistake is the first step to save yourself. ” Based on our empirical studies, we have also found that the logical reasoning capability of LLMs is closely related to their understanding of logical fallacies.

The previous studies related to logical fallacy Jin et al. (2022); Sourati et al. (2023); bench authors (2023) only focus on logical fallacy detection, i.e., the identification and classification of logical fallacies, rather than systematically evaluating LLMs’ capability of logical fallacy understanding (LFU), not to mention improving LLMs’ LFU capability. Moreover, they have not explored the relationships between LFU and logical reasoning, which is crucial to improve LLMs’ capability of logical reasoning through enhancing their LFU capability. To address this problem, we focus on evaluating and enhancing LLMs’ LFU capability in this paper, so as to enhance their capability of logical reasoning.

Nonetheless, our work has to face several challenges as follows. First, we need to formalize the concrete tasks for LFU, since no previous studies focus on this problem. Second, we need a new dataset specific to LFU, as the previous datasets of logical fallacies Jin et al. (2022) only contain the logical fallacy types presenting in the sentences. To this end, we should propose a framework of constructing the LFU dataset towards the concrete LFU tasks, and then truthfully evaluating LLMs’ LFU capability with the dataset.

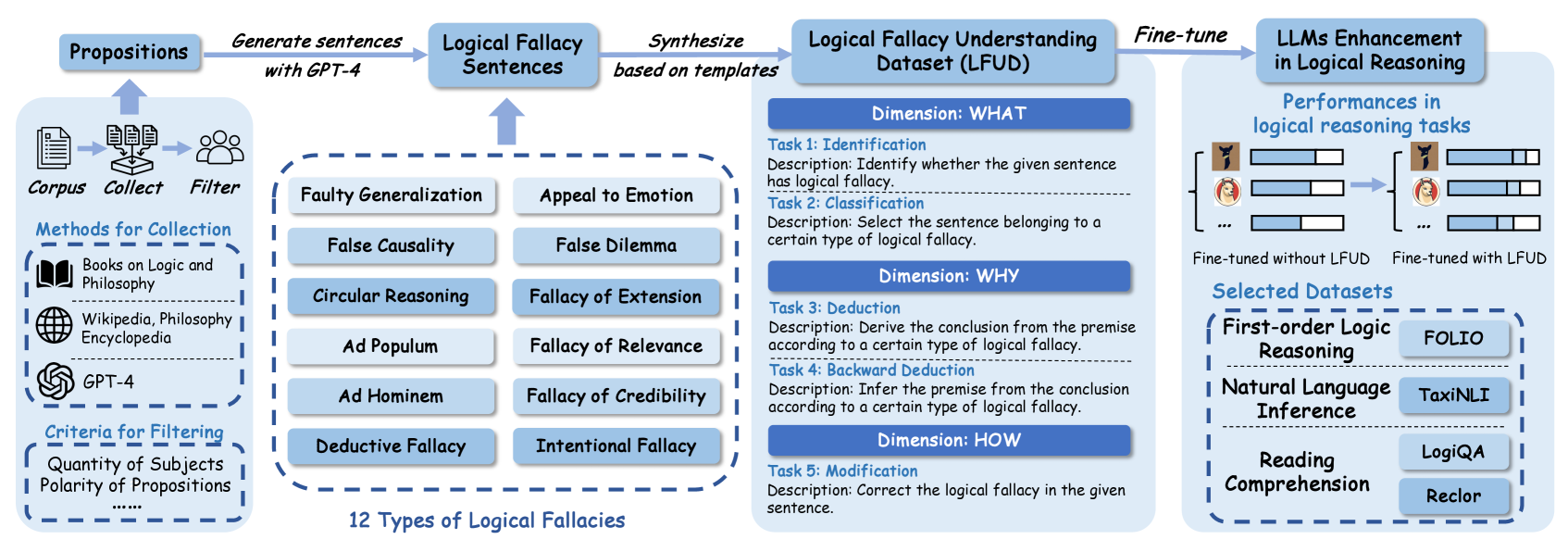

To overcome these challenges, we primarily focus on constructing a dataset for LFU in this paper, of which the samples are generated to evaluate models’ achievement on the following five LFU tasks corresponding to three cognitive dimensions of WHAT, WHY, and HOW Swanborn (2010).

1. WHAT -Identification (Task 1) and Classification (Task 2): identifying whether the given sentence contains a logical fallacy and which type of logical fallacy it is.

1. WHY -Deduction (Task 3) and Backward Deduction (Task 4): capturing the reasons causing the logical fallacy in the sentence.

1. HOW -Modification (Task 5): correcting the logical fallacy in the sentence.

Our proposed LFU tasks simulate the human understanding process of logical fallacies. Towards these tasks, we design a pipeline framework to automatically generate and synthesize a high-quality dataset, namely Logical Fallacy Understanding Dataset (LFUD), based on GPT-4 accompanied by a little human effort. Specifically, we first collect some sentences as the propositions (statements) which are the basic logic units and used to generate the sentences containing logical fallacies. Then, with the help of GPT-4, we generate sentences based on the propositions with twelve typical logical fallacy types Jin et al. (2022). And for each LFU task we propose, the instances of each fallacy type are synthesized. Then, we use our LFUD to evaluate the LFU capability of some representative LLMs. For the ultimate objective of our work, i.e., enhancing LLMs’ capability of logical reasoning, we further fine-tune these LLMs with the instances in LFUD. Our extensive experiments reveal that fine-tuning LLMs with LFUD can significantly enhance their logical reasoning capability.

In summary, our main contributions in this paper include:

1. Inspired by the three cognitive dimensions of WHAT, WHY, and HOW, we propose five concrete tasks which can truthfully evaluate LLMs’ performance on LFU.

2. Towards our proposed five LFU tasks, we devise a new framework for constructing a high-quality dataset, namely LFUD, to evaluate LLMs’ LFU capability, so as to enhance LLMs’ performance on logical reasoning.

3. The LFUD we constructed includes 4,020 instances involving 12 logical fallacy types. Our extensive experiments have demonstrated that our LFUD can not only evaluate LLMs’ LFU capability, but also improve LLMs’ capability of logical reasoning through fine-tuning LLMs with LFUD samples in terms of the LFU tasks.

## 2 Related Work

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Logical Fallacy Dataset Creation and LLM Enhancement Pipeline

### Overview

This image is a technical flowchart illustrating a multi-stage process for creating a "Logical Fallacy Understanding Dataset (LFUD)" and using it to fine-tune Large Language Models (LLMs) to improve their performance in logical reasoning tasks. The diagram flows from left to right, detailing data sourcing, fallacy categorization, dataset construction, and final model evaluation.

### Components/Axes

The diagram is segmented into four primary vertical sections, connected by arrows indicating process flow.

**1. Left Section: Data Collection & Filtering**

* **Header:** "Corpus" with icons for documents, collection, and people.

* **Sub-header:** "Methods for Collection"

* "Books on Logic and Philosophy" (with book icon)

* "Wikipedia, Philosophy Encyclopedia" (with globe icon)

* "GPT-4" (with OpenAI logo icon)

* **Sub-header:** "Criteria for Filtering"

* "Quantity of Subjects"

* "Polarity of Propositions"

* "......" (indicating additional criteria)

* **Flow:** An arrow points from this section to the next, labeled "Generate sentences with GPT-4".

**2. Middle-Left Section: Fallacy Types & Sentence Generation**

* **Header:** "Logical Fallacy Sentences" (receives input from the previous step).

* **Central Block:** A dashed box containing a 2x6 grid of 12 logical fallacy types:

* Faulty Generalization, Appeal to Emotion

* False Causality, False Dilemma

* Circular Reasoning, Fallacy of Extension

* Ad Populum, Fallacy of Relevance

* Ad Hominem, Fallacy of Credibility

* Deductive Fallacy, Intentional Fallacy

* **Label below grid:** "12 Types of Logical Fallacies".

* **Flow:** An arrow points from this section to the next, labeled "Synthesize based on templates".

**3. Middle-Right Section: Dataset Structure (LFUD)**

* **Header:** "Logical Fallacy Understanding Dataset (LFUD)".

* **Structure:** Organized into three "Dimensions," each containing specific tasks.

* **Dimension: WHAT**

* **Task 1: Identification**

* Description: "Identify whether the given sentence has logical fallacy."

* **Task 2: Classification**

* Description: "Select the sentence belonging to a certain type of logical fallacy."

* **Dimension: WHY**

* **Task 3: Deduction**

* Description: "Derive the conclusion from the premise according to a certain type of logical fallacy."

* **Task 4: Backward Deduction**

* Description: "Infer the premise from the conclusion according to a certain type of logical fallacy."

* **Dimension: HOW**

* **Task 5: Modification**

* Description: "Correct the logical fallacy in the given sentence."

* **Flow:** An arrow points from this section to the final section, labeled "Fine-tune".

**4. Right Section: Model Enhancement & Evaluation**

* **Header:** "LLMs Enhancement in Logical Reasoning".

* **Sub-section:** "Performances in logical reasoning tasks"

* Visual comparison of two model states using bar charts (represented by icons and bars).

* Left side: "Fine-tuned without LFUD" (shows lower performance bars).

* Right side: "Fine-tuned with LFUD" (shows higher performance bars).

* **Sub-section:** "Selected Datasets" (for evaluation)

* **First-order Logic Reasoning:** "FOLIO"

* **Natural Language Inference:** "TaxiNLI"

* **Reading Comprehension:** "LogiQA", "Reclor"

### Detailed Analysis

The process is a pipeline:

1. **Input:** Raw text corpus from philosophy books, encyclopedias, and GPT-4.

2. **Processing:** The corpus is filtered based on subject quantity and proposition polarity. GPT-4 is then used to generate sentences containing logical fallacies.

3. **Categorization:** These sentences are categorized into 12 specific types of logical fallacies.

4. **Dataset Synthesis:** Using templates, the categorized sentences are synthesized into the structured LFUD dataset, which contains five distinct tasks across three conceptual dimensions (WHAT, WHY, HOW).

5. **Application:** The LFUD dataset is used to fine-tune LLMs.

6. **Evaluation:** The fine-tuned models are evaluated on their performance across four established logical reasoning benchmark datasets (FOLIO, TaxiNLI, LogiQA, Reclor), with the diagram indicating improved performance when using LFUD.

### Key Observations

* The diagram explicitly names GPT-4 as both a source for the initial corpus and the tool for generating fallacy sentences.

* The 12 fallacy types are presented in a specific grid layout, suggesting a comprehensive taxonomy.

* The LFUD dataset is not a simple list of examples; it's a multi-task learning resource designed to teach models to identify, classify, reason about, and correct fallacies.

* The evaluation section contrasts model performance "without LFUD" versus "with LFUD," visually asserting the dataset's value.

* The selected evaluation datasets cover different facets of logical reasoning: formal logic (FOLIO), natural language inference (TaxiNLI), and reading comprehension with reasoning (LogiQA, Reclor).

### Interpretation

This diagram outlines a methodology for addressing a key weakness in LLMs: robust logical reasoning. The core hypothesis is that by explicitly training models on a structured dataset of logical fallacies (LFUD), their general reasoning capabilities can be enhanced.

The process is **Peircean** in its investigative approach:

1. **Abduction:** It starts with the observation that LLMs struggle with logic and hypothesizes that teaching them fallacies (a form of "negative knowledge") will improve them.

2. **Deduction:** It logically designs a pipeline to create a training resource (LFUD) with specific tasks (Identification, Classification, Deduction, etc.) that should, in theory, impart this knowledge.

3. **Induction:** It tests the hypothesis by fine-tuning models and measuring performance on benchmark datasets, expecting to see a positive correlation between LFUD training and improved scores.

The "reading between the lines" suggests that traditional training data may lack explicit, structured logical reasoning examples. LFUD fills this gap by providing synthetic, template-based examples that isolate and teach the structure of flawed reasoning. The inclusion of a "Modification" task (Task 5) is particularly insightful, as it moves beyond passive recognition to active correction, potentially leading to deeper model understanding. The ultimate goal, as implied by the final evaluation block, is not just to recognize fallacies in isolation but to transfer this skill to broader reasoning tasks like inference and comprehension.

</details>

Figure 2: Our framework of constructing LFUD and fine-tuning LLMs with LFUD to enhance logical reasoning. At first, we collected some propositions, based on which the sentences with the logical fallacies of 12 types were generated by GPT-4. Then, for the five LFU tasks we proposed, the QA instances were synthesized based on the previous generated sentences. Finally, we fine-tuned LLMs with LFUD, revealing that fine-tuning LLMs with LFUD can significantly enhance their logical reasoning capability.

Logical Reasoning

Up to now, a lot of efforts have been dedicated to logical reasoning based on language models Cresswell (1973); Kowalski (1974); Iwańska (1993); Liu et al. (2020). In particular, how to evaluate the models’ logical reasoning capability has attracted increasing attention, including deductive reasoning Ontanon et al. (2022); Han et al. (2022), natural language inference (NLI) Yanaka et al. (2019); Joshi et al. (2020); Liu et al. (2021) and multi-choice reading comprehension (MRC) Liu et al. (2020); Yu et al. (2020); Wang et al. (2022). Recently, the power of LLMs has stimulated the research on logical reasoning with LLMs, including LLMs evaluation Yu et al. (2023); Blair-Stanek et al. (2023); Teng et al. (2023), and LLMs enhancement Zhang et al. (2023); Chen et al. (2023b). Despite these works’ achievements, enhancing LLMs’ logical reasoning capability remains a non-negligible challenge. The major reason for logical inconsistencies in many sentences is the misunderstanding of logical fallacies, which is still under-explored in the research field of logic.

Logical Fallacy

Logical fallacy is the main reason for the logical inconsistencies presenting in our life. As early as 350 BC, Aristotle first proposed the concept of logical fallacy in his work Sophistical Refutations Aristotle (2006). Since then, logical fallacies have gradually gained attention in human society. In recent years, the studies related to logical fallacies mainly focused on dataset construction Habernal et al. (2018); Martino et al. (2020); Jin et al. (2022) and fallacy classification Stab and Gurevych (2017); Goffredo et al. (2022); Jin et al. (2022); Payandeh et al. (2023). For instance, Jin et al. first proposed the task of Logical Fallacy Detection, presenting a framework of 13 logical fallacy types, and evaluated all sentence samples on a classification task. Sourati et al. proposed a Case-Based Reasoning method that classifies new cases of logical fallacy by language-modeling-driven retrieval and the adaptation of historical cases. However, there is no work to systematically evaluate LLMs’ capability of logical fallacy understanding (LFU). For the first time, our work in this paper proposes a new dataset specific to LFU represented by five concrete tasks corresponding to three cognitive dimensions of WHAT, WHY, and HOW.

Learning from Synthetic Data

Synthesizing data for model training has gradually gained popularity along with the advancements of language models. This approach is particularly beneficial for tasks that are difficult to be constructed or those with scarce data resources Møller et al. (2023). Currently, synthetic data has been applied in various tasks such as relation extraction Papanikolaou and Pierleoni (2020), text classification Chung et al. (2023), irony detection Abaskohi et al. (2022), translation Sennrich et al. (2015), and sentiment analysis Maqsud (2015). For example, Josifoski et al. proposed a strategy to design an effective synthetic data generation pipeline and applied it to closed information extraction. In addition, Li et al. conducted a series of experiments to evaluate the effectiveness of LLMs in generating synthetic data to support model training for different text classification tasks. Beyond these fundamental tasks, Eldan and Li proposed to use LLMs with synthetic data to generate short stories typically for 3 to 4-year-old only containing words. But they did not focus on logical fallacy. We are the first to focus on the data augmentation strategies in LFU.

## 3 Methodology of Dataset Construction

In this section, we present the pipeline of constructing our LFUD, of which the overall framework is depicted in Figure 2. Starting from the propositions, we detail the steps of synthesizing the samples towards five LFU tasks and the twelve representative logical fallacy types.

| Singular Proposition | 54 |

| --- | --- |

| Particular Proposition | 5 |

| Universal Proposition | 8 |

| Affirmative Proposition | 56 |

| Negative Proposition | 11 |

| Propositions without Pronouns | 58 |

| Propositions with Pronouns | 9 |

| Propositions with Human Subjects | 52 |

| Propositions with Non-Human Subjects | 15 |

Table 1: Some statistics of the 67 propositions in LFUD.

### 3.1 Acquiring Propositions

At the first step of constructing our LFUD, we collected some propositions which were subsequently used for generating the sentences presenting various logical fallacies. According to Hurley (2000), a proposition is one sentence that is either true or false. We considered several sources of proposition collection, including some authoritative books of logic and philosophy Hurley (2000); Hausman (2012), open websites such as Wikipedia and Stanford Encyclopedia of Philosophy. In addition, LLMs can be utilized to generate some propositions for enriching proposition diversity. To seek the satisfactory LLM for generating propositions, we tested some representative LLMs’ identification performance on 200 instances from the Big-Bench bench authors (2023), consisting of correct and incorrect (logical fallacy) sentences. The results showed that GPT-4 can correctly identify in over 90% of the sentences whether they have logical fallacies, despite the limited capability in directly generating complex tasks. Thus, we leveraged GPT-4 to generate more propositions and subsequent sentences presenting logical fallacies.

The considerable propositions should be simple and intuitive, but diverse. Finally, we filtered out 67 propositions and the relevant statistics are listed in Table 1. The following sentences are the proposition examples:

1. Everyone in my family has never been to Europe.

2. X accepted Y’s suggestion.

3. Michael had dinner at an Italian restaurant.

| /* Generation Instruction */ As a logician, when presented with a proposition, your objective is to simulate the way of human thinking, generating a sentence with specific type of logical fallacy. The generation should follow these instructions: 1. Generate the sentence with Faulty Generalization. Faulty Generalization occurs when … (Detailed description) 2. The sentence should have complete premise and conclusion, but try not to make it too long. /* Three demonstration examples */ Proposition 1: Neither of the classes I took at UF were interesting. Result 1: A college is not a good college if none of its classes are interesting. Neither of the classes I took at UF were interesting, so UF is not a good college. … /* Input the proposition */ Proposition: Peter visited China last year. /* GPT-4’s output */ Result: Peter visited China last year. Peter is a European. Therefore, all Europeans have been to China. |

| --- |

Table 2: A prompt case for GPT-4 to generate a sentence with the given logical fallacy type.

### 3.2 Generating Sentences with GPT-4

Given GPT-4’s capability of natural language generation and logical fallacy identification, we directly used GPT-4 to generate the sentences presenting various logical fallacies in this step. To take into account the logical fallacies existing in our life as many as possible, we refered to the thirteen typical types of logical fallacies (as listed in Table 7 and Appendix B) proposed by Jin et al. (2022).

| Dimension | Task name | Task definition |

| --- | --- | --- |

| WHAT | Task1: Identification | Identify whether the given sentence has logical fallacy. |

| Task2: Classification | Select the sentence belonging to a certain type of logical fallacy. | |

| WHY | Task3: Deduction | Derive the conclusion from the premise according to a certain type of logical fallacy. |

| Task4: Backward Deduction | Infer the premise from the conclusion according to a certain type of logical fallacy. | |

| HOW | Task5: Modification | Correct the logical fallacy in the given sentence. |

Table 3: Five LFU tasks corresponding to three cognitive dimensions.

Given a proposition and a certain logical fallacy type, we asked GPT-4 to generate a sentence of this logical fallacy type with a prompt, which contains the generation instruction and a demonstration example of the given logical fallacy type. Table 2 illustrates the prompt for GPT-4 about the type of Faulty Generalization.

Specifically, due to the rather vague definition of Equivocation provided by Jin et al. (2022), and the scarcity of such fallacy instances in real life, GPT-4 can hardly understand Equivocation and generate corresponding sentences correctly. To ensure the quality of the sentences generated by GPT-4, we neglected Equivocation fallacy type and generated the sentences for the rest twelve logical fallacy types.

To ensure that the generated sentences meet the requirements, we further manually proofread the sentences with logical fallacies generated by GPT-4. Each generated sentence was proofread with two main areas of concern: structural integrity and validity of fallacies, as described in Appendix C, to ensure that the sentences made sense and met the requirements of specific fallacy type. For each of the 67 propositions, we generated 12 sentences with GPT-4, each of which presents one logical fallacy type. Thus, we generated 804 sentences with logical fallacies in total. These sentences are used to synthesize the samples for concrete LFU tasks as follows.

### 3.3 Proposing LFU Tasks and Synthesizing Task Instances

To evaluate LLMs’ capability of LFU, we need to design concrete evaluation tasks. According to the principles of cognitive science Swanborn (2010), humans generally understand objects from three dimensions: WHAT it is, WHY it is, and HOW it operates, which are interconnected and progressive cognition levels. Inspired by these dimensions, we propose five concrete tasks which are used to verify models’ capability of LFU. Table 3 lists the definitions of the five tasks. Wherein, Task 1 and Task 2 belong to WHAT dimension, which identify whether the given sentence has the logical fallacy (of a certain type). Task 3 and Task 4 belong to WHY dimension, which verify whether the model captures the reason causing the logical fallacy in the sentence. The last Task 5 belongs to HOW dimension, which requires correcting the logical fallacy of the given type in the sentence. Specifically, we synthesized multiple-choice questions for the first four tasks, and sentence generation questions for Task 5. We further provided one toy example for each task in Appendix A.

In fact, previous studies Jin et al. (2022); bench authors (2023) have focused on the two tasks of WHAT dimension, i.e., understanding what the logical fallacy in the sentence is. To the best of our knowledge, there are no studies concerning the tasks of WHY and HOW dimensions by now. But notably, the ultimate goal of LFU is to avoid logical fallacies, which requires us to understand the reasons causing logical fallacies and correct logical fallacies. Therefore, we paid more attention to the tasks of WHY and HOW dimension in this paper.

For each sentence with one of the twelve logical fallacy types generated in the previous step, we synthesized one QA instance for every LFU task with the question templates. For each LFU task, the question stems (without question options) of all instances are generated according to some templates, as shown in Appendix A. Particularly, for Task 3 and Task 4, we need to identify the premise and conclusion for the given sentence, and further provide question options. Thus, we directly asked GPT-4 to generate the results as we needed.

To minimize the impact of instruction design when asking LLMs to achieve these tasks, we first designed some candidate question templates to constitute a template pool in fact, and then randomly chose one template from the pool to generate the question for a certain LFU task. In addtion, we also shuffled the orders of question options. Finally, our LFUD contains 4,020 (QA) instances in total, involving 5 LFU tasks and 12 logical fallacy types, which stem from the 67 propositions and 804 sentences with logical fallacies. LFUD is provided at https://github.com/YandaGo/LFUD

## 4 Evaluation

| LogiQA2.0 | Origin | 45.55 | - | 42.30 | - | 52.74 | - | 47.71 | - | 54.39 | - |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Origin+LOGIC | 44.66 | -1.95 | 35.62 | -15.79 | 53.37 | 1.19 | 45.10 | -5.47 | 52.93 | -2.68 | |

| Origin+LFUD | 47.90 | 5.16 | 43.13 | 1.96 | 55.85 | 5.90 | 47.84 | 0.27 | 56.55 | 3.97 | |

| Reclor | Origin | 47.20 | - | 40.40 | - | 54.40 | - | 49.20 | - | 55.80 | - |

| Origin+LOGIC | 46.20 | -2.12 | 42.20 | 4.46 | 54.00 | -0.74 | 47.80 | -2.85 | 55.80 | 0.00 | |

| Origin+LFUD | 50.20 | 6.36 | 46.40 | 14.85 | 57.00 | 4.78 | 51.80 | 5.28 | 58.20 | 4.30 | |

| TaxiNLI | Origin | 68.54 | - | 62.68 | - | 78.91 | - | 77.47 | - | 82.33 | - |

| Origin+LOGIC | 40.60 | -40.76 | 58.80 | -6.19 | 77.92 | -1.25 | 76.18 | -1.67 | 82.18 | -0.18 | |

| Origin+LFUD | 73.70 | 7.53 | 67.26 | 7.31 | 79.76 | 1.08 | 77.77 | 0.39 | 84.02 | 2.05 | |

| FOLIO | Origin | 61.76 | - | 50.98 | - | 36.76 | - | 50.49 | - | 72.55 | - |

| Origin+LOGIC | 62.25 | 0.79 | 52.45 | 2.88 | 36.28 | -1.31 | 45.10 | -10.68 | 73.53 | 1.35 | |

| Origin+LFUD | 66.18 | 7.16 | 59.31 | 16.34 | 44.61 | 21.35 | 56.37 | 11.65 | 76.47 | 5.40 | |

Table 4: LLMs’ accuracy(%) on the four logical reasoning tasks (datasets) after being fine-tuned with different data. Origin represents fine-tuning the LLMs with the original training data in the logical reasoning datasets. $Δ\$ is accuracy improvement relative to Origin. The best accuracy scores are bolded and the second best scores are underlined.

### 4.1 Experiment Setup

Datasets

To evaluate LLMs’ performance on logical reasoning, we used four representative datasets including FOLIO Han et al. (2022), TaxiNLI Joshi et al. (2020), LogiQA Liu et al. (2020), and Reclor Yu et al. (2020) in our experiments.

FOLIO focuses on first-order logic reasoning (FOL) that is a classical deductive reasoning task. TaxiNLI is specific to natural language inference (NLI) that tests the logical relationship between a premise and a hypothesis. LogiQA and Reclor are the multi-choice reading comprehension (MRC) datasets, which choose the most suitable answer corresponding to the given text, could better reflect comprehensive logical reasoning abilities. The instances of the four datasets are shown in Appendix D. In addition to the training data in above four datasets and our LFUD, we also used the logical fallacy data LOGIC Jin et al. (2022) to fine-tune LLMs. LOGIC (including LOGIC-CLIMATE) contains thirteen types of logical fallacy sentences, as shown in Appendix B.

LLMs

We selected five popular LLMs in our experiments, including LLaMA2-7B, LLaMA2-13B Touvron et al. (2023), Vicuna-7B, Vicuna-13B Chiang et al. (2023) and Orca2-7B Mitra et al. (2023). When fine-tuning these LLMs, we set the learning rate to 2.5e-5 and the batch size to 8. To ensure the robustness of our results, we repeated all experiments for three times and reported the average performance (accuracy) scores.

Dataset Split

For the 4,020 synthesized instances in our LFUD, we randomly selected 3,000 instances (corresponding to 600 sentences with logical fallacies) as the training set and the remaining 1,020 instances (corresponding to 204 sentences with logical fallacies) as the test set. Given the instances of Task 1–4 (choice questions) have fixed answers, we only used the training samples (2,500 instances) of Task 1–4 to fine-tune the five LLMs. And we directly used some test samples of Task 5 to evaluate LLMs’ cross-task learning capability on LFU, as presented in Subsection 4.3. To balance the labels of logical right and fallacy in Task 1 instances, we appended 500 logically correct sentences of Big-Bench bench authors (2023), and thus collected 2,900 training samples in our LFUD in total.

### 4.2 Effectiveness on Enhancing LLMs’ Logical Reasoning

#### 4.2.1 Overall Performance

To justify the value of our LFUD instances on enhancing LLMs’ logical reasoning capability, we merged LFUD training samples with the original training samples in the four logical reasoning datasets, denoted by Origin, to fine-tune LLMs. We compared such a fine-tuning method with the method of fine-tuning LLMs only with Origin. In addition, we also compared the method of fine-tuning LLMs with Origin and some samples in LOGIC Jin et al. (2022), which have the same number as the training samples in LFUD.

Table 4 lists the accuracy(%) scores of all five LLMs on the four logical reasoning tasks (datasets) which were fine-tuned with Origin, Origin+LOGIC and Origin+LFUD, respectively. And the performance improvements of Origin+LOGIC and Origin+LFUD relative to Orign are also listed. Based on the results in this table, we have the following observations and analysis.

1. Appending the training samples in our LFUD to Origin when fine-tuning LLMs significantly enhances their performance on all logical reasoning tasks. It shows that learning the LFU tasks we proposed is indeed helpful to improve LLMs’ capability of various logical reasoning.

2. Although the samples in LOGIC are also the sentences with various logical fallacies, Origin+LOGIC cannot obtain the significant performance improvements of logical reasoning. Even worse, it degrades LLMs’ logical reasoning performance, compared with Origin in some cases. It implies that, unlike our LFU tasks from WHAT, WHY and HOW, only identifying the logical fallacy presented by the sentences in LOGIC cannot result in LLMs’ really capability of LFU. In addition, The samples in LOGIC are raw and unclean, with some examples consisting of even fallacy questions and fallacy definitions.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Accuracy Comparison Across Four Datasets

### Overview

The image is a line chart comparing the accuracy percentages of four different datasets or models (LogiQA2.0, Reclor, TaxiNLI, FOLIO) across five distinct points on the x-axis, labeled from 0% to 100%. The chart visualizes performance trends, with each dataset represented by a uniquely colored line and marker.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy(%)". The scale runs from 40 to 80, with major tick marks at intervals of 5 (40, 45, 50, 55, 60, 65, 70, 75, 80).

* **X-Axis:** Contains five categorical labels: "0%", "25%", "50%", "75%", "100%". The axis title is not explicitly shown.

* **Legend:** Positioned in the top-left corner of the chart area. It defines four data series:

* **LogiQA2.0:** Blue line with 'x' markers.

* **Reclor:** Orange line with '+' markers.

* **TaxiNLI:** Red line with star markers.

* **FOLIO:** Green line with circle markers.

### Detailed Analysis

**Data Series and Trends:**

1. **TaxiNLI (Red line with stars):**

* **Trend:** Shows a steady, slight upward slope from left to right.

* **Data Points:**

* At 0%: 68.54%

* At 25%: 72.21%

* At 50%: 72.51%

* At 75%: 72.61%

* At 100%: 73.70%

* **Observation:** This is the highest-performing series across all points. It experiences its largest gain between 0% and 25%, then plateaus with minimal increases before a final small rise to 100%.

2. **FOLIO (Green line with circles):**

* **Trend:** Shows a consistent, gentle upward slope.

* **Data Points:**

* At 0%: 61.76%

* At 25%: 63.24%

* At 50%: 63.73%

* At 75%: 64.22%

* At 100%: 66.18%

* **Observation:** This is the second-highest performing series. It maintains a steady, linear increase, with the most significant jump occurring between 75% and 100%.

3. **Reclor (Orange line with '+'):**

* **Trend:** Shows a very gradual, almost linear upward slope.

* **Data Points:**

* At 0%: 47.20%

* At 25%: 48.20%

* At 50%: 49.00%

* At 75%: 49.80%

* At 100%: 50.20%

* **Observation:** This series is in the lower performance tier. Its growth is slow and consistent, gaining exactly 1.00% between each labeled point from 0% to 75%, with a smaller 0.40% gain to 100%.

4. **LogiQA2.0 (Blue line with 'x'):**

* **Trend:** Shows an initial increase, followed by a plateau.

* **Data Points:**

* At 0%: 45.55%

* At 25%: 47.20%

* At 50%: 47.77%

* At 75%: 47.71%

* At 100%: 47.90%

* **Observation:** This is the lowest-performing series initially. It sees a notable increase from 0% to 25%, then essentially flatlines, with values hovering around 47.7-47.9% for the remainder of the chart. There is a negligible dip between 50% and 75%.

### Key Observations

* **Performance Tiers:** The chart clearly separates the datasets into two distinct performance groups. TaxiNLI and FOLIO operate in the 60-75% accuracy range, while Reclor and LogiQA2.0 operate in the 45-50% range.

* **Growth Patterns:** All series show non-decreasing accuracy from 0% to 100%. The highest-performing series (TaxiNLI) shows the most pronounced early gain, while the lowest (LogiQA2.0) shows the most pronounced plateau.

* **Convergence/Divergence:** The gap between the top series (TaxiNLI) and the bottom series (LogiQA2.0) widens from approximately 23 percentage points at 0% to nearly 26 percentage points at 100%. The gap between the two middle series (FOLIO and Reclor) remains relatively constant at around 14-16 percentage points.

### Interpretation

The data suggests that the variable represented on the x-axis (e.g., training data percentage, model size, or some other resource) has a positive but diminishing return on accuracy for these tasks. The most significant gains for the top models occur early (0-25%), after which improvements become marginal. This indicates a potential saturation point.

The clear stratification implies that the underlying difficulty or nature of the tasks measured by these datasets is fundamentally different. TaxiNLI and FOLIO appear to be "easier" tasks for the evaluated system, achieving high accuracy, while LogiQA2.0 and Reclor represent more challenging problems where accuracy is harder to improve. The plateau in LogiQA2.0 after 25% is particularly notable, suggesting that beyond a certain point, adding more of the x-axis resource does not help solve this specific type of problem. This chart would be crucial for understanding resource allocation—showing where input yields the best returns for different task types.

</details>

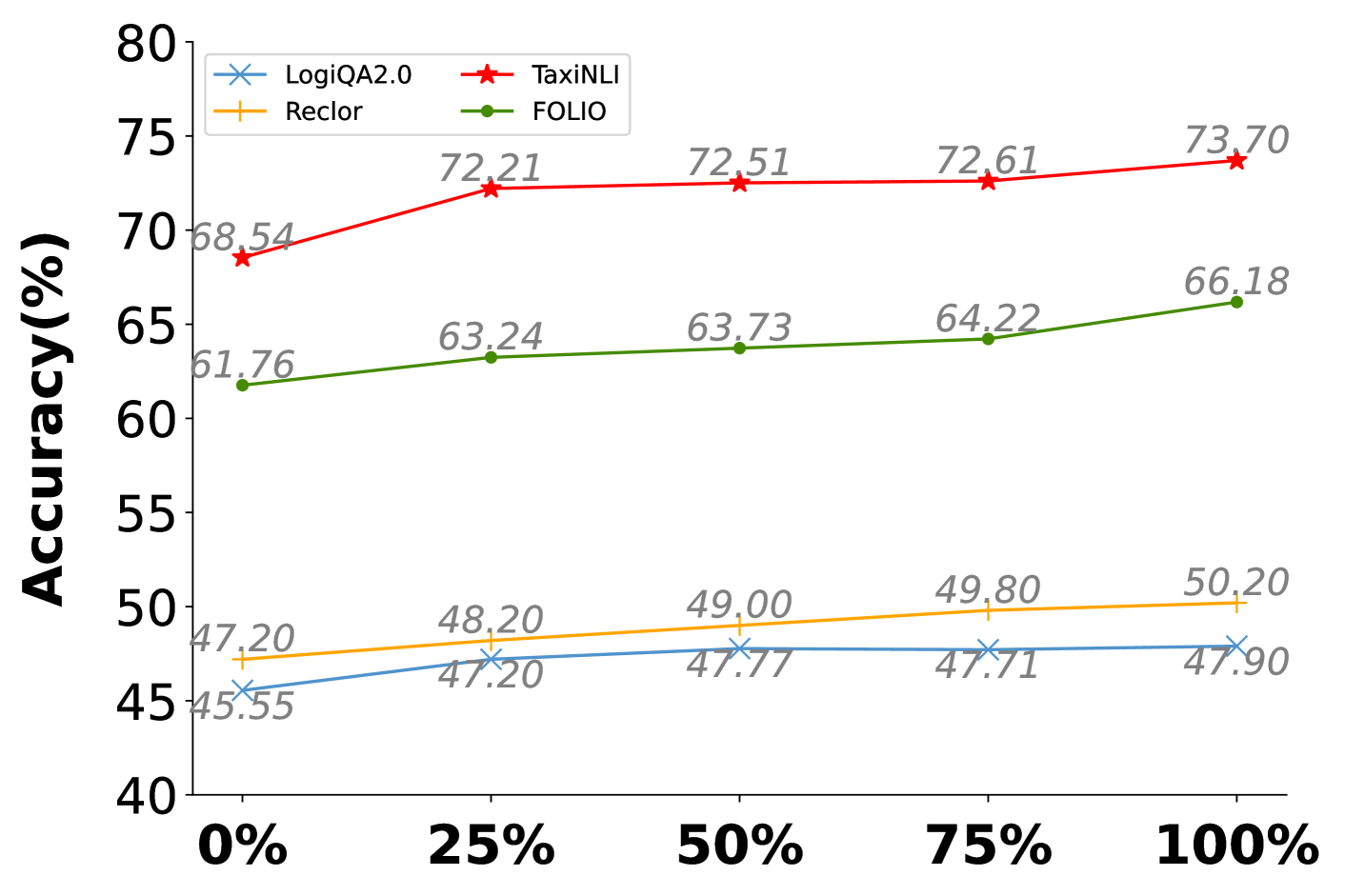

Figure 3: LLaMA2-13B’s performance on the four logical reasoning tasks with different scales of LFUD training samples.

| LLaMA2-7B | 46.84 | 47.86 | 24.75 | 29.35 | 40.00 |

| --- | --- | --- | --- | --- | --- |

| LLaMA2-13B | 55.76 | 53.61 | 52.36 | 54.48 | 50.00 |

| Vicuna-7B | 32.96 | 57.71 | 60.70 | 56.59 | 46.00 |

| Vicuna-13B | 50.76 | 58.46 | 58.33 | 61.32 | 56.00 |

| ChatGPT | 54.66 | 73.88 | 62.94 | 70.65 | 60.00 |

| GPT-4 | 86.35 | 86.19 | 78.61 | 85.70 | 88.00 |

Table 5: Six representative LLMs’ performance on our proposed five LFU tasks. To evaluate Task 5, we manually assessed LLMs’ outputs for 50 randomly selected samples.

#### 4.2.2 Impacts of Different Factors in LFUD

To further validate LFUD’s effectiveness on enhancing LLMs’ logical reasoning capability, we also investigated the impacts of different factors in LFUD, including the scale of training data, LFU tasks and logical fallacy types. Due to space limitation, we only display the results of LLaMA2-13B.

Training Data Scale

To verify the impacts of training data scale, we respectively extracted 25%, 50%, and 75% of the LFUD training data accompanied with Origin to fine-tune LLaMA2-13B, and then tested its performance on the four logical reasoning tasks. From Figure 3 we can see that, LLaMA2-13B’s performance improvement becomes more apparent as the training data scale increases, showing that even only a small part of LFUD samples is also valuable.

LFU Task

We fine-tuned LLaMA2-13B again with the training data excluding the instances of Task 1, Task 2, Task 3 and Task4, respectively. As shown in Table 6, excluding any task’s instances would lead to the performance decline of LLaMA2-13B.

| No Tasks w/o Task1 w/o Task2 | 45.55 46.69 45.74 | 47.20 49.80 48.00 | 68.54 69.53 69.88 | 61.76 63.73 65.20 |

| --- | --- | --- | --- | --- |

| w/o Task3 | 47.46 | 49.00 | 72.01 | 64.22 |

| w/o Task4 | 46.44 | 48.80 | 69.28 | 65.20 |

| All Tasks | 47.90 | 50.20 | 73.70 | 66.18 |

Table 6: LLaMA2-13B’s performance on the four logical reasoning tasks when excluding different LFU task’s training instances.

Logical Fallacy Type

Similarly, we respectively excluded the instances of each logical fallacy type from LFUD training data, and then tested LLaMA2-13B’s performance. The results in Table 7 indicate that every logical fallacy type contributes positively to LLM’s logical reasoning capability.

| No Fallacy Data w/o Faulty Generalization w/o False Causality | 45.55 46.56 46.69 | 47.20 49.80 47.60 | 68.54 71.91 72.56 | 61.76 64.71 62.75 |

| --- | --- | --- | --- | --- |

| w/o Circular Reasoning | 46.12 | 49.80 | 72.95 | 64.22 |

| w/o Ad Populum | 46.25 | 47.60 | 72.85 | 64.22 |

| w/o Ad hominem | 46.95 | 48.60 | 69.53 | 65.20 |

| w/o Deductive Fallacy | 45.87 | 49.40 | 73.78 | 62.75 |

| w/o Appeal to Emotion | 47.65 | 49.80 | 69.93 | 63.73 |

| w/o False Dilemma | 46.12 | 50.00 | 73.10 | 63.24 |

| w/o Fallacy of Extension | 45.93 | 49.40 | 72.51 | 64.71 |

| w/o Fallacy of Relevance | 47.65 | 50.20 | 70.92 | 61.27 |

| w/o Fallacy of Credibility | 47.58 | 48.60 | 72.06 | 62.75 |

| w/o Intentional Fallacy | 46.88 | 49.80 | 69.48 | 65.69 |

| All Fallacy Types | 47.90 | 50.20 | 73.70 | 66.18 |

Table 7: LLaMA2-13B’s performance on the four logical reasoning tasks when excluding different logical fallacy type’s training instances.

### 4.3 LFU Performance of LLMs

Next, we validate LLMs’ capability of LFU through evaluating their performance on the LFU tasks. We want to investigate LLMs’ inherent capability on LFU, thus we directly used all instances of each LFU task in LFUD as the test samples without fine-tuning them with the training data.

Performance on Each LFU Task

Besides the previous four LLMs, we additionally considered ChatGPT Ouyang et al. (2022) and the latest GPT-4 OpenAI (2023) (using OpenAI API with temperature 0.7) in LFU performance evaluation. To balance the labels of Task 1, we added all 654 correct sentences in Big-Bench into Task 1’s test data. Thus, we have a total of 1,458 instances for Task 1’s evaluation. In addition, as Task 5 is to generate a new sentence rather than a fixed answer, we randomly selected 50 samples from its instances and manually assessed LLMs’ outputs. All tested LLMs’ performance is listed in Table 5, showing that different LLMs’ performance varies significantly on the five LFU tasks. Among the LLMs, GPT-4 has much better performance than others on all tasks, justifying its strong capability of LFU. By contrast, LLaMA2-7B has the worst performance that is even worse than random selection.

| LLaMA-2-7B LLaMA-2-13B Vicuna-7B | 0.92 (6/654) 1.99 (13/654) 7.95 (52/654) |

| --- | --- |

| Vicuna-13B | 26.61 (174/654) |

| ChatGPT | 37.92 (248/654) |

| GPT-4 | 95.57 (625/654) |

Table 8: LLMs’ Performance on identifying 654 logically correct sentences of Task 1.

| Faulty Generalization False Causality Circular Reasoning | 76.12 61.19 34.33 | 89.55 70.15 52.24 | 59.70 67.16 55.22 | 50.75 65.67 62.69 |

| --- | --- | --- | --- | --- |

| Ad Populum | 65.67 | 80.60 | 79.10 | 79.10 |

| Ad hominem | 77.61 | 89.55 | 59.70 | 59.70 |

| Deductive Fallacy | 40.30 | 49.25 | 62.69 | 77.61 |

| Appeal to Emotion | 16.42 | 77.61 | 64.18 | 77.61 |

| False Dilemma | 29.85 | 44.78 | 62.69 | 50.75 |

| Fallacy of Extension | 53.73 | 25.37 | 41.79 | 38.81 |

| Fallacy of Relevance | 25.37 | 37.31 | 11.94 | 44.78 |

| Fallacy of Credibility | 40.30 | 53.73 | 61.19 | 64.18 |

| Intentional Fallacy | 68.66 | 31.34 | 47.76 | 64.18 |

Table 9: Vicuna-13B’s performance on Task 1–4 specific to each type of logical fallacies. The best accuracy scores are bolded and the second best scores are underlined.

Identifying Logical Correctness

To further investigate whether LLMs really understand logical fallacies, we also asked LLMs to achieve Task 1 for the 654 sentences from Big-Bench that are logically correct (without logical fallacies). Their accuracy scores are listed in Table 8, showing that only GPT-4 has the satisfactory performance for this task. In fact, the rest LLMs tended to recognize the sentences as having logical fallacies for catering to Task 1’s question. In addition, we also found these LLMs except for GPT-4 are easily influenced by the order of question options when achieving Task 1–4, indicating that they cannot well understand logical fallacies.

In Terms of Logical Fallacy Type

Besides, we evaluated LLMs’ LFU performance on Task 1–4 in terms of a specific logical fallacy type. The results listed in Table 9 show that, LLMs exhibited better performance on the tasks of Faulty Generalization, False Causality, Ad populum and Ad Hominem. These four types of logical fallacies are more distinctive and more frequently present in our life, resulting in that LLMs have encountered more sentences with these logical fallacy types during their pre-training.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Grouped Bar Chart: Model Accuracy Comparison (Original vs. Fine-tuned)

### Overview

The image displays a grouped bar chart comparing the accuracy percentages of four different large language models in their "Original" and "Fine-tuned" states. The chart demonstrates the performance improvement achieved through fine-tuning for each model variant.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Accuracy(%)"

* **Scale:** Linear scale from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **X-Axis:**

* **Categories (Models):** Four distinct models are listed from left to right:

1. LLaMA2-7B

2. LLaMA2-13B

3. Vicuna-7B

4. Vicuna-13B

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Items:**

* **Dark Blue Square:** Labeled "Original"

* **Light Blue Square:** Labeled "Fine-tuned"

* **Data Labels:** The exact accuracy percentage is printed directly above each bar.

### Detailed Analysis

The chart presents paired data for each model. The left bar (dark blue) represents the "Original" model's accuracy, and the right bar (light blue) represents the "Fine-tuned" model's accuracy.

| Model (X-Axis Category) | Original Accuracy (%) | Fine-tuned Accuracy (%) | Absolute Improvement (Fine-tuned - Original) |

| :--- | :--- | :--- | :--- |

| **LLaMA2-7B** | 40 | 48 | +8 |

| **LLaMA2-13B** | 50 | 62 | +12 |

| **Vicuna-7B** | 46 | 50 | +4 |

| **Vicuna-13B** | 56 | 80 | +24 |

**Trend Verification:**

* For every model pair, the light blue "Fine-tuned" bar is taller than the dark blue "Original" bar, indicating a consistent positive trend where fine-tuning improves accuracy.

* The magnitude of improvement varies significantly between models.

### Key Observations

1. **Universal Improvement:** All four models show higher accuracy after fine-tuning.

2. **Largest Gain:** The **Vicuna-13B** model exhibits the most substantial improvement, with accuracy increasing by 24 percentage points (from 56% to 80%).

3. **Smallest Gain:** The **Vicuna-7B** model shows the smallest improvement, with only a 4 percentage point increase (from 46% to 50%).

4. **Model Size Correlation:** Within each model family (LLaMA2 and Vicuna), the larger 13B parameter model achieves a greater absolute improvement from fine-tuning than its 7B counterpart.

5. **Final Performance:** After fine-tuning, **Vicuna-13B** achieves the highest overall accuracy (80%), while **LLaMA2-7B** has the lowest (48%).

### Interpretation

The data strongly suggests that the fine-tuning process applied in this context is effective for enhancing the accuracy of the tested language models. The relationship is not uniform, however.

The significant variance in improvement (from +4% to +24%) indicates that the efficacy of fine-tuning is highly dependent on the specific base model. The pattern where larger models (13B) benefit more than smaller ones (7B) could imply that larger models have a greater capacity to absorb and utilize the specialized knowledge imparted during fine-tuning.

The most striking result is the performance of **Vicuna-13B**. Its post-fine-tuning accuracy of 80% is not only the highest but also represents a transformative leap from its original state. This could suggest that the fine-tuning dataset or method was particularly well-aligned with the capabilities or pre-training data of the Vicuna-13B model, or that this model had a higher latent potential for the specific task being measured. Conversely, the minimal gain for Vicuna-7B might indicate a performance ceiling for that model size on this task, or a mismatch with the fine-tuning approach.

In summary, the chart provides clear evidence that fine-tuning boosts accuracy, but the degree of benefit is a critical variable that depends on the model's architecture and scale.

</details>

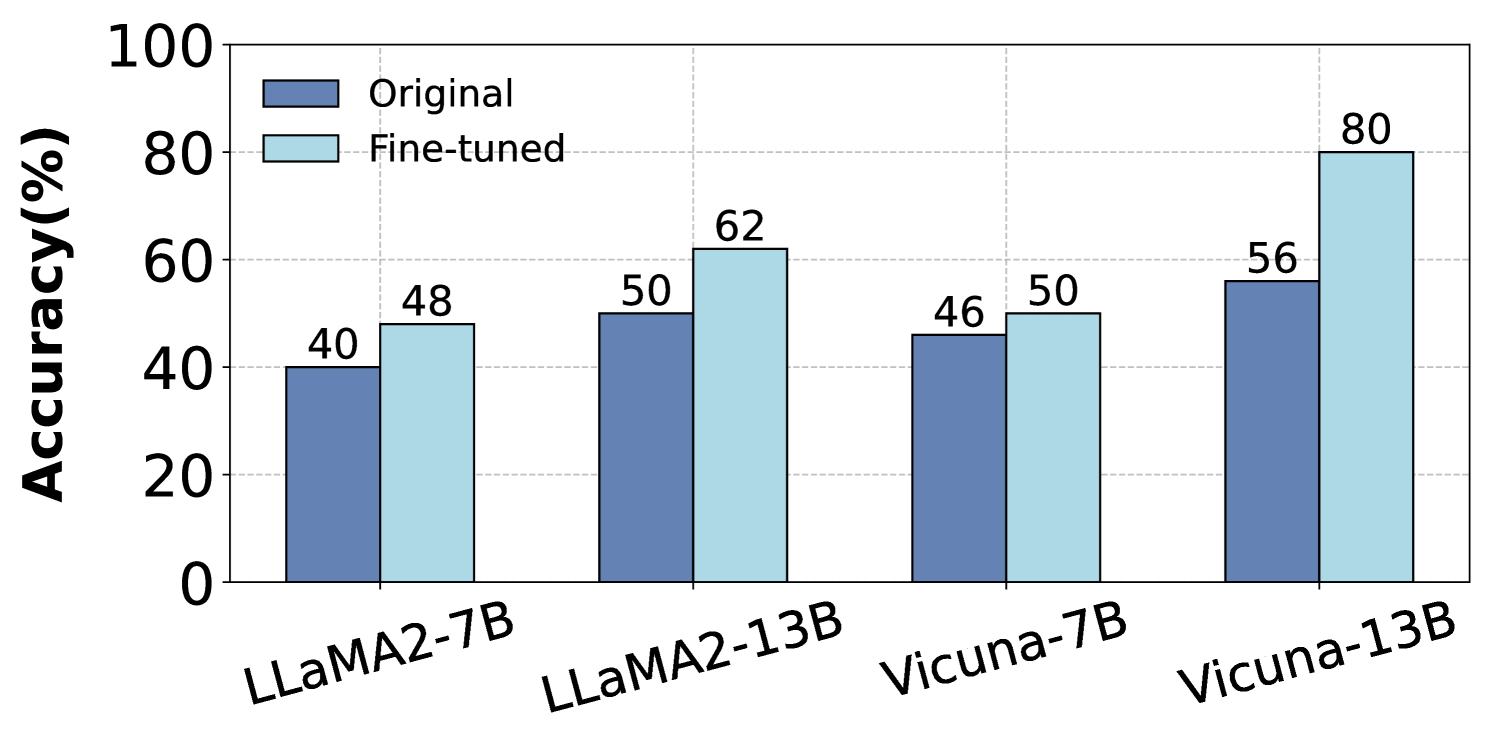

Figure 4: LLMs’ Performance on Task 5 without fine-tuning (denoted as Original) or after being fine-tuned with training data of Task 1–4.

Cross-task Learning Performance

Compared with Task 1–4, Task 5 belongs to the higher cognition dimension HOW, and is more difficult for LLMs since it requires to generate a sentence satisfying the demand. An interesting research question is that, whether LLMs can well achieve Task 5 after learning the previous four tasks? To answer this question, for each of Task 1–4 we sampled 60 instances from its training data, and mixed them with the equal amount (240) of general conversation instances from lmsys-chat-1m to fine-tune LLMs, which was used to guarantee LLMs’ generative ability. Then, we evaluated the fine-tuned LLMs’ performance on Task 5, of which the performance is depicted in Figure 4. As well, LLMs’ performance without fine-tuning, denoted as Original, is also displayed in the figure. The results indicate that, all the tested LLMs indeed enhanced their LFU performance through learning Task 1–4, also justifying their good cross-task learning capability of LFU tasks.

## 5 Conclusion

To evaluate LLMs’ LFU performance, we propose five concrete tasks from three cognition dimensions WHAT, WHY, and HOW. Towards these tasks, we constructed a high quality dataset LFUD, which has been proven helpful by our extensive experiments to enhance LLMs’ capability of logical reasoning. We hope our work in this paper is instructive and our LFUD becomes a valuable resource for further research on LFU.

## Limitations

Although we argue that enhancing LLMs’ logical reasoning capability through enabling LLMs to understand logical fallacies is language-independent, we should still acknowledge that the data and experiments of our work were only in English. As we know, LLMs might have different performance on many tasks including logical reasoning, across different languages. Therefore, the effectiveness of our solution proposed in this paper may vary when applied to other languages.

## Ethical Considerations

At first, all authors of this work abide by the provided Code of Ethics. The quality of manual proofreading for logical fallacy sentences is ensured through a double-check strategy outlined in Appendix C. We ensure that the privacy rights of all members for proofreading are respected in the process. Besides, synthetic data generated by LLMs may involve potential ethical risks regarding fairness and bias Bommasani et al. (2021); Blodgett et al. (2020), which results in further consideration when they are employed in downstream tasks. Although our dataset LFUD was built for better understanding logical fallacies, which is not intended for safety-critical applications, we still asked our members for proofreading to refine the offensive and harmful data generated by GPT-4. Despite these considerations, there may still be some unsatisfactory data that goes unnoticed in our final dataset.

## Acknowledgements

This work was supported by Chinese NSF Major Research Plan (No. 92270121), Youth Fund (No. 62102095), Shanghai Science and Technology Innovation Action Plan (No. 21511100401). The computations in this research were performed using the CFFF platform of Fudan University.

## References

- Abaskohi et al. (2022) Amirhossein Abaskohi, Arash Rasouli, Tanin Zeraati, and Behnam Bahrak. 2022. UTNLP at SemEval-2022 task 6: A comparative analysis of sarcasm detection using generative-based and mutation-based data augmentation. In Proceedings of the 16th International Workshop on Semantic Evaluation (SemEval-2022), pages 962–969, Seattle, United States. Association for Computational Linguistics.

- An et al. (2023) Shengnan An, Zexiong Ma, Zeqi Lin, Nanning Zheng, Jian-Guang Lou, and Weizhu Chen. 2023. Learning from mistakes makes llm better reasoner. arXiv preprint arXiv:2310.20689.

- Aristotle (2006) Aristotle. 2006. On sophistical refutations. ReadHowYouWant. com.

- bench authors (2023) BIG bench authors. 2023. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. Transactions on Machine Learning Research.

- Blair-Stanek et al. (2023) Andrew Blair-Stanek, Nils Holzenberger, and Benjamin Van Durme. 2023. Can gpt-3 perform statutory reasoning? arXiv preprint arXiv:2302.06100.

- Blodgett et al. (2020) Su Lin Blodgett, Solon Barocas, Hal Daumé III, and Hanna Wallach. 2020. Language (technology) is power: A critical survey of “bias” in NLP. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 5454–5476, Online. Association for Computational Linguistics.

- Bommasani et al. (2021) Rishi Bommasani, Drew A Hudson, Ehsan Adeli, Russ Altman, Simran Arora, Sydney von Arx, Michael S Bernstein, Jeannette Bohg, Antoine Bosselut, Emma Brunskill, et al. 2021. On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258.

- Chen et al. (2023a) Kai Chen, Chunwei Wang, Kuo Yang, Jianhua Han, Lanqing Hong, Fei Mi, Hang Xu, Zhengying Liu, Wenyong Huang, Zhenguo Li, et al. 2023a. Gaining wisdom from setbacks: Aligning large language models via mistake analysis. arXiv preprint arXiv:2310.10477.

- Chen et al. (2023b) Meiqi Chen, Yubo Ma, Kaitao Song, Yixin Cao, Yan Zhang, and Dongsheng Li. 2023b. Learning to teach large language models logical reasoning.

- Chiang et al. (2023) Wei-Lin Chiang, Zhuohan Li, Zi Lin, Ying Sheng, Zhanghao Wu, Hao Zhang, Lianmin Zheng, Siyuan Zhuang, Yonghao Zhuang, Joseph E Gonzalez, et al. 2023. Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality. See https://vicuna. lmsys. org (accessed 14 April 2023).

- Chung et al. (2023) John Joon Young Chung, Ece Kamar, and Saleema Amershi. 2023. Increasing diversity while maintaining accuracy: Text data generation with large language models and human interventions. arXiv preprint arXiv:2306.04140.

- Cresswell (1973) Maxwell John Cresswell. 1973. Logics and Languages. Routledge, London, England.

- Eldan and Li (2023) Ronen Eldan and Yuanzhi Li. 2023. Tinystories: How small can language models be and still speak coherent english? arXiv preprint arXiv:2305.07759.

- Goffredo et al. (2022) Pierpaolo Goffredo, Shohreh Haddadan, Vorakit Vorakitphan, Elena Cabrio, and Serena Villata. 2022. Fallacious argument classification in political debates. In Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence, IJCAI, pages 4143–4149.

- Habernal et al. (2018) Ivan Habernal, Henning Wachsmuth, Iryna Gurevych, and Benno Stein. 2018. Before name-calling: Dynamics and triggers of ad hominem fallacies in web argumentation. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers), pages 386–396, New Orleans, Louisiana. Association for Computational Linguistics.

- Han et al. (2022) Simeng Han, Hailey Schoelkopf, Yilun Zhao, Zhenting Qi, Martin Riddell, Luke Benson, Lucy Sun, Ekaterina Zubova, Yujie Qiao, Matthew Burtell, et al. 2022. Folio: Natural language reasoning with first-order logic. arXiv preprint arXiv:2209.00840.

- Hausman (2012) Alan Hausman. 2012. Logic and Philosophy: A Modern Introduction. Wadsworth, Cengage Learning, Boston, MA.

- Huang and Chang (2022) Jie Huang and Kevin Chen-Chuan Chang. 2022. Towards reasoning in large language models: A survey. arXiv preprint arXiv:2212.10403.

- Hurley (2000) Patrick J. Hurley. 2000. A Concise Introduction to Logic. Wadsworth, Belmont, CA.

- Iwańska (1993) Lucja Iwańska. 1993. Logical reasoning in natural language: It is all about knowledge. Minds and Machines, 3:475–510.

- Jin et al. (2022) Zhijing Jin, Abhinav Lalwani, Tejas Vaidhya, Xiaoyu Shen, Yiwen Ding, Zhiheng Lyu, Mrinmaya Sachan, Rada Mihalcea, and Bernhard Schoelkopf. 2022. Logical fallacy detection. In Findings of the Association for Computational Linguistics: EMNLP 2022, pages 7180–7198, Abu Dhabi, United Arab Emirates. Association for Computational Linguistics.

- Joshi et al. (2020) Pratik Joshi, Somak Aditya, Aalok Sathe, and Monojit Choudhury. 2020. Taxinli: Taking a ride up the nlu hill. arXiv preprint arXiv:2009.14505.

- Josifoski et al. (2023) Martin Josifoski, Marija Sakota, Maxime Peyrard, and Robert West. 2023. Exploiting asymmetry for synthetic training data generation: Synthie and the case of information extraction. arXiv preprint arXiv:2303.04132.

- Kowalski (1974) Robert Kowalski. 1974. Logic for problem solving. Department of Computational Logic, Edinburgh University.

- Li et al. (2023) Zhuoyan Li, Hangxiao Zhu, Zhuoran Lu, and Ming Yin. 2023. Synthetic data generation with large language models for text classification: Potential and limitations. arXiv preprint arXiv:2310.07849.

- Liu et al. (2021) Hanmeng Liu, Leyang Cui, Jian Liu, and Yue Zhang. 2021. Natural language inference in context-investigating contextual reasoning over long texts. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 35, pages 13388–13396.

- Liu et al. (2020) Jian Liu, Leyang Cui, Hanmeng Liu, Dandan Huang, Yile Wang, and Yue Zhang. 2020. Logiqa: A challenge dataset for machine reading comprehension with logical reasoning. arXiv preprint arXiv:2007.08124.

- Maqsud (2015) Umar Maqsud. 2015. Synthetic text generation for sentiment analysis. In Proceedings of the 6th Workshop on Computational Approaches to Subjectivity, Sentiment and Social Media Analysis, pages 156–161.

- Martino et al. (2020) G Martino, Alberto Barrón-Cedeno, Henning Wachsmuth, Rostislav Petrov, and Preslav Nakov. 2020. Semeval-2020 task 11: Detection of propaganda techniques in news articles. arXiv preprint arXiv:2009.02696.

- Mitra et al. (2023) Arindam Mitra, Luciano Del Corro, Shweti Mahajan, Andres Codas, Clarisse Simoes, Sahaj Agrawal, Xuxi Chen, Anastasia Razdaibiedina, Erik Jones, Kriti Aggarwal, Hamid Palangi, Guoqing Zheng, Corby Rosset, Hamed Khanpour, and Ahmed Awadallah. 2023. Orca 2: Teaching small language models how to reason.

- Møller et al. (2023) Anders Giovanni Møller, Jacob Aarup Dalsgaard, Arianna Pera, and Luca Maria Aiello. 2023. Is a prompt and a few samples all you need? using gpt-4 for data augmentation in low-resource classification tasks. arXiv preprint arXiv:2304.13861.

- Ontanon et al. (2022) Santiago Ontanon, Joshua Ainslie, Vaclav Cvicek, and Zachary Fisher. 2022. Logicinference: A new dataset for teaching logical inference to seq2seq models. arXiv preprint arXiv:2203.15099.

- OpenAI (2023) R OpenAI. 2023. Gpt-4 technical report. arxiv 2303.08774. View in Article.

- Ouyang et al. (2022) Long Ouyang, Jeffrey Wu, Xu Jiang, Diogo Almeida, Carroll Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. 2022. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems, 35:27730–27744.

- Papanikolaou and Pierleoni (2020) Yannis Papanikolaou and Andrea Pierleoni. 2020. Dare: Data augmented relation extraction with gpt-2. arXiv preprint arXiv:2004.13845.

- Payandeh et al. (2023) Amirreza Payandeh, Dan Pluth, Jordan Hosier, Xuesu Xiao, and Vijay K Gurbani. 2023. How susceptible are llms to logical fallacies? arXiv preprint arXiv:2308.09853.

- Sennrich et al. (2015) Rico Sennrich, Barry Haddow, and Alexandra Birch. 2015. Improving neural machine translation models with monolingual data. arXiv preprint arXiv:1511.06709.

- Sourati et al. (2023) Zhivar Sourati, Filip Ilievski, Hông-Ân Sandlin, and Alain Mermoud. 2023. Case-based reasoning with language models for classification of logical fallacies. arXiv preprint arXiv:2301.11879.

- Stab and Gurevych (2017) Christian Stab and Iryna Gurevych. 2017. Recognizing insufficiently supported arguments in argumentative essays. In Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics: Volume 1, Long Papers, pages 980–990, Valencia, Spain. Association for Computational Linguistics.

- Swanborn (2010) Peter Swanborn. 2010. Case study research: What, why and how? Case study research, pages 1–192.

- Teng et al. (2023) Zhiyang Teng, Ruoxi Ning, Jian Liu, Qiji Zhou, Yue Zhang, et al. 2023. Glore: Evaluating logical reasoning of large language models. arXiv preprint arXiv:2310.09107.

- Tindale (2007) Christopher W Tindale. 2007. Fallacies and argument appraisal. Cambridge University Press.

- Touvron et al. (2023) Hugo Touvron, Thibaut Lavril, Gautier Izacard, Xavier Martinet, Marie-Anne Lachaux, Timothée Lacroix, Baptiste Rozière, Naman Goyal, Eric Hambro, Faisal Azhar, et al. 2023. Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971.

- Wang et al. (2022) Siyuan Wang, Zhongkun Liu, Wanjun Zhong, Ming Zhou, Zhongyu Wei, Zhumin Chen, and Nan Duan. 2022. From lsat: The progress and challenges of complex reasoning. IEEE/ACM Transactions on Audio, Speech, and Language Processing, 30:2201–2216.

- Yanaka et al. (2019) Hitomi Yanaka, Koji Mineshima, Daisuke Bekki, Kentaro Inui, Satoshi Sekine, Lasha Abzianidze, and Johan Bos. 2019. Help: A dataset for identifying shortcomings of neural models in monotonicity reasoning. arXiv preprint arXiv:1904.12166.

- Yu et al. (2023) Fei Yu, Hongbo Zhang, and Benyou Wang. 2023. Nature language reasoning, a survey. arXiv preprint arXiv:2303.14725.

- Yu et al. (2020) Weihao Yu, Zihang Jiang, Yanfei Dong, and Jiashi Feng. 2020. Reclor: A reading comprehension dataset requiring logical reasoning. arXiv preprint arXiv:2002.04326.

- Zhang et al. (2023) Hanlin Zhang, Jiani Huang, Ziyang Li, Mayur Naik, and Eric Xing. 2023. Improved logical reasoning of language models via differentiable symbolic programming. arXiv preprint arXiv:2305.03742.

| Faulty Generalization False Causality Circular Claim | Faulty generalization occurs when a conclusion about all or many instances of a phenomenon is drawn from one or a few instances of that phenomenon. False causality occurs when an argument jumps to a conclusion implying a causal relationship without supporting evidence. Circular reasoning occurs when an argument uses the claim it is trying to prove as proof that the claim is true. | Kevin, who is a teenager, enjoys playing chess. Therefore, all teenagers must enjoy playing chess. Whenever David goes hiking in the mountains, it’s a sunny day. Clearly, David’s hiking trips cause sunny weather. Some students are not serious about their studies because they do not focus on their studies. |

| --- | --- | --- |

| Ad Populum | Ad populum occurs when an argument is based on affirming that something is real or better because the majority thinks so. | It’s widely believed that Nancy relocated to another city, so it must be true. |

| Ad Hominem | Ad hominem is an irrelevant attack towards the person or some aspect of the person who is making the argument, instead of addressing the argument or position directly. | John claims that all people should obey the rules of the road. But John has received several speeding tickets in the past. Therefore, it’s not necessary to obey the rules of the road. |

| Deductive Fallacy | Deductive fallacy occurs when there is a logical flaw in the reasoning behind the argument, such as Affirming the consequent, Denying the antecedent, Affirming a disjunct and so on. | Should Lucy feel alone, she will surely adopt a puppy. It’s evident Lucy has adopted a puppy. Therefore, it must be that Lucy is feeling lonely. |

| Appeal to Emotion | Appeal to emotion is when emotion is used in place of reason to support an argument in place of reason, such as pity, fear, anger, etc. | Jack had his wallet stolen at the concert, think about how desperate and helpless Jack is now, how can we not help him? |

| False Dilemma | False dilemma occurs when incorrect limitations are made on the possible options in a scenario when there could be other options. | Most museums will be closed on Mondays either due to low visitor turnout, or due to their disregard for public interest. |

| Equivocation | Equivocation is an argument which uses a key term or phrase in an ambiguous way, with one meaning in one portion of the argument and then another meaning in another portion of the argument. | All stars are exploding balls of gas. Miley Cyrus is a star. Therefore, Miley Cyrus is an exploding ball of gas. |

| Fallacy of Extension | Fallacy of extension is an argument that attacks an exaggerated or caricatured version of your opponent’s position. | Alex: All flowers don’t stay open forever. Jamie: So you’re saying that all flowers die instantly after they bloom? |

| Fallacy of Relevance | Fallacy of relevance, which is also known as Red Herring, occurs when the speaker attempts to divert attention from the primary argument by offering a point that does not suffice as counterpoint/supporting evidence (even if it is true). | A portion of the inhabitants of this city have a fever, but have you considered the high unemployment rate? |

| Fallacy of Credibility | Fallacy of credibility is when an appeal is made to some form of ethics, authority, or credibility. | Sharon, an acclaimed pianist with years of experience, claims that practicing every day will increase your piano skills by 50%. She’s an expert, therefore we should believe her. |

| Intentional Fallacy | Intentional fallacy is a custom category for when an argument has some element that shows the intent of a speaker to win an argument without actual supporting evidence. | Since no one can prove that Peter didn’t come to China last year, he must have. |

Table 10: Descriptions and examples of 13 logical fallacy types

## Appendix A Details of Five LFU Tasks

We list the definitions and examples of our five tasks below.

Dimension: WHAT

- Task1: Identification

- Definition: Identify whether the given sentence has logical fallacy.

- Example: Sentence: Many people believe most museums will be closed on Mondays, therefore it’s a fact. Identify if there is any logical fallacy in the sentence. A) Yes, there is a logical fallacy. B) No, there is no logical fallacy.

- Task2: Classification

- Definition: Select the sentence belonging to a certain type of logical fallacy.

- Example: Circular reasoning occurs when an argument uses the claim it is trying to prove as proof that the claim is true. Select which among the following options demonstrates the logical fallacy of circular reasoning. A) Most people believe that Rebecca doesn’t like spicy food, therefore it must be true. B) Rebecca, a renowned food critic, does not like spicy food. Hence, spicy food is not good. C) Rebecca either refrains from spicy food due to discomfort it causes her, or she lacks well-developed taste buds. D) Rebecca doesn’t like spicy food because she dislikes spicy food.

Dimension: WHY

- Task3: Deduction

- Definition: Derive the conclusion from the premise according to a certain type of logical fallacy.

- Example: Faulty generalization occurs when a conclusion about all or many instances of a phenomenon is drawn from one or a few instances of that phenomenon. The premise is known: Bob painted his house green and he is a homeowner. With which of the two conclusions can the premise be coupled to create logical fallacy of faulty generalization? A) Green is the most popular house color. B) All homeowners paint their houses green.

- Task4: Backward Deduction

- Definition: Infer the premise from the conclusion according to a certain type of logical fallacy.

- Example: Ad populum occurs when an argument is based on affirming that something is real or better because the majority thinks so. The conclusion is known: Cynthia’s painting must be a masterpiece. With which of the two premises can the conclusion be coupled to create the logical fallacy of ad populum? A) People widely agree that Cynthia made a beautiful painting. B) A famous art critic praised Cynthia’s painting.

Dimension: HOW

- Task5: Modification

- Definition: Correct the logical fallacy in the given sentence.

- Example: Original sentence: Person A: The garden needs watering. Person B: So you’re saying we should neglect everything else and just focus on the garden? Correct the logical fallacy in the original sentence and output the modified sentence without any logical fallacy.

## Appendix B Details of Logic Fallacy Types

In Table 10, we showcase the description and examples of 13 logical fallacy types.

## Appendix C Details of Manual Proofreading

The evaluation standard is strictly classified into two main categories: structural integrity and validity of fallacies. Structural integrity focuses on the correctness of grammar, the accuracy of punctuation, and the proper use of syntax. On the other hand, the validity of fallacies ensures that, under specific contexts or themes, the sentences satisfy the need for specific type of logical fallacy. Besides, any offensive and harmful data will be refined during the proofreading.

In this process, we assembled an expert team proficient in linguistics and logic. This team comprises four members, including one logician and three graduate students, who are engaged in linguistics, logic, and computer science respectively. They each have the ability to understand and classify various types of logical fallacies.

To enhance the efficiency of this process, each sentence was initially processed through Grammarly, eliminating basic grammatical and lexical errors. Subsequently, our expert team manually reviewed the content. Each sentence was assigned to two team members for review. A consensus confirmed the sentence met the requirements, but in case of disagreement, the third team member would be consulted. If three members cannot achieve consensus, the logician will make the final decision.

## Appendix D Examples of Logical Reasoning Datasets

We illustrate data examples of four logical reasoning datasets selected in our experiments, including FOLIO, TaxiNLI, LogiQA, and Reclor.

FOLIO

Premise: Beasts of Prey is either a fantasy novel or a science fiction novel. Science fiction novels are not about mythological creatures. Beasts of Prey Is about a creature known as the Shetani. Shetanis are mythological.

Conclusion: Beasts of prey isn’t a science fiction novel.

Answer: True

TaxiNLI

Premise: Even if auditors do not follow such other standards and methodologies, they may still serve as a useful source of guidance to auditors in planning their work under GAGAS.