# Better & Faster Large Language Models via Multi-token Prediction

## Better & Faster Large Language Models via Multi-token Prediction

Fabian Gloeckle * 1 2 Badr Youbi Idrissi * 1 3 Baptiste Rozière 1 David Lopez-Paz + 1 Gabriel Synnaeve + 1

## Abstract

Large language models such as GPT and Llama are trained with a next-token prediction loss. In this work, we suggest that training language models to predict multiple future tokens at once results in higher sample efficiency. More specifically, at each position in the training corpus, we ask the model to predict the following n tokens using n independent output heads, operating on top of a shared model trunk. Considering multi-token prediction as an auxiliary training task, we measure improved downstream capabilities with no overhead in training time for both code and natural language models. The method is increasingly useful for larger model sizes, and keeps its appeal when training for multiple epochs. Gains are especially pronounced on generative benchmarks like coding, where our models consistently outperform strong baselines by several percentage points. Our 13B parameter models solves 12 % more problems on HumanEval and 17 % more on MBPP than comparable next-token models. Experiments on small algorithmic tasks demonstrate that multi-token prediction is favorable for the development of induction heads and algorithmic reasoning capabilities. As an additional benefit, models trained with 4-token prediction are up to 3 × faster at inference, even with large batch sizes.

## 1. Introduction

Humanity has condensed its most ingenious undertakings, surprising findings and beautiful productions into text. Large Language Models (LLMs) trained on all of these corpora are able to extract impressive amounts of world knowledge, as well as basic reasoning capabilities by implementing a simple-yet powerful-unsupervised learning task: next-token prediction. Despite the recent wave of impressive achievements (OpenAI, 2023), next-token pre-

* Equal contribution + Last authors 1 FAIR at Meta 2 CERMICS Ecole des Ponts ParisTech 3 LISN Université Paris-Saclay. Correspondence to: Fabian Gloeckle <fgloeckle@meta.com>, Badr Youbi Idrissi <byoubi@meta.com>.

diction remains an inefficient way of acquiring language, world knowledge and reasoning capabilities. More precisely, teacher forcing with next-token prediction latches on local patterns and overlooks 'hard' decisions. Consequently, it remains a fact that state-of-the-art next-token predictors call for orders of magnitude more data than human children to arrive at the same level of fluency (Frank, 2023).

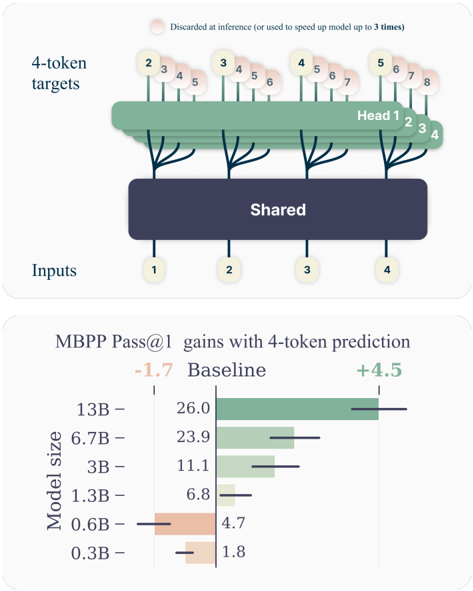

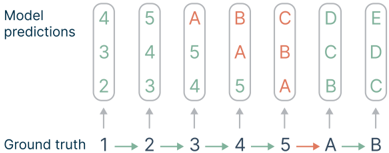

Figure 1: Overview of multi-token prediction. (Top) During training, the model predicts 4 future tokens at once, by means of a shared trunk and 4 dedicated output heads. During inference, we employ only the next-token output head. Optionally, the other three heads may be used to speed-up inference time. (Bottom) Multi-token prediction improves pass@1 on the MBPP code task, significantly so as model size increases. Error bars are confidence intervals of 90% computed with bootstrapping over dataset samples.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram and Chart: 4-Token Prediction Architecture and Performance Gains

### Overview

The image contains two distinct but related technical illustrations. The top section is a schematic diagram of a neural network architecture designed for 4-token prediction. The bottom section is a horizontal bar chart quantifying the performance gains (in MBPP Pass@1 score) achieved by this method across different model sizes, compared to a baseline.

### Components/Axes

**Top Diagram: Architecture Schematic**

* **Inputs:** A row of four circular nodes at the bottom, labeled sequentially: `1`, `2`, `3`, `4`.

* **Shared Layer:** A large, dark blue rectangular block labeled `Shared` in white text. It receives connections from all four input nodes.

* **Heads:** Four distinct green, tree-like structures emerging from the top of the `Shared` block. They are labeled `Head 1`, `Head 2`, `Head 3`, `Head 4` from left to right.

* **4-token targets:** A row of circular nodes at the top, grouped in sets of four corresponding to each head. The groups are:

* Head 1 targets: `2`, `3`, `4`, `5`

* Head 2 targets: `3`, `4`, `5`, `6`

* Head 3 targets: `4`, `5`, `6`, `7`

* Head 4 targets: `5`, `6`, `7`, `8`

* **Annotation:** A text note at the very top reads: `Discarded at inference (or used to speed up model up to 3 times)`. A faint, pinkish circular highlight is placed over the first token (`2`) of the first target group.

**Bottom Chart: MBPP Pass@1 Gains**

* **Title:** `MBPP Pass@1 gains with 4-token prediction`

* **Y-axis (Vertical):** Labeled `Model size`. Categories from top to bottom: `13B`, `6.7B`, `3B`, `1.3B`, `0.6B`, `0.3B`.

* **X-axis (Horizontal):** Represents the MBPP Pass@1 score. A central vertical line marks the `Baseline` score for each model.

* **Legend/Key:** Located at the top of the chart area.

* `-1.7` in orange text, associated with a leftward (negative) bar.

* `Baseline` in black text, associated with the central vertical line.

* `+4.5` in green text, associated with a rightward (positive) bar.

* **Data Series:** For each model size, a horizontal bar shows the change from the baseline.

* **Baseline Values (black text, left of center line):**

* 13B: `26.0`

* 6.7B: `23.9`

* 3B: `11.1`

* 1.3B: `6.8`

* 0.6B: `4.7`

* 0.3B: `1.8`

* **Gain/Loss Bars (colored, extending from baseline):**

* **13B:** A long green bar extending to the right. The gain is approximately `+4.5` (matching the legend's maximum).

* **6.7B:** A green bar extending to the right. Gain is approximately `+2.5` to `+3.0`.

* **3B:** A green bar extending to the right. Gain is approximately `+1.5` to `+2.0`.

* **1.3B:** A very short green bar extending to the right. Gain is approximately `+0.5`.

* **0.6B:** An orange bar extending to the left. Loss is approximately `-1.0` to `-1.5`.

* **0.3B:** A very short orange bar extending to the left. Loss is approximately `-0.2` to `-0.5`.

### Detailed Analysis

**Architecture Diagram Flow:**

The diagram illustrates a "shared trunk, multiple heads" architecture for next-token prediction. The four input tokens (`1-4`) are processed by a common `Shared` representation layer. From this shared representation, four separate prediction heads (`Head 1-4`) are instantiated. Each head is responsible for predicting a different, overlapping sequence of four future tokens. For example, Head 1 predicts tokens 2, 3, 4, 5, while Head 2 predicts tokens 3, 4, 5, 6. The annotation suggests that the predictions from these specialized heads (or parts of them) are not used during standard inference but can be leveraged to accelerate the model, potentially by a factor of up to 3.

**Chart Data Trends:**

The chart demonstrates a clear, positive correlation between model size and the effectiveness of the 4-token prediction method.

* **Trend Verification:** The green bars (gains) grow progressively longer as model size increases from 1.3B to 13B. Conversely, the smallest models (0.6B and 0.3B) show negative gains (orange bars), indicating a performance regression.

* **Key Data Points:** The largest model (13B) achieves the maximum illustrated gain of +4.5 points over its strong baseline of 26.0. The 6.7B model shows a substantial gain of ~+2.75. The method becomes detrimental for models at or below 0.6B parameters.

### Key Observations

1. **Scale-Dependent Efficacy:** The 4-token prediction technique is not universally beneficial. It provides significant gains for medium to large models (1.3B and above) but harms the performance of very small models (0.6B and below).

2. **Architecture Specificity:** The diagram shows a precise, non-autoregressive prediction pattern where each head predicts a fixed-length window of future tokens, differing from standard single-token next-prediction.

3. **Performance Ceiling:** The gain for the 13B model (+4.5) is explicitly called out in the legend, suggesting it might be a target or observed maximum in the experiment.

4. **Inference Optimization Hint:** The note about discarding predictions or using them for speed hints that this architecture is designed for efficiency, possibly enabling speculative decoding or parallel token generation.

### Interpretation

This image presents a method to improve code generation performance (as measured by the MBPP benchmark) by modifying a language model's prediction objective. Instead of predicting one next token, the model is trained with specialized heads to predict four future tokens simultaneously from a shared representation.

The data suggests this approach forces the model to learn more robust and forward-looking representations, which benefits larger models that have the capacity to leverage this complex objective. For small models, the added complexity of predicting multiple future tokens may be overwhelming, leading to worse performance than the simpler baseline.

The architectural diagram explains the "how," while the performance chart validates the "why" and "for whom." The combined message is that this 4-token prediction is a promising technique for scaling up the efficiency and capability of larger language models, particularly for tasks like code generation where understanding longer-range structure is crucial. The inference speed-up note further positions it as a practical optimization for deployment.

</details>

In this study, we argue that training LLMs to predict multiple tokens at once will drive these models toward better sample efficiency. As anticipated in Figure 1, multi-token prediction instructs the LLM to predict the n future tokens from each position in the training corpora, all at once and in parallel (Qi et al., 2020).

Contributions While multi-token prediction has been studied in previous literature (Qi et al., 2020), the present work offers the following contributions:

1. We propose a simple multi-token prediction architecture with no train time or memory overhead (Section 2).

2. We provide experimental evidence that this training paradigm is beneficial at scale, with models up to 13B parameters solving around 15% more code problems on average (Section 3).

3. Multi-token prediction enables self-speculative decoding, making models up to 3 times faster at inference time across a wide range of batch-sizes (Section 3.2).

While cost-free and simple, multi-token prediction is an effective modification to train stronger and faster transformer models. We hope that our work spurs interest in novel auxiliary losses for LLMs well beyond next-token prediction, as to improve the performance, coherence, and reasoning abilities of these fascinating models.

## 2. Method

Standard language modeling learns about a large text corpus x 1 , . . . x T by implementing a next-token prediction task. Formally, the learning objective is to minimize the crossentropy loss

$$L _ { 1 } = - \sum _ { t } ^ { L _ { 1 } } log P _ { 0 } ( x _ { t } ) ,$$

where P θ is our large language model under training, as to maximize the probability of x t +1 as the next future token, given the history of past tokens x t :1 = x t , . . . , x 1 .

In this work, we generalize the above by implementing a multi-token prediction task, where at each position of the training corpus, the model is instructed to predict n future tokens at once. This translates into the cross-entropy loss

$$L _ { n } = - \sum _ { t } ^ { L _ { n } } log P _ { 0 } ( x _ { t + 1 } ) .$$

To make matters tractable, we assume that our large language model P θ employs a shared trunk to produce a latent representation z t :1 of the observed context x t :1 , then fed into n independent heads to predict in parallel each of the n future tokens (see Figure 1). This leads to the following factorization of the multi-token prediction cross-entropy loss:

$$\begin{array}{ll}

L _ { n } = - \sum _ { t = 1 } ^ { n } \log P _ { 0 } ( x _ { t } | x _ { t - 1 } ) & L _ { n } = - \sum _ { t = 1 } ^ { n } \log P _ { 0 } ( x _ { t } | x _ { t - 1 } ). \\

& = - \sum _ { t = 1 } ^ { n } \left( z _ { t } + P _ { 0 } ( z _ { t } | x _ { t - 1 } )\right).

\end{array}$$

$$- \sum _ { i = 1 } ^ { n } [ ( 9 0 - 9 0 ( 1 + i ) , 1 + i ) \cdot P _ { 6 } ( 2 + i , 1 + i )$$

In practice, our architecture consists of a shared transformer trunk f s producing the hidden representation z t :1 from the observed context x t :1 , n independent output heads implemented in terms of transformer layers f h i , and a shared unembedding matrix f u . Therefore, to predict n future tokens, we compute:

$$P _ { 0 } ( x + i | x _ { 1 } ) = s o f t r$$

for i = 1 , . . . n , where, in particular, P θ ( x t +1 | x t :1 ) is our next-token prediction head. See Appendix B for other variations of multi-token prediction architectures.

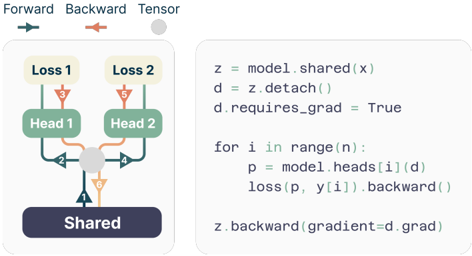

Memory-efficient implementation One big challenge in training multi-token predictors is reducing their GPU memory utilization. To see why this is the case, recall that in current LLMs the vocabulary size V is much larger than the dimension d of the latent representation-therefore, logit vectors become the GPU memory usage bottleneck. Naive implementations of multi-token predictors that materialize all logits and their gradients, both of shape ( n, V ) , severely limit the allowable batch-size and average GPU memory utilization. Because of these reasons, in our architecture we propose to carefully adapt the sequence of forward and backward operations, as illustrated in Figure 2. In particular, after the forward pass through the shared trunk f s , we sequentially compute the forward and backward pass of each independent output head f i , accumulating gradients at the trunk. While this creates logits (and their gradients) for the output head f i , these are freed before continuing to the next output head f i +1 , requiring the long-term storage only of the d -dimensional trunk gradient ∂L n /∂f s . In sum, we have reduced the peak GPU memory utilization from O ( nV + d ) to O ( V + d ) , at no expense in runtime (Table S5).

Inference During inference time, the most basic use of the proposed architecture is vanilla next-token autoregressive prediction using the next-token prediction head P θ ( x t +1 | x t :1 ) , while discarding all others. However, the additional output heads can be leveraged to speed up decoding from the next-token prediction head with self-speculative decoding methods such as blockwise parallel decoding (Stern et al., 2018)-a variant of speculative decoding (Leviathan et al., 2023) without the need for an additional draft model-and speculative decoding with Medusa-like tree attention (Cai et al., 2024).

Figure 2: Order of the forward/backward in an n -token prediction model with n = 2 heads. By performing the forward/backward on the heads in sequential order, we avoid materializing all unembedding layer gradients in memory simultaneously and reduce peak GPU memory usage.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram & Code Snippet: Multi-Task Learning with Shared Backbone

### Overview

The image is a composite technical illustration divided into two primary sections. On the left is a schematic diagram of a neural network architecture designed for multi-task learning. On the right is a corresponding Python code snippet that implements a specific training procedure for this architecture. The diagram uses color-coded arrows to illustrate the flow of data (forward pass) and gradients (backward pass).

### Components/Axes

**Left Diagram Components:**

1. **Legend (Top-Left):**

* `Forward` (Teal arrow pointing right)

* `Backward` (Orange arrow pointing left)

* `Tensor` (Gray filled circle)

2. **Architecture Blocks (from bottom to top):**

* `Shared`: A dark purple rectangular block at the base.

* `Head 1` & `Head 2`: Two green rectangular blocks positioned above the Shared block.

* `Loss 1` & `Loss 2`: Two light yellow rectangular blocks at the top.

3. **Connections (Arrows):**

* **Forward Pass (Teal):** Arrows flow upward from `Shared` to both `Head 1` and `Head 2`, and then from each Head to its respective `Loss`.

* **Backward Pass (Orange):** Arrows flow downward from `Loss 1` and `Loss 2` to their respective `Head`, and then converge at a gray `Tensor` circle positioned between the two heads. From this tensor, a single orange arrow points back down to the `Shared` block.

* **Tensor Node:** A gray circle acts as a junction point for the backward gradients from both heads before they are passed to the shared layer.

**Right Code Snippet:**

The code is written in a Python-like pseudocode syntax. It is presented as plain text within a light gray rounded rectangle.

### Detailed Analysis

**Diagram Flow Analysis:**

* **Forward Trend:** The data flow is strictly bottom-up and divergent. A single input `x` is processed by the `Shared` layer. The resulting representation is then fed independently into two separate task-specific heads (`Head 1`, `Head 2`), each producing its own output and calculating its own loss (`Loss 1`, `Loss 2`).

* **Backward Trend:** The gradient flow is convergent. Gradients from both `Loss 1` and `Loss 2` are backpropagated through their respective heads. These gradients meet at an intermediate tensor node (the gray circle). A single, combined gradient signal is then passed back to update the `Shared` layer. This suggests a mechanism for aggregating gradients from multiple tasks before updating the shared parameters.

**Code Transcription:**

```python

z = model.shared(x)

d = z.detach()

d.requires_grad = True

for i in range(n):

p = model.heads[i](d)

loss(p, y[i]).backward()

z.backward(gradient=d.grad)

```

**Code Logic Breakdown:**

1. `z = model.shared(x)`: The shared layer processes input `x` to produce representation `z`.

2. `d = z.detach()`: A detached copy `d` of the representation `z` is created. This severs the direct computational graph link between `d` and the parameters of `model.shared`.

3. `d.requires_grad = True`: The detached tensor `d` is manually set to require gradients. This allows it to accumulate gradients from the subsequent head computations.

4. **Loop (`for i in range(n)`):** Iterates through `n` tasks (corresponding to the heads).

* `p = model.heads[i](d)`: The i-th head processes the shared representation `d`.

* `loss(p, y[i]).backward()`: The loss for task `i` is computed and backpropagated. Gradients flow through the head and accumulate on `d.grad`, but **do not** flow further back into `model.shared` because of the `.detach()` operation earlier.

5. `z.backward(gradient=d.grad)`: After the loop, the accumulated gradients from all tasks (`d.grad`) are manually passed backward through the original, non-detached tensor `z`. This single call updates the parameters of `model.shared`.

### Key Observations

1. **Gradient Isolation Technique:** The core technical insight is the use of `.detach()` and manual gradient assignment. This prevents gradients from the individual task losses from interfering with each other *within* the shared layer's parameter update during the forward/backward pass of each task. The final update to the shared layer uses an aggregated gradient signal.

2. **Architectural vs. Procedural Representation:** The diagram shows a conceptual, simultaneous multi-task setup. The code reveals a sequential implementation where tasks are processed one after another in a loop, but their gradients are aggregated before the shared layer update.

3. **Spatial Grounding:** The legend is positioned top-left, clearly defining the visual language. The diagram occupies the left ~40% of the image, the code the right ~60%. The gray tensor node in the diagram is spatially centered between the two heads, visually representing its role as a gradient junction.

### Interpretation

This image illustrates a sophisticated method for **multi-task learning** aimed at mitigating "gradient conflict" or "negative transfer" between tasks. In naive multi-task learning, simultaneous backpropagation from different losses can lead to conflicting gradient directions for the shared parameters, harming performance.

The depicted technique, often associated with methods like **Gradient Surgery** or **PCGrad**, proposes a solution:

1. **Isolate:** Compute task-specific gradients on a detached copy of the shared representation (`d`). This allows each task's gradient to be calculated independently without immediately affecting the shared weights.

2. **Aggregate:** Combine the gradients from all tasks (the code implies simple summation via `.backward()` calls accumulating on `d.grad`, though more complex aggregation like projection could be implemented).

3. **Update:** Apply the aggregated, potentially "conflict-resolved" gradient to update the shared model (`z.backward()`).

The diagram simplifies this by showing a single convergence point (the gray tensor), while the code exposes the precise mechanism using PyTorch-style autograd operations. The overall goal is to enable the shared feature extractor to learn a representation that is beneficial for all tasks simultaneously, by carefully controlling how gradient information from different tasks is combined. This is a critical technique for building robust multi-task models in fields like computer vision (e.g., joint depth estimation, segmentation, and detection) or natural language processing (e.g., joint parsing, tagging, and classification).

</details>

## 3. Experiments on real data

We demonstrate the efficacy of multi-token prediction losses by seven large-scale experiments. Section 3.1 shows how multi-token prediction is increasingly useful when growing the model size. Section 3.2 shows how the additional prediction heads can speed up inference by a factor of 3 × using speculative decoding. Section 3.3 demonstrates how multi-token prediction promotes learning longer-term patterns, a fact most apparent in the extreme case of byte-level tokenization. Section 3.4 shows that 4 -token predictor leads to strong gains with a tokenizer of size 32 k. Section 3.5 illustrates that the benefits of multi-token prediction remain for training runs with multiple epochs. Section 3.6 showcases the rich representations promoted by pretraining with multi-token prediction losses by finetuning on the CodeContests dataset (Li et al., 2022). Section 3.7 shows that the benefits of multi-token prediction carry to natural language models, improving generative evaluations such as summarization, while not regressing significantly on standard benchmarks based on multiple choice questions and negative log-likelihoods.

To allow fair comparisons between next-token predictors and n -token predictors, the experiments that follow always compare models with an equal amount of parameters. That is, when we add n -1 layers in future prediction heads, we remove n -1 layers from the shared model trunk. Please refer to Table S14 for the model architectures and to Table S13 for an overview of the hyperparameters we use in our experiments.

## 3.1. Benefits scale with model size

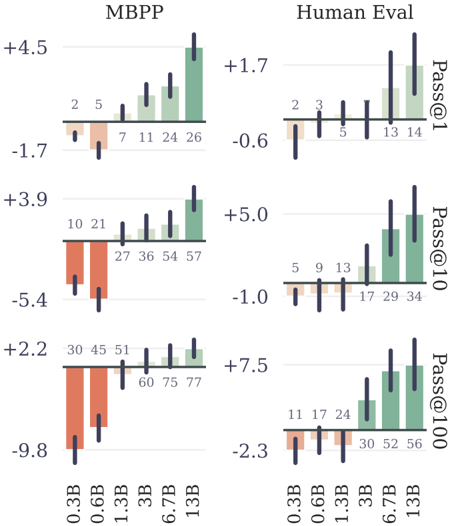

To study this phenomenon, we train models of six sizes in the range 300M to 13B parameters from scratch on at least 91B tokens of code. The evaluation results in Fig-

Figure 3: Results of n -token prediction models on MBPP by model size. We train models of six sizes in the range or 300M to 13B total parameters on code, and evaluate pass@1,10,100 on the MBPP (Austin et al., 2021) and HumanEval (Chen et al., 2021) benchmark with 1000 samples. Multi-token prediction models are worse than the baseline for small model sizes, but outperform the baseline at scale. Error bars are confidence intervals of 90% computed with bootstrapping over dataset samples.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Bar Charts: MBPP and Human Eval Performance by Model Size

### Overview

The image displays a 2x3 grid of bar charts comparing the performance of different-sized language models on two benchmarks: **MBPP** (left column) and **Human Eval** (right column). Performance is measured using the **Pass@k** metric for k=1, 10, and 100 (rows from top to bottom). Each chart plots performance against model size (0.3B to 13B parameters). The bars are colored in two distinct groups: orange/red for the two smallest models (0.3B, 0.6B) and green for the larger models (1.3B and above). Black vertical lines on each bar represent error bars or confidence intervals.

### Components/Axes

* **Charts:** Six individual bar charts arranged in two columns and three rows.

* **Column Headers:** "MBPP" (left column) and "Human Eval" (right column).

* **Row Labels (Right Side):** "Pass@1" (top row), "Pass@10" (middle row), "Pass@100" (bottom row).

* **X-Axis (Bottom of each column):** Model sizes: `0.3B`, `0.6B`, `1.3B`, `3B`, `6.7B`, `13B`.

* **Y-Axis:** Numerical scale representing the performance metric (likely percentage points or a normalized score). Each chart has its own independent scale with both positive and negative values.

* **Data Labels:** Each bar has a number printed directly above it, indicating the precise value.

* **Legend:** No explicit legend is present. The color grouping (orange for 0.3B/0.6B, green for 1.3B+) is consistent across all six charts.

### Detailed Analysis

#### **MBPP Column (Left)**

* **Pass@1 (Top-Left Chart)**

* **Y-Axis Range:** -1.7 to +4.5

* **Data Points (Model Size: Value):**

* 0.3B: 2

* 0.6B: 5

* 1.3B: 7

* 3B: 11

* 6.7B: 24

* 13B: 26

* **Trend:** Performance increases with model size. The growth is modest from 0.3B to 1.3B, then accelerates significantly from 3B to 13B.

* **Pass@10 (Middle-Left Chart)**

* **Y-Axis Range:** -5.4 to +3.9

* **Data Points (Model Size: Value):**

* 0.3B: 10

* 0.6B: 21

* 1.3B: 27

* 3B: 36

* 6.7B: 54

* 13B: 57

* **Trend:** A strong, consistent upward trend. Performance more than quintuples from the smallest to the largest model.

* **Pass@100 (Bottom-Left Chart)**

* **Y-Axis Range:** -9.8 to +2.2

* **Data Points (Model Size: Value):**

* 0.3B: 30

* 0.6B: 45

* 1.3B: 51

* 3B: 60

* 6.7B: 75

* 13B: 77

* **Trend:** Continued strong upward trend. The performance gap between 6.7B and 13B models is smaller than previous jumps, suggesting potential saturation.

#### **Human Eval Column (Right)**

* **Pass@1 (Top-Right Chart)**

* **Y-Axis Range:** -0.6 to +1.7

* **Data Points (Model Size: Value):**

* 0.3B: 2

* 0.6B: 3

* 1.3B: 5

* 3B: 13

* 6.7B: 14

* 13B: 14

* **Trend:** Performance increases with size but plateaus between 6.7B and 13B. The jump from 1.3B to 3B is the most significant.

* **Pass@10 (Middle-Right Chart)**

* **Y-Axis Range:** -1.0 to +5.0

* **Data Points (Model Size: Value):**

* 0.3B: 5

* 0.6B: 9

* 1.3B: 13

* 3B: 17

* 6.7B: 29

* 13B: 34

* **Trend:** A clear upward trend. The rate of improvement increases notably after the 3B model.

* **Pass@100 (Bottom-Right Chart)**

* **Y-Axis Range:** -2.3 to +7.5

* **Data Points (Model Size: Value):**

* 0.3B: 11

* 0.6B: 17

* 1.3B: 24

* 3B: 30

* 6.7B: 52

* 13B: 56

* **Trend:** Strong upward trend. A very large performance leap occurs between the 3B and 6.7B models.

### Key Observations

1. **Consistent Scaling Law:** Across both benchmarks and all `k` values, performance improves with increased model parameter count (from 0.3B to 13B).

2. **Benchmark Difficulty:** For any given model size and `k`, scores on **Human Eval** are consistently lower than on **MBPP**, suggesting Human Eval is the more challenging benchmark.

3. **Effect of `k`:** As `k` increases from 1 to 100, the absolute performance values increase dramatically for all models on both benchmarks, which is expected for the Pass@k metric.

4. **Performance Plateaus:** Evidence of diminishing returns appears in some series. For example, on Human Eval Pass@1, the 6.7B and 13B models have identical scores (14). On MBPP Pass@100, the gain from 6.7B (75) to 13B (77) is minimal.

5. **Color Grouping:** The consistent two-color scheme visually separates the "small" (0.3B, 0.6B) and "large" (1.3B+) model cohorts, emphasizing a performance threshold crossed around the 1B parameter mark.

### Interpretation

These charts empirically demonstrate the scaling laws of large language models on code generation tasks. The data suggests that:

* **Model size is a primary driver of capability** on standardized programming benchmarks. The relationship is not perfectly linear, with certain size transitions (e.g., 3B to 6.7B on Human Eval Pass@100) yielding outsized gains.

* **The choice of metric (`k`) drastically alters the perceived performance.** A model's ability to generate a correct solution *at least once* in 100 attempts (Pass@100) is far higher than its ability to get it right on the first try (Pass@1). This highlights the importance of considering multiple evaluation metrics.

* **Benchmark selection matters.** The consistent performance gap between MBPP and Human Eval indicates they test different aspects of coding ability or have different difficulty distributions. Researchers must consider which benchmark aligns with their target evaluation goals.

* **The observed plateaus (e.g., Human Eval Pass@1 at 6.7B/13B) are critical.** They may indicate that for certain tasks or metrics, simply adding more parameters yields diminishing returns, and architectural innovations or data quality improvements may be needed for further progress. The error bars, while not numerically specified, suggest variability in performance, which could be due to factors like random seed or evaluation set splits.

</details>

ure 3 for MBPP (Austin et al., 2021) and HumanEval (Chen et al., 2021) show that it is possible, with the exact same computational budget, to squeeze much more performance out of large language models given a fixed dataset using multi-token prediction.

We believe this usefulness only at scale to be a likely reason why multi-token prediction has so far been largely overlooked as a promising training loss for large language model training.

## 3.2. Faster inference

We implement greedy self-speculative decoding (Stern et al., 2018) with heterogeneous batch sizes using xFormers (Lefaudeux et al., 2022) and measure decoding speeds of our best 4-token prediction model with 7B parameters on completing prompts taken from a test dataset of code and natural language (Table S2) not seen during training. We observe a speedup of 3 . 0 × on code with an average of 2.5 accepted tokens out of 3 suggestions on code, and of

Table 1: Multi-token prediction improves performance and unlocks efficient byte level training. We compare models with 7B parameters trained from scratch on 200B and on 314B bytes of code on the MBPP (Austin et al., 2021), HumanEval (Chen et al., 2021) and APPS (Hendrycks et al., 2021) benchmarks. Multi-token prediction largely outperforms next token prediction on these settings. All numbers were calculated using the estimator from Chen et al. (2021) based on 200 samples per problem. The temperatures were chosen optimally (based on test scores; i.e. these are oracle temperatures) for each model, dataset and pass@k and are reported in Table S12.

| Training data | Vocabulary | n | MBPP | MBPP | MBPP | HumanEval | HumanEval | HumanEval | APPS/Intro | APPS/Intro | APPS/Intro |

|----------------------|--------------|-----|--------|--------|--------|-------------|-------------|-------------|--------------|--------------|--------------|

| Training data | Vocabulary | | @1 | @10 | @100 | @1 | @10 | @100 | @1 | @10 | @100 |

| | | 1 | 19.3 | 42.4 | 64.7 | 18.1 | 28.2 | 47.8 | 0.1 | 0.5 | 2.4 |

| | | 8 | 32.3 | 50.0 | 69.6 | 21.8 | 34.1 | 57.9 | 1.2 | 5.7 | 14.0 |

| | | 16 | 28.6 | 47.1 | 68.0 | 20.4 | 32.7 | 54.3 | 1.0 | 5.0 | 12.9 |

| | | 32 | 23.0 | 40.7 | 60.3 | 17.2 | 30.2 | 49.7 | 0.6 | 2.8 | 8.8 |

| | | 1 | 30.0 | 53.8 | 73.7 | 22.8 | 36.4 | 62.0 | 2.8 | 7.8 | 17.4 |

| | | 2 | 30.3 | 55.1 | 76.2 | 22.2 | 38.5 | 62.6 | 2.1 | 9.0 | 21.7 |

| | | 4 | 33.8 | 55.9 | 76.9 | 24.0 | 40.1 | 66.1 | 1.6 | 7.1 | 19.9 |

| | | 6 | 31.9 | 53.9 | 73.1 | 20.6 | 38.4 | 63.9 | 3.5 | 10.8 | 22.7 |

| | | 8 | 30.7 | 52.2 | 73.4 | 20.0 | 36.6 | 59.6 | 3.5 | 10.4 | 22.1 |

| 1T tokens (4 epochs) | | 1 | 40.7 | 65.4 | 83.4 | 31.7 | 57.6 | 83.0 | 5.4 | 17.8 | 34.1 |

| 1T tokens (4 epochs) | | 4 | 43.1 | 65.9 | 83.7 | 31.6 | 57.3 | 86.2 | 4.3 | 15.6 | 33.7 |

2 . 7 × on text. On an 8-byte prediction model, the inference speedup is 6 . 4 × (Table S3). Pretraining with multi-token prediction allows the additional heads to be much more accurate than a simple finetuning of a next-token prediction model, thus allowing our models to unlock self-speculative decoding's full potential.

## 3.3. Learning global patterns with multi-byte prediction

To show that the next-token prediction task latches to local patterns, we went to the extreme case of byte-level tokenization by training a 7B parameter byte-level transformer on 314B bytes, which is equivalent to around 116B tokens. The 8-byte prediction model achieves astounding improvements compared to next-byte prediction, solving 67% more problems on MBPP pass@1 and 20% more problems on HumanEval pass@1.

Multi-byte prediction is therefore a very promising avenue to unlock efficient training of byte-level models. Selfspeculative decoding can achieve speedups of 6 times for the 8-byte prediction model, which would allow to fully compensate the cost of longer byte-level sequences at inference time and even be faster than a next-token prediction model by nearly two times. The 8-byte prediction model is a strong byte-based model, approaching the performance of token-based models despite having been trained on 1 . 7 × less data.

## 3.4. Searching for the optimal n

To better understand the effect of the number of predicted tokens, we did comprehensive ablations on models of scale 7B trained on 200B tokens of code. We try n = 1 , 2 , 4 , 6 and 8 in this setting. Results in table 1 show that training with 4-future tokens outperforms all the other models consistently throughout HumanEval and MBPP for pass at 1, 10 and 100 metrics: +3.8%, +2.1% and +3.2% for MBPP and +1.2%, +3.7% and +4.1% for HumanEval. Interestingly, for APPS/Intro, n = 6 takes the lead with +0.7%, +3.0% and +5.3%. It is very likely that the optimal window size depends on input data distribution. As for the byte level models the optimal window size is more consistent (8 bytes) across these benchmarks.

## 3.5. Training for multiple epochs

Multi-token training still maintains an edge on next-token prediction when trained on multiple epochs of the same data. The improvements diminish but we still have a +2.4% increase on pass@1 on MBPP and +3.2% increase on pass@100 on HumanEval, while having similar performance for the rest. As for APPS/Intro, a window size of 4 was already not optimal with 200B tokens of training.

## 3.6. Finetuning multi-token predictors

Pretrained models with multi-token prediction loss also outperform next-token models for use in finetunings. We evaluate this by finetuning 7B parameter models from Section 3.3

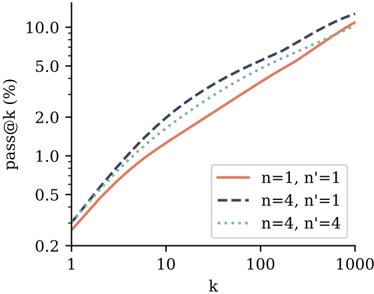

on the CodeContests dataset (Li et al., 2022). We compare the 4-token prediction model with the next-token prediction baseline, and include a setting where the 4-token prediction model is stripped off its additional prediction heads and finetuned using the classical next-token prediction target. According to the results in Figure 4, both ways of finetuning the 4-token prediction model outperform the next-token prediction model on pass@k across k . This means the models are both better at understanding and solving the task and at generating diverse answers. Note that CodeContests is the most challenging coding benchmark we evaluate in this study. Next-token prediction finetuning on top of 4-token prediction pretraining appears to be the best method overall, in line with the classical paradigm of pretraining with auxiliary tasks followed by task-specific finetuning. Please refer to Appendix F for details.

Figure 4: Comparison of finetuning performance on CodeContests. We finetune a 4 -token prediction model on CodeContests (Li et al., 2022) (train split) using n ′ -token prediction as training loss with n ′ = 4 or n ′ = 1 , and compare to a finetuning of the next-token prediction baseline model ( n = n ′ = 1 ). For evaluation, we generate 1000 samples per test problem for each temperature T ∈ { 0 . 5 , 0 . 6 , 0 . 7 , 0 . 8 , 0 . 9 } , and compute pass@k for each value of k and T . Shown is k ↦→ max T pass \_ at( k, T ) , i.e. we grant access to a temperature oracle. We observe that both ways of finetuning the 4-token prediction model outperform the next-token prediction baseline. Intriguingly, using next-token prediction finetuning on top of the 4-token prediction model appears to be the best method overall.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Line Chart: pass@k Performance Across Parameter Configurations

### Overview

The image displays a line chart comparing the performance of three different parameter configurations (n, n') on a metric called "pass@k (%)". The chart uses logarithmic scales on both axes to visualize performance across a wide range of k values. The data suggests an evaluation of some computational or machine learning model's success rate (pass rate) as a function of the number of attempts or samples (k).

### Components/Axes

* **Chart Type:** Line chart with logarithmic X and Y axes.

* **X-Axis:**

* **Label:** `k`

* **Scale:** Logarithmic (base 10).

* **Major Tick Marks:** 1, 10, 100, 1000.

* **Y-Axis:**

* **Label:** `pass@k (%)`

* **Scale:** Logarithmic.

* **Major Tick Marks:** 0.2, 0.5, 1.0, 2.0, 5.0, 10.0.

* **Legend:**

* **Position:** Bottom-right corner of the plot area.

* **Entries:**

1. **Solid Red Line:** `n=1, n'=1`

2. **Dashed Black Line:** `n=4, n'=1`

3. **Dotted Green Line:** `n=4, n'=4`

### Detailed Analysis

The chart plots three data series, each showing a monotonically increasing trend where `pass@k (%)` rises as `k` increases. The relationship appears roughly linear on this log-log plot, indicating a power-law relationship (`pass@k ∝ k^α`).

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **Series: `n=1, n'=1` (Solid Red Line)**

* **Trend:** Starts as the lowest-performing configuration at low `k` but shows a steady, consistent upward slope. It converges with the other lines at the highest `k` value.

* **Approximate Data Points:**

* At k=1: ~0.3%

* At k=10: ~1.5%

* At k=100: ~5.0%

* At k=1000: ~10.0%

2. **Series: `n=4, n'=1` (Dashed Black Line)**

* **Trend:** This is the highest-performing configuration across the entire range of `k`. It has the steepest initial slope and maintains a lead, though the gap narrows at very high `k`.

* **Approximate Data Points:**

* At k=1: ~0.4%

* At k=10: ~2.5%

* At k=100: ~7.0%

* At k=1000: ~11.0%

3. **Series: `n=4, n'=4` (Dotted Green Line)**

* **Trend:** Performs between the other two configurations. It starts higher than the `n=1, n'=1` line but lower than the `n=4, n'=1` line, and maintains this middle position throughout.

* **Approximate Data Points:**

* At k=1: ~0.35%

* At k=10: ~2.0%

* At k=100: ~6.0%

* At k=1000: ~10.5%

### Key Observations

1. **Parameter Impact:** Increasing the parameter `n` from 1 to 4 (comparing red vs. black/green lines) provides a significant boost to `pass@k` performance, especially at lower to mid-range `k` values.

2. **Trade-off with n':** For a fixed `n=4`, increasing `n'` from 1 to 4 (comparing black vs. green lines) results in a slight but consistent decrease in performance.

3. **Convergence at High k:** All three configurations converge to a similar pass rate (approximately 10-11%) when `k` reaches 1000, suggesting diminishing returns or a performance ceiling for this metric at very high sample counts.

4. **Log-Log Linearity:** The near-linear appearance on the log-log plot indicates that the pass rate improves as a power function of `k`.

### Interpretation

This chart likely evaluates the efficiency of a generative model or a code synthesis system where `pass@k` measures the probability that at least one of `k` generated samples is correct. The parameters `n` and `n'` could represent aspects like model size, number of refinement steps, or ensemble size.

The data demonstrates a clear **Peircean investigative insight**: there is a positive but non-linear relationship between computational budget (`k`) and success rate. More importantly, it reveals a **parameter hierarchy**: the configuration `n=4, n'=1` is optimal within the tested set. The fact that `n=4, n'=4` underperforms `n=4, n'=1` suggests a potential **over-constraint or interference effect** when both parameters are increased, which is a critical anomaly for system tuning. The convergence at high `k` implies that for applications where extensive sampling is feasible, the choice of `n` and `n'` becomes less critical, but for low-latency or resource-constrained settings (low `k`), selecting `n=4, n'=1` is crucial for maximizing performance.

</details>

## 3.7. Multi-token prediction on natural language

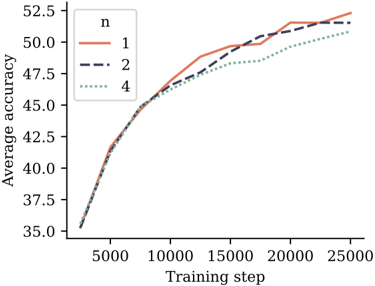

To evaluate multi-token prediction training on natural language, we train models of size 7B parameters on 200B tokens of natural language with a 4-token, 2-token and nexttoken prediction loss, respectively. In Figure 5, we evaluate the resulting checkpoints on 6 standard NLP benchmarks. On these benchmarks, the 2-future token prediction model performs on par with the next-token prediction baseline

Figure 5: Multi-token training with 7B models doesn't improve performance on choice tasks. This figure shows the evolution of average accuracy of 6 standard NLP benchmarks. Detailed results in Appendix G for 7B models trained on 200B tokens of language data. The 2 future token model has the same performance as the baseline and the 4 future token model regresses a bit. Larger model sizes might be necessary to see improvements on these tasks.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Chart: Average Accuracy vs. Training Step for Different 'n' Values

### Overview

The image displays a line chart comparing the training progress of three different models or configurations, differentiated by a parameter labeled 'n'. The chart plots "Average accuracy" against "Training step," showing how performance evolves over the course of training for each configuration.

### Components/Axes

* **Chart Type:** Multi-line chart.

* **Y-Axis:**

* **Label:** "Average accuracy"

* **Scale:** Linear, ranging from 35.0 to 52.5.

* **Major Ticks:** 35.0, 37.5, 40.0, 42.5, 45.0, 47.5, 50.0, 52.5.

* **X-Axis:**

* **Label:** "Training step"

* **Scale:** Linear, ranging from 0 to 25000.

* **Major Ticks:** 0, 5000, 10000, 15000, 20000, 25000.

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Title:** "n"

* **Series:**

1. **n=1:** Represented by a solid orange line.

2. **n=2:** Represented by a dark blue dashed line.

3. **n=4:** Represented by a green dotted line.

### Detailed Analysis

The chart shows the learning curves for three configurations. All three lines begin at approximately the same point (Training step ~0, Average accuracy ~35.0) and follow a similar logarithmic growth pattern—rapid initial improvement that gradually slows.

* **Trend for n=1 (Solid Orange Line):** This line shows the strongest overall growth. It rises steeply, crossing above the n=2 line around step 12,500. It continues to climb, ending at the highest final accuracy of approximately 52.5 at step 25,000.

* **Trend for n=2 (Dashed Dark Blue Line):** This line follows a path very close to n=1 for the first half of training. After step 12,500, its growth rate becomes slightly less than n=1. It finishes at an accuracy of approximately 51.5 at step 25,000.

* **Trend for n=4 (Dotted Green Line):** This line exhibits the slowest growth rate from the beginning. It consistently remains below the other two lines after the initial steps. It ends at the lowest final accuracy of approximately 51.0 at step 25,000.

**Approximate Data Points (Visual Estimation):**

* **At Step 5,000:** All lines are tightly clustered around an accuracy of 41.0-42.0.

* **At Step 15,000:**

* n=1: ~49.5

* n=2: ~49.0

* n=4: ~48.0

* **At Step 25,000 (Final):**

* n=1: ~52.5

* n=2: ~51.5

* n=4: ~51.0

### Key Observations

1. **Performance Hierarchy:** A clear inverse relationship is visible between the parameter 'n' and the final model accuracy. Lower 'n' values (n=1) result in higher final accuracy.

2. **Convergence Point:** All three models start at the same performance level and show very similar progress for the first ~5,000 steps before their paths begin to diverge noticeably.

3. **Crossover Event:** The line for n=1 overtakes the line for n=2 somewhere between step 10,000 and step 15,000, indicating a point where the n=1 configuration's learning trajectory becomes superior.

4. **Diminishing Returns:** All curves show classic diminishing returns; the gain in accuracy per training step decreases as training progresses.

### Interpretation

This chart likely illustrates the effect of a hyperparameter 'n' (which could represent model size, number of layers, ensemble size, or a regularization parameter) on the learning efficiency and final performance of a machine learning model.

The data suggests that for this specific task and training regime, a smaller 'n' (n=1) is more effective, leading to both faster learning after an initial period and a higher final accuracy. The configuration with n=4 performs the worst, which could indicate issues like overfitting (if 'n' relates to model complexity), optimization difficulties, or that the added complexity is not beneficial for the given data.

The tight clustering at the start implies that the initial learning phase is dominated by factors common to all models (e.g., learning basic features), while the later divergence highlights how the 'n' parameter influences the models' capacity to learn more nuanced patterns and achieve higher performance. The crossover between n=1 and n=2 is particularly interesting, suggesting that the benefits of the n=1 configuration manifest more strongly in the later stages of training.

</details>

throughout training. The 4-future token prediction model suffers a performance degradation. Detailed numbers are reported in Appendix G.

However, we do not believe that multiple-choice and likelihood-based benchmarks are suited to effectively discern generative capabilities of language models. In order to avoid the need for human annotations of generation quality or language model judges-which comes with its own pitfalls, as pointed out by Koo et al. (2023)-we conduct evaluations on summarization and natural language mathematics benchmarks and compare pretrained models with training sets sizes of 200B and 500B tokens and with nexttoken and multi-token prediction losses, respectively.

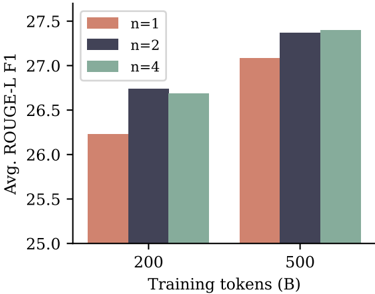

For summarization, we use eight benchmarks where ROUGE metrics (Lin, 2004) with respect to a ground-truth summary allow automatic evaluation of generated texts. We finetune each pretrained model on each benchmark's training dataset for three epochs and select the checkpoint with the highest ROUGE-L F 1 score on the validation dataset. Figure 6 shows that multi-token prediction models with both n = 2 and n = 4 improve over the next-token baseline in ROUGE-L F 1 scores for both training dataset sizes, with the performance gap shrinking with larger dataset size. All metrics can be found in Appendix H.

For natural language mathematics, we evaluate the pretrained models in 8-shot mode on the GSM8K benchmark (Cobbe et al., 2021) and measure accuracy of the final answer produced after a chain-of-thought elicited by the fewshot examples. We evaluate pass@k metrics to quantify diversity and correctness of answers like in code evaluations

Figure 6: Performance on abstractive text summarization. Average ROUGE-L (longest common subsequence overlap) F 1 score for 7B models trained on 200B and 500B tokens of natural language on eight summarization benchmarks. We finetune the respective models on each task's training data separately for three epochs and select the checkpoints with highest ROUGE-L F 1 validation score. Both n = 2 and n = 4 multi-token prediction models have an advantage over next-token prediction models. Individual scores per dataset and more details can be found in Appendix H.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Bar Chart: ROUGE-L F1 Score vs. Training Tokens and N-gram Size

### Overview

The image is a grouped bar chart comparing the average ROUGE-L F1 score (a metric for evaluating text summarization or generation quality) across two different amounts of training data and three different n-gram settings. The chart demonstrates how model performance scales with increased training data and changes with the n-gram parameter.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Avg. ROUGE-L F1"

* **Scale:** Linear, ranging from 25.0 to 27.5, with major tick marks every 0.5 units (25.0, 25.5, 26.0, 26.5, 27.0, 27.5).

* **X-Axis:**

* **Label:** "Training tokens (B)" (B denotes Billions).

* **Categories:** Two discrete categories: "200" and "500".

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Labels & Colors:**

* `n=1`: Salmon/light red color.

* `n=2`: Dark blue/indigo color.

* `n=4`: Sage green/gray-green color.

* **Data Series:** Three series (n=1, n=2, n=4) are plotted for each of the two x-axis categories (200B, 500B tokens), resulting in six total bars.

### Detailed Analysis

The chart presents the following approximate data points, read from the y-axis scale:

**For 200 Billion Training Tokens:**

* **n=1 (Salmon bar):** The bar height is approximately **26.2**.

* **n=2 (Dark blue bar):** The bar height is approximately **26.7**.

* **n=4 (Sage green bar):** The bar height is approximately **26.7**. It appears visually identical in height to the n=2 bar.

**For 500 Billion Training Tokens:**

* **n=1 (Salmon bar):** The bar height is approximately **27.1**.

* **n=2 (Dark blue bar):** The bar height is approximately **27.4**.

* **n=4 (Sage green bar):** The bar height is approximately **27.4**. It appears visually identical in height to the n=2 bar.

**Visual Trend Verification:**

* **Trend 1 (Data Scaling):** For all three n-gram settings (n=1, n=2, n=4), the bars for 500B tokens are taller than their counterparts for 200B tokens. This indicates a clear **upward trend** in ROUGE-L F1 score as the amount of training data increases from 200B to 500B tokens.

* **Trend 2 (N-gram Comparison):** Within each training data group (200B and 500B), the n=1 bar is the shortest. The n=2 and n=4 bars are taller and appear to be of equal height. This suggests that using bigrams (n=2) or 4-grams (n=4) yields a higher score than using unigrams (n=1), but there is no discernible difference in performance between n=2 and n=4 in this visualization.

### Key Observations

1. **Performance Gain from Data:** Increasing training tokens from 200B to 500B results in a consistent performance boost of approximately **0.9 points** for n=1 and **0.7 points** for both n=2 and n=4.

2. **N-gram Impact Plateau:** The performance difference between using n=2 and n=4 is negligible or non-existent in this chart. The primary performance jump occurs when moving from n=1 to n=2.

3. **Relative Performance Hierarchy:** The hierarchy of performance is consistent across both data scales: `n=2 ≈ n=4 > n=1`.

### Interpretation

This chart illustrates two key findings relevant to training text generation or summarization models:

1. **Data Scaling is Effective:** The model's output quality, as measured by ROUGE-L F1, improves with more training data. This is a fundamental and expected result in machine learning, confirming that the model benefits from exposure to a larger corpus.

2. **Diminishing Returns on N-gram Complexity:** While incorporating higher-order n-gram information (moving from unigrams to bigrams) provides a clear benefit, further increasing the n-gram order to 4 does not yield additional gains in this specific metric and experimental setup. This could suggest that the additional contextual information from 4-grams is either not captured effectively by the ROUGE-L metric, is redundant with the information captured by bigrams for this task, or requires a different model architecture or training approach to leverage.

The data implies that for this particular task and evaluation, optimizing for bigram (n=2) matching is sufficient to achieve peak performance as measured by ROUGE-L, and resources might be better allocated to acquiring more training data rather than increasing n-gram complexity beyond that point.

</details>

and use sampling temperatures between 0.2 and 1.4. The results are depicted in Figure S13 in Appendix I. For 200B training tokens, the n = 2 model clearly outperforms the next-token prediction baseline, while the pattern reverses after 500B tokens and n = 4 is worse throughout.

## 4. Ablations on synthetic data

What drives the improvements in downstream performance of multi-token prediction models on all of the tasks we have considered? By conducting toy experiments on controlled training datasets and evaluation tasks, we demonstrate that multi-token prediction leads to qualitative changes in model capabilities and generalization behaviors . In particular, Section 4.1 shows that for small model sizes, induction capability -as discussed by Olsson et al. (2022)-either only forms when using multi-token prediction as training loss, or it is vastly improved by it. Moreover, Section 4.2 shows that multi-token prediction improves generalization on an arithmetic task, even more so than tripling model size.

## 4.1. Induction capability

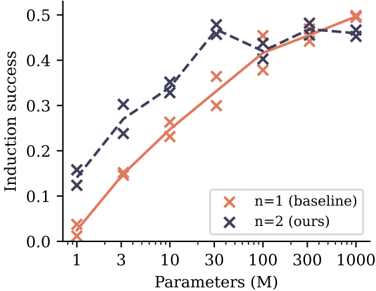

Induction describes a simple pattern of reasoning that completes partial patterns by their most recent continuation (Olsson et al., 2022). In other words, if a sentence contains 'AB' and later mentions 'A', induction is the prediction that the continuation is 'B'. We design a setup to measure induction

Figure 7: Induction capability of n -token prediction models. Shown is accuracy on the second token of two token names that have already been mentioned previously. Shown are numbers for models trained with a next-token and a 2-token prediction loss, respectively, with two independent runs each. The lines denote per-loss averages. For small model sizes, next-token prediction models learn practically no or significantly worse induction capability than 2-token prediction models, with their disadvantage disappearing at the size of 100M nonembedding parameters.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Line Chart: Induction Success vs. Model Parameters

### Overview

The image is a line chart comparing the performance of two models or methods, labeled "n=1 (baseline)" and "n=2 (ours)", across different model sizes. The chart plots "Induction success" (y-axis) against the number of model parameters in millions (x-axis), which uses a logarithmic scale. The data suggests an investigation into how scaling model size affects a specific capability ("induction") for a baseline approach versus a proposed method.

### Components/Axes

* **Chart Type:** Line chart with marked data points.

* **X-Axis:**

* **Label:** "Parameters (M)"

* **Scale:** Logarithmic.

* **Tick Marks/Values:** 1, 3, 10, 30, 100, 300, 1000.

* **Y-Axis:**

* **Label:** "Induction success"

* **Scale:** Linear, ranging from 0.0 to 0.5.

* **Tick Marks/Values:** 0.0, 0.1, 0.2, 0.3, 0.4, 0.5.

* **Legend:**

* **Position:** Bottom-right corner of the plot area.

* **Series 1:** Orange 'x' marker, labeled "n=1 (baseline)".

* **Series 2:** Dark blue 'x' marker, labeled "n=2 (ours)".

* **Data Series:** Two lines connecting 'x' markers. The orange line represents the baseline, and the dark blue line represents the authors' method.

### Detailed Analysis

**Data Series: n=1 (baseline) - Orange Line**

* **Trend:** Shows a steady, monotonic increase in induction success as the number of parameters grows. The slope is steepest between 1M and 30M parameters and begins to plateau after 100M.

* **Approximate Data Points:**

* 1M Parameters: ~0.03

* 3M Parameters: ~0.15

* 10M Parameters: ~0.23

* 30M Parameters: ~0.36

* 100M Parameters: ~0.38

* 300M Parameters: ~0.45

* 1000M Parameters: ~0.50

**Data Series: n=2 (ours) - Dark Blue Line**

* **Trend:** Starts at a higher success rate than the baseline for small models. It increases sharply to a peak at 30M parameters, then experiences a slight dip at 100M before recovering and plateauing. It does not show a clear advantage over the baseline at the largest model sizes (300M-1000M).

* **Approximate Data Points:**

* 1M Parameters: ~0.13

* 3M Parameters: ~0.27

* 10M Parameters: ~0.34

* 30M Parameters: ~0.47 (Peak)

* 100M Parameters: ~0.41 (Dip)

* 300M Parameters: ~0.48

* 1000M Parameters: ~0.46

### Key Observations

1. **Performance Crossover:** The "n=2 (ours)" method demonstrates significantly higher induction success than the baseline for small to medium-sized models (1M to 30M parameters). The advantage is most pronounced at 30M parameters.

2. **Diminishing Returns:** Both methods show diminishing returns as model size increases beyond 30M-100M parameters. The curves begin to flatten.

3. **Convergence at Scale:** At the largest model sizes (300M and 1000M parameters), the performance of the two methods converges, with both achieving an induction success rate between approximately 0.45 and 0.50.

4. **Non-Monotonic Behavior:** The "n=2" series exhibits a non-monotonic trend, with a noticeable dip in performance at 100M parameters before rising again. This could indicate an anomaly, a point of instability in training, or a characteristic of the method at that specific scale.

### Interpretation

This chart likely comes from a machine learning research paper. "Induction success" probably measures a model's ability to perform in-context learning or pattern completion. The data suggests the authors' proposed method ("n=2") is more **parameter-efficient**, achieving better performance with fewer computational resources (smaller models) compared to the baseline ("n=1").

The key finding is that the proposed method provides a substantial "head start" in capability at smaller scales. However, this advantage diminishes with brute-force scaling, as the baseline eventually catches up when given enough parameters (≥300M). This implies the authors' method may encode a more efficient algorithm or prior for the induction task, which becomes less critical as the model's raw capacity grows large enough to learn the task implicitly. The dip at 100M for the "n=2" method is an interesting anomaly that would warrant further investigation in the original research context.

</details>

capability in a controlled way. Training small models of sizes 1M to 1B nonembedding parameters on a dataset of children stories, we measure induction capability by means of an adapted test set: in 100 stories from the original test split, we replace the character names by randomly generated names that consist of two tokens with the tokenizer we employ. Predicting the first of these two tokens is linked to the semantics of the preceding text, while predicting the second token of each name's occurrence after it has been mentioned at least once can be seen as a pure induction task. In our experiments, we train for up to 90 epochs and perform early stopping with respect to the test metric (i.e. we allow an epoch oracle). Figure 7 reports induction capability as measured by accuracy on the names' second tokens in relation to model size for two runs with different seeds.

We find that 2-token prediction loss leads to a vastly improved formation of induction capability for models of size 30M nonembedding parameters and below, with their advantage disappearing for sizes of 100M nonembedding parameters and above. 1 We interpret this finding as follows: multitoken prediction losses help models to learn transferring information across sequence positions, which lends itself to the formation of induction heads and other in-context learning mechanisms. However, once induction capability has been formed, these learned features transform induction

1 Note that a perfect score is not reachable in this benchmark as some of the tokens in the names in the evaluation dataset never appear in the training data, and in our architecture, embedding and unembedding parameters are not linked.

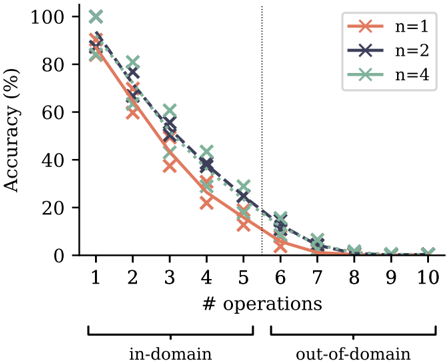

Figure 8: Accuracy on a polynomial arithmetic task with varying number of operations per expression. Training with multi-token prediction losses increases accuracy across task difficulties. In particular, it also significantly improves out-of-domain generalization performance, albeit at a low absolute level. Tripling the model size, on the other hand, has a considerably smaller effect than replacing next-token prediction with multi-token prediction loss (Figure S16). Shown are two independent runs per configuration with 100M parameter models.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Number of Operations for Different 'n' Values

### Overview

The image is a line graph plotting model accuracy (as a percentage) against the number of sequential operations performed. It compares three different model configurations, labeled by the parameter `n` (n=1, n=2, n=4). The graph is divided into two distinct domains: "in-domain" and "out-of-domain," separated by a vertical dotted line. The overall trend shows a sharp, consistent decline in accuracy as the number of operations increases for all configurations.

### Components/Axes

* **Y-Axis:** Labeled "Accuracy (%)". Scale runs from 0 to 100 in increments of 20 (0, 20, 40, 60, 80, 100).

* **X-Axis:** Labeled "# operations". Discrete integer markers from 1 to 10.

* **Domain Segmentation:** A vertical dotted line is positioned between x=5 and x=6. A bracket below the x-axis labels the region from 1 to 5 as "in-domain" and the region from 6 to 10 as "out-of-domain".

* **Legend:** Located in the top-right corner of the plot area. It defines three data series:

* `n=1`: Represented by an orange line with 'x' markers.

* `n=2`: Represented by a dark blue (navy) line with 'x' markers.

* `n=4`: Represented by a green line with 'x' markers.

* **Data Series:** Three lines, each connecting 'x' markers at integer x-values from 1 to 10.

### Detailed Analysis

**Trend Verification:** All three lines exhibit a strong, monotonic downward trend. The slope is steepest in the "in-domain" region (operations 1-5) and flattens as accuracy approaches zero in the "out-of-domain" region (operations 6-10).

**Data Point Extraction (Approximate Values):**

* **n=1 (Orange):**

* In-domain: Starts at ~90% (op 1), drops to ~60% (op 2), ~40% (op 3), ~25% (op 4), ~15% (op 5).

* Out-of-domain: ~5% (op 6), ~2% (op 7), ~0% (op 8), ~0% (op 9), ~0% (op 10).

* **n=2 (Dark Blue):**

* In-domain: Starts at ~95% (op 1), drops to ~70% (op 2), ~50% (op 3), ~35% (op 4), ~20% (op 5).

* Out-of-domain: ~10% (op 6), ~5% (op 7), ~0% (op 8), ~0% (op 9), ~0% (op 10).

* **n=4 (Green):**

* In-domain: Starts at ~100% (op 1), drops to ~80% (op 2), ~60% (op 3), ~40% (op 4), ~25% (op 5).

* Out-of-domain: ~15% (op 6), ~5% (op 7), ~0% (op 8), ~0% (op 9), ~0% (op 10).

**Cross-Reference & Spatial Grounding:** The legend is positioned in the top-right, clear of the data lines. The color and marker for each series are consistent throughout the plot. For every x-value, the vertical ordering of the points is consistent: the green line (`n=4`) is highest, followed by the dark blue line (`n=2`), and then the orange line (`n=1`). This hierarchy holds from operation 1 through approximately operation 7, after which all converge near zero.

### Key Observations

1. **Universal Performance Degradation:** Accuracy for all models decays rapidly with an increasing number of operations. No model maintains high accuracy beyond 5-6 operations.

2. **Domain Shift Impact:** The transition from "in-domain" to "out-of-domain" at operation 6 coincides with all models already being at very low accuracy (<15%). The most significant performance loss occurs *within* the in-domain region.

3. **Parameter `n` Effect:** Higher `n` values (n=4) provide a consistent, but diminishing, accuracy advantage over lower values (n=1, n=2) across the first ~7 operations. The advantage is most pronounced at lower operation counts (e.g., at op 1: ~10% gap between n=4 and n=1).

4. **Convergence to Zero:** By operation 8, all models have effectively reached 0% accuracy, and this persists through operation 10.

### Interpretation

This graph demonstrates a fundamental limitation in the evaluated system's ability to maintain performance through sequential reasoning or multi-step tasks. The steep, linear-like decline suggests an error accumulation or compounding effect where each additional operation significantly reduces the probability of a correct final outcome.

The parameter `n` likely represents a model capacity or ensemble size factor. While increasing `n` improves baseline accuracy and slows the rate of decay slightly, it does not change the fundamental trajectory toward zero. This implies that simply scaling this parameter is insufficient to solve the core problem of robust multi-step inference.

The "in-domain" vs. "out-of-domain" split is somewhat misleading in its visual emphasis, as the catastrophic failure is already well underway before the domain shift occurs. The primary takeaway is not the difference between domains, but the universal and severe degradation with task complexity (number of operations). This pattern is characteristic of systems lacking robust compositional generalization or those prone to cascading errors.

</details>

into a task that can be solved locally at the current token and learned with next-token prediction alone. From this point on, multi-token prediction actually hurts on this restricted benchmark-but we surmise that there are higher forms of in-context reasoning to which it further contributes, as evidenced by the results in Section 3.1. In Figure S14, we provide evidence for this explanation: replacing the children stories dataset by a higher-quality 9:1 mix of a books dataset with the children stories, we enforce the formation of induction capability early in training by means of the dataset alone. By consequence, except for the two smallest model sizes, the advantage of multi-token prediction on the task disappears: feature learning of induction features has converted the task into a pure next-token prediction task.

## 4.2. Algorithmic reasoning

Algorithmic reasoning tasks allow to measure more involved forms of in-context reasoning than induction alone. We train and evaluate models on a task on polynomial arithmetic in the ring F 7 [ X ] / ( X 5 ) with unary negation, addition, multiplication and composition of polynomials as operations. The coefficients of the operands and the operators are sampled uniformly. The task is to return the coefficients of the polynomials corresponding to the resulting expressions. The number m of operations contained in the expressions is selected uniformly from the range from 1 to 5 at training time, and can be used to adjust the difficulty of both in-domain ( m ≤ 5 ) and out-of-domain ( m> 5 ) generalization evaluations. The evaluations are conducted with greedy sampling on a fixed test set of 2000 samples per number of operations. We train models of two small sizes with 30M and 100M nonembedding parameters, respectively. This simulates the conditions of large language models trained on massive text corpora which are likewise under-parameterized and unable to memorize their entire training datasets.

Multi-token prediction improves algorithmic reasoning capabilities as measured by this task across task difficulties (Figure 8). In particular, it leads to impressive gains in out-of-distribution generalization, despite the low absolute numbers. Increasing the model size from 30M to 100M parameters, on the other hand, does not improve evaluation accuracy as much as replacing next-token prediction by multi-token prediction does (Figure S16). In Appendix K, we furthermore show that multi-token prediction models retain their advantage over next-token prediction models on this task when trained and evaluated with pause tokens (Goyal et al., 2023).

## 5. Why does it work? Some speculation

Why does multi-token prediction afford superior performance on coding evaluation benchmarks, and on small algorithmic reasoning tasks? Our intuition, developed in this section, is that multi-token prediction mitigates the distributional discrepancy between training-time teacher forcing and inference-time autoregressive generation. We support this view with an illustrative argument on the implicit weights multi-token prediction assigns to tokens depending on their relevance for the continuation of the text, as well as with an information-theoretic decomposition of multi-token prediction loss.

## 5.1. Lookahead reinforces choice points

Not all token decisions are equally important for generating useful texts from language models (Bachmann and Nagarajan, 2024; Lin et al., 2024). While some tokens allow stylistic variations that do not constrain the remainder of the text, others represent choice points that are linked with higher-level semantic properties of the text and may decide whether an answer is perceived as useful or derailing .

Multi-token prediction implicitly assigns weights to training tokens depending on how closely they are correlated with their successors. As an illustrative example, consider the sequence depicted in Figure 9 where one transition is a hard-to-predict choice point while the other transitions are considered 'inconsequential'. Inconsequential transitions following a choice point are likewise hard to predict in advance. By marking and counting loss terms, we find that

Figure 9: Multi-token prediction loss assigns higher implicit weights to consequential tokens. Shown is a sequence in which all transitions except '5 → A' are easy to predict, alongside the corresponding prediction targets in 3-token prediction. Since the consequences of the difficult transition '5 → A' are likewise hard to predict, this transition receives a higher implicit weight in the overall loss via its correlates '3 → A', ..., '5 → C'.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Diagram: Sequence Prediction Model vs. Ground Truth

### Overview

This image is a technical diagram illustrating the comparison between a model's sequential predictions and the corresponding ground truth labels. It visualizes a sequence prediction task, likely from a machine learning or time-series context, where the model outputs a ranked list of predictions at each step against a known true sequence. The diagram highlights a consistent predictive pattern and a transition in label type.

### Components/Axes

The diagram is organized into two primary horizontal rows, with vertical alignment connecting corresponding steps.

1. **Top Row: "Model predictions"**

* **Label:** The text "Model predictions" is positioned at the top-left.

* **Structure:** Contains seven vertical columns (or stacks). Each column is a rounded rectangle with a light green border, containing three vertically stacked characters.

* **Content (Left to Right):**

* Column 1: `4`, `3`, `2` (top to bottom)

* Column 2: `5`, `4`, `3`

* Column 3: `A`, `5`, `4`

* Column 4: `B`, `A`, `5`

* Column 5: `C`, `B`, `A`

* Column 6: `D`, `C`, `B`

* Column 7: `E`, `D`, `C`

2. **Bottom Row: "Ground truth"**

* **Label:** The text "Ground truth" is positioned at the bottom-left.

* **Structure:** A horizontal sequence of seven individual elements connected by right-pointing arrows (`→`).

* **Content (Left to Right):** `1` → `2` → `3` → `4` → `5` → `A` → `B`

* **Color Coding:** The first five elements (`1` through `5`) are in black text. The final two elements (`A` and `B`) are in **red text**, indicating a change in the label domain.

3. **Spatial Relationships & Connectors:**

* Each element in the "Ground truth" sequence has a thin, gray, upward-pointing arrow (`↑`) directly above it.

* These arrows point to the base of the corresponding column in the "Model predictions" row, establishing a direct step-by-step correspondence.

* The entire diagram flows from left to right, representing progression through a sequence.

### Detailed Analysis

* **Prediction Pattern:** At each step `n` (from 1 to 7), the model outputs a ranked list of three predictions. The **top prediction** in each column follows a clear pattern relative to the ground truth sequence.

* **Trend Verification:** The top predictions form the sequence: `4, 5, A, B, C, D, E`. This sequence is a **shifted version** of the ground truth. Specifically, the top prediction at step `n` corresponds to the ground truth label at step `n+3`.

* Step 1: Top prediction `4` = Ground Truth at Step 4.

* Step 2: Top prediction `5` = Ground Truth at Step 5.

* Step 3: Top prediction `A` = Ground Truth at Step 6.

* Step 4: Top prediction `B` = Ground Truth at Step 7.

* Steps 5-7: Top predictions `C, D, E` extrapolate beyond the provided ground truth sequence, continuing the alphabetic pattern established by `A` and `B`.

* **Secondary Predictions:** The middle and bottom predictions in each column appear to be the previous one or two top predictions, creating a "sliding window" effect. For example, at Step 2, the predictions are `5, 4, 3`, where `5` is the new top prediction, `4` was the top prediction from Step 1, and `3` was the middle prediction from Step 1.

### Key Observations

1. **Consistent Lag:** The model demonstrates a consistent predictive lag or offset, reliably forecasting three steps into the future relative to the current ground truth step.

2. **Domain Transition:** The ground truth transitions from numeric (`1-5`) to alphabetic (`A, B`) labels at step 6. The model not only captures this transition (predicting `A` and `B` correctly at steps 3 and 4) but also **extrapolates** the new domain, predicting `C`, `D`, and `E` in subsequent steps.

3. **Prediction Hierarchy:** Each prediction stack is ordered, with the most likely prediction at the top. The composition of the stack (current top + recent past tops) suggests the model may be using a recurrent or autoregressive mechanism.

4. **Visual Coding:** The use of red for the final ground truth labels (`A`, `B`) visually emphasizes the point of domain shift, which is a critical event in the sequence.

### Interpretation

This diagram likely illustrates the performance or internal state of a sequence prediction model (e.g., a recurrent neural network, transformer, or autoregressive model) on a specific task. The data suggests the following:

* **Task Nature:** The task involves predicting the next element in a sequence that changes its fundamental representation (from numbers to letters). This could simulate scenarios like predicting categorical states after a period of numerical measurement.

* **Model Behavior:** The model has learned the sequential structure well enough to maintain a fixed, multi-step lookahead. The consistent 3-step offset might be an architectural feature (e.g., a specific prediction horizon) or an emergent property of its training. Its ability to extrapolate the alphabetic sequence indicates it has learned the underlying pattern of progression, not just memorized labels.

* **Underlying Mechanism:** The composition of the prediction stacks (containing recent past predictions) is characteristic of models that maintain a hidden state or memory. This allows them to base new predictions on both the current input and their own recent outputs.

* **Anomaly/Outlier:** There are no visual outliers in the pattern; the model's behavior is remarkably consistent. The primary "anomaly" is the domain shift in the ground truth itself, which the model handles seamlessly.

In essence, the diagram provides a visual proof-of-concept for a model's capability to perform robust, multi-step-ahead sequence prediction across a changing label space.

</details>